Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

113,244

| 17,116,224,153

|

IssuesEvent

|

2021-07-11 12:10:03

|

theHinneh/ha

|

https://api.github.com/repos/theHinneh/ha

|

closed

|

CVE-2019-1010266 (Medium) detected in lodash-3.10.1.tgz

|

security vulnerability

|

## CVE-2019-1010266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: ha/backend/package.json</p>

<p>Path to vulnerable library: ha/backend/node_modules/ioredis/node_modules/lodash/package.json,ha/backend/node_modules/kafka-node/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- mosca-2.8.3.tgz (Root Library)

- ioredis-1.15.1.tgz

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/theHinneh/ha/commit/b67d33dd9df9e05b70466e310843976220230240">b67d33dd9df9e05b70466e310843976220230240</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash prior to 4.17.11 is affected by: CWE-400: Uncontrolled Resource Consumption. The impact is: Denial of service. The component is: Date handler. The attack vector is: Attacker provides very long strings, which the library attempts to match using a regular expression. The fixed version is: 4.17.11.

<p>Publish Date: 2019-07-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-1010266>CVE-2019-1010266</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-1010266">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-1010266</a></p>

<p>Release Date: 2019-07-17</p>

<p>Fix Resolution: 4.17.11</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-1010266 (Medium) detected in lodash-3.10.1.tgz - ## CVE-2019-1010266 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: ha/backend/package.json</p>

<p>Path to vulnerable library: ha/backend/node_modules/ioredis/node_modules/lodash/package.json,ha/backend/node_modules/kafka-node/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- mosca-2.8.3.tgz (Root Library)

- ioredis-1.15.1.tgz

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/theHinneh/ha/commit/b67d33dd9df9e05b70466e310843976220230240">b67d33dd9df9e05b70466e310843976220230240</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash prior to 4.17.11 is affected by: CWE-400: Uncontrolled Resource Consumption. The impact is: Denial of service. The component is: Date handler. The attack vector is: Attacker provides very long strings, which the library attempts to match using a regular expression. The fixed version is: 4.17.11.

<p>Publish Date: 2019-07-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-1010266>CVE-2019-1010266</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-1010266">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-1010266</a></p>

<p>Release Date: 2019-07-17</p>

<p>Fix Resolution: 4.17.11</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz the modern build of lodash modular utilities library home page a href path to dependency file ha backend package json path to vulnerable library ha backend node modules ioredis node modules lodash package json ha backend node modules kafka node node modules lodash package json dependency hierarchy mosca tgz root library ioredis tgz x lodash tgz vulnerable library found in head commit a href found in base branch main vulnerability details lodash prior to is affected by cwe uncontrolled resource consumption the impact is denial of service the component is date handler the attack vector is attacker provides very long strings which the library attempts to match using a regular expression the fixed version is publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

328,578

| 28,125,935,891

|

IssuesEvent

|

2023-03-31 17:42:55

|

MicrosoftDocs/visualstudio-docs

|

https://api.github.com/repos/MicrosoftDocs/visualstudio-docs

|

closed

|

How to target an Azure cloud PC / Windows365 that I have access to

|

doc-bug visual-studio-windows/prod vs-ide-test/tech Pri2

|

How to target an Azure cloud PC / Windows365 that I have access to via Remote Desktop app.

I don't see ways to target the machine, as I have no clue what would be the public name, as it seems to be behind a RDP gateway. Is there ways to proxy the connection via that?

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 90ec9455-ef64-8854-94bc-9be28887a6a4

* Version Independent ID: 9adeea5e-7ba1-065f-5de0-aae9147f9c85

* Content: [Remote Testing in Visual Studio - Visual Studio (Windows)](https://learn.microsoft.com/en-us/visualstudio/test/remote-testing?view=vs-2022)

* Content Source: [docs/test/remote-testing.md](https://github.com/MicrosoftDocs/visualstudio-docs/blob/main/docs/test/remote-testing.md)

* Product: **visual-studio-windows**

* Technology: **vs-ide-test**

* GitHub Login: @Mikejo5000

* Microsoft Alias: **mikejo**

|

1.0

|

How to target an Azure cloud PC / Windows365 that I have access to - How to target an Azure cloud PC / Windows365 that I have access to via Remote Desktop app.

I don't see ways to target the machine, as I have no clue what would be the public name, as it seems to be behind a RDP gateway. Is there ways to proxy the connection via that?

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 90ec9455-ef64-8854-94bc-9be28887a6a4

* Version Independent ID: 9adeea5e-7ba1-065f-5de0-aae9147f9c85

* Content: [Remote Testing in Visual Studio - Visual Studio (Windows)](https://learn.microsoft.com/en-us/visualstudio/test/remote-testing?view=vs-2022)

* Content Source: [docs/test/remote-testing.md](https://github.com/MicrosoftDocs/visualstudio-docs/blob/main/docs/test/remote-testing.md)

* Product: **visual-studio-windows**

* Technology: **vs-ide-test**

* GitHub Login: @Mikejo5000

* Microsoft Alias: **mikejo**

|

non_process

|

how to target an azure cloud pc that i have access to how to target an azure cloud pc that i have access to via remote desktop app i don t see ways to target the machine as i have no clue what would be the public name as it seems to be behind a rdp gateway is there ways to proxy the connection via that document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id version independent id content content source product visual studio windows technology vs ide test github login microsoft alias mikejo

| 0

|

178,296

| 13,772,140,416

|

IssuesEvent

|

2020-10-07 23:41:53

|

rancher/dashboard

|

https://api.github.com/repos/rancher/dashboard

|

closed

|

Schedule is not marked as a mandatory field in a CronJob create page

|

[zube]: To Test kind/bug

|

**On master-head - commit id: `33f755f18`**

- Workloads --> Cron Job --> create

- Schedule is not marked as a mandatory field in a CronJob create page

<img width="950" alt="Screen Shot 2020-09-17 at 3 03 21 PM" src="https://user-images.githubusercontent.com/26032343/93533061-0dc75800-f8f7-11ea-9d0c-0a23c18838da.png">

**Expected Result:**

Cron Job should be marked as a mandatory field

|

1.0

|

Schedule is not marked as a mandatory field in a CronJob create page - **On master-head - commit id: `33f755f18`**

- Workloads --> Cron Job --> create

- Schedule is not marked as a mandatory field in a CronJob create page

<img width="950" alt="Screen Shot 2020-09-17 at 3 03 21 PM" src="https://user-images.githubusercontent.com/26032343/93533061-0dc75800-f8f7-11ea-9d0c-0a23c18838da.png">

**Expected Result:**

Cron Job should be marked as a mandatory field

|

non_process

|

schedule is not marked as a mandatory field in a cronjob create page on master head commit id workloads cron job create schedule is not marked as a mandatory field in a cronjob create page img width alt screen shot at pm src expected result cron job should be marked as a mandatory field

| 0

|

13,513

| 3,343,449,948

|

IssuesEvent

|

2015-11-15 14:24:26

|

bolt/bolt

|

https://api.github.com/repos/bolt/bolt

|

closed

|

Multiple relation fields don't clear when single relation used

|

Blocking release Bug Needs Acceptance Test Needs Unit Test Regression

|

The value(s) fetched in `Bolt\Storage\Field\Type\RelationType::persist()` become `EntityProxy`

|

2.0

|

Multiple relation fields don't clear when single relation used - The value(s) fetched in `Bolt\Storage\Field\Type\RelationType::persist()` become `EntityProxy`

|

non_process

|

multiple relation fields don t clear when single relation used the value s fetched in bolt storage field type relationtype persist become entityproxy

| 0

|

93,588

| 3,906,046,838

|

IssuesEvent

|

2016-04-19 07:04:24

|

Captianrock/android_PV

|

https://api.github.com/repos/Captianrock/android_PV

|

opened

|

Dynamic updates for apps with traces

|

High Priority

|

Dynamically update the list of apps that have been analyzed by the user.

|

1.0

|

Dynamic updates for apps with traces - Dynamically update the list of apps that have been analyzed by the user.

|

non_process

|

dynamic updates for apps with traces dynamically update the list of apps that have been analyzed by the user

| 0

|

733,295

| 25,299,478,315

|

IssuesEvent

|

2022-11-17 09:38:06

|

opendatahub-io/odh-dashboard

|

https://api.github.com/repos/opendatahub-io/odh-dashboard

|

closed

|

[Model Serving]: Support Model Creation in Global View

|

kind/enhancement priority/blocker feature/model-serving

|

### Feature description

Follow up #648

Add the ability to deploy a model in the global view ([Mocks](https://www.sketch.com/s/113593f8-5970-49d6-a352-709b07639127/a/EL8AWlg))

### Describe alternatives you've considered

_No response_

### Anything else?

_No response_

|

1.0

|

[Model Serving]: Support Model Creation in Global View - ### Feature description

Follow up #648

Add the ability to deploy a model in the global view ([Mocks](https://www.sketch.com/s/113593f8-5970-49d6-a352-709b07639127/a/EL8AWlg))

### Describe alternatives you've considered

_No response_

### Anything else?

_No response_

|

non_process

|

support model creation in global view feature description follow up add the ability to deploy a model in the global view describe alternatives you ve considered no response anything else no response

| 0

|

16,661

| 21,728,177,662

|

IssuesEvent

|

2022-05-11 09:32:33

|

camunda/zeebe

|

https://api.github.com/repos/camunda/zeebe

|

closed

|

Extract RestClient creation logic out of the ElasticsearchClient

|

kind/toil team/process-automation area/maintainability

|

**Description**

The `ElasticsearchClient` class creates a new `RestClient` based on user configuration passed via `ElasticsearchExporterConfiguration`. Splitting this out will allow us to test that the rest clients are properly constructed, and also allow us to reuse the same logic to construct high level REST clients for testing.

|

1.0

|

Extract RestClient creation logic out of the ElasticsearchClient - **Description**

The `ElasticsearchClient` class creates a new `RestClient` based on user configuration passed via `ElasticsearchExporterConfiguration`. Splitting this out will allow us to test that the rest clients are properly constructed, and also allow us to reuse the same logic to construct high level REST clients for testing.

|

process

|

extract restclient creation logic out of the elasticsearchclient description the elasticsearchclient class creates a new restclient based on user configuration passed via elasticsearchexporterconfiguration splitting this out will allow us to test that the rest clients are properly constructed and also allow us to reuse the same logic to construct high level rest clients for testing

| 1

|

4,720

| 7,552,846,759

|

IssuesEvent

|

2018-04-19 02:43:42

|

UnbFeelings/unb-feelings-docs

|

https://api.github.com/repos/UnbFeelings/unb-feelings-docs

|

closed

|

[Não Conformidade] Relatório Final não existe

|

Processo Qualidade invalid

|

@UnbFeelings/process

Perante critérios definidos para as [Auditorias](https://github.com/UnbFeelings/unb-feelings-GQA/wiki/Crit%C3%A9rios-de-Avalia%C3%A7%C3%A3o-e-T%C3%A9cnicas-de-Auditoria#plano-de-medi%C3%A7%C3%A3o) fora auditada o artefato Relatório Final, resultante da atividade [Relatório Final de Medição](https://github.com/UnbFeelings/unb-feelings-docs/wiki/Processo#317-atividade-relatório-final-de-medição).

### Descrição

Foi identificado que não foram realizadas coletas de métricas de código fonte, ou se foram, não estão descritas em um artefato de acordo com o proposto pelo processo.

#### Recomendações

É recomendado a integração de ferramentas de análise de código para que as métricas possam ser geradas automaticamente, sem que haja a necessidade de atribuir esta tarefa a uma pessoa. No entanto recomenda-se definir um responsável pela elaboração do relatório de métricas.

#### Detalhes

**Auditor**: Jonathan Rufino

**Técnica de Audição**: Checklist

**Tipo:** Medição e Análise

**Prazo:** 23/04/2018

|

1.0

|

[Não Conformidade] Relatório Final não existe - @UnbFeelings/process

Perante critérios definidos para as [Auditorias](https://github.com/UnbFeelings/unb-feelings-GQA/wiki/Crit%C3%A9rios-de-Avalia%C3%A7%C3%A3o-e-T%C3%A9cnicas-de-Auditoria#plano-de-medi%C3%A7%C3%A3o) fora auditada o artefato Relatório Final, resultante da atividade [Relatório Final de Medição](https://github.com/UnbFeelings/unb-feelings-docs/wiki/Processo#317-atividade-relatório-final-de-medição).

### Descrição

Foi identificado que não foram realizadas coletas de métricas de código fonte, ou se foram, não estão descritas em um artefato de acordo com o proposto pelo processo.

#### Recomendações

É recomendado a integração de ferramentas de análise de código para que as métricas possam ser geradas automaticamente, sem que haja a necessidade de atribuir esta tarefa a uma pessoa. No entanto recomenda-se definir um responsável pela elaboração do relatório de métricas.

#### Detalhes

**Auditor**: Jonathan Rufino

**Técnica de Audição**: Checklist

**Tipo:** Medição e Análise

**Prazo:** 23/04/2018

|

process

|

relatório final não existe unbfeelings process perante critérios definidos para as fora auditada o artefato relatório final resultante da atividade descrição foi identificado que não foram realizadas coletas de métricas de código fonte ou se foram não estão descritas em um artefato de acordo com o proposto pelo processo recomendações é recomendado a integração de ferramentas de análise de código para que as métricas possam ser geradas automaticamente sem que haja a necessidade de atribuir esta tarefa a uma pessoa no entanto recomenda se definir um responsável pela elaboração do relatório de métricas detalhes auditor jonathan rufino técnica de audição checklist tipo medição e análise prazo

| 1

|

3,990

| 6,918,318,910

|

IssuesEvent

|

2017-11-29 11:43:50

|

nlbdev/pipeline

|

https://api.github.com/repos/nlbdev/pipeline

|

closed

|

Acrynyms with genitive "s" / Akronymer med genitivs-s

|

enhancement pre-processing Priority:1 - Low

|

(norwegian)

*from Trello-board (@matskober):*

Legge inn akronymer med genitivs-s (f.eks. NLBs. I punktskrift skal det være et tegn som markerer skillet mellom akronymet og genitivs-s - 56). Liste over akronymer er skaffet fra Mari

|

1.0

|

Acrynyms with genitive "s" / Akronymer med genitivs-s - (norwegian)

*from Trello-board (@matskober):*

Legge inn akronymer med genitivs-s (f.eks. NLBs. I punktskrift skal det være et tegn som markerer skillet mellom akronymet og genitivs-s - 56). Liste over akronymer er skaffet fra Mari

|

process

|

acrynyms with genitive s akronymer med genitivs s norwegian from trello board matskober legge inn akronymer med genitivs s f eks nlbs i punktskrift skal det være et tegn som markerer skillet mellom akronymet og genitivs s liste over akronymer er skaffet fra mari

| 1

|

13,650

| 8,306,928,655

|

IssuesEvent

|

2018-09-23 01:11:10

|

VSCodeVim/Vim

|

https://api.github.com/repos/VSCodeVim/Vim

|

closed

|

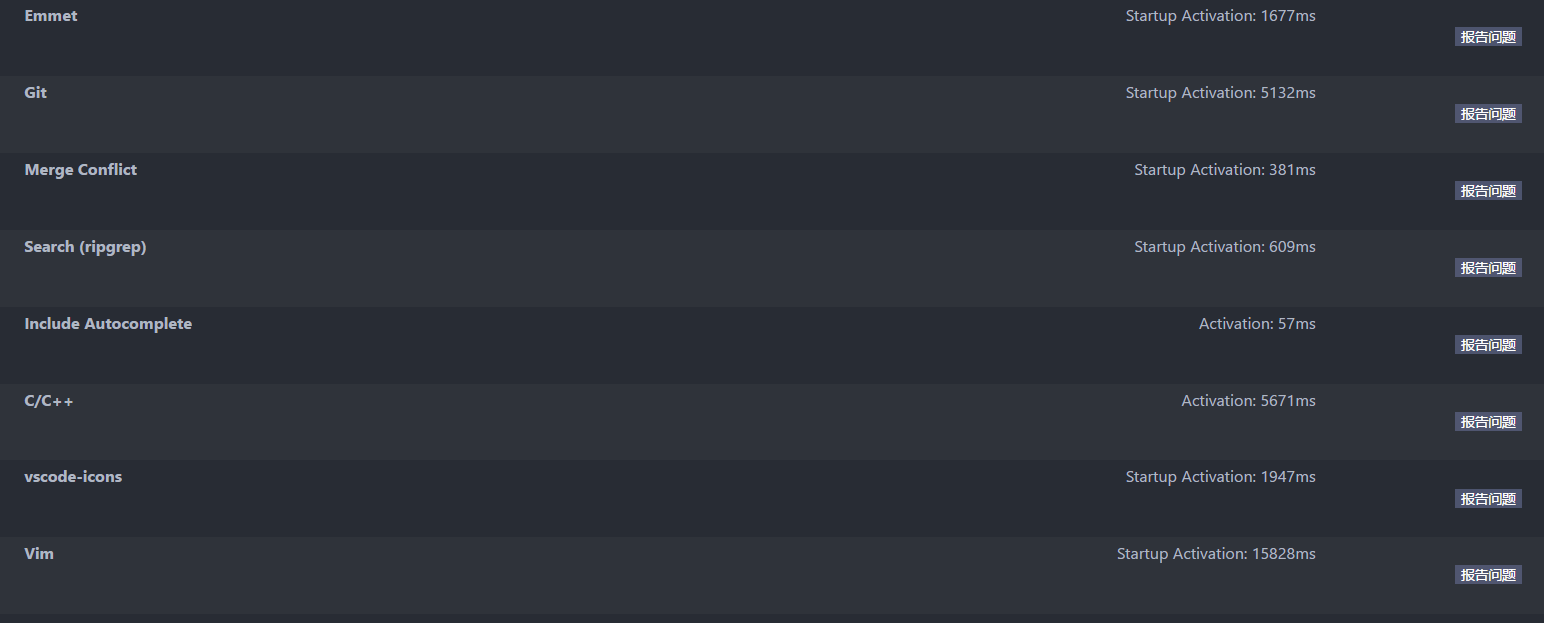

Investigate reducing startup activation time

|

area/performance

|

- Extension Name: vim

- Extension Version: 0.15.7

- OS Version: Windows_NT x64 10.0.15063

- VSCode version: 1.25.1

We have written the needed data into your clipboard. Please paste:

why soooooooooooooooooooo slow???!!!!

|

True

|

Investigate reducing startup activation time - - Extension Name: vim

- Extension Version: 0.15.7

- OS Version: Windows_NT x64 10.0.15063

- VSCode version: 1.25.1

We have written the needed data into your clipboard. Please paste:

why soooooooooooooooooooo slow???!!!!

|

non_process

|

investigate reducing startup activation time extension name vim extension version os version windows nt vscode version we have written the needed data into your clipboard please paste why soooooooooooooooooooo slow???!!!!

| 0

|

218,628

| 16,766,016,398

|

IssuesEvent

|

2021-06-14 08:54:06

|

jakobbossek/ecr3vis

|

https://api.github.com/repos/jakobbossek/ecr3vis

|

opened

|

Use mathjax for HTML output

|

documentation

|

We have many math formulas in the documentation. These are not rendered in the HTML RD files. Consider using [mathjaxr](http://cran.uni-muenster.de/web/packages/mathjaxr/mathjaxr.pdf)

|

1.0

|

Use mathjax for HTML output - We have many math formulas in the documentation. These are not rendered in the HTML RD files. Consider using [mathjaxr](http://cran.uni-muenster.de/web/packages/mathjaxr/mathjaxr.pdf)

|

non_process

|

use mathjax for html output we have many math formulas in the documentation these are not rendered in the html rd files consider using

| 0

|

349,799

| 31,831,824,270

|

IssuesEvent

|

2023-09-14 11:06:06

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

roachprod: implement "Reset" for aws and azure

|

C-enhancement X-stale A-roachprod no-issue-activity T-testeng O-cloudreport

|

Only GCE supports `Reset`--restarting a VM. As a result, some roachtests may be flaky (or incorrect) when executing outside of GCE. E.g., tpcc roachperf uses `Reset` [1] during each iteration of line search to determine an optimal number of warehouses. While the restart after each iteration is technically not required, it reduces noise.

Other examples include failure-injection scenarios, e.g., restarting a VM at random.

[1] https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/tests/tpcc.go#L1111

Epic: CRDB-10428

Jira issue: CRDB-13686

|

1.0

|

roachprod: implement "Reset" for aws and azure - Only GCE supports `Reset`--restarting a VM. As a result, some roachtests may be flaky (or incorrect) when executing outside of GCE. E.g., tpcc roachperf uses `Reset` [1] during each iteration of line search to determine an optimal number of warehouses. While the restart after each iteration is technically not required, it reduces noise.

Other examples include failure-injection scenarios, e.g., restarting a VM at random.

[1] https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/tests/tpcc.go#L1111

Epic: CRDB-10428

Jira issue: CRDB-13686

|

non_process

|

roachprod implement reset for aws and azure only gce supports reset restarting a vm as a result some roachtests may be flaky or incorrect when executing outside of gce e g tpcc roachperf uses reset during each iteration of line search to determine an optimal number of warehouses while the restart after each iteration is technically not required it reduces noise other examples include failure injection scenarios e g restarting a vm at random epic crdb jira issue crdb

| 0

|

19,448

| 25,727,174,600

|

IssuesEvent

|

2022-12-07 17:26:47

|

RobertCraigie/prisma-client-py

|

https://api.github.com/repos/RobertCraigie/prisma-client-py

|

opened

|

Could not connect to the Query Engine due to OSError [Errno 99]

|

bug/2-confirmed kind/bug process/candidate level/intermediate priority/high

|

<!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.io/en/stable/reference/logging/ for how to enable additional logging output.

-->

## Bug description

<!-- A clear and concise description of what the bug is. -->

A user has encountered this error:

```

OSError: [Errno 99] Cannot assign requested address

File "httpcore/_exceptions.py", line 10, in map_exceptions

yield

File "httpcore/backends/sync.py", line 94, in connect_tcp

sock = socket.create_connection(

File "socket.py", line 844, in create_connection

raise err

File "socket.py", line 832, in create_connection

sock.connect(sa)

```

## How to reproduce

<!--

Steps to reproduce the behavior:

1. Go to '...'

2. Change '....'

3. Run '....'

4. See error

-->

Not currently reproducible. Theorized cause is a race condition.

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

This should not crash.

|

1.0

|

Could not connect to the Query Engine due to OSError [Errno 99] - <!--

Thanks for helping us improve Prisma Client Python! 🙏 Please follow the sections in the template and provide as much information as possible about your problem, e.g. by enabling additional logging output.

See https://prisma-client-py.readthedocs.io/en/stable/reference/logging/ for how to enable additional logging output.

-->

## Bug description

<!-- A clear and concise description of what the bug is. -->

A user has encountered this error:

```

OSError: [Errno 99] Cannot assign requested address

File "httpcore/_exceptions.py", line 10, in map_exceptions

yield

File "httpcore/backends/sync.py", line 94, in connect_tcp

sock = socket.create_connection(

File "socket.py", line 844, in create_connection

raise err

File "socket.py", line 832, in create_connection

sock.connect(sa)

```

## How to reproduce

<!--

Steps to reproduce the behavior:

1. Go to '...'

2. Change '....'

3. Run '....'

4. See error

-->

Not currently reproducible. Theorized cause is a race condition.

## Expected behavior

<!-- A clear and concise description of what you expected to happen. -->

This should not crash.

|

process

|

could not connect to the query engine due to oserror thanks for helping us improve prisma client python 🙏 please follow the sections in the template and provide as much information as possible about your problem e g by enabling additional logging output see for how to enable additional logging output bug description a user has encountered this error oserror cannot assign requested address file httpcore exceptions py line in map exceptions yield file httpcore backends sync py line in connect tcp sock socket create connection file socket py line in create connection raise err file socket py line in create connection sock connect sa how to reproduce steps to reproduce the behavior go to change run see error not currently reproducible theorized cause is a race condition expected behavior this should not crash

| 1

|

834

| 3,297,247,142

|

IssuesEvent

|

2015-11-02 07:26:24

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

closed

|

System.Diagnostics.Process missing environment variables.

|

System.Diagnostics.Process

|

/cc @Priya91, @pallavit, @joshfree, @stephentoub

I am using Process to run a "Build.cmd", I have `echo %PATH%`, The result which run by C# is empty, at the same time, I tried add the system env vars again, it still not works. However, I double click the "Build.cmd", It can run the correct %PATH%

```

Process = new Process();

WorkingDirectory = FindDirectory(workingDirectory);

var fileName = "cmd.exe";

if (OS.Current != OSType.Windows)

{

fileName = "bash";

}

var arguments = "/c build.cmd";

if (OS.Current != OSType.Windows)

{

arguments = "./build.sh";

}

Process.StartInfo = new ProcessStartInfo

{

FileName = fileName,

Arguments = arguments,

UseShellExecute = false,

RedirectStandardError = true,

RedirectStandardOutput = true,

RedirectStandardInput = true,

WorkingDirectory = WorkingDirectory

};

```

...

```

var sysenv = Environment.GetEnvironmentVariables();

foreach(dynamic ev in sysenv)

{

#if DNXCORE50

if (Process.StartInfo.Environment[ev.Key] != null)

Process.StartInfo.Environment[ev.Key] = Process.StartInfo.Environment[ev.Key].TrimEnd(' ').TrimEnd(';') + ";" + ev.Value;

else

Process.StartInfo.Environment.Add(ev.Key, ev.Value);

#else

if (Process.StartInfo.EnvironmentVariables[ev.Key] != null)

Process.StartInfo.EnvironmentVariables[ev.Key] = Process.StartInfo.EnvironmentVariables[ev.Key].TrimEnd(' ').TrimEnd(';') + ";" + ev.Value;

else

Process.StartInfo.EnvironmentVariables.Add(ev.Key, ev.Value);

#endif

}

```

|

1.0

|

System.Diagnostics.Process missing environment variables. - /cc @Priya91, @pallavit, @joshfree, @stephentoub

I am using Process to run a "Build.cmd", I have `echo %PATH%`, The result which run by C# is empty, at the same time, I tried add the system env vars again, it still not works. However, I double click the "Build.cmd", It can run the correct %PATH%

```

Process = new Process();

WorkingDirectory = FindDirectory(workingDirectory);

var fileName = "cmd.exe";

if (OS.Current != OSType.Windows)

{

fileName = "bash";

}

var arguments = "/c build.cmd";

if (OS.Current != OSType.Windows)

{

arguments = "./build.sh";

}

Process.StartInfo = new ProcessStartInfo

{

FileName = fileName,

Arguments = arguments,

UseShellExecute = false,

RedirectStandardError = true,

RedirectStandardOutput = true,

RedirectStandardInput = true,

WorkingDirectory = WorkingDirectory

};

```

...

```

var sysenv = Environment.GetEnvironmentVariables();

foreach(dynamic ev in sysenv)

{

#if DNXCORE50

if (Process.StartInfo.Environment[ev.Key] != null)

Process.StartInfo.Environment[ev.Key] = Process.StartInfo.Environment[ev.Key].TrimEnd(' ').TrimEnd(';') + ";" + ev.Value;

else

Process.StartInfo.Environment.Add(ev.Key, ev.Value);

#else

if (Process.StartInfo.EnvironmentVariables[ev.Key] != null)

Process.StartInfo.EnvironmentVariables[ev.Key] = Process.StartInfo.EnvironmentVariables[ev.Key].TrimEnd(' ').TrimEnd(';') + ";" + ev.Value;

else

Process.StartInfo.EnvironmentVariables.Add(ev.Key, ev.Value);

#endif

}

```

|

process

|

system diagnostics process missing environment variables cc pallavit joshfree stephentoub i am using process to run a build cmd i have echo path the result which run by c is empty at the same time i tried add the system env vars again it still not works however i double click the build cmd it can run the correct path process new process workingdirectory finddirectory workingdirectory var filename cmd exe if os current ostype windows filename bash var arguments c build cmd if os current ostype windows arguments build sh process startinfo new processstartinfo filename filename arguments arguments useshellexecute false redirectstandarderror true redirectstandardoutput true redirectstandardinput true workingdirectory workingdirectory var sysenv environment getenvironmentvariables foreach dynamic ev in sysenv if if process startinfo environment null process startinfo environment process startinfo environment trimend trimend ev value else process startinfo environment add ev key ev value else if process startinfo environmentvariables null process startinfo environmentvariables process startinfo environmentvariables trimend trimend ev value else process startinfo environmentvariables add ev key ev value endif

| 1

|

14,234

| 17,154,611,056

|

IssuesEvent

|

2021-07-14 04:15:51

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

opened

|

Provision for app upgrades

|

Android P1 Participant datastore Process: Enhancement iOS

|

1. Notify app users if a new version of the mobile app is available on the app stores. The notification should redirect to the app store page.

2. Have a provision to configure forced vs. optional upgrade app behavior. If there is a new app version available, users see a message when they launch or visit the app, asking them to upgrade the app before continuing to use it. This can be a mandatory or optional step depending on a server-side configuration.

3. Users should be able to resume app usage smoothly post app upgrade. Requiring them to sign in again is acceptable if there are server-side changes that necessitate this.

|

1.0

|

Provision for app upgrades - 1. Notify app users if a new version of the mobile app is available on the app stores. The notification should redirect to the app store page.

2. Have a provision to configure forced vs. optional upgrade app behavior. If there is a new app version available, users see a message when they launch or visit the app, asking them to upgrade the app before continuing to use it. This can be a mandatory or optional step depending on a server-side configuration.

3. Users should be able to resume app usage smoothly post app upgrade. Requiring them to sign in again is acceptable if there are server-side changes that necessitate this.

|

process

|

provision for app upgrades notify app users if a new version of the mobile app is available on the app stores the notification should redirect to the app store page have a provision to configure forced vs optional upgrade app behavior if there is a new app version available users see a message when they launch or visit the app asking them to upgrade the app before continuing to use it this can be a mandatory or optional step depending on a server side configuration users should be able to resume app usage smoothly post app upgrade requiring them to sign in again is acceptable if there are server side changes that necessitate this

| 1

|

502,276

| 14,543,479,091

|

IssuesEvent

|

2020-12-15 16:55:44

|

zulip/zulip-mobile

|

https://api.github.com/repos/zulip/zulip-mobile

|

closed

|

Add mobile support for new `user_avatar_url_field_optional` client capability

|

P1 high-priority a-avatar api migrations

|

To resolve https://github.com/zulip/zulip/pull/15287, we're introducing a new client_capability that should allow the mobile app to have good performance when talking to servers with thousands of long-term-idle users (and email_address_visibility configured; that last bit being relevant mainly in that our previous attempt at solving this problem. `client_gravatar` feature works only with EMAIL_ADDRESS_VISIBILITY_EVERYONE), since the client needs real email addresses to compute gravatar hashes.

One should be able to prototype today against https://github.com/zulip/zulip/pull/15359; it should work aside from having the wrong capability name. I expect that to get cleaned up and this to merge in the next few days.

Tagging as a priority since this issue makes chat.zulip.org a lot slower to load on mobile.

|

1.0

|

Add mobile support for new `user_avatar_url_field_optional` client capability - To resolve https://github.com/zulip/zulip/pull/15287, we're introducing a new client_capability that should allow the mobile app to have good performance when talking to servers with thousands of long-term-idle users (and email_address_visibility configured; that last bit being relevant mainly in that our previous attempt at solving this problem. `client_gravatar` feature works only with EMAIL_ADDRESS_VISIBILITY_EVERYONE), since the client needs real email addresses to compute gravatar hashes.

One should be able to prototype today against https://github.com/zulip/zulip/pull/15359; it should work aside from having the wrong capability name. I expect that to get cleaned up and this to merge in the next few days.

Tagging as a priority since this issue makes chat.zulip.org a lot slower to load on mobile.

|

non_process

|

add mobile support for new user avatar url field optional client capability to resolve we re introducing a new client capability that should allow the mobile app to have good performance when talking to servers with thousands of long term idle users and email address visibility configured that last bit being relevant mainly in that our previous attempt at solving this problem client gravatar feature works only with email address visibility everyone since the client needs real email addresses to compute gravatar hashes one should be able to prototype today against it should work aside from having the wrong capability name i expect that to get cleaned up and this to merge in the next few days tagging as a priority since this issue makes chat zulip org a lot slower to load on mobile

| 0

|

71,943

| 9,545,095,416

|

IssuesEvent

|

2019-05-01 16:02:33

|

CosmiQ/cw-nets

|

https://api.github.com/repos/CosmiQ/cw-nets

|

closed

|

Augmentation docs

|

Difficulty: Easy Priority: High Status: On Hold Type: Documentation

|

After completing augmentation implementation (#35) we need to document it.

Documentation components:

[ ] list of available augmentations

[ ] set of augmentations only compatible with 3-channel imagery

[ ] set of required arguments for each augmentation

[ ] instructions and examples for using the `cw_nets.data.transform` submodule, including yaml config formatting

|

1.0

|

Augmentation docs - After completing augmentation implementation (#35) we need to document it.

Documentation components:

[ ] list of available augmentations

[ ] set of augmentations only compatible with 3-channel imagery

[ ] set of required arguments for each augmentation

[ ] instructions and examples for using the `cw_nets.data.transform` submodule, including yaml config formatting

|

non_process

|

augmentation docs after completing augmentation implementation we need to document it documentation components list of available augmentations set of augmentations only compatible with channel imagery set of required arguments for each augmentation instructions and examples for using the cw nets data transform submodule including yaml config formatting

| 0

|

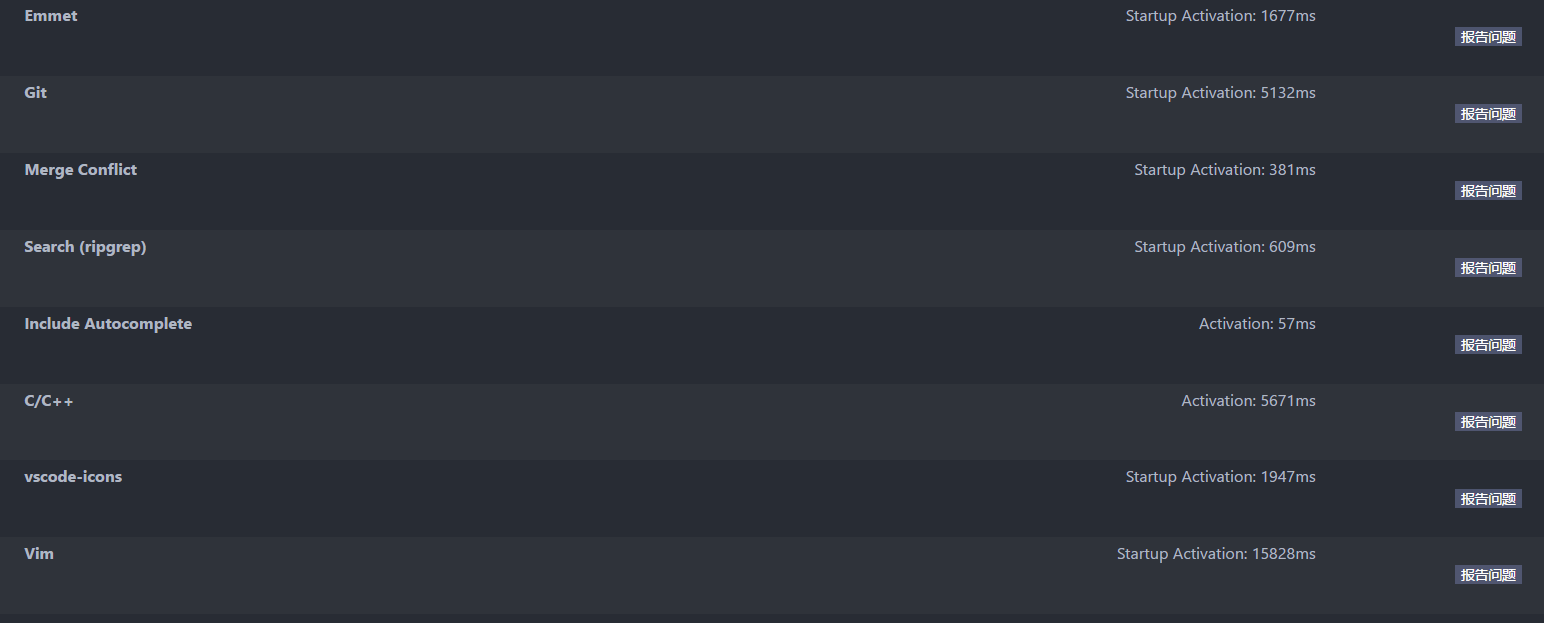

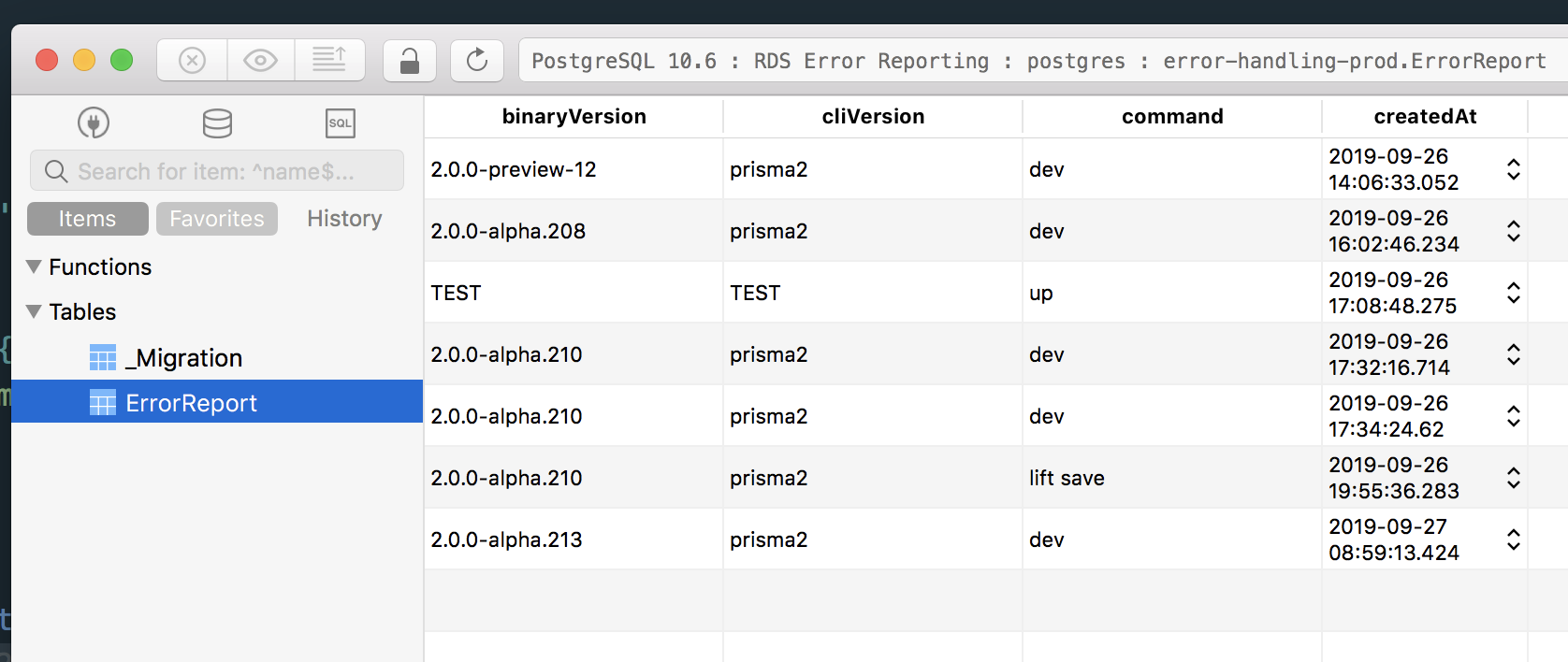

7,359

| 10,509,141,261

|

IssuesEvent

|

2019-09-27 10:14:32

|

prisma/studio

|

https://api.github.com/repos/prisma/studio

|

opened

|

Reload unintuitive

|

kind/improvement process/candidate

|

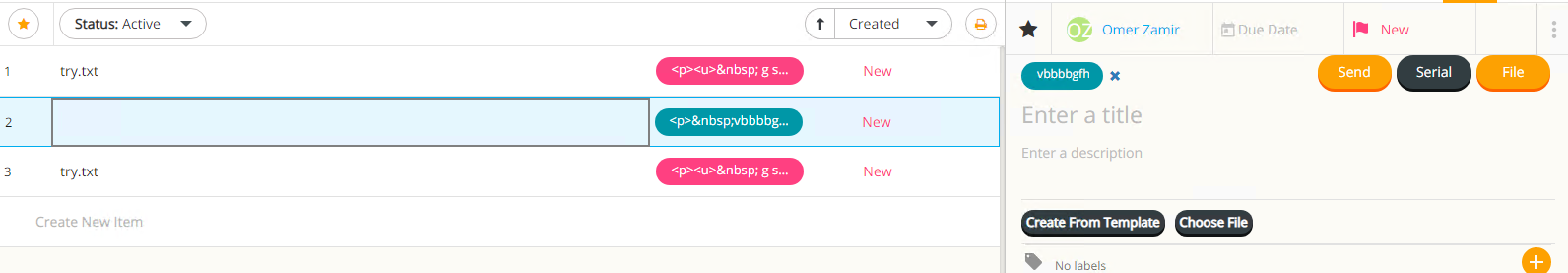

## Reload in Table Plus

## Reload in Studio

As a user, who just wants the data to reload, without knowing that Photon is used under the hood, I don't know, what `Run` means in this context. I just want the UI to reload.

I suggest either calling it `Reload` or replacing it with a reload icon.

|

1.0

|

Reload unintuitive - ## Reload in Table Plus

## Reload in Studio

As a user, who just wants the data to reload, without knowing that Photon is used under the hood, I don't know, what `Run` means in this context. I just want the UI to reload.

I suggest either calling it `Reload` or replacing it with a reload icon.

|

process

|

reload unintuitive reload in table plus reload in studio as a user who just wants the data to reload without knowing that photon is used under the hood i don t know what run means in this context i just want the ui to reload i suggest either calling it reload or replacing it with a reload icon

| 1

|

3,233

| 6,289,280,115

|

IssuesEvent

|

2017-07-19 18:51:21

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

closed

|

System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess fails with NRE on UAP

|

area-System.Diagnostics.Process bug os-windows-uwp

|

(Test case will be added soon, creating issue so that I can disable that in the PR)

```

ERROR: System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess [FAIL]

System.AggregateException : One or more errors occurred. (Object reference not set to an instance of an object.) (Object reference not s

et to an instance of an object.)

---- System.NullReferenceException : Object reference not set to an instance of an object.

---- System.NullReferenceException : Object reference not set to an instance of an object.

Stack Trace:

----- Inner Stack Trace #1 (System.NullReferenceException) -----

at System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess()

----- Inner Stack Trace #2 (System.NullReferenceException) -----

at System.Diagnostics.Tests.ProcessTestBase.Dispose(Boolean disposing)

at System.IO.FileCleanupTestBase.Dispose()

at ReflectionAbstractionExtensions.DisposeTestClass(ITest test, Object testClass, IMessageBus messageBus, ExecutionTimer timer, Can

cellationTokenSource cancellationTokenSource)

```

|

1.0

|

System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess fails with NRE on UAP - (Test case will be added soon, creating issue so that I can disable that in the PR)

```

ERROR: System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess [FAIL]

System.AggregateException : One or more errors occurred. (Object reference not set to an instance of an object.) (Object reference not s

et to an instance of an object.)

---- System.NullReferenceException : Object reference not set to an instance of an object.

---- System.NullReferenceException : Object reference not set to an instance of an object.

Stack Trace:

----- Inner Stack Trace #1 (System.NullReferenceException) -----

at System.Diagnostics.Tests.ProcessWaitingTests.WaitForPeerProcess()

----- Inner Stack Trace #2 (System.NullReferenceException) -----

at System.Diagnostics.Tests.ProcessTestBase.Dispose(Boolean disposing)

at System.IO.FileCleanupTestBase.Dispose()

at ReflectionAbstractionExtensions.DisposeTestClass(ITest test, Object testClass, IMessageBus messageBus, ExecutionTimer timer, Can

cellationTokenSource cancellationTokenSource)

```

|

process

|

system diagnostics tests processwaitingtests waitforpeerprocess fails with nre on uap test case will be added soon creating issue so that i can disable that in the pr error system diagnostics tests processwaitingtests waitforpeerprocess system aggregateexception one or more errors occurred object reference not set to an instance of an object object reference not s et to an instance of an object system nullreferenceexception object reference not set to an instance of an object system nullreferenceexception object reference not set to an instance of an object stack trace inner stack trace system nullreferenceexception at system diagnostics tests processwaitingtests waitforpeerprocess inner stack trace system nullreferenceexception at system diagnostics tests processtestbase dispose boolean disposing at system io filecleanuptestbase dispose at reflectionabstractionextensions disposetestclass itest test object testclass imessagebus messagebus executiontimer timer can cellationtokensource cancellationtokensource

| 1

|

125,894

| 17,861,285,972

|

IssuesEvent

|

2021-09-06 01:06:02

|

bsbtd/Teste

|

https://api.github.com/repos/bsbtd/Teste

|

opened

|

CVE-2021-23437 (High) detected in Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl

|

security vulnerability

|

## CVE-2021-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/e0/50/8e78e6f62ffa50d6ca95c281d5a2819bef66d023ac1b723e253de5bda9c5/Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/e0/50/8e78e6f62ffa50d6ca95c281d5a2819bef66d023ac1b723e253de5bda9c5/Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: Teste/pytorch-metric-learning</p>

<p>Path to vulnerable library: Teste/pytorch-metric-learning</p>

<p>

Dependency Hierarchy:

- :x: **Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package pillow from 0 and before 8.3.2 are vulnerable to Regular Expression Denial of Service (ReDoS) via the getrgb function.

<p>Publish Date: 2021-09-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23437>CVE-2021-23437</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://pillow.readthedocs.io/en/stable/releasenotes/8.3.2.html">https://pillow.readthedocs.io/en/stable/releasenotes/8.3.2.html</a></p>

<p>Release Date: 2021-09-03</p>

<p>Fix Resolution: Pillow - 8.3.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-23437 (High) detected in Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl - ## CVE-2021-23437 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl</b></p></summary>

<p>Python Imaging Library (Fork)</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/e0/50/8e78e6f62ffa50d6ca95c281d5a2819bef66d023ac1b723e253de5bda9c5/Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/e0/50/8e78e6f62ffa50d6ca95c281d5a2819bef66d023ac1b723e253de5bda9c5/Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: Teste/pytorch-metric-learning</p>

<p>Path to vulnerable library: Teste/pytorch-metric-learning</p>

<p>

Dependency Hierarchy:

- :x: **Pillow-7.1.2-cp36-cp36m-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package pillow from 0 and before 8.3.2 are vulnerable to Regular Expression Denial of Service (ReDoS) via the getrgb function.

<p>Publish Date: 2021-09-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23437>CVE-2021-23437</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://pillow.readthedocs.io/en/stable/releasenotes/8.3.2.html">https://pillow.readthedocs.io/en/stable/releasenotes/8.3.2.html</a></p>

<p>Release Date: 2021-09-03</p>

<p>Fix Resolution: Pillow - 8.3.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve high detected in pillow whl cve high severity vulnerability vulnerable library pillow whl python imaging library fork library home page a href path to dependency file teste pytorch metric learning path to vulnerable library teste pytorch metric learning dependency hierarchy x pillow whl vulnerable library vulnerability details the package pillow from and before are vulnerable to regular expression denial of service redos via the getrgb function publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution pillow step up your open source security game with whitesource

| 0

|

22,537

| 31,707,807,504

|

IssuesEvent

|

2023-09-09 00:18:23

|

hashgraph/hedera-mirror-node

|

https://api.github.com/repos/hashgraph/hedera-mirror-node

|

opened

|

Can’t scale up a node pool because of a failing scheduling predicate

|

bug process

|

### Description

Seen a couple times where GKE node pools can't scale because of a failing scheduling predicate. Logs indicate it was promtail and node exporter daemonset. These daemonset pods could not get scheduled because other items on the node had higher priority. This would be fine by itself since these pods are not the highest priority, but if it causes the node to not scale then it needs to be addressed.

### Steps to reproduce

Monitor GKE with real life workloads.

### Additional context

_No response_

### Hedera network

other

### Version

0.87.0

### Operating system

None

|

1.0

|

Can’t scale up a node pool because of a failing scheduling predicate - ### Description

Seen a couple times where GKE node pools can't scale because of a failing scheduling predicate. Logs indicate it was promtail and node exporter daemonset. These daemonset pods could not get scheduled because other items on the node had higher priority. This would be fine by itself since these pods are not the highest priority, but if it causes the node to not scale then it needs to be addressed.

### Steps to reproduce

Monitor GKE with real life workloads.

### Additional context

_No response_

### Hedera network

other

### Version

0.87.0

### Operating system

None

|

process

|

can’t scale up a node pool because of a failing scheduling predicate description seen a couple times where gke node pools can t scale because of a failing scheduling predicate logs indicate it was promtail and node exporter daemonset these daemonset pods could not get scheduled because other items on the node had higher priority this would be fine by itself since these pods are not the highest priority but if it causes the node to not scale then it needs to be addressed steps to reproduce monitor gke with real life workloads additional context no response hedera network other version operating system none

| 1

|

212,093

| 16,472,784,182

|

IssuesEvent

|

2021-05-23 18:53:41

|

truecharts/apps

|

https://api.github.com/repos/truecharts/apps

|

closed

|

Adapt for persitance.emptyDir to persistance.emptyDir.enabled

|

documentation enhancement good first issue

|

**Is your feature request related to a problem? Please describe.**

common 4.0.0 gave persistance.emptyDir it's own sub parameter `enabled`

**Describe the solution you'd like**

Adapt current docs and charts accordingly.

|

1.0

|

Adapt for persitance.emptyDir to persistance.emptyDir.enabled - **Is your feature request related to a problem? Please describe.**

common 4.0.0 gave persistance.emptyDir it's own sub parameter `enabled`

**Describe the solution you'd like**

Adapt current docs and charts accordingly.

|

non_process

|

adapt for persitance emptydir to persistance emptydir enabled is your feature request related to a problem please describe common gave persistance emptydir it s own sub parameter enabled describe the solution you d like adapt current docs and charts accordingly

| 0

|

3,714

| 6,732,600,623

|

IssuesEvent

|

2017-10-18 12:10:11

|

lockedata/rcms

|

https://api.github.com/repos/lockedata/rcms

|

opened

|

Manage attendees

|

conference team osem processes

|

## Detailed task

- Monitor sales

- Modify a registration e.g. issue a refund

- Send an email to attendees

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the task. Use a 👎 (`:-1:`) reaction to the task if you could not complete it. Add a reply with any comments or feedback.

## Extra Info

- Site: [osem](https://intense-shore-93790.herokuapp.com/)

- System documentation: [osem docs](http://osem.io/)

- Role: Conference team

- Area: Processes

|

1.0

|

Manage attendees - ## Detailed task

- Monitor sales

- Modify a registration e.g. issue a refund

- Send an email to attendees

## Assessing the task

Try to perform the task. Use google and the system documentation to help - part of what we're trying to assess how easy it is for people to work out how to do tasks.

Use a 👍 (`:+1:`) reaction to this task if you were able to perform the task. Use a 👎 (`:-1:`) reaction to the task if you could not complete it. Add a reply with any comments or feedback.

## Extra Info

- Site: [osem](https://intense-shore-93790.herokuapp.com/)

- System documentation: [osem docs](http://osem.io/)

- Role: Conference team

- Area: Processes

|

process

|

manage attendees detailed task monitor sales modify a registration e g issue a refund send an email to attendees assessing the task try to perform the task use google and the system documentation to help part of what we re trying to assess how easy it is for people to work out how to do tasks use a 👍 reaction to this task if you were able to perform the task use a 👎 reaction to the task if you could not complete it add a reply with any comments or feedback extra info site system documentation role conference team area processes

| 1

|

18,530

| 24,552,656,670

|

IssuesEvent

|

2022-10-12 13:43:18

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[iOS] [Offline indicator] My Account > Toggle buttons should not be functional in offline behaviour

|

Bug P1 iOS Process: Fixed Process: Tested dev

|

**Steps:**

1. Install the app

2. Sign in/signup

3. Navigated to My account

4. Switch off the internet

6. Now enable/disable toggle buttons like 'Receive push notifications?' or 'Receive study activity reminders?'

7. Switch on the internet

8. Navigate to different screen and come back to my account

9. Observe buttons enabled/disabled fails to retain

**Actual:** Button functionality fails to retain once navigated from different screen to my account

**Expected:** Toggle buttons should not be functional in offline behaviour

Refer video

https://user-images.githubusercontent.com/60386291/179733053-e6086ff4-ba51-42e4-86a2-6d3f3802a6e2.MOV

|

2.0

|

[iOS] [Offline indicator] My Account > Toggle buttons should not be functional in offline behaviour - **Steps:**

1. Install the app

2. Sign in/signup

3. Navigated to My account

4. Switch off the internet

6. Now enable/disable toggle buttons like 'Receive push notifications?' or 'Receive study activity reminders?'

7. Switch on the internet

8. Navigate to different screen and come back to my account

9. Observe buttons enabled/disabled fails to retain

**Actual:** Button functionality fails to retain once navigated from different screen to my account

**Expected:** Toggle buttons should not be functional in offline behaviour

Refer video

https://user-images.githubusercontent.com/60386291/179733053-e6086ff4-ba51-42e4-86a2-6d3f3802a6e2.MOV

|

process

|

my account toggle buttons should not be functional in offline behaviour steps install the app sign in signup navigated to my account switch off the internet now enable disable toggle buttons like receive push notifications or receive study activity reminders switch on the internet navigate to different screen and come back to my account observe buttons enabled disabled fails to retain actual button functionality fails to retain once navigated from different screen to my account expected toggle buttons should not be functional in offline behaviour refer video

| 1

|

70,963

| 13,564,458,780

|

IssuesEvent

|

2020-09-18 10:04:28

|

Regalis11/Barotrauma

|

https://api.github.com/repos/Regalis11/Barotrauma

|

closed

|

Death desync after moving sub away from spawnpoint

|

Bug Code High prio Networking

|

- [X] I have searched the issue tracker to check if the issue has already been reported.

**Description**

~After respawning in a shuttle on a mission~ After moving away from spawnpoint there will be massive death-related desync. When you die server-side you will be locked in place instead. When you are knocked out you can't `give up` and spectate.

**Steps To Reproduce** (Original, see below for a non-shuttle related one, though this one works too)

1. Host server (dedicated or p2p).

2. Start mission.

3. Kill yourself (for example `kill` command).

4. Respawn in a shuttle (`respawnnow` command).

5. Kill yourself again (`kill` command).

6. Notice that you will die on the server, but you will still appear very alive for some time but can't move or give up.

**Version**

0.10.505.0 - 0.10.5

**Additional information**

I'm pretty sure it did not happen in the previous minor unstable version.

EDIT: Update description, version and steps to reproduce with newly found information.

|

1.0

|

Death desync after moving sub away from spawnpoint - - [X] I have searched the issue tracker to check if the issue has already been reported.

**Description**

~After respawning in a shuttle on a mission~ After moving away from spawnpoint there will be massive death-related desync. When you die server-side you will be locked in place instead. When you are knocked out you can't `give up` and spectate.

**Steps To Reproduce** (Original, see below for a non-shuttle related one, though this one works too)

1. Host server (dedicated or p2p).

2. Start mission.

3. Kill yourself (for example `kill` command).

4. Respawn in a shuttle (`respawnnow` command).

5. Kill yourself again (`kill` command).

6. Notice that you will die on the server, but you will still appear very alive for some time but can't move or give up.

**Version**

0.10.505.0 - 0.10.5

**Additional information**

I'm pretty sure it did not happen in the previous minor unstable version.

EDIT: Update description, version and steps to reproduce with newly found information.

|

non_process

|

death desync after moving sub away from spawnpoint i have searched the issue tracker to check if the issue has already been reported description after respawning in a shuttle on a mission after moving away from spawnpoint there will be massive death related desync when you die server side you will be locked in place instead when you are knocked out you can t give up and spectate steps to reproduce original see below for a non shuttle related one though this one works too host server dedicated or start mission kill yourself for example kill command respawn in a shuttle respawnnow command kill yourself again kill command notice that you will die on the server but you will still appear very alive for some time but can t move or give up version additional information i m pretty sure it did not happen in the previous minor unstable version edit update description version and steps to reproduce with newly found information

| 0

|

17,944

| 5,535,467,504

|

IssuesEvent

|

2017-03-21 17:25:46

|

phetsims/masses-and-springs

|

https://api.github.com/repos/phetsims/masses-and-springs

|

opened

|

Factor out duplicated code in line creation

|

dev:code-review

|

During #36 I saw this code in IndicatorVisibilityControlPanel:

```js

// Lines added for reference in panel

var greenLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'rgb(93, 191, 142)',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'greenLine' )

} );

var blueLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'rgb(65,66,232)',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'blueLine' )

} );

var redLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'red',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'redLine' )

} );

```

I recommend factoring out a function so the lines can be created like this:

```js

var greenLine = createLine('rgb(93, 191, 142)',tandem.createTandem('greenLine'));

```

|

1.0

|

Factor out duplicated code in line creation - During #36 I saw this code in IndicatorVisibilityControlPanel:

```js

// Lines added for reference in panel

var greenLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'rgb(93, 191, 142)',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'greenLine' )

} );

var blueLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'rgb(65,66,232)',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'blueLine' )

} );

var redLine = new Line( 0, 0, LINE_LENGTH, 0, {

stroke: 'red',

lineDash: [ 6, 2.5 ],

lineWidth: 2.0,

cursor: 'pointer',

tandem: tandem.createTandem( 'redLine' )

} );

```

I recommend factoring out a function so the lines can be created like this:

```js

var greenLine = createLine('rgb(93, 191, 142)',tandem.createTandem('greenLine'));

```

|

non_process

|

factor out duplicated code in line creation during i saw this code in indicatorvisibilitycontrolpanel js lines added for reference in panel var greenline new line line length stroke rgb linedash linewidth cursor pointer tandem tandem createtandem greenline var blueline new line line length stroke rgb linedash linewidth cursor pointer tandem tandem createtandem blueline var redline new line line length stroke red linedash linewidth cursor pointer tandem tandem createtandem redline i recommend factoring out a function so the lines can be created like this js var greenline createline rgb tandem createtandem greenline

| 0

|

5,382

| 8,211,044,554

|

IssuesEvent

|

2018-09-04 12:45:58

|

linnovate/root

|

https://api.github.com/repos/linnovate/root

|

closed

|

Document: filtering by favorite not working

|

Process bug

|

@abrahamos

open a few documents.

set one of them as a favorite.

click on filtering by favorite.

all the documents still there.

|

1.0

|

Document: filtering by favorite not working - @abrahamos

open a few documents.

set one of them as a favorite.

click on filtering by favorite.

all the documents still there.

|

process

|

document filtering by favorite not working abrahamos open a few documents set one of them as a favorite click on filtering by favorite all the documents still there

| 1

|

2,447

| 5,226,087,836

|

IssuesEvent

|

2017-01-27 20:14:34

|

AnalyticalGraphicsInc/cesium

|

https://api.github.com/repos/AnalyticalGraphicsInc/cesium

|

closed

|

Run WebGL tests in CI

|

dev process enhancement priority

|

As discussed with @mramato offline:

- Replace all read pixels expectations with a function that can have a no-op expectation when the tests are ran with a "no WebGL" flag, e.g.,

``` javascript

expect(scene.renderForSpecs()).toEqual([0, 0, 0, 255]);

```

becomes

``` javascript

scene.expectRenderForSpecs([0, 0, 0, 255]);

```

- Replace the object returned by `getContext` with a mock object with GL functions that are no-ops, `function() {}`, except for `getExtension`, which should return mocked objects for the extensions we care about.

- Likewise, all `gl.get*` functions should be mocked to return reasonable values.

- Run the tests and fix things I forgot.

This should only take a few hours and will be more reliable than [mesa](https://github.com/AnalyticalGraphicsInc/cesium/compare/mesa).

|

1.0

|

Run WebGL tests in CI - As discussed with @mramato offline:

- Replace all read pixels expectations with a function that can have a no-op expectation when the tests are ran with a "no WebGL" flag, e.g.,

``` javascript

expect(scene.renderForSpecs()).toEqual([0, 0, 0, 255]);

```

becomes

``` javascript

scene.expectRenderForSpecs([0, 0, 0, 255]);

```

- Replace the object returned by `getContext` with a mock object with GL functions that are no-ops, `function() {}`, except for `getExtension`, which should return mocked objects for the extensions we care about.

- Likewise, all `gl.get*` functions should be mocked to return reasonable values.

- Run the tests and fix things I forgot.

This should only take a few hours and will be more reliable than [mesa](https://github.com/AnalyticalGraphicsInc/cesium/compare/mesa).

|

process

|

run webgl tests in ci as discussed with mramato offline replace all read pixels expectations with a function that can have a no op expectation when the tests are ran with a no webgl flag e g javascript expect scene renderforspecs toequal becomes javascript scene expectrenderforspecs replace the object returned by getcontext with a mock object with gl functions that are no ops function except for getextension which should return mocked objects for the extensions we care about likewise all gl get functions should be mocked to return reasonable values run the tests and fix things i forgot this should only take a few hours and will be more reliable than

| 1

|

136,522

| 11,049,379,521

|

IssuesEvent

|

2019-12-09 23:32:05

|

MangopearUK/European-Boating-Association--Theme

|

https://api.github.com/repos/MangopearUK/European-Boating-Association--Theme

|

closed

|

Test & audit: EBA subscription rate increase for budget year 2020

|

Testing: second round

|

Page URL: https://eba.eu.com/membership/secretariat-announcements/eba-subscription-rate-increase-for-budget-year-2020/

## Table of contents

- [x] **Task 1:** Perform automated audits _(10 tasks)_

- [x] **Task 2:** Manual standards & accessibility tests _(61 tasks)_

- [x] **Task 3:** Breakpoint testing _(15 tasks)_

- [x] **Task 4:** Re-run automated audits _(10 tasks)_

## 1: Perform automated audits _(10 tasks)_

### Lighthouse:

- [x] Run "Accessibility" audit in lighthouse _(using incognito tab)_

- [x] Run "Performance" audit in lighthouse _(using incognito tab)_

- [x] Run "Best practices" audit in lighthouse _(using incognito tab)_

- [x] Run "SEO" audit in lighthouse _(using incognito tab)_

- [x] Run "PWA" audit in lighthouse _(using incognito tab)_

### Pingdom

- [x] Run full audit of the the page's performance in Pingdom

### Browser's console

- [x] Check Chrome's console for errors

### Log results of audits

- [x] Screenshot snapshot of the lighthouse audits

- [x] Upload PDF of detailed lighthouse reports

- [x] Provide a screenshot of any console errors

## 2: Manual standards & accessibility tests _(61 tasks)_

### Forms

- [x] Give all form elements permanently visible labels

- [x] Place labels above form elements

- [x] Mark invalid fields clearly and provide associated error messages

- [x] Make forms as short as possible; offer shortcuts like autocompleting the address using the postcode

- [x] Ensure all form fields have the correct requried state

- [x] Provide status and error messages as WAI-ARIA live regions

### Readability of content

- [x] Ensure page has good grammar

- [x] Ensure page content has been spell-checked

- [x] Make sure headings are in logical order

- [x] Ensure the same content is available across different devices and platforms

- [x] Begin long, multi-section documents with a table of contents

### Presentation

- [x] Make sure all content is formatted correctly

- [x] Avoid all-caps text

- [x] Make sure data tables wider than their container can be scrolled horizontally

- [x] Use the same design patterns to solve the same problems

- [x] Do not mark up subheadings/straplines with separate heading elements

### Links & buttons

#### Links

- [x] Check all links to ensure they work

- [x] Check all links to third party websites use `rel="noopener"`

- [x] Make sure the purpose of a link is clearly described: "read more" vs. "read more about accessibility"

- [x] Provide a skip link if necessary

- [x] Underline links — at least in body copy

- [x] Warn users of links that have unusual behaviors, like linking off-site, or loading a new tab (i.e. aria-label)

#### Buttons

- [x] Ensure primary calls to action are easy to recognize and reach

- [x] Provide clear, unambiguous focus styles

- [x] Ensure states (pressed, expanded, invalid, etc) are communicated to assistive software

- [x] Ensure disabled controls are not focusable

- [x] Make sure controls within hidden content are not focusable

- [x] Provide large touch "targets" for interactive elements

- [x] Make controls look like controls; give them strong perceived affordance

- [x] Use well-established, therefore recognizable, icons and symbols

### Assistive technology

- [x] Ensure content is not obscured through zooming

- [x] Support Windows high contrast mode (use images, not background images)

- [x] Provide alternative text for salient images

- [x] Make scrollable elements focusable for keyboard users

- [x] Ensure keyboard focus order is logical regarding visual layout

- [x] Match semantics to behavior for assistive technology users

- [x] Provide a default language and use lang="[ISO code]" for subsections in different languages

- [x] Inform the user when there are important changes to the application state

- [x] Do not hijack standard scrolling behavior

- [x] Do not instate "infinite scroll" by default; provide buttons to load more items

### General accessibility

- [x] Make sure text and background colors contrast sufficiently

- [x] Do not rely on color for differentiation of visual elements

- [x] Avoid images of text — text that cannot be translated, selected, or understood by assistive tech

- [x] Provide a print stylesheet

- [x] Honour requests to remove animation via the prefers-reduced-motion media query

### SEO

- [x] Ensure all pages have appropriate title

- [x] Ensure all pages have meta descriptions

- [x] Make content easier to find and improve search results with structured data [Read more](https://developers.google.com/search/docs/guides/prototype)

- [x] Check whether page should be appearing in sitemap

- [x] Make sure page has Facebook and Twitter large image previews set correctly

- [x] Check canonical links for page

- [x] Mark as cornerstone content?

### Performance

- [x] Ensure all CSS assets are minified and concatenated

- [x] Ensure all JS assets are minified and concatenated

- [x] Ensure all images are compressed

- [x] Where possible, remove redundant code