Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,134

| 9,695,410,044

|

IssuesEvent

|

2019-05-24 22:23:29

|

NixOS/nixpkgs

|

https://api.github.com/repos/NixOS/nixpkgs

|

closed

|

prosody stores passwords in plain text

|

1.severity: security 6.topic: nixos 9.needs: module (update)

|

## Issue description

The prosody module does not have an option to set the `authentication` config. Prosody stores passwords in plain text by default.

An option for the `authentication` config should be added with default value `"internal_hashed"`.

To work around this, I added

```

extraConfig = ''

authentication = "internal_hashed"

'';

```

to my config

### Steps to reproduce

Set up prosody, register, check the user file in the data path. It will contain the password in plain text.

|

True

|

prosody stores passwords in plain text - ## Issue description

The prosody module does not have an option to set the `authentication` config. Prosody stores passwords in plain text by default.

An option for the `authentication` config should be added with default value `"internal_hashed"`.

To work around this, I added

```

extraConfig = ''

authentication = "internal_hashed"

'';

```

to my config

### Steps to reproduce

Set up prosody, register, check the user file in the data path. It will contain the password in plain text.

|

non_process

|

prosody stores passwords in plain text issue description the prosody module does not have an option to set the authentication config prosody stores passwords in plain text by default an option for the authentication config should be added with default value internal hashed to work around this i added extraconfig authentication internal hashed to my config steps to reproduce set up prosody register check the user file in the data path it will contain the password in plain text

| 0

|

1,835

| 27,016,945,987

|

IssuesEvent

|

2023-02-10 20:21:55

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

[Portable Dashboards] Build API Examples

|

Feature:Dashboard Team:Presentation loe:week impact:high Project:Portable Dashboard

|

### Why do we need examples?

The Portable Dashboards implementation will soon be merged, and with that implementation comes one example of how to use the portable Dashboard, the Dashboards app. When looking at this from the POV of a potential consumer, using the Dashboard app as an example comes with the following limitations.

1. The Dashboard App uses **every** feature of the portable Dashboard. There are no features currently created for solution use-cases, and there are no examples of what a more pared down version of the Portable Dashboard could look like.

2. The Dashboard App and the Dashboard Container are in the same plugin. How do we consume this externally?

3. The API isn't quite documented at the moment.

To answer these, as well as any unknown unknowns, we should build a Portable Dashboards Examples plugin.

### Examples of examples?

This portable dashboards example plugin could potentially contain examples of:

- A totally empty portable dashboard in edit mode.

- A portable dashboard in view mode used to show two or more pre-configured Lens charts. (pass in a hardcoded time range here for the Lens panels to show)

- A portable dashboard with a Unified Search integration which contains a test embeddable that prints out its input as JSON (credit to @nreese for this idea)

- A portable dashboard with a controls integration, which uses the Controls factory (could be exposed publicly from the controls plugin, builder pattern described in https://github.com/elastic/kibana/issues/145429) to build out a hardcoded set of Controls. This could also contain a test embeddable.

- _Optionally_ - Two portable dashboards side by side, both with controls implementations and Lens embeddables, demonstrating how each portable dashboard contains its own state instance.

These are just some ideas, any other examples are welcome! In this process, it is likely that things will need to change on the Dashboard side, which is all fair game!

### Documentation

Additionally, the PR that closes this issue could write more detailed API documentation, and explanations above each example

|

True

|

[Portable Dashboards] Build API Examples - ### Why do we need examples?

The Portable Dashboards implementation will soon be merged, and with that implementation comes one example of how to use the portable Dashboard, the Dashboards app. When looking at this from the POV of a potential consumer, using the Dashboard app as an example comes with the following limitations.

1. The Dashboard App uses **every** feature of the portable Dashboard. There are no features currently created for solution use-cases, and there are no examples of what a more pared down version of the Portable Dashboard could look like.

2. The Dashboard App and the Dashboard Container are in the same plugin. How do we consume this externally?

3. The API isn't quite documented at the moment.

To answer these, as well as any unknown unknowns, we should build a Portable Dashboards Examples plugin.

### Examples of examples?

This portable dashboards example plugin could potentially contain examples of:

- A totally empty portable dashboard in edit mode.

- A portable dashboard in view mode used to show two or more pre-configured Lens charts. (pass in a hardcoded time range here for the Lens panels to show)

- A portable dashboard with a Unified Search integration which contains a test embeddable that prints out its input as JSON (credit to @nreese for this idea)

- A portable dashboard with a controls integration, which uses the Controls factory (could be exposed publicly from the controls plugin, builder pattern described in https://github.com/elastic/kibana/issues/145429) to build out a hardcoded set of Controls. This could also contain a test embeddable.

- _Optionally_ - Two portable dashboards side by side, both with controls implementations and Lens embeddables, demonstrating how each portable dashboard contains its own state instance.

These are just some ideas, any other examples are welcome! In this process, it is likely that things will need to change on the Dashboard side, which is all fair game!

### Documentation

Additionally, the PR that closes this issue could write more detailed API documentation, and explanations above each example

|

non_process

|

build api examples why do we need examples the portable dashboards implementation will soon be merged and with that implementation comes one example of how to use the portable dashboard the dashboards app when looking at this from the pov of a potential consumer using the dashboard app as an example comes with the following limitations the dashboard app uses every feature of the portable dashboard there are no features currently created for solution use cases and there are no examples of what a more pared down version of the portable dashboard could look like the dashboard app and the dashboard container are in the same plugin how do we consume this externally the api isn t quite documented at the moment to answer these as well as any unknown unknowns we should build a portable dashboards examples plugin examples of examples this portable dashboards example plugin could potentially contain examples of a totally empty portable dashboard in edit mode a portable dashboard in view mode used to show two or more pre configured lens charts pass in a hardcoded time range here for the lens panels to show a portable dashboard with a unified search integration which contains a test embeddable that prints out its input as json credit to nreese for this idea a portable dashboard with a controls integration which uses the controls factory could be exposed publicly from the controls plugin builder pattern described in to build out a hardcoded set of controls this could also contain a test embeddable optionally two portable dashboards side by side both with controls implementations and lens embeddables demonstrating how each portable dashboard contains its own state instance these are just some ideas any other examples are welcome in this process it is likely that things will need to change on the dashboard side which is all fair game documentation additionally the pr that closes this issue could write more detailed api documentation and explanations above each example

| 0

|

33,027

| 2,761,502,213

|

IssuesEvent

|

2015-04-28 17:34:47

|

metapolator/metapolator

|

https://api.github.com/repos/metapolator/metapolator

|

closed

|

Specimens: Refactor the sample specimen texts

|

enhancement Priority Medium UI

|

The sample specimen texts can be improved.

They live here:

https://github.com/metapolator/metapolator/blob/gh-pages/purple-pill/js/controllers/specimenController.js#L18-L45

- [ ] Note in src that it is an array of arrays, to make separators

|

1.0

|

Specimens: Refactor the sample specimen texts - The sample specimen texts can be improved.

They live here:

https://github.com/metapolator/metapolator/blob/gh-pages/purple-pill/js/controllers/specimenController.js#L18-L45

- [ ] Note in src that it is an array of arrays, to make separators

|

non_process

|

specimens refactor the sample specimen texts the sample specimen texts can be improved they live here note in src that it is an array of arrays to make separators

| 0

|

40,641

| 5,244,887,098

|

IssuesEvent

|

2017-02-01 01:18:54

|

chihaya/chihaya

|

https://api.github.com/repos/chihaya/chihaya

|

closed

|

net.IP wrong usage

|

component/frontend/http component/frontend/udp component/middleware kind/design

|

Hi

I've noticed the code heavily rely on the length of `net.IP` variables to differentiate IPv4 and IPv6 protocols, and this is a mistake.

example https://github.com/chihaya/chihaya/blob/master/middleware/hooks.go#L126

Per the doc : https://golang.org/pkg/net/#IP

> Note that in this documentation, referring to an IP address as an IPv4 address or an IPv6 address is a semantic property of the address, not just the length of the byte slice: a 16-byte slice can still be an IPv4 address.

Instead i suggest using

```go

func IsIPv4(ip net.IP) bool {

return ip.to4() != nil

}

```

and

```go

// According to the documentation, 16-bytes addresses can

// be either protocol, but an IP returned by net.IP.To4() will

// always be an IPv4, so if the result is nil we know it is an IPv6.

func IsIPv6(ip net.IP) bool {

return len(ip) == net.IPv6len && ip.To4() == nil

}

```

|

1.0

|

net.IP wrong usage - Hi

I've noticed the code heavily rely on the length of `net.IP` variables to differentiate IPv4 and IPv6 protocols, and this is a mistake.

example https://github.com/chihaya/chihaya/blob/master/middleware/hooks.go#L126

Per the doc : https://golang.org/pkg/net/#IP

> Note that in this documentation, referring to an IP address as an IPv4 address or an IPv6 address is a semantic property of the address, not just the length of the byte slice: a 16-byte slice can still be an IPv4 address.

Instead i suggest using

```go

func IsIPv4(ip net.IP) bool {

return ip.to4() != nil

}

```

and

```go

// According to the documentation, 16-bytes addresses can

// be either protocol, but an IP returned by net.IP.To4() will

// always be an IPv4, so if the result is nil we know it is an IPv6.

func IsIPv6(ip net.IP) bool {

return len(ip) == net.IPv6len && ip.To4() == nil

}

```

|

non_process

|

net ip wrong usage hi i ve noticed the code heavily rely on the length of net ip variables to differentiate and protocols and this is a mistake example per the doc note that in this documentation referring to an ip address as an address or an address is a semantic property of the address not just the length of the byte slice a byte slice can still be an address instead i suggest using go func ip net ip bool return ip nil and go according to the documentation bytes addresses can be either protocol but an ip returned by net ip will always be an so if the result is nil we know it is an func ip net ip bool return len ip net ip nil

| 0

|

258,352

| 8,169,611,658

|

IssuesEvent

|

2018-08-27 02:39:53

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

fault during my timer testing

|

bug priority: medium

|

hello

I found a fault during my timer testing.

zephyr version: 1.9.1

code:

```

_struct k_timer test_timer, test_timer2;

static void test_timeout_event(os_timer *timer)

{

}

static void test2_timeout_event(os_timer *timer)

{

k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20));

}

void test_timer(void)

{

k_timer_init(&test_timer, test_timeout_event, NULL);

k_timer_init(&test_timer2, test2_timeout_event, NULL);

k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20));

while(1) {

k_timer_start(&test_timer2, K_MSEC(100), 0);

k_sleep(K_MSEC(1000));

}

}

```

analysis:

when timer1 & timer2 expired in the same tick, timer1 & timer2 will be dequeue from _timeout_q to expired.

In timer2 callback function, k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20)) will re-insert timer1 to _timeout_q. After timer2 callback function, the expired sys_dlist(in _handle_expired_timeouts()) has changed.

The callback of timer linked in the _timeout_q will be called in order. when run last timeout(_timeout_q which actually is not a timer structure),run timeout->func will trigger a fault.

|

1.0

|

fault during my timer testing - hello

I found a fault during my timer testing.

zephyr version: 1.9.1

code:

```

_struct k_timer test_timer, test_timer2;

static void test_timeout_event(os_timer *timer)

{

}

static void test2_timeout_event(os_timer *timer)

{

k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20));

}

void test_timer(void)

{

k_timer_init(&test_timer, test_timeout_event, NULL);

k_timer_init(&test_timer2, test2_timeout_event, NULL);

k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20));

while(1) {

k_timer_start(&test_timer2, K_MSEC(100), 0);

k_sleep(K_MSEC(1000));

}

}

```

analysis:

when timer1 & timer2 expired in the same tick, timer1 & timer2 will be dequeue from _timeout_q to expired.

In timer2 callback function, k_timer_start(&test_timer, K_MSEC(10), K_MSEC(20)) will re-insert timer1 to _timeout_q. After timer2 callback function, the expired sys_dlist(in _handle_expired_timeouts()) has changed.

The callback of timer linked in the _timeout_q will be called in order. when run last timeout(_timeout_q which actually is not a timer structure),run timeout->func will trigger a fault.

|

non_process

|

fault during my timer testing hello i found a fault during my timer testing zephyr version code struct k timer test timer test static void test timeout event os timer timer static void timeout event os timer timer k timer start test timer k msec k msec void test timer void k timer init test timer test timeout event null k timer init test timeout event null k timer start test timer k msec k msec while k timer start test k msec k sleep k msec analysis when expired in the same tick will be dequeue from timeout q to expired in callback function k timer start test timer k msec k msec will re insert to timeout q after callback function the expired sys dlist in handle expired timeouts has changed the callback of timer linked in the timeout q will be called in order when run last timeout timeout q which actually is not a timer structure ,run timeout func will trigger a fault

| 0

|

60,790

| 17,023,522,706

|

IssuesEvent

|

2021-07-03 02:27:38

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

XML not well formed in response to a reverse request (city district node)

|

Component: nominatim Priority: major Resolution: fixed Type: defect

|

**[Submitted to the original trac issue database at 10.52am, Friday, 4th December 2009]**

When the City District node is included in the response XML object this node appears with the name *<city district>''. If you run a parser on this object you encounter an error because the parser understands that the word ''district'' is an attribute without a value. It should be ''<city_district>'' as in ''<country_code>'' or ''<citydistrict>* without a space between the two words.

|

1.0

|

XML not well formed in response to a reverse request (city district node) - **[Submitted to the original trac issue database at 10.52am, Friday, 4th December 2009]**

When the City District node is included in the response XML object this node appears with the name *<city district>''. If you run a parser on this object you encounter an error because the parser understands that the word ''district'' is an attribute without a value. It should be ''<city_district>'' as in ''<country_code>'' or ''<citydistrict>* without a space between the two words.

|

non_process

|

xml not well formed in response to a reverse request city district node when the city district node is included in the response xml object this node appears with the name if you run a parser on this object you encounter an error because the parser understands that the word district is an attribute without a value it should be as in or without a space between the two words

| 0

|

2,443

| 5,220,657,532

|

IssuesEvent

|

2017-01-26 22:30:12

|

vuejs/vue-loader

|

https://api.github.com/repos/vuejs/vue-loader

|

closed

|

vue文件使用多个loader时不能正常工作

|

pre-processor

|

以下是我写的一个简单的loader,用来将vue文件里面的L("aaaas")替代成L("aaaas","talk")样式

在webpack里面是这样配置的:

`

{

test: /\.vue$/,

loader: 'vue-loader!lang-loader'

}

`

这个加载器的作用是用来进行处理多语言时使用的。

但我发现lang-loader可以正常运行,在console.log跟踪也可以正常进行字符串的替换的

但是结果输出时并没有,好象vue-loader只对原始文件进行处理,而不是在上一个输入的基础上

处理。

加载器的代码如下:

`

//本加载器用来专门处理多语言文件中的Region问题

// 将文件里的翻译函数注入Region变量

// 如 L("要翻译的内容")

// 如果上述语言定义在一个叫iptalk的翻译域中,

// 则将其转换成L("要翻译的内容","iptalk")

//

var langConfig = require("../src/language/language.config.json")

var loaderUtils = require('loader-utils');

var path = require("path")

// L("dfsdfds")

var langRegExp = new RegExp(/\bL\(\"(.*?)\"\)/g)

//根据文件名称返回其所在的Region

function getfileRegion(srcFile){

var resultRegion="main"

for(region in langConfig.regions || {}){

var regPath=path.resolve(__dirname,"../src",langConfig.regions[region])

var ref=path.relative(regPath,srcFile)

if(!(ref==srcFile || ref.slice(0,2)=="..")){

resultRegion=region

break;

}

}

return resultRegion

}

module.exports = function(source) {

var query = loaderUtils.parseQuery(this.query);

var region=getfileRegion(this.resourcePath)

if(region!="main"){

source=source.replace(langRegExp,'L("$1","' + region + '")')

}

return source

};

`

|

1.0

|

vue文件使用多个loader时不能正常工作 - 以下是我写的一个简单的loader,用来将vue文件里面的L("aaaas")替代成L("aaaas","talk")样式

在webpack里面是这样配置的:

`

{

test: /\.vue$/,

loader: 'vue-loader!lang-loader'

}

`

这个加载器的作用是用来进行处理多语言时使用的。

但我发现lang-loader可以正常运行,在console.log跟踪也可以正常进行字符串的替换的

但是结果输出时并没有,好象vue-loader只对原始文件进行处理,而不是在上一个输入的基础上

处理。

加载器的代码如下:

`

//本加载器用来专门处理多语言文件中的Region问题

// 将文件里的翻译函数注入Region变量

// 如 L("要翻译的内容")

// 如果上述语言定义在一个叫iptalk的翻译域中,

// 则将其转换成L("要翻译的内容","iptalk")

//

var langConfig = require("../src/language/language.config.json")

var loaderUtils = require('loader-utils');

var path = require("path")

// L("dfsdfds")

var langRegExp = new RegExp(/\bL\(\"(.*?)\"\)/g)

//根据文件名称返回其所在的Region

function getfileRegion(srcFile){

var resultRegion="main"

for(region in langConfig.regions || {}){

var regPath=path.resolve(__dirname,"../src",langConfig.regions[region])

var ref=path.relative(regPath,srcFile)

if(!(ref==srcFile || ref.slice(0,2)=="..")){

resultRegion=region

break;

}

}

return resultRegion

}

module.exports = function(source) {

var query = loaderUtils.parseQuery(this.query);

var region=getfileRegion(this.resourcePath)

if(region!="main"){

source=source.replace(langRegExp,'L("$1","' + region + '")')

}

return source

};

`

|

process

|

vue文件使用多个loader时不能正常工作 以下是我写的一个简单的loader,用来将vue文件里面的l aaaas 替代成l aaaas talk 样式 在webpack里面是这样配置的 test vue loader vue loader lang loader 这个加载器的作用是用来进行处理多语言时使用的。 但我发现lang loader可以正常运行,在console log跟踪也可以正常进行字符串的替换的 但是结果输出时并没有,好象vue loader只对原始文件进行处理,而不是在上一个输入的基础上 处理。 加载器的代码如下: 本加载器用来专门处理多语言文件中的region问题 将文件里的翻译函数注入region变量 如 l 要翻译的内容 如果上述语言定义在一个叫iptalk的翻译域中, 则将其转换成l 要翻译的内容 iptalk var langconfig require src language language config json var loaderutils require loader utils var path require path l dfsdfds var langregexp new regexp bl g 根据文件名称返回其所在的region function getfileregion srcfile var resultregion main for region in langconfig regions var regpath path resolve dirname src langconfig regions var ref path relative regpath srcfile if ref srcfile ref slice resultregion region break return resultregion module exports function source var query loaderutils parsequery this query var region getfileregion this resourcepath if region main source source replace langregexp l region return source

| 1

|

17,487

| 23,302,285,420

|

IssuesEvent

|

2022-08-07 13:59:43

|

Battle-s/battle-school-backend

|

https://api.github.com/repos/Battle-s/battle-school-backend

|

opened

|

구글 공동 계정 및 AWS 계정 생성 후 인프라 세팅

|

setting :hammer: processing :hourglass_flowing_sand:

|

## 설명

> 이슈에 대한 설명을 작성합니다. 담당자도 함께 작성하면 좋습니다.

## 체크사항

> 이슈를 close하기 위해 필요한 조건들을 체크박스로 나열합니다.

- [ ] 구글 공동 계정

- [ ] AWS 공동 계정

- [ ] ec2 & rds 세팅

## 참고자료

> 이슈를 해결하기 위해 필요한 참고자료가 있다면 추가합니다.

## 관련 논의

> 이슈에 대한 논의가 있었다면 논의 내용을 간략하게 추가합니다.

|

1.0

|

구글 공동 계정 및 AWS 계정 생성 후 인프라 세팅 - ## 설명

> 이슈에 대한 설명을 작성합니다. 담당자도 함께 작성하면 좋습니다.

## 체크사항

> 이슈를 close하기 위해 필요한 조건들을 체크박스로 나열합니다.

- [ ] 구글 공동 계정

- [ ] AWS 공동 계정

- [ ] ec2 & rds 세팅

## 참고자료

> 이슈를 해결하기 위해 필요한 참고자료가 있다면 추가합니다.

## 관련 논의

> 이슈에 대한 논의가 있었다면 논의 내용을 간략하게 추가합니다.

|

process

|

구글 공동 계정 및 aws 계정 생성 후 인프라 세팅 설명 이슈에 대한 설명을 작성합니다 담당자도 함께 작성하면 좋습니다 체크사항 이슈를 close하기 위해 필요한 조건들을 체크박스로 나열합니다 구글 공동 계정 aws 공동 계정 rds 세팅 참고자료 이슈를 해결하기 위해 필요한 참고자료가 있다면 추가합니다 관련 논의 이슈에 대한 논의가 있었다면 논의 내용을 간략하게 추가합니다

| 1

|

10,639

| 13,446,133,690

|

IssuesEvent

|

2020-09-08 12:31:31

|

MHRA/products

|

https://api.github.com/repos/MHRA/products

|

closed

|

PARs - Update an existing PAR

|

EPIC - PARs process HIGH PRIORITY :arrow_double_up: STORY :book:

|

### User want

As a Medical Writer in the licensing team

I would like to upload a new version of an existing PAR

so that the latest version is available on products.mhra.gov.uk

(Linked to #397 and #398)

## Acceptance Criteria

### Customer acceptance criteria

- [ ] Medical writers can find an existing PAR which they want to amend

- [ ] Medical writers can upload a new version of that file, which will then be surfaced on products.mhra.gov.uk

- [ ] Medical writers can input the new PAR information into form fields, which will then be surfaced on products.mhra.gov.uk

- [ ] The PAR can be linked to one or multiple products (which will have their own PL number)

- [ ] PAR has product name for each product

- [ ] PAR has active substances for each product

- [ ] PAR has a PLs / NR / THR for each product

- [ ] Medical writers must be logged in to the site with their MHRA account to upload and will be blocked if they aren't

- [ ] Medical writers who are not part of the [TBD - fill in when known] domain group, cannot upload

- [ ] Before submitting, the user is shown a summary of what they are submitting

- [ ] After submitting, the user is presented with: Author, date and time of submission.

### Technical acceptance criteria

- [ ] PAR pdf is in blob storage after upload

- [ ] PAR metadata is attached

- [ ] PAR is in the search index

- [ ] blob/metadata/search index is handled by doc-index-updater

### Data acceptance criteria

### Testing acceptance criteria

**Size**

XL

**Value**

**Effort**

### Exit Criteria met

- [x] Backlog

- [x] Discovery

- [x] DUXD

- [ ] Development

- [ ] Quality Assurance

- [ ] Release and Validate

|

1.0

|

PARs - Update an existing PAR - ### User want

As a Medical Writer in the licensing team

I would like to upload a new version of an existing PAR

so that the latest version is available on products.mhra.gov.uk

(Linked to #397 and #398)

## Acceptance Criteria

### Customer acceptance criteria

- [ ] Medical writers can find an existing PAR which they want to amend

- [ ] Medical writers can upload a new version of that file, which will then be surfaced on products.mhra.gov.uk

- [ ] Medical writers can input the new PAR information into form fields, which will then be surfaced on products.mhra.gov.uk

- [ ] The PAR can be linked to one or multiple products (which will have their own PL number)

- [ ] PAR has product name for each product

- [ ] PAR has active substances for each product

- [ ] PAR has a PLs / NR / THR for each product

- [ ] Medical writers must be logged in to the site with their MHRA account to upload and will be blocked if they aren't

- [ ] Medical writers who are not part of the [TBD - fill in when known] domain group, cannot upload

- [ ] Before submitting, the user is shown a summary of what they are submitting

- [ ] After submitting, the user is presented with: Author, date and time of submission.

### Technical acceptance criteria

- [ ] PAR pdf is in blob storage after upload

- [ ] PAR metadata is attached

- [ ] PAR is in the search index

- [ ] blob/metadata/search index is handled by doc-index-updater

### Data acceptance criteria

### Testing acceptance criteria

**Size**

XL

**Value**

**Effort**

### Exit Criteria met

- [x] Backlog

- [x] Discovery

- [x] DUXD

- [ ] Development

- [ ] Quality Assurance

- [ ] Release and Validate

|

process

|

pars update an existing par user want as a medical writer in the licensing team i would like to upload a new version of an existing par so that the latest version is available on products mhra gov uk linked to and acceptance criteria customer acceptance criteria medical writers can find an existing par which they want to amend medical writers can upload a new version of that file which will then be surfaced on products mhra gov uk medical writers can input the new par information into form fields which will then be surfaced on products mhra gov uk the par can be linked to one or multiple products which will have their own pl number par has product name for each product par has active substances for each product par has a pls nr thr for each product medical writers must be logged in to the site with their mhra account to upload and will be blocked if they aren t medical writers who are not part of the domain group cannot upload before submitting the user is shown a summary of what they are submitting after submitting the user is presented with author date and time of submission technical acceptance criteria par pdf is in blob storage after upload par metadata is attached par is in the search index blob metadata search index is handled by doc index updater data acceptance criteria testing acceptance criteria size xl value effort exit criteria met backlog discovery duxd development quality assurance release and validate

| 1

|

10,798

| 13,609,285,992

|

IssuesEvent

|

2020-09-23 04:49:50

|

googleapis/java-bigtable

|

https://api.github.com/repos/googleapis/java-bigtable

|

closed

|

Dependency Dashboard

|

api: bigtable type: process

|

This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-conformance-tests-0.x -->deps: update dependency com.google.cloud:google-cloud-conformance-tests to v0.0.12

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

1.0

|

Dependency Dashboard - This issue contains a list of Renovate updates and their statuses.

## Open

These updates have all been created already. Click a checkbox below to force a retry/rebase of any.

- [ ] <!-- rebase-branch=renovate/com.google.cloud-google-cloud-conformance-tests-0.x -->deps: update dependency com.google.cloud:google-cloud-conformance-tests to v0.0.12

---

- [ ] <!-- manual job -->Check this box to trigger a request for Renovate to run again on this repository

|

process

|

dependency dashboard this issue contains a list of renovate updates and their statuses open these updates have all been created already click a checkbox below to force a retry rebase of any deps update dependency com google cloud google cloud conformance tests to check this box to trigger a request for renovate to run again on this repository

| 1

|

510,105

| 14,784,996,341

|

IssuesEvent

|

2021-01-12 01:36:48

|

NCAR/GeoCAT

|

https://api.github.com/repos/NCAR/GeoCAT

|

closed

|

fail to install GeoCAT-comp on windows 10

|

bug enhancement medium priority support

|

Hi, I had succeed installed miniconda, but when input the command “conda create -n geocat -c conda-forge -c ncar geocat-comp”,it's show a error,the error information is:

Collecting package metadata (current_repodata.json): done

Solving environment: failed with repodata from current_repodata.json, will retry with next repodata source.

Collecting package metadata (repodata.json): done

Solving environment: |

Found conflicts! Looking for incompatible packages.

This can take several minutes. Press CTRL-C to abort.

Examining @/win-64::__cuda==10.2=0: 50%|████████████████▌ | 1/2 [00:00<0Examining @/win-64::__cuda==10.2=0: 100%|████████████████████████████████failed

UnsatisfiableError: The following specifications were found to be incompatible with your CUDA driver:

- feature:/win-64::__cuda==10.2=0

Your installed CUDA driver is: 10.2

|

1.0

|

fail to install GeoCAT-comp on windows 10 - Hi, I had succeed installed miniconda, but when input the command “conda create -n geocat -c conda-forge -c ncar geocat-comp”,it's show a error,the error information is:

Collecting package metadata (current_repodata.json): done

Solving environment: failed with repodata from current_repodata.json, will retry with next repodata source.

Collecting package metadata (repodata.json): done

Solving environment: |

Found conflicts! Looking for incompatible packages.

This can take several minutes. Press CTRL-C to abort.

Examining @/win-64::__cuda==10.2=0: 50%|████████████████▌ | 1/2 [00:00<0Examining @/win-64::__cuda==10.2=0: 100%|████████████████████████████████failed

UnsatisfiableError: The following specifications were found to be incompatible with your CUDA driver:

- feature:/win-64::__cuda==10.2=0

Your installed CUDA driver is: 10.2

|

non_process

|

fail to install geocat comp on windows hi i had succeed installed miniconda but when input the command “conda create n geocat c conda forge c ncar geocat comp”,it s show a error,the error information is collecting package metadata current repodata json done solving environment failed with repodata from current repodata json will retry with next repodata source collecting package metadata repodata json done solving environment found conflicts looking for incompatible packages this can take several minutes press ctrl c to abort examining win cuda ████████████████▌ win cuda ████████████████████████████████failed unsatisfiableerror the following specifications were found to be incompatible with your cuda driver feature win cuda your installed cuda driver is

| 0

|

814,383

| 30,505,514,840

|

IssuesEvent

|

2023-07-18 16:35:01

|

Automattic/woocommerce-payments

|

https://api.github.com/repos/Automattic/woocommerce-payments

|

closed

|

WooPay shoppers blocked from order success page by a security wall

|

type: bug priority: high impact: high component: WooPay GA Blocker

|

Actual: An authenticated WooPay shopper, on a shortcode checkout site, when successfully placing an order is frequently presented with a security screen and must either login to the site or verify their email address to access the order thank you page.

Expectation: Under no circumstance should a WooPay user who places an order be presented with a security screen in order to access the thank you page on an order they just placed.

Cross posted to https://github.com/Automattic/woopay/issues/1951, the primary ticket for this issue. Duplicated here for extra visibility and urgency.

|

1.0

|

WooPay shoppers blocked from order success page by a security wall - Actual: An authenticated WooPay shopper, on a shortcode checkout site, when successfully placing an order is frequently presented with a security screen and must either login to the site or verify their email address to access the order thank you page.

Expectation: Under no circumstance should a WooPay user who places an order be presented with a security screen in order to access the thank you page on an order they just placed.

Cross posted to https://github.com/Automattic/woopay/issues/1951, the primary ticket for this issue. Duplicated here for extra visibility and urgency.

|

non_process

|

woopay shoppers blocked from order success page by a security wall actual an authenticated woopay shopper on a shortcode checkout site when successfully placing an order is frequently presented with a security screen and must either login to the site or verify their email address to access the order thank you page expectation under no circumstance should a woopay user who places an order be presented with a security screen in order to access the thank you page on an order they just placed cross posted to the primary ticket for this issue duplicated here for extra visibility and urgency

| 0

|

21,446

| 29,478,711,157

|

IssuesEvent

|

2023-06-02 02:14:10

|

cypress-io/cypress

|

https://api.github.com/repos/cypress-io/cypress

|

closed

|

Update cypress docker images automatically on release

|

external: docker process: release stage: ready for work type: user experience stale

|

<!-- Is this a question? Don't open an issue. Ask in our chat https://on.cypress.io/chat -->

### Current behavior:

Cypress docker images are behind the cypress release

See the tags for `cypress/included`: https://hub.docker.com/r/cypress/included/tags

Currently the latest is `3.7.0`, but `3.8.0` was released yesterday

<!-- images, stack traces, etc -->

### Desired behavior:

An automated build should be setup to build the new docker images as soon as a release is cut

<!-- A clear concise description of what you want to happen -->

<!--

### Steps to reproduce: (app code and test code)

<!-- Issues without reproducible steps WILL BE CLOSED -->

<!-- You can fork https://github.com/cypress-io/cypress-test-tiny repo, set up a failing test, then tell us the repo/branch to try. -->

### Versions

3.8.0

<!-- Cypress, operating system, browser -->

|

1.0

|

Update cypress docker images automatically on release - <!-- Is this a question? Don't open an issue. Ask in our chat https://on.cypress.io/chat -->

### Current behavior:

Cypress docker images are behind the cypress release

See the tags for `cypress/included`: https://hub.docker.com/r/cypress/included/tags

Currently the latest is `3.7.0`, but `3.8.0` was released yesterday

<!-- images, stack traces, etc -->

### Desired behavior:

An automated build should be setup to build the new docker images as soon as a release is cut

<!-- A clear concise description of what you want to happen -->

<!--

### Steps to reproduce: (app code and test code)

<!-- Issues without reproducible steps WILL BE CLOSED -->

<!-- You can fork https://github.com/cypress-io/cypress-test-tiny repo, set up a failing test, then tell us the repo/branch to try. -->

### Versions

3.8.0

<!-- Cypress, operating system, browser -->

|

process

|

update cypress docker images automatically on release current behavior cypress docker images are behind the cypress release see the tags for cypress included currently the latest is but was released yesterday desired behavior an automated build should be setup to build the new docker images as soon as a release is cut steps to reproduce app code and test code versions

| 1

|

38

| 2,507,191,748

|

IssuesEvent

|

2015-01-12 16:41:03

|

GsDevKit/gsApplicationTools

|

https://api.github.com/repos/GsDevKit/gsApplicationTools

|

closed

|

GemServer>>doBasicTransaction: must be non-re-entrant

|

in process

|

After a discussion with @rjsargent, I've decided that the conflicting goals of

1. running production applications in `manual transaction mode`.

2. allowing folks to debug *gem servers* in `automatic transaction mode`.

3. allowing GemServer>>doBasicTransaction: to be re-entrant.

cannot be achieved cleanly. The basic problem is that it isn't possible to tell when it is **correct** to abort/commit when running in `automatic transaction mode`. Of course, I also don't have a strong case fr needing re-entrant GemServer>>doBasicTransaction: calls, so for now they will be non-re-entrant.

|

1.0

|

GemServer>>doBasicTransaction: must be non-re-entrant - After a discussion with @rjsargent, I've decided that the conflicting goals of

1. running production applications in `manual transaction mode`.

2. allowing folks to debug *gem servers* in `automatic transaction mode`.

3. allowing GemServer>>doBasicTransaction: to be re-entrant.

cannot be achieved cleanly. The basic problem is that it isn't possible to tell when it is **correct** to abort/commit when running in `automatic transaction mode`. Of course, I also don't have a strong case fr needing re-entrant GemServer>>doBasicTransaction: calls, so for now they will be non-re-entrant.

|

process

|

gemserver dobasictransaction must be non re entrant after a discussion with rjsargent i ve decided that the conflicting goals of running production applications in manual transaction mode allowing folks to debug gem servers in automatic transaction mode allowing gemserver dobasictransaction to be re entrant cannot be achieved cleanly the basic problem is that it isn t possible to tell when it is correct to abort commit when running in automatic transaction mode of course i also don t have a strong case fr needing re entrant gemserver dobasictransaction calls so for now they will be non re entrant

| 1

|

797

| 3,275,514,340

|

IssuesEvent

|

2015-10-26 15:51:13

|

grafeo/grafeo

|

https://api.github.com/repos/grafeo/grafeo

|

opened

|

Drawing Functions: Line

|

Component: Image Processing priority: high

|

- Input Array

- Point1

- Point2

- Color

- Thickness

- LineType: 4-neighbor, 8-neighbor or antialiased

- Shift: Fractional shift bits

|

1.0

|

Drawing Functions: Line - - Input Array

- Point1

- Point2

- Color

- Thickness

- LineType: 4-neighbor, 8-neighbor or antialiased

- Shift: Fractional shift bits

|

process

|

drawing functions line input array color thickness linetype neighbor neighbor or antialiased shift fractional shift bits

| 1

|

69,313

| 14,988,006,185

|

IssuesEvent

|

2021-01-29 00:11:35

|

oragono/oragono

|

https://api.github.com/repos/oragono/oragono

|

opened

|

account verification flows allowing a captcha?

|

help wanted security weird fun stuff

|

It's already possible to implement email and captcha verification externally: build a webapp that can dispatch verification emails, display and verify a captcha, then finally SAREGISTER an account with the ircd on success.

But we might want to have better code support for it within Oragono itself. For example, we could let people initiate the registration process in-band, then the external webapp would check the captcha and do a `SAVERIFY` or something. Or we could serve captchas straight out of Oragono itself.

|

True

|

account verification flows allowing a captcha? - It's already possible to implement email and captcha verification externally: build a webapp that can dispatch verification emails, display and verify a captcha, then finally SAREGISTER an account with the ircd on success.

But we might want to have better code support for it within Oragono itself. For example, we could let people initiate the registration process in-band, then the external webapp would check the captcha and do a `SAVERIFY` or something. Or we could serve captchas straight out of Oragono itself.

|

non_process

|

account verification flows allowing a captcha it s already possible to implement email and captcha verification externally build a webapp that can dispatch verification emails display and verify a captcha then finally saregister an account with the ircd on success but we might want to have better code support for it within oragono itself for example we could let people initiate the registration process in band then the external webapp would check the captcha and do a saverify or something or we could serve captchas straight out of oragono itself

| 0

|

28,966

| 4,454,742,602

|

IssuesEvent

|

2016-08-23 02:33:59

|

mautic/mautic

|

https://api.github.com/repos/mautic/mautic

|

closed

|

Failed e-mail appears as sent in contact timeline

|

Bug Ready To Test

|

What type of report is this:

| Q | A

| ---| ---

| Bug report? | yes

| Feature request? |

| Enhancement? |

## Description:

Failed e-mails to a contact appear in the timeline as sent. I encountered that behaviour as I was sending a manual e-mail to a contact.

I made the test with Amazon SES where you have to configure your allowed sending domains. The SMTP was dropping my mails and I think Mautic does not handle that error correctly.

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.1.0

| PHP version | 5.6.23-1+deprecated+dontuse+deb.sury.org~trusty+1

### Steps to reproduce:

1. Configure a mail transport that will reject certain sending domains (like Amazon SES)

1. Send a manual e-mail to that contact from the contact detail page

1. Process the mail queue from the cli (php app/console mautic:emails:send)

1. Check the contact page. The lead is marked as bounced (which is wrong, because the sending domain was wrong, not the contact). The email sent to the customer shows up as "sent", which give you the feeling that the contact received it.

### Log errors:

The Swift_TransportException bubbles up from the mautic:emails:send command

````

root@web001:/var/www/playground# php app/console mautic:emails:send

[Swift_TransportException]

Expected response code 250 but got code "554", with message "554 Message rejected: Email address is not verified. The following identities failed the check in region EU-WEST-1:

55hubs Administrator <me@nope.com>, me@nope.com

"

mautic:emails:send [--message-limit [MESSAGE-LIMIT]] [--time-limit [TIME-LIMIT]] [--do-not-clear] [--recover-timeout [RECOVER-TIMEOUT]] [--clear-timeout [CLEAR-TIMEOUT]] [-h|--help] [-q|--quiet] [-v|vv|vvv|--verbose] [-V|--version] [--ansi] [--no-ansi] [-n|--no-interaction] [-s|--shell] [--process-isolation] [-e|--env ENV] [--no-debug] [--] <command>

````

|

1.0

|

Failed e-mail appears as sent in contact timeline - What type of report is this:

| Q | A

| ---| ---

| Bug report? | yes

| Feature request? |

| Enhancement? |

## Description:

Failed e-mails to a contact appear in the timeline as sent. I encountered that behaviour as I was sending a manual e-mail to a contact.

I made the test with Amazon SES where you have to configure your allowed sending domains. The SMTP was dropping my mails and I think Mautic does not handle that error correctly.

## If a bug:

| Q | A

| --- | ---

| Mautic version | 2.1.0

| PHP version | 5.6.23-1+deprecated+dontuse+deb.sury.org~trusty+1

### Steps to reproduce:

1. Configure a mail transport that will reject certain sending domains (like Amazon SES)

1. Send a manual e-mail to that contact from the contact detail page

1. Process the mail queue from the cli (php app/console mautic:emails:send)

1. Check the contact page. The lead is marked as bounced (which is wrong, because the sending domain was wrong, not the contact). The email sent to the customer shows up as "sent", which give you the feeling that the contact received it.

### Log errors:

The Swift_TransportException bubbles up from the mautic:emails:send command

````

root@web001:/var/www/playground# php app/console mautic:emails:send

[Swift_TransportException]

Expected response code 250 but got code "554", with message "554 Message rejected: Email address is not verified. The following identities failed the check in region EU-WEST-1:

55hubs Administrator <me@nope.com>, me@nope.com

"

mautic:emails:send [--message-limit [MESSAGE-LIMIT]] [--time-limit [TIME-LIMIT]] [--do-not-clear] [--recover-timeout [RECOVER-TIMEOUT]] [--clear-timeout [CLEAR-TIMEOUT]] [-h|--help] [-q|--quiet] [-v|vv|vvv|--verbose] [-V|--version] [--ansi] [--no-ansi] [-n|--no-interaction] [-s|--shell] [--process-isolation] [-e|--env ENV] [--no-debug] [--] <command>

````

|

non_process

|

failed e mail appears as sent in contact timeline what type of report is this q a bug report yes feature request enhancement description failed e mails to a contact appear in the timeline as sent i encountered that behaviour as i was sending a manual e mail to a contact i made the test with amazon ses where you have to configure your allowed sending domains the smtp was dropping my mails and i think mautic does not handle that error correctly if a bug q a mautic version php version deprecated dontuse deb sury org trusty steps to reproduce configure a mail transport that will reject certain sending domains like amazon ses send a manual e mail to that contact from the contact detail page process the mail queue from the cli php app console mautic emails send check the contact page the lead is marked as bounced which is wrong because the sending domain was wrong not the contact the email sent to the customer shows up as sent which give you the feeling that the contact received it log errors the swift transportexception bubbles up from the mautic emails send command root var www playground php app console mautic emails send expected response code but got code with message message rejected email address is not verified the following identities failed the check in region eu west administrator me nope com mautic emails send

| 0

|

9,752

| 12,737,085,857

|

IssuesEvent

|

2020-06-25 18:05:49

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

closed

|

Why System.Diagnostics.Process.MainWindowTitle returns empty string on Linux?

|

area-System.Diagnostics.Process os-linux question

|

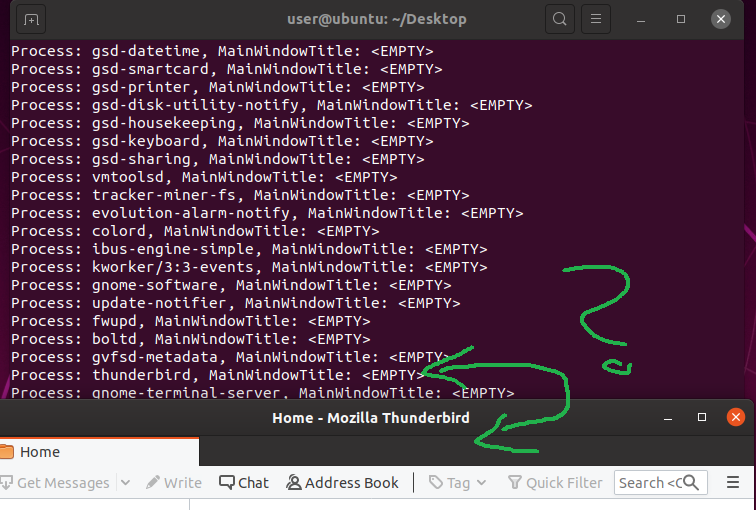

System.Diagnostics.Process.MainWindowTitle is empty for all processes on Linux, even when the application has root rights. Tested on Ubuntu 19.10 64bit with .NET Core 3.1 console application.

## Repro

`sudo ./ConsoleApp2`

```

class Program

{

static void Main(string[] args)

{

var procs = Process.GetProcesses();

foreach (var proc in procs)

{

Console.WriteLine($"Process: {proc.ProcessName}, MainWindowTitle: {(string.IsNullOrEmpty(proc.MainWindowTitle) ? "<EMPTY>" : proc.MainWindowTitle)}");

}

}

}

```

## Result:

|

1.0

|

Why System.Diagnostics.Process.MainWindowTitle returns empty string on Linux? - System.Diagnostics.Process.MainWindowTitle is empty for all processes on Linux, even when the application has root rights. Tested on Ubuntu 19.10 64bit with .NET Core 3.1 console application.

## Repro

`sudo ./ConsoleApp2`

```

class Program

{

static void Main(string[] args)

{

var procs = Process.GetProcesses();

foreach (var proc in procs)

{

Console.WriteLine($"Process: {proc.ProcessName}, MainWindowTitle: {(string.IsNullOrEmpty(proc.MainWindowTitle) ? "<EMPTY>" : proc.MainWindowTitle)}");

}

}

}

```

## Result:

|

process

|

why system diagnostics process mainwindowtitle returns empty string on linux system diagnostics process mainwindowtitle is empty for all processes on linux even when the application has root rights tested on ubuntu with net core console application repro sudo class program static void main string args var procs process getprocesses foreach var proc in procs console writeline process proc processname mainwindowtitle string isnullorempty proc mainwindowtitle proc mainwindowtitle result

| 1

|

272,876

| 20,761,837,264

|

IssuesEvent

|

2022-03-15 16:49:12

|

spring-cloud/spring-cloud-release

|

https://api.github.com/repos/spring-cloud/spring-cloud-release

|

closed

|

Add release documentation to combined Spring Cloud documentation

|

documentation

|

The `org.springframework.cloud:spring-cloud-dependencies` POM seems to serve the same purpose as the `io.spring.platform:platform-bom` POM (assisting with ensuring compatible transitive dependencies). I can't find any information on which versions of one work with which versions of the other. Are they meant to be used together or are they not necessarily compatible?

I see in `org.springframework.cloud:spring-cloud-dependencies` that there are a lot of exclusions and most of them look like things that are in `io.spring.platform:platform-bom` but I'm not sure if this is a "best effort" to make them work together or if it's intentional to make sure they do.

This isn't really an issue with the code (maybe the documentation though), but I didn't think this would get a good answer on Stack Overflow so I thought this was the best place to ask.

|

1.0

|

Add release documentation to combined Spring Cloud documentation - The `org.springframework.cloud:spring-cloud-dependencies` POM seems to serve the same purpose as the `io.spring.platform:platform-bom` POM (assisting with ensuring compatible transitive dependencies). I can't find any information on which versions of one work with which versions of the other. Are they meant to be used together or are they not necessarily compatible?

I see in `org.springframework.cloud:spring-cloud-dependencies` that there are a lot of exclusions and most of them look like things that are in `io.spring.platform:platform-bom` but I'm not sure if this is a "best effort" to make them work together or if it's intentional to make sure they do.

This isn't really an issue with the code (maybe the documentation though), but I didn't think this would get a good answer on Stack Overflow so I thought this was the best place to ask.

|

non_process

|

add release documentation to combined spring cloud documentation the org springframework cloud spring cloud dependencies pom seems to serve the same purpose as the io spring platform platform bom pom assisting with ensuring compatible transitive dependencies i can t find any information on which versions of one work with which versions of the other are they meant to be used together or are they not necessarily compatible i see in org springframework cloud spring cloud dependencies that there are a lot of exclusions and most of them look like things that are in io spring platform platform bom but i m not sure if this is a best effort to make them work together or if it s intentional to make sure they do this isn t really an issue with the code maybe the documentation though but i didn t think this would get a good answer on stack overflow so i thought this was the best place to ask

| 0

|

6,517

| 9,604,787,884

|

IssuesEvent

|

2019-05-10 21:07:23

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

How to retrain the model

|

assigned-to-author machine-learning/svc product-question team-data-science-process/subsvc triaged

|

If I update a dataset from my Python script as described here, can I also trigger the model to retrain based on the updated data from my Python script?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: c798198a-6a22-41a3-5bdc-5e4e06446a9b

* Version Independent ID: 3fb2d7ca-27df-87a8-9fdf-99245438f2e9

* Content: [Access datasets with Python client library - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/python-data-access)

* Content Source: [articles/machine-learning/team-data-science-process/python-data-access.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/python-data-access.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

1.0

|

How to retrain the model - If I update a dataset from my Python script as described here, can I also trigger the model to retrain based on the updated data from my Python script?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: c798198a-6a22-41a3-5bdc-5e4e06446a9b

* Version Independent ID: 3fb2d7ca-27df-87a8-9fdf-99245438f2e9

* Content: [Access datasets with Python client library - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/python-data-access)

* Content Source: [articles/machine-learning/team-data-science-process/python-data-access.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/python-data-access.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

process

|

how to retrain the model if i update a dataset from my python script as described here can i also trigger the model to retrain based on the updated data from my python script document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service machine learning sub service team data science process github login marktab microsoft alias tdsp

| 1

|

348,822

| 24,924,403,818

|

IssuesEvent

|

2022-10-31 05:33:00

|

Dun-sin/Code-Magic

|

https://api.github.com/repos/Dun-sin/Code-Magic

|

closed

|

[DOCS] add text shadow to the readme

|

documentation good first issue EddieHub:good-first-issue assigned hacktoberfest

|

### Description

All the current generators are on the readme except text shadow.

### Screenshots

_No response_

### Additional information

Tasks:

- [ ] Add a short description of the text shadow generator in the readme

### 👀 Have you checked if this issue has been raised before?

- [X] I checked and didn't find similar issue

### 🏢 Have you read the Contributing Guidelines?

- [X] I have read and understood the rules in the [Contributing Guidelines](https://github.com/Dun-sin/Code-Magic/blob/main/CONTRIBUTING.md)

### Are you willing to work on this issue ?

_No response_

|

1.0

|

[DOCS] add text shadow to the readme - ### Description

All the current generators are on the readme except text shadow.

### Screenshots

_No response_

### Additional information

Tasks:

- [ ] Add a short description of the text shadow generator in the readme

### 👀 Have you checked if this issue has been raised before?

- [X] I checked and didn't find similar issue

### 🏢 Have you read the Contributing Guidelines?

- [X] I have read and understood the rules in the [Contributing Guidelines](https://github.com/Dun-sin/Code-Magic/blob/main/CONTRIBUTING.md)

### Are you willing to work on this issue ?

_No response_

|

non_process

|

add text shadow to the readme description all the current generators are on the readme except text shadow screenshots no response additional information tasks add a short description of the text shadow generator in the readme 👀 have you checked if this issue has been raised before i checked and didn t find similar issue 🏢 have you read the contributing guidelines i have read and understood the rules in the are you willing to work on this issue no response

| 0

|

52,242

| 10,790,734,900

|

IssuesEvent

|

2019-11-05 15:28:52

|

eclipse-theia/theia

|

https://api.github.com/repos/eclipse-theia/theia

|

opened

|

[vscode] builtin extensions not recognized

|

plug-in system vscode

|

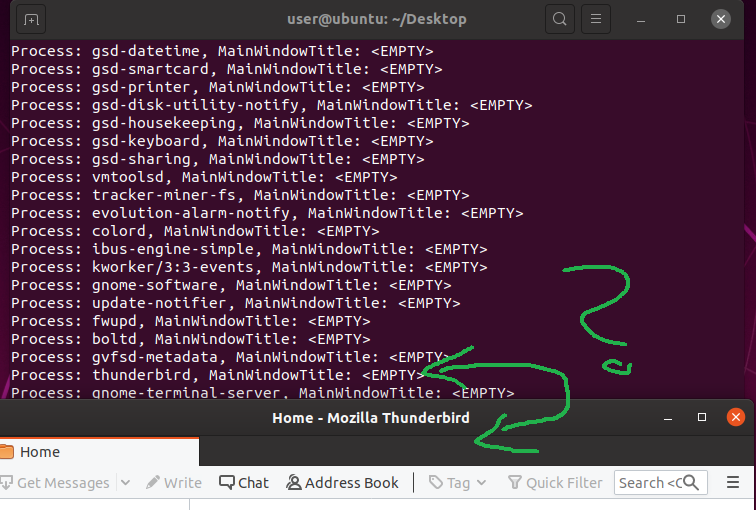

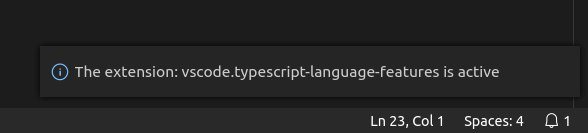

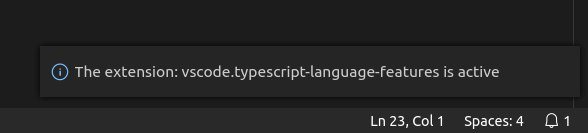

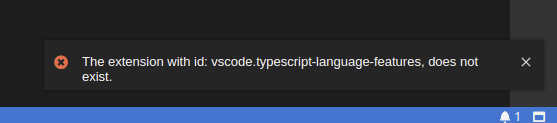

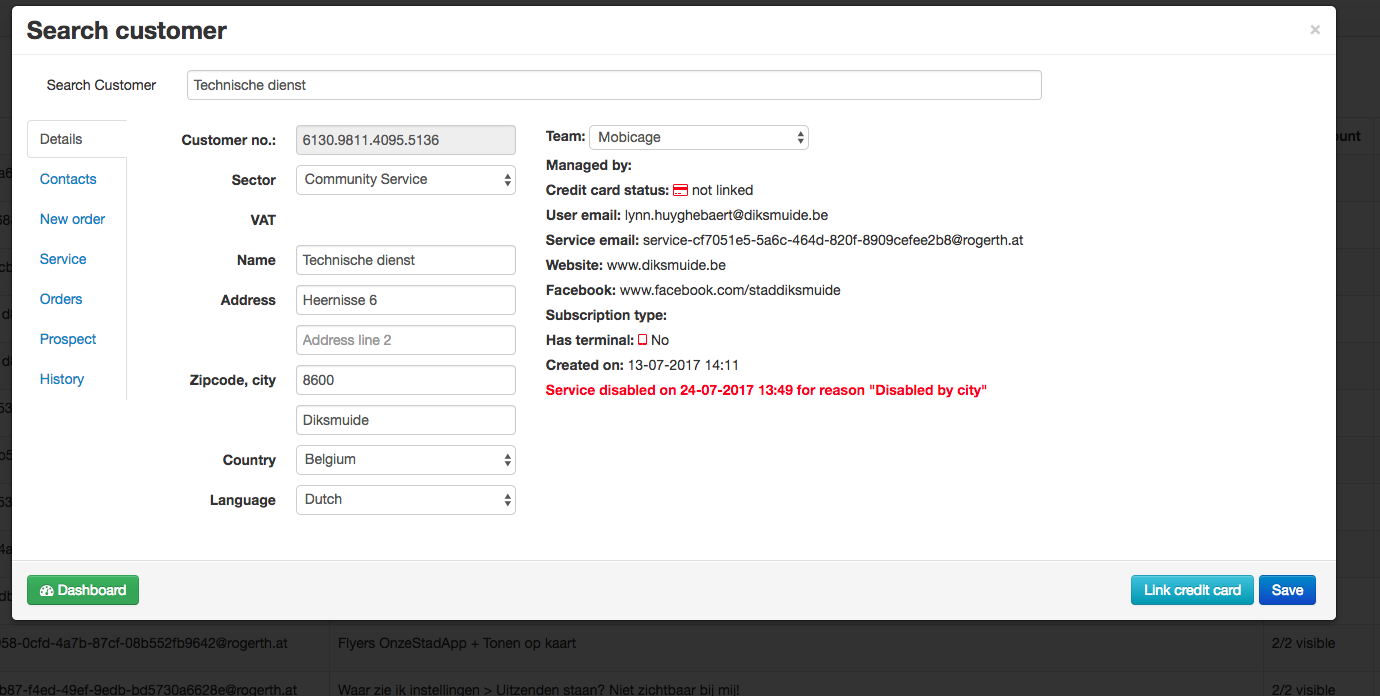

**Description**

I've created a custom VS Code [`plugin`](https://github.com/vince-fugnitto/ts-tools-plugin) which simply verifies that the `vscode-builtin-typescript-language-features` builtin extension correctly works. The plugin itself works perfectly in VS Code while in Theia it fails to find the extension.

**Screenshots**

_VS Code:_

<div align='center'>

</div>

_Theia:_

<div align='center'>

</div>

**Setup**

The following updates were made to the example-browser [package.json](https://github.com/eclipse-theia/theia/blob/master/examples/browser/package.json):

_Additions:_

```json

"@theia/vscode-builtin-typescript": "0.2.1",

"@theia/vscode-builtin-typescript-language-features": "0.2.1",

```

_Deletions:_

```json

"@theia/typescript": "^0.12.0"

```

---

Am I doing something wrong when attempting to consume builtin extensions in Theia?

|

1.0

|

[vscode] builtin extensions not recognized - **Description**

I've created a custom VS Code [`plugin`](https://github.com/vince-fugnitto/ts-tools-plugin) which simply verifies that the `vscode-builtin-typescript-language-features` builtin extension correctly works. The plugin itself works perfectly in VS Code while in Theia it fails to find the extension.

**Screenshots**

_VS Code:_

<div align='center'>

</div>

_Theia:_

<div align='center'>

</div>

**Setup**

The following updates were made to the example-browser [package.json](https://github.com/eclipse-theia/theia/blob/master/examples/browser/package.json):

_Additions:_

```json

"@theia/vscode-builtin-typescript": "0.2.1",

"@theia/vscode-builtin-typescript-language-features": "0.2.1",

```

_Deletions:_

```json

"@theia/typescript": "^0.12.0"

```

---

Am I doing something wrong when attempting to consume builtin extensions in Theia?

|

non_process

|

builtin extensions not recognized description i ve created a custom vs code which simply verifies that the vscode builtin typescript language features builtin extension correctly works the plugin itself works perfectly in vs code while in theia it fails to find the extension screenshots vs code theia setup the following updates were made to the example browser additions json theia vscode builtin typescript theia vscode builtin typescript language features deletions json theia typescript am i doing something wrong when attempting to consume builtin extensions in theia

| 0

|

436,048

| 12,544,557,094

|

IssuesEvent

|

2020-06-05 17:24:07

|

mintproject/mic

|

https://api.github.com/repos/mintproject/mic

|

closed

|

Detection outputs must ignore model configuration files

|

bug easy to fix enhancement medium priority

|

In my test with one input file, mic is constantly adding it as an output file after the execution, which is not correct.

|

1.0

|

Detection outputs must ignore model configuration files - In my test with one input file, mic is constantly adding it as an output file after the execution, which is not correct.

|

non_process

|

detection outputs must ignore model configuration files in my test with one input file mic is constantly adding it as an output file after the execution which is not correct

| 0

|

16,874

| 22,154,176,927

|

IssuesEvent

|

2022-06-03 20:20:40

|

0xffset/rOSt

|

https://api.github.com/repos/0xffset/rOSt

|

opened

|

InterProcess Communication

|

syscalls processes driver

|

We need to have some way of doing IPC.

We can have it synchronous or asynchronous, or both.

We can have it in the RPC style, or message-passing, or something else.

There are many options and we will have to look around and select the best one.

|

1.0

|

InterProcess Communication - We need to have some way of doing IPC.

We can have it synchronous or asynchronous, or both.

We can have it in the RPC style, or message-passing, or something else.

There are many options and we will have to look around and select the best one.

|

process

|

interprocess communication we need to have some way of doing ipc we can have it synchronous or asynchronous or both we can have it in the rpc style or message passing or something else there are many options and we will have to look around and select the best one

| 1

|

93,353

| 19,184,791,522

|

IssuesEvent

|

2021-12-05 01:57:07

|

CSC207-UofT/course-project-group-010

|

https://api.github.com/repos/CSC207-UofT/course-project-group-010

|

closed

|

Misplaced rating value bound check

|

code smell

|

Currently, whether a user-provided rating value is in-bounds is checked in [CourseManager](https://github.com/CSC207-UofT/course-project-group-010/blob/6e75d460d87626a94aa4c54594e901fa8b586628/src/main/java/usecase/CourseManager.java) (lines 59-61).

This feels like a violation of Clean Architecture principles: why should CourseManager care about how Rating is implemented?

**Suggested solution:**

1. CourseManager calls Rating constructor with parsed user rating value **in a try-except block**.

2. Check in-bounds condition in Rating constructor.

a. If in-bounds, create Rating object normally.

b. If out-of-bounds, throw an exception.

3. CourseManager catches the exception if one is thrown and rethrows it up to the command line. Otherwise, proceed normally.

|

1.0

|

Misplaced rating value bound check - Currently, whether a user-provided rating value is in-bounds is checked in [CourseManager](https://github.com/CSC207-UofT/course-project-group-010/blob/6e75d460d87626a94aa4c54594e901fa8b586628/src/main/java/usecase/CourseManager.java) (lines 59-61).

This feels like a violation of Clean Architecture principles: why should CourseManager care about how Rating is implemented?

**Suggested solution:**

1. CourseManager calls Rating constructor with parsed user rating value **in a try-except block**.

2. Check in-bounds condition in Rating constructor.

a. If in-bounds, create Rating object normally.

b. If out-of-bounds, throw an exception.

3. CourseManager catches the exception if one is thrown and rethrows it up to the command line. Otherwise, proceed normally.

|

non_process

|

misplaced rating value bound check currently whether a user provided rating value is in bounds is checked in lines this feels like a violation of clean architecture principles why should coursemanager care about how rating is implemented suggested solution coursemanager calls rating constructor with parsed user rating value in a try except block check in bounds condition in rating constructor a if in bounds create rating object normally b if out of bounds throw an exception coursemanager catches the exception if one is thrown and rethrows it up to the command line otherwise proceed normally

| 0

|

16,261

| 20,841,293,609

|

IssuesEvent

|

2022-03-21 00:14:25

|

duxli/duxli-css

|

https://api.github.com/repos/duxli/duxli-css

|

closed

|

Process Improvement: Add Formatter

|

process

|

# Process Improvement: Add Formatter

A formatter is needed for improved code consistency and readability.

We will need formatting for Sass/SCSS, JavaScript/TypeScript, HTML, and Markdown files.

Rules that focus on code quality do not need to be a part of this issue.

## Potential Solutions

There are tools like [Stylelint](https://stylelint.io/) or [ESLint](https://eslint.org/) that have more features.

However, most of these solutions are only intended for a few languages. They also require more configuration.

## Proposed Solution

[Prettier](https://prettier.io/docs/en/index.html) supports basic formatting for all languages that will be included in this project.

It also requires minimal configuration and setup.

|

1.0

|

Process Improvement: Add Formatter - # Process Improvement: Add Formatter

A formatter is needed for improved code consistency and readability.

We will need formatting for Sass/SCSS, JavaScript/TypeScript, HTML, and Markdown files.

Rules that focus on code quality do not need to be a part of this issue.

## Potential Solutions

There are tools like [Stylelint](https://stylelint.io/) or [ESLint](https://eslint.org/) that have more features.

However, most of these solutions are only intended for a few languages. They also require more configuration.

## Proposed Solution

[Prettier](https://prettier.io/docs/en/index.html) supports basic formatting for all languages that will be included in this project.

It also requires minimal configuration and setup.

|

process

|

process improvement add formatter process improvement add formatter a formatter is needed for improved code consistency and readability we will need formatting for sass scss javascript typescript html and markdown files rules that focus on code quality do not need to be a part of this issue potential solutions there are tools like or that have more features however most of these solutions are only intended for a few languages they also require more configuration proposed solution supports basic formatting for all languages that will be included in this project it also requires minimal configuration and setup

| 1

|

19,530

| 25,841,229,914

|

IssuesEvent

|

2022-12-13 00:38:12

|

devssa/onde-codar-em-salvador

|

https://api.github.com/repos/devssa/onde-codar-em-salvador

|

closed

|

CHEFE DE TI na [IESPsicologia]

|

SALVADOR INFRAESTRUTURA BANCO DE DADOS SERVIDOR PROCESSOS BACKUP HELP WANTED Stale

|

# CHEFE DE TI

1. **Escolaridade:** Superior completo (Sistemas de Informação ou Engenharia da Computação).

2. **Atribuições:** Gerenciar as atividades da área de TI, envolvendo hardware, sistemas, banco de dados, servidores, internet, back up e implantação de processos. Gerir equipe e processos de trabalho da área.

3. **Pré-requisitos:** Experiência na função. Experiência com liderança. Disponibilidade para viagem por demanda.

4. **Salário:** R$4.000,00 + Benefícios.

5. **Local:** Salvador/BA.

> Os interessados deverão encaminhar o currículo para o endereço andrecoutinho@iespsicologia.com.br ou trabalho@iespsicologia.com.br, informando o nome do cargo no título do e-mail "CHEFE DE TI".

|

1.0

|

CHEFE DE TI na [IESPsicologia] - # CHEFE DE TI

1. **Escolaridade:** Superior completo (Sistemas de Informação ou Engenharia da Computação).

2. **Atribuições:** Gerenciar as atividades da área de TI, envolvendo hardware, sistemas, banco de dados, servidores, internet, back up e implantação de processos. Gerir equipe e processos de trabalho da área.

3. **Pré-requisitos:** Experiência na função. Experiência com liderança. Disponibilidade para viagem por demanda.

4. **Salário:** R$4.000,00 + Benefícios.

5. **Local:** Salvador/BA.

> Os interessados deverão encaminhar o currículo para o endereço andrecoutinho@iespsicologia.com.br ou trabalho@iespsicologia.com.br, informando o nome do cargo no título do e-mail "CHEFE DE TI".

|

process

|

chefe de ti na chefe de ti escolaridade superior completo sistemas de informação ou engenharia da computação atribuições gerenciar as atividades da área de ti envolvendo hardware sistemas banco de dados servidores internet back up e implantação de processos gerir equipe e processos de trabalho da área pré requisitos experiência na função experiência com liderança disponibilidade para viagem por demanda salário r benefícios local salvador ba os interessados deverão encaminhar o currículo para o endereço andrecoutinho iespsicologia com br ou trabalho iespsicologia com br informando o nome do cargo no título do e mail chefe de ti

| 1

|

132,356

| 18,714,978,246

|

IssuesEvent

|

2021-11-03 02:28:31

|

department-of-veterans-affairs/va.gov-cms

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms

|

opened

|

MVP editorial experience for billing and insurance, register for care, medical records pages, and non-clinical facility services

|

Design Epic Content governance Content forms Needs refining UX writing

|

## Background

### User Story or Problem Statement

This is a subepic for creating an editorial experience for PAOs to manage Top Task pages.

There are a few known issues, and a few unknown opportunities that require some design discovery and thinking.

Some of the issues in this epic block roll out of these pages.

### Affected users and stakeholders

* Editors at VAMCs

## Design principles

Veteran-centered

- [ ] `Single source of truth`: Increase reliability and consistency of content on VA.gov by providing a single source of truth.

- [ ] `Accessible, plain language`: Provide guardrails and guidelines to ensure content quality.

- [x] `Purposely structured content`: Ensure Content API can deliver content whose meaning matches its structure.

- [x] `Content lifecycle governance`: Produce tools, processes and policies to maintain content quality throughout its lifecycle.

Editor-centered

- [ ] `Purpose-driven`: Create an opportunity to involve the editor community in VA’s mission and content strategy goals.

- [x] `Efficient`: Remove distractions and create clear, straightforward paths to get the job done.

- [ ] `Approachable`: Offer friendly guidance over authoritative instruction.

- [x] `Consistent`: Reduce user’s mental load by allowing them to fall back on pattern recognition to complete tasks.

- [x] `Empowering`: Provide clear information to help editors make decisions about their work.

### CMS Team

Please leave only the team that will do this work selected. If you're not sure, it's fine to leave both selected.

- [ ] `Platform CMS Team`

- [x] `Sitewide CMS Team`

|

1.0

|

MVP editorial experience for billing and insurance, register for care, medical records pages, and non-clinical facility services - ## Background

### User Story or Problem Statement

This is a subepic for creating an editorial experience for PAOs to manage Top Task pages.

There are a few known issues, and a few unknown opportunities that require some design discovery and thinking.

Some of the issues in this epic block roll out of these pages.

### Affected users and stakeholders

* Editors at VAMCs

## Design principles

Veteran-centered

- [ ] `Single source of truth`: Increase reliability and consistency of content on VA.gov by providing a single source of truth.

- [ ] `Accessible, plain language`: Provide guardrails and guidelines to ensure content quality.

- [x] `Purposely structured content`: Ensure Content API can deliver content whose meaning matches its structure.

- [x] `Content lifecycle governance`: Produce tools, processes and policies to maintain content quality throughout its lifecycle.

Editor-centered

- [ ] `Purpose-driven`: Create an opportunity to involve the editor community in VA’s mission and content strategy goals.

- [x] `Efficient`: Remove distractions and create clear, straightforward paths to get the job done.

- [ ] `Approachable`: Offer friendly guidance over authoritative instruction.

- [x] `Consistent`: Reduce user’s mental load by allowing them to fall back on pattern recognition to complete tasks.

- [x] `Empowering`: Provide clear information to help editors make decisions about their work.

### CMS Team

Please leave only the team that will do this work selected. If you're not sure, it's fine to leave both selected.

- [ ] `Platform CMS Team`

- [x] `Sitewide CMS Team`

|

non_process

|