Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

63,890 | 7,751,126,227 | IssuesEvent | 2018-05-30 16:05:56 | GPIG-Group-C/web-server-ui | https://api.github.com/repos/GPIG-Group-C/web-server-ui | closed | Create additional hidden webpage for triggering demonstration events on the server at key points in the demonstration | Web Server & UI design presentation | There needs to be a way to trigger the earthquake or the start of the data streams. A way to reset this without force closing all applications might also be useful. | 1.0 | Create additional hidden webpage for triggering demonstration events on the server at key points in the demonstration - There needs to be a way to trigger the earthquake or the start of the data streams. A way to reset this without force closing all applications might also be useful. | non_test | create additional hidden webpage for triggering demonstration events on the server at key points in the demonstration there needs to be a way to trigger the earthquake or the start of the data streams a way to reset this without force closing all applications might also be useful | 0 |

70,454 | 7,188,858,931 | IssuesEvent | 2018-02-02 11:44:46 | eclipse/smarthome | https://api.github.com/repos/eclipse/smarthome | opened | [Test failures] HostFragmentSupportTest | Automation Test | ```

Tests run: 2, Failures: 2, Errors: 0, Skipped: 0, Time elapsed: 12.494 sec <<< FAILURE! - in org.eclipse.smarthome.automation.integration.test.HostFragmentSupportTest

asserting that the update of the fragment-host provides the resources correctly(org.eclipse.smarthome.automation.integration.test.HostFragmentSuppo... | 1.0 | [Test failures] HostFragmentSupportTest - ```

Tests run: 2, Failures: 2, Errors: 0, Skipped: 0, Time elapsed: 12.494 sec <<< FAILURE! - in org.eclipse.smarthome.automation.integration.test.HostFragmentSupportTest

asserting that the update of the fragment-host provides the resources correctly(org.eclipse.smarthome.aut... | test | hostfragmentsupporttest tests run failures errors skipped time elapsed sec failure in org eclipse smarthome automation integration test hostfragmentsupporttest asserting that the update of the fragment host provides the resources correctly org eclipse smarthome automation integrati... | 1 |

819,323 | 30,728,857,898 | IssuesEvent | 2023-07-27 22:25:28 | UNopenGIS/7 | https://api.github.com/repos/UNopenGIS/7 | closed | Smart Maps Dojo process 1 | priority/MAY | # Generation 1

The Smart Maps Dojo process is a process to run a sustaining community of practice about Smart Maps. This is a first prototype concept generation.

## 1. 示範 demonstration

the mentor demonstrates smart maps application.

## 2. 解説 explanation

the mentor provides explanation about the fundamental pr... | 1.0 | Smart Maps Dojo process 1 - # Generation 1

The Smart Maps Dojo process is a process to run a sustaining community of practice about Smart Maps. This is a first prototype concept generation.

## 1. 示範 demonstration

the mentor demonstrates smart maps application.

## 2. 解説 explanation

the mentor provides explanat... | non_test | smart maps dojo process generation the smart maps dojo process is a process to run a sustaining community of practice about smart maps this is a first prototype concept generation 示範 demonstration the mentor demonstrates smart maps application 解説 explanation the mentor provides explanat... | 0 |

4,451 | 2,610,094,301 | IssuesEvent | 2015-02-26 18:28:29 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳红蓝光祛痘效果 | auto-migrated Priority-Medium Type-Defect | ```

深圳红蓝光祛痘效果【深圳韩方科颜全国热线400-869-1818,24小

时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国��

�方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩�

��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”

健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专��

�治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的�

��痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:14 | 1.0 | 深圳红蓝光祛痘效果 - ```

深圳红蓝光祛痘效果【深圳韩方科颜全国热线400-869-1818,24小

时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国��

�方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩�

��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”

健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专��

�治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的�

��痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:14 | non_test | 深圳红蓝光祛痘效果 深圳红蓝光祛痘效果【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国�� �方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩� ��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹” 健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专�� �治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的� ��痘。 original issue reported on code google com by szft com on may at | 0 |

269,528 | 23,447,494,777 | IssuesEvent | 2022-08-15 21:17:08 | prysmaticlabs/prysm | https://api.github.com/repos/prysmaticlabs/prysm | closed | Abstracting Time and Tickers from Prysm's Core Implementation | Enhancement Discussion Priority: Low Sync E2E Tests | # 💎 Issue

Thanks @kasey for bringing this up in our conversations.

### Background

Ethereum's consensus protocol, [Gasper](https://arxiv.org/abs/2003.03052), is a synchronous one. This means time is a critical part of its functionality and security. As Prysm implements the [specification](https://github.com/et... | 1.0 | Abstracting Time and Tickers from Prysm's Core Implementation - # 💎 Issue

Thanks @kasey for bringing this up in our conversations.

### Background

Ethereum's consensus protocol, [Gasper](https://arxiv.org/abs/2003.03052), is a synchronous one. This means time is a critical part of its functionality and securit... | test | abstracting time and tickers from prysm s core implementation 💎 issue thanks kasey for bringing this up in our conversations background ethereum s consensus protocol is a synchronous one this means time is a critical part of its functionality and security as prysm implements the we use ti... | 1 |

25,430 | 11,172,290,923 | IssuesEvent | 2019-12-29 04:31:19 | christian-cleberg/lets-debug | https://api.github.com/repos/christian-cleberg/lets-debug | opened | CVE-2019-11358 (Medium) detected in jquery-3.3.1.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-3.3.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2019-11358 (Medium) detected in jquery-3.3.1.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-3.3.1.min.js</b></p></summary>

<p>JavaScript librar... | non_test | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm lets debug articles full stack web html path to vulnerable li... | 0 |

118,449 | 9,990,906,948 | IssuesEvent | 2019-07-11 09:50:35 | chameleon-system/chameleon-system | https://api.github.com/repos/chameleon-system/chameleon-system | closed | Error-prone default portal selection | Status: Test Type: Bug | **Describe the bug**

If an action needs an active portal and no portal was set for example actions running in cms backend. The cms tries to get an default portal. Actually its the portal with the lowest id.

Our CMS was delivered with one Portal wit id "1". If you add a new portal with a lower id for example 01dsfs.... | 1.0 | Error-prone default portal selection - **Describe the bug**

If an action needs an active portal and no portal was set for example actions running in cms backend. The cms tries to get an default portal. Actually its the portal with the lowest id.

Our CMS was delivered with one Portal wit id "1". If you add a new por... | test | error prone default portal selection describe the bug if an action needs an active portal and no portal was set for example actions running in cms backend the cms tries to get an default portal actually its the portal with the lowest id our cms was delivered with one portal wit id if you add a new por... | 1 |

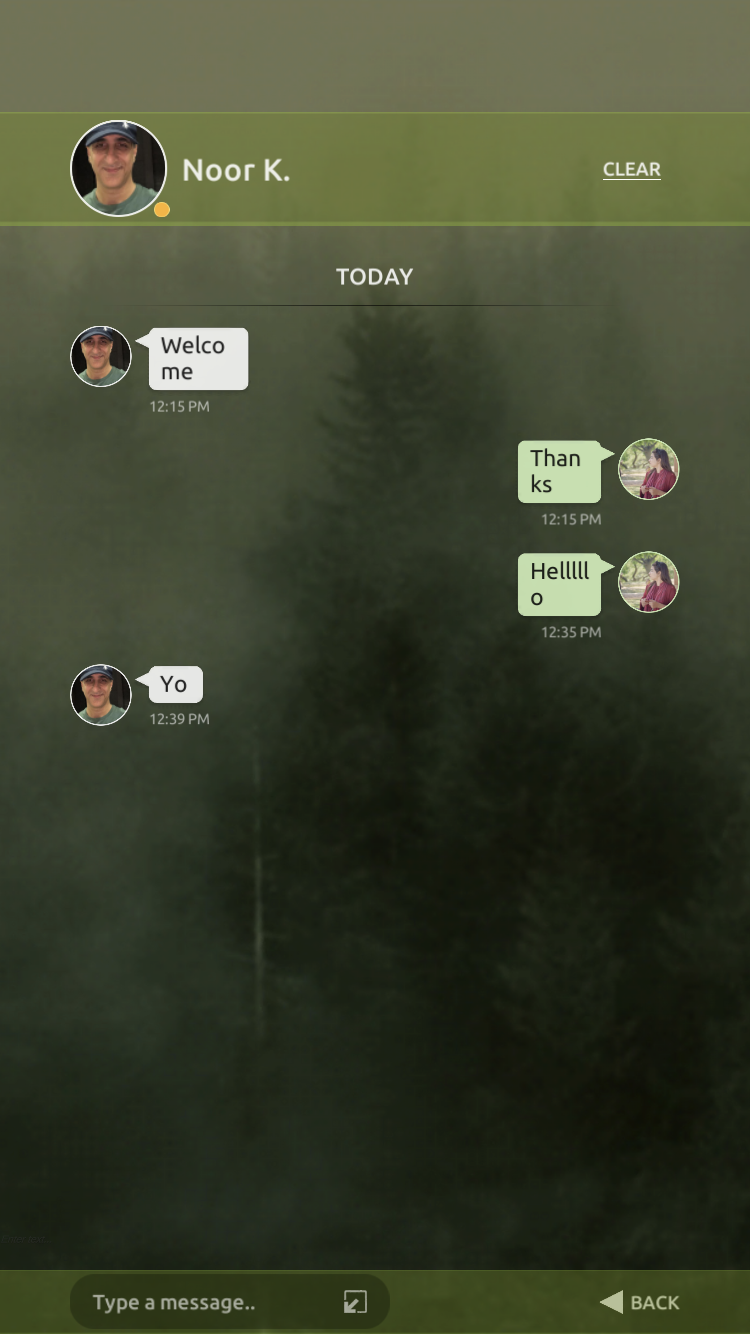

328,757 | 9,999,603,857 | IssuesEvent | 2019-07-12 11:08:21 | turbolabz/transfer-bug-track | https://api.github.com/repos/turbolabz/transfer-bug-track | opened | Chat messages are not showing properly in some iOS devices | Priority: Medium Type: bug | Text is showing in two lines and it shows as half on the board.

| 1.0 | Chat messages are not showing properly in some iOS devices - Text is showing in two lines and it shows as half on the board.

| non_test | chat messages are not showing properly in some ios devices text is showing in two lines and it shows as half on the board | 0 |

87,755 | 17,370,868,556 | IssuesEvent | 2021-07-30 13:51:00 | parallaxsecond/parsec | https://api.github.com/repos/parallaxsecond/parsec | closed | Investigate the strange CodeCov reports | bug code health question testing | Code coverage reporting was out of order for a while, and now that it's working again, the numbers are somewhat strange. You can see the most recent report [here](https://app.codecov.io/gh/parallaxsecond/parsec). The coverage has dropped, despite us adding new tests for various bits of functionality, while some parts o... | 1.0 | Investigate the strange CodeCov reports - Code coverage reporting was out of order for a while, and now that it's working again, the numbers are somewhat strange. You can see the most recent report [here](https://app.codecov.io/gh/parallaxsecond/parsec). The coverage has dropped, despite us adding new tests for various... | non_test | investigate the strange codecov reports code coverage reporting was out of order for a while and now that it s working again the numbers are somewhat strange you can see the most recent report the coverage has dropped despite us adding new tests for various bits of functionality while some parts of the repor... | 0 |

256,185 | 19,402,667,780 | IssuesEvent | 2021-12-19 13:16:42 | canwebe/CatBreeds | https://api.github.com/repos/canwebe/CatBreeds | closed | Add Readme and Information about the project in this repo | documentation | - [ ] Added Readme

- [ ] Added about section in repo | 1.0 | Add Readme and Information about the project in this repo - - [ ] Added Readme

- [ ] Added about section in repo | non_test | add readme and information about the project in this repo added readme added about section in repo | 0 |

257,005 | 8,131,790,901 | IssuesEvent | 2018-08-18 02:17:45 | alassanecoly/BookmarkMyChampions | https://api.github.com/repos/alassanecoly/BookmarkMyChampions | closed | ✨ Implement PATCH /users/me route | priority: medium 🚧 scope: api scope: authentication scope: routing status: accepted 👍 type: feature ✨ | **Is your feature request related to a problem ? Please describe.**

Current user can update his informations.

**Describe the solution you'd like**

Implement PATCH /users/me route

| 1.0 | ✨ Implement PATCH /users/me route - **Is your feature request related to a problem ? Please describe.**

Current user can update his informations.

**Describe the solution you'd like**

Implement PATCH /users/me route

| non_test | ✨ implement patch users me route is your feature request related to a problem please describe current user can update his informations describe the solution you d like implement patch users me route | 0 |

313,980 | 26,967,520,925 | IssuesEvent | 2023-02-09 00:11:05 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix tensor.test_torch_instance_type | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3977080495/jobs/6817950648" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/3977080495/jobs/6817950648" rel="noopener ... | 1.0 | Fix tensor.test_torch_instance_type - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3977080495/jobs/6817950648" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/3977... | test | fix tensor test torch instance type tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test torch test tensor py test torch instance type e assertionerror e falsifying exam... | 1 |

7,659 | 8,026,932,271 | IssuesEvent | 2018-07-27 07:07:55 | badges/shields | https://api.github.com/repos/badges/shields | closed | Jenkins permission requirements | question service-badge | Hello all,

I've been trying to get a shields badge of my Jenkins build status for [this job](https://ci.gamerking195.com/job/AutoUpdaterAPI). However, it always [displays inaccessible.](https://img.shields.io/jenkins/s/https/ci.gamerking195.com/job/AutoUpdaterAPI.svg) The job has project-based security, with anonym... | 1.0 | Jenkins permission requirements - Hello all,

I've been trying to get a shields badge of my Jenkins build status for [this job](https://ci.gamerking195.com/job/AutoUpdaterAPI). However, it always [displays inaccessible.](https://img.shields.io/jenkins/s/https/ci.gamerking195.com/job/AutoUpdaterAPI.svg) The job has p... | non_test | jenkins permission requirements hello all i ve been trying to get a shields badge of my jenkins build status for however it always the job has project based security with anonymous users being able to discover and read but nothing else things i ve tried adding removing ssl giving anonymous... | 0 |

354,918 | 25,175,215,091 | IssuesEvent | 2022-11-11 08:37:37 | Isaaclhy00/pe | https://api.github.com/repos/Isaaclhy00/pe | opened | Inconsistent grammar | severity.VeryLow type.DocumentationBug |

The listTasks command uses the plural form "Tasks" however the findTask command uses the ... | 1.0 | Inconsistent grammar -

The listTasks command uses the plural form "Tasks" however the fin... | non_test | inconsistent grammar the listtasks command uses the plural form tasks however the findtask command uses the singular form task although both commands may potentially return one or more tasks | 0 |

104,656 | 8,996,685,538 | IssuesEvent | 2019-02-02 03:34:33 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | opened | SSLConfigurationReloaderTests hangs when run locally | :Security/Network >test-failure | This test suite hangs indefinitely when run locally, but I haven't seen CI be impacted yet.

Reproduce with:

```

./gradlew :x-pack:plugin:core:test \

-Dtests.seed=5DB61DD425F081B \

-Dtests.class=org.elasticsearch.xpack.core.ssl.SSLConfigurationReloaderTests \

-Dtests.security.manager=true \

-Dtests.loca... | 1.0 | SSLConfigurationReloaderTests hangs when run locally - This test suite hangs indefinitely when run locally, but I haven't seen CI be impacted yet.

Reproduce with:

```

./gradlew :x-pack:plugin:core:test \

-Dtests.seed=5DB61DD425F081B \

-Dtests.class=org.elasticsearch.xpack.core.ssl.SSLConfigurationReloaderTes... | test | sslconfigurationreloadertests hangs when run locally this test suite hangs indefinitely when run locally but i haven t seen ci be impacted yet reproduce with gradlew x pack plugin core test dtests seed dtests class org elasticsearch xpack core ssl sslconfigurationreloadertests dtest... | 1 |

50,355 | 21,076,589,045 | IssuesEvent | 2022-04-02 08:21:59 | emergenzeHack/ukrainehelp.emergenzehack.info_segnalazioni | https://api.github.com/repos/emergenzeHack/ukrainehelp.emergenzehack.info_segnalazioni | opened | https://www.raiplay.it/benvenuti-bambini Cartoni animati in lingua italiana e ucraina (contenuti gr | Services translation Children | <pre><yamldata>

servicetypes:

materialGoods: false

hospitality: false

transport: false

healthcare: false

Legal: false

translation: true

job: false

psychologicalSupport: false

Children: true

disability: false

women: false

education: false

offerFromWho: Raiplay

title: https://www.raiplay.it/benven... | 1.0 | https://www.raiplay.it/benvenuti-bambini Cartoni animati in lingua italiana e ucraina (contenuti gr - <pre><yamldata>

servicetypes:

materialGoods: false

hospitality: false

transport: false

healthcare: false

Legal: false

translation: true

job: false

psychologicalSupport: false

Children: true

disabil... | non_test | cartoni animati in lingua italiana e ucraina contenuti gr servicetypes materialgoods false hospitality false transport false healthcare false legal false translation true job false psychologicalsupport false children true disability false women false education false offerfr... | 0 |

150,654 | 11,980,044,776 | IssuesEvent | 2020-04-07 08:40:39 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | Sindragosa gauntlet not active | Fix - Tester Confirmed | **What is Happening:** Currently the sindragosa gauntlet does not active, so the door is close and the teleport is not active, you can't get to her.

**What Should happen:** The gauntlet should active when you move to the gauntlet room before sindy.

Not sure if this is reported or not, but since i can't find i... | 1.0 | Sindragosa gauntlet not active - **What is Happening:** Currently the sindragosa gauntlet does not active, so the door is close and the teleport is not active, you can't get to her.

**What Should happen:** The gauntlet should active when you move to the gauntlet room before sindy.

Not sure if this is reported... | test | sindragosa gauntlet not active what is happening currently the sindragosa gauntlet does not active so the door is close and the teleport is not active you can t get to her what should happen the gauntlet should active when you move to the gauntlet room before sindy not sure if this is reported... | 1 |

145,865 | 11,710,898,343 | IssuesEvent | 2020-03-09 02:53:05 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | Fail to acquire lease for blobs in one SAS attached blob container | :beetle: regression :gear: blobs 🧪 testing | **Storage Explorer Version:** 1.12.0

**Build**: [20200305.6](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3529152)

**Branch**: dev/chuye/beta-blob-extension

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04

**Architecture**: ia32/x64

**Regression From:** Previous release(1.12.0)

**Steps to repro... | 1.0 | Fail to acquire lease for blobs in one SAS attached blob container - **Storage Explorer Version:** 1.12.0

**Build**: [20200305.6](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3529152)

**Branch**: dev/chuye/beta-blob-extension

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04

**Architecture**: ia32/x6... | test | fail to acquire lease for blobs in one sas attached blob container storage explorer version build branch dev chuye beta blob extension platform os windows linux ubuntu architecture regression from previous release steps to reproduce expand on... | 1 |

279,733 | 24,251,684,859 | IssuesEvent | 2022-09-27 14:37:48 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | closed | CMS Test Coverage Analysis | Automated testing ⭐️ Sitewide CMS | ## Acceptance Criteria

- [ ] team has a comprehensive list of manual test cases (in va.gov-team repo) to run through

## Implementation notes

- [ ] comprehensive list of custom features we've built that don't have test coverage

- [ ] stories created for any critical features that need automated test coverage | 1.0 | CMS Test Coverage Analysis - ## Acceptance Criteria

- [ ] team has a comprehensive list of manual test cases (in va.gov-team repo) to run through

## Implementation notes

- [ ] comprehensive list of custom features we've built that don't have test coverage

- [ ] stories created for any critical features that need aut... | test | cms test coverage analysis acceptance criteria team has a comprehensive list of manual test cases in va gov team repo to run through implementation notes comprehensive list of custom features we ve built that don t have test coverage stories created for any critical features that need automated... | 1 |

107,054 | 9,201,064,658 | IssuesEvent | 2019-03-07 18:39:11 | scylladb/scylla | https://api.github.com/repos/scylladb/scylla | opened | segfault in sstable::has_correct_non_compound_range_tombstones during repair_disjoint_row_2nodes_diff_shard_count_test | dtest | scylla version e9bc2a7912bcc3539f0a10c436a60069b88d1cab

scylla dtest version scylladb/scylla-dtest@f373388d91b494d398919ace1c54b73bd0a8b4a2

Seen in [dtest-release/50/artifact/logs-release.2/1551952592893_repair_additional_test.RepairAdditionalTest.repair_disjoint_row_2nodes_diff_shard_count_test/node1.log](http://j... | 1.0 | segfault in sstable::has_correct_non_compound_range_tombstones during repair_disjoint_row_2nodes_diff_shard_count_test - scylla version e9bc2a7912bcc3539f0a10c436a60069b88d1cab

scylla dtest version scylladb/scylla-dtest@f373388d91b494d398919ace1c54b73bd0a8b4a2

Seen in [dtest-release/50/artifact/logs-release.2/15519... | test | segfault in sstable has correct non compound range tombstones during repair disjoint row diff shard count test scylla version scylla dtest version scylladb scylla dtest seen in cfpi e logs release scylla void sea... | 1 |

12,421 | 3,269,147,677 | IssuesEvent | 2015-10-23 15:06:16 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Enhanced markdown: Design of Reports page for review with LG | 4 - Acceptance testing Feature Request Needs Design Work UI/UX | @Lesterng please provide details :-) | 1.0 | Enhanced markdown: Design of Reports page for review with LG - @Lesterng please provide details :-) | test | enhanced markdown design of reports page for review with lg lesterng please provide details | 1 |

192,622 | 14,622,909,335 | IssuesEvent | 2020-12-23 01:45:50 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | The public connected blob container is neither auto selected nor opened | :beetle: regression :gear: blobs :heavy_check_mark: merged 🧪 testing | **Storage Explorer Version:** 1.17.0

**Build Number:** 20201222.1

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04/ MacOS Catalina

**Architecture:** ia32/x64

**Regression From:** Previous build (20201218.3)

## Steps to Reproduce ##

1. Expand one storage account -> Blob Containers.

2. Create a ... | 1.0 | The public connected blob container is neither auto selected nor opened - **Storage Explorer Version:** 1.17.0

**Build Number:** 20201222.1

**Branch:** main

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04/ MacOS Catalina

**Architecture:** ia32/x64

**Regression From:** Previous build (20201218.3)

## Steps to Rep... | test | the public connected blob container is neither auto selected nor opened storage explorer version build number branch main platform os windows linux ubuntu macos catalina architecture regression from previous build steps to reproduce expand on... | 1 |

492,633 | 14,216,694,348 | IssuesEvent | 2020-11-17 09:20:48 | usc-isi-i2/datamart-api | https://api.github.com/repos/usc-isi-i2/datamart-api | opened | Load anntated datasets from Pam | Priority 1 world-modeler | Our local copy of the dataset files is here:

https://drive.google.com/drive/folders/1etSpJAJth_0xRSil6jSTYbr7su5Pdrmf

Pam's Google shared drive is here:

https://drive.google.com/drive/u/2/folders/13DwbrNaHuDr7ZmFkbYcXIOd1mkcre5S0 | 1.0 | Load anntated datasets from Pam - Our local copy of the dataset files is here:

https://drive.google.com/drive/folders/1etSpJAJth_0xRSil6jSTYbr7su5Pdrmf

Pam's Google shared drive is here:

https://drive.google.com/drive/u/2/folders/13DwbrNaHuDr7ZmFkbYcXIOd1mkcre5S0 | non_test | load anntated datasets from pam our local copy of the dataset files is here pam s google shared drive is here | 0 |

1,540 | 3,041,618,778 | IssuesEvent | 2015-08-07 22:42:23 | npgsql/npgsql | https://api.github.com/repos/npgsql/npgsql | closed | Add SyncDNS connection string parameter | feature performance | Our current connection mechanism resolves DNS with an asynchronous call; this is because the .NET sync DNS API provides no timeout facility, and we're bound by ADO.NET to provide a connection timeout.

We've had several reports of people having trouble with this mechanism, in cases of bursts: the threadpool is exhaus... | True | Add SyncDNS connection string parameter - Our current connection mechanism resolves DNS with an asynchronous call; this is because the .NET sync DNS API provides no timeout facility, and we're bound by ADO.NET to provide a connection timeout.

We've had several reports of people having trouble with this mechanism, in... | non_test | add syncdns connection string parameter our current connection mechanism resolves dns with an asynchronous call this is because the net sync dns api provides no timeout facility and we re bound by ado net to provide a connection timeout we ve had several reports of people having trouble with this mechanism in... | 0 |

300,838 | 25,998,255,450 | IssuesEvent | 2022-12-20 13:24:08 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | kvnemesis: use ReplicationManual | C-enhancement T-testeng | **Describe the problem**

In https://github.com/cockroachdb/cockroach/pull/89477, TestKVNemesisMultiNode was (accidentally) switched to use ReplicationAuto instead of ReplicationManual, meaning that the TestCluster will upreplicate and also have the replicate queue active throughout the run.

We should decide wheth... | 1.0 | kvnemesis: use ReplicationManual - **Describe the problem**

In https://github.com/cockroachdb/cockroach/pull/89477, TestKVNemesisMultiNode was (accidentally) switched to use ReplicationAuto instead of ReplicationManual, meaning that the TestCluster will upreplicate and also have the replicate queue active throughout... | test | kvnemesis use replicationmanual describe the problem in testkvnemesismultinode was accidentally switched to use replicationauto instead of replicationmanual meaning that the testcluster will upreplicate and also have the replicate queue active throughout the run we should decide whether that s desira... | 1 |

780,987 | 27,417,609,706 | IssuesEvent | 2023-03-01 14:45:59 | PrefectHQ/prefect | https://api.github.com/repos/PrefectHQ/prefect | closed | Orion - add search functionality in block selection. | enhancement status:accepted ui priority:medium | ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar request and didn't find it.

- [X] I searched the Prefect documentation for this feature.

### Prefect Version

2.x

### Describe the current behavior

If I define a block as an input for a flow... | 1.0 | Orion - add search functionality in block selection. - ### First check

- [X] I added a descriptive title to this issue.

- [X] I used the GitHub search to find a similar request and didn't find it.

- [X] I searched the Prefect documentation for this feature.

### Prefect Version

2.x

### Describe the current... | non_test | orion add search functionality in block selection first check i added a descriptive title to this issue i used the github search to find a similar request and didn t find it i searched the prefect documentation for this feature prefect version x describe the current behav... | 0 |

11,241 | 8,336,358,246 | IssuesEvent | 2018-09-28 07:30:41 | CoditEU/practical-api-guidelines | https://api.github.com/repos/CoditEU/practical-api-guidelines | closed | Complete the security first paragraph | guidance must-have security | Complete the security first paragraph with more details.

Decide whether any form of authentication / authorization will be part of the first maturity level | True | Complete the security first paragraph - Complete the security first paragraph with more details.

Decide whether any form of authentication / authorization will be part of the first maturity level | non_test | complete the security first paragraph complete the security first paragraph with more details decide whether any form of authentication authorization will be part of the first maturity level | 0 |

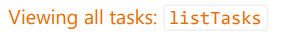

356,505 | 25,176,204,480 | IssuesEvent | 2022-11-11 09:28:53 | t1mzzz/pe | https://api.github.com/repos/t1mzzz/pe | opened | DG - Links to `Main` and `MainApp` are not correct | type.DocumentationBug severity.VeryLow | As seen below, `Main` and `MainApp` contain hyperlinks that should link to `Main.java` and `MainApp.java` of CLInkedIn. However, it still links to AB3s GitHub repository.

<!--session: 1668153883434-430ea50c... | 1.0 | DG - Links to `Main` and `MainApp` are not correct - As seen below, `Main` and `MainApp` contain hyperlinks that should link to `Main.java` and `MainApp.java` of CLInkedIn. However, it still links to AB3s GitHub repository.

| test | Use Pester to write down the tests based on the PS scripts. The result of this task will be the Pester Test Script. This script when executed should generate a NUnit.xml file. Eventually, it will be used by the Azure Pipeline. It should include the TestImage tests and Test Image using Stack Test. | 1.0 | P3 Add Tests Powershell script for Azure Pipeline (Pester Preferred) - Use Pester to write down the tests based on the PS scripts. The result of this task will be the Pester Test Script. This script when executed should generate a NUnit.xml file. Eventually, it will be used by the Azure Pipeline. It should include the ... | test | add tests powershell script for azure pipeline pester preferred use pester to write down the tests based on the ps scripts the result of this task will be the pester test script this script when executed should generate a nunit xml file eventually it will be used by the azure pipeline it should include the t... | 1 |

72,477 | 9,594,851,963 | IssuesEvent | 2019-05-09 14:49:38 | regolith-linux/regolith-desktop | https://api.github.com/repos/regolith-linux/regolith-desktop | closed | Add READMEs to all debian package repos | documentation | The content of readme's should contain:

1. general description of the package.

2. list and describe any dependencies with other Regolith packages.

3. notable configuration if any exists.

4. how to build the package locally and publish changes to a PPA. | 1.0 | Add READMEs to all debian package repos - The content of readme's should contain:

1. general description of the package.

2. list and describe any dependencies with other Regolith packages.

3. notable configuration if any exists.

4. how to build the package locally and publish changes to a PPA. | non_test | add readmes to all debian package repos the content of readme s should contain general description of the package list and describe any dependencies with other regolith packages notable configuration if any exists how to build the package locally and publish changes to a ppa | 0 |

185,070 | 14,292,764,484 | IssuesEvent | 2020-11-24 01:55:31 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | eclipse-iofog/iofog-go-sdk: vendor/k8s.io/gengo/examples/deepcopy-gen/generators/deepcopy_test.go; 10 LoC | fresh test tiny |

Found a possible issue in [eclipse-iofog/iofog-go-sdk](https://www.github.com/eclipse-iofog/iofog-go-sdk) at [vendor/k8s.io/gengo/examples/deepcopy-gen/generators/deepcopy_test.go](https://github.com/eclipse-iofog/iofog-go-sdk/blob/b8ff7f50d1585fd5f2a41ea43fb89c9dd6805c7c/vendor/k8s.io/gengo/examples/deepcopy-gen/gene... | 1.0 | eclipse-iofog/iofog-go-sdk: vendor/k8s.io/gengo/examples/deepcopy-gen/generators/deepcopy_test.go; 10 LoC -

Found a possible issue in [eclipse-iofog/iofog-go-sdk](https://www.github.com/eclipse-iofog/iofog-go-sdk) at [vendor/k8s.io/gengo/examples/deepcopy-gen/generators/deepcopy_test.go](https://github.com/eclipse-iof... | test | eclipse iofog iofog go sdk vendor io gengo examples deepcopy gen generators deepcopy test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the lin... | 1 |

281,374 | 24,388,050,664 | IssuesEvent | 2022-10-04 13:20:10 | celestiaorg/test-infra | https://api.github.com/repos/celestiaorg/test-infra | closed | testground/tests: implement TC-004 | enhancement test testground | After finishing #61 and #62 , we need to

- [ ] Create more composition files reflecting data

- [ ] Measure the sync times from both light and full nodes

Ref:

1. https://github.com/celestiaorg/test-infra/blob/main/docs/test-plans/001-Big-Blocks/test-cases/tc-004-full-light-past.md

2. #1

3. #55 | 2.0 | testground/tests: implement TC-004 - After finishing #61 and #62 , we need to

- [ ] Create more composition files reflecting data

- [ ] Measure the sync times from both light and full nodes

Ref:

1. https://github.com/celestiaorg/test-infra/blob/main/docs/test-plans/001-Big-Blocks/test-cases/tc-004-full-l... | test | testground tests implement tc after finishing and we need to create more composition files reflecting data measure the sync times from both light and full nodes ref | 1 |

122,237 | 10,217,751,368 | IssuesEvent | 2019-08-15 14:23:48 | DBCG/cql_engine | https://api.github.com/repos/DBCG/cql_engine | closed | Unexpected result in Multi Source Query | bug test created | Getting an unexpected output from a multi source query:

define "a":

{

{ code: 1, periods: {Interval[1, 2], Interval[3, 4]} }

}

define "b":

{

{ code: 1, periods: {Interval[1, 2], Interval[3, 4]} }

}

define "Multisource":

from "a" A, "b" B

>> Multisource [10:1] Index... | 1.0 | Unexpected result in Multi Source Query - Getting an unexpected output from a multi source query:

define "a":

{

{ code: 1, periods: {Interval[1, 2], Interval[3, 4]} }

}

define "b":

{

{ code: 1, periods: {Interval[1, 2], Interval[3, 4]} }

}

define "Multisource":

from "... | test | unexpected result in multi source query getting an unexpected output from a multi source query define a code periods interval interval define b code periods interval interval define multisource from a a b b mu... | 1 |

141,646 | 11,429,762,763 | IssuesEvent | 2020-02-04 08:44:39 | proarc/proarc | https://api.github.com/repos/proarc/proarc | closed | Smazání importního adresáře po úspěšném importu | 6 k testování Release-3.5.15 | Po úspěšném importu smazat importní složku (proarc_import) | 1.0 | Smazání importního adresáře po úspěšném importu - Po úspěšném importu smazat importní složku (proarc_import) | test | smazání importního adresáře po úspěšném importu po úspěšném importu smazat importní složku proarc import | 1 |

178,077 | 13,761,077,754 | IssuesEvent | 2020-10-07 07:06:29 | OpenPaaS-Suite/esn-frontend-calendar | https://api.github.com/repos/OpenPaaS-Suite/esn-frontend-calendar | closed | As a user, I want the more important fields to precede the less important fields in the event dialog | QA:Testing enhancement | #### User story summary

As a user, I want the more important fields to precede the less important fields in the event dialog as they are much more frequently used.

#### Where to find the feature

1. Go to Calendar

2. Choose to create a new event or edit an existing event

3. The event dialog should be opened

... | 1.0 | As a user, I want the more important fields to precede the less important fields in the event dialog - #### User story summary

As a user, I want the more important fields to precede the less important fields in the event dialog as they are much more frequently used.

#### Where to find the feature

1. Go to Cale... | test | as a user i want the more important fields to precede the less important fields in the event dialog user story summary as a user i want the more important fields to precede the less important fields in the event dialog as they are much more frequently used where to find the feature go to cale... | 1 |

115,734 | 14,880,521,865 | IssuesEvent | 2021-01-20 09:17:58 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Add editor setting to toggle Breadcrumb UI on/off. | General Interface Needs Design Feedback | At the bottom of the editor there is a white toolbar that lists a breadcrumb-like trail of the currently selected block:

<img width="173" alt="image" src="https://user-images.githubusercontent.com/191598/101187755-c3669500-3622-11eb-8cbe-7be911b77670.png">

The intention of this toolbar is to make it easier to tra... | 1.0 | Add editor setting to toggle Breadcrumb UI on/off. - At the bottom of the editor there is a white toolbar that lists a breadcrumb-like trail of the currently selected block:

<img width="173" alt="image" src="https://user-images.githubusercontent.com/191598/101187755-c3669500-3622-11eb-8cbe-7be911b77670.png">

The ... | non_test | add editor setting to toggle breadcrumb ui on off at the bottom of the editor there is a white toolbar that lists a breadcrumb like trail of the currently selected block img width alt image src the intention of this toolbar is to make it easier to traverse from a child block to its parent s howeve... | 0 |

535,373 | 15,687,219,002 | IssuesEvent | 2021-03-25 13:26:37 | gsbelarus/check-and-cash | https://api.github.com/repos/gsbelarus/check-and-cash | closed | gedemin control center | POSitive:Cash Priority-Normal Severity - Minor | Positive Cash. После оплаты, окно gedemin control center выходит на передний план. У двоих клиентов появилась данная проблема, предположительно после добавления нового пользователя. Версии программы у клиентов разные.

<!-- A clear and detailed description of what went wrong. -->

<!-- The more information you can provide, the easier we can handle this problem. -->

<!-- Start writing below this line -->

Hello, I was told to make an report with slimefun by... | 1.0 | Slimefun + EcoEnchant Incompatible - <!-- FILL IN THE FORM BELOW -->

## :round_pushpin: Description (REQUIRED)

<!-- A clear and detailed description of what went wrong. -->

<!-- The more information you can provide, the easier we can handle this problem. -->

<!-- Start writing below this line -->

Hello, I was t... | test | slimefun ecoenchant incompatible round pushpin description required hello i was told to make an report with slimefun by a plugin developer name auxilor i m using this plugin call ecoenchants which i will provide the link and wiki below this plugin allows mc servers to have custom enchantme... | 1 |

193,132 | 6,881,893,902 | IssuesEvent | 2017-11-21 00:37:51 | zulip/zulip | https://api.github.com/repos/zulip/zulip | closed | Move `tools/generate-custom-webfont-icon` to run as part of `update-prod-static` | area: tooling priority: high | I've determined that tools/generate-custom-webfont-icon never produces the same output twice (probably there's a timestamp in there). Given that it only takes 300ms to run, I think we want to move this to the update-prod-static / static/generated system. I'll open a follow-up issue.

We should move it to work more li... | 1.0 | Move `tools/generate-custom-webfont-icon` to run as part of `update-prod-static` - I've determined that tools/generate-custom-webfont-icon never produces the same output twice (probably there's a timestamp in there). Given that it only takes 300ms to run, I think we want to move this to the update-prod-static / static/... | non_test | move tools generate custom webfont icon to run as part of update prod static i ve determined that tools generate custom webfont icon never produces the same output twice probably there s a timestamp in there given that it only takes to run i think we want to move this to the update prod static static gene... | 0 |

121,003 | 10,146,264,293 | IssuesEvent | 2019-08-05 07:40:49 | linz/linz-bde-copy | https://api.github.com/repos/linz/linz-bde-copy | closed | Add test for calls with -o switch | Stale testsuite | I noticed current `runtests.sh` script is not ever testing calls with `-o` switch (for output fields).

As a bug was found in using that switch, I think it will be important to add some.

\cc @imincik | 1.0 | Add test for calls with -o switch - I noticed current `runtests.sh` script is not ever testing calls with `-o` switch (for output fields).

As a bug was found in using that switch, I think it will be important to add some.

\cc @imincik | test | add test for calls with o switch i noticed current runtests sh script is not ever testing calls with o switch for output fields as a bug was found in using that switch i think it will be important to add some cc imincik | 1 |

283,885 | 24,569,437,270 | IssuesEvent | 2022-10-13 07:26:52 | longhorn/longhorn | https://api.github.com/repos/longhorn/longhorn | closed | [BUG] Volume attach API not working for RWX volume | kind/bug kind/test | ## Describe the bug

(1) Try to attach a RWX volume to a node through API, the API response status code 200, but the RWX volume is still detached:

HTTP Request:

```

HTTP/1.1 POST /v1/volumes/test-2?action=attach

Host: 54.243.179.156:30007

Accept: application/json

Content-Type: application/json

Content-Length... | 1.0 | [BUG] Volume attach API not working for RWX volume - ## Describe the bug

(1) Try to attach a RWX volume to a node through API, the API response status code 200, but the RWX volume is still detached:

HTTP Request:

```

HTTP/1.1 POST /v1/volumes/test-2?action=attach

Host: 54.243.179.156:30007

Accept: application... | test | volume attach api not working for rwx volume describe the bug try to attach a rwx volume to a node through api the api response status code but the rwx volume is still detached http request http post volumes test action attach host accept application json content typ... | 1 |

299,629 | 25,915,255,859 | IssuesEvent | 2022-12-15 16:50:58 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Credentials expire for a new VPN account when system date is advanced on the client side | bug needs-discussion QA/Yes QA/Test-Plan-Specified OS/Desktop feature/vpn | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Credentials expire for a new VPN account when system date is advanced on the client side - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFIC... | test | credentials expire for a new vpn account when system date is advanced on the client side have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insuffic... | 1 |

283,753 | 30,913,539,427 | IssuesEvent | 2023-08-05 02:10:42 | hshivhare67/kernel_v4.19.72 | https://api.github.com/repos/hshivhare67/kernel_v4.19.72 | reopened | CVE-2019-11884 (Low) detected in linuxlinux-4.19.282 | Mend: dependency security vulnerability | ## CVE-2019-11884 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.ke... | True | CVE-2019-11884 (Low) detected in linuxlinux-4.19.282 - ## CVE-2019-11884 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.282</b></p></summary>

<p>

<p>The Linux Kernel</p... | non_test | cve low detected in linuxlinux cve low severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files vulner... | 0 |

38,949 | 8,559,443,977 | IssuesEvent | 2018-11-08 21:17:33 | kentcdodds/ama | https://api.github.com/repos/kentcdodds/ama | closed | Code sharing between projects, how to manage? | code-help | First of all I would like to thank you for all your insightful videos and your projects such as testing libraries. I am day-by-day extending the test coverage of my project. Thank you, Kent.

I am currently working on a charity project were I am having two React/CRA projects which I would like to share code with each... | 1.0 | Code sharing between projects, how to manage? - First of all I would like to thank you for all your insightful videos and your projects such as testing libraries. I am day-by-day extending the test coverage of my project. Thank you, Kent.

I am currently working on a charity project were I am having two React/CRA pro... | non_test | code sharing between projects how to manage first of all i would like to thank you for all your insightful videos and your projects such as testing libraries i am day by day extending the test coverage of my project thank you kent i am currently working on a charity project were i am having two react cra pro... | 0 |

374,687 | 26,127,914,368 | IssuesEvent | 2022-12-28 21:44:25 | yannellym/JavaBank | https://api.github.com/repos/yannellym/JavaBank | closed | Merge the the prompt and verify funcs | documentation enhancement | Could potentially merge the promptuserforAccnumberandpin and verify functions to cut down wet code | 1.0 | Merge the the prompt and verify funcs - Could potentially merge the promptuserforAccnumberandpin and verify functions to cut down wet code | non_test | merge the the prompt and verify funcs could potentially merge the promptuserforaccnumberandpin and verify functions to cut down wet code | 0 |

9,537 | 3,052,145,382 | IssuesEvent | 2015-08-12 13:18:10 | rssidlowski/Pollution_Source_Tracking | https://api.github.com/repos/rssidlowski/Pollution_Source_Tracking | closed | Link Sample: unable to successfully link sample | bug COBDev Ready for Testing moderate priority | As reported from the PST team: We have found that the Link Sample feature in the PST application does not work correctly. Several staff members have tried over the past few weeks and all have had the same experience. After clicking on the “Link Sample” button the application guides us to choose an existing sample to ... | 1.0 | Link Sample: unable to successfully link sample - As reported from the PST team: We have found that the Link Sample feature in the PST application does not work correctly. Several staff members have tried over the past few weeks and all have had the same experience. After clicking on the “Link Sample” button the appl... | test | link sample unable to successfully link sample as reported from the pst team we have found that the link sample feature in the pst application does not work correctly several staff members have tried over the past few weeks and all have had the same experience after clicking on the “link sample” button the appl... | 1 |

228,299 | 18,169,728,005 | IssuesEvent | 2021-09-27 18:27:54 | microsoft/vscode-jupyter | https://api.github.com/repos/microsoft/vscode-jupyter | opened | Ensure white background is applied to just the plot and not the entire output area for matplot lib plots | testplan-item | Refs: https://github.com/microsoft/vscode-jupyter/issues/7470

- [ ] anyOS

Complexity: 3

[Create Issue](https://github.com/microsoft/vscode-jupyter/issues/new?body=Testing+%237470%0A%0A&assignees=DonJayamanne)

---

Retina display option for Matplotlib does not work as intended

**Testing**

* Install P... | 1.0 | Ensure white background is applied to just the plot and not the entire output area for matplot lib plots - Refs: https://github.com/microsoft/vscode-jupyter/issues/7470

- [ ] anyOS

Complexity: 3

[Create Issue](https://github.com/microsoft/vscode-jupyter/issues/new?body=Testing+%237470%0A%0A&assignees=DonJaya... | test | ensure white background is applied to just the plot and not the entire output area for matplot lib plots refs anyos complexity retina display option for matplotlib does not work as intended testing install python install python jupyter extension change theme to dar... | 1 |

291,045 | 21,913,962,609 | IssuesEvent | 2022-05-21 14:08:49 | SebastianZolkwer/obligatorio-agil2-SebastianZolkwer-MauroWynter-AlanGarfinkel | https://api.github.com/repos/SebastianZolkwer/obligatorio-agil2-SebastianZolkwer-MauroWynter-AlanGarfinkel | opened | Registro de esfuerzo de los integrantes por tarea | documentation | Parte del TODO de la Entrega 2: Se debe llevar detalle de registro de esfuerzo por tarea e integrantes. | 1.0 | Registro de esfuerzo de los integrantes por tarea - Parte del TODO de la Entrega 2: Se debe llevar detalle de registro de esfuerzo por tarea e integrantes. | non_test | registro de esfuerzo de los integrantes por tarea parte del todo de la entrega se debe llevar detalle de registro de esfuerzo por tarea e integrantes | 0 |

25,638 | 3,953,191,309 | IssuesEvent | 2016-04-29 12:28:57 | codeforboston/cornerwise | https://api.github.com/repos/codeforboston/cornerwise | closed | Project detail view | css design javascript | Containing additional budget details, justification, project description, and associated address(es). | 1.0 | Project detail view - Containing additional budget details, justification, project description, and associated address(es). | non_test | project detail view containing additional budget details justification project description and associated address es | 0 |

349,043 | 31,769,448,792 | IssuesEvent | 2023-09-12 10:48:48 | BookStackApp/BookStack | https://api.github.com/repos/BookStackApp/BookStack | closed | Sorting of books is lost when copying | :bug: Bug :mag: Testing required | ### Describe the Bug

We have created a book as a template in our bookstack and now want to copy it. However, the manual sorting of the book is lost and is sorted automatically (by name?)

### Steps to Reproduce

1. Create a book with a fixed strcuture

2. hit the copy button

3. strucutre is lost

### Expected Behavio... | 1.0 | Sorting of books is lost when copying - ### Describe the Bug

We have created a book as a template in our bookstack and now want to copy it. However, the manual sorting of the book is lost and is sorted automatically (by name?)

### Steps to Reproduce

1. Create a book with a fixed strcuture

2. hit the copy button

3.... | test | sorting of books is lost when copying describe the bug we have created a book as a template in our bookstack and now want to copy it however the manual sorting of the book is lost and is sorted automatically by name steps to reproduce create a book with a fixed strcuture hit the copy button ... | 1 |

86,178 | 24,778,252,670 | IssuesEvent | 2022-10-24 00:34:11 | haskell/cabal | https://api.github.com/repos/haskell/cabal | closed | cabal-install 3.8.1.0 regression: can't control the order of hs-source-dirs anymore | type: bug cabal-install: cmd/build attention: needs-backport 3.8 regression in 3.8 | **Describe the bug**

`cabal-install` apparently seems to try to preprocess *all* modules in `hs-source-dirs`, regardless of whether they are listed in `exposed-modules`, `other-modules` or required for compilation. This can lead to the build of a component failing because preprocessing of a module fails that isn't a... | 1.0 | cabal-install 3.8.1.0 regression: can't control the order of hs-source-dirs anymore - **Describe the bug**

`cabal-install` apparently seems to try to preprocess *all* modules in `hs-source-dirs`, regardless of whether they are listed in `exposed-modules`, `other-modules` or required for compilation. This can lead to... | non_test | cabal install regression can t control the order of hs source dirs anymore describe the bug cabal install apparently seems to try to preprocess all modules in hs source dirs regardless of whether they are listed in exposed modules other modules or required for compilation this can lead to... | 0 |

339,242 | 10,245,029,208 | IssuesEvent | 2019-08-20 11:55:21 | CW-Khristos/scripts | https://api.github.com/repos/CW-Khristos/scripts | closed | Auto_Plan - Domain Environments | Agent / Probe Auto_Plan PRIORITY Protection Plans enhancement needs validation | Auto_Plan needs to be able to join device to domain, create RMMTech Domain Admin, add RMMTech Admin to Domain Admin group, add Domain Admin / RMMTech Admin to Local Admin group, and grant Service Logon rights to RMMTech Domain Admin

For a video review of complete progression through each "Stage" of script, watch thi... | 1.0 | Auto_Plan - Domain Environments - Auto_Plan needs to be able to join device to domain, create RMMTech Domain Admin, add RMMTech Admin to Domain Admin group, add Domain Admin / RMMTech Admin to Local Admin group, and grant Service Logon rights to RMMTech Domain Admin

For a video review of complete progression through... | non_test | auto plan domain environments auto plan needs to be able to join device to domain create rmmtech domain admin add rmmtech admin to domain admin group add domain admin rmmtech admin to local admin group and grant service logon rights to rmmtech domain admin for a video review of complete progression through... | 0 |

328,518 | 28,123,042,671 | IssuesEvent | 2023-03-31 15:27:12 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_lax_operators.test_jax_lax_shift_right_logical | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener nore... | 1.0 | Fix jax_lax_operators.test_jax_lax_shift_right_logical - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4570376767/jobs/8067642702" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/ac... | test | fix jax lax operators test jax lax shift right logical tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test jax test jax lax operators py test jax lax shift right logical e assertionerror the... | 1 |

10,827 | 3,143,537,186 | IssuesEvent | 2015-09-14 07:47:08 | tripikad/trip2 | https://api.github.com/repos/tripikad/trip2 | closed | Write login and registration tests | simple testing | Basically copy and adjust these tests:

https://github.com/laracasts/Email-Verification-In-Laravel/blob/master/tests/AuthTest.php

See the related video https://laracasts.com/lessons/email-verification-in-laravel

For mail testing, see https://github.com/bertramtruong/mailtrap

| 1.0 | Write login and registration tests - Basically copy and adjust these tests:

https://github.com/laracasts/Email-Verification-In-Laravel/blob/master/tests/AuthTest.php

See the related video https://laracasts.com/lessons/email-verification-in-laravel

For mail testing, see https://github.com/bertramtruong/mailtrap... | test | write login and registration tests basically copy and adjust these tests see the related video for mail testing see | 1 |

275,136 | 23,893,568,914 | IssuesEvent | 2022-09-08 13:18:52 | ARUP-CAS/aiscr-webamcr | https://api.github.com/repos/ARUP-CAS/aiscr-webamcr | closed | Mapový podklad - OpenStreetMap | bug / maintanance map TESTED | Všude sjednotit na šedou variantu (nyní je pouze v PAS; je lepší než barevná, ale mít obě je zbytečné) | 1.0 | Mapový podklad - OpenStreetMap - Všude sjednotit na šedou variantu (nyní je pouze v PAS; je lepší než barevná, ale mít obě je zbytečné) | test | mapový podklad openstreetmap všude sjednotit na šedou variantu nyní je pouze v pas je lepší než barevná ale mít obě je zbytečné | 1 |

321,235 | 27,517,001,212 | IssuesEvent | 2023-03-06 12:40:31 | Plutonomicon/cardano-transaction-lib | https://api.github.com/repos/Plutonomicon/cardano-transaction-lib | opened | E2E test suite: Allow piping the logs to the terminal from browser console | enhancement e2e testing | The config option should be implemented via an env variable in `test/e2e.env`.

`Ctl.Internal.Test.E2E.Feedback.Node` contains code that suppresses the logs until an error is observed. `addLogLine` there could be conditionally replaced with `Effect.Console.log` | 1.0 | E2E test suite: Allow piping the logs to the terminal from browser console - The config option should be implemented via an env variable in `test/e2e.env`.

`Ctl.Internal.Test.E2E.Feedback.Node` contains code that suppresses the logs until an error is observed. `addLogLine` there could be conditionally replaced with ... | test | test suite allow piping the logs to the terminal from browser console the config option should be implemented via an env variable in test env ctl internal test feedback node contains code that suppresses the logs until an error is observed addlogline there could be conditionally replaced with effec... | 1 |

246,636 | 20,888,605,615 | IssuesEvent | 2022-03-23 08:41:35 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | kv/kvserver: TestStoreTxnWaitQueueEnabledOnSplit failed | C-test-failure O-robot S-3 branch-master T-kv | kv/kvserver.TestStoreTxnWaitQueueEnabledOnSplit [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4316130&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4316130&tab=artifacts#/) on master @ [c95e8161b7752b1c9ac6c922070b7b1f2653a40b](https://github.com/cockroachdb/cockr... | 1.0 | kv/kvserver: TestStoreTxnWaitQueueEnabledOnSplit failed - kv/kvserver.TestStoreTxnWaitQueueEnabledOnSplit [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4316130&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4316130&tab=artifacts#/) on master @ [c95e8161b7752b1c9ac6... | test | kv kvserver teststoretxnwaitqueueenabledonsplit failed kv kvserver teststoretxnwaitqueueenabledonsplit with on master run teststoretxnwaitqueueenabledonsplit test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inlin... | 1 |

52,107 | 6,573,155,958 | IssuesEvent | 2017-09-11 07:34:24 | RRZE-Webteam/FAU-Einrichtungen | https://api.github.com/repos/RRZE-Webteam/FAU-Einrichtungen | closed | Mobile Navigation: Suche und Sprachwechsler in das Flyout-Menu übernehmen? | Design-Entscheidung | Wurde schon einmal darüber gesprochen wie die Suche und der Sprachwechsler in der mobilen Version umgesetzt wird?

Wie wäre es denn, wenn man die beiden Funktionen in das Flyout-Menü mit aufnehmen würde?

Im Moment sehen die beiden Funktionen etwas verloren aus, wenn in dem Meta-Bereich sonst keine weiteren Buttons sin... | 1.0 | Mobile Navigation: Suche und Sprachwechsler in das Flyout-Menu übernehmen? - Wurde schon einmal darüber gesprochen wie die Suche und der Sprachwechsler in der mobilen Version umgesetzt wird?

Wie wäre es denn, wenn man die beiden Funktionen in das Flyout-Menü mit aufnehmen würde?

Im Moment sehen die beiden Funktionen ... | non_test | mobile navigation suche und sprachwechsler in das flyout menu übernehmen wurde schon einmal darüber gesprochen wie die suche und der sprachwechsler in der mobilen version umgesetzt wird wie wäre es denn wenn man die beiden funktionen in das flyout menü mit aufnehmen würde im moment sehen die beiden funktionen ... | 0 |

362,387 | 25,373,147,733 | IssuesEvent | 2022-11-21 12:08:40 | STMicroelectronics/cmsis_core | https://api.github.com/repos/STMicroelectronics/cmsis_core | closed | ST_README.md outdated | documentation | The compatibility information in the "ST_README.md" file is outdated and should be corrected asap!

It is only listed up to v5.4.0 but v5.6.0 has been released for more than 2 years.

Thanks.

| 1.0 | ST_README.md outdated - The compatibility information in the "ST_README.md" file is outdated and should be corrected asap!

It is only listed up to v5.4.0 but v5.6.0 has been released for more than 2 years.

Thanks.

| non_test | st readme md outdated the compatibility information in the st readme md file is outdated and should be corrected asap it is only listed up to but has been released for more than years thanks | 0 |

131,054 | 10,679,317,343 | IssuesEvent | 2019-10-21 19:01:16 | smartsystemslab-uf/ZynqRobotController | https://api.github.com/repos/smartsystemslab-uf/ZynqRobotController | closed | UART Tests | testing | Need tests for all UART ports available in the system. Presumably, a simply program could be written to take as input a serial port and some data, send data out over serial port, and receive the same data on the receiver line of the UART port. | 1.0 | UART Tests - Need tests for all UART ports available in the system. Presumably, a simply program could be written to take as input a serial port and some data, send data out over serial port, and receive the same data on the receiver line of the UART port. | test | uart tests need tests for all uart ports available in the system presumably a simply program could be written to take as input a serial port and some data send data out over serial port and receive the same data on the receiver line of the uart port | 1 |

177,814 | 13,748,854,042 | IssuesEvent | 2020-10-06 09:38:22 | inveniosoftware/react-invenio-app-ils | https://api.github.com/repos/inveniosoftware/react-invenio-app-ils | closed | Cannot search for multiple aggregations of the same category | bug test-blocker | ### Reproduce

1. Go to frontsite

2. Perform an empty search

3. In "Literature types", tick "Book" and note the number of results

4. Now keep "Book" ticked and tick "Proceeding"; again note the number of results

5. Observe that the results always correspond to the last element you ticked (here, "Proceeding")

#... | 1.0 | Cannot search for multiple aggregations of the same category - ### Reproduce

1. Go to frontsite

2. Perform an empty search

3. In "Literature types", tick "Book" and note the number of results

4. Now keep "Book" ticked and tick "Proceeding"; again note the number of results

5. Observe that the results always corr... | test | cannot search for multiple aggregations of the same category reproduce go to frontsite perform an empty search in literature types tick book and note the number of results now keep book ticked and tick proceeding again note the number of results observe that the results always corr... | 1 |

197,980 | 14,952,220,001 | IssuesEvent | 2021-01-26 15:17:25 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | TestE2ETensorPipe.TestTrainingLoop is Flaky | module: flaky-tests module: rpc module: tensorpipe oncall: distributed triaged | https://app.circleci.com/pipelines/github/pytorch/pytorch/263779/workflows/c1535b0d-74cb-467f-9955-b05adf928ade/jobs/10367433/steps

```

Jan 25 23:17:56 [----------] Global test environment tear-down

Jan 25 23:17:56 [==========] 9 tests from 4 test cases ran. (1651 ms total)

Jan 25 23:17:56 [ PASSED ] 8 tests.

... | 1.0 | TestE2ETensorPipe.TestTrainingLoop is Flaky - https://app.circleci.com/pipelines/github/pytorch/pytorch/263779/workflows/c1535b0d-74cb-467f-9955-b05adf928ade/jobs/10367433/steps

```

Jan 25 23:17:56 [----------] Global test environment tear-down

Jan 25 23:17:56 [==========] 9 tests from 4 test cases ran. (1651 ms t... | test | testtrainingloop is flaky jan global test environment tear down jan tests from test cases ran ms total jan tests jan test listed below jan testtrainingloop var lib jenkins workspace test cpp rpc test tensorpipe cpp ... | 1 |

34,086 | 6,289,089,991 | IssuesEvent | 2017-07-19 18:26:11 | wp-cli/wp-cli | https://api.github.com/repos/wp-cli/wp-cli | opened | Update references to the Package Index | scope:documentation | Now that the [Package Index is being deprecated](https://make.wordpress.org/cli/2017/07/18/feature-development-discussion-recap/), we need to update the existing references:

* [ ] Update Package Index README: https://github.com/wp-cli/package-index#wp-cli-package-index

* [ ] Remove "Package Index" link from website... | 1.0 | Update references to the Package Index - Now that the [Package Index is being deprecated](https://make.wordpress.org/cli/2017/07/18/feature-development-discussion-recap/), we need to update the existing references:

* [ ] Update Package Index README: https://github.com/wp-cli/package-index#wp-cli-package-index

* [ ]... | non_test | update references to the package index now that the we need to update the existing references update package index readme remove package index link from website navigation update package index reference in commands cookbook | 0 |

82,404 | 7,840,682,067 | IssuesEvent | 2018-06-18 17:07:46 | udacity/lesson_feedback_nd113 | https://api.github.com/repos/udacity/lesson_feedback_nd113 | closed | [Neutral]2018-04-14 | 1.0.0 14. Robot Localization test | Lectures should have more detail\, I had difficulty in understanding the robot motion and probability moving in the direction of the robot | 1.0 | [Neutral]2018-04-14 - Lectures should have more detail\, I had difficulty in understanding the robot motion and probability moving in the direction of the robot | test | lectures should have more detail i had difficulty in understanding the robot motion and probability moving in the direction of the robot | 1 |

205,371 | 15,610,816,729 | IssuesEvent | 2021-03-19 13:38:28 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | [Object] Herb in tree (Redridge Mountains) | Fixed on PTR - Tester Confirmed | What is Happening:

There is herb inside tree and it's inaccessible

What Should happen:

It should be accesable I guess

| 1.0 | [Object] Herb in tree (Redridge Mountains) - What is Happening:

There is herb inside tree and it's inaccessible

What Should happen:

It should be accesable I guess

| test | herb in tree redridge mountains what is happening there is herb inside tree and it s inaccessible what should happen it should be accesable i guess | 1 |

40,009 | 2,862,123,565 | IssuesEvent | 2015-06-04 01:14:40 | kbandla/testrepo | https://api.github.com/repos/kbandla/testrepo | reopened | Record Fragmentation not handled in TLSMultiFactory(buf) | bug imported Priority-Medium | _From [achin...@gmail.com](https://code.google.com/u/115462122534195676742/) on November 12, 2014 20:42:23_

What steps will reproduce the problem? 1. Send data more than 17000 Bytes.

2. The TLSMultiFactory will throw an error in finding the TLs version

3. The number of records returned is 0 What is the expected out... | 1.0 | Record Fragmentation not handled in TLSMultiFactory(buf) - _From [achin...@gmail.com](https://code.google.com/u/115462122534195676742/) on November 12, 2014 20:42:23_

What steps will reproduce the problem? 1. Send data more than 17000 Bytes.

2. The TLSMultiFactory will throw an error in finding the TLs version

3. T... | non_test | record fragmentation not handled in tlsmultifactory buf from on november what steps will reproduce the problem send data more than bytes the tlsmultifactory will throw an error in finding the tls version the number of records returned is what is the expected output what do you se... | 0 |

529,914 | 15,397,648,326 | IssuesEvent | 2021-03-03 22:30:19 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Wrong day names are displayed when using not-Sunday as start of the week and grouping by "Day of week" | .Correctness .Frontend .Regression .Reproduced Customization/i18n Priority:P2 Type:Bug Visualization/ | When grouping by day of the week, the DATA use the correct date but the AXIS LABELS still start with Sunday.

**To Reproduce**

- Start of the week: Monday

- Sample dataset: table Orders

- Filter: "Created at" between 2020-03-02 and 2020-03-03 (monday and tuesday)

- Summarize: by Count and group by Created At (b... | 1.0 | Wrong day names are displayed when using not-Sunday as start of the week and grouping by "Day of week" - When grouping by day of the week, the DATA use the correct date but the AXIS LABELS still start with Sunday.

**To Reproduce**

- Start of the week: Monday

- Sample dataset: table Orders

- Filter: "Created at"... | non_test | wrong day names are displayed when using not sunday as start of the week and grouping by day of week when grouping by day of the week the data use the correct date but the axis labels still start with sunday to reproduce start of the week monday sample dataset table orders filter created at ... | 0 |

246,710 | 20,909,992,176 | IssuesEvent | 2022-03-24 08:23:00 | pygame/pygame | https://api.github.com/repos/pygame/pygame | closed | Update freetype version to 2.9.1+ | font freetype Gnu/Linux Windows needs-testing Wheels Difficulty: moderate | I noticed that pygame is about 6 versions (and at least 5 years) behind the latest version of freetype. It looks like there have been some potentially nice changes over the last five years that may improve font rendering for pygame - mainly the addition of ClearType hinting.

I suppose there is a chance it might just... | 1.0 | Update freetype version to 2.9.1+ - I noticed that pygame is about 6 versions (and at least 5 years) behind the latest version of freetype. It looks like there have been some potentially nice changes over the last five years that may improve font rendering for pygame - mainly the addition of ClearType hinting.

I sup... | test | update freetype version to i noticed that pygame is about versions and at least years behind the latest version of freetype it looks like there have been some potentially nice changes over the last five years that may improve font rendering for pygame mainly the addition of cleartype hinting i sup... | 1 |

105,621 | 23,083,640,223 | IssuesEvent | 2022-07-26 09:26:07 | backstage/backstage | https://api.github.com/repos/backstage/backstage | closed | Tech Docs broken header for small to medium devices | bug docs-like-code | ## Expected Behavior

The header should fill all available width.

## Actual Behavior

There is a strange block on top of the header. More easily to see the image than me try to explain:

## Steps to... | 1.0 | Tech Docs broken header for small to medium devices - ## Expected Behavior

The header should fill all available width.

## Actual Behavior

There is a strange block on top of the header. More easily to see the image than me try to explain:

detected in multiple libraries | security vulnerability | ## CVE-2019-16869 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>netty-codec-http-4.0.0.Final.jar</b>, <b>netty-codec-http-4.1.29.Final.jar</b>, <b>netty-codec-http-4.1.22.Final.jar<... | True | CVE-2019-16869 (High) detected in multiple libraries - ## CVE-2019-16869 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>netty-codec-http-4.0.0.Final.jar</b>, <b>netty-codec-http-4.1.... | non_test | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries netty codec http final jar netty codec http final jar netty codec http final jar netty codec http final jar netty codec http final jar netty codec http ... | 0 |

275,447 | 23,917,096,751 | IssuesEvent | 2022-09-09 13:38:29 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: cluster_creation failed | C-test-failure O-robot O-roachtest release-blocker branch-release-22.2 | roachtest.cluster_creation [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6400400?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6400400?buildTab=artifacts#/cluster_creation) on re... | 2.0 | roachtest: cluster_creation failed - roachtest.cluster_creation [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6400400?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6400400?buildT... | test | roachtest cluster creation failed roachtest cluster creation with on release test rangelookups split nodes was skipped due to test runner go test runner go stopper go in provider gce command gcloud exit status attached stack trace stack trace github com... | 1 |

181,907 | 21,664,466,918 | IssuesEvent | 2022-05-07 01:26:46 | Baneeishaque/spring_store_thymeleaf | https://api.github.com/repos/Baneeishaque/spring_store_thymeleaf | closed | WS-2018-0084 (High) detected in sshpk-1.13.1.tgz - autoclosed | security vulnerability | ## WS-2018-0084 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sshpk-1.13.1.tgz</b></p></summary>

<p>A library for finding and using SSH public keys</p>

<p>Library home page: <a href=... | True | WS-2018-0084 (High) detected in sshpk-1.13.1.tgz - autoclosed - ## WS-2018-0084 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sshpk-1.13.1.tgz</b></p></summary>

<p>A library for find... | non_test | ws high detected in sshpk tgz autoclosed ws high severity vulnerability vulnerable library sshpk tgz a library for finding and using ssh public keys library home page a href path to dependency file spring store thymeleaf html site template customer fashi source ... | 0 |

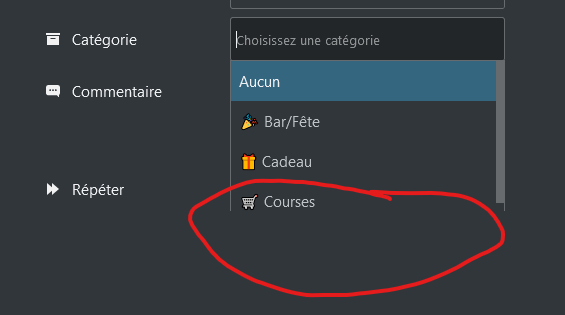

77,639 | 7,582,944,259 | IssuesEvent | 2018-04-25 07:10:33 | mozilla-iam/auth0-custom-lock | https://api.github.com/repos/mozilla-iam/auth0-custom-lock | closed | Cannot login passwordless | bug ready for test | STR:

1. Navigate to mozillians staging

2. Click Log in button

3. Add a non-LDAP email address and click Enter

Expected:

"Send email" screen is shown

Actual:

Spinning spinner is shown continuously

Nextcloud 22.0.0

Cospend 1.3.10

Breeze Dark 22.0.0 | 1.0 | Category doesn't show the full list - Since the last update the category doesn't show the full list when clicking on it :

Nextcloud 22.0.0

Cospend 1.3.10

Breeze Dark 22.0.0 | test | category doesn t show the full list since the last update the category doesn t show the full list when clicking on it nextcloud cospend breeze dark | 1 |

34,078 | 6,288,741,837 | IssuesEvent | 2017-07-19 17:40:10 | LLNL/maestrowf | https://api.github.com/repos/LLNL/maestrowf | closed | Upload sdist to pypi | Documentation High Priority | For the version 1.0.0 release, you should have both the sdist and bdist versions available. | 1.0 | Upload sdist to pypi - For the version 1.0.0 release, you should have both the sdist and bdist versions available. | non_test | upload sdist to pypi for the version release you should have both the sdist and bdist versions available | 0 |

13,591 | 3,349,129,516 | IssuesEvent | 2015-11-17 07:55:16 | start-jsk/jsk_apc | https://api.github.com/repos/start-jsk/jsk_apc | opened | Test recognition nodes | test | - [ ] localization of objects in bin

- [ ] localization of object in hand

- [ ] recognition of object in hand | 1.0 | Test recognition nodes - - [ ] localization of objects in bin

- [ ] localization of object in hand

- [ ] recognition of object in hand | test | test recognition nodes localization of objects in bin localization of object in hand recognition of object in hand | 1 |

298,626 | 25,840,922,914 | IssuesEvent | 2022-12-13 00:15:25 | mehah/otclient | https://api.github.com/repos/mehah/otclient | closed | Bug on target monsters | Priority: Critical Status: Pending Test Type: Bug | ### Priority

Critical

### Area

- [ ] Data

- [X] Source

- [ ] Docker

- [ ] Other

### What happened?

The client is bugging the target when selecting mobs.

### What OS are you seeing the problem on?

... | 1.0 | Bug on target monsters - ### Priority

Critical

### Area

- [ ] Data

- [X] Source

- [ ] Docker

- [ ] Other

### What happened?

The client is bugging the target when selecting mobs.