Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

71,941

| 23,863,515,152

|

IssuesEvent

|

2022-09-07 09:05:11

|

SeleniumHQ/selenium

|

https://api.github.com/repos/SeleniumHQ/selenium

|

opened

|

[🐛 Bug]: '--lang=en' or '--accept-lang=en' option not working with Node.js on Mac OS

|

I-defect needs-triaging

|

### What happened?

Hi ! ✌️

I try to create a web end to end automation framework for my company and I need to be able to run tests in multiple browsers and in multiple languages.

Current targets are:

- browsers: chrome and firefox

- languages: deutsh, english, french, italian, spanish (more to come later)

I am doing a Proof Of Concept project to see how it could be achieved with either Selenium or Puppeteer

I am trying to implement a simple scenario:

- Open Google home page

- Verify in the popup that the title is in the right language

Examples:

```

browser | langISO639 | countryCodeISO3166 | expectedTitle

${'chrome'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'chrome'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'chrome'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'chrome'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

${'firefox'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'firefox'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'firefox'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'firefox'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

```

My problem is that english test case is always failed and i've tried all workarounds i've found around the web (github issues, stack overflow, ...)

PS: I am using webdriver-manager to run a local grid but It is also failed with chromedriver and geckodriver local installations (from NPM)

### How can we reproduce the issue?

```shell

Node.js test script with selenium

const fs = require('fs')

const path = require('path')

const mkdirp = require('mkdirp')

const rimraf = require('rimraf')

const { Builder, until, By } = require('selenium-webdriver')

const Chrome = require('selenium-webdriver/chrome')

const Firefox = require('selenium-webdriver/firefox')

require('chromedriver')

require('geckodriver')

jest.setTimeout(1000*60*10)

async function getWebDriver(browser, lang, country) {

if (browser === 'chrome') {

const chromeOptions = new Chrome.Options()

.addArguments(

`--accept-lang=${lang}-${country}`,

'--browser-test',

'--bwsi',

`--default-country-code=${country}`,

'--disable-default-apps',

'--disable-extensions',

'--disable-gpu',

'--disable-logging',

'--disable-web-security',

'--dom-automation',

'--enable-automation',

'--force-headless-for-tests',

'--guest',

'--headless',

'--incognito',

`--lang=${lang}`,

'--no-sandbox',

'--window-size=1440,900',

)

.setUserPreferences({ ['intl.accept_languages']: lang, ['translate']: { enabled: true } })

const driver = new Builder()

.forBrowser('chrome')

.setChromeOptions(chromeOptions)

.usingServer('http://localhost:4444/wd/hub')

.build()

await sleep(3)

return driver

} else {

const firefoxOptions = new Firefox.Options()

.headless()

.setPreference('intl.accept_languages', lang)

const driver = new Builder()

.forBrowser('firefox')

.setFirefoxOptions(firefoxOptions)

.usingServer('http://localhost:4444/wd/hub')

.build()

await sleep(3)

return driver

}

}

async function sleep(seconds) {

return new Promise((resolve) => {

setTimeout(() => {

resolve()

}, 1000 * seconds)

})

}

describe.each`

browser | langISO639 | countryCodeISO3166 | expectedTitle

${'chrome'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'chrome'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'chrome'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'chrome'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

${'firefox'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'firefox'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'firefox'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'firefox'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

`('open Google on $browser browser with language $langISO639', ({ browser, langISO639, countryCodeISO3166, expectedTitle }) => {

let envLangBefore = process.env['LANG'] || undefined

beforeAll(async () => {

await rimraf.sync(path.join(__dirname, 'test-screenshots'))

await mkdirp.sync(path.join(__dirname, 'test-screenshots'))

process.env['LANG'] = langISO639

})

afterAll(() => {

process.env['LANG'] = envLangBefore

})

let driver

it('opens web driver instance', async () => {

driver = await getWebDriver(browser, langISO639, countryCodeISO3166)

})

it('reach goole web app', async () => {

await driver.get('https://www.google.com/')

await driver.navigate().refresh()

})

it('waits for translated text or take a screenshot and throw', async () => {

try {

await driver.wait(until.elementLocated(By.xpath(`//h1[text()="${expectedTitle}"]`)), 3000)

} catch (e) {

const image = await driver.takeScreenshot()

fs.writeFileSync(path.join(__dirname, `test-screenshots/${browser}_${langISO639}.png`), image, 'base64')

throw e

}

})

it('closes web driver instance', async () => {

await driver.quit()

})

})

```

Node.js test script with puppeteer

```

const fs = require('fs')

const path = require('path')

const mkdirp = require('mkdirp')

const puppeteer = require('puppeteer')

const rimraf = require('rimraf')

jest.setTimeout(1000*60*10)

async function getWebDriver(lang, country) {

const driver = await puppeteer.launch({

args: [

`--accept-lang=${lang}-${country}`,

'--browser-test',

'--bwsi',

`--default-country-code=${country}`,

'--disable-default-apps',

'--disable-extensions',

'--disable-gpu',

'--disable-logging',

'--disable-web-security',

'--dom-automation',

'--enable-automation',

'--force-headless-for-tests',

'--guest',

'--headless',

'--incognito',

`--lang=${lang}`,

'--no-sandbox',

'--window-size=1440,900',

]

})

await sleep(3)

return driver

}

async function sleep(seconds) {

return new Promise((resolve) => {

setTimeout(() => {

resolve()

}, 1000 * seconds)

})

}

describe.each`

langISO639 | countryCodeISO3166 | expectedTitle

${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

`('open Puppeteer with language $langISO639', ({ langISO639, countryCodeISO3166, expectedTitle }) => {

let envLangBefore = process.env['LANG'] || undefined

beforeAll(async () => {

await rimraf.sync(path.join(__dirname, 'test-screenshots'))

await mkdirp.sync(path.join(__dirname, 'test-screenshots'))

process.env['LANG'] = langISO639

})

afterAll(() => {

process.env['LANG'] = envLangBefore

})

/** @type {puppeteer.Browser} */

let browser

/** @type {puppeteer.Page} */

let page

it('opens web driver instance', async () => {

browser = await getWebDriver(langISO639, countryCodeISO3166)

page = await browser.newPage()

})

it('reach goole web app', async () => {

await page.goto('https://www.google.com/')

await page.reload()

})

it('waits for translated text or take a screenshot and throw', async () => {

try {

await page.waitForXPath(`//h1[text()="${expectedTitle}"]`)

} catch (e) {

await page.screenshot({ path: path.join(__dirname, `test-screenshots/puppeteer_${langISO639}.png`) })

throw e

}

})

it('closes web driver instance', async () => {

await browser.close()

})

})

```

Node.js project package.json example

```

{

"name": "sample-en-issue-with-chromium",

"scripts": {

"test": "jest"

},

"devDependencies": {

"@types/jest": "^29.0.0",

"@types/selenium-webdriver": "^4.1.3",

"eslint": "^8.23.0",

"eslint-config-node": "^4.1.0",

"eslint-plugin-import": "^2.26.0",

"eslint-plugin-jest": "^27.0.1",

"jest": "^29.0.1",

"jest-runner-groups": "^2.2.0",

"webdriver-manager": "^12.1.8"

},

"dependencies": {

"chromedriver": "^104.0.0",

"geckodriver": "^3.0.2",

"mkdirp": "^1.0.4",

"puppeteer": "^17.1.1",

"rimraf": "^3.0.2",

"selenium-webdriver": "^4.4.0"

}

}

```

```

### Relevant log output

```shell

With Selenium

FAIL framework/automate-web/WebDriverManager.test.js (45.459 s)

open Google on chrome browser with language de

✓ opens web driver instance (3003 ms)

✓ reach goole web app (1587 ms)

✓ waits for translated text or take a screenshot and throw (527 ms)

✓ closes web driver instance (54 ms)

open Google on chrome browser with language en

✓ opens web driver instance (3002 ms)

✓ reach goole web app (1364 ms)

✕ waits for translated text or take a screenshot and throw (3506 ms)

✓ closes web driver instance (55 ms)

open Google on chrome browser with language es

✓ opens web driver instance (3002 ms)

✓ reach goole web app (1371 ms)

✓ waits for translated text or take a screenshot and throw (485 ms)

✓ closes web driver instance (58 ms)

open Google on chrome browser with language fr

✓ opens web driver instance (3001 ms)

✓ reach goole web app (1414 ms)

✓ waits for translated text or take a screenshot and throw (490 ms)

✓ closes web driver instance (55 ms)

open Google on firefox browser with language de

✓ opens web driver instance (3001 ms)

✓ reach goole web app (909 ms)

✓ waits for translated text or take a screenshot and throw (22 ms)

✓ closes web driver instance (404 ms)

open Google on firefox browser with language en

✓ opens web driver instance (3002 ms)

✓ reach goole web app (786 ms)

✕ waits for translated text or take a screenshot and throw (3202 ms)

✓ closes web driver instance (409 ms)

open Google on firefox browser with language es

✓ opens web driver instance (3001 ms)

✓ reach goole web app (2718 ms)

✓ waits for translated text or take a screenshot and throw (17 ms)

✓ closes web driver instance (408 ms)

open Google on firefox browser with language fr

✓ opens web driver instance (3002 ms)

✓ reach goole web app (788 ms)

✓ waits for translated text or take a screenshot and throw (19 ms)

✓ closes web driver instance (406 ms)

```

With Puppeteer

```

FAIL framework/automate-web/test-screenshots/Puppeteer-multiple-langs.test.js (63.579 s)

open Puppeteer with language de

✓ opens web driver instance (19901 ms)

✓ reach goole web app (852 ms)

✓ waits for translated text or take a screenshot and throw (8 ms)

✓ closes web driver instance (8 ms)

open Puppeteer with language en

✓ opens web driver instance (3319 ms)

✓ reach goole web app (679 ms)

✕ waits for translated text or take a screenshot and throw (30071 ms)

✓ closes web driver instance (7 ms)

open Puppeteer with language es

✓ opens web driver instance (3324 ms)

✓ reach goole web app (825 ms)

✓ waits for translated text or take a screenshot and throw (6 ms)

✓ closes web driver instance (11 ms)

open Puppeteer with language fr

✓ opens web driver instance (3334 ms)

✓ reach goole web app (705 ms)

✓ waits for translated text or take a screenshot and throw (7 ms)

✓ closes web driver instance (6 ms)

```

```

### Operating System

Mac OS Monterey 12.5.1

### Selenium version

"selenium-webdriver": "^4.4.0",

### What are the browser(s) and version(s) where you see this issue?

Firefox 104.0.1, Chrome version as of 2022-09-06

### What are the browser driver(s) and version(s) where you see this issue?

from NPM: {"chromedriver": "^104.0.0", "geckodriver": "^3.0.2"}

### Are you using Selenium Grid?

yes with npm module webdriver-manager / no

|

1.0

|

[🐛 Bug]: '--lang=en' or '--accept-lang=en' option not working with Node.js on Mac OS - ### What happened?

Hi ! ✌️

I try to create a web end to end automation framework for my company and I need to be able to run tests in multiple browsers and in multiple languages.

Current targets are:

- browsers: chrome and firefox

- languages: deutsh, english, french, italian, spanish (more to come later)

I am doing a Proof Of Concept project to see how it could be achieved with either Selenium or Puppeteer

I am trying to implement a simple scenario:

- Open Google home page

- Verify in the popup that the title is in the right language

Examples:

```

browser | langISO639 | countryCodeISO3166 | expectedTitle

${'chrome'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'chrome'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'chrome'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'chrome'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

${'firefox'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'firefox'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'firefox'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'firefox'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

```

My problem is that english test case is always failed and i've tried all workarounds i've found around the web (github issues, stack overflow, ...)

PS: I am using webdriver-manager to run a local grid but It is also failed with chromedriver and geckodriver local installations (from NPM)

### How can we reproduce the issue?

```shell

Node.js test script with selenium

const fs = require('fs')

const path = require('path')

const mkdirp = require('mkdirp')

const rimraf = require('rimraf')

const { Builder, until, By } = require('selenium-webdriver')

const Chrome = require('selenium-webdriver/chrome')

const Firefox = require('selenium-webdriver/firefox')

require('chromedriver')

require('geckodriver')

jest.setTimeout(1000*60*10)

async function getWebDriver(browser, lang, country) {

if (browser === 'chrome') {

const chromeOptions = new Chrome.Options()

.addArguments(

`--accept-lang=${lang}-${country}`,

'--browser-test',

'--bwsi',

`--default-country-code=${country}`,

'--disable-default-apps',

'--disable-extensions',

'--disable-gpu',

'--disable-logging',

'--disable-web-security',

'--dom-automation',

'--enable-automation',

'--force-headless-for-tests',

'--guest',

'--headless',

'--incognito',

`--lang=${lang}`,

'--no-sandbox',

'--window-size=1440,900',

)

.setUserPreferences({ ['intl.accept_languages']: lang, ['translate']: { enabled: true } })

const driver = new Builder()

.forBrowser('chrome')

.setChromeOptions(chromeOptions)

.usingServer('http://localhost:4444/wd/hub')

.build()

await sleep(3)

return driver

} else {

const firefoxOptions = new Firefox.Options()

.headless()

.setPreference('intl.accept_languages', lang)

const driver = new Builder()

.forBrowser('firefox')

.setFirefoxOptions(firefoxOptions)

.usingServer('http://localhost:4444/wd/hub')

.build()

await sleep(3)

return driver

}

}

async function sleep(seconds) {

return new Promise((resolve) => {

setTimeout(() => {

resolve()

}, 1000 * seconds)

})

}

describe.each`

browser | langISO639 | countryCodeISO3166 | expectedTitle

${'chrome'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'chrome'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'chrome'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'chrome'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

${'firefox'} | ${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'firefox'} | ${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'firefox'} | ${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'firefox'} | ${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

`('open Google on $browser browser with language $langISO639', ({ browser, langISO639, countryCodeISO3166, expectedTitle }) => {

let envLangBefore = process.env['LANG'] || undefined

beforeAll(async () => {

await rimraf.sync(path.join(__dirname, 'test-screenshots'))

await mkdirp.sync(path.join(__dirname, 'test-screenshots'))

process.env['LANG'] = langISO639

})

afterAll(() => {

process.env['LANG'] = envLangBefore

})

let driver

it('opens web driver instance', async () => {

driver = await getWebDriver(browser, langISO639, countryCodeISO3166)

})

it('reach goole web app', async () => {

await driver.get('https://www.google.com/')

await driver.navigate().refresh()

})

it('waits for translated text or take a screenshot and throw', async () => {

try {

await driver.wait(until.elementLocated(By.xpath(`//h1[text()="${expectedTitle}"]`)), 3000)

} catch (e) {

const image = await driver.takeScreenshot()

fs.writeFileSync(path.join(__dirname, `test-screenshots/${browser}_${langISO639}.png`), image, 'base64')

throw e

}

})

it('closes web driver instance', async () => {

await driver.quit()

})

})

```

Node.js test script with puppeteer

```

const fs = require('fs')

const path = require('path')

const mkdirp = require('mkdirp')

const puppeteer = require('puppeteer')

const rimraf = require('rimraf')

jest.setTimeout(1000*60*10)

async function getWebDriver(lang, country) {

const driver = await puppeteer.launch({

args: [

`--accept-lang=${lang}-${country}`,

'--browser-test',

'--bwsi',

`--default-country-code=${country}`,

'--disable-default-apps',

'--disable-extensions',

'--disable-gpu',

'--disable-logging',

'--disable-web-security',

'--dom-automation',

'--enable-automation',

'--force-headless-for-tests',

'--guest',

'--headless',

'--incognito',

`--lang=${lang}`,

'--no-sandbox',

'--window-size=1440,900',

]

})

await sleep(3)

return driver

}

async function sleep(seconds) {

return new Promise((resolve) => {

setTimeout(() => {

resolve()

}, 1000 * seconds)

})

}

describe.each`

langISO639 | countryCodeISO3166 | expectedTitle

${'de'} | ${'DE'} | ${'Bevor Sie zu Google weitergehen'}

${'en'} | ${'GB'} | ${'Before you continue to Google'}

${'es'} | ${'ES'} | ${'Antes de ir a Google'}

${'fr'} | ${'FR'} | ${'Avant d\'accéder à Google'}

`('open Puppeteer with language $langISO639', ({ langISO639, countryCodeISO3166, expectedTitle }) => {

let envLangBefore = process.env['LANG'] || undefined

beforeAll(async () => {

await rimraf.sync(path.join(__dirname, 'test-screenshots'))

await mkdirp.sync(path.join(__dirname, 'test-screenshots'))

process.env['LANG'] = langISO639

})

afterAll(() => {

process.env['LANG'] = envLangBefore

})

/** @type {puppeteer.Browser} */

let browser

/** @type {puppeteer.Page} */

let page

it('opens web driver instance', async () => {

browser = await getWebDriver(langISO639, countryCodeISO3166)

page = await browser.newPage()

})

it('reach goole web app', async () => {

await page.goto('https://www.google.com/')

await page.reload()

})

it('waits for translated text or take a screenshot and throw', async () => {

try {

await page.waitForXPath(`//h1[text()="${expectedTitle}"]`)

} catch (e) {

await page.screenshot({ path: path.join(__dirname, `test-screenshots/puppeteer_${langISO639}.png`) })

throw e

}

})

it('closes web driver instance', async () => {

await browser.close()

})

})

```

Node.js project package.json example

```

{

"name": "sample-en-issue-with-chromium",

"scripts": {

"test": "jest"

},

"devDependencies": {

"@types/jest": "^29.0.0",

"@types/selenium-webdriver": "^4.1.3",

"eslint": "^8.23.0",

"eslint-config-node": "^4.1.0",

"eslint-plugin-import": "^2.26.0",

"eslint-plugin-jest": "^27.0.1",

"jest": "^29.0.1",

"jest-runner-groups": "^2.2.0",

"webdriver-manager": "^12.1.8"

},

"dependencies": {

"chromedriver": "^104.0.0",

"geckodriver": "^3.0.2",

"mkdirp": "^1.0.4",

"puppeteer": "^17.1.1",

"rimraf": "^3.0.2",

"selenium-webdriver": "^4.4.0"

}

}

```

```

### Relevant log output

```shell

With Selenium

FAIL framework/automate-web/WebDriverManager.test.js (45.459 s)

open Google on chrome browser with language de

✓ opens web driver instance (3003 ms)

✓ reach goole web app (1587 ms)

✓ waits for translated text or take a screenshot and throw (527 ms)

✓ closes web driver instance (54 ms)

open Google on chrome browser with language en

✓ opens web driver instance (3002 ms)

✓ reach goole web app (1364 ms)

✕ waits for translated text or take a screenshot and throw (3506 ms)

✓ closes web driver instance (55 ms)

open Google on chrome browser with language es

✓ opens web driver instance (3002 ms)

✓ reach goole web app (1371 ms)

✓ waits for translated text or take a screenshot and throw (485 ms)

✓ closes web driver instance (58 ms)

open Google on chrome browser with language fr

✓ opens web driver instance (3001 ms)

✓ reach goole web app (1414 ms)

✓ waits for translated text or take a screenshot and throw (490 ms)

✓ closes web driver instance (55 ms)

open Google on firefox browser with language de

✓ opens web driver instance (3001 ms)

✓ reach goole web app (909 ms)

✓ waits for translated text or take a screenshot and throw (22 ms)

✓ closes web driver instance (404 ms)

open Google on firefox browser with language en

✓ opens web driver instance (3002 ms)

✓ reach goole web app (786 ms)

✕ waits for translated text or take a screenshot and throw (3202 ms)

✓ closes web driver instance (409 ms)

open Google on firefox browser with language es

✓ opens web driver instance (3001 ms)

✓ reach goole web app (2718 ms)

✓ waits for translated text or take a screenshot and throw (17 ms)

✓ closes web driver instance (408 ms)

open Google on firefox browser with language fr

✓ opens web driver instance (3002 ms)

✓ reach goole web app (788 ms)

✓ waits for translated text or take a screenshot and throw (19 ms)

✓ closes web driver instance (406 ms)

```

With Puppeteer

```

FAIL framework/automate-web/test-screenshots/Puppeteer-multiple-langs.test.js (63.579 s)

open Puppeteer with language de

✓ opens web driver instance (19901 ms)

✓ reach goole web app (852 ms)

✓ waits for translated text or take a screenshot and throw (8 ms)

✓ closes web driver instance (8 ms)

open Puppeteer with language en

✓ opens web driver instance (3319 ms)

✓ reach goole web app (679 ms)

✕ waits for translated text or take a screenshot and throw (30071 ms)

✓ closes web driver instance (7 ms)

open Puppeteer with language es

✓ opens web driver instance (3324 ms)

✓ reach goole web app (825 ms)

✓ waits for translated text or take a screenshot and throw (6 ms)

✓ closes web driver instance (11 ms)

open Puppeteer with language fr

✓ opens web driver instance (3334 ms)

✓ reach goole web app (705 ms)

✓ waits for translated text or take a screenshot and throw (7 ms)

✓ closes web driver instance (6 ms)

```

```

### Operating System

Mac OS Monterey 12.5.1

### Selenium version

"selenium-webdriver": "^4.4.0",

### What are the browser(s) and version(s) where you see this issue?

Firefox 104.0.1, Chrome version as of 2022-09-06

### What are the browser driver(s) and version(s) where you see this issue?

from NPM: {"chromedriver": "^104.0.0", "geckodriver": "^3.0.2"}

### Are you using Selenium Grid?

yes with npm module webdriver-manager / no

|

non_test

|

lang en or accept lang en option not working with node js on mac os what happened hi ✌️ i try to create a web end to end automation framework for my company and i need to be able to run tests in multiple browsers and in multiple languages current targets are browsers chrome and firefox languages deutsh english french italian spanish more to come later i am doing a proof of concept project to see how it could be achieved with either selenium or puppeteer i am trying to implement a simple scenario open google home page verify in the popup that the title is in the right language examples browser expectedtitle chrome de de bevor sie zu google weitergehen chrome en gb before you continue to google chrome es es antes de ir a google chrome fr fr avant d accéder à google firefox de de bevor sie zu google weitergehen firefox en gb before you continue to google firefox es es antes de ir a google firefox fr fr avant d accéder à google my problem is that english test case is always failed and i ve tried all workarounds i ve found around the web github issues stack overflow ps i am using webdriver manager to run a local grid but it is also failed with chromedriver and geckodriver local installations from npm how can we reproduce the issue shell node js test script with selenium const fs require fs const path require path const mkdirp require mkdirp const rimraf require rimraf const builder until by require selenium webdriver const chrome require selenium webdriver chrome const firefox require selenium webdriver firefox require chromedriver require geckodriver jest settimeout async function getwebdriver browser lang country if browser chrome const chromeoptions new chrome options addarguments accept lang lang country browser test bwsi default country code country disable default apps disable extensions disable gpu disable logging disable web security dom automation enable automation force headless for tests guest headless incognito lang lang no sandbox window size setuserpreferences lang enabled true const driver new builder forbrowser chrome setchromeoptions chromeoptions usingserver build await sleep return driver else const firefoxoptions new firefox options headless setpreference intl accept languages lang const driver new builder forbrowser firefox setfirefoxoptions firefoxoptions usingserver build await sleep return driver async function sleep seconds return new promise resolve settimeout resolve seconds describe each browser expectedtitle chrome de de bevor sie zu google weitergehen chrome en gb before you continue to google chrome es es antes de ir a google chrome fr fr avant d accéder à google firefox de de bevor sie zu google weitergehen firefox en gb before you continue to google firefox es es antes de ir a google firefox fr fr avant d accéder à google open google on browser browser with language browser expectedtitle let envlangbefore process env undefined beforeall async await rimraf sync path join dirname test screenshots await mkdirp sync path join dirname test screenshots process env afterall process env envlangbefore let driver it opens web driver instance async driver await getwebdriver browser it reach goole web app async await driver get await driver navigate refresh it waits for translated text or take a screenshot and throw async try await driver wait until elementlocated by xpath catch e const image await driver takescreenshot fs writefilesync path join dirname test screenshots browser png image throw e it closes web driver instance async await driver quit node js test script with puppeteer const fs require fs const path require path const mkdirp require mkdirp const puppeteer require puppeteer const rimraf require rimraf jest settimeout async function getwebdriver lang country const driver await puppeteer launch args accept lang lang country browser test bwsi default country code country disable default apps disable extensions disable gpu disable logging disable web security dom automation enable automation force headless for tests guest headless incognito lang lang no sandbox window size await sleep return driver async function sleep seconds return new promise resolve settimeout resolve seconds describe each expectedtitle de de bevor sie zu google weitergehen en gb before you continue to google es es antes de ir a google fr fr avant d accéder à google open puppeteer with language expectedtitle let envlangbefore process env undefined beforeall async await rimraf sync path join dirname test screenshots await mkdirp sync path join dirname test screenshots process env afterall process env envlangbefore type puppeteer browser let browser type puppeteer page let page it opens web driver instance async browser await getwebdriver page await browser newpage it reach goole web app async await page goto await page reload it waits for translated text or take a screenshot and throw async try await page waitforxpath catch e await page screenshot path path join dirname test screenshots puppeteer png throw e it closes web driver instance async await browser close node js project package json example name sample en issue with chromium scripts test jest devdependencies types jest types selenium webdriver eslint eslint config node eslint plugin import eslint plugin jest jest jest runner groups webdriver manager dependencies chromedriver geckodriver mkdirp puppeteer rimraf selenium webdriver relevant log output shell with selenium fail framework automate web webdrivermanager test js s open google on chrome browser with language de ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on chrome browser with language en ✓ opens web driver instance ms ✓ reach goole web app ms ✕ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on chrome browser with language es ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on chrome browser with language fr ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on firefox browser with language de ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on firefox browser with language en ✓ opens web driver instance ms ✓ reach goole web app ms ✕ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on firefox browser with language es ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open google on firefox browser with language fr ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms with puppeteer fail framework automate web test screenshots puppeteer multiple langs test js s open puppeteer with language de ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open puppeteer with language en ✓ opens web driver instance ms ✓ reach goole web app ms ✕ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open puppeteer with language es ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms open puppeteer with language fr ✓ opens web driver instance ms ✓ reach goole web app ms ✓ waits for translated text or take a screenshot and throw ms ✓ closes web driver instance ms operating system mac os monterey selenium version selenium webdriver what are the browser s and version s where you see this issue firefox chrome version as of what are the browser driver s and version s where you see this issue from npm chromedriver geckodriver are you using selenium grid yes with npm module webdriver manager no

| 0

|

267,237

| 20,195,873,226

|

IssuesEvent

|

2022-02-11 10:35:49

|

UST-QuAntiL/qc-atlas

|

https://api.github.com/repos/UST-QuAntiL/qc-atlas

|

opened

|

Finalize Winery Feature

|

documentation enhancement

|

- we require **tests** for the new feature in the QC-Atlas

- union with UI

- we require (UI) **documentation** about the feature in our [readthedocs](https://github.com/UST-QuAntiL/quantil-docs)

- quantil-docker update for **winery** and **env variables**, additional profile for winery

|

1.0

|

Finalize Winery Feature - - we require **tests** for the new feature in the QC-Atlas

- union with UI

- we require (UI) **documentation** about the feature in our [readthedocs](https://github.com/UST-QuAntiL/quantil-docs)

- quantil-docker update for **winery** and **env variables**, additional profile for winery

|

non_test

|

finalize winery feature we require tests for the new feature in the qc atlas union with ui we require ui documentation about the feature in our quantil docker update for winery and env variables additional profile for winery

| 0

|

321,698

| 27,547,214,481

|

IssuesEvent

|

2023-03-07 12:40:28

|

Cli4d/Testing-GitHub-issues-and-Projects

|

https://api.github.com/repos/Cli4d/Testing-GitHub-issues-and-Projects

|

closed

|

Exploring GitHub projects

|

Test issue

|

I am using a test project to explore GitHub's project features and learn how to utilize them. As mentioned here

https://github.com/Cli4d/Testing-GitHub-issues-and-Projects/blob/0afe2260758d864698c7a1cb922a74fc7146b0c8/README.md?plain=1#L2

I will be exploring the following features:

- [x] Creating a project

- [x] Attaching issues to the project

- [x] Toggling the project's view and layout

- [x] Managing and applying an iteration

- [x] Applying and customizing default workflows

|

1.0

|

Exploring GitHub projects - I am using a test project to explore GitHub's project features and learn how to utilize them. As mentioned here

https://github.com/Cli4d/Testing-GitHub-issues-and-Projects/blob/0afe2260758d864698c7a1cb922a74fc7146b0c8/README.md?plain=1#L2

I will be exploring the following features:

- [x] Creating a project

- [x] Attaching issues to the project

- [x] Toggling the project's view and layout

- [x] Managing and applying an iteration

- [x] Applying and customizing default workflows

|

test

|

exploring github projects i am using a test project to explore github s project features and learn how to utilize them as mentioned here i will be exploring the following features creating a project attaching issues to the project toggling the project s view and layout managing and applying an iteration applying and customizing default workflows

| 1

|

226,356

| 7,518,335,093

|

IssuesEvent

|

2018-04-12 08:05:00

|

aiidateam/aiida_core

|

https://api.github.com/repos/aiidateam/aiida_core

|

closed

|

Allow passing a JobCalculation to the launch free functions

|

priority/nice to have priority/quality-of-life

|

Since the launch free functions (`run`, `submit` and companions) expect a `Process` class as the first argument, if one wants to launch a `JobCalculation` one has to call `JobCalculation.process()` to generate the `JobProcess` class. However, this could just as well be done by the launch function by checking the class of the first argument and this would make it a lot easier for the user

|

2.0

|

Allow passing a JobCalculation to the launch free functions - Since the launch free functions (`run`, `submit` and companions) expect a `Process` class as the first argument, if one wants to launch a `JobCalculation` one has to call `JobCalculation.process()` to generate the `JobProcess` class. However, this could just as well be done by the launch function by checking the class of the first argument and this would make it a lot easier for the user

|

non_test

|

allow passing a jobcalculation to the launch free functions since the launch free functions run submit and companions expect a process class as the first argument if one wants to launch a jobcalculation one has to call jobcalculation process to generate the jobprocess class however this could just as well be done by the launch function by checking the class of the first argument and this would make it a lot easier for the user

| 0

|

456,212

| 13,147,108,154

|

IssuesEvent

|

2020-08-08 13:56:09

|

unoplatform/uno

|

https://api.github.com/repos/unoplatform/uno

|

closed

|

Toggleswitch.Header doesn't work

|

kind/bug priority/backlog

|

<!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://github.com/nventive/Uno/security/

-->

## Current behavior

Assigning a value to a Toggleswitch Header has no effect in anything other than UWP.

<!-- Describe how the issue manifests. -->

## Expected behavior

<!-- Describe what the desired behavior would be. -->

## How to reproduce it (as minimally and precisely as possible)

`<ToggleSwitch Header="Hello World" />`

<!-- Please provide a **MINIMAL REPRO PROJECT** and the **STEPS TO REPRODUCE**-->

## Environment

<!-- For bug reports Check one or more of the following options with "x" -->

Nuget Package: Uno.UI

Package Version(s): 3.0.0-dev.636

Affected platform(s):

- [ ] iOS

- [x] Android

- [x] WebAssembly

- [ ] WebAssembly renderers for Xamarin.Forms

- [ ] macOS

- [ ] Windows

- [ ] Build tasks

- [ ] Solution Templates

Visual Studio:

- [ ] 2017 (version: )

- [x] 2019 (version: 16.6.2)

- [ ] for Mac (version: )

Relevant plugins:

- [ ] Resharper (version: )

## Anything else we need to know?

<!-- We would love to know of any friction, apart from knowledge, that prevented you from sending in a pull-request -->

|

1.0

|

Toggleswitch.Header doesn't work - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://github.com/nventive/Uno/security/

-->

## Current behavior

Assigning a value to a Toggleswitch Header has no effect in anything other than UWP.

<!-- Describe how the issue manifests. -->

## Expected behavior

<!-- Describe what the desired behavior would be. -->

## How to reproduce it (as minimally and precisely as possible)

`<ToggleSwitch Header="Hello World" />`

<!-- Please provide a **MINIMAL REPRO PROJECT** and the **STEPS TO REPRODUCE**-->

## Environment

<!-- For bug reports Check one or more of the following options with "x" -->

Nuget Package: Uno.UI

Package Version(s): 3.0.0-dev.636

Affected platform(s):

- [ ] iOS

- [x] Android

- [x] WebAssembly

- [ ] WebAssembly renderers for Xamarin.Forms

- [ ] macOS

- [ ] Windows

- [ ] Build tasks

- [ ] Solution Templates

Visual Studio:

- [ ] 2017 (version: )

- [x] 2019 (version: 16.6.2)

- [ ] for Mac (version: )

Relevant plugins:

- [ ] Resharper (version: )

## Anything else we need to know?

<!-- We would love to know of any friction, apart from knowledge, that prevented you from sending in a pull-request -->

|

non_test

|

toggleswitch header doesn t work please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks if the matter is security related please disclose it privately via current behavior assigning a value to a toggleswitch header has no effect in anything other than uwp expected behavior how to reproduce it as minimally and precisely as possible environment nuget package uno ui package version s dev affected platform s ios android webassembly webassembly renderers for xamarin forms macos windows build tasks solution templates visual studio version version for mac version relevant plugins resharper version anything else we need to know

| 0

|

298,633

| 22,540,893,725

|

IssuesEvent

|

2022-06-26 00:24:48

|

Max-Rodriguez/libastron-js

|

https://api.github.com/repos/Max-Rodriguez/libastron-js

|

closed

|

Created example Astron environment diagram

|

documentation

|

Already completed, issued for progress tracking on the project board.

|

1.0

|

Created example Astron environment diagram - Already completed, issued for progress tracking on the project board.

|

non_test

|

created example astron environment diagram already completed issued for progress tracking on the project board

| 0

|

127,092

| 10,451,833,524

|

IssuesEvent

|

2019-09-19 13:37:28

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

roachtest: disk-stalled/log=false,data=false failed

|

C-test-failure O-roachtest O-robot

|

SHA: https://github.com/cockroachdb/cockroach/commits/c6342c90a7fa4ceb1b674faa47a95e1726d05e79

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stress instead of stressrace and passing the '-p' stressflag which

# controls concurrency.

./scripts/gceworker.sh start && ./scripts/gceworker.sh mosh

cd ~/go/src/github.com/cockroachdb/cockroach && \

stdbuf -oL -eL \

make stressrace TESTS=disk-stalled/log=false,data=false PKG=roachtest TESTTIMEOUT=5m STRESSFLAGS='-maxtime 20m -timeout 10m' 2>&1 | tee /tmp/stress.log

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=1496387&tab=artifacts#/disk-stalled/log=false,data=false

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20190919-1496387/disk-stalled/log=false_data=false/run_1

disk_stall.go:68,disk_stall.go:40,test_runner.go:689: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod install teamcity-1568869602-26-n1cpu4:1 charybdefs returned:

stderr:

stdout:

Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/pcre3/libpcre32-3_8.38-3.1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/pkg-config/pkg-config_0.29.1-0ubuntu1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/m/manpages/manpages-dev_4.04-2_all.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/python-setuptools/python-setuptools_20.7.0-1_all.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/o/ocl-icd/ocl-icd-libopencl1_2.2.8-1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing?

Error: exit status 100

: exit status 1

```

|

2.0

|

roachtest: disk-stalled/log=false,data=false failed - SHA: https://github.com/cockroachdb/cockroach/commits/c6342c90a7fa4ceb1b674faa47a95e1726d05e79

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stress instead of stressrace and passing the '-p' stressflag which

# controls concurrency.

./scripts/gceworker.sh start && ./scripts/gceworker.sh mosh

cd ~/go/src/github.com/cockroachdb/cockroach && \

stdbuf -oL -eL \

make stressrace TESTS=disk-stalled/log=false,data=false PKG=roachtest TESTTIMEOUT=5m STRESSFLAGS='-maxtime 20m -timeout 10m' 2>&1 | tee /tmp/stress.log

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=1496387&tab=artifacts#/disk-stalled/log=false,data=false

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20190919-1496387/disk-stalled/log=false_data=false/run_1

disk_stall.go:68,disk_stall.go:40,test_runner.go:689: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod install teamcity-1568869602-26-n1cpu4:1 charybdefs returned:

stderr:

stdout:

Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/pcre3/libpcre32-3_8.38-3.1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/pkg-config/pkg-config_0.29.1-0ubuntu1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/m/manpages/manpages-dev_4.04-2_all.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/p/python-setuptools/python-setuptools_20.7.0-1_all.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Failed to fetch http://us-central1.gce.archive.ubuntu.com/ubuntu/pool/main/o/ocl-icd/ocl-icd-libopencl1_2.2.8-1_amd64.deb 503 Service Unavailable [IP: 35.184.34.241 80]

E: Unable to fetch some archives, maybe run apt-get update or try with --fix-missing?

Error: exit status 100

: exit status 1

```

|

test

|

roachtest disk stalled log false data false failed sha parameters to repro try don t forget to check out a clean suitable branch and experiment with the stress invocation until the desired results present themselves for example using stress instead of stressrace and passing the p stressflag which controls concurrency scripts gceworker sh start scripts gceworker sh mosh cd go src github com cockroachdb cockroach stdbuf ol el make stressrace tests disk stalled log false data false pkg roachtest testtimeout stressflags maxtime timeout tee tmp stress log failed test the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts disk stalled log false data false run disk stall go disk stall go test runner go home agent work go src github com cockroachdb cockroach bin roachprod install teamcity charybdefs returned stderr stdout service unavailable e failed to fetch service unavailable e failed to fetch service unavailable e failed to fetch service unavailable e failed to fetch service unavailable e failed to fetch service unavailable e unable to fetch some archives maybe run apt get update or try with fix missing error exit status exit status

| 1

|

274,310

| 20,831,080,971

|

IssuesEvent

|

2022-03-19 12:57:14

|

apache/airflow

|

https://api.github.com/repos/apache/airflow

|

opened

|

Ask problems about branching

|

kind:bug kind:documentation

|

### What do you see as an issue?

If I have a dag looks like attached: a branching with two branches, I wonder which branch will be executed first? It seems that they won't be executed at the same time. And how can I control which branch to go first?

<img width="276" alt="image" src="https://user-images.githubusercontent.com/37681002/159121949-0631bf85-05ff-48f1-a4a6-8e02821657a3.png">

### Solving the problem

_No response_

### Anything else

_No response_

### Are you willing to submit PR?

- [X] Yes I am willing to submit a PR!

### Code of Conduct

- [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

|

1.0

|

Ask problems about branching - ### What do you see as an issue?

If I have a dag looks like attached: a branching with two branches, I wonder which branch will be executed first? It seems that they won't be executed at the same time. And how can I control which branch to go first?

<img width="276" alt="image" src="https://user-images.githubusercontent.com/37681002/159121949-0631bf85-05ff-48f1-a4a6-8e02821657a3.png">

### Solving the problem

_No response_

### Anything else

_No response_

### Are you willing to submit PR?

- [X] Yes I am willing to submit a PR!

### Code of Conduct

- [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md)

|

non_test

|

ask problems about branching what do you see as an issue if i have a dag looks like attached a branching with two branches i wonder which branch will be executed first it seems that they won t be executed at the same time and how can i control which branch to go first img width alt image src solving the problem no response anything else no response are you willing to submit pr yes i am willing to submit a pr code of conduct i agree to follow this project s

| 0

|

260,144

| 22,595,539,794

|

IssuesEvent

|

2022-06-29 02:18:13

|

microsoft/AzureStorageExplorer

|

https://api.github.com/repos/microsoft/AzureStorageExplorer

|

closed

|

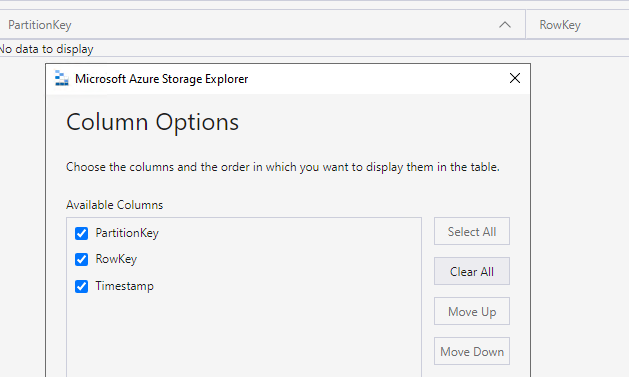

There is an extra option 'Timestamp' in the 'Column Options' dialog for one empty table

|

🧪 testing :gear: tables :beetle: regression

|

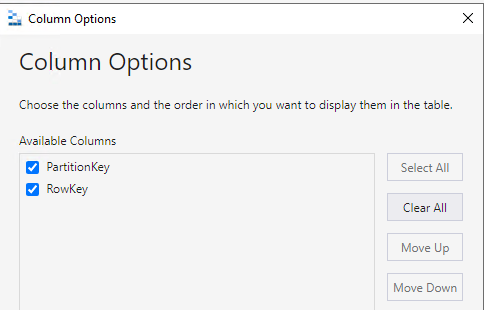

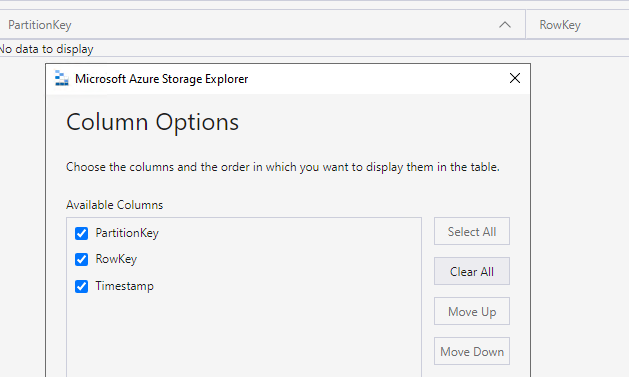

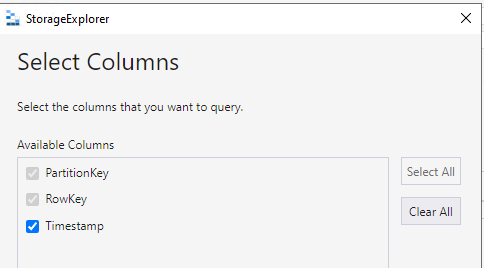

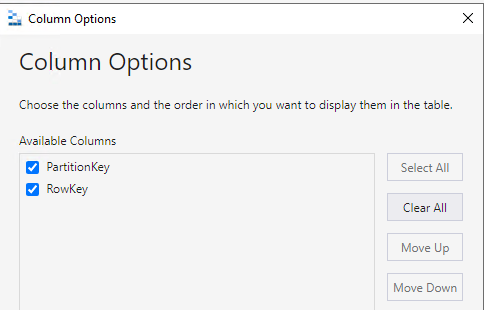

**Storage Explorer Version**: 1.25.0-dev

**Build Number**: 20220622.1

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Monterey 12.4 (Apple M1 Pro)

**Architecture** ia32/x64

**How Found**: From running test cases

**Regression From**: Previous release (1.24.3)

## Steps to Reproduce ##

1. Expand one storage account -> Tables.

2. Create a new table -> Click 'Column Options'.

3. Check there is no extra option 'Timestamp' in the 'Column Options' dialog.

## Expected Experience ##

There is no extra option 'Timestamp' in the 'Column Options' dialog.

## Actual Experience ##

There is an extra option 'Timestamp' in the 'Column Options' dialog.

## Additional Context ##

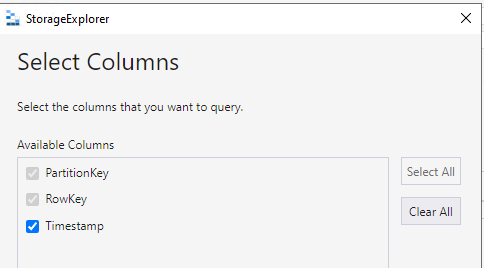

This issue also reproduces for 'Select Columns' dialog.

|

1.0

|

There is an extra option 'Timestamp' in the 'Column Options' dialog for one empty table - **Storage Explorer Version**: 1.25.0-dev

**Build Number**: 20220622.1

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Monterey 12.4 (Apple M1 Pro)

**Architecture** ia32/x64

**How Found**: From running test cases

**Regression From**: Previous release (1.24.3)

## Steps to Reproduce ##

1. Expand one storage account -> Tables.

2. Create a new table -> Click 'Column Options'.

3. Check there is no extra option 'Timestamp' in the 'Column Options' dialog.

## Expected Experience ##

There is no extra option 'Timestamp' in the 'Column Options' dialog.

## Actual Experience ##

There is an extra option 'Timestamp' in the 'Column Options' dialog.

## Additional Context ##

This issue also reproduces for 'Select Columns' dialog.

|

test

|

there is an extra option timestamp in the column options dialog for one empty table storage explorer version dev build number branch main platform os windows linux ubuntu macos monterey apple pro architecture how found from running test cases regression from previous release steps to reproduce expand one storage account tables create a new table click column options check there is no extra option timestamp in the column options dialog expected experience there is no extra option timestamp in the column options dialog actual experience there is an extra option timestamp in the column options dialog additional context this issue also reproduces for select columns dialog

| 1

|

46,875

| 19,533,598,107

|

IssuesEvent

|

2021-12-30 22:51:47

|

meshery/meshery-istio

|

https://api.github.com/repos/meshery/meshery-istio

|

opened

|

[CI] Consolidate end-to-end test workflows into one workflow

|

help wanted service-mesh/istio area/ci area/tests kind/enhancement

|

#### Current Behavior

This adapter has two separate end-to-end test GitHub workflows:

1. https://github.com/meshery/meshery-istio/blob/master/.github/workflows/e2etest-servicemeshinstall.yaml

2. https://github.com/meshery/meshery-istio/blob/master/.github/workflows/e2etest-servicemeshandaddon.yaml

#### Desired Behavior

These two separate workflows need to be combined into a single workflow.

#### Implementation

The resultant workflow filename should be `e2etests.yml`

#### Acceptance Tests

Combined test results should be published to Meshery Docs on PR merge, not on PR open.

---

#### Contributor [Guides](https://docs.meshery.io/project/contributing) and Resources

- 🛠 [Meshery Build & Release Strategy](https://docs.meshery.io/project/build-and-release)

- 📚 [Instructions for contributing to documentation](https://github.com/meshery/meshery/blob/master/CONTRIBUTING.md#documentation-contribution-flow)

- Meshery documentation [site](https://docs.meshery.io/) and [source](https://github.com/meshery/meshery/tree/master/docs)

- 🎨 Wireframes and designs for Meshery UI in [Figma](https://www.figma.com/file/SMP3zxOjZztdOLtgN4dS2W/Meshery-UI)

- 🙋🏾🙋🏼 Questions: [Layer5 Discussion Forum](https://discuss.layer5.io) and [Layer5 Community Slack](http://slack.layer5.io)

|

1.0

|

[CI] Consolidate end-to-end test workflows into one workflow - #### Current Behavior

This adapter has two separate end-to-end test GitHub workflows:

1. https://github.com/meshery/meshery-istio/blob/master/.github/workflows/e2etest-servicemeshinstall.yaml

2. https://github.com/meshery/meshery-istio/blob/master/.github/workflows/e2etest-servicemeshandaddon.yaml

#### Desired Behavior

These two separate workflows need to be combined into a single workflow.

#### Implementation

The resultant workflow filename should be `e2etests.yml`

#### Acceptance Tests

Combined test results should be published to Meshery Docs on PR merge, not on PR open.

---

#### Contributor [Guides](https://docs.meshery.io/project/contributing) and Resources

- 🛠 [Meshery Build & Release Strategy](https://docs.meshery.io/project/build-and-release)

- 📚 [Instructions for contributing to documentation](https://github.com/meshery/meshery/blob/master/CONTRIBUTING.md#documentation-contribution-flow)

- Meshery documentation [site](https://docs.meshery.io/) and [source](https://github.com/meshery/meshery/tree/master/docs)

- 🎨 Wireframes and designs for Meshery UI in [Figma](https://www.figma.com/file/SMP3zxOjZztdOLtgN4dS2W/Meshery-UI)

- 🙋🏾🙋🏼 Questions: [Layer5 Discussion Forum](https://discuss.layer5.io) and [Layer5 Community Slack](http://slack.layer5.io)

|

non_test

|

consolidate end to end test workflows into one workflow current behavior this adapter has two separate end to end test github workflows desired behavior these two separate workflows need to be combined into a single workflow implementation the resultant workflow filename should be yml acceptance tests combined test results should be published to meshery docs on pr merge not on pr open contributor and resources 🛠 📚 meshery documentation and 🎨 wireframes and designs for meshery ui in 🙋🏾🙋🏼 questions and

| 0

|

113,243

| 9,633,423,434

|

IssuesEvent

|

2019-05-15 18:36:13

|

andes/app

|

https://api.github.com/repos/andes/app

|

closed

|

MPI (NV) - Indicar tipo de relación

|

bug test

|

<!--

PASOS PARA REGISTRAR UN ISSUE

_____________________________________________

1) Seleccionar el proyecto al que pertenece (CITAS, RUP, MPI, ...)

2) Seleccionar un label de identificación (bug, feature, enhancement, etc.)

3) Asignar revisores que sean miembros del equipo responsable de solucionar el issue

4) Completar las siguientes secciones:

-->

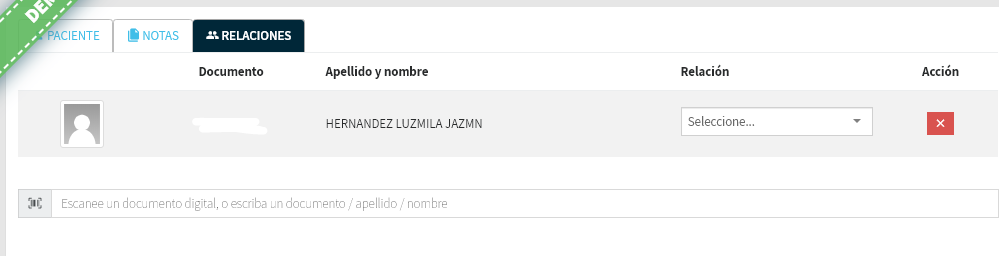

### Comportamiento actual

Al indicar el tipo de relación este dato no queda guardado

### Resultado esperado

una vez guardados los datos del paciente visualizar el tipo de relación ingresado

### Pasos para reproducir el problema

1. a un paciente agregar relación e indicar tipo

2. guardar

3. buscar nuevamente al paciente

4. ver el tipo en la solapa relaciones.

<!-- Agregar captura de pantalla, si fuera relevante -->

<!-- Código relevante

```

(pegar código aquí)

```

-->

|

1.0

|

MPI (NV) - Indicar tipo de relación - <!--

PASOS PARA REGISTRAR UN ISSUE

_____________________________________________

1) Seleccionar el proyecto al que pertenece (CITAS, RUP, MPI, ...)

2) Seleccionar un label de identificación (bug, feature, enhancement, etc.)

3) Asignar revisores que sean miembros del equipo responsable de solucionar el issue

4) Completar las siguientes secciones:

-->

### Comportamiento actual

Al indicar el tipo de relación este dato no queda guardado

### Resultado esperado

una vez guardados los datos del paciente visualizar el tipo de relación ingresado

### Pasos para reproducir el problema

1. a un paciente agregar relación e indicar tipo

2. guardar

3. buscar nuevamente al paciente

4. ver el tipo en la solapa relaciones.

<!-- Agregar captura de pantalla, si fuera relevante -->

<!-- Código relevante

```

(pegar código aquí)

```

-->

|

test

|

mpi nv indicar tipo de relación pasos para registrar un issue seleccionar el proyecto al que pertenece citas rup mpi seleccionar un label de identificación bug feature enhancement etc asignar revisores que sean miembros del equipo responsable de solucionar el issue completar las siguientes secciones comportamiento actual al indicar el tipo de relación este dato no queda guardado resultado esperado una vez guardados los datos del paciente visualizar el tipo de relación ingresado pasos para reproducir el problema a un paciente agregar relación e indicar tipo guardar buscar nuevamente al paciente ver el tipo en la solapa relaciones código relevante pegar código aquí

| 1

|

24,516

| 17,363,098,863

|

IssuesEvent

|

2021-07-30 00:53:21

|

APSIMInitiative/ApsimX

|

https://api.github.com/repos/APSIMInitiative/ApsimX

|

closed

|

Additional functionality needed for economic analysis of beef enterprises

|

CLEM interface/infrastructure

|

Research economists have provided a list of additional functionality needed for full economic analysis

- Price schedules from external data/streams

- Fully customisable category for reporting transaction types

- Breakdown of ruminant purchases and sales by class/pricing groups

- Present amount and/or value in resource balance report

|

1.0

|

Additional functionality needed for economic analysis of beef enterprises - Research economists have provided a list of additional functionality needed for full economic analysis

- Price schedules from external data/streams

- Fully customisable category for reporting transaction types

- Breakdown of ruminant purchases and sales by class/pricing groups

- Present amount and/or value in resource balance report

|

non_test

|

additional functionality needed for economic analysis of beef enterprises research economists have provided a list of additional functionality needed for full economic analysis price schedules from external data streams fully customisable category for reporting transaction types breakdown of ruminant purchases and sales by class pricing groups present amount and or value in resource balance report

| 0

|

284,112

| 8,735,807,612

|

IssuesEvent

|

2018-12-11 17:43:59

|

aowen87/TicketTester

|

https://api.github.com/repos/aowen87/TicketTester

|

closed

|

Cracks Clipper is broken in 2.x

|

bug crash likelihood medium priority reviewed severity high wrong results

|

The CracksClipper operator no longer works, as of 2.0. Greg Burton has need of this functionality, and would like it fixed asap.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 402

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: Cracks Clipper is broken in 2.x

Assigned to: Kathleen Biagas

Category:

Target version: 2.1.1

Author: Kathleen Biagas

Start: 09/22/2010

Due date:

% Done: 0

Estimated time:

Created: 09/22/2010 12:54 pm

Updated: 09/29/2010 02:53 pm

Likelihood: 3 - Occasional

Severity: 4 - Crash / Wrong Results

Found in version: 2.0.0

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

The CracksClipper operator no longer works, as of 2.0. Greg Burton has need of this functionality, and would like it fixed asap.

Comments:

Restored functionality of CracksClipper operator.SVN revisions 12594 (2.1 RC) 12596 (trunk).

|

1.0

|

Cracks Clipper is broken in 2.x - The CracksClipper operator no longer works, as of 2.0. Greg Burton has need of this functionality, and would like it fixed asap.

-----------------------REDMINE MIGRATION-----------------------

This ticket was migrated from Redmine. As such, not all

information was able to be captured in the transition. Below is

a complete record of the original redmine ticket.

Ticket number: 402

Status: Resolved

Project: VisIt

Tracker: Bug

Priority: High

Subject: Cracks Clipper is broken in 2.x

Assigned to: Kathleen Biagas

Category:

Target version: 2.1.1

Author: Kathleen Biagas

Start: 09/22/2010

Due date:

% Done: 0

Estimated time:

Created: 09/22/2010 12:54 pm

Updated: 09/29/2010 02:53 pm

Likelihood: 3 - Occasional

Severity: 4 - Crash / Wrong Results

Found in version: 2.0.0

Impact:

Expected Use:

OS: All

Support Group: Any

Description:

The CracksClipper operator no longer works, as of 2.0. Greg Burton has need of this functionality, and would like it fixed asap.

Comments:

Restored functionality of CracksClipper operator.SVN revisions 12594 (2.1 RC) 12596 (trunk).

|

non_test

|

cracks clipper is broken in x the cracksclipper operator no longer works as of greg burton has need of this functionality and would like it fixed asap redmine migration this ticket was migrated from redmine as such not all information was able to be captured in the transition below is a complete record of the original redmine ticket ticket number status resolved project visit tracker bug priority high subject cracks clipper is broken in x assigned to kathleen biagas category target version author kathleen biagas start due date done estimated time created pm updated pm likelihood occasional severity crash wrong results found in version impact expected use os all support group any description the cracksclipper operator no longer works as of greg burton has need of this functionality and would like it fixed asap comments restored functionality of cracksclipper operator svn revisions rc trunk

| 0

|

207,182

| 23,430,310,760

|

IssuesEvent

|

2022-08-15 01:01:35

|

MidnightBSD/src

|

https://api.github.com/repos/MidnightBSD/src

|

reopened

|

CVE-2020-24370 (Medium) detected in freebsd-srcrelease/12.3.0

|

security vulnerability

|

## CVE-2020-24370 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freebsd-srcrelease/12.3.0</b></p></summary>

<p>

<p>FreeBSD src tree (read-only mirror)</p>

<p>Library home page: <a href=https://github.com/freebsd/freebsd-src.git>https://github.com/freebsd/freebsd-src.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/MidnightBSD/src/commit/816463d989cc5839c1cca2efb5bf2503408507fb">816463d989cc5839c1cca2efb5bf2503408507fb</a></p>

<p>Found in base branch: <b>stable/2.1</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/ldebug.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/ldebug.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ldebug.c in Lua 5.4.0 allows a negation overflow and segmentation fault in getlocal and setlocal, as demonstrated by getlocal(3,2^31).

<p>Publish Date: 2020-08-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-24370>CVE-2020-24370</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2020-24370">https://nvd.nist.gov/vuln/detail/CVE-2020-24370</a></p>

<p>Release Date: 2020-09-26</p>

<p>Fix Resolution: lua-debuginfo - 5.3.4-12,5.3.4-12;lua-libs - 5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12;lua - 5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12;lua-debugsource - 5.3.4-12,5.3.4-12;lua-libs-debuginfo - 5.3.4-12,5.3.4-12</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-24370 (Medium) detected in freebsd-srcrelease/12.3.0 - ## CVE-2020-24370 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freebsd-srcrelease/12.3.0</b></p></summary>

<p>

<p>FreeBSD src tree (read-only mirror)</p>

<p>Library home page: <a href=https://github.com/freebsd/freebsd-src.git>https://github.com/freebsd/freebsd-src.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/MidnightBSD/src/commit/816463d989cc5839c1cca2efb5bf2503408507fb">816463d989cc5839c1cca2efb5bf2503408507fb</a></p>

<p>Found in base branch: <b>stable/2.1</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/ldebug.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/ldebug.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ldebug.c in Lua 5.4.0 allows a negation overflow and segmentation fault in getlocal and setlocal, as demonstrated by getlocal(3,2^31).

<p>Publish Date: 2020-08-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-24370>CVE-2020-24370</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2020-24370">https://nvd.nist.gov/vuln/detail/CVE-2020-24370</a></p>

<p>Release Date: 2020-09-26</p>

<p>Fix Resolution: lua-debuginfo - 5.3.4-12,5.3.4-12;lua-libs - 5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12;lua - 5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12,5.3.4-12;lua-debugsource - 5.3.4-12,5.3.4-12;lua-libs-debuginfo - 5.3.4-12,5.3.4-12</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in freebsd srcrelease cve medium severity vulnerability vulnerable library freebsd srcrelease freebsd src tree read only mirror library home page a href found in head commit a href found in base branch stable vulnerable source files ldebug c ldebug c vulnerability details ldebug c in lua allows a negation overflow and segmentation fault in getlocal and setlocal as demonstrated by getlocal publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution lua debuginfo lua libs lua lua debugsource lua libs debuginfo step up your open source security game with mend

| 0

|

13,965

| 5,521,781,081

|

IssuesEvent

|

2017-03-19 18:16:36

|

alexrj/Slic3r

|

https://api.github.com/repos/alexrj/Slic3r

|

closed

|

1.2.7-dev: parts jumping out of sight in 3D plater when moving around

|

Can't Reproduce - Development Build

|

Hi,

i've found a glitch in the 3D plater: when moving around several parts, sometimes the part that was just moved jumps far out of sight, away from the cursor - but can be found again when zooming out quite a bit.

This could possibly overlap with #2728 - anyway, i made a screencast where i just managed to reproduce this effect:

|

1.0

|

1.2.7-dev: parts jumping out of sight in 3D plater when moving around - Hi,

i've found a glitch in the 3D plater: when moving around several parts, sometimes the part that was just moved jumps far out of sight, away from the cursor - but can be found again when zooming out quite a bit.

This could possibly overlap with #2728 - anyway, i made a screencast where i just managed to reproduce this effect:

|

non_test

|

dev parts jumping out of sight in plater when moving around hi i ve found a glitch in the plater when moving around several parts sometimes the part that was just moved jumps far out of sight away from the cursor but can be found again when zooming out quite a bit this could possibly overlap with anyway i made a screencast where i just managed to reproduce this effect

| 0

|

9,388

| 2,902,610,568

|

IssuesEvent

|

2015-06-18 08:17:00

|

ramu2016/SXUMX357FFAWEJHBMNM54FBQ

|

https://api.github.com/repos/ramu2016/SXUMX357FFAWEJHBMNM54FBQ

|

closed

|

f+fZMR2Wh9VQBoBXfX4r/BZ071BepESorIvq4YpDlHQ5s4tJr4TbP8zTpB+5YkZzllhvexG+WP50tfVEFIpVsaFEYJsFf2H6cOCW8JyIU07Hj5JVOxHIbC0HrmoWGW0/ErvdYUeR86gS+oghElVphKwaFY8pOPsID9eHK6Vm1co=

|

design

|