Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

30,113 | 7,163,242,572 | IssuesEvent | 2018-01-29 06:13:53 | cquery-project/cquery | https://api.github.com/repos/cquery-project/cquery | closed | [VSCode] Semantic highlighting does not work for files whose path contains "+" symbol | vscode | Semantic highlighting is working fine in some workspaces, but not at all in others.

In the problematic workspace, I do not see any errors in the output window or the log file, but I do see lines like the following:

```

2018-01-06 19:34:51.858 ( 308.410s) [stdout ] timer.cc:38 0| [e2e] Running $cquery/publishSemanticHighlighting took 260173.259913790ms

```

That duration, if accurate, seems excessively long. Perhaps this is the cause of the problem, and the frontend (I'm using VSCode) is timing out or something? | 1.0 | [VSCode] Semantic highlighting does not work for files whose path contains "+" symbol - Semantic highlighting is working fine in some workspaces, but not at all in others.

In the problematic workspace, I do not see any errors in the output window or the log file, but I do see lines like the following:

```

2018-01-06 19:34:51.858 ( 308.410s) [stdout ] timer.cc:38 0| [e2e] Running $cquery/publishSemanticHighlighting took 260173.259913790ms

```

That duration, if accurate, seems excessively long. Perhaps this is the cause of the problem, and the frontend (I'm using VSCode) is timing out or something? | non_test | semantic highlighting does not work for files whose path contains symbol semantic highlighting is working fine in some workspaces but not at all in others in the problematic workspace i do not see any errors in the output window or the log file but i do see lines like the following timer cc running cquery publishsemantichighlighting took that duration if accurate seems excessively long perhaps this is the cause of the problem and the frontend i m using vscode is timing out or something | 0 |

33,580 | 16,037,617,021 | IssuesEvent | 2021-04-22 00:58:13 | unitystation/unitystation | https://api.github.com/repos/unitystation/unitystation | opened | Garbage reduction and crash fixes bundle B: special, see details | Bounty Type: Performance Type: Refactor |

## Description

**This is a stability related bounty, and is immune to the feature freeze.** This bounty bundle calls for reducing gc, improving performance of the game, and finding and killing crash causes.

This is not a traditional bounty. This bounty need not be assigned, and can be done by anyone, though it is still held to the standard bounty guidelines in the [contribution guide.](https://unitystation.github.io/unitystation/CONTRIBUTING/)

Any contributor may claim a reward for doing the following in a PR:

- substantially reducing gc of a system or method that is utilized often in the game.

- improving performance or speed of a system or that is utilized often in the game.

- fixing any bug that could cause either the host or clients to crash.

- fixing any bug that could prevent the game from properly launching, or initializing in a state that renders the game unplayable.

**the reward for fulfilling this bounty is set at 25.00 USD.**

This bounty is meant to encourage collective action. We want to get **as many contributors as possible** to work on game performance right now so that we may lift the [feature freeze](https://github.com/unitystation/unitystation/issues/6275) as soon as possible.

To give as many contributors the opportunity to get the reward as possible, **EACH CONTRIBUTOR MAY CLAIM A REWARD FROM THIS BOUNTY ONLY ONCE.**

We have allocated 250.00 USD for this bounty, providing **10** individual rewards to be claimed. Each Pull Request will be evaluated on request by @Bod9001 and @corp-0 as to whether the PR qualifies for a reward from this bounty bundle. If you have any questions about what _might_ qualify for a reward, please ask in the #bounties channel in the [unitystation discord.](https://discord.gg/WcF4cbxr)

We are opening this bounty opportunity retroactively to all Pull Requests merged since the feature freeze was put in place (Tuesday, March 23rd). If you would like to claim on a PR that has been merged, you can request an evaluation in a comment under that PR. | True | Garbage reduction and crash fixes bundle B: special, see details -

## Description

**This is a stability related bounty, and is immune to the feature freeze.** This bounty bundle calls for reducing gc, improving performance of the game, and finding and killing crash causes.

This is not a traditional bounty. This bounty need not be assigned, and can be done by anyone, though it is still held to the standard bounty guidelines in the [contribution guide.](https://unitystation.github.io/unitystation/CONTRIBUTING/)

Any contributor may claim a reward for doing the following in a PR:

- substantially reducing gc of a system or method that is utilized often in the game.

- improving performance or speed of a system or that is utilized often in the game.

- fixing any bug that could cause either the host or clients to crash.

- fixing any bug that could prevent the game from properly launching, or initializing in a state that renders the game unplayable.

**the reward for fulfilling this bounty is set at 25.00 USD.**

This bounty is meant to encourage collective action. We want to get **as many contributors as possible** to work on game performance right now so that we may lift the [feature freeze](https://github.com/unitystation/unitystation/issues/6275) as soon as possible.

To give as many contributors the opportunity to get the reward as possible, **EACH CONTRIBUTOR MAY CLAIM A REWARD FROM THIS BOUNTY ONLY ONCE.**

We have allocated 250.00 USD for this bounty, providing **10** individual rewards to be claimed. Each Pull Request will be evaluated on request by @Bod9001 and @corp-0 as to whether the PR qualifies for a reward from this bounty bundle. If you have any questions about what _might_ qualify for a reward, please ask in the #bounties channel in the [unitystation discord.](https://discord.gg/WcF4cbxr)

We are opening this bounty opportunity retroactively to all Pull Requests merged since the feature freeze was put in place (Tuesday, March 23rd). If you would like to claim on a PR that has been merged, you can request an evaluation in a comment under that PR. | non_test | garbage reduction and crash fixes bundle b special see details description this is a stability related bounty and is immune to the feature freeze this bounty bundle calls for reducing gc improving performance of the game and finding and killing crash causes this is not a traditional bounty this bounty need not be assigned and can be done by anyone though it is still held to the standard bounty guidelines in the any contributor may claim a reward for doing the following in a pr substantially reducing gc of a system or method that is utilized often in the game improving performance or speed of a system or that is utilized often in the game fixing any bug that could cause either the host or clients to crash fixing any bug that could prevent the game from properly launching or initializing in a state that renders the game unplayable the reward for fulfilling this bounty is set at usd this bounty is meant to encourage collective action we want to get as many contributors as possible to work on game performance right now so that we may lift the as soon as possible to give as many contributors the opportunity to get the reward as possible each contributor may claim a reward from this bounty only once we have allocated usd for this bounty providing individual rewards to be claimed each pull request will be evaluated on request by and corp as to whether the pr qualifies for a reward from this bounty bundle if you have any questions about what might qualify for a reward please ask in the bounties channel in the we are opening this bounty opportunity retroactively to all pull requests merged since the feature freeze was put in place tuesday march if you would like to claim on a pr that has been merged you can request an evaluation in a comment under that pr | 0 |

326,349 | 9,955,383,143 | IssuesEvent | 2019-07-05 10:52:55 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | Storybook: do we really need `core/css/inc/lib.scss` in `stories/index.js`? | priority: p3 storybook type: question | The file `core/css/inc/lib.scss` is already imported in `amo/components/App/styles.scss`, which is required in `stories/index.js`.

Do we really need to add it one more time? | 1.0 | Storybook: do we really need `core/css/inc/lib.scss` in `stories/index.js`? - The file `core/css/inc/lib.scss` is already imported in `amo/components/App/styles.scss`, which is required in `stories/index.js`.

Do we really need to add it one more time? | non_test | storybook do we really need core css inc lib scss in stories index js the file core css inc lib scss is already imported in amo components app styles scss which is required in stories index js do we really need to add it one more time | 0 |

247,646 | 20,987,403,850 | IssuesEvent | 2022-03-29 05:40:25 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: follower-reads/survival=zone/locality=regional/reads=exact-staleness failed | C-test-failure O-robot O-roachtest branch-master release-blocker | roachtest.follower-reads/survival=zone/locality=regional/reads=exact-staleness [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4713654&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4713654&tab=artifacts#/follower-reads/survival=zone/locality=regional/reads=exact-staleness) on master @ [29716850b181718594663889ddb5f479fef7a305](https://github.com/cockroachdb/cockroach/commits/29716850b181718594663889ddb5f479fef7a305):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /artifacts/follower-reads/survival=zone/locality=regional/reads=exact-staleness/run_1

cluster.go:1868,follower_reads.go:64,test_runner.go:875: one or more parallel execution failure

(1) attached stack trace

-- stack trace:

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).ParallelE

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:2042

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Parallel

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:1923

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Start

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cockroach.go:167

| github.com/cockroachdb/cockroach/pkg/roachprod.Start

| github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:660

| main.(*clusterImpl).StartE

| main/pkg/cmd/roachtest/cluster.go:1826

| main.(*clusterImpl).Start

| main/pkg/cmd/roachtest/cluster.go:1867

| github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests.registerFollowerReads.func1.1

| github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/follower_reads.go:64

| main.(*testRunner).runTest.func2

| main/pkg/cmd/roachtest/test_runner.go:875

| runtime.goexit

| GOROOT/src/runtime/asm_amd64.s:1581

Wraps: (2) one or more parallel execution failure

Error types: (1) *withstack.withStack (2) *errutil.leafError

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*follower-reads/survival=zone/locality=regional/reads=exact-staleness.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: follower-reads/survival=zone/locality=regional/reads=exact-staleness failed - roachtest.follower-reads/survival=zone/locality=regional/reads=exact-staleness [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4713654&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4713654&tab=artifacts#/follower-reads/survival=zone/locality=regional/reads=exact-staleness) on master @ [29716850b181718594663889ddb5f479fef7a305](https://github.com/cockroachdb/cockroach/commits/29716850b181718594663889ddb5f479fef7a305):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /artifacts/follower-reads/survival=zone/locality=regional/reads=exact-staleness/run_1

cluster.go:1868,follower_reads.go:64,test_runner.go:875: one or more parallel execution failure

(1) attached stack trace

-- stack trace:

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).ParallelE

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:2042

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Parallel

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cluster_synced.go:1923

| github.com/cockroachdb/cockroach/pkg/roachprod/install.(*SyncedCluster).Start

| github.com/cockroachdb/cockroach/pkg/roachprod/install/cockroach.go:167

| github.com/cockroachdb/cockroach/pkg/roachprod.Start

| github.com/cockroachdb/cockroach/pkg/roachprod/roachprod.go:660

| main.(*clusterImpl).StartE

| main/pkg/cmd/roachtest/cluster.go:1826

| main.(*clusterImpl).Start

| main/pkg/cmd/roachtest/cluster.go:1867

| github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests.registerFollowerReads.func1.1

| github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tests/follower_reads.go:64

| main.(*testRunner).runTest.func2

| main/pkg/cmd/roachtest/test_runner.go:875

| runtime.goexit

| GOROOT/src/runtime/asm_amd64.s:1581

Wraps: (2) one or more parallel execution failure

Error types: (1) *withstack.withStack (2) *errutil.leafError

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*follower-reads/survival=zone/locality=regional/reads=exact-staleness.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest follower reads survival zone locality regional reads exact staleness failed roachtest follower reads survival zone locality regional reads exact staleness with on master the test failed on branch master cloud gce test artifacts and logs in artifacts follower reads survival zone locality regional reads exact staleness run cluster go follower reads go test runner go one or more parallel execution failure attached stack trace stack trace github com cockroachdb cockroach pkg roachprod install syncedcluster parallele github com cockroachdb cockroach pkg roachprod install cluster synced go github com cockroachdb cockroach pkg roachprod install syncedcluster parallel github com cockroachdb cockroach pkg roachprod install cluster synced go github com cockroachdb cockroach pkg roachprod install syncedcluster start github com cockroachdb cockroach pkg roachprod install cockroach go github com cockroachdb cockroach pkg roachprod start github com cockroachdb cockroach pkg roachprod roachprod go main clusterimpl starte main pkg cmd roachtest cluster go main clusterimpl start main pkg cmd roachtest cluster go github com cockroachdb cockroach pkg cmd roachtest tests registerfollowerreads github com cockroachdb cockroach pkg cmd roachtest tests follower reads go main testrunner runtest main pkg cmd roachtest test runner go runtime goexit goroot src runtime asm s wraps one or more parallel execution failure error types withstack withstack errutil leaferror help see see cc cockroachdb kv triage | 1 |

117,369 | 9,932,812,981 | IssuesEvent | 2019-07-02 10:44:17 | jdev-org/ddv-viewer | https://api.github.com/repos/jdev-org/ddv-viewer | closed | Mettre à jour la couche client avec un fichier csv | To test | En tant qu'utilisateur,

Je souhaite mettre à jour les informations de la carte par l'import d'un fichier CSV qui remplacera les anciennes données

Afin de visualiser les informations clientes à jour et en toute autonomie.

Le fichier CSV remplacera l'ancien dans sa totalité.

Une sauvegarde du fichier M-1 peut être envisagée sur le serveur dans un autre répertoire ou sous un autre nom en cas d'erreur d'import.

Le fichier importer doit comporter le même nom que la couche et que le fichier présent sur le serveur. | 1.0 | Mettre à jour la couche client avec un fichier csv - En tant qu'utilisateur,

Je souhaite mettre à jour les informations de la carte par l'import d'un fichier CSV qui remplacera les anciennes données

Afin de visualiser les informations clientes à jour et en toute autonomie.

Le fichier CSV remplacera l'ancien dans sa totalité.

Une sauvegarde du fichier M-1 peut être envisagée sur le serveur dans un autre répertoire ou sous un autre nom en cas d'erreur d'import.

Le fichier importer doit comporter le même nom que la couche et que le fichier présent sur le serveur. | test | mettre à jour la couche client avec un fichier csv en tant qu utilisateur je souhaite mettre à jour les informations de la carte par l import d un fichier csv qui remplacera les anciennes données afin de visualiser les informations clientes à jour et en toute autonomie le fichier csv remplacera l ancien dans sa totalité une sauvegarde du fichier m peut être envisagée sur le serveur dans un autre répertoire ou sous un autre nom en cas d erreur d import le fichier importer doit comporter le même nom que la couche et que le fichier présent sur le serveur | 1 |

93,144 | 10,764,544,789 | IssuesEvent | 2019-11-01 08:36:58 | Davidcwh/ped | https://api.github.com/repos/Davidcwh/ped | opened | Incorrect delete/remove command in User Gudie | severity.High type.DocumentationBug | User guide states `remove 1` command to delete a food item:

But it is an invalid command:

| 1.0 | Incorrect delete/remove command in User Gudie - User guide states `remove 1` command to delete a food item:

But it is an invalid command:

| non_test | incorrect delete remove command in user gudie user guide states remove command to delete a food item but it is an invalid command | 0 |

638,686 | 20,734,703,234 | IssuesEvent | 2022-03-14 12:44:09 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | opened | Integrate the React Engine to B.io | Priority/Highest Area/Backend Type/NewFeature | **Description:**

Integrate the React Engine to B.io.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| 1.0 | Integrate the React Engine to B.io - **Description:**

Integrate the React Engine to B.io.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

| non_test | integrate the react engine to b io description integrate the react engine to b io describe your problem s describe your solution s related issues optional suggested labels optional suggested assignees optional | 0 |

167,808 | 13,043,419,089 | IssuesEvent | 2020-07-29 01:30:14 | huggingface/transformers | https://api.github.com/repos/huggingface/transformers | closed | CI: run tests against torch=1.6 | Help wanted Tests | Through github or circleci.

If github actions:

copy `.github/self-scheduled.yml` to `.github/torch_future.yml` and modify the install steps. | 1.0 | CI: run tests against torch=1.6 - Through github or circleci.

If github actions:

copy `.github/self-scheduled.yml` to `.github/torch_future.yml` and modify the install steps. | test | ci run tests against torch through github or circleci if github actions copy github self scheduled yml to github torch future yml and modify the install steps | 1 |

147,721 | 11,802,714,446 | IssuesEvent | 2020-03-18 22:11:49 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Enable web golden tests | a: tests team: flakes ☸ platform-web | The tests fail with "Unknown error loading". For example:

```

02:03 +118 ~33 -1: compiling /tmp/flutter sdk/packages/flutter/test/widgets/list_view_vertical_test.dart [E]

Failed to load "/tmp/flutter sdk/packages/flutter/test/widgets/list_view_vertical_test.dart": Unknown error loading http://localhost:43947/packages/flutter/material.ddc.js

```

Seen in the following, but I've seen it multiple times lately.

https://cirrus-ci.com/task/5055207964147712?command=main#L759

https://cirrus-ci.com/task/5899632894279680?command=main#L2370

/cc @jonahwilliams and @yjbanov who may know what's going on. | 1.0 | Enable web golden tests - The tests fail with "Unknown error loading". For example:

```

02:03 +118 ~33 -1: compiling /tmp/flutter sdk/packages/flutter/test/widgets/list_view_vertical_test.dart [E]

Failed to load "/tmp/flutter sdk/packages/flutter/test/widgets/list_view_vertical_test.dart": Unknown error loading http://localhost:43947/packages/flutter/material.ddc.js

```

Seen in the following, but I've seen it multiple times lately.

https://cirrus-ci.com/task/5055207964147712?command=main#L759

https://cirrus-ci.com/task/5899632894279680?command=main#L2370

/cc @jonahwilliams and @yjbanov who may know what's going on. | test | enable web golden tests the tests fail with unknown error loading for example compiling tmp flutter sdk packages flutter test widgets list view vertical test dart failed to load tmp flutter sdk packages flutter test widgets list view vertical test dart unknown error loading seen in the following but i ve seen it multiple times lately cc jonahwilliams and yjbanov who may know what s going on | 1 |

169,323 | 13,133,762,505 | IssuesEvent | 2020-08-06 21:36:53 | ESCOMP/CTSM | https://api.github.com/repos/ESCOMP/CTSM | closed | Failing ISSP585Clm50BgcCrop test for ARCTICGRIS | type: bug type: tests | ### Brief summary of bug

The test SMS.ne0ARCTICGRISne30x8_ne0ARCTICGRISne30x8_mt12.ISSP585Clm50BgcCrop.cheyenne_intel.clm-clm50cam6LndTuningMode

failed in ctsm1.0.dev105. It's a new test added in, and for some reason I missed the fact that it wasn't passing.

### General bug information

**CTSM version you are using:** ctsm1.0.dev105

**Does this bug cause significantly incorrect results in the model's science?** No

**Configurations affected:** Just this test

### Details of bug

I think the walltime is just too short.

| 1.0 | Failing ISSP585Clm50BgcCrop test for ARCTICGRIS - ### Brief summary of bug

The test SMS.ne0ARCTICGRISne30x8_ne0ARCTICGRISne30x8_mt12.ISSP585Clm50BgcCrop.cheyenne_intel.clm-clm50cam6LndTuningMode

failed in ctsm1.0.dev105. It's a new test added in, and for some reason I missed the fact that it wasn't passing.

### General bug information

**CTSM version you are using:** ctsm1.0.dev105

**Does this bug cause significantly incorrect results in the model's science?** No

**Configurations affected:** Just this test

### Details of bug

I think the walltime is just too short.

| test | failing test for arcticgris brief summary of bug the test sms cheyenne intel clm failed in it s a new test added in and for some reason i missed the fact that it wasn t passing general bug information ctsm version you are using does this bug cause significantly incorrect results in the model s science no configurations affected just this test details of bug i think the walltime is just too short | 1 |

626,667 | 19,830,687,810 | IssuesEvent | 2022-01-20 11:39:04 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | Рапорт о выработке продукции ККЦ печатает не верные данные | Type-Enhancement Priority-Medium Meat | Originally reported on Google Code with ID 2575

```

В колонку Норма выхода попадает значение из предыдущей позиции;

В колонки закладка сырья и закладка специй заполняются с накоплением, т.е. значение

второй позиции содержит значение 1й+2й и так далее...

```

Reported by `stasgm` on 2011-09-12 13:27:20

| 1.0 | Рапорт о выработке продукции ККЦ печатает не верные данные - Originally reported on Google Code with ID 2575

```

В колонку Норма выхода попадает значение из предыдущей позиции;

В колонки закладка сырья и закладка специй заполняются с накоплением, т.е. значение

второй позиции содержит значение 1й+2й и так далее...

```

Reported by `stasgm` on 2011-09-12 13:27:20

| non_test | рапорт о выработке продукции ккц печатает не верные данные originally reported on google code with id в колонку норма выхода попадает значение из предыдущей позиции в колонки закладка сырья и закладка специй заполняются с накоплением т е значение второй позиции содержит значение и так далее reported by stasgm on | 0 |

309,976 | 26,690,439,009 | IssuesEvent | 2023-01-27 04:06:23 | datafuselabs/databend | https://api.github.com/repos/datafuselabs/databend | closed | test: add more sqllogic test for COPY file type | C-testing | **Summary**

There is a bug in the:

https://github.com/datafuselabs/databend/blob/0d32a1936b62ef9d212e6b3177180a1da93a6b97/src/query/ast/src/parser/stage.rs#L75

It should be:

```diff

(TYPE ~ "=" ~ (TSV | CSV | NDJSON | PARQUET | JSON | XML) )

```

We need more SQL logic test to check it. | 1.0 | test: add more sqllogic test for COPY file type - **Summary**

There is a bug in the:

https://github.com/datafuselabs/databend/blob/0d32a1936b62ef9d212e6b3177180a1da93a6b97/src/query/ast/src/parser/stage.rs#L75

It should be:

```diff

(TYPE ~ "=" ~ (TSV | CSV | NDJSON | PARQUET | JSON | XML) )

```

We need more SQL logic test to check it. | test | test add more sqllogic test for copy file type summary there is a bug in the it should be diff type tsv csv ndjson parquet json xml we need more sql logic test to check it | 1 |

88,602 | 10,575,756,063 | IssuesEvent | 2019-10-07 16:21:26 | Varrrro/pay-up | https://api.github.com/repos/Varrrro/pay-up | opened | Redactar README con la descripción del proyecto | documentation | Escribir una pequeña descripción del proyecto en el archivo `README.md` del repositorio. | 1.0 | Redactar README con la descripción del proyecto - Escribir una pequeña descripción del proyecto en el archivo `README.md` del repositorio. | non_test | redactar readme con la descripción del proyecto escribir una pequeña descripción del proyecto en el archivo readme md del repositorio | 0 |

6,134 | 13,771,361,048 | IssuesEvent | 2020-10-07 21:52:14 | DarksunTeam/TaleManager | https://api.github.com/repos/DarksunTeam/TaleManager | closed | Inclusão de Campanha | architecture documentation new feature | **Descreva aqui a sua sugestão**

Será necessária a inclusão de um botão no menu lateral para a chamada da tela de Campanha.

Algumas adaptações se farão necessárias também na modelagem.

**Em que parte do sistema esta funcionalidade entraria**

Menu lateral.

**Como você gostaria**

Um botão a mais no inicio da lista.

**Utilidade**

Realizar a futura chamada à tela de campanha, onde o usuário poderá listar todas suas campanhas.

| 1.0 | Inclusão de Campanha - **Descreva aqui a sua sugestão**

Será necessária a inclusão de um botão no menu lateral para a chamada da tela de Campanha.

Algumas adaptações se farão necessárias também na modelagem.

**Em que parte do sistema esta funcionalidade entraria**

Menu lateral.

**Como você gostaria**

Um botão a mais no inicio da lista.

**Utilidade**

Realizar a futura chamada à tela de campanha, onde o usuário poderá listar todas suas campanhas.

| non_test | inclusão de campanha descreva aqui a sua sugestão será necessária a inclusão de um botão no menu lateral para a chamada da tela de campanha algumas adaptações se farão necessárias também na modelagem em que parte do sistema esta funcionalidade entraria menu lateral como você gostaria um botão a mais no inicio da lista utilidade realizar a futura chamada à tela de campanha onde o usuário poderá listar todas suas campanhas | 0 |

577,762 | 17,119,075,019 | IssuesEvent | 2021-07-12 00:14:21 | Techtonica/curriculum | https://api.github.com/repos/Techtonica/curriculum | closed | Fix JS testing using mocks lesson | MEDIUM good-first-issue pinned priority | Fix the problems below, in the lesson: https://github.com/techtonica/curriculum/blob/main/testing-and-tdd/mocking-and-abstraction.md

## Problems found in the tutorial:

1. Materials:

Example video (10 min) -> This link goes to www.google.com. Its not an actual link to video

2. Other example article(20 min read) -> This article link goes to www.google.com. It does not go to an actual link.

3. **Rewrite the following to help user better understand:**

Challenge

Following example above, try to represent the following scenarios and think about what would happen:

* Call getUser('not-octocat')?

* Change mockObject.id to be 42?

* Change mockObject.name to Techtonica?

**Change the explanation to:**

Challenge

Hope you were able to follow the example in the earlier section. Now, take a look at the following scenarios and see if you can make the changes:

* Call getUser('not-octocat')?

* Change mockObject.id to be 42?

* Change mockObject.name to Techtonica?

4. Well, it's tricky because getTodo is still making an external call to the database which is difficult to handle. -> In this section, its hard to understand which database the author is pointing to. Readers may need more detail on the database and why making the external call is difficult and challenging.

5. our reference TODO project) -> **typo**: our reference TODO project

6. It turns out that when we want to make complex verifications around how a mock is called doing that all manually is a lot of work... that somebody else has done for us. -> **This line is confusing. Need more simpler language explanation about the complex verification.**

8. Independent Practice

It's an interesting task to implement your own mocking and validation code by hand and teaches you a lot of neat tricks. If you're feeling adventurous give that a try!

**The above section is not very helpful, and maybe redundant. Instead we can give link to example problems to practice on.**

| 1.0 | Fix JS testing using mocks lesson - Fix the problems below, in the lesson: https://github.com/techtonica/curriculum/blob/main/testing-and-tdd/mocking-and-abstraction.md

## Problems found in the tutorial:

1. Materials:

Example video (10 min) -> This link goes to www.google.com. Its not an actual link to video

2. Other example article(20 min read) -> This article link goes to www.google.com. It does not go to an actual link.

3. **Rewrite the following to help user better understand:**

Challenge

Following example above, try to represent the following scenarios and think about what would happen:

* Call getUser('not-octocat')?

* Change mockObject.id to be 42?

* Change mockObject.name to Techtonica?

**Change the explanation to:**

Challenge

Hope you were able to follow the example in the earlier section. Now, take a look at the following scenarios and see if you can make the changes:

* Call getUser('not-octocat')?

* Change mockObject.id to be 42?

* Change mockObject.name to Techtonica?

4. Well, it's tricky because getTodo is still making an external call to the database which is difficult to handle. -> In this section, its hard to understand which database the author is pointing to. Readers may need more detail on the database and why making the external call is difficult and challenging.

5. our reference TODO project) -> **typo**: our reference TODO project

6. It turns out that when we want to make complex verifications around how a mock is called doing that all manually is a lot of work... that somebody else has done for us. -> **This line is confusing. Need more simpler language explanation about the complex verification.**

8. Independent Practice

It's an interesting task to implement your own mocking and validation code by hand and teaches you a lot of neat tricks. If you're feeling adventurous give that a try!

**The above section is not very helpful, and maybe redundant. Instead we can give link to example problems to practice on.**

| non_test | fix js testing using mocks lesson fix the problems below in the lesson problems found in the tutorial materials example video min this link goes to its not an actual link to video other example article min read this article link goes to it does not go to an actual link rewrite the following to help user better understand challenge following example above try to represent the following scenarios and think about what would happen call getuser not octocat change mockobject id to be change mockobject name to techtonica change the explanation to challenge hope you were able to follow the example in the earlier section now take a look at the following scenarios and see if you can make the changes call getuser not octocat change mockobject id to be change mockobject name to techtonica well it s tricky because gettodo is still making an external call to the database which is difficult to handle in this section its hard to understand which database the author is pointing to readers may need more detail on the database and why making the external call is difficult and challenging our reference todo project typo our reference todo project it turns out that when we want to make complex verifications around how a mock is called doing that all manually is a lot of work that somebody else has done for us this line is confusing need more simpler language explanation about the complex verification independent practice it s an interesting task to implement your own mocking and validation code by hand and teaches you a lot of neat tricks if you re feeling adventurous give that a try the above section is not very helpful and maybe redundant instead we can give link to example problems to practice on | 0 |

342,083 | 24,728,240,586 | IssuesEvent | 2022-10-20 15:28:42 | nebari-dev/nebari-docs | https://api.github.com/repos/nebari-dev/nebari-docs | closed | [DOC] - Quickstart/Commands page for advanced users | type: enhancement 💅🏼 area: documentation 📖 impact: high | ### Preliminary Checks

- [X] This issue is not a question, feature request, RFC, or anything other than a bug report. Please post those things in GitHub Discussions: https://github.com/nebari-dev/nebari/discussions

### Summary

This page is intended to be a cheat sheet with all the important commands a regualr/savvy user will need to quickly get Nebari up and running.

Inspiration:

- https://cli.github.com/manual/index

- https://spacy.io/usage#quickstart

- https://www.gatsbyjs.com/docs/quick-start/ (best)

This page can live in the Getting started section.

### Steps to Resolve this Issue

n/a | 1.0 | [DOC] - Quickstart/Commands page for advanced users - ### Preliminary Checks

- [X] This issue is not a question, feature request, RFC, or anything other than a bug report. Please post those things in GitHub Discussions: https://github.com/nebari-dev/nebari/discussions

### Summary

This page is intended to be a cheat sheet with all the important commands a regualr/savvy user will need to quickly get Nebari up and running.

Inspiration:

- https://cli.github.com/manual/index

- https://spacy.io/usage#quickstart

- https://www.gatsbyjs.com/docs/quick-start/ (best)

This page can live in the Getting started section.

### Steps to Resolve this Issue

n/a | non_test | quickstart commands page for advanced users preliminary checks this issue is not a question feature request rfc or anything other than a bug report please post those things in github discussions summary this page is intended to be a cheat sheet with all the important commands a regualr savvy user will need to quickly get nebari up and running inspiration best this page can live in the getting started section steps to resolve this issue n a | 0 |

444,332 | 12,810,151,551 | IssuesEvent | 2020-07-03 17:40:34 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | closed | java client: jobs are getting activated but not coming back to client (intermittent issue) | Impact: Performance Priority: Mid Scope: broker Severity: Mid Status: Ready Type: Bug | **Describe the bug**

I'm noticing that sometime jobs are getting activated but not handled through jobHandler and then after timeout, zeebe again retries the job and then it properly gets processed.

On ES exporter I'm seeing the job intent being "ACTIVATED", then after time out period, its "TIMED_OUT" and another record as "ACTIVATED", then properly handled in jobHandler and then "COMPLETED".

As the jobs are getting activated but timing out, I'm guessing its client issue rather than broker. Client has activated the job and started working on it but has not sent any response within proper time, so broker times out the job and the job is ready for polling again.

We are using java client , maven version 0.22.0-alpha1

As the intent is "ACTIVATED", I'm guessing jobPoller activates this job and it's in its jobList. The next thing it should do is to iterate through the list and submit jobs which eventually calls jobHandler's handle method.

> public void onNext(ActivateJobsResponse activateJobsResponse) {

this.activatedJobs += activateJobsResponse.getJobsCount();

activateJobsResponse.getJobsList().stream().map((job) -> {

return new ActivatedJobImpl(this.objectMapper, job);

}).forEach(this.jobConsumer);

}

I've logger info as the first line in handler method. But this line doesn't get printed in this particular case, neither the warning gets printed which is inside the catch block - this means the handle method dint even gets called.

> private void executeJob(ActivatedJob job, Runnable doneCallback) {

try {

this.handler.handle(this.jobClient, job);

} catch (Exception var10) {

LOG.warn("Worker {} failed to handle job with key {} of type {}, sending fail command to broker", new Object[]{job.getWorker(), job.getKey(), job.getType(), var10});

StringWriter stringWriter = new StringWriter();

PrintWriter printWriter = new PrintWriter(stringWriter);

var10.printStackTrace(printWriter);

String message = stringWriter.toString();

this.jobClient.newFailCommand(job.getKey()).retries(job.getRetries() - 1).errorMessage(message).send();

} finally {

doneCallback.run();

}

}

It seems to me that the thread is getting hanged somehow but not sure how to troubleshoot this particular issue.

**To Reproduce**

This is intermittent. 20% jobs fail on first attempt, but those again pass in second attempt ( ie, after retry)

**Expected behavior**

Jobs should be either fails or passes on first attempt.

| 1.0 | java client: jobs are getting activated but not coming back to client (intermittent issue) - **Describe the bug**

I'm noticing that sometime jobs are getting activated but not handled through jobHandler and then after timeout, zeebe again retries the job and then it properly gets processed.

On ES exporter I'm seeing the job intent being "ACTIVATED", then after time out period, its "TIMED_OUT" and another record as "ACTIVATED", then properly handled in jobHandler and then "COMPLETED".

As the jobs are getting activated but timing out, I'm guessing its client issue rather than broker. Client has activated the job and started working on it but has not sent any response within proper time, so broker times out the job and the job is ready for polling again.

We are using java client , maven version 0.22.0-alpha1

As the intent is "ACTIVATED", I'm guessing jobPoller activates this job and it's in its jobList. The next thing it should do is to iterate through the list and submit jobs which eventually calls jobHandler's handle method.

> public void onNext(ActivateJobsResponse activateJobsResponse) {

this.activatedJobs += activateJobsResponse.getJobsCount();

activateJobsResponse.getJobsList().stream().map((job) -> {

return new ActivatedJobImpl(this.objectMapper, job);

}).forEach(this.jobConsumer);

}

I've logger info as the first line in handler method. But this line doesn't get printed in this particular case, neither the warning gets printed which is inside the catch block - this means the handle method dint even gets called.

> private void executeJob(ActivatedJob job, Runnable doneCallback) {

try {

this.handler.handle(this.jobClient, job);

} catch (Exception var10) {

LOG.warn("Worker {} failed to handle job with key {} of type {}, sending fail command to broker", new Object[]{job.getWorker(), job.getKey(), job.getType(), var10});

StringWriter stringWriter = new StringWriter();

PrintWriter printWriter = new PrintWriter(stringWriter);

var10.printStackTrace(printWriter);

String message = stringWriter.toString();

this.jobClient.newFailCommand(job.getKey()).retries(job.getRetries() - 1).errorMessage(message).send();

} finally {

doneCallback.run();

}

}

It seems to me that the thread is getting hanged somehow but not sure how to troubleshoot this particular issue.

**To Reproduce**

This is intermittent. 20% jobs fail on first attempt, but those again pass in second attempt ( ie, after retry)

**Expected behavior**

Jobs should be either fails or passes on first attempt.

| non_test | java client jobs are getting activated but not coming back to client intermittent issue describe the bug i m noticing that sometime jobs are getting activated but not handled through jobhandler and then after timeout zeebe again retries the job and then it properly gets processed on es exporter i m seeing the job intent being activated then after time out period its timed out and another record as activated then properly handled in jobhandler and then completed as the jobs are getting activated but timing out i m guessing its client issue rather than broker client has activated the job and started working on it but has not sent any response within proper time so broker times out the job and the job is ready for polling again we are using java client maven version as the intent is activated i m guessing jobpoller activates this job and it s in its joblist the next thing it should do is to iterate through the list and submit jobs which eventually calls jobhandler s handle method public void onnext activatejobsresponse activatejobsresponse this activatedjobs activatejobsresponse getjobscount activatejobsresponse getjobslist stream map job return new activatedjobimpl this objectmapper job foreach this jobconsumer i ve logger info as the first line in handler method but this line doesn t get printed in this particular case neither the warning gets printed which is inside the catch block this means the handle method dint even gets called private void executejob activatedjob job runnable donecallback try this handler handle this jobclient job catch exception log warn worker failed to handle job with key of type sending fail command to broker new object job getworker job getkey job gettype stringwriter stringwriter new stringwriter printwriter printwriter new printwriter stringwriter printstacktrace printwriter string message stringwriter tostring this jobclient newfailcommand job getkey retries job getretries errormessage message send finally donecallback run it seems to me that the thread is getting hanged somehow but not sure how to troubleshoot this particular issue to reproduce this is intermittent jobs fail on first attempt but those again pass in second attempt ie after retry expected behavior jobs should be either fails or passes on first attempt | 0 |

49,736 | 6,038,594,377 | IssuesEvent | 2017-06-09 21:55:43 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | GCE 1.5->1.6 upgrade testing: CronJob - the server could not find the expected resource | kind/flake kind/upgrade-test-failure priority/failing-test sig/apps | @soltysh, please take a look ASAP and let me know if this is a 1.6 blocker or not.

https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gce-1.5-1.6-upgrade-cluster/19#k8sio-cronjob-should-not-emit-unexpected-warnings

On GCE, in the 1.5->1.6 upgrade-cluster job (which creates a 1.5 cluster, upgrades to 1.6, then runs 1.5 E2E tests), the CronJob tests are failing with

```

Expected error:

<*errors.StatusError | 0xc422657180>: {

ErrStatus: {

TypeMeta: {Kind: "", APIVersion: ""},

ListMeta: {SelfLink: "", ResourceVersion: ""},

Status: "Failure",

Message: "the server could not find the requested resource",

Reason: "NotFound",

Details: {Name: "", Group: "", Kind: "", Causes: nil, RetryAfterSeconds: 0},

Code: 404,

},

}

the server could not find the requested resource

not to have occurred

```

| 2.0 | GCE 1.5->1.6 upgrade testing: CronJob - the server could not find the expected resource - @soltysh, please take a look ASAP and let me know if this is a 1.6 blocker or not.

https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gce-1.5-1.6-upgrade-cluster/19#k8sio-cronjob-should-not-emit-unexpected-warnings

On GCE, in the 1.5->1.6 upgrade-cluster job (which creates a 1.5 cluster, upgrades to 1.6, then runs 1.5 E2E tests), the CronJob tests are failing with

```

Expected error:

<*errors.StatusError | 0xc422657180>: {

ErrStatus: {

TypeMeta: {Kind: "", APIVersion: ""},

ListMeta: {SelfLink: "", ResourceVersion: ""},

Status: "Failure",

Message: "the server could not find the requested resource",

Reason: "NotFound",

Details: {Name: "", Group: "", Kind: "", Causes: nil, RetryAfterSeconds: 0},

Code: 404,

},

}

the server could not find the requested resource

not to have occurred

```

| test | gce upgrade testing cronjob the server could not find the expected resource soltysh please take a look asap and let me know if this is a blocker or not on gce in the upgrade cluster job which creates a cluster upgrades to then runs tests the cronjob tests are failing with expected error errstatus typemeta kind apiversion listmeta selflink resourceversion status failure message the server could not find the requested resource reason notfound details name group kind causes nil retryafterseconds code the server could not find the requested resource not to have occurred | 1 |

287,652 | 8,817,975,661 | IssuesEvent | 2018-12-31 07:36:40 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | hangouts.google.com - site is not usable | browser-firefox priority-critical | <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.2; Win64; x64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: -->

**URL**: https://hangouts.google.com

**Browser / Version**: Firefox 64.0

**Operating System**: Windows 8

**Tested Another Browser**: Yes

**Problem type**: Site is not usable

**Description**: hangouts chats list ist empty. Seems due to a CSP restriction

**Steps to Reproduce**:

just open the page with a google account with some chats.

[](https://webcompat.com/uploads/2018/12/c72c5af1-5ca7-4163-9222-a47faa2de671.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

Reported by @arneschween

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | hangouts.google.com - site is not usable - <!-- @browser: Firefox 64.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.2; Win64; x64; rv:64.0) Gecko/20100101 Firefox/64.0 -->

<!-- @reported_with: -->

**URL**: https://hangouts.google.com

**Browser / Version**: Firefox 64.0

**Operating System**: Windows 8

**Tested Another Browser**: Yes

**Problem type**: Site is not usable

**Description**: hangouts chats list ist empty. Seems due to a CSP restriction

**Steps to Reproduce**:

just open the page with a google account with some chats.

[](https://webcompat.com/uploads/2018/12/c72c5af1-5ca7-4163-9222-a47faa2de671.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

Reported by @arneschween

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | hangouts google com site is not usable url browser version firefox operating system windows tested another browser yes problem type site is not usable description hangouts chats list ist empty seems due to a csp restriction steps to reproduce just open the page with a google account with some chats browser configuration none reported by arneschween from with ❤️ | 0 |

12,313 | 3,265,785,440 | IssuesEvent | 2015-10-22 17:46:39 | mautic/mautic | https://api.github.com/repos/mautic/mautic | closed | REST API for GET Campaign erroring out | Bug Ready To Test | Hello everyone,

I am trying to perform GET campaign call but getting following error. Anyone has any idea about this issue?

ERROR:

{

"error": {

"message": "Property Mautic\CampaignBundle\Entity\Campaign::$title does not exist",

"code": 0

}

}

REST CALL:

http://192.168.2.185/1.2.0/index.php/api/campaigns/2?access_token=NDYwZZcxZmNjOThmNsEzMjI4ZWRjYjlmMzE5NGI2MTBkMzg4MjAyOTNjNTcyYTllOGRkNjc3NjViNTEyLzdjMg

Error message in log file:

[2015-09-23 11:35:38] mautic.CRITICAL: Uncaught PHP Exception ReflectionException: "Property Mautic\CampaignBundle\Entity\Campaign::$title does not exist" at /var/www/html/1.2.0/vendor/jms/metadata/src/Metadata/PropertyMetadata.php line 40 {"exception":"[object] (ReflectionException(code: 0): Property Mautic\\CampaignBundle\\Entity\\Campaign::$title does not exist at /var/www/html/1.2.0/vendor/jms/metadata/src/Metadata/PropertyMetadata.php:40)"} []

Note: other rest calls like create lead, get lead etc are working just fine. Also GET Campaign is working fine with same request in Mautic 1.1 but not working in Mautic 1.2.

Thanks,

Nimesh

| 1.0 | REST API for GET Campaign erroring out - Hello everyone,

I am trying to perform GET campaign call but getting following error. Anyone has any idea about this issue?

ERROR:

{

"error": {

"message": "Property Mautic\CampaignBundle\Entity\Campaign::$title does not exist",

"code": 0

}

}

REST CALL:

http://192.168.2.185/1.2.0/index.php/api/campaigns/2?access_token=NDYwZZcxZmNjOThmNsEzMjI4ZWRjYjlmMzE5NGI2MTBkMzg4MjAyOTNjNTcyYTllOGRkNjc3NjViNTEyLzdjMg

Error message in log file:

[2015-09-23 11:35:38] mautic.CRITICAL: Uncaught PHP Exception ReflectionException: "Property Mautic\CampaignBundle\Entity\Campaign::$title does not exist" at /var/www/html/1.2.0/vendor/jms/metadata/src/Metadata/PropertyMetadata.php line 40 {"exception":"[object] (ReflectionException(code: 0): Property Mautic\\CampaignBundle\\Entity\\Campaign::$title does not exist at /var/www/html/1.2.0/vendor/jms/metadata/src/Metadata/PropertyMetadata.php:40)"} []

Note: other rest calls like create lead, get lead etc are working just fine. Also GET Campaign is working fine with same request in Mautic 1.1 but not working in Mautic 1.2.

Thanks,

Nimesh

| test | rest api for get campaign erroring out hello everyone i am trying to perform get campaign call but getting following error anyone has any idea about this issue error error message property mautic campaignbundle entity campaign title does not exist code rest call error message in log file mautic critical uncaught php exception reflectionexception property mautic campaignbundle entity campaign title does not exist at var www html vendor jms metadata src metadata propertymetadata php line exception reflectionexception code property mautic campaignbundle entity campaign title does not exist at var www html vendor jms metadata src metadata propertymetadata php note other rest calls like create lead get lead etc are working just fine also get campaign is working fine with same request in mautic but not working in mautic thanks nimesh | 1 |

152,883 | 13,486,580,308 | IssuesEvent | 2020-09-11 09:39:35 | abpframework/abp | https://api.github.com/repos/abpframework/abp | closed | Documentation how to define custom filters | documentation | https://docs.abp.io/en/abp/latest/Data-Filtering

Current document is not complete. For example, need to override `ShouldFilterEntity`. Could we provide more details? | 1.0 | Documentation how to define custom filters - https://docs.abp.io/en/abp/latest/Data-Filtering

Current document is not complete. For example, need to override `ShouldFilterEntity`. Could we provide more details? | non_test | documentation how to define custom filters current document is not complete for example need to override shouldfilterentity could we provide more details | 0 |

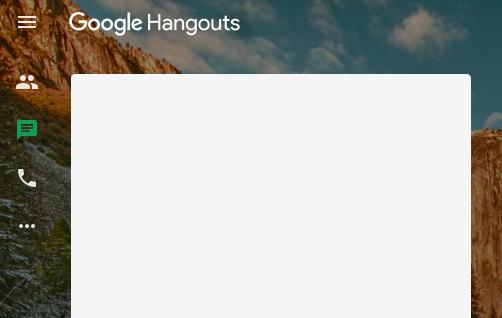

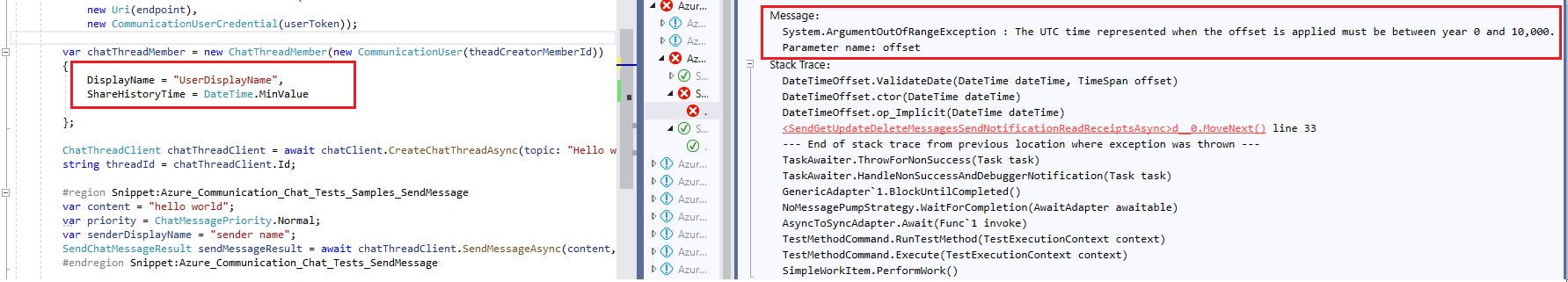

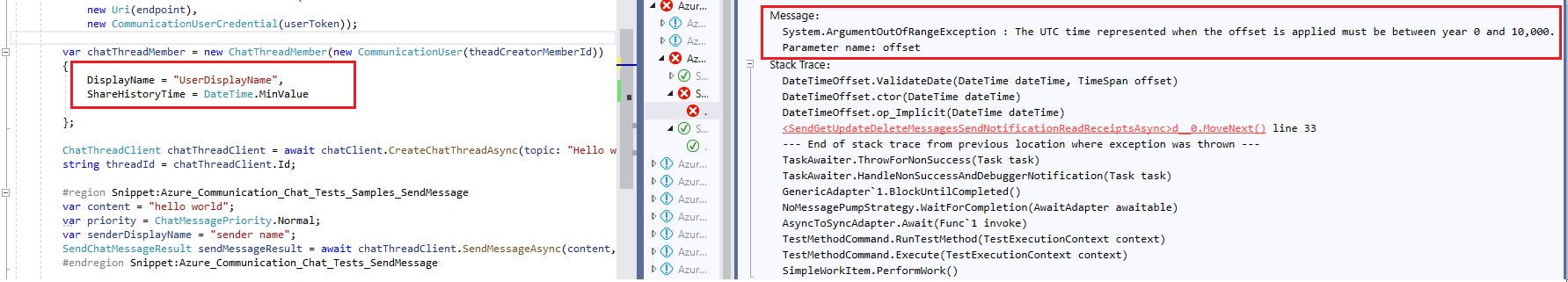

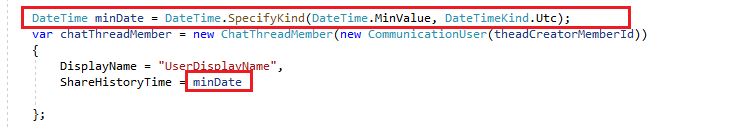

203,125 | 15,350,202,248 | IssuesEvent | 2021-03-01 01:43:14 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | Azure.Communication.Chat Sample issue | Client Communication Docs needs-team-triage test-manual-pass | 1.

Section [link1,](https://github.com/Azure/azure-sdk-for-net/blob/master/sdk/communication/Azure.Communication.Chat/tests/samples/Sample2_MessagingOperations.cs#L33)[link2:](https://github.com/Azure/azure-sdk-for-net/blob/master/sdk/communication/Azure.Communication.Chat/tests/samples/Sample3_MemberOperations.cs#L38)

Suggestion:

Add: `DateTime minDate = DateTime.SpecifyKind(DateTime.MinValue, DateTimeKind.Utc);`

And update `DateTime.MinValue` to `minDate `

@jongio for notification.

| 1.0 | Azure.Communication.Chat Sample issue - 1.

Section [link1,](https://github.com/Azure/azure-sdk-for-net/blob/master/sdk/communication/Azure.Communication.Chat/tests/samples/Sample2_MessagingOperations.cs#L33)[link2:](https://github.com/Azure/azure-sdk-for-net/blob/master/sdk/communication/Azure.Communication.Chat/tests/samples/Sample3_MemberOperations.cs#L38)

Suggestion:

Add: `DateTime minDate = DateTime.SpecifyKind(DateTime.MinValue, DateTimeKind.Utc);`

And update `DateTime.MinValue` to `minDate `

@jongio for notification.

| test | azure communication chat sample issue section suggestion add datetime mindate datetime specifykind datetime minvalue datetimekind utc and update datetime minvalue to mindate jongio for notification | 1 |

152,149 | 12,093,170,369 | IssuesEvent | 2020-04-19 18:31:35 | iqlusioninc/relayer | https://api.github.com/repos/iqlusioninc/relayer | closed | Integration tests | help wanted testing | The current plan is to test the `relayer` package using something like https://github.com/ory/dockertest and a gaia image that allows for passing in addresses to be funded for genesis. Will work on getting this setup. | 1.0 | Integration tests - The current plan is to test the `relayer` package using something like https://github.com/ory/dockertest and a gaia image that allows for passing in addresses to be funded for genesis. Will work on getting this setup. | test | integration tests the current plan is to test the relayer package using something like and a gaia image that allows for passing in addresses to be funded for genesis will work on getting this setup | 1 |

11,290 | 3,197,635,201 | IssuesEvent | 2015-10-01 06:58:24 | uProxy/uproxy | https://api.github.com/repos/uProxy/uproxy | closed | Move freedom mocking out of remote-connection.spec.ts into more obvious file | C:Testing | Right now a number of the tests for uProxy core all use the global storage object, which in unit tests is mocked to use our freedom_mocks.MockStorage class. However the glue to sets freedom['storage'] to freedom_mocks.MockStorage is in remote-connection.spec.ts, despite it being depended on by all our other unit tests in the core. This is not an obvious place for it, rather we should move it to some new file that is included in all our tests. We have a similar situation for MockLoggingController and now MockMetrics | 1.0 | Move freedom mocking out of remote-connection.spec.ts into more obvious file - Right now a number of the tests for uProxy core all use the global storage object, which in unit tests is mocked to use our freedom_mocks.MockStorage class. However the glue to sets freedom['storage'] to freedom_mocks.MockStorage is in remote-connection.spec.ts, despite it being depended on by all our other unit tests in the core. This is not an obvious place for it, rather we should move it to some new file that is included in all our tests. We have a similar situation for MockLoggingController and now MockMetrics | test | move freedom mocking out of remote connection spec ts into more obvious file right now a number of the tests for uproxy core all use the global storage object which in unit tests is mocked to use our freedom mocks mockstorage class however the glue to sets freedom to freedom mocks mockstorage is in remote connection spec ts despite it being depended on by all our other unit tests in the core this is not an obvious place for it rather we should move it to some new file that is included in all our tests we have a similar situation for mockloggingcontroller and now mockmetrics | 1 |

74,037 | 3,427,465,804 | IssuesEvent | 2015-12-10 01:50:55 | OctopusDeploy/Issues | https://api.github.com/repos/OctopusDeploy/Issues | closed | OctopusDeleteScriptsOnCleanup not working in 3.x | bug in progress priority | A customer reported that in 3.x OctopusDeleteScriptsOnCleanup being set to false no longer leaves the ps scripts behind. The variable still exists in the code, so the execution from calamari must be missing. (I have to assume if it was left out on purpose the variable would have been removed).

Source: http://help.octopusdeploy.com/discussions/problems/43117 | 1.0 | OctopusDeleteScriptsOnCleanup not working in 3.x - A customer reported that in 3.x OctopusDeleteScriptsOnCleanup being set to false no longer leaves the ps scripts behind. The variable still exists in the code, so the execution from calamari must be missing. (I have to assume if it was left out on purpose the variable would have been removed).

Source: http://help.octopusdeploy.com/discussions/problems/43117 | non_test | octopusdeletescriptsoncleanup not working in x a customer reported that in x octopusdeletescriptsoncleanup being set to false no longer leaves the ps scripts behind the variable still exists in the code so the execution from calamari must be missing i have to assume if it was left out on purpose the variable would have been removed source | 0 |

13,102 | 3,310,174,308 | IssuesEvent | 2015-11-05 07:13:39 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Hide head of family from family profile card | 4 - Acceptance testing Feature Request | Continuation of #1422, and similar to #1402, we should hide the "head of family" from the family profile card.

The exception to this is if the "head of family" is **not** in the family list - which is possible if people are reassigned manually. This exception is not likely for the field-test, but theoretically possible. | 1.0 | Hide head of family from family profile card - Continuation of #1422, and similar to #1402, we should hide the "head of family" from the family profile card.

The exception to this is if the "head of family" is **not** in the family list - which is possible if people are reassigned manually. This exception is not likely for the field-test, but theoretically possible. | test | hide head of family from family profile card continuation of and similar to we should hide the head of family from the family profile card the exception to this is if the head of family is not in the family list which is possible if people are reassigned manually this exception is not likely for the field test but theoretically possible | 1 |

4,677 | 7,517,304,707 | IssuesEvent | 2018-04-12 02:48:22 | UnbFeelings/unb-feelings-GQA | https://api.github.com/repos/UnbFeelings/unb-feelings-GQA | closed | Criar templates de documentação | document process wiki | Deve-se identificar quais documentações necessitam de templates para que possam ser criados, como: Resultados das Auditoria, Documentação do Checklist dentro do resultado da Auditoria, Documentação da Entrevista dentro do resultado da Auditoria, entre outros.

[Atividade no processo](https://github.com/UnbFeelings/unb-feelings-GQA/wiki/Fluxo-de-Trabalho#17-criar-templates-de-documenta%C3%A7%C3%A3o). | 1.0 | Criar templates de documentação - Deve-se identificar quais documentações necessitam de templates para que possam ser criados, como: Resultados das Auditoria, Documentação do Checklist dentro do resultado da Auditoria, Documentação da Entrevista dentro do resultado da Auditoria, entre outros.

[Atividade no processo](https://github.com/UnbFeelings/unb-feelings-GQA/wiki/Fluxo-de-Trabalho#17-criar-templates-de-documenta%C3%A7%C3%A3o). | non_test | criar templates de documentação deve se identificar quais documentações necessitam de templates para que possam ser criados como resultados das auditoria documentação do checklist dentro do resultado da auditoria documentação da entrevista dentro do resultado da auditoria entre outros | 0 |

36,242 | 12,404,341,726 | IssuesEvent | 2020-05-21 15:22:43 | jgeraigery/beaker-notebook | https://api.github.com/repos/jgeraigery/beaker-notebook | opened | CVE-2017-7656 (High) detected in multiple libraries | security vulnerability | ## CVE-2017-7656 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jetty-http-8.1.12.v20130726.jar</b>, <b>jetty-http-8.1.13.v20130916.jar</b>, <b>jetty-server-8.1.13.v20130916.jar</b>, <b>jetty-server-8.1.12.v20130726.jar</b></p></summary>

<p>

<details><summary><b>jetty-http-8.1.12.v20130726.jar</b></p></summary>

<p>Administrative parent pom for Jetty modules</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to dependency file: /tmp/ws-scm/beaker-notebook/shared/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.12.v20130726/530b3a21d71ac69279bee129869d7eac031e3533/jetty-http-8.1.12.v20130726.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.12.v20130726/530b3a21d71ac69279bee129869d7eac031e3533/jetty-http-8.1.12.v20130726.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-http-8.1.12.v20130726.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-http-8.1.13.v20130916.jar</b></p></summary>

<p>Administrative parent pom for Jetty modules</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to dependency file: /tmp/ws-scm/beaker-notebook/plugin/clojure/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.13.v20130916/6dcf37666815f6d0d90b77a2f5037a9ceaaca968/jetty-http-8.1.13.v20130916.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.13.v20130916/6dcf37666815f6d0d90b77a2f5037a9ceaaca968/jetty-http-8.1.13.v20130916.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-http-8.1.13.v20130916.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-server-8.1.13.v20130916.jar</b></p></summary>

<p>The core jetty server artifact.</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.13.v20130916/99d1bf6fb172cecb597f1c029c719c5f878d8405/jetty-server-8.1.13.v20130916.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.13.v20130916/99d1bf6fb172cecb597f1c029c719c5f878d8405/jetty-server-8.1.13.v20130916.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-server-8.1.13.v20130916.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-server-8.1.12.v20130726.jar</b></p></summary>

<p>The core jetty server artifact.</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.12.v20130726/e8d89c85edd00680a7b30bf219e6dba181dc4aa1/jetty-server-8.1.12.v20130726.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.12.v20130726/e8d89c85edd00680a7b30bf219e6dba181dc4aa1/jetty-server-8.1.12.v20130726.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-server-8.1.12.v20130726.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/jgeraigery/beaker-notebook/commit/e74341acf643e87bd21b092c7a9e9f6bb96fa7c4">e74341acf643e87bd21b092c7a9e9f6bb96fa7c4</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Eclipse Jetty, versions 9.2.x and older, 9.3.x (all configurations), and 9.4.x (non-default configuration with RFC2616 compliance enabled), HTTP/0.9 is handled poorly. An HTTP/1 style request line (i.e. method space URI space version) that declares a version of HTTP/0.9 was accepted and treated as a 0.9 request. If deployed behind an intermediary that also accepted and passed through the 0.9 version (but did not act on it), then the response sent could be interpreted by the intermediary as HTTP/1 headers. This could be used to poison the cache if the server allowed the origin client to generate arbitrary content in the response.

<p>Publish Date: 2018-06-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-7656>CVE-2017-7656</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://www.securitytracker.com/id/1041194">http://www.securitytracker.com/id/1041194</a></p>

<p>Fix Resolution: The vendor has issued a fix (9.4.11.v20180605).

9.2.25.v20180606, 9.3.24.v20180605

The vendor advisory is available at:

http://dev.eclipse.org/mhonarc/lists/jetty-announce/msg00123.html</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"org.eclipse.jetty","packageName":"jetty-http","packageVersion":"8.1.12.v20130726","isTransitiveDependency":true,"dependencyTree":"org.apache.cxf:cxf-bundle-jaxrs:2.7.7;org.eclipse.jetty:jetty-http:8.1.12.v20130726","isMinimumFixVersionAvailable":false},{"packageType":"Java","groupId":"org.eclipse.jetty","packageName":"jetty-http","packageVersion":"8.1.13.v20130916","isTransitiveDependency":true,"dependencyTree":"org.apache.cxf:cxf-bundle-jaxrs:2.7.7;org.eclipse.jetty:jetty-http:8.1.13.v20130916","isMinimumFixVersionAvailable":false},{"packageType":"Java","groupId":"org.eclipse.jetty","packageName":"jetty-server","packageVersion":"8.1.13.v20130916","isTransitiveDependency":true,"dependencyTree":"org.apache.cxf:cxf-bundle-jaxrs:2.7.7;org.eclipse.jetty:jetty-server:8.1.13.v20130916","isMinimumFixVersionAvailable":false},{"packageType":"Java","groupId":"org.eclipse.jetty","packageName":"jetty-server","packageVersion":"8.1.12.v20130726","isTransitiveDependency":true,"dependencyTree":"org.apache.cxf:cxf-bundle-jaxrs:2.7.7;org.eclipse.jetty:jetty-server:8.1.12.v20130726","isMinimumFixVersionAvailable":false}],"vulnerabilityIdentifier":"CVE-2017-7656","vulnerabilityDetails":"In Eclipse Jetty, versions 9.2.x and older, 9.3.x (all configurations), and 9.4.x (non-default configuration with RFC2616 compliance enabled), HTTP/0.9 is handled poorly. An HTTP/1 style request line (i.e. method space URI space version) that declares a version of HTTP/0.9 was accepted and treated as a 0.9 request. If deployed behind an intermediary that also accepted and passed through the 0.9 version (but did not act on it), then the response sent could be interpreted by the intermediary as HTTP/1 headers. This could be used to poison the cache if the server allowed the origin client to generate arbitrary content in the response.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-7656","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2017-7656 (High) detected in multiple libraries - ## CVE-2017-7656 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jetty-http-8.1.12.v20130726.jar</b>, <b>jetty-http-8.1.13.v20130916.jar</b>, <b>jetty-server-8.1.13.v20130916.jar</b>, <b>jetty-server-8.1.12.v20130726.jar</b></p></summary>

<p>

<details><summary><b>jetty-http-8.1.12.v20130726.jar</b></p></summary>

<p>Administrative parent pom for Jetty modules</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to dependency file: /tmp/ws-scm/beaker-notebook/shared/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.12.v20130726/530b3a21d71ac69279bee129869d7eac031e3533/jetty-http-8.1.12.v20130726.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.12.v20130726/530b3a21d71ac69279bee129869d7eac031e3533/jetty-http-8.1.12.v20130726.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-http-8.1.12.v20130726.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-http-8.1.13.v20130916.jar</b></p></summary>

<p>Administrative parent pom for Jetty modules</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to dependency file: /tmp/ws-scm/beaker-notebook/plugin/clojure/build.gradle</p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.13.v20130916/6dcf37666815f6d0d90b77a2f5037a9ceaaca968/jetty-http-8.1.13.v20130916.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-http/8.1.13.v20130916/6dcf37666815f6d0d90b77a2f5037a9ceaaca968/jetty-http-8.1.13.v20130916.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-http-8.1.13.v20130916.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-server-8.1.13.v20130916.jar</b></p></summary>

<p>The core jetty server artifact.</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.13.v20130916/99d1bf6fb172cecb597f1c029c719c5f878d8405/jetty-server-8.1.13.v20130916.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.13.v20130916/99d1bf6fb172cecb597f1c029c719c5f878d8405/jetty-server-8.1.13.v20130916.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-server-8.1.13.v20130916.jar** (Vulnerable Library)

</details>

<details><summary><b>jetty-server-8.1.12.v20130726.jar</b></p></summary>

<p>The core jetty server artifact.</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.eclipse.org/jetty</a></p>

<p>Path to vulnerable library: /root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.12.v20130726/e8d89c85edd00680a7b30bf219e6dba181dc4aa1/jetty-server-8.1.12.v20130726.jar,/root/.gradle/caches/modules-2/files-2.1/org.eclipse.jetty/jetty-server/8.1.12.v20130726/e8d89c85edd00680a7b30bf219e6dba181dc4aa1/jetty-server-8.1.12.v20130726.jar</p>

<p>

Dependency Hierarchy:

- cxf-bundle-jaxrs-2.7.7.jar (Root Library)

- :x: **jetty-server-8.1.12.v20130726.jar** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/jgeraigery/beaker-notebook/commit/e74341acf643e87bd21b092c7a9e9f6bb96fa7c4">e74341acf643e87bd21b092c7a9e9f6bb96fa7c4</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In Eclipse Jetty, versions 9.2.x and older, 9.3.x (all configurations), and 9.4.x (non-default configuration with RFC2616 compliance enabled), HTTP/0.9 is handled poorly. An HTTP/1 style request line (i.e. method space URI space version) that declares a version of HTTP/0.9 was accepted and treated as a 0.9 request. If deployed behind an intermediary that also accepted and passed through the 0.9 version (but did not act on it), then the response sent could be interpreted by the intermediary as HTTP/1 headers. This could be used to poison the cache if the server allowed the origin client to generate arbitrary content in the response.

<p>Publish Date: 2018-06-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-7656>CVE-2017-7656</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://www.securitytracker.com/id/1041194">http://www.securitytracker.com/id/1041194</a></p>

<p>Fix Resolution: The vendor has issued a fix (9.4.11.v20180605).

9.2.25.v20180606, 9.3.24.v20180605

The vendor advisory is available at: