Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

398,862 | 27,215,442,148 | IssuesEvent | 2023-02-20 21:09:40 | markrossington/sidewinder-x2-marlin | https://api.github.com/repos/markrossington/sidewinder-x2-marlin | closed | Make steps clearer for non software engineer | documentation | Clean up the steps in the readme.

- Can you double click Python files? And on all systems?

- Better filenames?

Share thoughts if you read this and have any ideas. | 1.0 | Make steps clearer for non software engineer - Clean up the steps in the readme.

- Can you double click Python files? And on all systems?

- Better filenames?

Share thoughts if you read this and have any ideas. | non_test | make steps clearer for non software engineer clean up the steps in the readme can you double click python files and on all systems better filenames share thoughts if you read this and have any ideas | 0 |

303,195 | 26,191,864,618 | IssuesEvent | 2023-01-03 09:49:20 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: costfuzz/rand-tables failed | C-test-failure O-robot O-roachtest branch-master release-blocker | roachtest.costfuzz/rand-tables [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8166107?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8166107?buildTab=artifacts#/costfuzz/rand-tables) on master @ [1d7bd69205c2197ccac33df9e2e6d4ff8c0fdbcf](https://github.com/cockroachdb/cockroach/commits/1d7bd69205c2197ccac33df9e2e6d4ff8c0fdbcf):

```

test artifacts and logs in: /artifacts/costfuzz/rand-tables/run_1

(query_comparison_util.go:158).runOneRoundQueryComparison: pq: Use of partitions requires an enterprise license. Your evaluation license expired on December 30, 2022. If you're interested in getting a new license, please contact subscriptions@cockroachlabs.com and we can help you out.

```

<p>Parameters: <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=4</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*costfuzz/rand-tables.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: costfuzz/rand-tables failed - roachtest.costfuzz/rand-tables [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8166107?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8166107?buildTab=artifacts#/costfuzz/rand-tables) on master @ [1d7bd69205c2197ccac33df9e2e6d4ff8c0fdbcf](https://github.com/cockroachdb/cockroach/commits/1d7bd69205c2197ccac33df9e2e6d4ff8c0fdbcf):

```

test artifacts and logs in: /artifacts/costfuzz/rand-tables/run_1

(query_comparison_util.go:158).runOneRoundQueryComparison: pq: Use of partitions requires an enterprise license. Your evaluation license expired on December 30, 2022. If you're interested in getting a new license, please contact subscriptions@cockroachlabs.com and we can help you out.

```

<p>Parameters: <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=4</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*costfuzz/rand-tables.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest costfuzz rand tables failed roachtest costfuzz rand tables with on master test artifacts and logs in artifacts costfuzz rand tables run query comparison util go runoneroundquerycomparison pq use of partitions requires an enterprise license your evaluation license expired on december if you re interested in getting a new license please contact subscriptions cockroachlabs com and we can help you out parameters roachtest cloud gce roachtest cpu roachtest encrypted false roachtest ssd help see see cc cockroachdb sql queries | 1 |

689,678 | 23,630,292,367 | IssuesEvent | 2022-08-25 08:45:26 | oceanprotocol/docs | https://api.github.com/repos/oceanprotocol/docs | opened | Postman examples for Provider | Type: Enhancement Priority: Low | It would be nice to have an equivalent of the Aquarius postman examples for provider. This is not high priority at the moment though as there aren't too many people using it. | 1.0 | Postman examples for Provider - It would be nice to have an equivalent of the Aquarius postman examples for provider. This is not high priority at the moment though as there aren't too many people using it. | non_test | postman examples for provider it would be nice to have an equivalent of the aquarius postman examples for provider this is not high priority at the moment though as there aren t too many people using it | 0 |

177,443 | 13,724,383,902 | IssuesEvent | 2020-10-03 14:01:10 | webpack/webpack-cli | https://api.github.com/repos/webpack/webpack-cli | closed | feature: integration of serve package into cli | Feature Tests enhancement | **Is your feature request related to a problem? Please describe.**

Part of roadmap, we would be integrating serve by default into the CLI so that user need not install it to use dev-server from command line.

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

- [ ] Robust tests for `serve`'s current features (if needed)

- [ ] Refactor `serve` and add/remove features from it

- [ ] Add tests for the previous step changes (if any)

- [ ] Integrate it with CLI

- [ ] Add integration tests for verifying integration

<!-- A clear and concise description of what you want to happen. -->

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

Roadmap #717

<!-- Add any other context or screenshots about the feature request here. -->

/cc @evilebottnawi | 1.0 | feature: integration of serve package into cli - **Is your feature request related to a problem? Please describe.**

Part of roadmap, we would be integrating serve by default into the CLI so that user need not install it to use dev-server from command line.

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

- [ ] Robust tests for `serve`'s current features (if needed)

- [ ] Refactor `serve` and add/remove features from it

- [ ] Add tests for the previous step changes (if any)

- [ ] Integrate it with CLI

- [ ] Add integration tests for verifying integration

<!-- A clear and concise description of what you want to happen. -->

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

Roadmap #717

<!-- Add any other context or screenshots about the feature request here. -->

/cc @evilebottnawi | test | feature integration of serve package into cli is your feature request related to a problem please describe part of roadmap we would be integrating serve by default into the cli so that user need not install it to use dev server from command line describe the solution you d like robust tests for serve s current features if needed refactor serve and add remove features from it add tests for the previous step changes if any integrate it with cli add integration tests for verifying integration describe alternatives you ve considered additional context roadmap cc evilebottnawi | 1 |

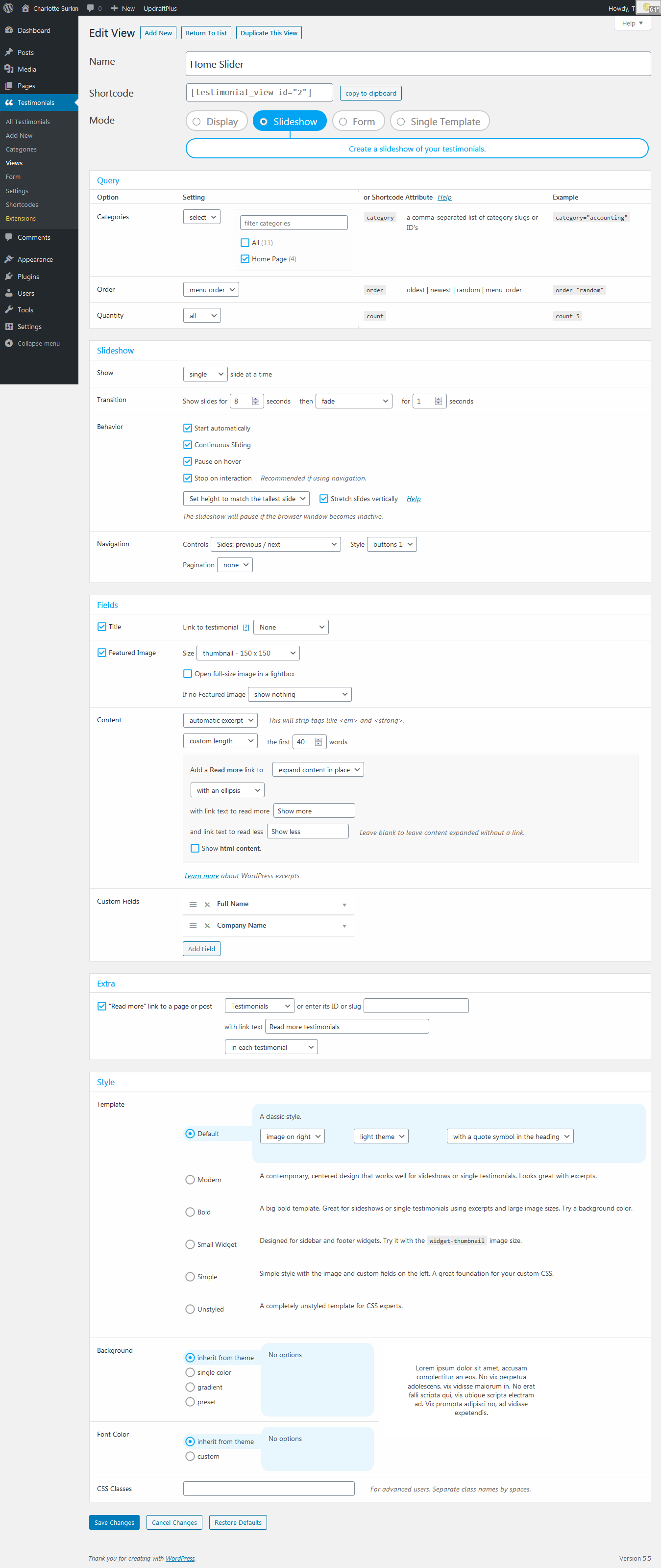

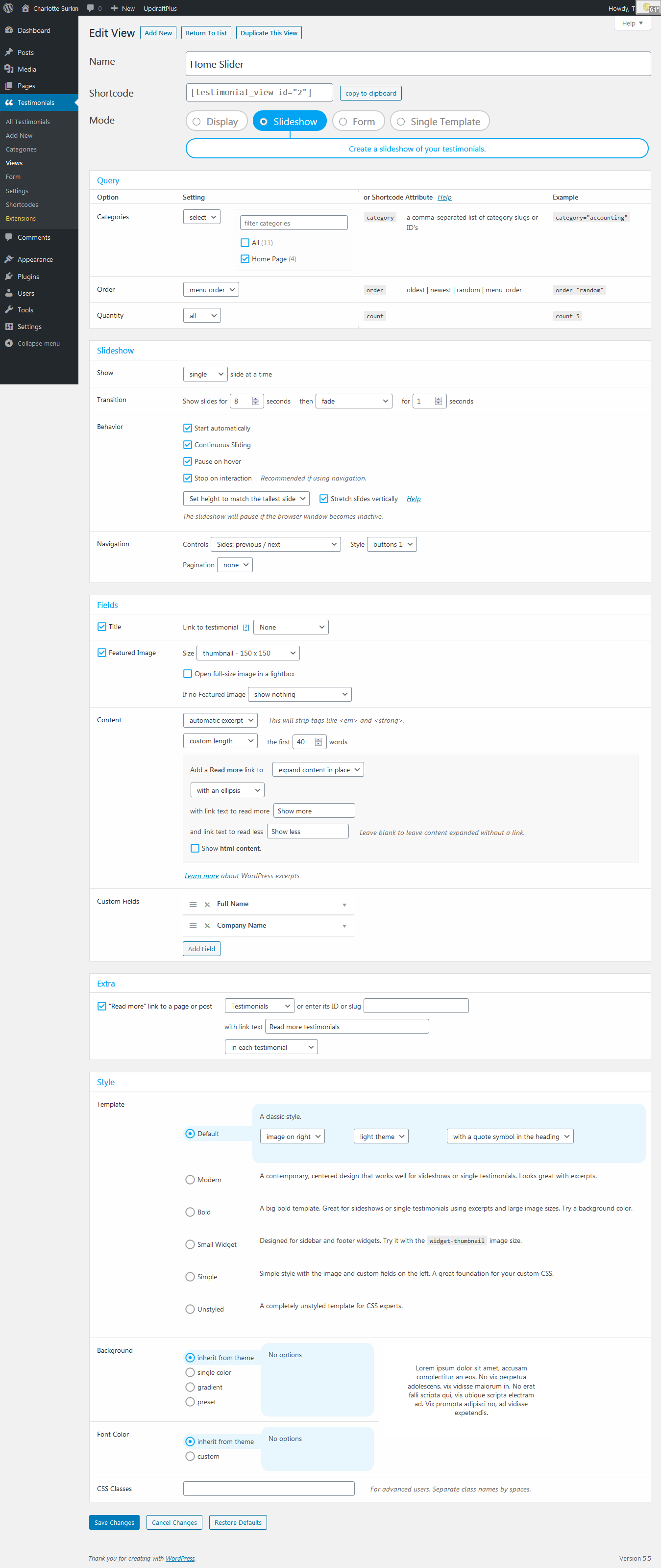

192,383 | 14,615,643,216 | IssuesEvent | 2020-12-22 11:54:39 | WPChill/strong-testimonials | https://api.github.com/repos/WPChill/strong-testimonials | closed | Slideshow doesn’t fully load in Firefox when using anchor link | bug can't replicate tested | **Describe the bug**

I have an anchor link in the main menu that scrolls to the Bio section of the home page that is below the slide show. When I am on the home page and click the anchor link it works as expected. In Firefox (latest version), when I am on another page and use Bio anchor link in the main menu, or if you just directly load the link with the anchor on the end, the home page loads and scrolls , but the slideshow only loads the navigation arrows. When I refresh the page the slide show loads.

**To Reproduce**

Steps to reproduce the behavior:

1. add an anchor link in the main menu that scrolls to the a section of the home page that is below the slideshow

2. then go to another page on the site and click on the anchor in the menu.

3. the slideshow only loads the navigation arrows

4. check in Firefox this behavior

Screenshot with settings:

**Expected behavior**

<!-- You can check these boxes once you've created the issue. -->

* Which browser is affected (or browsers):

- [x] Firefox

<!-- You can check these boxes once you've created the issue. -->

* Which device is affected (or devices):

- [x] Desktop

#### Used versions

* WordPress version: 5.5

* Strong Testimonials version: 2.50.0

https://wordpress.org/support/topic/slide-show-doesnt-fully-load-in-firfox-when-using-anchor-link/

| 1.0 | Slideshow doesn’t fully load in Firefox when using anchor link - **Describe the bug**

I have an anchor link in the main menu that scrolls to the Bio section of the home page that is below the slide show. When I am on the home page and click the anchor link it works as expected. In Firefox (latest version), when I am on another page and use Bio anchor link in the main menu, or if you just directly load the link with the anchor on the end, the home page loads and scrolls , but the slideshow only loads the navigation arrows. When I refresh the page the slide show loads.

**To Reproduce**

Steps to reproduce the behavior:

1. add an anchor link in the main menu that scrolls to the a section of the home page that is below the slideshow

2. then go to another page on the site and click on the anchor in the menu.

3. the slideshow only loads the navigation arrows

4. check in Firefox this behavior

Screenshot with settings:

**Expected behavior**

<!-- You can check these boxes once you've created the issue. -->

* Which browser is affected (or browsers):

- [x] Firefox

<!-- You can check these boxes once you've created the issue. -->

* Which device is affected (or devices):

- [x] Desktop

#### Used versions

* WordPress version: 5.5

* Strong Testimonials version: 2.50.0

https://wordpress.org/support/topic/slide-show-doesnt-fully-load-in-firfox-when-using-anchor-link/

| test | slideshow doesn’t fully load in firefox when using anchor link describe the bug i have an anchor link in the main menu that scrolls to the bio section of the home page that is below the slide show when i am on the home page and click the anchor link it works as expected in firefox latest version when i am on another page and use bio anchor link in the main menu or if you just directly load the link with the anchor on the end the home page loads and scrolls but the slideshow only loads the navigation arrows when i refresh the page the slide show loads to reproduce steps to reproduce the behavior add an anchor link in the main menu that scrolls to the a section of the home page that is below the slideshow then go to another page on the site and click on the anchor in the menu the slideshow only loads the navigation arrows check in firefox this behavior screenshot with settings expected behavior which browser is affected or browsers firefox which device is affected or devices desktop used versions wordpress version strong testimonials version | 1 |

480,947 | 13,878,460,246 | IssuesEvent | 2020-10-17 09:41:24 | buddyboss/buddyboss-platform | https://api.github.com/repos/buddyboss/buddyboss-platform | opened | The Group send invites list itself is not able to load more names when scrolling down | bug priority: medium | **Describe the bug**

The list of members has an issue to load in Group send invites. the list itself is not able to load more names when scrolling down. Works in other browsers and diff locations.

**To Reproduce**

Steps to reproduce the behavior:

See this video

https://www.loom.com/share/5aa75f0fa5194da0b384561e3eca7773

**Expected behavior**

Should able to load more names when scrolling down

**Support ticket links**

https://secure.helpscout.net/conversation/1308180589/103192?folderId=3955985 | 1.0 | The Group send invites list itself is not able to load more names when scrolling down - **Describe the bug**

The list of members has an issue to load in Group send invites. the list itself is not able to load more names when scrolling down. Works in other browsers and diff locations.

**To Reproduce**

Steps to reproduce the behavior:

See this video

https://www.loom.com/share/5aa75f0fa5194da0b384561e3eca7773

**Expected behavior**

Should able to load more names when scrolling down

**Support ticket links**

https://secure.helpscout.net/conversation/1308180589/103192?folderId=3955985 | non_test | the group send invites list itself is not able to load more names when scrolling down describe the bug the list of members has an issue to load in group send invites the list itself is not able to load more names when scrolling down works in other browsers and diff locations to reproduce steps to reproduce the behavior see this video expected behavior should able to load more names when scrolling down support ticket links | 0 |

133,598 | 18,298,975,180 | IssuesEvent | 2021-10-05 23:50:18 | bsbtd/Teste | https://api.github.com/repos/bsbtd/Teste | opened | CVE-2020-11619 (High) detected in jackson-databind-2.9.5.jar | security vulnerability | ## CVE-2020-11619 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: Teste/liferay-portal/modules/etl/talend/talend-runtime/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar,/home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar,/home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar</p>

<p>

Dependency Hierarchy:

- components-api-0.25.3.jar (Root Library)

- daikon-0.27.0.jar

- :x: **jackson-databind-2.9.5.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/bsbtd/Teste/commit/64dde89c50c07496423c4d4a865f2e16b92399ad">64dde89c50c07496423c4d4a865f2e16b92399ad</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to org.springframework.aop.config.MethodLocatingFactoryBean (aka spring-aop).

<p>Publish Date: 2020-04-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11619>CVE-2020-11619</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11619">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11619</a></p>

<p>Release Date: 2020-04-07</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-11619 (High) detected in jackson-databind-2.9.5.jar - ## CVE-2020-11619 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: Teste/liferay-portal/modules/etl/talend/talend-runtime/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar,/home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar,/home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.9.5/jackson-databind-2.9.5.jar</p>

<p>

Dependency Hierarchy:

- components-api-0.25.3.jar (Root Library)

- daikon-0.27.0.jar

- :x: **jackson-databind-2.9.5.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/bsbtd/Teste/commit/64dde89c50c07496423c4d4a865f2e16b92399ad">64dde89c50c07496423c4d4a865f2e16b92399ad</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to org.springframework.aop.config.MethodLocatingFactoryBean (aka spring-aop).

<p>Publish Date: 2020-04-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11619>CVE-2020-11619</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11619">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-11619</a></p>

<p>Release Date: 2020-04-07</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file teste liferay portal modules etl talend talend runtime pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy components api jar root library daikon jar x jackson databind jar vulnerable library found in head commit a href vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to org springframework aop config methodlocatingfactorybean aka spring aop publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind step up your open source security game with whitesource | 0 |

248,231 | 21,003,703,146 | IssuesEvent | 2022-03-29 20:06:33 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Webview integration tests timing out on GitHub CI | webview integration-test-failure | Seeing webview integration tests timeout more often today. This specifically seems to happen on the GitHub CI.

https://github.com/microsoft/vscode/runs/5742978871?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742931259?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742931058?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5741763332?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742831884?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742831969?check_suite_focus=true | 1.0 | Webview integration tests timing out on GitHub CI - Seeing webview integration tests timeout more often today. This specifically seems to happen on the GitHub CI.

https://github.com/microsoft/vscode/runs/5742978871?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742931259?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742931058?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5741763332?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742831884?check_suite_focus=true

https://github.com/microsoft/vscode/runs/5742831969?check_suite_focus=true | test | webview integration tests timing out on github ci seeing webview integration tests timeout more often today this specifically seems to happen on the github ci | 1 |

422,774 | 28,480,287,232 | IssuesEvent | 2023-04-18 01:41:10 | inlab-geo/espresso | https://api.github.com/repos/inlab-geo/espresso | closed | Rationalise docs & readmes | documentation | We currently have a variety of README.md files scattered through the repository, and contributor/developer guides within the docs. This is a future maintenance headache, and I think we already have some inconsistent advice.

I propose that we consolidate all important information into the 'docs', within the contributor and developer guides, and delete it from the README files. Instead, these should contain links to the relevant docs pages.

@jwhhh If you agree, can you do the initial migration of information -- you are better-placed than me to determine which information is still current.

Builds on #107.

UPDATE: also include [FAQ](https://hackmd.io/q-biMWqRSBOV51I9g1BNcQ#Espresso) in this PR. | 1.0 | Rationalise docs & readmes - We currently have a variety of README.md files scattered through the repository, and contributor/developer guides within the docs. This is a future maintenance headache, and I think we already have some inconsistent advice.

I propose that we consolidate all important information into the 'docs', within the contributor and developer guides, and delete it from the README files. Instead, these should contain links to the relevant docs pages.

@jwhhh If you agree, can you do the initial migration of information -- you are better-placed than me to determine which information is still current.

Builds on #107.

UPDATE: also include [FAQ](https://hackmd.io/q-biMWqRSBOV51I9g1BNcQ#Espresso) in this PR. | non_test | rationalise docs readmes we currently have a variety of readme md files scattered through the repository and contributor developer guides within the docs this is a future maintenance headache and i think we already have some inconsistent advice i propose that we consolidate all important information into the docs within the contributor and developer guides and delete it from the readme files instead these should contain links to the relevant docs pages jwhhh if you agree can you do the initial migration of information you are better placed than me to determine which information is still current builds on update also include in this pr | 0 |

2,025 | 2,581,430,053 | IssuesEvent | 2015-02-14 01:50:34 | wp-cli/wp-cli | https://api.github.com/repos/wp-cli/wp-cli | closed | Fix rate-limited requests to Github API | bug scope:testing | In #1535, we added `wp cli update` and the corresponding test coverage. However, Github's API is rate-limited to 60 requests/hour, so it's failing the build quite often:

Previously #1605 | 1.0 | Fix rate-limited requests to Github API - In #1535, we added `wp cli update` and the corresponding test coverage. However, Github's API is rate-limited to 60 requests/hour, so it's failing the build quite often:

Previously #1605 | test | fix rate limited requests to github api in we added wp cli update and the corresponding test coverage however github s api is rate limited to requests hour so it s failing the build quite often previously | 1 |

64,326 | 6,899,657,767 | IssuesEvent | 2017-11-24 14:42:54 | ValveSoftware/steam-for-linux | https://api.github.com/repos/ValveSoftware/steam-for-linux | closed | No List in Small Mode | Need Retest reviewed Steam client | After last update, I can't use small mode because there's no game shown on my list event I had clear the search bar

| 1.0 | No List in Small Mode - After last update, I can't use small mode because there's no game shown on my list event I had clear the search bar

| test | no list in small mode after last update i can t use small mode because there s no game shown on my list event i had clear the search bar | 1 |

251,876 | 21,527,049,186 | IssuesEvent | 2022-04-28 19:34:56 | damccorm/test-migration-target | https://api.github.com/repos/damccorm/test-migration-target | opened | flake: FlinkRunnerTest.testEnsureStdoutStdErrIsRestored | bug P1 test-failures | java.lang.AssertionError:

Expected: (a string containing "System.out: (none)" and a string containing "System.err: (none)")

but: a string containing "System.err: (none)" was "The program plan could not be fetched - the program aborted pre-maturely.

https://ci-beam.apache.org/job/beam_PreCommit_Java_Phrase/4515/

Imported from Jira [BEAM-13708](https://issues.apache.org/jira/browse/BEAM-13708). Original Jira may contain additional context.

Reported by: ibzib. Jira was originally assigned to robertwb. | 1.0 | flake: FlinkRunnerTest.testEnsureStdoutStdErrIsRestored - java.lang.AssertionError:

Expected: (a string containing "System.out: (none)" and a string containing "System.err: (none)")

but: a string containing "System.err: (none)" was "The program plan could not be fetched - the program aborted pre-maturely.

https://ci-beam.apache.org/job/beam_PreCommit_Java_Phrase/4515/

Imported from Jira [BEAM-13708](https://issues.apache.org/jira/browse/BEAM-13708). Original Jira may contain additional context.

Reported by: ibzib. Jira was originally assigned to robertwb. | test | flake flinkrunnertest testensurestdoutstderrisrestored java lang assertionerror expected a string containing system out none and a string containing system err none but a string containing system err none was the program plan could not be fetched the program aborted pre maturely imported from jira original jira may contain additional context reported by ibzib jira was originally assigned to robertwb | 1 |

581,996 | 17,350,082,486 | IssuesEvent | 2021-07-29 07:35:47 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | How to change related posts thumbnail size filter is not working. | [Priority: HIGH] bug | Tutorial URL: https://ampforwp.com/tutorials/article/how-to-change-related-posts-thumbnail-size/

Ticket URL: https://secure.helpscout.net/conversation/1578917856/207264?folderId=1060556

change related posts thumbnail size filter is not working. | 1.0 | How to change related posts thumbnail size filter is not working. - Tutorial URL: https://ampforwp.com/tutorials/article/how-to-change-related-posts-thumbnail-size/

Ticket URL: https://secure.helpscout.net/conversation/1578917856/207264?folderId=1060556

change related posts thumbnail size filter is not working. | non_test | how to change related posts thumbnail size filter is not working tutorial url ticket url change related posts thumbnail size filter is not working | 0 |

747,995 | 26,103,312,566 | IssuesEvent | 2022-12-27 09:59:41 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | chaturbate.com - design is broken | nsfw priority-important browser-focus-geckoview engine-gecko | <!-- @browser: Firefox Mobile 108.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:108.0) Gecko/108.0 Firefox/108.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/115968 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://chaturbate.com

**Browser / Version**: Firefox Mobile 108.0

**Operating System**: Android 11

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Images not loaded

**Steps to Reproduce**:

No images or video. Cleared cache. Restart phone. Checked on two other browsers....same issue. Not working on mobile.

<details>

<summary>View the screenshot</summary>

Screenshot removed - possible explicit content.

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20221208122842</li><li>channel: release</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/12/aa3e0e6c-bc13-4fbb-ba92-e3d363ee5704)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | chaturbate.com - design is broken - <!-- @browser: Firefox Mobile 108.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:108.0) Gecko/108.0 Firefox/108.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/115968 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://chaturbate.com

**Browser / Version**: Firefox Mobile 108.0

**Operating System**: Android 11

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Images not loaded

**Steps to Reproduce**:

No images or video. Cleared cache. Restart phone. Checked on two other browsers....same issue. Not working on mobile.

<details>

<summary>View the screenshot</summary>

Screenshot removed - possible explicit content.

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20221208122842</li><li>channel: release</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/12/aa3e0e6c-bc13-4fbb-ba92-e3d363ee5704)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | chaturbate com design is broken url browser version firefox mobile operating system android tested another browser yes chrome problem type design is broken description images not loaded steps to reproduce no images or video cleared cache restart phone checked on two other browsers same issue not working on mobile view the screenshot screenshot removed possible explicit content browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel release hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |

29,416 | 4,171,573,608 | IssuesEvent | 2016-06-21 00:19:15 | open-forcefield-group/smarty | https://api.github.com/repos/open-forcefield-group/smarty | opened | Allow binary rather than unary decorators for smarts sampling | design choice | @jchodera , this one will require your attention.

We believe that to allow better exploration we need to allow binary rather than unary construction of SMARTS to sample. I haven't dug in too much to the details of the code on this aspect, but what @cbayly13 is telling us on this end is that he needs to be able to combine "pick a bond order" with "pick an element type or functional group" more easily - i.e. rather than taking a decorator that does both at once, being able to combine two decorators. In other words, instead of a unary decorator, he wants binary decorators (take this action such as a bond, apply it to that chemical group).

So I think he wants to be able to revise this:

```

$(*~[#1]) hydrogen-adjacent

$(*~[#6]) carbon-adjacent

$(*~[#7]) nitrogen-adjacent

$(*~[#8]) oxygen-adjacent

$(*~[#9]) fluorine-adjacent

$(*~[#15]) phosphorous-adjacent

$(*~[#16]) sulfur-adjacent

$(*~[#17]) chlorine-adjacent

$(*~[#35]) bromine-adjacent

$(*~[#53]) iodine-adjacent

```

by replacing it with "pick a bond type" as one operation and "pick a functional group adjacent to it" as a second.

@cbayly13 , have I summarized properly?

Ultimately, he will also want to optimize how these selections are made under the hood, i.e. trying a single bond basically works with everything, but double or triple bonds only make sense for particular elements/functional groups, etc. This may be an API issue. | 1.0 | Allow binary rather than unary decorators for smarts sampling - @jchodera , this one will require your attention.

We believe that to allow better exploration we need to allow binary rather than unary construction of SMARTS to sample. I haven't dug in too much to the details of the code on this aspect, but what @cbayly13 is telling us on this end is that he needs to be able to combine "pick a bond order" with "pick an element type or functional group" more easily - i.e. rather than taking a decorator that does both at once, being able to combine two decorators. In other words, instead of a unary decorator, he wants binary decorators (take this action such as a bond, apply it to that chemical group).

So I think he wants to be able to revise this:

```

$(*~[#1]) hydrogen-adjacent

$(*~[#6]) carbon-adjacent

$(*~[#7]) nitrogen-adjacent

$(*~[#8]) oxygen-adjacent

$(*~[#9]) fluorine-adjacent

$(*~[#15]) phosphorous-adjacent

$(*~[#16]) sulfur-adjacent

$(*~[#17]) chlorine-adjacent

$(*~[#35]) bromine-adjacent

$(*~[#53]) iodine-adjacent

```

by replacing it with "pick a bond type" as one operation and "pick a functional group adjacent to it" as a second.

@cbayly13 , have I summarized properly?

Ultimately, he will also want to optimize how these selections are made under the hood, i.e. trying a single bond basically works with everything, but double or triple bonds only make sense for particular elements/functional groups, etc. This may be an API issue. | non_test | allow binary rather than unary decorators for smarts sampling jchodera this one will require your attention we believe that to allow better exploration we need to allow binary rather than unary construction of smarts to sample i haven t dug in too much to the details of the code on this aspect but what is telling us on this end is that he needs to be able to combine pick a bond order with pick an element type or functional group more easily i e rather than taking a decorator that does both at once being able to combine two decorators in other words instead of a unary decorator he wants binary decorators take this action such as a bond apply it to that chemical group so i think he wants to be able to revise this hydrogen adjacent carbon adjacent nitrogen adjacent oxygen adjacent fluorine adjacent phosphorous adjacent sulfur adjacent chlorine adjacent bromine adjacent iodine adjacent by replacing it with pick a bond type as one operation and pick a functional group adjacent to it as a second have i summarized properly ultimately he will also want to optimize how these selections are made under the hood i e trying a single bond basically works with everything but double or triple bonds only make sense for particular elements functional groups etc this may be an api issue | 0 |

1,771 | 6,688,399,688 | IssuesEvent | 2017-10-08 14:22:02 | t9md/atom-vim-mode-plus | https://api.github.com/repos/t9md/atom-vim-mode-plus | closed | What vmp will do when outer-vmp command add/modify selection | architecture-improvement documentation | Use this issue to consolidation place for

- Collaboration issue for vmp's `visual-mode` and selection change/addition by outer-vmp command.

# Examples

- When confirmed find-mini editor(`cmd-f`), it select next occurrence of word, but vmp doesn't auto-enter visual-mode, its odd when I see selection in `normal-mode`.

- When I use package which open editor with initially select some lines, vmp remains `normal-mode`, so moving cursor just clear selection, I want start with `visual-mode` in this case.

# Why this happens, and why this is difficult to fix completely.

- vmp have `visual-mode`, all vmp command is **mode-aware**, when it create selection, it automatically activate `visual-mode`. like `v`, `V`.

- but outer-vmp command just modify selection `editor:select-line`, that's it. Not auto-enter `visual-mode`.

- `visual-mode` doing special things

- modify cursor visible position so that it seems natural for vim-user

- preserve charwise position when shifting to linewise, that's why you can shift `V` to `v` with keeping original cursor column.

- So what I want vmp do is automatically activate `visual-mode` when outer-vmp command modify selection.

- Once it is done by `atom.commands.onWillDispatch` and `atom.commands.onDidDispatch` hook.

- Good: it's called less frequently than `editor.onDidChangeSelectionRange`.

- Bad: miss catching selection change for some command. e.g. modify selection in promise(it's fired after `editor.onDidChangeSelectionRange`)

- Another approach, use `editor.onDidChangeSelectionRange`.

- Good: no miss catch for selection change

- Bad: called so frequently. Need lock to avoid infinite loop(modifying selection in callback also fire `onDidChangeSelectionRange` event).

# Quick check TODO

- `cmd-e`(`find-and-replace:use-selection-as-find-pattern`), `cmd-g`(`find-and-replace:find-next`), works correctly? start `visual-mode`?

- `cmd-f`, input search word then `enter` works correctly? start `visual-mode`?

- with my [try](https://atom.io/packages/try) package, `try:paste` with selection start `visual-mode` in opened editor?

- `cmd-l`(`editor:select-line`) start `visual-linewise-mode`?

- `cmd-d`(`find-and-replace:select-next`) show cursor correctly, start `visual-mode`?

# Issue on this topic

#112

#490

#740

#744

#761

#794

#872

| 1.0 | What vmp will do when outer-vmp command add/modify selection - Use this issue to consolidation place for

- Collaboration issue for vmp's `visual-mode` and selection change/addition by outer-vmp command.

# Examples

- When confirmed find-mini editor(`cmd-f`), it select next occurrence of word, but vmp doesn't auto-enter visual-mode, its odd when I see selection in `normal-mode`.

- When I use package which open editor with initially select some lines, vmp remains `normal-mode`, so moving cursor just clear selection, I want start with `visual-mode` in this case.

# Why this happens, and why this is difficult to fix completely.

- vmp have `visual-mode`, all vmp command is **mode-aware**, when it create selection, it automatically activate `visual-mode`. like `v`, `V`.

- but outer-vmp command just modify selection `editor:select-line`, that's it. Not auto-enter `visual-mode`.

- `visual-mode` doing special things

- modify cursor visible position so that it seems natural for vim-user

- preserve charwise position when shifting to linewise, that's why you can shift `V` to `v` with keeping original cursor column.

- So what I want vmp do is automatically activate `visual-mode` when outer-vmp command modify selection.

- Once it is done by `atom.commands.onWillDispatch` and `atom.commands.onDidDispatch` hook.

- Good: it's called less frequently than `editor.onDidChangeSelectionRange`.

- Bad: miss catching selection change for some command. e.g. modify selection in promise(it's fired after `editor.onDidChangeSelectionRange`)

- Another approach, use `editor.onDidChangeSelectionRange`.

- Good: no miss catch for selection change

- Bad: called so frequently. Need lock to avoid infinite loop(modifying selection in callback also fire `onDidChangeSelectionRange` event).

# Quick check TODO

- `cmd-e`(`find-and-replace:use-selection-as-find-pattern`), `cmd-g`(`find-and-replace:find-next`), works correctly? start `visual-mode`?

- `cmd-f`, input search word then `enter` works correctly? start `visual-mode`?

- with my [try](https://atom.io/packages/try) package, `try:paste` with selection start `visual-mode` in opened editor?

- `cmd-l`(`editor:select-line`) start `visual-linewise-mode`?

- `cmd-d`(`find-and-replace:select-next`) show cursor correctly, start `visual-mode`?

# Issue on this topic

#112

#490

#740

#744

#761

#794

#872

| non_test | what vmp will do when outer vmp command add modify selection use this issue to consolidation place for collaboration issue for vmp s visual mode and selection change addition by outer vmp command examples when confirmed find mini editor cmd f it select next occurrence of word but vmp doesn t auto enter visual mode its odd when i see selection in normal mode when i use package which open editor with initially select some lines vmp remains normal mode so moving cursor just clear selection i want start with visual mode in this case why this happens and why this is difficult to fix completely vmp have visual mode all vmp command is mode aware when it create selection it automatically activate visual mode like v v but outer vmp command just modify selection editor select line that s it not auto enter visual mode visual mode doing special things modify cursor visible position so that it seems natural for vim user preserve charwise position when shifting to linewise that s why you can shift v to v with keeping original cursor column so what i want vmp do is automatically activate visual mode when outer vmp command modify selection once it is done by atom commands onwilldispatch and atom commands ondiddispatch hook good it s called less frequently than editor ondidchangeselectionrange bad miss catching selection change for some command e g modify selection in promise it s fired after editor ondidchangeselectionrange another approach use editor ondidchangeselectionrange good no miss catch for selection change bad called so frequently need lock to avoid infinite loop modifying selection in callback also fire ondidchangeselectionrange event quick check todo cmd e find and replace use selection as find pattern cmd g find and replace find next works correctly start visual mode cmd f input search word then enter works correctly start visual mode with my package try paste with selection start visual mode in opened editor cmd l editor select line start visual linewise mode cmd d find and replace select next show cursor correctly start visual mode issue on this topic | 0 |

331,753 | 29,057,865,795 | IssuesEvent | 2023-05-15 00:43:51 | TheRenegadeCoder/sample-programs | https://api.github.com/repos/TheRenegadeCoder/sample-programs | opened | Add Wren Testing | enhancement tests | To request a new language, please fill out the following:

Language name: Wren

Official Language Style Guide: https://wren.io/syntax.html

Official Language Website: https://wren.io/

Official Language Docker Image: https://hub.docker.com/r/esolang/wren

| 1.0 | Add Wren Testing - To request a new language, please fill out the following:

Language name: Wren

Official Language Style Guide: https://wren.io/syntax.html

Official Language Website: https://wren.io/

Official Language Docker Image: https://hub.docker.com/r/esolang/wren

| test | add wren testing to request a new language please fill out the following language name wren official language style guide official language website official language docker image | 1 |

320,349 | 27,432,478,923 | IssuesEvent | 2023-03-02 03:14:01 | brave/brave-ios | https://api.github.com/repos/brave/brave-ios | closed | Manual test run for `1.48.1` on `iPhone` or `iPad` running `iOS 14` | QA Pass - iPhone X QA/Yes release-notes/exclude ipad tests | ## Installer

- [x] Check that installer is close to the size of the last release

- [x] Check the Brave version in About and make sure it is EXACTLY as expected

## Data

- [x] Verify that data from the previous build appears in the updated build as expected (bookmarks, history, etc.)

- [x] Verify that cookies from the previous build are preserved after upgrade

- [x] Verify saved passwords are retained after upgrade

- [x] Verify stats are retained after upgrade

- [x] Verify sync chain created in the previous version is still retained on upgrade

- [x] Verify per-site settings are preserved after upgrade

## App linker

- [x] Long-press on a link in the Twitter app to get the share picker, choose Brave. Verify Brave doesn't crash after opening the link.

## Session storage

- [x] Verify that tabs restore when closed, including active tab

| 1.0 | Manual test run for `1.48.1` on `iPhone` or `iPad` running `iOS 14` - ## Installer

- [x] Check that installer is close to the size of the last release

- [x] Check the Brave version in About and make sure it is EXACTLY as expected

## Data

- [x] Verify that data from the previous build appears in the updated build as expected (bookmarks, history, etc.)

- [x] Verify that cookies from the previous build are preserved after upgrade

- [x] Verify saved passwords are retained after upgrade

- [x] Verify stats are retained after upgrade

- [x] Verify sync chain created in the previous version is still retained on upgrade

- [x] Verify per-site settings are preserved after upgrade

## App linker

- [x] Long-press on a link in the Twitter app to get the share picker, choose Brave. Verify Brave doesn't crash after opening the link.

## Session storage

- [x] Verify that tabs restore when closed, including active tab

| test | manual test run for on iphone or ipad running ios installer check that installer is close to the size of the last release check the brave version in about and make sure it is exactly as expected data verify that data from the previous build appears in the updated build as expected bookmarks history etc verify that cookies from the previous build are preserved after upgrade verify saved passwords are retained after upgrade verify stats are retained after upgrade verify sync chain created in the previous version is still retained on upgrade verify per site settings are preserved after upgrade app linker long press on a link in the twitter app to get the share picker choose brave verify brave doesn t crash after opening the link session storage verify that tabs restore when closed including active tab | 1 |

181,362 | 14,860,950,300 | IssuesEvent | 2021-01-18 21:38:32 | falcosecurity/falco-website | https://api.github.com/repos/falcosecurity/falco-website | closed | GKE Installation page | area/documentation kind/content lifecycle/rotten | While working on https://github.com/falcosecurity/falco/issues/650 - @caquino shared the Terraform they used to deploy Falco on GKE.

What we want to do is to add a documentation page, specific for GKE and specify the installation methods for it, adding this terraform config as a viable option.

<details>

<summary>Here is the terraform definition from the issue.</summary>

```

resource "kubernetes_service_account" "falco_sa" {

metadata {

name = "falco-account"

labels = {

app = "falco"

role = "security"

}

}

automount_service_account_token = true

}

resource "kubernetes_cluster_role" "falco_cr" {

metadata {

name = "falco-cluster-role"

labels = {

app = "falco"

role = "security"

}

}

rule {

api_groups = ["extensions", ""]

resources = ["nodes", "namespaces", "pods", "replicationcontrollers", "replicasets", "services", "daemonsets", "deployments", "events", "configmaps"]

verbs = ["get", "list", "watch"]

}

rule {

non_resource_urls = ["/healthz", "/healthz/*"]

verbs = ["get"]

}

}

resource "kubernetes_cluster_role_binding" "falco_crb" {

metadata {

name = "falco-cluster-role-bind"

labels = {

app = "falco"

role = "security"

}

}

subject {

kind = "ServiceAccount"

name = kubernetes_service_account.falco_sa.metadata.0.name

namespace = "default"

}

role_ref {

kind = "ClusterRole"

name = kubernetes_cluster_role.falco_cr.metadata.0.name

api_group = "rbac.authorization.k8s.io"

}

}

resource "kubernetes_config_map" "falco_cfgmap" {

metadata {

name = "falco-cfgmap"

labels = {

app = "falco"

role = "security"

}

}

data = {

"application_rules.yaml" = file("configs/falco/application_rules.yaml")

"falco_rules.local.yaml" = file("configs/falco/falco_rules.local.yaml")

"falco_rules.yaml" = file("configs/falco/falco_rules.yaml")

"k8s_audit_rules.yaml" = file("configs/falco/k8s_audit_rules.yaml")

"falco.yaml" = file("configs/falco/falco.yaml")

}

}

resource "kubernetes_daemonset" "falco_ds" {

metadata {

name = "falco-daemonset"

labels = {

app = "falco"

role = "security"

}

}

spec {

selector {

match_labels = {

app = "falco"

role = "security"

}

}

template {

metadata {

labels = {

app = "falco"

role = "security"

}

}

spec {

host_network = true

service_account_name = kubernetes_service_account.falco_sa.metadata.0.name

dns_policy = "ClusterFirstWithHostNet"

volume {

name = "docker-socket"

host_path {

path = "/var/run/docker.socket"

}

}

volume {

name = "containerd-socket"

host_path {

path = "/run/containerd/containerd.sock"

}

}

volume {

name = "dev-fs"

host_path {

path = "/dev"

}

}

volume {

name = "proc-fs"

host_path {

path = "/proc"

}

}

volume {

name = "boot-fs"

host_path {

path = "/boot"

}

}

volume {

name = "lib-modules"

host_path {

path = "/lib/modules"

}

}

volume {

name = "usr-fs"

host_path {

path = "/usr"

}

}

volume {

name = "etc-fs"

host_path {

path = "/etc"

}

}

volume {

name = "dshm"

empty_dir {

medium = "Memory"

}

}

volume {

name = "falco-config"

config_map {

name = kubernetes_config_map.falco_cfgmap.metadata.0.name

}

}

container {

name = "falco"

image = "falcosecurity/falco:latest"

args = [

"/usr/bin/falco",

"--cri", "/host/run/containerd/containerd.sock",

"-K", "/var/run/secrets/kubernetes.io/serviceaccount/token",

"-k", "https://$(KUBERNETES_SERVICE_HOST)",

"-pk",

]

security_context {

privileged = true

}

env {

name = "SYSDIG_BPF_PROBE"

value = ""

}

env {

name = "KBUILD_EXTRA_CPPFLAGS"

value = "-DCOS_73_WORKAROUND"

}

volume_mount {

name = "docker-socket"

mount_path = "/host/var/run/docker.sock"

}

volume_mount {

name = "containerd-socket"

mount_path = "/host/run/containerd/containerd.sock"

}

volume_mount {

name = "dev-fs"

mount_path = "/host/dev"

}

volume_mount {

name = "proc-fs"

mount_path = "/host/proc"

read_only = true

}

volume_mount {

name = "boot-fs"

mount_path = "/host/boot"

read_only = true

}

volume_mount {

name = "lib-modules"

mount_path = "/host/lib/modules"

read_only = true

}

volume_mount {

name = "usr-fs"

mount_path = "/host/usr"

read_only = true

}

volume_mount {

name = "etc-fs"

mount_path = "/host/etc"

read_only = true

}

volume_mount {

name = "dshm"

mount_path = "/dev/shm"

}

volume_mount {

name = "falco-config"

mount_path = "/etc/falco"

}

}

}

}

}

}

resource "kubernetes_service" "falco_svc" {

metadata {

name = kubernetes_daemonset.falco_ds.metadata.0.name

labels = {

app = "falco"

role = "security"

}

}

spec {

type = "ClusterIP"

port {

protocol = "TCP"

port = 8765

}

selector = {

app = "falco"

role = "security"

}

}

}

```

</details> | 1.0 | GKE Installation page - While working on https://github.com/falcosecurity/falco/issues/650 - @caquino shared the Terraform they used to deploy Falco on GKE.

What we want to do is to add a documentation page, specific for GKE and specify the installation methods for it, adding this terraform config as a viable option.

<details>

<summary>Here is the terraform definition from the issue.</summary>

```

resource "kubernetes_service_account" "falco_sa" {

metadata {

name = "falco-account"

labels = {

app = "falco"

role = "security"

}

}

automount_service_account_token = true

}

resource "kubernetes_cluster_role" "falco_cr" {

metadata {

name = "falco-cluster-role"

labels = {

app = "falco"

role = "security"

}

}

rule {

api_groups = ["extensions", ""]

resources = ["nodes", "namespaces", "pods", "replicationcontrollers", "replicasets", "services", "daemonsets", "deployments", "events", "configmaps"]

verbs = ["get", "list", "watch"]

}

rule {

non_resource_urls = ["/healthz", "/healthz/*"]

verbs = ["get"]

}

}

resource "kubernetes_cluster_role_binding" "falco_crb" {

metadata {

name = "falco-cluster-role-bind"

labels = {

app = "falco"

role = "security"

}

}

subject {

kind = "ServiceAccount"

name = kubernetes_service_account.falco_sa.metadata.0.name

namespace = "default"

}

role_ref {

kind = "ClusterRole"

name = kubernetes_cluster_role.falco_cr.metadata.0.name

api_group = "rbac.authorization.k8s.io"

}

}

resource "kubernetes_config_map" "falco_cfgmap" {

metadata {

name = "falco-cfgmap"

labels = {

app = "falco"

role = "security"

}

}

data = {

"application_rules.yaml" = file("configs/falco/application_rules.yaml")

"falco_rules.local.yaml" = file("configs/falco/falco_rules.local.yaml")

"falco_rules.yaml" = file("configs/falco/falco_rules.yaml")

"k8s_audit_rules.yaml" = file("configs/falco/k8s_audit_rules.yaml")

"falco.yaml" = file("configs/falco/falco.yaml")

}

}

resource "kubernetes_daemonset" "falco_ds" {

metadata {

name = "falco-daemonset"

labels = {

app = "falco"

role = "security"

}

}

spec {

selector {

match_labels = {

app = "falco"

role = "security"

}

}

template {

metadata {

labels = {

app = "falco"

role = "security"

}

}

spec {

host_network = true

service_account_name = kubernetes_service_account.falco_sa.metadata.0.name

dns_policy = "ClusterFirstWithHostNet"

volume {

name = "docker-socket"

host_path {

path = "/var/run/docker.socket"

}

}

volume {

name = "containerd-socket"

host_path {

path = "/run/containerd/containerd.sock"

}

}

volume {

name = "dev-fs"

host_path {

path = "/dev"

}

}

volume {

name = "proc-fs"

host_path {

path = "/proc"

}

}

volume {

name = "boot-fs"

host_path {

path = "/boot"

}

}

volume {

name = "lib-modules"

host_path {

path = "/lib/modules"

}

}

volume {

name = "usr-fs"

host_path {

path = "/usr"

}

}

volume {

name = "etc-fs"

host_path {

path = "/etc"

}

}

volume {

name = "dshm"

empty_dir {

medium = "Memory"

}

}

volume {

name = "falco-config"

config_map {

name = kubernetes_config_map.falco_cfgmap.metadata.0.name

}

}

container {

name = "falco"

image = "falcosecurity/falco:latest"

args = [

"/usr/bin/falco",

"--cri", "/host/run/containerd/containerd.sock",

"-K", "/var/run/secrets/kubernetes.io/serviceaccount/token",

"-k", "https://$(KUBERNETES_SERVICE_HOST)",

"-pk",

]

security_context {

privileged = true

}

env {

name = "SYSDIG_BPF_PROBE"

value = ""

}

env {

name = "KBUILD_EXTRA_CPPFLAGS"

value = "-DCOS_73_WORKAROUND"

}

volume_mount {

name = "docker-socket"

mount_path = "/host/var/run/docker.sock"

}

volume_mount {

name = "containerd-socket"

mount_path = "/host/run/containerd/containerd.sock"

}

volume_mount {

name = "dev-fs"

mount_path = "/host/dev"

}

volume_mount {

name = "proc-fs"

mount_path = "/host/proc"

read_only = true

}

volume_mount {

name = "boot-fs"

mount_path = "/host/boot"

read_only = true

}

volume_mount {

name = "lib-modules"

mount_path = "/host/lib/modules"

read_only = true

}

volume_mount {

name = "usr-fs"

mount_path = "/host/usr"

read_only = true

}

volume_mount {

name = "etc-fs"

mount_path = "/host/etc"

read_only = true

}

volume_mount {

name = "dshm"

mount_path = "/dev/shm"

}

volume_mount {

name = "falco-config"

mount_path = "/etc/falco"

}

}

}

}

}

}

resource "kubernetes_service" "falco_svc" {

metadata {

name = kubernetes_daemonset.falco_ds.metadata.0.name

labels = {

app = "falco"

role = "security"

}

}

spec {

type = "ClusterIP"

port {

protocol = "TCP"

port = 8765

}

selector = {

app = "falco"

role = "security"

}

}

}

```

</details> | non_test | gke installation page while working on caquino shared the terraform they used to deploy falco on gke what we want to do is to add a documentation page specific for gke and specify the installation methods for it adding this terraform config as a viable option here is the terraform definition from the issue resource kubernetes service account falco sa metadata name falco account labels app falco role security automount service account token true resource kubernetes cluster role falco cr metadata name falco cluster role labels app falco role security rule api groups resources verbs rule non resource urls verbs resource kubernetes cluster role binding falco crb metadata name falco cluster role bind labels app falco role security subject kind serviceaccount name kubernetes service account falco sa metadata name namespace default role ref kind clusterrole name kubernetes cluster role falco cr metadata name api group rbac authorization io resource kubernetes config map falco cfgmap metadata name falco cfgmap labels app falco role security data application rules yaml file configs falco application rules yaml falco rules local yaml file configs falco falco rules local yaml falco rules yaml file configs falco falco rules yaml audit rules yaml file configs falco audit rules yaml falco yaml file configs falco falco yaml resource kubernetes daemonset falco ds metadata name falco daemonset labels app falco role security spec selector match labels app falco role security template metadata labels app falco role security spec host network true service account name kubernetes service account falco sa metadata name dns policy clusterfirstwithhostnet volume name docker socket host path path var run docker socket volume name containerd socket host path path run containerd containerd sock volume name dev fs host path path dev volume name proc fs host path path proc volume name boot fs host path path boot volume name lib modules host path path lib modules volume name usr fs host path path usr volume name etc fs host path path etc volume name dshm empty dir medium memory volume name falco config config map name kubernetes config map falco cfgmap metadata name container name falco image falcosecurity falco latest args usr bin falco cri host run containerd containerd sock k var run secrets kubernetes io serviceaccount token k pk security context privileged true env name sysdig bpf probe value env name kbuild extra cppflags value dcos workaround volume mount name docker socket mount path host var run docker sock volume mount name containerd socket mount path host run containerd containerd sock volume mount name dev fs mount path host dev volume mount name proc fs mount path host proc read only true volume mount name boot fs mount path host boot read only true volume mount name lib modules mount path host lib modules read only true volume mount name usr fs mount path host usr read only true volume mount name etc fs mount path host etc read only true volume mount name dshm mount path dev shm volume mount name falco config mount path etc falco resource kubernetes service falco svc metadata name kubernetes daemonset falco ds metadata name labels app falco role security spec type clusterip port protocol tcp port selector app falco role security | 0 |

156,156 | 12,299,030,255 | IssuesEvent | 2020-05-11 11:37:35 | dotnet/winforms | https://api.github.com/repos/dotnet/winforms | opened | Flaky test: `ProfessionalColorTable_ChangeUserPreferences_GetColor_ReturnsExpected` deadlock | test-bug |

**Problem description:**

After #3226 was merged, `ProfessionalColorTable_ChangeUserPreferences_GetColor_ReturnsExpected` tests started deadlocking in x86 mode that caused CI builds to fail again.

I managed to reproduce the deadlock locally, though it took few attempts to do so.

Looks like the test deadlocks on itself, there are no other user-code executed:

**Expected behavior:**

The tests work as expected.

**Minimal repro:**

A repro is bit convoluted. Unfortunately `build.cmd -test -platform x86` command doesn't appear to work unless `Winforms.sln` is configured for x86 platform (which breaks other modes). | 1.0 | Flaky test: `ProfessionalColorTable_ChangeUserPreferences_GetColor_ReturnsExpected` deadlock -

**Problem description:**

After #3226 was merged, `ProfessionalColorTable_ChangeUserPreferences_GetColor_ReturnsExpected` tests started deadlocking in x86 mode that caused CI builds to fail again.

I managed to reproduce the deadlock locally, though it took few attempts to do so.

Looks like the test deadlocks on itself, there are no other user-code executed:

**Expected behavior:**

The tests work as expected.

**Minimal repro:**

A repro is bit convoluted. Unfortunately `build.cmd -test -platform x86` command doesn't appear to work unless `Winforms.sln` is configured for x86 platform (which breaks other modes). | test | flaky test professionalcolortable changeuserpreferences getcolor returnsexpected deadlock problem description after was merged professionalcolortable changeuserpreferences getcolor returnsexpected tests started deadlocking in mode that caused ci builds to fail again i managed to reproduce the deadlock locally though it took few attempts to do so looks like the test deadlocks on itself there are no other user code executed expected behavior the tests work as expected minimal repro a repro is bit convoluted unfortunately build cmd test platform command doesn t appear to work unless winforms sln is configured for platform which breaks other modes | 1 |

90,495 | 11,405,996,946 | IssuesEvent | 2020-01-31 13:25:31 | pyladiesdf/pyladiesdf_organizacao | https://api.github.com/repos/pyladiesdf/pyladiesdf_organizacao | closed | Agenda de Setembro | design media | - [ ] Criar agenda do mês no Canva

- [ ] Divulgar nos destaques do instagram | 1.0 | Agenda de Setembro - - [ ] Criar agenda do mês no Canva

- [ ] Divulgar nos destaques do instagram | non_test | agenda de setembro criar agenda do mês no canva divulgar nos destaques do instagram | 0 |

67,189 | 8,099,681,546 | IssuesEvent | 2018-08-11 12:07:25 | bologer/anycomment.io | https://api.github.com/repos/bologer/anycomment.io | closed | Make name & date inline to be more compact | design low priority | Think on how to this. Could be having name & date as inline ~ | 1.0 | Make name & date inline to be more compact - Think on how to this. Could be having name & date as inline ~ | non_test | make name date inline to be more compact think on how to this could be having name date as inline | 0 |

143,730 | 11,576,517,376 | IssuesEvent | 2020-02-21 12:08:10 | navikt/tiltaksgjennomforing-varsel | https://api.github.com/repos/navikt/tiltaksgjennomforing-varsel | closed | Bygg av ny-branch-test | deploy ny-branch-test | Kommenter med

>/deploy ny-branch-test

for å deploye til dev-fss.

Commit: | 1.0 | Bygg av ny-branch-test - Kommenter med

>/deploy ny-branch-test

for å deploye til dev-fss.

Commit: | test | bygg av ny branch test kommenter med deploy ny branch test for å deploye til dev fss commit | 1 |

181,871 | 14,891,483,604 | IssuesEvent | 2021-01-21 00:53:42 | GameBridgeAI/ts_serialize | https://api.github.com/repos/GameBridgeAI/ts_serialize | closed | [DOCS] - Add documentation for polymorphic class types on a parent class property | documentation | Please add documentation for polymorphic class types on a parent class property. The nesting nature makes it a bit unwieldly, and we should give an example.

Example:

```ts

class MyClass : Class {

@SerializeProperty({

fromJSONStrategy: json => polymorphicClassFromJSON<Polymorphic>(Polymorphic, json),

})

someClass : Polymorphic;

}

abstract class someClass: Polymorphic {

}

``` | 1.0 | [DOCS] - Add documentation for polymorphic class types on a parent class property - Please add documentation for polymorphic class types on a parent class property. The nesting nature makes it a bit unwieldly, and we should give an example.

Example:

```ts

class MyClass : Class {

@SerializeProperty({

fromJSONStrategy: json => polymorphicClassFromJSON<Polymorphic>(Polymorphic, json),

})

someClass : Polymorphic;

}

abstract class someClass: Polymorphic {

}

``` | non_test | add documentation for polymorphic class types on a parent class property please add documentation for polymorphic class types on a parent class property the nesting nature makes it a bit unwieldly and we should give an example example ts class myclass class serializeproperty fromjsonstrategy json polymorphicclassfromjson polymorphic json someclass polymorphic abstract class someclass polymorphic | 0 |

641,929 | 20,862,321,480 | IssuesEvent | 2022-03-22 00:55:02 | harvester/harvester | https://api.github.com/repos/harvester/harvester | opened | [BUG] harvester load balancer, IPAM, defaults to `DCHP` and overrides user's selection | bug priority/1 area/dashboard-related | Tracking bug [rancher/dashboard#5438](https://github.com/rancher/dashboard/issues/5438) | 1.0 | [BUG] harvester load balancer, IPAM, defaults to `DCHP` and overrides user's selection - Tracking bug [rancher/dashboard#5438](https://github.com/rancher/dashboard/issues/5438) | non_test | harvester load balancer ipam defaults to dchp and overrides user s selection tracking bug | 0 |

531 | 2,502,322,714 | IssuesEvent | 2015-01-09 07:18:12 | fossology/fossology | https://api.github.com/repos/fossology/fossology | opened | run Stress Testing weekly with latest code | Category: Testing Component: Rank Component: Tester Priority: Normal Status: New Tracker: Bug | ---

Author Name: **larry shi**

Original Redmine Issue: 6987, http://www.fossology.org/issues/6987

Original Date: 2014/05/07

Original Assignee: Dong Ma

---

manually or automatically.

| 2.0 | run Stress Testing weekly with latest code - ---

Author Name: **larry shi**

Original Redmine Issue: 6987, http://www.fossology.org/issues/6987

Original Date: 2014/05/07

Original Assignee: Dong Ma

---

manually or automatically.

| test | run stress testing weekly with latest code author name larry shi original redmine issue original date original assignee dong ma manually or automatically | 1 |

337,761 | 30,261,398,599 | IssuesEvent | 2023-07-07 08:30:01 | adrianlubitz/VVAD | https://api.github.com/repos/adrianlubitz/VVAD | opened | Establish unit tests for python functions | test | So far, there are no unit tests existent to verify the functionality of the used functions even after code changes. Goal of this issue is to establish unit tests in multiple steps:

- Commonly used functions

- Functions that must run on clusters/servers

- rarely used functions (or only used in pipelines) | 1.0 | Establish unit tests for python functions - So far, there are no unit tests existent to verify the functionality of the used functions even after code changes. Goal of this issue is to establish unit tests in multiple steps:

- Commonly used functions

- Functions that must run on clusters/servers

- rarely used functions (or only used in pipelines) | test | establish unit tests for python functions so far there are no unit tests existent to verify the functionality of the used functions even after code changes goal of this issue is to establish unit tests in multiple steps commonly used functions functions that must run on clusters servers rarely used functions or only used in pipelines | 1 |

254,318 | 8,072,780,669 | IssuesEvent | 2018-08-06 17:03:21 | marbl/MetagenomeScope | https://api.github.com/repos/marbl/MetagenomeScope | closed | Support somehow viewing node metadata during finishing | highpriorityfeature | This lets the user select nodes and inspect them during the finishing process. | 1.0 | Support somehow viewing node metadata during finishing - This lets the user select nodes and inspect them during the finishing process. | non_test | support somehow viewing node metadata during finishing this lets the user select nodes and inspect them during the finishing process | 0 |

158,661 | 12,422,153,478 | IssuesEvent | 2020-05-23 20:31:22 | drafthub/drafthub | https://api.github.com/repos/drafthub/drafthub | opened | not covered code in `core.signals` | help wanted tests | Here is the coverage report from `check.py`

```

$ docker-compose exec web python check.py coverage

Name Stmts Miss Cover Missing

-----------------------------------------------------------------------------

...

drafthub/core/signals.py 10 6 40% 8-14

...

```

And the [report from codecov](https://codecov.io/gh/drafthub/drafthub/src/master/drafthub/core/signals.py)

New tests for this issue must be written in `drafthub/core/tests/test_signals.py` | 1.0 | not covered code in `core.signals` - Here is the coverage report from `check.py`

```

$ docker-compose exec web python check.py coverage

Name Stmts Miss Cover Missing

-----------------------------------------------------------------------------

...

drafthub/core/signals.py 10 6 40% 8-14

...

```

And the [report from codecov](https://codecov.io/gh/drafthub/drafthub/src/master/drafthub/core/signals.py)

New tests for this issue must be written in `drafthub/core/tests/test_signals.py` | test | not covered code in core signals here is the coverage report from check py docker compose exec web python check py coverage name stmts miss cover missing drafthub core signals py and the new tests for this issue must be written in drafthub core tests test signals py | 1 |

3,194 | 2,743,549,033 | IssuesEvent | 2015-04-21 22:24:56 | elastic/curator | https://api.github.com/repos/elastic/curator | closed | Document that forceMerge takes a lot of space | Documentation | Apparently, up to 3x the size of an index. See https://issues.apache.org/jira/browse/LUCENE-6386 | 1.0 | Document that forceMerge takes a lot of space - Apparently, up to 3x the size of an index. See https://issues.apache.org/jira/browse/LUCENE-6386 | non_test | document that forcemerge takes a lot of space apparently up to the size of an index see | 0 |

122,062 | 10,211,588,273 | IssuesEvent | 2019-08-14 17:18:41 | input-output-hk/plutus | https://api.github.com/repos/input-output-hk/plutus | closed | support multiple modes of execution in plc-agda | Metatheory Test | There are currently two possible execution paths in plc-agda: extrinsic reduction via progress and extrinsic CK machine execution.

Add a command line flag to support both:

$ plc-agda --help

plc-agda - a Plutus Core implementation written in Agda

Usage: plc-agda --file FILENAME [--ck]

run a Plutus Core program

Available options:

--file FILENAME Plutus Core source file

--ck Whether to execute using the CK machine

-h,--help Show this help text | 1.0 | support multiple modes of execution in plc-agda - There are currently two possible execution paths in plc-agda: extrinsic reduction via progress and extrinsic CK machine execution.

Add a command line flag to support both:

$ plc-agda --help

plc-agda - a Plutus Core implementation written in Agda

Usage: plc-agda --file FILENAME [--ck]

run a Plutus Core program

Available options:

--file FILENAME Plutus Core source file

--ck Whether to execute using the CK machine