Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

531,709 | 15,503,167,957 | IssuesEvent | 2021-03-11 12:48:41 | FireDynamics/ARTSS | https://api.github.com/repos/FireDynamics/ARTSS | closed | obstacle domain restriction with multiple obstacles does not work | bug effort: high priority: high | - in a case, where obstacles would overlap, the calculation of inner cells would be wrong due to a false number of obstacle cells (du to duplicates in the obstacle list) | 1.0 | obstacle domain restriction with multiple obstacles does not work - - in a case, where obstacles would overlap, the calculation of inner cells would be wrong due to a false number of obstacle cells (du to duplicates in the obstacle list) | non_test | obstacle domain restriction with multiple obstacles does not work in a case where obstacles would overlap the calculation of inner cells would be wrong due to a false number of obstacle cells du to duplicates in the obstacle list | 0 |

129,378 | 10,572,871,167 | IssuesEvent | 2019-10-07 10:34:39 | robotology/gym-ignition | https://api.github.com/repos/robotology/gym-ignition | closed | Develop an example to compare Runtime classes | complexity::medium component::models component::test issue::status::in-progress issue::type::enhancement | At the moment the supported runtimes are the following:

- `GazeboRuntime`

- `PyBulletRuntime` (almost ready in #40)

It would be nice comparing simple environments like the existing CartPole, or an even simpler Pendulum. Without contacts, the two physics engines behaviors should mostly match. | 1.0 | Develop an example to compare Runtime classes - At the moment the supported runtimes are the following:

- `GazeboRuntime`

- `PyBulletRuntime` (almost ready in #40)

It would be nice comparing simple environments like the existing CartPole, or an even simpler Pendulum. Without contacts, the two physics engines behaviors should mostly match. | test | develop an example to compare runtime classes at the moment the supported runtimes are the following gazeboruntime pybulletruntime almost ready in it would be nice comparing simple environments like the existing cartpole or an even simpler pendulum without contacts the two physics engines behaviors should mostly match | 1 |

18,297 | 10,226,923,915 | IssuesEvent | 2019-08-16 19:12:56 | pcrane70/hadoop | https://api.github.com/repos/pcrane70/hadoop | opened | WS-2019-0103 (Medium) detected in handlebars-3.0.7.tgz | security vulnerability | ## WS-2019-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-3.0.7.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-3.0.7.tgz">https://registry.npmjs.org/handlebars/-/handlebars-3.0.7.tgz</a></p>

<p>Path to dependency file: /hadoop/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-ui/src/main/webapp/package.json</p>

<p>Path to vulnerable library: /tmp/git/hadoop/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-ui/src/main/webapp/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- ember-cli-1.13.14.tgz (Root Library)

- broccoli-0.16.8.tgz

- :x: **handlebars-3.0.7.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/pcrane70/hadoop/commit/9996d65feb6ec3d97f72187616daad5418f51db5">9996d65feb6ec3d97f72187616daad5418f51db5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Handlebars.js before 4.1.0 has Remote Code Execution (RCE)

<p>Publish Date: 2019-05-30

<p>URL: <a href=https://github.com/wycats/handlebars.js/issues/1267#issue-187151586>WS-2019-0103</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/wycats/handlebars.js/commit/edc6220d51139b32c28e51641fadad59a543ae57">https://github.com/wycats/handlebars.js/commit/edc6220d51139b32c28e51641fadad59a543ae57</a></p>

<p>Release Date: 2019-05-30</p>

<p>Fix Resolution: 4.0.13</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"handlebars","packageVersion":"3.0.7","isTransitiveDependency":true,"dependencyTree":"ember-cli:1.13.14;broccoli:0.16.8;handlebars:3.0.7","isMinimumFixVersionAvailable":true,"minimumFixVersion":"4.0.13"}],"vulnerabilityIdentifier":"WS-2019-0103","vulnerabilityDetails":"Handlebars.js before 4.1.0 has Remote Code Execution (RCE)","vulnerabilityUrl":"https://github.com/wycats/handlebars.js/issues/1267#issue-187151586","cvss2Severity":"medium","cvss2Score":"5.5","extraData":{}}</REMEDIATE> --> | True | WS-2019-0103 (Medium) detected in handlebars-3.0.7.tgz - ## WS-2019-0103 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-3.0.7.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-3.0.7.tgz">https://registry.npmjs.org/handlebars/-/handlebars-3.0.7.tgz</a></p>

<p>Path to dependency file: /hadoop/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-ui/src/main/webapp/package.json</p>

<p>Path to vulnerable library: /tmp/git/hadoop/hadoop-yarn-project/hadoop-yarn/hadoop-yarn-ui/src/main/webapp/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- ember-cli-1.13.14.tgz (Root Library)

- broccoli-0.16.8.tgz

- :x: **handlebars-3.0.7.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/pcrane70/hadoop/commit/9996d65feb6ec3d97f72187616daad5418f51db5">9996d65feb6ec3d97f72187616daad5418f51db5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Handlebars.js before 4.1.0 has Remote Code Execution (RCE)

<p>Publish Date: 2019-05-30

<p>URL: <a href=https://github.com/wycats/handlebars.js/issues/1267#issue-187151586>WS-2019-0103</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/wycats/handlebars.js/commit/edc6220d51139b32c28e51641fadad59a543ae57">https://github.com/wycats/handlebars.js/commit/edc6220d51139b32c28e51641fadad59a543ae57</a></p>

<p>Release Date: 2019-05-30</p>

<p>Fix Resolution: 4.0.13</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"handlebars","packageVersion":"3.0.7","isTransitiveDependency":true,"dependencyTree":"ember-cli:1.13.14;broccoli:0.16.8;handlebars:3.0.7","isMinimumFixVersionAvailable":true,"minimumFixVersion":"4.0.13"}],"vulnerabilityIdentifier":"WS-2019-0103","vulnerabilityDetails":"Handlebars.js before 4.1.0 has Remote Code Execution (RCE)","vulnerabilityUrl":"https://github.com/wycats/handlebars.js/issues/1267#issue-187151586","cvss2Severity":"medium","cvss2Score":"5.5","extraData":{}}</REMEDIATE> --> | non_test | ws medium detected in handlebars tgz ws medium severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file hadoop hadoop yarn project hadoop yarn hadoop yarn ui src main webapp package json path to vulnerable library tmp git hadoop hadoop yarn project hadoop yarn hadoop yarn ui src main webapp node modules handlebars package json dependency hierarchy ember cli tgz root library broccoli tgz x handlebars tgz vulnerable library found in head commit a href vulnerability details handlebars js before has remote code execution rce publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier ws vulnerabilitydetails handlebars js before has remote code execution rce vulnerabilityurl | 0 |

327,620 | 9,978,103,093 | IssuesEvent | 2019-07-09 18:59:37 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | opened | Filler values incorrect for structures | Area/Language Priority/Blocker Type/SpecDeviation | **Description:**

$title.

For example for records with fields with default values:

```ballerina

import ballerina/io;

type Bar record {

string s = "test 1";

};

public function main() {

Bar[] barr = [];

Bar b = { s: "test 2" };

barr[1] = b;

io:println(barr[0].s == "test 1"); // prints false, has empty string

}

```

Object arrays are just filled with null.

`getZeroValue()` methods need to be updated to use the value creator to create these filler values.

| 1.0 | Filler values incorrect for structures - **Description:**

$title.

For example for records with fields with default values:

```ballerina

import ballerina/io;

type Bar record {

string s = "test 1";

};

public function main() {

Bar[] barr = [];

Bar b = { s: "test 2" };

barr[1] = b;

io:println(barr[0].s == "test 1"); // prints false, has empty string

}

```

Object arrays are just filled with null.

`getZeroValue()` methods need to be updated to use the value creator to create these filler values.

| non_test | filler values incorrect for structures description title for example for records with fields with default values ballerina import ballerina io type bar record string s test public function main bar barr bar b s test barr b io println barr s test prints false has empty string object arrays are just filled with null getzerovalue methods need to be updated to use the value creator to create these filler values | 0 |

58,941 | 11,912,123,535 | IssuesEvent | 2020-03-31 09:44:49 | home-assistant/brands | https://api.github.com/repos/home-assistant/brands | closed | Brother Printer is missing brand images | domain-missing has-codeowner has-config-flow |

## The problem

The Brother Printer integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The following images are missing and would ideally be added:

- `src/brother/icon.png`

- `src/brother/logo.png`

- `src/brother/icon@2x.png`

- `src/brother/logo@2x.png`

For image specifications and requirements, please see [README.md](https://github.com/home-assistant/brands/blob/master/README.md).

## Updating the documentation repository

Our documentation repository already has a logo for this integration, however, it does not meet the image requirements of this new Brands repository.

If adding images to this repository, please open up a PR to the documentation repository as well, removing the `logo: brother.png` line from this file:

<https://github.com/home-assistant/home-assistant.io/blob/current/source/_integrations/brother.markdown>

**Note**: The documentation PR needs to be opened against the `current` branch.

**Note2**: Please leave the actual logo file in the documentation repository. It will be cleaned up differently.

## Additional information

For more information about this repository, read the [README.md](https://github.com/home-assistant/brands/blob/master/README.md) file of this repository. It contains information on how this repository works, and image specification and requirements.

## Codeowner mention

Hi there, @bieniu! Mind taking a look at this issue as it is with an integration (brother) you are listed as a [codeowner](https://github.com/home-assistant/core/blob/dev/homeassistant/components/brother/manifest.json) for? Thanks!

Resolving this issue is not limited to codeowners! If you want to help us out, feel free to resolve this issue! Thanks already!

| 1.0 | Brother Printer is missing brand images -

## The problem

The Brother Printer integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The following images are missing and would ideally be added:

- `src/brother/icon.png`

- `src/brother/logo.png`

- `src/brother/icon@2x.png`

- `src/brother/logo@2x.png`

For image specifications and requirements, please see [README.md](https://github.com/home-assistant/brands/blob/master/README.md).

## Updating the documentation repository

Our documentation repository already has a logo for this integration, however, it does not meet the image requirements of this new Brands repository.

If adding images to this repository, please open up a PR to the documentation repository as well, removing the `logo: brother.png` line from this file:

<https://github.com/home-assistant/home-assistant.io/blob/current/source/_integrations/brother.markdown>

**Note**: The documentation PR needs to be opened against the `current` branch.

**Note2**: Please leave the actual logo file in the documentation repository. It will be cleaned up differently.

## Additional information

For more information about this repository, read the [README.md](https://github.com/home-assistant/brands/blob/master/README.md) file of this repository. It contains information on how this repository works, and image specification and requirements.

## Codeowner mention

Hi there, @bieniu! Mind taking a look at this issue as it is with an integration (brother) you are listed as a [codeowner](https://github.com/home-assistant/core/blob/dev/homeassistant/components/brother/manifest.json) for? Thanks!

Resolving this issue is not limited to codeowners! If you want to help us out, feel free to resolve this issue! Thanks already!

| non_test | brother printer is missing brand images the problem the brother printer integration does not have brand images in this repository we recently started this brands repository to create a centralized storage of all brand related images these images are used on our website and the home assistant frontend the following images are missing and would ideally be added src brother icon png src brother logo png src brother icon png src brother logo png for image specifications and requirements please see updating the documentation repository our documentation repository already has a logo for this integration however it does not meet the image requirements of this new brands repository if adding images to this repository please open up a pr to the documentation repository as well removing the logo brother png line from this file note the documentation pr needs to be opened against the current branch please leave the actual logo file in the documentation repository it will be cleaned up differently additional information for more information about this repository read the file of this repository it contains information on how this repository works and image specification and requirements codeowner mention hi there bieniu mind taking a look at this issue as it is with an integration brother you are listed as a for thanks resolving this issue is not limited to codeowners if you want to help us out feel free to resolve this issue thanks already | 0 |

28,399 | 2,701,384,521 | IssuesEvent | 2015-04-05 07:39:52 | cs2103jan2015-t13-2c/main | https://api.github.com/repos/cs2103jan2015-t13-2c/main | closed | (GUI) As a user I can find my way around the program instinctively | Priority.high | so I do not spend time searching for commands | 1.0 | (GUI) As a user I can find my way around the program instinctively - so I do not spend time searching for commands | non_test | gui as a user i can find my way around the program instinctively so i do not spend time searching for commands | 0 |

156,969 | 12,341,953,121 | IssuesEvent | 2020-05-14 23:16:27 | Senetas/SureDrop | https://api.github.com/repos/Senetas/SureDrop | closed | This is a check list | Test Case | *This is the test plan for feature blah*

- [ ] Test item 1

- [ ] Test item 2

- [ ] Test item 3

- [ ] Test item 4 | 1.0 | This is a check list - *This is the test plan for feature blah*

- [ ] Test item 1

- [ ] Test item 2

- [ ] Test item 3

- [ ] Test item 4 | test | this is a check list this is the test plan for feature blah test item test item test item test item | 1 |

5,693 | 3,975,675,948 | IssuesEvent | 2016-05-05 07:13:46 | kolliSuman/issues | https://api.github.com/repos/kolliSuman/issues | closed | QA_Skeleton - Assembling, Identification & labeling_Simulation_p1 | Category: Usability Developed By: VLEAD Release Number: Production Severity: S2 Status: Open | Defect Description :

In the Skeleton-Assembling simulation page of "Skeleton - Assembling, Identification & labeling" experiment, the reset button is missing in the page instead reset button should be displayed on the screen inorder to clear the arranged parts from the positions

Actual Result :

In the Skeleton-Assembling simulation page of "Skeleton - Assembling, Identification & labeling" experiment, the reset button is missing in the page

Environment :

OS: Windows 7, Linux

Browsers: Firefox,Chrome

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Test Step Link:

https://github.com/Virtual-Labs/anthropology-iitg/blob/master/test-cases/integration_test-cases/Skeleton%20-%20Assembling%2C%20Identification%20%26%20labeling/Skeleton%20-%20Assembling%2C%20Identification%20%26%20labeling_12_Simulation_p1.org | True | QA_Skeleton - Assembling, Identification & labeling_Simulation_p1 - Defect Description :

In the Skeleton-Assembling simulation page of "Skeleton - Assembling, Identification & labeling" experiment, the reset button is missing in the page instead reset button should be displayed on the screen inorder to clear the arranged parts from the positions

Actual Result :

In the Skeleton-Assembling simulation page of "Skeleton - Assembling, Identification & labeling" experiment, the reset button is missing in the page

Environment :

OS: Windows 7, Linux

Browsers: Firefox,Chrome

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Test Step Link:

https://github.com/Virtual-Labs/anthropology-iitg/blob/master/test-cases/integration_test-cases/Skeleton%20-%20Assembling%2C%20Identification%20%26%20labeling/Skeleton%20-%20Assembling%2C%20Identification%20%26%20labeling_12_Simulation_p1.org | non_test | qa skeleton assembling identification labeling simulation defect description in the skeleton assembling simulation page of skeleton assembling identification labeling experiment the reset button is missing in the page instead reset button should be displayed on the screen inorder to clear the arranged parts from the positions actual result in the skeleton assembling simulation page of skeleton assembling identification labeling experiment the reset button is missing in the page environment os windows linux browsers firefox chrome bandwidth hardware configuration processor test step link | 0 |

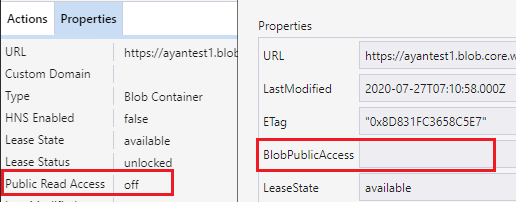

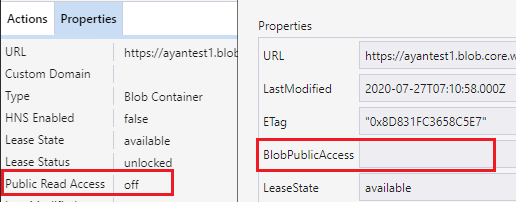

167,363 | 13,023,311,649 | IssuesEvent | 2020-07-27 09:47:18 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | Display 'off' for 'BlobPublicAccess' on blob container's properties dialog when 'Public Read Access' is off | :gear: blobs 🧪 testing | **Storage Explorer Version:** 1.15.0-dev

**Build**: 20200725.1

**Branch**: master

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ macOS Catalina

**Architecture**: ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Expand one storage account -> Blob Containers.

2. Create one blob container then right click it -> Select 'Set Public Access Level...' -> Select 'No public access' -> Click 'Apply'.

3. Observe the value of ‘Public Read Access’ on Properties panel.

4. Open the blob container's properties dialog -> Observe the value of property 'BlobPublicAccess'.

**Expect Experience:**

Display 'off' instead of blank for 'BlobPublicAccess' on blob container's properties dialog.

**Actual Experience:**

Display blank for 'BlobPublicAccess' on blob container's properties dialog.

| 1.0 | Display 'off' for 'BlobPublicAccess' on blob container's properties dialog when 'Public Read Access' is off - **Storage Explorer Version:** 1.15.0-dev

**Build**: 20200725.1

**Branch**: master

**Platform/OS:** Windows 10/ Linux Ubuntu 18.04/ macOS Catalina

**Architecture**: ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Expand one storage account -> Blob Containers.

2. Create one blob container then right click it -> Select 'Set Public Access Level...' -> Select 'No public access' -> Click 'Apply'.

3. Observe the value of ‘Public Read Access’ on Properties panel.

4. Open the blob container's properties dialog -> Observe the value of property 'BlobPublicAccess'.

**Expect Experience:**

Display 'off' instead of blank for 'BlobPublicAccess' on blob container's properties dialog.

**Actual Experience:**

Display blank for 'BlobPublicAccess' on blob container's properties dialog.

| test | display off for blobpublicaccess on blob container s properties dialog when public read access is off storage explorer version dev build branch master platform os windows linux ubuntu macos catalina architecture regression from not a regression steps to reproduce expand one storage account blob containers create one blob container then right click it select set public access level select no public access click apply observe the value of ‘public read access’ on properties panel open the blob container s properties dialog observe the value of property blobpublicaccess expect experience display off instead of blank for blobpublicaccess on blob container s properties dialog actual experience display blank for blobpublicaccess on blob container s properties dialog | 1 |

243,841 | 20,592,767,565 | IssuesEvent | 2022-03-05 03:08:45 | RelativityMC/C2ME-fabric | https://api.github.com/repos/RelativityMC/C2ME-fabric | closed | Alpha 5.96 (1.18) crash | bug need testing | Every so often my server crashes for an unknown reason. I have gotten the same error message:

`Exception in server tick loop` 5 times already in the span of 2 days.

Here are the crash reports:

[crash-2021-12-09_19.02.45-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694586/crash-2021-12-09_19.02.45-server.txt)

[crash-2021-12-09_22.41.21-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694587/crash-2021-12-09_22.41.21-server.txt)

[crash-2021-12-09_19.02.45-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694588/crash-2021-12-09_19.02.45-server.txt)

[crash-2021-12-09_22.41.21-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694589/crash-2021-12-09_22.41.21-server.txt)

[crash-2021-12-10_07.20.19-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694590/crash-2021-12-10_07.20.19-server.txt)

I also have latest.log:

[latest.log](https://github.com/RelativityMC/C2ME-fabric/files/7694595/latest.log)

Any idea what could be causing this? If it's not c2me's fault then please tell me where to post this in order to get it fixed.

Hardware:

i7-7700@4Ghz

32 GB DDR4 2400mhz ram

sata 4TB ssd with 500MB / s read and writes (where the server is on)

Windows 10

12GB of ram allocated to the server

Software:

Minecraft 1.18 fabric server

| 1.0 | Alpha 5.96 (1.18) crash - Every so often my server crashes for an unknown reason. I have gotten the same error message:

`Exception in server tick loop` 5 times already in the span of 2 days.

Here are the crash reports:

[crash-2021-12-09_19.02.45-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694586/crash-2021-12-09_19.02.45-server.txt)

[crash-2021-12-09_22.41.21-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694587/crash-2021-12-09_22.41.21-server.txt)

[crash-2021-12-09_19.02.45-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694588/crash-2021-12-09_19.02.45-server.txt)

[crash-2021-12-09_22.41.21-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694589/crash-2021-12-09_22.41.21-server.txt)

[crash-2021-12-10_07.20.19-server.txt](https://github.com/RelativityMC/C2ME-fabric/files/7694590/crash-2021-12-10_07.20.19-server.txt)

I also have latest.log:

[latest.log](https://github.com/RelativityMC/C2ME-fabric/files/7694595/latest.log)

Any idea what could be causing this? If it's not c2me's fault then please tell me where to post this in order to get it fixed.

Hardware:

i7-7700@4Ghz

32 GB DDR4 2400mhz ram

sata 4TB ssd with 500MB / s read and writes (where the server is on)

Windows 10

12GB of ram allocated to the server

Software:

Minecraft 1.18 fabric server

| test | alpha crash every so often my server crashes for an unknown reason i have gotten the same error message exception in server tick loop times already in the span of days here are the crash reports i also have latest log any idea what could be causing this if it s not s fault then please tell me where to post this in order to get it fixed hardware gb ram sata ssd with s read and writes where the server is on windows of ram allocated to the server software minecraft fabric server | 1 |

24,604 | 17,466,546,622 | IssuesEvent | 2021-08-06 17:44:14 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | Documentation - EKS | operations infrastructure eks | ## Description

Make sure developers understand how we use Kubernetes in our environment.

- How to onboard a new project

- Application Manifest documentation (How to make a simple application manifest, etc)

- CI documentation (what CI needs to output to manifest)

- ArgoCD documentation (logging in, interacting with applications)

- How to use Kubernetes secrets in your application repo (external-secrets, parameter store)

## Background/context/resources

_Any additional context for the reader to know, if applicable_

## Technical notes

_Notes around work that is happening, if applicable_

---

## Tasks

- [ ] _Write documentation_

- [ ] Peer review / Edits

## Definition of Done

- [ ] _Developer documentation for EKS is written and available to developers_

---

### Reminders

- [ ] Please attach your team label and any other appropriate label(s)

- [ ] Please attach the needs grooming tag if needed

- [ ] Please connect to an epic

| 1.0 | Documentation - EKS - ## Description

Make sure developers understand how we use Kubernetes in our environment.

- How to onboard a new project

- Application Manifest documentation (How to make a simple application manifest, etc)

- CI documentation (what CI needs to output to manifest)

- ArgoCD documentation (logging in, interacting with applications)

- How to use Kubernetes secrets in your application repo (external-secrets, parameter store)

## Background/context/resources

_Any additional context for the reader to know, if applicable_

## Technical notes

_Notes around work that is happening, if applicable_

---

## Tasks

- [ ] _Write documentation_

- [ ] Peer review / Edits

## Definition of Done

- [ ] _Developer documentation for EKS is written and available to developers_

---

### Reminders

- [ ] Please attach your team label and any other appropriate label(s)

- [ ] Please attach the needs grooming tag if needed

- [ ] Please connect to an epic

| non_test | documentation eks description make sure developers understand how we use kubernetes in our environment how to onboard a new project application manifest documentation how to make a simple application manifest etc ci documentation what ci needs to output to manifest argocd documentation logging in interacting with applications how to use kubernetes secrets in your application repo external secrets parameter store background context resources any additional context for the reader to know if applicable technical notes notes around work that is happening if applicable tasks write documentation peer review edits definition of done developer documentation for eks is written and available to developers reminders please attach your team label and any other appropriate label s please attach the needs grooming tag if needed please connect to an epic | 0 |

260,797 | 8,214,905,502 | IssuesEvent | 2018-09-05 02:11:01 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.ibm.com - Unable to access the website - Secure Connection Failed error thrown | browser-firefox priority-important severity-critical type-ssl | <!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.ibm.com/fr-fr/marketplace/requirements-management

**Browser / Version**: Firefox 63.0

**Operating System**: Windows 10

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: The secured connection is not working on the official website of IBM

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2018/9/3233d215-7854-4d2b-922c-d139f075fca6.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>buildID: 20180830123124</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.all: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>channel: aurora</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.ibm.com - Unable to access the website - Secure Connection Failed error thrown - <!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://www.ibm.com/fr-fr/marketplace/requirements-management

**Browser / Version**: Firefox 63.0

**Operating System**: Windows 10

**Tested Another Browser**: No

**Problem type**: Something else

**Description**: The secured connection is not working on the official website of IBM

**Steps to Reproduce**:

[](https://webcompat.com/uploads/2018/9/3233d215-7854-4d2b-922c-d139f075fca6.jpg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>buildID: 20180830123124</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.all: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>channel: aurora</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | unable to access the website secure connection failed error thrown url browser version firefox operating system windows tested another browser no problem type something else description the secured connection is not working on the official website of ibm steps to reproduce browser configuration mixed active content blocked false buildid tracking content blocked false gfx webrender blob images true gfx webrender all false mixed passive content blocked false gfx webrender enabled false image mem shared true channel aurora from with ❤️ | 0 |

234,107 | 19,095,681,890 | IssuesEvent | 2021-11-29 16:26:14 | GuabinaCore/WoWKoi | https://api.github.com/repos/GuabinaCore/WoWKoi | closed | [Tyce] - Spell Skyhorn Strafing Run (213467) for Quest Justice Rains from Above | Quest Object Zone:HIghmountain Test Fix | **Links:**

https://www.wowhead.com/quest=40594/justice-rains-from-above

**What is happening:**

1st spell on War Eagle is not working properly, it will cast 5-6 times and then it would say "You have no target"

Also, theres a lot mobs missing

VIDEO:

https://youtu.be/lFYFGhkGWxw

**What should happen:**

https://www.youtube.com/watch?v=4TXX-oCxY4Q | 1.0 | [Tyce] - Spell Skyhorn Strafing Run (213467) for Quest Justice Rains from Above - **Links:**

https://www.wowhead.com/quest=40594/justice-rains-from-above

**What is happening:**

1st spell on War Eagle is not working properly, it will cast 5-6 times and then it would say "You have no target"

Also, theres a lot mobs missing

VIDEO:

https://youtu.be/lFYFGhkGWxw

**What should happen:**

https://www.youtube.com/watch?v=4TXX-oCxY4Q | test | spell skyhorn strafing run for quest justice rains from above links what is happening spell on war eagle is not working properly it will cast times and then it would say you have no target also theres a lot mobs missing video what should happen | 1 |

139,900 | 11,298,713,979 | IssuesEvent | 2020-01-17 09:37:40 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | Pop up a Cloud Explorer window when clicking the 'Refresh All' using the mouse middle key | 🧪 testing | **Storage Explorer Version**: 1.12.0

**Build**: [20200117.2](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3393793)

**Branch**: master

**Platform/OS**: Windows 10/ Linux Ubuntu 18.04/ MacOS High Sierra

**Architecture**: ia32/x64

**Regression From**: Not a regression

**Steps to reproduce:**

1. Open Storage Explorer.

2. Click 'Refresh All' using the mouse middle key.

3. Check the results.

**Expect Experience:**

No window pops up.

**Actual Experience:**

Pop up a Cloud Explorer window.

**More Info:**

This issue also reproduces when clicking 'Collapse All' using the mouse middle key. | 1.0 | Pop up a Cloud Explorer window when clicking the 'Refresh All' using the mouse middle key - **Storage Explorer Version**: 1.12.0

**Build**: [20200117.2](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3393793)

**Branch**: master

**Platform/OS**: Windows 10/ Linux Ubuntu 18.04/ MacOS High Sierra

**Architecture**: ia32/x64

**Regression From**: Not a regression

**Steps to reproduce:**

1. Open Storage Explorer.

2. Click 'Refresh All' using the mouse middle key.

3. Check the results.

**Expect Experience:**

No window pops up.

**Actual Experience:**

Pop up a Cloud Explorer window.

**More Info:**

This issue also reproduces when clicking 'Collapse All' using the mouse middle key. | test | pop up a cloud explorer window when clicking the refresh all using the mouse middle key storage explorer version build branch master platform os windows linux ubuntu macos high sierra architecture regression from not a regression steps to reproduce open storage explorer click refresh all using the mouse middle key check the results expect experience no window pops up actual experience pop up a cloud explorer window more info this issue also reproduces when clicking collapse all using the mouse middle key | 1 |

326,575 | 28,002,429,763 | IssuesEvent | 2023-03-27 13:34:31 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | pull-kubernetes-dependencies is failing | kind/failing-test | ### Which jobs are failing?

pull-kubernetes-dependencies

### Which tests are failing?

verify.Vendor

### Since when has it been failing?

27 March 2023, 15:28 IST (9:58 UTC)

### Testgrid link

https://testgrid.k8s.io/presubmits-kubernetes-blocking#pull-kubernetes-dependencies

### Reason for failure (if possible)

```

Your vendored results are different:

diff -Naupr -x 'AUTHORS*' -x 'CONTRIBUTORS*' vendor/k8s.io/api/go.mod /home/prow/go/src/k8s.io/kubernetes/_tmp/kube-vendor.3Sq7Hh/kubernetes/vendor/k8s.io/api/go.mod

--- vendor/k8s.io/api/go.mod 2023-03-27 10:01:13.097931396 +0000

+++ /home/prow/go/src/k8s.io/kubernetes/_tmp/kube-vendor.3Sq7Hh/kubernetes/vendor/k8s.io/api/go.mod 2023-03-27 10:04:41.397038952 +0000

@@ -21,7 +21,7 @@ require (

github.com/modern-go/concurrent v0.0.0-20180306012644-bacd9c7ef1dd // indirect

github.com/modern-go/reflect2 v1.0.2 // indirect

github.com/pmezard/go-difflib v1.0.0 // indirect

- github.com/rogpeppe/go-internal v1.9.0 // indirect

+ github.com/rogpeppe/go-internal v1.10.0 // indirect

github.com/spf13/pflag v1.0.5 // indirect

golang.org/x/net v0.8.0 // indirect

golang.org/x/text v0.8.0 // indirect

```

### Anything else we need to know?

_No response_

### Relevant SIG(s)

/sig architecture testing | 1.0 | pull-kubernetes-dependencies is failing - ### Which jobs are failing?

pull-kubernetes-dependencies

### Which tests are failing?

verify.Vendor

### Since when has it been failing?

27 March 2023, 15:28 IST (9:58 UTC)

### Testgrid link

https://testgrid.k8s.io/presubmits-kubernetes-blocking#pull-kubernetes-dependencies

### Reason for failure (if possible)

```

Your vendored results are different:

diff -Naupr -x 'AUTHORS*' -x 'CONTRIBUTORS*' vendor/k8s.io/api/go.mod /home/prow/go/src/k8s.io/kubernetes/_tmp/kube-vendor.3Sq7Hh/kubernetes/vendor/k8s.io/api/go.mod

--- vendor/k8s.io/api/go.mod 2023-03-27 10:01:13.097931396 +0000

+++ /home/prow/go/src/k8s.io/kubernetes/_tmp/kube-vendor.3Sq7Hh/kubernetes/vendor/k8s.io/api/go.mod 2023-03-27 10:04:41.397038952 +0000

@@ -21,7 +21,7 @@ require (

github.com/modern-go/concurrent v0.0.0-20180306012644-bacd9c7ef1dd // indirect

github.com/modern-go/reflect2 v1.0.2 // indirect

github.com/pmezard/go-difflib v1.0.0 // indirect

- github.com/rogpeppe/go-internal v1.9.0 // indirect

+ github.com/rogpeppe/go-internal v1.10.0 // indirect

github.com/spf13/pflag v1.0.5 // indirect

golang.org/x/net v0.8.0 // indirect

golang.org/x/text v0.8.0 // indirect

```

### Anything else we need to know?

_No response_

### Relevant SIG(s)

/sig architecture testing | test | pull kubernetes dependencies is failing which jobs are failing pull kubernetes dependencies which tests are failing verify vendor since when has it been failing march ist utc testgrid link reason for failure if possible your vendored results are different diff naupr x authors x contributors vendor io api go mod home prow go src io kubernetes tmp kube vendor kubernetes vendor io api go mod vendor io api go mod home prow go src io kubernetes tmp kube vendor kubernetes vendor io api go mod require github com modern go concurrent indirect github com modern go indirect github com pmezard go difflib indirect github com rogpeppe go internal indirect github com rogpeppe go internal indirect github com pflag indirect golang org x net indirect golang org x text indirect anything else we need to know no response relevant sig s sig architecture testing | 1 |

160,836 | 20,120,307,424 | IssuesEvent | 2022-02-08 01:06:10 | AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | https://api.github.com/repos/AkshayMukkavilli/Analyzing-the-Significance-of-Structure-in-Amazon-Review-Data-Using-Machine-Learning-Approaches | opened | CVE-2022-23589 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2022-23589 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: /teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Tensorflow is an Open Source Machine Learning Framework. Under certain scenarios, Grappler component of TensorFlow can trigger a null pointer dereference. There are 2 places where this can occur, for the same malicious alteration of a `SavedModel` file (fixing the first one would trigger the same dereference in the second place). First, during constant folding, the `GraphDef` might not have the required nodes for the binary operation. If a node is missing, the correposning `mul_*child` would be null, and the dereference in the subsequent line would be incorrect. We have a similar issue during `IsIdentityConsumingSwitch`. The fix will be included in TensorFlow 2.8.0. We will also cherrypick this commit on TensorFlow 2.7.1, TensorFlow 2.6.3, and TensorFlow 2.5.3, as these are also affected and still in supported range.

<p>Publish Date: 2022-02-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-23589>CVE-2022-23589</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-9px9-73fg-3fqp">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-9px9-73fg-3fqp</a></p>

<p>Release Date: 2022-02-04</p>

<p>Fix Resolution: tensorflow - 2.5.3,2.6.3,2.7.1,2.8.0;tensorflow-cpu - 2.5.3,2.6.3,2.7.1,2.8.0;tensorflow-gpu - 2.5.3,2.6.3,2.7.1,2.8.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-23589 (Medium) detected in tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2022-23589 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>TensorFlow is an open source machine learning framework for everyone.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl">https://files.pythonhosted.org/packages/d2/ea/ab2c8c0e81bd051cc1180b104c75a865ab0fc66c89be992c4b20bbf6d624/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl</a></p>

<p>Path to dependency file: /FinalProject/requirements.txt</p>

<p>Path to vulnerable library: /teSource-ArchiveExtractor_8b9e071c-3b11-4aa9-ba60-cdeb60d053b7/20190525011350_65403/20190525011256_depth_0/9/tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64/tensorflow-1.13.1.data/purelib/tensorflow</p>

<p>

Dependency Hierarchy:

- :x: **tensorflow-1.13.1-cp27-cp27mu-manylinux1_x86_64.whl** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Tensorflow is an Open Source Machine Learning Framework. Under certain scenarios, Grappler component of TensorFlow can trigger a null pointer dereference. There are 2 places where this can occur, for the same malicious alteration of a `SavedModel` file (fixing the first one would trigger the same dereference in the second place). First, during constant folding, the `GraphDef` might not have the required nodes for the binary operation. If a node is missing, the correposning `mul_*child` would be null, and the dereference in the subsequent line would be incorrect. We have a similar issue during `IsIdentityConsumingSwitch`. The fix will be included in TensorFlow 2.8.0. We will also cherrypick this commit on TensorFlow 2.7.1, TensorFlow 2.6.3, and TensorFlow 2.5.3, as these are also affected and still in supported range.

<p>Publish Date: 2022-02-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-23589>CVE-2022-23589</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/tensorflow/tensorflow/security/advisories/GHSA-9px9-73fg-3fqp">https://github.com/tensorflow/tensorflow/security/advisories/GHSA-9px9-73fg-3fqp</a></p>

<p>Release Date: 2022-02-04</p>

<p>Fix Resolution: tensorflow - 2.5.3,2.6.3,2.7.1,2.8.0;tensorflow-cpu - 2.5.3,2.6.3,2.7.1,2.8.0;tensorflow-gpu - 2.5.3,2.6.3,2.7.1,2.8.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in tensorflow whl cve medium severity vulnerability vulnerable library tensorflow whl tensorflow is an open source machine learning framework for everyone library home page a href path to dependency file finalproject requirements txt path to vulnerable library tesource archiveextractor depth tensorflow tensorflow data purelib tensorflow dependency hierarchy x tensorflow whl vulnerable library vulnerability details tensorflow is an open source machine learning framework under certain scenarios grappler component of tensorflow can trigger a null pointer dereference there are places where this can occur for the same malicious alteration of a savedmodel file fixing the first one would trigger the same dereference in the second place first during constant folding the graphdef might not have the required nodes for the binary operation if a node is missing the correposning mul child would be null and the dereference in the subsequent line would be incorrect we have a similar issue during isidentityconsumingswitch the fix will be included in tensorflow we will also cherrypick this commit on tensorflow tensorflow and tensorflow as these are also affected and still in supported range publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tensorflow tensorflow cpu tensorflow gpu step up your open source security game with whitesource | 0 |

311,565 | 26,797,833,391 | IssuesEvent | 2023-02-01 13:10:59 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | kv/kvserver: TestReplicateQueueRebalanceMultiStore failed | C-test-failure O-robot branch-master T-kv no-test-failure-activity | kv/kvserver.TestReplicateQueueRebalanceMultiStore [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7609242?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7609242?buildTab=artifacts#/) on master @ [cfb5ae9a96e1770daa4aef1615a46e212b561a84](https://github.com/cockroachdb/cockroach/commits/cfb5ae9a96e1770daa4aef1615a46e212b561a84):

Fatal error:

```

panic: test timed out after 59m55s

```

Stack:

```

goroutine 225834439 [running]:

testing.(*M).startAlarm.func1()

GOROOT/src/testing/testing.go:2036 +0x8e

created by time.goFunc

GOROOT/src/time/sleep.go:176 +0x32

```

<details><summary>Log preceding fatal error</summary>

<p>

```

=== RUN TestReplicateQueueRebalanceMultiStore

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/33e1d369c27b9c01b2b6009c561815a3/logTestReplicateQueueRebalanceMultiStore360195895

test_log_scope.go:79: use -show-logs to present logs inline

=== RUN TestReplicateQueueRebalanceMultiStore/simple

```

</p>

</details>

<p>Parameters: <code>TAGS=bazel,gss,deadlock</code>

</p>

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

</p>

</details>

/cc @cockroachdb/kv

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestReplicateQueueRebalanceMultiStore.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-21654 | 2.0 | kv/kvserver: TestReplicateQueueRebalanceMultiStore failed - kv/kvserver.TestReplicateQueueRebalanceMultiStore [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7609242?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/7609242?buildTab=artifacts#/) on master @ [cfb5ae9a96e1770daa4aef1615a46e212b561a84](https://github.com/cockroachdb/cockroach/commits/cfb5ae9a96e1770daa4aef1615a46e212b561a84):

Fatal error:

```

panic: test timed out after 59m55s

```

Stack:

```

goroutine 225834439 [running]:

testing.(*M).startAlarm.func1()

GOROOT/src/testing/testing.go:2036 +0x8e

created by time.goFunc

GOROOT/src/time/sleep.go:176 +0x32

```

<details><summary>Log preceding fatal error</summary>

<p>

```

=== RUN TestReplicateQueueRebalanceMultiStore

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/33e1d369c27b9c01b2b6009c561815a3/logTestReplicateQueueRebalanceMultiStore360195895

test_log_scope.go:79: use -show-logs to present logs inline

=== RUN TestReplicateQueueRebalanceMultiStore/simple

```

</p>

</details>

<p>Parameters: <code>TAGS=bazel,gss,deadlock</code>

</p>

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

</p>

</details>

/cc @cockroachdb/kv

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestReplicateQueueRebalanceMultiStore.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

Jira issue: CRDB-21654 | test | kv kvserver testreplicatequeuerebalancemultistore failed kv kvserver testreplicatequeuerebalancemultistore with on master fatal error panic test timed out after stack goroutine testing m startalarm goroot src testing testing go created by time gofunc goroot src time sleep go log preceding fatal error run testreplicatequeuerebalancemultistore test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline run testreplicatequeuerebalancemultistore simple parameters tags bazel gss deadlock help see also cc cockroachdb kv jira issue crdb | 1 |

292,131 | 25,202,769,949 | IssuesEvent | 2022-11-13 10:05:29 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [rom-e2e] rom_e2e_shutdown_exception_asm | Type:Task SW:ROM Milestone:V2 Component:Rom/E2e/Test | **Testpoint name:** [rom_e2e_shutdown_exception_asm](https://cs.opensource.google/opentitan/opentitan/+/master:sw/device/silicon_creator/rom/data/rom_e2e_testplan.hjson?q=rom_e2e_shutdown_exception_asm)

**Contact person:** @alphan

**Description:** Verify that ROM asm exception handler resets the chip.

- Power on with the `CREATOR_SW_CFG_ROM_EXEC_EN` OTP item set to `0`.

- Execution should halt very early in `_rom_start_boot`.

- Connect the debugger and set a breakpoint at `_asm_exception_handler`.

- Note: We need to use a debugger for this test since `mtvec` points to C handlers

when `rom_main()` starts executing.

- Set `pc` to `0x10000000` (start of main SRAM) and execute one machine instruction,

i.e. `stepi`.

- Verify that execution stops at `_asm_exception_handler` since code execution from SRAM is

not enabled.

- Continue and verify that the asm exception handler resets the chip by confirming that

execution halts at `_rom_start_boot`.

| 1.0 | [rom-e2e] rom_e2e_shutdown_exception_asm - **Testpoint name:** [rom_e2e_shutdown_exception_asm](https://cs.opensource.google/opentitan/opentitan/+/master:sw/device/silicon_creator/rom/data/rom_e2e_testplan.hjson?q=rom_e2e_shutdown_exception_asm)

**Contact person:** @alphan

**Description:** Verify that ROM asm exception handler resets the chip.

- Power on with the `CREATOR_SW_CFG_ROM_EXEC_EN` OTP item set to `0`.

- Execution should halt very early in `_rom_start_boot`.

- Connect the debugger and set a breakpoint at `_asm_exception_handler`.

- Note: We need to use a debugger for this test since `mtvec` points to C handlers

when `rom_main()` starts executing.

- Set `pc` to `0x10000000` (start of main SRAM) and execute one machine instruction,

i.e. `stepi`.

- Verify that execution stops at `_asm_exception_handler` since code execution from SRAM is

not enabled.

- Continue and verify that the asm exception handler resets the chip by confirming that

execution halts at `_rom_start_boot`.

| test | rom shutdown exception asm testpoint name contact person alphan description verify that rom asm exception handler resets the chip power on with the creator sw cfg rom exec en otp item set to execution should halt very early in rom start boot connect the debugger and set a breakpoint at asm exception handler note we need to use a debugger for this test since mtvec points to c handlers when rom main starts executing set pc to start of main sram and execute one machine instruction i e stepi verify that execution stops at asm exception handler since code execution from sram is not enabled continue and verify that the asm exception handler resets the chip by confirming that execution halts at rom start boot | 1 |

162,312 | 12,643,204,260 | IssuesEvent | 2020-06-16 09:25:16 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | [Item] Minor Darkmoon Prize - Does not contain items sometimes | Fix - Tester Confirmed | **Links:**

https://www.wow-mania.com/armory/?item=19298

**What is Happening:**

The item does not contain an item sometimes. https://i.imgur.com/DPz3TAV.jpg

**What Should happen:**

Item should always conain an item

| 1.0 | [Item] Minor Darkmoon Prize - Does not contain items sometimes - **Links:**

https://www.wow-mania.com/armory/?item=19298

**What is Happening:**

The item does not contain an item sometimes. https://i.imgur.com/DPz3TAV.jpg

**What Should happen:**

Item should always conain an item

| test | minor darkmoon prize does not contain items sometimes links what is happening the item does not contain an item sometimes what should happen item should always conain an item | 1 |

331,196 | 10,061,754,723 | IssuesEvent | 2019-07-22 22:19:45 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | sts.karnataka.gov.in - see bug description | browser-firefox-mobile engine-gecko priority-normal | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

**URL**: https://sts.karnataka.gov.in/SATS/sts.htm#

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android 8.1.0

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Not able to logout .

**Steps to Reproduce**:

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | sts.karnataka.gov.in - see bug description - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 8.1.0; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

**URL**: https://sts.karnataka.gov.in/SATS/sts.htm#

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android 8.1.0

**Tested Another Browser**: Yes

**Problem type**: Something else

**Description**: Not able to logout .

**Steps to Reproduce**:

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | sts karnataka gov in see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description not able to logout steps to reproduce browser configuration none from with ❤️ | 0 |

144,346 | 11,613,237,414 | IssuesEvent | 2020-02-26 10:22:19 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | opened | JTReg Test Failure : java/lang/invoke/VarHandles/VarHandleTestMethodHandleAccessXXX.java (2) | test failure | Failure link

------------

Follow on from: https://github.com/eclipse/openj9/issues/3940

https://ci.adoptopenjdk.net/user/adam-thorpe/my-views/view/OpenJ9%20sanity%20openjdk/job/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/151/console

- test category: sanity.openjdk

- OS/architecture: jdk11+ all platforms

Optional info

-------------

- intermittent failure (yes|no): no

- regression or new test: regression

Failure output (captured from console output)

---------------------------------------------

I can see there's still some conversation and open PR's from the previous issue, I just wanted to raise this if this wasn't already a known issue.

```

00:38:27 Type=Segmentation error vmState=0x000501ff

00:38:27 J9Generic_Signal_Number=00000018 Signal_Number=0000000b Error_Value=00000000 Signal_Code=00000000

00:38:27 Handler1=000000000228F370 Handler2=0000000002487740

00:38:27 RDI=6E692E656C646E61 RSI=0000000000000003 RAX=0000000000000003 RBX=0000700000BBB160

00:38:27 RCX=0000000000000003 RDX=00000000770E3090 R8=00000000770D0BB0 R9=000000000000000A

00:38:27 R10=00000000037EE921 R11=00006FFFFD3CB63E R12=0000000000007FFF R13=0000000077097F20

00:38:27 R14=0000000000000003 R15=00000000037EE7DC

00:38:27 RIP=0000000002B2B58A GS=0000 FS=0000 RSP=0000700000BB9F88

00:38:27 RFlags=0000000000010202 CS=002B RBP=6E692E656C646E61 ERR=6921A00800000000

00:38:27 TRAPNO=000000000000000D CPU=A008000000000000 FAULTVADDR=00007FFD6921A008

00:38:27 XMM0 000000a5000000c0 (f: 192.000000, d: 3.501293e-312)

00:38:27 XMM1 00ff00ffffffffff (f: 4294967296.000000, d: 7.064164e-304)

00:38:27 XMM2 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM3 00000000770503a0 (f: 1996817280.000000, d: 9.865588e-315)

00:38:27 XMM4 0000000077050ef0 (f: 1996820224.000000, d: 9.865603e-315)

00:38:27 XMM5 0000000077011df0 (f: 1996561920.000000, d: 9.864326e-315)

00:38:27 XMM6 000000007704f430 (f: 1996813312.000000, d: 9.865569e-315)

00:38:27 XMM7 00000000770503a0 (f: 1996817280.000000, d: 9.865588e-315)

00:38:27 XMM8 0000000077050ef0 (f: 1996820224.000000, d: 9.865603e-315)

00:38:27 XMM9 000000d0000000d0 (f: 208.000000, d: 4.413751e-312)

00:38:27 XMM10 0000002000000020 (f: 32.000000, d: 6.790387e-313)

00:38:27 XMM11 8000000080000000 (f: 2147483648.000000, d: -1.060998e-314)

00:38:27 XMM12 0000002000000020 (f: 32.000000, d: 6.790387e-313)

00:38:27 XMM13 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM14 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM15 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 Module=/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdkbinary/j2sdk-image/Contents/Home/lib/compressedrefs/libj9jit29.dylib

00:38:27 Module_base_address=00000000025F8000 Symbol=_ZN15TR_PrexArgument14knowledgeLevelEPS_

00:38:27 Symbol_address=0000000002B2B580

00:38:27

00:38:27 Method_being_compiled=java/lang/invoke/BruteArgumentMoverHandle.invokeExact_thunkArchetype_X(I)I

00:38:27 Target=2_90_20200225_487 (Mac OS X 10.14.5)

00:38:27 CPU=amd64 (4 logical CPUs) (0x200000000 RAM)

00:38:27 ----------- Stack Backtrace -----------

00:38:27 ---------------------------------------

00:38:27 JVMDUMP039I Processing dump event "gpf", detail "" at 2020/02/25 16:36:28 - please wait.

00:38:27 JVMDUMP032I JVM requested System dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/core.20200225.163628.92838.0001.dmp' in response to an event

00:38:27 JVMDUMP012E Error in System dump: The core file created by child process with pid = 92960 was not found. Expected to find core file with name "/cores/core.92960"

00:38:27 JVMDUMP032I JVM requested Java dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163628.92838.0002.txt' in response to an event

00:38:27 JVMDUMP039I Processing dump event "gpf", detail "" at 2020/02/25 16:36:40 - please wait.

00:38:27 JVMDUMP032I JVM requested System dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/core.20200225.163640.92838.0003.dmp' in response to an event

00:38:27 JVMDUMP012E Error in System dump: The core file created by child process with pid = 92969 was not found. Expected to find core file with name "/cores/core.92969"

00:38:27 JVMDUMP032I JVM requested Java dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163640.92838.0004.txt' in response to an event

00:38:27 JVMDUMP010I Java dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163628.92838.0002.txt

00:38:27 JVMDUMP032I JVM requested Snap dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/Snap.20200225.163628.92838.0005.trc' in response to an event

00:38:27 JVMDUMP010I Snap dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/Snap.20200225.163628.92838.0005.trc

00:38:27 JVMDUMP007I JVM Requesting JIT dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/jitdump.20200225.163628.92838.0006.dmp'

00:38:27 JVMDUMP010I JIT dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/jitdump.20200225.163628.92838.0006.dmp

00:38:27 JVMDUMP013I Processed dump event "gpf", detail "".

``` | 1.0 | JTReg Test Failure : java/lang/invoke/VarHandles/VarHandleTestMethodHandleAccessXXX.java (2) - Failure link

------------

Follow on from: https://github.com/eclipse/openj9/issues/3940

https://ci.adoptopenjdk.net/user/adam-thorpe/my-views/view/OpenJ9%20sanity%20openjdk/job/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/151/console

- test category: sanity.openjdk

- OS/architecture: jdk11+ all platforms

Optional info

-------------

- intermittent failure (yes|no): no

- regression or new test: regression

Failure output (captured from console output)

---------------------------------------------

I can see there's still some conversation and open PR's from the previous issue, I just wanted to raise this if this wasn't already a known issue.

```

00:38:27 Type=Segmentation error vmState=0x000501ff

00:38:27 J9Generic_Signal_Number=00000018 Signal_Number=0000000b Error_Value=00000000 Signal_Code=00000000

00:38:27 Handler1=000000000228F370 Handler2=0000000002487740

00:38:27 RDI=6E692E656C646E61 RSI=0000000000000003 RAX=0000000000000003 RBX=0000700000BBB160

00:38:27 RCX=0000000000000003 RDX=00000000770E3090 R8=00000000770D0BB0 R9=000000000000000A

00:38:27 R10=00000000037EE921 R11=00006FFFFD3CB63E R12=0000000000007FFF R13=0000000077097F20

00:38:27 R14=0000000000000003 R15=00000000037EE7DC

00:38:27 RIP=0000000002B2B58A GS=0000 FS=0000 RSP=0000700000BB9F88

00:38:27 RFlags=0000000000010202 CS=002B RBP=6E692E656C646E61 ERR=6921A00800000000

00:38:27 TRAPNO=000000000000000D CPU=A008000000000000 FAULTVADDR=00007FFD6921A008

00:38:27 XMM0 000000a5000000c0 (f: 192.000000, d: 3.501293e-312)

00:38:27 XMM1 00ff00ffffffffff (f: 4294967296.000000, d: 7.064164e-304)

00:38:27 XMM2 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM3 00000000770503a0 (f: 1996817280.000000, d: 9.865588e-315)

00:38:27 XMM4 0000000077050ef0 (f: 1996820224.000000, d: 9.865603e-315)

00:38:27 XMM5 0000000077011df0 (f: 1996561920.000000, d: 9.864326e-315)

00:38:27 XMM6 000000007704f430 (f: 1996813312.000000, d: 9.865569e-315)

00:38:27 XMM7 00000000770503a0 (f: 1996817280.000000, d: 9.865588e-315)

00:38:27 XMM8 0000000077050ef0 (f: 1996820224.000000, d: 9.865603e-315)

00:38:27 XMM9 000000d0000000d0 (f: 208.000000, d: 4.413751e-312)

00:38:27 XMM10 0000002000000020 (f: 32.000000, d: 6.790387e-313)

00:38:27 XMM11 8000000080000000 (f: 2147483648.000000, d: -1.060998e-314)

00:38:27 XMM12 0000002000000020 (f: 32.000000, d: 6.790387e-313)

00:38:27 XMM13 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM14 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 XMM15 0000000000000000 (f: 0.000000, d: 0.000000e+00)

00:38:27 Module=/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdkbinary/j2sdk-image/Contents/Home/lib/compressedrefs/libj9jit29.dylib

00:38:27 Module_base_address=00000000025F8000 Symbol=_ZN15TR_PrexArgument14knowledgeLevelEPS_

00:38:27 Symbol_address=0000000002B2B580

00:38:27

00:38:27 Method_being_compiled=java/lang/invoke/BruteArgumentMoverHandle.invokeExact_thunkArchetype_X(I)I

00:38:27 Target=2_90_20200225_487 (Mac OS X 10.14.5)

00:38:27 CPU=amd64 (4 logical CPUs) (0x200000000 RAM)

00:38:27 ----------- Stack Backtrace -----------

00:38:27 ---------------------------------------

00:38:27 JVMDUMP039I Processing dump event "gpf", detail "" at 2020/02/25 16:36:28 - please wait.

00:38:27 JVMDUMP032I JVM requested System dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/core.20200225.163628.92838.0001.dmp' in response to an event

00:38:27 JVMDUMP012E Error in System dump: The core file created by child process with pid = 92960 was not found. Expected to find core file with name "/cores/core.92960"

00:38:27 JVMDUMP032I JVM requested Java dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163628.92838.0002.txt' in response to an event

00:38:27 JVMDUMP039I Processing dump event "gpf", detail "" at 2020/02/25 16:36:40 - please wait.

00:38:27 JVMDUMP032I JVM requested System dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/core.20200225.163640.92838.0003.dmp' in response to an event

00:38:27 JVMDUMP012E Error in System dump: The core file created by child process with pid = 92969 was not found. Expected to find core file with name "/cores/core.92969"

00:38:27 JVMDUMP032I JVM requested Java dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163640.92838.0004.txt' in response to an event

00:38:27 JVMDUMP010I Java dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/javacore.20200225.163628.92838.0002.txt

00:38:27 JVMDUMP032I JVM requested Snap dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/Snap.20200225.163628.92838.0005.trc' in response to an event

00:38:27 JVMDUMP010I Snap dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/Snap.20200225.163628.92838.0005.trc

00:38:27 JVMDUMP007I JVM Requesting JIT dump using '/Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/jitdump.20200225.163628.92838.0006.dmp'

00:38:27 JVMDUMP010I JIT dump written to /Users/jenkins/workspace/Test_openjdk11_j9_sanity.openjdk_x86-64_mac/openjdk-tests/TKG/test_output_15826763584317/jdk_lang_0/work/scratch/1/jitdump.20200225.163628.92838.0006.dmp

00:38:27 JVMDUMP013I Processed dump event "gpf", detail "".

``` | test | jtreg test failure java lang invoke varhandles varhandletestmethodhandleaccessxxx java failure link follow on from test category sanity openjdk os architecture all platforms optional info intermittent failure yes no no regression or new test regression failure output captured from console output i can see there s still some conversation and open pr s from the previous issue i just wanted to raise this if this wasn t already a known issue type segmentation error vmstate signal number signal number error value signal code rdi rsi rax rbx rcx rdx rip gs fs rsp rflags cs rbp err trapno cpu faultvaddr f d f d f d f d f d f d f d f d f d f d f d f d f d f d f d f d module users jenkins workspace test sanity openjdk mac openjdkbinary image contents home lib compressedrefs dylib module base address symbol symbol address method being compiled java lang invoke bruteargumentmoverhandle invokeexact thunkarchetype x i i target mac os x cpu logical cpus ram stack backtrace processing dump event gpf detail at please wait jvm requested system dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch core dmp in response to an event error in system dump the core file created by child process with pid was not found expected to find core file with name cores core jvm requested java dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch javacore txt in response to an event processing dump event gpf detail at please wait jvm requested system dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch core dmp in response to an event error in system dump the core file created by child process with pid was not found expected to find core file with name cores core jvm requested java dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch javacore txt in response to an event java dump written to users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch javacore txt jvm requested snap dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch snap trc in response to an event snap dump written to users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch snap trc jvm requesting jit dump using users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch jitdump dmp jit dump written to users jenkins workspace test sanity openjdk mac openjdk tests tkg test output jdk lang work scratch jitdump dmp processed dump event gpf detail | 1 |

172,534 | 13,309,418,831 | IssuesEvent | 2020-08-26 03:57:59 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | cdc: panic: semaphore release without acquire | A-cdc C-bug C-test-failure | Test flake?

```

=== RUN TestChangefeedNemeses/sinkless

panic: semaphore release without acquire [recovered]

panic: semaphore release without acquire

goroutine 218102 [running]:

github.com/cockroachdb/cockroach/pkg/util/stop.(*Stopper).Recover(0xc00209c240, 0x5c2ff60, 0xc001494740)

/go/src/github.com/cockroachdb/cockroach/pkg/util/stop/stopper.go:207 +0x11f

panic(0x44e0a40, 0x5b3b180)

/usr/local/go/src/runtime/panic.go:679 +0x1b2

github.com/marusama/semaphore.(*semaphore).Release(0xc003d35e60, 0x1, 0xc001d26000)

/go/src/github.com/cockroachdb/cockroach/vendor/github.com/marusama/semaphore/semaphore.go:170 +0x11d

github.com/cockroachdb/cockroach/pkg/util/limit.(*ConcurrentRequestLimiter).Finish(...)

/go/src/github.com/cockroachdb/cockroach/pkg/util/limit/limiter.go:54

github.com/cockroachdb/cockroach/pkg/kv/kvserver.iteratorWithCloser.Close(0x5c86b40, 0xc001d26000, 0xc009813150)

/go/src/github.com/cockroachdb/cockroach/pkg/kv/kvserver/replica_rangefeed.go:120 +0x3b

github.com/cockroachdb/cockroach/pkg/kv/kvserver/rangefeed.(*registration).maybeRunCatchupScan.func1(0x5c87500, 0xc002a32dc0, 0xc001091ea0, 0x2453900d, 0xed6d61674, 0x0)