Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

25,070 | 5,119,314,104 | IssuesEvent | 2017-01-08 16:43:29 | PythonNut/virtualbox-remote-snapshots | https://api.github.com/repos/PythonNut/virtualbox-remote-snapshots | closed | Document usage of "local" repositories | documentation priority:low | Since `borg mount` does not exists locally, differential extraction does not exist for local repositories.

Although `virtualbox-remote-snapshots` optimizes specifically for remote devices, local devices (i.e. big hard drives connected locally, while the VMs are stored on SSDs) should work too. | 1.0 | Document usage of "local" repositories - Since `borg mount` does not exists locally, differential extraction does not exist for local repositories.

Although `virtualbox-remote-snapshots` optimizes specifically for remote devices, local devices (i.e. big hard drives connected locally, while the VMs are stored on SSDs) should work too. | non_test | document usage of local repositories since borg mount does not exists locally differential extraction does not exist for local repositories although virtualbox remote snapshots optimizes specifically for remote devices local devices i e big hard drives connected locally while the vms are stored on ssds should work too | 0 |

6,177 | 22,362,922,429 | IssuesEvent | 2022-06-15 22:50:27 | nautobot/nautobot | https://api.github.com/repos/nautobot/nautobot | closed | Option to hide job source | group: automation group: ui-ux | ### As ...

Patti - Platform Admin

### I want ...

A configuration option to entirely disable the Job `Source` tab from the Job execution and Job results pages.

### So that ...

Potentially sensitive code is not exposed to end users of Jobs, and users are not confused by potentially inconsistent Job source based on historical Job results (see #1372)

### I know this is done when...

- I have a global configuration setting (boolean) that controls whether the Job source tab is shown in the UI or not.

- The global configuration settings can be set in the UI (Constance config)

- The setting defaults to `True` for existing installs.

### Optional - Feature groups this request pertains to.

- [X] Automation

- [ ] Circuits

- [ ] DCIM

- [ ] IPAM

- [X] Misc (including Data Sources)

- [ ] Organization

- [ ] Plugins (and other Extensibility)

- [X] Security (Secrets, etc)

- [ ] Image Management

- [X] UI/UX

- [ ] Documentation

- [ ] Other (not directly a platform feature)

### Database Changes

_No response_

### External Dependencies

_No response_ | 1.0 | Option to hide job source - ### As ...

Patti - Platform Admin

### I want ...

A configuration option to entirely disable the Job `Source` tab from the Job execution and Job results pages.

### So that ...

Potentially sensitive code is not exposed to end users of Jobs, and users are not confused by potentially inconsistent Job source based on historical Job results (see #1372)

### I know this is done when...

- I have a global configuration setting (boolean) that controls whether the Job source tab is shown in the UI or not.

- The global configuration settings can be set in the UI (Constance config)

- The setting defaults to `True` for existing installs.

### Optional - Feature groups this request pertains to.

- [X] Automation

- [ ] Circuits

- [ ] DCIM

- [ ] IPAM

- [X] Misc (including Data Sources)

- [ ] Organization

- [ ] Plugins (and other Extensibility)

- [X] Security (Secrets, etc)

- [ ] Image Management

- [X] UI/UX

- [ ] Documentation

- [ ] Other (not directly a platform feature)

### Database Changes

_No response_

### External Dependencies

_No response_ | non_test | option to hide job source as patti platform admin i want a configuration option to entirely disable the job source tab from the job execution and job results pages so that potentially sensitive code is not exposed to end users of jobs and users are not confused by potentially inconsistent job source based on historical job results see i know this is done when i have a global configuration setting boolean that controls whether the job source tab is shown in the ui or not the global configuration settings can be set in the ui constance config the setting defaults to true for existing installs optional feature groups this request pertains to automation circuits dcim ipam misc including data sources organization plugins and other extensibility security secrets etc image management ui ux documentation other not directly a platform feature database changes no response external dependencies no response | 0 |

514,567 | 14,940,979,489 | IssuesEvent | 2021-01-25 19:04:13 | Sequel-Ace/Sequel-Ace | https://api.github.com/repos/Sequel-Ace/Sequel-Ace | closed | Change Complete with Backticks --> Always Complete With Backticks | Feature Request Low priority PR Welcome stale | Please rename the preference "Complete with Backticks" to be "Always Complete With Backticks".

Some people want to use backticks with every identifier. That's rare, but I guess HFWT.

Everybody else that is using autocomplete expects that backticks will be added automatically if required. e.g. if you have a table column named `varchar` for some reason then autocompleting `mytable.var...` should insert backticks when you accept the recommended text.

---

Alternatively there is a case to be made in UX design to always avoid checkboxes. If you subscribe to that idea then I recommend:

"Complete using backticks:"

- ( ) Always

- ( ) Only when required | 1.0 | Change Complete with Backticks --> Always Complete With Backticks - Please rename the preference "Complete with Backticks" to be "Always Complete With Backticks".

Some people want to use backticks with every identifier. That's rare, but I guess HFWT.

Everybody else that is using autocomplete expects that backticks will be added automatically if required. e.g. if you have a table column named `varchar` for some reason then autocompleting `mytable.var...` should insert backticks when you accept the recommended text.

---

Alternatively there is a case to be made in UX design to always avoid checkboxes. If you subscribe to that idea then I recommend:

"Complete using backticks:"

- ( ) Always

- ( ) Only when required | non_test | change complete with backticks always complete with backticks please rename the preference complete with backticks to be always complete with backticks some people want to use backticks with every identifier that s rare but i guess hfwt everybody else that is using autocomplete expects that backticks will be added automatically if required e g if you have a table column named varchar for some reason then autocompleting mytable var should insert backticks when you accept the recommended text alternatively there is a case to be made in ux design to always avoid checkboxes if you subscribe to that idea then i recommend complete using backticks always only when required | 0 |

320,328 | 9,779,445,200 | IssuesEvent | 2019-06-07 14:31:46 | SatelliteQE/robottelo | https://api.github.com/repos/SatelliteQE/robottelo | closed | Boundary testing for parameters under settings menu | Low Priority RFT UI | TASK- Boundary testing for Settings submenus (not provisioning-specific per say, could possibly be broken out into its own section.

[P1] Provisioning ( Partially done)

[P2] Bootdisk

[P2] Discovered

[P2] Puppet

Assure proper positive and negative testing of each field

Referencing this task with #986 as few sub-tabs are already automated there. | 1.0 | Boundary testing for parameters under settings menu - TASK- Boundary testing for Settings submenus (not provisioning-specific per say, could possibly be broken out into its own section.

[P1] Provisioning ( Partially done)

[P2] Bootdisk

[P2] Discovered

[P2] Puppet

Assure proper positive and negative testing of each field

Referencing this task with #986 as few sub-tabs are already automated there. | non_test | boundary testing for parameters under settings menu task boundary testing for settings submenus not provisioning specific per say could possibly be broken out into its own section provisioning partially done bootdisk discovered puppet assure proper positive and negative testing of each field referencing this task with as few sub tabs are already automated there | 0 |

186,318 | 14,394,660,090 | IssuesEvent | 2020-12-03 01:49:23 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | jinzhongwei/etcd: integration/v3_watch_test.go; 86 LoC | fresh medium test |

Found a possible issue in [jinzhongwei/etcd](https://www.github.com/jinzhongwei/etcd) at [integration/v3_watch_test.go](https://github.com/jinzhongwei/etcd/blob/48567b8b38e6bfb11b6d74df832fd99b1b182ee3/integration/v3_watch_test.go#L206-L291)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/jinzhongwei/etcd/blob/48567b8b38e6bfb11b6d74df832fd99b1b182ee3/integration/v3_watch_test.go#L206-L291)

<details>

<summary>Click here to show the 86 line(s) of Go which triggered the analyzer.</summary>

```go

for i, tt := range tests {

clus := NewClusterV3(t, &ClusterConfig{Size: 3})

wAPI := toGRPC(clus.RandClient()).Watch

ctx, cancel := context.WithTimeout(context.Background(), 30*time.Second)

defer cancel()

wStream, err := wAPI.Watch(ctx)

if err != nil {

t.Fatalf("#%d: wAPI.Watch error: %v", i, err)

}

err = wStream.Send(tt.watchRequest)

if err != nil {

t.Fatalf("#%d: wStream.Send error: %v", i, err)

}

// ensure watcher request created a new watcher

cresp, err := wStream.Recv()

if err != nil {

t.Errorf("#%d: wStream.Recv error: %v", i, err)

clus.Terminate(t)

continue

}

if !cresp.Created {

t.Errorf("#%d: did not create watchid, got %+v", i, cresp)

clus.Terminate(t)

continue

}

if cresp.Canceled {

t.Errorf("#%d: canceled watcher on create %+v", i, cresp)

clus.Terminate(t)

continue

}

createdWatchId := cresp.WatchId

if cresp.Header == nil || cresp.Header.Revision != 1 {

t.Errorf("#%d: header revision got +%v, wanted revison 1", i, cresp)

clus.Terminate(t)

continue

}

// asynchronously create keys

go func() {

for _, k := range tt.putKeys {

kvc := toGRPC(clus.RandClient()).KV

req := &pb.PutRequest{Key: []byte(k), Value: []byte("bar")}

if _, err := kvc.Put(context.TODO(), req); err != nil {

t.Fatalf("#%d: couldn't put key (%v)", i, err)

}

}

}()

// check stream results

for j, wresp := range tt.wresps {

resp, err := wStream.Recv()

if err != nil {

t.Errorf("#%d.%d: wStream.Recv error: %v", i, j, err)

}

if resp.Header == nil {

t.Fatalf("#%d.%d: unexpected nil resp.Header", i, j)

}

if resp.Header.Revision != wresp.Header.Revision {

t.Errorf("#%d.%d: resp.Header.Revision got = %d, want = %d", i, j, resp.Header.Revision, wresp.Header.Revision)

}

if wresp.Created != resp.Created {

t.Errorf("#%d.%d: resp.Created got = %v, want = %v", i, j, resp.Created, wresp.Created)

}

if resp.WatchId != createdWatchId {

t.Errorf("#%d.%d: resp.WatchId got = %d, want = %d", i, j, resp.WatchId, createdWatchId)

}

if !reflect.DeepEqual(resp.Events, wresp.Events) {

t.Errorf("#%d.%d: resp.Events got = %+v, want = %+v", i, j, resp.Events, wresp.Events)

}

}

rok, nr := waitResponse(wStream, 1*time.Second)

if !rok {

t.Errorf("unexpected pb.WatchResponse is received %+v", nr)

}

// can't defer because tcp ports will be in use

clus.Terminate(t)

}

```

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> range-loop variable tt used in defer or goroutine at line 249

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 48567b8b38e6bfb11b6d74df832fd99b1b182ee3

| 1.0 | jinzhongwei/etcd: integration/v3_watch_test.go; 86 LoC -

Found a possible issue in [jinzhongwei/etcd](https://www.github.com/jinzhongwei/etcd) at [integration/v3_watch_test.go](https://github.com/jinzhongwei/etcd/blob/48567b8b38e6bfb11b6d74df832fd99b1b182ee3/integration/v3_watch_test.go#L206-L291)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/jinzhongwei/etcd/blob/48567b8b38e6bfb11b6d74df832fd99b1b182ee3/integration/v3_watch_test.go#L206-L291)

<details>

<summary>Click here to show the 86 line(s) of Go which triggered the analyzer.</summary>

```go

for i, tt := range tests {

clus := NewClusterV3(t, &ClusterConfig{Size: 3})

wAPI := toGRPC(clus.RandClient()).Watch

ctx, cancel := context.WithTimeout(context.Background(), 30*time.Second)

defer cancel()

wStream, err := wAPI.Watch(ctx)

if err != nil {

t.Fatalf("#%d: wAPI.Watch error: %v", i, err)

}

err = wStream.Send(tt.watchRequest)

if err != nil {

t.Fatalf("#%d: wStream.Send error: %v", i, err)

}

// ensure watcher request created a new watcher

cresp, err := wStream.Recv()

if err != nil {

t.Errorf("#%d: wStream.Recv error: %v", i, err)

clus.Terminate(t)

continue

}

if !cresp.Created {

t.Errorf("#%d: did not create watchid, got %+v", i, cresp)

clus.Terminate(t)

continue

}

if cresp.Canceled {

t.Errorf("#%d: canceled watcher on create %+v", i, cresp)

clus.Terminate(t)

continue

}

createdWatchId := cresp.WatchId

if cresp.Header == nil || cresp.Header.Revision != 1 {

t.Errorf("#%d: header revision got +%v, wanted revison 1", i, cresp)

clus.Terminate(t)

continue

}

// asynchronously create keys

go func() {

for _, k := range tt.putKeys {

kvc := toGRPC(clus.RandClient()).KV

req := &pb.PutRequest{Key: []byte(k), Value: []byte("bar")}

if _, err := kvc.Put(context.TODO(), req); err != nil {

t.Fatalf("#%d: couldn't put key (%v)", i, err)

}

}

}()

// check stream results

for j, wresp := range tt.wresps {

resp, err := wStream.Recv()

if err != nil {

t.Errorf("#%d.%d: wStream.Recv error: %v", i, j, err)

}

if resp.Header == nil {

t.Fatalf("#%d.%d: unexpected nil resp.Header", i, j)

}

if resp.Header.Revision != wresp.Header.Revision {

t.Errorf("#%d.%d: resp.Header.Revision got = %d, want = %d", i, j, resp.Header.Revision, wresp.Header.Revision)

}

if wresp.Created != resp.Created {

t.Errorf("#%d.%d: resp.Created got = %v, want = %v", i, j, resp.Created, wresp.Created)

}

if resp.WatchId != createdWatchId {

t.Errorf("#%d.%d: resp.WatchId got = %d, want = %d", i, j, resp.WatchId, createdWatchId)

}

if !reflect.DeepEqual(resp.Events, wresp.Events) {

t.Errorf("#%d.%d: resp.Events got = %+v, want = %+v", i, j, resp.Events, wresp.Events)

}

}

rok, nr := waitResponse(wStream, 1*time.Second)

if !rok {

t.Errorf("unexpected pb.WatchResponse is received %+v", nr)

}

// can't defer because tcp ports will be in use

clus.Terminate(t)

}

```

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> range-loop variable tt used in defer or goroutine at line 249

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 48567b8b38e6bfb11b6d74df832fd99b1b182ee3

| test | jinzhongwei etcd integration watch test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go for i tt range tests clus t clusterconfig size wapi togrpc clus randclient watch ctx cancel context withtimeout context background time second defer cancel wstream err wapi watch ctx if err nil t fatalf d wapi watch error v i err err wstream send tt watchrequest if err nil t fatalf d wstream send error v i err ensure watcher request created a new watcher cresp err wstream recv if err nil t errorf d wstream recv error v i err clus terminate t continue if cresp created t errorf d did not create watchid got v i cresp clus terminate t continue if cresp canceled t errorf d canceled watcher on create v i cresp clus terminate t continue createdwatchid cresp watchid if cresp header nil cresp header revision t errorf d header revision got v wanted revison i cresp clus terminate t continue asynchronously create keys go func for k range tt putkeys kvc togrpc clus randclient kv req pb putrequest key byte k value byte bar if err kvc put context todo req err nil t fatalf d couldn t put key v i err check stream results for j wresp range tt wresps resp err wstream recv if err nil t errorf d d wstream recv error v i j err if resp header nil t fatalf d d unexpected nil resp header i j if resp header revision wresp header revision t errorf d d resp header revision got d want d i j resp header revision wresp header revision if wresp created resp created t errorf d d resp created got v want v i j resp created wresp created if resp watchid createdwatchid t errorf d d resp watchid got d want d i j resp watchid createdwatchid if reflect deepequal resp events wresp events t errorf d d resp events got v want v i j resp events wresp events rok nr waitresponse wstream time second if rok t errorf unexpected pb watchresponse is received v nr can t defer because tcp ports will be in use clus terminate t below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message range loop variable tt used in defer or goroutine at line leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id | 1 |

153,300 | 12,139,810,196 | IssuesEvent | 2020-04-23 19:28:19 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: acceptance/bank/node-restart failed | C-test-failure O-roachtest O-robot branch-master release-blocker | [(roachtest).acceptance/bank/node-restart failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1860006&tab=buildLog) on [master@2d8263b1d5e5fb7c9697af1b17d3935bb88ad3d0](https://github.com/cockroachdb/cockroach/commits/2d8263b1d5e5fb7c9697af1b17d3935bb88ad3d0):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: artifacts/acceptance/bank/node-restart/run_1

bank.go:375,bank.go:490,acceptance.go:84,test_runner.go:753: pq: query execution canceled due to statement timeout

after 31.0s

main.(*bankClient).transferMoney

/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/bank.go:74

main.(*bankState).transferMoney

/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/bank.go:158

runtime.goexit

/usr/local/go/src/runtime/asm_amd64.s:1357

```

<details><summary>More</summary><p>

Artifacts: [/acceptance/bank/node-restart](https://teamcity.cockroachdb.com/viewLog.html?buildId=1860006&tab=artifacts#/acceptance/bank/node-restart)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Aacceptance%2Fbank%2Fnode-restart.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| 2.0 | roachtest: acceptance/bank/node-restart failed - [(roachtest).acceptance/bank/node-restart failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1860006&tab=buildLog) on [master@2d8263b1d5e5fb7c9697af1b17d3935bb88ad3d0](https://github.com/cockroachdb/cockroach/commits/2d8263b1d5e5fb7c9697af1b17d3935bb88ad3d0):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: artifacts/acceptance/bank/node-restart/run_1

bank.go:375,bank.go:490,acceptance.go:84,test_runner.go:753: pq: query execution canceled due to statement timeout

after 31.0s

main.(*bankClient).transferMoney

/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/bank.go:74

main.(*bankState).transferMoney

/go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/bank.go:158

runtime.goexit

/usr/local/go/src/runtime/asm_amd64.s:1357

```

<details><summary>More</summary><p>

Artifacts: [/acceptance/bank/node-restart](https://teamcity.cockroachdb.com/viewLog.html?buildId=1860006&tab=artifacts#/acceptance/bank/node-restart)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Aacceptance%2Fbank%2Fnode-restart.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| test | roachtest acceptance bank node restart failed on the test failed on branch master cloud gce test artifacts and logs in artifacts acceptance bank node restart run bank go bank go acceptance go test runner go pq query execution canceled due to statement timeout after main bankclient transfermoney go src github com cockroachdb cockroach pkg cmd roachtest bank go main bankstate transfermoney go src github com cockroachdb cockroach pkg cmd roachtest bank go runtime goexit usr local go src runtime asm s more artifacts powered by | 1 |

37,326 | 5,112,442,223 | IssuesEvent | 2017-01-06 11:12:43 | hpi-swt2/wimi-portal | https://api.github.com/repos/hpi-swt2/wimi-portal | closed | Test for handing in / rejecting / accepting time sheet twice | test-needed | What happens if the reject / accept / hand_in POST is sent multiple times? | 1.0 | Test for handing in / rejecting / accepting time sheet twice - What happens if the reject / accept / hand_in POST is sent multiple times? | test | test for handing in rejecting accepting time sheet twice what happens if the reject accept hand in post is sent multiple times | 1 |

123,788 | 10,289,023,059 | IssuesEvent | 2019-08-27 12:18:39 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Homepage amphtml not getting generated when there are no posts added. | NEED FAST REVIEW Need Testing [Priority: HIGH] bug | If we do not create any posts and set up a custom front page, then no amphtml is created on the homepage.

Ref: https://secure.helpscout.net/conversation/911411024/75266?folderId=2322649 | 1.0 | Homepage amphtml not getting generated when there are no posts added. - If we do not create any posts and set up a custom front page, then no amphtml is created on the homepage.

Ref: https://secure.helpscout.net/conversation/911411024/75266?folderId=2322649 | test | homepage amphtml not getting generated when there are no posts added if we do not create any posts and set up a custom front page then no amphtml is created on the homepage ref | 1 |

803,709 | 29,187,124,280 | IssuesEvent | 2023-05-19 16:22:14 | stratosphererl/stratosphere | https://api.github.com/repos/stratosphererl/stratosphere | closed | Make reference to number of users and replays dynamic in home.tsx and about.tsx | priority: high | # Acceptance Criteria

- [ ] When a user views either the home or about page, have the references in the text referring to the number of users and replays on our site display the actual number present on our site

# Estimation of Work

- TBA

# Tasks

For both home.tsx and replay.tsx:

- [ ] Call stats service for number of users and number of replays

- [ ] Dynamically present the two numbers in the text of each page

# Risks

None

# Notes

n/a | 1.0 | Make reference to number of users and replays dynamic in home.tsx and about.tsx - # Acceptance Criteria

- [ ] When a user views either the home or about page, have the references in the text referring to the number of users and replays on our site display the actual number present on our site

# Estimation of Work

- TBA

# Tasks

For both home.tsx and replay.tsx:

- [ ] Call stats service for number of users and number of replays

- [ ] Dynamically present the two numbers in the text of each page

# Risks

None

# Notes

n/a | non_test | make reference to number of users and replays dynamic in home tsx and about tsx acceptance criteria when a user views either the home or about page have the references in the text referring to the number of users and replays on our site display the actual number present on our site estimation of work tba tasks for both home tsx and replay tsx call stats service for number of users and number of replays dynamically present the two numbers in the text of each page risks none notes n a | 0 |

306,159 | 26,441,099,663 | IssuesEvent | 2023-01-16 00:09:50 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | BUG: ValueError converting dense categorical series to sparse when `fill_value` not in series | Bug Sparse Categorical Needs Tests | ### Pandas version checks

- [X] I have checked that this issue has not already been reported.

- [X] I have confirmed this bug exists on the [latest version](https://pandas.pydata.org/docs/whatsnew/index.html) of pandas.

- [X] I have confirmed this bug exists on the main branch of pandas.

### Reproducible Example

```python

import pandas as pd

from pandas import SparseDtype

df = pd.DataFrame([["a", 0],["b", 1], ["b", 2]], columns=["A","B"])

df["A"].astype(SparseDtype("category"))

# or: df["A"].astype(SparseDtype("category", fill_value="not_in_series"))

```

### Issue Description

I am unable to convert a dense categorical series to a sparse one when I leave the `fill_value` at default, or a value which does not exist in the series.

Stacktrace:

<details>

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/core/formatters.py:706, in PlainTextFormatter.__call__(self, obj)

699 stream = StringIO()

700 printer = pretty.RepresentationPrinter(stream, self.verbose,

701 self.max_width, self.newline,

702 max_seq_length=self.max_seq_length,

703 singleton_pprinters=self.singleton_printers,

704 type_pprinters=self.type_printers,

705 deferred_pprinters=self.deferred_printers)

--> 706 printer.pretty(obj)

707 printer.flush()

708 return stream.getvalue()

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/lib/pretty.py:410, in RepresentationPrinter.pretty(self, obj)

407 return meth(obj, self, cycle)

408 if cls is not object \

409 and callable(cls.__dict__.get('__repr__')):

--> 410 return _repr_pprint(obj, self, cycle)

412 return _default_pprint(obj, self, cycle)

413 finally:

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/lib/pretty.py:778, in _repr_pprint(obj, p, cycle)

776 """A pprint that just redirects to the normal repr function."""

777 # Find newlines and replace them with p.break_()

--> 778 output = repr(obj)

779 lines = output.splitlines()

780 with p.group():

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/core/series.py:1550, in Series.__repr__(self)

1548 # pylint: disable=invalid-repr-returned

1549 repr_params = fmt.get_series_repr_params()

-> 1550 return self.to_string(**repr_params)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/core/series.py:1643, in Series.to_string(self, buf, na_rep, float_format, header, index, length, dtype, name, max_rows, min_rows)

1597 """

1598 Render a string representation of the Series.

1599

(...)

1629 String representation of Series if ``buf=None``, otherwise None.

1630 """

1631 formatter = fmt.SeriesFormatter(

1632 self,

1633 name=name,

(...)

1641 max_rows=max_rows,

1642 )

-> 1643 result = formatter.to_string()

1645 # catch contract violations

1646 if not isinstance(result, str):

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:393, in SeriesFormatter.to_string(self)

390 return f"{type(self.series).__name__}([], {footer})"

392 fmt_index, have_header = self._get_formatted_index()

--> 393 fmt_values = self._get_formatted_values()

395 if self.is_truncated_vertically:

396 n_header_rows = 0

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:377, in SeriesFormatter._get_formatted_values(self)

376 def _get_formatted_values(self) -> list[str]:

--> 377 return format_array(

378 self.tr_series._values,

379 None,

380 float_format=self.float_format,

381 na_rep=self.na_rep,

382 leading_space=self.index,

383 )

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1326, in format_array(values, formatter, float_format, na_rep, digits, space, justify, decimal, leading_space, quoting)

1311 digits = get_option("display.precision")

1313 fmt_obj = fmt_klass(

1314 values,

1315 digits=digits,

(...)

1323 quoting=quoting,

1324 )

-> 1326 return fmt_obj.get_result()

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1357, in GenericArrayFormatter.get_result(self)

1356 def get_result(self) -> list[str]:

-> 1357 fmt_values = self._format_strings()

1358 return _make_fixed_width(fmt_values, self.justify)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1658, in ExtensionArrayFormatter._format_strings(self)

1656 array = values._internal_get_values()

1657 else:

-> 1658 array = np.asarray(values)

1660 fmt_values = format_array(

1661 array,

1662 formatter,

(...)

1670 quoting=self.quoting,

1671 )

1672 return fmt_values

ValueError: object __array__ method not producing an array

</details>

### Expected Behavior

I expect it to "just work", similar to providing a fill value which does exist in the series, or how it works with other dtypes:

```python

import pandas as pd

from pandas import SparseDtype

df = pd.DataFrame([["a", 0],["b", 1], ["b", 2]], columns=["A","B"])

# works, since "a" is a value present in the series

df["A"].astype(SparseDtype("category", fill_value="a"))

# also works, despite -1 not being present in the series

df["B"].astype(SparseDtype(int, fill_value=-1))

```

### Installed Versions

<details>

INSTALLED VERSIONS

------------------

commit : 8dab54d6573f7186ff0c3b6364d5e4dd635ff3e7

python : 3.10.5.final.0

python-bits : 64

OS : Darwin

OS-release : 21.5.0

Version : Darwin Kernel Version 21.5.0: Tue Apr 26 21:08:37 PDT 2022; root:xnu-8020.121.3~4/RELEASE_ARM64_T6000

machine : arm64

processor : arm

byteorder : little

LC_ALL : None

LANG : None

LOCALE : None.UTF-8

pandas : 1.5.2

numpy : 1.23.5

pytz : 2022.6

dateutil : 2.8.2

setuptools : 58.1.0

pip : 22.3.1

Cython : None

pytest : 7.2.0

hypothesis : None

sphinx : None

blosc : None

feather : None

xlsxwriter : None

lxml.etree : None

html5lib : None

pymysql : None

psycopg2 : None

jinja2 : 3.1.2

IPython : 8.6.0

pandas_datareader: None

bs4 : 4.11.1

bottleneck : None

brotli : None

fastparquet : None

fsspec : None

gcsfs : None

matplotlib : 3.6.2

numba : None

numexpr : None

odfpy : None

openpyxl : None

pandas_gbq : None

pyarrow : 10.0.0

pyreadstat : None

pyxlsb : None

s3fs : None

scipy : 1.9.3

snappy : None

sqlalchemy : None

tables : None

tabulate : None

xarray : None

xlrd : None

xlwt : None

zstandard : None

tzdata : None

</details>

| 1.0 | BUG: ValueError converting dense categorical series to sparse when `fill_value` not in series - ### Pandas version checks

- [X] I have checked that this issue has not already been reported.

- [X] I have confirmed this bug exists on the [latest version](https://pandas.pydata.org/docs/whatsnew/index.html) of pandas.

- [X] I have confirmed this bug exists on the main branch of pandas.

### Reproducible Example

```python

import pandas as pd

from pandas import SparseDtype

df = pd.DataFrame([["a", 0],["b", 1], ["b", 2]], columns=["A","B"])

df["A"].astype(SparseDtype("category"))

# or: df["A"].astype(SparseDtype("category", fill_value="not_in_series"))

```

### Issue Description

I am unable to convert a dense categorical series to a sparse one when I leave the `fill_value` at default, or a value which does not exist in the series.

Stacktrace:

<details>

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/core/formatters.py:706, in PlainTextFormatter.__call__(self, obj)

699 stream = StringIO()

700 printer = pretty.RepresentationPrinter(stream, self.verbose,

701 self.max_width, self.newline,

702 max_seq_length=self.max_seq_length,

703 singleton_pprinters=self.singleton_printers,

704 type_pprinters=self.type_printers,

705 deferred_pprinters=self.deferred_printers)

--> 706 printer.pretty(obj)

707 printer.flush()

708 return stream.getvalue()

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/lib/pretty.py:410, in RepresentationPrinter.pretty(self, obj)

407 return meth(obj, self, cycle)

408 if cls is not object \

409 and callable(cls.__dict__.get('__repr__')):

--> 410 return _repr_pprint(obj, self, cycle)

412 return _default_pprint(obj, self, cycle)

413 finally:

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/IPython/lib/pretty.py:778, in _repr_pprint(obj, p, cycle)

776 """A pprint that just redirects to the normal repr function."""

777 # Find newlines and replace them with p.break_()

--> 778 output = repr(obj)

779 lines = output.splitlines()

780 with p.group():

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/core/series.py:1550, in Series.__repr__(self)

1548 # pylint: disable=invalid-repr-returned

1549 repr_params = fmt.get_series_repr_params()

-> 1550 return self.to_string(**repr_params)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/core/series.py:1643, in Series.to_string(self, buf, na_rep, float_format, header, index, length, dtype, name, max_rows, min_rows)

1597 """

1598 Render a string representation of the Series.

1599

(...)

1629 String representation of Series if ``buf=None``, otherwise None.

1630 """

1631 formatter = fmt.SeriesFormatter(

1632 self,

1633 name=name,

(...)

1641 max_rows=max_rows,

1642 )

-> 1643 result = formatter.to_string()

1645 # catch contract violations

1646 if not isinstance(result, str):

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:393, in SeriesFormatter.to_string(self)

390 return f"{type(self.series).__name__}([], {footer})"

392 fmt_index, have_header = self._get_formatted_index()

--> 393 fmt_values = self._get_formatted_values()

395 if self.is_truncated_vertically:

396 n_header_rows = 0

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:377, in SeriesFormatter._get_formatted_values(self)

376 def _get_formatted_values(self) -> list[str]:

--> 377 return format_array(

378 self.tr_series._values,

379 None,

380 float_format=self.float_format,

381 na_rep=self.na_rep,

382 leading_space=self.index,

383 )

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1326, in format_array(values, formatter, float_format, na_rep, digits, space, justify, decimal, leading_space, quoting)

1311 digits = get_option("display.precision")

1313 fmt_obj = fmt_klass(

1314 values,

1315 digits=digits,

(...)

1323 quoting=quoting,

1324 )

-> 1326 return fmt_obj.get_result()

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1357, in GenericArrayFormatter.get_result(self)

1356 def get_result(self) -> list[str]:

-> 1357 fmt_values = self._format_strings()

1358 return _make_fixed_width(fmt_values, self.justify)

File ~/repositories/arff-to-parquet/venv/lib/python3.10/site-packages/pandas/io/formats/format.py:1658, in ExtensionArrayFormatter._format_strings(self)

1656 array = values._internal_get_values()

1657 else:

-> 1658 array = np.asarray(values)

1660 fmt_values = format_array(

1661 array,

1662 formatter,

(...)

1670 quoting=self.quoting,

1671 )

1672 return fmt_values

ValueError: object __array__ method not producing an array

</details>

### Expected Behavior

I expect it to "just work", similar to providing a fill value which does exist in the series, or how it works with other dtypes:

```python

import pandas as pd

from pandas import SparseDtype

df = pd.DataFrame([["a", 0],["b", 1], ["b", 2]], columns=["A","B"])

# works, since "a" is a value present in the series

df["A"].astype(SparseDtype("category", fill_value="a"))

# also works, despite -1 not being present in the series

df["B"].astype(SparseDtype(int, fill_value=-1))

```

### Installed Versions

<details>

INSTALLED VERSIONS

------------------

commit : 8dab54d6573f7186ff0c3b6364d5e4dd635ff3e7

python : 3.10.5.final.0

python-bits : 64

OS : Darwin

OS-release : 21.5.0

Version : Darwin Kernel Version 21.5.0: Tue Apr 26 21:08:37 PDT 2022; root:xnu-8020.121.3~4/RELEASE_ARM64_T6000

machine : arm64

processor : arm

byteorder : little

LC_ALL : None

LANG : None

LOCALE : None.UTF-8

pandas : 1.5.2

numpy : 1.23.5

pytz : 2022.6

dateutil : 2.8.2

setuptools : 58.1.0

pip : 22.3.1

Cython : None

pytest : 7.2.0

hypothesis : None

sphinx : None

blosc : None

feather : None

xlsxwriter : None

lxml.etree : None

html5lib : None

pymysql : None

psycopg2 : None

jinja2 : 3.1.2

IPython : 8.6.0

pandas_datareader: None

bs4 : 4.11.1

bottleneck : None

brotli : None

fastparquet : None

fsspec : None

gcsfs : None

matplotlib : 3.6.2

numba : None

numexpr : None

odfpy : None

openpyxl : None

pandas_gbq : None

pyarrow : 10.0.0

pyreadstat : None

pyxlsb : None

s3fs : None

scipy : 1.9.3

snappy : None

sqlalchemy : None

tables : None

tabulate : None

xarray : None

xlrd : None

xlwt : None

zstandard : None

tzdata : None

</details>

| test | bug valueerror converting dense categorical series to sparse when fill value not in series pandas version checks i have checked that this issue has not already been reported i have confirmed this bug exists on the of pandas i have confirmed this bug exists on the main branch of pandas reproducible example python import pandas as pd from pandas import sparsedtype df pd dataframe columns df astype sparsedtype category or df astype sparsedtype category fill value not in series issue description i am unable to convert a dense categorical series to a sparse one when i leave the fill value at default or a value which does not exist in the series stacktrace valueerror traceback most recent call last file repositories arff to parquet venv lib site packages ipython core formatters py in plaintextformatter call self obj stream stringio printer pretty representationprinter stream self verbose self max width self newline max seq length self max seq length singleton pprinters self singleton printers type pprinters self type printers deferred pprinters self deferred printers printer pretty obj printer flush return stream getvalue file repositories arff to parquet venv lib site packages ipython lib pretty py in representationprinter pretty self obj return meth obj self cycle if cls is not object and callable cls dict get repr return repr pprint obj self cycle return default pprint obj self cycle finally file repositories arff to parquet venv lib site packages ipython lib pretty py in repr pprint obj p cycle a pprint that just redirects to the normal repr function find newlines and replace them with p break output repr obj lines output splitlines with p group file repositories arff to parquet venv lib site packages pandas core series py in series repr self pylint disable invalid repr returned repr params fmt get series repr params return self to string repr params file repositories arff to parquet venv lib site packages pandas core series py in series to string self buf na rep float format header index length dtype name max rows min rows render a string representation of the series string representation of series if buf none otherwise none formatter fmt seriesformatter self name name max rows max rows result formatter to string catch contract violations if not isinstance result str file repositories arff to parquet venv lib site packages pandas io formats format py in seriesformatter to string self return f type self series name footer fmt index have header self get formatted index fmt values self get formatted values if self is truncated vertically n header rows file repositories arff to parquet venv lib site packages pandas io formats format py in seriesformatter get formatted values self def get formatted values self list return format array self tr series values none float format self float format na rep self na rep leading space self index file repositories arff to parquet venv lib site packages pandas io formats format py in format array values formatter float format na rep digits space justify decimal leading space quoting digits get option display precision fmt obj fmt klass values digits digits quoting quoting return fmt obj get result file repositories arff to parquet venv lib site packages pandas io formats format py in genericarrayformatter get result self def get result self list fmt values self format strings return make fixed width fmt values self justify file repositories arff to parquet venv lib site packages pandas io formats format py in extensionarrayformatter format strings self array values internal get values else array np asarray values fmt values format array array formatter quoting self quoting return fmt values valueerror object array method not producing an array expected behavior i expect it to just work similar to providing a fill value which does exist in the series or how it works with other dtypes python import pandas as pd from pandas import sparsedtype df pd dataframe columns works since a is a value present in the series df astype sparsedtype category fill value a also works despite not being present in the series df astype sparsedtype int fill value installed versions installed versions commit python final python bits os darwin os release version darwin kernel version tue apr pdt root xnu release machine processor arm byteorder little lc all none lang none locale none utf pandas numpy pytz dateutil setuptools pip cython none pytest hypothesis none sphinx none blosc none feather none xlsxwriter none lxml etree none none pymysql none none ipython pandas datareader none bottleneck none brotli none fastparquet none fsspec none gcsfs none matplotlib numba none numexpr none odfpy none openpyxl none pandas gbq none pyarrow pyreadstat none pyxlsb none none scipy snappy none sqlalchemy none tables none tabulate none xarray none xlrd none xlwt none zstandard none tzdata none | 1 |

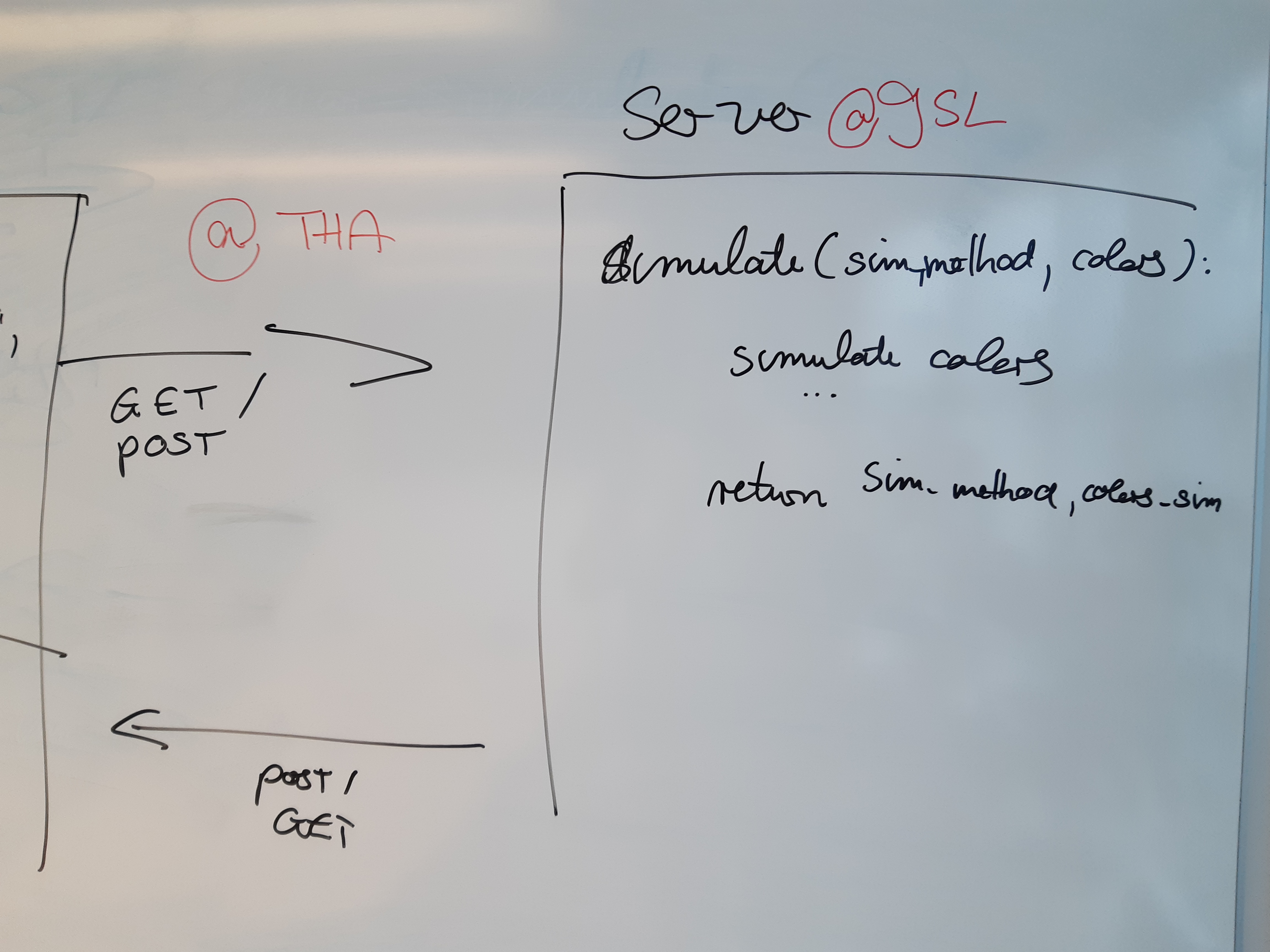

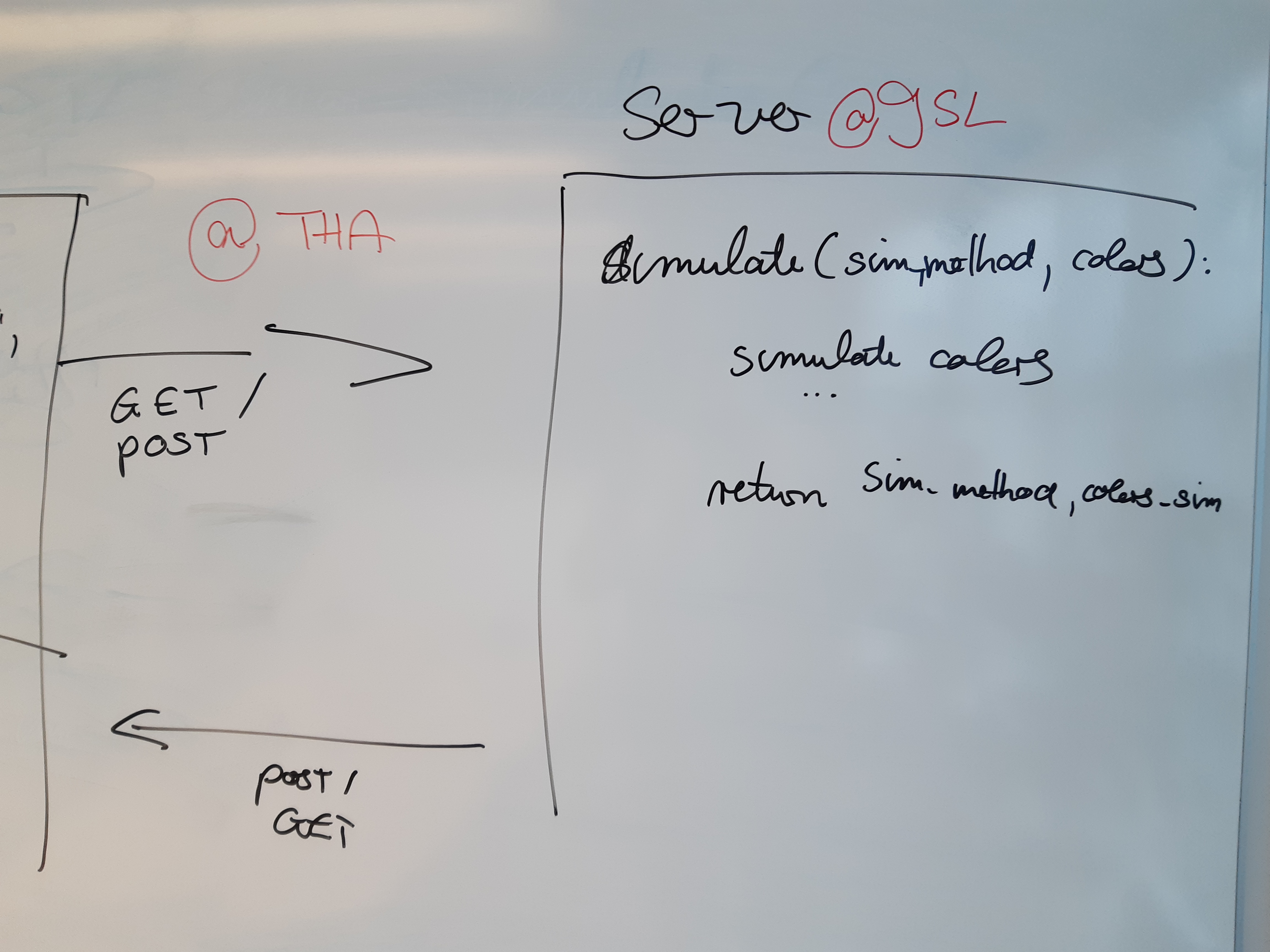

794,935 | 28,055,385,506 | IssuesEvent | 2023-03-29 09:02:31 | NorskRegnesentral/ccc21 | https://api.github.com/repos/NorskRegnesentral/ccc21 | closed | 3. Write function to simulate array with colors | color_deficiency high_priority JSL | Write a function that takes simulation_type and array with original colors as input. Compute simualted colors. Return simulation_type and array with simulation colors.

| 1.0 | 3. Write function to simulate array with colors - Write a function that takes simulation_type and array with original colors as input. Compute simualted colors. Return simulation_type and array with simulation colors.

| non_test | write function to simulate array with colors write a function that takes simulation type and array with original colors as input compute simualted colors return simulation type and array with simulation colors | 0 |

208,084 | 15,874,081,884 | IssuesEvent | 2021-04-09 04:06:05 | wesnoth/wesnoth | https://api.github.com/repos/wesnoth/wesnoth | closed | CI: WML unit tests results make it very hard to find errors | Bug Unit Tests | @CelticMinstrel the WML unit test script's results are currently very confusing. Below is part of the output of the c611128 validation (which passes, none of these are problems). However, this makes it very hard to work out why the CI failed on other runs - there's one other error, somewhere.

```

Running test test_move_fail_6

Error (strict mode, strict_level = 1): wesnoth reported on channel warning replay

20210314 23:50:58 warning replay: Warning: Path data contained something which could not be parsed to a sequence of locations:

config = x = 16,15,14,13,12,11

y = 3,3,3,3,3,bock

FAIL TEST (BROKE STRICT): test_move_fail_6

Running test test_store_unit_defense_deprecated

Error (strict mode, strict_level = 1): wesnoth reported on channel error deprecation

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

FAIL TEST (BROKE STRICT): test_store_unit_defense_deprecated

Running test alice_kills_bob

PASS TEST (VICTORY): alice_kills_bob

Running test bob_kills_alice_on_retal

PASS TEST (VICTORY): bob_kills_alice_on_retal

Running test alice_kills_bob_levelup

PASS TEST (VICTORY): alice_kills_bob_levelup

Running test bob_kills_alice

PASS TEST (VICTORY): bob_kills_alice

Running test alice_kills_bob_on_retal

PASS TEST (VICTORY): alice_kills_bob_on_retal

Running test alice_kills_bob_on_retal_levelup

PASS TEST (VICTORY): alice_kills_bob_on_retal_levelup

Running test test_wml_menu_items_2

Error (strict mode, strict_level = 1): wesnoth reported on channel warning wml

20210314 23:51:08 warning wml: The following conditional test unexpectedly failed:

[variable]

boolean_equals=yes

name="result"

[/variable]

Interpolated to:

[variable]

boolean_equals=yes

name="result"

[/variable]

Note: The variable result currently has the value false.

FAIL TEST: test_wml_menu_items_2

Running test filter_formula_unit_error

Error (strict mode, strict_level = 1): wesnoth reported on channel error scripting/lua

20210314 23:51:09 error scripting/lua: Formula error in formula:1

In formula +

Error: Illegal unary operator: '+'

stack traceback:

[C]: in ?

[C]: in field 'eval_conditional'

lua/wml-flow.lua:17: in local 'cmd'

lua/wml-utils.lua:144: in field 'handle_event_commands'

lua/wml-flow.lua:19: in local 'cmd'

lua/wml-utils.lua:144: in field 'handle_event_commands'

lua/wml-flow.lua:5: in function <lua/wml-flow.lua:4>

FAIL TEST (BROKE STRICT): filter_formula_unit_error

``` | 1.0 | CI: WML unit tests results make it very hard to find errors - @CelticMinstrel the WML unit test script's results are currently very confusing. Below is part of the output of the c611128 validation (which passes, none of these are problems). However, this makes it very hard to work out why the CI failed on other runs - there's one other error, somewhere.

```

Running test test_move_fail_6

Error (strict mode, strict_level = 1): wesnoth reported on channel warning replay

20210314 23:50:58 warning replay: Warning: Path data contained something which could not be parsed to a sequence of locations:

config = x = 16,15,14,13,12,11

y = 3,3,3,3,3,bock

FAIL TEST (BROKE STRICT): test_move_fail_6

Running test test_store_unit_defense_deprecated

Error (strict mode, strict_level = 1): wesnoth reported on channel error deprecation

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

20210314 23:50:59 error deprecation: [store_unit_defense] has been deprecated and will be removed in version 1.17.0.

This function returns the chance to be hit, high values represent bad defenses. Using [store_unit_defense_on] is recommended instead.

FAIL TEST (BROKE STRICT): test_store_unit_defense_deprecated

Running test alice_kills_bob

PASS TEST (VICTORY): alice_kills_bob

Running test bob_kills_alice_on_retal

PASS TEST (VICTORY): bob_kills_alice_on_retal

Running test alice_kills_bob_levelup

PASS TEST (VICTORY): alice_kills_bob_levelup

Running test bob_kills_alice

PASS TEST (VICTORY): bob_kills_alice

Running test alice_kills_bob_on_retal

PASS TEST (VICTORY): alice_kills_bob_on_retal

Running test alice_kills_bob_on_retal_levelup

PASS TEST (VICTORY): alice_kills_bob_on_retal_levelup

Running test test_wml_menu_items_2

Error (strict mode, strict_level = 1): wesnoth reported on channel warning wml

20210314 23:51:08 warning wml: The following conditional test unexpectedly failed:

[variable]

boolean_equals=yes

name="result"

[/variable]

Interpolated to:

[variable]

boolean_equals=yes

name="result"

[/variable]

Note: The variable result currently has the value false.

FAIL TEST: test_wml_menu_items_2

Running test filter_formula_unit_error

Error (strict mode, strict_level = 1): wesnoth reported on channel error scripting/lua

20210314 23:51:09 error scripting/lua: Formula error in formula:1

In formula +

Error: Illegal unary operator: '+'

stack traceback:

[C]: in ?

[C]: in field 'eval_conditional'

lua/wml-flow.lua:17: in local 'cmd'

lua/wml-utils.lua:144: in field 'handle_event_commands'

lua/wml-flow.lua:19: in local 'cmd'

lua/wml-utils.lua:144: in field 'handle_event_commands'

lua/wml-flow.lua:5: in function <lua/wml-flow.lua:4>

FAIL TEST (BROKE STRICT): filter_formula_unit_error

``` | test | ci wml unit tests results make it very hard to find errors celticminstrel the wml unit test script s results are currently very confusing below is part of the output of the validation which passes none of these are problems however this makes it very hard to work out why the ci failed on other runs there s one other error somewhere running test test move fail error strict mode strict level wesnoth reported on channel warning replay warning replay warning path data contained something which could not be parsed to a sequence of locations config x y bock fail test broke strict test move fail running test test store unit defense deprecated error strict mode strict level wesnoth reported on channel error deprecation error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead error deprecation has been deprecated and will be removed in version this function returns the chance to be hit high values represent bad defenses using is recommended instead fail test broke strict test store unit defense deprecated running test alice kills bob pass test victory alice kills bob running test bob kills alice on retal pass test victory bob kills alice on retal running test alice kills bob levelup pass test victory alice kills bob levelup running test bob kills alice pass test victory bob kills alice running test alice kills bob on retal pass test victory alice kills bob on retal running test alice kills bob on retal levelup pass test victory alice kills bob on retal levelup running test test wml menu items error strict mode strict level wesnoth reported on channel warning wml warning wml the following conditional test unexpectedly failed boolean equals yes name result interpolated to boolean equals yes name result note the variable result currently has the value false fail test test wml menu items running test filter formula unit error error strict mode strict level wesnoth reported on channel error scripting lua error scripting lua formula error in formula in formula error illegal unary operator stack traceback in in field eval conditional lua wml flow lua in local cmd lua wml utils lua in field handle event commands lua wml flow lua in local cmd lua wml utils lua in field handle event commands lua wml flow lua in function fail test broke strict filter formula unit error | 1 |

599,058 | 18,264,855,086 | IssuesEvent | 2021-10-04 07:10:06 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [BUG] VMs are crashing when writing bulk data to additional volumes | bug area/ui priority/1 | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

Running `dd` to write data to an additional volume causes the VM to crash.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a VM with an additional volume attached. Configure VM Memory limit to 10GB.

2. Run`dd if=/dev/zero of=/dev/sda bs=1M conv=sync` on the volume and wait for 10-20 seconds

3. The VM crashes and is automatically restarted

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

A VM should not crash when writing data to a secondary disc.

**Environment:**

- Harvester ISO version: 0.3.0-preview

**Additional context**

It appears the qemu pod is OOM killed because it exceeds the memory limit of 10GB that Harvester has configured.

```

Sep 16 08:38:09 lpedge01003 kernel: oom-kill:constraint=CONSTRAINT_MEMCG,nodemask=(null),cpuset=06ea4e4c81e3b77d6c6d4d150016783adb9476391a3d713e4ccf0180889a4557,mems_allowed=0-1,oom_memcg=/kubepods/pod4f6371a7-c7ea-416a-837a-a4692d874756,task_memcg=/kubepods/pod4f6371a7-c7ea-416a-837a-a4692d874756/06ea4e4c81e3b77d6c6d4d150016783adb9476391a3d713e4ccf0180889a4557,task=qemu-system-x86,pid=1526,uid=107

Sep 16 08:38:09 lpedge01003 kernel: Memory cgroup out of memory: Killed process 1526 (qemu-system-x86) total-vm:11226804kB, anon-rss:10439204kB, file-rss:24704kB, shmem-rss:4kB

Sep 16 08:38:09 lpedge01003 kernel: oom_reaper: reaped process 1526 (qemu-system-x86), now anon-rss:0kB, file-rss:12kB, shmem-rss:4kB

```

| 1.0 | [BUG] VMs are crashing when writing bulk data to additional volumes - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

Running `dd` to write data to an additional volume causes the VM to crash.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a VM with an additional volume attached. Configure VM Memory limit to 10GB.

2. Run`dd if=/dev/zero of=/dev/sda bs=1M conv=sync` on the volume and wait for 10-20 seconds

3. The VM crashes and is automatically restarted

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

A VM should not crash when writing data to a secondary disc.

**Environment:**

- Harvester ISO version: 0.3.0-preview

**Additional context**

It appears the qemu pod is OOM killed because it exceeds the memory limit of 10GB that Harvester has configured.

```

Sep 16 08:38:09 lpedge01003 kernel: oom-kill:constraint=CONSTRAINT_MEMCG,nodemask=(null),cpuset=06ea4e4c81e3b77d6c6d4d150016783adb9476391a3d713e4ccf0180889a4557,mems_allowed=0-1,oom_memcg=/kubepods/pod4f6371a7-c7ea-416a-837a-a4692d874756,task_memcg=/kubepods/pod4f6371a7-c7ea-416a-837a-a4692d874756/06ea4e4c81e3b77d6c6d4d150016783adb9476391a3d713e4ccf0180889a4557,task=qemu-system-x86,pid=1526,uid=107

Sep 16 08:38:09 lpedge01003 kernel: Memory cgroup out of memory: Killed process 1526 (qemu-system-x86) total-vm:11226804kB, anon-rss:10439204kB, file-rss:24704kB, shmem-rss:4kB

Sep 16 08:38:09 lpedge01003 kernel: oom_reaper: reaped process 1526 (qemu-system-x86), now anon-rss:0kB, file-rss:12kB, shmem-rss:4kB

```

| non_test | vms are crashing when writing bulk data to additional volumes describe the bug running dd to write data to an additional volume causes the vm to crash to reproduce steps to reproduce the behavior create a vm with an additional volume attached configure vm memory limit to run dd if dev zero of dev sda bs conv sync on the volume and wait for seconds the vm crashes and is automatically restarted expected behavior a vm should not crash when writing data to a secondary disc environment harvester iso version preview additional context it appears the qemu pod is oom killed because it exceeds the memory limit of that harvester has configured sep kernel oom kill constraint constraint memcg nodemask null cpuset mems allowed oom memcg kubepods task memcg kubepods task qemu system pid uid sep kernel memory cgroup out of memory killed process qemu system total vm anon rss file rss shmem rss sep kernel oom reaper reaped process qemu system now anon rss file rss shmem rss | 0 |

136,872 | 30,599,416,368 | IssuesEvent | 2023-07-22 06:59:28 | SCIInstitute/ShapeWorks | https://api.github.com/repos/SCIInstitute/ShapeWorks | closed | Parent Issue for Repo Cleanup | Priority: Medium Status: Code Cleanup | This is a parent issue for all issues that pertain to cleaning up the repo:

- [x] #160

- [x] #101

- [x] #171

- [ ] #408

- [ ] #173

- [ ] #1149 | 1.0 | Parent Issue for Repo Cleanup - This is a parent issue for all issues that pertain to cleaning up the repo:

- [x] #160

- [x] #101

- [x] #171

- [ ] #408

- [ ] #173

- [ ] #1149 | non_test | parent issue for repo cleanup this is a parent issue for all issues that pertain to cleaning up the repo | 0 |

329,125 | 10,012,497,822 | IssuesEvent | 2019-07-15 13:20:35 | weaveworks/ignite | https://api.github.com/repos/weaveworks/ignite | closed | missing /etc/resolv.conf | kind/bug priority/important-soon | So far with both the centos and ubuntu weaveworks images for ignite when I start a VM network connectivity seems to be fine, except I cannot lookup any hostnames. Creating a trivial `/etc/resolv.conf` with `nameserver 8.8.8.8` solves this and makes it a lot easier to work inside the VMs.

Docker and Kubernetes are both known to create / manage this file based on the hosts resolver settings and user options, it might be nice if ignite behaved similar to docker here.

Alternatively, if we don't want to do this and want to leave it out of the scope for `ignite`, it will probably be friendlier to new users if the default images ship a simple resolv.conf with at least one nameserver so package management, wget, etc. work. | 1.0 | missing /etc/resolv.conf - So far with both the centos and ubuntu weaveworks images for ignite when I start a VM network connectivity seems to be fine, except I cannot lookup any hostnames. Creating a trivial `/etc/resolv.conf` with `nameserver 8.8.8.8` solves this and makes it a lot easier to work inside the VMs.

Docker and Kubernetes are both known to create / manage this file based on the hosts resolver settings and user options, it might be nice if ignite behaved similar to docker here.

Alternatively, if we don't want to do this and want to leave it out of the scope for `ignite`, it will probably be friendlier to new users if the default images ship a simple resolv.conf with at least one nameserver so package management, wget, etc. work. | non_test | missing etc resolv conf so far with both the centos and ubuntu weaveworks images for ignite when i start a vm network connectivity seems to be fine except i cannot lookup any hostnames creating a trivial etc resolv conf with nameserver solves this and makes it a lot easier to work inside the vms docker and kubernetes are both known to create manage this file based on the hosts resolver settings and user options it might be nice if ignite behaved similar to docker here alternatively if we don t want to do this and want to leave it out of the scope for ignite it will probably be friendlier to new users if the default images ship a simple resolv conf with at least one nameserver so package management wget etc work | 0 |

346,317 | 30,884,496,601 | IssuesEvent | 2023-08-03 20:27:03 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Upgrading from Prisma 5.0.0 -> 5.1.0 results in "TS2321: Excessive stack depth comparing types" error using `mockDeep<PrismaClient>()` | bug/1-unconfirmed kind/bug topic: tests tech/typescript team/client 5.1.0 | ### Bug description

We use the InversifyJS dependency injection framework and Typescript 5.1.6. When upgrading from Prisma 5.0.0 to 5.1.0 with no other code changes, `tsc` results in the following error:

```

... - error TS2321: Excessive stack depth comparing types 'DeepMockProxy<PrismaClient<PrismaClientOptions, never, DefaultArgs>>' and 'PrismaClient<PrismaClientOptions, unknown, Args_2>'.

31 tc.bind(PrismaClient).toConstantValue(mockDeep<PrismaClient>());

```

Not sure if there are workarounds, but this directly reflects the recommended practice from the Prisma guide on unit testing here: https://www.prisma.io/docs/guides/testing/unit-testing#dependency-injection

### How to reproduce

1. Follow Prisma unit testing guide for dependency testing here: https://www.prisma.io/docs/guides/testing/unit-testing#dependency-injection

2. Use `mockDeep<PrismaClient>()` in a unit test

3. Upgrade to Prisma 5.1.0

4. Run TypeScript (i.e. v5.1.6) typechecker

### Expected behavior

TS compilation and typecheck behavior matches 5.0.0.

### Prisma information

<!-- Do not include your database credentials when sharing your Prisma schema! -->

```prisma

// Add your schema.prisma

```

```ts

const tc = new Container();

tc.bind(PrismaClient).toConstantValue(mockDeep<PrismaClient>());

return tc;

```

### Environment & setup

- OS: macOS and Debian

- Database: PostgreSQL

- Node.js version: 18.6.0

### Prisma Version

```

5.1.0

```

| 1.0 | Upgrading from Prisma 5.0.0 -> 5.1.0 results in "TS2321: Excessive stack depth comparing types" error using `mockDeep<PrismaClient>()` - ### Bug description

We use the InversifyJS dependency injection framework and Typescript 5.1.6. When upgrading from Prisma 5.0.0 to 5.1.0 with no other code changes, `tsc` results in the following error:

```

... - error TS2321: Excessive stack depth comparing types 'DeepMockProxy<PrismaClient<PrismaClientOptions, never, DefaultArgs>>' and 'PrismaClient<PrismaClientOptions, unknown, Args_2>'.

31 tc.bind(PrismaClient).toConstantValue(mockDeep<PrismaClient>());

```

Not sure if there are workarounds, but this directly reflects the recommended practice from the Prisma guide on unit testing here: https://www.prisma.io/docs/guides/testing/unit-testing#dependency-injection

### How to reproduce

1. Follow Prisma unit testing guide for dependency testing here: https://www.prisma.io/docs/guides/testing/unit-testing#dependency-injection

2. Use `mockDeep<PrismaClient>()` in a unit test

3. Upgrade to Prisma 5.1.0

4. Run TypeScript (i.e. v5.1.6) typechecker

### Expected behavior

TS compilation and typecheck behavior matches 5.0.0.

### Prisma information

<!-- Do not include your database credentials when sharing your Prisma schema! -->

```prisma

// Add your schema.prisma

```

```ts

const tc = new Container();

tc.bind(PrismaClient).toConstantValue(mockDeep<PrismaClient>());

return tc;

```

### Environment & setup

- OS: macOS and Debian

- Database: PostgreSQL

- Node.js version: 18.6.0

### Prisma Version

```

5.1.0

```

| test | upgrading from prisma results in excessive stack depth comparing types error using mockdeep bug description we use the inversifyjs dependency injection framework and typescript when upgrading from prisma to with no other code changes tsc results in the following error error excessive stack depth comparing types deepmockproxy and prismaclient tc bind prismaclient toconstantvalue mockdeep not sure if there are workarounds but this directly reflects the recommended practice from the prisma guide on unit testing here how to reproduce follow prisma unit testing guide for dependency testing here use mockdeep in a unit test upgrade to prisma run typescript i e typechecker expected behavior ts compilation and typecheck behavior matches prisma information prisma add your schema prisma ts const tc new container tc bind prismaclient toconstantvalue mockdeep return tc environment setup os macos and debian database postgresql node js version prisma version | 1 |

351,129 | 10,512,967,822 | IssuesEvent | 2019-09-27 19:17:37 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Advanced Masonry table can fly | Medium Priority | Step to reproduce:

- place Advanced Masonry table

- Move away. It flies

| 1.0 | Advanced Masonry table can fly - Step to reproduce:

- place Advanced Masonry table

- Move away. It flies

| non_test | advanced masonry table can fly step to reproduce place advanced masonry table move away it flies | 0 |

11,656 | 32,007,866,529 | IssuesEvent | 2023-09-21 15:54:13 | Nexus-Mods/NexusMods.App | https://api.github.com/repos/Nexus-Mods/NexusMods.App | closed | Use an extensible solution for IDs instead of Enums for GameFolderType | area-game-support area-code-architecture-design | GameFolderType currently is an Enum defined in the main App, that identifies a small list of common game paths that the main application and other components might need to reference in a game installation agnostic way.

The issue is that some games might have particular paths they need to expose that aren't present in other games.

If new game needs to add one such identifiable path it would need to edit the shared Enum.

The problem is that the enum would quickly get polluted with game specific path ids that might be interpreted differently for different games.

A different solution is needed, one that doesn't rely on a central shared list of IDs but instead allows each game to define its own list in addition to the common ones.

Many of these paths could likely be useful for other extension components (mod installers, diagnostics, etc), while the main application could realistically not need to be aware of them.

So it would be convenient for games to be able to define new PathIds without having to change the main app code, while still allowing other components to reference them.

The developer of the extension or component will need to know the static ID of the path it needs and check for its existence in the currently managed game.

This admittedly isn't very elegant, but would allow bypassing visibility of the ID definition.

It can make it harder for developers to find the correct ID that is needed to be implemented for a game.

For example all bethesda games have the `Data` folder, to add a new Bethesda game, the developer would need to look for what ID was used in the other games to define Data and make sure to reuse the same. Any extension developer wanting to reference Data would need to look up the exact ID to use. This is somewhat simplified in the case of human readable IDs like strings. while more error prone in the case of GUIDs for example.

## Proposal:

Use statically defined GUIDs wrapped in ValueObjects (for Nominal Typing) as GamePathTypes (or GamePathIds)

To define a new GUID statically on Rider, you can press Shift twice, type in GUID and there is an option to generate a GUID in various formats.

App defines a default list of common GamePathTypes (game, saves, confic, appdata)

Game then exposes a collection of GamePaths with either the App defined IDs or custom IDs.

Upside is fixed size of IDs and very low chance of collision and ability for any component to check for a path if they know the ID, even if the game type that defined it isn't visible from the extension or component.

Downside is non human readable nature of the ID.

You can name the value object instance in the code that references a particular path ID instead.

| 1.0 | Use an extensible solution for IDs instead of Enums for GameFolderType - GameFolderType currently is an Enum defined in the main App, that identifies a small list of common game paths that the main application and other components might need to reference in a game installation agnostic way.

The issue is that some games might have particular paths they need to expose that aren't present in other games.

If new game needs to add one such identifiable path it would need to edit the shared Enum.

The problem is that the enum would quickly get polluted with game specific path ids that might be interpreted differently for different games.

A different solution is needed, one that doesn't rely on a central shared list of IDs but instead allows each game to define its own list in addition to the common ones.

Many of these paths could likely be useful for other extension components (mod installers, diagnostics, etc), while the main application could realistically not need to be aware of them.

So it would be convenient for games to be able to define new PathIds without having to change the main app code, while still allowing other components to reference them.

The developer of the extension or component will need to know the static ID of the path it needs and check for its existence in the currently managed game.

This admittedly isn't very elegant, but would allow bypassing visibility of the ID definition.

It can make it harder for developers to find the correct ID that is needed to be implemented for a game.

For example all bethesda games have the `Data` folder, to add a new Bethesda game, the developer would need to look for what ID was used in the other games to define Data and make sure to reuse the same. Any extension developer wanting to reference Data would need to look up the exact ID to use. This is somewhat simplified in the case of human readable IDs like strings. while more error prone in the case of GUIDs for example.

## Proposal:

Use statically defined GUIDs wrapped in ValueObjects (for Nominal Typing) as GamePathTypes (or GamePathIds)

To define a new GUID statically on Rider, you can press Shift twice, type in GUID and there is an option to generate a GUID in various formats.

App defines a default list of common GamePathTypes (game, saves, confic, appdata)

Game then exposes a collection of GamePaths with either the App defined IDs or custom IDs.

Upside is fixed size of IDs and very low chance of collision and ability for any component to check for a path if they know the ID, even if the game type that defined it isn't visible from the extension or component.

Downside is non human readable nature of the ID.

You can name the value object instance in the code that references a particular path ID instead.