Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

262,421 | 22,840,149,849 | IssuesEvent | 2022-07-12 20:52:31 | dapr/components-contrib | https://api.github.com/repos/dapr/components-contrib | opened | InfluxDB binding as stable candidate | area/runtime/binding P1 pinned area/test/certification | ## Describe the proposal

Create certification tests for InfluxDB binding and mark it as stable candidate:

https://docs.dapr.io/reference/components-reference/supported-bindings/influxdb/ | 1.0 | InfluxDB binding as stable candidate - ## Describe the proposal

Create certification tests for InfluxDB binding and mark it as stable candidate:

https://docs.dapr.io/reference/components-reference/supported-bindings/influxdb/ | test | influxdb binding as stable candidate describe the proposal create certification tests for influxdb binding and mark it as stable candidate | 1 |

615,423 | 19,255,072,419 | IssuesEvent | 2021-12-09 10:23:39 | openscd/open-scd | https://api.github.com/repos/openscd/open-scd | closed | DOType or CDC creation helper wizards | Kind: Enhancement Reviewed: Prioritized Priority: Important | As a user of OpenSCD I want to be guided through the process of creating a new `DOType` element. I want the software to tell me which data objects `SDO`, data attributes (DA) are mandatory for the data object.

Two wizards are required here:

1. Choose the common data class (CDC) array automatically from `IEC_61850-7-3_2007B3`. Based on common data class, display possible data objects and data attributes in a selectable list. Mandatory elements shall be preselected.

2. Second wizard is the `sDOTypeWizard`, `dATypeWizard` where the user shall have the possibility to select the type. Types shall be preselected based on the information in `IEC_61850-7-3_2007B3`.

**Features**

- Array of possible common data classes are based on the definition in `IEC_61850-7-3_2007B3`

- Array of possible type in sDOTypeWizard and dATypeWizard are based on the CDC type definition in `IEC_61850-7-3_2007B3` and defined DOTypes, DATypes in the DataTypeTemplates section

- Progress shall be indicated in terms of how many wizards follow

- When canceling the process, no actions shall be performed. This is especially important when canceling in sDOTypeWizard, dATypeWizard | 1.0 | DOType or CDC creation helper wizards - As a user of OpenSCD I want to be guided through the process of creating a new `DOType` element. I want the software to tell me which data objects `SDO`, data attributes (DA) are mandatory for the data object.

Two wizards are required here:

1. Choose the common data class (CDC) array automatically from `IEC_61850-7-3_2007B3`. Based on common data class, display possible data objects and data attributes in a selectable list. Mandatory elements shall be preselected.

2. Second wizard is the `sDOTypeWizard`, `dATypeWizard` where the user shall have the possibility to select the type. Types shall be preselected based on the information in `IEC_61850-7-3_2007B3`.

**Features**

- Array of possible common data classes are based on the definition in `IEC_61850-7-3_2007B3`

- Array of possible type in sDOTypeWizard and dATypeWizard are based on the CDC type definition in `IEC_61850-7-3_2007B3` and defined DOTypes, DATypes in the DataTypeTemplates section

- Progress shall be indicated in terms of how many wizards follow

- When canceling the process, no actions shall be performed. This is especially important when canceling in sDOTypeWizard, dATypeWizard | non_test | dotype or cdc creation helper wizards as a user of openscd i want to be guided through the process of creating a new dotype element i want the software to tell me which data objects sdo data attributes da are mandatory for the data object two wizards are required here choose the common data class cdc array automatically from iec based on common data class display possible data objects and data attributes in a selectable list mandatory elements shall be preselected second wizard is the sdotypewizard datypewizard where the user shall have the possibility to select the type types shall be preselected based on the information in iec features array of possible common data classes are based on the definition in iec array of possible type in sdotypewizard and datypewizard are based on the cdc type definition in iec and defined dotypes datypes in the datatypetemplates section progress shall be indicated in terms of how many wizards follow when canceling the process no actions shall be performed this is especially important when canceling in sdotypewizard datypewizard | 0 |

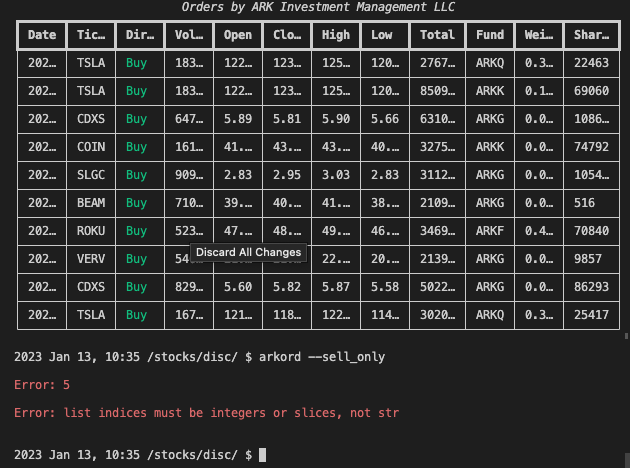

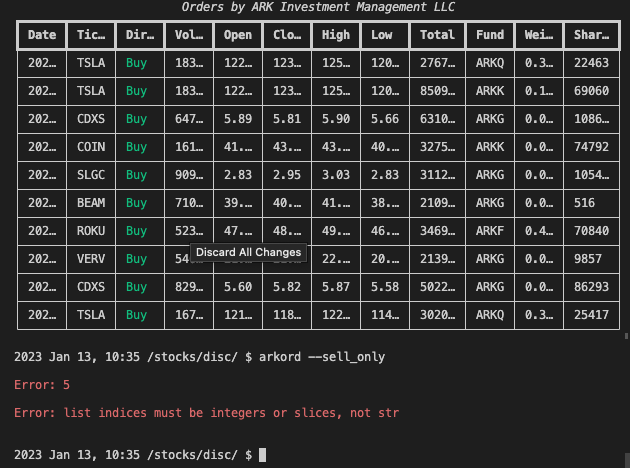

305,755 | 26,409,613,719 | IssuesEvent | 2023-01-13 11:03:02 | OpenBB-finance/OpenBBTerminal | https://api.github.com/repos/OpenBB-finance/OpenBBTerminal | closed | [Bug] stocks/disc/arkord --sell_only | bug tests | **Describe the bug**

A clear and concise description of what the bug is.

`main` branch

`develop` branch

* This unit test file is related to the command and fails when `--record-mode=rewrite`

tests/openbb_terminal/stocks/discovery/test_ark_view.py

**To Reproduce**

Steps(from the start) and commands to reproduce the behavior

**Screenshots**

If applicable, add screenshots to help explain your problem.

If you are running the terminal using the conda version please

rerun the terminal with `python terminal.py --debug`, and then

recreate your issue. Then include a screenshot of the entire

error printout.

**Desktop (please complete the following information):**

- OS: [e.g. Mac Sierra]

- Python version [e.g. 3.6.8]

**Additional context**

Add any other information that you think could be useful for us.

| 1.0 | [Bug] stocks/disc/arkord --sell_only - **Describe the bug**

A clear and concise description of what the bug is.

`main` branch

`develop` branch

* This unit test file is related to the command and fails when `--record-mode=rewrite`

tests/openbb_terminal/stocks/discovery/test_ark_view.py

**To Reproduce**

Steps(from the start) and commands to reproduce the behavior

**Screenshots**

If applicable, add screenshots to help explain your problem.

If you are running the terminal using the conda version please

rerun the terminal with `python terminal.py --debug`, and then

recreate your issue. Then include a screenshot of the entire

error printout.

**Desktop (please complete the following information):**

- OS: [e.g. Mac Sierra]

- Python version [e.g. 3.6.8]

**Additional context**

Add any other information that you think could be useful for us.

| test | stocks disc arkord sell only describe the bug a clear and concise description of what the bug is main branch develop branch this unit test file is related to the command and fails when record mode rewrite tests openbb terminal stocks discovery test ark view py to reproduce steps from the start and commands to reproduce the behavior screenshots if applicable add screenshots to help explain your problem if you are running the terminal using the conda version please rerun the terminal with python terminal py debug and then recreate your issue then include a screenshot of the entire error printout desktop please complete the following information os python version additional context add any other information that you think could be useful for us | 1 |

84,458 | 24,314,143,546 | IssuesEvent | 2022-09-30 03:32:47 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | opened | AIX cannot load libfontmanager.so | comp:build test failure | The problem occurs in an extended.openjdk test, but easily duplicated by a simple test case.

```

public class Load {

public static void main(String[] args) throws Exception {

System.loadLibrary("fontmanager");

}

}

```

It occurs on at least jdk11 and jdk17.

jdk17

```

[2022-09-08T13:10:23.577Z] java.lang.UnsatisfiedLinkError: Failed to load library "/home/jenkins/workspace/Test_openjdk17_j9_extended.openjdk_ppc64_aix/openjdkbinary/j2sdk-image/lib/libfontmanager.so"

[2022-09-08T13:10:23.577Z] at java.base/jdk.internal.loader.NativeLibraries.load(Native Method)

```

jdk11

```

Exception in thread "main" java.lang.UnsatisfiedLinkError: fontmanager (rtld: 0712-001 Symbol _ZN2hb8vtable_tI8hb_set_tXadL_Z16hb_set_get_emptyEEXadL_Z16hb_set_referenceEEXadL_Z14hb_set_destroyEEXadL_Z20hb_set_set_user_dataEEXadL_Z20hb_set_get_user_dataEEE7destroyE was referenced

from module /home/jenkins/peter/jdk/lib/libfontmanager.so(), but a runtime definition

of the symbol was not found.)

```

Even on the same machine where the JVM was compiled it doesn't work, with the same error.

Seems like a problem with xlc 16.1.0. I think what's happening is that harfbuzz was updated from 2.8 to 4.4.1 and it no longer works with xlc 16.1.0. | 1.0 | AIX cannot load libfontmanager.so - The problem occurs in an extended.openjdk test, but easily duplicated by a simple test case.

```

public class Load {

public static void main(String[] args) throws Exception {

System.loadLibrary("fontmanager");

}

}

```

It occurs on at least jdk11 and jdk17.

jdk17

```

[2022-09-08T13:10:23.577Z] java.lang.UnsatisfiedLinkError: Failed to load library "/home/jenkins/workspace/Test_openjdk17_j9_extended.openjdk_ppc64_aix/openjdkbinary/j2sdk-image/lib/libfontmanager.so"

[2022-09-08T13:10:23.577Z] at java.base/jdk.internal.loader.NativeLibraries.load(Native Method)

```

jdk11

```

Exception in thread "main" java.lang.UnsatisfiedLinkError: fontmanager (rtld: 0712-001 Symbol _ZN2hb8vtable_tI8hb_set_tXadL_Z16hb_set_get_emptyEEXadL_Z16hb_set_referenceEEXadL_Z14hb_set_destroyEEXadL_Z20hb_set_set_user_dataEEXadL_Z20hb_set_get_user_dataEEE7destroyE was referenced

from module /home/jenkins/peter/jdk/lib/libfontmanager.so(), but a runtime definition

of the symbol was not found.)

```

Even on the same machine where the JVM was compiled it doesn't work, with the same error.

Seems like a problem with xlc 16.1.0. I think what's happening is that harfbuzz was updated from 2.8 to 4.4.1 and it no longer works with xlc 16.1.0. | non_test | aix cannot load libfontmanager so the problem occurs in an extended openjdk test but easily duplicated by a simple test case public class load public static void main string args throws exception system loadlibrary fontmanager it occurs on at least and java lang unsatisfiedlinkerror failed to load library home jenkins workspace test extended openjdk aix openjdkbinary image lib libfontmanager so at java base jdk internal loader nativelibraries load native method exception in thread main java lang unsatisfiedlinkerror fontmanager rtld symbol set txadl set get emptyeexadl set referenceeexadl set destroyeexadl set set user dataeexadl set get user was referenced from module home jenkins peter jdk lib libfontmanager so but a runtime definition of the symbol was not found even on the same machine where the jvm was compiled it doesn t work with the same error seems like a problem with xlc i think what s happening is that harfbuzz was updated from to and it no longer works with xlc | 0 |

183,701 | 14,246,491,454 | IssuesEvent | 2020-11-19 10:08:24 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] HistoryTemplateEmailMappingsTests.testEmailFields fails | :Core/Features/Watcher >test-failure Team:Core/Features | **Build scan**: https://gradle-enterprise.elastic.co/s/uwm5wrx2u7vrg

**Repro line**:

```

./gradlew ':x-pack:plugin:watcher:internalClusterTest' --tests "org.elasticsearch.xpack.watcher.history.HistoryTemplateEmailMappingsTests.testEmailFields" -Dtests.seed=2E9825D4080F72BF -Dtests.security.manager=true -Dtests.locale=hr-HR -Dtests.timezone=SystemV/EST5EDT -Druntime.java=11

```

**Reproduces locally?**: No

**Applicable branches**: `master`

**Failure history**:

https://build-stats.elastic.co/app/kibana#/discover?_g=(refreshInterval:(pause:!t,value:0),time:(from:now-7d,mode:quick,to:now))&_a=(columns:!(_source),index:b646ed00-7efc-11e8-bf69-63c8ef516157,interval:auto,query:(language:lucene,query:testEmailFields),sort:!(process.time-start,desc))

**Failure excerpt**:

```

[2020-11-16T14:23:38,919][INFO ][o.s.s.s.SMTPServer ] [testEmailFields] SMTP server *:0 starting

[2020-11-16T14:23:38,930][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] before test

[2020-11-16T14:23:38,932][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: setting up test

[2020-11-16T14:23:38,932][INFO ][o.s.s.s.ServerThread ] [[org.subethamail.smtp.server.ServerThread *:39213]{smtpServerLocalSocketAddress=*:39213}] SMTP server *:39213 started

[2020-11-16T14:23:38,933][INFO ][o.e.t.InternalTestCluster] [testEmailFields] Setup InternalTestCluster [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster] with seed [98A0BE4EB87ED660] using [0] dedicated masters, [3] (data) nodes and [0] coord only nodes (master nodes are [auto-managed])

[2020-11-16T14:23:38,945][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:38,945][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:38,945][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:38,946][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:38,947][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:38,947][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:38,959][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:38,963][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:38,969][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s0], node ID [5WY1CYTqRbejYfTqT5LK1w], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,052][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,133][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,136][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:39,136][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:39,137][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:39,137][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:39,138][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:39,138][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:39,150][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:39,150][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:39,156][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s1], node ID [VADujvzoSQyhHelIQIPhGA], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,222][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,299][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,302][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:39,303][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:39,303][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:39,304][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:39,304][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:39,304][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:39,307][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:39,307][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:39,316][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:39,325][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:39,331][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s2], node ID [ssLW2ClgQ1eVaIok0ITx-A], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,389][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,451][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,467][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] starting ...

[2020-11-16T14:23:39,473][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] starting ...

[2020-11-16T14:23:39,481][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] starting ...

[2020-11-16T14:23:39,486][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] publish_address {127.0.0.1:44717}, bound_addresses {[::1]:43339}, {127.0.0.1:44717}

[2020-11-16T14:23:39,505][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] publish_address {127.0.0.1:37883}, bound_addresses {[::1]:37255}, {127.0.0.1:37883}

[2020-11-16T14:23:39,544][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] publish_address {127.0.0.1:38021}, bound_addresses {[::1]:39523}, {127.0.0.1:38021}

[2020-11-16T14:23:39,714][INFO ][o.e.c.c.Coordinator ] [node_s0] setting initial configuration to VotingConfiguration{5WY1CYTqRbejYfTqT5LK1w,{bootstrap-placeholder}-node_s1,ssLW2ClgQ1eVaIok0ITx-A}

[2020-11-16T14:23:39,895][INFO ][o.e.c.s.MasterService ] [node_s0] elected-as-master ([2] nodes joined)[{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true} elect leader, {node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true} elect leader, _BECOME_MASTER_TASK_, _FINISH_ELECTION_], term: 1, version: 1, delta: master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:39,993][INFO ][o.e.c.c.CoordinationState] [node_s0] cluster UUID set to [wU0jGhUqSaS_LV4XG_p1Zg]

[2020-11-16T14:23:40,004][INFO ][o.e.c.c.CoordinationState] [node_s2] cluster UUID set to [wU0jGhUqSaS_LV4XG_p1Zg]

[2020-11-16T14:23:40,113][INFO ][o.e.c.s.ClusterApplierService] [node_s2] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 1, reason: ApplyCommitRequest{term=1, version=1, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,115][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] started

[2020-11-16T14:23:40,122][INFO ][o.e.c.s.ClusterApplierService] [node_s0] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 1, reason: Publication{term=1, version=1}

[2020-11-16T14:23:40,166][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] started

[2020-11-16T14:23:40,183][INFO ][o.e.c.s.MasterService ] [node_s0] node-join[{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true} join existing leader], term: 1, version: 2, delta: added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,218][INFO ][o.e.c.r.a.DiskThresholdMonitor] [node_s0] skipping monitor as a check is already in progress

[2020-11-16T14:23:40,226][INFO ][o.e.c.s.ClusterApplierService] [node_s2] added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: ApplyCommitRequest{term=1, version=2, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,282][INFO ][o.e.c.s.ClusterApplierService] [node_s1] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true},{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: ApplyCommitRequest{term=1, version=2, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,284][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] started

[2020-11-16T14:23:40,285][INFO ][o.e.c.s.ClusterApplierService] [node_s0] added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: Publication{term=1, version=2}

[2020-11-16T14:23:40,439][INFO ][o.e.g.GatewayService ] [node_s0] recovered [0] indices into cluster_state

[2020-11-16T14:23:40,461][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.slm-history] for index patterns [.slm-history-5*]

[2020-11-16T14:23:40,545][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.triggered_watches] for index patterns [.triggered_watches*]

[2020-11-16T14:23:40,634][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.watches] for index patterns [.watches*]

[2020-11-16T14:23:40,744][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.watch-history-14] for index patterns [.watcher-history-14*]

[2020-11-16T14:23:40,838][INFO ][o.e.x.i.a.TransportPutLifecycleAction] [node_s0] adding index lifecycle policy [watch-history-ilm-policy]

[2020-11-16T14:23:40,921][INFO ][o.e.x.i.a.TransportPutLifecycleAction] [node_s0] adding index lifecycle policy [slm-history-ilm-policy]

[2020-11-16T14:23:41,284][INFO ][o.e.l.LicenseService ] [node_s1] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,288][INFO ][o.e.l.LicenseService ] [node_s2] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,331][INFO ][o.e.l.LicenseService ] [node_s0] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,351][WARN ][o.e.c.m.MetadataIndexTemplateService] [node_s0] legacy template [random_index_template] has index patterns [*] matching patterns from existing composable templates [.triggered_watches,.watch-history-14,.slm-history,.watches] with patterns (.triggered_watches => [.triggered_watches*],.watch-history-14 => [.watcher-history-14*],.slm-history => [.slm-history-5*],.watches => [.watches*]); this template [random_index_template] may be ignored in favor of a composable template at index creation time

[2020-11-16T14:23:41,353][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding template [random_index_template] for index patterns [*]

[2020-11-16T14:23:41,432][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: all set up test

[2020-11-16T14:23:41,437][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: freezing time on nodes

[2020-11-16T14:23:41,440][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STARTED], Tuple [v1=node_s2 (0), v2=STARTED], Tuple [v1=node_s1 (0), v2=STARTED]]

[2020-11-16T14:23:41,511][INFO ][o.e.x.w.WatcherService ] [node_s1] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,511][INFO ][o.e.x.w.WatcherService ] [node_s2] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,512][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s1] watcher has stopped

[2020-11-16T14:23:41,520][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s2] watcher has stopped

[2020-11-16T14:23:41,521][INFO ][o.e.x.w.WatcherService ] [node_s0] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,521][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s0] watcher has stopped

[2020-11-16T14:23:41,530][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STOPPED], Tuple [v1=node_s2 (0), v2=STOPPED], Tuple [v1=node_s1 (0), v2=STOPPED]]

[2020-11-16T14:23:41,540][DEPRECATION][o.e.d.c.m.MetadataCreateIndexService] [node_s0] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="index_name_starts_with_dot" message="index name [.watches] starts with a dot '.', in the next major version, index names starting with a dot are reserved for hidden indices and system indices"

[2020-11-16T14:23:41,550][INFO ][o.e.c.m.MetadataCreateIndexService] [node_s0] [.watches] creating index, cause [api], templates [.watches], shards [1]/[0]

[2020-11-16T14:23:41,555][INFO ][o.e.c.r.a.AllocationService] [node_s0] updating number_of_replicas to [1] for indices [.watches]

[2020-11-16T14:23:41,868][DEPRECATION][o.e.d.c.m.MetadataCreateIndexService] [node_s0] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="index_name_starts_with_dot" message="index name [.triggered_watches] starts with a dot '.', in the next major version, index names starting with a dot are reserved for hidden indices and system indices"

[2020-11-16T14:23:41,887][INFO ][o.e.c.m.MetadataCreateIndexService] [node_s0] [.triggered_watches] creating index, cause [api], templates [.triggered_watches], shards [1]/[1]

[2020-11-16T14:23:42,285][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STOPPED], Tuple [v1=node_s2, v2=STOPPED], Tuple [v1=node_s1, v2=STOPPED]]

[2020-11-16T14:23:42,534][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,544][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,553][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,568][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTED], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,608][INFO ][o.e.x.w.WatcherService ] [node_s2] reloading watcher, reason [new local watcher shard allocation ids], cancelled [0] queued tasks

[2020-11-16T14:23:42,619][INFO ][o.e.x.w.WatcherService ] [node_s0] reloading watcher, reason [new local watcher shard allocation ids], cancelled [0] queued tasks

[2020-11-16T14:23:42,636][INFO ][o.e.c.r.a.AllocationService] [node_s0] current.health="GREEN" message="Cluster health status changed from [YELLOW] to [GREEN] (reason: [shards started [[.triggered_watches][0]]])." previous.health="YELLOW" reason="shards started [[.triggered_watches][0]]"

[2020-11-16T14:23:42,803][INFO ][o.s.s.s.SMTPServer ] [testEmailFields] SMTP server *:39213 stopping

[2020-11-16T14:23:42,804][INFO ][o.s.s.s.ServerThread ] [[org.subethamail.smtp.server.ServerThread *:39213]{smtpServerLocalSocketAddress=*:39213}] SMTP server *:39213 stopped

[2020-11-16T14:23:42,805][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [#testEmailFields]: clearing watcher state

[2020-11-16T14:23:42,808][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STARTED], Tuple [v1=node_s2 (0), v2=STARTED], Tuple [v1=node_s1 (0), v2=STARTED]]

[2020-11-16T14:23:42,889][INFO ][o.e.x.w.WatcherService ] [node_s2] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,889][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s2] watcher has stopped

[2020-11-16T14:23:42,895][INFO ][o.e.x.w.WatcherService ] [node_s1] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,895][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s1] watcher has stopped

[2020-11-16T14:23:42,905][INFO ][o.e.x.w.WatcherService ] [node_s0] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,905][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s0] watcher has stopped

[2020-11-16T14:23:42,910][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STOPPED], Tuple [v1=node_s2 (0), v2=STOPPED], Tuple [v1=node_s1 (0), v2=STOPPED]]

[2020-11-16T14:23:42,919][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: cleaning up after test

[2020-11-16T14:23:42,919][INFO ][o.e.t.InternalTestCluster] [testEmailFields] Clearing active scheme time frozen, expected healing time 0s

[2020-11-16T14:23:43,002][INFO ][o.e.c.m.MetadataDeleteIndexService] [node_s0] [.watches/DgNTavZhQqSWESdZgB3WmA] deleting index

[2020-11-16T14:23:43,002][INFO ][o.e.c.m.MetadataDeleteIndexService] [node_s0] [.triggered_watches/NrMycBW_QZGlIMxOa4-pSw] deleting index

[2020-11-16T14:23:43,188][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] removing template [random_index_template]

[2020-11-16T14:23:43,252][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: cleaned up after test

[2020-11-16T14:23:43,252][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] after test

REPRODUCE WITH: ./gradlew ':x-pack:plugin:watcher:internalClusterTest' --tests "org.elasticsearch.xpack.watcher.history.HistoryTemplateEmailMappingsTests.testEmailFields" -Dtests.seed=F1C7AE174070F0E4 -Dtests.security.manager=true -Dtests.locale=pt-BR -Dtests.timezone=Asia/Brunei -Druntime.java=11

```

| 1.0 | [CI] HistoryTemplateEmailMappingsTests.testEmailFields fails - **Build scan**: https://gradle-enterprise.elastic.co/s/uwm5wrx2u7vrg

**Repro line**:

```

./gradlew ':x-pack:plugin:watcher:internalClusterTest' --tests "org.elasticsearch.xpack.watcher.history.HistoryTemplateEmailMappingsTests.testEmailFields" -Dtests.seed=2E9825D4080F72BF -Dtests.security.manager=true -Dtests.locale=hr-HR -Dtests.timezone=SystemV/EST5EDT -Druntime.java=11

```

**Reproduces locally?**: No

**Applicable branches**: `master`

**Failure history**:

https://build-stats.elastic.co/app/kibana#/discover?_g=(refreshInterval:(pause:!t,value:0),time:(from:now-7d,mode:quick,to:now))&_a=(columns:!(_source),index:b646ed00-7efc-11e8-bf69-63c8ef516157,interval:auto,query:(language:lucene,query:testEmailFields),sort:!(process.time-start,desc))

**Failure excerpt**:

```

[2020-11-16T14:23:38,919][INFO ][o.s.s.s.SMTPServer ] [testEmailFields] SMTP server *:0 starting

[2020-11-16T14:23:38,930][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] before test

[2020-11-16T14:23:38,932][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: setting up test

[2020-11-16T14:23:38,932][INFO ][o.s.s.s.ServerThread ] [[org.subethamail.smtp.server.ServerThread *:39213]{smtpServerLocalSocketAddress=*:39213}] SMTP server *:39213 started

[2020-11-16T14:23:38,933][INFO ][o.e.t.InternalTestCluster] [testEmailFields] Setup InternalTestCluster [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster] with seed [98A0BE4EB87ED660] using [0] dedicated masters, [3] (data) nodes and [0] coord only nodes (master nodes are [auto-managed])

[2020-11-16T14:23:38,945][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:38,945][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:38,945][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:38,946][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:38,947][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:38,947][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:38,948][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:38,949][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:38,950][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:38,959][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:38,963][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:38,969][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s0], node ID [5WY1CYTqRbejYfTqT5LK1w], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,052][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,133][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,136][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:39,136][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:39,137][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:39,137][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:39,138][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:39,138][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:39,139][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:39,140][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:39,150][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:39,150][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:39,156][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s1], node ID [VADujvzoSQyhHelIQIPhGA], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,222][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,299][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,302][INFO ][o.e.n.Node ] [testEmailFields] version[8.0.0-SNAPSHOT], pid[246899], build[unknown/unknown/c2864e38fb097bd0e8095aaa716263356a0c3f8a/2020-11-16T05:45:22.776947Z], OS[Linux/4.18.0-193.28.1.el8_2.x86_64/amd64], JVM[Oracle Corporation/OpenJDK 64-Bit Server VM/11.0.2/11.0.2+7]

[2020-11-16T14:23:39,303][INFO ][o.e.n.Node ] [testEmailFields] JVM home [/var/lib/jenkins/.java/openjdk-11.0.2-linux]

[2020-11-16T14:23:39,303][DEPRECATION][o.e.d.n.Node ] [testEmailFields] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="no-jdk" message="no-jdk distributions that do not bundle a JDK are deprecated and will be removed in a future release"

[2020-11-16T14:23:39,304][INFO ][o.e.n.Node ] [testEmailFields] JVM arguments [-Dfile.encoding=UTF8, -Des.scripting.update.ctx_in_params=false, -Des.search.rewrite_sort=true, -Des.set.netty.runtime.available.processors=false, -Des.transport.cname_in_publish_address=true, -Dgradle.dist.lib=/var/lib/jenkins/.gradle/wrapper/dists/gradle-6.6.1-all/ejrtlte9hlw8v6ii20a9584rs/gradle-6.6.1/lib, -Dgradle.user.home=/var/lib/jenkins/.gradle, -Dgradle.worker.jar=/var/lib/jenkins/.gradle/caches/6.6.1/workerMain/gradle-worker.jar, -Dio.netty.noKeySetOptimization=true, -Dio.netty.noUnsafe=true, -Dio.netty.recycler.maxCapacityPerThread=0, -Djava.awt.headless=true, -Djava.locale.providers=SPI,COMPAT, -Djna.nosys=true, -Dorg.gradle.native=false, -Dtests.artifact=watcher, -Dtests.gradle=true, -Dtests.logger.level=WARN, -Dtests.security.manager=true, -Dtests.seed=F1C7AE174070F0E4, -Dtests.task=:x-pack:plugin:watcher:internalClusterTest, --illegal-access=warn, -XX:+HeapDumpOnOutOfMemoryError, -esa, -XX:HeapDumpPath=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/heapdump, -Xms512m, -Xmx512m, -Dfile.encoding=UTF-8, -Djava.io.tmpdir=/var/lib/jenkins/workspace/elastic+elasticsearch+master+multijob-unix-compatibility/os/centos-8&&immutable/x-pack/plugin/watcher/build/testrun/internalClusterTest/temp, -Duser.country=US, -Duser.language=en, -Duser.variant, -ea]

[2020-11-16T14:23:39,304][WARN ][o.e.n.Node ] [testEmailFields] version [8.0.0-SNAPSHOT] is a pre-release version of Elasticsearch and is not suitable for production

[2020-11-16T14:23:39,304][INFO ][o.e.x.w.t.TimeWarpedWatcher] [testEmailFields] using time warped watchers plugin

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] no modules loaded

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.analysis.common.CommonAnalysisPlugin]

[2020-11-16T14:23:39,305][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.node.NodeMocksPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockMustacheScriptEngine$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.script.MockScriptService$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$AssertActionNamePlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.ESIntegTestCase$TestSeedPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.MockHttpTransport$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.TestGeoShapeFieldMapperPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.test.store.MockFSIndexStore$TestPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.transport.nio.MockNioTransportPlugin]

[2020-11-16T14:23:39,306][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.datastreams.DataStreamsPlugin]

[2020-11-16T14:23:39,307][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.ilm.IndexLifecycle]

[2020-11-16T14:23:39,307][INFO ][o.e.p.PluginsService ] [testEmailFields] loaded plugin [org.elasticsearch.xpack.watcher.test.TimeWarpedWatcher]

[2020-11-16T14:23:39,316][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] using [3] data paths, mounts [[/ (/dev/sda2)]], net usable_space [232.4gb], net total_space [349.7gb], types [xfs]

[2020-11-16T14:23:39,325][INFO ][o.e.e.NodeEnvironment ] [testEmailFields] heap size [512mb], compressed ordinary object pointers [true]

[2020-11-16T14:23:39,331][INFO ][o.e.n.Node ] [testEmailFields] node name [node_s2], node ID [ssLW2ClgQ1eVaIok0ITx-A], cluster name [SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster], roles [master, remote_cluster_client, data_hot, data_content, ingest, data_warm, data, data_cold]

[2020-11-16T14:23:39,389][INFO ][o.e.d.DiscoveryModule ] [testEmailFields] using discovery type [zen] and seed hosts providers [settings, file]

[2020-11-16T14:23:39,451][INFO ][o.e.n.Node ] [testEmailFields] initialized

[2020-11-16T14:23:39,467][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] starting ...

[2020-11-16T14:23:39,473][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] starting ...

[2020-11-16T14:23:39,481][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] starting ...

[2020-11-16T14:23:39,486][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] publish_address {127.0.0.1:44717}, bound_addresses {[::1]:43339}, {127.0.0.1:44717}

[2020-11-16T14:23:39,505][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] publish_address {127.0.0.1:37883}, bound_addresses {[::1]:37255}, {127.0.0.1:37883}

[2020-11-16T14:23:39,544][INFO ][o.e.t.TransportService ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] publish_address {127.0.0.1:38021}, bound_addresses {[::1]:39523}, {127.0.0.1:38021}

[2020-11-16T14:23:39,714][INFO ][o.e.c.c.Coordinator ] [node_s0] setting initial configuration to VotingConfiguration{5WY1CYTqRbejYfTqT5LK1w,{bootstrap-placeholder}-node_s1,ssLW2ClgQ1eVaIok0ITx-A}

[2020-11-16T14:23:39,895][INFO ][o.e.c.s.MasterService ] [node_s0] elected-as-master ([2] nodes joined)[{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true} elect leader, {node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true} elect leader, _BECOME_MASTER_TASK_, _FINISH_ELECTION_], term: 1, version: 1, delta: master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:39,993][INFO ][o.e.c.c.CoordinationState] [node_s0] cluster UUID set to [wU0jGhUqSaS_LV4XG_p1Zg]

[2020-11-16T14:23:40,004][INFO ][o.e.c.c.CoordinationState] [node_s2] cluster UUID set to [wU0jGhUqSaS_LV4XG_p1Zg]

[2020-11-16T14:23:40,113][INFO ][o.e.c.s.ClusterApplierService] [node_s2] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 1, reason: ApplyCommitRequest{term=1, version=1, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,115][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#3]]] started

[2020-11-16T14:23:40,122][INFO ][o.e.c.s.ClusterApplierService] [node_s0] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 1, reason: Publication{term=1, version=1}

[2020-11-16T14:23:40,166][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#1]]] started

[2020-11-16T14:23:40,183][INFO ][o.e.c.s.MasterService ] [node_s0] node-join[{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true} join existing leader], term: 1, version: 2, delta: added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,218][INFO ][o.e.c.r.a.DiskThresholdMonitor] [node_s0] skipping monitor as a check is already in progress

[2020-11-16T14:23:40,226][INFO ][o.e.c.s.ClusterApplierService] [node_s2] added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: ApplyCommitRequest{term=1, version=2, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,282][INFO ][o.e.c.s.ClusterApplierService] [node_s1] master node changed {previous [], current [{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}]}, added {{node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true},{node_s2}{ssLW2ClgQ1eVaIok0ITx-A}{Pd7_nYW2Roq0DxCQdyLOyw}{127.0.0.1}{127.0.0.1:38021}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: ApplyCommitRequest{term=1, version=2, sourceNode={node_s0}{5WY1CYTqRbejYfTqT5LK1w}{dilD5u-TTAODUYWqiqEEfQ}{127.0.0.1}{127.0.0.1:37883}{cdhimrsw}{xpack.installed=true}}

[2020-11-16T14:23:40,284][INFO ][o.e.n.Node ] [[test_SUITE-TEST_WORKER_VM=[826]-CLUSTER_SEED=[-7448744538358753696]-HASH=[226EBECF37E]-cluster[T#2]]] started

[2020-11-16T14:23:40,285][INFO ][o.e.c.s.ClusterApplierService] [node_s0] added {{node_s1}{VADujvzoSQyhHelIQIPhGA}{p5u_mAK3RpG62yrbtlzsbw}{127.0.0.1}{127.0.0.1:44717}{cdhimrsw}{xpack.installed=true}}, term: 1, version: 2, reason: Publication{term=1, version=2}

[2020-11-16T14:23:40,439][INFO ][o.e.g.GatewayService ] [node_s0] recovered [0] indices into cluster_state

[2020-11-16T14:23:40,461][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.slm-history] for index patterns [.slm-history-5*]

[2020-11-16T14:23:40,545][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.triggered_watches] for index patterns [.triggered_watches*]

[2020-11-16T14:23:40,634][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.watches] for index patterns [.watches*]

[2020-11-16T14:23:40,744][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding index template [.watch-history-14] for index patterns [.watcher-history-14*]

[2020-11-16T14:23:40,838][INFO ][o.e.x.i.a.TransportPutLifecycleAction] [node_s0] adding index lifecycle policy [watch-history-ilm-policy]

[2020-11-16T14:23:40,921][INFO ][o.e.x.i.a.TransportPutLifecycleAction] [node_s0] adding index lifecycle policy [slm-history-ilm-policy]

[2020-11-16T14:23:41,284][INFO ][o.e.l.LicenseService ] [node_s1] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,288][INFO ][o.e.l.LicenseService ] [node_s2] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,331][INFO ][o.e.l.LicenseService ] [node_s0] license [2860a10f-36d3-4bfd-b78f-c792d06c0567] mode [trial] - valid

[2020-11-16T14:23:41,351][WARN ][o.e.c.m.MetadataIndexTemplateService] [node_s0] legacy template [random_index_template] has index patterns [*] matching patterns from existing composable templates [.triggered_watches,.watch-history-14,.slm-history,.watches] with patterns (.triggered_watches => [.triggered_watches*],.watch-history-14 => [.watcher-history-14*],.slm-history => [.slm-history-5*],.watches => [.watches*]); this template [random_index_template] may be ignored in favor of a composable template at index creation time

[2020-11-16T14:23:41,353][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] adding template [random_index_template] for index patterns [*]

[2020-11-16T14:23:41,432][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: all set up test

[2020-11-16T14:23:41,437][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: freezing time on nodes

[2020-11-16T14:23:41,440][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STARTED], Tuple [v1=node_s2 (0), v2=STARTED], Tuple [v1=node_s1 (0), v2=STARTED]]

[2020-11-16T14:23:41,511][INFO ][o.e.x.w.WatcherService ] [node_s1] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,511][INFO ][o.e.x.w.WatcherService ] [node_s2] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,512][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s1] watcher has stopped

[2020-11-16T14:23:41,520][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s2] watcher has stopped

[2020-11-16T14:23:41,521][INFO ][o.e.x.w.WatcherService ] [node_s0] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:41,521][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s0] watcher has stopped

[2020-11-16T14:23:41,530][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STOPPED], Tuple [v1=node_s2 (0), v2=STOPPED], Tuple [v1=node_s1 (0), v2=STOPPED]]

[2020-11-16T14:23:41,540][DEPRECATION][o.e.d.c.m.MetadataCreateIndexService] [node_s0] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="index_name_starts_with_dot" message="index name [.watches] starts with a dot '.', in the next major version, index names starting with a dot are reserved for hidden indices and system indices"

[2020-11-16T14:23:41,550][INFO ][o.e.c.m.MetadataCreateIndexService] [node_s0] [.watches] creating index, cause [api], templates [.watches], shards [1]/[0]

[2020-11-16T14:23:41,555][INFO ][o.e.c.r.a.AllocationService] [node_s0] updating number_of_replicas to [1] for indices [.watches]

[2020-11-16T14:23:41,868][DEPRECATION][o.e.d.c.m.MetadataCreateIndexService] [node_s0] data_stream.dataset="deprecation.elasticsearch" data_stream.namespace="default" data_stream.type="logs" ecs.version="1.6" key="index_name_starts_with_dot" message="index name [.triggered_watches] starts with a dot '.', in the next major version, index names starting with a dot are reserved for hidden indices and system indices"

[2020-11-16T14:23:41,887][INFO ][o.e.c.m.MetadataCreateIndexService] [node_s0] [.triggered_watches] creating index, cause [api], templates [.triggered_watches], shards [1]/[1]

[2020-11-16T14:23:42,285][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STOPPED], Tuple [v1=node_s2, v2=STOPPED], Tuple [v1=node_s1, v2=STOPPED]]

[2020-11-16T14:23:42,534][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,544][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,553][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTING], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,568][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to start watcher, current states [Tuple [v1=node_s0, v2=STARTED], Tuple [v1=node_s2, v2=STARTED], Tuple [v1=node_s1, v2=STARTED]]

[2020-11-16T14:23:42,608][INFO ][o.e.x.w.WatcherService ] [node_s2] reloading watcher, reason [new local watcher shard allocation ids], cancelled [0] queued tasks

[2020-11-16T14:23:42,619][INFO ][o.e.x.w.WatcherService ] [node_s0] reloading watcher, reason [new local watcher shard allocation ids], cancelled [0] queued tasks

[2020-11-16T14:23:42,636][INFO ][o.e.c.r.a.AllocationService] [node_s0] current.health="GREEN" message="Cluster health status changed from [YELLOW] to [GREEN] (reason: [shards started [[.triggered_watches][0]]])." previous.health="YELLOW" reason="shards started [[.triggered_watches][0]]"

[2020-11-16T14:23:42,803][INFO ][o.s.s.s.SMTPServer ] [testEmailFields] SMTP server *:39213 stopping

[2020-11-16T14:23:42,804][INFO ][o.s.s.s.ServerThread ] [[org.subethamail.smtp.server.ServerThread *:39213]{smtpServerLocalSocketAddress=*:39213}] SMTP server *:39213 stopped

[2020-11-16T14:23:42,805][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [#testEmailFields]: clearing watcher state

[2020-11-16T14:23:42,808][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STARTED], Tuple [v1=node_s2 (0), v2=STARTED], Tuple [v1=node_s1 (0), v2=STARTED]]

[2020-11-16T14:23:42,889][INFO ][o.e.x.w.WatcherService ] [node_s2] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,889][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s2] watcher has stopped

[2020-11-16T14:23:42,895][INFO ][o.e.x.w.WatcherService ] [node_s1] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,895][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s1] watcher has stopped

[2020-11-16T14:23:42,905][INFO ][o.e.x.w.WatcherService ] [node_s0] stopping watch service, reason [watcher manually marked to shutdown by cluster state update]

[2020-11-16T14:23:42,905][INFO ][o.e.x.w.WatcherLifeCycleService] [node_s0] watcher has stopped

[2020-11-16T14:23:42,910][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] waiting to stop watcher, current states [Tuple [v1=node_s0 (0), v2=STOPPED], Tuple [v1=node_s2 (0), v2=STOPPED], Tuple [v1=node_s1 (0), v2=STOPPED]]

[2020-11-16T14:23:42,919][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: cleaning up after test

[2020-11-16T14:23:42,919][INFO ][o.e.t.InternalTestCluster] [testEmailFields] Clearing active scheme time frozen, expected healing time 0s

[2020-11-16T14:23:43,002][INFO ][o.e.c.m.MetadataDeleteIndexService] [node_s0] [.watches/DgNTavZhQqSWESdZgB3WmA] deleting index

[2020-11-16T14:23:43,002][INFO ][o.e.c.m.MetadataDeleteIndexService] [node_s0] [.triggered_watches/NrMycBW_QZGlIMxOa4-pSw] deleting index

[2020-11-16T14:23:43,188][INFO ][o.e.c.m.MetadataIndexTemplateService] [node_s0] removing template [random_index_template]

[2020-11-16T14:23:43,252][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] [HistoryTemplateEmailMappingsTests#testEmailFields]: cleaned up after test

[2020-11-16T14:23:43,252][INFO ][o.e.x.w.h.HistoryTemplateEmailMappingsTests] [testEmailFields] after test