Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

83,838 | 7,881,890,907 | IssuesEvent | 2018-06-26 20:37:27 | nasa-gibs/worldview | https://api.github.com/repos/nasa-gibs/worldview | closed | Bring in start/end dates from GC docs into layer descriptions | testing | Ben took an initial look at this: WV-1797

Here is a list of changes discussed on 11/14/17

- [x] Change process_temporal_value to build an array of all dates and add to wv.json.

i.e. this:

<Value>2011-09-30/2011-11-25/P1D</Value>

<Value>2011-11-27/2011-11-27/P1D</Value>

<Value>2011-11-29/2012-01-22/P1D</Value>

Becomes:

September 30th, 2011 - November 25th, 2011

November 27th, 2011 - November 27th, 2011

Novemeber 29th, 2011 - January 22nd, 2012

- [x] Display date range at top

- [x] Format date to be '24 November 2015' style

- [x] Add indicator to top date range to tell user there are gaps in that range.

- [x] Display exact dates at bottom in a list.

- [x] Remove titles from markdown files

- [x] Add date range to the layer list hover state when no data is available:

i.e. this -> "No data on selected date for this layer"

to this -> "Data available between 27 May 2001 - 3 March 2008"

-----------------------------------------------------------------------

Phase 2:

Link the bottom dates to the timeline | 1.0 | Bring in start/end dates from GC docs into layer descriptions - Ben took an initial look at this: WV-1797

Here is a list of changes discussed on 11/14/17

- [x] Change process_temporal_value to build an array of all dates and add to wv.json.

i.e. this:

<Value>2011-09-30/2011-11-25/P1D</Value>

<Value>2011-11-27/2011-11-27/P1D</Value>

<Value>2011-11-29/2012-01-22/P1D</Value>

Becomes:

September 30th, 2011 - November 25th, 2011

November 27th, 2011 - November 27th, 2011

Novemeber 29th, 2011 - January 22nd, 2012

- [x] Display date range at top

- [x] Format date to be '24 November 2015' style

- [x] Add indicator to top date range to tell user there are gaps in that range.

- [x] Display exact dates at bottom in a list.

- [x] Remove titles from markdown files

- [x] Add date range to the layer list hover state when no data is available:

i.e. this -> "No data on selected date for this layer"

to this -> "Data available between 27 May 2001 - 3 March 2008"

-----------------------------------------------------------------------

Phase 2:

Link the bottom dates to the timeline | test | bring in start end dates from gc docs into layer descriptions ben took an initial look at this wv here is a list of changes discussed on change process temporal value to build an array of all dates and add to wv json i e this becomes september november november november novemeber january display date range at top format date to be november style add indicator to top date range to tell user there are gaps in that range display exact dates at bottom in a list remove titles from markdown files add date range to the layer list hover state when no data is available i e this no data on selected date for this layer to this data available between may march phase link the bottom dates to the timeline | 1 |

127,426 | 10,469,446,701 | IssuesEvent | 2019-09-22 20:45:53 | RPTools/maptool | https://api.github.com/repos/RPTools/maptool | closed | GM tokens FOW revealed to the players on auto reveal | bug claimed tested | Full history

http://forums.rptools.net/viewtopic.php?f=3&p=269873#p269873

Maptool 1.4.1.8

a/ start a server with "auto reveal on move" option selected, connect a client to it

b/ empty map (visible to player) with FOW enabled and one token assigned to server/GM ownership

c/ hit CTRL+e CTRL+f and move the token around from the server

FOW is revealed to the player. | 1.0 | GM tokens FOW revealed to the players on auto reveal - Full history

http://forums.rptools.net/viewtopic.php?f=3&p=269873#p269873

Maptool 1.4.1.8

a/ start a server with "auto reveal on move" option selected, connect a client to it

b/ empty map (visible to player) with FOW enabled and one token assigned to server/GM ownership

c/ hit CTRL+e CTRL+f and move the token around from the server

FOW is revealed to the player. | test | gm tokens fow revealed to the players on auto reveal full history maptool a start a server with auto reveal on move option selected connect a client to it b empty map visible to player with fow enabled and one token assigned to server gm ownership c hit ctrl e ctrl f and move the token around from the server fow is revealed to the player | 1 |

311,523 | 26,795,870,338 | IssuesEvent | 2023-02-01 11:51:22 | akademia-envelo-3/moovelo-front | https://api.github.com/repos/akademia-envelo-3/moovelo-front | closed | [Fe] [U] User - przegląda grupy | question User Frontend SP4 test-ok | ### Story

User może przegląda grupy

### Dodatkowe informacje

- User przegląda grupy które są pobierane z endpointa /groups, wyświetlany jest tytuł, krótki opis, oraz przycisk dołączenia do grupy

### Link do Makiet:

https://www.figma.com/file/VwoFt9OUGvu0j4IABdGZHs/Moovelo?node-id=613026%3A6275&t=jnOwK4MYswdWwiEY-0

### Kryteria akceptacji:

- [ ] Wyświetlenie listy grup

| 1.0 | [Fe] [U] User - przegląda grupy - ### Story

User może przegląda grupy

### Dodatkowe informacje

- User przegląda grupy które są pobierane z endpointa /groups, wyświetlany jest tytuł, krótki opis, oraz przycisk dołączenia do grupy

### Link do Makiet:

https://www.figma.com/file/VwoFt9OUGvu0j4IABdGZHs/Moovelo?node-id=613026%3A6275&t=jnOwK4MYswdWwiEY-0

### Kryteria akceptacji:

- [ ] Wyświetlenie listy grup

| test | user przegląda grupy story user może przegląda grupy dodatkowe informacje user przegląda grupy które są pobierane z endpointa groups wyświetlany jest tytuł krótki opis oraz przycisk dołączenia do grupy link do makiet kryteria akceptacji wyświetlenie listy grup | 1 |

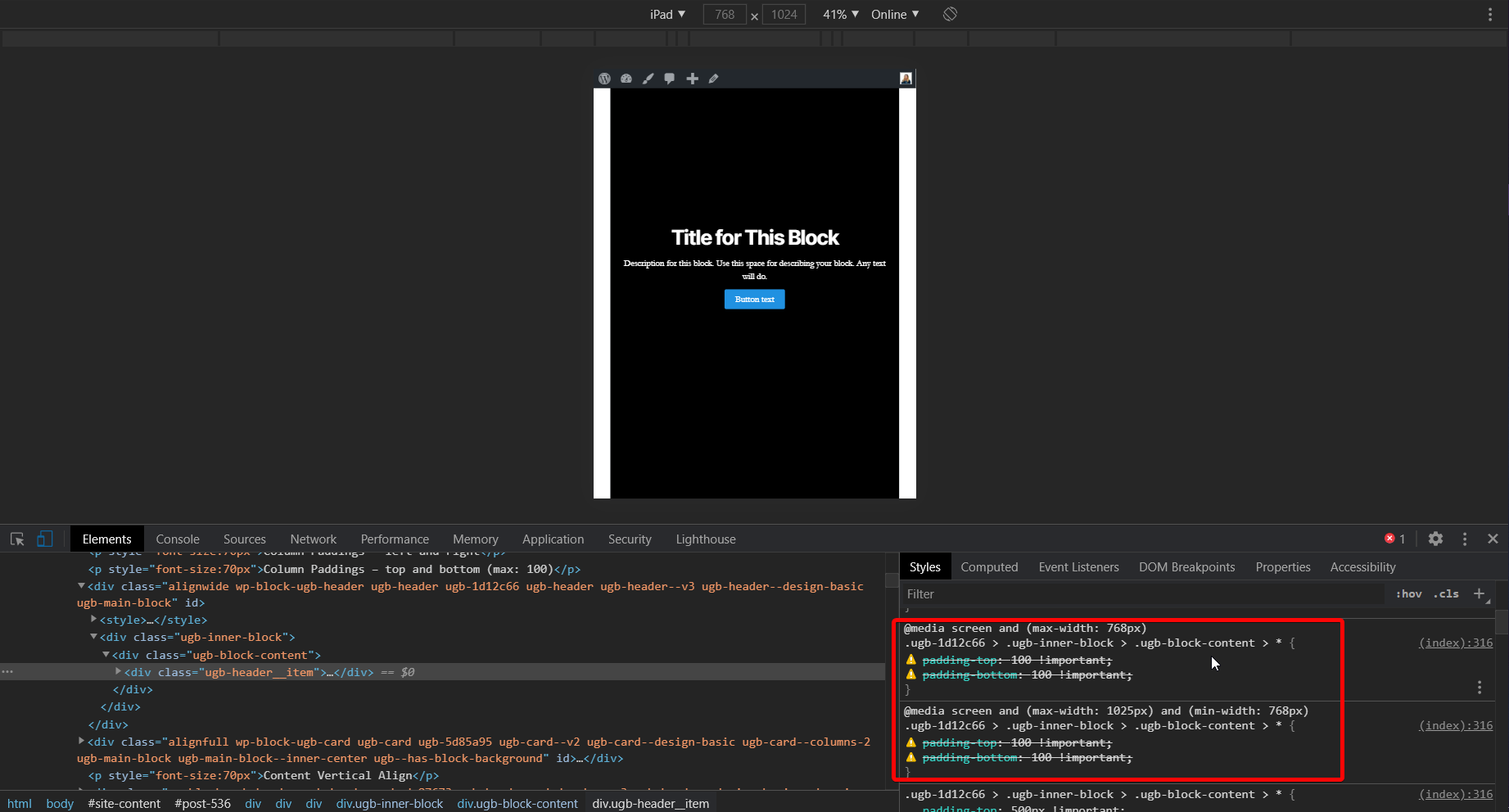

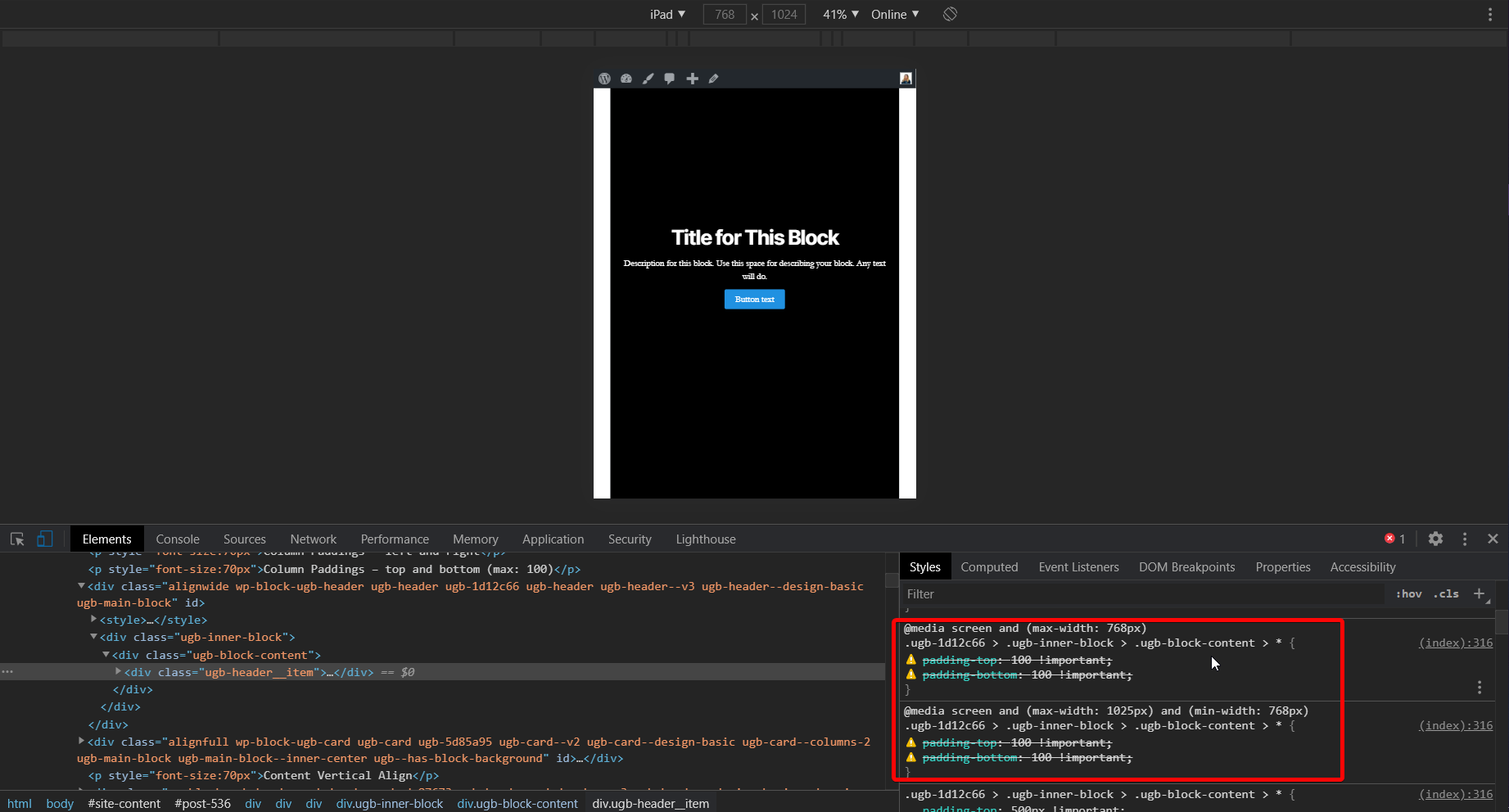

275,437 | 23,915,751,058 | IssuesEvent | 2022-09-09 12:33:10 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Navigation block: New menu list view inconsistent | [Type] Bug Needs Testing [Block] Navigation | ### Description

When I "create new menu" the links in the list view aren't updated:

<img width="1440" alt="Screenshot 2022-08-01 at 14 35 40" src="https://user-images.githubusercontent.com/275961/182159970-003dafbc-3672-4491-abe9-42a16a0fb8a7.png">

<img width="1440" alt="Screenshot 2022-08-01 at 14 35 31" src="https://user-images.githubusercontent.com/275961/182159981-99eca27e-90aa-42cd-af2d-4d7c0b784f5d.png">

### Step-by-step reproduction instructions

In a block theme with a navigation block,

- Open the list view so that you can see the list of items in the navigation block

- Click on "Create new menu" in the nav block

- Confirm that you still see the old nav items in the list view

### Screenshots, screen recording, code snippet

_No response_

### Environment info

_No response_

### Please confirm that you have searched existing issues in the repo.

Yes

### Please confirm that you have tested with all plugins deactivated except Gutenberg.

Yes | 1.0 | Navigation block: New menu list view inconsistent - ### Description

When I "create new menu" the links in the list view aren't updated:

<img width="1440" alt="Screenshot 2022-08-01 at 14 35 40" src="https://user-images.githubusercontent.com/275961/182159970-003dafbc-3672-4491-abe9-42a16a0fb8a7.png">

<img width="1440" alt="Screenshot 2022-08-01 at 14 35 31" src="https://user-images.githubusercontent.com/275961/182159981-99eca27e-90aa-42cd-af2d-4d7c0b784f5d.png">

### Step-by-step reproduction instructions

In a block theme with a navigation block,

- Open the list view so that you can see the list of items in the navigation block

- Click on "Create new menu" in the nav block

- Confirm that you still see the old nav items in the list view

### Screenshots, screen recording, code snippet

_No response_

### Environment info

_No response_

### Please confirm that you have searched existing issues in the repo.

Yes

### Please confirm that you have tested with all plugins deactivated except Gutenberg.

Yes | test | navigation block new menu list view inconsistent description when i create new menu the links in the list view aren t updated img width alt screenshot at src img width alt screenshot at src step by step reproduction instructions in a block theme with a navigation block open the list view so that you can see the list of items in the navigation block click on create new menu in the nav block confirm that you still see the old nav items in the list view screenshots screen recording code snippet no response environment info no response please confirm that you have searched existing issues in the repo yes please confirm that you have tested with all plugins deactivated except gutenberg yes | 1 |

444,145 | 12,807,021,989 | IssuesEvent | 2020-07-03 10:35:22 | MEN-Mikro-Elektronik/13MD05-90 | https://api.github.com/repos/MEN-Mikro-Elektronik/13MD05-90 | opened | Global variable G_freeUsrBufList not protected for context switches / SMP | bug high priority | # Internal PR

MAIN_PR006854 F75P IOP - F215 frequently lose frame in multi-thread receive mode

# Background

This problem was observed with the [MSCAN driver](https://github.com/MEN-Mikro-Elektronik/13Z015-06) but can probably be reproduce with any other driver.

# Description of the problem

The application is executed on multi-core CPU. Two different threads access two different devices (can_1 and can_2).

In the application we observe frame lost (this is not suppose to happen because the two interfaces are connected on the same CAN bus and shall receive the same number of frames).

# Workaround

The user application shall protect all the mscan_xxx() api calls with semaphore.

# Correction

The correction applied in PR #203 fix the issue with our current test setup.

# Work to do

1. Reproduce the problem and define a test setup for this problem (if needed I can provide some test SW for this)

2. Check and confirm if the correction in PR #203 is enough

3. Make some stress tests

| 1.0 | Global variable G_freeUsrBufList not protected for context switches / SMP - # Internal PR

MAIN_PR006854 F75P IOP - F215 frequently lose frame in multi-thread receive mode

# Background

This problem was observed with the [MSCAN driver](https://github.com/MEN-Mikro-Elektronik/13Z015-06) but can probably be reproduce with any other driver.

# Description of the problem

The application is executed on multi-core CPU. Two different threads access two different devices (can_1 and can_2).

In the application we observe frame lost (this is not suppose to happen because the two interfaces are connected on the same CAN bus and shall receive the same number of frames).

# Workaround

The user application shall protect all the mscan_xxx() api calls with semaphore.

# Correction

The correction applied in PR #203 fix the issue with our current test setup.

# Work to do

1. Reproduce the problem and define a test setup for this problem (if needed I can provide some test SW for this)

2. Check and confirm if the correction in PR #203 is enough

3. Make some stress tests

| non_test | global variable g freeusrbuflist not protected for context switches smp internal pr main iop frequently lose frame in multi thread receive mode background this problem was observed with the but can probably be reproduce with any other driver description of the problem the application is executed on multi core cpu two different threads access two different devices can and can in the application we observe frame lost this is not suppose to happen because the two interfaces are connected on the same can bus and shall receive the same number of frames workaround the user application shall protect all the mscan xxx api calls with semaphore correction the correction applied in pr fix the issue with our current test setup work to do reproduce the problem and define a test setup for this problem if needed i can provide some test sw for this check and confirm if the correction in pr is enough make some stress tests | 0 |

352,508 | 32,073,727,261 | IssuesEvent | 2023-09-25 09:38:11 | input-output-hk/cardano-ledger | https://api.github.com/repos/input-output-hk/cardano-ledger | closed | Add tests to ensure Conway DCerts decoding/encoding is backwards-compatible | :detective: testing | The `EncCBOR` and `DecCBOR` instances for `ConwayDCert` should be backwards compatible with `ShelleyDCert`, meaning that it should be possible to decode a Shelley era certificate in Conway era (with the exception of MIR certificates). We should add tests to check that this is indeed the case. | 1.0 | Add tests to ensure Conway DCerts decoding/encoding is backwards-compatible - The `EncCBOR` and `DecCBOR` instances for `ConwayDCert` should be backwards compatible with `ShelleyDCert`, meaning that it should be possible to decode a Shelley era certificate in Conway era (with the exception of MIR certificates). We should add tests to check that this is indeed the case. | test | add tests to ensure conway dcerts decoding encoding is backwards compatible the enccbor and deccbor instances for conwaydcert should be backwards compatible with shelleydcert meaning that it should be possible to decode a shelley era certificate in conway era with the exception of mir certificates we should add tests to check that this is indeed the case | 1 |

138,222 | 11,195,226,699 | IssuesEvent | 2020-01-03 05:22:09 | jojapoppa/fedoragold-wallet-electron | https://api.github.com/repos/jojapoppa/fedoragold-wallet-electron | closed | Transaction history list issues | bug completed - in testing in next release | The transaction history list needs debugging and cleanup. This is mostly already completed, and just needs testing prior to next release. | 1.0 | Transaction history list issues - The transaction history list needs debugging and cleanup. This is mostly already completed, and just needs testing prior to next release. | test | transaction history list issues the transaction history list needs debugging and cleanup this is mostly already completed and just needs testing prior to next release | 1 |

8,363 | 2,982,207,920 | IssuesEvent | 2015-07-17 09:28:26 | owncloud/client | https://api.github.com/repos/owncloud/client | closed | [OS X] [Multi account] Fix integration with OSX-style settings dialog | approved by qa bug ReadyToTest sev2-high | * [x] Crash on account delete

* [x] UI glitch on account add

* [x] Possibly more wrong? | 1.0 | [OS X] [Multi account] Fix integration with OSX-style settings dialog - * [x] Crash on account delete

* [x] UI glitch on account add

* [x] Possibly more wrong? | test | fix integration with osx style settings dialog crash on account delete ui glitch on account add possibly more wrong | 1 |

998 | 4,776,213,976 | IssuesEvent | 2016-10-27 13:08:10 | SpartanRefactoring/Spartanizer | https://api.github.com/repos/SpartanRefactoring/Spartanizer | closed | Tutorial/Travis: sign-up | Architecture Folks GUI/IT Quality assurance Teaching | @orimarco lets write it together.

* Step 1: enter [https://travis-ci.org/SpartanRefactoring/Spartanizer](https://travis-ci.org/SpartanRefactoring/Spartanizer)

* Step 2: click on `Sign in with GitHub`

* Step 3: Profit $$$. | 1.0 | Tutorial/Travis: sign-up - @orimarco lets write it together.

* Step 1: enter [https://travis-ci.org/SpartanRefactoring/Spartanizer](https://travis-ci.org/SpartanRefactoring/Spartanizer)

* Step 2: click on `Sign in with GitHub`

* Step 3: Profit $$$. | non_test | tutorial travis sign up orimarco lets write it together step enter step click on sign in with github step profit | 0 |

432,574 | 12,495,287,346 | IssuesEvent | 2020-06-01 12:55:41 | hochschule-darmstadt/openartbrowser | https://api.github.com/repos/hochschule-darmstadt/openartbrowser | closed | Movement overview timeline on start page | User Interface feature high priority | **Is your feature request related to a problem? Please describe.**

The user has no overview of the different movements an their chronological order.

**Why do you want this feature? What goals should be achieved**

Teach users the context of time and art movements.

**Describe the solution you'd like**

Adding an overview timeline containing (the most important) movements which are not a sub-movement to the start page.

This information can be acquired by the wikidata dataset. An empty *is part of* field indicates

that the movement is not a sub-movement itself.

In the future it might be possible to add more movements (even sub-movements). But this depends on how this will blow up the start page.

**Describe acceptance criteria**

A timeline on the start page shows a few different important movements in chronological order.

**Describe alternatives you've considered**

None

**What effects does your proposed solution have**

We need to crawl more fields of the wikidata dataset and change the model.

*This issue is closely coupled to issue #65*.

Therefore the newly required data should be equal for both features.

**Additional context**

| 1.0 | Movement overview timeline on start page - **Is your feature request related to a problem? Please describe.**

The user has no overview of the different movements an their chronological order.

**Why do you want this feature? What goals should be achieved**

Teach users the context of time and art movements.

**Describe the solution you'd like**

Adding an overview timeline containing (the most important) movements which are not a sub-movement to the start page.

This information can be acquired by the wikidata dataset. An empty *is part of* field indicates

that the movement is not a sub-movement itself.

In the future it might be possible to add more movements (even sub-movements). But this depends on how this will blow up the start page.

**Describe acceptance criteria**

A timeline on the start page shows a few different important movements in chronological order.

**Describe alternatives you've considered**

None

**What effects does your proposed solution have**

We need to crawl more fields of the wikidata dataset and change the model.

*This issue is closely coupled to issue #65*.

Therefore the newly required data should be equal for both features.

**Additional context**

| non_test | movement overview timeline on start page is your feature request related to a problem please describe the user has no overview of the different movements an their chronological order why do you want this feature what goals should be achieved teach users the context of time and art movements describe the solution you d like adding an overview timeline containing the most important movements which are not a sub movement to the start page this information can be acquired by the wikidata dataset an empty is part of field indicates that the movement is not a sub movement itself in the future it might be possible to add more movements even sub movements but this depends on how this will blow up the start page describe acceptance criteria a timeline on the start page shows a few different important movements in chronological order describe alternatives you ve considered none what effects does your proposed solution have we need to crawl more fields of the wikidata dataset and change the model this issue is closely coupled to issue therefore the newly required data should be equal for both features additional context | 0 |

281,731 | 24,415,182,586 | IssuesEvent | 2022-10-05 15:18:37 | spring-projects/spring-framework | https://api.github.com/repos/spring-projects/spring-framework | opened | Simplify TestRuntimeHintsRegistrar API | in: test type: enhancement theme: aot | The `TestRuntimeHintsRegistrar` currently combines `MergedContextConfiguration` and test classes. However, it appears that only `spring-test` internals have a need for registering hints based on the `MergedContextConfiguration`. For example, Spring Boot's AOT testing support has not had such a need.

In light of that, we should simplify the `TestRuntimeHintsRegistrar` API so that it only focuses on test classes, and we should move the hint registration code specific to `MergedContextConfiguration` to an internal mechanism. | 1.0 | Simplify TestRuntimeHintsRegistrar API - The `TestRuntimeHintsRegistrar` currently combines `MergedContextConfiguration` and test classes. However, it appears that only `spring-test` internals have a need for registering hints based on the `MergedContextConfiguration`. For example, Spring Boot's AOT testing support has not had such a need.

In light of that, we should simplify the `TestRuntimeHintsRegistrar` API so that it only focuses on test classes, and we should move the hint registration code specific to `MergedContextConfiguration` to an internal mechanism. | test | simplify testruntimehintsregistrar api the testruntimehintsregistrar currently combines mergedcontextconfiguration and test classes however it appears that only spring test internals have a need for registering hints based on the mergedcontextconfiguration for example spring boot s aot testing support has not had such a need in light of that we should simplify the testruntimehintsregistrar api so that it only focuses on test classes and we should move the hint registration code specific to mergedcontextconfiguration to an internal mechanism | 1 |

280,651 | 24,320,894,936 | IssuesEvent | 2022-09-30 10:37:25 | modicio/modicio | https://api.github.com/repos/modicio/modicio | opened | Testbase Implementation | KPtest | Create a Unit- & Integration Testbase on top of #1 covering important functionality, focusing on assembly and dissassembly of unfolding hierarchies. | 1.0 | Testbase Implementation - Create a Unit- & Integration Testbase on top of #1 covering important functionality, focusing on assembly and dissassembly of unfolding hierarchies. | test | testbase implementation create a unit integration testbase on top of covering important functionality focusing on assembly and dissassembly of unfolding hierarchies | 1 |

45,143 | 23,926,526,859 | IssuesEvent | 2022-09-10 00:02:33 | pytorch/TensorRT | https://api.github.com/repos/pytorch/TensorRT | closed | ❓ [Question] Why BERT Base is slower w/ Torch-TensorRT than native PyTorch? | question No Activity performance | ## ❓ Question

<!-- Your question -->

I'm trying to optimize hugging face's BERT Base uncased model using Torch-TensorRT, the code works after disabling full compilation (`require_full_compilation=False`), and the avg latency is ~10ms on T4. However, it it slower than native PyTorch implementation (~6ms on T4). In contrast, running the same model with `trtexec` only takes ~4ms. So, for BERT Base, it's 2.5x slower than TensorRT. I wonder if this is expected?

Here's the full code:

```

from transformers import BertModel, BertTokenizer, BertConfig

import torch

import time

enc = BertTokenizer.from_pretrained("./bert-base-uncased")

# Tokenizing input text

text = "[CLS] Who was Jim Henson ? [SEP] Jim Henson was a puppeteer [SEP]"

tokenized_text = enc.tokenize(text)

# Masking one of the input tokens

masked_index = 8

tokenized_text[masked_index] = '[MASK]'

indexed_tokens = enc.convert_tokens_to_ids(tokenized_text)

segments_ids = [0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1]

# Creating a dummy input

tokens_tensor = torch.tensor([indexed_tokens]).to(torch.int32).cuda()

segments_tensors = torch.tensor([segments_ids]).to(torch.int32).cuda()

dummy_input = [tokens_tensor, segments_tensors]

dummy_input_shapes = [list(v.size()) for v in dummy_input]

# Initializing the model with the torchscript flag

# Flag set to True even though it is not necessary as this model does not have an LM Head.

config = BertConfig(vocab_size_or_config_json_file=32000, hidden_size=768,

num_hidden_layers=12, num_attention_heads=12, intermediate_size=3072, torchscript=True)

# Instantiating the model

model = BertModel(config)

# The model needs to be in evaluation mode

model.eval()

# If you are instantiating the model with `from_pretrained` you can also easily set the TorchScript flag

model = BertModel.from_pretrained("./bert-base-uncased", torchscript=True)

model = model.eval().cuda()

# Creating the trace

traced_model = torch.jit.trace(model, dummy_input)

import torch_tensorrt

compile_settings = {

"require_full_compilation": False,

"truncate_long_and_double": True,

"torch_executed_ops": ["aten::Int"]

}

optimized_model = torch_tensorrt.compile(traced_model, inputs=dummy_input, **compile_settings)

def benchmark(model, input):

# Warming up

for _ in range(10):

model(*input)

inference_count = 1000

# inference test

start = time.time()

for _ in range(inference_count):

model(*input)

end = time.time()

print(f"use {(end-start)/inference_count*1000} ms each inference")

print(f"{inference_count/(end-start)} step/s")

print("before compile")

benchmark(traced_model, dummy_input)

print("after compile")

benchmark(optimized_model, dummy_input)

```

So, my question is why it is slower than native PyTorch, and how do I fine-tune it?

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

I've checked out the log from Torch-TensorRT, looks like the model is partitioned into 3 parts, separated by `at::Int` op, and looks like Int op is [hard to implement](https://github.com/NVIDIA/Torch-TensorRT/issues/513).

Next, I profiled the inference process with Nsight System, here's the screenshot:

It is expected to see 3 divided segments, however, there are 2 things that caught my attention:

1. Why segment 0 is slower than pure TensorRT? Is it due to over complicated conversion?

2. Why the `cudaMemcpyAsync` took so long? Shouldn't it only return the `last_hidden_state` tensor?

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages

- PyTorch Version (e.g., 1.0): 1.10

- CPU Architecture:

- OS (e.g., Linux): Ubuntu 18.04

- How you installed PyTorch (`conda`, `pip`, `libtorch`, source): pip

- Build command you used (if compiling from source): python setup.py develop

- Are you using local sources or building from archives: local sources

- Python version: 3.6.9

- CUDA version: 10.2

- GPU models and configuration: T4

- Any other relevant information:

## Additional context

<!-- Add any other context about the problem here. -->

| True | ❓ [Question] Why BERT Base is slower w/ Torch-TensorRT than native PyTorch? - ## ❓ Question

<!-- Your question -->

I'm trying to optimize hugging face's BERT Base uncased model using Torch-TensorRT, the code works after disabling full compilation (`require_full_compilation=False`), and the avg latency is ~10ms on T4. However, it it slower than native PyTorch implementation (~6ms on T4). In contrast, running the same model with `trtexec` only takes ~4ms. So, for BERT Base, it's 2.5x slower than TensorRT. I wonder if this is expected?

Here's the full code:

```

from transformers import BertModel, BertTokenizer, BertConfig

import torch

import time

enc = BertTokenizer.from_pretrained("./bert-base-uncased")

# Tokenizing input text

text = "[CLS] Who was Jim Henson ? [SEP] Jim Henson was a puppeteer [SEP]"

tokenized_text = enc.tokenize(text)

# Masking one of the input tokens

masked_index = 8

tokenized_text[masked_index] = '[MASK]'

indexed_tokens = enc.convert_tokens_to_ids(tokenized_text)

segments_ids = [0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1]

# Creating a dummy input

tokens_tensor = torch.tensor([indexed_tokens]).to(torch.int32).cuda()

segments_tensors = torch.tensor([segments_ids]).to(torch.int32).cuda()

dummy_input = [tokens_tensor, segments_tensors]

dummy_input_shapes = [list(v.size()) for v in dummy_input]

# Initializing the model with the torchscript flag

# Flag set to True even though it is not necessary as this model does not have an LM Head.

config = BertConfig(vocab_size_or_config_json_file=32000, hidden_size=768,

num_hidden_layers=12, num_attention_heads=12, intermediate_size=3072, torchscript=True)

# Instantiating the model

model = BertModel(config)

# The model needs to be in evaluation mode

model.eval()

# If you are instantiating the model with `from_pretrained` you can also easily set the TorchScript flag

model = BertModel.from_pretrained("./bert-base-uncased", torchscript=True)

model = model.eval().cuda()

# Creating the trace

traced_model = torch.jit.trace(model, dummy_input)

import torch_tensorrt

compile_settings = {

"require_full_compilation": False,

"truncate_long_and_double": True,

"torch_executed_ops": ["aten::Int"]

}

optimized_model = torch_tensorrt.compile(traced_model, inputs=dummy_input, **compile_settings)

def benchmark(model, input):

# Warming up

for _ in range(10):

model(*input)

inference_count = 1000

# inference test

start = time.time()

for _ in range(inference_count):

model(*input)

end = time.time()

print(f"use {(end-start)/inference_count*1000} ms each inference")

print(f"{inference_count/(end-start)} step/s")

print("before compile")

benchmark(traced_model, dummy_input)

print("after compile")

benchmark(optimized_model, dummy_input)

```

So, my question is why it is slower than native PyTorch, and how do I fine-tune it?

## What you have already tried

<!-- A clear and concise description of what you have already done. -->

I've checked out the log from Torch-TensorRT, looks like the model is partitioned into 3 parts, separated by `at::Int` op, and looks like Int op is [hard to implement](https://github.com/NVIDIA/Torch-TensorRT/issues/513).

Next, I profiled the inference process with Nsight System, here's the screenshot:

It is expected to see 3 divided segments, however, there are 2 things that caught my attention:

1. Why segment 0 is slower than pure TensorRT? Is it due to over complicated conversion?

2. Why the `cudaMemcpyAsync` took so long? Shouldn't it only return the `last_hidden_state` tensor?

## Environment

> Build information about Torch-TensorRT can be found by turning on debug messages

- PyTorch Version (e.g., 1.0): 1.10

- CPU Architecture:

- OS (e.g., Linux): Ubuntu 18.04

- How you installed PyTorch (`conda`, `pip`, `libtorch`, source): pip

- Build command you used (if compiling from source): python setup.py develop

- Are you using local sources or building from archives: local sources

- Python version: 3.6.9

- CUDA version: 10.2

- GPU models and configuration: T4

- Any other relevant information:

## Additional context

<!-- Add any other context about the problem here. -->

| non_test | ❓ why bert base is slower w torch tensorrt than native pytorch ❓ question i m trying to optimize hugging face s bert base uncased model using torch tensorrt the code works after disabling full compilation require full compilation false and the avg latency is on however it it slower than native pytorch implementation on in contrast running the same model with trtexec only takes so for bert base it s slower than tensorrt i wonder if this is expected here s the full code from transformers import bertmodel berttokenizer bertconfig import torch import time enc berttokenizer from pretrained bert base uncased tokenizing input text text who was jim henson jim henson was a puppeteer tokenized text enc tokenize text masking one of the input tokens masked index tokenized text indexed tokens enc convert tokens to ids tokenized text segments ids creating a dummy input tokens tensor torch tensor to torch cuda segments tensors torch tensor to torch cuda dummy input dummy input shapes initializing the model with the torchscript flag flag set to true even though it is not necessary as this model does not have an lm head config bertconfig vocab size or config json file hidden size num hidden layers num attention heads intermediate size torchscript true instantiating the model model bertmodel config the model needs to be in evaluation mode model eval if you are instantiating the model with from pretrained you can also easily set the torchscript flag model bertmodel from pretrained bert base uncased torchscript true model model eval cuda creating the trace traced model torch jit trace model dummy input import torch tensorrt compile settings require full compilation false truncate long and double true torch executed ops optimized model torch tensorrt compile traced model inputs dummy input compile settings def benchmark model input warming up for in range model input inference count inference test start time time for in range inference count model input end time time print f use end start inference count ms each inference print f inference count end start step s print before compile benchmark traced model dummy input print after compile benchmark optimized model dummy input so my question is why it is slower than native pytorch and how do i fine tune it what you have already tried i ve checked out the log from torch tensorrt looks like the model is partitioned into parts separated by at int op and looks like int op is next i profiled the inference process with nsight system here s the screenshot it is expected to see divided segments however there are things that caught my attention why segment is slower than pure tensorrt is it due to over complicated conversion why the cudamemcpyasync took so long shouldn t it only return the last hidden state tensor environment build information about torch tensorrt can be found by turning on debug messages pytorch version e g cpu architecture os e g linux ubuntu how you installed pytorch conda pip libtorch source pip build command you used if compiling from source python setup py develop are you using local sources or building from archives local sources python version cuda version gpu models and configuration any other relevant information additional context | 0 |

231,044 | 7,622,968,114 | IssuesEvent | 2018-05-03 13:49:28 | larray-project/larray | https://api.github.com/repos/larray-project/larray | closed | Session.summary should include non-LArray objects | difficulty: low enhancement priority: high work in progress | @gdementen should ``Session.summary`` also include Group objects? | 1.0 | Session.summary should include non-LArray objects - @gdementen should ``Session.summary`` also include Group objects? | non_test | session summary should include non larray objects gdementen should session summary also include group objects | 0 |

7,105 | 10,441,062,259 | IssuesEvent | 2019-09-18 09:59:32 | dan-solli/ottra | https://api.github.com/repos/dan-solli/ottra | closed | [User Story] Equipment | Requirement client server | As a user, I want to register actual objects so that I can keep track of where they are and for things-to-do to refer to them, so I know what I need to pick up to be able to complete a task.

- If they are consumables, ie, there are only a certain number of AA batteries, I want the system to keep track of the amount and suggest when I should replenish them.

- Equipment, some may have actions. I am thinking dish washer (run) or washing machine (run). Or vaccuum cleaner (change bag).

- - An action may create a task to be completed, ie "Create task to change the bag" for a vaccuum cleaner.

- - If I am to run a washing machine program, I want to be able to select the appropriate program because different programs may warrant different detergents, and they probably will run at variable lengths. For time management, this is important support.

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | [User Story] Equipment - As a user, I want to register actual objects so that I can keep track of where they are and for things-to-do to refer to them, so I know what I need to pick up to be able to complete a task.

- If they are consumables, ie, there are only a certain number of AA batteries, I want the system to keep track of the amount and suggest when I should replenish them.

- Equipment, some may have actions. I am thinking dish washer (run) or washing machine (run). Or vaccuum cleaner (change bag).

- - An action may create a task to be completed, ie "Create task to change the bag" for a vaccuum cleaner.

- - If I am to run a washing machine program, I want to be able to select the appropriate program because different programs may warrant different detergents, and they probably will run at variable lengths. For time management, this is important support.

**Additional context**

Add any other context or screenshots about the feature request here.

| non_test | equipment as a user i want to register actual objects so that i can keep track of where they are and for things to do to refer to them so i know what i need to pick up to be able to complete a task if they are consumables ie there are only a certain number of aa batteries i want the system to keep track of the amount and suggest when i should replenish them equipment some may have actions i am thinking dish washer run or washing machine run or vaccuum cleaner change bag an action may create a task to be completed ie create task to change the bag for a vaccuum cleaner if i am to run a washing machine program i want to be able to select the appropriate program because different programs may warrant different detergents and they probably will run at variable lengths for time management this is important support additional context add any other context or screenshots about the feature request here | 0 |

170,662 | 13,196,932,686 | IssuesEvent | 2020-08-13 21:44:01 | NVIDIA/spark-rapids | https://api.github.com/repos/NVIDIA/spark-rapids | closed | [FEA] Add some AQE-specific tests to the PySpark test suite | feature request test | **Is your feature request related to a problem? Please describe.**

We currently do not have any PySpark tests that use Adaptive Query Execution (AQE).

**Describe the solution you'd like**

We should add some AQE tests to the PySpark test suite so that we catch regressions.

**Describe alternatives you've considered**

N/A

**Additional context**

N/A

| 1.0 | [FEA] Add some AQE-specific tests to the PySpark test suite - **Is your feature request related to a problem? Please describe.**

We currently do not have any PySpark tests that use Adaptive Query Execution (AQE).

**Describe the solution you'd like**

We should add some AQE tests to the PySpark test suite so that we catch regressions.

**Describe alternatives you've considered**

N/A

**Additional context**

N/A

| test | add some aqe specific tests to the pyspark test suite is your feature request related to a problem please describe we currently do not have any pyspark tests that use adaptive query execution aqe describe the solution you d like we should add some aqe tests to the pyspark test suite so that we catch regressions describe alternatives you ve considered n a additional context n a | 1 |

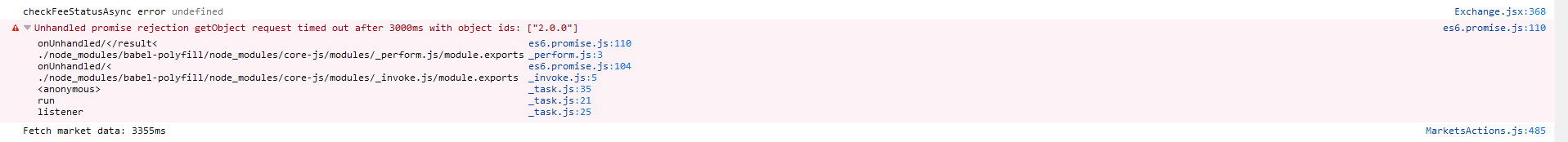

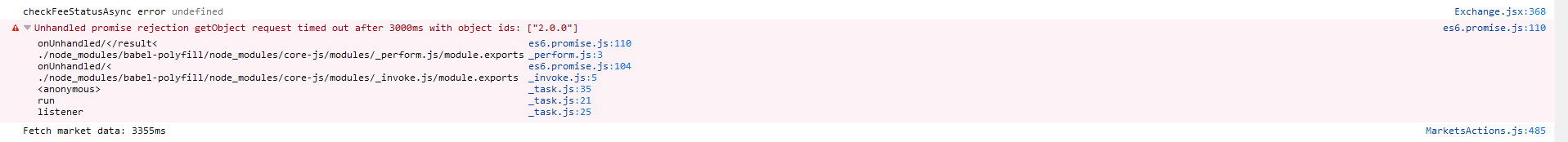

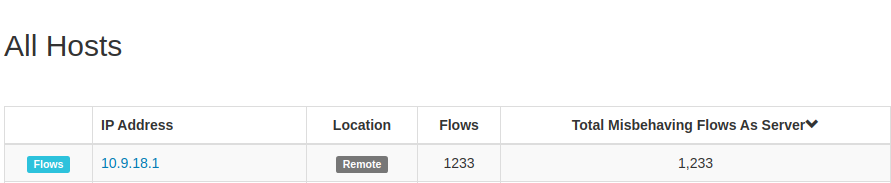

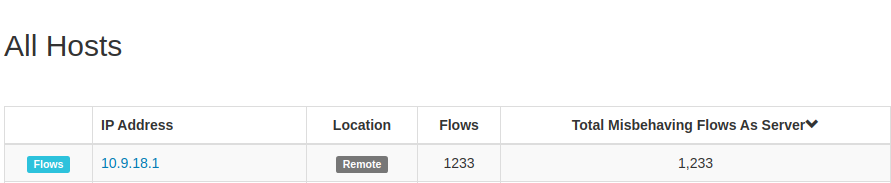

30,125 | 8,482,768,197 | IssuesEvent | 2018-10-25 19:30:51 | bitshares/bitshares-ui | https://api.github.com/repos/bitshares/bitshares-ui | closed | Exchange getObject ["2.0.0"] timed out | [1c] Task [3] Bug [4d] Critical Priority [5b] Small [6] Staging Build | When loading the exchange for the first time you may have a request timed out for object "2.0.0" resulting in the exchange page not loading the rest of the section due to missing `feeStatus`.

**Possible solution today**

One way is to change node, which will send the data again and most likley have a success with it. The page will then load as expected.

| 1.0 | Exchange getObject ["2.0.0"] timed out - When loading the exchange for the first time you may have a request timed out for object "2.0.0" resulting in the exchange page not loading the rest of the section due to missing `feeStatus`.

**Possible solution today**

One way is to change node, which will send the data again and most likley have a success with it. The page will then load as expected.

| non_test | exchange getobject timed out when loading the exchange for the first time you may have a request timed out for object resulting in the exchange page not loading the rest of the section due to missing feestatus possible solution today one way is to change node which will send the data again and most likley have a success with it the page will then load as expected | 0 |

116,353 | 24,902,791,788 | IssuesEvent | 2022-10-28 23:36:42 | alefragnani/vscode-read-only-indicator | https://api.github.com/repos/alefragnani/vscode-read-only-indicator | closed | [FEATURE] - Be aware/evaluate how to integrate with the new read-only indicator on editor tabs | enhancement depends on vscode api adoption | <!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

Keep looking how https://github.com/microsoft/vscode/issues/130526 will evolve.

- Will it be just an indicator in editor tabs?

- Will they provide an API? | 1.0 | [FEATURE] - Be aware/evaluate how to integrate with the new read-only indicator on editor tabs - <!-- Please search existing issues to avoid creating duplicates. -->

<!-- Describe the feature you'd like. -->

Keep looking how https://github.com/microsoft/vscode/issues/130526 will evolve.

- Will it be just an indicator in editor tabs?

- Will they provide an API? | non_test | be aware evaluate how to integrate with the new read only indicator on editor tabs keep looking how will evolve will it be just an indicator in editor tabs will they provide an api | 0 |

1,000 | 3,287,541,117 | IssuesEvent | 2015-10-29 10:57:27 | CartoDB/cartodb | https://api.github.com/repos/CartoDB/cartodb | closed | When importing through IMPORT API viz privacy is not set as dataset privacy is set on upload | 0 - Backlog Data-services | According to the referenced commit:

https://github.com/CartoDB/cartodb/pull/5022

"the privacy of the viz is the same as whatever the privacy param was set to."

I can't see that behavior on the latest version. Setting a dataset's privacy does work but when create_vis is set to "true" on the import api the resulting visualization is always set as private regardless of the privacy setting specified through the IMPORT API call. | 1.0 | When importing through IMPORT API viz privacy is not set as dataset privacy is set on upload - According to the referenced commit:

https://github.com/CartoDB/cartodb/pull/5022

"the privacy of the viz is the same as whatever the privacy param was set to."

I can't see that behavior on the latest version. Setting a dataset's privacy does work but when create_vis is set to "true" on the import api the resulting visualization is always set as private regardless of the privacy setting specified through the IMPORT API call. | non_test | when importing through import api viz privacy is not set as dataset privacy is set on upload according to the referenced commit the privacy of the viz is the same as whatever the privacy param was set to i can t see that behavior on the latest version setting a dataset s privacy does work but when create vis is set to true on the import api the resulting visualization is always set as private regardless of the privacy setting specified through the import api call | 0 |

54,504 | 6,393,284,018 | IssuesEvent | 2017-08-04 06:53:55 | frappe/erpnext | https://api.github.com/repos/frappe/erpnext | closed | Tests related to Calculations | testing | - [x] Multi currency

- [x] Multi UOM

- [x] Discount on item

- [x] Discount on total

- [x] Tax

- [x] Item wise Tax

- [x] Change Price List

- [x] Shipping Rule

- [x] Serialized Item

- [x] Batched Item | 1.0 | Tests related to Calculations - - [x] Multi currency

- [x] Multi UOM

- [x] Discount on item

- [x] Discount on total

- [x] Tax

- [x] Item wise Tax

- [x] Change Price List

- [x] Shipping Rule

- [x] Serialized Item

- [x] Batched Item | test | tests related to calculations multi currency multi uom discount on item discount on total tax item wise tax change price list shipping rule serialized item batched item | 1 |

368,101 | 10,866,193,077 | IssuesEvent | 2019-11-14 20:40:25 | pbek/QOwnNotes | https://api.github.com/repos/pbek/QOwnNotes | closed | Highlighting of trailing spaces | enhancement priority-low | Trailing spaces will be highlighted in the note editor in the next release. | 1.0 | Highlighting of trailing spaces - Trailing spaces will be highlighted in the note editor in the next release. | non_test | highlighting of trailing spaces trailing spaces will be highlighted in the note editor in the next release | 0 |

267,060 | 28,492,727,820 | IssuesEvent | 2023-04-18 12:22:36 | nidhi7598/OPENSSL_1.0.2_G2.5_CVE-2023-0215 | https://api.github.com/repos/nidhi7598/OPENSSL_1.0.2_G2.5_CVE-2023-0215 | opened | CVE-2016-2842 (High) detected in opensslOpenSSL_1_0_2 | Mend: dependency security vulnerability | ## CVE-2016-2842 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opensslOpenSSL_1_0_2</b></p></summary>

<p>

<p>TLS/SSL and crypto library</p>

<p>Library home page: <a href=https://github.com/openssl/openssl.git>https://github.com/openssl/openssl.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/OPENSSL_1.0.2_G2.5_CVE-2023-0215/commit/323924e277e1e2abec63ff28af8b21c235925c51">323924e277e1e2abec63ff28af8b21c235925c51</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/crypto/bio/b_print.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The doapr_outch function in crypto/bio/b_print.c in OpenSSL 1.0.1 before 1.0.1s and 1.0.2 before 1.0.2g does not verify that a certain memory allocation succeeds, which allows remote attackers to cause a denial of service (out-of-bounds write or memory consumption) or possibly have unspecified other impact via a long string, as demonstrated by a large amount of ASN.1 data, a different vulnerability than CVE-2016-0799.

<p>Publish Date: 2016-03-03

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2016-2842>CVE-2016-2842</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-2842">https://nvd.nist.gov/vuln/detail/CVE-2016-2842</a></p>

<p>Release Date: 2016-03-03</p>

<p>Fix Resolution: 1.0.1s,1.0.2g</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2016-2842 (High) detected in opensslOpenSSL_1_0_2 - ## CVE-2016-2842 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opensslOpenSSL_1_0_2</b></p></summary>

<p>

<p>TLS/SSL and crypto library</p>

<p>Library home page: <a href=https://github.com/openssl/openssl.git>https://github.com/openssl/openssl.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/OPENSSL_1.0.2_G2.5_CVE-2023-0215/commit/323924e277e1e2abec63ff28af8b21c235925c51">323924e277e1e2abec63ff28af8b21c235925c51</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/crypto/bio/b_print.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The doapr_outch function in crypto/bio/b_print.c in OpenSSL 1.0.1 before 1.0.1s and 1.0.2 before 1.0.2g does not verify that a certain memory allocation succeeds, which allows remote attackers to cause a denial of service (out-of-bounds write or memory consumption) or possibly have unspecified other impact via a long string, as demonstrated by a large amount of ASN.1 data, a different vulnerability than CVE-2016-0799.

<p>Publish Date: 2016-03-03

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2016-2842>CVE-2016-2842</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-2842">https://nvd.nist.gov/vuln/detail/CVE-2016-2842</a></p>

<p>Release Date: 2016-03-03</p>

<p>Fix Resolution: 1.0.1s,1.0.2g</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in opensslopenssl cve high severity vulnerability vulnerable library opensslopenssl tls ssl and crypto library library home page a href found in head commit a href found in base branch main vulnerable source files crypto bio b print c vulnerability details the doapr outch function in crypto bio b print c in openssl before and before does not verify that a certain memory allocation succeeds which allows remote attackers to cause a denial of service out of bounds write or memory consumption or possibly have unspecified other impact via a long string as demonstrated by a large amount of asn data a different vulnerability than cve publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

2,488 | 2,691,825,413 | IssuesEvent | 2015-04-01 00:34:54 | linkedin/dustjs | https://api.github.com/repos/linkedin/dustjs | closed | Tutorial or docs on asynchronous streaming interface (w/ Express) | documentation | ### Use Case

I'm working on an app using Node/Express and Dust. We're making a bunch of API calls in the server using Express, and then rendering the view with the data at the end. In doing so, however long it takes for the API calls to complete, that's how long it takes for the page to load. This isn't ideal, of course. Instead, we want to asynchronously pipe the data into the view from the server as it's loaded.

### The Problem

I'm trying to figure out how to use the Dust streaming interface. I haven't found much information on how to implement the Dust streaming interface, specifically with a Node/Express framework. All of the docs I've seen only provide small code snippets of how bits and pieces work, but none provide the entire context.

Could you guys create docs, or code samples, of how to implement this? Is there a 'Hello World' of the Dust streaming interface?

| 1.0 | Tutorial or docs on asynchronous streaming interface (w/ Express) - ### Use Case

I'm working on an app using Node/Express and Dust. We're making a bunch of API calls in the server using Express, and then rendering the view with the data at the end. In doing so, however long it takes for the API calls to complete, that's how long it takes for the page to load. This isn't ideal, of course. Instead, we want to asynchronously pipe the data into the view from the server as it's loaded.

### The Problem

I'm trying to figure out how to use the Dust streaming interface. I haven't found much information on how to implement the Dust streaming interface, specifically with a Node/Express framework. All of the docs I've seen only provide small code snippets of how bits and pieces work, but none provide the entire context.

Could you guys create docs, or code samples, of how to implement this? Is there a 'Hello World' of the Dust streaming interface?

| non_test | tutorial or docs on asynchronous streaming interface w express use case i m working on an app using node express and dust we re making a bunch of api calls in the server using express and then rendering the view with the data at the end in doing so however long it takes for the api calls to complete that s how long it takes for the page to load this isn t ideal of course instead we want to asynchronously pipe the data into the view from the server as it s loaded the problem i m trying to figure out how to use the dust streaming interface i haven t found much information on how to implement the dust streaming interface specifically with a node express framework all of the docs i ve seen only provide small code snippets of how bits and pieces work but none provide the entire context could you guys create docs or code samples of how to implement this is there a hello world of the dust streaming interface | 0 |

151,539 | 12,042,552,391 | IssuesEvent | 2020-04-14 10:46:46 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | Dahlia's Tears | Fix - Tester Confirmed | Starting off, I'd like to point out I am using the most current launcher for WoW Mania and my version is 2.6.0

I submitted a GM's Ticket and was aided by Divigrac who confirmed the issues and requested I submit a bug report.

[Convo with Divigrac.txt](https://github.com/WoWManiaUK/Redemption/files/4472613/Convo.with.Divigrac.txt)

**Links:**

Quest: [Dahlia's Tears](https://www.wowhead.com/quest=13078/dahlias-tears)

**What is Happening:**

The Ruby Keepers are severely bugged. Their pats are insanely small or they are getting stuck in to the terrain. Bringing mobs to kill as per the questline is not causing the Keepers to aggro or trigger. We actually had to drag a mob to directly underneath its feet just for the dragon to aggro and attack the mob. However, instead of burning the mob as per the quest scripts, the Keepers simply attack them and the quest item, [Dahlia's Tears](https://www.wowhead.com/item=43084/dahlias-tears), finally spawns.

**What Should happen:**

There should be only a few of the Keepers flying about. Once a mob is brought underneath them, the Keeper is supposed to burn them with fire, and spawning the quest item.

| 1.0 | Dahlia's Tears - Starting off, I'd like to point out I am using the most current launcher for WoW Mania and my version is 2.6.0

I submitted a GM's Ticket and was aided by Divigrac who confirmed the issues and requested I submit a bug report.

[Convo with Divigrac.txt](https://github.com/WoWManiaUK/Redemption/files/4472613/Convo.with.Divigrac.txt)

**Links:**

Quest: [Dahlia's Tears](https://www.wowhead.com/quest=13078/dahlias-tears)

**What is Happening:**

The Ruby Keepers are severely bugged. Their pats are insanely small or they are getting stuck in to the terrain. Bringing mobs to kill as per the questline is not causing the Keepers to aggro or trigger. We actually had to drag a mob to directly underneath its feet just for the dragon to aggro and attack the mob. However, instead of burning the mob as per the quest scripts, the Keepers simply attack them and the quest item, [Dahlia's Tears](https://www.wowhead.com/item=43084/dahlias-tears), finally spawns.

**What Should happen:**

There should be only a few of the Keepers flying about. Once a mob is brought underneath them, the Keeper is supposed to burn them with fire, and spawning the quest item.

| test | dahlia s tears starting off i d like to point out i am using the most current launcher for wow mania and my version is i submitted a gm s ticket and was aided by divigrac who confirmed the issues and requested i submit a bug report links quest what is happening the ruby keepers are severely bugged their pats are insanely small or they are getting stuck in to the terrain bringing mobs to kill as per the questline is not causing the keepers to aggro or trigger we actually had to drag a mob to directly underneath its feet just for the dragon to aggro and attack the mob however instead of burning the mob as per the quest scripts the keepers simply attack them and the quest item finally spawns what should happen there should be only a few of the keepers flying about once a mob is brought underneath them the keeper is supposed to burn them with fire and spawning the quest item | 1 |

317,302 | 27,226,109,840 | IssuesEvent | 2023-02-21 09:49:52 | KirilStrezikozin/BakeMaster-Blender-Addon | https://api.github.com/repos/KirilStrezikozin/BakeMaster-Blender-Addon | closed | REQUEST: Set up a texture set and select a map to sync all settings with | enhancement solved close on release needs testing | **This feature request is:**

- [x] not a duplicate

- [x] implemented

**Is your feature request related to a problem? Please describe.**

_atokim from BlenderMarket:_

Making resolution settings for texture sets and automatically applying them to baked maps is much faster and easier than doing it for every texture in every "baking job". It is logical to use the bake settings for each map for all texture sets automatically - there is no need to configure them individually each time - this is not a frequent case in my practice and in this case I will simply create a separate bake session.

**Describe the solution you'd like to be implemented**

Add functionality that will allow the user to set up a texture set and select a map to sync all settings with.

| 1.0 | REQUEST: Set up a texture set and select a map to sync all settings with - **This feature request is:**

- [x] not a duplicate

- [x] implemented

**Is your feature request related to a problem? Please describe.**

_atokim from BlenderMarket:_

Making resolution settings for texture sets and automatically applying them to baked maps is much faster and easier than doing it for every texture in every "baking job". It is logical to use the bake settings for each map for all texture sets automatically - there is no need to configure them individually each time - this is not a frequent case in my practice and in this case I will simply create a separate bake session.

**Describe the solution you'd like to be implemented**

Add functionality that will allow the user to set up a texture set and select a map to sync all settings with.

| test | request set up a texture set and select a map to sync all settings with this feature request is not a duplicate implemented is your feature request related to a problem please describe atokim from blendermarket making resolution settings for texture sets and automatically applying them to baked maps is much faster and easier than doing it for every texture in every baking job it is logical to use the bake settings for each map for all texture sets automatically there is no need to configure them individually each time this is not a frequent case in my practice and in this case i will simply create a separate bake session describe the solution you d like to be implemented add functionality that will allow the user to set up a texture set and select a map to sync all settings with | 1 |

406,446 | 11,893,285,551 | IssuesEvent | 2020-03-29 10:49:01 | bryntum/support | https://api.github.com/repos/bryntum/support | closed | Task incorrectly rendered after duration change | bug high-priority resolved | Go to advanced demo

- Edit Install Apache task, set duration to 0 days

- Edit the same again, set duration to 10 days

- Task doesn't render properly and percent complete is incorrect

https://www.bryntum.com/forum/viewtopic.php?f=52&t=13504

<img width="1178" alt="Снимок экрана 2020-03-18 в 16 49 41" src="https://user-images.githubusercontent.com/57486733/76968323-c3f6b880-6939-11ea-8a5d-511906443c82.png">

Or run in console:

```

gantt.taskStore.getById(11).setDuration(0)

gantt.taskStore.getById(11).setDuration(10)

``` | 1.0 | Task incorrectly rendered after duration change - Go to advanced demo

- Edit Install Apache task, set duration to 0 days

- Edit the same again, set duration to 10 days

- Task doesn't render properly and percent complete is incorrect

https://www.bryntum.com/forum/viewtopic.php?f=52&t=13504

<img width="1178" alt="Снимок экрана 2020-03-18 в 16 49 41" src="https://user-images.githubusercontent.com/57486733/76968323-c3f6b880-6939-11ea-8a5d-511906443c82.png">

Or run in console:

```

gantt.taskStore.getById(11).setDuration(0)

gantt.taskStore.getById(11).setDuration(10)

``` | non_test | task incorrectly rendered after duration change go to advanced demo edit install apache task set duration to days edit the same again set duration to days task doesn t render properly and percent complete is incorrect img width alt снимок экрана в src or run in console gantt taskstore getbyid setduration gantt taskstore getbyid setduration | 0 |

11,476 | 3,204,653,720 | IssuesEvent | 2015-10-03 10:00:53 | imixs/imixs-workflow | https://api.github.com/repos/imixs/imixs-workflow | closed | WorkflowSchedulerService - change timer object | bug testing | currently an error occurs when updating timer details | 1.0 | WorkflowSchedulerService - change timer object - currently an error occurs when updating timer details | test | workflowschedulerservice change timer object currently an error occurs when updating timer details | 1 |

261,820 | 22,774,010,104 | IssuesEvent | 2022-07-08 12:51:11 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | opened | Teste de generalizacao para a tag Informações institucionais - Link de acesso - Tabuleiro | generalization test development | DoD: Realizar o teste de Generalização do validador da tag Informações institucionais - Link de acesso para o Município de Tabuleiro. | 1.0 | Teste de generalizacao para a tag Informações institucionais - Link de acesso - Tabuleiro - DoD: Realizar o teste de Generalização do validador da tag Informações institucionais - Link de acesso para o Município de Tabuleiro. | test | teste de generalizacao para a tag informações institucionais link de acesso tabuleiro dod realizar o teste de generalização do validador da tag informações institucionais link de acesso para o município de tabuleiro | 1 |

76,791 | 21,570,441,625 | IssuesEvent | 2022-05-02 07:29:08 | Polymer/tools | https://api.github.com/repos/Polymer/tools | closed | Build process breaks with RxJs : this._r-- > 0 | Package: build wontfix | ### Description

Our project uses RxJs.

The command line `polymer build` seems to work ok. In the Gulp file, we are using this exactly currently:

https://github.com/PolymerElements/generator-polymer-init-custom-build/blob/master/generators/app/gulpfile.js

And we also tested with updated NPM modules all around `"polymer-build": "^0.7.0"`

the rx.lite.min file contains a section of code that is this:

`c.prototype.next = function (a) { this._r-- > 0 && (this._o.onNext(a), this._r <= 0 && this._o.onCompleted()) }`

Either the `polymerBuild.PolymerProject(JSON).sources()` or the `mergeStream()` method is converting `this._r-- > 0` to `this._r==\x3e0&&`

If I change `this._r-- > 0` to `(this._r-- > 0)` the build works.

Please keep in mind that this is without any splitHtml or rejoinHtml methods or any other inline transformations other than sources(), dependencies() and mergeStream()

### Versions & Environment

- polymer-build: 0.7.0

- node: v6.9.2

- Operating System: Mac 10.12.3

#### Steps to Reproduce

`bower install rxjs`

`<script src="../bower_components/rxjs/dist/rx.lite.min.js"></script>`

Run gulp script from:

https://github.com/PolymerElements/generator-polymer-init-custom-build/blob/master/generators/app/gulpfile.js

#### Expected Results

Working project with `this._r-- > 0` somewhere inside.

#### Actual Results

SyntaxError: Invalid or unexpected token: `this._r==\x3e0&&`

| 1.0 | Build process breaks with RxJs : this._r-- > 0 - ### Description

Our project uses RxJs.

The command line `polymer build` seems to work ok. In the Gulp file, we are using this exactly currently:

https://github.com/PolymerElements/generator-polymer-init-custom-build/blob/master/generators/app/gulpfile.js

And we also tested with updated NPM modules all around `"polymer-build": "^0.7.0"`

the rx.lite.min file contains a section of code that is this:

`c.prototype.next = function (a) { this._r-- > 0 && (this._o.onNext(a), this._r <= 0 && this._o.onCompleted()) }`

Either the `polymerBuild.PolymerProject(JSON).sources()` or the `mergeStream()` method is converting `this._r-- > 0` to `this._r==\x3e0&&`

If I change `this._r-- > 0` to `(this._r-- > 0)` the build works.

Please keep in mind that this is without any splitHtml or rejoinHtml methods or any other inline transformations other than sources(), dependencies() and mergeStream()

### Versions & Environment

- polymer-build: 0.7.0

- node: v6.9.2

- Operating System: Mac 10.12.3

#### Steps to Reproduce

`bower install rxjs`

`<script src="../bower_components/rxjs/dist/rx.lite.min.js"></script>`

Run gulp script from:

https://github.com/PolymerElements/generator-polymer-init-custom-build/blob/master/generators/app/gulpfile.js

#### Expected Results

Working project with `this._r-- > 0` somewhere inside.

#### Actual Results

SyntaxError: Invalid or unexpected token: `this._r==\x3e0&&`

| non_test | build process breaks with rxjs this r description our project uses rxjs the command line polymer build seems to work ok in the gulp file we are using this exactly currently and we also tested with updated npm modules all around polymer build the rx lite min file contains a section of code that is this c prototype next function a this r this o onnext a this r this o oncompleted either the polymerbuild polymerproject json sources or the mergestream method is converting this r to this r if i change this r to this r the build works please keep in mind that this is without any splithtml or rejoinhtml methods or any other inline transformations other than sources dependencies and mergestream versions environment polymer build node operating system mac steps to reproduce bower install rxjs run gulp script from expected results working project with this r somewhere inside actual results syntaxerror invalid or unexpected token this r | 0 |

215,905 | 16,721,542,773 | IssuesEvent | 2021-06-10 07:56:55 | SAPDocuments/Issues | https://api.github.com/repos/SAPDocuments/Issues | closed | Perform Actions On Cards To Support Your Business Case | High-Prio SCPTest-2105A SCPTest-mcard SCPTest-trial1 SCPTest-trial2 SCPTest-trial3 | **Tutorials: https://developers.sap.com/tutorials/cp-mobile-cards-booster-starter.html**

Steps:

**Step 3: View supplier contact card on your mobile device

Step 4: View sales order approval card on your mobile device**

--------------------------

**Issue:**

Contact Card and Sales Order Approval Card are auto subscribed, So please remove the steps to subscribe to these cards in Step 3 and Step 4.

Instead add a note that if the cards are not visible, then we have to unsubscribe and subscribe to these cards again and then do a pull refresh for the cards to be visible.

Best Regards,

Priyanka | 5.0 | Perform Actions On Cards To Support Your Business Case - **Tutorials: https://developers.sap.com/tutorials/cp-mobile-cards-booster-starter.html**

Steps:

**Step 3: View supplier contact card on your mobile device

Step 4: View sales order approval card on your mobile device**

--------------------------

**Issue:**

Contact Card and Sales Order Approval Card are auto subscribed, So please remove the steps to subscribe to these cards in Step 3 and Step 4.

Instead add a note that if the cards are not visible, then we have to unsubscribe and subscribe to these cards again and then do a pull refresh for the cards to be visible.

Best Regards,

Priyanka | test | perform actions on cards to support your business case tutorials steps step view supplier contact card on your mobile device step view sales order approval card on your mobile device issue contact card and sales order approval card are auto subscribed so please remove the steps to subscribe to these cards in step and step instead add a note that if the cards are not visible then we have to unsubscribe and subscribe to these cards again and then do a pull refresh for the cards to be visible best regards priyanka | 1 |

23,449 | 4,018,888,593 | IssuesEvent | 2016-05-16 12:54:24 | CyclopsMC/EvilCraft | https://api.github.com/repos/CyclopsMC/EvilCraft | opened | Bare Cap/Brush/Rod without modifiers | alpha-testing enhancement | When shift-right clicking a bare cap without modifiers reads "Modifiers:" but doesn't list anything.

It would be prettier if it read "no modifiers found" or something along that line | 1.0 | Bare Cap/Brush/Rod without modifiers - When shift-right clicking a bare cap without modifiers reads "Modifiers:" but doesn't list anything.

It would be prettier if it read "no modifiers found" or something along that line | test | bare cap brush rod without modifiers when shift right clicking a bare cap without modifiers reads modifiers but doesn t list anything it would be prettier if it read no modifiers found or something along that line | 1 |

276,396 | 23,990,991,284 | IssuesEvent | 2022-09-14 01:03:54 | hvac/hvac | https://api.github.com/repos/hvac/hvac | closed | TestOIDC Failure in Vault 1.9.0 | help wanted jwt/oidc test failures | Vault 1.9.0 introduced the following error in test_oidc.py. Creating an issue to track the resolution of this failure.

```

FAILED tests/integration_tests/api/auth_methods/test_oidc.py::TestOIDC::test_oidc_authorization_url_request_0_success - hvac.exceptions.InvalidRequest: cannot find key "oidc-test-key", on post https://localhost:8200/v1/identity/oidc/role/hvac-oidc-test

``` | 1.0 | TestOIDC Failure in Vault 1.9.0 - Vault 1.9.0 introduced the following error in test_oidc.py. Creating an issue to track the resolution of this failure.

```

FAILED tests/integration_tests/api/auth_methods/test_oidc.py::TestOIDC::test_oidc_authorization_url_request_0_success - hvac.exceptions.InvalidRequest: cannot find key "oidc-test-key", on post https://localhost:8200/v1/identity/oidc/role/hvac-oidc-test

``` | test | testoidc failure in vault vault introduced the following error in test oidc py creating an issue to track the resolution of this failure failed tests integration tests api auth methods test oidc py testoidc test oidc authorization url request success hvac exceptions invalidrequest cannot find key oidc test key on post | 1 |

642,526 | 20,906,464,227 | IssuesEvent | 2022-03-24 03:08:18 | o3de/o3de | https://api.github.com/repos/o3de/o3de | closed | [Material Editor] A crash happened when clicking a material file in Asset Browser within Editor to open it in the Material Editor. | kind/bug sig/graphics-audio priority/critical feature/graphics/tools |

**Describe the bug**

A clear and concise description of what the bug is. Try to isolate the issue to help the community to reproduce it easily and increase chances for a fast fix.

**Steps to reproduce**

Steps to reproduce the behavior:

1. Open O3DE.sln with Visual Studio 2019.

2. Set Editor as startup project.

3. Press F5 to start debug Editor.

4. When Editor is ready, click on 'Tools -> Material Editor' to open Material Editor.

5. Wait for a few seconds, then Material Editor is ready to work.

6. Browse files in Asset Browser within Editor, click a material file to view its content in Material Editor.

7. Check Process View in Visual Studio 2019, a second Material Editor process is running and failing to exit.

8. See error in Visual Studio 2019.

**Expected behavior**

The second Material Editor process could exit after its job is done.

**Actual behavior**

The second Material Editor process fails to exit normally. It crashed at end of `~AtomToolsApplication()` in AtomToolsApplication.cpp.

**Assets required**

NA

**Screenshots/Video**

NA

**Found in Branch**

development

main

stabilization/2111RTE

**Desktop/Device (please complete the following information):**

- Device: [PC]

- OS: [Windows]

- Version [10]

- CPU [Intel I9-9900k]

- GPU [NVidia RTX 3090]

- Memory [32GB]

**Additional context**

Add any other context about the problem here. | 1.0 | [Material Editor] A crash happened when clicking a material file in Asset Browser within Editor to open it in the Material Editor. -

**Describe the bug**

A clear and concise description of what the bug is. Try to isolate the issue to help the community to reproduce it easily and increase chances for a fast fix.

**Steps to reproduce**

Steps to reproduce the behavior:

1. Open O3DE.sln with Visual Studio 2019.

2. Set Editor as startup project.

3. Press F5 to start debug Editor.

4. When Editor is ready, click on 'Tools -> Material Editor' to open Material Editor.

5. Wait for a few seconds, then Material Editor is ready to work.

6. Browse files in Asset Browser within Editor, click a material file to view its content in Material Editor.

7. Check Process View in Visual Studio 2019, a second Material Editor process is running and failing to exit.

8. See error in Visual Studio 2019.

**Expected behavior**

The second Material Editor process could exit after its job is done.

**Actual behavior**

The second Material Editor process fails to exit normally. It crashed at end of `~AtomToolsApplication()` in AtomToolsApplication.cpp.

**Assets required**

NA

**Screenshots/Video**

NA

**Found in Branch**

development

main

stabilization/2111RTE

**Desktop/Device (please complete the following information):**

- Device: [PC]

- OS: [Windows]

- Version [10]

- CPU [Intel I9-9900k]

- GPU [NVidia RTX 3090]

- Memory [32GB]

**Additional context**