Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,650 | 2,855,530,019 | IssuesEvent | 2015-06-02 10:01:51 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Outgoing sample messages aren't resolved to contact names | 3 - Acceptance testing Bug | When the sample messages load, the incoming messages are resolved to contact names but the outgoing messages aren't. This results in two message threads instead of one (one for incoming, one for outgoing). This doesn't seem to affect message threads for contacts that I added on my own, only sample data. The reason why this is an issue is because when you start up the app and run the Messages tour, it doesn't run all the way through unless there is a message thread with both sent and received messages. | 1.0 | Outgoing sample messages aren't resolved to contact names - When the sample messages load, the incoming messages are resolved to contact names but the outgoing messages aren't. This results in two message threads instead of one (one for incoming, one for outgoing). This doesn't seem to affect message threads for contacts that I added on my own, only sample data. The reason why this is an issue is because when you start up the app and run the Messages tour, it doesn't run all the way through unless there is a message thread with both sent and received messages. | test | outgoing sample messages aren t resolved to contact names when the sample messages load the incoming messages are resolved to contact names but the outgoing messages aren t this results in two message threads instead of one one for incoming one for outgoing this doesn t seem to affect message threads for contacts that i added on my own only sample data the reason why this is an issue is because when you start up the app and run the messages tour it doesn t run all the way through unless there is a message thread with both sent and received messages | 1 |

227,188 | 18,053,441,245 | IssuesEvent | 2021-09-20 03:13:28 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.SV_FWD_01A_B JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test SV_FWD_01A_B Failing | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif sv_fwd_01a_b.pfc)

ISSUE: https://github.com/logicmoo/logicmoo_workspace/issues/

EDIT: https://github.com/logicmoo/logicmoo_workspace/edit/master/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc

JENKINS: https://jenkins.logicmoo.org/job/logicmoo_workspace/lastBuild/testReport/logicmoo.pfc.test.sanity_base/SV_FWD_01A_B/logicmoo_pfc_test_sanity_base_SV_FWD_01A_B_JUnit/

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ASV_FWD_01A_B

```

%~ init_phase(after_load)

%~ init_phase(restore_state)

%

running('/var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc'),

%~ this_test_might_need( :-( use_module( library(logicmoo_plarkc))))

:- set_fileAssertMt(header_sane).

%~ set_fileAssertMt(header_sane)

/*~

%~ set_fileAssertMt(header_sane)

~*/

:- expects_dialect(pfc).

arity(inChairZ,1).

prologSingleValued(inChairZ).

prologSingleValuedInArg(inChairZ,1).

singleValuedInArgAX(inChairZ, 1, 1).

:- ain( inChairZ(aZa)).

:- (ain( inChairZ(bYb))).

:- listing(inChairZ/1).

%~ skipped( listing( inChairZ/1))

%~ /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc:50

%~ unused(no_junit_results)

%~ test_completed_exit(0)

```

totalTime=1.000

FAILED: /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-junit-minor -k sv_fwd_01a_b.pfc (returned 0) Add_LABELS='' Rem_LABELS='Skipped,Skipped,Errors,Warnings,Overtime,Skipped,Skipped'

| 3.0 | logicmoo.pfc.test.sanity_base.SV_FWD_01A_B JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif sv_fwd_01a_b.pfc)

ISSUE: https://github.com/logicmoo/logicmoo_workspace/issues/

EDIT: https://github.com/logicmoo/logicmoo_workspace/edit/master/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc

JENKINS: https://jenkins.logicmoo.org/job/logicmoo_workspace/lastBuild/testReport/logicmoo.pfc.test.sanity_base/SV_FWD_01A_B/logicmoo_pfc_test_sanity_base_SV_FWD_01A_B_JUnit/

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ASV_FWD_01A_B

```

%~ init_phase(after_load)

%~ init_phase(restore_state)

%

running('/var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc'),

%~ this_test_might_need( :-( use_module( library(logicmoo_plarkc))))

:- set_fileAssertMt(header_sane).

%~ set_fileAssertMt(header_sane)

/*~

%~ set_fileAssertMt(header_sane)

~*/

:- expects_dialect(pfc).

arity(inChairZ,1).

prologSingleValued(inChairZ).

prologSingleValuedInArg(inChairZ,1).

singleValuedInArgAX(inChairZ, 1, 1).

:- ain( inChairZ(aZa)).

:- (ain( inChairZ(bYb))).

:- listing(inChairZ/1).

%~ skipped( listing( inChairZ/1))

%~ /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base/sv_fwd_01a_b.pfc:50

%~ unused(no_junit_results)

%~ test_completed_exit(0)

```

totalTime=1.000

FAILED: /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-junit-minor -k sv_fwd_01a_b.pfc (returned 0) Add_LABELS='' Rem_LABELS='Skipped,Skipped,Errors,Warnings,Overtime,Skipped,Skipped'

| test | logicmoo pfc test sanity base sv fwd b junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base swipl x var lib jenkins workspace logicmoo workspace bin lmoo clif sv fwd b pfc issue edit jenkins issue search init phase after load init phase restore state running var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base sv fwd b pfc this test might need use module library logicmoo plarkc set fileassertmt header sane set fileassertmt header sane set fileassertmt header sane expects dialect pfc arity inchairz prologsinglevalued inchairz prologsinglevaluedinarg inchairz singlevaluedinargax inchairz ain inchairz aza ain inchairz byb listing inchairz skipped listing inchairz var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base sv fwd b pfc unused no junit results test completed exit totaltime failed var lib jenkins workspace logicmoo workspace bin lmoo junit minor k sv fwd b pfc returned add labels rem labels skipped skipped errors warnings overtime skipped skipped | 1 |

304,473 | 26,279,562,338 | IssuesEvent | 2023-01-07 06:04:36 | serai-dex/serai | https://api.github.com/repos/serai-dex/serai | opened | Fingerprinting of monero-serai by receiving funds | untested monero | Will monero-serai scan (or refuse to scan) TXs wallet2 won't? We need to review torsion handling, TX extra encoding support and more.

I can immediately cite monero-serai ignores secondary outputs, for a larger amount, when the burning bug is exploited. wallet2 will credit the difference, if the original output hasn't already been spent. This is a safety issue I won't budge on. | 1.0 | Fingerprinting of monero-serai by receiving funds - Will monero-serai scan (or refuse to scan) TXs wallet2 won't? We need to review torsion handling, TX extra encoding support and more.

I can immediately cite monero-serai ignores secondary outputs, for a larger amount, when the burning bug is exploited. wallet2 will credit the difference, if the original output hasn't already been spent. This is a safety issue I won't budge on. | test | fingerprinting of monero serai by receiving funds will monero serai scan or refuse to scan txs won t we need to review torsion handling tx extra encoding support and more i can immediately cite monero serai ignores secondary outputs for a larger amount when the burning bug is exploited will credit the difference if the original output hasn t already been spent this is a safety issue i won t budge on | 1 |

130,988 | 27,804,493,105 | IssuesEvent | 2023-03-17 18:32:39 | apache/camel-karavan | https://api.github.com/repos/apache/camel-karavan | closed | [vs-code] Integrate with VS Code AtlasMap | enhancement wontfix usability designer vs-code | several scenarii in mind:

- when a data transformation exists, clicking on it is opening the Atlasmap editor and allows editing it

- when adding a data transformation, ask for the name/path(?) then open the AtlasMap editor

using VS Code AtlasMap commands to open files named .adm will work (done by VS Code language Support and VS Code Designer for Camel)

Advantages: can leverage existing VS Code AtlasMap and should simplfiy integration compared to have Karavan embedding AtlasMap

Drawback: this will be specific to VS Code | 1.0 | [vs-code] Integrate with VS Code AtlasMap - several scenarii in mind:

- when a data transformation exists, clicking on it is opening the Atlasmap editor and allows editing it

- when adding a data transformation, ask for the name/path(?) then open the AtlasMap editor

using VS Code AtlasMap commands to open files named .adm will work (done by VS Code language Support and VS Code Designer for Camel)

Advantages: can leverage existing VS Code AtlasMap and should simplfiy integration compared to have Karavan embedding AtlasMap

Drawback: this will be specific to VS Code | non_test | integrate with vs code atlasmap several scenarii in mind when a data transformation exists clicking on it is opening the atlasmap editor and allows editing it when adding a data transformation ask for the name path then open the atlasmap editor using vs code atlasmap commands to open files named adm will work done by vs code language support and vs code designer for camel advantages can leverage existing vs code atlasmap and should simplfiy integration compared to have karavan embedding atlasmap drawback this will be specific to vs code | 0 |

9,569 | 4,546,835,156 | IssuesEvent | 2016-09-12 00:47:26 | VOREStation/VOREStation | https://api.github.com/repos/VOREStation/VOREStation | closed | Saddlebags broken | Pri: 1-Minor Type: Bug Type: Sprite Works in latest build | #### Brief description of the issue

Saddlebags appear to be broken on horse tails.

#### What you expected to happen

The saddlebags are supposed to work on horse-taur/taur type characters.

#### What actually happened

It sticks out ahead of the character as though it were a backpack on a normal player.

#### Steps to reproduce

Strap a saddlepack onto a character with the horse tail.

#### Additional info:

- **Server Revision**: Found using the "Show Server Revision" verb under the OOC tab.

- **Anything else you may wish to add** (Location if it's a mapping issue, etc)

| 1.0 | Saddlebags broken - #### Brief description of the issue

Saddlebags appear to be broken on horse tails.

#### What you expected to happen

The saddlebags are supposed to work on horse-taur/taur type characters.

#### What actually happened

It sticks out ahead of the character as though it were a backpack on a normal player.

#### Steps to reproduce

Strap a saddlepack onto a character with the horse tail.

#### Additional info:

- **Server Revision**: Found using the "Show Server Revision" verb under the OOC tab.

- **Anything else you may wish to add** (Location if it's a mapping issue, etc)

| non_test | saddlebags broken brief description of the issue saddlebags appear to be broken on horse tails what you expected to happen the saddlebags are supposed to work on horse taur taur type characters what actually happened it sticks out ahead of the character as though it were a backpack on a normal player steps to reproduce strap a saddlepack onto a character with the horse tail additional info server revision found using the show server revision verb under the ooc tab anything else you may wish to add location if it s a mapping issue etc | 0 |

317,082 | 27,210,989,553 | IssuesEvent | 2023-02-20 16:30:54 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | closed | Reviewdog tests seem broken. | Automated testing CMS Team Quality Assurance | ## Description

```

Run reviewdog/action-eslint@d3395027ea2cfc5cf8f460b1ea939b6c86fea656

Run $GITHUB_ACTION_PATH/script.sh

🐶 Installing reviewdog ... https://github.com/reviewdog/reviewdog

Running `npm install` to install eslint ...

npm WARN deprecated querystring@0.2.0: The querystring API is considered Legacy. new code should use the URLSearchParams API instead.

npm WARN deprecated @stylelint/postcss-markdown@0.[36](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:39).2: Use the original unforked package instead: postcss-markdown

npm WARN deprecated gherkin@5.1.0: This package is now published under @cucumber/gherkin

npm WARN deprecated cucumber-expressions@6.6.2: This package is now published under @cucumber/cucumber-expressions

npm WARN deprecated cucumber-expressions@5.0.18: This package is now published under @cucumber/cucumber-expressions

npm WARN deprecated cucumber@4.2.1: Cucumber is publishing new releases under @cucumber/cucumber

npm WARN deprecated core-js@2.6.9: core-js@<3.23.3 is no longer maintained and not recommended for usage due to the number of issues. Because of the V8 engine whims, feature detection in old core-js versions could cause a slowdown up to 100x even if nothing is polyfilled. Some versions have web compatibility issues. Please, upgrade your dependencies to the actual version of core-js.

npm WARN deprecated core-js-pure@3.8.1: core-js-pure@<3.23.3 is no longer maintained and not recommended for usage due to the number of issues. Because of the V8 engine whims, feature detection in old core-js versions could cause a slowdown up to 100x even if nothing is polyfilled. Some versions have web compatibility issues. Please, upgrade your dependencies to the actual version of core-js-pure.

added 1054 packages, and audited 1055 packages in 26s

132 packages are looking for funding

run `npm fund` for details

4 high severity vulnerabilities

To address issues that do not require attention, run:

npm audit fix

To address all issues (including breaking changes), run:

npm audit fix --force

Run `npm audit` for details.

/home/runner/work/_actions/reviewdog/action-eslint/d3[39](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:42)5027ea2cfc5cf8f[46](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:49)0b1ea939b6c86fea656/script.sh: 20: Unknown: not found

eslint version:

Running eslint with reviewdog 🐶 ...

reviewdog: This GitHub token doesn't have write permission of Review API [1],

so reviewdog will report results via logging command [2] and create annotations similar to

github-pr-check reporter as a fallback.

[1]: https://docs.github.com/en/actions/reference/events-that-trigger-workflows#pull_request_target,

[2]: https://help.github.com/en/actions/automating-your-workflow-with-github-actions/development-tools-for-github-actions#logging-commands

/home/runner/work/_actions/reviewdog/action-eslint/d339[50](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:53)27ea2cfc5cf8f460b1ea939b6c86fea6[56](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:59)/script.sh: 23: Unknown: not found

reviewdog: parse error: failed to unmarshal rdjson (DiagnosticResult): proto: syntax error (line 1:1): unexpected token

Error: Process completed with exit code 1.

```

## Acceptance Criteria

- [ ] Testable_Outcome_X

- [ ] Testable_Outcome_Y

- [ ] Testable_Outcome_Z

- [ ] Requires design review

### Team

Please check the team(s) that will do this work.

- [ ] `CMS Team`

- [ ] `Public Websites`

- [ ] `Facilities`

- [ ] `User support`

| 1.0 | Reviewdog tests seem broken. - ## Description

```

Run reviewdog/action-eslint@d3395027ea2cfc5cf8f460b1ea939b6c86fea656

Run $GITHUB_ACTION_PATH/script.sh

🐶 Installing reviewdog ... https://github.com/reviewdog/reviewdog

Running `npm install` to install eslint ...

npm WARN deprecated querystring@0.2.0: The querystring API is considered Legacy. new code should use the URLSearchParams API instead.

npm WARN deprecated @stylelint/postcss-markdown@0.[36](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:39).2: Use the original unforked package instead: postcss-markdown

npm WARN deprecated gherkin@5.1.0: This package is now published under @cucumber/gherkin

npm WARN deprecated cucumber-expressions@6.6.2: This package is now published under @cucumber/cucumber-expressions

npm WARN deprecated cucumber-expressions@5.0.18: This package is now published under @cucumber/cucumber-expressions

npm WARN deprecated cucumber@4.2.1: Cucumber is publishing new releases under @cucumber/cucumber

npm WARN deprecated core-js@2.6.9: core-js@<3.23.3 is no longer maintained and not recommended for usage due to the number of issues. Because of the V8 engine whims, feature detection in old core-js versions could cause a slowdown up to 100x even if nothing is polyfilled. Some versions have web compatibility issues. Please, upgrade your dependencies to the actual version of core-js.

npm WARN deprecated core-js-pure@3.8.1: core-js-pure@<3.23.3 is no longer maintained and not recommended for usage due to the number of issues. Because of the V8 engine whims, feature detection in old core-js versions could cause a slowdown up to 100x even if nothing is polyfilled. Some versions have web compatibility issues. Please, upgrade your dependencies to the actual version of core-js-pure.

added 1054 packages, and audited 1055 packages in 26s

132 packages are looking for funding

run `npm fund` for details

4 high severity vulnerabilities

To address issues that do not require attention, run:

npm audit fix

To address all issues (including breaking changes), run:

npm audit fix --force

Run `npm audit` for details.

/home/runner/work/_actions/reviewdog/action-eslint/d3[39](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:42)5027ea2cfc5cf8f[46](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:49)0b1ea939b6c86fea656/script.sh: 20: Unknown: not found

eslint version:

Running eslint with reviewdog 🐶 ...

reviewdog: This GitHub token doesn't have write permission of Review API [1],

so reviewdog will report results via logging command [2] and create annotations similar to

github-pr-check reporter as a fallback.

[1]: https://docs.github.com/en/actions/reference/events-that-trigger-workflows#pull_request_target,

[2]: https://help.github.com/en/actions/automating-your-workflow-with-github-actions/development-tools-for-github-actions#logging-commands

/home/runner/work/_actions/reviewdog/action-eslint/d339[50](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:53)27ea2cfc5cf8f460b1ea939b6c86fea6[56](https://github.com/department-of-veterans-affairs/va.gov-cms/actions/runs/4224602430/jobs/7335769435#step:4:59)/script.sh: 23: Unknown: not found

reviewdog: parse error: failed to unmarshal rdjson (DiagnosticResult): proto: syntax error (line 1:1): unexpected token

Error: Process completed with exit code 1.

```

## Acceptance Criteria

- [ ] Testable_Outcome_X

- [ ] Testable_Outcome_Y

- [ ] Testable_Outcome_Z

- [ ] Requires design review

### Team

Please check the team(s) that will do this work.

- [ ] `CMS Team`

- [ ] `Public Websites`

- [ ] `Facilities`

- [ ] `User support`

| test | reviewdog tests seem broken description run reviewdog action eslint run github action path script sh 🐶 installing reviewdog running npm install to install eslint npm warn deprecated querystring the querystring api is considered legacy new code should use the urlsearchparams api instead npm warn deprecated stylelint postcss markdown use the original unforked package instead postcss markdown npm warn deprecated gherkin this package is now published under cucumber gherkin npm warn deprecated cucumber expressions this package is now published under cucumber cucumber expressions npm warn deprecated cucumber expressions this package is now published under cucumber cucumber expressions npm warn deprecated cucumber cucumber is publishing new releases under cucumber cucumber npm warn deprecated core js core js is no longer maintained and not recommended for usage due to the number of issues because of the engine whims feature detection in old core js versions could cause a slowdown up to even if nothing is polyfilled some versions have web compatibility issues please upgrade your dependencies to the actual version of core js npm warn deprecated core js pure core js pure is no longer maintained and not recommended for usage due to the number of issues because of the engine whims feature detection in old core js versions could cause a slowdown up to even if nothing is polyfilled some versions have web compatibility issues please upgrade your dependencies to the actual version of core js pure added packages and audited packages in packages are looking for funding run npm fund for details high severity vulnerabilities to address issues that do not require attention run npm audit fix to address all issues including breaking changes run npm audit fix force run npm audit for details home runner work actions reviewdog action eslint unknown not found eslint version running eslint with reviewdog 🐶 reviewdog this github token doesn t have write permission of review api so reviewdog will report results via logging command and create annotations similar to github pr check reporter as a fallback home runner work actions reviewdog action eslint unknown not found reviewdog parse error failed to unmarshal rdjson diagnosticresult proto syntax error line unexpected token error process completed with exit code acceptance criteria testable outcome x testable outcome y testable outcome z requires design review team please check the team s that will do this work cms team public websites facilities user support | 1 |

65,096 | 16,104,179,348 | IssuesEvent | 2021-04-27 13:09:06 | xamarin/xamarin-android | https://api.github.com/repos/xamarin/xamarin-android | closed | [NET6] libxamarin-debug-app-helper.so should not be packaged for Release builds | Area: App+Library Build | `libxamarin-debug-app-helper.so` is a helper DSO used by Debug builds only, however `dotnet build -c Release` for NET6 places it in the apk:

```shell

net6-samples/HelloMaui/bin/Release/net6.0-android $ zipinfo com.microsoft.hellomaui-Signed.apk | grep helper

-rwxr-xr-x 6.3 unx 37104 b- defX 21-Apr-20 16:45 lib/arm64-v8a/libxamarin-debug-app-helper.so

-rwxr-xr-x 6.3 unx 31796 b- defX 21-Apr-20 16:45 lib/x86/libxamarin-debug-app-helper.so

```

The DSO comes from the `microsoft.android.runtime.android-{ARCH}` nuget packages which contains all the native libraries from Xamarin.Android, for both Release and Debug builds. | 1.0 | [NET6] libxamarin-debug-app-helper.so should not be packaged for Release builds - `libxamarin-debug-app-helper.so` is a helper DSO used by Debug builds only, however `dotnet build -c Release` for NET6 places it in the apk:

```shell

net6-samples/HelloMaui/bin/Release/net6.0-android $ zipinfo com.microsoft.hellomaui-Signed.apk | grep helper

-rwxr-xr-x 6.3 unx 37104 b- defX 21-Apr-20 16:45 lib/arm64-v8a/libxamarin-debug-app-helper.so

-rwxr-xr-x 6.3 unx 31796 b- defX 21-Apr-20 16:45 lib/x86/libxamarin-debug-app-helper.so

```

The DSO comes from the `microsoft.android.runtime.android-{ARCH}` nuget packages which contains all the native libraries from Xamarin.Android, for both Release and Debug builds. | non_test | libxamarin debug app helper so should not be packaged for release builds libxamarin debug app helper so is a helper dso used by debug builds only however dotnet build c release for places it in the apk shell samples hellomaui bin release android zipinfo com microsoft hellomaui signed apk grep helper rwxr xr x unx b defx apr lib libxamarin debug app helper so rwxr xr x unx b defx apr lib libxamarin debug app helper so the dso comes from the microsoft android runtime android arch nuget packages which contains all the native libraries from xamarin android for both release and debug builds | 0 |

55,294 | 6,469,084,952 | IssuesEvent | 2017-08-17 04:05:04 | fossasia/phimpme-android | https://api.github.com/repos/fossasia/phimpme-android | closed | Espresso Test for Single Media Activity | Testing | **Actual Behaviour**

There is no espresso test for Single Media Activity.

**Expected Behaviour**

Add espresso test for Single Media Activity

**Would you like to work on the issue?**

Yes.

| 1.0 | Espresso Test for Single Media Activity - **Actual Behaviour**

There is no espresso test for Single Media Activity.

**Expected Behaviour**

Add espresso test for Single Media Activity

**Would you like to work on the issue?**

Yes.

| test | espresso test for single media activity actual behaviour there is no espresso test for single media activity expected behaviour add espresso test for single media activity would you like to work on the issue yes | 1 |

144,550 | 5,542,422,263 | IssuesEvent | 2017-03-22 14:59:40 | lucy-marko/centrepoint | https://api.github.com/repos/lucy-marko/centrepoint | closed | Enhanced implementation of request status | enhancement epic in-progress priority-2 | - [x] Show open / closed / in-progress status

- [x] Show other visual cues (e.g. bold text for open request)

- [x] Ability to assign admins (including themselves) to specific request

- [x] Ability to close a request (with 'are you sure' message) | 1.0 | Enhanced implementation of request status - - [x] Show open / closed / in-progress status

- [x] Show other visual cues (e.g. bold text for open request)

- [x] Ability to assign admins (including themselves) to specific request

- [x] Ability to close a request (with 'are you sure' message) | non_test | enhanced implementation of request status show open closed in progress status show other visual cues e g bold text for open request ability to assign admins including themselves to specific request ability to close a request with are you sure message | 0 |

353,296 | 25,111,019,888 | IssuesEvent | 2022-11-08 20:32:18 | aws/aws-sdk-js-v3 | https://api.github.com/repos/aws/aws-sdk-js-v3 | opened | AWS sdk is not following semantic versioning and not documented as such | documentation needs-triage | ### Describe the issue

There has been a lot of churn with the aws sdk in our application. We've had to perform emergency fixes several times over the last 2 weeks. I come to find out through a comment in an issue](https://github.com/aws/aws-sdk-js-v3/issues/4122#issuecomment-1306559498) that this SDK does not follow semantic versioning. The Node.js ecosystem relies on adhering to [semantic versioning to build trust](https://docs.npmjs.com/about-semantic-versioning). It's implicitly understood that 3rd party packages follow semantic versioning. It would be wonderful if there was an obvious warning in the README that this library, which is used by over [56.7k applications](https://github.com/aws/aws-sdk-js-v3/network/dependents), does not adhere to semantic versioning so users can properly mitigate this risk.

The project I work on [New Relic Node.js agen](https://github.com/newrelic/node-newrelic) is in a difficult position. We are an agent which means we build instrumentation for the AWS sdk. It's harder for us to lock down versions because it affects our customers when they upgrade past the version we support. It's untenable to ship a new version of our [aws-sdk instrumentation](https://github.com/newrelic/node-newrelic-aws-sdk) on every release as the AWS SDK seems to release daily.

### Links

https://github.com/aws/aws-sdk-js-v3/blob/main/README.md | 1.0 | AWS sdk is not following semantic versioning and not documented as such - ### Describe the issue

There has been a lot of churn with the aws sdk in our application. We've had to perform emergency fixes several times over the last 2 weeks. I come to find out through a comment in an issue](https://github.com/aws/aws-sdk-js-v3/issues/4122#issuecomment-1306559498) that this SDK does not follow semantic versioning. The Node.js ecosystem relies on adhering to [semantic versioning to build trust](https://docs.npmjs.com/about-semantic-versioning). It's implicitly understood that 3rd party packages follow semantic versioning. It would be wonderful if there was an obvious warning in the README that this library, which is used by over [56.7k applications](https://github.com/aws/aws-sdk-js-v3/network/dependents), does not adhere to semantic versioning so users can properly mitigate this risk.

The project I work on [New Relic Node.js agen](https://github.com/newrelic/node-newrelic) is in a difficult position. We are an agent which means we build instrumentation for the AWS sdk. It's harder for us to lock down versions because it affects our customers when they upgrade past the version we support. It's untenable to ship a new version of our [aws-sdk instrumentation](https://github.com/newrelic/node-newrelic-aws-sdk) on every release as the AWS SDK seems to release daily.

### Links

https://github.com/aws/aws-sdk-js-v3/blob/main/README.md | non_test | aws sdk is not following semantic versioning and not documented as such describe the issue there has been a lot of churn with the aws sdk in our application we ve had to perform emergency fixes several times over the last weeks i come to find out through a comment in an issue that this sdk does not follow semantic versioning the node js ecosystem relies on adhering to it s implicitly understood that party packages follow semantic versioning it would be wonderful if there was an obvious warning in the readme that this library which is used by over does not adhere to semantic versioning so users can properly mitigate this risk the project i work on is in a difficult position we are an agent which means we build instrumentation for the aws sdk it s harder for us to lock down versions because it affects our customers when they upgrade past the version we support it s untenable to ship a new version of our on every release as the aws sdk seems to release daily links | 0 |

197,656 | 14,937,950,643 | IssuesEvent | 2021-01-25 15:13:21 | golang/go | https://api.github.com/repos/golang/go | closed | os: spurious TestDirFS failures due to directory mtime skew on Windows | NeedsInvestigation OS-Windows Testing release-blocker | https://storage.googleapis.com/go-build-log/f31194a9/windows-386-2008_061172ea.log:

```

--- FAIL: TestDirFS (0.35s)

os_test.go:2690: TestFS found errors:

testdata/simple: mismatch:

entry.Info() = simple IsDir=true Mode=drwxrwxrwx Size=0 ModTime=2020-11-16 02:32:02.0111083 +0000 GMT

file.Stat() = simple IsDir=true Mode=drwxrwxrwx Size=0 ModTime=2020-11-16 02:32:02.012085 +0000 GMT

FAIL

FAIL os 2.635s

```

Marking as release-blocker for Go 1.16 because `DirFS` is a new API.

CC @rsc @robpike @alexbrainman @networkimprov @zx2c4 | 1.0 | os: spurious TestDirFS failures due to directory mtime skew on Windows - https://storage.googleapis.com/go-build-log/f31194a9/windows-386-2008_061172ea.log:

```

--- FAIL: TestDirFS (0.35s)

os_test.go:2690: TestFS found errors:

testdata/simple: mismatch:

entry.Info() = simple IsDir=true Mode=drwxrwxrwx Size=0 ModTime=2020-11-16 02:32:02.0111083 +0000 GMT

file.Stat() = simple IsDir=true Mode=drwxrwxrwx Size=0 ModTime=2020-11-16 02:32:02.012085 +0000 GMT

FAIL

FAIL os 2.635s

```

Marking as release-blocker for Go 1.16 because `DirFS` is a new API.

CC @rsc @robpike @alexbrainman @networkimprov @zx2c4 | test | os spurious testdirfs failures due to directory mtime skew on windows fail testdirfs os test go testfs found errors testdata simple mismatch entry info simple isdir true mode drwxrwxrwx size modtime gmt file stat simple isdir true mode drwxrwxrwx size modtime gmt fail fail os marking as release blocker for go because dirfs is a new api cc rsc robpike alexbrainman networkimprov | 1 |

120,928 | 25,895,505,510 | IssuesEvent | 2022-12-14 22:01:07 | WebDevStudios/custom-post-type-ui | https://api.github.com/repos/WebDevStudios/custom-post-type-ui | closed | Default `$data['cpt_labels']` to empty array if empty or not array for both post types and taxonomies. | Code QA | Much like we do at https://github.com/WebDevStudios/custom-post-type-ui/blob/1.13.2/inc/post-types.php#L2025-L2031 for some other data points. | 1.0 | Default `$data['cpt_labels']` to empty array if empty or not array for both post types and taxonomies. - Much like we do at https://github.com/WebDevStudios/custom-post-type-ui/blob/1.13.2/inc/post-types.php#L2025-L2031 for some other data points. | non_test | default data to empty array if empty or not array for both post types and taxonomies much like we do at for some other data points | 0 |

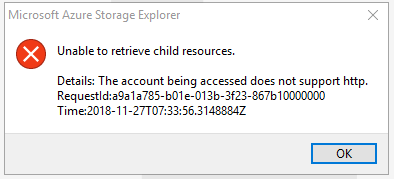

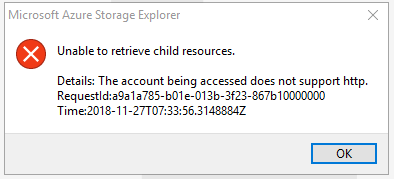

97,484 | 8,657,569,049 | IssuesEvent | 2018-11-27 21:43:04 | Microsoft/AzureStorageExplorer | https://api.github.com/repos/Microsoft/AzureStorageExplorer | closed | Unable to expand services nodes for attached accounts with name and key using HTTP | :gear: attach :white_check_mark: won't fix testing | Storage Explorer Version: 1.5.0

Platform/OS Version: Windows 10/ MacOS High Sierra/ Linux Ubuntu 16.04

Architecture: ia32

Build Number: 20181127.2

Commit: 71a17300

Regression From: Not a regression

#### Steps to Reproduce: ####

1. Choose one GPV2 account -> Attach it with key and name.

**Key**: raUzzv+OExzxlz0G6h7m4PvpETc3DZhVZicmZF2Zs9RfYHnp7Ggcs3H42PyK2L/qvupsX1K813iI8lyVSWY6Aw==

**Account Name**: lclyan

2. Check "Use HTTP" on 'Connect with Name and Key' dialog when adding the account info -> 'Connect'.

3. Expand 'Local & Attached' -> Storage Accounts -> The attached account -> Blob Containers node.

#### Expected Experience: ####

Blob Containers node expanded well.

#### Actual Experience: ####

Unable to expand the Blob Containers node and pop up an error dialog.

**More Info:**

1. This issue reproduces for File Shares/ Queues/Tables node under the same account.

2. This issue reproduces for StorageV1 & Premium & Blob storage account.

3. This issue doesn't reproduce for Classic account.

| 1.0 | Unable to expand services nodes for attached accounts with name and key using HTTP - Storage Explorer Version: 1.5.0

Platform/OS Version: Windows 10/ MacOS High Sierra/ Linux Ubuntu 16.04

Architecture: ia32

Build Number: 20181127.2

Commit: 71a17300

Regression From: Not a regression

#### Steps to Reproduce: ####

1. Choose one GPV2 account -> Attach it with key and name.

**Key**: raUzzv+OExzxlz0G6h7m4PvpETc3DZhVZicmZF2Zs9RfYHnp7Ggcs3H42PyK2L/qvupsX1K813iI8lyVSWY6Aw==

**Account Name**: lclyan

2. Check "Use HTTP" on 'Connect with Name and Key' dialog when adding the account info -> 'Connect'.

3. Expand 'Local & Attached' -> Storage Accounts -> The attached account -> Blob Containers node.

#### Expected Experience: ####

Blob Containers node expanded well.

#### Actual Experience: ####

Unable to expand the Blob Containers node and pop up an error dialog.

**More Info:**

1. This issue reproduces for File Shares/ Queues/Tables node under the same account.

2. This issue reproduces for StorageV1 & Premium & Blob storage account.

3. This issue doesn't reproduce for Classic account.

| test | unable to expand services nodes for attached accounts with name and key using http storage explorer version platform os version windows macos high sierra linux ubuntu architecture build number commit regression from not a regression steps to reproduce choose one account attach it with key and name key rauzzv account name lclyan check use http on connect with name and key dialog when adding the account info connect expand local attached storage accounts the attached account blob containers node expected experience blob containers node expanded well actual experience unable to expand the blob containers node and pop up an error dialog more info this issue reproduces for file shares queues tables node under the same account this issue reproduces for premium blob storage account this issue doesn t reproduce for classic account | 1 |

126,456 | 10,423,429,511 | IssuesEvent | 2019-09-16 11:24:54 | GetTerminus/terminus-ui | https://api.github.com/repos/GetTerminus/terminus-ui | closed | Add additional allowed video mime types to the file upload component | Focus: component Target: latest Type: feature | ### Is your feature request related to a problem? Please describe.

Product would like to allow additional video file types to the creative upload component.

### Describe the solution you'd like.

For the following file types to be added to `TsFileAcceptedMimeTypes`:

• FLV (`video/x-flv`)

• WebM (`video/webm`)

• MOV (`video/quicktime`)

• MPEG (`video/mpeg`)

### Describe alternatives you've considered

Wishing as hard as I can, and then wishing a little harder!

### Additional context

Han shot first.

| 1.0 | Add additional allowed video mime types to the file upload component - ### Is your feature request related to a problem? Please describe.

Product would like to allow additional video file types to the creative upload component.

### Describe the solution you'd like.

For the following file types to be added to `TsFileAcceptedMimeTypes`:

• FLV (`video/x-flv`)

• WebM (`video/webm`)

• MOV (`video/quicktime`)

• MPEG (`video/mpeg`)

### Describe alternatives you've considered

Wishing as hard as I can, and then wishing a little harder!

### Additional context

Han shot first.

| test | add additional allowed video mime types to the file upload component is your feature request related to a problem please describe product would like to allow additional video file types to the creative upload component describe the solution you d like for the following file types to be added to tsfileacceptedmimetypes • flv video x flv • webm video webm • mov video quicktime • mpeg video mpeg describe alternatives you ve considered wishing as hard as i can and then wishing a little harder additional context han shot first | 1 |

577,171 | 17,104,675,738 | IssuesEvent | 2021-07-09 15:52:53 | bcgov/entity | https://api.github.com/repos/bcgov/entity | opened | 0789965 B.C. LTD. BC0789965 Alteration to a Benefit Company | ENTITY OPS Priority1 |

#### ServiceNow incident: INC0099791

#### Contact information

Staff Name: Kathy Langlois

Staff Email: Kathy.Langlois@gov.bc.ca

#### Description

Can you please open a ticket with the lab to file an Alteration to a Benefit Company. Request includes change to Articles to include Benefit statement.

Affiliation info (mandatory - BC Registries account name or BCOL a/c): 330190 Company email (mandatory): trippon@comoxlaw.ca

Company phone (optional):250-339-7977

Filing date (date/time received at Registries. If email, time email received. If mail, time scanned document received): July 8, 2021 at 3:12 PM

Effective date (if different from filing date - ie. Future effective): n/a

Name request # (optional): n/a

Phone number or e-mail from Name request: 250-339-7977

Receipt info:

Method (Routing Slip or BCOL): BCOL

BCOL Account number or Route slip #:

BCOL DAT (if BCOL): C1019483

Folio (optional): n/a

Amount ($100 for immediate or $200 for future effective): $100.00

Request includes change to Articles to include Benefit statement.

[https://app.zenhub.com/files/157936592/2f9280b0-323a-4d1e-b8eb-1c63f2be2738/download](https://app.zenhub.com/files/157936592/2f9280b0-323a-4d1e-b8eb-1c63f2be2738/download)

DEV TASKS:

- [ ] use jupyter notebooks to file LTD > BEN

- [ ] Dev inform BAs that it has been filed and paid

- [ ] BAs review in SOFI or bcregistry.ca

- [ ] IF BEN > COLIN, BA downloads the alteration outputs

- [ ] BAs tell dev to unaffiliate the entity

- [ ] Devs unaffiliate the entity from the account

- [ ] BAs inform staff that they can proceed with their steps

#### Tasks

- [x] When ticket has been created, post the ticket in RocketChat '#Operations Tasks' channel

- [x] Add **entity** or **relationships** label to zenhub ticket

- [x] Add 'Priority1' label to zenhub ticket

- [x] Assign zenhub ticket to milestone: current, and place in pipeline: sprint backlog

- [x] Reply All to IT Ops email and provide zenhub ticket number opened and which team it was assigned to

- [ ] Dev/BAs to complete work & close zenhub ticket

- [ ] Author of zenhub ticket to mark ServiceNow ticket as resolved or ask IT Ops to do so

| 1.0 | 0789965 B.C. LTD. BC0789965 Alteration to a Benefit Company -

#### ServiceNow incident: INC0099791

#### Contact information

Staff Name: Kathy Langlois

Staff Email: Kathy.Langlois@gov.bc.ca

#### Description

Can you please open a ticket with the lab to file an Alteration to a Benefit Company. Request includes change to Articles to include Benefit statement.

Affiliation info (mandatory - BC Registries account name or BCOL a/c): 330190 Company email (mandatory): trippon@comoxlaw.ca

Company phone (optional):250-339-7977

Filing date (date/time received at Registries. If email, time email received. If mail, time scanned document received): July 8, 2021 at 3:12 PM

Effective date (if different from filing date - ie. Future effective): n/a

Name request # (optional): n/a

Phone number or e-mail from Name request: 250-339-7977

Receipt info:

Method (Routing Slip or BCOL): BCOL

BCOL Account number or Route slip #:

BCOL DAT (if BCOL): C1019483

Folio (optional): n/a

Amount ($100 for immediate or $200 for future effective): $100.00

Request includes change to Articles to include Benefit statement.

[https://app.zenhub.com/files/157936592/2f9280b0-323a-4d1e-b8eb-1c63f2be2738/download](https://app.zenhub.com/files/157936592/2f9280b0-323a-4d1e-b8eb-1c63f2be2738/download)

DEV TASKS:

- [ ] use jupyter notebooks to file LTD > BEN

- [ ] Dev inform BAs that it has been filed and paid

- [ ] BAs review in SOFI or bcregistry.ca

- [ ] IF BEN > COLIN, BA downloads the alteration outputs

- [ ] BAs tell dev to unaffiliate the entity

- [ ] Devs unaffiliate the entity from the account

- [ ] BAs inform staff that they can proceed with their steps

#### Tasks

- [x] When ticket has been created, post the ticket in RocketChat '#Operations Tasks' channel

- [x] Add **entity** or **relationships** label to zenhub ticket

- [x] Add 'Priority1' label to zenhub ticket

- [x] Assign zenhub ticket to milestone: current, and place in pipeline: sprint backlog

- [x] Reply All to IT Ops email and provide zenhub ticket number opened and which team it was assigned to

- [ ] Dev/BAs to complete work & close zenhub ticket

- [ ] Author of zenhub ticket to mark ServiceNow ticket as resolved or ask IT Ops to do so

| non_test | b c ltd alteration to a benefit company servicenow incident contact information staff name kathy langlois staff email kathy langlois gov bc ca description can you please open a ticket with the lab to file an alteration to a benefit company request includes change to articles to include benefit statement affiliation info mandatory bc registries account name or bcol a c company email mandatory trippon comoxlaw ca company phone optional filing date date time received at registries if email time email received if mail time scanned document received july at pm effective date if different from filing date ie future effective n a name request optional n a phone number or e mail from name request receipt info method routing slip or bcol bcol bcol account number or route slip bcol dat if bcol folio optional n a amount for immediate or for future effective request includes change to articles to include benefit statement dev tasks use jupyter notebooks to file ltd ben dev inform bas that it has been filed and paid bas review in sofi or bcregistry ca if ben colin ba downloads the alteration outputs bas tell dev to unaffiliate the entity devs unaffiliate the entity from the account bas inform staff that they can proceed with their steps tasks when ticket has been created post the ticket in rocketchat operations tasks channel add entity or relationships label to zenhub ticket add label to zenhub ticket assign zenhub ticket to milestone current and place in pipeline sprint backlog reply all to it ops email and provide zenhub ticket number opened and which team it was assigned to dev bas to complete work close zenhub ticket author of zenhub ticket to mark servicenow ticket as resolved or ask it ops to do so | 0 |

45,132 | 23,923,190,064 | IssuesEvent | 2022-09-09 19:09:00 | yds12/mexe | https://api.github.com/repos/yds12/mexe | closed | Check impact of `smallvec` | performance | Run benchmarks replacing `Vec` by the one in the `smallvec` crate, and see if it's worth it to add a dependency. | True | Check impact of `smallvec` - Run benchmarks replacing `Vec` by the one in the `smallvec` crate, and see if it's worth it to add a dependency. | non_test | check impact of smallvec run benchmarks replacing vec by the one in the smallvec crate and see if it s worth it to add a dependency | 0 |

160,417 | 12,510,872,801 | IssuesEvent | 2020-06-02 19:28:34 | PowerShell/PowerShell | https://api.github.com/repos/PowerShell/PowerShell | closed | Restore markdownlint tests | Area-Test Issue-Question | `markdownlint` tests were removed in #10163 due to a security issue in a dependancy ([CVE-2019-10746](https://snyk.io/vuln/SNYK-JS-MIXINDEEP-450212)).

According to [snyk](https://snyk.io/test/npm/markdownlint/), `markdownlint` has no currently known vulnerabilities, so we should restore these tests.

| 1.0 | Restore markdownlint tests - `markdownlint` tests were removed in #10163 due to a security issue in a dependancy ([CVE-2019-10746](https://snyk.io/vuln/SNYK-JS-MIXINDEEP-450212)).

According to [snyk](https://snyk.io/test/npm/markdownlint/), `markdownlint` has no currently known vulnerabilities, so we should restore these tests.

| test | restore markdownlint tests markdownlint tests were removed in due to a security issue in a dependancy according to markdownlint has no currently known vulnerabilities so we should restore these tests | 1 |

205,667 | 15,987,760,150 | IssuesEvent | 2021-04-19 01:38:44 | AsthMattic/Parallax | https://api.github.com/repos/AsthMattic/Parallax | closed | Add STL's for tracker to GitHub | documentation | The STL's need to be uploaded so that anyone who wants to make their own tracker can download and print them. | 1.0 | Add STL's for tracker to GitHub - The STL's need to be uploaded so that anyone who wants to make their own tracker can download and print them. | non_test | add stl s for tracker to github the stl s need to be uploaded so that anyone who wants to make their own tracker can download and print them | 0 |

94,871 | 8,526,472,134 | IssuesEvent | 2018-11-02 16:20:58 | SME-Issues/issues | https://api.github.com/repos/SME-Issues/issues | closed | Intent Errors (5004) - 01/11/2018 | NLP Api pulse_tests | |Expression|Result|

|---|---|

| _did i bill delta ltd for july_ | expected intent to be `query_invoices` but found `query_payment` |

| _Do I need to chase ABC Ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _do i need to pay anyone_ | expected intent to be `query_invoices` but found `query_payment` |

| _Do I need to pay anyone at the moment?_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me all the bills I have to pay by next Tues_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me all the invoices that are owed_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me the bills i need to pay next week_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much do i have to pay HMRC this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much do i have to pay on Tues_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much is owed on the johnson ring pulls account_ | expected intent to be `query_invoices` but found `query_payment` |

| _is carl overdue in paying this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _show me the transactions on wigglycom's account_ | expected intent to be `query_invoices` but found `query_payment` |

| _Tell me who I need to chase for payments?_ | expected intent to be `query_invoices` but found `query_payment` |

| _What do I need to pay Tau Ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what do i need to pay this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _what does HSBC owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what is the biggest one I owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what is the biggest one owed to me?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what should i expect to have to pay out this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _what supplier invoices need to be paid_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will cybg owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will CYGB owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will cygb owe?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will hsbc owe?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will i have to pay my landlord this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _What will I have to pay next month?_ | expected intent to be `query_invoices` but found `query_payment` |

| _when do i have to pay people_ | expected intent to be `query_invoices` but found `query_payment` |

| _when do i need to pay people?_ | expected intent to be `query_invoices` but found `query_payment` |

| _When should I get the money in from ABC ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _when will i have to pay my bills_ | expected intent to be `query_invoices` but found `query_payment` |

| _which bills need to be paid this week_ | expected intent to be `query_invoices` but found `query_payment` |

| _which is the oldest one I owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _which is the oldest one owed to me?_ | expected intent to be `query_invoices` but found `query_payment` |

| _which supplier invoices need to be paid_ | expected intent to be `query_invoices` but found `query_payment` |

| _who do i need to pay_ | expected intent to be `query_invoices` but found `query_payment` |

| _who do i need to pay this month?_ | expected intent to be `query_invoices` but found `query_payment` |

| _who hasn't paid me yet?_ | expected intent to be `query_invoices` but found `query_payment` |

| _who needs to pay me_ | expected intent to be `query_invoices` but found `query_payment` |

| _what do i need to pay and what should i be paid_ | Multiple failures or warnings in test:

1) Test needs updating

2) expected intent to be `query_invoices` but found `query_payment`

|

| 1.0 | Intent Errors (5004) - 01/11/2018 - |Expression|Result|

|---|---|

| _did i bill delta ltd for july_ | expected intent to be `query_invoices` but found `query_payment` |

| _Do I need to chase ABC Ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _do i need to pay anyone_ | expected intent to be `query_invoices` but found `query_payment` |

| _Do I need to pay anyone at the moment?_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me all the bills I have to pay by next Tues_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me all the invoices that are owed_ | expected intent to be `query_invoices` but found `query_payment` |

| _give me the bills i need to pay next week_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much do i have to pay HMRC this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much do i have to pay on Tues_ | expected intent to be `query_invoices` but found `query_payment` |

| _how much is owed on the johnson ring pulls account_ | expected intent to be `query_invoices` but found `query_payment` |

| _is carl overdue in paying this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _show me the transactions on wigglycom's account_ | expected intent to be `query_invoices` but found `query_payment` |

| _Tell me who I need to chase for payments?_ | expected intent to be `query_invoices` but found `query_payment` |

| _What do I need to pay Tau Ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what do i need to pay this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _what does HSBC owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what is the biggest one I owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what is the biggest one owed to me?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what should i expect to have to pay out this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _what supplier invoices need to be paid_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will cybg owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will CYGB owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will cygb owe?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will hsbc owe?_ | expected intent to be `query_invoices` but found `query_payment` |

| _what will i have to pay my landlord this month_ | expected intent to be `query_invoices` but found `query_payment` |

| _What will I have to pay next month?_ | expected intent to be `query_invoices` but found `query_payment` |

| _when do i have to pay people_ | expected intent to be `query_invoices` but found `query_payment` |

| _when do i need to pay people?_ | expected intent to be `query_invoices` but found `query_payment` |

| _When should I get the money in from ABC ltd?_ | expected intent to be `query_invoices` but found `query_payment` |

| _when will i have to pay my bills_ | expected intent to be `query_invoices` but found `query_payment` |

| _which bills need to be paid this week_ | expected intent to be `query_invoices` but found `query_payment` |

| _which is the oldest one I owe_ | expected intent to be `query_invoices` but found `query_payment` |

| _which is the oldest one owed to me?_ | expected intent to be `query_invoices` but found `query_payment` |

| _which supplier invoices need to be paid_ | expected intent to be `query_invoices` but found `query_payment` |

| _who do i need to pay_ | expected intent to be `query_invoices` but found `query_payment` |

| _who do i need to pay this month?_ | expected intent to be `query_invoices` but found `query_payment` |

| _who hasn't paid me yet?_ | expected intent to be `query_invoices` but found `query_payment` |

| _who needs to pay me_ | expected intent to be `query_invoices` but found `query_payment` |

| _what do i need to pay and what should i be paid_ | Multiple failures or warnings in test:

1) Test needs updating

2) expected intent to be `query_invoices` but found `query_payment`

|

| test | intent errors expression result did i bill delta ltd for july expected intent to be query invoices but found query payment do i need to chase abc ltd expected intent to be query invoices but found query payment do i need to pay anyone expected intent to be query invoices but found query payment do i need to pay anyone at the moment expected intent to be query invoices but found query payment give me all the bills i have to pay by next tues expected intent to be query invoices but found query payment give me all the invoices that are owed expected intent to be query invoices but found query payment give me the bills i need to pay next week expected intent to be query invoices but found query payment how much do i have to pay hmrc this month expected intent to be query invoices but found query payment how much do i have to pay on tues expected intent to be query invoices but found query payment how much is owed on the johnson ring pulls account expected intent to be query invoices but found query payment is carl overdue in paying this month expected intent to be query invoices but found query payment show me the transactions on wigglycom s account expected intent to be query invoices but found query payment tell me who i need to chase for payments expected intent to be query invoices but found query payment what do i need to pay tau ltd expected intent to be query invoices but found query payment what do i need to pay this month expected intent to be query invoices but found query payment what does hsbc owe expected intent to be query invoices but found query payment what is the biggest one i owe expected intent to be query invoices but found query payment what is the biggest one owed to me expected intent to be query invoices but found query payment what should i expect to have to pay out this month expected intent to be query invoices but found query payment what supplier invoices need to be paid expected intent to be query invoices but found query payment what will cybg owe expected intent to be query invoices but found query payment what will cygb owe expected intent to be query invoices but found query payment what will cygb owe expected intent to be query invoices but found query payment what will hsbc owe expected intent to be query invoices but found query payment what will i have to pay my landlord this month expected intent to be query invoices but found query payment what will i have to pay next month expected intent to be query invoices but found query payment when do i have to pay people expected intent to be query invoices but found query payment when do i need to pay people expected intent to be query invoices but found query payment when should i get the money in from abc ltd expected intent to be query invoices but found query payment when will i have to pay my bills expected intent to be query invoices but found query payment which bills need to be paid this week expected intent to be query invoices but found query payment which is the oldest one i owe expected intent to be query invoices but found query payment which is the oldest one owed to me expected intent to be query invoices but found query payment which supplier invoices need to be paid expected intent to be query invoices but found query payment who do i need to pay expected intent to be query invoices but found query payment who do i need to pay this month expected intent to be query invoices but found query payment who hasn t paid me yet expected intent to be query invoices but found query payment who needs to pay me expected intent to be query invoices but found query payment what do i need to pay and what should i be paid multiple failures or warnings in test test needs updating expected intent to be query invoices but found query payment | 1 |

76,125 | 26,254,298,160 | IssuesEvent | 2023-01-05 22:31:10 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: Adding np.nan to list changes pvalue | defect | ### Describe your issue.

Not sure if this counts as a bug but it seems like unintended functionality. Adding np.nan's to lists changes the pvalues when they should probably be ignored.

### Reproducing Code Example

```python

l1 = [1, 2, 3]

l2 = [0, 1, 2]

print(scipy.stats.ranksums(l1, l2))

RanksumsResult(statistic=1.091089451179962, pvalue=0.27523352407483426)

l1 = [1, 2, 3] + [np.nan]*100

l2 = [0, 1, 2] + [np.nan]*100

print(scipy.stats.ranksums(l1, l2))

RanksumsResult(statistic=11.343152467935827, pvalue=8.02022721910526e-30)

```

### Error message

```shell

No error message

```

### SciPy/NumPy/Python version information

1.7.3, 1.21.0, sys.version_info(major=3, minor=9, micro=9, releaselevel='final', serial=0) | 1.0 | BUG: Adding np.nan to list changes pvalue - ### Describe your issue.

Not sure if this counts as a bug but it seems like unintended functionality. Adding np.nan's to lists changes the pvalues when they should probably be ignored.

### Reproducing Code Example

```python

l1 = [1, 2, 3]

l2 = [0, 1, 2]

print(scipy.stats.ranksums(l1, l2))

RanksumsResult(statistic=1.091089451179962, pvalue=0.27523352407483426)

l1 = [1, 2, 3] + [np.nan]*100

l2 = [0, 1, 2] + [np.nan]*100

print(scipy.stats.ranksums(l1, l2))

RanksumsResult(statistic=11.343152467935827, pvalue=8.02022721910526e-30)

```

### Error message

```shell

No error message

```

### SciPy/NumPy/Python version information

1.7.3, 1.21.0, sys.version_info(major=3, minor=9, micro=9, releaselevel='final', serial=0) | non_test | bug adding np nan to list changes pvalue describe your issue not sure if this counts as a bug but it seems like unintended functionality adding np nan s to lists changes the pvalues when they should probably be ignored reproducing code example python print scipy stats ranksums ranksumsresult statistic pvalue print scipy stats ranksums ranksumsresult statistic pvalue error message shell no error message scipy numpy python version information sys version info major minor micro releaselevel final serial | 0 |

97,971 | 8,673,895,115 | IssuesEvent | 2018-11-30 04:53:14 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | FXLabs Testing : ApiV1EnvsIdGetPathParamIdMysqlSqlInjectionTimebound | FXLabs Testing | Project : FXLabs Testing

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=YTM3ZjdiZGMtNGQyMi00MDUwLWI4ZTMtYTkwNDE4M2I2NjBm; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Fri, 30 Nov 2018 04:51:34 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/envs/

Request :

Response :

{

"timestamp" : "2018-11-30T04:51:35.326+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/envs/"

}

Logs :

Assertion [@ResponseTime < 7000 OR @ResponseTime > 10000] resolved-to [491 < 7000 OR 491 > 10000] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot --- | 1.0 | FXLabs Testing : ApiV1EnvsIdGetPathParamIdMysqlSqlInjectionTimebound - Project : FXLabs Testing

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=YTM3ZjdiZGMtNGQyMi00MDUwLWI4ZTMtYTkwNDE4M2I2NjBm; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Fri, 30 Nov 2018 04:51:34 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/envs/

Request :

Response :

{

"timestamp" : "2018-11-30T04:51:35.326+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/envs/"

}

Logs :

Assertion [@ResponseTime < 7000 OR @ResponseTime > 10000] resolved-to [491 < 7000 OR 491 > 10000] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot --- | test | fxlabs testing project fxlabs testing job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint request response timestamp status error not found message no message available path api api envs logs assertion resolved to result assertion resolved to result fx bot | 1 |

116,494 | 9,854,174,798 | IssuesEvent | 2019-06-19 16:13:50 | input-output-hk/rust-cardano | https://api.github.com/repos/input-output-hk/rust-cardano | closed | CircleCI: add minimal rust compiler version job too | D - medium Testing | We need to make sure we support a minimal compiler version in the circle ci jobs.

the minimal version should be `1.31.0` (edition: 2018). | 1.0 | CircleCI: add minimal rust compiler version job too - We need to make sure we support a minimal compiler version in the circle ci jobs.

the minimal version should be `1.31.0` (edition: 2018). | test | circleci add minimal rust compiler version job too we need to make sure we support a minimal compiler version in the circle ci jobs the minimal version should be edition | 1 |

130,370 | 10,607,340,851 | IssuesEvent | 2019-10-11 03:21:12 | rsx-labs/aide-frontend | https://api.github.com/repos/rsx-labs/aide-frontend | closed | Create Project label consistency | Bug For QA Testing | **Describe the bug**

1. Change 'Select Category' to 'Select category' using lowercase on the word category

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Version (please complete the following information):**

- Version 2.6 | 1.0 | Create Project label consistency - **Describe the bug**

1. Change 'Select Category' to 'Select category' using lowercase on the word category

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Version (please complete the following information):**

- Version 2.6 | test | create project label consistency describe the bug change select category to select category using lowercase on the word category screenshots if applicable add screenshots to help explain your problem version please complete the following information version | 1 |

156,304 | 19,847,808,646 | IssuesEvent | 2022-01-21 08:54:06 | qiangmao/axios | https://api.github.com/repos/qiangmao/axios | opened | CVE-2017-16032 (Medium) detected in brace-expansion-1.1.6.tgz | security vulnerability | ## CVE-2017-16032 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>brace-expansion-1.1.6.tgz</b></p></summary>

<p>Brace expansion as known from sh/bash</p>

<p>Library home page: <a href="https://registry.npmjs.org/brace-expansion/-/brace-expansion-1.1.6.tgz">https://registry.npmjs.org/brace-expansion/-/brace-expansion-1.1.6.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/nyc/node_modules/brace-expansion/package.json</p>

<p>

Dependency Hierarchy:

- grunt-contrib-nodeunit-1.0.0.tgz (Root Library)

- nodeunit-0.9.5.tgz

- tap-7.1.2.tgz

- nyc-7.1.0.tgz

- glob-7.0.5.tgz

- minimatch-3.0.2.tgz

- :x: **brace-expansion-1.1.6.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/qiangmao/axios/commit/91ceb6046aaa22e9934ed13ea5acba9c988c490c">91ceb6046aaa22e9934ed13ea5acba9c988c490c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

brace-expansion before 1.1.7 are vulnerable to a regular expression denial of service.

<p>Publish Date: 2020-07-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-16032>CVE-2017-16032</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/338">https://www.npmjs.com/advisories/338</a></p>

<p>Release Date: 2020-07-21</p>

<p>Fix Resolution: v1.1.7</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2017-16032 (Medium) detected in brace-expansion-1.1.6.tgz - ## CVE-2017-16032 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>brace-expansion-1.1.6.tgz</b></p></summary>

<p>Brace expansion as known from sh/bash</p>

<p>Library home page: <a href="https://registry.npmjs.org/brace-expansion/-/brace-expansion-1.1.6.tgz">https://registry.npmjs.org/brace-expansion/-/brace-expansion-1.1.6.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/nyc/node_modules/brace-expansion/package.json</p>

<p>

Dependency Hierarchy:

- grunt-contrib-nodeunit-1.0.0.tgz (Root Library)

- nodeunit-0.9.5.tgz

- tap-7.1.2.tgz

- nyc-7.1.0.tgz

- glob-7.0.5.tgz

- minimatch-3.0.2.tgz

- :x: **brace-expansion-1.1.6.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/qiangmao/axios/commit/91ceb6046aaa22e9934ed13ea5acba9c988c490c">91ceb6046aaa22e9934ed13ea5acba9c988c490c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

brace-expansion before 1.1.7 are vulnerable to a regular expression denial of service.

<p>Publish Date: 2020-07-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2017-16032>CVE-2017-16032</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/338">https://www.npmjs.com/advisories/338</a></p>

<p>Release Date: 2020-07-21</p>

<p>Fix Resolution: v1.1.7</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in brace expansion tgz cve medium severity vulnerability vulnerable library brace expansion tgz brace expansion as known from sh bash library home page a href path to dependency file package json path to vulnerable library node modules nyc node modules brace expansion package json dependency hierarchy grunt contrib nodeunit tgz root library nodeunit tgz tap tgz nyc tgz glob tgz minimatch tgz x brace expansion tgz vulnerable library found in head commit a href found in base branch master vulnerability details brace expansion before are vulnerable to a regular expression denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity high privileges required low user interaction required scope unchanged impact metrics confidentiality impact low integrity impact low availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

115,242 | 9,784,974,630 | IssuesEvent | 2019-06-09 01:12:03 | fergusfrl/Recog | https://api.github.com/repos/fergusfrl/Recog | opened | More robust Unit tests | testing | The test suite does not currently cover 3 major use cases:

1. error states. eg: `Dir 'x' already exists`

2. unit level of the `handlers` file

3. unit level of the `templates` file. Jest + CSS is P1. React file template is P2

| 1.0 | More robust Unit tests - The test suite does not currently cover 3 major use cases:

1. error states. eg: `Dir 'x' already exists`

2. unit level of the `handlers` file

3. unit level of the `templates` file. Jest + CSS is P1. React file template is P2

| test | more robust unit tests the test suite does not currently cover major use cases error states eg dir x already exists unit level of the handlers file unit level of the templates file jest css is react file template is | 1 |

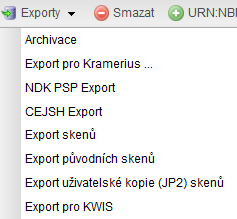

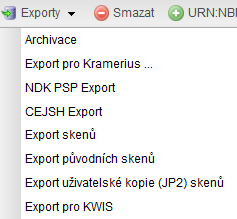

248,984 | 21,092,681,672 | IssuesEvent | 2022-04-04 07:20:17 | proarc/proarc-client | https://api.github.com/repos/proarc/proarc-client | closed | Úprava řazení v roletce Export | 1 chyba 6 k testování 7 návrh na zavření 6c otestováno: KNAV | Prosím upravit řazení v roletce export podle jádra.

Teď naskočí jako první Skeny. To se exportuje jen výjimečně.

| 2.0 | Úprava řazení v roletce Export - Prosím upravit řazení v roletce export podle jádra.

Teď naskočí jako první Skeny. To se exportuje jen výjimečně.

| test | úprava řazení v roletce export prosím upravit řazení v roletce export podle jádra teď naskočí jako první skeny to se exportuje jen výjimečně | 1 |

326,657 | 28,010,048,049 | IssuesEvent | 2023-03-27 17:56:19 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | pkg/server: `TestDrain` is flakey | C-bug skipped-test GA-blocker T-kv branch-release-23.1 | **Describe the problem**

<img width="759" alt="Screen Shot 2022-08-26 at 5 53 10 PM" src="https://user-images.githubusercontent.com/6658984/187007842-633f91a6-66eb-45da-b9b2-7c9beecb902a.png">

TestDrain has been flaking a lot today.

```

Failed

=== RUN TestDrain

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/ab5462d2cb3989e2a33018db970f23de/logTestDrain523638963

test_log_scope.go:79: use -show-logs to present logs inline

drain_test.go:254: expected remaining false, got true

panic.go:500: -- test log scope end --

--- FAIL: TestDrain (74.31s)

```

Jira issue: CRDB-19064 | 1.0 | pkg/server: `TestDrain` is flakey - **Describe the problem**

<img width="759" alt="Screen Shot 2022-08-26 at 5 53 10 PM" src="https://user-images.githubusercontent.com/6658984/187007842-633f91a6-66eb-45da-b9b2-7c9beecb902a.png">

TestDrain has been flaking a lot today.

```

Failed

=== RUN TestDrain

test_log_scope.go:161: test logs captured to: /artifacts/tmp/_tmp/ab5462d2cb3989e2a33018db970f23de/logTestDrain523638963

test_log_scope.go:79: use -show-logs to present logs inline