Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

26,605 | 4,235,943,958 | IssuesEvent | 2016-07-05 16:45:15 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | stacktrace dumping shell logic is causing test flakes | area/tests kind/test-flake priority/P2 | the stack trace dumping logic is actually causing excess evaluations of commands, which means logic that loops while expect a certain result never sees that result.

in this case, we loop trying to create a key. we expect to see "key created" come back, once the app is available and responsive.

Instead, during one of the failed POST attempts (because the app isn't up yet) the stack trace logic gets invoked, and the stack trace logic itself ends up evaluating the curl command and doing another POST. That stack trace logic gets the "key created" response, which is useless.

Then the main loop continues and tries the POST again, but now it gets "key updated" and fails the test.

see bash debug output here:

https://gist.github.com/bparees/5cd82c622dbfc9e6725653102110bd64

the test logic starts in

end-to-end/core.sh:46 (POST to create key 1337)

then enters the retry loop at

hack/util.sh:283 and ends up in the stack trace logic as a result of the eval on line 284.

If you look at the gist output, you can see we enter the stack trace logic at line 11 due to an RC=7 from curl.

then line line 39-41 of the gist you can see the curl command gets evaluated (still within the stack trace handling logic) and gets the "key created" response back.

i assume the issue is faulty quoting on line 39 where the eval within the stack trace handling logic occurs.

| 2.0 | stacktrace dumping shell logic is causing test flakes - the stack trace dumping logic is actually causing excess evaluations of commands, which means logic that loops while expect a certain result never sees that result.

in this case, we loop trying to create a key. we expect to see "key created" come back, once the app is available and responsive.

Instead, during one of the failed POST attempts (because the app isn't up yet) the stack trace logic gets invoked, and the stack trace logic itself ends up evaluating the curl command and doing another POST. That stack trace logic gets the "key created" response, which is useless.

Then the main loop continues and tries the POST again, but now it gets "key updated" and fails the test.

see bash debug output here:

https://gist.github.com/bparees/5cd82c622dbfc9e6725653102110bd64

the test logic starts in

end-to-end/core.sh:46 (POST to create key 1337)

then enters the retry loop at

hack/util.sh:283 and ends up in the stack trace logic as a result of the eval on line 284.

If you look at the gist output, you can see we enter the stack trace logic at line 11 due to an RC=7 from curl.

then line line 39-41 of the gist you can see the curl command gets evaluated (still within the stack trace handling logic) and gets the "key created" response back.

i assume the issue is faulty quoting on line 39 where the eval within the stack trace handling logic occurs.

| test | stacktrace dumping shell logic is causing test flakes the stack trace dumping logic is actually causing excess evaluations of commands which means logic that loops while expect a certain result never sees that result in this case we loop trying to create a key we expect to see key created come back once the app is available and responsive instead during one of the failed post attempts because the app isn t up yet the stack trace logic gets invoked and the stack trace logic itself ends up evaluating the curl command and doing another post that stack trace logic gets the key created response which is useless then the main loop continues and tries the post again but now it gets key updated and fails the test see bash debug output here the test logic starts in end to end core sh post to create key then enters the retry loop at hack util sh and ends up in the stack trace logic as a result of the eval on line if you look at the gist output you can see we enter the stack trace logic at line due to an rc from curl then line line of the gist you can see the curl command gets evaluated still within the stack trace handling logic and gets the key created response back i assume the issue is faulty quoting on line where the eval within the stack trace handling logic occurs | 1 |

21,696 | 3,916,251,286 | IssuesEvent | 2016-04-21 00:23:38 | elastic/logstash | https://api.github.com/repos/elastic/logstash | closed | [WiP] Testing how-to docs, RSpec features listing | docs ruby style guide tests-infra work in progress | ### Background

Testing a pipeline like logstash might become a hard process, there has been a lots of improvements and simplifications for the testing of logstash, however one of the few things missing is a good document where people can refere in order to know how to write test. This issue aim to be a place to discuss and show how to write great test for logstash using the last features of rspec3, after we're happy with the content in here the objective is to generate a wiki page where everyone can go.

## RSpec 3.x features

In this section we'll do an overview of the different rspec features and might be useful for you when planning your tests suites.

### rspec-core

The rspec-core provides the structure for writing executable examples of how your code should behave.

#### describe, context and examples

```ruby

describe "something" do

context "in one context" do

it "does one thing" do

end

end

context "in another context" do

it "does another thing" do

end

end

end

```

#### Subjects

```ruby

describe "something" do

context "in one context" do

it "does one thing" do

end

end

context "in another context" do

it "does another thing" do

end

end

end

```

#### let

```ruby

$count = 0

describe "let" do

let(:count) { $count += 1 }

it "memoizes the value" do

expect(count).to eq(1)

expect(count).to eq(1)

end

it "is not cached across examples" do

expect(count).to eq(2)

end

end

```

#### Other important features

* hooks (before, around and after)

* filters

* metadata

* formaters

### rspec-mocks

A test-double framework for rspec with support for method stubs, fakes, and message expectations on generated test-doubles and real objects alike

#### Allow and expect

```ruby

describe "allow" do

it "returns nil from allowed messages" do

dbl = double("Some Collaborator")

allow(dbl).to receive(:foo)

expect(dbl.foo).to be_nil

end

end

```

#### Constraints

```ruby

expect(...).to receive(...).once

expect(...).to receive(...).twice

expect(...).to receive(...).exactly(n).times

expect(...).to receive(...).at_least(:once)

expect(...).to receive(...).at_least(:twice)

expect(...).to receive(...).at_least(n).times

expect(...).to receive(...).at_most(:once)

expect(...).to receive(...).at_most(:twice)

expect(...).to receive(...).at_most(n).times

```

#### Spies

```ruby

describe "have_received" do

it "passes when the message has been received" do

invitation = spy('invitation')

invitation.deliver

expect(invitation).to have_received(:deliver)

end

end

```

#### Dealing with legacy code

```ruby

allow_any_instance_of(Widget).to receive(:name).and_return("Wibble")

```

```ruby

expect_any_instance_of(Widget).to receive(:name).and_return("Wobble")

```

### rspec-expectations

Collection of assertions that lets you express expected outcomes on an object in an example.

#### Equality matchers

```ruby

describe "a string" do

it "is equal to another string of the same value" do

expect("this string").to eq("this string")

end

it "is not equal to another string of a different value" do

expect("this string").not_to eq("a different string")

end

end

```

#### Comparison matchers

```ruby

describe 18 do

it { is_expected.to be < 20 }

it { is_expected.to be > 15 }

it { is_expected.to be <= 19 }

it { is_expected.to be >= 17 }

# deliberate failures

it { is_expected.to be < 15 }

it { is_expected.to be > 20 }

it { is_expected.to be <= 17 }

it { is_expected.to be >= 19 }

end

```

#### Predicate matchers

```ruby

# calls 7.zero?

expect(7).not_to be_zero

# calls [].empty?

expect([]).to be_empty

# calls x.multiple_of?(3)

expect(x).to be_multiple_of(3)

```

#### Type matchers

```ruby

expect(obj).to be_a_kind_of(type)

expect([1, 3, 5]).to all( be_odd )

expect(obj).to be_truthy

expect(obj).to be_nil

expect(area_of_circle).to be_within(0.1).of(28.3)

expect { Counter.increment }.to change{Counter.count}.from(0).to(1)

expect([1, 2, 3]).to contain_exactly(2, 3, 1)

expect(1..10).to cover(5) #@ruby-1.9

expect("this string").to end_with "string"

expect(obj).to exist

expect { raise StandardError }.to raise_error

expect(10).to satisfy { |v| v % 5 == 0 }

expect(obj).to respond_to(:foo)

specify { expect { |b| MyClass.yield_once_with(1, &b) }.to yield_control }

specify { expect { print('foo') }.to output.to_stdout }

```

#### Custom matchers

#### Composing matchers

## Best practices for RSpec but also general for testing.

### Use context

```ruby

context 'when logged in' do

it { is_expected.to respond_with 200 }

end

context 'when logged out' do

it { is_expected.to respond_with 401 }

end

```

### Have short and clean descriptions

```ruby

context 'when not valid' do

it { is_expected.to respond_with 422 }

end

```

becomes

```ruby

when not valid

it should respond with 422

```

### One assertion per test

```ruby

context "when a resource is exposed" do

it { is_expected.to respond_with_content_type(:json) }

it { is_expected.to assign_to(:resource) }

end

```

### Use subjects

```ruby

subject(:hero) { Hero.first }

it "carries a sword" do

expect(hero.equipment).to include "sword"

end

```

### Use let and let!

```ruby

describe '#type_id' do

let(:resource) { FactoryGirl.create :device }

let(:type) { Type.find resource.type_id }

it 'sets the type_id field' do

expect(resource.type_id).to equal(type.id)

end

end

```

### Mock and stub but with caution!

```ruby

# simulate a not found resource

context "when not found" do

before { allow(Resource).to receive(:where).

with(created_from: params[:id]).

and_return(false)

}

it { is_expected.to respond_with 404 }

end

```

### Use descriptive factories

```ruby

config = <<-CONFIG

filter {

mutate { add_field => { "always" => "awesome" } }

if [foo] == "bar" {

mutate { add_field => { "hello" => "world" } }

} else if [bar] == "baz" {

mutate { add_field => { "fancy" => "pants" } }

} else {

mutate { add_field => { "free" => "hugs" } }

}

}

CONFIG

```

### Your test want to be independent

...

### Structure your test layout

... | 1.0 | [WiP] Testing how-to docs, RSpec features listing - ### Background

Testing a pipeline like logstash might become a hard process, there has been a lots of improvements and simplifications for the testing of logstash, however one of the few things missing is a good document where people can refere in order to know how to write test. This issue aim to be a place to discuss and show how to write great test for logstash using the last features of rspec3, after we're happy with the content in here the objective is to generate a wiki page where everyone can go.

## RSpec 3.x features

In this section we'll do an overview of the different rspec features and might be useful for you when planning your tests suites.

### rspec-core

The rspec-core provides the structure for writing executable examples of how your code should behave.

#### describe, context and examples

```ruby

describe "something" do

context "in one context" do

it "does one thing" do

end

end

context "in another context" do

it "does another thing" do

end

end

end

```

#### Subjects

```ruby

describe "something" do

context "in one context" do

it "does one thing" do

end

end

context "in another context" do

it "does another thing" do

end

end

end

```

#### let

```ruby

$count = 0

describe "let" do

let(:count) { $count += 1 }

it "memoizes the value" do

expect(count).to eq(1)

expect(count).to eq(1)

end

it "is not cached across examples" do

expect(count).to eq(2)

end

end

```

#### Other important features

* hooks (before, around and after)

* filters

* metadata

* formaters

### rspec-mocks

A test-double framework for rspec with support for method stubs, fakes, and message expectations on generated test-doubles and real objects alike

#### Allow and expect

```ruby

describe "allow" do

it "returns nil from allowed messages" do

dbl = double("Some Collaborator")

allow(dbl).to receive(:foo)

expect(dbl.foo).to be_nil

end

end

```

#### Constraints

```ruby

expect(...).to receive(...).once

expect(...).to receive(...).twice

expect(...).to receive(...).exactly(n).times

expect(...).to receive(...).at_least(:once)

expect(...).to receive(...).at_least(:twice)

expect(...).to receive(...).at_least(n).times

expect(...).to receive(...).at_most(:once)

expect(...).to receive(...).at_most(:twice)

expect(...).to receive(...).at_most(n).times

```

#### Spies

```ruby

describe "have_received" do

it "passes when the message has been received" do

invitation = spy('invitation')

invitation.deliver

expect(invitation).to have_received(:deliver)

end

end

```

#### Dealing with legacy code

```ruby

allow_any_instance_of(Widget).to receive(:name).and_return("Wibble")

```

```ruby

expect_any_instance_of(Widget).to receive(:name).and_return("Wobble")

```

### rspec-expectations

Collection of assertions that lets you express expected outcomes on an object in an example.

#### Equality matchers

```ruby

describe "a string" do

it "is equal to another string of the same value" do

expect("this string").to eq("this string")

end

it "is not equal to another string of a different value" do

expect("this string").not_to eq("a different string")

end

end

```

#### Comparison matchers

```ruby

describe 18 do

it { is_expected.to be < 20 }

it { is_expected.to be > 15 }

it { is_expected.to be <= 19 }

it { is_expected.to be >= 17 }

# deliberate failures

it { is_expected.to be < 15 }

it { is_expected.to be > 20 }

it { is_expected.to be <= 17 }

it { is_expected.to be >= 19 }

end

```

#### Predicate matchers

```ruby

# calls 7.zero?

expect(7).not_to be_zero

# calls [].empty?

expect([]).to be_empty

# calls x.multiple_of?(3)

expect(x).to be_multiple_of(3)

```

#### Type matchers

```ruby

expect(obj).to be_a_kind_of(type)

expect([1, 3, 5]).to all( be_odd )

expect(obj).to be_truthy

expect(obj).to be_nil

expect(area_of_circle).to be_within(0.1).of(28.3)

expect { Counter.increment }.to change{Counter.count}.from(0).to(1)

expect([1, 2, 3]).to contain_exactly(2, 3, 1)

expect(1..10).to cover(5) #@ruby-1.9

expect("this string").to end_with "string"

expect(obj).to exist

expect { raise StandardError }.to raise_error

expect(10).to satisfy { |v| v % 5 == 0 }

expect(obj).to respond_to(:foo)

specify { expect { |b| MyClass.yield_once_with(1, &b) }.to yield_control }

specify { expect { print('foo') }.to output.to_stdout }

```

#### Custom matchers

#### Composing matchers

## Best practices for RSpec but also general for testing.

### Use context

```ruby

context 'when logged in' do

it { is_expected.to respond_with 200 }

end

context 'when logged out' do

it { is_expected.to respond_with 401 }

end

```

### Have short and clean descriptions

```ruby

context 'when not valid' do

it { is_expected.to respond_with 422 }

end

```

becomes

```ruby

when not valid

it should respond with 422

```

### One assertion per test

```ruby

context "when a resource is exposed" do

it { is_expected.to respond_with_content_type(:json) }

it { is_expected.to assign_to(:resource) }

end

```

### Use subjects

```ruby

subject(:hero) { Hero.first }

it "carries a sword" do

expect(hero.equipment).to include "sword"

end

```

### Use let and let!

```ruby

describe '#type_id' do

let(:resource) { FactoryGirl.create :device }

let(:type) { Type.find resource.type_id }

it 'sets the type_id field' do

expect(resource.type_id).to equal(type.id)

end

end

```

### Mock and stub but with caution!

```ruby

# simulate a not found resource

context "when not found" do

before { allow(Resource).to receive(:where).

with(created_from: params[:id]).

and_return(false)

}

it { is_expected.to respond_with 404 }

end

```

### Use descriptive factories

```ruby

config = <<-CONFIG

filter {

mutate { add_field => { "always" => "awesome" } }

if [foo] == "bar" {

mutate { add_field => { "hello" => "world" } }

} else if [bar] == "baz" {

mutate { add_field => { "fancy" => "pants" } }

} else {

mutate { add_field => { "free" => "hugs" } }

}

}

CONFIG

```

### Your test want to be independent

...

### Structure your test layout

... | test | testing how to docs rspec features listing background testing a pipeline like logstash might become a hard process there has been a lots of improvements and simplifications for the testing of logstash however one of the few things missing is a good document where people can refere in order to know how to write test this issue aim to be a place to discuss and show how to write great test for logstash using the last features of after we re happy with the content in here the objective is to generate a wiki page where everyone can go rspec x features in this section we ll do an overview of the different rspec features and might be useful for you when planning your tests suites rspec core the rspec core provides the structure for writing executable examples of how your code should behave describe context and examples ruby describe something do context in one context do it does one thing do end end context in another context do it does another thing do end end end subjects ruby describe something do context in one context do it does one thing do end end context in another context do it does another thing do end end end let ruby count describe let do let count count it memoizes the value do expect count to eq expect count to eq end it is not cached across examples do expect count to eq end end other important features hooks before around and after filters metadata formaters rspec mocks a test double framework for rspec with support for method stubs fakes and message expectations on generated test doubles and real objects alike allow and expect ruby describe allow do it returns nil from allowed messages do dbl double some collaborator allow dbl to receive foo expect dbl foo to be nil end end constraints ruby expect to receive once expect to receive twice expect to receive exactly n times expect to receive at least once expect to receive at least twice expect to receive at least n times expect to receive at most once expect to receive at most twice expect to receive at most n times spies ruby describe have received do it passes when the message has been received do invitation spy invitation invitation deliver expect invitation to have received deliver end end dealing with legacy code ruby allow any instance of widget to receive name and return wibble ruby expect any instance of widget to receive name and return wobble rspec expectations collection of assertions that lets you express expected outcomes on an object in an example equality matchers ruby describe a string do it is equal to another string of the same value do expect this string to eq this string end it is not equal to another string of a different value do expect this string not to eq a different string end end comparison matchers ruby describe do it is expected to be it is expected to be it is expected to be it is expected to be deliberate failures it is expected to be it is expected to be it is expected to be it is expected to be end predicate matchers ruby calls zero expect not to be zero calls empty expect to be empty calls x multiple of expect x to be multiple of type matchers ruby expect obj to be a kind of type expect to all be odd expect obj to be truthy expect obj to be nil expect area of circle to be within of expect counter increment to change counter count from to expect to contain exactly expect to cover ruby expect this string to end with string expect obj to exist expect raise standarderror to raise error expect to satisfy v v expect obj to respond to foo specify expect b myclass yield once with b to yield control specify expect print foo to output to stdout custom matchers composing matchers best practices for rspec but also general for testing use context ruby context when logged in do it is expected to respond with end context when logged out do it is expected to respond with end have short and clean descriptions ruby context when not valid do it is expected to respond with end becomes ruby when not valid it should respond with one assertion per test ruby context when a resource is exposed do it is expected to respond with content type json it is expected to assign to resource end use subjects ruby subject hero hero first it carries a sword do expect hero equipment to include sword end use let and let ruby describe type id do let resource factorygirl create device let type type find resource type id it sets the type id field do expect resource type id to equal type id end end mock and stub but with caution ruby simulate a not found resource context when not found do before allow resource to receive where with created from params and return false it is expected to respond with end use descriptive factories ruby config config filter mutate add field always awesome if bar mutate add field hello world else if baz mutate add field fancy pants else mutate add field free hugs config your test want to be independent structure your test layout | 1 |

315,449 | 27,074,586,532 | IssuesEvent | 2023-02-14 09:41:45 | gradido/gradido | https://api.github.com/repos/gradido/gradido | closed | 🔧 [Refactor] linting of end-to-end test code | refactor test | ## 🔧 Refactor ticket

The end-to-end test code should have the same linting rules set as the rest of the repository.

| 1.0 | 🔧 [Refactor] linting of end-to-end test code - ## 🔧 Refactor ticket

The end-to-end test code should have the same linting rules set as the rest of the repository.

| test | 🔧 linting of end to end test code 🔧 refactor ticket the end to end test code should have the same linting rules set as the rest of the repository | 1 |

173,587 | 13,431,560,385 | IssuesEvent | 2020-09-07 07:11:45 | h-makoto0212/googleFormToKintone | https://api.github.com/repos/h-makoto0212/googleFormToKintone | closed | Destroyテスト | test | # 課題

`kintoneManager.destroy()`が正常に機能していることを確認する

# 手順

- `destroy.js`を作成する

- `destroy.js`と`kintoneManager.js`をGoogleApplicationScriptに登録し、実行する

- kintoneの指定したレコードが削除されていること | 1.0 | Destroyテスト - # 課題

`kintoneManager.destroy()`が正常に機能していることを確認する

# 手順

- `destroy.js`を作成する

- `destroy.js`と`kintoneManager.js`をGoogleApplicationScriptに登録し、実行する

- kintoneの指定したレコードが削除されていること | test | destroyテスト 課題 kintonemanager destroy が正常に機能していることを確認する 手順 destroy js を作成する destroy js と kintonemanager js をgoogleapplicationscriptに登録し、実行する kintoneの指定したレコードが削除されていること | 1 |

306,637 | 26,485,584,311 | IssuesEvent | 2023-01-17 17:44:07 | dart-lang/co19 | https://api.github.com/repos/dart-lang/co19 | closed | LanguageFeatures/Patterns/map_A04_t02 | bad-test | This test uses instances of class `C` as keys in the maps. Class `C` has a user-defined `operator ==`, but it doesn't define matching `hashCode`, violating the following contract:

https://github.com/dart-lang/sdk/blob/9a7d8857ec81590f9c940f1e15c6923e3ab3453a/sdk/lib/core/object.dart#L65-L71

So, map lookup for `c1` is not guaranteed to find an element which was put into the map with the key `c2` (c1 == c2, but most likely c1.hashCode != c2.hashCode).

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L44-L55

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L98

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L63 | 1.0 | LanguageFeatures/Patterns/map_A04_t02 - This test uses instances of class `C` as keys in the maps. Class `C` has a user-defined `operator ==`, but it doesn't define matching `hashCode`, violating the following contract:

https://github.com/dart-lang/sdk/blob/9a7d8857ec81590f9c940f1e15c6923e3ab3453a/sdk/lib/core/object.dart#L65-L71

So, map lookup for `c1` is not guaranteed to find an element which was put into the map with the key `c2` (c1 == c2, but most likely c1.hashCode != c2.hashCode).

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L44-L55

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L98

https://github.com/dart-lang/co19/blob/780034a9476ea8e9de6d2eecf33e6e0f02a2c220/LanguageFeatures/Patterns/map_A04_t02.dart#L63 | test | languagefeatures patterns map this test uses instances of class c as keys in the maps class c has a user defined operator but it doesn t define matching hashcode violating the following contract so map lookup for is not guaranteed to find an element which was put into the map with the key but most likely hashcode hashcode | 1 |

490,925 | 14,142,167,987 | IssuesEvent | 2020-11-10 13:43:46 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | opened | [0.9.2 develop-109] Add information about minimum value for Craft resource/time multiplier | Category: UI Priority: Low | here:

and here:

| 1.0 | [0.9.2 develop-109] Add information about minimum value for Craft resource/time multiplier - here:

and here:

| non_test | add information about minimum value for craft resource time multiplier here and here | 0 |

317,611 | 27,247,920,486 | IssuesEvent | 2023-02-22 04:43:59 | harvester/harvester | https://api.github.com/repos/harvester/harvester | opened | [BUG] The preset namespace on resource edit page is not correct | kind/bug area/ui priority/2 severity/3 reproduce/always not-require/test-plan | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

**To Reproduce**

Steps to reproduce the behavior:

1. Go to create a Secret in `cattle-logging-system`.

2. Go to Volume create page.

3. The current namespace is `cattle-logging-system`

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

We can not create Volume in `cattle-logging-system`, we should improve the filter logic of namespace dropdown component.

**Support bundle**

<!--

You can generate a support bundle in the bottom of Harvester UI (https://docs.harvesterhci.io/v1.0/troubleshooting/harvester/#generate-a-support-bundle). It includes logs and configurations that help diagnose the issue.

Tokens, passwords, and secrets are automatically removed from support bundles. If you feel it's not appropriate to share the bundle files publicly, please consider:

- Wait for a developer to reach you and provide the bundle file by any secure methods.

- Join our Slack community (https://rancher-users.slack.com/archives/C01GKHKAG0K) to provide the bundle.

- Send the bundle to harvester-support-bundle@suse.com with the correct issue ID. -->

**Environment**

- Harvester ISO version:

- Underlying Infrastructure (e.g. Baremetal with Dell PowerEdge R630):

**Additional context**

Add any other context about the problem here.

| 1.0 | [BUG] The preset namespace on resource edit page is not correct - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

**To Reproduce**

Steps to reproduce the behavior:

1. Go to create a Secret in `cattle-logging-system`.

2. Go to Volume create page.

3. The current namespace is `cattle-logging-system`

**Expected behavior**

<!-- A clear and concise description of what you expected to happen. -->

We can not create Volume in `cattle-logging-system`, we should improve the filter logic of namespace dropdown component.

**Support bundle**

<!--

You can generate a support bundle in the bottom of Harvester UI (https://docs.harvesterhci.io/v1.0/troubleshooting/harvester/#generate-a-support-bundle). It includes logs and configurations that help diagnose the issue.

Tokens, passwords, and secrets are automatically removed from support bundles. If you feel it's not appropriate to share the bundle files publicly, please consider:

- Wait for a developer to reach you and provide the bundle file by any secure methods.

- Join our Slack community (https://rancher-users.slack.com/archives/C01GKHKAG0K) to provide the bundle.

- Send the bundle to harvester-support-bundle@suse.com with the correct issue ID. -->

**Environment**

- Harvester ISO version:

- Underlying Infrastructure (e.g. Baremetal with Dell PowerEdge R630):

**Additional context**

Add any other context about the problem here.

| test | the preset namespace on resource edit page is not correct describe the bug to reproduce steps to reproduce the behavior go to create a secret in cattle logging system go to volume create page the current namespace is cattle logging system expected behavior we can not create volume in cattle logging system we should improve the filter logic of namespace dropdown component support bundle you can generate a support bundle in the bottom of harvester ui it includes logs and configurations that help diagnose the issue tokens passwords and secrets are automatically removed from support bundles if you feel it s not appropriate to share the bundle files publicly please consider wait for a developer to reach you and provide the bundle file by any secure methods join our slack community to provide the bundle send the bundle to harvester support bundle suse com with the correct issue id environment harvester iso version underlying infrastructure e g baremetal with dell poweredge additional context add any other context about the problem here | 1 |

284,387 | 24,595,259,833 | IssuesEvent | 2022-10-14 07:45:56 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | v4 Beta 2 Win64 - Windows Desktop exported EXE Does Not Run On Linux WINE? | bug platform:windows platform:linuxbsd topic:porting needs testing crash | ### Godot version

v4.0.beta2.official [f8745f2f7]

### System information

Windows 11 Pro 64Bit/Linux Mint 21 Cinnamon 64Bit

### Issue description

Hi,

Version: v4.0.beta2.official [f8745f2f7] does not build

a Windows Desktop EXE that runs on Linux WINE version 7.x ?

Can this be fixed in Beta 3 ?

Jesse

### Steps to reproduce

Run Godot v4.0.beta2.official [f8745f2f7]

On Windows 11 Pro 64Bit

Then export game to Windows Desktop EXE executable.

Copy game folder to a Linux and run in WINE 6.x+.

WINE crashes:

________________________________________________________________

```

Unhandled exception: page fault on read access to 0x0000000000000060 in 64-bit code (0x000000014262bbb7).

Register dump:

rip:000000014262bbb7 rsp:000000000081f260 rbp:000000000081f8f0 eflags:00010206 ( R- -- I - -P- )

rax:0000000000000000 rbx:0000000009da85e0 rcx:0000000000000000 rdx:0000000009d40000

rsi:0000000142599690 rdi:0000000009da85f8 r8:0000000009da89f0 r9:0000000000000000 r10:0000000009f60000

r11:0000000009da8b78 r12:000000000081f320 r13:000000000081f310 r14:0000000009da85e8 r15:0000000009da89f0

Stack dump:

0x000000000081f260: 000000000081f348 000000017002a77b

0x000000000081f270: 0000000000000002 00000001429862ef

0x000000000081f280: 000000000081f338 000000014000cf99

0x000000000081f290: 0000000000000001 00000001c8dd5c5e

0x000000000081f2a0: 0000000000000002 0000000000000002

0x000000000081f2b0: 0000000000000002 0000000000000002

0x000000000081f2c0: 000000000081f328 00000001429b2f44

0x000000000081f2d0: 000000000081f8f0 000000000081f9b0

0x000000000081f2e0: 0000000143709fa0 000000000081f990

0x000000000081f2f0: 000000000081f970 00000001c8dd5c84

0x000000000081f300: 0000000009da8470 0000000000000000

0x000000000081f310: 0000000000000000 0000000000000005

Backtrace:

=>0 0x000000014262bbb7 EntryPoint+0x262a6f7() in tetristory (0x000000000081f8f0)

1 0x0000000142df67b9 EntryPoint+0x2df52f9() in tetristory (0x000000000081f8f0)

2 0x000000014006c9b6 EntryPoint+0x6b4f6() in tetristory (0x000000000085b320)

3 0x00000001430ef0d5 EntryPoint+0x30edc15() in tetristory (0x0000000000000001)

4 0x00000001400013c1 in tetristory (+0x13c1) (0x0000000000000001)

5 0x00000001400014d6 EntryPoint+0x16() in tetristory (0x0000000000000000)

6 0x000000007b62c599 ActivateActCtx+0x20a19() in kernel32 (0x0000000000000000)

7 0x0000000170059d53 A_SHAFinal+0x37e63() in ntdll (0x0000000000000000)

0x000000014262bbb7 tetristory+0x262bbb7: movq 0x0000000000000060(%r9),%rcx

Modules:

Module Address Debug info Name (36 modules)

PE 000000007a850000-000000007a854000 Deferred opengl32

PE 000000007b000000-000000007b0d5000 Deferred kernelbase

PE 000000007b600000-000000007b812000 Export kernel32

PE 0000000140000000-0000000143e03000 Export tetristory

PE 0000000170000000-000000017009a000 Export ntdll

PE 00000001c69e0000-00000001c72fc000 Deferred shell32

PE 00000001c8b40000-00000001c8b60000 Deferred msacm32

PE 00000001c8db0000-00000001c8e46000 Deferred msvcrt

PE 00000001d7cb0000-00000001d7cc1000 Deferred wsock32

PE 00000001ec2b0000-00000001ec2d5000 Deferred ws2_32

PE 00000001f51e0000-00000001f51f0000 Deferred hid

PE 000000021a7e0000-000000021a855000 Deferred setupapi

PE 0000000231ae0000-0000000231b62000 Deferred rpcrt4

PE 000000023d820000-000000023da6a000 Deferred user32

PE 0000000240030000-000000024005e000 Deferred iphlpapi

PE 000000025d740000-000000025d74e000 Deferred dwmapi

PE 000000026b4c0000-000000026b53b000 Deferred gdi32

PE 000000028dfa0000-000000028dfac000 Deferred nsi

PE 000000029cfc0000-000000029cfd6000 Deferred dnsapi

PE 00000002bb750000-00000002bb890000 Deferred comctl32

PE 00000002d4d40000-00000002d4d56000 Deferred bcrypt

PE 00000002e3540000-00000002e3591000 Deferred shlwapi

PE 00000002e8f10000-00000002e902b000 Deferred ole32

PE 00000002f1fa0000-00000002f1fad000 Deferred version

PE 00000002f2930000-00000002f293c000 Deferred avrt

PE 0000000308050000-000000030809b000 Deferred dinput8

PE 000000030a950000-000000030a9c1000 Deferred dwrite

PE 00000003126f0000-0000000312709000 Deferred shcore

PE 0000000327020000-0000000327072000 Deferred combase

PE 000000032a700000-000000032a729000 Deferred sechost

PE 0000000330260000-000000033029f000 Deferred advapi32

PE 0000000375610000-0000000375648000 Deferred win32u

PE 00000003af670000-00000003af72f000 Deferred ucrtbase

PE 00000003afd00000-00000003afd1a000 Deferred imm32

PE 00000003b8f00000-00000003b8fc1000 Deferred winmm

PE 00007f11f4080000-00007f11f4084000 Deferred winex11

Threads:

process tid prio (all id:s are in hex)

00000038 services.exe

0000003c 0

00000040 0

0000004c 0

00000050 0

00000074 0

00000098 0

000000b0 0

000000d4 0

000000d8 0

00000044 winedevice.exe

00000048 0

00000054 0

00000058 0

0000005c 0

00000060 0

000000bc 0

00000064 winedevice.exe

00000068 0

00000078 0

0000007c 0

00000080 0

00000084 0

00000088 0

0000008c 0

0000006c explorer.exe

00000070 0

000000c0 0

000000c4 0

00000090 plugplay.exe

00000094 0

0000009c 0

000000a0 0

000000a4 0

000000a8 svchost.exe

000000ac 0

000000b4 0

000000b8 0

000000cc rpcss.exe

000000d0 0

000000dc 0

000000e0 0

000000e4 0

000000e8 0

000000ec 0

000000f0 0

000000fc (D) Z:\home\jlp\Desktop\TS3-G4-Win64\TetriStory.exe

00000100 0 <==

00000104 0

00000108 0

0000010c 0

00000110 0

00000114 0

00000118 0

0000011c 0

00000120 0

00000124 0

00000128 0

0000012c 0

00000130 0

00000134 0

00000138 0

0000013c 0

00000140 0

00000144 0

00000150 0

System information:

Wine build: wine-7.0

Platform: x86_64

Version: Windows 7

Host system: Linux

Host version: 5.15.0-50-generic

```

________________________________________________________________

### Minimal reproduction project

https://github.com/BetaMaxHero/GDScript_Godot_4_T-Story | 1.0 | v4 Beta 2 Win64 - Windows Desktop exported EXE Does Not Run On Linux WINE? - ### Godot version

v4.0.beta2.official [f8745f2f7]

### System information

Windows 11 Pro 64Bit/Linux Mint 21 Cinnamon 64Bit

### Issue description

Hi,

Version: v4.0.beta2.official [f8745f2f7] does not build

a Windows Desktop EXE that runs on Linux WINE version 7.x ?

Can this be fixed in Beta 3 ?

Jesse

### Steps to reproduce

Run Godot v4.0.beta2.official [f8745f2f7]

On Windows 11 Pro 64Bit

Then export game to Windows Desktop EXE executable.

Copy game folder to a Linux and run in WINE 6.x+.

WINE crashes:

________________________________________________________________

```

Unhandled exception: page fault on read access to 0x0000000000000060 in 64-bit code (0x000000014262bbb7).

Register dump:

rip:000000014262bbb7 rsp:000000000081f260 rbp:000000000081f8f0 eflags:00010206 ( R- -- I - -P- )

rax:0000000000000000 rbx:0000000009da85e0 rcx:0000000000000000 rdx:0000000009d40000

rsi:0000000142599690 rdi:0000000009da85f8 r8:0000000009da89f0 r9:0000000000000000 r10:0000000009f60000

r11:0000000009da8b78 r12:000000000081f320 r13:000000000081f310 r14:0000000009da85e8 r15:0000000009da89f0

Stack dump:

0x000000000081f260: 000000000081f348 000000017002a77b

0x000000000081f270: 0000000000000002 00000001429862ef

0x000000000081f280: 000000000081f338 000000014000cf99

0x000000000081f290: 0000000000000001 00000001c8dd5c5e

0x000000000081f2a0: 0000000000000002 0000000000000002

0x000000000081f2b0: 0000000000000002 0000000000000002

0x000000000081f2c0: 000000000081f328 00000001429b2f44

0x000000000081f2d0: 000000000081f8f0 000000000081f9b0

0x000000000081f2e0: 0000000143709fa0 000000000081f990

0x000000000081f2f0: 000000000081f970 00000001c8dd5c84

0x000000000081f300: 0000000009da8470 0000000000000000

0x000000000081f310: 0000000000000000 0000000000000005

Backtrace:

=>0 0x000000014262bbb7 EntryPoint+0x262a6f7() in tetristory (0x000000000081f8f0)

1 0x0000000142df67b9 EntryPoint+0x2df52f9() in tetristory (0x000000000081f8f0)

2 0x000000014006c9b6 EntryPoint+0x6b4f6() in tetristory (0x000000000085b320)

3 0x00000001430ef0d5 EntryPoint+0x30edc15() in tetristory (0x0000000000000001)

4 0x00000001400013c1 in tetristory (+0x13c1) (0x0000000000000001)

5 0x00000001400014d6 EntryPoint+0x16() in tetristory (0x0000000000000000)

6 0x000000007b62c599 ActivateActCtx+0x20a19() in kernel32 (0x0000000000000000)

7 0x0000000170059d53 A_SHAFinal+0x37e63() in ntdll (0x0000000000000000)

0x000000014262bbb7 tetristory+0x262bbb7: movq 0x0000000000000060(%r9),%rcx

Modules:

Module Address Debug info Name (36 modules)

PE 000000007a850000-000000007a854000 Deferred opengl32

PE 000000007b000000-000000007b0d5000 Deferred kernelbase

PE 000000007b600000-000000007b812000 Export kernel32

PE 0000000140000000-0000000143e03000 Export tetristory

PE 0000000170000000-000000017009a000 Export ntdll

PE 00000001c69e0000-00000001c72fc000 Deferred shell32

PE 00000001c8b40000-00000001c8b60000 Deferred msacm32

PE 00000001c8db0000-00000001c8e46000 Deferred msvcrt

PE 00000001d7cb0000-00000001d7cc1000 Deferred wsock32

PE 00000001ec2b0000-00000001ec2d5000 Deferred ws2_32

PE 00000001f51e0000-00000001f51f0000 Deferred hid

PE 000000021a7e0000-000000021a855000 Deferred setupapi

PE 0000000231ae0000-0000000231b62000 Deferred rpcrt4

PE 000000023d820000-000000023da6a000 Deferred user32

PE 0000000240030000-000000024005e000 Deferred iphlpapi

PE 000000025d740000-000000025d74e000 Deferred dwmapi

PE 000000026b4c0000-000000026b53b000 Deferred gdi32

PE 000000028dfa0000-000000028dfac000 Deferred nsi

PE 000000029cfc0000-000000029cfd6000 Deferred dnsapi

PE 00000002bb750000-00000002bb890000 Deferred comctl32

PE 00000002d4d40000-00000002d4d56000 Deferred bcrypt

PE 00000002e3540000-00000002e3591000 Deferred shlwapi

PE 00000002e8f10000-00000002e902b000 Deferred ole32

PE 00000002f1fa0000-00000002f1fad000 Deferred version

PE 00000002f2930000-00000002f293c000 Deferred avrt

PE 0000000308050000-000000030809b000 Deferred dinput8

PE 000000030a950000-000000030a9c1000 Deferred dwrite

PE 00000003126f0000-0000000312709000 Deferred shcore

PE 0000000327020000-0000000327072000 Deferred combase

PE 000000032a700000-000000032a729000 Deferred sechost

PE 0000000330260000-000000033029f000 Deferred advapi32

PE 0000000375610000-0000000375648000 Deferred win32u

PE 00000003af670000-00000003af72f000 Deferred ucrtbase

PE 00000003afd00000-00000003afd1a000 Deferred imm32

PE 00000003b8f00000-00000003b8fc1000 Deferred winmm

PE 00007f11f4080000-00007f11f4084000 Deferred winex11

Threads:

process tid prio (all id:s are in hex)

00000038 services.exe

0000003c 0

00000040 0

0000004c 0

00000050 0

00000074 0

00000098 0

000000b0 0

000000d4 0

000000d8 0

00000044 winedevice.exe

00000048 0

00000054 0

00000058 0

0000005c 0

00000060 0

000000bc 0

00000064 winedevice.exe

00000068 0

00000078 0

0000007c 0

00000080 0

00000084 0

00000088 0

0000008c 0

0000006c explorer.exe

00000070 0

000000c0 0

000000c4 0

00000090 plugplay.exe

00000094 0

0000009c 0

000000a0 0

000000a4 0

000000a8 svchost.exe

000000ac 0

000000b4 0

000000b8 0

000000cc rpcss.exe

000000d0 0

000000dc 0

000000e0 0

000000e4 0

000000e8 0

000000ec 0

000000f0 0

000000fc (D) Z:\home\jlp\Desktop\TS3-G4-Win64\TetriStory.exe

00000100 0 <==

00000104 0

00000108 0

0000010c 0

00000110 0

00000114 0

00000118 0

0000011c 0

00000120 0

00000124 0

00000128 0

0000012c 0

00000130 0

00000134 0

00000138 0

0000013c 0

00000140 0

00000144 0

00000150 0

System information:

Wine build: wine-7.0

Platform: x86_64

Version: Windows 7

Host system: Linux

Host version: 5.15.0-50-generic

```

________________________________________________________________

### Minimal reproduction project

https://github.com/BetaMaxHero/GDScript_Godot_4_T-Story | test | beta windows desktop exported exe does not run on linux wine godot version official system information windows pro linux mint cinnamon issue description hi version official does not build a windows desktop exe that runs on linux wine version x can this be fixed in beta jesse steps to reproduce run godot official on windows pro then export game to windows desktop exe executable copy game folder to a linux and run in wine x wine crashes unhandled exception page fault on read access to in bit code register dump rip rsp rbp eflags r i p rax rbx rcx rdx rsi rdi stack dump backtrace entrypoint in tetristory entrypoint in tetristory entrypoint in tetristory entrypoint in tetristory in tetristory entrypoint in tetristory activateactctx in a shafinal in ntdll tetristory movq rcx modules module address debug info name modules pe deferred pe deferred kernelbase pe export pe export tetristory pe export ntdll pe deferred pe deferred pe deferred msvcrt pe deferred pe deferred pe deferred hid pe deferred setupapi pe deferred pe deferred pe deferred iphlpapi pe deferred dwmapi pe deferred pe deferred nsi pe deferred dnsapi pe deferred pe deferred bcrypt pe deferred shlwapi pe deferred pe deferred version pe deferred avrt pe deferred pe deferred dwrite pe deferred shcore pe deferred combase pe deferred sechost pe deferred pe deferred pe deferred ucrtbase pe deferred pe deferred winmm pe deferred threads process tid prio all id s are in hex services exe winedevice exe winedevice exe explorer exe plugplay exe svchost exe rpcss exe d z home jlp desktop tetristory exe system information wine build wine platform version windows host system linux host version generic minimal reproduction project | 1 |

40,161 | 5,280,250,523 | IssuesEvent | 2017-02-07 13:45:37 | leoponti/dufry | https://api.github.com/repos/leoponti/dufry | closed | Sist- Administracion- gnerarr excel de Packs-PRIORIDAD | Argentina VOLVER A TESTEAR | Hola , te adjunto el documento , esta fallando al intentar armar la hoja 2

[excel pack_enviar.docx](https://github.com/leoponti/dufry/files/729697/excel.pack_enviar.docx)

| 1.0 | Sist- Administracion- gnerarr excel de Packs-PRIORIDAD - Hola , te adjunto el documento , esta fallando al intentar armar la hoja 2

[excel pack_enviar.docx](https://github.com/leoponti/dufry/files/729697/excel.pack_enviar.docx)

| test | sist administracion gnerarr excel de packs prioridad hola te adjunto el documento esta fallando al intentar armar la hoja | 1 |

316,625 | 27,171,434,103 | IssuesEvent | 2023-02-17 19:50:52 | iho-ohi/S-101_Portrayal-Catalogue | https://api.github.com/repos/iho-ohi/S-101_Portrayal-Catalogue | closed | symbol SOUNDSC2 | enhancement test PC 1.1.0 | The symbol SOUNDSC2 for low accurate soundings inferior or equal to the safety depth does not have the correct color.

Proposal : In the definition of the symbol SOUNDSC2 Change the color SNDG1 to SNDG2. | 1.0 | symbol SOUNDSC2 - The symbol SOUNDSC2 for low accurate soundings inferior or equal to the safety depth does not have the correct color.

Proposal : In the definition of the symbol SOUNDSC2 Change the color SNDG1 to SNDG2. | test | symbol the symbol for low accurate soundings inferior or equal to the safety depth does not have the correct color proposal in the definition of the symbol change the color to | 1 |

264 | 5,105,283,853 | IssuesEvent | 2017-01-05 06:30:29 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | [FVT]Failed to run rmvm when setup SN in x86_64 for rh7.3 and rh6.8 | component:automation priority:high | Using latest daily build to install SN, when run below command, case failed.

```

RUN:if [ "x86_64" != "ppc64" -a "kvm" != "ipmi" ];then if [[ "dir:///var/lib/libvirt/images/" =~ "phy" ]]; then rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03; else rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03 -s 20G; fi;fi

[if [ "x86_64" != "ppc64" -a "kvm" != "ipmi" ];then if [[ "dir:///var/lib/libvirt/images/" =~ "phy" ]]; then rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03; else rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03 -s 20G; fi;fi] Running Time:1 sec

RETURN rc = 1

OUTPUT:

c910f04x18v03: Error: Cannot remove guest vm, no such vm found

```

| 1.0 | [FVT]Failed to run rmvm when setup SN in x86_64 for rh7.3 and rh6.8 - Using latest daily build to install SN, when run below command, case failed.

```

RUN:if [ "x86_64" != "ppc64" -a "kvm" != "ipmi" ];then if [[ "dir:///var/lib/libvirt/images/" =~ "phy" ]]; then rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03; else rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03 -s 20G; fi;fi

[if [ "x86_64" != "ppc64" -a "kvm" != "ipmi" ];then if [[ "dir:///var/lib/libvirt/images/" =~ "phy" ]]; then rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03; else rmvm c910f04x18v03 -f -p && mkvm c910f04x18v03 -s 20G; fi;fi] Running Time:1 sec

RETURN rc = 1

OUTPUT:

c910f04x18v03: Error: Cannot remove guest vm, no such vm found

```

| non_test | failed to run rmvm when setup sn in for and using latest daily build to install sn when run below command case failed run if then if then rmvm f p mkvm else rmvm f p mkvm s fi fi then if then rmvm f p mkvm else rmvm f p mkvm s fi fi running time sec return rc output error cannot remove guest vm no such vm found | 0 |

13,353 | 8,197,867,932 | IssuesEvent | 2018-08-31 14:40:29 | hyperapp/hyperapp | https://api.github.com/repos/hyperapp/hyperapp | closed | Rewrite patch algo / improve diffing performance | Feature Performance | I want to rewrite patch to incorporate some prefix/suffix trimming techniques as described in the first link posted below.

Hyperapp unkeyed and keyed fares relatively well according to [js-framework-benchmarks](https://github.com/krausest/js-framework-benchmark) (arguably the best benchmark out there and by far), but we could do a bit better and still get away with our 1 KB proposition.

- https://github.com/hyperapp/hyperapp/issues/216#issuecomment-314051357

- https://github.com/yelouafi/petit-dom/blob/master/src/vdom.js

| True | Rewrite patch algo / improve diffing performance - I want to rewrite patch to incorporate some prefix/suffix trimming techniques as described in the first link posted below.

Hyperapp unkeyed and keyed fares relatively well according to [js-framework-benchmarks](https://github.com/krausest/js-framework-benchmark) (arguably the best benchmark out there and by far), but we could do a bit better and still get away with our 1 KB proposition.

- https://github.com/hyperapp/hyperapp/issues/216#issuecomment-314051357

- https://github.com/yelouafi/petit-dom/blob/master/src/vdom.js

| non_test | rewrite patch algo improve diffing performance i want to rewrite patch to incorporate some prefix suffix trimming techniques as described in the first link posted below hyperapp unkeyed and keyed fares relatively well according to arguably the best benchmark out there and by far but we could do a bit better and still get away with our kb proposition | 0 |

181,180 | 14,855,647,085 | IssuesEvent | 2021-01-18 13:04:58 | poloz-lab/PADIVAR-Hardware | https://api.github.com/repos/poloz-lab/PADIVAR-Hardware | closed | waitingForConnection in ServerSocket has no return field | documentation | waitingForConnection function in ServerSocket class has no \return field in the doxygen documentation but it returns a ClientSocket object. | 1.0 | waitingForConnection in ServerSocket has no return field - waitingForConnection function in ServerSocket class has no \return field in the doxygen documentation but it returns a ClientSocket object. | non_test | waitingforconnection in serversocket has no return field waitingforconnection function in serversocket class has no return field in the doxygen documentation but it returns a clientsocket object | 0 |

15 | 2,490,249,351 | IssuesEvent | 2015-01-02 11:25:50 | tomchristie/mkdocs | https://api.github.com/repos/tomchristie/mkdocs | opened | Document all configuration options | Documentation | Some of the [configuration options](https://github.com/tomchristie/mkdocs/blob/0.11.1/mkdocs/config.py#L10) are not documented.

- `copyright`

- `google_analytics` (only mentioned in the release-notes)

- `repo_name`

- `extra_css` (only mentioned in the release-notes)

- `extra_javascript` (only mentioned in the release-notes)

- `include_nav`

- `include_next_prev`

- `include_search` - but this isn't valid once #222 lands

- `include_sitemap` - this feature doesn't exist next. | 1.0 | Document all configuration options - Some of the [configuration options](https://github.com/tomchristie/mkdocs/blob/0.11.1/mkdocs/config.py#L10) are not documented.

- `copyright`

- `google_analytics` (only mentioned in the release-notes)

- `repo_name`

- `extra_css` (only mentioned in the release-notes)

- `extra_javascript` (only mentioned in the release-notes)

- `include_nav`

- `include_next_prev`

- `include_search` - but this isn't valid once #222 lands

- `include_sitemap` - this feature doesn't exist next. | non_test | document all configuration options some of the are not documented copyright google analytics only mentioned in the release notes repo name extra css only mentioned in the release notes extra javascript only mentioned in the release notes include nav include next prev include search but this isn t valid once lands include sitemap this feature doesn t exist next | 0 |

94,627 | 8,507,087,940 | IssuesEvent | 2018-10-30 18:08:52 | beesEX/be | https://api.github.com/repos/beesEX/be | closed | [be/test] test controller to reset all test data POST /test/reset | BE test | Summary:

Create a controller with a handler function mapped on POST /test/reset to reset all test data in mongodb and prepair new test data for new test session.

TODO:

- create `src/test/test.controller.js` with handler function `reset` mapped on **POST /test/reset** which removes all documents of following collections: **orders**, **trades**, **transactions**, **ohlcv1m**, **ohlcv5m**, **ohlcv60m** and deposits for the called user 100000 BTC and 650000000 USDT. **POST /test/reset** is a secured route. Return 200 if everthing goes fine. | 1.0 | [be/test] test controller to reset all test data POST /test/reset - Summary:

Create a controller with a handler function mapped on POST /test/reset to reset all test data in mongodb and prepair new test data for new test session.

TODO:

- create `src/test/test.controller.js` with handler function `reset` mapped on **POST /test/reset** which removes all documents of following collections: **orders**, **trades**, **transactions**, **ohlcv1m**, **ohlcv5m**, **ohlcv60m** and deposits for the called user 100000 BTC and 650000000 USDT. **POST /test/reset** is a secured route. Return 200 if everthing goes fine. | test | test controller to reset all test data post test reset summary create a controller with a handler function mapped on post test reset to reset all test data in mongodb and prepair new test data for new test session todo create src test test controller js with handler function reset mapped on post test reset which removes all documents of following collections orders trades transactions and deposits for the called user btc and usdt post test reset is a secured route return if everthing goes fine | 1 |

298,140 | 25,792,784,135 | IssuesEvent | 2022-12-10 08:18:57 | neondatabase/neon | https://api.github.com/repos/neondatabase/neon | closed | test_remote_storage_backup_and_restore is flaky: Regex pattern 'tenant xxx already exists, state: Broken' does not match 'tenant xxx already exists, state: Attaching'. | t/bug a/test/flaky | ```

2022-12-09T16:10:58.5890037Z =================================== FAILURES ===================================

2022-12-09T16:10:58.5890555Z _______________ test_remote_storage_backup_and_restore[real_s3] ________________

2022-12-09T16:10:58.5892441Z [gw3] linux -- Python 3.9.2 /github/home/.cache/pypoetry/virtualenvs/neon-_pxWMzVK-py3.9/bin/python

2022-12-09T16:10:58.5893018Z test_runner/fixtures/neon_fixtures.py:1064: in verbose_error

2022-12-09T16:10:58.5893388Z res.raise_for_status()

2022-12-09T16:10:58.5894122Z /github/home/.cache/pypoetry/virtualenvs/neon-_pxWMzVK-py3.9/lib/python3.9/site-packages/requests/models.py:1021: in raise_for_status

2022-12-09T16:10:58.5894630Z raise HTTPError(http_error_msg, response=self)

2022-12-09T16:10:58.5895108Z E requests.exceptions.HTTPError: 500 Server Error: Internal Server Error for url: http://localhost:18077/v1/tenant/f741b9055abf7c666671447f1356d84c/attach

2022-12-09T16:10:58.5895405Z

2022-12-09T16:10:58.5895568Z The above exception was the direct cause of the following exception:

2022-12-09T16:10:58.5896020Z test_runner/regress/test_remote_storage.py:142: in test_remote_storage_backup_and_restore

2022-12-09T16:10:58.5896464Z client.tenant_attach(tenant_id)

2022-12-09T16:10:58.5896799Z test_runner/fixtures/neon_fixtures.py:1118: in tenant_attach

2022-12-09T16:10:58.5897128Z self.verbose_error(res)

2022-12-09T16:10:58.5897502Z test_runner/fixtures/neon_fixtures.py:1070: in verbose_error

2022-12-09T16:10:58.5897932Z raise PageserverApiException(msg) from e

2022-12-09T16:10:58.5898408Z E fixtures.neon_fixtures.PageserverApiException: tenant f741b9055abf7c666671447f1356d84c already exists, state: Attaching

2022-12-09T16:10:58.5901840Z

2022-12-09T16:10:58.5902075Z During handling of the above exception, another exception occurred:

2022-12-09T16:10:58.5902584Z test_runner/regress/test_remote_storage.py:142: in test_remote_storage_backup_and_restore

2022-12-09T16:10:58.5903018Z client.tenant_attach(tenant_id)

2022-12-09T16:10:58.5903932Z E AssertionError: Regex pattern 'tenant f741b9055abf7c666671447f1356d84c already exists, state: Broken' does not match 'tenant f741b9055abf7c666671447f1356d84c already exists, state: Attaching'.

```

https://github.com/neondatabase/neon/actions/runs/3658686712/jobs/6184058795 | 1.0 | test_remote_storage_backup_and_restore is flaky: Regex pattern 'tenant xxx already exists, state: Broken' does not match 'tenant xxx already exists, state: Attaching'. - ```

2022-12-09T16:10:58.5890037Z =================================== FAILURES ===================================

2022-12-09T16:10:58.5890555Z _______________ test_remote_storage_backup_and_restore[real_s3] ________________

2022-12-09T16:10:58.5892441Z [gw3] linux -- Python 3.9.2 /github/home/.cache/pypoetry/virtualenvs/neon-_pxWMzVK-py3.9/bin/python

2022-12-09T16:10:58.5893018Z test_runner/fixtures/neon_fixtures.py:1064: in verbose_error

2022-12-09T16:10:58.5893388Z res.raise_for_status()

2022-12-09T16:10:58.5894122Z /github/home/.cache/pypoetry/virtualenvs/neon-_pxWMzVK-py3.9/lib/python3.9/site-packages/requests/models.py:1021: in raise_for_status

2022-12-09T16:10:58.5894630Z raise HTTPError(http_error_msg, response=self)

2022-12-09T16:10:58.5895108Z E requests.exceptions.HTTPError: 500 Server Error: Internal Server Error for url: http://localhost:18077/v1/tenant/f741b9055abf7c666671447f1356d84c/attach

2022-12-09T16:10:58.5895405Z

2022-12-09T16:10:58.5895568Z The above exception was the direct cause of the following exception:

2022-12-09T16:10:58.5896020Z test_runner/regress/test_remote_storage.py:142: in test_remote_storage_backup_and_restore

2022-12-09T16:10:58.5896464Z client.tenant_attach(tenant_id)

2022-12-09T16:10:58.5896799Z test_runner/fixtures/neon_fixtures.py:1118: in tenant_attach

2022-12-09T16:10:58.5897128Z self.verbose_error(res)

2022-12-09T16:10:58.5897502Z test_runner/fixtures/neon_fixtures.py:1070: in verbose_error

2022-12-09T16:10:58.5897932Z raise PageserverApiException(msg) from e

2022-12-09T16:10:58.5898408Z E fixtures.neon_fixtures.PageserverApiException: tenant f741b9055abf7c666671447f1356d84c already exists, state: Attaching

2022-12-09T16:10:58.5901840Z

2022-12-09T16:10:58.5902075Z During handling of the above exception, another exception occurred:

2022-12-09T16:10:58.5902584Z test_runner/regress/test_remote_storage.py:142: in test_remote_storage_backup_and_restore

2022-12-09T16:10:58.5903018Z client.tenant_attach(tenant_id)

2022-12-09T16:10:58.5903932Z E AssertionError: Regex pattern 'tenant f741b9055abf7c666671447f1356d84c already exists, state: Broken' does not match 'tenant f741b9055abf7c666671447f1356d84c already exists, state: Attaching'.

```

https://github.com/neondatabase/neon/actions/runs/3658686712/jobs/6184058795 | test | test remote storage backup and restore is flaky regex pattern tenant xxx already exists state broken does not match tenant xxx already exists state attaching failures test remote storage backup and restore linux python github home cache pypoetry virtualenvs neon pxwmzvk bin python test runner fixtures neon fixtures py in verbose error res raise for status github home cache pypoetry virtualenvs neon pxwmzvk lib site packages requests models py in raise for status raise httperror http error msg response self e requests exceptions httperror server error internal server error for url the above exception was the direct cause of the following exception test runner regress test remote storage py in test remote storage backup and restore client tenant attach tenant id test runner fixtures neon fixtures py in tenant attach self verbose error res test runner fixtures neon fixtures py in verbose error raise pageserverapiexception msg from e e fixtures neon fixtures pageserverapiexception tenant already exists state attaching during handling of the above exception another exception occurred test runner regress test remote storage py in test remote storage backup and restore client tenant attach tenant id e assertionerror regex pattern tenant already exists state broken does not match tenant already exists state attaching | 1 |

296,180 | 25,535,111,535 | IssuesEvent | 2022-11-29 11:21:39 | vmware-tanzu/tanzu-framework | https://api.github.com/repos/vmware-tanzu/tanzu-framework | closed | Improve the runtime for the CI check 'Main' | area/testing kind/feature needs-triage area/dx | **Describe the feature request**

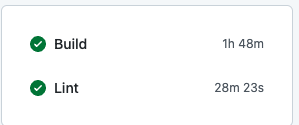

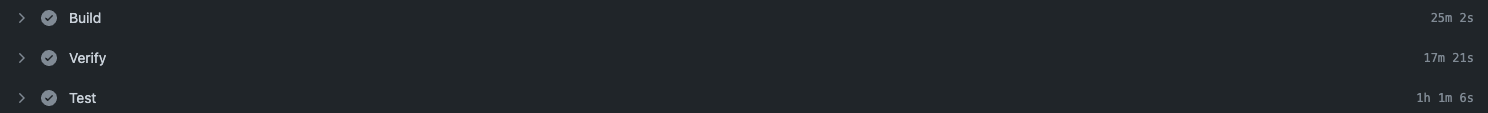

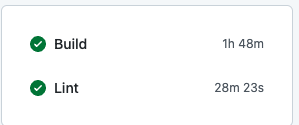

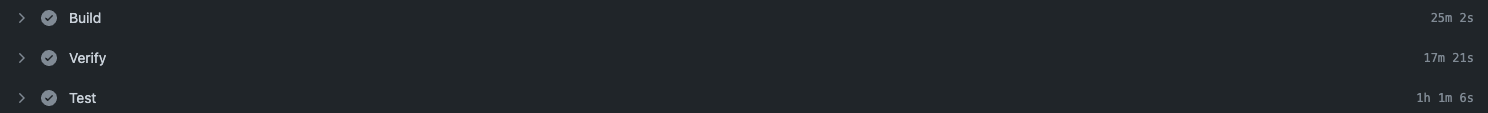

Successful completion of 'Main' github workflow ranges from ~ 1h 30m to ~1h 50m.

We run two parallel jobs in this workflow:

In build step, following steps are linear and takes time:

These steps can be run in parallel.

The lint step is running in parallel anyway.

Proposal:

Run all the 4 operations into different github workflows altogether for better reporting and signals.

**Describe alternative(s) you've considered**

**Affected product area (please put an X in all that apply)**

- ( ) APIs

- ( ) Addons

- ( ) CLI

- ( ) Docs

- ( ) IAM

- ( ) Installation

- ( ) Plugin

- ( ) Security

- ( ) Test and Release

- ( ) User Experience

- (x) Developer Experience

**Additional context**

| 1.0 | Improve the runtime for the CI check 'Main' - **Describe the feature request**

Successful completion of 'Main' github workflow ranges from ~ 1h 30m to ~1h 50m.

We run two parallel jobs in this workflow:

In build step, following steps are linear and takes time:

These steps can be run in parallel.

The lint step is running in parallel anyway.

Proposal:

Run all the 4 operations into different github workflows altogether for better reporting and signals.

**Describe alternative(s) you've considered**

**Affected product area (please put an X in all that apply)**

- ( ) APIs

- ( ) Addons

- ( ) CLI

- ( ) Docs

- ( ) IAM

- ( ) Installation

- ( ) Plugin

- ( ) Security

- ( ) Test and Release

- ( ) User Experience

- (x) Developer Experience

**Additional context**

| test | improve the runtime for the ci check main describe the feature request successful completion of main github workflow ranges from to we run two parallel jobs in this workflow in build step following steps are linear and takes time these steps can be run in parallel the lint step is running in parallel anyway proposal run all the operations into different github workflows altogether for better reporting and signals describe alternative s you ve considered affected product area please put an x in all that apply apis addons cli docs iam installation plugin security test and release user experience x developer experience additional context | 1 |

48,809 | 5,970,768,143 | IssuesEvent | 2017-05-30 23:48:51 | u01jmg3/ics-parser | https://api.github.com/repos/u01jmg3/ics-parser | closed | Monthly RRULE - CET/CEST | to-be-tested | ### Description of the Issue:

I'm getting hours that are not correct taking into account CEST/CET.

Below I'm passing an event that start at `090500`, but when I request the data I get `08:05` back for winter days and `07:05` back for summer days. I'm not seeing this in daily events for example that span CEST/CET months.

### Steps to Reproduce:

iCal:

```

BEGIN:VEVENT

DTSTART;TZID=Europe/Brussels:20170101CET090500

DTEND;TZID=Europe/Brussels:20170101CET172500

RRULE:BYSETPOS=-2;BYDAY=MO;FREQ=MONTHLY;UNTIL=20170529CEST235959

UID:foo

END:VEVENT

```

Edit:

- Apparently monthly recurring `BYMONTHDAY` _does_ return correct data, `BYDAY` _does not_.

- To clarify the hour is incorrect when the dtstart is in CET/CEST and you request the hours in a CEST/CET day, so for example the days in January/February/March will be ok, from April on, you'll get an hour offset

- Yearly suffers from the same issues that monthly was facing, it appears to also move up a week? By my knowledge there's no RRULE for yearly that could use that sort of iteration.

My fix: https://github.com/weconnectdata/ics-parser/commit/d59cb319306b2ec3e8fb3f7982d3dc6a9fb715be (L1107 and following lines are the most important, the rest was linting. The hard coded `|| true` was debug code and was removed in the commit after that. This seems to fix the problem about CET/CEST, but I'm not sure this covers every single case. | 1.0 | Monthly RRULE - CET/CEST - ### Description of the Issue:

I'm getting hours that are not correct taking into account CEST/CET.

Below I'm passing an event that start at `090500`, but when I request the data I get `08:05` back for winter days and `07:05` back for summer days. I'm not seeing this in daily events for example that span CEST/CET months.

### Steps to Reproduce:

iCal:

```

BEGIN:VEVENT

DTSTART;TZID=Europe/Brussels:20170101CET090500

DTEND;TZID=Europe/Brussels:20170101CET172500

RRULE:BYSETPOS=-2;BYDAY=MO;FREQ=MONTHLY;UNTIL=20170529CEST235959

UID:foo

END:VEVENT

```

Edit:

- Apparently monthly recurring `BYMONTHDAY` _does_ return correct data, `BYDAY` _does not_.

- To clarify the hour is incorrect when the dtstart is in CET/CEST and you request the hours in a CEST/CET day, so for example the days in January/February/March will be ok, from April on, you'll get an hour offset

- Yearly suffers from the same issues that monthly was facing, it appears to also move up a week? By my knowledge there's no RRULE for yearly that could use that sort of iteration.

My fix: https://github.com/weconnectdata/ics-parser/commit/d59cb319306b2ec3e8fb3f7982d3dc6a9fb715be (L1107 and following lines are the most important, the rest was linting. The hard coded `|| true` was debug code and was removed in the commit after that. This seems to fix the problem about CET/CEST, but I'm not sure this covers every single case. | test | monthly rrule cet cest description of the issue i m getting hours that are not correct taking into account cest cet below i m passing an event that start at but when i request the data i get back for winter days and back for summer days i m not seeing this in daily events for example that span cest cet months steps to reproduce ical begin vevent dtstart tzid europe brussels dtend tzid europe brussels rrule bysetpos byday mo freq monthly until uid foo end vevent edit apparently monthly recurring bymonthday does return correct data byday does not to clarify the hour is incorrect when the dtstart is in cet cest and you request the hours in a cest cet day so for example the days in january february march will be ok from april on you ll get an hour offset yearly suffers from the same issues that monthly was facing it appears to also move up a week by my knowledge there s no rrule for yearly that could use that sort of iteration my fix and following lines are the most important the rest was linting the hard coded true was debug code and was removed in the commit after that this seems to fix the problem about cet cest but i m not sure this covers every single case | 1 |

148,655 | 13,242,865,503 | IssuesEvent | 2020-08-19 10:26:52 | olifolkerd/tabulator | https://api.github.com/repos/olifolkerd/tabulator | closed | Docs: yarn command to install Tabulator is not correct | Documentation | **Website Page**

http://tabulator.info/docs/4.7/install

**Describe the issue**

The document tells to run `yarn install tabulator-tables` to get Tabulator via the yarn package manager, but it won't work on the latest yarn (v1.22.4). The correct command is `yarn add tabulator-tables`.

```

$ docker run --rm -it node:14-alpine /bin/ash

# Prepare /tmp/project/package.json

/ # mkdir /tmp/project

/ # cd /tmp/project

/tmp/project # npm init -y

# Run yarn install tabulator-tables

/tmp/project # yarn install tabulator-tables

yarn install v1.22.4

info No lockfile found.

error `install` has been replaced with `add` to add new dependencies. Run "yarn add tabulator-tables" instead.

info Visit https://yarnpkg.com/en/docs/cli/install for documentation about this command.

# Run yarn add tabulator-tables

/tmp/project # yarn add tabulator-tables

yarn add v1.22.4

info No lockfile found.

[1/4] Resolving packages...

[2/4] Fetching packages...

[3/4] Linking dependencies...

[4/4] Building fresh packages...

success Saved lockfile.

success Saved 1 new dependency.

info Direct dependencies

└─ tabulator-tables@4.7.2

info All dependencies

└─ tabulator-tables@4.7.2

``` | 1.0 | Docs: yarn command to install Tabulator is not correct - **Website Page**

http://tabulator.info/docs/4.7/install

**Describe the issue**

The document tells to run `yarn install tabulator-tables` to get Tabulator via the yarn package manager, but it won't work on the latest yarn (v1.22.4). The correct command is `yarn add tabulator-tables`.

```

$ docker run --rm -it node:14-alpine /bin/ash

# Prepare /tmp/project/package.json

/ # mkdir /tmp/project

/ # cd /tmp/project

/tmp/project # npm init -y

# Run yarn install tabulator-tables

/tmp/project # yarn install tabulator-tables

yarn install v1.22.4

info No lockfile found.

error `install` has been replaced with `add` to add new dependencies. Run "yarn add tabulator-tables" instead.

info Visit https://yarnpkg.com/en/docs/cli/install for documentation about this command.

# Run yarn add tabulator-tables

/tmp/project # yarn add tabulator-tables

yarn add v1.22.4

info No lockfile found.

[1/4] Resolving packages...

[2/4] Fetching packages...

[3/4] Linking dependencies...

[4/4] Building fresh packages...

success Saved lockfile.

success Saved 1 new dependency.

info Direct dependencies

└─ tabulator-tables@4.7.2

info All dependencies

└─ tabulator-tables@4.7.2

``` | non_test | docs yarn command to install tabulator is not correct website page describe the issue the document tells to run yarn install tabulator tables to get tabulator via the yarn package manager but it won t work on the latest yarn the correct command is yarn add tabulator tables docker run rm it node alpine bin ash prepare tmp project package json mkdir tmp project cd tmp project tmp project npm init y run yarn install tabulator tables tmp project yarn install tabulator tables yarn install info no lockfile found error install has been replaced with add to add new dependencies run yarn add tabulator tables instead info visit for documentation about this command run yarn add tabulator tables tmp project yarn add tabulator tables yarn add info no lockfile found resolving packages fetching packages linking dependencies building fresh packages success saved lockfile success saved new dependency info direct dependencies └─ tabulator tables info all dependencies └─ tabulator tables | 0 |

109,066 | 4,369,668,285 | IssuesEvent | 2016-08-04 01:17:55 | twosigma/beaker-notebook | https://api.github.com/repos/twosigma/beaker-notebook | closed | reorganize cell menus | Bug Priority High User Interface | <img width="303" alt="screen shot 2016-07-18 at 11 01 16 pm" src="https://cloud.githubusercontent.com/assets/963093/16937213/ef164860-4d3a-11e6-9f04-d4822b73c29a.png">

to

Initialization Cell

Lock Cell

Word wrap

Options...

-horizontal-rule-

Cut

Paste (append after)

Publish...

-horizontal-rule-

Run

Show input cell

Show output cell

Move up

Move down

Delete

| 1.0 | reorganize cell menus - <img width="303" alt="screen shot 2016-07-18 at 11 01 16 pm" src="https://cloud.githubusercontent.com/assets/963093/16937213/ef164860-4d3a-11e6-9f04-d4822b73c29a.png">

to

Initialization Cell

Lock Cell

Word wrap

Options...

-horizontal-rule-

Cut

Paste (append after)

Publish...

-horizontal-rule-

Run

Show input cell

Show output cell

Move up

Move down

Delete

| non_test | reorganize cell menus img width alt screen shot at pm src to initialization cell lock cell word wrap options horizontal rule cut paste append after publish horizontal rule run show input cell show output cell move up move down delete | 0 |

382,589 | 11,308,695,762 | IssuesEvent | 2020-01-19 07:55:29 | xournalpp/xournalpp | https://api.github.com/repos/xournalpp/xournalpp | opened | Mouse wheel scrolling does not work with touch workaround enabled | Input bug difficulty:easy priority: medium | **Affects versions :**

- OS: Solus Linux

- X11

- GTK 3.24

- Version of Xournal++: 1.1.0+dev

**Describe the bug**

When the "Touch Workaround" is enabled, scrolling with the mouse wheel does not work when the cursor is above the canvas. Scrolling _does_ work when the cursor is above the scrollbar.

**To Reproduce**

Steps to reproduce the behavior:

1. Open a blank document

2. Attempt to scroll with mouse wheel while above the canvas.

**Expected behavior**

Scrolling works.

**Screenshots of Problem**

N/A

**Additional context**

Can be implemented by listening to mouse wheel inputs on the main widget when "Touch Workaround" is enabled.

| 1.0 | Mouse wheel scrolling does not work with touch workaround enabled - **Affects versions :**

- OS: Solus Linux

- X11

- GTK 3.24

- Version of Xournal++: 1.1.0+dev

**Describe the bug**

When the "Touch Workaround" is enabled, scrolling with the mouse wheel does not work when the cursor is above the canvas. Scrolling _does_ work when the cursor is above the scrollbar.

**To Reproduce**

Steps to reproduce the behavior:

1. Open a blank document

2. Attempt to scroll with mouse wheel while above the canvas.

**Expected behavior**

Scrolling works.

**Screenshots of Problem**

N/A

**Additional context**

Can be implemented by listening to mouse wheel inputs on the main widget when "Touch Workaround" is enabled.