Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

146,402 | 11,735,164,658 | IssuesEvent | 2020-03-11 10:37:57 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: kv0bench/nodes=20/cpu=8/sequential failed | C-test-failure O-roachtest O-robot branch-release-19.2 release-blocker | [(roachtest).kv0bench/nodes=20/cpu=8/sequential failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1721939&tab=buildLog) on [release-19.2@6fab3dc4cb79e95bd8b301eb399220e5331dbf7d](https://github.com/cockroachdb/cockroach/commits/6fab3dc4cb79e95bd8b301eb399220e5331dbf7d):

```

The test failed on branch=release-19.2, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20200201-1721939/kv0bench/nodes=20/cpu=8/sequential/run_1

cluster.go:1927,kvbench.go:220,search.go:43,search.go:173,kvbench.go:330,kvbench.go:87,test_runner.go:734: error with attached stack trace:

main.execCmd

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:406

main.(*cluster).WipeE

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:1917

main.(*cluster).Wipe

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:1926

main.runKVBench.func1

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:220

github.com/cockroachdb/cockroach/pkg/util/search.searchWithSearcher

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/util/search/search.go:43

github.com/cockroachdb/cockroach/pkg/util/search.(*lineSearcher).Search

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/util/search/search.go:173

main.runKVBench

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:330

main.registerKVBenchSpec.func1

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:87

main.(*testRunner).runTest.func2

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:734

runtime.goexit

/usr/local/go/src/runtime/asm_amd64.s:1357

- error with embedded safe details: %s returned:

stderr:

%s

stdout:

%s

-- arg 1: <string>

-- arg 2: <string>

-- arg 3: <string>

- /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod wipe teamcity-1721939-1580542446-68-n21cpu8:1-20 returned:

stderr:

stdout:

teamcity-1721939-1580542446-68-n21cpu8: stopping and waiting

teamcity-1721939-1580542446-68-n21cpu8: wiping

4: exit status 255:

I200201 12:16:03.112635 1 cluster_synced.go:1635 command failed:

- exit status 1

```

<details><summary>More</summary><p>

Artifacts: [/kv0bench/nodes=20/cpu=8/sequential](https://teamcity.cockroachdb.com/viewLog.html?buildId=1721939&tab=artifacts#/kv0bench/nodes=20/cpu=8/sequential)

Related:

- #44330 roachtest: kv0bench/nodes=10/cpu=8/shards=20/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-19.1](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-19.1)

- #44109 roachtest: kv0bench/nodes=10/cpu=8/shards=20/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

- #43551 roachtest: kv0bench/nodes=20/cpu=8/shards=80/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

- #43519 roachtest: kv0bench/nodes=20/cpu=8/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Akv0bench%2Fnodes%3D20%2Fcpu%3D8%2Fsequential.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| 2.0 | roachtest: kv0bench/nodes=20/cpu=8/sequential failed - [(roachtest).kv0bench/nodes=20/cpu=8/sequential failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=1721939&tab=buildLog) on [release-19.2@6fab3dc4cb79e95bd8b301eb399220e5331dbf7d](https://github.com/cockroachdb/cockroach/commits/6fab3dc4cb79e95bd8b301eb399220e5331dbf7d):

```

The test failed on branch=release-19.2, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20200201-1721939/kv0bench/nodes=20/cpu=8/sequential/run_1

cluster.go:1927,kvbench.go:220,search.go:43,search.go:173,kvbench.go:330,kvbench.go:87,test_runner.go:734: error with attached stack trace:

main.execCmd

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:406

main.(*cluster).WipeE

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:1917

main.(*cluster).Wipe

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:1926

main.runKVBench.func1

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:220

github.com/cockroachdb/cockroach/pkg/util/search.searchWithSearcher

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/util/search/search.go:43

github.com/cockroachdb/cockroach/pkg/util/search.(*lineSearcher).Search

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/util/search/search.go:173

main.runKVBench

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:330

main.registerKVBenchSpec.func1

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kvbench.go:87

main.(*testRunner).runTest.func2

/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:734

runtime.goexit

/usr/local/go/src/runtime/asm_amd64.s:1357

- error with embedded safe details: %s returned:

stderr:

%s

stdout:

%s

-- arg 1: <string>

-- arg 2: <string>

-- arg 3: <string>

- /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod wipe teamcity-1721939-1580542446-68-n21cpu8:1-20 returned:

stderr:

stdout:

teamcity-1721939-1580542446-68-n21cpu8: stopping and waiting

teamcity-1721939-1580542446-68-n21cpu8: wiping

4: exit status 255:

I200201 12:16:03.112635 1 cluster_synced.go:1635 command failed:

- exit status 1

```

<details><summary>More</summary><p>

Artifacts: [/kv0bench/nodes=20/cpu=8/sequential](https://teamcity.cockroachdb.com/viewLog.html?buildId=1721939&tab=artifacts#/kv0bench/nodes=20/cpu=8/sequential)

Related:

- #44330 roachtest: kv0bench/nodes=10/cpu=8/shards=20/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-19.1](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-19.1)

- #44109 roachtest: kv0bench/nodes=10/cpu=8/shards=20/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

- #43551 roachtest: kv0bench/nodes=20/cpu=8/shards=80/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

- #43519 roachtest: kv0bench/nodes=20/cpu=8/sequential failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Akv0bench%2Fnodes%3D20%2Fcpu%3D8%2Fsequential.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| test | roachtest nodes cpu sequential failed on the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts nodes cpu sequential run cluster go kvbench go search go search go kvbench go kvbench go test runner go error with attached stack trace main execcmd home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main cluster wipee home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main cluster wipe home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main runkvbench home agent work go src github com cockroachdb cockroach pkg cmd roachtest kvbench go github com cockroachdb cockroach pkg util search searchwithsearcher home agent work go src github com cockroachdb cockroach pkg util search search go github com cockroachdb cockroach pkg util search linesearcher search home agent work go src github com cockroachdb cockroach pkg util search search go main runkvbench home agent work go src github com cockroachdb cockroach pkg cmd roachtest kvbench go main registerkvbenchspec home agent work go src github com cockroachdb cockroach pkg cmd roachtest kvbench go main testrunner runtest home agent work go src github com cockroachdb cockroach pkg cmd roachtest test runner go runtime goexit usr local go src runtime asm s error with embedded safe details s returned stderr s stdout s arg arg arg home agent work go src github com cockroachdb cockroach bin roachprod wipe teamcity returned stderr stdout teamcity stopping and waiting teamcity wiping exit status cluster synced go command failed exit status more artifacts related roachtest nodes cpu shards sequential failed roachtest nodes cpu shards sequential failed roachtest nodes cpu shards sequential failed roachtest nodes cpu sequential failed powered by | 1 |

24,443 | 4,082,165,144 | IssuesEvent | 2016-05-31 11:50:47 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Reorganize unit tests of functions, category "General", chunk 4 | Component: Fitting Component: Framework Misc: Maintenance Quality: Unit Tests | This is one chunk of #16267.

Move the test cases that use the algorithm Fit to the unit test file of Fit (FitTest). Add tests to test the function itself when necessary. Tidy up the includes wherever possible, as for example in #16470.

Functions included in this chunk:

- ThermalNeutronBk2BkExpSigma,

- ThermalNeutronDtoTOFFunction,

- UserFunction,

- UserFunctionMD,

- VesuvioResolution,

- Voigt

| 1.0 | Reorganize unit tests of functions, category "General", chunk 4 - This is one chunk of #16267.

Move the test cases that use the algorithm Fit to the unit test file of Fit (FitTest). Add tests to test the function itself when necessary. Tidy up the includes wherever possible, as for example in #16470.

Functions included in this chunk:

- ThermalNeutronBk2BkExpSigma,

- ThermalNeutronDtoTOFFunction,

- UserFunction,

- UserFunctionMD,

- VesuvioResolution,

- Voigt

| test | reorganize unit tests of functions category general chunk this is one chunk of move the test cases that use the algorithm fit to the unit test file of fit fittest add tests to test the function itself when necessary tidy up the includes wherever possible as for example in functions included in this chunk thermalneutrondtotoffunction userfunction userfunctionmd vesuvioresolution voigt | 1 |

43,394 | 7,044,452,926 | IssuesEvent | 2018-01-01 01:17:18 | jessesquires/JSQDataSourcesKit | https://api.github.com/repos/jessesquires/JSQDataSourcesKit | closed | Update changelog for TableEditingController (#67) | documentation | Update changelog for TableEditingController (#67) for the 6.1.0 release | 1.0 | Update changelog for TableEditingController (#67) - Update changelog for TableEditingController (#67) for the 6.1.0 release | non_test | update changelog for tableeditingcontroller update changelog for tableeditingcontroller for the release | 0 |

140,240 | 18,900,637,928 | IssuesEvent | 2021-11-16 00:14:08 | pustovitDmytro/code-chronicle | https://api.github.com/repos/pustovitDmytro/code-chronicle | opened | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js</a></p>

<p>Path to dependency file: code-chronicle/node_modules/redeyed/examples/browser/index.html</p>

<p>Path to vulnerable library: /node_modules/redeyed/examples/browser/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.8.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/pustovitDmytro/code-chronicle/commit/6e512d3b26c0461e41894cae6558b35a24e5b20e">6e512d3b26c0461e41894cae6558b35a24e5b20e</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440">https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0;jquery-rails - 4.4.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js</a></p>

<p>Path to dependency file: code-chronicle/node_modules/redeyed/examples/browser/index.html</p>

<p>Path to vulnerable library: /node_modules/redeyed/examples/browser/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.8.1.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/pustovitDmytro/code-chronicle/commit/6e512d3b26c0461e41894cae6558b35a24e5b20e">6e512d3b26c0461e41894cae6558b35a24e5b20e</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440">https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0;jquery-rails - 4.4.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file code chronicle node modules redeyed examples browser index html path to vulnerable library node modules redeyed examples browser index html dependency hierarchy x jquery min js vulnerable library found in head commit a href found in base branch master vulnerability details in jquery versions greater than or equal to and before passing html containing elements from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery jquery rails step up your open source security game with whitesource | 0 |

218,551 | 16,762,853,207 | IssuesEvent | 2021-06-14 03:23:42 | bee-queue/bee-queue | https://api.github.com/repos/bee-queue/bee-queue | reopened | In no way job is ever retried | needs-documentation | Code to reproduce

```javascript

const Queue = require("bee-queue");

const queue = new Queue("http-sender");

const job = queue.createJob({

timestamp: Date.now(),

});

job.backoff("exponential", 1000);

job.retries(3);

job.save();

job.on("succeeded", (result) => {

console.log(`${job.id}: sent`);

});

queue.process((job, done) => {

console.log(`Processing job ${job.id}`);

return done(new Error("test"));

});

queue.on("failed", (job, err) => {

console.log(`Job ${job.id} failed with error ${err.message}`);

});

queue.on("retrying", (job, err) => {

console.log(

`Job ${job.id} failed with error ${err.message} but is being retried!`

);

});

``` | 1.0 | In no way job is ever retried - Code to reproduce

```javascript

const Queue = require("bee-queue");

const queue = new Queue("http-sender");

const job = queue.createJob({

timestamp: Date.now(),

});

job.backoff("exponential", 1000);

job.retries(3);

job.save();

job.on("succeeded", (result) => {

console.log(`${job.id}: sent`);

});

queue.process((job, done) => {

console.log(`Processing job ${job.id}`);

return done(new Error("test"));

});

queue.on("failed", (job, err) => {

console.log(`Job ${job.id} failed with error ${err.message}`);

});

queue.on("retrying", (job, err) => {

console.log(

`Job ${job.id} failed with error ${err.message} but is being retried!`

);

});

``` | non_test | in no way job is ever retried code to reproduce javascript const queue require bee queue const queue new queue http sender const job queue createjob timestamp date now job backoff exponential job retries job save job on succeeded result console log job id sent queue process job done console log processing job job id return done new error test queue on failed job err console log job job id failed with error err message queue on retrying job err console log job job id failed with error err message but is being retried | 0 |

131,355 | 18,244,682,905 | IssuesEvent | 2021-10-01 16:46:23 | ibm-skills-network/eslint-config-apset | https://api.github.com/repos/ibm-skills-network/eslint-config-apset | opened | CVE-2021-23337 (High) detected in lodash-4.17.15.tgz | security vulnerability | ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.17.15.tgz">https://registry.npmjs.org/lodash/-/lodash-4.17.15.tgz</a></p>

<p>Path to dependency file: eslint-config-apset/package.json</p>

<p>Path to vulnerable library: eslint-config-apset/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- eslint-plugin-flowtype-3.13.0.tgz (Root Library)

- :x: **lodash-4.17.15.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ibm-skills-network/eslint-config-apset/commit/f87e56dd9ce1995d4e58eef9e03128254285c8d3">f87e56dd9ce1995d4e58eef9e03128254285c8d3</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Lodash versions prior to 4.17.21 are vulnerable to Command Injection via the template function.

<p>Publish Date: 2021-02-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23337>CVE-2021-23337</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.2</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/lodash/lodash/commit/3469357cff396a26c363f8c1b5a91dde28ba4b1c">https://github.com/lodash/lodash/commit/3469357cff396a26c363f8c1b5a91dde28ba4b1c</a></p>

<p>Release Date: 2021-02-15</p>

<p>Fix Resolution: lodash - 4.17.21</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-23337 (High) detected in lodash-4.17.15.tgz - ## CVE-2021-23337 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.15.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.17.15.tgz">https://registry.npmjs.org/lodash/-/lodash-4.17.15.tgz</a></p>

<p>Path to dependency file: eslint-config-apset/package.json</p>

<p>Path to vulnerable library: eslint-config-apset/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- eslint-plugin-flowtype-3.13.0.tgz (Root Library)

- :x: **lodash-4.17.15.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ibm-skills-network/eslint-config-apset/commit/f87e56dd9ce1995d4e58eef9e03128254285c8d3">f87e56dd9ce1995d4e58eef9e03128254285c8d3</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Lodash versions prior to 4.17.21 are vulnerable to Command Injection via the template function.

<p>Publish Date: 2021-02-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23337>CVE-2021-23337</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.2</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/lodash/lodash/commit/3469357cff396a26c363f8c1b5a91dde28ba4b1c">https://github.com/lodash/lodash/commit/3469357cff396a26c363f8c1b5a91dde28ba4b1c</a></p>

<p>Release Date: 2021-02-15</p>

<p>Fix Resolution: lodash - 4.17.21</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in lodash tgz cve high severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file eslint config apset package json path to vulnerable library eslint config apset node modules lodash package json dependency hierarchy eslint plugin flowtype tgz root library x lodash tgz vulnerable library found in head commit a href found in base branch master vulnerability details lodash versions prior to are vulnerable to command injection via the template function publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required high user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution lodash step up your open source security game with whitesource | 0 |

210,379 | 23,754,648,287 | IssuesEvent | 2022-09-01 01:04:37 | venkateshreddypala/enrollee-service | https://api.github.com/repos/venkateshreddypala/enrollee-service | opened | CVE-2022-25857 (High) detected in snakeyaml-1.26.jar | security vulnerability | ## CVE-2022-25857 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/yaml/snakeyaml/1.26/snakeyaml-1.26.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-data-mongodb-2.4.0-SNAPSHOT.jar (Root Library)

- spring-boot-starter-2.4.0-SNAPSHOT.jar

- :x: **snakeyaml-1.26.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package org.yaml:snakeyaml from 0 and before 1.31 are vulnerable to Denial of Service (DoS) due missing to nested depth limitation for collections.

<p>Publish Date: 2022-08-30

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-25857>CVE-2022-25857</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25857">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25857</a></p>

<p>Release Date: 2022-08-30</p>

<p>Fix Resolution: org.yaml:snakeyaml:1.31</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-25857 (High) detected in snakeyaml-1.26.jar - ## CVE-2022-25857 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/yaml/snakeyaml/1.26/snakeyaml-1.26.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-data-mongodb-2.4.0-SNAPSHOT.jar (Root Library)

- spring-boot-starter-2.4.0-SNAPSHOT.jar

- :x: **snakeyaml-1.26.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package org.yaml:snakeyaml from 0 and before 1.31 are vulnerable to Denial of Service (DoS) due missing to nested depth limitation for collections.

<p>Publish Date: 2022-08-30

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-25857>CVE-2022-25857</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25857">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-25857</a></p>

<p>Release Date: 2022-08-30</p>

<p>Fix Resolution: org.yaml:snakeyaml:1.31</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in snakeyaml jar cve high severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file pom xml path to vulnerable library home wss scanner repository org yaml snakeyaml snakeyaml jar dependency hierarchy spring boot starter data mongodb snapshot jar root library spring boot starter snapshot jar x snakeyaml jar vulnerable library found in base branch master vulnerability details the package org yaml snakeyaml from and before are vulnerable to denial of service dos due missing to nested depth limitation for collections publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org yaml snakeyaml step up your open source security game with mend | 0 |

250,400 | 21,263,544,524 | IssuesEvent | 2022-04-13 07:44:10 | hzi-braunschweig/SORMAS-Project | https://api.github.com/repos/hzi-braunschweig/SORMAS-Project | closed | Add loggers to reflect user steps at BDD level into automation framework | testing task e2e-tests | Currently the framework has logging only at the core level: actions performed on webelements.

For a better debuging, please update the BDD methods and add steps to reflect the actions of the user in order to determine failing steps and debug more easy further issues.

Please don't implement other logging libraries, use the existing one, and add fix to expose the logging file into allure report. | 2.0 | Add loggers to reflect user steps at BDD level into automation framework - Currently the framework has logging only at the core level: actions performed on webelements.

For a better debuging, please update the BDD methods and add steps to reflect the actions of the user in order to determine failing steps and debug more easy further issues.

Please don't implement other logging libraries, use the existing one, and add fix to expose the logging file into allure report. | test | add loggers to reflect user steps at bdd level into automation framework currently the framework has logging only at the core level actions performed on webelements for a better debuging please update the bdd methods and add steps to reflect the actions of the user in order to determine failing steps and debug more easy further issues please don t implement other logging libraries use the existing one and add fix to expose the logging file into allure report | 1 |

630,188 | 20,100,184,328 | IssuesEvent | 2022-02-07 02:24:43 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | m.youtube.com - Scrolling not responding when watching videos in landscape mode | browser-firefox-mobile priority-normal severity-important engine-gecko | <!-- @browser: Firefox Mobile 98.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 12; Mobile; rv:98.0) Gecko/98.0 Firefox/98.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/99010 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://m.youtube.com/watch?v=UnFOv0tdEmk&t=2s

**Browser / Version**: Firefox Mobile 98.0

**Operating System**: Android 12

**Tested Another Browser**: Yes Other

**Problem type**: Something else

**Description**: video is cut off even before going full screen, scrolling does not work, and I cant even reach full screen button, this happens on several but not all videos on youtube

**Steps to Reproduce**:

Ok so I am watching videos on youtube in landscape mode, most work fine, but several end up being too zoomed in, cutting the edges of the video and that is even before I hit fullscreen, I can't even scroll the video page and have to use android back button to escape, I am not sure why it only happens on some videos. Expected wantrd behaviour is for whole video to be viewable, and scrolling to work, in any orientation, and for full screen to not cut a single pixel off any edge of all videos, I suspect youtube is messing with firefox again intentionally on android, to make people use their app. Firefox on desktop works fine on all videos.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/2/8dc7f486-2e6e-4914-beba-676da9c6dd0a.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20220130093554</li><li>channel: nightly</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/2/a039d424-dc30-4a92-8b58-f67bf3cf1c7d)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | m.youtube.com - Scrolling not responding when watching videos in landscape mode - <!-- @browser: Firefox Mobile 98.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 12; Mobile; rv:98.0) Gecko/98.0 Firefox/98.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/99010 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://m.youtube.com/watch?v=UnFOv0tdEmk&t=2s

**Browser / Version**: Firefox Mobile 98.0

**Operating System**: Android 12

**Tested Another Browser**: Yes Other

**Problem type**: Something else

**Description**: video is cut off even before going full screen, scrolling does not work, and I cant even reach full screen button, this happens on several but not all videos on youtube

**Steps to Reproduce**:

Ok so I am watching videos on youtube in landscape mode, most work fine, but several end up being too zoomed in, cutting the edges of the video and that is even before I hit fullscreen, I can't even scroll the video page and have to use android back button to escape, I am not sure why it only happens on some videos. Expected wantrd behaviour is for whole video to be viewable, and scrolling to work, in any orientation, and for full screen to not cut a single pixel off any edge of all videos, I suspect youtube is messing with firefox again intentionally on android, to make people use their app. Firefox on desktop works fine on all videos.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/2/8dc7f486-2e6e-4914-beba-676da9c6dd0a.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20220130093554</li><li>channel: nightly</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/2/a039d424-dc30-4a92-8b58-f67bf3cf1c7d)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | m youtube com scrolling not responding when watching videos in landscape mode url browser version firefox mobile operating system android tested another browser yes other problem type something else description video is cut off even before going full screen scrolling does not work and i cant even reach full screen button this happens on several but not all videos on youtube steps to reproduce ok so i am watching videos on youtube in landscape mode most work fine but several end up being too zoomed in cutting the edges of the video and that is even before i hit fullscreen i can t even scroll the video page and have to use android back button to escape i am not sure why it only happens on some videos expected wantrd behaviour is for whole video to be viewable and scrolling to work in any orientation and for full screen to not cut a single pixel off any edge of all videos i suspect youtube is messing with firefox again intentionally on android to make people use their app firefox on desktop works fine on all videos view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel nightly hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |

417,147 | 12,156,140,335 | IssuesEvent | 2020-04-25 16:04:56 | code4romania/stam-acasa | https://api.github.com/repos/code4romania/stam-acasa | closed | Alte persoane în grijă details should only show for account holder | enhancement front-end medium-priority | The `Alte persoane în grijă:` info if now available in the main account holder profile details, but also in the `alte persoane` profile details.

I think it would be best to hide them for `alte persoane`. | 1.0 | Alte persoane în grijă details should only show for account holder - The `Alte persoane în grijă:` info if now available in the main account holder profile details, but also in the `alte persoane` profile details.

I think it would be best to hide them for `alte persoane`. | non_test | alte persoane în grijă details should only show for account holder the alte persoane în grijă info if now available in the main account holder profile details but also in the alte persoane profile details i think it would be best to hide them for alte persoane | 0 |

173,829 | 13,446,433,516 | IssuesEvent | 2020-09-08 12:57:36 | citusdata/citus | https://api.github.com/repos/citusdata/citus | opened | Remove almost duplicate tests | regression tests | We have some tests that are almost copy-pasted for testing mx structure as well. With mx we have some more commands as we connect to the workers but most of the queries are the same. It is hard to maintain two test files. With some conditions (such as is_mx) in the test file, we can have a single file, of course with an alternative output. However this would have the advantage that whenever we update the single file, we would also see the effect on the mx side (as the alternative output will change). With the current structure it is possible to forget to update one of the test files.

See: https://github.com/citusdata/citus/pull/4133#discussion_r484422432 | 1.0 | Remove almost duplicate tests - We have some tests that are almost copy-pasted for testing mx structure as well. With mx we have some more commands as we connect to the workers but most of the queries are the same. It is hard to maintain two test files. With some conditions (such as is_mx) in the test file, we can have a single file, of course with an alternative output. However this would have the advantage that whenever we update the single file, we would also see the effect on the mx side (as the alternative output will change). With the current structure it is possible to forget to update one of the test files.

See: https://github.com/citusdata/citus/pull/4133#discussion_r484422432 | test | remove almost duplicate tests we have some tests that are almost copy pasted for testing mx structure as well with mx we have some more commands as we connect to the workers but most of the queries are the same it is hard to maintain two test files with some conditions such as is mx in the test file we can have a single file of course with an alternative output however this would have the advantage that whenever we update the single file we would also see the effect on the mx side as the alternative output will change with the current structure it is possible to forget to update one of the test files see | 1 |

14,312 | 8,554,640,033 | IssuesEvent | 2018-11-08 07:20:20 | ckfinder/ckfinder | https://api.github.com/repos/ckfinder/ckfinder | closed | Very slow to load when folder contains many directories | Performance UI Tweak | CkFinder 3 hangs for a long time (1-2 minutes) when the image folder contains thousands of directories. CkFinder was much quicker. | True | Very slow to load when folder contains many directories - CkFinder 3 hangs for a long time (1-2 minutes) when the image folder contains thousands of directories. CkFinder was much quicker. | non_test | very slow to load when folder contains many directories ckfinder hangs for a long time minutes when the image folder contains thousands of directories ckfinder was much quicker | 0 |

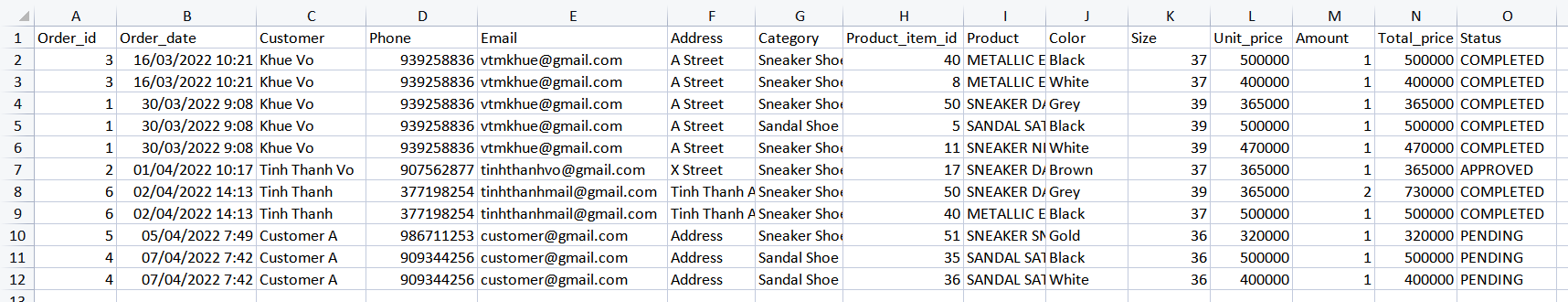

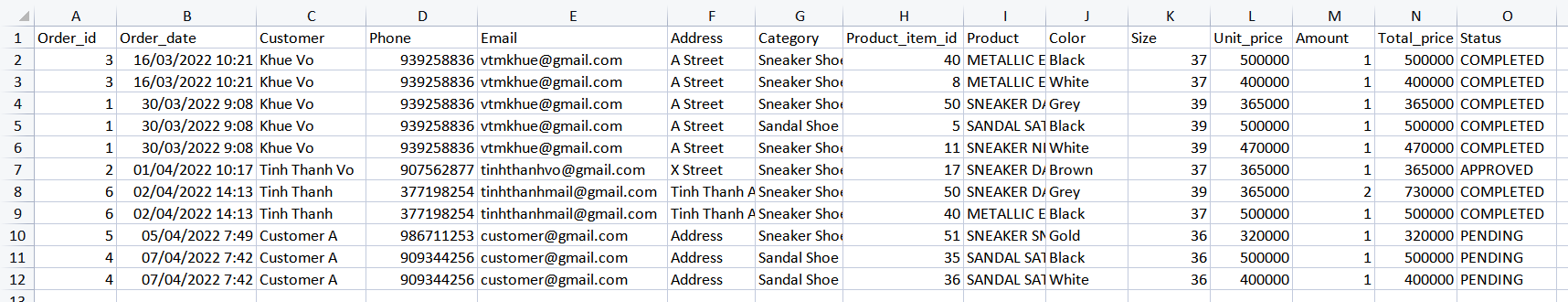

249,576 | 21,178,273,432 | IssuesEvent | 2022-04-08 04:09:50 | tinhthanhvo/api-symfony-unlock | https://api.github.com/repos/tinhthanhvo/api-symfony-unlock | closed | [Report] Export Order info with CSV extension | Testing | This feature support Admin export Order data

### Flow

- Handle data input: Filter condition

- Return output data

### Input (Payload & token)

1. Payload

- fileName (optional): Specific CSV file name

- status (optional): Order status

- fromDate (optional, format: Y-m-d): Start date (to get data)

- toDate (optional, format: Y-m-d): End date (to get data)

2. Token

### Output

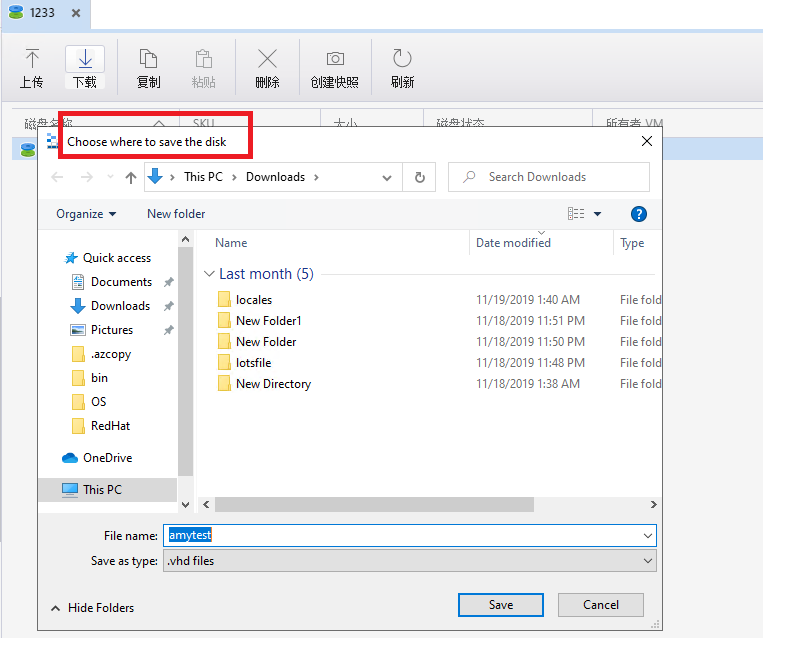

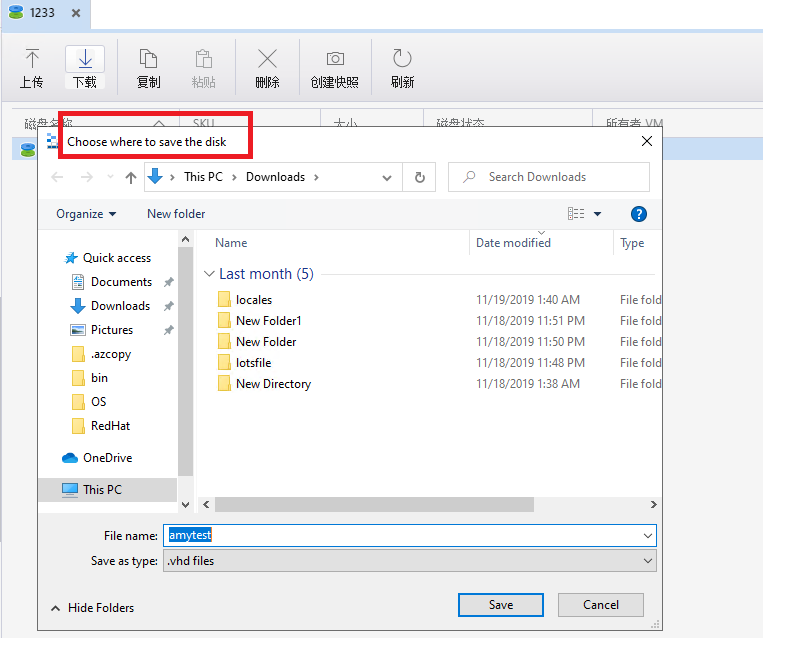

- success: String message with CSV file (format like image below)

- error: String message

### Validation

1. fileName

- Only contain alphabet letter and length cannot be more than 30 characters

2. status

- Value only [1, 2, 3, 4] correspond with [Pending, Approved, Canceled, Completed]

3. fromDate

- Format Y-m-d

- Date value cannot be greater than today

4. toDate

- Format Y-m-d

- Date value cannot be smaller than fromDate | 1.0 | [Report] Export Order info with CSV extension - This feature support Admin export Order data

### Flow

- Handle data input: Filter condition

- Return output data

### Input (Payload & token)

1. Payload

- fileName (optional): Specific CSV file name

- status (optional): Order status

- fromDate (optional, format: Y-m-d): Start date (to get data)

- toDate (optional, format: Y-m-d): End date (to get data)

2. Token

### Output

- success: String message with CSV file (format like image below)

- error: String message

### Validation

1. fileName

- Only contain alphabet letter and length cannot be more than 30 characters

2. status

- Value only [1, 2, 3, 4] correspond with [Pending, Approved, Canceled, Completed]

3. fromDate

- Format Y-m-d

- Date value cannot be greater than today

4. toDate

- Format Y-m-d

- Date value cannot be smaller than fromDate | test | export order info with csv extension this feature support admin export order data flow handle data input filter condition return output data input payload token payload filename optional specific csv file name status optional order status fromdate optional format y m d start date to get data todate optional format y m d end date to get data token output success string message with csv file format like image below error string message validation filename only contain alphabet letter and length cannot be more than characters status value only correspond with fromdate format y m d date value cannot be greater than today todate format y m d date value cannot be smaller than fromdate | 1 |

163,739 | 25,866,679,176 | IssuesEvent | 2022-12-13 21:34:18 | EscolaDeSaudePublica/DesignLab | https://api.github.com/repos/EscolaDeSaudePublica/DesignLab | closed | Atualização de Conteúdo | site Felicilab | Métodos | Site PROJ: Felicilab Prioridade Design: Alta | ## **Objetivo**

**Como** designer

**Quero** atualizar o novo site do Felicilab com conteúdos desenvolvidos pelas narrativas

**Para** lançá-lo até o fim do defeso eleitoral

## **Contexto**

O novo site do Felicilab está em sua fase final de desenvolvimento. Com isso, novas seções foram pensadas e o conteúdo de cada uma delas está em desenvolvimento pelo time de narrativas. Assim que cada conteúdo for entregue, a seção do site precisa ser atualizada e disponibilizada.

## **Escopo**

Métodos ( @ericabpinho )

Metodologias ágeis (ver no guia do colaborador, mas resumir consideravelmente). Breve história da board integrada. SCRUM/papeis. Cerimônias. Modelo híbrido/assíncrono - nossa construção (até o momento).

Ver o texto/modelo do Rani.

| 1.0 | Atualização de Conteúdo | site Felicilab | Métodos - ## **Objetivo**

**Como** designer

**Quero** atualizar o novo site do Felicilab com conteúdos desenvolvidos pelas narrativas

**Para** lançá-lo até o fim do defeso eleitoral

## **Contexto**

O novo site do Felicilab está em sua fase final de desenvolvimento. Com isso, novas seções foram pensadas e o conteúdo de cada uma delas está em desenvolvimento pelo time de narrativas. Assim que cada conteúdo for entregue, a seção do site precisa ser atualizada e disponibilizada.

## **Escopo**

Métodos ( @ericabpinho )

Metodologias ágeis (ver no guia do colaborador, mas resumir consideravelmente). Breve história da board integrada. SCRUM/papeis. Cerimônias. Modelo híbrido/assíncrono - nossa construção (até o momento).

Ver o texto/modelo do Rani.

| non_test | atualização de conteúdo site felicilab métodos objetivo como designer quero atualizar o novo site do felicilab com conteúdos desenvolvidos pelas narrativas para lançá lo até o fim do defeso eleitoral contexto o novo site do felicilab está em sua fase final de desenvolvimento com isso novas seções foram pensadas e o conteúdo de cada uma delas está em desenvolvimento pelo time de narrativas assim que cada conteúdo for entregue a seção do site precisa ser atualizada e disponibilizada escopo métodos ericabpinho metodologias ágeis ver no guia do colaborador mas resumir consideravelmente breve história da board integrada scrum papeis cerimônias modelo híbrido assíncrono nossa construção até o momento ver o texto modelo do rani | 0 |

341,124 | 30,567,461,865 | IssuesEvent | 2023-07-20 18:57:41 | darbaidzeavto/ci_final_exam_test | https://api.github.com/repos/darbaidzeavto/ci_final_exam_test | opened | fe35c89 failed unit tests. | ci-pytest | Automatically generated message

fe35c899c3e3cfff4bcf57cf67cc41921e591ed9 failed unit tests.

first bad commit for pytest was cdd9ed4c690b17c376a77c386ba5401d46ba3482

Pytest report: https://darbaidzeavto.github.io/ci_final_report/fe35c899c3e3cfff4bcf57cf67cc41921e591ed9-1689879459/pytest.html

Black report: https://darbaidzeavto.github.io/ci_final_report/fe35c899c3e3cfff4bcf57cf67cc41921e591ed9-1689879459/black.html

| 1.0 | fe35c89 failed unit tests. - Automatically generated message

fe35c899c3e3cfff4bcf57cf67cc41921e591ed9 failed unit tests.

first bad commit for pytest was cdd9ed4c690b17c376a77c386ba5401d46ba3482

Pytest report: https://darbaidzeavto.github.io/ci_final_report/fe35c899c3e3cfff4bcf57cf67cc41921e591ed9-1689879459/pytest.html

Black report: https://darbaidzeavto.github.io/ci_final_report/fe35c899c3e3cfff4bcf57cf67cc41921e591ed9-1689879459/black.html

| test | failed unit tests automatically generated message failed unit tests first bad commit for pytest was pytest report black report | 1 |

168,858 | 14,174,326,232 | IssuesEvent | 2020-11-12 19:43:41 | OpenMined/OM-Welcome-Package | https://api.github.com/repos/OpenMined/OM-Welcome-Package | opened | Can't Access PySyft Tutorials | Type: Documentation :books: | ## Description

When I click on the PySyft Tutorials link, It gives me a 404 Page Not Found Error

## Screenshots

| 1.0 | Can't Access PySyft Tutorials - ## Description

When I click on the PySyft Tutorials link, It gives me a 404 Page Not Found Error

## Screenshots

| non_test | can t access pysyft tutorials description when i click on the pysyft tutorials link it gives me a page not found error screenshots | 0 |

2,320 | 2,525,203,644 | IssuesEvent | 2015-01-20 22:53:06 | AtlasOfLivingAustralia/biocache-service | https://api.github.com/repos/AtlasOfLivingAustralia/biocache-service | opened | Rationalise i18n mappings | enhancement priority-medium status-accepted type-enhancement | _From @djtfmartin on August 19, 2014 13:20_

*migrated from:* https://code.google.com/p/ala/issues/detail?id=697

*date:* Thu Jun 12 18:33:27 2014

*author:* moyesyside

---

1. Take out duplicate from messages.properties & download.properties (with download.properties being the primary source).

2. Take out all references to EL and CL layers from both.

3. Support darwin core fields in downloads with a dwcHeaders=true request params - leaving the default as is.

4. Add the description into the /search/grouped/facets service.

5. Move i18n properties in message.properties into download.properties (e.g. [http://biocache.ala.org.au/ws/index/fields](http://biocache.ala.org.au/ws/index/fields))

6. If dwcHeaders=true, then use cl### field for headers for sampled fields.

7. Provide dwc.***** mapping in download.properties for index fields and include a dwcTerm field in /index/fields and /search/grouped/facets

8. Add sorts values (index or count).

9. Rename download.properties to fields.properties

10. Consider merging message.properties and download.properties and adding doco.

_Copied from original issue: AtlasOfLivingAustralia/biocache-hubs#83_ | 1.0 | Rationalise i18n mappings - _From @djtfmartin on August 19, 2014 13:20_

*migrated from:* https://code.google.com/p/ala/issues/detail?id=697

*date:* Thu Jun 12 18:33:27 2014

*author:* moyesyside

---

1. Take out duplicate from messages.properties & download.properties (with download.properties being the primary source).

2. Take out all references to EL and CL layers from both.

3. Support darwin core fields in downloads with a dwcHeaders=true request params - leaving the default as is.

4. Add the description into the /search/grouped/facets service.

5. Move i18n properties in message.properties into download.properties (e.g. [http://biocache.ala.org.au/ws/index/fields](http://biocache.ala.org.au/ws/index/fields))

6. If dwcHeaders=true, then use cl### field for headers for sampled fields.

7. Provide dwc.***** mapping in download.properties for index fields and include a dwcTerm field in /index/fields and /search/grouped/facets

8. Add sorts values (index or count).

9. Rename download.properties to fields.properties

10. Consider merging message.properties and download.properties and adding doco.

_Copied from original issue: AtlasOfLivingAustralia/biocache-hubs#83_ | non_test | rationalise mappings from djtfmartin on august migrated from date thu jun author moyesyside take out duplicate from messages properties download properties with download properties being the primary source take out all references to el and cl layers from both support darwin core fields in downloads with a dwcheaders true request params leaving the default as is add the description into the search grouped facets service move properties in message properties into download properties e g if dwcheaders true then use cl field for headers for sampled fields provide dwc mapping in download properties for index fields and include a dwcterm field in index fields and search grouped facets add sorts values index or count rename download properties to fields properties consider merging message properties and download properties and adding doco copied from original issue atlasoflivingaustralia biocache hubs | 0 |

250,769 | 21,335,179,204 | IssuesEvent | 2022-04-18 13:47:14 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | opened | [Flaky Test] Inserts the filtered hello world block even when filter added after block registration | [Type] Flaky Test | <!-- __META_DATA__:{"failedTimes":1,"totalCommits":1,"baseCommit":"33a5d514892c254760b5fceb17e72a9faefa1218"} -->

**Flaky test detected. This is an auto-generated issue by GitHub Actions. Please do NOT edit this manually.**

## Test title

Inserts the filtered hello world block even when filter added after block registration

## Test path

`/home/runner/work/gutenberg/gutenberg/test/e2e/specs/editor/plugins/block-api.spec.js`

## Flaky rate (_estimated_)

`1 / 2` runs

## Errors

| 1.0 | [Flaky Test] Inserts the filtered hello world block even when filter added after block registration - <!-- __META_DATA__:{"failedTimes":1,"totalCommits":1,"baseCommit":"33a5d514892c254760b5fceb17e72a9faefa1218"} -->

**Flaky test detected. This is an auto-generated issue by GitHub Actions. Please do NOT edit this manually.**

## Test title

Inserts the filtered hello world block even when filter added after block registration

## Test path

`/home/runner/work/gutenberg/gutenberg/test/e2e/specs/editor/plugins/block-api.spec.js`

## Flaky rate (_estimated_)

`1 / 2` runs

## Errors

| test | inserts the filtered hello world block even when filter added after block registration flaky test detected this is an auto generated issue by github actions please do not edit this manually test title inserts the filtered hello world block even when filter added after block registration test path home runner work gutenberg gutenberg test specs editor plugins block api spec js flaky rate estimated runs errors | 1 |

324,861 | 27,826,108,036 | IssuesEvent | 2023-03-19 19:37:01 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | kvcoord: flake in TestMultiRangeScanReverseScanInconsistent | C-test-failure A-kv-transactions A-kv skipped-test GA-blocker T-kv branch-release-23.1 | ```

$ ./dev test --stress //pkg/kv/kvclient/kvcoord --filter=TestMultiRangeScanReverseScanInconsistent

...

--- FAIL: TestMultiRangeScanReverseScanInconsistent (1.52s)

test_log_scope.go:161: test logs captured to: /tmp/_tmp/e1d742d7ffb97ae3adc81944eac18765/logTestMultiRangeScanReverseScanInconsistent3519145941

test_log_scope.go:79: use -show-logs to present logs inline

--- FAIL: TestMultiRangeScanReverseScanInconsistent/INCONSISTENT (0.70s)

dist_sender_server_test.go:1160: 0: expected 1 row; got 0

[]

dist_sender_server_test.go:1168: -- test log scope end --

FAIL

```

Also found here: https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_UnitTests_BazelUnitTests/7504394?buildTab=overview&showRootCauses=false&expandBuildProblemsSection=true&expandBuildTestsSection=true&expandBuildChangesSection=true&expandBuildDeploymentsSection=true#%2Ftmp

cc @nvanbenschoten for triage

Jira issue: CRDB-21459 | 2.0 | kvcoord: flake in TestMultiRangeScanReverseScanInconsistent - ```

$ ./dev test --stress //pkg/kv/kvclient/kvcoord --filter=TestMultiRangeScanReverseScanInconsistent

...

--- FAIL: TestMultiRangeScanReverseScanInconsistent (1.52s)

test_log_scope.go:161: test logs captured to: /tmp/_tmp/e1d742d7ffb97ae3adc81944eac18765/logTestMultiRangeScanReverseScanInconsistent3519145941

test_log_scope.go:79: use -show-logs to present logs inline

--- FAIL: TestMultiRangeScanReverseScanInconsistent/INCONSISTENT (0.70s)

dist_sender_server_test.go:1160: 0: expected 1 row; got 0

[]

dist_sender_server_test.go:1168: -- test log scope end --

FAIL

```

Also found here: https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_UnitTests_BazelUnitTests/7504394?buildTab=overview&showRootCauses=false&expandBuildProblemsSection=true&expandBuildTestsSection=true&expandBuildChangesSection=true&expandBuildDeploymentsSection=true#%2Ftmp

cc @nvanbenschoten for triage

Jira issue: CRDB-21459 | test | kvcoord flake in testmultirangescanreversescaninconsistent dev test stress pkg kv kvclient kvcoord filter testmultirangescanreversescaninconsistent fail testmultirangescanreversescaninconsistent test log scope go test logs captured to tmp tmp test log scope go use show logs to present logs inline fail testmultirangescanreversescaninconsistent inconsistent dist sender server test go expected row got dist sender server test go test log scope end fail also found here cc nvanbenschoten for triage jira issue crdb | 1 |

199,371 | 22,693,317,141 | IssuesEvent | 2022-07-05 01:12:12 | attesch/swapi | https://api.github.com/repos/attesch/swapi | closed | CVE-2018-16984 (Medium) detected in Django-1.7.4-py2.py3-none-any.whl - autoclosed | security vulnerability | ## CVE-2018-16984 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.7.4-py2.py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/c9/1e/66f185ca0d4d0ca11b94caeac96a33a13954963a8b563b67d11f50bfeee7/Django-1.7.4-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/c9/1e/66f185ca0d4d0ca11b94caeac96a33a13954963a8b563b67d11f50bfeee7/Django-1.7.4-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /swapi/requirements.txt</p>

<p>Path to vulnerable library: teSource-ArchiveExtractor_cd0131d4-2ba0-4601-beec-b5f2a7e3636b/20190620051939_56417/20190620051812_depth_0/13/Django-2.2.2.tar/Django-2.2.2/django</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.7.4-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/attesch/swapi/commit/4f94ce0b66c94cc8d0908d27fdc9d39faa534139">4f94ce0b66c94cc8d0908d27fdc9d39faa534139</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in Django 2.1 before 2.1.2, in which unprivileged users can read the password hashes of arbitrary accounts. The read-only password widget used by the Django Admin to display an obfuscated password hash was bypassed if a user has only the "view" permission (new in Django 2.1), resulting in display of the entire password hash to those users. This may result in a vulnerability for sites with legacy user accounts using insecure hashes.

<p>Publish Date: 2018-10-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16984>CVE-2018-16984</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2018-16984">https://nvd.nist.gov/vuln/detail/CVE-2018-16984</a></p>

<p>Release Date: 2018-10-02</p>

<p>Fix Resolution: 2.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2018-16984 (Medium) detected in Django-1.7.4-py2.py3-none-any.whl - autoclosed - ## CVE-2018-16984 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.7.4-py2.py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/c9/1e/66f185ca0d4d0ca11b94caeac96a33a13954963a8b563b67d11f50bfeee7/Django-1.7.4-py2.py3-none-any.whl">https://files.pythonhosted.org/packages/c9/1e/66f185ca0d4d0ca11b94caeac96a33a13954963a8b563b67d11f50bfeee7/Django-1.7.4-py2.py3-none-any.whl</a></p>

<p>Path to dependency file: /swapi/requirements.txt</p>

<p>Path to vulnerable library: teSource-ArchiveExtractor_cd0131d4-2ba0-4601-beec-b5f2a7e3636b/20190620051939_56417/20190620051812_depth_0/13/Django-2.2.2.tar/Django-2.2.2/django</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.7.4-py2.py3-none-any.whl** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/attesch/swapi/commit/4f94ce0b66c94cc8d0908d27fdc9d39faa534139">4f94ce0b66c94cc8d0908d27fdc9d39faa534139</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in Django 2.1 before 2.1.2, in which unprivileged users can read the password hashes of arbitrary accounts. The read-only password widget used by the Django Admin to display an obfuscated password hash was bypassed if a user has only the "view" permission (new in Django 2.1), resulting in display of the entire password hash to those users. This may result in a vulnerability for sites with legacy user accounts using insecure hashes.

<p>Publish Date: 2018-10-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16984>CVE-2018-16984</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: High

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2018-16984">https://nvd.nist.gov/vuln/detail/CVE-2018-16984</a></p>

<p>Release Date: 2018-10-02</p>

<p>Fix Resolution: 2.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in django none any whl autoclosed cve medium severity vulnerability vulnerable library django none any whl a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency file swapi requirements txt path to vulnerable library tesource archiveextractor beec depth django tar django django dependency hierarchy x django none any whl vulnerable library found in head commit a href vulnerability details an issue was discovered in django before in which unprivileged users can read the password hashes of arbitrary accounts the read only password widget used by the django admin to display an obfuscated password hash was bypassed if a user has only the view permission new in django resulting in display of the entire password hash to those users this may result in a vulnerability for sites with legacy user accounts using insecure hashes publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required high user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

118,749 | 10,007,255,431 | IssuesEvent | 2019-07-14 09:05:55 | inf3rno/patterns | https://api.github.com/repos/inf3rno/patterns | opened | Alt(ernatives) by patterns | do:2 - test do:3 - implement do:4 - document | I already use this in #27 I believe. It is already designed, I just need to test and document it. | 1.0 | Alt(ernatives) by patterns - I already use this in #27 I believe. It is already designed, I just need to test and document it. | test | alt ernatives by patterns i already use this in i believe it is already designed i just need to test and document it | 1 |

193,163 | 6,882,072,762 | IssuesEvent | 2017-11-21 01:41:47 | Marri/glowfic | https://api.github.com/repos/Marri/glowfic | opened | Audits for character & icon replace | 2. high priority 9. hard dev enhancement | Currently we don't track the state prior to the replace, which means we can lose data if someone accidentally does a replace (especially to something used in the same thread, so we can't just readily disentangle it on a thread-by-thread basis).

This is also a prerequisite to moderators getting the ability to do character / icon replace, since we want to be able to track that nothing silly has gone on (and revert it if a user makes a compelling case for it) – #3.

It would also lead into us allowing users to see their recent replacements, and revert them as necessary, or perhaps display it on the relevant reply histories. | 1.0 | Audits for character & icon replace - Currently we don't track the state prior to the replace, which means we can lose data if someone accidentally does a replace (especially to something used in the same thread, so we can't just readily disentangle it on a thread-by-thread basis).

This is also a prerequisite to moderators getting the ability to do character / icon replace, since we want to be able to track that nothing silly has gone on (and revert it if a user makes a compelling case for it) – #3.

It would also lead into us allowing users to see their recent replacements, and revert them as necessary, or perhaps display it on the relevant reply histories. | non_test | audits for character icon replace currently we don t track the state prior to the replace which means we can lose data if someone accidentally does a replace especially to something used in the same thread so we can t just readily disentangle it on a thread by thread basis this is also a prerequisite to moderators getting the ability to do character icon replace since we want to be able to track that nothing silly has gone on and revert it if a user makes a compelling case for it – it would also lead into us allowing users to see their recent replacements and revert them as necessary or perhaps display it on the relevant reply histories | 0 |

203,595 | 15,376,218,272 | IssuesEvent | 2021-03-02 15:44:55 | avandvik/masters-thesis | https://api.github.com/repos/avandvik/masters-thesis | closed | Skrive tester til Evaluator | test | - Test isFeasibleLoad

- Test isFeasibleDuration

- Test instInMoreThanOneSequence

- Test isIllegalPattern (få den skilt ut i submetode først) | 1.0 | Skrive tester til Evaluator - - Test isFeasibleLoad

- Test isFeasibleDuration

- Test instInMoreThanOneSequence

- Test isIllegalPattern (få den skilt ut i submetode først) | test | skrive tester til evaluator test isfeasibleload test isfeasibleduration test instinmorethanonesequence test isillegalpattern få den skilt ut i submetode først | 1 |

106,337 | 13,262,453,231 | IssuesEvent | 2020-08-20 21:49:34 | elastic/eui | https://api.github.com/repos/elastic/eui | opened | Replace highlight.js as the engine for EuiCodeBlock | assign:designer | Although popular, highlight.js is pretty slow and getting a little old in its methodology. I often see it fail in complex syntax blocks and it'd be nice if we had something that provided virtualization out of the gate.

I'd like to look into replacing it with [react-syntax-highlighter](https://github.com/react-syntax-highlighter/react-syntax-highlighter) which is backed by Prism JS (which I have some love and familiarity with) and comes with virtualization (which would close https://github.com/elastic/eui/issues/1208).

I can give this a shot myself, but please give a yell if anything looks out of sort from that dependency. The bulk of the work I think will be transferring our styling over. | 1.0 | Replace highlight.js as the engine for EuiCodeBlock - Although popular, highlight.js is pretty slow and getting a little old in its methodology. I often see it fail in complex syntax blocks and it'd be nice if we had something that provided virtualization out of the gate.

I'd like to look into replacing it with [react-syntax-highlighter](https://github.com/react-syntax-highlighter/react-syntax-highlighter) which is backed by Prism JS (which I have some love and familiarity with) and comes with virtualization (which would close https://github.com/elastic/eui/issues/1208).