Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

155,842 | 12,279,731,903 | IssuesEvent | 2020-05-08 12:49:42 | DiSSCo/ELViS | https://api.github.com/repos/DiSSCo/ELViS | closed | Change institute logo for Luomus | MVP ELViS - Hotfix 2 bug enhancement resolved to test | Instead of University of Helsinki logo, use Luomus logo for the Finnish Museum of Natural History Luomus institution page: https://elvis-accept.pictura-hosting.nl/institutions/grid.507626.0

| 1.0 | Change institute logo for Luomus - Instead of University of Helsinki logo, use Luomus logo for the Finnish Museum of Natural History Luomus institution page: https://elvis-accept.pictura-hosting.nl/institutions/grid.507626.0

| test | change institute logo for luomus instead of university of helsinki logo use luomus logo for the finnish museum of natural history luomus institution page | 1 |

110,546 | 4,428,593,592 | IssuesEvent | 2016-08-17 03:22:02 | empirical-org/Empirical-Core | https://api.github.com/repos/empirical-org/Empirical-Core | opened | Users cannot save new passwords | Priority: ★ | When a user edits a password in my account, it does not save. Test with username: Teacher, password: Demo. It'd be nice if there was a better interaction once it saves as well. For example, the activity planner text switches from "Save" to "Saved". | 1.0 | Users cannot save new passwords - When a user edits a password in my account, it does not save. Test with username: Teacher, password: Demo. It'd be nice if there was a better interaction once it saves as well. For example, the activity planner text switches from "Save" to "Saved". | non_test | users cannot save new passwords when a user edits a password in my account it does not save test with username teacher password demo it d be nice if there was a better interaction once it saves as well for example the activity planner text switches from save to saved | 0 |

219,982 | 7,348,714,979 | IssuesEvent | 2018-03-08 07:55:41 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | Test: util_file_create/TEST0W: SETUP (all\pmem\nondebug) fails | Exposure: Low OS: Windows Priority: 4 low Type: Bug | Found on 0067d81c59f6fa7c7088aecfd630a7d95a444c3a

Output in attached file:

[log_file.log](https://github.com/pmem/issues/files/1770774/log_file.log)

| 1.0 | Test: util_file_create/TEST0W: SETUP (all\pmem\nondebug) fails - Found on 0067d81c59f6fa7c7088aecfd630a7d95a444c3a

Output in attached file:

[log_file.log](https://github.com/pmem/issues/files/1770774/log_file.log)

| non_test | test util file create setup all pmem nondebug fails found on output in attached file | 0 |

55,516 | 6,480,978,727 | IssuesEvent | 2017-08-18 14:37:19 | Transkribus/TWI-mc | https://api.github.com/repos/Transkribus/TWI-mc | closed | A bread crumb | enhancement ready to test | To improve communication of context within the collection structure and also navigation. | 1.0 | A bread crumb - To improve communication of context within the collection structure and also navigation. | test | a bread crumb to improve communication of context within the collection structure and also navigation | 1 |

113,286 | 9,635,016,155 | IssuesEvent | 2019-05-15 23:11:01 | MichaIng/DietPi | https://api.github.com/repos/MichaIng/DietPi | closed | APT | Error while reinstalling SABnzbd pre-reqs | Bug :beetle: Solution available :clinking_glasses: Testing/testers required :arrow_down_small: | #### Details:

- Date | Tue 14 May 14:16:29 AEST 2019

- Bug report | N/A

- DietPi version | v6.23.3 (MichaIng/master)

- Img creator | DietPi Core Team

- Pre-image | Meveric

- SBC device | Odroid XU3/XU4/HC1/HC2 (armv7l) (index=11)

- Kernel version | #1 SMP PREEMPT Thu Apr 5 12:46:33 UTC 2018

- Distro | stretch (index=4)

- Command | G_AGI par2 p7zip-full libffi-dev libssl-dev

- Exit code | 100

- Software title | DietPi-Software

#### Steps to reproduce:

<!-- Explain how to reproduce the issue -->

1. Error found when updating to 6.23.3; and also when running: dietpi-software reinstall 139

2. ...

#### Expected behaviour:

<!-- What SHOULD be happening? -->

- Updating SABnzbd to latest version

#### Actual behaviour:

<!-- What IS happening? -->

- Exits with error

#### Extra details:

<!-- Please post any extra details that might help solve the issue -->

- ...

#### Additional logs:

```

Log file contents:

E: Unable to correct problems, you have held broken packages.

```

| 2.0 | APT | Error while reinstalling SABnzbd pre-reqs - #### Details:

- Date | Tue 14 May 14:16:29 AEST 2019

- Bug report | N/A

- DietPi version | v6.23.3 (MichaIng/master)

- Img creator | DietPi Core Team

- Pre-image | Meveric

- SBC device | Odroid XU3/XU4/HC1/HC2 (armv7l) (index=11)

- Kernel version | #1 SMP PREEMPT Thu Apr 5 12:46:33 UTC 2018

- Distro | stretch (index=4)

- Command | G_AGI par2 p7zip-full libffi-dev libssl-dev

- Exit code | 100

- Software title | DietPi-Software

#### Steps to reproduce:

<!-- Explain how to reproduce the issue -->

1. Error found when updating to 6.23.3; and also when running: dietpi-software reinstall 139

2. ...

#### Expected behaviour:

<!-- What SHOULD be happening? -->

- Updating SABnzbd to latest version

#### Actual behaviour:

<!-- What IS happening? -->

- Exits with error

#### Extra details:

<!-- Please post any extra details that might help solve the issue -->

- ...

#### Additional logs:

```

Log file contents:

E: Unable to correct problems, you have held broken packages.

```

| test | apt error while reinstalling sabnzbd pre reqs details date tue may aest bug report n a dietpi version michaing master img creator dietpi core team pre image meveric sbc device odroid index kernel version smp preempt thu apr utc distro stretch index command g agi full libffi dev libssl dev exit code software title dietpi software steps to reproduce error found when updating to and also when running dietpi software reinstall expected behaviour updating sabnzbd to latest version actual behaviour exits with error extra details additional logs log file contents e unable to correct problems you have held broken packages | 1 |

143,253 | 5,512,563,185 | IssuesEvent | 2017-03-17 09:48:43 | CS2103JAN2017-T11-B2/main | https://api.github.com/repos/CS2103JAN2017-T11-B2/main | closed | Add 'undo' command to undo most recent modifying action | priority.medium status.complete type.task | Give user ability to run 'undo', which undoes the effects of the last command that modified the todo list. This includes add, delete, and edit commands. | 1.0 | Add 'undo' command to undo most recent modifying action - Give user ability to run 'undo', which undoes the effects of the last command that modified the todo list. This includes add, delete, and edit commands. | non_test | add undo command to undo most recent modifying action give user ability to run undo which undoes the effects of the last command that modified the todo list this includes add delete and edit commands | 0 |

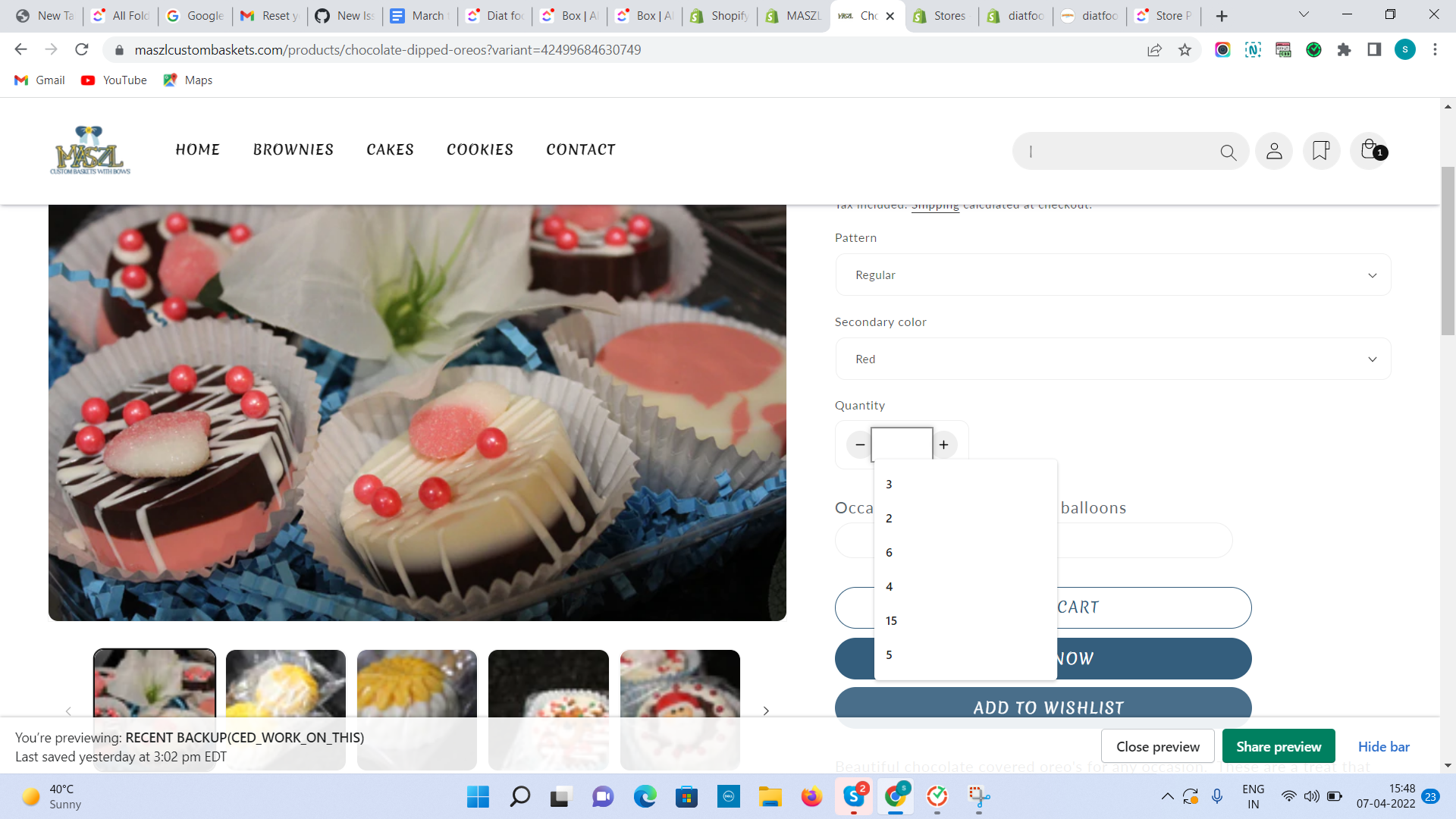

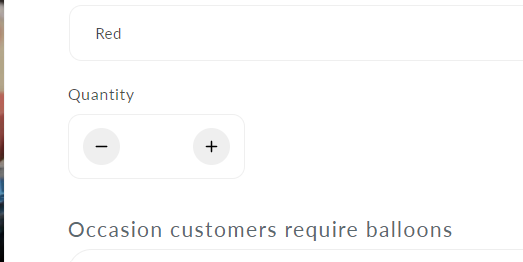

249,586 | 21,178,723,218 | IssuesEvent | 2022-04-08 05:01:34 | stores-cedcommerce/Internal--Shaka-Store-Built-Redesign---12-April22 | https://api.github.com/repos/stores-cedcommerce/Internal--Shaka-Store-Built-Redesign---12-April22 | closed | product page, the quantity input field is coming blank. | Product page Ready to test fixed Desktop | **Actual result:**

1: product page, the quantity input field is coming blank.

2: The border is coming when we click on the input field then the border is coming it .( suggestion )

**Expected result:**

The empty input field is coming when we deleting the quantity. | 1.0 | product page, the quantity input field is coming blank. - **Actual result:**

1: product page, the quantity input field is coming blank.

2: The border is coming when we click on the input field then the border is coming it .( suggestion )

**Expected result:**

The empty input field is coming when we deleting the quantity. | test | product page the quantity input field is coming blank actual result product page the quantity input field is coming blank the border is coming when we click on the input field then the border is coming it suggestion expected result the empty input field is coming when we deleting the quantity | 1 |

21,236 | 3,875,683,488 | IssuesEvent | 2016-04-12 02:45:35 | rancher/os | https://api.github.com/repos/rancher/os | closed | ros os upgrade does not count on local image | status/to-test | I would like to test upgrade. So I build an image and which tagged as rancher/os:v0.4.4-dev. I load this image into system-docker as below

<pre>

[rancher@rancher ~]$ sudo system-docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

rancher/os v0.4.4-dev df84018a6caa 30 minutes ago 193.6 MB

rancher/os v0.4.3 eed7c8ab50fd 3 days ago 193.1 MB

rancher/os-preload v0.4.3 983c005fe53f 3 days ago 25.65 MB

rancher/os-console v0.4.3 d9b2845438df 3 days ago 25.66 MB

rancher/os-udev v0.4.3 8dc9eee7501f 2 weeks ago 25.65 MB

rancher/os-syslog v0.4.3 987960440665 2 weeks ago 25.65 MB

rancher/os-statescript v0.4.3 24355446800e 2 weeks ago 25.65 MB

rancher/os-state v0.4.3 0fe21afc3049 2 weeks ago 25.65 MB

rancher/os-ntp v0.4.3 3e2d57d4ae21 2 weeks ago 25.65 MB

rancher/os-network v0.4.3 5288f2eb944e 2 weeks ago 25.65 MB

rancher/os-docker v0.4.3 6a4e2f959df2 2 weeks ago 25.65 MB

rancher/os-cloudinit v0.4.3 5c47e775e016 2 weeks ago 25.65 MB

rancher/os-autoformat v0.4.3 00182a66713c 2 weeks ago 25.65 MB

rancher/os-acpid v0.4.3 0fa901101944 2 weeks ago 25.65 MB

</pre>

I then run upgrade:

<pre>

[rancher@rancher ~]$ sudo ros os upgrade -i rancher/os:v0.4.4-dev

INFO[0000] Project [once]: Starting project

INFO[0000] [0/1] [os-upgrade]: Starting

INFO[0000] Rebuilding os-upgrade

INFO[0000] [1/1] [os-upgrade]: Started

INFO[0000] Project [once]: Project started

Pulling repository docker.io/rancher/os

ERRO[0008] Failed to pull image rancher/os:v0.4.4-dev: Tag v0.4.4-dev not found in repository docker.io/rancher/os

FATA[0008] Tag v0.4.4-dev not found in repository docker.io/rancher/os

</pre>

I could not say this is a bug. But I really want to see upgrade command respect local images, which should great convenient for testing/developing purpose, what do you think?

| 1.0 | ros os upgrade does not count on local image - I would like to test upgrade. So I build an image and which tagged as rancher/os:v0.4.4-dev. I load this image into system-docker as below

<pre>

[rancher@rancher ~]$ sudo system-docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

rancher/os v0.4.4-dev df84018a6caa 30 minutes ago 193.6 MB

rancher/os v0.4.3 eed7c8ab50fd 3 days ago 193.1 MB

rancher/os-preload v0.4.3 983c005fe53f 3 days ago 25.65 MB

rancher/os-console v0.4.3 d9b2845438df 3 days ago 25.66 MB

rancher/os-udev v0.4.3 8dc9eee7501f 2 weeks ago 25.65 MB

rancher/os-syslog v0.4.3 987960440665 2 weeks ago 25.65 MB

rancher/os-statescript v0.4.3 24355446800e 2 weeks ago 25.65 MB

rancher/os-state v0.4.3 0fe21afc3049 2 weeks ago 25.65 MB

rancher/os-ntp v0.4.3 3e2d57d4ae21 2 weeks ago 25.65 MB

rancher/os-network v0.4.3 5288f2eb944e 2 weeks ago 25.65 MB

rancher/os-docker v0.4.3 6a4e2f959df2 2 weeks ago 25.65 MB

rancher/os-cloudinit v0.4.3 5c47e775e016 2 weeks ago 25.65 MB

rancher/os-autoformat v0.4.3 00182a66713c 2 weeks ago 25.65 MB

rancher/os-acpid v0.4.3 0fa901101944 2 weeks ago 25.65 MB

</pre>

I then run upgrade:

<pre>

[rancher@rancher ~]$ sudo ros os upgrade -i rancher/os:v0.4.4-dev

INFO[0000] Project [once]: Starting project

INFO[0000] [0/1] [os-upgrade]: Starting

INFO[0000] Rebuilding os-upgrade

INFO[0000] [1/1] [os-upgrade]: Started

INFO[0000] Project [once]: Project started

Pulling repository docker.io/rancher/os

ERRO[0008] Failed to pull image rancher/os:v0.4.4-dev: Tag v0.4.4-dev not found in repository docker.io/rancher/os

FATA[0008] Tag v0.4.4-dev not found in repository docker.io/rancher/os

</pre>

I could not say this is a bug. But I really want to see upgrade command respect local images, which should great convenient for testing/developing purpose, what do you think?

| test | ros os upgrade does not count on local image i would like to test upgrade so i build an image and which tagged as rancher os dev i load this image into system docker as below sudo system docker images repository tag image id created size rancher os dev minutes ago mb rancher os days ago mb rancher os preload days ago mb rancher os console days ago mb rancher os udev weeks ago mb rancher os syslog weeks ago mb rancher os statescript weeks ago mb rancher os state weeks ago mb rancher os ntp weeks ago mb rancher os network weeks ago mb rancher os docker weeks ago mb rancher os cloudinit weeks ago mb rancher os autoformat weeks ago mb rancher os acpid weeks ago mb i then run upgrade sudo ros os upgrade i rancher os dev info project starting project info starting info rebuilding os upgrade info started info project project started pulling repository docker io rancher os erro failed to pull image rancher os dev tag dev not found in repository docker io rancher os fata tag dev not found in repository docker io rancher os i could not say this is a bug but i really want to see upgrade command respect local images which should great convenient for testing developing purpose what do you think | 1 |

244,809 | 20,718,138,474 | IssuesEvent | 2022-03-13 00:26:55 | fortran-lang/minpack | https://api.github.com/repos/fortran-lang/minpack | closed | Modernize examples | tests refactoring | The current tests are not actually testing anything other than running the examples. They provide no way to check the results other than by manual inspection, which makes them not reliable as regression tests.

Required steps:

- split the objective functions in separate callbacks rather than one big select case via a common block variable

- have a resource module to hold the objective functions and initializers to avoid code duplication

- actually test the outcome of the examples is correct up to a given tolerance | 1.0 | Modernize examples - The current tests are not actually testing anything other than running the examples. They provide no way to check the results other than by manual inspection, which makes them not reliable as regression tests.

Required steps:

- split the objective functions in separate callbacks rather than one big select case via a common block variable

- have a resource module to hold the objective functions and initializers to avoid code duplication

- actually test the outcome of the examples is correct up to a given tolerance | test | modernize examples the current tests are not actually testing anything other than running the examples they provide no way to check the results other than by manual inspection which makes them not reliable as regression tests required steps split the objective functions in separate callbacks rather than one big select case via a common block variable have a resource module to hold the objective functions and initializers to avoid code duplication actually test the outcome of the examples is correct up to a given tolerance | 1 |

171,629 | 20,984,377,547 | IssuesEvent | 2022-03-29 00:22:11 | senditagile/yodub.com | https://api.github.com/repos/senditagile/yodub.com | opened | secret discovered - "data/template/config.json - f438d1c5d8aaa822fbe180a5c5d3a7ac0e175938a75c139aa97bc384b70ddd71" | security security-risk: low trufflehog | New Finding Alert

To Forever Suppress This Finding From Alerting add the SHA256 to suppressions-trufflehog3 file, to suppress all findings for this commit, add the commit hash instead. See https://github.com/netlify/security-netlify-trufflehog3#suppression_file_path

--Repo: senditagile/yodub.com

--Date: "2022-03-28T20:19:36-04:00"

--Path: "data/template/config.json"

--Branch: "main"

--Commit: "cbbba2c6fb589031f1fb22de292d2d1637e5d75b"

--Commit Message: "Removes babelrc"

--Line Number: "25"

--Severity: "LOW"

--Reason: "Generic Secret" - "generic.secret"

--String Discovered: "Secret\": \"2ff6331da9e53f9a91bcc991d38d550c85026714\""

--SHA256: f438d1c5d8aaa822fbe180a5c5d3a7ac0e175938a75c139aa97bc384b70ddd71

| True | secret discovered - "data/template/config.json - f438d1c5d8aaa822fbe180a5c5d3a7ac0e175938a75c139aa97bc384b70ddd71" - New Finding Alert

To Forever Suppress This Finding From Alerting add the SHA256 to suppressions-trufflehog3 file, to suppress all findings for this commit, add the commit hash instead. See https://github.com/netlify/security-netlify-trufflehog3#suppression_file_path

--Repo: senditagile/yodub.com

--Date: "2022-03-28T20:19:36-04:00"

--Path: "data/template/config.json"

--Branch: "main"

--Commit: "cbbba2c6fb589031f1fb22de292d2d1637e5d75b"

--Commit Message: "Removes babelrc"

--Line Number: "25"

--Severity: "LOW"

--Reason: "Generic Secret" - "generic.secret"

--String Discovered: "Secret\": \"2ff6331da9e53f9a91bcc991d38d550c85026714\""

--SHA256: f438d1c5d8aaa822fbe180a5c5d3a7ac0e175938a75c139aa97bc384b70ddd71

| non_test | secret discovered data template config json new finding alert to forever suppress this finding from alerting add the to suppressions file to suppress all findings for this commit add the commit hash instead see repo senditagile yodub com date path data template config json branch main commit commit message removes babelrc line number severity low reason generic secret generic secret string discovered secret | 0 |

68,509 | 21,675,997,916 | IssuesEvent | 2022-05-08 18:18:59 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: | I-defect needs-triaging | ### What happened?

I'm noticing flaky session not created issue in my personal test framework. I have a Selenium Grid running with the help of docker-compose. I run my tests on gitlab CI.

Mostly it works fine but suddenly starts to fail. Sometime even a small change NOT related to selenium Gird in my code creates this issue.

To demostrate this - I'm sharing my Gitlab Repo link and under CI - you can see that I tried running the same codebase (without any changes) and it failed first and then successfully ran. If you check the logs you will notice the below error ->

I have tried using selenium grid 4.1.3 and 4.1.4

```

org.openqa.selenium.SessionNotCreatedException: Could not start a new session. Possible causes are invalid address of the remote server or browser start-up failure.

Build info: version: '4.1.3', revision: '7b1ebf28ef'

System info: host: '5a85d554d8f3', ip: '172.19.0.5', os.name: 'Linux', os.arch: 'amd64', os.version: '5.4.109+', java.version: '1.8.0_212'

Driver info: org.openqa.selenium.remote.RemoteWebDriver

Command: [null, newSession {capabilities=[Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}}], desiredCapabilities=Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}, name: UI Regression}}]

Capabilities {}

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:585)

at org.openqa.selenium.remote.RemoteWebDriver.startSession(RemoteWebDriver.java:248)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:164)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:146)

at functional.BaseTest.getRemoteDriver(BaseTest.java:113)

```

### How can we reproduce the issue?

```shell

Below is my project link -

https://gitlab.com/suryajit7/my-blog-topics

```

### Relevant log output

```shell

org.openqa.selenium.SessionNotCreatedException: Could not start a new session. Possible causes are invalid address of the remote server or browser start-up failure.

Build info: version: '4.1.3', revision: '7b1ebf28ef'

System info: host: '5a85d554d8f3', ip: '172.19.0.5', os.name: 'Linux', os.arch: 'amd64', os.version: '5.4.109+', java.version: '1.8.0_212'

Driver info: org.openqa.selenium.remote.RemoteWebDriver

Command: [null, newSession {capabilities=[Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}}], desiredCapabilities=Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}, name: UI Regression}}]

Capabilities {}

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:585)

at org.openqa.selenium.remote.RemoteWebDriver.startSession(RemoteWebDriver.java:248)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:164)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:146)

at functional.BaseTest.getRemoteDriver(BaseTest.java:113)

at functional.BaseTest.beforeClassSetup(BaseTest.java:88)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.testng.internal.invokers.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:135)

at org.testng.internal.invokers.MethodInvocationHelper.invokeMethodConsideringTimeout(MethodInvocationHelper.java:65)

at org.testng.internal.invokers.ConfigInvoker.invokeConfigurationMethod(ConfigInvoker.java:381)

at org.testng.internal.invokers.ConfigInvoker.invokeConfigurations(ConfigInvoker.java:319)

at org.testng.internal.invokers.TestMethodWorker.invokeBeforeClassMethods(TestMethodWorker.java:178)

at org.testng.internal.invokers.TestMethodWorker.run(TestMethodWorker.java:122)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.io.UncheckedIOException: java.net.ConnectException: Connection refused: hub/172.19.0.2:4444

at org.openqa.selenium.remote.http.netty.NettyHttpHandler.makeCall(NettyHttpHandler.java:80)

at org.openqa.selenium.remote.http.AddSeleniumUserAgent.lambda$apply$0(AddSeleniumUserAgent.java:42)

at org.openqa.selenium.remote.http.Filter.lambda$andFinally$1(Filter.java:56)

at org.openqa.selenium.remote.http.netty.NettyHttpHandler.execute(NettyHttpHandler.java:51)

at org.openqa.selenium.remote.http.AddSeleniumUserAgent.lambda$apply$0(AddSeleniumUserAgent.java:42)

at org.openqa.selenium.remote.http.Filter.lambda$andFinally$1(Filter.java:56)

at org.openqa.selenium.remote.http.netty.NettyClient.execute(NettyClient.java:124)

at org.openqa.selenium.remote.tracing.TracedHttpClient.execute(TracedHttpClient.java:55)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:102)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:84)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:62)

at org.openqa.selenium.remote.HttpCommandExecutor.execute(HttpCommandExecutor.java:156)

at org.openqa.selenium.remote.TracedCommandExecutor.execute(TracedCommandExecutor.java:51)

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:567)

... 18 more

Caused by: java.net.ConnectException: Connection refused: hub/172.19.0.2:4444

at org.asynchttpclient.netty.channel.NettyConnectListener.onFailure(NettyConnectListener.java:179)

at org.asynchttpclient.netty.channel.NettyChannelConnector$1.onFailure(NettyChannelConnector.java:108)

at org.asynchttpclient.netty.SimpleChannelFutureListener.operationComplete(SimpleChannelFutureListener.java:28)

at org.asynchttpclient.netty.SimpleChannelFutureListener.operationComplete(SimpleChannelFutureListener.java:20)

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:578)

at io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:571)

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:550)

at io.netty.util.concurrent.DefaultPromise.notifyListeners(DefaultPromise.java:491)

at io.netty.util.concurrent.DefaultPromise.setValue0(DefaultPromise.java:616)

at io.netty.util.concurrent.DefaultPromise.setFailure0(DefaultPromise.java:609)

at io.netty.util.concurrent.DefaultPromise.tryFailure(DefaultPromise.java:117)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.fulfillConnectPromise(AbstractNioChannel.java:321)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:337)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:710)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:658)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:584)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:496)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

... 1 more

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: hub/172.19.0.2:4444

Caused by: java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at io.netty.channel.socket.nio.NioSocketChannel.doFinishConnect(NioSocketChannel.java:330)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:334)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:710)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:658)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:584)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:496)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748)

```

### Operating System

Windows 10

### Selenium version

Selenium Grid with nodechrome = 4.1.3

### What are the browser(s) and version(s) where you see this issue?

Chrome latest version 100

### What are the browser driver(s) and version(s) where you see this issue?

Selenium Grid with nodechrome = 4.1.3

### Are you using Selenium Grid?

Yes - Selenium Grid 4.1.3 with docker-compose | 1.0 | [🐛 Bug]: - ### What happened?

I'm noticing flaky session not created issue in my personal test framework. I have a Selenium Grid running with the help of docker-compose. I run my tests on gitlab CI.

Mostly it works fine but suddenly starts to fail. Sometime even a small change NOT related to selenium Gird in my code creates this issue.

To demostrate this - I'm sharing my Gitlab Repo link and under CI - you can see that I tried running the same codebase (without any changes) and it failed first and then successfully ran. If you check the logs you will notice the below error ->

I have tried using selenium grid 4.1.3 and 4.1.4

```

org.openqa.selenium.SessionNotCreatedException: Could not start a new session. Possible causes are invalid address of the remote server or browser start-up failure.

Build info: version: '4.1.3', revision: '7b1ebf28ef'

System info: host: '5a85d554d8f3', ip: '172.19.0.5', os.name: 'Linux', os.arch: 'amd64', os.version: '5.4.109+', java.version: '1.8.0_212'

Driver info: org.openqa.selenium.remote.RemoteWebDriver

Command: [null, newSession {capabilities=[Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}}], desiredCapabilities=Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}, name: UI Regression}}]

Capabilities {}

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:585)

at org.openqa.selenium.remote.RemoteWebDriver.startSession(RemoteWebDriver.java:248)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:164)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:146)

at functional.BaseTest.getRemoteDriver(BaseTest.java:113)

```

### How can we reproduce the issue?

```shell

Below is my project link -

https://gitlab.com/suryajit7/my-blog-topics

```

### Relevant log output

```shell

org.openqa.selenium.SessionNotCreatedException: Could not start a new session. Possible causes are invalid address of the remote server or browser start-up failure.

Build info: version: '4.1.3', revision: '7b1ebf28ef'

System info: host: '5a85d554d8f3', ip: '172.19.0.5', os.name: 'Linux', os.arch: 'amd64', os.version: '5.4.109+', java.version: '1.8.0_212'

Driver info: org.openqa.selenium.remote.RemoteWebDriver

Command: [null, newSession {capabilities=[Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}}], desiredCapabilities=Capabilities {browserName: chrome, goog:chromeOptions: {args: [], extensions: []}, name: UI Regression}}]

Capabilities {}

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:585)

at org.openqa.selenium.remote.RemoteWebDriver.startSession(RemoteWebDriver.java:248)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:164)

at org.openqa.selenium.remote.RemoteWebDriver.<init>(RemoteWebDriver.java:146)

at functional.BaseTest.getRemoteDriver(BaseTest.java:113)

at functional.BaseTest.beforeClassSetup(BaseTest.java:88)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.testng.internal.invokers.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:135)

at org.testng.internal.invokers.MethodInvocationHelper.invokeMethodConsideringTimeout(MethodInvocationHelper.java:65)

at org.testng.internal.invokers.ConfigInvoker.invokeConfigurationMethod(ConfigInvoker.java:381)

at org.testng.internal.invokers.ConfigInvoker.invokeConfigurations(ConfigInvoker.java:319)

at org.testng.internal.invokers.TestMethodWorker.invokeBeforeClassMethods(TestMethodWorker.java:178)

at org.testng.internal.invokers.TestMethodWorker.run(TestMethodWorker.java:122)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.io.UncheckedIOException: java.net.ConnectException: Connection refused: hub/172.19.0.2:4444

at org.openqa.selenium.remote.http.netty.NettyHttpHandler.makeCall(NettyHttpHandler.java:80)

at org.openqa.selenium.remote.http.AddSeleniumUserAgent.lambda$apply$0(AddSeleniumUserAgent.java:42)

at org.openqa.selenium.remote.http.Filter.lambda$andFinally$1(Filter.java:56)

at org.openqa.selenium.remote.http.netty.NettyHttpHandler.execute(NettyHttpHandler.java:51)

at org.openqa.selenium.remote.http.AddSeleniumUserAgent.lambda$apply$0(AddSeleniumUserAgent.java:42)

at org.openqa.selenium.remote.http.Filter.lambda$andFinally$1(Filter.java:56)

at org.openqa.selenium.remote.http.netty.NettyClient.execute(NettyClient.java:124)

at org.openqa.selenium.remote.tracing.TracedHttpClient.execute(TracedHttpClient.java:55)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:102)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:84)

at org.openqa.selenium.remote.ProtocolHandshake.createSession(ProtocolHandshake.java:62)

at org.openqa.selenium.remote.HttpCommandExecutor.execute(HttpCommandExecutor.java:156)

at org.openqa.selenium.remote.TracedCommandExecutor.execute(TracedCommandExecutor.java:51)

at org.openqa.selenium.remote.RemoteWebDriver.execute(RemoteWebDriver.java:567)

... 18 more

Caused by: java.net.ConnectException: Connection refused: hub/172.19.0.2:4444

at org.asynchttpclient.netty.channel.NettyConnectListener.onFailure(NettyConnectListener.java:179)

at org.asynchttpclient.netty.channel.NettyChannelConnector$1.onFailure(NettyChannelConnector.java:108)

at org.asynchttpclient.netty.SimpleChannelFutureListener.operationComplete(SimpleChannelFutureListener.java:28)

at org.asynchttpclient.netty.SimpleChannelFutureListener.operationComplete(SimpleChannelFutureListener.java:20)

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:578)

at io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:571)

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:550)

at io.netty.util.concurrent.DefaultPromise.notifyListeners(DefaultPromise.java:491)

at io.netty.util.concurrent.DefaultPromise.setValue0(DefaultPromise.java:616)

at io.netty.util.concurrent.DefaultPromise.setFailure0(DefaultPromise.java:609)

at io.netty.util.concurrent.DefaultPromise.tryFailure(DefaultPromise.java:117)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.fulfillConnectPromise(AbstractNioChannel.java:321)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:337)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:710)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:658)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:584)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:496)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

... 1 more

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: hub/172.19.0.2:4444

Caused by: java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at io.netty.channel.socket.nio.NioSocketChannel.doFinishConnect(NioSocketChannel.java:330)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:334)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:710)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:658)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:584)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:496)

at io.netty.util.concurrent.SingleThreadEventExecutor$4.run(SingleThreadEventExecutor.java:986)

at io.netty.util.internal.ThreadExecutorMap$2.run(ThreadExecutorMap.java:74)

at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30)

at java.lang.Thread.run(Thread.java:748)

```

### Operating System

Windows 10

### Selenium version

Selenium Grid with nodechrome = 4.1.3

### What are the browser(s) and version(s) where you see this issue?

Chrome latest version 100

### What are the browser driver(s) and version(s) where you see this issue?

Selenium Grid with nodechrome = 4.1.3

### Are you using Selenium Grid?

Yes - Selenium Grid 4.1.3 with docker-compose | non_test | what happened i m noticing flaky session not created issue in my personal test framework i have a selenium grid running with the help of docker compose i run my tests on gitlab ci mostly it works fine but suddenly starts to fail sometime even a small change not related to selenium gird in my code creates this issue to demostrate this i m sharing my gitlab repo link and under ci you can see that i tried running the same codebase without any changes and it failed first and then successfully ran if you check the logs you will notice the below error i have tried using selenium grid and org openqa selenium sessionnotcreatedexception could not start a new session possible causes are invalid address of the remote server or browser start up failure build info version revision system info host ip os name linux os arch os version java version driver info org openqa selenium remote remotewebdriver command extensions desiredcapabilities capabilities browsername chrome goog chromeoptions args extensions name ui regression capabilities at org openqa selenium remote remotewebdriver execute remotewebdriver java at org openqa selenium remote remotewebdriver startsession remotewebdriver java at org openqa selenium remote remotewebdriver remotewebdriver java at org openqa selenium remote remotewebdriver remotewebdriver java at functional basetest getremotedriver basetest java how can we reproduce the issue shell below is my project link relevant log output shell org openqa selenium sessionnotcreatedexception could not start a new session possible causes are invalid address of the remote server or browser start up failure build info version revision system info host ip os name linux os arch os version java version driver info org openqa selenium remote remotewebdriver command extensions desiredcapabilities capabilities browsername chrome goog chromeoptions args extensions name ui regression capabilities at org openqa selenium remote remotewebdriver execute remotewebdriver java at org openqa selenium remote remotewebdriver startsession remotewebdriver java at org openqa selenium remote remotewebdriver remotewebdriver java at org openqa selenium remote remotewebdriver remotewebdriver java at functional basetest getremotedriver basetest java at functional basetest beforeclasssetup basetest java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org testng internal invokers methodinvocationhelper invokemethod methodinvocationhelper java at org testng internal invokers methodinvocationhelper invokemethodconsideringtimeout methodinvocationhelper java at org testng internal invokers configinvoker invokeconfigurationmethod configinvoker java at org testng internal invokers configinvoker invokeconfigurations configinvoker java at org testng internal invokers testmethodworker invokebeforeclassmethods testmethodworker java at org testng internal invokers testmethodworker run testmethodworker java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java caused by java io uncheckedioexception java net connectexception connection refused hub at org openqa selenium remote http netty nettyhttphandler makecall nettyhttphandler java at org openqa selenium remote http addseleniumuseragent lambda apply addseleniumuseragent java at org openqa selenium remote http filter lambda andfinally filter java at org openqa selenium remote http netty nettyhttphandler execute nettyhttphandler java at org openqa selenium remote http addseleniumuseragent lambda apply addseleniumuseragent java at org openqa selenium remote http filter lambda andfinally filter java at org openqa selenium remote http netty nettyclient execute nettyclient java at org openqa selenium remote tracing tracedhttpclient execute tracedhttpclient java at org openqa selenium remote protocolhandshake createsession protocolhandshake java at org openqa selenium remote protocolhandshake createsession protocolhandshake java at org openqa selenium remote protocolhandshake createsession protocolhandshake java at org openqa selenium remote httpcommandexecutor execute httpcommandexecutor java at org openqa selenium remote tracedcommandexecutor execute tracedcommandexecutor java at org openqa selenium remote remotewebdriver execute remotewebdriver java more caused by java net connectexception connection refused hub at org asynchttpclient netty channel nettyconnectlistener onfailure nettyconnectlistener java at org asynchttpclient netty channel nettychannelconnector onfailure nettychannelconnector java at org asynchttpclient netty simplechannelfuturelistener operationcomplete simplechannelfuturelistener java at org asynchttpclient netty simplechannelfuturelistener operationcomplete simplechannelfuturelistener java at io netty util concurrent defaultpromise defaultpromise java at io netty util concurrent defaultpromise defaultpromise java at io netty util concurrent defaultpromise notifylistenersnow defaultpromise java at io netty util concurrent defaultpromise notifylisteners defaultpromise java at io netty util concurrent defaultpromise defaultpromise java at io netty util concurrent defaultpromise defaultpromise java at io netty util concurrent defaultpromise tryfailure defaultpromise java at io netty channel nio abstractniochannel abstractniounsafe fulfillconnectpromise abstractniochannel java at io netty channel nio abstractniochannel abstractniounsafe finishconnect abstractniochannel java at io netty channel nio nioeventloop processselectedkey nioeventloop java at io netty channel nio nioeventloop processselectedkeysoptimized nioeventloop java at io netty channel nio nioeventloop processselectedkeys nioeventloop java at io netty channel nio nioeventloop run nioeventloop java at io netty util concurrent singlethreadeventexecutor run singlethreadeventexecutor java at io netty util internal threadexecutormap run threadexecutormap java at io netty util concurrent fastthreadlocalrunnable run fastthreadlocalrunnable java more caused by io netty channel abstractchannel annotatedconnectexception connection refused hub caused by java net connectexception connection refused at sun nio ch socketchannelimpl checkconnect native method at sun nio ch socketchannelimpl finishconnect socketchannelimpl java at io netty channel socket nio niosocketchannel dofinishconnect niosocketchannel java at io netty channel nio abstractniochannel abstractniounsafe finishconnect abstractniochannel java at io netty channel nio nioeventloop processselectedkey nioeventloop java at io netty channel nio nioeventloop processselectedkeysoptimized nioeventloop java at io netty channel nio nioeventloop processselectedkeys nioeventloop java at io netty channel nio nioeventloop run nioeventloop java at io netty util concurrent singlethreadeventexecutor run singlethreadeventexecutor java at io netty util internal threadexecutormap run threadexecutormap java at io netty util concurrent fastthreadlocalrunnable run fastthreadlocalrunnable java at java lang thread run thread java operating system windows selenium version selenium grid with nodechrome what are the browser s and version s where you see this issue chrome latest version what are the browser driver s and version s where you see this issue selenium grid with nodechrome are you using selenium grid yes selenium grid with docker compose | 0 |

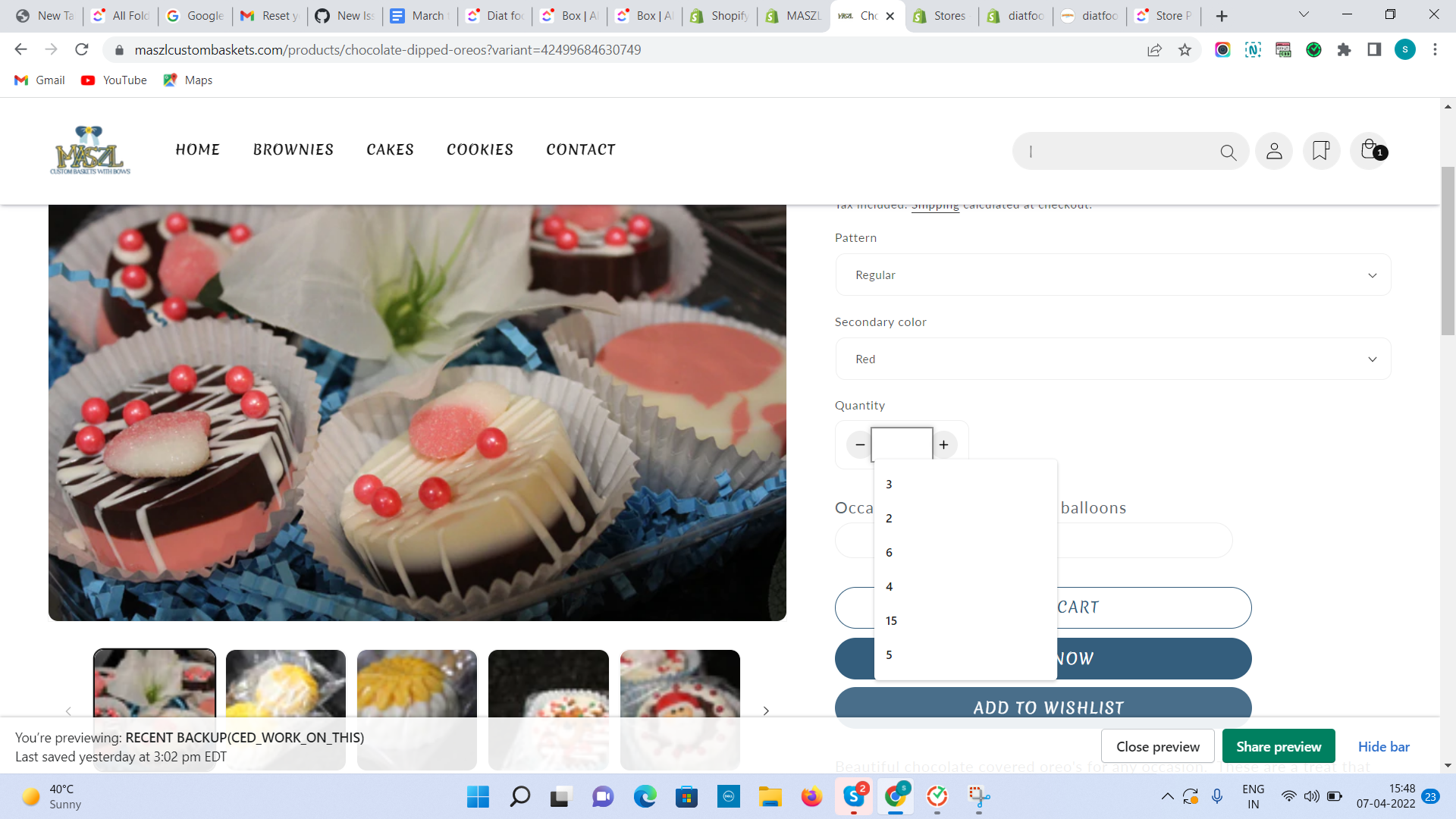

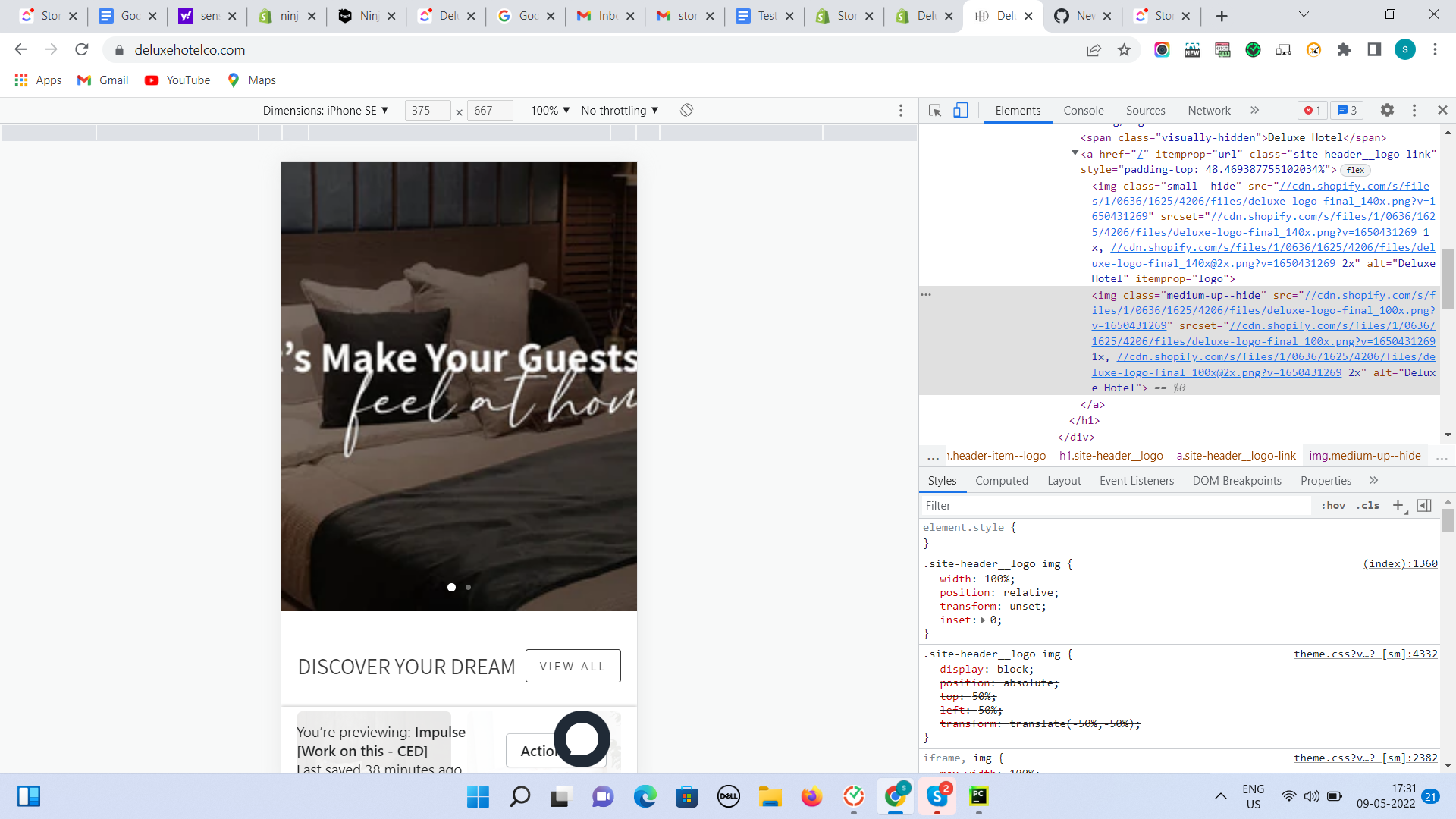

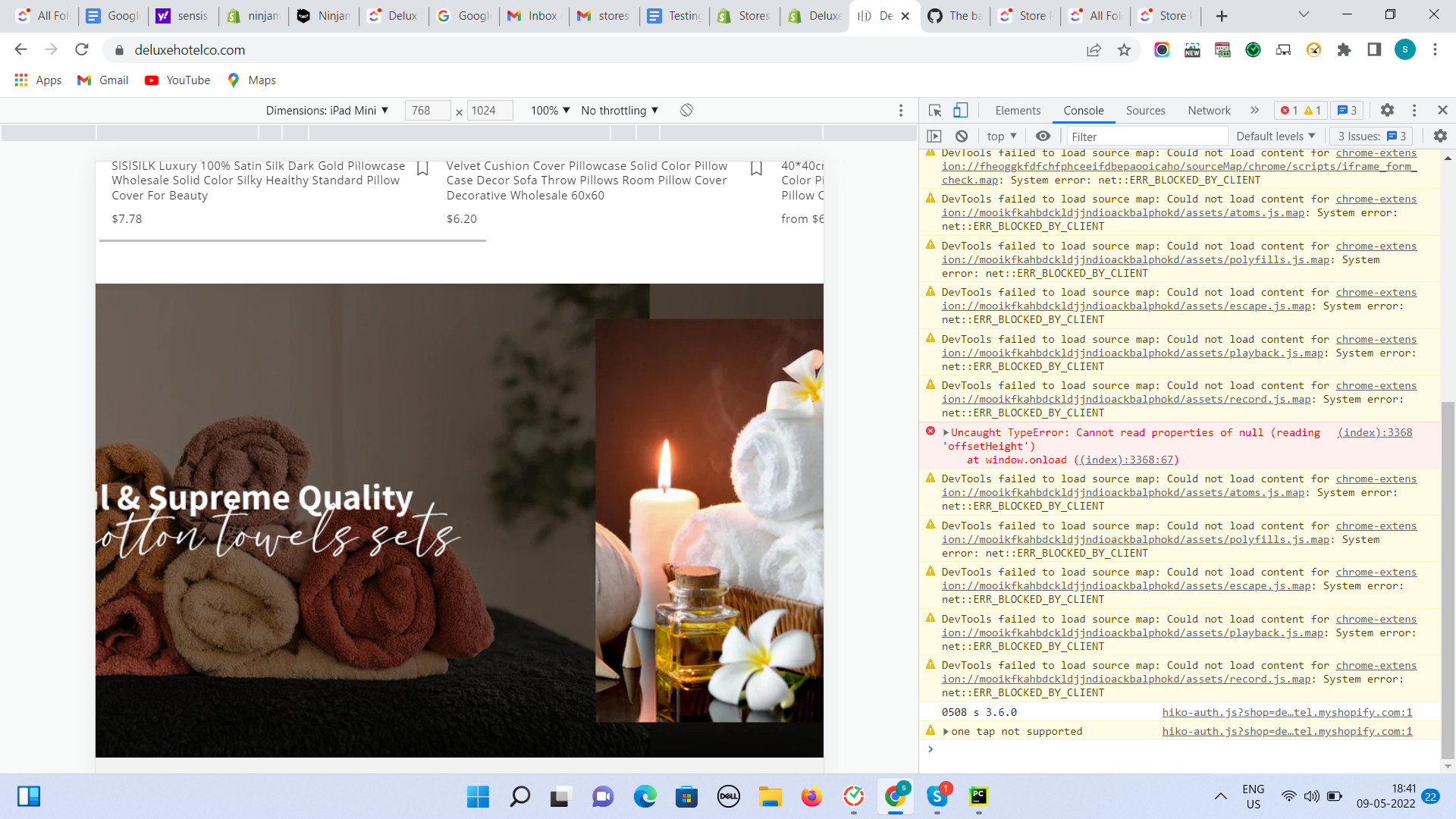

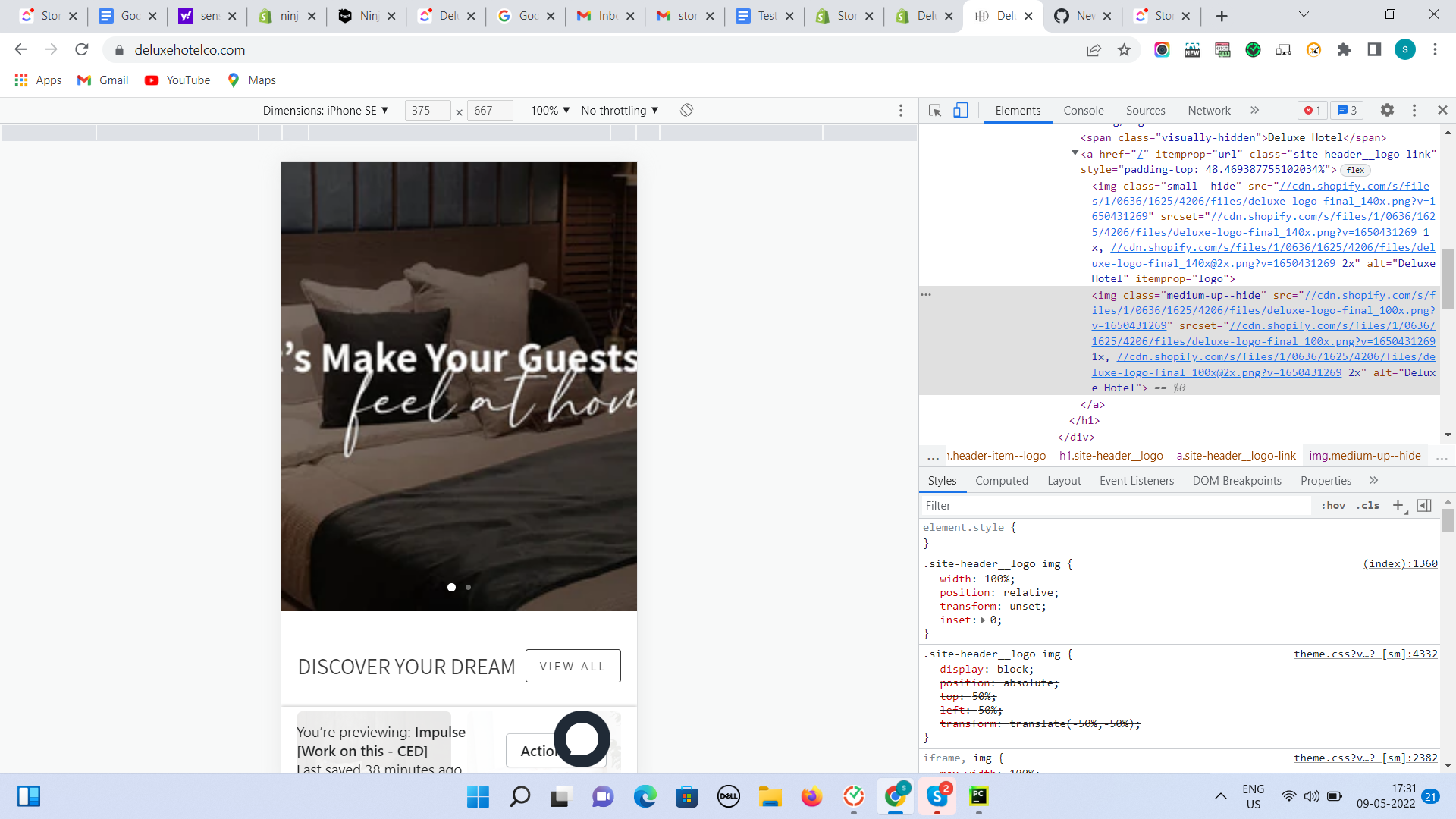

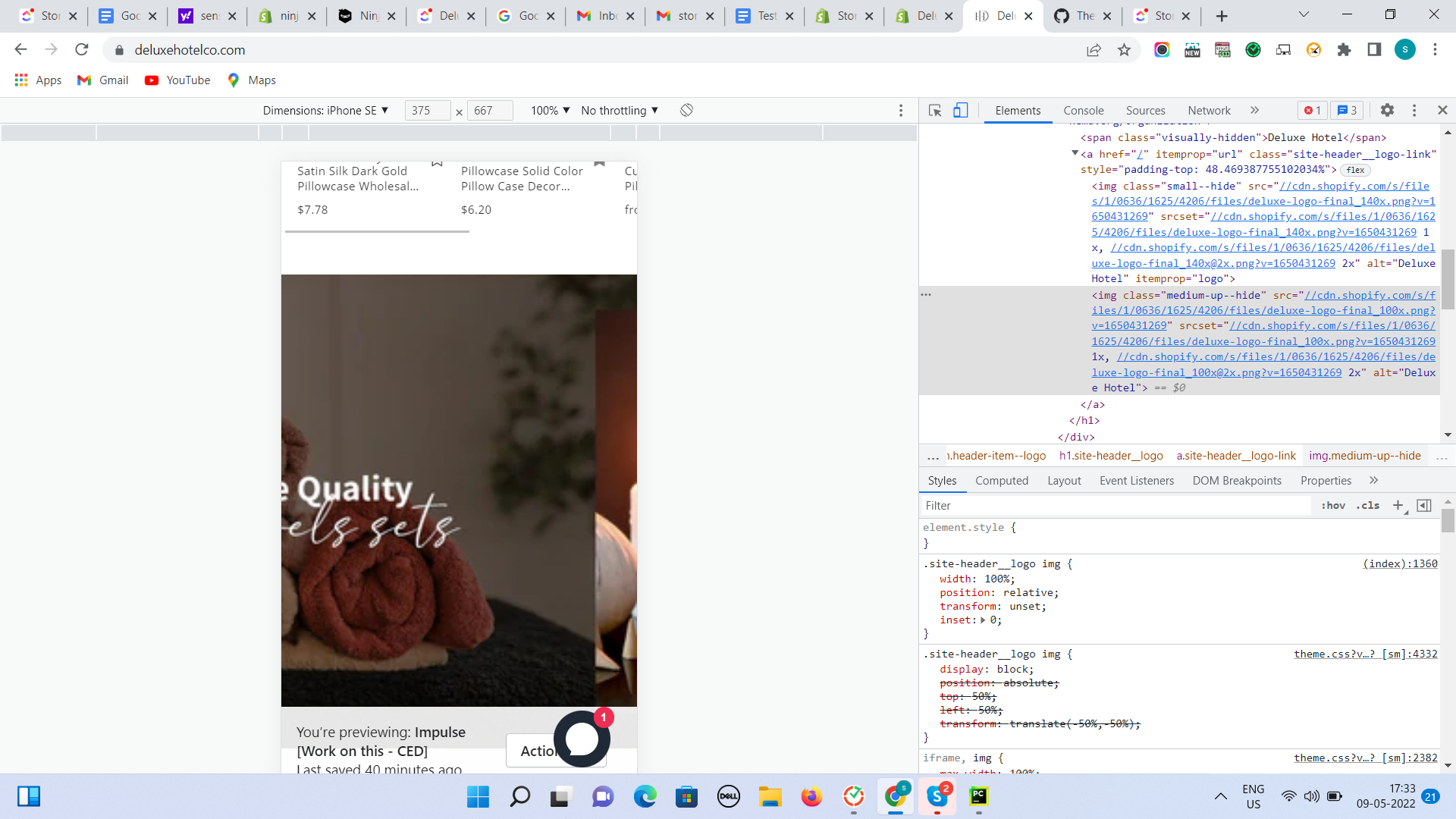

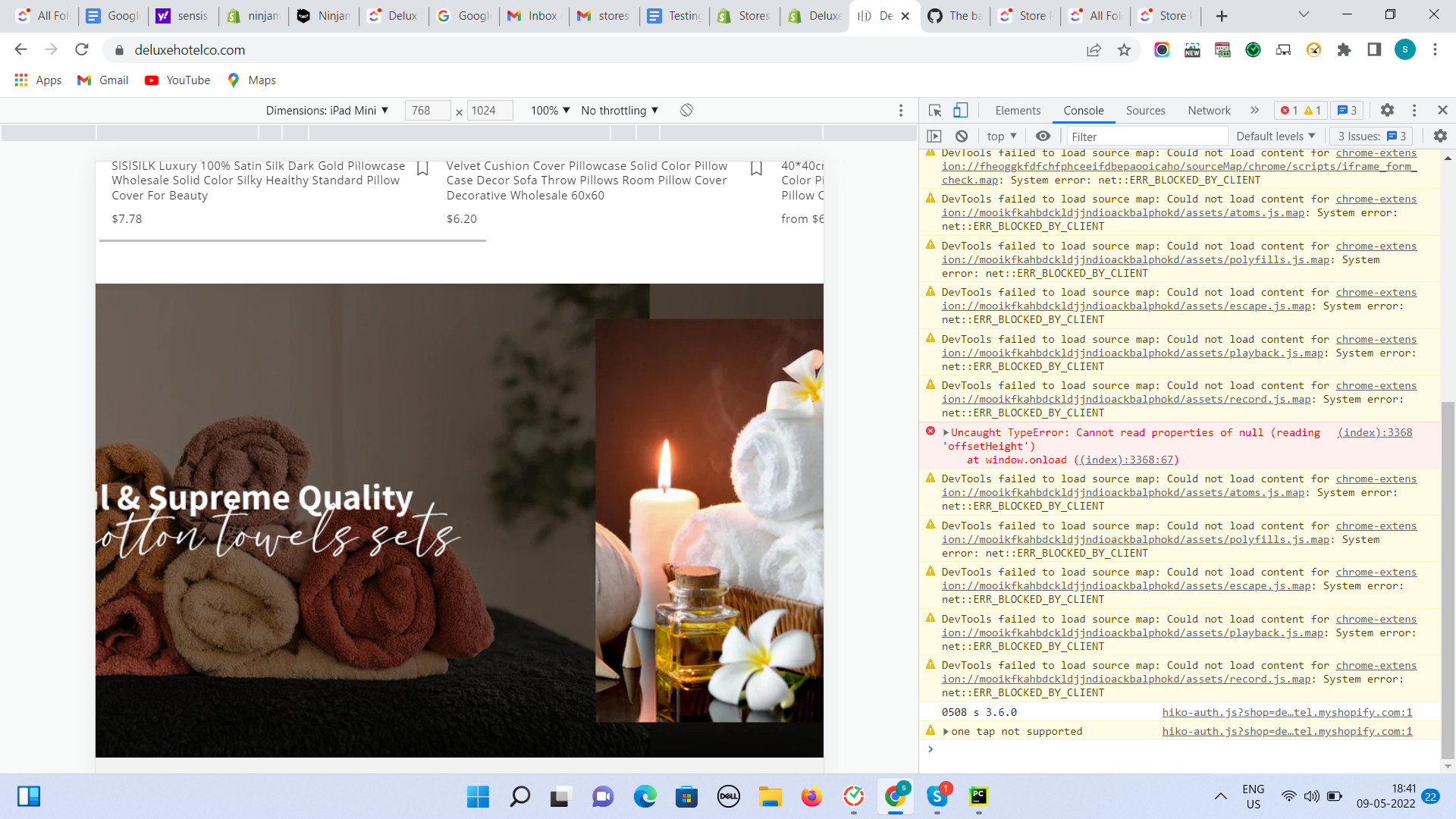

254,618 | 21,800,780,603 | IssuesEvent | 2022-05-16 04:48:55 | stores-cedcommerce/Deluxe-Hotel-LCC-Store-Redesign | https://api.github.com/repos/stores-cedcommerce/Deluxe-Hotel-LCC-Store-Redesign | closed | The banner text is cropping. | Tab Mobile Ready to test fixed homepage | **Actual result:**

The banner text is cropping.

**Expected result:**

The text of the banner should not be cropped.

| 1.0 | The banner text is cropping. - **Actual result:**

The banner text is cropping.

**Expected result:**

The text of the banner should not be cropped.

| test | the banner text is cropping actual result the banner text is cropping expected result the text of the banner should not be cropped | 1 |

465,159 | 13,357,861,078 | IssuesEvent | 2020-08-31 10:35:22 | input-output-hk/ouroboros-network | https://api.github.com/repos/input-output-hk/ouroboros-network | closed | Incorrect trimming of the Shelley Ledger View History | bug consensus priority high shelley ledger integration | The `LedgerViewHistory` maintained by the Shelley `LedgerState` is trimmed after a snapshot of the old ledger view history is made.

Call stack:

https://github.com/input-output-hk/ouroboros-network/blob/0934a3cb1e24ecbfcbab4e40522d99ffcd60feaf/ouroboros-consensus-shelley/src/Ouroboros/Consensus/Shelley/Ledger/History.hs#L70

https://github.com/input-output-hk/ouroboros-network/blob/0934a3cb1e24ecbfcbab4e40522d99ffcd60feaf/ouroboros-consensus/src/Ouroboros/Consensus/Ledger/History.hs#L103

This last module, `Ouroboros.Consensus.Ledger.History` is reused by the Byron ledger to maintain a history of the delegation state (= the Byron ledger view).

In `Ouroboros.Consensus.Ledger.History.trim`, snapshots older than "the earliest slot we might roll back to" are trimmed. This "earliest slot ..." is defined as "now - `2k`". This is correct for Byron, but not for Shelley! For Shelley, it should be "now - `3k/f`". This means that the effective rollback in the Shelley era is much shorter than it should be. | 1.0 | Incorrect trimming of the Shelley Ledger View History - The `LedgerViewHistory` maintained by the Shelley `LedgerState` is trimmed after a snapshot of the old ledger view history is made.

Call stack:

https://github.com/input-output-hk/ouroboros-network/blob/0934a3cb1e24ecbfcbab4e40522d99ffcd60feaf/ouroboros-consensus-shelley/src/Ouroboros/Consensus/Shelley/Ledger/History.hs#L70

https://github.com/input-output-hk/ouroboros-network/blob/0934a3cb1e24ecbfcbab4e40522d99ffcd60feaf/ouroboros-consensus/src/Ouroboros/Consensus/Ledger/History.hs#L103

This last module, `Ouroboros.Consensus.Ledger.History` is reused by the Byron ledger to maintain a history of the delegation state (= the Byron ledger view).

In `Ouroboros.Consensus.Ledger.History.trim`, snapshots older than "the earliest slot we might roll back to" are trimmed. This "earliest slot ..." is defined as "now - `2k`". This is correct for Byron, but not for Shelley! For Shelley, it should be "now - `3k/f`". This means that the effective rollback in the Shelley era is much shorter than it should be. | non_test | incorrect trimming of the shelley ledger view history the ledgerviewhistory maintained by the shelley ledgerstate is trimmed after a snapshot of the old ledger view history is made call stack this last module ouroboros consensus ledger history is reused by the byron ledger to maintain a history of the delegation state the byron ledger view in ouroboros consensus ledger history trim snapshots older than the earliest slot we might roll back to are trimmed this earliest slot is defined as now this is correct for byron but not for shelley for shelley it should be now f this means that the effective rollback in the shelley era is much shorter than it should be | 0 |

221,232 | 17,314,524,094 | IssuesEvent | 2021-07-27 02:58:03 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | Only download one folder's blobs when trying to download all blobs under flat list mode | :gear: blobs 🧪 testing | **Storage Explorer Version**: 1.21.0-dev

**Build Number**: 20210727.2

**Branch**: main

**Platform/OS**: Windows 10/ Linux Ubuntu 20.04/ MacOS Big Sur 11.4

**Architecture**: ia32/x64

**How Found**: Exploratory testing

**Regression From**: Not a regression

## Steps to Reproduce ##

1. Expand one Non-ADLS Gen2 storage account -> Blob Containers.

2. Create a blob container -> Create a new folder then upload blobs to it.

3. Back to the root -> Create another folder -> Upload blobs to it.

4. Click 'Show View Options' -> Select 'Flat'.

5. Hide view options panel -> Select all items -> Click 'Download'.

6. Check whether all the blobs are downloaded.

## Expected Experience ##

All blobs are downloaded.

## Actual Experience ##

Only the blobs under one folder are downloaded. | 1.0 | Only download one folder's blobs when trying to download all blobs under flat list mode - **Storage Explorer Version**: 1.21.0-dev

**Build Number**: 20210727.2

**Branch**: main

**Platform/OS**: Windows 10/ Linux Ubuntu 20.04/ MacOS Big Sur 11.4

**Architecture**: ia32/x64

**How Found**: Exploratory testing

**Regression From**: Not a regression

## Steps to Reproduce ##

1. Expand one Non-ADLS Gen2 storage account -> Blob Containers.

2. Create a blob container -> Create a new folder then upload blobs to it.

3. Back to the root -> Create another folder -> Upload blobs to it.

4. Click 'Show View Options' -> Select 'Flat'.

5. Hide view options panel -> Select all items -> Click 'Download'.

6. Check whether all the blobs are downloaded.

## Expected Experience ##

All blobs are downloaded.

## Actual Experience ##

Only the blobs under one folder are downloaded. | test | only download one folder s blobs when trying to download all blobs under flat list mode storage explorer version dev build number branch main platform os windows linux ubuntu macos big sur architecture how found exploratory testing regression from not a regression steps to reproduce expand one non adls storage account blob containers create a blob container create a new folder then upload blobs to it back to the root create another folder upload blobs to it click show view options select flat hide view options panel select all items click download check whether all the blobs are downloaded expected experience all blobs are downloaded actual experience only the blobs under one folder are downloaded | 1 |

351,163 | 31,986,894,019 | IssuesEvent | 2023-09-21 00:35:39 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix tensor.test_torch___lt__ | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-failure-red></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

| 1.0 | Fix tensor.test_torch___lt__ - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-failure-red></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6254778160/job/16982944534"><img src=https://img.shields.io/badge/-success-success></a>

| test | fix tensor test torch lt numpy a href src jax a href src tensorflow a href src torch a href src paddle a href src | 1 |

49,301 | 26,090,480,455 | IssuesEvent | 2022-12-26 10:37:20 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Task]: POC dataTree split | Performance Task Evaluated Value | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### SubTasks

main issue https://github.com/appsmithorg/appsmith/issues/11351 | True | [Task]: POC dataTree split - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### SubTasks

main issue https://github.com/appsmithorg/appsmith/issues/11351 | non_test | poc datatree split is there an existing issue for this i have searched the existing issues subtasks main issue | 0 |

613,431 | 19,090,136,495 | IssuesEvent | 2021-11-29 11:08:35 | SAP/xsk | https://api.github.com/repos/SAP/xsk | closed | [Migration] Enable selection of multiple Delivery Units | core priority-medium usability tooling | ### Background

Complete XS classic applications might consist of multiple delivery units (DUs) so the migration wizard should allow for the selection of multiple DUs at once.

### Target

In the migration wizard, when the list of DUs is show, change the view so that the user can select multiple DUs at the same time - ie dropdown with multiselect.

All DUs must be migrated in the same workspace.

**Failure behavior**

If migration of 1 DU fails it should be removed and the process should continue to the next one | 1.0 | [Migration] Enable selection of multiple Delivery Units - ### Background

Complete XS classic applications might consist of multiple delivery units (DUs) so the migration wizard should allow for the selection of multiple DUs at once.

### Target

In the migration wizard, when the list of DUs is show, change the view so that the user can select multiple DUs at the same time - ie dropdown with multiselect.

All DUs must be migrated in the same workspace.

**Failure behavior**

If migration of 1 DU fails it should be removed and the process should continue to the next one | non_test | enable selection of multiple delivery units background complete xs classic applications might consist of multiple delivery units dus so the migration wizard should allow for the selection of multiple dus at once target in the migration wizard when the list of dus is show change the view so that the user can select multiple dus at the same time ie dropdown with multiselect all dus must be migrated in the same workspace failure behavior if migration of du fails it should be removed and the process should continue to the next one | 0 |

29,732 | 4,535,329,469 | IssuesEvent | 2016-09-08 17:00:04 | mozilla/fxa-content-server | https://api.github.com/repos/mozilla/fxa-content-server | opened | force_auth error hidden as soon as it's displayed | tests ❤❤❤ |

This is causing test bustage on latest. | 1.0 | force_auth error hidden as soon as it's displayed -

This is causing test bustage on latest. | test | force auth error hidden as soon as it s displayed this is causing test bustage on latest | 1 |

97,170 | 8,650,556,531 | IssuesEvent | 2018-11-26 22:59:16 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Not able to launch kubectl shell from UI , gets stuck in "connecting.." | kind/bug-qa status/resolved status/to-test version/2.0 | Rancher server version - v2.1.2-rc13

Steps to reproduce the problem:

Create a 1 node DO cluster.

Try to launch kubectl shell from UI using the "launch kubectl" option.

Kubectl shell gets stuck in "connecting.."

Note - This issue is not seen when testing with v2.1.2-rc12 | 1.0 | Not able to launch kubectl shell from UI , gets stuck in "connecting.." - Rancher server version - v2.1.2-rc13

Steps to reproduce the problem:

Create a 1 node DO cluster.

Try to launch kubectl shell from UI using the "launch kubectl" option.

Kubectl shell gets stuck in "connecting.."

Note - This issue is not seen when testing with v2.1.2-rc12 | test | not able to launch kubectl shell from ui gets stuck in connecting rancher server version steps to reproduce the problem create a node do cluster try to launch kubectl shell from ui using the launch kubectl option kubectl shell gets stuck in connecting note this issue is not seen when testing with | 1 |

38,043 | 5,164,904,938 | IssuesEvent | 2017-01-17 12:01:58 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | CardinalityEstimatorAdvancedTest.testCardinalityEstimatorSpawnNodeInParallel | Team: Core Type: Test-Failure | ```

java.lang.AssertionError: CountDownLatch failed to complete within 120 seconds , count left: 1

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.assertTrue(Assert.java:41)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:812)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:805)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:797)

at com.hazelcast.cardinality.CardinalityEstimatorAdvancedTest.testCardinalityEstimatorSpawnNodeInParallel(CardinalityEstimatorAdvancedTest.java:104)

```

https://hazelcast-l337.ci.cloudbees.com/view/Hazelcast/job/Hazelcast-3.x-OracleJDK1.6/com.hazelcast$hazelcast/1069/testReport/junit/com.hazelcast.cardinality/CardinalityEstimatorAdvancedTest/testCardinalityEstimatorSpawnNodeInParallel/

https://hazelcast-l337.ci.cloudbees.com/view/Hazelcast/job/Hazelcast-3.x-OracleJDK8/com.hazelcast$hazelcast/963/testReport/junit/com.hazelcast.cardinality/CardinalityEstimatorAdvancedTest/testCardinalityEstimatorSpawnNodeInParallel/

| 1.0 | CardinalityEstimatorAdvancedTest.testCardinalityEstimatorSpawnNodeInParallel - ```

java.lang.AssertionError: CountDownLatch failed to complete within 120 seconds , count left: 1

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.assertTrue(Assert.java:41)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:812)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:805)

at com.hazelcast.test.HazelcastTestSupport.assertOpenEventually(HazelcastTestSupport.java:797)

at com.hazelcast.cardinality.CardinalityEstimatorAdvancedTest.testCardinalityEstimatorSpawnNodeInParallel(CardinalityEstimatorAdvancedTest.java:104)

```

https://hazelcast-l337.ci.cloudbees.com/view/Hazelcast/job/Hazelcast-3.x-OracleJDK1.6/com.hazelcast$hazelcast/1069/testReport/junit/com.hazelcast.cardinality/CardinalityEstimatorAdvancedTest/testCardinalityEstimatorSpawnNodeInParallel/

https://hazelcast-l337.ci.cloudbees.com/view/Hazelcast/job/Hazelcast-3.x-OracleJDK8/com.hazelcast$hazelcast/963/testReport/junit/com.hazelcast.cardinality/CardinalityEstimatorAdvancedTest/testCardinalityEstimatorSpawnNodeInParallel/

| test | cardinalityestimatoradvancedtest testcardinalityestimatorspawnnodeinparallel java lang assertionerror countdownlatch failed to complete within seconds count left at org junit assert fail assert java at org junit assert asserttrue assert java at com hazelcast test hazelcasttestsupport assertopeneventually hazelcasttestsupport java at com hazelcast test hazelcasttestsupport assertopeneventually hazelcasttestsupport java at com hazelcast test hazelcasttestsupport assertopeneventually hazelcasttestsupport java at com hazelcast cardinality cardinalityestimatoradvancedtest testcardinalityestimatorspawnnodeinparallel cardinalityestimatoradvancedtest java | 1 |

193,051 | 15,365,877,652 | IssuesEvent | 2021-03-02 00:25:44 | MicTott/FrozenPy | https://api.github.com/repos/MicTott/FrozenPy | closed | Need to add extinction example | documentation | Title. Need to complete documentation and examples so lab can transition easily | 1.0 | Need to add extinction example - Title. Need to complete documentation and examples so lab can transition easily | non_test | need to add extinction example title need to complete documentation and examples so lab can transition easily | 0 |

742,703 | 25,866,786,406 | IssuesEvent | 2022-12-13 21:39:59 | IBMa/equal-access | https://api.github.com/repos/IBMa/equal-access | closed | Clean up mdx template | Bug engine priority-3 (low) | The mdx template (accessibility-checker-engine/help/a_rule_help_template.mdx) has a number of spaces on empty lines, which are causing odd formatting glitches.

| 1.0 | Clean up mdx template - The mdx template (accessibility-checker-engine/help/a_rule_help_template.mdx) has a number of spaces on empty lines, which are causing odd formatting glitches.

| non_test | clean up mdx template the mdx template accessibility checker engine help a rule help template mdx has a number of spaces on empty lines which are causing odd formatting glitches | 0 |

344,111 | 10,340,036,500 | IssuesEvent | 2019-09-03 20:50:43 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | k_uptime_get_32() does not behave as documented | bug has-pr priority: high | It was recently pointed out on slack that `k_uptime_get_32()` does not return `The lower 32-bits of the elapsed time since the system booted, in milliseconds.`

It returns instead the number of milliseconds corresponding to the low 32 bits of the tick counter.

```

u32_t z_impl_k_uptime_get_32(void)

{

return __ticks_to_ms(z_tick_get_32());

}

```

The values are significantly different when you look at a 32-bit rollover of the tick clock:

```

At 10000 Hz ticks and 2^32 +/- 50:

t0 = 0x0000ffffffce = 4294967246 ticks => t0m = 429496724 ms

t1 = 0x000100000032 = 4294967346 ticks => t1m = 429496734 ms

t1-t0 = 100 ticks = 10 ms

and t1m-t0m = 10 ms

tt0 = 0x0000ffffffce = 4294967246 ticks => tt0m = 429496724 ms

tt1 = 0x000000000032 = 50 ticks => tt1m = 5 ms

tt1-tt0 = 100 ticks = 10 ms

but tt1m-tt1m = 3865470577 ms

```

This explains https://github.com/zephyrproject-rtos/zephyr/pull/17155#discussion_r309013463 which had claimed that the current implementation followed an algorithm that I noted was incorrect. Apparently it does. | 1.0 | k_uptime_get_32() does not behave as documented - It was recently pointed out on slack that `k_uptime_get_32()` does not return `The lower 32-bits of the elapsed time since the system booted, in milliseconds.`

It returns instead the number of milliseconds corresponding to the low 32 bits of the tick counter.

```

u32_t z_impl_k_uptime_get_32(void)

{

return __ticks_to_ms(z_tick_get_32());

}

```

The values are significantly different when you look at a 32-bit rollover of the tick clock:

```

At 10000 Hz ticks and 2^32 +/- 50:

t0 = 0x0000ffffffce = 4294967246 ticks => t0m = 429496724 ms

t1 = 0x000100000032 = 4294967346 ticks => t1m = 429496734 ms

t1-t0 = 100 ticks = 10 ms

and t1m-t0m = 10 ms

tt0 = 0x0000ffffffce = 4294967246 ticks => tt0m = 429496724 ms

tt1 = 0x000000000032 = 50 ticks => tt1m = 5 ms

tt1-tt0 = 100 ticks = 10 ms

but tt1m-tt1m = 3865470577 ms

```

This explains https://github.com/zephyrproject-rtos/zephyr/pull/17155#discussion_r309013463 which had claimed that the current implementation followed an algorithm that I noted was incorrect. Apparently it does. | non_test | k uptime get does not behave as documented it was recently pointed out on slack that k uptime get does not return the lower bits of the elapsed time since the system booted in milliseconds it returns instead the number of milliseconds corresponding to the low bits of the tick counter t z impl k uptime get void return ticks to ms z tick get the values are significantly different when you look at a bit rollover of the tick clock at hz ticks and ticks ms ticks ms ticks ms and ms ticks ms ticks ms ticks ms but ms this explains which had claimed that the current implementation followed an algorithm that i noted was incorrect apparently it does | 0 |

52,624 | 10,885,288,185 | IssuesEvent | 2019-11-18 10:06:01 | rapid-eth/rapid-adventures | https://api.github.com/repos/rapid-eth/rapid-adventures | opened | [Component/Page] QuestPagePrimary | code | The QuestPagePrimary component will manage several of the Quest Views: QuestSearch, QuestCreateModal, etc...

- [ ] <QuestSearch />

- [ ] <QuestCreateModal />

## Component(s)

### QuestSearch

- [ ] Display Quest Search

- [ ] Provide View Selection (Card/List)

### QuestCreateModal

- [ ] Use `react-portal-system`

- [ ] Render `<FormQuestCreate />` | 1.0 | [Component/Page] QuestPagePrimary - The QuestPagePrimary component will manage several of the Quest Views: QuestSearch, QuestCreateModal, etc...

- [ ] <QuestSearch />

- [ ] <QuestCreateModal />

## Component(s)

### QuestSearch

- [ ] Display Quest Search

- [ ] Provide View Selection (Card/List)

### QuestCreateModal

- [ ] Use `react-portal-system`

- [ ] Render `<FormQuestCreate />` | non_test | questpageprimary the questpageprimary component will manage several of the quest views questsearch questcreatemodal etc component s questsearch display quest search provide view selection card list questcreatemodal use react portal system render | 0 |

151,175 | 12,016,494,964 | IssuesEvent | 2020-04-10 16:13:30 | mathjax/MathJax | https://api.github.com/repos/mathjax/MathJax | closed | using "tex-...-full.js" still needs extensions being loaded and added ... | Feature Request Fixed Merged Test Needed v3 | The following minimal test code doesn't typeset the equations:

```

<!DOCTYPE html>

<head>

<meta charset="utf-8">

<meta http-equiv="x-ua-compatible" content="ie=edge">

<meta name="viewport" content="width=device-width">

<title>MathJax v3 with TeX input and SVG output</title>

<script>

MathJax = {

tex: {

inlineMath: [['$', '$']],

tagFormat: {

number: function(n){

return String(n).replace(/0/g,"00");

}

}

},

svg: {fontCache: 'global'}

};

</script>

<script id="MathJax-script" async src="tex-svg-full.js"></script>

</head>

<body>

When $a \ne 0$, there are two solutions to $ax^2 + bx + c = 0$ and they are

$$x = {-b \pm \sqrt{b^2-4ac} \over 2a}.$$

</body>

</html>

```

The problem is resolved if one adds the extension, loads it inside the loader, and adds it as a package to the config setup of Mathjax; but isn't "**...-full.js**" package expected to be single-file solutions? | 1.0 | using "tex-...-full.js" still needs extensions being loaded and added ... - The following minimal test code doesn't typeset the equations:

```

<!DOCTYPE html>

<head>

<meta charset="utf-8">

<meta http-equiv="x-ua-compatible" content="ie=edge">

<meta name="viewport" content="width=device-width">

<title>MathJax v3 with TeX input and SVG output</title>

<script>

MathJax = {

tex: {

inlineMath: [['$', '$']],

tagFormat: {

number: function(n){

return String(n).replace(/0/g,"00");

}

}

},

svg: {fontCache: 'global'}

};

</script>

<script id="MathJax-script" async src="tex-svg-full.js"></script>

</head>

<body>

When $a \ne 0$, there are two solutions to $ax^2 + bx + c = 0$ and they are

$$x = {-b \pm \sqrt{b^2-4ac} \over 2a}.$$

</body>

</html>

```

The problem is resolved if one adds the extension, loads it inside the loader, and adds it as a package to the config setup of Mathjax; but isn't "**...-full.js**" package expected to be single-file solutions? | test | using tex full js still needs extensions being loaded and added the following minimal test code doesn t typeset the equations mathjax with tex input and svg output mathjax tex inlinemath tagformat number function n return string n replace g svg fontcache global when a ne there are two solutions to ax bx c and they are x b pm sqrt b over the problem is resolved if one adds the extension loads it inside the loader and adds it as a package to the config setup of mathjax but isn t full js package expected to be single file solutions | 1 |

138,518 | 20,601,782,161 | IssuesEvent | 2022-03-06 11:28:39 | xieyuschen/grillen | https://api.github.com/repos/xieyuschen/grillen | opened | How to choose format of config file? | TechDesign | As a code generation project, grillen wants users to provide their setting files that define the action of their business. Here

# Which format of config file should we use?

There are many formats of config files. Such as `JSON`, `YAML`, and so on. As `YAML` can support `JSON` so I think I can use YAML here as the format of the config file.

## How to represent API in the config file?

Can use YAML format to present API docs. As OpenApi suggests to us, we can use YAML file to represent API, link is here, https://swagger.io/docs/specification/basic-structure/

| 1.0 | How to choose format of config file? - As a code generation project, grillen wants users to provide their setting files that define the action of their business. Here

# Which format of config file should we use?

There are many formats of config files. Such as `JSON`, `YAML`, and so on. As `YAML` can support `JSON` so I think I can use YAML here as the format of the config file.

## How to represent API in the config file?

Can use YAML format to present API docs. As OpenApi suggests to us, we can use YAML file to represent API, link is here, https://swagger.io/docs/specification/basic-structure/

| non_test | how to choose format of config file as a code generation project grillen wants users to provide their setting files that define the action of their business here which format of config file should we use there are many formats of config files such as json yaml and so on as yaml can support json so i think i can use yaml here as the format of the config file how to represent api in the config file can use yaml format to present api docs as openapi suggests to us we can use yaml file to represent api link is here | 0 |

132,488 | 10,757,036,798 | IssuesEvent | 2019-10-31 12:30:40 | elastic/beats | https://api.github.com/repos/elastic/beats | opened | Add integration test in test_base to cover the limits for default fields | :Testing libbeat | Elasticsearch `default_fields` has a default limit of 1024 fields, the limit is not enforced when we created the template but it will be returned when we query Elasticsearch through Kibana.

Recently master was broken because new fields were added and we didn't detect it right away.

@Andrewkroh has fixed the issues and removed unnecessary fields and added a test to make sure we don't go over.

But we are on borrowed time here until we change our template strategy because we are at 939 field now. The unit test will allow to be notified up front but we should add a new integration test to make sure that the default value of Elasticsearch is not changed to something else.

Scenario:

- Add a test to test_based.py so all the existing beats can run it.

- Start the beats

- Install the template

- Do an Elasticsearch query to see if we still have the problem.

See https://github.com/elastic/beats/issues/14262 for a description of the behavior when it fails. | 1.0 | Add integration test in test_base to cover the limits for default fields - Elasticsearch `default_fields` has a default limit of 1024 fields, the limit is not enforced when we created the template but it will be returned when we query Elasticsearch through Kibana.

Recently master was broken because new fields were added and we didn't detect it right away.

@Andrewkroh has fixed the issues and removed unnecessary fields and added a test to make sure we don't go over.

But we are on borrowed time here until we change our template strategy because we are at 939 field now. The unit test will allow to be notified up front but we should add a new integration test to make sure that the default value of Elasticsearch is not changed to something else.

Scenario:

- Add a test to test_based.py so all the existing beats can run it.

- Start the beats

- Install the template

- Do an Elasticsearch query to see if we still have the problem.

See https://github.com/elastic/beats/issues/14262 for a description of the behavior when it fails. | test | add integration test in test base to cover the limits for default fields elasticsearch default fields has a default limit of fields the limit is not enforced when we created the template but it will be returned when we query elasticsearch through kibana recently master was broken because new fields were added and we didn t detect it right away andrewkroh has fixed the issues and removed unnecessary fields and added a test to make sure we don t go over but we are on borrowed time here until we change our template strategy because we are at field now the unit test will allow to be notified up front but we should add a new integration test to make sure that the default value of elasticsearch is not changed to something else scenario add a test to test based py so all the existing beats can run it start the beats install the template do an elasticsearch query to see if we still have the problem see for a description of the behavior when it fails | 1 |

194,132 | 22,261,863,142 | IssuesEvent | 2022-06-10 01:46:13 | kapseliboi/WeiPay | https://api.github.com/repos/kapseliboi/WeiPay | closed | WS-2018-0625 (High) detected in xmlbuilder-4.0.0.tgz, xmlbuilder-8.2.2.tgz - autoclosed | security vulnerability | ## WS-2018-0625 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>xmlbuilder-4.0.0.tgz</b>, <b>xmlbuilder-8.2.2.tgz</b></p></summary>

<p>

<details><summary><b>xmlbuilder-4.0.0.tgz</b></p></summary>

<p>An XML builder for node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/xmlbuilder/-/xmlbuilder-4.0.0.tgz">https://registry.npmjs.org/xmlbuilder/-/xmlbuilder-4.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/xmlbuilder/package.json</p>

<p>

Dependency Hierarchy:

- react-native-0.55.4.tgz (Root Library)

- plist-1.2.0.tgz

- :x: **xmlbuilder-4.0.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>xmlbuilder-8.2.2.tgz</b></p></summary>

<p>An XML builder for node.js</p>

<p>Library home page: <a href="https://registry.npmjs.org/xmlbuilder/-/xmlbuilder-8.2.2.tgz">https://registry.npmjs.org/xmlbuilder/-/xmlbuilder-8.2.2.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/simple-plist/node_modules/xmlbuilder/package.json</p>

<p>

Dependency Hierarchy:

- react-native-0.55.4.tgz (Root Library)

- xcode-0.9.3.tgz

- simple-plist-0.2.1.tgz

- plist-2.0.1.tgz

- :x: **xmlbuilder-8.2.2.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>stable</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package xmlbuilder-js before 9.0.5 is vulnerable to denial of service due to a regular expression issue.

<p>Publish Date: 2018-02-08

<p>URL: <a href=https://github.com/oozcitak/xmlbuilder-js/commit/bbf929a8a54f0d012bdc44cbe622fdeda2509230>WS-2018-0625</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/oozcitak/xmlbuilder-js/commit/bbf929a8a54f0d012bdc44cbe622fdeda2509230">https://github.com/oozcitak/xmlbuilder-js/commit/bbf929a8a54f0d012bdc44cbe622fdeda2509230</a></p>

<p>Release Date: 2018-02-08</p>

<p>Fix Resolution (xmlbuilder): 9.0.5</p>

<p>Direct dependency fix Resolution (react-native): 0.59.0-rc.0</p><p>Fix Resolution (xmlbuilder): 9.0.5</p>

<p>Direct dependency fix Resolution (react-native): 0.59.0-rc.0</p>

</p>

</details>

<p></p>

***