Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

215,570 | 16,679,154,648 | IssuesEvent | 2021-06-07 20:26:23 | uoForms/App-CANBeWell | https://api.github.com/repos/uoForms/App-CANBeWell | closed | 'Family Planning' missing under Sexual and reproductive health in TM | Follow up with client To be tested | Its because of the missing age for 'Family planning'- (Transmasculine) in the excel sheet. | 1.0 | 'Family Planning' missing under Sexual and reproductive health in TM - Its because of the missing age for 'Family planning'- (Transmasculine) in the excel sheet. | test | family planning missing under sexual and reproductive health in tm its because of the missing age for family planning transmasculine in the excel sheet | 1 |

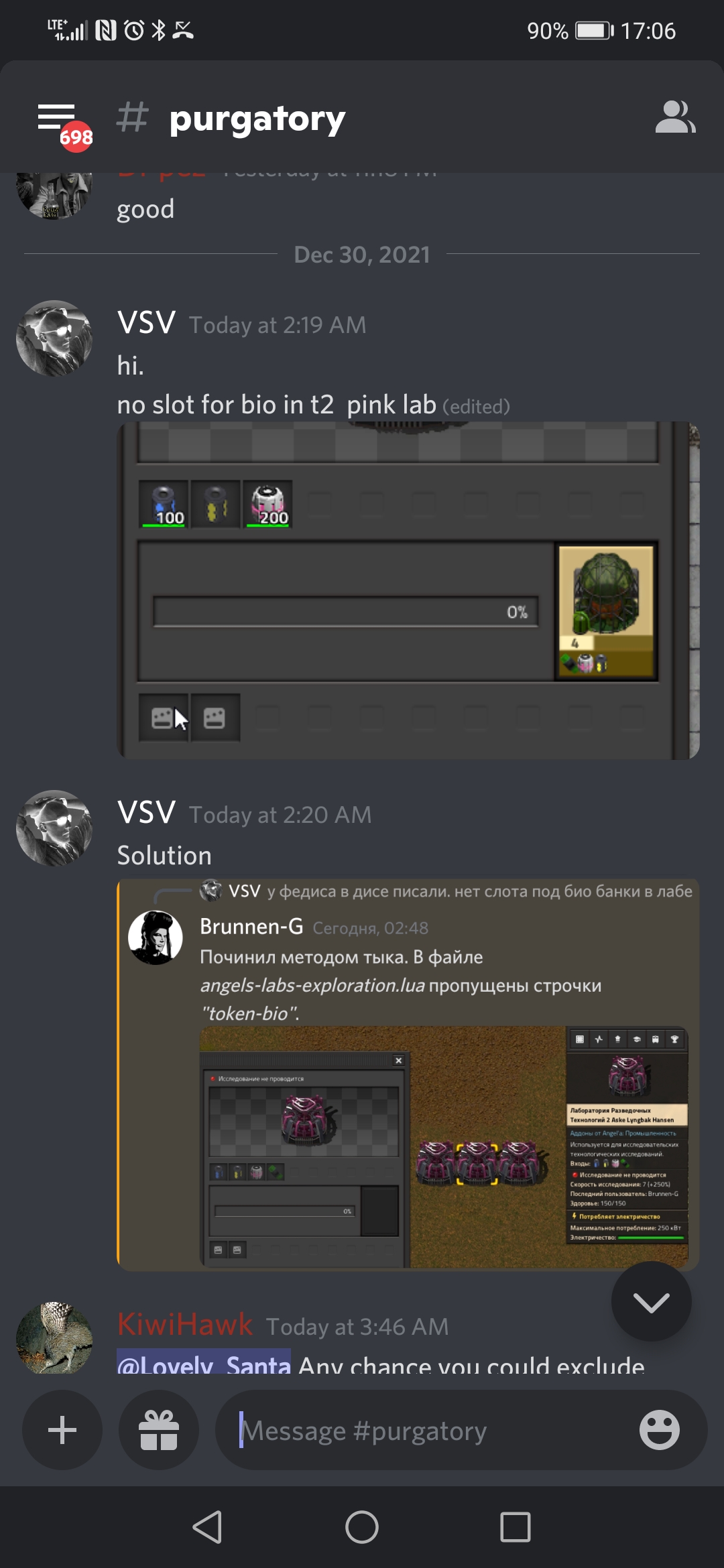

237,164 | 19,596,634,074 | IssuesEvent | 2022-01-05 18:40:45 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | closed | No slot for bio-token in T2 exploration lab | Impact: Bug Angels Industries (Technology mode) Angels Unit Tests |

**Describe the bug**

No bio-token slot in science overhaul lab

| 1.0 | No slot for bio-token in T2 exploration lab -

**Describe the bug**

No bio-token slot in science overhaul lab

| test | no slot for bio token in exploration lab describe the bug no bio token slot in science overhaul lab | 1 |

315,964 | 27,122,711,764 | IssuesEvent | 2023-02-16 00:58:39 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_to_cpu_blocking_by_default (__main__.TestCuda) | module: cuda triaged module: flaky-tests skipped | Platforms: rocm

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_to_cpu_blocking_by_default&suite=TestCuda) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/11365293941).

Over the past 3 hours, it has been determined flaky in 4 workflow(s) with 4 failures and 4 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT ASSUME THINGS ARE OKAY IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_to_cpu_blocking_by_default`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

Test file path: `test_cuda.py` | 1.0 | DISABLED test_to_cpu_blocking_by_default (__main__.TestCuda) - Platforms: rocm

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_to_cpu_blocking_by_default&suite=TestCuda) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/11365293941).

Over the past 3 hours, it has been determined flaky in 4 workflow(s) with 4 failures and 4 successes.

**Debugging instructions (after clicking on the recent samples link):**

DO NOT ASSUME THINGS ARE OKAY IF THE CI IS GREEN. We now shield flaky tests from developers so CI will thus be green but it will be harder to parse the logs.

To find relevant log snippets:

1. Click on the workflow logs linked above

2. Click on the Test step of the job so that it is expanded. Otherwise, the grepping will not work.

3. Grep for `test_to_cpu_blocking_by_default`

4. There should be several instances run (as flaky tests are rerun in CI) from which you can study the logs.

Test file path: `test_cuda.py` | test | disabled test to cpu blocking by default main testcuda platforms rocm this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instructions after clicking on the recent samples link do not assume things are okay if the ci is green we now shield flaky tests from developers so ci will thus be green but it will be harder to parse the logs to find relevant log snippets click on the workflow logs linked above click on the test step of the job so that it is expanded otherwise the grepping will not work grep for test to cpu blocking by default there should be several instances run as flaky tests are rerun in ci from which you can study the logs test file path test cuda py | 1 |

19,497 | 3,774,165,269 | IssuesEvent | 2016-03-17 07:49:56 | dojo/core | https://api.github.com/repos/dojo/core | closed | Issue with location of helpers... | bug - test case | It appears some of the changes made to Intern (specifically how it deals with dependencies) have caused issues with the location of some files and the [CI is failing](https://travis-ci.org/dojo/core/builds/116074773#L225).

```

Error: Failed to load module leadfoot/helpers/pollUntil from /home/travis/build/dojo/core/leadfoot/helpers/pollUntil.js (parent: tests/functional/text/textPlugin)

at reportModuleLoadError <node_modules/dojo-loader/loader.ts:122:44>

at loadCallback <node_modules/dojo-loader/loader.ts:884:7>

at executeModule <node_modules/dojo-loader/loader.ts:632:52>

at <node_modules/dojo-loader/loader.ts:620:10>

at Array.map <native>

at executeModule <node_modules/dojo-loader/loader.ts:614:43>

at <node_modules/dojo-loader/loader.ts:620:10>

at Array.map <native>

at executeModule <node_modules/dojo-loader/loader.ts:614:43>

at <node_modules/dojo-loader/loader.ts:620:10>

``` | 1.0 | Issue with location of helpers... - It appears some of the changes made to Intern (specifically how it deals with dependencies) have caused issues with the location of some files and the [CI is failing](https://travis-ci.org/dojo/core/builds/116074773#L225).

```

Error: Failed to load module leadfoot/helpers/pollUntil from /home/travis/build/dojo/core/leadfoot/helpers/pollUntil.js (parent: tests/functional/text/textPlugin)

at reportModuleLoadError <node_modules/dojo-loader/loader.ts:122:44>

at loadCallback <node_modules/dojo-loader/loader.ts:884:7>

at executeModule <node_modules/dojo-loader/loader.ts:632:52>

at <node_modules/dojo-loader/loader.ts:620:10>

at Array.map <native>

at executeModule <node_modules/dojo-loader/loader.ts:614:43>

at <node_modules/dojo-loader/loader.ts:620:10>

at Array.map <native>

at executeModule <node_modules/dojo-loader/loader.ts:614:43>

at <node_modules/dojo-loader/loader.ts:620:10>

``` | test | issue with location of helpers it appears some of the changes made to intern specifically how it deals with dependencies have caused issues with the location of some files and the error failed to load module leadfoot helpers polluntil from home travis build dojo core leadfoot helpers polluntil js parent tests functional text textplugin at reportmoduleloaderror at loadcallback at executemodule at at array map at executemodule at at array map at executemodule at | 1 |

397,681 | 11,731,288,149 | IssuesEvent | 2020-03-10 23:37:49 | IS-AgroSmart/AgroSmart-Web | https://api.github.com/repos/IS-AgroSmart/AgroSmart-Web | opened | Refactor Flight datepicker to use BootstrapVue's native dialog | enhancement low-priority | NewFlight.vue currently uses a plain b-form-input with type="date" to select the Flight date. https://bootstrap-vue.js.org/docs/components/form-datepicker is a control with better format and, in theory, the same functionality.

Expected work:

- [ ] Swap [the date input control](https://github.com/IS-AgroSmart/AgroSmart-Web/blob/281e17d495ae1587f7f70ad221667b05c050e79e/frontend/src/components/NewFlight.vue#L9) for `<b-form-datepicker id="input-2" v-model="form.date"></b-form-datepicker>`

- [ ] The new control should work correctly with the form submission. | 1.0 | Refactor Flight datepicker to use BootstrapVue's native dialog - NewFlight.vue currently uses a plain b-form-input with type="date" to select the Flight date. https://bootstrap-vue.js.org/docs/components/form-datepicker is a control with better format and, in theory, the same functionality.

Expected work:

- [ ] Swap [the date input control](https://github.com/IS-AgroSmart/AgroSmart-Web/blob/281e17d495ae1587f7f70ad221667b05c050e79e/frontend/src/components/NewFlight.vue#L9) for `<b-form-datepicker id="input-2" v-model="form.date"></b-form-datepicker>`

- [ ] The new control should work correctly with the form submission. | non_test | refactor flight datepicker to use bootstrapvue s native dialog newflight vue currently uses a plain b form input with type date to select the flight date is a control with better format and in theory the same functionality expected work swap for the new control should work correctly with the form submission | 0 |

248,102 | 18,858,033,735 | IssuesEvent | 2021-11-12 09:18:38 | kaushikkrdy/pe | https://api.github.com/repos/kaushikkrdy/pe | opened | Marking contact as called user story missing | severity.Low type.DocumentationBug | Marking contact as called exists as a feature in UG and code, but not present in DG User Stories

<!--session: 1636703758671-33d508eb-996f-4ff9-b08f-08310e28f766-->

<!--Version: Web v3.4.1--> | 1.0 | Marking contact as called user story missing - Marking contact as called exists as a feature in UG and code, but not present in DG User Stories

<!--session: 1636703758671-33d508eb-996f-4ff9-b08f-08310e28f766-->

<!--Version: Web v3.4.1--> | non_test | marking contact as called user story missing marking contact as called exists as a feature in ug and code but not present in dg user stories | 0 |

37,945 | 12,510,814,472 | IssuesEvent | 2020-06-02 19:21:51 | autoai-org/AID | https://api.github.com/repos/autoai-org/AID | closed | WS-2019-0367 (Medium) detected in angular-1.4.2.min.js | security vulnerability wontfix | ## WS-2019-0367 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>angular-1.4.2.min.js</b></p></summary>

<p>AngularJS is an MVC framework for building web applications. The core features include HTML enhanced with custom component and data-binding capabilities, dependency injection and strong focus on simplicity, testability, maintainability and boiler-plate reduction.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/angular.js/1.4.2/angular.min.js">https://cdnjs.cloudflare.com/ajax/libs/angular.js/1.4.2/angular.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/AID/docs/node_modules/autocomplete.js/test/playground_angular.html</p>

<p>Path to vulnerable library: /AID/docs/node_modules/autocomplete.js/test/playground_angular.html,/AID/docs/node_modules/autocomplete.js/examples/basic_angular.html</p>

<p>

Dependency Hierarchy:

- :x: **angular-1.4.2.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/autoai-org/AID/commit/708c0f68e8540b044f2c44c3ef7e7aff2e34dfef">708c0f68e8540b044f2c44c3ef7e7aff2e34dfef</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype Pollution vulnerability found in Angular before 1.7.9.

<p>Publish Date: 2020-01-08

<p>URL: <a href=https://github.com/RetireJS/retire.js/commit/f07a7557d3fc1c26b86fe11a5b33cb1b8f3dcf2f>WS-2019-0367</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/angular/angular.js/blob/master/CHANGELOG.md#179-pollution-eradication-2019-11-19">https://github.com/angular/angular.js/blob/master/CHANGELOG.md#179-pollution-eradication-2019-11-19</a></p>

<p>Release Date: 2020-01-08</p>

<p>Fix Resolution: angular - 1.7.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | WS-2019-0367 (Medium) detected in angular-1.4.2.min.js - ## WS-2019-0367 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>angular-1.4.2.min.js</b></p></summary>

<p>AngularJS is an MVC framework for building web applications. The core features include HTML enhanced with custom component and data-binding capabilities, dependency injection and strong focus on simplicity, testability, maintainability and boiler-plate reduction.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/angular.js/1.4.2/angular.min.js">https://cdnjs.cloudflare.com/ajax/libs/angular.js/1.4.2/angular.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/AID/docs/node_modules/autocomplete.js/test/playground_angular.html</p>

<p>Path to vulnerable library: /AID/docs/node_modules/autocomplete.js/test/playground_angular.html,/AID/docs/node_modules/autocomplete.js/examples/basic_angular.html</p>

<p>

Dependency Hierarchy:

- :x: **angular-1.4.2.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/autoai-org/AID/commit/708c0f68e8540b044f2c44c3ef7e7aff2e34dfef">708c0f68e8540b044f2c44c3ef7e7aff2e34dfef</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype Pollution vulnerability found in Angular before 1.7.9.

<p>Publish Date: 2020-01-08

<p>URL: <a href=https://github.com/RetireJS/retire.js/commit/f07a7557d3fc1c26b86fe11a5b33cb1b8f3dcf2f>WS-2019-0367</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/angular/angular.js/blob/master/CHANGELOG.md#179-pollution-eradication-2019-11-19">https://github.com/angular/angular.js/blob/master/CHANGELOG.md#179-pollution-eradication-2019-11-19</a></p>

<p>Release Date: 2020-01-08</p>

<p>Fix Resolution: angular - 1.7.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | ws medium detected in angular min js ws medium severity vulnerability vulnerable library angular min js angularjs is an mvc framework for building web applications the core features include html enhanced with custom component and data binding capabilities dependency injection and strong focus on simplicity testability maintainability and boiler plate reduction library home page a href path to dependency file tmp ws scm aid docs node modules autocomplete js test playground angular html path to vulnerable library aid docs node modules autocomplete js test playground angular html aid docs node modules autocomplete js examples basic angular html dependency hierarchy x angular min js vulnerable library found in head commit a href vulnerability details prototype pollution vulnerability found in angular before publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution angular step up your open source security game with whitesource | 0 |

42,204 | 17,082,734,911 | IssuesEvent | 2021-07-08 07:57:15 | NewTec-GmbH/ZumoComSystem | https://api.github.com/repos/NewTec-GmbH/ZumoComSystem | closed | Implement InitAPMode | SystemServices | **Acceptance Criteria**

- [ ] Architecture designed

- [ ] AP is spawned when WiFiKey is pressed during boot-up

- [ ] AP is spawned when there are no network credentials available for AP mode

- [ ] Transferred data is encrypted

- [ ] IP clients cannot communicate with each other

- [ ] Uses default SSID and PSK

- [ ] DHCP service available

- [ ] DNS service available

- [ ] ComPlatform reachable at "https://complatform.local" | 1.0 | Implement InitAPMode - **Acceptance Criteria**

- [ ] Architecture designed

- [ ] AP is spawned when WiFiKey is pressed during boot-up

- [ ] AP is spawned when there are no network credentials available for AP mode

- [ ] Transferred data is encrypted

- [ ] IP clients cannot communicate with each other

- [ ] Uses default SSID and PSK

- [ ] DHCP service available

- [ ] DNS service available

- [ ] ComPlatform reachable at "https://complatform.local" | non_test | implement initapmode acceptance criteria architecture designed ap is spawned when wifikey is pressed during boot up ap is spawned when there are no network credentials available for ap mode transferred data is encrypted ip clients cannot communicate with each other uses default ssid and psk dhcp service available dns service available complatform reachable at | 0 |

234,465 | 19,184,124,741 | IssuesEvent | 2021-12-04 22:54:51 | Hamlib/Hamlib | https://api.github.com/repos/Hamlib/Hamlib | closed | Kenwood mode set problems | bug needs test critical WSJTX JTDX | TS590S is setting mode when mode does not need to be set causing lots of relay chatter when transmitting with WSJTX.

| 1.0 | Kenwood mode set problems - TS590S is setting mode when mode does not need to be set causing lots of relay chatter when transmitting with WSJTX.

| test | kenwood mode set problems is setting mode when mode does not need to be set causing lots of relay chatter when transmitting with wsjtx | 1 |

31,790 | 5,997,050,905 | IssuesEvent | 2017-06-03 19:58:37 | networkupstools/nut | https://api.github.com/repos/networkupstools/nut | opened | Developer manual: fix typo and formatting in subdriver commands | documentation | http://buildbot.networkupstools.org/~buildbot/docker-debian-jessie/docs/latest//docs/developer-guide.chunked/ar01s04.html#_writing_a_subdriver has a command that ends in `auto` - should be `-a auto`. Also fix formatting of surrounding paragraphs with commands. | 1.0 | Developer manual: fix typo and formatting in subdriver commands - http://buildbot.networkupstools.org/~buildbot/docker-debian-jessie/docs/latest//docs/developer-guide.chunked/ar01s04.html#_writing_a_subdriver has a command that ends in `auto` - should be `-a auto`. Also fix formatting of surrounding paragraphs with commands. | non_test | developer manual fix typo and formatting in subdriver commands has a command that ends in auto should be a auto also fix formatting of surrounding paragraphs with commands | 0 |

59,606 | 17,023,174,963 | IssuesEvent | 2021-07-03 00:42:49 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Name finder loses cities west of Greenwich | Component: namefinder Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 1.39pm, Friday, 24th August 2007]**

-------- Original Message --------

Subject: Re: [OSM-dev] Name finder for the main OSM page?

Date: Fri, 24 Aug 2007 00:21:06 +0100

From: Tom Hughes <tom@compton.nu>

To: dev@openstreetmap.org

I've been playing with this using the three queries that your new

version uses and I've found a couple of issues...

The "towns near" and "places near" queries generally behave fairly

sensibly, and give things near "requestedplace" which has the lat

and lon I supplied.

The "cities near" query does not do this however, and gives me all

sorts of wierd results which are relative to other places and not

the lat and lon I gave. My test case of 51.76,0.0 which is just north

of London gives me Bristol as the first result!

A second problem is that it doesn't cope with wrapping around the

zero meridian - as you can probably guess from that test case I live

about half a mile or so from the meridian and I find that if I'm to

the east of it I only find towns to the east and vice verse when I'm

to the west of it.

| 1.0 | Name finder loses cities west of Greenwich - **[Submitted to the original trac issue database at 1.39pm, Friday, 24th August 2007]**

-------- Original Message --------

Subject: Re: [OSM-dev] Name finder for the main OSM page?

Date: Fri, 24 Aug 2007 00:21:06 +0100

From: Tom Hughes <tom@compton.nu>

To: dev@openstreetmap.org

I've been playing with this using the three queries that your new

version uses and I've found a couple of issues...

The "towns near" and "places near" queries generally behave fairly

sensibly, and give things near "requestedplace" which has the lat

and lon I supplied.

The "cities near" query does not do this however, and gives me all

sorts of wierd results which are relative to other places and not

the lat and lon I gave. My test case of 51.76,0.0 which is just north

of London gives me Bristol as the first result!

A second problem is that it doesn't cope with wrapping around the

zero meridian - as you can probably guess from that test case I live

about half a mile or so from the meridian and I find that if I'm to

the east of it I only find towns to the east and vice verse when I'm

to the west of it.

| non_test | name finder loses cities west of greenwich original message subject re name finder for the main osm page date fri aug from tom hughes to dev openstreetmap org i ve been playing with this using the three queries that your new version uses and i ve found a couple of issues the towns near and places near queries generally behave fairly sensibly and give things near requestedplace which has the lat and lon i supplied the cities near query does not do this however and gives me all sorts of wierd results which are relative to other places and not the lat and lon i gave my test case of which is just north of london gives me bristol as the first result a second problem is that it doesn t cope with wrapping around the zero meridian as you can probably guess from that test case i live about half a mile or so from the meridian and i find that if i m to the east of it i only find towns to the east and vice verse when i m to the west of it | 0 |

65,889 | 6,977,748,798 | IssuesEvent | 2017-12-12 15:32:01 | GoogleCloudPlatform/google-cloud-eclipse | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-eclipse | closed | Does Kokoro only build on googler commits? | high priority testing | Quickly reviewing my PR queue, I don't see any build from Kokoro. Yet I have seen Kokoro builds on PRs from @chanseokoh and @elharo. We should verify that Kokoro is doing builds for external contributors. | 1.0 | Does Kokoro only build on googler commits? - Quickly reviewing my PR queue, I don't see any build from Kokoro. Yet I have seen Kokoro builds on PRs from @chanseokoh and @elharo. We should verify that Kokoro is doing builds for external contributors. | test | does kokoro only build on googler commits quickly reviewing my pr queue i don t see any build from kokoro yet i have seen kokoro builds on prs from chanseokoh and elharo we should verify that kokoro is doing builds for external contributors | 1 |

74,505 | 7,431,472,167 | IssuesEvent | 2018-03-25 15:03:06 | phil-r/react-native-looped-carousel | https://api.github.com/repos/phil-r/react-native-looped-carousel | closed | Fix test error | tests | Tests work, but here is an error in the output:

```

console.error node_modules/fbjs/lib/warning.js:33

Warning: Can only update a mounted or mounting component. This usually means you called setState, replaceState, or forceUpdate on an unmounted component. This is a no-op.

Please check the code for the Carousel component.

``` | 1.0 | Fix test error - Tests work, but here is an error in the output:

```

console.error node_modules/fbjs/lib/warning.js:33

Warning: Can only update a mounted or mounting component. This usually means you called setState, replaceState, or forceUpdate on an unmounted component. This is a no-op.

Please check the code for the Carousel component.

``` | test | fix test error tests work but here is an error in the output console error node modules fbjs lib warning js warning can only update a mounted or mounting component this usually means you called setstate replacestate or forceupdate on an unmounted component this is a no op please check the code for the carousel component | 1 |

82,885 | 7,855,097,180 | IssuesEvent | 2018-06-20 23:36:22 | att/ast | https://api.github.com/repos/att/ast | opened | Switch (or add) one of the Travis environments to 32-bits | compatibility enhancement testing | I've been focusing this past week on making ksh free of compiler lint warnings. It now builds with no compiler warnings on macOS, OpenSuse, and FreeBSD. On Ubuntu 32-bit there is this warning:

```

../src/cmd/ksh93/edit/edit.c: In function ‘sh_ioctl’:

../src/cmd/ksh93/edit/edit.c:1383:22: warning: cast from pointer to integer of different size [-Wpointer-to-int-cast]

Sflong_t l = (Sflong_t)val;

^

```

We should add, or switch one of the existing, Travis test environments to 32-bits to help catch code that incorrectly assumes the length of a `long int` is the same as a pointer. | 1.0 | Switch (or add) one of the Travis environments to 32-bits - I've been focusing this past week on making ksh free of compiler lint warnings. It now builds with no compiler warnings on macOS, OpenSuse, and FreeBSD. On Ubuntu 32-bit there is this warning:

```

../src/cmd/ksh93/edit/edit.c: In function ‘sh_ioctl’:

../src/cmd/ksh93/edit/edit.c:1383:22: warning: cast from pointer to integer of different size [-Wpointer-to-int-cast]

Sflong_t l = (Sflong_t)val;

^

```

We should add, or switch one of the existing, Travis test environments to 32-bits to help catch code that incorrectly assumes the length of a `long int` is the same as a pointer. | test | switch or add one of the travis environments to bits i ve been focusing this past week on making ksh free of compiler lint warnings it now builds with no compiler warnings on macos opensuse and freebsd on ubuntu bit there is this warning src cmd edit edit c in function ‘sh ioctl’ src cmd edit edit c warning cast from pointer to integer of different size sflong t l sflong t val we should add or switch one of the existing travis test environments to bits to help catch code that incorrectly assumes the length of a long int is the same as a pointer | 1 |

19,192 | 10,335,227,139 | IssuesEvent | 2019-09-03 10:04:42 | anhnongdan/Spark1.6_Problems | https://api.github.com/repos/anhnongdan/Spark1.6_Problems | closed | Repartition with and without caching | In/Out Performance Platform best-practice enhancement | **Context: many 'where' look up on a big DF, the result has just 345 records but take 9k steps to calc.**

```

start_d = 20171001

if "hist_df" in locals():

hist_df.unpersist()

hist_df = sqlContext.read.parquet("{}/{}/update_date={}".format(WARE_HOUSE_PATH, HIS, start_d))

hist_df.repartition(100, 'sub_encrypted_phone_number')

#smpl = df.where(df.sub_encrypted_phone_number == 0)

if 'sampled_calls' in locals():

sampled_calls.unpersist()

sampled_calls = sqlContext.createDataFrame([] ,hist_df.schema, samplingRatio=0)

# append call list of visited pn and return cont_list as Pandas DF

def read_connections(pn, df):

print 'reading connection ...'

global sampled_calls

# g is a graph data structure

# how can I improve this where?

calls_list = df.where('sub_encrypted_phone_number = {}'.format(pn))

sampled_calls = sampled_calls.unionAll(calls_list)

# avoid counting g here

#print g.count()

#return g

return calls_list

'''

taboo_list is a python list

df is the df of big tc_call_history tables

'''

def snowball_sampling(center, df, max_depth = 1, current_depth = 0, taboo_list = []):

print center, current_depth, max_depth, taboo_list

if current_depth == max_depth:

print 'out of depth'

return taboo_list

if center in taboo_list and len(taboo_list)>0:

# Visited this person -- exit

return taboo_list

else:

# New person! Don't visit again

taboo_list.append(center)

calls_list = read_connections(center, df)

#print 'fetched list: ', g.count()

#print g.count()

# This command seems slow

cont_list = calls_list.select('contact_encrypted_phone_number').distinct().toPandas()

for index, call in cont_list.iterrows():

# Iterate through all friends of the central node, and

# recursively call snowball sampling

taboo_list = snowball_sampling(call['contact_encrypted_phone_number'], df, current_depth = current_depth + 1,

max_depth = max_depth, taboo_list = taboo_list)

return taboo_list

def run_ss(pn, df, max_depth = 1):

#print smpl.show()

snowball_sampling(pn, df, max_depth)

```

| True | Repartition with and without caching - **Context: many 'where' look up on a big DF, the result has just 345 records but take 9k steps to calc.**

```

start_d = 20171001

if "hist_df" in locals():

hist_df.unpersist()

hist_df = sqlContext.read.parquet("{}/{}/update_date={}".format(WARE_HOUSE_PATH, HIS, start_d))

hist_df.repartition(100, 'sub_encrypted_phone_number')

#smpl = df.where(df.sub_encrypted_phone_number == 0)

if 'sampled_calls' in locals():

sampled_calls.unpersist()

sampled_calls = sqlContext.createDataFrame([] ,hist_df.schema, samplingRatio=0)

# append call list of visited pn and return cont_list as Pandas DF

def read_connections(pn, df):

print 'reading connection ...'

global sampled_calls

# g is a graph data structure

# how can I improve this where?

calls_list = df.where('sub_encrypted_phone_number = {}'.format(pn))

sampled_calls = sampled_calls.unionAll(calls_list)

# avoid counting g here

#print g.count()

#return g

return calls_list

'''

taboo_list is a python list

df is the df of big tc_call_history tables

'''

def snowball_sampling(center, df, max_depth = 1, current_depth = 0, taboo_list = []):

print center, current_depth, max_depth, taboo_list

if current_depth == max_depth:

print 'out of depth'

return taboo_list

if center in taboo_list and len(taboo_list)>0:

# Visited this person -- exit

return taboo_list

else:

# New person! Don't visit again

taboo_list.append(center)

calls_list = read_connections(center, df)

#print 'fetched list: ', g.count()

#print g.count()

# This command seems slow

cont_list = calls_list.select('contact_encrypted_phone_number').distinct().toPandas()

for index, call in cont_list.iterrows():

# Iterate through all friends of the central node, and

# recursively call snowball sampling

taboo_list = snowball_sampling(call['contact_encrypted_phone_number'], df, current_depth = current_depth + 1,

max_depth = max_depth, taboo_list = taboo_list)

return taboo_list

def run_ss(pn, df, max_depth = 1):

#print smpl.show()

snowball_sampling(pn, df, max_depth)

```

| non_test | repartition with and without caching context many where look up on a big df the result has just records but take steps to calc start d if hist df in locals hist df unpersist hist df sqlcontext read parquet update date format ware house path his start d hist df repartition sub encrypted phone number smpl df where df sub encrypted phone number if sampled calls in locals sampled calls unpersist sampled calls sqlcontext createdataframe hist df schema samplingratio append call list of visited pn and return cont list as pandas df def read connections pn df print reading connection global sampled calls g is a graph data structure how can i improve this where calls list df where sub encrypted phone number format pn sampled calls sampled calls unionall calls list avoid counting g here print g count return g return calls list taboo list is a python list df is the df of big tc call history tables def snowball sampling center df max depth current depth taboo list print center current depth max depth taboo list if current depth max depth print out of depth return taboo list if center in taboo list and len taboo list visited this person exit return taboo list else new person don t visit again taboo list append center calls list read connections center df print fetched list g count print g count this command seems slow cont list calls list select contact encrypted phone number distinct topandas for index call in cont list iterrows iterate through all friends of the central node and recursively call snowball sampling taboo list snowball sampling call df current depth current depth max depth max depth taboo list taboo list return taboo list def run ss pn df max depth print smpl show snowball sampling pn df max depth | 0 |

78,890 | 7,680,924,717 | IssuesEvent | 2018-05-16 04:45:30 | adobe/brackets | https://api.github.com/repos/adobe/brackets | closed | [Brackets auto-update Mac] Update scenario fails on Mac. | Testing | ### Description

Update scenario fails on Mac.

### Steps to Reproduce

1. Launch brackets 1.13.

2. Click on Update Notification Button.

3. Click on Get it now button.

4. Click on Restart button.

5. Brackets gets updated.

6. Launch brackets.

7. Red colored update info bar comes up on launching brackets after it has been updated.

This bar does not display any message.

**Expected behavior:** Brackets should get updated to 1.14 and launch.

**Actual behavior:** Update to 1.14 fails as download failed.

### Versions

Mac 10.13

Release 1.13 build 1.13.0-17639 | 1.0 | [Brackets auto-update Mac] Update scenario fails on Mac. - ### Description

Update scenario fails on Mac.

### Steps to Reproduce

1. Launch brackets 1.13.

2. Click on Update Notification Button.

3. Click on Get it now button.

4. Click on Restart button.

5. Brackets gets updated.

6. Launch brackets.

7. Red colored update info bar comes up on launching brackets after it has been updated.

This bar does not display any message.

**Expected behavior:** Brackets should get updated to 1.14 and launch.

**Actual behavior:** Update to 1.14 fails as download failed.

### Versions

Mac 10.13

Release 1.13 build 1.13.0-17639 | test | update scenario fails on mac description update scenario fails on mac steps to reproduce launch brackets click on update notification button click on get it now button click on restart button brackets gets updated launch brackets red colored update info bar comes up on launching brackets after it has been updated this bar does not display any message expected behavior brackets should get updated to and launch actual behavior update to fails as download failed versions mac release build | 1 |

68,027 | 3,283,808,137 | IssuesEvent | 2015-10-28 14:22:45 | leeensminger/OED_Wetlands | https://api.github.com/repos/leeensminger/OED_Wetlands | closed | Delineation - Inspections - Cannot edit inspection where Inspection Date = current system date | bug - high priority | Within the Inspections tab of an asset, the system disables Editing when the Inspection Date is the same as the current system date.

To recreate:

1. Create New Inspection, with inspection date of today

2. Click Save

3. Click the feature record in the Feature Manager to refresh

4. The Inspection fields and associated Photo tab are disabled

| 1.0 | Delineation - Inspections - Cannot edit inspection where Inspection Date = current system date - Within the Inspections tab of an asset, the system disables Editing when the Inspection Date is the same as the current system date.

To recreate:

1. Create New Inspection, with inspection date of today

2. Click Save

3. Click the feature record in the Feature Manager to refresh

4. The Inspection fields and associated Photo tab are disabled

| non_test | delineation inspections cannot edit inspection where inspection date current system date within the inspections tab of an asset the system disables editing when the inspection date is the same as the current system date to recreate create new inspection with inspection date of today click save click the feature record in the feature manager to refresh the inspection fields and associated photo tab are disabled | 0 |

120,846 | 10,136,797,415 | IssuesEvent | 2019-08-02 13:51:15 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [ML] DataFrameTaskFailedStateIT.testForceStartFailedTransform failure | :ml :ml/Data Frame >test-failure | Build link:

https://elasticsearch-ci.elastic.co/job/elastic+elasticsearch+master+multijob+fast+part2/394/console

Reproduce with:

```

./gradlew :x-pack:plugin:data-frame:qa:single-node-tests:integTestRunner --tests "org.elasticsearch.xpack.dataframe.integration.DataFrameTaskFailedStateIT.testForceStartFailedTransform" -Dtests.seed=2FFED17786A4BC97 -Dtests.security.manager=true -Dtests.locale=ar-LB -Dtests.timezone=Africa/Gaborone -Dcompiler.java=12 -Druntime.java=11

```

Failure:

```

java.lang.AssertionError:

Expected: <1>

but: was <2>

at __randomizedtesting.SeedInfo.seed([2FFED17786A4BC97:3E73F40F72853770]:0)

at org.hamcrest.MatcherAssert.assertThat(MatcherAssert.java:18)

at org.junit.Assert.assertThat(Assert.java:956)

at org.junit.Assert.assertThat(Assert.java:923)

at org.elasticsearch.xpack.dataframe.integration.DataFrameTaskFailedStateIT.testForceStartFailedTransform(DataFrameTaskFailedStateIT.java:111)

```

I could not reproduce locally.

It seems that somehow the test may get to have 2 index failures rather than 1. | 1.0 | [ML] DataFrameTaskFailedStateIT.testForceStartFailedTransform failure - Build link:

https://elasticsearch-ci.elastic.co/job/elastic+elasticsearch+master+multijob+fast+part2/394/console

Reproduce with:

```

./gradlew :x-pack:plugin:data-frame:qa:single-node-tests:integTestRunner --tests "org.elasticsearch.xpack.dataframe.integration.DataFrameTaskFailedStateIT.testForceStartFailedTransform" -Dtests.seed=2FFED17786A4BC97 -Dtests.security.manager=true -Dtests.locale=ar-LB -Dtests.timezone=Africa/Gaborone -Dcompiler.java=12 -Druntime.java=11

```

Failure:

```

java.lang.AssertionError:

Expected: <1>

but: was <2>

at __randomizedtesting.SeedInfo.seed([2FFED17786A4BC97:3E73F40F72853770]:0)

at org.hamcrest.MatcherAssert.assertThat(MatcherAssert.java:18)

at org.junit.Assert.assertThat(Assert.java:956)

at org.junit.Assert.assertThat(Assert.java:923)

at org.elasticsearch.xpack.dataframe.integration.DataFrameTaskFailedStateIT.testForceStartFailedTransform(DataFrameTaskFailedStateIT.java:111)

```

I could not reproduce locally.

It seems that somehow the test may get to have 2 index failures rather than 1. | test | dataframetaskfailedstateit testforcestartfailedtransform failure build link reproduce with gradlew x pack plugin data frame qa single node tests integtestrunner tests org elasticsearch xpack dataframe integration dataframetaskfailedstateit testforcestartfailedtransform dtests seed dtests security manager true dtests locale ar lb dtests timezone africa gaborone dcompiler java druntime java failure java lang assertionerror expected but was at randomizedtesting seedinfo seed at org hamcrest matcherassert assertthat matcherassert java at org junit assert assertthat assert java at org junit assert assertthat assert java at org elasticsearch xpack dataframe integration dataframetaskfailedstateit testforcestartfailedtransform dataframetaskfailedstateit java i could not reproduce locally it seems that somehow the test may get to have index failures rather than | 1 |

9,742 | 3,069,226,046 | IssuesEvent | 2015-08-18 19:25:58 | Darthpbal/homeTests | https://api.github.com/repos/Darthpbal/homeTests | closed | Who is the primary users of the app | test | ## gameplan

Read through some of the code that would be identifying of an owner department

read through some of the database tables that I have to identify an owner.

**report** back with my findings. | 1.0 | Who is the primary users of the app - ## gameplan

Read through some of the code that would be identifying of an owner department

read through some of the database tables that I have to identify an owner.

**report** back with my findings. | test | who is the primary users of the app gameplan read through some of the code that would be identifying of an owner department read through some of the database tables that i have to identify an owner report back with my findings | 1 |

97,094 | 8,644,293,707 | IssuesEvent | 2018-11-26 01:52:06 | vmware/vic | https://api.github.com/repos/vmware/vic | closed | [Scenario]Update 5-4-High-Availability steps | component/test/scenario source/scenario | 5-4-High-Availability is not stable and sometimes get failure. The root cause is that our test scripts does not differentiate where the tested container vm is. When the container vm is in the same ESXi with VCH and scripts triggers ESXi shutdown, the container VM is not moved to other hosts yet and then hit the issue of 'Error response from daemon: Server error from portlayer: Couldn't remove container.The ESX host is temporarily disconnected.', when calling docker rm <container>. Details info is in https://github.com/vmware/vic/issues/6667

At case layer, we will check if tested container vm is in the same as VCH, scripts will retry a few times to wait container vm move to other available host and then call docker rm <container id>. | 1.0 | [Scenario]Update 5-4-High-Availability steps - 5-4-High-Availability is not stable and sometimes get failure. The root cause is that our test scripts does not differentiate where the tested container vm is. When the container vm is in the same ESXi with VCH and scripts triggers ESXi shutdown, the container VM is not moved to other hosts yet and then hit the issue of 'Error response from daemon: Server error from portlayer: Couldn't remove container.The ESX host is temporarily disconnected.', when calling docker rm <container>. Details info is in https://github.com/vmware/vic/issues/6667

At case layer, we will check if tested container vm is in the same as VCH, scripts will retry a few times to wait container vm move to other available host and then call docker rm <container id>. | test | update high availability steps high availability is not stable and sometimes get failure the root cause is that our test scripts does not differentiate where the tested container vm is when the container vm is in the same esxi with vch and scripts triggers esxi shutdown the container vm is not moved to other hosts yet and then hit the issue of error response from daemon server error from portlayer couldn t remove container the esx host is temporarily disconnected when calling docker rm details info is in at case layer we will check if tested container vm is in the same as vch scripts will retry a few times to wait container vm move to other available host and then call docker rm | 1 |

122,504 | 10,225,324,477 | IssuesEvent | 2019-08-16 14:54:00 | ValveSoftware/steam-for-linux | https://api.github.com/repos/ValveSoftware/steam-for-linux | closed | Bluetooth module is detected as a gamepad in Overlord games | Need Retest reviewed | CPU : Intel I7 3820 3.6GHz

GPU : Nvidia Gtx 1070 Gigabyte G1 gaming (drivers 367.35)

RAM : 16GB DDR 2133MHz

DM : Gnome 3.20

Bluetooth : Atheros Communications, Inc. AR3011 Bluetooth

- Steam client version: 1468023329 (Latest Stable)

- Distribution (e.g. Ubuntu): Archlinux

- Opted into Steam client beta?: No

- Have you checked for system updates?: Yes

I played Overlord that just released yesterday on Steam on linux. I have this issue when the bluetooth is enabled the game camera moves itselfs. Seems that the bluetooth module is detected as a steam controller by Steam

Virtual-programming helped me with a workaround for their game but it would be nice to be resolved in Steam.

I link the issue on VP github https://github.com/virtual-programming/overlord-linux/issues/6

Here is my log file from Overlord game it could be useful .

[eon.txt](https://github.com/ValveSoftware/steam-for-linux/files/385972/eon.txt)

1. Enable Bluetooth module

2. Launch Overlord or Overlord II game with steam

3. start a new game or load game

4. Camera move itself while playing

Thank you very much

| 1.0 | Bluetooth module is detected as a gamepad in Overlord games - CPU : Intel I7 3820 3.6GHz

GPU : Nvidia Gtx 1070 Gigabyte G1 gaming (drivers 367.35)

RAM : 16GB DDR 2133MHz

DM : Gnome 3.20

Bluetooth : Atheros Communications, Inc. AR3011 Bluetooth

- Steam client version: 1468023329 (Latest Stable)

- Distribution (e.g. Ubuntu): Archlinux

- Opted into Steam client beta?: No

- Have you checked for system updates?: Yes

I played Overlord that just released yesterday on Steam on linux. I have this issue when the bluetooth is enabled the game camera moves itselfs. Seems that the bluetooth module is detected as a steam controller by Steam

Virtual-programming helped me with a workaround for their game but it would be nice to be resolved in Steam.

I link the issue on VP github https://github.com/virtual-programming/overlord-linux/issues/6

Here is my log file from Overlord game it could be useful .

[eon.txt](https://github.com/ValveSoftware/steam-for-linux/files/385972/eon.txt)

1. Enable Bluetooth module

2. Launch Overlord or Overlord II game with steam

3. start a new game or load game

4. Camera move itself while playing

Thank you very much

| test | bluetooth module is detected as a gamepad in overlord games cpu intel gpu nvidia gtx gigabyte gaming drivers ram ddr dm gnome bluetooth atheros communications inc bluetooth steam client version latest stable distribution e g ubuntu archlinux opted into steam client beta no have you checked for system updates yes i played overlord that just released yesterday on steam on linux i have this issue when the bluetooth is enabled the game camera moves itselfs seems that the bluetooth module is detected as a steam controller by steam virtual programming helped me with a workaround for their game but it would be nice to be resolved in steam i link the issue on vp github here is my log file from overlord game it could be useful enable bluetooth module launch overlord or overlord ii game with steam start a new game or load game camera move itself while playing thank you very much | 1 |

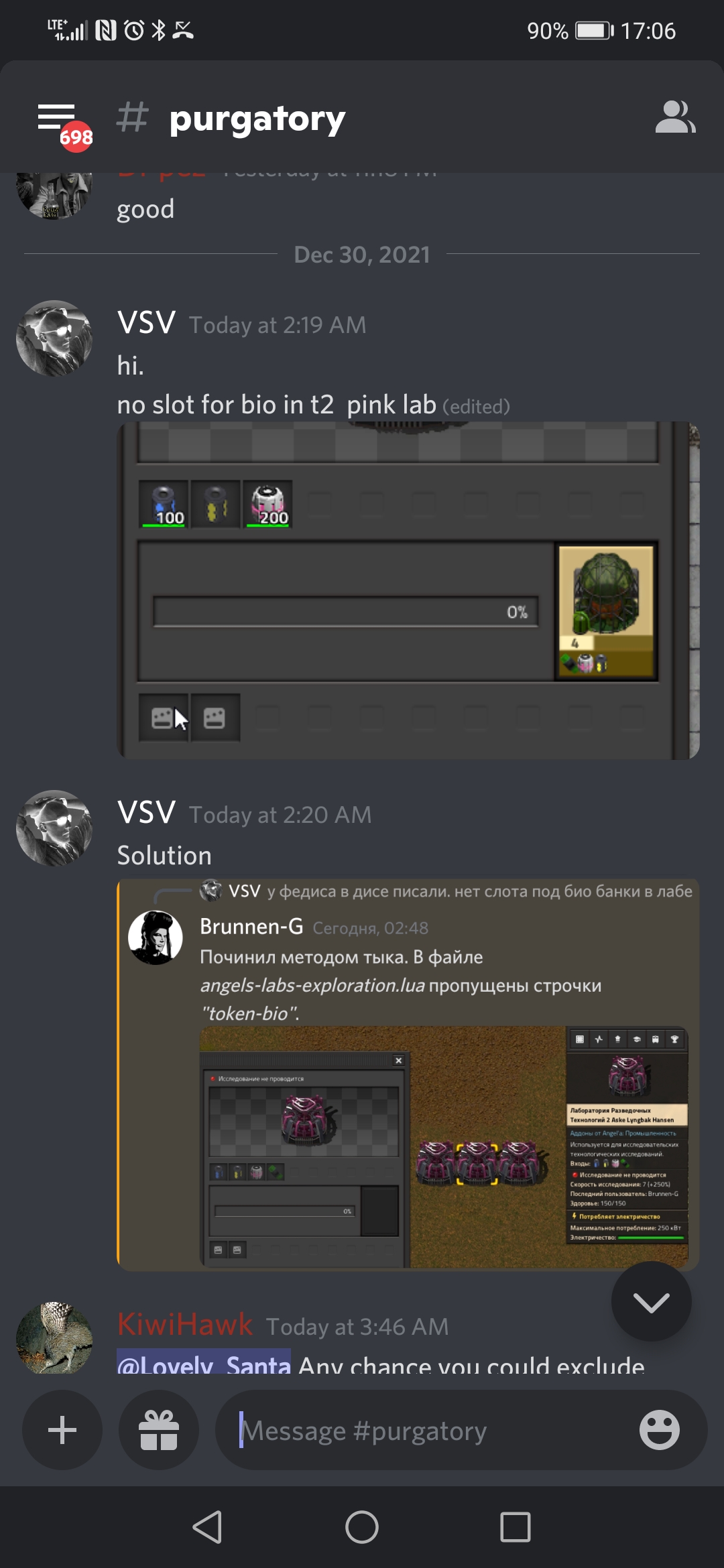

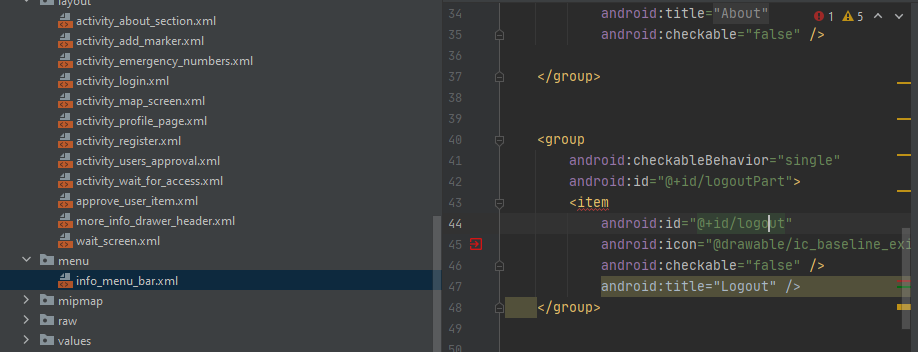

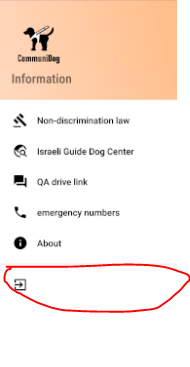

568,836 | 16,989,590,401 | IssuesEvent | 2021-06-30 18:34:08 | IdoSagiv/CommuniDog | https://api.github.com/repos/IdoSagiv/CommuniDog | closed | Logout button doesn't showed right xml tag not closed correctly | Mid Priority bug | Logout button doesn't showed right because it is not closed correctly

picture of the bug:

It's in info_menu_bar.xml under menu directory.

Enviroment :

* emulator Pixel 2

* Android api 29 | 1.0 | Logout button doesn't showed right xml tag not closed correctly - Logout button doesn't showed right because it is not closed correctly

picture of the bug:

It's in info_menu_bar.xml under menu directory.

Enviroment :

* emulator Pixel 2

* Android api 29 | non_test | logout button doesn t showed right xml tag not closed correctly logout button doesn t showed right because it is not closed correctly picture of the bug it s in info menu bar xml under menu directory enviroment emulator pixel android api | 0 |

39,050 | 5,215,678,234 | IssuesEvent | 2017-01-26 06:50:38 | mjs7231/python-plexapi | https://api.github.com/repos/mjs7231/python-plexapi | closed | Automated testing | testing | I'd like to incorporate some automated tests into the project, especially for the code submitted for #7

In the past, i've used **py.test** but id be open to another framework if you are partial to one.

| 1.0 | Automated testing - I'd like to incorporate some automated tests into the project, especially for the code submitted for #7

In the past, i've used **py.test** but id be open to another framework if you are partial to one.

| test | automated testing i d like to incorporate some automated tests into the project especially for the code submitted for in the past i ve used py test but id be open to another framework if you are partial to one | 1 |

89,445 | 8,203,896,154 | IssuesEvent | 2018-09-03 02:36:24 | backdrop/backdrop-issues | https://api.github.com/repos/backdrop/backdrop-issues | closed | [UX] Bring back node preview | pr - reviewed & tested by the community status - has pull request type - feature request | We removed node edit previews early on, but haven't agreed on how to re-introduce it. I think this is expected functionality.

Lets decide how to bring it back.

---

~PR: https://github.com/backdrop/backdrop/pull/2174~

PR: https://github.com/backdrop/backdrop/pull/2290 | 1.0 | [UX] Bring back node preview - We removed node edit previews early on, but haven't agreed on how to re-introduce it. I think this is expected functionality.

Lets decide how to bring it back.

---

~PR: https://github.com/backdrop/backdrop/pull/2174~

PR: https://github.com/backdrop/backdrop/pull/2290 | test | bring back node preview we removed node edit previews early on but haven t agreed on how to re introduce it i think this is expected functionality lets decide how to bring it back pr pr | 1 |

259,405 | 22,472,679,375 | IssuesEvent | 2022-06-22 09:28:11 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Worker reconciling Rafter occasionally fails with context deadline timeout | kind/failing-test release blocker | **Description**

See occurences:

- https://storage.googleapis.com/kyma-prow-logs/logs/kyma-upgrade-gardener-kyma2-to-main-reconciler-main/1528707388486455296/build-log.txt

- https://storage.googleapis.com/kyma-prow-logs/pr-logs/pull/kyma-project_kyma/14334/pre-main-kyma-gardener-azure-alpha-prod/1531219067166265344/build-log.txt

**Expected result**

Worker installing/updating rafter deployment should finish within desired context timeout

**Actual result**

Fails to finish ( gets stuck ) and timeout

| 1.0 | Worker reconciling Rafter occasionally fails with context deadline timeout - **Description**

See occurences:

- https://storage.googleapis.com/kyma-prow-logs/logs/kyma-upgrade-gardener-kyma2-to-main-reconciler-main/1528707388486455296/build-log.txt

- https://storage.googleapis.com/kyma-prow-logs/pr-logs/pull/kyma-project_kyma/14334/pre-main-kyma-gardener-azure-alpha-prod/1531219067166265344/build-log.txt

**Expected result**

Worker installing/updating rafter deployment should finish within desired context timeout

**Actual result**

Fails to finish ( gets stuck ) and timeout

| test | worker reconciling rafter occasionally fails with context deadline timeout description see occurences expected result worker installing updating rafter deployment should finish within desired context timeout actual result fails to finish gets stuck and timeout | 1 |

586,748 | 17,596,197,968 | IssuesEvent | 2021-08-17 05:39:47 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Message under API application quota | Type/Improvement Priority/Normal | ### Describe your problem(s)

<!-- Describe why you think this project needs this feature -->

When we are creating a new application and choose Quota for a token, under the dropdown filed the message is

"....**Assign API request quota per access token**. Allocated quota will be shared among all the subscribed APIs of the application...."

I get feedback that this message is confusing. Indicate that EACH token will have a chosen token, but in reality, a quota will be shared among all tokens.

can we change the message or explain it differently to avoid any confusion.

| 1.0 | Message under API application quota - ### Describe your problem(s)

<!-- Describe why you think this project needs this feature -->

When we are creating a new application and choose Quota for a token, under the dropdown filed the message is

"....**Assign API request quota per access token**. Allocated quota will be shared among all the subscribed APIs of the application...."

I get feedback that this message is confusing. Indicate that EACH token will have a chosen token, but in reality, a quota will be shared among all tokens.

can we change the message or explain it differently to avoid any confusion.

| non_test | message under api application quota describe your problem s when we are creating a new application and choose quota for a token under the dropdown filed the message is assign api request quota per access token allocated quota will be shared among all the subscribed apis of the application i get feedback that this message is confusing indicate that each token will have a chosen token but in reality a quota will be shared among all tokens can we change the message or explain it differently to avoid any confusion | 0 |

398,635 | 27,205,364,246 | IssuesEvent | 2023-02-20 12:41:53 | feelpp/book.feelpp.org | https://api.github.com/repos/feelpp/book.feelpp.org | closed | All pyfeel++ toolboxes examples point to "Fluid" | domain:documentation no-issue-activity | Could you provide examples for toolboxes other than "Fluid"?

Or at least change the links in the docs... | 1.0 | All pyfeel++ toolboxes examples point to "Fluid" - Could you provide examples for toolboxes other than "Fluid"?

Or at least change the links in the docs... | non_test | all pyfeel toolboxes examples point to fluid could you provide examples for toolboxes other than fluid or at least change the links in the docs | 0 |

449,004 | 12,961,903,651 | IssuesEvent | 2020-07-20 16:19:39 | monarch-initiative/mondo | https://api.github.com/repos/monarch-initiative/mondo | closed | Large drop in monogenic diseases after DO-MOndo mapping | high priority obsolete |

The following 210 genes used to be annotated to "monogenic disease"

https://www.pombase.org/results/from/id/707b3611-0b71-4ebd-9ee1-9326e44d76c6

Possibly not all are, but most do appear to be from spot checking

For example

SPAC31A2.05c | mis4 | cohesin loading factor (adherin) Mis4/Scc2

SPBC776.13 | cnd1 | condensin complex non-SMC subunit Cnd1

SPCC306.03c | cnd2 | condensin complex non-SMC subunit Cnd2

all have causative mutations for Cornelia de Lange syndrome

ark1 | aurora-B kinase Ark1

spermatogenic failure 5 (DOID:0070183) -has_material_basis_in mutation in the AURKC gene on chromosome 19q13

alg14 |

SPAC5D6.06c | alg14 | UDP-GlcNAc transferase associated protein Alg14

congenital myasthenic syndrome 15

compound heterozygous mutation in the ALG14 gene on chromosome 1p21.

SPAC18G6.10 | lem2 | LEM domain nuclear inner membrane protein Heh1/Lem2

cataract 46 juvenile-onset (DOID:0110243)

A cataract that has_material_basis_in homozygous mutation in the LEMD2 gene on chromosome 6p21.

| 1.0 | Large drop in monogenic diseases after DO-MOndo mapping -

The following 210 genes used to be annotated to "monogenic disease"

https://www.pombase.org/results/from/id/707b3611-0b71-4ebd-9ee1-9326e44d76c6

Possibly not all are, but most do appear to be from spot checking

For example

SPAC31A2.05c | mis4 | cohesin loading factor (adherin) Mis4/Scc2

SPBC776.13 | cnd1 | condensin complex non-SMC subunit Cnd1

SPCC306.03c | cnd2 | condensin complex non-SMC subunit Cnd2

all have causative mutations for Cornelia de Lange syndrome

ark1 | aurora-B kinase Ark1

spermatogenic failure 5 (DOID:0070183) -has_material_basis_in mutation in the AURKC gene on chromosome 19q13

alg14 |

SPAC5D6.06c | alg14 | UDP-GlcNAc transferase associated protein Alg14

congenital myasthenic syndrome 15

compound heterozygous mutation in the ALG14 gene on chromosome 1p21.

SPAC18G6.10 | lem2 | LEM domain nuclear inner membrane protein Heh1/Lem2

cataract 46 juvenile-onset (DOID:0110243)

A cataract that has_material_basis_in homozygous mutation in the LEMD2 gene on chromosome 6p21.

| non_test | large drop in monogenic diseases after do mondo mapping the following genes used to be annotated to monogenic disease possibly not all are but most do appear to be from spot checking for example cohesin loading factor adherin condensin complex non smc subunit condensin complex non smc subunit all have causative mutations for cornelia de lange syndrome aurora b kinase spermatogenic failure doid has material basis in mutation in the aurkc gene on chromosome udp glcnac transferase associated protein congenital myasthenic syndrome compound heterozygous mutation in the gene on chromosome lem domain nuclear inner membrane protein cataract juvenile onset doid a cataract that has material basis in homozygous mutation in the gene on chromosome | 0 |

232,150 | 18,847,409,815 | IssuesEvent | 2021-11-11 16:24:08 | ContinualAI/avalanche | https://api.github.com/repos/ContinualAI/avalanche | closed | Creation of a new envirnment failed for python 3.9 | test Continuous integration | Here are the differences between the last working environment and the new one that I tried to run:

```

9c9

< - absl-py=0.13.0=pyhd8ed1ab_0

---

> - absl-py=0.15.0=pyhd8ed1ab_0

16c16

< - brotlipy=0.7.0=py39h3811e60_1001

---

> - brotlipy=0.7.0=py39h3811e60_1003

18,22c18,22

< - c-ares=1.17.2=h7f98852_0

< - ca-certificates=2021.5.30=ha878542_0

< - cachetools=4.2.2=pyhd8ed1ab_0

< - certifi=2021.5.30=py39hf3d152e_0

< - cffi=1.14.6=py39he32792d_0

---

> - c-ares=1.18.1=h7f98852_0

> - ca-certificates=2021.10.8=ha878542_0

> - cachetools=4.2.4=pyhd8ed1ab_0

> - certifi=2021.10.8=py39hf3d152e_1

> - cffi=1.14.6=py39h4bc2ebd_2

24c24

< - click=8.0.1=py39hf3d152e_0

---

> - click=8.0.3=py39hf3d152e_1

27,30c27,30

< - cpuonly=1.0=0

< - cryptography=3.4.7=py39hbca0aa6_0

< - cycler=0.10.0=py_2

< - cython=0.29.24=py39he80948d_0

---

> - cpuonly=2.0=0

> - cryptography=35.0.0=py39h95dcef6_1

> - cycler=0.11.0=pyhd8ed1ab_0

> - cython=0.29.24=py39he80948d_1

32d31

< - dbus=1.13.6=he372182_0

34d32

< - expat=2.4.1=h9c3ff4c_0

36,40c34,37

< - fontconfig=2.13.1=he4413a7_1000

< - freetype=2.10.4=h5ab3b9f_0

< - gitdb=4.0.7=pyhd8ed1ab_0

< - gitpython=3.1.18=pyhd8ed1ab_0

< - glib=2.69.1=h5202010_0

---

> - freetype=2.11.0=h70c0345_0

> - giflib=5.2.1=h7b6447c_0

> - gitdb=4.0.9=pyhd8ed1ab_0

> - gitpython=3.1.24=pyhd8ed1ab_0

44c41

< - google-auth-oauthlib=0.4.5=pyhd8ed1ab_0

---

> - google-auth-oauthlib=0.4.6=pyhd8ed1ab_0

48,51c45

< - grpcio=1.38.1=py39hff7568b_0

< - gst-plugins-base=1.14.0=hbbd80ab_1

< - gstreamer=1.14.0=h28cd5cc_2

< - icu=58.2=hf484d3e_1000

---

> - grpcio=1.41.1=py39hff7568b_1

53,57c47,51

< - importlib-metadata=4.7.1=py39hf3d152e_1

< - intel-openmp=2021.3.0=h06a4308_3350

< - joblib=1.0.1=pyhd8ed1ab_0

< - jpeg=9b=h024ee3a_2

< - kiwisolver=1.3.2=py39h1a9c180_0

---

> - importlib-metadata=4.8.1=py39hf3d152e_1

> - intel-openmp=2021.4.0=h06a4308_3561

> - joblib=1.1.0=pyhd8ed1ab_0

> - jpeg=9d=h7f8727e_0

> - kiwisolver=1.3.2=py39h1a9c180_1

61,67c55,61

< - libblas=3.9.0=11_linux64_mkl

< - libcblas=3.9.0=11_linux64_mkl

< - libffi=3.3=h58526e2_2

< - libgcc-ng=11.1.0=hc902ee8_8

< - libgfortran-ng=11.1.0=h69a702a_8

< - libgfortran5=11.1.0=h6c583b3_8

< - libgomp=11.1.0=hc902ee8_8

---

> - libblas=3.9.0=12_linux64_mkl

> - libcblas=3.9.0=12_linux64_mkl

> - libffi=3.4.2=h9c3ff4c_4

> - libgcc-ng=11.2.0=h1d223b6_11

> - libgfortran-ng=11.2.0=h69a702a_11

> - libgfortran5=11.2.0=h5c6108e_11

> - libgomp=11.2.0=h1d223b6_11

70c64

< - liblapack=3.9.0=11_linux64_mkl

---

> - liblapack=3.9.0=12_linux64_mkl

72,73c66,67

< - libprotobuf=3.17.2=h780b84a_1

< - libstdcxx-ng=11.1.0=h56837e0_8

---

> - libprotobuf=3.19.1=h780b84a_0

> - libstdcxx-ng=11.2.0=he4da1e4_11

77d70

< - libuuid=2.32.1=h7f98852_1000

78a72

> - libwebp=1.2.0=h89dd481_0

80,81c74

< - libxcb=1.13=h7f98852_1003

< - libxml2=2.9.9=h13577e0_2

---

> - libzlib=1.2.11=h36c2ea0_1013

84,87c77,83

< - matplotlib=3.4.3=py39hf3d152e_0

< - matplotlib-base=3.4.3=py39h2fa2bec_0

< - mkl=2021.3.0=h06a4308_520

< - multidict=5.1.0=py39h3811e60_1

---

> - matplotlib=3.3.2=0

> - matplotlib-base=3.3.2=py39h98787fa_1

> - mkl=2021.4.0=h06a4308_640

> - mkl-service=2.4.0=py39h7f8727e_0

> - mkl_fft=1.3.1=py39hd3c417c_0

> - mkl_random=1.2.2=py39h51133e4_0

> - multidict=5.2.0=py39h3811e60_1

90,91c86,87

< - ninja=1.10.2=hff7bd54_1

< - numpy=1.21.2=py39hdbf815f_0

---

> - numpy=1.21.2=py39h20f2e39_0

> - numpy-base=1.21.2=py39h79a1101_0

94c90

< - olefile=0.46=py_0

---

> - olefile=0.46=pyhd3eb1b0_0

96,97c92

< - openjpeg=2.4.0=h3ad879b_0

< - openssl=1.1.1k=h7f98852_1

---

> - openssl=1.1.1l=h7f98852_0

99,105c94,98

< - pcre=8.45=h9c3ff4c_0

< - pillow=8.3.1=py39h2c7a002_0

< - pip=21.2.4=pyhd8ed1ab_0

< - promise=2.3=py39hf3d152e_3

< - protobuf=3.17.2=py39he80948d_0

< - psutil=5.8.0=py39h3811e60_1

< - pthread-stubs=0.4=h36c2ea0_1001

---

> - pillow=8.4.0=py39h5aabda8_0

> - pip=21.3.1=pyhd8ed1ab_0

> - promise=2.3=py39hf3d152e_4

> - protobuf=3.19.1=py39he80948d_1

> - psutil=5.8.0=py39h3811e60_2

110,115c103,107

< - pyjwt=2.1.0=pyhd8ed1ab_0

< - pyopenssl=20.0.1=pyhd8ed1ab_0

< - pyparsing=2.4.7=pyh9f0ad1d_0

< - pyqt=5.9.2=py39h2531618_6

< - pysocks=1.7.1=py39hf3d152e_3

< - python=3.9.6=h49503c6_1_cpython

---

> - pyjwt=2.3.0=pyhd8ed1ab_0

> - pyopenssl=21.0.0=pyhd8ed1ab_0

> - pyparsing=3.0.4=pyhd8ed1ab_0

> - pysocks=1.7.1=py39hf3d152e_4

> - python=3.9.7=hb7a2778_3_cpython

118c110,111

< - pytorch=1.9.0=py3.9_cpu_0

---

> - pytorch=1.10.0=py3.9_cpu_0

> - pytorch-mutex=1.0=cpu

120,122c113,114

< - pyyaml=5.4.1=py39h3811e60_1

< - qt=5.9.7=h5867ecd_1

< - quadprog=0.1.8=py39h1a9c180_2

---

> - pyyaml=6.0=py39h3811e60_2

> - quadprog=0.1.10=py39h1a9c180_0

127c119

< - scikit-learn=0.24.2=py39h4dfa638_1

---

> - scikit-learn=1.0.1=py39h7c5d8c9_1

129,130c121,122

< - sentry-sdk=1.3.1=pyhd8ed1ab_0

< - setuptools=57.4.0=py39hf3d152e_0

---

> - sentry-sdk=1.4.3=pyhd8ed1ab_0

> - setuptools=58.5.2=py39hf3d152e_0

132,133c124

< - sip=4.19.13=py39h2531618_0

< - six=1.16.0=pyh6c4a22f_0

---

> - six=1.16.0=pyhd3eb1b0_0

135c126

< - sqlite=3.36.0=h9cd32fc_0

---

> - sqlite=3.36.0=h9cd32fc_2

140,148c131,139

< - threadpoolctl=2.2.0=pyh8a188c0_0

< - tk=8.6.11=h21135ba_0

< - torchvision=0.10.0=py39_cpu

< - tornado=6.1=py39h3811e60_1

< - tqdm=4.62.2=pyhd8ed1ab_0

< - typing_extensions=3.10.0.0=pyh06a4308_0

< - tzdata=2021a=he74cb21_1

< - urllib3=1.26.6=pyhd8ed1ab_0

< - wandb=0.11.2=pyhd8ed1ab_0

---

> - threadpoolctl=3.0.0=pyh8a188c0_0

> - tk=8.6.11=h27826a3_1

> - torchvision=0.11.1=py39_cpu

> - tornado=6.1=py39h3811e60_2

> - tqdm=4.62.3=pyhd8ed1ab_0

> - typing_extensions=3.10.0.2=pyh06a4308_0

> - tzdata=2021e=he74cb21_0

> - urllib3=1.26.7=pyhd8ed1ab_0

> - wandb=0.12.1=pyhd8ed1ab_0

152,153d142

< - xorg-libxau=1.0.9=h7f98852_0

< - xorg-libxdmcp=1.1.3=h7f98852_0

156,158c145,147

< - yarl=1.6.3=py39h3811e60_2

< - zipp=3.5.0=pyhd8ed1ab_0

< - zlib=1.2.11=h516909a_1010

---

> - yarl=1.7.0=py39h3811e60_0

> - zipp=3.6.0=pyhd8ed1ab_0

> - zlib=1.2.11=h36c2ea0_1013

161,163c150,156

< - filelock==3.0.12

< - gdown==3.13.0

< - pytorchcv==0.0.66

---

> - beautifulsoup4==4.10.0

> - cloudpickle==2.0.0

> - filelock==3.3.2

> - gdown==4.2.0

> - gym==0.21.0

> - pytorchcv==0.0.67

> - soupsieve==2.3

``` | 1.0 | Creation of a new envirnment failed for python 3.9 - Here are the differences between the last working environment and the new one that I tried to run:

```

9c9

< - absl-py=0.13.0=pyhd8ed1ab_0

---

> - absl-py=0.15.0=pyhd8ed1ab_0

16c16

< - brotlipy=0.7.0=py39h3811e60_1001

---

> - brotlipy=0.7.0=py39h3811e60_1003

18,22c18,22

< - c-ares=1.17.2=h7f98852_0

< - ca-certificates=2021.5.30=ha878542_0

< - cachetools=4.2.2=pyhd8ed1ab_0

< - certifi=2021.5.30=py39hf3d152e_0

< - cffi=1.14.6=py39he32792d_0

---

> - c-ares=1.18.1=h7f98852_0

> - ca-certificates=2021.10.8=ha878542_0

> - cachetools=4.2.4=pyhd8ed1ab_0

> - certifi=2021.10.8=py39hf3d152e_1

> - cffi=1.14.6=py39h4bc2ebd_2

24c24

< - click=8.0.1=py39hf3d152e_0

---

> - click=8.0.3=py39hf3d152e_1

27,30c27,30

< - cpuonly=1.0=0

< - cryptography=3.4.7=py39hbca0aa6_0

< - cycler=0.10.0=py_2

< - cython=0.29.24=py39he80948d_0

---

> - cpuonly=2.0=0

> - cryptography=35.0.0=py39h95dcef6_1

> - cycler=0.11.0=pyhd8ed1ab_0

> - cython=0.29.24=py39he80948d_1

32d31

< - dbus=1.13.6=he372182_0

34d32

< - expat=2.4.1=h9c3ff4c_0

36,40c34,37

< - fontconfig=2.13.1=he4413a7_1000

< - freetype=2.10.4=h5ab3b9f_0

< - gitdb=4.0.7=pyhd8ed1ab_0

< - gitpython=3.1.18=pyhd8ed1ab_0

< - glib=2.69.1=h5202010_0

---

> - freetype=2.11.0=h70c0345_0

> - giflib=5.2.1=h7b6447c_0

> - gitdb=4.0.9=pyhd8ed1ab_0

> - gitpython=3.1.24=pyhd8ed1ab_0

44c41

< - google-auth-oauthlib=0.4.5=pyhd8ed1ab_0

---

> - google-auth-oauthlib=0.4.6=pyhd8ed1ab_0

48,51c45

< - grpcio=1.38.1=py39hff7568b_0

< - gst-plugins-base=1.14.0=hbbd80ab_1

< - gstreamer=1.14.0=h28cd5cc_2

< - icu=58.2=hf484d3e_1000

---

> - grpcio=1.41.1=py39hff7568b_1

53,57c47,51

< - importlib-metadata=4.7.1=py39hf3d152e_1

< - intel-openmp=2021.3.0=h06a4308_3350

< - joblib=1.0.1=pyhd8ed1ab_0

< - jpeg=9b=h024ee3a_2

< - kiwisolver=1.3.2=py39h1a9c180_0

---

> - importlib-metadata=4.8.1=py39hf3d152e_1

> - intel-openmp=2021.4.0=h06a4308_3561

> - joblib=1.1.0=pyhd8ed1ab_0

> - jpeg=9d=h7f8727e_0

> - kiwisolver=1.3.2=py39h1a9c180_1

61,67c55,61

< - libblas=3.9.0=11_linux64_mkl

< - libcblas=3.9.0=11_linux64_mkl

< - libffi=3.3=h58526e2_2

< - libgcc-ng=11.1.0=hc902ee8_8

< - libgfortran-ng=11.1.0=h69a702a_8

< - libgfortran5=11.1.0=h6c583b3_8

< - libgomp=11.1.0=hc902ee8_8

---

> - libblas=3.9.0=12_linux64_mkl

> - libcblas=3.9.0=12_linux64_mkl

> - libffi=3.4.2=h9c3ff4c_4

> - libgcc-ng=11.2.0=h1d223b6_11

> - libgfortran-ng=11.2.0=h69a702a_11

> - libgfortran5=11.2.0=h5c6108e_11

> - libgomp=11.2.0=h1d223b6_11

70c64

< - liblapack=3.9.0=11_linux64_mkl

---

> - liblapack=3.9.0=12_linux64_mkl

72,73c66,67

< - libprotobuf=3.17.2=h780b84a_1

< - libstdcxx-ng=11.1.0=h56837e0_8

---

> - libprotobuf=3.19.1=h780b84a_0

> - libstdcxx-ng=11.2.0=he4da1e4_11

77d70

< - libuuid=2.32.1=h7f98852_1000

78a72

> - libwebp=1.2.0=h89dd481_0

80,81c74

< - libxcb=1.13=h7f98852_1003

< - libxml2=2.9.9=h13577e0_2

---

> - libzlib=1.2.11=h36c2ea0_1013

84,87c77,83

< - matplotlib=3.4.3=py39hf3d152e_0

< - matplotlib-base=3.4.3=py39h2fa2bec_0

< - mkl=2021.3.0=h06a4308_520

< - multidict=5.1.0=py39h3811e60_1

---

> - matplotlib=3.3.2=0

> - matplotlib-base=3.3.2=py39h98787fa_1

> - mkl=2021.4.0=h06a4308_640

> - mkl-service=2.4.0=py39h7f8727e_0

> - mkl_fft=1.3.1=py39hd3c417c_0

> - mkl_random=1.2.2=py39h51133e4_0

> - multidict=5.2.0=py39h3811e60_1

90,91c86,87

< - ninja=1.10.2=hff7bd54_1

< - numpy=1.21.2=py39hdbf815f_0

---

> - numpy=1.21.2=py39h20f2e39_0

> - numpy-base=1.21.2=py39h79a1101_0

94c90

< - olefile=0.46=py_0

---

> - olefile=0.46=pyhd3eb1b0_0

96,97c92

< - openjpeg=2.4.0=h3ad879b_0

< - openssl=1.1.1k=h7f98852_1

---

> - openssl=1.1.1l=h7f98852_0

99,105c94,98

< - pcre=8.45=h9c3ff4c_0

< - pillow=8.3.1=py39h2c7a002_0

< - pip=21.2.4=pyhd8ed1ab_0

< - promise=2.3=py39hf3d152e_3

< - protobuf=3.17.2=py39he80948d_0

< - psutil=5.8.0=py39h3811e60_1

< - pthread-stubs=0.4=h36c2ea0_1001

---

> - pillow=8.4.0=py39h5aabda8_0

> - pip=21.3.1=pyhd8ed1ab_0

> - promise=2.3=py39hf3d152e_4

> - protobuf=3.19.1=py39he80948d_1

> - psutil=5.8.0=py39h3811e60_2

110,115c103,107

< - pyjwt=2.1.0=pyhd8ed1ab_0

< - pyopenssl=20.0.1=pyhd8ed1ab_0

< - pyparsing=2.4.7=pyh9f0ad1d_0

< - pyqt=5.9.2=py39h2531618_6

< - pysocks=1.7.1=py39hf3d152e_3

< - python=3.9.6=h49503c6_1_cpython

---

> - pyjwt=2.3.0=pyhd8ed1ab_0

> - pyopenssl=21.0.0=pyhd8ed1ab_0

> - pyparsing=3.0.4=pyhd8ed1ab_0

> - pysocks=1.7.1=py39hf3d152e_4

> - python=3.9.7=hb7a2778_3_cpython

118c110,111

< - pytorch=1.9.0=py3.9_cpu_0

---

> - pytorch=1.10.0=py3.9_cpu_0

> - pytorch-mutex=1.0=cpu

120,122c113,114

< - pyyaml=5.4.1=py39h3811e60_1

< - qt=5.9.7=h5867ecd_1

< - quadprog=0.1.8=py39h1a9c180_2

---

> - pyyaml=6.0=py39h3811e60_2

> - quadprog=0.1.10=py39h1a9c180_0

127c119

< - scikit-learn=0.24.2=py39h4dfa638_1

---

> - scikit-learn=1.0.1=py39h7c5d8c9_1

129,130c121,122

< - sentry-sdk=1.3.1=pyhd8ed1ab_0

< - setuptools=57.4.0=py39hf3d152e_0

---

> - sentry-sdk=1.4.3=pyhd8ed1ab_0

> - setuptools=58.5.2=py39hf3d152e_0

132,133c124

< - sip=4.19.13=py39h2531618_0

< - six=1.16.0=pyh6c4a22f_0

---

> - six=1.16.0=pyhd3eb1b0_0

135c126

< - sqlite=3.36.0=h9cd32fc_0

---

> - sqlite=3.36.0=h9cd32fc_2

140,148c131,139

< - threadpoolctl=2.2.0=pyh8a188c0_0

< - tk=8.6.11=h21135ba_0

< - torchvision=0.10.0=py39_cpu

< - tornado=6.1=py39h3811e60_1

< - tqdm=4.62.2=pyhd8ed1ab_0

< - typing_extensions=3.10.0.0=pyh06a4308_0

< - tzdata=2021a=he74cb21_1

< - urllib3=1.26.6=pyhd8ed1ab_0

< - wandb=0.11.2=pyhd8ed1ab_0

---

> - threadpoolctl=3.0.0=pyh8a188c0_0

> - tk=8.6.11=h27826a3_1

> - torchvision=0.11.1=py39_cpu

> - tornado=6.1=py39h3811e60_2

> - tqdm=4.62.3=pyhd8ed1ab_0

> - typing_extensions=3.10.0.2=pyh06a4308_0

> - tzdata=2021e=he74cb21_0

> - urllib3=1.26.7=pyhd8ed1ab_0

> - wandb=0.12.1=pyhd8ed1ab_0

152,153d142

< - xorg-libxau=1.0.9=h7f98852_0

< - xorg-libxdmcp=1.1.3=h7f98852_0

156,158c145,147

< - yarl=1.6.3=py39h3811e60_2

< - zipp=3.5.0=pyhd8ed1ab_0

< - zlib=1.2.11=h516909a_1010

---

> - yarl=1.7.0=py39h3811e60_0

> - zipp=3.6.0=pyhd8ed1ab_0

> - zlib=1.2.11=h36c2ea0_1013

161,163c150,156

< - filelock==3.0.12

< - gdown==3.13.0

< - pytorchcv==0.0.66

---

> - beautifulsoup4==4.10.0

> - cloudpickle==2.0.0

> - filelock==3.3.2

> - gdown==4.2.0

> - gym==0.21.0

> - pytorchcv==0.0.67

> - soupsieve==2.3

``` | test | creation of a new envirnment failed for python here are the differences between the last working environment and the new one that i tried to run absl py absl py brotlipy brotlipy c ares ca certificates cachetools certifi cffi c ares ca certificates cachetools certifi cffi click click cpuonly cryptography cycler py cython cpuonly cryptography cycler cython dbus expat fontconfig freetype gitdb gitpython glib freetype giflib gitdb gitpython google auth oauthlib google auth oauthlib grpcio gst plugins base gstreamer icu grpcio importlib metadata intel openmp joblib jpeg kiwisolver importlib metadata intel openmp joblib jpeg kiwisolver libblas mkl libcblas mkl libffi libgcc ng libgfortran ng libgomp libblas mkl libcblas mkl libffi libgcc ng libgfortran ng libgomp liblapack mkl liblapack mkl libprotobuf libstdcxx ng libprotobuf libstdcxx ng libuuid libwebp libxcb libzlib matplotlib matplotlib base mkl multidict matplotlib matplotlib base mkl mkl service mkl fft mkl random multidict ninja numpy numpy numpy base olefile py olefile openjpeg openssl openssl pcre pillow pip promise protobuf psutil pthread stubs pillow pip promise protobuf psutil pyjwt pyopenssl pyparsing pyqt pysocks python cpython pyjwt pyopenssl pyparsing pysocks python cpython pytorch cpu pytorch cpu pytorch mutex cpu pyyaml qt quadprog pyyaml quadprog scikit learn scikit learn sentry sdk setuptools sentry sdk setuptools sip six six sqlite sqlite threadpoolctl tk torchvision cpu tornado tqdm typing extensions tzdata wandb threadpoolctl tk torchvision cpu tornado tqdm typing extensions tzdata wandb xorg libxau xorg libxdmcp yarl zipp zlib yarl zipp zlib filelock gdown pytorchcv cloudpickle filelock gdown gym pytorchcv soupsieve | 1 |

73,610 | 7,346,013,008 | IssuesEvent | 2018-03-07 19:17:15 | istio/test-infra | https://api.github.com/repos/istio/test-infra | closed | Update pipeline artifacts to also include docker images | test-infra | Artifacts link is defined here

https://github.com/istio/test-infra/blob/master/src/org/istio/testutils/GitUtilities.groovy#L152

It should be updated to list all docker images created and pushlished. | 1.0 | Update pipeline artifacts to also include docker images - Artifacts link is defined here

https://github.com/istio/test-infra/blob/master/src/org/istio/testutils/GitUtilities.groovy#L152

It should be updated to list all docker images created and pushlished. | test | update pipeline artifacts to also include docker images artifacts link is defined here it should be updated to list all docker images created and pushlished | 1 |

192,657 | 6,876,394,460 | IssuesEvent | 2017-11-20 00:04:49 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | opened | Add new columns to oneacct that require some processing | Category: CLI Priority: Normal Status: Pending Tracker: Backlog | ---

Author Name: **Carlos Martín** (Carlos Martín)

Original Redmine Issue: 1491, https://dev.opennebula.org/issues/1491

Original Date: 2012-09-21

---

For example, the running time.

Requested by "Jan Benadik":http://lists.opennebula.org/pipermail/users-opennebula.org/2012-September/020320.html

| 1.0 | Add new columns to oneacct that require some processing - ---

Author Name: **Carlos Martín** (Carlos Martín)

Original Redmine Issue: 1491, https://dev.opennebula.org/issues/1491

Original Date: 2012-09-21

---

For example, the running time.

Requested by "Jan Benadik":http://lists.opennebula.org/pipermail/users-opennebula.org/2012-September/020320.html

| non_test | add new columns to oneacct that require some processing author name carlos martín carlos martín original redmine issue original date for example the running time requested by jan benadik | 0 |

8,474 | 22,615,319,146 | IssuesEvent | 2022-06-29 21:19:34 | Quran-Journey/backend | https://api.github.com/repos/Quran-Journey/backend | opened | Create db Schema for backend | architecture | Include all of the tables that are laid out in our ERD. You can see the ERD in the README.md | 1.0 | Create db Schema for backend - Include all of the tables that are laid out in our ERD. You can see the ERD in the README.md | non_test | create db schema for backend include all of the tables that are laid out in our erd you can see the erd in the readme md | 0 |

56,279 | 3,078,753,124 | IssuesEvent | 2015-08-21 12:36:06 | pavel-pimenov/flylinkdc-r5xx | https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx | opened | Падение при удалении запроса на скачивание файл-листа юзера по DHT из Очереди скачивания | bug Component-Logic imported Priority-High | _From [reaor...@gmail.com](https://code.google.com/u/102418317896447533964/) on August 29, 2011 18:59:00_