Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

496,804 | 14,355,386,991 | IssuesEvent | 2020-11-30 10:01:58 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | closed | Add checksum to AppendEntries request | Impact: Data Priority: High Scope: broker Status: Needs Review Type: Maintenance | **Description**

We should add checksums to AppendEntries requests in order to ensure that followers don't write corrupted entries. | 1.0 | Add checksum to AppendEntries request - **Description**

We should add checksums to AppendEntries requests in order to ensure that followers don't write corrupted entries. | non_test | add checksum to appendentries request description we should add checksums to appendentries requests in order to ensure that followers don t write corrupted entries | 0 |

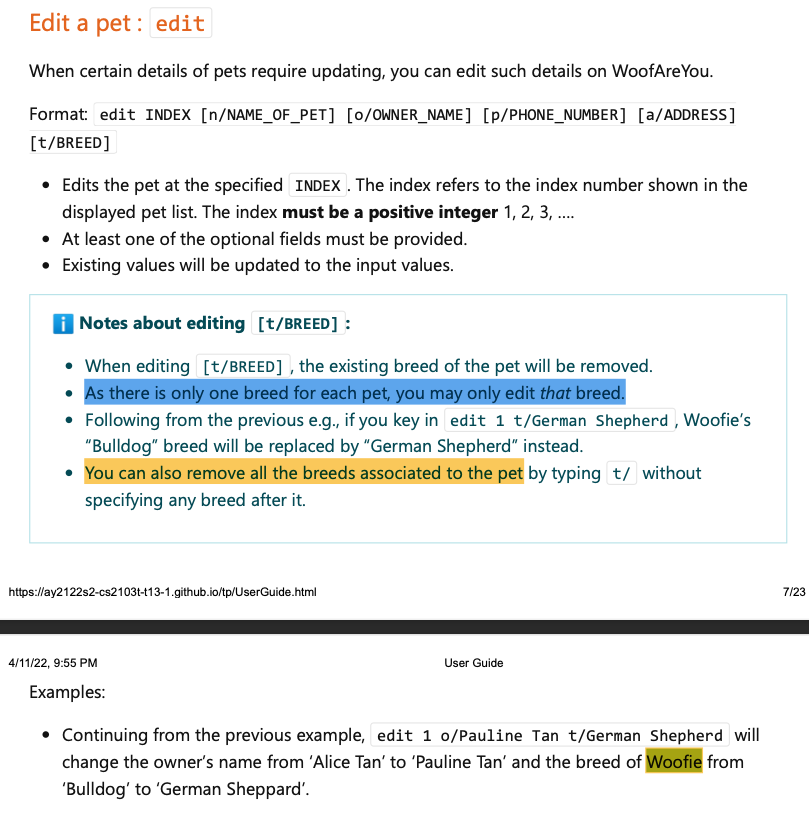

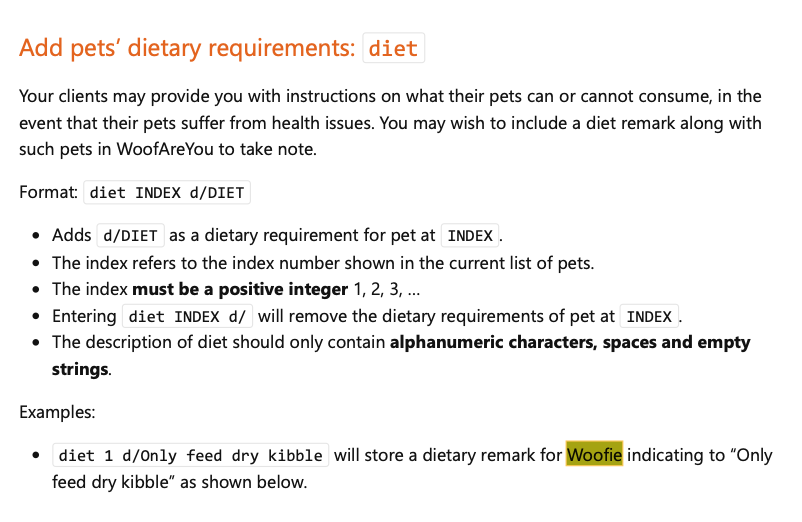

351,327 | 25,022,488,342 | IssuesEvent | 2022-11-04 03:06:29 | AY2223S1-CS2103T-W15-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-W15-2/tp | closed | [PE-D][Tester B] Unexpected behavior for addO command | documentation priority.High | **Steps to produce:**

1. Enter addO id/abc a/John Doe o/2000000 as suggested by the UG.

2. Enter add0 id/1 a/Betsy Crowe o/20 as suggested by the UG.

**Expected:**

1. Added 2 new orders

**Actual:**

1. Received "Invalid Command" for first command.

2. Received "Unknown Command" for the second.

<!--session: 1666944217695-35af3a60-58e4-4a68-9c43-9e45d7bd947a-->

<!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.Low`

original: wweqg/ped#5 | 1.0 | [PE-D][Tester B] Unexpected behavior for addO command - **Steps to produce:**

1. Enter addO id/abc a/John Doe o/2000000 as suggested by the UG.

2. Enter add0 id/1 a/Betsy Crowe o/20 as suggested by the UG.

**Expected:**

1. Added 2 new orders

**Actual:**

1. Received "Invalid Command" for first command.

2. Received "Unknown Command" for the second.

<!--session: 1666944217695-35af3a60-58e4-4a68-9c43-9e45d7bd947a-->

<!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.Low`

original: wweqg/ped#5 | non_test | unexpected behavior for addo command steps to produce enter addo id abc a john doe o as suggested by the ug enter id a betsy crowe o as suggested by the ug expected added new orders actual received invalid command for first command received unknown command for the second labels type documentationbug severity low original wweqg ped | 0 |

28,018 | 4,350,480,181 | IssuesEvent | 2016-07-31 08:49:12 | EasyRPG/Player | https://api.github.com/repos/EasyRPG/Player | closed | 3DS crash on Moby Housekeeper | 3DS Crash Duplicate Testcase available | __Name of the game__: Moby Housekeeper

__Player platform__: 3DS, .cia version (Edit: Using the continuous build)

__Describe the issue in detail and how to reproduce it__: Load the game Moby Housekeeper from https://rpgmaker.net/games/23/ . Play it on a 3DS (I used the English translated RTP--using a Japanese RTP is problematic because of the FAT32 on the SD card). Start a new game, see the E rating screen, then it either crashes or locks up. It does not do this on a PC.

[easyrpg_log.txt](https://github.com/EasyRPG/Player/files/391667/easyrpg_log.txt)

| 1.0 | 3DS crash on Moby Housekeeper - __Name of the game__: Moby Housekeeper

__Player platform__: 3DS, .cia version (Edit: Using the continuous build)

__Describe the issue in detail and how to reproduce it__: Load the game Moby Housekeeper from https://rpgmaker.net/games/23/ . Play it on a 3DS (I used the English translated RTP--using a Japanese RTP is problematic because of the FAT32 on the SD card). Start a new game, see the E rating screen, then it either crashes or locks up. It does not do this on a PC.

[easyrpg_log.txt](https://github.com/EasyRPG/Player/files/391667/easyrpg_log.txt)

| test | crash on moby housekeeper name of the game moby housekeeper player platform cia version edit using the continuous build describe the issue in detail and how to reproduce it load the game moby housekeeper from play it on a i used the english translated rtp using a japanese rtp is problematic because of the on the sd card start a new game see the e rating screen then it either crashes or locks up it does not do this on a pc | 1 |

155,415 | 12,255,038,045 | IssuesEvent | 2020-05-06 09:30:51 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Failures on Allow `NoSuchMethodError.withInvocation` to accept any `Invocation`. | area-test gardening | There are new test failures on [Allow `NoSuchMethodError.withInvocation` to accept any `Invocation`.](https://github.com/dart-lang/sdk/commit/2ca3555c44ac7b98bb366e19ff891c4b4c4940e7).

The tests

```

standalone_2/no_such_method_error_with_invocation_test RuntimeError (expected Pass)

```

are failing on configurations

```

dartkp-obfuscate-linux-release-x64

```

This test should either be modified to work with obfuscation or SkipByDesign'd in the status file. | 1.0 | Failures on Allow `NoSuchMethodError.withInvocation` to accept any `Invocation`. - There are new test failures on [Allow `NoSuchMethodError.withInvocation` to accept any `Invocation`.](https://github.com/dart-lang/sdk/commit/2ca3555c44ac7b98bb366e19ff891c4b4c4940e7).

The tests

```

standalone_2/no_such_method_error_with_invocation_test RuntimeError (expected Pass)

```

are failing on configurations

```

dartkp-obfuscate-linux-release-x64

```

This test should either be modified to work with obfuscation or SkipByDesign'd in the status file. | test | failures on allow nosuchmethoderror withinvocation to accept any invocation there are new test failures on the tests standalone no such method error with invocation test runtimeerror expected pass are failing on configurations dartkp obfuscate linux release this test should either be modified to work with obfuscation or skipbydesign d in the status file | 1 |

246,943 | 20,945,962,468 | IssuesEvent | 2022-03-26 00:08:50 | microsoft/msquic | https://api.github.com/repos/microsoft/msquic | opened | Server Initial Read Key not discarded on Compatible Version Negotiation | Bug: Core Area: Core Bug: Test/Tool | ### Describe the bug

The QUIC version 2 draft says that a server which upgrades a client to Version 2 via Compatible Version Negotiation (CVN)

> The server MUST NOT discard its original version Initial receive keys until it successfully processes a packet with the negotiated version.

[Source](https://www.ietf.org/archive/id/draft-ietf-quic-v2-01.html#section-4.1-5)

This was not implemented yet, and is difficult to test

### Affected OS

- [ ] All

- [ ] Windows Server 2022

- [ ] Windows 11

- [ ] Windows Insider Preview (specify affected build below)

- [ ] Ubuntu

- [ ] Debian

- [ ] Other (specify below)

### Additional OS information

_No response_

### MsQuic version

main

### Steps taken to reproduce bug

1. Client sends an Initial packet with all the crypto payload in it. Client VersionInfo starts with Version 1, but supports Version 2.

2. Server processes client Initial packet and uses CVN to upgrade client to Version 2. Server discards both read and write initial keys for Version 1, and replaces them with Initial keys for Version 2.

3. Client sends a second Initial packet with padding(??), before processing the Server's flight.

### Expected behavior

The Server should still be able to read the Client's second Initial packet until the Client acknowledges the CVN by sending a Handshake flight with the new version.

### Actual outcome

Server fails to decrypt client's second Initial packet and drops it.

### Additional details

_No response_ | 1.0 | Server Initial Read Key not discarded on Compatible Version Negotiation - ### Describe the bug

The QUIC version 2 draft says that a server which upgrades a client to Version 2 via Compatible Version Negotiation (CVN)

> The server MUST NOT discard its original version Initial receive keys until it successfully processes a packet with the negotiated version.

[Source](https://www.ietf.org/archive/id/draft-ietf-quic-v2-01.html#section-4.1-5)

This was not implemented yet, and is difficult to test

### Affected OS

- [ ] All

- [ ] Windows Server 2022

- [ ] Windows 11

- [ ] Windows Insider Preview (specify affected build below)

- [ ] Ubuntu

- [ ] Debian

- [ ] Other (specify below)

### Additional OS information

_No response_

### MsQuic version

main

### Steps taken to reproduce bug

1. Client sends an Initial packet with all the crypto payload in it. Client VersionInfo starts with Version 1, but supports Version 2.

2. Server processes client Initial packet and uses CVN to upgrade client to Version 2. Server discards both read and write initial keys for Version 1, and replaces them with Initial keys for Version 2.

3. Client sends a second Initial packet with padding(??), before processing the Server's flight.

### Expected behavior

The Server should still be able to read the Client's second Initial packet until the Client acknowledges the CVN by sending a Handshake flight with the new version.

### Actual outcome

Server fails to decrypt client's second Initial packet and drops it.

### Additional details

_No response_ | test | server initial read key not discarded on compatible version negotiation describe the bug the quic version draft says that a server which upgrades a client to version via compatible version negotiation cvn the server must not discard its original version initial receive keys until it successfully processes a packet with the negotiated version this was not implemented yet and is difficult to test affected os all windows server windows windows insider preview specify affected build below ubuntu debian other specify below additional os information no response msquic version main steps taken to reproduce bug client sends an initial packet with all the crypto payload in it client versioninfo starts with version but supports version server processes client initial packet and uses cvn to upgrade client to version server discards both read and write initial keys for version and replaces them with initial keys for version client sends a second initial packet with padding before processing the server s flight expected behavior the server should still be able to read the client s second initial packet until the client acknowledges the cvn by sending a handshake flight with the new version actual outcome server fails to decrypt client s second initial packet and drops it additional details no response | 1 |

3,152 | 4,105,936,007 | IssuesEvent | 2016-06-06 05:58:05 | KhronosGroup/glslang | https://api.github.com/repos/KhronosGroup/glslang | closed | On windows, debug and release builds are installed to the same location with the same file names | Infrastructure | This makes it problematic to embed this project's libraries into a larger CMake project that needs to build in both debug and release. | 1.0 | On windows, debug and release builds are installed to the same location with the same file names - This makes it problematic to embed this project's libraries into a larger CMake project that needs to build in both debug and release. | non_test | on windows debug and release builds are installed to the same location with the same file names this makes it problematic to embed this project s libraries into a larger cmake project that needs to build in both debug and release | 0 |

63,574 | 6,850,513,045 | IssuesEvent | 2017-11-14 03:43:58 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | teamcity: failed tests on 20019: Jepsen/Jepsen: JepsenBank: JepsenBank/split, Jepsen/Jepsen: JepsenBank-multitable: JepsenBank-multitable/start-kill-2, Jepsen/Jepsen: JepsenSets: JepsenSets/subcritical-skews+start-kill-2 | Robot test-failure | The following tests appear to have failed:

[#408054](https://teamcity.cockroachdb.com/viewLog.html?buildId=408054):

```

--- FAIL: Jepsen/Jepsen: JepsenBank: JepsenBank/split (130.492s)

None

--- FAIL: Jepsen/Jepsen: JepsenBank-multitable: JepsenBank-multitable/start-kill-2 (467.398s)

None

--- FAIL: Jepsen/Jepsen: JepsenSets: JepsenSets/subcritical-skews+start-kill-2 (129.548s)

None

```

Please assign, take a look and update the issue accordingly.

| 1.0 | teamcity: failed tests on 20019: Jepsen/Jepsen: JepsenBank: JepsenBank/split, Jepsen/Jepsen: JepsenBank-multitable: JepsenBank-multitable/start-kill-2, Jepsen/Jepsen: JepsenSets: JepsenSets/subcritical-skews+start-kill-2 - The following tests appear to have failed:

[#408054](https://teamcity.cockroachdb.com/viewLog.html?buildId=408054):

```

--- FAIL: Jepsen/Jepsen: JepsenBank: JepsenBank/split (130.492s)

None

--- FAIL: Jepsen/Jepsen: JepsenBank-multitable: JepsenBank-multitable/start-kill-2 (467.398s)

None

--- FAIL: Jepsen/Jepsen: JepsenSets: JepsenSets/subcritical-skews+start-kill-2 (129.548s)

None

```

Please assign, take a look and update the issue accordingly.

| test | teamcity failed tests on jepsen jepsen jepsenbank jepsenbank split jepsen jepsen jepsenbank multitable jepsenbank multitable start kill jepsen jepsen jepsensets jepsensets subcritical skews start kill the following tests appear to have failed fail jepsen jepsen jepsenbank jepsenbank split none fail jepsen jepsen jepsenbank multitable jepsenbank multitable start kill none fail jepsen jepsen jepsensets jepsensets subcritical skews start kill none please assign take a look and update the issue accordingly | 1 |

302,764 | 26,161,119,176 | IssuesEvent | 2022-12-31 14:51:22 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: unoptimized-query-oracle/disable-rules=half failed | C-test-failure O-robot O-roachtest branch-release-22.2 | roachtest.unoptimized-query-oracle/disable-rules=half [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8147282?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8147282?buildTab=artifacts#/unoptimized-query-oracle/disable-rules=half) on release-22.2 @ [07a53a36601e9ca5fcffcff55f69b43c6dfbf1c1](https://github.com/cockroachdb/cockroach/commits/07a53a36601e9ca5fcffcff55f69b43c6dfbf1c1):

```

test artifacts and logs in: /artifacts/unoptimized-query-oracle/disable-rules=half/run_1

(test_impl.go:286).Fatal: pq: Use of partitions requires an enterprise license. Your evaluation license expired on December 30, 2022. If you're interested in getting a new license, please contact subscriptions@cockroachlabs.com and we can help you out.

```

<p>Parameters: <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=4</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_fs=ext4</code>

, <code>ROACHTEST_localSSD=true</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #94557 roachtest: unoptimized-query-oracle/disable-rules=half/seed-multi-region failed [C-test-failure O-roachtest O-robot T-sql-queries branch-master release-blocker]

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*unoptimized-query-oracle/disable-rules=half.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: unoptimized-query-oracle/disable-rules=half failed - roachtest.unoptimized-query-oracle/disable-rules=half [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8147282?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/8147282?buildTab=artifacts#/unoptimized-query-oracle/disable-rules=half) on release-22.2 @ [07a53a36601e9ca5fcffcff55f69b43c6dfbf1c1](https://github.com/cockroachdb/cockroach/commits/07a53a36601e9ca5fcffcff55f69b43c6dfbf1c1):

```

test artifacts and logs in: /artifacts/unoptimized-query-oracle/disable-rules=half/run_1

(test_impl.go:286).Fatal: pq: Use of partitions requires an enterprise license. Your evaluation license expired on December 30, 2022. If you're interested in getting a new license, please contact subscriptions@cockroachlabs.com and we can help you out.

```

<p>Parameters: <code>ROACHTEST_cloud=gce</code>

, <code>ROACHTEST_cpu=4</code>

, <code>ROACHTEST_encrypted=false</code>

, <code>ROACHTEST_fs=ext4</code>

, <code>ROACHTEST_localSSD=true</code>

, <code>ROACHTEST_ssd=0</code>

</p>

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #94557 roachtest: unoptimized-query-oracle/disable-rules=half/seed-multi-region failed [C-test-failure O-roachtest O-robot T-sql-queries branch-master release-blocker]

</p>

</details>

/cc @cockroachdb/sql-queries

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*unoptimized-query-oracle/disable-rules=half.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest unoptimized query oracle disable rules half failed roachtest unoptimized query oracle disable rules half with on release test artifacts and logs in artifacts unoptimized query oracle disable rules half run test impl go fatal pq use of partitions requires an enterprise license your evaluation license expired on december if you re interested in getting a new license please contact subscriptions cockroachlabs com and we can help you out parameters roachtest cloud gce roachtest cpu roachtest encrypted false roachtest fs roachtest localssd true roachtest ssd help see see same failure on other branches roachtest unoptimized query oracle disable rules half seed multi region failed cc cockroachdb sql queries | 1 |

205,241 | 15,598,147,127 | IssuesEvent | 2021-03-18 17:44:44 | scikit-hep/pyhf | https://api.github.com/repos/scikit-hep/pyhf | closed | Regression in sbottom regression tests | bug tests | # Description

As of 2021-03-11 there is a regression in `tests/test_regression.py` that is arising from the following change (as noted by comapring outputs from the minimum supported dependencies workflow

```

$ diff pass_requirements.txt fail_requirements.txt

84,85c84,85

< prompt-toolkit 3.0.16

< protobuf 3.15.5

---

> prompt-toolkit 3.0.17

> protobuf 3.15.6

```

# Expected Behavior

CI passes

# Actual Behavior

```pytb

=================================== FAILURES ===================================

_______________________ test_sbottom_regionA_1300_205_60 _______________________

sbottom_likelihoods_download = <tarfile.TarFile object at 0x7fd1b420add0>

get_json_from_tarfile = <function get_json_from_tarfile.<locals>._get_json_from_tarfile at 0x7fd1d05925f0>

def test_sbottom_regionA_1300_205_60(

sbottom_likelihoods_download, get_json_from_tarfile

):

sbottom_regionA_bkgonly_json = get_json_from_tarfile(

sbottom_likelihoods_download, "RegionA/BkgOnly.json"

)

sbottom_regionA_1300_205_60_patch_json = get_json_from_tarfile(

sbottom_likelihoods_download, "RegionA/patch.sbottom_1300_205_60.json"

)

CLs_obs, CLs_exp = calculate_CLs(

sbottom_regionA_bkgonly_json, sbottom_regionA_1300_205_60_patch_json

)

assert CLs_obs == pytest.approx(0.24443627759085326, rel=1e-5)

> assert np.all(

np.isclose(

np.array(CLs_exp),

np.array(

[

0.09022509053507759,

0.1937839194960632,

0.38432344933992,

0.6557757334303531,

0.8910420971601081,

]

),

rtol=1e-5,

)

)

E assert False

E + where False = <function all at 0x7fd2c139d0e0>(array([False, True, True, True, True]))

E + where <function all at 0x7fd2c139d0e0> = np.all

E + and array([False, True, True, True, True]) = <function isclose at 0x7fd2c13af4d0>(array([0.09022391, 0.19378211, 0.38432119, 0.65577379, 0.89104121]), array([0.09022509, 0.19378392, 0.38432345, 0.65577573, 0.8910421 ]), rtol=1e-05)

E + where <function isclose at 0x7fd2c13af4d0> = np.isclose

E + and array([0.09022391, 0.19378211, 0.38432119, 0.65577379, 0.89104121]) = <built-in function array>([array(0.09022391), array(0.19378211), array(0.38432119), array(0.65577379), array(0.89104121)])

E + where <built-in function array> = np.array

E + and array([0.09022509, 0.19378392, 0.38432345, 0.65577573, 0.8910421 ]) = <built-in function array>([0.09022509053507759, 0.1937839194960632, 0.38432344933992, 0.6557757334303531, 0.8910420971601081])

E + where <built-in function array> = np.array

tests/test_regression.py:47: AssertionError

```

# Steps to Reproduce

Run CI

# Checklist

- [x] Run `git fetch` to get the most up to date version of `master`

- [x] Searched through existing Issues to confirm this is not a duplicate issue

- [x] Filled out the Description, Expected Behavior, Actual Behavior, and Steps to Reproduce sections above or have edited/removed them in a way that fully describes the issue

| 1.0 | Regression in sbottom regression tests - # Description

As of 2021-03-11 there is a regression in `tests/test_regression.py` that is arising from the following change (as noted by comapring outputs from the minimum supported dependencies workflow

```

$ diff pass_requirements.txt fail_requirements.txt

84,85c84,85

< prompt-toolkit 3.0.16

< protobuf 3.15.5

---

> prompt-toolkit 3.0.17

> protobuf 3.15.6

```

# Expected Behavior

CI passes

# Actual Behavior

```pytb

=================================== FAILURES ===================================

_______________________ test_sbottom_regionA_1300_205_60 _______________________

sbottom_likelihoods_download = <tarfile.TarFile object at 0x7fd1b420add0>

get_json_from_tarfile = <function get_json_from_tarfile.<locals>._get_json_from_tarfile at 0x7fd1d05925f0>

def test_sbottom_regionA_1300_205_60(

sbottom_likelihoods_download, get_json_from_tarfile

):

sbottom_regionA_bkgonly_json = get_json_from_tarfile(

sbottom_likelihoods_download, "RegionA/BkgOnly.json"

)

sbottom_regionA_1300_205_60_patch_json = get_json_from_tarfile(

sbottom_likelihoods_download, "RegionA/patch.sbottom_1300_205_60.json"

)

CLs_obs, CLs_exp = calculate_CLs(

sbottom_regionA_bkgonly_json, sbottom_regionA_1300_205_60_patch_json

)

assert CLs_obs == pytest.approx(0.24443627759085326, rel=1e-5)

> assert np.all(

np.isclose(

np.array(CLs_exp),

np.array(

[

0.09022509053507759,

0.1937839194960632,

0.38432344933992,

0.6557757334303531,

0.8910420971601081,

]

),

rtol=1e-5,

)

)

E assert False

E + where False = <function all at 0x7fd2c139d0e0>(array([False, True, True, True, True]))

E + where <function all at 0x7fd2c139d0e0> = np.all

E + and array([False, True, True, True, True]) = <function isclose at 0x7fd2c13af4d0>(array([0.09022391, 0.19378211, 0.38432119, 0.65577379, 0.89104121]), array([0.09022509, 0.19378392, 0.38432345, 0.65577573, 0.8910421 ]), rtol=1e-05)

E + where <function isclose at 0x7fd2c13af4d0> = np.isclose

E + and array([0.09022391, 0.19378211, 0.38432119, 0.65577379, 0.89104121]) = <built-in function array>([array(0.09022391), array(0.19378211), array(0.38432119), array(0.65577379), array(0.89104121)])

E + where <built-in function array> = np.array

E + and array([0.09022509, 0.19378392, 0.38432345, 0.65577573, 0.8910421 ]) = <built-in function array>([0.09022509053507759, 0.1937839194960632, 0.38432344933992, 0.6557757334303531, 0.8910420971601081])

E + where <built-in function array> = np.array

tests/test_regression.py:47: AssertionError

```

# Steps to Reproduce

Run CI

# Checklist

- [x] Run `git fetch` to get the most up to date version of `master`

- [x] Searched through existing Issues to confirm this is not a duplicate issue

- [x] Filled out the Description, Expected Behavior, Actual Behavior, and Steps to Reproduce sections above or have edited/removed them in a way that fully describes the issue

| test | regression in sbottom regression tests description as of there is a regression in tests test regression py that is arising from the following change as noted by comapring outputs from the minimum supported dependencies workflow diff pass requirements txt fail requirements txt prompt toolkit protobuf prompt toolkit protobuf expected behavior ci passes actual behavior pytb failures test sbottom regiona sbottom likelihoods download get json from tarfile get json from tarfile at def test sbottom regiona sbottom likelihoods download get json from tarfile sbottom regiona bkgonly json get json from tarfile sbottom likelihoods download regiona bkgonly json sbottom regiona patch json get json from tarfile sbottom likelihoods download regiona patch sbottom json cls obs cls exp calculate cls sbottom regiona bkgonly json sbottom regiona patch json assert cls obs pytest approx rel assert np all np isclose np array cls exp np array rtol e assert false e where false array e where np all e and array array array rtol e where np isclose e and array e where np array e and array e where np array tests test regression py assertionerror steps to reproduce run ci checklist run git fetch to get the most up to date version of master searched through existing issues to confirm this is not a duplicate issue filled out the description expected behavior actual behavior and steps to reproduce sections above or have edited removed them in a way that fully describes the issue | 1 |

38,105 | 5,166,527,367 | IssuesEvent | 2017-01-17 16:26:11 | glitchassassin/lackey | https://api.github.com/repos/glitchassassin/lackey | closed | TypeError: bytes or integer address expected instead of str instance | bug fix in testing | Hello, i'm using python 3.5.x, and windows 7 x64.

When i use this piece of code:

```

import lackey

pattern = lackey.Pattern(r'C:\Users\rainman\Documents\cam\my.png')

screen = lackey.Screen()

screen.click(pattern) # or screen.find(patter) or screen.capture()

```

Error happens:

```

hdc = self._gdi32.CreateDCA(ctypes.c_char_p(device_name), 0, 0, 0)

TypeError: bytes or integer address expected instead of str instance

``` | 1.0 | TypeError: bytes or integer address expected instead of str instance - Hello, i'm using python 3.5.x, and windows 7 x64.

When i use this piece of code:

```

import lackey

pattern = lackey.Pattern(r'C:\Users\rainman\Documents\cam\my.png')

screen = lackey.Screen()

screen.click(pattern) # or screen.find(patter) or screen.capture()

```

Error happens:

```

hdc = self._gdi32.CreateDCA(ctypes.c_char_p(device_name), 0, 0, 0)

TypeError: bytes or integer address expected instead of str instance

``` | test | typeerror bytes or integer address expected instead of str instance hello i m using python x and windows when i use this piece of code import lackey pattern lackey pattern r c users rainman documents cam my png screen lackey screen screen click pattern or screen find patter or screen capture error happens hdc self createdca ctypes c char p device name typeerror bytes or integer address expected instead of str instance | 1 |

805,895 | 29,736,098,320 | IssuesEvent | 2023-06-14 01:07:38 | longhorn/longhorn | https://api.github.com/repos/longhorn/longhorn | closed | [BUG] After migration of Longhorn from Rancher old UI to dashboard, the csi-plugin doesn't update | kind/bug reproduce/always priority/0 area/csi severity/4 | ## Describe the bug

Do a migration from Rancher old UI to dashboard using https://longhorn.io/kb/how-to-migrate-longhorn-chart-installed-in-old-rancher-ui-to-the-chart-in-new-rancher-ui/, the csi-plugin doesn't update and remains with the longhornio image instead of Rancher mirrored image.

## To Reproduce

Steps to reproduce the behavior:

1. Set up a cluster of Kubernetes 1.20.

1. Access old rancher UI by navigating to `<your-rancher-url>/g`.

1. Install Longhorn 1.0.2.

1. Create/attach some volumes. Create a few recurring snapshot/backup job that run every minutes.

1. Upgrade Longhorn to v1.2.4.

1. Migrate Longhorn to new chart in new rancher UI https://longhorn.io/kb/how-to-migrate-longhorn-chart-installed-in-old-rancher-ui-to-the-chart-in-new-rancher-ui/.

1. Check the csi plugin image

## Expected behavior

The CSI plugin image should also be updated to rancher mirrored image

## Log or Support bundle

<img width="1537" alt="Screen Shot 2022-09-01 at 3 10 54 PM" src="https://user-images.githubusercontent.com/60111667/188244752-1451fbf6-8b1c-4662-993c-1f7be0179dbb.png">

## Environment

- Longhorn version: v1.2.4

- Installation method (e.g. Rancher Catalog App/Helm/Kubectl): Rancher App

- Kubernetes distro (e.g. RKE/K3s/EKS/OpenShift) and version: v1.20

| 1.0 | [BUG] After migration of Longhorn from Rancher old UI to dashboard, the csi-plugin doesn't update - ## Describe the bug

Do a migration from Rancher old UI to dashboard using https://longhorn.io/kb/how-to-migrate-longhorn-chart-installed-in-old-rancher-ui-to-the-chart-in-new-rancher-ui/, the csi-plugin doesn't update and remains with the longhornio image instead of Rancher mirrored image.

## To Reproduce

Steps to reproduce the behavior:

1. Set up a cluster of Kubernetes 1.20.

1. Access old rancher UI by navigating to `<your-rancher-url>/g`.

1. Install Longhorn 1.0.2.

1. Create/attach some volumes. Create a few recurring snapshot/backup job that run every minutes.

1. Upgrade Longhorn to v1.2.4.

1. Migrate Longhorn to new chart in new rancher UI https://longhorn.io/kb/how-to-migrate-longhorn-chart-installed-in-old-rancher-ui-to-the-chart-in-new-rancher-ui/.

1. Check the csi plugin image

## Expected behavior

The CSI plugin image should also be updated to rancher mirrored image

## Log or Support bundle

<img width="1537" alt="Screen Shot 2022-09-01 at 3 10 54 PM" src="https://user-images.githubusercontent.com/60111667/188244752-1451fbf6-8b1c-4662-993c-1f7be0179dbb.png">

## Environment

- Longhorn version: v1.2.4

- Installation method (e.g. Rancher Catalog App/Helm/Kubectl): Rancher App

- Kubernetes distro (e.g. RKE/K3s/EKS/OpenShift) and version: v1.20

| non_test | after migration of longhorn from rancher old ui to dashboard the csi plugin doesn t update describe the bug do a migration from rancher old ui to dashboard using the csi plugin doesn t update and remains with the longhornio image instead of rancher mirrored image to reproduce steps to reproduce the behavior set up a cluster of kubernetes access old rancher ui by navigating to g install longhorn create attach some volumes create a few recurring snapshot backup job that run every minutes upgrade longhorn to migrate longhorn to new chart in new rancher ui check the csi plugin image expected behavior the csi plugin image should also be updated to rancher mirrored image log or support bundle img width alt screen shot at pm src environment longhorn version installation method e g rancher catalog app helm kubectl rancher app kubernetes distro e g rke eks openshift and version | 0 |

680,390 | 23,268,643,182 | IssuesEvent | 2022-08-04 20:07:13 | intel/cve-bin-tool | https://api.github.com/repos/intel/cve-bin-tool | closed | Feature request: Filters for component view (HTML reports) | enhancement higher priority | Feature request I received by email:

>In component view – it will be great to have opportunity to filter what is: New, Confirmed, Mitigated, Unexplored, Ignored.

| 1.0 | Feature request: Filters for component view (HTML reports) - Feature request I received by email:

>In component view – it will be great to have opportunity to filter what is: New, Confirmed, Mitigated, Unexplored, Ignored.

| non_test | feature request filters for component view html reports feature request i received by email in component view – it will be great to have opportunity to filter what is new confirmed mitigated unexplored ignored | 0 |

332,206 | 29,190,661,874 | IssuesEvent | 2023-05-19 19:38:25 | ValveSoftware/steam-for-linux | https://api.github.com/repos/ValveSoftware/steam-for-linux | closed | Steam guard in big picture cannot enter code no imput field | Big Picture Need Retest | #### Your system information

* Steam client version (build number or date):

Steam beta

* Distribution (e.g. Ubuntu):

Gamer-os / arch

* Opted into Steam client beta?: [Yes/No]

Yes

* Have you checked for system updates?: [Yes/No]

Yes

#### Please describe your issue in as much detail as possible:

Describe what you _expected_ should happen and what _did_ happen. Please link any large code pastes as a [Github Gist](https://gist.github.com/)

I removed my steam machine from the trusted devices so that I have to enter the steam guard code again.

Unfortunately I cannot enter the code as no input field is rendered in bpm.

#### Steps for reproducing this issue:

1. Remove steam machine from trusted devices

2. Next system start shows pop-up "you need to enter validation code" mail gets sent

3. After pressing "OK" there is nothing rendered no input field just blue background and select and done at the bottom

| 1.0 | Steam guard in big picture cannot enter code no imput field - #### Your system information

* Steam client version (build number or date):

Steam beta

* Distribution (e.g. Ubuntu):

Gamer-os / arch

* Opted into Steam client beta?: [Yes/No]

Yes

* Have you checked for system updates?: [Yes/No]

Yes

#### Please describe your issue in as much detail as possible:

Describe what you _expected_ should happen and what _did_ happen. Please link any large code pastes as a [Github Gist](https://gist.github.com/)

I removed my steam machine from the trusted devices so that I have to enter the steam guard code again.

Unfortunately I cannot enter the code as no input field is rendered in bpm.

#### Steps for reproducing this issue:

1. Remove steam machine from trusted devices

2. Next system start shows pop-up "you need to enter validation code" mail gets sent

3. After pressing "OK" there is nothing rendered no input field just blue background and select and done at the bottom

| test | steam guard in big picture cannot enter code no imput field your system information steam client version build number or date steam beta distribution e g ubuntu gamer os arch opted into steam client beta yes have you checked for system updates yes please describe your issue in as much detail as possible describe what you expected should happen and what did happen please link any large code pastes as a i removed my steam machine from the trusted devices so that i have to enter the steam guard code again unfortunately i cannot enter the code as no input field is rendered in bpm steps for reproducing this issue remove steam machine from trusted devices next system start shows pop up you need to enter validation code mail gets sent after pressing ok there is nothing rendered no input field just blue background and select and done at the bottom | 1 |

176,431 | 13,641,547,029 | IssuesEvent | 2020-09-25 14:18:52 | tracim/tracim | https://api.github.com/repos/tracim/tracim | closed | Keep only chosen TLMs in notification panel | add to changelog frontend manually tested | ## Feature description and goals

For now, every TLM is displayed as a notification in Tracim. After some integration tests, our goal is to keep only the notifications that we believe will be important for the user.

We didn't try filtering them sooner because we want to try this first to avoid writing unnecessary code.

TLM types/categories to remove from the notification display:

- [X] ~~TLMs whose author is the logged user~~ done in https://github.com/tracim/tracim/issues/3523

- [X] create i18n text for notifications that are not handled yet

- [x] check that the portuguese translation works well on small screens

- [X] add a .ini parameter which is the list of TLMs which won't generate a notification

- [X] add this parameter to the tracim config API so that frontend has access to it

- [X] choose an icon and add wording for all current notifications

- [X] implement in frontend the mechanism which ignores blacklisted TLMs

- [X] keep the current mechanism which shows unknown notifications as "raw notifications"

- [x] Display an INFO flash message "Only an administrator can see this user's profile" will be displayed on a notification related to a user"

- [x] Display a WARNING flash message "This notification does not have an associated content" will be displayed for the default "unhandled" notification if it does not have any content

Default blacklist (configuration web.notifications.excluded in development.ini):

- user.created

- user.modified

- user.deleted

- user.undeleted

- workspace.modified

- workspace.deleted

- workspace.undeleted

- workspace_member.modified

- content.modified

### Current TLMs that are processed notifications

- workspace_member.created

- with a difference between "added you to" and "added {{user}} to"

- content.comment.created

- content.(file/html-document/thread/folder.)created

- content.(file/html-document/thread/folder.)modified

- with a difference between status update and the other modification

- mention.created

- with a difference between mention in a document and in a comment

### Current TLMs that are notifications but not processed, i.e. don't have a specific text and icon

- user.created

- user.modified

- user.deleted

- user.undeleted

- workspace.created

- workspace.modified

- workspace.deleted

- workspace.undeleted

- workspace_member.modified

- workspace_member.deleted

- content.(file/html-document/thread/folder.)deleted

- content.(file/html-document/thread/folder.)undeleted

### Current TLMs that are not notifications

- TLMs whose author is the logged user

- content.comment.modified

- content.comment.deleted

- content.comment.undeleted

## Discussed

Which notifications should we filter out?

_18-09-2020_ Decision: https://github.com/tracim/tracim/issues/3477#issuecomment-694749770

## Translations

||English|French|Portuguese|

|-|-|-|-|

|**user.created**| {{author}} created {{user}}'s profile | {{author}} a créé le profil de {{user}} | {{author}} criou o perfil de {{user}} |

|**user.modified**| {{author}} updated {{user}}'s profile | {{author}} a mis à jour le profil de {{user}} | {{author}} actualizou o perfil de {{user}} |

|**user.deleted**| {{author}} deleted {{user}}'s profile | {{author}} a supprimé le profil de {{user}} | {{author}} eliminou o perfil de {{user}} |

|**user.undeleted**| {{author}} restored {{user}}'s profile | {{author}} a restauré le profil de {{user}} | {{author}} restaurou o perfil de {{user}} |

|**workspace.created**| {{author}} created the space {{space}} | {{author}} a créé l'espace {{space}} | {{author}} criou o espaço {{space}} |

|**workspace.modified**| {{author}} updated the space {{space}} | {{author}} a mis à jour l'espace {{space}} | {{author}} actualizou o espaço {{space}} |

|**workspace.deleted**| {{author}} deleted the space {{space}} | {{author}} a supprimé l'espace {{space}} | {{author}} eliminou o espaço {{space}} |

|**workspace.undeleted**| {{author}} restored the space {{space}} | {{author}} a restauré l'espace {{space}} | {{author}} restaurou o espaço {{space}} |

|**workspace_member.modified**| {{author}} updated member {{member}} in {{space}} | {{author}} a mis à jour le membre {{member}} dans {{space}} | {{author}} actualizou o membro {{member}} no espaço {{space}} |

|**workspace_member.deleted**| {{author}} deleted member {{member}} from {{space}} | {{author}} a supprimé le membre {{member}} de {{space}} | {{author}} eliminou o membro {{member}} de {{space}} |

|**content.deleted**| {{author}} deleted {{content}} from {{space}} | {{author}} a supprimé {{content}} de {{space}} | {{author}} eliminou {{content}} de {{space}} |

|**content.undeleted**| {{author}} restored {{content}} in {{space}} | {{author}} a restauré {{content}} dans {{space}} | {{author}} restaurou {{content}} no espaço {{space}} |

## Icons

||Icon|

|-|-|

|**user.created**|user-plus|

|**user.modified**|user + history (use ComposedIcon component)|

|**user.deleted**|user-times|

|**user.undeleted**|user + undo (use ComposedIcon component)|

|**workspace.created**|university + plus (use ComposedIcon component)|

|**workspace.modified**|university + history (use ComposedIcon component)|

|**workspace.deleted**|university + times (use ComposedIcon component)|

|**workspace.undeleted**|university + undo (use ComposedIcon component)|

|**workspace_member.modified**|user-o + history (use ComposedIcon component)|

|**workspace_member.deleted**|user-o + times (use ComposedIcon component)|

|**content.(file/html-document/thread/folder.)deleted**|file-o + times (use ComposedIcon component)|

|**content.(file/html-document/thread/folder.)undeleted**|file-o + undo (use ComposedIcon component)|

## Redirections

||Redirect to|

|-|-|

|**user.created**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.modified**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.deleted**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.undeleted**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**workspace.created**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace.modified**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace.deleted**|`/ui`|

|**workspace.undeleted**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace_member.modified**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace_member.deleted**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**content.deleted**|`/ui/workspaces/{{workspace_id}}/contents/{{content_type}}/{{content_id}}`|

|**content.undeleted**|`/ui/workspaces/{{workspace_id}}/contents/{{content_type}}/{{content_id}}`|

___

_2020-09-16: added the idem about text string to write_

_2020-09-21: added translations, icons and redirections_ | 1.0 | Keep only chosen TLMs in notification panel - ## Feature description and goals

For now, every TLM is displayed as a notification in Tracim. After some integration tests, our goal is to keep only the notifications that we believe will be important for the user.

We didn't try filtering them sooner because we want to try this first to avoid writing unnecessary code.

TLM types/categories to remove from the notification display:

- [X] ~~TLMs whose author is the logged user~~ done in https://github.com/tracim/tracim/issues/3523

- [X] create i18n text for notifications that are not handled yet

- [x] check that the portuguese translation works well on small screens

- [X] add a .ini parameter which is the list of TLMs which won't generate a notification

- [X] add this parameter to the tracim config API so that frontend has access to it

- [X] choose an icon and add wording for all current notifications

- [X] implement in frontend the mechanism which ignores blacklisted TLMs

- [X] keep the current mechanism which shows unknown notifications as "raw notifications"

- [x] Display an INFO flash message "Only an administrator can see this user's profile" will be displayed on a notification related to a user"

- [x] Display a WARNING flash message "This notification does not have an associated content" will be displayed for the default "unhandled" notification if it does not have any content

Default blacklist (configuration web.notifications.excluded in development.ini):

- user.created

- user.modified

- user.deleted

- user.undeleted

- workspace.modified

- workspace.deleted

- workspace.undeleted

- workspace_member.modified

- content.modified

### Current TLMs that are processed notifications

- workspace_member.created

- with a difference between "added you to" and "added {{user}} to"

- content.comment.created

- content.(file/html-document/thread/folder.)created

- content.(file/html-document/thread/folder.)modified

- with a difference between status update and the other modification

- mention.created

- with a difference between mention in a document and in a comment

### Current TLMs that are notifications but not processed, i.e. don't have a specific text and icon

- user.created

- user.modified

- user.deleted

- user.undeleted

- workspace.created

- workspace.modified

- workspace.deleted

- workspace.undeleted

- workspace_member.modified

- workspace_member.deleted

- content.(file/html-document/thread/folder.)deleted

- content.(file/html-document/thread/folder.)undeleted

### Current TLMs that are not notifications

- TLMs whose author is the logged user

- content.comment.modified

- content.comment.deleted

- content.comment.undeleted

## Discussed

Which notifications should we filter out?

_18-09-2020_ Decision: https://github.com/tracim/tracim/issues/3477#issuecomment-694749770

## Translations

||English|French|Portuguese|

|-|-|-|-|

|**user.created**| {{author}} created {{user}}'s profile | {{author}} a créé le profil de {{user}} | {{author}} criou o perfil de {{user}} |

|**user.modified**| {{author}} updated {{user}}'s profile | {{author}} a mis à jour le profil de {{user}} | {{author}} actualizou o perfil de {{user}} |

|**user.deleted**| {{author}} deleted {{user}}'s profile | {{author}} a supprimé le profil de {{user}} | {{author}} eliminou o perfil de {{user}} |

|**user.undeleted**| {{author}} restored {{user}}'s profile | {{author}} a restauré le profil de {{user}} | {{author}} restaurou o perfil de {{user}} |

|**workspace.created**| {{author}} created the space {{space}} | {{author}} a créé l'espace {{space}} | {{author}} criou o espaço {{space}} |

|**workspace.modified**| {{author}} updated the space {{space}} | {{author}} a mis à jour l'espace {{space}} | {{author}} actualizou o espaço {{space}} |

|**workspace.deleted**| {{author}} deleted the space {{space}} | {{author}} a supprimé l'espace {{space}} | {{author}} eliminou o espaço {{space}} |

|**workspace.undeleted**| {{author}} restored the space {{space}} | {{author}} a restauré l'espace {{space}} | {{author}} restaurou o espaço {{space}} |

|**workspace_member.modified**| {{author}} updated member {{member}} in {{space}} | {{author}} a mis à jour le membre {{member}} dans {{space}} | {{author}} actualizou o membro {{member}} no espaço {{space}} |

|**workspace_member.deleted**| {{author}} deleted member {{member}} from {{space}} | {{author}} a supprimé le membre {{member}} de {{space}} | {{author}} eliminou o membro {{member}} de {{space}} |

|**content.deleted**| {{author}} deleted {{content}} from {{space}} | {{author}} a supprimé {{content}} de {{space}} | {{author}} eliminou {{content}} de {{space}} |

|**content.undeleted**| {{author}} restored {{content}} in {{space}} | {{author}} a restauré {{content}} dans {{space}} | {{author}} restaurou {{content}} no espaço {{space}} |

## Icons

||Icon|

|-|-|

|**user.created**|user-plus|

|**user.modified**|user + history (use ComposedIcon component)|

|**user.deleted**|user-times|

|**user.undeleted**|user + undo (use ComposedIcon component)|

|**workspace.created**|university + plus (use ComposedIcon component)|

|**workspace.modified**|university + history (use ComposedIcon component)|

|**workspace.deleted**|university + times (use ComposedIcon component)|

|**workspace.undeleted**|university + undo (use ComposedIcon component)|

|**workspace_member.modified**|user-o + history (use ComposedIcon component)|

|**workspace_member.deleted**|user-o + times (use ComposedIcon component)|

|**content.(file/html-document/thread/folder.)deleted**|file-o + times (use ComposedIcon component)|

|**content.(file/html-document/thread/folder.)undeleted**|file-o + undo (use ComposedIcon component)|

## Redirections

||Redirect to|

|-|-|

|**user.created**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.modified**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.deleted**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**user.undeleted**|if logged user is admin `/ui/admin/user/{{user_id}}` else `/ui`|

|**workspace.created**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace.modified**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace.deleted**|`/ui`|

|**workspace.undeleted**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace_member.modified**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**workspace_member.deleted**|`/ui/workspaces/{{workspace_id}}/dashboard`|

|**content.deleted**|`/ui/workspaces/{{workspace_id}}/contents/{{content_type}}/{{content_id}}`|

|**content.undeleted**|`/ui/workspaces/{{workspace_id}}/contents/{{content_type}}/{{content_id}}`|

___

_2020-09-16: added the idem about text string to write_

_2020-09-21: added translations, icons and redirections_ | test | keep only chosen tlms in notification panel feature description and goals for now every tlm is displayed as a notification in tracim after some integration tests our goal is to keep only the notifications that we believe will be important for the user we didn t try filtering them sooner because we want to try this first to avoid writing unnecessary code tlm types categories to remove from the notification display tlms whose author is the logged user done in create text for notifications that are not handled yet check that the portuguese translation works well on small screens add a ini parameter which is the list of tlms which won t generate a notification add this parameter to the tracim config api so that frontend has access to it choose an icon and add wording for all current notifications implement in frontend the mechanism which ignores blacklisted tlms keep the current mechanism which shows unknown notifications as raw notifications display an info flash message only an administrator can see this user s profile will be displayed on a notification related to a user display a warning flash message this notification does not have an associated content will be displayed for the default unhandled notification if it does not have any content default blacklist configuration web notifications excluded in development ini user created user modified user deleted user undeleted workspace modified workspace deleted workspace undeleted workspace member modified content modified current tlms that are processed notifications workspace member created with a difference between added you to and added user to content comment created content file html document thread folder created content file html document thread folder modified with a difference between status update and the other modification mention created with a difference between mention in a document and in a comment current tlms that are notifications but not processed i e don t have a specific text and icon user created user modified user deleted user undeleted workspace created workspace modified workspace deleted workspace undeleted workspace member modified workspace member deleted content file html document thread folder deleted content file html document thread folder undeleted current tlms that are not notifications tlms whose author is the logged user content comment modified content comment deleted content comment undeleted discussed which notifications should we filter out decision translations english french portuguese user created author created user s profile author a créé le profil de user author criou o perfil de user user modified author updated user s profile author a mis à jour le profil de user author actualizou o perfil de user user deleted author deleted user s profile author a supprimé le profil de user author eliminou o perfil de user user undeleted author restored user s profile author a restauré le profil de user author restaurou o perfil de user workspace created author created the space space author a créé l espace space author criou o espaço space workspace modified author updated the space space author a mis à jour l espace space author actualizou o espaço space workspace deleted author deleted the space space author a supprimé l espace space author eliminou o espaço space workspace undeleted author restored the space space author a restauré l espace space author restaurou o espaço space workspace member modified author updated member member in space author a mis à jour le membre member dans space author actualizou o membro member no espaço space workspace member deleted author deleted member member from space author a supprimé le membre member de space author eliminou o membro member de space content deleted author deleted content from space author a supprimé content de space author eliminou content de space content undeleted author restored content in space author a restauré content dans space author restaurou content no espaço space icons icon user created user plus user modified user history use composedicon component user deleted user times user undeleted user undo use composedicon component workspace created university plus use composedicon component workspace modified university history use composedicon component workspace deleted university times use composedicon component workspace undeleted university undo use composedicon component workspace member modified user o history use composedicon component workspace member deleted user o times use composedicon component content file html document thread folder deleted file o times use composedicon component content file html document thread folder undeleted file o undo use composedicon component redirections redirect to user created if logged user is admin ui admin user user id else ui user modified if logged user is admin ui admin user user id else ui user deleted if logged user is admin ui admin user user id else ui user undeleted if logged user is admin ui admin user user id else ui workspace created ui workspaces workspace id dashboard workspace modified ui workspaces workspace id dashboard workspace deleted ui workspace undeleted ui workspaces workspace id dashboard workspace member modified ui workspaces workspace id dashboard workspace member deleted ui workspaces workspace id dashboard content deleted ui workspaces workspace id contents content type content id content undeleted ui workspaces workspace id contents content type content id added the idem about text string to write added translations icons and redirections | 1 |

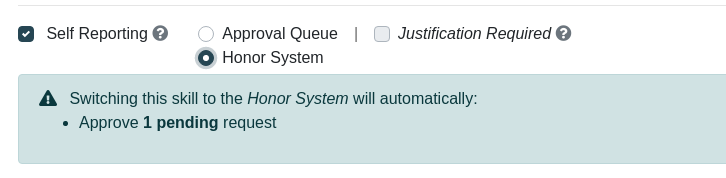

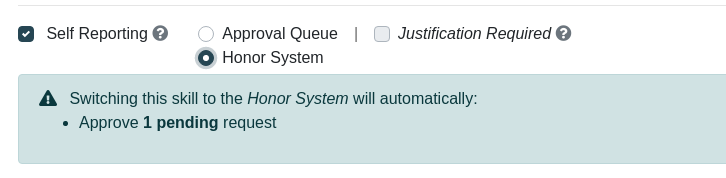

285,491 | 24,670,299,789 | IssuesEvent | 2022-10-18 13:21:16 | NationalSecurityAgency/skills-service | https://api.github.com/repos/NationalSecurityAgency/skills-service | closed | Changing a Skill from Approval Required to Honor System incorrectly reports pending requests | bug test | If a self report approval required skill is edited and changed to honor system, the edit skill dialog incorrectly reports previously approved requests as pending requests that will be automatically approved.

To replicate

1. create a self report approval required skill

2. request points for that skill

3. approve the requested points (ensure that there are no other pending requests for that skill)

4. edit the skill and change from approval required to honor system

| 1.0 | Changing a Skill from Approval Required to Honor System incorrectly reports pending requests - If a self report approval required skill is edited and changed to honor system, the edit skill dialog incorrectly reports previously approved requests as pending requests that will be automatically approved.

To replicate

1. create a self report approval required skill

2. request points for that skill

3. approve the requested points (ensure that there are no other pending requests for that skill)

4. edit the skill and change from approval required to honor system

| test | changing a skill from approval required to honor system incorrectly reports pending requests if a self report approval required skill is edited and changed to honor system the edit skill dialog incorrectly reports previously approved requests as pending requests that will be automatically approved to replicate create a self report approval required skill request points for that skill approve the requested points ensure that there are no other pending requests for that skill edit the skill and change from approval required to honor system | 1 |

1,809 | 3,129,977,994 | IssuesEvent | 2015-09-09 06:27:18 | SpriteStudio/SS5PlayerForUnity | https://api.github.com/repos/SpriteStudio/SS5PlayerForUnity | closed | シェーダファイルを1つにする | improvement performance | Unity4.3 から BlendFunc を動的に指定できるようになったので1つにできそうだ。

結果として、1テクスチャ:1マテリアルになる。

Drawcall 減少には寄与しないが、以下のメリットがあるだろう。

- アセットサイズの削減

- カラー指定などマテリアルに対する操作を行いたい時、1つのマテリアルだけ留意すればいい。

参考

http://answers.unity3d.com/questions/161945/is-it-possible-to-change-blend-mode-in-shader-at-r.html | True | シェーダファイルを1つにする - Unity4.3 から BlendFunc を動的に指定できるようになったので1つにできそうだ。

結果として、1テクスチャ:1マテリアルになる。

Drawcall 減少には寄与しないが、以下のメリットがあるだろう。

- アセットサイズの削減

- カラー指定などマテリアルに対する操作を行いたい時、1つのマテリアルだけ留意すればいい。

参考

http://answers.unity3d.com/questions/161945/is-it-possible-to-change-blend-mode-in-shader-at-r.html | non_test | から blendfunc 。 結果として、 : 。 drawcall 減少には寄与しないが、以下のメリットがあるだろう。 アセットサイズの削減 カラー指定などマテリアルに対する操作を行いたい時、 。 参考 | 0 |

258,316 | 22,302,314,362 | IssuesEvent | 2022-06-13 09:49:16 | perlang-org/perlang | https://api.github.com/repos/perlang-org/perlang | closed | Add data-driven tests for binary operators | tests | #230 added data-driven tests for comparison operators. Similar work could be done elsewhere as well:

### Data driven tests with test data for all types currently supported in the language

- [x] `Addition` and `Subtraction`: #317

- [x] `AdditionAssignment` and `SubtractionAssignment`: #319

- [x] `Exponential`: #323

- [x] `Modulo`: #324

- [x] `Multiplication` and `Division`: #318

- [x] `Equal` and `NotEqual`: #320

- [x] `ShiftLeft` and `ShiftRight`: #325

- [x] `Less` and `LessEqual`: #321

- [x] `Greater` and `GreaterEqual`: #322

### Non-binary operators which would also be good to cover at some point

- [ ] `PostfixDecrement`

- [ ] `PostfixIncrement`

| 1.0 | Add data-driven tests for binary operators - #230 added data-driven tests for comparison operators. Similar work could be done elsewhere as well:

### Data driven tests with test data for all types currently supported in the language

- [x] `Addition` and `Subtraction`: #317

- [x] `AdditionAssignment` and `SubtractionAssignment`: #319

- [x] `Exponential`: #323

- [x] `Modulo`: #324

- [x] `Multiplication` and `Division`: #318

- [x] `Equal` and `NotEqual`: #320

- [x] `ShiftLeft` and `ShiftRight`: #325

- [x] `Less` and `LessEqual`: #321

- [x] `Greater` and `GreaterEqual`: #322

### Non-binary operators which would also be good to cover at some point

- [ ] `PostfixDecrement`

- [ ] `PostfixIncrement`

| test | add data driven tests for binary operators added data driven tests for comparison operators similar work could be done elsewhere as well data driven tests with test data for all types currently supported in the language addition and subtraction additionassignment and subtractionassignment exponential modulo multiplication and division equal and notequal shiftleft and shiftright less and lessequal greater and greaterequal non binary operators which would also be good to cover at some point postfixdecrement postfixincrement | 1 |

438,952 | 12,664,052,252 | IssuesEvent | 2020-06-18 03:21:55 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Add support for &, |, ^, <<, >>, >>> operators in compound-assignment statement | Component/Parser Priority/High Type/NewFeature | Add support for &, |, ^, <<, >>, >>> operators for

compound-assignment-stmt := lvexpr CompoundAssignmentOperator action-or-expr ;

CompoundAssignmentOperator := BinaryOperator =

BinaryOperator := + | - | * | / | & | | | ^ | << | >> | >>>

in incremental parser | 1.0 | Add support for &, |, ^, <<, >>, >>> operators in compound-assignment statement - Add support for &, |, ^, <<, >>, >>> operators for

compound-assignment-stmt := lvexpr CompoundAssignmentOperator action-or-expr ;

CompoundAssignmentOperator := BinaryOperator =

BinaryOperator := + | - | * | / | & | | | ^ | << | >> | >>>

in incremental parser | non_test | add support for operators in compound assignment statement add support for operators for compound assignment stmt lvexpr compoundassignmentoperator action or expr compoundassignmentoperator binaryoperator binaryoperator in incremental parser | 0 |

87,530 | 8,093,476,264 | IssuesEvent | 2018-08-10 01:06:45 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: tpccbench/nodes=3/cpu=4 failed on master | C-test-failure O-robot | SHA: https://github.com/cockroachdb/cockroach/commits/bf76db84cb64dc90f65d8b2e129c75028127cda2

Parameters:

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=822738&tab=buildLog

```

test.go:494,cluster.go:1095,tpcc.go:563,tpcc.go:234: unexpected node event: 1: dead

``` | 1.0 | roachtest: tpccbench/nodes=3/cpu=4 failed on master - SHA: https://github.com/cockroachdb/cockroach/commits/bf76db84cb64dc90f65d8b2e129c75028127cda2

Parameters:

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=822738&tab=buildLog

```

test.go:494,cluster.go:1095,tpcc.go:563,tpcc.go:234: unexpected node event: 1: dead

``` | test | roachtest tpccbench nodes cpu failed on master sha parameters failed test test go cluster go tpcc go tpcc go unexpected node event dead | 1 |

321,285 | 27,520,241,665 | IssuesEvent | 2023-03-06 14:36:33 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix tensor.test_torch_instance_sort | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

| 1.0 | Fix tensor.test_torch_instance_sort - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

| test | fix tensor test torch instance sort tensorflow img src torch img src numpy img src jax img src | 1 |

604,803 | 18,719,223,727 | IssuesEvent | 2021-11-03 09:50:56 | google/ExoPlayer | https://api.github.com/repos/google/ExoPlayer | closed | ENDED event is not fired by ExoPlayer if seek after pause media | bug low priority | Hi,

Observed on Demo app 2.7.0

We try to seek to END after selecting pause on this media ( HLS - Apple 16x9) (This media is multi audio)

https://devstreaming-cdn.apple.com/videos/streaming/examples/bipbop_16x9/bipbop_16x9_variant.m3u8

But Ended event is not fired

For (HLS - ID3 Metadata) media the ended is fired for same scenario when pause and then seek to END

http://devimages.apple.com/samplecode/adDemo/ad.m3u8 (HLS ID3 Metadata)

What can be the reason?

10x

Gilad. | 1.0 | ENDED event is not fired by ExoPlayer if seek after pause media - Hi,

Observed on Demo app 2.7.0

We try to seek to END after selecting pause on this media ( HLS - Apple 16x9) (This media is multi audio)

https://devstreaming-cdn.apple.com/videos/streaming/examples/bipbop_16x9/bipbop_16x9_variant.m3u8

But Ended event is not fired

For (HLS - ID3 Metadata) media the ended is fired for same scenario when pause and then seek to END

http://devimages.apple.com/samplecode/adDemo/ad.m3u8 (HLS ID3 Metadata)

What can be the reason?

10x

Gilad. | non_test | ended event is not fired by exoplayer if seek after pause media hi observed on demo app we try to seek to end after selecting pause on this media hls apple this media is multi audio but ended event is not fired for hls metadata media the ended is fired for same scenario when pause and then seek to end hls metadata what can be the reason gilad | 0 |

66,457 | 7,001,039,136 | IssuesEvent | 2017-12-18 08:41:11 | chamilo/chamilo-lms | https://api.github.com/repos/chamilo/chamilo-lms | closed | Error al corregir ejercicios con preguntas de combinación exacta | Requires testing/validation | ### Current behavior / Resultado actual / Résultat actuel

En un ejercicio con preguntas de combinación exacta, cuando el profesor accede al resultado de un alumno, las calificaciones mostradas son erróneas y aparecen preguntas como incorrectas aunque no lo sean.

Tanto en la lista de intentos como cuando el alumno accede a ver su intento la puntuación se muestra bien.

### Expected behavior / Resultado esperado / Résultat attendu

El profesor debería ver las preguntas con las respuestas que ha dado el alumno, y no es asi

### Steps to reproduce / Pasos para reproducir / Étapes pour reproduire

-Crear un ejercicio con preguntas de combinación exacta, con un par de opciones como correctas.

-Acceder como alumno, realizar el ejercicio correctamente.

-Acceder como profesor a revisar el intento, aparecen preguntas incorrectas.

Se puede probar aqui: https://11.chamilo.org/main/exercise/exercise_report.php?cidReq=123333&id_session=0&gidReq=0&gradebook=0&origin=&exerciseId=736

### Chamilo Version / Versión de Chamilo / Version de Chamilo

1.11.x

| 1.0 | Error al corregir ejercicios con preguntas de combinación exacta - ### Current behavior / Resultado actual / Résultat actuel

En un ejercicio con preguntas de combinación exacta, cuando el profesor accede al resultado de un alumno, las calificaciones mostradas son erróneas y aparecen preguntas como incorrectas aunque no lo sean.

Tanto en la lista de intentos como cuando el alumno accede a ver su intento la puntuación se muestra bien.

### Expected behavior / Resultado esperado / Résultat attendu

El profesor debería ver las preguntas con las respuestas que ha dado el alumno, y no es asi

### Steps to reproduce / Pasos para reproducir / Étapes pour reproduire

-Crear un ejercicio con preguntas de combinación exacta, con un par de opciones como correctas.

-Acceder como alumno, realizar el ejercicio correctamente.

-Acceder como profesor a revisar el intento, aparecen preguntas incorrectas.

Se puede probar aqui: https://11.chamilo.org/main/exercise/exercise_report.php?cidReq=123333&id_session=0&gidReq=0&gradebook=0&origin=&exerciseId=736

### Chamilo Version / Versión de Chamilo / Version de Chamilo

1.11.x

| test | error al corregir ejercicios con preguntas de combinación exacta current behavior resultado actual résultat actuel en un ejercicio con preguntas de combinación exacta cuando el profesor accede al resultado de un alumno las calificaciones mostradas son erróneas y aparecen preguntas como incorrectas aunque no lo sean tanto en la lista de intentos como cuando el alumno accede a ver su intento la puntuación se muestra bien expected behavior resultado esperado résultat attendu el profesor debería ver las preguntas con las respuestas que ha dado el alumno y no es asi steps to reproduce pasos para reproducir étapes pour reproduire crear un ejercicio con preguntas de combinación exacta con un par de opciones como correctas acceder como alumno realizar el ejercicio correctamente acceder como profesor a revisar el intento aparecen preguntas incorrectas se puede probar aqui chamilo version versión de chamilo version de chamilo x | 1 |

211,559 | 23,833,151,826 | IssuesEvent | 2022-09-06 01:08:29 | RG4421/atlasdb | https://api.github.com/repos/RG4421/atlasdb | opened | CVE-2022-38749 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2022-38749 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.23.jar</b>, <b>snakeyaml-1.24.jar</b>, <b>snakeyaml-1.26.jar</b></p></summary>

<p>

<details><summary><b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /lock-impl/build.gradle</p>

<p>Path to vulnerable library: /20210226193332_TENYLC/downloadResource_RSJUCV/20210226194911/snakeyaml-1.23.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.23.jar** (Vulnerable Library)

</details>

<details><summary><b>snakeyaml-1.24.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to vulnerable library: /timelock-api/build/conjureCompiler/lib/snakeyaml-1.24.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.24.jar** (Vulnerable Library)

</details>

<details><summary><b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /atlasdb-service/build.gradle</p>

<p>Path to vulnerable library: /timelock-api/build/conjureJava/lib/snakeyaml-1.26.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.yaml/snakeyaml/1.26/a78a8747147d2c5807683e76ec2b633e95c14fe9/snakeyaml-1.26.jar,/canner/.gradle/caches/modules-2/files-2.1/org.yaml/snakeyaml/1.26/a78a8747147d2c5807683e76ec2b633e95c14fe9/snakeyaml-1.26.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.26.jar** (Vulnerable Library)

</details>

<p>Found in base branch: <b>develop</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Using snakeYAML to parse untrusted YAML files may be vulnerable to Denial of Service attacks (DOS). If the parser is running on user supplied input, an attacker may supply content that causes the parser to crash by stackoverflow.

<p>Publish Date: 2022-09-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-38749>CVE-2022-38749</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bitbucket.org/snakeyaml/snakeyaml/issues/525/got-stackoverflowerror-for-many-open">https://bitbucket.org/snakeyaml/snakeyaml/issues/525/got-stackoverflowerror-for-many-open</a></p>

<p>Release Date: 2022-09-05</p>

<p>Fix Resolution: org.yaml:snakeyaml:1.31</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue | True | CVE-2022-38749 (Medium) detected in multiple libraries - ## CVE-2022-38749 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.23.jar</b>, <b>snakeyaml-1.24.jar</b>, <b>snakeyaml-1.26.jar</b></p></summary>

<p>

<details><summary><b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /lock-impl/build.gradle</p>

<p>Path to vulnerable library: /20210226193332_TENYLC/downloadResource_RSJUCV/20210226194911/snakeyaml-1.23.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.23.jar** (Vulnerable Library)

</details>

<details><summary><b>snakeyaml-1.24.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to vulnerable library: /timelock-api/build/conjureCompiler/lib/snakeyaml-1.24.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.24.jar** (Vulnerable Library)

</details>

<details><summary><b>snakeyaml-1.26.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http://www.snakeyaml.org">http://www.snakeyaml.org</a></p>

<p>Path to dependency file: /atlasdb-service/build.gradle</p>

<p>Path to vulnerable library: /timelock-api/build/conjureJava/lib/snakeyaml-1.26.jar,/home/wss-scanner/.gradle/caches/modules-2/files-2.1/org.yaml/snakeyaml/1.26/a78a8747147d2c5807683e76ec2b633e95c14fe9/snakeyaml-1.26.jar,/canner/.gradle/caches/modules-2/files-2.1/org.yaml/snakeyaml/1.26/a78a8747147d2c5807683e76ec2b633e95c14fe9/snakeyaml-1.26.jar</p>

<p>

Dependency Hierarchy:

- :x: **snakeyaml-1.26.jar** (Vulnerable Library)

</details>

<p>Found in base branch: <b>develop</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Using snakeYAML to parse untrusted YAML files may be vulnerable to Denial of Service attacks (DOS). If the parser is running on user supplied input, an attacker may supply content that causes the parser to crash by stackoverflow.

<p>Publish Date: 2022-09-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-38749>CVE-2022-38749</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged