Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

145,143 | 22,613,887,568 | IssuesEvent | 2022-06-29 19:50:02 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | Create master list of possible MPV features | Workgroup: DTS Service: Product Need: 1-Must Have Product: Moped Type: Design | Based on the Design Discovery research (#9510) conducted in late June 2022, Rebecca will create a universal list of potential features for the Mobility Project Viewer (#1106). | 1.0 | Create master list of possible MPV features - Based on the Design Discovery research (#9510) conducted in late June 2022, Rebecca will create a universal list of potential features for the Mobility Project Viewer (#1106). | non_test | create master list of possible mpv features based on the design discovery research conducted in late june rebecca will create a universal list of potential features for the mobility project viewer | 0 |

826,565 | 31,654,088,884 | IssuesEvent | 2023-09-07 02:30:18 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [deployer] Deployer throws NPE on startup with Springframework log level `info` | bug priority: medium CI validate | ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [ ] The issue is in the latest released 4.2.x

- [X] The issue is in the latest released 4.1.x

- [ ] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

Deployer throws NPE on s... | 1.0 | [deployer] Deployer throws NPE on startup with Springframework log level `info` - ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [ ] The issue is in the latest released 4.2.x

- [X] The issue is in the latest released 4.1.x

- [ ] The issue is in the latest released 4.0.x

- [ ] The issue... | non_test | deployer throws npe on startup with springframework log level info duplicates i have searched the existing issues latest version the issue is in the latest released x the issue is in the latest released x the issue is in the latest released x the issue is in the latest r... | 0 |

164,285 | 12,795,627,802 | IssuesEvent | 2020-07-02 09:03:59 | ComputationalRadiationPhysics/picongpu | https://api.github.com/repos/ComputationalRadiationPhysics/picongpu | opened | PML with uneven domains is not output and check pointed correctly | affects latest release bug component: plugin | The logic of writing and reading of PML fields relies on the domains being of equal size. It equally concerns output and checkpointing.

Here is the code that assumed it: [1](https://github.com/ComputationalRadiationPhysics/picongpu/blob/fe322db49e69b09258691c4aa781769470fe693d/include/picongpu/plugins/hdf5/writer/... | 1.0 | PML with uneven domains is not output and check pointed correctly - The logic of writing and reading of PML fields relies on the domains being of equal size. It equally concerns output and checkpointing.

Here is the code that assumed it: [1](https://github.com/ComputationalRadiationPhysics/picongpu/blob/fe322db49e... | test | pml with uneven domains is not output and check pointed correctly the logic of writing and reading of pml fields relies on the domains being of equal size it equally concerns output and checkpointing here is the code that assumed it and similar for the other two output plugins the error is that while... | 1 |

208,554 | 15,894,514,477 | IssuesEvent | 2021-04-11 10:26:58 | tech256/jobs | https://api.github.com/repos/tech256/jobs | closed | Intermediate Program Analysts (#1620600) | Active Clearance Required Hiring Testing/Quality Assurance stale | Team PeopleTec is currently seeking multiple Intermediate Program Analysts to support our efforts on MDA's TEAMS NEXT contract.

Required Skills/Experience:

Capable of providing expertise to relevant program analytical principles and practices for developmental and operational programs.

Capable of pr... | 1.0 | Intermediate Program Analysts (#1620600) - Team PeopleTec is currently seeking multiple Intermediate Program Analysts to support our efforts on MDA's TEAMS NEXT contract.

Required Skills/Experience:

Capable of providing expertise to relevant program analytical principles and practices for developmental a... | test | intermediate program analysts team peopletec is currently seeking multiple intermediate program analysts to support our efforts on mda s teams next contract required skills experience capable of providing expertise to relevant program analytical principles and practices for developmental and ope... | 1 |

253,187 | 27,300,467,086 | IssuesEvent | 2023-02-24 01:11:30 | panasalap/linux-4.19.72_1 | https://api.github.com/repos/panasalap/linux-4.19.72_1 | opened | CVE-2023-0179 (High) detected in linux-yoctov5.4.51 | security vulnerability | ## CVE-2023-0179 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.y... | True | CVE-2023-0179 (High) detected in linux-yoctov5.4.51 - ## CVE-2023-0179 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded ... | non_test | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in base branch master vulnerable source files net netfilter nft payload c net netfilter ... | 0 |

94,252 | 8,481,826,493 | IssuesEvent | 2018-10-25 16:45:21 | freedomofpress/securedrop-ux | https://api.github.com/repos/freedomofpress/securedrop-ux | closed | SecureDrop Client Error Messaging Inventory | Alpha Workstation NEED Thing we need to test with users | Need to know what user actions will trigger error messages, and when, in [SD Client](https://github.com/freedomofpress/securedrop-client).

Also need to know what user actions will need to be taken to rectify problems, to develop content. @eloquence volunteered to take a first stab at messaging content. @redshiftzer... | 1.0 | SecureDrop Client Error Messaging Inventory - Need to know what user actions will trigger error messages, and when, in [SD Client](https://github.com/freedomofpress/securedrop-client).

Also need to know what user actions will need to be taken to rectify problems, to develop content. @eloquence volunteered to take a... | test | securedrop client error messaging inventory need to know what user actions will trigger error messages and when in also need to know what user actions will need to be taken to rectify problems to develop content eloquence volunteered to take a first stab at messaging content redshiftzero volunteered to... | 1 |

26,434 | 4,226,219,022 | IssuesEvent | 2016-07-02 09:42:00 | ubiquits/ubiquits | https://api.github.com/repos/ubiquits/ubiquits | closed | Travis CI is picking up ts 1.8.x not @next which means there are a lot out errors output. | comp: testing effort1: easy (hour) priority3: required severity5: regression type: bug | Maybe intermittent, follow up on https://travis-ci.org/ubiquits/ubiquits/builds/141821325 when complete. | 1.0 | Travis CI is picking up ts 1.8.x not @next which means there are a lot out errors output. - Maybe intermittent, follow up on https://travis-ci.org/ubiquits/ubiquits/builds/141821325 when complete. | test | travis ci is picking up ts x not next which means there are a lot out errors output maybe intermittent follow up on when complete | 1 |

184,949 | 14,291,131,339 | IssuesEvent | 2020-11-23 22:06:40 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | wptide/wptide: cmd/phpcs-server/main_test.go; 11 LoC | small test |

Found a possible issue in [wptide/wptide](https://www.github.com/wptide/wptide) at [cmd/phpcs-server/main_test.go](https://github.com/wptide/wptide/blob/24ecc5d569823e858eb1414ce5386b67d3f31d46/cmd/phpcs-server/main_test.go#L58-L68)

The below snippet of Go code triggered static analysis which searches for goroutines ... | 1.0 | wptide/wptide: cmd/phpcs-server/main_test.go; 11 LoC -

Found a possible issue in [wptide/wptide](https://www.github.com/wptide/wptide) at [cmd/phpcs-server/main_test.go](https://github.com/wptide/wptide/blob/24ecc5d569823e858eb1414ce5386b67d3f31d46/cmd/phpcs-server/main_test.go#L58-L68)

The below snippet of Go code t... | test | wptide wptide cmd phpcs server main test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go f... | 1 |

13,599 | 3,754,825,934 | IssuesEvent | 2016-03-12 07:21:17 | Rdatatable/data.table | https://api.github.com/repos/Rdatatable/data.table | closed | [R-Forge #5643] ?data.table should clear up the difference with data.frame on subset on logical column | documentation | Submitted by: Arun ; Assigned to: Nobody; [R-Forge link](https://r-forge.r-project.org/tracker/index.php?func=detail&aid=5643&group_id=240&atid=5356)

From here:

http://r.789695.n4.nabble.com/Subsetting-with-logical-td4689054.html

The documentation says currently (in ?data.table): "integer and logical vectors wo... | 1.0 | [R-Forge #5643] ?data.table should clear up the difference with data.frame on subset on logical column - Submitted by: Arun ; Assigned to: Nobody; [R-Forge link](https://r-forge.r-project.org/tracker/index.php?func=detail&aid=5643&group_id=240&atid=5356)

From here:

http://r.789695.n4.nabble.com/Subsetting-with-lo... | non_test | data table should clear up the difference with data frame on subset on logical column submitted by arun assigned to nobody from here the documentation says currently in data table integer and logical vectors work the same way they do in data frame other than nas in logical i are treate... | 0 |

123,236 | 17,772,192,742 | IssuesEvent | 2021-08-30 14:50:25 | kapseliboi/sqlpad | https://api.github.com/repos/kapseliboi/sqlpad | opened | CVE-2021-32796 (Medium) detected in xmldom-0.5.0.tgz, xmldom-0.6.0.tgz | security vulnerability | ## CVE-2021-32796 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>xmldom-0.5.0.tgz</b>, <b>xmldom-0.6.0.tgz</b></p></summary>

<p>

<details><summary><b>xmldom-0.5.0.tgz</b></p></sum... | True | CVE-2021-32796 (Medium) detected in xmldom-0.5.0.tgz, xmldom-0.6.0.tgz - ## CVE-2021-32796 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>xmldom-0.5.0.tgz</b>, <b>xmldom-0.6.0.tgz<... | non_test | cve medium detected in xmldom tgz xmldom tgz cve medium severity vulnerability vulnerable libraries xmldom tgz xmldom tgz xmldom tgz a pure javascript standard based xml dom level core domparser and xmlserializer module library home page ... | 0 |

302,610 | 26,154,295,012 | IssuesEvent | 2022-12-30 18:46:05 | cancervariants/therapy-normalization | https://api.github.com/repos/cancervariants/therapy-normalization | closed | Use Mock classes for testing | test | (where possible)

https://docs.pytest.org/en/stable/monkeypatch.html?highlight=mocking#monkeypatching-returned-objects-building-mock-classes

* It's probably fine to pull most of the 'mock' db layer out of the existing normalization tests, as long as there's a way to catch update requests before they alter a local ... | 1.0 | Use Mock classes for testing - (where possible)

https://docs.pytest.org/en/stable/monkeypatch.html?highlight=mocking#monkeypatching-returned-objects-building-mock-classes

* It's probably fine to pull most of the 'mock' db layer out of the existing normalization tests, as long as there's a way to catch update requ... | test | use mock classes for testing where possible it s probably fine to pull most of the mock db layer out of the existing normalization tests as long as there s a way to catch update requests before they alter a local db validate them and prevent them from making any changes | 1 |

233,716 | 17,875,740,309 | IssuesEvent | 2021-09-07 03:12:04 | PyTorchLightning/lightning-bolts | https://api.github.com/repos/PyTorchLightning/lightning-bolts | closed | Different pip installation command on the official website and doc site | documentation | On the front page of the [lightning website](https://www.pytorchlightning.ai), the installation guide says use `pip install pytorch-lightning` to install pytorch lightning, which results in subsequent failure from attempts to import pl_bolts as instructed by tutorials. While on the documentation site, the installation ... | 1.0 | Different pip installation command on the official website and doc site - On the front page of the [lightning website](https://www.pytorchlightning.ai), the installation guide says use `pip install pytorch-lightning` to install pytorch lightning, which results in subsequent failure from attempts to import pl_bolts as i... | non_test | different pip installation command on the official website and doc site on the front page of the the installation guide says use pip install pytorch lightning to install pytorch lightning which results in subsequent failure from attempts to import pl bolts as instructed by tutorials while on the documentation... | 0 |

109,681 | 4,402,603,480 | IssuesEvent | 2016-08-11 02:10:56 | CorWatts/fasterscale | https://api.github.com/repos/CorWatts/fasterscale | closed | Add an FAQ section | enhancement medium priority small | Answer common questions and provide links to specific questions at relevant parts of the app. | 1.0 | Add an FAQ section - Answer common questions and provide links to specific questions at relevant parts of the app. | non_test | add an faq section answer common questions and provide links to specific questions at relevant parts of the app | 0 |

102,671 | 16,577,869,478 | IssuesEvent | 2021-05-31 07:49:59 | AlexRogalskiy/github-action-random-proverb | https://api.github.com/repos/AlexRogalskiy/github-action-random-proverb | opened | CVE-2021-33502 (High) detected in normalize-url-6.0.0.tgz | security vulnerability | ## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>normalize-url-6.0.0.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs... | True | CVE-2021-33502 (High) detected in normalize-url-6.0.0.tgz - ## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>normalize-url-6.0.0.tgz</b></p></summary>

<p>Normalize a U... | non_test | cve high detected in normalize url tgz cve high severity vulnerability vulnerable library normalize url tgz normalize a url library home page a href path to dependency file github action random proverb package json path to vulnerable library github action random prov... | 0 |

315,129 | 27,047,221,152 | IssuesEvent | 2023-02-13 10:37:30 | stargate/stargate | https://api.github.com/repos/stargate/stargate | opened | Avoid using profiles in REST integration tests | test | There are two integration tests in REST that test the same thing based on the properties:

* RestApiV2QCqlDisabledIT

* RestApiV2QCqlIT

The second one specifies a profile that sets `stargate.rest.cql.disabled=false`.

However, although this works on the integration tests, it fails when we are using the integrati... | 1.0 | Avoid using profiles in REST integration tests - There are two integration tests in REST that test the same thing based on the properties:

* RestApiV2QCqlDisabledIT

* RestApiV2QCqlIT

The second one specifies a profile that sets `stargate.rest.cql.disabled=false`.

However, although this works on the integratio... | test | avoid using profiles in rest integration tests there are two integration tests in rest that test the same thing based on the properties the second one specifies a profile that sets stargate rest cql disabled false however although this works on the integration tests it fails when we are using ... | 1 |

43,157 | 7,028,752,787 | IssuesEvent | 2017-12-25 13:33:24 | androguard/androguard | https://api.github.com/repos/androguard/androguard | closed | Deprecated documentation | documentation | I can't find the `get_AndroidManifest()` method in the apk.py script that is mentioned in the documentation. Maybe the documentation is deprecated?

APK class can't call `get_AndroidManifest()` method that is listed in the [documentation](http://androguard.readthedocs.io/en/latest/api/androguard.core.bytecodes.html#... | 1.0 | Deprecated documentation - I can't find the `get_AndroidManifest()` method in the apk.py script that is mentioned in the documentation. Maybe the documentation is deprecated?

APK class can't call `get_AndroidManifest()` method that is listed in the [documentation](http://androguard.readthedocs.io/en/latest/api/and... | non_test | deprecated documentation i can t find the get androidmanifest method in the apk py script that is mentioned in the documentation maybe the documentation is deprecated apk class can t call get androidmanifest method that is listed in the androguard version python version operati... | 0 |

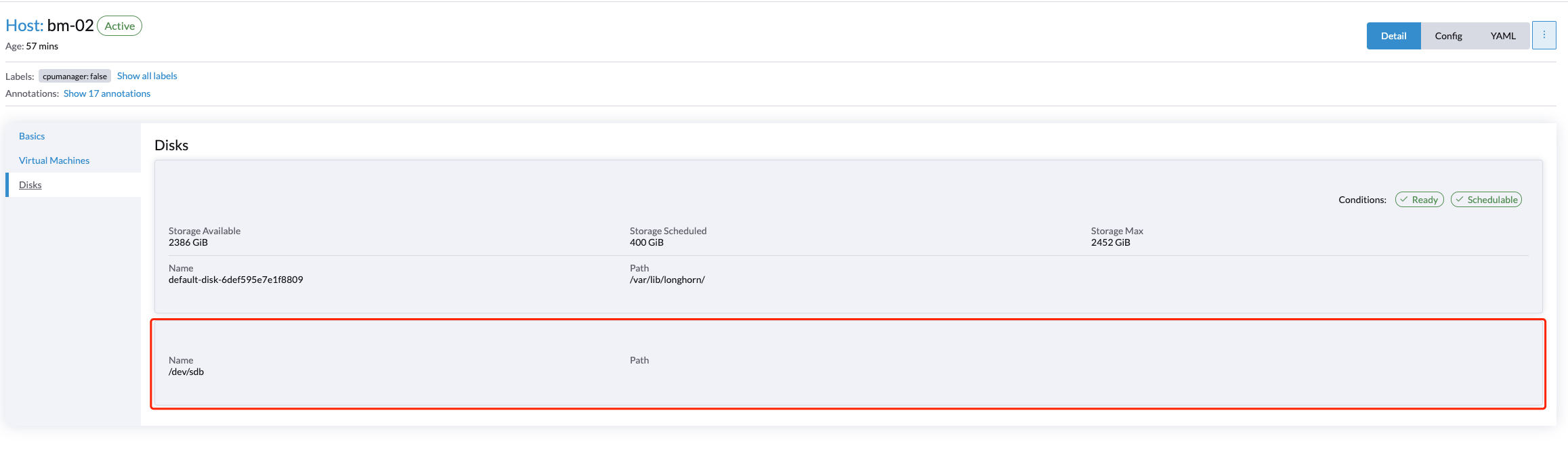

596,920 | 18,150,460,501 | IssuesEvent | 2021-09-26 07:08:49 | harvester/harvester | https://api.github.com/repos/harvester/harvester | opened | [BUG] A force-formatted disk was not added as an additional data volume | bug priority/1 area/node-disk-manager | **Describe the bug**

A force-formatted disk was not added as an additional data volume successfully.

**To Reproduce**

Steps to reproduce the behavior:

1. Select an additional disk that already has a par... | 1.0 | [BUG] A force-formatted disk was not added as an additional data volume - **Describe the bug**

A force-formatted disk was not added as an additional data volume successfully.

**To Reproduce**

Steps to re... | non_test | a force formatted disk was not added as an additional data volume describe the bug a force formatted disk was not added as an additional data volume successfully to reproduce steps to reproduce the behavior select an additional disk that already has a partition and filesystem type set t... | 0 |

162,768 | 12,690,388,857 | IssuesEvent | 2020-06-21 11:53:05 | Oldes/Rebol-issues | https://api.github.com/repos/Oldes/Rebol-issues | closed | READ/string crashes for UCS4 ("UTF-32") LE/BE files with a BOM | Oldes.resolved Ren.important Status.important Test.written Type.bug | _Submitted by:_ **abolka**

See the example code.

``` rebol

>> read %fixtures/umlauts-utf32le.txt

== #{FFFE0000E4000000F6000000FC0000000A000000}

>> read/string %fixtures/umlauts-utf32le.txt

(R3 crashes) ;; Expected: "äöü^/"

--

>> read %fixtures/umlauts-utf32be.txt

== #{0000FEFF000000E4000000F6000000FC0000000A}

>... | 1.0 | READ/string crashes for UCS4 ("UTF-32") LE/BE files with a BOM - _Submitted by:_ **abolka**

See the example code.

``` rebol

>> read %fixtures/umlauts-utf32le.txt

== #{FFFE0000E4000000F6000000FC0000000A000000}

>> read/string %fixtures/umlauts-utf32le.txt

(R3 crashes) ;; Expected: "äöü^/"

--

>> read %fixtures/umla... | test | read string crashes for utf le be files with a bom submitted by abolka see the example code rebol read fixtures umlauts txt read string fixtures umlauts txt crashes expected äöü read fixtures umlauts txt read fixtures umlauts txt cr... | 1 |

641,747 | 20,833,630,199 | IssuesEvent | 2022-03-19 21:15:23 | SoftwareEngineeringGroup3-3/recipe-app-backend | https://api.github.com/repos/SoftwareEngineeringGroup3-3/recipe-app-backend | closed | [BACKEND] [TESTING] Add better tests for API/Ingredients endpoint | enhancement priority:high | Add more comprehensive tests for API/Ingredients endpoint which will test the actual response instead of just the validation functions by themselves. | 1.0 | [BACKEND] [TESTING] Add better tests for API/Ingredients endpoint - Add more comprehensive tests for API/Ingredients endpoint which will test the actual response instead of just the validation functions by themselves. | non_test | add better tests for api ingredients endpoint add more comprehensive tests for api ingredients endpoint which will test the actual response instead of just the validation functions by themselves | 0 |

288,398 | 21,702,890,081 | IssuesEvent | 2022-05-10 06:54:25 | GenericMappingTools/pygmt | https://api.github.com/repos/GenericMappingTools/pygmt | closed | Point of view in docstrings | question documentation | Some docstrings for PyGMT and GMT modules use plural first person when describing what the function does (e.g "we create labels" in `grd2cpt`, "we will write a GeoTiff image" in `grdimage`). Personally, I'm not a fan of this, as there is no "we" doing the operations; it's PyGMT/GMT that is carrying them out. While I do... | 1.0 | Point of view in docstrings - Some docstrings for PyGMT and GMT modules use plural first person when describing what the function does (e.g "we create labels" in `grd2cpt`, "we will write a GeoTiff image" in `grdimage`). Personally, I'm not a fan of this, as there is no "we" doing the operations; it's PyGMT/GMT that is... | non_test | point of view in docstrings some docstrings for pygmt and gmt modules use plural first person when describing what the function does e g we create labels in we will write a geotiff image in grdimage personally i m not a fan of this as there is no we doing the operations it s pygmt gmt that is carry... | 0 |

15,065 | 9,466,171,018 | IssuesEvent | 2019-04-18 03:09:12 | PennyLin1127/Remediate | https://api.github.com/repos/PennyLin1127/Remediate | opened | CVE-2018-14719 High Severity Vulnerability detected by WhiteSource | security vulnerability | ## CVE-2018-14719 - High Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core... | True | CVE-2018-14719 High Severity Vulnerability detected by WhiteSource - ## CVE-2018-14719 - High Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.3.jar</b></p></summa... | non_test | cve high severity vulnerability detected by whitesource cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file remediate webgoat we... | 0 |

587,629 | 17,627,309,281 | IssuesEvent | 2021-08-19 00:28:22 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | enc424j600 driver unusable/broken on stm32l552 | bug priority: low Stale | **Describe the bug**

Testing with the dumb_http_server_mt sample with an STM32 nucleo board and enc424j600 ethernet results in an unusable application which outputs lots of error

**To Reproduce**

Steps to reproduce the behavior:

1. Configure ethernet for nucleo_l552ze_q with following:

```

&spi1 {

pinctrl-0 =... | 1.0 | enc424j600 driver unusable/broken on stm32l552 - **Describe the bug**

Testing with the dumb_http_server_mt sample with an STM32 nucleo board and enc424j600 ethernet results in an unusable application which outputs lots of error

**To Reproduce**

Steps to reproduce the behavior:

1. Configure ethernet for nucleo_l55... | non_test | driver unusable broken on describe the bug testing with the dumb http server mt sample with an nucleo board and ethernet results in an unusable application which outputs lots of error to reproduce steps to reproduce the behavior configure ethernet for nucleo q with following ... | 0 |

6,253 | 7,543,678,655 | IssuesEvent | 2018-04-17 16:08:00 | ga4gh/dockstore | https://api.github.com/repos/ga4gh/dockstore | closed | Base command is optional, post CWL 1.0 | bug cli gui web service | ## Feature Request

### Desired behaviour

It should probably be discouraged, but `baseCommand` is optional.

Ensure that tools with no baseCommand validate as valid CWL.

For now, workaround by providing `baseCommand: []` | 1.0 | Base command is optional, post CWL 1.0 - ## Feature Request

### Desired behaviour

It should probably be discouraged, but `baseCommand` is optional.

Ensure that tools with no baseCommand validate as valid CWL.

For now, workaround by providing `baseCommand: []` | non_test | base command is optional post cwl feature request desired behaviour it should probably be discouraged but basecommand is optional ensure that tools with no basecommand validate as valid cwl for now workaround by providing basecommand | 0 |

238,602 | 26,140,610,638 | IssuesEvent | 2022-12-29 17:52:07 | vectordotdev/vector | https://api.github.com/repos/vectordotdev/vector | closed | [RUSTSEC-2020-0095]: difference is unmaintained | domain: security meta: blocked domain: deps | Added to `deny.toml` in https://github.com/timberio/vector/pull/6226

```

┌─ /home/kirill/tmp/vector-deny/Cargo.lock:139:1

│

139 │ difference 2.0.0 registry+https://github.com/rust-lang/crates.io-index

│ ---------------------------------------------------------------------- unmaintained advisory detec... | True | [RUSTSEC-2020-0095]: difference is unmaintained - Added to `deny.toml` in https://github.com/timberio/vector/pull/6226

```

┌─ /home/kirill/tmp/vector-deny/Cargo.lock:139:1

│

139 │ difference 2.0.0 registry+https://github.com/rust-lang/crates.io-index

│ ------------------------------------------------... | non_test | difference is unmaintained added to deny toml in ┌─ home kirill tmp vector deny cargo lock │ │ difference registry │ unmaintained advisory detected │ id rustsec advisory ... | 0 |

10,480 | 8,066,788,420 | IssuesEvent | 2018-08-04 20:14:52 | kenger-dk/DAW | https://api.github.com/repos/kenger-dk/DAW | closed | Error pages | enhancement security | Make nice error pages and handle all errors this way, no .net error pages.

Error logging? | True | Error pages - Make nice error pages and handle all errors this way, no .net error pages.

Error logging? | non_test | error pages make nice error pages and handle all errors this way no net error pages error logging | 0 |

61,491 | 15,014,694,337 | IssuesEvent | 2021-02-01 07:05:43 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [SB] Consent > Auto-created consent document > Reduce the font size of the title | Bug P2 Process: Fixed Process: Tested dev Study builder | SB > Consent > Auto-created consent document > Reduce the font size of the titles of the consent sections in the auto-created document. They should proportional to the font size of the section's content.

with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3087613&tab=artifacts#/tpcc/w=100/nodes=3/chaos=true) on master @ [ec36bc66f5147a8703719a9c890be12dd0c08945](https://github.... | 2.0 | roachtest: tpcc/w=100/nodes=3/chaos=true failed - roachtest.tpcc/w=100/nodes=3/chaos=true [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3087613&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3087613&tab=artifacts#/tpcc/w=100/nodes=3/chaos=true) on master @ [ec36bc6... | test | roachtest tpcc w nodes chaos true failed roachtest tpcc w nodes chaos true with on master neworder orderstatus ... | 1 |

104,970 | 9,013,378,685 | IssuesEvent | 2019-02-05 19:20:42 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | DateMathExpressionResolverTests fails on master | :Core/Infra/Core >test-failure | Three tests have started failing over the weekend, I believe it has to do with the end of the year approaching. Not sure if the failures may be related to datetime changes. They happen only in master:

```

org.elasticsearch.cluster.metadata.DateMathExpressionResolverTests testExpression_CustomFormat

org.elasticsear... | 1.0 | DateMathExpressionResolverTests fails on master - Three tests have started failing over the weekend, I believe it has to do with the end of the year approaching. Not sure if the failures may be related to datetime changes. They happen only in master:

```

org.elasticsearch.cluster.metadata.DateMathExpressionResolver... | test | datemathexpressionresolvertests fails on master three tests have started failing over the weekend i believe it has to do with the end of the year approaching not sure if the failures may be related to datetime changes they happen only in master org elasticsearch cluster metadata datemathexpressionresolver... | 1 |

199,522 | 15,046,434,732 | IssuesEvent | 2021-02-03 07:23:04 | celo-org/celo-monorepo | https://api.github.com/repos/celo-org/celo-monorepo | opened | [FLAKEY TEST] cli-test -> cli -> account metadata cmds -> Modifying the metadata file -> account:create-metadata cmd | FLAKEY cli cli-test | Discovered at commit 8b36d4953b957afbad2a67384d3dbf1a2dc438c7

Attempt No. 1:

Error: thrown: "Exceeded timeout of 10000 ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if this is a long-running test."

at describe (/home/circleci/app/packages/cli/src/commands/account/claims.test.ts:43:... | 1.0 | [FLAKEY TEST] cli-test -> cli -> account metadata cmds -> Modifying the metadata file -> account:create-metadata cmd - Discovered at commit 8b36d4953b957afbad2a67384d3dbf1a2dc438c7

Attempt No. 1:

Error: thrown: "Exceeded timeout of 10000 ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if... | test | cli test cli account metadata cmds modifying the metadata file account create metadata cmd discovered at commit attempt no error thrown exceeded timeout of ms for a test use jest settimeout newtimeout to increase the timeout value if this is a long running test at describe home c... | 1 |

90,796 | 8,272,319,541 | IssuesEvent | 2018-09-16 18:53:59 | hyperledger/composer | https://api.github.com/repos/hyperledger/composer | closed | New BusCard round trip scenarios required | integration test playground protractor qa top10 | With the inbound changes relating to business network deployment, it will be necessary to modify the existing e2e test that tests the integration with the runtime/fabric/playground.

This is because is will not be possible to use the existing inclusion of an npmrc file in the runtime package

## Required Scenarios

... | 1.0 | New BusCard round trip scenarios required - With the inbound changes relating to business network deployment, it will be necessary to modify the existing e2e test that tests the integration with the runtime/fabric/playground.

This is because is will not be possible to use the existing inclusion of an npmrc file in t... | test | new buscard round trip scenarios required with the inbound changes relating to business network deployment it will be necessary to modify the existing test that tests the integration with the runtime fabric playground this is because is will not be possible to use the existing inclusion of an npmrc file in the... | 1 |

181,000 | 14,849,358,170 | IssuesEvent | 2021-01-18 00:58:45 | davtorcue/decide | https://api.github.com/repos/davtorcue/decide | closed | Video Presentación Decide | Accepted New documentation priority:high rol: ALL | Video para presentar el proyecto Decide:

- Que cambios hemos hecho.

- Que herramientas hemos utilizado.

- Como hemos utilizado dichas herramientas.

- Ejemplos. | 1.0 | Video Presentación Decide - Video para presentar el proyecto Decide:

- Que cambios hemos hecho.

- Que herramientas hemos utilizado.

- Como hemos utilizado dichas herramientas.

- Ejemplos. | non_test | video presentación decide video para presentar el proyecto decide que cambios hemos hecho que herramientas hemos utilizado como hemos utilizado dichas herramientas ejemplos | 0 |

168,387 | 13,082,840,495 | IssuesEvent | 2020-08-01 15:50:17 | ForgottenGlory/Living-Skyrim-2 | https://api.github.com/repos/ForgottenGlory/Living-Skyrim-2 | closed | vampire lord cape inside body | bug help wanted need testers question | **If you are reporting a crash to desktop, please attach your NET Script Framework crash log. This can be found in MO2's Overwrite folder.

If possible, please also attach a copy of your most recent save before the issue occurred.**

Please check back on your bug request periodically. I will ask for more details so... | 1.0 | vampire lord cape inside body - **If you are reporting a crash to desktop, please attach your NET Script Framework crash log. This can be found in MO2's Overwrite folder.

If possible, please also attach a copy of your most recent save before the issue occurred.**

Please check back on your bug request periodically... | test | vampire lord cape inside body if you are reporting a crash to desktop please attach your net script framework crash log this can be found in s overwrite folder if possible please also attach a copy of your most recent save before the issue occurred please check back on your bug request periodically ... | 1 |

149,457 | 13,281,628,955 | IssuesEvent | 2020-08-23 18:18:28 | No-Budget-Science-Hack-Week-2020/miguezometro | https://api.github.com/repos/No-Budget-Science-Hack-Week-2020/miguezometro | closed | Transferir documentação do Google Docs para documentação permanente | documentation help wanted nbs hack week 2020 | Uma coisa importante é deixar as contribuições e discussões de vocês disponíveis para a posteridade.

Uma opção é colocar aqui no GitHub mesmo, seja na Wiki do repositório, seja como documentos de texto.

Exemplos de coisas boas a se documentar são:

- [x] Referências da literatura utilizadas

- [x] Atas e dis... | 1.0 | Transferir documentação do Google Docs para documentação permanente - Uma coisa importante é deixar as contribuições e discussões de vocês disponíveis para a posteridade.

Uma opção é colocar aqui no GitHub mesmo, seja na Wiki do repositório, seja como documentos de texto.

Exemplos de coisas boas a se documentar... | non_test | transferir documentação do google docs para documentação permanente uma coisa importante é deixar as contribuições e discussões de vocês disponíveis para a posteridade uma opção é colocar aqui no github mesmo seja na wiki do repositório seja como documentos de texto exemplos de coisas boas a se documentar... | 0 |

173,792 | 13,443,846,713 | IssuesEvent | 2020-09-08 08:57:19 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Consider incorporating cbor official spec suite | area-System.Security test enhancement | CBOR has an official spec test suite which validates handful of corner cases: https://github.com/cbor/test-vectors.

In order to fully incorporate that test data as is in `System.Formats.Cbor`'s test project, it would require implementing RFC 7049 [<kbd>**§6 - Diagnostic Notation**</kbd>](https://tools.ietf.org/html/... | 1.0 | Consider incorporating cbor official spec suite - CBOR has an official spec test suite which validates handful of corner cases: https://github.com/cbor/test-vectors.

In order to fully incorporate that test data as is in `System.Formats.Cbor`'s test project, it would require implementing RFC 7049 [<kbd>**§6 - Diagnos... | test | consider incorporating cbor official spec suite cbor has an official spec test suite which validates handful of corner cases in order to fully incorporate that test data as is in system formats cbor s test project it would require implementing rfc diagnostic notation api will also help comparing the do... | 1 |

20,979 | 6,971,776,529 | IssuesEvent | 2017-12-11 15:04:52 | cnr-ibf-pa/hbp-bsp-issues | https://api.github.com/repos/cnr-ibf-pa/hbp-bsp-issues | opened | Usage of '/home/jupyter' instead `/home/jovyan` | Critical Type_BUG UC_HippoCellModel_Rebuild | ### Expected behavior

No error

### Actual Behavior

OSError: [Errno 2] No such file or directory, line 62:

```

<ipython-input-7-cdc45f67112e> in on_w3_clicked(change)

60 outputHTML.layout.display='none'

61 plotly.offline.init_notebook_mode()

---> 62 os.chdir("/home/jupyter/BPOPTANAL... | 1.0 | Usage of '/home/jupyter' instead `/home/jovyan` - ### Expected behavior

No error

### Actual Behavior

OSError: [Errno 2] No such file or directory, line 62:

```

<ipython-input-7-cdc45f67112e> in on_w3_clicked(change)

60 outputHTML.layout.display='none'

61 plotly.offline.init_notebook_mo... | non_test | usage of home jupyter instead home jovyan expected behavior no error actual behavior oserror no such file or directory line in on clicked change outputhtml layout display none plotly offline init notebook mode os chdir home jupyter ... | 0 |

3,971 | 2,698,759,729 | IssuesEvent | 2015-04-03 10:41:43 | bedita/bedita | https://api.github.com/repos/bedita/bedita | closed | Inconsistency in new Section creation form | Module - Publications Priority - Low Status - Test | When attempting to create a new Section, there are some minor issues in view that seem to depend on which is the default tree location of the Section being created.

@rzecchini | 1.0 | Inconsistency in new Section creation form - When attempting to create a new Section, there are some minor issues in view that seem to depend on which is the default tree location of the Section being created.

@rzecchini | test | inconsistency in new section creation form when attempting to create a new section there are some minor issues in view that seem to depend on which is the default tree location of the section being created rzecchini | 1 |

227,933 | 18,111,032,901 | IssuesEvent | 2021-09-23 04:01:06 | E3SM-Project/scream | https://api.github.com/repos/E3SM-Project/scream | opened | Erratic testing beavior on weaver | bug testing priority:high | There seem to be issues with testing on weaver. Mainly, there are two sub-issues.

1. The nightly testing sometimes reports an internal compiler error, when building `atmosphere_microphysics.cpp`. This behavior has been recorded when running on weaver1 and weaver4 compute nodes, but did not happen on weaver2 (no data... | 1.0 | Erratic testing beavior on weaver - There seem to be issues with testing on weaver. Mainly, there are two sub-issues.

1. The nightly testing sometimes reports an internal compiler error, when building `atmosphere_microphysics.cpp`. This behavior has been recorded when running on weaver1 and weaver4 compute nodes, bu... | test | erratic testing beavior on weaver there seem to be issues with testing on weaver mainly there are two sub issues the nightly testing sometimes reports an internal compiler error when building atmosphere microphysics cpp this behavior has been recorded when running on and compute nodes but did not ha... | 1 |

20,734 | 6,923,265,095 | IssuesEvent | 2017-11-30 08:18:56 | spack/spack | https://api.github.com/repos/spack/spack | closed | cgal 4.9.1 checksum wrong. | build-error | Tried to install a package and the `cgal` chosen by concretisation wasn't installed as the checksum was wrong:

```sh

spack install gcc@7.2.0

spack load gcc@7.2.0

spack compilers find

spack unload gcc@7.2.0

spack install openfoam-com@1706 ^openmpi@3.0 fabrics=psm2 schedulers=sge

...

ChecksumError: ChecksumErro... | 1.0 | cgal 4.9.1 checksum wrong. - Tried to install a package and the `cgal` chosen by concretisation wasn't installed as the checksum was wrong:

```sh

spack install gcc@7.2.0

spack load gcc@7.2.0

spack compilers find

spack unload gcc@7.2.0

spack install openfoam-com@1706 ^openmpi@3.0 fabrics=psm2 schedulers=sge

...... | non_test | cgal checksum wrong tried to install a package and the cgal chosen by concretisation wasn t installed as the checksum was wrong sh spack install gcc spack load gcc spack compilers find spack unload gcc spack install openfoam com openmpi fabrics schedulers sge chec... | 0 |

92,242 | 8,356,584,793 | IssuesEvent | 2018-10-02 18:55:28 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | stats are not updated on private window when default tab stats update | QA/Test-Plan-Specified QA/Yes priority/P5 | ## Test Plan

See https://github.com/brave/brave-core/pull/540

## Description

1. open a default window and a private window both on new tab page

2. visit wired.com on the default window

3. watch that stats on the private tab page didn't update

First reported by @simonhong and confirmed by @yrliou. | 1.0 | stats are not updated on private window when default tab stats update - ## Test Plan

See https://github.com/brave/brave-core/pull/540

## Description

1. open a default window and a private window both on new tab page

2. visit wired.com on the default window

3. watch that stats on the private tab page didn't updat... | test | stats are not updated on private window when default tab stats update test plan see description open a default window and a private window both on new tab page visit wired com on the default window watch that stats on the private tab page didn t update first reported by simonhong and confi... | 1 |

151,458 | 12,036,765,331 | IssuesEvent | 2020-04-13 20:26:47 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack Jest Tests.x-pack/plugins/endpoint/public/applications/endpoint/store/policy_list - policy list store concerns it sets `isLoading` when `userPaginatedPolicyListTable` | Feature:Endpoint Team:Endpoint Data Visibility Team:Endpoint Management Team:Endpoint Response failed-test | A test failed on a tracked branch

```

Error: expect(received).toBe(expected) // Object.is equality

Expected: false

Received: true

at Object.test (/var/lib/jenkins/workspace/elastic+kibana+master/kibana/x-pack/plugins/endpoint/public/applications/endpoint/store/policy_list/index.test.ts:52:41)

```

First failure: ... | 1.0 | Failing test: X-Pack Jest Tests.x-pack/plugins/endpoint/public/applications/endpoint/store/policy_list - policy list store concerns it sets `isLoading` when `userPaginatedPolicyListTable` - A test failed on a tracked branch

```

Error: expect(received).toBe(expected) // Object.is equality

Expected: false

Received: tru... | test | failing test x pack jest tests x pack plugins endpoint public applications endpoint store policy list policy list store concerns it sets isloading when userpaginatedpolicylisttable a test failed on a tracked branch error expect received tobe expected object is equality expected false received tru... | 1 |

304,450 | 23,066,881,635 | IssuesEvent | 2022-07-25 14:35:50 | NDragneelL9/the-undermine-bot | https://api.github.com/repos/NDragneelL9/the-undermine-bot | closed | Documentation | documentation | Write SSD documentation.

What should be inside Readme file:

- [x] Table of content

- [x] About application (or goal of the project)

- [x] Requirements (both functional and non-functional)

- [x] Glossary

- [x] Stakeholders

- [x] Design section with diagrams

- [x] Architecture with different viewpoints

- [... | 1.0 | Documentation - Write SSD documentation.

What should be inside Readme file:

- [x] Table of content

- [x] About application (or goal of the project)

- [x] Requirements (both functional and non-functional)

- [x] Glossary

- [x] Stakeholders

- [x] Design section with diagrams

- [x] Architecture with different... | non_test | documentation write ssd documentation what should be inside readme file table of content about application or goal of the project requirements both functional and non functional glossary stakeholders design section with diagrams architecture with different viewpoints ... | 0 |

11,594 | 3,211,062,903 | IssuesEvent | 2015-10-06 08:38:41 | CoderDojo/community-platform | https://api.github.com/repos/CoderDojo/community-platform | closed | Show number of events a user has attended | backlog Ready For Testing | In the manage users section show the number of events the user has attended for that Dojo.

Requested by myself | 1.0 | Show number of events a user has attended - In the manage users section show the number of events the user has attended for that Dojo.

Requested by myself | test | show number of events a user has attended in the manage users section show the number of events the user has attended for that dojo requested by myself | 1 |

71,052 | 9,477,307,224 | IssuesEvent | 2019-04-19 18:09:11 | golang/go | https://api.github.com/repos/golang/go | closed | cmd/go/internal/modfetch: document known bug in `isVendoredPackage` | Documentation NeedsFix Unfortunate modules | ### What version of Go are you using (`go version`)?

<pre>

$ go version

go version go1.12.1 linux/amd64

</pre>

### Does this issue reproduce with the latest release?

Yes

### What operating system and processor architecture are you using (`go env`)?

<details><summary><code>go env</code> Output</summary><... | 1.0 | cmd/go/internal/modfetch: document known bug in `isVendoredPackage` - ### What version of Go are you using (`go version`)?

<pre>

$ go version

go version go1.12.1 linux/amd64

</pre>

### Does this issue reproduce with the latest release?

Yes

### What operating system and processor architecture are you using ... | non_test | cmd go internal modfetch document known bug in isvendoredpackage what version of go are you using go version go version go version linux does this issue reproduce with the latest release yes what operating system and processor architecture are you using go env ... | 0 |

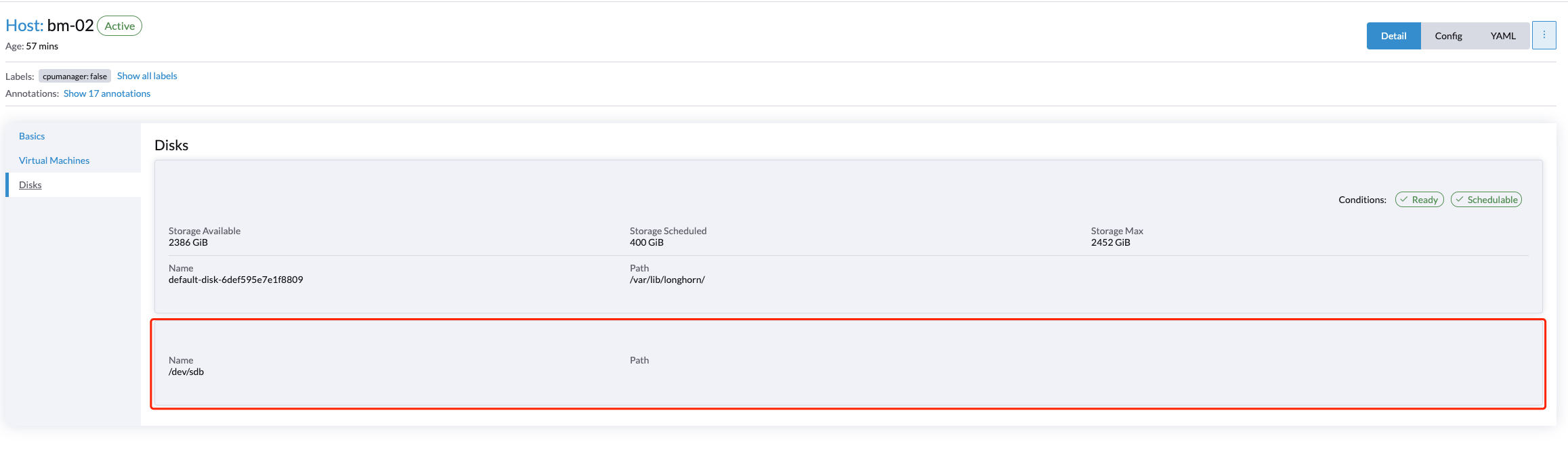

93,703 | 8,442,029,726 | IssuesEvent | 2018-10-18 12:06:52 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | closed | KCom2: browse by price | Ready to Test - Dev bug | Not quite understand how "Browse by price" section works

Why it dislpay only 5 products with such a high priceses and ignores those are with lower ones

Also, not quite understand why it show say 490 to 990 i... | 1.0 | KCom2: browse by price - Not quite understand how "Browse by price" section works

Why it dislpay only 5 products with such a high priceses and ignores those are with lower ones

Also, not quite understand why... | test | browse by price not quite understand how browse by price section works why it dislpay only products with such a high priceses and ignores those are with lower ones also not quite understand why it show say to instead of current user have no discounts | 1 |

143,697 | 5,521,861,213 | IssuesEvent | 2017-03-19 18:56:01 | JosefAssad/SeMaWi | https://api.github.com/repos/JosefAssad/SeMaWi | closed | Analyser | enhancement high priority balk | Vi laver ofte analyser for andre centre med personfølsomme data . Det bliver større krav til dokumentationen til sådanne analyser i fremtiden, så vi overvejer at oprette en analyse kategori.

Props:

Modtager af analyse | 1.0 | Analyser - Vi laver ofte analyser for andre centre med personfølsomme data . Det bliver større krav til dokumentationen til sådanne analyser i fremtiden, så vi overvejer at oprette en analyse kategori.

Props:

Modtager af analyse | non_test | analyser vi laver ofte analyser for andre centre med personfølsomme data det bliver større krav til dokumentationen til sådanne analyser i fremtiden så vi overvejer at oprette en analyse kategori props modtager af analyse | 0 |

4,604 | 6,725,362,957 | IssuesEvent | 2017-10-17 04:54:42 | jhipster/generator-jhipster | https://api.github.com/repos/jhipster/generator-jhipster | closed | UAA: can't access to administration pages | microservice uaa | ##### **Overview of the issue**

When using UAA option, I can't access to the administration pages

That's why the build for UAA + Protractor tests failed since more than 1 week:

- https://travis-ci.org/hipster-labs/jhipster-travis-build/builds/284099556

- https://travis-ci.org/hipster-labs/jhipster-travis-build/... | 1.0 | UAA: can't access to administration pages - ##### **Overview of the issue**

When using UAA option, I can't access to the administration pages

That's why the build for UAA + Protractor tests failed since more than 1 week:

- https://travis-ci.org/hipster-labs/jhipster-travis-build/builds/284099556

- https://travi... | non_test | uaa can t access to administration pages overview of the issue when using uaa option i can t access to the administration pages that s why the build for uaa protractor tests failed since more than week it works for but it s broken in current master reproduce the ... | 0 |

288,451 | 21,705,753,106 | IssuesEvent | 2022-05-10 09:26:15 | meugenom/markdown-ts-compiler | https://api.github.com/repos/meugenom/markdown-ts-compiler | closed | Design as an external module | documentation | It's very important to write code as an external module with index.html as an entry point and src.js

We need research about external modules | 1.0 | Design as an external module - It's very important to write code as an external module with index.html as an entry point and src.js

We need research about external modules | non_test | design as an external module it s very important to write code as an external module with index html as an entry point and src js we need research about external modules | 0 |

111,385 | 9,529,560,002 | IssuesEvent | 2019-04-29 11:40:26 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | the test(s) from issue 25579 should be ported to NLL run-pass tests | E-needstest NLL-fixed-by-NLL | The work on reviewing the diagnostic differences between AST-borrowck and NLL leads me to conclude that NLL is (probably) correctly accepting the regression test we added for #25579

Namely this:

https://github.com/rust-lang/rust/blob/653da4fd006c97625247acd7e076d0782cdc149b/src/test/ui/issues/issue-25579.rs#L16-L... | 1.0 | the test(s) from issue 25579 should be ported to NLL run-pass tests - The work on reviewing the diagnostic differences between AST-borrowck and NLL leads me to conclude that NLL is (probably) correctly accepting the regression test we added for #25579

Namely this:

https://github.com/rust-lang/rust/blob/653da4fd00... | test | the test s from issue should be ported to nll run pass tests the work on reviewing the diagnostic differences between ast borrowck and nll leads me to conclude that nll is probably correctly accepting the regression test we added for namely this we should make the original test be ast borrowck onl... | 1 |

55,525 | 23,493,560,261 | IssuesEvent | 2022-08-17 21:25:53 | quesst-technologies/qst-admin-status-all | https://api.github.com/repos/quesst-technologies/qst-admin-status-all | opened | 🛑 Email Service is down | status email-service | In [`2284d85`](https://github.com/quesst-technologies/qst-admin-status-all/commit/2284d8519da01714a5674a59c3a31d83f33e0c48

), Email Service (https://quessttechnologies.com/email/healthcheck) was **down**:

- HTTP code: 0

- Response time: 0 ms

| 1.0 | 🛑 Email Service is down - In [`2284d85`](https://github.com/quesst-technologies/qst-admin-status-all/commit/2284d8519da01714a5674a59c3a31d83f33e0c48

), Email Service (https://quessttechnologies.com/email/healthcheck) was **down**:

- HTTP code: 0

- Response time: 0 ms

| non_test | 🛑 email service is down in email service was down http code response time ms | 0 |

178,010 | 29,481,382,356 | IssuesEvent | 2023-06-02 06:05:49 | CryptKeeperZK/crypt-keeper-extension | https://api.github.com/repos/CryptKeeperZK/crypt-keeper-extension | closed | Support multiple root keys for different identities | 🖼️ ui logic 🖌️ ui design 🌱 new feature ⚙️ scripts | As a user I'd like to create CK accounts and create identities using different addresses.

- [x] Add array of root keys to key storage

- [x] Support backup of multiple keys

- [x] Specify ck account for identity creation and list view

- [x] Integrate wallet connection with ck (web3-react)

- [x] Rewrite connect to ... | 1.0 | Support multiple root keys for different identities - As a user I'd like to create CK accounts and create identities using different addresses.

- [x] Add array of root keys to key storage

- [x] Support backup of multiple keys

- [x] Specify ck account for identity creation and list view

- [x] Integrate wallet conn... | non_test | support multiple root keys for different identities as a user i d like to create ck accounts and create identities using different addresses add array of root keys to key storage support backup of multiple keys specify ck account for identity creation and list view integrate wallet connection w... | 0 |

335,042 | 30,006,802,817 | IssuesEvent | 2023-06-26 12:58:51 | enthought/traits | https://api.github.com/repos/enthought/traits | opened | Tests fail with latest Traits and TraitsUI from PyPI | type: bug component: test suite | I'm seeing a test failure with the latest Traits and TraitsUI from PyPI, on Python 3.11. (I haven't tested with other Python versions, but it likely affects those, too.)

Steps to reproduce:

- Create a new Python 3.11 venv, and activate it.

- Run `pip install traits traitsui`

- Run `python -m unittest discover -... | 1.0 | Tests fail with latest Traits and TraitsUI from PyPI - I'm seeing a test failure with the latest Traits and TraitsUI from PyPI, on Python 3.11. (I haven't tested with other Python versions, but it likely affects those, too.)

Steps to reproduce:

- Create a new Python 3.11 venv, and activate it.

- Run `pip install... | test | tests fail with latest traits and traitsui from pypi i m seeing a test failure with the latest traits and traitsui from pypi on python i haven t tested with other python versions but it likely affects those too steps to reproduce create a new python venv and activate it run pip install t... | 1 |

452,700 | 13,058,295,177 | IssuesEvent | 2020-07-30 08:48:28 | siteorigin/so-widgets-bundle | https://api.github.com/repos/siteorigin/so-widgets-bundle | closed | Contact Form: Ensure compatibility with Akismet | bug priority-2 | Via Twitter:

@SiteOrigin

: looks like your Contact Form plugin has a bug that impairs its spam-fighting abilities: when sending data to the Akismet API, content should be sent as `comment_content`, not `comment_text`. We've handled it, for now, so your users get better results. | 1.0 | Contact Form: Ensure compatibility with Akismet - Via Twitter:

@SiteOrigin

: looks like your Contact Form plugin has a bug that impairs its spam-fighting abilities: when sending data to the Akismet API, content should be sent as `comment_content`, not `comment_text`. We've handled it, for now, so your users get be... | non_test | contact form ensure compatibility with akismet via twitter siteorigin looks like your contact form plugin has a bug that impairs its spam fighting abilities when sending data to the akismet api content should be sent as comment content not comment text we ve handled it for now so your users get be... | 0 |

5,354 | 8,181,944,071 | IssuesEvent | 2018-08-29 02:01:21 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | closed | [Monitoring] cannot be used with Python 3.7 (Pandas) | api: monitoring testing type: process | Pandas/Cython doesn't currently support 3.7. Once it does we need to enable 3.7 testing on Monitoring.

Collecting pandas>=0.17.1 (from google-cloud-monitoring==0.29.0)

Using cached https://files.pythonhosted.org/packages/08/01/803834bc8a4e708aedebb133095a88a4dad9f45bbaf5ad777d2bea543c7e/pandas-0.22.0.tar.gz

C... | 1.0 | [Monitoring] cannot be used with Python 3.7 (Pandas) - Pandas/Cython doesn't currently support 3.7. Once it does we need to enable 3.7 testing on Monitoring.

Collecting pandas>=0.17.1 (from google-cloud-monitoring==0.29.0)

Using cached https://files.pythonhosted.org/packages/08/01/803834bc8a4e708aedebb133095a88a... | non_test | cannot be used with python pandas pandas cython doesn t currently support once it does we need to enable testing on monitoring collecting pandas from google cloud monitoring using cached could not find a version that satisfies the requirement cython from versions no ... | 0 |

124,086 | 12,224,507,308 | IssuesEvent | 2020-05-02 23:01:02 | czammar/MNO_finalproject | https://api.github.com/repos/czammar/MNO_finalproject | closed | 1.a Extracción de los datos desde Yahoo Finance | documentation enhancement | Crear una función que extraiga los precios de las acciones de 50 empresas, para los últimos 5 años:

Industria *Energy*- SASE:2222, NYSE:XOM, NYSE:CVX,ENXTAM:RDSA, NSEI:RELIANCE

Industria *Real Estate* -NYSE:AMT, NYSE:CCI, NYSE:PLD, NasdaqGS:QEIX, NYSE:DLR

Industria *Materiales*- NYSE:LIN, ASX:BHP, LSE:RIO, ENXTPA:AI... | 1.0 | 1.a Extracción de los datos desde Yahoo Finance - Crear una función que extraiga los precios de las acciones de 50 empresas, para los últimos 5 años:

Industria *Energy*- SASE:2222, NYSE:XOM, NYSE:CVX,ENXTAM:RDSA, NSEI:RELIANCE

Industria *Real Estate* -NYSE:AMT, NYSE:CCI, NYSE:PLD, NasdaqGS:QEIX, NYSE:DLR

Industria *... | non_test | a extracción de los datos desde yahoo finance crear una función que extraiga los precios de las acciones de empresas para los últimos años industria energy sase nyse xom nyse cvx enxtam rdsa nsei reliance industria real estate nyse amt nyse cci nyse pld nasdaqgs qeix nyse dlr industria mate... | 0 |

114,163 | 9,691,256,215 | IssuesEvent | 2019-05-24 10:41:01 | pybamm-team/PyBaMM | https://api.github.com/repos/pybamm-team/PyBaMM | closed | Shape test | testing | Add a method `test_shape` to the Finite Volume class that checks, when discretising, that we can evaluate the shape of a discretised object without raising any errors.

Should help to catch bugs earlier. | 1.0 | Shape test - Add a method `test_shape` to the Finite Volume class that checks, when discretising, that we can evaluate the shape of a discretised object without raising any errors.

Should help to catch bugs earlier. | test | shape test add a method test shape to the finite volume class that checks when discretising that we can evaluate the shape of a discretised object without raising any errors should help to catch bugs earlier | 1 |

823,261 | 30,962,746,335 | IssuesEvent | 2023-08-08 06:04:58 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | 9to5google.com - see bug description | browser-firefox priority-normal engine-gecko | <!-- @browser: Firefox 117.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:109.0) Gecko/20100101 Firefox/117.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/125512 -->

**URL**: https://9to5google.com/

**Browser / Version**: Firefox 117... | 1.0 | 9to5google.com - see bug description - <!-- @browser: Firefox 117.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:109.0) Gecko/20100101 Firefox/117.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/125512 -->

**URL**: https://9to5google.c... | non_test | com see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description dark mode doesn t work and poles doesn t work in the new ish design it works fine in for example chrome and bra... | 0 |

314,042 | 26,972,252,869 | IssuesEvent | 2023-02-09 06:24:55 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Add a technique similar to GWP-ASan | feature testing | **Describe the solution you'd like**

Small random subset of memory allocations should be protected by guard pages.

GWP-ASan (is in fact almost unrelated to ASan) is not a specific tool but a technique that requires a small change in memory allocator. https://llvm.org/docs/GwpAsan.html

The only point is to enable... | 1.0 | Add a technique similar to GWP-ASan - **Describe the solution you'd like**

Small random subset of memory allocations should be protected by guard pages.

GWP-ASan (is in fact almost unrelated to ASan) is not a specific tool but a technique that requires a small change in memory allocator. https://llvm.org/docs/GwpAs... | test | add a technique similar to gwp asan describe the solution you d like small random subset of memory allocations should be protected by guard pages gwp asan is in fact almost unrelated to asan is not a specific tool but a technique that requires a small change in memory allocator the only point is to e... | 1 |

64,565 | 15,949,182,673 | IssuesEvent | 2021-04-15 07:04:15 | nextcloud/desktop | https://api.github.com/repos/nextcloud/desktop | closed | CMake Can’t Find Included SQLite3 on macOS | bug building os: :apple: macOS | ## How to use GitHub

* Please use the 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to show that you are affected by the same issue.

* Please don't comment if you have no relevant information to add. It's just extra noise for everyone subscribed to this issue.... | 1.0 | CMake Can’t Find Included SQLite3 on macOS - ## How to use GitHub

* Please use the 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to show that you are affected by the same issue.

* Please don't comment if you have no relevant information to add. It's just extra... | non_test | cmake can’t find included on macos how to use github please use the 👍 to show that you are affected by the same issue please don t comment if you have no relevant information to add it s just extra noise for everyone subscribed to this issue subscribe to receive notifications on status change ... | 0 |

59,901 | 6,666,153,595 | IssuesEvent | 2017-10-03 06:49:35 | RaRe-Technologies/gensim | https://api.github.com/repos/RaRe-Technologies/gensim | closed | use AppVeyor to test on Windows and upload wheels | medium testing wishlist | AppVeyor is free for open source projects:

http://www.appveyor.com/

| 1.0 | use AppVeyor to test on Windows and upload wheels - AppVeyor is free for open source projects:

http://www.appveyor.com/

| test | use appveyor to test on windows and upload wheels appveyor is free for open source projects | 1 |

176,649 | 28,130,863,341 | IssuesEvent | 2023-03-31 22:53:06 | pulumi/pulumi-cloudflare | https://api.github.com/repos/pulumi/pulumi-cloudflare | closed | Importing zone doesn't work propertly | kind/bug resolution/by-design | ### What happened?

I've imported a zone that already exists, but during preview, the resource is not shown at all (with the `import` info, for example).

### Steps to reproduce

e.g.

```

new cloudflare.Zone("myzone", {

zone: "example.com",

plan: "free",

},

{

id: "<the zon... | 1.0 | Importing zone doesn't work propertly - ### What happened?

I've imported a zone that already exists, but during preview, the resource is not shown at all (with the `import` info, for example).

### Steps to reproduce

e.g.

```

new cloudflare.Zone("myzone", {

zone: "example.com",

plan: "free... | non_test | importing zone doesn t work propertly what happened i ve imported a zone that already exists but during preview the resource is not shown at all with the import info for example steps to reproduce e g new cloudflare zone myzone zone example com plan free... | 0 |

96,939 | 12,193,394,297 | IssuesEvent | 2020-04-29 14:21:00 | USDA-FSA/fsa-style | https://api.github.com/repos/USDA-FSA/fsa-style | closed | Apply Level to relevant existing components | P3 source: internal FBCSS type: design type: feature request | The new "Level" component (#385) will naturally lend itself to many other "composite-like" components.

* Evaluate which components can be updated/amended with it

* Deprecate variations/examples as necessary, etc. | 1.0 | Apply Level to relevant existing components - The new "Level" component (#385) will naturally lend itself to many other "composite-like" components.

* Evaluate which components can be updated/amended with it

* Deprecate variations/examples as necessary, etc. | non_test | apply level to relevant existing components the new level component will naturally lend itself to many other composite like components evaluate which components can be updated amended with it deprecate variations examples as necessary etc | 0 |

557,979 | 16,523,688,862 | IssuesEvent | 2021-05-26 17:14:10 | chef/chef | https://api.github.com/repos/chef/chef | closed | Chef::DataBagItem.save does not properly validate names with periods | Focus: knife Priority: Low Status: Good First Issue Type: Bug | BUG:

Databag item ID can not contain period " . " when created through `knife databag` commands or via `chef manage` UI.

However, they can be created from inside the recipe via code with `Chef::DataBagItem.new`; `raw_data` & `.save`

Once the Databag Item is created via the recipe, the item can be edited via the GUI b... | 1.0 | Chef::DataBagItem.save does not properly validate names with periods - BUG:

Databag item ID can not contain period " . " when created through `knife databag` commands or via `chef manage` UI.

However, they can be created from inside the recipe via code with `Chef::DataBagItem.new`; `raw_data` & `.save`

Once the Datab... | non_test | chef databagitem save does not properly validate names with periods bug databag item id can not contain period when created through knife databag commands or via chef manage ui however they can be created from inside the recipe via code with chef databagitem new raw data save once the datab... | 0 |

26,583 | 4,234,992,459 | IssuesEvent | 2016-07-05 13:55:39 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | closed | Manual tests for Ubuntu 0.11.0 RC3 | tests | 1. [x] Check that installer is close to the size of last release.

2. [x] Check Brave, electron, and libchromiumcontent version in About and make sure it is EXACTLY as expected.

## About pages

1. [x] Test that about:bookmarks loads bookmarks

2. [x] Test that about:downloads loads downloads

3. [x] Test that abou... | 1.0 | Manual tests for Ubuntu 0.11.0 RC3 - 1. [x] Check that installer is close to the size of last release.

2. [x] Check Brave, electron, and libchromiumcontent version in About and make sure it is EXACTLY as expected.

## About pages

1. [x] Test that about:bookmarks loads bookmarks

2. [x] Test that about:downloads l... | test | manual tests for ubuntu check that installer is close to the size of last release check brave electron and libchromiumcontent version in about and make sure it is exactly as expected about pages test that about bookmarks loads bookmarks test that about downloads loads downlo... | 1 |

557,848 | 16,520,787,876 | IssuesEvent | 2021-05-26 14:22:09 | ARMmbed/mbed-os | https://api.github.com/repos/ARMmbed/mbed-os | closed | High-ish SPI clock speed breaks STM async SPI | devices: st priority: untriaged | <!--

************************************** WARNING **************************************

The ciarcom bot parses this header automatically. Any deviation from the

template may cause the bot to automatically correct this header or may result in a

warning message, requesting updates.

Please e... | 1.0 | High-ish SPI clock speed breaks STM async SPI - <!--

************************************** WARNING **************************************

The ciarcom bot parses this header automatically. Any deviation from the

template may cause the bot to automatically correct this header or may result in a

war... | non_test | high ish spi clock speed breaks stm async spi warning the ciarcom bot parses this header automatically any deviation from the template may cause the bot to automatically correct this header or may result in a war... | 0 |

438,639 | 30,653,611,853 | IssuesEvent | 2023-07-25 10:32:11 | josdem/status-catcher | https://api.github.com/repos/josdem/status-catcher | closed | Add project documentation | documentation enhancement in progress | As a **user**, I want to add a `readme` file **so that** I can have documentation about this project.

**Acceptance Criteria**

Documentation with this information:

- Project software requirements

- Build instructions

- Execute instructions

- Run tests instructions

- Run

- References | 1.0 | Add project documentation - As a **user**, I want to add a `readme` file **so that** I can have documentation about this project.

**Acceptance Criteria**

Documentation with this information:

- Project software requirements

- Build instructions

- Execute instructions

- Run tests instructions

- Run

- Referenc... | non_test | add project documentation as a user i want to add a readme file so that i can have documentation about this project acceptance criteria documentation with this information project software requirements build instructions execute instructions run tests instructions run referenc... | 0 |

158,965 | 6,038,188,488 | IssuesEvent | 2017-06-09 20:43:52 | DCLP/dclpxsltbox | https://api.github.com/repos/DCLP/dclpxsltbox | closed | unmerged commits on deprecated "dclp" branch of DCLP/idp.data repository | priority: high task | While working on #177, I discovered that @kirchnerf and @anagnosis have made 5 commits to [the "dclp" branch of DCLP/idp.data](https://github.com/DCLP/idp.data/tree/dclp) since 31 March that have not been merged to [the "master" branch](https://github.com/DCLP/idp.data/tree/master). The "dclp" branch was deprecated in ... | 1.0 | unmerged commits on deprecated "dclp" branch of DCLP/idp.data repository - While working on #177, I discovered that @kirchnerf and @anagnosis have made 5 commits to [the "dclp" branch of DCLP/idp.data](https://github.com/DCLP/idp.data/tree/dclp) since 31 March that have not been merged to [the "master" branch](https://... | non_test | unmerged commits on deprecated dclp branch of dclp idp data repository while working on i discovered that kirchnerf and anagnosis have made commits to since march that have not been merged to the dclp branch was deprecated in february this change was coordinated with heidelberg but we failed t... | 0 |

134,211 | 12,577,570,645 | IssuesEvent | 2020-06-09 09:45:21 | GoogleCloudPlatform/serverless-photosharing-workshop | https://api.github.com/repos/GoogleCloudPlatform/serverless-photosharing-workshop | closed | Add cleanup steps at the end of each codelabs | documentation enhancement | Once users have gone through each codelab, we could add an extra section where we mention steps to get rid of all the resources, to avoid recurring costs. | 1.0 | Add cleanup steps at the end of each codelabs - Once users have gone through each codelab, we could add an extra section where we mention steps to get rid of all the resources, to avoid recurring costs. | non_test | add cleanup steps at the end of each codelabs once users have gone through each codelab we could add an extra section where we mention steps to get rid of all the resources to avoid recurring costs | 0 |

359,094 | 25,219,324,297 | IssuesEvent | 2022-11-14 11:32:55 | nicolasdaudin/pulpito | https://api.github.com/repos/nicolasdaudin/pulpito | closed | Nettoyer le code | documentation enhancement api ux |

- [x] Chercher si il y a un indicateur "automatisé" de clean code

- [x] Relire les règles de Clean Code https://medium.com/swlh/clean-code-4-rules-of-simple-design-f86b066ee43d et https://gist.github.com/wojteklu/73c6914cc446146b8b533c0988cf8d29

- [x] Nettoyer le code et le rendre plus clean.

- [x] dans le matchingIt... | 1.0 | Nettoyer le code -

- [x] Chercher si il y a un indicateur "automatisé" de clean code

- [x] Relire les règles de Clean Code https://medium.com/swlh/clean-code-4-rules-of-simple-design-f86b066ee43d et https://gist.github.com/wojteklu/73c6914cc446146b8b533c0988cf8d29

- [x] Nettoyer le code et le rendre plus clean.

- [x]... | non_test | nettoyer le code chercher si il y a un indicateur automatisé de clean code relire les règles de clean code et nettoyer le code et le rendre plus clean dans le matchingitem addeventlistener click function e on pourrait peut être nettoyer utiliser une closure non passer à import au ... | 0 |

200,909 | 15,164,531,226 | IssuesEvent | 2021-02-12 13:52:27 | arturo-lang/arturo | https://api.github.com/repos/arturo-lang/arturo | closed | [Collections\extend] verify functionality | library todo unit-test | [Collections\extend] verify functionality

https://github.com/arturo-lang/arturo/blob/aba9af1045a5008bf4669dbe3203164d79d82963/src/library/Collections.nim#L240

```text

else: discard

# TODO(Collections\extend) verify functionality

# labels: library, unit-test

builtin "extend",

alia... | 1.0 | [Collections\extend] verify functionality - [Collections\extend] verify functionality

https://github.com/arturo-lang/arturo/blob/aba9af1045a5008bf4669dbe3203164d79d82963/src/library/Collections.nim#L240

```text

else: discard

# TODO(Collections\extend) verify functionality

# labels: library, ... | test | verify functionality verify functionality text else discard todo collections extend verify functionality labels library unit test builtin extend alias unaliased rule prefixprecedence | 1 |

292,897 | 25,249,252,294 | IssuesEvent | 2022-11-15 13:32:29 | rancher/dashboard | https://api.github.com/repos/rancher/dashboard | closed | In namespace selection dropdown, only "Create a New Namespace" should be blue | kind/bug [zube]: To Test | I opened this issue based on Kenneth's feedback from a demo.

Currently, all list items in the namespace dropdown are blue:

<img width="780" alt="Screen Shot 2022-07-19 at 6 32 41 PM" src="https://user-images.githubusercontent.com/20599230/179876449-b59782ff-8491-41df-87e7-15cc6731dadd.png">

But if only the "Crea... | 1.0 | In namespace selection dropdown, only "Create a New Namespace" should be blue - I opened this issue based on Kenneth's feedback from a demo.

Currently, all list items in the namespace dropdown are blue:

<img width="780" alt="Screen Shot 2022-07-19 at 6 32 41 PM" src="https://user-images.githubusercontent.com/205992... | test | in namespace selection dropdown only create a new namespace should be blue i opened this issue based on kenneth s feedback from a demo currently all list items in the namespace dropdown are blue img width alt screen shot at pm src but if only the create a new namespace was blue an... | 1 |

20,288 | 13,792,660,530 | IssuesEvent | 2020-10-09 13:54:53 | bkochuna/ners570f20-Lab06 | https://api.github.com/repos/bkochuna/ners570f20-Lab06 | closed | Choose a programming language(s) | infrastructure | Prior to getting too far into the project, the team should decide on using one or more of C, C++, or Fortran for implementation of the code.

This issue is about having that discussion to decide which language(s) to use. | 1.0 | Choose a programming language(s) - Prior to getting too far into the project, the team should decide on using one or more of C, C++, or Fortran for implementation of the code.

This issue is about having that discussion to decide which language(s) to use. | non_test | choose a programming language s prior to getting too far into the project the team should decide on using one or more of c c or fortran for implementation of the code this issue is about having that discussion to decide which language s to use | 0 |

196,252 | 6,926,210,583 | IssuesEvent | 2017-11-30 18:22:10 | minio/minio | https://api.github.com/repos/minio/minio | closed | Minio behind nginx | priority: medium | I have set up minio using [these instructions](https://github.com/minio/minio-service/tree/master/linux-systemd) and have proxied it behind nginx as per [this guide](https://github.com/minio/cookbook/blob/master/docs/setup-nginx-proxy-with-minio.md#non-root-configuration) using this block:

```

location ~^/ix35-s3... | 1.0 | Minio behind nginx - I have set up minio using [these instructions](https://github.com/minio/minio-service/tree/master/linux-systemd) and have proxied it behind nginx as per [this guide](https://github.com/minio/cookbook/blob/master/docs/setup-nginx-proxy-with-minio.md#non-root-configuration) using this block:

```

... | non_test | minio behind nginx i have set up minio using and have proxied it behind nginx as per using this block location proxy buffering off proxy set header host http host proxy pass but when i access hostname i get a nosuchbucket error what dd i do w... | 0 |