Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

16,835 | 10,573,292,688 | IssuesEvent | 2019-10-07 11:37:53 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | azurerm_virtual_machine_scale_set.scaleset: diffs didn't match during apply | bug service/vmss | _This issue was originally opened by @agolomoodysaada as hashicorp/terraform#17291. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of the issue is below._

<hr>

```

Terraform Version: 0.11.3

Resource ID:... | 1.0 | azurerm_virtual_machine_scale_set.scaleset: diffs didn't match during apply - _This issue was originally opened by @agolomoodysaada as hashicorp/terraform#17291. It was migrated here as a result of the [provider split](https://www.hashicorp.com/blog/upcoming-provider-changes-in-terraform-0-10/). The original body of th... | non_test | azurerm virtual machine scale set scaleset diffs didn t match during apply this issue was originally opened by agolomoodysaada as hashicorp terraform it was migrated here as a result of the the original body of the issue is below terraform version resource id azurerm virtual mach... | 0 |

65,335 | 6,955,906,281 | IssuesEvent | 2017-12-07 09:37:56 | CLARIAH/wp5_mediasuite | https://api.github.com/repos/CLARIAH/wp5_mediasuite | closed | Improve "detailed resource viewer"(s) and the result list | Done & tested! Function: result list Importance: medium MS-Component-function MSv2 Work: interface | ==summary of this request:

1. Delete eye icon

2. Make description-metadata viewable only if you click on an arrow icon (expand-collapse) that shows the information-metadata that currently pops-up when people click on eye icon)

==

In the search result list of the Comparative search and Multi-layered Single collectio... | 1.0 | Improve "detailed resource viewer"(s) and the result list - ==summary of this request:

1. Delete eye icon

2. Make description-metadata viewable only if you click on an arrow icon (expand-collapse) that shows the information-metadata that currently pops-up when people click on eye icon)

==

In the search result list ... | test | improve detailed resource viewer s and the result list summary of this request delete eye icon make description metadata viewable only if you click on an arrow icon expand collapse that shows the information metadata that currently pops up when people click on eye icon in the search result list ... | 1 |

204,794 | 23,280,869,233 | IssuesEvent | 2022-08-05 11:57:24 | MendDemo-josh/IdentityServer4 | https://api.github.com/repos/MendDemo-josh/IdentityServer4 | opened | bootstrap-3.3.6.min.js: 6 vulnerabilities (highest severity is: 6.1) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.6.min.js</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home page... | True | bootstrap-3.3.6.min.js: 6 vulnerabilities (highest severity is: 6.1) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-3.3.6.min.js</b></p></summary>

<p>The most popular front-end framework for developing... | non_test | bootstrap min js vulnerabilities highest severity is vulnerable library bootstrap min js the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to vulnerable library samples clients src mvchybridbackch... | 0 |

310,497 | 26,719,178,215 | IssuesEvent | 2023-01-28 23:06:53 | PalisadoesFoundation/talawa-api | https://api.github.com/repos/PalisadoesFoundation/talawa-api | closed | Test: src/lib/resolvers/index.ts | good first issue unapproved points 01 test | - Please coordinate **issue assignment** and **PR reviews** with the contributors listed in this issue https://github.com/PalisadoesFoundation/talawa/issues/359

The Talawa-API code base needs to be 100% reliable. This means we need to have 100% test code coverage.

- Tests need to be written for file `src/lib/reso... | 1.0 | Test: src/lib/resolvers/index.ts - - Please coordinate **issue assignment** and **PR reviews** with the contributors listed in this issue https://github.com/PalisadoesFoundation/talawa/issues/359

The Talawa-API code base needs to be 100% reliable. This means we need to have 100% test code coverage.

- Tests need ... | test | test src lib resolvers index ts please coordinate issue assignment and pr reviews with the contributors listed in this issue the talawa api code base needs to be reliable this means we need to have test code coverage tests need to be written for file src lib resolvers index ts we wi... | 1 |

802,209 | 28,781,234,562 | IssuesEvent | 2023-05-02 00:59:58 | Dotori-app/Dotori-iOS | https://api.github.com/repos/Dotori-app/Dotori-iOS | closed | Then을 Configure로 변경 | ⚙ Setting 2️⃣Priority: Medium ⚡️ Simple | ### Describe

직접 구현해놓은 Then을

https://github.com/GSM-MSG/Configure 를 사용하도록 바꿉니다

### Additional

_No response_ | 1.0 | Then을 Configure로 변경 - ### Describe

직접 구현해놓은 Then을

https://github.com/GSM-MSG/Configure 를 사용하도록 바꿉니다

### Additional

_No response_ | non_test | then을 configure로 변경 describe 직접 구현해놓은 then을 를 사용하도록 바꿉니다 additional no response | 0 |

95,285 | 16,084,499,655 | IssuesEvent | 2021-04-26 09:35:37 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | opened | migrate away from ffmpeg_3 | 1.severity: security | `ffmpeg_3` has many open vulnerabilities (see #94003 and #120372). There seems to be no effort to add patches for these, so we should drop `ffmpeg_3` or at least mark it as insecure.

In https://github.com/NixOS/nixpkgs/pull/89264, `ffmpeg_3` was made the de facto default by making every package that depends on `ffmpeg... | True | migrate away from ffmpeg_3 - `ffmpeg_3` has many open vulnerabilities (see #94003 and #120372). There seems to be no effort to add patches for these, so we should drop `ffmpeg_3` or at least mark it as insecure.

In https://github.com/NixOS/nixpkgs/pull/89264, `ffmpeg_3` was made the de facto default by making every pa... | non_test | migrate away from ffmpeg ffmpeg has many open vulnerabilities see and there seems to be no effort to add patches for these so we should drop ffmpeg or at least mark it as insecure in ffmpeg was made the de facto default by making every package that depends on ffmpeg depend on ffmpeg ... | 0 |

180,010 | 13,915,349,995 | IssuesEvent | 2020-10-21 00:23:32 | nicorithner/rails_engine | https://api.github.com/repos/nicorithner/rails_engine | closed | Relationships | API Tests database | These endpoints should show related records. The relationship endpoints you should expose are:

- [ ] GET /api/v1/merchants/:id/items - return all items associated with a merchant.

- [ ] GET /api/v1/items/:id/merchants - return the merchant associated with an item | 1.0 | Relationships - These endpoints should show related records. The relationship endpoints you should expose are:

- [ ] GET /api/v1/merchants/:id/items - return all items associated with a merchant.

- [ ] GET /api/v1/items/:id/merchants - return the merchant associated with an item | test | relationships these endpoints should show related records the relationship endpoints you should expose are get api merchants id items return all items associated with a merchant get api items id merchants return the merchant associated with an item | 1 |

331,275 | 28,807,832,013 | IssuesEvent | 2023-05-03 00:14:01 | NCAR/ucomp-pipeline | https://api.github.com/repos/NCAR/ucomp-pipeline | closed | Fix incorrect FLTFILE1 and MFLTEXT1 values | bug needs testing | For the run for 20220901 with intermediate products, the following information about the flats used for `20220901.182014.ucomp.1074.l1.3.fts` was:

FLTFILE1= '20220901.173314.67.ucomp.530.l0.fts' / name of raw flat file used

FLTEXTS1= '2,8,14,20' / ext in 20220901.173314.67.ucomp.530.l0.fts used

... | 1.0 | Fix incorrect FLTFILE1 and MFLTEXT1 values - For the run for 20220901 with intermediate products, the following information about the flats used for `20220901.182014.ucomp.1074.l1.3.fts` was:

FLTFILE1= '20220901.173314.67.ucomp.530.l0.fts' / name of raw flat file used

FLTEXTS1= '2,8,14,20' / ext in... | test | fix incorrect and values for the run for with intermediate products the following information about the flats used for ucomp fts was ucomp fts name of raw flat file used ext in ucomp fts used ext in ucomp f... | 1 |

79,007 | 9,815,804,579 | IssuesEvent | 2019-06-13 13:26:43 | xi-editor/xi-editor | https://api.github.com/repos/xi-editor/xi-editor | closed | Displaying spaces, tabs (and other usually not drawn characters?) | feature request needs design | It would be really nice if Xi supported drawing tabs, spaces etc. I've (sort of) implemeted support for this in gxi by replacings spaces with a dot during rendering, but this comes with some problems (namely that I have to carefully space them to not mess up the cursor position and that theming them doesn't work proper... | 1.0 | Displaying spaces, tabs (and other usually not drawn characters?) - It would be really nice if Xi supported drawing tabs, spaces etc. I've (sort of) implemeted support for this in gxi by replacings spaces with a dot during rendering, but this comes with some problems (namely that I have to carefully space them to not m... | non_test | displaying spaces tabs and other usually not drawn characters it would be really nice if xi supported drawing tabs spaces etc i ve sort of implemeted support for this in gxi by replacings spaces with a dot during rendering but this comes with some problems namely that i have to carefully space them to not m... | 0 |

111,450 | 4,473,202,714 | IssuesEvent | 2016-08-26 02:22:56 | GandaG/fomod-designer | https://api.github.com/repos/GandaG/fomod-designer | closed | Refresh rate changed in settings but not taking affect | bug mid priority | Per request, opening a new ticket.

Changed the Preview Refresh Rate from On Property Editing to On Node Select, but the preview window is still updated with each change in the property editor. A restart of the utility did not change behavior. | 1.0 | Refresh rate changed in settings but not taking affect - Per request, opening a new ticket.

Changed the Preview Refresh Rate from On Property Editing to On Node Select, but the preview window is still updated with each change in the property editor. A restart of the utility did not change behavior. | non_test | refresh rate changed in settings but not taking affect per request opening a new ticket changed the preview refresh rate from on property editing to on node select but the preview window is still updated with each change in the property editor a restart of the utility did not change behavior | 0 |

82,315 | 10,240,479,025 | IssuesEvent | 2019-08-19 20:54:45 | GoogleChrome/devsummit | https://api.github.com/repos/GoogleChrome/devsummit | opened | Schedule page design v2 | 💀 design | - [ ] current session

- [ ] current time

- [ ] past session

- [ ] sessions with stream links

any other interesting states or functionality we want to strive for? | 1.0 | Schedule page design v2 - - [ ] current session

- [ ] current time

- [ ] past session

- [ ] sessions with stream links

any other interesting states or functionality we want to strive for? | non_test | schedule page design current session current time past session sessions with stream links any other interesting states or functionality we want to strive for | 0 |

86,635 | 8,042,427,538 | IssuesEvent | 2018-07-31 08:07:02 | alibaba/pouch | https://api.github.com/repos/alibaba/pouch | closed | [help wanted] add unit-test for modifyContainerNamespaceOptions | areas/test | ### Ⅰ. Issue Description

Add unit-test for `modifyContainerNamespaceOptions` method which locate on cri/v1alpha1/cri_utils.go.

You can take [env_test.go](https://github.com/alibaba/pouch/blob/master/apis/opts/env_test.go) for reference.

### Ⅱ. Describe what happened

### Ⅲ. Describe what you expected to happe... | 1.0 | [help wanted] add unit-test for modifyContainerNamespaceOptions - ### Ⅰ. Issue Description

Add unit-test for `modifyContainerNamespaceOptions` method which locate on cri/v1alpha1/cri_utils.go.

You can take [env_test.go](https://github.com/alibaba/pouch/blob/master/apis/opts/env_test.go) for reference.

### Ⅱ. Desc... | test | add unit test for modifycontainernamespaceoptions ⅰ issue description add unit test for modifycontainernamespaceoptions method which locate on cri cri utils go you can take for reference ⅱ describe what happened ⅲ describe what you expected to happen ⅳ how to reproduce ... | 1 |

101,877 | 8,806,665,464 | IssuesEvent | 2018-12-27 05:46:23 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | testing 3 : ApiV1AbacProjectProjectidAddAbacpositiveRulesGetPathParamProjectidNullValue | testing 3 | Project : testing 3

Job : Default

Env : Default

Region : US_WEST

Result : fail

Status Code : 200

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-... | 1.0 | testing 3 : ApiV1AbacProjectProjectidAddAbacpositiveRulesGetPathParamProjectidNullValue - Project : testing 3

Job : Default

Env : Default

Region : US_WEST

Result : fail

Status Code : 200

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store,... | test | testing project testing job default env default region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint ... | 1 |

723,990 | 24,914,971,831 | IssuesEvent | 2022-10-30 09:39:55 | apache/hudi | https://api.github.com/repos/apache/hudi | closed | [SUPPORT] Executor OOM upserting 20M records from Kafka | performance priority:major | **Describe the problem you faced**

While upserting Mongo oplogs from Kafka to Blob, facing Executor OOM

**Environment Description**

* Hudi version : 0.9.0

* Spark version : 2.4.4

* Hive version : 3.1.2

* Hadoop version : 2.7.3

* Storage (HDFS/S3/GCS..) : Azure Blob

* Running on Docker? (yes/no)... | 1.0 | [SUPPORT] Executor OOM upserting 20M records from Kafka - **Describe the problem you faced**

While upserting Mongo oplogs from Kafka to Blob, facing Executor OOM

**Environment Description**

* Hudi version : 0.9.0

* Spark version : 2.4.4

* Hive version : 3.1.2

* Hadoop version : 2.7.3

* Storage (HD... | non_test | executor oom upserting records from kafka describe the problem you faced while upserting mongo oplogs from kafka to blob facing executor oom environment description hudi version spark version hive version hadoop version storage hdfs gcs ... | 0 |

314,795 | 9,603,170,428 | IssuesEvent | 2019-05-10 16:18:31 | threefoldtech/jumpscaleX | https://api.github.com/repos/threefoldtech/jumpscaleX | opened | jsx tfchain client lists available outputs that are actually spent | priority_critical type_bug | need to investigate why and fix it | 1.0 | jsx tfchain client lists available outputs that are actually spent - need to investigate why and fix it | non_test | jsx tfchain client lists available outputs that are actually spent need to investigate why and fix it | 0 |

15,694 | 3,481,393,577 | IssuesEvent | 2015-12-29 15:51:52 | slivne/try_git | https://api.github.com/repos/slivne/try_git | opened | repair : repair_while_new_node_is_added_test | dtest repair | Check that repair is accompileshed while new node is added

1. Create a cluster of 2 nodes with rf=2

2. Stop node 2

3. Insert data

4. Start node 2

6. Start repair

7. Create a new node and start it

| 1.0 | repair : repair_while_new_node_is_added_test - Check that repair is accompileshed while new node is added

1. Create a cluster of 2 nodes with rf=2

2. Stop node 2

3. Insert data

4. Start node 2

6. Start repair

7. Create a new node and start it

| test | repair repair while new node is added test check that repair is accompileshed while new node is added create a cluster of nodes with rf stop node insert data start node start repair create a new node and start it | 1 |

344,255 | 10,341,954,801 | IssuesEvent | 2019-09-04 04:30:28 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.pubgmobile.com - A zoomed in version of the site is displayed | browser-fenix engine-gecko priority-normal severity-important | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.pubgmobile.com/act/a20180515iggamepc/

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Andro... | 1.0 | www.pubgmobile.com - A zoomed in version of the site is displayed - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.pubgmobile.com/act/a20180515iggamepc/

**... | non_test | a zoomed in version of the site is displayed url browser version firefox mobile operating system android tested another browser yes problem type design is broken description site isn t displaying properly steps to reproduce browser configuration non... | 0 |

301,974 | 26,113,956,637 | IssuesEvent | 2022-12-28 01:54:41 | atsushieno/android-audio-plugin-framework | https://api.github.com/repos/atsushieno/android-audio-plugin-framework | closed | privatize Binder instance within connection manager and provide proxy instead | bug testing | Currently `AAPClientContext` in binder-client-as-plugin acquires `AIBinder* binder` from plugin factory at `aap_client_as_plugin_new()` and instantiate a strongly typed proxy (class created by AIDL) locally. The proxy instance is then destroyed when the `AAPClientContext` is destroyed.

It worked when there is only o... | 1.0 | privatize Binder instance within connection manager and provide proxy instead - Currently `AAPClientContext` in binder-client-as-plugin acquires `AIBinder* binder` from plugin factory at `aap_client_as_plugin_new()` and instantiate a strongly typed proxy (class created by AIDL) locally. The proxy instance is then destr... | test | privatize binder instance within connection manager and provide proxy instead currently aapclientcontext in binder client as plugin acquires aibinder binder from plugin factory at aap client as plugin new and instantiate a strongly typed proxy class created by aidl locally the proxy instance is then destr... | 1 |

104,431 | 8,972,329,529 | IssuesEvent | 2019-01-29 17:59:44 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | visualize app pie chart other bucket should apply correct filter on other bucket | :KibanaApp :Visualizations failed-test high test triaged | └- ✖ fail: "visualize app pie chart other bucket should apply correct filter on other bucket"

23:58:17 │ pie chart

23:58:17 │ other bucket

23:58:17 │ should apply correct filter on other bucket:

23:58:17 │

23:58:17 │ ... | 2.0 | visualize app pie chart other bucket should apply correct filter on other bucket - └- ✖ fail: "visualize app pie chart other bucket should apply correct filter on other bucket"

23:58:17 │ pie chart

23:58:17 │ other bucket

23:58:17 │ should apply cor... | test | visualize app pie chart other bucket should apply correct filter on other bucket └ ✖ fail visualize app pie chart other bucket should apply correct filter on other bucket │ pie chart │ other bucket │ should apply correct filt... | 1 |

198,001 | 14,953,091,376 | IssuesEvent | 2021-01-26 16:16:53 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: Jest Tests.src/core/server/http - Cookie based SessionStorage #get() reads from session storage | failed-test | A test failed on a tracked branch

```

Error: connect ECONNRESET 127.0.0.1:37795

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1146:16)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.11/234/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Jest Tests.src/core/serve... | 1.0 | Failing test: Jest Tests.src/core/server/http - Cookie based SessionStorage #get() reads from session storage - A test failed on a tracked branch

```

Error: connect ECONNRESET 127.0.0.1:37795

at TCPConnectWrap.afterConnect [as oncomplete] (net.js:1146:16)

```

First failure: [Jenkins Build](https://kibana-ci.elast... | test | failing test jest tests src core server http cookie based sessionstorage get reads from session storage a test failed on a tracked branch error connect econnreset at tcpconnectwrap afterconnect net js first failure | 1 |

151,925 | 12,065,932,399 | IssuesEvent | 2020-04-16 10:50:28 | dso-toolkit/dso-toolkit | https://api.github.com/repos/dso-toolkit/dso-toolkit | closed | Ontwerp Inloggen en Machtiging discussie | question status:testable | Vanwege nieuwe functionaliteit krijgen de inlogpagina's een herontwerp. Deze is gebaseerd op het inlog systeem van de belasting dienst(besproken met belastingdienst).

In een branchrelease is een prototype gemaakt

https://dso-toolkit.nl/_489-Machtigen-Inloggen-Herontwerp/components/detail/machtigen.html

De hoof... | 1.0 | Ontwerp Inloggen en Machtiging discussie - Vanwege nieuwe functionaliteit krijgen de inlogpagina's een herontwerp. Deze is gebaseerd op het inlog systeem van de belasting dienst(besproken met belastingdienst).

In een branchrelease is een prototype gemaakt

https://dso-toolkit.nl/_489-Machtigen-Inloggen-Herontwerp/... | test | ontwerp inloggen en machtiging discussie vanwege nieuwe functionaliteit krijgen de inlogpagina s een herontwerp deze is gebaseerd op het inlog systeem van de belasting dienst besproken met belastingdienst in een branchrelease is een prototype gemaakt de hoofdvragen over deze ontwerpen zijn is dit... | 1 |

322,056 | 23,887,362,734 | IssuesEvent | 2022-09-08 08:44:59 | IBM-Cloud/terraform-provider-ibm | https://api.github.com/repos/IBM-Cloud/terraform-provider-ibm | opened | Confusing wording in resource description | documentation | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Confusing wording in resource description - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainer... | non_test | confusing wording in resource description community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate ext... | 0 |

189,098 | 14,485,047,560 | IssuesEvent | 2020-12-10 17:05:55 | compare-ci/admin | https://api.github.com/repos/compare-ci/admin | closed | Automated test 1607619864.7172809 | Test | This is a tracking issue for the automated tests being run. Test id: `automated-test-1607619864.7172809`

|[python-sum](https://github.com/compare-ci/python-sum/pull/1386)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|17:04:29|17:04:30|17:05:30|0:01:01|0:01:00|

|Travis CI|17:04:29|17:05:... | 1.0 | Automated test 1607619864.7172809 - This is a tracking issue for the automated tests being run. Test id: `automated-test-1607619864.7172809`

|[python-sum](https://github.com/compare-ci/python-sum/pull/1386)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|17:04:29|17:04:30|17:05:30|0:01:01... | test | automated test this is a tracking issue for the automated tests being run test id automated test created check start check end total check circleci checks travis ci github actions azure pip... | 1 |

22,042 | 3,932,149,294 | IssuesEvent | 2016-04-25 14:53:03 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | Test API: Add support for internal links in previewHtml | testplan-item | Test plan item for #3676

@jrieken Please complete... | 1.0 | Test API: Add support for internal links in previewHtml - Test plan item for #3676

@jrieken Please complete... | test | test api add support for internal links in previewhtml test plan item for jrieken please complete | 1 |

51,775 | 10,723,770,774 | IssuesEvent | 2019-10-27 21:01:10 | comphack/comp_hack | https://api.github.com/repos/comphack/comp_hack | closed | Parallel Boss Defeat Bug | bug code | (On the Re:Imagine server if this matters at all)

At seemingly random times since the latest content release, every single one of my/my demons abilities will just seem to stop working. I can click a skill, click a demon to summon/desummon, anything. It will look like it worked but it does absolutely nothing except let... | 1.0 | Parallel Boss Defeat Bug - (On the Re:Imagine server if this matters at all)

At seemingly random times since the latest content release, every single one of my/my demons abilities will just seem to stop working. I can click a skill, click a demon to summon/desummon, anything. It will look like it worked but it does ab... | non_test | parallel boss defeat bug on the re imagine server if this matters at all at seemingly random times since the latest content release every single one of my my demons abilities will just seem to stop working i can click a skill click a demon to summon desummon anything it will look like it worked but it does ab... | 0 |

133,151 | 10,797,904,757 | IssuesEvent | 2019-11-06 08:58:03 | whatTool/Validation | https://api.github.com/repos/whatTool/Validation | closed | Option to add requirements in __construct() | TODO Test solution | Add option for requirement withing construct so $v->requirements($rules) isn't needed. | 1.0 | Option to add requirements in __construct() - Add option for requirement withing construct so $v->requirements($rules) isn't needed. | test | option to add requirements in construct add option for requirement withing construct so v requirements rules isn t needed | 1 |

47,283 | 2,974,618,168 | IssuesEvent | 2015-07-15 02:26:54 | palantir/tslint | https://api.github.com/repos/palantir/tslint | closed | class-name rule fails on default class export | Bug ES6+ Syntax High Priority | this code breaks on the "class-name" rule:

```ts

export default class {

...

}

``` | 1.0 | class-name rule fails on default class export - this code breaks on the "class-name" rule:

```ts

export default class {

...

}

``` | non_test | class name rule fails on default class export this code breaks on the class name rule ts export default class | 0 |

14,803 | 3,422,590,344 | IssuesEvent | 2015-12-08 23:50:29 | radare/radare2 | https://api.github.com/repos/radare/radare2 | reopened | Broken search when mapping a file in an address where it collides with its header | bug test-required | **UDPATE**: Detailed description of this bug is in this comment: https://github.com/radare/radare2/issues/3788#issuecomment-163042481

Checkout latest radare2-regressions repo and `cd` to:

```

$ cd radare2-regressions/bins/vsf

$ r2 ./c64-rambo2-rom.vsf

```

It has 3 sections, by default the last section is en... | 1.0 | Broken search when mapping a file in an address where it collides with its header - **UDPATE**: Detailed description of this bug is in this comment: https://github.com/radare/radare2/issues/3788#issuecomment-163042481

Checkout latest radare2-regressions repo and `cd` to:

```

$ cd radare2-regressions/bins/vsf

$ ... | test | broken search when mapping a file in an address where it collides with its header udpate detailed description of this bug is in this comment checkout latest regressions repo and cd to cd regressions bins vsf rom vsf it has sections by default the last section is enabl... | 1 |

176,499 | 13,644,106,504 | IssuesEvent | 2020-09-25 18:18:07 | softmatterlab/Braph-2.0-Matlab | https://api.github.com/repos/softmatterlab/Braph-2.0-Matlab | closed | Add Average Overlapping In-Strength and Average Overlapping Out-Strength | measure test | - [x] OverlappingInStrengthAv.m

- [x] test_OverlappingInStrengthAv.m

- [x] OverlappingOutStrengthAv.m

- [x] test_OverlappingOutStrengthAv.m

Branch from develop. | 1.0 | Add Average Overlapping In-Strength and Average Overlapping Out-Strength - - [x] OverlappingInStrengthAv.m

- [x] test_OverlappingInStrengthAv.m

- [x] OverlappingOutStrengthAv.m

- [x] test_OverlappingOutStrengthAv.m

Branch from develop. | test | add average overlapping in strength and average overlapping out strength overlappinginstrengthav m test overlappinginstrengthav m overlappingoutstrengthav m test overlappingoutstrengthav m branch from develop | 1 |

65,624 | 6,970,858,863 | IssuesEvent | 2017-12-11 11:53:32 | joserogerio/promocaldasSite | https://api.github.com/repos/joserogerio/promocaldasSite | closed | Validar CNPJ | melhoramento test | Validar CNPJ ao cadastrar e atualizar empresa, pois se não tiver um CNPJ válido irá dar erro ao gerar boleto. | 1.0 | Validar CNPJ - Validar CNPJ ao cadastrar e atualizar empresa, pois se não tiver um CNPJ válido irá dar erro ao gerar boleto. | test | validar cnpj validar cnpj ao cadastrar e atualizar empresa pois se não tiver um cnpj válido irá dar erro ao gerar boleto | 1 |

15,413 | 9,549,226,551 | IssuesEvent | 2019-05-02 08:32:12 | talent-wins/devjobv2 | https://api.github.com/repos/talent-wins/devjobv2 | closed | CVE-2015-9251 Medium Severity Vulnerability detected by WhiteSource | security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a... | True | CVE-2015-9251 Medium Severity Vulnerability detected by WhiteSource - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://www.whitesourcesoftware.com/wp-content/uploads/2018/10/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.1.min.js</b></p></summary>

... | non_test | cve medium severity vulnerability detected by whitesource cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file public admin layout js plugins codemirror mode slim index html ... | 0 |

121,219 | 10,153,985,647 | IssuesEvent | 2019-08-06 06:46:51 | OWASP/NodeGoat | https://api.github.com/repos/OWASP/NodeGoat | opened | Improve Cypress script | enhancement help wanted priority: HIGH testing | ### Context

- This is part of `release-1.5` #148

- Critical task

### Tasks

- [ ] Improve the speed of the e2e tests (almost 8 minutes now). Maybe videos are generated (ci/local envs)

- [ ] Add test that are missing (fast check)

- [ ] Remove unnecessary/repetitive tests

- [ ] Improve the [command `dbReset`](h... | 1.0 | Improve Cypress script - ### Context

- This is part of `release-1.5` #148

- Critical task

### Tasks

- [ ] Improve the speed of the e2e tests (almost 8 minutes now). Maybe videos are generated (ci/local envs)

- [ ] Add test that are missing (fast check)

- [ ] Remove unnecessary/repetitive tests

- [ ] Improve... | test | improve cypress script context this is part of release critical task tasks improve the speed of the tests almost minutes now maybe videos are generated ci local envs add test that are missing fast check remove unnecessary repetitive tests improve the mis... | 1 |

626,568 | 19,828,128,112 | IssuesEvent | 2022-01-20 09:10:00 | canonical-web-and-design/snapcraft.io | https://api.github.com/repos/canonical-web-and-design/snapcraft.io | closed | Closing and reopening the "Add member" panel in brand store doesn't clear roles | Priority: High | - Try to add a new member

- Close and reopen the new member panel

- The roles are still populated

- Filling in the email address doesn't enable the save button until there is a change in the roles | 1.0 | Closing and reopening the "Add member" panel in brand store doesn't clear roles - - Try to add a new member

- Close and reopen the new member panel

- The roles are still populated

- Filling in the email address doesn't enable the save button until there is a change in the roles | non_test | closing and reopening the add member panel in brand store doesn t clear roles try to add a new member close and reopen the new member panel the roles are still populated filling in the email address doesn t enable the save button until there is a change in the roles | 0 |

218,168 | 24,351,807,075 | IssuesEvent | 2022-10-03 01:21:28 | snowdensb/jpo-ode | https://api.github.com/repos/snowdensb/jpo-ode | opened | CVE-2022-42004 (Medium) detected in jackson-databind-2.12.3.jar | security vulnerability | ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.12.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core strea... | True | CVE-2022-42004 (Medium) detected in jackson-databind-2.12.3.jar - ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.12.3.jar</b></p></summary>

<p>G... | non_test | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file jpo ode common pom xml p... | 0 |

341,992 | 30,607,076,344 | IssuesEvent | 2023-07-23 06:07:29 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix tensor.test_torch_special_gt | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5634798880"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5629743168/job/15255044367"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="h... | 1.0 | Fix tensor.test_torch_special_gt - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5634798880"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5629743168/job/15255044367"><img src=https://img.shields.io/badge/-succ... | test | fix tensor test torch special gt tensorflow a href src jax a href src numpy a href src torch a href src paddle a href src | 1 |

183,979 | 14,964,705,406 | IssuesEvent | 2021-01-27 12:22:23 | alphagov/govuk-frontend | https://api.github.com/repos/alphagov/govuk-frontend | opened | Update macros content to specify when you use `text` or `html` | awaiting triage documentation | ## What

While working on cookie banner macros, I got some feedback that could apply to all other macros content.

A reviewer asked why users should choose either `text` or `html`, as we hadn't specified this in the cookie content. I then learned that if users ever attempt to pass both `text` and `html`, `html` will... | 1.0 | Update macros content to specify when you use `text` or `html` - ## What

While working on cookie banner macros, I got some feedback that could apply to all other macros content.

A reviewer asked why users should choose either `text` or `html`, as we hadn't specified this in the cookie content. I then learned that i... | non_test | update macros content to specify when you use text or html what while working on cookie banner macros i got some feedback that could apply to all other macros content a reviewer asked why users should choose either text or html as we hadn t specified this in the cookie content i then learned that i... | 0 |

188,097 | 14,438,559,633 | IssuesEvent | 2020-12-07 13:13:25 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | yasya1100/RnJvbSAyMTgyYmIwOTdkYzVjYWNmNTU2Zjg5ZTRlN2QyY2ZkZDk2ODgyMjM3IE1vbiBTZXAgMTcgMDA6MDA6MDAgMjAwMQpGcm9t: src/sync/atomic/atomic_test.go; 21 LoC | fresh small test |

Found a possible issue in [yasya1100/RnJvbSAyMTgyYmIwOTdkYzVjYWNmNTU2Zjg5ZTRlN2QyY2ZkZDk2ODgyMjM3IE1vbiBTZXAgMTcgMDA6MDA6MDAgMjAwMQpGcm9t](https://www.github.com/yasya1100/RnJvbSAyMTgyYmIwOTdkYzVjYWNmNTU2Zjg5ZTRlN2QyY2ZkZDk2ODgyMjM3IE1vbiBTZXAgMTcgMDA6MDA6MDAgMjAwMQpGcm9t) at [src/sync/atomic/atomic_test.go](https://g... | 1.0 | yasya1100/RnJvbSAyMTgyYmIwOTdkYzVjYWNmNTU2Zjg5ZTRlN2QyY2ZkZDk2ODgyMjM3IE1vbiBTZXAgMTcgMDA6MDA6MDAgMjAwMQpGcm9t: src/sync/atomic/atomic_test.go; 21 LoC -

Found a possible issue in [yasya1100/RnJvbSAyMTgyYmIwOTdkYzVjYWNmNTU2Zjg5ZTRlN2QyY2ZkZDk2ODgyMjM3IE1vbiBTZXAgMTcgMDA6MDA6MDAgMjAwMQpGcm9t](https://www.github.com/yasy... | test | src sync atomic atomic test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message range loop variab... | 1 |

52,331 | 3,022,642,565 | IssuesEvent | 2015-07-31 21:39:25 | information-artifact-ontology/IAO | https://api.github.com/repos/information-artifact-ontology/IAO | opened | synonym | imported Priority-Medium Type-Term | _From [dosu...@gmail.com](https://code.google.com/u/102674886352087815907/) on March 10, 2011 09:53:44_

*label for the new term*

synonym

*Background*

This is intended as an IAO mapping for the OBO tag synonym.

This tag is used to relate ontology terms to the language that people use. But that language is... | 1.0 | synonym - _From [dosu...@gmail.com](https://code.google.com/u/102674886352087815907/) on March 10, 2011 09:53:44_

*label for the new term*

synonym

*Background*

This is intended as an IAO mapping for the OBO tag synonym.

This tag is used to relate ontology terms to the language that people use. But that l... | non_test | synonym from on march label for the new term synonym background this is intended as an iao mapping for the obo tag synonym this tag is used to relate ontology terms to the language that people use but that language is not always precise words do not come with necessary and suffi... | 0 |

348,295 | 24,911,104,075 | IssuesEvent | 2022-10-29 21:43:06 | altair-viz/altair | https://api.github.com/repos/altair-viz/altair | closed | Add dict to allow a mapping from nominal names to what's displayed in a legend (or similar) | vega-lite-related documentation | I'd like to have a little flexibility to specify a mapping between a nominal data type and what's actually displayed. For instance, I might have:

```

color=Color('first_names:N',

legend=Legend(title='First Names'),

),

```

but my `first_names` are `tom`, `dick`, and `harry` ... | 1.0 | Add dict to allow a mapping from nominal names to what's displayed in a legend (or similar) - I'd like to have a little flexibility to specify a mapping between a nominal data type and what's actually displayed. For instance, I might have:

```

color=Color('first_names:N',

legend=Legend(title='F... | non_test | add dict to allow a mapping from nominal names to what s displayed in a legend or similar i d like to have a little flexibility to specify a mapping between a nominal data type and what s actually displayed for instance i might have color color first names n legend legend title f... | 0 |

4,433 | 7,308,529,931 | IssuesEvent | 2018-02-28 08:42:48 | UKHomeOffice/dq-aws-transition | https://api.github.com/repos/UKHomeOffice/dq-aws-transition | closed | Configure Maytech Mock Connectivity from NotProd Ingest Linux Server | DQ Data Ingest DQ Tranche 1 Production SSM processing | Private Key Migration

- [x] Private Key for Linux Ingest NotProd (/home/SSM/.ssh/id_rsa) for Mock Maytech Server

Configure NotProd ssh_remote_* parameters in sftp_oag_client_maytech.py

- [x] ssh_remote_host: <mock SFTP server>

- [x] ssh_remote_user: <mock oag user>

- [x] ssh_remote_key: /home/SSM/.ssh/id_rsa | 1.0 | Configure Maytech Mock Connectivity from NotProd Ingest Linux Server - Private Key Migration

- [x] Private Key for Linux Ingest NotProd (/home/SSM/.ssh/id_rsa) for Mock Maytech Server

Configure NotProd ssh_remote_* parameters in sftp_oag_client_maytech.py

- [x] ssh_remote_host: <mock SFTP server>

- [x] ssh_remo... | non_test | configure maytech mock connectivity from notprod ingest linux server private key migration private key for linux ingest notprod home ssm ssh id rsa for mock maytech server configure notprod ssh remote parameters in sftp oag client maytech py ssh remote host ssh remote user ssh rem... | 0 |

259,978 | 22,581,532,141 | IssuesEvent | 2022-06-28 12:05:58 | 4team-final/client | https://api.github.com/repos/4team-final/client | closed | feat: 로그인 client-server 연결 및 토큰 관리 | 🙂 FEAT 📜 TEST | ## 💡 Issue

server-client 로그인 서비스 연동 및 쿠키와 리덕스를 통한 토큰 관리

## 📝 todo

- [x] 로그인 server-client 연결

- [x] 로그인 테스트

- [x] Access / Refresh 토큰 분할 관리 구현

- [x] 토큰 저장 테스트

| 1.0 | feat: 로그인 client-server 연결 및 토큰 관리 - ## 💡 Issue

server-client 로그인 서비스 연동 및 쿠키와 리덕스를 통한 토큰 관리

## 📝 todo

- [x] 로그인 server-client 연결

- [x] 로그인 테스트

- [x] Access / Refresh 토큰 분할 관리 구현

- [x] 토큰 저장 테스트

| test | feat 로그인 client server 연결 및 토큰 관리 💡 issue server client 로그인 서비스 연동 및 쿠키와 리덕스를 통한 토큰 관리 📝 todo 로그인 server client 연결 로그인 테스트 access refresh 토큰 분할 관리 구현 토큰 저장 테스트 | 1 |

198,384 | 22,634,541,229 | IssuesEvent | 2022-06-30 17:34:24 | devonfw/devon4j | https://api.github.com/repos/devonfw/devon4j | closed | Add fido2 support | enhancement security | The standards fido2 and webauthn are the new hot topics revolutionizing authentication getting rid of passwords:

https://fidoalliance.org/fido2/

We should add a module/starter for devon4j supporting this OOTB. | True | Add fido2 support - The standards fido2 and webauthn are the new hot topics revolutionizing authentication getting rid of passwords:

https://fidoalliance.org/fido2/

We should add a module/starter for devon4j supporting this OOTB. | non_test | add support the standards and webauthn are the new hot topics revolutionizing authentication getting rid of passwords we should add a module starter for supporting this ootb | 0 |

215,112 | 16,637,042,828 | IssuesEvent | 2021-06-04 01:11:23 | Azure/azure-sdk-for-js | https://api.github.com/repos/Azure/azure-sdk-for-js | closed | Azure Ai Text Analytics Readme Issue | Client Cognitive - Text Analytics Docs bug test-manual-pass | 1.

Section [link](https://github.com/Azure/azure-sdk-for-js/tree/master/sdk/textanalytics/ai-text-analytics#recognize-pii-entities):

Reason:

The ` TextAnalyticsApiKeyCredential ` is not in the ` @az... | 1.0 | Azure Ai Text Analytics Readme Issue - 1.

Section [link](https://github.com/Azure/azure-sdk-for-js/tree/master/sdk/textanalytics/ai-text-analytics#recognize-pii-entities):

Reason:

The ` TextAnalytic... | test | azure ai text analytics readme issue section reason the textanalyticsapikeycredential is not in the azure ai text analytics suggestion update to const textanalyticsclient azurekeycredential require azure ai text analytics const client new textanalyticsclien... | 1 |

358,297 | 25,186,142,155 | IssuesEvent | 2022-11-11 18:10:25 | r3bl-org/r3bl_rs_utils | https://api.github.com/repos/r3bl-org/r3bl_rs_utils | opened | Add docs for algorithms | documentation enhancement | 1. Layout algorithm

- Why not use a DOM like tree?

- Pros / cons of using a stack instead of a tree?

- Cons: can't look up nodes by id?

- Pros: less memory consumption?

- How do algorithms for non-binary tree match up w/ stack based ones for eg: layout pass, tree walking, etc?

3. Tree walking w/ mem... | 1.0 | Add docs for algorithms - 1. Layout algorithm

- Why not use a DOM like tree?

- Pros / cons of using a stack instead of a tree?

- Cons: can't look up nodes by id?

- Pros: less memory consumption?

- How do algorithms for non-binary tree match up w/ stack based ones for eg: layout pass, tree walking, et... | non_test | add docs for algorithms layout algorithm why not use a dom like tree pros cons of using a stack instead of a tree cons can t look up nodes by id pros less memory consumption how do algorithms for non binary tree match up w stack based ones for eg layout pass tree walking et... | 0 |

23,840 | 11,963,082,045 | IssuesEvent | 2020-04-05 14:43:36 | badges/shields | https://api.github.com/repos/badges/shields | closed | Badge request: POEditor | good first issue service-badge | :clipboard: **Description**

Showing translation progress per language, from the online platform l10n platform <https://poeditor.com>.

Right now, I make my own static badges for this. For example:

| 1.0 | Hide radio button for thumbnail image on submit a listing page - We want this radio button to be hidden so it does not cause confusion for users.

| non_test | hide radio button for thumbnail image on submit a listing page we want this radio button to be hidden so it does not cause confusion for users | 0 |

94,929 | 10,861,875,563 | IssuesEvent | 2019-11-14 12:04:23 | CHEF-KOCH/Windows-10-hardening | https://api.github.com/repos/CHEF-KOCH/Windows-10-hardening | opened | LoLBins | Documentation Script | ## Overview

LOLBins are on my to-do list for a very long time. The only reliable solution I can find is to block the critical files such as powershell.exe, regedit, etc. and/or restrict their internet access.

## Problems

While this is problematic (you might wanna actually work with those things) there should be a... | 1.0 | LoLBins - ## Overview

LOLBins are on my to-do list for a very long time. The only reliable solution I can find is to block the critical files such as powershell.exe, regedit, etc. and/or restrict their internet access.

## Problems

While this is problematic (you might wanna actually work with those things) there s... | non_test | lolbins overview lolbins are on my to do list for a very long time the only reliable solution i can find is to block the critical files such as powershell exe regedit etc and or restrict their internet access problems while this is problematic you might wanna actually work with those things there s... | 0 |

9,509 | 3,051,267,054 | IssuesEvent | 2015-08-12 07:18:25 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | enketo date-picker is missing its styles | 3 - Acceptance testing | * date picker needs class `enketo` applied to inherit the styles correctly

* when this fix has been made, date-picker `z-index` can be a substyle of `.enketo` | 1.0 | enketo date-picker is missing its styles - * date picker needs class `enketo` applied to inherit the styles correctly

* when this fix has been made, date-picker `z-index` can be a substyle of `.enketo` | test | enketo date picker is missing its styles date picker needs class enketo applied to inherit the styles correctly when this fix has been made date picker z index can be a substyle of enketo | 1 |

43,538 | 11,255,290,736 | IssuesEvent | 2020-01-12 08:01:54 | minecraft-dev/MinecraftDev | https://api.github.com/repos/minecraft-dev/MinecraftDev | closed | Gradle error after creating project | build: gradle status: stale status: waiting reply | Please include the following information in all issues:

* Minecraft Development for IntelliJ plugin version: 1.2.23.1

* IntelliJ version: 2019.2.1 Ultimate

* Operating System: Windows 10

* Target platforms: Forge 1.12.2-14.23.5.2838

When I create a new project and run the gradle sync this error comes:

Unable ... | 1.0 | Gradle error after creating project - Please include the following information in all issues:

* Minecraft Development for IntelliJ plugin version: 1.2.23.1

* IntelliJ version: 2019.2.1 Ultimate

* Operating System: Windows 10

* Target platforms: Forge 1.12.2-14.23.5.2838

When I create a new project and run the ... | non_test | gradle error after creating project please include the following information in all issues minecraft development for intellij plugin version intellij version ultimate operating system windows target platforms forge when i create a new project and run the gradle sync... | 0 |

190,325 | 14,542,428,648 | IssuesEvent | 2020-12-15 15:42:13 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | sourcegraph/go-vcs: vcs/diff_test.go; 59 LoC | fresh medium test |

Found a possible issue in [sourcegraph/go-vcs](https://www.github.com/sourcegraph/go-vcs) at [vcs/diff_test.go](https://github.com/sourcegraph/go-vcs/blob/d784c9520ccdd19883f59efd0a2ae4441f576582/vcs/diff_test.go#L284-L342)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyz... | 1.0 | sourcegraph/go-vcs: vcs/diff_test.go; 59 LoC -

Found a possible issue in [sourcegraph/go-vcs](https://www.github.com/sourcegraph/go-vcs) at [vcs/diff_test.go](https://github.com/sourcegraph/go-vcs/blob/d784c9520ccdd19883f59efd0a2ae4441f576582/vcs/diff_test.go#L284-L342)

Below is the message reported by the analyzer f... | test | sourcegraph go vcs vcs diff test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message range loop varia... | 1 |

286,102 | 24,719,346,904 | IssuesEvent | 2022-10-20 09:28:21 | matrix-org/dendrite | https://api.github.com/repos/matrix-org/dendrite | closed | CS peek sytest flakes about 10% of the time with db deadlock in sqlite | tests | Sytest is from https://github.com/matrix-org/sytest/pull/944/files (in this instance, it's the test to check that peeking by alias works, but it can happen to any of them)

It looks like this when it jams:

```

[server]: time="2020-09-07T22:43:25.649467000Z" level=info msg="Sent event to roomserver" func="SendEven... | 1.0 | CS peek sytest flakes about 10% of the time with db deadlock in sqlite - Sytest is from https://github.com/matrix-org/sytest/pull/944/files (in this instance, it's the test to check that peeking by alias works, but it can happen to any of them)

It looks like this when it jams:

```

[server]: time="2020-09-07T22:4... | test | cs peek sytest flakes about of the time with db deadlock in sqlite sytest is from in this instance it s the test to check that peeking by alias works but it can happen to any of them it looks like this when it jams time level info msg sent event to roomserver func sendevent n ... | 1 |

134,013 | 12,559,840,738 | IssuesEvent | 2020-06-07 20:12:39 | gcm1001/TFG-CeniehAriadne | https://api.github.com/repos/gcm1001/TFG-CeniehAriadne | opened | Actualizar memoria y anexos | documentation | ## Memoria

**Actualizar** los puntos:

- 1 Introducción.

- 2 Objetivos del proyecto.

- 3 Conceptos teóricos.

- 4 Técnicas y herramientas.

**Empezar** los puntos:

- 5 Aspectos relevantes del desarrollo del proyecto

## Anexos

**Actualizar** los puntos:

A. Plan proyecto.

**Empezar** los puntos:

- E Manual... | 1.0 | Actualizar memoria y anexos - ## Memoria

**Actualizar** los puntos:

- 1 Introducción.

- 2 Objetivos del proyecto.

- 3 Conceptos teóricos.

- 4 Técnicas y herramientas.

**Empezar** los puntos:

- 5 Aspectos relevantes del desarrollo del proyecto

## Anexos

**Actualizar** los puntos:

A. Plan proyecto.

**Emp... | non_test | actualizar memoria y anexos memoria actualizar los puntos introducción objetivos del proyecto conceptos teóricos técnicas y herramientas empezar los puntos aspectos relevantes del desarrollo del proyecto anexos actualizar los puntos a plan proyecto emp... | 0 |

343,431 | 30,665,580,890 | IssuesEvent | 2023-07-25 18:01:03 | opensearch-project/dashboards-visualizations | https://api.github.com/repos/opensearch-project/dashboards-visualizations | closed | [AUTOCUT] Integration Test failed for ganttChartDashboards: 2.9.0 tar distribution | untriaged autocut integ-test-failure v2.9.0 | The integration test failed at distribution level for component ganttChartDashboards<br>Version: 2.9.0<br>Distribution: tar<br>Architecture: arm64<br>Platform: linux<br><br>Please check the logs: https://build.ci.opensearch.org/job/integ-test-opensearch-dashboards/3691/display/redirect<br><br> * Steps to reproduce: See... | 1.0 | [AUTOCUT] Integration Test failed for ganttChartDashboards: 2.9.0 tar distribution - The integration test failed at distribution level for component ganttChartDashboards<br>Version: 2.9.0<br>Distribution: tar<br>Architecture: arm64<br>Platform: linux<br><br>Please check the logs: https://build.ci.opensearch.org/job/int... | test | integration test failed for ganttchartdashboards tar distribution the integration test failed at distribution level for component ganttchartdashboards version distribution tar architecture platform linux please check the logs steps to reproduce see see all log files if applicabl... | 1 |

55,768 | 14,020,550,292 | IssuesEvent | 2020-10-29 19:51:49 | anyulled/react-skeleton | https://api.github.com/repos/anyulled/react-skeleton | opened | CVE-2020-7751 (Medium) detected in pathval-1.1.0.tgz | security vulnerability | ## CVE-2020-7751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>pathval-1.1.0.tgz</b></p></summary>

<p>Object value retrieval given a string path</p>

<p>Library home page: <a href="... | True | CVE-2020-7751 (Medium) detected in pathval-1.1.0.tgz - ## CVE-2020-7751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>pathval-1.1.0.tgz</b></p></summary>

<p>Object value retrieval ... | non_test | cve medium detected in pathval tgz cve medium severity vulnerability vulnerable library pathval tgz object value retrieval given a string path library home page a href path to dependency file react skeleton client package json path to vulnerable library react skeleto... | 0 |

182,867 | 14,169,139,667 | IssuesEvent | 2020-11-12 12:48:22 | cnigfr/PCRS | https://api.github.com/repos/cnigfr/PCRS | closed | Jeu de test : nombreux namespaces définis sans nécessité | jeux tests | Dans le jeu de tests JeuxTestv2.gml des espaces de nommages non nécessaires sont définis de nombreuses fois.

`

<featureMember xmlns:xs="http://www.w3.org/2001/XMLSchema" xmlns:fn="http://www.w3.org/2005/xpath-functions" xmlns:pcrs="http://cnig.gouv.fr/pcrs">

`

Les espaces de nommage xmlns:xs="http://www.w3.org/... | 1.0 | Jeu de test : nombreux namespaces définis sans nécessité - Dans le jeu de tests JeuxTestv2.gml des espaces de nommages non nécessaires sont définis de nombreuses fois.

`

<featureMember xmlns:xs="http://www.w3.org/2001/XMLSchema" xmlns:fn="http://www.w3.org/2005/xpath-functions" xmlns:pcrs="http://cnig.gouv.fr/pcrs"... | test | jeu de test nombreux namespaces définis sans nécessité dans le jeu de tests gml des espaces de nommages non nécessaires sont définis de nombreuses fois featuremember xmlns xs xmlns fn xmlns pcrs les espaces de nommage xmlns xs et xmlns fn ne sont pas utilisés ils n’ont pas besoin d’... | 1 |

299,011 | 25,875,251,508 | IssuesEvent | 2022-12-14 07:19:51 | zephyrproject-rtos/test_results | https://api.github.com/repos/zephyrproject-rtos/test_results | closed |

tests-ci : portability: posix: eventfd_basic.newlib.posix_api test No Console Output(Timeout)

| bug area: Tests |

**Describe the bug**

eventfd_basic.newlib.posix_api test is No Console Output(Timeout) on zephyr-v3.2.0-2490-ga1b4896efe46 on mimxrt1170_evk_cm7

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt1170_evk_cm7 --sub-tes... | 1.0 |

tests-ci : portability: posix: eventfd_basic.newlib.posix_api test No Console Output(Timeout)

-

**Describe the bug**

eventfd_basic.newlib.posix_api test is No Console Output(Timeout) on zephyr-v3.2.0-2490-ga1b4896efe46 on mimxrt1170_evk_cm7

see logs for details

**To Reproduce**

1.

... | test | tests ci portability posix eventfd basic newlib posix api test no console output timeout describe the bug eventfd basic newlib posix api test is no console output timeout on zephyr on evk see logs for details to reproduce scripts twister d... | 1 |

216,747 | 16,814,905,774 | IssuesEvent | 2021-06-17 05:55:46 | datafuselabs/datafuse | https://api.github.com/repos/datafuselabs/datafuse | closed | [tests] Tests support error code match rather than error text. | easy-task feature testing | **Summary**

Tests support error code match rather than error text.

```

SELECT x, number FROM system.numbers LIMIT 1; -- { serverError 47 }

```

ClickHouse: https://github.com/ClickHouse/ClickHouse/blob/master/tests/queries/0_stateless/00002_system_numbers.sql#L9-L14

| 1.0 | [tests] Tests support error code match rather than error text. - **Summary**

Tests support error code match rather than error text.

```

SELECT x, number FROM system.numbers LIMIT 1; -- { serverError 47 }

```

ClickHouse: https://github.com/ClickHouse/ClickHouse/blob/master/tests/queries/0_stateless/00002_syst... | test | tests support error code match rather than error text summary tests support error code match rather than error text select x number from system numbers limit servererror clickhouse | 1 |

90,923 | 8,287,392,222 | IssuesEvent | 2018-09-19 08:44:51 | Mojo1917/LocBase | https://api.github.com/repos/Mojo1917/LocBase | closed | Database-Umschaltung cleverer machen | Feature zum Test für Matthias | Lass uns oben, beim Data-Base-Umschalten eine ähnliche Logik wie bei dem (neuen) Filterbutton anweden:

Gehen wir davon aus, dass es 5 Datenbanken gibt und ich mich in der 2. befinde. Dann kann ich horizontal nach rechts und links wischen. In gewissen Abständen switche ich (nur den Titel) zu den anderen Datenbanekn, ... | 1.0 | Database-Umschaltung cleverer machen - Lass uns oben, beim Data-Base-Umschalten eine ähnliche Logik wie bei dem (neuen) Filterbutton anweden:

Gehen wir davon aus, dass es 5 Datenbanken gibt und ich mich in der 2. befinde. Dann kann ich horizontal nach rechts und links wischen. In gewissen Abständen switche ich (nur... | test | database umschaltung cleverer machen lass uns oben beim data base umschalten eine ähnliche logik wie bei dem neuen filterbutton anweden gehen wir davon aus dass es datenbanken gibt und ich mich in der befinde dann kann ich horizontal nach rechts und links wischen in gewissen abständen switche ich nur... | 1 |

678,279 | 23,191,254,704 | IssuesEvent | 2022-08-01 12:51:49 | cheminfo/nmrium | https://api.github.com/repos/cheminfo/nmrium | closed | Rename workspace | enhancement Priority | Process 1D workspace is probably useless and we can rename it to '1D multiple spectra analysis' (I don't think we need to repeat in the menu 'workspace'. Just keep it for the first item (Default workspace)).

Here are the active tools / panels :

).

Here are the active tools / panels :

.

## Why

Our Drive contains a doc about [what should, and should not, go to the working group](https://docs.google.com/document/d/1xL-0WBl2hct1eiIBbW7rY6xExf... | 1.0 | Update working group page with content about submissions - ## What

Add content about submissions to [Community page about the working group](https://design-system.service.gov.uk/community/design-system-working-group/).

## Why

Our Drive contains a doc about [what should, and should not, go to the working group](htt... | non_test | update working group page with content about submissions what add content about submissions to why our drive contains a doc about it s only had a few viewers since a prior team member created it if this info were visible publicly it could tell more users about the scope of the working group ... | 0 |

288,138 | 24,882,768,787 | IssuesEvent | 2022-10-28 03:47:11 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Orçamento - Execução - Capela Nova | generalization test development template - Memory (66) tag - Orçamento subtag - Execução | DoD: Realizar o teste de Generalização do validador da tag Orçamento - Execução para o Município de Capela Nova. | 1.0 | Teste de generalizacao para a tag Orçamento - Execução - Capela Nova - DoD: Realizar o teste de Generalização do validador da tag Orçamento - Execução para o Município de Capela Nova. | test | teste de generalizacao para a tag orçamento execução capela nova dod realizar o teste de generalização do validador da tag orçamento execução para o município de capela nova | 1 |

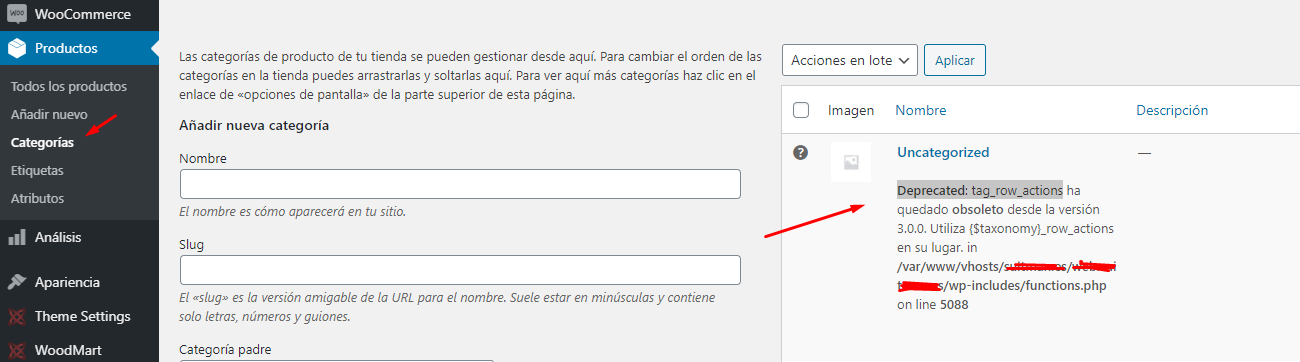

421,371 | 12,256,179,867 | IssuesEvent | 2020-05-06 11:36:14 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Deprecated: tag_row_actions after update to WooCommerce 4.0 | community effort: [S] module: cache priority: low type: bug |

This occurs when Wp-rocket 3.5.2 is active

Deprecated: tag_row_actions has been deprecated since version 3.0.0. Use {$ taxonomy} _row_actions instead.

**Describe the bug**

A message displayed from... | 1.0 | Deprecated: tag_row_actions after update to WooCommerce 4.0 -

This occurs when Wp-rocket 3.5.2 is active

Deprecated: tag_row_actions has been deprecated since version 3.0.0. Use {$ taxonomy} _row_acti... | non_test | deprecated tag row actions after update to woocommerce this occurs when wp rocket is active deprecated tag row actions has been deprecated since version use taxonomy row actions instead describe the bug a message displayed from a function listed as deprecated to reproduc... | 0 |

163,121 | 12,704,638,757 | IssuesEvent | 2020-06-23 02:04:41 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | Code deficiencies of various kinds and severities | area/test-infra kind/feature lifecycle/frozen sig/testing | Hi,

I ran [staticcheck](https://github.com/dominikh/go-staticcheck) on Kubernetes at commit 2110f72, filtered out benign issues and false positives and assembled the following report, grouped by category and sorted by severity in descending order. I hope this report is useful to you.

# defer mu.Lock() (SA2003)

... | 2.0 | Code deficiencies of various kinds and severities - Hi,

I ran [staticcheck](https://github.com/dominikh/go-staticcheck) on Kubernetes at commit 2110f72, filtered out benign issues and false positives and assembled the following report, grouped by category and sorted by severity in descending order. I hope this repor... | test | code deficiencies of various kinds and severities hi i ran on kubernetes at commit filtered out benign issues and false positives and assembled the following report grouped by category and sorted by severity in descending order i hope this report is useful to you defer mu lock the follow... | 1 |

121,495 | 10,170,396,014 | IssuesEvent | 2019-08-08 05:07:58 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed test: TestStoreMetrics | C-test-failure O-robot | The following tests appear to have failed on master (test): TestStoreMetrics

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestStoreMetrics).

[#1428229](https://teamcity.cockroachdb.com/viewLog.html?buildId=1428229):

```

TestStoreMetrics

.../client_me... | 1.0 | teamcity: failed test: TestStoreMetrics - The following tests appear to have failed on master (test): TestStoreMetrics

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestStoreMetrics).

[#1428229](https://teamcity.cockroachdb.com/viewLog.html?buildId=1428... | test | teamcity failed test teststoremetrics the following tests appear to have failed on master test teststoremetrics you may want to check teststoremetrics client metrics test go succeedssoon expected intent count to be zero was storage client metrics test go succeedssoon ... | 1 |

703,572 | 24,166,352,709 | IssuesEvent | 2022-09-22 15:19:23 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.bankrate.com - Missaligned buttons overlapping page elemtents | browser-firefox priority-normal severity-minor engine-gecko | <!-- @browser: Firefox 91.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; rv:91.0) Gecko/20100101 Firefox/91.0 -->

<!-- @reported_with: unknown -->

**URL**: https://www.bankrate.com/retirement/calculators/roth-ira-plan-calculator/

**Browser / Version**: Firefox Nightly 95.0a1 (2021-10-08) (64-bit)

**Opera... | 1.0 | www.bankrate.com - Missaligned buttons overlapping page elemtents - <!-- @browser: Firefox 91.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; rv:91.0) Gecko/20100101 Firefox/91.0 -->

<!-- @reported_with: unknown -->

**URL**: https://www.bankrate.com/retirement/calculators/roth-ira-plan-calculator/

**Browse... | non_test | missaligned buttons overlapping page elemtents url browser version firefox nightly bit operating system windows tested another browser yes chrome problem type design is broken description items are overlapped steps to reproduce buttons... | 0 |

185 | 2,573,331,948 | IssuesEvent | 2015-02-11 08:56:03 | molgenis/molgenis | https://api.github.com/repos/molgenis/molgenis | opened | Inactive user can still request a new password (which doesn't work) | enhancement security | To reproduce:

Set a user (for example admin) to active = 0.

Request a new password at login.

A new password is sent to to the admin email address.

Password will not be valid.

A message telling the user the account is inactive should be shown. | True | Inactive user can still request a new password (which doesn't work) - To reproduce:

Set a user (for example admin) to active = 0.

Request a new password at login.

A new password is sent to to the admin email address.

Password will not be valid.

A message telling the user the account is inactive should be shown. | non_test | inactive user can still request a new password which doesn t work to reproduce set a user for example admin to active request a new password at login a new password is sent to to the admin email address password will not be valid a message telling the user the account is inactive should be shown | 0 |

137,591 | 11,145,975,180 | IssuesEvent | 2019-12-23 08:23:50 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Test failure ClientRegressionWithRealNetworkTest.testConnectionCountAfterClientReconnect_memberHostname_clientHostname | Source: Internal Team: Client Type: Test-Failure | http://jenkins.hazelcast.com/job/Hazelcast-pr-builder/5127/testReport/junit/com.hazelcast.client/ClientRegressionWithRealNetworkTest/testConnectionCountAfterClientReconnect_memberHostname_clientHostname/

```

Error Message

expected:<1> but was:<0>

Stacktrace

java.lang.AssertionError: expected:<1> but was:<0>

at... | 1.0 | Test failure ClientRegressionWithRealNetworkTest.testConnectionCountAfterClientReconnect_memberHostname_clientHostname - http://jenkins.hazelcast.com/job/Hazelcast-pr-builder/5127/testReport/junit/com.hazelcast.client/ClientRegressionWithRealNetworkTest/testConnectionCountAfterClientReconnect_memberHostname_clientHostn... | test | test failure clientregressionwithrealnetworktest testconnectioncountafterclientreconnect memberhostname clienthostname error message expected but was stacktrace java lang assertionerror expected but was at com hazelcast client clientregressionwithrealnetworktest testconnectioncountafterclientr... | 1 |

143,594 | 11,570,268,295 | IssuesEvent | 2020-02-20 19:11:32 | cdnjs/cdnjs | https://api.github.com/repos/cdnjs/cdnjs | closed | [Test] Make sure libs are under ajax/libs | :bulb: Help wanted :sunglasses: Nice to Have :traffic_light: Test | Sometimes contributor put the libraries at the wrong place - `ajax/lib`, then the currently test process will totally miss that library and just let the test pass, I didn't come up with a great solution yet, but maybe we can just make sure there is no other files than `libs` under `ajax`.

<bountysource-plugin>

--... | 1.0 | [Test] Make sure libs are under ajax/libs - Sometimes contributor put the libraries at the wrong place - `ajax/lib`, then the currently test process will totally miss that library and just let the test pass, I didn't come up with a great solution yet, but maybe we can just make sure there is no other files than `libs` ... | test | make sure libs are under ajax libs sometimes contributor put the libraries at the wrong place ajax lib then the currently test process will totally miss that library and just let the test pass i didn t come up with a great solution yet but maybe we can just make sure there is no other files than libs under... | 1 |

39,551 | 5,102,008,430 | IssuesEvent | 2017-01-04 17:00:43 | fsr-itse/1327 | https://api.github.com/repos/fsr-itse/1327 | opened | show last level of navigation in breadcrumbs | [P] nice to have [T] design | Currently the last level is not shown in the breadcrumbs. It should be shown if the breadcrumbs itself are shown (so on a page on the main level breadcrumbs should still be shown) | 1.0 | show last level of navigation in breadcrumbs - Currently the last level is not shown in the breadcrumbs. It should be shown if the breadcrumbs itself are shown (so on a page on the main level breadcrumbs should still be shown) | non_test | show last level of navigation in breadcrumbs currently the last level is not shown in the breadcrumbs it should be shown if the breadcrumbs itself are shown so on a page on the main level breadcrumbs should still be shown | 0 |

61,410 | 14,986,142,635 | IssuesEvent | 2021-01-28 20:51:30 | codereport/jsource | https://api.github.com/repos/codereport/jsource | closed | More folder restructuring | HIGH PRIORITY ci / build good first issue | Add the new folders:

* `words`

* `debugging` (:star: note **debugging** not **debug**)

* `format`

* `parsing`

* `representations`

* `xenos`

With the following files:

```

./wc.c:4:/* Words: Control Words */

./ws.c:4:/* Words: Spelling ... | 1.0 | More folder restructuring - Add the new folders:

* `words`

* `debugging` (:star: note **debugging** not **debug**)

* `format`

* `parsing`

* `representations`

* `xenos`

With the following files:

```

./wc.c:4:/* Words: Control Words */

./ws.c:4:/* Words... | non_test | more folder restructuring add the new folders words debugging star note debugging not debug format parsing representations xenos with the following files wc c words control words ws c words... | 0 |

77,721 | 7,600,914,691 | IssuesEvent | 2018-04-28 07:51:20 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Operation cannot be fulfilled on namespaces (extended image ecosystem tests failure) | kind/test-flake priority/P1 | "Operation cannot be fulfilled on namespaces "<namespace>": The system is ensuring all content is removed from this namespace. Upon completion, this namespace will automatically be purged by the system.

https://ci.openshift.redhat.com/jenkins/job/test_branch_origin_extended_image_ecosystem/458/ | 1.0 | Operation cannot be fulfilled on namespaces (extended image ecosystem tests failure) - "Operation cannot be fulfilled on namespaces "<namespace>": The system is ensuring all content is removed from this namespace. Upon completion, this namespace will automatically be purged by the system.

https://ci.openshift.redha... | test | operation cannot be fulfilled on namespaces extended image ecosystem tests failure operation cannot be fulfilled on namespaces the system is ensuring all content is removed from this namespace upon completion this namespace will automatically be purged by the system | 1 |

81,141 | 7,768,458,863 | IssuesEvent | 2018-06-03 18:14:42 | cerberustesting/cerberus-source | https://api.github.com/repos/cerberustesting/cerberus-source | closed | Allways Execute a step at the end of test case execution | Perim : ENGINETransversal Perim : GUITest Prio : 1 high+ |

Add possibility to always execute a step at the end of test case execution :

- [x] Add a new check box on a step `Force this step if Testcase is not OK`

- [x] Add possibility to create a `PostTesting` Testcase link to an application. This `PostTesting` Testcase will executed at the end of a TestCase. If `Force t... | 1.0 | Allways Execute a step at the end of test case execution -

Add possibility to always execute a step at the end of test case execution :

- [x] Add a new check box on a step `Force this step if Testcase is not OK`

- [x] Add possibility to create a `PostTesting` Testcase link to an application. This `PostTesting` T... | test | allways execute a step at the end of test case execution add possibility to always execute a step at the end of test case execution add a new check box on a step force this step if testcase is not ok add possibility to create a posttesting testcase link to an application this posttesting testc... | 1 |

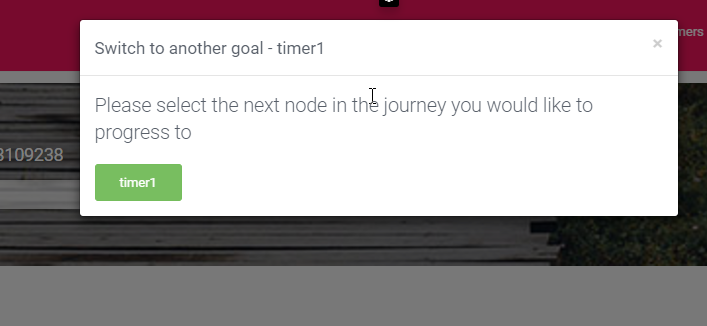

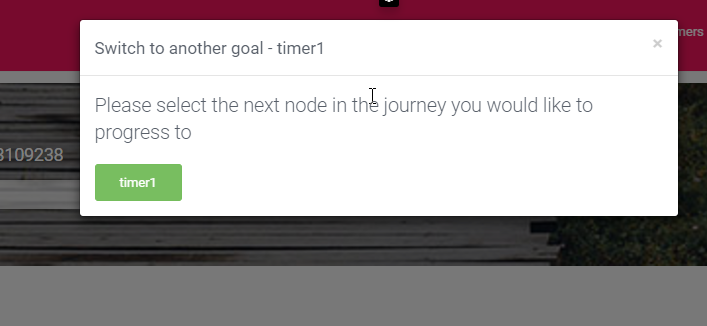

51,711 | 6,194,013,069 | IssuesEvent | 2017-07-05 08:51:29 | Kademi/kademi-dev | https://api.github.com/repos/Kademi/kademi-dev | closed | leadman theme - Switch to another goal Popup: wrong message | Ready to Test - Dev | https://github.com/Kademi/kademi-dev/issues/3431

profile to login: http://lantest1.admin.kademi-prod.com/manageUsers/74549

lead: http://hattonfake001.kademi-prod.com/leads/117242/

current, this popup's s... | 1.0 | leadman theme - Switch to another goal Popup: wrong message - https://github.com/Kademi/kademi-dev/issues/3431

profile to login: http://lantest1.admin.kademi-prod.com/manageUsers/74549

lead: http://hatton... | test | leadman theme switch to another goal popup wrong message profile to login lead current this popup s showing message of next node function | 1 |

19,380 | 3,769,105,730 | IssuesEvent | 2016-03-16 09:16:58 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | [Localization] Extensions icon hover tooltip not localized | v-test | - VSCode Version: 0.10.12-alpha --locale=fr (or any locale)

- OS Version: Windows 10

Steps to Reproduce:

1. Launch a localized VS Code instance

2. Hover over the extensions icon in the bottom left

3. "Extensions" tooltip is not localized

- OS Version: Windows 10

Steps to Reproduce:

1. Launch a localized VS Code instance

2. Hover over the extensions icon in the bottom left

3. "Extensions" tooltip is not localized

![extens... | test | extensions icon hover tooltip not localized vscode version alpha locale fr or any locale os version windows steps to reproduce launch a localized vs code instance hover over the extensions icon in the bottom left extensions tooltip is not localized | 1 |

26,723 | 4,240,986,134 | IssuesEvent | 2016-07-06 15:02:57 | rlf/uSkyBlock | https://api.github.com/repos/rlf/uSkyBlock | closed | Island party bug | S duplicate T tested awaiting reporter | _Please paste the output from `/usb version` below_

```

�Name: �uSkyBlock�

�Version: �2.6.12�

�Description: �Ultimate SkyBlock v2.6.12-9e2d30-413�

�Language: �cs (cs)�

�------------------------------�

�Server: �CraftBukkit git-Spigot-c3e4052-1953f52 (MC: 1.10)�

�------------------------------�

��Vault �1.5.6-b... | 1.0 | Island party bug - _Please paste the output from `/usb version` below_

```

�Name: �uSkyBlock�

�Version: �2.6.12�

�Description: �Ultimate SkyBlock v2.6.12-9e2d30-413�

�Language: �cs (cs)�

�------------------------------�