Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

260,731 | 22,644,334,977 | IssuesEvent | 2022-07-01 07:10:25 | zkSNACKs/WalletWasabi | https://api.github.com/repos/zkSNACKs/WalletWasabi | closed | CoinJoinClient double started in Manual mode | ww2 testing | ### General Description

A clear and concise description of what the bug is.

### How To Reproduce?

Let the client run and wait until one successful round in Manual CoinJoin mode.

When the round finished both of these lines will be triggered:

https://github.com/zkSNACKs/WalletWasabi/blob/d634fa58386ec56f92d72f7d65cd019e48998042/WalletWasabi.Fluent/ViewModels/Wallets/CoinJoinStateViewModel.cs#L248

https://github.com/zkSNACKs/WalletWasabi/blob/d634fa58386ec56f92d72f7d65cd019e48998042/WalletWasabi.Fluent/ViewModels/Wallets/CoinJoinStateViewModel.cs#L253

### Logs

```

2022-05-19 15:52:07.461 [54] INFO AliceClient.SignTransactionAsync (237) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2), Alice (cbf126da-df1d-c0e5-10cb-0d5310f40a33): Posted a signature.

2022-05-19 15:52:07.464 [54] DEBUG CoinJoinClient.ProceedWithSigningStateAsync (669) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2): Alices(3) have signed the coinjoin tx.

2022-05-19 15:52:10.203 [49] DEBUG CoinJoinClient.StartRoundAsync (218) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2): Broadcasted. Coinjoin TxId: (c3c82cc4ebe577cf141273a0972d85c70ff218a8863af0749695d7f6375556c7)

2022-05-19 15:52:10.210 [49] DEBUG CoinJoinClient.LogCoinJoinSummary (425) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2):

Input total: +0.00325266 Eff: +0.00299598 NetwFee: +0.00025668 CoordFee: 0.00

Outpu total: +0.00272690 Eff: +0.00245782 NetwFee: +0.00026908

Total diff : +0.00052576

Effec diff : +0.00053816

Total fee : +0.00052576

2022-05-19 15:52:20.628 [46] DEBUG CoinJoinManager.HandleCoinJoinCommandsAsync (144) Wallet (Random Wallet): Cannot start coinjoin, bacause it is already running.

2022-05-19 15:52:20.652 [21] INFO CoinJoinManager.HandleCoinJoinFinalizationAsync (306) Wallet (Random Wallet): CoinJoinClient finished!

2022-05-19 15:52:37.124 [19] DEBUG CoinJoinManager.HandleCoinJoinCommandsAsync (198) Wallet (Random Wallet): Coinjoin client started, auto-coinjoin: 'False' overridePlebStop:'True'.

2022-05-19 15:52:42.586 [6] DEBUG CoinJoinClient.CreateRegisterAndConfirmCoinsAsync (289) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae): Input registration started - it will end in: 00:01:01.

2022-05-19 15:52:44.308 [52] DEBUG WabiSabiHttpApiClient.SendWithRetriesAsync (89) Received a response for RegisterInput in 0.01s.

2022-05-19 15:52:44.326 [52] INFO AliceClient.RegisterInputAsync (98) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae), Alice (313f9064-9ded-0170-d2c6-9312f71d8ffb): Registered 77f39ee5efbc2c935334d02500323f2dcd50693a4827dffc950d89baa1279142-0.

2022-05-19 15:52:46.083 [50] DEBUG WabiSabiHttpApiClient.SendWithRetriesAsync (89) Received a response for RegisterInput in 0.01s.

2022-05-19 15:52:46.103 [50] INFO AliceClient.RegisterInputAsync (98) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae), Alice (da2eba0f-fae2-36e1-aba5-dc6ff21d02c1): Registered deca4c5ba3309a4a19cae20f65fd34a4641db6a730a17271355377c280c2d42c-1.

```

| 1.0 | CoinJoinClient double started in Manual mode - ### General Description

A clear and concise description of what the bug is.

### How To Reproduce?

Let the client run and wait until one successful round in Manual CoinJoin mode.

When the round finished both of these lines will be triggered:

https://github.com/zkSNACKs/WalletWasabi/blob/d634fa58386ec56f92d72f7d65cd019e48998042/WalletWasabi.Fluent/ViewModels/Wallets/CoinJoinStateViewModel.cs#L248

https://github.com/zkSNACKs/WalletWasabi/blob/d634fa58386ec56f92d72f7d65cd019e48998042/WalletWasabi.Fluent/ViewModels/Wallets/CoinJoinStateViewModel.cs#L253

### Logs

```

2022-05-19 15:52:07.461 [54] INFO AliceClient.SignTransactionAsync (237) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2), Alice (cbf126da-df1d-c0e5-10cb-0d5310f40a33): Posted a signature.

2022-05-19 15:52:07.464 [54] DEBUG CoinJoinClient.ProceedWithSigningStateAsync (669) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2): Alices(3) have signed the coinjoin tx.

2022-05-19 15:52:10.203 [49] DEBUG CoinJoinClient.StartRoundAsync (218) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2): Broadcasted. Coinjoin TxId: (c3c82cc4ebe577cf141273a0972d85c70ff218a8863af0749695d7f6375556c7)

2022-05-19 15:52:10.210 [49] DEBUG CoinJoinClient.LogCoinJoinSummary (425) Round (e55e776f07f15550d03f34a75d4b1de59fe363bdf40cf0cdc31094d165dd93c2):

Input total: +0.00325266 Eff: +0.00299598 NetwFee: +0.00025668 CoordFee: 0.00

Outpu total: +0.00272690 Eff: +0.00245782 NetwFee: +0.00026908

Total diff : +0.00052576

Effec diff : +0.00053816

Total fee : +0.00052576

2022-05-19 15:52:20.628 [46] DEBUG CoinJoinManager.HandleCoinJoinCommandsAsync (144) Wallet (Random Wallet): Cannot start coinjoin, bacause it is already running.

2022-05-19 15:52:20.652 [21] INFO CoinJoinManager.HandleCoinJoinFinalizationAsync (306) Wallet (Random Wallet): CoinJoinClient finished!

2022-05-19 15:52:37.124 [19] DEBUG CoinJoinManager.HandleCoinJoinCommandsAsync (198) Wallet (Random Wallet): Coinjoin client started, auto-coinjoin: 'False' overridePlebStop:'True'.

2022-05-19 15:52:42.586 [6] DEBUG CoinJoinClient.CreateRegisterAndConfirmCoinsAsync (289) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae): Input registration started - it will end in: 00:01:01.

2022-05-19 15:52:44.308 [52] DEBUG WabiSabiHttpApiClient.SendWithRetriesAsync (89) Received a response for RegisterInput in 0.01s.

2022-05-19 15:52:44.326 [52] INFO AliceClient.RegisterInputAsync (98) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae), Alice (313f9064-9ded-0170-d2c6-9312f71d8ffb): Registered 77f39ee5efbc2c935334d02500323f2dcd50693a4827dffc950d89baa1279142-0.

2022-05-19 15:52:46.083 [50] DEBUG WabiSabiHttpApiClient.SendWithRetriesAsync (89) Received a response for RegisterInput in 0.01s.

2022-05-19 15:52:46.103 [50] INFO AliceClient.RegisterInputAsync (98) Round (ed826cce3d4b385f847dc12160f32cb46bc9f982ec45c0440fa22ec112d99bae), Alice (da2eba0f-fae2-36e1-aba5-dc6ff21d02c1): Registered deca4c5ba3309a4a19cae20f65fd34a4641db6a730a17271355377c280c2d42c-1.

```

| test | coinjoinclient double started in manual mode general description a clear and concise description of what the bug is how to reproduce let the client run and wait until one successful round in manual coinjoin mode when the round finished both of these lines will be triggered logs info aliceclient signtransactionasync round alice posted a signature debug coinjoinclient proceedwithsigningstateasync round alices have signed the coinjoin tx debug coinjoinclient startroundasync round broadcasted coinjoin txid debug coinjoinclient logcoinjoinsummary round input total eff netwfee coordfee outpu total eff netwfee total diff effec diff total fee debug coinjoinmanager handlecoinjoincommandsasync wallet random wallet cannot start coinjoin bacause it is already running info coinjoinmanager handlecoinjoinfinalizationasync wallet random wallet coinjoinclient finished debug coinjoinmanager handlecoinjoincommandsasync wallet random wallet coinjoin client started auto coinjoin false overrideplebstop true debug coinjoinclient createregisterandconfirmcoinsasync round input registration started it will end in debug wabisabihttpapiclient sendwithretriesasync received a response for registerinput in info aliceclient registerinputasync round alice registered debug wabisabihttpapiclient sendwithretriesasync received a response for registerinput in info aliceclient registerinputasync round alice registered | 1 |

211,617 | 16,329,769,648 | IssuesEvent | 2021-05-12 07:45:48 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: tpcc/headroom/n4cpu16 failed | C-test-failure O-roachtest O-robot branch-release-20.1 release-blocker | [(roachtest).tpcc/headroom/n4cpu16 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2949856&tab=buildLog) on [release-20.1@3ae6b59f0ea417486c3c41f85ea5baff3b5a00df](https://github.com/cockroachdb/cockroach/commits/3ae6b59f0ea417486c3c41f85ea5baff3b5a00df):

```

| 2883.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2883.0s 0 0.0 182.0 0.0 0.0 0.0 0.0 payment

| 2883.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2884.0s 0 0.0 181.1 0.0 0.0 0.0 0.0 newOrder

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2884.0s 0 0.0 181.9 0.0 0.0 0.0 0.0 payment

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| _elapsed___errors__ops/sec(inst)___ops/sec(cum)__p50(ms)__p95(ms)__p99(ms)_pMax(ms)

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2885.0s 0 0.0 181.0 0.0 0.0 0.0 0.0 newOrder

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2885.0s 0 0.0 181.8 0.0 0.0 0.0 0.0 payment

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2886.0s 0 0.0 181.0 0.0 0.0 0.0 0.0 newOrder

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2886.0s 0 0.0 181.8 0.0 0.0 0.0 0.0 payment

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

cluster.go:2629,tpcc.go:174,tpcc.go:238,test_runner.go:749: monitor failure: monitor task failed: t.Fatal() was called

(1) attached stack trace

| main.(*monitor).WaitE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2617

| main.(*monitor).Wait

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2625

| main.runTPCC

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tpcc.go:174

| main.registerTPCC.func1

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tpcc.go:238

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

Wraps: (2) monitor failure

Wraps: (3) attached stack trace

| main.(*monitor).wait.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2673

Wraps: (4) monitor task failed

Wraps: (5) attached stack trace

| main.init

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2587

| runtime.doInit

| /usr/local/go/src/runtime/proc.go:5652

| runtime.main

| /usr/local/go/src/runtime/proc.go:191

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (6) t.Fatal() was called

Error types: (1) *withstack.withStack (2) *errutil.withMessage (3) *withstack.withStack (4) *errutil.withMessage (5) *withstack.withStack (6) *errors.errorString

```

<details><summary>More</summary><p>

Artifacts: [/tpcc/headroom/n4cpu16](https://teamcity.cockroachdb.com/viewLog.html?buildId=2949856&tab=artifacts#/tpcc/headroom/n4cpu16)

Related:

- #64624 roachtest: tpcc/headroom/n4cpu16 failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-20.2](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-20.2) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Atpcc%2Fheadroom%2Fn4cpu16.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| 2.0 | roachtest: tpcc/headroom/n4cpu16 failed - [(roachtest).tpcc/headroom/n4cpu16 failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2949856&tab=buildLog) on [release-20.1@3ae6b59f0ea417486c3c41f85ea5baff3b5a00df](https://github.com/cockroachdb/cockroach/commits/3ae6b59f0ea417486c3c41f85ea5baff3b5a00df):

```

| 2883.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2883.0s 0 0.0 182.0 0.0 0.0 0.0 0.0 payment

| 2883.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2884.0s 0 0.0 181.1 0.0 0.0 0.0 0.0 newOrder

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2884.0s 0 0.0 181.9 0.0 0.0 0.0 0.0 payment

| 2884.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| _elapsed___errors__ops/sec(inst)___ops/sec(cum)__p50(ms)__p95(ms)__p99(ms)_pMax(ms)

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2885.0s 0 0.0 181.0 0.0 0.0 0.0 0.0 newOrder

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2885.0s 0 0.0 181.8 0.0 0.0 0.0 0.0 payment

| 2885.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 delivery

| 2886.0s 0 0.0 181.0 0.0 0.0 0.0 0.0 newOrder

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 orderStatus

| 2886.0s 0 0.0 181.8 0.0 0.0 0.0 0.0 payment

| 2886.0s 0 0.0 18.2 0.0 0.0 0.0 0.0 stockLevel

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

cluster.go:2629,tpcc.go:174,tpcc.go:238,test_runner.go:749: monitor failure: monitor task failed: t.Fatal() was called

(1) attached stack trace

| main.(*monitor).WaitE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2617

| main.(*monitor).Wait

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2625

| main.runTPCC

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tpcc.go:174

| main.registerTPCC.func1

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/tpcc.go:238

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

Wraps: (2) monitor failure

Wraps: (3) attached stack trace

| main.(*monitor).wait.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2673

Wraps: (4) monitor task failed

Wraps: (5) attached stack trace

| main.init

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2587

| runtime.doInit

| /usr/local/go/src/runtime/proc.go:5652

| runtime.main

| /usr/local/go/src/runtime/proc.go:191

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (6) t.Fatal() was called

Error types: (1) *withstack.withStack (2) *errutil.withMessage (3) *withstack.withStack (4) *errutil.withMessage (5) *withstack.withStack (6) *errors.errorString

```

<details><summary>More</summary><p>

Artifacts: [/tpcc/headroom/n4cpu16](https://teamcity.cockroachdb.com/viewLog.html?buildId=2949856&tab=artifacts#/tpcc/headroom/n4cpu16)

Related:

- #64624 roachtest: tpcc/headroom/n4cpu16 failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-20.2](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-20.2) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Atpcc%2Fheadroom%2Fn4cpu16.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| test | roachtest tpcc headroom failed on orderstatus payment stocklevel delivery neworder orderstatus payment stocklevel elapsed errors ops sec inst ops sec cum ms ms ms pmax ms delivery neworder orderstatus payment stocklevel delivery neworder orderstatus payment stocklevel wraps exit status error types withstack withstack safedetails withsafedetails errutil withmessage main withcommanddetails exec exiterror cluster go tpcc go tpcc go test runner go monitor failure monitor task failed t fatal was called attached stack trace main monitor waite home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main monitor wait home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main runtpcc home agent work go src github com cockroachdb cockroach pkg cmd roachtest tpcc go main registertpcc home agent work go src github com cockroachdb cockroach pkg cmd roachtest tpcc go main testrunner runtest home agent work go src github com cockroachdb cockroach pkg cmd roachtest test runner go wraps monitor failure wraps attached stack trace main monitor wait home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go wraps monitor task failed wraps attached stack trace main init home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go runtime doinit usr local go src runtime proc go runtime main usr local go src runtime proc go runtime goexit usr local go src runtime asm s wraps t fatal was called error types withstack withstack errutil withmessage withstack withstack errutil withmessage withstack withstack errors errorstring more artifacts related roachtest tpcc headroom failed powered by | 1 |

668,643 | 22,592,327,609 | IssuesEvent | 2022-06-28 21:14:12 | Ijwu/Archipelago.HollowKnight | https://api.github.com/repos/Ijwu/Archipelago.HollowKnight | closed | Stag stations don't cost Geo, but should | enhancement mid priority | StagStation placement handler should place vanilla cost for the Stag station and force the player to have to buy it to unlock it. | 1.0 | Stag stations don't cost Geo, but should - StagStation placement handler should place vanilla cost for the Stag station and force the player to have to buy it to unlock it. | non_test | stag stations don t cost geo but should stagstation placement handler should place vanilla cost for the stag station and force the player to have to buy it to unlock it | 0 |

27,533 | 4,320,627,453 | IssuesEvent | 2016-07-25 06:30:37 | fossasia/engelsystem | https://api.github.com/repos/fossasia/engelsystem | opened | Writing test for Travis-CI | testing | We need to set up Travis to run tests for our system. We need to write test codes for model and view, that Travis will run, and script that will allow travis to set up database and run other commands for tests | 1.0 | Writing test for Travis-CI - We need to set up Travis to run tests for our system. We need to write test codes for model and view, that Travis will run, and script that will allow travis to set up database and run other commands for tests | test | writing test for travis ci we need to set up travis to run tests for our system we need to write test codes for model and view that travis will run and script that will allow travis to set up database and run other commands for tests | 1 |

270,016 | 23,484,582,541 | IssuesEvent | 2022-08-17 13:29:36 | instadeepai/Mava | https://api.github.com/repos/instadeepai/Mava | closed | [TEST] Jax separate networks step | test | ### What do you want to test?

Unit test for the `MAPGWithTrustRegionStepSeparateNetworks` component of the Jax PPO implementation that makes use of separate critic and policy netowrks.

### Outline of test structure

* Unit tests

* Test components and hooks

### Definition of done

Passing checks, cover all hooks, edge cases considered

### Mandatory checklist before making a PR

* [ ] The success criteria laid down in “Definition of done” are met.

* [ ] Test code is documented - docstrings for methods and classes, static types for arguments.

* [ ] Documentation is updated - README, CONTRIBUTING, or other documentation. | 1.0 | [TEST] Jax separate networks step - ### What do you want to test?

Unit test for the `MAPGWithTrustRegionStepSeparateNetworks` component of the Jax PPO implementation that makes use of separate critic and policy netowrks.

### Outline of test structure

* Unit tests

* Test components and hooks

### Definition of done

Passing checks, cover all hooks, edge cases considered

### Mandatory checklist before making a PR

* [ ] The success criteria laid down in “Definition of done” are met.

* [ ] Test code is documented - docstrings for methods and classes, static types for arguments.

* [ ] Documentation is updated - README, CONTRIBUTING, or other documentation. | test | jax separate networks step what do you want to test unit test for the mapgwithtrustregionstepseparatenetworks component of the jax ppo implementation that makes use of separate critic and policy netowrks outline of test structure unit tests test components and hooks definition of done passing checks cover all hooks edge cases considered mandatory checklist before making a pr the success criteria laid down in “definition of done” are met test code is documented docstrings for methods and classes static types for arguments documentation is updated readme contributing or other documentation | 1 |

9,625 | 8,056,467,992 | IssuesEvent | 2018-08-02 12:49:29 | eslint/eslint | https://api.github.com/repos/eslint/eslint | opened | Internal consistent-docs-url rule crashes if meta.docs isn't present | accepted bug infrastructure rule | <!--

ESLint adheres to the [JS Foundation Code of Conduct](https://js.foundation/community/code-of-conduct).

This template is for bug reports. If you are here for another reason, please see below:

1. To propose a new rule: https://eslint.org/docs/developer-guide/contributing/new-rules

2. To request a rule change: https://eslint.org/docs/developer-guide/contributing/rule-changes

3. To request a change that is not a bug fix, rule change, or new rule: https://eslint.org/docs/developer-guide/contributing/changes

4. If you have any questions, please stop by our chatroom: https://gitter.im/eslint/eslint

Note that leaving sections blank will make it difficult for us to troubleshoot and we may have to close the issue.

-->

**Tell us about your environment**

* **ESLint Version:** master

* **Node Version:** n/a

* **npm Version:** n/a

**What parser (default, Babel-ESLint, etc.) are you using?**

Default

**Please show your full configuration:**

According to the internal ESLint config around rules:

```yml

rules:

rulesdir/consistent-docs-url: "error"

```

**What did you do? Please include the actual source code causing the issue, as well as the command that you used to run ESLint.**

<!-- Paste the source code below: -->

```js

module.exports = {

meta: {}

};

```

<!-- Paste the command you used to run ESLint: -->

```bash

npm run lint

```

**What did you expect to happen?**

Either a lint error about the lack of `meta.docs` (or `meta.docs.url`), or nothing if that is covered by another rule.

**What actually happened? Please include the actual, raw output from ESLint.**

ESLint crashes, with an error message from `context.report` that the node location must be passed if the node is not passed.

--------

I believe the problem is in [this area](https://github.com/eslint/eslint/blob/master/tools/internal-rules/consistent-docs-url.js#L48-L57), where we check for the absence of `metaDocsUrl` but don't check that `metaDocs` is non-null before sending it to `context.report`. | 1.0 | Internal consistent-docs-url rule crashes if meta.docs isn't present - <!--

ESLint adheres to the [JS Foundation Code of Conduct](https://js.foundation/community/code-of-conduct).

This template is for bug reports. If you are here for another reason, please see below:

1. To propose a new rule: https://eslint.org/docs/developer-guide/contributing/new-rules

2. To request a rule change: https://eslint.org/docs/developer-guide/contributing/rule-changes

3. To request a change that is not a bug fix, rule change, or new rule: https://eslint.org/docs/developer-guide/contributing/changes

4. If you have any questions, please stop by our chatroom: https://gitter.im/eslint/eslint

Note that leaving sections blank will make it difficult for us to troubleshoot and we may have to close the issue.

-->

**Tell us about your environment**

* **ESLint Version:** master

* **Node Version:** n/a

* **npm Version:** n/a

**What parser (default, Babel-ESLint, etc.) are you using?**

Default

**Please show your full configuration:**

According to the internal ESLint config around rules:

```yml

rules:

rulesdir/consistent-docs-url: "error"

```

**What did you do? Please include the actual source code causing the issue, as well as the command that you used to run ESLint.**

<!-- Paste the source code below: -->

```js

module.exports = {

meta: {}

};

```

<!-- Paste the command you used to run ESLint: -->

```bash

npm run lint

```

**What did you expect to happen?**

Either a lint error about the lack of `meta.docs` (or `meta.docs.url`), or nothing if that is covered by another rule.

**What actually happened? Please include the actual, raw output from ESLint.**

ESLint crashes, with an error message from `context.report` that the node location must be passed if the node is not passed.

--------

I believe the problem is in [this area](https://github.com/eslint/eslint/blob/master/tools/internal-rules/consistent-docs-url.js#L48-L57), where we check for the absence of `metaDocsUrl` but don't check that `metaDocs` is non-null before sending it to `context.report`. | non_test | internal consistent docs url rule crashes if meta docs isn t present eslint adheres to the this template is for bug reports if you are here for another reason please see below to propose a new rule to request a rule change to request a change that is not a bug fix rule change or new rule if you have any questions please stop by our chatroom note that leaving sections blank will make it difficult for us to troubleshoot and we may have to close the issue tell us about your environment eslint version master node version n a npm version n a what parser default babel eslint etc are you using default please show your full configuration according to the internal eslint config around rules yml rules rulesdir consistent docs url error what did you do please include the actual source code causing the issue as well as the command that you used to run eslint js module exports meta bash npm run lint what did you expect to happen either a lint error about the lack of meta docs or meta docs url or nothing if that is covered by another rule what actually happened please include the actual raw output from eslint eslint crashes with an error message from context report that the node location must be passed if the node is not passed i believe the problem is in where we check for the absence of metadocsurl but don t check that metadocs is non null before sending it to context report | 0 |

75,577 | 7,477,582,706 | IssuesEvent | 2018-04-04 08:47:23 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | [cluster] MembershipFailureTest_withTCP.secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted | Team: Core Type: Test-Failure | ```

java.lang.AssertionError: expected:<[127.0.0.1]:5702> but was:<[127.0.0.1]:5701>

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.failNotEquals(Assert.java:834)

at org.junit.Assert.assertEquals(Assert.java:118)

at org.junit.Assert.assertEquals(Assert.java:144)

at com.hazelcast.test.HazelcastTestSupport.assertMasterAddress(HazelcastTestSupport.java:924)

at com.hazelcast.test.HazelcastTestSupport$14.run(HazelcastTestSupport.java:934)

at com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1066)

at com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1083)

at com.hazelcast.test.HazelcastTestSupport.assertMasterAddressEventually(HazelcastTestSupport.java:929)

at com.hazelcast.internal.cluster.impl.MembershipFailureTest.secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted(MembershipFailureTest.java:642)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

at org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:105)

at com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:97)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.lang.Thread.run(Thread.java:745)

```

https://hazelcast-l337.ci.cloudbees.com/view/Sonar/job/Hazelcast-3.x-sonar/com.hazelcast$hazelcast/1563/testReport/junit/com.hazelcast.internal.cluster.impl/MembershipFailureTest_withTCP/secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted/ | 1.0 | [cluster] MembershipFailureTest_withTCP.secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted - ```

java.lang.AssertionError: expected:<[127.0.0.1]:5702> but was:<[127.0.0.1]:5701>

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.failNotEquals(Assert.java:834)

at org.junit.Assert.assertEquals(Assert.java:118)

at org.junit.Assert.assertEquals(Assert.java:144)

at com.hazelcast.test.HazelcastTestSupport.assertMasterAddress(HazelcastTestSupport.java:924)

at com.hazelcast.test.HazelcastTestSupport$14.run(HazelcastTestSupport.java:934)

at com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1066)

at com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1083)

at com.hazelcast.test.HazelcastTestSupport.assertMasterAddressEventually(HazelcastTestSupport.java:929)

at com.hazelcast.internal.cluster.impl.MembershipFailureTest.secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted(MembershipFailureTest.java:642)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

at org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:105)

at com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:97)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.lang.Thread.run(Thread.java:745)

```

https://hazelcast-l337.ci.cloudbees.com/view/Sonar/job/Hazelcast-3.x-sonar/com.hazelcast$hazelcast/1563/testReport/junit/com.hazelcast.internal.cluster.impl/MembershipFailureTest_withTCP/secondMastershipClaimByYounger_shouldRetry_when_firstMastershipClaimByElder_accepted/ | test | membershipfailuretest withtcp secondmastershipclaimbyyounger shouldretry when firstmastershipclaimbyelder accepted java lang assertionerror expected but was at org junit assert fail assert java at org junit assert failnotequals assert java at org junit assert assertequals assert java at org junit assert assertequals assert java at com hazelcast test hazelcasttestsupport assertmasteraddress hazelcasttestsupport java at com hazelcast test hazelcasttestsupport run hazelcasttestsupport java at com hazelcast test hazelcasttestsupport asserttrueeventually hazelcasttestsupport java at com hazelcast test hazelcasttestsupport asserttrueeventually hazelcasttestsupport java at com hazelcast test hazelcasttestsupport assertmasteraddresseventually hazelcasttestsupport java at com hazelcast internal cluster impl membershipfailuretest secondmastershipclaimbyyounger shouldretry when firstmastershipclaimbyelder accepted membershipfailuretest java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org junit runners model frameworkmethod runreflectivecall frameworkmethod java at org junit internal runners model reflectivecallable run reflectivecallable java at org junit runners model frameworkmethod invokeexplosively frameworkmethod java at org junit internal runners statements invokemethod evaluate invokemethod java at com hazelcast test failontimeoutstatement callablestatement call failontimeoutstatement java at com hazelcast test failontimeoutstatement callablestatement call failontimeoutstatement java at java util concurrent futuretask run futuretask java at java lang thread run thread java | 1 |

61,569 | 17,023,728,265 | IssuesEvent | 2021-07-03 03:31:27 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Oauth Authorize access page doesn't give the currently logged in user | Component: website Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 5.29pm, Friday, 1st July 2011]**

It would be useful to give the name of the currently logged in user to the Oauth auth screen, so that you can confirm that you are giving access to the correct account, just in case the wrong user happens to be logged in, for example someone else has used your machine or you have multiple account. | 1.0 | Oauth Authorize access page doesn't give the currently logged in user - **[Submitted to the original trac issue database at 5.29pm, Friday, 1st July 2011]**

It would be useful to give the name of the currently logged in user to the Oauth auth screen, so that you can confirm that you are giving access to the correct account, just in case the wrong user happens to be logged in, for example someone else has used your machine or you have multiple account. | non_test | oauth authorize access page doesn t give the currently logged in user it would be useful to give the name of the currently logged in user to the oauth auth screen so that you can confirm that you are giving access to the correct account just in case the wrong user happens to be logged in for example someone else has used your machine or you have multiple account | 0 |

324,787 | 24,016,854,327 | IssuesEvent | 2022-09-15 02:10:55 | DanCampos12/DivDados | https://api.github.com/repos/DanCampos12/DivDados | closed | 3º versão - Proposta de Solução de Software. | documentation enhancement | Correção da Proposta de Solução de Software com base na última revisão. | 1.0 | 3º versão - Proposta de Solução de Software. - Correção da Proposta de Solução de Software com base na última revisão. | non_test | versão proposta de solução de software correção da proposta de solução de software com base na última revisão | 0 |

29,513 | 4,506,189,454 | IssuesEvent | 2016-09-02 02:08:34 | raveloda/Coatl-Aerospace | https://api.github.com/repos/raveloda/Coatl-Aerospace | closed | Test RemoteTech antenna Configs | testing work in progress | See #5

General Balancing pass on the antenna stats based on stock and RT configs. This should include consideration for the new antenna beings added.

- [x] Test komodo's configs (screwed up by Github formatting) | 1.0 | Test RemoteTech antenna Configs - See #5

General Balancing pass on the antenna stats based on stock and RT configs. This should include consideration for the new antenna beings added.

- [x] Test komodo's configs (screwed up by Github formatting) | test | test remotetech antenna configs see general balancing pass on the antenna stats based on stock and rt configs this should include consideration for the new antenna beings added test komodo s configs screwed up by github formatting | 1 |

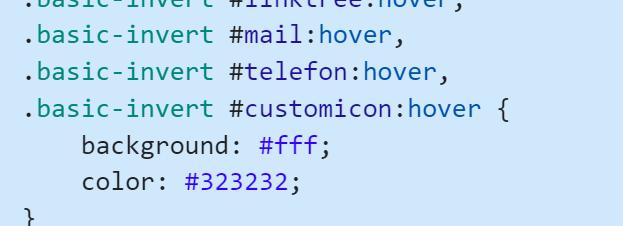

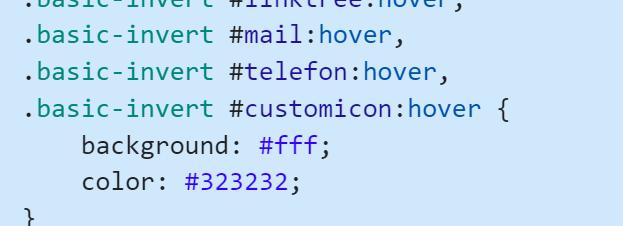

710,972 | 24,445,791,830 | IssuesEvent | 2022-10-06 17:50:46 | MCFabian/social-media-widget | https://api.github.com/repos/MCFabian/social-media-widget | closed | Bug report: hover style for phone does not work -> wrong id in new ui (gen.js/style.css) | bug high priority | Since the new ui update and the new gen.js, the dynamic id from the gen.js script as changed from telefon to phone.

so change the css (all styles) or change the give id from the gen.js script

| 1.0 | Bug report: hover style for phone does not work -> wrong id in new ui (gen.js/style.css) - Since the new ui update and the new gen.js, the dynamic id from the gen.js script as changed from telefon to phone.

so change the css (all styles) or change the give id from the gen.js script

| non_test | bug report hover style for phone does not work wrong id in new ui gen js style css since the new ui update and the new gen js the dynamic id from the gen js script as changed from telefon to phone so change the css all styles or change the give id from the gen js script | 0 |

785,757 | 27,624,232,421 | IssuesEvent | 2023-03-10 04:36:48 | AY2223S2-CS2113-W12-1/tp | https://api.github.com/repos/AY2223S2-CS2113-W12-1/tp | opened | Create a remove appointment feature. | type.Story priority.High | As a user, I am able to remove appointments if necessary so that the appointment list is not clogged up. | 1.0 | Create a remove appointment feature. - As a user, I am able to remove appointments if necessary so that the appointment list is not clogged up. | non_test | create a remove appointment feature as a user i am able to remove appointments if necessary so that the appointment list is not clogged up | 0 |

805,554 | 29,524,826,258 | IssuesEvent | 2023-06-05 06:51:38 | telerik/kendo-react | https://api.github.com/repos/telerik/kendo-react | opened | Heatmap renders wrong colors if there are negative values | bug pkg:charts Priority 1 SEV: Medium | When the Heatmap has negative values, the default logic for generating the colors fails and it is using the range from 0 to the max positive value:

- https://stackblitz.com/edit/react-n6g4fp?file=app%2Fmain.jsx

The expected behavior is to have the colors range from the min value to the max value.

A temporary workaround is to define a custom "color" for the series:

- https://stackblitz.com/edit/react-c5hpwv?file=app%2Fmake-data-objects.js,app%2Fmain.jsx | 1.0 | Heatmap renders wrong colors if there are negative values - When the Heatmap has negative values, the default logic for generating the colors fails and it is using the range from 0 to the max positive value:

- https://stackblitz.com/edit/react-n6g4fp?file=app%2Fmain.jsx

The expected behavior is to have the colors range from the min value to the max value.

A temporary workaround is to define a custom "color" for the series:

- https://stackblitz.com/edit/react-c5hpwv?file=app%2Fmake-data-objects.js,app%2Fmain.jsx | non_test | heatmap renders wrong colors if there are negative values when the heatmap has negative values the default logic for generating the colors fails and it is using the range from to the max positive value the expected behavior is to have the colors range from the min value to the max value a temporary workaround is to define a custom color for the series | 0 |

646,134 | 21,038,523,355 | IssuesEvent | 2022-03-31 10:04:42 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | reopened | appengine.standard_python3.warmup.main_test: test_index failed | priority: p1 type: bug api: appengine samples flakybot: issue flakybot: flaky | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 3b6d797140afa6ca900a1e38a554795bfe800368

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/7e7321f5-302f-441b-b01b-0047fd05b625), [Sponge](http://sponge2/7e7321f5-302f-441b-b01b-0047fd05b625)

status: failed

<details><summary>Test output</summary><br><pre>Traceback (most recent call last):

File "/workspace/appengine/standard_python3/warmup/main_test.py", line 22, in test_index

r = client.get('/')

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/werkzeug/test.py", line 1134, in get

return self.open(*args, **kw)

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/flask/testing.py", line 220, in open

follow_redirects=follow_redirects,

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/werkzeug/test.py", line 1081, in open

builder = EnvironBuilder(*args, **kwargs)

TypeError: __init__() got an unexpected keyword argument 'as_tuple'</pre></details> | 1.0 | appengine.standard_python3.warmup.main_test: test_index failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 3b6d797140afa6ca900a1e38a554795bfe800368

buildURL: [Build Status](https://source.cloud.google.com/results/invocations/7e7321f5-302f-441b-b01b-0047fd05b625), [Sponge](http://sponge2/7e7321f5-302f-441b-b01b-0047fd05b625)

status: failed

<details><summary>Test output</summary><br><pre>Traceback (most recent call last):

File "/workspace/appengine/standard_python3/warmup/main_test.py", line 22, in test_index

r = client.get('/')

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/werkzeug/test.py", line 1134, in get

return self.open(*args, **kw)

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/flask/testing.py", line 220, in open

follow_redirects=follow_redirects,

File "/workspace/appengine/standard_python3/warmup/.nox/py-3-7/lib/python3.7/site-packages/werkzeug/test.py", line 1081, in open

builder = EnvironBuilder(*args, **kwargs)

TypeError: __init__() got an unexpected keyword argument 'as_tuple'</pre></details> | non_test | appengine standard warmup main test test index failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed test output traceback most recent call last file workspace appengine standard warmup main test py line in test index r client get file workspace appengine standard warmup nox py lib site packages werkzeug test py line in get return self open args kw file workspace appengine standard warmup nox py lib site packages flask testing py line in open follow redirects follow redirects file workspace appengine standard warmup nox py lib site packages werkzeug test py line in open builder environbuilder args kwargs typeerror init got an unexpected keyword argument as tuple | 0 |

29,772 | 5,887,120,004 | IssuesEvent | 2017-05-17 06:16:15 | catmaid/CATMAID | https://api.github.com/repos/catmaid/CATMAID | closed | Node radius can only be edited in XY view | status: done type: defect | In orthogonal views the mouse movements don't register properly. | 1.0 | Node radius can only be edited in XY view - In orthogonal views the mouse movements don't register properly. | non_test | node radius can only be edited in xy view in orthogonal views the mouse movements don t register properly | 0 |

280,234 | 24,286,131,074 | IssuesEvent | 2022-09-28 22:27:01 | nrwl/nx | https://api.github.com/repos/nrwl/nx | closed | Jest resolves the whole tree structure of all exported elements in a index.ts file | type: bug scope: testing tools | ## Current Behavior

We have created a local module in nx libs folder, we import two elements from this lib in one of our test file. When we run the test, Jest is resolving the whole tree structure of all exported elements in the lib index.ts file.

## Expected Behavior

Jest should not resolve the whole tree structure of all exported elements, but only resolve it for elements imported in the test file.

## Steps to Reproduce

Here is a github [repository](https://github.com/jcabannes/nx-angular-ngxs-jest) which reproduces the bug if you run `npm test` command

### Failure Logs

```

FAIL my-app apps/my-app/src/app/tests/test-app.spec.ts

● Test suite failed to run

TypeError: Cannot read properties of undefined (reading 'child')

8 | imports: [

9 | TestModule.forRoot(

> 10 | EnvServiceProvider.useFactory().parent.child

| ^

11 | ? {

12 | id: 'test',

13 | }

at Object.<anonymous> (../../libs/features/src/app/shared/auth/auth.module.ts:10:45)

at Object.<anonymous> (../../libs/features/src/app/core/core.module.ts:4:1)

at Object.<anonymous> (../../libs/features/src/app/app.module.ts:6:1)

at Object.<anonymous> (../../libs/features/src/index.ts:6:1)

at Object.<anonymous> (src/app/tests/test-app.spec.ts:2:1)

```

Error comes from the metadata of an ngModule imported by an exported module in our lib.

### Environment

```

Node : 16.15.0

OS : linux x64

yarn : 1.22.19

nx : 14.1.7

@nrwl/angular : 14.1.7

@nrwl/cypress : 14.1.7

@nrwl/detox : Not Found

@nrwl/devkit : 14.1.7

@nrwl/eslint-plugin-nx : 14.1.7

@nrwl/express : Not Found

@nrwl/jest : 14.1.7

@nrwl/js : Not Found

@nrwl/linter : 14.1.7

@nrwl/nest : Not Found

@nrwl/next : Not Found

@nrwl/node : Not Found

@nrwl/nx-cloud : Not Found

@nrwl/nx-plugin : Not Found

@nrwl/react : Not Found

@nrwl/react-native : Not Found

@nrwl/schematics : Not Found

@nrwl/storybook : 14.1.7

@nrwl/web : Not Found

@nrwl/workspace : 14.1.7

typescript : 4.6.4

rxjs : 7.5.5

---------------------------------------

Community plugins:

```

| 1.0 | Jest resolves the whole tree structure of all exported elements in a index.ts file - ## Current Behavior

We have created a local module in nx libs folder, we import two elements from this lib in one of our test file. When we run the test, Jest is resolving the whole tree structure of all exported elements in the lib index.ts file.

## Expected Behavior

Jest should not resolve the whole tree structure of all exported elements, but only resolve it for elements imported in the test file.

## Steps to Reproduce

Here is a github [repository](https://github.com/jcabannes/nx-angular-ngxs-jest) which reproduces the bug if you run `npm test` command

### Failure Logs

```

FAIL my-app apps/my-app/src/app/tests/test-app.spec.ts

● Test suite failed to run

TypeError: Cannot read properties of undefined (reading 'child')

8 | imports: [

9 | TestModule.forRoot(

> 10 | EnvServiceProvider.useFactory().parent.child

| ^

11 | ? {

12 | id: 'test',

13 | }

at Object.<anonymous> (../../libs/features/src/app/shared/auth/auth.module.ts:10:45)

at Object.<anonymous> (../../libs/features/src/app/core/core.module.ts:4:1)

at Object.<anonymous> (../../libs/features/src/app/app.module.ts:6:1)

at Object.<anonymous> (../../libs/features/src/index.ts:6:1)

at Object.<anonymous> (src/app/tests/test-app.spec.ts:2:1)

```

Error comes from the metadata of an ngModule imported by an exported module in our lib.

### Environment

```

Node : 16.15.0

OS : linux x64

yarn : 1.22.19

nx : 14.1.7

@nrwl/angular : 14.1.7

@nrwl/cypress : 14.1.7

@nrwl/detox : Not Found

@nrwl/devkit : 14.1.7

@nrwl/eslint-plugin-nx : 14.1.7

@nrwl/express : Not Found

@nrwl/jest : 14.1.7

@nrwl/js : Not Found

@nrwl/linter : 14.1.7

@nrwl/nest : Not Found

@nrwl/next : Not Found

@nrwl/node : Not Found

@nrwl/nx-cloud : Not Found

@nrwl/nx-plugin : Not Found

@nrwl/react : Not Found

@nrwl/react-native : Not Found

@nrwl/schematics : Not Found

@nrwl/storybook : 14.1.7

@nrwl/web : Not Found

@nrwl/workspace : 14.1.7

typescript : 4.6.4

rxjs : 7.5.5

---------------------------------------

Community plugins:

```

| test | jest resolves the whole tree structure of all exported elements in a index ts file current behavior we have created a local module in nx libs folder we import two elements from this lib in one of our test file when we run the test jest is resolving the whole tree structure of all exported elements in the lib index ts file expected behavior jest should not resolve the whole tree structure of all exported elements but only resolve it for elements imported in the test file steps to reproduce here is a github which reproduces the bug if you run npm test command failure logs fail my app apps my app src app tests test app spec ts ● test suite failed to run typeerror cannot read properties of undefined reading child imports testmodule forroot envserviceprovider usefactory parent child id test at object libs features src app shared auth auth module ts at object libs features src app core core module ts at object libs features src app app module ts at object libs features src index ts at object src app tests test app spec ts error comes from the metadata of an ngmodule imported by an exported module in our lib environment node os linux yarn nx nrwl angular nrwl cypress nrwl detox not found nrwl devkit nrwl eslint plugin nx nrwl express not found nrwl jest nrwl js not found nrwl linter nrwl nest not found nrwl next not found nrwl node not found nrwl nx cloud not found nrwl nx plugin not found nrwl react not found nrwl react native not found nrwl schematics not found nrwl storybook nrwl web not found nrwl workspace typescript rxjs community plugins | 1 |

84,608 | 7,928,729,769 | IssuesEvent | 2018-07-06 12:48:34 | ArkEcosystem/core | https://api.github.com/repos/ArkEcosystem/core | opened | Unify tests | development tests | Currently we don't have a unified way to test all the packages: some of them are using `core-test-utils` or have received more love than others.

- [ ] Unify tests (share more tools between packages and use a standard way of mocking, expecting, etc.) | 1.0 | Unify tests - Currently we don't have a unified way to test all the packages: some of them are using `core-test-utils` or have received more love than others.

- [ ] Unify tests (share more tools between packages and use a standard way of mocking, expecting, etc.) | test | unify tests currently we don t have a unified way to test all the packages some of them are using core test utils or have received more love than others unify tests share more tools between packages and use a standard way of mocking expecting etc | 1 |

130,992 | 10,677,680,524 | IssuesEvent | 2019-10-21 15:50:09 | cigumo/krli | https://api.github.com/repos/cigumo/krli | closed | Armor buff aura and Dante's Relics of Power | kr2 needs testing | In the clip, Dante got Relics of Power lvl 3.

https://streamable.com/cb42p

the buff aura is off and the armor/resistance is lowered 100% by dante. However, the enemy still has armor/resistance when it had none before the buff.

| 1.0 | Armor buff aura and Dante's Relics of Power - In the clip, Dante got Relics of Power lvl 3.

https://streamable.com/cb42p

the buff aura is off and the armor/resistance is lowered 100% by dante. However, the enemy still has armor/resistance when it had none before the buff.

| test | armor buff aura and dante s relics of power in the clip dante got relics of power lvl the buff aura is off and the armor resistance is lowered by dante however the enemy still has armor resistance when it had none before the buff | 1 |

163,861 | 12,748,503,798 | IssuesEvent | 2020-06-26 20:16:44 | QuantConnect/Lean | https://api.github.com/repos/QuantConnect/Lean | closed | Add regression algorithm trading during extended market hours | good first issue testing up for grabs | <!--- This template provides sections for bugs and features. Please delete any irrelevant sections before submitting -->

#### Expected Behavior

<!--- Required. Describe the behavior you expect to see for your case. -->

- Lean has a regression test algorithm that explicitly and actively trades during extended market hours. With the objective of testing extended market hours data and Leans behavior using it.

#### Actual Behavior

<!--- Required. Describe the actual behavior for your case. -->

- There is no such regression algorithm

#### Potential Solution

<!--- Optional. Describe any potential solutions and/or thoughts as to what may be causing the difference between expected and actual behavior. -->

- Implement C#/Py

- Algorithm should use data already present in the repo. See https://github.com/QuantConnect/Lean/tree/master/Data/equity/usa/minute

#### Reproducing the Problem

<!--- Required for Bugs. Describe how to reproduce the problem. This can be via a failing unit test or a simplified algorithm that reliably demonstrates this issue. -->

N/A

#### System Information

<!--- Required for Bugs. Include any system specific information, such as OS. -->

N/A

#### Checklist

<!--- Confirm that you've provided all the required information. -->

<!--- Required fields --->

- [x] I have completely filled out this template

- [x] I have confirmed that this issue exists on the current `master` branch

- [x] I have confirmed that this is not a duplicate issue by searching [issues](https://github.com/QuantConnect/Lean/issues)

<!--- Required for Bugs, feature request can delete the line below. -->

- [x] I have provided detailed steps to reproduce the issue

<!--- Template inspired by https://github.com/stevemao/github-issue-templates --> | 1.0 | Add regression algorithm trading during extended market hours - <!--- This template provides sections for bugs and features. Please delete any irrelevant sections before submitting -->

#### Expected Behavior

<!--- Required. Describe the behavior you expect to see for your case. -->

- Lean has a regression test algorithm that explicitly and actively trades during extended market hours. With the objective of testing extended market hours data and Leans behavior using it.

#### Actual Behavior

<!--- Required. Describe the actual behavior for your case. -->

- There is no such regression algorithm

#### Potential Solution

<!--- Optional. Describe any potential solutions and/or thoughts as to what may be causing the difference between expected and actual behavior. -->

- Implement C#/Py

- Algorithm should use data already present in the repo. See https://github.com/QuantConnect/Lean/tree/master/Data/equity/usa/minute

#### Reproducing the Problem

<!--- Required for Bugs. Describe how to reproduce the problem. This can be via a failing unit test or a simplified algorithm that reliably demonstrates this issue. -->

N/A

#### System Information

<!--- Required for Bugs. Include any system specific information, such as OS. -->

N/A

#### Checklist

<!--- Confirm that you've provided all the required information. -->

<!--- Required fields --->

- [x] I have completely filled out this template

- [x] I have confirmed that this issue exists on the current `master` branch

- [x] I have confirmed that this is not a duplicate issue by searching [issues](https://github.com/QuantConnect/Lean/issues)

<!--- Required for Bugs, feature request can delete the line below. -->

- [x] I have provided detailed steps to reproduce the issue

<!--- Template inspired by https://github.com/stevemao/github-issue-templates --> | test | add regression algorithm trading during extended market hours expected behavior lean has a regression test algorithm that explicitly and actively trades during extended market hours with the objective of testing extended market hours data and leans behavior using it actual behavior there is no such regression algorithm potential solution implement c py algorithm should use data already present in the repo see reproducing the problem n a system information n a checklist i have completely filled out this template i have confirmed that this issue exists on the current master branch i have confirmed that this is not a duplicate issue by searching i have provided detailed steps to reproduce the issue | 1 |

7,774 | 5,198,951,570 | IssuesEvent | 2017-01-23 19:32:37 | Starcounter/Starcounter | https://api.github.com/repos/Starcounter/Starcounter | closed | Using shortnames in queries: problem with ambiguous names and mapped database views | usability | When we are now moving to having several smaller apps running together that have their own mapped views of the database, the likelyhood of having more then one databasetype with the same shortname increases.

This poses a problem since it is really easy to break an working app, by starting another one that happen to have the same shortname for a dabasetype in their mapped model.

This has been mentioned before, but only briefly in shorter discussions, so I add it as an issue so we can discuss and come up with a solution.

As far as I can see there is not too many different options to solve this. I can come up with two:

- The shortnames are resolved by application, so that each app have there local database views usable with shortnames. Not sure if this is even possible to implement.

- Require all database types to be specified with fully qualified name in queries. This will be the simplest solution and will make it certain that all queries will work. The downside is of course that you have to write the fullname which can be quite long.

@malx122, @warpech, @miyconst, @dan31, @k-rus, @Starcounter-Jack

| True | Using shortnames in queries: problem with ambiguous names and mapped database views - When we are now moving to having several smaller apps running together that have their own mapped views of the database, the likelyhood of having more then one databasetype with the same shortname increases.

This poses a problem since it is really easy to break an working app, by starting another one that happen to have the same shortname for a dabasetype in their mapped model.

This has been mentioned before, but only briefly in shorter discussions, so I add it as an issue so we can discuss and come up with a solution.

As far as I can see there is not too many different options to solve this. I can come up with two:

- The shortnames are resolved by application, so that each app have there local database views usable with shortnames. Not sure if this is even possible to implement.

- Require all database types to be specified with fully qualified name in queries. This will be the simplest solution and will make it certain that all queries will work. The downside is of course that you have to write the fullname which can be quite long.

@malx122, @warpech, @miyconst, @dan31, @k-rus, @Starcounter-Jack

| non_test | using shortnames in queries problem with ambiguous names and mapped database views when we are now moving to having several smaller apps running together that have their own mapped views of the database the likelyhood of having more then one databasetype with the same shortname increases this poses a problem since it is really easy to break an working app by starting another one that happen to have the same shortname for a dabasetype in their mapped model this has been mentioned before but only briefly in shorter discussions so i add it as an issue so we can discuss and come up with a solution as far as i can see there is not too many different options to solve this i can come up with two the shortnames are resolved by application so that each app have there local database views usable with shortnames not sure if this is even possible to implement require all database types to be specified with fully qualified name in queries this will be the simplest solution and will make it certain that all queries will work the downside is of course that you have to write the fullname which can be quite long warpech miyconst k rus starcounter jack | 0 |

40,091 | 10,450,689,814 | IssuesEvent | 2019-09-19 11:08:48 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | Support the Ubuntu Cross Toolchain | area: Build System feature request | **Is your feature request related to a problem? Please describe.**

It would be helpful for many Ubuntu users, and also for continuous integration (CI), to be able to use Ubuntu's `gcc-arm-none-eabi` cross-compiler for building zephyr for arm microcontrollers.

In particular, many CI loops use Ubuntu's cross-compiler because it is so easy to install from Ubuntu's default package repository in many containerized and non-containerized build environments.

It's as easy as `apt-get update && apt install -y gcc-arm-none-eabi`.

Currently, I believe that only `gnuarmemb` and `crosstool-ng` toolchains are supported. In both cases, it's possible that downloading & installing them might require significantly more time and bandwidth than installing the Ubuntu toolchain. In particular, Travis CI hosts their own Ubuntu package repository, and so it would be very quick to install from that source.

**Describe the solution you'd like**

Support for Ubuntu's toolchain along with a small bit of documentation on how to install it in the "3rd Party Toolchains" section.

**Describe alternatives you've considered**

I've used the gnuarmemb toolchain, but it does add some overhead to my CI process. | 1.0 | Support the Ubuntu Cross Toolchain - **Is your feature request related to a problem? Please describe.**

It would be helpful for many Ubuntu users, and also for continuous integration (CI), to be able to use Ubuntu's `gcc-arm-none-eabi` cross-compiler for building zephyr for arm microcontrollers.

In particular, many CI loops use Ubuntu's cross-compiler because it is so easy to install from Ubuntu's default package repository in many containerized and non-containerized build environments.

It's as easy as `apt-get update && apt install -y gcc-arm-none-eabi`.

Currently, I believe that only `gnuarmemb` and `crosstool-ng` toolchains are supported. In both cases, it's possible that downloading & installing them might require significantly more time and bandwidth than installing the Ubuntu toolchain. In particular, Travis CI hosts their own Ubuntu package repository, and so it would be very quick to install from that source.

**Describe the solution you'd like**

Support for Ubuntu's toolchain along with a small bit of documentation on how to install it in the "3rd Party Toolchains" section.

**Describe alternatives you've considered**

I've used the gnuarmemb toolchain, but it does add some overhead to my CI process. | non_test | support the ubuntu cross toolchain is your feature request related to a problem please describe it would be helpful for many ubuntu users and also for continuous integration ci to be able to use ubuntu s gcc arm none eabi cross compiler for building zephyr for arm microcontrollers in particular many ci loops use ubuntu s cross compiler because it is so easy to install from ubuntu s default package repository in many containerized and non containerized build environments it s as easy as apt get update apt install y gcc arm none eabi currently i believe that only gnuarmemb and crosstool ng toolchains are supported in both cases it s possible that downloading installing them might require significantly more time and bandwidth than installing the ubuntu toolchain in particular travis ci hosts their own ubuntu package repository and so it would be very quick to install from that source describe the solution you d like support for ubuntu s toolchain along with a small bit of documentation on how to install it in the party toolchains section describe alternatives you ve considered i ve used the gnuarmemb toolchain but it does add some overhead to my ci process | 0 |

278,067 | 24,121,807,008 | IssuesEvent | 2022-09-20 19:26:27 | mozilla-mobile/mobile-test-eng | https://api.github.com/repos/mozilla-mobile/mobile-test-eng | closed | [META] Deprecate old GCP / Firebase resources | infra:ui-test maintenance META | DESCRIPTION

our team should take responsibility for deprecating and mobile GCP projects.

NOTES

- This would likely only be former focus-android project, any projects used for Rocket (Firefox Lite) and Amazon

- Re-confirm w/ Stefan before decommissioning Rocket projects

PROJECTS-TO-BE-DEL

1. moz-fx-mobile-firebase-testlab

2. moz-demo-project

3. moz-demo-project2

4. moz-firefox-ios?

5. moz-l10n-screenshots

6. moz-firefox-tv

MISC

1. Remove all old keys

2. Set (and document!) data retention threshold for Focus-android

3. Revisit 9 mo data retention for fenix - is 3? 6? mos of Firebase data enough? | 1.0 | [META] Deprecate old GCP / Firebase resources - DESCRIPTION

our team should take responsibility for deprecating and mobile GCP projects.

NOTES

- This would likely only be former focus-android project, any projects used for Rocket (Firefox Lite) and Amazon

- Re-confirm w/ Stefan before decommissioning Rocket projects

PROJECTS-TO-BE-DEL

1. moz-fx-mobile-firebase-testlab

2. moz-demo-project

3. moz-demo-project2

4. moz-firefox-ios?

5. moz-l10n-screenshots

6. moz-firefox-tv

MISC

1. Remove all old keys

2. Set (and document!) data retention threshold for Focus-android

3. Revisit 9 mo data retention for fenix - is 3? 6? mos of Firebase data enough? | test | deprecate old gcp firebase resources description our team should take responsibility for deprecating and mobile gcp projects notes this would likely only be former focus android project any projects used for rocket firefox lite and amazon re confirm w stefan before decommissioning rocket projects projects to be del moz fx mobile firebase testlab moz demo project moz demo moz firefox ios moz screenshots moz firefox tv misc remove all old keys set and document data retention threshold for focus android revisit mo data retention for fenix is mos of firebase data enough | 1 |

123,315 | 10,263,919,298 | IssuesEvent | 2019-08-22 15:16:19 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | closed | TestJcmd_0 | comp:vm test failure | All extended testing.

```

FAILED: testJcmdHelps

java.lang.AssertionError: Help text corrupt: [Usage : jcmd <vmid> <arguments>, , -J : supply arguments to the Java VM running jcmd, -l : list JVM processes on the local machine, -h : print this help message, , <vmid> : Attach API VM ID as shown in jps or other Attach API-based tools, , arguments:, help : print the list of diagnostic commands, help <command> : print help for the specific command, <command> [command arguments] : command from the list returned by "help", , list JVM processes on the local machine. Default behavior when no options are specified., , NOTE: this utility may significantly affect the performance of the target JVM., The available diagnostic commands are determined by, the target VM and may vary between VMs.] expected [true] but found [false]

at org.testng.Assert.fail(Assert.java:96)

at org.testng.Assert.failNotEquals(Assert.java:776)

at org.testng.Assert.assertTrue(Assert.java:44)

at org.openj9.test.attachAPI.TestJcmd.testJcmdHelps(TestJcmd.java:87)

``` | 1.0 | TestJcmd_0 - All extended testing.

```

FAILED: testJcmdHelps

java.lang.AssertionError: Help text corrupt: [Usage : jcmd <vmid> <arguments>, , -J : supply arguments to the Java VM running jcmd, -l : list JVM processes on the local machine, -h : print this help message, , <vmid> : Attach API VM ID as shown in jps or other Attach API-based tools, , arguments:, help : print the list of diagnostic commands, help <command> : print help for the specific command, <command> [command arguments] : command from the list returned by "help", , list JVM processes on the local machine. Default behavior when no options are specified., , NOTE: this utility may significantly affect the performance of the target JVM., The available diagnostic commands are determined by, the target VM and may vary between VMs.] expected [true] but found [false]

at org.testng.Assert.fail(Assert.java:96)

at org.testng.Assert.failNotEquals(Assert.java:776)

at org.testng.Assert.assertTrue(Assert.java:44)

at org.openj9.test.attachAPI.TestJcmd.testJcmdHelps(TestJcmd.java:87)

``` | test | testjcmd all extended testing failed testjcmdhelps java lang assertionerror help text corrupt command from the list returned by help list jvm processes on the local machine default behavior when no options are specified note this utility may significantly affect the performance of the target jvm the available diagnostic commands are determined by the target vm and may vary between vms expected but found at org testng assert fail assert java at org testng assert failnotequals assert java at org testng assert asserttrue assert java at org test attachapi testjcmd testjcmdhelps testjcmd java | 1 |

48,174 | 5,948,045,218 | IssuesEvent | 2017-05-26 10:07:58 | LDMW/app | https://api.github.com/repos/LDMW/app | closed | Feedback page inputs go into spreadsheet | please-test T1d T4h technical | As a London Minds admin, I would like to be able to see the feedback on the application (captured in the Feedback Page #131) in a quick and easy way so that I can digest the information.

## Acceptance Criteria

+ [x] When a user enters data in the feedback page #131, upon pressing the submit button, that data is visible in a google spreadsheet. | 1.0 | Feedback page inputs go into spreadsheet - As a London Minds admin, I would like to be able to see the feedback on the application (captured in the Feedback Page #131) in a quick and easy way so that I can digest the information.

## Acceptance Criteria

+ [x] When a user enters data in the feedback page #131, upon pressing the submit button, that data is visible in a google spreadsheet. | test | feedback page inputs go into spreadsheet as a london minds admin i would like to be able to see the feedback on the application captured in the feedback page in a quick and easy way so that i can digest the information acceptance criteria when a user enters data in the feedback page upon pressing the submit button that data is visible in a google spreadsheet | 1 |

57,561 | 6,550,730,953 | IssuesEvent | 2017-09-05 12:21:06 | EyeSeeTea/QAApp | https://api.github.com/repos/EyeSeeTea/QAApp | closed | bb and regular version of HNQIS on same device | testing | It seems that it is no longer possible to keep both apps (bb and regular version as downloaded from gPlay) on a device any more. When we have downloaded bb#59, we have been asked to remove the other version of HNQIS from the device.

This wasn't happening before. Reporting this in case this is something you want to fix (?). | 1.0 | bb and regular version of HNQIS on same device - It seems that it is no longer possible to keep both apps (bb and regular version as downloaded from gPlay) on a device any more. When we have downloaded bb#59, we have been asked to remove the other version of HNQIS from the device.

This wasn't happening before. Reporting this in case this is something you want to fix (?). | test | bb and regular version of hnqis on same device it seems that it is no longer possible to keep both apps bb and regular version as downloaded from gplay on a device any more when we have downloaded bb we have been asked to remove the other version of hnqis from the device this wasn t happening before reporting this in case this is something you want to fix | 1 |

361,058 | 25,323,319,251 | IssuesEvent | 2022-11-18 06:53:31 | andrewcargill/johans_eco_timber | https://api.github.com/repos/andrewcargill/johans_eco_timber | opened | Optimal image sizes | documentation | As a Owner I want the site to perform well so that users are not distracted by slow loading pages

Criteria:

All oversized images should be resized

All images should use a good compression format

All images should show on all screen sizes

| 1.0 | Optimal image sizes - As a Owner I want the site to perform well so that users are not distracted by slow loading pages

Criteria:

All oversized images should be resized

All images should use a good compression format

All images should show on all screen sizes

| non_test | optimal image sizes as a owner i want the site to perform well so that users are not distracted by slow loading pages criteria all oversized images should be resized all images should use a good compression format all images should show on all screen sizes | 0 |

342,393 | 30,619,424,117 | IssuesEvent | 2023-07-24 07:09:47 | iamlogand/republic-of-rome-online | https://api.github.com/repos/iamlogand/republic-of-rome-online | closed | Unit test for an API call | Testing | Add a unit test to the backend using Django’s test-execution framework and REST framework's test classes. | 1.0 | Unit test for an API call - Add a unit test to the backend using Django’s test-execution framework and REST framework's test classes. | test | unit test for an api call add a unit test to the backend using django’s test execution framework and rest framework s test classes | 1 |

609,869 | 18,889,625,055 | IssuesEvent | 2021-11-15 11:45:16 | enviroCar/enviroCar-app | https://api.github.com/repos/enviroCar/enviroCar-app | closed | Toggle button in Adapter Selection does not change state on deny | bug 3 - Done Priority - 3 - Low | **Describe the bug**

On trying to enable bluetooth from the app the toggle button does not change it state to off if we click on deny on anywhere on screen.

**To Reproduce**

Steps to reproduce the behavior:

1. Click on OBD selection in Dasboard fragment.

2. Click on toggle button.

3. Click on deny or anywhere on screen.

4. Toggle button switches to ON state rather it should be in off state if deny or nothing is selected.

**Expected behavior**

Reset toggle button to off state if no selection is made or deny is clicked. Also we can add ON /OFF instead of simple toggle button.

| 1.0 | Toggle button in Adapter Selection does not change state on deny - **Describe the bug**

On trying to enable bluetooth from the app the toggle button does not change it state to off if we click on deny on anywhere on screen.

**To Reproduce**

Steps to reproduce the behavior:

1. Click on OBD selection in Dasboard fragment.

2. Click on toggle button.

3. Click on deny or anywhere on screen.

4. Toggle button switches to ON state rather it should be in off state if deny or nothing is selected.

**Expected behavior**

Reset toggle button to off state if no selection is made or deny is clicked. Also we can add ON /OFF instead of simple toggle button.

| non_test | toggle button in adapter selection does not change state on deny describe the bug on trying to enable bluetooth from the app the toggle button does not change it state to off if we click on deny on anywhere on screen to reproduce steps to reproduce the behavior click on obd selection in dasboard fragment click on toggle button click on deny or anywhere on screen toggle button switches to on state rather it should be in off state if deny or nothing is selected expected behavior reset toggle button to off state if no selection is made or deny is clicked also we can add on off instead of simple toggle button | 0 |

323,886 | 23,971,747,744 | IssuesEvent | 2022-09-13 08:20:39 | wirDesign-communication-AG/wirHub-doc | https://api.github.com/repos/wirDesign-communication-AG/wirHub-doc | closed | v2.4.2 | documentation | Voraussichtlicher Release 12.09.

## Bug

- [x] #336

- [x] #338

- [x] #339

- [x] #341

- [x] #343