Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

384,036 | 26,573,492,130 | IssuesEvent | 2023-01-21 13:45:40 | supabase/supabase | https://api.github.com/repos/supabase/supabase | closed | JWT generator in self-hosted docs is broken - cannot connect to default project. | documentation auth | ### Discussed in https://github.com/supabase/supabase/discussions/8402

(I removed the original discussion, all the bug hunting was irrelevant)

I think the JWT token generator in the self-hosted docs is broken.

tokens generated by it are not accepted and lead to "cannot connect to default project"

AFAICT it come... | 1.0 | JWT generator in self-hosted docs is broken - cannot connect to default project. - ### Discussed in https://github.com/supabase/supabase/discussions/8402

(I removed the original discussion, all the bug hunting was irrelevant)

I think the JWT token generator in the self-hosted docs is broken.

tokens generated by it... | non_test | jwt generator in self hosted docs is broken cannot connect to default project discussed in i removed the original discussion all the bug hunting was irrelevant i think the jwt token generator in the self hosted docs is broken tokens generated by it are not accepted and lead to cannot connect to def... | 0 |

114,037 | 9,672,301,454 | IssuesEvent | 2019-05-22 02:50:47 | flutter/flutter | https://api.github.com/repos/flutter/flutter | opened | flutter_tools test/commands/create_test.dart takes over 6 minutes | a: tests tool | We really need to make this test more efficient somehow. Is it doing something redundant? Something we can cache? (But without making it less hermetic.) | 1.0 | flutter_tools test/commands/create_test.dart takes over 6 minutes - We really need to make this test more efficient somehow. Is it doing something redundant? Something we can cache? (But without making it less hermetic.) | test | flutter tools test commands create test dart takes over minutes we really need to make this test more efficient somehow is it doing something redundant something we can cache but without making it less hermetic | 1 |

47,212 | 6,044,996,167 | IssuesEvent | 2017-06-12 08:02:07 | python-trio/trio | https://api.github.com/repos/python-trio/trio | closed | Design: higher-level stream abstractions | design discussion | There's a gesture towards moving beyond concrete objects like sockets and pipes in the `trio._streams` interfaces. I'm not sure how much of this belongs in trio proper (as opposed to a library on top), but I think even a simple bit of convention might go a long way. What should this look like?

Prior art: [Twisted en... | 1.0 | Design: higher-level stream abstractions - There's a gesture towards moving beyond concrete objects like sockets and pipes in the `trio._streams` interfaces. I'm not sure how much of this belongs in trio proper (as opposed to a library on top), but I think even a simple bit of convention might go a long way. What shoul... | non_test | design higher level stream abstractions there s a gesture towards moving beyond concrete objects like sockets and pipes in the trio streams interfaces i m not sure how much of this belongs in trio proper as opposed to a library on top but i think even a simple bit of convention might go a long way what shoul... | 0 |

209,986 | 16,074,428,010 | IssuesEvent | 2021-04-25 04:10:53 | pingcap/br | https://api.github.com/repos/pingcap/br | opened | `br_tikv_outage` sometimes got stuck | component/test type/bug | Please answer these questions before submitting your issue. Thanks!

1. What did you do?

If possible, provide a recipe for reproducing the error.

run `br_tikv_outage`

2. What did you expect to see?

test passed.

3. What did you see instead?

Sometimes, if one TiKV leave permanently, there would be some regi... | 1.0 | `br_tikv_outage` sometimes got stuck - Please answer these questions before submitting your issue. Thanks!

1. What did you do?

If possible, provide a recipe for reproducing the error.

run `br_tikv_outage`

2. What did you expect to see?

test passed.

3. What did you see instead?

Sometimes, if one TiKV leav... | test | br tikv outage sometimes got stuck please answer these questions before submitting your issue thanks what did you do if possible provide a recipe for reproducing the error run br tikv outage what did you expect to see test passed what did you see instead sometimes if one tikv leav... | 1 |

77,643 | 7,583,052,484 | IssuesEvent | 2018-04-25 07:34:07 | red/red | https://api.github.com/repos/red/red | closed | GUI Console: Cursor misaligned when using input or when using ask with an empty string | GUI status.built status.tested type.bug | ### Expected behavior

`input` goes to the beginning of the new line before capturing input,

### Actual behavior

Cursor position is lined up with the end of the previous line, awaiting input.

### Steps to reproduce the problem

Type `input` then Enter, or `input`, then any string, then Enter.

`ask` with an empty st... | 1.0 | GUI Console: Cursor misaligned when using input or when using ask with an empty string - ### Expected behavior

`input` goes to the beginning of the new line before capturing input,

### Actual behavior

Cursor position is lined up with the end of the previous line, awaiting input.

### Steps to reproduce the problem

... | test | gui console cursor misaligned when using input or when using ask with an empty string expected behavior input goes to the beginning of the new line before capturing input actual behavior cursor position is lined up with the end of the previous line awaiting input steps to reproduce the problem ... | 1 |

23,328 | 3,793,333,647 | IssuesEvent | 2016-03-22 13:36:37 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | CNAME on a name directly under the zone-apex leads to DNSSEC SERVFAIL | defect rec | Commandline: `./pdns/pdns_recursor --daemon=no --loglevel=9 --trace --local-port=5300 --local-address=127.0.0.1 --socket-dir=. --dnssec=validate`

Considering the following records in a zone (where the NS and SOA are added by the registrar):

```

a.lieter.nl. IN CNAME b.lieter.nl.

b.lieter.nl. IN A 127.0.0.53

... | 1.0 | CNAME on a name directly under the zone-apex leads to DNSSEC SERVFAIL - Commandline: `./pdns/pdns_recursor --daemon=no --loglevel=9 --trace --local-port=5300 --local-address=127.0.0.1 --socket-dir=. --dnssec=validate`

Considering the following records in a zone (where the NS and SOA are added by the registrar):

`... | non_test | cname on a name directly under the zone apex leads to dnssec servfail commandline pdns pdns recursor daemon no loglevel trace local port local address socket dir dnssec validate considering the following records in a zone where the ns and soa are added by the registrar a... | 0 |

41,269 | 5,345,738,286 | IssuesEvent | 2017-02-17 17:44:28 | hacsoc/the_jolly_advisor | https://api.github.com/repos/hacsoc/the_jolly_advisor | closed | Search by keyword feature should be case-insensitive | beginner-friendly bug help wanted testing | ```

Scenario: Search by keyword in a course description # features/course_explorer.feature:24

When I search for courses by a keyword # features/step_definitions/course_explorer_steps.rb:59

Then I see only classes with that keyword in the name # features/step_definitions/course_explorer_ste... | 1.0 | Search by keyword feature should be case-insensitive - ```

Scenario: Search by keyword in a course description # features/course_explorer.feature:24

When I search for courses by a keyword # features/step_definitions/course_explorer_steps.rb:59

Then I see only classes with that keyword in t... | test | search by keyword feature should be case insensitive scenario search by keyword in a course description features course explorer feature when i search for courses by a keyword features step definitions course explorer steps rb then i see only classes with that keyword in the... | 1 |

74,926 | 25,409,328,255 | IssuesEvent | 2022-11-22 17:35:10 | FreeRADIUS/freeradius-server | https://api.github.com/repos/FreeRADIUS/freeradius-server | closed | Segmentation fault Freeradius 3.2.1 Robust proxy | defect v3.2.x | ### What type of defect/bug is this?

Crash or memory corruption (segv, abort, etc...)

### How can the issue be reproduced?

When try to send accounting package to robust proxy radius crash with Segmentation fault

### Log output from the FreeRADIUS daemon

```shell

freeradius -Xxxxxx

Tue Nov 22 21:31:19 2022 : Debu... | 1.0 | Segmentation fault Freeradius 3.2.1 Robust proxy - ### What type of defect/bug is this?

Crash or memory corruption (segv, abort, etc...)

### How can the issue be reproduced?

When try to send accounting package to robust proxy radius crash with Segmentation fault

### Log output from the FreeRADIUS daemon

```shell

... | non_test | segmentation fault freeradius robust proxy what type of defect bug is this crash or memory corruption segv abort etc how can the issue be reproduced when try to send accounting package to robust proxy radius crash with segmentation fault log output from the freeradius daemon shell ... | 0 |

159,144 | 6,041,203,894 | IssuesEvent | 2017-06-10 21:49:57 | svof/svof | https://api.github.com/repos/svof/svof | closed | Wrong definition of `a_darkyellow` | bug low priority simple difficulty up for grabs | > Sent By: Lynara On 2017-03-20 00:54:22

A_darkyellow, a color made by svo, is wrong - it should be {179,179,0}. It is {0,179,0}. Which is a_darkgreen. | 1.0 | Wrong definition of `a_darkyellow` - > Sent By: Lynara On 2017-03-20 00:54:22

A_darkyellow, a color made by svo, is wrong - it should be {179,179,0}. It is {0,179,0}. Which is a_darkgreen. | non_test | wrong definition of a darkyellow sent by lynara on a darkyellow a color made by svo is wrong it should be it is which is a darkgreen | 0 |

211,385 | 7,200,716,061 | IssuesEvent | 2018-02-05 19:59:22 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Server 0.7.0.0 - Consistent Server Crashing. | High Priority | [ServerCrash.txt](https://github.com/StrangeLoopGames/EcoIssues/files/1692641/ServerCrash.txt)

It seems to be roughly every half an hour that the server is online that it'll crash with the error log that has been attached above. This may be a Mono issue but I just thought I'd report the error log so someone could ha... | 1.0 | Server 0.7.0.0 - Consistent Server Crashing. - [ServerCrash.txt](https://github.com/StrangeLoopGames/EcoIssues/files/1692641/ServerCrash.txt)

It seems to be roughly every half an hour that the server is online that it'll crash with the error log that has been attached above. This may be a Mono issue but I just thoug... | non_test | server consistent server crashing it seems to be roughly every half an hour that the server is online that it ll crash with the error log that has been attached above this may be a mono issue but i just thought i d report the error log so someone could have a look over it | 0 |

49,923 | 13,187,292,663 | IssuesEvent | 2020-08-13 02:57:12 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [dataclasses] SuperDST pulse width (again) (Trac #2296) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2296">https://code.icecube.wisc.edu/ticket/2296</a>, reported by david.schultz and owned by david.schultz</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-06-04T16:18:43",

"description": "Apparently if... | 1.0 | [dataclasses] SuperDST pulse width (again) (Trac #2296) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2296">https://code.icecube.wisc.edu/ticket/2296</a>, reported by david.schultz and owned by david.schultz</em></summary>

<p>

```json

{

"status": "closed",

"changetime":... | non_test | superdst pulse width again trac migrated from json status closed changetime description apparently if the pulses to merge are at the end of the pulse list in superdst the merge does not happen example n n n n n n nlooks like this is because... | 0 |

692,181 | 23,725,271,268 | IssuesEvent | 2022-08-30 18:58:21 | cds-snc/notification-planning | https://api.github.com/repos/cds-snc/notification-planning | closed | (File Upload) Multiple Labels. | Accessiblity | Accessibilité Medium Priority | Priorité moyenne | # File Upload, Fields

Visibility: 2

Evaluation: Does not support

Success Criteria: 3.3.2: Labels or Instructions

Needs design:

### Description

For file upload inputs, there appear to be three <label> elements associated with the input. This may cause confusion for an AT and may result in the label not being reported... | 1.0 | (File Upload) Multiple Labels. - # File Upload, Fields

Visibility: 2

Evaluation: Does not support

Success Criteria: 3.3.2: Labels or Instructions

Needs design:

### Description

For file upload inputs, there appear to be three <label> elements associated with the input. This may cause confusion for an AT and may resul... | non_test | file upload multiple labels file upload fields visibility evaluation does not support success criteria labels or instructions needs design description for file upload inputs there appear to be three elements associated with the input this may cause confusion for an at and may result in t... | 0 |

73,569 | 7,344,404,262 | IssuesEvent | 2018-03-07 14:35:48 | kubeflow/kubeflow | https://api.github.com/repos/kubeflow/kubeflow | closed | E2E Testing For Kubeflow. | testing | We need to setup continuous E2E testing for google/kubeflow

- [ ] Create a basic an E2E test

- [ ] Deploy and verify JupyterHub is working

- [X] Deploy and verify TfJob is working

- [ ] Verify TfServing is working

- [X] Setup prow for google/kubeflow

- [X] Setup presubmit tests

- [ ] S... | 1.0 | E2E Testing For Kubeflow. - We need to setup continuous E2E testing for google/kubeflow

- [ ] Create a basic an E2E test

- [ ] Deploy and verify JupyterHub is working

- [X] Deploy and verify TfJob is working

- [ ] Verify TfServing is working

- [X] Setup prow for google/kubeflow

- [X] Setup p... | test | testing for kubeflow we need to setup continuous testing for google kubeflow create a basic an test deploy and verify jupyterhub is working deploy and verify tfjob is working verify tfserving is working setup prow for google kubeflow setup presubmit tests ... | 1 |

95,885 | 8,580,121,351 | IssuesEvent | 2018-11-13 11:02:05 | elastic/beats | https://api.github.com/repos/elastic/beats | reopened | [Metricbeat] Flaky test_couchbase.Test.test_couchbase_0_bucket | :Testing Metricbeat flaky-test | link: https://beats-ci.elastic.co/job/elastic+beats+pull-request+multijob-linux/5782/beat=metricbeat,label=ubuntu/testReport/junit/test_couchbase/Test/test_couchbase_0_bucket/

platform: linux

```

Element counts were not equal:

First has 1, Second has 0: 'couchbase'

First has 0, Second has 1: u'error'

----------... | 2.0 | [Metricbeat] Flaky test_couchbase.Test.test_couchbase_0_bucket - link: https://beats-ci.elastic.co/job/elastic+beats+pull-request+multijob-linux/5782/beat=metricbeat,label=ubuntu/testReport/junit/test_couchbase/Test/test_couchbase_0_bucket/

platform: linux

```

Element counts were not equal:

First has 1, Second has ... | test | flaky test couchbase test test couchbase bucket link platform linux element counts were not equal first has second has couchbase first has second has u error begin captured stdout u beat u hostname u u name u u versi... | 1 |

161,894 | 13,879,643,500 | IssuesEvent | 2020-10-17 15:20:30 | DnanaDev/Covid19-India-Analysis-and-Forecasting | https://api.github.com/repos/DnanaDev/Covid19-India-Analysis-and-Forecasting | closed | Multi-Step Forecast when using Lagged features | bug documentation | The models use multiple lagged features of the target from t-1 to t-7. This is a problem at inference time. Suppose you need to predict 2 days into the future. Two possible solutions exists:

1. Direct approach - Predict for the next day. Use all the data to train a new model. predict for the next day.

2. Recurs... | 1.0 | Multi-Step Forecast when using Lagged features - The models use multiple lagged features of the target from t-1 to t-7. This is a problem at inference time. Suppose you need to predict 2 days into the future. Two possible solutions exists:

1. Direct approach - Predict for the next day. Use all the data to train a n... | non_test | multi step forecast when using lagged features the models use multiple lagged features of the target from t to t this is a problem at inference time suppose you need to predict days into the future two possible solutions exists direct approach predict for the next day use all the data to train a n... | 0 |

233,133 | 18,950,393,657 | IssuesEvent | 2021-11-18 14:39:17 | geosolutions-it/geonode | https://api.github.com/repos/geosolutions-it/geonode | closed | Tests for the release of GN 3.3.0 | Epic Testing | @ElenaGallo we are ready to run a full test of https://development.demo.geonode.org/ in view of the release of GN 3.3.0 next week.

Issues:

- mobile

- [x] [video](https://studio.cucumber.io/projects/291214/test-runs/605303/folder-snapshots/6156164/scenario-snapshots/21581429/test-snapshots/29431160) https://gi... | 1.0 | Tests for the release of GN 3.3.0 - @ElenaGallo we are ready to run a full test of https://development.demo.geonode.org/ in view of the release of GN 3.3.0 next week.

Issues:

- mobile

- [x] [video](https://studio.cucumber.io/projects/291214/test-runs/605303/folder-snapshots/6156164/scenario-snapshots/21581429... | test | tests for the release of gn elenagallo we are ready to run a full test of in view of the release of gn next week issues mobile can t reproduce download add thumbnails from save as and ... | 1 |

311,401 | 9,532,633,643 | IssuesEvent | 2019-04-29 19:06:36 | dojot/dojot | https://api.github.com/repos/dojot/dojot | opened | [Flowbroker] Publishing on device using device out node causes persister failure | Priority:Critical Team:Backend Type:Bug | Publishing on device using device out node causes persister failure.

```

persister_1 | Exception in thread Persister:

persister_1 | Traceback (most recent call last):

persister_1 | File "/usr/local/lib/python3.6/threading.py", line 916, in _bootstrap_inner

... | 1.0 | [Flowbroker] Publishing on device using device out node causes persister failure - Publishing on device using device out node causes persister failure.

```

persister_1 | Exception in thread Persister:

persister_1 | Traceback (most recent call last):

persister_1 ... | non_test | publishing on device using device out node causes persister failure publishing on device using device out node causes persister failure persister exception in thread persister persister traceback most recent call last persister file ... | 0 |

46,089 | 9,882,746,911 | IssuesEvent | 2019-06-24 17:39:04 | MicrosoftDocs/live-share | https://api.github.com/repos/MicrosoftDocs/live-share | closed | [VS Code] Removing Terminal: Cannot read property 'terminalId' of undefined | area: terminal external logs attached vscode | <!--

For Visual Studio problems/feedback, please use the "Report a Problem..." feature built into the tool. See https://aka.ms/vsls-vsproblem.

For VS Code issues, attach verbose logs as follows:

1. Press F1 (or Ctrl-Shift-P), type "export logs" and run the "Live Share: Export Logs" command.

2. Drag and drop the ... | 1.0 | [VS Code] Removing Terminal: Cannot read property 'terminalId' of undefined - <!--

For Visual Studio problems/feedback, please use the "Report a Problem..." feature built into the tool. See https://aka.ms/vsls-vsproblem.

For VS Code issues, attach verbose logs as follows:

1. Press F1 (or Ctrl-Shift-P), type "expo... | non_test | removing terminal cannot read property terminalid of undefined for visual studio problems feedback please use the report a problem feature built into the tool see for vs code issues attach verbose logs as follows press or ctrl shift p type export logs and run the live share expo... | 0 |

56,377 | 6,518,056,414 | IssuesEvent | 2017-08-28 05:51:27 | ThaDafinser/ZfcDatagrid | https://api.github.com/repos/ThaDafinser/ZfcDatagrid | closed | Cannot inject custom formatter | Verify/test needed | Though a column can use translation setting the `setTranslationEnabled()` method to true I need to translate my value inside a custom formatter.

This is more of _Question_ than an _Issue_.

Since this is my _DI_ attempt I tried to inject the `viewRenderer` into my custom formatter inside my `module.config.php`:

``` p... | 1.0 | Cannot inject custom formatter - Though a column can use translation setting the `setTranslationEnabled()` method to true I need to translate my value inside a custom formatter.

This is more of _Question_ than an _Issue_.

Since this is my _DI_ attempt I tried to inject the `viewRenderer` into my custom formatter insi... | test | cannot inject custom formatter though a column can use translation setting the settranslationenabled method to true i need to translate my value inside a custom formatter this is more of question than an issue since this is my di attempt i tried to inject the viewrenderer into my custom formatter insi... | 1 |

114,051 | 24,536,725,494 | IssuesEvent | 2022-10-11 21:32:43 | quiqueck/BCLib | https://api.github.com/repos/quiqueck/BCLib | closed | [Bug] Double plants don't have drop for top block | 🔥 bug 🎉 Dev Code | ### What happened?

BaseDoublePlantBlock don't have a drop list for top block:

```java

@Override

public List<ItemStack> getDrops(BlockState state, LootContext.Builder builder) {

if (state.getValue(TOP)) {

return Lists.newArrayList();

}

ItemStack tool = builder.getPar... | 1.0 | [Bug] Double plants don't have drop for top block - ### What happened?

BaseDoublePlantBlock don't have a drop list for top block:

```java

@Override

public List<ItemStack> getDrops(BlockState state, LootContext.Builder builder) {

if (state.getValue(TOP)) {

return Lists.newArrayList();

... | non_test | double plants don t have drop for top block what happened basedoubleplantblock don t have a drop list for top block java override public list getdrops blockstate state lootcontext builder builder if state getvalue top return lists newarraylist ... | 0 |

57,490 | 3,082,696,986 | IssuesEvent | 2015-08-24 00:11:25 | magro/memcached-session-manager | https://api.github.com/repos/magro/memcached-session-manager | closed | When the jvmRoute contains a dash (-) sessions are not saved in memcached | bug imported Milestone-1.4.1 Priority-Medium | _From [rainer.j...@kippdata.de](https://code.google.com/u/102517300929192813948/) on March 16, 2011 16:59:57_

SessionIdFormat uses a pattern "[^-.]+-[^.]+(\\.[\\w-]+)?" to test for valid session ids.

I had a memcached called "n1" and a jvmRoute tc7-a (and tc7-b) which leads to session ids like ...-n1.tc7-a. Unfortu... | 1.0 | When the jvmRoute contains a dash (-) sessions are not saved in memcached - _From [rainer.j...@kippdata.de](https://code.google.com/u/102517300929192813948/) on March 16, 2011 16:59:57_

SessionIdFormat uses a pattern "[^-.]+-[^.]+(\\.[\\w-]+)?" to test for valid session ids.

I had a memcached called "n1" and a jvmR... | non_test | when the jvmroute contains a dash sessions are not saved in memcached from on march sessionidformat uses a pattern to test for valid session ids i had a memcached called and a jvmroute a and b which leads to session ids like a unfortunately a does not m... | 0 |

196,613 | 14,881,729,116 | IssuesEvent | 2021-01-20 10:53:24 | rancher/harvester | https://api.github.com/repos/rancher/harvester | closed | [BUG] UI incorrectly states that data will be deleted from the volume when a data disk is removed from a VM | area/ui bug to-test | **Describe the bug**

If you attach and mount a volume to a VM and then put data on it, when you delete that volume from a VM, you get a message stating that the data on the volume will be removed. However, if you mount that volume on a different VM, that data is available (as I would have expected it to be).

**To ... | 1.0 | [BUG] UI incorrectly states that data will be deleted from the volume when a data disk is removed from a VM - **Describe the bug**

If you attach and mount a volume to a VM and then put data on it, when you delete that volume from a VM, you get a message stating that the data on the volume will be removed. However, if... | test | ui incorrectly states that data will be deleted from the volume when a data disk is removed from a vm describe the bug if you attach and mount a volume to a vm and then put data on it when you delete that volume from a vm you get a message stating that the data on the volume will be removed however if you... | 1 |

735,322 | 25,389,446,681 | IssuesEvent | 2022-11-22 01:58:36 | tomm3hgunn/Jayhawk-Go | https://api.github.com/repos/tomm3hgunn/Jayhawk-Go | closed | Display Moneyline in matches.html | feature high priority | Similar to the currently implemented Spreads Pane, display the necessary data for Moneyline in the Moneyline pane. Inside the matches.html, look for the comment PANE 1 to see how the Spreads data was displayed. Do your work under the PANE 3 comment. The format of the row may need to be modified as the Moneyline data ma... | 1.0 | Display Moneyline in matches.html - Similar to the currently implemented Spreads Pane, display the necessary data for Moneyline in the Moneyline pane. Inside the matches.html, look for the comment PANE 1 to see how the Spreads data was displayed. Do your work under the PANE 3 comment. The format of the row may need to ... | non_test | display moneyline in matches html similar to the currently implemented spreads pane display the necessary data for moneyline in the moneyline pane inside the matches html look for the comment pane to see how the spreads data was displayed do your work under the pane comment the format of the row may need to ... | 0 |

236,702 | 26,046,799,999 | IssuesEvent | 2022-12-22 15:02:59 | Gal-Doron/private-gradle-github | https://api.github.com/repos/Gal-Doron/private-gradle-github | opened | CVE-2021-44228 (High) detected in log4j-core-2.13.1.jar | security vulnerability | ## CVE-2021-44228 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.13.1.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Library home page: <a href="https://... | True | CVE-2021-44228 (High) detected in log4j-core-2.13.1.jar - ## CVE-2021-44228 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.13.1.jar</b></p></summary>

<p>The Apache Log4j ... | non_test | cve high detected in core jar cve high severity vulnerability vulnerable library core jar the apache implementation library home page a href path to dependency file build gradle path to vulnerable library home wss scanner gradle caches modules files or... | 0 |

123,607 | 10,276,745,218 | IssuesEvent | 2019-08-24 20:16:48 | wasmjit-omr/wasmjit-omr | https://api.github.com/repos/wasmjit-omr/wasmjit-omr | opened | Find a better way to prevent JIT compilation of certain functions | testing | Currently, there are several tests that need to ensure that certain functions are run in the interpreter, e.g. the stack trace tests. Currently, these tests make use of the unsupported `memory.size` opcode to cause the function to not be JIT compiled. Unfortunately, this solution will only work until we run out of opco... | 1.0 | Find a better way to prevent JIT compilation of certain functions - Currently, there are several tests that need to ensure that certain functions are run in the interpreter, e.g. the stack trace tests. Currently, these tests make use of the unsupported `memory.size` opcode to cause the function to not be JIT compiled. ... | test | find a better way to prevent jit compilation of certain functions currently there are several tests that need to ensure that certain functions are run in the interpreter e g the stack trace tests currently these tests make use of the unsupported memory size opcode to cause the function to not be jit compiled ... | 1 |

15,795 | 3,483,013,999 | IssuesEvent | 2015-12-30 07:12:34 | sadikovi/octohaven | https://api.github.com/repos/sadikovi/octohaven | closed | [OCTO-46] Scheduling more items than half of the pool size | bug test | Scheduler bug when scheduling more items than half of the pool size results in fetching the same items, therefore, others are not updated properly. For example,

```

job1 -> CREATED

job2 -> CREATED

job3 -> CREATED

job4 -> CREATED

```

After fetching two jobs, you will be fetching those 2 jobs only, so the rest ... | 1.0 | [OCTO-46] Scheduling more items than half of the pool size - Scheduler bug when scheduling more items than half of the pool size results in fetching the same items, therefore, others are not updated properly. For example,

```

job1 -> CREATED

job2 -> CREATED

job3 -> CREATED

job4 -> CREATED

```

After fetching t... | test | scheduling more items than half of the pool size scheduler bug when scheduling more items than half of the pool size results in fetching the same items therefore others are not updated properly for example created created created created after fetching two jobs you will be... | 1 |

14,929 | 3,436,348,400 | IssuesEvent | 2015-12-12 09:32:23 | akvo/akvo-caddisfly | https://api.github.com/repos/akvo/akvo-caddisfly | closed | Use 'level' quality parameter | Strip test | to make sure the calibration card image is straight enough, we can use a 'level' quality parameter.

Alternatively, we could show a 'level' indic... | 1.0 | Use 'level' quality parameter - to make sure the calibration card image is straight enough, we can use a 'level' quality parameter.

Alternativel... | test | use level quality parameter to make sure the calibration card image is straight enough we can use a level quality parameter alternatively we could show a level indicator with an arrow the arrow shown is the direction in which the user needs to move the camera the arrow to show can be determin... | 1 |

165,734 | 20,617,066,761 | IssuesEvent | 2022-03-07 14:12:52 | ioana-nicolae/terraform-tests | https://api.github.com/repos/ioana-nicolae/terraform-tests | opened | CVE-2021-45105 (Medium) detected in log4j-core-2.12.1.jar | security vulnerability | ## CVE-2021-45105 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.12.1.jar</b></p></summary>

<p>The Apache Log4j Implementation</p>

<p>Library home page: <a href="https:... | True | CVE-2021-45105 (Medium) detected in log4j-core-2.12.1.jar - ## CVE-2021-45105 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-core-2.12.1.jar</b></p></summary>

<p>The Apache Lo... | non_test | cve medium detected in core jar cve medium severity vulnerability vulnerable library core jar the apache implementation library home page a href path to dependency file pom xml path to vulnerable library itory org apache logging core core ja... | 0 |

154,100 | 12,193,066,618 | IssuesEvent | 2020-04-29 13:53:32 | bitcoin/bitcoin | https://api.github.com/repos/bitcoin/bitcoin | opened | Run functional tests from make check | Brainstorming Feature Tests | We have a bunch of tests:

* unit tests

* util tests written in python

* bench runner

* subtree tests

All of them are run when you type `make check`.

However, for running the functional tests, one has to type `./test/functional/test_runner.py`

I think some people asked for the functional tests to be run a... | 1.0 | Run functional tests from make check - We have a bunch of tests:

* unit tests

* util tests written in python

* bench runner

* subtree tests

All of them are run when you type `make check`.

However, for running the functional tests, one has to type `./test/functional/test_runner.py`

I think some people ask... | test | run functional tests from make check we have a bunch of tests unit tests util tests written in python bench runner subtree tests all of them are run when you type make check however for running the functional tests one has to type test functional test runner py i think some people ask... | 1 |

317,989 | 27,276,938,344 | IssuesEvent | 2023-02-23 06:33:16 | TencentBlueKing/bk-ci | https://api.github.com/repos/TencentBlueKing/bk-ci | closed | 【研发商店】插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空 | for gray for test kind/enhancement tested kind/version/sample streams/tested streams/for test streams/for gray streams/done sample/passed | 当插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空,便于用户修改配置或者复制内容

<img width="400" alt="image" src="https://user-images.githubusercontent.com/54432927/215701103-e122b895-4f57-4307-b993-84f6286ad393.png"> | 4.0 | 【研发商店】插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空 - 当插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空,便于用户修改配置或者复制内容

<img width="400" alt="image" src="https://user-images.githubusercontent.com/54432927/215701103-e122b895-4f57-4307-b993-84f6286ad393.png"> | test | 【研发商店】插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空 当插件私有配置当字段值是明文展示时,聚焦编辑框不需要清空,便于用户修改配置或者复制内容 img width alt image src | 1 |

334,808 | 29,992,362,854 | IssuesEvent | 2023-06-26 00:07:16 | flojoy-io/studio | https://api.github.com/repos/flojoy-io/studio | closed | open & run every example app in the `apps` repo. validate that run results display in the CTRL panel | CI & test automation | To Do:

open & run every example app in the app’s repo. validate that run results display in the CTRL panel

- need more automation

- may need to add mocking, if so - convert to new tasks | 1.0 | open & run every example app in the `apps` repo. validate that run results display in the CTRL panel - To Do:

open & run every example app in the app’s repo. validate that run results display in the CTRL panel

- need more automation

- may need to add mocking, if so - convert to new tasks | test | open run every example app in the apps repo validate that run results display in the ctrl panel to do open run every example app in the app’s repo validate that run results display in the ctrl panel need more automation may need to add mocking if so convert to new tasks | 1 |

758,473 | 26,556,911,009 | IssuesEvent | 2023-01-20 12:50:27 | owid/owid-grapher | https://api.github.com/repos/owid/owid-grapher | closed | Project: Port Wordpress pages content to ArchieML JSON | site priority 2 - important | We want to move fully to the new google docs based authoring flow but we have a lot of content still in Wordpress. This project is about automatically moving wordpress pages into google docs/ArchieML.

A substantial fraction of components that we have in Wordpress do not exist yet in google docs. Part of this project... | 1.0 | Project: Port Wordpress pages content to ArchieML JSON - We want to move fully to the new google docs based authoring flow but we have a lot of content still in Wordpress. This project is about automatically moving wordpress pages into google docs/ArchieML.

A substantial fraction of components that we have in Wordpr... | non_test | project port wordpress pages content to archieml json we want to move fully to the new google docs based authoring flow but we have a lot of content still in wordpress this project is about automatically moving wordpress pages into google docs archieml a substantial fraction of components that we have in wordpr... | 0 |

46,593 | 5,824,148,869 | IssuesEvent | 2017-05-07 10:07:33 | TechnionYP5777/Leonidas-FTW | https://api.github.com/repos/TechnionYP5777/Leonidas-FTW | closed | Find a way to automatically test GUI | Quality assurance Testing | @AnnaBel7 @amirsagiv83 do you know any convenient ways to do this? Who do you think this issue is most relevant for? | 1.0 | Find a way to automatically test GUI - @AnnaBel7 @amirsagiv83 do you know any convenient ways to do this? Who do you think this issue is most relevant for? | test | find a way to automatically test gui do you know any convenient ways to do this who do you think this issue is most relevant for | 1 |

300,420 | 25,967,521,597 | IssuesEvent | 2022-12-19 08:28:24 | betagouv/preuve-covoiturage | https://api.github.com/repos/betagouv/preuve-covoiturage | closed | Attestation - coquille dans le tableau ‘Contributions passager', | BUG ATTESTATION Stale | j'ai reçu un retour concernant les attestations, on a laissé traîner une coquille.

Dans la partie ‘Contributions passager', le tableau indique en dernière colonne les ‘Gains’ or, il s’agit du 'Reste à chargé’.

=> Il faudrait donc renommer la colonne ‘gains’ en ‘coûts’ ?

<img width="809" alt="Capture d’écran 20... | 1.0 | Attestation - coquille dans le tableau ‘Contributions passager', - j'ai reçu un retour concernant les attestations, on a laissé traîner une coquille.

Dans la partie ‘Contributions passager', le tableau indique en dernière colonne les ‘Gains’ or, il s’agit du 'Reste à chargé’.

=> Il faudrait donc renommer la colonn... | test | attestation coquille dans le tableau ‘contributions passager j ai reçu un retour concernant les attestations on a laissé traîner une coquille dans la partie ‘contributions passager le tableau indique en dernière colonne les ‘gains’ or il s’agit du reste à chargé’ il faudrait donc renommer la colonn... | 1 |

400,009 | 27,265,488,584 | IssuesEvent | 2023-02-22 17:41:44 | OpenC3/cosmos | https://api.github.com/repos/OpenC3/cosmos | closed | Even when specifying OPENC3_TAG=5.1.1 in .env , the latest beta version is built (OpenC3/COSMOS repo) | documentation | This is more a cautionary warning than a bug I believe...

I have to use the "OpenC3/cosmos" instead of the reccomended "OpenC3/cosmos-project" repo, because I want to create the Ethernet To Serial bridge connection, solved and documented in several other posts at BallAerospace/COSMOS.

When cloning that repo, it'... | 1.0 | Even when specifying OPENC3_TAG=5.1.1 in .env , the latest beta version is built (OpenC3/COSMOS repo) - This is more a cautionary warning than a bug I believe...

I have to use the "OpenC3/cosmos" instead of the reccomended "OpenC3/cosmos-project" repo, because I want to create the Ethernet To Serial bridge connecti... | non_test | even when specifying tag in env the latest beta version is built cosmos repo this is more a cautionary warning than a bug i believe i have to use the cosmos instead of the reccomended cosmos project repo because i want to create the ethernet to serial bridge connection solved and docum... | 0 |

22,729 | 4,833,882,240 | IssuesEvent | 2016-11-08 12:36:06 | TalatCikikci/Fall2016Swe573_HealthTracker | https://api.github.com/repos/TalatCikikci/Fall2016Swe573_HealthTracker | opened | Create the Project Plan | documentation in progress to-do | A project plan needs to be created to track the progress, assess the risks and deliver the project in a structured manner. | 1.0 | Create the Project Plan - A project plan needs to be created to track the progress, assess the risks and deliver the project in a structured manner. | non_test | create the project plan a project plan needs to be created to track the progress assess the risks and deliver the project in a structured manner | 0 |

43,485 | 5,541,520,190 | IssuesEvent | 2017-03-22 13:05:27 | openbmc/openbmc-test-automation | https://api.github.com/repos/openbmc/openbmc-test-automation | closed | Add support for 'fieldReplaceable' and 'cacheable' | Test | https://github.com/openbmc/openbmc/issues/1099 - This is equivalent to what deepak had dropped the changes.

Please feel free to estimate. | 1.0 | Add support for 'fieldReplaceable' and 'cacheable' - https://github.com/openbmc/openbmc/issues/1099 - This is equivalent to what deepak had dropped the changes.

Please feel free to estimate. | test | add support for fieldreplaceable and cacheable this is equivalent to what deepak had dropped the changes please feel free to estimate | 1 |

74,508 | 15,350,273,111 | IssuesEvent | 2021-03-01 01:55:49 | bitbar/test-samples | https://api.github.com/repos/bitbar/test-samples | closed | CVE-2017-5929 (High) detected in logback-core-1.1.7.jar, logback-classic-1.1.7.jar | closing security vulnerability | ## CVE-2017-5929 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>logback-core-1.1.7.jar</b>, <b>logback-classic-1.1.7.jar</b></p></summary>

<p>

<details><summary><b>logback-core-1.1.... | True | CVE-2017-5929 (High) detected in logback-core-1.1.7.jar, logback-classic-1.1.7.jar - ## CVE-2017-5929 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>logback-core-1.1.7.jar</b>, <b>lo... | non_test | cve high detected in logback core jar logback classic jar cve high severity vulnerability vulnerable libraries logback core jar logback classic jar logback core jar logback core module library home page a href path to dependency file test ... | 0 |

782,644 | 27,501,918,586 | IssuesEvent | 2023-03-05 19:44:52 | AUBGTheHUB/spa-website-2022 | https://api.github.com/repos/AUBGTheHUB/spa-website-2022 | closed | Add participants to respective teams | high priority api ADMIN PANEL | ## Brief description:

Create a new team if the one entered in the form doesn't exist, otherwise, add that participant to their team in the DB.

## How to achieve it:

Check if TeamNoTeam is true or false:

If True -> Check if the team exists if not create it. If it exists add the participant to it.

Else -> Add parti... | 1.0 | Add participants to respective teams - ## Brief description:

Create a new team if the one entered in the form doesn't exist, otherwise, add that participant to their team in the DB.

## How to achieve it:

Check if TeamNoTeam is true or false:

If True -> Check if the team exists if not create it. If it exists add th... | non_test | add participants to respective teams brief description create a new team if the one entered in the form doesn t exist otherwise add that participant to their team in the db how to achieve it check if teamnoteam is true or false if true check if the team exists if not create it if it exists add th... | 0 |

241,909 | 18,499,714,041 | IssuesEvent | 2021-10-19 12:37:25 | chartjs/Chart.js | https://api.github.com/repos/chartjs/Chart.js | closed | Cant run example "Time Scale - Max Span" | type: documentation | Documentation Is:

<!-- Please place an x (no spaces!) in all [ ] that apply -->

- [ ] Missing or needed

- [x] Confusing

- [ ] Not Sure?

### Please Explain in Detail...

I try run example from https://www.chartjs.org/docs/latest/samples/scales/time-max-span.html but geting error `Uncaught Error: This method i... | 1.0 | Cant run example "Time Scale - Max Span" - Documentation Is:

<!-- Please place an x (no spaces!) in all [ ] that apply -->

- [ ] Missing or needed

- [x] Confusing

- [ ] Not Sure?

### Please Explain in Detail...

I try run example from https://www.chartjs.org/docs/latest/samples/scales/time-max-span.html but ... | non_test | cant run example time scale max span documentation is missing or needed confusing not sure please explain in detail i try run example from but geting error uncaught error this method is not implemented check that a complete date adapter is provided your proposal fo... | 0 |

142,463 | 13,025,386,263 | IssuesEvent | 2020-07-27 13:28:11 | mash-up-kr/Dionysos-Backend | https://api.github.com/repos/mash-up-kr/Dionysos-Backend | opened | 스프린트 회의사항을 정리합니다. | documentation | ## 목적

스프린트 회의사항을 정리하는 이슈입니다.

## 작업 상세 내용

- [ ] 회의 안건 취합하기

- [ ] 한 달 단위 스프린트 설정하기

- [ ] 일 주일 스프린트 설정하기

## 참고사항

.

| 1.0 | 스프린트 회의사항을 정리합니다. - ## 목적

스프린트 회의사항을 정리하는 이슈입니다.

## 작업 상세 내용

- [ ] 회의 안건 취합하기

- [ ] 한 달 단위 스프린트 설정하기

- [ ] 일 주일 스프린트 설정하기

## 참고사항

.

| non_test | 스프린트 회의사항을 정리합니다 목적 스프린트 회의사항을 정리하는 이슈입니다 작업 상세 내용 회의 안건 취합하기 한 달 단위 스프린트 설정하기 일 주일 스프린트 설정하기 참고사항 | 0 |

57,590 | 6,551,473,222 | IssuesEvent | 2017-09-05 14:52:12 | pburns96/Revature-VenderBender | https://api.github.com/repos/pburns96/Revature-VenderBender | closed | As a manager, I would like to be able to add CDs and LPs | High Priority Testing | Task List

----------

-Be able to add a new CD and LP to the database

-Be able to view the newly added CD and LP? | 1.0 | As a manager, I would like to be able to add CDs and LPs - Task List

----------

-Be able to add a new CD and LP to the database

-Be able to view the newly added CD and LP? | test | as a manager i would like to be able to add cds and lps task list be able to add a new cd and lp to the database be able to view the newly added cd and lp | 1 |

10,337 | 3,103,601,336 | IssuesEvent | 2015-08-31 11:06:02 | sohelvali/Test-Git-Issue | https://api.github.com/repos/sohelvali/Test-Git-Issue | closed | Bactrim label Warnings&Precautions section contains unrelated sections | test Label | See highlights in the comparison in Warnings/Precautions section. Looks like it contains 3 unrelated sections under the actual warnings and precautions section. Some of them are also captured in their own sections separately as well. Why are they duplicated here? Can this be fixed? The issue is with the label in the da... | 1.0 | Bactrim label Warnings&Precautions section contains unrelated sections - See highlights in the comparison in Warnings/Precautions section. Looks like it contains 3 unrelated sections under the actual warnings and precautions section. Some of them are also captured in their own sections separately as well. Why are they ... | test | bactrim label warnings precautions section contains unrelated sections see highlights in the comparison in warnings precautions section looks like it contains unrelated sections under the actual warnings and precautions section some of them are also captured in their own sections separately as well why are they ... | 1 |

117,341 | 9,924,160,967 | IssuesEvent | 2019-07-01 09:03:21 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | Add/improve test specs | area/testing priority/High | [kind/Enhancement]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the chat and talk to us if you have a question rather than a bug or feature request.

The chat room is at: https://chat.openshift.io/developers/channels/odo

Thanks for understanding, and for contribu... | 1.0 | Add/improve test specs - [kind/Enhancement]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the chat and talk to us if you have a question rather than a bug or feature request.

The chat room is at: https://chat.openshift.io/developers/channels/odo

Thanks for unders... | test | add improve test specs welcome we kindly ask you to fill out the issue template below use the chat and talk to us if you have a question rather than a bug or feature request the chat room is at thanks for understanding and for contributing to the project which ... | 1 |

356,392 | 25,176,178,829 | IssuesEvent | 2022-11-11 09:27:35 | cliftonfelix/pe | https://api.github.com/repos/cliftonfelix/pe | opened | INDEX Constraints for View | type.DocumentationBug severity.Low |

Eventhough it's already stated in the Placeholders section, I would still need to k... | 1.0 | INDEX Constraints for View -

Eventhough it's already stated in the Placeholders sec... | non_test | index constraints for view eventhough it s already stated in the placeholders section i would still need to know the constraints of index under view command i wouldn t want to be bothered to go back and forth to see what is the constraint especially if the ug is pages long | 0 |

22,005 | 4,763,942,442 | IssuesEvent | 2016-10-25 15:40:45 | PrismLibrary/Prism | https://api.github.com/repos/PrismLibrary/Prism | closed | Inconsistent rendering of doc headers | documentation | On first sight the title in both markdown files are the same (# followed by spaced followed by title)

https://raw.githubusercontent.com/PrismLibrary/Prism/master/docs/WPF/04-Modules.md

https://raw.githubusercontent.com/PrismLibrary/Prism/master/docs/WPF/05-Implementing-MVVM.md

But rendering is different, 04 fails ... | 1.0 | Inconsistent rendering of doc headers - On first sight the title in both markdown files are the same (# followed by spaced followed by title)

https://raw.githubusercontent.com/PrismLibrary/Prism/master/docs/WPF/04-Modules.md

https://raw.githubusercontent.com/PrismLibrary/Prism/master/docs/WPF/05-Implementing-MVVM.md

... | non_test | inconsistent rendering of doc headers on first sight the title in both markdown files are the same followed by spaced followed by title but rendering is different fails and is correct any specialists around who spot the issue | 0 |

40,745 | 5,314,904,060 | IssuesEvent | 2017-02-13 16:06:18 | openbmc/openbmc-test-automation | https://api.github.com/repos/openbmc/openbmc-test-automation | opened | [Code Update] Fix MTD device block 5 check to avoid failure from new kernel changes | Test | Executing command 'df -h | grep -v /dev/mtdblock5 | cut -c 52-54 | grep 100 | wc -l'

This will fail since the initramfs block is going away..

This needs to be fixed https://github.com/openbmc/openbmc-test-automation/blob/master/extended/code_update/update_bmc.robot#L42

"Check BMC File System Performance" | 1.0 | [Code Update] Fix MTD device block 5 check to avoid failure from new kernel changes - Executing command 'df -h | grep -v /dev/mtdblock5 | cut -c 52-54 | grep 100 | wc -l'

This will fail since the initramfs block is going away..

This needs to be fixed https://github.com/openbmc/openbmc-test-automation/blob/master... | test | fix mtd device block check to avoid failure from new kernel changes executing command df h grep v dev cut c grep wc l this will fail since the initramfs block is going away this needs to be fixed check bmc file system performance | 1 |

327,735 | 28,080,572,939 | IssuesEvent | 2023-03-30 05:54:02 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | pkg/ccl/logictestccl/tests/multiregion-9node-3region-3azs-no-los/multiregion-9node-3region-3azs-no-los_test: TestCCLLogic_regional_by_row_hash_sharded_index failed | C-test-failure O-robot branch-master | pkg/ccl/logictestccl/tests/multiregion-9node-3region-3azs-no-los/multiregion-9node-3region-3azs-no-los_test.TestCCLLogic_regional_by_row_hash_sharded_index [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/9330243?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com... | 1.0 | pkg/ccl/logictestccl/tests/multiregion-9node-3region-3azs-no-los/multiregion-9node-3region-3azs-no-los_test: TestCCLLogic_regional_by_row_hash_sharded_index failed - pkg/ccl/logictestccl/tests/multiregion-9node-3region-3azs-no-los/multiregion-9node-3region-3azs-no-los_test.TestCCLLogic_regional_by_row_hash_sharded_inde... | test | pkg ccl logictestccl tests multiregion no los multiregion no los test testccllogic regional by row hash sharded index failed pkg ccl logictestccl tests multiregion no los multiregion no los test testccllogic regional by row hash sharded index with on master run testc... | 1 |

437,386 | 30,596,400,705 | IssuesEvent | 2023-07-21 22:47:58 | ClimateImpactLab/dodola | https://api.github.com/repos/ClimateImpactLab/dodola | closed | Add label for code source to container images | documentation enhancement help wanted | We should put a label in the Dockerfile to add a URL to the code repository.

Basically add this

```

LABEL org.opencontainers.image.source="https://github.com/ClimateImpactLab/dodola"

```

to `Dockerfile`. | 1.0 | Add label for code source to container images - We should put a label in the Dockerfile to add a URL to the code repository.

Basically add this

```

LABEL org.opencontainers.image.source="https://github.com/ClimateImpactLab/dodola"

```

to `Dockerfile`. | non_test | add label for code source to container images we should put a label in the dockerfile to add a url to the code repository basically add this label org opencontainers image source to dockerfile | 0 |

179,540 | 14,705,249,814 | IssuesEvent | 2021-01-04 17:48:29 | pcdshub/happi | https://api.github.com/repos/pcdshub/happi | opened | Document container entrypoints | Documentation | ## Current Behavior

Undocumented?

## Expected Behavior

Downstream package documentation for how to add entrypoints does not appear to exist. It seems like it would fit in here: https://pcdshub.github.io/happi/containers.html

Copying in my comment to #191, which may be a reasonable outline for the start of a d... | 1.0 | Document container entrypoints - ## Current Behavior

Undocumented?

## Expected Behavior

Downstream package documentation for how to add entrypoints does not appear to exist. It seems like it would fit in here: https://pcdshub.github.io/happi/containers.html

Copying in my comment to #191, which may be a reason... | non_test | document container entrypoints current behavior undocumented expected behavior downstream package documentation for how to add entrypoints does not appear to exist it seems like it would fit in here copying in my comment to which may be a reasonable outline for the start of a document f... | 0 |

11,534 | 4,237,484,255 | IssuesEvent | 2016-07-05 22:00:26 | SleepyTrousers/EnderIO | https://api.github.com/repos/SleepyTrousers/EnderIO | closed | 1.10.2 Crash on Respawn | 1.9 Code Complete Logfile Missing Report Incomplete | I died, when I respawned the game locked up and crashed. At the time my friend was running a soul binder. LAN World: http://pastebin.com/GB8ETX6w

| 1.0 | 1.10.2 Crash on Respawn - I died, when I respawned the game locked up and crashed. At the time my friend was running a soul binder. LAN World: http://pastebin.com/GB8ETX6w

| non_test | crash on respawn i died when i respawned the game locked up and crashed at the time my friend was running a soul binder lan world | 0 |

14,966 | 5,028,931,929 | IssuesEvent | 2016-12-15 19:40:11 | certbot/certbot | https://api.github.com/repos/certbot/certbot | opened | Refactor IDisplay interface | code health refactoring ui / ux | As of writing this, no third party plugins are using it. It's getting a little gross with tons of arguments being passed in, many of which aren't relevant anymore with the removal of `dialog` and lots of `*args` and `**kwargs` due to differences in `FileDisplay` and `NoninteractiveDisplay`. We should clean all this up ... | 1.0 | Refactor IDisplay interface - As of writing this, no third party plugins are using it. It's getting a little gross with tons of arguments being passed in, many of which aren't relevant anymore with the removal of `dialog` and lots of `*args` and `**kwargs` due to differences in `FileDisplay` and `NoninteractiveDisplay`... | non_test | refactor idisplay interface as of writing this no third party plugins are using it it s getting a little gross with tons of arguments being passed in many of which aren t relevant anymore with the removal of dialog and lots of args and kwargs due to differences in filedisplay and noninteractivedisplay ... | 0 |

88,530 | 3,778,680,946 | IssuesEvent | 2016-03-18 02:17:29 | phetsims/scenery | https://api.github.com/repos/phetsims/scenery | opened | Don't draw not-yet-loaded image elements in Canvas | priority:2-high type:bug | See https://github.com/phetsims/function-builder/issues/15

We'll want a better guard here:

```js

paintCanvas: function( wrapper, node ) {

if ( node._image ) {

wrapper.context.drawImage( node._image, 0, 0 );

}

},

``` | 1.0 | Don't draw not-yet-loaded image elements in Canvas - See https://github.com/phetsims/function-builder/issues/15

We'll want a better guard here:

```js

paintCanvas: function( wrapper, node ) {

if ( node._image ) {

wrapper.context.drawImage( node._image, 0, 0 );

}

},

``` | non_test | don t draw not yet loaded image elements in canvas see we ll want a better guard here js paintcanvas function wrapper node if node image wrapper context drawimage node image | 0 |

224,604 | 17,760,998,435 | IssuesEvent | 2021-08-29 17:41:48 | BenCodez/VotingPlugin | https://api.github.com/repos/BenCodez/VotingPlugin | closed | The command removepoints does not longer work | Needs Testing Possible Bug | **Versions**

6.6.1

**Describe the bug**

When I do /adminvote User <user> RemovePoints <points>, it does not decrease the points count.

**To Reproduce**

Use the command removepoints

**Expected behavior**

It should decrease the points count

**Screenshots/Configs**

detected in xstream-1.3.1.jar - autoclosed | security vulnerability | ## CVE-2022-40154 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path to vulnerable library: /gameoflife-web/tools/jmeter/lib/xstream-1.... | True | CVE-2022-40154 (High) detected in xstream-1.3.1.jar - autoclosed - ## CVE-2022-40154 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.3.1.jar</b></p></summary>

<p></p>

<p>Path... | non_test | cve high detected in xstream jar autoclosed cve high severity vulnerability vulnerable library xstream jar path to vulnerable library gameoflife web tools jmeter lib xstream jar dependency hierarchy x xstream jar vulnerable library found in b... | 0 |

82,387 | 15,894,717,842 | IssuesEvent | 2021-04-11 11:18:19 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | Insights charts sometimes have dots, sometimes don't | bug team/code-insights webapp |

Seems to be some kind of race condition in the charting lib (recharts) | 1.0 | Insights charts sometimes have dots, sometimes don't -

Seems to be some kind of race condition in the charting lib (recharts) | non_test | insights charts sometimes have dots sometimes don t seems to be some kind of race condition in the charting lib recharts | 0 |

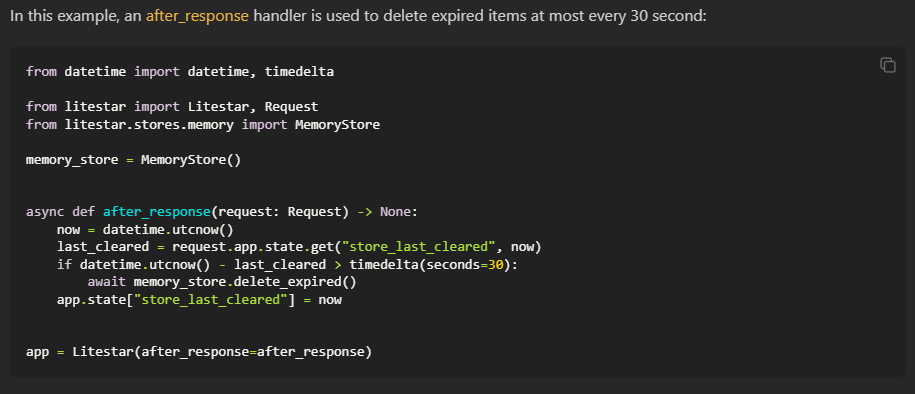

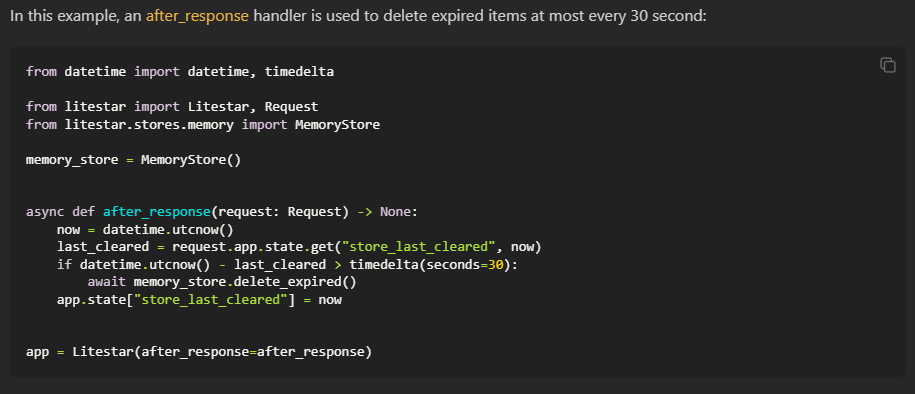

422,324 | 28,434,754,069 | IssuesEvent | 2023-04-15 06:52:58 | litestar-org/litestar | https://api.github.com/repos/litestar-org/litestar | closed | Docs: Issue in "Stores/Deleting expired values" section | documentation | ### Summary

Hi guys, I found this bit pretty confusing:

As I understand, `after_response` function will be called after every awaited response , not "at most every 30 second"

| 1.0 | Docs: Issue in "Stores/Deleting expired values" section - ### Summary

Hi guys, I found this bit pretty confusing:

As I understand, `after_response` function will be called afte... | non_test | docs issue in stores deleting expired values section summary hi guys i found this bit pretty confusing as i understand after response function will be called after every awaited response not at most every second | 0 |

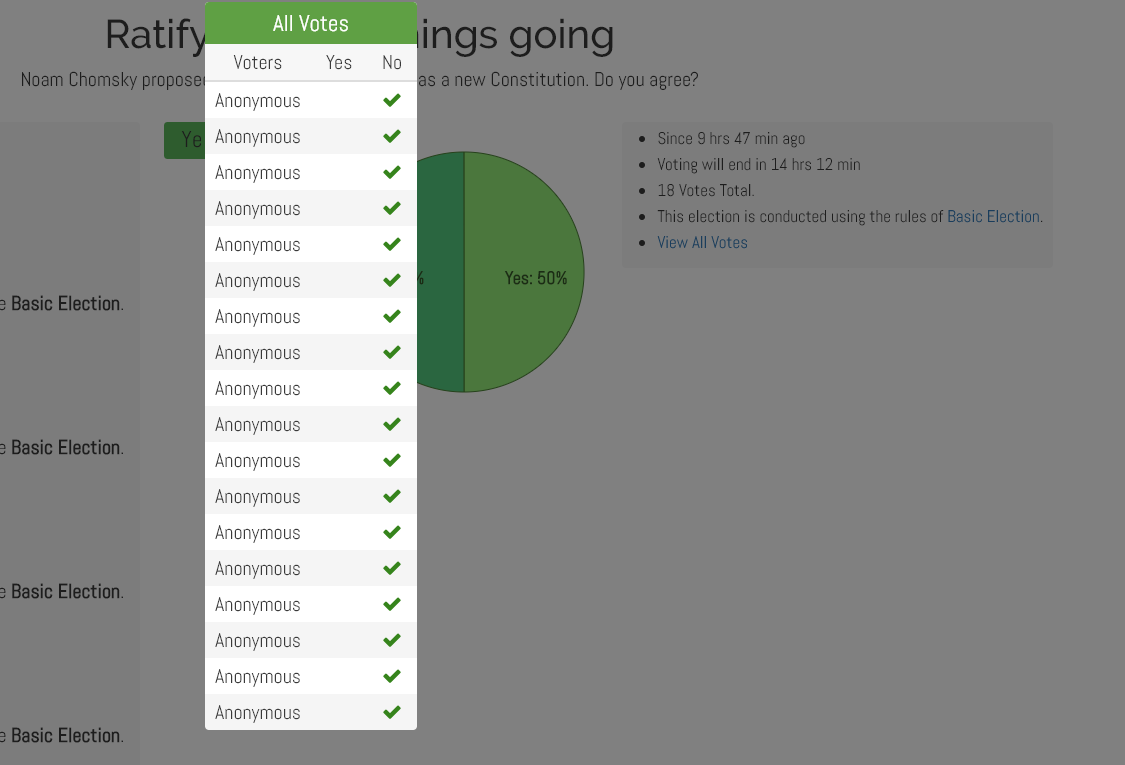

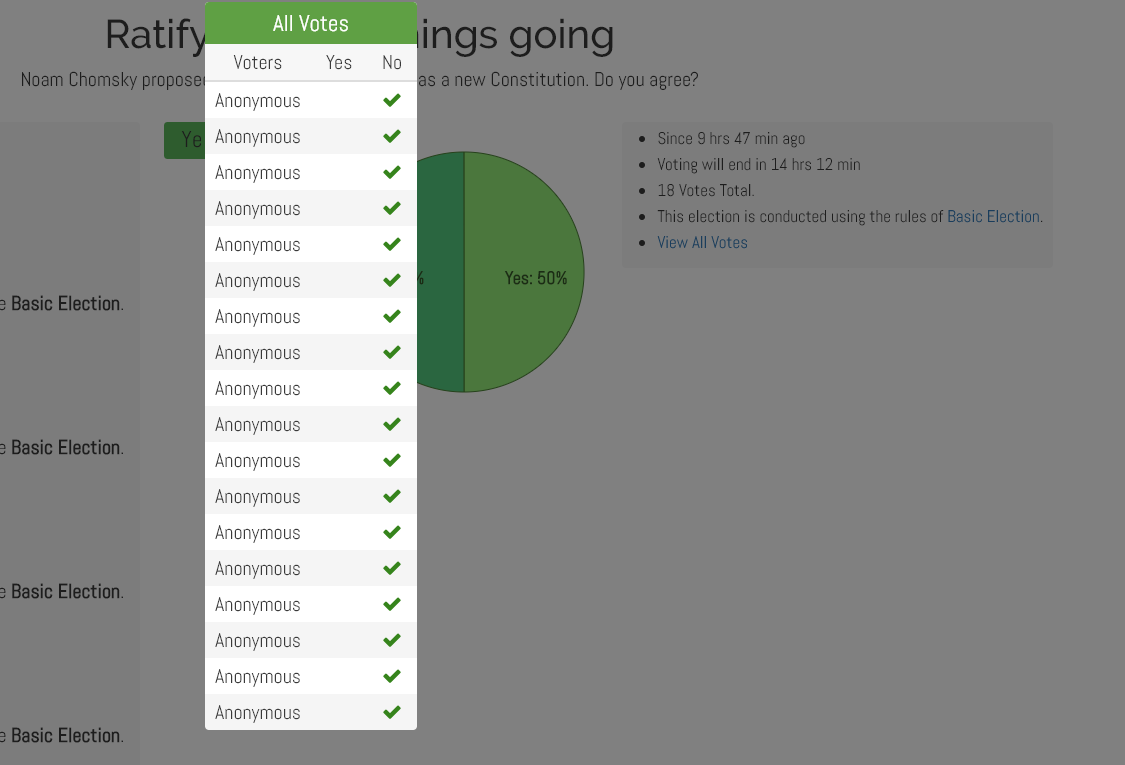

460,839 | 13,218,923,522 | IssuesEvent | 2020-08-17 09:34:19 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0.0 beta staging-stable-3] view vote link doesn't show the correct vote in Web view | Category: Elections Website Priority: Medium Status: Fixed |

As you can see it show that everyone voted No but when you see real result (in background of my image) you can see it's 50%/50%. | 1.0 | [0.9.0.0 beta staging-stable-3] view vote link doesn't show the correct vote in Web view -

As you can see it show that everyone voted No but when you see real result (in background of my image) you can see it... | non_test | view vote link doesn t show the correct vote in web view as you can see it show that everyone voted no but when you see real result in background of my image you can see it s | 0 |

85,947 | 16,767,898,641 | IssuesEvent | 2021-06-14 11:16:56 | GeekMasher/advanced-security-compliance | https://api.github.com/repos/GeekMasher/advanced-security-compliance | closed | SLA / Time to Remediate Policy as Code | codescanning dependabot enhancement licensing secretscanning | ### Description

It would be awesome to define a "time to remediate" or SLA (service-level agreement) policy that only brings up an alert if certain criteria is meet. By default, this mode should not be present and can be enabled by the policy.

**Example Scenario**

- Dependabot opens a High security issue

- I ... | 1.0 | SLA / Time to Remediate Policy as Code - ### Description

It would be awesome to define a "time to remediate" or SLA (service-level agreement) policy that only brings up an alert if certain criteria is meet. By default, this mode should not be present and can be enabled by the policy.

**Example Scenario**

- Dep... | non_test | sla time to remediate policy as code description it would be awesome to define a time to remediate or sla service level agreement policy that only brings up an alert if certain criteria is meet by default this mode should not be present and can be enabled by the policy example scenario dep... | 0 |

41,776 | 5,396,561,989 | IssuesEvent | 2017-02-27 12:07:53 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | opened | ci-kubernetes-e2e-gci-gke: broken test run | kind/flake priority/P2 team/test-infra | https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gci-gke/5270/

Multiple broken tests:

Failed: [k8s.io] [HPA] Horizontal pod autoscaling (scale resource: CPU) [k8s.io] ReplicationController light Should scale from 1 pod to 2 pods {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernetes/_o... | 1.0 | ci-kubernetes-e2e-gci-gke: broken test run - https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-e2e-gci-gke/5270/

Multiple broken tests:

Failed: [k8s.io] [HPA] Horizontal pod autoscaling (scale resource: CPU) [k8s.io] ReplicationController light Should scale from 1 pod to 2 pods {Kubernetes... | test | ci kubernetes gci gke broken test run multiple broken tests failed horizontal pod autoscaling scale resource cpu replicationcontroller light should scale from pod to pods kubernetes suite go src io kubernetes output dockerized go src io kubernetes test horizontal pod autoscaling ... | 1 |

175,355 | 13,547,620,118 | IssuesEvent | 2020-09-17 04:38:28 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Running Editor tests prints out unnecessary outputs | Area-IDE Test | This is probably an issue with how loggers are removed or registered.

```

------ Test started: Assembly: Roslyn.Services.Editor2.UnitTests.dll ------

Output from Microsoft.CodeAnalysis.Editor.UnitTests.FindReferences.FindReferencesTests.TestNamedType_CaseSensitivity:

Looking for a cached skeleton assembly for... | 1.0 | Running Editor tests prints out unnecessary outputs - This is probably an issue with how loggers are removed or registered.

```

------ Test started: Assembly: Roslyn.Services.Editor2.UnitTests.dll ------

Output from Microsoft.CodeAnalysis.Editor.UnitTests.FindReferences.FindReferencesTests.TestNamedType_CaseSens... | test | running editor tests prints out unnecessary outputs this is probably an issue with how loggers are removed or registered test started assembly roslyn services unittests dll output from microsoft codeanalysis editor unittests findreferences findreferencestests testnamedtype casesensitivit... | 1 |

9,154 | 2,615,134,066 | IssuesEvent | 2015-03-01 06:05:12 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | closed | Maps Engine API library throws IllegalArgumentException when creating a GeoJsonGeometryCollection | auto-migrated Priority-Medium Type-Defect | ```

Version of google-api-java-client? 1.18.0-rc

Java environment? OpenJDK 1.7.0

Describe the problem.

I'm having an issue when running code against the generated mapsengine library,

rev5 from Maven, 1.18.0-rc. I've attached the Jar I used, which was downloaded

from here:

http://search.maven.org/#artifactdetai... | 1.0 | Maps Engine API library throws IllegalArgumentException when creating a GeoJsonGeometryCollection - ```

Version of google-api-java-client? 1.18.0-rc

Java environment? OpenJDK 1.7.0

Describe the problem.

I'm having an issue when running code against the generated mapsengine library,

rev5 from Maven, 1.18.0-rc. I'... | non_test | maps engine api library throws illegalargumentexception when creating a geojsongeometrycollection version of google api java client rc java environment openjdk describe the problem i m having an issue when running code against the generated mapsengine library from maven rc i ve at... | 0 |

45,502 | 7,187,924,567 | IssuesEvent | 2018-02-02 08:06:52 | FriendsOfPHP/PHP-CS-Fixer | https://api.github.com/repos/FriendsOfPHP/PHP-CS-Fixer | closed | Fixers should be forced to have a rationale | documentation question | I would like to start a discussion about the need for every single fixers to be documented with their rationale. This should then appear in the `describe` command, and in the documentation (`README.rst`).

For now all fixers must have a summary and may have a description. The description usually explains in details _... | 1.0 | Fixers should be forced to have a rationale - I would like to start a discussion about the need for every single fixers to be documented with their rationale. This should then appear in the `describe` command, and in the documentation (`README.rst`).

For now all fixers must have a summary and may have a description.... | non_test | fixers should be forced to have a rationale i would like to start a discussion about the need for every single fixers to be documented with their rationale this should then appear in the describe command and in the documentation readme rst for now all fixers must have a summary and may have a description ... | 0 |

32,160 | 4,755,000,622 | IssuesEvent | 2016-10-24 09:22:04 | Alfresco/alfresco-ng2-components | https://api.github.com/repos/Alfresco/alfresco-ng2-components | closed | Task service level agreement - Analytics component | automated test required comp: analytics New Feature | Reproduce the Task service level agreement graph of Activiti. | 1.0 | Task service level agreement - Analytics component - Reproduce the Task service level agreement graph of Activiti. | test | task service level agreement analytics component reproduce the task service level agreement graph of activiti | 1 |

224,189 | 17,670,093,333 | IssuesEvent | 2021-08-23 04:05:08 | apple/servicetalk | https://api.github.com/repos/apple/servicetalk | reopened | ClientClosureRaceTest.testPipelinedPosts test failure | flaky tests | https://ci.servicetalk.io/job/servicetalk-java11-prb/379/testReport/junit/io.servicetalk.http.netty/ClientClosureRaceTest/testPipelinedPosts/

```

Error Message

java.util.concurrent.ExecutionException: io.netty.channel.socket.ChannelOutputShutdownException: Channel output shutdown

Stacktrace

java.util.concurr... | 1.0 | ClientClosureRaceTest.testPipelinedPosts test failure - https://ci.servicetalk.io/job/servicetalk-java11-prb/379/testReport/junit/io.servicetalk.http.netty/ClientClosureRaceTest/testPipelinedPosts/

```

Error Message

java.util.concurrent.ExecutionException: io.netty.channel.socket.ChannelOutputShutdownException: ... | test | clientclosureracetest testpipelinedposts test failure error message java util concurrent executionexception io netty channel socket channeloutputshutdownexception channel output shutdown stacktrace java util concurrent executionexception io netty channel socket channeloutputshutdownexception chan... | 1 |

75,037 | 7,458,796,623 | IssuesEvent | 2018-03-30 12:22:54 | ballerina-lang/testerina | https://api.github.com/repos/ballerina-lang/testerina | closed | [Blocker] Cannot access the values in a catch block of a try-catch | component/testerina | 1. I have a function like below which I test using testarina

```

public function scheduledTaskAppointment (string cron) (string msg) {

string appTid;

function () returns (error) onTriggerFunction;

function (error) onErrorFunction;

onTriggerFunction = cleanupOTP;

onErrorFunction = cleanupErr... | 1.0 | [Blocker] Cannot access the values in a catch block of a try-catch - 1. I have a function like below which I test using testarina

```

public function scheduledTaskAppointment (string cron) (string msg) {

string appTid;

function () returns (error) onTriggerFunction;

function (error) onErrorFunction;

... | test | cannot access the values in a catch block of a try catch i have a function like below which i test using testarina public function scheduledtaskappointment string cron string msg string apptid function returns error ontriggerfunction function error onerrorfunction ontr... | 1 |

193,334 | 6,884,071,103 | IssuesEvent | 2017-11-21 11:38:53 | Sharavanth/ho-app | https://api.github.com/repos/Sharavanth/ho-app | opened | Format common colors and common variables based on individual components in Core-lib. | Low Priority React UI: core-lib | Formats colors.js and variables.js files based on the individual components in core-lib.

| 1.0 | Format common colors and common variables based on individual components in Core-lib. - Formats colors.js and variables.js files based on the individual components in core-lib.

| non_test | format common colors and common variables based on individual components in core lib formats colors js and variables js files based on the individual components in core lib | 0 |

406,379 | 27,561,559,852 | IssuesEvent | 2023-03-07 22:32:39 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | closed | libc apparently superfluous option for SCUDO | documentation libc | In the website [documentation](https://libc.llvm.org/full_host_build.html) for building libc, this command is given to configure it properly.

```shell

cmake ../llvm \

-G Ninja \ # Generator

-DLLVM_ENABLE_PROJECTS="clang;libc;lld;compiler-rt" \ # Enabled projects

-DCMAKE_BUILD_TYPE=<Debug|Release> \... | 1.0 | libc apparently superfluous option for SCUDO - In the website [documentation](https://libc.llvm.org/full_host_build.html) for building libc, this command is given to configure it properly.

```shell

cmake ../llvm \

-G Ninja \ # Generator

-DLLVM_ENABLE_PROJECTS="clang;libc;lld;compiler-rt" \ # Enabled pro... | non_test | libc apparently superfluous option for scudo in the website for building libc this command is given to configure it properly shell cmake llvm g ninja generator dllvm enable projects clang libc lld compiler rt enabled projects dcmake build type select build type ... | 0 |

46,651 | 13,055,955,125 | IssuesEvent | 2020-07-30 03:13:35 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [lilliput] destructor must be noexecpt in c++11 (Trac #1666) | Incomplete Migration Migrated from Trac combo reconstruction defect | Migrated from https://code.icecube.wisc.edu/ticket/1666

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:10",

"description": "Don't call log_fatal in a destructor.\n\nWarning:\n{{{\nfrom\n /home/dschultz/Documents/combo/trunk/src/lilliput/private/test/PSSTestModul\n e.h:4,\nfrom /home/d... | 1.0 | [lilliput] destructor must be noexecpt in c++11 (Trac #1666) - Migrated from https://code.icecube.wisc.edu/ticket/1666

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:10",

"description": "Don't call log_fatal in a destructor.\n\nWarning:\n{{{\nfrom\n /home/dschultz/Documents/combo/trunk/s... | non_test | destructor must be noexecpt in c trac migrated from json status closed changetime description don t call log fatal in a destructor n nwarning n nfrom n home dschultz documents combo trunk src lilliput private test psstestmodul n e h nfrom home d... | 0 |

807 | 3,285,422,387 | IssuesEvent | 2015-10-28 20:32:42 | GsDevKit/zinc | https://api.github.com/repos/GsDevKit/zinc | reopened | Timeout passed to a ZnClient is ignored while making the connection via the underlying socket | inprocess | ### Suppose the following situation:

- Create a ZnClient

- Pass in an explicit timeout (e.g., 3 seconds)

- Pass in a host/port combination that does not exist

### Expected behavior: an error should be thrown after 3 seconds.

What happens: the user has to wait for the (default) timeout of GsSocket; the timeout pa... | 1.0 | Timeout passed to a ZnClient is ignored while making the connection via the underlying socket - ### Suppose the following situation:

- Create a ZnClient

- Pass in an explicit timeout (e.g., 3 seconds)

- Pass in a host/port combination that does not exist

### Expected behavior: an error should be thrown after 3 se... | non_test | timeout passed to a znclient is ignored while making the connection via the underlying socket suppose the following situation create a znclient pass in an explicit timeout e g seconds pass in a host port combination that does not exist expected behavior an error should be thrown after se... | 0 |

452,043 | 32,048,890,520 | IssuesEvent | 2023-09-23 10:09:06 | hedyorg/hedy | https://api.github.com/repos/hedyorg/hedy | closed | [DOCUMENTATION] Pygame markdown | documentation | **For team Pygame:**

1. Create `pygame.md` for documentation.

This should make it possible for other contributors to understand what we've implemented during our project.

2. It's important to note what we've **done** and what we've **NOT done** so far.

3. Mention the technical implementation.

| 1.0 | [DOCUMENTATION] Pygame markdown - **For team Pygame:**

1. Create `pygame.md` for documentation.

This should make it possible for other contributors to understand what we've implemented during our project.

2. It's important to note what we've **done** and what we've **NOT done** so far.

3. Mention the technical... | non_test | pygame markdown for team pygame create pygame md for documentation this should make it possible for other contributors to understand what we ve implemented during our project it s important to note what we ve done and what we ve not done so far mention the technical implementatio... | 0 |

17,008 | 9,962,799,374 | IssuesEvent | 2019-07-07 17:36:27 | brewpi-remix/brewpi-script-rmx | https://api.github.com/repos/brewpi-remix/brewpi-script-rmx | closed | BEERSOCKET is 777 | security | BEERSOCKET is 777, as we expand capabilities, this opens a pretty big security issue. | True | BEERSOCKET is 777 - BEERSOCKET is 777, as we expand capabilities, this opens a pretty big security issue. | non_test | beersocket is beersocket is as we expand capabilities this opens a pretty big security issue | 0 |

342,441 | 10,317,112,006 | IssuesEvent | 2019-08-30 11:51:25 | garden-io/garden | https://api.github.com/repos/garden-io/garden | closed | Command names not displaying in garden --help in PowerShell | bug good first issue priority:low | No idea why, but it seems the color we use for the command names in our help message don't display in PowerShell:

https://www.dropbox.com/s/nxcr92o7zfnwcrf/Screenshot%202018-09-16%2016.45.56.png?dl=0

Side-note: I'm not sure the single letter aliases make much sense for top-level commands, and some of them are cle... | 1.0 | Command names not displaying in garden --help in PowerShell - No idea why, but it seems the color we use for the command names in our help message don't display in PowerShell:

https://www.dropbox.com/s/nxcr92o7zfnwcrf/Screenshot%202018-09-16%2016.45.56.png?dl=0

Side-note: I'm not sure the single letter aliases ma... | non_test | command names not displaying in garden help in powershell no idea why but it seems the color we use for the command names in our help message don t display in powershell side note i m not sure the single letter aliases make much sense for top level commands and some of them are clearly wrong r is an ... | 0 |

307,921 | 26,570,274,479 | IssuesEvent | 2023-01-21 03:31:07 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | manager v4.1.28-1 (r4.2) | r4.2-host-cur-test r4.2-vm-bullseye-cur-test r4.2-vm-bookworm-cur-test r4.2-vm-fc37-cur-test r4.2-vm-fc36-cur-test r4.2-vm-centos-stream8-cur-test | Update of manager to v4.1.28-1 for Qubes r4.2, see comments below for details and build status.

From commit: https://github.com/QubesOS/qubes-manager/commit/c63e3257997fdde9e8192cddf4d4d588b8fa6ad9