Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

102,192 | 16,548,284,026 | IssuesEvent | 2021-05-28 04:35:54 | samq-ghdemo/Java-Demo | https://api.github.com/repos/samq-ghdemo/Java-Demo | opened | CVE-2017-3589 (Low) detected in mysql-connector-java-5.1.25.jar | security vulnerability | ## CVE-2017-3589 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.25.jar</b></p></summary>

<p>MySQL JDBC Type 4 driver</p>

<p>Library home page: <a href="http://... | True | CVE-2017-3589 (Low) detected in mysql-connector-java-5.1.25.jar - ## CVE-2017-3589 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mysql-connector-java-5.1.25.jar</b></p></summary>

<p>M... | non_test | cve low detected in mysql connector java jar cve low severity vulnerability vulnerable library mysql connector java jar mysql jdbc type driver library home page a href path to dependency file java demo pom xml path to vulnerable library canner repository mysql m... | 0 |

33,685 | 4,848,973,578 | IssuesEvent | 2016-11-10 19:04:34 | radare/radare2 | https://api.github.com/repos/radare/radare2 | closed | Commands are sensitive to double-whitespace | has-test | Some commands will not work correctly with multiple spaces instead of one, but will not report an error/warning either. For example:

```

// this works

f foobar=0x1000

// this does nothing

f foobar=0x1000

```

version 0.10.6

| 1.0 | Commands are sensitive to double-whitespace - Some commands will not work correctly with multiple spaces instead of one, but will not report an error/warning either. For example:

```

// this works

f foobar=0x1000

// this does nothing

f foobar=0x1000

```

version 0.10.6

| test | commands are sensitive to double whitespace some commands will not work correctly with multiple spaces instead of one but will not report an error warning either for example this works f foobar this does nothing f foobar version | 1 |

454,401 | 13,100,218,753 | IssuesEvent | 2020-08-03 23:48:11 | GoogleCloudPlatform/stackdriver-sandbox | https://api.github.com/repos/GoogleCloudPlatform/stackdriver-sandbox | opened | Credential check fail when accessing the storage bucket | priority: p2 type: bug | Some users may encounter a credential check failure when accessing the storage bucket in Terraform initialization. They need to do "gcloud auth application-default login". We need to put a try-catch block around the initialization. | 1.0 | Credential check fail when accessing the storage bucket - Some users may encounter a credential check failure when accessing the storage bucket in Terraform initialization. They need to do "gcloud auth application-default login". We need to put a try-catch block around the initialization. | non_test | credential check fail when accessing the storage bucket some users may encounter a credential check failure when accessing the storage bucket in terraform initialization they need to do gcloud auth application default login we need to put a try catch block around the initialization | 0 |

5,715 | 2,790,522,000 | IssuesEvent | 2015-05-09 09:27:17 | Dalmirog/OctoPosh | https://api.github.com/repos/Dalmirog/OctoPosh | reopened | Improve tests for Get-* cmdlets | Testing | They should be more Unit-test-like and consider scenarios like:

- Get-* should not get a resource that doesnt exist

- Get-* [specific names] should only get resources with those names, and nothing extra

- Get-* with date filters should return results with between the correct date ranges

- Get-* with version filte... | 1.0 | Improve tests for Get-* cmdlets - They should be more Unit-test-like and consider scenarios like:

- Get-* should not get a resource that doesnt exist

- Get-* [specific names] should only get resources with those names, and nothing extra

- Get-* with date filters should return results with between the correct date ... | test | improve tests for get cmdlets they should be more unit test like and consider scenarios like get should not get a resource that doesnt exist get should only get resources with those names and nothing extra get with date filters should return results with between the correct date ranges get ... | 1 |

325,821 | 27,964,388,929 | IssuesEvent | 2023-03-24 18:08:47 | Satellite-im/testing-uplink | https://api.github.com/repos/Satellite-im/testing-uplink | opened | UI Tests - Settings Developer - Save Logs In a File | test Settings | Logs should save in a file when User toggles on Save Logs In A File | 1.0 | UI Tests - Settings Developer - Save Logs In a File - Logs should save in a file when User toggles on Save Logs In A File | test | ui tests settings developer save logs in a file logs should save in a file when user toggles on save logs in a file | 1 |

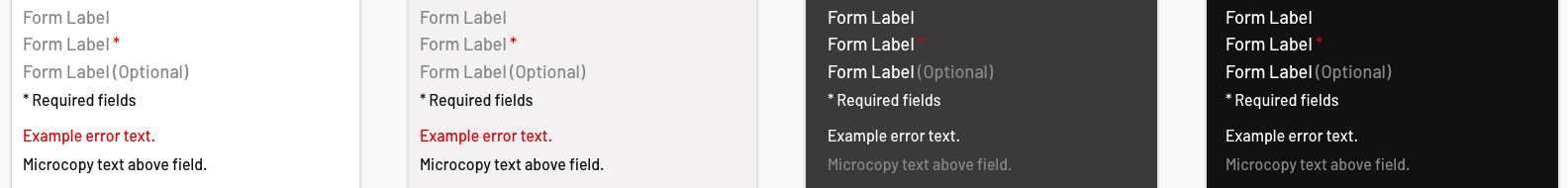

92,837 | 11,714,795,081 | IssuesEvent | 2020-03-09 13:03:47 | EightShapes/esds-library | https://api.github.com/repos/EightShapes/esds-library | closed | Required Field Indicator [Design Asset] | Component [Design Asset] | ### Design Starting Point

This is the key for how forms denote required fields, which will usually be positioned below the title of the form.

### Must Have

* Setti... | 1.0 | Required Field Indicator [Design Asset] - ### Design Starting Point

This is the key for how forms denote required fields, which will usually be positioned below the title of the form.

- finish last clip

- resume by clicking or typing space or `flowplayer(0).resume()` in the console

yields:

```

flowplayer.min.js:6 Uncaught (in promise) DOMException: The play() request was interrupted by a new load request

```

Which descr... | 1.0 | [Chrome] playlist: error on resume when last clip is in finished state - https://flowplayer.org/standalone/playlist/javascript.html (no plugins)

- finish last clip

- resume by clicking or typing space or `flowplayer(0).resume()` in the console

yields:

```

flowplayer.min.js:6 Uncaught (in promise) DOMException: T... | test | playlist error on resume when last clip is in finished state no plugins finish last clip resume by clicking or typing space or flowplayer resume in the console yields flowplayer min js uncaught in promise domexception the play request was interrupted by a new load request wh... | 1 |

141,798 | 11,437,609,410 | IssuesEvent | 2020-02-05 00:30:18 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Go panic in NodeController every 1 second | [zube]: To Test internal | <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a public issue in GitHub. You may (but are not required to) use the GPG ke... | 1.0 | Go panic in NodeController every 1 second - <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a public issue in GitHub. You ... | test | go panic in nodecontroller every second please search for existing issues first then read to see what we expect in an issue for security issues please email security rancher com instead of posting a public issue in github you may but are not required to use the gpg key located on keybase wh... | 1 |

49,887 | 6,044,810,449 | IssuesEvent | 2017-06-12 07:24:52 | pixelhumain/co2 | https://api.github.com/repos/pixelhumain/co2 | closed | Création URL | to test | Création d'une URL depuis une organisation :

1\ Erreur JS au chargement de la popup

2\ Erreur à la validation du form au saveurl

2\ Erreur à la validation du form au saveurl

* [The API definition](https://github.com/elafros/elafros/tree/master/pkg/apis/ela)

The [conformance t... | 1.0 | Create PR check to verify API changes reflected in conformance tests - /area API

/area test-and-release

/kind dev

/kind doc

## Expected Behavior

Whenever changes are made to the API, in either:

* [The spec doc](https://github.com/elafros/elafros/blob/master/docs/spec/spec.md)

* [The API definition](https://git... | test | create pr check to verify api changes reflected in conformance tests area api area test and release kind dev kind doc expected behavior whenever changes are made to the api in either the should probably be updated as well when a pr is submitted that changes either of those a... | 1 |

107,919 | 9,248,944,446 | IssuesEvent | 2019-03-15 08:03:44 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | Get odo tests as part of OpenShift testgrid | kind/testing priority/High state/Ready | [kind/Feature]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the chat and talk to us if you have a question rather than a bug or feature request.

The chat room is at: https://chat.openshift.io/developers/channels/odo

Thanks for understanding, and for contributi... | 1.0 | Get odo tests as part of OpenShift testgrid - [kind/Feature]

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the chat and talk to us if you have a question rather than a bug or feature request.

The chat room is at: https://chat.openshift.io/developers/channels/odo

... | test | get odo tests as part of openshift testgrid welcome we kindly ask you to fill out the issue template below use the chat and talk to us if you have a question rather than a bug or feature request the chat room is at thanks for understanding and for contributing to the proje... | 1 |

61,558 | 3,147,475,762 | IssuesEvent | 2015-09-15 08:22:12 | handsontable/handsontable | https://api.github.com/repos/handsontable/handsontable | closed | Broken scrolling on the grouping example. | Bug Plugin: drag to scroll Priority: normal | Scrolling with shift + mouse wheel can cause sheet to go off the screen.

http://docs.handsontable.com/0.16.0/demo-grouping-and-ungrouping.html?_ga=1.181662767.981730759.1437017583

It also works here

http://docs.handsontable.com/0.16.0/demo-scrollbars.html

http://imgur.com/v4GWthl

has some new type hints included. This results in quite a few mypy issues that will need looking at. I'm setting this as high priority as I don't think we want to start pinning versions of commonly used libraries like xarray. | 1.0 | New xarray and mypy issues - The latest version of xarray (2022.6.0) has some new type hints included. This results in quite a few mypy issues that will need looking at. I'm setting this as high priority as I don't think we want to start pinning versions of commonly used libraries like xarray. | non_test | new xarray and mypy issues the latest version of xarray has some new type hints included this results in quite a few mypy issues that will need looking at i m setting this as high priority as i don t think we want to start pinning versions of commonly used libraries like xarray | 0 |

103,291 | 4,166,283,881 | IssuesEvent | 2016-06-20 01:40:07 | nvs/gem | https://api.github.com/repos/nvs/gem | opened | Introduce a pause before 'starting' | Area: JASS Priority: Later Status: Not Started Type: Enhancement | This would help some slower computers 'settle', as well as giving players an indication of the game actually starting. This issue is most noticeable when hosted via HCL, and would help with #79. | 1.0 | Introduce a pause before 'starting' - This would help some slower computers 'settle', as well as giving players an indication of the game actually starting. This issue is most noticeable when hosted via HCL, and would help with #79. | non_test | introduce a pause before starting this would help some slower computers settle as well as giving players an indication of the game actually starting this issue is most noticeable when hosted via hcl and would help with | 0 |

19,170 | 5,814,941,387 | IssuesEvent | 2017-05-05 06:42:59 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | Massive loading times after update to 3.7? | No Code Attached Yet | Hey there,

i updated two pages now to joomla 3.7 and notices that both of them got a high loading time up to 8 seconds to get the page loaded. Does anyone else have that problem? | 1.0 | Massive loading times after update to 3.7? - Hey there,

i updated two pages now to joomla 3.7 and notices that both of them got a high loading time up to 8 seconds to get the page loaded. Does anyone else have that problem? | non_test | massive loading times after update to hey there i updated two pages now to joomla and notices that both of them got a high loading time up to seconds to get the page loaded does anyone else have that problem | 0 |

308,389 | 26,603,901,914 | IssuesEvent | 2023-01-23 17:43:39 | kedacore/keda | https://api.github.com/repos/kedacore/keda | opened | Add e2e test for Openstack Metrics Scaler | help wanted good first issue testing | ### Proposal

https://github.com/kedacore/keda/tree/main/tests

### Use-Case

_No response_

### Is this a feature you are interested in implementing yourself?

No

### Anything else?

_No response_ | 1.0 | Add e2e test for Openstack Metrics Scaler - ### Proposal

https://github.com/kedacore/keda/tree/main/tests

### Use-Case

_No response_

### Is this a feature you are interested in implementing yourself?

No

### Anything else?

_No response_ | test | add test for openstack metrics scaler proposal use case no response is this a feature you are interested in implementing yourself no anything else no response | 1 |

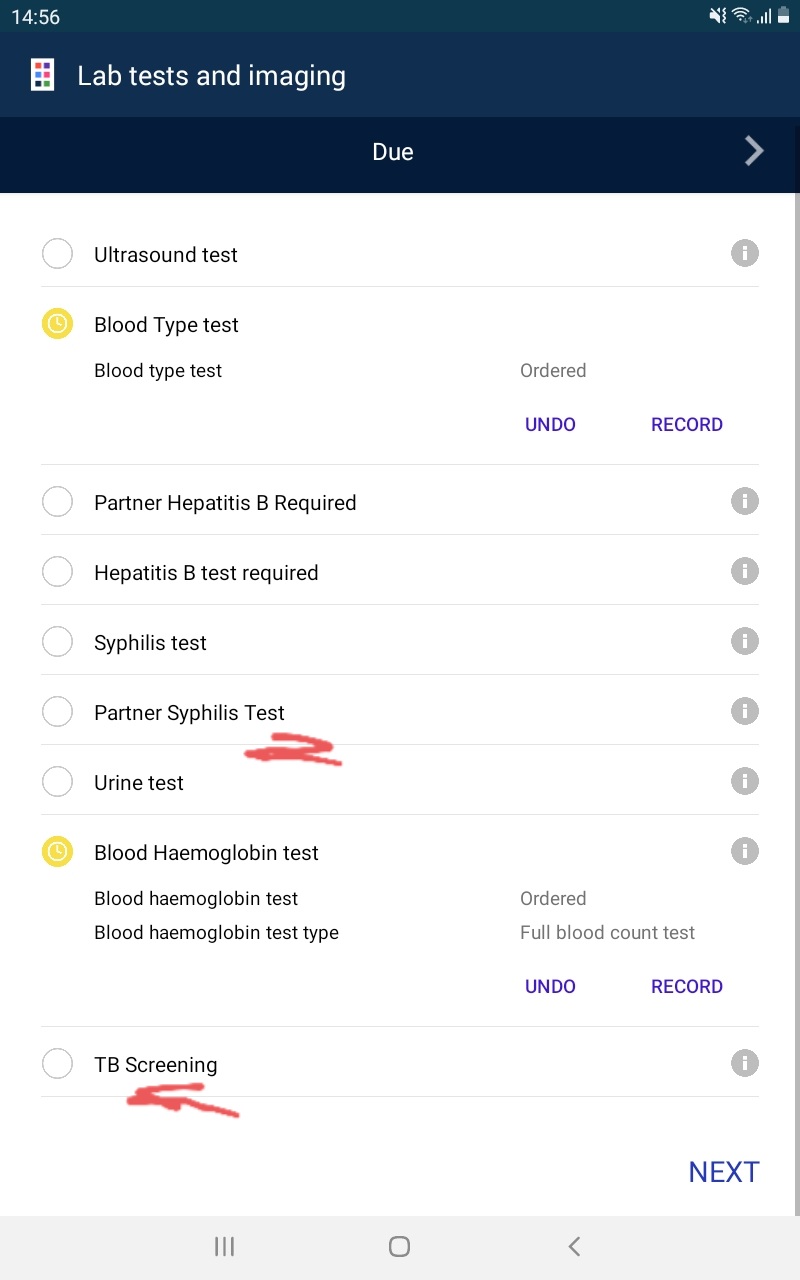

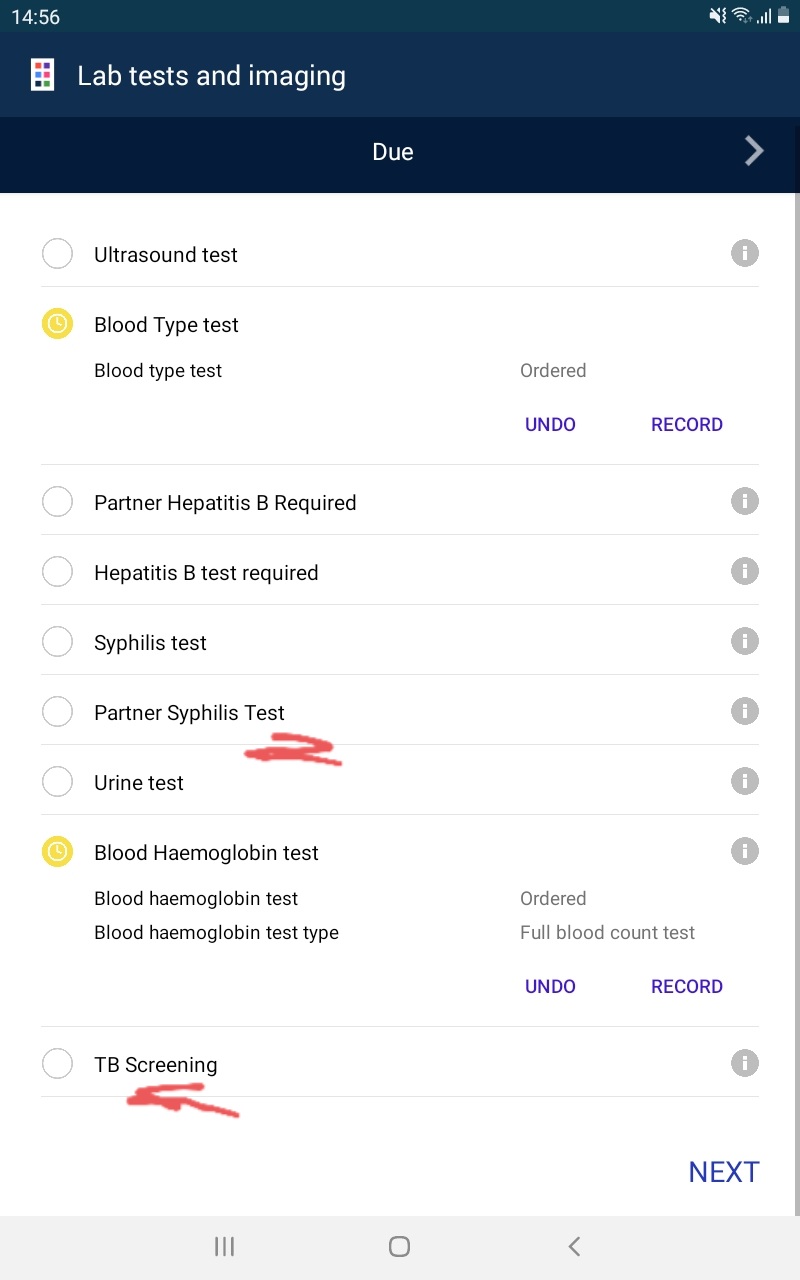

233,117 | 18,949,430,268 | IssuesEvent | 2021-11-18 13:48:00 | BlueCodeSystems/who-anc | https://api.github.com/repos/BlueCodeSystems/who-anc | closed | Use lower case for first letters for the words "Test" and "Screening" for consistency. | Tests v1.0.0-beta.4 |

| 1.0 | Use lower case for first letters for the words "Test" and "Screening" for consistency. -

| test | use lower case for first letters for the words test and screening for consistency | 1 |

209,490 | 16,024,515,182 | IssuesEvent | 2021-04-21 07:21:07 | CarlosRayon/symfony | https://api.github.com/repos/CarlosRayon/symfony | opened | Metodologias | Testing General | - Seguir misma estructura directorios que el proyecto bajo un directorio test.

- Hacer una clase de test por cada clase del proyecto principal

- Nombre de la clase sera el mismo que la clase a testear añadiendo la palabra _Test_ en camelCase

(ejemploController.php -> ejemploControllerTest.php).

- La fun... | 1.0 | Metodologias - - Seguir misma estructura directorios que el proyecto bajo un directorio test.

- Hacer una clase de test por cada clase del proyecto principal

- Nombre de la clase sera el mismo que la clase a testear añadiendo la palabra _Test_ en camelCase

(ejemploController.php -> ejemploControllerTest.ph... | test | metodologias seguir misma estructura directorios que el proyecto bajo un directorio test hacer una clase de test por cada clase del proyecto principal nombre de la clase sera el mismo que la clase a testear añadiendo la palabra test en camelcase ejemplocontroller php ejemplocontrollertest ph... | 1 |

110,100 | 9,430,499,397 | IssuesEvent | 2019-04-12 09:10:45 | goharbor/harbor | https://api.github.com/repos/goharbor/harbor | closed | Should add retry in keyword <Add Labels To Tag> | area/ci area/test automation | In keyword <Add Labels To Tag>, one of steps is to click repository name, there should be retry when attempting to go into repository.

https://jenkins11.svc.eng.vmware.com/job/harbor_nightly_result_publisher/2095/robot/report/log.html

vagrant@ubuntu-focal:~/Ocean-Data-Map-Project/oceannavigator/frontend$ yarn install

yarn install v1.22.10

[1/4] Resolving packages...

[2/4] Fetching packages...

info fsevents@2.3.2: The platform "linux" is incompatible with this module.

info "fsevents@2.3.2" is an optional dependency and failed co... | 1.0 | Issue with building the React JS modules for mainline - ```

(navigator) vagrant@ubuntu-focal:~/Ocean-Data-Map-Project/oceannavigator/frontend$ yarn install

yarn install v1.22.10

[1/4] Resolving packages...

[2/4] Fetching packages...

info fsevents@2.3.2: The platform "linux" is incompatible with this module.

info ... | non_test | issue with building the react js modules for mainline navigator vagrant ubuntu focal ocean data map project oceannavigator frontend yarn install yarn install resolving packages fetching packages info fsevents the platform linux is incompatible with this module info fsevents ... | 0 |

53,962 | 6,353,950,271 | IssuesEvent | 2017-07-29 04:05:46 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Investigate flaky sequential/test-fs-readfile-tostring-fail.js on smartos16-64 | fs smartos test | * **Version**: master

* **Platform**: smartos

* **Subsystem**: fs

Example: https://ci.nodejs.org/job/node-test-commit-smartos/7481/nodes=smartos16-64/console

```

not ok 1385 sequential/test-fs-readfile-tostring-fail

---

duration_ms: 0.729

severity: fail

stack: |-

/home/iojs/build/workspace/nod... | 1.0 | Investigate flaky sequential/test-fs-readfile-tostring-fail.js on smartos16-64 - * **Version**: master

* **Platform**: smartos

* **Subsystem**: fs

Example: https://ci.nodejs.org/job/node-test-commit-smartos/7481/nodes=smartos16-64/console

```

not ok 1385 sequential/test-fs-readfile-tostring-fail

---

dura... | test | investigate flaky sequential test fs readfile tostring fail js on version master platform smartos subsystem fs example not ok sequential test fs readfile tostring fail duration ms severity fail stack home iojs build workspace node test commit ... | 1 |

7,560 | 18,245,610,188 | IssuesEvent | 2021-10-01 17:58:13 | arduino/arduino-cli | https://api.github.com/repos/arduino/arduino-cli | closed | Crash after execute "fatal error: unexpected signal during runtime execution" (macOS 11.6) | os: macos architecture: arm64 conclusion: resolved type: imperfection | ## Bug Report

### Current behavior

`arduino-cli` (tag 0.19.1) built with go 1.17.1. Crashes immediately after executing a binary file:

Crash after execution binary (builded with go 1.17.1):

```console

$ arduino-cli

fatal error: unexpected signal during runtime execution

[signal SIGSEGV: segmentation viol... | 1.0 | Crash after execute "fatal error: unexpected signal during runtime execution" (macOS 11.6) - ## Bug Report

### Current behavior

`arduino-cli` (tag 0.19.1) built with go 1.17.1. Crashes immediately after executing a binary file:

Crash after execution binary (builded with go 1.17.1):

```console

$ arduino-cli... | non_test | crash after execute fatal error unexpected signal during runtime execution macos bug report current behavior arduino cli tag built with go crashes immediately after executing a binary file crash after execution binary builded with go console arduino cli fa... | 0 |

268,822 | 23,396,737,046 | IssuesEvent | 2022-08-12 01:03:12 | nim-lang/Nim | https://api.github.com/repos/nim-lang/Nim | closed | Bug with effect system and forward declarations | Effect system works_but_needs_test_case | ```nim

type

SafeFn = proc (): void {. raises: [] }

proc ok() {. raises: [] .} = discard

proc fail() {. raises: [] .}

let f1 : SafeFn = ok

let f2 : SafeFn = fail # Error: type mismatch: got (proc ()) but expected 'SafeFn = proc (){.closure.}'

# .raise effect is 'can raise any'

... | 1.0 | Bug with effect system and forward declarations - ```nim

type

SafeFn = proc (): void {. raises: [] }

proc ok() {. raises: [] .} = discard

proc fail() {. raises: [] .}

let f1 : SafeFn = ok

let f2 : SafeFn = fail # Error: type mismatch: got (proc ()) but expected 'SafeFn = proc (){.closure.}'

... | test | bug with effect system and forward declarations nim type safefn proc void raises proc ok raises discard proc fail raises let safefn ok let safefn fail error type mismatch got proc but expected safefn proc closure ... | 1 |

183,649 | 21,775,132,577 | IssuesEvent | 2022-05-13 13:07:46 | ssobue/redis-demo | https://api.github.com/repos/ssobue/redis-demo | closed | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar | security vulnerability | ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p>logback-classic module</p>

<p>Library home page: <a href="http://logb... | True | CVE-2021-42550 (Medium) detected in logback-classic-1.2.3.jar - ## CVE-2021-42550 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>logback-classic-1.2.3.jar</b></p></summary>

<p>logba... | non_test | cve medium detected in logback classic jar cve medium severity vulnerability vulnerable library logback classic jar logback classic module library home page a href path to dependency file redis demo pom xml path to vulnerable library home wss scanner repository c... | 0 |

120,214 | 10,109,722,207 | IssuesEvent | 2019-07-30 08:41:03 | khartec/waltz | https://api.github.com/repos/khartec/waltz | closed | Auth Source fails on Person page | bug fixed (test & close) | ```

ServiceBroker::loadData - AuthSourcesStore.findAuthSources: Internal Server Error - Exception: Cannot make generic selector for kind: PERSON /

``` | 1.0 | Auth Source fails on Person page - ```

ServiceBroker::loadData - AuthSourcesStore.findAuthSources: Internal Server Error - Exception: Cannot make generic selector for kind: PERSON /

``` | test | auth source fails on person page servicebroker loaddata authsourcesstore findauthsources internal server error exception cannot make generic selector for kind person | 1 |

344,589 | 30,751,786,665 | IssuesEvent | 2023-07-28 20:01:06 | saltstack/salt | https://api.github.com/repos/saltstack/salt | opened | [Increase Test Coverage] Batch 16 | Tests | Increase the code coverage percent on the following files to at least 80%.

Please be aware that currently the percentage might be inaccurate if the module uses salt due to #64696

File | Percent

salt/modules/junos.py 67

salt/modules/postgres.py 76

salt/_compat.py 64

salt/cloud/clouds/gce.py 18

salt/_logging/impl.py 70

| 1.0 | [Increase Test Coverage] Batch 16 - Increase the code coverage percent on the following files to at least 80%.

Please be aware that currently the percentage might be inaccurate if the module uses salt due to #64696

File | Percent

salt/modules/junos.py 67

salt/modules/postgres.py 76

salt/_compat.py 64

salt/cloud/clouds... | test | batch increase the code coverage percent on the following files to at least please be aware that currently the percentage might be inaccurate if the module uses salt due to file percent salt modules junos py salt modules postgres py salt compat py salt cloud clouds gce py salt logging impl py ... | 1 |

334,069 | 29,820,345,671 | IssuesEvent | 2023-06-17 01:31:37 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix manipulation.test_flipud | Sub Task Ivy API Experimental Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4561754220/jobs/8048085832" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4561754220/jobs/8048085832" rel="noopener ... | 1.0 | Fix manipulation.test_flipud - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4561754220/jobs/8048085832" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4561754220/... | test | fix manipulation test flipud tensorflow img src torch img src numpy img src jax img src | 1 |

98,106 | 20,611,770,876 | IssuesEvent | 2022-03-07 09:21:14 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.1] Schedule tasks error reporting | No Code Attached Yet | ### Steps to reproduce the issue

Create a new image size action

Do NOT select a path

Save and run a test

### Expected result

Error message that the image path does not exist

### Actual result

... | 1.0 | [4.1] Schedule tasks error reporting - ### Steps to reproduce the issue

Create a new image size action

Do NOT select a path

Save and run a test

### Expected result

Error message that the image path does not exist

| 1.0 | Following Tags Back-end Logic - Users should be able to follow tags based on their interested areas.

Implement the back-end functionality of following a tag for a user. Write the dao, service and controller class functions. It would be better if users can follow multiple tags at once. (similar to adding tags to herita... | non_test | following tags back end logic users should be able to follow tags based on their interested areas implement the back end functionality of following a tag for a user write the dao service and controller class functions it would be better if users can follow multiple tags at once similar to adding tags to herita... | 0 |

285,499 | 24,671,170,002 | IssuesEvent | 2022-10-18 13:52:23 | aldefouw/redcap_cypress | https://api.github.com/repos/aldefouw/redcap_cypress | reopened | Design Forms using Data Dictionary & Online Designer | Core Functionality Test Script Feature | File Location:

https://github.com/aldefouw/redcap_cypress/blob/v11.1.29/cypress/features/core/pre-requisite/design_forms.feature

Task:

Write the test specs in Gherkin DSL following manual test script as guide

Contact Adam De Fouw ([aldefouw@medicine.wisc.edu](mailto:aldefouw@medicine.wisc.edu)) with any questio... | 1.0 | Design Forms using Data Dictionary & Online Designer - File Location:

https://github.com/aldefouw/redcap_cypress/blob/v11.1.29/cypress/features/core/pre-requisite/design_forms.feature

Task:

Write the test specs in Gherkin DSL following manual test script as guide

Contact Adam De Fouw ([aldefouw@medicine.wisc.ed... | test | design forms using data dictionary online designer file location task write the test specs in gherkin dsl following manual test script as guide contact adam de fouw mailto aldefouw medicine wisc edu with any questions | 1 |

33,267 | 4,820,388,615 | IssuesEvent | 2016-11-04 22:39:48 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | closed | Persistent Data Source Throttle Threshold Setting | Enhancement Ready for Testing | Add system settings and help for the threshold.

| 1.0 | Persistent Data Source Throttle Threshold Setting - Add system settings and help for the threshold.

| test | persistent data source throttle threshold setting add system settings and help for the threshold | 1 |

303,482 | 26,212,654,306 | IssuesEvent | 2023-01-04 08:16:03 | WPChill/download-monitor | https://api.github.com/repos/WPChill/download-monitor | closed | prices bigger than 1000 throw an error with PayPal gateway | Bug needs testing | Error with prices bigger than 1000 on checkout.

https://github.com/WPChill/download-monitor/blob/4.7.70/src/Shop/Checkout/PaymentGateway/PayPal/Api/NumericValidator.php#L22

The if is TRUE because 1,234.00 is not numeric.

Formatted price is set here -> https://github.com/WPChill/download-monitor/blob/4.7.70/src/Sh... | 1.0 | prices bigger than 1000 throw an error with PayPal gateway - Error with prices bigger than 1000 on checkout.

https://github.com/WPChill/download-monitor/blob/4.7.70/src/Shop/Checkout/PaymentGateway/PayPal/Api/NumericValidator.php#L22

The if is TRUE because 1,234.00 is not numeric.

Formatted price is set here -> h... | test | prices bigger than throw an error with paypal gateway error with prices bigger than on checkout the if is true because is not numeric formatted price is set here | 1 |

135,530 | 11,010,062,856 | IssuesEvent | 2019-12-04 13:55:43 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack API Integration Tests.x-pack/test/api_integration/apis/apm/feature_controls·ts - apis APM apm feature controls APIs can be accessed by global_all user | Team:apm failed-test | A test failed on a tracked branch

```

Error: Endpoint: POST /api/apm/settings/agent-configuration/search

Status code: 404

Response: Not found

expected 404 to equal 200

at executeRequests (test/api_integration/apis/apm/feature_controls.ts:216:15)

```

First failure: [Jenkin... | 1.0 | Failing test: X-Pack API Integration Tests.x-pack/test/api_integration/apis/apm/feature_controls·ts - apis APM apm feature controls APIs can be accessed by global_all user - A test failed on a tracked branch

```

Error: Endpoint: POST /api/apm/settings/agent-configuration/search

Status code: 404

... | test | failing test x pack api integration tests x pack test api integration apis apm feature controls·ts apis apm apm feature controls apis can be accessed by global all user a test failed on a tracked branch error endpoint post api apm settings agent configuration search status code ... | 1 |

240,629 | 18,363,131,946 | IssuesEvent | 2021-10-09 15:28:17 | girlscript/winter-of-contributing | https://api.github.com/repos/girlscript/winter-of-contributing | closed | C-CPP DSA: Bubble Sorting but printing all the passes | documentation GWOC21 DSA Assigned C/CPP | ### Description

Well, this code will be representing the implementation of bubble sort and printing output of all the passes that occured between the elements.

### Domain

C/CPP

### Type of Contribution

Documentation

### Code of Conduct

- [X] I follow [Contributing Guidelines](https://github.com/girlscript/winter... | 1.0 | C-CPP DSA: Bubble Sorting but printing all the passes - ### Description

Well, this code will be representing the implementation of bubble sort and printing output of all the passes that occured between the elements.

### Domain

C/CPP

### Type of Contribution

Documentation

### Code of Conduct

- [X] I follow [Cont... | non_test | c cpp dsa bubble sorting but printing all the passes description well this code will be representing the implementation of bubble sort and printing output of all the passes that occured between the elements domain c cpp type of contribution documentation code of conduct i follow ... | 0 |

136,505 | 12,717,075,730 | IssuesEvent | 2020-06-24 03:58:24 | gardener-attic/issues-foo | https://api.github.com/repos/gardener-attic/issues-foo | closed | A | component/dashboard component/documentation component/gardener kind/bug kind/post-mortem kind/regression os/garden-linux os/suse-chost platform/alicloud platform/aws platform/azure platform/converged-cloud platform/gcp priority/normal topology/shoot | ## Which cluster is affected?

https://dashboard.garden.dev.k8s.ondemand.com/namespace/garden/shoots/aws/

## What happened?

## What you expected to happen?

## When did it happen or started to happen?

<!-- Please provide start time in UTC OR relative time in hours from now, so that we can pull the proper l... | 1.0 | A - ## Which cluster is affected?

https://dashboard.garden.dev.k8s.ondemand.com/namespace/garden/shoots/aws/

## What happened?

## What you expected to happen?

## When did it happen or started to happen?

<!-- Please provide start time in UTC OR relative time in hours from now, so that we can pull the prop... | non_test | a which cluster is affected what happened what you expected to happen when did it happen or started to happen absolute relative how would we reproduce it concisely and precisely anything else we need to know help us categorise this issue for faster ... | 0 |

135,997 | 11,032,485,525 | IssuesEvent | 2019-12-06 20:18:02 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | opened | P3A - Open TOR window response value isn't displayed correctly in Upgraded profile | QA/Test-Plan-Specified QA/Yes bug | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | P3A - Open TOR window response value isn't displayed correctly in Upgraded profile - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT I... | test | open tor window response value isn t displayed correctly in upgraded profile have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient inf... | 1 |

429,744 | 12,427,009,404 | IssuesEvent | 2020-05-25 00:28:20 | eclipse-ee4j/glassfish | https://api.github.com/repos/eclipse-ee4j/glassfish | closed | New annotation @TimedProbe to probe the beginning and end of a method and calculate total time | Component: monitoring ERR: Assignee Priority: Major Stale Type: New Feature | Commonly needed is how much time is spent in a method. Create a new @TimedProbe which does this automatically. | 1.0 | New annotation @TimedProbe to probe the beginning and end of a method and calculate total time - Commonly needed is how much time is spent in a method. Create a new @TimedProbe which does this automatically. | non_test | new annotation timedprobe to probe the beginning and end of a method and calculate total time commonly needed is how much time is spent in a method create a new timedprobe which does this automatically | 0 |

63,081 | 14,656,666,922 | IssuesEvent | 2020-12-28 13:56:13 | fu1771695yongxie/learnGitBranching | https://api.github.com/repos/fu1771695yongxie/learnGitBranching | opened | CVE-2015-9251 (Medium) detected in jquery-1.12.4.js | security vulnerability | ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.12.4.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https:... | True | CVE-2015-9251 (Medium) detected in jquery-1.12.4.js - ## CVE-2015-9251 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.12.4.js</b></p></summary>

<p>JavaScript library for DO... | non_test | cve medium detected in jquery js cve medium severity vulnerability vulnerable library jquery js javascript library for dom operations library home page a href path to dependency file learngitbranching node modules jquery ui demos effect removeclass html path to vulner... | 0 |

752,129 | 26,274,311,237 | IssuesEvent | 2023-01-06 20:15:34 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio-ui] Tree Nav loses state when background refresh | bug priority: high validate | ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

Receiving socket events while you tree nav is expanded past its default results in losing the expanded state

### Steps ... | 1.0 | [studio-ui] Tree Nav loses state when background refresh - ### Duplicates

- [X] I have searched the existing issues

### Latest version

- [X] The issue is in the latest released 4.0.x

- [ ] The issue is in the latest released 3.1.x

### Describe the issue

Receiving socket events while you tree nav is expanded past i... | non_test | tree nav loses state when background refresh duplicates i have searched the existing issues latest version the issue is in the latest released x the issue is in the latest released x describe the issue receiving socket events while you tree nav is expanded past its default resul... | 0 |

40,128 | 9,852,416,338 | IssuesEvent | 2019-06-19 12:49:40 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Possibly wrong MOD implementation in ACCESS and TERADATA | C: DB: Access C: DB: Teradata C: Functionality E: Enterprise Edition E: Professional Edition P: Medium T: Defect | When generating modulo expressions in `ACCESS` and `TERADATA`, we're currently not wrapping the expression in parentheses:

```sql

a MOD b

```

This could potentially lead to operator precedence issues when combined with operators of higher precedence | 1.0 | Possibly wrong MOD implementation in ACCESS and TERADATA - When generating modulo expressions in `ACCESS` and `TERADATA`, we're currently not wrapping the expression in parentheses:

```sql

a MOD b

```

This could potentially lead to operator precedence issues when combined with operators of higher precedence | non_test | possibly wrong mod implementation in access and teradata when generating modulo expressions in access and teradata we re currently not wrapping the expression in parentheses sql a mod b this could potentially lead to operator precedence issues when combined with operators of higher precedence | 0 |

148,924 | 11,872,135,752 | IssuesEvent | 2020-03-26 15:22:53 | infinispan/infinispan-operator | https://api.github.com/repos/infinispan/infinispan-operator | closed | Unable to run some of the e2e tests against OpenShift | bug test | Some of the e2e tests do no work with OpenShift (tested with 4.2 and 4.3). The issue started appearing after migration to Operator SDK 0.15.2 PR was merged (#293).

So far this applies for all the `*Update` tests. Request to change the parameter won't hit the OpenShift.

This issue is probably caused by Kubernetes ... | 1.0 | Unable to run some of the e2e tests against OpenShift - Some of the e2e tests do no work with OpenShift (tested with 4.2 and 4.3). The issue started appearing after migration to Operator SDK 0.15.2 PR was merged (#293).

So far this applies for all the `*Update` tests. Request to change the parameter won't hit the Op... | test | unable to run some of the tests against openshift some of the tests do no work with openshift tested with and the issue started appearing after migration to operator sdk pr was merged so far this applies for all the update tests request to change the parameter won t hit the openshift... | 1 |

666,260 | 22,348,140,570 | IssuesEvent | 2022-06-15 09:35:59 | PCSX2/pcsx2 | https://api.github.com/repos/PCSX2/pcsx2 | closed | [BUG]: Gran Turismo 4 - Bad Edges on Split Time Vehicle Icons | Bug GS: Hardware Regression GS: Texture Cache High Priority | ### Describe the Bug

On any race, with any vehicle, the vehicle icon which appears while showing split times will have a line on the bottom and right edges. The icon is not supposed to have any boundaries, and is supposed to be transparent except for the vehicle picture on it.

Bug was introduced by 1.7.2126 and pre... | 1.0 | [BUG]: Gran Turismo 4 - Bad Edges on Split Time Vehicle Icons - ### Describe the Bug

On any race, with any vehicle, the vehicle icon which appears while showing split times will have a line on the bottom and right edges. The icon is not supposed to have any boundaries, and is supposed to be transparent except for the ... | non_test | gran turismo bad edges on split time vehicle icons describe the bug on any race with any vehicle the vehicle icon which appears while showing split times will have a line on the bottom and right edges the icon is not supposed to have any boundaries and is supposed to be transparent except for the vehi... | 0 |

45,839 | 13,055,754,994 | IssuesEvent | 2020-07-30 02:38:18 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | omkey inherits from i3Frame object (Trac #117) | IceTray Incomplete Migration Migrated from Trac defect | Migrated from https://code.icecube.wisc.edu/ticket/117

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:56",

"description": "where did this come from?",

"reporter": "troy",

"cc": "",

"resolution": "wont or cant fix",

"_ts": "1416713876900096",

"component": "IceTray", ... | 1.0 | omkey inherits from i3Frame object (Trac #117) - Migrated from https://code.icecube.wisc.edu/ticket/117

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:56",

"description": "where did this come from?",

"reporter": "troy",

"cc": "",

"resolution": "wont or cant fix",

"_ts": ... | non_test | omkey inherits from object trac migrated from json status closed changetime description where did this come from reporter troy cc resolution wont or cant fix ts component icetray summary omkey ... | 0 |

91,210 | 8,300,600,604 | IssuesEvent | 2018-09-21 08:39:00 | swarmcity/SwarmCityDapp | https://api.github.com/repos/swarmcity/SwarmCityDapp | closed | on iphone button enter swarm city doesn't appear | blocking bug ready to test | # Location

/mykeys

# Expected behavior

when creating new account on iphone, after checking the box to use the private key to be the backup, a button appears "enter swarm.city"

# Actual behavior

when creating new account on iphone, after checking the box to use the private key to be the backup, the button appea... | 1.0 | on iphone button enter swarm city doesn't appear - # Location

/mykeys

# Expected behavior

when creating new account on iphone, after checking the box to use the private key to be the backup, a button appears "enter swarm.city"

# Actual behavior

when creating new account on iphone, after checking the box to use... | test | on iphone button enter swarm city doesn t appear location mykeys expected behavior when creating new account on iphone after checking the box to use the private key to be the backup a button appears enter swarm city actual behavior when creating new account on iphone after checking the box to use... | 1 |

338,309 | 30,291,860,715 | IssuesEvent | 2023-07-09 11:36:12 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix blas_and_lapack_ops.test_torch_ger | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5499384391/jobs/10021539111"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5499384391/jobs/10021539111"><img src=https://img.shields.io/badge/-success-success><... | 1.0 | Fix blas_and_lapack_ops.test_torch_ger - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5499384391/jobs/10021539111"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5499384391/jobs/10021539111"><img src=https:... | test | fix blas and lapack ops test torch ger tensorflow a href src jax a href src numpy a href src torch a href src paddle a href src | 1 |

222,656 | 17,466,766,700 | IssuesEvent | 2021-08-06 18:02:25 | paritytech/polkadot | https://api.github.com/repos/paritytech/polkadot | closed | Approval Voting unit/integration hybrid tests | F4-tests | In order to adequately test approval voting beyond simple unit tests that validate the behavior of the subsystem, we should create multiple instances of the approval voting subsystem and play them against each other. | 1.0 | Approval Voting unit/integration hybrid tests - In order to adequately test approval voting beyond simple unit tests that validate the behavior of the subsystem, we should create multiple instances of the approval voting subsystem and play them against each other. | test | approval voting unit integration hybrid tests in order to adequately test approval voting beyond simple unit tests that validate the behavior of the subsystem we should create multiple instances of the approval voting subsystem and play them against each other | 1 |

157,690 | 12,389,088,909 | IssuesEvent | 2020-05-20 08:27:22 | moment/moment | https://api.github.com/repos/moment/moment | closed | 2 tests failed. locale:gu:calendar day (1107.6) locale:x-pseudo:calendar day (2655.6) | DST Unit Test Failed | ### Client info

```

Date String : Mon Mar 12 2018 14:40:57 GMT-0700 (Pacific Daylight Time)

Locale String : 3/12/2018, 2:40:57 PM

Offset : 420

User Agent : Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/64.0.3282.186 Safari/537.36

Moment Version: 2.21.0

```

... | 1.0 | 2 tests failed. locale:gu:calendar day (1107.6) locale:x-pseudo:calendar day (2655.6) - ### Client info

```

Date String : Mon Mar 12 2018 14:40:57 GMT-0700 (Pacific Daylight Time)

Locale String : 3/12/2018, 2:40:57 PM

Offset : 420

User Agent : Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.... | test | tests failed locale gu calendar day locale x pseudo calendar day client info date string mon mar gmt pacific daylight time locale string pm offset user agent mozilla windows nt applewebkit khtml like gecko chrome ... | 1 |

615,698 | 19,273,306,644 | IssuesEvent | 2021-12-10 08:55:23 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | logging: memory leak at high frequency logging | type: bug api: logging priority: p2 lang: go | We have an issue when using the go logging library. We are experiencing a very high memory consumption, potentially a leak, when writing logs at a high frequency.

We first observed this issue in one of our Go services hosted in Cloud Run. When receiving a high amount of requests (6000rps+) we can see the memory utilis... | 1.0 | logging: memory leak at high frequency logging - We have an issue when using the go logging library. We are experiencing a very high memory consumption, potentially a leak, when writing logs at a high frequency.

We first observed this issue in one of our Go services hosted in Cloud Run. When receiving a high amount of... | non_test | logging memory leak at high frequency logging we have an issue when using the go logging library we are experiencing a very high memory consumption potentially a leak when writing logs at a high frequency we first observed this issue in one of our go services hosted in cloud run when receiving a high amount of... | 0 |

4,814 | 2,875,502,626 | IssuesEvent | 2015-06-09 08:36:24 | bpmn-io/bpmn-js | https://api.github.com/repos/bpmn-io/bpmn-js | closed | Investigate: Document our APIs in a user friendly way | documentation in progress | Adding a (self) hosted solution with up to date documentation.

[**ReadMe.io**](https://readme.io/) could be a great option since it's a well built, easy to use system and free for open source.

### Primary Use Case

Users should learn about our public API, i.e. the [Overlays](https://github.com/bpmn-io/diagram-j... | 1.0 | Investigate: Document our APIs in a user friendly way - Adding a (self) hosted solution with up to date documentation.

[**ReadMe.io**](https://readme.io/) could be a great option since it's a well built, easy to use system and free for open source.

### Primary Use Case

Users should learn about our public API, ... | non_test | investigate document our apis in a user friendly way adding a self hosted solution with up to date documentation could be a great option since it s a well built easy to use system and free for open source primary use case users should learn about our public api i e the service or the main... | 0 |

410,250 | 11,985,432,924 | IssuesEvent | 2020-04-07 17:29:51 | IpsumCapra/project-3-4 | https://api.github.com/repos/IpsumCapra/project-3-4 | closed | US-B2 - As a user, I want to be able to quickly choose the amount I want to withdraw. | 3 ATM High priority! MUST UI User Story |

<h1>Acceptance Criteria</h1>

<ul>

<li>UI software is present, and running on the Raspberry PI.</li>

<li>The Raspberry PI and monitor can communicate correctly.</li>

<li>ATM is functional.</li>

<li>User can interface with ATM terminal.</li>

<li>ATM keypad is communicating with other hardware correc... | 1.0 | US-B2 - As a user, I want to be able to quickly choose the amount I want to withdraw. -

<h1>Acceptance Criteria</h1>

<ul>

<li>UI software is present, and running on the Raspberry PI.</li>

<li>The Raspberry PI and monitor can communicate correctly.</li>

<li>ATM is functional.</li>

<li>User can interfac... | non_test | us as a user i want to be able to quickly choose the amount i want to withdraw acceptance criteria ui software is present and running on the raspberry pi the raspberry pi and monitor can communicate correctly atm is functional user can interface with atm terminal atm keyp... | 0 |

87,937 | 8,127,236,613 | IssuesEvent | 2018-08-17 07:15:36 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | cli: Example_csv_tsv_quoting failed under stress | C-test-failure O-robot X-duplicate | SHA: https://github.com/cockroachdb/cockroach/commits/eccb4a127dd519375d87d5ffd9f6394c37c3a427

Parameters:

```

TAGS=

GOFLAGS=

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=842992&tab=buildLog

```

W180817 05:15:21.657719 1 server/status/runtime.go:294 [n?] Could not parse build timestamp: p... | 1.0 | cli: Example_csv_tsv_quoting failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/eccb4a127dd519375d87d5ffd9f6394c37c3a427

Parameters:

```

TAGS=

GOFLAGS=

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=842992&tab=buildLog

```

W180817 05:15:21.657719 1 server/status/runt... | test | cli example csv tsv quoting failed under stress sha parameters tags goflags failed test server status runtime go could not parse build timestamp parsing time as cannot parse as server server go monitoring forward clock jumps based o... | 1 |

103,855 | 16,610,450,370 | IssuesEvent | 2021-06-02 10:48:58 | Thanraj/OpenSSL_ | https://api.github.com/repos/Thanraj/OpenSSL_ | opened | CVE-2015-1787 (Low) detected in opensslOpenSSL_1_0_2 | security vulnerability | ## CVE-2015-1787 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opensslOpenSSL_1_0_2</b></p></summary>

<p>

<p>TLS/SSL and crypto library</p>

<p>Library home page: <a href=https://githu... | True | CVE-2015-1787 (Low) detected in opensslOpenSSL_1_0_2 - ## CVE-2015-1787 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>opensslOpenSSL_1_0_2</b></p></summary>

<p>

<p>TLS/SSL and crypto ... | non_test | cve low detected in opensslopenssl cve low severity vulnerability vulnerable library opensslopenssl tls ssl and crypto library library home page a href found in head commit a href found in base branch master vulnerable source files o... | 0 |

69,517 | 14,992,608,921 | IssuesEvent | 2021-01-29 10:06:27 | KorAP/Kalamar | https://api.github.com/repos/KorAP/Kalamar | closed | Establish Content Security Policy | security | Before integrating the widget plugin mechanism in Kalamar, the server should establish strict rules to limit security risks. Currently there are some violations of basic JS and CSS inline rules, that could easily be fixed. | True | Establish Content Security Policy - Before integrating the widget plugin mechanism in Kalamar, the server should establish strict rules to limit security risks. Currently there are some violations of basic JS and CSS inline rules, that could easily be fixed. | non_test | establish content security policy before integrating the widget plugin mechanism in kalamar the server should establish strict rules to limit security risks currently there are some violations of basic js and css inline rules that could easily be fixed | 0 |

241,367 | 20,118,266,167 | IssuesEvent | 2022-02-07 22:06:02 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | closed | JDK11 MacOS jdk_imageio_0_FAILED - AWTError: WindowServer is not available & others | comp:vm test failure os:macos | Failure link

------------

From an internal build `Test_openjdk11_j9_extended.openjdk_x86-64_mac/1`

```

05:23:03 openjdk version "11.0.11" 2021-04-20

05:23:03 OpenJDK Runtime Environment AdoptOpenJDK (build 11.0.11+4)

05:23:03 Eclipse OpenJ9 VM AdoptOpenJDK (build master-f021812fb, JRE 11 Mac OS X amd64-64-Bi... | 1.0 | JDK11 MacOS jdk_imageio_0_FAILED - AWTError: WindowServer is not available & others - Failure link

------------

From an internal build `Test_openjdk11_j9_extended.openjdk_x86-64_mac/1`

```

05:23:03 openjdk version "11.0.11" 2021-04-20

05:23:03 OpenJDK Runtime Environment AdoptOpenJDK (build 11.0.11+4)

05:23:... | test | macos jdk imageio failed awterror windowserver is not available others failure link from an internal build test extended openjdk mac openjdk version openjdk runtime environment adoptopenjdk build eclipse vm adoptopenjdk bui... | 1 |

145,288 | 11,683,459,254 | IssuesEvent | 2020-03-05 03:27:35 | creativecommons/cc-chooser | https://api.github.com/repos/creativecommons/cc-chooser | opened | Add unit and e2e tests for the LicenseCopy component | good first issue help wanted test-coverage | Unit and e2e tests need to be written for the LicenseCopy component. Unit tests are done with [Jest](https://jestjs.io/), and e2e tests are done with [nightwatch](https://nightwatchjs.org/).

Please remember to test the following things:

- That individual parts of the component are present when appropriate. (unit an... | 1.0 | Add unit and e2e tests for the LicenseCopy component - Unit and e2e tests need to be written for the LicenseCopy component. Unit tests are done with [Jest](https://jestjs.io/), and e2e tests are done with [nightwatch](https://nightwatchjs.org/).

Please remember to test the following things:

- That individual parts ... | test | add unit and tests for the licensecopy component unit and tests need to be written for the licensecopy component unit tests are done with and tests are done with please remember to test the following things that individual parts of the component are present when appropriate unit and that... | 1 |

75,471 | 9,855,940,053 | IssuesEvent | 2019-06-19 20:42:24 | ofpinewood/http-exceptions | https://api.github.com/repos/ofpinewood/http-exceptions | closed | Update documentation and sample project | documentation | Update the documentation for the projects and add more sample code to the sample project. | 1.0 | Update documentation and sample project - Update the documentation for the projects and add more sample code to the sample project. | non_test | update documentation and sample project update the documentation for the projects and add more sample code to the sample project | 0 |

349,467 | 31,806,832,428 | IssuesEvent | 2023-09-13 14:24:43 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: cdc/pubsub-sink failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-cdc | roachtest.cdc/pubsub-sink [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11734739?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11734739?buildTab=artifacts#/cdc/pubsub-sink) on ma... | 2.0 | roachtest: cdc/pubsub-sink failed - roachtest.cdc/pubsub-sink [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11734739?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11734739?buildT... | test | roachtest cdc pubsub sink failed roachtest cdc pubsub sink with on master latency verifier go assertvalid latency never dropped to acceptable steady level monitor go wait monitor failure monitor user task failed t fatal was called test artifacts and logs in artifacts cdc pubsub... | 1 |

292,469 | 25,216,141,519 | IssuesEvent | 2022-11-14 09:18:07 | Joystream/pioneer | https://api.github.com/repos/Joystream/pioneer | closed | Set Working Group Lead Reward | enhancement scope:proposals qa-task qa-tests-failed | [ ] Set Working Group Lead Reward

- Same as when updating reward of a worker in group with given inputs, except signer check | 1.0 | Set Working Group Lead Reward - [ ] Set Working Group Lead Reward

- Same as when updating reward of a worker in group with given inputs, except signer check | test | set working group lead reward set working group lead reward same as when updating reward of a worker in group with given inputs except signer check | 1 |

568,767 | 16,988,328,393 | IssuesEvent | 2021-06-30 16:55:03 | npm/cli | https://api.github.com/repos/npm/cli | closed | [BUG] progress=false is ignored on npm 7 | Bug Priority 2 Release 7.x | <!--

Note: Please search to see if an issue already exists for your problem: https://github.com/npm/cli/issues

-->

### Current Behavior:

Have an .npmrc with progress=false for aesthetic reasons.

On npm6 that disables the progress bar, on npm7 it does not although the docs say it still should.

This is my full... | 1.0 | [BUG] progress=false is ignored on npm 7 - <!--

Note: Please search to see if an issue already exists for your problem: https://github.com/npm/cli/issues

-->

### Current Behavior:

Have an .npmrc with progress=false for aesthetic reasons.

On npm6 that disables the progress bar, on npm7 it does not although the do... | non_test | progress false is ignored on npm note please search to see if an issue already exists for your problem current behavior have an npmrc with progress false for aesthetic reasons on that disables the progress bar on it does not although the docs say it still should this is my ful... | 0 |

321,146 | 27,508,935,704 | IssuesEvent | 2023-03-06 07:07:08 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | ccl/serverccl: TestServerControllerHTTP failed | C-test-failure O-robot branch-master | ccl/serverccl.TestServerControllerHTTP [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8933299?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8933299?buildTab=artifacts#/) on master @ [14b43be03c1c246765be17... | 1.0 | ccl/serverccl: TestServerControllerHTTP failed - ccl/serverccl.TestServerControllerHTTP [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8933299?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/8933299?buildTab... | test | ccl serverccl testservercontrollerhttp failed ccl serverccl testservercontrollerhttp with on master run testservercontrollerhttp test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline server controller test ... | 1 |

105,759 | 9,100,592,774 | IssuesEvent | 2019-02-20 08:58:48 | intermine/intermine | https://api.github.com/repos/intermine/intermine | closed | OMIM - failed on bad line | data in-progress please-test | ```

Caused by: java.lang.RuntimeException: bad line: '[Beta-glycopyranoside tasting], 617956 (3) {Alcohol dependence, susceptibility to}, 103780 (3) TAS2R16, T2R16, BGLPT 604867 7q31.32'

at org.intermine.bio.dataconversion.OmimConverter.processMorbidMapFile(OmimConverter.java:168)

at org.i... | 1.0 | OMIM - failed on bad line - ```

Caused by: java.lang.RuntimeException: bad line: '[Beta-glycopyranoside tasting], 617956 (3) {Alcohol dependence, susceptibility to}, 103780 (3) TAS2R16, T2R16, BGLPT 604867 7q31.32'

at org.intermine.bio.dataconversion.OmimConverter.processMorbidMapFile(OmimConverter... | test | omim failed on bad line caused by java lang runtimeexception bad line alcohol dependence susceptibility to bglpt at org intermine bio dataconversion omimconverter processmorbidmapfile omimconverter java at org intermine bio dataconversion omim... | 1 |

93,021 | 8,391,895,924 | IssuesEvent | 2018-10-09 16:05:18 | kabirbaidhya/boss | https://api.github.com/repos/kabirbaidhya/boss | closed | Write tests for boss api and utils | good first issue hacktoberfest help wanted test | Write tests for:

* Modules and functions under `boss.api`

* Code under `boss.util`

* Any other code that requires tests | 1.0 | Write tests for boss api and utils - Write tests for:

* Modules and functions under `boss.api`

* Code under `boss.util`

* Any other code that requires tests | test | write tests for boss api and utils write tests for modules and functions under boss api code under boss util any other code that requires tests | 1 |

487,676 | 14,050,375,076 | IssuesEvent | 2020-11-02 11:39:48 | drashland/dmm | https://api.github.com/repos/drashland/dmm | opened | Support https://raw.githubusercontent.com | Priority: Low Type: Enhancement | ## Summary

What:

Alongside supporting deno.land and x.nest.land, add support for https://raw.githubusercontent

Why:

Mainly, so we can use dmm to update dependencies pulled from the services repo, but it does have it's own good use

## Acceptance Criteria

Below is a list of tasks that must be completed ... | 1.0 | Support https://raw.githubusercontent.com - ## Summary

What:

Alongside supporting deno.land and x.nest.land, add support for https://raw.githubusercontent

Why:

Mainly, so we can use dmm to update dependencies pulled from the services repo, but it does have it's own good use

## Acceptance Criteria

Belo... | non_test | support summary what alongside supporting deno land and x nest land add support for why mainly so we can use dmm to update dependencies pulled from the services repo but it does have it s own good use acceptance criteria below is a list of tasks that must be completed before this issu... | 0 |

147,712 | 23,260,569,060 | IssuesEvent | 2022-08-04 13:11:33 | excalidraw/excalidraw | https://api.github.com/repos/excalidraw/excalidraw | closed | Flip horizontal does not work on linear elements since the redesign (#5501). Flip vertical works. | bug arrow-redesign | ERROR: type should be string, got "\r\nhttps://user-images.githubusercontent.com/14358394/182805730-1ad74bca-da46-4383-b03b-6d89f2a68ae8.mp4\r\n\r\n" | 1.0 | Flip horizontal does not work on linear elements since the redesign (#5501). Flip vertical works. -

https://user-images.githubusercontent.com/14358394/182805730-1ad74bca-da46-4383-b03b-6d89f2a68ae8.mp4

| non_test | flip horizontal does not work on linear elements since the redesign flip vertical works | 0 |

293,471 | 22,059,411,895 | IssuesEvent | 2022-05-30 15:49:52 | ms-club-sliit/ms-meeting-manager | https://api.github.com/repos/ms-club-sliit/ms-meeting-manager | opened | README for the repository | documentation help wanted | Need to create a Readme file for this project. The readme file should contains the following information.

* Technologies that used in the project

* How to run the project

* How to contribute the project

* Recent contributors

* CI/ CD pipeline status | 1.0 | README for the repository - Need to create a Readme file for this project. The readme file should contains the following information.

* Technologies that used in the project

* How to run the project

* How to contribute the project

* Recent contributors

* CI/ CD pipeline status | non_test | readme for the repository need to create a readme file for this project the readme file should contains the following information technologies that used in the project how to run the project how to contribute the project recent contributors ci cd pipeline status | 0 |

120,167 | 12,060,612,333 | IssuesEvent | 2020-04-15 21:34:48 | deathlyrage/pot-demo-bugs | https://api.github.com/repos/deathlyrage/pot-demo-bugs | closed | Camera spawns in Trees (stuck) and Stuck Daspleto cannot move | documentation needs testing | Spawned in to try AI mode and find my camera unable to move after having been spawned inside the crown of a tree

as well as daspletosaurus being stuck between a rock and a tree the moment it spawned in

... | 1.0 | Camera spawns in Trees (stuck) and Stuck Daspleto cannot move - Spawned in to try AI mode and find my camera unable to move after having been spawned inside the crown of a tree

as well as daspletosaurus ... | non_test | camera spawns in trees stuck and stuck daspleto cannot move spawned in to try ai mode and find my camera unable to move after having been spawned inside the crown of a tree as well as daspletosaurus being stuck between a rock and a tree the moment it spawned in | 0 |

811,365 | 30,285,275,602 | IssuesEvent | 2023-07-08 15:43:33 | SuffolkLITLab/ALKiln | https://api.github.com/repos/SuffolkLITLab/ALKiln | closed | Restore multi-language tests set by env vars | priority | As per user request, restore running language tests and write internal tests for such (though I'm not sure how we check that the right tests have been run). These should primarily be triggered manually through the workflow dispatch, though allowing an env var for them might still be useful to carry over from previous b... | 1.0 | Restore multi-language tests set by env vars - As per user request, restore running language tests and write internal tests for such (though I'm not sure how we check that the right tests have been run). These should primarily be triggered manually through the workflow dispatch, though allowing an env var for them migh... | non_test | restore multi language tests set by env vars as per user request restore running language tests and write internal tests for such though i m not sure how we check that the right tests have been run these should primarily be triggered manually through the workflow dispatch though allowing an env var for them migh... | 0 |

821,730 | 30,833,468,475 | IssuesEvent | 2023-08-02 05:02:22 | GSM-MSG/SMS-Android | https://api.github.com/repos/GSM-MSG/SMS-Android | opened | Show the snack bar when a screen capture is detected. | 0️⃣ Priority: Critical ✨ Feature | ### Describe

화면 캡쳐가 감지되었을 때 스낵바를 표시합니다.

### Additional

_No response_ | 1.0 | Show the snack bar when a screen capture is detected. - ### Describe

화면 캡쳐가 감지되었을 때 스낵바를 표시합니다.

### Additional

_No response_ | non_test | show the snack bar when a screen capture is detected describe 화면 캡쳐가 감지되었을 때 스낵바를 표시합니다 additional no response | 0 |

610,596 | 18,911,904,600 | IssuesEvent | 2021-11-16 14:53:38 | googleapis/google-api-dotnet-client | https://api.github.com/repos/googleapis/google-api-dotnet-client | closed | How to Create Custom Dimension and Custom metrics in google analytics | type: question priority: p2 api: analytics | We have tried below steps to create Custom Dimension and Custom metrics from code side.

1) We have installed Nuget package "Install-Package Google.Apis.Analytics.v3 -Version 1.55.0.1679" into solution and Enabled Google Analytics API's in "Google Analytics Account".

2) We have created service account for google a... | 1.0 | How to Create Custom Dimension and Custom metrics in google analytics - We have tried below steps to create Custom Dimension and Custom metrics from code side.

1) We have installed Nuget package "Install-Package Google.Apis.Analytics.v3 -Version 1.55.0.1679" into solution and Enabled Google Analytics API's in "Googl... | non_test | how to create custom dimension and custom metrics in google analytics we have tried below steps to create custom dimension and custom metrics from code side we have installed nuget package install package google apis analytics version into solution and enabled google analytics api s in google ana... | 0 |

65,592 | 12,625,364,989 | IssuesEvent | 2020-06-14 11:32:14 | intellij-rust/intellij-rust | https://api.github.com/repos/intellij-rust/intellij-rust | opened | No E0368/E0369 for binary operations | feature subsystem::code insight | <!--

Hello and thank you for the issue!

If you would like to report a bug, we have added some points below that you can fill out.

Feel free to remove all the irrelevant text to request a new feature.

-->

## Environment

* **IntelliJ Rust plugin version:** 0.2.125.3158-202-nightly

* **Rust toolchain version:**... | 1.0 | No E0368/E0369 for binary operations - <!--

Hello and thank you for the issue!

If you would like to report a bug, we have added some points below that you can fill out.

Feel free to remove all the irrelevant text to request a new feature.

-->

## Environment

* **IntelliJ Rust plugin version:** 0.2.125.3158-202... | non_test | no for binary operations hello and thank you for the issue if you would like to report a bug we have added some points below that you can fill out feel free to remove all the irrelevant text to request a new feature environment intellij rust plugin version nightly r... | 0 |

20,861 | 6,114,254,682 | IssuesEvent | 2017-06-22 00:24:09 | ganeti/ganeti | https://api.github.com/repos/ganeti/ganeti | closed | burnin instructions & possible bug | imported_from_google_code Status:Invalid | Originally reported of Google Code with ID 106.

```

What software version are you running? Please provide the output of "gnt-

cluster --version" and "gnt-cluster version".

<b>What distribution are you using?</b>

gnt-cluster (ganeti) 2.1.1

software version 2.1.1

internode protocol: 30

configuration format: 20100... | 1.0 | burnin instructions & possible bug - Originally reported of Google Code with ID 106.

```

What software version are you running? Please provide the output of "gnt-

cluster --version" and "gnt-cluster version".

<b>What distribution are you using?</b>

gnt-cluster (ganeti) 2.1.1

software version 2.1.1

internode prot... | non_test | burnin instructions possible bug originally reported of google code with id what software version are you running please provide the output of gnt cluster version and gnt cluster version what distribution are you using gnt cluster ganeti software version internode protocol ... | 0 |

87,624 | 25,165,008,263 | IssuesEvent | 2022-11-10 20:00:17 | libjxl/libjxl | https://api.github.com/repos/libjxl/libjxl | closed | StoreInterleaved: 2 3 4 | building/portability unrelated to 1.0 highway | **Describe the bug**

in order to compile `main` branch I had to comment out all lines with `StoreInterleaved2` `StoreInterleaved3` `StoreInterleaved4`

(in `dec_group_jpeg.cc` and `stage_write.cc`)

**To Reproduce**

try to compile `main` branch

**Expected behavior**

`main` branch compiles successfully

**Envi... | 1.0 | StoreInterleaved: 2 3 4 - **Describe the bug**

in order to compile `main` branch I had to comment out all lines with `StoreInterleaved2` `StoreInterleaved3` `StoreInterleaved4`

(in `dec_group_jpeg.cc` and `stage_write.cc`)

**To Reproduce**

try to compile `main` branch

**Expected behavior**

`main` branch compi... | non_test | storeinterleaved describe the bug in order to compile main branch i had to comment out all lines with in dec group jpeg cc and stage write cc to reproduce try to compile main branch expected behavior main branch compiles successfully environment os gent... | 0 |

41,907 | 2,869,088,009 | IssuesEvent | 2015-06-05 23:14:12 | dart-lang/polymer-dart | https://api.github.com/repos/dart-lang/polymer-dart | opened | Provide a way to easily trace async stack traces | bug Priority-Medium | <a href="https://github.com/sigmundch"><img src="https://avatars.githubusercontent.com/u/2049220?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sigmundch](https://github.com/sigmundch)**

_Originally opened as dart-lang/sdk#20322_

----

maybe a toggle UI like the one we have for logger? | 1.0 | Provide a way to easily trace async stack traces - <a href="https://github.com/sigmundch"><img src="https://avatars.githubusercontent.com/u/2049220?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [sigmundch](https://github.com/sigmundch)**

_Originally opened as dart-lang/sdk#20322_

----

mayb... | non_test | provide a way to easily trace async stack traces issue by originally opened as dart lang sdk maybe a toggle ui like the one we have for logger | 0 |

323,802 | 27,753,378,396 | IssuesEvent | 2023-03-15 23:04:15 | sanktjodel/cctest1 | https://api.github.com/repos/sanktjodel/cctest1 | opened | Fix "method_complexity" issue in Signing.java | test1 test2'"><h1>tt | Method `signRequest` has a Cognitive Complexity of 34 (exceeds 5 allowed). Consider refactoring.

https://codeclimate.com/github/sanktjodel/cctest1/Signing.java#issue_64124ec1a9c49c0001000012 | 2.0 | Fix "method_complexity" issue in Signing.java - Method `signRequest` has a Cognitive Complexity of 34 (exceeds 5 allowed). Consider refactoring.

https://codeclimate.com/github/sanktjodel/cctest1/Signing.java#issue_64124ec1a9c49c0001000012 | test | fix method complexity issue in signing java method signrequest has a cognitive complexity of exceeds allowed consider refactoring | 1 |

266,972 | 8,377,573,801 | IssuesEvent | 2018-10-06 02:53:54 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Horti crashes on subsequent upgrade | Priority: 1 - High Status: 5 - Ready Type: Bug horticulturalist | Each time an app (api or sentinel) is updated, horti creates a symlink to the previous running version (called `old`).

When doing multiple upgrades, this `old` symlink is not removed prior to attempting to write it again resulting in the following fatal error (which crashes horti):

```

horti:debug Primary ddoc w... | 1.0 | Horti crashes on subsequent upgrade - Each time an app (api or sentinel) is updated, horti creates a symlink to the previous running version (called `old`).

When doing multiple upgrades, this `old` symlink is not removed prior to attempting to write it again resulting in the following fatal error (which crashes horti)... | non_test | horti crashes on subsequent upgrade each time an app api or sentinel is updated horti creates a symlink to the previous running version called old when doing multiple upgrades this old symlink is not removed prior to attempting to write it again resulting in the following fatal error which crashes horti ... | 0 |

158,570 | 6,031,907,622 | IssuesEvent | 2017-06-09 01:09:57 | chartjs/Chart.js | https://api.github.com/repos/chartjs/Chart.js | closed | First and last bars display problem | Category: Bug Help wanted Inactive: duplicate Priority: p1 Time Scale | I have the following issue:

The first bar is not fully displayed and the last one is hidden at all.

Any solution to this? | 1.0 | First and last bars display problem - I have the following issue:

The first bar is not fully displayed and the last one is hidden at all.

Any solution to this? | non_test | first and last bars display problem i have the following issue the first bar is not fully displayed and the last one is hidden at all any solution to this | 0 |

488,037 | 14,073,876,166 | IssuesEvent | 2020-11-04 06:05:17 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.rawstory.com - video or audio doesn't play | browser-fenix engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61027 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.rawstory... | 1.0 | www.rawstory.com - video or audio doesn't play - <!-- @browser: Firefox Mobile 83.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:83.0) Gecko/83.0 Firefox/83.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61027 -->