Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

350,688 | 31,931,944,540 | IssuesEvent | 2023-09-19 08:02:28 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix math.test_tensorflow_reduce_logsumexp | TensorFlow Frontend Sub Task Failing Test | | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6194295732/job/16817071183"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6194295732/job/16817071183"><img src=https://img.shields.io/badge/-success-success></a>

|t... | 1.0 | Fix math.test_tensorflow_reduce_logsumexp - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6194295732/job/16817071183"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6194295732/job/16817071183"><img src=https://im... | test | fix math test tensorflow reduce logsumexp numpy a href src jax a href src tensorflow a href src torch a href src paddle a href src | 1 |

133,868 | 10,865,389,282 | IssuesEvent | 2019-11-14 18:53:38 | rancher/rke | https://api.github.com/repos/rancher/rke | closed | full-cluster-state configmap is not updated on certificate rotation | [zube]: To Test kind/bug team/ca | **RKE version:**

v0.3.2

**Steps to Reproduce:**

Create a cluster with 3 nodes each with all roles, run `rke up`, and run `rke cert rotate`.

**Results:**

The cluster state which is stored in the local `cluster.rkestate` file and the one stored in the configmap `full-cluster-state` are not identical.

Check ... | 1.0 | full-cluster-state configmap is not updated on certificate rotation - **RKE version:**

v0.3.2

**Steps to Reproduce:**

Create a cluster with 3 nodes each with all roles, run `rke up`, and run `rke cert rotate`.

**Results:**

The cluster state which is stored in the local `cluster.rkestate` file and the one sto... | test | full cluster state configmap is not updated on certificate rotation rke version steps to reproduce create a cluster with nodes each with all roles run rke up and run rke cert rotate results the cluster state which is stored in the local cluster rkestate file and the one stor... | 1 |

85,990 | 8,015,708,744 | IssuesEvent | 2018-07-25 10:55:23 | telstra/open-kilda | https://api.github.com/repos/telstra/open-kilda | opened | [atdd-staging] Find a way to implement 'fail-fast' behavior for atdd-staging scenarios | area/testing | We need to implement 'fail-fast' behavior (tests execution stops after the first test failure) in the atdd-staging module because in most cases when one test failed, something is wrong with the test environment and there is no sense to run other scenarios because of a low percentage of their success. | 1.0 | [atdd-staging] Find a way to implement 'fail-fast' behavior for atdd-staging scenarios - We need to implement 'fail-fast' behavior (tests execution stops after the first test failure) in the atdd-staging module because in most cases when one test failed, something is wrong with the test environment and there is no sen... | test | find a way to implement fail fast behavior for atdd staging scenarios we need to implement fail fast behavior tests execution stops after the first test failure in the atdd staging module because in most cases when one test failed something is wrong with the test environment and there is no sense to run oth... | 1 |

266,989 | 23,271,758,416 | IssuesEvent | 2022-08-05 00:25:48 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | DNS Configmap tests sometimes panics in ipv6 envs | sig/network kind/flake sig/testing needs-triage | ### Which jobs are flaking?

We've seen this line of [test/e2e/network/dns_configmap.go:301](https://github.com/kubernetes/kubernetes/blob/master/test/e2e/network/dns_configmap.go#L301) panic from time to time. It is caused by an index out of bounds. The array length check on that line seems to be incorrect.

We see ... | 1.0 | DNS Configmap tests sometimes panics in ipv6 envs - ### Which jobs are flaking?

We've seen this line of [test/e2e/network/dns_configmap.go:301](https://github.com/kubernetes/kubernetes/blob/master/test/e2e/network/dns_configmap.go#L301) panic from time to time. It is caused by an index out of bounds. The array length ... | test | dns configmap tests sometimes panics in envs which jobs are flaking we ve seen this line of panic from time to time it is caused by an index out of bounds the array length check on that line seems to be incorrect we see this flakiness when running in or dualstack primary environments we do n... | 1 |

111,939 | 14,173,251,690 | IssuesEvent | 2020-11-12 18:04:02 | flutter/flutter | https://api.github.com/repos/flutter/flutter | reopened | ModalBottomSheet with isScrollControlled doesn't respect SafeArea | a: layout f: material design found in release: 1.20 framework has reproducible steps | ## Steps to Reproduce

1. Create a modal bottom sheet with Scaffold as its child

2. Wrap the Scaffold into a SafeArea widget

3. Add a Text widget in Scaffold's body

4. Run the application on a device with notch

5. Open the modal bottom sheet and notice that the text is under the status bar

## Code example

```... | 1.0 | ModalBottomSheet with isScrollControlled doesn't respect SafeArea - ## Steps to Reproduce

1. Create a modal bottom sheet with Scaffold as its child

2. Wrap the Scaffold into a SafeArea widget

3. Add a Text widget in Scaffold's body

4. Run the application on a device with notch

5. Open the modal bottom sheet and ... | non_test | modalbottomsheet with isscrollcontrolled doesn t respect safearea steps to reproduce create a modal bottom sheet with scaffold as its child wrap the scaffold into a safearea widget add a text widget in scaffold s body run the application on a device with notch open the modal bottom sheet and ... | 0 |

163,047 | 12,702,228,873 | IssuesEvent | 2020-06-22 19:45:59 | nicolargo/glances | https://api.github.com/repos/nicolargo/glances | closed | Bug: [fs] plugin needs to reflect user disk space usage | needs test | #### Bug description

[python-pystache](https://aur.archlinux.org/packages/python-pystache/) is required for the mustache templating.

When I start glances with 2 mins refresh time, I want to execute an action that alerts me when my disk usage is above 90%.

What I see is if I use the "%" character, everything breaks... | 1.0 | Bug: [fs] plugin needs to reflect user disk space usage - #### Bug description

[python-pystache](https://aur.archlinux.org/packages/python-pystache/) is required for the mustache templating.

When I start glances with 2 mins refresh time, I want to execute an action that alerts me when my disk usage is above 90%.

W... | test | bug plugin needs to reflect user disk space usage bug description is required for the mustache templating when i start glances with mins refresh time i want to execute an action that alerts me when my disk usage is above what i see is if i use the character everything breaks with error ... | 1 |

343,589 | 24,775,544,886 | IssuesEvent | 2022-10-23 17:34:18 | Azure/NoOpsAccelerator | https://api.github.com/repos/Azure/NoOpsAccelerator | closed | Walkthrough for AKS Workload doesn't work | bug documentation good first issue | **Describe the bug**

Following the walkthrough below doesn't result in a successful deployment as there are prerequisites that aren't called out in the README.md like the need for an AKS Service Principal.

**To Reproduce**

Follow the steps in [Deploy the Workload](https://github.com/Azure/NoOpsAccelerator/tree/mai... | 1.0 | Walkthrough for AKS Workload doesn't work - **Describe the bug**

Following the walkthrough below doesn't result in a successful deployment as there are prerequisites that aren't called out in the README.md like the need for an AKS Service Principal.

**To Reproduce**

Follow the steps in [Deploy the Workload](https:... | non_test | walkthrough for aks workload doesn t work describe the bug following the walkthrough below doesn t result in a successful deployment as there are prerequisites that aren t called out in the readme md like the need for an aks service principal to reproduce follow the steps in and watch for the red st... | 0 |

220,347 | 17,190,015,686 | IssuesEvent | 2021-07-16 09:34:34 | Vividh25/Sign-Up-Flow | https://api.github.com/repos/Vividh25/Sign-Up-Flow | closed | Sign Up Page - Submit Button - Tests | Testing | - [x] Test to check whether the button gets disabled if the user has not filled the fields properly.

- [x] Test to check if the button is disabled for 2-3 seconds after pressing.

- [x] Test to check if the button leads to the OTP page. | 1.0 | Sign Up Page - Submit Button - Tests - - [x] Test to check whether the button gets disabled if the user has not filled the fields properly.

- [x] Test to check if the button is disabled for 2-3 seconds after pressing.

- [x] Test to check if the button leads to the OTP page. | test | sign up page submit button tests test to check whether the button gets disabled if the user has not filled the fields properly test to check if the button is disabled for seconds after pressing test to check if the button leads to the otp page | 1 |

111,428 | 11,732,360,311 | IssuesEvent | 2020-03-11 03:23:38 | UBC-MDS/pypuck | https://api.github.com/repos/UBC-MDS/pypuck | closed | Adhere to PEP-8 Styleguide | documentation | Ensure all `.py` files adhere to the PEP-8 style guide using the Flake8 package. | 1.0 | Adhere to PEP-8 Styleguide - Ensure all `.py` files adhere to the PEP-8 style guide using the Flake8 package. | non_test | adhere to pep styleguide ensure all py files adhere to the pep style guide using the package | 0 |

223,291 | 17,110,212,812 | IssuesEvent | 2021-07-10 05:57:49 | svelte-jp/svelte-site-jp | https://api.github.com/repos/svelte-jp/svelte-site-jp | closed | [Docs]04-compile-time varsReport の翻訳 | documentation translation | ## 対象ドキュメント

- 対象ファイル

- [content/docs/ja/04-compile-time.ja.md の追加部分](https://github.com/svelte-jp/svelte-site-jp/pull/460/files#diff-e3093898533feb897c17352992e386e61d5c7c18fd7a53376e1562aff1ee4f05)

- ※翻訳は70行目のみ

## 翻訳したい/してほしい

- 翻訳してほしい

## 備考

特になし | 1.0 | [Docs]04-compile-time varsReport の翻訳 - ## 対象ドキュメント

- 対象ファイル

- [content/docs/ja/04-compile-time.ja.md の追加部分](https://github.com/svelte-jp/svelte-site-jp/pull/460/files#diff-e3093898533feb897c17352992e386e61d5c7c18fd7a53376e1562aff1ee4f05)

- ※翻訳は70行目のみ

## 翻訳したい/してほしい

- 翻訳してほしい

## 備考

特になし | non_test | compile time varsreport の翻訳 対象ドキュメント 対象ファイル ※ 翻訳したい してほしい 翻訳してほしい 備考 特になし | 0 |

119,716 | 10,062,046,453 | IssuesEvent | 2019-07-22 23:25:25 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Golems can put the bloodchiller on their belt slot and can't get it out | Bug Tested/Reproduced | ## Reproduction:

Be a golem, put the bloodchiller on your belt slot, never be able to get it back.

(The bloodchiller is that one xenobio crossbreed)

| 1.0 | Golems can put the bloodchiller on their belt slot and can't get it out - ## Reproduction:

Be a golem, put the bloodchiller on your belt slot, never be able to get it back.

(The bloodchiller is that one xenobio crossbreed)

| test | golems can put the bloodchiller on their belt slot and can t get it out reproduction be a golem put the bloodchiller on your belt slot never be able to get it back the bloodchiller is that one xenobio crossbreed | 1 |

9,185 | 8,554,065,337 | IssuesEvent | 2018-11-08 04:05:08 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Number in strictFilter Value results in "no good match" | cognitive-services/svc cxp product-question triaged | we sync the qna maker with an internal system. so we added a metadata syncitemid with a number as value, e.g. "name":"syncitemid", "value":"123"

after we added this metadata to all our questions in the qnamaker, we were getting always "no good match" result from the qna REST service - with and without using a strictFi... | 1.0 | Number in strictFilter Value results in "no good match" - we sync the qna maker with an internal system. so we added a metadata syncitemid with a number as value, e.g. "name":"syncitemid", "value":"123"

after we added this metadata to all our questions in the qnamaker, we were getting always "no good match" result fro... | non_test | number in strictfilter value results in no good match we sync the qna maker with an internal system so we added a metadata syncitemid with a number as value e g name syncitemid value after we added this metadata to all our questions in the qnamaker we were getting always no good match result from ... | 0 |

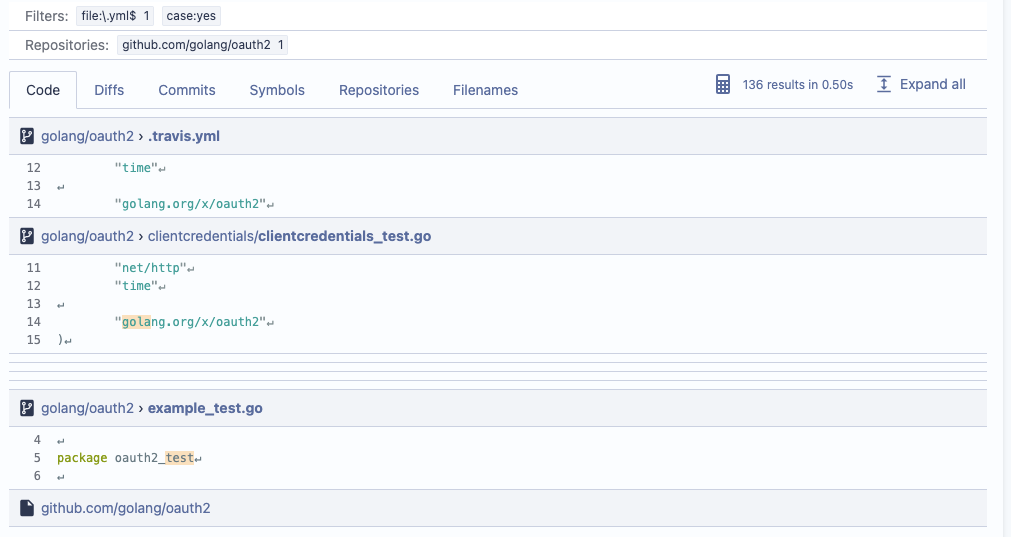

184,979 | 14,291,794,689 | IssuesEvent | 2020-11-23 23:28:04 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | Storybook Tests: only the first code excerpt is loaded in search results | bug team/search testing | In storybook tests which show search results (both for GraphQL and streaming search), only the first code excerpt is loading:

After debugging, I found out that the code excerpt thinks it's not visible. Th... | 1.0 | Storybook Tests: only the first code excerpt is loaded in search results - In storybook tests which show search results (both for GraphQL and streaming search), only the first code excerpt is loading:

Aft... | test | storybook tests only the first code excerpt is loaded in search results in storybook tests which show search results both for graphql and streaming search only the first code excerpt is loading after debugging i found out that the code excerpt thinks it s not visible the library we are using to dete... | 1 |

49,057 | 13,438,311,579 | IssuesEvent | 2020-09-07 17:45:49 | AOSC-Dev/aosc-os-abbs | https://api.github.com/repos/AOSC-Dev/aosc-os-abbs | closed | sane-backends: security update to 1.0.30 | security to-stable upgrade | <!-- Please remove items do not apply. -->

**CVE IDs:** CVE-2020-12861, CVE-2020-12865

**Other security advisory IDs:** RHSA-2020:2902-01

**Description:**

This release fixes several security related issues and a build issue.

### Backends

- `epson2`: fixes CVE-2020-12867 (GHSL-2020-075) and several memor... | True | sane-backends: security update to 1.0.30 - <!-- Please remove items do not apply. -->

**CVE IDs:** CVE-2020-12861, CVE-2020-12865

**Other security advisory IDs:** RHSA-2020:2902-01

**Description:**

This release fixes several security related issues and a build issue.

### Backends

- `epson2`: fixes CVE-2... | non_test | sane backends security update to cve ids cve cve other security advisory ids rhsa description this release fixes several security related issues and a build issue backends fixes cve ghsl and several memory management issues found while ad... | 0 |

222,867 | 17,497,869,716 | IssuesEvent | 2021-08-10 04:47:47 | spacemeshos/go-spacemesh | https://api.github.com/repos/spacemeshos/go-spacemesh | closed | Hare inbound gossip message processing delays | bug Hare Protocol BLOCKER testnet concurrency | ## Description

Nodes are taking as long as a minute to receive inbound Hare gossip messages and report them as valid (after which they're gossiped on to other peers).

I can't tell exactly what's going on under the hood without full debug logs. It could be an issue with the Hare broker priority queue, message valida... | 1.0 | Hare inbound gossip message processing delays - ## Description

Nodes are taking as long as a minute to receive inbound Hare gossip messages and report them as valid (after which they're gossiped on to other peers).

I can't tell exactly what's going on under the hood without full debug logs. It could be an issue wit... | test | hare inbound gossip message processing delays description nodes are taking as long as a minute to receive inbound hare gossip messages and report them as valid after which they re gossiped on to other peers i can t tell exactly what s going on under the hood without full debug logs it could be an issue wit... | 1 |

831,219 | 32,041,546,945 | IssuesEvent | 2023-09-22 19:49:33 | FlutterFlow/flutterflow-issues | https://api.github.com/repos/FlutterFlow/flutterflow-issues | closed | JSON Paths Bugs - Filtering Expressions and paths that select elements from arrays and more | status: confirmed priority: medium | ### Has your issue been reported?

- [X] I have searched the existing issues and confirm it has not been reported.

- [X] I give permission for members of the FlutterFlow team to access and test my project for the sole purpose of investigating this issue.

### Current Behavior

I have 6 new bugs that showed up today and... | 1.0 | JSON Paths Bugs - Filtering Expressions and paths that select elements from arrays and more - ### Has your issue been reported?

- [X] I have searched the existing issues and confirm it has not been reported.

- [X] I give permission for members of the FlutterFlow team to access and test my project for the sole purpose ... | non_test | json paths bugs filtering expressions and paths that select elements from arrays and more has your issue been reported i have searched the existing issues and confirm it has not been reported i give permission for members of the flutterflow team to access and test my project for the sole purpose of i... | 0 |

107,099 | 9,201,888,678 | IssuesEvent | 2019-03-07 20:51:50 | open-apparel-registry/open-apparel-registry | https://api.github.com/repos/open-apparel-registry/open-apparel-registry | closed | Show country name instead of code on the list detail page | tested/verified | ## Overview

We standardize on using the 2-character code internally, but the uploaded lists almost always use the full country name. Show the full country name on the list detail page.

### Describe the solution you'd like

* Replace the "Country Code" column with "Country" | 1.0 | Show country name instead of code on the list detail page - ## Overview

We standardize on using the 2-character code internally, but the uploaded lists almost always use the full country name. Show the full country name on the list detail page.

### Describe the solution you'd like

* Replace the "Country Code" ... | test | show country name instead of code on the list detail page overview we standardize on using the character code internally but the uploaded lists almost always use the full country name show the full country name on the list detail page describe the solution you d like replace the country code ... | 1 |

11,193 | 28,367,208,403 | IssuesEvent | 2023-04-12 14:35:41 | OpenCTI-Platform/connectors | https://api.github.com/repos/OpenCTI-Platform/connectors | closed | Modularization of relation refs | feature solved architecture | ## Information

* Linked to [issue 3012](https://github.com/OpenCTI-Platform/opencti/issues/3012)

## Bug resolution

* handle multiple x-opencti-linked-ref | 1.0 | Modularization of relation refs - ## Information

* Linked to [issue 3012](https://github.com/OpenCTI-Platform/opencti/issues/3012)

## Bug resolution

* handle multiple x-opencti-linked-ref | non_test | modularization of relation refs information linked to bug resolution handle multiple x opencti linked ref | 0 |

416,459 | 28,083,307,005 | IssuesEvent | 2023-03-30 08:06:45 | magang-mknows/cs | https://api.github.com/repos/magang-mknows/cs | closed | Week 1 : Base Component | Card | documentation enhancement | - [ ] Base Style Card

- [ ] Props for Text, Custom Size, Custom Icon or Image

- [ ] Custom Title on Card

- [ ] Custom Description on Card | 1.0 | Week 1 : Base Component | Card - - [ ] Base Style Card

- [ ] Props for Text, Custom Size, Custom Icon or Image

- [ ] Custom Title on Card

- [ ] Custom Description on Card | non_test | week base component card base style card props for text custom size custom icon or image custom title on card custom description on card | 0 |

8,552 | 6,568,937,497 | IssuesEvent | 2017-09-09 00:12:06 | opensim-org/opensim-core | https://api.github.com/repos/opensim-org/opensim-core | opened | Model::scale() calls initSystem() three times, which is expensive | Performance | It does not seem like it should be necessary to destroy and rebuild the underlying computational system 3 times when scaling a Model.

`Model::scale()` calls `SimbodyEngine::scale()` [here](https://github.com/opensim-org/opensim-core/blob/master/OpenSim/Simulation/Model/Model.cpp#L1478); `initSystem()` is called :one... | True | Model::scale() calls initSystem() three times, which is expensive - It does not seem like it should be necessary to destroy and rebuild the underlying computational system 3 times when scaling a Model.

`Model::scale()` calls `SimbodyEngine::scale()` [here](https://github.com/opensim-org/opensim-core/blob/master/Open... | non_test | model scale calls initsystem three times which is expensive it does not seem like it should be necessary to destroy and rebuild the underlying computational system times when scaling a model model scale calls simbodyengine scale initsystem is called one and two on returning ... | 0 |

315,942 | 27,120,697,592 | IssuesEvent | 2023-02-15 22:35:08 | UWB-Biocomputing/Graphitti | https://api.github.com/repos/UWB-Biocomputing/Graphitti | closed | Generated unit test executables are not included in .gitignore | testing serialization | The generated unit test executables for serialization and deserialization are not included in `.gitignore` so `git` identifies them as untracked files. | 1.0 | Generated unit test executables are not included in .gitignore - The generated unit test executables for serialization and deserialization are not included in `.gitignore` so `git` identifies them as untracked files. | test | generated unit test executables are not included in gitignore the generated unit test executables for serialization and deserialization are not included in gitignore so git identifies them as untracked files | 1 |

185,029 | 6,718,398,329 | IssuesEvent | 2017-10-15 12:23:28 | johndeverall/BehaviourCoder | https://api.github.com/repos/johndeverall/BehaviourCoder | closed | A bunch of errors appear in the log when creating and cancelling trials / restarting sessions etc. | Priority: Critical Type: Bug | Here is an example of some. I haven't worked out exact replication steps yet. I think this is likely actually two defects.

```

'Exception in thread "AWT-EventQueue-0" java.lang.NullPointerException

at de.bochumuniruhr.psy.bio.behaviourcoder.Main$5.actionPerformed(Main.java:399)

at javax.swing.AbstractB... | 1.0 | A bunch of errors appear in the log when creating and cancelling trials / restarting sessions etc. - Here is an example of some. I haven't worked out exact replication steps yet. I think this is likely actually two defects.

```

'Exception in thread "AWT-EventQueue-0" java.lang.NullPointerException

at de.bochum... | non_test | a bunch of errors appear in the log when creating and cancelling trials restarting sessions etc here is an example of some i haven t worked out exact replication steps yet i think this is likely actually two defects exception in thread awt eventqueue java lang nullpointerexception at de bochum... | 0 |

14,588 | 25,198,659,451 | IssuesEvent | 2022-11-12 21:13:37 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Failed to pick up right Node.js version in sub folder | type:bug status:requirements priority-5-triage | ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No response_

### If you're self-hosting Renovate, tell us what version of... | 1.0 | Failed to pick up right Node.js version in sub folder - ### How are you running Renovate?

Mend Renovate hosted app on github.com

### If you're self-hosting Renovate, tell us what version of Renovate you run.

_No response_

### If you're self-hosting Renovate, select which platform you are using.

_No response_

### ... | non_test | failed to pick up right node js version in sub folder how are you running renovate mend renovate hosted app on github com if you re self hosting renovate tell us what version of renovate you run no response if you re self hosting renovate select which platform you are using no response ... | 0 |

98,086 | 20,606,533,697 | IssuesEvent | 2022-03-07 01:25:47 | inventree/InvenTree | https://api.github.com/repos/inventree/InvenTree | closed | [BUG] App QR scanner fails with server version 0.6 | bug barcode app | **Describe the bug**

Barcode / QR code scanner in iPhone App does not work. App version 0.5.6 and Inventree version 0.6.1

**Steps to Reproduce**

Steps to reproduce the behavior:

1. Installed a fresh docker version of 0.6.1.

2. Added a part category and a single new part and displayed the QR code for tha... | 1.0 | [BUG] App QR scanner fails with server version 0.6 - **Describe the bug**

Barcode / QR code scanner in iPhone App does not work. App version 0.5.6 and Inventree version 0.6.1

**Steps to Reproduce**

Steps to reproduce the behavior:

1. Installed a fresh docker version of 0.6.1.

2. Added a part category an... | non_test | app qr scanner fails with server version describe the bug barcode qr code scanner in iphone app does not work app version and inventree version steps to reproduce steps to reproduce the behavior installed a fresh docker version of added a part category and a ... | 0 |

41,726 | 5,394,725,052 | IssuesEvent | 2017-02-27 05:05:30 | Microsoft/vsts-tasks | https://api.github.com/repos/Microsoft/vsts-tasks | closed | PublishTestResults .ts version handles wildcards differently than the .ps1 version | Area: Test | I have build definitions for a cross platform project that includes running googletest unit tests in both Linux and windows. I have the junit style xml test results on each of them being generated in the staging directory (e.g. BuildAgent/_work/1/a). On our windows build platform, **/TEST-*.xml works fine, and the te... | 1.0 | PublishTestResults .ts version handles wildcards differently than the .ps1 version - I have build definitions for a cross platform project that includes running googletest unit tests in both Linux and windows. I have the junit style xml test results on each of them being generated in the staging directory (e.g. BuildA... | test | publishtestresults ts version handles wildcards differently than the version i have build definitions for a cross platform project that includes running googletest unit tests in both linux and windows i have the junit style xml test results on each of them being generated in the staging directory e g buildage... | 1 |

704,127 | 24,186,698,283 | IssuesEvent | 2022-09-23 13:52:53 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | bigcountypreps.com - Desktop layout displayed instead of mobile layout | os-ios browser-firefox-ios priority-normal type-trackingprotection severity-critical action-needssitepatch | <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/74605 -->

**URL**: https:... | 1.0 | bigcountypreps.com - Desktop layout displayed instead of mobile layout - <!-- @browser: Firefox iOS 33.1 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU OS 14_4_2 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/33.1 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_ur... | non_test | bigcountypreps com desktop layout displayed instead of mobile layout url browser version firefox ios operating system ios tested another browser yes safari problem type design is broken description items are overlapped steps to reproduce can’t explore for... | 0 |

332,744 | 29,491,864,091 | IssuesEvent | 2023-06-02 14:01:30 | dudykr/stc | https://api.github.com/repos/dudykr/stc | closed | Fix unit test: `tests/pass-only/conformance/internalModules/DeclarationMerging/ModuleAndEnumWithSameNameAndCommonRoot/.1.ts` | tsc-unit-test |

Related test input: https://github.com/dudykr/stc/blob/main/crates/stc_ts_file_analyzer/tests/pass-only/conformance/internalModules/DeclarationMerging/ModuleAndEnumWithSameNameAndCommonRoot/.1.ts

Test file name: `tests/pass-only/conformance/internalModules/DeclarationMerging/ModuleAndEnumWithSam... | 1.0 | Fix unit test: `tests/pass-only/conformance/internalModules/DeclarationMerging/ModuleAndEnumWithSameNameAndCommonRoot/.1.ts` -

Related test input: https://github.com/dudykr/stc/blob/main/crates/stc_ts_file_analyzer/tests/pass-only/conformance/internalModules/DeclarationMerging/ModuleAndEnumWithS... | test | fix unit test tests pass only conformance internalmodules declarationmerging moduleandenumwithsamenameandcommonroot ts related test input test file name tests pass only conformance internalmodules declarationmerging moduleandenumwithsamenameandcommonroot ts i may e... | 1 |

266,906 | 28,480,261,605 | IssuesEvent | 2023-04-18 01:39:00 | artsking/linux-4.19.72 | https://api.github.com/repos/artsking/linux-4.19.72 | opened | CVE-2023-30772 (Medium) detected in linux-yoctov5.4.51 | Mend: dependency security vulnerability | ## CVE-2023-30772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://gi... | True | CVE-2023-30772 (Medium) detected in linux-yoctov5.4.51 - ## CVE-2023-30772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Emb... | non_test | cve medium detected in linux cve medium severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in head commit a href found in base branch master vulnerable source files drivers pow... | 0 |

5,527 | 2,945,354,555 | IssuesEvent | 2015-07-03 12:24:03 | netty/netty | https://api.github.com/repos/netty/netty | closed | Example code in ByteBuf 4.0 javadoc uses 3.x API | documentation | https://netty.io/4.0/api/io/netty/buffer/ByteBuf.html

// Iterates the readable bytes of a buffer.

ByteBuf buffer = ...;

while (buffer.readable()) {

System.out.println(buffer.readByte());

}

But readable() was changed to isReadable() in 4.0 | 1.0 | Example code in ByteBuf 4.0 javadoc uses 3.x API - https://netty.io/4.0/api/io/netty/buffer/ByteBuf.html

// Iterates the readable bytes of a buffer.

ByteBuf buffer = ...;

while (buffer.readable()) {

System.out.println(buffer.readByte());

}

But readable() was changed to isReadable() in 4.0 | non_test | example code in bytebuf javadoc uses x api iterates the readable bytes of a buffer bytebuf buffer while buffer readable system out println buffer readbyte but readable was changed to isreadable in | 0 |

351,051 | 31,933,580,443 | IssuesEvent | 2023-09-19 09:01:44 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix activations.test_tensorflow_relu | TensorFlow Frontend Sub Task Failing Test | | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6200560441/job/16835488067"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6200560441/job/16835488067"><img src=https://img.shields.io/badge/-success-success></a>

|ja... | 1.0 | Fix activations.test_tensorflow_relu - | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6200560441/job/16835488067"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6200560441/job/16835488067"><img src=https://img.shie... | test | fix activations test tensorflow relu paddle a href src numpy a href src jax a href src tensorflow a href src torch a href src | 1 |

66,159 | 12,729,129,570 | IssuesEvent | 2020-06-25 04:54:10 | nopSolutions/nopCommerce | https://api.github.com/repos/nopSolutions/nopCommerce | opened | Add database type mapping for int64/long | refactoring / source code | There is no type mapping in MigrationManager.cs for long type.

A line should be added to _typeMapping :

[typeof(long)] = c => c.AsInt64(),

Source: https://www.nopcommerce.com/en/boards/topic/82849/43-missing-database-type-mapping-for-int64long | 1.0 | Add database type mapping for int64/long - There is no type mapping in MigrationManager.cs for long type.

A line should be added to _typeMapping :

[typeof(long)] = c => c.AsInt64(),

Source: https://www.nopcommerce.com/en/boards/topic/82849/43-missing-database-type-mapping-for-int64long | non_test | add database type mapping for long there is no type mapping in migrationmanager cs for long type a line should be added to typemapping c c source | 0 |

746,752 | 26,043,821,852 | IssuesEvent | 2022-12-22 12:46:59 | telerik/kendo-ui-core | https://api.github.com/repos/telerik/kendo-ui-core | closed | Wrong dropdown width when set through list.width | Bug C: DropDownList SEV: Low jQuery Priority 5 FP: Planned | ### Bug report

When the width of the DropDownList is set using the list.width() and the DropDownList gets opened there is a slight delay in expanding the popup width.

### Reproduction of the problem

1. Open the [Dojo](https://dojo.telerik.com/@NeliKondova/EHobUqOV) and open the DropDownList

### Current behavi... | 1.0 | Wrong dropdown width when set through list.width - ### Bug report

When the width of the DropDownList is set using the list.width() and the DropDownList gets opened there is a slight delay in expanding the popup width.

### Reproduction of the problem

1. Open the [Dojo](https://dojo.telerik.com/@NeliKondova/EHobUq... | non_test | wrong dropdown width when set through list width bug report when the width of the dropdownlist is set using the list width and the dropdownlist gets opened there is a slight delay in expanding the popup width reproduction of the problem open the and open the dropdownlist current behavi... | 0 |

69,488 | 7,135,858,897 | IssuesEvent | 2018-01-23 03:25:47 | neuropoly/spinalcordtoolbox | https://api.github.com/repos/neuropoly/spinalcordtoolbox | closed | Minor verbose issues during testing | fix:minor testing | Issue visible on [Travis](https://travis-ci.org/neuropoly/spinalcordtoolbox/jobs/330619681).

~~~

Checking sct_get_centerline........................./home/travis/build/neuropoly/spinalcordtoolbox/python/lib/python2.7/site-packages/matplotlib/font_manager.py:273: UserWarning: Matplotlib is building the font cache us... | 1.0 | Minor verbose issues during testing - Issue visible on [Travis](https://travis-ci.org/neuropoly/spinalcordtoolbox/jobs/330619681).

~~~

Checking sct_get_centerline........................./home/travis/build/neuropoly/spinalcordtoolbox/python/lib/python2.7/site-packages/matplotlib/font_manager.py:273: UserWarning: Ma... | test | minor verbose issues during testing issue visible on checking sct get centerline home travis build neuropoly spinalcordtoolbox python lib site packages matplotlib font manager py userwarning matplotlib is building the font cache using fc list this may take a moment wa... | 1 |

8,692 | 3,779,507,633 | IssuesEvent | 2016-03-18 08:42:48 | OData/odata.net | https://api.github.com/repos/OData/odata.net | closed | Strongname validation failure in a community build | 3 - Resolved (code ready) | Enlist the ODataLib repo in a newly installed VS2013 computer, open Microsoft.OData.Lite.sln, build and run unit tests.

Unit tests failed with strong name validation.

To reduce the friction of community contributions:

* Skip strong name validation with a myget package for non-official build. Refer to the WebApi r... | 1.0 | Strongname validation failure in a community build - Enlist the ODataLib repo in a newly installed VS2013 computer, open Microsoft.OData.Lite.sln, build and run unit tests.

Unit tests failed with strong name validation.

To reduce the friction of community contributions:

* Skip strong name validation with a myget p... | non_test | strongname validation failure in a community build enlist the odatalib repo in a newly installed computer open microsoft odata lite sln build and run unit tests unit tests failed with strong name validation to reduce the friction of community contributions skip strong name validation with a myget packag... | 0 |

274,899 | 23,877,721,407 | IssuesEvent | 2022-09-07 20:49:56 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [test-triage] partner_flash_ctrl | Component:TestTriage | ### Hierarchy of regression failure

Block level

### Failure Description

```

* `Error-[XMRE] Cross-module reference resolution error` has 1 failures:

* Test default has 1 failures.

* default\

Line 1809, in log /workspaces/repo/scratch/master/flash_ctrl-sim-vcs/default/build.log

... | 1.0 | [test-triage] partner_flash_ctrl - ### Hierarchy of regression failure

Block level

### Failure Description

```

* `Error-[XMRE] Cross-module reference resolution error` has 1 failures:

* Test default has 1 failures.

* default\

Line 1809, in log /workspaces/repo/scratch/master/flash_ctrl-sim-... | test | partner flash ctrl hierarchy of regression failure block level failure description error cross module reference resolution error has failures test default has failures default line in log workspaces repo scratch master flash ctrl sim vcs default build lo... | 1 |

54,294 | 6,378,172,767 | IssuesEvent | 2017-08-02 12:03:29 | astropy/astropy | https://api.github.com/repos/astropy/astropy | closed | FAILURES: TestUtilMode.test_mode_pil_image -- conda update astropy | Close? Duplicate io.fits testing | I have updated my astropy version running

```

> conda update astropy

```

Then, to test the installed version of astropy I ran the function astropy.test() and I got this error:

```

========================================================================== FAILURES ===============================================... | 1.0 | FAILURES: TestUtilMode.test_mode_pil_image -- conda update astropy - I have updated my astropy version running

```

> conda update astropy

```

Then, to test the installed version of astropy I ran the function astropy.test() and I got this error:

```

==============================================================... | test | failures testutilmode test mode pil image conda update astropy i have updated my astropy version running conda update astropy then to test the installed version of astropy i ran the function astropy test and i got this error ... | 1 |

75,472 | 7,473,148,080 | IssuesEvent | 2018-04-03 14:37:31 | EnMasseProject/enmasse | https://api.github.com/repos/EnMasseProject/enmasse | opened | system-tests: new test for auto scaleup after manual scaledown | component/systemtests test development | 1. create plan for queue which consume 0.5 broker resource

2. create queue1, queue2 create queue3, queue4

4. send 1000 messages into each of queues above

5. manually scale broker StatefulSet to 1 pod

6. wait until StatefulSet will be automatically scaled up to 2 pods

7. try to receive mesages from all queues above... | 2.0 | system-tests: new test for auto scaleup after manual scaledown - 1. create plan for queue which consume 0.5 broker resource

2. create queue1, queue2 create queue3, queue4

4. send 1000 messages into each of queues above

5. manually scale broker StatefulSet to 1 pod

6. wait until StatefulSet will be automatically sc... | test | system tests new test for auto scaleup after manual scaledown create plan for queue which consume broker resource create create send messages into each of queues above manually scale broker statefulset to pod wait until statefulset will be automatically scaled up to pods t... | 1 |

61,267 | 6,731,480,977 | IssuesEvent | 2017-10-18 07:48:25 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | OL Feature Info marker disappear on second click | bug OL3 Priority: High Tested | FeatureInfo with OpenLayers map changes marker on second click.

### Steps to reproduce:

- Open an OpenLayers map (with at least one layer)

- Enable feature info tool

- Click on the map. You see the marker

- Click again in another point. Now you see a red circle instead of marker

| 1.0 | OL Feature Info marker disappear on second click - FeatureInfo with OpenLayers map changes marker on second click.

### Steps to reproduce:

- Open an OpenLayers map (with at least one layer)

- Enable feature info tool

- Click on the map. You see the marker

- Click again in another point. Now you see a red c... | test | ol feature info marker disappear on second click featureinfo with openlayers map changes marker on second click steps to reproduce open an openlayers map with at least one layer enable feature info tool click on the map you see the marker click again in another point now you see a red c... | 1 |

35,413 | 4,974,743,936 | IssuesEvent | 2016-12-06 08:05:42 | wangding/courses | https://api.github.com/repos/wangding/courses | closed | 13.3 提交黑盒测试设计思路 | Testing Learning | 被测程序名称:ProcessOn 文件和文件夹管理

被测程序的“需求规格说明书”请通过在线使用和探索获得,注意两个问题:

文件管理都有哪些功能;

文件夹管理都有哪些功能;

设计工具:ProcessOn在线思维导图

设计方法:等价类、边界值,等

任务要求:用思维导图工具为被测程序设计黑盒测试案例,并将设计好的思维导图发布出来;

在问题更新的描述中,贴上 ProcessOn 发布后的思维导图的 URL 地址。

| 1.0 | 13.3 提交黑盒测试设计思路 - 被测程序名称:ProcessOn 文件和文件夹管理

被测程序的“需求规格说明书”请通过在线使用和探索获得,注意两个问题:

文件管理都有哪些功能;

文件夹管理都有哪些功能;

设计工具:ProcessOn在线思维导图

设计方法:等价类、边界值,等

任务要求:用思维导图工具为被测程序设计黑盒测试案例,并将设计好的思维导图发布出来;

在问题更新的描述中,贴上 ProcessOn 发布后的思维导图的 URL 地址。

| test | 提交黑盒测试设计思路 被测程序名称:processon 文件和文件夹管理 被测程序的“需求规格说明书”请通过在线使用和探索获得,注意两个问题: 文件管理都有哪些功能; 文件夹管理都有哪些功能; 设计工具:processon在线思维导图 设计方法:等价类、边界值,等 任务要求:用思维导图工具为被测程序设计黑盒测试案例,并将设计好的思维导图发布出来; 在问题更新的描述中,贴上 processon 发布后的思维导图的 url 地址。 | 1 |

340,672 | 30,535,373,816 | IssuesEvent | 2023-07-19 16:56:58 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix paddle_tensor.test_paddle_instance_bitwise_xor | Sub Task Failing Test Paddle Frontend | | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5599074213"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5600789863"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com... | 1.0 | Fix paddle_tensor.test_paddle_instance_bitwise_xor - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5599074213"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5600789863"><img src=https://img.shields.io/badge/-suc... | test | fix paddle tensor test paddle instance bitwise xor numpy a href src jax a href src torch a href src | 1 |

7,877 | 2,938,761,815 | IssuesEvent | 2015-07-01 12:56:55 | stan-dev/stan | https://api.github.com/repos/stan-dev/stan | closed | unnormalized min/max tests failing for I/O on Intel compilers | Bug testing | There's noramlization issue with the icpc compiler, which Ben Goodrich tracked down:

Message 0:

I now have an icpc version 15. I am getting a test failure on this branch at

```

[ RUN ] io_dump.reader_big_doubles

unknown file: Failure

C++ exception with description "data b value 2.22507e-308 beyond num... | 1.0 | unnormalized min/max tests failing for I/O on Intel compilers - There's noramlization issue with the icpc compiler, which Ben Goodrich tracked down:

Message 0:

I now have an icpc version 15. I am getting a test failure on this branch at

```

[ RUN ] io_dump.reader_big_doubles

unknown file: Failure

C++ ... | test | unnormalized min max tests failing for i o on intel compilers there s noramlization issue with the icpc compiler which ben goodrich tracked down message i now have an icpc version i am getting a test failure on this branch at io dump reader big doubles unknown file failure c exception wi... | 1 |

365,056 | 25,518,963,824 | IssuesEvent | 2022-11-28 18:44:01 | cloudflare/cloudflare-docs | https://api.github.com/repos/cloudflare/cloudflare-docs | closed | Add a note to mTLS page if using a custom Root CA | documentation content:edit | ### Which Cloudflare product does this pertain to?

SSL

### Existing documentation URL(s)

https://developers.cloudflare.com/ssl/client-certificates/enable-mtls/

### Section that requires update

Footnote addition.

### What needs to change?

The chapter doesn't differentiate cases when using a Cloudflare managed CA ... | 1.0 | Add a note to mTLS page if using a custom Root CA - ### Which Cloudflare product does this pertain to?

SSL

### Existing documentation URL(s)

https://developers.cloudflare.com/ssl/client-certificates/enable-mtls/

### Section that requires update

Footnote addition.

### What needs to change?

The chapter doesn't dif... | non_test | add a note to mtls page if using a custom root ca which cloudflare product does this pertain to ssl existing documentation url s section that requires update footnote addition what needs to change the chapter doesn t differentiate cases when using a cloudflare managed ca or a custom ca w... | 0 |

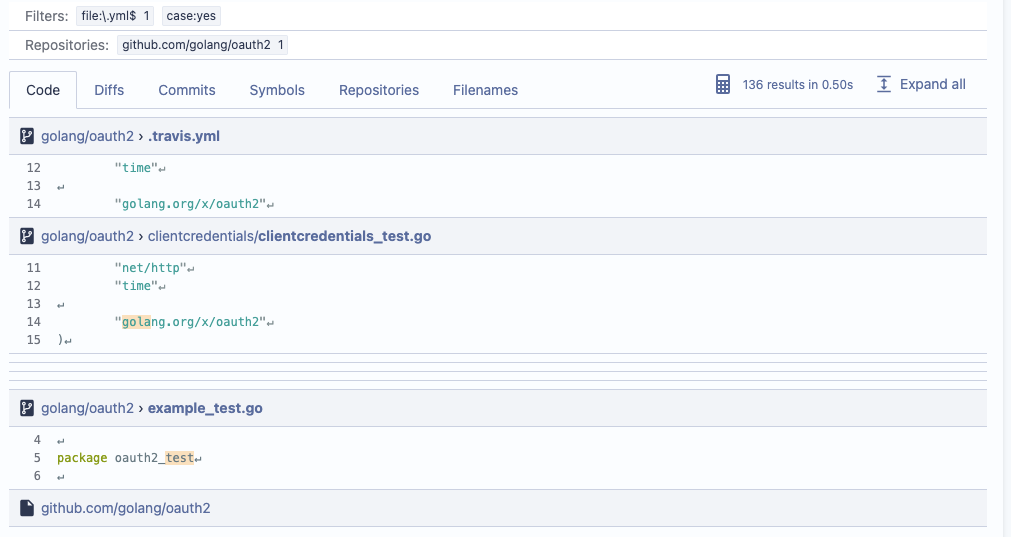

248,107 | 7,927,518,245 | IssuesEvent | 2018-07-06 08:20:19 | canonical-websites/www.ubuntu.com | https://api.github.com/repos/canonical-websites/www.ubuntu.com | closed | Global nav - Enterprise dropdown- Kubernetes links are not correct - DEV | Priority: High | please check the [copydoc](https://docs.google.com/document/d/1YBdQvLuqEpEQr_QqyycxMhZr4OaLapMlNJyYKCmPJsQ/edit)

| 1.0 | Global nav - Enterprise dropdown- Kubernetes links are not correct - DEV - please check the [copydoc](https://docs.google.com/document/d/1YBdQvLuqEpEQr_QqyycxMhZr4OaLapMlNJyYKCmPJsQ/edit)

: TestHashJoinerAgainstProcessor

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestHashJoinerAgainstProcessor).

[#1563297](https://teamcity.cockroachdb.com/viewLog.html?buildId=1563297):

```

Te... | 1.0 | teamcity: failed test: TestHashJoinerAgainstProcessor - The following tests appear to have failed on master (test): TestHashJoinerAgainstProcessor

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestHashJoinerAgainstProcessor).

[#1563297](https://teamcity... | test | teamcity failed test testhashjoineragainstprocessor the following tests appear to have failed on master test testhashjoineragainstprocessor you may want to check testhashjoineragainstprocessor fail test testhashjoineragainstprocessor stdout join type full outer ... | 1 |

243,172 | 26,277,952,563 | IssuesEvent | 2023-01-07 01:35:11 | venkateshreddypala/post-it-a4 | https://api.github.com/repos/venkateshreddypala/post-it-a4 | opened | WS-2018-0650 (High) detected in useragent-2.1.13.tgz | security vulnerability | ## WS-2018-0650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>useragent-2.1.13.tgz</b></p></summary>

<p>Fastest, most accurate & effecient user agent string parser, uses Browserscope... | True | WS-2018-0650 (High) detected in useragent-2.1.13.tgz - ## WS-2018-0650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>useragent-2.1.13.tgz</b></p></summary>

<p>Fastest, most accurate ... | non_test | ws high detected in useragent tgz ws high severity vulnerability vulnerable library useragent tgz fastest most accurate effecient user agent string parser uses browserscope s research for parsing library home page a href path to dependency file post it package js... | 0 |

18,914 | 10,265,318,741 | IssuesEvent | 2019-08-22 18:33:10 | flutter/devtools | https://api.github.com/repos/flutter/devtools | opened | CPU profiler start and end times should be able to be configured in user code | performance page | In order to profile a single method or sequence of events, a user should be able to specify start and end times in their code.

Maybe there is something in the dart:developer API that the user can use to do this. Then, when recording a CPU profile, we can pull samples from the interval specified by the user-triggered... | True | CPU profiler start and end times should be able to be configured in user code - In order to profile a single method or sequence of events, a user should be able to specify start and end times in their code.

Maybe there is something in the dart:developer API that the user can use to do this. Then, when recording a CP... | non_test | cpu profiler start and end times should be able to be configured in user code in order to profile a single method or sequence of events a user should be able to specify start and end times in their code maybe there is something in the dart developer api that the user can use to do this then when recording a cp... | 0 |

198,697 | 14,993,286,350 | IssuesEvent | 2021-01-29 11:05:56 | strictdoc-project/strictdoc | https://api.github.com/repos/strictdoc-project/strictdoc | opened | Enable testing on older Linux distributions | testing | One user has reported problems on Ubuntu 16, at least two issues:

1) Having troubles when installing Poetry.

2) Problem installing `xlsxwriter`.

| 1.0 | Enable testing on older Linux distributions - One user has reported problems on Ubuntu 16, at least two issues:

1) Having troubles when installing Poetry.

2) Problem installing `xlsxwriter`.

| test | enable testing on older linux distributions one user has reported problems on ubuntu at least two issues having troubles when installing poetry problem installing xlsxwriter | 1 |

22,870 | 3,974,194,831 | IssuesEvent | 2016-05-04 21:16:12 | PulpQE/pulp-smash | https://api.github.com/repos/PulpQE/pulp-smash | closed | Add new test for "Uploading the same Content Unit twice" | high priority test case | As per this [issue](https://pulp.plan.io/issues/1406), there is a new regression in Pulp 2.8 Beta when one tries to upload the same content (ie. rpms, puppet modules, etc) to an existing repository. We need a new automated test that does the following:

* Create a new feed-less repository

* Manually import a valid c... | 1.0 | Add new test for "Uploading the same Content Unit twice" - As per this [issue](https://pulp.plan.io/issues/1406), there is a new regression in Pulp 2.8 Beta when one tries to upload the same content (ie. rpms, puppet modules, etc) to an existing repository. We need a new automated test that does the following:

* Cre... | test | add new test for uploading the same content unit twice as per this there is a new regression in pulp beta when one tries to upload the same content ie rpms puppet modules etc to an existing repository we need a new automated test that does the following create a new feed less repository manua... | 1 |

140,200 | 11,305,151,601 | IssuesEvent | 2020-01-18 02:51:35 | aristanetworks/atd-public | https://api.github.com/repos/aristanetworks/atd-public | opened | Update login script for latest | DC-Latest Topo bug labvm | Need to update the topology type check for media labs from:

`if topology == ‘datacenter’`

To:

`if ‘datacenter’ in topology `

| 1.0 | Update login script for latest - Need to update the topology type check for media labs from:

`if topology == ‘datacenter’`

To:

`if ‘datacenter’ in topology `

| test | update login script for latest need to update the topology type check for media labs from if topology ‘datacenter’ to if ‘datacenter’ in topology | 1 |

290,721 | 25,089,658,721 | IssuesEvent | 2022-11-08 04:30:37 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Godot allowing adding files and folders with special characters (etc / \ : * " |) | bug platform:windows topic:editor needs testing | ### Godot version

v3.4.4 stable official

### System information

Windows 10, Git and Github Desktop

### Issue description

When creating a new file/scenes/scripts, Godot allows special characters which let your VCS to broke (you can't commit)

I expect it not allowing special characters in file name

#... | 1.0 | Godot allowing adding files and folders with special characters (etc / \ : * " |) - ### Godot version

v3.4.4 stable official

### System information

Windows 10, Git and Github Desktop

### Issue description

When creating a new file/scenes/scripts, Godot allows special characters which let your VCS to broke... | test | godot allowing adding files and folders with special characters etc godot version stable official system information windows git and github desktop issue description when creating a new file scenes scripts godot allows special characters which let your vcs to broke ... | 1 |

484,706 | 13,943,983,667 | IssuesEvent | 2020-10-23 00:39:51 | elementary/stylesheet | https://api.github.com/repos/elementary/stylesheet | closed | Extend the Gtk.STYLE_CLASS_FLAT to Gtk.ActionBars too | Bitesize Priority: Wishlist Status: Confirmed | This new Flat class could also be used in Gtk.ActionBars, since those are also part of the window controls, and could have the option to be styled as Flat similarly to Gtk.HeaderBars.

Here's a relevant CSS to get started to adding this:

```css

actionbar,

.action-bar {

border-top-color: transparent;

back... | 1.0 | Extend the Gtk.STYLE_CLASS_FLAT to Gtk.ActionBars too - This new Flat class could also be used in Gtk.ActionBars, since those are also part of the window controls, and could have the option to be styled as Flat similarly to Gtk.HeaderBars.

Here's a relevant CSS to get started to adding this:

```css

actionbar,

.ac... | non_test | extend the gtk style class flat to gtk actionbars too this new flat class could also be used in gtk actionbars since those are also part of the window controls and could have the option to be styled as flat similarly to gtk headerbars here s a relevant css to get started to adding this css actionbar ac... | 0 |

125,341 | 12,258,094,406 | IssuesEvent | 2020-05-06 14:38:06 | project-koku/koku-ui | https://api.github.com/repos/project-koku/koku-ui | closed | Update Azure Sources UI Wizard Instructions | cost model documentation | ## User Story

As a user configuring an Azure source I want to include only the necessary permissions so that we create a minimal footprint.

## Impacts

- Sources UI Wizard

## Assumptions

- See https://github.com/project-koku/koku/issues/1768 for details on what was wrong

- The change is essentially implementi... | 1.0 | Update Azure Sources UI Wizard Instructions - ## User Story

As a user configuring an Azure source I want to include only the necessary permissions so that we create a minimal footprint.

## Impacts

- Sources UI Wizard

## Assumptions

- See https://github.com/project-koku/koku/issues/1768 for details on what was... | non_test | update azure sources ui wizard instructions user story as a user configuring an azure source i want to include only the necessary permissions so that we create a minimal footprint impacts sources ui wizard assumptions see for details on what was wrong the change is essentially implementin... | 0 |

21,487 | 6,157,181,079 | IssuesEvent | 2017-06-28 18:22:48 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | typo in the Flutter Codelab | dev: docs - codelab | There is a minor typo in the Flutter Codelab. "property" is typed twice, see the screenshot.

| 1.0 | typo in the Flutter Codelab - There is a minor typo in the Flutter Codelab. "property" is typed twice, see the screenshot.

| non_test | typo in the flutter codelab there is a minor typo in the flutter codelab property is typed twice see the screenshot | 0 |

75,393 | 7,470,314,856 | IssuesEvent | 2018-04-03 04:10:08 | s-newman/skitter | https://api.github.com/repos/s-newman/skitter | closed | PyLint Rating | required test | All python code should have a 10/10 rating from PyLint. An automated test should be created to perform this check. | 1.0 | PyLint Rating - All python code should have a 10/10 rating from PyLint. An automated test should be created to perform this check. | test | pylint rating all python code should have a rating from pylint an automated test should be created to perform this check | 1 |

292,157 | 25,204,293,183 | IssuesEvent | 2022-11-13 13:47:09 | TeamFogFog/FogFog-Server-Upptime | https://api.github.com/repos/TeamFogFog/FogFog-Server-Upptime | closed | 🛑 Test 용 FogFog Server - DEV is down | status test-fog-fog-server-dev | In [`ff76110`](https://github.com/TeamFogFog/FogFog-Server-Upptime/commit/ff761102b62075e6c21ce5269b0423aacfa963b2

), Test 용 FogFog Server - DEV (https://klsjflskjdfljdslkfjsdlkjfl.com) was **down**:

- HTTP code: 0

- Response time: 0 ms

| 1.0 | 🛑 Test 용 FogFog Server - DEV is down - In [`ff76110`](https://github.com/TeamFogFog/FogFog-Server-Upptime/commit/ff761102b62075e6c21ce5269b0423aacfa963b2

), Test 용 FogFog Server - DEV (https://klsjflskjdfljdslkfjsdlkjfl.com) was **down**:

- HTTP code: 0

- Response time: 0 ms

| test | 🛑 test 용 fogfog server dev is down in test 용 fogfog server dev was down http code response time ms | 1 |

457,645 | 13,159,648,046 | IssuesEvent | 2020-08-10 16:11:08 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | opened | UI: Filter collection search by repository | priority/high status/blocked status/new type/enhancement | - [ ] On the search page provide a filter for community, Red Hat certified, and private content

- [ ] On collection details, pull the detail from the correct repository

Subtask of #154 | 1.0 | UI: Filter collection search by repository - - [ ] On the search page provide a filter for community, Red Hat certified, and private content

- [ ] On collection details, pull the detail from the correct repository

Subtask of #154 | non_test | ui filter collection search by repository on the search page provide a filter for community red hat certified and private content on collection details pull the detail from the correct repository subtask of | 0 |

41,253 | 5,345,354,943 | IssuesEvent | 2017-02-17 16:45:30 | TheScienceMuseum/collectionsonline | https://api.github.com/repos/TheScienceMuseum/collectionsonline | closed | Fields not appearing on archive documents | bug please-test priority-2 T3h | We seem have lost a couple of fields from Archive document pages (despite them being in the index). See also https://github.com/TheScienceMuseum/collectionsonline/issues/747

- **Date(s)** (at top of page):`lifecycle.creation.date`

- **Extent**:`measurements.dimensions`

CO: https://collection.sciencemuseum.org.uk... | 1.0 | Fields not appearing on archive documents - We seem have lost a couple of fields from Archive document pages (despite them being in the index). See also https://github.com/TheScienceMuseum/collectionsonline/issues/747

- **Date(s)** (at top of page):`lifecycle.creation.date`

- **Extent**:`measurements.dimensions`

... | test | fields not appearing on archive documents we seem have lost a couple of fields from archive document pages despite them being in the index see also date s at top of page lifecycle creation date extent measurements dimensions co archive site lifecycle creati... | 1 |

58,032 | 6,565,754,102 | IssuesEvent | 2017-09-08 09:36:58 | RepoCamp/teapot | https://api.github.com/repos/RepoCamp/teapot | opened | Fix warning in Travis build | Component: Testing Type: Enhancement | ```

Warning: the running version of Bundler (1.15.1) is older than the version that created the lockfile (1.15.4). We suggest you upgrade to the latest version of Bundler by running `gem install bundler`.

``` | 1.0 | Fix warning in Travis build - ```

Warning: the running version of Bundler (1.15.1) is older than the version that created the lockfile (1.15.4). We suggest you upgrade to the latest version of Bundler by running `gem install bundler`.

``` | test | fix warning in travis build warning the running version of bundler is older than the version that created the lockfile we suggest you upgrade to the latest version of bundler by running gem install bundler | 1 |

695,858 | 23,874,239,294 | IssuesEvent | 2022-09-07 17:25:36 | Rusi91/basisdokument | https://api.github.com/repos/Rusi91/basisdokument | closed | [Gliederungspunkte] Gliederungspunkte löschen | high priority user story | _Als Nutzer:in möchte ich Gliederungspunkte löschen können, damit sie nicht mehr angezeigt werden._ (Nach endgültigen Status nicht mehr)

**Zusammenhängendes Issue:** #63

- [x] Lösch-Icon hinzufügen

- [x] Edit-Icon hinzufügen

- [x] Vor dem Löschen sollte ein Pop-Up zur Bestätigung kommen.

- [x] Nach dem Löschen sollte... | 1.0 | [Gliederungspunkte] Gliederungspunkte löschen - _Als Nutzer:in möchte ich Gliederungspunkte löschen können, damit sie nicht mehr angezeigt werden._ (Nach endgültigen Status nicht mehr)

**Zusammenhängendes Issue:** #63

- [x] Lösch-Icon hinzufügen

- [x] Edit-Icon hinzufügen

- [x] Vor dem Löschen sollte ein Pop-Up zur B... | non_test | gliederungspunkte löschen als nutzer in möchte ich gliederungspunkte löschen können damit sie nicht mehr angezeigt werden nach endgültigen status nicht mehr zusammenhängendes issue lösch icon hinzufügen edit icon hinzufügen vor dem löschen sollte ein pop up zur bestätigung kommen na... | 0 |

124,548 | 4,927,116,523 | IssuesEvent | 2016-11-26 15:10:11 | GeoDiver/R-Core | https://api.github.com/repos/GeoDiver/R-Core | closed | Streamline gage analysis Script | Low Priority | Download_GEO.R L72-78

```R

entrez.gene.id <- featureData[, 'ENTREZ_GENE_ID']

go.bio <- featureData[, 'Gene Ontology Biological Process']

go.cell <- featureData[, 'Gene Ontology Cellular Component']

go.mol <- featureData[, 'Gene Ontology Molecular Function']

gene.titles <- featureData[... | 1.0 | Streamline gage analysis Script - Download_GEO.R L72-78

```R

entrez.gene.id <- featureData[, 'ENTREZ_GENE_ID']

go.bio <- featureData[, 'Gene Ontology Biological Process']

go.cell <- featureData[, 'Gene Ontology Cellular Component']

go.mol <- featureData[, 'Gene Ontology Molecular Functio... | non_test | streamline gage analysis script download geo r r entrez gene id featuredata go bio featuredata go cell featuredata go mol featuredata gene titles featuredata genes data frame gene names entrez gene id gene titles go bio ... | 0 |

69,420 | 30,277,788,903 | IssuesEvent | 2023-07-07 21:36:02 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | closed | Add netpol to devops-xray namespace | *team/ security* *team/ ops and shared services* | **Describe the issue**

The Compliance Operator has identified that some namespaces don't have any NetworkPolicies and is encouraging us to add them.

**Additional context**

https://github.com/bcgov/how-to-workshops/tree/master/labs/netpol-quickstart

**Definition of done**

Add KNP to `devops-xray` namespace in GOLD, GO... | 1.0 | Add netpol to devops-xray namespace - **Describe the issue**

The Compliance Operator has identified that some namespaces don't have any NetworkPolicies and is encouraging us to add them.

**Additional context**

https://github.com/bcgov/how-to-workshops/tree/master/labs/netpol-quickstart

**Definition of done**

Add KNP ... | non_test | add netpol to devops xray namespace describe the issue the compliance operator has identified that some namespaces don t have any networkpolicies and is encouraging us to add them additional context definition of done add knp to devops xray namespace in gold golddr and emerald clusters | 0 |

60,666 | 8,453,584,200 | IssuesEvent | 2018-10-20 17:02:34 | KratosMultiphysics/Kratos | https://api.github.com/repos/KratosMultiphysics/Kratos | closed | [Tutorials] Tutorials must be updated with the new Model | Documentation Invalid | Now with the merge of the new Model tutorials must be updated in order to continue working | 1.0 | [Tutorials] Tutorials must be updated with the new Model - Now with the merge of the new Model tutorials must be updated in order to continue working | non_test | tutorials must be updated with the new model now with the merge of the new model tutorials must be updated in order to continue working | 0 |

137,927 | 11,167,750,685 | IssuesEvent | 2019-12-27 18:30:55 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | UI - Support creating storage class for local persistent volumes | [zube]: To Test kind/enhancement team/cn team/ui | <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a public issue in GitHub. You may (but are not required to) use the GPG ke... | 1.0 | UI - Support creating storage class for local persistent volumes - <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a publi... | test | ui support creating storage class for local persistent volumes please search for existing issues first then read to see what we expect in an issue for security issues please email security rancher com instead of posting a public issue in github you may but are not required to use the gpg key located o... | 1 |

165,227 | 12,833,695,670 | IssuesEvent | 2020-07-07 09:44:25 | bebbo/gcc | https://api.github.com/repos/bebbo/gcc | closed | ScummVM error if fbbb is on. | bug please test | item variable will be too high if fbbb is on -> error.

https://github.com/mheyer32/scummvm-amigaos3/blob/29b08bf2c4e34f8b7b9a621474ca5841a45f07a3/engines/agos/items.cpp#L385

Happens with Simon1 (OCS/Amiga) demo.

| 1.0 | ScummVM error if fbbb is on. - item variable will be too high if fbbb is on -> error.

https://github.com/mheyer32/scummvm-amigaos3/blob/29b08bf2c4e34f8b7b9a621474ca5841a45f07a3/engines/agos/items.cpp#L385

Happens with Simon1 (OCS/Amiga) demo.

| test | scummvm error if fbbb is on item variable will be too high if fbbb is on error happens with ocs amiga demo | 1 |

93,141 | 8,401,721,779 | IssuesEvent | 2018-10-11 02:37:46 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed test: TestDistSQLDrainingHosts | C-test-failure O-robot | The following tests appear to have failed on master (testrace): TestDistSQLDrainingHosts

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestDistSQLDrainingHosts).

[#957642](https://teamcity.cockroachdb.com/viewLog.html?buildId=957642):

```

TestDistSQLD... | 1.0 | teamcity: failed test: TestDistSQLDrainingHosts - The following tests appear to have failed on master (testrace): TestDistSQLDrainingHosts

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestDistSQLDrainingHosts).

[#957642](https://teamcity.cockroachdb.co... | test | teamcity failed test testdistsqldraininghosts the following tests appear to have failed on master testrace testdistsqldraininghosts you may want to check testdistsqldraininghosts sary migrations have run server server go serving sql connections server server upda... | 1 |

130,304 | 5,113,919,866 | IssuesEvent | 2017-01-06 16:47:34 | hpi-swt2/wimi-portal | https://api.github.com/repos/hpi-swt2/wimi-portal | closed | Allow filtering on time_sheets#index | enhancement priority-3 | Just like on `projects#index`, it should be possible to filter and search time sheets on the `time_sheets#index` page. The functionality should reside in the sidebar.

| 1.0 | Allow filtering on time_sheets#index - Just like on `projects#index`, it should be possible to filter and search time sheets on the `time_sheets#index` page. The functionality should reside in the sidebar.

detected in handlebars-4.4.5.tgz | security vulnerability | ## CVE-2021-23383 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.5.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ... | True | CVE-2021-23383 (High) detected in handlebars-4.4.5.tgz - ## CVE-2021-23383 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.4.5.tgz</b></p></summary>

<p>Handlebars provides... | non_test | cve high detected in handlebars tgz cve high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file dagger brow... | 0 |

345,813 | 30,845,086,894 | IssuesEvent | 2023-08-02 13:15:53 | ita-social-projects/Space2Study-Client-mvp | https://api.github.com/repos/ita-social-projects/Space2Study-Client-mvp | closed | (SP: 1) Write unit test for "AccordionWithImage" component | FrontEnd part Unit test | ### Component unit test

Unit test for "AccordionWithImage" component

Scenaries descriptions:

- [x] Imitate user click on title and it should open content of it

[Link to component](https://github.com/ita-social-projects/Space2Study-Client-mvp/blob/develop/tests/unit/components/accordion-with-image/AccordionWithImage.s... | 1.0 | (SP: 1) Write unit test for "AccordionWithImage" component - ### Component unit test

Unit test for "AccordionWithImage" component

Scenaries descriptions:

- [x] Imitate user click on title and it should open content of it

[Link to component](https://github.com/ita-social-projects/Space2Study-Client-mvp/blob/develop/te... | test | sp write unit test for accordionwithimage component component unit test unit test for accordionwithimage component scenaries descriptions imitate user click on title and it should open content of it current coverage | 1 |

21,907 | 3,925,949,297 | IssuesEvent | 2016-04-22 21:03:05 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | SslStream tests: timeout | 2 - In Progress System.Net test bug | http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_release_prtest/6135/console

```

System.Net.Security.Tests.SslStreamStreamToStreamTest.SslStream_StreamToStream_Authentication_Success [FAIL]

Handshake completed in the allotted time

Expected: True

Actual: False

Stack Trace:

... | 1.0 | SslStream tests: timeout - http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_release_prtest/6135/console

```

System.Net.Security.Tests.SslStreamStreamToStreamTest.SslStream_StreamToStream_Authentication_Success [FAIL]

Handshake completed in the allotted time

Expected: True

Actual: F... | test | sslstream tests timeout system net security tests sslstreamstreamtostreamtest sslstream streamtostream authentication success handshake completed in the allotted time expected true actual false stack trace d j workspace dotnet corefx windows release prt... | 1 |

42,937 | 17,373,019,825 | IssuesEvent | 2021-07-30 16:27:18 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Missing storage URL's for azurefiles StorageClass | Pri1 assigned-to-author container-service/svc doc-enhancement triaged |

Hello,

we have limited egress connectivity for AKS clusters and during operations we figured out that this document missing URL for azure storage services, if PVC requested by cluster:

```

Mounting arguments: -t cifs -o file_mode=0777,dir_mode=0777,vers=3.0,actimeo=30,mfsymlinks,<masked> //f6728f91dc4234cbcaf7b2c.... | 1.0 | Missing storage URL's for azurefiles StorageClass -

Hello,

we have limited egress connectivity for AKS clusters and during operations we figured out that this document missing URL for azure storage services, if PVC requested by cluster:

```

Mounting arguments: -t cifs -o file_mode=0777,dir_mode=0777,vers=3.0,actim... | non_test | missing storage url s for azurefiles storageclass hello we have limited egress connectivity for aks clusters and during operations we figured out that this document missing url for azure storage services if pvc requested by cluster mounting arguments t cifs o file mode dir mode vers actimeo m... | 0 |

2,032 | 4,162,623,308 | IssuesEvent | 2016-06-17 21:08:57 | htwg-cloud-application-development/static-code-analytics-application | https://api.github.com/repos/htwg-cloud-application-development/static-code-analytics-application | closed | Implement logging and save log output into logfiles | all-microservices |

example:

*In your class*

```

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

public class MyClass{

private static final Logger LOG = LoggerFactory.getLogger(MyClass.class);

...

```

*use*

```

LOG.debug("check repositoryUrl: " + repositoryUrl);

LOG.error(....);

...

```

*In applicat... | 1.0 | Implement logging and save log output into logfiles -

example:

*In your class*

```

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

public class MyClass{

private static final Logger LOG = LoggerFactory.getLogger(MyClass.class);

...

```

*use*

```

LOG.debug("check repositoryUrl: " + reposi... | non_test | implement logging and save log output into logfiles example in your class import org logger import org loggerfactory public class myclass private static final logger log loggerfactory getlogger myclass class use log debug check repositoryurl repositoryurl ... | 0 |

336,425 | 30,193,204,701 | IssuesEvent | 2023-07-04 17:27:00 | MohistMC/Mohist | https://api.github.com/repos/MohistMC/Mohist | closed | [1.16.5] Guardvillagers Ticking entity crash | 1.16.5 Needs Testing | **Minecraft Version :** 1.16.5

**Mohist Version :** 707

**Operating System :** Unbuntu 20

**Concerned mod / plugin** : Guardvillagers

**Logs :** https://haste.mohistmc.com/garegigovo.coffeescript

**Steps to Reproduce :**

1. I don’t know, it works correctly and didn’t give such failures before, apparently... | 1.0 | [1.16.5] Guardvillagers Ticking entity crash - **Minecraft Version :** 1.16.5

**Mohist Version :** 707

**Operating System :** Unbuntu 20

**Concerned mod / plugin** : Guardvillagers

**Logs :** https://haste.mohistmc.com/garegigovo.coffeescript

**Steps to Reproduce :**

1. I don’t know, it works correctly a... | test | guardvillagers ticking entity crash minecraft version mohist version operating system unbuntu concerned mod plugin guardvillagers logs steps to reproduce i don’t know it works correctly and didn’t give such failures before apparently something in... | 1 |

197,997 | 14,953,083,623 | IssuesEvent | 2021-01-26 16:16:22 | pints-team/pints | https://api.github.com/repos/pints-team/pints | closed | Add value-based (numerical) tests for all samplers / optimisers | unit-testing | E.g.

- Seed

- Run 100 iterations

- Check that there's sufficient change within those iterations (and reduce n if possible)

- Store output, either in CSV or in code

- Compare

This would be _in addition to_ functional testing, and would be slightly annoying because you'd need to update the stored results any ti... | 1.0 | Add value-based (numerical) tests for all samplers / optimisers - E.g.

- Seed

- Run 100 iterations

- Check that there's sufficient change within those iterations (and reduce n if possible)

- Store output, either in CSV or in code

- Compare

This would be _in addition to_ functional testing, and would be slight... | test | add value based numerical tests for all samplers optimisers e g seed run iterations check that there s sufficient change within those iterations and reduce n if possible store output either in csv or in code compare this would be in addition to functional testing and would be slightly... | 1 |