Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

295,875 | 25,511,927,323 | IssuesEvent | 2022-11-28 13:44:31 | neondatabase/neon | https://api.github.com/repos/neondatabase/neon | closed | `test_isolation` is flacky | t/bug a/test a/test/flaky | `debug` version of the test fails frequently.

Recent failure Allure report: https://neon-github-public-dev.s3.amazonaws.com/reports/main/debug/3206518630/index.html#suites/158be07438eb5188d40b466b6acfaeb3/605ca28f08b88e15/ | 2.0 | `test_isolation` is flacky - `debug` version of the test fails frequently.

Recent failure Allure report: https://neon-github-public-dev.s3.amazonaws.com/reports/main/debug/3206518630/index.html#suites/158be07438eb5188d40b466b6acfaeb3/605ca28f08b88e15/ | test | test isolation is flacky debug version of the test fails frequently recent failure allure report | 1 |

248,103 | 20,995,872,960 | IssuesEvent | 2022-03-29 13:28:01 | elastic/beats | https://api.github.com/repos/elastic/beats | closed | [Metricbeat] Add integration tests for AWS | enhancement Metricbeat :Testing [zube]: Backlog Team:Platforms size/L | We want to improve our coverage with integration tests for aws module using real AWS services. Similar to terraform scenario in Filebeat for ELBs, we can create scenarios for deploying and destroying testing services in AWS using Terraform for individual metricsets.

There are aws module in Metricbeat and aws autodis... | 1.0 | [Metricbeat] Add integration tests for AWS - We want to improve our coverage with integration tests for aws module using real AWS services. Similar to terraform scenario in Filebeat for ELBs, we can create scenarios for deploying and destroying testing services in AWS using Terraform for individual metricsets.

There... | test | add integration tests for aws we want to improve our coverage with integration tests for aws module using real aws services similar to terraform scenario in filebeat for elbs we can create scenarios for deploying and destroying testing services in aws using terraform for individual metricsets there are aws mo... | 1 |

204,820 | 15,555,629,928 | IssuesEvent | 2021-03-16 06:28:44 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: kv/splits/nodes=3/quiesce=true failed | C-test-failure O-roachtest O-robot branch-release-21.1 release-blocker | [(roachtest).kv/splits/nodes=3/quiesce=true failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2780761&tab=buildLog) on [release-21.1@4a47e0e305cbdabf963f896c1cb571a28b34e63d](https://github.com/cockroachdb/cockroach/commits/4a47e0e305cbdabf963f896c1cb571a28b34e63d):

```

Wraps: (4) secondary error attachm... | 2.0 | roachtest: kv/splits/nodes=3/quiesce=true failed - [(roachtest).kv/splits/nodes=3/quiesce=true failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2780761&tab=buildLog) on [release-21.1@4a47e0e305cbdabf963f896c1cb571a28b34e63d](https://github.com/cockroachdb/cockroach/commits/4a47e0e305cbdabf963f896c1cb571a28... | test | roachtest kv splits nodes quiesce true failed on wraps secondary error attachment signal killed signal killed error types exec exiterror wraps context canceled error types withstack withstack errutil withprefix main withcommanddetails ... | 1 |

77,716 | 14,910,639,871 | IssuesEvent | 2021-01-22 09:52:48 | firecracker-microvm/firecracker | https://api.github.com/repos/firecracker-microvm/firecracker | closed | [Code improvement] deduplicate literal HTTP responses in tests | Codebase: Refactoring Contribute: Good First Issue Contribute: Help Wanted | There are many tests with literal hardcoded HTTP responses that bloat the code. Some of them even have data embedded in them, making those tests hard to maintain.

Example possible deduplication:

in https://github.com/firecracker-microvm/firecracker/blob/e8200f3c3eaba014220e447e8d426c8cf8607eec/src/api_server/src/... | 1.0 | [Code improvement] deduplicate literal HTTP responses in tests - There are many tests with literal hardcoded HTTP responses that bloat the code. Some of them even have data embedded in them, making those tests hard to maintain.

Example possible deduplication:

in https://github.com/firecracker-microvm/firecracker/... | non_test | deduplicate literal http responses in tests there are many tests with literal hardcoded http responses that bloat the code some of them even have data embedded in them making those tests hard to maintain example possible deduplication in diff let expected response format ... | 0 |

807,500 | 30,005,856,254 | IssuesEvent | 2023-06-26 12:24:42 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | app.meandu.com - Firefox is not a supported browser | browser-firefox priority-normal severity-critical type-unsupported action-needssitepatch engine-gecko needsinfo-raul diagnosis-priority-p1 | <!-- @browser: Firefox 112.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:109.0) Gecko/20100101 Firefox/112.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/121875 -->

**URL**: https://app.meandu.com

**Browser / Version**: Firefox 112.0

**Opera... | 2.0 | app.meandu.com - Firefox is not a supported browser - <!-- @browser: Firefox 112.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:109.0) Gecko/20100101 Firefox/112.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/121875 -->

**URL**: https://app.me... | non_test | app meandu com firefox is not a supported browser url browser version firefox operating system windows tested another browser yes safari problem type site is not usable description browser unsupported steps to reproduce the page doesn t load and says the brows... | 0 |

265,942 | 23,211,551,551 | IssuesEvent | 2022-08-02 10:34:48 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | closed | Intermittent UI test failure - < SettingsAddonsTest. noCrashWithAddonInstalledTest > | eng:intermittent-test eng:ui-test | ### Firebase Test Run: [Firebase link](https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/6331410488741407859/executions/bs.d3c4f02ab026c4a3/testcases/1/test-cases)

### Stacktrace:

androidx.test.espresso.IdlingResourceTimeoutException: Wait for [SessionLoadedIdlingR... | 2.0 | Intermittent UI test failure - < SettingsAddonsTest. noCrashWithAddonInstalledTest > - ### Firebase Test Run: [Firebase link](https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/6331410488741407859/executions/bs.d3c4f02ab026c4a3/testcases/1/test-cases)

### Stacktrace... | test | intermittent ui test failure firebase test run stacktrace androidx test espresso idlingresourcetimeoutexception wait for to become idle timed out at dalvik system vmstack getthreadstacktrace native method at java lang thread getstacktrace thread java at androidx test espresso base defaultf... | 1 |

95,624 | 19,723,565,916 | IssuesEvent | 2022-01-13 17:36:03 | philres/catfishq | https://api.github.com/repos/philres/catfishq | opened | Use numpy to compute q-scores more efficiently | code-review | https://github.com/philres/catfishq/blob/4c42039d8b7c4d9009b0668399672f6d87aa3177/catfishq/cat_fastq.py#L36

1) Check if pysam returns numpy arrays. If it does use numpy to compute probabilities from phred scores more efficiently

2) Alternative: cython implementation of q-score computation | 1.0 | Use numpy to compute q-scores more efficiently - https://github.com/philres/catfishq/blob/4c42039d8b7c4d9009b0668399672f6d87aa3177/catfishq/cat_fastq.py#L36

1) Check if pysam returns numpy arrays. If it does use numpy to compute probabilities from phred scores more efficiently

2) Alternative: cython implementatio... | non_test | use numpy to compute q scores more efficiently check if pysam returns numpy arrays if it does use numpy to compute probabilities from phred scores more efficiently alternative cython implementation of q score computation | 0 |

385,778 | 26,653,572,714 | IssuesEvent | 2023-01-25 15:18:46 | ll7/paf22 | https://api.github.com/repos/ll7/paf22 | closed | [Feature]: Implement filter for the GPS sensor | documentation enhancement Acting Perception additionally | ### Description

The current implementation of the GPS signal is too noisy for the lateral control algorithms.

A filter of some sort needs to be implemented.

With current methods (simple average) not working, a dedicated effort should be made to the processing of the sensor data.

### Definition of Done

- sensor dat... | 1.0 | [Feature]: Implement filter for the GPS sensor - ### Description

The current implementation of the GPS signal is too noisy for the lateral control algorithms.

A filter of some sort needs to be implemented.

With current methods (simple average) not working, a dedicated effort should be made to the processing of the s... | non_test | implement filter for the gps sensor description the current implementation of the gps signal is too noisy for the lateral control algorithms a filter of some sort needs to be implemented with current methods simple average not working a dedicated effort should be made to the processing of the sensor da... | 0 |

539,179 | 15,784,761,236 | IssuesEvent | 2021-04-01 15:30:40 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | Quest-Deviate-Hides-From-Nalpak-in-Wailing-Caverns-not-available-to-alliance | 1-19 ChromieCraft Generic Confirmed DB Fix included Priority - Low | Originally reported https://github.com/chromiecraft/chromiecraft/issues/209

##### ISSUE: FACTION SIDE:

<!--

________________________________________________________________________________________________________________________________________

_____________________________________________________________________... | 1.0 | Quest-Deviate-Hides-From-Nalpak-in-Wailing-Caverns-not-available-to-alliance - Originally reported https://github.com/chromiecraft/chromiecraft/issues/209

##### ISSUE: FACTION SIDE:

<!--

________________________________________________________________________________________________________________________________... | non_test | quest deviate hides from nalpak in wailing caverns not available to alliance originally reported issue faction side ... | 0 |

100,732 | 12,556,582,600 | IssuesEvent | 2020-06-07 10:13:10 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | ENH: Dropping outliers | API Design Enhancement Numeric | Create a new function to remove outliers.

http://stackoverflow.com/questions/23199796/detect-and-exclude-outliers-in-pandas-dataframe

I find myself using the code from SO quite often to remove outliers in a particular column when preprocessing data and it seems this is a common issue. It would be nice to have a f... | 1.0 | ENH: Dropping outliers - Create a new function to remove outliers.

http://stackoverflow.com/questions/23199796/detect-and-exclude-outliers-in-pandas-dataframe

I find myself using the code from SO quite often to remove outliers in a particular column when preprocessing data and it seems this is a common issue. It ... | non_test | enh dropping outliers create a new function to remove outliers i find myself using the code from so quite often to remove outliers in a particular column when preprocessing data and it seems this is a common issue it would be nice to have a function that operates on a series to do this automatically ... | 0 |

246,974 | 20,948,146,451 | IssuesEvent | 2022-03-26 06:54:49 | Uuvana-Studios/longvinter-windows-client | https://api.github.com/repos/Uuvana-Studios/longvinter-windows-client | closed | Unmovable Infinite Storage Bug | bug need more info Not Tested | The Infinite Storage Bug when picked up will drop around 4-9 maybe more storage before disappearing leaving stacks of chests on the floor creating basically an infinite amount of storage chests.

The Unmovable Storage Bug when collecting or picking an empty storage it would do the same with the Infinite Storage Bug b... | 1.0 | Unmovable Infinite Storage Bug - The Infinite Storage Bug when picked up will drop around 4-9 maybe more storage before disappearing leaving stacks of chests on the floor creating basically an infinite amount of storage chests.

The Unmovable Storage Bug when collecting or picking an empty storage it would do the sam... | test | unmovable infinite storage bug the infinite storage bug when picked up will drop around maybe more storage before disappearing leaving stacks of chests on the floor creating basically an infinite amount of storage chests the unmovable storage bug when collecting or picking an empty storage it would do the sam... | 1 |

1,305 | 3,550,790,801 | IssuesEvent | 2016-01-20 23:29:40 | brata-hsdc/brata.masterserver | https://api.github.com/repos/brata-hsdc/brata.masterserver | closed | Reg Code | ms-piservice priority:1-drop-everything state:2-in-work | We need the master server to accept the registration url with the passcode as the last element of the path and use that to look up the team as well as respond with the reg_code they should use. There is an open issue @ellerychan is working for how the passcode and reg codes are generated so this issue will only put in... | 1.0 | Reg Code - We need the master server to accept the registration url with the passcode as the last element of the path and use that to look up the team as well as respond with the reg_code they should use. There is an open issue @ellerychan is working for how the passcode and reg codes are generated so this issue will ... | non_test | reg code we need the master server to accept the registration url with the passcode as the last element of the path and use that to look up the team as well as respond with the reg code they should use there is an open issue ellerychan is working for how the passcode and reg codes are generated so this issue will ... | 0 |

21,634 | 3,535,336,093 | IssuesEvent | 2016-01-16 12:31:58 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | Reading from backup does not update access data | Team: Core Type: Defect | When **MapConfig** option *"readBackupData"* is set to true then "get" operations do not trigger the update of access timestamps and hit count. This way the entries might get evicted even though they are frequently used.

Cause of this issue is in *com.hazelcast.map.impl.DefaultRecordStore#readBackupData* method. | 1.0 | Reading from backup does not update access data - When **MapConfig** option *"readBackupData"* is set to true then "get" operations do not trigger the update of access timestamps and hit count. This way the entries might get evicted even though they are frequently used.

Cause of this issue is in *com.hazelcast.map.... | non_test | reading from backup does not update access data when mapconfig option readbackupdata is set to true then get operations do not trigger the update of access timestamps and hit count this way the entries might get evicted even though they are frequently used cause of this issue is in com hazelcast map ... | 0 |

696,338 | 23,897,921,778 | IssuesEvent | 2022-09-08 16:07:19 | ooni/probe | https://api.github.com/repos/ooni/probe | opened | Add copy in Test Options screen to mention that test settings apply to both manual and automated runs | ooni/probe-mobile priority/high ooni/probe-desktop copy | As a follow-up to https://github.com/ooni/probe/issues/2265 and https://github.com/ooni/probe/issues/2266, we need to communicate to users that the **test settings** that they configure (e.g. disabling WhatsApp and Psiphon tests) via the Test Options settings of the OONI Probe app **apply to both manual and automated r... | 1.0 | Add copy in Test Options screen to mention that test settings apply to both manual and automated runs - As a follow-up to https://github.com/ooni/probe/issues/2265 and https://github.com/ooni/probe/issues/2266, we need to communicate to users that the **test settings** that they configure (e.g. disabling WhatsApp and ... | non_test | add copy in test options screen to mention that test settings apply to both manual and automated runs as a follow up to and we need to communicate to users that the test settings that they configure e g disabling whatsapp and psiphon tests via the test options settings of the ooni probe app apply to bot... | 0 |

386,126 | 11,432,451,687 | IssuesEvent | 2020-02-04 14:06:30 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Inconclusive or false positive triage should re-enabled auto-approval | component: scanners priority: p3 triaged | ### Describe the problem and steps to reproduce it:

When a version that is held for manual review gets triaged as _inconclusive_ or _false positive_, we should re-enable auto-approval it.

### What happened?

Clicking on _inconclusive_ or _false positive_ does not auto-approve the held version.

### What did y... | 1.0 | Inconclusive or false positive triage should re-enabled auto-approval - ### Describe the problem and steps to reproduce it:

When a version that is held for manual review gets triaged as _inconclusive_ or _false positive_, we should re-enable auto-approval it.

### What happened?

Clicking on _inconclusive_ or _f... | non_test | inconclusive or false positive triage should re enabled auto approval describe the problem and steps to reproduce it when a version that is held for manual review gets triaged as inconclusive or false positive we should re enable auto approval it what happened clicking on inconclusive or f... | 0 |

101,597 | 8,791,282,789 | IssuesEvent | 2018-12-21 12:05:26 | SME-Issues/issues | https://api.github.com/repos/SME-Issues/issues | closed | Compound Query Tests Invoice None - 21/12/18 11:01 - 5004 | NLP Api PETEDEV pulse_tests | **Compound Query Tests Invoice None**

- Total: 24

- Passed: 19

- **Pass: 11 (73%)**

- Not Understood: 9

- Error (not understood): 0

- Failed but Understood: 4 (27%)

| 1.0 | Compound Query Tests Invoice None - 21/12/18 11:01 - 5004 - **Compound Query Tests Invoice None**

- Total: 24

- Passed: 19

- **Pass: 11 (73%)**

- Not Understood: 9

- Error (not understood): 0

- Failed but Understood: 4 (27%)

| test | compound query tests invoice none compound query tests invoice none total passed pass not understood error not understood failed but understood | 1 |

38,315 | 5,173,562,276 | IssuesEvent | 2017-01-18 16:22:47 | ngageoint/hootenanny-ui | https://api.github.com/repos/ngageoint/hootenanny-ui | closed | Issue with Cookie Cutter Conflation | Category: Test Identified During Regression Test Status: Ready for Test Type: Bug | Attempted the following method:

**Cookie Cutter & Horizontal**

For this example we’ll need to create two custom translations, one for the DC Street Centerline Data* described in and a second simple translation to ensure that the OSM highway data for DC maintains the correct osm tags.

Ingest DC Street datasets... | 3.0 | Issue with Cookie Cutter Conflation - Attempted the following method:

**Cookie Cutter & Horizontal**

For this example we’ll need to create two custom translations, one for the DC Street Centerline Data* described in and a second simple translation to ensure that the OSM highway data for DC maintains the correct os... | test | issue with cookie cutter conflation attempted the following method cookie cutter horizontal for this example we’ll need to create two custom translations one for the dc street centerline data described in and a second simple translation to ensure that the osm highway data for dc maintains the correct os... | 1 |

346,698 | 10,418,690,931 | IssuesEvent | 2019-09-15 10:51:18 | SunwellTracker/issues | https://api.github.com/repos/SunwellTracker/issues | closed | Autoattacking trough pillar los on all arenas | Fixed & Implemented High Priority Map | Decription: ^ Title

How it works: ^ Title

How it should work: U shouldn't be able to attack trough los rofl.

Source (you should point out proofs of your report, please give us some source): | 1.0 | Autoattacking trough pillar los on all arenas - Decription: ^ Title

How it works: ^ Title

How it should work: U shouldn't be able to attack trough los rofl.

Source (you should point out proofs of your report, please give us some source): | non_test | autoattacking trough pillar los on all arenas decription title how it works title how it should work u shouldn t be able to attack trough los rofl source you should point out proofs of your report please give us some source | 0 |

159,520 | 12,478,329,528 | IssuesEvent | 2020-05-29 16:20:44 | dbrownukk/EFD_v2 | https://api.github.com/repos/dbrownukk/EFD_v2 | closed | Unable to upload interview spreadsheet | For Testing bug | Instance: EFD_HM

App: OIHM

Module: HH

HH: Old Mother Riley

1. in Module Study, click 'AnOIHM Study' to invoke detail

1. Click 'Template Spreadsheet' to generate interview spreadsheet template view

1. Fill in the template (see attached)

1. In HH module, click 'New' to create a new HH

1. Upload the filled in Template sp... | 1.0 | Unable to upload interview spreadsheet - Instance: EFD_HM

App: OIHM

Module: HH

HH: Old Mother Riley

1. in Module Study, click 'AnOIHM Study' to invoke detail

1. Click 'Template Spreadsheet' to generate interview spreadsheet template view

1. Fill in the template (see attached)

1. In HH module, click 'New' to create a n... | test | unable to upload interview spreadsheet instance efd hm app oihm module hh hh old mother riley in module study click anoihm study to invoke detail click template spreadsheet to generate interview spreadsheet template view fill in the template see attached in hh module click new to create a n... | 1 |

212,193 | 7,229,198,706 | IssuesEvent | 2018-02-11 17:35:23 | Asgaros/asgaros-forum | https://api.github.com/repos/Asgaros/asgaros-forum | closed | Moving Forums | Feature Priority: High | It would be great to have an admin tool to move topics from one Category to another. | 1.0 | Moving Forums - It would be great to have an admin tool to move topics from one Category to another. | non_test | moving forums it would be great to have an admin tool to move topics from one category to another | 0 |

34,613 | 4,934,217,013 | IssuesEvent | 2016-11-28 18:27:05 | Metaswitch/clearwater-etcd | https://api.github.com/repos/Metaswitch/clearwater-etcd | opened | Running upload_shared_config too early causes problems | bug cat:system-test low-priority | #### Symptoms

I've spun up a deployment and hit a couple of problems running upload_shared_config, I think because I ran it too early.

1) I fell the wrong side of [this check](https://github.com/Metaswitch/clearwater-etcd/blob/bdfc0ee2a90bb1fd3d0f0d8dc19fbc63bcc2341c/clearwater-config-manager.root/usr/share/clearwa... | 1.0 | Running upload_shared_config too early causes problems - #### Symptoms

I've spun up a deployment and hit a couple of problems running upload_shared_config, I think because I ran it too early.

1) I fell the wrong side of [this check](https://github.com/Metaswitch/clearwater-etcd/blob/bdfc0ee2a90bb1fd3d0f0d8dc19fbc63... | test | running upload shared config too early causes problems symptoms i ve spun up a deployment and hit a couple of problems running upload shared config i think because i ran it too early i fell the wrong side of obviously this is wad but how long am i supposed to wait i hit a crash ... | 1 |

134,018 | 10,878,544,487 | IssuesEvent | 2019-11-16 18:23:45 | CARTAvis/carta-backend-ICD-test | https://api.github.com/repos/CARTAvis/carta-backend-ICD-test | closed | [Backend ICD test] image zoom and pan | test implementation | Test cases doc:

https://docs.google.com/document/d/1h098N2xCB4Rburjm8G9XTcN3MqfMjVhwt_Qrpy-69Os/edit#heading=h.umgtuzlazls4

Test cases in the doc:

- (backlog) IMAGE_ZOOM_PAN

Relevant messages:

- SET_IMAGE_VIEW

- RASTER_IMAGE_DATA | 1.0 | [Backend ICD test] image zoom and pan - Test cases doc:

https://docs.google.com/document/d/1h098N2xCB4Rburjm8G9XTcN3MqfMjVhwt_Qrpy-69Os/edit#heading=h.umgtuzlazls4

Test cases in the doc:

- (backlog) IMAGE_ZOOM_PAN

Relevant messages:

- SET_IMAGE_VIEW

- RASTER_IMAGE_DATA | test | image zoom and pan test cases doc test cases in the doc backlog image zoom pan relevant messages set image view raster image data | 1 |

203,486 | 15,371,490,222 | IssuesEvent | 2021-03-02 10:04:44 | G-Node/WinGIN | https://api.github.com/repos/G-Node/WinGIN | opened | WinGIN is not closing corectly | needs testing | When restarting or shutting down PC (with fast SSD), the WinGIN will show error and does not close correctly.

| 1.0 | WinGIN is not closing corectly - When restarting or shutting down PC (with fast SSD), the WinGIN will show error and does not close correctly.

| test | wingin is not closing corectly when restarting or shutting down pc with fast ssd the wingin will show error and does not close correctly | 1 |

167,839 | 13,044,597,824 | IssuesEvent | 2020-07-29 05:12:17 | blockstack/stacks-blockchain | https://api.github.com/repos/blockstack/stacks-blockchain | closed | miner panicked at 'attempt to subtract with overflow' | bug help wanted testnet | ## Describe the bug

Was running a miner for over 24h (nearly 48 hours I think), and the miner panicked with:

```

DEBUG [1594297336.457] [src/chainstate/stacks/index/storage.rs:1080] [ThreadId(24214)] Flush: identifier of self is 8637

DEBUG [1594297336.457] [src/chainstate/burn/db/burndb.rs:680] [ThreadId(24214)] In... | 1.0 | miner panicked at 'attempt to subtract with overflow' - ## Describe the bug

Was running a miner for over 24h (nearly 48 hours I think), and the miner panicked with:

```

DEBUG [1594297336.457] [src/chainstate/stacks/index/storage.rs:1080] [ThreadId(24214)] Flush: identifier of self is 8637

DEBUG [1594297336.457] [sr... | test | miner panicked at attempt to subtract with overflow describe the bug was running a miner for over nearly hours i think and the miner panicked with debug flush identifier of self is debug insert block snapshot state for block debug accepted leader key register ... | 1 |

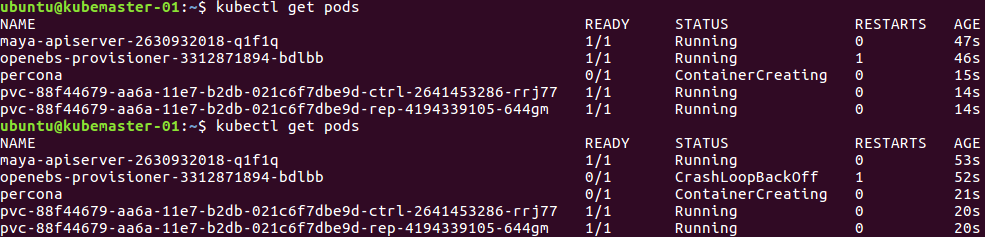

187,344 | 6,756,324,863 | IssuesEvent | 2017-10-24 06:29:48 | openebs/openebs | https://api.github.com/repos/openebs/openebs | closed | openebs-provisioner get crashed after launching percona pods. | area/volume/provisioning kind/bug priority/0 | BUG REPORT

**What happened**:

* I tried to launch percona and just after creation of pvc and percona pod openebs-provisoner pod went crashloopbackoff. Here is the screenshot.

**What I did?**

i d... | 1.0 | openebs-provisioner get crashed after launching percona pods. - BUG REPORT

**What happened**:

* I tried to launch percona and just after creation of pvc and percona pod openebs-provisoner pod went crashloopbackoff. Here is the screenshot.

doesn't work anymore:

```

sub options_categories {

my $self = shift;

my $result = $self->form->roles;

my @roles = map { $_->{name} => $_->{name} } @{$result} if ($result);

return ('' => '', @roles);

}

```

This one work:

```

sub options_categories {

... | 1.0 | Role selection isn't working on Firewall SSO and Scan. - This code (in Firewall_SSO.pm) doesn't work anymore:

```

sub options_categories {

my $self = shift;

my $result = $self->form->roles;

my @roles = map { $_->{name} => $_->{name} } @{$result} if ($result);

return ('' => '', @roles);

}

```... | non_test | role selection isn t working on firewall sso and scan this code in firewall sso pm doesn t work anymore sub options categories my self shift my result self form roles my roles map name name result if result return roles ... | 0 |

11,191 | 2,641,732,933 | IssuesEvent | 2015-03-11 19:25:35 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | slides: a simpler template | Priority-Medium Slides Type-Defect | Original [issue 93](https://code.google.com/p/html5rocks/issues/detail?id=93) created by chrsmith on 2010-07-28T21:49:22.000Z:

Reported by KaiYanNju, Apr 30, 2010

It's coolest slider I have seen.

So I think a template to make html5-slide is useful.

The tempate should not have too many features,a video,a text and a ... | 1.0 | slides: a simpler template - Original [issue 93](https://code.google.com/p/html5rocks/issues/detail?id=93) created by chrsmith on 2010-07-28T21:49:22.000Z:

Reported by KaiYanNju, Apr 30, 2010

It's coolest slider I have seen.

So I think a template to make html5-slide is useful.

The tempate should not have too many f... | non_test | slides a simpler template original created by chrsmith on reported by kaiyannju apr it s coolest slider i have seen so i think a template to make slide is useful the tempate should not have too many features a video a text and a mousic is enough and i can do this work if you like ... | 0 |

284,519 | 30,913,640,762 | IssuesEvent | 2023-08-05 02:28:33 | Nivaskumark/kernel_v4.19.72_old | https://api.github.com/repos/Nivaskumark/kernel_v4.19.72_old | reopened | CVE-2021-3483 (High) detected in linux-yoctov5.4.51 | Mend: dependency security vulnerability | ## CVE-2021-3483 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded kernel</p>

<p>Library home page: <a href=https://git.y... | True | CVE-2021-3483 (High) detected in linux-yoctov5.4.51 - ## CVE-2021-3483 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yoctov5.4.51</b></p></summary>

<p>

<p>Yocto Linux Embedded ... | non_test | cve high detected in linux cve high severity vulnerability vulnerable library linux yocto linux embedded kernel library home page a href found in head commit a href found in base branch master vulnerable source files drivers firewir... | 0 |

75,919 | 9,358,263,637 | IssuesEvent | 2019-04-02 01:37:29 | isenseDev/MYR | https://api.github.com/repos/isenseDev/MYR | closed | Remove display components from containers | Frontend Redesign enhancement | Use containers for binding and move the display components into screens | 1.0 | Remove display components from containers - Use containers for binding and move the display components into screens | non_test | remove display components from containers use containers for binding and move the display components into screens | 0 |

282,681 | 30,889,402,969 | IssuesEvent | 2023-08-04 02:40:16 | madhans23/linux-4.1.15 | https://api.github.com/repos/madhans23/linux-4.1.15 | reopened | CVE-2023-1281 (High) detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2023-1281 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page... | True | CVE-2023-1281 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2023-1281 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwri... | non_test | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in base branch master vulnerable source files net sched cls tc... | 0 |

37,146 | 18,156,515,534 | IssuesEvent | 2021-09-27 02:52:30 | verilator/verilator | https://api.github.com/repos/verilator/verilator | closed | Support profile guided optimization of mtasks | resolution: fixed area: performance type: feature-non-IEEE | I've nearly finished implementing profile guided optimization (PGO) of the multithreaded mtasks.

Details of using this will be captured in the documentation.

This issue collects some performance notes.

Using verilated_ext_test t_cores_swerv_cmark.pl and --threads 4

Best of 5 runs with --threads 4: 25.31 sec... | True | Support profile guided optimization of mtasks - I've nearly finished implementing profile guided optimization (PGO) of the multithreaded mtasks.

Details of using this will be captured in the documentation.

This issue collects some performance notes.

Using verilated_ext_test t_cores_swerv_cmark.pl and --threads... | non_test | support profile guided optimization of mtasks i ve nearly finished implementing profile guided optimization pgo of the multithreaded mtasks details of using this will be captured in the documentation this issue collects some performance notes using verilated ext test t cores swerv cmark pl and threads... | 0 |

150,968 | 11,995,487,402 | IssuesEvent | 2020-04-08 15:16:48 | wasabee-project/Wasabee-IITC | https://api.github.com/repos/wasabee-project/Wasabee-IITC | closed | Split languages into individual JSON files | In Testing | Crystalwizard (ENL L16 AZ), [06.04.20 15:23]

I have a concern btw - each language has so many keys that translations.json is going to get huge, fast. should we be thinking about multiple translations files?

deviousness (Scot 🐢 D/FW Ԙ13), [06.04.20 15:24]

Yes.

Crystalwizard (ENL L16 AZ), [06.04.20 15:24]

okay ... | 1.0 | Split languages into individual JSON files - Crystalwizard (ENL L16 AZ), [06.04.20 15:23]

I have a concern btw - each language has so many keys that translations.json is going to get huge, fast. should we be thinking about multiple translations files?

deviousness (Scot 🐢 D/FW Ԙ13), [06.04.20 15:24]

Yes.

Crysta... | test | split languages into individual json files crystalwizard enl az i have a concern btw each language has so many keys that translations json is going to get huge fast should we be thinking about multiple translations files deviousness scot 🐢 d fw yes crystalwizard enl az okay so how... | 1 |

212,617 | 16,469,603,498 | IssuesEvent | 2021-05-23 06:20:05 | theimpossibleastronaut/rmw | https://api.github.com/repos/theimpossibleastronaut/rmw | opened | [MacOS] Sometimes the mkdir test fails | bug osx test | The rmw_mkdir() test *sometimes* fails on MacOS. I've recently noticed it about 1 out of 6 times when I push to the master branch.

```

FAIL: test_utils

================

Assertion failed: (rmw_mkdir (dir, S_IRWXU) == 0), function test_rmw_mkdir, file ../../test/test_utils.c, line 27.

FAIL test_utils (exit s... | 1.0 | [MacOS] Sometimes the mkdir test fails - The rmw_mkdir() test *sometimes* fails on MacOS. I've recently noticed it about 1 out of 6 times when I push to the master branch.

```

FAIL: test_utils

================

Assertion failed: (rmw_mkdir (dir, S_IRWXU) == 0), function test_rmw_mkdir, file ../../test/test_uti... | test | sometimes the mkdir test fails the rmw mkdir test sometimes fails on macos i ve recently noticed it about out of times when i push to the master branch fail test utils assertion failed rmw mkdir dir s irwxu function test rmw mkdir file test test utils c ... | 1 |

214,957 | 16,619,909,483 | IssuesEvent | 2021-06-02 22:24:10 | galaxyproject/galaxy | https://api.github.com/repos/galaxyproject/galaxy | closed | pytest ignores tests grouped within a class | area/testing | Currently, pytest will ignore tests grouped in classes. This was done [here](https://github.com/galaxyproject/galaxy/pull/6722/commits/721a4656d941a5462a4db046d192ef81cd1a23c1) to get rid of pytest warnings issued when pytest attempted to collect tests from a class with a name matching the test collection pattern (`Te... | 1.0 | pytest ignores tests grouped within a class - Currently, pytest will ignore tests grouped in classes. This was done [here](https://github.com/galaxyproject/galaxy/pull/6722/commits/721a4656d941a5462a4db046d192ef81cd1a23c1) to get rid of pytest warnings issued when pytest attempted to collect tests from a class with a ... | test | pytest ignores tests grouped within a class currently pytest will ignore tests grouped in classes this was done to get rid of pytest warnings issued when pytest attempted to collect tests from a class with a name matching the test collection pattern test but also with a constructor which causes the class... | 1 |

649,924 | 21,330,399,354 | IssuesEvent | 2022-04-18 07:31:06 | ballerina-platform/ballerina-dev-website | https://api.github.com/repos/ballerina-platform/ballerina-dev-website | closed | Add a Mandatory Checkbox to Avoid Breaking URL Changes | Priority/High Type/Improvement Points/0.5 | **Description:**

We need to add a mandatory checkbox to the PR template so that it can be done to verify if redirections are properly added when implementing breaking URL changes.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issues (optional):**

<!-- Any related issues such as sub tas... | 1.0 | Add a Mandatory Checkbox to Avoid Breaking URL Changes - **Description:**

We need to add a mandatory checkbox to the PR template so that it can be done to verify if redirections are properly added when implementing breaking URL changes.

**Describe your problem(s)**

**Describe your solution(s)**

**Related Issu... | non_test | add a mandatory checkbox to avoid breaking url changes description we need to add a mandatory checkbox to the pr template so that it can be done to verify if redirections are properly added when implementing breaking url changes describe your problem s describe your solution s related issu... | 0 |

56,025 | 6,498,833,857 | IssuesEvent | 2017-08-22 19:00:02 | mozilla-mobile/focus-android | https://api.github.com/repos/mozilla-mobile/focus-android | closed | Have an option to bypass Strict Mode on UITests | needs triage testing | Some version of API/simulator combination causes the Focus app to crash early when strict mode is enabled. For example, Nexus 9 fails locally, but for some reason BB Nexus 9 setup currents runs tests with no issues. If there is a way to turn off strict mode (i.e. command-line parameter) then we won't have to worry abo... | 1.0 | Have an option to bypass Strict Mode on UITests - Some version of API/simulator combination causes the Focus app to crash early when strict mode is enabled. For example, Nexus 9 fails locally, but for some reason BB Nexus 9 setup currents runs tests with no issues. If there is a way to turn off strict mode (i.e. comma... | test | have an option to bypass strict mode on uitests some version of api simulator combination causes the focus app to crash early when strict mode is enabled for example nexus fails locally but for some reason bb nexus setup currents runs tests with no issues if there is a way to turn off strict mode i e comma... | 1 |

345,252 | 30,793,164,790 | IssuesEvent | 2023-07-31 17:42:56 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | FE | Profile | Contact Information | Fix / remedy failed unit tests for useProfileTransaction hook | bug frontend authenticated-experience profile testing contact-information | ## Issue Description

The CI/CD Github action runs have been failing periodically due to unit tests that the team is responsible for.

Specifically the unit tests for the `useProfileTransaction` hook. Not really sure why those passed for a long time and now are failing, but the hook really isn't used outside of experim... | 1.0 | FE | Profile | Contact Information | Fix / remedy failed unit tests for useProfileTransaction hook - ## Issue Description

The CI/CD Github action runs have been failing periodically due to unit tests that the team is responsible for.

Specifically the unit tests for the `useProfileTransaction` hook. Not really sure wh... | test | fe profile contact information fix remedy failed unit tests for useprofiletransaction hook issue description the ci cd github action runs have been failing periodically due to unit tests that the team is responsible for specifically the unit tests for the useprofiletransaction hook not really sure wh... | 1 |

126,330 | 10,419,066,756 | IssuesEvent | 2019-09-15 13:55:11 | zio/zio | https://api.github.com/repos/zio/zio | closed | ZIO Test: Explore Alternatives for Bringing Back Old MockRandom Functionality | tests | Originally `MockRandom` had a very simple implementation along the lines of `MockConsole` where you would “feed” data into `MockRandom` and then when you called methods that relied on `Random` you would just get back the data you fed in. As we built out ZIO Test we upgraded `MockRandom` to a full blown purely functiona... | 1.0 | ZIO Test: Explore Alternatives for Bringing Back Old MockRandom Functionality - Originally `MockRandom` had a very simple implementation along the lines of `MockConsole` where you would “feed” data into `MockRandom` and then when you called methods that relied on `Random` you would just get back the data you fed in. As... | test | zio test explore alternatives for bringing back old mockrandom functionality originally mockrandom had a very simple implementation along the lines of mockconsole where you would “feed” data into mockrandom and then when you called methods that relied on random you would just get back the data you fed in as... | 1 |

78,347 | 7,626,046,135 | IssuesEvent | 2018-05-04 00:36:26 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | closed | Test cannot directly copy and run cmd that is printed in console | comp:test prio:medium | Running test in build, we will get console output as following:

```

===============================================

Running test memoryCategories_0 ...

===============================================

test with NoOptions

{ mkdir -p "/tmp/bld_380527/memoryCategories_0"; \

cd "/tmp/bld_380527/memoryCategories_0";... | 1.0 | Test cannot directly copy and run cmd that is printed in console - Running test in build, we will get console output as following:

```

===============================================

Running test memoryCategories_0 ...

===============================================

test with NoOptions

{ mkdir -p "/tmp/bld_380527... | test | test cannot directly copy and run cmd that is printed in console running test in build we will get console output as following running test memorycategories test with nooptions mkdir p tmp bld memo... | 1 |

285,972 | 24,711,348,793 | IssuesEvent | 2022-10-20 01:15:32 | harvester/harvester | https://api.github.com/repos/harvester/harvester | closed | [FEATURE] Logging | kind/enhancement priority/0 highlight require/HEP Epic not-require/test-plan | 1. We need to provide a way for users to view the system-related logs.

2. We need the ability to export the system-related logs to outside the cluster in case the user wants to collect and analyse it later.

To elaborate:

1. We need to export Harvester logs to a central location. We should support what Rancher logg... | 1.0 | [FEATURE] Logging - 1. We need to provide a way for users to view the system-related logs.

2. We need the ability to export the system-related logs to outside the cluster in case the user wants to collect and analyse it later.

To elaborate:

1. We need to export Harvester logs to a central location. We should suppo... | test | logging we need to provide a way for users to view the system related logs we need the ability to export the system related logs to outside the cluster in case the user wants to collect and analyse it later to elaborate we need to export harvester logs to a central location we should support what ... | 1 |

246,619 | 18,848,997,227 | IssuesEvent | 2021-11-11 18:13:30 | kubernetes-sigs/aws-ebs-csi-driver | https://api.github.com/repos/kubernetes-sigs/aws-ebs-csi-driver | closed | Improve documentation around extraTags argument | lifecycle/rotten kind/documentation | /kind bug

**What happened?** There is no example of how to set it. The arg help text shows that it's like key=value,key=value (comma-separated) but this isn't good enough because users are not interacting with the binary as a CLI, they are using it as a Pod.

Plus I had to check code the difference between extraTa... | 1.0 | Improve documentation around extraTags argument - /kind bug

**What happened?** There is no example of how to set it. The arg help text shows that it's like key=value,key=value (comma-separated) but this isn't good enough because users are not interacting with the binary as a CLI, they are using it as a Pod.

Plus ... | non_test | improve documentation around extratags argument kind bug what happened there is no example of how to set it the arg help text shows that it s like key value key value comma separated but this isn t good enough because users are not interacting with the binary as a cli they are using it as a pod plus ... | 0 |

269,835 | 23,470,677,692 | IssuesEvent | 2022-08-16 21:27:35 | rusqlite/rusqlite | https://api.github.com/repos/rusqlite/rusqlite | closed | Add tests for 32-bit systems. | testing / ci | One impediment here is that the bundled bindings include tests that only work on 64-bit systems (because they include hard-coded constants).

Probably worth removing those tests when generating the bundled bindings and including them for non-bundled cases (e.g. buildtime_bindgen). | 1.0 | Add tests for 32-bit systems. - One impediment here is that the bundled bindings include tests that only work on 64-bit systems (because they include hard-coded constants).

Probably worth removing those tests when generating the bundled bindings and including them for non-bundled cases (e.g. buildtime_bindgen). | test | add tests for bit systems one impediment here is that the bundled bindings include tests that only work on bit systems because they include hard coded constants probably worth removing those tests when generating the bundled bindings and including them for non bundled cases e g buildtime bindgen | 1 |

224,261 | 17,685,507,516 | IssuesEvent | 2021-08-24 00:31:04 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: sqlsmith/setup=empty/setting=no-mutations failed | C-test-failure O-robot O-roachtest branch-master T-sql-queries E-quick-win | roachtest.sqlsmith/setup=empty/setting=no-mutations [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3248647&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3248647&tab=artifacts#/sqlsmith/setup=empty/setting=no-mutations) on master @ [31af9e32a55a166166e9ba9c5327b7cd8... | 2.0 | roachtest: sqlsmith/setup=empty/setting=no-mutations failed - roachtest.sqlsmith/setup=empty/setting=no-mutations [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3248647&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3248647&tab=artifacts#/sqlsmith/setup=empty/settin... | test | roachtest sqlsmith setup empty setting no mutations failed roachtest sqlsmith setup empty setting no mutations with on master the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts sqlsmith setup empty setting n... | 1 |

23,797 | 16,598,187,136 | IssuesEvent | 2021-06-01 15:44:38 | OpenHistoricalMap/issues | https://api.github.com/repos/OpenHistoricalMap/issues | closed | Move Overpass into the OHM infrastructure | infrastructure nominatim overpass | **What's your idea for a cool feature that would help you use OHM better.**

It turns out... Overpass is too important not to have in our infrastructure. Especially when Nominatim has a dependency on Overpass.

**Current workarounds**

Hosting Overpass externally, but that makes it too tricky to make mods, fix what'... | 1.0 | Move Overpass into the OHM infrastructure - **What's your idea for a cool feature that would help you use OHM better.**

It turns out... Overpass is too important not to have in our infrastructure. Especially when Nominatim has a dependency on Overpass.

**Current workarounds**

Hosting Overpass externally, but that... | non_test | move overpass into the ohm infrastructure what s your idea for a cool feature that would help you use ohm better it turns out overpass is too important not to have in our infrastructure especially when nominatim has a dependency on overpass current workarounds hosting overpass externally but that... | 0 |

108,583 | 9,311,087,177 | IssuesEvent | 2019-03-25 20:27:59 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Add integration test to assert that HEAD can use state created by latest release | area/testing help wanted kind/feature lifecycle/frozen priority/important-soon | <!-- Thanks for filing an issue! Before hitting the button, please answer these questions.-->

**Is this a BUG REPORT or FEATURE REQUEST?** (choose one): Feature request

The 0.12.0 to 0.12.1 release required a recreate of the minikube VM because of localkube DNS changes. This caused a lot of confusion for users u... | 1.0 | Add integration test to assert that HEAD can use state created by latest release - <!-- Thanks for filing an issue! Before hitting the button, please answer these questions.-->

**Is this a BUG REPORT or FEATURE REQUEST?** (choose one): Feature request

The 0.12.0 to 0.12.1 release required a recreate of the miniku... | test | add integration test to assert that head can use state created by latest release is this a bug report or feature request choose one feature request the to release required a recreate of the minikube vm because of localkube dns changes this caused a lot of confusion for users upgrading ... | 1 |

77,819 | 14,920,607,849 | IssuesEvent | 2021-01-23 05:44:40 | 4Moyede/HexatonClass-01 | https://api.github.com/repos/4Moyede/HexatonClass-01 | closed | [Python] 최적의 변수명짓기 | Python Code | **Commit** : commit 번호

**Content** : 질문 내용에 대해서 서술하시오

```python

PFilter = int(input("원하는 가격을 입력하시오 : "))

NFilter = input("지역을 입력하시오 : ")

RFilter = int(input("최소 리뷰수를 입력하시오 : "))

airbnb_filter = airbnb_db[(airbnb_db["price"]>PFilter) & (airbnb_db["neighborhood"] == NFilter) & (airbnb_db["reviews"] > RFilter)]

... | 1.0 | [Python] 최적의 변수명짓기 - **Commit** : commit 번호

**Content** : 질문 내용에 대해서 서술하시오

```python

PFilter = int(input("원하는 가격을 입력하시오 : "))

NFilter = input("지역을 입력하시오 : ")

RFilter = int(input("최소 리뷰수를 입력하시오 : "))

airbnb_filter = airbnb_db[(airbnb_db["price"]>PFilter) & (airbnb_db["neighborhood"] == NFilter) & (airbnb_db["r... | non_test | 최적의 변수명짓기 commit commit 번호 content 질문 내용에 대해서 서술하시오 python pfilter int input 원하는 가격을 입력하시오 nfilter input 지역을 입력하시오 rfilter int input 최소 리뷰수를 입력하시오 airbnb filter airbnb db pfilter airbnb db nfilter airbnb db rfilter print start filtering job ... | 0 |

52,669 | 6,264,687,957 | IssuesEvent | 2017-07-16 10:41:06 | go-siris/siris | https://api.github.com/repos/go-siris/siris | closed | Faster Json Renderer | branch for tests available feature request | The default Iris Json renderer uses encode/json.

For fast Json Rendering (and also parsing) there is https://github.com/mailru/easyjson.

We should add an easy option to use easyjson. | 1.0 | Faster Json Renderer - The default Iris Json renderer uses encode/json.

For fast Json Rendering (and also parsing) there is https://github.com/mailru/easyjson.

We should add an easy option to use easyjson. | test | faster json renderer the default iris json renderer uses encode json for fast json rendering and also parsing there is we should add an easy option to use easyjson | 1 |

105,121 | 11,432,961,799 | IssuesEvent | 2020-02-04 14:56:19 | carbon-design-system/ibm-dotcom-library | https://api.github.com/repos/carbon-design-system/ibm-dotcom-library | closed | Abstract migration: Feature card | Airtable Done Sprint Must Have documentation package: patterns web simplification | ### user story

As a QA tester using a Windows PC, I need a way to access the design specs from my Windows PC so that I can conducts QA tests.

### Additional Information

- Prod QA testing issue (#1213)

### Acceptance criteria

- [x] Upload the design spec file(s) to the [Layout patterns Abstract project](https://share... | 1.0 | Abstract migration: Feature card - ### user story

As a QA tester using a Windows PC, I need a way to access the design specs from my Windows PC so that I can conducts QA tests.

### Additional Information

- Prod QA testing issue (#1213)

### Acceptance criteria

- [x] Upload the design spec file(s) to the [Layout patt... | non_test | abstract migration feature card user story as a qa tester using a windows pc i need a way to access the design specs from my windows pc so that i can conducts qa tests additional information prod qa testing issue acceptance criteria upload the design spec file s to the a collect... | 0 |

274,235 | 8,558,793,215 | IssuesEvent | 2018-11-08 19:18:45 | ansible/galaxy | https://api.github.com/repos/ansible/galaxy | closed | Can't disable namespaces with - or . in their names. | area/backend area/frontend priority/medium type/bug | Legacy namespaces with forbidden characters such as `-` throw `Error! Name can only contain [A-Za-z0-9_] (ansible-galaxy) ` when trying to disable them. | 1.0 | Can't disable namespaces with - or . in their names. - Legacy namespaces with forbidden characters such as `-` throw `Error! Name can only contain [A-Za-z0-9_] (ansible-galaxy) ` when trying to disable them. | non_test | can t disable namespaces with or in their names legacy namespaces with forbidden characters such as throw error name can only contain ansible galaxy when trying to disable them | 0 |

178,107 | 29,498,857,461 | IssuesEvent | 2023-06-02 19:34:13 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | Design feature to opt-out from MHV on VA.gov | ux appointments-product-design | ## Task Description

Add a way for Veterans to temporarily opt out of using Appointments on MHV on VA.gov and return to MHV.gov Liferay.

## Notes and References

- This will only show for Appointments until we have Cerner data displaying (phase 1b). After that we'd remove it

- [Here's an example](https://dsva.slack.com/... | 1.0 | Design feature to opt-out from MHV on VA.gov - ## Task Description

Add a way for Veterans to temporarily opt out of using Appointments on MHV on VA.gov and return to MHV.gov Liferay.

## Notes and References

- This will only show for Appointments until we have Cerner data displaying (phase 1b). After that we'd remove i... | non_test | design feature to opt out from mhv on va gov task description add a way for veterans to temporarily opt out of using appointments on mhv on va gov and return to mhv gov liferay notes and references this will only show for appointments until we have cerner data displaying phase after that we d remove it... | 0 |

283,056 | 30,889,554,281 | IssuesEvent | 2023-08-04 02:54:04 | maddyCode23/linux-4.1.15 | https://api.github.com/repos/maddyCode23/linux-4.1.15 | reopened | CVE-2019-11478 (High) detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## CVE-2019-11478 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home pag... | True | CVE-2019-11478 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2019-11478 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartw... | non_test | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files... | 0 |

74,706 | 7,438,679,132 | IssuesEvent | 2018-03-27 01:52:47 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | closed | [fvt] regression for new rflash (python version) | component:test type:feature | what to do:

* [ ] overall regression for new ``rflash`` (python version) against ``openbmc``

**Start to test at the third week of 2.13.10 sprint 2** | 1.0 | [fvt] regression for new rflash (python version) - what to do:

* [ ] overall regression for new ``rflash`` (python version) against ``openbmc``

**Start to test at the third week of 2.13.10 sprint 2** | test | regression for new rflash python version what to do overall regression for new rflash python version against openbmc start to test at the third week of sprint | 1 |

199,249 | 6,987,294,721 | IssuesEvent | 2017-12-14 08:40:35 | qutebrowser/qutebrowser | https://api.github.com/repos/qutebrowser/qutebrowser | closed | Completion window moves horizontally | bug: behavior component: completion component: ui priority: 2 - low | How to reproduce:

- Open any page.

- Press o (to show the completion window).

- Pan left/right, you will see it shakes (bad).

How to not reproduce:

- Open any page.

- Press :.

- Pan left/right, you will see it not shaking (good).

qutebrowser v1.0.4

Git commit: a137a29cc (2017-12-03 22:32:17... | 1.0 | Completion window moves horizontally - How to reproduce:

- Open any page.

- Press o (to show the completion window).

- Pan left/right, you will see it shakes (bad).

How to not reproduce:

- Open any page.

- Press :.

- Pan left/right, you will see it not shaking (good).

qutebrowser v1.0.4

Git... | non_test | completion window moves horizontally how to reproduce open any page press o to show the completion window pan left right you will see it shakes bad how to not reproduce open any page press pan left right you will see it not shaking good qutebrowser git ... | 0 |

96,351 | 8,607,204,562 | IssuesEvent | 2018-11-17 19:54:57 | couchbase/couchbase-lite-core | https://api.github.com/repos/couchbase/couchbase-lite-core | closed | C4Database leaked in "Pull Overflowed Rev Tree" test | unit-test-failure 👎 | Unit tests occasionally fail with a leaked C4Database (and litecore::DataFile::Shared) instance. This happens only rarely when I run tests, but very frequently on the Jenkins Mac builder.

[Excerpt of test log](https://gist.github.com/snej/5e571818e771fe50babe170d2e68f889) | 1.0 | C4Database leaked in "Pull Overflowed Rev Tree" test - Unit tests occasionally fail with a leaked C4Database (and litecore::DataFile::Shared) instance. This happens only rarely when I run tests, but very frequently on the Jenkins Mac builder.

[Excerpt of test log](https://gist.github.com/snej/5e571818e771fe50babe170... | test | leaked in pull overflowed rev tree test unit tests occasionally fail with a leaked and litecore datafile shared instance this happens only rarely when i run tests but very frequently on the jenkins mac builder | 1 |

648,691 | 21,192,058,360 | IssuesEvent | 2022-04-08 18:37:49 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | opened | User is taken to the Community channel directly after logging in | bug Communities priority 1: high | # Bug Report

## Description

User is taken to the Community channel directly after logging in.

## Steps to reproduce

- Launch app and create a new Community and a few Community channels

- Add a couple of known contacts to this Community

- Sign Out and Quit

- Remove Data folder

- Launch app again and sig... | 1.0 | User is taken to the Community channel directly after logging in - # Bug Report

## Description

User is taken to the Community channel directly after logging in.

## Steps to reproduce

- Launch app and create a new Community and a few Community channels

- Add a couple of known contacts to this Community

- ... | non_test | user is taken to the community channel directly after logging in bug report description user is taken to the community channel directly after logging in steps to reproduce launch app and create a new community and a few community channels add a couple of known contacts to this community ... | 0 |

203,298 | 23,140,944,538 | IssuesEvent | 2022-07-28 18:25:42 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Security Solution] Unable to create rule if timestamp fallback toggle button not selected | bug Team:Detections and Resp Team: SecuritySolution Team:Detection Alerts v8.4.0 | **Describe the bug**

Unable to create rule if timestamp fallback toggle button not selected

**Build info**

```

VERSION : main

COMMIT: bcbef78b4dfaebe40f334fcaa5cef94fda9acc29

```

**Preconditions**

1. Kibana should be running

**Steps to Reproduce**

1. Navigate to security > rule page

2. Click on creat... | True | [Security Solution] Unable to create rule if timestamp fallback toggle button not selected - **Describe the bug**

Unable to create rule if timestamp fallback toggle button not selected

**Build info**

```

VERSION : main

COMMIT: bcbef78b4dfaebe40f334fcaa5cef94fda9acc29

```

**Preconditions**

1. Kibana should... | non_test | unable to create rule if timestamp fallback toggle button not selected describe the bug unable to create rule if timestamp fallback toggle button not selected build info version main commit preconditions kibana should be running steps to reproduce navigate to sec... | 0 |

291,076 | 25,119,535,773 | IssuesEvent | 2022-11-09 06:44:24 | o1-labs/snarkyjs | https://api.github.com/repos/o1-labs/snarkyjs | closed | Dex: Token tests | logic-testing | Tests for Creating, Minting, Burning, Transferring, and Putting Preconditions/Assertions on Tokens | 1.0 | Dex: Token tests - Tests for Creating, Minting, Burning, Transferring, and Putting Preconditions/Assertions on Tokens | test | dex token tests tests for creating minting burning transferring and putting preconditions assertions on tokens | 1 |

237,623 | 19,661,701,309 | IssuesEvent | 2022-01-10 17:40:58 | Topl/Bifrost | https://api.github.com/repos/Topl/Bifrost | closed | Domain-Specific Language | testing feature | Writing out a template of functions that can be used in Javascript contracts that directly translate to specific sequences of transactions

┆Issue is synchronized with this [Jira Epic](https://topl.atlassian.net/browse/CORE-915) by [Unito](https://www.unito.io)

| 1.0 | Domain-Specific Language - Writing out a template of functions that can be used in Javascript contracts that directly translate to specific sequences of transactions

┆Issue is synchronized with this [Jira Epic](https://topl.atlassian.net/browse/CORE-915) by [Unito](https://www.unito.io)

| test | domain specific language writing out a template of functions that can be used in javascript contracts that directly translate to specific sequences of transactions ┆issue is synchronized with this by | 1 |

742,902 | 25,877,143,795 | IssuesEvent | 2022-12-14 08:45:13 | wso2/api-manager | https://api.github.com/repos/wso2/api-manager | opened | Log Tracing is not working as expected in OpenTracing | Type/Bug Priority/Normal Component/APIM 4.x.x | ### Description

When you enable log tracing as mentioned in [1], the log file will not contain correct information. It only prints something like, `n14:11:48,992 [-] [PassThroughMessageProcessor-1] TRACE `

### Steps to Reproduce

Follow the steps in [1]

### Affected Component

APIM

### Version

4.2.0-Pre-Alpha

###... | 1.0 | Log Tracing is not working as expected in OpenTracing - ### Description

When you enable log tracing as mentioned in [1], the log file will not contain correct information. It only prints something like, `n14:11:48,992 [-] [PassThroughMessageProcessor-1] TRACE `

### Steps to Reproduce

Follow the steps in [1]

### Aff... | non_test | log tracing is not working as expected in opentracing description when you enable log tracing as mentioned in the log file will not contain correct information it only prints something like trace steps to reproduce follow the steps in affected component apim version ... | 0 |

129,554 | 5,098,705,892 | IssuesEvent | 2017-01-04 03:17:59 | PolarisSS13/Polaris | https://api.github.com/repos/PolarisSS13/Polaris | closed | Blindness, Confusion and Blurry Eyes do not modify chance to hit for some attacks | Oversight Priority: High | #### Brief description of the issue

Blindness from any source, including genetics, having no eyes, and being flashed does not add the intended 75% to hit malus. #2134

Confusion and blurred vision from flashes also seems to have no effect on chance to hit.

#### What you expected to happen

Someone with no eyes to have ... | 1.0 | Blindness, Confusion and Blurry Eyes do not modify chance to hit for some attacks - #### Brief description of the issue

Blindness from any source, including genetics, having no eyes, and being flashed does not add the intended 75% to hit malus. #2134

Confusion and blurred vision from flashes also seems to have no effe... | non_test | blindness confusion and blurry eyes do not modify chance to hit for some attacks brief description of the issue blindness from any source including genetics having no eyes and being flashed does not add the intended to hit malus confusion and blurred vision from flashes also seems to have no effect o... | 0 |

6,115 | 5,286,679,483 | IssuesEvent | 2017-02-08 10:02:38 | MadOgre/angular-rest | https://api.github.com/repos/MadOgre/angular-rest | opened | Needs to be optimized for lazy API loading | performance related | Currently the root component is loading all existing articles on the system.

Maybe implement a delta update system by last modified date. | True | Needs to be optimized for lazy API loading - Currently the root component is loading all existing articles on the system.

Maybe implement a delta update system by last modified date. | non_test | needs to be optimized for lazy api loading currently the root component is loading all existing articles on the system maybe implement a delta update system by last modified date | 0 |

200,438 | 22,773,751,101 | IssuesEvent | 2022-07-08 12:37:12 | ignatandrei/AspNetCoreImageTagHelper | https://api.github.com/repos/ignatandrei/AspNetCoreImageTagHelper | opened | CVE-2016-10735 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js | security vulnerability | ## CVE-2016-10735 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>bootstrap-3.3.6.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.6.min.j... | True | CVE-2016-10735 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js - ## CVE-2016-10735 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>boo... | non_test | cve medium detected in bootstrap min js bootstrap js cve medium severity vulnerability vulnerable libraries bootstrap min js bootstrap js bootstrap min js the most popular front end framework for developing responsive mobile first projects on the ... | 0 |

3,415 | 2,763,271,488 | IssuesEvent | 2015-04-29 08:05:14 | apinf/api-umbrella-dashboard | https://api.github.com/repos/apinf/api-umbrella-dashboard | closed | Create communication plan document | Documentation | Create a documennt describing tools and procedures for team communication, review process, etc.. | 1.0 | Create communication plan document - Create a documennt describing tools and procedures for team communication, review process, etc.. | non_test | create communication plan document create a documennt describing tools and procedures for team communication review process etc | 0 |

47,722 | 13,066,142,182 | IssuesEvent | 2020-07-30 21:04:45 | googlefonts/noto-fonts | https://api.github.com/repos/googlefonts/noto-fonts | closed | Noto Nastaliq ک final and isolated? shape | FoundIn-1.x Script-Urdu Type-Defect | ```

What steps will reproduce the problem?

The 'kaaf' which is used at this moment produces the miniature kaaf symbol

together with the letter in the final and stand-alone positions. This occurs in

some words while in others it doesn't!

What is the expected output? What do you see instead?

In Urdu it is never used, t... | 1.0 | Noto Nastaliq ک final and isolated? shape - ```

What steps will reproduce the problem?

The 'kaaf' which is used at this moment produces the miniature kaaf symbol

together with the letter in the final and stand-alone positions. This occurs in

some words while in others it doesn't!

What is the expected output? What do ... | non_test | noto nastaliq ک final and isolated shape what steps will reproduce the problem the kaaf which is used at this moment produces the miniature kaaf symbol together with the letter in the final and stand alone positions this occurs in some words while in others it doesn t what is the expected output what do ... | 0 |

213,689 | 16,532,610,895 | IssuesEvent | 2021-05-27 08:04:34 | LeisyVasquez/EcoCol | https://api.github.com/repos/LeisyVasquez/EcoCol | closed | Prototipo en miro | documentation | Según la documentación solo quedo faltando el espacio para **reportar errores de la página**, se pondrá en el prototipo si en ultima se va a implementar.

[https://miro.com/welcomeonboard/Zs1alv6K7tog1rKS8nBVAGiCorznevAsc4JHSbodoT797AmxSIABO73fLFsCMswk](Miro)

| 1.0 | Prototipo en miro - Según la documentación solo quedo faltando el espacio para **reportar errores de la página**, se pondrá en el prototipo si en ultima se va a implementar.

[https://miro.com/welcomeonboard/Zs1alv6K7tog1rKS8nBVAGiCorznevAsc4JHSbodoT797AmxSIABO73fLFsCMswk](Miro)

| non_test | prototipo en miro según la documentación solo quedo faltando el espacio para reportar errores de la página se pondrá en el prototipo si en ultima se va a implementar miro | 0 |

293,620 | 25,311,384,262 | IssuesEvent | 2022-11-17 17:43:34 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | closed | Verify engine's API behavior | team/qa type/manual-testing role/qa-runtime-terror subteam/qa-rainbow target/5.0.0 | | Target version | Related issue | Related PR/dev branch |

|--------------------|--------------------|-----------------|

| 5.0 | https://github.com/wazuh/wazuh-qa/issues/3533 | https://github.com/wazuh/wazuh/issues/11334 |

<!-- Important: No section may be l... | 1.0 | Verify engine's API behavior - | Target version | Related issue | Related PR/dev branch |

|--------------------|--------------------|-----------------|

| 5.0 | https://github.com/wazuh/wazuh-qa/issues/3533 | https://github.com/wazuh/wazuh/issues/11334 |

<!--... | test | verify engine s api behavior target version related issue related pr dev branch description since the team is reworking the engine we need to cover this new ... | 1 |

117,247 | 25,079,276,995 | IssuesEvent | 2022-11-07 17:51:33 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | insights: migrate backfill_completed_at | team/code-insights backend | Deprecate the old background routine and migrate backfill_completed_at to use the new stateful backfiller | 1.0 | insights: migrate backfill_completed_at - Deprecate the old background routine and migrate backfill_completed_at to use the new stateful backfiller | non_test | insights migrate backfill completed at deprecate the old background routine and migrate backfill completed at to use the new stateful backfiller | 0 |

172,853 | 13,349,283,505 | IssuesEvent | 2020-08-29 23:42:59 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: sqlsmith/setup=tpcc/setting=default failed | C-test-failure O-roachtest O-robot branch-master release-blocker | [(roachtest).sqlsmith/setup=tpcc/setting=default failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2231078&tab=buildLog) on [master@8b91062f9351d18f9104aff567cb152df162021e](https://github.com/cockroachdb/cockroach/commits/8b91062f9351d18f9104aff567cb152df162021e):

```

The test failed on branch=master, clo... | 2.0 | roachtest: sqlsmith/setup=tpcc/setting=default failed - [(roachtest).sqlsmith/setup=tpcc/setting=default failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2231078&tab=buildLog) on [master@8b91062f9351d18f9104aff567cb152df162021e](https://github.com/cockroachdb/cockroach/commits/8b91062f9351d18f9104aff567cb1... | test | roachtest sqlsmith setup tpcc setting default failed on the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts sqlsmith setup tpcc setting default run sqlsmith go sqlsmith go test runner go error pq interna... | 1 |

52,469 | 6,258,118,753 | IssuesEvent | 2017-07-14 14:52:25 | openSUSE/umoci | https://api.github.com/repos/openSUSE/umoci | closed | tests: remove need for Docker | test/integration test/unit | Usage of Docker is a bit of an anti-pattern IMO, because it actually makes it harder to run the tests in environments where you don't have Docker (such as inside `rpmbuild`). Luckily the bats tests aren't *too* specific to Docker.

* [ ] Make a nice way of getting the right version of our various testing dependencies... | 2.0 | tests: remove need for Docker - Usage of Docker is a bit of an anti-pattern IMO, because it actually makes it harder to run the tests in environments where you don't have Docker (such as inside `rpmbuild`). Luckily the bats tests aren't *too* specific to Docker.

* [ ] Make a nice way of getting the right version of ... | test | tests remove need for docker usage of docker is a bit of an anti pattern imo because it actually makes it harder to run the tests in environments where you don t have docker such as inside rpmbuild luckily the bats tests aren t too specific to docker make a nice way of getting the right version of ou... | 1 |

189,904 | 14,527,517,813 | IssuesEvent | 2020-12-14 15:26:49 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | closed | Test Failure: com.ibm.ws.ejbcontainer.session.passivation.tests.StatefulTimeoutTest.testXMLOnly_EE8_FEATURES | in:EJB Container team:Blizzard test bug | testXMLOnly_EE8_FEATURES:junit.framework.AssertionFailedError: 2020-11-30-19:48:51:577 The response did not contain "[SUCCESS]". Full output is:"

ERROR: Caught exception attempting to call test method testXMLOnly on servlet com.ibm.ws.ejbcontainer.session.passivation.statefulTimeout.web.StatefulTimeoutServlet

java.l... | 1.0 | Test Failure: com.ibm.ws.ejbcontainer.session.passivation.tests.StatefulTimeoutTest.testXMLOnly_EE8_FEATURES - testXMLOnly_EE8_FEATURES:junit.framework.AssertionFailedError: 2020-11-30-19:48:51:577 The response did not contain "[SUCCESS]". Full output is:"

ERROR: Caught exception attempting to call test method testXM... | test | test failure com ibm ws ejbcontainer session passivation tests statefultimeouttest testxmlonly features testxmlonly features junit framework assertionfailederror the response did not contain full output is error caught exception attempting to call test method testxmlonly on servlet com i... | 1 |

286,061 | 21,562,447,779 | IssuesEvent | 2022-05-01 11:16:24 | rollerderby/scoreboard | https://api.github.com/repos/rollerderby/scoreboard | closed | Announce v5.0.0 and forward feedback on some issues reported on Facebook | documentation | **CRG 5.0.0 has been [released](https://github.com/rollerderby/scoreboard/releases/tag/v5.0.0).** This should be announced in the Facebook group.

Also when preparing the release I went through the public Facebook group to see if any feedback on the beta was posted there and found a couple of posts where I think I ca... | 1.0 | Announce v5.0.0 and forward feedback on some issues reported on Facebook - **CRG 5.0.0 has been [released](https://github.com/rollerderby/scoreboard/releases/tag/v5.0.0).** This should be announced in the Facebook group.

Also when preparing the release I went through the public Facebook group to see if any feedback ... | non_test | announce and forward feedback on some issues reported on facebook crg has been this should be announced in the facebook group also when preparing the release i went through the public facebook group to see if any feedback on the beta was posted there and found a couple of posts where i think i c... | 0 |