Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

298,639 | 9,200,620,674 | IssuesEvent | 2019-03-07 17:29:32 | hannesschulze/optimizer | https://api.github.com/repos/hannesschulze/optimizer | closed | Window is to large after update | Priority: High | I think it's due to French translations. Here is a screenshot:

Maybe you can adjust this a little bit. | 1.0 | Window is to large after update - I think it's due to French translations. Here is a screenshot:

Maybe you can adjust this a little bit. | non_test | window is to large after update i think it s due to french translations here is a screenshot maybe you can adjust this a little bit | 0 |

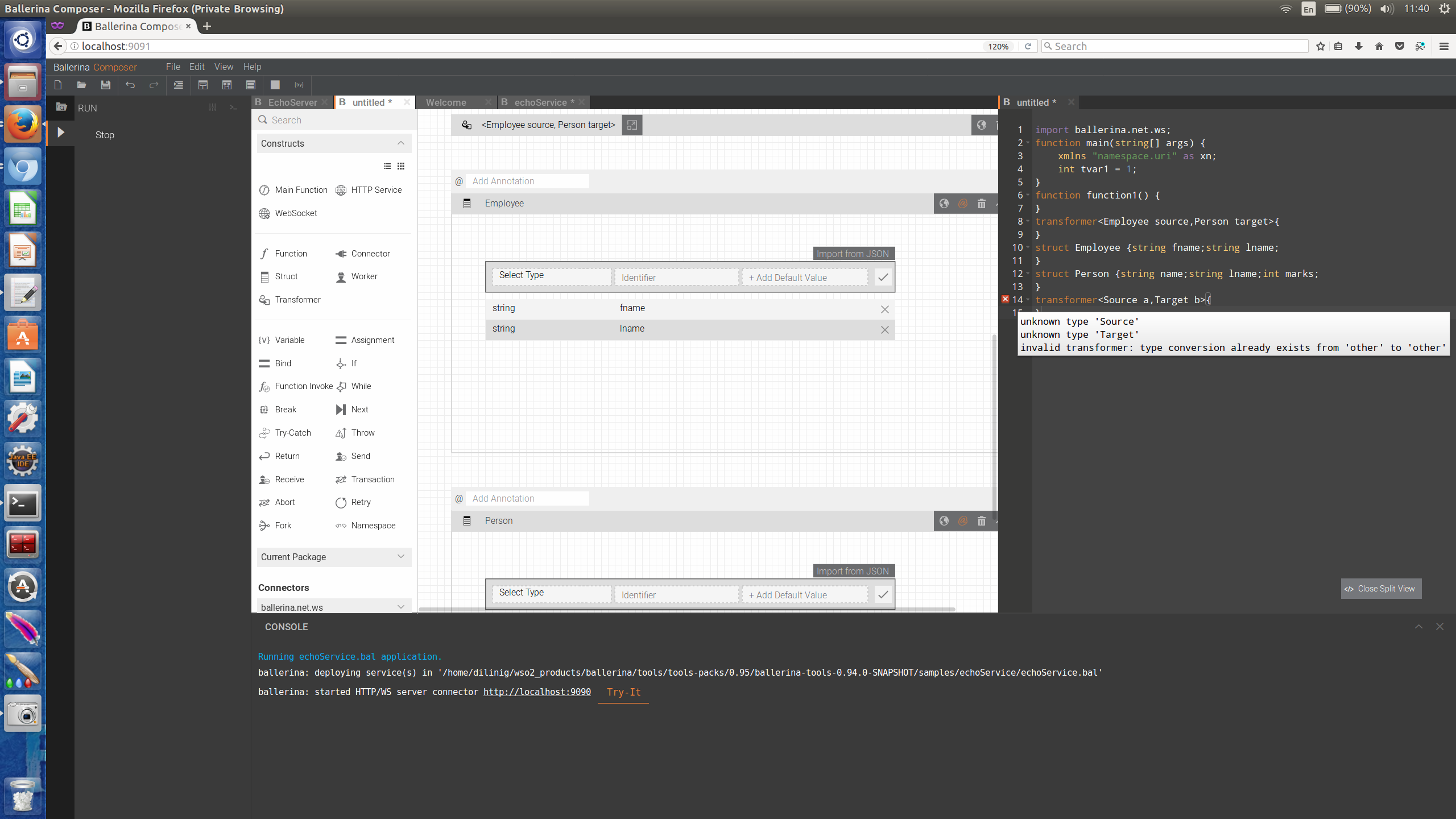

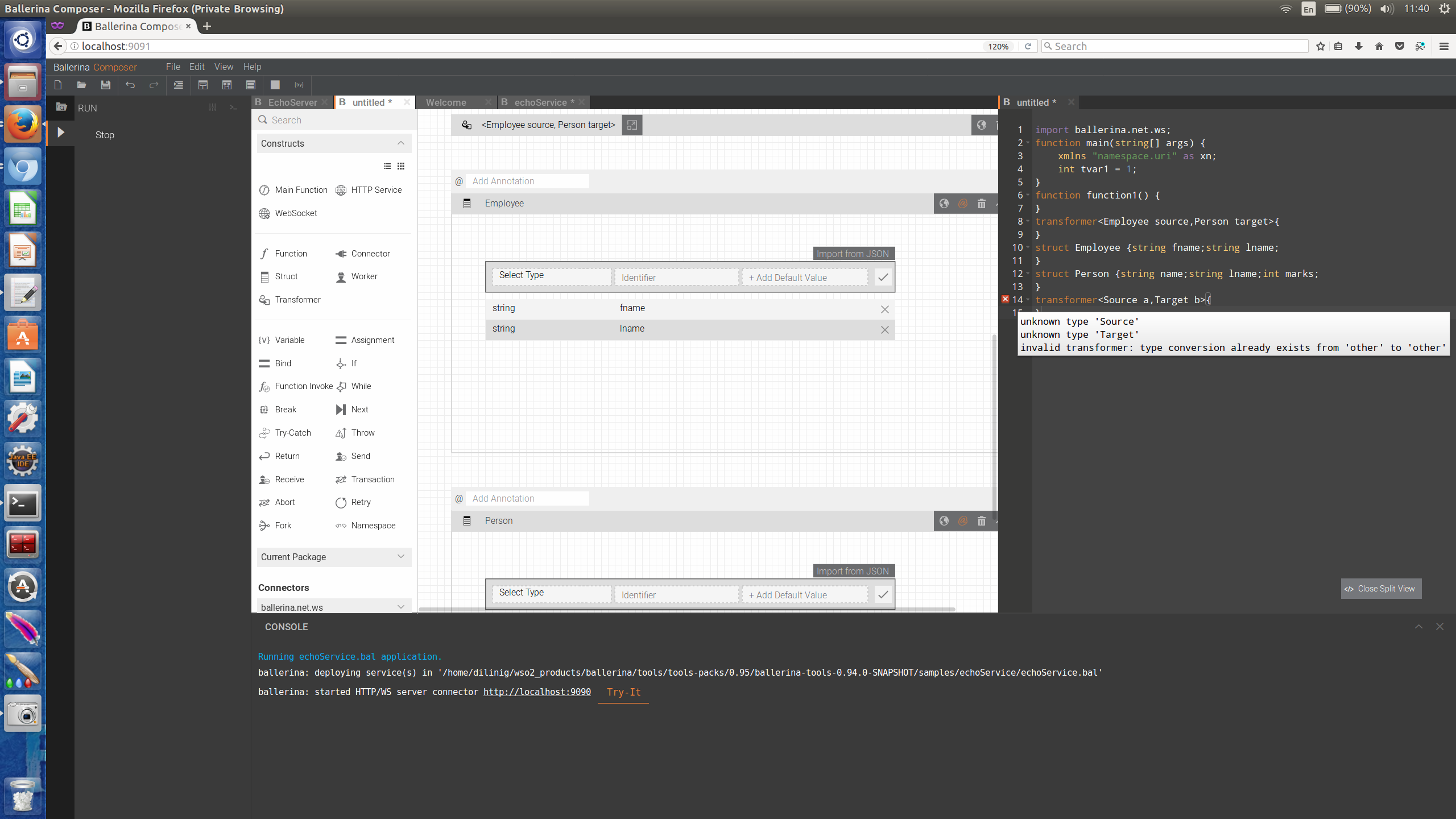

190,478 | 6,818,869,390 | IssuesEvent | 2017-11-07 08:02:20 | ballerinalang/composer | https://api.github.com/repos/ballerinalang/composer | closed | Error messages are not meaningful in transform | 0.95 Priority/High Severity/Minor Type/Bug | Error message that is coming as soon as a transform is added is not meaningful

"type conversion is already exists from other to other"

| 1.0 | Error messages are not meaningful in transform - Error message that is coming as soon as a transform is added is not meaningful

"type conversion is already exists from other to other"

| non_test | error messages are not meaningful in transform error message that is coming as soon as a transform is added is not meaningful type conversion is already exists from other to other | 0 |

168,859 | 6,388,306,447 | IssuesEvent | 2017-08-03 15:19:25 | javaee/glassfish | https://api.github.com/repos/javaee/glassfish | closed | Add log message in server.log when skipping resource validation | Component: deployment Component: logging Priority: Minor Type: Task | When the jvm property `-Ddeployment.resource.validation=false` is set, we want to log a message in the server.log notifying that resource validation is being skipped. | 1.0 | Add log message in server.log when skipping resource validation - When the jvm property `-Ddeployment.resource.validation=false` is set, we want to log a message in the server.log notifying that resource validation is being skipped. | non_test | add log message in server log when skipping resource validation when the jvm property ddeployment resource validation false is set we want to log a message in the server log notifying that resource validation is being skipped | 0 |

71,100 | 30,815,405,444 | IssuesEvent | 2023-08-01 13:11:53 | MicrosoftDocs/powerbi-docs | https://api.github.com/repos/MicrosoftDocs/powerbi-docs | closed | Q&A Tooling note | assigned-to-author in-progress doc-enhancement powerbi/svc backlog powerbi-service/subsvc Pri2 Quick | Since the Note regarding Q&A Tooling only currently support Import connections applies to all Tooling, not just the Teach Q&A feature, should the note move from the Teach Q&A Limitations section to the Tooling Limitations section? I chose to leave this feedback rather than change it myself in case there was something I... | 1.0 | Q&A Tooling note - Since the Note regarding Q&A Tooling only currently support Import connections applies to all Tooling, not just the Teach Q&A feature, should the note move from the Teach Q&A Limitations section to the Tooling Limitations section? I chose to leave this feedback rather than change it myself in case th... | non_test | q a tooling note since the note regarding q a tooling only currently support import connections applies to all tooling not just the teach q a feature should the note move from the teach q a limitations section to the tooling limitations section i chose to leave this feedback rather than change it myself in case th... | 0 |

405,284 | 27,511,268,745 | IssuesEvent | 2023-03-06 09:00:17 | iotaledger/wallet.rs | https://api.github.com/repos/iotaledger/wallet.rs | closed | Fix clone command | dx-documentation | ## Description

The git clone command in i.e. [Clone the Repository](https://wiki.iota.org/shimmer/wallet.rs/how_tos/run_how_tos/#clone-the-repository) does not work, it should be `git clone https://github.com/iotaledger/wallet.rs.git`.

## Are you planning to do it yourself in a pull request?

Yes.

| 1.0 | Fix clone command - ## Description

The git clone command in i.e. [Clone the Repository](https://wiki.iota.org/shimmer/wallet.rs/how_tos/run_how_tos/#clone-the-repository) does not work, it should be `git clone https://github.com/iotaledger/wallet.rs.git`.

## Are you planning to do it yourself in a pull request?

... | non_test | fix clone command description the git clone command in i e does not work it should be git clone are you planning to do it yourself in a pull request yes | 0 |

448,125 | 31,768,918,750 | IssuesEvent | 2023-09-12 10:28:43 | ArtalkJS/Artalk | https://api.github.com/repos/ArtalkJS/Artalk | closed | Docker 2.6.0版本如何使用环境变量 | documentation | 看到2.6.0更新,看到支持环境变量,我找了文档,没有发现支持环境变量说明。

# 示例

`artalk.example.zh-CN.yml`文件有

```

# 服务器地址

host: "0.0.0.0"

# 服务器端口

port: 23366

# 加密密钥

app_key: ""

# 调试模式

debug: false

# 语言 ["en", "zh-CN", "zh-TW", "jp"]

locale: "zh-CN"

# 时间区域

timezone: "Asia/Shanghai"

# 默认站点名

site_default: "默认站点"

```

环境变量是什么?

... | 1.0 | Docker 2.6.0版本如何使用环境变量 - 看到2.6.0更新,看到支持环境变量,我找了文档,没有发现支持环境变量说明。

# 示例

`artalk.example.zh-CN.yml`文件有

```

# 服务器地址

host: "0.0.0.0"

# 服务器端口

port: 23366

# 加密密钥

app_key: ""

# 调试模式

debug: false

# 语言 ["en", "zh-CN", "zh-TW", "jp"]

locale: "zh-CN"

# 时间区域

timezone: "Asia/Shanghai"

# 默认站点名

site_default... | non_test | docker ,看到支持环境变量,我找了文档,没有发现支持环境变量说明。 示例 artalk example zh cn yml 文件有 服务器地址 host 服务器端口 port 加密密钥 app key 调试模式 debug false 语言 locale zh cn 时间区域 timezone asia shanghai 默认站点名 site default 默认站点 环境变量是什么? docker compose yml ... | 0 |

278,951 | 24,187,463,894 | IssuesEvent | 2022-09-23 14:27:19 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: Jest Tests.x-pack/plugins/index_lifecycle_management/__jest__/client_integration/edit_policy/form_validation - <EditPolicy /> policy name validation doesn't allow policy name starting with underscore | failed-test | A test failed on a tracked branch

```

Error: expect(received).toEqual(expected) // deep equality

- Expected - 3

+ Received + 1

- Array [

- "A policy name cannot start with an underscore.",

- ]

+ Array []

at Object.expectMessages (/var/lib/buildkite-agent/builds/kb-n2-4-spot-0891049732a0e254/elastic/kibana-on... | 1.0 | Failing test: Jest Tests.x-pack/plugins/index_lifecycle_management/__jest__/client_integration/edit_policy/form_validation - <EditPolicy /> policy name validation doesn't allow policy name starting with underscore - A test failed on a tracked branch

```

Error: expect(received).toEqual(expected) // deep equality

- Exp... | test | failing test jest tests x pack plugins index lifecycle management jest client integration edit policy form validation policy name validation doesn t allow policy name starting with underscore a test failed on a tracked branch error expect received toequal expected deep equality expected ... | 1 |

248,400 | 21,016,580,010 | IssuesEvent | 2022-03-30 11:36:12 | stores-cedcommerce/Dezymart-Store-ReDesign | https://api.github.com/repos/stores-cedcommerce/Dezymart-Store-ReDesign | closed | Product page | Product page Mobile Issue Inprogress Ready to test Fixed | **The actual result:**

1: When we click on the thumbnail images then the border is not coming over the images of thumbnail.

**The url :** https://8jul4942fz5ea8w7-56773443766.shopifypreview.com/products/knee-heating-pads-wrap-for-men-and-women-hot-compress-therapy-thermal-pads-for-cramps-and-arthritis-pain-relief

*... | 1.0 | Product page - **The actual result:**

1: When we click on the thumbnail images then the border is not coming over the images of thumbnail.

**The url :** https://8jul4942fz5ea8w7-56773443766.shopifypreview.com/products/knee-heating-pads-wrap-for-men-and-women-hot-compress-therapy-thermal-pads-for-cramps-and-arthritis... | test | product page the actual result when we click on the thumbnail images then the border is not coming over the images of thumbnail the url the issue the sliders arrows are not visible properly in the thumbnail images expected result the border have to come when we click... | 1 |

38,911 | 5,204,519,853 | IssuesEvent | 2017-01-24 15:45:41 | publiclab/plots2 | https://api.github.com/repos/publiclab/plots2 | closed | Add code coverage to plots2 | in progress testing | Its time that we did some analytics on our code. Add code coverage and test coverage to plots2. Trying in two different services - [codeclimate](https://codeclimate.com) and [coveralls](http://coveralls.io/) | 1.0 | Add code coverage to plots2 - Its time that we did some analytics on our code. Add code coverage and test coverage to plots2. Trying in two different services - [codeclimate](https://codeclimate.com) and [coveralls](http://coveralls.io/) | test | add code coverage to its time that we did some analytics on our code add code coverage and test coverage to trying in two different services and | 1 |

312,470 | 26,866,372,672 | IssuesEvent | 2023-02-04 00:34:30 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [QUALIDADE] [SENIOR] [SALVADOR] [HOME OFFICE] Analista de Qualidade Sr | home office na [CAPGEMINI] | SALVADOR HOME OFFICE JAVA SENIOR GIT MOBILE SELENIUM APPIUM JENKINS QUALIDADE ECLIPSE QUALIDADE DE SOFTWARE ANDROID STUDIO HELP WANTED PIPELINE TESTES DE API Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

========================... | 1.0 | [QUALIDADE] [SENIOR] [SALVADOR] [HOME OFFICE] Analista de Qualidade Sr | home office na [CAPGEMINI] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `... | test | analista de qualidade sr home office na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na ... | 1 |

76,963 | 7,550,124,254 | IssuesEvent | 2018-04-18 15:58:08 | EyeSeeTea/QAApp | https://api.github.com/repos/EyeSeeTea/QAApp | closed | 1.2.9: Improve, incorrect calculation of date of next assessment | buddybug question testing type - bug type - maintenance | Feedback from clussiana@psi.org : Incorrect calculation of date of next assessment. This is related to #255, too.

| Item | Value |

| --- | --- |

| Cr... | 1.0 | 1.2.9: Improve, incorrect calculation of date of next assessment - Feedback from clussiana@psi.org : Incorrect calculation of date of next assessment. This is related to #255, too.

about which groups had co-views higher than 3 of the group the student is currently looking at | 1.0 | Students will get recommendations on what groups they could join - The web layer will pull information gained from the api (which took info from the model) about which groups had co-views higher than 3 of the group the student is currently looking at | non_test | students will get recommendations on what groups they could join the web layer will pull information gained from the api which took info from the model about which groups had co views higher than of the group the student is currently looking at | 0 |

206,258 | 16,023,862,435 | IssuesEvent | 2021-04-21 06:19:49 | caos/zitadel | https://api.github.com/repos/caos/zitadel | closed | [Admin UX] Explain Management Roles | documentation enhancement help wanted | **Describe the bug**

There's no description of the management roles either in the Management Console or the documentation.

**Expected behavior**

Explain what each role does. Best in UI & Docs. | 1.0 | [Admin UX] Explain Management Roles - **Describe the bug**

There's no description of the management roles either in the Management Console or the documentation.

**Expected behavior**

Explain what each role does. Best in UI & Docs. | non_test | explain management roles describe the bug there s no description of the management roles either in the management console or the documentation expected behavior explain what each role does best in ui docs | 0 |

63,736 | 6,883,697,767 | IssuesEvent | 2017-11-21 10:18:24 | DEIB-GECO/GMQL | https://api.github.com/repos/DEIB-GECO/GMQL | closed | MAP wrong number of output samples | test Urgent | I run the following query:

INPUT_1 = SELECT(region: chr== chr2) dataset_1;

INPUT_2 = SELECT(region: chr== chr2) dataset_2;

RES = MAP(avg_score AS AVG(score)) INPUT_1 INPUT_2 ;

MATERIALIZE RES INTO MAP_EXAMPLE_1;

The input_1 has 1 sample, the input_2 has 3 but the res of the map is 6 samples (instead of 3!). I encl... | 1.0 | MAP wrong number of output samples - I run the following query:

INPUT_1 = SELECT(region: chr== chr2) dataset_1;

INPUT_2 = SELECT(region: chr== chr2) dataset_2;

RES = MAP(avg_score AS AVG(score)) INPUT_1 INPUT_2 ;

MATERIALIZE RES INTO MAP_EXAMPLE_1;

The input_1 has 1 sample, the input_2 has 3 but the res of the map... | test | map wrong number of output samples i run the following query input select region chr dataset input select region chr dataset res map avg score as avg score input input materialize res into map example the input has sample the input has but the res of the map is ... | 1 |

494,269 | 14,247,613,240 | IssuesEvent | 2020-11-19 11:42:56 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.amazon.com - see bug description | browser-focus-geckoview engine-gecko ml-needsdiagnosis-false priority-critical | <!-- @browser: Firefox Mobile 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/62099 -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://www.amazon.com/ap/reg... | 1.0 | www.amazon.com - see bug description - <!-- @browser: Firefox Mobile 81.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 7.0; Mobile; rv:81.0) Gecko/81.0 Firefox/81.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/62099 -->

<!-- @extra_labels: browser-focus-geckoview -->

... | non_test | see bug description url browser version firefox mobile operating system android tested another browser yes other problem type something else description when i am creating a account it is showing an internal error steps to reproduce i want to create a ne... | 0 |

205,359 | 15,610,412,999 | IssuesEvent | 2021-03-19 13:10:00 | Azure/azure-sdk-for-python | https://api.github.com/repos/Azure/azure-sdk-for-python | closed | URL encodings for recordings can make a request unfindable by recording infrastructure | EngSys feature-request test enhancement | If a key has a `:`, `/`, `?`, `=`, etc. within the key, the scrubber needs to look for the key in both an encoded and non-encoded format. It is probably best to change the scrubber.register_name_pair to register an additional pairing with a url encoded version of the key. | 1.0 | URL encodings for recordings can make a request unfindable by recording infrastructure - If a key has a `:`, `/`, `?`, `=`, etc. within the key, the scrubber needs to look for the key in both an encoded and non-encoded format. It is probably best to change the scrubber.register_name_pair to register an additional pairi... | test | url encodings for recordings can make a request unfindable by recording infrastructure if a key has a etc within the key the scrubber needs to look for the key in both an encoded and non encoded format it is probably best to change the scrubber register name pair to register an additional pairi... | 1 |

102,336 | 4,154,325,169 | IssuesEvent | 2016-06-16 11:09:45 | alexhultman/uWebSockets | https://api.github.com/repos/alexhultman/uWebSockets | closed | Implement permessage-deflate | enhancement high priority | This is a popular extension and is needed to pass Autobahn fully. | 1.0 | Implement permessage-deflate - This is a popular extension and is needed to pass Autobahn fully. | non_test | implement permessage deflate this is a popular extension and is needed to pass autobahn fully | 0 |

300,083 | 25,944,726,261 | IssuesEvent | 2022-12-16 22:40:46 | hashicorp/terraform-provider-google | https://api.github.com/repos/hashicorp/terraform-provider-google | opened | Failing test(s): TestAccDNSRecordSet_routingPolicy | test failure | <!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or "X% failure in MONTH" -->

Failure rate: 100% since 2022-12-06

<!-- List all impacted tests for searchability. The... | 1.0 | Failing test(s): TestAccDNSRecordSet_routingPolicy - <!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or "X% failure in MONTH" -->

Failure rate: 100% since 2022-12-06

... | test | failing test s testaccdnsrecordset routingpolicy failure rate since impacted tests testaccdnsrecordset routingpolicy nightly builds message error error creating dns recordset googleapi error routing policies referencing internal load balancers cannot be a... | 1 |

287,858 | 24,868,543,618 | IssuesEvent | 2022-10-27 13:43:32 | openrocket/openrocket | https://api.github.com/repos/openrocket/openrocket | closed | Write unit tests for software updater | good first issue Unit testing | These unit tests should verify all the different scenarios for the software updater checker: if there's a newer release available, if you have a newer release than the official release, if you have the same release, if you have a bogus release etc. | 1.0 | Write unit tests for software updater - These unit tests should verify all the different scenarios for the software updater checker: if there's a newer release available, if you have a newer release than the official release, if you have the same release, if you have a bogus release etc. | test | write unit tests for software updater these unit tests should verify all the different scenarios for the software updater checker if there s a newer release available if you have a newer release than the official release if you have the same release if you have a bogus release etc | 1 |

312,873 | 26,882,967,903 | IssuesEvent | 2023-02-05 21:16:48 | IntellectualSites/PlotSquared | https://api.github.com/repos/IntellectualSites/PlotSquared | opened | PlayerQuitEvent | Requires Testing | ### Server Implementation

Paper

### Server Version

1.18.2

### Describe the bug

When you kick / ban / disconnect a player, it will show this error in console.

https://pastebin.com/QLPPVP3L

https://pastebin.com/W1t1yNY5

### To Reproduce

By kicking / banning / disconnecting, the error happens

https://pastebin.... | 1.0 | PlayerQuitEvent - ### Server Implementation

Paper

### Server Version

1.18.2

### Describe the bug

When you kick / ban / disconnect a player, it will show this error in console.

https://pastebin.com/QLPPVP3L

https://pastebin.com/W1t1yNY5

### To Reproduce

By kicking / banning / disconnecting, the error happens

... | test | playerquitevent server implementation paper server version describe the bug when you kick ban disconnect a player it will show this error in console to reproduce by kicking banning disconnecting the error happens expected behaviour not getting errors scree... | 1 |

159,395 | 12,474,935,618 | IssuesEvent | 2020-05-29 10:34:33 | redhat-developer/service-binding-operator | https://api.github.com/repos/redhat-developer/service-binding-operator | opened | Add tests for Empty Service Selector scenario | unit-test | ## Motivation

Unit tests for [empty service selector scenario](https://github.com/redhat-developer/service-binding-operator/blob/master/pkg/controller/servicebindingrequest/reconciler.go#L131) are missing which is needed.

| 1.0 | Add tests for Empty Service Selector scenario - ## Motivation

Unit tests for [empty service selector scenario](https://github.com/redhat-developer/service-binding-operator/blob/master/pkg/controller/servicebindingrequest/reconciler.go#L131) are missing which is needed.

| test | add tests for empty service selector scenario motivation unit tests for are missing which is needed | 1 |

743,122 | 25,888,000,322 | IssuesEvent | 2022-12-14 15:50:14 | SETI/pds-oops | https://api.github.com/repos/SETI/pds-oops | opened | Remove / deprecate PolynimialFOV and RadialFOV classes | A-Cleanup Effort 3 Easy B-OOPS Priority 5 Minor | These classes will be superceded by PolyFOV and BarrelFOV (see issue #26). Once they are adequately validated, these curent classes can be removed. | 1.0 | Remove / deprecate PolynimialFOV and RadialFOV classes - These classes will be superceded by PolyFOV and BarrelFOV (see issue #26). Once they are adequately validated, these curent classes can be removed. | non_test | remove deprecate polynimialfov and radialfov classes these classes will be superceded by polyfov and barrelfov see issue once they are adequately validated these curent classes can be removed | 0 |

303,448 | 26,208,021,678 | IssuesEvent | 2023-01-04 01:44:25 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional_with_es_ssl/apps/discover/search_source_alert·ts - Discover alerting Search source Alert should show time field validation error | failed-test Team:DataDiscovery | A test failed on a tracked branch

```

TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="esQueryAlertExpressionError"])

Wait timed out after 10281ms

at /var/lib/buildkite-agent/builds/kb-n2-4-spot-67b254da5c2dae6f/elastic/kibana-on-merge/kibana/node_modules/selenium-webdriver/lib/web... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional_with_es_ssl/apps/discover/search_source_alert·ts - Discover alerting Search source Alert should show time field validation error - A test failed on a tracked branch

```

TimeoutError: Waiting for element to be located By(css selector, [data-test-sub... | test | failing test chrome x pack ui functional tests x pack test functional with es ssl apps discover search source alert·ts discover alerting search source alert should show time field validation error a test failed on a tracked branch timeouterror waiting for element to be located by css selector wait timed ... | 1 |

585,770 | 17,533,554,005 | IssuesEvent | 2021-08-12 02:20:29 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Unable to install grpcio on Alpine Linux distro | kind/bug priority/P2 | <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questions that specifically need to be answered by gRPC t... | 1.0 | Unable to install grpcio on Alpine Linux distro - <!--

PLEASE DO NOT POST A QUESTION HERE.

This form is for bug reports and feature requests ONLY!

For general questions and troubleshooting, please ask/look for answers at StackOverflow, with "grpc" tag: https://stackoverflow.com/questions/tagged/grpc

For questio... | non_test | unable to install grpcio on alpine linux distro please do not post a question here this form is for bug reports and feature requests only for general questions and troubleshooting please ask look for answers at stackoverflow with grpc tag for questions that specifically need to be answered by gr... | 0 |

59,446 | 24,769,314,750 | IssuesEvent | 2022-10-22 23:51:06 | covid-projections/act-now-links-service | https://api.github.com/repos/covid-projections/act-now-links-service | closed | `/getShareLinkUrl` requests being blocked due to (an assumed) CORS issue | bug links-service | Requests to the links service began failing unexpectedly (without any changes as far as I can d) due to a CORS issue.

Requests succeed in [CORS testers](https://cors-test.codehappy.dev/?url=https%3A%2F%2Fus-central1-act-now-links-dev.cloudfunctions.net%2Fapi%2FgetShareLinkUrl%2Fhttp%3A%2F%2Fhackathon-september-2022-... | 1.0 | `/getShareLinkUrl` requests being blocked due to (an assumed) CORS issue - Requests to the links service began failing unexpectedly (without any changes as far as I can d) due to a CORS issue.

Requests succeed in [CORS testers](https://cors-test.codehappy.dev/?url=https%3A%2F%2Fus-central1-act-now-links-dev.cloudfun... | non_test | getsharelinkurl requests being blocked due to an assumed cors issue requests to the links service began failing unexpectedly without any changes as far as i can d due to a cors issue requests succeed in example of blocked failed request note the permenantly moved response afaik t... | 0 |

546,159 | 16,005,290,590 | IssuesEvent | 2021-04-20 01:33:23 | membermatters/MemberMatters | https://api.github.com/repos/membermatters/MemberMatters | closed | Long login sessions | bug high priority | It would be great to have long sticky login sessions so i dont have to auth every time i open the portal. | 1.0 | Long login sessions - It would be great to have long sticky login sessions so i dont have to auth every time i open the portal. | non_test | long login sessions it would be great to have long sticky login sessions so i dont have to auth every time i open the portal | 0 |

36,698 | 5,077,609,370 | IssuesEvent | 2016-12-28 10:55:54 | cyphar/umoci | https://api.github.com/repos/cyphar/umoci | opened | test: increase coverage | test/unit | I'd prefer if we can hit a unit test coverage of ~80%. Currently it's not enough testing IMO. The problem is that there's a lot of error paths that we will not be able to hit. | 1.0 | test: increase coverage - I'd prefer if we can hit a unit test coverage of ~80%. Currently it's not enough testing IMO. The problem is that there's a lot of error paths that we will not be able to hit. | test | test increase coverage i d prefer if we can hit a unit test coverage of currently it s not enough testing imo the problem is that there s a lot of error paths that we will not be able to hit | 1 |

112,231 | 9,558,240,545 | IssuesEvent | 2019-05-03 13:46:07 | saltstack/salt | https://api.github.com/repos/saltstack/salt | closed | unit.utils.test_vmware.DisconnectTestCase.test_disconnect_raise_vim_fault | 2019.2.1 Test Failure | 2019.2.1 failed [salt-fedora-29-py3](https://jenkinsci.saltstack.com/job/2019.2.1/job/salt-fedora-29-py3/11/testReport/junit/unit.utils.test_vmware/DisconnectTestCase/test_disconnect_raise_vim_fault)

---

<module 'salt.utils.vmware' from '/tmp/kitchen/testing/salt/utils/vmware.py'> does not have the attribute 'Disco... | 1.0 | unit.utils.test_vmware.DisconnectTestCase.test_disconnect_raise_vim_fault - 2019.2.1 failed [salt-fedora-29-py3](https://jenkinsci.saltstack.com/job/2019.2.1/job/salt-fedora-29-py3/11/testReport/junit/unit.utils.test_vmware/DisconnectTestCase/test_disconnect_raise_vim_fault)

---

<module 'salt.utils.vmware' from '/t... | test | unit utils test vmware disconnecttestcase test disconnect raise vim fault failed does not have the attribute disconnect traceback most recent call last file tmp kitchen testing tests unit utils test vmware py line in test disconnect raise vim fault with patch salt ut... | 1 |

23,450 | 11,966,016,681 | IssuesEvent | 2020-04-06 01:50:19 | rapidsai/cudf | https://api.github.com/repos/rapidsai/cudf | closed | [BUG] Reading huge csv by chunks is too slow | Performance bug cuIO | **Bug description**

I want to read an 8.4G CSV file with 100 millions lines. To do that, I'm reading a million lines by step using the _nrows_ and _skiprows_ argument of _cudf.read_csv_. But, each iteration take at least 42 seconds.

I'm doing a batching process:

* read a chunk of the csv file and create a datafram... | True | [BUG] Reading huge csv by chunks is too slow - **Bug description**

I want to read an 8.4G CSV file with 100 millions lines. To do that, I'm reading a million lines by step using the _nrows_ and _skiprows_ argument of _cudf.read_csv_. But, each iteration take at least 42 seconds.

I'm doing a batching process:

* rea... | non_test | reading huge csv by chunks is too slow bug description i want to read an csv file with millions lines to do that i m reading a million lines by step using the nrows and skiprows argument of cudf read csv but each iteration take at least seconds i m doing a batching process read a chun... | 0 |

189,620 | 14,516,718,556 | IssuesEvent | 2020-12-13 16:56:45 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | iwind/GoIM: src/github.com/iwind/TeaMQ/nets/server_test.go; 9 LoC | fresh test tiny |

Found a possible issue in [iwind/GoIM](https://www.github.com/iwind/GoIM) at [src/github.com/iwind/TeaMQ/nets/server_test.go](https://github.com/iwind/GoIM/blob/4644e1d7bc38a64e43d0ce0d311729ea0c2e975b/src/github.com/iwind/TeaMQ/nets/server_test.go#L29-L37)

Below is the message reported by the analyzer for this snipp... | 1.0 | iwind/GoIM: src/github.com/iwind/TeaMQ/nets/server_test.go; 9 LoC -

Found a possible issue in [iwind/GoIM](https://www.github.com/iwind/GoIM) at [src/github.com/iwind/TeaMQ/nets/server_test.go](https://github.com/iwind/GoIM/blob/4644e1d7bc38a64e43d0ce0d311729ea0c2e975b/src/github.com/iwind/TeaMQ/nets/server_test.go#L2... | test | iwind goim src github com iwind teamq nets server test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below messag... | 1 |

48,586 | 20,195,418,594 | IssuesEvent | 2022-02-11 10:12:24 | BBVA-Openweb/uptime-services | https://api.github.com/repos/BBVA-Openweb/uptime-services | closed | 🛑 Openweb Service (PLAY) - Analytics is down | status openweb-service-play-analytics | In [`58f785a`](https://github.com/BBVA-Openweb/uptime-services/commit/58f785a5bbbaff4e2c1759d27f1da0069ebe0fd4

), Openweb Service (PLAY) - Analytics ($OPENWEB_HEALTH_PLAY/analytics) was **down**:

- HTTP code: 0

- Response time: 0 ms

| 1.0 | 🛑 Openweb Service (PLAY) - Analytics is down - In [`58f785a`](https://github.com/BBVA-Openweb/uptime-services/commit/58f785a5bbbaff4e2c1759d27f1da0069ebe0fd4

), Openweb Service (PLAY) - Analytics ($OPENWEB_HEALTH_PLAY/analytics) was **down**:

- HTTP code: 0

- Response time: 0 ms

| non_test | 🛑 openweb service play analytics is down in openweb service play analytics openweb health play analytics was down http code response time ms | 0 |

265,745 | 23,194,734,646 | IssuesEvent | 2022-08-01 15:23:28 | ChainSafe/lodestar | https://api.github.com/repos/ChainSafe/lodestar | closed | Lodestar produced block with invalid attestation during Sepolia instability | scope-testnet-debugging | Post merge Sepolia has 2 unstable epochs

- the first merge slot was https://sepolia.beaconcha.in/block/115193

- Up until slot 115232, we don't see any of the config issue related validators missing a single slot. 115233 however is missed by lodestar and subsequently 115234&115235 are also missed by Besu and Nimbus.... | 1.0 | Lodestar produced block with invalid attestation during Sepolia instability - Post merge Sepolia has 2 unstable epochs

- the first merge slot was https://sepolia.beaconcha.in/block/115193

- Up until slot 115232, we don't see any of the config issue related validators missing a single slot. 115233 however is missed ... | test | lodestar produced block with invalid attestation during sepolia instability post merge sepolia has unstable epochs the first merge slot was up until slot we don t see any of the config issue related validators missing a single slot however is missed by lodestar and subsequently are also missed b... | 1 |

135,266 | 10,968,030,717 | IssuesEvent | 2019-11-28 10:44:18 | DiscordFederation/Erin | https://api.github.com/repos/DiscordFederation/Erin | closed | Improve test coverage | tests | This is not related to #8. Some simple tests can be added to de-coupled methods and functions.

**Task list**:

- [x] Verify `glia init` retrieves proper files

- [x] Ensure [`glia.cli`](https://github.com/DiscordFederation/Glia/tree/124eca51f8dc8485b17411a2c33ebf58e51339a3/glia/cli) commands work

- [x] Test [`glia.c... | 1.0 | Improve test coverage - This is not related to #8. Some simple tests can be added to de-coupled methods and functions.

**Task list**:

- [x] Verify `glia init` retrieves proper files

- [x] Ensure [`glia.cli`](https://github.com/DiscordFederation/Glia/tree/124eca51f8dc8485b17411a2c33ebf58e51339a3/glia/cli) commands ... | test | improve test coverage this is not related to some simple tests can be added to de coupled methods and functions task list verify glia init retrieves proper files ensure commands work test | 1 |

268,712 | 23,391,427,840 | IssuesEvent | 2022-08-11 18:14:04 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Notebook Editor Event - onDidChangeVisibleNotebookEditors on two editor groups | integration-test-failure notebook | https://github.com/microsoft/vscode/runs/4541821400?check_suite_focus=true#step:18:365

```

1) Notebook Editor

Notebook Editor Event - onDidChangeVisibleNotebookEditors on two editor groups:

Error: Timeout of 60000ms exceeded. For async tests and hooks, ensure "done()" is called; if returning a Promise... | 1.0 | Notebook Editor Event - onDidChangeVisibleNotebookEditors on two editor groups - https://github.com/microsoft/vscode/runs/4541821400?check_suite_focus=true#step:18:365

```

1) Notebook Editor

Notebook Editor Event - onDidChangeVisibleNotebookEditors on two editor groups:

Error: Timeout of 60000ms excee... | test | notebook editor event ondidchangevisiblenotebookeditors on two editor groups notebook editor notebook editor event ondidchangevisiblenotebookeditors on two editor groups error timeout of exceeded for async tests and hooks ensure done is called if returning a promise ensure i... | 1 |

292,983 | 25,256,045,079 | IssuesEvent | 2022-11-15 18:09:40 | hashicorp/terraform-provider-google | https://api.github.com/repos/hashicorp/terraform-provider-google | closed | Failing test(s): TestAccApigeeInstance_apigeeInstanceServiceAttachmentBasicTestExample (permadiff causing recreate) | size/xs test failure crosslinked | <!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or "X% failure in MONTH" -->

Failure rate: 100% since June 11 2022

<!-- List all impacted tests for searchability. T... | 1.0 | Failing test(s): TestAccApigeeInstance_apigeeInstanceServiceAttachmentBasicTestExample (permadiff causing recreate) - <!--- This is a template for reporting test failures on nightly builds. It should only be used by core contributors who have access to our CI/CD results. --->

<!-- i.e. "Consistently since X date" or... | test | failing test s testaccapigeeinstance apigeeinstanceserviceattachmentbasictestexample permadiff causing recreate failure rate since june impacted tests testaccapigeeinstance apigeeinstanceserviceattachmentbasictestexample nightly builds message terraform will pe... | 1 |

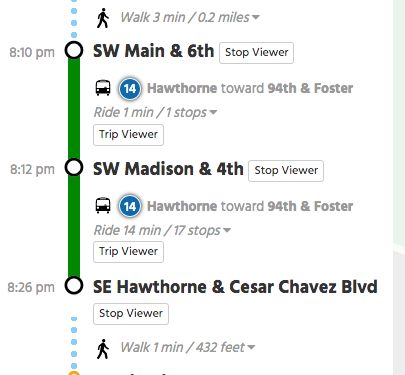

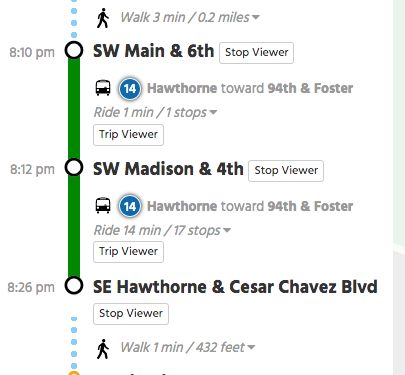

210,550 | 7,190,812,049 | IssuesEvent | 2018-02-02 18:36:47 | conveyal/trimet-mod-otp | https://api.github.com/repos/conveyal/trimet-mod-otp | closed | UI: For legs with one stop (like on an interline), don't list the stops on the "Ride 1 min, 1 stops" drop down. | high priority | If a leg only has 1 stop, don't show that "Ride 1 min, 1 stops" drop down.

NOTE: I don't think we really need the separate Trip Viewer button and view (at minimum will need a way to turn that off as a ... | 1.0 | UI: For legs with one stop (like on an interline), don't list the stops on the "Ride 1 min, 1 stops" drop down. - If a leg only has 1 stop, don't show that "Ride 1 min, 1 stops" drop down.

NOTE: I don'... | non_test | ui for legs with one stop like on an interline don t list the stops on the ride min stops drop down if a leg only has stop don t show that ride min stops drop down note i don t think we really need the separate trip viewer button and view at minimum will need a way to turn that off... | 0 |

134,695 | 10,927,183,003 | IssuesEvent | 2019-11-22 16:09:44 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | [Flaky Test] diffResources test failling in ci-kubernetes-e2e-gci-gce-ingress | kind/failing-test kind/flake priority/important-soon sig/network | <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

ci-kubernetes-e2e-gci-gce-ingress

**Which test(s) are failing**:

diffResources

**Since when has it been failing**:

Failing since 8/8 at around 3pm PDT.

**Testgrid link**:

h... | 1.0 | [Flaky Test] diffResources test failling in ci-kubernetes-e2e-gci-gce-ingress - <!-- Please only use this template for submitting reports about failing tests in Kubernetes CI jobs -->

**Which jobs are failing**:

ci-kubernetes-e2e-gci-gce-ingress

**Which test(s) are failing**:

diffResources

**Since when has i... | test | diffresources test failling in ci kubernetes gci gce ingress which jobs are failing ci kubernetes gci gce ingress which test s are failing diffresources since when has it been failing failing since at around pdt testgrid link reason for failure ... | 1 |

88,604 | 17,615,062,698 | IssuesEvent | 2021-08-18 08:42:28 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4] No "real" error page, always a "page not found" | No Code Attached Yet | ### Steps to reproduce the issue

generate a 403 or php fatal issue in Joomla 4

### Expected result

403/Error page

### Actual result

"The requested page can't be found." (which is factually incorrect)

<img width="994" alt="Screenshot 2021-08-17 at 22 49 51" src="https://user-images.githubusercontent.c... | 1.0 | [4] No "real" error page, always a "page not found" - ### Steps to reproduce the issue

generate a 403 or php fatal issue in Joomla 4

### Expected result

403/Error page

### Actual result

"The requested page can't be found." (which is factually incorrect)

<img width="994" alt="Screenshot 2021-08-17 at ... | non_test | no real error page always a page not found steps to reproduce the issue generate a or php fatal issue in joomla expected result error page actual result the requested page can t be found which is factually incorrect img width alt screenshot at src ... | 0 |

498,169 | 14,402,361,842 | IssuesEvent | 2020-12-03 14:49:21 | mlr-org/mlr3tuning | https://api.github.com/repos/mlr-org/mlr3tuning | opened | Avoid storing learner with TuneToken and search space in ObjectiveTuning | Priority: Medium | If a learner with `TuneToken` is supplied, a search space is generated in `TuningInstanceSingleCrit$initialize()`, `TuningInstanceMultiCrit$initialize()` and `AutoTuner$initialize()`. We decided that we remove `TuneToken`s from learners before they are stored in `ObjectiveTuning`. They are not needed anymore because th... | 1.0 | Avoid storing learner with TuneToken and search space in ObjectiveTuning - If a learner with `TuneToken` is supplied, a search space is generated in `TuningInstanceSingleCrit$initialize()`, `TuningInstanceMultiCrit$initialize()` and `AutoTuner$initialize()`. We decided that we remove `TuneToken`s from learners before t... | non_test | avoid storing learner with tunetoken and search space in objectivetuning if a learner with tunetoken is supplied a search space is generated in tuninginstancesinglecrit initialize tuninginstancemulticrit initialize and autotuner initialize we decided that we remove tunetoken s from learners before t... | 0 |

74,820 | 7,446,189,605 | IssuesEvent | 2018-03-28 08:19:07 | datahq/datahub-qa | https://api.github.com/repos/datahq/datahub-qa | closed | Search works unexpected with connective words (the, in, on, etc) | Severity: Minor Tested: Success | Connective words and articles words make search results invalid :(

This is incorrect. I think we should exclude such words from the filter conditions.

## How to reproduce

#### http://datahub.io/search?q=Mauna+Loa

- 2 have 'Mauna Loa' in the title

- 1 have 'Mauna Loa' in the Readme

This is correct

#### ht... | 1.0 | Search works unexpected with connective words (the, in, on, etc) - Connective words and articles words make search results invalid :(

This is incorrect. I think we should exclude such words from the filter conditions.

## How to reproduce

#### http://datahub.io/search?q=Mauna+Loa

- 2 have 'Mauna Loa' in the titl... | test | search works unexpected with connective words the in on etc connective words and articles words make search results invalid this is incorrect i think we should exclude such words from the filter conditions how to reproduce have mauna loa in the title have mauna loa in the read... | 1 |

98,447 | 8,677,510,990 | IssuesEvent | 2018-11-30 16:58:54 | SME-Issues/issues | https://api.github.com/repos/SME-Issues/issues | closed | Test Summary - 30/11/2018 - 5004 | NLP Api pulse_tests | ### Intent

- **Intent Errors: 3** (#1479)

### Canonical

- Query Invoice Tests Canonical (250): **91%** pass (212), 20 failed understood (#1477)

### Comprehension

- Query Invoice Tests Comprehension Partial (22): **42%** pass (8), 11 failed understood (#1478)

| 1.0 | Test Summary - 30/11/2018 - 5004 - ### Intent

- **Intent Errors: 3** (#1479)

### Canonical

- Query Invoice Tests Canonical (250): **91%** pass (212), 20 failed understood (#1477)

### Comprehension

- Query Invoice Tests Comprehension Partial (22): **42%** pass (8), 11 failed understood (#1478)

| test | test summary intent intent errors canonical query invoice tests canonical pass failed understood comprehension query invoice tests comprehension partial pass failed understood | 1 |

35,300 | 17,019,788,201 | IssuesEvent | 2021-07-02 17:01:07 | LiveSplit/LiveSplitOne | https://api.github.com/repos/LiveSplit/LiveSplitOne | closed | Inline main JS bundle into HTML | enhancement performance suitable for contributions | We should look into a way to inline the main JavaScript bundle into the HTML. If you look at the request waterfall, you can see that we first request the HTML as usual, then the bundle.js and then that one requests all the other resources in parallel. The first chunk however is very minimal, so the browser shouldn't ne... | True | Inline main JS bundle into HTML - We should look into a way to inline the main JavaScript bundle into the HTML. If you look at the request waterfall, you can see that we first request the HTML as usual, then the bundle.js and then that one requests all the other resources in parallel. The first chunk however is very mi... | non_test | inline main js bundle into html we should look into a way to inline the main javascript bundle into the html if you look at the request waterfall you can see that we first request the html as usual then the bundle js and then that one requests all the other resources in parallel the first chunk however is very mi... | 0 |

264,151 | 8,305,870,794 | IssuesEvent | 2018-09-22 12:29:25 | Bro-Time/Bro-Time-Server | https://api.github.com/repos/Bro-Time/Bro-Time-Server | closed | BroBit Dropping in-chat | priority: medium | Every 4-7 minutes, 1-3 BroBits will drop in the chat and users should be able to pick them up using !pick, or other commands (will be listed below). This system will only be put in #hangout.

# Functionality:

- Randomize # of minutes after a message is sent

15% - 4 minutes after a message is sent

25% - 5 minutes a... | 1.0 | BroBit Dropping in-chat - Every 4-7 minutes, 1-3 BroBits will drop in the chat and users should be able to pick them up using !pick, or other commands (will be listed below). This system will only be put in #hangout.

# Functionality:

- Randomize # of minutes after a message is sent

15% - 4 minutes after a message ... | non_test | brobit dropping in chat every minutes brobits will drop in the chat and users should be able to pick them up using pick or other commands will be listed below this system will only be put in hangout functionality randomize of minutes after a message is sent minutes after a message i... | 0 |

314,576 | 27,012,059,758 | IssuesEvent | 2023-02-10 16:08:39 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix raw_ops.test_tensorflow_Sigmoid | TensorFlow Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4012329973/jobs/6890687587" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4012329973/jobs/6890687587" rel="noopener ... | 1.0 | Fix raw_ops.test_tensorflow_Sigmoid - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4012329973/jobs/6890687587" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4012... | test | fix raw ops test tensorflow sigmoid tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test tensorflow test raw ops py test tensorflow sigmoid e runtimeerror sigmoid cpu not implemented for h... | 1 |

44,735 | 5,642,133,135 | IssuesEvent | 2017-04-06 20:24:29 | camile024/tsam | https://api.github.com/repos/camile024/tsam | closed | File removal - testing | Fixed (if closed)/Fixed in next update (if open) needs testing | Old file removal needs testing if works properly (the system call in main.c) | 1.0 | File removal - testing - Old file removal needs testing if works properly (the system call in main.c) | test | file removal testing old file removal needs testing if works properly the system call in main c | 1 |

640,998 | 20,814,537,327 | IssuesEvent | 2022-03-18 08:46:51 | ASE-Projekte-WS-2021/ase-ws-21-unser-horsaal | https://api.github.com/repos/ASE-Projekte-WS-2021/ase-ws-21-unser-horsaal | closed | (PROFIL) Profildaten ändern | Medium Priority | - [x] Als Student:in möchte ich im Profil meine Email, Passwort und Nutzername ändern können, um das Profil meinen Bedürfnissen anpassen zu können. | 1.0 | (PROFIL) Profildaten ändern - - [x] Als Student:in möchte ich im Profil meine Email, Passwort und Nutzername ändern können, um das Profil meinen Bedürfnissen anpassen zu können. | non_test | profil profildaten ändern als student in möchte ich im profil meine email passwort und nutzername ändern können um das profil meinen bedürfnissen anpassen zu können | 0 |

93,399 | 15,886,055,909 | IssuesEvent | 2021-04-09 21:45:42 | garymsegal-ws-org/dev-example-places | https://api.github.com/repos/garymsegal-ws-org/dev-example-places | opened | CVE-2021-25122 (High) detected in tomcat-embed-core-9.0.35.jar, tomcat-embed-core-9.0.36.jar | security vulnerability | ## CVE-2021-25122 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-embed-core-9.0.35.jar</b>, <b>tomcat-embed-core-9.0.36.jar</b></p></summary>

<p>

<details><summary><b>tomcat-... | True | CVE-2021-25122 (High) detected in tomcat-embed-core-9.0.35.jar, tomcat-embed-core-9.0.36.jar - ## CVE-2021-25122 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-embed-core-9.0.... | non_test | cve high detected in tomcat embed core jar tomcat embed core jar cve high severity vulnerability vulnerable libraries tomcat embed core jar tomcat embed core jar tomcat embed core jar core tomcat implementation library home page a href path... | 0 |

63,282 | 15,553,537,784 | IssuesEvent | 2021-03-16 01:41:21 | JacobUsgaard/FlameSymbol | https://api.github.com/repos/JacobUsgaard/FlameSymbol | opened | Add Release github action | build | When the time comes, there should be a github actions for when a release is created | 1.0 | Add Release github action - When the time comes, there should be a github actions for when a release is created | non_test | add release github action when the time comes there should be a github actions for when a release is created | 0 |

184,079 | 14,270,127,182 | IssuesEvent | 2020-11-21 05:07:26 | adamconnelly/Thrift.Net | https://api.github.com/repos/adamconnelly/Thrift.Net | closed | Testing and code coverage strategy | component/testing | Having a well thought out and really solid automated testing strategy is crucial for the project to allow us to grow and accept contributions from more contributors, as well as being able to rapidly release changes as soon as they are made. At the moment we've got unit tests running and code coverage results published ... | 1.0 | Testing and code coverage strategy - Having a well thought out and really solid automated testing strategy is crucial for the project to allow us to grow and accept contributions from more contributors, as well as being able to rapidly release changes as soon as they are made. At the moment we've got unit tests running... | test | testing and code coverage strategy having a well thought out and really solid automated testing strategy is crucial for the project to allow us to grow and accept contributions from more contributors as well as being able to rapidly release changes as soon as they are made at the moment we ve got unit tests running... | 1 |

50,468 | 21,111,618,108 | IssuesEvent | 2022-04-05 02:43:12 | dotnet/fsharp | https://api.github.com/repos/dotnet/fsharp | closed | Something is referring to 3 strings in the FSharpPackage that cannot be found | Area-LangService Area-Setup Feature Improvement | My ActivityLog states the following:

``` XML

-<entry>

<record>622</record>

<time>2019/02/19 00:11:32.518</time>

<type>Warning</type>

<source>VisualStudio</source>

<description>Performance warning: String load failed. Pkg:{871D2A70-12A2-4E42-9440-425DD92A4116} (FSharpPackage) LANG:0409 ID:6000 </description>

<... | 1.0 | Something is referring to 3 strings in the FSharpPackage that cannot be found - My ActivityLog states the following:

``` XML

-<entry>

<record>622</record>

<time>2019/02/19 00:11:32.518</time>

<type>Warning</type>

<source>VisualStudio</source>

<description>Performance warning: String load failed. Pkg:{871D2A70-... | non_test | something is referring to strings in the fsharppackage that cannot be found my activitylog states the following xml warning visualstudio performance warning string load failed pkg fsharppackage lang id warning visual... | 0 |

248,224 | 21,003,413,753 | IssuesEvent | 2022-03-29 19:48:29 | pulp/pulpcore | https://api.github.com/repos/pulp/pulpcore | closed | Test task child/parent tracking | Tests Finished? | Author: @dralley (dalley)

Redmine Issue: 6431, https://pulp.plan.io/issues/6431

---

Task parentage functionality was added without tests, because it is difficult to test via the standard means. Task parent/child relationships can only be set up through the plugin API, so the only way to test this would be:

* man... | 1.0 | Test task child/parent tracking - Author: @dralley (dalley)

Redmine Issue: 6431, https://pulp.plan.io/issues/6431

---

Task parentage functionality was added without tests, because it is difficult to test via the standard means. Task parent/child relationships can only be set up through the plugin API, so the only w... | test | test task child parent tracking author dralley dalley redmine issue task parentage functionality was added without tests because it is difficult to test via the standard means task parent child relationships can only be set up through the plugin api so the only way to test this would be man... | 1 |

103,672 | 8,925,772,015 | IssuesEvent | 2019-01-22 00:37:53 | linkerd/linkerd2 | https://api.github.com/repos/linkerd/linkerd2 | closed | Proxy: Intermittent inbound_tcp test failure | area/proxy area/test priority/P2 wontfix | ```

running 5 tests

test outbound_times_out ... ignored

server h1 error: invalid HTTP version specified

ERROR:conduit_proxy::map_err: turning service error into 500: Inner(Upstream(Inner(Inner(Inner(Error { kind: Inner(Error { kind: Proto(FRAME_SIZE_ERROR) }) })))))

test outbound_uses_orig_dst_if_not_local_svc ...... | 1.0 | Proxy: Intermittent inbound_tcp test failure - ```

running 5 tests

test outbound_times_out ... ignored

server h1 error: invalid HTTP version specified

ERROR:conduit_proxy::map_err: turning service error into 500: Inner(Upstream(Inner(Inner(Inner(Error { kind: Inner(Error { kind: Proto(FRAME_SIZE_ERROR) }) })))))

t... | test | proxy intermittent inbound tcp test failure running tests test outbound times out ignored server error invalid http version specified error conduit proxy map err turning service error into inner upstream inner inner inner error kind inner error kind proto frame size error test... | 1 |

11,860 | 18,276,674,529 | IssuesEvent | 2021-10-04 19:43:28 | CMPUT301F21T09/BudgetProjectName | https://api.github.com/repos/CMPUT301F21T09/BudgetProjectName | closed | RE-HE #10: User should be able to make a habit event | user requirement | :exclamation: This requirement is based off of a user story that requires more investigation with the client

A user should be able to make a habit event as a marker for completing a specific habit they own on a given day.

A habit event should only be able to be created on today (confirm with client if creating habi... | 1.0 | RE-HE #10: User should be able to make a habit event - :exclamation: This requirement is based off of a user story that requires more investigation with the client

A user should be able to make a habit event as a marker for completing a specific habit they own on a given day.

A habit event should only be able to be... | non_test | re he user should be able to make a habit event exclamation this requirement is based off of a user story that requires more investigation with the client a user should be able to make a habit event as a marker for completing a specific habit they own on a given day a habit event should only be able to be ... | 0 |

164,602 | 12,809,119,461 | IssuesEvent | 2020-07-03 14:57:50 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | closed | RVD#3116: CWE-134 (format), If format strings can be influenced by an attacker, they can be exploi... @ vers/boards/tap-v1/sdio.c:80 | CWE-134 bug components software flawfinder flawfinder_level_4 mitigated robot component: PX4 static analysis testing triage version: v1.8.0 | ```yaml

id: 3116

title: 'RVD#3116: CWE-134 (format), If format strings can be influenced by an attacker,

they can be exploi... @ vers/boards/tap-v1/sdio.c:80'

type: bug

description: If format strings can be influenced by an attacker, they can be exploited

(CWE-134). Use a constant for the format specification. . Ha... | 1.0 | RVD#3116: CWE-134 (format), If format strings can be influenced by an attacker, they can be exploi... @ vers/boards/tap-v1/sdio.c:80 - ```yaml

id: 3116

title: 'RVD#3116: CWE-134 (format), If format strings can be influenced by an attacker,

they can be exploi... @ vers/boards/tap-v1/sdio.c:80'

type: bug

description: I... | test | rvd cwe format if format strings can be influenced by an attacker they can be exploi vers boards tap sdio c yaml id title rvd cwe format if format strings can be influenced by an attacker they can be exploi vers boards tap sdio c type bug description if format strings ... | 1 |

246,176 | 26,600,345,026 | IssuesEvent | 2023-01-23 15:21:15 | lukebrogan-mend/django.nV | https://api.github.com/repos/lukebrogan-mend/django.nV | closed | CVE-2016-2513 (Low) detected in Django-1.8.3-py2.py3-none-any.whl - autoclosed | security vulnerability | ## CVE-2016-2513 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.8.3-py2.py3-none-any.whl</b></p></summary>

<p>A high-level Python Web framework that encourages rapid developme... | True | CVE-2016-2513 (Low) detected in Django-1.8.3-py2.py3-none-any.whl - autoclosed - ## CVE-2016-2513 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.8.3-py2.py3-none-any.whl</b></p... | non_test | cve low detected in django none any whl autoclosed cve low severity vulnerability vulnerable library django none any whl a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency... | 0 |

98,764 | 8,685,445,805 | IssuesEvent | 2018-12-03 07:46:28 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | FX Testing 3 : ApiV1OrgsIdGetPathParamIdNullValue | FX Testing 3 | Project : FX Testing 3

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cooki... | 1.0 | FX Testing 3 : ApiV1OrgsIdGetPathParamIdNullValue - Project : FX Testing 3

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-ca... | test | fx testing project fx testing job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint r... | 1 |

444,753 | 31,145,262,547 | IssuesEvent | 2023-08-16 05:45:52 | ErlisI/TTP-CAPSTONE | https://api.github.com/repos/ErlisI/TTP-CAPSTONE | closed | As a Business Owner | documentation | As a Business Owner, I often find myself overwhelmed with the numerous tasks involved in managing my restaurant efficiently. I need a comprehensive restaurant management app that can help me streamline and centralize all aspects of my business in one place. | 1.0 | As a Business Owner - As a Business Owner, I often find myself overwhelmed with the numerous tasks involved in managing my restaurant efficiently. I need a comprehensive restaurant management app that can help me streamline and centralize all aspects of my business in one place. | non_test | as a business owner as a business owner i often find myself overwhelmed with the numerous tasks involved in managing my restaurant efficiently i need a comprehensive restaurant management app that can help me streamline and centralize all aspects of my business in one place | 0 |

60,652 | 14,576,715,089 | IssuesEvent | 2020-12-18 00:09:15 | h2oai/wave | https://api.github.com/repos/h2oai/wave | closed | Propagate OIDC refresh token to Python client. | feature security | `Access` token is already accessible in Python client. For us to be able to access DAI and MLOps we need also `refresh` token.

## Goal

Propagate `refresh` token the same way as `access` token to Python client. It should be present in `q.auth.refresh_token` if OIDC enabled. | True | Propagate OIDC refresh token to Python client. - `Access` token is already accessible in Python client. For us to be able to access DAI and MLOps we need also `refresh` token.

## Goal

Propagate `refresh` token the same way as `access` token to Python client. It should be present in `q.auth.refresh_token` if OIDC en... | non_test | propagate oidc refresh token to python client access token is already accessible in python client for us to be able to access dai and mlops we need also refresh token goal propagate refresh token the same way as access token to python client it should be present in q auth refresh token if oidc en... | 0 |

280,014 | 24,273,735,161 | IssuesEvent | 2022-09-28 12:25:09 | elastic/kibana | https://api.github.com/repos/elastic/kibana | reopened | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/ml/data_frame_analytics/regression_creation·ts - machine learning data frame analytics regression creation electrical grid stability navigates through the wizard and sets all needed fields | :ml failed-test | A test failed on a tracked branch

```

Error: mlAnalyticsCreateJobWizardTrainingPercentSlider slider value should be '20' (got '10')

at Assertion.assert (/dev/shm/workspace/parallel/7/kibana/packages/kbn-expect/expect.js:100:11)

at Assertion.eql (/dev/shm/workspace/parallel/7/kibana/packages/kbn-expect/expect.j... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/ml/data_frame_analytics/regression_creation·ts - machine learning data frame analytics regression creation electrical grid stability navigates through the wizard and sets all needed fields - A test failed on a tracked branch

```

Error: mlAnal... | test | failing test chrome x pack ui functional tests x pack test functional apps ml data frame analytics regression creation·ts machine learning data frame analytics regression creation electrical grid stability navigates through the wizard and sets all needed fields a test failed on a tracked branch error mlanal... | 1 |

136,903 | 11,092,251,898 | IssuesEvent | 2019-12-15 17:43:40 | ayumi-cloud/oc-security-module | https://api.github.com/repos/ayumi-cloud/oc-security-module | closed | Add blank Ahrefs Crawler records to whitelist | Add to Whitelist FINSIHED Firewall Definitions Priority: Medium Testing - Passed enhancement | ### Enhancement idea

- [x] Add blank Ahrefs Crawler records to whitelist.

Fields | Details

---|---

ISP | OVH SAS

Type | Data Center/Web Hosting/Transit

Hostname | ip-xxx.xxx.xxx.xxx.a.ahrefs.com

Domain | ovh.com

Country | France

City | Roubaix, Hauts-de-France

Note: Has two different bots one for crawli... | 1.0 | Add blank Ahrefs Crawler records to whitelist - ### Enhancement idea

- [x] Add blank Ahrefs Crawler records to whitelist.

Fields | Details

---|---

ISP | OVH SAS

Type | Data Center/Web Hosting/Transit

Hostname | ip-xxx.xxx.xxx.xxx.a.ahrefs.com

Domain | ovh.com

Country | France

City | Roubaix, Hauts-de-Franc... | test | add blank ahrefs crawler records to whitelist enhancement idea add blank ahrefs crawler records to whitelist fields details isp ovh sas type data center web hosting transit hostname ip xxx xxx xxx xxx a ahrefs com domain ovh com country france city roubaix hauts de france ... | 1 |

12,960 | 15,214,359,164 | IssuesEvent | 2021-02-17 13:06:32 | BauhausLuftfahrt/PAXelerate | https://api.github.com/repos/BauhausLuftfahrt/PAXelerate | closed | Check functionalities of local EMFStore | compatibilty | - import and export of models

- versionizing

- exchange

see #88 for potential error

| True | Check functionalities of local EMFStore - - import and export of models

- versionizing

- exchange

see #88 for potential error

| non_test | check functionalities of local emfstore import and export of models versionizing exchange see for potential error | 0 |

212,912 | 16,504,091,636 | IssuesEvent | 2021-05-25 17:05:05 | Accenture/AmpliGraph | https://api.github.com/repos/Accenture/AmpliGraph | closed | Update docs of BCE loss | quality & documentation | **Background and Context**

In the constructor of the BCE loss, we need to add details of label smoothing and label weighting under loss params. It is missing currently

**Description**

| 1.0 | Update docs of BCE loss - **Background and Context**

In the constructor of the BCE loss, we need to add details of label smoothing and label weighting under loss params. It is missing currently

**Description**

| non_test | update docs of bce loss background and context in the constructor of the bce loss we need to add details of label smoothing and label weighting under loss params it is missing currently description | 0 |

250,753 | 7,987,206,817 | IssuesEvent | 2018-07-19 06:52:38 | ess-dmsc/forward-epics-to-kafka | https://api.github.com/repos/ess-dmsc/forward-epics-to-kafka | closed | Is a scalar value message really 112 bytes? | high priority | If the statistics reported by the Forwarder are correct then a scalar value pv update message is 112 bytes. This seems very large. | 1.0 | Is a scalar value message really 112 bytes? - If the statistics reported by the Forwarder are correct then a scalar value pv update message is 112 bytes. This seems very large. | non_test | is a scalar value message really bytes if the statistics reported by the forwarder are correct then a scalar value pv update message is bytes this seems very large | 0 |

104,320 | 13,055,539,797 | IssuesEvent | 2020-07-30 01:59:54 | alice-i-cecile/Fonts-of-Power | https://api.github.com/repos/alice-i-cecile/Fonts-of-Power | closed | Try to fix interaction between prismatic and weapon swapping | balance design | Really really sad to lose all your power.

Maybe one prismatic affix per piece of gear?? | 1.0 | Try to fix interaction between prismatic and weapon swapping - Really really sad to lose all your power.

Maybe one prismatic affix per piece of gear?? | non_test | try to fix interaction between prismatic and weapon swapping really really sad to lose all your power maybe one prismatic affix per piece of gear | 0 |

161,024 | 12,529,897,772 | IssuesEvent | 2020-06-04 12:10:53 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | storage: TestInOrderDelivery failed under stress | C-test-failure O-robot branch-master | SHA: https://github.com/cockroachdb/cockroach/commits/cf4d9a46193b9fc2c63b7adf89b3b0b5c84adb2b

Parameters:

```

TAGS=

GOFLAGS=

```

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stres... | 1.0 | storage: TestInOrderDelivery failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/cf4d9a46193b9fc2c63b7adf89b3b0b5c84adb2b

Parameters:

```

TAGS=

GOFLAGS=

```

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired res... | test | storage testinorderdelivery failed under stress sha parameters tags goflags to repro try don t forget to check out a clean suitable branch and experiment with the stress invocation until the desired results present themselves for example using stress instead of stressrace and passing t... | 1 |

125,219 | 17,835,974,710 | IssuesEvent | 2021-09-03 01:09:38 | varkalaramalingam/test-drone-build | https://api.github.com/repos/varkalaramalingam/test-drone-build | opened | CVE-2021-37712 (High) detected in tar-6.1.0.tgz | security vulnerability | ## CVE-2021-37712 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/ta... | True | CVE-2021-37712 (High) detected in tar-6.1.0.tgz - ## CVE-2021-37712 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-6.1.0.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home ... | non_test | cve high detected in tar tgz cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file test drone build package json path to vulnerable library test drone build node modules tar package json dependenc... | 0 |

88,220 | 8,135,671,588 | IssuesEvent | 2018-08-20 04:38:54 | istio/istio | https://api.github.com/repos/istio/istio | closed | istio/tools/setup_run and update_all out of date | area/perf and scalability area/test and release stale | Both scripts contain references to bazel artifacts.

| 1.0 | istio/tools/setup_run and update_all out of date - Both scripts contain references to bazel artifacts.

| test | istio tools setup run and update all out of date both scripts contain references to bazel artifacts | 1 |

549,186 | 16,087,457,148 | IssuesEvent | 2021-04-26 13:03:15 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.gob.mx - site is not usable | browser-firefox engine-gecko ml-needsdiagnosis-false os-linux priority-normal | <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/71469 -->

**URL**: https://www.gob.mx/curp/

**Browser / Version**: Firefox 78.0

**Operating... | 1.0 | www.gob.mx - site is not usable - <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (X11; Linux x86_64; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/71469 -->

**URL**: https://www.gob.mx/curp/

**Browser / V... | non_test | site is not usable url browser version firefox operating system linux tested another browser yes chrome problem type site is not usable description buttons or links not working steps to reproduce no descarga el pdf de el curp solo se queda pensando ... | 0 |

140,617 | 11,353,617,075 | IssuesEvent | 2020-01-24 15:54:36 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Test: backup and hot-exit | testplan-item | Refs: https://github.com/Microsoft/vscode/issues/84672

- [ ] anyOS

- [ ] anyOS

Complexity: 3

This milestone, backup / hot-exit was rewritten to work with custom editors. This test plan item is to verify there are no regressions for existing text based editors.

Steps:

* work with dirty files and dirty unti... | 1.0 | Test: backup and hot-exit - Refs: https://github.com/Microsoft/vscode/issues/84672

- [ ] anyOS

- [ ] anyOS

Complexity: 3

This milestone, backup / hot-exit was rewritten to work with custom editors. This test plan item is to verify there are no regressions for existing text based editors.

Steps:

* work wit... | test | test backup and hot exit refs anyos anyos complexity this milestone backup hot exit was rewritten to work with custom editors this test plan item is to verify there are no regressions for existing text based editors steps work with dirty files and dirty untitled editors with de... | 1 |

611,814 | 18,981,955,674 | IssuesEvent | 2021-11-21 02:52:40 | code-ready/crc | https://api.github.com/repos/code-ready/crc | closed | [BUG] `crc start` exits with "Failed to update cluster ID" | kind/bug priority/minor status/stale | ### General information

* OS: Linux

* Hypervisor: KVM

## CRC version

master with a 4.7.11 bundle

### Steps to reproduce

1. `crc start -b ~/Downloads/crc_libvirt_4.7.11.crcbundle --log-level debug -p ~/pull-secret.txt`

It happened only once for me.

### Expected

A Working cluster

### A... | 1.0 | [BUG] `crc start` exits with "Failed to update cluster ID" - ### General information

* OS: Linux

* Hypervisor: KVM

## CRC version

master with a 4.7.11 bundle

### Steps to reproduce

1. `crc start -b ~/Downloads/crc_libvirt_4.7.11.crcbundle --log-level debug -p ~/pull-secret.txt`

It happened onl... | non_test | crc start exits with failed to update cluster id general information os linux hypervisor kvm crc version master with a bundle steps to reproduce crc start b downloads crc libvirt crcbundle log level debug p pull secret txt it happened only once... | 0 |

414,885 | 28,008,530,294 | IssuesEvent | 2023-03-27 16:50:39 | dtcenter/METplotpy | https://api.github.com/repos/dtcenter/METplotpy | closed | Enhance the Release Notes by adding dropdown menus | type: task priority: low component: documentation requestor: METplus Team | Please use [Sphinx Design for Dropdown menus](https://sphinx-design.readthedocs.io/en/latest/dropdowns.html) . This will allow for searches of material hidden within dropdown menus.

Changes will need to be made to the below files:

1. config.py

add 'sphinx_design' to the "extensions =" section. (note the und... | 1.0 | Enhance the Release Notes by adding dropdown menus - Please use [Sphinx Design for Dropdown menus](https://sphinx-design.readthedocs.io/en/latest/dropdowns.html) . This will allow for searches of material hidden within dropdown menus.

Changes will need to be made to the below files:

1. config.py

add 'sphinx_... | non_test | enhance the release notes by adding dropdown menus please use this will allow for searches of material hidden within dropdown menus changes will need to be made to the below files config py add sphinx design to the extensions section note the underscore docs requirements txt fil... | 0 |

89,689 | 8,212,041,766 | IssuesEvent | 2018-09-04 15:16:25 | nasa-gibs/worldview | https://api.github.com/repos/nasa-gibs/worldview | closed | Zoomed out button allows you to 'click through' to map | bug testing | **Describe the bug**

Zooming out all the way using Zoom - button allows you to 'click through' to map once button is disabled

**To Reproduce**

Steps to reproduce the behavior:

1. Click zoom - button until it is disabled

2. The click now 'clicks through' the disabled button and moves the map

**Expected beha... | 1.0 | Zoomed out button allows you to 'click through' to map - **Describe the bug**

Zooming out all the way using Zoom - button allows you to 'click through' to map once button is disabled

**To Reproduce**

Steps to reproduce the behavior:

1. Click zoom - button until it is disabled

2. The click now 'clicks through'... | test | zoomed out button allows you to click through to map describe the bug zooming out all the way using zoom button allows you to click through to map once button is disabled to reproduce steps to reproduce the behavior click zoom button until it is disabled the click now clicks through ... | 1 |

350,742 | 31,932,003,497 | IssuesEvent | 2023-09-19 08:04:42 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix tensor.test_tensorflow__rfloordiv__ | TensorFlow Frontend Sub Task Failing Test | | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6230808265/job/16911378107"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6230808265/job/16911378107"><img src=https://img.shields.io/badge/-success-success></a>

|tenso... | 1.0 | Fix tensor.test_tensorflow__rfloordiv__ - | | |

|---|---|

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6230808265/job/16911378107"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6230808265/job/16911378107"><img src=https://img.shie... | test | fix tensor test tensorflow rfloordiv numpy a href src jax a href src tensorflow a href src torch a href src paddle a href src | 1 |

178,045 | 13,759,000,373 | IssuesEvent | 2020-10-07 01:43:20 | heremaps/xyz-spaces-python | https://api.github.com/repos/heremaps/xyz-spaces-python | closed | Test Spaces functionalities | no-issue-activity test | Please test below functionalities:

- All methods of the **Space** class - [API Reference](https://xyz-spaces-python.readthedocs.io/en/latest/xyzspaces.spaces.html) and [Example Notebook](https://github.com/heremaps/xyz-spaces-python/blob/master/docs/notebooks/spaces_class_example.ipynb)

- Performance test for me... | 1.0 | Test Spaces functionalities - Please test below functionalities: