Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

65,990 | 7,948,303,982 | IssuesEvent | 2018-07-11 07:39:00 | unee-t/frontend | https://api.github.com/repos/unee-t/frontend | closed | No possibility to create a case for a unit which has no case in the list (Ex: empty list of cases) | critical design/ux enhancement production | # The problem:

If a user has no open cases, he/she will see an empty page with no clear call to action

@kiatlim can you think of a solution to solve that?

We could send the user to a different page... | 1.0 | No possibility to create a case for a unit which has no case in the list (Ex: empty list of cases) - # The problem:

If a user has no open cases, he/she will see an empty page with no clear call to action

... | non_test | no possibility to create a case for a unit which has no case in the list ex empty list of cases the problem if a user has no open cases he she will see an empty page with no clear call to action kiatlim can you think of a solution to solve that we could send the user to a different page the... | 0 |

160,889 | 25,250,472,808 | IssuesEvent | 2022-11-15 14:20:41 | Sage/carbon | https://api.github.com/repos/Sage/carbon | closed | Change background color for FlatTableCell | Enhancement triage Design System Review Required flat-table | ### Desired behaviour

FlatTableCell should have prop background-color

### Current behaviour

FlatTableCell don't have prop background-color

### Suggested Solution

_No response_

### CodeSandbox or Storybook URL

_No response_

### Anything else we should know?

_No response_

### Confidentiality

- [X] I confirm th... | 1.0 | Change background color for FlatTableCell - ### Desired behaviour

FlatTableCell should have prop background-color

### Current behaviour

FlatTableCell don't have prop background-color

### Suggested Solution

_No response_

### CodeSandbox or Storybook URL

_No response_

### Anything else we should know?

_No respon... | non_test | change background color for flattablecell desired behaviour flattablecell should have prop background color current behaviour flattablecell don t have prop background color suggested solution no response codesandbox or storybook url no response anything else we should know no respon... | 0 |

19,947 | 10,564,176,528 | IssuesEvent | 2019-10-04 23:55:29 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Flutter SSL Memory Leaks | customer: gold dependency: dart engine severe: performance |

## Steps to Reproduce

1. Start Flutter app.

2. Push several pages the Navigator

3. Pop several pages.

4. In Xcode use Debug Memory Graph to capture memory graph

5. In memory filter the leaks.

## leaks

<img width="1341" alt="ssl_leaks" src="https://user-images.githubusercontent.com/2551915/43937206-ce03e7ea... | True | Flutter SSL Memory Leaks -

## Steps to Reproduce

1. Start Flutter app.

2. Push several pages the Navigator

3. Pop several pages.

4. In Xcode use Debug Memory Graph to capture memory graph

5. In memory filter the leaks.

## leaks

<img width="1341" alt="ssl_leaks" src="https://user-images.githubusercontent.co... | non_test | flutter ssl memory leaks steps to reproduce start flutter app push several pages the navigator pop several pages in xcode use debug memory graph to capture memory graph in memory filter the leaks leaks img width alt ssl leaks src run flutter analyz... | 0 |

263,119 | 23,036,618,286 | IssuesEvent | 2022-07-22 19:34:19 | OvercastCommunity/public-competitive | https://api.github.com/repos/OvercastCommunity/public-competitive | closed | 5CP - 5v5 - Backstreets | contest | ### Checklist

Check what applies to you. *Add an X between the brackets or click the checkboxes when you have submitted the issue.*

- [X] I have [pruned](https://pgm.dev/docs/guides/packaging/pruning-chunks) the map

- [X] I have agreed with assigning the [CC BY-SA 4.0 license](https://creativecommons.org/licenses/by... | 1.0 | 5CP - 5v5 - Backstreets - ### Checklist

Check what applies to you. *Add an X between the brackets or click the checkboxes when you have submitted the issue.*

- [X] I have [pruned](https://pgm.dev/docs/guides/packaging/pruning-chunks) the map

- [X] I have agreed with assigning the [CC BY-SA 4.0 license](https://crea... | test | backstreets checklist check what applies to you add an x between the brackets or click the checkboxes when you have submitted the issue i have the map i have agreed with assigning the to this map i have provided an xml file i have uploaded the map zip file to a file shari... | 1 |

109,062 | 16,827,998,683 | IssuesEvent | 2021-06-17 21:32:11 | kevins01/Java3 | https://api.github.com/repos/kevins01/Java3 | opened | CVE-2020-8840 (High) detected in jackson-databind-2.8.8.jar | security vulnerability | ## CVE-2020-8840 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming... | True | CVE-2020-8840 (High) detected in jackson-databind-2.8.8.jar - ## CVE-2020-8840 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.8.jar</b></p></summary>

<p>General d... | non_test | cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnerable ... | 0 |

78,798 | 9,796,465,630 | IssuesEvent | 2019-06-11 07:42:38 | wazuh/wazuh-kibana-app | https://api.github.com/repos/wazuh/wazuh-kibana-app | closed | Adapt menu directive design to Kibana 7.x new style | UI/UX design frontend | Hi team,

**Description**

Since Kibana 7, our menu directive looks weird, the design breaks all the views.

Here is how it looks with the default theme:

And here is how it looks with the dark theme... | 1.0 | Adapt menu directive design to Kibana 7.x new style - Hi team,

**Description**

Since Kibana 7, our menu directive looks weird, the design breaks all the views.

Here is how it looks with the default theme:

### Platforms affected

... | 1.0 | Fix C++ Exercise documentation - ### Expected behavior

- EX01 explains what is expected of hyphenated words.

- EX02 is consistent with its use of area.

### Actual behavior

- Unclear and inconsistent.

### Steps to reproduce the behavior

1. Visit [page](https://www.mantidproject.org/New_Starter_C%2B%2B_introdu... | non_test | fix c exercise documentation expected behavior explains what is expected of hyphenated words is consistent with its use of area actual behavior unclear and inconsistent steps to reproduce the behavior visit platforms affected all | 0 |

92,547 | 8,367,598,446 | IssuesEvent | 2018-10-04 12:43:48 | ValveSoftware/csgo-osx-linux | https://api.github.com/repos/ValveSoftware/csgo-osx-linux | closed | Multicore rendering not working with Linux | Linux Need Retest | #### Your system information

Informations de l'ordinateur :

Fabricant : Unknown

Modèle : Unknown

Type : Ordinateur portable

Aucun écran tactile détecté

Processeur :

Fabricant du CPU : GenuineIntel

Marque du processeur : Intel(R) Core(TM) i3-3217U CPU @ 1.80GHz

Famille du pr... | 1.0 | Multicore rendering not working with Linux - #### Your system information

Informations de l'ordinateur :

Fabricant : Unknown

Modèle : Unknown

Type : Ordinateur portable

Aucun écran tactile détecté

Processeur :

Fabricant du CPU : GenuineIntel

Marque du processeur : Intel(R) Core(... | test | multicore rendering not working with linux your system information informations de l ordinateur fabricant unknown modèle unknown type ordinateur portable aucun écran tactile détecté processeur fabricant du cpu genuineintel marque du processeur intel r core ... | 1 |

14,714 | 8,676,256,816 | IssuesEvent | 2018-11-30 13:35:29 | typelead/eta | https://api.github.com/repos/typelead/eta | opened | Allocate a dedicated thread to detect deadlocks in the runtime system | performance rts | In certain extreme cases, it is possible for the runtime system to deadlock and lightweight threads to stall. An example of an extreme case is when all of the runtime system allocated threads are all blocked on a Java FFI call. In such a case, it is beneficial to have a separate, dedicated thread that monitors the prog... | True | Allocate a dedicated thread to detect deadlocks in the runtime system - In certain extreme cases, it is possible for the runtime system to deadlock and lightweight threads to stall. An example of an extreme case is when all of the runtime system allocated threads are all blocked on a Java FFI call. In such a case, it i... | non_test | allocate a dedicated thread to detect deadlocks in the runtime system in certain extreme cases it is possible for the runtime system to deadlock and lightweight threads to stall an example of an extreme case is when all of the runtime system allocated threads are all blocked on a java ffi call in such a case it i... | 0 |

248,170 | 21,000,965,458 | IssuesEvent | 2022-03-29 17:24:12 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [test-failed]: Chrome UI Functional Tests1.test/functional/apps/home/_welcome·ts - homepage app Welcome interstitial is displayed on a fresh on-prem install | failed-test test-cloud | **Version: 7.17.2**

**Class: Chrome UI Functional Tests1.test/functional/apps/home/_welcome·ts**

**Stack Trace:**

```

TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="homeWelcomeInterstitial"])

Wait timed out after 10126ms

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tes... | 2.0 | [test-failed]: Chrome UI Functional Tests1.test/functional/apps/home/_welcome·ts - homepage app Welcome interstitial is displayed on a fresh on-prem install - **Version: 7.17.2**

**Class: Chrome UI Functional Tests1.test/functional/apps/home/_welcome·ts**

**Stack Trace:**

```

TimeoutError: Waiting for element to be lo... | test | chrome ui functional test functional apps home welcome·ts homepage app welcome interstitial is displayed on a fresh on prem install version class chrome ui functional test functional apps home welcome·ts stack trace timeouterror waiting for element to be located by css selector ... | 1 |

104,420 | 8,972,309,518 | IssuesEvent | 2019-01-29 17:56:39 | nasa-gibs/worldview | https://api.github.com/repos/nasa-gibs/worldview | closed | Add cssnext, a postcss plugin, to the build process | enhancement good first issue help wanted testing | ### Description

Right now we are using these `postcss` plugins to build our CSS: `postcssImport`, `autoprefixer` and `cssnano`.

I suggest we add the [cssnext](http://cssnext.io/) plugin to this pipeline. `cssnext` will allow us to use features found in SASS and LESS without having to write in a separate style sh... | 1.0 | Add cssnext, a postcss plugin, to the build process - ### Description

Right now we are using these `postcss` plugins to build our CSS: `postcssImport`, `autoprefixer` and `cssnano`.

I suggest we add the [cssnext](http://cssnext.io/) plugin to this pipeline. `cssnext` will allow us to use features found in SASS a... | test | add cssnext a postcss plugin to the build process description right now we are using these postcss plugins to build our css postcssimport autoprefixer and cssnano i suggest we add the plugin to this pipeline cssnext will allow us to use features found in sass and less without having to ... | 1 |

57,229 | 8,166,082,940 | IssuesEvent | 2018-08-25 04:00:33 | zcash/zcash | https://api.github.com/repos/zcash/zcash | opened | Clean up RPC help messages to have consistent naming of shielded funds | RPC interface documentation | Re: "private funds" or "shielded funds"?

from comment

https://github.com/zcash/zcash/pull/3436#discussion_r212747800 | 1.0 | Clean up RPC help messages to have consistent naming of shielded funds - Re: "private funds" or "shielded funds"?

from comment

https://github.com/zcash/zcash/pull/3436#discussion_r212747800 | non_test | clean up rpc help messages to have consistent naming of shielded funds re private funds or shielded funds from comment | 0 |

394,332 | 11,641,537,277 | IssuesEvent | 2020-02-29 03:16:23 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | closed | skaffold dev - deployment fails, but no events | area/dev kind/question priority/p2 | <!--

Issues without logs and details are more complicated to fix.

Please help us by filling the template below!

-->

### Expected behavior

I can see following message after "skaffold dev"

````bash

0/3 nodes are available: 3 node(s) didn't find available persistent volumes to bind.

````

### Actual behavior... | 1.0 | skaffold dev - deployment fails, but no events - <!--

Issues without logs and details are more complicated to fix.

Please help us by filling the template below!

-->

### Expected behavior

I can see following message after "skaffold dev"

````bash

0/3 nodes are available: 3 node(s) didn't find available persi... | non_test | skaffold dev deployment fails but no events issues without logs and details are more complicated to fix please help us by filling the template below expected behavior i can see following message after skaffold dev bash nodes are available node s didn t find available persi... | 0 |

212,864 | 16,485,811,938 | IssuesEvent | 2021-05-24 17:47:05 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Add validatePodmanEnv subtest for crio runtime to TestFunctional | area/testing co/runtime/crio kind/feature lifecycle/rotten priority/important-longterm | This goes hand in hand with validateDockerEnv which currently runs for the docker runtime | 1.0 | Add validatePodmanEnv subtest for crio runtime to TestFunctional - This goes hand in hand with validateDockerEnv which currently runs for the docker runtime | test | add validatepodmanenv subtest for crio runtime to testfunctional this goes hand in hand with validatedockerenv which currently runs for the docker runtime | 1 |

262,287 | 22,829,445,191 | IssuesEvent | 2022-07-12 11:37:25 | Bouboule-Corp/thurii-mobile-kotlin | https://api.github.com/repos/Bouboule-Corp/thurii-mobile-kotlin | opened | uppgrade coverage % | enhancement missing test | - [ ] dossier DoubleAuth

- [ ] dossier EmailRegistration

- [ ] dossier HomePage

- [ ] dossier Login

- [ ] dossier LoginSignInMenu

- [ ] dossier Registration

- [ ] dossier Settings

- [ ] fichier MainActivity.kt | 1.0 | uppgrade coverage % - - [ ] dossier DoubleAuth

- [ ] dossier EmailRegistration

- [ ] dossier HomePage

- [ ] dossier Login

- [ ] dossier LoginSignInMenu

- [ ] dossier Registration

- [ ] dossier Settings

- [ ] fichier MainActivity.kt | test | uppgrade coverage dossier doubleauth dossier emailregistration dossier homepage dossier login dossier loginsigninmenu dossier registration dossier settings fichier mainactivity kt | 1 |

105,778 | 13,216,931,175 | IssuesEvent | 2020-08-17 05:31:00 | nextcloud/server | https://api.github.com/repos/nextcloud/server | closed | Internal / private link | client: 💻 desktop client: 🤖🍏 mobile design feature: sharing high overview | - [x] Android @tobiasKaminsky

- [x] Desktop @camilasan

- [x] iOS @marinofaggiana

- [x] Server @nextcloud/server-triage

There is a feature request to have the internal/private link (clipboard on the right) also on android.

also on android.

detected in multiple libraries | security vulnerability | ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-10.1.0.tgz</b>, <b>yargs-parser-13.1.1.tgz</b>, <b>yargs-parser-5.0.0.tgz</b></p></summary>

<p>

<detai... | True | CVE-2020-7608 (Medium) detected in multiple libraries - ## CVE-2020-7608 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>yargs-parser-10.1.0.tgz</b>, <b>yargs-parser-13.1.1.tgz</b>,... | non_test | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries yargs parser tgz yargs parser tgz yargs parser tgz yargs parser tgz the mighty option parser used by yargs library home page a href path to depende... | 0 |

123,670 | 10,278,931,168 | IssuesEvent | 2019-08-25 18:29:47 | dexpenses/dexpenses-extract | https://api.github.com/repos/dexpenses/dexpenses-extract | opened | Implement test receipt normal/goe-cafe-del-sol | enhancement test-data | Receipt to implement:

| 1.0 | Implement test receipt normal/goe-cafe-del-sol - Receipt to implement:

| test | implement test receipt normal goe cafe del sol receipt to implement normal goe cafe del sol | 1 |

327,659 | 28,075,599,473 | IssuesEvent | 2023-03-29 23:09:37 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix creation.test_native_array | Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4520842325/jobs/7962215017" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4520842325/jobs/7962215017" rel="noopener nore... | 1.0 | Fix creation.test_native_array - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4520842325/jobs/7962215017" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4520842325/jo... | test | fix creation test native array tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test functional test core test creation py test native array e assertionerror there are no parameters in the inputs to connect ... | 1 |

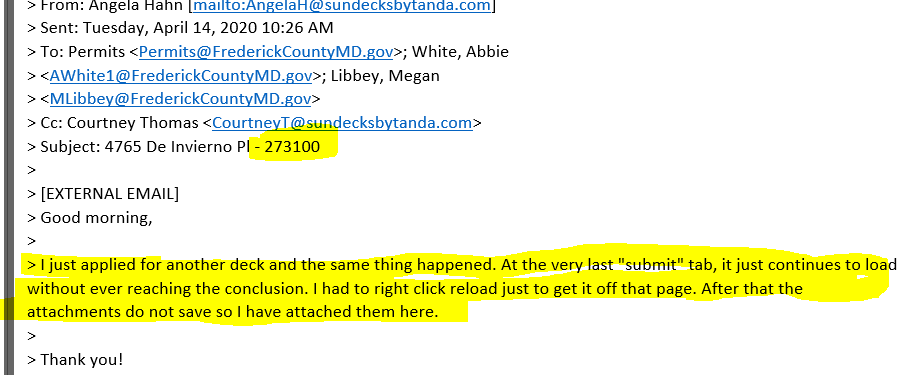

165,911 | 12,884,028,752 | IssuesEvent | 2020-07-13 01:24:10 | dhenry-KCI/FredCo-Post-Go-Live- | https://api.github.com/repos/dhenry-KCI/FredCo-Post-Go-Live- | closed | R4C - Permit & Planning Application Submission Issues - Extreme Ticket #14547500 | High Priority Ready for Testing in TEST | A customer has had consistent issues when trying to submit her ResUse - Deck permit via the R4C portal. See the applicant's description of her issue below.

It appears as though the application submits succ... | 2.0 | R4C - Permit & Planning Application Submission Issues - Extreme Ticket #14547500 - A customer has had consistent issues when trying to submit her ResUse - Deck permit via the R4C portal. See the applicant's description of her issue below.

and slow (network/verifier), so that some peer queries can b... | True | Simplify initial network setup so that peer queries can be answered sooner - ## Motivation

Ziggurat's testing report on Zebra `v1.0.0-beta.8` discovered that Zebra ignores inbound peer queries during network setup.

### Designs

There are a few ways we can resolve this issue:

1. Split `Inbound` initialisation i... | non_test | simplify initial network setup so that peer queries can be answered sooner motivation ziggurat s testing report on zebra beta discovered that zebra ignores inbound peer queries during network setup designs there are a few ways we can resolve this issue split inbound initialisation in... | 0 |

17,828 | 23,768,850,174 | IssuesEvent | 2022-09-01 14:45:36 | ArneBinder/pie-utils | https://api.github.com/repos/ArneBinder/pie-utils | closed | create a partition via regex | document processor | Implement a document processor that creates a partition via a regex split pattern. This should take advantage from [previous implementation](https://github.com/ArneBinder/pytorch-ie-sam-template/blob/main/src/document_processors/partition.py). This should also collect the distribution of the lengths of the parts (parti... | 1.0 | create a partition via regex - Implement a document processor that creates a partition via a regex split pattern. This should take advantage from [previous implementation](https://github.com/ArneBinder/pytorch-ie-sam-template/blob/main/src/document_processors/partition.py). This should also collect the distribution of ... | non_test | create a partition via regex implement a document processor that creates a partition via a regex split pattern this should take advantage from this should also collect the distribution of the lengths of the parts partition entries and the full texts to compare against and also the number of parts per documen... | 0 |

180,697 | 30,549,230,807 | IssuesEvent | 2023-07-20 07:19:52 | DeveloperAcademy-POSTECH/MC3-Team8-Aing | https://api.github.com/repos/DeveloperAcademy-POSTECH/MC3-Team8-Aing | closed | [Design] 카메라뷰 레이아웃 제작 | Design 클리프 | ## 📸 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 카메라 뷰 레이아웃 제작

- 카메라 뷰 페이지 전체는 UIKit으로 제작한 뒤 Representable로 래핑

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [x] 컴포넌트 배치

- [x] 오토 레이아웃 및 Constraints 설정

| 1.0 | [Design] 카메라뷰 레이아웃 제작 - ## 📸 Issue

<!-- 이슈에 대해 간략하게 설명해주세요 -->

- 카메라 뷰 레이아웃 제작

- 카메라 뷰 페이지 전체는 UIKit으로 제작한 뒤 Representable로 래핑

## 📝 To-do

<!-- 진행할 작업에 대해 적어주세요 -->

- [x] 컴포넌트 배치

- [x] 오토 레이아웃 및 Constraints 설정

| non_test | 카메라뷰 레이아웃 제작 📸 issue 카메라 뷰 레이아웃 제작 카메라 뷰 페이지 전체는 uikit으로 제작한 뒤 representable로 래핑 📝 to do 컴포넌트 배치 오토 레이아웃 및 constraints 설정 | 0 |

249,964 | 21,219,486,395 | IssuesEvent | 2022-04-11 10:30:26 | LimeChain/hashport-validator | https://api.github.com/repos/LimeChain/hashport-validator | closed | Unit tests for app/process/handler/nft/transfer/handler.go | unit tests | - Implement unit tests for **app/process/handler/nft/transfer/handler.go** | 1.0 | Unit tests for app/process/handler/nft/transfer/handler.go - - Implement unit tests for **app/process/handler/nft/transfer/handler.go** | test | unit tests for app process handler nft transfer handler go implement unit tests for app process handler nft transfer handler go | 1 |

293,285 | 25,281,668,496 | IssuesEvent | 2022-11-16 16:11:45 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | opened | Add request analysisd configuration test | team/qa test/integration type/test-development status/not-tracked subteam/qa-main | | Target version | Related issue |

|--------------------|--------------------|

| 4.4 | #3112 |

<!-- Important: No section may be left blank. If not, delete it directly (in principle only "Configurations" and "Considerations" could be left blank in case of not... | 2.0 | Add request analysisd configuration test - | Target version | Related issue |

|--------------------|--------------------|

| 4.4 | #3112 |

<!-- Important: No section may be left blank. If not, delete it directly (in principle only "Configurations" and "Conside... | test | add request analysisd configuration test target version related issue description we need to add a test that checks if the analysisd configuration requested with the api is the expecte... | 1 |

41,168 | 16,652,475,851 | IssuesEvent | 2021-06-05 00:09:14 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | az webapp identity assign fails with `NoneType` object is not callable | Managed Identity Service Attention Web Apps | ### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az webapp identity assign`

**Errors:**

```

The command failed with an unexpected error. Here is the traceback:

'NoneType' object is not callable

Traceback (most recent call last):

File "D:\a\1\s\bui... | 1.0 | az webapp identity assign fails with `NoneType` object is not callable - ### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az webapp identity assign`

**Errors:**

```

The command failed with an unexpected error. Here is the traceback:

'NoneType' object ... | non_test | az webapp identity assign fails with nonetype object is not callable this is autogenerated please review and update as needed describe the bug command name az webapp identity assign errors the command failed with an unexpected error here is the traceback nonetype object ... | 0 |

443,754 | 12,799,098,009 | IssuesEvent | 2020-07-02 14:53:35 | TheRandomLabs/RandomPatches | https://api.github.com/repos/TheRandomLabs/RandomPatches | closed | [MC1.12.2 - 1.18.1.1] TickNextTick error still occurs, world crashing | bug help wanted low priority | ---- Minecraft Crash Report ----

WARNING: coremods are present:

RandomPatches (randompatches-1.12.2-1.18.1.1.jar)

SplashAnimationCoremod (SplashAnimation-0.2.1.jar)

BedPatch (bedpatch-2.2-1.12.2.jar)

Do not report to Forge! (If you haven't disabled the FoamFix coremod, try disabling it in the config! Not... | 1.0 | [MC1.12.2 - 1.18.1.1] TickNextTick error still occurs, world crashing - ---- Minecraft Crash Report ----

WARNING: coremods are present:

RandomPatches (randompatches-1.12.2-1.18.1.1.jar)

SplashAnimationCoremod (SplashAnimation-0.2.1.jar)

BedPatch (bedpatch-2.2-1.12.2.jar)

Do not report to Forge! (If you h... | non_test | ticknexttick error still occurs world crashing minecraft crash report warning coremods are present randompatches randompatches jar splashanimationcoremod splashanimation jar bedpatch bedpatch jar do not report to forge if you haven t disabled the foa... | 0 |

91,338 | 8,303,331,136 | IssuesEvent | 2018-09-21 17:11:33 | udacity/lesson_feedback_nd113 | https://api.github.com/repos/udacity/lesson_feedback_nd113 | closed | [Negative]2018-08-15 | 1.0.0 19. Matrices and Transformation of State test | No Solutions to coding parts\, this just makes it much harder for people with basic programming skills to understand the work that is going on. | 1.0 | [Negative]2018-08-15 - No Solutions to coding parts\, this just makes it much harder for people with basic programming skills to understand the work that is going on. | test | no solutions to coding parts this just makes it much harder for people with basic programming skills to understand the work that is going on | 1 |

289,388 | 24,984,971,069 | IssuesEvent | 2022-11-02 14:28:20 | MetaMask/metamask-extension | https://api.github.com/repos/MetaMask/metamask-extension | closed | e2e tests for editing and deleting contacts | area-testSuite stedmap team extension client | We need to expand the functionality covered by our `test/e2e/tests/address-book.spec.js`

Currently, there are tests for adding a contact and sending to a contact.

We need to add tests for editing an already added contact and deleting an already added contact.

We should be able to use the `'address-entry'` fixt... | 1.0 | e2e tests for editing and deleting contacts - We need to expand the functionality covered by our `test/e2e/tests/address-book.spec.js`

Currently, there are tests for adding a contact and sending to a contact.

We need to add tests for editing an already added contact and deleting an already added contact.

We sh... | test | tests for editing and deleting contacts we need to expand the functionality covered by our test tests address book spec js currently there are tests for adding a contact and sending to a contact we need to add tests for editing an already added contact and deleting an already added contact we should... | 1 |

710,498 | 24,420,506,484 | IssuesEvent | 2022-10-05 19:51:06 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Request] corepaint | request:new-pkg priority:low | ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/corepaint

### Utility this package has for you

A paint app from the C Suite

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a great amount.

### Do you consider the package to be useful for ... | 1.0 | [Request] corepaint - ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/corepaint

### Utility this package has for you

A paint app from the C Suite

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a great amount.

### Do you consider the pac... | non_test | corepaint link to the package s in the aur utility this package has for you a paint app from the c suite do you consider the package s to be useful for every chaotic aur user no but for a great amount do you consider the package to be useful for feature testing preview ... | 0 |

322,264 | 27,592,482,815 | IssuesEvent | 2023-03-09 02:07:12 | dotnet/machinelearning-modelbuilder | https://api.github.com/repos/dotnet/machinelearning-modelbuilder | opened | All scenarios: "Next step" button on the Environment page is disabled, hence we cannot continue the next steps. | Priority:0 Reported by: Test | **System Information (please complete the following information):**

Windows OS: Windows-11-Enterprise-22H2

ML.Net Model Builder 2022: 17.14.4.2315802 (Main Build)

Microsoft Visual Studio Enterprise: 2022(17.4.5)

.Net: 6.0

**Describe the bug**

- On which step of the process did you run into an issue:

"Next ste... | 1.0 | All scenarios: "Next step" button on the Environment page is disabled, hence we cannot continue the next steps. - **System Information (please complete the following information):**

Windows OS: Windows-11-Enterprise-22H2

ML.Net Model Builder 2022: 17.14.4.2315802 (Main Build)

Microsoft Visual Studio Enterprise: 2022... | test | all scenarios next step button on the environment page is disabled hence we cannot continue the next steps system information please complete the following information windows os windows enterprise ml net model builder main build microsoft visual studio enterprise net ... | 1 |

226,016 | 17,934,973,441 | IssuesEvent | 2021-09-10 14:16:06 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | opened | Crash vmState=0x000509ff | comp:jit test failure segfault | https://openj9-jenkins.osuosl.org/job/Test_openjdk11_j9_extended.functional_x86-64_mac_Nightly_testList_0/90/

testDDRExt_Class_0 (NoOptions)

vmState [0x509ff]: {J9VMSTATE_JIT} {localValuePropagation}

https://openj9-artifactory.osuosl.org/artifactory/ci-openj9/Test/Test_openjdk11_j9_extended.functional_x86-64_mac_N... | 1.0 | Crash vmState=0x000509ff - https://openj9-jenkins.osuosl.org/job/Test_openjdk11_j9_extended.functional_x86-64_mac_Nightly_testList_0/90/

testDDRExt_Class_0 (NoOptions)

vmState [0x509ff]: {J9VMSTATE_JIT} {localValuePropagation}

https://openj9-artifactory.osuosl.org/artifactory/ci-openj9/Test/Test_openjdk11_j9_exten... | test | crash vmstate testddrext class nooptions vmstate jit localvaluepropagation tck run tests ddrext running the ddr extension test unhandled exception type segmentation error vmstate signal number signal number error value signal code ... | 1 |

122,844 | 26,174,200,028 | IssuesEvent | 2023-01-02 07:17:56 | arduino/arduino-language-server | https://api.github.com/repos/arduino/arduino-language-server | opened | Temporary files are not cleaned up | topic: code type: imperfection | ### Describe the problem

Arduino Language Server and [**clangd**](https://clangd.llvm.org/) create some temporary files:

- Name format: `arduino-language-server2131811926/`

- Arduino CLI sketch build folder

- Name format: `system-includes-0f3fe3.clangd`

- Created by **clangd**

- Name format: `preamble-4df... | 1.0 | Temporary files are not cleaned up - ### Describe the problem

Arduino Language Server and [**clangd**](https://clangd.llvm.org/) create some temporary files:

- Name format: `arduino-language-server2131811926/`

- Arduino CLI sketch build folder

- Name format: `system-includes-0f3fe3.clangd`

- Created by **c... | non_test | temporary files are not cleaned up describe the problem arduino language server and create some temporary files name format arduino language arduino cli sketch build folder name format system includes clangd created by clangd name format preamble pch created b... | 0 |

165,517 | 26,183,988,518 | IssuesEvent | 2023-01-02 20:09:28 | flutter/website | https://api.github.com/repos/flutter/website | opened | Migrate to Bootstrap 5 | infrastructure design p3-low blocked e2-days e3-weeks | ### Describe the problem

Bootstrap 5 is the current release Bootstrap, replacing Bootstrap 4. We use it heavily across the site and we want to make sure we stay up to date. This will also allow us to eventually drop Jquery since Bootstrap 5 no longer uses it. Beyond that, this will also make a dark mode slightly easie... | 1.0 | Migrate to Bootstrap 5 - ### Describe the problem

Bootstrap 5 is the current release Bootstrap, replacing Bootstrap 4. We use it heavily across the site and we want to make sure we stay up to date. This will also allow us to eventually drop Jquery since Bootstrap 5 no longer uses it. Beyond that, this will also make a... | non_test | migrate to bootstrap describe the problem bootstrap is the current release bootstrap replacing bootstrap we use it heavily across the site and we want to make sure we stay up to date this will also allow us to eventually drop jquery since bootstrap no longer uses it beyond that this will also make a... | 0 |

25,343 | 4,154,841,619 | IssuesEvent | 2016-06-16 13:10:25 | WormBase/website | https://api.github.com/repos/WormBase/website | closed | Searching for a peptide ID returns irrelevant results | Under testing Webteam | E.g. searching for BM38054 (Bm2, isoform b) yields a selection of other results but not the protein we are interested in: http://www.wormbase.org/search/protein/BM38054

In some cases, e.g. CN06574, results are displayed for a completely different species.

These identifiers are found elsewhere on the web, e.g. Ens... | 1.0 | Searching for a peptide ID returns irrelevant results - E.g. searching for BM38054 (Bm2, isoform b) yields a selection of other results but not the protein we are interested in: http://www.wormbase.org/search/protein/BM38054

In some cases, e.g. CN06574, results are displayed for a completely different species.

Th... | test | searching for a peptide id returns irrelevant results e g searching for isoform b yields a selection of other results but not the protein we are interested in in some cases e g results are displayed for a completely different species these identifiers are found elsewhere on the web e g ensemb... | 1 |

38,385 | 5,184,451,948 | IssuesEvent | 2017-01-20 06:14:39 | Automattic/jetpack | https://api.github.com/repos/Automattic/jetpack | closed | Unit Tests: add PHP 7.1 | Unit Tests [Type] Enhancement [Type] Good First Bug | It would be nice to add PHP 7.1 to our list of Unit Tests, as it includes a few breaking changes from PHP7, and is getting more and more popular with site owners. | 1.0 | Unit Tests: add PHP 7.1 - It would be nice to add PHP 7.1 to our list of Unit Tests, as it includes a few breaking changes from PHP7, and is getting more and more popular with site owners. | test | unit tests add php it would be nice to add php to our list of unit tests as it includes a few breaking changes from and is getting more and more popular with site owners | 1 |

645 | 2,577,795,466 | IssuesEvent | 2015-02-12 19:10:56 | chrsmith/google-styleguide | https://api.github.com/repos/chrsmith/google-styleguide | opened | No word about standard date format in lispguide.xml, how about http://www.w3.org/TR/NOTE-datetime | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.

https://google-styleguide.googlecode.com/svn/trunk/lispguide.xml?showone=Attenti

on_Required#Attention_Required

2.

3.

What is the expected output? What do you see instead?

I would expect the "Google Common Lisp Style Guide" to specify the format dates

in comments should... | 1.0 | No word about standard date format in lispguide.xml, how about http://www.w3.org/TR/NOTE-datetime - ```

What steps will reproduce the problem?

1.

https://google-styleguide.googlecode.com/svn/trunk/lispguide.xml?showone=Attenti

on_Required#Attention_Required

2.

3.

What is the expected output? What do you see instead?

... | non_test | no word about standard date format in lispguide xml how about what steps will reproduce the problem on required attention required what is the expected output what do you see instead i would expect the google common lisp style guide to specify the format dates in comments should be in my s... | 0 |

225,373 | 17,856,339,225 | IssuesEvent | 2021-09-05 04:59:34 | ObliqueNET/Server | https://api.github.com/repos/ObliqueNET/Server | closed | Plot issues | needs testing landlord discussion needed | I and the ppl in my village are all mayors so we all have the same ability. Last night we had somone join our town and we have been made aware of this issue. Ppl in my town/village are experiencing this same issue. We cannot create, claim, delete, or edit plots that we had set up for new joins. I tried to figure it out... | 1.0 | Plot issues - I and the ppl in my village are all mayors so we all have the same ability. Last night we had somone join our town and we have been made aware of this issue. Ppl in my town/village are experiencing this same issue. We cannot create, claim, delete, or edit plots that we had set up for new joins. I tried to... | test | plot issues i and the ppl in my village are all mayors so we all have the same ability last night we had somone join our town and we have been made aware of this issue ppl in my town village are experiencing this same issue we cannot create claim delete or edit plots that we had set up for new joins i tried to... | 1 |

37,381 | 5,114,751,386 | IssuesEvent | 2017-01-06 19:31:34 | tomMoral/loky | https://api.github.com/repos/tomMoral/loky | closed | Check crash happening after the executor has been GC'ed | testing | Add a variant of `test_processes_terminate_on_executor_gc` with all possible crashes. | 1.0 | Check crash happening after the executor has been GC'ed - Add a variant of `test_processes_terminate_on_executor_gc` with all possible crashes. | test | check crash happening after the executor has been gc ed add a variant of test processes terminate on executor gc with all possible crashes | 1 |

3,283 | 2,666,552,562 | IssuesEvent | 2015-03-21 17:50:00 | contao-community-alliance/dc-general | https://api.github.com/repos/contao-community-alliance/dc-general | closed | [develop] TinyMCE funktioniert nicht | bug testing | Ich habe bei Contao 3.3.5 das Problem das der TinyMCE nicht angezeigt wurde. Ich habe dann an folgender Stelle https://github.com/contao-community-alliance/dc-general/blob/f0bd490f0d24b6a34a1140ab81b67b93e98606f3/src/ContaoCommunityAlliance/DcGeneral/Contao/View/Contao2BackendView/ContaoWidgetManager.php#L343

dies e... | 1.0 | [develop] TinyMCE funktioniert nicht - Ich habe bei Contao 3.3.5 das Problem das der TinyMCE nicht angezeigt wurde. Ich habe dann an folgender Stelle https://github.com/contao-community-alliance/dc-general/blob/f0bd490f0d24b6a34a1140ab81b67b93e98606f3/src/ContaoCommunityAlliance/DcGeneral/Contao/View/Contao2BackendView... | test | tinymce funktioniert nicht ich habe bei contao das problem das der tinymce nicht angezeigt wurde ich habe dann an folgender stelle dies eingefügt selector propertyid danach geht es | 1 |

212,377 | 23,882,526,099 | IssuesEvent | 2022-09-08 03:32:02 | Azure/AKS | https://api.github.com/repos/Azure/AKS | opened | Node Access (SSH Refinement) - Phase 3 update SSH key | security feature-request | With this feature, we allow users to update the SSH key permanently for AKS all existing nodepools. | True | Node Access (SSH Refinement) - Phase 3 update SSH key - With this feature, we allow users to update the SSH key permanently for AKS all existing nodepools. | non_test | node access ssh refinement phase update ssh key with this feature we allow users to update the ssh key permanently for aks all existing nodepools | 0 |

10,618 | 3,131,030,604 | IssuesEvent | 2015-09-09 12:57:00 | nmaguirre/eiffel-subtitle-converter | https://api.github.com/repos/nmaguirre/eiffel-subtitle-converter | opened | Missing unit tests for routine adjust_stop_frame.MICRODVD_SUBTITLE_ITEM | enhancement testing | Routine adjust_stop_frame from class MICRODVD_SUBTITLE_ITEM has no unit tests to assess its behaviour. At least one unit "positive" and one "negative" unit test must be provided for this routine. In this context, 'positive' is a test that evaluates the correct behaviour of the routine when right arguments are passed, w... | 1.0 | Missing unit tests for routine adjust_stop_frame.MICRODVD_SUBTITLE_ITEM - Routine adjust_stop_frame from class MICRODVD_SUBTITLE_ITEM has no unit tests to assess its behaviour. At least one unit "positive" and one "negative" unit test must be provided for this routine. In this context, 'positive' is a test that evaluat... | test | missing unit tests for routine adjust stop frame microdvd subtitle item routine adjust stop frame from class microdvd subtitle item has no unit tests to assess its behaviour at least one unit positive and one negative unit test must be provided for this routine in this context positive is a test that evaluat... | 1 |

201,027 | 22,946,647,439 | IssuesEvent | 2022-07-19 01:06:16 | liorzilberg/swagger-parser | https://api.github.com/repos/liorzilberg/swagger-parser | opened | CVE-2020-10650 (Medium) detected in jackson-databind-2.9.5.jar | security vulnerability | ## CVE-2020-10650 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2020-10650 (Medium) detected in jackson-databind-2.9.5.jar - ## CVE-2020-10650 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.5.jar</b></p></summary>

<p>Gen... | non_test | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml path to vulnerab... | 0 |

257,591 | 22,196,845,830 | IssuesEvent | 2022-06-07 07:45:03 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | sql/catalog/lease: TestLeaseTxnDeadlineExtension failed | C-test-failure O-robot branch-master | sql/catalog/lease.TestLeaseTxnDeadlineExtension [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/5396090?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/5396090?buildTab=artifacts#/) on master @ [8344e69de4174... | 1.0 | sql/catalog/lease: TestLeaseTxnDeadlineExtension failed - sql/catalog/lease.TestLeaseTxnDeadlineExtension [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBazel/5396090?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_StressBaze... | test | sql catalog lease testleasetxndeadlineextension failed sql catalog lease testleasetxndeadlineextension with on master run testleasetxndeadlineextension validate lease txn deadline ext parameters tags bazel gss help see also cc cockroachdb sql schema ... | 1 |

51,199 | 6,150,831,797 | IssuesEvent | 2017-06-27 23:57:22 | mozilla/activity-stream | https://api.github.com/repos/mozilla/activity-stream | closed | The "active" property in experiments has an unclear meaning | Chore P2 Test Pilot | Right now it's unclear what we are using the `"active"` field on experiments for -- this came in a with a discussion with @emtwo. In practice we use it for "archiving" old experiments that may not even be referenced in the code, whereas for experiments that are not yet running, we tend to just set the `"threshold"` val... | 1.0 | The "active" property in experiments has an unclear meaning - Right now it's unclear what we are using the `"active"` field on experiments for -- this came in a with a discussion with @emtwo. In practice we use it for "archiving" old experiments that may not even be referenced in the code, whereas for experiments that ... | test | the active property in experiments has an unclear meaning right now it s unclear what we are using the active field on experiments for this came in a with a discussion with emtwo in practice we use it for archiving old experiments that may not even be referenced in the code whereas for experiments that ... | 1 |

116,700 | 24,969,120,743 | IssuesEvent | 2022-11-01 22:28:19 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Add a reference to System.Linq automatically when LINQ is used | Area-IDE Feature Request IDE-CodeStyle | _This issue has been moved from [a ticket on Developer Community](https://developercommunity.visualstudio.com/content/idea/1035070/add-a-reference-to-systemlinq-automatically-when-l.html)._

---

VS2019 has brought in an extremely helpful feature; if you use an item (for example an attribute) that's in a non-referenced ... | 1.0 | Add a reference to System.Linq automatically when LINQ is used - _This issue has been moved from [a ticket on Developer Community](https://developercommunity.visualstudio.com/content/idea/1035070/add-a-reference-to-systemlinq-automatically-when-l.html)._

---

VS2019 has brought in an extremely helpful feature; if you u... | non_test | add a reference to system linq automatically when linq is used this issue has been moved from has brought in an extremely helpful feature if you use an item for example an attribute that s in a non referenced namespace the approprtiate using statement is automatically added it would be great if vs... | 0 |

341,247 | 30,575,803,242 | IssuesEvent | 2023-07-21 05:15:52 | ChainSafe/gossamer | https://api.github.com/repos/ChainSafe/gossamer | closed | Add `go test -race` workflows | tests Type: Feature | ## Task summary

<!-- A clear and concise description of what the task is. -->

- We don't run tests with -race automatically in CI.

- There is only `test-state-race` which tests only for dot/state. We have data race tests in `dot/sync` and `lib/blocktree`, but they are not running with -race at the moment.

So, ad... | 1.0 | Add `go test -race` workflows - ## Task summary

<!-- A clear and concise description of what the task is. -->

- We don't run tests with -race automatically in CI.

- There is only `test-state-race` which tests only for dot/state. We have data race tests in `dot/sync` and `lib/blocktree`, but they are not running wit... | test | add go test race workflows task summary we don t run tests with race automatically in ci there is only test state race which tests only for dot state we have data race tests in dot sync and lib blocktree but they are not running with race at the moment so add ci workflows to run data r... | 1 |

305 | 2,736,749,196 | IssuesEvent | 2015-04-19 18:49:31 | ChelseaStats/issues | https://api.github.com/repos/ChelseaStats/issues | closed | football_league April 17 2015 at 11:05AM | to process tweet | <blockquote class="twitter-tweet">

<p>So which of the three shortlisted players do you think should win the prize at the <a href="http://u.thechels.uk/1b9vjTj">#FLAwards</a> on Sunday evening? <a href="http://u.thechels.uk/1IloIiN">http://u.thechels.uk/1b9vjTk</a></p>

— The Football League (@football_league) <a h... | 1.0 | football_league April 17 2015 at 11:05AM - <blockquote class="twitter-tweet">

<p>So which of the three shortlisted players do you think should win the prize at the <a href="http://u.thechels.uk/1b9vjTj">#FLAwards</a> on Sunday evening? <a href="http://u.thechels.uk/1IloIiN">http://u.thechels.uk/1b9vjTk</a></p>

— ... | non_test | football league april at so which of the three shortlisted players do you think should win the prize at the a href on sunday evening a href mdash the football league football league april at via twitter | 0 |

311,190 | 26,774,544,825 | IssuesEvent | 2023-01-31 16:14:38 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Latest Posts and More Block | [Type] Enhancement [Status] Needs More Info [Status] Stale [Block] Latest Posts | In my organization, it has been the policy to always include basic information about any partner supplying the original information contained in a news posting. To that end, we have always included a "More..." block in our posts. On our home page, we would like the Latest Posts block to display information in complete ... | 1.0 | Latest Posts and More Block - In my organization, it has been the policy to always include basic information about any partner supplying the original information contained in a news posting. To that end, we have always included a "More..." block in our posts. On our home page, we would like the Latest Posts block to di... | test | latest posts and more block in my organization it has been the policy to always include basic information about any partner supplying the original information contained in a news posting to that end we have always included a more block in our posts on our home page we would like the latest posts block to di... | 1 |

177,724 | 13,744,802,381 | IssuesEvent | 2020-10-06 01:00:39 | codewars/codewars-runner-cli | https://api.github.com/repos/codewars/codewars-runner-cli | closed | Add test support for SASS/SCSS | request/language request/test-framework | I've been recently experimenting with a test framework for SASS called true. It would be nice to have it included in the Codewars environment.

Characteristics:

* Open Source ( BSD-3 License )

* node module

* can be called from mocha as `var sassTrue = require('sass-true');`

| 1.0 | Add test support for SASS/SCSS - I've been recently experimenting with a test framework for SASS called true. It would be nice to have it included in the Codewars environment.

Characteristics:

* Open Source ( BSD-3 License )

* node module

* can be called from mocha as `var sassTrue = require('sass-true');`

| test | add test support for sass scss i ve been recently experimenting with a test framework for sass called true it would be nice to have it included in the codewars environment characteristics open source bsd license node module can be called from mocha as var sasstrue require sass true | 1 |

490,862 | 14,141,082,710 | IssuesEvent | 2020-11-10 12:10:41 | Puzzlepart/prosjektportalen365 | https://api.github.com/repos/Puzzlepart/prosjektportalen365 | closed | Enable project extensions by default when creating new project | Complexity: medium Priority: low enhancement | **Describe the solution you'd like**

Boolean to enable/disable project extensions by default. Identical to PP2.

**Additional context**

| 1.0 | Enable project extensions by default when creating new project - **Describe the solution you'd like**

Boolean to enable/disable project extensions by default. Identical to PP2.

**Additional context**

| non_test | enable project extensions by default when creating new project describe the solution you d like boolean to enable disable project extensions by default identical to additional context | 0 |

147,613 | 5,642,827,396 | IssuesEvent | 2017-04-06 22:08:53 | Azure/acs-engine | https://api.github.com/repos/Azure/acs-engine | closed | acstgen: kubernetes: consider switching to `kubeadm` | orchestrator/k8s priority/P2 | Filing this for ongoing and future discussion/consideration.

This could dramatically reduce the amount of code we maintain to deploy Kubernetes.

Advantages:

- removing 90+% of the yaml we have in the repo for Kubernetes (cloud-config, certs, etc)

- not having to worry about drift between upstream addons and our addon... | 1.0 | acstgen: kubernetes: consider switching to `kubeadm` - Filing this for ongoing and future discussion/consideration.

This could dramatically reduce the amount of code we maintain to deploy Kubernetes.

Advantages:

- removing 90+% of the yaml we have in the repo for Kubernetes (cloud-config, certs, etc)

- not having to ... | non_test | acstgen kubernetes consider switching to kubeadm filing this for ongoing and future discussion consideration this could dramatically reduce the amount of code we maintain to deploy kubernetes advantages removing of the yaml we have in the repo for kubernetes cloud config certs etc not having to w... | 0 |

248,267 | 18,858,055,516 | IssuesEvent | 2021-11-12 09:20:06 | frederickpek/pe | https://api.github.com/repos/frederickpek/pe | opened | Developer Guide: Use Cases not numbered | type.DocumentationBug severity.Medium | This can be very confusing for developer trying to follow the use cases, especially when inclusions are involved.<br>

<img width="684" alt="isyro" src="https://user-images.githubusercontent.com/105806953/180877945-ebd97228-68d9-4b86-a9cc-f826d3d6a2c0.PNG"> | 1.0 | [prof] Variable sur préfixe fonctions - Le nom de la fonction dans le préfixe d'un champ de réponse expression n'est pas reconnue (&f& ne lit pas la variable f)

<img width="684" alt="isyro" src="https://user-images.githubusercontent.com/105806953/180877945-ebd97228-68d9-4b86-a9cc-f826d3d6a2c0.PNG"> | test | variable sur préfixe fonctions le nom de la fonction dans le préfixe d un champ de réponse expression n est pas reconnue f ne lit pas la variable f img width alt isyro src | 1 |

168,013 | 13,055,646,267 | IssuesEvent | 2020-07-30 02:18:58 | vmware-tanzu/antrea | https://api.github.com/repos/vmware-tanzu/antrea | opened | [e2e] t.Error for cleanup functions | area/test/e2e good first issue kind/feature | **Describe the problem/challenge you have**

Suggested by @antoninbas , it is better to use `t.Error` instead of `t.Fatal` in cleanup functions.

**Describe the solution you'd like**

Reconsider the usage of `t.Error` and `t.Fatal` in e2e tests.

| 1.0 | [e2e] t.Error for cleanup functions - **Describe the problem/challenge you have**

Suggested by @antoninbas , it is better to use `t.Error` instead of `t.Fatal` in cleanup functions.

**Describe the solution you'd like**

Reconsider the usage of `t.Error` and `t.Fatal` in e2e tests.

| test | t error for cleanup functions describe the problem challenge you have suggested by antoninbas it is better to use t error instead of t fatal in cleanup functions describe the solution you d like reconsider the usage of t error and t fatal in tests | 1 |

102,974 | 8,872,401,074 | IssuesEvent | 2019-01-11 15:20:14 | paritytech/substrate | https://api.github.com/repos/paritytech/substrate | opened | Restore integration tests | F4-tests Q3-medium | Integration tests introduced in #805 were lost somewhere when moving to aura. Would be nice to re-introduce them. | 1.0 | Restore integration tests - Integration tests introduced in #805 were lost somewhere when moving to aura. Would be nice to re-introduce them. | test | restore integration tests integration tests introduced in were lost somewhere when moving to aura would be nice to re introduce them | 1 |

290,725 | 25,090,126,260 | IssuesEvent | 2022-11-08 05:09:50 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | [Bug]: Report Generation Failure for ballerinax/postgresql with the New Testerina Framework | Type/Bug Team/DevTools Area/TestFramework Reason/EngineeringMistake | ### Description

With the new testerina framework, the postgres module test report generation fails with the following output.

```

[fail] testInOutParameterArray:

Timestamp timezone range array does not match.

expected: <pos... | 1.0 | [Bug]: Report Generation Failure for ballerinax/postgresql with the New Testerina Framework - ### Description

With the new testerina framework, the postgres module test report generation fails with the following output.

```

[fail] testInOutParameterArray:

Timestamp timezone ... | test | report generation failure for ballerinax postgresql with the new testerina framework description with the new testerina framework the postgres module test report generation fails with the following output testinoutparameterarray timestamp timezone range arr... | 1 |

77,323 | 14,784,856,191 | IssuesEvent | 2021-01-12 01:15:09 | mangonaise/braincache | https://api.github.com/repos/mangonaise/braincache | opened | Gameplay logic is unnecessarily bundled together with screen-switching logic. | nasty code | This is an issue with the implementation and not a bug.

Currently the gameplay logic is in App, alongside the more general app logic such as switching screens (e.g. home screen, game over screen) and saving high scores.

The gameplay logic should be separated into its own component. | 1.0 | Gameplay logic is unnecessarily bundled together with screen-switching logic. - This is an issue with the implementation and not a bug.

Currently the gameplay logic is in App, alongside the more general app logic such as switching screens (e.g. home screen, game over screen) and saving high scores.

The gameplay ... | non_test | gameplay logic is unnecessarily bundled together with screen switching logic this is an issue with the implementation and not a bug currently the gameplay logic is in app alongside the more general app logic such as switching screens e g home screen game over screen and saving high scores the gameplay ... | 0 |

80,465 | 7,748,559,145 | IssuesEvent | 2018-05-30 08:42:42 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: largerange/splits/size=10GiB,nodes=3 failed on release-2.0 | C-test-failure O-robot | SHA: https://github.com/cockroachdb/cockroach/commits/32b7aa635af34c5b150abba9df1cd51a5fafe804

Parameters:

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=686347&tab=buildLog

```

cluster.go:678,large_range.go:74,large_range.go:45: /home/agent/work/.go/bin/roachprod start teamcity-686347-largerang... | 1.0 | roachtest: largerange/splits/size=10GiB,nodes=3 failed on release-2.0 - SHA: https://github.com/cockroachdb/cockroach/commits/32b7aa635af34c5b150abba9df1cd51a5fafe804

Parameters:

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=686347&tab=buildLog

```

cluster.go:678,large_range.go:74,large_range.g... | test | roachtest largerange splits size nodes failed on release sha parameters failed test cluster go large range go large range go home agent work go bin roachprod start teamcity largerange splits size nodes encrypt exit status | 1 |

75,602 | 14,495,891,053 | IssuesEvent | 2020-12-11 11:54:48 | IgniteUI/igniteui-angular-samples | https://api.github.com/repos/IgniteUI/igniteui-angular-samples | closed | Calendar angular samples are not loaded in code view | code-view status: in-review status: resolved | - [x] Both samples under Views section are not loaded in Code View:

1. Open https://www.infragistics.com/products/ignite-ui-angular/angular/components/calendar#views

2. See the result:

The "View in fu... | 1.0 | Calendar angular samples are not loaded in code view - - [x] Both samples under Views section are not loaded in Code View:

1. Open https://www.infragistics.com/products/ignite-ui-angular/angular/components/calendar#views

2. See the result:

``` | 1.0 | com.hazelcast.jet.impl.connector.StreamJmsPTest.when_topic - ```Error Message

expected:<2db03d3c-ee91-45a4-bac2-ee4d13e79731> but was:<null>

Stacktrace

java.lang.AssertionError: expected:<2db03d3c-ee91-45a4-bac2-ee4d13e79731> but was:<null>

at com.hazelcast.jet.impl.connector.StreamJmsPTest.when_topic(StreamJmsPT... | test | com hazelcast jet impl connector streamjmsptest when topic error message expected but was stacktrace java lang assertionerror expected but was at com hazelcast jet impl connector streamjmsptest when topic streamjmsptest java | 1 |

240,904 | 26,256,533,649 | IssuesEvent | 2023-01-06 01:34:48 | farooqmir/React-Redux-Demonstration-with-api | https://api.github.com/repos/farooqmir/React-Redux-Demonstration-with-api | opened | CVE-2021-3803 (High) detected in nth-check-1.0.1.tgz | security vulnerability | ## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.1.tgz</b></p></summary>

<p>performant nth-check parser & compiler</p>

<p>Library home page: <a href="http... | True | CVE-2021-3803 (High) detected in nth-check-1.0.1.tgz - ## CVE-2021-3803 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nth-check-1.0.1.tgz</b></p></summary>

<p>performant nth-check pa... | non_test | cve high detected in nth check tgz cve high severity vulnerability vulnerable library nth check tgz performant nth check parser compiler library home page a href path to dependency file react redux demonstration with api package json path to vulnerable library no... | 0 |

278,712 | 24,169,612,972 | IssuesEvent | 2022-09-22 17:57:15 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | [BUG] Local cluster v2prov non-functional when feature flag multi-cluster-management=false | kind/bug [zube]: To Test area/harvester team/area2 | **Rancher Server Setup**

- Rancher version: v2.6.7

- Installation option (Docker install/Helm Chart): Helm

- If Helm Chart, Kubernetes Cluster and version (RKE1, RKE2, k3s, EKS, etc): v1.22.12+rke2r1

**User Information**

- What is the role of the user logged in? Admin

**Describe the bug**

When starting rk... | 1.0 | [BUG] Local cluster v2prov non-functional when feature flag multi-cluster-management=false - **Rancher Server Setup**

- Rancher version: v2.6.7

- Installation option (Docker install/Helm Chart): Helm

- If Helm Chart, Kubernetes Cluster and version (RKE1, RKE2, k3s, EKS, etc): v1.22.12+rke2r1

**User Information... | test | local cluster non functional when feature flag multi cluster management false rancher server setup rancher version installation option docker install helm chart helm if helm chart kubernetes cluster and version eks etc user information what is the role of ... | 1 |

190,095 | 6,808,665,191 | IssuesEvent | 2017-11-04 06:28:38 | ballerinalang/plugin-intellij | https://api.github.com/repos/ballerinalang/plugin-intellij | closed | Quick doc support not working | Priority/Highest Type/Improvement | Quick doc support is not working currently because the doc package was move to built-in package. | 1.0 | Quick doc support not working - Quick doc support is not working currently because the doc package was move to built-in package. | non_test | quick doc support not working quick doc support is not working currently because the doc package was move to built in package | 0 |

66,460 | 7,001,065,264 | IssuesEvent | 2017-12-18 08:48:04 | NativeScript/nativescript-cli | https://api.github.com/repos/NativeScript/nativescript-cli | closed | tns debug ios: nativescript inspector doesn't open on High Sierra and Xcode 9.2 | bug debug iOS Ready For Test | ### Tell us about the problem

When run `tns debug ios` it tries to open Nativescript Inspector and immediately closes it and throws an error.

### Which platform(s) does your issue occur on?

iOS

### Please provide the following version numbers that your issue occurs with:

- CLI: 3.3.1

- Cross-platform modules:... | 1.0 | tns debug ios: nativescript inspector doesn't open on High Sierra and Xcode 9.2 - ### Tell us about the problem

When run `tns debug ios` it tries to open Nativescript Inspector and immediately closes it and throws an error.

### Which platform(s) does your issue occur on?

iOS

### Please provide the following ver... | test | tns debug ios nativescript inspector doesn t open on high sierra and xcode tell us about the problem when run tns debug ios it tries to open nativescript inspector and immediately closes it and throws an error which platform s does your issue occur on ios please provide the following ver... | 1 |

19,374 | 10,349,849,701 | IssuesEvent | 2019-09-05 00:11:12 | uniquelyparticular/sync-moltin-to-algolia | https://api.github.com/repos/uniquelyparticular/sync-moltin-to-algolia | opened | CVE-2018-20834 (High) detected in tar-2.2.1.tgz | security vulnerability | ## CVE-2018-20834 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.1.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/ta... | True | CVE-2018-20834 (High) detected in tar-2.2.1.tgz - ## CVE-2018-20834 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.1.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home ... | non_test | cve high detected in tar tgz cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file sync moltin to algolia package json path to vulnerable library tmp git sync moltin to algolia node modules npm node... | 0 |

237,657 | 19,663,719,931 | IssuesEvent | 2022-01-10 19:52:45 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | closed | Intermittent UI test failure - <Classname.testName> | needs:triage eng:ui-test | Firefox 95.2.0 android 7.1.2

The logic for working with inserts and bookmarks is terribly inconvenient. In version 68, I opened a bookmark in the current tab if a new tab was not needed. Now the bookmark is forced to open in a new tab and I have to go to the tab bar to remove the unnecessary one 😖 are you kidding me?... | 1.0 | Intermittent UI test failure - <Classname.testName> - Firefox 95.2.0 android 7.1.2

The logic for working with inserts and bookmarks is terribly inconvenient. In version 68, I opened a bookmark in the current tab if a new tab was not needed. Now the bookmark is forced to open in a new tab and I have to go to the tab ba... | test | intermittent ui test failure firefox android the logic for working with inserts and bookmarks is terribly inconvenient in version i opened a bookmark in the current tab if a new tab was not needed now the bookmark is forced to open in a new tab and i have to go to the tab bar to remove the unnec... | 1 |

364,790 | 25,502,573,017 | IssuesEvent | 2022-11-28 06:19:50 | oleksandrblazhko/ai203-tokarev | https://api.github.com/repos/oleksandrblazhko/ai203-tokarev | closed | CW5 | documentation | **Завдання 1**

[Посилання](https://github.com/oleksandrblazhko/ai203-tokarev/blob/main/2-SoftwareDesign/2.7-PlantUML/UML-Activity.md)

|TC id|Опис кроків тестового сценарію|Опис очікуваних результатів|

|-|-|-|

|TC1.1|Початкові умови: платіж ще не підтверджено</br>Кроки: </br>Користувач підтверджує оплату, платіж... | 1.0 | CW5 - **Завдання 1**

[Посилання](https://github.com/oleksandrblazhko/ai203-tokarev/blob/main/2-SoftwareDesign/2.7-PlantUML/UML-Activity.md)

|TC id|Опис кроків тестового сценарію|Опис очікуваних результатів|

|-|-|-|

|TC1.1|Початкові умови: платіж ще не підтверджено</br>Кроки: </br>Користувач підтверджує оплату, ... | non_test | завдання tc id опис кроків тестового сценарію опис очікуваних результатів початкові умови платіж ще не підтверджено кроки користувач підтверджує оплату платіж успішний зберегається інформація що платіж успішно проведено початкові умови платіж ще не підтверджено кроки к... | 0 |

108,490 | 9,309,115,791 | IssuesEvent | 2019-03-25 15:50:06 | containership/cluster-manager | https://api.github.com/repos/containership/cluster-manager | closed | Travis should use a Docker cache for builds | component/test type/optimization | ### Description

#### Why should this feature be added?

Travis needs to use a Docker cache for builds. Currently builds are painfully slow.

#### How would you like the feature to work?

I think we should just push `latest` on each successful build and then use `--cache-from`. [Here's a more thorough explanati... | 1.0 | Travis should use a Docker cache for builds - ### Description

#### Why should this feature be added?

Travis needs to use a Docker cache for builds. Currently builds are painfully slow.

#### How would you like the feature to work?

I think we should just push `latest` on each successful build and then use `--... | test | travis should use a docker cache for builds description why should this feature be added travis needs to use a docker cache for builds currently builds are painfully slow how would you like the feature to work i think we should just push latest on each successful build and then use ... | 1 |

363,458 | 10,741,404,638 | IssuesEvent | 2019-10-29 20:10:25 | rubrikinc/rubrik-sdk-for-powershell | https://api.github.com/repos/rubrikinc/rubrik-sdk-for-powershell | closed | Force PrimaryClusterId to lowercase | area-rcdm exp-intermediate kind-enhancement kind-feature priority-p3 | When trying to filter on PrimaryClusterId using local, the text must be entered in lowercase. Entering any uppercase text (IE Local) causes the API to return nothing

**Describe the solution you'd like**

Would like to see this support uppercase to fall more inline with how PowerShell handles case.

Can be done ... | 1.0 | Force PrimaryClusterId to lowercase - When trying to filter on PrimaryClusterId using local, the text must be entered in lowercase. Entering any uppercase text (IE Local) causes the API to return nothing

**Describe the solution you'd like**

Would like to see this support uppercase to fall more inline with how Po... | non_test | force primaryclusterid to lowercase when trying to filter on primaryclusterid using local the text must be entered in lowercase entering any uppercase text ie local causes the api to return nothing describe the solution you d like would like to see this support uppercase to fall more inline with how po... | 0 |

404,775 | 11,863,017,367 | IssuesEvent | 2020-03-25 18:56:33 | teamforus/forus | https://api.github.com/repos/teamforus/forus | closed | checking if IBAN is companies IBAN | Difficulty: Medium Priority: Could have Scope: Medium Status: Refinement Needed enhancement project-31 | ## Main asssignee: @

## Context/goal:

With this tool we can verify that an IBAN number is the IBAN of the company that signed up.

https://www.cm.com/nl-nl/producten/toegang/iban-verificatie/ | 1.0 | checking if IBAN is companies IBAN - ## Main asssignee: @

## Context/goal:

With this tool we can verify that an IBAN number is the IBAN of the company that signed up.

https://www.cm.com/nl-nl/producten/toegang/iban-verificatie/ | non_test | checking if iban is companies iban main asssignee context goal with this tool we can verify that an iban number is the iban of the company that signed up | 0 |

141,137 | 11,395,376,581 | IssuesEvent | 2020-01-30 11:17:06 | microsoft/ptvsd | https://api.github.com/repos/microsoft/ptvsd | closed | attach_by_socket tests fail sporadically on MacOS + Python 3.6 | Test-issue | When it happens, it's usually several dozen tests failing in the same run for a given Python version. The only common theme is the attach method - the tests themselves are completely unrelated. | 1.0 | attach_by_socket tests fail sporadically on MacOS + Python 3.6 - When it happens, it's usually several dozen tests failing in the same run for a given Python version. The only common theme is the attach method - the tests themselves are completely unrelated. | test | attach by socket tests fail sporadically on macos python when it happens it s usually several dozen tests failing in the same run for a given python version the only common theme is the attach method the tests themselves are completely unrelated | 1 |

156,369 | 12,307,684,666 | IssuesEvent | 2020-05-12 05:23:02 | kubernetes/test-infra | https://api.github.com/repos/kubernetes/test-infra | closed | Using kubetest : unable to make the pods run | area/kubetest kind/bug triage/needs-information | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!-->

**When I use kubetest to take e2e test . It occurs something wrong!**

```bash

Apr 24 17:45:31.061: INFO: Density Pods: 219 out of 1320... | 1.0 | Using kubetest : unable to make the pods run - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!-->

**When I use kubetest to take e2e test . It occurs something wrong!**

```bash

Apr 24 17... | test | using kubetest unable to make the pods run when i use kubetest to take test it occurs something wrong bash apr info density pods out of created running pending waiting inactive terminating unknown runningbutnotready apr info density pods o... | 1 |

223,824 | 17,633,505,102 | IssuesEvent | 2021-08-19 10:58:33 | icyphy/lingua-franca | https://api.github.com/repos/icyphy/lingua-franca | opened | Errors reported via ErrorReporter during validation do not cause test failure | bug compiler testing | There is a strange bug where the C++ test [WidthGivenByCode.lf](https://github.com/icyphy/lingua-franca/blob/master/test/Cpp/src/multiport/WidthGivenByCode.lf) passes in our test runs, but does not compile when invoking lfc manually. lfc reports the following error:

```

lfc: error: Cannot infer width.

--> multiport... | 1.0 | Errors reported via ErrorReporter during validation do not cause test failure - There is a strange bug where the C++ test [WidthGivenByCode.lf](https://github.com/icyphy/lingua-franca/blob/master/test/Cpp/src/multiport/WidthGivenByCode.lf) passes in our test runs, but does not compile when invoking lfc manually. lfc re... | test | errors reported via errorreporter during validation do not cause test failure there is a strange bug where the c test passes in our test runs but does not compile when invoking lfc manually lfc reports the following error lfc error cannot infer width multiport widthgivenbycode lf ... | 1 |

15,823 | 5,188,133,655 | IssuesEvent | 2017-01-20 18:59:38 | phetsims/unit-rates | https://api.github.com/repos/phetsims/unit-rates | closed | items on shelf and scale don't have a pointer cursor | dev:code-review type:bug type:wontfix | Code review #52.

Three's no indication that the items on the shelf or scale are draggable, because they don't show the pointer cursor when you mouse over them. It's not until you actually start dragging that the cursor changes.

@arouinfar Is this a bug or intended behavior?

| 1.0 | items on shelf and scale don't have a pointer cursor - Code review #52.

Three's no indication that the items on the shelf or scale are draggable, because they don't show the pointer cursor when you mouse over them. It's not until you actually start dragging that the cursor changes.

@arouinfar Is this a bug or inten... | non_test | items on shelf and scale don t have a pointer cursor code review three s no indication that the items on the shelf or scale are draggable because they don t show the pointer cursor when you mouse over them it s not until you actually start dragging that the cursor changes arouinfar is this a bug or intend... | 0 |

268,614 | 23,383,998,002 | IssuesEvent | 2022-08-11 12:14:37 | MTES-MCT/histologe | https://api.github.com/repos/MTES-MCT/histologe | closed | [FO - Création du signalement] - info propriétaire | A tester Contenu Manquant | Sur version de test 447

Lors de la création du signalement, si déclarant occupant, le champ nom du propriétaire doit être obligatoire mais s'il n'est pas renseigné, il n'y a pas de message d'information liè à l'erreur (sous Chrome) :

:

AppleWebKit/537.36 (KHTML, like Gecko) Chrome/104.0.0.0 Safari/537.36 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/108904 -->

**URL**: https://usa.embassy.gov.au/pa... | 2.0 | usa.embassy.gov.au - Link arrows are displayed misaligned - <!-- @browser: Firefox 64bit 103.0.2 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/104.0.0.0 Safari/537.36 -->

<!-- @reported_with: unknown -->