Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

308,778 | 26,631,368,497 | IssuesEvent | 2023-01-24 18:06:46 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/maps/group2/index·js - maps app "before all" hook in "maps app" | failed-test | A test failed on a tracked branch

```

Error: 400 resp: ''

req: {

transitional: {

silentJSONParsing: true,

forcedJSONParsing: true,

clarifyTimeoutError: false

},

adapter: [Function: httpAdapter],

transformRequest: [ [Function: transformRequest] ],

transformResponse: [ [Function: transformResponse]... | 1.0 | Failing test: Chrome X-Pack UI Functional Tests.x-pack/test/functional/apps/maps/group2/index·js - maps app "before all" hook in "maps app" - A test failed on a tracked branch

```

Error: 400 resp: ''

req: {

transitional: {

silentJSONParsing: true,

forcedJSONParsing: true,

clarifyTimeoutError: false

},

... | test | failing test chrome x pack ui functional tests x pack test functional apps maps index·js maps app before all hook in maps app a test failed on a tracked branch error resp req transitional silentjsonparsing true forcedjsonparsing true clarifytimeouterror false adapt... | 1 |

440,508 | 30,748,258,562 | IssuesEvent | 2023-07-28 16:47:15 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | [DOCS] Missing values are now supported in Decision Trees | Documentation module:tree | v1.3 of scikit-learn introduced some missing value support as evident in the same documentation [file](https://scikit-learn.org/stable/modules/tree.html#tree-missing-value-support) later on but it still states in the beginning of the dos that missing values are ["not supported in this module"](https://github.com/scikit... | 1.0 | [DOCS] Missing values are now supported in Decision Trees - v1.3 of scikit-learn introduced some missing value support as evident in the same documentation [file](https://scikit-learn.org/stable/modules/tree.html#tree-missing-value-support) later on but it still states in the beginning of the dos that missing values ar... | non_test | missing values are now supported in decision trees of scikit learn introduced some missing value support as evident in the same documentation later on but it still states in the beginning of the dos that missing values are i think it would be fine to just remove that sentence or reference the section ex... | 0 |

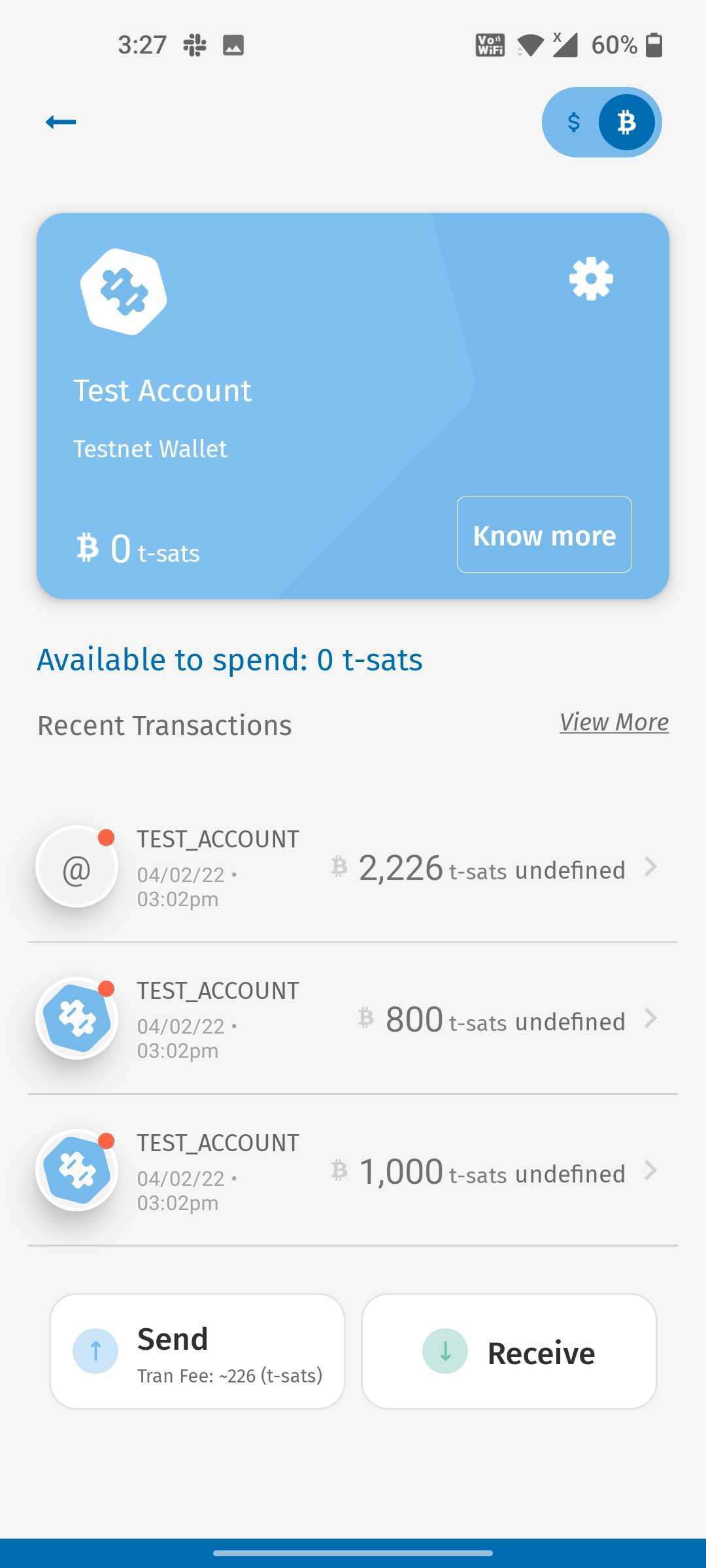

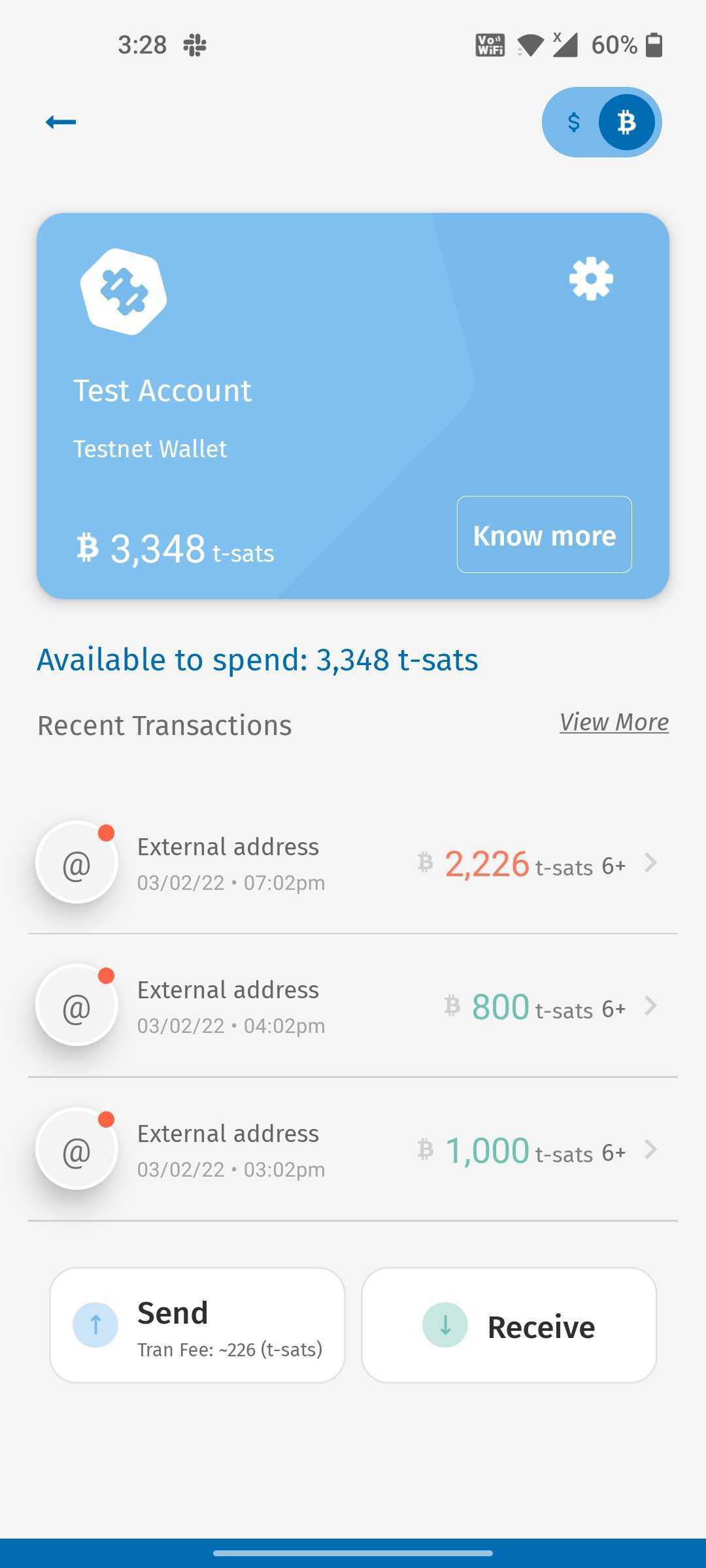

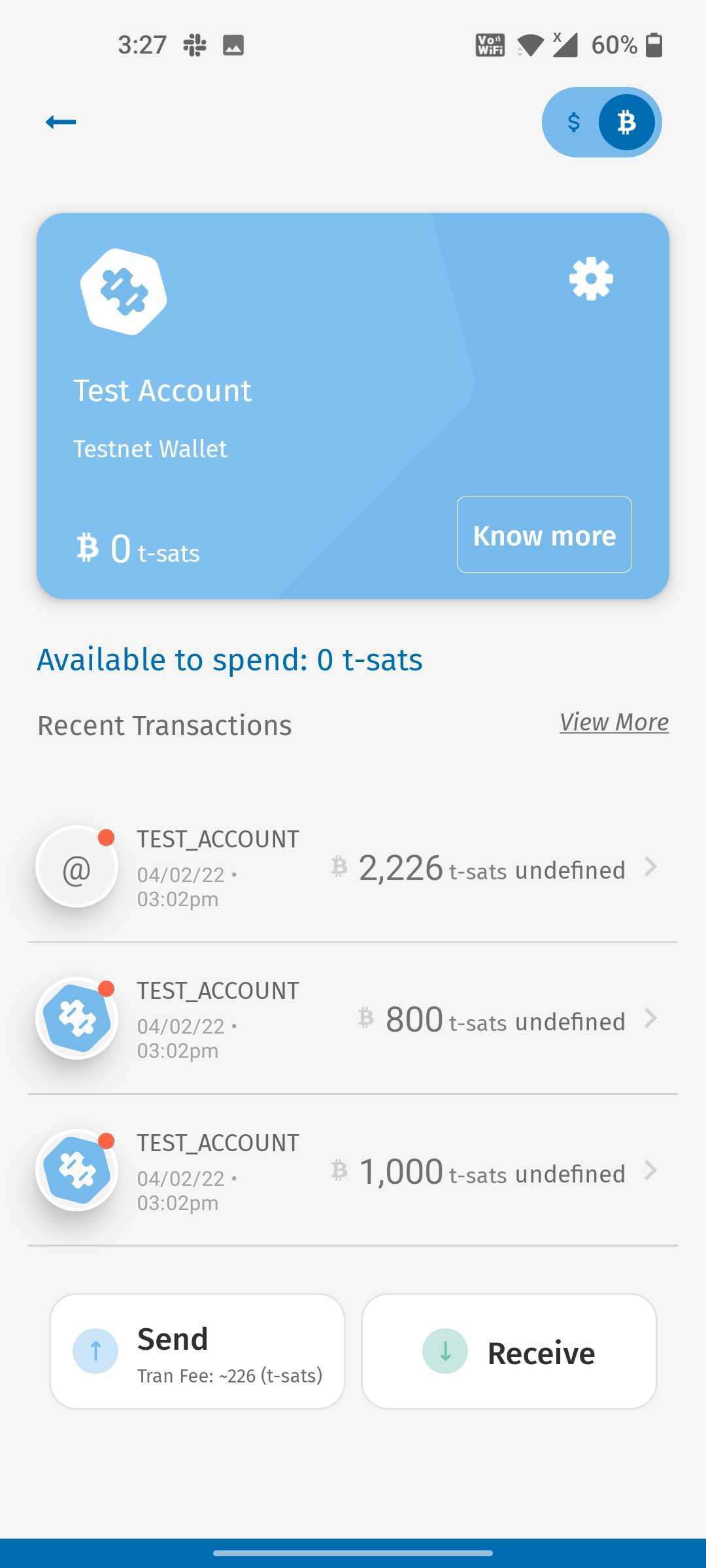

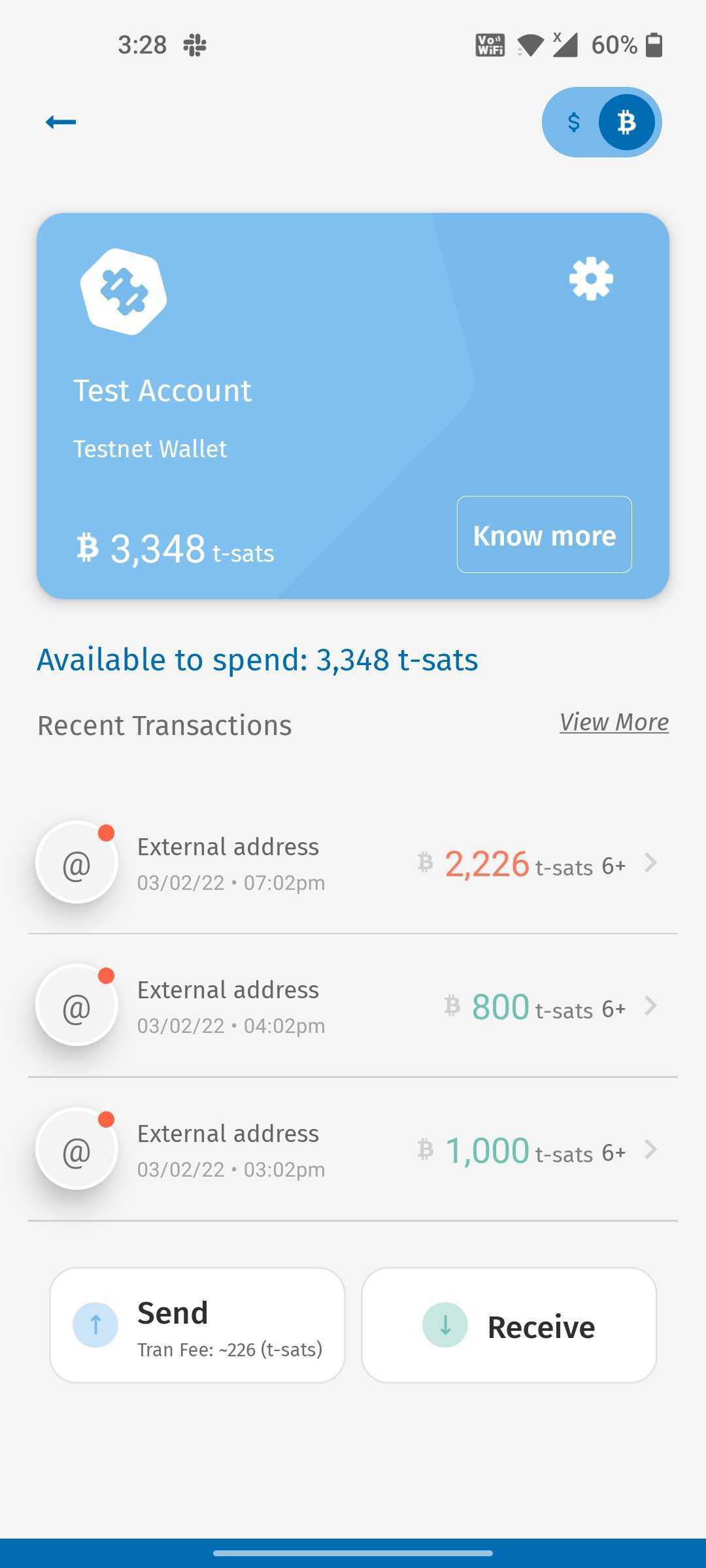

241,230 | 20,110,765,039 | IssuesEvent | 2022-02-07 14:54:04 | bithyve/hexa | https://api.github.com/repos/bithyve/hexa | closed | Post restore:Test account issue. | fixed Test Account 2.0.70 |

- Post recovery When I open the test account, the ... | 1.0 | Post restore:Test account issue. -

- Post recovery... | test | post restore test account issue post recovery when i open the test account the ui was different and confirmation was undefined on refresh the ui was different | 1 |

115,195 | 9,783,620,131 | IssuesEvent | 2019-06-08 12:01:06 | imixs/imixs-workflow | https://api.github.com/repos/imixs/imixs-workflow | closed | SchedulerService must not throw application exceptions | bug testing | The bean: SchedulerService has timeout method onTimeout which must not throw application exceptions | 1.0 | SchedulerService must not throw application exceptions - The bean: SchedulerService has timeout method onTimeout which must not throw application exceptions | test | schedulerservice must not throw application exceptions the bean schedulerservice has timeout method ontimeout which must not throw application exceptions | 1 |

248,020 | 20,989,772,430 | IssuesEvent | 2022-03-29 08:15:32 | OpenSID/OpenSID | https://api.github.com/repos/OpenSID/OpenSID | closed | [Premium V22.03-Rev02] Setelah Impor Database melalui PhpMyadmin, Database berubah bukan menjadi Collation utf8_general_ci | bug tester-22.03 | ### Jelaskan error yg dialami

1. Impor Data_contoh_awal ataupun dari data base backup melalui PHPMYADMIN, Setelah di Impor Database berubah bukan menjadi Collation utf8_general_ci.

2. Hal ini terjadi baik impor di locolhost maupun impor pada hosting

3. Pada hosting setelah impor database, ujicoba loading->website ... | 1.0 | [Premium V22.03-Rev02] Setelah Impor Database melalui PhpMyadmin, Database berubah bukan menjadi Collation utf8_general_ci - ### Jelaskan error yg dialami

1. Impor Data_contoh_awal ataupun dari data base backup melalui PHPMYADMIN, Setelah di Impor Database berubah bukan menjadi Collation utf8_general_ci.

2. Hal in... | test | setelah impor database melalui phpmyadmin database berubah bukan menjadi collation general ci jelaskan error yg dialami impor data contoh awal ataupun dari data base backup melalui phpmyadmin setelah di impor database berubah bukan menjadi collation general ci hal ini terjadi baik impor di loc... | 1 |

185,963 | 14,394,532,873 | IssuesEvent | 2020-12-03 01:31:00 | github-vet/rangeclosure-findings | https://api.github.com/repos/github-vet/rangeclosure-findings | closed | deferpanic/gorump: 1.7/go/src/time/format_test.go; 8 LoC | fresh test tiny |

Found a possible issue in [deferpanic/gorump](https://www.github.com/deferpanic/gorump) at [1.7/go/src/time/format_test.go](https://github.com/deferpanic/gorump/blob/313ecc2ef408fbfd85123cdfcf448042787b53ea/1.7/go/src/time/format_test.go#L186-L193)

Below is the message reported by the analyzer for this snippet of cod... | 1.0 | deferpanic/gorump: 1.7/go/src/time/format_test.go; 8 LoC -

Found a possible issue in [deferpanic/gorump](https://www.github.com/deferpanic/gorump) at [1.7/go/src/time/format_test.go](https://github.com/deferpanic/gorump/blob/313ecc2ef408fbfd85123cdfcf448042787b53ea/1.7/go/src/time/format_test.go#L186-L193)

Below is t... | test | deferpanic gorump go src time format test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message fun... | 1 |

289,817 | 25,015,920,752 | IssuesEvent | 2022-11-03 18:48:00 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | //sw/device/tests:entropy_src_ast_rng_req_test_sim_verilator Times out waiting for entropy | Type:Bug Component:TestTriage | Timed out after 1000 usec

üI00000 test_rom.c:133] Version: earlgrey_silver_release_v5-8368-g62844d571, Build Date: 2022-11-02 10:54:46^M^M

I00001 test_rom.c:235] Test ROM complete, jumping to flash (addr: 20000480)!^M^M

I00000 ottf_main.c:126] Running sw/device/tests/entropy_src_ast_rng_req_test.c^M^M

E00001 e... | 1.0 | //sw/device/tests:entropy_src_ast_rng_req_test_sim_verilator Times out waiting for entropy - Timed out after 1000 usec

üI00000 test_rom.c:133] Version: earlgrey_silver_release_v5-8368-g62844d571, Build Date: 2022-11-02 10:54:46^M^M

I00001 test_rom.c:235] Test ROM complete, jumping to flash (addr: 20000480)!^M^M

... | test | sw device tests entropy src ast rng req test sim verilator times out waiting for entropy timed out after usec test rom c version earlgrey silver release build date m m test rom c test rom complete jumping to flash addr m m ottf main c running sw device tests entro... | 1 |

206,931 | 7,123,013,195 | IssuesEvent | 2018-01-19 14:04:02 | fxi/map-x-mgl | https://api.github.com/repos/fxi/map-x-mgl | closed | Layout tweak: adding mapx logo to the app | Priority 1 | Can the new mapx logo be added to the mapx app engine. Can the logo actually have a pop up tag called training and can it link to the training pages on the main landing site ? | 1.0 | Layout tweak: adding mapx logo to the app - Can the new mapx logo be added to the mapx app engine. Can the logo actually have a pop up tag called training and can it link to the training pages on the main landing site ? | non_test | layout tweak adding mapx logo to the app can the new mapx logo be added to the mapx app engine can the logo actually have a pop up tag called training and can it link to the training pages on the main landing site | 0 |

67,681 | 7,057,564,497 | IssuesEvent | 2018-01-04 16:53:28 | JuliaGraphs/LightGraphs.jl | https://api.github.com/repos/JuliaGraphs/LightGraphs.jl | closed | Nonbacktracking matrix eigenvalues are sometime NaN | CI / tests wontfix | Sometimes when you compute the eigenvalues of the Nonbacktracking matrix the leading eigenvalue comes out as NaN. This is happening on the explicitly computed matrix and happens on both 0.5 and 0.6. I need to investigate for changes to eigs that make it more common on 0.6. | 1.0 | Nonbacktracking matrix eigenvalues are sometime NaN - Sometimes when you compute the eigenvalues of the Nonbacktracking matrix the leading eigenvalue comes out as NaN. This is happening on the explicitly computed matrix and happens on both 0.5 and 0.6. I need to investigate for changes to eigs that make it more common ... | test | nonbacktracking matrix eigenvalues are sometime nan sometimes when you compute the eigenvalues of the nonbacktracking matrix the leading eigenvalue comes out as nan this is happening on the explicitly computed matrix and happens on both and i need to investigate for changes to eigs that make it more common ... | 1 |

427,315 | 12,393,982,397 | IssuesEvent | 2020-05-20 16:13:30 | googleapis/elixir-google-api | https://api.github.com/repos/googleapis/elixir-google-api | closed | Synthesis failed for SQLAdmin | autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate SQLAdmin. :broken_heart:

Here's the output from running `synth.py`:

```

led to remove deps/parse_trans/ebin/parse_trans.app: Permission denied

warning: failed to remove deps/parse_trans/ebin/parse_trans_mod.beam: Permission denied

warning: failed to remove deps/parse_trans/ebin/pa... | 1.0 | Synthesis failed for SQLAdmin - Hello! Autosynth couldn't regenerate SQLAdmin. :broken_heart:

Here's the output from running `synth.py`:

```

led to remove deps/parse_trans/ebin/parse_trans.app: Permission denied

warning: failed to remove deps/parse_trans/ebin/parse_trans_mod.beam: Permission denied

warning: failed to... | non_test | synthesis failed for sqladmin hello autosynth couldn t regenerate sqladmin broken heart here s the output from running synth py led to remove deps parse trans ebin parse trans app permission denied warning failed to remove deps parse trans ebin parse trans mod beam permission denied warning failed to... | 0 |

33,847 | 9,206,177,855 | IssuesEvent | 2019-03-08 12:58:20 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | Build error in 1.0.0 (linguist problem in French translation), Qt 4.5.0 beta1 | Category: Build/Install Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **vince -** (vince -)

Original Redmine Issue: 1470, https://issues.qgis.org/issues/1470

Original Assignee: nobody -

---

I am currently trying to port Qgis to Macports. I have fetched the 1.0.0 source code as found on download.osgeo.org/qgis/src, and I use the prerelease of Qt 4.5.0 (not yet posted... | 1.0 | Build error in 1.0.0 (linguist problem in French translation), Qt 4.5.0 beta1 - ---

Author Name: **vince -** (vince -)

Original Redmine Issue: 1470, https://issues.qgis.org/issues/1470

Original Assignee: nobody -

---

I am currently trying to port Qgis to Macports. I have fetched the 1.0.0 source code as found on d... | non_test | build error in linguist problem in french translation qt author name vince vince original redmine issue original assignee nobody i am currently trying to port qgis to macports i have fetched the source code as found on download osgeo org qgis src and i use the... | 0 |

5,460 | 2,576,490,341 | IssuesEvent | 2015-02-12 10:29:12 | KIZI/EasyMiner-EasyMinerCenter | https://api.github.com/repos/KIZI/EasyMiner-EasyMinerCenter | closed | Chyba importu csv s "id" | priority: high state: commited type: bug | Pokud csv obsahuje sloupec ID, dojde k pádu importu (ani se nevytvoří příslušná tabulka)...

Zároveň kontrolovat unikátnost názvu sloupců | 1.0 | Chyba importu csv s "id" - Pokud csv obsahuje sloupec ID, dojde k pádu importu (ani se nevytvoří příslušná tabulka)...

Zároveň kontrolovat unikátnost názvu sloupců | non_test | chyba importu csv s id pokud csv obsahuje sloupec id dojde k pádu importu ani se nevytvoří příslušná tabulka zároveň kontrolovat unikátnost názvu sloupců | 0 |

218,267 | 16,981,410,793 | IssuesEvent | 2021-06-30 09:19:33 | moby/moby | https://api.github.com/repos/moby/moby | opened | Flaky test: libnetwork TestCreateParallel (arm64) | area/networking area/testing kind/bug | logs: https://ci-next.docker.com/public/blue/rest/organizations/jenkins/pipelines/moby/branches/PR-42576/runs/1/nodes/295/log/?start=0

Seen failing on https://github.com/moby/moby/pull/42576

```

=== RUN TestCreateParallel

time="2021-06-29T04:56:20Z" level=warning msg="bridge store not initialized. kv object d... | 1.0 | Flaky test: libnetwork TestCreateParallel (arm64) - logs: https://ci-next.docker.com/public/blue/rest/organizations/jenkins/pipelines/moby/branches/PR-42576/runs/1/nodes/295/log/?start=0

Seen failing on https://github.com/moby/moby/pull/42576

```

=== RUN TestCreateParallel

time="2021-06-29T04:56:20Z" level=wa... | test | flaky test libnetwork testcreateparallel logs seen failing on run testcreateparallel time level warning msg bridge store not initialized kv object docker network bridge is not added to the store time level warning msg bridge store not initialized kv obj... | 1 |

6,849 | 10,040,321,111 | IssuesEvent | 2019-07-18 19:39:26 | westmary48/le-voyage- | https://api.github.com/repos/westmary48/le-voyage- | closed | Single Trip | CRUD MVP requirement | ## User Story

As a user, when view my home page, I should see a link called view memory and when I click the link, I should be taken to a page that has my single memory information.

## Acceptance Criteria

**WHEN** I look at the home page

**THEN** I should see a link on each of my cards that says view memory

**AN... | 1.0 | Single Trip - ## User Story

As a user, when view my home page, I should see a link called view memory and when I click the link, I should be taken to a page that has my single memory information.

## Acceptance Criteria

**WHEN** I look at the home page

**THEN** I should see a link on each of my cards that says vie... | non_test | single trip user story as a user when view my home page i should see a link called view memory and when i click the link i should be taken to a page that has my single memory information acceptance criteria when i look at the home page then i should see a link on each of my cards that says vie... | 0 |

135,714 | 30,351,332,914 | IssuesEvent | 2023-07-11 19:13:10 | creativecommons/cc-resource-archive | https://api.github.com/repos/creativecommons/cc-resource-archive | closed | Unnecessary <a> tags in resource page | 🟧 priority: high 🏁 status: ready for work 🛠 goal: fix 💻 aspect: code | ## Description

Unnecessary <a> tags are used in all the resource pages.

## Reproduction

1. open docs folder

2. then navigate to _site folder

3. all the index.html files in each folder contains this error ( specifically while rendering the title for the page )

4. See error.

5. This is also seen in the resource.... | 1.0 | Unnecessary <a> tags in resource page - ## Description

Unnecessary <a> tags are used in all the resource pages.

## Reproduction

1. open docs folder

2. then navigate to _site folder

3. all the index.html files in each folder contains this error ( specifically while rendering the title for the page )

4. See error... | non_test | unnecessary tags in resource page description unnecessary tags are used in all the resource pages reproduction open docs folder then navigate to site folder all the index html files in each folder contains this error specifically while rendering the title for the page see error ... | 0 |

60,987 | 6,720,332,610 | IssuesEvent | 2017-10-16 07:21:19 | zalando/zalenium | https://api.github.com/repos/zalando/zalenium | closed | Kubernetes GKE support | waiting-retest | Running kubernetes version 1.6.6 on GKE and I'm having trouble getting started with zalenium.

Manifest:

```

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: zalenium

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: zalenium

subjects:

- kind: ServiceAccount... | 1.0 | Kubernetes GKE support - Running kubernetes version 1.6.6 on GKE and I'm having trouble getting started with zalenium.

Manifest:

```

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: zalenium

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: zalenium

subjects... | test | kubernetes gke support running kubernetes version on gke and i m having trouble getting started with zalenium manifest apiversion kind serviceaccount metadata name zalenium apiversion rbac authorization io kind rolebinding metadata name zalenium subjects kind... | 1 |

25,764 | 5,198,035,376 | IssuesEvent | 2017-01-23 17:03:15 | arquillian/arquillian-cube | https://api.github.com/repos/arquillian/arquillian-cube | closed | Refactor ftest-containerobject project | bug documentation enhancement | ##### Issue Overview

Refactor `ftest-containerobject` so instead of using Ping Pong container which is not a real example of when to use Container Objects pattern and change it to use an FTP server (https://github.com/m-creations/docker-openwrt-ftp) with Apache Commons (https://commons.apache.org/proper/commons-net/... | 1.0 | Refactor ftest-containerobject project - ##### Issue Overview

Refactor `ftest-containerobject` so instead of using Ping Pong container which is not a real example of when to use Container Objects pattern and change it to use an FTP server (https://github.com/m-creations/docker-openwrt-ftp) with Apache Commons (https... | non_test | refactor ftest containerobject project issue overview refactor ftest containerobject so instead of using ping pong container which is not a real example of when to use container objects pattern and change it to use an ftp server with apache commons expected behaviour use ftp which is a mo... | 0 |

101,946 | 31,771,595,658 | IssuesEvent | 2023-09-12 12:09:47 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | closed | Failed Profile-i386 tests on new Windows 32bit MSVC buildbot | clang bugzilla build-problem obsolete platform:windows | | | |

| --- | --- |

| Bugzilla Link | [47759](https://llvm.org/bz47759) |

| Version | trunk |

| OS | Windows 2000 |

| CC | @zygoloid |

## Extended Description

When setting up a new 32bit WIndows buildbot with MSVC, I noticed a few of the instrprof tests are failing. The buildbot is currently in staging as I want to... | 1.0 | Failed Profile-i386 tests on new Windows 32bit MSVC buildbot - | | |

| --- | --- |

| Bugzilla Link | [47759](https://llvm.org/bz47759) |

| Version | trunk |

| OS | Windows 2000 |

| CC | @zygoloid |

## Extended Description

When setting up a new 32bit WIndows buildbot with MSVC, I noticed a few of the instrprof tests... | non_test | failed profile tests on new windows msvc buildbot bugzilla link version trunk os windows cc zygoloid extended description when setting up a new windows buildbot with msvc i noticed a few of the instrprof tests are failing the buildbot is currently in s... | 0 |

216,947 | 16,675,268,121 | IssuesEvent | 2021-06-07 15:27:28 | bounswe/2021SpringGroup10 | https://api.github.com/repos/bounswe/2021SpringGroup10 | closed | Creation of RAM table template for the Milestone | Everybody Priority: High Status: Needs Review Status: Pending Type: Communication Type: Documentation Type: Wiki | We should show the task explicitly. The main headings are Wiki Documentation, Communication, Requirements, User Scenarios and Mockups, UML Diagrams, Project Plan, and RAM. | 1.0 | Creation of RAM table template for the Milestone - We should show the task explicitly. The main headings are Wiki Documentation, Communication, Requirements, User Scenarios and Mockups, UML Diagrams, Project Plan, and RAM. | non_test | creation of ram table template for the milestone we should show the task explicitly the main headings are wiki documentation communication requirements user scenarios and mockups uml diagrams project plan and ram | 0 |

93,620 | 8,439,255,578 | IssuesEvent | 2018-10-18 00:47:22 | kubeflow/testing | https://api.github.com/repos/kubeflow/testing | closed | NFS share is out of space. | area/testing priority/p1 |

```

W

+ SRC_DIR=/mnt/test-data-volume/kubeflow-presubmit-kfctl-1776-268c7f3-3833-549e/src

+ mkdir -p /src/kubeflow

+ git clone https://github.com/kubeflow/kubeflow.git /mnt/test-data-volume/kubeflow-presubmit-kfctl-1776-268c7f3-3833-549e/src/kubeflow/kubeflow

Cloning into '/mnt/test-data-volume/kubeflow-presubmi... | 1.0 | NFS share is out of space. -

```

W

+ SRC_DIR=/mnt/test-data-volume/kubeflow-presubmit-kfctl-1776-268c7f3-3833-549e/src

+ mkdir -p /src/kubeflow

+ git clone https://github.com/kubeflow/kubeflow.git /mnt/test-data-volume/kubeflow-presubmit-kfctl-1776-268c7f3-3833-549e/src/kubeflow/kubeflow

Cloning into '/mnt/test-... | test | nfs share is out of space w src dir mnt test data volume kubeflow presubmit kfctl src mkdir p src kubeflow git clone mnt test data volume kubeflow presubmit kfctl src kubeflow kubeflow cloning into mnt test data volume kubeflow presubmit kfctl src kubeflow kubeflow ... | 1 |

20,002 | 11,355,895,127 | IssuesEvent | 2020-01-24 21:11:39 | edgexfoundry/edgex-go | https://api.github.com/repos/edgexfoundry/edgex-go | opened | Update OpenAPI Docs for V2 API -- Generic Errors | core-services f2f-geneva support-services | Remove ErrorEnvelope (batch endpoint DTO -- wraps use-case DTO).

Add ErrorResponse (use-case DTO).

Add ErrorResponse to all relevant use-case-request paths.

Related conversation:

| 2.0 | Update OpenAPI Docs for V2 API -- Generic Errors - Remove ErrorEnvelope (batch endpoint DTO -- wraps use-case DTO).

Add ErrorResponse (use-case DTO).

Add ErrorResponse to all relevant use-case-request paths.

Related conversation:

vs. ingame IRC and /say Command | bugMinor need to be tested | (Ingame) IRC chat msg's and /say msg's show up additional times equal to the number of times that item was used.

Relogging resets the "use count" to 0.

Needs to be tested if can be fixed by IRC relay change.

Bug does not affect normal ingame player msg's. So chatting woth other players ingame is flawless. | 1.0 | Remote Accessor (id:7048) vs. ingame IRC and /say Command - (Ingame) IRC chat msg's and /say msg's show up additional times equal to the number of times that item was used.

Relogging resets the "use count" to 0.

Needs to be tested if can be fixed by IRC relay change.

Bug does not affect normal ingame player msg'... | test | remote accessor id vs ingame irc and say command ingame irc chat msg s and say msg s show up additional times equal to the number of times that item was used relogging resets the use count to needs to be tested if can be fixed by irc relay change bug does not affect normal ingame player msg s ... | 1 |

745,963 | 26,008,164,419 | IssuesEvent | 2022-12-20 21:39:19 | robotframework/robotframework | https://api.github.com/repos/robotframework/robotframework | closed | Bug in `--reportbackgroundcolor` documentation in the User Guide | bug priority: medium | _Observation_: User guide and robot mismatching in #setting-background-colors section

_User Guide_: If you specify three colors, the first one will be used when all the tests pass, the second when all tests have been skipped, and the last when there are any failures. (pass:skip:fail)

_Robot Command Line Help_: '-... | 1.0 | Bug in `--reportbackgroundcolor` documentation in the User Guide - _Observation_: User guide and robot mismatching in #setting-background-colors section

_User Guide_: If you specify three colors, the first one will be used when all the tests pass, the second when all tests have been skipped, and the last when there ... | non_test | bug in reportbackgroundcolor documentation in the user guide observation user guide and robot mismatching in setting background colors section user guide if you specify three colors the first one will be used when all the tests pass the second when all tests have been skipped and the last when there ... | 0 |

177,829 | 29,170,712,408 | IssuesEvent | 2023-05-19 01:20:08 | wpumacay/renderer | https://api.github.com/repos/wpumacay/renderer | closed | Shader Manager Implementation | component: python component: shaders component: assets priority: high type: design type: documentation type: feature | # Description

This tracks the implementation of the `shader_manager` singleton, used to both `create` and `store` shaders in a easier way. The rationale is that `shaders` will be considered as assets, and this manager will be in charge of easily create them and share the ownership of this shaders with user code that... | 1.0 | Shader Manager Implementation - # Description

This tracks the implementation of the `shader_manager` singleton, used to both `create` and `store` shaders in a easier way. The rationale is that `shaders` will be considered as assets, and this manager will be in charge of easily create them and share the ownership of ... | non_test | shader manager implementation description this tracks the implementation of the shader manager singleton used to both create and store shaders in a easier way the rationale is that shaders will be considered as assets and this manager will be in charge of easily create them and share the ownership of ... | 0 |

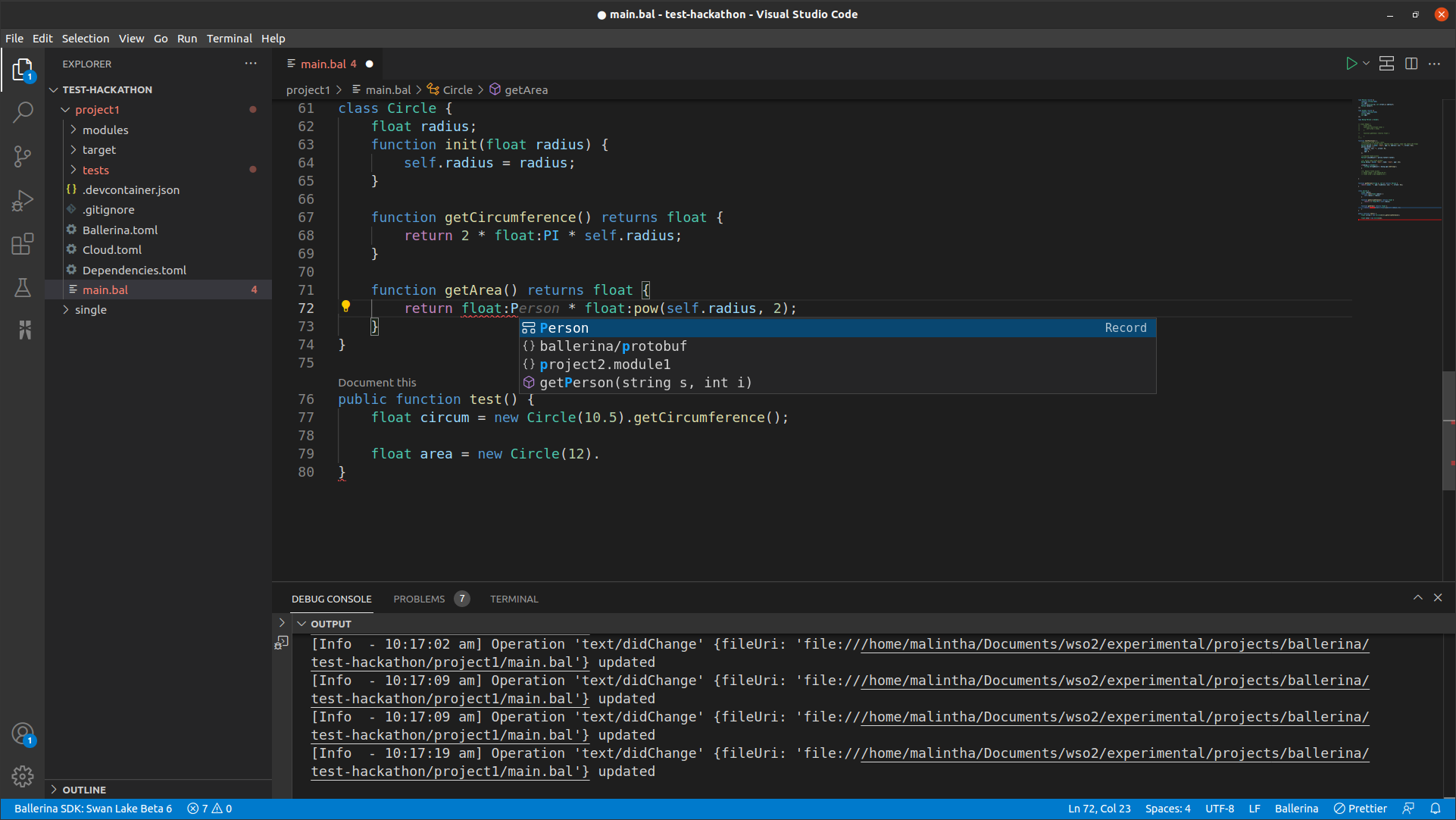

236,717 | 19,569,640,259 | IssuesEvent | 2022-01-04 08:14:59 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | No completion support for Qualified name reference | Type/Bug Priority/High Team/LanguageServer Area/Completion GA-Test-Hackathon | **Description:**

Consider the following capture.

Visible symbols in the float module is not suggested as depicted above.

**Steps to reproduce:**

```ballerina

class Circle {

float radius;

... | 1.0 | No completion support for Qualified name reference - **Description:**

Consider the following capture.

Visible symbols in the float module is not suggested as depicted above.

**Steps to reproduce:*... | test | no completion support for qualified name reference description consider the following capture visible symbols in the float module is not suggested as depicted above steps to reproduce ballerina class circle float radius function init float radius self radius r... | 1 |

93,084 | 10,764,499,396 | IssuesEvent | 2019-11-01 08:28:39 | Kzrthikz/ped | https://api.github.com/repos/Kzrthikz/ped | opened | Optional inputs are confusing. | severity.Medium type.DocumentationBug | From the user guide, optional user inputs are not clear. Could consider using [CAPS] to show its an optional user input. This is especially confusing for filter feature as to what is defined by "FIELD", "QUANTIFIER" and "VALUE".

| 1.0 | Optional inputs are confusing. - From the user guide, optional user inputs are not clear. Could consider using [CAPS] to show its an optional user input. This is especially confusing for filter feature as to what is defined by "FIELD", "QUANTIFIER" and "VALUE".

| non_test | optional inputs are confusing from the user guide optional user inputs are not clear could consider using to show its an optional user input this is especially confusing for filter feature as to what is defined by field quantifier and value | 0 |

50,690 | 6,107,374,474 | IssuesEvent | 2017-06-21 07:56:12 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | closed | Disable fetching background images on test runs | automated-tests suggestion | **Describe the issue you encountered:** Create an option to disable fetching background images on test runs.

https://github.com/brave/browser-laptop/issues/6503#issuecomment-304769664

> I notice `npm run test` often fails due to timeout because images are not fetched in time.

Per @bsclifton;

> another option: w... | 1.0 | Disable fetching background images on test runs - **Describe the issue you encountered:** Create an option to disable fetching background images on test runs.

https://github.com/brave/browser-laptop/issues/6503#issuecomment-304769664

> I notice `npm run test` often fails due to timeout because images are not fetche... | test | disable fetching background images on test runs describe the issue you encountered create an option to disable fetching background images on test runs i notice npm run test often fails due to timeout because images are not fetched in time per bsclifton another option we could default that se... | 1 |

589,217 | 17,692,463,131 | IssuesEvent | 2021-08-24 11:46:11 | kirbydesign/designsystem | https://api.github.com/repos/kirbydesign/designsystem | closed | [Housekeeping] Keep a CHANGELOG.md | NOT Tech refined housekeeping priority 2 | **Short description of housekeeping request**

Maintain a changelog next to the code instead of only in the cookbook site named CHANGELOG.md This is a pretty standard location for changelogs, thus making it easier for people unfamiliar with Kirby Design System to see changes between versions at a glance. The format c... | 1.0 | [Housekeeping] Keep a CHANGELOG.md - **Short description of housekeeping request**

Maintain a changelog next to the code instead of only in the cookbook site named CHANGELOG.md This is a pretty standard location for changelogs, thus making it easier for people unfamiliar with Kirby Design System to see changes betwe... | non_test | keep a changelog md short description of housekeeping request maintain a changelog next to the code instead of only in the cookbook site named changelog md this is a pretty standard location for changelogs thus making it easier for people unfamiliar with kirby design system to see changes between versions a... | 0 |

85,998 | 15,755,314,366 | IssuesEvent | 2021-03-31 01:33:29 | heltondoria/event-system | https://api.github.com/repos/heltondoria/event-system | opened | CVE-2020-7751 (High) detected in pathval-1.1.0.tgz | security vulnerability | ## CVE-2020-7751 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>pathval-1.1.0.tgz</b></p></summary>

<p>Object value retrieval given a string path</p>

<p>Library home page: <a href="ht... | True | CVE-2020-7751 (High) detected in pathval-1.1.0.tgz - ## CVE-2020-7751 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>pathval-1.1.0.tgz</b></p></summary>

<p>Object value retrieval give... | non_test | cve high detected in pathval tgz cve high severity vulnerability vulnerable library pathval tgz object value retrieval given a string path library home page a href path to dependency file event system package json path to vulnerable library event system node modules... | 0 |

37,122 | 5,103,110,167 | IssuesEvent | 2017-01-04 20:21:52 | dhermes/bezier | https://api.github.com/repos/dhermes/bezier | opened | Implemented Speedups in C/C++/Fortran | hygiene perf testing | e.g. for `Surface.evaluate_cartesian`.

To provide test coverage for these extensions, can [use `gcov`][1]

[1]: http://stackoverflow.com/questions/5144603/shared-library-coverage-test-with-gcov-linux-fortran | 1.0 | Implemented Speedups in C/C++/Fortran - e.g. for `Surface.evaluate_cartesian`.

To provide test coverage for these extensions, can [use `gcov`][1]

[1]: http://stackoverflow.com/questions/5144603/shared-library-coverage-test-with-gcov-linux-fortran | test | implemented speedups in c c fortran e g for surface evaluate cartesian to provide test coverage for these extensions can | 1 |

256,861 | 22,107,069,652 | IssuesEvent | 2022-06-01 17:50:29 | owid/covid-19-data | https://api.github.com/repos/owid/covid-19-data | opened | Ending our COVID-19 testing data updates | dom:testing announcement | As of 23 June 2022, we will no longer add new data points to our COVID-19 testing dataset. We will continue updates of all other metrics in our COVID-19 dataset.

You can read more [in this discussion](https://github.com/owid/covid-19-data/discussions/2667) | 1.0 | Ending our COVID-19 testing data updates - As of 23 June 2022, we will no longer add new data points to our COVID-19 testing dataset. We will continue updates of all other metrics in our COVID-19 dataset.

You can read more [in this discussion](https://github.com/owid/covid-19-data/discussions/2667) | test | ending our covid testing data updates as of june we will no longer add new data points to our covid testing dataset we will continue updates of all other metrics in our covid dataset you can read more | 1 |

211,634 | 16,330,998,344 | IssuesEvent | 2021-05-12 09:12:22 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | No error info displays in the 'Error Details' dialog after clicking the 'Details...' next to a promote version failed activity log | 🧪 testing | **Storage Explorer Version**: 1.20.0-dev

**Build Number**: 20210512.2

**Branch**: main

**Platform/OS**: Windows 10/ Linux Ubuntu 18.04/ MacOS Big Sur 11.3

**Architecture**: ia32/x64

**How found**: exploratory testing

**Regression From**: Not a regression

## Steps to Reproduce ##

1. Expand the storage account ... | 1.0 | No error info displays in the 'Error Details' dialog after clicking the 'Details...' next to a promote version failed activity log - **Storage Explorer Version**: 1.20.0-dev

**Build Number**: 20210512.2

**Branch**: main

**Platform/OS**: Windows 10/ Linux Ubuntu 18.04/ MacOS Big Sur 11.3

**Architecture**: ia32/x64

... | test | no error info displays in the error details dialog after clicking the details next to a promote version failed activity log storage explorer version dev build number branch main platform os windows linux ubuntu macos big sur architecture how found ex... | 1 |

30,922 | 2,729,656,404 | IssuesEvent | 2015-04-16 09:59:29 | jkall/qgis-midvatten-plugin | https://api.github.com/repos/jkall/qgis-midvatten-plugin | opened | allow obsid dubplicates by introducing another ID as primary key | enhancement Priority-High | Introduce a unique observation id that is independent of obsid and name. This will allow the existance of duplicates among obsid.

Major code revisions are needed. Several security checks are needed during imports and also, when obsid duplicates are found, user interaction to distinguish between observations of sa... | 1.0 | allow obsid dubplicates by introducing another ID as primary key - Introduce a unique observation id that is independent of obsid and name. This will allow the existance of duplicates among obsid.

Major code revisions are needed. Several security checks are needed during imports and also, when obsid duplicates ar... | non_test | allow obsid dubplicates by introducing another id as primary key introduce a unique observation id that is independent of obsid and name this will allow the existance of duplicates among obsid major code revisions are needed several security checks are needed during imports and also when obsid duplicates ar... | 0 |

179,704 | 13,895,971,515 | IssuesEvent | 2020-10-19 16:31:49 | WuriGuinea/mosip-guinea-ref-impl | https://api.github.com/repos/WuriGuinea/mosip-guinea-ref-impl | closed | Additional address details-i.e. "complément d'adresse" not populated in notification email and/or INU card | In progress Priority 1 Test | See Row 1131 in Master templates,

$!additionalAddressDetails_fra | 1.0 | Additional address details-i.e. "complément d'adresse" not populated in notification email and/or INU card - See Row 1131 in Master templates,

$!additionalAddressDetails_fra | test | additional address details i e complément d adresse not populated in notification email and or inu card see row in master templates additionaladdressdetails fra | 1 |

339,046 | 10,241,019,185 | IssuesEvent | 2019-08-19 22:33:33 | kubernetes-sigs/cluster-api-provider-aws | https://api.github.com/repos/kubernetes-sigs/cluster-api-provider-aws | closed | service of type LoadBalancer stuck in pending, missing tag on subnets | kind/bug lifecycle/active priority/important-soon | /kind bug

**What steps did you take and what happened:**

deployed CAPA v0.3.7 succesfully

`kubectl run test1 --image=nginx`

`kubectl expose deploy test1 --port=80 --type=LoadBalancer`

```

E0813 18:59:18.479595 1 service_controller.go:219] error processing service default/test1 (will retry): failed to en... | 1.0 | service of type LoadBalancer stuck in pending, missing tag on subnets - /kind bug

**What steps did you take and what happened:**

deployed CAPA v0.3.7 succesfully

`kubectl run test1 --image=nginx`

`kubectl expose deploy test1 --port=80 --type=LoadBalancer`

```

E0813 18:59:18.479595 1 service_controller.g... | non_test | service of type loadbalancer stuck in pending missing tag on subnets kind bug what steps did you take and what happened deployed capa succesfully kubectl run image nginx kubectl expose deploy port type loadbalancer service controller go error processing ... | 0 |

227,232 | 18,054,175,771 | IssuesEvent | 2021-09-20 05:09:48 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.pfc.test.sanity_base.ATTVAR_04 JUnit | Test_9999 logicmoo.pfc.test.sanity_base unit_test ATTVAR_04 Passing | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif attvar_04.pl)

% ISSUE: https://github.com/logicmoo/logicmoo_workspace/issues/

% EDIT: https://github.com/lo... | 3.0 | logicmoo.pfc.test.sanity_base.ATTVAR_04 JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/pfc/t/sanity_base ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s swipl -x /var/lib/jenkins/workspace/logicmoo_workspace/bin/lmoo-clif attvar_04.pl)

% ISSUE: https://github.com/logicmoo/logicmoo_... | test | logicmoo pfc test sanity base attvar junit cd var lib jenkins workspace logicmoo workspace packs sys pfc t sanity base timeout foreground preserve status s sigkill k swipl x var lib jenkins workspace logicmoo workspace bin lmoo clif attvar pl issue edit jenkins issue search ... | 1 |

40,911 | 2,868,949,721 | IssuesEvent | 2015-06-05 22:08:45 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Make the pub shell script work from within the repo | enhancement Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#10928_

----

The shell script under sdk/bin/ for running pub only w... | 1.0 | Make the pub shell script work from within the repo - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#10928_

----

... | non_test | make the pub shell script work from within the repo issue by originally opened as dart lang sdk the shell script under sdk bin for running pub only works in the built sdk not in the source repo it should support both for reference the script handles this | 0 |

170,788 | 27,015,556,558 | IssuesEvent | 2023-02-10 19:01:22 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Tuple Declaration Hiding | Area-IDE Feature Request Developer Community Need Design Review | VSF_TYPE_MARKDOWNNew code editor suggestion. I love Tuples but they elongate the method declaration. The suggestion is to allow a toggle to collapse them. For example...

```

private static (bool showHelp, bool deleteExistingFiles, string inputFolder, string outputFolder, bool pauseCommandWindow, List<string> Fil... | 1.0 | Tuple Declaration Hiding - VSF_TYPE_MARKDOWNNew code editor suggestion. I love Tuples but they elongate the method declaration. The suggestion is to allow a toggle to collapse them. For example...

```

private static (bool showHelp, bool deleteExistingFiles, string inputFolder, string outputFolder, bool pauseCommandWin... | non_test | tuple declaration hiding vsf type markdownnew code editor suggestion i love tuples but they elongate the method declaration the suggestion is to allow a toggle to collapse them for example private static bool showhelp bool deleteexistingfiles string inputfolder string outputfolder bool pausecommandwin... | 0 |

273,208 | 23,738,083,294 | IssuesEvent | 2022-08-31 09:52:26 | pingcap/tidb | https://api.github.com/repos/pingcap/tidb | closed | unstable test in the TestNowAndUTCTimestamp | type/bug component/test component/expression severity/minor | ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

```

=== RUN TestNowAndUTCTimestamp

builtin_time_test.go:849:

Error Trace: /home/jenkins/.tidb/tmp/04446c229c5a73c16deb3edddcb4db34/sandbox/processwrapper-sandbox/5550/e... | 1.0 | unstable test in the TestNowAndUTCTimestamp - ## Bug Report

Please answer these questions before submitting your issue. Thanks!

### 1. Minimal reproduce step (Required)

```

=== RUN TestNowAndUTCTimestamp

builtin_time_test.go:849:

Error Trace: /home/jenkins/.tidb/tmp/04446c229c5a73c16deb3edd... | test | unstable test in the testnowandutctimestamp bug report please answer these questions before submitting your issue thanks minimal reproduce step required run testnowandutctimestamp builtin time test go error trace home jenkins tidb tmp sandbox processwrapper s... | 1 |

776,903 | 27,264,758,444 | IssuesEvent | 2023-02-22 17:11:07 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | opened | SC-7(11): Boundary Protection | Restrict Incoming Communications Traffic | Priority: P2 Class: Technical ITSG-33 Suggested Assignment: IT Projects Control: SC-7 | # Control Definition

BOUNDARY PROTECTION | RESTRICT INCOMING COMMUNICATIONS TRAFFIC

The information system only allows incoming communications from [Assignment: organization-defined authorized sources] routed to [Assignment: organization-defined authorized destinations].

# Class

Technical

# Supplemental Guidance

Thi... | 1.0 | SC-7(11): Boundary Protection | Restrict Incoming Communications Traffic - # Control Definition

BOUNDARY PROTECTION | RESTRICT INCOMING COMMUNICATIONS TRAFFIC

The information system only allows incoming communications from [Assignment: organization-defined authorized sources] routed to [Assignment: organization-define... | non_test | sc boundary protection restrict incoming communications traffic control definition boundary protection restrict incoming communications traffic the information system only allows incoming communications from routed to class technical supplemental guidance this control enhancement provides dete... | 0 |

284,620 | 24,611,193,486 | IssuesEvent | 2022-10-14 21:42:52 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | opened | DISABLED test_binary_op_scalar_fastpath__foreach_mul_cuda_bfloat16 (__main__.TestForeachCUDA) | triaged module: flaky-tests skipped module: mta | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_binary_op_scalar_fastpath__foreach_mul_cuda_bfloat16&suite=TestForeachCUDA) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/8897924861).

Over the pas... | 1.0 | DISABLED test_binary_op_scalar_fastpath__foreach_mul_cuda_bfloat16 (__main__.TestForeachCUDA) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_binary_op_scalar_fastpath__foreach_mul_cuda_bfloat16&suite=TestForeachCUDA) and the most... | test | disabled test binary op scalar fastpath foreach mul cuda main testforeachcuda platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has been determined flaky in workflow s with failures and successes debugging instruc... | 1 |

579,586 | 17,195,248,357 | IssuesEvent | 2021-07-16 16:21:22 | magento/magento2 | https://api.github.com/repos/magento/magento2 | opened | Update laminas/laminas-code composer dependency to version 4.4.2 | Priority: P2 Project: Platform Health | Update laminas/laminas-code composer dependency to version 4.4.2 | 1.0 | Update laminas/laminas-code composer dependency to version 4.4.2 - Update laminas/laminas-code composer dependency to version 4.4.2 | non_test | update laminas laminas code composer dependency to version update laminas laminas code composer dependency to version | 0 |

529,021 | 15,378,998,290 | IssuesEvent | 2021-03-02 19:03:52 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | closed | Can collection carousel display be sorted by # of editions or availability? | Lead: @mekarpeles Needs: Community Discussion Priority: 3 Type: Question | ### Question

Can collection carousel display be sorted by # of editions or availability?

### Additional context

For example, if the results of a query include public domain, lending library, and print-disabled, the ability to sort or filter on the type best suited for the collection would be helpful. See https://o... | 1.0 | Can collection carousel display be sorted by # of editions or availability? - ### Question

Can collection carousel display be sorted by # of editions or availability?

### Additional context

For example, if the results of a query include public domain, lending library, and print-disabled, the ability to sort or fil... | non_test | can collection carousel display be sorted by of editions or availability question can collection carousel display be sorted by of editions or availability additional context for example if the results of a query include public domain lending library and print disabled the ability to sort or fil... | 0 |

318,229 | 27,296,533,454 | IssuesEvent | 2023-02-23 20:52:55 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Servidores - Dados dos Servidores - Luminárias | generalization test development | DoD: Realizar o teste de Generalização do validador da tag Servidores - Dados dos Servidores para o Município de Luminárias. | 1.0 | Teste de generalizacao para a tag Servidores - Dados dos Servidores - Luminárias - DoD: Realizar o teste de Generalização do validador da tag Servidores - Dados dos Servidores para o Município de Luminárias. | test | teste de generalizacao para a tag servidores dados dos servidores luminárias dod realizar o teste de generalização do validador da tag servidores dados dos servidores para o município de luminárias | 1 |

21,422 | 3,899,075,999 | IssuesEvent | 2016-04-17 14:22:44 | BobbyDarkbean/consumer-producer-solutions | https://api.github.com/repos/BobbyDarkbean/consumer-producer-solutions | closed | CP-20: add test extension | enhancement implementation prototype test | Server module mock extension.

Implement interfaces: IRequestDecoder, IReplyEncoder, ISocketController, IConnectionTaskChart, IConnectionTaskFactory and provide concrete tasks. | 1.0 | CP-20: add test extension - Server module mock extension.

Implement interfaces: IRequestDecoder, IReplyEncoder, ISocketController, IConnectionTaskChart, IConnectionTaskFactory and provide concrete tasks. | test | cp add test extension server module mock extension implement interfaces irequestdecoder ireplyencoder isocketcontroller iconnectiontaskchart iconnectiontaskfactory and provide concrete tasks | 1 |

66,796 | 7,018,622,796 | IssuesEvent | 2017-12-21 14:25:23 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed tests on master: Examples-ORMs/TestSQLAlchemy, Examples-ORMs/TestSQLAlchemy/FirstRun, Examples-ORMs/TestSQLAlchemy/SecondRun, Examples-ORMs/TestSQLAlchemy/SecondRun/RetrieveFromAPIAfterRestart, Examples-ORMs/TestSQLAlchemy/SecondRun/RetrieveFromAPIAfterRestart/Order, Examples-ORMs/TestSQLAlchemy/Second... | Robot test-failure | The following tests appear to have failed:

[#451827](https://teamcity.cockroachdb.com/viewLog.html?buildId=451827):

```

--- FAIL: Examples-ORMs/TestSQLAlchemy (183.020s)

None

--- FAIL: Examples-ORMs/TestSQLAlchemy/FirstRun (180.770s)

main_test.go:160: Get http://localhost:6543/ping: dial tcp 127.0.0.1:6543: getsocko... | 1.0 | teamcity: failed tests on master: Examples-ORMs/TestSQLAlchemy, Examples-ORMs/TestSQLAlchemy/FirstRun, Examples-ORMs/TestSQLAlchemy/SecondRun, Examples-ORMs/TestSQLAlchemy/SecondRun/RetrieveFromAPIAfterRestart, Examples-ORMs/TestSQLAlchemy/SecondRun/RetrieveFromAPIAfterRestart/Order, Examples-ORMs/TestSQLAlchemy/Second... | test | teamcity failed tests on master examples orms testsqlalchemy examples orms testsqlalchemy firstrun examples orms testsqlalchemy secondrun examples orms testsqlalchemy secondrun retrievefromapiafterrestart examples orms testsqlalchemy secondrun retrievefromapiafterrestart order examples orms testsqlalchemy second... | 1 |

322,961 | 27,656,502,593 | IssuesEvent | 2023-03-12 01:57:36 | frigid14/stationware | https://api.github.com/repos/frigid14/stationware | closed | Dying between challenges permakills you | bug priority: before playtest difficult | Uhhh uhmm uhmmm.

I don't really know a good solution tbqh.

The main issue is that the respawning uses the challenge's players, which doesn't include dead people.

The main issue is not making this cbt when trying to administrate. | 1.0 | Dying between challenges permakills you - Uhhh uhmm uhmmm.

I don't really know a good solution tbqh.

The main issue is that the respawning uses the challenge's players, which doesn't include dead people.

The main issue is not making this cbt when trying to administrate. | test | dying between challenges permakills you uhhh uhmm uhmmm i don t really know a good solution tbqh the main issue is that the respawning uses the challenge s players which doesn t include dead people the main issue is not making this cbt when trying to administrate | 1 |

221,604 | 17,360,218,221 | IssuesEvent | 2021-07-29 19:28:13 | urapadmin/kiosk | https://api.github.com/repos/urapadmin/kiosk | closed | new config key: visibility/collected_material_show_weight | filemaker test-stage | just because we also have show_quantity | 1.0 | new config key: visibility/collected_material_show_weight - just because we also have show_quantity | test | new config key visibility collected material show weight just because we also have show quantity | 1 |

34,562 | 7,844,000,100 | IssuesEvent | 2018-06-19 08:19:15 | An-Sar/PrimalCore | https://api.github.com/repos/An-Sar/PrimalCore | closed | Major lag on every new world | Code Review World Gen duplicate | Everytime I create a new world, the world generates normally, but after a few minutes it starts to lag a lot, eventually leading to Minecraft stopping responding.

https://paste.ee/p/OPsLu | 1.0 | Major lag on every new world - Everytime I create a new world, the world generates normally, but after a few minutes it starts to lag a lot, eventually leading to Minecraft stopping responding.

https://paste.ee/p/OPsLu | non_test | major lag on every new world everytime i create a new world the world generates normally but after a few minutes it starts to lag a lot eventually leading to minecraft stopping responding | 0 |

305,539 | 26,391,904,774 | IssuesEvent | 2023-01-12 16:15:59 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Fix miscellaneous_ops.test_torch_tril_indices | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3897647796/jobs/6655531507" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/3897647796/jobs/6655531507" rel="noopener nore... | 1.0 | Fix miscellaneous_ops.test_torch_tril_indices - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/3897647796/jobs/6655531507" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/run... | test | fix miscellaneous ops test torch tril indices tensorflow img src torch img src numpy img src jax img src not found not found not found not found not found not found not found not found | 1 |

116,387 | 9,850,857,245 | IssuesEvent | 2019-06-19 09:08:27 | zonemaster/zonemaster | https://api.github.com/repos/zonemaster/zonemaster | closed | Add test case that on queries with OPT | test spec | If a name server gets a query with an OPT section, it should respond with an OPT section, unless it does not support OPT, and then is should respond with FORMERR.

Create a Test Case that checks this.

| 1.0 | Add test case that on queries with OPT - If a name server gets a query with an OPT section, it should respond with an OPT section, unless it does not support OPT, and then is should respond with FORMERR.

Create a Test Case that checks this.

| test | add test case that on queries with opt if a name server gets a query with an opt section it should respond with an opt section unless it does not support opt and then is should respond with formerr create a test case that checks this | 1 |

204,001 | 15,397,418,349 | IssuesEvent | 2021-03-03 22:07:18 | Vivid-Project/frontend | https://api.github.com/repos/Vivid-Project/frontend | closed | FE End to End testing | Frontend Testing | - [ ] dashboard to add new dream form

- [ ] add a new dream from to dashboard

- [ ] dashboard to all dream view

- [ ] all dreams view to dashboard

- [ ] dashboard to charts view

- [ ] charts view to dashboard

- [ ] dashboard search to results

- [ ] results to dashboard | 1.0 | FE End to End testing - - [ ] dashboard to add new dream form

- [ ] add a new dream from to dashboard

- [ ] dashboard to all dream view

- [ ] all dreams view to dashboard

- [ ] dashboard to charts view

- [ ] charts view to dashboard

- [ ] dashboard search to results

- [ ] results to dashboard | test | fe end to end testing dashboard to add new dream form add a new dream from to dashboard dashboard to all dream view all dreams view to dashboard dashboard to charts view charts view to dashboard dashboard search to results results to dashboard | 1 |

671,729 | 22,773,569,134 | IssuesEvent | 2022-07-08 12:27:15 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | reopened | Request of BILTerrainProvider layer are not using proxy url | bug Priority: High Accepted C027-COMUNE_FI-2021-SUPPORT 3D | ## Description

<!-- Add here a few sentences describing the bug. -->

The implementation of WMSLayer and TerrainLayer in cesium are providing a configuration for proxy not supported by the BILTerrainProvider class and for this reason the proxy url is never applied. Currently BILTerrainProvider accept proxy as root o... | 1.0 | Request of BILTerrainProvider layer are not using proxy url - ## Description

<!-- Add here a few sentences describing the bug. -->

The implementation of WMSLayer and TerrainLayer in cesium are providing a configuration for proxy not supported by the BILTerrainProvider class and for this reason the proxy url is neve... | non_test | request of bilterrainprovider layer are not using proxy url description the implementation of wmslayer and terrainlayer in cesium are providing a configuration for proxy not supported by the bilterrainprovider class and for this reason the proxy url is never applied currently bilterrainprovider accept proxy... | 0 |

220,805 | 17,261,950,456 | IssuesEvent | 2021-07-22 08:52:41 | FlowCrypt/flowcrypt-android | https://api.github.com/repos/FlowCrypt/flowcrypt-android | opened | Test decryption errors after moving to use PGPainless for the message decryption | android_testing | It would be nice to have such tests. I think it's very important to prevent security bugs (for example after upgrading PGPainless to a newer version). We should be sure that a received content is parsed to expected `blocks` and Android shows the expected UI. | 1.0 | Test decryption errors after moving to use PGPainless for the message decryption - It would be nice to have such tests. I think it's very important to prevent security bugs (for example after upgrading PGPainless to a newer version). We should be sure that a received content is parsed to expected `blocks` and Android s... | test | test decryption errors after moving to use pgpainless for the message decryption it would be nice to have such tests i think it s very important to prevent security bugs for example after upgrading pgpainless to a newer version we should be sure that a received content is parsed to expected blocks and android s... | 1 |

140,280 | 5,399,734,518 | IssuesEvent | 2017-02-27 20:12:12 | GRIS-UdeM/SpatGRIS | https://api.github.com/repos/GRIS-UdeM/SpatGRIS | opened | DP Buffer size Incorrect | bug High priority |

Attention dans DP, le plugin croit qu'il travaille à 2048 mais en réalité DP est à 512.

Cela fait planter DP quand on est en mode 12*12 ou 16*16.

| 1.0 | DP Buffer size Incorrect -

Attention dans DP, le plugin croit qu'il travaille à 2048 mais en réalité DP est à 512.

Cela fait planter DP quand on est en mode 12*12 ou 16*16.

| non_test | dp buffer size incorrect attention dans dp le plugin croit qu il travaille à mais en réalité dp est à cela fait planter dp quand on est en mode ou | 0 |

250,442 | 21,299,718,629 | IssuesEvent | 2022-04-15 00:20:29 | NMGRL/pychron | https://api.github.com/repos/NMGRL/pychron | closed | Add ability to move label on isochron | Enhancement Testing Required Data Presentation | When plotting highly radiogenic data, labels for step commonly overlap spatially. Add ability to move labels for graphic clarity. | 1.0 | Add ability to move label on isochron - When plotting highly radiogenic data, labels for step commonly overlap spatially. Add ability to move labels for graphic clarity. | test | add ability to move label on isochron when plotting highly radiogenic data labels for step commonly overlap spatially add ability to move labels for graphic clarity | 1 |

211,898 | 16,464,257,410 | IssuesEvent | 2021-05-22 04:31:01 | neuropsychology/NeuroKit | https://api.github.com/repos/neuropsychology/NeuroKit | closed | Docs: Example gallery | documentation :scroll: inactive 👻 | Would be nice to have an example gallery such as [here](https://sphinx-nbexamples.readthedocs.io/en/latest/examples/index.html).

Other example: http://visbrain.org/index.html | 1.0 | Docs: Example gallery - Would be nice to have an example gallery such as [here](https://sphinx-nbexamples.readthedocs.io/en/latest/examples/index.html).

Other example: http://visbrain.org/index.html | non_test | docs example gallery would be nice to have an example gallery such as other example | 0 |

178,441 | 6,608,821,410 | IssuesEvent | 2017-09-19 12:33:59 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | opened | CI coverage of pre-built drake visualizer | priority: medium team: kitware type: continuous integration | Drake's CI should offer coverage of pre-built drake visualizer -- for example, that is launches without faults, that it runs for a few seconds without crashing, that it is able to locate data resources. It is not unusual for users to experience problems running this software, so we should have CI coverage to point to ... | 1.0 | CI coverage of pre-built drake visualizer - Drake's CI should offer coverage of pre-built drake visualizer -- for example, that is launches without faults, that it runs for a few seconds without crashing, that it is able to locate data resources. It is not unusual for users to experience problems running this software... | non_test | ci coverage of pre built drake visualizer drake s ci should offer coverage of pre built drake visualizer for example that is launches without faults that it runs for a few seconds without crashing that it is able to locate data resources it is not unusual for users to experience problems running this software... | 0 |

22,182 | 3,940,716,154 | IssuesEvent | 2016-04-27 02:44:25 | extnet/Ext.NET | https://api.github.com/repos/extnet/Ext.NET | closed | GridView's DisableSelection="false" doesn't disable selection | 3.x fixed-in-latest-extjs sencha | http://forums.ext.net/showthread.php?53651

http://www.sencha.com/forum/showthread.php?298197

**Update:** This is allegedly fixed since ExtJS 5.1.2. | 1.0 | GridView's DisableSelection="false" doesn't disable selection - http://forums.ext.net/showthread.php?53651

http://www.sencha.com/forum/showthread.php?298197

**Update:** This is allegedly fixed since ExtJS 5.1.2. | test | gridview s disableselection false doesn t disable selection update this is allegedly fixed since extjs | 1 |

52,859 | 6,283,865,739 | IssuesEvent | 2017-07-19 05:42:18 | intel-analytics/BigDL | https://api.github.com/repos/intel-analytics/BigDL | opened | Pip install python bigdl need sudo | 0.2 release test high priority | If I run the install commands in doc

```

pip install --upgrade pip

pip install BigDL==0.2.0.dev3 # for Python 2.7

```

It will throw exception

```

Downloading/unpacking pip from https://pypi.python.org/packages/b6/ac/7015eb97dc749283ffdec1c3a88ddb8ae03b8fad0f0e611408f196358da3/pip-9.0.1-py2.py3-none-any.w... | 1.0 | Pip install python bigdl need sudo - If I run the install commands in doc

```

pip install --upgrade pip

pip install BigDL==0.2.0.dev3 # for Python 2.7

```

It will throw exception

```

Downloading/unpacking pip from https://pypi.python.org/packages/b6/ac/7015eb97dc749283ffdec1c3a88ddb8ae03b8fad0f0e611408f1... | test | pip install python bigdl need sudo if i run the install commands in doc pip install upgrade pip pip install bigdl for python it will throw exception downloading unpacking pip from downloading pip none any whl downloaded installing collected packag... | 1 |

80,923 | 7,761,168,756 | IssuesEvent | 2018-06-01 08:59:33 | Nineclown/The-Convenient-ATM | https://api.github.com/repos/Nineclown/The-Convenient-ATM | closed | Definition: 분실된 카드의 재발급을 신청하는 기능 | Failed Test pass | 재발급의 의미를 더 정확히 정의해야한다.

재발급을 하면 카드 목록에 다시 그 카드가 추가되어야 하는건지 아니면 단순히 재발급이란 액션을 취한건지 명확해야한다.

T220: 분실된 카드의 재발급을 신청하는 기능

https://jaehyun379.testrail.io/index.php?/tests/view/220 | 1.0 | Definition: 분실된 카드의 재발급을 신청하는 기능 - 재발급의 의미를 더 정확히 정의해야한다.

재발급을 하면 카드 목록에 다시 그 카드가 추가되어야 하는건지 아니면 단순히 재발급이란 액션을 취한건지 명확해야한다.

T220: 분실된 카드의 재발급을 신청하는 기능

https://jaehyun379.testrail.io/index.php?/tests/view/220 | test | definition 분실된 카드의 재발급을 신청하는 기능 재발급의 의미를 더 정확히 정의해야한다 재발급을 하면 카드 목록에 다시 그 카드가 추가되어야 하는건지 아니면 단순히 재발급이란 액션을 취한건지 명확해야한다 분실된 카드의 재발급을 신청하는 기능 | 1 |

431,992 | 12,487,403,901 | IssuesEvent | 2020-05-31 08:53:23 | on3iro/aeons-end-randomizer | https://api.github.com/repos/on3iro/aeons-end-randomizer | closed | RFE: Language translation | Priority: High feature | ### Is your feature request related to a problem? Please describe.

I'm a French player and using French core box. It takes to time to find out card as name doesn't match.

### Describe the solution you'd like

As additionnal detail, I would like to say that some French players are mixing language content (even i... | 1.0 | RFE: Language translation - ### Is your feature request related to a problem? Please describe.

I'm a French player and using French core box. It takes to time to find out card as name doesn't match.

### Describe the solution you'd like

As additionnal detail, I would like to say that some French players are mix... | non_test | rfe language translation is your feature request related to a problem please describe i m a french player and using french core box it takes to time to find out card as name doesn t match describe the solution you d like as additionnal detail i would like to say that some french players are mix... | 0 |

296,559 | 25,559,179,603 | IssuesEvent | 2022-11-30 09:28:34 | wazuh/wazuh | https://api.github.com/repos/wazuh/wazuh | opened | Release 4.4.0 - Alpha 1 - E2E UX tests - Monitoring Docker | module/docker type/test/manual team/framework release test/4.4.0 | The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Test information

| | |

|-------------------------|-----------------------------------------... | 2.0 | Release 4.4.0 - Alpha 1 - E2E UX tests - Monitoring Docker - The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Test information

| | |

|------... | test | release alpha ux tests monitoring docker the following issue aims to run the specified test for the current release candidate report the results and open new issues for any encountered errors test information ... | 1 |

325,211 | 27,856,522,096 | IssuesEvent | 2023-03-20 23:41:26 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Test: find in output by default | testplan-item | Refs: https://github.com/microsoft/vscode/issues/174969

- [ ] anyOS

- [ ] anyOS

Complexity: 3

Authors: @rebornix, @amunger

---

This month we made "Find in Output" option turned on by default, this means when you open a notebook, we will try to render cell outputs on idle and when you cmd+f to open th... | 1.0 | Test: find in output by default - Refs: https://github.com/microsoft/vscode/issues/174969

- [ ] anyOS

- [ ] anyOS

Complexity: 3

Authors: @rebornix, @amunger

---

This month we made "Find in Output" option turned on by default, this means when you open a notebook, we will try to render cell outputs on ... | test | test find in output by default refs anyos anyos complexity authors rebornix amunger this month we made find in output option turned on by default this means when you open a notebook we will try to render cell outputs on idle and when you cmd f to open the find widget we ... | 1 |

91,374 | 8,303,852,620 | IssuesEvent | 2018-09-21 19:00:26 | tschottdorf/cockroach | https://api.github.com/repos/tschottdorf/cockroach | closed | teamcity: failed test: test/TestImportPgDump, t, - | C-test-failure O-robot | The following tests appear to have failed on release-banana.

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+test/TestImportPgDump, t, -).

[#864629](https://teamcity.cockroachdb.com/viewLog.html?buildId=864629):

```

e---- FAIL: test/TestImportPgDump (0.0... | 1.0 | teamcity: failed test: test/TestImportPgDump, t, - - The following tests appear to have failed on release-banana.

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+test/TestImportPgDump, t, -).

[#864629](https://teamcity.cockroachdb.com/viewLog.html?buildId=... | test | teamcity failed test test testimportpgdump t the following tests appear to have failed on release banana you may want to check t e fail test testimportpgdump test ended in panic stdout server status runtime go could not parse build timestamp p... | 1 |

253,980 | 27,338,744,700 | IssuesEvent | 2023-02-26 14:41:41 | asaf-mend-test/lvp-is-amazing | https://api.github.com/repos/asaf-mend-test/lvp-is-amazing | closed | bootstrap-4.0.0-beta.tgz: 2 vulnerabilities (highest severity is: 6.1) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-4.0.0-beta.tgz</b></p></summary>

<p>The most popular front-end framework for developing responsive, mobile first projects on the web.</p>

<p>Library home pa... | True | bootstrap-4.0.0-beta.tgz: 2 vulnerabilities (highest severity is: 6.1) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>bootstrap-4.0.0-beta.tgz</b></p></summary>

<p>The most popular front-end framewo... | non_test | bootstrap beta tgz vulnerabilities highest severity is autoclosed vulnerable library bootstrap beta tgz the most popular front end framework for developing responsive mobile first projects on the web library home page a href path to dependency file package json path t... | 0 |

93,350 | 15,885,816,705 | IssuesEvent | 2021-04-09 21:16:02 | turkdevops/grafana | https://api.github.com/repos/turkdevops/grafana | opened | WS-2019-0427 (Medium) detected in elliptic-6.5.1.tgz | security vulnerability | ## WS-2019-0427 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.1.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/... | True | WS-2019-0427 (Medium) detected in elliptic-6.5.1.tgz - ## WS-2019-0427 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.1.tgz</b></p></summary>

<p>EC cryptography</p>

<p>... | non_test | ws medium detected in elliptic tgz ws medium severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file grafana node modules elliptic package json path to vulnerable library grafana node modules elliptic pa... | 0 |

319,442 | 27,373,692,977 | IssuesEvent | 2023-02-28 02:57:01 | void-linux/void-packages | https://api.github.com/repos/void-linux/void-packages | opened | vscode doesn't apply non-English Display Language | bug needs-testing | ### Is this a new report?

Yes

### System Info

Void newest

### Package(s) Affected

vscode-1.75.1_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

https://github.com/microsoft/vscode/issues/154417

### Expected behaviour

could apply display language other than engli... | 1.0 | vscode doesn't apply non-English Display Language - ### Is this a new report?

Yes

### System Info

Void newest

### Package(s) Affected

vscode-1.75.1_1

### Does a report exist for this bug with the project's home (upstream) and/or another distro?

https://github.com/microsoft/vscode/issues/154417

### Expected beha... | test | vscode doesn t apply non english display language is this a new report yes system info void newest package s affected vscode does a report exist for this bug with the project s home upstream and or another distro expected behaviour could apply display language other than en... | 1 |

1,262 | 5,353,855,504 | IssuesEvent | 2017-02-20 07:54:14 | espeak-ng/espeak-ng | https://api.github.com/repos/espeak-ng/espeak-ng | closed | Merge the android branch into master. | maintainability portability resolved/fixed | Now that espeak-ng has diverged from espeak, it makes sense to have the android branch merged into the main development line. This will make it easier to maintain the Android support in the future and keep it up-to-date.

- [x] Merge the android code into the master branch.

- [x] Fix building the JNI and libespeak-n... | True | Merge the android branch into master. - Now that espeak-ng has diverged from espeak, it makes sense to have the android branch merged into the main development line. This will make it easier to maintain the Android support in the future and keep it up-to-date.

- [x] Merge the android code into the master branch.

- ... | non_test | merge the android branch into master now that espeak ng has diverged from espeak it makes sense to have the android branch merged into the main development line this will make it easier to maintain the android support in the future and keep it up to date merge the android code into the master branch ... | 0 |

28,958 | 11,706,038,472 | IssuesEvent | 2020-03-07 19:32:11 | vlaship/spark-streaming | https://api.github.com/repos/vlaship/spark-streaming | opened | CVE-2019-14892 (Medium) detected in jackson-databind-2.6.5.jar | security vulnerability | ## CVE-2019-14892 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.5.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2019-14892 (Medium) detected in jackson-databind-2.6.5.jar - ## CVE-2019-14892 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.6.5.jar</b></p></summary>

<p>Gen... | non_test | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm spark streaming... | 0 |

136,307 | 30,519,700,595 | IssuesEvent | 2023-07-19 07:11:05 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | apache-tvm 0.14.dev54 has 9 GuardDog issues | guarddog code-execution exec-base64 | https://pypi.org/project/apache-tvm

https://inspector.pypi.io/project/apache-tvm

```{

"dependency": "apache-tvm",

"version": "0.14.dev54",

"result": {

"issues": 9,

"errors": {},

"results": {

"exec-base64": [

{

"location": "tvm/3rdparty/dmlc-core/tracker/dmlc_tracker/launcher.py... | 1.0 | apache-tvm 0.14.dev54 has 9 GuardDog issues - https://pypi.org/project/apache-tvm

https://inspector.pypi.io/project/apache-tvm

```{

"dependency": "apache-tvm",

"version": "0.14.dev54",

"result": {

"issues": 9,

"errors": {},

"results": {

"exec-base64": [

{

"location": "tvm/3rdpa... | non_test | apache tvm has guarddog issues dependency apache tvm version result issues errors results exec location tvm dmlc core tracker dmlc tracker launcher py tvm dmlc core tracker dmlc tracker launcher py ... | 0 |

105,654 | 23,088,899,758 | IssuesEvent | 2022-07-26 13:45:18 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | worker: potentially use AWS's default credential provider instead of static | estimate/1d team/batchers user-code-execution | Use AWS default credential chain provider rather than just the [current static provider](https://github.com/sourcegraph/sourcegraph/blob/ce2005995a8126960451c125605605dd54696f49/enterprise/cmd/worker/internal/executorqueue/aws_reporter.go#L96).

In https://github.com/sourcegraph/accounts/issues/565 's case, it could... | 1.0 | worker: potentially use AWS's default credential provider instead of static - Use AWS default credential chain provider rather than just the [current static provider](https://github.com/sourcegraph/sourcegraph/blob/ce2005995a8126960451c125605605dd54696f49/enterprise/cmd/worker/internal/executorqueue/aws_reporter.go#L96... | non_test | worker potentially use aws s default credential provider instead of static use aws default credential chain provider rather than just the in s case it could eliminate the need to inject aws keys via env vars | 0 |

198,389 | 14,977,593,353 | IssuesEvent | 2021-01-28 09:42:45 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | At least Java 11 is required to build elasticsearch gradle tools | >test-failure | <!--

Please fill out the following information, and ensure you have attempted

to reproduce locally

-->

**Build scan**:

**Repro line**:

Build file 'elasticsearch/buildSrc/build.gradle' line: 61

**Reproduces locally?**:

**Applicable branches**:

master

**Failure history**:

<!--