Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

695,040

| 23,841,474,340

|

IssuesEvent

|

2022-09-06 10:35:42

|

status-im/status-desktop

|

https://api.github.com/repos/status-im/status-desktop

|

opened

|

Can't show pinned messages anymore

|

bug priority 2: medium E:Communities

|

When a channel has pinned messages, there's a tiny "pin" icon in the chat toolbar.

That icon is clickable, and opens a dialog with all pinned messages.

Clicking the pin icon doesn't do anything anymore. In fact there's a QML error:

```sh

qrc:/app/AppLayouts/Chat/views/ChatHeaderContentView.qml:290: ReferenceError: messageStore is not defined

```

|

1.0

|

Can't show pinned messages anymore - When a channel has pinned messages, there's a tiny "pin" icon in the chat toolbar.

That icon is clickable, and opens a dialog with all pinned messages.

Clicking the pin icon doesn't do anything anymore. In fact there's a QML error:

```sh

qrc:/app/AppLayouts/Chat/views/ChatHeaderContentView.qml:290: ReferenceError: messageStore is not defined

```

|

non_test

|

can t show pinned messages anymore when a channel has pinned messages there s a tiny pin icon in the chat toolbar that icon is clickable and opens a dialog with all pinned messages clicking the pin icon doesn t do anything anymore in fact there s a qml error sh qrc app applayouts chat views chatheadercontentview qml referenceerror messagestore is not defined

| 0

|

177,064

| 28,315,077,475

|

IssuesEvent

|

2023-04-10 18:51:23

|

phetsims/my-solar-system

|

https://api.github.com/repos/phetsims/my-solar-system

|

closed

|

Z order of bodies and Panel.

|

design:general

|

For https://github.com/phetsims/qa/issues/927

Test device

Dell XPS 15

Operating System

Windows 10

Browser

Chrome

Problem description

Bodies hidden behind panels

This has been mostly likely discussed during a design meeting, but i may be worth revisiting.

PhET has a handful of simulation where the "play-area" occupies the entire screen.

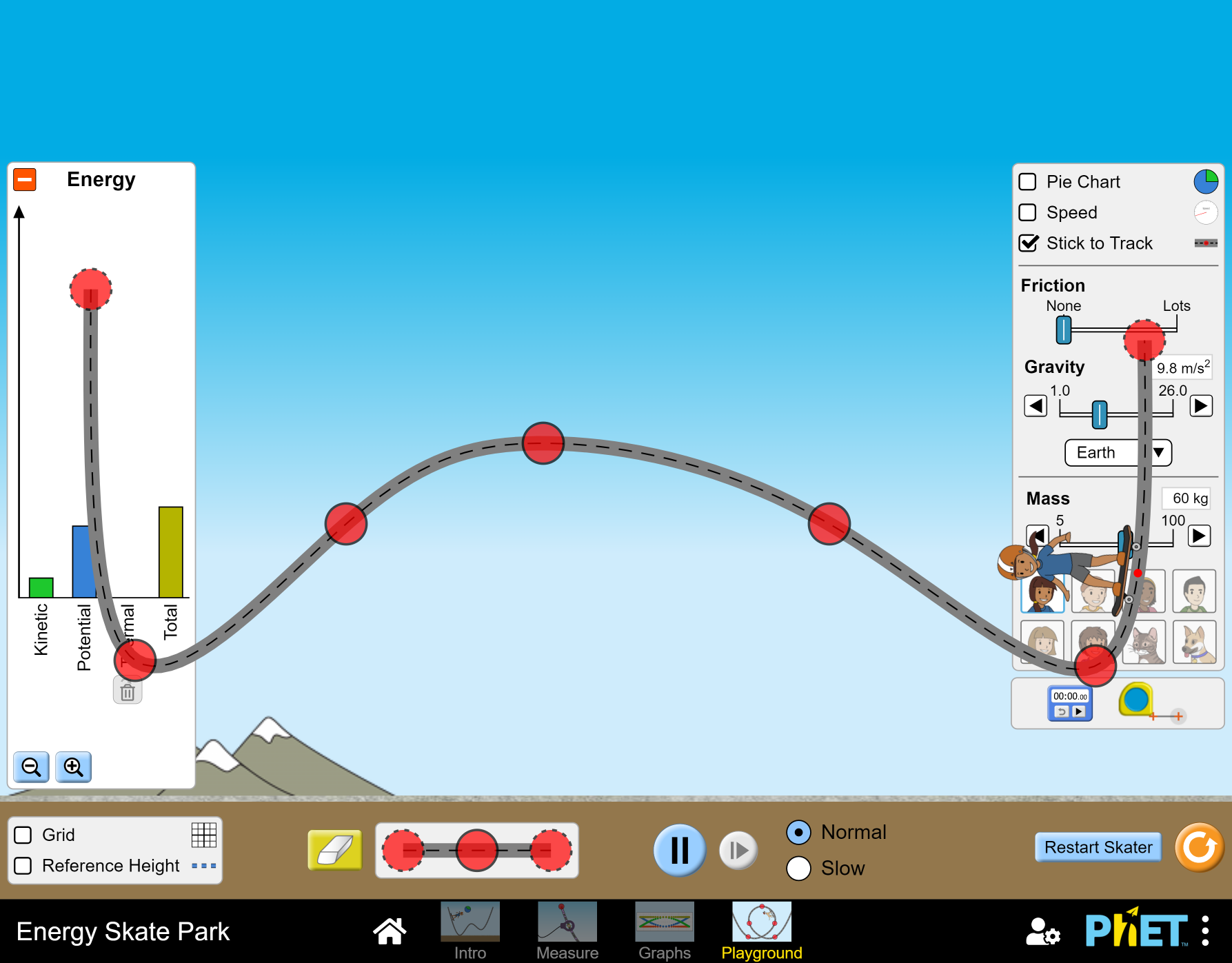

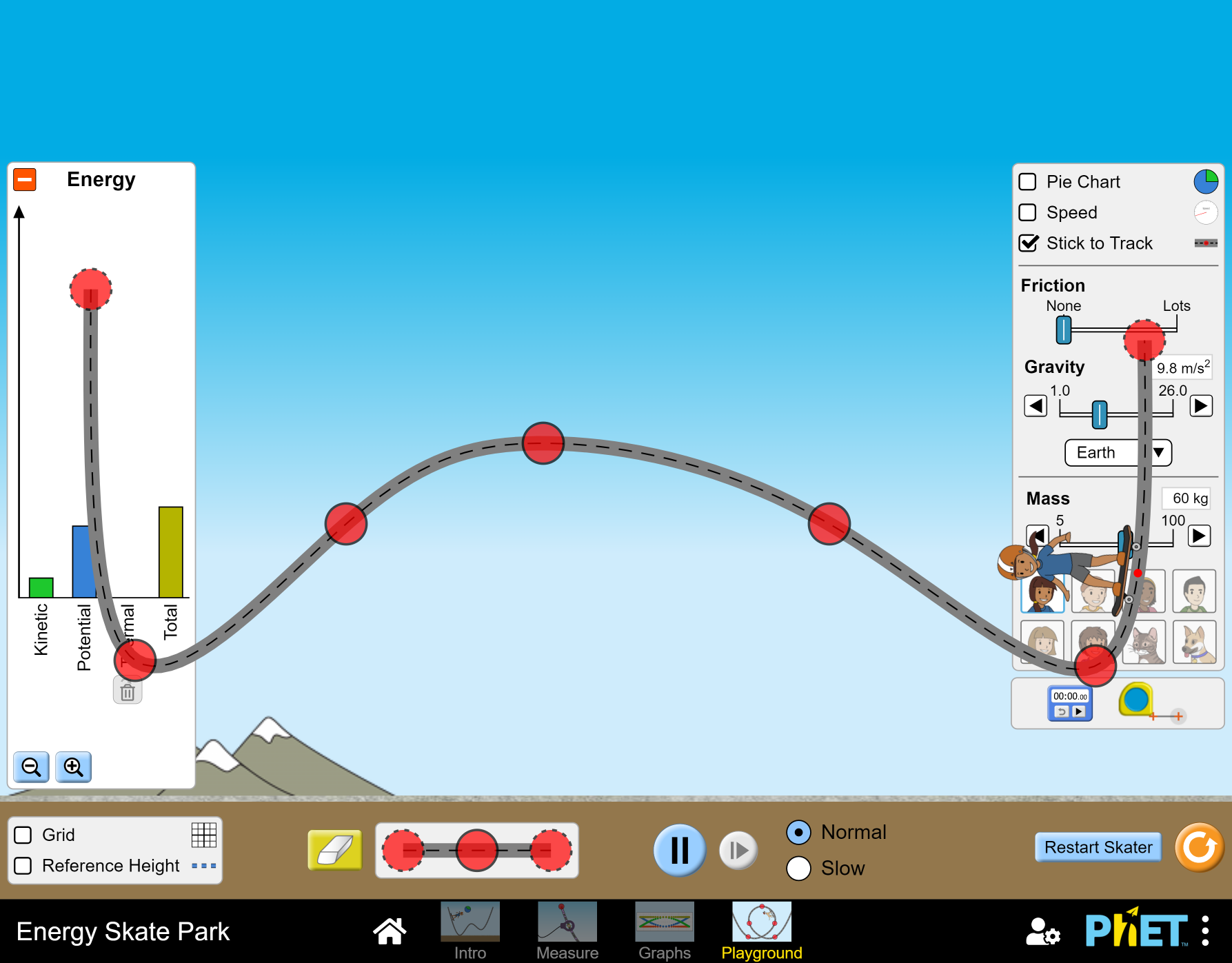

- Energy Skate Park

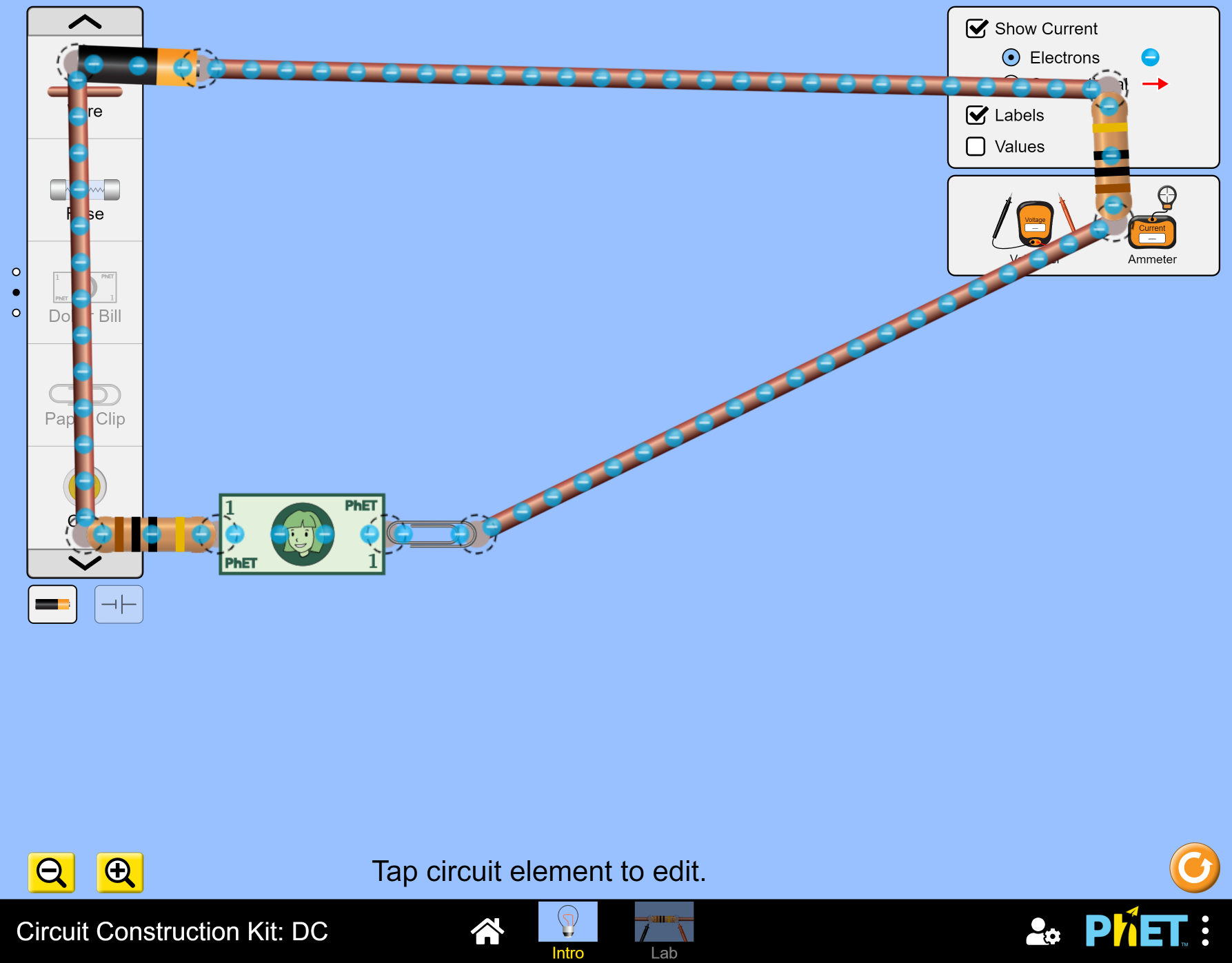

- CCK

- Charges And Fields

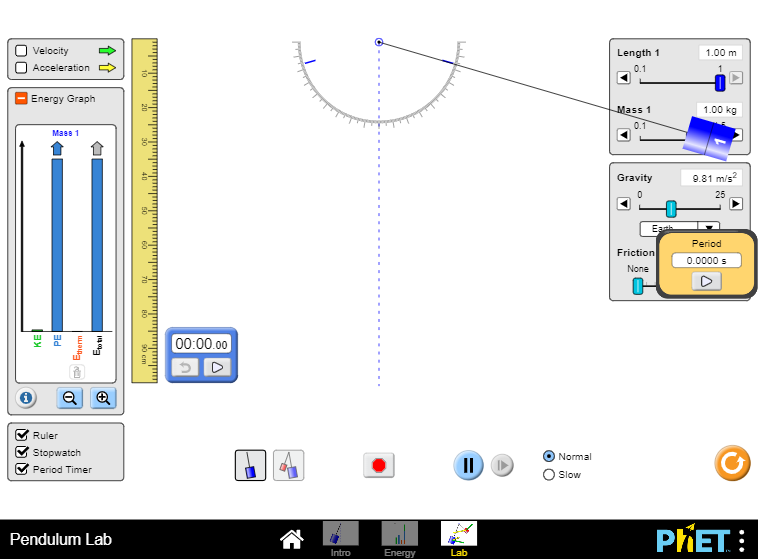

- Pendulum Lab

- Gravity and Orbits

In most cases, we let the user drag objects over the panel. There doesn't seem any ill effects associated with having objects on top of a panel.

Gravity and orbits is rather the exception in that respect as it attempts to limit the ability of a user to dragged an object over the panel and the velocity vectors appear behind the panels, which I find odd. I personally prefer to have the object that is dragged on top on the z-order.

This is related to https://github.com/phetsims/my-solar-system/issues/129 but yet different.

This is not a bug per say, so feel free to close if you feel strongly about your design choice.

|

1.0

|

Z order of bodies and Panel. - For https://github.com/phetsims/qa/issues/927

Test device

Dell XPS 15

Operating System

Windows 10

Browser

Chrome

Problem description

Bodies hidden behind panels

This has been mostly likely discussed during a design meeting, but i may be worth revisiting.

PhET has a handful of simulation where the "play-area" occupies the entire screen.

- Energy Skate Park

- CCK

- Charges And Fields

- Pendulum Lab

- Gravity and Orbits

In most cases, we let the user drag objects over the panel. There doesn't seem any ill effects associated with having objects on top of a panel.

Gravity and orbits is rather the exception in that respect as it attempts to limit the ability of a user to dragged an object over the panel and the velocity vectors appear behind the panels, which I find odd. I personally prefer to have the object that is dragged on top on the z-order.

This is related to https://github.com/phetsims/my-solar-system/issues/129 but yet different.

This is not a bug per say, so feel free to close if you feel strongly about your design choice.

|

non_test

|

z order of bodies and panel for test device dell xps operating system windows browser chrome problem description bodies hidden behind panels this has been mostly likely discussed during a design meeting but i may be worth revisiting phet has a handful of simulation where the play area occupies the entire screen energy skate park cck charges and fields pendulum lab gravity and orbits in most cases we let the user drag objects over the panel there doesn t seem any ill effects associated with having objects on top of a panel gravity and orbits is rather the exception in that respect as it attempts to limit the ability of a user to dragged an object over the panel and the velocity vectors appear behind the panels which i find odd i personally prefer to have the object that is dragged on top on the z order this is related to but yet different this is not a bug per say so feel free to close if you feel strongly about your design choice

| 0

|

182,927

| 31,029,350,023

|

IssuesEvent

|

2023-08-10 11:22:42

|

Shimpei-GANGAN/create-nuxt3-app

|

https://api.github.com/repos/Shimpei-GANGAN/create-nuxt3-app

|

closed

|

【タスク】Nuxt3環境構築

|

🏰 design / consider

|

## 内容

- Nuxt3環境を構築する。以下のパッケージの追加を行う

- Vitest

- ESLint

- Prettier

## 備考

- UIライブラリについては別ブランチ or 別リポジトリでテンプレートを作ることになる。以下のように出来ると理想ではある。

- create-nuxt3-app/chakraui

- create-nuxt3-app/vuetify

- `nuxi --template`の機能でインストールできると便利だよな

## TODO

- [ ] Nuxt3環境構築

- [ ] Vitest: #4 に対応を分割

- [x] ESLintおよびESLintモジュール

- [x] Prettier

|

1.0

|

【タスク】Nuxt3環境構築 - ## 内容

- Nuxt3環境を構築する。以下のパッケージの追加を行う

- Vitest

- ESLint

- Prettier

## 備考

- UIライブラリについては別ブランチ or 別リポジトリでテンプレートを作ることになる。以下のように出来ると理想ではある。

- create-nuxt3-app/chakraui

- create-nuxt3-app/vuetify

- `nuxi --template`の機能でインストールできると便利だよな

## TODO

- [ ] Nuxt3環境構築

- [ ] Vitest: #4 に対応を分割

- [x] ESLintおよびESLintモジュール

- [x] Prettier

|

non_test

|

【タスク】 内容 。以下のパッケージの追加を行う vitest eslint prettier 備考 uiライブラリについては別ブランチ or 別リポジトリでテンプレートを作ることになる。以下のように出来ると理想ではある。 create app chakraui create app vuetify nuxi template の機能でインストールできると便利だよな todo vitest に対応を分割 eslintおよびeslintモジュール prettier

| 0

|

293,688

| 25,317,132,881

|

IssuesEvent

|

2022-11-17 22:47:56

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

tests: drivers: gpio_basic_api and gpio_api_1pin: convert to new ztest API

|

Enhancement area: GPIO area: Tests

|

**Is your enhancement proposal related to a problem? Please describe.**

#46960 adds support for the new ztest API to `tests/drivers/gpio/gpio_get_direction/`, but the other gpio driver tests are still using the old ztest API.

**Describe the solution you'd like**

Should probably migrate gpio driver tests to the new ztest API.

**Describe alternatives you've considered**

@mnkp - feel free to delegate to @henrikbrixandersen or myself if you are too busy.

**Additional context**

Should list the change in https://github.com/zephyrproject-rtos/zephyr/issues/47002 when complete.

|

1.0

|

tests: drivers: gpio_basic_api and gpio_api_1pin: convert to new ztest API - **Is your enhancement proposal related to a problem? Please describe.**

#46960 adds support for the new ztest API to `tests/drivers/gpio/gpio_get_direction/`, but the other gpio driver tests are still using the old ztest API.

**Describe the solution you'd like**

Should probably migrate gpio driver tests to the new ztest API.

**Describe alternatives you've considered**

@mnkp - feel free to delegate to @henrikbrixandersen or myself if you are too busy.

**Additional context**

Should list the change in https://github.com/zephyrproject-rtos/zephyr/issues/47002 when complete.

|

test

|

tests drivers gpio basic api and gpio api convert to new ztest api is your enhancement proposal related to a problem please describe adds support for the new ztest api to tests drivers gpio gpio get direction but the other gpio driver tests are still using the old ztest api describe the solution you d like should probably migrate gpio driver tests to the new ztest api describe alternatives you ve considered mnkp feel free to delegate to henrikbrixandersen or myself if you are too busy additional context should list the change in when complete

| 1

|

162,612

| 12,682,992,080

|

IssuesEvent

|

2020-06-19 18:40:03

|

brimsec/brim

|

https://api.github.com/repos/brimsec/brim

|

closed

|

reload is flaky

|

bug test

|

[This test run](https://github.com/brimsec/brim/runs/777908057?check_suite_focus=true) failed in Spectron `app.browserWindow.reload()`. We know this is so because it's written inside an `appStep`, and that `appStep` never completed.

```

[2020-06-16 19:44:14.955 debug]: Starting step "app reload"

[2020-06-16 19:44:16.769 error]: handleError: Test hit exception: unknown error: cannot determine loading status

from unknown error: unhandled inspector error: {"code":-32000,"message":"Inspected target navigated or closed"}

```

Looks related to or actually is https://github.com/electron-userland/spectron/issues/493 .

|

1.0

|

reload is flaky - [This test run](https://github.com/brimsec/brim/runs/777908057?check_suite_focus=true) failed in Spectron `app.browserWindow.reload()`. We know this is so because it's written inside an `appStep`, and that `appStep` never completed.

```

[2020-06-16 19:44:14.955 debug]: Starting step "app reload"

[2020-06-16 19:44:16.769 error]: handleError: Test hit exception: unknown error: cannot determine loading status

from unknown error: unhandled inspector error: {"code":-32000,"message":"Inspected target navigated or closed"}

```

Looks related to or actually is https://github.com/electron-userland/spectron/issues/493 .

|

test

|

reload is flaky failed in spectron app browserwindow reload we know this is so because it s written inside an appstep and that appstep never completed starting step app reload handleerror test hit exception unknown error cannot determine loading status from unknown error unhandled inspector error code message inspected target navigated or closed looks related to or actually is

| 1

|

228,558

| 25,219,056,714

|

IssuesEvent

|

2022-11-14 11:20:26

|

freedomofpress/dangerzone

|

https://api.github.com/repos/freedomofpress/dangerzone

|

closed

|

Failed CLI execution produces an empty "safe" document

|

bug security

|

## Description

Running Dangerzone CLI on a Linux environment that does not have Podman properly configured, throws an error during document conversion. A side effect of this error is that it produces an *empty* `*-safe.pdf` document.

## Steps to Reproduce

OS: Ubuntu 22.04

Release: 0.3.2

* Ensure you have access to the Dangerzone CLI (run `dangerzone-cli --help`).

* Make sure that `podman images ls` fails. You can temporarily move the `/usr/bin/podman` binary to somewhere else, for instance.

* Have an example PDF document ready.

* Run `dangerzone-cli test.pdf` within that container, where `test.pdf` is your example PDF.

* List the contents of your directory. You should see a `test-safe.pdf` there.

## Expected Behavior

Fail the execution without creating a safe document.

|

True

|

Failed CLI execution produces an empty "safe" document - ## Description

Running Dangerzone CLI on a Linux environment that does not have Podman properly configured, throws an error during document conversion. A side effect of this error is that it produces an *empty* `*-safe.pdf` document.

## Steps to Reproduce

OS: Ubuntu 22.04

Release: 0.3.2

* Ensure you have access to the Dangerzone CLI (run `dangerzone-cli --help`).

* Make sure that `podman images ls` fails. You can temporarily move the `/usr/bin/podman` binary to somewhere else, for instance.

* Have an example PDF document ready.

* Run `dangerzone-cli test.pdf` within that container, where `test.pdf` is your example PDF.

* List the contents of your directory. You should see a `test-safe.pdf` there.

## Expected Behavior

Fail the execution without creating a safe document.

|

non_test

|

failed cli execution produces an empty safe document description running dangerzone cli on a linux environment that does not have podman properly configured throws an error during document conversion a side effect of this error is that it produces an empty safe pdf document steps to reproduce os ubuntu release ensure you have access to the dangerzone cli run dangerzone cli help make sure that podman images ls fails you can temporarily move the usr bin podman binary to somewhere else for instance have an example pdf document ready run dangerzone cli test pdf within that container where test pdf is your example pdf list the contents of your directory you should see a test safe pdf there expected behavior fail the execution without creating a safe document

| 0

|

35,712

| 5,003,854,973

|

IssuesEvent

|

2016-12-12 02:01:15

|

FaradayRF/FaradayRF-Hardware

|

https://api.github.com/repos/FaradayRF/FaradayRF-Hardware

|

opened

|

First Batch Rev D1 Build

|

Testing

|

# First Batch Boards

We've already brought up the first of twelve boards on #46 so here are the next 11! Order of building these units will be the same as the first being SMA connector, GPS, and finally Power/MOSFET connectors. None of them will initially get a JTAG connector but they may eventually. Finally I will use prebuilt binaries to update the firmware of the CC430 via USB.

|

1.0

|

First Batch Rev D1 Build - # First Batch Boards

We've already brought up the first of twelve boards on #46 so here are the next 11! Order of building these units will be the same as the first being SMA connector, GPS, and finally Power/MOSFET connectors. None of them will initially get a JTAG connector but they may eventually. Finally I will use prebuilt binaries to update the firmware of the CC430 via USB.

|

test

|

first batch rev build first batch boards we ve already brought up the first of twelve boards on so here are the next order of building these units will be the same as the first being sma connector gps and finally power mosfet connectors none of them will initially get a jtag connector but they may eventually finally i will use prebuilt binaries to update the firmware of the via usb

| 1

|

86,089

| 15,755,328,070

|

IssuesEvent

|

2021-03-31 01:34:54

|

ysmanohar/DashBoard

|

https://api.github.com/repos/ysmanohar/DashBoard

|

opened

|

WS-2019-0425 (Medium) detected in multiple libraries

|

security vulnerability

|

## WS-2019-0425 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>mocha-2.5.3.tgz</b>, <b>mocha-3.5.3.tgz</b>, <b>mocha-1.21.4.tgz</b>, <b>mocha-1.21.5.tgz</b></p></summary>

<p>

<details><summary><b>mocha-2.5.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-2.5.3.tgz">https://registry.npmjs.org/mocha/-/mocha-2.5.3.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/chai/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/async/node_modules/mocha/package.json,DashBoard/bower_components/async/node_modules/mocha/package.json,DashBoard/bower_components/async/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-2.5.3.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-3.5.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-3.5.3.tgz">https://registry.npmjs.org/mocha/-/mocha-3.5.3.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/es6-promise/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/es6-promise/node_modules/mocha/package.json,DashBoard/bower_components/es6-promise/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-3.5.3.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-1.21.4.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-1.21.4.tgz">https://registry.npmjs.org/mocha/-/mocha-1.21.4.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/sinon-chai/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/sinon-chai/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-1.21.4.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-1.21.5.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-1.21.5.tgz">https://registry.npmjs.org/mocha/-/mocha-1.21.5.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/es6-promise/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/es6-promise/node_modules/promises-aplus-tests-phantom/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- promises-aplus-tests-phantom-2.1.0-revise.tgz (Root Library)

- :x: **mocha-1.21.5.tgz** (Vulnerable Library)

</details>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Mocha is vulnerable to ReDoS attack. If the stack trace in utils.js begins with a large error message, and full-trace is not enabled, utils.stackTraceFilter() will take exponential run time.

<p>Publish Date: 2019-01-24

<p>URL: <a href=https://github.com/mochajs/mocha/commit/1a43d8b11a64e4e85fe2a61aed91c259bbbac559>WS-2019-0425</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="v6.0.0">v6.0.0</a></p>

<p>Release Date: 2020-05-07</p>

<p>Fix Resolution: https://github.com/mochajs/mocha/commit/1a43d8b11a64e4e85fe2a61aed91c259bbbac559</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

WS-2019-0425 (Medium) detected in multiple libraries - ## WS-2019-0425 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>mocha-2.5.3.tgz</b>, <b>mocha-3.5.3.tgz</b>, <b>mocha-1.21.4.tgz</b>, <b>mocha-1.21.5.tgz</b></p></summary>

<p>

<details><summary><b>mocha-2.5.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-2.5.3.tgz">https://registry.npmjs.org/mocha/-/mocha-2.5.3.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/chai/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/async/node_modules/mocha/package.json,DashBoard/bower_components/async/node_modules/mocha/package.json,DashBoard/bower_components/async/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-2.5.3.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-3.5.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-3.5.3.tgz">https://registry.npmjs.org/mocha/-/mocha-3.5.3.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/es6-promise/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/es6-promise/node_modules/mocha/package.json,DashBoard/bower_components/es6-promise/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-3.5.3.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-1.21.4.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-1.21.4.tgz">https://registry.npmjs.org/mocha/-/mocha-1.21.4.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/sinon-chai/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/sinon-chai/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- :x: **mocha-1.21.4.tgz** (Vulnerable Library)

</details>

<details><summary><b>mocha-1.21.5.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-1.21.5.tgz">https://registry.npmjs.org/mocha/-/mocha-1.21.5.tgz</a></p>

<p>Path to dependency file: /DashBoard/bower_components/es6-promise/package.json</p>

<p>Path to vulnerable library: DashBoard/bower_components/es6-promise/node_modules/promises-aplus-tests-phantom/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- promises-aplus-tests-phantom-2.1.0-revise.tgz (Root Library)

- :x: **mocha-1.21.5.tgz** (Vulnerable Library)

</details>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Mocha is vulnerable to ReDoS attack. If the stack trace in utils.js begins with a large error message, and full-trace is not enabled, utils.stackTraceFilter() will take exponential run time.

<p>Publish Date: 2019-01-24

<p>URL: <a href=https://github.com/mochajs/mocha/commit/1a43d8b11a64e4e85fe2a61aed91c259bbbac559>WS-2019-0425</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="v6.0.0">v6.0.0</a></p>

<p>Release Date: 2020-05-07</p>

<p>Fix Resolution: https://github.com/mochajs/mocha/commit/1a43d8b11a64e4e85fe2a61aed91c259bbbac559</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

ws medium detected in multiple libraries ws medium severity vulnerability vulnerable libraries mocha tgz mocha tgz mocha tgz mocha tgz mocha tgz simple flexible fun test framework library home page a href path to dependency file dashboard bower components chai package json path to vulnerable library dashboard bower components async node modules mocha package json dashboard bower components async node modules mocha package json dashboard bower components async node modules mocha package json dependency hierarchy x mocha tgz vulnerable library mocha tgz simple flexible fun test framework library home page a href path to dependency file dashboard bower components promise package json path to vulnerable library dashboard bower components promise node modules mocha package json dashboard bower components promise node modules mocha package json dependency hierarchy x mocha tgz vulnerable library mocha tgz simple flexible fun test framework library home page a href path to dependency file dashboard bower components sinon chai package json path to vulnerable library dashboard bower components sinon chai node modules mocha package json dependency hierarchy x mocha tgz vulnerable library mocha tgz simple flexible fun test framework library home page a href path to dependency file dashboard bower components promise package json path to vulnerable library dashboard bower components promise node modules promises aplus tests phantom node modules mocha package json dependency hierarchy promises aplus tests phantom revise tgz root library x mocha tgz vulnerable library vulnerability details mocha is vulnerable to redos attack if the stack trace in utils js begins with a large error message and full trace is not enabled utils stacktracefilter will take exponential run time publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin release date fix resolution step up your open source security game with whitesource

| 0

|

754,464

| 26,389,716,175

|

IssuesEvent

|

2023-01-12 14:52:25

|

l7mp/stunner

|

https://api.github.com/repos/l7mp/stunner

|

opened

|

MIlestone v1.14: Performance: Per-allocation CPU load-balancing

|

priority: low type: enhancement

|

This issue is to plan & discuss the performance optimizations that should go into v1.14.

**Problem:** Currently STUNner UDP performance is limited at about 100-200 kpps per UDP listener (i.e., per UDP Gateway/listener in the Kubernetes Gateway API terminology). This is because we allocate a single `net.PacketConn` per UDP listener, which is then [drained by a single CPU thread/go-routine](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/server.go#L68). This means that all client allocations made via that listener will share the same CPU thread and there is no way to load-balance client allocations across CPUs; i.e., each listener is restricted to a single CPU. If STUNner is exposed via a single UDP listener (the most common setting) then it will be restricted to about 1200-1500 mcore.

**Notes:**

- **This is not a problem in Kubernetes:** instead of vertical scaling (let a single STUNner instance use as many CPUs as available), Kubernetes defaults to horizontal scaling; if a single `stunnerd` pod is a bottleneck we simply fire up more (e.g., using HPA). In fact, the single-CPU-restriction makes HPA *simpler* since the CPU triggers are easier to set (e.g., we have to scale-out when when the average CPU load approaches 1000 mcores); when the application can vertically scale to some arbitrary number of CPUs by itself we never know how to fix the CPU trigger for HPA (this is when vertical scaling interferes with horizonmtal scaling). Eventually we'll have as many pods as CPU cores and Kubernetes will readily load-balance client connections across our pods. This makes us wonder whether to solve the vertical scaling problem *at all*, since there is very little use of such a feature in Kubernetes.

- **The single-CPU restriction apples per-UDP-listener:** if STUNner is exposed via multiple UDP TURN listeners then each listener will receive a separate CPU thread.

- **This limitation applies to UDP only:** for TCP, TLS and DTLS the TURN sockets are connected back to the client and therefore [a separate CPU thread/go-routine is created for each allocation](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/server.go#L96).

**Solution:** The plan is to create a separate `net.Conn` for each UDP allocation, by (1) sharing the same listener server address using `REUSEADDR/REUSEPORT`, (2) connecting each per-allocation connection back to the client (this will turn the `net.PacketConn` into a connected `net.Conn`), and (3) firing up a separate read-loop/go-routine per each allocation/socket. Extreme care must be taken though in implementing this: if we blindly create a new socket per received UDP packet then a simple UDP portscan will DoS the TURN listener.

**Plan:**

1. Move the creation of per-allocation connection creation after the client has authenticated with the server, e.g., [when the TURN allocation request has been successfully processed](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/internal/server/turn.go#L151). Note that this still allows a client with a valid credential to DoS the server, so we need to quota per-client connections.

2. Implement per-client quotas as per RFC8656, Section 7.2., "Receiving an Allocate Request", point 10:

> At any point, the server MAY choose to reject the request with a 486 (Allocation Quota Reached) error if it feels the client is trying to exceed some locally defined allocation quota. The server is free to define this allocation quota any way it wishes, but it SHOULD define it based on the username used to authenticate the request and not on the client's transport address.

3. Expose the client quota via `turn.ServerConfig`. Possibly also expose a setting to let users to opt in to per-allocation CPU load-balancing.

4. Test and upstream.

Feedback appreciated.

|

1.0

|

MIlestone v1.14: Performance: Per-allocation CPU load-balancing - This issue is to plan & discuss the performance optimizations that should go into v1.14.

**Problem:** Currently STUNner UDP performance is limited at about 100-200 kpps per UDP listener (i.e., per UDP Gateway/listener in the Kubernetes Gateway API terminology). This is because we allocate a single `net.PacketConn` per UDP listener, which is then [drained by a single CPU thread/go-routine](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/server.go#L68). This means that all client allocations made via that listener will share the same CPU thread and there is no way to load-balance client allocations across CPUs; i.e., each listener is restricted to a single CPU. If STUNner is exposed via a single UDP listener (the most common setting) then it will be restricted to about 1200-1500 mcore.

**Notes:**

- **This is not a problem in Kubernetes:** instead of vertical scaling (let a single STUNner instance use as many CPUs as available), Kubernetes defaults to horizontal scaling; if a single `stunnerd` pod is a bottleneck we simply fire up more (e.g., using HPA). In fact, the single-CPU-restriction makes HPA *simpler* since the CPU triggers are easier to set (e.g., we have to scale-out when when the average CPU load approaches 1000 mcores); when the application can vertically scale to some arbitrary number of CPUs by itself we never know how to fix the CPU trigger for HPA (this is when vertical scaling interferes with horizonmtal scaling). Eventually we'll have as many pods as CPU cores and Kubernetes will readily load-balance client connections across our pods. This makes us wonder whether to solve the vertical scaling problem *at all*, since there is very little use of such a feature in Kubernetes.

- **The single-CPU restriction apples per-UDP-listener:** if STUNner is exposed via multiple UDP TURN listeners then each listener will receive a separate CPU thread.

- **This limitation applies to UDP only:** for TCP, TLS and DTLS the TURN sockets are connected back to the client and therefore [a separate CPU thread/go-routine is created for each allocation](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/server.go#L96).

**Solution:** The plan is to create a separate `net.Conn` for each UDP allocation, by (1) sharing the same listener server address using `REUSEADDR/REUSEPORT`, (2) connecting each per-allocation connection back to the client (this will turn the `net.PacketConn` into a connected `net.Conn`), and (3) firing up a separate read-loop/go-routine per each allocation/socket. Extreme care must be taken though in implementing this: if we blindly create a new socket per received UDP packet then a simple UDP portscan will DoS the TURN listener.

**Plan:**

1. Move the creation of per-allocation connection creation after the client has authenticated with the server, e.g., [when the TURN allocation request has been successfully processed](https://github.com/l7mp/turn/blob/7bd80d5f800480042e404d1d49e5a6d20377127e/internal/server/turn.go#L151). Note that this still allows a client with a valid credential to DoS the server, so we need to quota per-client connections.

2. Implement per-client quotas as per RFC8656, Section 7.2., "Receiving an Allocate Request", point 10:

> At any point, the server MAY choose to reject the request with a 486 (Allocation Quota Reached) error if it feels the client is trying to exceed some locally defined allocation quota. The server is free to define this allocation quota any way it wishes, but it SHOULD define it based on the username used to authenticate the request and not on the client's transport address.

3. Expose the client quota via `turn.ServerConfig`. Possibly also expose a setting to let users to opt in to per-allocation CPU load-balancing.

4. Test and upstream.

Feedback appreciated.

|

non_test

|

milestone performance per allocation cpu load balancing this issue is to plan discuss the performance optimizations that should go into problem currently stunner udp performance is limited at about kpps per udp listener i e per udp gateway listener in the kubernetes gateway api terminology this is because we allocate a single net packetconn per udp listener which is then this means that all client allocations made via that listener will share the same cpu thread and there is no way to load balance client allocations across cpus i e each listener is restricted to a single cpu if stunner is exposed via a single udp listener the most common setting then it will be restricted to about mcore notes this is not a problem in kubernetes instead of vertical scaling let a single stunner instance use as many cpus as available kubernetes defaults to horizontal scaling if a single stunnerd pod is a bottleneck we simply fire up more e g using hpa in fact the single cpu restriction makes hpa simpler since the cpu triggers are easier to set e g we have to scale out when when the average cpu load approaches mcores when the application can vertically scale to some arbitrary number of cpus by itself we never know how to fix the cpu trigger for hpa this is when vertical scaling interferes with horizonmtal scaling eventually we ll have as many pods as cpu cores and kubernetes will readily load balance client connections across our pods this makes us wonder whether to solve the vertical scaling problem at all since there is very little use of such a feature in kubernetes the single cpu restriction apples per udp listener if stunner is exposed via multiple udp turn listeners then each listener will receive a separate cpu thread this limitation applies to udp only for tcp tls and dtls the turn sockets are connected back to the client and therefore solution the plan is to create a separate net conn for each udp allocation by sharing the same listener server address using reuseaddr reuseport connecting each per allocation connection back to the client this will turn the net packetconn into a connected net conn and firing up a separate read loop go routine per each allocation socket extreme care must be taken though in implementing this if we blindly create a new socket per received udp packet then a simple udp portscan will dos the turn listener plan move the creation of per allocation connection creation after the client has authenticated with the server e g note that this still allows a client with a valid credential to dos the server so we need to quota per client connections implement per client quotas as per section receiving an allocate request point at any point the server may choose to reject the request with a allocation quota reached error if it feels the client is trying to exceed some locally defined allocation quota the server is free to define this allocation quota any way it wishes but it should define it based on the username used to authenticate the request and not on the client s transport address expose the client quota via turn serverconfig possibly also expose a setting to let users to opt in to per allocation cpu load balancing test and upstream feedback appreciated

| 0

|

42,357

| 5,435,081,906

|

IssuesEvent

|

2017-03-05 14:01:06

|

openbmc/openbmc-test-automation

|

https://api.github.com/repos/openbmc/openbmc-test-automation

|

closed

|

[Automation] BMC boot count

|

feature Test

|

We are seeing lately phantom reset in code update.. so we want to add logic to find way to track the boot count.. look like as per Dev Folks either uptime or /proc/stat btime makes up for it..

need to derived logic from it to keep the boot count.

|

1.0

|

[Automation] BMC boot count - We are seeing lately phantom reset in code update.. so we want to add logic to find way to track the boot count.. look like as per Dev Folks either uptime or /proc/stat btime makes up for it..

need to derived logic from it to keep the boot count.

|

test

|

bmc boot count we are seeing lately phantom reset in code update so we want to add logic to find way to track the boot count look like as per dev folks either uptime or proc stat btime makes up for it need to derived logic from it to keep the boot count

| 1

|

224,147

| 24,769,703,913

|

IssuesEvent

|

2022-10-23 01:12:09

|

snykiotcubedev/arangodb-3.7.6

|

https://api.github.com/repos/snykiotcubedev/arangodb-3.7.6

|

reopened

|

CVE-2018-11694 (Medium) detected in node-sass-4.14.1.tgz

|

security vulnerability

|

## CVE-2018-11694 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz</a></p>

<p>

Dependency Hierarchy:

- :x: **node-sass-4.14.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/snykiotcubedev/arangodb-3.7.6/commit/fce8f85f1c2f070c8e6a8e76d17210a2117d3833">fce8f85f1c2f070c8e6a8e76d17210a2117d3833</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. A NULL pointer dereference was found in the function Sass::Functions::selector_append which could be leveraged by an attacker to cause a denial of service (application crash) or possibly have unspecified other impact.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11694>CVE-2018-11694</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: 5.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-11694 (Medium) detected in node-sass-4.14.1.tgz - ## CVE-2018-11694 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz</a></p>

<p>

Dependency Hierarchy:

- :x: **node-sass-4.14.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/snykiotcubedev/arangodb-3.7.6/commit/fce8f85f1c2f070c8e6a8e76d17210a2117d3833">fce8f85f1c2f070c8e6a8e76d17210a2117d3833</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. A NULL pointer dereference was found in the function Sass::Functions::selector_append which could be leveraged by an attacker to cause a denial of service (application crash) or possibly have unspecified other impact.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11694>CVE-2018-11694</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: 5.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in node sass tgz cve medium severity vulnerability vulnerable library node sass tgz wrapper around libsass library home page a href dependency hierarchy x node sass tgz vulnerable library found in head commit a href found in base branch main vulnerability details an issue was discovered in libsass through a null pointer dereference was found in the function sass functions selector append which could be leveraged by an attacker to cause a denial of service application crash or possibly have unspecified other impact publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version release date fix resolution step up your open source security game with mend

| 0

|

142

| 2,494,758,213

|

IssuesEvent

|

2015-01-06 01:11:45

|

yaobinshi/test1

|

https://api.github.com/repos/yaobinshi/test1

|

opened

|

upload from server did not work

|

Category: UI Component: Rank Component: Tester Priority: High Status: Closed Tracker: Bug

|

---

Author Name: **larry shi**

Original Redmine Issue: 87, http://www.fossology.org/issues/87

Original Date: 2011/12/16

Original Assignee: Mary Laser

---

upload from server did not work

cause: fosscp_agent is passed away.

how to fix this issue

my suggestion is:

upload file with cp2foss directly.

Make sense?

|

1.0

|

upload from server did not work - ---

Author Name: **larry shi**

Original Redmine Issue: 87, http://www.fossology.org/issues/87

Original Date: 2011/12/16

Original Assignee: Mary Laser

---

upload from server did not work

cause: fosscp_agent is passed away.

how to fix this issue

my suggestion is:

upload file with cp2foss directly.

Make sense?

|

test

|

upload from server did not work author name larry shi original redmine issue original date original assignee mary laser upload from server did not work cause fosscp agent is passed away how to fix this issue my suggestion is upload file with directly make sense

| 1

|

224,241

| 17,673,813,941

|

IssuesEvent

|

2021-08-23 09:45:03

|

spring-projects/spring-framework

|

https://api.github.com/repos/spring-projects/spring-framework

|

closed

|

Introduce `ExceptionCollector` testing utility

|

in: test type: enhancement

|

In order to support _soft assertions_ in #26917 and #26969, we need common support for tracking multiple failures and generating a single `AssertionError` containing those failures as suppressed exceptions.

|

1.0

|

Introduce `ExceptionCollector` testing utility - In order to support _soft assertions_ in #26917 and #26969, we need common support for tracking multiple failures and generating a single `AssertionError` containing those failures as suppressed exceptions.

|

test

|

introduce exceptioncollector testing utility in order to support soft assertions in and we need common support for tracking multiple failures and generating a single assertionerror containing those failures as suppressed exceptions

| 1

|

327,150

| 28,045,873,642

|

IssuesEvent

|

2023-03-28 22:50:12

|

finos/waltz

|

https://api.github.com/repos/finos/waltz

|

closed

|

Search: searching for a guid throws an error

|

bug small change fixed (test & close)

|

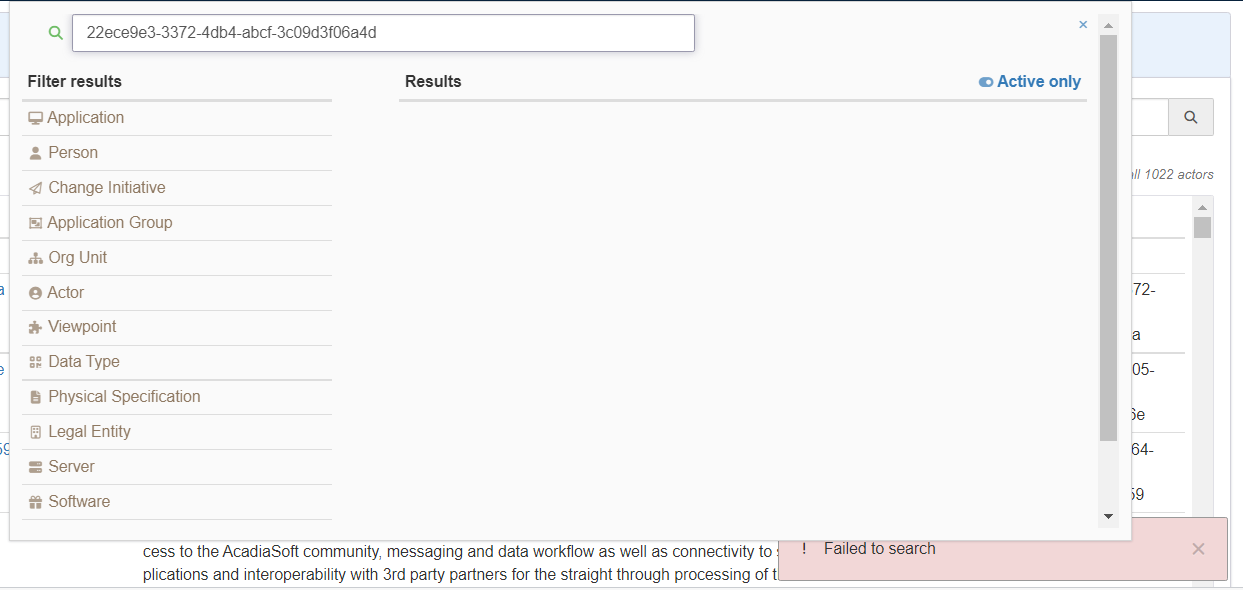

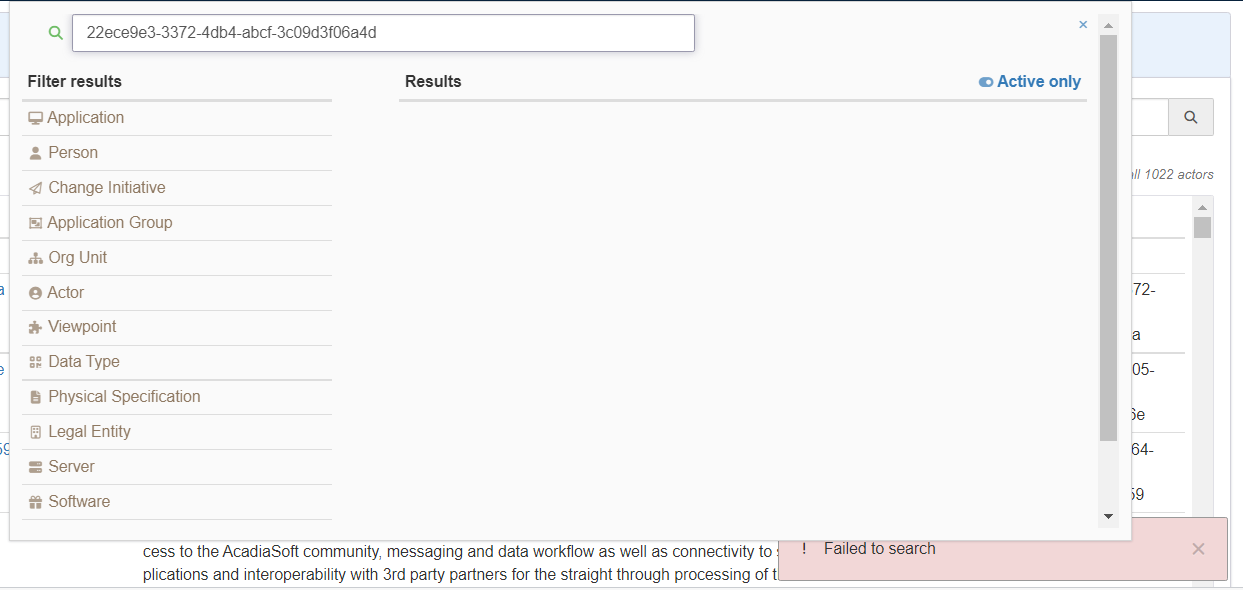

### Description

An actor was registered with a name/external-id as a guid. Searching for that guid causes an error to be displayed.

Not sure that the actor had anything to do with it. May have just been the first time we noticed it.

### Waltz Version

1.47.1

### Steps to Reproduce

Search for guid

### Expected Result

Entity should be found (or no hits returned).

### Actual Result

Error message:

|

1.0

|

Search: searching for a guid throws an error - ### Description

An actor was registered with a name/external-id as a guid. Searching for that guid causes an error to be displayed.

Not sure that the actor had anything to do with it. May have just been the first time we noticed it.

### Waltz Version

1.47.1

### Steps to Reproduce

Search for guid

### Expected Result

Entity should be found (or no hits returned).

### Actual Result

Error message:

|

test

|

search searching for a guid throws an error description an actor was registered with a name external id as a guid searching for that guid causes an error to be displayed not sure that the actor had anything to do with it may have just been the first time we noticed it waltz version steps to reproduce search for guid expected result entity should be found or no hits returned actual result error message

| 1

|

135,750

| 11,016,601,106

|

IssuesEvent

|

2019-12-05 06:00:05

|

microsoft/AzureStorageExplorer

|

https://api.github.com/repos/microsoft/AzureStorageExplorer

|

closed

|

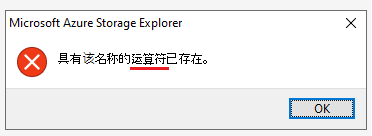

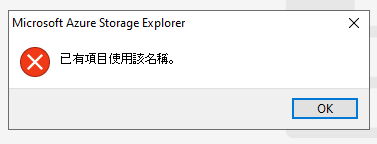

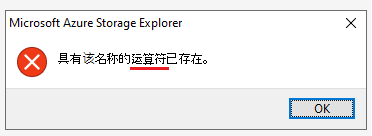

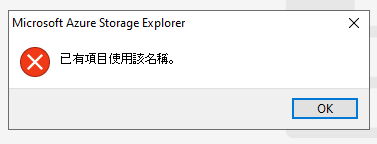

Update '运算符' to '文件共享' on the prompt dialog when renaming a file share with an exist name

|

:gear: blobs :gear: files 🌐 localization 🧪 testing

|

**Storage Explorer Version:** 1.11.0

**Build:** [20191105.2](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3213388)

**Branch:** rel/1.11.0

**Platform/OS:** Windows 10/ Linux Ubuntu 19.04/macOS High Sierra

**Language:** Chinese(zh-CN)

**Architecture:** ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Launch Storage Explorer.

2. Open 'Settings' -> Application (Regional Settings) -> Select 'Chinese (simplified)' -> Restart Storage Explorer.

3. Expand one storage account -> File Shares.

4. Create two file shares -> Rename one file share using another file share's name.

5. Check the prompt dialog.

**Expect Experience:**

Show '具有该名称的**文件共享**已存在' on the dialog.

**Actual Experience:**

Show '具有该名称的**运算符**已存在' on the dialog.

**More Info:**

1. This issue also reproduces for one blob container. (Update '运算符' to 'blob 容器')

2. The prompt dialog shows like below in Chinese (traditional).

|

1.0

|

Update '运算符' to '文件共享' on the prompt dialog when renaming a file share with an exist name - **Storage Explorer Version:** 1.11.0

**Build:** [20191105.2](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3213388)

**Branch:** rel/1.11.0

**Platform/OS:** Windows 10/ Linux Ubuntu 19.04/macOS High Sierra

**Language:** Chinese(zh-CN)

**Architecture:** ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Launch Storage Explorer.

2. Open 'Settings' -> Application (Regional Settings) -> Select 'Chinese (simplified)' -> Restart Storage Explorer.

3. Expand one storage account -> File Shares.

4. Create two file shares -> Rename one file share using another file share's name.

5. Check the prompt dialog.

**Expect Experience:**

Show '具有该名称的**文件共享**已存在' on the dialog.

**Actual Experience:**

Show '具有该名称的**运算符**已存在' on the dialog.

**More Info:**

1. This issue also reproduces for one blob container. (Update '运算符' to 'blob 容器')

2. The prompt dialog shows like below in Chinese (traditional).

|

test

|

update 运算符 to 文件共享 on the prompt dialog when renaming a file share with an exist name storage explorer version build branch rel platform os windows linux ubuntu macos high sierra language chinese zh cn architecture regression from not a regression steps to reproduce launch storage explorer open settings application regional settings select chinese simplified restart storage explorer expand one storage account file shares create two file shares rename one file share using another file share s name check the prompt dialog expect experience show 具有该名称的 文件共享 已存在 on the dialog actual experience show 具有该名称的 运算符 已存在 on the dialog more info this issue also reproduces for one blob container update 运算符 to blob 容器 the prompt dialog shows like below in chinese traditional

| 1

|

99,712

| 8,709,144,773

|

IssuesEvent

|

2018-12-06 13:08:17

|

NKCR-INPROVE/evidence.periodik

|

https://api.github.com/repos/NKCR-INPROVE/evidence.periodik

|

closed

|

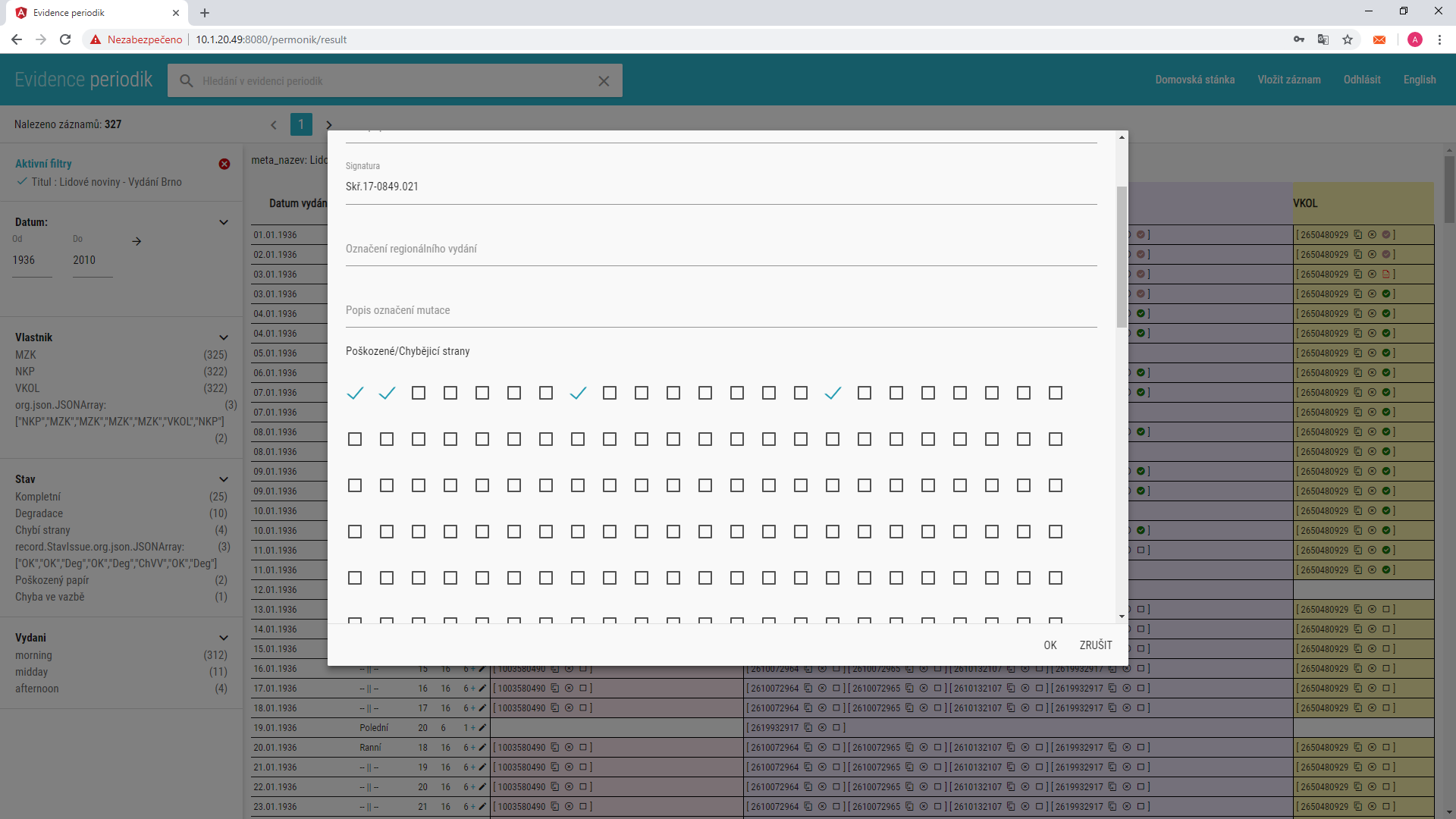

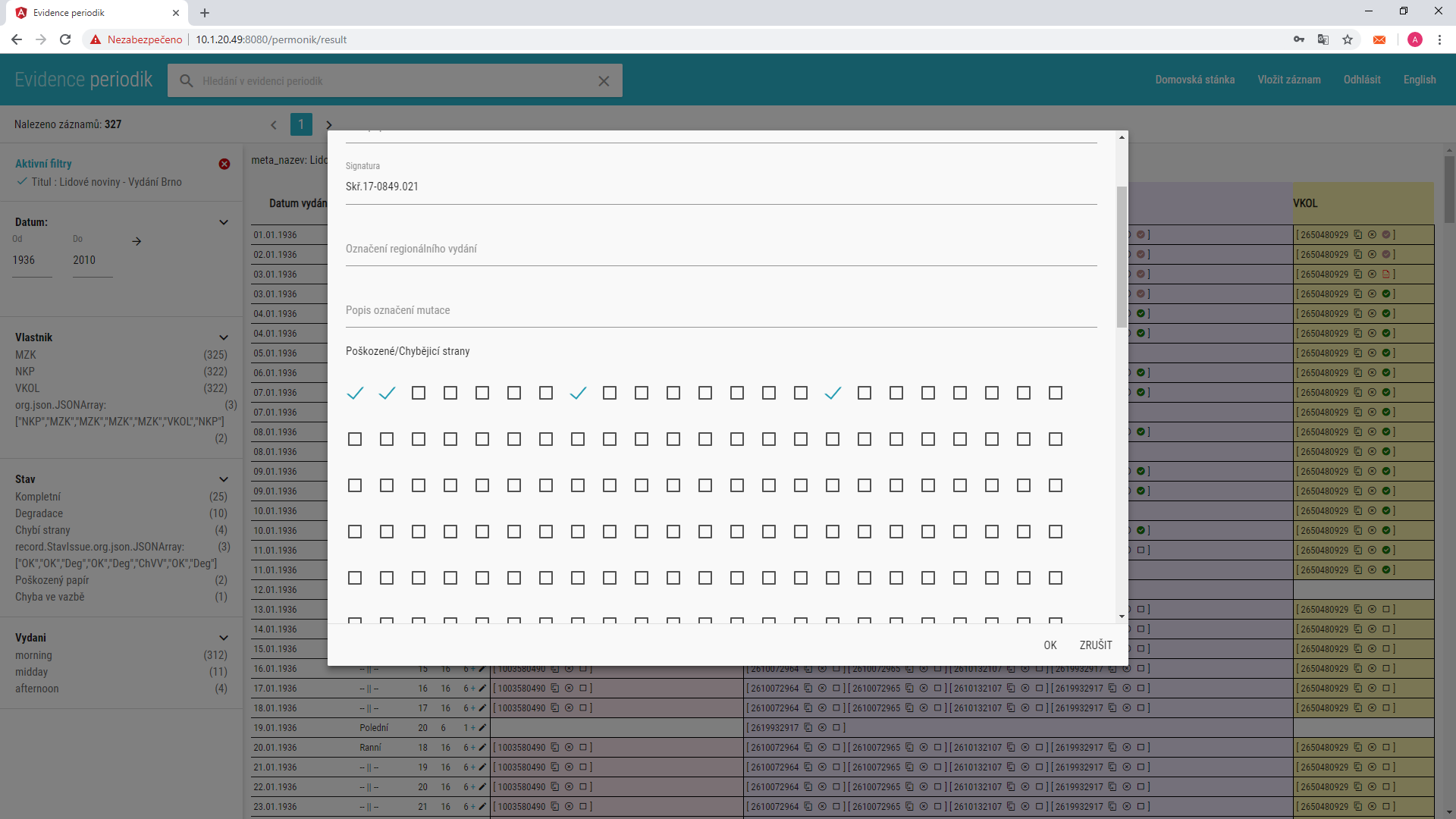

počet stran u editace exempláře

|

ready for test

|

u čísla 1 jsme upravili a specifikovali počet stran, následně při editu exempláře se zobrazují špatně checkboxy pro výběr stran.

|

1.0

|

počet stran u editace exempláře - u čísla 1 jsme upravili a specifikovali počet stran, následně při editu exempláře se zobrazují špatně checkboxy pro výběr stran.

|

test

|

počet stran u editace exempláře u čísla jsme upravili a specifikovali počet stran následně při editu exempláře se zobrazují špatně checkboxy pro výběr stran

| 1

|

281,103

| 30,872,817,016

|

IssuesEvent

|

2023-08-03 12:32:25

|

hinoshiba/news

|

https://api.github.com/repos/hinoshiba/news

|

closed

|

[SecurityWeek] Ransomware Attacks on Industrial Organizations Doubled in Past Year: Report

|

SecurityWeek Stale

|

The number of ransomware attacks targeting industrial organizations and infrastructure has doubled since the second quarter of 2022, according to Dragos.

The post [Ransomware Attacks on Industrial Organizations Doubled in Past Year: Report](https://www.securityweek.com/ransomware-attacks-on-industrial-organizations-doubled-in-past-year-report/) appeared first on [SecurityWeek](https://www.securityweek.com).

<https://www.securityweek.com/ransomware-attacks-on-industrial-organizations-doubled-in-past-year-report/>

|

True

|

[SecurityWeek] Ransomware Attacks on Industrial Organizations Doubled in Past Year: Report -

The number of ransomware attacks targeting industrial organizations and infrastructure has doubled since the second quarter of 2022, according to Dragos.

The post [Ransomware Attacks on Industrial Organizations Doubled in Past Year: Report](https://www.securityweek.com/ransomware-attacks-on-industrial-organizations-doubled-in-past-year-report/) appeared first on [SecurityWeek](https://www.securityweek.com).

<https://www.securityweek.com/ransomware-attacks-on-industrial-organizations-doubled-in-past-year-report/>

|

non_test

|

ransomware attacks on industrial organizations doubled in past year report the number of ransomware attacks targeting industrial organizations and infrastructure has doubled since the second quarter of according to dragos the post appeared first on

| 0

|

10,543

| 6,794,477,718

|

IssuesEvent

|

2017-11-01 12:20:46

|

Elgg/Elgg

|

https://api.github.com/repos/Elgg/Elgg

|

closed

|

Linkify headers in group modules

|

easy ui usability

|

E.g. Link "Group blog". Replace the "view all" link with the "Write a blog post" link in that position.

|

True

|

Linkify headers in group modules - E.g. Link "Group blog". Replace the "view all" link with the "Write a blog post" link in that position.

|

non_test

|

linkify headers in group modules e g link group blog replace the view all link with the write a blog post link in that position

| 0

|

113,741

| 17,150,895,063

|

IssuesEvent

|

2021-07-13 20:26:56

|

snowdensb/braindump

|

https://api.github.com/repos/snowdensb/braindump

|

opened

|

CVE-2020-8203 (High) detected in lodash-4.16.6.tgz, lodash-1.0.2.tgz

|

security vulnerability

|

## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.16.6.tgz</b>, <b>lodash-1.0.2.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.16.6.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.16.6.tgz">https://registry.npmjs.org/lodash/-/lodash-4.16.6.tgz</a></p>

<p>Path to dependency file: braindump/package.json</p>

<p>Path to vulnerable library: braindump/node_modules/lodash</p>

<p>

Dependency Hierarchy:

- gulp-sass-2.3.2.tgz (Root Library)

- node-sass-3.12.1.tgz

- sass-graph-2.1.2.tgz

- :x: **lodash-4.16.6.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-1.0.2.tgz</b></p></summary>

<p>A utility library delivering consistency, customization, performance, and extras.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-1.0.2.tgz">https://registry.npmjs.org/lodash/-/lodash-1.0.2.tgz</a></p>

<p>Path to dependency file: braindump/package.json</p>

<p>Path to vulnerable library: braindump/node_modules/lodash</p>

<p>

Dependency Hierarchy:

- gulp-3.9.1.tgz (Root Library)

- vinyl-fs-0.3.14.tgz

- glob-watcher-0.0.6.tgz

- gaze-0.5.2.tgz

- globule-0.1.0.tgz

- :x: **lodash-1.0.2.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/snowdensb/braindump/commit/815ae0afebcf867f02143f3ab9cf88b1d4dacdec">815ae0afebcf867f02143f3ab9cf88b1d4dacdec</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype pollution attack when using _.zipObjectDeep in lodash before 4.17.20.

<p>Publish Date: 2020-07-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8203>CVE-2020-8203</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1523">https://www.npmjs.com/advisories/1523</a></p>

<p>Release Date: 2020-10-21</p>

<p>Fix Resolution: lodash - 4.17.19</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"4.16.6","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp-sass:2.3.2;node-sass:3.12.1;sass-graph:2.1.2;lodash:4.16.6","isMinimumFixVersionAvailable":true,"minimumFixVersion":"lodash - 4.17.19"},{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"1.0.2","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp:3.9.1;vinyl-fs:0.3.14;glob-watcher:0.0.6;gaze:0.5.2;globule:0.1.0;lodash:1.0.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"lodash - 4.17.19"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2020-8203","vulnerabilityDetails":"Prototype pollution attack when using _.zipObjectDeep in lodash before 4.17.20.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8203","cvss3Severity":"high","cvss3Score":"7.4","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2020-8203 (High) detected in lodash-4.16.6.tgz, lodash-1.0.2.tgz - ## CVE-2020-8203 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-4.16.6.tgz</b>, <b>lodash-1.0.2.tgz</b></p></summary>

<p>

<details><summary><b>lodash-4.16.6.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-4.16.6.tgz">https://registry.npmjs.org/lodash/-/lodash-4.16.6.tgz</a></p>

<p>Path to dependency file: braindump/package.json</p>

<p>Path to vulnerable library: braindump/node_modules/lodash</p>

<p>

Dependency Hierarchy:

- gulp-sass-2.3.2.tgz (Root Library)

- node-sass-3.12.1.tgz

- sass-graph-2.1.2.tgz

- :x: **lodash-4.16.6.tgz** (Vulnerable Library)

</details>

<details><summary><b>lodash-1.0.2.tgz</b></p></summary>

<p>A utility library delivering consistency, customization, performance, and extras.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-1.0.2.tgz">https://registry.npmjs.org/lodash/-/lodash-1.0.2.tgz</a></p>

<p>Path to dependency file: braindump/package.json</p>

<p>Path to vulnerable library: braindump/node_modules/lodash</p>

<p>

Dependency Hierarchy:

- gulp-3.9.1.tgz (Root Library)

- vinyl-fs-0.3.14.tgz

- glob-watcher-0.0.6.tgz

- gaze-0.5.2.tgz

- globule-0.1.0.tgz

- :x: **lodash-1.0.2.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/snowdensb/braindump/commit/815ae0afebcf867f02143f3ab9cf88b1d4dacdec">815ae0afebcf867f02143f3ab9cf88b1d4dacdec</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Prototype pollution attack when using _.zipObjectDeep in lodash before 4.17.20.

<p>Publish Date: 2020-07-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8203>CVE-2020-8203</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1523">https://www.npmjs.com/advisories/1523</a></p>

<p>Release Date: 2020-10-21</p>

<p>Fix Resolution: lodash - 4.17.19</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"4.16.6","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp-sass:2.3.2;node-sass:3.12.1;sass-graph:2.1.2;lodash:4.16.6","isMinimumFixVersionAvailable":true,"minimumFixVersion":"lodash - 4.17.19"},{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"1.0.2","packageFilePaths":["/package.json"],"isTransitiveDependency":true,"dependencyTree":"gulp:3.9.1;vinyl-fs:0.3.14;glob-watcher:0.0.6;gaze:0.5.2;globule:0.1.0;lodash:1.0.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"lodash - 4.17.19"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2020-8203","vulnerabilityDetails":"Prototype pollution attack when using _.zipObjectDeep in lodash before 4.17.20.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8203","cvss3Severity":"high","cvss3Score":"7.4","cvss3Metrics":{"A":"High","AC":"High","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_test

|

cve high detected in lodash tgz lodash tgz cve high severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash modular utilities library home page a href path to dependency file braindump package json path to vulnerable library braindump node modules lodash dependency hierarchy gulp sass tgz root library node sass tgz sass graph tgz x lodash tgz vulnerable library lodash tgz a utility library delivering consistency customization performance and extras library home page a href path to dependency file braindump package json path to vulnerable library braindump node modules lodash dependency hierarchy gulp tgz root library vinyl fs tgz glob watcher tgz gaze tgz globule tgz x lodash tgz vulnerable library found in head commit a href found in base branch master vulnerability details prototype pollution attack when using zipobjectdeep in lodash before publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution lodash isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree gulp sass node sass sass graph lodash isminimumfixversionavailable true minimumfixversion lodash packagetype javascript node js packagename lodash packageversion packagefilepaths istransitivedependency true dependencytree gulp vinyl fs glob watcher gaze globule lodash isminimumfixversionavailable true minimumfixversion lodash basebranches vulnerabilityidentifier cve vulnerabilitydetails prototype pollution attack when using zipobjectdeep in lodash before vulnerabilityurl

| 0

|

70,812

| 15,111,824,712

|

IssuesEvent

|

2021-02-08 20:58:46

|

department-of-veterans-affairs/va.gov-team

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-team

|

opened

|

Remove contexts from CircleCI until we have the ability to limit context access by security groups

|

devops operations security

|

## Description

Contexts in CircleCI are currently only able to be created with a default 'All members' group, which gives access to all members of the github Org 'department-of-veterans-affairs'. This can be limited by security groups within the circleci interface, based on github teams within the org, however, configuring those settings requires administrative privileges on the org.

It appears that the only workaround for the time being is to manage the AWS and github tokens by putting them in the per-project ENV settings. This means that if we change these credentials, we have to change them in _all_ of the projects, but this is safer than keeping them in a context.

## Background/context/resources

https://dsva.slack.com/archives/C01CJV0L9PS/p1612651359029600

## Technical notes

_Notes around work that is happening, if applicable_

---

## Tasks

For each project:

- [ ] Determine what contexts a job needs (defined in the workflows section of the config.yml)

- [ ] Determine what permissions are conferred by those contexts

- [ ] Create Environmental Variables in the project that replicate the credentials that would have been in the context

Once all have been done:

- [ ] Delete the global contexts

## Definition of Done

- [ ] AWS credentials and github credentials will no longer be stored in the global CircleCI Organizational contexts.

---

### Reminders

- [ ] Please attach your team label and any other appropriate label(s)

- [ ] Please attach the needs grooming tag if needed

- [ ] Please connect to an epic

|

True

|

Remove contexts from CircleCI until we have the ability to limit context access by security groups - ## Description

Contexts in CircleCI are currently only able to be created with a default 'All members' group, which gives access to all members of the github Org 'department-of-veterans-affairs'. This can be limited by security groups within the circleci interface, based on github teams within the org, however, configuring those settings requires administrative privileges on the org.

It appears that the only workaround for the time being is to manage the AWS and github tokens by putting them in the per-project ENV settings. This means that if we change these credentials, we have to change them in _all_ of the projects, but this is safer than keeping them in a context.

## Background/context/resources

https://dsva.slack.com/archives/C01CJV0L9PS/p1612651359029600

## Technical notes

_Notes around work that is happening, if applicable_

---

## Tasks

For each project:

- [ ] Determine what contexts a job needs (defined in the workflows section of the config.yml)

- [ ] Determine what permissions are conferred by those contexts

- [ ] Create Environmental Variables in the project that replicate the credentials that would have been in the context

Once all have been done:

- [ ] Delete the global contexts

## Definition of Done

- [ ] AWS credentials and github credentials will no longer be stored in the global CircleCI Organizational contexts.

---

### Reminders

- [ ] Please attach your team label and any other appropriate label(s)

- [ ] Please attach the needs grooming tag if needed

- [ ] Please connect to an epic

|

non_test

|

remove contexts from circleci until we have the ability to limit context access by security groups description contexts in circleci are currently only able to be created with a default all members group which gives access to all members of the github org department of veterans affairs this can be limited by security groups within the circleci interface based on github teams within the org however configuring those settings requires administrative privileges on the org it appears that the only workaround for the time being is to manage the aws and github tokens by putting them in the per project env settings this means that if we change these credentials we have to change them in all of the projects but this is safer than keeping them in a context background context resources technical notes notes around work that is happening if applicable tasks for each project determine what contexts a job needs defined in the workflows section of the config yml determine what permissions are conferred by those contexts create environmental variables in the project that replicate the credentials that would have been in the context once all have been done delete the global contexts definition of done aws credentials and github credentials will no longer be stored in the global circleci organizational contexts reminders please attach your team label and any other appropriate label s please attach the needs grooming tag if needed please connect to an epic

| 0

|

36,484

| 5,060,658,526

|

IssuesEvent

|

2016-12-22 12:53:33

|

SpamExperts/SpamPAD

|

https://api.github.com/repos/SpamExperts/SpamPAD

|

closed

|

Add replacement for Plugin::URIEval

|

enhancement Plugin Testing

|

- [Plugin::URIEval](http://svn.apache.org/repos/asf/spamassassin/tags/sa-update_3.3.0_20070730085137/lib/Mail/SpamAssassin/Plugin/URIEval.pm)

- check_for_http_redirector

- check_https_ip_mismatch

- check_uri_truncated

Most of these can probably be already done with the URIDetail plugin

|

1.0

|

Add replacement for Plugin::URIEval - - [Plugin::URIEval](http://svn.apache.org/repos/asf/spamassassin/tags/sa-update_3.3.0_20070730085137/lib/Mail/SpamAssassin/Plugin/URIEval.pm)

- check_for_http_redirector

- check_https_ip_mismatch

- check_uri_truncated

Most of these can probably be already done with the URIDetail plugin

|

test

|

add replacement for plugin urieval check for http redirector check https ip mismatch check uri truncated most of these can probably be already done with the uridetail plugin

| 1

|

7,316

| 6,826,670,745

|

IssuesEvent

|

2017-11-08 14:50:57

|

drud/ddev

|

https://api.github.com/repos/drud/ddev

|

closed

|

[Meta] Handle or resolve Windows Compatibility Issues like hosts file/privilege escalation

|

incubate needs decision security

|

This is a follow-up to https://github.com/drud/ddev/issues/196#issuecomment-302441130, specifically with respect to the following:

> Windows Compatibility: Addressing areas where linux/macOS assumptions fail (/etc/hosts, linux commands, .exe naming convention requirements on Windows, etc.).

* Currently we check for the existence of the sudo command, and if it is found, use it to escalate privileges to add an entry to the /etc/hosts file. This is not a valid technique on Windows native, as sudo is not available and privilege escalation is done in other ways.

* The *purpose* for the privilege escalation is to edit the hosts file, which is at /Windows/System32/drivers/etc/hosts instead of /etc/hosts. It's our belief that the hosts management library we're using can actually handle this on windows if we have a good privilege escalation technique.

## Related source links or issues:

* Parent issue: https://github.com/drud/ddev/issues/196#issuecomment-302441130

* Discussion of providing a wildcard DNS entry to serve this function: https://github.com/drud/ddev/issues/175

|

True

|

[Meta] Handle or resolve Windows Compatibility Issues like hosts file/privilege escalation - This is a follow-up to https://github.com/drud/ddev/issues/196#issuecomment-302441130, specifically with respect to the following:

> Windows Compatibility: Addressing areas where linux/macOS assumptions fail (/etc/hosts, linux commands, .exe naming convention requirements on Windows, etc.).

* Currently we check for the existence of the sudo command, and if it is found, use it to escalate privileges to add an entry to the /etc/hosts file. This is not a valid technique on Windows native, as sudo is not available and privilege escalation is done in other ways.