Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

76,552

| 26,485,877,213

|

IssuesEvent

|

2023-01-17 17:58:34

|

idaholab/HERON

|

https://api.github.com/repos/idaholab/HERON

|

opened

|

[DEFECT] Static Histories Does Not Work Fully in Debug Dispatch Mode.

|

defect

|

--------

Defect Description

--------

**Describe the defect**

##### What did you expect to see happen?

To be able to use static histories and debug mode

##### What did you see instead?

There are some errors concerning _ROM_Cluster not being found

Also we must deal with reducing the project time despite the CSV containing more years of data. For example, setting macro_steps = 1 and keeping 20 years of data in your CSV causes an error.

##### Do you have a suggested fix for the development team?

**Describe how to Reproduce**

Steps to reproduce the behavior:

1.

2.

3.

4.

**Screenshots and Input Files**

Please attach the input file(s) that generate this error. The simpler the input, the faster we can find the issue.

**Platform (please complete the following information):**

- OS: [e.g. iOS]

- Version: [e.g. 22]

- Dependencies Installation: [CONDA or PIP]

----------------

For Change Control Board: Issue Review

----------------

This review should occur before any development is performed as a response to this issue.

- [ ] 1. Is it tagged with a type: defect or task?

- [ ] 2. Is it tagged with a priority: critical, normal or minor?

- [ ] 3. If it will impact requirements or requirements tests, is it tagged with requirements?

- [ ] 4. If it is a defect, can it cause wrong results for users? If so an email needs to be sent to the users.

- [ ] 5. Is a rationale provided? (Such as explaining why the improvement is needed or why current code is wrong.)

-------

For Change Control Board: Issue Closure

-------

This review should occur when the issue is imminently going to be closed.

- [ ] 1. If the issue is a defect, is the defect fixed?

- [ ] 2. If the issue is a defect, is the defect tested for in the regression test system? (If not explain why not.)

- [ ] 3. If the issue can impact users, has an email to the users group been written (the email should specify if the defect impacts stable or master)?

- [ ] 4. If the issue is a defect, does it impact the latest release branch? If yes, is there any issue tagged with release (create if needed)?

- [ ] 5. If the issue is being closed without a pull request, has an explanation of why it is being closed been provided?

|

1.0

|

[DEFECT] Static Histories Does Not Work Fully in Debug Dispatch Mode. - --------

Defect Description

--------

**Describe the defect**

##### What did you expect to see happen?

To be able to use static histories and debug mode

##### What did you see instead?

There are some errors concerning _ROM_Cluster not being found

Also we must deal with reducing the project time despite the CSV containing more years of data. For example, setting macro_steps = 1 and keeping 20 years of data in your CSV causes an error.

##### Do you have a suggested fix for the development team?

**Describe how to Reproduce**

Steps to reproduce the behavior:

1.

2.

3.

4.

**Screenshots and Input Files**

Please attach the input file(s) that generate this error. The simpler the input, the faster we can find the issue.

**Platform (please complete the following information):**

- OS: [e.g. iOS]

- Version: [e.g. 22]

- Dependencies Installation: [CONDA or PIP]

----------------

For Change Control Board: Issue Review

----------------

This review should occur before any development is performed as a response to this issue.

- [ ] 1. Is it tagged with a type: defect or task?

- [ ] 2. Is it tagged with a priority: critical, normal or minor?

- [ ] 3. If it will impact requirements or requirements tests, is it tagged with requirements?

- [ ] 4. If it is a defect, can it cause wrong results for users? If so an email needs to be sent to the users.

- [ ] 5. Is a rationale provided? (Such as explaining why the improvement is needed or why current code is wrong.)

-------

For Change Control Board: Issue Closure

-------

This review should occur when the issue is imminently going to be closed.

- [ ] 1. If the issue is a defect, is the defect fixed?

- [ ] 2. If the issue is a defect, is the defect tested for in the regression test system? (If not explain why not.)

- [ ] 3. If the issue can impact users, has an email to the users group been written (the email should specify if the defect impacts stable or master)?

- [ ] 4. If the issue is a defect, does it impact the latest release branch? If yes, is there any issue tagged with release (create if needed)?

- [ ] 5. If the issue is being closed without a pull request, has an explanation of why it is being closed been provided?

|

non_test

|

static histories does not work fully in debug dispatch mode defect description describe the defect what did you expect to see happen to be able to use static histories and debug mode what did you see instead there are some errors concerning rom cluster not being found also we must deal with reducing the project time despite the csv containing more years of data for example setting macro steps and keeping years of data in your csv causes an error do you have a suggested fix for the development team describe how to reproduce steps to reproduce the behavior screenshots and input files please attach the input file s that generate this error the simpler the input the faster we can find the issue platform please complete the following information os version dependencies installation for change control board issue review this review should occur before any development is performed as a response to this issue is it tagged with a type defect or task is it tagged with a priority critical normal or minor if it will impact requirements or requirements tests is it tagged with requirements if it is a defect can it cause wrong results for users if so an email needs to be sent to the users is a rationale provided such as explaining why the improvement is needed or why current code is wrong for change control board issue closure this review should occur when the issue is imminently going to be closed if the issue is a defect is the defect fixed if the issue is a defect is the defect tested for in the regression test system if not explain why not if the issue can impact users has an email to the users group been written the email should specify if the defect impacts stable or master if the issue is a defect does it impact the latest release branch if yes is there any issue tagged with release create if needed if the issue is being closed without a pull request has an explanation of why it is being closed been provided

| 0

|

33,212

| 4,818,575,574

|

IssuesEvent

|

2016-11-04 16:44:07

|

mapbox/mapbox-gl-native

|

https://api.github.com/repos/mapbox/mapbox-gl-native

|

closed

|

icon alignment differences in tests

|

tests

|

Moved from https://github.com/mapbox/mapbox-gl-js/issues/1569 now that this happens only in native.

icon-offset/literal:

http://mapbox.s3.amazonaws.com/mapbox-gl-native/render-tests/8219.6/index.html

|

1.0

|

icon alignment differences in tests - Moved from https://github.com/mapbox/mapbox-gl-js/issues/1569 now that this happens only in native.

icon-offset/literal:

http://mapbox.s3.amazonaws.com/mapbox-gl-native/render-tests/8219.6/index.html

|

test

|

icon alignment differences in tests moved from now that this happens only in native icon offset literal

| 1

|

28,451

| 2,702,711,624

|

IssuesEvent

|

2015-04-06 11:31:03

|

OCHA-DAP/hdx-ckan

|

https://api.github.com/repos/OCHA-DAP/hdx-ckan

|

closed

|

Custom Org Admin Page: Change default value for custom styling to our standard main nav style

|

Custom org page Priority-Medium

|

Use this less code as default:

@hdxLogoUrl: "@{imagePath}/homepage-new/logo-beta.svg";

@headerUserBackgroundColor: @darkGrayColor;

@headerNavBackgroundColor: @whiteColor;

@headerNavBorderColor: @lightGrayColor;

@headerNavSearchBorderColor: @grayColor;

@toolbarBackgroundColor: @extraLightGrayColor;

Alternatively, we may want to hide this field since the decision was made to not allow customization of the main nav.

(do not remove the functionality :) )

|

1.0

|

Custom Org Admin Page: Change default value for custom styling to our standard main nav style - Use this less code as default:

@hdxLogoUrl: "@{imagePath}/homepage-new/logo-beta.svg";

@headerUserBackgroundColor: @darkGrayColor;

@headerNavBackgroundColor: @whiteColor;

@headerNavBorderColor: @lightGrayColor;

@headerNavSearchBorderColor: @grayColor;

@toolbarBackgroundColor: @extraLightGrayColor;

Alternatively, we may want to hide this field since the decision was made to not allow customization of the main nav.

(do not remove the functionality :) )

|

non_test

|

custom org admin page change default value for custom styling to our standard main nav style use this less code as default hdxlogourl imagepath homepage new logo beta svg headeruserbackgroundcolor darkgraycolor headernavbackgroundcolor whitecolor headernavbordercolor lightgraycolor headernavsearchbordercolor graycolor toolbarbackgroundcolor extralightgraycolor alternatively we may want to hide this field since the decision was made to not allow customization of the main nav do not remove the functionality

| 0

|

344,597

| 30,751,816,649

|

IssuesEvent

|

2023-07-28 20:03:09

|

rancher/rancher

|

https://api.github.com/repos/rancher/rancher

|

closed

|

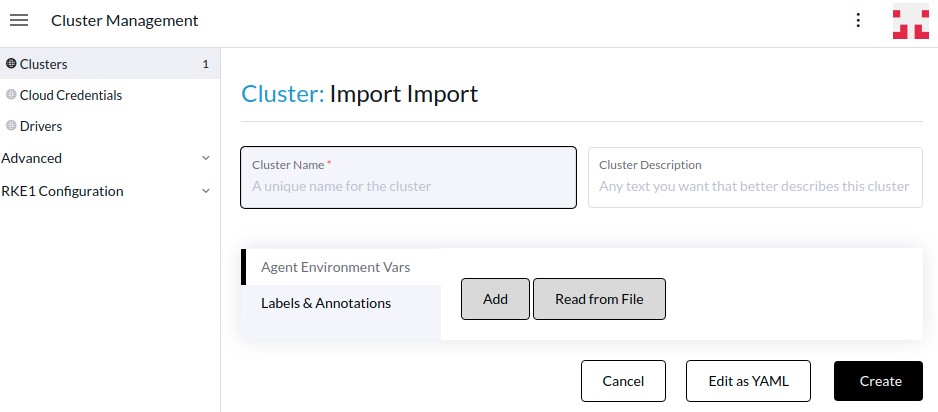

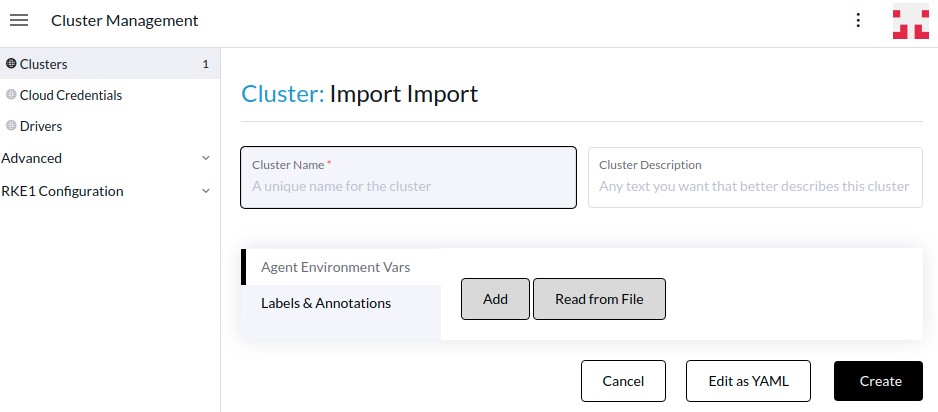

[Backport v2.7.6] Node gets kicked out of Cluster after snapshots are restored.

|

kind/bug internal [zube]: To Test QA/S area/provisioning-v2 team/area2 regression JIRA

|

This is a backport issue for https://github.com/rancher/rancher/issues/42201, automatically created via rancherbot by @Sahota1225

Original issue description:

<!--------- For bugs and general issues --------->

**Setup**

Rancher version: 2.7.5

Downstream cluster: Custom cluster

Nodes: 3

CNI: Cilium

Kubernetes version: v1.25.7+rke2r1

**Describe the bug**

When restoring a snapshot on a custom cluster a node gets deleted from the cluster.

**To Reproduce**

1. Deploy a fresh RKE2 custom cluster

2. Take a snapshot

3. Restore snapshot

4. repeat steps 2-3 until the bug is hit. (usually 2 tries)

**Result**

A worker node gets deleted from the cluster.

**Expected Result**

All nodes remain in the cluster and the restore occurs properly.

**Screenshots**

<img width="1100" alt="image" src="https://github.com/rancher/dashboard/assets/136753565/3f0a0484-0733-4582-b47f-ede6da8053a7">

**Additional context**

Tried to reproduce this on 2.7.4 but was unable to do so after 5-10 restores

SURE-6669

|

1.0

|

[Backport v2.7.6] Node gets kicked out of Cluster after snapshots are restored. - This is a backport issue for https://github.com/rancher/rancher/issues/42201, automatically created via rancherbot by @Sahota1225

Original issue description:

<!--------- For bugs and general issues --------->

**Setup**

Rancher version: 2.7.5

Downstream cluster: Custom cluster

Nodes: 3

CNI: Cilium

Kubernetes version: v1.25.7+rke2r1

**Describe the bug**

When restoring a snapshot on a custom cluster a node gets deleted from the cluster.

**To Reproduce**

1. Deploy a fresh RKE2 custom cluster

2. Take a snapshot

3. Restore snapshot

4. repeat steps 2-3 until the bug is hit. (usually 2 tries)

**Result**

A worker node gets deleted from the cluster.

**Expected Result**

All nodes remain in the cluster and the restore occurs properly.

**Screenshots**

<img width="1100" alt="image" src="https://github.com/rancher/dashboard/assets/136753565/3f0a0484-0733-4582-b47f-ede6da8053a7">

**Additional context**

Tried to reproduce this on 2.7.4 but was unable to do so after 5-10 restores

SURE-6669

|

test

|

node gets kicked out of cluster after snapshots are restored this is a backport issue for automatically created via rancherbot by original issue description setup rancher version downstream cluster custom cluster nodes cni cilium kubernetes version describe the bug when restoring a snapshot on a custom cluster a node gets deleted from the cluster to reproduce deploy a fresh custom cluster take a snapshot restore snapshot repeat steps until the bug is hit usually tries result a worker node gets deleted from the cluster expected result all nodes remain in the cluster and the restore occurs properly screenshots img width alt image src additional context tried to reproduce this on but was unable to do so after restores sure

| 1

|

208,172

| 7,136,419,281

|

IssuesEvent

|

2018-01-23 07:00:49

|

wso2/testgrid

|

https://api.github.com/repos/wso2/testgrid

|

closed

|

Fix undefined error when navigating to test log view in web app

|

Priority/High Severity/Major Type/Bug

|

**Description:**

When navigating to the web app the following error is displayed.

```

react-dom.production.min.js:164 TypeError: Cannot read property 'infraParameters' of undefined

at t.value (TestRunView.js:116)

at l (react-dom.production.min.js:130)

at beginWork (react-dom.production.min.js:133)

at o (react-dom.production.min.js:161)

at s (react-dom.production.min.js:161)

at a (react-dom.production.min.js:162)

at C (react-dom.production.min.js:169)

at w (react-dom.production.min.js:168)

at p (react-dom.production.min.js:167)

at f (react-dom.production.min.js:165)

l @ react-dom.production.min.js:164

TestRunView.js:116 Uncaught (in promise) TypeError: Cannot read property 'infraParameters' of undefined

at t.value (TestRunView.js:116)

at l (react-dom.production.min.js:130)

at beginWork (react-dom.production.min.js:133)

at o (react-dom.production.min.js:161)

at s (react-dom.production.min.js:161)

at a (react-dom.production.min.js:162)

at C (react-dom.production.min.js:169)

at w (react-dom.production.min.js:168)

at p (react-dom.production.min.js:167)

at f (react-dom.production.min.js:165)

Failed to load resource: the server responded with a status of 404 ()

```

|

1.0

|

Fix undefined error when navigating to test log view in web app - **Description:**

When navigating to the web app the following error is displayed.

```

react-dom.production.min.js:164 TypeError: Cannot read property 'infraParameters' of undefined

at t.value (TestRunView.js:116)

at l (react-dom.production.min.js:130)

at beginWork (react-dom.production.min.js:133)

at o (react-dom.production.min.js:161)

at s (react-dom.production.min.js:161)

at a (react-dom.production.min.js:162)

at C (react-dom.production.min.js:169)

at w (react-dom.production.min.js:168)

at p (react-dom.production.min.js:167)

at f (react-dom.production.min.js:165)

l @ react-dom.production.min.js:164

TestRunView.js:116 Uncaught (in promise) TypeError: Cannot read property 'infraParameters' of undefined

at t.value (TestRunView.js:116)

at l (react-dom.production.min.js:130)

at beginWork (react-dom.production.min.js:133)

at o (react-dom.production.min.js:161)

at s (react-dom.production.min.js:161)

at a (react-dom.production.min.js:162)

at C (react-dom.production.min.js:169)

at w (react-dom.production.min.js:168)

at p (react-dom.production.min.js:167)

at f (react-dom.production.min.js:165)

Failed to load resource: the server responded with a status of 404 ()

```

|

non_test

|

fix undefined error when navigating to test log view in web app description when navigating to the web app the following error is displayed react dom production min js typeerror cannot read property infraparameters of undefined at t value testrunview js at l react dom production min js at beginwork react dom production min js at o react dom production min js at s react dom production min js at a react dom production min js at c react dom production min js at w react dom production min js at p react dom production min js at f react dom production min js l react dom production min js testrunview js uncaught in promise typeerror cannot read property infraparameters of undefined at t value testrunview js at l react dom production min js at beginwork react dom production min js at o react dom production min js at s react dom production min js at a react dom production min js at c react dom production min js at w react dom production min js at p react dom production min js at f react dom production min js failed to load resource the server responded with a status of

| 0

|

62,665

| 3,192,939,217

|

IssuesEvent

|

2015-09-30 00:18:43

|

fusioninventory/fusioninventory-for-glpi

|

https://api.github.com/repos/fusioninventory/fusioninventory-for-glpi

|

closed

|

Use device template when create device

|

Component: For junior contributor Priority: Normal Status: Closed Tracker: Feature

|

---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 985, http://forge.fusioninventory.org/issues/985

Original Date: 2011-06-27

---

Example for computer, create a computer with a template.

Manage it in rules import equipment:

Add action : "Template for new item" "is" "template xxxx"

When only device is created, we can use the template

|

1.0

|

Use device template when create device - ---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 985, http://forge.fusioninventory.org/issues/985

Original Date: 2011-06-27

---

Example for computer, create a computer with a template.

Manage it in rules import equipment:

Add action : "Template for new item" "is" "template xxxx"

When only device is created, we can use the template

|

non_test

|

use device template when create device author name david durieux ddurieux original redmine issue original date example for computer create a computer with a template manage it in rules import equipment add action template for new item is template xxxx when only device is created we can use the template

| 0

|

98,108

| 8,674,304,094

|

IssuesEvent

|

2018-11-30 07:00:56

|

humera987/FXLabs-Test-Automation

|

https://api.github.com/repos/humera987/FXLabs-Test-Automation

|

reopened

|

FXLabs Testing 30 : ApiV1RunsIdTestSuiteResponsesGetPathParamIdMysqlSqlInjectionTimebound

|

FXLabs Testing 30

|

Project : FXLabs Testing 30

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=YzgwMDExZjItZjU1NS00ZmFmLWE5ZmUtOTk1NWY2YjJlYTc4; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Fri, 30 Nov 2018 06:46:17 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/runs//test-suite-responses

Request :

Response :

{

"timestamp" : "2018-11-30T06:46:18.335+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/runs/test-suite-responses"

}

Logs :

Assertion [@ResponseTime < 7000 OR @ResponseTime > 10000] resolved-to [546 < 7000 OR 546 > 10000] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot ---

|

1.0

|

FXLabs Testing 30 : ApiV1RunsIdTestSuiteResponsesGetPathParamIdMysqlSqlInjectionTimebound - Project : FXLabs Testing 30

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=YzgwMDExZjItZjU1NS00ZmFmLWE5ZmUtOTk1NWY2YjJlYTc4; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Fri, 30 Nov 2018 06:46:17 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/runs//test-suite-responses

Request :

Response :

{

"timestamp" : "2018-11-30T06:46:18.335+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/runs/test-suite-responses"

}

Logs :

Assertion [@ResponseTime < 7000 OR @ResponseTime > 10000] resolved-to [546 < 7000 OR 546 > 10000] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot ---

|

test

|

fxlabs testing project fxlabs testing job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint request response timestamp status error not found message no message available path api api runs test suite responses logs assertion resolved to result assertion resolved to result fx bot

| 1

|

6,577

| 2,610,257,149

|

IssuesEvent

|

2015-02-26 19:22:05

|

chrsmith/dsdsdaadf

|

https://api.github.com/repos/chrsmith/dsdsdaadf

|

opened

|

深圳激光祛粉刺要几次搞定

|

auto-migrated Priority-Medium Type-Defect

|

```

深圳激光祛粉刺要几次搞定【深圳韩方科颜全国热线400-869-181

8,24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构��

�韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳�

��,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不

反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创��

�内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客�

��上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:39

|

1.0

|

深圳激光祛粉刺要几次搞定 - ```

深圳激光祛粉刺要几次搞定【深圳韩方科颜全国热线400-869-181

8,24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构��

�韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳�

��,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不

反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创��

�内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客�

��上的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:39

|

non_test

|

深圳激光祛粉刺要几次搞定 深圳激光祛粉刺要几次搞定【 , 】深圳韩方科颜专业祛痘连锁机构,机构�� �韩国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳� ��,韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不 反弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创�� �内专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客� ��上的痘痘。 original issue reported on code google com by szft com on may at

| 0

|

673,365

| 22,959,840,374

|

IssuesEvent

|

2022-07-19 14:33:07

|

kubernetes/ingress-nginx

|

https://api.github.com/repos/kubernetes/ingress-nginx

|

closed

|

Affinity setting affinity-canary-behavior: "legacy" doesn't fully restore old behavior

|

kind/bug lifecycle/rotten needs-triage needs-priority

|

**NGINX Ingress controller version**

v1.1.0

**Kubernetes version** (use `kubectl version`):

v1.21.2

**Environment**:

- **Cloud provider or hardware configuration**: AKS

- **OS** (e.g. from /etc/os-release): ubuntu

- **Kernel** (e.g. `uname -a`): 5.4.0-1062-azure

- **How was the ingress-nginx-controller installed**:

`kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.1.0/deploy/static/provider/cloud/deploy.yaml`

**What happened**:

Recently upgraded nginx, which changed the behavior of how the canary feature behaves together with cookie sessions affinity. In the past the session cookie was completely ignored by the canary, which means even if the user was already affinitized to a primary pod, using the header defined by canary-by-header or the cookie defined by canary-by-cookie, the user would go to the canary side.

As we use the canary feature mainly for internal testing, and additionally for selected users(we set the cookie for them), we depended on this behavior.

With the new release using affinity-canary-behavior: "legacy", if the user is already affinitized to a primary pod, it will completely ignore the canary header/cookie, until the session cookie is deleted/ expired, which is a breaking change for us, as it now requires manual deletion of the session cookie, to direct the user to the canary side.

**What you expected to happen**:

Using the affinity-canary-behavior: "legacy", restores the old behavior when the session cookie was completely ignored by the canary feature, so with the correct canary header/cookie the user goes to the canary side even if already affinitized to a primary pod.

Form the looks of it the change is caused by https://github.com/kubernetes/ingress-nginx/pull/7371/files#diff-1057b4fc96d635cc08eabd1301c6a4ab3bf3272ceb86667020ecc56d94d6c195R189 which doesn't consider if the legacy behavior is used

**How to reproduce it**:

Create a canary deployment for the echoserver that uses session affinity with the legacy flag and canary.

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-svc

spec:

replicas: 1

selector:

matchLabels:

app: http-svc

template:

metadata:

labels:

app: http-svc

spec:

containers:

- name: http-svc

image: k8s.gcr.io/e2e-test-images/echoserver:2.3

ports:

- containerPort: 8080

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

---

apiVersion: v1

kind: Service

metadata:

name: http-svc

labels:

app: http-svc

spec:

ports:

- port: 80

targetPort: 8080

protocol: TCP

name: http

selector:

app: http-svc

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-test

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/affinity: "cookie"

nginx.ingress.kubernetes.io/session-cookie-name: "route"

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

nginx.ingress.kubernetes.io/affinity-canary-behavior: "legacy"

spec:

rules:

- host: stickyingress.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: http-svc

port:

number: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-svc-canary

spec:

replicas: 1

selector:

matchLabels:

app: http-svc-canary

template:

metadata:

labels:

app: http-svc-canary

spec:

containers:

- name: http-svc-canary

image: k8s.gcr.io/e2e-test-images/echoserver:2.3

ports:

- containerPort: 8080

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

---

apiVersion: v1

kind: Service

metadata:

name: http-svc-canary

labels:

app: http-svc-canary

spec:

ports:

- port: 80

targetPort: 8080

protocol: TCP

name: http

selector:

app: http-svc-canary

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-test-canary

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "canary"

nginx.ingress.kubernetes.io/canary-by-cookie: "canary"

spec:

rules:

- host: stickyingress.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: http-svc-canary

port:

number: 80

```

Make a request to get the session cookie (using the ip of the nginx service here)

`curl -k -I https://51.136.77.203 -H "Host: stickyingress.example.com"`

`set-cookie: route=1645002633.401.440.174114|db2969d6c9db73733a7d888a6bd51c15; Expires=Fri, 18-Feb-22 09:10:32 GMT; Max-Age=172800; Path=/; Secure; HttpOnly`

Using the session cookie and canary header make a request, this should hit the canary, but the header is ignored:

`curl -k https://51.136.77.203 -H "Host: stickyingress.example.com" --cookie "route=1645000503.171.439.345197|db2969d6c9db73733a7d888a6bd51c15" -H "canary: always"`

`Hostname: http-svc-58dcbd68c4-lpkk7`

Make a request without the session cookie:

`curl -k https://51.136.77.203 -H "Host: stickyingress.example.com" -H "canary: always"`

`Hostname: http-svc-canary-5bbccbc7cd-c6s6p`

|

1.0

|

Affinity setting affinity-canary-behavior: "legacy" doesn't fully restore old behavior - **NGINX Ingress controller version**

v1.1.0

**Kubernetes version** (use `kubectl version`):

v1.21.2

**Environment**:

- **Cloud provider or hardware configuration**: AKS

- **OS** (e.g. from /etc/os-release): ubuntu

- **Kernel** (e.g. `uname -a`): 5.4.0-1062-azure

- **How was the ingress-nginx-controller installed**:

`kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.1.0/deploy/static/provider/cloud/deploy.yaml`

**What happened**:

Recently upgraded nginx, which changed the behavior of how the canary feature behaves together with cookie sessions affinity. In the past the session cookie was completely ignored by the canary, which means even if the user was already affinitized to a primary pod, using the header defined by canary-by-header or the cookie defined by canary-by-cookie, the user would go to the canary side.

As we use the canary feature mainly for internal testing, and additionally for selected users(we set the cookie for them), we depended on this behavior.

With the new release using affinity-canary-behavior: "legacy", if the user is already affinitized to a primary pod, it will completely ignore the canary header/cookie, until the session cookie is deleted/ expired, which is a breaking change for us, as it now requires manual deletion of the session cookie, to direct the user to the canary side.

**What you expected to happen**:

Using the affinity-canary-behavior: "legacy", restores the old behavior when the session cookie was completely ignored by the canary feature, so with the correct canary header/cookie the user goes to the canary side even if already affinitized to a primary pod.

Form the looks of it the change is caused by https://github.com/kubernetes/ingress-nginx/pull/7371/files#diff-1057b4fc96d635cc08eabd1301c6a4ab3bf3272ceb86667020ecc56d94d6c195R189 which doesn't consider if the legacy behavior is used

**How to reproduce it**:

Create a canary deployment for the echoserver that uses session affinity with the legacy flag and canary.

```

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-svc

spec:

replicas: 1

selector:

matchLabels:

app: http-svc

template:

metadata:

labels:

app: http-svc

spec:

containers:

- name: http-svc

image: k8s.gcr.io/e2e-test-images/echoserver:2.3

ports:

- containerPort: 8080

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

---

apiVersion: v1

kind: Service

metadata:

name: http-svc

labels:

app: http-svc

spec:

ports:

- port: 80

targetPort: 8080

protocol: TCP

name: http

selector:

app: http-svc

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-test

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/affinity: "cookie"

nginx.ingress.kubernetes.io/session-cookie-name: "route"

nginx.ingress.kubernetes.io/session-cookie-expires: "172800"

nginx.ingress.kubernetes.io/session-cookie-max-age: "172800"

nginx.ingress.kubernetes.io/affinity-canary-behavior: "legacy"

spec:

rules:

- host: stickyingress.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: http-svc

port:

number: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: http-svc-canary

spec:

replicas: 1

selector:

matchLabels:

app: http-svc-canary

template:

metadata:

labels:

app: http-svc-canary

spec:

containers:

- name: http-svc-canary

image: k8s.gcr.io/e2e-test-images/echoserver:2.3

ports:

- containerPort: 8080

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: POD_IP

valueFrom:

fieldRef:

fieldPath: status.podIP

---

apiVersion: v1

kind: Service

metadata:

name: http-svc-canary

labels:

app: http-svc-canary

spec:

ports:

- port: 80

targetPort: 8080

protocol: TCP

name: http

selector:

app: http-svc-canary

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-test-canary

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "canary"

nginx.ingress.kubernetes.io/canary-by-cookie: "canary"

spec:

rules:

- host: stickyingress.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: http-svc-canary

port:

number: 80

```

Make a request to get the session cookie (using the ip of the nginx service here)

`curl -k -I https://51.136.77.203 -H "Host: stickyingress.example.com"`

`set-cookie: route=1645002633.401.440.174114|db2969d6c9db73733a7d888a6bd51c15; Expires=Fri, 18-Feb-22 09:10:32 GMT; Max-Age=172800; Path=/; Secure; HttpOnly`

Using the session cookie and canary header make a request, this should hit the canary, but the header is ignored:

`curl -k https://51.136.77.203 -H "Host: stickyingress.example.com" --cookie "route=1645000503.171.439.345197|db2969d6c9db73733a7d888a6bd51c15" -H "canary: always"`

`Hostname: http-svc-58dcbd68c4-lpkk7`

Make a request without the session cookie:

`curl -k https://51.136.77.203 -H "Host: stickyingress.example.com" -H "canary: always"`

`Hostname: http-svc-canary-5bbccbc7cd-c6s6p`

|

non_test

|

affinity setting affinity canary behavior legacy doesn t fully restore old behavior nginx ingress controller version kubernetes version use kubectl version environment cloud provider or hardware configuration aks os e g from etc os release ubuntu kernel e g uname a azure how was the ingress nginx controller installed kubectl apply f what happened recently upgraded nginx which changed the behavior of how the canary feature behaves together with cookie sessions affinity in the past the session cookie was completely ignored by the canary which means even if the user was already affinitized to a primary pod using the header defined by canary by header or the cookie defined by canary by cookie the user would go to the canary side as we use the canary feature mainly for internal testing and additionally for selected users we set the cookie for them we depended on this behavior with the new release using affinity canary behavior legacy if the user is already affinitized to a primary pod it will completely ignore the canary header cookie until the session cookie is deleted expired which is a breaking change for us as it now requires manual deletion of the session cookie to direct the user to the canary side what you expected to happen using the affinity canary behavior legacy restores the old behavior when the session cookie was completely ignored by the canary feature so with the correct canary header cookie the user goes to the canary side even if already affinitized to a primary pod form the looks of it the change is caused by which doesn t consider if the legacy behavior is used how to reproduce it create a canary deployment for the echoserver that uses session affinity with the legacy flag and canary apiversion apps kind deployment metadata name http svc spec replicas selector matchlabels app http svc template metadata labels app http svc spec containers name http svc image gcr io test images echoserver ports containerport env name node name valuefrom fieldref fieldpath spec nodename name pod name valuefrom fieldref fieldpath metadata name name pod namespace valuefrom fieldref fieldpath metadata namespace name pod ip valuefrom fieldref fieldpath status podip apiversion kind service metadata name http svc labels app http svc spec ports port targetport protocol tcp name http selector app http svc apiversion networking io kind ingress metadata name nginx test annotations kubernetes io ingress class nginx nginx ingress kubernetes io affinity cookie nginx ingress kubernetes io session cookie name route nginx ingress kubernetes io session cookie expires nginx ingress kubernetes io session cookie max age nginx ingress kubernetes io affinity canary behavior legacy spec rules host stickyingress example com http paths path pathtype prefix backend service name http svc port number apiversion apps kind deployment metadata name http svc canary spec replicas selector matchlabels app http svc canary template metadata labels app http svc canary spec containers name http svc canary image gcr io test images echoserver ports containerport env name node name valuefrom fieldref fieldpath spec nodename name pod name valuefrom fieldref fieldpath metadata name name pod namespace valuefrom fieldref fieldpath metadata namespace name pod ip valuefrom fieldref fieldpath status podip apiversion kind service metadata name http svc canary labels app http svc canary spec ports port targetport protocol tcp name http selector app http svc canary apiversion networking io kind ingress metadata name nginx test canary annotations kubernetes io ingress class nginx nginx ingress kubernetes io canary true nginx ingress kubernetes io canary by header canary nginx ingress kubernetes io canary by cookie canary spec rules host stickyingress example com http paths path pathtype prefix backend service name http svc canary port number make a request to get the session cookie using the ip of the nginx service here curl k i h host stickyingress example com set cookie route expires fri feb gmt max age path secure httponly using the session cookie and canary header make a request this should hit the canary but the header is ignored curl k h host stickyingress example com cookie route h canary always hostname http svc make a request without the session cookie curl k h host stickyingress example com h canary always hostname http svc canary

| 0

|

7,215

| 4,820,744,018

|

IssuesEvent

|

2016-11-05 00:48:49

|

VirtualDisgrace/opencollar

|

https://api.github.com/repos/VirtualDisgrace/opencollar

|

closed

|

Restart stopped relays with reboot command

|

completed enhancement usability

|

We have already a command to restart all scripts (settings excluded) to get the collar refreshed without losing any settings.

`prefix reboot`

In a case where the rlv relay (oc_relay) got crashed by a very bad spamming object somehow, this sadly does not get the relay back to work.

So, lets add a `llSetScriptState("oc_relay",TRUE)` to the reboot command in case the relay was crashed before.

This way there is no need to reset manually but a command can do it with hassle and possible resetting all scripts which ends in setting reset as well and often needs also a relog into non rlv etc etc

|

True

|

Restart stopped relays with reboot command - We have already a command to restart all scripts (settings excluded) to get the collar refreshed without losing any settings.

`prefix reboot`

In a case where the rlv relay (oc_relay) got crashed by a very bad spamming object somehow, this sadly does not get the relay back to work.

So, lets add a `llSetScriptState("oc_relay",TRUE)` to the reboot command in case the relay was crashed before.

This way there is no need to reset manually but a command can do it with hassle and possible resetting all scripts which ends in setting reset as well and often needs also a relog into non rlv etc etc

|

non_test

|

restart stopped relays with reboot command we have already a command to restart all scripts settings excluded to get the collar refreshed without losing any settings prefix reboot in a case where the rlv relay oc relay got crashed by a very bad spamming object somehow this sadly does not get the relay back to work so lets add a llsetscriptstate oc relay true to the reboot command in case the relay was crashed before this way there is no need to reset manually but a command can do it with hassle and possible resetting all scripts which ends in setting reset as well and often needs also a relog into non rlv etc etc

| 0

|

676,969

| 23,144,870,194

|

IssuesEvent

|

2022-07-28 22:52:46

|

apcountryman/picolibrary

|

https://api.github.com/repos/apcountryman/picolibrary

|

closed

|

Add not connected generic error

|

priority-normal status-awaiting_review type-enhancement

|

Add operation timeout generic error (`::picolibrary::Generic_Error::NOT_CONNECTED`).

|

1.0

|

Add not connected generic error - Add operation timeout generic error (`::picolibrary::Generic_Error::NOT_CONNECTED`).

|

non_test

|

add not connected generic error add operation timeout generic error picolibrary generic error not connected

| 0

|

340,337

| 24,650,288,408

|

IssuesEvent

|

2022-10-17 18:02:38

|

PyFPDF/fpdf2

|

https://api.github.com/repos/PyFPDF/fpdf2

|

closed

|

Doc: provide an example on how to combine usages of PyPDF2 & fpdf2

|

documentation good first issue up-for-grabs hacktoberfest

|

We already have a page about [editing existing PDFs using `pdfrw` & `fpdf2`](https://pyfpdf.github.io/fpdf2/ExistingPDFs.html) in our documentation, and another one about [combining `borb` & `fpdf2`](https://pyfpdf.github.io/fpdf2/borb.html).

Given that [PyPDF2](https://github.com/py-pdf/PyPDF2) is a lot more popular that `pdfrw`, we could provide another documentation page about combining `PyPDF2` & `fpdf2`.

Practically, we could:

* copy `docs/ExistingPDFs.md` into `docs/CombineWithPyPDF2.md`, a new documentation page describing how to

- open a PDF file with `PyPDF2` and edit it with `fpdf2`

- create a PDF document `fpdf2` and edit it with `PyPDF2`

* rename `docs/ExistingPDFs.md` into `docs/CombineWithPdfrw.md`

* rename `docs/borb.md` into `docs/CombineWithBorb.md`

|

1.0

|

Doc: provide an example on how to combine usages of PyPDF2 & fpdf2 - We already have a page about [editing existing PDFs using `pdfrw` & `fpdf2`](https://pyfpdf.github.io/fpdf2/ExistingPDFs.html) in our documentation, and another one about [combining `borb` & `fpdf2`](https://pyfpdf.github.io/fpdf2/borb.html).

Given that [PyPDF2](https://github.com/py-pdf/PyPDF2) is a lot more popular that `pdfrw`, we could provide another documentation page about combining `PyPDF2` & `fpdf2`.

Practically, we could:

* copy `docs/ExistingPDFs.md` into `docs/CombineWithPyPDF2.md`, a new documentation page describing how to

- open a PDF file with `PyPDF2` and edit it with `fpdf2`

- create a PDF document `fpdf2` and edit it with `PyPDF2`

* rename `docs/ExistingPDFs.md` into `docs/CombineWithPdfrw.md`

* rename `docs/borb.md` into `docs/CombineWithBorb.md`

|

non_test

|

doc provide an example on how to combine usages of we already have a page about in our documentation and another one about given that is a lot more popular that pdfrw we could provide another documentation page about combining practically we could copy docs existingpdfs md into docs md a new documentation page describing how to open a pdf file with and edit it with create a pdf document and edit it with rename docs existingpdfs md into docs combinewithpdfrw md rename docs borb md into docs combinewithborb md

| 0

|

18,423

| 5,631,669,895

|

IssuesEvent

|

2017-04-05 15:00:54

|

TEAMMATES/teammates

|

https://api.github.com/repos/TEAMMATES/teammates

|

closed

|

Typos in CourseRoster, TeamEvalResult and FieldValidator

|

a-CodeQuality d.FirstTimers p.Low

|

Detail CheckStyle Report:

https://htmlpreview.github.io/?https://github.com/xpdavid/CS2103R-Report/blob/master/codingStandard/spelling/main.html

CourseRoster.java

Stuent is not a word according to provided dictionary

``` java

public CourseRoster(List<StudentAttributes> students, List<InstructorAttributes> instructors) {

populateStuentListByEmail(students);

populateInstructorListByEmail(instructors);

}

```

TeamEvalResult.java

Should be camel case sumOfPerceived

``` java

double sumOfperceived = sum(filteredPerceived);

double sumOfActual = sum(filteredSanitizedActual);

```

FieldValidator.java

Invalidty is not a word according to provided dictionary

``` java

public String getInvalidityInfoForTimeForVisibilityStartAndResultsPublish(Date visibilityStart,

Date resultsPublish) {

return getInvalidtyInfoForFirstTimeIsBeforeSecondTime(visibilityStart, resultsPublish,

SESSION_VISIBLE_TIME_FIELD_NAME, RESULTS_VISIBLE_TIME_FIELD_NAME);

}

```

|

1.0

|

Typos in CourseRoster, TeamEvalResult and FieldValidator - Detail CheckStyle Report:

https://htmlpreview.github.io/?https://github.com/xpdavid/CS2103R-Report/blob/master/codingStandard/spelling/main.html

CourseRoster.java

Stuent is not a word according to provided dictionary

``` java

public CourseRoster(List<StudentAttributes> students, List<InstructorAttributes> instructors) {

populateStuentListByEmail(students);

populateInstructorListByEmail(instructors);

}

```

TeamEvalResult.java

Should be camel case sumOfPerceived

``` java

double sumOfperceived = sum(filteredPerceived);

double sumOfActual = sum(filteredSanitizedActual);

```

FieldValidator.java

Invalidty is not a word according to provided dictionary

``` java

public String getInvalidityInfoForTimeForVisibilityStartAndResultsPublish(Date visibilityStart,

Date resultsPublish) {

return getInvalidtyInfoForFirstTimeIsBeforeSecondTime(visibilityStart, resultsPublish,

SESSION_VISIBLE_TIME_FIELD_NAME, RESULTS_VISIBLE_TIME_FIELD_NAME);

}

```

|

non_test

|

typos in courseroster teamevalresult and fieldvalidator detail checkstyle report courseroster java stuent is not a word according to provided dictionary java public courseroster list students list instructors populatestuentlistbyemail students populateinstructorlistbyemail instructors teamevalresult java should be camel case sumofperceived java double sumofperceived sum filteredperceived double sumofactual sum filteredsanitizedactual fieldvalidator java invalidty is not a word according to provided dictionary java public string getinvalidityinfofortimeforvisibilitystartandresultspublish date visibilitystart date resultspublish return getinvalidtyinfoforfirsttimeisbeforesecondtime visibilitystart resultspublish session visible time field name results visible time field name

| 0

|

160,914

| 13,803,338,829

|

IssuesEvent

|

2020-10-11 02:21:32

|

kevtan/CUDA

|

https://api.github.com/repos/kevtan/CUDA

|

opened

|

CUDA binaries

|

documentation

|

I know that the `nvcc` is, to some extent, just a wrapper around a typical C/C++ compiler like `gcc`. This means that the structure of the object files that are generated must be similar. So, what exactly is _different_ about CUDA binaries? Are there special sections? What are their meanings?

|

1.0

|

CUDA binaries - I know that the `nvcc` is, to some extent, just a wrapper around a typical C/C++ compiler like `gcc`. This means that the structure of the object files that are generated must be similar. So, what exactly is _different_ about CUDA binaries? Are there special sections? What are their meanings?

|

non_test

|

cuda binaries i know that the nvcc is to some extent just a wrapper around a typical c c compiler like gcc this means that the structure of the object files that are generated must be similar so what exactly is different about cuda binaries are there special sections what are their meanings

| 0

|

4,046

| 2,610,086,508

|

IssuesEvent

|

2015-02-26 18:26:17

|

chrsmith/dsdsdaadf

|

https://api.github.com/repos/chrsmith/dsdsdaadf

|

opened

|

深圳除青春痘费用

|

auto-migrated Priority-Medium Type-Defect

|

```

深圳除青春痘费用【深圳韩方科颜全国热线400-869-1818,24小时

QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘��

�——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方�

��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健

康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业��

�疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘�

��。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:12

|

1.0

|

深圳除青春痘费用 - ```

深圳除青春痘费用【深圳韩方科颜全国热线400-869-1818,24小时

QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘��

�——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方�

��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健

康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业��

�疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘�

��。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:12

|

non_test

|

深圳除青春痘费用 深圳除青春痘费用【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘�� �——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方� ��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健 康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业�� �疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘� ��。 original issue reported on code google com by szft com on may at

| 0

|

11,694

| 3,218,645,572

|

IssuesEvent

|

2015-10-08 03:21:36

|

SpongePowered/Sponge

|

https://api.github.com/repos/SpongePowered/Sponge

|

closed

|

PositionOutOfBoundsException

|

bug needs testing

|

I had this Crash now twice: http://pastebin.com/2ErVndLE

I know its not the latest Sponge Version, but as i am not exactly sure what it caused i cant reproduce it really.

I updated my Sponge Version now and i'll tell you if the crash persists.

Maybe you can figure something with that Stacktrace :)

As far as i can tell none of my Plugins are involved.

|

1.0

|

PositionOutOfBoundsException - I had this Crash now twice: http://pastebin.com/2ErVndLE

I know its not the latest Sponge Version, but as i am not exactly sure what it caused i cant reproduce it really.

I updated my Sponge Version now and i'll tell you if the crash persists.

Maybe you can figure something with that Stacktrace :)

As far as i can tell none of my Plugins are involved.

|

test

|

positionoutofboundsexception i had this crash now twice i know its not the latest sponge version but as i am not exactly sure what it caused i cant reproduce it really i updated my sponge version now and i ll tell you if the crash persists maybe you can figure something with that stacktrace as far as i can tell none of my plugins are involved

| 1

|

391,028

| 11,567,635,847

|

IssuesEvent

|

2020-02-20 14:38:14

|

bryntum/support

|

https://api.github.com/repos/bryntum/support

|

closed

|

Gantt React: Trial runtime error with development server

|

bug forum high-priority react

|

Reported here

https://www.bryntum.com/forum/viewtopic.php?f=52&t=13384

Gantt react javascript demos fail to run in browser with

```

npm install

npm run start

```

Runtime error occurs

```

TypeError [ERR_INVALID_ARG_TYPE]: The "path" argument must be of type string. Received type undefined

...

```

Build with `npm run start` works fine.

|

1.0

|

Gantt React: Trial runtime error with development server - Reported here

https://www.bryntum.com/forum/viewtopic.php?f=52&t=13384

Gantt react javascript demos fail to run in browser with

```

npm install

npm run start

```

Runtime error occurs

```

TypeError [ERR_INVALID_ARG_TYPE]: The "path" argument must be of type string. Received type undefined

...

```

Build with `npm run start` works fine.

|

non_test

|

gantt react trial runtime error with development server reported here gantt react javascript demos fail to run in browser with npm install npm run start runtime error occurs typeerror the path argument must be of type string received type undefined build with npm run start works fine

| 0

|

24,487

| 23,827,960,544

|

IssuesEvent

|

2022-09-05 16:35:53

|

ultorg/public_issues

|

https://api.github.com/repos/ultorg/public_issues

|

closed

|

Improve the UI around creation and organization of perspectives

|

usability

|

Currently, one of the very first things a new Ultorg user tends to encounter is the following annoying dialog box:

<img width="277" alt="newpersp" src="https://user-images.githubusercontent.com/886243/166390247-1361106e-4461-4446-b518-e692b8116d7d.png">

The default behavior should probably be changed so that the default action when double-clicking a new database table is to create a new perspective based on that database table. On the other hand, we don't want to pollute the folder hierarchy with dozens of new perspectives. Some UI design work is needed here to work out the best behavior.

The current UI also does not prompt the user where to save new perspectives, or what to name them, leading to a large number of perspectives with names like "Courses (2)", "Courses (3)" etc. gathering in the root folder by default:

<img width="210" alt="Folders" src="https://user-images.githubusercontent.com/886243/166390507-a1d57d39-0cdd-49a7-9795-968aa7f252ff.png">

Furthermore, most users have not discovered that it is possible to create user-defined folders of data sources and perspectives in the Folders hierarchy. (Currently requires right-clicking the parent folder and clicking "New Folder".)

These related usability problems will be fixed in a future Ultorg version.

|

True

|

Improve the UI around creation and organization of perspectives - Currently, one of the very first things a new Ultorg user tends to encounter is the following annoying dialog box:

<img width="277" alt="newpersp" src="https://user-images.githubusercontent.com/886243/166390247-1361106e-4461-4446-b518-e692b8116d7d.png">

The default behavior should probably be changed so that the default action when double-clicking a new database table is to create a new perspective based on that database table. On the other hand, we don't want to pollute the folder hierarchy with dozens of new perspectives. Some UI design work is needed here to work out the best behavior.

The current UI also does not prompt the user where to save new perspectives, or what to name them, leading to a large number of perspectives with names like "Courses (2)", "Courses (3)" etc. gathering in the root folder by default:

<img width="210" alt="Folders" src="https://user-images.githubusercontent.com/886243/166390507-a1d57d39-0cdd-49a7-9795-968aa7f252ff.png">

Furthermore, most users have not discovered that it is possible to create user-defined folders of data sources and perspectives in the Folders hierarchy. (Currently requires right-clicking the parent folder and clicking "New Folder".)

These related usability problems will be fixed in a future Ultorg version.

|

non_test

|

improve the ui around creation and organization of perspectives currently one of the very first things a new ultorg user tends to encounter is the following annoying dialog box img width alt newpersp src the default behavior should probably be changed so that the default action when double clicking a new database table is to create a new perspective based on that database table on the other hand we don t want to pollute the folder hierarchy with dozens of new perspectives some ui design work is needed here to work out the best behavior the current ui also does not prompt the user where to save new perspectives or what to name them leading to a large number of perspectives with names like courses courses etc gathering in the root folder by default img width alt folders src furthermore most users have not discovered that it is possible to create user defined folders of data sources and perspectives in the folders hierarchy currently requires right clicking the parent folder and clicking new folder these related usability problems will be fixed in a future ultorg version

| 0

|

49,010

| 10,314,354,937

|

IssuesEvent

|

2019-08-30 03:07:10

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

Failing test: Firefox XPack UI Functional Tests.x-pack/test/functional/apps/code/file_tree·ts - Code File Tree Click file/directory on the file tree

|

Team:Code failed-test skipped-test

|

A test failed on a tracked branch

```

Error: retry.tryForTime timeout: Error: expected false to be truthy

at Assertion.assert (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/packages/kbn-expect/expect.js:100:11)

at Assertion.ok (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/packages/kbn-expect/expect.js:119:8)

at ok (test/functional/apps/code/file_tree.ts:108:11)

at process._tickCallback (internal/process/next_tick.js:68:7)

at lastError (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/test/common/services/retry/retry_for_success.ts:28:9)

at onFailure (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/test/common/services/retry/retry_for_success.ts:68:13)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+master/JOB=x-pack-firefoxSmoke,node=linux-immutable/1611/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Firefox XPack UI Functional Tests.x-pack/test/functional/apps/code/file_tree·ts","test.name":"Code File Tree Click file/directory on the file tree","test.failCount":5}} -->

|

1.0

|

Failing test: Firefox XPack UI Functional Tests.x-pack/test/functional/apps/code/file_tree·ts - Code File Tree Click file/directory on the file tree - A test failed on a tracked branch

```

Error: retry.tryForTime timeout: Error: expected false to be truthy

at Assertion.assert (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/packages/kbn-expect/expect.js:100:11)

at Assertion.ok (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/packages/kbn-expect/expect.js:119:8)

at ok (test/functional/apps/code/file_tree.ts:108:11)

at process._tickCallback (internal/process/next_tick.js:68:7)

at lastError (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/test/common/services/retry/retry_for_success.ts:28:9)

at onFailure (/var/lib/jenkins/workspace/elastic+kibana+master/JOB/x-pack-firefoxSmoke/node/linux-immutable/kibana/test/common/services/retry/retry_for_success.ts:68:13)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+master/JOB=x-pack-firefoxSmoke,node=linux-immutable/1611/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Firefox XPack UI Functional Tests.x-pack/test/functional/apps/code/file_tree·ts","test.name":"Code File Tree Click file/directory on the file tree","test.failCount":5}} -->

|

non_test

|

failing test firefox xpack ui functional tests x pack test functional apps code file tree·ts code file tree click file directory on the file tree a test failed on a tracked branch error retry tryfortime timeout error expected false to be truthy at assertion assert var lib jenkins workspace elastic kibana master job x pack firefoxsmoke node linux immutable kibana packages kbn expect expect js at assertion ok var lib jenkins workspace elastic kibana master job x pack firefoxsmoke node linux immutable kibana packages kbn expect expect js at ok test functional apps code file tree ts at process tickcallback internal process next tick js at lasterror var lib jenkins workspace elastic kibana master job x pack firefoxsmoke node linux immutable kibana test common services retry retry for success ts at onfailure var lib jenkins workspace elastic kibana master job x pack firefoxsmoke node linux immutable kibana test common services retry retry for success ts first failure

| 0

|

253,694

| 27,300,796,536

|

IssuesEvent

|

2023-02-24 01:38:48

|

panasalap/linux-4.19.72_1

|

https://api.github.com/repos/panasalap/linux-4.19.72_1

|

closed

|

CVE-2021-38300 (High) detected in kernelv4.19.76 - autoclosed

|

security vulnerability

|

## CVE-2021-38300 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kernelv4.19.76</b></p></summary>

<p>

<p>Our patched kernel sources. This repository is generated from https://github.com/openSUSE/kernel-source</p>

<p>Library home page: <a href=https://github.com/openSUSE/kernel.git>https://github.com/openSUSE/kernel.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/linux-4.19.72/commit/c5a08fe8179013aad614165d792bc5b436591df6">c5a08fe8179013aad614165d792bc5b436591df6</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/arch/mips/net/bpf_jit.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/arch/mips/net/bpf_jit.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

arch/mips/net/bpf_jit.c in the Linux kernel before 5.4.10 can generate undesirable machine code when transforming unprivileged cBPF programs, allowing execution of arbitrary code within the kernel context. This occurs because conditional branches can exceed the 128 KB limit of the MIPS architecture.

<p>Publish Date: 2021-09-20

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-38300>CVE-2021-38300</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2021-38300">https://www.linuxkernelcves.com/cves/CVE-2021-38300</a></p>

<p>Release Date: 2021-09-20</p>

<p>Fix Resolution: v4.14.251,v4.19.211,v5.4.153,v5.10.71,v5.14.10,v5.15-rc4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-38300 (High) detected in kernelv4.19.76 - autoclosed - ## CVE-2021-38300 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kernelv4.19.76</b></p></summary>

<p>

<p>Our patched kernel sources. This repository is generated from https://github.com/openSUSE/kernel-source</p>

<p>Library home page: <a href=https://github.com/openSUSE/kernel.git>https://github.com/openSUSE/kernel.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/linux-4.19.72/commit/c5a08fe8179013aad614165d792bc5b436591df6">c5a08fe8179013aad614165d792bc5b436591df6</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/arch/mips/net/bpf_jit.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/arch/mips/net/bpf_jit.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

arch/mips/net/bpf_jit.c in the Linux kernel before 5.4.10 can generate undesirable machine code when transforming unprivileged cBPF programs, allowing execution of arbitrary code within the kernel context. This occurs because conditional branches can exceed the 128 KB limit of the MIPS architecture.

<p>Publish Date: 2021-09-20

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-38300>CVE-2021-38300</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2021-38300">https://www.linuxkernelcves.com/cves/CVE-2021-38300</a></p>

<p>Release Date: 2021-09-20</p>

<p>Fix Resolution: v4.14.251,v4.19.211,v5.4.153,v5.10.71,v5.14.10,v5.15-rc4</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in autoclosed cve high severity vulnerability vulnerable library our patched kernel sources this repository is generated from library home page a href found in head commit a href found in base branch master vulnerable source files arch mips net bpf jit c arch mips net bpf jit c vulnerability details arch mips net bpf jit c in the linux kernel before can generate undesirable machine code when transforming unprivileged cbpf programs allowing execution of arbitrary code within the kernel context this occurs because conditional branches can exceed the kb limit of the mips architecture publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

54,429

| 6,387,430,860

|

IssuesEvent

|

2017-08-03 13:39:55

|

QubesOS/updates-status

|

https://api.github.com/repos/QubesOS/updates-status

|

closed

|

artwork v4.0.0 (r4.0)

|

r4.0-dom0-testing

|

Update of artwork to v4.0.0 for Qubes r4.0, see comments below for details.

Built from: https://github.com/QubesOS/qubes-artwork/commit/93b71b6fd75db319aaa7452e6e089985601e40d5

[Changes since previous version](https://github.com/QubesOS/qubes-artwork/compare/v3.2.0...v4.0.0):

QubesOS/qubes-artwork@93b71b6 version 4.0.0

QubesOS/qubes-artwork@cfc2a53 travis: update for Qubes 4.0

QubesOS/qubes-artwork@50500b0 Switch mkpadlock script to python 3, adjust build deps

QubesOS/qubes-artwork@067f0ed travis: drop debootstrap workaround

QubesOS/qubes-artwork@9780a95 Renamed imgconverter module

Referenced issues:

If you're release manager, you can issue GPG-inline signed command:

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 current repo` (available 7 days from now)

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 current (dists) repo`, you can choose subset of distributions, like `vm-fc24 vm-fc25` (available 7 days from now)

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 security-testing repo`

Above commands will work only if packages in current-testing repository were built from given commit (i.e. no new version superseded it).

|

1.0

|

artwork v4.0.0 (r4.0) - Update of artwork to v4.0.0 for Qubes r4.0, see comments below for details.

Built from: https://github.com/QubesOS/qubes-artwork/commit/93b71b6fd75db319aaa7452e6e089985601e40d5

[Changes since previous version](https://github.com/QubesOS/qubes-artwork/compare/v3.2.0...v4.0.0):

QubesOS/qubes-artwork@93b71b6 version 4.0.0

QubesOS/qubes-artwork@cfc2a53 travis: update for Qubes 4.0

QubesOS/qubes-artwork@50500b0 Switch mkpadlock script to python 3, adjust build deps

QubesOS/qubes-artwork@067f0ed travis: drop debootstrap workaround

QubesOS/qubes-artwork@9780a95 Renamed imgconverter module

Referenced issues:

If you're release manager, you can issue GPG-inline signed command:

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 current repo` (available 7 days from now)

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 current (dists) repo`, you can choose subset of distributions, like `vm-fc24 vm-fc25` (available 7 days from now)

* `Upload artwork 93b71b6fd75db319aaa7452e6e089985601e40d5 r4.0 security-testing repo`

Above commands will work only if packages in current-testing repository were built from given commit (i.e. no new version superseded it).

|

test

|

artwork update of artwork to for qubes see comments below for details built from qubesos qubes artwork version qubesos qubes artwork travis update for qubes qubesos qubes artwork switch mkpadlock script to python adjust build deps qubesos qubes artwork travis drop debootstrap workaround qubesos qubes artwork renamed imgconverter module referenced issues if you re release manager you can issue gpg inline signed command upload artwork current repo available days from now upload artwork current dists repo you can choose subset of distributions like vm vm available days from now upload artwork security testing repo above commands will work only if packages in current testing repository were built from given commit i e no new version superseded it

| 1

|

241,458

| 20,142,853,302

|

IssuesEvent

|

2022-02-09 02:18:28

|

kubernetes/minikube

|

https://api.github.com/repos/kubernetes/minikube

|

reopened

|

Frequent test failures of `TestOffline`

|

priority/backlog kind/failing-test

|

This test has high flake rates for the following environments:

|Environment|Flake Rate (%)|

|---|---|

|[Docker_Linux_containerd](https://storage.googleapis.com/minikube-flake-rate/flake_chart.html?env=Docker_Linux_containerd&test=TestOffline)|27.59|

|

1.0

|

Frequent test failures of `TestOffline` - This test has high flake rates for the following environments:

|Environment|Flake Rate (%)|

|---|---|

|[Docker_Linux_containerd](https://storage.googleapis.com/minikube-flake-rate/flake_chart.html?env=Docker_Linux_containerd&test=TestOffline)|27.59|

|

test

|

frequent test failures of testoffline this test has high flake rates for the following environments environment flake rate

| 1

|

81,098

| 7,767,726,502

|

IssuesEvent

|

2018-06-03 10:06:30

|

NucleusPowered/Nucleus

|

https://api.github.com/repos/NucleusPowered/Nucleus

|

closed

|

Unable to delete nucleus-created world

|

needs testing pending information

|

Hello,