Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,706

| 2,685,500,691

|

IssuesEvent

|

2015-03-30 01:48:11

|

danielpclark/PolyBelongsTo

|

https://api.github.com/repos/danielpclark/PolyBelongsTo

|

closed

|

Optimize deep duplication method (unaffecting code?).

|

provide additional tests refactor

|

This doesn't affect the current test during the circular record test:

```ruby

unless singleton_record.include?(item_to_build_on)

```

https://github.com/danielpclark/PolyBelongsTo/blob/master/lib/poly_belongs_to/dup.rb#L25

|

1.0

|

Optimize deep duplication method (unaffecting code?). - This doesn't affect the current test during the circular record test:

```ruby

unless singleton_record.include?(item_to_build_on)

```

https://github.com/danielpclark/PolyBelongsTo/blob/master/lib/poly_belongs_to/dup.rb#L25

|

test

|

optimize deep duplication method unaffecting code this doesn t affect the current test during the circular record test ruby unless singleton record include item to build on

| 1

|

83,302

| 7,868,228,729

|

IssuesEvent

|

2018-06-23 18:49:11

|

brave/browser-laptop

|

https://api.github.com/repos/brave/browser-laptop

|

closed

|

URLbar paste-and-search should be disabled till Tor connection is successfully created

|

OS/Windows QA/test-plan-specified bug feature/tor priority/P3 release-notes/exclude

|

<!--

Have you searched for similar issues? We have received a lot of feedback and bug reports that we have closed as duplicates. Before submitting this issue, please visit our community site for common ones: https://community.brave.com/c/common-issues

-->

### Description

URLbar should be disabled till Tor connection is successfully created

### Test plan / Steps to Reproduce

<!--

Please add a series of steps to reproduce the problem. See https://stackoverflow.com/help/mcve for in depth information on how to create a minimal, complete, and verifiable example.

-->

1. Clean install 0.23.14

2. Launch browser and open a new tor private tab

3. While connection is being established, right click on URL bar and paste and search

4. Tries to load search while TOR connection is being created

5. Connection fails, doesn't load search result and throws about:error page

6. Open a new tor tab doesn't connect but log says `Bootstrap 100% Done`

**Actual result:**

Paste and search from context menu in URL while establishing tor connection breaks flow

https://youtu.be/RugK4-4MFVs

Tor Log shows

```

Jun 22 16:36:49.000 [notice] Have tried resolving or connecting to address '[scrubbed]' at 3 different places. Giving up.

```

**Expected result:**

URL bar should just show connection info and be in read only state while Tor connection is being established. If unsuccessful, clicking on `Disable Tor` button should activate URL bar

**Reproduces how often:**

100%

### Brave Version

**about:brave info:**

Brave | 0.23.14

-- | --

V8 | 6.7.288.46

rev | f4da855

Muon | 7.1.1

OS Release | 10.0.17134

Update Channel | Beta

OS Architecture | x64

OS Platform | Microsoft Windows

Node.js | 7.9.0

Brave Sync | v1.4.2

libchromiumcontent | 67.0.3396.87

**Reproducible on current live release:**

N/A

### Additional Information

cc: @kjozwiak @LaurenWags @btlechowski @GeetaSarvadnya

|

1.0

|

URLbar paste-and-search should be disabled till Tor connection is successfully created - <!--

Have you searched for similar issues? We have received a lot of feedback and bug reports that we have closed as duplicates. Before submitting this issue, please visit our community site for common ones: https://community.brave.com/c/common-issues

-->

### Description

URLbar should be disabled till Tor connection is successfully created

### Test plan / Steps to Reproduce

<!--

Please add a series of steps to reproduce the problem. See https://stackoverflow.com/help/mcve for in depth information on how to create a minimal, complete, and verifiable example.

-->

1. Clean install 0.23.14

2. Launch browser and open a new tor private tab

3. While connection is being established, right click on URL bar and paste and search

4. Tries to load search while TOR connection is being created

5. Connection fails, doesn't load search result and throws about:error page

6. Open a new tor tab doesn't connect but log says `Bootstrap 100% Done`

**Actual result:**

Paste and search from context menu in URL while establishing tor connection breaks flow

https://youtu.be/RugK4-4MFVs

Tor Log shows

```

Jun 22 16:36:49.000 [notice] Have tried resolving or connecting to address '[scrubbed]' at 3 different places. Giving up.

```

**Expected result:**

URL bar should just show connection info and be in read only state while Tor connection is being established. If unsuccessful, clicking on `Disable Tor` button should activate URL bar

**Reproduces how often:**

100%

### Brave Version

**about:brave info:**

Brave | 0.23.14

-- | --

V8 | 6.7.288.46

rev | f4da855

Muon | 7.1.1

OS Release | 10.0.17134

Update Channel | Beta

OS Architecture | x64

OS Platform | Microsoft Windows

Node.js | 7.9.0

Brave Sync | v1.4.2

libchromiumcontent | 67.0.3396.87

**Reproducible on current live release:**

N/A

### Additional Information

cc: @kjozwiak @LaurenWags @btlechowski @GeetaSarvadnya

|

test

|

urlbar paste and search should be disabled till tor connection is successfully created have you searched for similar issues we have received a lot of feedback and bug reports that we have closed as duplicates before submitting this issue please visit our community site for common ones description urlbar should be disabled till tor connection is successfully created test plan steps to reproduce please add a series of steps to reproduce the problem see for in depth information on how to create a minimal complete and verifiable example clean install launch browser and open a new tor private tab while connection is being established right click on url bar and paste and search tries to load search while tor connection is being created connection fails doesn t load search result and throws about error page open a new tor tab doesn t connect but log says bootstrap done actual result paste and search from context menu in url while establishing tor connection breaks flow tor log shows jun have tried resolving or connecting to address at different places giving up expected result url bar should just show connection info and be in read only state while tor connection is being established if unsuccessful clicking on disable tor button should activate url bar reproduces how often brave version about brave info brave rev muon os release update channel beta os architecture os platform microsoft windows node js brave sync libchromiumcontent reproducible on current live release n a additional information cc kjozwiak laurenwags btlechowski geetasarvadnya

| 1

|

57,163

| 6,539,617,661

|

IssuesEvent

|

2017-09-01 12:11:05

|

opensistemas-hub/osbrain

|

https://api.github.com/repos/opensistemas-hub/osbrain

|

opened

|

Be a bit more lenient in test_timer.py

|

test

|

https://travis-ci.org/opensistemas-hub/osbrain/jobs/270799690

Instead of sleeping for 0.9, sleep for 0.5?

See if there are other timer tests that could have the same problem.

|

1.0

|

Be a bit more lenient in test_timer.py - https://travis-ci.org/opensistemas-hub/osbrain/jobs/270799690

Instead of sleeping for 0.9, sleep for 0.5?

See if there are other timer tests that could have the same problem.

|

test

|

be a bit more lenient in test timer py instead of sleeping for sleep for see if there are other timer tests that could have the same problem

| 1

|

319,466

| 27,374,728,196

|

IssuesEvent

|

2023-02-28 04:22:05

|

prgrms-web-devcourse/Team-JJINSA-HyperLink-BE

|

https://api.github.com/repos/prgrms-web-devcourse/Team-JJINSA-HyperLink-BE

|

closed

|

Content, Creator table 변경에 따른 코드 수정

|

Test Fix

|

- content, creator table category FK 컬럼 추가에 따른 코드 수정 (테스트 코드, 엔티티 생성자)

|

1.0

|

Content, Creator table 변경에 따른 코드 수정 - - content, creator table category FK 컬럼 추가에 따른 코드 수정 (테스트 코드, 엔티티 생성자)

|

test

|

content creator table 변경에 따른 코드 수정 content creator table category fk 컬럼 추가에 따른 코드 수정 테스트 코드 엔티티 생성자

| 1

|

239,142

| 19,823,492,047

|

IssuesEvent

|

2022-01-20 01:57:57

|

elastic/elasticsearch

|

https://api.github.com/repos/elastic/elasticsearch

|

opened

|

[CI] Security Index upgrade failure during FullClusterRestart

|

:Core/Infra/Core >test-failure

|

The failing test is FullClusterRestartIT testApiKeySuperuser

This happens on my PR CI and related to my change.

But the underlying reason seems to be upgrade failure of security system index. Cluster log shows a NPE https://gradle-enterprise.elastic.co/s/wgya6tyniu7y2/console-log#L2024 for `AliasMetadata#isHidden` invocation and comparison.

I suspect it has something to do with #79512 and recent enforce of 7.last for 8.x upgrade.

**Build scan:**

https://gradle-enterprise.elastic.co/s/wgya6tyniu7y2/tests/:x-pack:qa:full-cluster-restart:v7.8.1%23upgradedClusterTest/org.elasticsearch.xpack.restart.FullClusterRestartIT/testApiKeySuperuser

**Reproduction line:**

`./gradlew ':x-pack:qa:full-cluster-restart:v7.8.1#upgradedClusterTest' -Dtests.class="org.elasticsearch.xpack.restart.FullClusterRestartIT" -Dtests.method="testApiKeySuperuser" -Dtests.seed=704325F309264EE2 -Dtests.bwc=true -Dtests.locale=fi -Dtests.timezone=America/Boise -Druntime.java=17`

**Applicable branches:**

master

**Reproduces locally?:**

Yes

**Failure history:**

https://gradle-enterprise.elastic.co/scans/tests?tests.container=org.elasticsearch.xpack.restart.FullClusterRestartIT&tests.test=testApiKeySuperuser

**Failure excerpt:**

```

org.elasticsearch.client.WarningFailureException: method [GET], host [http://127.0.0.1:42205], URI [.security/_search], status line [HTTP/1.1 200 OK]

{"took":2,"timed_out":false,"_shards":{"total":1,"successful":1,"skipped":0,"failed":0},"hits":{"total":{"value":4,"relation":"eq"},"max_score":1.0,"hits":[{"_index":".security-7","_id":"user-api_key_super_creator","_score":1.0,"_source":{"username":"api_key_super_creator","password":"$2a$10$OsBKVjcqgvziPdsewcOcmufqeJNjplujZ8NYFW6sjL2VXdVfTgpMe","roles":["superuser","monitoring_user"],"full_name":null,"email":null,"metadata":null,"enabled":true,"type":"user"}},{"_index":".security-7","_id":"_mHGdH4B2CIXTYmcz0_9","_score":1.0,"_source":{"doc_type":"api_key","creation_time":1642636693479,"expiration_time":null,"api_key_invalidated":false,"api_key_hash":"{PBKDF2}10000$v4R2re60QvaorlE+Cwl0MRq2uIE0rABYIrmBSMAxs4U=$yDhHqMHGzXtVgb4CPnGAdtKRMxP+nzpP0L4zdT45pPw=","role_descriptors":{},"limited_by_role_descriptors":{"superuser":{"cluster":["all"],"indices":[{"names":["*"],"privileges":["all"],"allow_restricted_indices":true}],"applications":[{"application":"*","privileges":["*"],"resources":["*"]}],"run_as":["*"],"metadata":{"_reserved":true},"type":"role"},"monitoring_user":{"cluster":["cluster:monitor/main","cluster:monitor/xpack/info","cluster:monitor/remote/info"],"indices":[{"names":[".monitoring-*"],"privileges":["read","read_cross_cluster"],"allow_restricted_indices":false}],"applications":[{"application":"kibana-*","privileges":["reserved_monitoring"],"resources":["*"]}],"run_as":[],"metadata":{"_reserved":true},"type":"role"}},"name":"super_legacy_key","version":7080199,"creator":{"principal":"api_key_super_creator","metadata":{},"realm":"default_native","realm_type":"native"}}},{"_index":".security-7","_id":"9aTGdH4B8Erq23pH0QLn","_score":1.0,"_source":{

"doc_type": "foo"

}},{"_index":".security-7","_id":"AGHGdH4B2CIXTYmc1FBS","_score":1.0,"_source":{"doc_type":"api_key","creation_time":1642636694588,"expiration_time":null,"api_key_invalidated":false,"api_key_hash":"{PBKDF2}10000$Uj+vKfhGVDJ+0dM+YBDyJIsC2hSFGXCqojoMQ6Ens9E=$/8X0Dup+8cwgm1L5d5zNHtUPoDG1fV1WCPhcPu1p4yg=","role_descriptors":{"r":{"cluster":["all"],"indices":[{"names":["*"],"privileges":["all"],"allow_restricted_indices":false}],"applications":[],"run_as":[],"metadata":{},"type":"role"}},"limited_by_role_descriptors":{"_es_test_root":{"cluster":["ALL"],"indices":[{"names":["*"],"privileges":["ALL"],"allow_restricted_indices":true}],"applications":[{"application":"*","privileges":["*"],"resources":["*"]}],"run_as":["*"],"metadata":{},"type":"role"}},"name":"key-1","version":7080199,"creator":{"principal":"test_user","metadata":{},"realm":"default_file","realm_type":"file"}}}]}}

at __randomizedtesting.SeedInfo.seed([704325F309264EE2:478E48673AE6CF31]:0)

at org.elasticsearch.client.RestClient.convertResponse(RestClient.java:342)

at org.elasticsearch.client.RestClient.performRequest(RestClient.java:312)

at org.elasticsearch.client.RestClient.performRequest(RestClient.java:287)

at org.elasticsearch.xpack.restart.FullClusterRestartIT.testApiKeySuperuser(FullClusterRestartIT.java:421)

at jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(NativeMethodAccessorImpl.java:-2)

at jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:77)

at jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:568)

at com.carrotsearch.randomizedtesting.RandomizedRunner.invoke(RandomizedRunner.java:1758)

at com.carrotsearch.randomizedtesting.RandomizedRunner$8.evaluate(RandomizedRunner.java:946)

at com.carrotsearch.randomizedtesting.RandomizedRunner$9.evaluate(RandomizedRunner.java:982)

at com.carrotsearch.randomizedtesting.RandomizedRunner$10.evaluate(RandomizedRunner.java:996)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleSetupTeardownChained$1.evaluate(TestRuleSetupTeardownChained.java:44)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at org.apache.lucene.util.TestRuleThreadAndTestName$1.evaluate(TestRuleThreadAndTestName.java:45)

at org.apache.lucene.util.TestRuleIgnoreAfterMaxFailures$1.evaluate(TestRuleIgnoreAfterMaxFailures.java:60)

at org.apache.lucene.util.TestRuleMarkFailure$1.evaluate(TestRuleMarkFailure.java:44)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$StatementRunner.run(ThreadLeakControl.java:375)

at com.carrotsearch.randomizedtesting.ThreadLeakControl.forkTimeoutingTask(ThreadLeakControl.java:824)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$3.evaluate(ThreadLeakControl.java:475)

at com.carrotsearch.randomizedtesting.RandomizedRunner.runSingleTest(RandomizedRunner.java:955)

at com.carrotsearch.randomizedtesting.RandomizedRunner$5.evaluate(RandomizedRunner.java:840)

at com.carrotsearch.randomizedtesting.RandomizedRunner$6.evaluate(RandomizedRunner.java:891)

at com.carrotsearch.randomizedtesting.RandomizedRunner$7.evaluate(RandomizedRunner.java:902)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleStoreClassName$1.evaluate(TestRuleStoreClassName.java:38)

at com.carrotsearch.randomizedtesting.rules.NoShadowingOrOverridesOnMethodsRule$1.evaluate(NoShadowingOrOverridesOnMethodsRule.java:40)

at com.carrotsearch.randomizedtesting.rules.NoShadowingOrOverridesOnMethodsRule$1.evaluate(NoShadowingOrOverridesOnMethodsRule.java:40)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleAssertionsRequired$1.evaluate(TestRuleAssertionsRequired.java:53)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at org.apache.lucene.util.TestRuleMarkFailure$1.evaluate(TestRuleMarkFailure.java:44)

at org.apache.lucene.util.TestRuleIgnoreAfterMaxFailures$1.evaluate(TestRuleIgnoreAfterMaxFailures.java:60)

at org.apache.lucene.util.TestRuleIgnoreTestSuites$1.evaluate(TestRuleIgnoreTestSuites.java:47)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$StatementRunner.run(ThreadLeakControl.java:375)

at com.carrotsearch.randomizedtesting.ThreadLeakControl.lambda$forkTimeoutingTask$0(ThreadLeakControl.java:831)

at java.lang.Thread.run(Thread.java:833)

```

|

1.0

|

[CI] Security Index upgrade failure during FullClusterRestart - The failing test is FullClusterRestartIT testApiKeySuperuser

This happens on my PR CI and related to my change.

But the underlying reason seems to be upgrade failure of security system index. Cluster log shows a NPE https://gradle-enterprise.elastic.co/s/wgya6tyniu7y2/console-log#L2024 for `AliasMetadata#isHidden` invocation and comparison.

I suspect it has something to do with #79512 and recent enforce of 7.last for 8.x upgrade.

**Build scan:**

https://gradle-enterprise.elastic.co/s/wgya6tyniu7y2/tests/:x-pack:qa:full-cluster-restart:v7.8.1%23upgradedClusterTest/org.elasticsearch.xpack.restart.FullClusterRestartIT/testApiKeySuperuser

**Reproduction line:**

`./gradlew ':x-pack:qa:full-cluster-restart:v7.8.1#upgradedClusterTest' -Dtests.class="org.elasticsearch.xpack.restart.FullClusterRestartIT" -Dtests.method="testApiKeySuperuser" -Dtests.seed=704325F309264EE2 -Dtests.bwc=true -Dtests.locale=fi -Dtests.timezone=America/Boise -Druntime.java=17`

**Applicable branches:**

master

**Reproduces locally?:**

Yes

**Failure history:**

https://gradle-enterprise.elastic.co/scans/tests?tests.container=org.elasticsearch.xpack.restart.FullClusterRestartIT&tests.test=testApiKeySuperuser

**Failure excerpt:**

```

org.elasticsearch.client.WarningFailureException: method [GET], host [http://127.0.0.1:42205], URI [.security/_search], status line [HTTP/1.1 200 OK]

{"took":2,"timed_out":false,"_shards":{"total":1,"successful":1,"skipped":0,"failed":0},"hits":{"total":{"value":4,"relation":"eq"},"max_score":1.0,"hits":[{"_index":".security-7","_id":"user-api_key_super_creator","_score":1.0,"_source":{"username":"api_key_super_creator","password":"$2a$10$OsBKVjcqgvziPdsewcOcmufqeJNjplujZ8NYFW6sjL2VXdVfTgpMe","roles":["superuser","monitoring_user"],"full_name":null,"email":null,"metadata":null,"enabled":true,"type":"user"}},{"_index":".security-7","_id":"_mHGdH4B2CIXTYmcz0_9","_score":1.0,"_source":{"doc_type":"api_key","creation_time":1642636693479,"expiration_time":null,"api_key_invalidated":false,"api_key_hash":"{PBKDF2}10000$v4R2re60QvaorlE+Cwl0MRq2uIE0rABYIrmBSMAxs4U=$yDhHqMHGzXtVgb4CPnGAdtKRMxP+nzpP0L4zdT45pPw=","role_descriptors":{},"limited_by_role_descriptors":{"superuser":{"cluster":["all"],"indices":[{"names":["*"],"privileges":["all"],"allow_restricted_indices":true}],"applications":[{"application":"*","privileges":["*"],"resources":["*"]}],"run_as":["*"],"metadata":{"_reserved":true},"type":"role"},"monitoring_user":{"cluster":["cluster:monitor/main","cluster:monitor/xpack/info","cluster:monitor/remote/info"],"indices":[{"names":[".monitoring-*"],"privileges":["read","read_cross_cluster"],"allow_restricted_indices":false}],"applications":[{"application":"kibana-*","privileges":["reserved_monitoring"],"resources":["*"]}],"run_as":[],"metadata":{"_reserved":true},"type":"role"}},"name":"super_legacy_key","version":7080199,"creator":{"principal":"api_key_super_creator","metadata":{},"realm":"default_native","realm_type":"native"}}},{"_index":".security-7","_id":"9aTGdH4B8Erq23pH0QLn","_score":1.0,"_source":{

"doc_type": "foo"

}},{"_index":".security-7","_id":"AGHGdH4B2CIXTYmc1FBS","_score":1.0,"_source":{"doc_type":"api_key","creation_time":1642636694588,"expiration_time":null,"api_key_invalidated":false,"api_key_hash":"{PBKDF2}10000$Uj+vKfhGVDJ+0dM+YBDyJIsC2hSFGXCqojoMQ6Ens9E=$/8X0Dup+8cwgm1L5d5zNHtUPoDG1fV1WCPhcPu1p4yg=","role_descriptors":{"r":{"cluster":["all"],"indices":[{"names":["*"],"privileges":["all"],"allow_restricted_indices":false}],"applications":[],"run_as":[],"metadata":{},"type":"role"}},"limited_by_role_descriptors":{"_es_test_root":{"cluster":["ALL"],"indices":[{"names":["*"],"privileges":["ALL"],"allow_restricted_indices":true}],"applications":[{"application":"*","privileges":["*"],"resources":["*"]}],"run_as":["*"],"metadata":{},"type":"role"}},"name":"key-1","version":7080199,"creator":{"principal":"test_user","metadata":{},"realm":"default_file","realm_type":"file"}}}]}}

at __randomizedtesting.SeedInfo.seed([704325F309264EE2:478E48673AE6CF31]:0)

at org.elasticsearch.client.RestClient.convertResponse(RestClient.java:342)

at org.elasticsearch.client.RestClient.performRequest(RestClient.java:312)

at org.elasticsearch.client.RestClient.performRequest(RestClient.java:287)

at org.elasticsearch.xpack.restart.FullClusterRestartIT.testApiKeySuperuser(FullClusterRestartIT.java:421)

at jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(NativeMethodAccessorImpl.java:-2)

at jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:77)

at jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:568)

at com.carrotsearch.randomizedtesting.RandomizedRunner.invoke(RandomizedRunner.java:1758)

at com.carrotsearch.randomizedtesting.RandomizedRunner$8.evaluate(RandomizedRunner.java:946)

at com.carrotsearch.randomizedtesting.RandomizedRunner$9.evaluate(RandomizedRunner.java:982)

at com.carrotsearch.randomizedtesting.RandomizedRunner$10.evaluate(RandomizedRunner.java:996)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleSetupTeardownChained$1.evaluate(TestRuleSetupTeardownChained.java:44)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at org.apache.lucene.util.TestRuleThreadAndTestName$1.evaluate(TestRuleThreadAndTestName.java:45)

at org.apache.lucene.util.TestRuleIgnoreAfterMaxFailures$1.evaluate(TestRuleIgnoreAfterMaxFailures.java:60)

at org.apache.lucene.util.TestRuleMarkFailure$1.evaluate(TestRuleMarkFailure.java:44)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$StatementRunner.run(ThreadLeakControl.java:375)

at com.carrotsearch.randomizedtesting.ThreadLeakControl.forkTimeoutingTask(ThreadLeakControl.java:824)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$3.evaluate(ThreadLeakControl.java:475)

at com.carrotsearch.randomizedtesting.RandomizedRunner.runSingleTest(RandomizedRunner.java:955)

at com.carrotsearch.randomizedtesting.RandomizedRunner$5.evaluate(RandomizedRunner.java:840)

at com.carrotsearch.randomizedtesting.RandomizedRunner$6.evaluate(RandomizedRunner.java:891)

at com.carrotsearch.randomizedtesting.RandomizedRunner$7.evaluate(RandomizedRunner.java:902)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleStoreClassName$1.evaluate(TestRuleStoreClassName.java:38)

at com.carrotsearch.randomizedtesting.rules.NoShadowingOrOverridesOnMethodsRule$1.evaluate(NoShadowingOrOverridesOnMethodsRule.java:40)

at com.carrotsearch.randomizedtesting.rules.NoShadowingOrOverridesOnMethodsRule$1.evaluate(NoShadowingOrOverridesOnMethodsRule.java:40)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at org.apache.lucene.util.TestRuleAssertionsRequired$1.evaluate(TestRuleAssertionsRequired.java:53)

at org.apache.lucene.util.AbstractBeforeAfterRule$1.evaluate(AbstractBeforeAfterRule.java:43)

at org.apache.lucene.util.TestRuleMarkFailure$1.evaluate(TestRuleMarkFailure.java:44)

at org.apache.lucene.util.TestRuleIgnoreAfterMaxFailures$1.evaluate(TestRuleIgnoreAfterMaxFailures.java:60)

at org.apache.lucene.util.TestRuleIgnoreTestSuites$1.evaluate(TestRuleIgnoreTestSuites.java:47)

at com.carrotsearch.randomizedtesting.rules.StatementAdapter.evaluate(StatementAdapter.java:36)

at com.carrotsearch.randomizedtesting.ThreadLeakControl$StatementRunner.run(ThreadLeakControl.java:375)

at com.carrotsearch.randomizedtesting.ThreadLeakControl.lambda$forkTimeoutingTask$0(ThreadLeakControl.java:831)

at java.lang.Thread.run(Thread.java:833)

```

|

test

|

security index upgrade failure during fullclusterrestart the failing test is fullclusterrestartit testapikeysuperuser this happens on my pr ci and related to my change but the underlying reason seems to be upgrade failure of security system index cluster log shows a npe for aliasmetadata ishidden invocation and comparison i suspect it has something to do with and recent enforce of last for x upgrade build scan reproduction line gradlew x pack qa full cluster restart upgradedclustertest dtests class org elasticsearch xpack restart fullclusterrestartit dtests method testapikeysuperuser dtests seed dtests bwc true dtests locale fi dtests timezone america boise druntime java applicable branches master reproduces locally yes failure history failure excerpt org elasticsearch client warningfailureexception method host uri status line took timed out false shards total successful skipped failed hits total value relation eq max score hits full name null email null metadata null enabled true type user index security id score source doc type api key creation time expiration time null api key invalidated false api key hash role descriptors limited by role descriptors superuser cluster indices privileges allow restricted indices true applications resources run as metadata reserved true type role monitoring user cluster indices privileges allow restricted indices false applications resources run as metadata reserved true type role name super legacy key version creator principal api key super creator metadata realm default native realm type native index security id score source doc type foo index security id score source doc type api key creation time expiration time null api key invalidated false api key hash uj vkfhgvdj role descriptors r cluster indices privileges allow restricted indices false applications run as metadata type role limited by role descriptors es test root cluster indices privileges allow restricted indices true applications resources run as metadata type role name key version creator principal test user metadata realm default file realm type file at randomizedtesting seedinfo seed at org elasticsearch client restclient convertresponse restclient java at org elasticsearch client restclient performrequest restclient java at org elasticsearch client restclient performrequest restclient java at org elasticsearch xpack restart fullclusterrestartit testapikeysuperuser fullclusterrestartit java at jdk internal reflect nativemethodaccessorimpl nativemethodaccessorimpl java at jdk internal reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at jdk internal reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at com carrotsearch randomizedtesting randomizedrunner invoke randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at org apache lucene util testrulesetupteardownchained evaluate testrulesetupteardownchained java at org apache lucene util abstractbeforeafterrule evaluate abstractbeforeafterrule java at org apache lucene util testrulethreadandtestname evaluate testrulethreadandtestname java at org apache lucene util testruleignoreaftermaxfailures evaluate testruleignoreaftermaxfailures java at org apache lucene util testrulemarkfailure evaluate testrulemarkfailure java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at com carrotsearch randomizedtesting threadleakcontrol statementrunner run threadleakcontrol java at com carrotsearch randomizedtesting threadleakcontrol forktimeoutingtask threadleakcontrol java at com carrotsearch randomizedtesting threadleakcontrol evaluate threadleakcontrol java at com carrotsearch randomizedtesting randomizedrunner runsingletest randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at com carrotsearch randomizedtesting randomizedrunner evaluate randomizedrunner java at org apache lucene util abstractbeforeafterrule evaluate abstractbeforeafterrule java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at org apache lucene util testrulestoreclassname evaluate testrulestoreclassname java at com carrotsearch randomizedtesting rules noshadowingoroverridesonmethodsrule evaluate noshadowingoroverridesonmethodsrule java at com carrotsearch randomizedtesting rules noshadowingoroverridesonmethodsrule evaluate noshadowingoroverridesonmethodsrule java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at org apache lucene util testruleassertionsrequired evaluate testruleassertionsrequired java at org apache lucene util abstractbeforeafterrule evaluate abstractbeforeafterrule java at org apache lucene util testrulemarkfailure evaluate testrulemarkfailure java at org apache lucene util testruleignoreaftermaxfailures evaluate testruleignoreaftermaxfailures java at org apache lucene util testruleignoretestsuites evaluate testruleignoretestsuites java at com carrotsearch randomizedtesting rules statementadapter evaluate statementadapter java at com carrotsearch randomizedtesting threadleakcontrol statementrunner run threadleakcontrol java at com carrotsearch randomizedtesting threadleakcontrol lambda forktimeoutingtask threadleakcontrol java at java lang thread run thread java

| 1

|

97,904

| 8,673,146,917

|

IssuesEvent

|

2018-11-30 00:59:22

|

rancher/rke

|

https://api.github.com/repos/rancher/rke

|

closed

|

Potential performance bottleneck when syncing node labels and taints on large environments

|

kind/bug status/resolved status/to-test

|

**RKE version:**

0.1.10

**Docker version: (`docker version`,`docker info` preferred)**

```

Client:

Version: 17.09.0-ce

API version: 1.32

Go version: go1.8.3

Git commit: afdb6d4

Built: Tue Sep 26 22:42:18 2017

OS/Arch: linux/amd64

Server:

Version: 17.09.0-ce

API version: 1.32 (minimum version 1.12)

Go version: go1.8.3

Git commit: afdb6d4

Built: Tue Sep 26 22:40:56 2017

OS/Arch: linux/amd64

Experimental: false

```

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

Ubuntu 16.04.1

```

$ uname -r

4.13.0-21-generic

```

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

Bare-metal

**cluster.yml file:**

Available upon request

**Steps to Reproduce:**

- run rke up on a large environment (700+ nodes)

**Results:**

The step `Syncing nodes Labels and Taints` takes over 15 minutes and appears to be applying labels serially (one node at a time). Ran the command `kubectl get nodes | grep worker | wc -l` several times and saw the number of `worker` labels being applied was increasing gradually.

From the logs:

```

time="2018-10-15T02:52:47Z" level=info msg="[sync] Syncing nodes Labels and Taints"

time="2018-10-15T03:11:37Z" level=info msg="[sync] Successfully synced nodes Labels and Taints"

```

As a performance improvement, consider labeling nodes in parallel.

|

1.0

|

Potential performance bottleneck when syncing node labels and taints on large environments - **RKE version:**

0.1.10

**Docker version: (`docker version`,`docker info` preferred)**

```

Client:

Version: 17.09.0-ce

API version: 1.32

Go version: go1.8.3

Git commit: afdb6d4

Built: Tue Sep 26 22:42:18 2017

OS/Arch: linux/amd64

Server:

Version: 17.09.0-ce

API version: 1.32 (minimum version 1.12)

Go version: go1.8.3

Git commit: afdb6d4

Built: Tue Sep 26 22:40:56 2017

OS/Arch: linux/amd64

Experimental: false

```

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

Ubuntu 16.04.1

```

$ uname -r

4.13.0-21-generic

```

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

Bare-metal

**cluster.yml file:**

Available upon request

**Steps to Reproduce:**

- run rke up on a large environment (700+ nodes)

**Results:**

The step `Syncing nodes Labels and Taints` takes over 15 minutes and appears to be applying labels serially (one node at a time). Ran the command `kubectl get nodes | grep worker | wc -l` several times and saw the number of `worker` labels being applied was increasing gradually.

From the logs:

```

time="2018-10-15T02:52:47Z" level=info msg="[sync] Syncing nodes Labels and Taints"

time="2018-10-15T03:11:37Z" level=info msg="[sync] Successfully synced nodes Labels and Taints"

```

As a performance improvement, consider labeling nodes in parallel.

|

test

|

potential performance bottleneck when syncing node labels and taints on large environments rke version docker version docker version docker info preferred client version ce api version go version git commit built tue sep os arch linux server version ce api version minimum version go version git commit built tue sep os arch linux experimental false operating system and kernel cat etc os release uname r preferred ubuntu uname r generic type provider of hosts virtualbox bare metal aws gce do bare metal cluster yml file available upon request steps to reproduce run rke up on a large environment nodes results the step syncing nodes labels and taints takes over minutes and appears to be applying labels serially one node at a time ran the command kubectl get nodes grep worker wc l several times and saw the number of worker labels being applied was increasing gradually from the logs time level info msg syncing nodes labels and taints time level info msg successfully synced nodes labels and taints as a performance improvement consider labeling nodes in parallel

| 1

|

43,634

| 13,026,129,986

|

IssuesEvent

|

2020-07-27 14:33:12

|

NixOS/nixpkgs

|

https://api.github.com/repos/NixOS/nixpkgs

|

opened

|

Vulnerability roundup 90: monero-0.16.0.1: 1 advisory [5.5]

|

1.severity: security

|

[search](https://search.nix.gsc.io/?q=monero&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=monero+in%3Apath&type=Code)

* [ ] [CVE-2020-6861](https://nvd.nist.gov/vuln/detail/CVE-2020-6861) CVSSv3=5.5 (nixos-unstable)

Scanned versions: nixos-unstable: 28fce082c8c. May contain false positives.

Cc @ehmry

Cc @rnhmjoj

|

True

|

Vulnerability roundup 90: monero-0.16.0.1: 1 advisory [5.5] - [search](https://search.nix.gsc.io/?q=monero&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=monero+in%3Apath&type=Code)

* [ ] [CVE-2020-6861](https://nvd.nist.gov/vuln/detail/CVE-2020-6861) CVSSv3=5.5 (nixos-unstable)

Scanned versions: nixos-unstable: 28fce082c8c. May contain false positives.

Cc @ehmry

Cc @rnhmjoj

|

non_test

|

vulnerability roundup monero advisory nixos unstable scanned versions nixos unstable may contain false positives cc ehmry cc rnhmjoj

| 0

|

52,154

| 3,021,925,068

|

IssuesEvent

|

2015-07-31 17:22:59

|

creatorsschool/Encoden

|

https://api.github.com/repos/creatorsschool/Encoden

|

closed

|

create Home/Dashboard link for user when logged

|

Priority 3

|

Create a link for the home or dashboard for the user to be able to return to his homepage when it's logged in.

to be implemented after creating the feautre of login validation with rails and after changing users table to accomodate the teacher and students users.

|

1.0

|

create Home/Dashboard link for user when logged - Create a link for the home or dashboard for the user to be able to return to his homepage when it's logged in.

to be implemented after creating the feautre of login validation with rails and after changing users table to accomodate the teacher and students users.

|

non_test

|

create home dashboard link for user when logged create a link for the home or dashboard for the user to be able to return to his homepage when it s logged in to be implemented after creating the feautre of login validation with rails and after changing users table to accomodate the teacher and students users

| 0

|

288,256

| 31,861,220,568

|

IssuesEvent

|

2023-09-15 11:03:49

|

nidhi7598/linux-v4.19.72_CVE-2022-3564

|

https://api.github.com/repos/nidhi7598/linux-v4.19.72_CVE-2022-3564

|

opened

|

CVE-2020-10711 (Medium) detected in linuxlinux-4.19.294

|

Mend: dependency security vulnerability

|

## CVE-2020-10711 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-v4.19.72_CVE-2022-3564/commit/9ffee08efa44c7887e2babb8f304df0fa1094efb">9ffee08efa44c7887e2babb8f304df0fa1094efb</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/netlabel/netlabel_kapi.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/netlabel/netlabel_kapi.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A NULL pointer dereference flaw was found in the Linux kernel's SELinux subsystem in versions before 5.7. This flaw occurs while importing the Commercial IP Security Option (CIPSO) protocol's category bitmap into the SELinux extensible bitmap via the' ebitmap_netlbl_import' routine. While processing the CIPSO restricted bitmap tag in the 'cipso_v4_parsetag_rbm' routine, it sets the security attribute to indicate that the category bitmap is present, even if it has not been allocated. This issue leads to a NULL pointer dereference issue while importing the same category bitmap into SELinux. This flaw allows a remote network user to crash the system kernel, resulting in a denial of service.

<p>Publish Date: 2020-05-22

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2020-10711>CVE-2020-10711</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2020-10711">https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2020-10711</a></p>

<p>Release Date: 2020-05-22</p>

<p>Fix Resolution: v5.7-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-10711 (Medium) detected in linuxlinux-4.19.294 - ## CVE-2020-10711 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-v4.19.72_CVE-2022-3564/commit/9ffee08efa44c7887e2babb8f304df0fa1094efb">9ffee08efa44c7887e2babb8f304df0fa1094efb</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/netlabel/netlabel_kapi.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/netlabel/netlabel_kapi.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A NULL pointer dereference flaw was found in the Linux kernel's SELinux subsystem in versions before 5.7. This flaw occurs while importing the Commercial IP Security Option (CIPSO) protocol's category bitmap into the SELinux extensible bitmap via the' ebitmap_netlbl_import' routine. While processing the CIPSO restricted bitmap tag in the 'cipso_v4_parsetag_rbm' routine, it sets the security attribute to indicate that the category bitmap is present, even if it has not been allocated. This issue leads to a NULL pointer dereference issue while importing the same category bitmap into SELinux. This flaw allows a remote network user to crash the system kernel, resulting in a denial of service.

<p>Publish Date: 2020-05-22

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2020-10711>CVE-2020-10711</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2020-10711">https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2020-10711</a></p>

<p>Release Date: 2020-05-22</p>

<p>Fix Resolution: v5.7-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files net netlabel netlabel kapi c net netlabel netlabel kapi c vulnerability details a null pointer dereference flaw was found in the linux kernel s selinux subsystem in versions before this flaw occurs while importing the commercial ip security option cipso protocol s category bitmap into the selinux extensible bitmap via the ebitmap netlbl import routine while processing the cipso restricted bitmap tag in the cipso parsetag rbm routine it sets the security attribute to indicate that the category bitmap is present even if it has not been allocated this issue leads to a null pointer dereference issue while importing the same category bitmap into selinux this flaw allows a remote network user to crash the system kernel resulting in a denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

179,605

| 13,890,929,988

|

IssuesEvent

|

2020-10-19 09:56:37

|

dailykit/dailyos

|

https://api.github.com/repos/dailykit/dailyos

|

closed

|

Module opening Crashing

|

Highest TestQuality bug

|

#### Precondition

1. CRM app should be allowed to be used by test user.

#### Steps to Reproduce:

| Step | Action | Expected | Status |

| -------- | -------- | -------- | -------- |

| 1| Double Click on CRM app| CRM app opens successfully in active state| Pass |

| 2| Click on Coupons Module| A new tab should open with Coupon Listing</p><br><ol><br><li>Should show Coupon Name</li><br><li>Should show it's reward value</li><br></ol>| Fail |

#### Actual Results:

For this reason, the test failed.

|

1.0

|

Module opening Crashing - #### Precondition

1. CRM app should be allowed to be used by test user.

#### Steps to Reproduce:

| Step | Action | Expected | Status |

| -------- | -------- | -------- | -------- |

| 1| Double Click on CRM app| CRM app opens successfully in active state| Pass |

| 2| Click on Coupons Module| A new tab should open with Coupon Listing</p><br><ol><br><li>Should show Coupon Name</li><br><li>Should show it's reward value</li><br></ol>| Fail |

#### Actual Results:

For this reason, the test failed.

|

test

|

module opening crashing precondition crm app should be allowed to be used by test user steps to reproduce step action expected status double click on crm app crm app opens successfully in active state pass click on coupons module a new tab should open with coupon listing should show coupon name should show it s reward value fail actual results for this reason the test failed

| 1

|

61,998

| 6,772,642,827

|

IssuesEvent

|

2017-10-27 00:11:56

|

istio/istio

|

https://api.github.com/repos/istio/istio

|

closed

|

bug in release-0.2 bot setup

|

automated-release test-infra

|

the bot keep trying to and successfully merging auth changes into release-0.2 while there has been no such changes

https://github.com/istio/istio/commits/release-0.2 has 2 that went through

for instance

https://github.com/istio/istio/commit/6b3c74568fe241dfca8f8d479a6b345d519591d8

https://github.com/istio/auth/tree/release-0.2

last change was 12 days ago

cc @ayj who is trying to make a 0.2 release and will need to make sure the SHAs/tags are all from the actual release-0.2 branches unlike what is now in istio/istio release-0.2 for CA

|

1.0

|

bug in release-0.2 bot setup - the bot keep trying to and successfully merging auth changes into release-0.2 while there has been no such changes

https://github.com/istio/istio/commits/release-0.2 has 2 that went through

for instance

https://github.com/istio/istio/commit/6b3c74568fe241dfca8f8d479a6b345d519591d8

https://github.com/istio/auth/tree/release-0.2

last change was 12 days ago

cc @ayj who is trying to make a 0.2 release and will need to make sure the SHAs/tags are all from the actual release-0.2 branches unlike what is now in istio/istio release-0.2 for CA

|

test

|

bug in release bot setup the bot keep trying to and successfully merging auth changes into release while there has been no such changes has that went through for instance last change was days ago cc ayj who is trying to make a release and will need to make sure the shas tags are all from the actual release branches unlike what is now in istio istio release for ca

| 1

|

534,643

| 15,631,604,035

|

IssuesEvent

|

2021-03-22 05:17:07

|

kubesphere/kubesphere

|

https://api.github.com/repos/kubesphere/kubesphere

|

closed

|

The status of a new pipeline should not be displayed as 'Warning'

|

area/devops kind/bug priority/medium

|

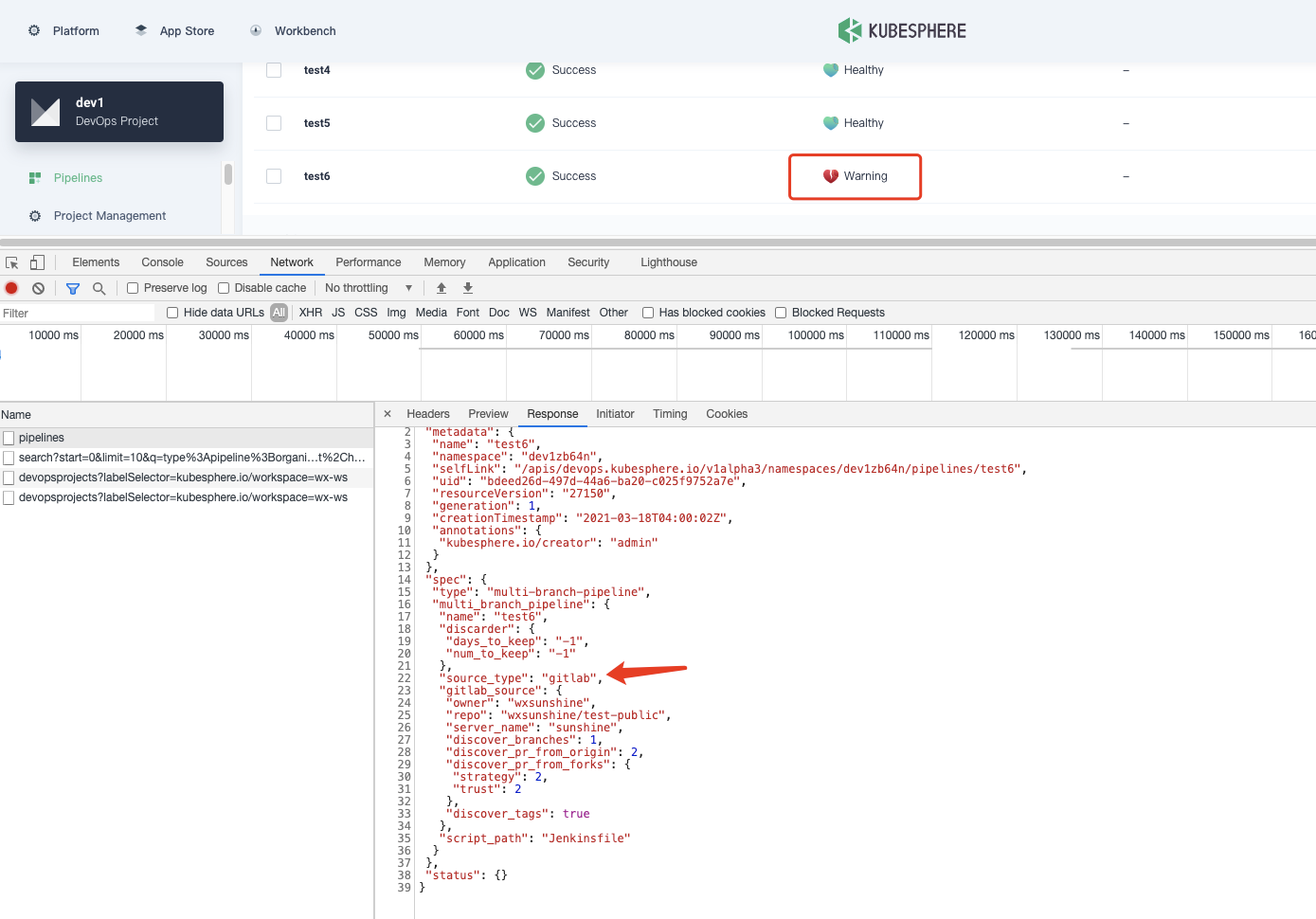

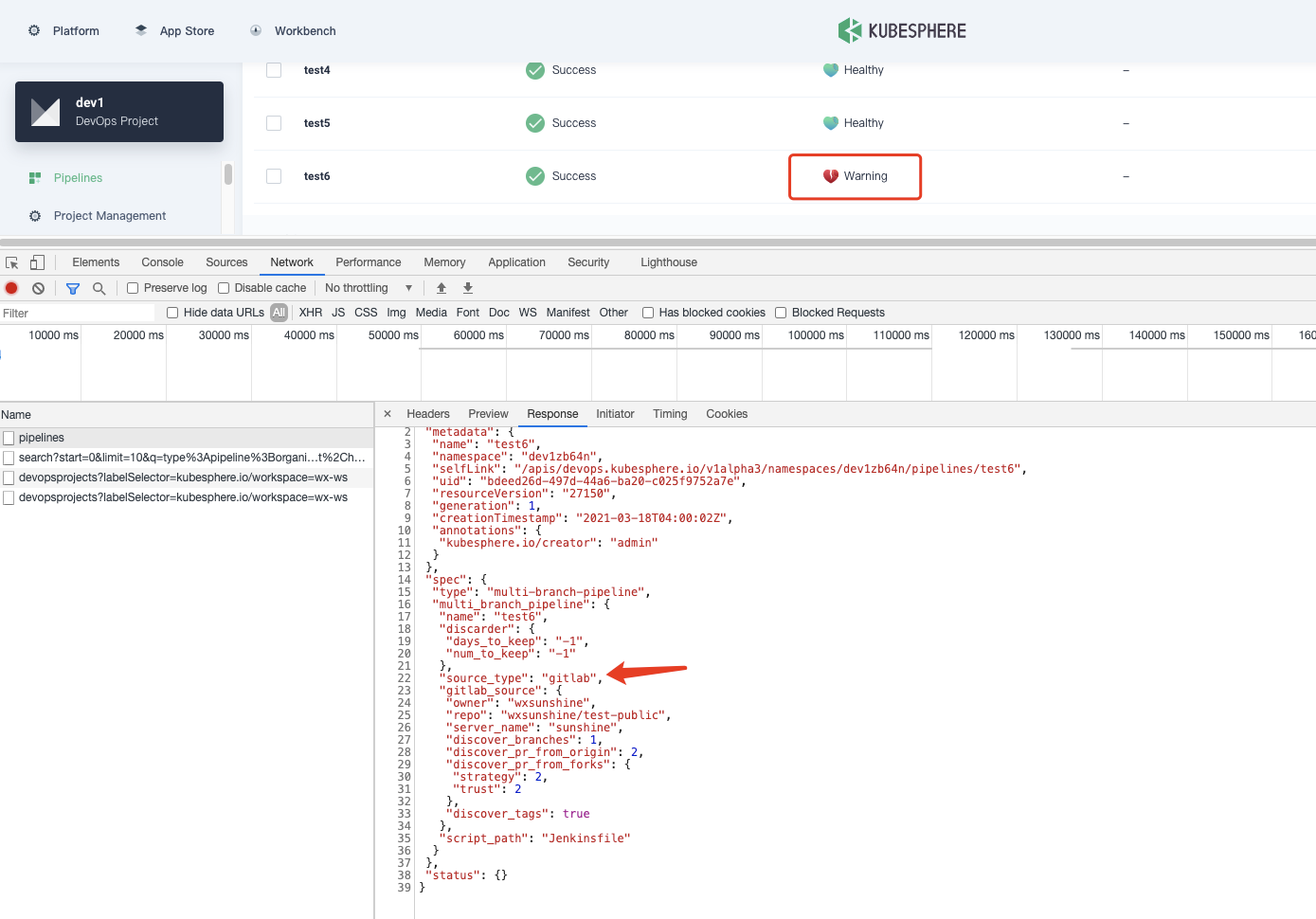

**Describe the Bug**

**Versions Used**

KubeSphere: `dev:latest`

**Preset conditions**

There is a devops project 'dev1'

**How To Reproduce**

Steps to reproduce the behavior:

1. Go to devops project 'dev1'

2. Create a new pipeline based on gitlab

3. View status of the new pipeline

**Expected behavior**

The status of a new pipeline is 'Healthy'

**Actual behavior**

The status of a new pipeline is 'Warning'

/priority medium

/area devops

/cc @kubesphere/sig-devops

/kind bug

/milestone 3.1.0

|

1.0

|

The status of a new pipeline should not be displayed as 'Warning' - **Describe the Bug**

**Versions Used**

KubeSphere: `dev:latest`

**Preset conditions**

There is a devops project 'dev1'

**How To Reproduce**

Steps to reproduce the behavior:

1. Go to devops project 'dev1'

2. Create a new pipeline based on gitlab

3. View status of the new pipeline

**Expected behavior**

The status of a new pipeline is 'Healthy'

**Actual behavior**

The status of a new pipeline is 'Warning'

/priority medium

/area devops

/cc @kubesphere/sig-devops

/kind bug

/milestone 3.1.0

|

non_test

|

the status of a new pipeline should not be displayed as warning describe the bug versions used kubesphere dev latest preset conditions there is a devops project how to reproduce steps to reproduce the behavior go to devops project create a new pipeline based on gitlab view status of the new pipeline expected behavior the status of a new pipeline is healthy actual behavior the status of a new pipeline is warning priority medium area devops cc kubesphere sig devops kind bug milestone

| 0

|

252,138

| 18,990,451,263

|

IssuesEvent

|

2021-11-22 06:22:37

|

ztsv-av/spellbook

|

https://api.github.com/repos/ztsv-av/spellbook

|

closed

|

Unionize Formatting for README's

|

documentation

|

Come back to me after the important stuff is done. We will go through

- character limit per line

- header blank space management

- standard for item lists and other `.md` elements

Files to go through:

- [x] .`/README.md`

- [x] `object_detection/README.md`

- [x] `projects/README.md`

- [x] `birdclef-2021/README.md`

- [x] `covid19/README.md`

- [x] `exoplanet_hunting/README.md`

|

1.0

|

Unionize Formatting for README's - Come back to me after the important stuff is done. We will go through

- character limit per line

- header blank space management

- standard for item lists and other `.md` elements

Files to go through:

- [x] .`/README.md`

- [x] `object_detection/README.md`

- [x] `projects/README.md`

- [x] `birdclef-2021/README.md`

- [x] `covid19/README.md`

- [x] `exoplanet_hunting/README.md`

|

non_test

|

unionize formatting for readme s come back to me after the important stuff is done we will go through character limit per line header blank space management standard for item lists and other md elements files to go through readme md object detection readme md projects readme md birdclef readme md readme md exoplanet hunting readme md

| 0

|

39,836

| 5,252,143,632

|

IssuesEvent

|

2017-02-02 02:46:18

|

semperfiwebdesign/all-in-one-seo-pack

|

https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack

|

closed

|

Uncaught exception ‘BadMethodCallException’ generate_htaccess_blocklist

|

Bug Needs Testing Priority - High

|

Reported here - https://wordpress.org/support/topic/uncaught-exception-badmethodcallexception-generate_htaccess_blocklist/

User states that when updating to WordPress v4.7.2 they get a white screen and this error in the debug log:

Method generate_htaccess_blocklist doesn’t exist’ in /path/to/wordpress/wp-content/plugins/all-in-one-seo-pack/admin/aioseop_module_class.php:52

User is running nginx.

|

1.0

|

Uncaught exception ‘BadMethodCallException’ generate_htaccess_blocklist - Reported here - https://wordpress.org/support/topic/uncaught-exception-badmethodcallexception-generate_htaccess_blocklist/

User states that when updating to WordPress v4.7.2 they get a white screen and this error in the debug log:

Method generate_htaccess_blocklist doesn’t exist’ in /path/to/wordpress/wp-content/plugins/all-in-one-seo-pack/admin/aioseop_module_class.php:52

User is running nginx.

|

test

|

uncaught exception ‘badmethodcallexception’ generate htaccess blocklist reported here user states that when updating to wordpress they get a white screen and this error in the debug log method generate htaccess blocklist doesn’t exist’ in path to wordpress wp content plugins all in one seo pack admin aioseop module class php user is running nginx

| 1

|

245,871

| 20,799,820,173

|

IssuesEvent

|

2022-03-17 12:56:09

|

compare-ci/admin

|

https://api.github.com/repos/compare-ci/admin

|

closed

|

Automated test 1647521697.166569

|

Test

|

This is a tracking issue for the automated tests being run. Test id: `automated-test-1647521697.166569`

|[python-sum](https://github.com/compare-ci/python-sum/pull/2366)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|12:55:07|12:55:08|12:55:12|0:00:05|0:00:04|

|GitHub Actions|12:55:07|12:55:23|12:55:25|0:00:18|0:00:02|

|Azure Pipelines|12:55:07|12:55:24|12:55:36|0:00:29|0:00:12|

|[node-sum](https://github.com/compare-ci/node-sum/pull/2343)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|12:55:15|12:55:16|12:55:29|0:00:14|0:00:13|

|GitHub Actions|12:55:15|12:55:34|12:55:53|0:00:38|0:00:19|

|

1.0

|

Automated test 1647521697.166569 - This is a tracking issue for the automated tests being run. Test id: `automated-test-1647521697.166569`

|[python-sum](https://github.com/compare-ci/python-sum/pull/2366)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|12:55:07|12:55:08|12:55:12|0:00:05|0:00:04|

|GitHub Actions|12:55:07|12:55:23|12:55:25|0:00:18|0:00:02|

|Azure Pipelines|12:55:07|12:55:24|12:55:36|0:00:29|0:00:12|

|[node-sum](https://github.com/compare-ci/node-sum/pull/2343)|Pull Created|Check Start|Check End|Total|Check|

|-|-|-|-|-|-|

|CircleCI Checks|12:55:15|12:55:16|12:55:29|0:00:14|0:00:13|

|GitHub Actions|12:55:15|12:55:34|12:55:53|0:00:38|0:00:19|

|

test

|

automated test this is a tracking issue for the automated tests being run test id automated test created check start check end total check circleci checks github actions azure pipelines created check start check end total check circleci checks github actions

| 1

|

50,701

| 6,107,775,456

|

IssuesEvent

|

2017-06-21 08:56:28

|

Microsoft/vscode

|

https://api.github.com/repos/Microsoft/vscode

|

opened

|

Test: multi root explorer

|

multi-root testplan-item

|

- [ ] win

- [ ] mac

- [ ] linux

Complexity: 4

Refs: https://github.com/Microsoft/vscode/pull/29030

This milestone we have started to work on the multi root experience, currently this is only available on insiders. Verify:

* Quickly check that the single root experience is the same as before

* You can nicely transition from single root to multi root

* View state is preserved in the explorer between restarts (focus, expand state, opened editor)

* You can drag and drop in the explorer (also between different roots)

* All the context menu actions make sense (check the ones for the roots)

* Deleting, renaming, adding file should work as before

* Check the case when you have the same folder opened twice (try to rename / delete something in that folder, both parents should get updated)

* File events: TODO@isidor

* files.exclude: TODO@isidor

* Be creative in trying to break the explorer

@bpasero feel free to edit

|

1.0

|

Test: multi root explorer - - [ ] win

- [ ] mac

- [ ] linux

Complexity: 4

Refs: https://github.com/Microsoft/vscode/pull/29030

This milestone we have started to work on the multi root experience, currently this is only available on insiders. Verify:

* Quickly check that the single root experience is the same as before

* You can nicely transition from single root to multi root

* View state is preserved in the explorer between restarts (focus, expand state, opened editor)

* You can drag and drop in the explorer (also between different roots)

* All the context menu actions make sense (check the ones for the roots)

* Deleting, renaming, adding file should work as before

* Check the case when you have the same folder opened twice (try to rename / delete something in that folder, both parents should get updated)

* File events: TODO@isidor

* files.exclude: TODO@isidor

* Be creative in trying to break the explorer

@bpasero feel free to edit

|

test

|

test multi root explorer win mac linux complexity refs this milestone we have started to work on the multi root experience currently this is only available on insiders verify quickly check that the single root experience is the same as before you can nicely transition from single root to multi root view state is preserved in the explorer between restarts focus expand state opened editor you can drag and drop in the explorer also between different roots all the context menu actions make sense check the ones for the roots deleting renaming adding file should work as before check the case when you have the same folder opened twice try to rename delete something in that folder both parents should get updated file events todo isidor files exclude todo isidor be creative in trying to break the explorer bpasero feel free to edit

| 1

|

214,775

| 16,611,426,869

|

IssuesEvent

|

2021-06-02 12:01:42

|

microsoft/vscode

|

https://api.github.com/repos/microsoft/vscode

|

closed

|

Smoke Test

|

testplan-item

|

- [x] Windows @bpasero

- [x] macOS @Tyriar

- [x] Linux @JacksonKearl

Complexity: 2

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23125033%0A%0A)

---

**NOTE:** Desktop & Web tests MUST run with `--build` argument

**NOTE:** Desktop tests MUST run with `--stable-build` argument additionally

Documentation: https://github.com/Microsoft/vscode/blob/main/test/smoke/README.md#run.

If the automated tests fail, create and issue for that and run the tests manually: https://github.com/microsoft/vscode/wiki/Smoke-Test

|

1.0

|

Smoke Test - - [x] Windows @bpasero

- [x] macOS @Tyriar

- [x] Linux @JacksonKearl

Complexity: 2

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23125033%0A%0A)

---

**NOTE:** Desktop & Web tests MUST run with `--build` argument

**NOTE:** Desktop tests MUST run with `--stable-build` argument additionally

Documentation: https://github.com/Microsoft/vscode/blob/main/test/smoke/README.md#run.

If the automated tests fail, create and issue for that and run the tests manually: https://github.com/microsoft/vscode/wiki/Smoke-Test

|

test

|

smoke test windows bpasero macos tyriar linux jacksonkearl complexity note desktop web tests must run with build argument note desktop tests must run with stable build argument additionally documentation if the automated tests fail create and issue for that and run the tests manually

| 1

|

365,489

| 25,538,664,094

|

IssuesEvent

|

2022-11-29 13:52:46

|

department-of-veterans-affairs/va.gov-cms

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms

|

opened

|

Create a document with some good ELI5 information on the Queue API.

|

Needs refining documentation ⭐️ Sitewide CMS

|

## Description

I feel like the Queue API is underutilized, and part of the reason might be a lack of good ELI5-level documentation that makes it clear how simple and easy to use the Queue API really is. It'd be nice to provide some, especially since we find ourselves writing scripts to perform automated changes across tens of thousands of nodes.

## Acceptance Criteria

- [ ] A README exists within the repo documenting how to use the Queue API.

- [ ] We've attempted to improve the Drupal.org documentation on the Queue API.

|

1.0

|

Create a document with some good ELI5 information on the Queue API. - ## Description

I feel like the Queue API is underutilized, and part of the reason might be a lack of good ELI5-level documentation that makes it clear how simple and easy to use the Queue API really is. It'd be nice to provide some, especially since we find ourselves writing scripts to perform automated changes across tens of thousands of nodes.

## Acceptance Criteria

- [ ] A README exists within the repo documenting how to use the Queue API.

- [ ] We've attempted to improve the Drupal.org documentation on the Queue API.

|

non_test

|

create a document with some good information on the queue api description i feel like the queue api is underutilized and part of the reason might be a lack of good level documentation that makes it clear how simple and easy to use the queue api really is it d be nice to provide some especially since we find ourselves writing scripts to perform automated changes across tens of thousands of nodes acceptance criteria a readme exists within the repo documenting how to use the queue api we ve attempted to improve the drupal org documentation on the queue api

| 0

|

36,200

| 17,533,062,065

|

IssuesEvent

|

2021-08-12 01:28:33

|

eclipse/eclipse.jdt.ls

|

https://api.github.com/repos/eclipse/eclipse.jdt.ls

|

closed

|

completion performance: calculating constantValue is expensive

|

performance

|

When code completion suggests constant fields, it will resolve their values directly and display them in the label section. See the screenshot.

It turns out this is an expensive operation. Especially when a type contains many constant fields, resolving them all during code completion will significantly reduce performance. See the profiling result, resolving constant value for the fields of `org.eclipse.jdt.internal.compiler.ast.ASTNode` will cost 45% CPU time of the language server.

And if I set the CompletionHandler.completion as the call tree root, you can see that resolving constant field will cost more than 90% CPU time of the completion handler.

**Suggestion**: We can remove the constant value from the label part so as to avoid the expensive calculations. But keep its value in javadoc in case the user wants to see its value.

|

True

|

completion performance: calculating constantValue is expensive - When code completion suggests constant fields, it will resolve their values directly and display them in the label section. See the screenshot.

It turns out this is an expensive operation. Especially when a type contains many constant fields, resolving them all during code completion will significantly reduce performance. See the profiling result, resolving constant value for the fields of `org.eclipse.jdt.internal.compiler.ast.ASTNode` will cost 45% CPU time of the language server.

And if I set the CompletionHandler.completion as the call tree root, you can see that resolving constant field will cost more than 90% CPU time of the completion handler.

**Suggestion**: We can remove the constant value from the label part so as to avoid the expensive calculations. But keep its value in javadoc in case the user wants to see its value.

|

non_test

|

completion performance calculating constantvalue is expensive when code completion suggests constant fields it will resolve their values directly and display them in the label section see the screenshot it turns out this is an expensive operation especially when a type contains many constant fields resolving them all during code completion will significantly reduce performance see the profiling result resolving constant value for the fields of org eclipse jdt internal compiler ast astnode will cost cpu time of the language server and if i set the completionhandler completion as the call tree root you can see that resolving constant field will cost more than cpu time of the completion handler suggestion we can remove the constant value from the label part so as to avoid the expensive calculations but keep its value in javadoc in case the user wants to see its value

| 0

|

159,468

| 6,046,631,917

|

IssuesEvent

|

2017-06-12 12:39:35

|

mborzenkov/Read-Later-List

|

https://api.github.com/repos/mborzenkov/Read-Later-List

|

opened

|

Improve ContentProvider with AbstractThreadedSyncAdapter

|

Priority: High Type: Maintenance

|

AbstractThreadedSyncAdapter:

- https://developer.android.com/training/sync-adapters/creating-sync-adapter.html

- https://developer.android.com/training/efficient-downloads/index.html

|

1.0

|

Improve ContentProvider with AbstractThreadedSyncAdapter - AbstractThreadedSyncAdapter:

- https://developer.android.com/training/sync-adapters/creating-sync-adapter.html

- https://developer.android.com/training/efficient-downloads/index.html

|

non_test

|

improve contentprovider with abstractthreadedsyncadapter abstractthreadedsyncadapter

| 0

|

96,846

| 16,167,131,283

|

IssuesEvent

|

2021-05-01 18:27:07

|

Ryan-Oneil/oneil-industries-website

|

https://api.github.com/repos/Ryan-Oneil/oneil-industries-website

|

closed

|

CVE-2021-23368 (Medium) detected in postcss-7.0.21.tgz, postcss-7.0.35.tgz

|

security vulnerability

|

## CVE-2021-23368 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.21.tgz</b>, <b>postcss-7.0.35.tgz</b></p></summary>

<p>

<details><summary><b>postcss-7.0.21.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz</a></p>

<p>Path to dependency file: oneil-industries-website/frontend/package.json</p>

<p>Path to vulnerable library: oneil-industries-website/frontend/node_modules/resolve-url-loader/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.4.tgz (Root Library)

- resolve-url-loader-3.1.2.tgz

- :x: **postcss-7.0.21.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.35.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz</a></p>

<p>Path to dependency file: oneil-industries-website/frontend/package.json</p>

<p>Path to vulnerable library: oneil-industries-website/frontend/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.4.tgz (Root Library)

- css-loader-3.4.2.tgz

- :x: **postcss-7.0.35.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/Ryan-Oneil/oneil-industries-website/commit/eefbb36da29b795d82b2f6a32f4ea94c8bc821f8">eefbb36da29b795d82b2f6a32f4ea94c8bc821f8</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss from 7.0.0 and before 8.2.10 are vulnerable to Regular Expression Denial of Service (ReDoS) during source map parsing.

<p>Publish Date: 2021-04-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23368>CVE-2021-23368</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23368">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-23368</a></p>

<p>Release Date: 2021-04-12</p>

<p>Fix Resolution: postcss -8.2.10</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-23368 (Medium) detected in postcss-7.0.21.tgz, postcss-7.0.35.tgz - ## CVE-2021-23368 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-7.0.21.tgz</b>, <b>postcss-7.0.35.tgz</b></p></summary>

<p>

<details><summary><b>postcss-7.0.21.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.21.tgz</a></p>

<p>Path to dependency file: oneil-industries-website/frontend/package.json</p>

<p>Path to vulnerable library: oneil-industries-website/frontend/node_modules/resolve-url-loader/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.4.tgz (Root Library)

- resolve-url-loader-3.1.2.tgz

- :x: **postcss-7.0.21.tgz** (Vulnerable Library)

</details>

<details><summary><b>postcss-7.0.35.tgz</b></p></summary>

<p>Tool for transforming styles with JS plugins</p>

<p>Library home page: <a href="https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz">https://registry.npmjs.org/postcss/-/postcss-7.0.35.tgz</a></p>

<p>Path to dependency file: oneil-industries-website/frontend/package.json</p>

<p>Path to vulnerable library: oneil-industries-website/frontend/node_modules/postcss/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.4.4.tgz (Root Library)

- css-loader-3.4.2.tgz

- :x: **postcss-7.0.35.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/Ryan-Oneil/oneil-industries-website/commit/eefbb36da29b795d82b2f6a32f4ea94c8bc821f8">eefbb36da29b795d82b2f6a32f4ea94c8bc821f8</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The package postcss from 7.0.0 and before 8.2.10 are vulnerable to Regular Expression Denial of Service (ReDoS) during source map parsing.

<p>Publish Date: 2021-04-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23368>CVE-2021-23368</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>