Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

102,800

| 12,823,497,572

|

IssuesEvent

|

2020-07-06 11:50:56

|

Altinn/altinn-studio

|

https://api.github.com/repos/Altinn/altinn-studio

|

closed

|

Setting app parameters in Studio designer

|

Epic area/app-parameters kind/analysis solution/studio/designer status/draft status/won't fix

|

## Description

EPIC for app-parameter issues in MVP3

Lorem ipsum

## In scope

> What's in scope of this analysis?

Lorem ipsum

## Out of scope

> What's **out** of scope for this analysis?

Lorem ipsum

## Constraints

> Constraints or requirements (technical or functional) that affects this analysis.

Lorem ipsum

## Analysis

Lorem ipsum

## Conclusion

> Short summary of the proposed solution.

## Tasks

- [ ] Is this issue labeled with a correct area label?

- [ ] QA has been done

|

1.0

|

Setting app parameters in Studio designer - ## Description

EPIC for app-parameter issues in MVP3

Lorem ipsum

## In scope

> What's in scope of this analysis?

Lorem ipsum

## Out of scope

> What's **out** of scope for this analysis?

Lorem ipsum

## Constraints

> Constraints or requirements (technical or functional) that affects this analysis.

Lorem ipsum

## Analysis

Lorem ipsum

## Conclusion

> Short summary of the proposed solution.

## Tasks

- [ ] Is this issue labeled with a correct area label?

- [ ] QA has been done

|

non_test

|

setting app parameters in studio designer description epic for app parameter issues in lorem ipsum in scope what s in scope of this analysis lorem ipsum out of scope what s out of scope for this analysis lorem ipsum constraints constraints or requirements technical or functional that affects this analysis lorem ipsum analysis lorem ipsum conclusion short summary of the proposed solution tasks is this issue labeled with a correct area label qa has been done

| 0

|

629,981

| 20,073,272,862

|

IssuesEvent

|

2022-02-04 09:48:48

|

metabase/metabase

|

https://api.github.com/repos/metabase/metabase

|

closed

|

Custom columns not appearing in result set when selecting subset of fields

|

Type:Bug Priority:P1 .Frontend .Reproduced

|

Create a question on the sample dataset on Orders, and create a custom column called "adjective" who's formula is `case([Total] > 100, "expensive", "cheap")`

The custom column appears in the results when all fields on Orders are selected, but does not appear when only a subset of fields are selected:

NOTE: most important is `release-x.42.x` It is possible this bug does not manifest in `master`. Will need a e2e test when we have narrowed and identified.

|

1.0

|

Custom columns not appearing in result set when selecting subset of fields - Create a question on the sample dataset on Orders, and create a custom column called "adjective" who's formula is `case([Total] > 100, "expensive", "cheap")`

The custom column appears in the results when all fields on Orders are selected, but does not appear when only a subset of fields are selected:

NOTE: most important is `release-x.42.x` It is possible this bug does not manifest in `master`. Will need a e2e test when we have narrowed and identified.

|

non_test

|

custom columns not appearing in result set when selecting subset of fields create a question on the sample dataset on orders and create a custom column called adjective who s formula is case expensive cheap the custom column appears in the results when all fields on orders are selected but does not appear when only a subset of fields are selected note most important is release x x it is possible this bug does not manifest in master will need a test when we have narrowed and identified

| 0

|

291,405

| 25,144,718,758

|

IssuesEvent

|

2022-11-10 03:40:24

|

ZcashFoundation/zebra

|

https://api.github.com/repos/ZcashFoundation/zebra

|

closed

|

Get transactions from the non-finalized state in the send transactions test

|

C-bug S-needs-triage P-Medium :zap: I-slow C-testing A-rpc

|

## Motivation

Currently, the send transactions test is very slow, because it:

- copies the entire Zebra finalized state directory

- syncs to the tip

- gets transactions from the copied finalized state

- runs the test on the old finalized state

This is ok for now, but it might become a problem if the state gets much bigger, or we need to modify that test a lot.

### Designs

Instead, the test could:

- use the original Zebra cached state directory

- sync to the tip

- get transactions from at least 3 blocks in the non-finalized state via JSON-RPC

- run the test on the updated finalized state (which won't have all those non-finalized transactions)

|

1.0

|

Get transactions from the non-finalized state in the send transactions test - ## Motivation

Currently, the send transactions test is very slow, because it:

- copies the entire Zebra finalized state directory

- syncs to the tip

- gets transactions from the copied finalized state

- runs the test on the old finalized state

This is ok for now, but it might become a problem if the state gets much bigger, or we need to modify that test a lot.

### Designs

Instead, the test could:

- use the original Zebra cached state directory

- sync to the tip

- get transactions from at least 3 blocks in the non-finalized state via JSON-RPC

- run the test on the updated finalized state (which won't have all those non-finalized transactions)

|

test

|

get transactions from the non finalized state in the send transactions test motivation currently the send transactions test is very slow because it copies the entire zebra finalized state directory syncs to the tip gets transactions from the copied finalized state runs the test on the old finalized state this is ok for now but it might become a problem if the state gets much bigger or we need to modify that test a lot designs instead the test could use the original zebra cached state directory sync to the tip get transactions from at least blocks in the non finalized state via json rpc run the test on the updated finalized state which won t have all those non finalized transactions

| 1

|

165,209

| 20,574,342,649

|

IssuesEvent

|

2022-03-04 01:46:43

|

slothymonk/reviewing-a-pull-request

|

https://api.github.com/repos/slothymonk/reviewing-a-pull-request

|

closed

|

CVE-2020-7595 (High) detected in nokogiri-1.10.3.gem - autoclosed

|

security vulnerability

|

## CVE-2020-7595 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.3.gem</b></p></summary>

<p>Nokogiri (鋸) is an HTML, XML, SAX, and Reader parser. Among

Nokogiri's many features is the ability to search documents via XPath

or CSS3 selectors.</p>

<p>Library home page: <a href="https://rubygems.org/gems/nokogiri-1.10.3.gem">https://rubygems.org/gems/nokogiri-1.10.3.gem</a></p>

<p>Path to dependency file: /reviewing-a-pull-request/Gemfile.lock</p>

<p>Path to vulnerable library: /var/lib/gems/2.3.0/cache/nokogiri-1.10.3.gem</p>

<p>

Dependency Hierarchy:

- github-pages-198.gem (Root Library)

- jekyll-mentions-1.4.1.gem

- html-pipeline-2.11.0.gem

- :x: **nokogiri-1.10.3.gem** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

xmlStringLenDecodeEntities in parser.c in libxml2 2.9.10 has an infinite loop in a certain end-of-file situation.

<p>Publish Date: 2020-01-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7595>CVE-2020-7595</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://security.gentoo.org/glsa/202010-04">https://security.gentoo.org/glsa/202010-04</a></p>

<p>Fix Resolution: All libxml2 users should upgrade to the latest version # emerge --sync

# emerge --ask --oneshot --verbose >=dev-libs/libxml2-2.9.10 >= </p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-7595 (High) detected in nokogiri-1.10.3.gem - autoclosed - ## CVE-2020-7595 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.3.gem</b></p></summary>

<p>Nokogiri (鋸) is an HTML, XML, SAX, and Reader parser. Among

Nokogiri's many features is the ability to search documents via XPath

or CSS3 selectors.</p>

<p>Library home page: <a href="https://rubygems.org/gems/nokogiri-1.10.3.gem">https://rubygems.org/gems/nokogiri-1.10.3.gem</a></p>

<p>Path to dependency file: /reviewing-a-pull-request/Gemfile.lock</p>

<p>Path to vulnerable library: /var/lib/gems/2.3.0/cache/nokogiri-1.10.3.gem</p>

<p>

Dependency Hierarchy:

- github-pages-198.gem (Root Library)

- jekyll-mentions-1.4.1.gem

- html-pipeline-2.11.0.gem

- :x: **nokogiri-1.10.3.gem** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

xmlStringLenDecodeEntities in parser.c in libxml2 2.9.10 has an infinite loop in a certain end-of-file situation.

<p>Publish Date: 2020-01-21

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7595>CVE-2020-7595</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://security.gentoo.org/glsa/202010-04">https://security.gentoo.org/glsa/202010-04</a></p>

<p>Fix Resolution: All libxml2 users should upgrade to the latest version # emerge --sync

# emerge --ask --oneshot --verbose >=dev-libs/libxml2-2.9.10 >= </p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in nokogiri gem autoclosed cve high severity vulnerability vulnerable library nokogiri gem nokogiri 鋸 is an html xml sax and reader parser among nokogiri s many features is the ability to search documents via xpath or selectors library home page a href path to dependency file reviewing a pull request gemfile lock path to vulnerable library var lib gems cache nokogiri gem dependency hierarchy github pages gem root library jekyll mentions gem html pipeline gem x nokogiri gem vulnerable library vulnerability details xmlstringlendecodeentities in parser c in has an infinite loop in a certain end of file situation publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href fix resolution all users should upgrade to the latest version emerge sync emerge ask oneshot verbose dev libs step up your open source security game with whitesource

| 0

|

213,242

| 16,507,340,314

|

IssuesEvent

|

2021-05-25 21:06:45

|

NillerMedDild/Enigmatica6

|

https://api.github.com/repos/NillerMedDild/Enigmatica6

|

closed

|

Twilight Forest

|

Status: Ready For Testing Suggestion

|

**CurseForge Link**

https://www.curseforge.com/minecraft/mc-mods/the-twilight-forest

**Mod description**

I feel like the description is pretty much known at this point :p

**Why would you like the mod added?**

Even though its not finished i believe the mod can actually have a new approach to the modpack with its new databased spawning, you guys can make the twilight forest have worth while loot in the final castle such as: Antimatter,Polonium,Totems of undying, Machine casing, Artifacts anything that would make actually progressing through the twilight forest worth whole, you guys could even buff the bosses up so it makes the fights alot more challenging

Hope it makes it into the pack :)

|

1.0

|

Twilight Forest -

**CurseForge Link**

https://www.curseforge.com/minecraft/mc-mods/the-twilight-forest

**Mod description**

I feel like the description is pretty much known at this point :p

**Why would you like the mod added?**

Even though its not finished i believe the mod can actually have a new approach to the modpack with its new databased spawning, you guys can make the twilight forest have worth while loot in the final castle such as: Antimatter,Polonium,Totems of undying, Machine casing, Artifacts anything that would make actually progressing through the twilight forest worth whole, you guys could even buff the bosses up so it makes the fights alot more challenging

Hope it makes it into the pack :)

|

test

|

twilight forest curseforge link mod description i feel like the description is pretty much known at this point p why would you like the mod added even though its not finished i believe the mod can actually have a new approach to the modpack with its new databased spawning you guys can make the twilight forest have worth while loot in the final castle such as antimatter polonium totems of undying machine casing artifacts anything that would make actually progressing through the twilight forest worth whole you guys could even buff the bosses up so it makes the fights alot more challenging hope it makes it into the pack

| 1

|

257,772

| 22,209,160,830

|

IssuesEvent

|

2022-06-07 17:28:44

|

Hamlib/Hamlib

|

https://api.github.com/repos/Hamlib/Hamlib

|

closed

|

FT-991 rig split behavior

|

bug needs test fixed

|

Here are the results with rigctl Hamlib 4.5~git Wed Jun 01 22:20:14 2022 +0000 SHA=ce1d86:

- This driver looks more stable than the other ones. I saw neither absurd frequencies nor unwanted changes to the band stack memories.

- Only the effect that 0 Hz is displayed briefly with every transmission is still there. I therefore took a closer look. This happens as follows:

- During normal non-split operation the top line of my FT-991's display shows the rig QRG (usually VFO A), and in the second top line Clarifier setting is displayed. Thus, usually this line shows: "CLAR 0 Hz".

- When Split Operation is set to "Rig", at my FT-991 the split mode is activated. This changes the second top line for example to "SPLIT VFO B 50.31150"

- Now it comes: When transmitting with Split Operation = Rig, each time it comes for about 0.5 seconds again "CLAR 0 Hz" and then display switches back to "SPLIT VFO B 50.31150". (= The “0 Hz” comes from the Clarifier setting.) This means, that – for whatever reason – split mode must be briefly disabled and the enabled again. As said, during each transmission. This is the bug.

|

1.0

|

FT-991 rig split behavior - Here are the results with rigctl Hamlib 4.5~git Wed Jun 01 22:20:14 2022 +0000 SHA=ce1d86:

- This driver looks more stable than the other ones. I saw neither absurd frequencies nor unwanted changes to the band stack memories.

- Only the effect that 0 Hz is displayed briefly with every transmission is still there. I therefore took a closer look. This happens as follows:

- During normal non-split operation the top line of my FT-991's display shows the rig QRG (usually VFO A), and in the second top line Clarifier setting is displayed. Thus, usually this line shows: "CLAR 0 Hz".

- When Split Operation is set to "Rig", at my FT-991 the split mode is activated. This changes the second top line for example to "SPLIT VFO B 50.31150"

- Now it comes: When transmitting with Split Operation = Rig, each time it comes for about 0.5 seconds again "CLAR 0 Hz" and then display switches back to "SPLIT VFO B 50.31150". (= The “0 Hz” comes from the Clarifier setting.) This means, that – for whatever reason – split mode must be briefly disabled and the enabled again. As said, during each transmission. This is the bug.

|

test

|

ft rig split behavior here are the results with rigctl hamlib git wed jun sha this driver looks more stable than the other ones i saw neither absurd frequencies nor unwanted changes to the band stack memories only the effect that hz is displayed briefly with every transmission is still there i therefore took a closer look this happens as follows during normal non split operation the top line of my ft s display shows the rig qrg usually vfo a and in the second top line clarifier setting is displayed thus usually this line shows clar hz when split operation is set to rig at my ft the split mode is activated this changes the second top line for example to split vfo b now it comes when transmitting with split operation rig each time it comes for about seconds again clar hz and then display switches back to split vfo b the “ hz” comes from the clarifier setting this means that – for whatever reason – split mode must be briefly disabled and the enabled again as said during each transmission this is the bug

| 1

|

103,714

| 8,940,773,727

|

IssuesEvent

|

2019-01-24 01:11:55

|

apache/incubator-mxnet

|

https://api.github.com/repos/apache/incubator-mxnet

|

closed

|

ARM QEMU test in CI failed unrelated PR

|

ARM CI Question Test

|

## Description

Test with ARM QEMU fails with some kind of network interruption...

Makes wonder about these network issues where dependencies fail to download... should we put in a retry function, so that we don't have to restart our PRs when there's a transient error?

## Error

```

runtime_functions.py: 2018-11-26 03:47:02,687 ['run_ut_py3_qemu']

⢎⡑ ⣰⡀ ⢀⣀ ⡀⣀ ⣰⡀ ⠄ ⣀⡀ ⢀⡀ ⡎⢱ ⣏⡉ ⡷⢾ ⡇⢸

⠢⠜ ⠘⠤ ⠣⠼ ⠏ ⠘⠤ ⠇ ⠇⠸ ⣑⡺ ⠣⠪ ⠧⠤ ⠇⠸ ⠣⠜

runtime_functions.py: 2018-11-26 03:47:02,765 Starting VM, ssh port redirected to localhost:2222 (inside docker, not exposed by default)

runtime_functions.py: 2018-11-26 03:47:02,765 Starting in non-interactive mode. Terminal output is disabled.

runtime_functions.py: 2018-11-26 03:47:02,766 waiting for ssh to be open in the VM (timeout 300s)

runtime_functions.py: 2018-11-26 03:47:46,729 wait_ssh_open: port 127.0.0.1:2222 is open and ssh is ready

runtime_functions.py: 2018-11-26 03:47:46,729 VM is online and SSH is up

runtime_functions.py: 2018-11-26 03:47:46,729 Provisioning the VM with artifacts and sources

ssh_exchange_identification: read: Connection reset by peer

rsync: safe_write failed to write 4 bytes to socket [sender]: Broken pipe (32)

rsync error: unexplained error (code 255) at io.c(320) [sender=3.1.1]

runtime_functions.py: 2018-11-26 03:47:46,916 Shutdown via ssh

ssh_exchange_identification: read: Connection reset by peer

Traceback (most recent call last):

File "./runtime_functions.py", line 66, in run_ut_py3_qemu

qemu_provision(vm.ssh_port)

File "/work/vmcontrol.py", line 186, in qemu_provision

qemu_rsync(ssh_port, x, 'mxnet_dist/')

File "/work/vmcontrol.py", line 175, in qemu_rsync

check_call(['rsync', '-e', 'ssh -o StrictHostKeyChecking=no -p{}'.format(ssh_port), '-a', local_path, 'qemu@localhost:{}'.format(remote_path)])

File "/usr/lib/python3.5/subprocess.py", line 581, in check_call

raise CalledProcessError(retcode, cmd)

subprocess.CalledProcessError: Command '['rsync', '-e', 'ssh -o StrictHostKeyChecking=no -p2222', '-a', '/work/mxnet/build/mxnet-1.4.0-py2.py3-none-any.whl', 'qemu@localhost:mxnet_dist/']' returned non-zero exit status 255

```

|

1.0

|

ARM QEMU test in CI failed unrelated PR - ## Description

Test with ARM QEMU fails with some kind of network interruption...

Makes wonder about these network issues where dependencies fail to download... should we put in a retry function, so that we don't have to restart our PRs when there's a transient error?

## Error

```

runtime_functions.py: 2018-11-26 03:47:02,687 ['run_ut_py3_qemu']

⢎⡑ ⣰⡀ ⢀⣀ ⡀⣀ ⣰⡀ ⠄ ⣀⡀ ⢀⡀ ⡎⢱ ⣏⡉ ⡷⢾ ⡇⢸

⠢⠜ ⠘⠤ ⠣⠼ ⠏ ⠘⠤ ⠇ ⠇⠸ ⣑⡺ ⠣⠪ ⠧⠤ ⠇⠸ ⠣⠜

runtime_functions.py: 2018-11-26 03:47:02,765 Starting VM, ssh port redirected to localhost:2222 (inside docker, not exposed by default)

runtime_functions.py: 2018-11-26 03:47:02,765 Starting in non-interactive mode. Terminal output is disabled.

runtime_functions.py: 2018-11-26 03:47:02,766 waiting for ssh to be open in the VM (timeout 300s)

runtime_functions.py: 2018-11-26 03:47:46,729 wait_ssh_open: port 127.0.0.1:2222 is open and ssh is ready

runtime_functions.py: 2018-11-26 03:47:46,729 VM is online and SSH is up

runtime_functions.py: 2018-11-26 03:47:46,729 Provisioning the VM with artifacts and sources

ssh_exchange_identification: read: Connection reset by peer

rsync: safe_write failed to write 4 bytes to socket [sender]: Broken pipe (32)

rsync error: unexplained error (code 255) at io.c(320) [sender=3.1.1]

runtime_functions.py: 2018-11-26 03:47:46,916 Shutdown via ssh

ssh_exchange_identification: read: Connection reset by peer

Traceback (most recent call last):

File "./runtime_functions.py", line 66, in run_ut_py3_qemu

qemu_provision(vm.ssh_port)

File "/work/vmcontrol.py", line 186, in qemu_provision

qemu_rsync(ssh_port, x, 'mxnet_dist/')

File "/work/vmcontrol.py", line 175, in qemu_rsync

check_call(['rsync', '-e', 'ssh -o StrictHostKeyChecking=no -p{}'.format(ssh_port), '-a', local_path, 'qemu@localhost:{}'.format(remote_path)])

File "/usr/lib/python3.5/subprocess.py", line 581, in check_call

raise CalledProcessError(retcode, cmd)

subprocess.CalledProcessError: Command '['rsync', '-e', 'ssh -o StrictHostKeyChecking=no -p2222', '-a', '/work/mxnet/build/mxnet-1.4.0-py2.py3-none-any.whl', 'qemu@localhost:mxnet_dist/']' returned non-zero exit status 255

```

|

test

|

arm qemu test in ci failed unrelated pr description test with arm qemu fails with some kind of network interruption makes wonder about these network issues where dependencies fail to download should we put in a retry function so that we don t have to restart our prs when there s a transient error error runtime functions py ⢎⡑ ⣰⡀ ⢀⣀ ⡀⣀ ⣰⡀ ⠄ ⣀⡀ ⢀⡀ ⡎⢱ ⣏⡉ ⡷⢾ ⡇⢸ ⠢⠜ ⠘⠤ ⠣⠼ ⠏ ⠘⠤ ⠇ ⠇⠸ ⣑⡺ ⠣⠪ ⠧⠤ ⠇⠸ ⠣⠜ runtime functions py starting vm ssh port redirected to localhost inside docker not exposed by default runtime functions py starting in non interactive mode terminal output is disabled runtime functions py waiting for ssh to be open in the vm timeout runtime functions py wait ssh open port is open and ssh is ready runtime functions py vm is online and ssh is up runtime functions py provisioning the vm with artifacts and sources ssh exchange identification read connection reset by peer rsync safe write failed to write bytes to socket broken pipe rsync error unexplained error code at io c runtime functions py shutdown via ssh ssh exchange identification read connection reset by peer traceback most recent call last file runtime functions py line in run ut qemu qemu provision vm ssh port file work vmcontrol py line in qemu provision qemu rsync ssh port x mxnet dist file work vmcontrol py line in qemu rsync check call file usr lib subprocess py line in check call raise calledprocesserror retcode cmd subprocess calledprocesserror command returned non zero exit status

| 1

|

275,713

| 23,932,453,382

|

IssuesEvent

|

2022-09-10 18:59:15

|

hajimehoshi/ebiten

|

https://api.github.com/repos/hajimehoshi/ebiten

|

closed

|

Test on Windows Server

|

os:windows test

|

OpenGL version might be unexpectedly old. DirectX should be used anyway?

Related #739

|

1.0

|

Test on Windows Server - OpenGL version might be unexpectedly old. DirectX should be used anyway?

Related #739

|

test

|

test on windows server opengl version might be unexpectedly old directx should be used anyway related

| 1

|

469,610

| 13,521,961,975

|

IssuesEvent

|

2020-09-15 07:51:33

|

buddyboss/buddyboss-platform

|

https://api.github.com/repos/buddyboss/buddyboss-platform

|

opened

|

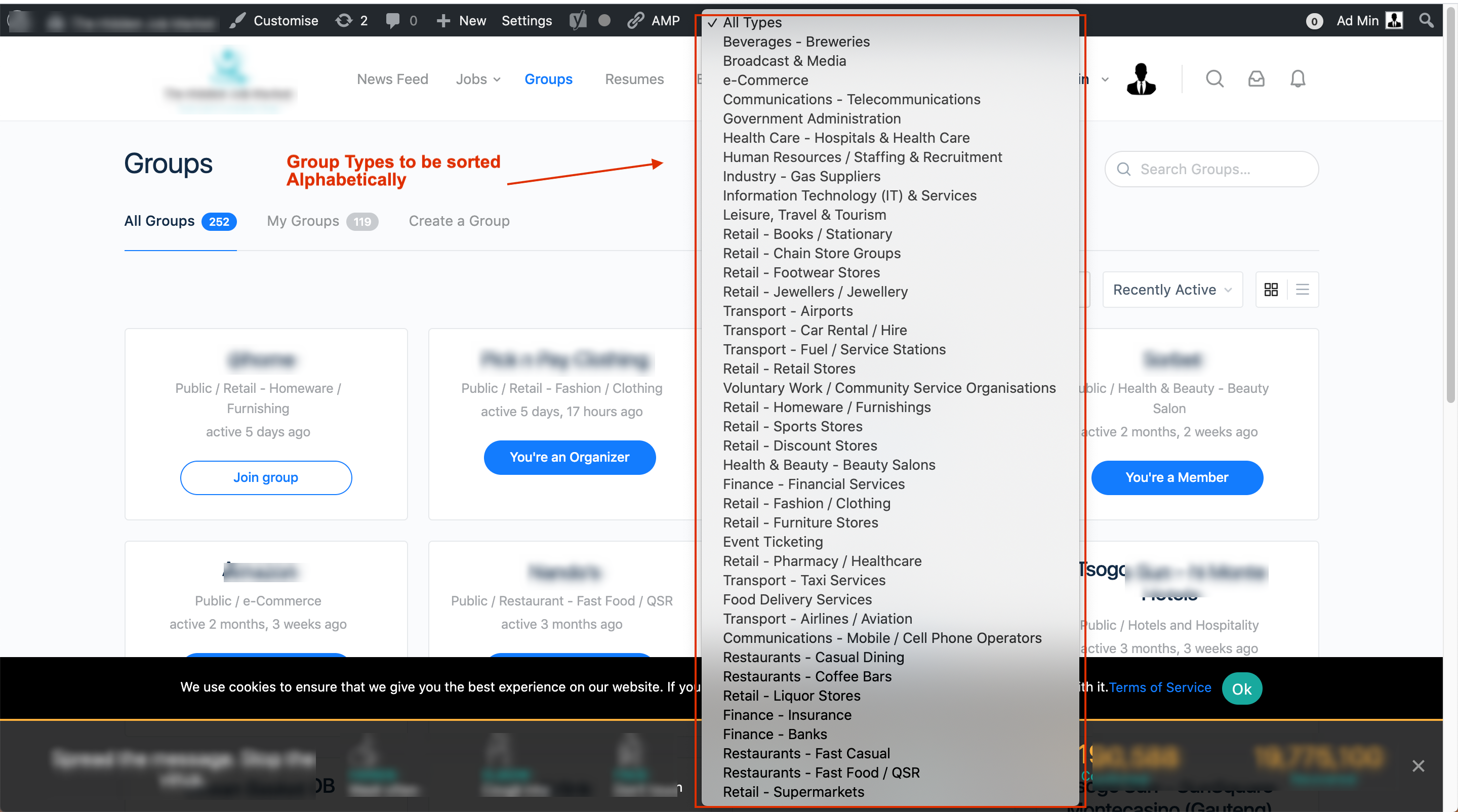

Sort Group Types Alphabetically

|

feature: enhancement priority: medium

|

**Is your feature request related to a problem? Please describe.**

When there are several group types, the group type dropdown is not user friendly, some users are having some hard time to find the group type they are looking for.

**Describe the solution you'd like**

Be able to sort the Group Type filter in the Group directory so that the user can easily find and select a group type.

**Screenshot**

**Support ticket links**

https://secure.helpscout.net/conversation/1273251749/97145

|

1.0

|

Sort Group Types Alphabetically - **Is your feature request related to a problem? Please describe.**

When there are several group types, the group type dropdown is not user friendly, some users are having some hard time to find the group type they are looking for.

**Describe the solution you'd like**

Be able to sort the Group Type filter in the Group directory so that the user can easily find and select a group type.

**Screenshot**

**Support ticket links**

https://secure.helpscout.net/conversation/1273251749/97145

|

non_test

|

sort group types alphabetically is your feature request related to a problem please describe when there are several group types the group type dropdown is not user friendly some users are having some hard time to find the group type they are looking for describe the solution you d like be able to sort the group type filter in the group directory so that the user can easily find and select a group type screenshot support ticket links

| 0

|

461,818

| 13,236,670,967

|

IssuesEvent

|

2020-08-18 20:13:28

|

amici-ursi/redbear

|

https://api.github.com/repos/amici-ursi/redbear

|

closed

|

cog / redbear / config: implement member_commands, personal_commands, muted_members in Config

|

enhancement high priority

|

These were previously stored in pdsettings.p and we should use the new system. This needs to be in place for many other features to work.

#3

|

1.0

|

cog / redbear / config: implement member_commands, personal_commands, muted_members in Config - These were previously stored in pdsettings.p and we should use the new system. This needs to be in place for many other features to work.

#3

|

non_test

|

cog redbear config implement member commands personal commands muted members in config these were previously stored in pdsettings p and we should use the new system this needs to be in place for many other features to work

| 0

|

87,293

| 8,071,411,828

|

IssuesEvent

|

2018-08-06 13:07:27

|

timogoudzwaard/arcatering-app

|

https://api.github.com/repos/timogoudzwaard/arcatering-app

|

closed

|

Add tests to Register component

|

unit test

|

Test if...

- the component is able to render

- child components render

- fields render correctly

- the onLoading function renders the correct field

- error handling works

|

1.0

|

Add tests to Register component - Test if...

- the component is able to render

- child components render

- fields render correctly

- the onLoading function renders the correct field

- error handling works

|

test

|

add tests to register component test if the component is able to render child components render fields render correctly the onloading function renders the correct field error handling works

| 1

|

190,271

| 14,540,368,737

|

IssuesEvent

|

2020-12-15 13:13:43

|

ubtue/DatenProbleme

|

https://api.github.com/repos/ubtue/DatenProbleme

|

closed

|

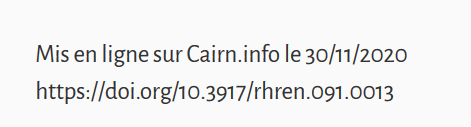

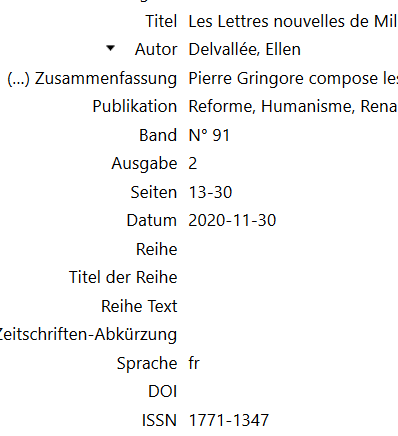

ISSN 1771-1347 | Reforme, Humanisme, Renaissance | DOI

|

Fehlerquelle: Translator Zotero_SEMI-AUTO ready for testing

|

https://www.cairn.info/revue-reforme-humanisme-renaissance-2020-2-page-13.htm

Am unteren Seitenrand befindet sich ein DOI. Dieser wird nicht erfasst.

|

1.0

|

ISSN 1771-1347 | Reforme, Humanisme, Renaissance | DOI - https://www.cairn.info/revue-reforme-humanisme-renaissance-2020-2-page-13.htm

Am unteren Seitenrand befindet sich ein DOI. Dieser wird nicht erfasst.

|

test

|

issn reforme humanisme renaissance doi am unteren seitenrand befindet sich ein doi dieser wird nicht erfasst

| 1

|

136,606

| 11,053,754,821

|

IssuesEvent

|

2019-12-10 12:04:46

|

brave/brave-ios

|

https://api.github.com/repos/brave/brave-ios

|

closed

|

Unit Tests: Data Sync Classes

|

Epic: CI/Tests QA/No enhancement release-notes/exclude

|

All core data models have unit tests already.

The only untested classes in Data framework are those related to data sync.

|

1.0

|

Unit Tests: Data Sync Classes - All core data models have unit tests already.

The only untested classes in Data framework are those related to data sync.

|

test

|

unit tests data sync classes all core data models have unit tests already the only untested classes in data framework are those related to data sync

| 1

|

18,402

| 3,389,374,628

|

IssuesEvent

|

2015-11-30 01:15:51

|

jgirald/ES2015C

|

https://api.github.com/repos/jgirald/ES2015C

|

closed

|

Sonido Animaciones Militar Persa (secondary actions)

|

Animation Character Design Medium Priority Persian Sound Team A

|

### Descripción

* Crear sonidos para animaciones de los personajes.

* Añadir los sonidos a las animaciones del Militar Persa:

* Die

* Creation

### Acceptance criteria

Sonidos de las animaciones preparados para utilizar en integración.

### Esfuerzo estimado:

1/2 hr

|

1.0

|

Sonido Animaciones Militar Persa (secondary actions) - ### Descripción

* Crear sonidos para animaciones de los personajes.

* Añadir los sonidos a las animaciones del Militar Persa:

* Die

* Creation

### Acceptance criteria

Sonidos de las animaciones preparados para utilizar en integración.

### Esfuerzo estimado:

1/2 hr

|

non_test

|

sonido animaciones militar persa secondary actions descripción crear sonidos para animaciones de los personajes añadir los sonidos a las animaciones del militar persa die creation acceptance criteria sonidos de las animaciones preparados para utilizar en integración esfuerzo estimado hr

| 0

|

19,268

| 6,694,890,040

|

IssuesEvent

|

2017-10-10 05:18:14

|

commontk/CTK

|

https://api.github.com/repos/commontk/CTK

|

closed

|

Building problem with qt5-ctk

|

Build System

|

Hello,

I would like to build the qt5-ctk library with cmake using QT 5.4.0 with mingw32.

I changed the version in ctkMacroSetupQt.cmake to "5" with

set(CTK_QT_VERSION "5" CACHE STRING "Expected Qt version")

but I get the same Error Message when trying with 4 (which is also installed).

Do you have any idea what this could cause?

This is the output of cmake:

CMake Error at CMake/ctkMacroSetupQt.cmake:74 (message):

error: Qt4 was not found on your system. You probably need to set the

QT_QMAKE_EXECUTABLE variable

Call Stack (most recent call first):

CMakeLists.txt:398 (ctkMacroSetupQt)

-- Found unsuitable Qt version "5.4.0" from C:/Qt/5.4/mingw491_32/bin/qmake.exe

-- Configuring incomplete, errors occurred!

See also "C:/Users/rsalinas/Documents/Software/HelloCmake/CTK-qt5/CTK-superbuild/CMakeFiles/CMakeOutput.log".

Thanks a lot,

Ricardo Salinas

|

1.0

|

Building problem with qt5-ctk - Hello,

I would like to build the qt5-ctk library with cmake using QT 5.4.0 with mingw32.

I changed the version in ctkMacroSetupQt.cmake to "5" with

set(CTK_QT_VERSION "5" CACHE STRING "Expected Qt version")

but I get the same Error Message when trying with 4 (which is also installed).

Do you have any idea what this could cause?

This is the output of cmake:

CMake Error at CMake/ctkMacroSetupQt.cmake:74 (message):

error: Qt4 was not found on your system. You probably need to set the

QT_QMAKE_EXECUTABLE variable

Call Stack (most recent call first):

CMakeLists.txt:398 (ctkMacroSetupQt)

-- Found unsuitable Qt version "5.4.0" from C:/Qt/5.4/mingw491_32/bin/qmake.exe

-- Configuring incomplete, errors occurred!

See also "C:/Users/rsalinas/Documents/Software/HelloCmake/CTK-qt5/CTK-superbuild/CMakeFiles/CMakeOutput.log".

Thanks a lot,

Ricardo Salinas

|

non_test

|

building problem with ctk hello i would like to build the ctk library with cmake using qt with i changed the version in ctkmacrosetupqt cmake to with set ctk qt version cache string expected qt version but i get the same error message when trying with which is also installed do you have any idea what this could cause this is the output of cmake cmake error at cmake ctkmacrosetupqt cmake message error was not found on your system you probably need to set the qt qmake executable variable call stack most recent call first cmakelists txt ctkmacrosetupqt found unsuitable qt version from c qt bin qmake exe configuring incomplete errors occurred see also c users rsalinas documents software hellocmake ctk ctk superbuild cmakefiles cmakeoutput log thanks a lot ricardo salinas

| 0

|

74,200

| 7,389,001,283

|

IssuesEvent

|

2018-03-16 06:30:17

|

brave/browser-laptop

|

https://api.github.com/repos/brave/browser-laptop

|

closed

|

Refactor needed because of deprecation of `did-get-response-details`

|

QA/test-plan-specified muon refactoring release-notes/exclude

|

## Test plan

1. Launch Brave and enable payments

2. Open 3 new tabs

3. Switch between the 3 tabs and load a unique site in each

4. Switch between the tabs and wait a few seconds, to build a history

5. Visit the payments screen. Visit time should be recorded properly

## Description

When doing Chromium 65 upgrade, the emitting of `did-get-response-details` was removed with https://github.com/brave/muon/commit/00d729f2585964027deff728a1ef3b32f7f21d65

Unless replacement code is found, this code will not execute

|

1.0

|

Refactor needed because of deprecation of `did-get-response-details` - ## Test plan

1. Launch Brave and enable payments

2. Open 3 new tabs

3. Switch between the 3 tabs and load a unique site in each

4. Switch between the tabs and wait a few seconds, to build a history

5. Visit the payments screen. Visit time should be recorded properly

## Description

When doing Chromium 65 upgrade, the emitting of `did-get-response-details` was removed with https://github.com/brave/muon/commit/00d729f2585964027deff728a1ef3b32f7f21d65

Unless replacement code is found, this code will not execute

|

test

|

refactor needed because of deprecation of did get response details test plan launch brave and enable payments open new tabs switch between the tabs and load a unique site in each switch between the tabs and wait a few seconds to build a history visit the payments screen visit time should be recorded properly description when doing chromium upgrade the emitting of did get response details was removed with unless replacement code is found this code will not execute

| 1

|

51,620

| 6,187,180,136

|

IssuesEvent

|

2017-07-04 06:35:13

|

Microsoft/vsts-tasks

|

https://api.github.com/repos/Microsoft/vsts-tasks

|

closed

|

TFS 2015 Release Cannot Get “Run Functional Tests” Task to Work on Multiple Machines

|

Area: Test

|

On-prem TFS 2015 Update 3.

I have multiple machines (different Operating Systems) that I want to run my tests on. I'm having issues getting this simple flow to work successfully. Here's what I've tried:

1. Deploy Test Agent task on multiple machines are successful.

2. If I put multiple machines in one "Run Functional Tests" task, it will execute the test one ONE of those machines in step 1 only (and will complete successful if this is the first task). Logs here: [ReleaseLogs_57.zip](https://github.com/Microsoft/vsts-tasks/files/1077767/ReleaseLogs_57.zip)

2. If I set up 2 separate tasks, one for each machine, the 1st task will execute successfully, but as seen in bullet 2, the test is run on ANY ONE of the machines in step 1 (NOT the specific one specified for the task). In the example attached, the 1st task is set up to run on Win7, but the test was actually executed on the Win8 machine.

Then the 2nd task (which is set up to run against the Win10 machine) will not complete, no matter what machine or test I put in it. Logs for this scenario attached:

[ReleaseLogs_60.zip](https://github.com/Microsoft/vsts-tasks/files/1078061/ReleaseLogs_60.zip)

It seems that the PS script(s) for this task is broken in our environment. Here's the zip file of the entire "tasks" folder for your reference.

[tasks.zip](https://github.com/Microsoft/vsts-tasks/files/1080899/tasks.zip)

Thanks!

|

1.0

|

TFS 2015 Release Cannot Get “Run Functional Tests” Task to Work on Multiple Machines - On-prem TFS 2015 Update 3.

I have multiple machines (different Operating Systems) that I want to run my tests on. I'm having issues getting this simple flow to work successfully. Here's what I've tried:

1. Deploy Test Agent task on multiple machines are successful.

2. If I put multiple machines in one "Run Functional Tests" task, it will execute the test one ONE of those machines in step 1 only (and will complete successful if this is the first task). Logs here: [ReleaseLogs_57.zip](https://github.com/Microsoft/vsts-tasks/files/1077767/ReleaseLogs_57.zip)

2. If I set up 2 separate tasks, one for each machine, the 1st task will execute successfully, but as seen in bullet 2, the test is run on ANY ONE of the machines in step 1 (NOT the specific one specified for the task). In the example attached, the 1st task is set up to run on Win7, but the test was actually executed on the Win8 machine.

Then the 2nd task (which is set up to run against the Win10 machine) will not complete, no matter what machine or test I put in it. Logs for this scenario attached:

[ReleaseLogs_60.zip](https://github.com/Microsoft/vsts-tasks/files/1078061/ReleaseLogs_60.zip)

It seems that the PS script(s) for this task is broken in our environment. Here's the zip file of the entire "tasks" folder for your reference.

[tasks.zip](https://github.com/Microsoft/vsts-tasks/files/1080899/tasks.zip)

Thanks!

|

test

|

tfs release cannot get “run functional tests” task to work on multiple machines on prem tfs update i have multiple machines different operating systems that i want to run my tests on i m having issues getting this simple flow to work successfully here s what i ve tried deploy test agent task on multiple machines are successful if i put multiple machines in one run functional tests task it will execute the test one one of those machines in step only and will complete successful if this is the first task logs here if i set up separate tasks one for each machine the task will execute successfully but as seen in bullet the test is run on any one of the machines in step not the specific one specified for the task in the example attached the task is set up to run on but the test was actually executed on the machine then the task which is set up to run against the machine will not complete no matter what machine or test i put in it logs for this scenario attached it seems that the ps script s for this task is broken in our environment here s the zip file of the entire tasks folder for your reference thanks

| 1

|

11,007

| 8,869,683,574

|

IssuesEvent

|

2019-01-11 06:39:47

|

hashmapinc/Tempus

|

https://api.github.com/repos/hashmapinc/Tempus

|

closed

|

Create an NLB for Tempus via the ingress controller

|

Complete bug infrastructure/issue

|

Blocked by #359

Child of #361

Create a layer 4 load balancer for tempus manually (until it is officially supported by K8s). This will attach to the ingress controller

|

1.0

|

Create an NLB for Tempus via the ingress controller - Blocked by #359

Child of #361

Create a layer 4 load balancer for tempus manually (until it is officially supported by K8s). This will attach to the ingress controller

|

non_test

|

create an nlb for tempus via the ingress controller blocked by child of create a layer load balancer for tempus manually until it is officially supported by this will attach to the ingress controller

| 0

|

326,614

| 28,006,698,916

|

IssuesEvent

|

2023-03-27 15:41:14

|

nucleus-security/Test-repo

|

https://api.github.com/repos/nucleus-security/Test-repo

|

opened

|

Nucleus: [Critical] - 440041

|

Test

|

Source: QUALYS

Description: CentOS has released security update for kernel to fix the vulnerabilities. Affected Products: centos 6

Impact: This vulnerability could be exploited to gain complete access to sensitive information. Malicious users could also use this vulnerability to change all the contents or configuration on the system. Additionally this vulnerability can also be used to cause a complete denial of service and could render the resource completely unavailable.

Target:

Asset name: 192.168.56.103 - IP: 192.168.56.103

Asset name: 192.168.56.131 - IP: 192.168.56.131

Solution: To resolve this issue, upgrade to the latest packages which contain a patch. Refer to CentOS advisory centos 6 (https://lists.centos.org/pipermail/centos-announce/2018-May/022827.html) for updates and patch information.

Patch:

Following are links for downloading patches to fix the vulnerabilities:

CESA-2018:1319: centos 6 (https://lists.centos.org/pipermail/centos-announce/2018-May/022827.html)

References:

QID: 440041

CVE: CVE-2017-5754, CVE-2018-8897, CVE-2017-7645, CVE-2017-8824, CVE-2017-13166, CVE-2017-18017, CVE-2017-1000410

Category: CentOS

PCI Flagged: yes

Vendor References: CESA-2018:1319 centos 6

Bugtraq IDs: 102101, 102378, 97950, 102056, 104071, 102367, 99843, 106128

Severity: Critical

Exploitable: Yes

Date Discovered: 2023-03-12 08:04:44

Please see https://nucleus-qa1.nucleussec.com/nucleus/public/app/index.php?sso=b3JnX2lkJTNEMSUyNmRvbWFpbiUzRG51Y2xldXNzZWMuY29t#vuln/1000028/NDQwMDQx/UVVBTFlT/VnVsbi1Db21wbGlhbmNl/false/MTAwMDAyOA--/c3VtbWFyeQ--/false/MjAyMy0wMy0xMiAwODowNDo0NA-- for more information on these vulnerabilities

Issue was manually created by Nucleus user: Selenium user

|

1.0

|

Nucleus: [Critical] - 440041 - Source: QUALYS

Description: CentOS has released security update for kernel to fix the vulnerabilities. Affected Products: centos 6

Impact: This vulnerability could be exploited to gain complete access to sensitive information. Malicious users could also use this vulnerability to change all the contents or configuration on the system. Additionally this vulnerability can also be used to cause a complete denial of service and could render the resource completely unavailable.

Target:

Asset name: 192.168.56.103 - IP: 192.168.56.103

Asset name: 192.168.56.131 - IP: 192.168.56.131

Solution: To resolve this issue, upgrade to the latest packages which contain a patch. Refer to CentOS advisory centos 6 (https://lists.centos.org/pipermail/centos-announce/2018-May/022827.html) for updates and patch information.

Patch:

Following are links for downloading patches to fix the vulnerabilities:

CESA-2018:1319: centos 6 (https://lists.centos.org/pipermail/centos-announce/2018-May/022827.html)

References:

QID: 440041

CVE: CVE-2017-5754, CVE-2018-8897, CVE-2017-7645, CVE-2017-8824, CVE-2017-13166, CVE-2017-18017, CVE-2017-1000410

Category: CentOS

PCI Flagged: yes

Vendor References: CESA-2018:1319 centos 6

Bugtraq IDs: 102101, 102378, 97950, 102056, 104071, 102367, 99843, 106128

Severity: Critical

Exploitable: Yes

Date Discovered: 2023-03-12 08:04:44

Please see https://nucleus-qa1.nucleussec.com/nucleus/public/app/index.php?sso=b3JnX2lkJTNEMSUyNmRvbWFpbiUzRG51Y2xldXNzZWMuY29t#vuln/1000028/NDQwMDQx/UVVBTFlT/VnVsbi1Db21wbGlhbmNl/false/MTAwMDAyOA--/c3VtbWFyeQ--/false/MjAyMy0wMy0xMiAwODowNDo0NA-- for more information on these vulnerabilities

Issue was manually created by Nucleus user: Selenium user

|

test

|

nucleus source qualys description centos has released security update for kernel to fix the vulnerabilities affected products centos impact this vulnerability could be exploited to gain complete access to sensitive information malicious users could also use this vulnerability to change all the contents or configuration on the system additionally this vulnerability can also be used to cause a complete denial of service and could render the resource completely unavailable target asset name ip asset name ip solution to resolve this issue upgrade to the latest packages which contain a patch refer to centos advisory centos for updates and patch information patch following are links for downloading patches to fix the vulnerabilities cesa centos references qid cve cve cve cve cve cve cve cve category centos pci flagged yes vendor references cesa centos bugtraq ids severity critical exploitable yes date discovered please see for more information on these vulnerabilities issue was manually created by nucleus user selenium user

| 1

|

248,728

| 7,935,327,738

|

IssuesEvent

|

2018-07-09 04:19:44

|

minio/minio-py

|

https://api.github.com/repos/minio/minio-py

|

closed

|

fput_object: AWS S3 multipart upload fails

|

priority: medium

|

minio version: 4.0.2

## Reproduce Steps

The bug happened on our system, and some minimal steps would be this (not tested, sorry):

```python

from minio import Minio

minio_client = Minio(...) # setup to run against AWS S3

amz_meta_data = {'x_amz_meta-sha256': 'foo'}

minio_client.fput_object(bucket_name='our-bucket-name',

object_name='large_file.jpeg',

file_path='/path/to/large_file.jpeg',

content_type='image/jpeg',

metadata=amz_meta_data)

```

## Current Behaviour

An `InvalidArgument: InvalidArgument: message: Invalid Argument` exception is thrown, the upload is not finished.

## Possible Solution

I debugged this, and what happens is apparently that an AWS S3 multipart upload is initiated, which consists of (among other things) a [Initiate Multipart Upload](https://docs.aws.amazon.com/AmazonS3/latest/API/mpUploadInitiate.html) and an [Upload Part](https://docs.aws.amazon.com/AmazonS3/latest/API/mpUploadUploadPart.html) request.

The initiate multipart request runs through, but the upload part request has a 400 error response:

```

<Error><Code>InvalidArgument</Code><Message>Metadata cannot be specified in this context.</Message><ArgumentName>x-amz-meta-sha256</ArgumentName><ArgumentValue>1f/29/1f29c29fb3fde0d6f9a211d74872c1b0b267d09e83e6a18bfb7b1a4d71c0352e

```

Some googling found [this answer on the AWS forum](https://forums.aws.amazon.com/thread.jspa?threadID=223994), where it is pointed out that you shouldn't pass metadata to the upload part request, only to the initiate multipart upload request.

Therefore, I suspect the solution is to adjust the minio code accordingly.

|

1.0

|

fput_object: AWS S3 multipart upload fails - minio version: 4.0.2

## Reproduce Steps

The bug happened on our system, and some minimal steps would be this (not tested, sorry):

```python

from minio import Minio

minio_client = Minio(...) # setup to run against AWS S3

amz_meta_data = {'x_amz_meta-sha256': 'foo'}

minio_client.fput_object(bucket_name='our-bucket-name',

object_name='large_file.jpeg',

file_path='/path/to/large_file.jpeg',

content_type='image/jpeg',

metadata=amz_meta_data)

```

## Current Behaviour

An `InvalidArgument: InvalidArgument: message: Invalid Argument` exception is thrown, the upload is not finished.

## Possible Solution

I debugged this, and what happens is apparently that an AWS S3 multipart upload is initiated, which consists of (among other things) a [Initiate Multipart Upload](https://docs.aws.amazon.com/AmazonS3/latest/API/mpUploadInitiate.html) and an [Upload Part](https://docs.aws.amazon.com/AmazonS3/latest/API/mpUploadUploadPart.html) request.

The initiate multipart request runs through, but the upload part request has a 400 error response:

```

<Error><Code>InvalidArgument</Code><Message>Metadata cannot be specified in this context.</Message><ArgumentName>x-amz-meta-sha256</ArgumentName><ArgumentValue>1f/29/1f29c29fb3fde0d6f9a211d74872c1b0b267d09e83e6a18bfb7b1a4d71c0352e

```

Some googling found [this answer on the AWS forum](https://forums.aws.amazon.com/thread.jspa?threadID=223994), where it is pointed out that you shouldn't pass metadata to the upload part request, only to the initiate multipart upload request.

Therefore, I suspect the solution is to adjust the minio code accordingly.

|

non_test

|

fput object aws multipart upload fails minio version reproduce steps the bug happened on our system and some minimal steps would be this not tested sorry python from minio import minio minio client minio setup to run against aws amz meta data x amz meta foo minio client fput object bucket name our bucket name object name large file jpeg file path path to large file jpeg content type image jpeg metadata amz meta data current behaviour an invalidargument invalidargument message invalid argument exception is thrown the upload is not finished possible solution i debugged this and what happens is apparently that an aws multipart upload is initiated which consists of among other things a and an request the initiate multipart request runs through but the upload part request has a error response invalidargument metadata cannot be specified in this context x amz meta some googling found where it is pointed out that you shouldn t pass metadata to the upload part request only to the initiate multipart upload request therefore i suspect the solution is to adjust the minio code accordingly

| 0

|

53,561

| 13,179,468,393

|

IssuesEvent

|

2020-08-12 10:59:52

|

rockyFierro/WEBPT19_TEAM_BUILDER

|

https://api.github.com/repos/rockyFierro/WEBPT19_TEAM_BUILDER

|

closed

|

FINAL STRETCH

|

build your form form submission functionality stretch

|

#### More Stretch Problems

After finishing your required elements, you can push your work further. These goals may or may not be things you have learned in this module but they build on the material you just studied. Time allowing, stretch your limits and see if you can deliver on the following optional goals:

- [ ] Follow the steps above to edit members. This is difficult to do, and the architecture is tough. But it is a great skill to practice! Pay attention the the implementation details, and to the architecture. There are many ways to accomplish this. When you finish, can you think of another way?

- [ ] Build another layer of your App so that you can keep track of multiple teams, each with their own encapsulated list of team members.

- [ ] Look into the various strategies around form validation. What happens if you try to enter a number as a team-members name? Does your App allow for that? Should it? What happens if you try and enter a function as the value to one of your fields? How could this be dangerous? How might you prevent it?

- [x] Style the forms. There are some subtle browser defaults for input tags that might need to be overwritten based on their state (active, focus, hover, etc.); Keep those CSS skill sharp.

|

1.0

|

FINAL STRETCH -

#### More Stretch Problems

After finishing your required elements, you can push your work further. These goals may or may not be things you have learned in this module but they build on the material you just studied. Time allowing, stretch your limits and see if you can deliver on the following optional goals:

- [ ] Follow the steps above to edit members. This is difficult to do, and the architecture is tough. But it is a great skill to practice! Pay attention the the implementation details, and to the architecture. There are many ways to accomplish this. When you finish, can you think of another way?

- [ ] Build another layer of your App so that you can keep track of multiple teams, each with their own encapsulated list of team members.

- [ ] Look into the various strategies around form validation. What happens if you try to enter a number as a team-members name? Does your App allow for that? Should it? What happens if you try and enter a function as the value to one of your fields? How could this be dangerous? How might you prevent it?

- [x] Style the forms. There are some subtle browser defaults for input tags that might need to be overwritten based on their state (active, focus, hover, etc.); Keep those CSS skill sharp.

|

non_test

|

final stretch more stretch problems after finishing your required elements you can push your work further these goals may or may not be things you have learned in this module but they build on the material you just studied time allowing stretch your limits and see if you can deliver on the following optional goals follow the steps above to edit members this is difficult to do and the architecture is tough but it is a great skill to practice pay attention the the implementation details and to the architecture there are many ways to accomplish this when you finish can you think of another way build another layer of your app so that you can keep track of multiple teams each with their own encapsulated list of team members look into the various strategies around form validation what happens if you try to enter a number as a team members name does your app allow for that should it what happens if you try and enter a function as the value to one of your fields how could this be dangerous how might you prevent it style the forms there are some subtle browser defaults for input tags that might need to be overwritten based on their state active focus hover etc keep those css skill sharp

| 0

|

714,413

| 24,560,688,545

|

IssuesEvent

|

2022-10-12 19:58:28

|

Poobslag/turbofat

|

https://api.github.com/repos/Poobslag/turbofat

|

opened

|

"insert line" level effect should be able to insert entire boxes

|

priority-4

|

Currently, line inserts are handled one-at-a-time which means 3x3 boxes can't be inserted -- only a series of 3x1 boxes. It would be better if line inserts could insert a bunch of rows at once, and insert an entire 3x3 box.

|

1.0

|

"insert line" level effect should be able to insert entire boxes - Currently, line inserts are handled one-at-a-time which means 3x3 boxes can't be inserted -- only a series of 3x1 boxes. It would be better if line inserts could insert a bunch of rows at once, and insert an entire 3x3 box.

|

non_test

|

insert line level effect should be able to insert entire boxes currently line inserts are handled one at a time which means boxes can t be inserted only a series of boxes it would be better if line inserts could insert a bunch of rows at once and insert an entire box

| 0

|

69,631

| 9,310,932,174

|

IssuesEvent

|

2019-03-25 20:03:49

|

exercism/javascript

|

https://api.github.com/repos/exercism/javascript

|

closed

|

Suggestion: prefer eslint syntax over style

|

chore documentation

|

With [more](https://github.com/prettier/prettier) and [more](https://github.com/xojs/xo) tools becoming available to automatically format the code base to a certain set of style rules (and [more comprehensive](https://github.com/prettier/prettier-eslint/issues/101) than `eslint --fix`, I'm also more inclined to stop teaching style preference, as indicated by mentor notes, mentor guidance docs and discussions on slack.

## The current state

Currently, the javavscript `package.json` sets a _very restrictive_ code style (`airbnb`) and I *don't* think this:

- helps the student understand the language

- helps the student get fluent in a language

- helps the mentor mentoring as downloading exercises can result in wibbly wobblies all over the place

We don't instruct or enforce these rules (https://github.com/exercism/javascript/issues/44#issuecomment-416760562) strictly, but I seem them in mentoring comments and most IDE's will run these _automagically_ if present.

## Recommendation

I therefore recommend to drop down to [eslint:recommend](eslint:recommended"](https://eslint.org/docs/rules/)) or if we must have a non-company style guide, use [standard](https://github.com/standard/eslint-config-standard) with semicolon rules disabled (people should decide about ASI themselves -- I don't think pushing people who know about ASI helps anyone, and TS doesn't have them either).

If this is something we want, I think doing it sooner rather than later is good and will speed up #480 greatly. The eslintignore list is still quite long and I don't think tháts particularly helpful.

As a final note, I think we should _better_ communicate to the students to run `npm/yarn install` and then `npm/yarn test`. I also suggest running `test` followed by `lint` so that someone is only bugged once the test succeeds, instead of using a `"pretest": "lint"`:

```json

{

"scripts": {

"test": "test:jest && test:lint",

"test:jest": "...",

"test:lint": "..."

}

}

```

|

1.0

|

Suggestion: prefer eslint syntax over style - With [more](https://github.com/prettier/prettier) and [more](https://github.com/xojs/xo) tools becoming available to automatically format the code base to a certain set of style rules (and [more comprehensive](https://github.com/prettier/prettier-eslint/issues/101) than `eslint --fix`, I'm also more inclined to stop teaching style preference, as indicated by mentor notes, mentor guidance docs and discussions on slack.

## The current state

Currently, the javavscript `package.json` sets a _very restrictive_ code style (`airbnb`) and I *don't* think this:

- helps the student understand the language

- helps the student get fluent in a language

- helps the mentor mentoring as downloading exercises can result in wibbly wobblies all over the place

We don't instruct or enforce these rules (https://github.com/exercism/javascript/issues/44#issuecomment-416760562) strictly, but I seem them in mentoring comments and most IDE's will run these _automagically_ if present.

## Recommendation

I therefore recommend to drop down to [eslint:recommend](eslint:recommended"](https://eslint.org/docs/rules/)) or if we must have a non-company style guide, use [standard](https://github.com/standard/eslint-config-standard) with semicolon rules disabled (people should decide about ASI themselves -- I don't think pushing people who know about ASI helps anyone, and TS doesn't have them either).

If this is something we want, I think doing it sooner rather than later is good and will speed up #480 greatly. The eslintignore list is still quite long and I don't think tháts particularly helpful.

As a final note, I think we should _better_ communicate to the students to run `npm/yarn install` and then `npm/yarn test`. I also suggest running `test` followed by `lint` so that someone is only bugged once the test succeeds, instead of using a `"pretest": "lint"`:

```json

{

"scripts": {

"test": "test:jest && test:lint",

"test:jest": "...",

"test:lint": "..."

}

}

```

|

non_test

|

suggestion prefer eslint syntax over style with and tools becoming available to automatically format the code base to a certain set of style rules and than eslint fix i m also more inclined to stop teaching style preference as indicated by mentor notes mentor guidance docs and discussions on slack the current state currently the javavscript package json sets a very restrictive code style airbnb and i don t think this helps the student understand the language helps the student get fluent in a language helps the mentor mentoring as downloading exercises can result in wibbly wobblies all over the place we don t instruct or enforce these rules strictly but i seem them in mentoring comments and most ide s will run these automagically if present recommendation i therefore recommend to drop down to eslint recommended or if we must have a non company style guide use with semicolon rules disabled people should decide about asi themselves i don t think pushing people who know about asi helps anyone and ts doesn t have them either if this is something we want i think doing it sooner rather than later is good and will speed up greatly the eslintignore list is still quite long and i don t think tháts particularly helpful as a final note i think we should better communicate to the students to run npm yarn install and then npm yarn test i also suggest running test followed by lint so that someone is only bugged once the test succeeds instead of using a pretest lint json scripts test test jest test lint test jest test lint

| 0

|

10,828

| 27,424,080,674

|

IssuesEvent

|

2023-03-01 18:51:49

|

Azure/azure-sdk

|

https://api.github.com/repos/Azure/azure-sdk

|

closed

|

Board Review: Azure IoT Models Repository Client (Python)

|

architecture board-review

|

## Contacts and Timeline

* Responsible service team: Azure IoT Portal & UX

* Main contacts:

* Developer: carter.tinney@microsoft.com

* Product Manager: ricardo.minguez@microsoft.com

* Dev Lead: paymaun.heidari@microsoft.com

* Expected code complete date: March 17 2020

* Expected release date: (Unknown - This is a preview version of a package)

## About the Service

* Link to documentation introducing/describing the service: N/A

* Link to the service REST APIs: N/A

* Link to GitHub issue for previous review sessions, if applicable: N/A

## About the client library

* Name of the client library: Azure Iot Models Repository (azure-iot-modelsrepository)

* Languages for this review: Python

## Artifacts required (per language)

### Python

* APIView Link: https://apiview.dev/Assemblies/Review/d043acebf7b7407fb4533d9814d1e334

* Link to Champion Scenarios/Quickstart samples: [Link to samples](https://github.com/cartertinney/azure-sdk-for-python/tree/master/sdk/iot/azure-iot-modelsrepository/samples)

* PR: https://github.com/Azure/azure-sdk-for-python/pull/17180

|

1.0

|

Board Review: Azure IoT Models Repository Client (Python) - ## Contacts and Timeline

* Responsible service team: Azure IoT Portal & UX

* Main contacts:

* Developer: carter.tinney@microsoft.com

* Product Manager: ricardo.minguez@microsoft.com

* Dev Lead: paymaun.heidari@microsoft.com

* Expected code complete date: March 17 2020

* Expected release date: (Unknown - This is a preview version of a package)

## About the Service

* Link to documentation introducing/describing the service: N/A

* Link to the service REST APIs: N/A

* Link to GitHub issue for previous review sessions, if applicable: N/A

## About the client library

* Name of the client library: Azure Iot Models Repository (azure-iot-modelsrepository)

* Languages for this review: Python

## Artifacts required (per language)

### Python

* APIView Link: https://apiview.dev/Assemblies/Review/d043acebf7b7407fb4533d9814d1e334

* Link to Champion Scenarios/Quickstart samples: [Link to samples](https://github.com/cartertinney/azure-sdk-for-python/tree/master/sdk/iot/azure-iot-modelsrepository/samples)

* PR: https://github.com/Azure/azure-sdk-for-python/pull/17180

|

non_test

|

board review azure iot models repository client python contacts and timeline responsible service team azure iot portal ux main contacts developer carter tinney microsoft com product manager ricardo minguez microsoft com dev lead paymaun heidari microsoft com expected code complete date march expected release date unknown this is a preview version of a package about the service link to documentation introducing describing the service n a link to the service rest apis n a link to github issue for previous review sessions if applicable n a about the client library name of the client library azure iot models repository azure iot modelsrepository languages for this review python artifacts required per language python apiview link link to champion scenarios quickstart samples pr

| 0

|

328,957

| 28,142,904,746

|

IssuesEvent

|

2023-04-02 06:05:56

|

gama-platform/gama

|

https://api.github.com/repos/gama-platform/gama

|

closed

|

Meta-data for required plugin (or invisible pragma) of GAML syntax

|

🤗 Enhancement 👍 Fix to be tested

|

**Is your request related to a problem? Please describe.**

Trying to have dynamic installation plugins/features , i have problem with finding the right plugin that provide the extensions of GAML syntax

**Describe the improvement you'd like**

For example, with a standard GAMA , when we edit the model that use extensions syntax (R, netcdf, Gaming, launchpad....) , an error syntax occurs ("xxx was not declared"). But we cant know which is/are the plugins need to be installed.

**Describe alternatives you've considered**

Some information should have , as "declared in ..... plugin" or even a quick fix with lead to the installation of that plugin.

|

1.0

|

Meta-data for required plugin (or invisible pragma) of GAML syntax - **Is your request related to a problem? Please describe.**

Trying to have dynamic installation plugins/features , i have problem with finding the right plugin that provide the extensions of GAML syntax

**Describe the improvement you'd like**

For example, with a standard GAMA , when we edit the model that use extensions syntax (R, netcdf, Gaming, launchpad....) , an error syntax occurs ("xxx was not declared"). But we cant know which is/are the plugins need to be installed.

**Describe alternatives you've considered**

Some information should have , as "declared in ..... plugin" or even a quick fix with lead to the installation of that plugin.

|

test

|

meta data for required plugin or invisible pragma of gaml syntax is your request related to a problem please describe trying to have dynamic installation plugins features i have problem with finding the right plugin that provide the extensions of gaml syntax describe the improvement you d like for example with a standard gama when we edit the model that use extensions syntax r netcdf gaming launchpad an error syntax occurs xxx was not declared but we cant know which is are the plugins need to be installed describe alternatives you ve considered some information should have as declared in plugin or even a quick fix with lead to the installation of that plugin

| 1

|

350,310

| 24,978,250,267

|

IssuesEvent

|

2022-11-02 09:39:05

|

AY2223S1-CS2103T-W13-1/tp

|

https://api.github.com/repos/AY2223S1-CS2103T-W13-1/tp

|

closed

|

[PE-D][Tester C] For unparticipate command suggestion

|

bug duplicate fixable bug.documentationbug

|

For unparticipate command, it works when you try to pass in a component that does not exist for a student, (command is executed since the input goes away), however just that nothing occurs. Maybe should state such a case in the user guide?

<!--session: 1666945157096-9798dbfe-290b-4667-b806-b70f2831affe-->

<!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.VeryLow`

original: maxng17/ped#11

|

1.0

|

[PE-D][Tester C] For unparticipate command suggestion - For unparticipate command, it works when you try to pass in a component that does not exist for a student, (command is executed since the input goes away), however just that nothing occurs. Maybe should state such a case in the user guide?

<!--session: 1666945157096-9798dbfe-290b-4667-b806-b70f2831affe-->

<!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.VeryLow`

original: maxng17/ped#11

|

non_test

|

for unparticipate command suggestion for unparticipate command it works when you try to pass in a component that does not exist for a student command is executed since the input goes away however just that nothing occurs maybe should state such a case in the user guide labels type documentationbug severity verylow original ped

| 0

|

100,420

| 11,194,945,872

|

IssuesEvent

|

2020-01-03 03:47:22

|

mgp25/Instagram-API

|

https://api.github.com/repos/mgp25/Instagram-API

|

closed

|

Update Wiki

|

documentation

|

## Prerequisites

- You will be asked some questions and requested to provide some information, please read them **carefully** and answer completely.

- Put an `x` into all the boxes [ ] relevant to your issue (like so [x]).

- Use the *Preview* tab to see how your issue will actually look like, before sending it.

- Understand that we will *CLOSE* (without answering) *all* issues related to `challenge_required`, `checkpoint_required`, `feedback_required` or `sentry_block`. They've already been answered in the Wiki and *countless* closed tickets in the past!

- Do not post screenshots of error messages or code.

---

### Before submitting an issue make sure you have:

- [x] [Searched](https://github.com/mgp25/Instagram-API/search?type=Issues) the bugtracker for similar issues including **closed** ones

- [x] [Read the FAQ](https://github.com/mgp25/Instagram-API/wiki/FAQ)

- [x] [Read the wiki](https://github.com/mgp25/Instagram-API/wiki)

- [x] [Reviewed the examples](https://github.com/mgp25/Instagram-API/tree/master/examples)

- [x] [Installed the api using ``composer``](https://github.com/mgp25/Instagram-API#installation)

- [x] [Using latest API release](https://github.com/mgp25/Instagram-API/releases)

### Purpose of your issue?

- [ ] Bug report (encountered problems/errors)

- [ ] Feature request (request for new functionality)

- [ ] Question

- [x] Other

---

https://github.com/mgp25/Instagram-API/wiki#instagram-direct

This section has outdated information

`$ig->direct->sendPost($recipients, $mediaId);`

Instagram needs compulsory 3 parameters. Third parameter is missing.

3rd one is called options

```

$options = [

'media_type' => 'video', //compulsory, it doesn't care photo can also be sent as video and it will sent normally

'text' => 'wow 22', // optional, if you want to send some message with media.

];

```

`$ig->direct->sendPost($recipients, $mediaId, $options);`

|

1.0

|

Update Wiki - ## Prerequisites

- You will be asked some questions and requested to provide some information, please read them **carefully** and answer completely.

- Put an `x` into all the boxes [ ] relevant to your issue (like so [x]).

- Use the *Preview* tab to see how your issue will actually look like, before sending it.

- Understand that we will *CLOSE* (without answering) *all* issues related to `challenge_required`, `checkpoint_required`, `feedback_required` or `sentry_block`. They've already been answered in the Wiki and *countless* closed tickets in the past!

- Do not post screenshots of error messages or code.

---

### Before submitting an issue make sure you have:

- [x] [Searched](https://github.com/mgp25/Instagram-API/search?type=Issues) the bugtracker for similar issues including **closed** ones

- [x] [Read the FAQ](https://github.com/mgp25/Instagram-API/wiki/FAQ)

- [x] [Read the wiki](https://github.com/mgp25/Instagram-API/wiki)

- [x] [Reviewed the examples](https://github.com/mgp25/Instagram-API/tree/master/examples)

- [x] [Installed the api using ``composer``](https://github.com/mgp25/Instagram-API#installation)

- [x] [Using latest API release](https://github.com/mgp25/Instagram-API/releases)

### Purpose of your issue?

- [ ] Bug report (encountered problems/errors)

- [ ] Feature request (request for new functionality)

- [ ] Question

- [x] Other

---