Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

132,971

| 10,775,689,060

|

IssuesEvent

|

2019-11-03 15:54:51

|

dbeaver/dbeaver

|

https://api.github.com/repos/dbeaver/dbeaver

|

closed

|

NPE appears after changing active datasource [D8]

|

TestQuality bug xp:minor

|

#### System information:

- Operating system (distribution) and version win 10 x64

- DBeaver version 6.2.4

#### Describe the problem you're observing:

Npe appears when I switch in SQL editor from current DB (e.g. hana) to another (e.g. PostgreSQL) and back to first DB (hana). The issue has no affection for UI

#### Steps to reproduce, if exist:

1. Execute some script

2. Change datasource to another (e.g. Hana - PostgreSQL)

3. Try to execute script - get error that item doesn't exist

4. Switch back to the previous DB - try to execute the script. - The script is successfully executed, but there is NPE in Error log

[npe_changedatasource.log](https://github.com/dbeaver/dbeaver/files/3798444/npe_changedatasource.log)

#### Include any warning/errors/backtraces from the logs

<!-- Please, find the short guide how to find logs here: https://github.com/dbeaver/dbeaver/wiki/Log-files -->

|

1.0

|

NPE appears after changing active datasource [D8] - #### System information:

- Operating system (distribution) and version win 10 x64

- DBeaver version 6.2.4

#### Describe the problem you're observing:

Npe appears when I switch in SQL editor from current DB (e.g. hana) to another (e.g. PostgreSQL) and back to first DB (hana). The issue has no affection for UI

#### Steps to reproduce, if exist:

1. Execute some script

2. Change datasource to another (e.g. Hana - PostgreSQL)

3. Try to execute script - get error that item doesn't exist

4. Switch back to the previous DB - try to execute the script. - The script is successfully executed, but there is NPE in Error log

[npe_changedatasource.log](https://github.com/dbeaver/dbeaver/files/3798444/npe_changedatasource.log)

#### Include any warning/errors/backtraces from the logs

<!-- Please, find the short guide how to find logs here: https://github.com/dbeaver/dbeaver/wiki/Log-files -->

|

test

|

npe appears after changing active datasource system information operating system distribution and version win dbeaver version describe the problem you re observing npe appears when i switch in sql editor from current db e g hana to another e g postgresql and back to first db hana the issue has no affection for ui steps to reproduce if exist execute some script change datasource to another e g hana postgresql try to execute script get error that item doesn t exist switch back to the previous db try to execute the script the script is successfully executed but there is npe in error log include any warning errors backtraces from the logs

| 1

|

234,474

| 7,721,547,570

|

IssuesEvent

|

2018-05-24 06:01:51

|

FirecraftMC/FirecraftCore

|

https://api.github.com/repos/FirecraftMC/FirecraftCore

|

closed

|

Fully Implement Subranks

|

enhancement low-priority

|

NOTES: Staff will have limited donor permissions, however they will be able to use the commands/permissions from any donor rank they have bought

|

1.0

|

Fully Implement Subranks - NOTES: Staff will have limited donor permissions, however they will be able to use the commands/permissions from any donor rank they have bought

|

non_test

|

fully implement subranks notes staff will have limited donor permissions however they will be able to use the commands permissions from any donor rank they have bought

| 0

|

20,603

| 30,606,711,756

|

IssuesEvent

|

2023-07-23 04:52:14

|

mebjas/html5-qrcode

|

https://api.github.com/repos/mebjas/html5-qrcode

|

opened

|

Compatibility - [OS] [Browser] - [What is not working]

|

compatibility

|

**Describe the bug**

A clear and concise description of what the bug is.

- What is not working and what is expected.

**Describe the browser:**

- OS: [e.g. iOS, Android, MacOs or Windows]

- Browser [e.g. chrome, safari, edge, firefox]

- Version [e.g. 22]

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Add any other context about the problem here.

|

True

|

Compatibility - [OS] [Browser] - [What is not working] - **Describe the bug**

A clear and concise description of what the bug is.

- What is not working and what is expected.

**Describe the browser:**

- OS: [e.g. iOS, Android, MacOs or Windows]

- Browser [e.g. chrome, safari, edge, firefox]

- Version [e.g. 22]

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Additional context**

Add any other context about the problem here.

|

non_test

|

compatibility describe the bug a clear and concise description of what the bug is what is not working and what is expected describe the browser os browser version screenshots if applicable add screenshots to help explain your problem additional context add any other context about the problem here

| 0

|

5,992

| 2,800,962,290

|

IssuesEvent

|

2015-05-13 13:28:32

|

acemod/ACE3

|

https://api.github.com/repos/acemod/ACE3

|

closed

|

Game Crashing with Medical Modules

|

needs testing

|

ACE3 Version: 3.x.x

**Mods:**

* @cba_a3

* @ace3

**Placed ACE3 Modules:**

* *Add the list of modules you have placed on the map. Use 'None' if the error occurs without using any modules.*

Advanced Medical Settings

Medical Settings

(Tried with everything except BLUFOR tracking and the Set Medical modules earlier with same issues)

**Description:**

*Add a detailed description of the error. This makes it easier for us to fix the issue.*

When loading into a mission the bar gets about 70% through and then the following error pops up:

Bad version 68 in p3d file 'z\ace\addons\medical\data\bandage.p3d

**Steps to reproduce:**

* *Add the steps needed to reproduce the issue.*

Im guessing run Arma with CBA and ACE3 place down the two modules about, set the difficulty to advanced and enable advanced wounds.

**Where did the issue occur?**

*A possible answer might be "Multiplayer", "Singleplayer"*

Singleplayer

**RPT log file:**

*Add a link (pastebin.com) to the client or server RPT file.*

http://pastebin.com/gcFZpkkW

|

1.0

|

Game Crashing with Medical Modules - ACE3 Version: 3.x.x

**Mods:**

* @cba_a3

* @ace3

**Placed ACE3 Modules:**

* *Add the list of modules you have placed on the map. Use 'None' if the error occurs without using any modules.*

Advanced Medical Settings

Medical Settings

(Tried with everything except BLUFOR tracking and the Set Medical modules earlier with same issues)

**Description:**

*Add a detailed description of the error. This makes it easier for us to fix the issue.*

When loading into a mission the bar gets about 70% through and then the following error pops up:

Bad version 68 in p3d file 'z\ace\addons\medical\data\bandage.p3d

**Steps to reproduce:**

* *Add the steps needed to reproduce the issue.*

Im guessing run Arma with CBA and ACE3 place down the two modules about, set the difficulty to advanced and enable advanced wounds.

**Where did the issue occur?**

*A possible answer might be "Multiplayer", "Singleplayer"*

Singleplayer

**RPT log file:**

*Add a link (pastebin.com) to the client or server RPT file.*

http://pastebin.com/gcFZpkkW

|

test

|

game crashing with medical modules version x x mods cba placed modules add the list of modules you have placed on the map use none if the error occurs without using any modules advanced medical settings medical settings tried with everything except blufor tracking and the set medical modules earlier with same issues description add a detailed description of the error this makes it easier for us to fix the issue when loading into a mission the bar gets about through and then the following error pops up bad version in file z ace addons medical data bandage steps to reproduce add the steps needed to reproduce the issue im guessing run arma with cba and place down the two modules about set the difficulty to advanced and enable advanced wounds where did the issue occur a possible answer might be multiplayer singleplayer singleplayer rpt log file add a link pastebin com to the client or server rpt file

| 1

|

54,404

| 6,385,613,941

|

IssuesEvent

|

2017-08-03 09:00:52

|

SatelliteQE/robottelo

|

https://api.github.com/repos/SatelliteQE/robottelo

|

opened

|

Failure's on tests.foreman.ui.test_organization due to different pages for two screnarios

|

6.3 High test-failure

|

Same as described in #4592 but for Organization.

|

1.0

|

Failure's on tests.foreman.ui.test_organization due to different pages for two screnarios - Same as described in #4592 but for Organization.

|

test

|

failure s on tests foreman ui test organization due to different pages for two screnarios same as described in but for organization

| 1

|

297,411

| 25,729,159,087

|

IssuesEvent

|

2022-12-07 18:51:33

|

opensearch-project/OpenSearch

|

https://api.github.com/repos/opensearch-project/OpenSearch

|

closed

|

[BUG] org.opensearch.repositories.s3.RepositoryS3ClientYamlTestSuiteIT/test {yaml=repository_s3/20_repository_permanent_credentials/Snapshot and Restore with repository-s3 using permanent credentials} flaky

|

bug flaky-test

|

org.opensearch.repositories.s3.RepositoryS3ClientYamlTestSuiteIT/test {yaml=repository_s3/20_repository_permanent_credentials/Snapshot and Restore with repository-s3 using permanent credentials}

https://build.ci.opensearch.org/job/gradle-check/6782/

https://build.ci.opensearch.org/job/gradle-check/6779/

https://build.ci.opensearch.org/job/gradle-check/6778/

https://build.ci.opensearch.org/job/gradle-check/6766/

https://build.ci.opensearch.org/job/gradle-check/6751/

https://build.ci.opensearch.org/job/gradle-check/6750/

|

1.0

|

[BUG] org.opensearch.repositories.s3.RepositoryS3ClientYamlTestSuiteIT/test {yaml=repository_s3/20_repository_permanent_credentials/Snapshot and Restore with repository-s3 using permanent credentials} flaky - org.opensearch.repositories.s3.RepositoryS3ClientYamlTestSuiteIT/test {yaml=repository_s3/20_repository_permanent_credentials/Snapshot and Restore with repository-s3 using permanent credentials}

https://build.ci.opensearch.org/job/gradle-check/6782/

https://build.ci.opensearch.org/job/gradle-check/6779/

https://build.ci.opensearch.org/job/gradle-check/6778/

https://build.ci.opensearch.org/job/gradle-check/6766/

https://build.ci.opensearch.org/job/gradle-check/6751/

https://build.ci.opensearch.org/job/gradle-check/6750/

|

test

|

org opensearch repositories test yaml repository repository permanent credentials snapshot and restore with repository using permanent credentials flaky org opensearch repositories test yaml repository repository permanent credentials snapshot and restore with repository using permanent credentials

| 1

|

166,005

| 14,017,473,112

|

IssuesEvent

|

2020-10-29 15:41:44

|

tskit-dev/tskit

|

https://api.github.com/repos/tskit-dev/tskit

|

opened

|

Track documentation views as metric

|

Infrastructure and tools documentation

|

In this [blog post](https://blog.dask.org/2020/01/14/estimating-users) Matthew Rocklin talks about how they estimate the number of users Dask has. The number of unique IPs looking at the documentation seems like quite a good metric to me.

Can we do something similar for tskit-dev related projects? (Since we're moving away from RTD?)

|

1.0

|

Track documentation views as metric - In this [blog post](https://blog.dask.org/2020/01/14/estimating-users) Matthew Rocklin talks about how they estimate the number of users Dask has. The number of unique IPs looking at the documentation seems like quite a good metric to me.

Can we do something similar for tskit-dev related projects? (Since we're moving away from RTD?)

|

non_test

|

track documentation views as metric in this matthew rocklin talks about how they estimate the number of users dask has the number of unique ips looking at the documentation seems like quite a good metric to me can we do something similar for tskit dev related projects since we re moving away from rtd

| 0

|

20,100

| 4,495,115,771

|

IssuesEvent

|

2016-08-31 09:02:33

|

radare/radare2

|

https://api.github.com/repos/radare/radare2

|

closed

|

`afu` command undocumented

|

anal documentation

|

While looking how to resize a function that radare/anal incorrectly delimited, I accidentally stumbled on an `afu` command that is handled in cmd_anal_fcn, but it is not documented. What is it supposed to do?

|

1.0

|

`afu` command undocumented - While looking how to resize a function that radare/anal incorrectly delimited, I accidentally stumbled on an `afu` command that is handled in cmd_anal_fcn, but it is not documented. What is it supposed to do?

|

non_test

|

afu command undocumented while looking how to resize a function that radare anal incorrectly delimited i accidentally stumbled on an afu command that is handled in cmd anal fcn but it is not documented what is it supposed to do

| 0

|

333,502

| 29,669,509,681

|

IssuesEvent

|

2023-06-11 08:19:06

|

cse110-sp23-group23/Zoltar

|

https://api.github.com/repos/cse110-sp23-group23/Zoltar

|

opened

|

Mute button test

|

End to End Tests

|

Requirement Description:

- Toggle mute button test

Any key challenges:

-

Steps to Implementing:

- visuals.test.js

Other:

-

|

1.0

|

Mute button test - Requirement Description:

- Toggle mute button test

Any key challenges:

-

Steps to Implementing:

- visuals.test.js

Other:

-

|

test

|

mute button test requirement description toggle mute button test any key challenges steps to implementing visuals test js other

| 1

|

85,209

| 16,615,102,835

|

IssuesEvent

|

2021-06-02 15:44:24

|

WISE-Community/WISE-Client

|

https://api.github.com/repos/WISE-Community/WISE-Client

|

closed

|

Separate authoring-tool into node/project-authoring

|

2 points Code Quality

|

Separate authoring-tool.module.ts into node-authoring.module.ts and project-authoring.module.ts

|

1.0

|

Separate authoring-tool into node/project-authoring - Separate authoring-tool.module.ts into node-authoring.module.ts and project-authoring.module.ts

|

non_test

|

separate authoring tool into node project authoring separate authoring tool module ts into node authoring module ts and project authoring module ts

| 0

|

19,984

| 5,961,561,208

|

IssuesEvent

|

2017-05-29 17:59:34

|

ggez/ggez

|

https://api.github.com/repos/ggez/ggez

|

closed

|

Drawing is *horribly* slow on Windows

|

enhancement help wanted [CODE]

|

Like, what on Linux or Mac runs at 130 fps instead runs at 6 fps.

Good example program: https://github.com/icefoxen/ggj2017 at least as of commit 851f83782d0e4dd05cb6633fd4a4e1ea9f2d1376

I blame SDL2. Still! Should get to the bottom of it one way or another.

Tested on:

* Intel core i5 laptop with NVidia 5100M GPU running Windows 10

* Intel core i5 laptop with NVidia 425M GPU running Windows 7

|

1.0

|

Drawing is *horribly* slow on Windows - Like, what on Linux or Mac runs at 130 fps instead runs at 6 fps.

Good example program: https://github.com/icefoxen/ggj2017 at least as of commit 851f83782d0e4dd05cb6633fd4a4e1ea9f2d1376

I blame SDL2. Still! Should get to the bottom of it one way or another.

Tested on:

* Intel core i5 laptop with NVidia 5100M GPU running Windows 10

* Intel core i5 laptop with NVidia 425M GPU running Windows 7

|

non_test

|

drawing is horribly slow on windows like what on linux or mac runs at fps instead runs at fps good example program at least as of commit i blame still should get to the bottom of it one way or another tested on intel core laptop with nvidia gpu running windows intel core laptop with nvidia gpu running windows

| 0

|

11,186

| 3,185,520,441

|

IssuesEvent

|

2015-09-28 05:46:53

|

e-government-ua/i

|

https://api.github.com/repos/e-government-ua/i

|

closed

|

Настроить Томкат в Neatbeans

|

active test

|

https://github.com/e-government-ua/i/wiki/%D0%A3%D1%81%D1%82%D0%B0%D0%BD%D0%BE%D0%B2%D0%BA%D0%B0-%D0%B8-%D0%BD%D0%B0%D1%81%D1%82%D1%80%D0%BE%D0%B9%D0%BA%D0%B0-%D1%81%D0%B5%D1%80%D0%B2%D0%B5%D1%80%D0%B0-Tomcat-%28backend%29

На страничке "Установка и настройка сервера Tomcat (backend)" добавить описание в пункт 3 "Настроить Томкат в Neatbeans".

|

1.0

|

Настроить Томкат в Neatbeans - https://github.com/e-government-ua/i/wiki/%D0%A3%D1%81%D1%82%D0%B0%D0%BD%D0%BE%D0%B2%D0%BA%D0%B0-%D0%B8-%D0%BD%D0%B0%D1%81%D1%82%D1%80%D0%BE%D0%B9%D0%BA%D0%B0-%D1%81%D0%B5%D1%80%D0%B2%D0%B5%D1%80%D0%B0-Tomcat-%28backend%29

На страничке "Установка и настройка сервера Tomcat (backend)" добавить описание в пункт 3 "Настроить Томкат в Neatbeans".

|

test

|

настроить томкат в neatbeans на страничке установка и настройка сервера tomcat backend добавить описание в пункт настроить томкат в neatbeans

| 1

|

441,018

| 12,707,019,744

|

IssuesEvent

|

2020-06-23 08:13:36

|

luna/luna

|

https://api.github.com/repos/luna/luna

|

closed

|

Safe/Unsafe FFI Semantics

|

Category: Compiler Category: Semantics Category: Tooling Difficulty: Core Contributor Priority: High Type: Enhancement

|

Opening up some bikeshedding on the names for our safety annotations in Luna. The parser currently deals with the following:

- `safe`: The FFI call can be executed on any thread.

- `unsafe`: The FFI call must be executed on one thread.

Chatting has also proposed `moveable` and `pinned` respectively, which I like a lot.

I will implement whatever changes to the parser are necessary based on the results of the discussion here.

|

1.0

|

Safe/Unsafe FFI Semantics - Opening up some bikeshedding on the names for our safety annotations in Luna. The parser currently deals with the following:

- `safe`: The FFI call can be executed on any thread.

- `unsafe`: The FFI call must be executed on one thread.

Chatting has also proposed `moveable` and `pinned` respectively, which I like a lot.

I will implement whatever changes to the parser are necessary based on the results of the discussion here.

|

non_test

|

safe unsafe ffi semantics opening up some bikeshedding on the names for our safety annotations in luna the parser currently deals with the following safe the ffi call can be executed on any thread unsafe the ffi call must be executed on one thread chatting has also proposed moveable and pinned respectively which i like a lot i will implement whatever changes to the parser are necessary based on the results of the discussion here

| 0

|

3,422

| 2,671,941,248

|

IssuesEvent

|

2015-03-24 10:53:45

|

rssidlowski/Pollution_Source_Tracking

|

https://api.github.com/repos/rssidlowski/Pollution_Source_Tracking

|

closed

|

Zoom value after sample selected using identify feature

|

COBDev Ready for Testing enhancement moderate priority

|

From COB personnel:

There appears to be a default zoom value that the map goes to after a sample is selected from the identify feature. In some cases where there are several clusters of separate sample points the map view will zoom out beyond the ability to distinguish the clusters. Is there a way to leave the zoom value as it was prior to sample selection?

Before sample is selected:

After selecting sample.

|

1.0

|

Zoom value after sample selected using identify feature - From COB personnel:

There appears to be a default zoom value that the map goes to after a sample is selected from the identify feature. In some cases where there are several clusters of separate sample points the map view will zoom out beyond the ability to distinguish the clusters. Is there a way to leave the zoom value as it was prior to sample selection?

Before sample is selected:

After selecting sample.

|

test

|

zoom value after sample selected using identify feature from cob personnel there appears to be a default zoom value that the map goes to after a sample is selected from the identify feature in some cases where there are several clusters of separate sample points the map view will zoom out beyond the ability to distinguish the clusters is there a way to leave the zoom value as it was prior to sample selection before sample is selected after selecting sample

| 1

|

75,600

| 7,478,228,751

|

IssuesEvent

|

2018-04-04 10:54:05

|

FreeRDP/FreeRDP

|

https://api.github.com/repos/FreeRDP/FreeRDP

|

closed

|

Serial redirection reserved names `COM1-9`

|

client fixed-waiting-test wayland x11

|

hi,

i've dowloaded sources from github and build deb with dpkg-buildpackage. Unfortunately i can't use option /serial with that build (if i omit it it works ok). here's my connection line:

```/opt/freerdp-nightly/bin/xfreerdp

/serial:COM3,/dev/ttyS0 /printer:'laser' /clipboard /kbd:0x00000415

/load-balance-info:'TSV://MS Terminal Services Plugin.1.ATD' /cert-tofu

/size:'90%' /auto-reconnect /v:XXXXXX /u:YYYY

[16:06:28:457] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx rdpdr

[16:06:28:459] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx rdpsnd

[16:06:28:460] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx cliprdr

Password:

[16:06:33:933] [25852:25853] [INFO][com.freerdp.gdi] - Local framebuffer format PIXEL_FORMAT_BGRX32

[16:06:33:935] [25852:25853] [INFO][com.freerdp.gdi] - Remote framebuffer format PIXEL_FORMAT_RGB16

[16:06:33:960] [25852:25853] [INFO][com.winpr.clipboard] - initialized POSIX local file subsystem

[16:06:33:968] [25852:25858] [INFO][com.freerdp.channels.rdpdr.client] - Loading device service serial [COM3] (static)

[16:06:33:970] [25852:25858] [ERROR][com.freerdp.channels.serial.client] - DefineCommDevice failed!

[16:06:33:971] [25852:25858] [ERROR][com.freerdp.channels.rdpdr.client] - devman_load_device_service failed with error 1359!

[16:06:33:973] [25852:25858] [ERROR][com.freerdp.channels.rdpdr.client] - rdpdr_process_connect failed with error 1359!

[16:06:33:974] [25852:25853] [ERROR][com.freerdp.core] - rdpdr_virtual_channel_client_thread reported an error. Error was 1359

[16:06:33:975] [25852:25853] [INFO][com.freerdp.client.x11] - Network disconnect!

[16:06:33:975] [25852:25853] [INFO][com.freerdp.client.x11] - Attempting reconnect (1 of 20)

[16:06:33:196] [25852:25853] [ERROR][com.freerdp.core] - rdpdr_virtual_channel_client_thread reported an error. Error was 1359

[16:06:33:197] [25852:25853] [INFO][com.freerdp.client.x11] - Network disconnect!

[16:06:33:198] [25852:25853] [INFO][com.freerdp.client.x11] - Attempting reconnect (1 of 20)

[16:06:33:199] [25852:25861] [INFO][com.freerdp.channels.rdpdr.client] - Loading device service serial [COM3] (static)

[16:06:33:200] [25852:25861] [ERROR][com.freerdp.channels.serial.client] - DefineCommDevice failed!

[16:06:33:200] [25852:25861] [ERROR][com.freerdp.channels.rdpdr.client] - devman_load_device_service failed with error 1359!

[16:06:33:201] [25852:25861] [ERROR][com.freerdp.channels.rdpdr.client] - rdpdr_process_connect failed with error 1359!

ASAN:SIGSEGV

=================================================================

==25852==ERROR: AddressSanitizer: SEGV on unknown address 0x000000000000

(pc 0x7fe4635318da bp 0x7fe451e80650 sp 0x7fe451e80640 T1)

#0 0x7fe4635318d9 in MessagePipe_PostQuit (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0xc08d9)

#1 0x7fe4643a63e3 (/opt/freerdp-nightly/bin/../lib/libfreerdp-client2.so.2+0xb93e3)

#2 0x7fe463eed9a4 (/opt/freerdp-nightly/bin/../lib/libfreerdp2.so.2+0x1499a4)

#3 0x7fe463f02ebf (/opt/freerdp-nightly/bin/../lib/libfreerdp2.so.2+0x15eebf)

#4 0x443528 (/opt/freerdp-nightly/bin/xfreerdp+0x443528)

#5 0x7fe463578a31 (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x107a31)

#6 0x7fe462e916b9 in start_thread (/lib/x86_64-linux-gnu/libpthread.so.0+0x76b9)

#7 0x7fe4631ae41c in clone (/lib/x86_64-linux-gnu/libc.so.6+0x10741c)

AddressSanitizer can not provide additional info.

SUMMARY: AddressSanitizer: SEGV ??:0 MessagePipe_PostQuit

Thread T1 created by T0 here:

#0 0x7fe465a91253 in pthread_create (/usr/lib/x86_64-linux-gnu/libasan.so.2+0x36253)

#1 0x7fe4635786c4 (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x1076c4)

#2 0x7fe463578ed7 in CreateThread (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x107ed7)

#3 0x43dc7f (/opt/freerdp-nightly/bin/xfreerdp+0x43dc7f)

#4 0x407985 (/opt/freerdp-nightly/bin/xfreerdp+0x407985)

#5 0x7fe4630c782f in __libc_start_main (/lib/x86_64-linux-gnu/libc.so.6+0x2082f)

==25852==ABORTING```

|

1.0

|

Serial redirection reserved names `COM1-9` - hi,

i've dowloaded sources from github and build deb with dpkg-buildpackage. Unfortunately i can't use option /serial with that build (if i omit it it works ok). here's my connection line:

```/opt/freerdp-nightly/bin/xfreerdp

/serial:COM3,/dev/ttyS0 /printer:'laser' /clipboard /kbd:0x00000415

/load-balance-info:'TSV://MS Terminal Services Plugin.1.ATD' /cert-tofu

/size:'90%' /auto-reconnect /v:XXXXXX /u:YYYY

[16:06:28:457] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx rdpdr

[16:06:28:459] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx rdpsnd

[16:06:28:460] [25852:25853] [INFO][com.freerdp.client.common.cmdline] - loading channelEx cliprdr

Password:

[16:06:33:933] [25852:25853] [INFO][com.freerdp.gdi] - Local framebuffer format PIXEL_FORMAT_BGRX32

[16:06:33:935] [25852:25853] [INFO][com.freerdp.gdi] - Remote framebuffer format PIXEL_FORMAT_RGB16

[16:06:33:960] [25852:25853] [INFO][com.winpr.clipboard] - initialized POSIX local file subsystem

[16:06:33:968] [25852:25858] [INFO][com.freerdp.channels.rdpdr.client] - Loading device service serial [COM3] (static)

[16:06:33:970] [25852:25858] [ERROR][com.freerdp.channels.serial.client] - DefineCommDevice failed!

[16:06:33:971] [25852:25858] [ERROR][com.freerdp.channels.rdpdr.client] - devman_load_device_service failed with error 1359!

[16:06:33:973] [25852:25858] [ERROR][com.freerdp.channels.rdpdr.client] - rdpdr_process_connect failed with error 1359!

[16:06:33:974] [25852:25853] [ERROR][com.freerdp.core] - rdpdr_virtual_channel_client_thread reported an error. Error was 1359

[16:06:33:975] [25852:25853] [INFO][com.freerdp.client.x11] - Network disconnect!

[16:06:33:975] [25852:25853] [INFO][com.freerdp.client.x11] - Attempting reconnect (1 of 20)

[16:06:33:196] [25852:25853] [ERROR][com.freerdp.core] - rdpdr_virtual_channel_client_thread reported an error. Error was 1359

[16:06:33:197] [25852:25853] [INFO][com.freerdp.client.x11] - Network disconnect!

[16:06:33:198] [25852:25853] [INFO][com.freerdp.client.x11] - Attempting reconnect (1 of 20)

[16:06:33:199] [25852:25861] [INFO][com.freerdp.channels.rdpdr.client] - Loading device service serial [COM3] (static)

[16:06:33:200] [25852:25861] [ERROR][com.freerdp.channels.serial.client] - DefineCommDevice failed!

[16:06:33:200] [25852:25861] [ERROR][com.freerdp.channels.rdpdr.client] - devman_load_device_service failed with error 1359!

[16:06:33:201] [25852:25861] [ERROR][com.freerdp.channels.rdpdr.client] - rdpdr_process_connect failed with error 1359!

ASAN:SIGSEGV

=================================================================

==25852==ERROR: AddressSanitizer: SEGV on unknown address 0x000000000000

(pc 0x7fe4635318da bp 0x7fe451e80650 sp 0x7fe451e80640 T1)

#0 0x7fe4635318d9 in MessagePipe_PostQuit (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0xc08d9)

#1 0x7fe4643a63e3 (/opt/freerdp-nightly/bin/../lib/libfreerdp-client2.so.2+0xb93e3)

#2 0x7fe463eed9a4 (/opt/freerdp-nightly/bin/../lib/libfreerdp2.so.2+0x1499a4)

#3 0x7fe463f02ebf (/opt/freerdp-nightly/bin/../lib/libfreerdp2.so.2+0x15eebf)

#4 0x443528 (/opt/freerdp-nightly/bin/xfreerdp+0x443528)

#5 0x7fe463578a31 (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x107a31)

#6 0x7fe462e916b9 in start_thread (/lib/x86_64-linux-gnu/libpthread.so.0+0x76b9)

#7 0x7fe4631ae41c in clone (/lib/x86_64-linux-gnu/libc.so.6+0x10741c)

AddressSanitizer can not provide additional info.

SUMMARY: AddressSanitizer: SEGV ??:0 MessagePipe_PostQuit

Thread T1 created by T0 here:

#0 0x7fe465a91253 in pthread_create (/usr/lib/x86_64-linux-gnu/libasan.so.2+0x36253)

#1 0x7fe4635786c4 (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x1076c4)

#2 0x7fe463578ed7 in CreateThread (/opt/freerdp-nightly/bin/../lib/libwinpr2.so.2+0x107ed7)

#3 0x43dc7f (/opt/freerdp-nightly/bin/xfreerdp+0x43dc7f)

#4 0x407985 (/opt/freerdp-nightly/bin/xfreerdp+0x407985)

#5 0x7fe4630c782f in __libc_start_main (/lib/x86_64-linux-gnu/libc.so.6+0x2082f)

==25852==ABORTING```

|

test

|

serial redirection reserved names hi i ve dowloaded sources from github and build deb with dpkg buildpackage unfortunately i can t use option serial with that build if i omit it it works ok here s my connection line opt freerdp nightly bin xfreerdp serial dev printer laser clipboard kbd load balance info tsv ms terminal services plugin atd cert tofu size auto reconnect v xxxxxx u yyyy loading channelex rdpdr loading channelex rdpsnd loading channelex cliprdr password local framebuffer format pixel format remote framebuffer format pixel format initialized posix local file subsystem loading device service serial static definecommdevice failed devman load device service failed with error rdpdr process connect failed with error rdpdr virtual channel client thread reported an error error was network disconnect attempting reconnect of rdpdr virtual channel client thread reported an error error was network disconnect attempting reconnect of loading device service serial static definecommdevice failed devman load device service failed with error rdpdr process connect failed with error asan sigsegv error addresssanitizer segv on unknown address pc bp sp in messagepipe postquit opt freerdp nightly bin lib so opt freerdp nightly bin lib libfreerdp so opt freerdp nightly bin lib so opt freerdp nightly bin lib so opt freerdp nightly bin xfreerdp opt freerdp nightly bin lib so in start thread lib linux gnu libpthread so in clone lib linux gnu libc so addresssanitizer can not provide additional info summary addresssanitizer segv messagepipe postquit thread created by here in pthread create usr lib linux gnu libasan so opt freerdp nightly bin lib so in createthread opt freerdp nightly bin lib so opt freerdp nightly bin xfreerdp opt freerdp nightly bin xfreerdp in libc start main lib linux gnu libc so aborting

| 1

|

414,458

| 27,987,912,849

|

IssuesEvent

|

2023-03-26 22:11:49

|

Witiko/markdown

|

https://api.github.com/repos/Witiko/markdown

|

closed

|

Write TUGboat 44:1 article about attributes and attribute contexts

|

documentation

|

In Markdown 2.22.0, we will support attribute context renderers for [headings][1], [bracketed spans and fenced divs][2], and [links, images, code spans, and fenced code][3]. We should write a TUGboat article that introduces the concept of attributes and attribute contexts and show how coders can style attributes with the Markdown package.

[1]: https://github.com/Witiko/markdown/issues/91

[2]: https://github.com/Witiko/markdown/issues/126

[3]: https://github.com/Witiko/markdown/issues/123

[4]: https://github.com/Witiko/markdown/issues/232

|

1.0

|

Write TUGboat 44:1 article about attributes and attribute contexts - In Markdown 2.22.0, we will support attribute context renderers for [headings][1], [bracketed spans and fenced divs][2], and [links, images, code spans, and fenced code][3]. We should write a TUGboat article that introduces the concept of attributes and attribute contexts and show how coders can style attributes with the Markdown package.

[1]: https://github.com/Witiko/markdown/issues/91

[2]: https://github.com/Witiko/markdown/issues/126

[3]: https://github.com/Witiko/markdown/issues/123

[4]: https://github.com/Witiko/markdown/issues/232

|

non_test

|

write tugboat article about attributes and attribute contexts in markdown we will support attribute context renderers for and we should write a tugboat article that introduces the concept of attributes and attribute contexts and show how coders can style attributes with the markdown package

| 0

|

17,660

| 3,633,109,909

|

IssuesEvent

|

2016-02-11 13:15:32

|

AtomLinter/linter-javac

|

https://api.github.com/repos/AtomLinter/linter-javac

|

closed

|

spawn E2BIG when linting

|

bug untested

|

I am getting spawn E2BIG errors when saving .java files in Atom.

It might have something to do with the number of files in the project folder, as open a window including only a single folder from the project does not produce the error.

#### Steps

* Make changes to an open .java file

* Save file

#### Expected:

Atom updates linter display without error

#### Actual:

No linting, following error displayed:

<img width="453" alt="screen shot 2015-11-19 at 1 55 28 pm" src="https://cloud.githubusercontent.com/assets/870130/11282529/6164fe5a-8ec5-11e5-9306-616b7a5f0ec0.png">

#### Setup:

Atom 1.2.3

Mac OS X 10.11.1

|

1.0

|

spawn E2BIG when linting - I am getting spawn E2BIG errors when saving .java files in Atom.

It might have something to do with the number of files in the project folder, as open a window including only a single folder from the project does not produce the error.

#### Steps

* Make changes to an open .java file

* Save file

#### Expected:

Atom updates linter display without error

#### Actual:

No linting, following error displayed:

<img width="453" alt="screen shot 2015-11-19 at 1 55 28 pm" src="https://cloud.githubusercontent.com/assets/870130/11282529/6164fe5a-8ec5-11e5-9306-616b7a5f0ec0.png">

#### Setup:

Atom 1.2.3

Mac OS X 10.11.1

|

test

|

spawn when linting i am getting spawn errors when saving java files in atom it might have something to do with the number of files in the project folder as open a window including only a single folder from the project does not produce the error steps make changes to an open java file save file expected atom updates linter display without error actual no linting following error displayed img width alt screen shot at pm src setup atom mac os x

| 1

|

251,236

| 21,448,222,178

|

IssuesEvent

|

2022-04-25 08:43:43

|

hazelcast/hazelcast-csharp-client

|

https://api.github.com/repos/hazelcast/hazelcast-csharp-client

|

closed

|

DNS Host name test [API-1247]

|

Type: Test Failure Type: Backport Priority: Normal Jira State: Active

|

Implement and validate

`com.hazelcast.client.ClientRegressionWithRealNetworkTest.testConnectWithDNSHostnames`

Backport from https://github.com/hazelcast/hazelcast/pull/11368

|

1.0

|

DNS Host name test [API-1247] - Implement and validate

`com.hazelcast.client.ClientRegressionWithRealNetworkTest.testConnectWithDNSHostnames`

Backport from https://github.com/hazelcast/hazelcast/pull/11368

|

test

|

dns host name test implement and validate com hazelcast client clientregressionwithrealnetworktest testconnectwithdnshostnames backport from

| 1

|

64,047

| 26,598,039,628

|

IssuesEvent

|

2023-01-23 13:56:10

|

flexion/ef-cms

|

https://api.github.com/repos/flexion/ef-cms

|

closed

|

Court: Designated Service Person

|

Delay Ship Indefinitely - No (5) Service on Parties

|

As the Court, in order to comply with Rule 21(b)(1)(D)(2), I need to designate a service party for Petitioner and designate a service party for Respondent.

## Pre-Conditions:

* Case has been created.

## Acceptance Criteria:

* There must always be a designated service person for each party.

* If petitioner is unrepresented, they are the designated service party

* The first attorney on each side (Petitioner and Respondent) should be the default designated service person for that party

* If petitioner has more than 1 counsel, a Court user needs to be able to change/designate which of Petitioner's counsel is the designated service person for Petitioner

* If respondent has more than 1 counsel, a Court user needs to be able to change/designated which Respondent's counsel is the designated service person for Respondent

|

1.0

|

Court: Designated Service Person - As the Court, in order to comply with Rule 21(b)(1)(D)(2), I need to designate a service party for Petitioner and designate a service party for Respondent.

## Pre-Conditions:

* Case has been created.

## Acceptance Criteria:

* There must always be a designated service person for each party.

* If petitioner is unrepresented, they are the designated service party

* The first attorney on each side (Petitioner and Respondent) should be the default designated service person for that party

* If petitioner has more than 1 counsel, a Court user needs to be able to change/designate which of Petitioner's counsel is the designated service person for Petitioner

* If respondent has more than 1 counsel, a Court user needs to be able to change/designated which Respondent's counsel is the designated service person for Respondent

|

non_test

|

court designated service person as the court in order to comply with rule b d i need to designate a service party for petitioner and designate a service party for respondent pre conditions case has been created acceptance criteria there must always be a designated service person for each party if petitioner is unrepresented they are the designated service party the first attorney on each side petitioner and respondent should be the default designated service person for that party if petitioner has more than counsel a court user needs to be able to change designate which of petitioner s counsel is the designated service person for petitioner if respondent has more than counsel a court user needs to be able to change designated which respondent s counsel is the designated service person for respondent

| 0

|

56,562

| 3,080,253,843

|

IssuesEvent

|

2015-08-21 20:59:08

|

pavel-pimenov/flylinkdc-r5xx

|

https://api.github.com/repos/pavel-pimenov/flylinkdc-r5xx

|

closed

|

Падение при использовании мастера быстрой настройки.

|

bug imported Priority-High

|

_From [kotyar...@gmail.com](https://code.google.com/u/110049176879914219675/) on May 25, 2012 05:57:59_

r501 build 9474

Unhandled exception at 0x75a09673:

Code 0xe06d7363

FlylinkDC++ r501 build 9474 startup on machine with:

Number of processors: 2.

Page size: 4096 Bytes.

Processor type: x86.

Memory config:

There is 49 percent of memory in use.

There are 2047 MB total of physical memory.

There are 1036 MB free of physical memory.

Running in Windows native (NT version 6.1).

Текущее состояние системы:

Memory config:

There is 55 percent of memory in use.

There are 2,00 ГБ total of physical memory.

There are 912,99 МБ free of physical memory.

Частота процессора: 2000,02 MHz

на вин7, чистая установка, трижды вылетел при первой настройке на этапе определения айпишника

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=759_

|

1.0

|

Падение при использовании мастера быстрой настройки. - _From [kotyar...@gmail.com](https://code.google.com/u/110049176879914219675/) on May 25, 2012 05:57:59_

r501 build 9474

Unhandled exception at 0x75a09673:

Code 0xe06d7363

FlylinkDC++ r501 build 9474 startup on machine with:

Number of processors: 2.

Page size: 4096 Bytes.

Processor type: x86.

Memory config:

There is 49 percent of memory in use.

There are 2047 MB total of physical memory.

There are 1036 MB free of physical memory.

Running in Windows native (NT version 6.1).

Текущее состояние системы:

Memory config:

There is 55 percent of memory in use.

There are 2,00 ГБ total of physical memory.

There are 912,99 МБ free of physical memory.

Частота процессора: 2000,02 MHz

на вин7, чистая установка, трижды вылетел при первой настройке на этапе определения айпишника

_Original issue: http://code.google.com/p/flylinkdc/issues/detail?id=759_

|

non_test

|

падение при использовании мастера быстрой настройки from on may build unhandled exception at code flylinkdc build startup on machine with number of processors page size bytes processor type memory config there is percent of memory in use there are mb total of physical memory there are mb free of physical memory running in windows native nt version текущее состояние системы memory config there is percent of memory in use there are гб total of physical memory there are мб free of physical memory частота процессора mhz на чистая установка трижды вылетел при первой настройке на этапе определения айпишника original issue

| 0

|

86,001

| 16,795,665,324

|

IssuesEvent

|

2021-06-16 02:52:41

|

GameDev-One/BattleofRhiannonNetwork

|

https://api.github.com/repos/GameDev-One/BattleofRhiannonNetwork

|

closed

|

Enemy does not react when being damaged

|

Art Code Design

|

The enemy does not react to being hit.

Recommend having some sort of recoil and flash VFX to indicate that damage was taken.

|

1.0

|

Enemy does not react when being damaged - The enemy does not react to being hit.

Recommend having some sort of recoil and flash VFX to indicate that damage was taken.

|

non_test

|

enemy does not react when being damaged the enemy does not react to being hit recommend having some sort of recoil and flash vfx to indicate that damage was taken

| 0

|

593,828

| 18,017,942,112

|

IssuesEvent

|

2021-09-16 15:46:27

|

pnxenopoulos/csgo

|

https://api.github.com/repos/pnxenopoulos/csgo

|

closed

|

Competitive Rank

|

Feature Request Low Priority

|

I would like to add the ranks of each player when i get the parsed json file. I asked the author of the demoinfocs-golang if this is implemented and he said that it is already there. [Code](https://pkg.go.dev/github.com/markus-wa/demoinfocs-golang/v2@v2.8.1/pkg/demoinfocs/events#RankUpdate)

**Describe the solution you'd like**

Maybe at the end of the array the relevant rank information for each player

|

1.0

|

Competitive Rank - I would like to add the ranks of each player when i get the parsed json file. I asked the author of the demoinfocs-golang if this is implemented and he said that it is already there. [Code](https://pkg.go.dev/github.com/markus-wa/demoinfocs-golang/v2@v2.8.1/pkg/demoinfocs/events#RankUpdate)

**Describe the solution you'd like**

Maybe at the end of the array the relevant rank information for each player

|

non_test

|

competitive rank i would like to add the ranks of each player when i get the parsed json file i asked the author of the demoinfocs golang if this is implemented and he said that it is already there describe the solution you d like maybe at the end of the array the relevant rank information for each player

| 0

|

714,665

| 24,569,654,396

|

IssuesEvent

|

2022-10-13 07:37:24

|

AlphaWallet/alpha-wallet-ios

|

https://api.github.com/repos/AlphaWallet/alpha-wallet-ios

|

opened

|

Can't connect using WalletConnect for minting with DevCon ticket attestation

|

High Priority

|

1. Visit the attestation URL in macOS Safari

2. In the same session, click "NFTs" in the top navigation bar

3. Click "Mint Arbitrum One NFT"

4. Dot click "Mint NFT"

5. Click "WALLET CONNECT" (it doesn't seem to matter which chain is enabled because the actionsheet to start the session doesn't appear at all for me)

6. Try to connect

Don't click "Mint NFT" at any time :)

It times out when I tried it a few times. Also have tried this:

A. It works most of the time on Android AlphaWallet. Fails a few times

B. It works most of the time on another wallet app. Fails a few times

C. It works https://test.walletconnect.org the one time I tried (and accidentally mint 😭 a token on Polygon)

D. AlphaWallet iOS works if I connect to http://example.walletconnect.org

E. It doesn't work even if I comment out the timeout and wait

|

1.0

|

Can't connect using WalletConnect for minting with DevCon ticket attestation - 1. Visit the attestation URL in macOS Safari

2. In the same session, click "NFTs" in the top navigation bar

3. Click "Mint Arbitrum One NFT"

4. Dot click "Mint NFT"

5. Click "WALLET CONNECT" (it doesn't seem to matter which chain is enabled because the actionsheet to start the session doesn't appear at all for me)

6. Try to connect

Don't click "Mint NFT" at any time :)

It times out when I tried it a few times. Also have tried this:

A. It works most of the time on Android AlphaWallet. Fails a few times

B. It works most of the time on another wallet app. Fails a few times

C. It works https://test.walletconnect.org the one time I tried (and accidentally mint 😭 a token on Polygon)

D. AlphaWallet iOS works if I connect to http://example.walletconnect.org

E. It doesn't work even if I comment out the timeout and wait

|

non_test

|

can t connect using walletconnect for minting with devcon ticket attestation visit the attestation url in macos safari in the same session click nfts in the top navigation bar click mint arbitrum one nft dot click mint nft click wallet connect it doesn t seem to matter which chain is enabled because the actionsheet to start the session doesn t appear at all for me try to connect don t click mint nft at any time it times out when i tried it a few times also have tried this a it works most of the time on android alphawallet fails a few times b it works most of the time on another wallet app fails a few times c it works the one time i tried and accidentally mint 😭 a token on polygon d alphawallet ios works if i connect to e it doesn t work even if i comment out the timeout and wait

| 0

|

46,624

| 24,631,476,104

|

IssuesEvent

|

2022-10-17 02:44:52

|

HypothesisWorks/hypothesis

|

https://api.github.com/repos/HypothesisWorks/hypothesis

|

opened

|

Efficient strategy for `st.text(...).filter(str.isidentifier)`

|

enhancement performance

|

As a follow-up to https://github.com/HypothesisWorks/hypothesis/issues/2693#issuecomment-823710924 and #3134, I'd like to return an efficient strategy for `st.text(...).filter(str.isidentifier)`.

Adapting https://github.com/Zac-HD/hypothesmith/blob/85358991f8498db489569e81ac9dc9049c75773f/src/hypothesmith/syntactic.py#L39-L56 should make this pretty easy, even with the slight complication of incorporating the restrictions of the `alphabet=` strategy.

We could optionally add similar support for the other `str.isX` methods, which mostly impose simpler constraints and could be satisfied more efficiently by replacing the `self.elements` strategy (e.g. `str.isspace` requires all-whitespace chars), and for some adding the filter on at the end (e.g. `str.isupper` is satisfied if all cased characters in the string are uppercase and there is at least one cased character)

|

True

|

Efficient strategy for `st.text(...).filter(str.isidentifier)` - As a follow-up to https://github.com/HypothesisWorks/hypothesis/issues/2693#issuecomment-823710924 and #3134, I'd like to return an efficient strategy for `st.text(...).filter(str.isidentifier)`.

Adapting https://github.com/Zac-HD/hypothesmith/blob/85358991f8498db489569e81ac9dc9049c75773f/src/hypothesmith/syntactic.py#L39-L56 should make this pretty easy, even with the slight complication of incorporating the restrictions of the `alphabet=` strategy.

We could optionally add similar support for the other `str.isX` methods, which mostly impose simpler constraints and could be satisfied more efficiently by replacing the `self.elements` strategy (e.g. `str.isspace` requires all-whitespace chars), and for some adding the filter on at the end (e.g. `str.isupper` is satisfied if all cased characters in the string are uppercase and there is at least one cased character)

|

non_test

|

efficient strategy for st text filter str isidentifier as a follow up to and i d like to return an efficient strategy for st text filter str isidentifier adapting should make this pretty easy even with the slight complication of incorporating the restrictions of the alphabet strategy we could optionally add similar support for the other str isx methods which mostly impose simpler constraints and could be satisfied more efficiently by replacing the self elements strategy e g str isspace requires all whitespace chars and for some adding the filter on at the end e g str isupper is satisfied if all cased characters in the string are uppercase and there is at least one cased character

| 0

|

512,208

| 14,890,271,512

|

IssuesEvent

|

2021-01-20 22:45:50

|

woocommerce/woocommerce-admin

|

https://api.github.com/repos/woocommerce/woocommerce-admin

|

opened

|

[1.9.0-rc.1] Home Screen Fatal

|

priority: critical

|

When starting the tasks list, an uninitialised `trackedCompletedTasks` prop is returned as `false` instead of an array causing a fatal error on the home screen.

https://github.com/woocommerce/woocommerce-admin/blob/41970b8fcdd859a44aa5d15fe112e76e8c593e00/client/header/activity-panel/index.js#L312

<img width="1659" alt="Screen Shot 2021-01-21 at 11 42 20 AM" src="https://user-images.githubusercontent.com/1922453/105250046-f3232980-5bdd-11eb-9248-e4da49bcdad6.png">

Looks like this code was added in https://github.com/woocommerce/woocommerce-admin/pull/5826 cc @louwie17

## To Reproduce

* New site, click on skip onboarding details

* Go to WooCommerce->home

* Click on “Add my products”

* Click on WooCommerce->home again

* Click on “Add my products” again.

* See blank screen

|

1.0

|

[1.9.0-rc.1] Home Screen Fatal - When starting the tasks list, an uninitialised `trackedCompletedTasks` prop is returned as `false` instead of an array causing a fatal error on the home screen.

https://github.com/woocommerce/woocommerce-admin/blob/41970b8fcdd859a44aa5d15fe112e76e8c593e00/client/header/activity-panel/index.js#L312

<img width="1659" alt="Screen Shot 2021-01-21 at 11 42 20 AM" src="https://user-images.githubusercontent.com/1922453/105250046-f3232980-5bdd-11eb-9248-e4da49bcdad6.png">

Looks like this code was added in https://github.com/woocommerce/woocommerce-admin/pull/5826 cc @louwie17

## To Reproduce

* New site, click on skip onboarding details

* Go to WooCommerce->home

* Click on “Add my products”

* Click on WooCommerce->home again

* Click on “Add my products” again.

* See blank screen

|

non_test

|

home screen fatal when starting the tasks list an uninitialised trackedcompletedtasks prop is returned as false instead of an array causing a fatal error on the home screen img width alt screen shot at am src looks like this code was added in cc to reproduce new site click on skip onboarding details go to woocommerce home click on “add my products” click on woocommerce home again click on “add my products” again see blank screen

| 0

|

139,666

| 11,275,602,444

|

IssuesEvent

|

2020-01-14 21:08:53

|

aliasrobotics/RVD

|

https://api.github.com/repos/aliasrobotics/RVD

|

opened

|

(error) Resource leak

|

bug cppcheck static analysis testing triage

|

```yaml

{

"system": "src/industrial_calibration/industrial_extrinsic_cal/src/nodes/wrist_cal_srv.cpp",

"type": "bug",

"exploitation": {

"description": "",

"exploitation-image": "",

"exploitation-vector": ""

},

"keywords": [

"cppcheck",

"static analysis",

"testing",

"triage",

"bug"

],

"mitigation": {

"description": "",

"pull-request": "",

"date-mitigation": ""

},

"flaw": {

"architectural-location": "N/A",

"reported-by-relationship": "automatic",

"phase": "testing",

"reported-by": "Alias Robotics",

"reproducibility": "always",

"specificity": "N/A",

"languages": "None",

"detected-by-method": "testing static",

"application": "N/A",

"subsystem": "N/A",

"trace": "",

"package": "N/A",

"reproduction": "See artifacts below (if available)",

"date-detected": "2020-01-14 (21:08)",

"detected-by": "Alias Robotics",

"date-reported": "2020-01-14 (21:08)",

"reproduction-image": "gitlab.com/aliasrobotics/offensive/alurity/pipelines/active/pipeline_ros_industrial/-/jobs/403099637/artifacts/download",

"issue": ""

},

"cve": "None",

"description": "[src/industrial_calibration/industrial_extrinsic_cal/src/nodes/wrist_cal_srv.cpp:450]: (error) Resource leak: fp",

"severity": {

"cvss-vector": "",

"rvss-score": 0,

"rvss-vector": "",

"cvss-score": 0,

"severity-description": ""

},

"title": "(error) Resource leak",

"cwe": "None",

"id": 1,

"vendor": null,

"links": ""

}

```

|

1.0

|

(error) Resource leak - ```yaml

{

"system": "src/industrial_calibration/industrial_extrinsic_cal/src/nodes/wrist_cal_srv.cpp",

"type": "bug",

"exploitation": {

"description": "",

"exploitation-image": "",

"exploitation-vector": ""

},

"keywords": [

"cppcheck",

"static analysis",

"testing",

"triage",

"bug"

],

"mitigation": {

"description": "",

"pull-request": "",

"date-mitigation": ""

},

"flaw": {

"architectural-location": "N/A",

"reported-by-relationship": "automatic",

"phase": "testing",

"reported-by": "Alias Robotics",

"reproducibility": "always",

"specificity": "N/A",

"languages": "None",

"detected-by-method": "testing static",

"application": "N/A",

"subsystem": "N/A",

"trace": "",

"package": "N/A",

"reproduction": "See artifacts below (if available)",

"date-detected": "2020-01-14 (21:08)",

"detected-by": "Alias Robotics",

"date-reported": "2020-01-14 (21:08)",

"reproduction-image": "gitlab.com/aliasrobotics/offensive/alurity/pipelines/active/pipeline_ros_industrial/-/jobs/403099637/artifacts/download",

"issue": ""

},

"cve": "None",

"description": "[src/industrial_calibration/industrial_extrinsic_cal/src/nodes/wrist_cal_srv.cpp:450]: (error) Resource leak: fp",

"severity": {

"cvss-vector": "",

"rvss-score": 0,

"rvss-vector": "",

"cvss-score": 0,

"severity-description": ""

},

"title": "(error) Resource leak",

"cwe": "None",

"id": 1,

"vendor": null,

"links": ""

}

```

|

test

|

error resource leak yaml system src industrial calibration industrial extrinsic cal src nodes wrist cal srv cpp type bug exploitation description exploitation image exploitation vector keywords cppcheck static analysis testing triage bug mitigation description pull request date mitigation flaw architectural location n a reported by relationship automatic phase testing reported by alias robotics reproducibility always specificity n a languages none detected by method testing static application n a subsystem n a trace package n a reproduction see artifacts below if available date detected detected by alias robotics date reported reproduction image gitlab com aliasrobotics offensive alurity pipelines active pipeline ros industrial jobs artifacts download issue cve none description error resource leak fp severity cvss vector rvss score rvss vector cvss score severity description title error resource leak cwe none id vendor null links

| 1

|

302,552

| 9,276,184,782

|

IssuesEvent

|

2019-03-20 01:46:50

|

evscott/Rambl

|

https://api.github.com/repos/evscott/Rambl

|

closed

|

Basic global styling

|

Low priority

|

Add basic global styles including:

- header tags

- p tags

- links

- background colours and Bootstrap colour override

- generic flex wrapping container

|

1.0

|

Basic global styling - Add basic global styles including:

- header tags

- p tags

- links

- background colours and Bootstrap colour override

- generic flex wrapping container

|

non_test

|

basic global styling add basic global styles including header tags p tags links background colours and bootstrap colour override generic flex wrapping container

| 0

|

148,669

| 13,243,593,871

|

IssuesEvent

|

2020-08-19 11:43:40

|

freenas/documentation

|

https://api.github.com/repos/freenas/documentation

|

closed

|

TrueNAS Core 12: Cloud Sync post-script

|

documentation

|

I'm missing some details for post-script. Is for example possible pass return code (or another vars) from task to this post-script?

Source: https://www.truenas.com/docs/hub/tasks/scheduled/cloudsync/

|

1.0

|

TrueNAS Core 12: Cloud Sync post-script - I'm missing some details for post-script. Is for example possible pass return code (or another vars) from task to this post-script?

Source: https://www.truenas.com/docs/hub/tasks/scheduled/cloudsync/

|

non_test

|

truenas core cloud sync post script i m missing some details for post script is for example possible pass return code or another vars from task to this post script source

| 0

|

225,071

| 17,791,753,399

|

IssuesEvent

|

2021-08-31 17:00:32

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

opened

|

FR: Record results of OpInfo reference tests and detect when numerics of an operator change

|

feature module: tests triaged

|

This would help identify the extent of changes to the operator set and require engineers appreciate the scope and impact of their change before committing it.

|

1.0

|

FR: Record results of OpInfo reference tests and detect when numerics of an operator change - This would help identify the extent of changes to the operator set and require engineers appreciate the scope and impact of their change before committing it.

|

test

|

fr record results of opinfo reference tests and detect when numerics of an operator change this would help identify the extent of changes to the operator set and require engineers appreciate the scope and impact of their change before committing it

| 1

|

273,884

| 8,554,221,030

|

IssuesEvent

|

2018-11-08 05:04:52

|

buttercup/buttercup-desktop

|

https://api.github.com/repos/buttercup/buttercup-desktop

|

closed

|

Cannot scroll at the column of entry

|

Effort: Medium Platform: Mac Priority: High Status: Available Type: Bug

|

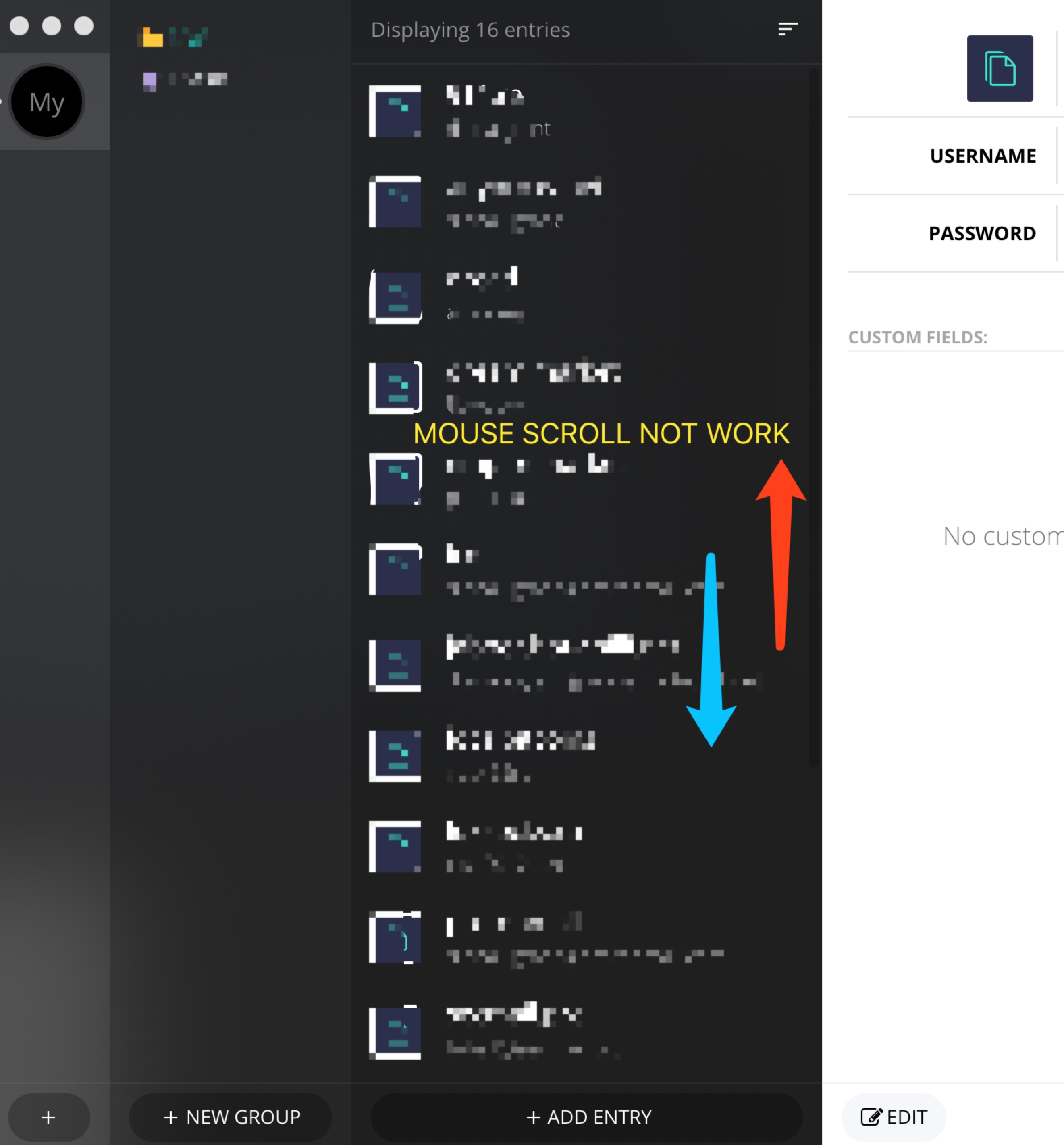

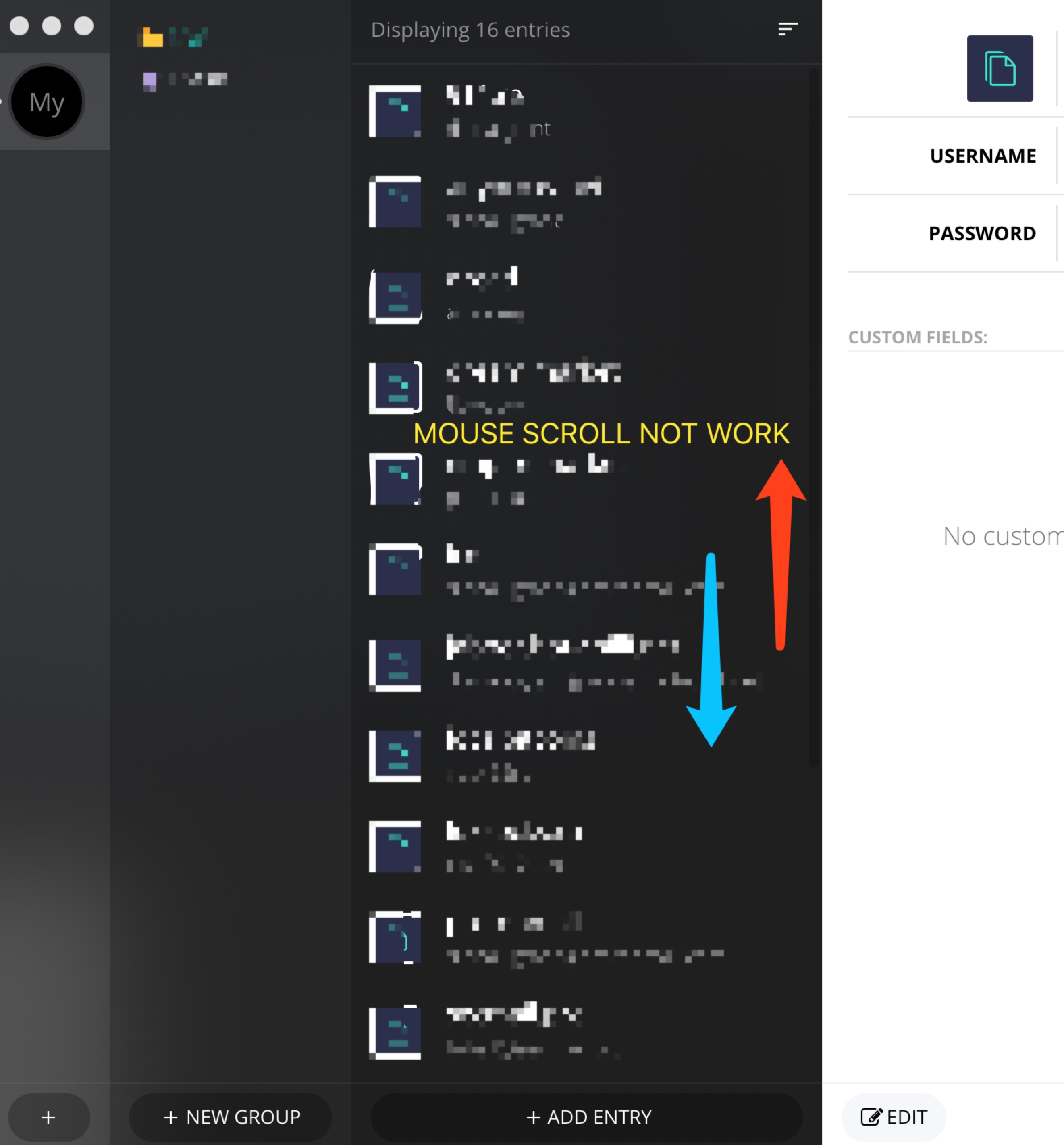

OS: macOS 10.14

BC: 1.10.3

When the number of entries is greater than the one can be displayed in a window, mouse cannot scroll up/down the entries. But using trackpad is OK.

|

1.0

|

Cannot scroll at the column of entry - OS: macOS 10.14

BC: 1.10.3

When the number of entries is greater than the one can be displayed in a window, mouse cannot scroll up/down the entries. But using trackpad is OK.

|

non_test

|

cannot scroll at the column of entry os macos bc when the number of entries is greater than the one can be displayed in a window mouse cannot scroll up down the entries but using trackpad is ok

| 0

|

17,911

| 3,645,879,566

|

IssuesEvent

|

2016-02-15 16:23:52

|

backbee/backbee-standard

|

https://api.github.com/repos/backbee/backbee-standard

|

closed

|

[PAGE] ask user to validate modification before putting online a page that never has been validate from creation

|

bug To test

|

scenario

Create a page

Give a name and select a layout

make modifications on this new page

Go to the page tab and select "online"

Save status

In front office the page modifications are not made

Solution :

automatically validate new page creation and modifcation before putting online

|

1.0

|

[PAGE] ask user to validate modification before putting online a page that never has been validate from creation - scenario

Create a page

Give a name and select a layout

make modifications on this new page

Go to the page tab and select "online"

Save status

In front office the page modifications are not made

Solution :

automatically validate new page creation and modifcation before putting online

|

test

|

ask user to validate modification before putting online a page that never has been validate from creation scenario create a page give a name and select a layout make modifications on this new page go to the page tab and select online save status in front office the page modifications are not made solution automatically validate new page creation and modifcation before putting online

| 1

|

147,286

| 19,512,669,958

|

IssuesEvent

|

2021-12-29 02:56:27

|

ChoeMinji/deno-1.5.0

|

https://api.github.com/repos/ChoeMinji/deno-1.5.0

|

closed

|

CVE-2021-38191 (Medium) detected in tokio-0.2.22.crate - autoclosed

|

security vulnerability

|

## CVE-2021-38191 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tokio-0.2.22.crate</b></p></summary>

<p>An event-driven, non-blocking I/O platform for writing asynchronous I/O

backed applications.

</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/tokio/0.2.22/download">https://crates.io/api/v1/crates/tokio/0.2.22/download</a></p>

<p>

Dependency Hierarchy:

- test_plugin-0.0.1 (Root Library)

- test_util-0.1.0

- :x: **tokio-0.2.22.crate** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ChoeMinji/deno-1.5.0/commit/6bd9a93e55faf7abd43040d83fa5bb6fcbd55f5c">6bd9a93e55faf7abd43040d83fa5bb6fcbd55f5c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the tokio crate before 1.8.1 for Rust. Upon a JoinHandle::abort, a Task may be dropped in the wrong thread.

<p>Publish Date: 2021-08-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-38191>CVE-2021-38191</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://rustsec.org/advisories/RUSTSEC-2021-0072.html">https://rustsec.org/advisories/RUSTSEC-2021-0072.html</a></p>

<p>Release Date: 2021-08-08</p>

<p>Fix Resolution: tokio - 1.5.1,1.6.3,1.7.2, 1.8.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-38191 (Medium) detected in tokio-0.2.22.crate - autoclosed - ## CVE-2021-38191 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tokio-0.2.22.crate</b></p></summary>

<p>An event-driven, non-blocking I/O platform for writing asynchronous I/O

backed applications.

</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/tokio/0.2.22/download">https://crates.io/api/v1/crates/tokio/0.2.22/download</a></p>

<p>

Dependency Hierarchy:

- test_plugin-0.0.1 (Root Library)

- test_util-0.1.0

- :x: **tokio-0.2.22.crate** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ChoeMinji/deno-1.5.0/commit/6bd9a93e55faf7abd43040d83fa5bb6fcbd55f5c">6bd9a93e55faf7abd43040d83fa5bb6fcbd55f5c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the tokio crate before 1.8.1 for Rust. Upon a JoinHandle::abort, a Task may be dropped in the wrong thread.

<p>Publish Date: 2021-08-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-38191>CVE-2021-38191</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://rustsec.org/advisories/RUSTSEC-2021-0072.html">https://rustsec.org/advisories/RUSTSEC-2021-0072.html</a></p>

<p>Release Date: 2021-08-08</p>

<p>Fix Resolution: tokio - 1.5.1,1.6.3,1.7.2, 1.8.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in tokio crate autoclosed cve medium severity vulnerability vulnerable library tokio crate an event driven non blocking i o platform for writing asynchronous i o backed applications library home page a href dependency hierarchy test plugin root library test util x tokio crate vulnerable library found in head commit a href found in base branch master vulnerability details an issue was discovered in the tokio crate before for rust upon a joinhandle abort a task may be dropped in the wrong thread publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution tokio step up your open source security game with whitesource

| 0

|

561,557

| 16,619,271,748

|

IssuesEvent

|

2021-06-02 21:18:01

|

googleapis/rules_gapic

|

https://api.github.com/repos/googleapis/rules_gapic

|

opened

|

feat: do not automatically generate gapic_library targets for protos that don't have a service

|

priority: p3 type: feature request

|

We currently generate gapic_library targets in the BUILD file for all protos, including protos that only contain messages (e.g. do not contain a service). We do not need to do that for protos that do not contain services.

We could either parse the proto itself for a service, or look at the yaml file for APIs being referenced to determine whether gapic_library targets need to be included.

|

1.0

|

feat: do not automatically generate gapic_library targets for protos that don't have a service - We currently generate gapic_library targets in the BUILD file for all protos, including protos that only contain messages (e.g. do not contain a service). We do not need to do that for protos that do not contain services.

We could either parse the proto itself for a service, or look at the yaml file for APIs being referenced to determine whether gapic_library targets need to be included.

|

non_test

|

feat do not automatically generate gapic library targets for protos that don t have a service we currently generate gapic library targets in the build file for all protos including protos that only contain messages e g do not contain a service we do not need to do that for protos that do not contain services we could either parse the proto itself for a service or look at the yaml file for apis being referenced to determine whether gapic library targets need to be included

| 0

|

165,099

| 20,574,159,560

|

IssuesEvent

|

2022-03-04 01:26:35

|

renfei/renfei-java-sdk

|

https://api.github.com/repos/renfei/renfei-java-sdk

|

opened

|

WS-2022-0089 (High) detected in nokogiri-1.11.5.gem

|

security vulnerability

|

## WS-2022-0089 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.11.5.gem</b></p></summary>

<p>Nokogiri (鋸) makes it easy and painless to work with XML and HTML from Ruby. It provides a

sensible, easy-to-understand API for reading, writing, modifying, and querying documents. It is

fast and standards-compliant by relying on native parsers like libxml2 (C) and xerces (Java).

</p>

<p>Library home page: <a href="https://rubygems.org/gems/nokogiri-1.11.5.gem">https://rubygems.org/gems/nokogiri-1.11.5.gem</a></p>

<p>

Dependency Hierarchy:

- w3c_validators-1.3.5.gem (Root Library)

- :x: **nokogiri-1.11.5.gem** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Nokogiri before version 1.13.2 is vulnerable.

<p>Publish Date: 2022-03-01

<p>URL: <a href=https://github.com/sparklemotion/nokogiri/commit/472913378794b8cae21751b0777205e7c0606a95>WS-2022-0089</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sparklemotion/nokogiri/security/advisories/GHSA-fq42-c5rg-92c2">https://github.com/sparklemotion/nokogiri/security/advisories/GHSA-fq42-c5rg-92c2</a></p>

<p>Release Date: 2022-03-01</p>

<p>Fix Resolution: nokogiri - v1.13.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

WS-2022-0089 (High) detected in nokogiri-1.11.5.gem - ## WS-2022-0089 - High Severity Vulnerability