Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

224,624

| 17,762,281,746

|

IssuesEvent

|

2021-08-29 22:56:01

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

TEST: audit uses of maybeWarnsRegex

|

module: tests triaged

|

Follow on for #47624, which created an `assertWarnsOnceRegex` context manager to test C-level `TORCH_WARN_ONCE`. All the places that currently use `maybeWarnsRegex` should be replaced with the `assrtWarnsOnceRegex`, and any untested `TORCH_WARN_ONCE` code should be covered by a test.

cc @mruberry @VitalyFedyunin @walterddr

|

1.0

|

TEST: audit uses of maybeWarnsRegex - Follow on for #47624, which created an `assertWarnsOnceRegex` context manager to test C-level `TORCH_WARN_ONCE`. All the places that currently use `maybeWarnsRegex` should be replaced with the `assrtWarnsOnceRegex`, and any untested `TORCH_WARN_ONCE` code should be covered by a test.

cc @mruberry @VitalyFedyunin @walterddr

|

test

|

test audit uses of maybewarnsregex follow on for which created an assertwarnsonceregex context manager to test c level torch warn once all the places that currently use maybewarnsregex should be replaced with the assrtwarnsonceregex and any untested torch warn once code should be covered by a test cc mruberry vitalyfedyunin walterddr

| 1

|

265,789

| 23,198,241,726

|

IssuesEvent

|

2022-08-01 18:36:36

|

metrico/qryn

|

https://api.github.com/repos/metrico/qryn

|

closed

|

qryn managed alert doesn't get triggered.

|

help wanted needs testing

|

Hello again and good day to you.

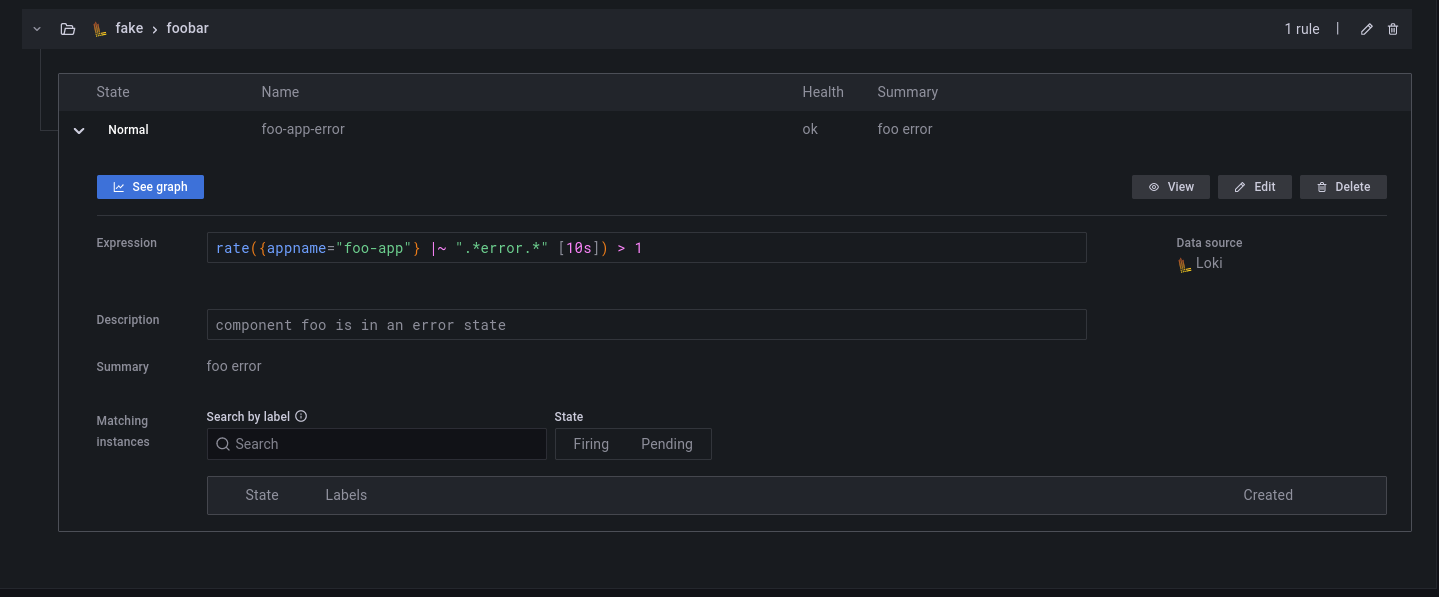

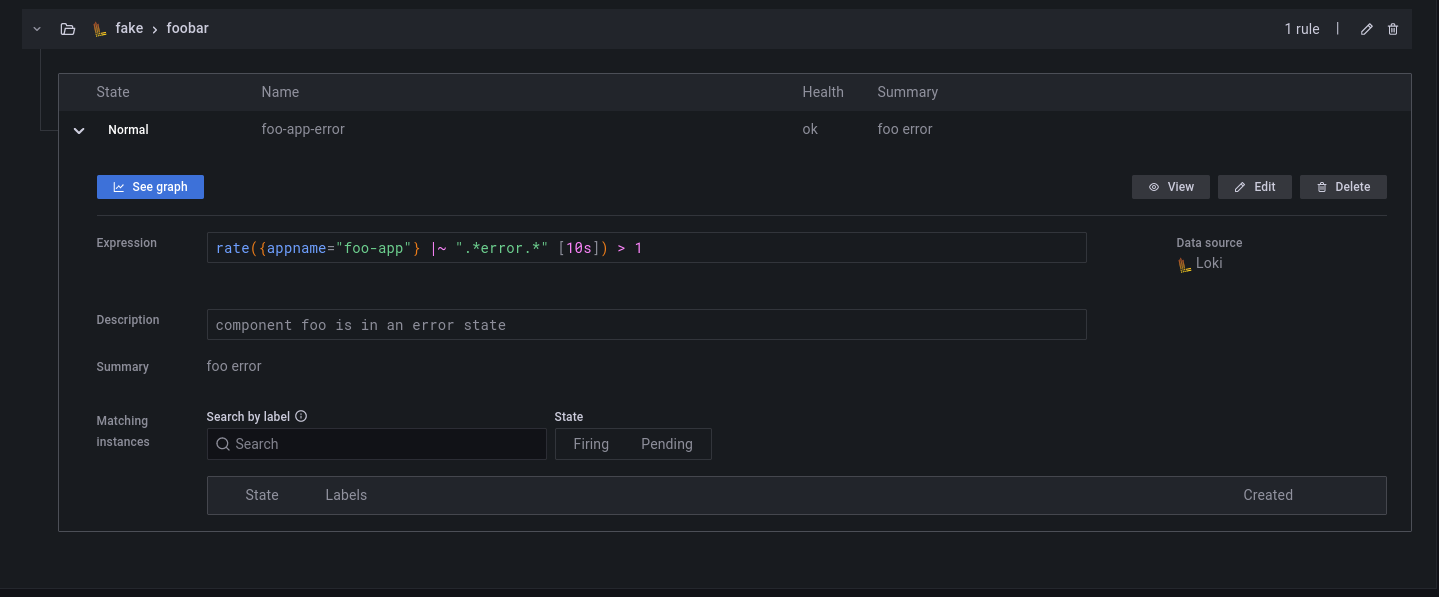

I've created an alerting rule managed by `qryn` via [this guide](https://github.com/metrico/qryn/wiki/Ruler---Alerts#-qryn-ruler--alert-manager) and it looks like this:

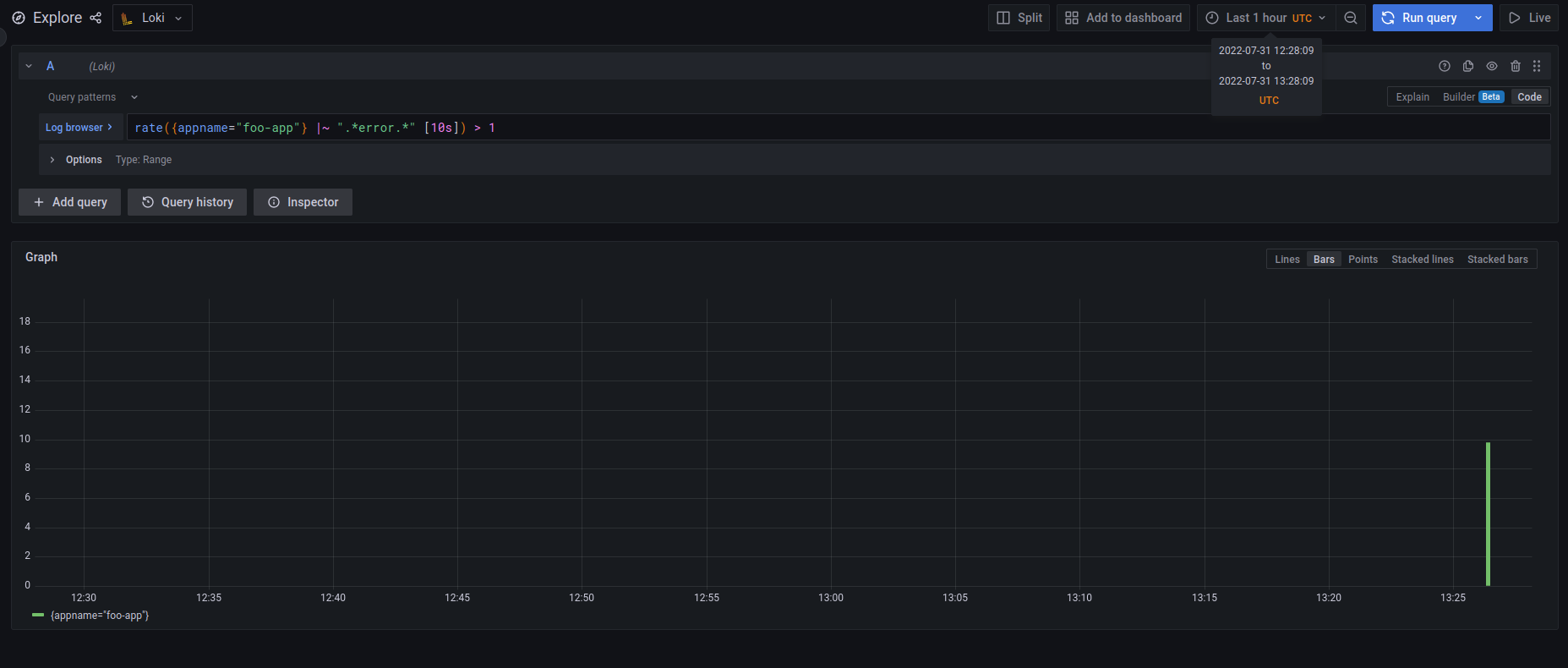

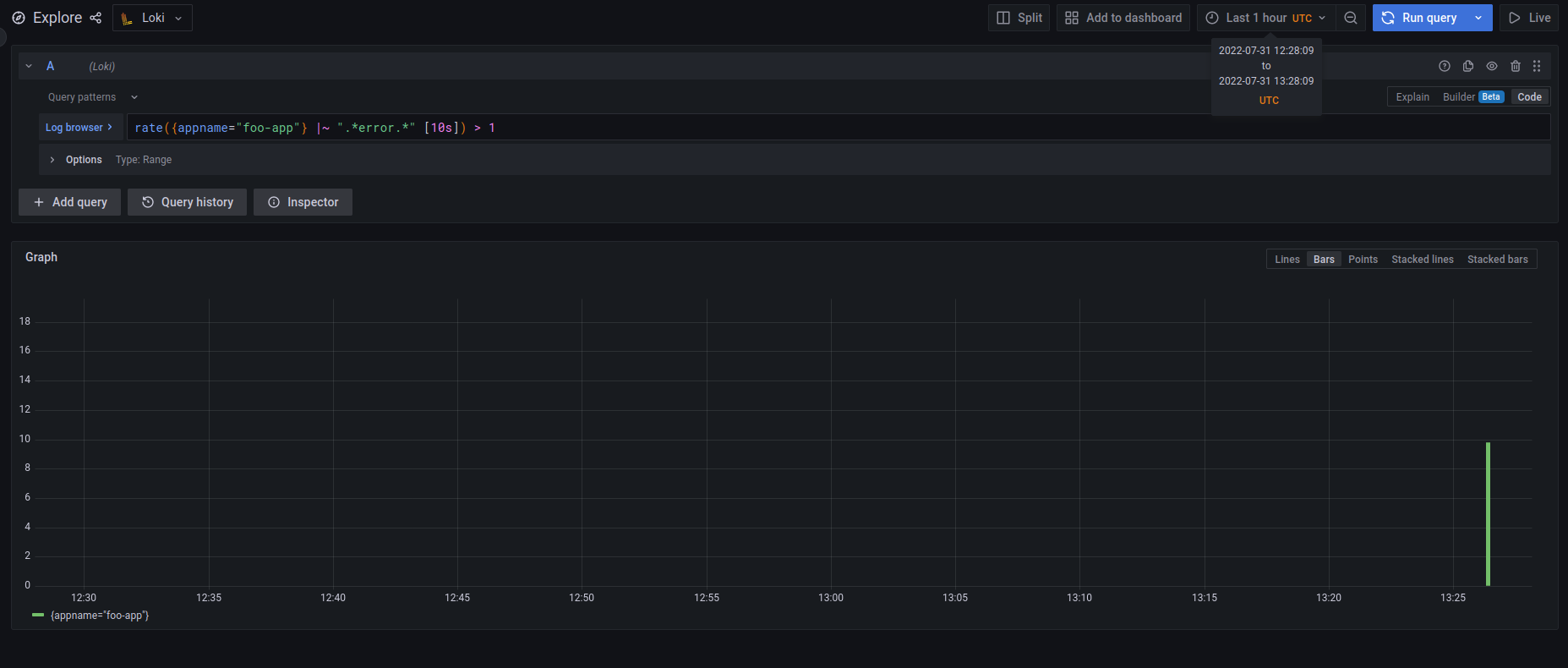

The problem is when I wanted to test it by manually inserting some logs to match the condition, the alert didn't change state from normal to pending and then firing even though when you click on the graph it shows that it has definitely matched the condition as it can be seen in the below picture:

I've tested some other expressions and log queries but the state doesn't change at all. As a matter of fact, the `normal` string in the first picture looks a bit weird since it doesn't have the green box around it.

In addition, I tried to see if the rule is actually inserted into qryn and it was:

```bash

curl -i -XGET -H "Content-Type: application/json" http://<SOME_IP>:<SOME_PORT>/api/prom/rules

HTTP/1.1 200 OK

vary: Origin

access-control-allow-origin: *

content-type: yaml

content-length: 288

Date: Sun, 31 Jul 2022 13:36:20 GMT

Connection: keep-alive

Keep-Alive: timeout=5

fake:

- interval: 1s

name: foobar

rules:

- alert: foo-app-error

expr: rate({appname="foo-app"} |~ ".*error.*" [10s]) > 1

for: 1m

annotations:

description: component foo is in an error state

summary: foo error

labels: {}

```

My Grafana notifications and routing are configured correctly, in fact, I tried the same expression but with a Grafana managed alerting rule and it worked just fine.

- Am I doing it right? Maybe I've misconfigured something (I'm open to sharing more information if needed.)

- Isn't there any other way to insert and add `qryn` managed alerting rules other than Grafana GUI and the API? (not related to this issue just asking).

|

1.0

|

qryn managed alert doesn't get triggered. - Hello again and good day to you.

I've created an alerting rule managed by `qryn` via [this guide](https://github.com/metrico/qryn/wiki/Ruler---Alerts#-qryn-ruler--alert-manager) and it looks like this:

The problem is when I wanted to test it by manually inserting some logs to match the condition, the alert didn't change state from normal to pending and then firing even though when you click on the graph it shows that it has definitely matched the condition as it can be seen in the below picture:

I've tested some other expressions and log queries but the state doesn't change at all. As a matter of fact, the `normal` string in the first picture looks a bit weird since it doesn't have the green box around it.

In addition, I tried to see if the rule is actually inserted into qryn and it was:

```bash

curl -i -XGET -H "Content-Type: application/json" http://<SOME_IP>:<SOME_PORT>/api/prom/rules

HTTP/1.1 200 OK

vary: Origin

access-control-allow-origin: *

content-type: yaml

content-length: 288

Date: Sun, 31 Jul 2022 13:36:20 GMT

Connection: keep-alive

Keep-Alive: timeout=5

fake:

- interval: 1s

name: foobar

rules:

- alert: foo-app-error

expr: rate({appname="foo-app"} |~ ".*error.*" [10s]) > 1

for: 1m

annotations:

description: component foo is in an error state

summary: foo error

labels: {}

```

My Grafana notifications and routing are configured correctly, in fact, I tried the same expression but with a Grafana managed alerting rule and it worked just fine.

- Am I doing it right? Maybe I've misconfigured something (I'm open to sharing more information if needed.)

- Isn't there any other way to insert and add `qryn` managed alerting rules other than Grafana GUI and the API? (not related to this issue just asking).

|

test

|

qryn managed alert doesn t get triggered hello again and good day to you i ve created an alerting rule managed by qryn via and it looks like this the problem is when i wanted to test it by manually inserting some logs to match the condition the alert didn t change state from normal to pending and then firing even though when you click on the graph it shows that it has definitely matched the condition as it can be seen in the below picture i ve tested some other expressions and log queries but the state doesn t change at all as a matter of fact the normal string in the first picture looks a bit weird since it doesn t have the green box around it in addition i tried to see if the rule is actually inserted into qryn and it was bash curl i xget h content type application json http ok vary origin access control allow origin content type yaml content length date sun jul gmt connection keep alive keep alive timeout fake interval name foobar rules alert foo app error expr rate appname foo app error for annotations description component foo is in an error state summary foo error labels my grafana notifications and routing are configured correctly in fact i tried the same expression but with a grafana managed alerting rule and it worked just fine am i doing it right maybe i ve misconfigured something i m open to sharing more information if needed isn t there any other way to insert and add qryn managed alerting rules other than grafana gui and the api not related to this issue just asking

| 1

|

678,769

| 23,210,135,937

|

IssuesEvent

|

2022-08-02 09:24:50

|

testomatio/app

|

https://api.github.com/repos/testomatio/app

|

closed

|

Add the ability to choose where to send the results of the wound run

|

enhancement reporting ci\cd priority medium

|

**Is your feature request related to a problem? Please describe.**

sometimes the tests fail not because they are bad, but because there are problems on the test bench. In this regard, when you retrieve tests that fail, it is not always necessary to create a new run, clogging the run table

**Describe the solution you'd like**

add the ability or choice of where to send the results of the wound, whether to create a new use the same

|

1.0

|

Add the ability to choose where to send the results of the wound run - **Is your feature request related to a problem? Please describe.**

sometimes the tests fail not because they are bad, but because there are problems on the test bench. In this regard, when you retrieve tests that fail, it is not always necessary to create a new run, clogging the run table

**Describe the solution you'd like**

add the ability or choice of where to send the results of the wound, whether to create a new use the same

|

non_test

|

add the ability to choose where to send the results of the wound run is your feature request related to a problem please describe sometimes the tests fail not because they are bad but because there are problems on the test bench in this regard when you retrieve tests that fail it is not always necessary to create a new run clogging the run table describe the solution you d like add the ability or choice of where to send the results of the wound whether to create a new use the same

| 0

|

81,832

| 7,805,226,455

|

IssuesEvent

|

2018-06-11 10:05:55

|

ODIQueensland/data-curator

|

https://api.github.com/repos/ODIQueensland/data-curator

|

closed

|

UAT v0.17.0

|

i:User-Acceptance-Test

|

Sponsor to user acceptance test Data Curator

- review [acceptance tests](https://app.cucumber.pro/projects/data-curator/documents/branch/master)

- [download](https://github.com/ODIQueensland/data-curator/releases), install, and test Data Curator

- [report issues](https://github.com/ODIQueensland/data-curator/issues/new?template=bug.md&labels=problem:Bug&assignee=Stephen-Gates)

cc: @louisjasek

|

1.0

|

UAT v0.17.0 - Sponsor to user acceptance test Data Curator

- review [acceptance tests](https://app.cucumber.pro/projects/data-curator/documents/branch/master)

- [download](https://github.com/ODIQueensland/data-curator/releases), install, and test Data Curator

- [report issues](https://github.com/ODIQueensland/data-curator/issues/new?template=bug.md&labels=problem:Bug&assignee=Stephen-Gates)

cc: @louisjasek

|

test

|

uat sponsor to user acceptance test data curator review install and test data curator cc louisjasek

| 1

|

237,745

| 19,671,112,662

|

IssuesEvent

|

2022-01-11 07:23:10

|

purefun/today-i-learned

|

https://api.github.com/repos/purefun/today-i-learned

|

closed

|

t.Helper() for assertion helper function

|

golang testing

|

`t.Helper()` will report `assertCorrectMessage` callers line number instead of `t.Errorf`'s.

```go

func TestHello(t *testing.T) {

assertCorrectMessage := func(t testing.TB, got, want string) {

t.Helper()

if got != want {

t.Errorf("got %q want %q", got, want)

}

}

// test 1

t.Run("saying hello to people", func(t *testing.T) {

got := Hello("Chris")

want := "Hello, Chris"

assertCorrectMessage(t, got, want)

})

// test 2

t.Run("empty string defaults to 'World'", func(t *testing.T) {

got := Hello("")

want := "Hello, World"

assertCorrectMessage(t, got, want)

})

}

```

|

1.0

|

t.Helper() for assertion helper function - `t.Helper()` will report `assertCorrectMessage` callers line number instead of `t.Errorf`'s.

```go

func TestHello(t *testing.T) {

assertCorrectMessage := func(t testing.TB, got, want string) {

t.Helper()

if got != want {

t.Errorf("got %q want %q", got, want)

}

}

// test 1

t.Run("saying hello to people", func(t *testing.T) {

got := Hello("Chris")

want := "Hello, Chris"

assertCorrectMessage(t, got, want)

})

// test 2

t.Run("empty string defaults to 'World'", func(t *testing.T) {

got := Hello("")

want := "Hello, World"

assertCorrectMessage(t, got, want)

})

}

```

|

test

|

t helper for assertion helper function t helper will report assertcorrectmessage callers line number instead of t errorf s go func testhello t testing t assertcorrectmessage func t testing tb got want string t helper if got want t errorf got q want q got want test t run saying hello to people func t testing t got hello chris want hello chris assertcorrectmessage t got want test t run empty string defaults to world func t testing t got hello want hello world assertcorrectmessage t got want

| 1

|

679,934

| 23,250,913,267

|

IssuesEvent

|

2022-08-04 03:33:34

|

MuntashirAkon/AppManager

|

https://api.github.com/repos/MuntashirAkon/AppManager

|

closed

|

Support for market://search

|

Feature Priority: 3 Status: Accepted

|

Add support for `market://search?q=<package-name>` so that people can access searching facility directly from the launcher's search engine (if they support it).

|

1.0

|

Support for market://search - Add support for `market://search?q=<package-name>` so that people can access searching facility directly from the launcher's search engine (if they support it).

|

non_test

|

support for market search add support for market search q so that people can access searching facility directly from the launcher s search engine if they support it

| 0

|

298,720

| 25,851,638,687

|

IssuesEvent

|

2022-12-13 10:47:23

|

mozilla-mobile/fenix

|

https://api.github.com/repos/mozilla-mobile/fenix

|

opened

|

[UITests] Track ignored tests from #27262

|

eng:disabled-test eng:ui-test

|

In https://github.com/mozilla-mobile/fenix/pull/27262 we disabled some failing tests after changing the interaction with the homescreen from `SearchDialogFragment`.

Failing tests were ignored with:

`@Ignore("Failing after changing SearchDialog homescreen interaction. See: https://github.com/mozilla-mobile/fenix/issues/??")`

cc @AndiAJ @sv-ohorvath

|

2.0

|

[UITests] Track ignored tests from #27262 - In https://github.com/mozilla-mobile/fenix/pull/27262 we disabled some failing tests after changing the interaction with the homescreen from `SearchDialogFragment`.

Failing tests were ignored with:

`@Ignore("Failing after changing SearchDialog homescreen interaction. See: https://github.com/mozilla-mobile/fenix/issues/??")`

cc @AndiAJ @sv-ohorvath

|

test

|

track ignored tests from in we disabled some failing tests after changing the interaction with the homescreen from searchdialogfragment failing tests were ignored with ignore failing after changing searchdialog homescreen interaction see cc andiaj sv ohorvath

| 1

|

84,709

| 7,930,151,671

|

IssuesEvent

|

2018-07-06 17:39:42

|

brave/browser-laptop

|

https://api.github.com/repos/brave/browser-laptop

|

closed

|

Lazy load Tor instead of at startup

|

QA/test-plan-specified feature/tor release-notes/include release/blocking

|

## Test plan

See https://github.com/brave/browser-laptop/pull/14668

### Description

Currently Tor is loaded always at startup, but not everyone wants Tor.

This negatively affects startup time and also bypasses the ability to not use Tor which is important especially for corporate users.

### Steps to Reproduce

1. npm start

**Actual result:**

You can see Tor logging

**Expected result:**

You only see Tor logging once the first private tab is opened.

**Reproduces how often:**

Always at startup

### Brave Version

**about:brave info:**

Brave: 0.23.19

V8: 6.7.288.46

rev: 178c3fb

Muon: 7.1.3

OS Release: 17.5.0

Update Channel: Release

OS Architecture: x64

OS Platform: macOS

Node.js: 7.9.0

Tor: 0.3.3.7 (git-035a35178c92da94)

Brave Sync: v1.4.2

libchromiumcontent: 67.0.3396.87

**Reproducible on current live release:**

Yes

### Additional Information

<!--

Any additional information, related issues, extra QA steps, configuration or data that might be necessary to reproduce the issue.

-->

|

1.0

|

Lazy load Tor instead of at startup - ## Test plan

See https://github.com/brave/browser-laptop/pull/14668

### Description

Currently Tor is loaded always at startup, but not everyone wants Tor.

This negatively affects startup time and also bypasses the ability to not use Tor which is important especially for corporate users.

### Steps to Reproduce

1. npm start

**Actual result:**

You can see Tor logging

**Expected result:**

You only see Tor logging once the first private tab is opened.

**Reproduces how often:**

Always at startup

### Brave Version

**about:brave info:**

Brave: 0.23.19

V8: 6.7.288.46

rev: 178c3fb

Muon: 7.1.3

OS Release: 17.5.0

Update Channel: Release

OS Architecture: x64

OS Platform: macOS

Node.js: 7.9.0

Tor: 0.3.3.7 (git-035a35178c92da94)

Brave Sync: v1.4.2

libchromiumcontent: 67.0.3396.87

**Reproducible on current live release:**

Yes

### Additional Information

<!--

Any additional information, related issues, extra QA steps, configuration or data that might be necessary to reproduce the issue.

-->

|

test

|

lazy load tor instead of at startup test plan see description currently tor is loaded always at startup but not everyone wants tor this negatively affects startup time and also bypasses the ability to not use tor which is important especially for corporate users steps to reproduce npm start actual result you can see tor logging expected result you only see tor logging once the first private tab is opened reproduces how often always at startup brave version about brave info brave rev muon os release update channel release os architecture os platform macos node js tor git brave sync libchromiumcontent reproducible on current live release yes additional information any additional information related issues extra qa steps configuration or data that might be necessary to reproduce the issue

| 1

|

591,335

| 17,837,203,340

|

IssuesEvent

|

2021-09-03 04:04:56

|

bleachbit/bleachbit

|

https://api.github.com/repos/bleachbit/bleachbit

|

closed

|

Persistent error when deleting (Windows Defender backups)

|

bug priority:high

|

Bleachbit 4.0.0 on Windows 10.

Receive the following error during execution of clean, no errors show up during preview.

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpasbase.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpasdlta.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpavbase.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpavdlta.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpengine.dll

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpengine.lkg

|

1.0

|

Persistent error when deleting (Windows Defender backups) - Bleachbit 4.0.0 on Windows 10.

Receive the following error during execution of clean, no errors show up during preview.

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpasbase.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpasdlta.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpavbase.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpavdlta.vdm

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpengine.dll

[WinError 5] Access is denied.: Command to delete C:\ProgramData\Microsoft\Windows Defender\Definition Updates\Backup\mpengine.lkg

|

non_test

|

persistent error when deleting windows defender backups bleachbit on windows receive the following error during execution of clean no errors show up during preview access is denied command to delete c programdata microsoft windows defender definition updates backup mpasbase vdm access is denied command to delete c programdata microsoft windows defender definition updates backup mpasdlta vdm access is denied command to delete c programdata microsoft windows defender definition updates backup mpavbase vdm access is denied command to delete c programdata microsoft windows defender definition updates backup mpavdlta vdm access is denied command to delete c programdata microsoft windows defender definition updates backup mpengine dll access is denied command to delete c programdata microsoft windows defender definition updates backup mpengine lkg

| 0

|

291,784

| 25,175,086,746

|

IssuesEvent

|

2022-11-11 08:30:42

|

apache/pulsar

|

https://api.github.com/repos/apache/pulsar

|

closed

|

Intermittent test failure: DispatcherBlockConsumerTest.testConsumerBlockingWithUnAckedMessagesAndRedelivery

|

type/bug help-wanted component/test triage/week-43 lifecycle/stale

|

[build](https://builds.apache.org/job/pulsar-pull-request/org.apache.pulsar$pulsar-broker/681/testReport/junit/org.apache.pulsar.client.api/DispatcherBlockConsumerTest/testConsumerBlockingWithUnAckedMessagesAndRedelivery/)

```

Error Message

expected [true] but found [false]

Stacktrace

java.lang.AssertionError: expected [true] but found [false]

at org.testng.Assert.fail(Assert.java:94)

at org.testng.Assert.failNotEquals(Assert.java:494)

at org.testng.Assert.assertTrue(Assert.java:42)

at org.testng.Assert.assertTrue(Assert.java:52)

at org.apache.pulsar.client.api.DispatcherBlockConsumerTest.testConsumerBlockingWithUnAckedMessagesAndRedelivery(DispatcherBlockConsumerTest.java:288)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.testng.internal.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:84)

at org.testng.internal.InvokeMethodRunnable.runOne(InvokeMethodRunnable.java:46)

at org.testng.internal.InvokeMethodRunnable.run(InvokeMethodRunnable.java:37)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

```

|

1.0

|

Intermittent test failure: DispatcherBlockConsumerTest.testConsumerBlockingWithUnAckedMessagesAndRedelivery - [build](https://builds.apache.org/job/pulsar-pull-request/org.apache.pulsar$pulsar-broker/681/testReport/junit/org.apache.pulsar.client.api/DispatcherBlockConsumerTest/testConsumerBlockingWithUnAckedMessagesAndRedelivery/)

```

Error Message

expected [true] but found [false]

Stacktrace

java.lang.AssertionError: expected [true] but found [false]

at org.testng.Assert.fail(Assert.java:94)

at org.testng.Assert.failNotEquals(Assert.java:494)

at org.testng.Assert.assertTrue(Assert.java:42)

at org.testng.Assert.assertTrue(Assert.java:52)

at org.apache.pulsar.client.api.DispatcherBlockConsumerTest.testConsumerBlockingWithUnAckedMessagesAndRedelivery(DispatcherBlockConsumerTest.java:288)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.testng.internal.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:84)

at org.testng.internal.InvokeMethodRunnable.runOne(InvokeMethodRunnable.java:46)

at org.testng.internal.InvokeMethodRunnable.run(InvokeMethodRunnable.java:37)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

```

|

test

|

intermittent test failure dispatcherblockconsumertest testconsumerblockingwithunackedmessagesandredelivery error message expected but found stacktrace java lang assertionerror expected but found at org testng assert fail assert java at org testng assert failnotequals assert java at org testng assert asserttrue assert java at org testng assert asserttrue assert java at org apache pulsar client api dispatcherblockconsumertest testconsumerblockingwithunackedmessagesandredelivery dispatcherblockconsumertest java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org testng internal methodinvocationhelper invokemethod methodinvocationhelper java at org testng internal invokemethodrunnable runone invokemethodrunnable java at org testng internal invokemethodrunnable run invokemethodrunnable java at java util concurrent executors runnableadapter call executors java at java util concurrent futuretask run futuretask java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java

| 1

|

218,102

| 16,943,819,826

|

IssuesEvent

|

2021-06-28 01:55:11

|

alibaba/nacos

|

https://api.github.com/repos/alibaba/nacos

|

closed

|

Add unit tests for package com.alibaba.nacos.client.utils in nacos 2.0

|

area/Test

|

This is a sub-issue of [ISSUE #5011]

|

1.0

|

Add unit tests for package com.alibaba.nacos.client.utils in nacos 2.0 - This is a sub-issue of [ISSUE #5011]

|

test

|

add unit tests for package com alibaba nacos client utils in nacos this is a sub issue of

| 1

|

251,782

| 21,522,977,098

|

IssuesEvent

|

2022-04-28 15:40:54

|

erikpl/SDG-ontology-visualizer

|

https://api.github.com/repos/erikpl/SDG-ontology-visualizer

|

reopened

|

Make usertest

|

i18n testing rollover

|

- [ ] Make testable Figma prototype

- [ ] Decide type of user test

- [ ] Final changes before user test 07/04/22

|

1.0

|

Make usertest - - [ ] Make testable Figma prototype

- [ ] Decide type of user test

- [ ] Final changes before user test 07/04/22

|

test

|

make usertest make testable figma prototype decide type of user test final changes before user test

| 1

|

256,891

| 22,108,942,353

|

IssuesEvent

|

2022-06-01 19:23:23

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

roachtest: jepsen/g2/start-stop-2 failed

|

C-test-failure O-robot O-roachtest branch-master release-blocker T-kv

|

roachtest.jepsen/g2/start-stop-2 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/5336174?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/5336174?buildTab=artifacts#/jepsen/g2/start-stop-2) on master @ [1cea73c8a18623949b81705eb5f75179e6cd8d86](https://github.com/cockroachdb/cockroach/commits/1cea73c8a18623949b81705eb5f75179e6cd8d86):

```

| initialize submodules in the clone

| -j, --jobs <n> number of submodules cloned in parallel

| --template <template-directory>

| directory from which templates will be used

| --reference <repo> reference repository

| --reference-if-able <repo>

| reference repository

| --dissociate use --reference only while cloning

| -o, --origin <name> use <name> instead of 'origin' to track upstream

| -b, --branch <branch>

| checkout <branch> instead of the remote's HEAD

| -u, --upload-pack <path>

| path to git-upload-pack on the remote

| --depth <depth> create a shallow clone of that depth

| --shallow-since <time>

| create a shallow clone since a specific time

| --shallow-exclude <revision>

| deepen history of shallow clone, excluding rev

| --single-branch clone only one branch, HEAD or --branch

| --no-tags don't clone any tags, and make later fetches not to follow them

| --shallow-submodules any cloned submodules will be shallow

| --separate-git-dir <gitdir>

| separate git dir from working tree

| -c, --config <key=value>

| set config inside the new repository

| --server-option <server-specific>

| option to transmit

| -4, --ipv4 use IPv4 addresses only

| -6, --ipv6 use IPv6 addresses only

| --filter <args> object filtering

| --remote-submodules any cloned submodules will use their remote-tracking branch

| --sparse initialize sparse-checkout file to include only files at root

|

|

| stdout:

Wraps: (6) COMMAND_PROBLEM

Wraps: (7) Node 6. Command with error:

| ``````

| bash -e -c '

| if ! test -d /mnt/data1/jepsen; then

| git clone -b tc-nightly --depth 1 https://github.com/cockroachdb/jepsen /mnt/data1/jepsen --add safe.directory /mnt/data1/jepsen

| else

| cd /mnt/data1/jepsen

| git fetch origin

| git checkout origin/tc-nightly

| fi

| '

| ``````

Wraps: (8) exit status 129

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *withstack.withStack (4) *errutil.withPrefix (5) *cluster.WithCommandDetails (6) errors.Cmd (7) *hintdetail.withDetail (8) *exec.ExitError

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*jepsen/g2/start-stop-2.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

2.0

|

roachtest: jepsen/g2/start-stop-2 failed - roachtest.jepsen/g2/start-stop-2 [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/5336174?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/5336174?buildTab=artifacts#/jepsen/g2/start-stop-2) on master @ [1cea73c8a18623949b81705eb5f75179e6cd8d86](https://github.com/cockroachdb/cockroach/commits/1cea73c8a18623949b81705eb5f75179e6cd8d86):

```

| initialize submodules in the clone

| -j, --jobs <n> number of submodules cloned in parallel

| --template <template-directory>

| directory from which templates will be used

| --reference <repo> reference repository

| --reference-if-able <repo>

| reference repository

| --dissociate use --reference only while cloning

| -o, --origin <name> use <name> instead of 'origin' to track upstream

| -b, --branch <branch>

| checkout <branch> instead of the remote's HEAD

| -u, --upload-pack <path>

| path to git-upload-pack on the remote

| --depth <depth> create a shallow clone of that depth

| --shallow-since <time>

| create a shallow clone since a specific time

| --shallow-exclude <revision>

| deepen history of shallow clone, excluding rev

| --single-branch clone only one branch, HEAD or --branch

| --no-tags don't clone any tags, and make later fetches not to follow them

| --shallow-submodules any cloned submodules will be shallow

| --separate-git-dir <gitdir>

| separate git dir from working tree

| -c, --config <key=value>

| set config inside the new repository

| --server-option <server-specific>

| option to transmit

| -4, --ipv4 use IPv4 addresses only

| -6, --ipv6 use IPv6 addresses only

| --filter <args> object filtering

| --remote-submodules any cloned submodules will use their remote-tracking branch

| --sparse initialize sparse-checkout file to include only files at root

|

|

| stdout:

Wraps: (6) COMMAND_PROBLEM

Wraps: (7) Node 6. Command with error:

| ``````

| bash -e -c '

| if ! test -d /mnt/data1/jepsen; then

| git clone -b tc-nightly --depth 1 https://github.com/cockroachdb/jepsen /mnt/data1/jepsen --add safe.directory /mnt/data1/jepsen

| else

| cd /mnt/data1/jepsen

| git fetch origin

| git checkout origin/tc-nightly

| fi

| '

| ``````

Wraps: (8) exit status 129

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *withstack.withStack (4) *errutil.withPrefix (5) *cluster.WithCommandDetails (6) errors.Cmd (7) *hintdetail.withDetail (8) *exec.ExitError

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/kv-triage

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*jepsen/g2/start-stop-2.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

test

|

roachtest jepsen start stop failed roachtest jepsen start stop with on master initialize submodules in the clone j jobs number of submodules cloned in parallel template directory from which templates will be used reference reference repository reference if able reference repository dissociate use reference only while cloning o origin use instead of origin to track upstream b branch checkout instead of the remote s head u upload pack path to git upload pack on the remote depth create a shallow clone of that depth shallow since create a shallow clone since a specific time shallow exclude deepen history of shallow clone excluding rev single branch clone only one branch head or branch no tags don t clone any tags and make later fetches not to follow them shallow submodules any cloned submodules will be shallow separate git dir separate git dir from working tree c config set config inside the new repository server option option to transmit use addresses only use addresses only filter object filtering remote submodules any cloned submodules will use their remote tracking branch sparse initialize sparse checkout file to include only files at root stdout wraps command problem wraps node command with error bash e c if test d mnt jepsen then git clone b tc nightly depth mnt jepsen add safe directory mnt jepsen else cd mnt jepsen git fetch origin git checkout origin tc nightly fi wraps exit status error types withstack withstack errutil withprefix withstack withstack errutil withprefix cluster withcommanddetails errors cmd hintdetail withdetail exec exiterror help see see cc cockroachdb kv triage

| 1

|

73,218

| 31,991,628,354

|

IssuesEvent

|

2023-09-21 06:22:58

|

elastic/integrations

|

https://api.github.com/repos/elastic/integrations

|

closed

|

CockroachDb TSDB Enablement

|

Team:Service-Integrations

|

## Test Environment Setup

- [x] Creation of CockroachDb Test Environment.

## Datastream : Status

- [x] Add dimension fields

- https://github.com/elastic/integrations/pull/5479

- [x] #6728

- https://github.com/elastic/integrations/pull/7429

#### Verification and validation

- [x] Verification of data in visualisation after enabling TSDB flag in kibana

- [x] Verification of the count of documents (before & after TSDB enablement) in Discover Interface

- [x] Verify if field mapping is correct in the data stream template.

## Issues

- https://github.com/elastic/kibana/issues/155004. [Blocker for metric_type]

Enable TSDB by default: https://github.com/elastic/integrations/pull/6774

|

1.0

|

CockroachDb TSDB Enablement - ## Test Environment Setup

- [x] Creation of CockroachDb Test Environment.

## Datastream : Status

- [x] Add dimension fields

- https://github.com/elastic/integrations/pull/5479

- [x] #6728

- https://github.com/elastic/integrations/pull/7429

#### Verification and validation

- [x] Verification of data in visualisation after enabling TSDB flag in kibana

- [x] Verification of the count of documents (before & after TSDB enablement) in Discover Interface

- [x] Verify if field mapping is correct in the data stream template.

## Issues

- https://github.com/elastic/kibana/issues/155004. [Blocker for metric_type]

Enable TSDB by default: https://github.com/elastic/integrations/pull/6774

|

non_test

|

cockroachdb tsdb enablement test environment setup creation of cockroachdb test environment datastream status add dimension fields verification and validation verification of data in visualisation after enabling tsdb flag in kibana verification of the count of documents before after tsdb enablement in discover interface verify if field mapping is correct in the data stream template issues enable tsdb by default

| 0

|

267,116

| 8,379,269,718

|

IssuesEvent

|

2018-10-06 23:18:00

|

otrv4/pidgin-otrng

|

https://api.github.com/repos/otrv4/pidgin-otrng

|

closed

|

Managing persistent values

|

high priority needs clarification question

|

Some ideas:

- if the private key file is deleted, the client profile, prekey profile and prekey messages should be regenerated as well.

- if the forging key file is deleted, the client profile and (maybe) prekey profile should be regenerated as well.

- if the shared prekey file is deleted, the prekey profile should be regenerated as well.

- if the prekey messages are deleted should we deleted the published ones as well?

|

1.0

|

Managing persistent values - Some ideas:

- if the private key file is deleted, the client profile, prekey profile and prekey messages should be regenerated as well.

- if the forging key file is deleted, the client profile and (maybe) prekey profile should be regenerated as well.

- if the shared prekey file is deleted, the prekey profile should be regenerated as well.

- if the prekey messages are deleted should we deleted the published ones as well?

|

non_test

|

managing persistent values some ideas if the private key file is deleted the client profile prekey profile and prekey messages should be regenerated as well if the forging key file is deleted the client profile and maybe prekey profile should be regenerated as well if the shared prekey file is deleted the prekey profile should be regenerated as well if the prekey messages are deleted should we deleted the published ones as well

| 0

|

177,323

| 13,691,903,361

|

IssuesEvent

|

2020-09-30 16:08:29

|

phetsims/QA

|

https://api.github.com/repos/phetsims/QA

|

closed

|

RC test: Energy Forms and Changes 1.4.0-rc.2

|

QA:rc-test

|

<!---

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

~~ PhET Release Candidate Test Template ~~

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

Notes and Instructions for Developers:

1. Comments indicate whether something can be omitted or edited.

2. Please check the comments before trying to omit or edit something.

3. Please don't rearrange the sections.

-->

@KatieWoe, @arouinfar, @ariel-phet, @kathy-phet, energy-forms-and-changes/1.4.0-rc.2 is ready for RC testing. The phet-io version of this release will be shared with a client. The publication due date is October 1st. This is the 2nd release from the 1.4 release branch, but the code changes since the previous release were quite significant, so a full retest is needed. There are also several fixed issues that should be checked (these are listed in two of the sections below). Please document issues in https://github.com/phetsims/energy-forms-and-changes/issues and link to this issue.

Assigning to @ariel-phet for prioritization.

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 1: General RC Testing [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>General RC Test</b></summary>

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>What to Test</h3>

- Click every single button.

- Test all possible forms of input.

- Test all mouse/trackpad inputs.

- Test all touchscreen inputs.

- If there is sound, make sure it works.

- Make sure you can't lose anything.

- Play with the sim normally.

- Try to break the sim.

- Test all query parameters on all platforms. (See [QA Book](https://github.com/phetsims/QA/blob/master/doc/qa-book.md)

for a list of query parameters.)

- Download HTML on Chrome and iOS.

- Make sure the iFrame version of the simulation is working as intended on all platforms.

- Make sure the XHTML version of the simulation is working as intended on all platforms.

- Complete the test matrix.

- Don't forget to make sure the sim works with Legends of Learning.

- Test the Game Up harness on at least one platform.

- Check [this](https://docs.google.com/spreadsheets/d/1umIAmhn89WN1nzcHKhYJcv-n3Oj6ps1wITc-CjWYytE/edit#gid=0) LoL

spreadsheet and notify AR or AM if it not there.

- If this is rc.2 please do a memory test.

- When making an issue, check to see if it was in a previously published version

- Try to include version numbers for browsers

- If there is a console available, check for errors and include them in the Problem Description.

- As an RC begins and ends, check the sim repo. If there is a maintenance issue, check it and notify developers if

there is a problem.

<!--- [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Issues to Verify</h3>

- [x] [Water temp has delayed reset on second screen](https://github.com/phetsims/energy-forms-and-changes/issues/343)

- [x] [Water energy chunks boil far too early when biker runs out of food](https://github.com/phetsims/energy-forms-and-changes/issues/346)

- [x] [Energy chunks don't appear in falling water if faucet is off](https://github.com/phetsims/energy-forms-and-changes/issues/347)

These issues should have the "status:ready-for-qa" label. Check these issues off and close them if they are fixed.

Otherwise, post a comment in the issue saying that it wasn't fixed and link back to this issue. If the label is

"status:ready-for-review" or "status:fixed-pending-deploy" then assign back to the developer when done, even if fixed.

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>Link(s)</h3>

- **[Simulation](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/energy-forms-and-changes_all_phet.html)**

- **[iFrame](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/energy-forms-and-changes_all_iframe_phet.html)**

- **[XHTML](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/xhtml/energy-forms-and-changes_all.xhtml)**

- **[Test Matrix](https://docs.google.com/spreadsheets/d/19WAm2BOsEg1f8XCLo-PT8eQsGgb3uR9Npt6obPrMiA4/edit#gid=1313829856)**

- **[Legends of Learning Harness](https://developers.legendsoflearning.com/public-harness/index.html?startPayload=%7B%22languageCode%22%3A%22en%22%7D)**

<hr>

</details>

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 2: PhET-iO RC Test [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>PhET-iO RC Test</b></summary>

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>What to Test</h3>

- Make sure that public files do not have password protection. Use a private browser for this.

- Make sure that private files do have password protection. Use a private browser for this.

- Make sure standalone sim is working properly.

- Make sure the wrapper index is working properly.

- Make sure each wrapper is working properly.

- Launch the simulation in Studio with ?stringTest=xss and make sure the sim doesn't navigate to youtube

- For newer PhET-iO wrapper indices, save the "basic example of a functional wrapper" as a .html file and open it. Make

sure the simulation loads without crashing or throwing errors.

- For an update or maintenance release please check the backwards compatibility of the playback wrapper.

[Here's the link to the previous wrapper.](link)

- Load the login wrapper just to make sure it works. Do so by adding this link from the sim deployed root:

```

/wrappers/login/?wrapper=record&validationRule=validateDigits&&numberOfDigits=5&promptText=ENTER_A_5_DIGIT_NUMBER

```

Further instructions in QA Book

- Conduct a recording test to Metacog, further instructions in the QA Book. Do this for iPadOS + Safari and one other random platform.

- Conduct a memory test on the stand alone sim wrapper.

<!--- [CAN BE

OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Focus and Special Instructions</h3>

Please pay close attention to loading/setting state (hitting "launch" in Studio), especially on the second screen. Because of some implementation decisions, the loaded state may not match perfectly for all cases, but please bring any behavior that is unexpected to my attention. I think @KatieWoe is getting pretty familiar with what to expect for state on this sim, but feel free to Slack me any time with questions, or make an issue. Thanks!

<!--- [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Issues to Verify</h3>

- [x] [Tea kettle energy chunk preloading](https://github.com/phetsims/energy-forms-and-changes/issues/336)

- [x] [Second screen state](https://github.com/phetsims/energy-forms-and-changes/issues/306)

- [x] [Feed Me button is pressed when launched](https://github.com/phetsims/energy-forms-and-changes/issues/335)

- [x] [Hiding Buttons on Second Screen Hides Connected Element as Well](https://github.com/phetsims/energy-forms-and-changes/issues/348)

- [x] [screen 1 blocks and beakers emitted chunks don't work in state](https://github.com/phetsims/energy-forms-and-changes/issues/361)

- [x] [Studio launch doesn't restore EnergyChunk state like the state wrapper](https://github.com/phetsims/energy-forms-and-changes/issues/362)

- [x] [Memory leak in the view when setting state](https://github.com/phetsims/energy-forms-and-changes/issues/368)

- [x] [Water from faucet can have gaps and other odd-looking behavior](https://github.com/phetsims/energy-forms-and-changes/issues/369)

These issues should have the "status:ready-for-qa" label. Check these issues off and close them if they are fixed.

Otherwise, post a comment in the issue saying that it wasn't fixed and link back to this issue. If the label is

"status:ready-for-review" or "status:fixed-pending-deploy" then assign back to the developer when done, even if fixed.

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>Link(s)</h3>

- **[Wrapper Index](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet-io/)**

- **[Test Matrix](https://docs.google.com/spreadsheets/d/17FYYm5Halt8VVi4vMIsjGsrtELD317S5f0zNUdWqt-A/edit#gid=1474718953)**

<hr>

</details>

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 4: FAQs for QA Members [DO NOT OMIT, DO NOT EDIT]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>FAQs for QA Members</b></summary>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>There are multiple tests in this issue... Which test should I do first?</i></summary>

Test in order! Test the first thing first, the second thing second, and so on.

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>How should I format my issue?</i></summary>

Here's a template for making issues:

<b>Test Device</b>

blah

<b>Operating System</b>

blah

<b>Browser</b>

blah

<b>Problem Description</b>

blah

<b>Steps to Reproduce</b>

blah

<b>Visuals</b>

blah

<details>

<summary><b>Troubleshooting Information</b></summary>

blah

</details>

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>Who should I assign?</i></summary>

We typically assign the developer who opened the issue in the QA repository.

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>My question isn't in here... What should I do?</i></summary>

You should:

1. Consult the [QA Book](https://github.com/phetsims/QA/blob/master/doc/qa-book.md).

2. Google it.

3. Ask Katie.

4. Ask a developer.

5. Google it again.

6. Cry.

</details>

<br>

<hr>

</details>

|

1.0

|

RC test: Energy Forms and Changes 1.4.0-rc.2 - <!---

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

~~ PhET Release Candidate Test Template ~~

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

Notes and Instructions for Developers:

1. Comments indicate whether something can be omitted or edited.

2. Please check the comments before trying to omit or edit something.

3. Please don't rearrange the sections.

-->

@KatieWoe, @arouinfar, @ariel-phet, @kathy-phet, energy-forms-and-changes/1.4.0-rc.2 is ready for RC testing. The phet-io version of this release will be shared with a client. The publication due date is October 1st. This is the 2nd release from the 1.4 release branch, but the code changes since the previous release were quite significant, so a full retest is needed. There are also several fixed issues that should be checked (these are listed in two of the sections below). Please document issues in https://github.com/phetsims/energy-forms-and-changes/issues and link to this issue.

Assigning to @ariel-phet for prioritization.

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 1: General RC Testing [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>General RC Test</b></summary>

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>What to Test</h3>

- Click every single button.

- Test all possible forms of input.

- Test all mouse/trackpad inputs.

- Test all touchscreen inputs.

- If there is sound, make sure it works.

- Make sure you can't lose anything.

- Play with the sim normally.

- Try to break the sim.

- Test all query parameters on all platforms. (See [QA Book](https://github.com/phetsims/QA/blob/master/doc/qa-book.md)

for a list of query parameters.)

- Download HTML on Chrome and iOS.

- Make sure the iFrame version of the simulation is working as intended on all platforms.

- Make sure the XHTML version of the simulation is working as intended on all platforms.

- Complete the test matrix.

- Don't forget to make sure the sim works with Legends of Learning.

- Test the Game Up harness on at least one platform.

- Check [this](https://docs.google.com/spreadsheets/d/1umIAmhn89WN1nzcHKhYJcv-n3Oj6ps1wITc-CjWYytE/edit#gid=0) LoL

spreadsheet and notify AR or AM if it not there.

- If this is rc.2 please do a memory test.

- When making an issue, check to see if it was in a previously published version

- Try to include version numbers for browsers

- If there is a console available, check for errors and include them in the Problem Description.

- As an RC begins and ends, check the sim repo. If there is a maintenance issue, check it and notify developers if

there is a problem.

<!--- [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Issues to Verify</h3>

- [x] [Water temp has delayed reset on second screen](https://github.com/phetsims/energy-forms-and-changes/issues/343)

- [x] [Water energy chunks boil far too early when biker runs out of food](https://github.com/phetsims/energy-forms-and-changes/issues/346)

- [x] [Energy chunks don't appear in falling water if faucet is off](https://github.com/phetsims/energy-forms-and-changes/issues/347)

These issues should have the "status:ready-for-qa" label. Check these issues off and close them if they are fixed.

Otherwise, post a comment in the issue saying that it wasn't fixed and link back to this issue. If the label is

"status:ready-for-review" or "status:fixed-pending-deploy" then assign back to the developer when done, even if fixed.

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>Link(s)</h3>

- **[Simulation](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/energy-forms-and-changes_all_phet.html)**

- **[iFrame](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/energy-forms-and-changes_all_iframe_phet.html)**

- **[XHTML](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet/xhtml/energy-forms-and-changes_all.xhtml)**

- **[Test Matrix](https://docs.google.com/spreadsheets/d/19WAm2BOsEg1f8XCLo-PT8eQsGgb3uR9Npt6obPrMiA4/edit#gid=1313829856)**

- **[Legends of Learning Harness](https://developers.legendsoflearning.com/public-harness/index.html?startPayload=%7B%22languageCode%22%3A%22en%22%7D)**

<hr>

</details>

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 2: PhET-iO RC Test [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>PhET-iO RC Test</b></summary>

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>What to Test</h3>

- Make sure that public files do not have password protection. Use a private browser for this.

- Make sure that private files do have password protection. Use a private browser for this.

- Make sure standalone sim is working properly.

- Make sure the wrapper index is working properly.

- Make sure each wrapper is working properly.

- Launch the simulation in Studio with ?stringTest=xss and make sure the sim doesn't navigate to youtube

- For newer PhET-iO wrapper indices, save the "basic example of a functional wrapper" as a .html file and open it. Make

sure the simulation loads without crashing or throwing errors.

- For an update or maintenance release please check the backwards compatibility of the playback wrapper.

[Here's the link to the previous wrapper.](link)

- Load the login wrapper just to make sure it works. Do so by adding this link from the sim deployed root:

```

/wrappers/login/?wrapper=record&validationRule=validateDigits&&numberOfDigits=5&promptText=ENTER_A_5_DIGIT_NUMBER

```

Further instructions in QA Book

- Conduct a recording test to Metacog, further instructions in the QA Book. Do this for iPadOS + Safari and one other random platform.

- Conduct a memory test on the stand alone sim wrapper.

<!--- [CAN BE

OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Focus and Special Instructions</h3>

Please pay close attention to loading/setting state (hitting "launch" in Studio), especially on the second screen. Because of some implementation decisions, the loaded state may not match perfectly for all cases, but please bring any behavior that is unexpected to my attention. I think @KatieWoe is getting pretty familiar with what to expect for state on this sim, but feel free to Slack me any time with questions, or make an issue. Thanks!

<!--- [CAN BE OMITTED, SHOULD BE EDITED IF NOT OMITTED] -->

<h3>Issues to Verify</h3>

- [x] [Tea kettle energy chunk preloading](https://github.com/phetsims/energy-forms-and-changes/issues/336)

- [x] [Second screen state](https://github.com/phetsims/energy-forms-and-changes/issues/306)

- [x] [Feed Me button is pressed when launched](https://github.com/phetsims/energy-forms-and-changes/issues/335)

- [x] [Hiding Buttons on Second Screen Hides Connected Element as Well](https://github.com/phetsims/energy-forms-and-changes/issues/348)

- [x] [screen 1 blocks and beakers emitted chunks don't work in state](https://github.com/phetsims/energy-forms-and-changes/issues/361)

- [x] [Studio launch doesn't restore EnergyChunk state like the state wrapper](https://github.com/phetsims/energy-forms-and-changes/issues/362)

- [x] [Memory leak in the view when setting state](https://github.com/phetsims/energy-forms-and-changes/issues/368)

- [x] [Water from faucet can have gaps and other odd-looking behavior](https://github.com/phetsims/energy-forms-and-changes/issues/369)

These issues should have the "status:ready-for-qa" label. Check these issues off and close them if they are fixed.

Otherwise, post a comment in the issue saying that it wasn't fixed and link back to this issue. If the label is

"status:ready-for-review" or "status:fixed-pending-deploy" then assign back to the developer when done, even if fixed.

<!--- [DO NOT OMIT, CAN BE EDITED] -->

<h3>Link(s)</h3>

- **[Wrapper Index](https://phet-dev.colorado.edu/html/energy-forms-and-changes/1.4.0-rc.2/phet-io/)**

- **[Test Matrix](https://docs.google.com/spreadsheets/d/17FYYm5Halt8VVi4vMIsjGsrtELD317S5f0zNUdWqt-A/edit#gid=1474718953)**

<hr>

</details>

<!---

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

// Section 4: FAQs for QA Members [DO NOT OMIT, DO NOT EDIT]

////////////////////////////////////////////////////////////////////////////////////////////////////////////////////////

-->

<details>

<summary><b>FAQs for QA Members</b></summary>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>There are multiple tests in this issue... Which test should I do first?</i></summary>

Test in order! Test the first thing first, the second thing second, and so on.

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>How should I format my issue?</i></summary>

Here's a template for making issues:

<b>Test Device</b>

blah

<b>Operating System</b>

blah

<b>Browser</b>

blah

<b>Problem Description</b>

blah

<b>Steps to Reproduce</b>

blah

<b>Visuals</b>

blah

<details>

<summary><b>Troubleshooting Information</b></summary>

blah

</details>

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>Who should I assign?</i></summary>

We typically assign the developer who opened the issue in the QA repository.

</details>

<br>

<!--- [DO NOT OMIT, DO NOT EDIT] -->

<details>

<summary><i>My question isn't in here... What should I do?</i></summary>

You should:

1. Consult the [QA Book](https://github.com/phetsims/QA/blob/master/doc/qa-book.md).

2. Google it.

3. Ask Katie.

4. Ask a developer.

5. Google it again.

6. Cry.

</details>

<br>

<hr>

</details>

|

test

|

rc test energy forms and changes rc phet release candidate test template notes and instructions for developers comments indicate whether something can be omitted or edited please check the comments before trying to omit or edit something please don t rearrange the sections katiewoe arouinfar ariel phet kathy phet energy forms and changes rc is ready for rc testing the phet io version of this release will be shared with a client the publication due date is october this is the release from the release branch but the code changes since the previous release were quite significant so a full retest is needed there are also several fixed issues that should be checked these are listed in two of the sections below please document issues in and link to this issue assigning to ariel phet for prioritization section general rc testing general rc test what to test click every single button test all possible forms of input test all mouse trackpad inputs test all touchscreen inputs if there is sound make sure it works make sure you can t lose anything play with the sim normally try to break the sim test all query parameters on all platforms see for a list of query parameters download html on chrome and ios make sure the iframe version of the simulation is working as intended on all platforms make sure the xhtml version of the simulation is working as intended on all platforms complete the test matrix don t forget to make sure the sim works with legends of learning test the game up harness on at least one platform check lol spreadsheet and notify ar or am if it not there if this is rc please do a memory test when making an issue check to see if it was in a previously published version try to include version numbers for browsers if there is a console available check for errors and include them in the problem description as an rc begins and ends check the sim repo if there is a maintenance issue check it and notify developers if there is a problem issues to verify these issues should have the status ready for qa label check these issues off and close them if they are fixed otherwise post a comment in the issue saying that it wasn t fixed and link back to this issue if the label is status ready for review or status fixed pending deploy then assign back to the developer when done even if fixed link s section phet io rc test phet io rc test what to test make sure that public files do not have password protection use a private browser for this make sure that private files do have password protection use a private browser for this make sure standalone sim is working properly make sure the wrapper index is working properly make sure each wrapper is working properly launch the simulation in studio with stringtest xss and make sure the sim doesn t navigate to youtube for newer phet io wrapper indices save the basic example of a functional wrapper as a html file and open it make sure the simulation loads without crashing or throwing errors for an update or maintenance release please check the backwards compatibility of the playback wrapper link load the login wrapper just to make sure it works do so by adding this link from the sim deployed root wrappers login wrapper record validationrule validatedigits numberofdigits prompttext enter a digit number further instructions in qa book conduct a recording test to metacog further instructions in the qa book do this for ipados safari and one other random platform conduct a memory test on the stand alone sim wrapper can be omitted should be edited if not omitted focus and special instructions please pay close attention to loading setting state hitting launch in studio especially on the second screen because of some implementation decisions the loaded state may not match perfectly for all cases but please bring any behavior that is unexpected to my attention i think katiewoe is getting pretty familiar with what to expect for state on this sim but feel free to slack me any time with questions or make an issue thanks issues to verify these issues should have the status ready for qa label check these issues off and close them if they are fixed otherwise post a comment in the issue saying that it wasn t fixed and link back to this issue if the label is status ready for review or status fixed pending deploy then assign back to the developer when done even if fixed link s section faqs for qa members faqs for qa members there are multiple tests in this issue which test should i do first test in order test the first thing first the second thing second and so on how should i format my issue here s a template for making issues test device blah operating system blah browser blah problem description blah steps to reproduce blah visuals blah troubleshooting information blah who should i assign we typically assign the developer who opened the issue in the qa repository my question isn t in here what should i do you should consult the google it ask katie ask a developer google it again cry

| 1

|

204,292

| 15,437,247,358

|

IssuesEvent

|

2021-03-07 16:01:27

|

commercialhaskell/stackage

|

https://api.github.com/repos/commercialhaskell/stackage

|

opened

|

sydtest-yesod-0.0.0.0 fails to compile with GHC 9.0.1

|

failure: compile failure: test-suite

|

```

Preprocessing test suite 'sydtest-yesod-blog-example-test' for sydtest-yesod-0.0.0.0..

Building test suite 'sydtest-yesod-blog-example-test' for sydtest-yesod-0.0.0.0..

[1 of 4] Compiling Example.Blog

/var/stackage/work/unpack-dir/unpacked/sydtest-yesod-0.0.0.0-c5b28e6fe56216c6c1eba08800cd2468c81dcd17dc72dfbe6db820d0fc0da888/blog-example/Example/Blog.hs:27:1: error:

Generating Persistent entities now requires the following language extensions:

DataKinds

FlexibleInstances

Please enable the extensions by copy/pasting these lines into the top of your file:

{-# LANGUAGE DataKinds #-}

{-# LANGUAGE FlexibleInstances #-}

|

27 | share

| ^^^^^...

```

Will skip testing for now

CC @NorfairKing

|

1.0

|

sydtest-yesod-0.0.0.0 fails to compile with GHC 9.0.1 - ```

Preprocessing test suite 'sydtest-yesod-blog-example-test' for sydtest-yesod-0.0.0.0..

Building test suite 'sydtest-yesod-blog-example-test' for sydtest-yesod-0.0.0.0..

[1 of 4] Compiling Example.Blog

/var/stackage/work/unpack-dir/unpacked/sydtest-yesod-0.0.0.0-c5b28e6fe56216c6c1eba08800cd2468c81dcd17dc72dfbe6db820d0fc0da888/blog-example/Example/Blog.hs:27:1: error:

Generating Persistent entities now requires the following language extensions:

DataKinds

FlexibleInstances

Please enable the extensions by copy/pasting these lines into the top of your file:

{-# LANGUAGE DataKinds #-}

{-# LANGUAGE FlexibleInstances #-}

|

27 | share

| ^^^^^...

```

Will skip testing for now

CC @NorfairKing

|

test

|

sydtest yesod fails to compile with ghc preprocessing test suite sydtest yesod blog example test for sydtest yesod building test suite sydtest yesod blog example test for sydtest yesod compiling example blog var stackage work unpack dir unpacked sydtest yesod blog example example blog hs error generating persistent entities now requires the following language extensions datakinds flexibleinstances please enable the extensions by copy pasting these lines into the top of your file language datakinds language flexibleinstances share will skip testing for now cc norfairking

| 1

|

22,761

| 11,782,783,473

|

IssuesEvent

|

2020-03-17 03:09:53

|

Azure/azure-cli

|

https://api.github.com/repos/Azure/azure-cli

|

closed

|

az acr import with --source and --registry is confusing.

|

Container Registry Service Attention

|

**Describe the bug**

az acr import

**To Reproduce**

`az acr import -h` has `--source` and `--registry` parameters. The help section doesn't document that `-r` and `--source` with registry is mutually exclusive (i.e. both can't specify registry/loginserver name). This causes confusion and bad user experience for cx, running into error state not knowing what's the issue. The error message should also be refined to include the issue above when user actually runs into that condition.

Our [official doc](https://docs.microsoft.com/en-us/azure/container-registry/container-registry-import-images#import-from-a-registry-in-a-different-subscription

) does say: `Notice that the --source parameter specifies only the source repository and image name, not the registry login server name.`

**Expected behavior**

Proper help and error message.

**Environment summary**

```

Windows-10-10.0.18362-SP0

Python 3.6.6

Shell: powershell.exe

azure-cli 2.0.70 *

```

|

1.0

|

az acr import with --source and --registry is confusing. - **Describe the bug**

az acr import

**To Reproduce**

`az acr import -h` has `--source` and `--registry` parameters. The help section doesn't document that `-r` and `--source` with registry is mutually exclusive (i.e. both can't specify registry/loginserver name). This causes confusion and bad user experience for cx, running into error state not knowing what's the issue. The error message should also be refined to include the issue above when user actually runs into that condition.

Our [official doc](https://docs.microsoft.com/en-us/azure/container-registry/container-registry-import-images#import-from-a-registry-in-a-different-subscription

) does say: `Notice that the --source parameter specifies only the source repository and image name, not the registry login server name.`

**Expected behavior**

Proper help and error message.

**Environment summary**

```

Windows-10-10.0.18362-SP0

Python 3.6.6

Shell: powershell.exe

azure-cli 2.0.70 *

```

|

non_test

|

az acr import with source and registry is confusing describe the bug az acr import to reproduce az acr import h has source and registry parameters the help section doesn t document that r and source with registry is mutually exclusive i e both can t specify registry loginserver name this causes confusion and bad user experience for cx running into error state not knowing what s the issue the error message should also be refined to include the issue above when user actually runs into that condition our does say notice that the source parameter specifies only the source repository and image name not the registry login server name expected behavior proper help and error message environment summary windows python shell powershell exe azure cli

| 0

|

74,050

| 7,373,768,097

|

IssuesEvent

|

2018-03-13 18:12:49

|

grpc/grpc

|

https://api.github.com/repos/grpc/grpc

|

opened

|

Bazel Debug build failure

|

infra/bazel test failures

|

Relevant log:

```

+ mkdir -p /tmpfs/src/keystore

+ cp /tmpfs/src/gfile/GrpcTesting-d0eeee2db331.json /tmpfs/src/keystore/4321_grpc-testing-service

++ mktemp -d

+ temp_dir=/tmp/tmp.qfu09RtbtH

+ ln -f /tmpfs/src/gfile/bazel-canary /tmp/tmp.qfu09RtbtH/bazel

ln: failed to create hard link '/tmp/tmp.qfu09RtbtH/bazel' => '/tmpfs/src/gfile/bazel-canary': Invalid cross-device link

```

|

1.0

|

Bazel Debug build failure - Relevant log:

```

+ mkdir -p /tmpfs/src/keystore

+ cp /tmpfs/src/gfile/GrpcTesting-d0eeee2db331.json /tmpfs/src/keystore/4321_grpc-testing-service

++ mktemp -d

+ temp_dir=/tmp/tmp.qfu09RtbtH

+ ln -f /tmpfs/src/gfile/bazel-canary /tmp/tmp.qfu09RtbtH/bazel

ln: failed to create hard link '/tmp/tmp.qfu09RtbtH/bazel' => '/tmpfs/src/gfile/bazel-canary': Invalid cross-device link

```

|

test

|

bazel debug build failure relevant log mkdir p tmpfs src keystore cp tmpfs src gfile grpctesting json tmpfs src keystore grpc testing service mktemp d temp dir tmp tmp ln f tmpfs src gfile bazel canary tmp tmp bazel ln failed to create hard link tmp tmp bazel tmpfs src gfile bazel canary invalid cross device link

| 1

|

27,714

| 4,326,942,649

|

IssuesEvent

|

2016-07-26 08:42:28

|

NishantUpadhyay-BTC/BLISS-Issue-Tracking

|

https://api.github.com/repos/NishantUpadhyay-BTC/BLISS-Issue-Tracking

|

closed

|

#1397 Guest UI: Personal Retreat Availability Page: Text change

|

Change Request Deployed to Test

|

Also, please add to the descriptive text:

"Begin your reservation by providing the following information (* = required):"

|

1.0

|

#1397 Guest UI: Personal Retreat Availability Page: Text change - Also, please add to the descriptive text:

"Begin your reservation by providing the following information (* = required):"

|

test

|

guest ui personal retreat availability page text change also please add to the descriptive text begin your reservation by providing the following information required

| 1

|

312,012

| 26,831,607,157

|

IssuesEvent

|

2023-02-02 16:22:22

|

Flowminder/FlowKit

|

https://api.github.com/repos/Flowminder/FlowKit

|

closed

|

FlowAuth test timeout

|

bug FlowAuth tests P-Next

|

As the permission space for FlowAPI increases, the FlowAuth end-to-end test is becoming increasingly shaky and slow.

**Product**

FlowAPI test suite

**Version**

1.5 +

**To Reproduce**

Run `flowauth_end_to_end` after adding new query types

**Expected behaviour**

Test ends in a timely manner (within half an hour), but does not cause failure due to timeout.

**Additional context**

The quick fix is to increase the timeout for Cypress, but this obviously isn't sustainable - do we need to reexamine the testing framework for flowauth?

|

1.0

|

FlowAuth test timeout - As the permission space for FlowAPI increases, the FlowAuth end-to-end test is becoming increasingly shaky and slow.

**Product**

FlowAPI test suite

**Version**

1.5 +

**To Reproduce**

Run `flowauth_end_to_end` after adding new query types

**Expected behaviour**

Test ends in a timely manner (within half an hour), but does not cause failure due to timeout.

**Additional context**

The quick fix is to increase the timeout for Cypress, but this obviously isn't sustainable - do we need to reexamine the testing framework for flowauth?

|