Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

38,972

| 5,206,467,320

|

IssuesEvent

|

2017-01-24 20:42:51

|

c2corg/v6_ui

|

https://api.github.com/repos/c2corg/v6_ui

|

closed

|

On route, links to material article are not migrated

|

Association fixed and ready for testing

|

**on v5**, route http://www.camptocamp.org/routes/784566/fr/la-pyramide-traversee-toit-pyramide-petite-traversee

The route has a connection, in material section, to the article "material" http://www.camptocamp.org/articles/185384/fr/le-contenu-du-sac-alpinisme-rocheux-de-f-a-ad

**on v6** the article exists

https://www.demov6.camptocamp.org/articles/185384/fr/le-contenu-du-sac-alpinisme-rocheux-de-f-a-ad

but the route has no connection to the article

https://www.demov6.camptocamp.org/routes/784566/fr/la-pyramide-traversee-toit-pyramide-petite-traversee, only "sangles" was migrated

|

1.0

|

On route, links to material article are not migrated - **on v5**, route http://www.camptocamp.org/routes/784566/fr/la-pyramide-traversee-toit-pyramide-petite-traversee

The route has a connection, in material section, to the article "material" http://www.camptocamp.org/articles/185384/fr/le-contenu-du-sac-alpinisme-rocheux-de-f-a-ad

**on v6** the article exists

https://www.demov6.camptocamp.org/articles/185384/fr/le-contenu-du-sac-alpinisme-rocheux-de-f-a-ad

but the route has no connection to the article

https://www.demov6.camptocamp.org/routes/784566/fr/la-pyramide-traversee-toit-pyramide-petite-traversee, only "sangles" was migrated

|

test

|

on route links to material article are not migrated on route the route has a connection in material section to the article material on the article exists but the route has no connection to the article only sangles was migrated

| 1

|

59,761

| 6,662,864,283

|

IssuesEvent

|

2017-10-02 14:32:53

|

openbmc/openbmc-test-automation

|

https://api.github.com/repos/openbmc/openbmc-test-automation

|

closed

|

Code update : Reboot BMC during PNOR activation in progress

|

Test

|

- [x] Upload PNOR

- [x] Start activation and reset BMC

- [x] Verify the PNOR failed

|

1.0

|

Code update : Reboot BMC during PNOR activation in progress - - [x] Upload PNOR

- [x] Start activation and reset BMC

- [x] Verify the PNOR failed

|

test

|

code update reboot bmc during pnor activation in progress upload pnor start activation and reset bmc verify the pnor failed

| 1

|

16,487

| 3,535,009,529

|

IssuesEvent

|

2016-01-16 05:35:36

|

WormBase/website

|

https://api.github.com/repos/WormBase/website

|

closed

|

Great site, thank you! But:

pop-up window with seq...

|

bug HelpDesk source: offline chat UI-data display Under testing

|

*Help Desk query collected when no chat operators were online. Follow up required.*

Great site, thank you! But:

pop-up window with sequences cuts area on the right side, and there is no way to scroll, so that 3-4 letters on the right are not visible.

Ubuntu 14.04 LTS 64-bit

Chrome Version 47.0.2526.106 (64-bit)

**Reported by:** Leon******** (leon******************)

**Submitted from:** <a target="_blank" href="http://www.wormbase.org/http://www.wormbase.org/species/c_elegans/gene/WBGene00009706#0b1-9ed2f68c457gha3-10">http://www.wormbase.org/species/c_elegans/gene/WBGene00009706#0b1-9ed2f68c457gha3-10</a>

**Browser:** Chrome 47.0.2526.106

|

1.0

|

Great site, thank you! But:

pop-up window with seq... -

*Help Desk query collected when no chat operators were online. Follow up required.*

Great site, thank you! But:

pop-up window with sequences cuts area on the right side, and there is no way to scroll, so that 3-4 letters on the right are not visible.

Ubuntu 14.04 LTS 64-bit

Chrome Version 47.0.2526.106 (64-bit)

**Reported by:** Leon******** (leon******************)

**Submitted from:** <a target="_blank" href="http://www.wormbase.org/http://www.wormbase.org/species/c_elegans/gene/WBGene00009706#0b1-9ed2f68c457gha3-10">http://www.wormbase.org/species/c_elegans/gene/WBGene00009706#0b1-9ed2f68c457gha3-10</a>

**Browser:** Chrome 47.0.2526.106

|

test

|

great site thank you but pop up window with seq help desk query collected when no chat operators were online follow up required great site thank you but pop up window with sequences cuts area on the right side and there is no way to scroll so that letters on the right are not visible ubuntu lts bit chrome version bit reported by leon leon submitted from a target blank href browser chrome

| 1

|

64,323

| 26,688,867,370

|

IssuesEvent

|

2023-01-27 01:30:49

|

cityofaustin/atd-data-tech

|

https://api.github.com/repos/cityofaustin/atd-data-tech

|

closed

|

Release VZ v1.30.0 (Iguana Cir)

|

Service: Dev Workgroup: VZ Product: Vision Zero Crash Data System Product: Vision Zero Viewer

|

**To-do's for upcoming release**

- [x] Schedule release party - @patrickm02L

- [ ] Advise users of downtime - @patrickm02L

- [x] Create a [release PR](https://github.com/cityofaustin/atd-vz-data/pull/1165) - @mddilley

- [x] Propose + vote on release names - @patrickm02L

- [x] Refine [release notes](https://github.com/cityofaustin/atd-vz-data/releases/tag/v1.30.0) @patrickm02L

- [x] Bump VZ **staging** version to `v1.31.0` and VZ production to `v1.30.0`- @mddilley

- [ ] Send out release notes - @patrickm02L

|

1.0

|

Release VZ v1.30.0 (Iguana Cir) - **To-do's for upcoming release**

- [x] Schedule release party - @patrickm02L

- [ ] Advise users of downtime - @patrickm02L

- [x] Create a [release PR](https://github.com/cityofaustin/atd-vz-data/pull/1165) - @mddilley

- [x] Propose + vote on release names - @patrickm02L

- [x] Refine [release notes](https://github.com/cityofaustin/atd-vz-data/releases/tag/v1.30.0) @patrickm02L

- [x] Bump VZ **staging** version to `v1.31.0` and VZ production to `v1.30.0`- @mddilley

- [ ] Send out release notes - @patrickm02L

|

non_test

|

release vz iguana cir to do s for upcoming release schedule release party advise users of downtime create a mddilley propose vote on release names refine bump vz staging version to and vz production to mddilley send out release notes

| 0

|

65,855

| 6,976,578,121

|

IssuesEvent

|

2017-12-12 11:35:25

|

LiskHQ/lisk

|

https://api.github.com/repos/LiskHQ/lisk

|

opened

|

Fix test/unit/logic

|

*medium test

|

Parent: #972

Adjust `test/unit/logic` in order to work with new database schema.

- [ ] account.js

- [ ] block.js

- [ ] blockReward.js

- [ ] dapp.js

- [ ] delegate.js

- [ ] inTransfer.js

- [ ] multisignature.js

- [ ] outTransfer.js

- [ ] peer.js

- [ ] peers.js

- [ ] signature.js

- [ ] transaction.js

- [ ] transactionPool.js

- [ ] transactions/pool.js

- [ ] transfer.js

- [ ] vote.js

|

1.0

|

Fix test/unit/logic - Parent: #972

Adjust `test/unit/logic` in order to work with new database schema.

- [ ] account.js

- [ ] block.js

- [ ] blockReward.js

- [ ] dapp.js

- [ ] delegate.js

- [ ] inTransfer.js

- [ ] multisignature.js

- [ ] outTransfer.js

- [ ] peer.js

- [ ] peers.js

- [ ] signature.js

- [ ] transaction.js

- [ ] transactionPool.js

- [ ] transactions/pool.js

- [ ] transfer.js

- [ ] vote.js

|

test

|

fix test unit logic parent adjust test unit logic in order to work with new database schema account js block js blockreward js dapp js delegate js intransfer js multisignature js outtransfer js peer js peers js signature js transaction js transactionpool js transactions pool js transfer js vote js

| 1

|

244,376

| 26,392,712,068

|

IssuesEvent

|

2023-01-12 16:49:49

|

some-natalie/kubernoodles

|

https://api.github.com/repos/some-natalie/kubernoodles

|

closed

|

[SECURITY] - Cert Manager Mandatory for Openshift 4.X

|

security

|

## Describe the problem

A clear and concise description of the problem.

We are trying to setup ARC on Openshift 4.X. Can we install the ARC without using the cert-manager for openshift 4.X?

|

True

|

[SECURITY] - Cert Manager Mandatory for Openshift 4.X - ## Describe the problem

A clear and concise description of the problem.

We are trying to setup ARC on Openshift 4.X. Can we install the ARC without using the cert-manager for openshift 4.X?

|

non_test

|

cert manager mandatory for openshift x describe the problem a clear and concise description of the problem we are trying to setup arc on openshift x can we install the arc without using the cert manager for openshift x

| 0

|

67,614

| 14,881,915,698

|

IssuesEvent

|

2021-01-20 11:08:40

|

jimbob88/wheelers-wort-works

|

https://api.github.com/repos/jimbob88/wheelers-wort-works

|

opened

|

CVE-2020-11022 (Medium) detected in jquery-1.12.4.min.js

|

security vulnerability

|

## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.12.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.12.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.12.4/jquery.min.js</a></p>

<p>Path to dependency file: wheelers-wort-works/docs/_layouts/default.html</p>

<p>Path to vulnerable library: wheelers-wort-works/docs/_layouts/default.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.12.4.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/jimbob88/wheelers-wort-works/commit/25796e4e26d9fe24b168a4b755e433c8f35c9b2a">25796e4e26d9fe24b168a4b755e433c8f35c9b2a</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.2 and before 3.5.0, passing HTML from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11022>CVE-2020-11022</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/">https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jQuery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-11022 (Medium) detected in jquery-1.12.4.min.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.12.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.12.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.12.4/jquery.min.js</a></p>

<p>Path to dependency file: wheelers-wort-works/docs/_layouts/default.html</p>

<p>Path to vulnerable library: wheelers-wort-works/docs/_layouts/default.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.12.4.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/jimbob88/wheelers-wort-works/commit/25796e4e26d9fe24b168a4b755e433c8f35c9b2a">25796e4e26d9fe24b168a4b755e433c8f35c9b2a</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.2 and before 3.5.0, passing HTML from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11022>CVE-2020-11022</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/">https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jQuery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file wheelers wort works docs layouts default html path to vulnerable library wheelers wort works docs layouts default html dependency hierarchy x jquery min js vulnerable library found in head commit a href found in base branch master vulnerability details in jquery versions greater than or equal to and before passing html from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery step up your open source security game with whitesource

| 0

|

75,468

| 7,472,902,962

|

IssuesEvent

|

2018-04-03 14:00:11

|

easy-software-ufal/annotations_repos

|

https://api.github.com/repos/easy-software-ufal/annotations_repos

|

opened

|

concordion/concordion ExpectedToFail annotation causes ClassCastException in RunResultsCache.addResults()

|

ADANCX bug faulty impl. test

|

Issue: `https://github.com/concordion/concordion/issues/188`

PR: `https://github.com/concordion/concordion/pull/190`

Fix: `https://github.com/concordion/concordion/commit/b211a763d51eaa22b535a671359a0fb8a314e8ec`

|

1.0

|

concordion/concordion ExpectedToFail annotation causes ClassCastException in RunResultsCache.addResults() - Issue: `https://github.com/concordion/concordion/issues/188`

PR: `https://github.com/concordion/concordion/pull/190`

Fix: `https://github.com/concordion/concordion/commit/b211a763d51eaa22b535a671359a0fb8a314e8ec`

|

test

|

concordion concordion expectedtofail annotation causes classcastexception in runresultscache addresults issue pr fix

| 1

|

612,097

| 18,990,770,689

|

IssuesEvent

|

2021-11-22 06:55:22

|

grafana/grafana

|

https://api.github.com/repos/grafana/grafana

|

closed

|

Unexpected cursor snapping to the previous text box (Firefox only)

|

type/bug priority/important-soon datasource/Prometheus area/frontend area/panel/data

|

<!--

Please use this template to create your bug report. By providing as much info as possible you help us understand the issue, reproduce it and resolve it for you quicker. Therefor take a couple of extra minutes to make sure you have provided all info needed.

PROTIP: record your screen and attach it as a gif to showcase the issue.

* Questions should be posted to: https://community.grafana.com

* Use query inspector to troubleshoot issues: https://bit.ly/2XNF6YS

* How to record and attach gif: https://bit.ly/2Mi8T6K

-->

**What happened**:

When clicking from one text box to another, the cursor unexpectedly jumps to the beginning of the first text box.

**What you expected to happen**:

Clicking the second text box would move the cursor to the second text box.

**How to reproduce it (as minimally and precisely as possible)**:

Make two queries (nonempty). Edit text in the first one. Click the second one. The cursor will jump to the beginning of the first one, instead of jumping to where you click.

**Anything else we need to know?**:

**Environment**:

- Grafana version: Grafana v7.1.1 (3039f9c3bd)

- Data source type & version: Prometheus, official docker, 2.19.3

- OS Grafana is installed on: Official docker

- User OS & Browser: Mac OSX 10.15.5, Firefox 79.0

- Grafana plugins: None

- Others:

|

1.0

|

Unexpected cursor snapping to the previous text box (Firefox only) - <!--

Please use this template to create your bug report. By providing as much info as possible you help us understand the issue, reproduce it and resolve it for you quicker. Therefor take a couple of extra minutes to make sure you have provided all info needed.

PROTIP: record your screen and attach it as a gif to showcase the issue.

* Questions should be posted to: https://community.grafana.com

* Use query inspector to troubleshoot issues: https://bit.ly/2XNF6YS

* How to record and attach gif: https://bit.ly/2Mi8T6K

-->

**What happened**:

When clicking from one text box to another, the cursor unexpectedly jumps to the beginning of the first text box.

**What you expected to happen**:

Clicking the second text box would move the cursor to the second text box.

**How to reproduce it (as minimally and precisely as possible)**:

Make two queries (nonempty). Edit text in the first one. Click the second one. The cursor will jump to the beginning of the first one, instead of jumping to where you click.

**Anything else we need to know?**:

**Environment**:

- Grafana version: Grafana v7.1.1 (3039f9c3bd)

- Data source type & version: Prometheus, official docker, 2.19.3

- OS Grafana is installed on: Official docker

- User OS & Browser: Mac OSX 10.15.5, Firefox 79.0

- Grafana plugins: None

- Others:

|

non_test

|

unexpected cursor snapping to the previous text box firefox only please use this template to create your bug report by providing as much info as possible you help us understand the issue reproduce it and resolve it for you quicker therefor take a couple of extra minutes to make sure you have provided all info needed protip record your screen and attach it as a gif to showcase the issue questions should be posted to use query inspector to troubleshoot issues how to record and attach gif what happened when clicking from one text box to another the cursor unexpectedly jumps to the beginning of the first text box what you expected to happen clicking the second text box would move the cursor to the second text box how to reproduce it as minimally and precisely as possible make two queries nonempty edit text in the first one click the second one the cursor will jump to the beginning of the first one instead of jumping to where you click anything else we need to know environment grafana version grafana data source type version prometheus official docker os grafana is installed on official docker user os browser mac osx firefox grafana plugins none others

| 0

|

216,478

| 16,766,301,703

|

IssuesEvent

|

2021-06-14 09:13:42

|

harens/checkdigit

|

https://api.github.com/repos/harens/checkdigit

|

opened

|

Separate build tests from linting tests

|

tests

|

Similar to [seaport's tests](https://github.com/harens/seaport/tree/master/scripts).

This can hopefully not only speed up the tests, but also make it easier to see where they've failed.

|

1.0

|

Separate build tests from linting tests - Similar to [seaport's tests](https://github.com/harens/seaport/tree/master/scripts).

This can hopefully not only speed up the tests, but also make it easier to see where they've failed.

|

test

|

separate build tests from linting tests similar to this can hopefully not only speed up the tests but also make it easier to see where they ve failed

| 1

|

19,340

| 3,188,821,872

|

IssuesEvent

|

2015-09-29 00:10:29

|

aBitNomadic/shimeji-ee

|

https://api.github.com/repos/aBitNomadic/shimeji-ee

|

closed

|

shimejis wont appear but the taskbar icon does

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. opening shimeji application

2. choosing any character

3. loading program

What is the expected output? What do you see instead?

i should see the shimeji's drop onto my screen, but instead nothing appears yet

the icon is on the taskbar

What version of the product are you using? On what operating system?

1.0.3, windows 8, most recent java,

Please provide any additional information below.

i did everything right to set it up, i even helped my friend put it on their

computers the same way (they have windows 7) and it worked for theirs but not

mine, and i've tried searching for help videos for shimeji on windows 8 but no

matter what way i try to open it, it still doesn't work. :(

```

Original issue reported on code.google.com by `angelica...@gmail.com` on 25 Mar 2015 at 2:34

|

1.0

|

shimejis wont appear but the taskbar icon does - ```

What steps will reproduce the problem?

1. opening shimeji application

2. choosing any character

3. loading program

What is the expected output? What do you see instead?

i should see the shimeji's drop onto my screen, but instead nothing appears yet

the icon is on the taskbar

What version of the product are you using? On what operating system?

1.0.3, windows 8, most recent java,

Please provide any additional information below.

i did everything right to set it up, i even helped my friend put it on their

computers the same way (they have windows 7) and it worked for theirs but not

mine, and i've tried searching for help videos for shimeji on windows 8 but no

matter what way i try to open it, it still doesn't work. :(

```

Original issue reported on code.google.com by `angelica...@gmail.com` on 25 Mar 2015 at 2:34

|

non_test

|

shimejis wont appear but the taskbar icon does what steps will reproduce the problem opening shimeji application choosing any character loading program what is the expected output what do you see instead i should see the shimeji s drop onto my screen but instead nothing appears yet the icon is on the taskbar what version of the product are you using on what operating system windows most recent java please provide any additional information below i did everything right to set it up i even helped my friend put it on their computers the same way they have windows and it worked for theirs but not mine and i ve tried searching for help videos for shimeji on windows but no matter what way i try to open it it still doesn t work original issue reported on code google com by angelica gmail com on mar at

| 0

|

173,992

| 13,452,068,208

|

IssuesEvent

|

2020-09-08 21:24:08

|

killian-mahe/shareyourproject

|

https://api.github.com/repos/killian-mahe/shareyourproject

|

closed

|

Add technologies

|

controller database orm p:low test

|

Add technologies in the app (for a user and a project).

- [x] Controller

- [x] Factory

- [x] Relationships in model and migrations

- [x] Tests

|

1.0

|

Add technologies - Add technologies in the app (for a user and a project).

- [x] Controller

- [x] Factory

- [x] Relationships in model and migrations

- [x] Tests

|

test

|

add technologies add technologies in the app for a user and a project controller factory relationships in model and migrations tests

| 1

|

46,947

| 11,936,006,342

|

IssuesEvent

|

2020-04-02 09:34:06

|

hashicorp/packer

|

https://api.github.com/repos/hashicorp/packer

|

closed

|

Packer Qemu builder does not support newest network devices

|

bug builder/qemu community-supported plugin

|

#### Overview of the Issue

https://www.packer.io/docs/builders/qemu.html#net_device

net_device supports a list which is not the same as `/usr/libexec/qemu-kvm` in version 2.12.0 :

```

Network devices:

name "e1000", bus PCI, desc "Intel Gigabit Ethernet"

name "e1000-82540em", bus PCI, desc "Intel Gigabit Ethernet"

name "e1000e", bus PCI, desc "Intel 82574L GbE Controller"

name "rtl8139", bus PCI

name "virtio-net-device", bus virtio-bus

name "virtio-net-pci", bus PCI, alias "virtio-net"

```

its missing `virtio-net-device`.

#### Reproduction Steps

To get the list of available devices :

```

/usr/libexec/qemu-kvm --device help

```

### Packer version

Packer 1.4.3

|

1.0

|

Packer Qemu builder does not support newest network devices - #### Overview of the Issue

https://www.packer.io/docs/builders/qemu.html#net_device

net_device supports a list which is not the same as `/usr/libexec/qemu-kvm` in version 2.12.0 :

```

Network devices:

name "e1000", bus PCI, desc "Intel Gigabit Ethernet"

name "e1000-82540em", bus PCI, desc "Intel Gigabit Ethernet"

name "e1000e", bus PCI, desc "Intel 82574L GbE Controller"

name "rtl8139", bus PCI

name "virtio-net-device", bus virtio-bus

name "virtio-net-pci", bus PCI, alias "virtio-net"

```

its missing `virtio-net-device`.

#### Reproduction Steps

To get the list of available devices :

```

/usr/libexec/qemu-kvm --device help

```

### Packer version

Packer 1.4.3

|

non_test

|

packer qemu builder does not support newest network devices overview of the issue net device supports a list which is not the same as usr libexec qemu kvm in version network devices name bus pci desc intel gigabit ethernet name bus pci desc intel gigabit ethernet name bus pci desc intel gbe controller name bus pci name virtio net device bus virtio bus name virtio net pci bus pci alias virtio net its missing virtio net device reproduction steps to get the list of available devices usr libexec qemu kvm device help packer version packer

| 0

|

347,903

| 31,332,828,316

|

IssuesEvent

|

2023-08-24 02:01:34

|

3d-gussner/Prusa-Firmware

|

https://api.github.com/repos/3d-gussner/Prusa-Firmware

|

closed

|

📑Report: Retraction test results

|

Test-report stale-issue

|

# [Update 5 June 2020]: Report moved to [Prusa3d-Test-Object](https://github.com/prusa3d/Prusa3D-Test-Objects/issues/11) please don't comment anymore here.

Please report here your Retraction test results.

Use the 3mf file below and try to pint it with PETG or some other material that tends to be stringy.

[Container_50x20_NoCore_v2.zip](https://github.com/3d-gussner/Prusa-Firmware/files/4585431/Container_50x20_NoCore_v2.zip)

- Printer: MK2.5, MK2.5s, MK3, MK3s

- MMU: MMU1, MMU2, MMU2s

- Firmware version printer and MMU

- Bad to good scale : 0 - 10

Feel free to write additional information you want to share.

I will try to update following table so everyone can see what results we got from the community.

|Printer | MMU | PFW | MFW |K value| Quality | User |

| ------ | ------- | ---------- | ---- | ------- | --------- | -------- |

| MK3s | N/A | 3.8.1 | N/A |66| 2 | Average |

| MK3s | N/A | 3.9.0-RC3 | N/A |66| 0 | Average |

| MK3s | N/A | 3.9.0-RC3 | N/A |0.11| 9 | Average |

| | | | | | | | | | |

| MK3s | N/A | 3.8.1 | N/A |66| 2 | Vossberger |

| MK3s | N/A| 3.9.0-RC3 | N/A |66| 0 | Vossberger |

| MK3s | N/A | 3.9.0-RC3 | N/A |0.11| 9| Vossberger |

|

1.0

|

📑Report: Retraction test results - # [Update 5 June 2020]: Report moved to [Prusa3d-Test-Object](https://github.com/prusa3d/Prusa3D-Test-Objects/issues/11) please don't comment anymore here.

Please report here your Retraction test results.

Use the 3mf file below and try to pint it with PETG or some other material that tends to be stringy.

[Container_50x20_NoCore_v2.zip](https://github.com/3d-gussner/Prusa-Firmware/files/4585431/Container_50x20_NoCore_v2.zip)

- Printer: MK2.5, MK2.5s, MK3, MK3s

- MMU: MMU1, MMU2, MMU2s

- Firmware version printer and MMU

- Bad to good scale : 0 - 10

Feel free to write additional information you want to share.

I will try to update following table so everyone can see what results we got from the community.

|Printer | MMU | PFW | MFW |K value| Quality | User |

| ------ | ------- | ---------- | ---- | ------- | --------- | -------- |

| MK3s | N/A | 3.8.1 | N/A |66| 2 | Average |

| MK3s | N/A | 3.9.0-RC3 | N/A |66| 0 | Average |

| MK3s | N/A | 3.9.0-RC3 | N/A |0.11| 9 | Average |

| | | | | | | | | | |

| MK3s | N/A | 3.8.1 | N/A |66| 2 | Vossberger |

| MK3s | N/A| 3.9.0-RC3 | N/A |66| 0 | Vossberger |

| MK3s | N/A | 3.9.0-RC3 | N/A |0.11| 9| Vossberger |

|

test

|

📑report retraction test results report moved to please don t comment anymore here please report here your retraction test results use the file below and try to pint it with petg or some other material that tends to be stringy printer mmu firmware version printer and mmu bad to good scale feel free to write additional information you want to share i will try to update following table so everyone can see what results we got from the community printer mmu pfw mfw k value quality user n a n a average n a n a average n a n a average n a n a vossberger n a n a vossberger n a n a vossberger

| 1

|

7,468

| 2,905,093,147

|

IssuesEvent

|

2015-06-18 21:35:29

|

sosol/sosol

|

https://api.github.com/repos/sosol/sosol

|

opened

|

Fix SAML for JRuby 1.7.20+

|

testing

|

Tests pass under JRuby 1.7.19, but changing to 1.7.20 or 1.7.20.1 results in a slew of `Java::JavaLang::NullPointerException`s in `RubySamlTest`.

|

1.0

|

Fix SAML for JRuby 1.7.20+ - Tests pass under JRuby 1.7.19, but changing to 1.7.20 or 1.7.20.1 results in a slew of `Java::JavaLang::NullPointerException`s in `RubySamlTest`.

|

test

|

fix saml for jruby tests pass under jruby but changing to or results in a slew of java javalang nullpointerexception s in rubysamltest

| 1

|

202,593

| 15,835,788,230

|

IssuesEvent

|

2021-04-06 18:27:35

|

Spidy-Coder/Forest_Fire_Prevention

|

https://api.github.com/repos/Spidy-Coder/Forest_Fire_Prevention

|

opened

|

Front-end required for this project

|

documentation enhancement

|

This project is yet under _**construction.**_

I will be adding a front-end web page which will demonstrate this **_project using Flask_** for real-time application .

This is very important part of our machine learning model.

`⭐star` this repository for getting updates and further changes made in this project.

|

1.0

|

Front-end required for this project - This project is yet under _**construction.**_

I will be adding a front-end web page which will demonstrate this **_project using Flask_** for real-time application .

This is very important part of our machine learning model.

`⭐star` this repository for getting updates and further changes made in this project.

|

non_test

|

front end required for this project this project is yet under construction i will be adding a front end web page which will demonstrate this project using flask for real time application this is very important part of our machine learning model ⭐star this repository for getting updates and further changes made in this project

| 0

|

73,790

| 7,358,916,906

|

IssuesEvent

|

2018-03-10 00:30:31

|

medic/medic-webapp

|

https://api.github.com/repos/medic/medic-webapp

|

closed

|

Add support for death reporting workflow

|

Contacts Priority: 2 - Medium Status: 4 - Acceptance testing Type: Feature

|

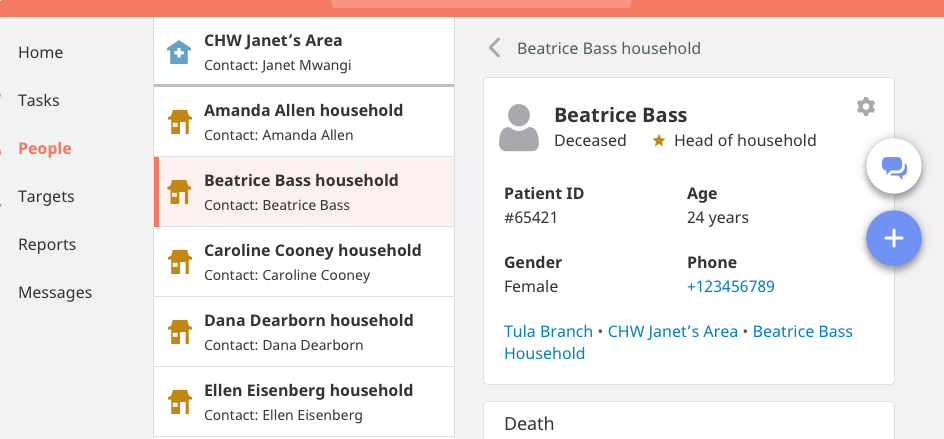

This issue adds support for a death reporting and confirmation workflow involving CHWs and managers. We need to support reporting death as well as reversing a death.

The basic workflow for reporting a death is as follows:

- CHW reports a death in the community via SMS or an app form

- This death report triggers a task for the manager in the app OR sends her an SMS notification

- This task opens a second form (death confirmation) that the manager fills out and submits OR the manager submits a death confirmation form via SMS

- If the manager confirms the death, the person's profile updates to show that they are deceased

- NOTE: The manager can also fill in the death confirmation form directly (in app or via SMS) and the person's profile updates to show that they are deceased

The basic workflow for reversing a death is as follows:

- CHW requests a correction via SMS or an app form

- This request correction form triggers a task for the manager in the app OR sends her an SMS notification

- This task opens an undo death form that the manager submits OR the manager submits an undo death form via SMS

- If the manager confirms that the death should be reversed, the person's profile updates to show that they are alive

- NOTE: The manager can also directly undo the death (in app or via SMS), which should update the profile to show that the person is alive

[Design spec is here](https://docs.google.com/document/d/1EKmOdip2cebl_BbIJNbl1kTbG3rYxaxVYmI_mL5y9M8/edit#)

Summary of UI changes (these are applied once a death is confirmed). See the design spec for more info:

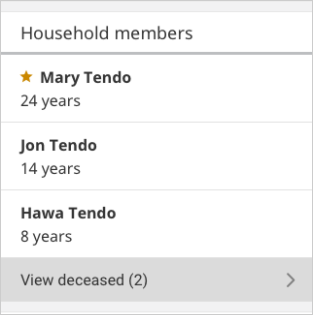

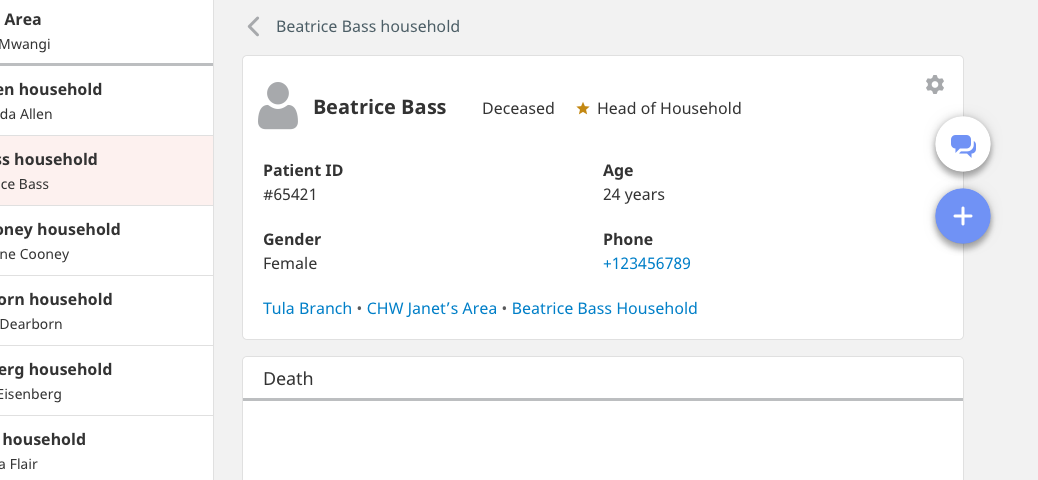

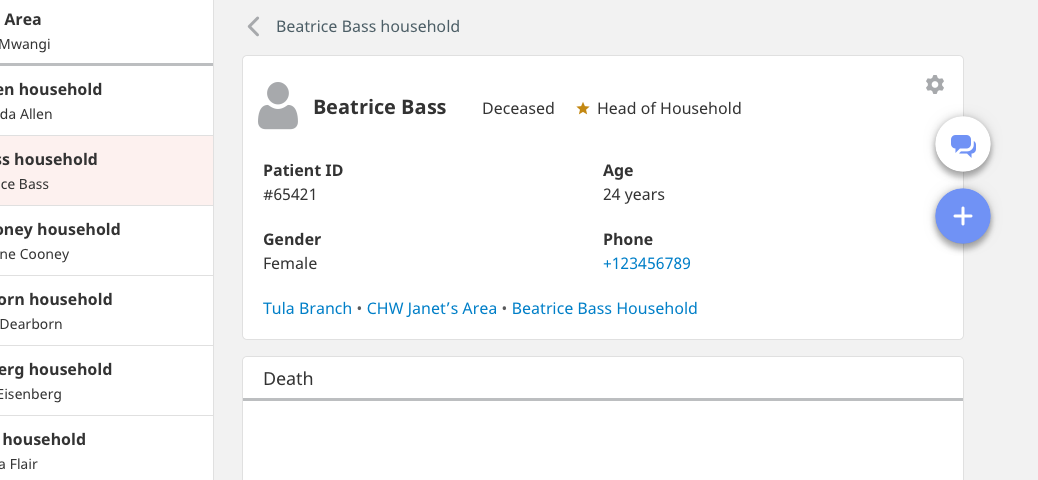

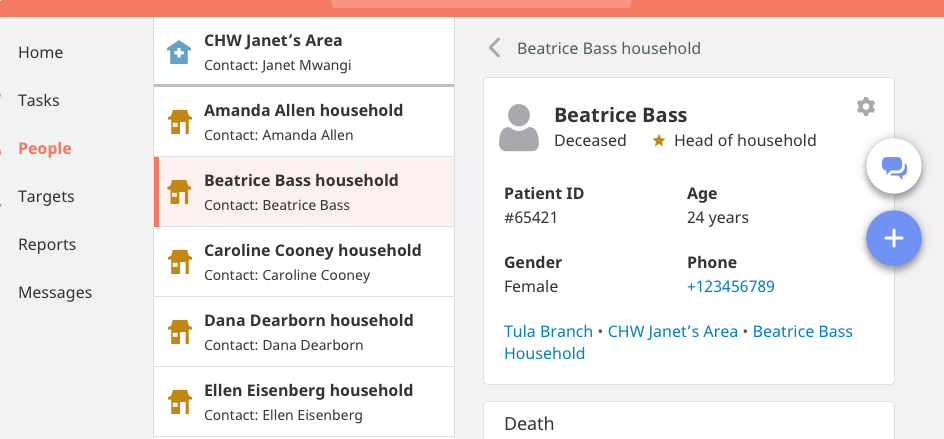

- [x] person's icon changes from pink to gray (screenshot 1)

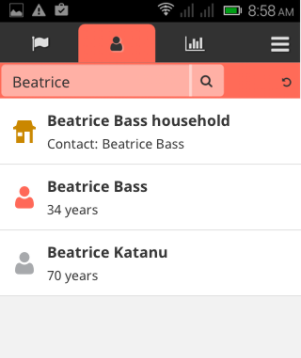

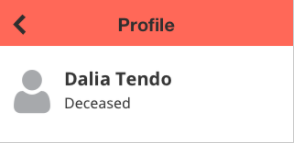

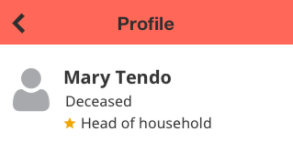

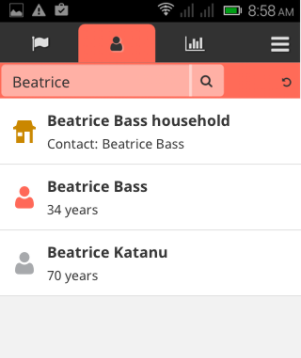

- [x] the word "Deceased" is added either next to or immediately below the person's name on their profile (screenshot 1 & 2 are mobile view, on desktop the word "Deceased" should be immediately next to the person's name, see screenshots 6 & 7)

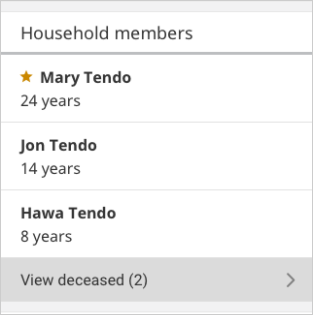

- [x] on the family page, a new row appears at the end of the list of family members that says "View deceased" (screenshot 3)

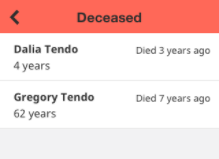

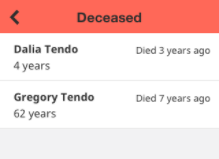

- [x] when you click on "View deceased", you are taken to a detail page that lists the deceased family members. These are the same as the content rows on the family page except that they show the age at the time of death and have a relative date of death on the right, e.g. "Died 3 years ago" (screenshot 4)

- [x] in search, deceased people should have gray icons and show up at the bottom of the list (screenshot 5)

**Screenshot 1**

**Screenshot 2**

**Screenshot 3**

**Screenshot 4**

**Screenshot 5**

**Screenshot 6**

**Screenshot 7**

|

1.0

|

Add support for death reporting workflow - This issue adds support for a death reporting and confirmation workflow involving CHWs and managers. We need to support reporting death as well as reversing a death.

The basic workflow for reporting a death is as follows:

- CHW reports a death in the community via SMS or an app form

- This death report triggers a task for the manager in the app OR sends her an SMS notification

- This task opens a second form (death confirmation) that the manager fills out and submits OR the manager submits a death confirmation form via SMS

- If the manager confirms the death, the person's profile updates to show that they are deceased

- NOTE: The manager can also fill in the death confirmation form directly (in app or via SMS) and the person's profile updates to show that they are deceased

The basic workflow for reversing a death is as follows:

- CHW requests a correction via SMS or an app form

- This request correction form triggers a task for the manager in the app OR sends her an SMS notification

- This task opens an undo death form that the manager submits OR the manager submits an undo death form via SMS

- If the manager confirms that the death should be reversed, the person's profile updates to show that they are alive

- NOTE: The manager can also directly undo the death (in app or via SMS), which should update the profile to show that the person is alive

[Design spec is here](https://docs.google.com/document/d/1EKmOdip2cebl_BbIJNbl1kTbG3rYxaxVYmI_mL5y9M8/edit#)

Summary of UI changes (these are applied once a death is confirmed). See the design spec for more info:

- [x] person's icon changes from pink to gray (screenshot 1)

- [x] the word "Deceased" is added either next to or immediately below the person's name on their profile (screenshot 1 & 2 are mobile view, on desktop the word "Deceased" should be immediately next to the person's name, see screenshots 6 & 7)

- [x] on the family page, a new row appears at the end of the list of family members that says "View deceased" (screenshot 3)

- [x] when you click on "View deceased", you are taken to a detail page that lists the deceased family members. These are the same as the content rows on the family page except that they show the age at the time of death and have a relative date of death on the right, e.g. "Died 3 years ago" (screenshot 4)

- [x] in search, deceased people should have gray icons and show up at the bottom of the list (screenshot 5)

**Screenshot 1**

**Screenshot 2**

**Screenshot 3**

**Screenshot 4**

**Screenshot 5**

**Screenshot 6**

**Screenshot 7**

|

test

|

add support for death reporting workflow this issue adds support for a death reporting and confirmation workflow involving chws and managers we need to support reporting death as well as reversing a death the basic workflow for reporting a death is as follows chw reports a death in the community via sms or an app form this death report triggers a task for the manager in the app or sends her an sms notification this task opens a second form death confirmation that the manager fills out and submits or the manager submits a death confirmation form via sms if the manager confirms the death the person s profile updates to show that they are deceased note the manager can also fill in the death confirmation form directly in app or via sms and the person s profile updates to show that they are deceased the basic workflow for reversing a death is as follows chw requests a correction via sms or an app form this request correction form triggers a task for the manager in the app or sends her an sms notification this task opens an undo death form that the manager submits or the manager submits an undo death form via sms if the manager confirms that the death should be reversed the person s profile updates to show that they are alive note the manager can also directly undo the death in app or via sms which should update the profile to show that the person is alive summary of ui changes these are applied once a death is confirmed see the design spec for more info person s icon changes from pink to gray screenshot the word deceased is added either next to or immediately below the person s name on their profile screenshot are mobile view on desktop the word deceased should be immediately next to the person s name see screenshots on the family page a new row appears at the end of the list of family members that says view deceased screenshot when you click on view deceased you are taken to a detail page that lists the deceased family members these are the same as the content rows on the family page except that they show the age at the time of death and have a relative date of death on the right e g died years ago screenshot in search deceased people should have gray icons and show up at the bottom of the list screenshot screenshot screenshot screenshot screenshot screenshot screenshot screenshot

| 1

|

300,607

| 22,688,608,217

|

IssuesEvent

|

2022-07-04 16:39:25

|

DickinsonCollege/FarmData2

|

https://api.github.com/repos/DickinsonCollege/FarmData2

|

closed

|

FD2 Example Module Readme Clarifications

|

documentation enhancement

|

In the README.md file in farmdata2_modules/fd2_tabs/fd2_example a few points could be clarified:

- Make it more clear that the `xyz` in the document is a place-holder for any module. Maybe just an (e.g. fd2_example or fd2_barn_kit) or something like that.

- In step 2 make it more clear that just a new block like the one shown needs to be added to the function. Currently the //... placeholders are in the wrong spots and its not quite intuitive what they mean anyway. So finding some way to clarify that just the block shown needs to be copied and edited would be good.

- Possibly move the section about creating a main tab to the bottom as that will be an unusual operation.

- Make clear that clearing cache is a console command.

- Make clear that clearing cache only has to happen if .module is modified not when .html is modified.

|

1.0

|

FD2 Example Module Readme Clarifications - In the README.md file in farmdata2_modules/fd2_tabs/fd2_example a few points could be clarified:

- Make it more clear that the `xyz` in the document is a place-holder for any module. Maybe just an (e.g. fd2_example or fd2_barn_kit) or something like that.

- In step 2 make it more clear that just a new block like the one shown needs to be added to the function. Currently the //... placeholders are in the wrong spots and its not quite intuitive what they mean anyway. So finding some way to clarify that just the block shown needs to be copied and edited would be good.

- Possibly move the section about creating a main tab to the bottom as that will be an unusual operation.

- Make clear that clearing cache is a console command.

- Make clear that clearing cache only has to happen if .module is modified not when .html is modified.

|

non_test

|

example module readme clarifications in the readme md file in modules tabs example a few points could be clarified make it more clear that the xyz in the document is a place holder for any module maybe just an e g example or barn kit or something like that in step make it more clear that just a new block like the one shown needs to be added to the function currently the placeholders are in the wrong spots and its not quite intuitive what they mean anyway so finding some way to clarify that just the block shown needs to be copied and edited would be good possibly move the section about creating a main tab to the bottom as that will be an unusual operation make clear that clearing cache is a console command make clear that clearing cache only has to happen if module is modified not when html is modified

| 0

|

204,177

| 23,218,806,906

|

IssuesEvent

|

2022-08-02 16:10:53

|

pravega/pravega

|

https://api.github.com/repos/pravega/pravega

|

opened

|

Update versions of gson, Apache Portable Runtime and Jackson-databind

|

area/security

|

**Describe the bug**

We need to update versions of the following dependencies in order to address vulnerabilities(CVEs) found by running container image scans.

| Library | Current Version | CVEs found |

| --- | --- | --- |

APR | 1.6.5 | CVE-2017-12613 |

com.google.code.gson | 2.8.6 | CVE-2022-25647

com.fasterxml.jackson.core | 2.13.2.2 | CVE-2020-36518

**Problem location**

`gradle.properties`

**Solution**

Bump up the above libraries to higher versions.

|

True

|

Update versions of gson, Apache Portable Runtime and Jackson-databind - **Describe the bug**

We need to update versions of the following dependencies in order to address vulnerabilities(CVEs) found by running container image scans.

| Library | Current Version | CVEs found |

| --- | --- | --- |

APR | 1.6.5 | CVE-2017-12613 |

com.google.code.gson | 2.8.6 | CVE-2022-25647

com.fasterxml.jackson.core | 2.13.2.2 | CVE-2020-36518

**Problem location**

`gradle.properties`

**Solution**

Bump up the above libraries to higher versions.

|

non_test

|

update versions of gson apache portable runtime and jackson databind describe the bug we need to update versions of the following dependencies in order to address vulnerabilities cves found by running container image scans library current version cves found apr cve com google code gson cve com fasterxml jackson core cve problem location gradle properties solution bump up the above libraries to higher versions

| 0

|

227,517

| 25,081,127,399

|

IssuesEvent

|

2022-11-07 19:24:14

|

JMD60260/fetchmeaband

|

https://api.github.com/repos/JMD60260/fetchmeaband

|

closed

|

CVE-2018-19838 (Medium) detected in libsass3.3.6, node-sass-3.13.1.tgz

|

security vulnerability

|

## CVE-2018-19838 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>libsass3.3.6</b>, <b>node-sass-3.13.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-3.13.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-3.13.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-3.13.1.tgz</a></p>

<p>Path to dependency file: /public/vendor/owl.carousel/package.json</p>

<p>Path to vulnerable library: /public/vendor/owl.carousel/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- grunt-sass-1.2.1.tgz (Root Library)

- :x: **node-sass-3.13.1.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass prior to 3.5.5, functions inside ast.cpp for IMPLEMENT_AST_OPERATORS expansion allow attackers to cause a denial-of-service resulting from stack consumption via a crafted sass file, as demonstrated by recursive calls involving clone(), cloneChildren(), and copy().

<p>Publish Date: 2018-12-04

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2018-19838>CVE-2018-19838</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2018-12-04</p>

<p>Fix Resolution (node-sass): 5.0.0</p>

<p>Direct dependency fix Resolution (grunt-sass): 3.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-19838 (Medium) detected in libsass3.3.6, node-sass-3.13.1.tgz - ## CVE-2018-19838 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>libsass3.3.6</b>, <b>node-sass-3.13.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-3.13.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-3.13.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-3.13.1.tgz</a></p>

<p>Path to dependency file: /public/vendor/owl.carousel/package.json</p>

<p>Path to vulnerable library: /public/vendor/owl.carousel/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- grunt-sass-1.2.1.tgz (Root Library)

- :x: **node-sass-3.13.1.tgz** (Vulnerable Library)

</details>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass prior to 3.5.5, functions inside ast.cpp for IMPLEMENT_AST_OPERATORS expansion allow attackers to cause a denial-of-service resulting from stack consumption via a crafted sass file, as demonstrated by recursive calls involving clone(), cloneChildren(), and copy().

<p>Publish Date: 2018-12-04

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2018-19838>CVE-2018-19838</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2018-12-04</p>

<p>Fix Resolution (node-sass): 5.0.0</p>

<p>Direct dependency fix Resolution (grunt-sass): 3.0.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in node sass tgz cve medium severity vulnerability vulnerable libraries node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file public vendor owl carousel package json path to vulnerable library public vendor owl carousel node modules node sass package json dependency hierarchy grunt sass tgz root library x node sass tgz vulnerable library found in base branch master vulnerability details in libsass prior to functions inside ast cpp for implement ast operators expansion allow attackers to cause a denial of service resulting from stack consumption via a crafted sass file as demonstrated by recursive calls involving clone clonechildren and copy publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution node sass direct dependency fix resolution grunt sass step up your open source security game with mend

| 0

|

278,250

| 30,702,242,140

|

IssuesEvent

|

2023-07-27 01:14:12

|

snykiotcubedev/arangodb-3.7.6

|

https://api.github.com/repos/snykiotcubedev/arangodb-3.7.6

|

opened

|

CVE-2023-3079 (High) detected in v88.3.47, v88.3.47

|

Mend: dependency security vulnerability

|

## CVE-2023-3079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>v88.3.47</b>, <b>v88.3.47</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

Type confusion in V8 in Google Chrome prior to 114.0.5735.110 allowed a remote attacker to potentially exploit heap corruption via a crafted HTML page. (Chromium security severity: High)

<p>Publish Date: 2023-06-05

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-3079>CVE-2023-3079</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://chromereleases.googleblog.com/2023/06/stable-channel-update-for-desktop.html">https://chromereleases.googleblog.com/2023/06/stable-channel-update-for-desktop.html</a></p>

<p>Release Date: 2023-06-05</p>

<p>Fix Resolution: 114.0.5735.110</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2023-3079 (High) detected in v88.3.47, v88.3.47 - ## CVE-2023-3079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>v88.3.47</b>, <b>v88.3.47</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

Type confusion in V8 in Google Chrome prior to 114.0.5735.110 allowed a remote attacker to potentially exploit heap corruption via a crafted HTML page. (Chromium security severity: High)

<p>Publish Date: 2023-06-05

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-3079>CVE-2023-3079</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://chromereleases.googleblog.com/2023/06/stable-channel-update-for-desktop.html">https://chromereleases.googleblog.com/2023/06/stable-channel-update-for-desktop.html</a></p>

<p>Release Date: 2023-06-05</p>

<p>Fix Resolution: 114.0.5735.110</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in cve high severity vulnerability vulnerable libraries vulnerability details type confusion in in google chrome prior to allowed a remote attacker to potentially exploit heap corruption via a crafted html page chromium security severity high publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

120,708

| 10,132,219,378

|

IssuesEvent

|

2019-08-01 21:41:59

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

roachtest: jepsen/g2/majority-ring-start-kill-2 failed

|

C-test-failure O-roachtest O-robot

|

SHA: https://github.com/cockroachdb/cockroach/commits/da56c792e968574b8f1d9ef3fdb45d56a530221a

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stress instead of stressrace and passing the '-p' stressflag which

# controls concurrency.

./scripts/gceworker.sh start && ./scripts/gceworker.sh mosh

cd ~/go/src/github.com/cockroachdb/cockroach && \

stdbuf -oL -eL \

make stressrace TESTS=jepsen/g2/majority-ring-start-kill-2 PKG=roachtest TESTTIMEOUT=5m STRESSFLAGS='-maxtime 20m -timeout 10m' 2>&1 | tee /tmp/stress.log

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=1415578&tab=buildLog

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20190801-1415578/jepsen/g2/majority-ring-start-kill-2/run_1

jepsen.go:264,jepsen.go:325,test_runner.go:691: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-1564640260-44-n6cpu4:6 -- bash -e -c "\

cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

~/lein run test \

--tarball file://${PWD}/cockroach.tgz \

--username ${USER} \

--ssh-private-key ~/.ssh/id_rsa \

--os ubuntu \

--time-limit 300 \

--concurrency 30 \

--recovery-time 25 \

--test-count 1 \

-n 10.128.0.86 -n 10.128.0.76 -n 10.128.0.59 -n 10.128.0.55 -n 10.128.0.45 \

--test g2 --nemesis majority-ring --nemesis2 start-kill-2 \

> invoke.log 2>&1 \

" returned:

stderr:

stdout:

Error: exit status 255

: exit status 1

```

|

2.0

|

roachtest: jepsen/g2/majority-ring-start-kill-2 failed - SHA: https://github.com/cockroachdb/cockroach/commits/da56c792e968574b8f1d9ef3fdb45d56a530221a

Parameters:

To repro, try:

```

# Don't forget to check out a clean suitable branch and experiment with the

# stress invocation until the desired results present themselves. For example,

# using stress instead of stressrace and passing the '-p' stressflag which

# controls concurrency.

./scripts/gceworker.sh start && ./scripts/gceworker.sh mosh

cd ~/go/src/github.com/cockroachdb/cockroach && \

stdbuf -oL -eL \

make stressrace TESTS=jepsen/g2/majority-ring-start-kill-2 PKG=roachtest TESTTIMEOUT=5m STRESSFLAGS='-maxtime 20m -timeout 10m' 2>&1 | tee /tmp/stress.log

```

Failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=1415578&tab=buildLog

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/20190801-1415578/jepsen/g2/majority-ring-start-kill-2/run_1

jepsen.go:264,jepsen.go:325,test_runner.go:691: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-1564640260-44-n6cpu4:6 -- bash -e -c "\

cd /mnt/data1/jepsen/cockroachdb && set -eo pipefail && \

~/lein run test \

--tarball file://${PWD}/cockroach.tgz \

--username ${USER} \

--ssh-private-key ~/.ssh/id_rsa \

--os ubuntu \

--time-limit 300 \

--concurrency 30 \

--recovery-time 25 \

--test-count 1 \

-n 10.128.0.86 -n 10.128.0.76 -n 10.128.0.59 -n 10.128.0.55 -n 10.128.0.45 \

--test g2 --nemesis majority-ring --nemesis2 start-kill-2 \

> invoke.log 2>&1 \

" returned:

stderr:

stdout:

Error: exit status 255

: exit status 1

```

|

test

|

roachtest jepsen majority ring start kill failed sha parameters to repro try don t forget to check out a clean suitable branch and experiment with the stress invocation until the desired results present themselves for example using stress instead of stressrace and passing the p stressflag which controls concurrency scripts gceworker sh start scripts gceworker sh mosh cd go src github com cockroachdb cockroach stdbuf ol el make stressrace tests jepsen majority ring start kill pkg roachtest testtimeout stressflags maxtime timeout tee tmp stress log failed test the test failed on branch master cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts jepsen majority ring start kill run jepsen go jepsen go test runner go home agent work go src github com cockroachdb cockroach bin roachprod run teamcity bash e c cd mnt jepsen cockroachdb set eo pipefail lein run test tarball file pwd cockroach tgz username user ssh private key ssh id rsa os ubuntu time limit concurrency recovery time test count n n n n n test nemesis majority ring start kill invoke log returned stderr stdout error exit status exit status

| 1

|

481,840

| 13,892,890,748

|

IssuesEvent

|

2020-10-19 12:50:17

|

space-wizards/space-station-14

|

https://api.github.com/repos/space-wizards/space-station-14

|

closed

|

The implementation of excited groups is broken

|

Feature: Atmospherics Priority: 1-high

|

Yo, your implementation of excited groups, like all repos using tg LINDA past this pr https://github.com/tgstation/tgstation/pull/19189, is broken.

Because excited groups break down as soon as a turf within them is removed from active, this line breaks the core purpose of excited groups, slowly growing to represent the "processing" turfs in an area, then settling to equalize atmos diffs. https://github.com/space-wizards/space-station-14/blob/master/Content.Server/Atmos/TileAtmosphere.cs#L741

I'm not sure how relevant this is to your codebase, as you have monstermos's equalization to fill somewhat the same role, but I thought you'd want to know.

I've implemented a hellfix here: https://github.com/tgstation/tgstation/pull/52493, but it's a bit bloated (Isn't finished yet), and I only do what I do because I need to lower the active turf count as much as I can. In your case, removing the portion of the line that deals with timers, while it would make planetary turfs a lot laggier, will solve the issue. Or just remove excited groups, or keep them as they function now, as low group size settlers.

|

1.0

|

The implementation of excited groups is broken - Yo, your implementation of excited groups, like all repos using tg LINDA past this pr https://github.com/tgstation/tgstation/pull/19189, is broken.

Because excited groups break down as soon as a turf within them is removed from active, this line breaks the core purpose of excited groups, slowly growing to represent the "processing" turfs in an area, then settling to equalize atmos diffs. https://github.com/space-wizards/space-station-14/blob/master/Content.Server/Atmos/TileAtmosphere.cs#L741

I'm not sure how relevant this is to your codebase, as you have monstermos's equalization to fill somewhat the same role, but I thought you'd want to know.

I've implemented a hellfix here: https://github.com/tgstation/tgstation/pull/52493, but it's a bit bloated (Isn't finished yet), and I only do what I do because I need to lower the active turf count as much as I can. In your case, removing the portion of the line that deals with timers, while it would make planetary turfs a lot laggier, will solve the issue. Or just remove excited groups, or keep them as they function now, as low group size settlers.

|

non_test

|

the implementation of excited groups is broken yo your implementation of excited groups like all repos using tg linda past this pr is broken because excited groups break down as soon as a turf within them is removed from active this line breaks the core purpose of excited groups slowly growing to represent the processing turfs in an area then settling to equalize atmos diffs i m not sure how relevant this is to your codebase as you have monstermos s equalization to fill somewhat the same role but i thought you d want to know i ve implemented a hellfix here but it s a bit bloated isn t finished yet and i only do what i do because i need to lower the active turf count as much as i can in your case removing the portion of the line that deals with timers while it would make planetary turfs a lot laggier will solve the issue or just remove excited groups or keep them as they function now as low group size settlers

| 0

|

326,548

| 28,000,264,548

|

IssuesEvent

|

2023-03-27 11:14:53

|

wazuh/wazuh-qa

|

https://api.github.com/repos/wazuh/wazuh-qa

|

closed

|

Research Engine's Metrics module

|

team/qa research role/qa-runtime-terror subteam/qa-rainbow qa-planning target/5.0.0 level/task type/test

|

# Description

The objective of this issue is to investigate and design a testing plan for the development issue: [Engine - Metrics module](https://github.com/wazuh/wazuh/issues/15988)

# Planning stage

- [x] Research the applied change.

- [x] Research if we have a test for this case.

- [x] Define the test cases. Identify the base cases, and then the rest of the tests as tier 2.

- [x] Define whether it is necessary to test systems, integration, or E2E. Create the corresponding issues.

|

1.0

|

Research Engine's Metrics module - # Description

The objective of this issue is to investigate and design a testing plan for the development issue: [Engine - Metrics module](https://github.com/wazuh/wazuh/issues/15988)

# Planning stage

- [x] Research the applied change.

- [x] Research if we have a test for this case.

- [x] Define the test cases. Identify the base cases, and then the rest of the tests as tier 2.

- [x] Define whether it is necessary to test systems, integration, or E2E. Create the corresponding issues.

|

test

|

research engine s metrics module description the objective of this issue is to investigate and design a testing plan for the development issue planning stage research the applied change research if we have a test for this case define the test cases identify the base cases and then the rest of the tests as tier define whether it is necessary to test systems integration or create the corresponding issues

| 1

|

197,725

| 14,940,686,710

|

IssuesEvent

|

2021-01-25 18:38:23

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

opened

|

Failing test: Jest Tests.src/setup_node_env - NodeVersionValidator should run the script WITH error

|

failed-test

|

A test failed on a tracked branch

```

Error: thrown: "Exceeded timeout of 5000 ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if this is a long-running test."

at /dev/shm/workspace/parallel/4/kibana/src/setup_node_env/node_version_validator.test.js:16:3

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous> (/dev/shm/workspace/parallel/4/kibana/src/setup_node_env/node_version_validator.test.js:15:1)

at Runtime._execModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:1299:24)

at Runtime._loadModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:898:12)

at Runtime.requireModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:746:10)

at jestAdapter (/dev/shm/workspace/kibana/node_modules/jest-circus/build/legacy-code-todo-rewrite/jestAdapter.js:106:13)

at processTicksAndRejections (internal/process/task_queues.js:93:5)

at runTestInternal (/dev/shm/workspace/kibana/node_modules/jest-runner/build/runTest.js:380:16)

at runTest (/dev/shm/workspace/kibana/node_modules/jest-runner/build/runTest.js:472:34)

at Object.worker (/dev/shm/workspace/kibana/node_modules/jest-runner/build/testWorker.js:133:12)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+master/11402/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Jest Tests.src/setup_node_env","test.name":"NodeVersionValidator should run the script WITH error","test.failCount":1}} -->

|

1.0

|

Failing test: Jest Tests.src/setup_node_env - NodeVersionValidator should run the script WITH error - A test failed on a tracked branch

```

Error: thrown: "Exceeded timeout of 5000 ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if this is a long-running test."

at /dev/shm/workspace/parallel/4/kibana/src/setup_node_env/node_version_validator.test.js:16:3

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous> (/dev/shm/workspace/parallel/4/kibana/src/setup_node_env/node_version_validator.test.js:15:1)

at Runtime._execModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:1299:24)

at Runtime._loadModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:898:12)

at Runtime.requireModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:746:10)

at jestAdapter (/dev/shm/workspace/kibana/node_modules/jest-circus/build/legacy-code-todo-rewrite/jestAdapter.js:106:13)

at processTicksAndRejections (internal/process/task_queues.js:93:5)

at runTestInternal (/dev/shm/workspace/kibana/node_modules/jest-runner/build/runTest.js:380:16)

at runTest (/dev/shm/workspace/kibana/node_modules/jest-runner/build/runTest.js:472:34)

at Object.worker (/dev/shm/workspace/kibana/node_modules/jest-runner/build/testWorker.js:133:12)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+master/11402/)

<!-- kibanaCiData = {"failed-test":{"test.class":"Jest Tests.src/setup_node_env","test.name":"NodeVersionValidator should run the script WITH error","test.failCount":1}} -->

|

test

|

failing test jest tests src setup node env nodeversionvalidator should run the script with error a test failed on a tracked branch error thrown exceeded timeout of ms for a test use jest settimeout newtimeout to increase the timeout value if this is a long running test at dev shm workspace parallel kibana src setup node env node version validator test js at dispatchdescribe dev shm workspace kibana node modules jest circus build index js at describe dev shm workspace kibana node modules jest circus build index js at object dev shm workspace parallel kibana src setup node env node version validator test js at runtime execmodule dev shm workspace kibana node modules jest runtime build index js at runtime loadmodule dev shm workspace kibana node modules jest runtime build index js at runtime requiremodule dev shm workspace kibana node modules jest runtime build index js at jestadapter dev shm workspace kibana node modules jest circus build legacy code todo rewrite jestadapter js at processticksandrejections internal process task queues js at runtestinternal dev shm workspace kibana node modules jest runner build runtest js at runtest dev shm workspace kibana node modules jest runner build runtest js at object worker dev shm workspace kibana node modules jest runner build testworker js first failure

| 1

|

427,575

| 29,830,297,005

|

IssuesEvent