Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

32,270 | 6,756,696,827 | IssuesEvent | 2017-10-24 08:10:12 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | MegaMenu doesn't compile with TypeScript 2.4 | confirmed defect | **I'm submitting a ...**

```

[X] bug report

```

**Test case**

You can use the following demo app as test case:

https://github.com/ova2/angular-development-with-primeng/tree/master/chapter7/megamenu

**Current behavior**

If you run the showcase for the MegaMenu with TypeScript 2.4 or run the demo app linked above, you will get a compilation error like

```

Type '{ label: string; items: { label: string; }[]; }[]' has no properties in common with type 'MenuItem'.

```

**Expected behavior**

There should be no compilation error, as when compiling with TypeScript 2.3.

**Minimal reproduction of the problem with instructions**

* Install the above app

* or install the current master of PrimeNG and change the requirements in package.json to Angular 4.3, Angular-Cli 1.3, TypeScript 2.4 (lower Angular/Cli versions require TypeScript 2.3, so you need to test with Angular 4.3 and Cli 1.3)

* Run `npm` install and `npm start` and check the MegaMenu

* **Angular version:** 4.3.3

* **PrimeNG version:** 4.1.2

* **Browser:** all

* **Language:** TypeScript 2.4

* **Node (for AoT issues):** 8.1.4

* **Analysis of the problem:**

In the `MenuItem` interface, the `items` property is defined as of type `MenuItem[]`. But in the MegaMenu, you can have arrays of arrays of MenuItems as items, not just arrays of MenuItems.

In TypeScript 2.4, it’s now an error to assign anything to a weak type when there’s no overlap in properties (see [here](https://www.typescriptlang.org/docs/handbook/release-notes/typescript-2-4.html#weak-type-detection)).

Note that in the `MenuItem` interface all properties are marked as optional. Therefore, this is considered a "weak type".

* **Proposed solution:**

The `items` property in the `MenutItem` interface should be defined as follows:

```

items?: MenuItem[]|MenuItem[][];

```

| 1.0 | MegaMenu doesn't compile with TypeScript 2.4 - **I'm submitting a ...**

```

[X] bug report

```

**Test case**

You can use the following demo app as test case:

https://github.com/ova2/angular-development-with-primeng/tree/master/chapter7/megamenu

**Current behavior**

If you run the showcase for the MegaMenu with TypeScript 2.4 or run the demo app linked above, you will get a compilation error like

```

Type '{ label: string; items: { label: string; }[]; }[]' has no properties in common with type 'MenuItem'.

```

**Expected behavior**

There should be no compilation error, as when compiling with TypeScript 2.3.

**Minimal reproduction of the problem with instructions**

* Install the above app

* or install the current master of PrimeNG and change the requirements in package.json to Angular 4.3, Angular-Cli 1.3, TypeScript 2.4 (lower Angular/Cli versions require TypeScript 2.3, so you need to test with Angular 4.3 and Cli 1.3)

* Run `npm` install and `npm start` and check the MegaMenu

* **Angular version:** 4.3.3

* **PrimeNG version:** 4.1.2

* **Browser:** all

* **Language:** TypeScript 2.4

* **Node (for AoT issues):** 8.1.4

* **Analysis of the problem:**

In the `MenuItem` interface, the `items` property is defined as of type `MenuItem[]`. But in the MegaMenu, you can have arrays of arrays of MenuItems as items, not just arrays of MenuItems.

In TypeScript 2.4, it’s now an error to assign anything to a weak type when there’s no overlap in properties (see [here](https://www.typescriptlang.org/docs/handbook/release-notes/typescript-2-4.html#weak-type-detection)).

Note that in the `MenuItem` interface all properties are marked as optional. Therefore, this is considered a "weak type".

* **Proposed solution:**

The `items` property in the `MenutItem` interface should be defined as follows:

```

items?: MenuItem[]|MenuItem[][];

```

| non_usab | megamenu doesn t compile with typescript i m submitting a bug report test case you can use the following demo app as test case current behavior if you run the showcase for the megamenu with typescript or run the demo app linked above you will get a compilation error like type label string items label string has no properties in common with type menuitem expected behavior there should be no compilation error as when compiling with typescript minimal reproduction of the problem with instructions install the above app or install the current master of primeng and change the requirements in package json to angular angular cli typescript lower angular cli versions require typescript so you need to test with angular and cli run npm install and npm start and check the megamenu angular version primeng version browser all language typescript node for aot issues analysis of the problem in the menuitem interface the items property is defined as of type menuitem but in the megamenu you can have arrays of arrays of menuitems as items not just arrays of menuitems in typescript it’s now an error to assign anything to a weak type when there’s no overlap in properties see note that in the menuitem interface all properties are marked as optional therefore this is considered a weak type proposed solution the items property in the menutitem interface should be defined as follows items menuitem menuitem | 0 |

8,566 | 5,825,824,655 | IssuesEvent | 2017-05-08 00:51:39 | bronzehedwick/chrisdeluca | https://api.github.com/repos/bronzehedwick/chrisdeluca | closed | Add more links to navigation | usability | The main navigation should have the following links:

* Now

* Contact

* Sections (this is categories. Maybe rename?)

* RSS

In addition, there should be a form to subscribe to email notifications on site updates.

Depends on #61 #60 #59 #57 | True | Add more links to navigation - The main navigation should have the following links:

* Now

* Contact

* Sections (this is categories. Maybe rename?)

* RSS

In addition, there should be a form to subscribe to email notifications on site updates.

Depends on #61 #60 #59 #57 | usab | add more links to navigation the main navigation should have the following links now contact sections this is categories maybe rename rss in addition there should be a form to subscribe to email notifications on site updates depends on | 1 |

325,413 | 27,876,174,470 | IssuesEvent | 2023-03-21 16:09:19 | airbytehq/airbyte | https://api.github.com/repos/airbytehq/airbyte | closed | E2E stream table tests - stream details panel | team/platform-move area/frontend ui/connection ui/tests e2e-testing-tool | ## Tell us about the problem you're trying to solve

Add E2E tests for stream details panel functionality in the new stream table

### Tasks

- [x] Check panel opening (this case should be covered in https://github.com/airbytehq/airbyte/issues/22640)

- [x] Check stream details info: sync state, namespace(if exist), stream name, chosen sync mode

- [x] Check amount of displayed fields(depends on tests data we will use)

- [x] Check that user is able to scroll a long list of fields()scrolling and desired field is visible

- [x] Check that each field has info: source field name, data type, Cursor(optional), PK(optional), destination field name

- [x] Check that user is able to select only one cursor value (appropriate sync mode should be chosen)

- [x] Check that user is NOT able to select only one cursor value if it's source-defined (appropriate sync mode should be chosen)

- [x] Check that user is able to select only multiple PK values (appropriate sync mode should be chosen)

- [x] Check that user is NOT able to select PK value if it's source-defined (appropriate sync mode should be chosen)

| 2.0 | E2E stream table tests - stream details panel - ## Tell us about the problem you're trying to solve

Add E2E tests for stream details panel functionality in the new stream table

### Tasks

- [x] Check panel opening (this case should be covered in https://github.com/airbytehq/airbyte/issues/22640)

- [x] Check stream details info: sync state, namespace(if exist), stream name, chosen sync mode

- [x] Check amount of displayed fields(depends on tests data we will use)

- [x] Check that user is able to scroll a long list of fields()scrolling and desired field is visible

- [x] Check that each field has info: source field name, data type, Cursor(optional), PK(optional), destination field name

- [x] Check that user is able to select only one cursor value (appropriate sync mode should be chosen)

- [x] Check that user is NOT able to select only one cursor value if it's source-defined (appropriate sync mode should be chosen)

- [x] Check that user is able to select only multiple PK values (appropriate sync mode should be chosen)

- [x] Check that user is NOT able to select PK value if it's source-defined (appropriate sync mode should be chosen)

| non_usab | stream table tests stream details panel tell us about the problem you re trying to solve add tests for stream details panel functionality in the new stream table tasks check panel opening this case should be covered in check stream details info sync state namespace if exist stream name chosen sync mode check amount of displayed fields depends on tests data we will use check that user is able to scroll a long list of fields scrolling and desired field is visible check that each field has info source field name data type cursor optional pk optional destination field name check that user is able to select only one cursor value appropriate sync mode should be chosen check that user is not able to select only one cursor value if it s source defined appropriate sync mode should be chosen check that user is able to select only multiple pk values appropriate sync mode should be chosen check that user is not able to select pk value if it s source defined appropriate sync mode should be chosen | 0 |

269,063 | 28,959,988,826 | IssuesEvent | 2023-05-10 01:06:12 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | reopened | CVE-2011-2699 (High) detected in linuxv3.0 | Mend: dependency security vulnerability | ## CVE-2011-2699 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/verygreen/linux.git>https://github.com/verygreen/linux.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/dpteam/RK3188_TABLET/commit/0c501f5a0fd72c7b2ac82904235363bd44fd8f9e">0c501f5a0fd72c7b2ac82904235363bd44fd8f9e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/include/net/inetpeer.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The IPv6 implementation in the Linux kernel before 3.1 does not generate Fragment Identification values separately for each destination, which makes it easier for remote attackers to cause a denial of service (disrupted networking) by predicting these values and sending crafted packets.

<p>Publish Date: 2012-05-24

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2011-2699>CVE-2011-2699</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2011-2699">https://nvd.nist.gov/vuln/detail/CVE-2011-2699</a></p>

<p>Release Date: 2012-05-24</p>

<p>Fix Resolution: 3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2011-2699 (High) detected in linuxv3.0 - ## CVE-2011-2699 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/verygreen/linux.git>https://github.com/verygreen/linux.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/dpteam/RK3188_TABLET/commit/0c501f5a0fd72c7b2ac82904235363bd44fd8f9e">0c501f5a0fd72c7b2ac82904235363bd44fd8f9e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/include/net/inetpeer.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The IPv6 implementation in the Linux kernel before 3.1 does not generate Fragment Identification values separately for each destination, which makes it easier for remote attackers to cause a denial of service (disrupted networking) by predicting these values and sending crafted packets.

<p>Publish Date: 2012-05-24

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2011-2699>CVE-2011-2699</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2011-2699">https://nvd.nist.gov/vuln/detail/CVE-2011-2699</a></p>

<p>Release Date: 2012-05-24</p>

<p>Fix Resolution: 3.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files include net inetpeer h vulnerability details the implementation in the linux kernel before does not generate fragment identification values separately for each destination which makes it easier for remote attackers to cause a denial of service disrupted networking by predicting these values and sending crafted packets publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

117,536 | 17,496,281,368 | IssuesEvent | 2021-08-10 01:00:40 | billmcchesney1/vulnerable-rust | https://api.github.com/repos/billmcchesney1/vulnerable-rust | opened | CVE-2020-36471 (Medium) detected in generator-0.6.21.crate | security vulnerability | ## CVE-2020-36471 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>generator-0.6.21.crate</b></p></summary>

<p>Stackfull Generator Library in Rust</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/generator/0.6.21/download">https://crates.io/api/v1/crates/generator/0.6.21/download</a></p>

<p>

Dependency Hierarchy:

- hyper-0.13.5.crate (Root Library)

- tokio-0.2.21.crate

- bytes-0.5.5.crate

- loom-0.3.4.crate

- :x: **generator-0.6.21.crate** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the generator crate before 0.7.0 for Rust. It does not ensure that a function (for yielding values) has Send bounds.

<p>Publish Date: 2021-08-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36471>CVE-2020-36471</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://rustsec.org/advisories/RUSTSEC-2020-0151.html">https://rustsec.org/advisories/RUSTSEC-2020-0151.html</a></p>

<p>Release Date: 2021-08-08</p>

<p>Fix Resolution: 0.7.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Crate","packageName":"generator","packageVersion":"0.6.21","packageFilePaths":[],"isTransitiveDependency":true,"dependencyTree":"hyper:0.13.5;tokio:0.2.21;bytes:0.5.5;loom:0.3.4;generator:0.6.21","isMinimumFixVersionAvailable":true,"minimumFixVersion":"0.7.0"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2020-36471","vulnerabilityDetails":"An issue was discovered in the generator crate before 0.7.0 for Rust. It does not ensure that a function (for yielding values) has Send bounds.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36471","cvss3Severity":"medium","cvss3Score":"5.5","cvss3Metrics":{"A":"N/A","AC":"N/A","PR":"N/A","S":"N/A","C":"N/A","UI":"N/A","AV":"N/A","I":"N/A"},"extraData":{}}</REMEDIATE> --> | True | CVE-2020-36471 (Medium) detected in generator-0.6.21.crate - ## CVE-2020-36471 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>generator-0.6.21.crate</b></p></summary>

<p>Stackfull Generator Library in Rust</p>

<p>Library home page: <a href="https://crates.io/api/v1/crates/generator/0.6.21/download">https://crates.io/api/v1/crates/generator/0.6.21/download</a></p>

<p>

Dependency Hierarchy:

- hyper-0.13.5.crate (Root Library)

- tokio-0.2.21.crate

- bytes-0.5.5.crate

- loom-0.3.4.crate

- :x: **generator-0.6.21.crate** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in the generator crate before 0.7.0 for Rust. It does not ensure that a function (for yielding values) has Send bounds.

<p>Publish Date: 2021-08-08

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36471>CVE-2020-36471</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://rustsec.org/advisories/RUSTSEC-2020-0151.html">https://rustsec.org/advisories/RUSTSEC-2020-0151.html</a></p>

<p>Release Date: 2021-08-08</p>

<p>Fix Resolution: 0.7.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Crate","packageName":"generator","packageVersion":"0.6.21","packageFilePaths":[],"isTransitiveDependency":true,"dependencyTree":"hyper:0.13.5;tokio:0.2.21;bytes:0.5.5;loom:0.3.4;generator:0.6.21","isMinimumFixVersionAvailable":true,"minimumFixVersion":"0.7.0"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2020-36471","vulnerabilityDetails":"An issue was discovered in the generator crate before 0.7.0 for Rust. It does not ensure that a function (for yielding values) has Send bounds.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36471","cvss3Severity":"medium","cvss3Score":"5.5","cvss3Metrics":{"A":"N/A","AC":"N/A","PR":"N/A","S":"N/A","C":"N/A","UI":"N/A","AV":"N/A","I":"N/A"},"extraData":{}}</REMEDIATE> --> | non_usab | cve medium detected in generator crate cve medium severity vulnerability vulnerable library generator crate stackfull generator library in rust library home page a href dependency hierarchy hyper crate root library tokio crate bytes crate loom crate x generator crate vulnerable library found in base branch master vulnerability details an issue was discovered in the generator crate before for rust it does not ensure that a function for yielding values has send bounds publish date url a href cvss score details base score metrics exploitability metrics attack vector n a attack complexity n a privileges required n a user interaction n a scope n a impact metrics confidentiality impact n a integrity impact n a availability impact n a for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree hyper tokio bytes loom generator isminimumfixversionavailable true minimumfixversion basebranches vulnerabilityidentifier cve vulnerabilitydetails an issue was discovered in the generator crate before for rust it does not ensure that a function for yielding values has send bounds vulnerabilityurl | 0 |

371,169 | 25,939,851,565 | IssuesEvent | 2022-12-16 17:16:26 | projf/projf-explore | https://api.github.com/repos/projf/projf-explore | closed | Feedback on endianness | documentation | On page https://projectf.io/posts/numbers-in-verilog/ you mention:

> Say you’ve got a bit-endian ...

You probably mean big

> ... byte from I2C and want to convert it to little-endian. Alas, you can’t mix big and little-endian vectors, so the following won’t work:

I would pay attention to endianness in this context. In my experience endianness is mostly known in data consisting of multiple bytes. I think you are talking about the endianness of bits, which seem to be called *bit endianness* ([example](https://stackoverflow.com/questions/6043483/why-bit-endianness-is-an-issue-in-bitfields).

---

Another issue I stumbled upon:

> wire [0:7] i2c_byte; // 8-bit wire (big-endian)

> reg [7:0] le_byte; // 8-bit reg (little-endian)

> always_ff @(posedge clk) le_byte <= i2c_byte; // Won't work :(

I tried:

```verilog

`define LED_COUNT 16

module led_sw_all_reversed(

input [`LED_COUNT-1:0] sw,

output [0:`LED_COUNT-1] led // reversed bit-endianness

);

assign led = sw;

endmodule

```

Which worked fine using Vivado on my hardware with 16 switches and LEDs. Last but not least, I am new to Verilog.

PS: 😎 way of teaching FPGAs using graphics! | 1.0 | Feedback on endianness - On page https://projectf.io/posts/numbers-in-verilog/ you mention:

> Say you’ve got a bit-endian ...

You probably mean big

> ... byte from I2C and want to convert it to little-endian. Alas, you can’t mix big and little-endian vectors, so the following won’t work:

I would pay attention to endianness in this context. In my experience endianness is mostly known in data consisting of multiple bytes. I think you are talking about the endianness of bits, which seem to be called *bit endianness* ([example](https://stackoverflow.com/questions/6043483/why-bit-endianness-is-an-issue-in-bitfields).

---

Another issue I stumbled upon:

> wire [0:7] i2c_byte; // 8-bit wire (big-endian)

> reg [7:0] le_byte; // 8-bit reg (little-endian)

> always_ff @(posedge clk) le_byte <= i2c_byte; // Won't work :(

I tried:

```verilog

`define LED_COUNT 16

module led_sw_all_reversed(

input [`LED_COUNT-1:0] sw,

output [0:`LED_COUNT-1] led // reversed bit-endianness

);

assign led = sw;

endmodule

```

Which worked fine using Vivado on my hardware with 16 switches and LEDs. Last but not least, I am new to Verilog.

PS: 😎 way of teaching FPGAs using graphics! | non_usab | feedback on endianness on page you mention say you’ve got a bit endian you probably mean big byte from and want to convert it to little endian alas you can’t mix big and little endian vectors so the following won’t work i would pay attention to endianness in this context in my experience endianness is mostly known in data consisting of multiple bytes i think you are talking about the endianness of bits which seem to be called bit endianness another issue i stumbled upon wire byte bit wire big endian reg le byte bit reg little endian always ff posedge clk le byte byte won t work i tried verilog define led count module led sw all reversed input sw output led reversed bit endianness assign led sw endmodule which worked fine using vivado on my hardware with switches and leds last but not least i am new to verilog ps 😎 way of teaching fpgas using graphics | 0 |

6,746 | 4,534,191,130 | IssuesEvent | 2016-09-08 14:01:03 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 28206321: iMessage extension auto rotation is completely broken | classification:ui/usability reproducible:always status:open | #### Description

Steps to Reproduce:

- Open the IceCreamBuilder sample iMessage extension.

- Expand

- Rotate from portrait to landscape

- Collapse

Looks like in the attached screenshot.

-

Product Version: 10.0 GM

Created: 2016-09-08T13:47:18.862640

Originated: 2016-09-08T15:47:00

Open Radar Link: http://www.openradar.me/28206321 | True | 28206321: iMessage extension auto rotation is completely broken - #### Description

Steps to Reproduce:

- Open the IceCreamBuilder sample iMessage extension.

- Expand

- Rotate from portrait to landscape

- Collapse

Looks like in the attached screenshot.

-

Product Version: 10.0 GM

Created: 2016-09-08T13:47:18.862640

Originated: 2016-09-08T15:47:00

Open Radar Link: http://www.openradar.me/28206321 | usab | imessage extension auto rotation is completely broken description steps to reproduce open the icecreambuilder sample imessage extension expand rotate from portrait to landscape collapse looks like in the attached screenshot product version gm created originated open radar link | 1 |

21,947 | 18,149,869,788 | IssuesEvent | 2021-09-26 04:37:42 | tailscale/tailscale | https://api.github.com/repos/tailscale/tailscale | closed | Synology DSM6 x86_64 package (1.14.3) stops working after 1 minute | L1 Very few P3 Can't get started T6 Major usability OS-synology | Hello,

I installed the new package 1.14.3 (tailscale-x86_64-1.14.3-008-dsm6.spk) over the existing 1.12.1 version on my DS3617xs. That worked, NAS showed as "online" on the Tailscale website and after approx. 1 minuted the status went to "offline". I checked the NAS and the package was stopped - restarted the package, and it worked for roughly 1 minute again.

Please let me can provide any logs. I deleted it and installed 1.12.1 again.

Thank you!

<img src="https://frontapp.com/assets/img/favicons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_3kykx) | True | Synology DSM6 x86_64 package (1.14.3) stops working after 1 minute - Hello,

I installed the new package 1.14.3 (tailscale-x86_64-1.14.3-008-dsm6.spk) over the existing 1.12.1 version on my DS3617xs. That worked, NAS showed as "online" on the Tailscale website and after approx. 1 minuted the status went to "offline". I checked the NAS and the package was stopped - restarted the package, and it worked for roughly 1 minute again.

Please let me can provide any logs. I deleted it and installed 1.12.1 again.

Thank you!

<img src="https://frontapp.com/assets/img/favicons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_3kykx) | usab | synology package stops working after minute hello i installed the new package tailscale spk over the existing version on my that worked nas showed as online on the tailscale website and after approx minuted the status went to offline i checked the nas and the package was stopped restarted the package and it worked for roughly minute again please let me can provide any logs i deleted it and installed again thank you | 1 |

24,794 | 12,403,651,756 | IssuesEvent | 2020-05-21 14:16:14 | tgstation/tgstation-server | https://api.github.com/repos/tgstation/tgstation-server | closed | Database Commit step of Automatic Deployments can hang for... 30 MINUTES?!? (MySql/Linux only maybe) | Area: Jobs Backlog Database Issue Help Wanted Performance Reproduction Required | VORE-station experiences this regularly. The deployment process runs perfectly fine, except commiting the compile job to the database can hang for 30 minutes. This not only makes the chat bots liars when they say `Deployment Complete` it's obviously bad for bitcoin.

This doesn't seem to happen with manual deployments even though they follow the same code path. | True | Database Commit step of Automatic Deployments can hang for... 30 MINUTES?!? (MySql/Linux only maybe) - VORE-station experiences this regularly. The deployment process runs perfectly fine, except commiting the compile job to the database can hang for 30 minutes. This not only makes the chat bots liars when they say `Deployment Complete` it's obviously bad for bitcoin.

This doesn't seem to happen with manual deployments even though they follow the same code path. | non_usab | database commit step of automatic deployments can hang for minutes mysql linux only maybe vore station experiences this regularly the deployment process runs perfectly fine except commiting the compile job to the database can hang for minutes this not only makes the chat bots liars when they say deployment complete it s obviously bad for bitcoin this doesn t seem to happen with manual deployments even though they follow the same code path | 0 |

15,877 | 3,488,321,709 | IssuesEvent | 2016-01-02 21:11:10 | SemanticMediaWiki/SemanticMediaWiki | https://api.github.com/repos/SemanticMediaWiki/SemanticMediaWiki | opened | Setting multiple values #set/#subobject using `|` | question requires test wikidocu missing | [0] wrote "with some old templates of mine I found out that ... can be used to store multiple values for one property. Since I did not find any mention of it as I wanted to confirm this"

```

{{#set:

|property1=value1|value2|value3

|property2=value1|value2|value3

...

}}

```

Above is codified in [1] but we are missing an integration test [2, 3] and it would be great if someone could send a PR to cover this in order to avoid any regression in future.

[0] https://www.semantic-mediawiki.org/wiki/Thread:Help_talk:Setting_values/Setting_multiple_values_in_one_turn

[1] https://github.com/SemanticMediaWiki/SemanticMediaWiki/blob/master/src/ParserParameterProcessor.php#L205-L212

[2] https://github.com/SemanticMediaWiki/SemanticMediaWiki/blob/master/tests/phpunit/Integration/ByJsonScript/README.md

[3] https://github.com/SemanticMediaWiki/SemanticMediaWiki/tree/master/tests#write-integration-tests-using-json-script | 1.0 | Setting multiple values #set/#subobject using `|` - [0] wrote "with some old templates of mine I found out that ... can be used to store multiple values for one property. Since I did not find any mention of it as I wanted to confirm this"

```

{{#set:

|property1=value1|value2|value3

|property2=value1|value2|value3

...

}}

```

Above is codified in [1] but we are missing an integration test [2, 3] and it would be great if someone could send a PR to cover this in order to avoid any regression in future.

[0] https://www.semantic-mediawiki.org/wiki/Thread:Help_talk:Setting_values/Setting_multiple_values_in_one_turn

[1] https://github.com/SemanticMediaWiki/SemanticMediaWiki/blob/master/src/ParserParameterProcessor.php#L205-L212

[2] https://github.com/SemanticMediaWiki/SemanticMediaWiki/blob/master/tests/phpunit/Integration/ByJsonScript/README.md

[3] https://github.com/SemanticMediaWiki/SemanticMediaWiki/tree/master/tests#write-integration-tests-using-json-script | non_usab | setting multiple values set subobject using wrote with some old templates of mine i found out that can be used to store multiple values for one property since i did not find any mention of it as i wanted to confirm this set above is codified in but we are missing an integration test and it would be great if someone could send a pr to cover this in order to avoid any regression in future | 0 |

16,937 | 11,495,617,675 | IssuesEvent | 2020-02-12 05:29:26 | the-tale/the-tale | https://api.github.com/repos/the-tale/the-tale | closed | Гильдии: изменить или убрать ограничение на длину описания гильдии | comp_general cont_community cont_usability est_simple good first issue type_improvement | Сейчас слишком короткое. Сделать по аналогии с описаниями Хранителя/героя? | True | Гильдии: изменить или убрать ограничение на длину описания гильдии - Сейчас слишком короткое. Сделать по аналогии с описаниями Хранителя/героя? | usab | гильдии изменить или убрать ограничение на длину описания гильдии сейчас слишком короткое сделать по аналогии с описаниями хранителя героя | 1 |

23,113 | 6,369,372,045 | IssuesEvent | 2017-08-01 11:42:36 | Yoast/wordpress-seo | https://api.github.com/repos/Yoast/wordpress-seo | closed | Updated Premium page with My Yoast integration | needs-code-review | To work with the upcoming My Yoast, the Premium page in the plugin needs some changes.

- [x] Remove the `Licenses` tab completely (this functionality will be merged into the `Extensions` tab)

- [x] On the `Extensions` tab, add a second status label to all plugins shown:

- [x] When you don't own a plugin, show the `Buy` button with a link that says `More Information`.

- [x] When you have a plugin installed, show the `INSTALLED` label and the `NOT ACTIVATED` label, with a link to My Yoast that says `Activate your license on My Yoast >>`.

- [x] When the plugin is installed and activated in My Yoast, show the `INSTALLED` and `ACTIVATED` labels with a link that says `Manage this license on My Yoast >>`

- [x] Change the warning when a plugin is not activated to `Warning! You have not yet activated [PLUGIN NAME] in My Yoast. If you want to do so now, click here. Otherwise, you will not receive updates or support.` Which links to My Yoast.

- [x] Remove the page title and tab navigation at the top.

- [x] Increase the spacing between the upsell checklists and the buy button/status labels.

Fixes #6561. | 1.0 | Updated Premium page with My Yoast integration - To work with the upcoming My Yoast, the Premium page in the plugin needs some changes.

- [x] Remove the `Licenses` tab completely (this functionality will be merged into the `Extensions` tab)

- [x] On the `Extensions` tab, add a second status label to all plugins shown:

- [x] When you don't own a plugin, show the `Buy` button with a link that says `More Information`.

- [x] When you have a plugin installed, show the `INSTALLED` label and the `NOT ACTIVATED` label, with a link to My Yoast that says `Activate your license on My Yoast >>`.

- [x] When the plugin is installed and activated in My Yoast, show the `INSTALLED` and `ACTIVATED` labels with a link that says `Manage this license on My Yoast >>`

- [x] Change the warning when a plugin is not activated to `Warning! You have not yet activated [PLUGIN NAME] in My Yoast. If you want to do so now, click here. Otherwise, you will not receive updates or support.` Which links to My Yoast.

- [x] Remove the page title and tab navigation at the top.

- [x] Increase the spacing between the upsell checklists and the buy button/status labels.

Fixes #6561. | non_usab | updated premium page with my yoast integration to work with the upcoming my yoast the premium page in the plugin needs some changes remove the licenses tab completely this functionality will be merged into the extensions tab on the extensions tab add a second status label to all plugins shown when you don t own a plugin show the buy button with a link that says more information when you have a plugin installed show the installed label and the not activated label with a link to my yoast that says activate your license on my yoast when the plugin is installed and activated in my yoast show the installed and activated labels with a link that says manage this license on my yoast change the warning when a plugin is not activated to warning you have not yet activated in my yoast if you want to do so now click here otherwise you will not receive updates or support which links to my yoast remove the page title and tab navigation at the top increase the spacing between the upsell checklists and the buy button status labels fixes | 0 |

45,678 | 7,195,418,698 | IssuesEvent | 2018-02-04 16:58:10 | golang/go | https://api.github.com/repos/golang/go | opened | strconv: Unquote example looks like a unit test instead of an example | Documentation NeedsFix help wanted | The Unquote example (https://golang.org/pkg/strconv/#Unquote) looks like a unit test instead of an example.

That is a sea of backslashes and quotes. I think we could make a more readable example.

| 1.0 | strconv: Unquote example looks like a unit test instead of an example - The Unquote example (https://golang.org/pkg/strconv/#Unquote) looks like a unit test instead of an example.

That is a sea of backslashes and quotes. I think we could make a more readable example.

| non_usab | strconv unquote example looks like a unit test instead of an example the unquote example looks like a unit test instead of an example that is a sea of backslashes and quotes i think we could make a more readable example | 0 |

465,265 | 13,369,622,130 | IssuesEvent | 2020-09-01 09:06:00 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | manabi-gakushu.benesse.ne.jp - site is not usable | browser-firefox engine-gecko priority-normal | <!-- @browser: Firefox 82.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:82.0) Gecko/20100101 Firefox/82.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57348 -->

**URL**: https://manabi-gakushu.benesse.ne.jp/gakushu/typing/nihongonyuryoku.html

**Browser / Version**: Firefox 82.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Site is not usable

**Description**: Unable to type

**Steps to Reproduce**:

does not work type " - " key

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/8/50ab0f6d-d6ef-4297-b9a3-c997f39c6fd5.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200828153126</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/8/765ca612-fb1f-4421-a199-e5a9b85ab6e6)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | manabi-gakushu.benesse.ne.jp - site is not usable - <!-- @browser: Firefox 82.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:82.0) Gecko/20100101 Firefox/82.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/57348 -->

**URL**: https://manabi-gakushu.benesse.ne.jp/gakushu/typing/nihongonyuryoku.html

**Browser / Version**: Firefox 82.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Site is not usable

**Description**: Unable to type

**Steps to Reproduce**:

does not work type " - " key

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2020/8/50ab0f6d-d6ef-4297-b9a3-c997f39c6fd5.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200828153126</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2020/8/765ca612-fb1f-4421-a199-e5a9b85ab6e6)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_usab | manabi gakushu benesse ne jp site is not usable url browser version firefox operating system windows tested another browser yes edge problem type site is not usable description unable to type steps to reproduce does not work type key view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel nightly hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 0 |

82,662 | 16,011,575,303 | IssuesEvent | 2021-04-20 11:17:23 | wazuh/wazuh | https://api.github.com/repos/wazuh/wazuh | closed | <program_name> extracting an empty string | ruleset threatintel threatintel/decoders threatintel/rules | ```

Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)

**Phase 1: Completed pre-decoding.

full event: 'Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)'

timestamp: 'Nov 20 06:35:00'

hostname: 'PUNGDC-FDCS-DIST_SF'

program_name: ''

log: '(root) CMD ( /usr/libexec/atrun)'

**Phase 2: Completed decoding.

No decoder matched.

```

As you can notice below the result for that log gives program_name an empty string and not (null), I've tried with a pre-match entry but the decoder hasn't been detected.

The only way to get it work was to use the program_name in that way :

```

<decoder name="Junos-dist-switch-info4">

<program_name></program_name>

</decoder>

<decoder name="Junos-dist-info-cron">

<parent>Junos-dist-switch-info4</parent>

<regex>(\(\S+\)) \S+ (\(\s+\S+\))</regex>

<order>cron.user, cron.action</order>

</decoder>

```

the result is :

```

Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)

**Phase 1: Completed pre-decoding.

full event: 'Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun) '

timestamp: 'Nov 20 06:35:00'

hostname: 'PUNGDC-FDCS-DIST_SF'

program_name: ''

log: '(root) CMD ( /usr/libexec/atrun) '

**Phase 2: Completed decoding.

decoder: 'Junos-dist-switch-info4'

cron.user: '(root)'

cron.action: '( /usr/libexec/atrun)'

```

That may make many conflicts especially if we have many logs from different sources where the program_name is extracted as an empty string like in this case.

| 1.0 | <program_name> extracting an empty string - ```

Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)

**Phase 1: Completed pre-decoding.

full event: 'Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)'

timestamp: 'Nov 20 06:35:00'

hostname: 'PUNGDC-FDCS-DIST_SF'

program_name: ''

log: '(root) CMD ( /usr/libexec/atrun)'

**Phase 2: Completed decoding.

No decoder matched.

```

As you can notice below the result for that log gives program_name an empty string and not (null), I've tried with a pre-match entry but the decoder hasn't been detected.

The only way to get it work was to use the program_name in that way :

```

<decoder name="Junos-dist-switch-info4">

<program_name></program_name>

</decoder>

<decoder name="Junos-dist-info-cron">

<parent>Junos-dist-switch-info4</parent>

<regex>(\(\S+\)) \S+ (\(\s+\S+\))</regex>

<order>cron.user, cron.action</order>

</decoder>

```

the result is :

```

Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun)

**Phase 1: Completed pre-decoding.

full event: 'Nov 20 06:35:00 PUNGDC-FDCS-DIST_SF : (root) CMD ( /usr/libexec/atrun) '

timestamp: 'Nov 20 06:35:00'

hostname: 'PUNGDC-FDCS-DIST_SF'

program_name: ''

log: '(root) CMD ( /usr/libexec/atrun) '

**Phase 2: Completed decoding.

decoder: 'Junos-dist-switch-info4'

cron.user: '(root)'

cron.action: '( /usr/libexec/atrun)'

```

That may make many conflicts especially if we have many logs from different sources where the program_name is extracted as an empty string like in this case.

| non_usab | extracting an empty string nov pungdc fdcs dist sf root cmd usr libexec atrun phase completed pre decoding full event nov pungdc fdcs dist sf root cmd usr libexec atrun timestamp nov hostname pungdc fdcs dist sf program name log root cmd usr libexec atrun phase completed decoding no decoder matched as you can notice below the result for that log gives program name an empty string and not null i ve tried with a pre match entry but the decoder hasn t been detected the only way to get it work was to use the program name in that way junos dist switch s s s s cron user cron action the result is nov pungdc fdcs dist sf root cmd usr libexec atrun phase completed pre decoding full event nov pungdc fdcs dist sf root cmd usr libexec atrun timestamp nov hostname pungdc fdcs dist sf program name log root cmd usr libexec atrun phase completed decoding decoder junos dist switch cron user root cron action usr libexec atrun that may make many conflicts especially if we have many logs from different sources where the program name is extracted as an empty string like in this case | 0 |

9,461 | 6,307,560,505 | IssuesEvent | 2017-07-22 02:30:55 | coreos/bugs | https://api.github.com/repos/coreos/bugs | closed | iptables kernel panic | area/usability component/kernel kind/bug team/os | # Issue Report #

Bug

## Bug ##

When iptables-restore is used to perform replacements of very large tables, `ipt_alloc_initial_table` hangs, and when it does, the linux kernel panics.

The following screenshot was captured from the console of a virtual machine, but the issue also occurs on bare-metal machines.

### Container Linux Version ###

```

cat /etc/os-release

NAME=CoreOS

ID=coreos

VERSION=1185.5.0

VERSION_ID=1185.5.0

BUILD_ID=2016-12-07-0937

PRETTY_NAME="CoreOS 1185.5.0 (MoreOS)"

ANSI_COLOR="1;32"

HOME_URL="https://coreos.com/"

BUG_REPORT_URL="https://github.com/coreos/bugs/issues"

cat /proc/version

Linux version 4.7.3-coreos-r3 (jenkins@jenkins-os-executor-1.c.coreos-gce-testing.internal) (gcc version 4.9.3 (Gentoo Hardened 4.9.3 p1.5, pie-0.6.4) ) #1 SMP Wed Dec 7 09:29:55 UTC 2016

```

### Environment ###

replicated on:

bare metal machines.

also on virtual machines.

occurs when iptables directly on the host machine.

also occurs when iptables is executed from within a docker container.

### Expected Behavior ###

a kernel panic does not occur

### Actual Behavior ###

linux kernel panics

### Reproduction Steps ###

1. generate an iptables ruleset with lots and lots of rules.

2. run `iptables-restore -T nat --noflush --counters saved.log`

3. the kernel will panic

or here's a shell script:

```

# with sudo:

~ # for i in {1..100000}; do iptables-save -t nat > heh.txt; lines=$(cat heh.txt | wc -l); echo -n "#$i - saved $lines rules... "; iptables-restore -T nat --noflush --counters heh.txt ; date +%s; done

#1 - saved 259 rules... 1490325227

#2 - saved 262 rules... 1490325227

#3 - saved 268 rules... 1490325227

#4 - saved 280 rules... 1490325227

#5 - saved 304 rules... 1490325227

#6 - saved 352 rules... 1490325227

#7 - saved 448 rules... 1490325227

#8 - saved 640 rules... 1490325227

#9 - saved 1024 rules... 1490325227

#10 - saved 1792 rules... 1490325227

#11 - saved 3328 rules... 1490325228 <------------- NOTE: it starts to get sluggish here

#12 - saved 6400 rules... 1490325230

#13 - saved 12544 rules... 1490325237

#14 - saved 24832 rules... <------------- Can't exceed 32768?

```

| True | iptables kernel panic - # Issue Report #

Bug

## Bug ##

When iptables-restore is used to perform replacements of very large tables, `ipt_alloc_initial_table` hangs, and when it does, the linux kernel panics.

The following screenshot was captured from the console of a virtual machine, but the issue also occurs on bare-metal machines.

### Container Linux Version ###

```

cat /etc/os-release

NAME=CoreOS

ID=coreos

VERSION=1185.5.0

VERSION_ID=1185.5.0

BUILD_ID=2016-12-07-0937

PRETTY_NAME="CoreOS 1185.5.0 (MoreOS)"

ANSI_COLOR="1;32"

HOME_URL="https://coreos.com/"

BUG_REPORT_URL="https://github.com/coreos/bugs/issues"

cat /proc/version

Linux version 4.7.3-coreos-r3 (jenkins@jenkins-os-executor-1.c.coreos-gce-testing.internal) (gcc version 4.9.3 (Gentoo Hardened 4.9.3 p1.5, pie-0.6.4) ) #1 SMP Wed Dec 7 09:29:55 UTC 2016

```

### Environment ###

replicated on:

bare metal machines.

also on virtual machines.

occurs when iptables directly on the host machine.

also occurs when iptables is executed from within a docker container.

### Expected Behavior ###

a kernel panic does not occur

### Actual Behavior ###

linux kernel panics

### Reproduction Steps ###

1. generate an iptables ruleset with lots and lots of rules.

2. run `iptables-restore -T nat --noflush --counters saved.log`

3. the kernel will panic

or here's a shell script:

```

# with sudo:

~ # for i in {1..100000}; do iptables-save -t nat > heh.txt; lines=$(cat heh.txt | wc -l); echo -n "#$i - saved $lines rules... "; iptables-restore -T nat --noflush --counters heh.txt ; date +%s; done

#1 - saved 259 rules... 1490325227

#2 - saved 262 rules... 1490325227

#3 - saved 268 rules... 1490325227

#4 - saved 280 rules... 1490325227

#5 - saved 304 rules... 1490325227

#6 - saved 352 rules... 1490325227

#7 - saved 448 rules... 1490325227

#8 - saved 640 rules... 1490325227

#9 - saved 1024 rules... 1490325227

#10 - saved 1792 rules... 1490325227

#11 - saved 3328 rules... 1490325228 <------------- NOTE: it starts to get sluggish here

#12 - saved 6400 rules... 1490325230

#13 - saved 12544 rules... 1490325237

#14 - saved 24832 rules... <------------- Can't exceed 32768?

```

| usab | iptables kernel panic issue report bug bug when iptables restore is used to perform replacements of very large tables ipt alloc initial table hangs and when it does the linux kernel panics the following screenshot was captured from the console of a virtual machine but the issue also occurs on bare metal machines container linux version cat etc os release name coreos id coreos version version id build id pretty name coreos moreos ansi color home url bug report url cat proc version linux version coreos jenkins jenkins os executor c coreos gce testing internal gcc version gentoo hardened pie smp wed dec utc environment replicated on bare metal machines also on virtual machines occurs when iptables directly on the host machine also occurs when iptables is executed from within a docker container expected behavior a kernel panic does not occur actual behavior linux kernel panics reproduction steps generate an iptables ruleset with lots and lots of rules run iptables restore t nat noflush counters saved log the kernel will panic or here s a shell script with sudo for i in do iptables save t nat heh txt lines cat heh txt wc l echo n i saved lines rules iptables restore t nat noflush counters heh txt date s done saved rules saved rules saved rules saved rules saved rules saved rules saved rules saved rules saved rules saved rules saved rules note it starts to get sluggish here saved rules saved rules saved rules can t exceed | 1 |

5,104 | 3,900,324,251 | IssuesEvent | 2016-04-18 05:02:10 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 12551140: Smart Banners require an App Store installation | classification:ui/usability reproducible:always status:open | #### Description

Summary:

iOS 6 Smart Banners only work if the device has an App Store installation of the app in question. To-be-released products, and even Xcode builds of existing products, are not recognized by the smart banner machinery.

Steps to Reproduce:

0) Uninstall any copies of the app to be tested

1) Build and run your smart-banner-supporting app from Xcode

2) Go to your website with smart banner metadata

3) Note the VIEW button, which takes you to the App Store, rather than OPEN

4) Install the app from the App Store

5) Repeat 1)

Expected Results:

Expect smart banners to work all the time.

Actual Results:

Smart banners ONLY work after step 5 — specifically after building from Xcode on top of an App Store installation.

Regression:

iOS 6.0 (10A403)

Notes:

As far as I can tell, this makes Smart Banner testing completely impossible for pre-1.0 App Store apps. Similarly painful for existing products because the tester now needs the Xcode project and codesign / provisioning. This is a nonstarter for end-user beta testing, but even in many workplaces a QA team may not have source access. IPA installations from the Xcode Organizer do not work. Third-party OTA tools like TestFlight and Hockey are similarly out of luck.

Please revise this behavior to work with Debug and Ad-Hoc builds. This should ultimately be linked to the App's Bundle ID on the device side:

1) Safari reads the app-id parameter from the meta tag

2) Safari contacts the App Store for the related bundle ID

3) Safari asks the system if an app with said bundle ID exists

These steps can simply be used as a fallback against the existing current mechanism, rather than replacing it completely.

-

Product Version: 10A403

Created: 2012-10-22T21:31:55.921885

Originated: 2012-10-22T00:00:00

Open Radar Link: http://www.openradar.me/12551140 | True | 12551140: Smart Banners require an App Store installation - #### Description

Summary:

iOS 6 Smart Banners only work if the device has an App Store installation of the app in question. To-be-released products, and even Xcode builds of existing products, are not recognized by the smart banner machinery.

Steps to Reproduce:

0) Uninstall any copies of the app to be tested

1) Build and run your smart-banner-supporting app from Xcode

2) Go to your website with smart banner metadata

3) Note the VIEW button, which takes you to the App Store, rather than OPEN

4) Install the app from the App Store

5) Repeat 1)

Expected Results:

Expect smart banners to work all the time.

Actual Results:

Smart banners ONLY work after step 5 — specifically after building from Xcode on top of an App Store installation.

Regression:

iOS 6.0 (10A403)

Notes:

As far as I can tell, this makes Smart Banner testing completely impossible for pre-1.0 App Store apps. Similarly painful for existing products because the tester now needs the Xcode project and codesign / provisioning. This is a nonstarter for end-user beta testing, but even in many workplaces a QA team may not have source access. IPA installations from the Xcode Organizer do not work. Third-party OTA tools like TestFlight and Hockey are similarly out of luck.

Please revise this behavior to work with Debug and Ad-Hoc builds. This should ultimately be linked to the App's Bundle ID on the device side:

1) Safari reads the app-id parameter from the meta tag

2) Safari contacts the App Store for the related bundle ID

3) Safari asks the system if an app with said bundle ID exists

These steps can simply be used as a fallback against the existing current mechanism, rather than replacing it completely.

-

Product Version: 10A403

Created: 2012-10-22T21:31:55.921885

Originated: 2012-10-22T00:00:00

Open Radar Link: http://www.openradar.me/12551140 | usab | smart banners require an app store installation description summary ios smart banners only work if the device has an app store installation of the app in question to be released products and even xcode builds of existing products are not recognized by the smart banner machinery steps to reproduce uninstall any copies of the app to be tested build and run your smart banner supporting app from xcode go to your website with smart banner metadata note the view button which takes you to the app store rather than open install the app from the app store repeat expected results expect smart banners to work all the time actual results smart banners only work after step — specifically after building from xcode on top of an app store installation regression ios notes as far as i can tell this makes smart banner testing completely impossible for pre app store apps similarly painful for existing products because the tester now needs the xcode project and codesign provisioning this is a nonstarter for end user beta testing but even in many workplaces a qa team may not have source access ipa installations from the xcode organizer do not work third party ota tools like testflight and hockey are similarly out of luck please revise this behavior to work with debug and ad hoc builds this should ultimately be linked to the app s bundle id on the device side safari reads the app id parameter from the meta tag safari contacts the app store for the related bundle id safari asks the system if an app with said bundle id exists these steps can simply be used as a fallback against the existing current mechanism rather than replacing it completely product version created originated open radar link | 1 |

34,362 | 12,269,416,894 | IssuesEvent | 2020-05-07 14:02:52 | logzio/jmx2logzio | https://api.github.com/repos/logzio/jmx2logzio | closed | CVE-2020-9547 (Medium) detected in jackson-databind-2.9.10.2.jar | security vulnerability | ## CVE-2020-9547 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/jmx2logzio/pom.xml</p>

<p>Path to vulnerable library: downloadResource_c762fbf8-ee9b-4076-944f-b22607c0cecb/20200210155926/jackson-databind-2.9.10.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.9.10.2.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).

<p>Publish Date: 2020-03-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547>CVE-2020-9547</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547</a></p>

<p>Release Date: 2020-03-02</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.3</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.9.10.2","isTransitiveDependency":false,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.9.10.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.10.3"}],"vulnerabilityIdentifier":"CVE-2020-9547","vulnerabilityDetails":"FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547","cvss2Severity":"medium","cvss2Score":"5.0","extraData":{}}</REMEDIATE> --> | True | CVE-2020-9547 (Medium) detected in jackson-databind-2.9.10.2.jar - ## CVE-2020-9547 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /tmp/ws-scm/jmx2logzio/pom.xml</p>

<p>Path to vulnerable library: downloadResource_c762fbf8-ee9b-4076-944f-b22607c0cecb/20200210155926/jackson-databind-2.9.10.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.9.10.2.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).

<p>Publish Date: 2020-03-02

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547>CVE-2020-9547</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>5.0</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-9547</a></p>

<p>Release Date: 2020-03-02</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.3</p>

</p>

</details>

<p></p>

***

<!-- REMEDIATE-OPEN-PR-START -->

- [ ] Check this box to open an automated fix PR

<!-- REMEDIATE-OPEN-PR-END -->

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"com.fasterxml.jackson.core","packageName":"jackson-databind","packageVersion":"2.9.10.2","isTransitiveDependency":false,"dependencyTree":"com.fasterxml.jackson.core:jackson-databind:2.9.10.2","isMinimumFixVersionAvailable":true,"minimumFixVersion":"com.fasterxml.jackson.core:jackson-databind:2.10.3"}],"vulnerabilityIdentifier":"CVE-2020-9547","vulnerabilityDetails":"FasterXML jackson-databind 2.x before 2.9.10.4 mishandles the interaction between serialization gadgets and typing, related to com.ibatis.sqlmap.engine.transaction.jta.JtaTransactionConfig (aka ibatis-sqlmap).","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-9547","cvss2Severity":"medium","cvss2Score":"5.0","extraData":{}}</REMEDIATE> --> | non_usab | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm pom xml path to vulnerable library downloadresource jackson databind jar dependency hierarchy x jackson databind jar vulnerable library vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to com ibatis sqlmap engine transaction jta jtatransactionconfig aka ibatis sqlmap publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind check this box to open an automated fix pr isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to com ibatis sqlmap engine transaction jta jtatransactionconfig aka ibatis sqlmap vulnerabilityurl | 0 |

11,020 | 7,027,700,807 | IssuesEvent | 2017-12-25 01:42:33 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | "F" key Viewport focus problem | bug junior job topic:editor usability | version -> http://godot3builds.digitecnology.com/builds/2017-12-06.12:52:48.a8ae46e14/godot-v3.0-osx.fat.zip

**Issue description:**

When i press F key, wrong viewport is active.

**Steps to reproduce:**

https://www.youtube.com/watch?v=y5K5xv7UBuo&feature=youtu.be

| True | "F" key Viewport focus problem - version -> http://godot3builds.digitecnology.com/builds/2017-12-06.12:52:48.a8ae46e14/godot-v3.0-osx.fat.zip

**Issue description:**

When i press F key, wrong viewport is active.

**Steps to reproduce:**

https://www.youtube.com/watch?v=y5K5xv7UBuo&feature=youtu.be

| usab | f key viewport focus problem version issue description when i press f key wrong viewport is active steps to reproduce | 1 |

24,613 | 24,033,901,064 | IssuesEvent | 2022-09-15 17:17:02 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | opened | pulumi new project already exists error does not respect the default org | kind/bug impact/usability | [internal slack convo](https://pulumi.slack.com/archives/C9SGX9QA1/p1663254061919969)

repro steps

- create a project in your individual account with name X

- set your default org to an org that does not have a project with the name X

- use pulumi new

- when it asks for a project name, set it to X

- receive an error saying that project already exists | True | pulumi new project already exists error does not respect the default org - [internal slack convo](https://pulumi.slack.com/archives/C9SGX9QA1/p1663254061919969)

repro steps

- create a project in your individual account with name X

- set your default org to an org that does not have a project with the name X

- use pulumi new

- when it asks for a project name, set it to X

- receive an error saying that project already exists | usab | pulumi new project already exists error does not respect the default org repro steps create a project in your individual account with name x set your default org to an org that does not have a project with the name x use pulumi new when it asks for a project name set it to x receive an error saying that project already exists | 1 |

8,769 | 5,957,741,646 | IssuesEvent | 2017-05-29 04:21:36 | Virtual-Labs/circular-dichronism-spectroscopy-iiith | https://api.github.com/repos/Virtual-Labs/circular-dichronism-spectroscopy-iiith | opened | QA_Circular Dichroism Spectroscopy_Introduction_Target Audiance_Spelling-mistakes | Category:Usability Developed by: VLEAD Open-Edx Severity:S2 Severity:S3 | Defect Description :

In the Sub section "Target Audience" of Introduction section of this lab, found spelling mistakes.

Actual Result :

In the Sub section "Target Audience" of Introduction section of this lab, found spelling mistakes.

Environment :

OS: Windows 7, Ubuntu-16.04,Centos-6

Browsers:Firefox-42.0,Chrome-47.0,chromium-45.0

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Attachments:

| True | QA_Circular Dichroism Spectroscopy_Introduction_Target Audiance_Spelling-mistakes - Defect Description :

In the Sub section "Target Audience" of Introduction section of this lab, found spelling mistakes.

Actual Result :

In the Sub section "Target Audience" of Introduction section of this lab, found spelling mistakes.

Environment :

OS: Windows 7, Ubuntu-16.04,Centos-6

Browsers:Firefox-42.0,Chrome-47.0,chromium-45.0

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Attachments:

| usab | qa circular dichroism spectroscopy introduction target audiance spelling mistakes defect description in the sub section target audience of introduction section of this lab found spelling mistakes actual result in the sub section target audience of introduction section of this lab found spelling mistakes environment os windows ubuntu centos browsers firefox chrome chromium bandwidth hardware configuration processor attachments | 1 |

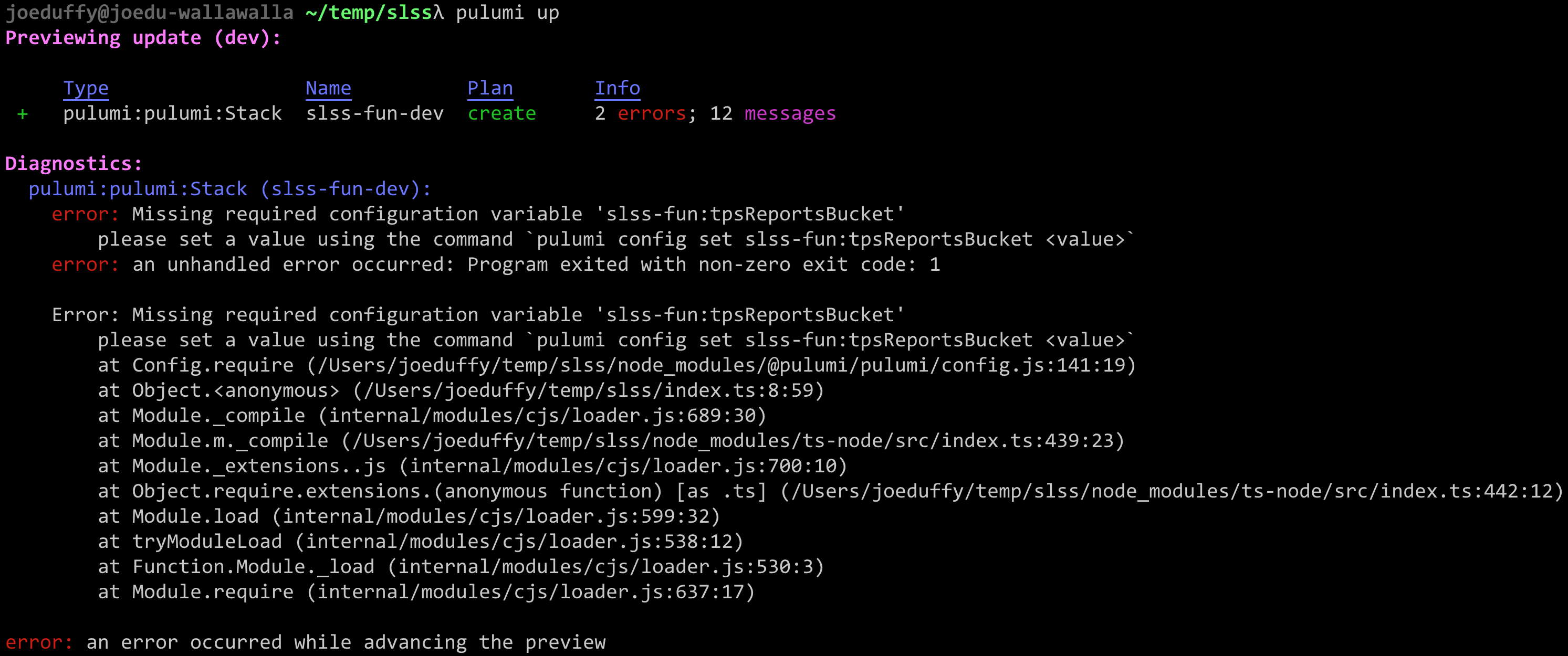

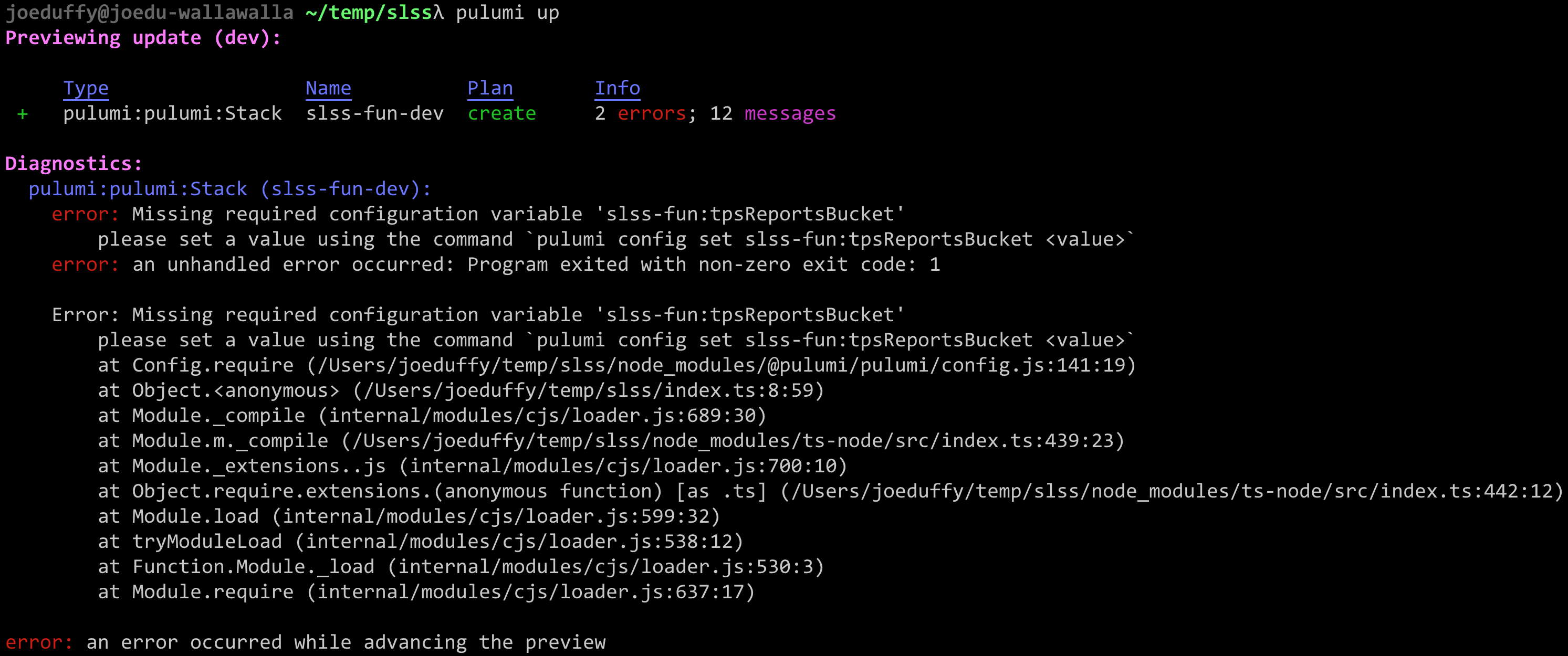

14,444 | 9,194,935,033 | IssuesEvent | 2019-03-07 00:16:25 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | opened | RunErrors aren't concisely reported anymore | area/cli impact/usability kind/bug | It used to be the case that `RunError`s led to concise error reporting, including omission of the stack trace. The idea here was that these errors want to instruct the user to do something differently -- like run `pulumi config set ...`, and that the extra noise of a stack trace is simply confusing.

It appears this behavior has regressed semi-recently:

| True | RunErrors aren't concisely reported anymore - It used to be the case that `RunError`s led to concise error reporting, including omission of the stack trace. The idea here was that these errors want to instruct the user to do something differently -- like run `pulumi config set ...`, and that the extra noise of a stack trace is simply confusing.

It appears this behavior has regressed semi-recently:

| usab | runerrors aren t concisely reported anymore it used to be the case that runerror s led to concise error reporting including omission of the stack trace the idea here was that these errors want to instruct the user to do something differently like run pulumi config set and that the extra noise of a stack trace is simply confusing it appears this behavior has regressed semi recently | 1 |

272,800 | 29,795,090,404 | IssuesEvent | 2023-06-16 01:10:11 | billmcchesney1/hadoop | https://api.github.com/repos/billmcchesney1/hadoop | closed | WS-2017-0234 (Medium) detected in jquery.dataTables-1.10.7.min.js - autoclosed | Mend: dependency security vulnerability | ## WS-2017-0234 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery.dataTables-1.10.7.min.js</b></p></summary>

<p>DataTables enhances HTML tables with the ability to sort, filter and page the data in the table very easily. It provides a comprehensive API and set of configuration options, allowing you to consume data from virtually any data source.</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/datatables/1.10.7/js/jquery.dataTables.min.js">https://cdnjs.cloudflare.com/ajax/libs/datatables/1.10.7/js/jquery.dataTables.min.js</a></p>

<p>Path to vulnerable library: /hadoop-hdfs-project/hadoop-hdfs/target/webapps/static/jquery.dataTables.min.js,/hadoop-hdfs-project/hadoop-hdfs/target/test-classes/webapps/static/jquery.dataTables.min.js,/hadoop-hdfs-project/hadoop-hdfs/src/main/webapps/static/jquery.dataTables.min.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery.dataTables-1.10.7.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/billmcchesney1/hadoop/commit/6dcd8400219941dcbd7fb0f6b980cc2c6a2a6b0a">6dcd8400219941dcbd7fb0f6b980cc2c6a2a6b0a</a></p>

<p>Found in base branch: <b>trunk</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

Affected versions of the package are vulnerable to Cross-site Scripting (XSS).

<p>Publish Date: 2015-11-06

<p>URL: <a href=https://github.com/DataTables/DataTables/commit/6f67df2d21f9858ec40a6e9565c3a653cdb691a6>WS-2017-0234</a></p>

</p>