Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

6,432 | 4,286,462,340 | IssuesEvent | 2016-07-16 04:32:00 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Crafting menu now annoying to use. | Bug tgui Usability | Each items part is now overly large and the crafting menu now feels overly clunky to and annoying to use. And a few times when I tried to go to the foods subsection, the crafting menu just decided to stop working and crashed. | True | Crafting menu now annoying to use. - Each items part is now overly large and the crafting menu now feels overly clunky to and annoying to use. And a few times when I tried to go to the foods subsection, the crafting menu just decided to stop working and crashed. | usab | crafting menu now annoying to use each items part is now overly large and the crafting menu now feels overly clunky to and annoying to use and a few times when i tried to go to the foods subsection the crafting menu just decided to stop working and crashed | 1 |

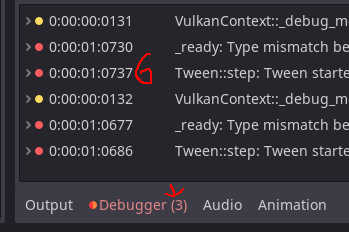

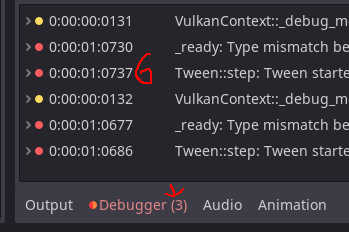

12,494 | 7,919,284,169 | IssuesEvent | 2018-07-04 16:07:04 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Godot is randomly unable to write to config files and file cache | bug platform:windows topic:editor usability | Godot 3.0 beta 1 and master and 2.1.4

Windows 10 64 bits

Every so often, under pretty random occasions, I see error messages in which I see Godot is unable to write to some config file or file cache, things like `Unable to write to file <path>, file in use, locked or lacking permissions`.

Example here, I got this one 1 second after opening a scene:

Another one with file cache, when I use Ctrl+S:

| True | Godot is randomly unable to write to config files and file cache - Godot 3.0 beta 1 and master and 2.1.4

Windows 10 64 bits

Every so often, under pretty random occasions, I see error messages in which I see Godot is unable to write to some config file or file cache, things like `Unable to write to file <path>, file in use, locked or lacking permissions`.

Example here, I got this one 1 second after opening a scene:

Another one with file cache, when I use Ctrl+S:

| usab | godot is randomly unable to write to config files and file cache godot beta and master and windows bits every so often under pretty random occasions i see error messages in which i see godot is unable to write to some config file or file cache things like unable to write to file file in use locked or lacking permissions example here i got this one second after opening a scene another one with file cache when i use ctrl s | 1 |

314,742 | 9,602,223,001 | IssuesEvent | 2019-05-10 14:07:08 | openpracticelibrary/openpracticelibrary | https://api.github.com/repos/openpracticelibrary/openpracticelibrary | closed | Adding a new practice when your author/perspective is not merged will fail the build | bug priority-high technical enhancement | **What is this about**

The CMS workflow will create a new author on a separate branch so if you in parallel create a new practice, the build will fail for this until the author is merged because a page _has_ to be associated with a given primary author.

**The solution**

1. better way to add new author to avoid this race condition

or

2. better error handling/ guard in the Hugo template. | 1.0 | Adding a new practice when your author/perspective is not merged will fail the build - **What is this about**

The CMS workflow will create a new author on a separate branch so if you in parallel create a new practice, the build will fail for this until the author is merged because a page _has_ to be associated with a given primary author.

**The solution**

1. better way to add new author to avoid this race condition

or

2. better error handling/ guard in the Hugo template. | non_usab | adding a new practice when your author perspective is not merged will fail the build what is this about the cms workflow will create a new author on a separate branch so if you in parallel create a new practice the build will fail for this until the author is merged because a page has to be associated with a given primary author the solution better way to add new author to avoid this race condition or better error handling guard in the hugo template | 0 |

16,868 | 11,439,942,222 | IssuesEvent | 2020-02-05 08:36:50 | clarity-h2020/marketplace | https://api.github.com/repos/clarity-h2020/marketplace | opened | References Screen Usability Issues | BB: Marketplace Usability bug | > You can reference here existing projects which use your offer as a use case. You can write a short description about the project and the use of the offer in the text field. If there is no project on the marketplace to reference yet you can add a project here [**here**](https://marketplace-dev.myclimateservices.eu/project/add).

Following [the link](https://marketplace-dev.myclimateservices.eu/project/add) `https://marketplace-dev.myclimateservices.eu/project/add` behind the second *here* results in **404 Page not found**. | True | References Screen Usability Issues - > You can reference here existing projects which use your offer as a use case. You can write a short description about the project and the use of the offer in the text field. If there is no project on the marketplace to reference yet you can add a project here [**here**](https://marketplace-dev.myclimateservices.eu/project/add).

Following [the link](https://marketplace-dev.myclimateservices.eu/project/add) `https://marketplace-dev.myclimateservices.eu/project/add` behind the second *here* results in **404 Page not found**. | usab | references screen usability issues you can reference here existing projects which use your offer as a use case you can write a short description about the project and the use of the offer in the text field if there is no project on the marketplace to reference yet you can add a project here following behind the second here results in page not found | 1 |

19,843 | 14,640,903,802 | IssuesEvent | 2020-12-25 04:24:12 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | shareProcessNamespace will cause the container no response, and restart docker will waiting for "Loading containers" | kind/bug lifecycle/rotten sig/node sig/usability | <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://kubernetes.io/security/

-->

**What happened**:

shareProcessNamespace will cause the container no response, and restart docker will waiting for "Loading containers"

**What you expected to happen**:

container has response, and restart docker no problem.

**How to reproduce it (as minimally and precisely as possible)**:

1. Create nginx pod as follows:

```yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

name: nginx

spec:

shareProcessNamespace: true

nodeName: master1

containers:

- image: registry.icp.com:5000/library/common/nginx-amd64:1.17.5

name: nginx

```

2. Find the `nginx: master process nginx -g daemon off` process, and kill it

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-699575b7df-2bxfd 1/1 Running 0 7s 10.151.161.19 master1 <none> <none>

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker ps | grep nginx-699575b7df-2bxfd

6e2231761cbc 540a289bab6c "nginx -g 'daemon of…" 14 seconds ago Up 12 seconds k8s_nginx_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

849f76682e0a registry.icp.com:5000/library/cke/kubernetes/pause-amd64:3.1 "/pause" 17 seconds ago Up 16 seconds k8s_POD_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

root@xuewei81-tgquxn9spy-master-0:~/xjs#

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker inspect 6e2231761cbc | grep -i pid

"Pid": 2119,

"PidMode": "container:849f76682e0a322d0109250d0d5eb3767248f18c2ee7457593729339fb400eef",

"PidsLimit": 0,

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -ef | grep 2119 | grep -v color

root 2119 2093 0 14:31 ? 00:00:00 nginx: master process nginx -g daemon off;

systemd+ 2152 2119 0 14:31 ? 00:00:00 nginx: worker process

```

Then, the 2152 's parent process will become "/pause"

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# kill -9 2119

root@xuewei81-tgquxn9spy-master-0:~/xjs#

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -f 2152

UID PID PPID C STIME TTY STAT TIME CMD

systemd+ 2152 1897 0 14:31 ? S 0:00 nginx: worker process

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -f 1897

UID PID PPID C STIME TTY STAT TIME CMD

root 1897 1863 0 14:31 ? Ss 0:00 /pause

root@xuewei81-tgquxn9spy-master-0:~/xjs#

```

3. At this moment, the commands "docker inspect" or "docker exec" or "docker logs" will no response:

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker ps | grep nginx-699575b7df-2bxfd

6e2231761cbc 540a289bab6c "nginx -g 'daemon of…" About a minute ago Up About a minute k8s_nginx_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

849f76682e0a registry.icp.com:5000/library/cke/kubernetes/pause-amd64:3.1 "/pause" About a minute ago Up About a minute k8s_POD_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker inspect 6e2231761cbc

^C

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker exec -it 6e2231761cbc sh

^C

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker logs 6e2231761cbc

^C

```

4. And it will wait for "Loading containers" if restart docker:

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# systemctl restart docker

Job for docker.service failed because a timeout was exceeded.

See "systemctl status docker.service" and "journalctl -xe" for details.

Jun 17 14:33:55 xuewei81-tgquxn9spy-master-0 dockerd[2814]: time="2020-06-17T14:33:55.525517831+08:00" level=info msg="Loading containers: start."

Jun 17 14:34:55 xuewei81-tgquxn9spy-master-0 systemd[1]: docker.service: Start operation timed out. Terminating.

Jun 17 14:34:55 xuewei81-tgquxn9spy-master-0 dockerd[2814]: time="2020-06-17T14:34:55.503401172+08:00" level=info msg="Processing signal 'terminated'"

```

5. The docker will restart successfully only I kill the `containerd-shim` process

```shell

root@xuewei81-tgquxn9spy-master-0:~# ps -ef | grep 6e2231761cbc | grep containerd-shim

root 2093 14254 0 14:31 ? 00:00:00 containerd-shim -namespace moby -workdir /var/lib/containerd/io.containerd.runtime.v1.linux/moby/6e2231761cbc4b6ceff36dbcc4cfae67530377616aed47ba34cf0d057f37301d -address /run/containerd/containerd.sock -containerd-binary /usr/bin/containerd -runtime-root /var/run/docker/runtime-runc -systemd-cgroup

root@xuewei81-tgquxn9spy-master-0:~# kill -9 2093

root@xuewei81-tgquxn9spy-master-0:~#

root@xuewei81-tgquxn9spy-master-0:~# journalctl -u docker -f

-- Logs begin at Wed 2020-01-01 11:46:34 CST. --

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054660644+08:00" level=warning msg="Your kernel does not support cgroup rt runtime"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054673960+08:00" level=warning msg="Your kernel does not support cgroup blkio weight"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054684371+08:00" level=warning msg="Your kernel does not support cgroup blkio weight_device"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.055271704+08:00" level=info msg="Loading containers: start."

Jun 17 14:35:37 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:37.806790934+08:00" level=info msg="There are old running containers, the network config will not take affect"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.038308094+08:00" level=info msg="Loading containers: done."

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.178666784+08:00" level=info msg="Docker daemon" commit=0dd43dd graphdriver(s)=overlay2 version=18.09.8

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.178815965+08:00" level=info msg="Daemon has completed initialization"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.258160782+08:00" level=info msg="API listen on /var/run/docker.sock"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 systemd[1]: Started Docker Application Container Engine.

```

**Anything else we need to know?**:

**Environment**:

- Kubernetes version (use `kubectl version`):

1.14.3

- Cloud provider or hardware configuration:

- OS (e.g: `cat /etc/os-release`):

```shell

root@xuewei81-tgquxn9spy-master-0:~# cat /etc/os-release

NAME="Ubuntu"

VERSION="18.04.3 LTS (Bionic Beaver)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 18.04.3 LTS"

VERSION_ID="18.04"

HOME_URL="https://www.ubuntu.com/"

SUPPORT_URL="https://help.ubuntu.com/"

BUG_REPORT_URL="https://bugs.launchpad.net/ubuntu/"

PRIVACY_POLICY_URL="https://www.ubuntu.com/legal/terms-and-policies/privacy-policy"

VERSION_CODENAME=bionic

UBUNTU_CODENAME=bionic

```

- Kernel (e.g. `uname -a`):

```shell

root@xuewei81-tgquxn9spy-master-0:~# uname -a

Linux xuewei81-tgquxn9spy-master-0 5.0.0-29-generic #31~18.04.1-Ubuntu SMP Thu Sep 12 18:29:21 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

````

- Install tools:

- Network plugin and version (if this is a network-related bug):

- Others:

My docker version is:

```shell

root@xuewei81-tgquxn9spy-master-0:~# docker version

Client:

Version: 18.09.8

API version: 1.39

Go version: go1.10.8

Git commit: 0dd43dd87f

Built: Wed Jul 17 17:41:19 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.8

API version: 1.39 (minimum version 1.12)

Go version: go1.10.8

Git commit: 0dd43dd

Built: Wed Jul 17 17:07:25 2019

OS/Arch: linux/amd64

Experimental: false

```

| True | shareProcessNamespace will cause the container no response, and restart docker will waiting for "Loading containers" - <!-- Please use this template while reporting a bug and provide as much info as possible. Not doing so may result in your bug not being addressed in a timely manner. Thanks!

If the matter is security related, please disclose it privately via https://kubernetes.io/security/

-->

**What happened**:

shareProcessNamespace will cause the container no response, and restart docker will waiting for "Loading containers"

**What you expected to happen**:

container has response, and restart docker no problem.

**How to reproduce it (as minimally and precisely as possible)**:

1. Create nginx pod as follows:

```yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

name: nginx

spec:

shareProcessNamespace: true

nodeName: master1

containers:

- image: registry.icp.com:5000/library/common/nginx-amd64:1.17.5

name: nginx

```

2. Find the `nginx: master process nginx -g daemon off` process, and kill it

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# kubectl get pod -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-699575b7df-2bxfd 1/1 Running 0 7s 10.151.161.19 master1 <none> <none>

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker ps | grep nginx-699575b7df-2bxfd

6e2231761cbc 540a289bab6c "nginx -g 'daemon of…" 14 seconds ago Up 12 seconds k8s_nginx_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

849f76682e0a registry.icp.com:5000/library/cke/kubernetes/pause-amd64:3.1 "/pause" 17 seconds ago Up 16 seconds k8s_POD_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

root@xuewei81-tgquxn9spy-master-0:~/xjs#

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker inspect 6e2231761cbc | grep -i pid

"Pid": 2119,

"PidMode": "container:849f76682e0a322d0109250d0d5eb3767248f18c2ee7457593729339fb400eef",

"PidsLimit": 0,

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -ef | grep 2119 | grep -v color

root 2119 2093 0 14:31 ? 00:00:00 nginx: master process nginx -g daemon off;

systemd+ 2152 2119 0 14:31 ? 00:00:00 nginx: worker process

```

Then, the 2152 's parent process will become "/pause"

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# kill -9 2119

root@xuewei81-tgquxn9spy-master-0:~/xjs#

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -f 2152

UID PID PPID C STIME TTY STAT TIME CMD

systemd+ 2152 1897 0 14:31 ? S 0:00 nginx: worker process

root@xuewei81-tgquxn9spy-master-0:~/xjs# ps -f 1897

UID PID PPID C STIME TTY STAT TIME CMD

root 1897 1863 0 14:31 ? Ss 0:00 /pause

root@xuewei81-tgquxn9spy-master-0:~/xjs#

```

3. At this moment, the commands "docker inspect" or "docker exec" or "docker logs" will no response:

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker ps | grep nginx-699575b7df-2bxfd

6e2231761cbc 540a289bab6c "nginx -g 'daemon of…" About a minute ago Up About a minute k8s_nginx_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

849f76682e0a registry.icp.com:5000/library/cke/kubernetes/pause-amd64:3.1 "/pause" About a minute ago Up About a minute k8s_POD_nginx-699575b7df-2bxfd_default_24c7f7b4-b064-11ea-94b0-fa163e279982_0

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker inspect 6e2231761cbc

^C

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker exec -it 6e2231761cbc sh

^C

root@xuewei81-tgquxn9spy-master-0:~/xjs# docker logs 6e2231761cbc

^C

```

4. And it will wait for "Loading containers" if restart docker:

```shell

root@xuewei81-tgquxn9spy-master-0:~/xjs# systemctl restart docker

Job for docker.service failed because a timeout was exceeded.

See "systemctl status docker.service" and "journalctl -xe" for details.

Jun 17 14:33:55 xuewei81-tgquxn9spy-master-0 dockerd[2814]: time="2020-06-17T14:33:55.525517831+08:00" level=info msg="Loading containers: start."

Jun 17 14:34:55 xuewei81-tgquxn9spy-master-0 systemd[1]: docker.service: Start operation timed out. Terminating.

Jun 17 14:34:55 xuewei81-tgquxn9spy-master-0 dockerd[2814]: time="2020-06-17T14:34:55.503401172+08:00" level=info msg="Processing signal 'terminated'"

```

5. The docker will restart successfully only I kill the `containerd-shim` process

```shell

root@xuewei81-tgquxn9spy-master-0:~# ps -ef | grep 6e2231761cbc | grep containerd-shim

root 2093 14254 0 14:31 ? 00:00:00 containerd-shim -namespace moby -workdir /var/lib/containerd/io.containerd.runtime.v1.linux/moby/6e2231761cbc4b6ceff36dbcc4cfae67530377616aed47ba34cf0d057f37301d -address /run/containerd/containerd.sock -containerd-binary /usr/bin/containerd -runtime-root /var/run/docker/runtime-runc -systemd-cgroup

root@xuewei81-tgquxn9spy-master-0:~# kill -9 2093

root@xuewei81-tgquxn9spy-master-0:~#

root@xuewei81-tgquxn9spy-master-0:~# journalctl -u docker -f

-- Logs begin at Wed 2020-01-01 11:46:34 CST. --

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054660644+08:00" level=warning msg="Your kernel does not support cgroup rt runtime"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054673960+08:00" level=warning msg="Your kernel does not support cgroup blkio weight"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.054684371+08:00" level=warning msg="Your kernel does not support cgroup blkio weight_device"

Jun 17 14:35:36 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:36.055271704+08:00" level=info msg="Loading containers: start."

Jun 17 14:35:37 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:37.806790934+08:00" level=info msg="There are old running containers, the network config will not take affect"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.038308094+08:00" level=info msg="Loading containers: done."

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.178666784+08:00" level=info msg="Docker daemon" commit=0dd43dd graphdriver(s)=overlay2 version=18.09.8

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.178815965+08:00" level=info msg="Daemon has completed initialization"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 dockerd[3409]: time="2020-06-17T14:35:39.258160782+08:00" level=info msg="API listen on /var/run/docker.sock"

Jun 17 14:35:39 xuewei81-tgquxn9spy-master-0 systemd[1]: Started Docker Application Container Engine.

```

**Anything else we need to know?**:

**Environment**:

- Kubernetes version (use `kubectl version`):

1.14.3

- Cloud provider or hardware configuration:

- OS (e.g: `cat /etc/os-release`):

```shell

root@xuewei81-tgquxn9spy-master-0:~# cat /etc/os-release

NAME="Ubuntu"

VERSION="18.04.3 LTS (Bionic Beaver)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 18.04.3 LTS"

VERSION_ID="18.04"

HOME_URL="https://www.ubuntu.com/"

SUPPORT_URL="https://help.ubuntu.com/"

BUG_REPORT_URL="https://bugs.launchpad.net/ubuntu/"

PRIVACY_POLICY_URL="https://www.ubuntu.com/legal/terms-and-policies/privacy-policy"

VERSION_CODENAME=bionic

UBUNTU_CODENAME=bionic

```

- Kernel (e.g. `uname -a`):

```shell

root@xuewei81-tgquxn9spy-master-0:~# uname -a

Linux xuewei81-tgquxn9spy-master-0 5.0.0-29-generic #31~18.04.1-Ubuntu SMP Thu Sep 12 18:29:21 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux

````

- Install tools:

- Network plugin and version (if this is a network-related bug):

- Others:

My docker version is:

```shell

root@xuewei81-tgquxn9spy-master-0:~# docker version

Client:

Version: 18.09.8

API version: 1.39

Go version: go1.10.8

Git commit: 0dd43dd87f

Built: Wed Jul 17 17:41:19 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.8

API version: 1.39 (minimum version 1.12)

Go version: go1.10.8

Git commit: 0dd43dd

Built: Wed Jul 17 17:07:25 2019

OS/Arch: linux/amd64

Experimental: false

```

| usab | shareprocessnamespace will cause the container no response and restart docker will waiting for loading containers please use this template while reporting a bug and provide as much info as possible not doing so may result in your bug not being addressed in a timely manner thanks if the matter is security related please disclose it privately via what happened shareprocessnamespace will cause the container no response and restart docker will waiting for loading containers what you expected to happen container has response and restart docker no problem how to reproduce it as minimally and precisely as possible create nginx pod as follows yaml apiversion extensions kind deployment metadata labels app nginx name nginx spec selector matchlabels app nginx template metadata labels app nginx name nginx spec shareprocessnamespace true nodename containers image registry icp com library common nginx name nginx find the nginx master process nginx g daemon off process and kill it shell root master xjs kubectl get pod owide name ready status restarts age ip node nominated node readiness gates nginx running root master xjs docker ps grep nginx nginx g daemon of… seconds ago up seconds nginx nginx default registry icp com library cke kubernetes pause pause seconds ago up seconds pod nginx default root master xjs root master xjs docker inspect grep i pid pid pidmode container pidslimit root master xjs ps ef grep grep v color root nginx master process nginx g daemon off systemd nginx worker process then the s parent process will become pause shell root master xjs kill root master xjs root master xjs ps f uid pid ppid c stime tty stat time cmd systemd s nginx worker process root master xjs ps f uid pid ppid c stime tty stat time cmd root ss pause root master xjs at this moment the commands docker inspect or docker exec or docker logs will no response shell root master xjs docker ps grep nginx nginx g daemon of… about a minute ago up about a minute nginx nginx default registry icp com library cke kubernetes pause pause about a minute ago up about a minute pod nginx default root master xjs docker inspect c root master xjs docker exec it sh c root master xjs docker logs c and it will wait for loading containers if restart docker shell root master xjs systemctl restart docker job for docker service failed because a timeout was exceeded see systemctl status docker service and journalctl xe for details jun master dockerd time level info msg loading containers start jun master systemd docker service start operation timed out terminating jun master dockerd time level info msg processing signal terminated the docker will restart successfully only i kill the containerd shim process shell root master ps ef grep grep containerd shim root containerd shim namespace moby workdir var lib containerd io containerd runtime linux moby address run containerd containerd sock containerd binary usr bin containerd runtime root var run docker runtime runc systemd cgroup root master kill root master root master journalctl u docker f logs begin at wed cst jun master dockerd time level warning msg your kernel does not support cgroup rt runtime jun master dockerd time level warning msg your kernel does not support cgroup blkio weight jun master dockerd time level warning msg your kernel does not support cgroup blkio weight device jun master dockerd time level info msg loading containers start jun master dockerd time level info msg there are old running containers the network config will not take affect jun master dockerd time level info msg loading containers done jun master dockerd time level info msg docker daemon commit graphdriver s version jun master dockerd time level info msg daemon has completed initialization jun master dockerd time level info msg api listen on var run docker sock jun master systemd started docker application container engine anything else we need to know environment kubernetes version use kubectl version cloud provider or hardware configuration os e g cat etc os release shell root master cat etc os release name ubuntu version lts bionic beaver id ubuntu id like debian pretty name ubuntu lts version id home url support url bug report url privacy policy url version codename bionic ubuntu codename bionic kernel e g uname a shell root master uname a linux master generic ubuntu smp thu sep utc gnu linux install tools network plugin and version if this is a network related bug others my docker version is shell root master docker version client version api version go version git commit built wed jul os arch linux experimental false server docker engine community engine version api version minimum version go version git commit built wed jul os arch linux experimental false | 1 |

4,444 | 6,617,239,869 | IssuesEvent | 2017-09-21 00:11:03 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | Catalog configuration file changes | area/catalog-service kind/enhancement status/resolved status/to-test | 1. Support `template.yml` in addition to `config.yml`

2. Support `icon.extension` and `icon-example.extension` in addition to `catalogIcon.extension` and `catalogIcon-example.extension`

3. Support `compose.yml` in addition to `docker-compose.yml`. It should support all keys in `docker-compose.yml` and `rancher-compose.yml`.

3. Support `template-version.yml` in addition to `rancher-compose.yml`. `template-version.yml` should only contain the keys in the `.catalog` section of `rancher-compose.yml`, but does not require the `.catalog`.

4. Add in `default_version` in `template.yml`, which should replace `version`.

4. Some keys in `template.yml/config.yml` use camel case rather than underscore separated. Both should be supported. | 1.0 | Catalog configuration file changes - 1. Support `template.yml` in addition to `config.yml`

2. Support `icon.extension` and `icon-example.extension` in addition to `catalogIcon.extension` and `catalogIcon-example.extension`

3. Support `compose.yml` in addition to `docker-compose.yml`. It should support all keys in `docker-compose.yml` and `rancher-compose.yml`.

3. Support `template-version.yml` in addition to `rancher-compose.yml`. `template-version.yml` should only contain the keys in the `.catalog` section of `rancher-compose.yml`, but does not require the `.catalog`.

4. Add in `default_version` in `template.yml`, which should replace `version`.

4. Some keys in `template.yml/config.yml` use camel case rather than underscore separated. Both should be supported. | non_usab | catalog configuration file changes support template yml in addition to config yml support icon extension and icon example extension in addition to catalogicon extension and catalogicon example extension support compose yml in addition to docker compose yml it should support all keys in docker compose yml and rancher compose yml support template version yml in addition to rancher compose yml template version yml should only contain the keys in the catalog section of rancher compose yml but does not require the catalog add in default version in template yml which should replace version some keys in template yml config yml use camel case rather than underscore separated both should be supported | 0 |

599,556 | 18,276,921,350 | IssuesEvent | 2021-10-04 20:02:22 | dtcenter/METplotpy | https://api.github.com/repos/dtcenter/METplotpy | opened | Revision series for MODE-TD | type: bug priority: high alert: NEED ACCOUNT KEY alert: NEED MORE DEFINITION alert: NEED PROJECT ASSIGNMENT METplotpy: Plots |

## Describe the Problem ##

MODE-TD Revision box plot is empty. Need to fix it and match the Rscript version.

This is the XML:

[plot_20211004_134424.xml.txt](https://github.com/dtcenter/METplotpy/files/7280968/plot_20211004_134424.xml.txt)

### Expected Behavior ###

Python and Rscript MODE-TD Revision plots should be similar

### Environment ###

Describe your runtime environment:

*1. Machine: (e.g. HPC name, Linux Workstation, Mac Laptop)*

*2. OS: (e.g. RedHat Linux, MacOS)*

*3. Software version number(s)*

### To Reproduce ###

Describe the steps to reproduce the behavior:

*1. Go to '...'*

*2. Click on '....'*

*3. Scroll down to '....'*

*4. See error*

*Post relevant sample data following these instructions:*

*https://dtcenter.org/community-code/model-evaluation-tools-met/met-help-desk#ftp*

### Relevant Deadlines ###

*List relevant project deadlines here or state NONE.*

### Funding Source ###

*Define the source of funding and account keys here or state NONE.*

## Define the Metadata ##

### Assignee ###

- [ ] Select **engineer(s)** or **no engineer** required

- [ ] Select **scientist(s)** or **no scientist** required

### Labels ###

- [ ] Select **component(s)**

- [ ] Select **priority**

- [ ] Select **requestor(s)**

### Projects and Milestone ###

- [ ] Select **Organization** level **Project** for support of the current coordinated release

- [ ] Select **Repository** level **Project** for development toward the next official release or add **alert: NEED PROJECT ASSIGNMENT** label

- [ ] Select **Milestone** as the next bugfix version

## Define Related Issue(s) ##

Consider the impact to the other METplus components.

- [ ] [METplus](https://github.com/dtcenter/METplus/issues/new/choose), [MET](https://github.com/dtcenter/MET/issues/new/choose), [METdatadb](https://github.com/dtcenter/METdatadb/issues/new/choose), [METviewer](https://github.com/dtcenter/METviewer/issues/new/choose), [METexpress](https://github.com/dtcenter/METexpress/issues/new/choose), [METcalcpy](https://github.com/dtcenter/METcalcpy/issues/new/choose), [METplotpy](https://github.com/dtcenter/METplotpy/issues/new/choose)

## Bugfix Checklist ##

See the [METplus Workflow](https://metplus.readthedocs.io/en/latest/Contributors_Guide/github_workflow.html) for details.

- [ ] Complete the issue definition above, including the **Time Estimate** and **Funding Source**.

- [ ] Fork this repository or create a branch of **main_\<Version>**.

Branch name: `bugfix_<Issue Number>_main_<Version>_<Description>`

- [ ] Fix the bug and test your changes.

- [ ] Add/update log messages for easier debugging.

- [ ] Add/update unit tests.

- [ ] Add/update documentation.

- [ ] Push local changes to GitHub.

- [ ] Submit a pull request to merge into **main_\<Version>**.

Pull request: `bugfix <Issue Number> main_<Version> <Description>`

- [ ] Define the pull request metadata, as permissions allow.

Select: **Reviewer(s)** and **Linked issues**

Select: **Organization** level software support **Project** for the current coordinated release

Select: **Milestone** as the next bugfix version

- [ ] Iterate until the reviewer(s) accept and merge your changes.

- [ ] Delete your fork or branch.

- [ ] Complete the steps above to fix the bug on the **develop** branch.

Branch name: `bugfix_<Issue Number>_develop_<Description>`

Pull request: `bugfix <Issue Number> develop <Description>`

Select: **Reviewer(s)** and **Linked issues**

Select: **Repository** level development cycle **Project** for the next official release

Select: **Milestone** as the next official version

- [ ] Close this issue.

| 1.0 | Revision series for MODE-TD -

## Describe the Problem ##

MODE-TD Revision box plot is empty. Need to fix it and match the Rscript version.

This is the XML:

[plot_20211004_134424.xml.txt](https://github.com/dtcenter/METplotpy/files/7280968/plot_20211004_134424.xml.txt)

### Expected Behavior ###

Python and Rscript MODE-TD Revision plots should be similar

### Environment ###

Describe your runtime environment:

*1. Machine: (e.g. HPC name, Linux Workstation, Mac Laptop)*

*2. OS: (e.g. RedHat Linux, MacOS)*

*3. Software version number(s)*

### To Reproduce ###

Describe the steps to reproduce the behavior:

*1. Go to '...'*

*2. Click on '....'*

*3. Scroll down to '....'*

*4. See error*

*Post relevant sample data following these instructions:*

*https://dtcenter.org/community-code/model-evaluation-tools-met/met-help-desk#ftp*

### Relevant Deadlines ###

*List relevant project deadlines here or state NONE.*

### Funding Source ###

*Define the source of funding and account keys here or state NONE.*

## Define the Metadata ##

### Assignee ###

- [ ] Select **engineer(s)** or **no engineer** required

- [ ] Select **scientist(s)** or **no scientist** required

### Labels ###

- [ ] Select **component(s)**

- [ ] Select **priority**

- [ ] Select **requestor(s)**

### Projects and Milestone ###

- [ ] Select **Organization** level **Project** for support of the current coordinated release

- [ ] Select **Repository** level **Project** for development toward the next official release or add **alert: NEED PROJECT ASSIGNMENT** label

- [ ] Select **Milestone** as the next bugfix version

## Define Related Issue(s) ##

Consider the impact to the other METplus components.

- [ ] [METplus](https://github.com/dtcenter/METplus/issues/new/choose), [MET](https://github.com/dtcenter/MET/issues/new/choose), [METdatadb](https://github.com/dtcenter/METdatadb/issues/new/choose), [METviewer](https://github.com/dtcenter/METviewer/issues/new/choose), [METexpress](https://github.com/dtcenter/METexpress/issues/new/choose), [METcalcpy](https://github.com/dtcenter/METcalcpy/issues/new/choose), [METplotpy](https://github.com/dtcenter/METplotpy/issues/new/choose)

## Bugfix Checklist ##

See the [METplus Workflow](https://metplus.readthedocs.io/en/latest/Contributors_Guide/github_workflow.html) for details.

- [ ] Complete the issue definition above, including the **Time Estimate** and **Funding Source**.

- [ ] Fork this repository or create a branch of **main_\<Version>**.

Branch name: `bugfix_<Issue Number>_main_<Version>_<Description>`

- [ ] Fix the bug and test your changes.

- [ ] Add/update log messages for easier debugging.

- [ ] Add/update unit tests.

- [ ] Add/update documentation.

- [ ] Push local changes to GitHub.

- [ ] Submit a pull request to merge into **main_\<Version>**.

Pull request: `bugfix <Issue Number> main_<Version> <Description>`

- [ ] Define the pull request metadata, as permissions allow.

Select: **Reviewer(s)** and **Linked issues**

Select: **Organization** level software support **Project** for the current coordinated release

Select: **Milestone** as the next bugfix version

- [ ] Iterate until the reviewer(s) accept and merge your changes.

- [ ] Delete your fork or branch.

- [ ] Complete the steps above to fix the bug on the **develop** branch.

Branch name: `bugfix_<Issue Number>_develop_<Description>`

Pull request: `bugfix <Issue Number> develop <Description>`

Select: **Reviewer(s)** and **Linked issues**

Select: **Repository** level development cycle **Project** for the next official release

Select: **Milestone** as the next official version

- [ ] Close this issue.

| non_usab | revision series for mode td describe the problem mode td revision box plot is empty need to fix it and match the rscript version this is the xml expected behavior python and rscript mode td revision plots should be similar environment describe your runtime environment machine e g hpc name linux workstation mac laptop os e g redhat linux macos software version number s to reproduce describe the steps to reproduce the behavior go to click on scroll down to see error post relevant sample data following these instructions relevant deadlines list relevant project deadlines here or state none funding source define the source of funding and account keys here or state none define the metadata assignee select engineer s or no engineer required select scientist s or no scientist required labels select component s select priority select requestor s projects and milestone select organization level project for support of the current coordinated release select repository level project for development toward the next official release or add alert need project assignment label select milestone as the next bugfix version define related issue s consider the impact to the other metplus components bugfix checklist see the for details complete the issue definition above including the time estimate and funding source fork this repository or create a branch of main branch name bugfix main fix the bug and test your changes add update log messages for easier debugging add update unit tests add update documentation push local changes to github submit a pull request to merge into main pull request bugfix main define the pull request metadata as permissions allow select reviewer s and linked issues select organization level software support project for the current coordinated release select milestone as the next bugfix version iterate until the reviewer s accept and merge your changes delete your fork or branch complete the steps above to fix the bug on the develop branch branch name bugfix develop pull request bugfix develop select reviewer s and linked issues select repository level development cycle project for the next official release select milestone as the next official version close this issue | 0 |

4,812 | 3,896,645,276 | IssuesEvent | 2016-04-16 00:02:35 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 16729740: Jonathan Morgan's user directory is hardcoded in Automator in Instruments 5.1.1 (55045) | classification:ui/usability reproducible:always status:open | #### Description

Summary:

When trying to run a default, unmodified Automator script, I get the following error:

Path not found '/Users/jonathan_morgan/Library/Developer/Xcode/DerivedData/lkj-efnmzqijwdkcixghgjaokmivpnnx/Build/Products/Release-iphonesimulator/lkj.app/lkj'

Steps to Reproduce:

1. Open Instruments.

2. Click Automation.

3. Click Choose.

4. Click Play arrow at bottom of Automator window.

5. Get error message in an untitled dialog that only has an OK button.

Expected Results:

I expect the default script to execute without the error.

-

Product Version: Instruments 5.1.1 (55045) Xcode Version 5.1.1 (5B1008) OS X 10.9.2 Build 13C1021

Created: 2014-04-25T21:25:04.465577

Originated: 2014-04-25T00:00:00

Open Radar Link: http://www.openradar.me/16729740 | True | 16729740: Jonathan Morgan's user directory is hardcoded in Automator in Instruments 5.1.1 (55045) - #### Description

Summary:

When trying to run a default, unmodified Automator script, I get the following error:

Path not found '/Users/jonathan_morgan/Library/Developer/Xcode/DerivedData/lkj-efnmzqijwdkcixghgjaokmivpnnx/Build/Products/Release-iphonesimulator/lkj.app/lkj'

Steps to Reproduce:

1. Open Instruments.

2. Click Automation.

3. Click Choose.

4. Click Play arrow at bottom of Automator window.

5. Get error message in an untitled dialog that only has an OK button.

Expected Results:

I expect the default script to execute without the error.

-

Product Version: Instruments 5.1.1 (55045) Xcode Version 5.1.1 (5B1008) OS X 10.9.2 Build 13C1021

Created: 2014-04-25T21:25:04.465577

Originated: 2014-04-25T00:00:00

Open Radar Link: http://www.openradar.me/16729740 | usab | jonathan morgan s user directory is hardcoded in automator in instruments description summary when trying to run a default unmodified automator script i get the following error path not found users jonathan morgan library developer xcode deriveddata lkj efnmzqijwdkcixghgjaokmivpnnx build products release iphonesimulator lkj app lkj steps to reproduce open instruments click automation click choose click play arrow at bottom of automator window get error message in an untitled dialog that only has an ok button expected results i expect the default script to execute without the error product version instruments xcode version os x build created originated open radar link | 1 |

125,245 | 12,254,814,701 | IssuesEvent | 2020-05-06 09:07:57 | crate/crate-docs-theme | https://api.github.com/repos/crate/crate-docs-theme | opened | formatting test | documentation | ### Documentation feedback

<!--Please do not edit or remove the following information -->

- Page title: Crate Docs Theme

- Page URL: https://crate.io/docs/fake/en/latest/index.rst

- Source file: https://github.com/crate/crate-docs-theme/blob/master/docs/index.rst

- DocID: 6a992d55

---

just testing the formatting

| 1.0 | formatting test - ### Documentation feedback

<!--Please do not edit or remove the following information -->

- Page title: Crate Docs Theme

- Page URL: https://crate.io/docs/fake/en/latest/index.rst

- Source file: https://github.com/crate/crate-docs-theme/blob/master/docs/index.rst

- DocID: 6a992d55

---

just testing the formatting

| non_usab | formatting test documentation feedback page title crate docs theme page url source file docid just testing the formatting | 0 |

6,911 | 6,661,674,233 | IssuesEvent | 2017-10-02 09:39:39 | datawire/telepresence | https://api.github.com/repos/datawire/telepresence | closed | Unable to start telepresence 0.67 on arch linux | bug infrastructure | ### What were you trying to do?

Start telepresence to access services deployed on local minikube.

Telepresence is installed from AUR package: https://aur.archlinux.org/packages/telepresence/

Not sure what additional info could help, please ask if you need something.

### What did you expect to happen?

Telepresence started

### What happened instead?

It crashed

### Automatically included information

Command line: `['/usr/bin/telepresence', '--logfile', '/tmp/telepresence.log']`

Version: `0.67`

Python version: `3.6.2 (default, Jul 20 2017, 03:52:27)

[GCC 7.1.1 20170630]`

kubectl version: `Client Version: v1.7.6`

oc version: `(error: [Errno 2] No such file or directory: 'oc')`

OS: `Linux vstepchik_macpro 4.12.13-1-ARCH #1 SMP PREEMPT Fri Sep 15 06:36:43 UTC 2017 x86_64 GNU/Linux`

Traceback:

```

Traceback (most recent call last):

File "/usr/bin/telepresence", line 257, in call_f

return f(*args, **kwargs)

File "/usr/bin/telepresence", line 2350, in go

runner, args

File "/usr/bin/telepresence", line 1516, in start_proxy

processes, socks_port, ssh = connect(runner, remote_info, args)

File "/usr/bin/telepresence", line 1099, in connect

bufsize=0,

File "/usr/bin/telepresence", line 404, in popen

return self.launch_command(track, *args, **kwargs)

File "/usr/bin/telepresence", line 367, in launch_command

stderr=self.logfile

File "/usr/lib/python3.6/subprocess.py", line 707, in __init__

restore_signals, start_new_session)

File "/usr/lib/python3.6/subprocess.py", line 1333, in _execute_child

raise child_exception_type(errno_num, err_msg)

FileNotFoundError: [Errno 2] No such file or directory: 'stamp-telepresence'

```

Logs:

```

kube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

18.4 TL | [58] captured.

18.6 TL | [59] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

18.8 TL | [59] captured.

19.0 TL | [60] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

19.2 TL | [60] captured.

19.4 TL | [61] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

19.5 TL | [61] captured.

19.5 TL | [62] Launching: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'logs', '-f', 'telepresence-1506520541-048964-25778-1327857849-08rns', '--container', 'telepresence-1506520541-048964-25778'],)...

```

| 1.0 | Unable to start telepresence 0.67 on arch linux - ### What were you trying to do?

Start telepresence to access services deployed on local minikube.

Telepresence is installed from AUR package: https://aur.archlinux.org/packages/telepresence/

Not sure what additional info could help, please ask if you need something.

### What did you expect to happen?

Telepresence started

### What happened instead?

It crashed

### Automatically included information

Command line: `['/usr/bin/telepresence', '--logfile', '/tmp/telepresence.log']`

Version: `0.67`

Python version: `3.6.2 (default, Jul 20 2017, 03:52:27)

[GCC 7.1.1 20170630]`

kubectl version: `Client Version: v1.7.6`

oc version: `(error: [Errno 2] No such file or directory: 'oc')`

OS: `Linux vstepchik_macpro 4.12.13-1-ARCH #1 SMP PREEMPT Fri Sep 15 06:36:43 UTC 2017 x86_64 GNU/Linux`

Traceback:

```

Traceback (most recent call last):

File "/usr/bin/telepresence", line 257, in call_f

return f(*args, **kwargs)

File "/usr/bin/telepresence", line 2350, in go

runner, args

File "/usr/bin/telepresence", line 1516, in start_proxy

processes, socks_port, ssh = connect(runner, remote_info, args)

File "/usr/bin/telepresence", line 1099, in connect

bufsize=0,

File "/usr/bin/telepresence", line 404, in popen

return self.launch_command(track, *args, **kwargs)

File "/usr/bin/telepresence", line 367, in launch_command

stderr=self.logfile

File "/usr/lib/python3.6/subprocess.py", line 707, in __init__

restore_signals, start_new_session)

File "/usr/lib/python3.6/subprocess.py", line 1333, in _execute_child

raise child_exception_type(errno_num, err_msg)

FileNotFoundError: [Errno 2] No such file or directory: 'stamp-telepresence'

```

Logs:

```

kube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

18.4 TL | [58] captured.

18.6 TL | [59] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

18.8 TL | [59] captured.

19.0 TL | [60] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

19.2 TL | [60] captured.

19.4 TL | [61] Capturing: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'get', 'pod', 'telepresence-1506520541-048964-25778-1327857849-08rns', '-o', 'json'],)...

19.5 TL | [61] captured.

19.5 TL | [62] Launching: (['kubectl', '--context', 'minikube', '--namespace', 'default', 'logs', '-f', 'telepresence-1506520541-048964-25778-1327857849-08rns', '--container', 'telepresence-1506520541-048964-25778'],)...

```

| non_usab | unable to start telepresence on arch linux what were you trying to do start telepresence to access services deployed on local minikube telepresence is installed from aur package not sure what additional info could help please ask if you need something what did you expect to happen telepresence started what happened instead it crashed automatically included information command line version python version default jul kubectl version client version oc version error no such file or directory oc os linux vstepchik macpro arch smp preempt fri sep utc gnu linux traceback traceback most recent call last file usr bin telepresence line in call f return f args kwargs file usr bin telepresence line in go runner args file usr bin telepresence line in start proxy processes socks port ssh connect runner remote info args file usr bin telepresence line in connect bufsize file usr bin telepresence line in popen return self launch command track args kwargs file usr bin telepresence line in launch command stderr self logfile file usr lib subprocess py line in init restore signals start new session file usr lib subprocess py line in execute child raise child exception type errno num err msg filenotfounderror no such file or directory stamp telepresence logs kube namespace default get pod telepresence o json tl captured tl capturing tl captured tl capturing tl captured tl capturing tl captured tl launching | 0 |

289,861 | 25,018,386,916 | IssuesEvent | 2022-11-03 21:05:27 | psu-libraries/researcher-metadata | https://api.github.com/repos/psu-libraries/researcher-metadata | opened | Make "Proxies" link in menu more descriptive | 2022-user-testing | In user testing, we got the suggestion to make the "Proxies" link in the menu more descriptive. | 1.0 | Make "Proxies" link in menu more descriptive - In user testing, we got the suggestion to make the "Proxies" link in the menu more descriptive. | non_usab | make proxies link in menu more descriptive in user testing we got the suggestion to make the proxies link in the menu more descriptive | 0 |

400,023 | 11,765,751,624 | IssuesEvent | 2020-03-14 18:51:16 | ayumi-cloud/oc-security-module | https://api.github.com/repos/ayumi-cloud/oc-security-module | opened | Add automatic protection for 'error_log' files being created | Add to Blacklist Firewall Priority: Low enhancement in-progress | ### Enhancement idea

- [ ] Add automatic protection for 'error_log' files being created.

| 1.0 | Add automatic protection for 'error_log' files being created - ### Enhancement idea

- [ ] Add automatic protection for 'error_log' files being created.

| non_usab | add automatic protection for error log files being created enhancement idea add automatic protection for error log files being created | 0 |

10,338 | 6,671,093,801 | IssuesEvent | 2017-10-04 04:49:48 | loconomics/loconomics | https://api.github.com/repos/loconomics/loconomics | closed | Meet 3.2.1 - On Focus | C: Usability F: Accessbility | ## Summary

Provides that user interface components do not initiate a change of context when receiving focus

**Conformance Level:** A

**Existing 508 Corresponding Provision:** 1194.21(l) and .22(n) | True | Meet 3.2.1 - On Focus - ## Summary

Provides that user interface components do not initiate a change of context when receiving focus

**Conformance Level:** A

**Existing 508 Corresponding Provision:** 1194.21(l) and .22(n) | usab | meet on focus summary provides that user interface components do not initiate a change of context when receiving focus conformance level a existing corresponding provision l and n | 1 |

14,727 | 9,441,110,130 | IssuesEvent | 2019-04-14 23:03:03 | factbox/factbox | https://api.github.com/repos/factbox/factbox | closed | Form errors without special style | usability | When a wrong form is submit, the errors that are be shown are not styled. | True | Form errors without special style - When a wrong form is submit, the errors that are be shown are not styled. | usab | form errors without special style when a wrong form is submit the errors that are be shown are not styled | 1 |

143,924 | 22,204,414,814 | IssuesEvent | 2022-06-07 13:50:38 | deke207/turn-it-around-dev | https://api.github.com/repos/deke207/turn-it-around-dev | closed | Theme development | design dev | **Description**

Develop a theme based on approved mockups.

- Content pages

* Homepage

* Resource Directory

* Resource Membership

* Resource Submissions

* Student Section

* Shop

* Coaching Sessions

* About Us

* News (blog roll)

* Events

* Contact Us

**Resources**

[Approved mocks](https://greatdesigns.me)

| 1.0 | Theme development - **Description**

Develop a theme based on approved mockups.

- Content pages

* Homepage

* Resource Directory

* Resource Membership

* Resource Submissions

* Student Section

* Shop

* Coaching Sessions

* About Us

* News (blog roll)

* Events

* Contact Us

**Resources**

[Approved mocks](https://greatdesigns.me)

| non_usab | theme development description develop a theme based on approved mockups content pages homepage resource directory resource membership resource submissions student section shop coaching sessions about us news blog roll events contact us resources | 0 |

22,369 | 19,186,340,105 | IssuesEvent | 2021-12-05 09:02:04 | bgo-bioimagerie/platformmanager | https://api.github.com/repos/bgo-bioimagerie/platformmanager | closed | Helpdesk: clean spam tickets with delay | enhancement usability | When setting a ticket as spam, do not delete it, just set status to spam (to avoid errors).

In ui, allow access to spam status to allow revert to other status

On pfm-helpdesk process, clean spam tagged tickets only if update time > x hours. | True | Helpdesk: clean spam tickets with delay - When setting a ticket as spam, do not delete it, just set status to spam (to avoid errors).

In ui, allow access to spam status to allow revert to other status

On pfm-helpdesk process, clean spam tagged tickets only if update time > x hours. | usab | helpdesk clean spam tickets with delay when setting a ticket as spam do not delete it just set status to spam to avoid errors in ui allow access to spam status to allow revert to other status on pfm helpdesk process clean spam tagged tickets only if update time x hours | 1 |

131,476 | 10,697,197,367 | IssuesEvent | 2019-10-23 16:02:12 | fedora-python/tox-current-env | https://api.github.com/repos/fedora-python/tox-current-env | closed | Allow paralel test execution of integration tests | enhancement tests | Be it pyest-xdist or `tox --parallel`/`detox`, current integartion testes will fight over the `tests/.tox` directory. We should adapt the test suite to use temporary directories and copy files into it. | 1.0 | Allow paralel test execution of integration tests - Be it pyest-xdist or `tox --parallel`/`detox`, current integartion testes will fight over the `tests/.tox` directory. We should adapt the test suite to use temporary directories and copy files into it. | non_usab | allow paralel test execution of integration tests be it pyest xdist or tox parallel detox current integartion testes will fight over the tests tox directory we should adapt the test suite to use temporary directories and copy files into it | 0 |

2,772 | 3,163,731,398 | IssuesEvent | 2015-09-20 15:51:26 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 22773990: No way to disable picture in picture (PiP) when starting another video | classification:ui usability reproducible:always status:open | #### Description

Summary:

It seems the when using picture in picture, there's no way to programmatically stop it. For example, when launching a video (AVPlayerViewController) in my app, I want to stop PiP from another app.

Steps to Reproduce:

1. Play video

2. Start picture in picture

3. Play another video

Expected Results:

Existing picture in picture would stop

Actual Results:

Picture in picture plays over the top of the new video.

Version:

iOS 9.0 (simulator)

Notes:

Even if it wasn't automated, a way to stop the video programatically on a kind of sharedInstance of AVPictureInPictureController would be good.

Configuration:

iPad Air

Attachments:

'Simulator Screen Shot 20 Sep 2015, 16.09.59.png' was successfully uploaded.

-

Product Version: 9.0

Created: 2015-09-20T15:13:49.554590

Originated: 2015-09-20T00:00:00

Open Radar Link: http://www.openradar.me/22773990 | True | 22773990: No way to disable picture in picture (PiP) when starting another video - #### Description

Summary:

It seems the when using picture in picture, there's no way to programmatically stop it. For example, when launching a video (AVPlayerViewController) in my app, I want to stop PiP from another app.

Steps to Reproduce:

1. Play video

2. Start picture in picture

3. Play another video

Expected Results:

Existing picture in picture would stop

Actual Results:

Picture in picture plays over the top of the new video.

Version:

iOS 9.0 (simulator)

Notes:

Even if it wasn't automated, a way to stop the video programatically on a kind of sharedInstance of AVPictureInPictureController would be good.

Configuration:

iPad Air

Attachments:

'Simulator Screen Shot 20 Sep 2015, 16.09.59.png' was successfully uploaded.

-

Product Version: 9.0

Created: 2015-09-20T15:13:49.554590

Originated: 2015-09-20T00:00:00

Open Radar Link: http://www.openradar.me/22773990 | usab | no way to disable picture in picture pip when starting another video description summary it seems the when using picture in picture there s no way to programmatically stop it for example when launching a video avplayerviewcontroller in my app i want to stop pip from another app steps to reproduce play video start picture in picture play another video expected results existing picture in picture would stop actual results picture in picture plays over the top of the new video version ios simulator notes even if it wasn t automated a way to stop the video programatically on a kind of sharedinstance of avpictureinpicturecontroller would be good configuration ipad air attachments simulator screen shot sep png was successfully uploaded product version created originated open radar link | 1 |

8,491 | 5,756,732,748 | IssuesEvent | 2017-04-26 00:47:43 | unfoldingWord-dev/translationCore | https://api.github.com/repos/unfoldingWord-dev/translationCore | closed | When an edit is removed the user should not be required to indicate a reason | duplicate Usability | If the text is changed back to the original the reason for the change should not be required. It may make sense to indicate in the file system that the previous edit was deleted. Eventually we may want to have an undo button that would allow the user to roll back through the edits one by one. | True | When an edit is removed the user should not be required to indicate a reason - If the text is changed back to the original the reason for the change should not be required. It may make sense to indicate in the file system that the previous edit was deleted. Eventually we may want to have an undo button that would allow the user to roll back through the edits one by one. | usab | when an edit is removed the user should not be required to indicate a reason if the text is changed back to the original the reason for the change should not be required it may make sense to indicate in the file system that the previous edit was deleted eventually we may want to have an undo button that would allow the user to roll back through the edits one by one | 1 |

53,475 | 3,040,580,422 | IssuesEvent | 2015-08-07 16:08:21 | OpenBEL/bel.rb | https://api.github.com/repos/OpenBEL/bel.rb | closed | Installation in JRuby | high priority | JRuby does not support C extensions which bel.rb contains (i.e. BEL Script, C-based parser).

If running in JRuby we should do the following:

- Do not attempt to load libbel C extension.

- Provide alternative implementations for APIs relying on C extension (lib/parser, lib/completion).

- Strip C extension code from gem.

- Push a java architecture gem. | 1.0 | Installation in JRuby - JRuby does not support C extensions which bel.rb contains (i.e. BEL Script, C-based parser).

If running in JRuby we should do the following:

- Do not attempt to load libbel C extension.

- Provide alternative implementations for APIs relying on C extension (lib/parser, lib/completion).

- Strip C extension code from gem.

- Push a java architecture gem. | non_usab | installation in jruby jruby does not support c extensions which bel rb contains i e bel script c based parser if running in jruby we should do the following do not attempt to load libbel c extension provide alternative implementations for apis relying on c extension lib parser lib completion strip c extension code from gem push a java architecture gem | 0 |

13,428 | 8,454,558,811 | IssuesEvent | 2018-10-21 04:52:45 | MarkBind/markbind | https://api.github.com/repos/MarkBind/markbind | closed | Support custom keywords | a-AuthorUsability c.Feature p.Medium | Extension of #428

With the current implementation of keywords, users are forced to include these keywords in the text if they want them to be tagged to a heading.

Allow the option of having keywords invisibly tagged to a heading, for example:

```

# Using a Java IDE

<span class="keyword hidden">Eclipse</span>

<span class="keyword hidden">Netbeans</span>

<span class="keyword hidden">IntelliJ</span>

``` | True | Support custom keywords - Extension of #428

With the current implementation of keywords, users are forced to include these keywords in the text if they want them to be tagged to a heading.

Allow the option of having keywords invisibly tagged to a heading, for example:

```

# Using a Java IDE

<span class="keyword hidden">Eclipse</span>

<span class="keyword hidden">Netbeans</span>

<span class="keyword hidden">IntelliJ</span>

``` | usab | support custom keywords extension of with the current implementation of keywords users are forced to include these keywords in the text if they want them to be tagged to a heading allow the option of having keywords invisibly tagged to a heading for example using a java ide eclipse netbeans intellij | 1 |

140,082 | 18,893,691,003 | IssuesEvent | 2021-11-15 15:41:39 | Zolyn/vuepress-plugin-waline | https://api.github.com/repos/Zolyn/vuepress-plugin-waline | closed | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz | security vulnerability | ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz">https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz</a></p>

<p>Path to dependency file: vuepress-plugin-waline/package.json</p>

<p>Path to vulnerable library: vuepress-plugin-waline/node_modules/ansi-html/package.json</p>

<p>

Dependency Hierarchy:

- minivaline-5.1.7.tgz (Root Library)

- webpack-dev-server-4.0.0-beta.3.tgz

- :x: **ansi-html-0.0.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package ansi-html. If an attacker provides a malicious string, it will get stuck processing the input for an extremely long time.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23424>CVE-2021-23424</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz - ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz">https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz</a></p>

<p>Path to dependency file: vuepress-plugin-waline/package.json</p>

<p>Path to vulnerable library: vuepress-plugin-waline/node_modules/ansi-html/package.json</p>

<p>

Dependency Hierarchy:

- minivaline-5.1.7.tgz (Root Library)

- webpack-dev-server-4.0.0-beta.3.tgz

- :x: **ansi-html-0.0.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package ansi-html. If an attacker provides a malicious string, it will get stuck processing the input for an extremely long time.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23424>CVE-2021-23424</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve high detected in ansi html tgz cve high severity vulnerability vulnerable library ansi html tgz an elegant lib that converts the chalked ansi text to html library home page a href path to dependency file vuepress plugin waline package json path to vulnerable library vuepress plugin waline node modules ansi html package json dependency hierarchy minivaline tgz root library webpack dev server beta tgz x ansi html tgz vulnerable library found in base branch main vulnerability details this affects all versions of package ansi html if an attacker provides a malicious string it will get stuck processing the input for an extremely long time publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href step up your open source security game with whitesource | 0 |

38,948 | 5,019,772,397 | IssuesEvent | 2016-12-14 12:56:24 | ELENA-LANG/elena-lang | https://api.github.com/repos/ELENA-LANG/elena-lang | closed | Open argument list : assigning | Design Idea Discussion | Open argument list should support setAt operator.

This will extremely help dynamic programming in Tape, because direct manipulation with the tape stack will be possible

| 1.0 | Open argument list : assigning - Open argument list should support setAt operator.

This will extremely help dynamic programming in Tape, because direct manipulation with the tape stack will be possible

| non_usab | open argument list assigning open argument list should support setat operator this will extremely help dynamic programming in tape because direct manipulation with the tape stack will be possible | 0 |

15,742 | 10,269,938,422 | IssuesEvent | 2019-08-23 10:14:29 | glam-lab/degender-the-web | https://api.github.com/repos/glam-lab/degender-the-web | closed | Try moving the DGtW header to the bottom of the window | enhancement question usability wontfix | **Is your feature request related to a problem? Please describe.**

While it's very visible, the DGtW header often covers up important functions at the top of the window. Its interactions with site components are quite unpredictable, as well.

**Describe the solution you'd like**

Try putting the DGtW header at the bottom of the window instead of the top, similar to the "cookies" div on StackOverflow.com:

Conveniently, this screen shot is from a discussion of what I'm proposing: https://stackoverflow.com/questions/31942227/stick-div-to-bottom-of-browser-window

One challenge is how multiple such headers might stack. Hiding the cookie div would be a problem. | True | Try moving the DGtW header to the bottom of the window - **Is your feature request related to a problem? Please describe.**

While it's very visible, the DGtW header often covers up important functions at the top of the window. Its interactions with site components are quite unpredictable, as well.

**Describe the solution you'd like**

Try putting the DGtW header at the bottom of the window instead of the top, similar to the "cookies" div on StackOverflow.com:

Conveniently, this screen shot is from a discussion of what I'm proposing: https://stackoverflow.com/questions/31942227/stick-div-to-bottom-of-browser-window

One challenge is how multiple such headers might stack. Hiding the cookie div would be a problem. | usab | try moving the dgtw header to the bottom of the window is your feature request related to a problem please describe while it s very visible the dgtw header often covers up important functions at the top of the window its interactions with site components are quite unpredictable as well describe the solution you d like try putting the dgtw header at the bottom of the window instead of the top similar to the cookies div on stackoverflow com conveniently this screen shot is from a discussion of what i m proposing one challenge is how multiple such headers might stack hiding the cookie div would be a problem | 1 |

18,546 | 13,032,433,518 | IssuesEvent | 2020-07-28 04:15:25 | OBOFoundry/OBOFoundry.github.io | https://api.github.com/repos/OBOFoundry/OBOFoundry.github.io | closed | Scrolling down headers | usability feature website | Is there a way to have headers following as you scroll down, or repeat them in the middle?

| True | Scrolling down headers - Is there a way to have headers following as you scroll down, or repeat them in the middle?

| usab | scrolling down headers is there a way to have headers following as you scroll down or repeat them in the middle | 1 |

35,023 | 4,622,933,364 | IssuesEvent | 2016-09-27 09:18:06 | vector-im/vector-android | https://api.github.com/repos/vector-im/vector-android | opened | Media picker: Switching camera button and exit button are not very visible | design P1 | Related to https://github.com/vector-im/vector-ios/issues/610.

A solution is required for android too | 1.0 | Media picker: Switching camera button and exit button are not very visible - Related to https://github.com/vector-im/vector-ios/issues/610.

A solution is required for android too | non_usab | media picker switching camera button and exit button are not very visible related to a solution is required for android too | 0 |

9,189 | 6,155,148,277 | IssuesEvent | 2017-06-28 14:14:08 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Cant hide CanvasModulate | enhancement topic:editor usability | It seems like there is some problem with all CanvasItem options in CanvasModulate. 'Visible' checkbox doesn't work, so as all other options there. In order to hide it, you need to delete it basically.

| True | Cant hide CanvasModulate - It seems like there is some problem with all CanvasItem options in CanvasModulate. 'Visible' checkbox doesn't work, so as all other options there. In order to hide it, you need to delete it basically.

| usab | cant hide canvasmodulate it seems like there is some problem with all canvasitem options in canvasmodulate visible checkbox doesn t work so as all other options there in order to hide it you need to delete it basically | 1 |

12,115 | 7,703,362,145 | IssuesEvent | 2018-05-21 08:07:31 | github/VisualStudio | https://api.github.com/repos/github/VisualStudio | opened | Scrolling is broken in PRs with lots of changed files. | usability | When looking at the details for a large PR, the horizontal scroll bar is shown at the bottom of the scrollable area, meaning that one has to scroll right to the bottom in order to scroll horizontally. This makes navigating this panel very difficult:

| True | Scrolling is broken in PRs with lots of changed files. - When looking at the details for a large PR, the horizontal scroll bar is shown at the bottom of the scrollable area, meaning that one has to scroll right to the bottom in order to scroll horizontally. This makes navigating this panel very difficult:

| usab | scrolling is broken in prs with lots of changed files when looking at the details for a large pr the horizontal scroll bar is shown at the bottom of the scrollable area meaning that one has to scroll right to the bottom in order to scroll horizontally this makes navigating this panel very difficult | 1 |

260,678 | 27,784,696,408 | IssuesEvent | 2023-03-17 01:29:32 | michaeldotson/home-inventory-vue-app | https://api.github.com/repos/michaeldotson/home-inventory-vue-app | opened | CVE-2022-38900 (High) detected in decode-uri-component-0.2.0.tgz | Mend: dependency security vulnerability | ## CVE-2022-38900 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>decode-uri-component-0.2.0.tgz</b></p></summary>

<p>A better decodeURIComponent</p>