repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

ni1o1/transbigdata | data-visualization | 79 | 出现报错:cannot import name 'TopologicalError' from 'shapely.geos' | import时出现报错:cannot import name 'TopologicalError' from 'shapely.geos' (D:\Anaconda\envs\TransBigData\lib\site-packages\shapely\geos.py)

这是为什么呢,是我的shapely版本没安装对吗,但是我都是让conda自动安装的呀 | closed | 2023-07-31T03:50:39Z | 2024-01-25T11:47:20Z | https://github.com/ni1o1/transbigdata/issues/79 | [] | Dennissy23 | 1 |

ShishirPatil/gorilla | api | 494 | Question about AST evaluation for Java | Hello, I am testing my own model. The test set is java. There is an example:

The output of my model is` {'invokemethod007_runIt': {'args': ['suspend', 'log'], 'out': 'debugLog'}}`. When I execute the code, it seems that the code forces all the parameter values to be of type string: ` {'invokemethod007_runIt': {'args... | closed | 2024-07-01T09:37:22Z | 2024-10-16T07:35:58Z | https://github.com/ShishirPatil/gorilla/issues/494 | [

"BFCL-General"

] | GeniusYx | 3 |

slackapi/bolt-python | fastapi | 981 | What is the difference between ts and event_ts VERSUS ts and thread_ts? | (Describe your issue and goal here)

What is the difference between ts and event_ts VERSUS ts and thread_ts?

For instance when a thread is replied to, an event of the following type is generated:

```python

{

"type": "reaction_added",

"user": "xxx",

"reaction": "happy-face",

"item": {"type":... | closed | 2023-11-01T06:43:37Z | 2023-11-01T08:53:30Z | https://github.com/slackapi/bolt-python/issues/981 | [

"question"

] | WhyIsItSoHardToPickAUsername | 1 |

babysor/MockingBird | deep-learning | 271 | 进行音频和梅尔频谱图预处理报错怎么回事: python encoder_preprocess.py <datasets_root> | The dataset consists of 0 utterances, 0 mel frames, 0 audio timesteps (0.00 hours).

Traceback (most recent call last):

File "pre.py", line 74, in <module>

preprocess_dataset(**vars(args))

File "E:\PythonProject\MockingBird\synthesizer\preprocess.py", line 88, in preprocess_dataset

print("Max input leng... | open | 2021-12-14T16:03:18Z | 2023-06-27T09:03:17Z | https://github.com/babysor/MockingBird/issues/271 | [] | LiangChenStart | 7 |

kennethreitz/responder | flask | 48 | Unable to reach subequently defined routes | I have thrown together a quick app, and I think I discovered a bug with memoization of the does_match method in routes. The behavior I was seeing was that only the first registered route would pass the 'does_match' function, even though the route had been registered, commenting out the @memoize decorator seemed to fix ... | closed | 2018-10-15T11:09:02Z | 2018-10-15T19:51:12Z | https://github.com/kennethreitz/responder/issues/48 | [] | nmunro | 1 |

koaning/scikit-lego | scikit-learn | 38 | documentation on github pages | locally it seems to run just fine

but github seems to not be rendering it appropriately | closed | 2019-03-20T06:07:24Z | 2019-03-20T06:25:08Z | https://github.com/koaning/scikit-lego/issues/38 | [] | koaning | 2 |

flairNLP/flair | nlp | 3,015 | connection timeout | getting connection timeout error while trying to download 'en-sentiment' model.

<img width="984" alt="image" src="https://user-images.githubusercontent.com/6858237/206390383-6d3e754d-b5ca-4916-aa93-72f07a351442.png">

| closed | 2022-12-08T07:54:53Z | 2023-09-27T10:48:14Z | https://github.com/flairNLP/flair/issues/3015 | [] | amod99 | 7 |

aeon-toolkit/aeon | scikit-learn | 2,015 | [DOC] Failed Example | ### Describe the issue linked to the documentation

Hi. In the "getting started" documentation [here](https://www.aeon-toolkit.org/en/stable/getting_started.html) there is an example for **Pipelines for aeon estimators**. This example throws the following error

```

ValueError: Multivariate data not supported by Box... | closed | 2024-08-27T14:19:28Z | 2024-11-28T11:18:31Z | https://github.com/aeon-toolkit/aeon/issues/2015 | [

"documentation"

] | twobitunicorn | 2 |

roboflow/supervision | machine-learning | 831 | useing my viedo to run speed | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

HI ,i used my viedo to run speed [speed_estimation](https://github.com/roboflow/supervision/tree/develop/examples/speed_estimation) code.

I did... | closed | 2024-02-01T07:34:15Z | 2024-02-01T09:30:04Z | https://github.com/roboflow/supervision/issues/831 | [

"question"

] | althonmp | 4 |

plotly/dash | data-visualization | 2,851 | Dash 2.17.0 prevents some generated App Studio apps from running | https://github.com/plotly/notebook-to-app/actions/runs/8974757424/job/24647808759#step:9:1283

We've reverted to 2.16.1 for the time being. | closed | 2024-05-06T20:27:02Z | 2024-07-26T13:45:34Z | https://github.com/plotly/dash/issues/2851 | [

"P2"

] | hatched | 2 |

2noise/ChatTTS | python | 914 | 遇到多音字如何处理?比如我生成的语音里面有仔,模型默认输出为zai,我想让模型按zi输出,如何设置? | 遇到多音字如何处理?比如我生成的语音里面有仔,模型默认输出为zai,我想让模型按zi输出,如何设置? | closed | 2025-03-11T01:15:02Z | 2025-03-12T13:54:39Z | https://github.com/2noise/ChatTTS/issues/914 | [

"documentation"

] | tjh123321 | 1 |

jschneier/django-storages | django | 1,190 | wrong usage of LibCloudStorage._get_object in LibCloudStorage._read | I bumped into this bug when I was trying to make **LibCloudStorage** work with django's **ManifestFilesMixin** but that doesn't matter and it should be fixed regardless.

exact version of what it is now:

```

def _get_object(self, name):

"""Get object by its name. [Return None if object not found"""

clean_... | closed | 2022-10-27T14:42:55Z | 2023-02-16T15:14:11Z | https://github.com/jschneier/django-storages/issues/1190 | [] | engAmirEng | 0 |

deezer/spleeter | deep-learning | 195 | [Discussion] Ideas to improve deep learning on a particular music style | Newbie here. First approach to Git, Python/PiP and commands.

I'm really interested in the development of this tool and the use of it. My main focus is to separate stems in a particular field of music: jazzfunk. I'm not fully aware of how neural networks learn themselves and evolve. So, I need basic info to clarify ... | open | 2019-12-23T16:48:42Z | 2020-01-07T13:12:55Z | https://github.com/deezer/spleeter/issues/195 | [

"question"

] | antojsan | 2 |

ets-labs/python-dependency-injector | asyncio | 750 | Fix Closing dependency resolution |

There's a PR that came up four months ago. [PR LINK](https://github.com/ets-labs/python-dependency-injector/pull/711)

I think the problem has been solved, why hasn't that PR been merged for 4 months? | open | 2023-09-26T06:52:57Z | 2023-09-26T06:52:57Z | https://github.com/ets-labs/python-dependency-injector/issues/750 | [] | HyungJunKimB | 0 |

modin-project/modin | data-science | 7,445 | Metrics interface for collecting modin frontend telemetry | Within Snowflake Pandas we want to start understanding interactive workloads as seen by the end user in modin. Since we are looking at how to balance/change the underlying engine these statistics cannot be collected from our engine plugin alone. This interface should allow us to collect this data without overriding the... | open | 2025-02-17T23:53:21Z | 2025-03-15T19:49:56Z | https://github.com/modin-project/modin/issues/7445 | [

"new feature/request 💬",

"P2"

] | sfc-gh-jkew | 1 |

microsoft/nni | deep-learning | 5,688 | How to use customize assessor in NNI? | Describe the issue:

How to use customized assessor in NNI?

I can run my experiment when using builtin accessors like Medianstop. But when I want to customize my own accessors, it starts having problems.

i learn from this: https://nni.readthedocs.io/en/stable/hpo/custom_algorithm.html

and set the config.yml as the m... | open | 2023-09-30T14:51:48Z | 2023-10-13T20:42:40Z | https://github.com/microsoft/nni/issues/5688 | [] | skyling0299 | 1 |

dynaconf/dynaconf | fastapi | 614 | [RFC] merge strategies/deep merge strategies for lazy objects | **Is your feature request related to a problem? Please describe.**

when performing deep merges, dynaconf always eagerly evaluates lazy objects

this can in particular end bad if one wants to configure dynaconf for usage with a loader based on config data

**Describe the solution you'd like**

have a merge strate... | closed | 2021-07-12T19:15:23Z | 2024-01-08T11:00:21Z | https://github.com/dynaconf/dynaconf/issues/614 | [

"wontfix",

"Not a Bug",

"RFC"

] | RonnyPfannschmidt | 1 |

plotly/dash-table | dash | 830 | Cell with dropdown does not allow for backspace | When editing the value of a cell with a dropdown after double clicking, the value can only be appended with more characters. If a typo was made when filtering the dropdown, pressing the backspace key doesn't do anything and you must click outside the cell to clear the input. However, if you double click on a cell witho... | open | 2020-09-23T18:45:01Z | 2020-09-23T18:45:01Z | https://github.com/plotly/dash-table/issues/830 | [] | blozano824 | 0 |

horovod/horovod | pytorch | 3,923 | fail to build horovod 0.28.0 from the source with gcc 12 due to gloo issue | **Environment:**

1. Framework: tensorflow 2.12.0, pytorch 2.0.1

2. Framework version:

3. Horovod version: 0.28.0

4. MPI version:

5. CUDA version: 12.1.1

6. NCCL version: 2.17.1

7. Python version: 3.11

8. Spark / PySpark version:

9. Ray version:

10. OS and version: ArchLinux

11. GCC version: 12.3.0

12. CMak... | closed | 2023-05-12T15:15:13Z | 2023-05-24T16:52:41Z | https://github.com/horovod/horovod/issues/3923 | [

"bug"

] | hubutui | 3 |

ageitgey/face_recognition | python | 863 | Unable To install face_recognition | * face_recognition version: Latest

* Python version: 3

* Operating System: Raspberry Pi 3

### Description

When I entered the command:

```

sudo pip install face_recognition

```

I got:

```

pi@raspberrypi:~ $ pip install face_recognition

Looking in indexes: https://pypi.org/simple, https://www.piwheels.... | closed | 2019-06-25T06:40:19Z | 2019-06-25T07:11:12Z | https://github.com/ageitgey/face_recognition/issues/863 | [] | bytesByHarsh | 7 |

ContextLab/hypertools | data-visualization | 155 | saving a DataGeometry object | After performing an analysis and visualizing the result, we want to save out the `geo` so that it can be shared or loaded in at a later time. After a little research, here are a few options:

+ `pickle` - this is the simplest way to save out an object. the downside is that its not an efficient way to store large ar... | closed | 2017-10-09T12:52:51Z | 2017-10-09T19:57:13Z | https://github.com/ContextLab/hypertools/issues/155 | [] | andrewheusser | 6 |

521xueweihan/HelloGitHub | python | 2,416 | 【开源自荐】GitRec - GitHub仓库推荐系统增强插件 | ## 推荐项目

- 项目地址:https://github.com/gorse-io/gitrec

- 类别:JS(前端)、Python(后端)

- 项目标题:GitRec

- 项目描述:GitHub仓库推荐系统增强插件

- 亮点:GitRec浏览器扩展能在GitHub网页上插入推荐内容

1. 替换GitHub官方推荐仓库。GitRec插件能替换GitHub首页官方推荐仓库为GitRec生成的推荐内容,可以通过配置进行切换。

2. 为热门仓库生成相似仓库推荐。如果仓库的星星数超过100,那么GitRec会在右下角展示该仓库的相似仓库。

- 示例代码:(可选)

- 截图:(可选)g... | closed | 2022-11-05T15:19:50Z | 2024-01-24T08:15:15Z | https://github.com/521xueweihan/HelloGitHub/issues/2416 | [

"Python 项目"

] | zhenghaoz | 0 |

LAION-AI/Open-Assistant | python | 3,251 | Unable to train model (Loss is 0.000000) | I am trying to fine tune the LLM(OpenAssistant/oasst-sft-4-pythia-12b-epoch-3.5) with my data.

My code

```

import torch

from transformers import LineByLineTextDataset, DataCollatorForLanguageModeling

from transformers import Trainer, TrainingArguments

from transformers import AutoTokenizer, AutoModelForCausal... | closed | 2023-05-29T09:05:55Z | 2023-06-07T18:15:31Z | https://github.com/LAION-AI/Open-Assistant/issues/3251 | [] | ban1989ban | 2 |

pandas-dev/pandas | pandas | 60,923 | BUG: `series.reindex(mi)` behaves different for series with Index and MultiIndex | ### Pandas version checks

- [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the [latest version](https://pandas.pydata.org/docs/whatsnew/index.html) of pandas.

- [x] I have confirmed this bug exists on the [main branch](https://pandas.pydata.org/docs/dev/ge... | open | 2025-02-13T01:25:54Z | 2025-03-06T21:59:54Z | https://github.com/pandas-dev/pandas/issues/60923 | [

"Bug",

"MultiIndex",

"Index"

] | ssche | 6 |

pydantic/pydantic | pydantic | 11,055 | field annotation not respected by mypy when updated by a decorator | ### Initial Checks

- [X] I confirm that I'm using Pydantic V2

### Description

This decorator successfully makes the field optional (no runtime errors from this code), but mypy throws saying it is required. I'm unclear where the issue is between mypy, pydantic, pydantic mypy plugin, python, and this code.

Th... | closed | 2024-12-05T20:12:20Z | 2024-12-06T12:36:52Z | https://github.com/pydantic/pydantic/issues/11055 | [

"bug V2",

"pending"

] | BarrettStephen | 4 |

fastapi/sqlmodel | sqlalchemy | 495 | How to reference a foreign key in a table that is in a different (postgres) schema | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X... | closed | 2022-11-11T11:58:12Z | 2024-04-15T10:15:36Z | https://github.com/fastapi/sqlmodel/issues/495 | [

"question"

] | ivyleavedtoadflax | 4 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,701 | Could not connect to deb.globaleaks.org | ### What version of GlobaLeaks are you using?

4.13.13

### What browser(s) are you seeing the problem on?

_No response_

### What operating system(s) are you seeing the problem on?

Linux

### Describe the issue

We keep getting this error during the installation process

Failed to fetch http://deb.globaleaks.org/ja... | closed | 2023-10-13T09:25:03Z | 2023-10-13T10:21:39Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3701 | [

"T: Bug",

"Triage"

] | alexspanos-wide | 2 |

dask/dask | numpy | 11,494 | `align_partitions` creates mismatched partitions. | **Describe the issue**:

The `divisions` attribute doesn't match between data frames even after applying `align_partitions` on them.

**Minimal Complete Verifiable Example**:

```python

import numpy as np

from distributed import Client, LocalCluster

from dask import dataframe as dd

from dask.dataframe.multi... | closed | 2024-11-05T12:26:04Z | 2024-11-05T13:53:43Z | https://github.com/dask/dask/issues/11494 | [

"needs triage"

] | trivialfis | 6 |

gradio-app/gradio | machine-learning | 10,256 | Freeze Panel | Hi everyone, how are you? I would like to know if there is any way to create a frozen panel with some gradio component, similar to what we do in Excel, so that I can fix a section of components above and below as I scroll the main vertical scroll bar, the components below just scroll.

| closed | 2024-12-26T21:37:50Z | 2024-12-27T16:24:45Z | https://github.com/gradio-app/gradio/issues/10256 | [] | elismasilva | 2 |

recommenders-team/recommenders | machine-learning | 1,256 | [FEATURE] remove TF warning messages in all TF notebooks | ### Description

<!--- Describe your expected feature in detail -->

```

tf.get_logger().setLevel('ERROR') # only show error messages

```

### Expected behavior with the suggested feature

<!--- For example: -->

<!--- *Adding algorithm xxx will help people understand more about xxx use case scenarios. -->

##... | closed | 2020-12-04T12:45:21Z | 2021-01-18T16:42:36Z | https://github.com/recommenders-team/recommenders/issues/1256 | [

"enhancement"

] | miguelgfierro | 0 |

TencentARC/GFPGAN | deep-learning | 505 | Ytrded | ![Uploading replicate-prediction-ffu2e6aw4barxliwxu23ydgbnu.png…]()

| open | 2024-02-03T15:05:42Z | 2024-02-03T15:05:42Z | https://github.com/TencentARC/GFPGAN/issues/505 | [] | habiom | 0 |

microsoft/nni | machine-learning | 5,274 | MNIST Kubeflow Example Starts the Worker Pod then Set Status to Error | **Describe the issue**: My Issue is after executing the following command `nnictl create --config nni/examples/trials/mnist-tfv1/config_kubeflow.yml` it starts the experiment successfully. And it sends the TFJob to Kubeflow and kubeflow starts a working pod that gets the image msranni/nni:latest and then starts running... | closed | 2022-12-08T13:08:26Z | 2023-02-20T08:42:11Z | https://github.com/microsoft/nni/issues/5274 | [] | MHGanainy | 4 |

piskvorky/gensim | data-science | 2,585 | Having issue with encoding. | #### Problem description

I am trying to process a large corpus but in preprocess_string( ) it returns an error shown below

```

Traceback (most recent call last):

File "D:/Projects/docs_handler/data_preprocessing.py", line 60, in <module>

for temp in batch(iterator,1000):

File "D:/Projects/docs_handler/dat... | closed | 2019-08-28T08:23:02Z | 2019-08-28T13:57:50Z | https://github.com/piskvorky/gensim/issues/2585 | [] | gauravkoradiya | 2 |

lucidrains/vit-pytorch | computer-vision | 18 | Why only use the first patch? Thanks | I don't understand in line 124 of vit_pytorch.py:

`x = self.to_cls_token(x[:, 0])`

If the first dimension of x is batch, then the 2nd dimension 0 should be patch, as the dimension of x should be [batch, patch, feature]. Does it mean only the first patch is used? Could anybody help me on this? Thanks a lot. | closed | 2020-10-20T19:26:01Z | 2020-10-21T19:54:13Z | https://github.com/lucidrains/vit-pytorch/issues/18 | [] | junyongyou | 3 |

freqtrade/freqtrade | python | 10,717 | FreqUI backtesting visualize history error no data found | <!--

Have you searched for similar issues before posting it?

**Yes**

If you have discovered a bug in the bot, please [search the issue tracker](https://github.com/freqtrade/freqtrade/issues?q=is%3Aissue).

If it hasn't been reported, please create a new issue.

Please do not use bug reports to request new feat... | closed | 2024-09-27T09:59:33Z | 2024-09-27T12:13:35Z | https://github.com/freqtrade/freqtrade/issues/10717 | [

"Question"

] | jtong99 | 2 |

areed1192/interactive-broker-python-api | rest-api | 20 | issue "no module name IBW" error | <img width="259" alt="Screen Shot 2020-12-20 at 9 53 17 AM" src="https://user-images.githubusercontent.com/76019147/102720452-4c2f3300-42a9-11eb-9dd8-62d9fd1b951f.png">

I'm trying to figure out the issue but having hard time. I have a IB account. I created the config. run java8 with brew (run in Mac).

any help? | closed | 2020-12-20T17:55:20Z | 2021-03-04T02:29:14Z | https://github.com/areed1192/interactive-broker-python-api/issues/20 | [] | gra88hopper | 0 |

biolab/orange3 | scikit-learn | 6,879 | Python Script: example in widget help page causes warning | **What's wrong?**

The suggested code for the Zoo example in the Python Script help page causes a warning: "Direct calls to Table's constructor are deprecated and will be removed. Replace this call with Table.from_table":

**What's the solution?**

Rewrite the example code so that it conforms to tha latest version o... | open | 2024-08-21T13:27:31Z | 2024-09-06T07:18:23Z | https://github.com/biolab/orange3/issues/6879 | [

"bug report"

] | wvdvegte | 0 |

gevent/gevent | asyncio | 1,794 | How to use asgiref sync_to_async using a patched version of gevent.threadpool.ThreadPoolExecutor? | * gevent version: Please note how you installed it: From source, from

most recent on pypi

* Python version: Please be as specific as possible. For example,

"cPython 3.8.10 downloaded from python.org"

* Operating System: Please be as specific as possible. For example,

... | closed | 2021-05-29T02:27:49Z | 2021-05-31T10:43:29Z | https://github.com/gevent/gevent/issues/1794 | [] | allen-munsch | 2 |

lanpa/tensorboardX | numpy | 594 | Source archives contain byte-compiled .pyc files for Python 2.7 | **Describe the bug**

The source archives of the package as hosted on PyPI include 20 .pyc files byte compiled for Python 2.7 (`0x03f3` magic value):

```

$ file tensorboardX/proto/__init__.pyc

tensorboardX/proto/__init__.pyc: python 2.7 byte-compiled

```

**Expected behavior**

The source archives for the pac... | closed | 2020-06-30T22:07:44Z | 2020-07-03T12:55:31Z | https://github.com/lanpa/tensorboardX/issues/594 | [] | scdub | 1 |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 1,068 | choppy stretched out audio | My spectrogram looks kinda weird and the audio sounds like heavily synthesised choppy vocals, did I install anything [wrong?[

<img width="664" alt="Screenshot 2022-05-23 at 07 08 02" src="https://user-images.githubusercontent.com/71672036/169755394-e387d753-f4ce-46a3-8553-bafcec526580.png">

] | open | 2022-05-23T06:17:29Z | 2022-05-25T20:16:48Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1068 | [] | zemigm | 1 |

quokkaproject/quokka | flask | 637 | admin: actions | Enable more actions

https://github.com/rochacbruno/quokka_ng/issues/34 | open | 2018-02-07T01:56:32Z | 2018-02-07T01:56:33Z | https://github.com/quokkaproject/quokka/issues/637 | [

"1.0.0",

"hacktoberfest"

] | rochacbruno | 0 |

vitalik/django-ninja | django | 355 | [BUG] Reverse url names are not auto generated | **Describe the bug**

Reverse resolution of urls described on https://django-ninja.rest-framework.com/tutorial/urls/ does not work for me. By inspecting the generated resolver, I discovered, that views that do not explicitly specify `url_name` do not have a name generated at all. View function name is not used.

**Ve... | closed | 2022-02-09T14:01:11Z | 2022-06-26T16:29:26Z | https://github.com/vitalik/django-ninja/issues/355 | [] | stinovlas | 2 |

yeongpin/cursor-free-vip | automation | 189 | BUG | ℹ️ Checking Config File...

❌ Config File Not Found: C:\Users\Arda YaÅŸdiken (2)\AppData\Roaming\Cursor\User\globalStorage\storage.json

character encoding error | closed | 2025-03-11T01:51:55Z | 2025-03-11T08:52:32Z | https://github.com/yeongpin/cursor-free-vip/issues/189 | [

"bug",

"Completed"

] | kkatree | 1 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 501 | result is empty for any url from domain http://www.mckinsey.com | **Describe the bug**

All URLs from domain is returning empty result

**To Reproduce**

Domain: http://www.mckinsey.com

URLs tested and not working:

https://www.mckinsey.com/features/mckinsey-center-for-future-mobility/our-insights/autonomous-vehicles-moving-forward-perspectives-from-industry-leaders

https://ww... | closed | 2024-08-01T19:42:28Z | 2024-08-03T20:55:49Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/501 | [] | regismvargas | 3 |

strawberry-graphql/strawberry | asyncio | 2,832 | Sanic can't set cookies: get_context returning a TemporalResponse | ## Describe the Bug

Ref.: https://strawberry.rocks/docs/integrations/sanic#get_context

I am trying to set cookies in the response as shown here:

https://strawberry.rocks/docs/integrations/asgi#setting-response-headers

```python

class MyGraphQLView(GraphQLView):

async def get_context(self, request: Reque... | open | 2023-06-09T13:35:18Z | 2025-03-20T15:56:13Z | https://github.com/strawberry-graphql/strawberry/issues/2832 | [

"bug"

] | wedobetter | 5 |

uriyyo/fastapi-pagination | fastapi | 585 | create custom Page | Hi, I'm using beanie as ODM. Result of query is like it:

```code

{

"data": [

{

"_id": "641c538ff9188f91c76a8b66",

"created_at": "2023-03-23T13:26:39.289000",

"name": "string",

"description": "Welcome to Biscotte restaurant! Restaurant Biscotte offers a cuisine based on fresh, quality products, often local, or... | closed | 2023-03-24T17:38:33Z | 2023-03-24T18:20:06Z | https://github.com/uriyyo/fastapi-pagination/issues/585 | [] | opaniagu | 1 |

feder-cr/Jobs_Applier_AI_Agent_AIHawk | automation | 976 | [DOCS]: Docs udpate Job portals code refactoring and plugin. | ### Affected documentation section

_No response_

### Documentation improvement description

ADR for Job portals code refactoring and plugin.

updating Readme, plugin creation instructions and any other relevant docs

### Why is this change necessary?

_No response_

### Additional context

_No respons... | closed | 2024-12-01T12:42:29Z | 2024-12-19T02:03:46Z | https://github.com/feder-cr/Jobs_Applier_AI_Agent_AIHawk/issues/976 | [

"documentation",

"stale"

] | surapuramakhil | 2 |

pywinauto/pywinauto | automation | 1,099 | Python code freeze on exit pywinauto and tkinter . Python.exe created in crashdump folder | ## Expected Behavior

## Actual Behavior

Freeze and crashesh python.

## Steps to Reproduce the Problem

from tkinter import Tk

import tkinter.messagebox

import pywinauto

from pywinauto.keyboard import SendKeys

def show_msg(window_title, window_message):

root = Tk()

root.attributes('-alpha', 0.... | open | 2021-07-20T05:50:44Z | 2021-07-20T09:44:49Z | https://github.com/pywinauto/pywinauto/issues/1099 | [

"duplicate"

] | Botways | 1 |

xlwings/xlwings | automation | 2,265 | test_markdown.py fails due XLWINGS_LICENSE_KEY_SECRET being none | #### OS (e.g. Windows 10 or macOS Sierra)

macOS Ventura

#### Versions of xlwings, Excel and Python (e.g. 0.11.8, Office 365, Python 3.7)

xlwings - Latest,

Python 3.11

Office 365

#### Describe your issue (incl. Traceback!)

```python

pytest tests/test_markdown.py

```

```python

tests/test_markdown.py:8: ... | closed | 2023-05-24T08:10:56Z | 2023-05-25T09:35:06Z | https://github.com/xlwings/xlwings/issues/2265 | [] | Jeroendevr | 3 |

thtrieu/darkflow | tensorflow | 661 | Darknet YOLO is 4 bytes off for tiny-yolo and not working for tiny-yolo-voc | I was trying to use the tiny-yolo.weights for my project and I'm sure that I'm using the correct corresponding cfg file however it keeps on giving me this error:

Parsing ./cfg/tiny-yolo.cfg

Parsing cfg/tiny-yolo.cfg

Loading bin/tiny-yolo.weights ...

Traceback (most recent call last):

File "/Users/user/anacond... | open | 2018-03-24T15:48:37Z | 2019-04-03T17:28:14Z | https://github.com/thtrieu/darkflow/issues/661 | [] | MithilV | 6 |

jschneier/django-storages | django | 790 | . | . | closed | 2019-11-17T15:24:35Z | 2019-11-17T19:04:54Z | https://github.com/jschneier/django-storages/issues/790 | [] | niccolomineo | 0 |

horovod/horovod | pytorch | 3,955 | Segmentation fault error | **Environment:**

1. Framework: TensorFlow

2. Framework version: 1.15.0

3. Horovod version:0.19.5

4. MPI version:4.0.0

5. CUDA version:10.0

6. Python version:3.6.8

**Bug report:**

When I was running the example at https://github.com/horovod/horovod/blob/master/examples/tensorflow/tensorflow_word2vec.py it ra... | closed | 2023-07-04T05:53:59Z | 2023-08-31T10:38:29Z | https://github.com/horovod/horovod/issues/3955 | [

"bug"

] | etoilestar | 2 |

serengil/deepface | deep-learning | 768 | please find exception stacktrace - using arcface as model | ```

return DeepFace.find(img_path=img_path, db_path=config.tdes_images_location, align=align,

File "/home/akhil/PycharmProjects/TDES-analytics/venv/lib/python3.10/site-packages/deepface/DeepFace.py", line 488, in find

img_objs = functions.extract_faces(

File "/home/akhil/PycharmProjects/TDES-analytics/ve... | closed | 2023-06-03T19:17:18Z | 2023-10-15T21:32:30Z | https://github.com/serengil/deepface/issues/768 | [

"question"

] | surapuramakhil | 3 |

Yorko/mlcourse.ai | numpy | 776 | Issue on page /book/topic04/topic4_linear_models_part5_valid_learning_curves.html | The first validation curve is missing

| closed | 2024-08-30T12:07:28Z | 2025-01-06T15:49:43Z | https://github.com/Yorko/mlcourse.ai/issues/776 | [] | ssukhgit | 1 |

bmoscon/cryptofeed | asyncio | 418 | Dynamically adding feeds | **Is your feature request related to a problem? Please describe.**

I'm running cryptofeed in a separate thread, and I would like to dynamically subscribe to feeds from the main thread.

Is this already supported?

**Describe the solution you'd like**

Potentially, the `add_feed` function should be callable from anot... | closed | 2021-02-14T19:09:11Z | 2021-04-01T22:18:59Z | https://github.com/bmoscon/cryptofeed/issues/418 | [

"Feature Request"

] | thisiscam | 10 |

graphql-python/graphene-django | django | 1,273 | Consider supporting promise-based dataloaders in v3 | I know graphql-core dropped support for promises, but the author seems to think promise-support can be added via hooks like middleware and execution context (see response to my identical issue in https://github.com/graphql-python/graphql-core/issues/148).

Since most people using syrusakbary's promise library are pr... | closed | 2021-11-25T22:08:40Z | 2021-11-25T22:12:11Z | https://github.com/graphql-python/graphene-django/issues/1273 | [

"✨enhancement"

] | AlexCLeduc | 1 |

mwouts/itables | jupyter | 32 | Conversion to HTML shows only header line | When running `jupyter nbconvert --to html test.ipynb`

to convert the example script from the website (see below), the resulting html page only shows the header of table but no contents.

When inspecting the HTML code, the header is in plain HTML while the data are in a JavaScript. However, they don't show in any of ... | closed | 2021-12-22T15:27:48Z | 2021-12-22T19:18:18Z | https://github.com/mwouts/itables/issues/32 | [] | axel-loewe | 3 |

jmcnamara/XlsxWriter | pandas | 721 | Don't work 'invert_if_negative' | Maybe I’m not using the 'invert_if_negative' attribute correctly, but I don’t see any changes when applying it.

```

import xlsxwriter

workbook = xlsxwriter.Workbook('chart_column.xlsx')

worksheet = workbook.add_worksheet()

chart = workbook.add_chart({'type': 'column'})

worksheet.write_column('A1', [1, 2,... | closed | 2020-05-29T18:04:39Z | 2020-05-29T19:18:01Z | https://github.com/jmcnamara/XlsxWriter/issues/721 | [

"question"

] | VladislavN | 1 |

healthchecks/healthchecks | django | 376 | [feature request] ability to specify paused ping handling through API | Thanks for implementing https://github.com/healthchecks/healthchecks/issues/369!

Would it be possible to have that feature be able to be specified through the API when creating a check? That way I can add it to the healthcheck manager script to solve the problem described in https://github.com/healthchecks/healthche... | closed | 2020-06-06T23:10:24Z | 2020-09-09T20:49:59Z | https://github.com/healthchecks/healthchecks/issues/376 | [] | caleb15 | 4 |

pywinauto/pywinauto | automation | 656 | does pywinauto support other .NET objects like Infragistics? | hi

i have a WPF application which full of customized objects like infragistic, tabRibon, embeded browser ...

does pywinauto can support it ? | open | 2019-01-16T09:04:55Z | 2019-01-18T11:36:01Z | https://github.com/pywinauto/pywinauto/issues/656 | [

"question"

] | michaazran | 2 |

mwaskom/seaborn | matplotlib | 3,639 | catplot with numeric hue and hue_order: empty legend handles | Tested with Seaborn 0.13.2, pandas 2.2.1

When the hue values are numeric, `hue_order` isn't respected in the plot. The legend does respect the order, but with empty legend handles.

```python

import seaborn as sns

import pandas as pd

tips = sns.load_dataset('tips')

sns.catplot(tips, kind='box', x='time', y='ti... | open | 2024-02-27T07:19:45Z | 2024-03-01T17:52:27Z | https://github.com/mwaskom/seaborn/issues/3639 | [] | jhncls | 2 |

hyperspy/hyperspy | data-visualization | 3,056 | Incompatibility with a new | #### Describe the bug

Cannot load data with the new version of pint (released three days ago)

#### To Reproduce

Steps to reproduce the behavior:

```python

import hyperspy.api as hs

s = hs.load('data.hspy')

### Wild error appears

```

#### Expected behavior

pint.unit has disappeared in the new pint release.... | closed | 2022-10-28T14:31:05Z | 2022-10-29T10:43:57Z | https://github.com/hyperspy/hyperspy/issues/3056 | [

"type: bug"

] | LMSC-NTappy | 2 |

eralchemy/eralchemy | sqlalchemy | 80 | KeyError: '_data' | With

- Python 3.9.2

- sqlalchemy 1.4.0

I get this error:

```

Traceback (most recent call last):

File "/home/julian/src/bruce-leads/.venv/lib/python3.9/site-packages/sqlalchemy/sql/base.py", line 1104, in __getattr__

return self._index[key]

KeyError: '_data'

The above exception was the direct caus... | closed | 2021-03-19T10:38:58Z | 2024-07-07T10:02:17Z | https://github.com/eralchemy/eralchemy/issues/80 | [] | julian-r | 13 |

albumentations-team/albumentations | deep-learning | 2,392 | [New feature] Add apply_to_images to AutoContrast | open | 2025-03-11T00:58:31Z | 2025-03-11T00:58:38Z | https://github.com/albumentations-team/albumentations/issues/2392 | [

"enhancement",

"good first issue"

] | ternaus | 0 | |

plotly/plotly.py | plotly | 4,131 | plotly-express fig.update_traces(root_color="black") has no effect | Graph renders with default charcoal root colour irrespective of colour set in update_traces(root_color='some color')

| closed | 2023-03-29T08:59:52Z | 2023-04-01T14:02:13Z | https://github.com/plotly/plotly.py/issues/4131 | [] | ouryperd | 2 |

coqui-ai/TTS | pytorch | 2,555 | [Bug] RuntimeError: min(): Expected reduction dim to be specified for input.numel() == 0. Specify the reduction dim with the 'dim' argument. | ### Describe the bug

I am training a voice cloning model using VITS. My dataset is in LJSpeech Format. I am trying to train Indian English model straight from character with Phonemizer = False. The training runs for 35-40 epochs and then abruptly stops. Sometimes it runs for even longer, like 15k steps and then stop... | closed | 2023-04-25T11:52:05Z | 2024-01-23T15:28:31Z | https://github.com/coqui-ai/TTS/issues/2555 | [

"bug",

"wontfix"

] | offside609 | 13 |

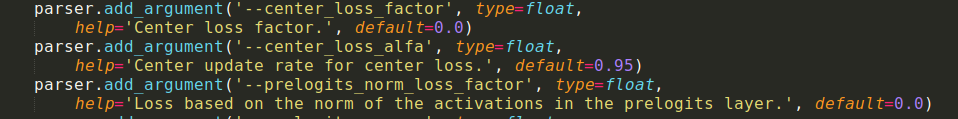

davidsandberg/facenet | computer-vision | 1,161 | center_loss_factor and prelogits_norm_loss_factor during softmax training | In train_softmax.py, the default value of center_loss_factor and prelogits_norm_loss_factor are both 0.0. Is it the setting we should use to train facenet from scratch?

| open | 2020-06-24T04:41:18Z | 2020-06-24T04:41:18Z | https://github.com/davidsandberg/facenet/issues/1161 | [] | w19787 | 0 |

tflearn/tflearn | tensorflow | 1,137 | tensorflow.python.framework.errors_impl.FailedPreconditionError | How can i fix this error ?

please help

<

import tflearn

import numpy as np

import tensorflow as tf

tf.reset_default_graph()

q_inputs = tf.placeholder(tf.float32, [None, 1, 8])

net, hidden_states_1 = tflearn.lstm(q_inputs, 10, return_seq=True, return_state=True, scope="lstm_1", reuse=False)

q_values = tflearn... | open | 2019-10-11T08:04:53Z | 2019-10-11T08:04:53Z | https://github.com/tflearn/tflearn/issues/1137 | [] | Eroslon | 0 |

pytorch/pytorch | numpy | 149,799 | bug in pytorch/torch/nn/parameter: | ### 🐛 Describe the bug

```python

class UninitializedBuffer(UninitializedTensorMixin, torch.Tensor):

r"""A buffer that is not initialized.

Uninitialized Buffer is a a special case of :class:`torch.Tensor`

where the shape of the data is still unknown.

Unlike a :class:`torch.Tensor`, uninitialized para... | closed | 2025-03-22T08:46:52Z | 2025-03-24T16:21:31Z | https://github.com/pytorch/pytorch/issues/149799 | [] | said-ml | 1 |

vitalik/django-ninja | pydantic | 1,319 | Content Negotiation | Is it possible to return a different response based on the "Accept" header or a query param e.g. ?format=json-ld

| open | 2024-10-16T08:03:35Z | 2024-10-17T05:25:00Z | https://github.com/vitalik/django-ninja/issues/1319 | [] | MiltosD | 5 |

minivision-ai/photo2cartoon | computer-vision | 45 | The lights of faces may cause bad results. | I train the model with the given dataset, but found that some faces with light get a bad result at the edge of face. | closed | 2020-10-19T05:57:07Z | 2020-11-19T07:38:33Z | https://github.com/minivision-ai/photo2cartoon/issues/45 | [] | CodingMice | 1 |

RobertCraigie/prisma-client-py | asyncio | 3 | Model aliases can clash | ## Problem

For example, a model defines two relational fields, the first named "categories" that references a "CustomCategories" model and the second named "posts" that references a "Post" model and in the "Post" model a relational field named "categories" is defined that references a "Categories" model. This setup wi... | closed | 2021-01-13T07:29:01Z | 2021-06-18T13:55:47Z | https://github.com/RobertCraigie/prisma-client-py/issues/3 | [

"bug/2-confirmed",

"kind/bug"

] | RobertCraigie | 0 |

miguelgrinberg/Flask-Migrate | flask | 410 | Is There a way to ignore a model not to generate op.create_table for the model? | I'm using multiple databases with MySQL and BigQuery by declaring the binds

```

SQLALCHEMY_BINDS = {

'bigquery': BIGQUERY_URI,

}

```

```

app = Flask(__name__)

from models import db, migrate

db.init_app(app)

migrate.init_app(app=app, db=db)

```

the bigquery table is already created but when I exec... | closed | 2021-06-02T11:18:37Z | 2021-06-03T01:58:56Z | https://github.com/miguelgrinberg/Flask-Migrate/issues/410 | [

"question"

] | mz-ericlee | 2 |

supabase/supabase-py | fastapi | 525 | Bulk Delete by array of UUID strings | **Describe the bug**

Unable to delete using a .filter('in", array_of_ids)

**To Reproduce**

Steps to reproduce the behavior:

1. Create a supabase python client (mine is under)

`self.commons['supabase']`

2. Have an array of uuids represented as strings ex:

`vector_ids = ["aed10938-65db-4678-8438-cf7684eaefd7", "... | closed | 2023-08-24T01:57:14Z | 2024-07-06T12:01:43Z | https://github.com/supabase/supabase-py/issues/525 | [

"enhancement"

] | levi-katarok | 2 |

iterative/dvc | machine-learning | 9,913 | `repro`: does not run stage if params are removed from dvc.yaml (in some cases) | # Bug Report

## Description

DVC runs a stage if params are changed, but in some cases the stage is considered as unchanged if params are compeletely removed from `dvc.yaml`.

If params are changed in params.yaml, everything works as expected (stage is run).

I have not tested how DVC behaves if dependencies a... | closed | 2023-09-05T11:47:02Z | 2023-09-08T14:58:51Z | https://github.com/iterative/dvc/issues/9913 | [

"awaiting response",

"A: pipelines",

"A: params"

] | lumbric | 4 |

schemathesis/schemathesis | graphql | 1,933 | [BUG] Error in exception handling | ### Checklist

- [x] I checked the [FAQ section](https://schemathesis.readthedocs.io/en/stable/faq.html#frequently-asked-questions) of the documentation

- [x] I looked for similar issues in the [issue tracker](https://github.com/schemathesis/schemathesis/issues)

- [x] I am using the latest version of Schemathesis

... | closed | 2023-12-04T10:38:38Z | 2023-12-04T12:21:10Z | https://github.com/schemathesis/schemathesis/issues/1933 | [

"Priority: Critical",

"Type: Bug"

] | navruzm | 3 |

getsentry/sentry | django | 87,181 | Add details on differences between auto vs manual setup of Cocoa SDK | When configuring an iOS SDK, there are two options: `Auto` and `Manual`.

If choosing `Auto`, it summarizes the steps take like this:

> The Sentry wizard will automatically patch your application:

>

> - Install the Sentry SDK via Swift Package Manager or Cocoapods

> - Update your AppDelegate or SwiftUI App Initialize... | open | 2025-03-17T15:18:20Z | 2025-03-17T15:18:20Z | https://github.com/getsentry/sentry/issues/87181 | [

"Platform: Cocoa",

"Type: Improvement"

] | philprime | 0 |

home-assistant/core | python | 140,491 | ONVIF - No registered handler for event from XX:XX:XX:XX:XX:XX - IPCAM C9F0SEZ0N0P2L0 | ### The problem

Warning for No registered handler for event

Happens after restarted HA and from time to time while HA is up and running with no particular trigger as far as I have seen.

The camera streams correctly thought, but I've got thousands of those warning in the log.

This is a Chinese cam, called IPCAM C9F0... | open | 2025-03-13T08:21:59Z | 2025-03-15T15:24:18Z | https://github.com/home-assistant/core/issues/140491 | [

"integration: onvif"

] | tslpre | 7 |

explosion/spaCy | data-science | 12,590 | Incorrect lemma for "taxes" | ## Description of issue

I'm not sure if this should be an accepted side-effect of the model or not so I apologize in advance if this is not considered a bug. When obtaining the lemma for "taxes", it returns "taxis".

## How to reproduce the behaviour

```

import spacy

nlp = spacy.load("en_core_web_sm")

nlp.... | closed | 2023-05-02T17:54:35Z | 2023-06-08T00:02:17Z | https://github.com/explosion/spaCy/issues/12590 | [

"lang / en",

"feat / lemmatizer",

"resolved"

] | jaleskovec | 3 |

geopandas/geopandas | pandas | 2,654 | BUG: GeoDataFrame.to_parquet writes geometry in EWKB instead of ISO WKB required by spec | - [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest version of geopandas.

- [x] (optional) I have confirmed this bug exists on the main branch of geopandas.

---

#### Code Sample, a copy-pastable example

```python

import geopandas

ge... | closed | 2022-11-23T16:12:10Z | 2023-02-11T10:36:10Z | https://github.com/geopandas/geopandas/issues/2654 | [

"bug"

] | himikof | 3 |

miguelgrinberg/Flask-Migrate | flask | 252 | sqlalchemy.exc.OperationalError: when trying to migrate existing database | Hi Miguel,

I'm having issues migrating a sqlite database (dev version, production is mysql). I needed a change on String length. The migration script is created as follows:

`def upgrade():

# ### commands auto generated by Alembic - please adjust! ###

op.alter_column('answer', 'answer',

e... | closed | 2019-02-04T20:58:25Z | 2021-07-09T13:31:10Z | https://github.com/miguelgrinberg/Flask-Migrate/issues/252 | [

"question"

] | git-bone | 6 |

lanpa/tensorboardX | numpy | 716 | Loosening protobuf version limit breaking downstream packages | **Describe the bug**

The recent removal of the protobuf version version limit (https://github.com/lanpa/tensorboardX/pull/712) has breaking implications for downstream packages dependent on tensorboardX and protobuf<3.20.

**Minimal runnable code to reproduce the behavior**

```

$ pip install tensorboardX==2.6.2

... | closed | 2023-08-01T17:12:23Z | 2023-08-23T17:16:22Z | https://github.com/lanpa/tensorboardX/issues/716 | [] | psfoley | 7 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 383 | Do we have a output parser to get a certain format output | **Is your feature request related to a problem? Please describe.**

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear an... | closed | 2024-06-14T18:37:05Z | 2024-06-14T18:43:54Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/383 | [] | Vikrant-Khedkar | 1 |

rougier/from-python-to-numpy | numpy | 108 | anatomy of an array: multidimensional array start and stop from view | At the end of the section "Anatomy of an array" you give an example of how given a view of a multidimensional array we can infer the start:stop:step structure of each index. I have compiled the code and it works fine. But there is a line of code that I have trouble following:

`offset_stop = (np.byte_bounds(view)[-1] -... | open | 2023-01-12T20:59:02Z | 2023-02-14T10:00:08Z | https://github.com/rougier/from-python-to-numpy/issues/108 | [] | empeirikos | 5 |

flairNLP/flair | nlp | 2,773 | Fine-tuning or extended training of target language in NER few-shot transfer? | Firstly, I would like to thank everyone contributing to this easy-to-use, well structured and open-source framework.

I myself am currently writing my bachelor thesis and using during the last weeks daily the flair framework, since I am trying out different languages in zero and few-shot transfer in order to figure out... | closed | 2022-05-16T16:45:02Z | 2022-11-01T15:04:45Z | https://github.com/flairNLP/flair/issues/2773 | [

"question",

"wontfix"

] | i-partalas | 4 |

PaddlePaddle/ERNIE | nlp | 800 | 复现ERNIE-GEN的Persona-chat数据集时出现错误 | 当我执行

```

MODEL="base" # base or large or large_430g

TASK="personachat" # cnndm, coqa, gigaword, squad_qg or persona-chat

sh run_seq2seq.sh ./configs/${MODEL}/${TASK}_conf

```

这是log文件的输出

```

----------- Configuration Arguments -----------

current_node_ip: 127.0.1.1

log_prefix:

node_id: 0

node_ips: 1... | closed | 2022-04-29T08:47:09Z | 2022-07-14T07:41:14Z | https://github.com/PaddlePaddle/ERNIE/issues/800 | [

"wontfix"

] | xiang-xiang-zhu | 3 |

thunlp/OpenPrompt | nlp | 116 | Library does not allow to extract on mask | There is no way to extract the mask prediction... the [extract_at_mask)](https://thunlp.github.io/OpenPrompt/modules/base.html?highlight=mask#openprompt.pipeline_base.PromptForClassification.extract_at_mask) is only implemented for `PromptForClassification` which only outputs the prediction of classification layer...

... | closed | 2022-02-12T15:34:25Z | 2022-03-31T02:17:27Z | https://github.com/thunlp/OpenPrompt/issues/116 | [] | gmihaila | 2 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,664 | [Feature Request]: Added notification sound | ### Is there an existing issue for this?

- [X] I have searched the existing issues and checked the recent builds/commits

### What would your feature do ?

Not do, does. Not sending up as a pull request, more fyi if anyone wants the feature and how to do it.

Does:

Upon completion of image generation, or, completio... | open | 2024-11-18T01:46:09Z | 2024-11-19T05:59:47Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16664 | [

"enhancement"

] | hackedpassword | 3 |

errbotio/errbot | automation | 1,692 | Package installation issues | Hey everyone,

I would really appreciate some help with getting errbot to connect to a mattermost-preview server running locally

**Issue description**

I have a mattermost-preview server successfully running on a docker image with an account set up

I'm having trouble with cryptography and openssl.

I get this err... | open | 2024-05-09T01:47:06Z | 2024-05-09T01:47:34Z | https://github.com/errbotio/errbot/issues/1692 | [

"type: support/question"

] | Marcusg33 | 0 |

hankcs/HanLP | nlp | 700 | 繁转简中出现的“陷阱”被翻译为“猫腻” | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检... | closed | 2017-11-29T09:10:01Z | 2017-12-02T03:17:07Z | https://github.com/hankcs/HanLP/issues/700 | [

"improvement"

] | lucifering | 2 |

postmanlabs/httpbin | api | 291 | Would it be possible to add a "/timeout" endpoint to httpbin? | My use case is building a client that understands the unreliability of distributed systems, and attempts backoffs, retries and other stuff. Having an endpoint which intentionally never returns a response or similarly represents the unreliability here would be really useful. Let me know if I can provide more detail.

| closed | 2016-06-16T10:59:19Z | 2018-04-26T17:51:10Z | https://github.com/postmanlabs/httpbin/issues/291 | [] | fables-tales | 3 |

sqlalchemy/alembic | sqlalchemy | 550 | consider rationale for ModifyTableOps emitting "pass" for no operations | I was using alembic's rewrite feature to ignore comment changes (temporarily, not important for the issue):

```python

@writer.rewrites(ops.AlterColumnOp)

def rewrite_alter_column(context, revision, op):

op.modify_comment = False

if not op.has_changes():

return []

return op

```

the resul... | closed | 2019-04-01T13:05:40Z | 2019-09-17T23:09:11Z | https://github.com/sqlalchemy/alembic/issues/550 | [

"bug",

"autogenerate - rendering"

] | RazerM | 3 |

man-group/arctic | pandas | 228 | Mongo fail-over during append can leave a Version in an inconsistent state | We can trigger this assertion:

```

...

File "/app/AHL/packages/ahl.tickdownsample/1.16.0-py2.7/app/AHL/ahl.tickdownsample/lib/python2.7/site-packages/ahl.mongo-1.297.0-py2.7-linux-x86_64.egg/ahl/mongo/mongoose/store/version_store.py", line 105, in append

return super(VersionStore, self).append(symbol, data, meta... | closed | 2016-09-19T17:22:37Z | 2016-09-20T18:38:17Z | https://github.com/man-group/arctic/issues/228 | [

"bug"

] | jamesblackburn | 3 |

vitalik/django-ninja | pydantic | 921 | [BUG] Package generates invalid openapi.json files | **Describe the bug**

The `openapi.json` file states that it is compliant with version 3.0.2, but it uses features that are only valid in 3.1.0.

Pydantic docs [say](https://docs.pydantic.dev/latest/why/): "Pydantic generates [JSON Schema version 2020-12](https://json-schema.org/draft/2020-12/release-notes.html), the... | closed | 2023-11-13T23:02:48Z | 2023-11-16T16:35:46Z | https://github.com/vitalik/django-ninja/issues/921 | [] | scott-8 | 0 |

awesto/django-shop | django | 719 | Failed building wheel for rcssmin | ----------------------------------------

Failed building wheel for hiredis

Running setup.py clean for hiredis

Running setup.py bdist_wheel for rcssmin ... error

Complete output from command /home/c013ra/Desktop/django-shop/myenv/bin/python3 -u -c "import setuptools, tokenize;__file__='/tmp/pip-install-24x5v... | closed | 2018-04-01T05:12:16Z | 2018-11-08T16:43:20Z | https://github.com/awesto/django-shop/issues/719 | [] | ghost | 3 |

google-research/bert | tensorflow | 580 | Model Hyper Parameters to change after pretraining on the custom dataset | I had run the pretraining code of bert on a custom dataset and now i want to know which arguments i should change based on the pretrained model. The only arguments which I have changed among the three arguments(vocab_file,config_file,init_checkpoint) is the init_checkpoint which I have given the latest checkpoint creat... | closed | 2019-04-15T09:27:05Z | 2019-04-16T10:20:47Z | https://github.com/google-research/bert/issues/580 | [] | aswin-giridhar | 1 |

lundberg/respx | pytest | 90 | Big overhead when mocking 149 urls | I'm currently migrating from aiohttp/aioresponses to httpx/respx, and seeing a large regression in test times. An integration test where I mock 149 urls which took 0.5s with aioresponses now takes 2.2s with respx. `build_request` seems to be the major culprit. I've created a flamegraph with py-spy ([full svg as gist](h... | closed | 2020-10-09T10:39:37Z | 2020-10-15T08:43:25Z | https://github.com/lundberg/respx/issues/90 | [] | konstin | 10 |

fastapi/sqlmodel | fastapi | 107 | Return a Column class for relationship attributes that require it | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X] I al... | open | 2021-09-20T16:53:55Z | 2024-05-28T20:25:13Z | https://github.com/fastapi/sqlmodel/issues/107 | [

"feature"

] | ohmeow | 3 |

marshmallow-code/flask-marshmallow | sqlalchemy | 128 | `__init__() got an unexpected keyword argument 'ordered'` when subclassing mm.ModelSchema | This worked fine with marshmallow 2 but started failing with marshmallow 3rc4, on Python 2.7.15

```python

from flask_marshmallow import Marshmallow

from flask_marshmallow.sqla import SchemaOpts

from marshmallow_sqlalchemy import ModelConverter

class MyModelConverter(ModelConverter):

pass # usually i do... | closed | 2019-03-14T16:23:41Z | 2019-03-14T16:26:54Z | https://github.com/marshmallow-code/flask-marshmallow/issues/128 | [] | ThiefMaster | 1 |

schenkd/nginx-ui | flask | 30 | flask auth? | Do I need to implement authorization based on flask? This will protect inexperienced users from unauthorized access by default.

| closed | 2020-07-05T15:29:08Z | 2022-11-18T21:27:09Z | https://github.com/schenkd/nginx-ui/issues/30 | [] | foozzi | 4 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.