repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

Johnserf-Seed/TikTokDownload | api | 555 | 希望加个抖音被封账号批量下载视频功能 | 现在被封账号,下载链接是403,登录被封账号就能下载 | open | 2023-09-21T15:17:53Z | 2023-12-26T11:59:13Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/555 | [

"不修复(wontfix)"

] | allcdn | 2 |

google-research/bert | nlp | 839 | Windows fatal exception: access violation | When I was running the MRPC example, this line of code reported a fatal error.

` with tf.io.gfile.GFile(FLAGS.input_meta_data_path, 'rb') as reader:

input_meta_data = json.loads(reader.read().decode('utf-8'))

` | open | 2019-09-05T02:14:55Z | 2022-08-11T04:36:36Z | https://github.com/google-research/bert/issues/839 | [] | BruceLee66 | 2 |

openapi-generators/openapi-python-client | rest-api | 525 | Add support for basic auth | **Is your feature request related to a problem? Please describe.**

OpenAPI 3 supports expressing basic auth support: https://swagger.io/docs/specification/authentication/basic-authentication/

While basic auth is often not ideal for production, during development basic auth can be quite handy. Currently it is not ... | closed | 2021-10-25T19:09:40Z | 2023-08-13T01:56:52Z | https://github.com/openapi-generators/openapi-python-client/issues/525 | [

"✨ enhancement",

"🍭 OpenAPI Compliance"

] | johnthagen | 0 |

alteryx/featuretools | scikit-learn | 2,755 | Is it possible to support azure Synapse as SQL dialect | All the data on my business is on Azure SQL Synapse.

Is it possible to support this with feature tools. Otherwise I cant use the package due to infrastructure constraints.

| open | 2024-10-07T09:20:04Z | 2024-10-10T09:13:23Z | https://github.com/alteryx/featuretools/issues/2755 | [

"new feature"

] | Fish-Soup | 1 |

seleniumbase/SeleniumBase | web-scraping | 3,226 | SeleniumBase Freezing when initializing in debugger | I'm having a problem when initializing the Driver. When I run it through the vscode debugger, when I install the SeleniumBase library, it runs normally once. However, when I run it again through the debugger, it freezes when declaring the Driver (uc = True). Without the parameter, it runs normally.

disclaimer: it runs... | closed | 2024-10-27T02:40:27Z | 2024-10-27T03:49:34Z | https://github.com/seleniumbase/SeleniumBase/issues/3226 | [

"invalid usage",

"external",

"UC Mode / CDP Mode"

] | TheHolsback | 1 |

MaartenGr/BERTopic | nlp | 1,130 | LookupError in fit_transform | Hello,

Running `fit_transform` gave me the following output.

> LookupError Traceback (most recent call last) Cell In[14], line 1 ----> 1 topics, probabilities = model.fit_transform(cleaned_articles) File ~\AppData\Local\Packages\PythonSoftwareFoundation.Python.3.10_qbz5n2kfra8p0\LocalCache\local-packages\Python310\s... | closed | 2023-03-27T21:27:19Z | 2023-05-23T09:25:53Z | https://github.com/MaartenGr/BERTopic/issues/1130 | [] | PanosP | 4 |

rougier/from-python-to-numpy | numpy | 48 | Typos 3.3 | > Thus, if you need fancy indexing, it's better to keep a copy of **your** fancy index (especially if it was complex to compute it) and to work with it:

...

> If you are unsure if the result of **your** indexing is a view or a copy, you can check what is the base of your result. If it is None, then you result is ... | closed | 2017-01-26T17:06:17Z | 2017-01-26T19:47:40Z | https://github.com/rougier/from-python-to-numpy/issues/48 | [] | pylang | 1 |

google-research/bert | tensorflow | 557 | masked_lm_accuracy is low at 0.51, but next_sentence_accuracy is high at 0.93 | how to explain that,

my training set about 1M line, runing 50000 steps, batchsize is 32 | closed | 2019-04-06T03:11:54Z | 2019-10-24T11:56:38Z | https://github.com/google-research/bert/issues/557 | [] | SeekPoint | 4 |

google-research/bert | tensorflow | 1,250 | Where is pre-trained model tf_examples.tfrecord ? | i try run this command

python run_pretraining.py \

--input_file=/tmp/tf_examples.tfrecord \

--output_dir=/tmp/pretraining_output \

--do_train=True \

--do_eval=True \

--bert_config_file=$BERT_BASE_DIR/bert_config.json \

--init_checkpoint=$BERT_BASE_DIR/bert_model.ckpt \

--train_batch_size=32 \

... | open | 2021-08-04T10:26:50Z | 2022-06-05T09:05:49Z | https://github.com/google-research/bert/issues/1250 | [] | nicejava | 1 |

vitalik/django-ninja | rest-api | 1,027 | Use `class Meta` or `class Config` | So the documentation states for `ModelSchema` we should define it like

```python

from django.contrib.auth.models import User

from ninja import ModelSchema

class UserSchema(ModelSchema):

class Meta:

model = User

fields = ['id', 'username', 'first_name', 'last_name']

```

However, I am c... | open | 2023-12-22T17:14:02Z | 2023-12-26T18:14:08Z | https://github.com/vitalik/django-ninja/issues/1027 | [] | alexwolf22 | 1 |

coqui-ai/TTS | python | 3,233 | [Bug] In tokenizer.py[line 180],_abbreviations miss "zh-cn" options, and cause valid dataset blank. please check | ### Describe the bug

In tokenizer.py[line 180],_abbreviations miss "zh-cn" options, and cause valid dataset blank

### To Reproduce

python ..\TTS\recipes\ljspeech\xtts_v2\train_gpt_xtts.py

### Expected behavior

mo... | closed | 2023-11-16T02:20:16Z | 2023-11-21T21:24:18Z | https://github.com/coqui-ai/TTS/issues/3233 | [

"bug"

] | jackyin68 | 5 |

NullArray/AutoSploit | automation | 818 | Divided by zero exception88 | Error: Attempted to divide by zero.88 | closed | 2019-04-19T16:01:10Z | 2019-04-19T16:37:33Z | https://github.com/NullArray/AutoSploit/issues/818 | [] | AutosploitReporter | 0 |

jupyter/nbgrader | jupyter | 995 | Released assignments are still there after nbgrader db assignment remove | OS: Ubuntu 16.04.4 LTS

nbgrader version 0.5.4

jupyterhub version 0.9.0

jupyter notebook version 5.5.0

Expected behavior:

When I use the command `nbgrader db assignment remove <assignment_name>`, it must remove all the relevant files and mentions.

Actual behavior:

When I use the command `nbgrader db assignmen... | open | 2018-07-14T14:15:23Z | 2022-06-23T10:21:11Z | https://github.com/jupyter/nbgrader/issues/995 | [

"enhancement",

"documentation"

] | maryamdev | 3 |

huggingface/transformers | python | 36,640 | [Feature Request]: refactor _update_causal_mask to a public utility | ### Feature request

refactor _update_causal_mask to a public utility

### Motivation

After this pr https://github.com/huggingface/transformers/pull/35235/files#diff-06392bad3b9e97be9ade60d4ac46f73b6809388f4d507c2ba1384ab872711c51

all the attention implement already refactor to use ALL_ATTENTION_FUNCTIONS and people... | open | 2025-03-11T07:14:39Z | 2025-03-12T15:13:09Z | https://github.com/huggingface/transformers/issues/36640 | [

"Feature request"

] | Irvingwangjr | 2 |

proplot-dev/proplot | data-visualization | 391 | When subplotting, all lines are plotted only in the last image plot | Very basic example code, I am plotting images for each axis. Additionally, for each axis, I am plotting some lines with `coco.plot.showAnns(anns)` which in turn calls several `pplt.pyplot.plot()` functions

For some reason, instead of having lines plotted in each image, all them are plotted only in the last one?

| closed | 2022-09-23T15:46:02Z | 2023-03-03T22:45:39Z | https://github.com/proplot-dev/proplot/issues/391 | [

"support"

] | Robotatron | 5 |

kynan/nbstripout | jupyter | 10 | ions | closed | 2016-02-04T04:53:54Z | 2016-02-15T21:51:18Z | https://github.com/kynan/nbstripout/issues/10 | [

"resolution:invalid"

] | mforbes | 0 | |

piskvorky/gensim | nlp | 3,001 | save_word2vec_format TypeError when specifying count in KeyedVectors initialization | #### Problem description

When using preallocation for the initialization of KeyedVectors, the model cannot be stored with `save_word2vec_format`.

This prevents iteratively filling the model with `add_vector` as it would incur a big performance hit.

#### Steps/code/corpus to reproduce

Minimal example:

```pyth... | open | 2020-11-19T09:35:55Z | 2022-07-04T08:25:54Z | https://github.com/piskvorky/gensim/issues/3001 | [] | Iseratho | 4 |

python-restx/flask-restx | api | 179 | How to define a generic class in my return class | The unified return object we defined in the project is a generic object. How should I express it on swagger? The generic class is as follows:

`from typing import TypeVar, Generic

from flask import jsonify

T = TypeVar('T')

class ResponseResult(Generic[T]):

errorCode: str

errorMessage: str

errorTyp... | open | 2020-07-21T12:32:41Z | 2020-07-21T12:32:41Z | https://github.com/python-restx/flask-restx/issues/179 | [

"question"

] | somta | 0 |

TheKevJames/coveralls-python | pytest | 251 | Update 3.0.0 Release Notes | Thank you for the great work on this package.

Upon running a build today on GitHub actions, coveralls.io submission failed with a `422 Client Error: Unprocessable Entity` error when submitting to coveralls.io.

The release notes look to have a typo (it says to set `service-name` vs `service`) on the command to ov... | closed | 2021-01-12T13:17:55Z | 2021-01-15T21:25:27Z | https://github.com/TheKevJames/coveralls-python/issues/251 | [] | davidmezzetti | 4 |

pydantic/pydantic-ai | pydantic | 205 | logfire included in base install | Hey, I just wanted to start of by saying - thank you for making this amazing package. Contrary to the installation docs (see https://ai.pydantic.dev/install/), it looks like `logfire-api` is installed with the base version of `pydantic-ai`.

```

matthewlemay@Matthews-Laptop-2 ~/G/brugge (main)> uv add pydantic-ai

R... | closed | 2024-12-10T18:15:32Z | 2024-12-10T19:12:26Z | https://github.com/pydantic/pydantic-ai/issues/205 | [

"question"

] | mplemay | 2 |

davidsandberg/facenet | tensorflow | 281 | How to get the model with .pb format, the format of the model I got from facenet_train_classifier.py is .ckpt | closed | 2017-05-18T03:43:28Z | 2017-11-21T18:56:07Z | https://github.com/davidsandberg/facenet/issues/281 | [] | bingjilin | 5 | |

jina-ai/serve | fastapi | 5,554 | Specify openapi_url to customize openapi.json serving | Hi, is there a way to specify a custom location for the openapi.json in a similar fashion as FastAPI does? Any help would be appreciated. Often you want to use different gateway permissions for your swagger docs served on /docs and it's handy to have all assets served there.

```

app = FastAPI(

title="..",

... | closed | 2022-12-22T08:31:56Z | 2022-12-22T15:55:30Z | https://github.com/jina-ai/serve/issues/5554 | [] | masc-it | 1 |

mwaskom/seaborn | matplotlib | 3,644 | histogram with fixed binwidth - unexpected results for last column | When creating a simple histogram with binwidth of 1, I was surprised that the last two numbers were merged in a single column.

`fig = sns.histplot([0,1,1,1,3,4,6,7], binwidth=1)`

Similarly, the last bar in the o... | closed | 2024-02-29T14:05:24Z | 2024-03-01T12:26:25Z | https://github.com/mwaskom/seaborn/issues/3644 | [] | KathSe1984 | 4 |

zappa/Zappa | django | 661 | [Migrated] `exclude` setting doesn't work with packages installed as editable (from github, etc.,) | Originally from: https://github.com/Miserlou/Zappa/issues/1680 by [jnoortheen](https://github.com/jnoortheen)

<!--- Provide a general summary of the issue in the Title above -->

## Context

I have installed `lambda-packages` using its `git` repo URL. But this makes it to end up in the final zip package. As I have ins... | closed | 2021-02-20T12:32:35Z | 2024-04-13T17:36:41Z | https://github.com/zappa/Zappa/issues/661 | [

"no-activity",

"auto-closed"

] | jneves | 2 |

remsky/Kokoro-FastAPI | fastapi | 105 | add timestamps for each word | I would like to have timestamps for each word in the generated text-to-speech output. This would improve the accuracy of syncing the audio with other media.

I could also submit this as a PR if I get some guidance.

| closed | 2025-01-31T12:52:53Z | 2025-02-02T09:59:20Z | https://github.com/remsky/Kokoro-FastAPI/issues/105 | [

"enhancement"

] | merouanezouaid | 1 |

RobertCraigie/prisma-client-py | asyncio | 424 | Can't create nested relation | ## Bug description

When trying to create entity `Foo` with relation `bar` and without relation `baz` it errors with:

```

{

"errors": [

{

"error": 'Error in query graph construction: QueryParserError(QueryParserError { path: QueryPath { segments: ["Mutation", "createOneFoo", "data"] }, ... | closed | 2022-06-11T20:19:29Z | 2022-06-26T09:41:46Z | https://github.com/RobertCraigie/prisma-client-py/issues/424 | [

"kind/question"

] | iddan | 4 |

vimalloc/flask-jwt-extended | flask | 148 | Should be able to unset access and refresh token cookies independently. | I am looking specifically to be able to unset access token cookies without unsetting refresh token cookies.

My reason for this is that I am handling JWTs before dispatching to the view function (i have written a JWT session extension) and I would like to return a 401 when I receive an expired token and then remove o... | closed | 2018-05-05T06:15:00Z | 2018-05-05T17:47:46Z | https://github.com/vimalloc/flask-jwt-extended/issues/148 | [] | matthewstory | 1 |

ultralytics/yolov5 | pytorch | 13,167 | How can a model trained on Ultralytics HUB perform inference prediction on the test set? | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I have successfully trained several models on Ultratics HUB, and now I want to make the f... | closed | 2024-07-05T02:39:14Z | 2024-10-20T19:49:32Z | https://github.com/ultralytics/yolov5/issues/13167 | [

"question",

"Stale"

] | Aq114 | 3 |

huggingface/datasets | numpy | 7,357 | Python process aborded with GIL issue when using image dataset | ### Describe the bug

The issue is visible only with the latest `datasets==3.2.0`.

When using image dataset the Python process gets aborted right before the exit with the following error:

```

Fatal Python error: PyGILState_Release: thread state 0x7fa1f409ade0 must be current when releasing

Python runtime state: f... | open | 2025-01-06T11:29:30Z | 2025-03-08T15:59:36Z | https://github.com/huggingface/datasets/issues/7357 | [] | AlexKoff88 | 1 |

Teemu/pytest-sugar | pytest | 173 | Keep consistency in the output | Right now, when a test fails the output looks like this:

```

...

Results (2.34s):

5 passed

1 failed

- path/to/your_test.py:666 test_something_wrong

```

The way the failing test (ie `PATH:LINE TEST`) is displayed is not the *natural* way pytest displays *test paths* (ie `PATH::TEST`), an... | open | 2019-03-26T11:08:47Z | 2020-08-25T18:28:34Z | https://github.com/Teemu/pytest-sugar/issues/173 | [

"question"

] | azmeuk | 11 |

jonaswinkler/paperless-ng | django | 1,323 | [FEATURE] Make it possible to individually change the tesseract language to (re)ocr | CURRENT SITUATION:

When setting up paperless-ng there is only one place to set tesseract languages to use in the config.

My current, as I have tons of multiple scientific documents with different languages, config is...

`PAPERLESS_OCR_LANGUAGE=bul+cat+chi_sim+dan+deu+eng+est+fin+fra+ita+jpn+kor+lat+lav+nld+nor+osd+p... | open | 2021-09-17T11:15:43Z | 2021-09-17T11:15:43Z | https://github.com/jonaswinkler/paperless-ng/issues/1323 | [] | bwakkie | 0 |

supabase/supabase-py | fastapi | 192 | Can't query float values | On querying database with gte, lte, gt, lt for float or np.float32 values. It's throwing Api error.

Error message-

> APIError: {'message': 'invalid input syntax for type real: ""4.6079545""', 'code': '22P02', 'details': None, 'hint': None}

The field I tried querying are float4 and float8 data types.

**Additio... | closed | 2022-04-18T20:24:18Z | 2022-05-01T11:54:48Z | https://github.com/supabase/supabase-py/issues/192 | [

"bug"

] | Sharaddition | 8 |

matterport/Mask_RCNN | tensorflow | 2,254 | The server has only CPU but no GPU, how to call multiple CPUs to run the program? | The server has 48 CPUs, but when I run the code, I find that only one CPU is used. How can I use all 48 CPUs? | open | 2020-06-24T03:28:57Z | 2020-06-24T04:08:43Z | https://github.com/matterport/Mask_RCNN/issues/2254 | [] | Romuns-Nicole | 0 |

pytest-dev/pytest-xdist | pytest | 521 | xdist master freezes if socketserver worker calls pytest.exit() | - test_exit.py

```

import pytest

def test_a():

pass

def test_b():

pytest.exit('system state unrecoverable, destroy pytest session.')

```

- socketserver https://bitbucket.org/hpk42/execnet/raw/2af991418160/execnet/script/socketserver.py

- expected: `pytest -d --tx socket=localhost:8888 test_ex... | open | 2020-04-22T05:26:13Z | 2020-04-27T00:16:54Z | https://github.com/pytest-dev/pytest-xdist/issues/521 | [] | cielavenir | 1 |

yihong0618/running_page | data-visualization | 574 | Sync Actions 无法生成分析 SVG | 更新数据时,显示更新完成,但无法生成最新的 Total SVG 图片,返回提示如下:

```

Run python run_page/gen_svg.py --from-db --title "Ryan's Running" --type github --athlete "Ryan" --special-distance 10 --special-distance2 20 --special-color yellow --special-color2 red --output assets/github.svg --use-localtime --min-distance 0.5

All tracks: 28

... | closed | 2023-12-17T15:47:08Z | 2023-12-18T00:57:11Z | https://github.com/yihong0618/running_page/issues/574 | [] | 85Ryan | 0 |

graphql-python/graphene-django | graphql | 1,523 | API mutation for google translate | Hi all is there any way to mutate request for google translator? | closed | 2024-05-22T05:36:41Z | 2024-05-22T16:04:41Z | https://github.com/graphql-python/graphene-django/issues/1523 | [

"🐛bug"

] | abdulhafeez1724 | 1 |

httpie/cli | rest-api | 1,608 | Install httpie-edgegrid plugins fails | ## Checklist

- [x] I've searched for similar issues.

- [x] I'm using the latest version of HTTPie.

---

## Minimal reproduction code and steps

1. Execute `httpie cli plugins install httpie-edgegrid`

## Current result

```

Installing httpie-edgegrid...

Collecting httpie-edgegrid

Using cached httpie... | closed | 2024-11-04T17:36:34Z | 2024-11-04T20:42:15Z | https://github.com/httpie/cli/issues/1608 | [

"bug",

"new"

] | glenthomas | 2 |

wagtail/wagtail | django | 12,584 | Eliminate use of .listing styles on group permission tables | ### Issue Summary

Under Settings -> Groups -> Page permissions in Wagtail 6.3, the page chooser is squeezed into an undersized table cell causing every letter to be wrapped:

This appears to have been introduced as a result of t... | open | 2024-11-15T15:12:37Z | 2024-12-14T04:16:12Z | https://github.com/wagtail/wagtail/issues/12584 | [

"type:Cleanup/Optimisation",

"component:Frontend"

] | gasman | 2 |

plotly/dash-bio | dash | 135 | Make setup.py require dash | Really just a suggestion, but I believe it would be nice to have setup.py require dash by default:

https://github.com/plotly/dash-bio/blob/bd5ce53c4529ce3e0c783cb61eb6eab7b298dd93/setup.py#L11-L20

That way, when the user installs dash-bio, they simply need to create a venv and run:

```

pip install dash-bio

```

... | closed | 2019-01-25T20:01:16Z | 2019-06-11T14:54:04Z | https://github.com/plotly/dash-bio/issues/135 | [] | xhluca | 19 |

plotly/dash | jupyter | 2,917 | When using keyword arguments in a background callback with Celery, no_update does not work | **Describe your context**

```

dash 2.17.1

dash-ag-grid 2.4.0

dash-auth-external 1.2.1

dash-auth0-oauth 0.1.5

dash-bootstrap-components 1.6.0

dash-core-components 2.0.0

dash-cytoscape 1.0.0

dash-extensions 0.0.71

dash-html-components ... | open | 2024-07-09T09:49:24Z | 2024-08-13T19:54:35Z | https://github.com/plotly/dash/issues/2917 | [

"bug",

"P3"

] | JeongMinSik | 0 |

Evil0ctal/Douyin_TikTok_Download_API | web-scraping | 450 | 关于怎么再docker替换cookie | 有2个东西不太理解!

第一是再docker里怎么去替换cookei

我看了视频,你用的是本地python,是可以直接替换!那docker里怎么弄!

还有就是这个API怎么用到脚本里面去!我是API小白所以问问! | closed | 2024-07-14T15:58:10Z | 2024-07-25T18:07:36Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/450 | [

"enhancement"

] | xilib | 3 |

encode/databases | asyncio | 550 | Support for UNIX domain socket for MySQL | Issue similar to #422, but for MySQL connection strings.

In short, `unix_socket`option is not passed to the underlying `aiomysql` or `asyncmy` backends, which results in failed connection to database.

Originally described by [coryvirok](https://github.com/coryvirok) in #239 pull request (fixes only `aiomysql` bac... | closed | 2023-05-09T22:21:13Z | 2023-07-12T01:12:10Z | https://github.com/encode/databases/issues/550 | [] | wojtasiq | 0 |

Guovin/iptv-api | api | 878 | [Bug]:ipv6结果疑问 | ### Don't skip these steps | 不要跳过这些步骤

- [x] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field | 我明白,如果我“故意”删除或跳过任何强制性的\*字段,我将被**限制**

- [x] I am sure that this is a running error exception problem and will not submit any problems unrelated to this project | 我确定这是运行报错异常问题,... | closed | 2025-01-26T07:02:00Z | 2025-01-26T07:13:18Z | https://github.com/Guovin/iptv-api/issues/878 | [

"invalid"

] | GSD-3726 | 3 |

CorentinJ/Real-Time-Voice-Cloning | python | 928 | This project needs a maintainer | This is blue-fish. Unfortunately, I cannot participate in open source anymore. It has been my pleasure to contribute to this project. I wish you all the best! | closed | 2021-12-01T09:31:19Z | 2021-12-28T12:34:18Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/928 | [] | ghost | 2 |

serengil/deepface | deep-learning | 1,248 | What about IQA | ### Description

First of all, thanks for your job. I wonder if there is an opportunity to add face quality assessment models to pipeline?

Or maybe anyone could advice a repo to explore. Tried PyIQA but not satisfied.

### Additional Info

_No response_ | closed | 2024-06-02T21:12:57Z | 2024-06-03T09:49:45Z | https://github.com/serengil/deepface/issues/1248 | [

"enhancement"

] | wauxhall | 1 |

ResidentMario/missingno | data-visualization | 170 | Heatmap ValueError:could not convert string to float: '--' | Im trying missingno.heatmap on the NYPD Motor Vehicle Collisions Dataset.

`import pandas`

`import missingno`

`df = pandas.read_csv('Motor_Vehicle_Collisions_-_Crashes_20240322.csv')`

`missingno.heatmap(df)`

Then this error occured

`ValueError Traceback (most recent call last)

Cel... | open | 2024-03-24T18:17:37Z | 2024-05-14T18:32:58Z | https://github.com/ResidentMario/missingno/issues/170 | [] | dvcchamhocvcl | 4 |

sinaptik-ai/pandas-ai | pandas | 1,459 | I use fastapi+pandasai to provide data-generated image services, but as the number of requests increases, many figures do not exit automatically, so I want to find out if there is a way to exit these figures? please |

I use fastapi+pandasai to provide data-generated image services, but as the number of requests increases, many figures do not exit automatically, so I want to find out if there is a way to exit these figures? please!

| closed | 2024-12-09T08:39:25Z | 2024-12-12T20:27:03Z | https://github.com/sinaptik-ai/pandas-ai/issues/1459 | [] | lwdnxu | 2 |

521xueweihan/HelloGitHub | python | 2,098 | 【开源自荐】Yank Note - 一款面向程序员的 Markdown 笔记应用 | ## 项目推荐

- 项目地址:https://github.com/purocean/yn

- 类别:JS

- 项目后续更新计划:

- 增加插件中心:将一些功能做成插件发布,也方便用户共享插件

- 增加反向索引:将文档信息结构化,便于搜索,也便于程序使用

- 增强文档关联:能查看文档引用和被引用情况

- 项目描述:

Yank Note 是一款面向程序员的本地 Markdown 笔记应用。支持在文档中嵌入可运行的代码块、思维导图以及各种图形 (Drawio、Mermaid、Plantuml),支持插件拓展、文档历史版本回溯。

- 推荐理由:

Yank Note 非常适合程... | closed | 2022-02-10T06:55:32Z | 2022-02-28T02:03:13Z | https://github.com/521xueweihan/HelloGitHub/issues/2098 | [

"已发布",

"JavaScript 项目"

] | purocean | 3 |

streamlit/streamlit | deep-learning | 9,917 | Use index value instead of row position in `session_state` of `st.data_editor` | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [X] I added a very descriptive title to this issue.

- [X] I have provided sufficient information below to help reproduce this issue.

### Summary

The index number in the edited and delete row... | open | 2024-11-24T22:42:16Z | 2024-11-25T14:03:21Z | https://github.com/streamlit/streamlit/issues/9917 | [

"type:enhancement",

"feature:st.data_editor"

] | mhupfauer | 3 |

paperless-ngx/paperless-ngx | django | 7,634 | [BUG] Google invoice throws with "File type application/octet-stream not supported" | ### Description

Google Invoice is not parsed.

Direct upload and automatic mail scan both fail.

Error message:

File type application/octet-stream not supported

Importing Google Invoices in the past was never a problem. So either Google Invoice changed something in their PDF, or there is a regression bug in pa... | closed | 2024-09-05T22:24:33Z | 2024-10-08T03:10:27Z | https://github.com/paperless-ngx/paperless-ngx/issues/7634 | [

"not a bug"

] | draptik | 7 |

strawberry-graphql/strawberry | graphql | 2,828 | Allow codegen to process multiple queries | <!--- Provide a general summary of the changes you want in the title above. -->

<!--- This template is entirely optional and can be removed, but is here to help both you and us. -->

<!--- Anything on lines wrapped in comments like these will not show up in the final text. -->

## Feature Request Type

- [ ] Cor... | closed | 2023-06-08T14:56:07Z | 2025-03-20T15:56:12Z | https://github.com/strawberry-graphql/strawberry/issues/2828 | [] | mgilson | 2 |

Kinto/kinto | api | 2,643 | Run some tests in GitHub Actions | I think it would be wise to have at least one set of CI validation coming from GitHub Actions, so that we have two sources of code quality, especially for times like now where open source builds on Travis are delayed for several hours. (As of writing, GHA are finishing within 10 minutes of a PR being opened, whereas Tr... | closed | 2020-10-26T23:47:37Z | 2021-10-15T15:54:44Z | https://github.com/Kinto/kinto/issues/2643 | [] | dstaley | 6 |

pydantic/pydantic-ai | pydantic | 699 | Why not use abstract classes to define agents? | First off, congratulations on the project! Since there’s no dedicated discussions tab, I decided to open an issue instead.

All mainstream frameworks seem to use the same approach for defining agents: passing arguments to the agent class constructor.

```python

@dataclass

class SupportDependencies:

customer_id: int... | closed | 2025-01-16T10:59:37Z | 2025-02-07T14:07:08Z | https://github.com/pydantic/pydantic-ai/issues/699 | [

"question",

"Stale"

] | lucasmsoares96 | 7 |

JaidedAI/EasyOCR | machine-learning | 992 | EasyOCR failed when the picture has long height like long wechat snapshot contained several small snapshots | open | 2023-04-17T04:07:42Z | 2023-04-17T04:07:42Z | https://github.com/JaidedAI/EasyOCR/issues/992 | [] | crazyn2 | 0 | |

plotly/dash | flask | 2,539 | Wrong typing on Dash init | Types in the docstring or init of the Dash class should be valid types for the checker.

Right now it has `boolean` instead of `bool`, `string` instead of `str` and an amalgation of types with `or` when it should be union. | open | 2023-05-24T14:11:18Z | 2024-08-13T19:33:11Z | https://github.com/plotly/dash/issues/2539 | [

"bug",

"P3"

] | T4rk1n | 1 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 4,024 | Can't send mails with internal SMTP server | ### What version of GlobaLeaks are you using?

Globaleaks 4.14.8 hosted on Debian 6.1.76-1

### What browser(s) are you seeing the problem on?

All

### What operating system(s) are you seeing the problem on?

Windows, macOS

### Describe the issue

We tried to configure mail notifications using our internal SMTP serve... | open | 2024-03-18T15:15:14Z | 2024-03-18T16:46:23Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/4024 | [

"T: Bug",

"Triage"

] | diegodipalma | 1 |

home-assistant/core | asyncio | 140,462 | Smartthings integration broken and cannot be fixed. Getting 400 error trying to get token? | ### The problem

Since I updated to 2025.3.2 this error shows up and is not resolving for me. I thought that it might get resolved after waiting for some time, but a whole day in and this doesn't seem to get fixed.

Anyone have ... | closed | 2025-03-12T17:55:39Z | 2025-03-13T15:14:52Z | https://github.com/home-assistant/core/issues/140462 | [

"integration: smartthings"

] | zainag | 10 |

jazzband/django-oauth-toolkit | django | 902 | A limit on redirect_uri only up to 255 chars | **Describe the bug**

Cannot handle `redirect_uri` longer then 255 chars. [RFC 7230](https://tools.ietf.org/html/rfc7230#section-3.1.1) recommends to design system to be capable to work with URI at least to 8000 chars long.

> It is RECOMMENDED that all HTTP senders and recipients support, at a minimum, request-line ... | closed | 2020-12-11T08:49:37Z | 2020-12-18T10:20:06Z | https://github.com/jazzband/django-oauth-toolkit/issues/902 | [

"bug"

] | shaddeus | 10 |

tflearn/tflearn | data-science | 776 | Will there be a weighted_cross_entropy_with_logits? | open | 2017-05-29T09:46:35Z | 2017-06-06T15:40:52Z | https://github.com/tflearn/tflearn/issues/776 | [] | noeagles | 1 | |

activeloopai/deeplake | tensorflow | 2,630 | [BUG] | ### Severity

P0 - Critical breaking issue or missing functionality

### Current Behavior

hello ,

I am trying to deploy an app on google function and i have dependency issue that result from deeplake. I am using 3.7.1 version, and the dependency issues commes from ERROR: Cannot install -r requirements.txt (line 1... | closed | 2023-09-29T19:06:58Z | 2024-09-24T17:09:31Z | https://github.com/activeloopai/deeplake/issues/2630 | [

"bug"

] | kabsikabs | 2 |

tqdm/tqdm | pandas | 1,484 | reversed(tqdm(some_list)) does not yield a reversed list | - [x] I have marked all applicable categories:

+ [ ] exception-raising bug

+ [ ] visual output bug

- [x] I have visited the [source website], and in particular

read the [known issues]

- [x] I have searched through the [issue tracker] for duplicates

- [x] I have mentioned version numbers, operating syste... | open | 2023-07-23T17:56:34Z | 2023-07-23T19:28:53Z | https://github.com/tqdm/tqdm/issues/1484 | [] | harmenwassenaar | 0 |

gradio-app/gradio | machine-learning | 10,399 | TabbedInterface does not work with Chatbot defined in ChatInterface | ### Describe the bug

When defining a `Chatbot` in `ChatInterface`, the `TabbedInterface` does not render it properly.

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

def chat():

return "Hello"

chat_ui = gr.ChatInter... | open | 2025-01-21T17:11:08Z | 2025-01-22T17:19:36Z | https://github.com/gradio-app/gradio/issues/10399 | [

"bug"

] | arnaldog12 | 2 |

junyanz/pytorch-CycleGAN-and-pix2pix | computer-vision | 898 | Generator samples from other dataset | I trained a CycleGAN with default parameters (but images loaded at 128x128 without cropping) for 200 epochs. The images generated are bad, but that's not the point. The strange thing is that sometimes the generator samples modified images from destination dataset. Have you ever seen this problem? Do you have any idea w... | closed | 2020-01-11T15:42:17Z | 2020-01-15T18:48:37Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/898 | [] | domef | 3 |

betodealmeida/shillelagh | sqlalchemy | 482 | More backends | Let's write some more backends!

- [X] apsw

- [X] Postgres (multicorn2)

- [ ] duckdb

- [ ] sqlglot | open | 2024-11-01T14:51:25Z | 2024-11-01T14:51:42Z | https://github.com/betodealmeida/shillelagh/issues/482 | [

"enhancement",

"help wanted",

"developer"

] | betodealmeida | 0 |

pandas-dev/pandas | data-science | 60,467 | QST: Is Using pandas.test() Equivalent to Running pytest Directly? | ### Research

- [X] I have searched the [[pandas] tag](https://stackoverflow.com/questions/tagged/pandas) on StackOverflow for similar questions.

- [X] I have asked my usage related question on [StackOverflow](https://stackoverflow.com).

### Link to question on StackOverflow

https://stackoverflow.com/questions/7924... | closed | 2024-12-02T13:18:15Z | 2024-12-02T18:23:34Z | https://github.com/pandas-dev/pandas/issues/60467 | [

"Usage Question",

"Needs Triage"

] | angiolye24 | 1 |

aws/aws-sdk-pandas | pandas | 2,509 | Exponential backoff | **Is your idea related to a problem? Please describe.**

When using awswrangler with millions of calls to databases, I am constantly receive throttling errors. I have had to write an exponential backoff decorator in python and create wrappers for all of the wrangler functions I am using.

**Describe the solution y... | closed | 2023-11-01T13:20:03Z | 2023-11-15T15:04:18Z | https://github.com/aws/aws-sdk-pandas/issues/2509 | [

"enhancement"

] | awspiv | 2 |

ansible/ansible | python | 84,660 | meta: end_role only works for a single server on a group | ### Summary

When a role does a "meta: end_role" with a "when" clause only the first server on a group honors the end_role statement.

### Issue Type

Bug Report

### Component Name

ansible.builtin.meta

### Ansible Version

```console

$ ansible --version

ansible [core 2.18.2]

config file = /etc/ansible/ansible.cfg

... | closed | 2025-02-03T17:02:12Z | 2025-02-25T14:00:05Z | https://github.com/ansible/ansible/issues/84660 | [

"module",

"bug",

"has_pr",

"affects_2.18"

] | Sirtea | 1 |

waditu/tushare | pandas | 1,393 | document bug in hsgt_top10 沪深股通十大成交股文档描述错误 |

沪深股通十大成交股 https://tushare.pro/document/2?doc_id=48

change | float | 涨跌额

文档描述为涨跌额,但其实是涨跌百分比

| open | 2020-07-13T12:28:35Z | 2020-07-13T12:28:35Z | https://github.com/waditu/tushare/issues/1393 | [] | zergscut2017 | 0 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 457 | "OSError: [WinError 193] %1 is not a valid Win32 application" on attempt to run python scripts | I'm set up with an Anaconda environment for Python 3.7.7

Running on a 64-bit Windows 10 system with an AMD processor.

Installed PYTorch and ffmpeg through `conda install pytorch torchvision cudatoolkit=10.2 -c pytorch` and `conda install ffmpeg` (unsure if that may introduce some difference), and the rest through the... | closed | 2020-07-28T20:43:30Z | 2020-08-07T07:43:34Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/457 | [] | EnergeticSpaceCore | 9 |

PokeAPI/pokeapi | graphql | 670 | GraphQL API seems to be down? | <!--

Thanks for contributing to the PokéAPI project. To make sure we're effective, please check the following:

- Make sure your issue hasn't already been submitted on the issues tab. (It has search functionality!)

- If your issue is one of outdated API data, please note that we get our data from [veekun](https://g... | closed | 2021-11-18T10:13:32Z | 2022-09-07T08:23:35Z | https://github.com/PokeAPI/pokeapi/issues/670 | [

"graphql"

] | MiroStW | 9 |

matplotlib/mplfinance | matplotlib | 124 | how to addplot with different lengths? | I have plotted the normal data,

but there are some add_plots that are either shorter or longer which I want to plot on top of the main plot, now it complains about their length,

```

File "....Python\Python38\lib\site-packages\matplotlib\axes\_base.py", line 269, in _xy_from_xy

raise ValueError("x and y must hav... | closed | 2020-05-04T22:24:02Z | 2021-08-05T01:10:53Z | https://github.com/matplotlib/mplfinance/issues/124 | [

"question"

] | allahyarzadeh | 7 |

JaidedAI/EasyOCR | pytorch | 473 | The file cannot be found by running after packaging with pyinstaller | I don't know if there are any friends like me. After packing with pyinstaller, I couldn't find the file. The specific error report is as follows:

```python

Traceback (most recent call last):

File "main.py", line 363, in main

File "captcha/easy_ocr.py", line 46, in easy_ocr

File "easyocr/easyocr.py", line 1... | closed | 2021-06-29T10:17:11Z | 2022-11-11T23:06:36Z | https://github.com/JaidedAI/EasyOCR/issues/473 | [] | yqchilde | 9 |

yihong0618/running_page | data-visualization | 334 | 悦跑圈导出数据问题 | 你好,我按照文档方法成功导出悦跑圈数据并记录了uid和sid,但是在我登录悦跑圈app(尝试了验证码和微信登录)后sid就失效了,无法再次获取数据

所以现在的问题是手机app在线和每天定时导出悦跑圈数据这两件事情只能实现一个。是我操作的方式有问题还是其他什么原因

| closed | 2022-11-03T06:22:33Z | 2022-11-03T07:16:31Z | https://github.com/yihong0618/running_page/issues/334 | [] | w749 | 3 |

horovod/horovod | pytorch | 3,494 | [Elastic] Deadlock when a node dies gracefully and # survivors < min_np | **Environment:**

1. Framework: PyTorch

2. Framework version: 1.10

3. Horovod version: latest

**Bug report:**

The deadlock scenario:

- We currently have `n = min_np` nodes running

- One or more nodes **_gracefully_** dies, resulting in the number of active nodes `n < min_np`

- For the surviving nodes, this is ... | open | 2022-03-25T15:24:56Z | 2022-11-12T03:08:48Z | https://github.com/horovod/horovod/issues/3494 | [

"bug"

] | ASDen | 1 |

sinaptik-ai/pandas-ai | data-science | 1,405 | conversational failed | ### System Info

OS version: windows 10

Python version: 3.12.7

The current version of pandasai being used: 2.3.0

### 🐛 Describe the bug

It seems the pipeline is not able to categorize correctly the conversation:

Here the config code for the Agent:

`config = {"llm":llm,"verbose": True, "direct_sql": False... | closed | 2024-10-21T17:17:55Z | 2025-01-28T16:01:46Z | https://github.com/sinaptik-ai/pandas-ai/issues/1405 | [

"bug"

] | HAL9KKK | 3 |

modin-project/modin | pandas | 6,620 | TypeError: bins argument only works with numeric data. | When running the Modin tests with the following way I see the error.

```bash

MODIN_CPUS=44 MODIN_ENGINE=ray python -m pytest modin/pandas/test/test_general.py

FAILED modin/pandas/test/test_general.py::test_value_counts[True-3-False] - TypeError: bins argument only works with numeric data.

``` | closed | 2023-10-02T14:15:42Z | 2023-10-18T09:57:27Z | https://github.com/modin-project/modin/issues/6620 | [

"bug 🦗",

"P2"

] | YarShev | 2 |

MaartenGr/BERTopic | nlp | 2,296 | Duplicate Document Entries in Documents from get_document_info(corpus) | Hello!

I am working on analyzing research funding trends using data collected from NIH ExPORTER.

My team and I have run into a problem where there are no duplicate documents in our raw data, as in there are not repeated ABSTRACT, APPLICATION_ID, or PI_names, but documents extracted from the BERTopic results using get_... | open | 2025-02-25T04:41:02Z | 2025-03-04T12:18:24Z | https://github.com/MaartenGr/BERTopic/issues/2296 | [] | zerubael | 5 |

flairNLP/flair | nlp | 2,997 | TARS Zero Shot Classifier Predictions | Here is the example code to use TARS Zero Shot Classifier

```

from flair.models import TARSClassifier

from flair.data import Sentence

# 1. Load our pre-trained TARS model for English

tars = TARSClassifier.load('tars-base')

# 2. Prepare a test sentence

sentence = Sentence("I am so glad you liked it!")

# 3.... | closed | 2022-11-24T07:37:38Z | 2023-05-21T15:36:59Z | https://github.com/flairNLP/flair/issues/2997 | [

"bug",

"wontfix"

] | ghost | 2 |

Lightning-AI/pytorch-lightning | data-science | 19,574 | Constructor arguments in init_args get instantiated while parsing arguments of LightningModule | ### Bug description

I have a model and one can specify the layers as arguments of the model's constructor, for example, one can set a different normalization layer to the boring model below with a command like ```model = BoringNN(norm_layer=nn.InstanceNorm1d)```:

BoringNN:

```

class BoringNN(nn.Module) :

d... | closed | 2024-03-05T11:28:01Z | 2024-04-23T08:07:36Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19574 | [

"question",

"lightningcli"

] | tommycwh | 12 |

nolar/kopf | asyncio | 877 | RuntimeError: Session is closed in kopf.testing.KopfRunner | ### Long story short

I encounter the following error a majority of the time when running python kubernetes queries inside the kopf.testing.KopfRunner context. Occasionally, it will succeed, but most of the time it fails as shown below.

Error:

```

self = <aiohttp.client.ClientSession object at 0x12258f8e0>, m... | open | 2021-12-24T21:36:17Z | 2022-03-13T01:33:00Z | https://github.com/nolar/kopf/issues/877 | [

"bug"

] | retr0h | 3 |

huggingface/transformers | tensorflow | 36,579 | AutoModel failed with empty tensor error | ### System Info

Copy-and-paste the text below in your GitHub issue and FILL OUT the two last points.

- `transformers` version: 4.50.0.dev0

- Platform: Linux-4.18.0-553.16.1.el8_10.x86_64-x86_64-with-glibc2.35

- Python version: 3.10.12

- Huggingface_hub version: 0.28.1

- Safetensors version: 0.5.2

- Accelerate version... | closed | 2025-03-06T07:57:25Z | 2025-03-13T17:18:16Z | https://github.com/huggingface/transformers/issues/36579 | [

"bug"

] | jiqing-feng | 1 |

AirtestProject/Airtest | automation | 1,066 | 用 pycharm運行自動化腳本時,可以將手機畫面即時投屏嗎? | 用 pycharm運行自動化腳本時,可以將手機畫面即時投屏嗎? | open | 2022-07-08T10:27:24Z | 2022-07-08T10:27:24Z | https://github.com/AirtestProject/Airtest/issues/1066 | [] | Ray-W-u | 0 |

jupyterhub/jupyterhub-deploy-docker | jupyter | 80 | Use Lab as default route | I would like to use jupyterlab as the default application. Although, it is properly installed and reachable (by manually navigating to the /lab endpoint), /tree (jupyterhub) is still the default route. I found some old posts (e.g., #26 ) and there is even an [example](https://github.com/jupyterhub/jupyterhub-deploy-doc... | closed | 2019-02-05T12:47:49Z | 2022-12-05T00:52:46Z | https://github.com/jupyterhub/jupyterhub-deploy-docker/issues/80 | [] | inkrement | 5 |

explosion/spaCy | data-science | 11,949 | PhraseMatcher not matching correctly on attr when tokenization is customized | I have an example where I have `$` in my infixes tokenization rules. However then the `PhraseMatcher` fails to match on `LOWER` attr

## How to reproduce the behaviour

```python

import spacy

from spacy.matcher import PhraseMatcher

from spacy.util import compile_infix_regex

nlp = spacy.load("blank:en")

nlp.tok... | closed | 2022-12-08T10:18:54Z | 2022-12-08T12:47:36Z | https://github.com/explosion/spaCy/issues/11949 | [] | NixBiks | 2 |

joerick/pyinstrument | django | 347 | Timeline view is difficult to scroll around on | I like the new timeline view, but the scrolling behavior is a little wonky. I think it needs to be much harder to zoom in and out. Ideally it would lock either horizontal scrolling or zooming, so that you can scroll the timeline without zooming at the same time. Maybe it should also require more of a vertical scroll to... | open | 2024-10-14T19:00:35Z | 2024-10-15T20:40:16Z | https://github.com/joerick/pyinstrument/issues/347 | [] | asmeurer | 2 |

CPJKU/madmom | numpy | 91 | add convenience methods to MIDIFile to add notes, set tempo and time signature | It would be nice to have some convenience methods to:

- add notes

- set tempo

- set time signature

of a `MIDIFile`, the method should take both take input given in seconds or beats.

These methods should be added to `MIDIFile` since the events need to be put into a track, but the tempo and time signature events can be... | closed | 2016-02-18T12:20:57Z | 2018-03-04T11:55:08Z | https://github.com/CPJKU/madmom/issues/91 | [

"enhancement",

"feature request"

] | superbock | 1 |

clovaai/donut | nlp | 48 | Different input resolution throws error | Following is the error we get when we try to pass an input size of 512\*2,512\*3:

Are different input resolution/sizes are not supported currently?

Traceback (most recent call last):

File "train.py", line 149, in <module>

train(config)

File "train.py", line 57, in train

model_module = DonutModelPLModu... | closed | 2022-09-11T04:23:52Z | 2024-06-18T22:56:55Z | https://github.com/clovaai/donut/issues/48 | [] | Souvic | 3 |

snarfed/granary | rest-api | 143 | Access source URLs in retweets? | I've recently downloaded my tweets from Twitter, and am trying to use Granary to convert them into static HTML files.

I'm basically doing this:

```

posts = json.loads(open(sys.argv[1], 'r').read())

for post in posts:

decoded = twitter.Twitter('token', 'secret', 'smerrill').tweet_to_object(post)

print de... | closed | 2018-04-01T21:35:56Z | 2018-04-02T13:47:29Z | https://github.com/snarfed/granary/issues/143 | [] | skpy | 4 |

ultrafunkamsterdam/undetected-chromedriver | automation | 1,394 | i only can pass cloudflare with devtool opened | anyone have a solution to get around it, it just rotates | open | 2023-07-14T09:22:11Z | 2023-07-17T03:42:32Z | https://github.com/ultrafunkamsterdam/undetected-chromedriver/issues/1394 | [] | NCLnclNCL | 5 |

lorien/grab | web-scraping | 370 | Segmentation fault 11 | Segmentation fault 11 error occurs when i call "go" method of Grab class in mac OS | closed | 2018-12-24T13:51:23Z | 2022-02-25T07:56:47Z | https://github.com/lorien/grab/issues/370 | [] | akoikelov | 2 |

airtai/faststream | asyncio | 1,100 | Bug: FastAPI 0.106.0 broke the integration | **Describe the bug**

Installing fastapi version 0.106.0 and above break tests.

**How to reproduce**

```sh

pip install fastapi==0.106.0

pytest tests

``` | closed | 2023-12-26T20:54:30Z | 2023-12-27T18:46:50Z | https://github.com/airtai/faststream/issues/1100 | [

"bug"

] | davorrunje | 0 |

plotly/dash | jupyter | 2,569 | Dash crashes when I deploy it with Gunicorn | **Describe your context**

- replace the result of `pip list | grep dash` below

```

dash 2.10.2

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

**Describe the bug**

I am trying to deploy a dash app to production using Nginx and Ubuntu 22.04, also I am ... | open | 2023-06-18T20:57:34Z | 2024-08-13T19:34:22Z | https://github.com/plotly/dash/issues/2569 | [

"bug",

"P3"

] | manumartinm | 2 |

mars-project/mars | pandas | 2,899 | [BUG] mars storage put too much shuffle meta to data manager and supervisor | <!--

Thank you for your contribution!

Please review https://github.com/mars-project/mars/blob/master/CONTRIBUTING.rst before opening an issue.

-->

**Describe the bug**

A clear and concise description of what the bug is.

**To Reproduce**

To help us reproducing this bug, please provide information below:

1.... | closed | 2022-04-02T06:43:28Z | 2022-04-09T12:01:38Z | https://github.com/mars-project/mars/issues/2899 | [

"type: bug",

"mod: meta service"

] | chaokunyang | 5 |

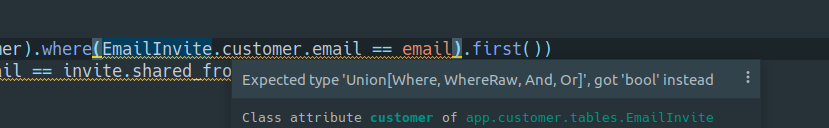

piccolo-orm/piccolo | fastapi | 436 | IDE type hints expects "Where", "WhereRaw", "And", "Or" | My editor doesn't like this statement, since it evaluates to a boolean type, which doesn't match the type signature `Combinable = t.Union["Where", "WhereRaw", "And", "Or"]`

... | open | 2022-02-16T09:13:48Z | 2025-01-21T21:35:40Z | https://github.com/piccolo-orm/piccolo/issues/436 | [] | trondhindenes | 13 |

saulpw/visidata | pandas | 2,703 | Create a plugin list somewhere | Is there a collection of plugins somewhere? I couldn't find a list.

It might be helpful if there was at least an "awesome"-style list cataloging what's out there. | open | 2025-02-09T20:17:19Z | 2025-02-12T03:49:25Z | https://github.com/saulpw/visidata/issues/2703 | [

"question"

] | deliciouslytyped | 3 |

getsentry/sentry | django | 87,279 | Filtering Transaction > Summary > Spans by releases returns no results | ### Environment

SaaS (https://sentry.io/)

### Steps to Reproduce

1. Navigate to `Transaction > Summary > Spans`.

2. Attempt to filter search by a well known release # with corresponding events.

### Expected Result

Span operations are shown that occur within the given release.

### Actual Result

Results seem to al... | open | 2025-03-18T15:15:15Z | 2025-03-18T15:17:11Z | https://github.com/getsentry/sentry/issues/87279 | [

"Bug"

] | bcoe | 1 |

hatchet-dev/hatchet | fastapi | 539 | docs: Kubernetes Quickstart examples are incomplete | The example workers found at https://docs.hatchet.run/self-hosting/kubernetes#run-your-first-worker do not work without major changes. It would be really useful for users to know which configuration properties they have to set in order to get it working.

In my case, the following config worked (typescript example):... | closed | 2024-05-29T15:13:07Z | 2024-06-14T13:58:36Z | https://github.com/hatchet-dev/hatchet/issues/539 | [] | kosmoz | 1 |

pytorch/vision | machine-learning | 8,390 | AttributeError: module 'torchvision.transforms' has no attribute 'v2' | ### 🐛 Describe the bug

I am getting the following error:

> AttributeError: module 'torchvision.transforms' has no attribute 'v2'

### Versions

I am using the following versions:

```python

torch version: 2.2.2, torchvision version: 0.17.2

``` | closed | 2024-04-20T12:46:43Z | 2024-04-29T09:42:37Z | https://github.com/pytorch/vision/issues/8390 | [] | ImahnShekhzadeh | 1 |

ranaroussi/yfinance | pandas | 1,288 | Debian - yfinance - ERROR: Could not build wheels for cryptography, which are required to inatall pyproject.toml-based projects | ### Still think it's a bug? YES, definitely.

- Info about your system:

- yfinance version - UNABLE TO INSTALL **ANY** yfinance version using pip3 install yfinance

Python: 3.7.3

platform: Linux-4.19.0-22-686-i686-with-debian-10.13

pip: n/a

setuptools: 65.6.3

setuptools_rust: 1.5.2

rustc: 1.66.0 (69f9c33d7... | closed | 2023-01-10T21:55:10Z | 2023-01-15T18:24:05Z | https://github.com/ranaroussi/yfinance/issues/1288 | [] | SymbReprUnlim | 14 |

littlecodersh/ItChat | api | 965 | 关于itchat一天时间给内存占到百分百的问题 | 我用itchat微信多开 , 一天就给服务器内存暂居到百分百, 是不是由于好友信息 群信息太多导致的呢, 怎么可以关闭接收这些信息呢 , 只要登陆好 能发送信息即可,希望大佬解答一下 | open | 2022-07-18T04:28:20Z | 2022-07-18T04:29:21Z | https://github.com/littlecodersh/ItChat/issues/965 | [] | cheduiwang | 2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.