repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

skypilot-org/skypilot | data-science | 4,981 | [GCP] Provisioning fails with TPU API error despite TPU not even used (and not wanted) | Skypilot 0.8

As you can see in the log, it sucessfully gets a L4 instance but still decides to contact TPU API for reasons I dont understand - then it fails with an error as TPU is deactivated and we definately dont want to use it. The log is slightly cleaned:

> I 03-18 13:07:46 optimizer.py:752] Estimated cost: $0.8... | closed | 2025-03-18T15:19:33Z | 2025-03-19T18:31:50Z | https://github.com/skypilot-org/skypilot/issues/4981 | [] | shiosai | 3 |

huggingface/datasets | pytorch | 7,267 | Source installation fails on Macintosh with python 3.10 | ### Describe the bug

Hi,

Decord is a dev dependency not maintained since couple years.

It does not have an ARM package available rendering it uninstallable on non-intel based macs

Suggestion is to move to eva-decord (https://github.com/georgia-tech-db/eva-decord) which doesnt have this problem.

Happy to... | open | 2024-10-31T10:18:45Z | 2024-11-04T22:18:06Z | https://github.com/huggingface/datasets/issues/7267 | [] | mayankagarwals | 1 |

twtrubiks/django-rest-framework-tutorial | rest-api | 2 | def get_days_since_created(self, obj) 問題請教 | 不好意思,python新手,這邊有個概念不是很懂:

class MusicSerializer(serializers.ModelSerializer):

days_since_created = serializers.SerializerMethodField()

def get_days_since_created(self, obj):

return (now() - obj.created).days

我的理解是class MusicSerializer 繼承了serializers.ModelSerializer ,然後他利用他底下的method get_days_since_c... | open | 2018-04-27T12:56:03Z | 2018-05-08T02:53:18Z | https://github.com/twtrubiks/django-rest-framework-tutorial/issues/2 | [] | ekils | 1 |

TencentARC/GFPGAN | deep-learning | 374 | Tencentarc damo no working | Tencentarc damo no working solve your tencentarc site | open | 2023-05-08T02:45:38Z | 2023-05-08T02:45:38Z | https://github.com/TencentARC/GFPGAN/issues/374 | [] | jgfuj | 0 |

nvbn/thefuck | python | 1,071 | alias fuck="fuck -y" causes error when sourcing .zshrc | <!-- If you have any issue with The Fuck, sorry about that, but we will do what we

can to fix that. Actually, maybe we already have, so first thing to do is to

update The Fuck and see if the bug is still there. -->

<!-- If it is (sorry again), check if the problem has not already been reported and

if not, just op... | closed | 2020-03-27T16:36:12Z | 2020-03-29T14:05:56Z | https://github.com/nvbn/thefuck/issues/1071 | [] | orthodoX | 6 |

piskvorky/gensim | data-science | 3,081 | Memory leaks when using doc_topics in LdaSeqModel | <!--

**IMPORTANT**:

- Use the [Gensim mailing list](https://groups.google.com/forum/#!forum/gensim) to ask general or usage questions. Github issues are only for bug reports.

- Check [Recipes&FAQ](https://github.com/RaRe-Technologies/gensim/wiki/Recipes-&-FAQ) first for common answers.

Github bug reports that d... | open | 2021-03-18T15:29:10Z | 2021-03-18T17:46:06Z | https://github.com/piskvorky/gensim/issues/3081 | [] | nikchha | 0 |

GibbsConsulting/django-plotly-dash | plotly | 81 | Install from requirements.txt | This is an awesome project and I appreciate your work!

When installing from `pip install -r requirements.txt` the install will fail because in setup.py for django-plotly-dash has the line `import django_plotly_dash as dpd` which requires dash, django, etc. to work.

See point 6. https://packaging.python.org/guide... | closed | 2018-12-06T16:40:21Z | 2018-12-08T21:24:35Z | https://github.com/GibbsConsulting/django-plotly-dash/issues/81 | [

"bug",

"good first issue"

] | VenturaFranklin | 2 |

TheAlgorithms/Python | python | 12,046 | add quicksort under divide and conquer | ### Feature description

would like to add quicksort algo under divide and conquer | closed | 2024-10-14T00:42:53Z | 2024-10-16T02:52:02Z | https://github.com/TheAlgorithms/Python/issues/12046 | [

"enhancement"

] | OmMahajan29 | 1 |

noirbizarre/flask-restplus | flask | 626 | Enforce the order of functions | How do i enforce the order of functions under a certain method? Even if use Order Preservation as described in the link below the order of functions seems random.

https://flask-restplus.readthedocs.io/en/stable/quickstart.html?highlight=order

Thanks!

Isaac | open | 2019-04-12T15:49:50Z | 2019-04-12T15:49:50Z | https://github.com/noirbizarre/flask-restplus/issues/626 | [] | iwainstein | 0 |

automl/auto-sklearn | scikit-learn | 952 | Pipeline Export | Is there a way to export the best pipeline into python code and save to a file? | closed | 2020-09-17T19:56:03Z | 2021-11-17T10:51:41Z | https://github.com/automl/auto-sklearn/issues/952 | [

"question"

] | amybachir | 6 |

huggingface/datasets | deep-learning | 7,072 | nm | closed | 2024-07-25T17:03:24Z | 2024-07-25T20:36:11Z | https://github.com/huggingface/datasets/issues/7072 | [] | brettdavies | 0 | |

BeanieODM/beanie | pydantic | 599 | [BUG] Updating documents with a frozen BaseModel as field raises TypeError | **Describe the bug**

Relating a document X to document Y using `Link[]`, with document Y having a `pydantic.BaseModel` field that has `class Config: frozen = True`, will trigger the error `TypError: <field> is immutable` in Pydantic.

**To Reproduce**

**edit** please check my comment below, there is an easier way... | closed | 2023-06-22T13:52:41Z | 2023-08-24T14:43:31Z | https://github.com/BeanieODM/beanie/issues/599 | [] | Mark90 | 6 |

pytorch/pytorch | deep-learning | 149,495 | DISABLED AotInductorTest.FreeInactiveConstantBufferCuda (build.bin.test_aoti_inference) | Platforms: inductor

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=AotInductorTest.FreeInactiveConstantBufferCuda&suite=build.bin.test_aoti_inference&limit=100) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/3901216756... | open | 2025-03-19T09:43:10Z | 2025-03-21T09:41:37Z | https://github.com/pytorch/pytorch/issues/149495 | [

"module: flaky-tests",

"skipped",

"oncall: pt2",

"oncall: export",

"module: aotinductor"

] | pytorch-bot[bot] | 11 |

KaiyangZhou/deep-person-reid | computer-vision | 259 | Can't training with GPU | In the version of pytorch 1.3.1, OsNet can't train with GPU, report CUDA out of memory, but the gpu is not occupied。Bug? | closed | 2019-11-20T08:09:24Z | 2020-05-18T10:09:51Z | https://github.com/KaiyangZhou/deep-person-reid/issues/259 | [] | lovekittynine | 9 |

roboflow/supervision | deep-learning | 1,694 | Crash when filtering empty detections: xyxy shape (0, 0, 4). | Reproduction code:

```python

import supervision as sv

import numpy as np

CLASSES = [0, 1, 2]

prediction = sv.Detections.empty()

prediction = prediction[np.isin(prediction["class_name"], CLASSES)]

```

Error:

```

Traceback (most recent call last):

File "/Users/linasko/.settler_workspace/pr/supervis... | closed | 2024-11-28T11:31:18Z | 2024-12-04T10:15:33Z | https://github.com/roboflow/supervision/issues/1694 | [

"bug"

] | LinasKo | 0 |

odoo/odoo | python | 202,652 | [16.0] stock: _find_delivery_ids_by_lot is hanging (recursive calls) | ### Odoo Version

- [x] 16.0

- [ ] 17.0

- [ ] 18.0

- [ ] Other (specify)

### Steps to Reproduce

Step to reproduce :

- Go to Inventory > Products > Variant Product

- Select a Product that is managed with lots

- Click on button "on hand" stock quantity

- Then double click on a "Lot/Serial numer" column value

Then it ... | open | 2025-03-20T09:52:54Z | 2025-03-20T10:09:34Z | https://github.com/odoo/odoo/issues/202652 | [] | matmicro | 1 |

jschneier/django-storages | django | 860 | Non-seekable streams can not be uploaded to S3 | I have a large (1gb or so) csv file that I want admin users to be able to upload that will then be processed by an offline task. Unfortunately, uploading that file to Django isn't straightforward - because Nginx has reasonable limits to prevent bad users from uploading massive files.

Long story short, I want users t... | closed | 2020-03-13T11:39:17Z | 2021-09-19T00:17:40Z | https://github.com/jschneier/django-storages/issues/860 | [] | jarshwah | 8 |

ageitgey/face_recognition | python | 705 | face_encodings make the code slow | * face_recognition version: 1.2.3

* Python version: 3.7

* Operating System: win10

cpu is i5-7300HQ

### Description

when detection face in the use camera

face_encodings will make the cv2.imshow() show the frame slow.

and how can i make the code quick.

i test to use the model='cnn', it is also slow

i test t... | open | 2018-12-15T09:35:50Z | 2018-12-25T05:34:57Z | https://github.com/ageitgey/face_recognition/issues/705 | [] | guozhaojian | 2 |

onnx/onnx | pytorch | 6,359 | flash-attention onnx export. | When using Flash-Attention version 2.6.3, there is an issue with the ONNX file saved using torch.onnx.export.

code:

import sys

import torch

qkv=torch.load("/home/qkv.pth")

from modeling_intern_vit import FlashAttention

falsh=FlashAttention().eval().cuda()

out=falsh(qkv.cpu().cuda())

with torch.no_grad():... | closed | 2024-09-10T05:41:26Z | 2024-09-13T14:01:15Z | https://github.com/onnx/onnx/issues/6359 | [

"question"

] | scuizhibin | 3 |

lepture/authlib | django | 283 | authlib 0.15.1 does not send authorizaton header | **Describe the bug**

authlib 0.15.1 does not seem to send authentication token with the request

**Error Stacks**

with authlib 0.15.1

```

RACE [2020-10-15 15:08:46] httpx._config - load_verify_locations cafile=/home/bartosz/.pyenv/versions/3.8.3/envs/quetz-heroku-test/lib/python3.8/site-packages/certifi/cac... | closed | 2020-10-15T13:28:57Z | 2020-10-18T06:40:07Z | https://github.com/lepture/authlib/issues/283 | [

"bug",

"client",

"httpx"

] | btel | 9 |

junyanz/pytorch-CycleGAN-and-pix2pix | computer-vision | 1,275 | After starting training the model,it doesn't work and only see "learning rate 0.0002000 -> 0.0002000" | I try to start training the model but it doesn't work and stay in the same position for a long time,may you tell me the reason?

My GPU is RTX5000 16G batchsize 1

| open | 2021-04-22T08:59:35Z | 2021-12-08T21:07:31Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1275 | [] | KnightWin123 | 7 |

axnsan12/drf-yasg | django | 727 | Undocumented TypeError: NetworkError when attempting to fetch resource. | I have api then i try request from swagger ui and get

But if i send request from browse i get status 200 (ok) | closed | 2021-07-21T08:45:45Z | 2021-07-21T13:31:32Z | https://github.com/axnsan12/drf-yasg/issues/727 | [] | Barolina | 1 |

zihangdai/xlnet | tensorflow | 295 | xlnet, transformer xl attention score funtion problem | A function tf.einsum (‘ibnd,jbnd->ijbn’, (head_q, head_k) used to obtain an attachment score from xlnet, transformer xl, can’t find correlation between all words, can anyone explain it on calculation? For example, if you have a 2,2 tensor called [i,am],[a,boy] i is i,a am is am,boy A is i, a Boy only calculates am,boy ... | open | 2023-11-07T02:15:40Z | 2023-11-07T02:15:40Z | https://github.com/zihangdai/xlnet/issues/295 | [] | wonjunchoi-arc | 0 |

JaidedAI/EasyOCR | pytorch | 1,209 | supported optimizer engine for optimization in training | @rkcosmos I just saw list of optimizers currently supported by EasyOCR for training a custom model, is there any reasons that we have just only these 2 optimizers? if not, i can help making a PR to add more optimizers for more robust model optimization of EasyOCR.

https://github.com/JaidedAI/EasyOCR/blob/c999505ef6... | open | 2024-02-03T08:17:47Z | 2024-02-03T08:17:47Z | https://github.com/JaidedAI/EasyOCR/issues/1209 | [] | pavaris-pm | 0 |

piskvorky/gensim | data-science | 3,543 | The model architecture of word2vec | Excuse me,I want to know that the model architecture of gensim.models.Word2Vec(CBOW).

It seems that the architecture is not as simple as an embedding layer,a hidden layer(linear)and softmax. | closed | 2024-07-10T08:18:09Z | 2024-07-10T19:01:13Z | https://github.com/piskvorky/gensim/issues/3543 | [] | LiuBurger | 1 |

microsoft/nni | pytorch | 5,483 | TypeError: __new__() missing 1 required positional argument: 'task' | **Describe the issue**: Hello, developers. I'm a newbie just getting started learning nas. When I run the tutorial file in the notebook, I get an error, I hope you can help me to solve this problem:

File "search_2.py", line 59, in <module>

max_epochs=5)

File "D:\anaconda3\envs\venv_copy\lib\site-packages\nn... | closed | 2023-03-28T01:18:25Z | 2023-03-29T02:38:04Z | https://github.com/microsoft/nni/issues/5483 | [] | zzfer490 | 3 |

jazzband/django-oauth-toolkit | django | 1,074 | Add missing migration caused by #1020 | Discovered by @max-wittig:

```sh

poetry run python manage.py makemigrations --check --dry-run

Migrations for 'oauth2_provider':

/Users/max/Library/Caches/pypoetry/virtualenvs/codeapps-wUwe-wZH-py3.10/lib/python3.10/site-packages/oauth2_provider/migrations/0006_alter_application_client_secret.py

- Alter field... | closed | 2022-01-07T20:00:27Z | 2022-01-07T23:03:08Z | https://github.com/jazzband/django-oauth-toolkit/issues/1074 | [

"bug"

] | n2ygk | 1 |

horovod/horovod | deep-learning | 3,698 | reducescatter() and grouped_reducescatter() crash for scalar inputs | `hvd.reducescatter(3.14)` currently leads to a C++ assertion failure in debug builds or a segmentation fault in release builds.

There should either be a clear error message or it should behave similarly to `hvd.reducescatter([3.14])`, which returns a one-element tensor on the root rank and an empty tensor on the ot... | closed | 2022-09-12T18:00:01Z | 2022-09-20T06:30:14Z | https://github.com/horovod/horovod/issues/3698 | [

"bug"

] | maxhgerlach | 0 |

collerek/ormar | pydantic | 343 | Foreign Key as int | hello your library is great. But it has one big drawback - the output of external keys for get requests. It is very inconvenient when in post requests you can send an int and receive a dict. is it possible to add the field <field_id> next to your key as in SqlAlchemy so that the request and responses are the same.

I c... | closed | 2021-09-12T10:51:43Z | 2021-09-14T20:21:57Z | https://github.com/collerek/ormar/issues/343 | [

"enhancement"

] | artel1992 | 1 |

gradio-app/gradio | data-science | 10,144 | autofocus in multimodal chatbot takes focus away from textbox in additional_inputs | ### Describe the bug

When `multimodal=True` is set, when I try to type something in the textbox in `additional_inputs` of `gr.ChatInterface`, the focus shifts to the main textbox right after pressing the first key. This doesn't happen with `multimodal=False`.

### Have you searched existing issues? 🔎

- [X] I have s... | closed | 2024-12-06T06:07:05Z | 2024-12-11T14:16:22Z | https://github.com/gradio-app/gradio/issues/10144 | [

"bug"

] | hysts | 1 |

benbusby/whoogle-search | flask | 523 | App crashes on fly.io deployment. | The docker image gets deployed successfully and the landing page is loaded, but when a search request is sent it just crashes and throws ERROR 502.

I think due to the limited ram availability the app cras... | closed | 2021-11-04T21:07:13Z | 2021-11-07T18:26:10Z | https://github.com/benbusby/whoogle-search/issues/523 | [] | doloresjose | 1 |

K3D-tools/K3D-jupyter | jupyter | 40 | Use instancing to render glyphs more efficiently | I see in `vectors.js` that you do:

```

useHead ? new THREE.Geometry().copy(singleConeGeometry) : null,

```

i.e. you make a copy of the cone head for each glyph.

A possible optimization is to use instanced rendering, avoiding duplication of the glyph model.

https://threejs.org/docs/#api/core/Instan... | closed | 2017-05-31T07:05:41Z | 2017-05-31T08:41:54Z | https://github.com/K3D-tools/K3D-jupyter/issues/40 | [] | martinal | 2 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 288 | during Encoder train, MemoryError happened sometimes | Hi,

below is log:

Average execution time over 10 steps:

Blocking, waiting for batch (threaded) (10/10): mean: 8459ms std: 16934ms

Data to cuda (10/10): mean: 8ms std: 6ms

Forward pass (10/10): mean: 7ms std: 3ms

Loss (10/10): ... | closed | 2020-02-21T02:28:33Z | 2020-08-13T06:11:55Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/288 | [] | DatanIMU | 2 |

pytest-dev/pytest-cov | pytest | 77 | pytest-cov makes test fail by putting a temporary file into cwd | i have tests that check if some specific files have been extracted into cwd.

they fail when using pytest-cov because some .coverage\* file gets created there.

is there any way to avoid this?

| closed | 2015-08-08T01:10:07Z | 2015-10-02T18:31:55Z | https://github.com/pytest-dev/pytest-cov/issues/77 | [

"bug"

] | ThomasWaldmann | 11 |

cleanlab/cleanlab | data-science | 383 | CI: check docs for newly added source code files | - [ ] Add CI check that documentation index pages on docs.cleanlab.ai include new source code files which have been added in a new commit. Otherwise somebody may push commit with new source code files, but the documentation for them will never appear on docs.cleanlab.ai.

Ideally we also need to edit less docs/ files a... | open | 2022-08-31T09:11:19Z | 2024-12-25T20:09:56Z | https://github.com/cleanlab/cleanlab/issues/383 | [

"enhancement",

"good first issue",

"help-wanted"

] | jwmueller | 2 |

idealo/image-super-resolution | computer-vision | 218 | Can't run Dockerfile.gpu. AttributeError: module 'google.protobuf.internal.containers' has no attribute 'MutableMapping' | Running command

```

docker run -v $(pwd)/data/:/home/isr/data -v $(pwd)/weights/:/home/isr/weights -v $(pwd)/config.yml:/home/isr/config.yml -it isr -p -d -c config.yml

```

Gets error message

```Traceback (most recent call last):

File "/home/isr/ISR/assistant.py", line 90, in <module>

prediction=args['pred... | open | 2021-11-08T22:39:39Z | 2022-02-13T17:53:46Z | https://github.com/idealo/image-super-resolution/issues/218 | [] | zelkourban | 0 |

proplot-dev/proplot | data-visualization | 322 | Compatible with norm colorbar ticks in matplotlib3.5+ | ### Description

The ticks of colorbar for manual levels are wrong for matplotlib3.5+.

### Steps to reproduce

```python

import proplot as pplt

import matplotlib

import numpy as np

crf_bounds = np.array([0, 0.7, 0.75, 0.8, 0.85, 0.9, 0.95, 0.97, 0.98, 0.99, 1.0])

norm = matplotlib.colors.BoundaryNorm(boun... | closed | 2022-01-14T14:16:40Z | 2022-01-15T02:43:15Z | https://github.com/proplot-dev/proplot/issues/322 | [

"bug",

"external issue"

] | zxdawn | 6 |

pydata/pandas-datareader | pandas | 655 | Cannot import name 'StringIO' from 'pandas.compat' | Conflict with Pandas 0.25.0 and pandas-datareader 0.7.0.

On Python 3.7.3

import pandas_datareader as pdr

raises exception:

/usr/local/lib/python3.7/dist-packages/pandas_datareader/base.py in <module>

9 from pandas import read_csv, concat

10 from pandas.io.common import urlencode

---> 11 from panda... | closed | 2019-07-21T12:03:56Z | 2019-09-09T06:43:24Z | https://github.com/pydata/pandas-datareader/issues/655 | [] | coulanuk | 14 |

man-group/arctic | pandas | 692 | Fix flaky integration test: test_multiprocessing_safety | This test seems to be quite flaky, have seen it fail half the times. Will take a look at it if there is an underlying issue or just the test being flaky.

LOG:

@pytest.mark.timeout(600)

def test_multiprocessing_safety(mongo_host, library_name):

# Create/initialize library at the parent process, t... | closed | 2019-01-14T14:32:03Z | 2019-01-30T16:28:00Z | https://github.com/man-group/arctic/issues/692 | [] | shashank88 | 5 |

microsoft/nlp-recipes | nlp | 186 | [BUG] Problem when activating conda with an ADO pipeline on a DSVM | ### Description

<!--- Describe your bug in detail -->

When trying to execute the cpu tests https://github.com/microsoft/nlp/blob/staging/tests/ci/cpu_unit_tests_linux.yml, the conda env can't be activated. In the [documentation](https://docs.microsoft.com/en-us/azure/devops/pipelines/languages/anaconda?view=azure-dev... | closed | 2019-07-23T12:56:26Z | 2019-07-23T16:28:33Z | https://github.com/microsoft/nlp-recipes/issues/186 | [

"bug"

] | miguelgfierro | 5 |

aleju/imgaug | deep-learning | 27 | Image Translation | I am not sure how to ask this question properly so bare with me.

I am going to **try** to use an illustrative example:

1. Consider a door on its hinges. Lets say door opens away from you (so that you have to push the door open rather than pull it towards you). Picture the door rotating on its hinge away from you... | closed | 2017-04-01T18:05:19Z | 2017-04-05T18:04:27Z | https://github.com/aleju/imgaug/issues/27 | [] | pGit1 | 4 |

coqui-ai/TTS | pytorch | 2,522 | [Feature request] | <!-- Welcome to the 🐸TTS project!

We are excited to see your interest, and appreciate your support! --->

**🚀 Feature Description**

Hi there,

is there a chance you could make an angrier and especially louder sounding voice ?

<!--A clear and concise description of what the problem is. Ex. I'm always frustrated whe... | closed | 2023-04-14T20:05:11Z | 2023-04-21T09:59:06Z | https://github.com/coqui-ai/TTS/issues/2522 | [

"feature request"

] | nicenice134 | 0 |

onnx/onnx | scikit-learn | 6,432 | export T5 model to onnx | # Bug Report

hello

i am using https://huggingface.co/Ahmad/parsT5-base model

i want toe xport it to onnx using "python -m transformers.onnx --model="Ahmad/parsT5-base" onnx/"

but i get this error:

```

/usr/local/lib/python3.10/dist-packages/torchvision/io/image.py:13: UserWarning: Failed to load image Python e... | closed | 2024-10-08T21:08:39Z | 2024-10-08T21:51:41Z | https://github.com/onnx/onnx/issues/6432 | [] | arefekh | 1 |

CTFd/CTFd | flask | 2,687 | Timed Release Challenges | I have wanted to implement this for some time.

Challenges that automatically release themselves at a specific time. | open | 2024-12-30T19:59:53Z | 2024-12-30T19:59:54Z | https://github.com/CTFd/CTFd/issues/2687 | [] | ColdHeat | 0 |

wandb/wandb | tensorflow | 8,623 | [Bug]: wandb fails to log power on MI300x GPUs | ### Describe the bug

When extracting power consumption from `rocm-smi -a --json`, wandb tries to match the field [`Average Graphics Package Power (W)`](https://github.com/wandb/wandb/blob/3f841abaa6d9f4ca814f61901b3f344938a59c3c/core/pkg/monitor/gpu_amd.go#L279). However, some newer AMD GPUs (e.g, MI300x) instead rep... | closed | 2024-10-15T18:29:14Z | 2024-10-17T22:54:58Z | https://github.com/wandb/wandb/issues/8623 | [

"ty:bug",

"a:sdk",

"c:sdk:system-metrics"

] | ntenenz | 3 |

nl8590687/ASRT_SpeechRecognition | tensorflow | 19 | 怎么样识别方言 | 比如南方方言 我需要怎么做才能识别 | closed | 2018-06-12T08:48:46Z | 2022-07-05T06:31:50Z | https://github.com/nl8590687/ASRT_SpeechRecognition/issues/19 | [] | a2741432 | 3 |

developmentseed/lonboard | data-visualization | 762 | Animate `ColumnLayer` | **Is your feature request related to a problem? Please describe.**

I'm currently using lonboard to animate geospatial data with the `TripsLayer` and it's working great. I'd like to extend this to animate statistics about point locations using the `ColumnLayer`, but it doesn't currently support animation.

**Describe th... | open | 2025-02-25T06:44:31Z | 2025-03-05T16:06:24Z | https://github.com/developmentseed/lonboard/issues/762 | [] | Jake-Moss | 1 |

OpenInterpreter/open-interpreter | python | 610 | Unsupported Language: powershell , Win11 | ### Describe the bug

get this error whenever any of the models output powershell script.

```

Traceback (most recent call last):

File

"C:\Users\Dave\AppData\Local\Programs\Python\Python310\lib\site-packages\interpreter\code_interpreters\create_code_i

nterpreter.py", line 8, in create_code_interpreter

... | closed | 2023-10-09T19:44:48Z | 2023-10-11T18:26:26Z | https://github.com/OpenInterpreter/open-interpreter/issues/610 | [

"Bug"

] | DaveChini | 2 |

python-gitlab/python-gitlab | api | 2,706 | Wiki subpage moved up when updated | ## Description of the problem, including code/CLI snippet

Hi, I'm using the libraire inside a Python script and when I saving a wiki subpage, it is moved up.

```python

gwuc_page: ProjectWiki = glp.wikis.get('my/slug')

# do some work

gwuc_page.save()

```

## Expected Behavior

The page is saved.

## Actu... | closed | 2023-10-26T16:40:40Z | 2025-03-03T01:48:37Z | https://github.com/python-gitlab/python-gitlab/issues/2706 | [

"upstream"

] | trotro | 7 |

tfranzel/drf-spectacular | rest-api | 1,145 | Typing issue when `extend_schema` contained in dict literal | **Describe the bug**

I have a module where I maintain all `extend_schema` definitions like so:

```python

from drf_spectacular.utils import extend_schema

a = extend_schema(summary="a")

b = extend_schema(summary="b")

f = {"a": a, "b": b}

```

Checking types gives following errors:

```

main.py:6: error:... | closed | 2024-01-18T18:09:47Z | 2024-07-31T20:54:40Z | https://github.com/tfranzel/drf-spectacular/issues/1145 | [] | realsuayip | 2 |

open-mmlab/mmdetection | pytorch | 11,989 | Can parallel inference be used in dino detection? | Can parallel inference be used in dino detection? I based it on image_demo.py and tried using the batch_size parameter, but it didn't work | open | 2024-10-10T06:34:31Z | 2024-10-10T06:34:46Z | https://github.com/open-mmlab/mmdetection/issues/11989 | [] | zhoulin2545210131 | 0 |

seleniumbase/SeleniumBase | web-scraping | 2,563 | Possibility integrating SeleniumBase in dotnet ? | Normally been using normal selenium library. But when needed google automation, or somewhere cloudflare, only SB worked for me.

But I mainly write for .Net Framework and Visual Basic as programming language.

Wrapping in to pyinstaller looks workaround at a moment. But harder to control.

Are there any SB binding... | closed | 2024-03-04T13:05:35Z | 2025-03-06T03:03:12Z | https://github.com/seleniumbase/SeleniumBase/issues/2563 | [

"external",

"UC Mode / CDP Mode"

] | graysuit | 4 |

pallets/flask | flask | 5,275 | Pallets | <!--

Replace this comment with a description of what the feature should do.

Include details such as links to relevant specs or previous discussions.

-->

<!--

Replace this comment with an example of the problem which this feature

would resolve. Is this problem solvable without changes to Flask, such

as by subclassing o... | closed | 2023-10-02T07:19:26Z | 2023-10-17T00:05:47Z | https://github.com/pallets/flask/issues/5275 | [] | markjpT | 0 |

huggingface/datasets | pandas | 6,729 | Support zipfiles that span multiple disks? | See https://huggingface.co/datasets/PhilEO-community/PhilEO-downstream

The dataset viewer gives the following error:

```

Error code: ConfigNamesError

Exception: BadZipFile

Message: zipfiles that span multiple disks are not supported

Traceback: Traceback (most recent call last):

F... | closed | 2024-03-11T21:07:41Z | 2024-06-26T05:08:59Z | https://github.com/huggingface/datasets/issues/6729 | [

"enhancement",

"question"

] | severo | 6 |

iperov/DeepFaceLab | machine-learning | 835 | New bug of train.sh? | I have followed the installation of linux. There was a numpy package in my conda environment, but it still told me "no module named numpy" when I ran the train.sh.

I am doing a work about DL, so I have been using numpy for a long time.

Why this happened?

Thanks! | closed | 2020-07-15T12:30:07Z | 2020-07-24T07:45:42Z | https://github.com/iperov/DeepFaceLab/issues/835 | [] | Joevaen | 6 |

bmoscon/cryptofeed | asyncio | 95 | book delta support in backends | Support book deltas in the backends as storing complete books for some of the larger exchanges is somewhat unfeasible due to the volume of data (4000+ levels per side, 100s of updates a second). | closed | 2019-05-20T22:45:39Z | 2019-05-23T22:22:05Z | https://github.com/bmoscon/cryptofeed/issues/95 | [] | bmoscon | 1 |

autogluon/autogluon | data-science | 4,808 | [BUG] [timeseries] TimeSeriesPredictor.feature_importance outputting 0 when covariate is used by regressor | **Bug Report Checklist**

<!-- Please ensure at least one of the following to help the developers troubleshoot the problem: -->

- [x] I provided code that demonstrates a minimal reproducible example. <!-- Ideal, especially via source install -->

- [x] I confirmed bug exists on the latest mainline of AutoGluon via sour... | closed | 2025-01-17T08:09:53Z | 2025-01-29T16:31:16Z | https://github.com/autogluon/autogluon/issues/4808 | [

"bug",

"module: timeseries"

] | canerturkmen | 1 |

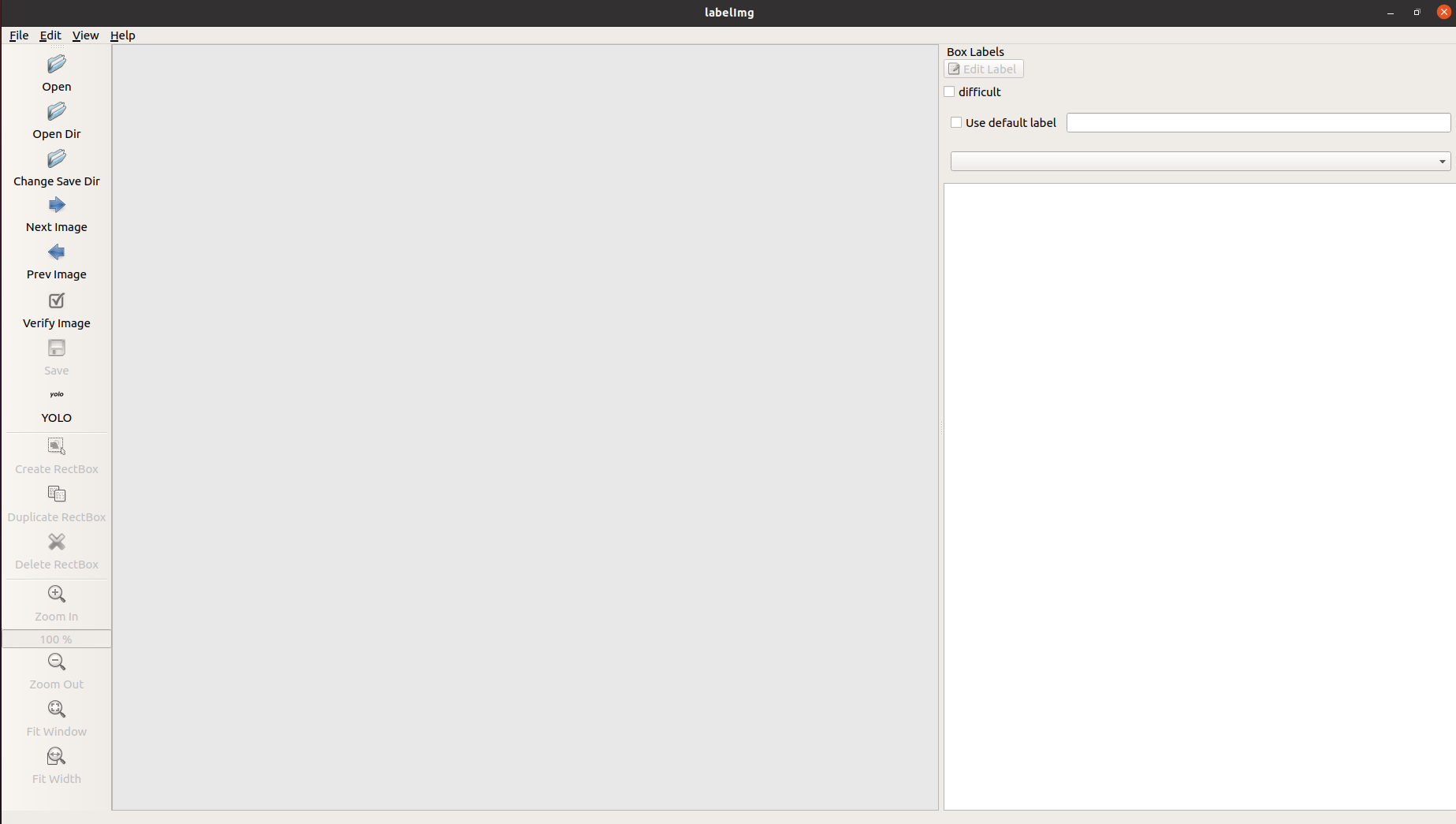

HumanSignal/labelImg | deep-learning | 706 | File explorer is missing for no reason | Hello, I was using labelimg and suddenly the file explorer is missing.

What I have to do to view again this explorer?

Thanks in advance

- **OS:** Ubuntu 20.04

- **PyQt version:** Python3.8

| closed | 2021-02-11T15:23:15Z | 2021-03-25T02:06:53Z | https://github.com/HumanSignal/labelImg/issues/706 | [] | hdnh2006 | 3 |

rougier/numpy-100 | numpy | 8 | examples 32 and 33 are identical | and this one is really cool BTW!

| closed | 2016-03-08T23:03:33Z | 2016-03-09T06:02:21Z | https://github.com/rougier/numpy-100/issues/8 | [] | ev-br | 1 |

mouredev/Hello-Python | fastapi | 422 | 全球网上赌博最佳靠谱选择靠谱的游戏下载APP平台推荐 | 十大赌博靠谱网络平台娱乐游戏网址:376838.com

游戏开户经理 薇:xiaolu460570 飞机:lc15688正规实体平台-创联娱乐实体网赌平台-创联游戏联盟实体网赌平台推荐-十大亚洲博彩娱乐-亚洲赌博平台线上开户-线上赌博/APP-十大实体赌博平台推荐-实体网络赌博平台-实体博彩娱乐创联国际-创联娱乐-创联游戏-全球十大网赌正规平台在线推荐! 推荐十大赌博靠谱平台-十大赌博靠谱信誉的平台 国际 东南亚线上网赌平台 合法网上赌场,中国网上合法赌场,最好的网上赌场 网赌十大快3平台 - 十大网赌平台推荐- 网赌最好最大平台-十大最好的网赌平台 十大靠谱网赌平台- 网上赌搏网站十大排行 全球最大网赌正规平台: 十大,资金安全有... | closed | 2025-03-02T07:33:33Z | 2025-03-02T11:12:18Z | https://github.com/mouredev/Hello-Python/issues/422 | [] | 376838 | 0 |

PablocFonseca/streamlit-aggrid | streamlit | 132 | Component error: when using double forward slash in JsCode | I have encountered an error when used AgGrid with `allow_unsafe_jscode` to `True` and passing `cellRenderer` for one of the Column

```

Component Error

`` literal not terminated before end of script

```

I am constructing a custom anchor tag based on `params.value` and using `https://` there was resulting in er... | closed | 2022-08-16T09:56:09Z | 2022-08-27T19:55:14Z | https://github.com/PablocFonseca/streamlit-aggrid/issues/132 | [] | DharmeshPandav | 1 |

google-research/bert | nlp | 385 | dose <s> represent whitespace in the chinese pretrained vocabulary? | Because the chinese pretrained vocab does not include all the english words, so I split english words into characters. Then how do I represent whitespace between english words? | open | 2019-01-22T09:17:56Z | 2021-06-29T06:30:07Z | https://github.com/google-research/bert/issues/385 | [] | lorashen | 1 |

statsmodels/statsmodels | data-science | 8,918 | How can I use version 0.15.0? | I'd like to use the fact that SARIMAX in 0.15.0 supports a maxiter argument. When will 0.15.0 be released? In the meantime, is there a way for me to use the code in 0.15.0? | closed | 2023-06-19T17:16:13Z | 2023-10-27T09:57:28Z | https://github.com/statsmodels/statsmodels/issues/8918 | [

"comp-tsa-statespace",

"question"

] | dblim | 1 |

FlareSolverr/FlareSolverr | api | 1,227 | TimeOut during solving | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Dis... | closed | 2024-06-21T16:33:41Z | 2024-06-21T19:22:24Z | https://github.com/FlareSolverr/FlareSolverr/issues/1227 | [

"duplicate"

] | bozoweed | 7 |

seleniumbase/SeleniumBase | pytest | 2,481 | Getting "selenium.common.exceptions.WebDriverException: Message: disconnected: Unable to receive message from renderer" in Driver(uc=True) | I am getting `"selenium.common.exceptions.WebDriverException: Message: disconnected: Unable to receive message from renderer"` in `Driver(uc=True)`

google search suggests adding `--no-sandbox` and `--disable-dev-shm-usage` but I can see they are already added in [line](https://github.com/seleniumbase/SeleniumBase/bl... | closed | 2024-02-11T06:28:14Z | 2024-11-05T16:40:58Z | https://github.com/seleniumbase/SeleniumBase/issues/2481 | [

"duplicate",

"invalid usage",

"UC Mode / CDP Mode"

] | iamumairayub | 11 |

Esri/arcgis-python-api | jupyter | 1,755 | Error displaying widget: model not found for arcgis 2.1.0 | **Describe the bug**

When installing arcgis=2.1.0, jupyterlab=2 and nodejs=18.16.0=ha637b67_1, get an "error displaying widget: model not found" error when trying to display a map.

**To Reproduce**

Steps to reproduce the behavior:

```python

#I have the esri channel in my conda channel list

conda create -n arcgis2... | closed | 2024-02-06T20:49:13Z | 2024-02-09T12:45:36Z | https://github.com/Esri/arcgis-python-api/issues/1755 | [

"As-Designed"

] | timhaverland-noaa | 3 |

NullArray/AutoSploit | automation | 664 | Unhandled Exception (d2d3bf199) | Autosploit version: `3.1`

OS information: `Linux-4.17.0-kali3-amd64-x86_64-with-Kali-kali-rolling-kali-rolling`

Running context: `autosploit.py`

Error meesage: `object of type 'NoneType' has no len()`

Error traceback:

```

Traceback (most recent call):

File "/root/Autosploit/autosploit/main.py", line 116, in main

t... | closed | 2019-04-16T19:16:59Z | 2019-04-17T18:33:03Z | https://github.com/NullArray/AutoSploit/issues/664 | [] | AutosploitReporter | 0 |

aimhubio/aim | data-visualization | 3,181 | RuntimeError: dictionary keys changed during iteration | ## 🐛 Bug

When using ray.tune for hyperparameter optimization and invoking the AimLoggerCallback, the following error occurs:

```

Traceback (most recent call last):

File "/root/data/mamba-reid/tst.py", line 92, in <module>

results = tuner.fit()

^^^^^^^^^^^

File "/root/data/anaconda3/lib/pyt... | closed | 2024-07-08T01:07:41Z | 2024-12-05T13:58:25Z | https://github.com/aimhubio/aim/issues/3181 | [

"type / bug",

"help wanted"

] | GuHongyang | 2 |

pytorch/pytorch | machine-learning | 149,349 | DISABLED test_unshard_async (__main__.TestFullyShardUnshardMultiProcess) | Platforms: inductor

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_unshard_async&suite=TestFullyShardUnshardMultiProcess&limit=100) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/38913895101).

Over the past 3 hou... | open | 2025-03-17T21:40:56Z | 2025-03-24T18:47:44Z | https://github.com/pytorch/pytorch/issues/149349 | [

"oncall: distributed",

"triaged",

"module: flaky-tests",

"skipped",

"module: fsdp",

"oncall: pt2"

] | pytorch-bot[bot] | 2 |

donnemartin/system-design-primer | python | 278 | 第二步:回顾可扩展性的文章对应的链接打不开 | ps:我已翻墙 | open | 2019-05-08T11:19:47Z | 2019-09-11T06:20:26Z | https://github.com/donnemartin/system-design-primer/issues/278 | [

"needs-review",

"response-needed"

] | baitian77 | 5 |

CorentinJ/Real-Time-Voice-Cloning | python | 1,026 | synthesizer.pt size changes after fine-tuning | Hey,

I'm fine-tuning the synthesizer model using my dateset, that I organized in LibriSpeech hierarchy (dataSet->Speaker_id -> segment_id -> audio.txt and audio.flac)

However, after running one epoch, the size of the saved synthesizer model (synthesizer.pt) have changed, causing the program to not use this model an... | open | 2022-02-24T15:05:17Z | 2022-02-24T15:05:17Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1026 | [] | matnshrn | 0 |

WZMIAOMIAO/deep-learning-for-image-processing | pytorch | 501 | feature_pyramid_network.py | 在运行feature_pyramid_network.py模块时,出现错误,不知道怎么解决?

错误代码:

from .roi_head import RoIHeads

ImportError: attempted relative import with no known parent package | closed | 2022-03-26T07:56:45Z | 2022-03-29T08:34:04Z | https://github.com/WZMIAOMIAO/deep-learning-for-image-processing/issues/501 | [] | pace0120 | 1 |

NullArray/AutoSploit | automation | 539 | Unhandled Exception (a21f12a70) | Autosploit version: `3.0`

OS information: `Linux-3.10.0-957.5.1.el7.x86_64-x86_64-with-centos-7.6.1810-Core`

Running context: `autosploit.py -a -q ***`

Error meesage: `'access_token'`

Error traceback:

```

Traceback (most recent call):

File "/dev/snd/Autosploit/autosploit/main.py", line 110, in main

AutoSploitParse... | closed | 2019-03-05T18:35:52Z | 2019-03-06T01:26:27Z | https://github.com/NullArray/AutoSploit/issues/539 | [] | AutosploitReporter | 0 |

coqui-ai/TTS | deep-learning | 3,143 | [Bug] AttributeError: 'NoneType' object has no attribute 'load_wav' when using tts_with_vc_to_file | ### Describe the bug

Fix #3108 breaks `tts_with_vc_to_file` at least with VITS.

See: https://github.com/coqui-ai/TTS/blob/6fef4f9067c0647258e0cd1d2998716565f59330/TTS/api.py#L463

By changing the line from:

`self.tts_to_file(text=text, speaker=None, language=language, file_path=fp.name,speaker_wav=speaker_wav)... | closed | 2023-11-05T21:22:48Z | 2023-11-24T11:26:39Z | https://github.com/coqui-ai/TTS/issues/3143 | [

"bug"

] | pprobst | 4 |

tartiflette/tartiflette | graphql | 114 | Should execute only the specified operation | A GraphQL request containing multiple operation definition shouldn't execute all of them but only the one specified (cf. [GraphQL spec](https://facebook.github.io/graphql/June2018/#ExecuteRequest())):

* **Tartiflette version:** 0.3.6

* **Python version:** 3.6.1

* **Executed in docker:** No

* **Is a regression fro... | closed | 2019-02-05T17:07:40Z | 2019-02-06T12:55:07Z | https://github.com/tartiflette/tartiflette/issues/114 | [

"bug"

] | Maximilien-R | 0 |

biolab/orange3 | scikit-learn | 6,609 | Widget help function no longer working | <!--

Thanks for taking the time to report a bug!

If you're raising an issue about an add-on (i.e., installed via Options > Add-ons), raise an issue in the relevant add-on's issue tracker instead. See: https://github.com/biolab?q=orange3

To fix the bug, we need to be able to reproduce it. Please answer the following... | closed | 2023-10-25T13:44:18Z | 2024-01-26T11:20:17Z | https://github.com/biolab/orange3/issues/6609 | [

"bug report"

] | wvdvegte | 8 |

qubvel-org/segmentation_models.pytorch | computer-vision | 762 | SegFormer | Kindly requesting you add segformer please :) | closed | 2023-05-19T10:12:24Z | 2023-05-21T01:30:59Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/762 | [] | chefkrym | 3 |

noirbizarre/flask-restplus | api | 523 | wrong swagger.json path | http://dev.py.haizb.cn/gifts/1/

this is my develop test server,and my code and document in there.

you can see , can't get the swagger.json, because the absolute path is http://127.0.0.1:10000/gifts/1/swagger.json.

and i use nginx to proxy uwsgi

nginx.conf like this:

> location / {

proxy_pas... | closed | 2018-09-13T14:44:18Z | 2019-03-27T09:34:38Z | https://github.com/noirbizarre/flask-restplus/issues/523 | [

"duplicate",

"documentation"

] | dengqianyi | 3 |

thp/urlwatch | automation | 347 | Cache database redesign | I've been thinking about tackling the following issues:

* Support job specific check intervals #148, #171

* Find the latest entry of a job accurately #335

* Avoid cache entries duplication #326

* Export site change/version history #53, #170

I believe a redesign of the cache database can help with all these issue... | closed | 2019-01-16T05:23:33Z | 2020-07-29T20:25:05Z | https://github.com/thp/urlwatch/issues/347 | [] | cfbao | 2 |

ansible/ansible | python | 84,127 | include_tasks in a block unintentionally inherits block tags | ### Summary

When specifying tags: always in a block, the include_tasks task within the block unintentionally inherits the tags: always. This causes tasks that are not supposed to run (based on tag filtering) to be executed, as seen in the example below. Specifically, when the playbook is run with the tag tag1, tasks w... | closed | 2024-10-16T07:15:14Z | 2024-10-31T13:00:02Z | https://github.com/ansible/ansible/issues/84127 | [

"module",

"bug",

"affects_2.15"

] | HIROYUKI-ONODERA1 | 7 |

gee-community/geemap | jupyter | 1,336 | Add Landsat Collection 2 cloud masking | ERROR: type should be string, got "\r\nhttps://code.earthengine.google.com/?as_external&scriptPath=Examples%3ACloud%20Masking%2FLandsat457%20Surface%20Reflectance \r\n\r\n\r\n" | closed | 2022-11-19T04:28:00Z | 2023-04-17T14:01:18Z | https://github.com/gee-community/geemap/issues/1336 | [

"Feature Request"

] | giswqs | 0 |

aws/aws-sdk-pandas | pandas | 2,220 | wr.s3.to_parquet fails while writing data from Excel file | ### Describe the bug

We are just reading some Excel file using

`df=wr.s3.read_excel("path")`

Immediately, we are writing it as parquet

`wr.s3.to_parquet(df, "path")`

But the above operation fails with the following in Glue 4.0

```

File "<stdin>", line 1, in <module>

File "/opt/amazon/python3.9-ray... | closed | 2023-04-24T07:18:13Z | 2023-05-15T21:26:37Z | https://github.com/aws/aws-sdk-pandas/issues/2220 | [

"bug"

] | kondisettyravi | 7 |

psf/black | python | 4,098 | `hug_parens_with_braces_and_square_brackets`: Recursively hugging parens decrease readability | This is feedback related to the `hug_parens_with_braces_and_square_brackets` preview style.

**Describe the style change**

Only collapse the first set of parens rather than all parens recursively. Having too many opening/closing parens on a single line makes it harder to distinguish the individual parentheses (es... | open | 2023-12-10T23:53:54Z | 2024-11-25T09:39:59Z | https://github.com/psf/black/issues/4098 | [

"T: style",

"C: preview style"

] | MichaReiser | 1 |

jmcnamara/XlsxWriter | pandas | 592 | Feature Request: Print legend series array to know what to 'delete_series': [?,?] | Hello, I was wondering if there is a quick and easy way to print out or access the legend series that are available to delete.

Right now I feel blind when deleting series with only 'delete_series': [?,?] | closed | 2019-01-10T22:19:48Z | 2019-01-11T15:59:08Z | https://github.com/jmcnamara/XlsxWriter/issues/592 | [

"question"

] | NikoTumi | 2 |

AUTOMATIC1111/stable-diffusion-webui | pytorch | 16,248 | [Feature Request]: clarify in the reamd to set webui.sh. to executable | ### Is there an existing issue for this?

- [X] I have searched the existing issues and checked the recent builds/commits

### What would your feature do ?

i know it sounds basic, but beginners get confused. 3) Set webui.sh to executable and run

### Proposed workflow

.

### Additional information

_No response_ | open | 2024-07-22T12:10:58Z | 2024-07-23T05:14:51Z | https://github.com/AUTOMATIC1111/stable-diffusion-webui/issues/16248 | [

"enhancement"

] | RustoMCSpit | 1 |

browser-use/browser-use | python | 640 | Empty <a> tags being sent to llm | ### Bug Description

Currently, empty <a> tags are being sent to llm and this is causing two issues:

1. it is increasing unnecessary cost by using more tokens.

2. it is confusing the llm.

### Reproduction Steps

NA

### Code Sample

```python

NA

```

### Version

0.1.36

### LLM Model

Other (specify in description)

... | closed | 2025-02-09T11:48:23Z | 2025-02-12T06:36:50Z | https://github.com/browser-use/browser-use/issues/640 | [

"bug"

] | PaperBoardOfficial | 0 |

flairNLP/flair | nlp | 2,686 | Replace print with logging in model training |

**Is your feature/enhancement request related to a problem? Please describe.**

When using the ModelTrainer, everything is printed out through a logger, except for when the learning rate is reduced. This is just a normal print to stdout. See picture:

<img width="983" alt="Screenshot 2022-03-23 at 20 22 46" src="... | closed | 2022-03-23T19:30:48Z | 2022-09-09T02:02:39Z | https://github.com/flairNLP/flair/issues/2686 | [

"wontfix"

] | HallerPatrick | 2 |

pyeve/eve | flask | 1,332 | Incorrect values deserialization | ### Description

Given a resource schema that uses an `anyof` in a `list`, when parsing a value in a field, Eve tries to apply all the possible deserializations but if several deserializations are possible it will end up parsing it using an invalid deserializator.

### Example

Using this resource definition:

... | closed | 2019-11-28T16:05:20Z | 2020-06-06T11:42:04Z | https://github.com/pyeve/eve/issues/1332 | [

"stale"

] | jordeu | 1 |

sebastianruder/NLP-progress | machine-learning | 412 | Incomparable results in the WMT 2014 EN-DE table for machine translation | I noticed that some of the results reported in the WMT 2014 EN-DE table are obtained by models trained on data from newer WMT datasets (but they report results on newstest2014), e.g, Edunov et al. (2018) uses WMT’18 and Wu et al. (2019) uses WMT’16 for training.

The few results on WMT 2014 EN-FR that i checked were ... | open | 2020-01-29T09:54:16Z | 2020-02-02T17:03:14Z | https://github.com/sebastianruder/NLP-progress/issues/412 | [] | rihardsk | 1 |

pydantic/pydantic | pydantic | 10,654 | Custom classes serialization schema not evaluated when specified as an `extra` field / Circular reference error when serializing custom passthrough class | ### Initial Checks

- [X] I confirm that I'm using Pydantic V2

## Update:

Please see the comment here for the current status of this issue: https://github.com/pydantic/pydantic/issues/10654#issuecomment-2423475533

tl;dr:

the bug: one needs to manually define `__pydantic_serializer__` on custom classes in a... | open | 2024-10-18T06:57:06Z | 2024-10-19T02:04:32Z | https://github.com/pydantic/pydantic/issues/10654 | [

"bug V2",

"pending"

] | sneakers-the-rat | 2 |

yzhao062/pyod | data-science | 119 | SO_GAAL and MO_GAAL decision_function mistake | In the original paper "Generative adversarial active learning for unsupervised outlier detection", the outlier score is defined as OS(x)=1-D(x) (**Page 7 Algorithm 1**). However, in pyod's implementation, the outlier score is defined as D(x), so I hope this mistake can be revised。 | open | 2019-06-26T04:43:04Z | 2019-06-27T22:48:05Z | https://github.com/yzhao062/pyod/issues/119 | [] | WangXuhongCN | 2 |

pytest-dev/pytest-django | pytest | 1,041 | Python 3.11 support | Are there any plans to add support for python 3.11? Or is this supported already?

If everything is already working, I'm not sure if anything needs to be done other than update the `readme.md` and the `tox.ini` files. | closed | 2023-01-16T06:22:36Z | 2023-01-16T16:52:31Z | https://github.com/pytest-dev/pytest-django/issues/1041 | [] | jacksund | 2 |

mwaskom/seaborn | pandas | 3,379 | AttributeError: 'DataFrame' object has no attribute 'get' | It appears that seaborn somewhat supports `polars.DataFrame` objects, such as:

```

iris = pl.read_csv("data/iris.csv")

p = sns.displot(

data=iris,

x="sepal_width",

hue="species",

col="species",

height=3,

aspect=1,

alpha=1

)

```

...but not fully:

```

p = sns.catplot(

... | closed | 2023-06-06T19:15:32Z | 2023-06-06T22:44:53Z | https://github.com/mwaskom/seaborn/issues/3379 | [] | nick-youngblut | 2 |

pydantic/bump-pydantic | pydantic | 167 | I have a big pile of improvements to upstream | I upgraded a large private codebase to Pydantic 2, and in the process I made a bunch of improvements to bump-pydantic. See [this branch](https://github.com/camillol/bump-pydantic/tree/camillo/public), and the [list of commits](https://github.com/pydantic/bump-pydantic/compare/main...camillol:bump-pydantic:camillo/publi... | open | 2024-06-04T22:59:14Z | 2024-06-20T11:42:35Z | https://github.com/pydantic/bump-pydantic/issues/167 | [] | camillol | 2 |

faif/python-patterns | python | 390 | Shortened URL is spam now | https://github.com/faif/python-patterns/blob/79d12755010a33a5195d5475a2c8853cda674c29/patterns/creational/factory.py#L15

Just wanted to point out that this link is now spam which tries to fire a WhatsApp message on your behalf... I'm assuming this is the URL shortening company up to some hijinks? Google recommends B... | closed | 2022-05-31T16:16:16Z | 2022-05-31T17:33:22Z | https://github.com/faif/python-patterns/issues/390 | [] | rachtsingh | 2 |

comfyanonymous/ComfyUI | pytorch | 6,820 | prolbme | ### Expected Behavior

fg

### Actual Behavior

fd

### Steps to Reproduce

fd

### Debug Logs

```powershell

fd

```

### Other

fd | open | 2025-02-15T16:26:25Z | 2025-02-15T16:26:25Z | https://github.com/comfyanonymous/ComfyUI/issues/6820 | [

"Potential Bug"

] | szymektm | 0 |

activeloopai/deeplake | data-science | 2,663 | [BUG] ds.visualize cannot work offline in jupyter notebook with local dataset | ### Severity

P1 - Urgent, but non-breaking

### Current Behavior

I try ds.visualize in jupyter notebook with loacl dataset, it failed to visualize the dataset.Like this

It reported a failure to connect to... | closed | 2023-10-18T04:03:10Z | 2023-10-18T19:23:00Z | https://github.com/activeloopai/deeplake/issues/2663 | [

"bug"

] | alphabet-lgtm | 7 |

onnx/onnx | deep-learning | 6,649 | [Feature request] Can SpaceToDepth also add mode attribute? | ### System information

ONNX 1.17

### What is the problem that this feature solves?

Current SpaceToDepth Op https://github.com/onnx/onnx/blob/main/docs/Operators.md#spacetodepth doesn't have attributes to assign the DCR/CRD, and can only supports CRD in computation.

But the DepthToSpace Op https://github.com/onnx/onn... | open | 2025-01-22T03:33:51Z | 2025-02-20T03:56:36Z | https://github.com/onnx/onnx/issues/6649 | [

"module: spec"

] | vera121 | 0 |

hankcs/HanLP | nlp | 567 | CoreDictionary中有一个"机收"的词,导致“手机收邮件”分词结果为“手 机收 邮件” | <!--

这是HanLP的issue模板,用于规范提问题的格式。本来并不打算用死板的格式限制大家,但issue区实在有点混乱。有时候说了半天才搞清楚原来对方用的是旧版、自己改了代码之类,浪费双方宝贵时间。所以这里用一个规范的模板统一一下,造成不便望海涵。除了注意事项外,其他部分可以自行根据实际情况做适量修改。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](htt... | closed | 2017-06-22T12:43:28Z | 2017-06-22T14:08:04Z | https://github.com/hankcs/HanLP/issues/567 | [

"improvement"

] | sjturan1 | 1 |

docarray/docarray | fastapi | 1,040 | v2: filter query languague | # Context

We need to implement the filter query language equivalent to docarray v1 : https://docarray.jina.ai/fundamentals/documentarray/find/#query-by-conditions

One of the goal is to keep compatibility with jina filetering in topology https://docs.jina.ai/concepts/flow/add-conditioning/

Under the hood the framew... | closed | 2023-01-19T13:30:29Z | 2023-01-27T13:38:33Z | https://github.com/docarray/docarray/issues/1040 | [] | samsja | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.