repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

slackapi/python-slack-sdk | asyncio | 1,612 | websockets adapter fails to check the current session with error "'ClientConnection' object has no attribute 'closed'" | (Filling out the following details about bugs will help us solve your issue sooner.)

### Reproducible in:

```bash

pip freeze | grep slack

python --version

sw_vers && uname -v # or `ver`

```

#### The Slack SDK version

```

slack_bolt==1.21.3

slack_sdk==3.33.5

```

#### Python runtime version

```

... | closed | 2024-12-11T18:31:53Z | 2024-12-13T00:03:05Z | https://github.com/slackapi/python-slack-sdk/issues/1612 | [

"bug",

"Version: 3x",

"socket-mode",

"area:async"

] | ericli-splunk | 2 |

huggingface/datasets | pandas | 7,337 | One or several metadata.jsonl were found, but not in the same directory or in a parent directory of | ### Describe the bug

ImageFolder with metadata.jsonl error. I downloaded liuhaotian/LLaVA-CC3M-Pretrain-595K locally from Hugging Face. According to the tutorial in https://huggingface.co/docs/datasets/image_dataset#image-captioning, only put images.zip and metadata.jsonl containing information in the same folder. How... | open | 2024-12-17T12:58:43Z | 2025-01-03T15:28:13Z | https://github.com/huggingface/datasets/issues/7337 | [] | mst272 | 1 |

aiortc/aiortc | asyncio | 1,190 | Consent to Send Failure using examples on Edge (Chrome) | I'am consistently getting Consent to Send Failures after the video has been streaming over webrtc for 10-20 seconds on Edge (Chrome). Regular Chrome and Safari (iPhone) work great.

### Steps to reproduce

1. Download or clone the examples directory.

2. Utilize the webcam example.

3. Execute `pip install aiortc... | closed | 2024-11-14T02:28:55Z | 2025-02-01T09:34:21Z | https://github.com/aiortc/aiortc/issues/1190 | [] | JoshuaHintze | 0 |

saleor/saleor | graphql | 17,280 | `mime-support` is not available now | `mime-support` is not available now

https://github.com/saleor/saleor/blob/438595033c608219a88583ae91d50f2e2415e558/Dockerfile#L34C3-L34C15

Please check https://wiki.debian.org/mime-support

> The mime-support package ([final version number: 3.66](http://snapshot.debian.org/package/mime-support/3.66/)) was split into [... | open | 2025-01-21T17:27:47Z | 2025-01-21T17:27:47Z | https://github.com/saleor/saleor/issues/17280 | [] | http600 | 0 |

erdewit/ib_insync | asyncio | 92 | Assertation error on connection | I run ib_insync on an anaconda installation (python 3.6) on Debian.

```

from ib_insync import *

ib = IB()

ib.connect('ip_here', portno, clientId=12)

```

I then get the following error:

```

--------------------------------------------------------------------------

AssertionError ... | closed | 2018-08-16T13:37:39Z | 2018-08-17T07:34:53Z | https://github.com/erdewit/ib_insync/issues/92 | [] | tfrojd | 2 |

roboflow/supervision | pytorch | 1,451 | How does line zone get triggered? | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

Hi, I am trying to under how the line zone get triggered.

Let's say I set the triggering_anchors as BOTTOM_LEFT. Does xy of the bottom_left c... | closed | 2024-08-15T11:17:47Z | 2024-08-15T11:25:06Z | https://github.com/roboflow/supervision/issues/1451 | [

"question"

] | abichoi | 0 |

pyqtgraph/pyqtgraph | numpy | 2,272 | png export issue | <!-- In the following, please describe your issue in detail! -->

I was trying to run the Demo to export .png file. Then I found the picture was deficiency.

<!-- If some of the sections do not apply, just remove them. -->

### Short description

<!-- This should summarize the issue. -->

![EB8EFB3E-8672-4373-AD90-78... | open | 2022-04-26T10:08:35Z | 2022-05-04T16:13:15Z | https://github.com/pyqtgraph/pyqtgraph/issues/2272 | [

"exporters"

] | owenbearPython | 1 |

huggingface/transformers | pytorch | 36,145 | Problems with Training ModernBERT | ### System Info

- `transformers` version: 4.48.3

- Platform: Linux-6.8.0-52-generic-x86_64-with-glibc2.35

- Python version: 3.12.9

- Huggingface_hub version: 0.28.1

- Safetensors version: 0.5.2

- Accelerate version: 1.3.0

- Accelerate config: not found

- PyTorch version (GPU?): 2.6.0+cu126 (True)

- Tensorflow version... | closed | 2025-02-12T04:04:50Z | 2025-02-14T04:21:22Z | https://github.com/huggingface/transformers/issues/36145 | [

"bug"

] | hyunjongkimmath | 4 |

noirbizarre/flask-restplus | flask | 498 | api.model doesnt accept null values | flask-restplus version: 0.11.0

Input model doesnt access null values from the payload, looking into flask-restplus code found that jsonschema definition type is not designed for that , right not it just accepts the data type i.e.,, either integer or string etc it should have been [<datatype>,"null"] , attached is the ... | open | 2018-07-23T15:43:10Z | 2018-07-23T15:43:10Z | https://github.com/noirbizarre/flask-restplus/issues/498 | [] | boyaps | 0 |

ymcui/Chinese-BERT-wwm | nlp | 54 | 有roberta large版本的下载地址吗 | @ymcui | closed | 2019-10-14T09:27:47Z | 2019-10-15T00:26:09Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/54 | [] | xiongma | 2 |

kornia/kornia | computer-vision | 2,813 | Improve the `ImageSequential` docs | now that i see this one, i think it's worth somewhere to update the docs properly explaining when to use AuggmentationSequential vs ImageSequential

_Originally posted by @edgarriba in https://github.com/kornia/kornia/pull/2799#discussion_r1488008322_

worth discuss the advantages, examples, etc for e... | open | 2024-02-23T00:53:33Z | 2024-02-28T13:35:26Z | https://github.com/kornia/kornia/issues/2813 | [

"help wanted",

"good first issue",

"docs :books:"

] | johnnv1 | 1 |

marcomusy/vedo | numpy | 198 | k3d: TraitError: colors has wrong size: 4000000 (1000000 required) | Copying and paste the example https://github.com/marcomusy/vedo/blob/master/examples/basic/manypoints.py does not work (is that supposed to work in a jupyter notebook?). By the way, is the k3d backend supporting transparency?

```

"""Colorize a large cloud of 1M points by passing

colors and transparencies in the form... | open | 2020-08-24T14:41:14Z | 2020-11-11T06:47:57Z | https://github.com/marcomusy/vedo/issues/198 | [] | rikigigi | 2 |

QuivrHQ/quivr | api | 3,015 | Supabase local creation of new users not working | New version of supabase. Need to update config.toml | closed | 2024-08-16T09:06:23Z | 2024-08-16T09:07:13Z | https://github.com/QuivrHQ/quivr/issues/3015 | [] | StanGirard | 1 |

fastapi/sqlmodel | sqlalchemy | 202 | Showing Warning | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X... | closed | 2021-12-24T03:07:54Z | 2022-01-02T05:22:21Z | https://github.com/fastapi/sqlmodel/issues/202 | [

"question"

] | abhint | 2 |

serengil/deepface | deep-learning | 923 | Use an interface for basemodels and detectors | We have many different base model, detectors and extended models. We can define an interface and inherit it in existing models. This will help maintainers to add new models in the future because required methods can be found in that interface easily. | closed | 2023-12-17T15:16:42Z | 2024-01-20T20:37:35Z | https://github.com/serengil/deepface/issues/923 | [

"enhancement"

] | serengil | 1 |

joerick/pyinstrument | django | 76 | Can't see any data regarding any raised exception | Thanks for this lib.

I tried to raise an exception manually to see if I can check it in the trace result, it looks like it doesn't show anything regarding that exception. it just says an error occurred, am I missing something?

Here's the configuration I'm using:

```python

@app.before_request

def before_request... | closed | 2019-12-19T07:44:13Z | 2021-04-03T22:27:21Z | https://github.com/joerick/pyinstrument/issues/76 | [] | AbdoDabbas | 2 |

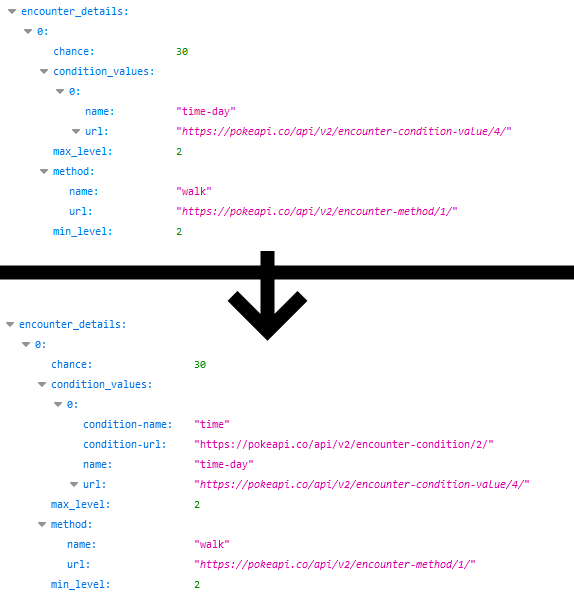

PokeAPI/pokeapi | graphql | 584 | Add encounter condition to encounter details | It would be useful for sorting and to avoid querying the encounter-condition-value

| closed | 2021-03-07T13:33:53Z | 2021-03-08T19:04:43Z | https://github.com/PokeAPI/pokeapi/issues/584 | [] | SimplyBLGDev | 1 |

jina-ai/clip-as-service | pytorch | 239 | BERT for Information Retrieval (Feature Request) | Hi @hanxiao

Thanks for sharing this amazing work! I am really amazed by this super scalable architecture! I did the query to related query similarity. It works quite Ok with a custom build BERT model for my content corpus (which is much different than wiki corpus). I am trying to see if i can use BERT for query to do... | open | 2019-02-18T03:56:27Z | 2020-02-14T02:25:13Z | https://github.com/jina-ai/clip-as-service/issues/239 | [] | sujithjoseph | 2 |

Avaiga/taipy | data-visualization | 1,739 | Get geolocation from clicking on a map | ### Description

The goal would be to get the lon/lat of a point when clicking anywhere on a map.

This was asked by a user [here](https://discord.com/channels/1125797687476887563/1279625158394511360/1280344356066431037)

### Acceptance Criteria

- [ ] Ensure new code is unit tested, and check code coverage is ... | closed | 2024-09-03T08:02:02Z | 2024-09-05T07:47:22Z | https://github.com/Avaiga/taipy/issues/1739 | [

"🖰 GUI",

"🟩 Priority: Low",

"✨New feature"

] | FlorianJacta | 3 |

aiortc/aiortc | asyncio | 1,188 | Getting Bundled Media in the SessionDescription to Work with Pion | We're trying to get `aiortc` talking with our [Pion](https://github.com/pion/webrtc) WebRTC API. We get errors from Pion because `aiortc` constructs different `ice-ufrag` and `ice-pwd` for every m-line, even though a bundle is configured.

The solution is to have consistent `ice-ufrag` and `ice-pwd` for each media/a... | open | 2024-11-10T14:22:20Z | 2025-01-31T03:37:02Z | https://github.com/aiortc/aiortc/issues/1188 | [] | quintenrosseel | 3 |

tfranzel/drf-spectacular | rest-api | 459 | Private endpoints or hide schema? | Is there any way to hide the schema generation for a view all together? I know you can set exclude=True for specific http methods, but can it be done for an entire url? | closed | 2021-07-15T18:34:28Z | 2021-07-15T19:00:24Z | https://github.com/tfranzel/drf-spectacular/issues/459 | [] | li-darren | 4 |

zappa/Zappa | django | 489 | [Migrated] Refactor Let's Encrypt implementation to use available packages [proposed code] | Originally from: https://github.com/Miserlou/Zappa/issues/1300 by [rgov](https://github.com/rgov)

The Let's Encrypt integration works by invoking the `openssl` command line tool, creating various temporary files, and communicating with the Let's Encrypt certificate authority API directly.

The Python package that Le... | closed | 2021-02-20T09:43:23Z | 2024-04-13T16:36:18Z | https://github.com/zappa/Zappa/issues/489 | [

"no-activity",

"auto-closed"

] | jneves | 2 |

unionai-oss/pandera | pandas | 1,205 | Static type hint error on class pandera DataFrame | - [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest version of pandera.

- [x] (optional) I have confirmed this bug exists on the master branch of pandera.

Currently, the type hint for the static method of the class pandera DataFrame in pandera.ty... | closed | 2023-05-31T11:05:05Z | 2023-06-28T10:55:32Z | https://github.com/unionai-oss/pandera/issues/1205 | [

"bug"

] | manel-ab | 3 |

openapi-generators/openapi-python-client | rest-api | 721 | TypeError when model default value is a list | **Describe the bug**

When generating models for an API where a default argument is a list of enums, a TypeError is raised [on this line](https://github.com/openapi-generators/openapi-python-client/blob/main/openapi_python_client/parser/properties/__init__.py#L463):

return f"{prop.class_info.name}.{inverse_value... | open | 2023-01-23T15:51:03Z | 2023-01-23T15:51:03Z | https://github.com/openapi-generators/openapi-python-client/issues/721 | [

"🐞bug"

] | maxbergmark | 0 |

mljar/mercury | jupyter | 27 | Error when uploading file | I'm getting this error when uploading a file through the widget

Forbidden: /api/v1/fp/process/ | closed | 2022-01-26T04:10:35Z | 2022-01-27T03:39:38Z | https://github.com/mljar/mercury/issues/27 | [] | ismaelc | 4 |

holoviz/panel | jupyter | 6,887 | `pn.widgets.Tabulator`: Hover effect not disabled with `selectable=False` | https://github.com/holoviz/panel/blob/6f63f81c827d197a6f367f86fa1eff98b257c116/panel/models/tabulator.ts#L657C69-L657C72

Why NaN? It seems that it doesn't let `selectable=False` as in

```

pn.widgets.Tabulator(df, show_index=False, disabled=True, selectable=False)

```

properly apply to the Tabulator JavaScrip... | open | 2024-06-01T20:52:14Z | 2025-02-20T15:04:43Z | https://github.com/holoviz/panel/issues/6887 | [

"component: tabulator"

] | earshinov | 0 |

stanfordnlp/stanza | nlp | 729 | [QUESTION] Converting CoreNLP ParseTree to nltk.Tree | How do I convert the constituency parseTree generated from the code below to [`nltk.Tree`](https://www.nltk.org/_modules/nltk/tree.html)?

```python

from stanza.server import CoreNLPClient

with CoreNLPClient(annotators=["parse"], timeout=30000, memory="16G") as client:

ann = client.annotate("Is there such ... | closed | 2021-06-24T13:07:20Z | 2021-06-24T14:55:29Z | https://github.com/stanfordnlp/stanza/issues/729 | [

"question"

] | hardianlawi | 3 |

keras-team/keras | pytorch | 20,103 | Module not found errors 3.4.1 | Not useful. After I updated my libraries in Anaconda, all of my Keras codes started to give dramatic errors. I can import the Keras, but can not use it! I re-installed but the situation is same. I don't know how the dependencies or methods changed, but you should consider how people are using these. Now I have to insta... | closed | 2024-08-09T11:35:27Z | 2025-01-17T01:59:25Z | https://github.com/keras-team/keras/issues/20103 | [

"type:support",

"stat:awaiting response from contributor",

"stale"

] | O-Memis | 14 |

chatopera/Synonyms | nlp | 142 | 请问该库是需要联网才能工作吗 | ## 概述

你好,由于数据安全的问题,请问该库需要一直联网才能工作吗?还是在下载完词库文件后,就不需要联网?

<!-- 其它相关事项,或通过其它方式联系我们:https://www.chatopera.com/mail.html -->

| open | 2024-05-17T10:31:02Z | 2024-05-17T10:31:43Z | https://github.com/chatopera/Synonyms/issues/142 | [] | Dengshunge | 1 |

lepture/authlib | flask | 218 | Potential compliance-fix issue with Zoom refresh token headers | **Is your feature request related to a problem? Please describe.**

The Zoom refresh token process requires a header like so:

https://marketplace.zoom.us/docs/guides/auth/oauth#refreshing

```

Authorization | The string "Basic" with your Client ID and Client Secret with a colon : in between, Base64 Encoded. For... | closed | 2020-04-20T20:14:49Z | 2020-05-07T12:55:21Z | https://github.com/lepture/authlib/issues/218 | [] | wgwz | 4 |

saulpw/visidata | pandas | 1,714 | Replay of command log aborts erratically | **Small description**

When replaying a (probably fairly long) command log file against a (probably fairly extensive) data set, VisiData encourters errors sporadically and non-reproducably, triggering the replay to be aborted. Interestingly, this does not seem to be a problem if the same command log file is run in b... | closed | 2023-02-02T11:11:54Z | 2023-03-03T07:11:09Z | https://github.com/saulpw/visidata/issues/1714 | [

"bug",

"fixed"

] | tdussa | 9 |

proplot-dev/proplot | data-visualization | 261 | Linear ticks for LogLocator | ### Description

The official tutorial adds the ticks for LogLocator like this: `xlocator='log', xminorlocator='logminor'`.

But, if I manually set the ticks to linear one, it doesn't work well.

### Steps to reproduce

```python

fig, axs = plot.subplots(share=0)

axs.format(xlim=(1, 18), xlocator='log', xmino... | closed | 2021-07-13T08:48:38Z | 2021-07-13T21:47:31Z | https://github.com/proplot-dev/proplot/issues/261 | [

"already fixed"

] | zxdawn | 1 |

MaartenGr/BERTopic | nlp | 1,768 | how to get ctf-idf formula inputs for top-10 topic words? | Hello Maarten,

Thank you for creating and maintaining BERTopic, it is an incredibly useful tool in my current work!

I want to ask if it is possible to obtain input components for ctf-idf formula for each of the top 10 words returned per topic. That is I would like to have actual values of tf_{x,c} , f_{x}, and A for... | open | 2024-01-23T18:18:52Z | 2024-01-26T15:22:22Z | https://github.com/MaartenGr/BERTopic/issues/1768 | [] | vpolkovn | 1 |

hankcs/HanLP | nlp | 718 | 训练最新中文wiki问题 | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检... | closed | 2017-12-18T11:57:06Z | 2017-12-19T00:29:48Z | https://github.com/hankcs/HanLP/issues/718 | [

"bug"

] | zhengzhuangjie | 1 |

d2l-ai/d2l-en | pytorch | 2,478 | Chapter 15.4. Pretraining word2vec: AttributeError: Can't pickle local object 'load_data_ptb.<locals>.PTBDataset' | AttributeError: Can't pickle local object 'load_data_ptb.<locals>.PTBDataset'

can anyone help with this error? | open | 2023-04-30T20:01:53Z | 2023-07-12T03:00:55Z | https://github.com/d2l-ai/d2l-en/issues/2478 | [] | keyuchen21 | 2 |

zappa/Zappa | flask | 420 | [Migrated] API Gateway caching and query parameters | Originally from: https://github.com/Miserlou/Zappa/issues/1081 by [tspecht](https://github.com/tspecht)

I'm currently trying to configure the caching on API Gateway side to reduce the load of my database. The endpoint I'm trying to configure is using a query parameter to pass-in the term the user typed into the search... | closed | 2021-02-20T08:32:40Z | 2024-04-13T15:37:38Z | https://github.com/zappa/Zappa/issues/420 | [

"help wanted",

"hacktoberfest",

"no-activity",

"auto-closed"

] | jneves | 2 |

wkentaro/labelme | deep-learning | 1,300 | When I click on Create AI-polygon the program crashes and flashes back | ### Provide environment information

I'm using version v5.3.0a0 (Labelme.exe) in releases on windows, which should be able to run standalone without the python environment

### What OS are you using?

Windows 10 22H2 19042.3086

### Describe the Bug

When I click on Create AI-polygon the program crashes and flashes bac... | open | 2023-07-12T04:58:28Z | 2024-11-06T17:32:45Z | https://github.com/wkentaro/labelme/issues/1300 | [

"issue::bug"

] | jaycecd | 15 |

jumpserver/jumpserver | django | 14,384 | [Bug] 查询sql表格数据值,查询出来结果,无法复制,不能复制及其不方便二次查询数据 | ### 产品版本

v4.3.0

### 版本类型

- [X] 社区版

- [ ] 企业版

- [ ] 企业试用版

### 安装方式

- [ ] 在线安装 (一键命令安装)

- [X] 离线包安装

- [ ] All-in-One

- [ ] 1Panel

- [ ] Kubernetes

- [ ] 源码安装

### 环境信息

1、系统:Rocky Linux release 9.2 (Blue Onyx)

2、内核:5.14.0-284.30.1.el9_2.x86_64

3、初次安装v4.1.0,使用脚本jmsctl.sh upgrade 依次离线升级成v4.3.0

4、jumpserver连接的是自己搭建的... | open | 2024-10-31T02:48:25Z | 2025-01-25T09:37:09Z | https://github.com/jumpserver/jumpserver/issues/14384 | [

"🐛 Bug",

"📦 z~release:Version TBD",

"📝 Recorded"

] | czwHNB | 2 |

pytest-dev/pytest-html | pytest | 55 | IDE specific auto-generated files need to be in gitignore. | In the recent times many IDEs have become popular for Python development. Among them are Jetbrain Community's [Pycharm](https://www.jetbrains.com/pycharm/) and [PyDev](http://www.pydev.org/), an IDE extended from Eclipse for Python development.

Upon opening a project within, these IDEs generate certain files and direc... | closed | 2016-07-03T03:53:51Z | 2016-07-04T10:39:21Z | https://github.com/pytest-dev/pytest-html/issues/55 | [] | kdexd | 1 |

plotly/dash-table | dash | 411 | Pagination - move current_page out of pagination_settings? | `current_page` is more state than configuration - it would be nice to be able to both create `pagination_settings` without involving `current_page`, and to subscribe to `current_page` in a callback independent of `pagination_settings`. I might also move `pagination_mode` to be `pagination_settings.mode` while we're at ... | closed | 2019-04-21T20:20:53Z | 2019-06-25T14:26:04Z | https://github.com/plotly/dash-table/issues/411 | [] | alexcjohnson | 2 |

huggingface/pytorch-image-models | pytorch | 2,355 | [BUG] mobilenetv4_conv RuntimeError: one of the variables needed for gradient computation has been modified by an inplace operation | **Describe the bug**

I tried to train model with 'mobilenetv4_conv_large.e600_r384_in1k' as backbone and got this error. Other models train without any problems.

```

/home/xxxxxx/.local/lib/python3.12/site-packages/torch/autograd/graph.py:825: UserWarning: Error detected in ReluBackward0. Traceback of forward ca... | closed | 2024-12-04T09:24:04Z | 2024-12-05T05:29:34Z | https://github.com/huggingface/pytorch-image-models/issues/2355 | [

"bug"

] | MichaelMonashev | 3 |

ageitgey/face_recognition | python | 1,130 | dlib is using GPU but the cnn model is still taking too much, something is off somewhere on my setup. | * face_recognition version: 1.2.3

* Python version: 3.6.9

* Operating System:

Ubuntu 18.04.4 LTS

Kernel Version: 4.9.140-tegra

CUDA 10.2.89

### Description

Hi.

I've been using the face_recognition library for some time under jetson nano Jetpack 4.3, having a performance of around 500 ms per frame on a 128... | open | 2020-05-01T15:23:27Z | 2020-06-16T08:14:09Z | https://github.com/ageitgey/face_recognition/issues/1130 | [] | drakorg | 5 |

plotly/dash | jupyter | 2,877 | [Feature Request] Add `outputs` and `outputs_list` to `window.dash_clientside.callback_context` | For dash's client-side callbacks, adding `outputs` and `outputs_list` for `window.dash_clientside.callback_context` will improve operational freedom in many scenarios. Currently, only the following information can be obtained in the client-side callbacks:

data_src faceset extract and 5) data_dst faceset extract | THIS IS NOT TECH SUPPORT FOR NEWBIE FAKERS

POST ONLY ISSUES RELATED TO BUGS OR CODE

## Expected behavior

Successfully run 4) data_src faceset extract.bat

## Actual behavior

Choose one or several GPU idxs (separated by comma).

[CPU] : CPU

[0] : GeForce GTX 1050 Ti

[0] Which GPU indexes to choose? :

... | closed | 2020-07-28T02:54:04Z | 2020-07-29T05:10:39Z | https://github.com/iperov/DeepFaceLab/issues/842 | [] | Danielfoit | 3 |

unionai-oss/pandera | pandas | 930 | Best way to integrate parsing logic | ### Question

What is the best way to integrate data cleaning/parsing logic with Pandera? I have included an example use case and my current solution below, but looking for feedback on other approaches etc.

### Scenario

Let's say I have a dataframe, like this:

```

name, phone_number

"user1", "+11231231234"

"u... | open | 2022-08-31T02:36:04Z | 2022-08-31T02:36:04Z | https://github.com/unionai-oss/pandera/issues/930 | [

"question"

] | pwithams | 0 |

gradio-app/gradio | data-visualization | 10,731 | The reply text rendered by the streaming chatbot page is incomplete | ### Describe the bug

The reply text rendered by the streaming chatbot page is incomplete and missing from the content of response_message in yield response_message, state

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

yi... | closed | 2025-03-05T07:11:51Z | 2025-03-07T14:27:22Z | https://github.com/gradio-app/gradio/issues/10731 | [

"bug",

"needs repro"

] | wuxianyess | 8 |

ploomber/ploomber | jupyter | 172 | Jupyter extension improvements | * Better error log messages (task does not exist, dag failed to initialize, etc) in notebook and console

* Option to parse spec on file load vs jupyter start

| closed | 2020-07-06T13:46:28Z | 2020-07-08T06:18:25Z | https://github.com/ploomber/ploomber/issues/172 | [] | edublancas | 2 |

huggingface/datasets | tensorflow | 7,129 | Inconsistent output in documentation example: `num_classes` not displayed in `ClassLabel` output | In the documentation for [ClassLabel](https://huggingface.co/docs/datasets/v2.21.0/en/package_reference/main_classes#datasets.ClassLabel), there is an example of usage with the following code:

````

from datasets import Features

features = Features({'label': ClassLabel(num_classes=3, names=['bad', 'ok', 'good'])})

... | closed | 2024-08-28T12:27:48Z | 2024-12-06T11:32:02Z | https://github.com/huggingface/datasets/issues/7129 | [] | sergiopaniego | 0 |

fastapi/sqlmodel | pydantic | 466 | Alembic migration generated always set enum nullable to true | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [X... | closed | 2022-10-11T03:35:15Z | 2023-11-09T00:10:17Z | https://github.com/fastapi/sqlmodel/issues/466 | [

"question",

"answered",

"investigate"

] | yixiongngvsys | 4 |

Evil0ctal/Douyin_TikTok_Download_API | api | 23 | 国际Tiktok 下载的是720p, 可以下1080p 吗? | 国际Tiktok 下载的是720p, 可以下1080p 吗? | closed | 2022-05-05T16:50:45Z | 2022-11-09T21:10:24Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/23 | [

"Fixed"

] | EddyN8 | 13 |

nerfstudio-project/nerfstudio | computer-vision | 2,663 | How can I enter the camera view after rotating a camera | After I rotate a camera using the rotate widget, how can I then enter that rotated camera view? | open | 2023-12-09T21:41:30Z | 2023-12-09T21:41:30Z | https://github.com/nerfstudio-project/nerfstudio/issues/2663 | [] | ecations | 0 |

plotly/dash | data-science | 2,567 | [BUG] Pinning werkzeug<2.3.0 causing issues with file watcher for hot reloader. |

**Describe your context**

- replace the result of `pip list | grep dash` below

```

dash 2.10.2

dash-bootstrap-components 1.4.1

dash-core-components 2.0.0

dash-daq 0.5.0

dash-html-components 2.0.0

dash-table 5.0.0

```

- if frontend ... | closed | 2023-06-16T10:54:53Z | 2024-07-25T13:16:15Z | https://github.com/plotly/dash/issues/2567 | [] | schlich | 4 |

python-restx/flask-restx | api | 191 | Custom swagger filename | Currently, when trying to use multiple blueprints with different restx Api's but with the same url_prefix, restx will default to using the same swagger.json file (since it is located in the folder). This makes eg Api(bp1, doc='/docs/internal') and Api(bp2, doc='docs/external') show the same swagger.json file, with only... | open | 2020-08-06T09:31:31Z | 2021-09-08T21:19:09Z | https://github.com/python-restx/flask-restx/issues/191 | [

"enhancement"

] | ProgHaj | 5 |

jonra1993/fastapi-alembic-sqlmodel-async | sqlalchemy | 79 | New routes not reflecting in docs | Hi, I need to create new routes to add various utilities for example `/calculate_focrmula_1`. Even though I am adding rotes in `app.py` and creating new endpoint it is not reflecting. | closed | 2023-08-11T01:43:19Z | 2023-08-11T05:42:58Z | https://github.com/jonra1993/fastapi-alembic-sqlmodel-async/issues/79 | [] | ranjeetds | 0 |

gradio-app/gradio | machine-learning | 10,856 | Save the history to a json file or a database and load it after restart gradio, using a chatinterface with save_history=True | - [x] I have searched to see if a similar issue already exists.

**Is your feature request related to a problem? Please describe.**

I created a chatbot program using the component chatinterface for gradio. I added the parameter save_history=True and it works fine. It creates the option to create a new conversation, ... | closed | 2025-03-21T18:05:26Z | 2025-03-23T21:30:46Z | https://github.com/gradio-app/gradio/issues/10856 | [] | clebermarq | 1 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,280 | raise^H^H^H^H^H^H automatically proxy when column is reused in new values | ### Discussed in https://github.com/sqlalchemy/sqlalchemy/discussions/10278

| closed | 2023-08-25T14:39:33Z | 2023-08-30T15:02:03Z | https://github.com/sqlalchemy/sqlalchemy/issues/10280 | [

"bug",

"sql",

"near-term release"

] | zzzeek | 2 |

eriklindernoren/ML-From-Scratch | machine-learning | 102 | Project dependencies may have API risk issues | Hi, In **ML-From-Scratch**, inappropriate dependency versioning constraints can cause risks.

Below are the dependencies and version constraints that the project is using

```

matplotlib

numpy

sklearn

pandas

cvxopt

scipy

progressbar33

terminaltables

gym

```

The version constraint **==** will introduce ... | open | 2022-10-26T02:13:37Z | 2022-10-26T02:13:37Z | https://github.com/eriklindernoren/ML-From-Scratch/issues/102 | [] | PyDeps | 0 |

ageitgey/face_recognition | machine-learning | 1,547 | Windows installation | * face_recognition version: 1.3.0

* Python version: 3.12

* Operating System: windows 11

I trying to built face recognition application, I had successfully installed opencv-python, dlib, face_recognition libraries but after this when I tried to run the file I was asked to install the face_recognition_models and I d... | open | 2024-01-07T06:51:57Z | 2025-03-13T13:13:21Z | https://github.com/ageitgey/face_recognition/issues/1547 | [] | SATHISHK108 | 12 |

plotly/dash | data-visualization | 2,711 | [BUG] Import error with typing_extensions == 4.9.0 | Thank you so much for helping improve the quality of Dash!

We do our best to catch bugs during the release process, but we rely on your help to find the ones that slip through.

**Describe your context**

python 3.9.7

Linux

typing_extensions == 4.9.0

```

dash 2.14.0

dash-core-component... | closed | 2023-12-14T10:36:38Z | 2023-12-14T16:04:35Z | https://github.com/plotly/dash/issues/2711 | [] | abetatos | 3 |

scikit-image/scikit-image | computer-vision | 7,087 | TypeError: No matching signature found in watershed | Hi,

I am a new user, I encountered the problem with watershed of the package. Here are the errors:

```

TypeError Traceback (most recent call last)

Input In [1], in <cell line: 52>()

49 markers[resized_im >= upper_cutoff] = 2

50 print (markers)

---> 52 segmentation = ... | closed | 2023-08-07T05:11:48Z | 2024-02-21T13:05:53Z | https://github.com/scikit-image/scikit-image/issues/7087 | [

":people_hugging: Support"

] | weibei43 | 7 |

pytest-dev/pytest-mock | pytest | 252 | When pytest-mock installed "assert_not_called" has an exception when assertion fails | # Description

Hi,

I am trying to use MagicMock's `assert_not_called_with` method. When I have `pytest-mock` installed and the assertion is failing, I see this message: `During handling of the above exception, another exception occurred:`

This might be undesired because there is too much output while trying to ... | closed | 2021-08-09T18:43:00Z | 2022-01-28T12:31:35Z | https://github.com/pytest-dev/pytest-mock/issues/252 | [

"enhancement",

"help wanted"

] | ecs-jnguyen | 5 |

pyg-team/pytorch_geometric | deep-learning | 9,709 | add_random_edge triggers type error | ### 🐛 Describe the bug

Hi,

```python

import torch

from torch_geometric.utils import add_random_edge

edge_index = torch.tensor([[0, 0, 0, 0, 1, 1, 1, 1, 2, 2, 2, 3, 3, 3, 4, 4, 4, 4],

[1, 2, 3, 4, 0, 2, 3, 4, 0, 1, 4, 0, 1, 4, 0, 1, 2, 3]])

edge_index, added_edges = add_random_e... | open | 2024-10-14T19:37:36Z | 2024-10-14T19:37:36Z | https://github.com/pyg-team/pytorch_geometric/issues/9709 | [

"bug"

] | Zero-Yi | 0 |

miguelgrinberg/python-socketio | asyncio | 831 | python-socketio sio.emit missing / automatically disconnects | **Describe the bug**

I am doing an emit with a json as data but it automatically disconnects me

sio.emit('createC', json)

error: Disconnected from "myservername:port"

**Logs**

Dec 15 18:42:04 b1 python3[13906]: AQkJ_fz9NXpxSkucAAAA: Received packet PONG data

Dec 15 18:42:29 b1 python3[13906]: AQkJ_fz9NXpxSkuc... | closed | 2021-12-16T00:44:15Z | 2022-07-16T11:11:15Z | https://github.com/miguelgrinberg/python-socketio/issues/831 | [

"question"

] | skorvus | 2 |

flairNLP/flair | nlp | 3,434 | [Question]: | ### Question

I am working on a Sequence Labelling problem using the [FLAIR module](https://github.com/flairNLP/flair).

I have dummy e-commerce data with 3 different types of entities and each entity has approx ~1K sub-entities. Training Data (size ~200K) is synthetically created with a combination of 3K labels.

... | open | 2024-03-27T09:16:17Z | 2024-03-27T09:16:17Z | https://github.com/flairNLP/flair/issues/3434 | [

"question"

] | keshavgarg139 | 0 |

polarsource/polar | fastapi | 4,789 | Customer portal is empty | ### Description

<!-- A brief description with a link to the page on the site where you found the issue. -->

When I open a customer portal, it just shows an empty page

### Current Behavior

<!-- A brief description of the current behavior of the issue. -->

- Go to a customers

- Click on a customer

- Click Ge... | closed | 2025-01-06T03:12:06Z | 2025-01-06T12:48:45Z | https://github.com/polarsource/polar/issues/4789 | [

"bug"

] | rotimi-best | 2 |

deepfakes/faceswap | deep-learning | 1,281 | Error occured during extraction | *Note: For general usage questions and help, please use either our [FaceSwap Forum](https://faceswap.dev/forum)

or [FaceSwap Discord server](https://discord.gg/FC54sYg). General usage questions are liable to be closed without

response.*

**Crash reports MUST be included when reporting bugs.**

**Describe the bug... | closed | 2022-11-07T00:23:51Z | 2022-11-10T13:15:17Z | https://github.com/deepfakes/faceswap/issues/1281 | [] | DonOhhhh | 1 |

jacobgil/pytorch-grad-cam | computer-vision | 322 | batch size for cam results | For batch size N > 1, I used to set targets = [ClassifierOutputTarget(cls)] * N to generate the grayscale_cam with output [N, H, W]

But not sure why, I tried again today and it doesn't work anymore. the grayscale_cam output is always [1, H, W]

The input tensor is [N, C, H, W]

Could you please check?

In compute... | closed | 2022-08-30T03:57:31Z | 2023-02-27T01:17:51Z | https://github.com/jacobgil/pytorch-grad-cam/issues/322 | [] | YangjiaqiDig | 9 |

mitmproxy/mitmproxy | python | 6,467 | Mitmproxy with authentication does not work with Maven | #### Problem Description

I have configured mitmproxy in my localhost with credentials in ~/.mitmproxy/config.yaml file. When I try to use maven deployment with the mitmproxy set-up. It hangs.

Maven CLI command

```

./mvn deploy:deploy-file -Durl=https://maven.pkg.github.com/Thevakumar-Luheerathan/module-ballerina-... | open | 2023-11-07T07:40:47Z | 2023-11-08T14:15:12Z | https://github.com/mitmproxy/mitmproxy/issues/6467 | [

"kind/triage"

] | Thevakumar-Luheerathan | 1 |

yt-dlp/yt-dlp | python | 11,786 | How to get all video links from Facebook page and Instagram? | ### DO NOT REMOVE OR SKIP THE ISSUE TEMPLATE

- [X] I understand that I will be **blocked** if I *intentionally* remove or skip any mandatory\* field

### Checklist

- [X] I'm asking a question and **not** reporting a bug or requesting a feature

- [X] I've looked through the [README](https://github.com/yt-dlp/yt-dlp#re... | closed | 2024-12-11T03:37:04Z | 2024-12-15T04:04:42Z | https://github.com/yt-dlp/yt-dlp/issues/11786 | [

"question"

] | seaklin83546 | 1 |

huggingface/transformers | tensorflow | 36,277 | The output tensor's data type is not torch.long when the input text is empty. | ### System Info

- `transformers` version: 4.48.1

- Platform: Linux-5.15.0-130-generic-x86_64-with-glibc2.35

- Python version: 3.12.8

- Huggingface_hub version: 0.27.1

- Safetensors version: 0.5.2

- Accelerate version: 1.3.0

- Accelerate config: not found

- PyTorch version (GPU?): 2.5.1+cu124 (True)

- Tensorflow versi... | open | 2025-02-19T09:43:29Z | 2025-03-04T14:54:19Z | https://github.com/huggingface/transformers/issues/36277 | [

"bug"

] | wangzhen0518 | 7 |

miguelgrinberg/flasky | flask | 111 | A problem when use "git push" | Hello, Miguel.

When I use "git push heroku master", the problem happends:

`Total 504 (delta 274), reused 501 (delta 273)

remote: Compressing source files... done.

remote: Building source:

remote:

remote: -----> Using set buildpack heroku/python

remote:

remote: ! Push rejected, failed to detect set buildpack hero... | closed | 2016-01-28T16:45:45Z | 2017-01-23T10:08:50Z | https://github.com/miguelgrinberg/flasky/issues/111 | [

"question"

] | xpleaf | 8 |

robotframework/robotframework | automation | 5,032 | Collections: No default value shown in documentation for `Get/Pop From Dictionary` | When Checking the Documentation of keywords which uses `NOT_SET` as default , It shows "mandatory argument missing" which is wrong.

Though the functionality of the keyword is working fine.

https://robotframework.slack.com/archives/C3C28F9DF/p1705692610282559

API and hopefully you can find a more up-to-date sources better than the current one, thanks @ExpDev07 ❤️ | open | 2020-03-16T07:06:39Z | 2020-03-21T13:38:15Z | https://github.com/ExpDev07/coronavirus-tracker-api/issues/52 | [

"feedback"

] | mugetsu | 1 |

indico/indico | sqlalchemy | 6,706 | Configurable event types | **Is your feature request related to a problem? Please describe.**

Our organization supports only two types of events.

**Describe the solution you'd like**

Make available event types configurable.

**Describe alternatives you've considered**

Currently we patch the Indico installation, since the types are hard coded in... | open | 2025-01-22T07:12:15Z | 2025-01-22T08:11:31Z | https://github.com/indico/indico/issues/6706 | [

"enhancement"

] | Reis-A-CIT | 2 |

agronholm/anyio | asyncio | 863 | Quadratic traceback-growth in TaskGroup nesting-level on asyncio backend | ### Things to check first

- [x] I have searched the existing issues and didn't find my bug already reported there

- [x] I have checked that my bug is still present in the latest release

### AnyIO version

4.8.0

### Python version

3.11

### What happened?

Since python implicitly adds a context linking the current... | closed | 2025-01-28T16:02:18Z | 2025-01-29T19:39:44Z | https://github.com/agronholm/anyio/issues/863 | [

"bug"

] | tapetersen | 0 |

ray-project/ray | tensorflow | 51,010 | [distributed debugger] exception in regular remote worker function leading to access violation when debugger connects | ### What happened + What you expected to happen

In my setup we are using a Ray serve deployment with fast API and starlette middleware

To test the use use of the distributed debugger, I created a class that has remote static member functions that are decorated with Ray remote but it is not an actor.

The deployment ha... | open | 2025-03-01T00:58:27Z | 2025-03-06T20:31:48Z | https://github.com/ray-project/ray/issues/51010 | [

"bug",

"triage",

"serve"

] | kotoroshinoto | 1 |

Esri/arcgis-python-api | jupyter | 1,475 | [Question]: How do I load a previously generated model in TextClassifier? | I have used arcgis learn.text to import TextClassifier in order for creating a Machine learning module. Now I want to use the same model in Streamlitfor creating an interface for re-use and displaying the predictions.

Code for the app I am creating:

```

import streamlit as st

import os

from arcgis.learn.text i... | closed | 2023-02-27T09:56:30Z | 2023-04-21T08:24:02Z | https://github.com/Esri/arcgis-python-api/issues/1475 | [

"bug",

"question",

"learn"

] | Daremitsu1 | 4 |

voila-dashboards/voila | jupyter | 903 | Uncaught ReferenceError: IPython is not defined | Hi,

when i try to render a simple notebook

```

import IPython.display

IPython.display.HTML('''

<script type="text/javascript">

IPython.notebook.kernel.execute("foo=11")

</script>

''')

```

in the JS console Voila comes back with

Uncaught ReferenceError: IPython is not defined

voil... | closed | 2021-06-12T19:35:02Z | 2021-09-13T18:07:17Z | https://github.com/voila-dashboards/voila/issues/903 | [] | VitoKovacic | 3 |

ageitgey/face_recognition | machine-learning | 679 | cv with knn | How do you combine knn with cv | open | 2018-11-18T07:50:45Z | 2018-11-18T15:10:19Z | https://github.com/ageitgey/face_recognition/issues/679 | [] | nhangox22 | 1 |

supabase/supabase-py | fastapi | 57 | Current upload does not support inclusion of mime-type | Our current upload/update methods do not include the mime-type. As such, when we upload photos to storage and download them again they don't render properly.

The current fix was proposed by John on the discord channel. We should integrate it in so that Users can download/use photos.

```

multipart_data = M... | closed | 2021-10-09T23:53:49Z | 2021-10-30T21:28:39Z | https://github.com/supabase/supabase-py/issues/57 | [

"bug",

"good first issue",

"hacktoberfest"

] | J0 | 7 |

iperov/DeepFaceLab | machine-learning | 5,629 | Дип фейк | open | 2023-02-24T01:50:14Z | 2023-06-08T20:03:38Z | https://github.com/iperov/DeepFaceLab/issues/5629 | [] | Johny-tech-creator | 4 | |

PokeAPI/pokeapi | api | 347 | Documentation API endpoints missing trailing forward slash | #### api/v2/pokemon/{id or name} should be api/v2/pokemon/{id or name}/

Some HTTP client doesn't follow redirect by default so this could potentially cause issues? And it's probably better if the user requests the correct endpoints in the first place instead of following redirects | closed | 2018-08-29T03:24:45Z | 2018-09-22T03:02:17Z | https://github.com/PokeAPI/pokeapi/issues/347 | [] | tien | 2 |

onnx/onnx | machine-learning | 6,100 | ERROR: Could not build wheels for onnx which use PEP 517 and cannot be installed directly | # Bug Report

### Is the issue related to model conversion?

When I install onnx using pip, and run `pip install onnx`, I failed!

### Describe the bug

when I use pip install Onnx ,and run `pip install onnx==1.14.1`, I failed!

it print:

```

Looking in indexes: https://pypi.tuna.tsinghua.edu.cn/simple

Collecti... | closed | 2024-04-25T09:49:28Z | 2024-04-28T18:11:55Z | https://github.com/onnx/onnx/issues/6100 | [

"question",

"topic: build"

] | qd986692950 | 2 |

huggingface/transformers | python | 36,317 | DS3 zero3_save_16bit_model is not compatible with resume_from_checkpoint | ### System Info

- `transformers` version: 4.48.3

- Platform: Linux-5.4.0-148-generic-x86_64-with-glibc2.35

- Python version: 3.11.10

- Huggingface_hub version: 0.28.1

- Safetensors version: 0.5.2

- Accelerate version: 1.3.0

- Accelerate config: not found

- PyTorch version (GPU?): 2.5.1+cu124 (True)

- Tensorflow ver... | open | 2025-02-21T05:56:30Z | 2025-03-23T08:03:25Z | https://github.com/huggingface/transformers/issues/36317 | [

"bug"

] | starmpcc | 1 |

ansible/awx | automation | 15,124 | RFE: Implement Maximum Execution Limit for Scheduled Jobs | ### Please confirm the following

- [X] I agree to follow this project's [code of conduct](https://docs.ansible.com/ansible/latest/community/code_of_conduct.html).

- [X] I have checked the [current issues](https://github.com/ansible/awx/issues) for duplicates.

- [X] I understand that AWX is open source software provide... | closed | 2024-04-22T12:22:55Z | 2024-04-22T12:23:25Z | https://github.com/ansible/awx/issues/15124 | [

"type:enhancement",

"component:api",

"component:ui",

"component:awx_collection",

"needs_triage",

"community"

] | demystifyingk8s | 0 |

reloadware/reloadium | pandas | 181 | [Feature Request] Frame Transaction | Frame transaction is to ensure the mutable data got revert back during reloading frame or dropping it. This usefull when you have a function that mutate a list or dictionary and need to reload for some changes.

```py

my_list = [1,2]

def addition(target_list):

target_list.append(3)

print("Reload here")... | open | 2024-02-19T20:25:51Z | 2024-02-19T20:25:51Z | https://github.com/reloadware/reloadium/issues/181 | [] | MeGaNeKoS | 0 |

aminalaee/sqladmin | asyncio | 380 | get_model_objects is not using list_query (export data) | ### Checklist

- [X] The bug is reproducible against the latest release or `master`.

- [X] There are no similar issues or pull requests to fix it yet.

### Describe the bug

Currently the exported data is using the generic `select()` query, and not the `list_query`.

See https://github.com/aminalaee/sqladmin/blob/mai... | closed | 2022-11-16T11:31:57Z | 2022-11-17T11:31:54Z | https://github.com/aminalaee/sqladmin/issues/380 | [] | villqrd | 1 |

tox-dev/tox | automation | 3,045 | referenced deps from an environment defined by an optional combinator are not pulled in | ## Issue

<!-- Describe what's the expected behaviour and what you're observing. -->

Suppose we have two environments defined by the below:

```

[testenv:lint{,-ci}]

deps =

flake8

flake8-print

flake8-black

ci: flake8-junit-report

commands =

!ci: flake8

ci: flake8 --output-file flake8.txt --ex... | open | 2023-06-19T21:26:22Z | 2024-03-05T22:16:15Z | https://github.com/tox-dev/tox/issues/3045 | [

"bug:minor",

"help:wanted"

] | hans2520 | 1 |

hootnot/oanda-api-v20 | rest-api | 208 | Extend the pricing endpoints | 2 new endpoints were introduced

- /v3/accounts/{accountID}/candles/latest

- /v3/accounts/{accountID}/instruments/{instrument}/candles

The request classes for those endpoints will be added to oandapyv20.endpoints.pricing | open | 2023-12-11T10:54:02Z | 2023-12-11T14:53:08Z | https://github.com/hootnot/oanda-api-v20/issues/208 | [

"enhancement"

] | hootnot | 0 |

frappe/frappe | rest-api | 31,451 | Workflow bug | - Creating any work flow then choosing workflow builder

- the builder shows mistakes in stalkholders | open | 2025-02-27T16:18:36Z | 2025-02-27T16:18:36Z | https://github.com/frappe/frappe/issues/31451 | [

"bug"

] | m-aglan | 0 |

chiphuyen/stanford-tensorflow-tutorials | tensorflow | 133 | chatbot outputs array of numbers ? | ```

> hi

[[-0.05387566 -2.2077456 -0.1335546 ... -0.3521466 0.15176542

0.4837527 ]]

> how are you?

[[-0.05443239 -2.2417731 -0.1325449 ... -0.35882115 0.15338464

0.49410236]]

> say something

[[-0.05385711 -2.2218084 -0.13375734 ... -0.3538314 0.15226512

0.48862678]]

``` | open | 2018-08-26T20:34:47Z | 2019-12-10T06:49:13Z | https://github.com/chiphuyen/stanford-tensorflow-tutorials/issues/133 | [] | Marwan-Mostafa7 | 3 |

skypilot-org/skypilot | data-science | 4,952 | [Dependency] SkyPilot installed with `uv venv` does not work correctly with gcloud installed with wget on MacOS | To reproduce:

1. `uv venv ~/sky-env --python 3.10 --seed`

2. `source ~/sky-env/bin/activate`

3. `wget https://dl.google.com/dl/cloudsdk/channels/rapid/downloads/google-cloud-cli-darwin-arm.tar.gz; extract google-cloud-cli-darwin-arm.tar.gz; ./google-cloud-sdk/install.sh`

4. `sky check gcp` shows: `gcloud --version` fai... | open | 2025-03-14T02:14:06Z | 2025-03-17T21:43:23Z | https://github.com/skypilot-org/skypilot/issues/4952 | [] | Michaelvll | 1 |

gee-community/geemap | streamlit | 610 | Output point locations from geemap.extract_values_to_points doesn’t match input | I am using geemap.extract_values_to_points to extract pixel values under points from a Shapefile (EPSG: 4326) and outputting to a Shapefile. This is the raster image I’m using:

ee.Image("projects/soilgrids-isric/clay_mean")

When I checked the results in QGIS the points in the output Shapefile did not align with th... | closed | 2021-08-06T16:19:51Z | 2022-05-28T17:28:40Z | https://github.com/gee-community/geemap/issues/610 | [

"bug"

] | nedhorning | 5 |

Nekmo/amazon-dash | dash | 114 | First press does not work | Put an `x` into all the boxes [ ] relevant to your *issue* (like this: `[x]`)

### What is the purpose of your *issue*?

- [ ] Bug report (encountered problems with amazon-dash)

- [ ] Feature request (request for a new functionality)

- [ ] Question

- [ ] Other

### Guideline for bug reports

You can delete this ... | closed | 2019-01-12T17:57:26Z | 2019-05-15T18:17:54Z | https://github.com/Nekmo/amazon-dash/issues/114 | [] | mcgurdan | 7 |

pinry/pinry | django | 293 | Official Apple M1 Arm Processor Support | I know some form of Arm support was [recently added](https://github.com/pinry/pinry/pull/248) but I think it only supports the Raspberry Pi platform.

I got a warning (not a blocking error) when I ran this on the new M1 processor Macbook Pro. The app still launches so it isn't a huge deal but I thought I'd mention i... | closed | 2021-08-11T22:50:04Z | 2021-09-02T03:15:07Z | https://github.com/pinry/pinry/issues/293 | [] | dakotahp | 1 |

gradio-app/gradio | deep-learning | 10,267 | gradio 5.0 unable to load javascript file | ### Describe the bug

if I provide JavaScript code in a variable, it is executed perfectly well but when I put the same code in a file "app.js" and then pass the file path in `js` parameter in `Blocks`, it doesn't work. I have added the code in reproduction below. if the same code is put in a file, the block will be ... | open | 2024-12-30T15:09:28Z | 2024-12-30T16:19:48Z | https://github.com/gradio-app/gradio/issues/10267 | [

"bug"

] | git-hamza | 2 |

gradio-app/gradio | deep-learning | 10,605 | Automatically adjust the page | ### Describe the bug

Does Gradio support automatic page adjustment for different devices, such as mobile phones and computers?

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

```

### Screenshot

_No response_

### Logs

... | closed | 2025-02-17T13:57:40Z | 2025-02-18T01:07:12Z | https://github.com/gradio-app/gradio/issues/10605 | [

"bug"

] | nvliajia | 1 |

hatchet-dev/hatchet | fastapi | 474 | SSL error: Failed to connect to all addresses; last error: UNKNOWN: ipv4:127.0.0.1:7 | I am running from docker-compose.yml and got this error while trying to run the worker:

```

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "src/worker/main.py", line 5, in start

worker.register_workflow(ProcessingWorkflow()) # type: ignore

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^... | closed | 2024-05-09T13:00:11Z | 2024-05-09T13:40:54Z | https://github.com/hatchet-dev/hatchet/issues/474 | [] | simjak | 1 |

aidlearning/AidLearning-FrameWork | jupyter | 105 | My computer can't connect it via ssh though i upload my id_rsa and id_rsa.pub |

the ip address and the usrname are all right

i don't know why is that?

| closed | 2020-05-14T02:57:33Z | 2020-05-15T13:24:27Z | https://github.com/aidlearning/AidLearning-FrameWork/issues/105 | [] | QYHcrossover | 2 |

keras-team/keras | pytorch | 20,603 | Request for multi backend support for timeseries data loading | Hi,

I wonder is it possible for you to implement keras.utils.timeseries_dataset_from_array() method by other backends (e.g. JAX)?

it would be nice to not have to add TF dependency just because of this module.

https://github.com/keras-team/keras/blob/v3.7.0/keras/src/utils/timeseries_dataset_utils.py#L7 | closed | 2024-12-06T08:35:40Z | 2025-01-21T07:02:07Z | https://github.com/keras-team/keras/issues/20603 | [

"type:support",

"stat:awaiting response from contributor"

] | linomi | 4 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.