repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

jupyter-book/jupyter-book | jupyter | 2,304 | Aligning figures in grid to the bottom. | I am trying to put 2 figures side-by-side.

I am currently trying the `grid` directive for this.

```

:::::{grid} 2

::::{grid-item}

:::{figure} assets/fish_swimming.svg

:height: 350px

Caption 1

:::

::::

::::{grid-item}

:::{figure} assets/stanford_bunny.svg

:height: 225px

Caption 2

:::

::::

:::::

```

This shows the figures as attached:

<img width="743" alt="Image" src="https://github.com/user-attachments/assets/8a6c1af9-6a81-43c4-8265-a78189f7c866" />

How do I bottom align the figures?

Is there a better way to produce subfigures so that I can have proper referencing of subfigures as Fig1a and Fig1b etc. I know the figure directive can be used for that but I want a two column figure style with both bottom aligned. | open | 2025-01-22T16:58:02Z | 2025-01-22T16:58:18Z | https://github.com/jupyter-book/jupyter-book/issues/2304 | [] | atharvaaalok | 0 |

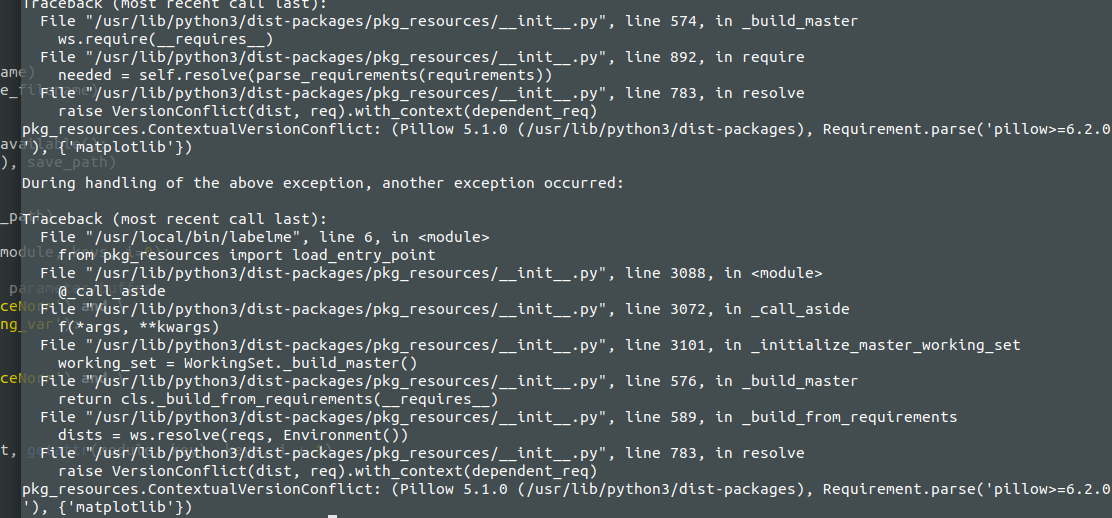

wkentaro/labelme | computer-vision | 792 | Ubuntu 18 , not able to launch issue | File "/usr/lib/python3/dist-packages/pkg_resources/__init__.py", line 783, in resolve

raise VersionConflict(dist, req).with_context(dependent_req)

pkg_resources.ContextualVersionConflict: (Pillow 5.1.0 (/usr/lib/python3/dist-packages), Requirement.parse('pillow>=6.2.0'), {'matplotlib'})

| closed | 2020-10-26T11:41:04Z | 2020-12-11T08:22:23Z | https://github.com/wkentaro/labelme/issues/792 | [] | sreshu | 1 |

Evil0ctal/Douyin_TikTok_Download_API | fastapi | 539 | fetch_user_post 接口只能读取第一页数据? | 获取用户帖子列表的接口只能获取第一页的数据吗?这个作者有687个视频

**`https://douyin.wtf/api/tiktok/web/fetch_user_post?secUid=MS4wLjABAAAAWtC4Km0mPiqpO8CM4JnOTG7sTMqs6ionh6AWF9sFb1dVtKiafyCwNz10DGf2UFk8&cursor=1&count=35&coverFormat=2`**

`{

code: 200,

router: "/api/tiktok/web/fetch_user_post",

data: {

cursor: "1",

extra: {

fatal_item_ids: [ ],

logid: "20250118172756C056BC4051BAEA68F65D",

now: 1737221277000

},

hasMore: false,

log_pb: {

impr_id: "20250118172756C056BC4051BAEA68F65D"

},

statusCode: 0,

status_code: 0,

status_msg: ""

}

}` | closed | 2025-01-17T05:58:49Z | 2025-01-21T04:29:39Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/539 | [

"BUG",

"enhancement"

] | Bruse-Lee | 1 |

piskvorky/gensim | machine-learning | 3,162 | Doc2Vec: when we have string tags, build_vocab with update removes previous index | <!--

**IMPORTANT**:

- Use the [Gensim mailing list](https://groups.google.com/forum/#!forum/gensim) to ask general or usage questions. Github issues are only for bug reports.

- Check [Recipes&FAQ](https://github.com/RaRe-Technologies/gensim/wiki/Recipes-&-FAQ) first for common answers.

Github bug reports that do not include relevant information and context will be closed without an answer. Thanks!

-->

#### Problem description

I'm trying to resume training my Doc2Vec model with string tags, but `model.build_vocab` removes all previous index from `model.dv`.

#### Steps/code/corpus to reproduce

A simple example to reproduce this:

```python

import string

from gensim.test.utils import common_texts

from gensim.models.doc2vec import Doc2Vec, TaggedDocument

documents = [TaggedDocument(doc, [tag]) for tag, doc in zip(string.ascii_lowercase, common_texts)]

documents1 = documents[:6]

documents2 = documents[6:]

model = Doc2Vec(vector_size=5, window=2, min_count=1)

model.build_vocab(documents1)

model.train(documents1, total_examples=len(documents1), epochs=5)

model.save('model')

model = Doc2Vec.load('model')

print('Vector count after train:', len(model.dv))

print('Keys:', model.dv.index_to_key)

model.build_vocab(documents2, update=True)

model.train(documents2, total_examples=model.corpus_count, epochs=model.epochs)

print('Vector count after update:', len(model.dv))

print('Keys:', model.dv.index_to_key)

model.save('model')

model = Doc2Vec.load('model')

print('Vector count after load:', len(model.dv))

print('Keys:', model.dv.index_to_key)

```

Output:

```

Vector count after train: 6

Keys: ['a', 'b', 'c', 'd', 'e', 'f']

Vector count after update: 3

Keys: ['g', 'h', 'i']

Vector count after load: 3

Keys: ['g', 'h', 'i']

```

And we have an interesting behavior:

```python

print('b' in model.dv)

# True

print(model.dv['b'])

# [ 0.00524729 -0.19762747 -0.10339681 -0.19433555 0.04022206]

```

The tag seems still exists in the model after updating, but `len` and `index_to_key` do not show this.

At the same time the code with int tags works correctly (it seems to me):

```python

documents = [TaggedDocument(doc, [tag]) for tag, doc in enumerate(common_texts)]

documents1 = documents[:6]

documents2 = documents[6:]

...

```

```

Vector count after train: 6

Keys: [0, 1, 2, 3, 4, 5]

Vector count after update: 9

Keys: [0, 1, 2, 3, 4, 5, 6, 7, 8]

Vector count after load: 9

Keys: [0, 1, 2, 3, 4, 5, 6, 7, 8]

```

#### Versions

```

Windows-10-10.0.19041-SP0

Python 3.9.0 (tags/v3.9.0:9cf6752, Oct 5 2020, 15:34:40) [MSC v.1927 64 bit (AMD64)]

Bits 64

NumPy 1.20.3

SciPy 1.6.1

gensim 4.0.1

FAST_VERSION 0

```

| closed | 2021-06-04T05:54:12Z | 2022-03-17T20:46:48Z | https://github.com/piskvorky/gensim/issues/3162 | [] | espdev | 13 |

python-visualization/folium | data-visualization | 1,813 | Make the Map pickable | **Is your feature request related to a problem? Please describe.**

As an extension of https://github.com/python-visualization/branca/pull/99, I want to be able to cache a map for an application, to switch quickly between them.

**Describe the solution you'd like**

I proposed a first solution in the following PR : https://github.com/python-visualization/folium/pull/1812

The solution is partial and would greatly appreciate any help, as I feel a pickable Map could be helpful in many ways.

**Additional context**

Map before correction :

Map after correction

**Implementation**

See https://github.com/python-visualization/folium/pull/1812

| closed | 2023-10-06T14:45:17Z | 2023-10-16T12:07:50Z | https://github.com/python-visualization/folium/issues/1813 | [

"enhancement"

] | BastienGauthier | 8 |

deepspeedai/DeepSpeed | pytorch | 6,639 | No module named 'op_builder' | deepspeed-0.15.2

AMD 5600x

Rtx 4060Ti

ERROR:

ModuleNotFoundError: No module named 'op_builder'

try install it ,but :

pip install op-builder

ERROR: Could not find a version that satisfies the requirement op-builder (from versions: none)

ERROR: No matching distribution found for op-builder

help!!! | closed | 2024-10-18T04:02:44Z | 2024-11-05T23:31:42Z | https://github.com/deepspeedai/DeepSpeed/issues/6639 | [

"windows"

] | hujiquan | 8 |

ranaroussi/yfinance | pandas | 1,976 | Currency data for statements | ### Describe bug

Currency data for financial statements

For some stocks, like BP.L, the share price data is GBp whereas the financial statements are in USD.

The currency of GBp is available in fast_info but the currency (USD) for the financial statements does not appear to be available.

Am I missing something?

### Simple code that reproduces your problem

import yfinance as yf

ticker = yf.Ticker("BP.L")

ticker.info['currency']

### Debug log

not a bug

### Bad data proof

ticker.info['currency']

'GBp'

### `yfinance` version

0.2.40

### Python version

_No response_

### Operating system

_No response_ | closed | 2024-07-04T19:29:24Z | 2024-07-05T20:11:48Z | https://github.com/ranaroussi/yfinance/issues/1976 | [] | mking007 | 3 |

JaidedAI/EasyOCR | deep-learning | 1,324 | Fine-tuned CRAFT model works much slower on CPU than default one. | I fine-tuned CRAFT model according to this guide: https://github.com/JaidedAI/EasyOCR/tree/master/trainer/craft

But this model works 5 times slower than default model 'craft_mlt_25k' on some server CPUs (on some CPUs speeds are same). What can it be? Is 'craft_mlt_25k' quantized in some way? | open | 2024-10-18T09:21:37Z | 2024-12-09T02:27:33Z | https://github.com/JaidedAI/EasyOCR/issues/1324 | [] | romanvelichkin | 1 |

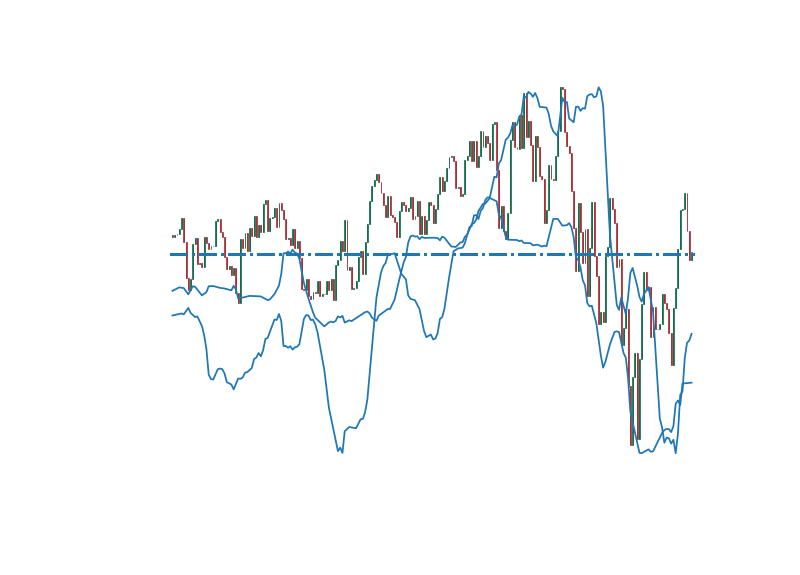

matplotlib/matplotlib | data-science | 28,872 | [Bug]: Why is there an offset between grey bars and width of arrows in upper limits (reproducible data and code provided) | ### Bug summary

For the image shown below, which shows upper limits in red arrows between two variables X and Y and on the right side there is Z axis showing value of grey bars, why is their an offset between grey bars and width of arrows.

### Code for reproduction

```Python

def plot(Xmin, Xmax):

datafile = '' # Placeholder for the data file path

try:

data = np.loadtxt(datafile)

xmin = data[:, 0]

xmax = data[:, 1]

yvalue = data[:, 2]

yerror = data[:, 3]

zvalue = data[:, 4]

upperBound = data[:, 5]

# Compute the midpoint of the x-axis

x = np.sqrt(xmin * xmax)

xerr = np.array([x - xmin, xmax - x])

# Compute y-axis values

y = x**2 * yvalue / (xmax - xmin)

yerr = x**2 * yerror / (xmax - xmin)

y_ul = x**2 * upperBound / (xmax - xmin)

y_ulerr = np.array([0.5 * y_ul, [0] * len(y)])

# Create the plot

fig, ax = plt.subplots()

# Plot the data points where z > 9

ax.errorbar(x[zvalue > 9], y[zvalue > 9], xerr=xerr[:, zvalue > 9], yerr=yerr[zvalue > 9],

fmt='.k', color='blue', label='Detected', markersize=12)

# Plot upper limits where z < 9, in red

ax.errorbar(x[zvalue < 9], y_ul[zvalue < 9], xerr=xerr[:, zvalue < 9], yerr=y_ulerr[:, zvalue < 9],

fmt='.k', color='red', uplims=True, label='Upper Limit', markersize=12)

# Set axis limits and scales

ax.set_xlim(a,b)

ax.set_ylim(c, d)

ax.set_xscale('log')

ax.set_yscale('log')

# Set axis labels with bold font

ax.set_xlabel(r'X [units]', fontweight='bold')

ax.set_ylabel(r'Y [units]', fontweight='bold', fontsize=9)

# Plot z-values on a secondary axis

ax2 = ax.twinx()

ax2.bar(x, zvalue, width=(xmax - xmin), color='gray', edgecolor='gray', alpha=0.5)

ax2.set_ylim(bottom=0)

ax2.set_xscale('log')

ax2.set_ylabel('Z value', fontweight='bold')

# Return the file paths (placeholders)

return png_file, pdf_file

except Exception as e:

print(f"An error occurred: {e}")

```

### Actual outcome

When we use x = np.sqrt(xmin * xmax) which is the geometrical mean their is an offset between grey bars and width of arrows in upper limits. But when we use arithmetic mean x = 0.5 (xmin + xmax) the upper limit error bars are alligned with the grey bars. Why is it not alligning using geometric mean. The data to reproduce the above plot is at https://github.com/siddhantmannaiith/data/blob/main/data.dat where the first two columns are xmin and xmax. If you need any furthur details to reproduce the above plot please let me know.

### Expected outcome

In expected outcome the upper limits should align with the grey bars which happens when we use arithmetic mean but does not happen with geometric mean. I have provided the data at https://github.com/siddhantmannaiith/data/blob/main/data.dat to replicate the above plot. First two columns are xmin and xmax.

### Additional information

I have provided the data at https://github.com/siddhantmannaiith/data/blob/main/data.dat to replicate the above plot. First two columns are xmin and xmax.

### Operating system

Ubuntu

### Matplotlib Version

3.7.1

### Matplotlib Backend

_No response_

### Python version

_No response_

### Jupyter version

_No response_

### Installation

pip | closed | 2024-09-24T07:03:15Z | 2024-09-24T13:48:56Z | https://github.com/matplotlib/matplotlib/issues/28872 | [

"status: needs clarification",

"status: needs revision"

] | siddhantmannaiith | 1 |

ARM-DOE/pyart | data-visualization | 1,333 | NEXRAD Non-Reflectivity Values | Building on the discussion here - https://openradar.discourse.group/t/reflectivity-and-velocity-resolution-from-aws/97

The main question is whether we are parsing NEXRAD Level 2 files with the proper digital resolution.

Reflectivity should be at 8 bit resolution (every 0.5 dBZ), but other fields such as radial velocity and spectrum width should be higher? With differences in values on the order of 0.1 m/s, etc.

@dopplershift do you know where to find this information so we can verify we are treating this properly? Or do you know if the current implementation in MetPy/Py-ART handle this properly? | closed | 2022-11-22T15:49:15Z | 2022-11-22T19:28:04Z | https://github.com/ARM-DOE/pyart/issues/1333 | [] | mgrover1 | 2 |

aminalaee/sqladmin | sqlalchemy | 152 | Many to many field setup error | ### Checklist

- [X] The bug is reproducible against the latest release or `master`.

- [X] There are no similar issues or pull requests to fix it yet.

### Describe the bug

I am trying to update m2m field in form but i am getting error `"sqlalchemy.exc.InvalidRequestError: Can't attach instance another instance with key is already present in this session"`

### Steps to reproduce the bug

_No response_

### Expected behavior

_No response_

### Actual behavior

_No response_

### Debugging material

_No response_

### Environment

Macos , python 3.9

### Additional context

_No response_ | closed | 2022-05-14T16:16:25Z | 2024-06-15T13:33:42Z | https://github.com/aminalaee/sqladmin/issues/152 | [] | dasaderto | 10 |

pallets-eco/flask-sqlalchemy | flask | 512 | NoForeignKeysError with polymorphism and schemas | ```python

from flask import Flask

from flask_sqlalchemy import SQLAlchemy

app = Flask(__name__)

app.config['SQLALCHEMY_TRACK_MODIFICATIONS'] = False

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql:///test'

db = SQLAlchemy(app)

class Item(db.Model):

__tablename__ = 'items'

__table_args__ = {'schema': 'foo'} # this causes the error

__mapper_args__ = {'polymorphic_on': 'type', 'polymorphic_identity': None}

id = db.Column(db.Integer, primary_key=True)

type = db.Column(db.Integer, nullable=False)

parent_id = db.Column(db.ForeignKey('foo.items.id'), index=True, nullable=True)

children = db.relationship('Item', backref=db.backref('parent', remote_side=[id]))

class SubItem(Item):

__mapper_args__ = {

'polymorphic_identity': 1

}

```

traceback:

```pythontraceback

Traceback (most recent call last):

File "flasksatest.py", line 21, in <module>

class SubItem(Item):

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/flask_sqlalchemy/__init__.py", line 602, in __init__

DeclarativeMeta.__init__(self, name, bases, d)

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/api.py", line 64, in __init__

_as_declarative(cls, classname, cls.__dict__)

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/base.py", line 88, in _as_declarative

_MapperConfig.setup_mapping(cls, classname, dict_)

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/base.py", line 103, in setup_mapping

cfg_cls(cls_, classname, dict_)

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/base.py", line 135, in __init__

self._early_mapping()

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/base.py", line 138, in _early_mapping

self.map()

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/ext/declarative/base.py", line 534, in map

**self.mapper_args

File "<string>", line 2, in mapper

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/orm/mapper.py", line 671, in __init__

self._configure_inheritance()

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/orm/mapper.py", line 978, in _configure_inheritance

self.local_table)

File "<string>", line 2, in join_condition

File "/home/adrian/dev/indico/env/lib/python2.7/site-packages/sqlalchemy/sql/selectable.py", line 979, in _join_condition

(a.description, b.description, hint))

sqlalchemy.exc.NoForeignKeysError: Can't find any foreign key relationships between 'items' and 'items'.

```

It works fine if I do not use a custom schema. | closed | 2017-06-27T09:44:42Z | 2020-12-05T20:46:22Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/512 | [

"tablename"

] | ThiefMaster | 3 |

amidaware/tacticalrmm | django | 954 | Server Side Checks | **Is your feature request related to a problem? Please describe.**

We sometimes need to monitor externally hosted services and cannot install an agent

**Describe the solution you'd like**

I would really like if the RMM server itself could carry out some very basic checks similar to the agents. i.e. ping checks, website availability checks (check for status 200) etc. This would be helpful as sometimes we monitor externally hosted services such as web servers, mail servers and VPN gateways where we cannot install an agent

Love this software! Keep up the good work! | closed | 2022-01-25T19:46:00Z | 2022-01-27T11:38:57Z | https://github.com/amidaware/tacticalrmm/issues/954 | [] | daveclev12 | 4 |

pydata/bottleneck | numpy | 9 | Writing beyond the range of an array | The low-level functions nanstd_3d_int32_axis1 and nanstd_3d_int64_axis1, called by bottleneck.nanstd() for 3d input, wrote beyond the memory owned by the output array if arr.shape[1] == 0 and arr.shape[0] > arr.shape[2], where arr is the input array.

Thanks to Christoph Gohlke for finding an example to demonstrate the bug.

| closed | 2011-03-08T20:24:41Z | 2011-03-08T20:51:45Z | https://github.com/pydata/bottleneck/issues/9 | [

"bug"

] | kwgoodman | 1 |

Textualize/rich | python | 2,825 | [REQUEST] Tweak default colors for RichHandler | Hello, first of all, thank you for rich! I use it in pretty much all my projects.

I have a _very minor_ suggestion regarding the _default_ colors for logging levels.

I know we can customize them using [themes](https://rich.readthedocs.io/en/latest/style.html#style-themes) (and I already do!).

I use mostly info/warning/error levels for logging, and depending on the terminal used, _warnings and errors_ render almost identically.

When googling "Python colored logs", the top solutions (in my case) use yellow-ish for warnings and red for errors.

Granted, many of the top results use `coloredlogs`, but in any case I see:

* [stack overflow top answer](https://stackoverflow.com/questions/384076/how-can-i-color-python-logging-output)

* [PyPI coloredlogs](https://pypi.org/project/coloredlogs/)

* [a blog post](https://alexandra-zaharia.github.io/posts/make-your-own-custom-color-formatter-with-python-logging/)

* [another blog post](https://betterstack.com/community/questions/how-to-color-python-logging-output/)

* [PyPI colorlog](https://pypi.org/project/colorlog/)

So I was wondering if you would be willing to tweak the default `'logging.level.warning'` to something closer to yellow, to be more in line with this, and give a bit more distinction to warnings and errors.

Anyway, I am perfectly happy with customization through themes!

| closed | 2023-02-22T14:39:27Z | 2024-07-01T10:43:43Z | https://github.com/Textualize/rich/issues/2825 | [

"accepted"

] | alexprengere | 3 |

modin-project/modin | data-science | 7,350 | Possible issue with `dropna(how="all")` not deleting data from partition on ray. | When processing a large dataframe with modin running on ray, if I had previously dropped invalid rows, it runs into an issue by accessing data from the new dataframe (after dropna).

It looks like the data is not released from ray, or maybe modin `dropna` operation is not removing it properly.

It works fine if I run an operation where modin defaults to pandas.

# EXAMPLE:

```

import modin.pandas as pd

data = [

{"record": 1, "data_set": [0,0,0,0], "index": 1},

{"record": 2, "data_set": [0,0,0,0], "index": 2},

{"record": 3, "data_set": [0,0,0,0], "index": 3},

{"record": 4, "data_set": [0,0,0,0], "index": 4},

{"record": 5, "data_set": [0,0,0,0], "index": 5},

{"record": 6, "data_set": [0,0,0,0], "index": 6},

{"record": 7, "data_set": [0,0,0,0], "index": 7},

{"record": 8, "data_set": [0,0,0,0], "index": 8},

{"record": 9, "data_set": [0,0,0,0], "index": 9},

{"record": 10, "data_set": [0,0,0,0], "index": 10},

] * 10000

modin_df = pd.DataFrame(data)

# process and remove unwanted rows

# imagine this as a more complex than just filtering by index

modin_df = modin_df.apply(lambda x: x if x["index"] < 5 else None, axis=1).dropna(how="all")

# try to access data_set column

# imagine this as a more complex processing job

modin_df.apply(lambda x: x["data_set"], axis=1)

```

# ERROR:

<details>

```python-traceback

{

"name": "RayTaskError(KeyError)",

"message": "ray::_apply_func() (pid=946, ip=10.169.23.29)

At least one of the input arguments for this task could not be computed:

ray.exceptions.RayTaskError: ray::_deploy_ray_func() (pid=942, ip=10.169.23.29)

File \"pandas/_libs/index.pyx\", line 138, in pandas._libs.index.IndexEngine.get_loc

File \"pandas/_libs/index.pyx\", line 165, in pandas._libs.index.IndexEngine.get_loc

File \"pandas/_libs/hashtable_class_helper.pxi\", line 5745, in pandas._libs.hashtable.PyObjectHashTable.get_item

File \"pandas/_libs/hashtable_class_helper.pxi\", line 5753, in pandas._libs.hashtable.PyObjectHashTable.get_item

KeyError: 'data_set'

The above exception was the direct cause of the following exception:

ray::_deploy_ray_func() (pid=942, ip=10.169.23.29)

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/execution/ray/implementations/pandas_on_ray/partitioning/virtual_partition.py\", line 313, in _deploy_ray_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/partitioning/axis_partition.py\", line 419, in deploy_axis_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/dataframe/dataframe.py\", line 1788, in _tree_reduce_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/storage_formats/pandas/query_compiler.py\", line 3084, in <lambda>

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/frame.py\", line 9568, in apply

return op.apply().__finalize__(self, method=\"apply\")

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 764, in apply

return self.apply_standard()

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 891, in apply_standard

results, res_index = self.apply_series_generator()

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 907, in apply_series_generator

results[i] = self.f(v)

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/utils.py\", line 611, in wrapper

File \"/var/folders/lz/4cs_fypj0ld8x6kyk9rbkl400000gn/T/ipykernel_24081/3890645143.py\", line 24, in <lambda>

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/series.py\", line 981, in __getitem__

return self._get_value(key)

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/series.py\", line 1089, in _get_value

loc = self.index.get_loc(label)

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/indexes/base.py\", line 3804, in get_loc

raise KeyError(key) from err

KeyError: 'data_set'",

"stack": "---------------------------------------------------------------------------

RayTaskError(KeyError) Traceback (most recent call last)

Cell In[79], line 24

20 modin_df = modin_df.apply(lambda x: x if x[\"index\"] < 5 else None, axis=1).dropna(how=\"all\")

22 # try to access data_set column

23 # imagine this as a more complex processing job

---> 24 modin_df.apply(lambda x: x[\"data_set\"], axis=1)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/pandas/dataframe.py:419, in DataFrame.apply(self, func, axis, raw, result_type, args, **kwargs)

416 else:

417 output_type = DataFrame

--> 419 return output_type(query_compiler=query_compiler)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/pandas/series.py:144, in Series.__init__(self, data, index, dtype, name, copy, fastpath, query_compiler)

130 name = data.name

132 query_compiler = from_pandas(

133 pandas.DataFrame(

134 pandas.Series(

(...)

142 )

143 )._query_compiler

--> 144 self._query_compiler = query_compiler.columnarize()

145 if name is not None:

146 self.name = name

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/storage_formats/base/query_compiler.py:1236, in BaseQueryCompiler.columnarize(self)

1232 if self._shape_hint == \"column\":

1233 return self

1235 if len(self.columns) != 1 or (

-> 1236 len(self.index) == 1 and self.index[0] == MODIN_UNNAMED_SERIES_LABEL

1237 ):

1238 return self.transpose()

1239 return self

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/storage_formats/pandas/query_compiler.py:87, in _get_axis.<locals>.<lambda>(self)

74 \"\"\"

75 Build index labels getter of the specified axis.

76

(...)

84 callable(PandasQueryCompiler) -> pandas.Index

85 \"\"\"

86 if axis == 0:

---> 87 return lambda self: self._modin_frame.index

88 else:

89 return lambda self: self._modin_frame.columns

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/dataframe/dataframe.py:522, in PandasDataframe._get_index(self)

520 index, row_lengths = self._index_cache.get(return_lengths=True)

521 else:

--> 522 index, row_lengths = self._compute_axis_labels_and_lengths(0)

523 self.set_index_cache(index)

524 if self._row_lengths_cache is None:

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/dataframe/dataframe.py:626, in PandasDataframe._compute_axis_labels_and_lengths(self, axis, partitions)

624 if partitions is None:

625 partitions = self._partitions

--> 626 new_index, internal_idx = self._partition_mgr_cls.get_indices(axis, partitions)

627 return new_index, list(map(len, internal_idx))

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/partitioning/partition_manager.py:933, in PandasDataframePartitionManager.get_indices(cls, axis, partitions, index_func)

931 if len(target):

932 new_idx = [idx.apply(func) for idx in target[0]]

--> 933 new_idx = cls.get_objects_from_partitions(new_idx)

934 else:

935 new_idx = [pandas.Index([])]

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/logging/logger_decorator.py:128, in enable_logging.<locals>.decorator.<locals>.run_and_log(*args, **kwargs)

113 \"\"\"

114 Compute function with logging if Modin logging is enabled.

115

(...)

125 Any

126 \"\"\"

127 if LogMode.get() == \"disable\":

--> 128 return obj(*args, **kwargs)

130 logger = get_logger()

131 logger_level = getattr(logger, log_level)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/partitioning/partition_manager.py:874, in PandasDataframePartitionManager.get_objects_from_partitions(cls, partitions)

870 partitions[idx] = part.force_materialization()

871 assert all(

872 [len(partition.list_of_blocks) == 1 for partition in partitions]

873 ), \"Implementation assumes that each partition contains a single block.\"

--> 874 return cls._execution_wrapper.materialize(

875 [partition.list_of_blocks[0] for partition in partitions]

876 )

877 return [partition.get() for partition in partitions]

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/execution/ray/common/engine_wrapper.py:92, in RayWrapper.materialize(cls, obj_id)

77 @classmethod

78 def materialize(cls, obj_id):

79 \"\"\"

80 Get the value of object from the Plasma store.

81

(...)

90 Whatever was identified by `obj_id`.

91 \"\"\"

---> 92 return ray.get(obj_id)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/ray/_private/auto_init_hook.py:21, in wrap_auto_init.<locals>.auto_init_wrapper(*args, **kwargs)

18 @wraps(fn)

19 def auto_init_wrapper(*args, **kwargs):

20 auto_init_ray()

---> 21 return fn(*args, **kwargs)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/ray/_private/client_mode_hook.py:102, in client_mode_hook.<locals>.wrapper(*args, **kwargs)

98 if client_mode_should_convert():

99 # Legacy code

100 # we only convert init function if RAY_CLIENT_MODE=1

101 if func.__name__ != \"init\" or is_client_mode_enabled_by_default:

--> 102 return getattr(ray, func.__name__)(*args, **kwargs)

103 return func(*args, **kwargs)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/ray/util/client/api.py:42, in _ClientAPI.get(self, vals, timeout)

35 def get(self, vals, *, timeout=None):

36 \"\"\"get is the hook stub passed on to replace `ray.get`

37

38 Args:

39 vals: [Client]ObjectRef or list of these refs to retrieve.

40 timeout: Optional timeout in milliseconds

41 \"\"\"

---> 42 return self.worker.get(vals, timeout=timeout)

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/ray/util/client/worker.py:433, in Worker.get(self, vals, timeout)

431 op_timeout = max_blocking_operation_time

432 try:

--> 433 res = self._get(to_get, op_timeout)

434 break

435 except GetTimeoutError:

File ~/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/ray/util/client/worker.py:461, in Worker._get(self, ref, timeout)

459 logger.exception(\"Failed to deserialize {}\".format(chunk.error))

460 raise

--> 461 raise err

462 if chunk.total_size > OBJECT_TRANSFER_WARNING_SIZE and log_once(

463 \"client_object_transfer_size_warning\"

464 ):

465 size_gb = chunk.total_size / 2**30

RayTaskError(KeyError): ray::_apply_func() (pid=946, ip=10.169.23.29)

At least one of the input arguments for this task could not be computed:

ray.exceptions.RayTaskError: ray::_deploy_ray_func() (pid=942, ip=10.169.23.29)

File \"pandas/_libs/index.pyx\", line 138, in pandas._libs.index.IndexEngine.get_loc

File \"pandas/_libs/index.pyx\", line 165, in pandas._libs.index.IndexEngine.get_loc

File \"pandas/_libs/hashtable_class_helper.pxi\", line 5745, in pandas._libs.hashtable.PyObjectHashTable.get_item

File \"pandas/_libs/hashtable_class_helper.pxi\", line 5753, in pandas._libs.hashtable.PyObjectHashTable.get_item

KeyError: 'data_set'

The above exception was the direct cause of the following exception:

ray::_deploy_ray_func() (pid=942, ip=10.169.23.29)

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/execution/ray/implementations/pandas_on_ray/partitioning/virtual_partition.py\", line 313, in _deploy_ray_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/partitioning/axis_partition.py\", line 419, in deploy_axis_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/dataframe/pandas/dataframe/dataframe.py\", line 1788, in _tree_reduce_func

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/core/storage_formats/pandas/query_compiler.py\", line 3084, in <lambda>

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/frame.py\", line 9568, in apply

return op.apply().__finalize__(self, method=\"apply\")

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 764, in apply

return self.apply_standard()

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 891, in apply_standard

results, res_index = self.apply_series_generator()

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/apply.py\", line 907, in apply_series_generator

results[i] = self.f(v)

File \"/Users/brunoj/.pyenv/versions/3.9.18/envs/che/lib/python3.9/site-packages/modin/utils.py\", line 611, in wrapper

File \"/var/folders/lz/4cs_fypj0ld8x6kyk9rbkl400000gn/T/ipykernel_24081/3890645143.py\", line 24, in <lambda>

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/series.py\", line 981, in __getitem__

return self._get_value(key)

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/series.py\", line 1089, in _get_value

loc = self.index.get_loc(label)

File \"/home/ray/anaconda3/lib/python3.9/site-packages/pandas/core/indexes/base.py\", line 3804, in get_loc

raise KeyError(key) from err

KeyError: 'data_set'"

}

```

</details>

# INSTALLED VERSION

```

UserWarning: Setuptools is replacing distutils.

INSTALLED VERSIONS

------------------

commit : f5f9ae993ba5ed26461d3c9d26fbefecab88ee69

python : 3.9.18.final.0

python-bits : 64

OS : Darwin

OS-release : 23.5.0

Version : Darwin Kernel Version 23.5.0: Wed May 1 20:12:58 PDT 2024; root:xnu-10063.121.3~5/RELEASE_ARM64_T6000

machine : arm64

processor : arm

byteorder : little

LC_ALL : None

LANG : None

LOCALE : None.UTF-8

Modin dependencies

------------------

modin : 0.31.0+5.gf5f9ae99

ray : 2.23.0

dask : 2024.7.1

distributed : 2024.7.1

pandas dependencies

-------------------

pandas : 2.2.2

numpy : 1.26.4

pytz : 2024.1

dateutil : 2.9.0.post0

setuptools : 69.5.1

pip : 24.0

Cython : None

pytest : None

hypothesis : None

sphinx : None

blosc : None

feather : None

xlsxwriter : None

lxml.etree : None

html5lib : None

pymysql : None

psycopg2 : None

jinja2 : 3.1.4

IPython : 8.18.1

pandas_datareader : None

adbc-driver-postgresql: None

adbc-driver-sqlite : None

bs4 : 4.12.3

bottleneck : None

dataframe-api-compat : None

fastparquet : None

fsspec : 2024.6.1

gcsfs : 2024.6.1

matplotlib : 3.9.1

numba : None

numexpr : None

odfpy : None

openpyxl : None

pandas_gbq : 0.23.1

pyarrow : 14.0.2

pyreadstat : None

python-calamine : None

pyxlsb : None

s3fs : 2024.6.1

scipy : 1.13.1

sqlalchemy : None

tables : None

tabulate : 0.9.0

xarray : None

xlrd : None

zstandard : None

tzdata : 2024.1

qtpy : None

pyqt5 : None

``` | open | 2024-07-23T11:05:03Z | 2024-07-25T21:22:44Z | https://github.com/modin-project/modin/issues/7350 | [

"bug 🦗",

"P0"

] | brunojensen | 1 |

serengil/deepface | machine-learning | 882 | Why is the euclidean distance calculated that way and not using np.linalign.norm? | Just curios.

| closed | 2023-11-02T19:31:27Z | 2023-11-02T22:29:47Z | https://github.com/serengil/deepface/issues/882 | [

"question"

] | ghost | 1 |

vimalloc/flask-jwt-extended | flask | 57 | Version Logs? | @vimalloc Should we have a log of changes to clarify if newer releases might break previous versions? | closed | 2017-06-15T14:06:16Z | 2017-07-02T18:58:59Z | https://github.com/vimalloc/flask-jwt-extended/issues/57 | [] | rlam3 | 4 |

unionai-oss/pandera | pandas | 1,759 | Pass additional `Check` kwargs into `register_check_method` | **Is your feature request related to a problem? Please describe.**

Adding a custom error message to an inline custom check works now due to you're recent commits, because you can pass an error argument to the `Check` object. Thank you by the way. I could have missed it, but is there a way to extend that ability class based custom checks? I assumed maybe `register_check_method` or `Field` would take additional arguments for the init of the Check object, but they don't seem to.

**Describe the solution you'd like**

I was able to hack something together, but I'm not really qualified to muck around or contribute to a project like this. Even though I'd love to.

```python

def register_check_method( # pylint:disable=too-many-branches

check_fn=None,

*,

statistics: Optional[List[str]] = None,

supported_types: Optional[Union[type, Tuple, List]] = None,

check_type: Union[CheckType, str] = "vectorized",

strategy=None,

**kwargs # + ln 142

):

```

```python

if check_fn is None:

return partial(

register_check_method,

statistics=statistics,

supported_types=supported_types,

check_type=check_type,

strategy=strategy,

**kwargs # + ln 233

)

```

```python

if check_fn is None:

return partial(

register_check_method,

statistics=statistics,

supported_types=supported_types,

check_type=check_type,

strategy=strategy,

**kwargs # + ln 233

)

```

```python

def validate_check_kwargs(check_kwargs):

check_kwargs = check_kwargs | kwargs # + ln 259

msg = (

f"'{check_fn.__name__} has check_type={check_type}. "

"Providing the following arguments will have no effect: "

"{}. Remove these arguments to avoid this warning."

)

```

...

| open | 2024-07-20T19:07:11Z | 2025-01-05T20:15:42Z | https://github.com/unionai-oss/pandera/issues/1759 | [

"enhancement"

] | typkrft | 1 |

dunossauro/fastapi-do-zero | sqlalchemy | 175 | Repositório do Paulo Cesar Peixoto (PC) | | Link do projeto | Seu @ no git | Comentário (opcional) |

| --- | --- | --- |

| [fast_zero_api](https://github.com/peixoto-pc/fast_api_zero) | [@peixoto-pc ](https://github.com/peixoto-pc)| Implementação do material do curso sem alterações | | closed | 2024-06-14T21:57:08Z | 2024-06-15T00:55:29Z | https://github.com/dunossauro/fastapi-do-zero/issues/175 | [] | peixoto-pc | 1 |

graphql-python/graphene-sqlalchemy | sqlalchemy | 211 | AssertionError: Found different types with the same name in the schema | I have two Classes Products and SalableProducts in my Models (SalableProducts inherits from Products so it has every field of it's database), in my Schema here is what i did

```python

class Product(SQLAlchemyObjectType):

class Meta:

model = ProductModel

interfaces = (relay.Node, )

class ProductConnections(relay.Connection):

class Meta:

node = Product

```

```python

class SalableProduct(SQLAlchemyObjectType):

class Meta:

model = SalableProductModel

interfaces = (relay.Node, )

class SalableProductConnections(relay.Connection):

class Meta:

node = SalableProduct

```

and here is my Query class :

```python

class Query(graphene.ObjectType):

node = relay.Node.Field()

all_products = SQLAlchemyConnectionField(ProductConnections)

all_salable_products = SQLAlchemyConnectionField(SalableProductConnections)

```

When i run my server i got this error :

AssertionError: Found different types with the same name in the schema: product_status, product_status. | open | 2019-04-29T11:04:01Z | 2022-07-18T21:24:07Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/211 | [] | Rafik-Belkadi | 19 |

clovaai/donut | computer-vision | 221 | donut-base-finetuned-cord-v2 Demo not working properly in Gradio Space web demo | There is a run time error when the demo link is launched. I think there might be some dependency issue for models build on CORD dataset for document parsing. | open | 2023-07-02T03:01:20Z | 2023-11-25T07:48:35Z | https://github.com/clovaai/donut/issues/221 | [] | being-invincible | 1 |

Colin-b/pytest_httpx | pytest | 40 | Support for httpx > 0.17.x | `httpx` released a [new version](https://github.com/encode/httpx/releases/tag/0.18.0). Currently the `httpx` is limited to [`0.17.*`](https://github.com/Colin-b/pytest_httpx/blob/develop/setup.py#L41).

It would be nice if `pytest-httpx` is updated.

Thanks | closed | 2021-04-27T15:42:14Z | 2021-05-04T18:28:42Z | https://github.com/Colin-b/pytest_httpx/issues/40 | [

"enhancement"

] | fabaff | 3 |

streamlit/streamlit | deep-learning | 10,673 | st.data_editor: Pasting a copied range fails when the bottom-right cell is empty or None | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

When copying a selected range of cells from st.data_editor and pasting it into another part of the table, the paste operation does not work if the bottom-right cell of the copied selection contains either an empty string ("") or None.

**Conditions**

Copying a range of cells works as expected.

However, when pasting the copied content into another part of the table, nothing happens if the bottom-right cell of the copied selection is empty ("") or None.

Sample table:

Copying a selected range of cells from st.data_editor:

Pasting it into another part of the table does not work:

### Reproducible Code Example

[](https://issues.streamlitapp.com/?issue=gh-10673)

```Python

import streamlit as st

import pandas as pd

samples = {

"col1": ["test11", "test12", "test13"],

"col2": ["test21", "", "test23"],

"col3": ["test31", "test32", None]

}

df = pd.DataFrame(samples)

editor = st.data_editor(df, num_rows="dynamic")

```

### Steps To Reproduce

1. Run the sample code.

2. Select a range of cells, e.g., from row 1, column 1 (test11) to row 2, column 2 ("").

3. Copy the selected range (Ctrl+C).

4. Try pasting it into row 2, column 1 (Ctrl+V).

5. Observe that the paste operation does not work.

### Expected Behavior

The selected range should be pasted successfully, regardless of whether the bottom-right cell is empty ("") or None.

### Current Behavior

Copying fails when the bottom-right cell of the selection is empty ("") or None.

### Is this a regression?

- [ ] Yes, this used to work in a previous version.

### Debug info

- Streamlit version: 1.43.0

- Python version: 3.11.0

- Operating System: Windows11

- Browser: Chrome

### Additional Information

_No response_ | open | 2025-03-07T05:57:07Z | 2025-03-14T02:55:26Z | https://github.com/streamlit/streamlit/issues/10673 | [

"type:bug",

"status:confirmed",

"priority:P3",

"feature:st.data_editor"

] | hirokika | 4 |

KaiyangZhou/deep-person-reid | computer-vision | 563 | torchreid | It's not 'torchreid.utils', it should be 'torchreid.reid.utils' | open | 2023-11-10T11:34:07Z | 2023-11-10T11:34:07Z | https://github.com/KaiyangZhou/deep-person-reid/issues/563 | [] | motherflunker | 0 |

strawberry-graphql/strawberry | graphql | 3,431 | Should we hide fields that starts with `_` by default? | We have strawberry.Private to hide field, but I was wondering if we should automatically hide fields that start with a underscore, since it is a common convention in Python to use it for "private" fields.

What do you all think? | open | 2024-04-02T11:55:00Z | 2025-03-20T15:56:39Z | https://github.com/strawberry-graphql/strawberry/issues/3431 | [] | patrick91 | 0 |

modin-project/modin | pandas | 7,385 | FEAT: Add type annotations to frontend methods | **Is your feature request related to a problem? Please describe.**

Many frontend methods are missing type annotations on parameters or return types, which are necessary for downstream extension libraries to generate annotations in documentation. | open | 2024-09-05T22:08:38Z | 2024-09-05T22:08:52Z | https://github.com/modin-project/modin/issues/7385 | [

"P3",

"Interfaces and abstractions"

] | noloerino | 0 |

deezer/spleeter | deep-learning | 161 | [Discussion] No GPU stress | Curious as to why activity monitor on Windows tells me that my GPU is barely used during `spleeter-gpu`.

I know for a fact that `spleeter-gpu` is running because my spleeter sessions complete A LOT faster now compared to when I run `spleeter-cpu`.

Usage: 3%

Dedicated GPU-Memory: 0,7 / 8,0 GB

GPU-Memory: 0,8 / 16,0 GB

Shared GPU-Memory: 0,1 / 8,0 GB

Why is this happening?

How can I take advantage of this?

Could on-board graphics be involved here? | closed | 2019-12-05T04:16:41Z | 2019-12-18T15:03:51Z | https://github.com/deezer/spleeter/issues/161 | [

"question"

] | aidv | 2 |

airtai/faststream | asyncio | 1,059 | Feature: subscribers should be resilient to segmentation faults | Thank you for FastStream, I really enjoy the use of pydantic here :smiley:

**Is your feature request related to a problem? Please describe.**

Segmentation faults can happen in the process of handling a message, when involving a library causing the segmentation fault (I don't think it is possible to cause a segmentation fault with native Python code).

When a segmentation fault occurs in the FastStream application which consumes the message, the application stops and is not restarted (that is for faststream[rabbit]==0.3.6; for 0.2.5, the application was hanging defunct). The message is not processed nor redirected to a dead letter queue, for example (in the case of a RabbitMQ cluster).

**Describe the solution you'd like**

I suggest that the message causing the segmentation fault does not stop the application, which would react the same way as if the message had raised an error/exception: the message is rejected and the subscriber keeps on consuming the next message.

**Feature code example**

I published this project to demonstrate how a segmentation fault stops the subscriber application: https://github.com/lucsorel/sigseg-faststream.

**Describe alternatives you've considered**

Being resilient to segmentation faults might involve handling each message in a sub-process for the main process to be resilient to segmentation faults. | closed | 2023-12-15T16:11:37Z | 2024-07-09T15:47:33Z | https://github.com/airtai/faststream/issues/1059 | [

"enhancement"

] | lucsorel | 10 |

PaddlePaddle/models | computer-vision | 5,368 | 下载不了pix2pix模型 | https://paddle-gan-models.bj.bcebos.com/pix2pix_G.tar.gz

https://www.paddlepaddle.org.cn/modelbasedetail/pix2pix | open | 2021-11-10T02:03:24Z | 2024-02-26T05:08:29Z | https://github.com/PaddlePaddle/models/issues/5368 | [] | zhenzi0322 | 0 |

ageitgey/face_recognition | python | 1,341 | Landmark detection is pretty slow :( | * face_recognition version: 1.3.0

* Python version: 3.9.5

* Operating System: Mac OS 10.14.6

I am detecting face landmarks. Mostly nose bridge in order to crop the images later. I found it pretty slow to do it. About 6 seconds per image.

Is there a way to speed up the process ? Can I look only for the nose bridge landmarks somehow ? Would that be faster ? Also file size might be a problem ?

Any help is appreciated !

`from PIL import Image, ImageDraw

import face_recognition

# Load the jpg file into a numpy array

image = face_recognition.load_image_file("test.jpg")

# Find all facial features in all the faces in the image

face_landmarks_list = face_recognition.face_landmarks(image)

print("I found {} face(s) in this photograph.".format(len(face_landmarks_list)))

# Create a PIL imagedraw object so we can draw on the picture

pil_image = Image.fromarray(image)

d = ImageDraw.Draw(pil_image)

for face_landmarks in face_landmarks_list:

# Print the location of each facial feature in this image

for facial_feature in face_landmarks.keys():

print("The {} in this face has the following points: {}".format(facial_feature, face_landmarks[facial_feature]))

# Let's trace out each facial feature in the image with a line!

for facial_feature in face_landmarks.keys():

d.line(face_landmarks[facial_feature], width=2)

# Show the picturew

pil_image.show()

cv2.imwrite('result.png', test)` | open | 2021-07-09T11:12:31Z | 2022-07-01T19:11:19Z | https://github.com/ageitgey/face_recognition/issues/1341 | [] | schwarzwals | 4 |

quokkaproject/quokka | flask | 663 | jinja2.exceptions.UndefinedError: 'theme' is undefined | when i create a block or page, and try to view i get:

```

2018-06-12 11:51:20,001 - werkzeug - ERROR - Error on request:

Traceback (most recent call last):

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/werkzeug/serving.py", line 270, in run_wsgi

execute(self.server.app)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/werkzeug/serving.py", line 258, in execute

application_iter = app(environ, start_response)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/flask/app.py", line 2309, in __call__

return self.wsgi_app(environ, start_response)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/flask/app.py", line 2295, in wsgi_app

response = self.handle_exception(e)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/flask/app.py", line 1748, in handle_exception

return self.finalize_request(handler(e), from_error_handler=True)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/quokka/core/error_handlers.py", line 54, in server_error_page

return render_template("errors/server_error.html"), 500

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/flask/templating.py", line 135, in render_template

context, ctx.app)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/flask/templating.py", line 117, in _render

rv = template.render(context)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/jinja2/asyncsupport.py", line 76, in render

return original_render(self, *args, **kwargs)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/jinja2/environment.py", line 1008, in render

return self.environment.handle_exception(exc_info, True)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/jinja2/environment.py", line 780, in handle_exception

reraise(exc_type, exc_value, tb)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/jinja2/_compat.py", line 37, in reraise

raise value.with_traceback(tb)

File "/Users/kyle/git/blog/venv/lib/python3.6/site-packages/quokka/templates/errors/server_error.html", line 2, in top-level template code

{% extends theme("base.html") %}

jinja2.exceptions.UndefinedError: 'theme' is undefined

``` | closed | 2018-06-12T18:53:57Z | 2018-07-23T20:16:48Z | https://github.com/quokkaproject/quokka/issues/663 | [] | jstacoder | 1 |

geopandas/geopandas | pandas | 2,478 | BUG: AttributeError about datetimelike values when reading file with Fiona engine | - [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest version of geopandas (on the date of issue, last version is 0.11.0).

- [ ] (optional) I have confirmed this bug exists on the main branch of geopandas.

---

**Note**: Please read [this guide](https://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports) detailing how to provide the necessary information for us to reproduce your bug.

#### Code Sample, a copy-pastable example

```python

import geopandas as gpd

path_to_file = <path to the file attached>

gdf = gpd.read_file(path_to_file)

```

#### Problem description

The attached file contains date-like columns. With the last version of geopandas I'm not able to read it anymore, as it raises an AttributeError `AttributeError: Can only use .dt accessor with datetimelike values`.

It was not the case with previous version of geopandas. The date-like columns were identified as object columns but at least no error were raised.

If I switch the engine from Fiona to Pyogrio to read the file, there is no error raised and all columns are well detected as datetime columns.

#### Expected Output

Have all columns parsed with no error raised. Here the output I obtained with engine Pyogrio instead of Fiona:

```

>>> gdf.dtypes

cleabs object

nature object

nature_detaillee object

toponyme object

statut_du_toponyme object

fictif bool

etat_de_l_objet object

date_creation datetime64[ns]

date_modification datetime64[ns]

date_d_apparition datetime64[ns]

date_de_confirmation datetime64[ns]

sources object

identifiants_sources object

precision_planimetrique float64

geometry geometry

```

#### Output of ``geopandas.show_versions()``

<details>

<pre>

SYSTEM INFO

-----------

python : 3.8.10 (default, Mar 15 2022, 12:22:08) [GCC 9.4.0]

executable : my_env/bin/python

machine : Linux-5.13.0-51-generic-x86_64-with-glibc2.29

GEOS, GDAL, PROJ INFO

---------------------

GEOS : None

GEOS lib : None

GDAL : 3.4.1

GDAL data dir: my_env/lib/python3.8/site-packages/fiona/gdal_data

PROJ : 8.2.0

PROJ data dir: my_env/lib/python3.8/site-packages/pyproj/proj_dir/share/proj

PYTHON DEPENDENCIES

-------------------

geopandas : 0.11.0

pandas : 1.4.3

fiona : 1.8.21

numpy : 1.23.0

shapely : 1.8.2

rtree : None

pyproj : 3.3.1

matplotlib : None

mapclassify: None

geopy : None

psycopg2 : None

geoalchemy2: None

pyarrow : None

pygeos : None

</pre>

</details>

Attached file: [grosfi.ch/GzMKuv5CHgu](https://www.grosfichiers.com/GzMKuv5CHgu) | closed | 2022-06-27T14:32:37Z | 2022-07-24T09:10:04Z | https://github.com/geopandas/geopandas/issues/2478 | [

"regression"

] | paumillet | 3 |

hankcs/HanLP | nlp | 594 | 在提取关键词的过程中,根据词性过滤得出的关键词 | 在提取关键词的过程中,我想根据词性过滤,只留下词性为名词的关键词。

我的想法是在分词的步骤中,就按照词性去过滤,只留下名词,但是在标准分词源码部分,并没有找到关于词性的代码,hankcs能否指点一下小弟呢?不胜感激

| closed | 2017-07-31T10:30:37Z | 2020-01-01T11:08:34Z | https://github.com/hankcs/HanLP/issues/594 | [

"ignored"

] | cpeixin | 4 |

yunjey/pytorch-tutorial | pytorch | 62 | Evaluation mode in Resnet | I have a question about the evaluation mode. I found that in the resnet tutorial, the network is not set to evaluation through resnet.eval(). Will this affect the testing accuracy? Thanks! | closed | 2017-09-20T08:59:55Z | 2017-10-12T04:59:22Z | https://github.com/yunjey/pytorch-tutorial/issues/62 | [] | zhangmozhe | 1 |

milesmcc/shynet | django | 73 | You were added to awesome-humane-tech | This is just a FYI issue to notify that you were added to the curated awesome-humane-tech in the 'Analytics' category, and - if you like that - are now entitled to wear our badge:

[](https://github.com/humanetech-community/awesome-humane-tech)

By adding this to the README:

```markdown

[](https://github.com/humanetech-community/awesome-humane-tech)

```

https://github.com/humanetech-community/awesome-humane-tech | closed | 2020-08-15T06:25:15Z | 2020-08-15T15:53:15Z | https://github.com/milesmcc/shynet/issues/73 | [

"meta"

] | aschrijver | 1 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 544 | Scrapegraph returns relative path URLs instead of absolute path **Possible Bug?** | **Describe the bug**

When using gpt4o as the llm and scraping a webpage to return a list of links, sometimes the paths returned are :

- relative paths (OR)

- full path with an incorrect prefix/domain usually "http://example.com"

The behaviour was consistent until 3 days ago i.e. it always returned full paths on a large dataset as well. Since then, I had to uninstall Scrapegraph and reinstall the library and that's when this issue started popping up.

**Expected behavior**

For example : asking to scrape a website `www.some-actual-website.com` and return a list of webpages that contain information about the contact details of the company, used to consistently/always return a json like :

```

{"list_of_urls": "['www.some-actual-website.com/about','www.some-actual-website.com/contact-us']"}

```

However, now I get either :

```

{"list_of_urls": "['https://example.com/about', 'https://example.com/contact-us']"}

```

OR

```

{"list_of_urls": "['/about','/contact-us']"}

```

I'm curious , shouldn't the list of URLs being parsed/scraped be a straightforward output? Is the final output always produced by the LLM?

**Desktop (please complete the following information):**

- Ubuntu 22.04

- Chromium Browser with Playwright

| closed | 2024-08-13T10:28:49Z | 2024-09-12T14:14:34Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/544 | [] | sandeepchittilla | 12 |

s3rius/FastAPI-template | asyncio | 219 | taskiq scheduler does not run ... | I followed the documentation for Taskiq [here](https://taskiq-python.github.io/available-components/schedule-sources.html#redisschedulesource) to set up scheduler in my tkq.py file, like following:

```

result_backend = RedisAsyncResultBackend(

redis_url=str(settings.redis_url.with_path("/1")),

)

broker = ListQueueBroker(

str(settings.redis_url.with_path("/1")),

).with_result_backend(result_backend)

scheduler = TaskiqScheduler(broker=broker, sources=[LabelScheduleSource(broker)])

```

And I created an example task:

```

@broker.task(schedule=[{"cron": "*/1 * * * *", "cron_offset": None, "time": None, "args": [10], "kwargs": {}, "labels": {}}])

async def heavy_task(a: int) -> int:

if broker.is_worker_process:

logger.info("heavy_task: {} is in worker process!!!", a)

else:

logger.info("heavy_task: {} NOT in worker process", a)

return 100 + a

```

In the docker-compose.yml file, I start the broker and scheduler like so:

```

taskiq-worker:

<<: *main_app

labels: []

command:

- taskiq

- worker

- market_insights.tkq:scheduler && market_insights.tkq:broker

```

However, the taskiq scheduler does not seem to do anything. I guess I must be missing something. Can some experts help? Thanks | closed | 2024-07-17T04:39:18Z | 2024-07-20T21:13:29Z | https://github.com/s3rius/FastAPI-template/issues/219 | [] | rcholic | 3 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,654 | Deleting the information provided by the whistleblower without deleting all the report | ### Proposal

Our proposal is to add a new feature that allows the recipient to deleat the information provided by the whistleblower without deleating all the report, so that the recipient can keep the communication with the whistleblower through the comments. This way, for exemple, whistleblowers can be informed that their denunciations have not been accepted, while we accomplish the rule of deletting the information after decidding whether to start or not an investigation (rule that we mention below).

### Motivation and context

* The current functionalities of the platform permit deleating the report. But, by doing that, it also deletes the possibility of keeping the communication through the comments with the whistleblower.

* The Spanish transposition law of the Directive (EU) 2019/1937 of the European Parliament and of the Council, prescribes that the data provided by the whistleblower can be kept in the information system only for the time necessary to decide on the appropriateness of starting an investigation (article 32.3 Ley 2/2023, de 20 de febrero). | open | 2023-09-25T06:57:18Z | 2023-09-27T11:45:34Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3654 | [

"T: Feature"

] | jowis | 5 |

junyanz/pytorch-CycleGAN-and-pix2pix | pytorch | 1,113 | exporting Pix2Pix to onnx | Hello,

PyTorch complains that I used DataParallel to train the model and that it can't be exported because of it

so I have to remove that info somehow. But I can't figure out how to do it

I tried this [workaround](https://stackoverflow.com/questions/44230907/keyerror-unexpected-key-module-encoder-embedding-weight-in-state-dict) :

I'm using a modified test.py script in Google Colab

```

import os

from options.test_options import TestOptions

from data import create_dataset

from models import create_model

from util.visualizer import save_images

from util import html

import torch

if name == 'main':

opt = TestOptions().parse() # get test options

# hard-code some parameters for test

opt.num_threads = 0 # test code only supports num_threads =

opt.batch_size = 1 # test code only supports batch_size = 1

opt.serial_batches = True # disable data shuffling; comment this line if results on randomly chosen images are needed.

opt.no_flip = True # no flip; comment this line if results on flipped images are needed.

opt.display_id = -1 # no visdom display; the test code saves the results to a HTML file.

dataset = create_dataset(opt) # create a dataset given opt.dataset_mode and other options

model = create_model(opt) # create a model given opt.model and other options

model.setup(opt) # regular setup: load and print networks; create schedulers

# original saved file with DataParallel

state_dict = torch.load('/content/drive/My Drive/Training Data/checkpoints/human2cat_pix2pix/latest_net_G.pth')

# create new OrderedDict that does not contain module.

from collections import OrderedDict

new_state_dict = OrderedDict()

for k, v in state_dict.items():

name = k[7:] # remove module.

new_state_dict[name] = v

# load params

model.netG.load_state_dict(new_state_dict)

dummy = torch.randn(10, 3, 256, 256)

torch.onnx.export(model.netG, dummy, './out.onnx')

```

But I get `RuntimeError: Error(s) in loading state_dict for DataParallel:`

Do you have any suggestions for how to export to ONNB with a model trained with DataParallel?

thanks | open | 2020-08-03T10:01:22Z | 2022-09-16T08:21:58Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1113 | [] | ReallyRad | 4 |

nerfstudio-project/nerfstudio | computer-vision | 3,184 | License of the gsplat | can you share the lic of the gsplat model ? | closed | 2024-05-31T03:49:02Z | 2024-05-31T05:47:27Z | https://github.com/nerfstudio-project/nerfstudio/issues/3184 | [] | sumanttyagi | 4 |

newpanjing/simpleui | django | 63 | 与django-import-export集成的时候遇到的兼容性问题 | **bug描述**

简单的描述下遇到的bug:

按照simpleui_demo的用了django_import_export,然后导入导出的图标跟过滤搜索的这些重叠了

建议是否可以增加配置把过滤搜索这些框下降一行吗?

**重现步骤**

1.

2.

3.

**环境**

Django==2.2.1

django-import-export==1.2.0

django-simpleui==2.1

**其他描述**

| closed | 2019-05-24T07:27:50Z | 2020-03-08T03:06:09Z | https://github.com/newpanjing/simpleui/issues/63 | [

"bug"

] | pandadriver | 4 |

awesto/django-shop | django | 112 | Bug in template samples | The current templates do not reflect the ManyToMany model between categories and products.

Is there an other way to submit patches, rather than Email?

diff --git a/shop/templates/shop/product_detail.html b/shop/templates/shop/product_detail.html

index 70d4ade..323b061 100644

--- a/shop/templates/shop/product_detail.html

+++ b/shop/templates/shop/product_detail.html

@@ -10,8 +10,8 @@

{{object.unit_price}}<br />

-{% if object.category %}

-{{object.category.name}}

+{% if object.categories %}

+{% for cat in object.categories.all %} {{ cat.name }} {% endfor %}

{% else %}

(Product is at root category)

{% endif %}

diff --git a/shop/templates/shop/product_list.html b/shop/templates/shop/product_list.html

index c7314c0..6439c39 100644

--- a/shop/templates/shop/product_list.html

+++ b/shop/templates/shop/product_list.html

@@ -13,8 +13,8 @@

{{object.unit_price}}<br />

-{% if object.category %}

-{{object.category.name}}<br />

+{% if object.categories %}

+{% for cat in object.categories.all %} {{ cat.name }}<br /> {% endfor %}

{% else %}

(Product is at root category)<br />

{% endif %}

| closed | 2011-11-01T10:00:21Z | 2016-02-02T14:09:11Z | https://github.com/awesto/django-shop/issues/112 | [] | jrief | 2 |

FactoryBoy/factory_boy | django | 465 | Model returned from .create() doesn't have an id | I might be missing something, but the docs say that `create()` returns a saved model, but if I simply do `UserFactory.create().id` I get back `None`, yet if I do `user = UserFactory.create(); user.save()` then the `user.id` is actually set. | closed | 2018-04-05T00:01:04Z | 2018-05-05T00:07:49Z | https://github.com/FactoryBoy/factory_boy/issues/465 | [

"Q&A"

] | darthdeus | 3 |

Sanster/IOPaint | pytorch | 348 | 1 Click Installer : AttributeError: 'LaMa' object has no attribute 'is_local_sd_model' | I was using the cleaner fine but when I tried to boot it up today it throws this error on all models. Ran config to see if there were any updates but no luck. Some help would be appreciated.

```

[2023-07-18 17:13:08,790] ERROR in app: Exception on /inpaint [POST]

Traceback (most recent call last):

File "S:\lama-cleaner\installer\lib\site-packages\flask\app.py", line 2528, in wsgi_app

response = self.full_dispatch_request()

File "S:\lama-cleaner\installer\lib\site-packages\flask\app.py", line 1825, in full_dispatch_request

rv = self.handle_user_exception(e)

File "S:\lama-cleaner\installer\lib\site-packages\flask_cors\extension.py", line 176, in wrapped_function

return cors_after_request(app.make_response(f(*args, **kwargs)))

File "S:\lama-cleaner\installer\lib\site-packages\flask\app.py", line 1823, in full_dispatch_request

rv = self.dispatch_request()

File "S:\lama-cleaner\installer\lib\site-packages\flask\app.py", line 1799, in dispatch_request

return self.ensure_sync(self.view_functions[rule.endpoint])(**view_args)

File "S:\lama-cleaner\installer\lib\site-packages\lama_cleaner\server.py", line 291, in process

res_np_img = model(image, mask, config)

File "S:\lama-cleaner\installer\lib\site-packages\lama_cleaner\model_manager.py", line 63, in __call__

self.switch_controlnet_method(control_method=config.controlnet_method)

File "S:\lama-cleaner\installer\lib\site-packages\lama_cleaner\model_manager.py", line 88, in switch_controlnet_method

if self.model.is_local_sd_model:

AttributeError: 'LaMa' object has no attribute 'is_local_sd_model'

127.0.0.1 - - [18/Jul/2023 17:13:08] "POST /inpaint HTTP/1.1" 500 -

``` | closed | 2023-07-18T07:15:20Z | 2023-07-18T13:45:53Z | https://github.com/Sanster/IOPaint/issues/348 | [] | Acephalia | 1 |

benlubas/molten-nvim | jupyter | 260 | [Feature Request] Text-Objects for jupytext "py:percent" format | [Jupytext](https://jupytext.readthedocs.io/en/latest/index.html) is a versatile tool for converting between Jupyter notebooks (`.ipynb`) and Python scripts (`.py`) and vice versa. It provides support for various formats, including the ["percent" format](https://jupytext.readthedocs.io/en/latest/formats-scripts.html#the-percent-format), which adds notebook cell metadata as comments in Python scripts.

It would be useful if `molten.nvim` included a custom text object to represent Jupyter notebook cells in Python scripts using this "percent" format.

#### Use Case

For example, consider the following Python script converted from a Jupyter notebook with three cells:

```python

# %% [markdown] # ┓

# This is a multiline. # ┠ first cell (markdown type)

# Markdown cell # ┃

# ┛

# %% [markdown] # ┓

# Another Markdown cell # ┠ second cell (markdown type)

# ┃

# ┛

# %% # ┓

# This is a code cell # ┠ third cell (code type)

class A(): # ┃

def one(): # ┃

return 1 # ┃

# ┃

def two(): # ┃

return 2 # ┛

```

The custom text object would identify and operate on these cell structures. This would allow users to:

1. Navigate between Jupyter cells easily (e.g., move to the next/previous cell).

2. Evaluate cells individually or sequentially using `MoltenEvaluateOperator`.

3. Bind familiar key mappings like `Shift-Enter` or `Ctrl-Enter` to run the current Jupyter cell and move to the next one, emulating the experience of running cells in a Jupyter notebook.

#### Proposed Solution

- Implement a custom text object to recognize Jupyter cells in Python scripts using the "percent" format.

- Add support for evaluating these cells through `MoltenEvaluateOperator`.

- Optionally, provide default key mappings for running and navigating cells (`Shift-Enter`/`Ctrl-Enter`).

#### Benefits

This feature would enhance the experience of working with Python scripts derived from Jupyter notebooks. | closed | 2024-12-04T19:05:56Z | 2024-12-04T22:16:47Z | https://github.com/benlubas/molten-nvim/issues/260 | [

"enhancement"

] | S1M0N38 | 1 |

jupyter/nbgrader | jupyter | 1,326 | assignment_dir issues | The `c.Exchange.assignment_dir` config setting is not behaving as expected when fetching assignments through the web interface (assignment list). We have a Jupyterhub setup where the notebooks of the users are placed in `~/Jupyter`:

in `jupyterhub_config.py`:

`c.Spawner.notebook_dir = '~/Jupyter'`

Additionally, we use following settings in `nbgrader_config.py` of the normal users:

```

c = get_config()

c.CourseDirectory.course_id = "somecourse"

c.Exchange.path_includes_course = True

```

### not setting `c.Exchange.assignment_dir`

When fetching assignments though the web interface, the files are placed in the home directory of users, instead of in `~/Jupyter`

e.g. `~/somecourse/ps1` instead of `~/Jupyter/somecourse/ps1`

### setting `c.Exchange.assignment_dir` to a the `Spawner.notebook_dir`

Files are placed in `~/Jupyter/Jupyter/somecourse/ps1` rather than `~/Jupyter/somecourse/ps1`

### setting `c.Exchange.assignment_dir` to a relative path

e.g. `c.Exchange.assignment_dir = 'foo'`

Files are placed in `~/foo/ps1` instead of `~/Jupyter/foo`

### setting `c.Exchange.assignment_dir` to an absolute path

We initially solved the issue by setting

```c.Exchange.assignment_dir = os.path.expanduser("~/Jupyter")```

This places files in `~/Jupyter/somecourse/ps1` as expected.

However, this introduces a bug with the `(view feedback)` links. They now link to:

`https://hostname/jupyter/user/someuser/tree/home/someuser/Jupyter/somecourse/ps1/feedback/2020-04-14%2018:01:49.392273%20UTC`

Note the absolute path following `tree`

Where we should have

`https://hostname/jupyter/user/someuser/tree/somecourse/ps1/feedback/2020-04-14%2018:01:49.392273%20UTC`

(removal of `home/someuser/Jupyter/`)

Note this does not happen with the links to the notebooks within a fetched course. The paths used to generate the links are relative in:

```

course_id: "somecourse"

assignment_id: "ps1"

status: "fetched"

path: "somecourse/ps1"

notebooks: [

{notebook_id: "problem1", path: "somecourse/ps1/problem1.ipynb"},

…]

```

The paths listed for feedback are absolute in `local_feedback_path: /home/someuser/Jupyter/somecourse/ps1/feedback/...`

### Recap

I think there are two issues: 1) inconsistencies in how `c.Exchange.assignment_dir` is handled, and 2) the path to feedback files should be handled the same way the path to notebooks are handled in the assignment list (see also https://github.com/jupyter/nbgrader/issues/1317 ).

### `nbgrader --version`

```

Python version 3.7.3 (default, Jun 25 2019, 16:36:57)

[GCC 5.5.0]

nbgrader version 0.6.1

```

### `jupyterhub --version` (if used with JupyterHub)

```

1.0.0

```

### `jupyter notebook --version`

```

5.7.8

```

| open | 2020-04-15T10:50:53Z | 2020-04-15T12:58:20Z | https://github.com/jupyter/nbgrader/issues/1326 | [] | bomma | 0 |

pandas-dev/pandas | python | 60,560 | BUG: inconsistent return types from __getitem__ vs iteration | ### Pandas version checks

- [X] I have checked that this issue has not already been reported.

- [X] I have confirmed this bug exists on the [latest version](https://pandas.pydata.org/docs/whatsnew/index.html) of pandas.

- [X] I have confirmed this bug exists on the [main branch](https://pandas.pydata.org/docs/dev/getting_started/install.html#installing-the-development-version-of-pandas) of pandas.

### Reproducible Example

```python

import numpy as np

import pandas as pd

print(np.__version__) # 2.0.2

print(pd.__version__) # 2.2.3

data = pd.Series([333, 555])

# accessing scalar via __getitem__ returns <class 'numpy.int64'>

print(type(data[0]))

# accessing scalar via iteration returns <class 'int'>

print(type(next(iter(data))))

```

### Issue Description

numpy 2.0 recently changed its [representation of scalars](https://numpy.org/devdocs/release/2.0.0-notes.html#representation-of-numpy-scalars-changed) to include type information. However, pandas produces inconsistent return types when one is accessing scalars with `__getitem__` vs iterating over items, as demonstrated in the example code snippet.