repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

gee-community/geemap | streamlit | 2,191 | Bug in linked_maps for 3x2 layouts | Dear team,

I am trying to set up a 3x2 grid of linked_maps, but get an erroneous message that the list index is out of range. Contrarily, the code works fine if I set the number of desired rows to 2, without changing the length of the ee_objects, vis_params, and labels list. According to the documentation, I would expect this to throw an error since the length of the three lists does then not correspond to rows*cols. See my code example below.

This is the setup I am using: <table style='border: 1.5px solid;'>

<tr>

<td style='text-align: center; font-weight: bold; font-size: 1.2em; border: 1px solid;' colspan='6'>Fri Dec 13 13:55:58 2024 UTC</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>OS</td>

<td style='text-align: left; border: 1px solid;'>Linux (Ubuntu 24.04)</td>

<td style='text-align: right; border: 1px solid;'>CPU(s)</td>

<td style='text-align: left; border: 1px solid;'>48</td>

<td style='text-align: right; border: 1px solid;'>Machine</td>

<td style='text-align: left; border: 1px solid;'>x86_64</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>Architecture</td>

<td style='text-align: left; border: 1px solid;'>64bit</td>

<td style='text-align: right; border: 1px solid;'>RAM</td>

<td style='text-align: left; border: 1px solid;'>251.7 GiB</td>

<td style='text-align: right; border: 1px solid;'>Environment</td>

<td style='text-align: left; border: 1px solid;'>Jupyter</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>File system</td>

<td style='text-align: left; border: 1px solid;'>ext4</td>

</tr>

<tr>

<td style='text-align: center; border: 1px solid;' colspan='6'>Python 3.12.3 (main, Nov 6 2024, 18:32:19) [GCC 13.2.0]</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>geemap</td>

<td style='text-align: left; border: 1px solid;'>0.35.1</td>

<td style='text-align: right; border: 1px solid;'>ee</td>

<td style='text-align: left; border: 1px solid;'>1.1.0</td>

<td style='text-align: right; border: 1px solid;'>ipyleaflet</td>

<td style='text-align: left; border: 1px solid;'>0.19.2</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>folium</td>

<td style='text-align: left; border: 1px solid;'>0.17.0</td>

<td style='text-align: right; border: 1px solid;'>jupyterlab</td>

<td style='text-align: left; border: 1px solid;'>Module not found</td>

<td style='text-align: right; border: 1px solid;'>notebook</td>

<td style='text-align: left; border: 1px solid;'>Module not found</td>

</tr>

<tr>

<td style='text-align: right; border: 1px solid;'>ipyevents</td>

<td style='text-align: left; border: 1px solid;'>2.0.2</td>

<td style='text-align: right; border: 1px solid;'>geopandas</td>

<td style='text-align: left; border: 1px solid;'>1.0.1</td>

<td style= border: 1px solid;'></td>

<td style= border: 1px solid;'></td>

</tr>

</table>

```import ee

import geemap

# Initialize the Earth Engine API

ee.Initialize()

# Define a region of interest

lat, lon = 37.7749, -122.4194 # San Francisco

roi = ee.Geometry.Point([lon, lat])

# Load sample datasets

image1 = ee.ImageCollection('MODIS/006/MOD13Q1').select('NDVI').filterDate('2021-01-01', '2021-12-31').mean()

image2 = ee.ImageCollection('MODIS/006/MOD13Q1').select('NDVI').filterDate('2020-01-01', '2020-12-31').mean()

image3 = ee.ImageCollection('MODIS/006/MOD13Q1').select('NDVI').filterDate('2019-01-01', '2019-12-31').mean()

# Create differences for visualization

image_diff1 = image1.subtract(image2)

image_diff2 = image2.subtract(image3)

# Define visualization parameters

ndvi_vis = {

'min': 0,

'max': 9000,

'palette': ['blue', 'white', 'green'],

}

ndvi_diff_vis = {

'min': -500,

'max': 500,

'palette': ['red', 'white', 'blue'],

}

# Visualization parameter list

vis_params = [ndvi_vis, ndvi_vis, ndvi_vis, ndvi_diff_vis, ndvi_diff_vis, ndvi_diff_vis]

# Create linked maps

geemap.linked_maps(

rows=3,

cols=2,

height="400px",

center=[lat, lon],

zoom=5,

ee_objects=[image1, image2, image3, image_diff1, image_diff2, image_diff1],

vis_params=vis_params,

labels=[

"NDVI 2021", "NDVI 2020", "NDVI 2019",

"NDVI Diff 2021-2020", "NDVI Diff 2020-2019", "NDVI Diff 2021-2020 (Duplicate)"

],

label_position="topright",

)

```

I get the following error message:

```

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

Cell In[16], line 37

34 vis_params = [ndvi_vis, ndvi_vis, ndvi_vis, ndvi_diff_vis, ndvi_diff_vis, ndvi_diff_vis]

36 # Create linked maps

---> 37 geemap.linked_maps(

38 rows=3,

39 cols=2,

40 height="400px",

41 center=[lat, lon],

42 zoom=5,

43 ee_objects=[image1, image2, image3, image_diff1, image_diff2, image_diff1],

44 vis_params=vis_params,

45 labels=[

46 "NDVI 2021", "NDVI 2020", "NDVI 2019",

47 "NDVI Diff 2021-2020", "NDVI Diff 2020-2019", "NDVI Diff 2021-2020 (Duplicate)"

48 ],

49 label_position="topright",

50 )

File ~/24_09_clean.venv/lib/python3.12/site-packages/geemap/geemap.py:5067, in linked_maps(rows, cols, height, ee_objects, vis_params, labels, label_position, **kwargs)

5058 m = Map(

5059 height=height,

5060 lite_mode=True,

(...)

5063 **kwargs,

5064 )

5066 if len(ee_objects) > 0:

-> 5067 m.addLayer(ee_objects[index], vis_params[index], labels[index])

5069 if len(labels) > 0:

5070 label = widgets.Label(

5071 labels[index], layout=widgets.Layout(padding="0px 5px 0px 5px")

5072 )

IndexError: list index out of range

``` | closed | 2024-12-13T13:58:49Z | 2024-12-14T16:43:50Z | https://github.com/gee-community/geemap/issues/2191 | [

"bug"

] | nielja | 0 |

litestar-org/litestar | api | 3,451 | Bug: Litestar instance or factory not found | Hi, every time I try to run litestar from vscode I get a "Could not find Litestar instance or factory" | closed | 2024-04-29T17:34:09Z | 2025-03-20T15:54:39Z | https://github.com/litestar-org/litestar/issues/3451 | [

"Bug :bug:",

"Needs MCVE"

] | glickums | 1 |

erdewit/ib_insync | asyncio | 290 | No contract information in barUpdateEvent | Hi,

Is there anyway to know the contract for `reqRealTimeBars` ? `barUpdateEvent` won't give.

Versions:

```

python: 3.6.8

ib_insync: 0.9.61

IB: GateWay 978.2e

System: Centos 8

```

To produce:

```

from ib_insync import IB,util,Forex,Stock,ContFuture,Order,Index,LimitOrder

contracts = [Forex(pair) for pair in ('EURUSD', 'AUDUSD')]

ib = IB()

ib.connect('127.0.0.1', 6665, clientId=14)

ib.qualifyContracts(*contracts)

for contract in contracts:

ib.reqRealTimeBars(contract, 5, 'MIDPOINT', True)

def real5bar2q(bars,hasNewBar):

print(bars[-1])

ib.barUpdateEvent+=real5bar2q

ib.sleep(20)

ib.disconnect()

exit()

```

Output:

```

Python 3.6.8 (default, Apr 16 2020, 01:36:27)

[GCC 8.3.1 20191121 (Red Hat 8.3.1-5)] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> from ib_insync import IB,util,Forex,Stock,ContFuture,Order,Index,LimitOrder

>>> contracts = [Forex(pair) for pair in ('EURUSD', 'AUDUSD')]

>>> ib = IB()

>>> ib.connect('127.0.0.1', 6665, clientId=14)

<IB connected to 127.0.0.1:6665 clientId=14>

>>> ib.qualifyContracts(*contracts)

[Forex('EURUSD', conId=12087792, exchange='IDEALPRO', localSymbol='EUR.USD', tradingClass='EUR.USD'), Forex('AUDUSD', conId=14433401, exchange='IDEALPRO', localSymbol='AUD.USD', tradingClass='AUD.USD')]

>>> for contract in contracts:

... ib.reqRealTimeBars(contract, 5, 'MIDPOINT', True)

...

[]

[]

>>> def real5bar2q(bars,hasNewBar):

... print(bars[-1])

...

>>> ib.barUpdateEvent+=real5bar2q

>>> ib.sleep(20)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 45, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187295, high=1.187295, low=1.187265, close=1.187275, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 45, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.72167, high=0.72167, low=0.72167, close=0.72167, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 50, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187275, high=1.187275, low=1.187235, close=1.187255, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 50, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.72167, high=0.72169, low=0.721665, close=0.72169, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 55, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.72169, high=0.72173, low=0.72169, close=0.72172, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 26, 55, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187255, high=1.187335, low=1.187255, close=1.187295, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187295, high=1.187295, low=1.18729, close=1.187295, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.72172, high=0.72172, low=0.72171, close=0.721715, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, 5, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187295, high=1.187295, low=1.18729, close=1.187295, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, 5, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.721715, high=0.721715, low=0.721715, close=0.721715, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, 10, tzinfo=datetime.timezone.utc), endTime=-1, open_=1.187295, high=1.187355, low=1.18729, close=1.187345, volume=-1, wap=-1.0, count=-1)

RealTimeBar(time=datetime.datetime(2020, 8, 17, 14, 27, 10, tzinfo=datetime.timezone.utc), endTime=-1, open_=0.721715, high=0.72172, low=0.72168, close=0.72172, volume=-1, wap=-1.0, count=-1)

True

>>> ib.disconnect()

>>> exit()

```

We see there's no specific information to tell which `RealTimeBar` is for `EURUSD` or `AUDUSD`, unless we look at the price value, which is however not a very systematic way.

Thanks a lot !

Xiangpeng | closed | 2020-08-17T14:37:20Z | 2020-08-18T14:11:09Z | https://github.com/erdewit/ib_insync/issues/290 | [] | xiangpeng2008 | 1 |

google-research/bert | nlp | 1,116 | Dealing with ellipses in BERT tokenization | I have a speech transcript dataset in which ellipses (...) have been used to indicate the speaker pause. I am using BERT embeddings for text classification. It is very important for me that the BERT model properly recognizes these ellipses (...). Currently, it tokenizes them as 3 separate full stops, so I am not sure if it is capturing the context of the essential "speaker pause" in the speech transcripts. What can I do in such cases? Should I replace the ellipses (...) with another symbol like a hash or dash? or let them remain as it is? | open | 2020-06-30T10:04:19Z | 2020-10-30T17:40:58Z | https://github.com/google-research/bert/issues/1116 | [] | fliptrail | 2 |

tflearn/tflearn | tensorflow | 944 | Request a new feature : weight normalization | can you implement [weight norm](https://arxiv.org/pdf/1602.07868.pdf) ?

I want to use it as follows.

```python

x = tf.layers.conv2d(x, filter_size=32, kernel_size=[3,3], strides=2)

x = weight_norm(x)

```

Is it possible? | open | 2017-10-29T13:10:48Z | 2017-11-08T09:13:32Z | https://github.com/tflearn/tflearn/issues/944 | [] | taki0112 | 1 |

localstack/localstack | python | 11,678 | bug: `_custom_key_material_` does not seem to work for SECP256k1 | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

While running the following script in a docker-compose context the logs succeed when creating the actual key but the key created is not using the provided custom key material (it works for the `keyId` aka `CUSTOM_ID` though):

```

CUSTOM_KEY_MATERIAL="base64 secp256k1 private key"

CUSTOM_ID="custom id"

awslocal kms create-key --tags "[{\"TagKey\":\"_custom_key_material_\",\"TagValue\":\"$CUSTOM_KEY_MATERIAL\"},{\"TagKey\":\"_custom_id_\",\"TagValue\":\"$CUSTOM_ID\"}]" --key-usage SIGN_VERIFY --key-spec ECC_SECG_P256K1

```

When running the tests both sign and get public key return something but those are not definitely the values I am expecting (actually every time I restart it it creates new key material associated to the provided ID, I am generating an address from the public key in my test).

### Expected Behavior

The key is created with the custom material.

### How are you starting LocalStack?

With a docker-compose file

### Steps To Reproduce

#### How are you starting localstack (e.g., `bin/localstack` command, arguments, or `docker-compose.yml`)

docker-compose -f docker-compose.localstack.yml up

#### Client commands (e.g., AWS SDK code snippet, or sequence of "awslocal" commands)

The sequence above

### Environment

```markdown

- OS: MacOS Ventura 13.0.1

- LocalStack:

LocalStack version: 3.3.0

LocalStack Docker image sha: sha256:b5c082a6d78d49fc4a102841648a8adeab4895f7d9a4ad042d7d485aed2da10d

```

### Anything else?

This is the configuration within the docker-compose file:

```

services:

localstack:

container_name: localstack

hostname: localstack

image: localstack/localstack:3.3.0

restart: always

ports:

- 4599:4599

environment:

- SERVICES=kms

- HOSTNAME_EXTERNAL=localstack

- DOCKER_HOST=unix:///var/run/docker.sock

- GATEWAY_LISTEN=0.0.0.0:4599

- AWS_ENDPOINT_URL=http://localstack:4599

- AWS_DEFAULT_REGION=eu-west-1

volumes:

- ./localstack/init:/etc/localstack/init/ready.d

- /var/run/docker.sock:/var/run/docker.sock

entrypoint:

[

"/bin/sh",

"-c",

"apt-get update && apt-get -y install jq && docker-entrypoint.sh"

]

healthcheck:

test:

- CMD

- bash

- -c

- $$(awslocal kms list-keys | jq '.Keys | length | . == 1') || exit 1; # There is 1 key at the moment

interval: 5s

timeout: 20s

start_period: 2s

``` | open | 2024-10-11T16:53:54Z | 2024-12-09T01:42:31Z | https://github.com/localstack/localstack/issues/11678 | [

"type: bug",

"aws:kms",

"status: backlog"

] | freemanzMrojo | 5 |

biolab/orange3 | numpy | 6,414 | Edit Domain resets when reopening orange | ### Discussed in https://github.com/biolab/orange3/discussions/6412

<div type='discussions-op-text'>

<sup>Originally posted by **zikook** April 14, 2023</sup>

Hi,

When I close and reopen Orange, changes I've made in Edit Domain, for example naming a variable, sometimes disappear.

This happens when I use the widget 'Create Table' from the Educational tab to send it a table.

I can upload a workflow if it helps.

Anyone experienced this? Is there a workaround?

Thanks,

-Aaron</div> | closed | 2023-04-14T22:04:07Z | 2023-11-30T14:01:56Z | https://github.com/biolab/orange3/issues/6414 | [] | janezd | 6 |

PaddlePaddle/PaddleHub | nlp | 2,242 | Gradio App 支持报错 | - 版本、环境信息

1)PPaddleHub2.3.1,PaddlePaddle2.4.2

2)系统环境:Linux,python:3.7.3

- 复现信息:如为报错,请给出复现环境、复现步骤

打开gradio连接比如 http://127.0.0.1:8866/gradio/ch_pp-ocrv3

会报"GET /theme.css HTTP/1.1" 404 -。页面也无法展示 | closed | 2023-04-18T08:20:28Z | 2023-04-18T08:32:36Z | https://github.com/PaddlePaddle/PaddleHub/issues/2242 | [] | metaire | 1 |

modin-project/modin | data-science | 7,447 | BUG: modin.pandas.concat wrangles types; differs from pandas.concat | ### Modin version checks

- [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the latest released version of Modin.

- [ ] I have confirmed this bug exists on the main branch of Modin. (In order to do this you can follow [this guide](https://modin.readthedocs.io/en/stable/getting_started/installation.html#installing-from-the-github-main-branch).)

### Reproducible Example

```python

import pandas as pd

import modin.pandas as mpd

import numpy as np

a = pd.DataFrame({'a': [1,2,3], 'b': [False, True, False]})

b = pd.DataFrame({'a': [4,5,6]})

print("pandas:\n")

print(pd.concat([a,b]))

c = mpd.DataFrame({'a': [1,2,3], 'b': [False, True, False]})

d = mpd.DataFrame({'a': [4,5,6]})

print("\nmodin.pandas:\n")

print(mpd.concat([c,d]))

```

### Issue Description

Related to issue 5964, but more severe as this mangles the types of existing entries. Boolean entries are overwritten to floats. Code results:

```

pandas:

a b

0 1 False

1 2 True

2 3 False

0 4 NaN

1 5 NaN

2 6 NaN

modin.pandas:

a b

0 1 0.0

1 2 1.0

2 3 0.0

0 4 NaN

1 5 NaN

2 6 NaN

```

### Expected Behavior

Identical behaviour to Pandas.

### Error Logs

<details>

```python-traceback

Replace this line with the error backtrace (if applicable).

```

</details>

### Installed Versions

<details>

Replace this line with the output of pd.show_versions()

</details>

| open | 2025-02-26T13:47:11Z | 2025-03-03T10:19:33Z | https://github.com/modin-project/modin/issues/7447 | [

"bug 🦗",

"Triage 🩹"

] | Sander-B | 3 |

explosion/spaCy | deep-learning | 12,556 | documentation for German dependency label inventory | <!-- Describe the problem or suggestion here. If you've found a mistake and you know the answer, feel free to submit a pull request straight away: https://github.com/explosion/spaCy/pulls -->

The documentation for the dependency labels used by the German parser gives the labels and with `spacy.explain` one can see a disabbreviated form of the labels.

But I didn't manage to find a reference to annotation guidelines or links to papers or treebanks that can be consulted on what the labels are supposed to mean.

As far as I can tell the current inventory of 42 labels is not identical with either Tiger or NEGRA. For instance, spacy's labels for German include `avc` which is in NEGRA but not in Tiger. The label `ag` is used by spacy. It is not in NEGRA but it is in Tiger. spacy's inventory also includes `dep` which is neither in NEGRA nor in Tiger.

Could you steer me towards documentation of the label set that spaCy uses?

## Which page or section is this issue related to?

https://spacy.io/models/de

| closed | 2023-04-20T12:19:04Z | 2023-04-21T08:03:46Z | https://github.com/explosion/spaCy/issues/12556 | [

"lang / de",

"models"

] | josefkr | 2 |

falconry/falcon | api | 2,009 | Method decorator in ASGI causes "Method Not Allowed" error | Defining a decorator on top of an async endpoint will result in "Method Not Allowed" error.

You will get `405 Method Not Allowed` when running:

```

import falcon.asgi

import logging

logging.basicConfig()

logging.getLogger().setLevel(logging.DEBUG)

def test():

async def wrap1(func, *args):

async def limit_wrap(cls, req, resp, *args, **kwargs):

await func(cls, req, resp, *args, **kwargs)

return limit_wrap

return wrap1

class ThingsResource:

@test()

async def on_get(self, req, resp):

resp.body = 'Hello world!'

app = falcon.asgi.App()

things = ThingsResource()

app.add_route('/things', things)

```

That error pops, because the `responder` is not a callable, see https://github.com/falconry/falcon/blob/818698b7ca63f239642cf6d705109d720e8c6782/falcon/routing/util.py#L137

Even if that line (falcon/routing/util.py#L137) gets taken out, I still get the next error:

```

ERROR:falcon:[FALCON] Unhandled exception in ASGI app

Traceback (most recent call last):

File "/home/user/.local/share/virtualenvs/falcon-limiter-test-app-async-eh6amfJm/lib/python3.8/site-packages/falcon/asgi/app.py", line 408, in __call__

await responder(req, resp, **params)

TypeError: 'coroutine' object is not callable

```

The same thing works with WSGI (no async):

```

import falcon

def test():

def wrap1(func, *args):

def limit_wrap(cls, req, resp, *args, **kwargs):

func(cls, req, resp, *args, **kwargs)

return limit_wrap

return wrap1

class ThingsResource:

@test()

def on_get(self, req, resp):

resp.body = 'Hello world!'

app = falcon.API()

things = ThingsResource()

app.add_route('/things', things)

```

How can decorators be added to the async view functions?

Thanks!

Note: The reason for asking about this, because I trying to release a new version for the `Falcon-limiter` package to support Falcon ASGI. | closed | 2022-01-22T21:45:59Z | 2022-01-23T10:03:34Z | https://github.com/falconry/falcon/issues/2009 | [

"question"

] | zoltan-fedor | 3 |

jonaswinkler/paperless-ng | django | 125 | document_retagger -T -f removes inbox tag from all documents | I'm not sure if this is intentional, but if document_retagger -T with the -f force option is run, it removes the inbox tag from all documents.

I can understand why it's done this, as with no other matching criteria specified by the user the -f option would remove the tag, but I'm logging this as it might be "safer" to have the -f option exclude inbox tags from removal when running it as it might be unexpected behaviour for users (or at least make it clear in the docs). | closed | 2020-12-11T14:10:58Z | 2020-12-12T00:23:29Z | https://github.com/jonaswinkler/paperless-ng/issues/125 | [] | rknightion | 2 |

deepset-ai/haystack | pytorch | 9,020 | Add run_async for `OpenAIDocumentEmbedder` | open | 2025-03-11T11:10:02Z | 2025-03-23T07:09:26Z | https://github.com/deepset-ai/haystack/issues/9020 | [

"Contributions wanted!",

"P2"

] | sjrl | 1 | |

ludwig-ai/ludwig | computer-vision | 3,465 | Missing documentation for Ludwig Explainer | **Is your feature request related to a problem? Please describe.**

There seems to be no documentation at all about the [explainer class](https://github.com/ludwig-ai/ludwig/blob/master/ludwig/explain/explainer.py) on the [official homepage](https://ludwig.ai/latest/).

Without the documentation it is difficult to understand how to use the explainer in the correct and intended way.

I got it to work, however, the explained attributes of the model do not make any sense at all and are even the same for two different models.

I cannot use shap to get feature importances/attributes, because the Ludwig predict function returns a tuple which is not accepted by shap, so I have to rely on the explainer provided by Ludwig.

**Describe the use case**

The documentation should describe how to use the built-in explainer of Ludwig, provide use cases and examples.

**Describe the solution you'd like**

A dedicated page describing the explainer on the official homepage.

| closed | 2023-07-13T20:07:36Z | 2024-10-18T16:55:20Z | https://github.com/ludwig-ai/ludwig/issues/3465 | [] | Exitare | 5 |

yzhao062/pyod | data-science | 210 | Combination models strange different results | Hi, nice work with this pyod module.

I have a question regarding the combination of methods. For example I want to combine multiple KNN models, but when I compare the results of the ensemble detection and a single KNN model there is a big difference that I can't understand.

For example here in the code below I obtain the following output when comparing single and combined methods:

Number outliers: 50 - % outliers: 5.0 %

Number outliers: 572 - % outliers: 57.2 %

If I use the "average" combination method there is no such difference and the results are comparable. Maybe there is a hint of an explanation on the underlying implementation of the "aom" method and the "n_buckets" parameter?

Here is my code:

```

# -*- coding: utf-8 -*-

import numpy as np

from pyod.models.knn import KNN

from pyod.utils.data import generate_data

from pyod.models.combination import aom, moa, average, maximization, majority_vote

from pyod.models.lscp import LSCP

from pyod.utils.utility import standardizer

def ensemble(data, outliers_fraction=0.1, combi_method='avg'):

n_clf = 20

k_list = [10, 20, 30, 40, 50, 60, 70, 80, 90, 100, 110, 120, 130, 140, 150, 160, 170, 180, 190, 200]

data_norm = standardizer(data)

scores = np.zeros([data_norm.shape[0], n_clf])

clf_list = []

clf_list_clean = []

for i in range(n_clf):

k = k_list[i]

clf = KNN(n_neighbors=k, method='largest', contamination=outliers_fraction)

clf_list_clean.append(clf)

clf.fit(data_norm)

# Store the results in each column:

scores[:, i] = clf.decision_scores_

# Store the list of classifiers:

clf_list.append(clf)

# Decision scores have to be normalized before combination

scores_norm = standardizer(scores)

if combi_method == 'avg':

y_by_method = average(scores_norm)

elif combi_method == 'max':

y_by_method = maximization(scores_norm)

elif combi_method == 'aom':

y_by_method = aom(scores_norm)

elif combi_method == 'moa':

y_by_method = moa(scores_norm)

elif combi_method == 'maj':

y_by_method = majority_vote(scores_norm)

elif combi_method == 'lscp':

clf_list = LSCP(clf_list, contamination=outliers_fraction)

clf_list.fit(data_norm)

y_by_method = clf_list.labels_

else:

raise ValueError("Combination method option not valid")

if combi_method == 'lscp':

out_idx = y_by_method

else:

out_idx = np.where(y_by_method<0, 0, 1)

return out_idx

if __name__ == '__main__':

outliers_fraction = 0.05

#generate random data with two features

X_train, X_test, y_train, y_test = generate_data(n_features=10, behaviour="new", contamination=outliers_fraction)

# train the KNN detector

clf = KNN(contamination=outliers_fraction)

clf.fit(X_train)

# get outlier scores

out_idx = clf.labels_

print("Number outliers: "+str(np.sum(out_idx))+" - % outliers: "+str(np.sum(out_idx)*100/len(X_train))+" %")

out_idx_ens = ensemble(X_train, outliers_fraction=outliers_fraction, combi_method='aom')

print("Number outliers: "+str(np.sum(out_idx_ens))+" - % outliers: "+str(np.sum(out_idx_ens)*100/len(X_train))+" %")

``` | open | 2020-07-17T08:40:28Z | 2020-07-17T11:40:35Z | https://github.com/yzhao062/pyod/issues/210 | [] | davidusb-geek | 0 |

explosion/spaCy | deep-learning | 13,001 | Entity linking with workable examples | ### Discussed in https://github.com/explosion/spaCy/discussions/12989

<div type='discussions-op-text'>

<sup>Originally posted by **igormorgado** September 19, 2023</sup>

I'm reading spaCy documentation and I'm having issues with Entity linking shown at

[[official documentaion](https://spacy.io/usage/linguistic-features#entity-linking)](https://spacy.io/usage/linguistic-features#entity-linking), the code does not run simply because there isn't a "my_custom_el_pipeline". This seems an issue in documentation since the examples do not work.

Here I'm asking two things.

First, how this work? How can I write a simple code that shows entity linking?

Second, is possible to fix the documentation with runnable examples?

</div>

---

We should add a sentence explaining that `my_custom_el_pipeline` is a user-provided model. | closed | 2023-09-22T10:26:03Z | 2023-12-01T00:02:26Z | https://github.com/explosion/spaCy/issues/13001 | [

"usage",

"docs",

"feat / nel"

] | danieldk | 1 |

JoeanAmier/TikTokDownloader | api | 384 | 不能读取cookie | 用浏览器获取或者用开发者模式复制cookie到程序,提示cookie未设置,下载视频提示不是有效的json | open | 2025-01-22T04:29:55Z | 2025-01-22T10:37:47Z | https://github.com/JoeanAmier/TikTokDownloader/issues/384 | [] | Kannkinnu | 1 |

miguelgrinberg/python-socketio | asyncio | 514 | Bug when try to reconnect from another event loop | Hi,

I have some trouble with the async client.

```

[32mINFO[0m: Engine.IO connection dropped

Websocket disconnected

[32mINFO[0m:\t Exiting read loop task

[32mINFO[0m:\t Connection failed, new attempt in 0.98 seconds

Exception in thread Thread-1:

Traceback (most recent call last):

[32mINFO[0m: Exiting read loop task

[32mINFO[0m: Connection failed, new attempt in 0.98 seconds

Exception in thread Thread-1:

Traceback (most recent call last):

File "F:\Anaconda3\envs\myProject\lib\threading.py", line 917, in _bootstrap_inner

self.run()

File "F:\Anaconda3\envs\myProject\lib\threading.py", line 865, in run

self._target(*self._args, **self._kwargs)

File "application\main.py", line 62, in websocket_connection

client_loop.run_until_complete(launch_websocket(dispatcher))

File "F:\Anaconda3\envs\myProject\lib\asyncio\base_events.py", line 568, in run_until_complete

return future.result()

File "application\main.py", line 83, in launch_websocket

await dispatcher.wait()

File "application\..\application\core\socketio\client_socketio.py", line 34, in wait

await self._client.wait()

File "F:\Anaconda3\envs\myProject\lib\site-packages\socketio\asyncio_client.py", line 132, in wait

await self._reconnect_task

File "F:\Anaconda3\envs\myProject\lib\site-packages\socketio\asyncio_client.py", line 417, in _handle_reconnect

await asyncio.wait_for(self._reconnect_abort.wait(), delay)

File "F:\Anaconda3\envs\myProject\lib\asyncio\tasks.py", line 412, in wait_for

return fut.result()

File "F:\Anaconda3\envs\myProject\lib\asyncio\locks.py", line 293, in wait

await fut

RuntimeError: Task <Task pending coro=<Event.wait() running at F:\Anaconda3\envs\myProject\lib\asyncio\locks.py:293> cb=[_release_waiter(<Future pendi...CCA173EE8>()]>)() at F:\Anaconda3\envs\myProject\lib\asyncio\tasks.py:362]> got Future <Future pending> attached to a different loop

# I have my client loop define like that:

client_loop = asyncio.new_event_loop()

#And I launch the client like that:

def websocket_connection(dispatcher):

client_loop.run_until_complete(launch_websocket(dispatcher))

async def launch_websocket(dispatcher):

await dispatcher.connect(url)

await dispatcher.wait()

# Start Websocket client thread

ws_client = socketio.AsyncClient()

t = Thread(target=websocket_connection, args=(ws_client,))

t.start()

```

I think to handle multi-threading you need to allow developpers to pass the loop argument when you use asyncio in socketio and engineio.

In my case I have simultanously client and server in same program, and it's the await dispatcher.wait() which is problematic, I think this bug can be resolve by passing the client_loop to File "F:\Anaconda3\envs\myProject\lib\site-packages\socketio\asyncio_client.py", line 417, in _handle_reconnect

await asyncio.wait_for(self._reconnect_abort.wait(), delay /** loop=client_loop **/)

| closed | 2020-07-06T16:28:13Z | 2020-07-07T17:11:33Z | https://github.com/miguelgrinberg/python-socketio/issues/514 | [

"question"

] | PhpEssential | 6 |

python-gitlab/python-gitlab | api | 2,876 | Redirected `head()` requests raise `RedirectError` | ## Description

When downloading artifacts from a GitLab job I'd like to implement a progress bar. For that I'd need to know the file size in advance. The file size can be inferred by issuing a HEAD request and is found in the `content-length` response header.

```python

job = project.jobs.get(job.id)

endpoint = f"{job.manager.path}/artifacts/{job.ref}/download"

response: dict = job.manager.gitlab.http_head(endpoint, query_data={"job": job.name})

file_size = int(response.get('content-length', 0)

cd: str = response.get("content-disposition", "filename=artifacts.zip")

file_name = cd.partition("filename=")[-1].strip('"')

```

## Expected Behavior

I'd expect that head redirects are allowed, judging by the comments in the `client.py`

```python

@staticmethod

def _check_redirects(result: requests.Response) -> None:

# Check the requests history to detect 301/302 redirections.

# If the initial verb is POST or PUT, the redirected request will use a

# GET request, leading to unwanted behaviour.

# If we detect a redirection with a POST or a PUT request, we

# raise an exception with a useful error message.

if not result.history:

return

for item in result.history:

if item.status_code not in (301, 302):

continue

# GET methods can be redirected without issue

if item.request.method == "GET": # should be if item.request.method in ("GET", "HEAD"):

continue

target = item.headers.get("location")

raise gitlab.exceptions.RedirectError(

REDIRECT_MSG.format(

status_code=item.status_code,

reason=item.reason,

source=item.url,

target=target,

)

)

```

## Actual Behavior

This snippet of code results in:

```

File "/Users/secret/Workspace/repo/env/lib/python3.10/site-packages/gitlab/client.py", line 632, in _check_redirects

raise gitlab.exceptions.RedirectError(

gitlab.exceptions.RedirectError: python-gitlab detected a 302 ('Found') redirection. You must update your GitLab URL to the correct URL to avoid issues. The redirection was from: 'https://gitlab.com/api/v4/projects/1234567/jobs/artifacts/develop/download?job=mac%3Abuild' to 'https://cdn.artifacts.gitlab-static.net/secret'

```

## Specifications

- python-gitlab version: 4.5.0

- API version: v4

- gitlab.com as SaaS

| closed | 2024-05-21T13:59:31Z | 2024-05-21T15:35:28Z | https://github.com/python-gitlab/python-gitlab/issues/2876 | [

"bug"

] | EugenSusurrus | 3 |

OpenBB-finance/OpenBB | machine-learning | 6,991 | [FR] Add a parameter to disable the startup prompt | **What's the problem of not having this feature?**

I installed openbb-platform following the [docs (Docker section)](https://docs.openbb.co/platform/installation#docker).

Then I start it in interactive mode using the command from the docs:

```

docker run -it --rm -p 6900:6900 -v <my_path_to_settings>:/root/.openbb_platform openbb-platform:latest

```

(my_path_to_settings exists and I confirm that it contains the rigth files which are correctly mapped)

After a few seconds I see this screen, which expects me to press Enter.

When I run the same container in a detached mode, it fails due to lack of an interactive session.

However, once I make sure the configuration file works as expected, I'd love to be able to start the container in a detached mode.

**Describe the solution you would like**

Two options:

* provide an ENV_VAR that disables the prompt (e.g. `DISABLE_PAT_PROMPT` with default value `0` in order to provide backward compatibility).

* automatically disable the prompt I presented in the screenshot if a user provided a valid configuration (this is however a change modifying the current behaviour, so some might consider it breaking).

**Describe alternatives you've considered**

I'd like to deploy `openbb-platofrm` in a Docker container to one of my virtual machines and use the REST-API from there. Without being able to disable the prompt, I need to ssh to my machine and start it manually, then potentially I need to keep the tty session up all the time. Not impossible, but inconvenient.

**Additional information**

I've started using openBB-platform recently so it might happen that I'm missing something fundamental here.

| closed | 2024-12-29T21:14:11Z | 2024-12-30T21:30:22Z | https://github.com/OpenBB-finance/OpenBB/issues/6991 | [] | bratfizyk | 3 |

AutoGPTQ/AutoGPTQ | nlp | 261 | [DOC]: Recommendations for serving in production | Hi,

are there recommendations on how to serve AutoGPTQ models in production? I am currently thinking about looking into either

* Torch Serve

* Nvidia Triton Inference Server

I have seen that AutoGPTQ has partial support for Triton "acceleration". What is ment by that? That Triton can be used for serving and its improvements can be leveraged, or are there some Triton specific optimizations that are part of AutoGPTQ? | closed | 2023-08-15T13:25:19Z | 2023-09-30T12:45:15Z | https://github.com/AutoGPTQ/AutoGPTQ/issues/261 | [] | mapa17 | 4 |

horovod/horovod | tensorflow | 3,762 | mpirun failed with exit status 1 while running spark submit with master yarn on horovod | **Environment:**

1. Framework: (Keras)

2. Framework version: 2.6.0

3. Horovod version: 0.25.0

4. MPI version: 3

5. CUDA version: 11.7

6. NCCL version: NA

7. Python version: 3.8

8. Spark / PySpark version: 3.1.1

9. Ray version: NA

10. OS and version: ubuntu

11. GCC version:

12. CMake version:

8881 [0] NCCL INFO comm 0x7fd37c402d10 rank 1 nranks 2 cudaDev 0 busId 2000 - Init COMPLETE

Thu Nov 10 07:45:12 2022[1,0]<stderr>:/usr/local/lib/python3.8/dist-packages/petastorm/fs_utils.py:88: FutureWarning: pyarrow.localfs is deprecated as of 2.0.0, please use pyarrow.fs.LocalFileSystem instead.

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Shared lib path is pointing to: <CDLL '/usr/local/lib/python3.8/dist-packages/horovod/tensorflow/mpi_lib.cpython-38-x86_64-linux-gnu.so', handle 53613d0 at 0x7fea411798b0>

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Found TensorBoard callback, updating log_dir to /tmp/tmp_kuatqj9/logs

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Training parameters: Epochs: 12, Scaled lr: 2.0, Shuffle size: 17444, random_seed: 1

Thu Nov 10 07:45:12 2022[1,0]<stderr>: self._filesystem = pyarrow.localfs

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Train rows: 34888, Train batch size: 128, Train_steps_per_epoch: 137

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Val rows: 3464, Val batch size: 128, Val_steps_per_epoch: None

Thu Nov 10 07:45:12 2022[1,0]<stdout>:Checkpoint file: file:///tmp/runs/keras_1668066293, Logs dir: file:///tmp/runs/keras_1668066293/logs

Thu Nov 10 07:45:12 2022[1,0]<stdout>:

Thu Nov 10 07:45:12 2022[1,0]<stderr>:Traceback (most recent call last):

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/lib/python3.8/runpy.py", line 194, in _run_module_as_main

Thu Nov 10 07:45:12 2022[1,0]<stderr>: return _run_code(code, main_globals, None,

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/lib/python3.8/runpy.py", line 87, in _run_code

Thu Nov 10 07:45:12 2022[1,0]<stderr>: exec(code, run_globals)

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/mpirun_exec_fn.py", line 52, in <module>

Thu Nov 10 07:45:12 2022[1,0]<stderr>: main(codec.loads_base64(sys.argv[1]), codec.loads_base64(sys.argv[2]))

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/mpirun_exec_fn.py", line 45, in main

Thu Nov 10 07:45:12 2022[1,0]<stderr>: task_exec(driver_addresses, settings, 'OMPI_COMM_WORLD_RANK', 'OMPI_COMM_WORLD_LOCAL_RANK')

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/__init__.py", line 61, in task_exec

Thu Nov 10 07:45:12 2022[1,0]<stderr>: result = fn(*args, **kwargs)

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/keras/remote.py", line 258, in train

Thu Nov 10 07:45:12 2022[1,0]<stderr>: with reader_factory(remote_store.train_data_path,

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 317, in make_batch_reader

Thu Nov 10 07:45:12 2022[1,0]<stderr>: return Reader(filesystem, dataset_path_or_paths,

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 448, in __init__

Thu Nov 10 07:45:12 2022[1,0]<stderr>: filtered_row_group_indexes, worker_predicate = self._filter_row_groups(self.dataset, row_groups, predicate,

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 529, in _filter_row_groups

Thu Nov 10 07:45:12 2022[1,0]<stderr>: filtered_row_group_indexes = self._partition_row_groups(dataset, row_groups, shard_count,

Thu Nov 10 07:45:12 2022[1,0]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 550, in _partition_row_groups

Thu Nov 10 07:45:12 2022[1,0]<stderr>: raise NoDataAvailableError('Number of row-groups in the dataset must be greater or equal to the number of '

Thu Nov 10 07:45:12 2022[1,0]<stderr>:petastorm.errors.NoDataAvailableError: Number of row-groups in the dataset must be greater or equal to the number of requested shards. Otherwise, some of the shards will end up being empty.

Thu Nov 10 07:45:12 2022[1,1]<stderr>:/usr/local/lib/python3.8/dist-packages/petastorm/fs_utils.py:88: FutureWarning: pyarrow.localfs is deprecated as of 2.0.0, please use pyarrow.fs.LocalFileSystem instead.

Thu Nov 10 07:45:12 2022[1,1]<stderr>: self._filesystem = pyarrow.localfs

Thu Nov 10 07:45:12 2022[1,1]<stderr>:Traceback (most recent call last):

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/etl/dataset_metadata.py", line 414, in infer_or_load_unischema

Thu Nov 10 07:45:12 2022[1,1]<stderr>: return get_schema(dataset)

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/etl/dataset_metadata.py", line 363, in get_schema

Thu Nov 10 07:45:12 2022[1,1]<stderr>: raise PetastormMetadataError(

Thu Nov 10 07:45:12 2022[1,1]<stderr>:petastorm.etl.dataset_metadata.PetastormMetadataError: Could not find _common_metadata file. Use materialize_dataset(..) in petastorm.etl.dataset_metadata.py to generate this file in your ETL code. You can generate it on an existing dataset using petastorm-generate-metadata.py

Thu Nov 10 07:45:12 2022[1,1]<stderr>:

Thu Nov 10 07:45:12 2022[1,1]<stderr>:During handling of the above exception, another exception occurred:

Thu Nov 10 07:45:12 2022[1,1]<stderr>:

Thu Nov 10 07:45:12 2022[1,1]<stderr>:Traceback (most recent call last):

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/lib/python3.8/runpy.py", line 194, in _run_module_as_main

Thu Nov 10 07:45:12 2022[1,1]<stderr>: return _run_code(code, main_globals, None,

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/lib/python3.8/runpy.py", line 87, in _run_code

Thu Nov 10 07:45:12 2022[1,1]<stderr>: exec(code, run_globals)

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/mpirun_exec_fn.py", line 52, in <module>

Thu Nov 10 07:45:12 2022[1,1]<stderr>: main(codec.loads_base64(sys.argv[1]), codec.loads_base64(sys.argv[2]))

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/mpirun_exec_fn.py", line 45, in main

Thu Nov 10 07:45:12 2022[1,1]<stderr>: task_exec(driver_addresses, settings, 'OMPI_COMM_WORLD_RANK', 'OMPI_COMM_WORLD_LOCAL_RANK')

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/task/__init__.py", line 61, in task_exec

Thu Nov 10 07:45:12 2022[1,1]<stderr>: result = fn(*args, **kwargs)

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/horovod/spark/keras/remote.py", line 258, in train

Thu Nov 10 07:45:12 2022[1,1]<stderr>: with reader_factory(remote_store.train_data_path,

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 317, in make_batch_reader

Thu Nov 10 07:45:12 2022[1,1]<stderr>: return Reader(filesystem, dataset_path_or_paths,

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/reader.py", line 409, in __init__

Thu Nov 10 07:45:12 2022[1,1]<stderr>: stored_schema = infer_or_load_unischema(self.dataset)

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/etl/dataset_metadata.py", line 418, in infer_or_load_unischema

Thu Nov 10 07:45:12 2022[1,1]<stderr>: return Unischema.from_arrow_schema(dataset)

Thu Nov 10 07:45:12 2022[1,1]<stderr>: File "/usr/local/lib/python3.8/dist-packages/petastorm/unischema.py", line 317, in from_arrow_schema

Thu Nov 10 07:45:12 2022[1,1]<stderr>: meta = parquet_dataset.pieces[0].get_metadata()

Thu Nov 10 07:45:12 2022[1,1]<stderr>:IndexErrorThu Nov 10 07:45:12 2022[1,1]<stderr>:: list index out of range

-------------------------------------------------------

Primary job terminated normally, but 1 process returned

a non-zero exit code. Per user-direction, the job has been aborted.

-------------------------------------------------------

--------------------------------------------------------------------------

mpirun detected that one or more processes exited with non-zero status, thus causing

the job to be terminated. The first process to do so was:

Process name: [[56569,1],0]

Exit code: 1

--------------------------------------------------------------------------

Traceback (most recent call last):

File "/horovod/examples/spark/keras/keras_spark_mnist.py", line 122, in <module>

keras_model = keras_estimator.fit(train_df).setOutputCols(['label_prob'])

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/common/estimator.py", line 35, in fit

return super(HorovodEstimator, self).fit(df, params)

File "/spark/python/lib/pyspark.zip/pyspark/ml/base.py", line 161, in fit

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/common/estimator.py", line 80, in _fit

return self._fit_on_prepared_data(

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/keras/estimator.py", line 275, in _fit_on_prepared_data

handle = backend.run(trainer,

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/common/backend.py", line 83, in run

return horovod.spark.run(fn, args=args, kwargs=kwargs,

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/runner.py", line 290, in run

_launch_job(use_mpi, use_gloo, settings, driver, env, stdout, stderr, executable)

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/runner.py", line 155, in _launch_job

run_controller(use_gloo, lambda: gloo_run(executable, settings, nics, driver, env, stdout, stderr),

File "/usr/local/lib/python3.8/dist-packages/horovod/runner/launch.py", line 761, in run_controller

mpi_run()

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/runner.py", line 156, in <lambda>

use_mpi, lambda: mpi_run(executable, settings, nics, driver, env, stdout, stderr),

File "/usr/local/lib/python3.8/dist-packages/horovod/spark/mpi_run.py", line 56, in mpi_run

hr_mpi_run(settings, nics, env, command, stdout=stdout, stderr=stderr)

File "/usr/local/lib/python3.8/dist-packages/horovod/runner/mpi_run.py", line 252, in mpi_run

raise RuntimeError("mpirun failed with exit code {exit_code}".format(exit_code=exit_code))

RuntimeError: mpirun failed with exit code 1

**Checklist:**

1. Did you search issues to find if somebody asked this question before?

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)?

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)?

4. Did you check if you question is answered in the [troubleshooting guide](https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)?

**Bug report:**

Please describe erroneous behavior you're observing and steps to reproduce it.

| open | 2022-11-10T07:48:21Z | 2024-05-28T13:42:24Z | https://github.com/horovod/horovod/issues/3762 | [

"bug",

"spark"

] | mtsol | 3 |

jordaneremieff/djantic | pydantic | 31 | Error on django ImageField | Error if I add in scheme field _avatar_

**Model:**

```

def get_avatar_upload_path(instance, filename):

return os.path.join(

"customer_avatar", str(instance.id), filename)

class Customer(models.Model):

"""

params: user* avatar shop* balance permissions deleted

prefetch: -

"""

id = models.AutoField(primary_key=True)

user = models.ForeignKey(User, on_delete=models.CASCADE, related_name='customers', verbose_name='Пользователь')

avatar = models.ImageField(upload_to=get_avatar_upload_path, verbose_name='Аватарка', blank=True, null=True)

retail = models.ForeignKey('retail.Retail', on_delete=models.CASCADE, related_name='customers', verbose_name='Розница')

balance = models.DecimalField(max_digits=9, decimal_places=2, verbose_name='Баланс', default=0)

permissions = models.ManyToManyField('retail.Permission', verbose_name='Права доступа', related_name='customers', blank=True)

deleted = models.BooleanField(default=False, verbose_name='Удален')

created = models.DateTimeField(auto_now_add=True, verbose_name='Дата создания')

```

**Scheme**

```

class CustomerSchema(ModelSchema):

user: UserSchema

class Config:

model = Customer

include = ['id', 'user', 'avatar', 'retail', 'balance', 'permissions']

```

**Error:**

```

fastapi_1 | Process SpawnProcess-36:

fastapi_1 | Traceback (most recent call last):

fastapi_1 | File "/usr/local/lib/python3.8/multiprocessing/process.py", line 315, in _bootstrap

fastapi_1 | self.run()

fastapi_1 | File "/usr/local/lib/python3.8/multiprocessing/process.py", line 108, in run

fastapi_1 | self._target(*self._args, **self._kwargs)

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/uvicorn/subprocess.py", line 62, in subprocess_started

fastapi_1 | target(sockets=sockets)

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/uvicorn/main.py", line 390, in run

fastapi_1 | loop.run_until_complete(self.serve(sockets=sockets))

fastapi_1 | File "uvloop/loop.pyx", line 1494, in uvloop.loop.Loop.run_until_complete

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/uvicorn/main.py", line 397, in serve

fastapi_1 | config.load()

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/uvicorn/config.py", line 278, in load

fastapi_1 | self.loaded_app = import_from_string(self.app)

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/uvicorn/importer.py", line 20, in import_from_string

fastapi_1 | module = importlib.import_module(module_str)

fastapi_1 | File "/usr/local/lib/python3.8/importlib/__init__.py", line 127, in import_module

fastapi_1 | return _bootstrap._gcd_import(name[level:], package, level)

fastapi_1 | File "<frozen importlib._bootstrap>", line 1014, in _gcd_import

fastapi_1 | File "<frozen importlib._bootstrap>", line 991, in _find_and_load

fastapi_1 | File "<frozen importlib._bootstrap>", line 975, in _find_and_load_unlocked

fastapi_1 | File "<frozen importlib._bootstrap>", line 671, in _load_unlocked

fastapi_1 | File "<frozen importlib._bootstrap_external>", line 783, in exec_module

fastapi_1 | File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

fastapi_1 | File "/opt/project/config/asgi.py", line 18, in <module>

fastapi_1 | from backend.retail.fastapp import app

fastapi_1 | File "/opt/project/backend/retail/fastapp.py", line 9, in <module>

fastapi_1 | from backend.retail.routers import router

fastapi_1 | File "/opt/project/backend/retail/routers.py", line 3, in <module>

fastapi_1 | from backend.retail.api import auth, postitem, ping, customer

fastapi_1 | File "/opt/project/backend/retail/api/customer.py", line 8, in <module>

fastapi_1 | from backend.retail.schemas.customer import CustomerSchema

fastapi_1 | File "/opt/project/backend/retail/schemas/customer.py", line 7, in <module>

fastapi_1 | class CustomerSchema(ModelSchema):

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/djantic/main.py", line 97, in __new__

fastapi_1 | python_type, pydantic_field = ModelSchemaField(field)

fastapi_1 | File "/usr/local/lib/python3.8/site-packages/djantic/fields.py", line 135, in ModelSchemaField

fastapi_1 | python_type,

fastapi_1 | UnboundLocalError: local variable 'python_type' referenced before assignment

```

| closed | 2021-06-29T06:39:24Z | 2021-07-02T14:47:54Z | https://github.com/jordaneremieff/djantic/issues/31 | [] | 50Bytes-dev | 4 |

davidsandberg/facenet | computer-vision | 528 | In center loss, the way of centers update | Hi,I don't understand your demo about center loss,your demo about centers update centers below:

diff = (1 - alfa) * (centers_batch - features)

centers = tf.scatter_sub(centers, label, diff)

but in the paper,centers should update should follow below:

diff = centers_batch - features

unique_label, unique_idx, unique_count = tf.unique_with_counts(labels)

appear_times = tf.gather(unique_count, unique_idx)

appear_times = tf.reshape(appear_times, [-1, 1])

diff = diff / tf.cast((1 + appear_times), tf.float32)

diff = alpha * diff

# update centers

centers = tf.scatter_sub(centers, labels, diff) | closed | 2017-11-14T12:29:49Z | 2018-04-04T14:29:51Z | https://github.com/davidsandberg/facenet/issues/528 | [] | biubug6 | 1 |

allenai/allennlp | pytorch | 5,519 | [XAI for transformer custom model using AllenNLP] | I have been solving the NER problem for a Vietnamese dataset with 15 tags in IO format. I have been using the AllenNLP Interpret Toolkit for my model, but I can not configure it completely.

I have used a pre-trained language model "xlm-roberta-base" based-on HuggingFace. I have concatenated 4 last bert layers, and pass through to linear layer. The model architecture you can see in the attachment below.

What steps do I have to take to integrate this model to AllenNLP Interpret?

Could you please help me with this problem? | closed | 2021-12-18T09:36:58Z | 2022-01-04T15:25:49Z | https://github.com/allenai/allennlp/issues/5519 | [

"question",

"stale"

] | lengocloi1805 | 7 |

sammchardy/python-binance | api | 1,295 | Async websocket queue suddenly increases and overflow | **Describe the bug**

Running a very simple async routine for connecting the trade socket (or any) and monitoring the receive queue length runs correctly with queue length at 0 for a while and suddenly the queue length increases and overflow (at 100) which lead to socket close

*To Reproduce**

```

import sys

from PyQt5.QtWidgets import QApplication, QMainWindow, QLabel

from PyQt5.QtCore import pyqtSignal, QObject

import asyncio

import asyncqt

from binance import AsyncClient, BinanceSocketManager

class BinanceWebSocket(QObject):

new_data = pyqtSignal(dict)

async def connect_to_binance_websocket(self, symbol):

client = await AsyncClient.create()

bsm = BinanceSocketManager(client)

socket = bsm.trade_socket(symbol)

async with socket as trade_stream:

while True:

res = await trade_stream.recv()

print('queue lenghth :', trade_stream._queue.qsize())

self.new_data.emit(res)

class MainWindow(QMainWindow):

def __init__(self):

super().__init__()

self.label = QLabel(self)

self.label.setGeometry(50, 50, 500, 30)

self.binance_websocket = BinanceWebSocket()

self.binance_websocket.new_data.connect(self.handle_new_data)

loop = asyncio.get_event_loop()

loop.create_task(self.binance_websocket.connect_to_binance_websocket('btcusdt'))

def handle_new_data(self, data):

self.label.setText(str(data))

if ('e' in data):

if (data['m'] == 'Queue overflow. Message not filled'):

print("Socket queue full. Resetting connection.")

# self.reset_socket()

return

else:

if data['m'] is not True and data['m'] is not False:

print(f"Stream error: {data['m']}")

# exit(1)

if __name__ == '__main__':

app = QApplication(sys.argv)

loop = asyncqt.QEventLoop(app)

asyncio.set_event_loop(loop)

mainWindow = MainWindow()

mainWindow.show()

with loop:

loop.run_forever()

sys.exit(app.exec_())

```

**Expected behavior**

Expecting not happening and/or having a flow control enabling the client to ask the API stop sending until the client is not ready.

**Environment (please complete the following information):**

- Pycharm Community

- Python 3.9.0

- Virtual Env: virtualenv

- Windows 10

- python-binance version 1.0.17

**Logs or Additional context**

Add any other context about the problem here.

| open | 2023-02-27T15:08:10Z | 2024-03-05T20:14:14Z | https://github.com/sammchardy/python-binance/issues/1295 | [] | stephanerey | 1 |

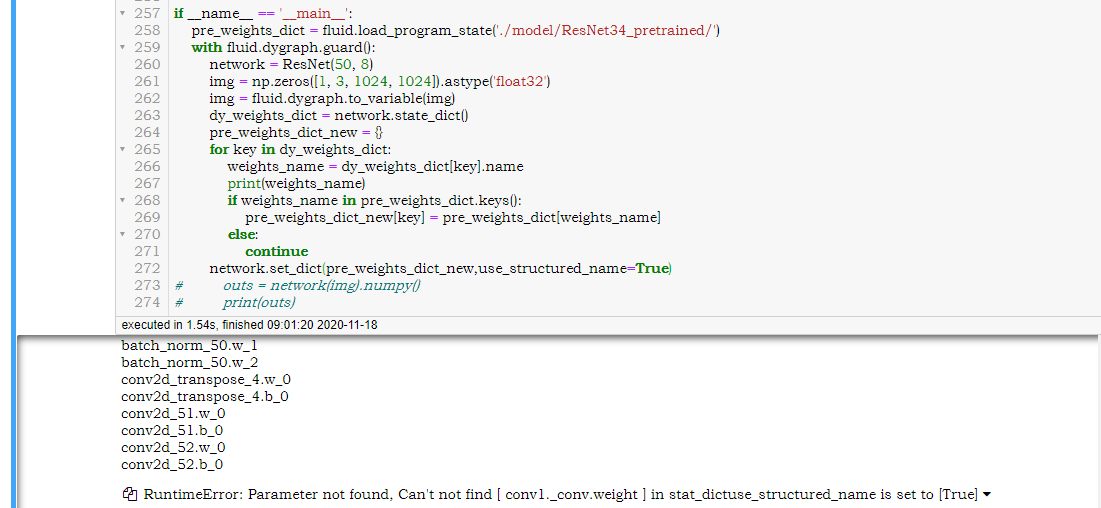

PaddlePaddle/models | computer-vision | 4,959 | 动态图导入静态图模型报错 |

这个[ conv1._conv.weight]在name_space并没有使用,是paddle内置的必须有的参数吗? | open | 2020-11-18T01:03:41Z | 2024-02-26T05:09:52Z | https://github.com/PaddlePaddle/models/issues/4959 | [] | knightning | 2 |

healthchecks/healthchecks | django | 204 | Pushover: support different priorities for the "down" and "up" alerts | closed | 2018-11-28T15:30:47Z | 2018-11-28T19:41:07Z | https://github.com/healthchecks/healthchecks/issues/204 | [] | cuu508 | 0 | |

mckinsey/vizro | pydantic | 707 | Contribute `Diverging bar` to Vizro visual vocabulary | ## Thank you for contributing to our visual-vocabulary! 🎨

Our visual-vocabulary is a dashboard, that serves a a comprehensive guide for selecting and creating various types of charts. It helps you decide when to use each chart type, and offers sample Python code using [Plotly](https://plotly.com/python/), and instructions for embedding these charts into a [Vizro](https://github.com/mckinsey/vizro) dashboard.

Take a look at the dashboard here: https://huggingface.co/spaces/vizro/demo-visual-vocabulary

The source code for the dashboard is here: https://github.com/mckinsey/vizro/tree/main/vizro-core/examples/visual-vocabulary

## Instructions

0. Get familiar with the dev set-up (this should be done already as part of the initial intro sessions)

1. Read through the [README](https://github.com/mckinsey/vizro/tree/main/vizro-core/examples/visual-vocabulary) of the visual vocabulary

2. Follow the steps to contribute a chart. Take a look at other examples. This [commit](https://github.com/mckinsey/vizro/pull/634/commits/417efffded2285e6cfcafac5d780834e0bdcc625) might be helpful as a reference to see which changes are required to add a chart.

3. Ensure the app is running without any issues via `hatch run example visual-vocabulary`

4. List out the resources you've used in the [README](https://github.com/mckinsey/vizro/tree/main/vizro-core/examples/visual-vocabulary)

5. Raise a PR

**Useful resources:**

- Data chart mastery: https://www.atlassian.com/data/charts/how-to-choose-data-visualization | closed | 2024-09-17T12:17:28Z | 2024-10-11T08:14:24Z | https://github.com/mckinsey/vizro/issues/707 | [

"Good first issue :baby_chick:",

"GHC: chart/dashboard track"

] | huong-li-nguyen | 3 |

gee-community/geemap | streamlit | 275 | Request a new feature to use GEE for the fusion of Sentinel2 and Landsat ( 10 m) in jupyter notebook | closed | 2021-01-26T00:48:23Z | 2021-02-13T02:33:22Z | https://github.com/gee-community/geemap/issues/275 | [

"Feature Request"

] | gaowudao | 2 | |

Nemo2011/bilibili-api | api | 727 | [代理ip] 添加settings.proxy = "http://your-proxy.com" 后 一直请求报CHUNK_FAILED | {'name': 'PREUPLOAD', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>},)}

{'name': 'PRE_PAGE', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 0, 'chunk_number': 0, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 10485760, 'chunk_number': 1, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'CHUNK_FAILED', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5, 'info': ''},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 20971520, 'chunk_number': 2, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 31457280, 'chunk_number': 3, 'total_chunk_count': 5},)}

{'name': 'PRE_CHUNK', 'data': ({'page': <bilibili_api.video_uploader.VideoUploaderPage object at 0x10fa7d9d0>, 'offset': 41943040, 'chunk_number': 4, 'total_chunk_count': 5},)}

。。。。。。 | closed | 2024-03-24T07:19:22Z | 2024-03-31T08:12:37Z | https://github.com/Nemo2011/bilibili-api/issues/727 | [

"bug"

] | smilemilk1992 | 2 |

dynaconf/dynaconf | django | 579 | Can I use layered environments for `.env`? | I want to set logging level in [`loguru`](https://github.com/Delgan/loguru/) due my layered environment. Can I do it? | closed | 2021-05-05T21:05:49Z | 2021-08-19T13:48:27Z | https://github.com/dynaconf/dynaconf/issues/579 | [

"question"

] | deknowny | 4 |