repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

paperless-ngx/paperless-ngx | django | 9,480 | [BUG] Consumer losing connection to redis behind HA proxy | ### Description

Hello, I have a redis cluster behind haproxy in kubernetes. The consumer seems to regularly lose connection to redis and recovers connection immediately after the following error message. The cycle continues indefinitely spamming the log file. It doesn't seem to cause other disruptions and the app seems to be otherwise working ok.

Thanks for looking into this.

```

[2025-03-24 08:12:59,761] [INFO] [celery.worker.consumer.connection] Connected to redis://:**@redis-prod-redis-ha-haproxy.database.svc.cluster.local:6379//

[2025-03-24 08:13:29,888] [WARNING] [celery.worker.consumer.consumer] consumer: Connection to broker lost. Trying to re-establish the connection...

Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py", line 340, in start

blueprint.start(self)

File "/usr/local/lib/python3.12/site-packages/celery/bootsteps.py", line 116, in start

step.start(parent)

File "/usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py", line 746, in start

c.loop(*c.loop_args())

File "/usr/local/lib/python3.12/site-packages/celery/worker/loops.py", line 97, in asynloop

next(loop)

File "/usr/local/lib/python3.12/site-packages/kombu/asynchronous/hub.py", line 373, in create_loop

cb(*cbargs)

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 1352, in on_readable

self.cycle.on_readable(fileno)

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 569, in on_readable

chan.handlers[type]()

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 918, in _receive

ret.append(self._receive_one(c))

^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 928, in _receive_one

response = c.parse_response()

^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 865, in parse_response

response = self._execute(conn, try_read)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 841, in _execute

return conn.retry.call_with_retry(

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/retry.py", line 65, in call_with_retry

fail(error)

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 843, in <lambda>

lambda error: self._disconnect_raise_connect(conn, error),

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 830, in _disconnect_raise_connect

raise error

File "/usr/local/lib/python3.12/site-packages/redis/retry.py", line 62, in call_with_retry

return do()

^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 842, in <lambda>

lambda: command(*args, **kwargs),

^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 863, in try_read

return conn.read_response(disconnect_on_error=False, push_request=True)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/connection.py", line 592, in read_response

response = self._parser.read_response(disable_decoding=disable_decoding)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/_parsers/hiredis.py", line 128, in read_response

self.read_from_socket()

File "/usr/local/lib/python3.12/site-packages/redis/_parsers/hiredis.py", line 90, in read_from_socket

raise ConnectionError(SERVER_CLOSED_CONNECTION_ERROR)

redis.exceptions.ConnectionError: Connection closed by server.

[2025-03-24 08:13:32,175] [WARNING] [py.warnings] /usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py:391: CPendingDeprecationWarning:

In Celery 5.1 we introduced an optional breaking change which

on connection loss cancels all currently executed tasks with late acknowledgement enabled.

These tasks cannot be acknowledged as the connection is gone, and the tasks are automatically redelivered

back to the queue. You can enable this behavior using the worker_cancel_long_running_tasks_on_connection_loss

setting. In Celery 5.1 it is set to False by default. The setting will be set to True by default in Celery 6.0.

warnings.warn(CANCEL_TASKS_BY_DEFAULT, CPendingDeprecationWarning)

[2025-03-24 08:13:32,175] [INFO] [celery.worker.consumer.consumer] Temporarily reducing the prefetch count to 4 to avoid over-fetching since 8 tasks are currently being processed.

The prefetch count will be gradually restored to 16 as the tasks complete processing.

```

### Steps to reproduce

1. Set up paperless to connect to redis cluster behind haproxy

2. Check logs

### Webserver logs

```bash

[2025-03-24 08:12:59,687] [INFO] [celery.worker.consumer.consumer] Temporarily reducing the prefetch count to 4 to avoid over-fetching since 8 tasks are currently being processed.

The prefetch count will be gradually restored to 16 as the tasks complete processing.

[2025-03-24 08:12:59,761] [INFO] [celery.worker.consumer.connection] Connected to redis://:**@redis-prod-redis-ha-haproxy.database.svc.cluster.local:6379//

[2025-03-24 08:13:29,888] [WARNING] [celery.worker.consumer.consumer] consumer: Connection to broker lost. Trying to re-establish the connection...

Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py", line 340, in start

blueprint.start(self)

File "/usr/local/lib/python3.12/site-packages/celery/bootsteps.py", line 116, in start

step.start(parent)

File "/usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py", line 746, in start

c.loop(*c.loop_args())

File "/usr/local/lib/python3.12/site-packages/celery/worker/loops.py", line 97, in asynloop

next(loop)

File "/usr/local/lib/python3.12/site-packages/kombu/asynchronous/hub.py", line 373, in create_loop

cb(*cbargs)

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 1352, in on_readable

self.cycle.on_readable(fileno)

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 569, in on_readable

chan.handlers[type]()

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 918, in _receive

ret.append(self._receive_one(c))

^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/kombu/transport/redis.py", line 928, in _receive_one

response = c.parse_response()

^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 865, in parse_response

response = self._execute(conn, try_read)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 841, in _execute

return conn.retry.call_with_retry(

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/retry.py", line 65, in call_with_retry

fail(error)

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 843, in <lambda>

lambda error: self._disconnect_raise_connect(conn, error),

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 830, in _disconnect_raise_connect

raise error

File "/usr/local/lib/python3.12/site-packages/redis/retry.py", line 62, in call_with_retry

return do()

^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 842, in <lambda>

lambda: command(*args, **kwargs),

^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/client.py", line 863, in try_read

return conn.read_response(disconnect_on_error=False, push_request=True)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/connection.py", line 592, in read_response

response = self._parser.read_response(disable_decoding=disable_decoding)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/redis/_parsers/hiredis.py", line 128, in read_response

self.read_from_socket()

File "/usr/local/lib/python3.12/site-packages/redis/_parsers/hiredis.py", line 90, in read_from_socket

raise ConnectionError(SERVER_CLOSED_CONNECTION_ERROR)

redis.exceptions.ConnectionError: Connection closed by server.

[2025-03-24 08:13:32,175] [WARNING] [py.warnings] /usr/local/lib/python3.12/site-packages/celery/worker/consumer/consumer.py:391: CPendingDeprecationWarning:

In Celery 5.1 we introduced an optional breaking change which

on connection loss cancels all currently executed tasks with late acknowledgement enabled.

These tasks cannot be acknowledged as the connection is gone, and the tasks are automatically redelivered

back to the queue. You can enable this behavior using the worker_cancel_long_running_tasks_on_connection_loss

setting. In Celery 5.1 it is set to False by default. The setting will be set to True by default in Celery 6.0.

warnings.warn(CANCEL_TASKS_BY_DEFAULT, CPendingDeprecationWarning)

[2025-03-24 08:13:32,175] [INFO] [celery.worker.consumer.consumer] Temporarily reducing the prefetch count to 4 to avoid over-fetching since 8 tasks are currently being processed.

The prefetch count will be gradually restored to 16 as the tasks complete processing.

[2025-03-24 08:13:32,259] [INFO] [celery.worker.consumer.connection] Connected to redis://:**@redis-prod-redis-ha-haproxy.database.svc.cluster.local:6379//

```

### Browser logs

```bash

```

### Paperless-ngx version

2.14.7

### Host OS

docker official image

### Installation method

Docker - official image

### System status

```json

```

### Browser

_No response_

### Configuration changes

_No response_

### Please confirm the following

- [x] I believe this issue is a bug that affects all users of Paperless-ngx, not something specific to my installation.

- [x] This issue is not about the OCR or archive creation of a specific file(s). Otherwise, please see above regarding OCR tools.

- [x] I have already searched for relevant existing issues and discussions before opening this report.

- [x] I have updated the title field above with a concise description. | closed | 2025-03-24T15:18:49Z | 2025-03-24T15:32:16Z | https://github.com/paperless-ngx/paperless-ngx/issues/9480 | [

"not a bug"

] | fcarucci | 2 |

InstaPy/InstaPy | automation | 6,747 | Candra | closed | 2023-08-22T07:18:10Z | 2023-08-22T07:18:34Z | https://github.com/InstaPy/InstaPy/issues/6747 | [] | Persia48 | 0 | |

voila-dashboards/voila | jupyter | 865 | New release with PR [#841]? | Hi everyone. I would like to use the multi_kernel_manager_class configuration, in combination with the [hotpot_km](https://github.com/voila-dashboards/hotpot_km) library in order to warm up kernels. Unfortunately, I've found that the latest release (0.2.7) available from PyPI doesn't contain it. Would it be possible to do a new release of voilà including PR [#841](https://github.com/voila-dashboards/voila/pull/841/files)? | closed | 2021-03-30T07:48:35Z | 2021-04-12T14:55:46Z | https://github.com/voila-dashboards/voila/issues/865 | [] | mcornudella | 6 |

remsky/Kokoro-FastAPI | fastapi | 124 | [Solved] Docker container crashes on generation | **Describe the bug**

Attempting to generate any output the docker container just crashes without any error message.

Doesn't make a difference if you use the integrated Web UI or the Gradio UI.

**Screenshots or console output**

```

==========

== CUDA ==

==========

CUDA Version 12.4.1

Container image Copyright (c) 2016-2023, NVIDIA CORPORATION & AFFILIATES. All rights reserved.

This container image and its contents are governed by the NVIDIA Deep Learning Container License.

By pulling and using the container, you accept the terms and conditions of this license:

https://developer.nvidia.com/ngc/nvidia-deep-learning-container-license

A copy of this license is made available in this container at /NGC-DL-CONTAINER-LICENSE for your convenience.

11:18:54 AM | INFO | Loading TTS model and voice packs...

11:18:54 AM | INFO | Initializing Kokoro V1 on cuda

11:18:54 AM | DEBUG | Searching for model in path: /app/api/src/models

11:18:54 AM | INFO | Loading Kokoro model on cuda

11:18:54 AM | INFO | Config path: /app/api/src/models/v1_0/config.json

11:18:54 AM | INFO | Model path: /app/api/src/models/v1_0/kokoro-v1_0.pth

11:18:56 AM | DEBUG | Scanning for voices in path: /app/api/src/voices/v1_0

11:18:56 AM | DEBUG | Searching for voice in path: /app/api/src/voices/v1_0

11:18:56 AM | DEBUG | Generating audio for text: 'Warmup text for initialization....'

11:18:56 AM | DEBUG | Got audio chunk with shape: torch.Size([57600])

11:18:56 AM | INFO | Warmup completed in 1993ms

11:18:56 AM | INFO |

░░░░░░░░░░░░░░░░░░░░░░░░

╔═╗┌─┐┌─┐┌┬┐

╠╣ ├─┤└─┐ │

╚ ┴ ┴└─┘ ┴

╦╔═┌─┐┬┌─┌─┐

╠╩╗│ │├┴┐│ │

╩ ╩└─┘┴ ┴└─┘

░░░░░░░░░░░░░░░░░░░░░░░░

Model warmed up on cuda: kokoro_v1CUDA: True

67 voice packs loaded

Beta Web Player: http://0.0.0.0:8880/web/

░░░░░░░░░░░░░░░░░░░░░░░░

11:19:04 AM | INFO | Created global TTSService instance

11:19:04 AM | DEBUG | Scanning for voices in path: /app/api/src/voices/v1_0

INFO: xxx:51832 - "GET /v1/audio/voices HTTP/1.1" 200 OK

11:19:17 AM | DEBUG | Scanning for voices in path: /app/api/src/voices/v1_0

INFO: xxx:46778 - "GET /v1/audio/voices HTTP/1.1" 200 OK

11:19:17 AM | DEBUG | Scanning for voices in path: /app/api/src/voices/v1_0

INFO: xxx:46792 - "POST /v1/audio/speech HTTP/1.1" 200 OK

11:19:17 AM | DEBUG | Scanning for voices in path: /app/api/src/voices/v1_0

11:19:17 AM | DEBUG | Searching for voice in path: /app/api/src/voices/v1_0

11:19:17 AM | DEBUG | Using single voice path: /app/api/src/voices/v1_0/af_heart.pt

11:19:17 AM | DEBUG | Using voice path: /app/api/src/voices/v1_0/af_heart.pt

11:19:17 AM | INFO | Starting smart split for 4 chars

** Press ANY KEY to close this window **

```

**Branch / Deployment used**

Docker container for `kokoro-fastapi-gpu:v0.2.0`

**Operating System**

Linux w/ Nvidia GPU | closed | 2025-02-07T11:30:55Z | 2025-02-08T05:20:58Z | https://github.com/remsky/Kokoro-FastAPI/issues/124 | [] | 0pac1ty | 2 |

lux-org/lux | pandas | 107 | Widget can not show in jupyter notebook | I used follow commend to instal lux widget.

pip install git+https://github.com/lux-org/lux-widget

jupyter nbextension install --py luxWidget

jupyter nbextension enable --py luxWidget

It's ok but the widget is not appear in Jupyter notebook. My environment is conda 4.8.5. and Python is 3.7.8. | closed | 2020-10-06T09:12:27Z | 2020-10-15T12:34:40Z | https://github.com/lux-org/lux/issues/107 | [] | Jack-ee | 11 |

apachecn/ailearning | python | 647 | 第11章_Apriori算法 | # Apriori 算法的使用

Typo: ”燃尽后对生下来的集合进行组合以声场包含两个元素的项集。“

应为 ”然后对剩下来的集合进行组合以生成包含两个元素的项集。“

| closed | 2023-12-15T16:54:46Z | 2024-01-03T01:51:23Z | https://github.com/apachecn/ailearning/issues/647 | [] | SydCS | 2 |

python-restx/flask-restx | api | 381 | Swagger Topbar Missing from Swagger UI | Swagger UI have a topbar, that is generally used as a search bar for all the APIs that are in the API documentation. Is there any roadmap to include that topbar in Restx Swagger UI? | open | 2021-10-25T08:42:27Z | 2022-07-21T13:39:03Z | https://github.com/python-restx/flask-restx/issues/381 | [

"enhancement"

] | anandtripathi5 | 1 |

JoeanAmier/TikTokDownloader | api | 425 | [功能异常] Tiktok连接超时,无法下载视频 | **问题描述**

设置了代理地址还是无法下载TK视频

我不知道是不是我设置错了

我只是打开翻墙软件后启动程序,不知道少了步骤没有

| open | 2025-03-10T08:25:08Z | 2025-03-10T11:25:44Z | https://github.com/JoeanAmier/TikTokDownloader/issues/425 | [] | oyoy131 | 2 |

TheKevJames/coveralls-python | pytest | 20 | Nothing gets reported to coveralls.io | I think I have set up everything correctly. As you can see in Travis-CI's logs coveralls gets called and it returns 200:

https://travis-ci.org/rubik/radon

However, on coveralls.io dashboard the coverage percentage does not get displayed!

https://coveralls.io/r/rubik/radon

How is that possible?

Thanks,

rubik

| closed | 2013-05-31T15:27:44Z | 2013-06-11T13:12:01Z | https://github.com/TheKevJames/coveralls-python/issues/20 | [] | rubik | 7 |

ARM-DOE/pyart | data-visualization | 1,408 | BUG: read_odim_hdf5 - TypeError: 'numpy.float32' object cannot be interpreted as an integer | * Py-ART version: 1.14.6

* Python version: 3.11 (same with 3.10)

* Operating System: Linux / Ubuntu

### Description

An error is raised when using `pyart.aux_io.odim_hdf5.read_odim_hdf5`:

`TypeError: 'numpy.float32' object cannot be interpreted as an integer`

The line to blame is

https://github.com/ARM-DOE/pyart/blob/75cb88466a74b48680c0cdf1400e3ed8a92d35f1/pyart/aux_io/odim_h5.py#L333

The `datetime.datetime.utcfromtimestamp` function doesn't seem to like `numpy.float32` dtype anymore.

A fix (that worked for me) is to specify "float64" in the dtype of `t_data`, instead of "float32":

https://github.com/ARM-DOE/pyart/blob/75cb88466a74b48680c0cdf1400e3ed8a92d35f1/pyart/aux_io/odim_h5.py#L327

| closed | 2023-03-29T17:07:27Z | 2023-05-03T18:47:35Z | https://github.com/ARM-DOE/pyart/issues/1408 | [

"Bug"

] | Vforcell | 7 |

ExpDev07/coronavirus-tracker-api | rest-api | 251 | Add docker compatibility | Hello everyone!!!

To get the benefit of run the api with one command (with isolation), how about add the dockerfile and docker-compose to this project?

Do you see some problem about this? | closed | 2020-04-01T21:06:26Z | 2020-04-07T17:33:18Z | https://github.com/ExpDev07/coronavirus-tracker-api/issues/251 | [

"enhancement",

"dev"

] | GabrielDS | 8 |

kubeflow/katib | scikit-learn | 2,175 | Error "Objective metric accuracy is not found in training logs, unavailable value is reported. metric:<name:"accuracy" value:"unavailable" | /kind bug

**What steps did you take and what happened:**

I have been trying to create a simple Katib experiment with sklearn iris dataset but am facing an error "Objective metric accuracy is not found in training logs, unavailable value is reported. metric:<name:"accuracy" value:"unavailable"

**Below is my code:**

import argparse

import os

import hypertune

import logging

import pandas as pd

# YOUR IMPORTS HERE

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.neighbors import KNeighborsClassifier

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--neighbors', type=int, default=3,

help='value of k')

parser.add_argument("--log-path", type=str, default="",

help="Path to save logs. Print to StdOut if log-path is not set")

parser.add_argument("--logger", type=str, choices=["standard", "hypertune"],

help="Logger", default="standard")

args = parser.parse_args()

if args.log_path == "" or args.logger == "hypertune":

logging.basicConfig(

format="%(asctime)s %(levelname)-8s %(message)s",

datefmt="%Y-%m-%dT%H:%M:%SZ",

level=logging.DEBUG)

else:

logging.basicConfig(

format="%(asctime)s %(levelname)-8s %(message)s",

datefmt="%Y-%m-%dT%H:%M:%SZ",

level=logging.DEBUG,

filename=args.log_path)

if args.logger == "hypertune" and args.log_path != "":

os.environ['CLOUD_ML_HP_METRIC_FILE'] = args.log_path

# For JSON logging

hpt = hypertune.HyperTune()

# LOAD DATA HERE

iris_data = load_iris()

iris_df = pd.DataFrame(data=iris_data['data'], columns=iris_data['feature_names'])

iris_df['Iris type'] = iris_data['target']

iris_df['Iris name'] = iris_df['Iris type'].apply(

lambda x: 'sentosa' if x == 0 else ('versicolor' if x == 1 else 'virginica'))

def f(x):

if x == 0:

val = 'setosa'

elif x == 1:

val = 'versicolor'

else:

val = 'virginica'

return val

iris_df['test'] = iris_df['Iris type'].apply(f)

iris_df.drop(['test'], axis=1, inplace=True)

X = iris_df[['sepal length (cm)', 'sepal width (cm)', 'petal length (cm)', 'petal width (cm)']]

y = iris_df['Iris name']

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=0)

knn = KNeighborsClassifier(n_neighbors=args.neighbors)

knn.fit(X_train, y_train)

accuracy = knn.score(X_test, y_test)

logging.info("{{metricName: accuracy, metricValue: {:.4f}}}\n".format(accuracy))

if args.logger == "hypertune":

hpt.report_hyperparameter_tuning_metric(

hyperparameter_metric_tag='accuracy',

metric_value=accuracy)

if __name__ == '__main__':

main()

**Below is my yaml file:**

---

apiVersion: kubeflow.org/v1beta1

kind: Experiment

metadata:

namespace: kubeflow

name: iris-1

spec:

parallelTrialCount: 1

maxTrialCount: 2

maxFailedTrialCount: 3

objective:

type: maximize

goal: 0.99

objectiveMetricName: accuracy

metricsCollectorSpec:

collector:

kind: StdOut

algorithm:

algorithmName: random

parameters:

- name: neighbors

parameterType: int

feasibleSpace:

min: "3"

max: "5"

trialTemplate:

primaryContainerName: training-container

trialParameters:

- name: neighbors

description: KNN neighbors

reference: neighbors

trialSpec:

apiVersion: batch/v1

kind: Job

spec:

template:

metadata:

annotations:

sidecar.istio.io/inject: "false"

spec:

containers:

- name: training-container

image: e-dpiac-docker-local.docker.lowes.com/katib-sklearn:v3

command:

- "python3"

- "/app/iris.py"

- "--neighbors=${trialParameters.neighbors}"

- "--logger=hypertune"

resources:

requests:

memory: "6Gi"

cpu: "2"

limits:

memory: "10Gi"

cpu: "4"

restartPolicy: Never

**What did you expect to happen:** The metrics should have been collected and the trials should have succeeded..

**Anything else you would like to add:**

[Miscellaneous information that will assist in solving the issue.]

**Environment:**

- Katib version (check the Katib controller image version): katib-controller:v0.12.0

- Kubernetes version: (`kubectl version`):

- OS (`uname -a`):

---

<!-- Don't delete this message to encourage users to support your issue! -->

Impacted by this bug? Give it a 👍 We prioritize the issues with the most 👍

| closed | 2023-07-19T16:45:32Z | 2023-07-24T20:34:25Z | https://github.com/kubeflow/katib/issues/2175 | [

"kind/bug"

] | mChowdhury-91 | 23 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 1,593 | poor quality for testing with a custom dataset | Hello,

I want to train pix2pix on cityscape and test on a segmentation masks from another dataset.

This is my train command:

`python train.py --name label2city_1024p --label_nc 0 --no_instance

`

And my test command:

`python test.py --name label2city_1024p --label_nc 0 --no_instance`

These are the results:

Thank you for helping | open | 2023-07-31T10:08:28Z | 2023-07-31T10:08:28Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1593 | [] | At-Walid | 0 |

jupyterhub/repo2docker | jupyter | 1,272 | Packagemanager.rstudio.com migration broke R buildpack | <!-- Thank you for contributing. These HTML commments will not render in the issue, but you can delete them once you've read them if you prefer! -->

### Bug description

Per https://status.posit.co/ Rstudio-now-Posit PackageManager server was migrated from https://packagemanager.rstudio.com to https://packagemanager.posit.co/. `RBuildPack.get_rspm_snapshot_url` doesn't handle the redirect properly.

<!-- Use this section to clearly and concisely describe the bug. -->

#### Expected behaviour

r2d outputs Dockerfile to stdout

<!-- Tell us what you thought would happen. -->

#### Actual behaviour

```

[Repo2Docker] Looking for repo2docker_config in /tmp/a

Picked Local content provider.

Using local repo ..

Traceback (most recent call last):

File "/home/xarth/codes/jupyterhub/repo2docker/venv/lib/python3.10/site-packages/requests/models.py", line 971, in json

return complexjson.loads(self.text, **kwargs)

File "/usr/lib/python3.10/json/__init__.py", line 346, in loads

return _default_decoder.decode(s)

File "/usr/lib/python3.10/json/decoder.py", line 340, in decode

raise JSONDecodeError("Extra data", s, end)

json.decoder.JSONDecodeError: Extra data: line 1 column 5 (char 4)

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/xarth/codes/jupyterhub/repo2docker/venv/bin/jupyter-repo2docker", line 33, in <module>

sys.exit(load_entry_point('jupyter-repo2docker', 'console_scripts', 'jupyter-repo2docker')())

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/__main__.py", line 469, in main

r2d.start()

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/app.py", line 850, in start

self.build()

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/app.py", line 799, in build

print(picked_buildpack.render(build_args))

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/buildpacks/base.py", line 464, in render

for user, script in self.get_build_scripts():

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/buildpacks/r.py", line 278, in get_build_scripts

cran_mirror_url = self.get_cran_mirror_url(self.checkpoint_date)

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/buildpacks/r.py", line 241, in get_cran_mirror_url

return self.get_rspm_snapshot_url(snapshot_date)

File "/home/xarth/codes/jupyterhub/repo2docker/repo2docker/buildpacks/r.py", line 209, in get_rspm_snapshot_url

).json()

File "/home/xarth/codes/jupyterhub/repo2docker/venv/lib/python3.10/site-packages/requests/models.py", line 975, in json

raise RequestsJSONDecodeError(e.msg, e.doc, e.pos)

requests.exceptions.JSONDecodeError: Extra data: line 1 column 5 (char 4)

```

<!-- Tell us what you actually happens. -->

### How to reproduce

<!-- Use this section to describe the steps that a user would take to experience this bug. -->

1. `mkdir /tmp/foo`

2. `echo "r-2022-05-05" > /tmp/foo/runtime.txt`

3. `jupyter-repo2docker --no-build --debug /tmp/foo`

4. See error

### Your personal set up

<!-- Tell us a little about the system you're using. You can see the guidelines for setting up and reporting this information at https://repo2docker.readthedocs.io/en/latest/contributing/contributing.html#setting-up-for-local-development. -->

- OS: Linux

- Docker version: 24.0.0

- repo2docker version: 2022.10.0+69.g364bf2e.dirty (dirty cause I already tested a patch)

| closed | 2023-05-22T17:51:50Z | 2023-05-30T14:29:03Z | https://github.com/jupyterhub/repo2docker/issues/1272 | [] | Xarthisius | 1 |

svc-develop-team/so-vits-svc | pytorch | 59 | Not saving checkpoints | I am running the Colab 4.0 notebook and everything works very well but when I ran the actual training step I notice that it's not saving any of the checkpoints. It does so at the beginning but then it just doesn't at all. I've run it for over 80 epochs now with absolutely no updates. | closed | 2023-03-19T20:59:54Z | 2023-03-23T08:39:19Z | https://github.com/svc-develop-team/so-vits-svc/issues/59 | [] | Wacky817 | 1 |

yihong0618/running_page | data-visualization | 195 | TODO List | @geekplux @ben-29 @shaonianche what we can do.

- [x] 地图上虚线作为可选项

- [ ] 考虑换成 next.js

- [x] fix all alert

- [ ] python code refactor

- [ ] pnpm consider?

- [ ] 性能提升

- [x] doc improve

- [ ] geojson support

- [ ] rss3 maybe

- [ ] #122

- [ ] more status char

- [ ] iOS app new --> GitHubPoster

- [ ] oppo

- [x] #187

- [x] dockerfile | open | 2022-01-07T05:25:52Z | 2023-11-11T14:54:57Z | https://github.com/yihong0618/running_page/issues/195 | [

"help wanted"

] | yihong0618 | 0 |

dsdanielpark/Bard-API | api | 250 | How do I use cookies with ChatBard | i can only use bard with full cookie dict | closed | 2023-12-12T22:02:54Z | 2023-12-23T12:08:09Z | https://github.com/dsdanielpark/Bard-API/issues/250 | [] | tinker1234 | 2 |

lepture/authlib | flask | 409 | in v0.15.5, flask_client do not contain UserInfo in `authorize_access_token()` method | I used pipenv to install authlib, the lastest version is `v.0.15.5`

however, when I read the document, it said

```

when .authorize_access_token, the provider will include a id_token in the response. This id_token contains the UserInfo we need so that we don’t have to fetch userinfo endpoint again.

```

but when I look into the source code of authlib, I don't think it contains the UserInfo

https://github.com/lepture/authlib/blob/d8e428c9350c792fc3d25dbaaffa3bfefaabd8e3/authlib/integrations/flask_client/remote_app.py#L67

is the source code in the master branch will be tagged in the future? | closed | 2021-12-07T09:25:37Z | 2022-01-14T06:53:59Z | https://github.com/lepture/authlib/issues/409 | [] | tan-i-ham | 1 |

JaidedAI/EasyOCR | machine-learning | 614 | ModuleNotFoundError: No module named 'easyocr' | Hey,

I tried every method to install easyocr. I installed PyTorch without GPU

`pip3 install torch torchvision torchaudio`

and then I tried `pip install easyocr` but still I got an error, afterwards from one of the solved issues I tried

`pip uninstall easyocr`

`pip install git+git://github.com/jaidedai/easyocr.git` but still unable to import easyocr.

And also firstly when I run the command `pip uninstall easyocr`, it showed me **WARNING: Skipping easyocr as it is not installed.**

@rkcosmos please help through this problem | closed | 2021-12-09T06:57:13Z | 2022-08-07T05:01:26Z | https://github.com/JaidedAI/EasyOCR/issues/614 | [] | harshitkd | 4 |

pyeve/eve | flask | 1,097 | Application does not work when I upload photos to an eve dict schema properties | Hi:

I have an eve schema set up as such:

``` python

'schema': {

'email': {

'type': 'string',

'unique': True,

},

'isEmailVerified': {

'type': 'boolean',

'default': False,

'readonly': True,

},

'profile': {

'type': 'dict',

'schema': {

'avatar': {

'type': 'media',

},

'bio': {

'type': 'string',

'minlength': 1,

'maxlength': 30,

},

'location': {

'type': 'dict',

'schema': {

'state': {

'type': 'string',

},

'city': {

'type': 'string',

},

},

},

'birth': {

'type': 'datetime',

},

'gender': {

'type': 'string',

'allowed': ["male", "famale"],

}

},

},

}

```

And I'm using cURL to make a PATCH request:

```cURL

curl -X PATCH "http://127.0.0.1:5000/api/v1/accounts/5a461646b2fcb841401ea437" -H 'If-Match: a1944717f279751875f6f6794147b258df7e2af1' -F "profile.avatar=@WechatIMG21.jpeg"

```

Application is not responding and blocking other requests. How can I upload the photo? | closed | 2017-12-29T12:17:22Z | 2018-07-04T14:01:13Z | https://github.com/pyeve/eve/issues/1097 | [

"stale"

] | zzzhouuu | 1 |

nltk/nltk | nlp | 2,666 | Encountered an old issue #1387 with the latest version | I've got the same error after doing similar stuff. (See #1387.)

```

---------------------------------------------------------------------------

FileNotFoundError Traceback (most recent call last)

G:\Anaconda3\lib\site-packages\IPython\core\formatters.py in __call__(self, obj)

343 method = get_real_method(obj, self.print_method)

344 if method is not None:

--> 345 return method()

346 return None

347 else:

G:\Anaconda3\lib\site-packages\nltk\tree.py in _repr_png_(self)

817 raise LookupError

818

--> 819 with open(out_path, "rb") as sr:

820 res = sr.read()

821 os.remove(in_path)

FileNotFoundError: [Errno 2] No such file or directory: 'G:\\Users\\01\\AppData\\Local\\Temp\\tmpkyszdc9g.png'

```

(Python)

```

Error: /syntaxerror in (binary token, type=155)

Operand stack:

--nostringval-- 寰蒋?Execution stack:

%interp_exit .runexec2 --nostringval-- --nostringval-- --nostringval-

- 2 %stopped_push --nostringval-- --nostringval-- --nostringval-- fa

lse 1 %stopped_push 1926 1 3 %oparray_pop 1925 1 3 %oparray_

pop --nostringval-- 1909 1 3 %oparray_pop 1803 1 3 %oparray_po

p --nostringval-- %errorexec_pop .runexec2 --nostringval-- --nostringv

al-- --nostringval-- 2 %stopped_push

Dictionary stack:

--dict:1173/1684(ro)(G)-- --dict:0/20(G)-- --dict:82/200(L)-- --dict:23

/50(L)--

Current allocation mode is local

Last OS error: No such file or directory

GPL Ghostscript 9.05: Unrecoverable error, exit code 1

```

(commmand line)

Seems the problem is that ghostscript failed - strange.

It is reproducible as https://github.com/nltk/nltk/issues/1387#issuecomment-216426399:

(in ipython notebook)

```

import nltk

nltk.tree.Tree.fromstring("(test (this tree))")

```

This old issue is closed and referenced by some PRs, so I thought it was fixed - or this is a problem of Jupyter Notebook? | open | 2021-02-08T14:02:44Z | 2021-10-27T20:06:50Z | https://github.com/nltk/nltk/issues/2666 | [] | yanhuihang | 1 |

scikit-optimize/scikit-optimize | scikit-learn | 1,127 | Bug with using n_calls, n_initial_points and (x0, y0) | This is regarding `gp_minimize` method from skopt. The problem is using the `n_calls` and `n_initial_points` parameters when the (x0, y0) is already present.

From the documentation I find,

`The total number of evaluations, n_calls, are performed like the following. If x0 is provided but not y0, then the elements of x0 are first evaluated, followed by n_initial_points evaluations. Finally, n_calls - len(x0) - n_initial_points evaluations are made guided by the surrogate model. If x0 and y0 are both provided then n_initial_points evaluations are first made then n_calls - n_initial_points subsequent evaluations are made guided by the surrogate model.`

This works well when `(x0, y0)` is not provided. However, when we provide `(x0, y0)` along with `n_calls` and `n_initial_points`, it does not behave the way it's supposed to be.

**Scenario 1**

(x0, y0) are provided.

len(x0)=10

n_initial_points=5

n_calls=20

Expected: According to above documentation, 10 provided points are evaluated --> 5 initial random points are evaluated --> (20-5)=15 optimization steps are taken guided by surrogate model.

Observed: 10 provided points are evaluated --> (len(x0) + n_initial_points) = 10+5 = 15 initial random points are evaluated --> (20-15) = 5 optimization steps are evaluated. If I had provided n_calls < (len(x0) + n_initial_points) e.g. n_calls=10, it complains that `n_calls` should be higher than `len(x0)` + `n_initial_points`.

**Scenario 2 (hack)**

(x0, y0) are provided.

len(x0)=10

n_initial_points= -len(x0) + 5 = -10 + 5 = -5

n_calls=20

Expected: 10 provided points are evaluated --> -5 initial random points are evaluated (which is basically no new points are evaluated) --> (20-(-5)) = 25 optimization steps are taken guided by surrogate model.

Observed: 10 provided points are evaluated --> -len(x0) + 5) = -10+5 = -5 initial random points are evaluated which means it should not evaluate anything but it takes -5 as 5 (absolute value) and evaluates 5 random points --> (20 - 10 - (-5)) = 15 optimization steps are evaluated.

Would be great if you kindly fix the number or explain what is expected in reality. | open | 2022-10-04T12:00:53Z | 2024-01-19T15:34:33Z | https://github.com/scikit-optimize/scikit-optimize/issues/1127 | [] | skshahidur | 2 |

SALib/SALib | numpy | 131 | Add new method - New Sampling Strategy for Method of Morris | [Khare et al. (2015)](http://www.sciencedirect.com/science/article/pii/S1364815214003399) describe an improved method for sampling for the method of morris. | open | 2017-01-20T11:03:46Z | 2019-05-22T17:09:09Z | https://github.com/SALib/SALib/issues/131 | [

"enhancement",

"add_method"

] | willu47 | 2 |

graphql-python/graphene-sqlalchemy | sqlalchemy | 320 | How to convert a json response to graphql SQLAlchemyObjectType | I am trying to build an application with graphene_sqlalchemy to manage quite a few micro-services. I have the data models (SQLAlchemyObjectType) of each micro-service, and want to reuse them so that I don't need to duplicate data models. But the challenge is how to convert json responses from micro-services to graphql SQLAlchemyObjectType. Is there any reference? Or do I have to redefine data models for graphql? Thank you! | open | 2021-10-25T13:40:07Z | 2022-04-27T19:18:42Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/320 | [

"question"

] | zhaoxwei | 0 |

apify/crawlee-python | automation | 662 | Memory usage average periodically printed from `AutoscaledPool` seems wrong | This issue was observed on the Apify platform - see for example https://api.apify.com/v2/logs/g7piQaNbAdteXnkFC. The problem is that the average memory usage starts at 0 and suddenly hops to 1.0. I can testify that the increase was way more gradual :slightly_smiling_face:

```

2024-11-06T15:11:50.471Z [crawlee._autoscaling.autoscaled_pool] INFO current_concurrency = 1; desired_concurrency = 1; cpu = 0.581; mem = 0.0; event_loop = 0.227; client_info = 0.0

2024-11-06T15:12:50.474Z [crawlee._autoscaling.autoscaled_pool] INFO current_concurrency = 0; desired_concurrency = 1; cpu = 0.491; mem = 0.0; event_loop = 0.1; client_info = 0.0

2024-11-06T15:13:50.487Z [crawlee._autoscaling.autoscaled_pool] INFO current_concurrency = 0; desired_concurrency = 1; cpu = 0.671; mem = 0.0; event_loop = 0.104; client_info = 0.0

2024-11-06T15:14:50.496Z [crawlee._autoscaling.autoscaled_pool] INFO current_concurrency = 0; desired_concurrency = 3; cpu = 0.451; mem = 0.206; event_loop = 0.039; client_info = 0.0

2024-11-06T15:15:50.491Z [crawlee._autoscaling.autoscaled_pool] INFO current_concurrency = 0; desired_concurrency = 1; cpu = 0.242; mem = 1.0; event_loop = 0.0; client_info = 0.0

``` | closed | 2024-11-06T16:37:13Z | 2024-11-15T16:23:43Z | https://github.com/apify/crawlee-python/issues/662 | [

"bug",

"t-tooling"

] | janbuchar | 15 |

sigmavirus24/github3.py | rest-api | 792 | Latest PyPI wheel not installing all dependencies | Is it possible that the recently released version 1.0.0 does not install all required dependencies. On Linux and Windows environments (Python 3.6.3, 3.6.4) we get an error like this during our unit test execution, which involves calling into the package API:

```

2018-03-14 10:39:11.684617 | node | ImportError while importing test module '/workspace/test/test_github.py'.

2018-03-14 10:39:11.684809 | node | Hint: make sure your test modules/packages have valid Python names.

2018-03-14 10:39:11.684862 | node | Traceback:

2018-03-14 10:39:11.684974 | node | test/test_github.py:1: in <module>

2018-03-14 10:39:11.685046 | node | import github3

2018-03-14 10:39:11.685267 | node | /usr/local/lib/python3.6/site-packages/github3/__init__.py:18: in <module>

2018-03-14 10:39:11.685349 | node | from .api import (

2018-03-14 10:39:11.686490 | node | /usr/local/lib/python3.6/site-packages/github3/api.py:11: in <module>

2018-03-14 10:39:11.686651 | node | from .github import GitHub, GitHubEnterprise

2018-03-14 10:39:11.686855 | node | /usr/local/lib/python3.6/site-packages/github3/github.py:10: in <module>

2018-03-14 10:39:11.686939 | node | from . import auths

2018-03-14 10:39:11.687134 | node | /usr/local/lib/python3.6/site-packages/github3/auths.py:6: in <module>

2018-03-14 10:39:11.687243 | node | from .models import GitHubCore

2018-03-14 10:39:11.687441 | node | /usr/local/lib/python3.6/site-packages/github3/models.py:8: in <module>

2018-03-14 10:39:11.687557 | node | import dateutil.parser

2018-03-14 10:39:11.687711 | node | E ModuleNotFoundError: No module named 'dateutil'

```

On the ``develop`` branch (is this where you released from?) ``setup.py`` does contain the required dependency ``python-dateutil``, however, it is not installed on Windows nor Linux. While not sure, I just noticed that ``setup.cfg`` does _not_ mention the new package in ``requires-dist``. Maybe this overrides the settings? | closed | 2018-03-14T12:05:50Z | 2018-03-14T17:24:49Z | https://github.com/sigmavirus24/github3.py/issues/792 | [] | moltob | 1 |

iperov/DeepFaceLab | deep-learning | 932 | Error extracting frames from data_src in deepfacelab colab | I was trying to run DFL_Colab, i imported the workspace folder successfully but got this error when i ran the extract frames cell

"/content

/!\ input file not found.

Done. "

please, any help regarding this? | open | 2020-10-30T00:34:27Z | 2023-06-08T21:51:12Z | https://github.com/iperov/DeepFaceLab/issues/932 | [] | jamesbright | 2 |

hpcaitech/ColossalAI | deep-learning | 6,091 | [BUG]: Got nan during backward with zero2 | ### Is there an existing issue for this bug?

- [X] I have searched the existing issues

### 🐛 Describe the bug

My code is based on Open-Sora, and can run without any issue on 32 gpus, using zero2.

However, when using 64 gpus, nan appears in the tensor gradients after the second backward step.

I have made a workaround to patch [`colossalai/zero/low_level/low_level_optim.py`](https://github.com/hpcaitech/ColossalAI/blob/89a9a600bc4802c912b0ed48d48f70bbcdd8142b/colossalai/zero/low_level/low_level_optim.py#L315) with

```python

# line 313, in _run_reduction

flat_grads = bucket_store.get_flatten_grad()

flat_grads /= bucket_store.world_size

if torch.isnan(flat_grads).any(): # here

raise RuntimeError(f"rank {dist.get_rank()} got nan on flat_grads") # here

...

if received_grad.dtype != grad_dtype:

received_grad = received_grad.to(grad_dtype)

if torch.isnan(received_grad).any(): # here

raise RuntimeError(f"rank {dist.get_rank()} got nan on received_grad") # here

...

```

With the patch above, my code run normally and the loss seems fine.

I think it may related to asynchronized state between cuda streams. I do not the exact reason and I do not think my workaround could really solve the issue.

Any idea from the team member?

### Environment

Nvidia H20

ColossalAI version: 0.4.3

cuda 12.4

pytorch 2.4 | open | 2024-10-16T06:39:10Z | 2024-10-29T01:40:58Z | https://github.com/hpcaitech/ColossalAI/issues/6091 | [

"bug"

] | flymin | 9 |

huggingface/datasets | nlp | 7,297 | wrong return type for `IterableDataset.shard()` | ### Describe the bug

`IterableDataset.shard()` has the wrong typing for its return as `"Dataset"`. It should be `"IterableDataset"`. Makes my IDE unhappy.

### Steps to reproduce the bug

look at [the source code](https://github.com/huggingface/datasets/blob/main/src/datasets/iterable_dataset.py#L2668)?

### Expected behavior

Correct return type as `"IterableDataset"`

### Environment info

datasets==3.1.0 | closed | 2024-11-22T17:25:46Z | 2024-12-03T14:27:27Z | https://github.com/huggingface/datasets/issues/7297 | [] | ysngshn | 1 |

jina-ai/serve | machine-learning | 5,898 | Streaming for HTTP for Deployment | closed | 2023-05-30T07:13:50Z | 2023-06-19T08:23:38Z | https://github.com/jina-ai/serve/issues/5898 | [] | alaeddine-13 | 0 | |

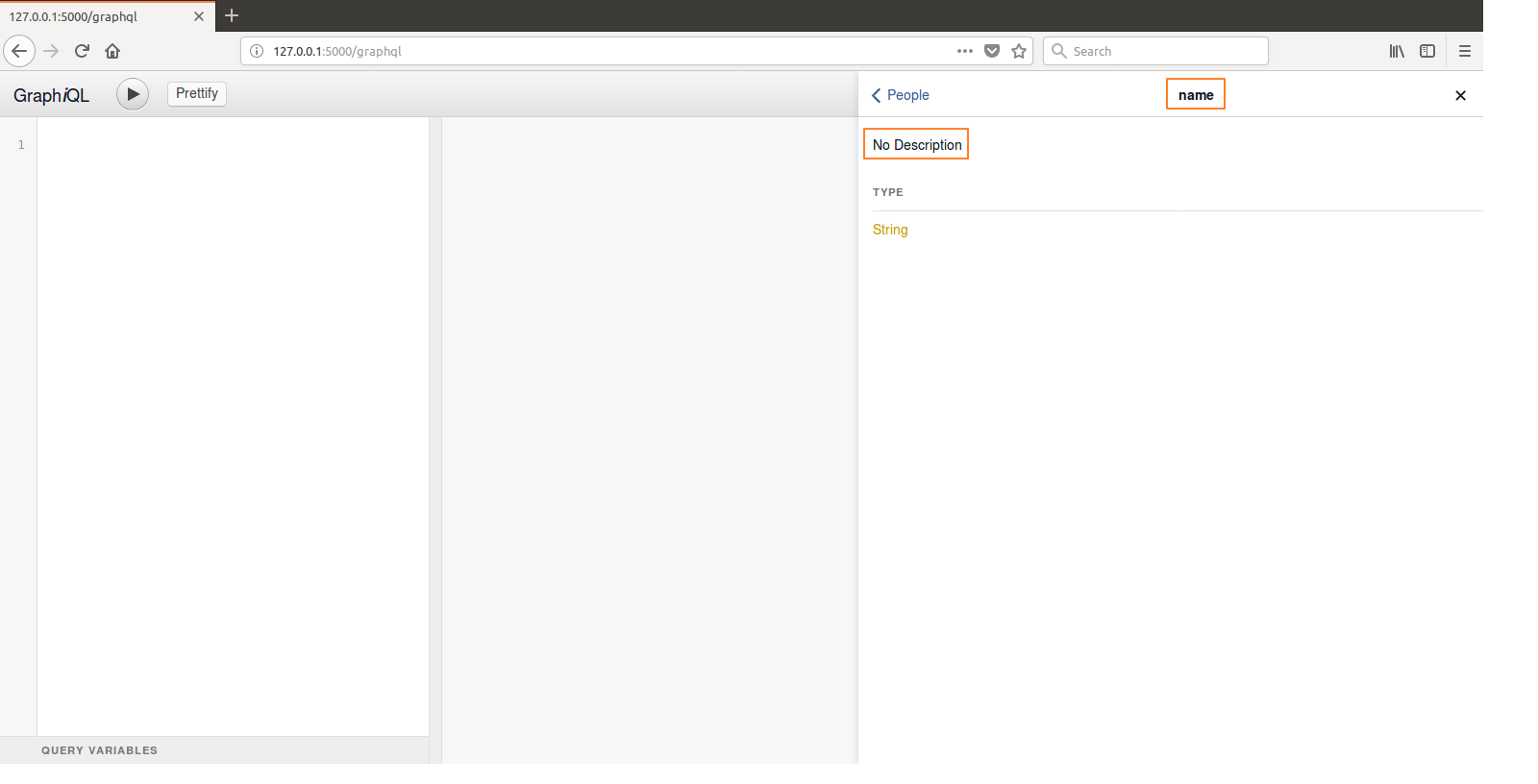

graphql-python/graphene-sqlalchemy | sqlalchemy | 111 | How to generate GraphiQL documentation? | Hello,

I'm trying to figure out how to provide descriptions to for the attributes of my SQLAlchemy class used to define my Graphene `ObjectType`:

```python

from graphene_sqlalchemy import SQLAlchemyObjectType

from database.model_people import ModelPeople

import graphene

class People(SQLAlchemyObjectType):

"""People node."""

class Meta:

model = ModelPeople

interfaces = (graphene.relay.Node,)

```

My "People node." docstring get displayed properly in GraphiQL but I'm not able to get a description for the attributes of my node which come from the ModelPeople class defined by SQLAlchemy.

Any idea?

Thank you

| closed | 2018-02-09T05:27:15Z | 2023-02-25T06:58:45Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/111 | [] | alexisrolland | 3 |

waditu/tushare | pandas | 1,033 | 最近2天一直出现Read Time Out的exception | #使用tushare的pro.query失败:HTTPConnectionPool(host='api.tushare.pro', port=80): Read timed out. (read timeout=10)

我使用pro.query执行查询,基本最近2天都有遇到这个出错。 | closed | 2019-05-07T22:55:49Z | 2019-05-08T04:28:23Z | https://github.com/waditu/tushare/issues/1033 | [] | yyxochen | 1 |

Johnserf-Seed/TikTokDownload | api | 374 | 你们会经常出现”获取作品数据失败,正在重新获取“吗? | 经常出现提示”获取作品数据失败,正在重新获取“

然后几次之后又能获取到。

就是说,能用,但是老是出这种提示。

你们也这样吗? | open | 2023-03-28T04:52:20Z | 2023-03-28T04:52:21Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/374 | [

"故障(bug)",

"额外求助(help wanted)",

"无效(invalid)"

] | zhengjinzhj | 0 |

sczhou/CodeFormer | pytorch | 273 | scripts/crop_align_face.py broken | Hello. This file uses some deprecated function, which can be fixed (like ANTIALIAS). The other big problem is that if -o is anything other than "." is defined the whole thing breaks. it combines input and output and then uses that.

Another thing is why save only the biggest picture? any tags to save everything? | open | 2023-07-22T06:18:49Z | 2023-07-22T06:18:49Z | https://github.com/sczhou/CodeFormer/issues/273 | [] | Astra060 | 0 |

plotly/dash | jupyter | 2,975 | add new persistence_type: history_state | When basing the app around internal links which add entries to the browser history it could be useful with a persistence type which stores the values in the history state entries (`history.pushState(state, ...)`).

I know that _ideally_ most/all such state would be part of the URL as this has more advantages beyond preserving the state on back/forward. But sometimes this can be a bit tricky - using URL parameters for state does require a certain app architecture - so I think it could be useful to easily add some state to the history entry "under the hood".

I think this could be reasonable easy to implement. Do a `replaceState({...history.state, ...historyProps}, ...)` each time a "history-state persisted" property is changed. Then restore these in a "popstate" event handler.

(I could not find any previous discussion on this topic) | open | 2024-09-03T18:23:57Z | 2024-09-04T13:22:42Z | https://github.com/plotly/dash/issues/2975 | [

"feature",

"P3"

] | olejorgenb | 0 |

keras-team/keras | data-science | 20,857 | Several Problems when using Keras Image Augmentation Layers to Augment Images and Masks for a Semantic Segmentation Dataset | In the following, I will try to tell you a bit of my “story” about how I read through the various layers and (partly outdated) tutorials on image augmentation for semantic segmentation, what problems and bugs I encountered and how I was able to solve them, at least for my use case. I hope that I am reporting these issue(s) in the right place and that I can help the developers responsible with my “story”.

I am currently using the latest versions of Keras (3.8) and Tensorflow (2.18.0) on an Ubuntu 23.10 machine, but have also observed the issues with other setups.

My goal was to use some of the Image Augmentation Layers from Keras to augment the images AND masks in my semantic segmentation dataset. To do this I followed the [segmentation tutorial from Tensarflow](https://www.tensorflow.org/tutorials/images/segmentation) where a custom `Augment` class is used for augmentation.

This code works fine because the `Augment` class uses only a single augmentation layer. But, for my custom code I wanted to use more augmentations. Therefore, I "combined" several of the augmentation layers trying both the normal [Pipeline layer](https://keras.io/api/layers/preprocessing_layers/image_augmentation/pipeline/) but also my preferred [RandomAugmentationPipeline layer from keras_cv](https://github.com/keras-team/keras-cv/blob/master/keras_cv/src/layers/preprocessing/random_augmentation_pipeline.py).

I provide an example implementation in [this gist](https://colab.research.google.com/gist/RabJon/9f27d600301dcf6a734e8b663c802c7f/modification_of_segmentation.ipynb). The gist is a modification of the aforementioned [image segmentation tutorial from Tensorflow](https://www.tensorflow.org/tutorials/images/segmentation) where I intended to only change the `Augment` class slightly to fit my use case and to remove all the unnecessary parts. Unfortunately, I also had to touch the code that loads the dataset, because the dataset version used in the notebook was no longer supported. But, this is another issue.

Anyway, at the bottom of the notebook you can see some example images and masks from the data pipeline and if you inspect the in more detail you can see that for some examples images and masks do not match because they were augmented differently, which is obviously not the expected behaviour. You can also see that these mismatches already happen from the first epoch on. On my system with my custom use case (where I was using the RandomAugmentationPipeline layer) it happend only from the second epoch onward, which made debugging much more difficult.

I first assumed that it was one specific augmentation layer that caused the problems, but after trying them one-by-one I found out that it is the combination of layers that makes the problems. So, I started to think about possible solutions and I also tried to use the Sequential model from keras to combine the layers instead of the Pipeline layer, but the result remained the same.

I found that the random seed is the only parameter which can be used to "control and sync" the augmentation of the images and masks, but I already had ensured that I always used the same seed. So, I started digging into the source code of the different augmentation layers and I found that most of the ones that I was using implement a `transform_segmentation_masks()` method which is however not used if the layers are called as described in the tutorial. This is because, in order to enforce the call of this method, images and masks must be passed to the same augmentation layer object as a dictionary with the keys “images” and “segmentation_masks” instead of using two different augmentation layer objects for images and masks. However, I had not seen this type of call using a dictionary in any tutorial, neither for Tensorflow nor for Keras. Nevertheless, I decided to change my code to make use of the `transform_segmentation_masks()` method, as I hoped that if such a method already existed, it would also process the images and masks correctly and thus avoid the mismatches.

Unfortunately, this was not directly the case, because some of the augmentation layers changed the masks to float32 data types although the input was uint8. Even with layers such as “RandomFlip”, which should not change or interpolate the data at all, but only shift it. So, I had to wrap all layers again in a custom layer, which castes the data back to the input data type before returning it:

```python

class ApplyAndCastAugmenter(BaseImageAugmentationLayer):

def __init__(self, other_augmenter, **kwargs):

super().__init__(**kwargs)

self.other_augmenter = other_augmenter

def call(self, inputs):

output_dtypes = {}

is_dict = isinstance(inputs, dict)

if is_dict:

for key, value in inputs.items():

output_dtypes[key] = value.dtype

else:

output_dtypes = inputs.dtype

outputs = self.other_augmenter(inputs)

if is_dict:

for key in outputs.keys():

outputs[key] = keras.ops.cast(outputs[key], output_dtypes[key])

else:

outputs = keras.ops.cast(outputs, output_dtypes)

return outputs

```

With this (admittedly unpleasant) work-around, I was able to fix all the remaining problems. Images and masks finally matched perfectly after augmentation and this was immediately noticeable in a significant performance improvement in the training of my segmentation model.

For the future I would now like to see that wrapping with my custom `ApplyAndCastAugmenter` is no longer necessary but is handled directly by the `transform_segmentation_masks()` method and it would also be good to have a proper tutorial on image augmentation for semantic segmentation or to update the old tutorials. | open | 2025-02-04T11:08:42Z | 2025-03-12T08:32:24Z | https://github.com/keras-team/keras/issues/20857 | [

"type:Bug"

] | RabJon | 3 |

jschneier/django-storages | django | 567 | The root folder is unsupported | I run the following code:

```

from django.core.management import call_command

def create_db_backup():

call_command('dbbackup', compress=True, clean=True)

```

the backup creates and is uploaded to my dropbox account but at the end I get the following error:

```

[2018-08-25 17:18:13,416: INFO/ForkPoolWorker-2] Writing file to default-2018-08-25-17-17-50.psql.gz

[2018-08-25 17:18:13,417: INFO/ForkPoolWorker-2] Request to files/get_metadata

[2018-08-25 17:18:13,951: INFO/ForkPoolWorker-2] Request to files/upload_session/start

[2018-08-25 17:18:32,623: INFO/ForkPoolWorker-2] Request to files/upload_session/append_v2

[2018-08-25 17:18:50,764: INFO/ForkPoolWorker-2] Request to files/upload_session/append_v2

[2018-08-25 17:21:52,031: INFO/ForkPoolWorker-2] Request to files/upload_session/finish

[2018-08-25 17:21:54,395: INFO/ForkPoolWorker-2] Request to files/get_metadata

BadInputError: BadInputError('d8e906319f920d75ffc769f3fe86fba4', 'Error in call to API function "files/get_metadata": request body: path: The root folder is unsupported.')

```

If I set any directory in root_path of DBBACKUP_STORAGE_OPTIONS, e.g.:

```

DBBACKUP_STORAGE_OPTIONS = {

'oauth2_access_token': 'token',

'root_path': '/dir/'

}

```

then the error changes to: TypeError: 'FolderMetadata' object is not subscriptable. The same as in the issue: https://github.com/jschneier/django-storages/issues/396

Do you have any idea what may be wrong? Below I enclose information about my configuration.

Requirements:

```

dropbox==9.0.0

django-dbbackup==3.2.0

django-storages==1.6.6

```

Settings:

```

DBBACKUP_STORAGE = 'storages.backends.dropbox.DropBoxStorage'

DBBACKUP_STORAGE_OPTIONS = {

'oauth2_access_token': 'token'

}

``` | closed | 2018-08-25T17:45:17Z | 2019-09-08T04:49:31Z | https://github.com/jschneier/django-storages/issues/567 | [

"dropbox"

] | pwierzgala | 3 |

davidsandberg/facenet | tensorflow | 459 | Is SVM a good choice for large amount class classification? | I am trying to use SVM to classify unknown image. In my use case, I have almost 1400 classes of people to classify. But when I apply SVM on my pipeline the performance is so bad that I really doubt that if SVM is the best choice.

My pipeline is shown as below.

1. Collect images in each class

The amount of face of each person is not fixed, from about max 600 to min 20. But the angle of face is not fixed also.

2. Embed by facenet & remove outlier

After collecting all class image, input all of them to facenet class by class. I remove outlier in each class using [Local Outlier Factor](http://scikit-learn.org/stable/modules/generated/sklearn.neighbors.LocalOutlierFactor.html) and set n_neighbors=10

3. Apply SVM as final classifier

Here is the most difficult stage I meet. After survey on the internet I think maybe the reason is that output of facenet 128 dimension vector which is much less than the number of classes.

Apart from the reason I mentioned (dimension difference between embedding vector & amount of classes) I think there are still somewhere may cause such problem.

- the size of bounding box is too small or resolution may too low

- the algorithm I choose or some parameter can be tuned

I hope I describe my problem well enough for everyone to understand. If there is still some information I need to provide, please let me know. Thank you.

| closed | 2017-09-17T09:53:56Z | 2017-11-07T02:49:00Z | https://github.com/davidsandberg/facenet/issues/459 | [] | posutsai | 4 |

google-research/bert | nlp | 609 | Order of classes in test_results.tsv | When using BERT to make a prediction, I can see the prediction probabilities in the output file test_results.tsv, but I am not sure which is the order of the classes. How can I determine that? | open | 2019-04-28T20:03:57Z | 2019-07-25T18:47:59Z | https://github.com/google-research/bert/issues/609 | [] | Crista23 | 1 |

dpgaspar/Flask-AppBuilder | flask | 1,645 | How can a flask Addon overwrite a template? | Sorry to post here but no result in other places like Stack Overflow or the mailing list.

I created a flask addon using "flask fab create-addon".

I would like to change the template appbuilder/general/security/login_oauth.html of a third-party app so I have in my addon:

```

templates

appbuilder

general

security

login_oauth.html

```

But when I load the third-party application my version of login_oauth.html is not loaded. I tried registering a blueprint as in [this post](https://stackoverflow.com/questions/32415245/how-to-load-templates-from-flask-extension) with the following code:

```

from flask import Blueprint

bp = Blueprint('fab_addon_fslogin', __name__, template_folder='templates')

class MyAddOnManager(BaseManager):

def __init__(self, appbuilder):

"""

Use the constructor to setup any config keys specific for your app.

"""

super(MyAddOnManager, self).__init__(appbuilder)

self.appbuilder.get_app.config.setdefault("MYADDON_KEY", "SOME VALUE")

self.appbuilder.register_blueprint(bp)

def register_views(self):

"""

This method is called by AppBuilder when initializing, use it to add you views

"""

pass

def pre_process(self):

pass

def post_process(self):

pass

```

But register_blueprint(bp) return:

```

File "/home/cquiros/data/projects2017/personal/software/superset/addons/fab_addon_fslogin/fab_addon_fslogin/manager.py", line 24, in __init__

self.appbuilder.register_blueprint(bp)

File "/home/cquiros/data/projects2017/personal/software/superset/env_superset/lib/python3.8/site-packages/Flask_AppBuilder-3.3.0-py3.8.egg/flask_appbuilder/base.py", line 643, in register_blueprint

baseview.create_blueprint(

AttributeError: 'Blueprint' object has no attribute 'create_blueprint'

``` | closed | 2021-05-25T11:14:46Z | 2021-09-06T20:42:00Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/1645 | [

"stale"

] | qlands | 2 |

deeppavlov/DeepPavlov | nlp | 896 | Bert_Squad context length longer than 512 tokens sequences | Hi,

Currently I have a long context and the answer cannot be extracted from the context when the context exceeds a certain length. Is there any python code to deal with long length context.

P.S. I am using BERT-based model for context question answering. | closed | 2019-06-23T14:37:55Z | 2020-05-13T11:41:45Z | https://github.com/deeppavlov/DeepPavlov/issues/896 | [] | Chunglwc | 2 |

python-arq/arq | asyncio | 220 | New release? | Hello,

Seems arq wasn't updated since October. Also, there's some good PR's to be reviewed.

@samuelcolvin do you plan any updates to project? | closed | 2020-12-21T21:51:04Z | 2021-04-26T11:55:53Z | https://github.com/python-arq/arq/issues/220 | [] | erakli | 7 |

modelscope/modelscope | nlp | 581 | AttributeError: SiameseUiePipeline: 'torch.device' object has no attribute 'lower' | ModelScope中我在使用'damo/nlp_structbert_siamese-uninlu_chinese-base'模型时,根据模型示例finetune微调代码测试,报错:AttributeError: SiameseUiePipeline: 'torch.device' object has no attribute 'lower' | closed | 2023-10-10T06:22:25Z | 2023-12-20T13:18:23Z | https://github.com/modelscope/modelscope/issues/581 | [] | CourseAI2015 | 5 |

blacklanternsecurity/bbot | automation | 1,533 | HTTP_RESPONSE header_dict cant handle multiple headers with the same value | This is most impactful with set-cookie headers, where only the last set-cookie cookie will end up in the dict, and multiple headers are often expected | closed | 2024-07-07T18:32:19Z | 2024-07-08T00:52:57Z | https://github.com/blacklanternsecurity/bbot/issues/1533 | [

"bug"

] | liquidsec | 1 |

pytest-dev/pytest-cov | pytest | 425 | pytest and local pytest plugin running in two separate processes, talking over http | This is first general approach, I can go to details, but that would require some time since I need to filter out some sensitive data/code. But I summarise the best I can for the moment.

Essentially, we have a local pytest plugin api in `tests/fix_api.py` (snippet):

```python

"""

Pytest plugin to interact with the api at http level

"""

import json

from urllib.parse import urljoin

import pytest

import requests

def pytest_addoption(parser):

@pytest.fixture(scope="session")

def api_url(request):

@pytest.fixture(scope="function")

def api(api_url):

# Users readily available in the test suite

users = {

"demo": "demo123",

}

class Api:

def __init__(self, url=None):

def get(self, user, url, data=None, headers=None):

return self._request("get", user, url, data=data, headers=headers)

def put(self, user, url, data=None, headers=None):

def post(self, user, url, data=None, headers=None):

def patch(self, user, url, data=None, headers=None):

def delete(self, user, url, data=None, headers=None):

def _request(self, method_name, user, url, data=None, headers=None):

def login(self, user, password=None):

def logout(self, user):

```

And our `conftest.py`:

```python

import pytest

pytest_plugins = ("tests.fix_api",)

```

Then, a test example `test_auth.py`:

```python

def test_login_logout(api):

resp = api.get(None, "/is_logged_in")

assert resp.status_code == 401

assert resp.json() == {"error": "Unauthenticated"}

resp = api.post(None, "/login", {"user": "demo", "password": "demo1234"})

assert resp.status_code == 401

assert resp.json() == {"error": "Invalid Credentials. Please try again."}

resp = api.get(None, "/is_logged_in")

assert resp.status_code == 401

resp = api.post(None, "/login", {"user": "demo", "password": "demo123"})

assert resp.status_code == 200

assert resp.json() == {"success": "Authenticated", "username": "demo"}

resp = api.get(None, "/is_logged_in")

assert resp.status_code == 200

assert resp.json() == {"username": "demo"}

resp = api.post(None, "/logout")

assert resp.status_code == 200

assert resp.json() == {"success": "logged out"}

resp = api.get(None, "/is_logged_in")

assert resp.status_code == 401

```

Running `pytest tests/test_auth.py --cov --cov-report term-missing` and I got:

```

views/auth.py 45 26 42% 20-24, 30-39, 48-55, 63-65, 71

```

Basically, none of the functions `def login()`, `def logout()` and `def is_logged_in()` is being reported as covered.

Since pytest and local pytest plugin are running in two separate processes and talking over http, I'm wondering how could I make, if possible, coverage to work here.

| closed | 2020-08-17T20:29:23Z | 2020-09-06T07:03:52Z | https://github.com/pytest-dev/pytest-cov/issues/425 | [] | alanwilter | 13 |

robotframework/robotframework | automation | 4,523 | Unit test `test_parse_time_with_now_and_utc` fails around DST change | Very minor but reporting as it was creating me headaches, running tests just exactly _today_.

It seems to me that the `test_parse_time_with_now_and_utc` test will fail if run just on the day of DST change (ie. **today**) or the day before as they are doing comparisons with +/- 1 day and a hardcoded seconds difference value, which is incorrect if the DST happens between the two cases compared.

Specifically today (last night DST changed here) I see failing those tests:

```

('now - 1 day 100 seconds',-86500),

('NOW - 1D 10H 1MIN 10S', -122470)]:

```

Probably yesterday (so just before DST change) would be the ones with `+`.

As written above I'm not sure this is worth investigating and fixing, but just wanted to report as it gave me by chance some worries while rebuilding and retesting the new version of Robotframework. I will just redo the tests tomorrow ;-)

**Version Information:**

Tried with both 6.0 and 5.0.1

**Steps to reproduce:**

Run the unit tests, specifically on the day immediately before or after DST change.

**Error message and traceback:**

```

======================================================================

FAIL: test_parse_time_with_now_and_utc (test_robottime.TestTime)

----------------------------------------------------------------------

Traceback (most recent call last):

File "/home/foo/rpmbuild/BUILD/robotframework-6.0/utest/utils/test_robottime.py", line 341, in test_parse_time_with_now_and_utc

assert_true(expected <= parsed <= expected + 1),

File "/home/foo/rpmbuild/BUILD/robotframework-6.0/utest/../src/robot/utils/asserts.py", line 115, in assert_true

_report_failure(msg)

File "/home/foo/rpmbuild/BUILD/robotframework-6.0/utest/../src/robot/utils/asserts.py", line 218, in _report_failure

raise AssertionError()

AssertionError

----------------------------------------------------------------------

```

| closed | 2022-10-30T11:37:08Z | 2022-10-30T18:36:13Z | https://github.com/robotframework/robotframework/issues/4523 | [

"bug",

"priority: low"

] | fedepell | 1 |

polarsource/polar | fastapi | 4,782 | Subscription (Webhook): Renewal event | ### Description

Currently, you need to listen to `order.created` with `billing_reason=subscription_cycle` to get notified about subscription renewals. Trigger a `subscription.renewed` event to offer a more intuitive and self-explanatory webhook event. | open | 2025-01-03T15:25:39Z | 2025-01-03T15:25:43Z | https://github.com/polarsource/polar/issues/4782 | [

"feature",

"changelog",

"dx"

] | birkjernstrom | 0 |

tflearn/tflearn | data-science | 928 | IOError: [Errno socket error] [Errno 110] Connection timed out | hello, When I run `python convnet_mnist.py`, it still shows error:

```

File "/home/xxx/local/anaconda2/lib/python2.7/socket.py", line 575, in create_connection

raise err

IOError: [Errno socket error] [Errno 110] Connection timed out

```

is the url bad?

| open | 2017-10-10T04:07:16Z | 2017-10-10T04:07:16Z | https://github.com/tflearn/tflearn/issues/928 | [] | PapaMadeleine2022 | 0 |

aimhubio/aim | data-visualization | 3,286 | Remove or prune artifacts along with the run | ## 🚀 Feature

When deleting a run, artifacts should be removed too.

If that is not possible, maybe `aim storage prune` could remove dangling artifacts ?

### Motivation

Not filling up my hard drive with checkpoints.

### Additional context

https://github.com/aimhubio/aim/pull/3164 https://github.com/aimhubio/aim/issues/3234 | open | 2025-02-07T15:57:52Z | 2025-02-22T21:48:00Z | https://github.com/aimhubio/aim/issues/3286 | [

"type / enhancement"

] | nlgranger | 2 |

PaddlePaddle/models | computer-vision | 5,696 | 希望能够给这个页面加一个目录,要不然从上往下翻非常不方便 | https://github.com/PaddlePaddle/models/blob/release/2.4/docs/official/README.md

祝好~ | open | 2023-01-03T06:37:34Z | 2024-02-26T05:07:48Z | https://github.com/PaddlePaddle/models/issues/5696 | [] | we-enjoy-today | 0 |

numba/numba | numpy | 9,598 | NumPy 2.0 incompatibility - A use of `np.complex_` still remains | When using `np.corrcoef`, the following error is produced with NumPy 2.0:

```

AttributeError: `np.complex_` was removed in the NumPy 2.0 release. Use `np.complex128` instead.

```

This is because its implementation still uses `np.complex_`: https://github.com/numba/numba/blob/556545c5b2b162574c600490a855ba8856255154/numba/np/arraymath.py#L2904 | closed | 2024-05-31T12:04:16Z | 2024-06-10T11:33:03Z | https://github.com/numba/numba/issues/9598 | [

"bug - failure to compile",

"NumPy 2.0"

] | gmarkall | 0 |

python-security/pyt | flask | 46 | Trim the "Reassigned in:" nodes to the ones that are relevant | So if we have the following code:

```python

@app.route('/menu', methods=['POST'])

def menu():

param = request.form['suggestion']

command = 'echo ' + param + ' >> ' + 'menu.txt'

hey = 'echo ' + param + ' >> ' + 'menu.txt'

yo = 'echo ' + hey + ' >> ' + 'menu.txt'

subprocess.call(command, shell=True)

with open('menu.txt','r') as f:

menu = f.read()

return render_template('command_injection.html', menu=menu)

```

We show the vulnerability output as:

```

1 vulnerability found:

Vulnerability 1:

File: example/vulnerable_code/command_injection.py

> User input at line 15, trigger word "form[":

param = request.form['suggestion']

Reassigned in:

File: example/vulnerable_code/command_injection.py

> Line 16: command = 'echo ' + param + ' >> ' + 'menu.txt'

File: example/vulnerable_code/command_injection.py

> Line 17: hey = 'echo ' + param + ' >> ' + 'menu.txt'

File: example/vulnerable_code/command_injection.py

> Line 18: yo = 'echo ' + hey + ' >> ' + 'menu.txt'

File: example/vulnerable_code/command_injection.py

> reaches line 20, trigger word "subprocess.call(":

subprocess.call(command,shell=True)

```

Where we don't really care about Line 17 and 18 in the output, right?

I ran into this while doing https://github.com/python-security/pyt/issues/45, once I fix this then I can make the PR fixing both of them. | closed | 2017-05-22T23:36:39Z | 2017-06-05T17:36:28Z | https://github.com/python-security/pyt/issues/46 | [] | KevinHock | 5 |

awtkns/fastapi-crudrouter | fastapi | 184 | Overriding GET route does not properly remove existing route | Just stumbled upon this (and admit I haven't actually tested it yet, apologies for that) but it _looks_ like `CRUDGenerator.get` would not properly remove its existing GET route when overriding it:

https://github.com/awtkns/fastapi-crudrouter/blob/9b829865d85113a3f16f94c029502a9a584d47bb/fastapi_crudrouter/core/_base.py#L149

Looks like a typo to me - shouldn't it rather be `"GET"` instead of `"Get"` here?

The corresponding removal code compares to `route.methods` and IIRC FastAPI does uppercase all the methods:

https://github.com/awtkns/fastapi-crudrouter/blob/26702027aa0b823c105ee1b96e1b2b3f46a3b742/fastapi_crudrouter/core/_base.py#L170-L178

Best regards,

Holger

| open | 2023-03-29T14:19:07Z | 2023-03-29T14:19:07Z | https://github.com/awtkns/fastapi-crudrouter/issues/184 | [] | hjoukl | 0 |

keras-team/autokeras | tensorflow | 1,466 | How to furnish AutoKeras in Anaconda on M1-chip MacBook Air? | First, sorry for my basic question.

This is my first time to use MacOS 11.1, and I wonder how to install and build up a new AutoKeras-compatible virtual environment.

Could you kindly let me about it?

During a "usual" formalities below, the kernel dies :<

I'm using Jupyter Notebook in Anaconda, and the environment is virtual one.

---

import pandas as pd

import gc

import random

import numpy as np

from datetime import datetime, date, timedelta

import math

import re

import sys

import codecs

import pickle

import autokeras as ak

| closed | 2020-12-18T11:12:49Z | 2021-09-28T22:06:35Z | https://github.com/keras-team/autokeras/issues/1466 | [

"wontfix"

] | jisho-iemoto | 9 |

huggingface/transformers | pytorch | 36,045 | Optimization -OO crashes docstring handling | ### System Info

transformers = 4.48.1

Python = 3.12

### Who can help?

_No response_

### Information

- [ ] The official example scripts

- [x] My own modified scripts

### Tasks

- [ ] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own task or dataset (give details below)

### Reproduction

Use the `pipeline_flux.calculate_shift` function with` python -OO`

Code runs fine with no Python interpreter optimization but crashes with `-OO` with:

```

File "/home/me/Documents/repo/train.py", line 25, in <module>

from diffusers.pipelines.flux.pipeline_flux import calculate_shift

File "/home/me/Documents/repo/.venv/lib/python3.12/site-packages/diffusers/pipelines/flux/pipeline_flux.py", line 20, in <module>

from transformers import (

File "<frozen importlib._bootstrap>", line 1412, in _handle_fromlist

File "/home/me/Documents/repo/.venv/lib/python3.12/site-packages/transformers/utils/import_utils.py", line 1806, in __getattr__

value = getattr(module, name)

^^^^^^^^^^^^^^^^^^^^^

File "/home/me/Documents/repo/.venv/lib/python3.12/site-packages/transformers/utils/import_utils.py", line 1805, in __getattr__

module = self._get_module(self._class_to_module[name])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/home/me/Documents/repo/.venv/lib/python3.12/site-packages/transformers/utils/import_utils.py", line 1819, in _get_module

raise RuntimeError(

RuntimeError: Failed to import transformers.models.clip.modeling_clip because of the following error (look up to see its traceback):

'NoneType' object has no attribute 'split'

```

This is related to the removal of docstrings with `-OO`.

### Expected behavior

I'd expect no crash.

I assume

`lines = func_doc.split("\n")`

could be replaced with:

`lines = func_doc.split("\n") if func_doc else []` | closed | 2025-02-05T11:31:43Z | 2025-02-06T15:31:24Z | https://github.com/huggingface/transformers/issues/36045 | [

"bug"

] | donthomasitos | 3 |

ultralytics/ultralytics | deep-learning | 18,769 | Can yolo11 count objects in Classification? | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hi! I want to know if a classification model can count the classes. Like there are multiple bottles in a picture and classification only return "bottle". Can the classification model be use to count how many bottles are present. Thanks.

### Additional

_No response_ | open | 2025-01-20T06:05:16Z | 2025-01-20T08:40:11Z | https://github.com/ultralytics/ultralytics/issues/18769 | [

"question",

"classify"

] | Taimoor505 | 6 |

sczhou/CodeFormer | pytorch | 384 | Macos Video Enhancement Issue | Enviroment:

MacOS Monterey 12.2.1

Command:

sudo python inference_codeformer.py --bg_upsampler realesrgan --face_upsample -w 0.1 --input_path /Users/justinhan/Desktop/test/7.mp4

Error info:

[vost#0:0/rawvideo @ 0x7fb4824118c0] Error submitting a packet to the muxer: Broken pipe

Last message repeated 1 times

[out#0/rawvideo @ 0x7fb48240e840] Error muxing a packet

[out#0/rawvideo @ 0x7fb48240e840] Task finished with error code: -32 (Broken pipe)

[out#0/rawvideo @ 0x7fb48240e840] Terminating thread with return code -32 (Broken pipe)

zsh: killed sudo python inference_codeformer.py --bg_upsampler realesrgan --face_upsample

[out#0/rawvideo @ 0x7fb48240e840] Error writing trailer: Broken pipe

[out#0/rawvideo @ 0x7fb48240e840] Error closing file: Broken pipe | open | 2024-07-01T07:39:46Z | 2024-07-01T07:39:46Z | https://github.com/sczhou/CodeFormer/issues/384 | [] | qunge5211314 | 0 |