repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

aio-libs/aiomysql | sqlalchemy | 63 | Echo option for default cursor | This line:

https://github.com/aio-libs/aiomysql/blob/master/aiomysql/connection.py#L365

``` python

cur = cursor(self, self._echo) if cursor else self.cursorclass(self)

```

We should pass echo param to cursorclass too, don't we?

| closed | 2016-02-16T14:07:02Z | 2017-04-17T10:28:51Z | https://github.com/aio-libs/aiomysql/issues/63 | [] | tvoinarovskyi | 2 |

mwaskom/seaborn | pandas | 3,263 | Color not changing | Hi! I'm trying to change colors in seaborn but it's having no effect?

<img width="706" alt="Captura de pantalla 2023-02-15 a la(s) 21 53 02" src="https://user-images.githubusercontent.com/119420090/219230518-91d40820-51b1-4d11-b724-408e0b5525e1.png">

| closed | 2023-02-16T00:58:04Z | 2023-02-16T02:23:52Z | https://github.com/mwaskom/seaborn/issues/3263 | [] | pablotucu | 1 |

coqui-ai/TTS | deep-learning | 2,775 | [Bug] training a new model stops with "Decoder stopped with `max_decoder_steps` 10000" | ### Describe the bug

Hello!

I am running TTS 0.15.6 and try to train a new voice basing on 22,000 wav files. The training process seems to work but stops after a while, sometimes after 30 minutes and sometimes after 2 hours. Please see me log here:

```

[...]

--> STEP: 14

| > decoder_loss: 16.613059997558594 (17.25351878574916)

| > postnet_loss: 17.12180519104004 (21.706623349870956)

| > stopnet_loss: 0.6667962074279785 (0.6582207466874804)

| > decoder_coarse_loss: 15.918059349060059 (16.576676845550537)

| > decoder_ddc_loss: 0.0008170512155629694 (0.002927447099604511)

| > ga_loss: 0.0023095402866601944 (0.006352203565516642)

| > decoder_diff_spec_loss: 1.2021437883377075 (1.1472604700497218)

| > postnet_diff_spec_loss: 0.7060739398002625 (0.7125482303755624)

| > decoder_ssim_loss: 0.8515534400939941 (0.8743813676493508)

| > postnet_ssim_loss: 0.7912083864212036 (0.8292492159775325)

| > loss: 13.979524612426758 (15.465778078351702)

| > align_error: 0.9930884041823447 (0.9810643588259284)

warning: audio amplitude out of range, auto clipped.

--> EVAL PERFORMANCE

| > avg_loader_time: 0.00717801707131522 (+0.003781165395464216)

| > avg_decoder_loss: 17.25351878574916 (-1.5459022521972656)

| > avg_postnet_loss: 21.706623349870956 (-0.7187363760811927)

| > avg_stopnet_loss: 0.6582207466874804 (-0.1437307809080396)

| > avg_decoder_coarse_loss: 16.576676845550537 (-1.278356620243617)

| > avg_decoder_ddc_loss: 0.002927447099604511 (+0.00029547616473532155)

| > avg_ga_loss: 0.006352203565516642 (-2.3055106534489687e-05)

| > avg_decoder_diff_spec_loss: 1.1472604700497218 (+0.06803790586335312)

| > avg_postnet_diff_spec_loss: 0.7125482303755624 (+0.019743638379233208)

| > avg_decoder_ssim_loss: 0.8743813676493508 (-0.000993796757289389)

| > avg_postnet_ssim_loss: 0.8292492159775325 (-0.00942203402519226)

| > avg_loss: 15.465778078351702 (-1.0101798602512932)

| > avg_align_error: 0.9810643588259284 (-0.0001967931166291237)

| > Synthesizing test sentences.

> Decoder stopped with `max_decoder_steps` 10000

> Decoder stopped with `max_decoder_steps` 10000

> Decoder stopped with `max_decoder_steps` 10000

> Decoder stopped with `max_decoder_steps` 10000

> Decoder stopped with `max_decoder_steps` 10000

```

In the past this issue was already reported, but the thread was closed but the issue not fixed. Are there any updates?

### To Reproduce

```

import os

import sklearn

from TTS.config.shared_configs import BaseAudioConfig

from trainer import Trainer, TrainerArgs

from TTS.tts.configs.shared_configs import BaseDatasetConfig, CharactersConfig

from TTS.tts.configs.tacotron2_config import Tacotron2Config

from TTS.tts.datasets import load_tts_samples

from TTS.tts.models.tacotron2 import Tacotron2

from TTS.utils.audio import AudioProcessor

from TTS.tts.utils.text.tokenizer import TTSTokenizer

output_path = os.path.dirname(os.path.abspath(__file__))

dataset_config = BaseDatasetConfig(formatter="thorsten", meta_file_train="metadata.csv", path="/home/marc/Desktop/AI/Voice_Cloning3/")

character_config = CharactersConfig(

characters="ABCDEFGHIJKLMNOPQRSTUVWXYZ!',-.:;?abcdefghijklmnopqrstuvwxyzßäéöü̈‒–—‘’“„ ",

punctuations="!'(),-.:;? \u2012\u2013\u2014\u2018\u2019",

pad="_",

eos="~",

bos="^",

phonemes=" a b d e f h i j k l m n o p r s t u v w y z ç ð ø œ ɑ ɒ ɔ ɛ ɡ ɪ ɹ ʃ ʊ ʌ ʏ!!!!!!!?,....:;??!abdefhijklmnoprstuvwxyzçøŋœɐɑɒɔəɛɜɡɪɹɾʃʊʌʏʒː̩̃"

)

audio_config = BaseAudioConfig(

stats_path="/home/marc/Desktop/AI/Voice_Cloning3/stats-thorsten-dec2021-22k.npy",

sample_rate=22050,

do_trim_silence=True,

trim_db=60.0,

signal_norm=False,

mel_fmin=50,

spec_gain=1.0,

log_func="np.log",

ref_level_db=20,

preemphasis=0.0,

)

config = Tacotron2Config( # This is the config that is saved for the future use

audio=audio_config,

batch_size=40, # BS of 40 and max length of 10s will use about 20GB of GPU memory

eval_batch_size=16,

num_loader_workers=4,

num_eval_loader_workers=4,

run_eval=True,

test_delay_epochs=-1,

r=6,

gradual_training=[[0, 6, 64], [10000, 4, 32], [50000, 3, 32], [100000, 2, 32]],

double_decoder_consistency=True,

epochs=1000,

text_cleaner="phoneme_cleaners",

use_phonemes=True,

phoneme_language="de",

phoneme_cache_path=os.path.join(output_path, "phoneme_cache"),

precompute_num_workers=8,

print_step=25,

print_eval=True,

mixed_precision=False,

test_sentences=[

"Es hat mich viel Zeit gekostet ein Stimme zu entwickeln, jetzt wo ich sie habe werde ich nicht mehr schweigen.",

"Sei eine Stimme, kein Echo.",

"Es tut mir Leid David. Das kann ich leider nicht machen.",

"Dieser Kuchen ist großartig. Er ist so lecker und feucht.",

"Vor dem 22. November 1963.",

],

# max audio length of 10 seconds, feel free to increase if you got more than 20GB GPU memory

max_audio_len=22050 * 10,

output_path=output_path,

datasets=[dataset_config],

)

ap = AudioProcessor(**config.audio.to_dict())

ap = AudioProcessor.init_from_config(config)

tokenizer, config = TTSTokenizer.init_from_config(config)

train_samples, eval_samples = load_tts_samples(

dataset_config,

eval_split=True,

eval_split_max_size=config.eval_split_max_size,

eval_split_size=config.eval_split_size,

)

model = Tacotron2(config, ap, tokenizer, speaker_manager=None)

trainer = Trainer(

TrainerArgs(), config, output_path, model=model, train_samples=train_samples, eval_samples=eval_samples

)

trainer.fit()

```

### Expected behavior

_No response_

### Logs

_No response_

### Environment

```shell

TTS 0.15.6

Python 3.9.17

Ubuntu 23.04

cuda/cudnn originally 11.8 but TTS installed into the conda environment

nvidia-cublas-cu11 11.10.3.66

nvidia-cuda-cupti-cu11 11.7.101

nvidia-cuda-nvrtc-cu11 11.7.99

nvidia-cuda-runtime-cu11 11.7.99

nvidia-cudnn-cu11 8.5.0.96

nvidia-cufft-cu11 10.9.0.58

nvidia-curand-cu11 10.2.10.91

nvidia-cusolver-cu11 11.4.0.1

nvidia-cusparse-cu11 11.7.4.91

nvidia-nccl-cu11 2.14.3

nvidia-nvtx-cu11 11.7.91

Hardware AMD 5900X, RTX 4090

```

### Additional context

_No response_ | closed | 2023-07-17T02:47:52Z | 2023-07-20T12:58:24Z | https://github.com/coqui-ai/TTS/issues/2775 | [

"bug"

] | Marcophono2 | 5 |

bloomberg/pytest-memray | pytest | 53 | incompatible with flaky (as used in urllib3) | ## Bug Report

**Current Behavior** if pytest-flaky re-runs a test it fails with `FAILED test/test_demo.py::test_demo - RuntimeError: No more than one Tracker instance can be active at the same time`

**Input Code**

```python

import itertools

import pytest

count = itertools.count().__next__

@pytest.mark.flaky

def test_demo():

if count() <= 0:

assert False

```

**Expected behavior/code**

**Environment**

- Python(s): all versions (tested on 3.11)

```

pytest-memray==1.3.0

flaky==3.7.0

```

**Possible Solution**

<!--- Only if you have suggestions on a fix for the bug -->

**Additional context/Screenshots** Add any other context about the problem here. If

applicable, add screenshots to help explain.

| closed | 2022-11-23T13:30:25Z | 2022-11-23T13:48:27Z | https://github.com/bloomberg/pytest-memray/issues/53 | [] | graingert | 3 |

pandas-dev/pandas | python | 60,517 | DOC: Convert v to conv_val in function for pytables.py | ### Pandas version checks

- [X] I have checked that the issue still exists on the latest versions of the docs on `main` [here](https://pandas.pydata.org/docs/dev/)

### Location of the documentation

pandas\pandas\core\computation\pytables.py

### Documentation problem

Many instances of just v in this function. Wanted to clarify throughout

### Suggested fix for documentation

Change v to conv_val | closed | 2024-12-07T07:58:29Z | 2024-12-09T18:32:32Z | https://github.com/pandas-dev/pandas/issues/60517 | [

"Clean",

"Dependencies"

] | migelogali | 1 |

pbugnion/gmaps | jupyter | 22 | Build a viable pipeline for writing documentation. | This package desperately needs documentation. Unfortunately, the obvious solution of writing IPython notebooks and exporting them to HTML doesn't work. Javascript widgets are not included in the exported HTML, so you can't actually see the maps (see [this](http://nbviewer.ipython.org/github/pbugnion/gmaps/blob/master/examples/ipy3/heatmap_demo.ipynb), for instance).

I think that the solution will be to write a custom 'nbexport' script, but that sounds like a real pain.

| closed | 2014-12-02T17:15:36Z | 2016-06-25T08:37:08Z | https://github.com/pbugnion/gmaps/issues/22 | [] | pbugnion | 2 |

jpadilla/django-rest-framework-jwt | django | 472 | [feature] permit to use custom header instead of `Authorization` | Permit to use another header than `Authorization` to retrieve token.

**Current and suggested behavior**

Current: this module permit only to use the standard header `Authorization`

Suggested: permit to user to define a custom header name

**Why would the enhancement be useful to most users**

For example:

I have a Django API which uses this module to manage authentication with JWT. I run this API in 2 environment: stage and production. Both behind a nginx proxy.

* On production: it works perfectly: my client use the `Authorization` header to give JWT to API

* On stage: I want to protect my stage environment with a password and without any change to API code.

* I configure `basic_auth` in nginx which use the `Authorization` header.

* Like in production, my API uses the `Authorization` header.

* I have a header conflict...

In this example, I want to use a custom header (ex `X-Authorization`) in order to avoid conflict and provide both header for nginx & api authentication.

Example from stackoverflow: https://stackoverflow.com/questions/22229996/basic-http-and-bearer-token-authentication

| open | 2019-03-26T14:01:07Z | 2019-03-26T14:12:08Z | https://github.com/jpadilla/django-rest-framework-jwt/issues/472 | [] | thomasboni | 0 |

liangliangyy/DjangoBlog | django | 686 | 如何自定义后端页面,1.0.0.7文件我翻了一圈,没有找到与后端页面相关的html文件 | <!--

如果你不认真勾选下面的内容,我可能会直接关闭你的 Issue。

提问之前,建议先阅读 https://github.com/ruby-china/How-To-Ask-Questions-The-Smart-Way

-->

**我确定我已经查看了** (标注`[ ]`为`[x]`)

- [X] [DjangoBlog的readme](https://github.com/liangliangyy/DjangoBlog/blob/master/README.md)

- [X] [配置说明](https://github.com/liangliangyy/DjangoBlog/blob/master/bin/config.md)

- [X] [其他 Issues](https://github.com/liangliangyy/DjangoBlog/issues)

----

**我要申请** (标注`[ ]`为`[x]`)

- [ ] BUG 反馈

- [ ] 添加新的特性或者功能

- [X] 请求技术支持

| closed | 2023-10-24T10:00:56Z | 2023-11-06T06:15:20Z | https://github.com/liangliangyy/DjangoBlog/issues/686 | [] | qychui | 1 |

python-restx/flask-restx | api | 451 | Custom Array for Json argument parser | I'm attempting to create a custom array type for argument parsing in the json body. So I created a function and created a schema for it:

```

type_services.__schema__ = {'type':'array','items':{'type':'string'}}

```

and create it with

```

testparser.add_argument(name='services',type=type_services,location='json',required=True)

```

The type seems to work correctly, when I parse the arguments I get a list of strings. However, it doesn't show it as a field in the json body within the swagger documentation. With that being the only parameter is the json body is being shown as '{}'

When checking the swagger.json, it the items type seems to be missing. I added a "firstName" parameter string for comparison and this is the what I get in the swagger.json

```

"/customer/test": {

"parameters": [

{

"name": "payload",

"required": true,

"in": "body",

"schema": {

"type": "object",

"properties": {

"firstName": {

"type": "string"

},

"services": {

"type": "array"

}

}

}

}

],

"post": {

"responses": {

"200": {

"description": "Success"

}

},

"operationId": "post_create",

"tags": [

"customer"

]

}

}

},

```

The type: string portion is missing in the schema. Any suggestions? | open | 2022-06-30T16:25:14Z | 2022-06-30T16:25:14Z | https://github.com/python-restx/flask-restx/issues/451 | [] | Bxmnx | 0 |

jupyterlab/jupyter-ai | jupyter | 668 | Add support for Claude V3 models on AWS Bedrock | ### Problem

Claude V3 was recently announced and AWS Bedrock already provides Claude V3 Sonnet (model id `anthropic.claude-3-sonnet-20240229-v1:0`).

| closed | 2024-03-04T21:06:34Z | 2024-03-07T19:38:37Z | https://github.com/jupyterlab/jupyter-ai/issues/668 | [

"enhancement"

] | DzmitrySudnik | 1 |

labmlai/annotated_deep_learning_paper_implementations | machine-learning | 190 | want to use CelebA dataset,but there is an issue | PLZ | closed | 2023-06-07T13:57:10Z | 2024-06-19T10:47:29Z | https://github.com/labmlai/annotated_deep_learning_paper_implementations/issues/190 | [] | Z0Victor | 2 |

noirbizarre/flask-restplus | flask | 367 | Marshalling a long list of nested objects seems to scale badly | To support my testing, I hacked in the following lines to `model.py`

```python

def __deepcopy__(self, memo):

DEEP_COPY_CALL_COUNT[0] += 1

obj = self.__class__(self.name,

[(key, copy.deepcopy(value, memo)) for key, value in self.items()],

mask=self.__mask__)

obj.__parents__ = self.__parents__

return obj

DEEP_COPY_CALL_COUNT = [0]

```

I then wrote the following tests:

```python

from flask_restplus import Namespace, fields, marshal

from flask_restplus.model import DEEP_COPY_CALL_COUNT

from time import time

from collections import OrderedDict

ns = Namespace('')

thing = ns.model('Thing', {'a': fields.String,

'b': fields.String,

'c': fields.String,

'd': fields.String,

'e': fields.String})

element = ns.model('Element', {'value': fields.Nested(thing)})

single_nested_model = ns.model('Single', {'data': fields.List(fields.Nested(thing))})

double_nested_model = ns.model('Double', {'data': fields.List(fields.Nested(element))})

class Thing(object):

def __init__(self):

self.a = 1

self.b = 1

self.c = 1

self.d = 1

self.e = 1

class Element(object):

def __init__(self):

self.value = Thing()

single_things = OrderedDict({'data': [Thing() for _ in xrange(100000)]})

double_things = OrderedDict({'data': [Element() for _ in xrange(100000)]})

print DEEP_COPY_CALL_COUNT

start = time()

marshal(single_things, single_nested_model)

print time() - start

print DEEP_COPY_CALL_COUNT

DEEP_COPY_CALL_COUNT[0] = 0

print DEEP_COPY_CALL_COUNT

start = time()

marshal(double_things, double_nested_model)

print time() - start

print DEEP_COPY_CALL_COUNT

```

On my machine, the single-nested structure takes around 18 seconds and the doubly-nested one takes about 47. This seems to be a fairly long time. For the singly-nested structure, this resulted in 200,002 calls to `__deepcopy__`, followed by 600,003 for the doubly-nested structure.

Given that I've only increased the nesting level from 2 to 3, I'm not sure why the number of clones would treble here. I'm actually not sure why there's any need to copy the models so frequently at all. Indeed, replacing the `__deepcopy__` method with a simple `return self` seems only to break `ModelTest.test_model_deepcopy`, which just asserts that deepcopy works.

Why does deepcopy get called this many times? Can the marshalling code be reworked into something that's a bit more efficient? | closed | 2017-12-19T16:09:11Z | 2018-01-06T01:24:43Z | https://github.com/noirbizarre/flask-restplus/issues/367 | [] | Ymbirtt | 2 |

horovod/horovod | pytorch | 3,315 | recipe for target 'horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/all' failed | **Environment:**

1. Framework: (TensorFlow, Keras, PyTorch, MXNet):PyTorch

2. Framework version: 1.5.1

3. Horovod version:0.23.0

4. MPI version:

5. CUDA version:10.2

6. NCCL version:2

7. Python version:3.7

8. Spark / PySpark version:

9. Ray version:

10. OS and version:

11. GCC version: 7.5.0

12. CMake version:0.23.0

**Checklist:**

1. Did you search issues to find if somebody asked this question before?

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)?

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)?

4. Did you check if you question is answered in the [troubleshooting guide](https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)?

**Bug report:**

Please describe erroneous behavior you're observing and steps to reproduce it.

running build_ext

-- Could not find CCache. Consider installing CCache to speed up compilation.

-- The CXX compiler identification is GNU 7.3.0

-- Check for working CXX compiler: /home/xcc/anaconda3/envs/lanegcn/bin/x86_64-conda_cos6-linux-gnu-c++

-- Check for working CXX compiler: /home/xcc/anaconda3/envs/lanegcn/bin/x86_64-conda_cos6-linux-gnu-c++ -- works

-- Detecting CXX compiler ABI info

-- Detecting CXX compiler ABI info - done

-- Detecting CXX compile features

-- Detecting CXX compile features - done

-- Build architecture flags: -mf16c -mavx -mfma

-- Using command /home/xcc/anaconda3/envs/lanegcn/bin/python

-- Found CUDA: /usr/local/cuda-10.2 (found version "10.2")

-- Linking against static NCCL library

-- Found NCCL: /usr/include

-- Determining NCCL version from the header file: /usr/include/nccl.h

-- NCCL_MAJOR_VERSION: 2

-- Found NCCL (include: /usr/include, library: /usr/lib/x86_64-linux-gnu/libnccl_static.a)

-- Found NVTX: /usr/local/cuda-10.2/include

-- Found NVTX (include: /usr/local/cuda-10.2/include, library: dl)

-- Found Pytorch: 1.5.1 (found suitable version "1.5.1", minimum required is "1.2.0")

-- HVD_NVCC_COMPILE_FLAGS = --std=c++11 -O3 -Xcompiler -fPIC -gencode arch=compute_30,code=sm_30 -gencode arch=compute_32,code=sm_32 -gencode arch=compute_35,code=sm_35 -gencode arch=compute_37,code=sm_37 -gencode arch=compute_50,code=sm_50 -gencode arch=compute_52,code=sm_52 -gencode arch=compute_53,code=sm_53 -gencode arch=compute_60,code=sm_60 -gencode arch=compute_61,code=sm_61 -gencode arch=compute_62,code=sm_62 -gencode arch=compute_70,code=sm_70 -gencode arch=compute_72,code=sm_72 -gencode arch=compute_75,code=sm_75 -gencode arch=compute_75,code=compute_75

-- Configuring done

CMake Warning at horovod/torch/CMakeLists.txt:81 (add_library):

Cannot generate a safe runtime search path for target pytorch because files

in some directories may conflict with libraries in implicit directories:

runtime library [libcudart.so.10.2] in /home/xcc/anaconda3/envs/lanegcn/lib may be hidden by files in:

/usr/local/cuda-10.2/lib64

Some of these libraries may not be found correctly.

In file included from /usr/local/cuda-10.2/include/driver_types.h:77:0,

from /usr/local/cuda-10.2/include/builtin_types.h:59,

from /usr/local/cuda-10.2/include/cuda_runtime.h:91,

from <command-line>:0:

/usr/include/limits.h:26:10: fatal error: bits/libc-header-start.h: No such file or directory

#include <bits/libc-header-start.h>

^~~~~~~~~~~~~~~~~~~~~~~~~~

compilation terminated.

CMake Error at compatible_horovod_cuda_kernels_generated_cuda_kernels.cu.o.RelWithDebInfo.cmake:219 (message):

Error generating

/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/build/temp.linux-x86_64-3.7/RelWithDebInfo/horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir//./compatible_horovod_cuda_kernels_generated_cuda_kernels.cu.o

horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/build.make:70: recipe for target 'horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/compatible_horovod_cuda_kernels_generated_cuda_kernels.cu.o' failed

make[2]: *** [horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/compatible_horovod_cuda_kernels_generated_cuda_kernels.cu.o] Error 1

make[2]: Leaving directory '/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/build/temp.linux-x86_64-3.7/RelWithDebInfo'

CMakeFiles/Makefile2:215: recipe for target 'horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/all' failed

make[1]: *** [horovod/common/ops/cuda/CMakeFiles/compatible_horovod_cuda_kernels.dir/all] Error 2

make[1]: Leaving directory '/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/build/temp.linux-x86_64-3.7/RelWithDebInfo'

Makefile:83: recipe for target 'all' failed

make: *** [all] Error 2

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/setup.py", line 211, in <module>

'horovodrun = horovod.runner.launch:run_commandline'

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/site-packages/setuptools/__init__.py", line 153, in setup

return distutils.core.setup(**attrs)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/core.py", line 148, in setup

dist.run_commands()

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/dist.py", line 966, in run_commands

self.run_command(cmd)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/site-packages/setuptools/command/install.py", line 61, in run

return orig.install.run(self)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/command/install.py", line 545, in run

self.run_command('build')

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/command/build.py", line 135, in run

self.run_command(cmd_name)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/site-packages/setuptools/command/build_ext.py", line 79, in run

_build_ext.run(self)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/distutils/command/build_ext.py", line 340, in run

self.build_extensions()

File "/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/setup.py", line 101, in build_extensions

cwd=cmake_build_dir)

File "/home/xcc/anaconda3/envs/lanegcn/lib/python3.7/subprocess.py", line 363, in check_call

raise CalledProcessError(retcode, cmd)

subprocess.CalledProcessError: Command '['cmake', '--build', '.', '--config', 'RelWithDebInfo', '--', 'VERBOSE=1']' returned non-zero exit status 2.

----------------------------------------

ERROR: Command errored out with exit status 1: /home/xcc/anaconda3/envs/lanegcn/bin/python -u -c 'import io, os, sys, setuptools, tokenize; sys.argv[0] = '"'"'/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/setup.py'"'"'; __file__='"'"'/tmp/pip-install-skjf0ukf/horovod_598e328bc68b407fb152042944d44da9/setup.py'"'"';f = getattr(tokenize, '"'"'open'"'"', open)(__file__) if os.path.exists(__file__) else io.StringIO('"'"'from setuptools import setup; setup()'"'"');code = f.read().replace('"'"'\r\n'"'"', '"'"'\n'"'"');f.close();exec(compile(code, __file__, '"'"'exec'"'"'))' install --record /tmp/pip-record-h8bnj0_b/install-record.txt --single-version-externally-managed --compile --install-headers /home/xcc/anaconda3/envs/lanegcn/include/python3.7m/horovod Check the logs for full command output.

steps to reproduce it:

HOROVOD_NCCL_INCLUDE=/usr/include HOROVOD_NCCL_LIB=/usr/lib/x86_64-linux-gnu HOROVOD_CUDA_HOME=/usr/local/cuda-10.2 HOROVOD_CUDA_INCLUDE=/usr/local/cuda-10.2/include HOROVOD_GPU_OPERATIONS=NCCL HOROVOD_WITHOUT_GLOO=1 HOROVOD_WITHOUT_MPI=1 HOROVOD_WITHOUT_TENSORFLOW=1 HOROVOD_WITHOUT_MXNET=1 pip install horovod

BTW,the cmake version is 3.10.2,and i do "pip install mpi4py"(in conda env)

| open | 2021-12-13T13:25:36Z | 2021-12-13T14:06:00Z | https://github.com/horovod/horovod/issues/3315 | [

"bug"

] | coco-99-coco | 0 |

ultralytics/ultralytics | python | 19,270 | expected str, bytes or os.PathLike object, not NoneType | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

_No response_

### Bug

I imported the dataset directly from Roboflow, so it should not have problem.

The following is the code I run

from ultralytics import YOLO, checks, hub

checks()

hub.login('hidden')

model = YOLO('https://hub.ultralytics.com/models/DDnZzdKNetoATXL0SY0Q')

results = model.train()

Traceback (most recent call last):

File "C:\Program Files\Python310\lib\site-packages\ultralytics\engine\trainer.py", line 558, in get_dataset

elif self.args.data.split(".")[-1] in {"yaml", "yml"} or self.args.task in {

AttributeError: 'NoneType' object has no attribute 'split'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\Users\a\Desktop\Weight1\Train.py", line 7, in <module>

results = model.train()

File "C:\Program Files\Python310\lib\site-packages\ultralytics\engine\model.py", line 803, in train

self.trainer = (trainer or self._smart_load("trainer"))(overrides=args, _callbacks=self.callbacks)

File "C:\Program Files\Python310\lib\site-packages\ultralytics\engine\trainer.py", line 134, in __init__

self.trainset, self.testset = self.get_dataset()

File "C:\Program Files\Python310\lib\site-packages\ultralytics\engine\trainer.py", line 568, in get_dataset

raise RuntimeError(emojis(f"Dataset '{clean_url(self.args.data)}' error ❌ {e}")) from e

File "C:\Program Files\Python310\lib\site-packages\ultralytics\utils\__init__.py", line 1301, in clean_url

url = Path(url).as_posix().replace(":/", "://") # Pathlib turns :// -> :/, as_posix() for Windows

File "C:\Program Files\Python310\lib\pathlib.py", line 960, in __new__

self = cls._from_parts(args)

File "C:\Program Files\Python310\lib\pathlib.py", line 594, in _from_parts

drv, root, parts = self._parse_args(args)

File "C:\Program Files\Python310\lib\pathlib.py", line 578, in _parse_args

a = os.fspath(a)

TypeError: expected str, bytes or os.PathLike object, not NoneType

### Environment

Ultralytics 8.3.75 🚀 Python-3.10.11 torch-2.5.1+cu118 CUDA:0 (NVIDIA GeForce RTX 2080 Ti, 11264MiB)

Setup complete ✅ (16 CPUs, 15.9 GB RAM, 306.5/446.5 GB disk)

OS Windows-10-10.0.19045-SP0

Environment Windows

Python 3.10.11

Install pip

RAM 15.93 GB

Disk 306.5/446.5 GB

CPU AMD Ryzen 7 5700X 8-Core Processor

CPU count 16

GPU NVIDIA GeForce RTX 2080 Ti, 11264MiB

GPU count 1

CUDA 11.8

numpy ✅ 1.26.4<=2.1.1,>=1.23.0

matplotlib ✅ 3.10.0>=3.3.0

opencv-python ✅ 4.10.0.84>=4.6.0

pillow ✅ 10.4.0>=7.1.2

pyyaml ✅ 6.0.2>=5.3.1

requests ✅ 2.32.3>=2.23.0

scipy ✅ 1.15.1>=1.4.1

torch ✅ 2.5.1+cu118>=1.8.0

torch ✅ 2.5.1+cu118!=2.4.0,>=1.8.0; sys_platform == "win32"

torchvision ✅ 0.20.1+cu118>=0.9.0

tqdm ✅ 4.67.1>=4.64.0

psutil ✅ 6.1.1

py-cpuinfo ✅ 9.0.0

pandas ✅ 2.2.3>=1.1.4

seaborn ✅ 0.13.2>=0.11.0

ultralytics-thop ✅ 2.0.14>=2.0.0

### Minimal Reproducible Example

from ultralytics import YOLO, checks, hub

checks()

hub.login('hidden')

model = YOLO('https://hub.ultralytics.com/models/DDnZzdKNetoATXL0SY0Q')

results = model.train()

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | open | 2025-02-16T22:55:49Z | 2025-02-16T23:15:55Z | https://github.com/ultralytics/ultralytics/issues/19270 | [

"question"

] | felixho789 | 2 |

koaning/scikit-lego | scikit-learn | 366 | [DOCS] Add reference to pyod | It's a related project with *many* outlier detection models. It would help folks if we add a reference in our outlier docs.

https://github.com/yzhao062/pyod

| closed | 2020-06-02T08:22:47Z | 2020-07-08T20:50:16Z | https://github.com/koaning/scikit-lego/issues/366 | [

"good first issue",

"documentation"

] | koaning | 2 |

robinhood/faust | asyncio | 152 | problem with python3.6 | Hello there,

I am trying to use faust for one of my learning project where I am working with GDAL which is compatible up to python3.6 and I find difficulty working with faust 1.0.30 on python3.6 virtual environment. Here is the error I am getting:

```

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/streams.py", line 58, in <module>

from contextvars import ContextVar

ModuleNotFoundError: No module named 'contextvars'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "hello_world.py", line 2, in <module>

app = faust.App(

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/__init__.py", line 229, in __getattr__

object_origins[name], None, None, [name])

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/app/__init__.py", line 1, in <module>

from .base import App

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/app/base.py", line 51, in <module>

from faust.channels import Channel, ChannelT

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/channels.py", line 27, in <module>

from .streams import current_event

File "/home/santosh/project/pinp/env3.6/lib/python3.6/site-packages/faust/streams.py", line 63, in <module>

from aiocontextvars import ContextVar, Context

ModuleNotFoundError: No module named 'aiocontextvars'

```

Could you please let me know how to fix this issue. Thanks in advance!

| open | 2018-08-28T10:09:34Z | 2018-11-09T16:32:05Z | https://github.com/robinhood/faust/issues/152 | [

"Category: Deployment",

"Category: Packaging and Release Management",

"Status: Need Verification"

] | skumarsah | 10 |

microsoft/MMdnn | tensorflow | 16 | Need to update pip installation | When I tried to update my MMdnn by `pip install -U https://github.com/Microsoft/MMdnn/releases/download/0.1.1/mmdnn-0.1.1-py2.py3-none-any.whl

`, it didn't update the version in master now.

For the `setup.py`, I tried it but it looked like I have to add some command after it

```

usage: setup.py [global_opts] cmd1 [cmd1_opts] [cmd2 [cmd2_opts] ...]

or: setup.py --help [cmd1 cmd2 ...]

or: setup.py --help-commands

or: setup.py cmd --help

error: no commands supplied

```

And if I use `pip install git+https://github.com/Microsoft/MMdnn.git@master`, there will be an encoding error:

```

Collecting git+https://github.com/Microsoft/MMdnn.git@master

Cloning https://github.com/Microsoft/MMdnn.git (to master) to /tmp/pip-UM6BrV-build

Complete output from command python setup.py egg_info:

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/tmp/pip-UM6BrV-build/setup.py", line 5, in <module>

with open('README.md', encoding='utf-8') as f:

TypeError: 'encoding' is an invalid keyword argument for this function

```

Can you please explain the usage or update it?

Thanks.

| closed | 2017-12-01T16:46:15Z | 2017-12-05T14:57:30Z | https://github.com/microsoft/MMdnn/issues/16 | [] | seanchung2 | 1 |

roboflow/supervision | machine-learning | 1,182 | HaloAnnotator does not work | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

can you give me a complete code? when i use HaloAnnotator, nothing changes in the image, the detections.mask is None

```python

import cv2

import supervision as sv

from ultralytics import YOLO

filename = '../src/static/dog.png'

image = cv2.imread(filename)

model = YOLO('../models/yolov8x.pt')

results = model(image)[0]

detections = sv.Detections.from_ultralytics(results)

annotated_image = sv.HaloAnnotator().annotate(

scene=image.copy(), detections=detections)

sv.plot_image(annotated_image)

```

### Additional

_No response_ | closed | 2024-05-09T07:33:38Z | 2024-05-09T08:11:32Z | https://github.com/roboflow/supervision/issues/1182 | [

"question"

] | wilsonlv | 1 |

saulpw/visidata | pandas | 2,404 | Scientific notation shown for column with large number even when type is string | **Small description**

**Expected result**

Seeing the original string that is in the csv

**Actual result with screenshot**

If you get an unexpected error, please include the full stack trace that you get with `Ctrl-E`.

**Steps to reproduce with sample data and a .vd**

First try reproducing without any user configuration by using the flag `-N`.

e.g. `echo "abc" | vd -f txt -N`

Please attach the commandlog (saved with `Ctrl-D`) to show the steps that led to the issue.

See [here](http://visidata.org/docs/save-restore/) for more details.

**Additional context**

Please include the version of VisiData and Python.

| closed | 2024-05-12T18:58:40Z | 2024-05-12T20:10:53Z | https://github.com/saulpw/visidata/issues/2404 | [

"bug",

"By Design"

] | jay-babu | 2 |

chezou/tabula-py | pandas | 93 | CalledProcessError | # Summary of your issue

Hello. Recently I want to use the tabula-py to extract multiple tables from a pdf file https://drive.google.com/open?id=10Z5203McD66puNAfy2Or85NpeVUZqbL3 . However, I face the call process error. I read several issues in your GitHub. It seems to be the problem of Java. However, after reinstalling the Java, the problem appears again. I have already added the environment paths. And my OS is Win 10. Thank you!

# Environment

Write and check your environment. Please paste outputs of specific commands if required.

- [ ] Paste the output of `import tabula; tabula.environment_info()` on Python REPL:

```py

tabula.environment_info()

Python version:

3.5.2 |Anaconda 4.2.0 (64-bit)| (default, Jul 5 2016, 11:41:13) [MSC v.1900 64 bit (AMD64)]

Java version:

java version "1.8.0_171"

Java(TM) SE Runtime Environment (build 1.8.0_171-b11)

Java HotSpot(TM) 64-Bit Server VM (build 25.171-b11, mixed mode)

tabula-py version: 1.1.1

platform: Windows-10-10.0.16299-SP0

uname:

uname_result(system='Windows', node='DESKTOP-8ATR4KH', release='10', version='10.0.16299', machine='AMD64', processor='Intel64 Family 6 Model 142 Stepping 9, GenuineIntel')

linux_distribution: ('', '', '')

mac_ver: ('', ('', '', ''), '')

```

If not possible to execute `tabula.environment_info()`, please answer following questions manually.

- [ ] Paste the output of `python --version` command on your terminal: ?

- [ ] Paste the output of `java -version` command on your terminal: ?

- [ ] Does `java -h` command work well?; Ensure your java command is included in `PATH`

- [ ] Write your OS and it's version: ?

Providing PDF would be really helpful to resolve the issue.

- [ ] (Optional, but really helpful) Your PDF URL: ?

# What did you do when you faced the problem?

Provide your information to reproduce the issue.

## Code:

```

import tabula

df = tabula.read_pdf("D:/Research Assistant/Task/2011.pdf", pages=2, multiple_tables=True)

```

## Expected behavior:

The df contains the tables.

## Actual behavior:

```

CalledProcessError: Command '['java', '-jar', 'C:\\Users\\yipin\\Anaconda3\\lib\\site-packages\\tabula\\tabula-1.0.1-jar-with-dependencies.jar', '--pages', '2', '--guess', 'D:/Research Assistant/Task/2011.pdf']' returned non-zero exit status 1

```

## Related Issues:

| closed | 2018-05-22T00:25:25Z | 2021-07-15T06:32:27Z | https://github.com/chezou/tabula-py/issues/93 | [] | yipinlyu | 6 |

PokemonGoF/PokemonGo-Bot | automation | 5,655 | Conditions to enable Sniper | ### Short Description

Add a set of conditions similar to MoveToMapPokemon in Sniper

like:

"max_sniping distance": 10000,

"max__walking_distance": 500,

"min_time": 60,

"min_ball": 50,

"prioritize_vips": true,

Also, add a minimum great ball and minimum ultra ball condition to both sniping tasks.

### How it would help others

Sniping with only a few pokeballs and no greatballs to catch a dragonite would end up with the player having no balls if the pokemon doesn't vanish in time.

| closed | 2016-09-24T12:07:23Z | 2016-09-24T23:44:04Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5655 | [] | abhinavagrawal1995 | 5 |

horovod/horovod | tensorflow | 3,143 | [Elastic Horovod] It will loss some indices of processed samples in hvd.elastic.state when some nodes dropped | **Environment:**

1. Framework: PyTorch

2. Framework version: 1.7.0+cu101

3. Horovod version: 0.22.1

**Checklist:**

1. Did you search issues to find if somebody asked this question before?

yes

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)?

yes

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)?

yes

4. Did you check if you question is answered in the [troubleshooting guide](https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)?

yes

**Bug report:**

horovod/torch/elastic/state.py

```python

class SamplerStateHandler(StateHandler):

def __init__(self, sampler):

super().__init__(sampler)

self._saved_sampler_state = copy.deepcopy(self.value.state_dict())

def save(self):

self._saved_sampler_state = copy.deepcopy(self.value.state_dict())

def restore(self):

self.value.load_state_dict(self._saved_sampler_state)

def sync(self):

# Get the set of processed indices from all workers

world_processed_indices = _union(allgather_object(self.value.processed_indices))

# Replace local processed indices with global indices

state_dict = self.value.state_dict()

state_dict['processed_indices'] = world_processed_indices

# Broadcast and load the state to make sure we're all in sync

self.value.load_state_dict(broadcast_object(state_dict))

```

when `state.commit()` is called, function `save()` above only save the local state of `ElasticSampler` locally. If one node is dropped by some reason, the indices of processed samples on this node is lost. So after restart and sync, those samples whill be processed again, which is not what we want.

**Steps to reproduce**

1. Add some log to `SamplerStateHandler.sync()`

```python

class SamplerStateHandler(StateHandler):

......

def sync(self):

# Get the set of processed indices from all workers

world_processed_indices = _union(allgather_object(self.value.processed_indices))

print(f"world_processed_indices: {world_processed_indices }")

......

```

2. Use the code below to reproduce. Note not do shuffle for the convenience of observation.

```python

#! /usr/bin/env python3

# -*- coding: utf-8 -*-

import time

import torch

import horovod.torch as hvd

BATCH_SIZE_PER_GPU = 2

class MyDataset(torch.utils.data.Dataset):

def __init__(self, n):

self.n = n

def __getitem__(self, index):

index = index % self.n

return index

def __len__(self):

return self.n

@hvd.elastic.run

def train(state, data_loader, a):

rank = hvd.rank()

print(f"train rank={rank}")

total_epoch = 100

for epoch in range(state.epoch, total_epoch):

print(f"epoch={epoch}")

print("Epoch {} / {}, Start training".format(epoch, total_epoch))

print(f"train... rank={rank}")

print(f"start enumerate train_loader... rank={rank}")

batch_offset = state.batch

for i, d in enumerate(data_loader):

state.batch = batch_idx = batch_offset + i

if state.batch % 5 == 0:

t1 = time.time()

state.commit()

print(f"time: {time.time() - t1}")

state.check_host_updates()

b = hvd.allreduce(a)

print(f"b: {b}")

state.train_sampler.record_batch(i, BATCH_SIZE_PER_GPU)

# if rank == 0:

msg = 'Epoch: [{0}][{1}/{2}]\t'.format(

state.epoch, state.batch, len(data_loader))

print(msg)

time.sleep(0.5)

state.epoch += 1

state.batch = 0

data_loader.sampler.set_epoch(epoch)

state.commit()

def main():

hvd.init()

torch.manual_seed(219)

torch.cuda.set_device(hvd.local_rank())

dataset = MyDataset(2000)

sampler = hvd.elastic.ElasticSampler(dataset, shuffle=False)

data_loader = torch.utils.data.DataLoader(

dataset,

batch_size=BATCH_SIZE_PER_GPU,

shuffle=False,

num_workers=2,

sampler=sampler,

worker_init_fn=None,

drop_last=True,

)

a = torch.Tensor([1,2,3,4])

state = hvd.elastic.TorchState(epoch=0,

train_sampler=sampler,

batch=0)

train(state, data_loader, a)

if __name__ == "__main__":

main()

```

3. Use elastic horovod to run the above code on some nodes, for example, on 3 nodes.

4. Kill the processes on one node after a while.

5. Observe the log we added to `SamplerStateHandler.sync()`.

**Sloutions**

1. sloution 1

Save the global state. To get the global state of ElasticSampler on every node, function `save` should call function `sync` first.

```python

class SamplerStateHandler(StateHandler):

......

def save(self):

self.sync()

self._saved_sampler_state = copy.deepcopy(self.value.state_dict())

......

```

But this will cause `state.commit()` to take a long time.

2. sloution 2

Maybe we can save the number of processed samples instead of save all the processed indices. The number of processed samples can be calculated locally by `batch_size` and `num_replicas`.

| closed | 2021-09-01T12:33:21Z | 2021-10-21T20:44:14Z | https://github.com/horovod/horovod/issues/3143 | [

"bug"

] | hgx1991 | 0 |

DistrictDataLabs/yellowbrick | scikit-learn | 425 | Improving Quick Start Documentation | **Quick Start Documentation**

In the walk through part of the documentation, correlation between actual temperature and feels like temperature were analysed. The author mentioned that it was intuitive to prioritize the feature 'feels like' over 'temp'. But for the reader, it is bit hard to understand the reason behind author's decision on feature selection from the plot.

Expectation:

Could add some more details on why 'feels like' is better than 'temp' when we train machine learning models.

### Background

https://www.datascience.com/blog/introduction-to-correlation-learn-data-science-tutorials

https://newonlinecourses.science.psu.edu/stat501/node/346/

| closed | 2018-05-15T17:52:14Z | 2018-06-08T11:09:06Z | https://github.com/DistrictDataLabs/yellowbrick/issues/425 | [

"type: question",

"level: intermediate",

"type: documentation"

] | muthu-tech | 4 |

pyg-team/pytorch_geometric | deep-learning | 9,862 | Bug in the implementation of sagpool | ### 🐛 Describe the bug

Hi this is an old bug described in pull request #8562 .

As mentioned, the implementation of select function in [sagpool](https://github.com/pyg-team/pytorch_geometric/blob/master/torch_geometric/nn/pool/sag_pool.py) reuses the select class of topkpool, which introduces an extra learnable weight. As a consequence, this select function learns to take the opposite of the scores if the weight is negative, which is not consistent with the paper, neither the [codes](https://github.com/inyeoplee77/SAGPool/blob/master/layers.py) of the authors. In my observation, this change influences the effectiveness of sagpool significantly in some situations.

Considering the future work may use the sagpool layer in Pyg for evaluation, would you mind fixing this bug so that it doesn't influence their results and conclusion?

### Versions

I cant get the collect_env tool. The connection refused.

| open | 2024-12-14T18:15:00Z | 2024-12-14T18:15:00Z | https://github.com/pyg-team/pytorch_geometric/issues/9862 | [

"bug"

] | ChenYizhu97 | 0 |

plotly/dash-table | plotly | 830 | Cell with dropdown does not allow for backspace | When editing the value of a cell with a dropdown after double clicking, the value can only be appended with more characters. If a typo was made when filtering the dropdown, pressing the backspace key doesn't do anything and you must click outside the cell to clear the input. However, if you double click on a cell without a dropdown to enable cell editing and then double click on a cell with a dropdown, the backspace key works as expected. | open | 2020-09-23T18:45:01Z | 2020-09-23T18:45:01Z | https://github.com/plotly/dash-table/issues/830 | [] | blozano824 | 0 |

PrefectHQ/prefect | automation | 17,280 | Loading a GCPSecret block generates a warning | ### Bug summary

Loading a GcpSecret Block seems to generating a warning, it appears to be coming from the GCP library, the Block still loads everything executes but the warning doesn't appear until the flow run completes causing some confusion

```python

from prefect import flow

from prefect_gcp.secret_manager import GcpSecret

@flow(log_prints=True)

def gcp_secret_flow():

gcpsecret_block = GcpSecret.load("mm2-test-secret")

gcpsecret_block.read_secret()

```

### Version info

```Text

Version: 3.2.2

API version: 0.8.4

Python version: 3.12.4

Git commit: d982c69a

Built: Thu, Feb 13, 2025 10:53 AM

OS/Arch: darwin/arm64

Profile: masonsandbox

Server type: cloud

Pydantic version: 2.10.6

Integrations:

prefect-dask: 0.3.2

prefect-snowflake: 0.28.0

prefect-slack: 0.3.0

prefect-gcp: 0.6.2

prefect-aws: 0.5.0

prefect-gitlab: 0.3.1

prefect-dbt: 0.6.4

prefect-docker: 0.6.1

prefect-sqlalchemy: 0.5.1

prefect-shell: 0.3.1

```

### Additional context

Log output

```

13:09:08.327 | INFO | Flow run 'spry-donkey' - Beginning flow run 'spry-donkey' for flow 'env-vars-flow'

13:09:08.331 | INFO | Flow run 'spry-donkey' - View at

13:09:09.698 | INFO | Flow run 'spry-donkey' - The secret 'NOTREAL' data was successfully read.

13:09:09.918 | INFO | Flow run 'spry-donkey' - Finished in state Completed()

13:09:10.086 | WARNING | EventsWorker - Still processing items: 1 items remaining...

WARNING: All log messages before absl::InitializeLog() is called are written to STDERR

E0000 00:00:1740514154.823139 19166321 init.cc:232] grpc_wait_for_shutdown_with_timeout() timed out.

``` | closed | 2025-02-25T20:42:35Z | 2025-02-25T20:51:50Z | https://github.com/PrefectHQ/prefect/issues/17280 | [

"upstream dependency"

] | masonmenges | 1 |

keras-team/keras | data-science | 20,350 | argmax returns incorrect result for input containing -0.0 (Keras using TensorFlow backend) | Description:

When using keras.backend.argmax with an input array containing -0.0, the result is incorrect. Specifically, the function returns 1 (the index of -0.0) as the position of the maximum value, while the actual maximum value is 1.401298464324817e-45 at index 2.

This issue is reproducible in TensorFlow and JAX as well, as they share similar backend logic for the argmax function. However, PyTorch correctly returns the expected index 2 for the maximum value.

Expected Behavior:

keras.backend.argmax should return 2, as the value at index 2 (1.401298464324817e-45) is greater than both -1.0 and -0.0.

```

import numpy as np

import torch

import tensorflow as tf

import jax.numpy as jnp

from tensorflow import keras

def test_argmax():

# Input data

input_data = np.array([-1.0, -0.0, 1.401298464324817e-45], dtype=np.float32)

# PyTorch argmax

pytorch_result = torch.argmax(torch.tensor(input_data, dtype=torch.float32)).item()

print(f"PyTorch argmax result: {pytorch_result}")

# TensorFlow argmax

tensorflow_result = tf.math.argmax(input_data).numpy()

print(f"TensorFlow argmax result: {tensorflow_result}")

# Keras argmax (Keras internally uses TensorFlow, so should be the same)

keras_result = keras.backend.argmax(input_data).numpy()

print(f"Keras argmax result: {keras_result}")

# JAX argmax

jax_result = jnp.argmax(input_data)

print(f"JAX argmax result: {jax_result}")

if __name__ == "__main__":

test_argmax()

```

```

PyTorch argmax result: 2

TensorFlow argmax result: 1

Keras argmax result: 1

JAX argmax result: 1

``` | closed | 2024-10-14T10:15:25Z | 2025-01-25T06:13:46Z | https://github.com/keras-team/keras/issues/20350 | [

"stat:awaiting keras-eng",

"type:Bug"

] | LilyDong0127 | 1 |

viewflow/viewflow | django | 290 | Adding setUp method | Hello, is it possible to add a `setUp()` method to the Flow class that gets called when a workflow instance gets started? Problem I cannot put it inside the `__init__` is that this method gets called when the code gets loaded, eg.

```python

class MyFlow(Flow):

def __init__(self, *args, **kwargs):

super().__init__(*args, **kwargs)

# this code gets executed when code being loaded

# by the FlowMetaClass

team = Team.objects.get(id=1)

```

Proposing

```python

class MyFlow(Flow):

def setUp(self, *args, **kwargs):

team = Team.objects.get(id=1)

```

Normally this will work fine, but if I have to add a field to the Team model, this prevents me able to make migration because it will instantiate the `MyFlow` class in the meta class, and ultimately complain about the new field is missing.

Whilst I can create a custom Start node to achieve this but I think it's more convenient to do it inside `setUp` method so a few similar flows can simply extend on the same base flow class.

An alternative way right now I am using is to create a descriptor class for the team to lazy load:

```python

class LazyTeamLoader():

"""

Mainly to get around this problem:

https://github.com/viewflow/viewflow/issues/290

"""

def __init__(self, team_slug: str):

self.team_slug = team_slug

self.team_instance = None

def __get__(self, *args, **kwargs):

if self.team_instance:

return self.team_instance

return Team.objects.get(slug=self.team_slug)

class MyFlow(Flow):

iaas_team = LazyTeamLoader('iaas')

```

Let me know what you think? | closed | 2020-09-16T23:01:31Z | 2020-12-11T10:16:04Z | https://github.com/viewflow/viewflow/issues/290 | [

"request/question"

] | variable | 1 |

akfamily/akshare | data-science | 5,405 | AKShare 接口问题报告 | 1. 请先详细阅读文档对应接口的使用方式:https://akshare.akfamily.xyz

2. 操作系统版本,目前只支持 64 位操作系统

3. Python 版本,目前只支持 3.8 以上的版本 【 3.11.8】

4. AKShare 版本,请升级到最新版【1.14.57】

5. 接口的名称和相应的调用代码

``` python

# 个股分红信息查询

news_trade_notify_dividend_baidu_df = ak.news_trade_notify_dividend_baidu(date="20241107")

print(news_trade_notify_dividend_baidu_df)

```

8. 接口报错的截图或描述

1. 尝试了date 为 20141202 至 20141205与示例中的20241107,都返回空的dataframe,应该有数据

2. 返回空的dataframe,没有表头

10. 期望能正确返回数据,没有数据时应该带表头

| closed | 2024-12-05T12:00:29Z | 2024-12-06T06:19:08Z | https://github.com/akfamily/akshare/issues/5405 | [

"bug"

] | a932455223 | 1 |

voxel51/fiftyone | computer-vision | 4,880 | [INSTALL] Cannot launch QuickStart | ### System information

- **OS Platform and Distribution** (e.g., Linux Ubuntu 16.04): macOS 15.0 (ARM)

- **Python version** (`python --version`): 3.9-3.11, installed via miniconda

- **FiftyOne version** (`fiftyone --version`): 1.0.0

- **FiftyOne installed from** (pip or source): pip

### Commands to reproduce

As thoroughly as possible, please provide the Python and/or shell commands used to encounter the issue.

```

fiftyone quickstart

```

### Describe the problem

Stack trace:

```

Traceback (most recent call last):

File "/Users/USER/miniforge3/envs/fo/bin/fiftyone", line 8, in <module>

sys.exit(main())

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/core/cli.py", line 4636, in main

args.execute(args)

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/core/cli.py", line 4619, in <lambda>

parser.set_defaults(execute=lambda args: command.execute(parser, args))

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/core/cli.py", line 174, in execute

_, session = fouq.quickstart(

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/utils/quickstart.py", line 44, in quickstart

return _quickstart(port, address, remote, desktop)

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/utils/quickstart.py", line 50, in _quickstart

return _launch_app(dataset, port, address, remote, desktop)

File "/Users/USER/miniforge3/envs/fo/lib/python3.9/site-packages/fiftyone/utils/quickstart.py", line 60, in _launch_app

session = fos.launch_app(

TypeError: launch_app() got an unexpected keyword argument 'desktop'

```

### Other info/logs

I originally tried to load my own dataset (a small YOLO one) via python code, but I always just get a window in my browser with an unspecified "TypeError: Load failed". So I tried the demo (`fiftyone quickstart`), and it looks like my installation is wrong somehow? I went into the code a bit, it looks like session's launch_app wants no desktop argument at all. If I just remove that one in quickstart's launch_app() call the error is gone, but then I go back to my original issue with my own dataset and get a "TypeError: Load failed".

I've tried a few different python versions but both errors are persistent. I'd appreciate any help, or even some help in enabling some more verbose output/pointing me to where logs are stored. Thanks!

PS: Considering that no one else has encountered this issue so far I've put this as an installation issue, hope that works.

| closed | 2024-10-03T08:46:33Z | 2024-10-04T14:34:19Z | https://github.com/voxel51/fiftyone/issues/4880 | [

"bug",

"installation"

] | tfaehse | 1 |

FactoryBoy/factory_boy | sqlalchemy | 831 | Post-generated attribute of RelatedFactoryList isn't generated | #### Description

In order to automatically chain Factories whose underlying models aren't explicitly related all the way down the chain, I use a `post_generation` hook on a child `SubFactory` that's implicitly related through my code's business logic. When generating a child alone, the `post_generation` hook succeeds. However, when trying to generate a child via an explicitly related parent, the `post_generated` attributes aren't generated.

#### To Reproduce

Example: while the Shoe object can exist independently of a Child, in practice a child will always have a left and right shoe. However, without explicit relations such as FK, the relation can't be mocked using RelatedFactory or SubFactory.

```python

from factory import Factory, RelatedFactoryList, Faker, post_generation

from sqlalchemy.ext.declarative import declarative_base

from sqlalchemy import Column, Integer, Unicode, create_engine, ForeignKey

from factory.alchemy import SQLAlchemyModelFactory

from sqlalchemy.orm import scoped_session, sessionmaker, relationship

Base = declarative_base()

engine = create_engine('sqlite://')

session = scoped_session(sessionmaker(bind=engine))

class Parent(Base):

__tablename__ = 'parent'

id = Column(Integer, primary_key=True)

children = relationship("Child", back_populates="parent")

class Child(Base):

__tablename__ = 'child'

id = Column(Integer, primary_key=True)

parent_id = Column(Integer, ForeignKey('parent.id'))

name = Column(Unicode())

parent = relationship("Parent", back_populates="children")

class Shoe(Base):

__tablename__ = 'toy'

id = Column(Integer, primary_key=True)

foot = Column(Unicode())

class ShoeFactory(SQLAlchemyModelFactory):

class Meta:

model = Shoe

sqlalchemy_session = session

foot = Faker('random_element', elements=["right", "left"])

class ChildFactory(SQLAlchemyModelFactory):

# Child has to have at least 1 right shoe and one left shoe.

class Meta:

model = Child

sqlalchemy_session = session

name = Faker('word')

@post_generation

def post_generated_attribute(self, create, extracted, **kwargs):

self.right_shoe = ShoeFactory(

foot="right"

)

self.left_shoe = ShoeFactory(

foot="left"

)

class ParentFactory(SQLAlchemyModelFactory):

class Meta:

model = Parent

sqlalchemy_session = session

children = RelatedFactoryList(ChildFactory, 'parent', size=1,)

```

##### The issue

When generating an ChildFactory alone, the `post-generation` hook succeeds. However if generating a Parent, somehow the SubFactory `child` attribute doesn't generate the `shoes` post-attribute.

```python

# Generating child with no parents.

orphan = ChildFactory()

try:

orphan_right_shoe = orphan.right_shoe

except:

print("Orphan has no right shoe")

else:

print("Orphan's right shoe is on")

try:

orphan_left_shoe = orphan.left_shoe # shoes are there

except:

print("Orphan has no left shoe")

else:

print("Orphan's left shoe is on")

# Generating child through parents

parent = ParentFactory()

child = parent.children

try:

child_right_shoe = parent.children.right_shoe

except:

print("Child has no right shoe")

else:

print("Child's right shoe is on")

try:

child_left_shoe = parent.childern.left_shoe

except:

print("Child has no left shoe")

else:

print("Child's left shoe is on")

>>>

Orphan's right shoe is on

Orphan's left shoe is on

Child has no right shoe

Child has no left shoe

```

The error on the Child reads:

```shell

Traceback (most recent call last):

File "scratch.py", line 58, in <module>

child_toy = parent.children.toy

AttributeError: 'InstrumentedList' object has no attribute 'toy'

```

#### Notes

This also seems to happen on `RelatedFactory` (which would make sense since the `List` is an extension).

Any ideas why this would be happening? Otherwise, any recommendations on how to do the desired above (i.e. generate a parent, with children, with shoes)? Thanks!

| closed | 2021-01-08T23:23:39Z | 2021-04-16T00:25:33Z | https://github.com/FactoryBoy/factory_boy/issues/831 | [

"Q&A",

"SQLAlchemy",

"Fixed"

] | kabdallah-galileo | 2 |

MagicStack/asyncpg | asyncio | 316 | asyncpg.exceptions.DataError: invalid input for query argument $1 (value out of int32 range) | <!--

Thank you for reporting an issue/feature request.

If this is a feature request, please disregard this template. If this is

a bug report, please answer to the questions below.

It will be much easier for us to fix the issue if a test case that reproduces

the problem is provided, with clear instructions on how to run it.

Thank you!

-->

* **asyncpg version**: 0.16.0

* **PostgreSQL version**: PostgreSQL 9.5.10 on x86_64-pc-linux-gnu, compiled by gcc (GCC) 4.8.2 20140120 (Red Hat 4.8.2-16), 64-bit (AWS RDS version)

* **Do you use a PostgreSQL SaaS? If so, which? Can you reproduce

the issue with a local PostgreSQL install?**: Yes, AWS RDS

* **Python version**: 3.6.4

* **Platform**: Red Hat 4.8.5-16

* **Do you use pgbouncer?**: no

* **Did you install asyncpg with pip?**: yes

* **If you built asyncpg locally, which version of Cython did you use?**: n/a

* **Can the issue be reproduced under both asyncio and

[uvloop](https://github.com/magicstack/uvloop)?**: n/a

<!-- Enter your issue details below this comment. -->

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 372, in prepare

return await self._prepare(query, timeout=timeout, use_cache=False)

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 377, in _prepare

use_cache=use_cache)

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 308, in _get_statement

types_with_missing_codecs, timeout)

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 348, in _introspect_types

self._intro_query, (list(typeoids),), 0, timeout)

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 1363, in __execute

return await self._do_execute(query, executor, timeout)

File "/usr/lib64/python3.6/site-packages/asyncpg/connection.py", line 1385, in _do_execute

result = await executor(stmt, None)

File "asyncpg/protocol/protocol.pyx", line 190, in bind_execute

File "asyncpg/protocol/prepared_stmt.pyx", line 160, in asyncpg.protocol.protocol.PreparedStatementState._encode_bind_msg

asyncpg.exceptions.DataError: invalid input for query argument $1: [4144356976] (value out of int32 range)

I'm encountering another issue similar to https://github.com/MagicStack/asyncpg/issues/279. When I use a custom data type defined in Postgres and attempt to reference it in a query, if the OID of the datatype is greater than 2^31 I get the above error. I'm not sure what the fix is, but I imagine it again involves treating OIDs as uint32 rather than int32 values. | closed | 2018-06-13T19:27:37Z | 2018-06-14T04:32:10Z | https://github.com/MagicStack/asyncpg/issues/316 | [] | eheien | 1 |

sqlalchemy/sqlalchemy | sqlalchemy | 11,917 | the ORM does not expire server_onupdate columns during a bulk UPDATE (new style) | This was discussed in #11911

The `server_onupdate` columns do not get expired by the orm following an update, requiring populate-existing to fetch the new values. This impact mainly computed columns.

Reproducer:

```py

from sqlalchemy import orm

import sqlalchemy as sa

class Base(orm.DeclarativeBase):

pass

class T(Base):

__tablename__ = "t"

id: orm.Mapped[int] = orm.mapped_column(primary_key=True)

value: orm.Mapped[int]

cc: orm.Mapped[int] = orm.mapped_column(sa.Computed("value + 42"))

e = sa.create_engine("sqlite:///", echo=True)

Base.metadata.create_all(e)

with orm.Session(e) as s:

s.add(T(value=10))

s.flush()

s.commit()

with orm.Session(e) as s:

assert (v := s.get_one(T, 1)).cc == 52

s.execute(sa.update(T).values(id=1, value=2))

assert (v := s.get_one(T, 1)).cc != 44 # should be 44

with orm.Session(e) as s:

assert (v := s.get_one(T, 1)).cc == 52

r = s.execute(sa.update(T).values(value=2).returning(T))

assert (v := r.scalar_one()).cc != 44 # should be 44

assert (v := s.get_one(T, 1)).cc != 44 # should be 44

with orm.Session(e) as s:

r = s.execute(sa.insert(T).values(value=9).returning(T))

assert (v := r.scalar_one()).cc == 51

r = s.execute(sa.update(T).values(value=2).filter_by(id=2).returning(T))

assert (v := r.scalar_one()).cc != 44 # should be 44

r = s.execute(sa.select(T).filter_by(id=2))

assert (v := r.scalar_one()).cc != 44 # should be 44

``` | closed | 2024-09-23T18:21:09Z | 2024-11-24T21:29:36Z | https://github.com/sqlalchemy/sqlalchemy/issues/11917 | [

"bug",

"orm",

"great mcve"

] | CaselIT | 5 |

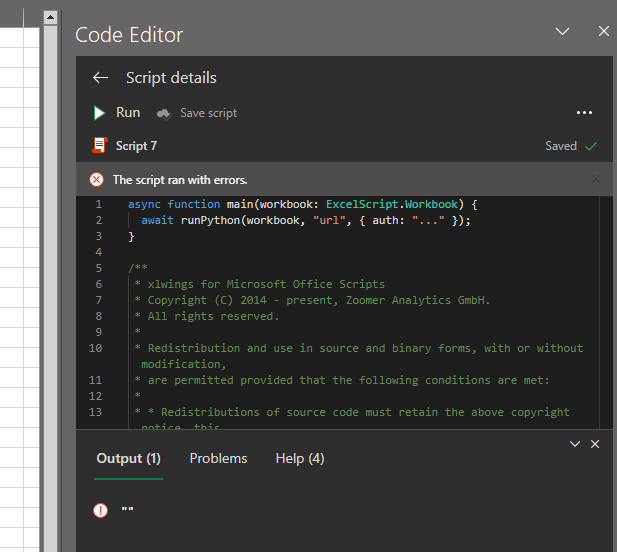

xlwings/xlwings | automation | 2,156 | xlwings Server via Office Scripts not responsive | #### Windows 10

#### 0.28.6, Office 365, Python 3.10

Hello there, and first of all, what a phenomenal project! Using it on a daily basis!

I am currently trying to make the xlwings server work (trying it out on my local machine) as the add-in option gets blocked by the networks firewall (and no - network admin does not want to whitelist it... sigh). So I made the local server run with the demo folder and the included main.py and went the OfficeScripts route. However once I click **Run** in the Office Script after pasting the output of _xlwings copy os_... nothing happens, except the small pop up "The scipt ran with errors."

Is there anything I have to adjust in the xlwings copy os output, maybe in order to direct the script to my local server?

I am stuck on this for a while now and really appreciate any guidance / help here!

Many many thanks and best wishes

| closed | 2023-02-03T16:51:49Z | 2023-02-03T18:37:48Z | https://github.com/xlwings/xlwings/issues/2156 | [] | alexanderburkhard | 3 |

tensorly/tensorly | numpy | 414 | robust_pca does not work on GPU with PyTorch as a backend | #### Describe the bug

robust_pca does not work on GPU with PyTorch as a backend with tensorly-0.7.0 and PyTorch 1.11.0+cu113

However, i also tried with tensorly-0.7.0 and pytorch 1.7+cu102 that has the same issues

#### Steps or Code to Reproduce

```

pip install tensorly

print(torch.__version__)

import torch

import tensorly as tl

tl.set_backend('pytorch')

cuda = torch.device('cuda')

fake_data = torch.randn(2500, 9000, device=cuda)

low_rank_part, sparse_part = tl.decomposition.robust_pca(fake_data, reg_E=0.04, learning_rate=1.2, n_iter_max=20)

```

#### Expected behavior

Run robust_pca on GPU without any issues

#### Actual result

```

/usr/local/lib/python3.7/dist-packages/tensorly/backend/core.py:1106: UserWarning: In partial_svd: converting to NumPy. Check SVD_FUNS for available alternatives if you want to avoid this.

warnings.warn('In partial_svd: converting to NumPy.

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

[<ipython-input-7-e31773fb0320>](https://localhost:8080/#) in <module>()

1 cuda = torch.device('cuda')

2 fake_data = torch.randn(2500, 9000, device=cuda)

----> 3 low_rank_part, sparse_part = tl.decomposition.robust_pca(fake_data, reg_E=0.04, learning_rate=1.2, n_iter_max=20)

3 frames

<__array_function__ internals> in amax(*args, **kwargs)

[/usr/local/lib/python3.7/dist-packages/torch/_tensor.py](https://localhost:8080/#) in __array__(self, dtype)

730 return handle_torch_function(Tensor.__array__, (self,), self, dtype=dtype)

731 if dtype is None:

--> 732 return self.numpy()

733 else:

734 return self.numpy().astype(dtype, copy=False)

TypeError: can't convert cuda:0 device type tensor to numpy. Use Tensor.cpu() to copy the tensor to host memory first.

```

#### Versions

Linux-5.4.188+-x86_64-with-Ubuntu-18.04-bionic

Python 3.7.13 (default, Apr 24 2022, 01:04:09)

[GCC 7.5.0]

NumPy 1.21.6

SciPy 1.4.1

TensorLy 0.7.0

Pytorch 1.11.0+cu113

| closed | 2022-06-23T03:29:30Z | 2022-06-24T16:38:06Z | https://github.com/tensorly/tensorly/issues/414 | [] | Mahmood-Hussain | 2 |

pyg-team/pytorch_geometric | pytorch | 10,119 | pytorch_geometric is using a compromised tj-actions/changed-files GitHub action | pytorch_geometric uses a compromised version of tj-actions/changed-files. The compromised action appears to leak secrets the runner has in memory.

The action is included in:

- https://github.com/pyg-team/pytorch_geometric/blob/d2bb939a1bfba3b7a6f7d7b102a2771471657319/.github/workflows/documentation.yml

Output of an affected runs:

- https://github.com/pyg-team/pytorch_geometric/actions/runs/13864242315/job/38799668099#step:3:63

Please review.

Learn about the compromise on [StepSecurity](https://www.stepsecurity.io/blog/harden-runner-detection-tj-actions-changed-files-action-is-compromised) of [Semgrep](https://semgrep.dev/blog/2025/popular-github-action-tj-actionschanged-files-is-compromised/). | open | 2025-03-15T21:31:58Z | 2025-03-15T21:32:06Z | https://github.com/pyg-team/pytorch_geometric/issues/10119 | [

"bug"

] | eslerm | 0 |

dbfixtures/pytest-postgresql | pytest | 225 | TypeError: '>=' not supported between instances of 'float' and 'Version' | Tried upgrading to 2.0.0 and same problem

```

@request.addfinalizer

def drop_database():

> drop_postgresql_database(pg_user, pg_host, pg_port, pg_db, 11.2)

qc/test/conftest.py:46:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

user = None, host = None, port = None, db_name = 'qc_test', version = 11.2

def drop_postgresql_database(user, host, port, db_name, version):

"""

Drop databse in postgresql.

:param str user: postgresql username

:param str host: postgresql host

:param str port: postgresql port

:param str db_name: database name

:param packaging.version.Version version: postgresql version number

"""

conn = psycopg2.connect(user=user, host=host, port=port)

conn.set_isolation_level(psycopg2.extensions.ISOLATION_LEVEL_AUTOCOMMIT)

cur = conn.cursor()

# We cannot drop the database while there are connections to it, so we

# terminate all connections first while not allowing new connections.

> if version >= parse_version('9.2'):

E TypeError: '>=' not supported between instances of 'float' and 'Version'

../../../../.virtualenvs/qc-backend-qjdNaO4n/lib/python3.7/site-packages/pytest_postgresql/factories.p:

84: TypeError

``` | closed | 2019-08-08T13:47:22Z | 2019-08-09T08:03:06Z | https://github.com/dbfixtures/pytest-postgresql/issues/225 | [

"question"

] | revmischa | 6 |

python-gino/gino | asyncio | 40 | GINO query methods should accept raw SQL | So that user could get model objects from raw SQL. For example:

```python

users = await db.text('SELECT * FROM users WHERE id > :num').gino.model(User).return_model(True).all(num=28, bind=db.bind)

``` | closed | 2017-08-30T02:28:27Z | 2017-08-30T03:16:49Z | https://github.com/python-gino/gino/issues/40 | [

"help wanted",

"task"

] | fantix | 1 |

ray-project/ray | tensorflow | 51,423 | Ray on kubernetes with custom image_uri is broken | ### What happened + What you expected to happen

Hi, I am trying to use a custom image on a kubernetes cluster. I am using this cluster: `https://github.com/ray-project/kuberay/blob/master/ray-operator/config/samples/ray-cluster.autoscaler.yaml`.

Unfortunately, it seems that ray uses podman to launch custom images (`https://github.com/ray-project/ray/blame/master/python/ray/_private/runtime_env/image_uri.py#L16C10-L18C15`) (by @zcin), however it does not seem that in the official ray image that podman is installed, so I get issues saying that podman is not installed.

I have tried installing podman manually, but then I get the error `WARN[0000] "/" is not a shared mount, this could cause issues or missing mounts with rootless containers`.

In my opinion, the best solution for this would be to completely remove this podman dependency, as it seems to be causing many issues.

Is there a workaround for this right now? I'm completely blocked as things stand.

### Versions / Dependencies

latest

### Reproduction script

```python

from ray.job_submission import JobSubmissionClient

client = JobSubmissionClient(args.address)

job_id = client.submit_job(

entrypoint=""" cat /etc/hostname; echo "import ray; print(ray.__version__); print('hello'); import time; time.sleep(100); print('done');" > main.py; python main.py """,

runtime_env={

"image_uri": "<choose an image here>",

},

)

print(job_id)

```

### Issue Severity

High: It blocks me from completing my task. | open | 2025-03-17T15:07:30Z | 2025-03-21T23:06:12Z | https://github.com/ray-project/ray/issues/51423 | [

"bug",

"triage",

"core"

] | CowKeyMan | 5 |

davidteather/TikTok-Api | api | 879 | RuntimeError: This event loop is already running[BUG] - Your Error Here | Fill Out the template :)

**I have installed all required library and still getting runtime error**

A clear and concise description of what the bug is.

**from TikTokApi import TikTokApi

api = TikTokApi()

n_videos = 100

username = 'nastyblaq'

user_videos = api.byUsername(username, count=n_videos)**

Please add any relevant code that is giving you unexpected results.

Preferably the smallest amount of code to reproduce the issue.

**SET LOGGING LEVEL TO INFO BEFORE POSTING CODE OUTPUT**

```py

import logging

TikTokApi(logging_level=logging.INFO) # SETS LOGGING_LEVEL TO INFO

# Hopefully the info level will help you debug or at least someone else on the issue

```

```py

# Code Goes Here

```

**Expected behavior**

A clear and concise description of what you expected to happen.

**Error Trace (if any)**

Put the error trace below if there's any error thrown.

```

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

[<ipython-input-3-55c3fab06e06>](https://localhost:8080/#) in <module>()

1 from TikTokApi import TikTokApi

----> 2 api = TikTokApi()

3 n_videos = 100

4 username = 'nastyblaq'

5 user_videos = api.byUsername(username, count=n_videos)

```

**Desktop (please complete the following information):**

- OS: [e.g. Windows 10]

- TikTokApi Version [e.g. 5.0.0] - if out of date upgrade before posting an issue

**Additional context**

Add any other context about the problem here.

| closed | 2022-05-04T13:07:30Z | 2023-08-08T22:14:34Z | https://github.com/davidteather/TikTok-Api/issues/879 | [

"bug"

] | kareemrasheed89 | 1 |

inventree/InvenTree | django | 8,618 | Cannot delete Attachment | ### Please verify that this bug has NOT been raised before.

- [x] I checked and didn't find a similar issue

### Describe the bug*

When I try to delete Attachement which I accidentally uploaded. I get error code ,,Action Prohibited-Delete operation not allowed”

### Steps to Reproduce

Upload attachement to part, then try to delete it.