repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

pydantic/logfire | pydantic | 581 | Document `instrument_httpx(client)` | ### Description

Document feature introduced on https://github.com/pydantic/logfire/pull/575. | closed | 2024-11-12T11:47:59Z | 2024-11-13T10:27:06Z | https://github.com/pydantic/logfire/issues/581 | [

"Feature Request"

] | Kludex | 0 |

n0kovo/fb_friend_list_scraper | web-scraping | 10 | Your Firefox profile cannot be loaded. It may be missing or inaccessible. | Hi. I wanted to try your script. I installed it via pip, but when I try to scrape something, the moment I enter my password I get the following error: "Your Firefox profile cannot be loaded. It may be missing or inaccessible".

A quick google search appears to indicate that the root of the issue is that Firefox in Ubuntu is installed as a snap package (instead of a .deb file), which does not use the default profile path. In my case I use Kubuntu 20.04 LTS

Is there any workaround? Thanks in advance. | open | 2024-03-02T22:30:01Z | 2024-03-02T22:30:01Z | https://github.com/n0kovo/fb_friend_list_scraper/issues/10 | [] | wonx | 0 |

modoboa/modoboa | django | 3,230 | 'Calendars' tab from webmail fails with "Error reading /srv/modoboa/...../modoboa_radicale/webpack-stats.json. Are you sure webpack has generated the file and the path is correct?" | # Impacted versions

* OS Type: Debian

* OS Version: 12 Bookworm

* Database Type: MySQL

* Database version: MariaDB 10.11.6

* Modoboa: 2.2.4

* installer used: Yes

* Webserver: Nginx

# Steps to reproduce

- Fresh Debian 12 VM

- Run installer as root: `./run.py mytest.mydomain.com`

- add MX and A record as prompted by installer

- have a coffee while it installs, as prompted by installer

- enable *DEBUG = True* in `/srv/modoboa/instance/instance/settings.py` and restart uwsgi service

- Log into the admin (admin:password) and set up a test domain with defaults

- create a test Simple User in the test domain

- in a new browser session, log into the new test user.

- Click the Calendars link at the top and the error occurs.

# Current behavior

An error is thrown: `Error reading /srv/modoboa/env/lib/python3.11/site-packages/modoboa_radicale/static/modoboa_radicale/webpack-stats.json. Are you sure webpack has generated the file and the path is correct?`

There is no static folder to be found at all under `/srv/modoboa/env/lib/python3.11/site-packages/modoboa_radicale`:

```

root@mailtest03:/srv/modoboa# ls -l /srv/modoboa/env/lib/python3.11/site-packages/modoboa_radicale

total 124

-rw-r--r-- 1 modoboa modoboa 362 Apr 9 01:24 __init__.py

drwxr-xr-x 2 modoboa modoboa 4096 Apr 9 01:24 __pycache__

-rw-r--r-- 1 modoboa modoboa 260 Apr 9 01:24 apps.py

drwxr-xr-x 3 modoboa modoboa 4096 Apr 9 01:24 backends

-rw-r--r-- 1 modoboa modoboa 848 Apr 9 01:24 factories.py

-rw-r--r-- 1 modoboa modoboa 1780 Apr 9 01:24 forms.py

-rw-r--r-- 1 modoboa modoboa 2384 Apr 9 01:24 handlers.py

drwxr-xr-x 16 modoboa modoboa 4096 Apr 9 01:24 locale

drwxr-xr-x 4 modoboa modoboa 4096 Apr 9 01:24 management

drwxr-xr-x 3 modoboa modoboa 4096 Apr 9 01:24 migrations

-rw-r--r-- 1 modoboa modoboa 1841 Apr 9 01:24 mocks.py

-rw-r--r-- 1 modoboa modoboa 4683 Apr 9 01:24 models.py

-rw-r--r-- 1 modoboa modoboa 863 Apr 9 01:24 modo_extension.py

-rw-r--r-- 1 modoboa modoboa 7385 Apr 9 01:24 serializers.py

-rw-r--r-- 1 modoboa modoboa 783 Apr 9 01:24 settings.py

drwxr-xr-x 3 modoboa modoboa 4096 Apr 9 01:24 templates

drwxr-xr-x 2 modoboa modoboa 4096 Apr 9 01:24 test_data

-rw-r--r-- 1 modoboa modoboa 23653 Apr 9 01:24 tests.py

-rw-r--r-- 1 modoboa modoboa 210 Apr 9 01:24 urls.py

-rw-r--r-- 1 modoboa modoboa 1067 Apr 9 01:24 urls_api.py

-rw-r--r-- 1 modoboa modoboa 1340 Apr 9 01:24 views.py

-rw-r--r-- 1 modoboa modoboa 9588 Apr 9 01:24 viewsets.py

```

I also had this same problem on an upgraded 2.2.4 instance.

# Expected behavior

The Calendars tab should open with calendar stuff in it from radicale.

| closed | 2024-04-09T02:06:15Z | 2024-04-09T16:52:57Z | https://github.com/modoboa/modoboa/issues/3230 | [] | cantrust-hosting-cooperative | 3 |

BeanieODM/beanie | pydantic | 402 | [BUG] AWS DocumentDB does not work with 1.14.0 - Not found for _id: ... | **Describe the bug**

I noticed, that since I updated to beanie 1.14.0, my program does not work with AWS DocumentDB anymore.

This has not been a problem prior, and the same code works perfectly with 1.13.1

Additionally, the code works perfectly fine with 1.14.0 against the local MongoDB test database in version 5.0.10.

The error message is not very helpful, the requested resources can simply be not found (although they are there)

```

NotFound '<some_OID>' for '<class 'mongodb.model.user.odm.User'>'

not found in database 'User' with id '<some_OID>' not found in database

```

To verify, that the resource is there I use a tool like NoSQLBooster or Robo3T

```

db.user.find( {"_id" : ObjectId("<some_OID>")} )

.projection({})

.sort({_id:-1})

.limit(100)

```

**To Reproduce**

```python

# Nothing special, just a simple find command

result = await model.find_one(model.id == oid)

```

**Expected behavior**

I expected beanie 1.14.0 to work with AWS DocumentDB the same way as 1.13.1

**Additional context**

I am glad to provide further information, or I can make some tests against DocumentDB if someone can give me hints what to do.

| closed | 2022-11-05T03:19:39Z | 2022-11-06T16:47:31Z | https://github.com/BeanieODM/beanie/issues/402 | [] | mickdewald | 7 |

iperov/DeepFaceLab | machine-learning | 966 | nadagit/lbfs linux port not utilizing GPU | Been trying for days to try and get lbfs/DeepFaceLab_Linux to work. Mainly encountered user error programs but now when running ./4_data_src_extract_faces_S3FD.sh it only utilizes the CPU and my GPU (Tesla M40 24GB) sits at idle when opening nvidia-smi. Can someone tell me what I am doing wrong.

anaconda3 (deepfacelab) meta:

nvidia-driver-455

python=3.6.8

cudnn=7.6.5

cudatoolkit=10.0.130

requirements-cuda.txt

-------------------------

tqdm

numpy==1.19.3

h5py

opencv-python==4.1.0.25

ffmpeg-python==0.1.17

scikit-image==0.14.2

colorama==0.4.4

tesnorflow-gpu==2.4.0rc1

pyqt5==5.15.2

^^^^^^^^^^^^^^^^^^^^ based on requirements-cuda.txt in https://libraries.io/github/iperov/DeepFaceLab

I have tried nagadit/DeepFaceLab_Linux as well with python==3.7 & cudatoolkit==10.1.243 but to no avail.

update 12/7/20 - installed nvidia-cuda-toolkit, no change in utilization. | open | 2020-12-07T17:14:19Z | 2023-06-08T21:44:25Z | https://github.com/iperov/DeepFaceLab/issues/966 | [] | TheGermanEngie | 5 |

prkumar/uplink | rest-api | 171 | isnt asyncio coroutine deprecated from python 3.5 ? | **Describe the bug**

```

File "/home/alexv/.local/share/virtualenvs/boken-WCZqebO_/lib/python3.7/site-packages/uplink/clients/io/asyncio_strategy.py", line 14, in AsyncioStrategy

@asyncio.coroutine

AttributeError: module 'asyncio' has no attribute 'coroutine'

```

**To Reproduce**

Use uplink.AiohttpClient() with python 3.7

**Expected behavior**

working, no matter which (current) version of python we are using. see https://devguide.python.org/#status-of-python-branches

So what about adopting the new await syntax in https://github.com/prkumar/uplink/blob/master/uplink/clients/io/asyncio_strategy.py ?

| closed | 2019-08-21T09:24:25Z | 2019-08-21T09:44:22Z | https://github.com/prkumar/uplink/issues/171 | [] | asmodehn | 1 |

dask/dask | scikit-learn | 11,229 | Removal of Sphinx context injection at build time | From Read The Docs

> We are announcing the deprecation of Sphinx context injection at build time for all the projects. The deprecation date is set on Monday, October 7th, 2024. After this date, Read the Docs won't install the readthedocs-sphinx-ext extension and won't manipulate the project's conf.py file.

>

> This will get us closer to our goal of having all projects build on Read the Docs be the exact same as on other build environments, making understanding of documentation builds much easier to understand.

>

> You can read our [blog post on the deprecation](https://about.readthedocs.com/blog/2024/07/addons-by-default/) for all the information about possible impacts of this change, in particular the READTHEDOCS and other variables in the Sphinx context are no longer set automatically. | open | 2024-07-16T18:24:11Z | 2024-07-24T00:44:32Z | https://github.com/dask/dask/issues/11229 | [

"needs triage"

] | aterrel | 1 |

NVIDIA/pix2pixHD | computer-vision | 226 | Can we get access to the interactive editing tool depicted in the original paper? | open | 2020-10-06T02:15:23Z | 2022-02-08T09:02:56Z | https://github.com/NVIDIA/pix2pixHD/issues/226 | [] | ghost | 2 | |

ydataai/ydata-profiling | data-science | 981 | TypeError From ProfileReport in Google Colab | ### Current Behaviour

In Google Colab the `.to_notebook_iframe` method on `ProfileReport` throws an error:

```Python

TypeError: concat() got an unexpected keyword argument 'join_axes'

```

This issue has been spotted in other contexts and there are questions in StackOverflow: https://stackoverflow.com/questions/61362942/concat-got-an-unexpected-keyword-argument-join-axes

### Expected Behaviour

This section not applicable. Reporting bug that throws an error.

### Data Description

You can reproduce the error with this data:

```Python

https://projects.fivethirtyeight.com/polls/data/favorability_polls.csv

```

### Code that reproduces the bug

```Python

import pandas as pd

from pandas_profiling import ProfileReport

df = pd.read_csv('https://projects.fivethirtyeight.com/polls/data/favorability_polls.csv')

profile = ProfileReport(df)

profile.to_notebook_iframe

```

### pandas-profiling version

Version 1.4.1

### Dependencies

```Text

absl-py==1.0.0

alabaster==0.7.12

albumentations==0.1.12

altair==4.2.0

appdirs==1.4.4

argon2-cffi==21.3.0

argon2-cffi-bindings==21.2.0

arviz==0.12.0

astor==0.8.1

astropy==4.3.1

astunparse==1.6.3

atari-py==0.2.9

atomicwrites==1.4.0

attrs==21.4.0

audioread==2.1.9

autograd==1.4

Babel==2.10.1

backcall==0.2.0

beautifulsoup4==4.6.3

bleach==5.0.0

blis==0.4.1

bokeh==2.3.3

Bottleneck==1.3.4

branca==0.5.0

bs4==0.0.1

CacheControl==0.12.11

cached-property==1.5.2

cachetools==4.2.4

catalogue==1.0.0

certifi==2021.10.8

cffi==1.15.0

cftime==1.6.0

chardet==3.0.4

charset-normalizer==2.0.12

click==7.1.2

cloudpickle==1.3.0

cmake==3.22.4

cmdstanpy==0.9.5

colorcet==3.0.0

colorlover==0.3.0

community==1.0.0b1

contextlib2==0.5.5

convertdate==2.4.0

coverage==3.7.1

coveralls==0.5

crcmod==1.7

cufflinks==0.17.3

cvxopt==1.2.7

cvxpy==1.0.31

cycler==0.11.0

cymem==2.0.6

Cython==0.29.28

daft==0.0.4

dask==2.12.0

datascience==0.10.6

debugpy==1.0.0

decorator==4.4.2

defusedxml==0.7.1

descartes==1.1.0

dill==0.3.4

distributed==1.25.3

dlib @ file:///dlib-19.18.0-cp37-cp37m-linux_x86_64.whl

dm-tree==0.1.7

docopt==0.6.2

docutils==0.17.1

dopamine-rl==1.0.5

earthengine-api==0.1.307

easydict==1.9

ecos==2.0.10

editdistance==0.5.3

en-core-web-sm @ https://github.com/explosion/spacy-models/releases/download/en_core_web_sm-2.2.5/en_core_web_sm-2.2.5.tar.gz

entrypoints==0.4

ephem==4.1.3

et-xmlfile==1.1.0

fa2==0.3.5

fastai==1.0.61

fastdtw==0.3.4

fastjsonschema==2.15.3

fastprogress==1.0.2

fastrlock==0.8

fbprophet==0.7.1

feather-format==0.4.1

filelock==3.6.0

firebase-admin==4.4.0

fix-yahoo-finance==0.0.22

Flask==1.1.4

flatbuffers==2.0

folium==0.8.3

future==0.16.0

gast==0.5.3

GDAL==2.2.2

gdown==4.4.0

gensim==3.6.0

geographiclib==1.52

geopy==1.17.0

gin-config==0.5.0

glob2==0.7

google==2.0.3

google-api-core==1.31.5

google-api-python-client==1.12.11

google-auth==1.35.0

google-auth-httplib2==0.0.4

google-auth-oauthlib==0.4.6

google-cloud-bigquery==1.21.0

google-cloud-bigquery-storage==1.1.1

google-cloud-core==1.0.3

google-cloud-datastore==1.8.0

google-cloud-firestore==1.7.0

google-cloud-language==1.2.0

google-cloud-storage==1.18.1

google-cloud-translate==1.5.0

google-colab @ file:///colabtools/dist/google-colab-1.0.0.tar.gz

google-pasta==0.2.0

google-resumable-media==0.4.1

googleapis-common-protos==1.56.0

googledrivedownloader==0.4

graphviz==0.10.1

greenlet==1.1.2

grpcio==1.44.0

gspread==3.4.2

gspread-dataframe==3.0.8

gym==0.17.3

h5py==3.1.0

HeapDict==1.0.1

hijri-converter==2.2.3

holidays==0.10.5.2

holoviews==1.14.8

html5lib==1.0.1

httpimport==0.5.18

httplib2==0.17.4

httplib2shim==0.0.3

humanize==0.5.1

hyperopt==0.1.2

ideep4py==2.0.0.post3

idna==2.10

imageio==2.4.1

imagesize==1.3.0

imbalanced-learn==0.8.1

imblearn==0.0

imgaug==0.2.9

importlib-metadata==4.11.3

importlib-resources==5.7.1

imutils==0.5.4

inflect==2.1.0

iniconfig==1.1.1

intel-openmp==2022.1.0

intervaltree==2.1.0

ipykernel==4.10.1

ipython==5.5.0

ipython-genutils==0.2.0

ipython-sql==0.3.9

ipywidgets==7.7.0

itsdangerous==1.1.0

jax==0.3.8

jaxlib @ https://storage.googleapis.com/jax-releases/cuda11/jaxlib-0.3.7+cuda11.cudnn805-cp37-none-manylinux2014_x86_64.whl

jedi==0.18.1

jieba==0.42.1

Jinja2==2.11.3

joblib==1.1.0

jpeg4py==0.1.4

jsonschema==4.3.3

jupyter==1.0.0

jupyter-client==5.3.5

jupyter-console==5.2.0

jupyter-core==4.10.0

jupyterlab-pygments==0.2.2

jupyterlab-widgets==1.1.0

kaggle==1.5.12

kapre==0.3.7

keras==2.8.0

Keras-Preprocessing==1.1.2

keras-vis==0.4.1

kiwisolver==1.4.2

korean-lunar-calendar==0.2.1

libclang==14.0.1

librosa==0.8.1

lightgbm==2.2.3

llvmlite==0.34.0

lmdb==0.99

LunarCalendar==0.0.9

lxml==4.2.6

Markdown==3.3.6

MarkupSafe==2.0.1

matplotlib==3.2.2

matplotlib-inline==0.1.3

matplotlib-venn==0.11.7

missingno==0.5.1

mistune==0.8.4

mizani==0.6.0

mkl==2019.0

mlxtend==0.14.0

more-itertools==8.12.0

moviepy==0.2.3.5

mpmath==1.2.1

msgpack==1.0.3

multiprocess==0.70.12.2

multitasking==0.0.10

murmurhash==1.0.7

music21==5.5.0

natsort==5.5.0

nbclient==0.6.2

nbconvert==5.6.1

nbformat==5.3.0

nest-asyncio==1.5.5

netCDF4==1.5.8

networkx==2.6.3

nibabel==3.0.2

nltk==3.2.5

notebook==5.3.1

numba==0.51.2

numexpr==2.8.1

numpy==1.21.6

nvidia-ml-py3==7.352.0

oauth2client==4.1.3

oauthlib==3.2.0

okgrade==0.4.3

opencv-contrib-python==4.1.2.30

opencv-python==4.1.2.30

openpyxl==3.0.9

opt-einsum==3.3.0

osqp==0.6.2.post0

packaging==21.3

palettable==3.3.0

pandas==1.3.5

pandas-datareader==0.9.0

pandas-gbq==0.13.3

pandas-profiling==1.4.1

pandocfilters==1.5.0

panel==0.12.1

param==1.12.1

parso==0.8.3

pathlib==1.0.1

patsy==0.5.2

pep517==0.12.0

pexpect==4.8.0

pickleshare==0.7.5

Pillow==7.1.2

pip-tools==6.2.0

plac==1.1.3

plotly==5.5.0

plotnine==0.6.0

pluggy==0.7.1

pooch==1.6.0

portpicker==1.3.9

prefetch-generator==1.0.1

preshed==3.0.6

prettytable==3.2.0

progressbar2==3.38.0

prometheus-client==0.14.1

promise==2.3

prompt-toolkit==1.0.18

protobuf==3.17.3

psutil==5.4.8

psycopg2==2.7.6.1

ptyprocess==0.7.0

py==1.11.0

pyarrow==6.0.1

pyasn1==0.4.8

pyasn1-modules==0.2.8

pycocotools==2.0.4

pycparser==2.21

pyct==0.4.8

pydata-google-auth==1.4.0

pydot==1.3.0

pydot-ng==2.0.0

pydotplus==2.0.2

PyDrive==1.3.1

pyemd==0.5.1

pyerfa==2.0.0.1

pyglet==1.5.0

Pygments==2.6.1

pygobject==3.26.1

pymc3==3.11.4

PyMeeus==0.5.11

pymongo==4.1.1

pymystem3==0.2.0

PyOpenGL==3.1.6

pyparsing==3.0.8

pyrsistent==0.18.1

pysndfile==1.3.8

PySocks==1.7.1

pystan==2.19.1.1

pytest==3.6.4

python-apt==0.0.0

python-chess==0.23.11

python-dateutil==2.8.2

python-louvain==0.16

python-slugify==6.1.2

python-utils==3.1.0

pytz==2022.1

pyviz-comms==2.2.0

PyWavelets==1.3.0

PyYAML==3.13

pyzmq==22.3.0

qdldl==0.1.5.post2

qtconsole==5.3.0

QtPy==2.1.0

regex==2019.12.20

requests==2.23.0

requests-oauthlib==1.3.1

resampy==0.2.2

rpy2==3.4.5

rsa==4.8

scikit-image==0.18.3

scikit-learn==1.0.2

scipy==1.4.1

screen-resolution-extra==0.0.0

scs==3.2.0

seaborn==0.11.2

semver==2.13.0

Send2Trash==1.8.0

setuptools-git==1.2

Shapely==1.8.1.post1

simplegeneric==0.8.1

six==1.15.0

sklearn==0.0

sklearn-pandas==1.8.0

smart-open==6.0.0

snowballstemmer==2.2.0

sortedcontainers==2.4.0

SoundFile==0.10.3.post1

soupsieve==2.3.2.post1

spacy==2.2.4

Sphinx==1.8.6

sphinxcontrib-serializinghtml==1.1.5

sphinxcontrib-websupport==1.2.4

SQLAlchemy==1.4.36

sqlparse==0.4.2

srsly==1.0.5

statsmodels==0.10.2

sympy==1.7.1

tables==3.7.0

tabulate==0.8.9

tblib==1.7.0

tenacity==8.0.1

tensorboard==2.8.0

tensorboard-data-server==0.6.1

tensorboard-plugin-wit==1.8.1

tensorflow @ file:///tensorflow-2.8.0-cp37-cp37m-linux_x86_64.whl

tensorflow-datasets==4.0.1

tensorflow-estimator==2.8.0

tensorflow-gcs-config==2.8.0

tensorflow-hub==0.12.0

tensorflow-io-gcs-filesystem==0.25.0

tensorflow-metadata==1.7.0

tensorflow-probability==0.16.0

termcolor==1.1.0

terminado==0.13.3

testpath==0.6.0

text-unidecode==1.3

textblob==0.15.3

Theano-PyMC==1.1.2

thinc==7.4.0

threadpoolctl==3.1.0

tifffile==2021.11.2

tinycss2==1.1.1

tomli==2.0.1

toolz==0.11.2

torch @ https://download.pytorch.org/whl/cu113/torch-1.11.0%2Bcu113-cp37-cp37m-linux_x86_64.whl

torchaudio @ https://download.pytorch.org/whl/cu113/torchaudio-0.11.0%2Bcu113-cp37-cp37m-linux_x86_64.whl

torchsummary==1.5.1

torchtext==0.12.0

torchvision @ https://download.pytorch.org/whl/cu113/torchvision-0.12.0%2Bcu113-cp37-cp37m-linux_x86_64.whl

tornado==5.1.1

tqdm==4.64.0

traitlets==5.1.1

tweepy==3.10.0

typeguard==2.7.1

typing-extensions==4.2.0

tzlocal==1.5.1

uritemplate==3.0.1

urllib3==1.24.3

vega-datasets==0.9.0

wasabi==0.9.1

wcwidth==0.2.5

webencodings==0.5.1

Werkzeug==1.0.1

widgetsnbextension==3.6.0

wordcloud==1.5.0

wrapt==1.14.0

xarray==0.18.2

xgboost==0.90

xkit==0.0.0

xlrd==1.1.0

xlwt==1.3.0

yellowbrick==1.4

zict==2.2.0

zipp==3.8.0

```

```

### OS

Google Colab

### Checklist

- [X] There is not yet another bug report for this issue in the [issue tracker](https://github.com/ydataai/pandas-profiling/issues)

- [X] The problem is reproducible from this bug report. [This guide](http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports) can help to craft a minimal bug report.

- [X] The issue has not been resolved by the entries listed under [Frequent Issues](https://pandas-profiling.ydata.ai/docs/master/rtd/pages/support.html#frequent-issues). | closed | 2022-05-13T19:00:16Z | 2022-05-16T18:06:54Z | https://github.com/ydataai/ydata-profiling/issues/981 | [

"documentation 📖"

] | adamrossnelson | 3 |

tox-dev/tox | automation | 2,422 | UnicodeDecodeError on line 100 of execute/stream.py | Currently using Version: 4.0.0b2 on Windows, and getting an error whereby Tox is trying to decode everything using "utf-8" alone while there might be other valid encodings as well.

This is the problematic line for me that happened on Windows:

Line 100 onwards of "execute/stream.py"

```

@property

def text(self) -> str:

with self._content_lock:

return self._content.decode("utf-8")

```

When using breakpoint() there it seems that it will produce a UnicodeDecodeError with one of the self._content having an encoding of "Windows-1252" if I use the chardet library to detect it.

My own personal patch is modifying the function this way but I suppose a more elegant fix can be put in by someone else hopefully:

```

import chardet

...

...

@property

def text(self) -> str:

with self._content_lock:

encoding_detected: str | None = chardet.detect(self._content).get('encoding')

if encoding_detected:

return self._content.decode(encoding_detected)

return self._content.decode('utf-8')

``` | closed | 2022-05-19T12:53:15Z | 2023-06-17T01:18:12Z | https://github.com/tox-dev/tox/issues/2422 | [

"bug:normal",

"help:wanted"

] | julzt0244 | 13 |

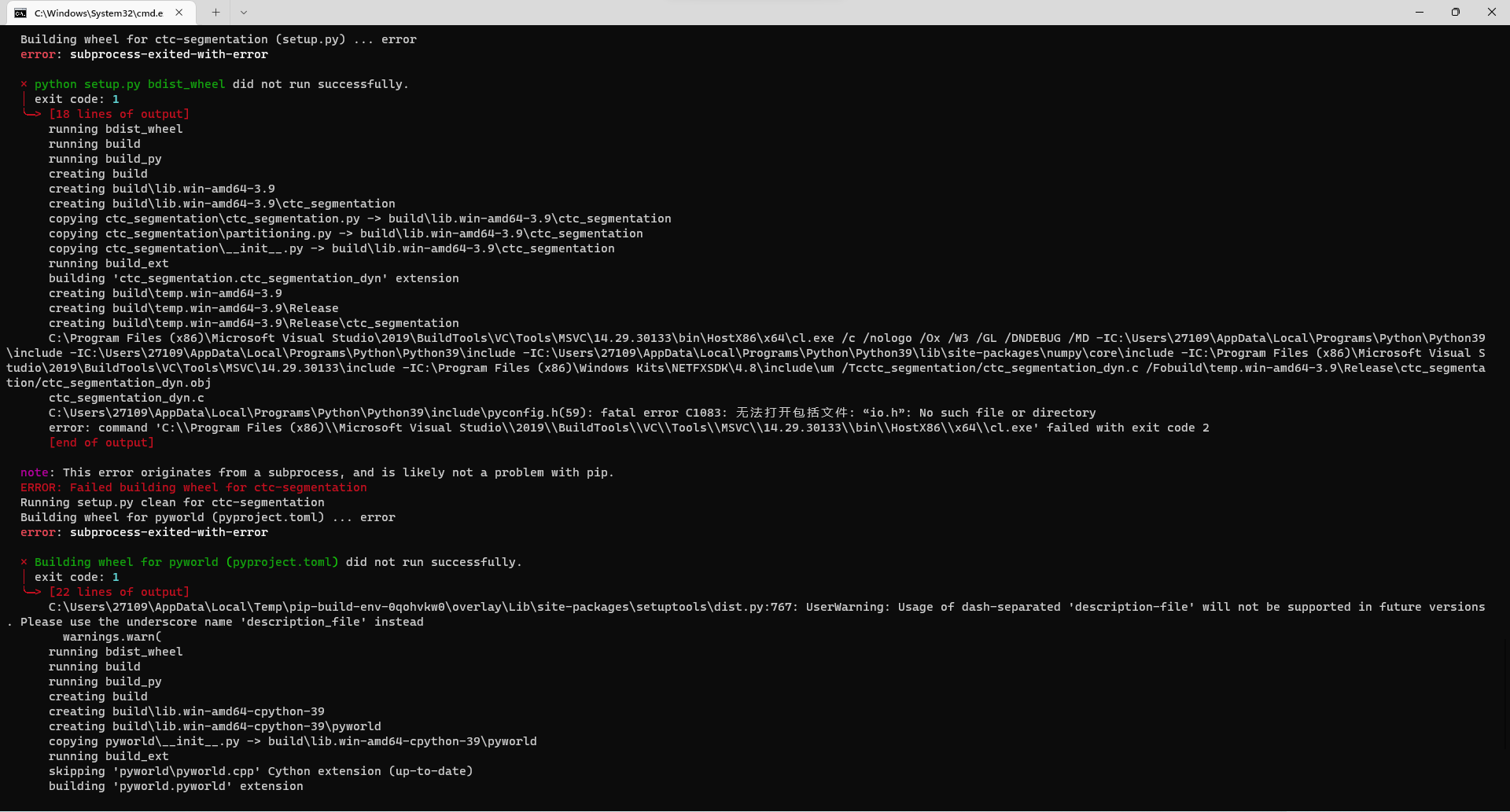

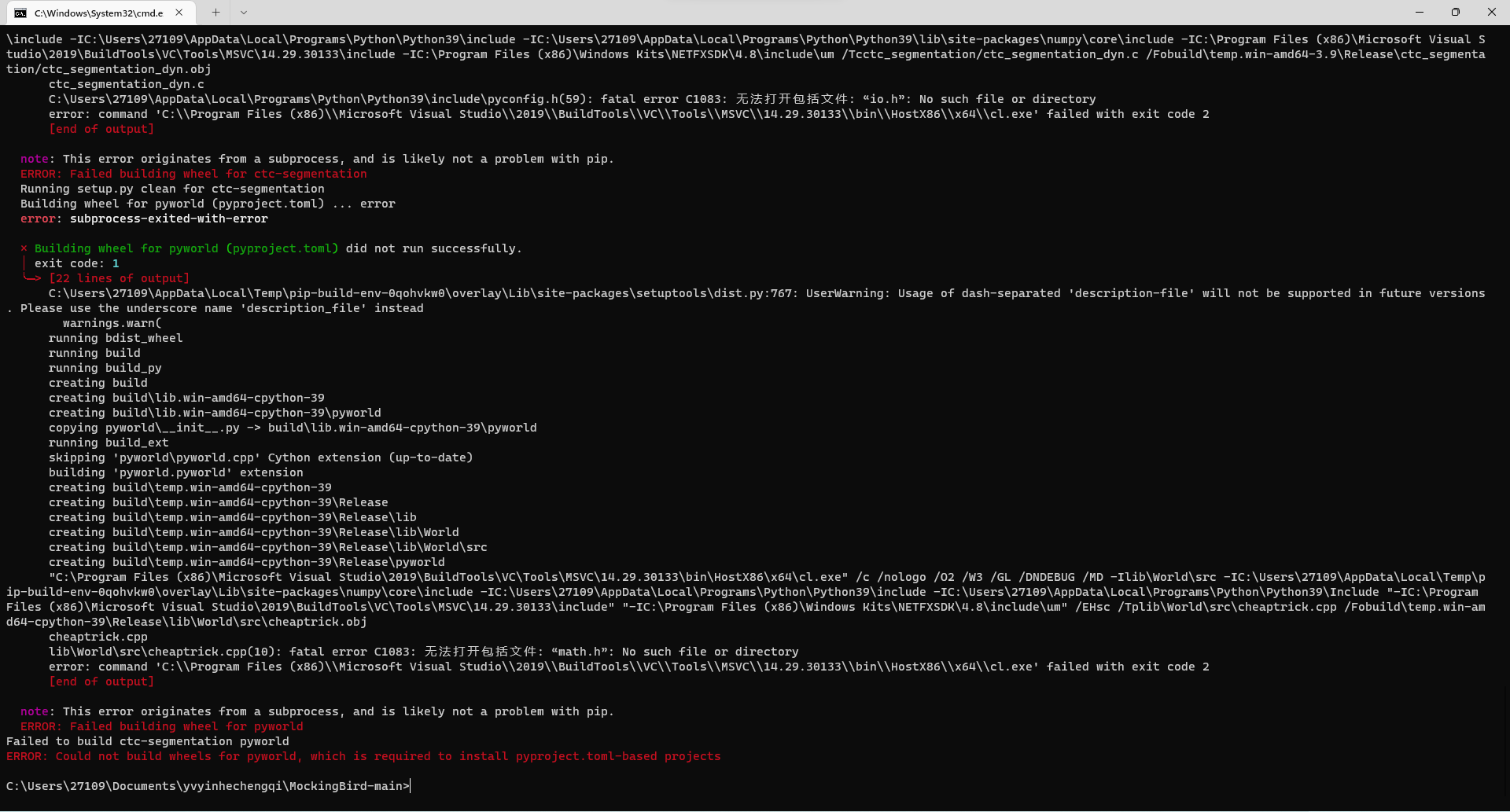

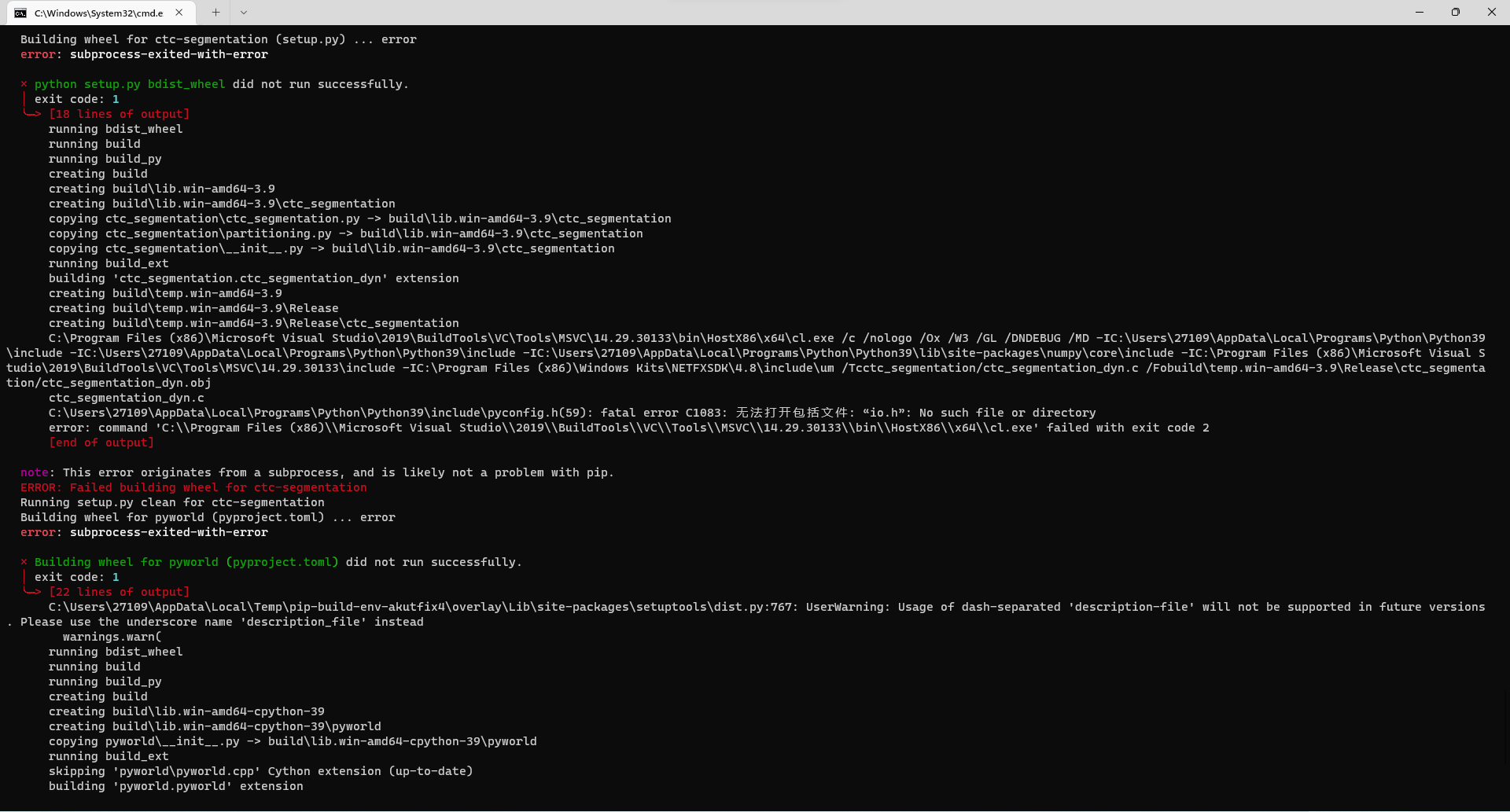

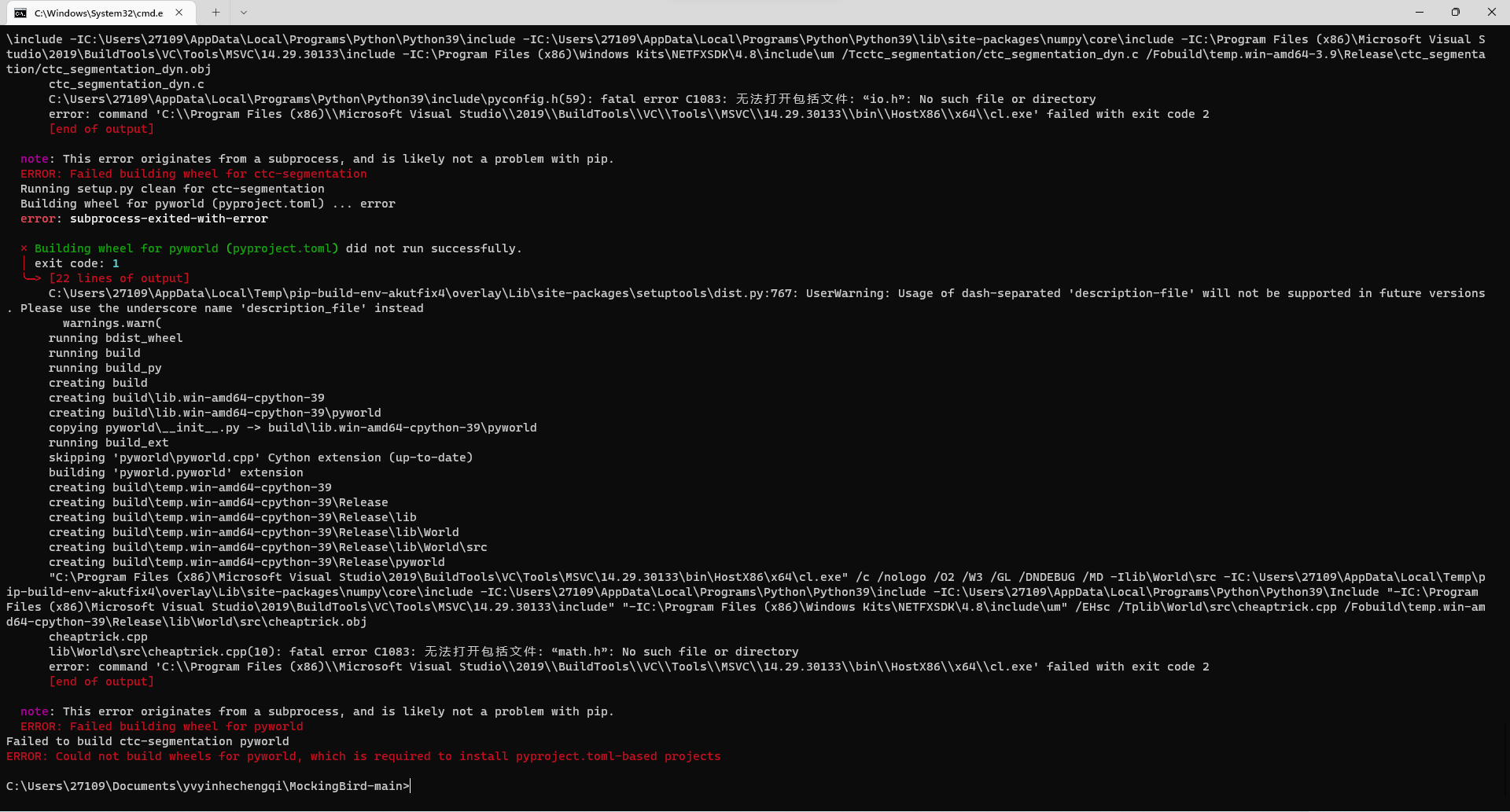

babysor/MockingBird | pytorch | 553 | 为什么缺失.h文件呢,缺了两个,不知道怎么回事,求教 | **Summary[问题简述(一句话)]**

为什么缺失.h文件呢,缺了两个,不知道怎么回事,求教

**Env & To Reproduce[复现与环境]**

py3.9 PyTorch11.0 cuda 11.6 不涉及模型

**Screenshots[截图(如有)]**

运行pip install -r requirements.txt的报错截图

运行pip install espnet的报错截图

就是这样,vc工具箱总是运行失败,是我少安装什么组件了吗,还是我缺少什么文件

| open | 2022-05-15T09:08:09Z | 2022-05-15T12:05:01Z | https://github.com/babysor/MockingBird/issues/553 | [] | NONAME-2121237 | 1 |

tensorpack/tensorpack | tensorflow | 1,561 | nr_tower | If you're asking about an unexpected problem which you do not know the root cause,

use this template. __PLEASE DO NOT DELETE THIS TEMPLATE, FILL IT__:

If you already know the root cause to your problem,

feel free to delete everything in this template.

### 1. What you did:

import argparse

import os

import tensorflow as tf

from tensorflow.contrib.layers import variance_scaling_initializer

from tensorpack import *

from tensorpack.dataflow import dataset

from tensorpack.tfutils.summary import *

from tensorpack.tfutils.symbolic_functions import *

from tensorpack.utils.gpu import get_nr_gpu

max_epoch=400,

nr_tower=max(get_nr_gpu(), 1),

session_init=SaverRestore(args.load) if args.load else None

launch_train_with_config(config,SyncMultiGPUTrainerParameterServer(nr_tower))

(1) **If you're using examples, what's the command you run:**

python /home/liuxp/LQ-Nets-master/cifar10-vgg-small.py --gpu 0 --qw 1 --qa 2

(2) **If you're using examples, have you made any changes to the examples? Paste `git status; git diff` here:**

(3) **If not using examples, help us reproduce your issue:**

It's always better to copy-paste what you did than to describe them.

Please try to provide enough information to let others __reproduce__ your issues.

Without reproducing the issue, we may not be able to investigate it.

### 2. What you observed:

(1) **Include the ENTIRE logs here:**

Traceback (most recent call last):

File "/home/liuxp/LQ-Nets-master/cifar10-vgg-small.py", line 157, in <module>

launch_train_with_config(config, SyncMultiGPUTrainerParameterServer(nr_tower))

NameError: name 'nr_tower' is not defined

Tensorpack typically saves stdout to its training log.

If stderr is relevant, you can run a command with `my_command 2>&1 | tee logs.txt`

to save both stdout and stderr to one file.

(2) **Other observations, if any:**

For example, CPU/GPU utilization, output images, tensorboard curves, if relevant to your issue.

### 3. What you expected, if not obvious.

I wanna debug this

### 4. Your environment:

Paste the output of this command: `python -m tensorpack.tfutils.collect_env`

If this command failed, also tell us your version of Python/TF/tensorpack.

python 3.6.13 h12debd9_1

python-dateutil 2.8.2

python-prctl 1.8.1

tensorflow 1.10.0 gpu_py36h8dbd23f_0

tensorflow-base 1.10.0 gpu_py36h3435052_0

tensorflow-gpu 1.10.0

tensorpack 0.9.9

Note that:

+ You can install tensorpack master by `pip install -U git+https://github.com/tensorpack/tensorpack.git`

and see if your issue is already solved.

+ If you're not using tensorpack under a normal command line shell (e.g.,

using an IDE or jupyter notebook), please retry under a normal command line shell.

You may often want to provide extra information related to your issue, but

at the minimum please try to provide the above information __accurately__ to save effort in the investigation.

| open | 2024-01-11T09:15:18Z | 2024-01-11T13:29:17Z | https://github.com/tensorpack/tensorpack/issues/1561 | [] | bon1996 | 0 |

roboflow/supervision | computer-vision | 1,554 | Allow TIFF (and more) image formats in `load_yolo_annotations` | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Description

* Currently, `load_yolo_annotations` only allows `png,` `jpg`, and `jpeg` file formats. `load_yolo_annotations` is internally called by `sv.DetectionDataset.from_yolo` functionality.

https://github.com/roboflow/supervision/blob/1860fdb0a4e21edc5fa03d973e9f31c055bdcf4f/supervision/dataset/formats/yolo.py#L156

* Ultralytics supports a wide variety of image formats. Copied the following table from [their website](https://docs.ultralytics.com/modes/predict/#image-and-video-formats:~:text=The%20below%20table%20contains%20valid%20Ultralytics%20image%20formats.).

| Image Suffix | Example Predict Command | Reference |

|---------------|-----------------------------------|---------------------------------|

| .bmp | yolo predict source=image.bmp | Microsoft BMP File Format |

| .dng | yolo predict source=image.dng | Adobe DNG |

| .jpeg | yolo predict source=image.jpeg | JPEG |

| .jpg | yolo predict source=image.jpg | JPEG |

| .mpo | yolo predict source=image.mpo | Multi Picture Object |

| .png | yolo predict source=image.png | Portable Network Graphics |

| .tif | yolo predict source=image.tif | Tag Image File Format |

| .tiff | yolo predict source=image.tiff | Tag Image File Format |

| .webp | yolo predict source=image.webp | WebP |

| .pfm | yolo predict source=image.pfm | Portable FloatMap |

* Use of TIFF files is common in satellite imagery such as Sentinel-2. One may prefer to preserve the TIFF format over convert it to PNG/JPG because TIFF allows the storage of georeferencing information.

* I see that the `load_yolo_annotations` uses `cv2.imread` to read the image files. [OpenCV seems to support](https://docs.opencv.org/4.x/d4/da8/group__imgcodecs.html#gacbaa02cffc4ec2422dfa2e24412a99e2) many of the Ultralytics-supported formats.

https://github.com/roboflow/supervision/blob/1860fdb0a4e21edc5fa03d973e9f31c055bdcf4f/supervision/dataset/formats/yolo.py#L170

### Proposals

* P1: We can expand the hardcoded list of allowed formats.

* P2: We may choose not to restrict the image format and let it fail later.

### Use case

* Everyone who'd like to use formats other than `png,` `jpg`, and `jpeg` will be able to use this extension.

### Additional

_No response_

### Are you willing to submit a PR?

- [X] Yes I'd like to help by submitting a PR! | closed | 2024-09-28T15:04:57Z | 2025-01-19T13:02:38Z | https://github.com/roboflow/supervision/issues/1554 | [

"enhancement",

"hacktoberfest"

] | patel-zeel | 11 |

nl8590687/ASRT_SpeechRecognition | tensorflow | 254 | file_dict.py没必要定义两个函数吧,似乎功能一样,只保留GetSymbolList_trash2()不是更简洁吗? | closed | 2021-08-24T11:55:44Z | 2021-09-02T14:14:02Z | https://github.com/nl8590687/ASRT_SpeechRecognition/issues/254 | [] | DQ2020scut | 2 | |

seleniumbase/SeleniumBase | web-scraping | 2,251 | UC mode detected on PixelTest (Custom Script and Test Scripts) | Hello from youtube. I've been working on a scraping bot that uses seleniumbase and I need a little assistance with. It seems when I check with pixelscan and other tools my bot is being detected. Even when using a simple script., it seems to get detected. Here is the Frankenstein script I mentioned. I have replaced actual site with blahblahblah.com but for reference this basic concept is this:

**Python script that will collect card information for a trading card game, extract that info to a data frame, hold it, then search google with that information. Once the google search is complete the bot navigates to a website to get price data for that card relative the condition of the card. in the end it exports all of the information to an organized CSV file.**

You will probably think this code is hilarious as I used many things I now realized are built into seleniumbase and I probably didn't need some of them such as time and maybe even beautifulsoup (still learning a ton).

Anyways here is the script. You can modify it to stay open at the end and navigate to pixelscan if you want to test but you might know right away what could be going on . It might be better to troubleshoot with a simple script but I thought you might find this one insteresting and would love feedback

```

import time

import random

from seleniumbase import SB

from bs4 import BeautifulSoup

import pandas as pd

# Function to extract data from a given URL

def extract_data_from_url(url, data_frame):

with SB(uc=True, incognito=True) as sb:

sb.get(url)

time.sleep(random.uniform(5, 8)) # Random delay after opening the URL

page_source = sb.get_page_source()

soup = BeautifulSoup(page_source, 'html.parser')

data = {}

label_selectors = {

"Cert #": "div.certlookup-intro dl:nth-child(2) dd",

"Card Name": "div.certlookup-intro dl:nth-child(3) dd",

"Year": "div.certlookup-intro div:nth-child(4) dl:nth-child(2) dd",

"Language": "div.certlookup-intro div:nth-child(4) dl:nth-child(3) dd",

"Card Set": "div.certlookup-intro div:nth-child(5) dl:nth-child(1) dd",

"Card Number": "div.certlookup-intro div:nth-child(5) dl:nth-child(2) dd",

"Grade": "div.related-info.grades dl dd"

}

for label, selector in label_selectors.items():

element = soup.select_one(selector)

data[label] = element.text.strip() if element else "Data not found"

data_frame = data_frame._append(data, ignore_index=True)

return data_frame

# Function to get the price for a specific grade from the page source of blahblahblah123.com

def get_price_for_grade(page_source, grade):

soup = BeautifulSoup(page_source, 'html.parser')

price_rows = soup.select("#full-prices table tr")

for row in price_rows:

cells = row.find_all('td')

if cells:

grade_cell = cells[0].get_text(strip=True)

price_cell = cells[1].get_text(strip=True)

# Check for the special case of grade 10

if grade == '10' and 'PSA 10' in grade_cell:

return price_cell

elif grade_cell == f"Grade {grade}":

return price_cell

return "Price not found"

# Read a list of URLs from a file (e.g., "urls.txt")

with open("urls.txt", "r") as url_file:

urls = url_file.read().splitlines()

# Create an empty DataFrame to store the data

data_frame = pd.DataFrame(columns=["Cert #", "Year", "Language", "Card Set", "Card Name", "Card Number", "Grade", "Price"])

# Iterate through the list of URLs and extract data from each

for url in urls:

print(f"Extracting data from URL: {url}")

data_frame = extract_data_from_url(url, data_frame)

# Construct the Google URL based on the mapping

google_mapping = {

1: "Card Name",

2: "Year",

3: "Language",

4: "Card Set",

5: "Card Number"

}

google_url = "https://www.google.com/search?q=" + "+".join([data_frame.iloc[-1][google_mapping[i]] for i in range(1, 6)])

# Open the Google URL and click on the first hyperlink that goes to www.blahblahblah.com

with SB(uc=True, incognito=True) as sb:

sb.get(google_url)

time.sleep(random.uniform(5, 8)) # Random delay before searching

sb.wait_for_element('a[href*="www.blahblahblah.com"]')

first_blahblahblah_link = sb.find_element('a[href*="www.blahblahblah.com"]')

first_blahblahblah_link.click()

time.sleep(random.uniform(5, 8)) # Random delay after clicking

sb.wait_for_ready_state_complete()

blahblahblah_page_source = sb.get_page_source()

# Get the price for the grade from the page source of blahblahblah.com

grade = data_frame.iloc[-1]['Grade']

# Special handling for grade 10

grade = '10' if 'Gem Mint 10' in grade else grade

price = get_price_for_grade(blahblahblah_page_source, grade)

data_frame.at[data_frame.index[-1], 'Price'] = price

time.sleep(random.uniform(5, 8)) # Random delay after processing each URL

# Export the DataFrame to a CSV file

data_frame.to_csv('collected_data.csv', index=False)

# If you want to keep the browser open for inspection, otherwise remove this line

# sb.sleep(999)

```

So I decided to try a more simple script and still cannot get it to pass detections

```

from seleniumbase import SB

# Create a function to navigate to the specified URL and keep the browser open

def navigate_to_url_and_keep_open(url):

with SB(uc=True, incognito=True) as sb:

# Open the specified URL

sb.open(url)

sb.sleep(999)

# Specify the URL you want to navigate to

url = "www.pixelscan.net"

# Navigate to the specified URL and keep the browser open

navigate_to_url_and_keep_open(url)

```

I attempted sb.get on the above script with not luck as well. Confirmed I am using latest seleniumbase.

Any ideas, thoughts and recommendations are very very welcomed. I'm super new at python and scripts as a whole but have been spending a ton of time digging in with my spare time.

Appreciate your hard work and dedication! | closed | 2023-11-08T04:43:17Z | 2023-11-09T18:00:25Z | https://github.com/seleniumbase/SeleniumBase/issues/2251 | [

"question",

"UC Mode / CDP Mode"

] | anthonyg45157 | 2 |

pytest-dev/pytest-mock | pytest | 61 | Fix tests for pytest 3 | Hey!

Many tests are failing when running with pytest 3.0.1:

```

_____________________________ TestMockerStub.test_failure_message_with_no_name _____________________________

self = <test_pytest_mock.TestMockerStub instance at 0x7f0b7f55c758>

mocker = <pytest_mock.MockFixture object at 0x7f0b7f51add0>

def test_failure_message_with_no_name(self, mocker):

> self.__test_failure_message(mocker)

test_pytest_mock.py:182:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

self = <test_pytest_mock.TestMockerStub instance at 0x7f0b7f55c758>

mocker = <pytest_mock.MockFixture object at 0x7f0b7f51add0>, kwargs = {}, expected_name = 'mock'

expected_message = 'Expected call: mock()\nNot called'

stub = <MagicMock spec='function' id='139687357426384'>, exc_info = <ExceptionInfo AssertionError tblen=3>

@py_assert1 = AssertionError('Expected call: mock()\nNot called',)

def __test_failure_message(self, mocker, **kwargs):

expected_name = kwargs.get('name') or 'mock'

expected_message = 'Expected call: {0}()\nNot called'.format(expected_name)

stub = mocker.stub(**kwargs)

with pytest.raises(AssertionError) as exc_info:

stub.assert_called_with()

> assert exc_info.value.msg == expected_message

E AttributeError: 'exceptions.AssertionError' object has no attribute 'msg'

test_pytest_mock.py:179: AttributeError

```

| closed | 2016-09-03T10:42:23Z | 2016-09-15T01:48:48Z | https://github.com/pytest-dev/pytest-mock/issues/61 | [

"bug"

] | vincentbernat | 3 |

iperov/DeepFaceLab | deep-learning | 5,405 | Not able to detect GPU - Build_10_09_2021 | ## Expected behavior

DeepFaceLab_NVIDIA_RTX3000_series_build_10_09_2021 should be able to detect installed GPU

## Actual behavior

DeepFaceLab_NVIDIA_RTX3000_series_build_10_09_2021 is not able to detect GPU, **while the older build (build_09_06_2021) is able to detect them.**

## Steps to reproduce

GPU: 3060 RTX 12 GB

Windows 10 Home

Nvidia Studio Driver

| closed | 2021-10-10T07:49:45Z | 2021-10-11T14:44:54Z | https://github.com/iperov/DeepFaceLab/issues/5405 | [] | exploreTech32 | 6 |

microsoft/nni | data-science | 5,653 | Error: /lib64/libstdc++.so.6: version `CXXABI_1.3.8' not found | **Describe the issue**:

Hello, I encountered the following error while using NNI 2.10.1:

```

[2023-08-01 14:07:41] Creating experiment, Experiment ID: aj7wd2ey

[2023-08-01 14:07:41] Starting web server...

node:internal/modules/cjs/loader:1187

return process.dlopen(module, path.toNamespacedPath(filename));

^

Error: /lib64/libstdc++.so.6: version `CXXABI_1.3.8' not found (required by /home/zzdx/.local/lib/python3.10/site-packages/nni_node/node_modules/sqlite3/lib/binding/napi-v6-linux-glibc-x64/node_sqlite3.node)

at Object.Module._extensions..node (node:internal/modules/cjs/loader:1187:18)

at Module.load (node:internal/modules/cjs/loader:981:32)

at Function.Module._load (node:internal/modules/cjs/loader:822:12)

at Module.require (node:internal/modules/cjs/loader:1005:19)

at require (node:internal/modules/cjs/helpers:102:18)

at Object.<anonymous> (/home/zzdx/.local/lib/python3.10/site-packages/nni_node/node_modules/sqlite3/lib/sqlite3-binding.js:4:17)

at Module._compile (node:internal/modules/cjs/loader:1103:14)

at Object.Module._extensions..js (node:internal/modules/cjs/loader:1157:10)

at Module.load (node:internal/modules/cjs/loader:981:32)

at Function.Module._load (node:internal/modules/cjs/loader:822:12) {

code: 'ERR_DLOPEN_FAILED'

}

Thrown at:

at Module._extensions..node (node:internal/modules/cjs/loader:1187:18)

at Module.load (node:internal/modules/cjs/loader:981:32)

at Module._load (node:internal/modules/cjs/loader:822:12)

at Module.require (node:internal/modules/cjs/loader:1005:19)

at require (node:internal/modules/cjs/helpers:102:18)

at /home/zzdx/.local/lib/python3.10/site-packages/nni_node/node_modules/sqlite3/lib/sqlite3-binding.js:4:17

at Module._compile (node:internal/modules/cjs/loader:1103:14)

at Module._extensions..js (node:internal/modules/cjs/loader:1157:10)

at Module.load (node:internal/modules/cjs/loader:981:32)

at Module._load (node:internal/modules/cjs/loader:822:12)

[2023-08-01 14:07:42] WARNING: Timeout, retry...

[2023-08-01 14:07:43] WARNING: Timeout, retry...

[2023-08-01 14:07:44] ERROR: Create experiment failed

Traceback (most recent call last):

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connection.py", line 203, in _new_conn

sock = connection.create_connection(

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/util/connection.py", line 85, in create_connection

raise err

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/util/connection.py", line 73, in create_connection

sock.connect(sa)

ConnectionRefusedError: [Errno 111] Connection refused

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 790, in urlopen

response = self._make_request(

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 496, in _make_request

conn.request(

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connection.py", line 395, in request

self.endheaders()

File "/usr/local/python3/lib/python3.10/http/client.py", line 1278, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/usr/local/python3/lib/python3.10/http/client.py", line 1038, in _send_output

self.send(msg)

File "/usr/local/python3/lib/python3.10/http/client.py", line 976, in send

self.connect()

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connection.py", line 243, in connect

self.sock = self._new_conn()

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connection.py", line 218, in _new_conn

raise NewConnectionError(

urllib3.exceptions.NewConnectionError: <urllib3.connection.HTTPConnection object at 0x7f2e65bc04c0>: Failed to establish a new connection: [Errno 111] Connection refused

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/home/zzdx/.local/lib/python3.10/site-packages/requests/adapters.py", line 486, in send

resp = conn.urlopen(

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/connectionpool.py", line 844, in urlopen

retries = retries.increment(

File "/home/zzdx/.local/lib/python3.10/site-packages/urllib3/util/retry.py", line 515, in increment

raise MaxRetryError(_pool, url, reason) from reason # type: ignore[arg-type]

urllib3.exceptions.MaxRetryError: HTTPConnectionPool(host='localhost', port=8080): Max retries exceeded with url: /api/v1/nni/check-status (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7f2e65bc04c0>: Failed to establish a new connection: [Errno 111] Connection refused'))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/home/zzdx/search_api/area_api/3_5_1126_1623_711_715-30min_20230801140738/main.py", line 51, in <module>

experiment.run(8080)

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/experiment.py", line 180, in run

self.start(port, debug)

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/experiment.py", line 135, in start

self._start_impl(port, debug, run_mode, None, [])

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/experiment.py", line 103, in _start_impl

self._proc = launcher.start_experiment(self._action, self.id, config, port, debug, run_mode,

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/launcher.py", line 148, in start_experiment

raise e

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/launcher.py", line 126, in start_experiment

_check_rest_server(port, url_prefix=url_prefix)

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/launcher.py", line 196, in _check_rest_server

rest.get(port, '/check-status', url_prefix)

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/rest.py", line 43, in get

return request('get', port, api, prefix=prefix)

File "/home/zzdx/.local/lib/python3.10/site-packages/nni/experiment/rest.py", line 31, in request

resp = requests.request(method, url, timeout=timeout)

File "/home/zzdx/.local/lib/python3.10/site-packages/requests/api.py", line 59, in request

return session.request(method=method, url=url, **kwargs)

File "/home/zzdx/.local/lib/python3.10/site-packages/requests/sessions.py", line 589, in request

resp = self.send(prep, **send_kwargs)

File "/home/zzdx/.local/lib/python3.10/site-packages/requests/sessions.py", line 703, in send

r = adapter.send(request, **kwargs)

File "/home/zzdx/.local/lib/python3.10/site-packages/requests/adapters.py", line 519, in send

raise ConnectionError(e, request=request)

requests.exceptions.ConnectionError: HTTPConnectionPool(host='localhost', port=8080): Max retries exceeded with url: /api/v1/nni/check-status (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7f2e65bc04c0>: Failed to establish a new connection: [Errno 111] Connection refused'))

[2023-08-01 14:07:44] Stopping experiment, please wait...

[2023-08-01 14:07:44] Experiment stopped

```

**Environment**:

- NNI version: 2.10.1

- Training service (local|remote|pai|aml|etc): remote

- Client OS: Windows 11

- Server OS (for remote mode only): Centos 7.9

- Python version: 3.10

- PyTorch/TensorFlow version: None

- Is conda/virtualenv/venv used?: no

- Is running in Docker?: no

**Log message**:

- nnimanager.log:

```

[2023-08-01 14:38:48] INFO (nni.experiment) Creating experiment, Experiment ID: s1dz03t2

[2023-08-01 14:38:48] INFO (nni.experiment) Starting web server...

[2023-08-01 14:38:49] WARNING (nni.experiment) Timeout, retry...

[2023-08-01 14:38:50] WARNING (nni.experiment) Timeout, retry...

[2023-08-01 14:38:51] ERROR (nni.experiment) Create experiment failed

[2023-08-01 14:38:51] INFO (nni.experiment) Stopping experiment, please wait...

```

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

| closed | 2023-08-01T06:42:16Z | 2023-08-01T09:07:31Z | https://github.com/microsoft/nni/issues/5653 | [] | yifan-dadada | 0 |

zappa/Zappa | flask | 1,064 | Lambda client read timeout should match maximum Lambda execution time | <!--- Provide a general summary of the issue in the Title above -->

## Context

The boto3 Lambda client used by the Zappa CLI has its read timeout set to [5 minutes](https://github.com/zappa/Zappa/blob/master/zappa/core.py#L340), while the maximum Lambda function execution time is now [15 minutes](https://aws.amazon.com/about-aws/whats-new/2018/10/aws-lambda-supports-functions-that-can-run-up-to-15-minutes). This means that `zappa invoke` or `zappa manage` could time out before the actual function execution completes. I encountered this recently with a long-running Django management command, using Zappa 0.54 on Python 3.8.

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

<!--- Also, please make sure that you are running Zappa _from a virtual environment_ and are using Python 3.6/3.7/3.8 -->

## Expected Behavior

<!--- Tell us what should happen -->

The Lambda client used by the CLI should not time out before the execution completes.

## Actual Behavior

<!--- Tell us what happens instead -->

The client times out after 5 minutes regardless of the configured execution time, which can be up to 15 minutes.

## Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

The timeout should be updated to the maximum Lambda function execution time.

## Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->

1. Set up a Lambda function with a timeout greater than 5 minutes.

2. Deploy some code that takes more than 5 minutes to run.

3. Invoke the long-running code using `zappa invoke` or `zappa manage`.

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Zappa version used: 0.54

* Operating System and Python version: macOS 11.6.1, Python 3.8.11

* The output of `pip freeze`: `zappa==0.54.0`

| closed | 2021-11-03T21:54:29Z | 2021-11-05T17:40:25Z | https://github.com/zappa/Zappa/issues/1064 | [] | rolandcrosby-check | 0 |

koxudaxi/fastapi-code-generator | pydantic | 323 | Specs with vendor extensions might lead to invalid Python code | Users can add additional information with x-fields. But Python does not accept identifiers with dashses.

Here are some common vendor extensions documented: https://github.com/Redocly/redoc/blob/main/docs/redoc-vendor-extensions.md#x-logo

Here is example spec:

```yaml

openapi: 3.0.0

info:

title: Example

version: 1.0.0

x-audience: company-internal

x-logo:

url: https://www.example.com/company-logo.png

```

The generated code looks like this

```python

app = FastAPI(

title='Example',

version='1.0.0',

x - audience='company-internal',

x - logo={'url': 'https://www.example.com/company-logo.png'},

)

```

I fixed it this way:

```patch

--- fastapi_code_generator/template/main.jinja2.orig 2023-02-13 16:11:00.677867572 +0100

+++ fastapi_code_generator/template/main.jinja2 2023-02-13 16:37:28.944897684 +0100

@@ -8,7 +8,9 @@

{% if info %}

{% for key,value in info.items() %}

{% set info_value= value.__repr__() %}

+ {%- if not key.startswith("x-") -%}

{{ key }} = {{info_value}},

+ {%- endif -%}

{% endfor %}

{% endif %}

)

```

First I tried to add all fields as a dict passed as kwargs:

```python

app = FastAPI(**{

'title': 'Example',

'version': '1.0.0',

'x-audience': 'company-internal',

'x-logo': {'url': 'https://www.example.com/company-logo.png'},

})

```

But ignoring these fields seems sufficient because FastAPI ignores them anyway. | open | 2023-02-13T15:51:05Z | 2023-02-13T15:51:05Z | https://github.com/koxudaxi/fastapi-code-generator/issues/323 | [] | hajs | 0 |

keras-team/keras | data-science | 20,335 | Floating point exception (core dumped) with onednn opt on tensorflow backend | As shown in this [colab](https://colab.research.google.com/drive/1XjoAtDP4SC2qyLWslW8qWzqQusn9eDOu?usp=sharing), the kernel, not the program, crashes if the OneDNN OPT is on and the output tensor shape contains a zero dimension.

As discussed in tensorflow/tensorflow#77131, and also shown in the above colab, we found that the error disapeared after downgrading from keras 3.0 to keras 2.0.

Therefore, I think some errors are introduced when updating from keras 2.0 to keras 3.0.

| closed | 2024-10-09T08:41:45Z | 2024-11-14T02:01:52Z | https://github.com/keras-team/keras/issues/20335 | [

"type:bug/performance",

"stat:awaiting response from contributor",

"stale",

"backend:tensorflow"

] | Shuo-Sun20 | 4 |

flasgger/flasgger | flask | 54 | cannot access the website using my host ip | Hi , flasgger made my task easier in creating documentation for my api but I cannot access the documentation unless the flask-api is hosted on 5000 and also cannot access documentation on my IP(http://10.0.5.40:5000/apidocs/index.html) but can access when localhost is used. Any suggestions? | closed | 2017-03-23T12:39:21Z | 2017-03-23T19:00:46Z | https://github.com/flasgger/flasgger/issues/54 | [] | coolguy456 | 2 |

lux-org/lux | pandas | 144 | Speed up test by using shared global variable | Use pytest fixture global variable to share dataframes to prevent having to load in the test dataset every time across the different tests. For example:

This might also help resolve #97 . | closed | 2020-11-17T14:41:00Z | 2020-11-19T12:36:00Z | https://github.com/lux-org/lux/issues/144 | [

"easy",

"test"

] | dorisjlee | 1 |

milesmcc/shynet | django | 263 | [Discussion] Support Docker Secrets | I recently discovered that passing secrets to Docker containers is discouraged, and that is the reason Docker does not support out of the shelf mounting secrets into env variables:

> Developers often rely on environment variables to store sensitive data, which is okay for some scenarios but not recommended for Docker containers. Environment variables are even less secure than files. They are vulnerable in more ways, such as:

> * [Linked containers](https://docs.docker.com/network/links/)

> * The [docker inspect](https://stackoverflow.com/questions/30342796/how-to-get-env-variable-when-doing-docker-inspect) command

> * [Child processes](https://devblogs.microsoft.com/oldnewthing/20150915-00/?p=91591)

> * Event log files

(https://snyk.io/blog/keeping-docker-secrets-secure/)

I've been using a utility I made for a while in my Django projects to easily get Docker secrets with fallback to Env environment, and even supporting custom environ objects:

https://gist.github.com/sergioisidoro/7972229bb5826c25f12e7a406f11e7cd

I'm wondering if you would be willing to accept a PR which uses this wrapper for most sensitive stuff (Django secret key, DB password, etc) | open | 2023-04-03T17:02:39Z | 2023-04-04T07:39:09Z | https://github.com/milesmcc/shynet/issues/263 | [] | sergioisidoro | 2 |

horovod/horovod | deep-learning | 3,115 | [MacOS] Race condition makes parallel tests hang on macOS | Sometimes our tests in `test/parallel` hang on macOS, e.g. [here](https://github.com/horovod/horovod/runs/3338155166?check_suite_focus=true):

[1,0]<stderr>:Missing ranks:

[1,0]<stderr>:0: [allgather.duplicate_name, allgather.noname.1362]

[1,0]<stderr>:1: [allgather.noname.1361]

[1,0]<stderr>:[2021-08-16 13:29:51.206828: W /private/var/folders/24/8k48jl6d249_n_qfxwsl6xvm0000gn/T/pip-req-build-

e3uh2f38/horovod/common/stall_inspector.cc:107] One or more tensors were submitted to be reduced, gathered or

broadcasted by subset of ranks and are waiting for remainder of ranks for more than 60 seconds. This may

indicate that different ranks are trying to submit different tensors or that only subset of ranks is submitting

tensors, which will cause deadlock.

This has been mitigated by retrying these tests but should be investigated, understood and fixed systematically. | open | 2021-08-17T14:01:50Z | 2021-08-17T14:03:28Z | https://github.com/horovod/horovod/issues/3115 | [

"bug"

] | EnricoMi | 0 |

marimo-team/marimo | data-visualization | 3,402 | Don't notify on save | When pressing the save button, a notification pops up.

Unless saving fails, I don't see a need for a notification, especially since it blocks the "run all stale cells button"

| closed | 2025-01-12T09:24:05Z | 2025-01-14T04:54:39Z | https://github.com/marimo-team/marimo/issues/3402 | [] | Hofer-Julian | 1 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 413 | 训练后的lora模型无法加载 | ```

HFValidationError: Repo id must be in the form 'repo_name' or 'namespace/repo_name': './out/lora/checkpoint-70000'. Use `repo_type` argument if needed.

```

我这里自定义了一个 output_dir,按照repo自带的训练脚本,保存之后lora无法再次被重新加载 | closed | 2023-05-23T08:29:03Z | 2023-06-05T22:02:19Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/413 | [

"stale"

] | lucasjinreal | 5 |

pydata/xarray | pandas | 9,582 | Disable methods implemented by map_over_subtree and inheritance from Dataset | ### What is your issue?

Today we discussed how there are a bunch of methods for DataTree that are implemented using the somewhat sketchy approach of taking the equivalent method from `Dataset`, then applying it using `map_over_subtree`. Because there are so many of these methods, and they are ultimately just syntactic sugar that saves the user about 3 lines of code each, these were originally implemented in `xarray-contrib/datatree` without tests.

We decided that it would be safer to disable these methods for now, and then add them back in as we double-check that they actually work / write unit tests for them. The logic being that it would be less painful to just force users to write out their loops over the tree in early versions than it would be for our new inheritance model to break the method in some subtle way without anyone realizing.

Some very important methods we will try to get into the first release (particularly `isel`/`sel` and arithmetic).

The code with the lists of methods to be disabled is here

https://github.com/pydata/xarray/blob/main/xarray/core/datatree_ops.py

cc @shoyer, @flamingbear, @kmuehlbauer, @owenlittlejohns , @castelao, @keewis | closed | 2024-10-04T21:53:19Z | 2024-10-07T11:42:01Z | https://github.com/pydata/xarray/issues/9582 | [

"topic-DataTree"

] | TomNicholas | 0 |

FujiwaraChoki/MoneyPrinterV2 | automation | 100 | about to crashout over this tweet button , cant get the PR fix to work either . |

ℹ️ => Fetching songs...

============ OPTIONS ============

1. YouTube Shorts Automation

2. Twitter Bot

3. Affiliate Marketing

4. Outreach

5. Quit

=================================

Select an option: 2

ℹ️ Starting Twitter Bot...

+----+--------------------------------------+----------+-------------------+

| ID | UUID | Nickname | Account Topic |

+----+--------------------------------------+----------+-------------------+

| 1 | 4568db8e-8f05-4238-96d1-f0af9ebaff61 | rtts | music pop culture |

+----+--------------------------------------+----------+-------------------+

❓ Select an account to start: 1

============ OPTIONS ============

1. Post something

2. Reply to something

3. Show all Posts

4. Setup CRON Job

5. Quit

=================================

❓ Select an option: 1

ℹ️ Generating a post...

ℹ️ Length of post: 269

ℹ️ Generating a post...

ℹ️ Length of post: 225

=> Posting to Twitter: 🎶✨ The resurgence of 90s pop i...

Traceback (most recent call last):

File "/Users/acetwotimes/MoneyPrinterV2/src/classes/Twitter.py", line 83, in post

bot.find_element(By.XPATH, "//a[@data-testid='SideNav_NewTweet_Button']").click()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/webdriver.py", line 888, in find_element

return self.execute(Command.FIND_ELEMENT, {"using": by, "value": value})["value"]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/webdriver.py", line 429, in execute

self.error_handler.check_response(response)

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/errorhandler.py", line 232, in check_response

raise exception_class(message, screen, stacktrace)

selenium.common.exceptions.NoSuchElementException: Message: Unable to locate element: //a[@data-testid='SideNav_NewTweet_Button']; For documentation on this error, please visit: https://www.selenium.dev/documentation/webdriver/troubleshooting/errors#no-such-element-exception

Stacktrace:

RemoteError@chrome://remote/content/shared/RemoteError.sys.mjs:8:8

WebDriverError@chrome://remote/content/shared/webdriver/Errors.sys.mjs:197:5

NoSuchElementError@chrome://remote/content/shared/webdriver/Errors.sys.mjs:527:5

dom.find/</<@chrome://remote/content/shared/DOM.sys.mjs:136:16

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/acetwotimes/MoneyPrinterV2/src/main.py", line 438, in <module>

main()

File "/Users/acetwotimes/MoneyPrinterV2/src/main.py", line 277, in main

twitter.post()

File "/Users/acetwotimes/MoneyPrinterV2/src/classes/Twitter.py", line 86, in post

bot.find_element(By.XPATH, "//a[@data-testid='SideNav_NewTweet_Button']").click()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/webdriver.py", line 888, in find_element

return self.execute(Command.FIND_ELEMENT, {"using": by, "value": value})["value"]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/webdriver.py", line 429, in execute

self.error_handler.check_response(response)

File "/usr/local/anaconda3/lib/python3.11/site-packages/selenium/webdriver/remote/errorhandler.py", line 232, in check_response

raise exception_class(message, screen, stacktrace)

selenium.common.exceptions.NoSuchElementException: Message: Unable to locate element: //a[@data-testid='SideNav_NewTweet_Button']; For documentation on this error, please visit: https://www.selenium.dev/documentation/webdriver/troubleshooting/errors#no-such-element-exception

Stacktrace:

RemoteError@chrome://remote/content/shared/RemoteError.sys.mjs:8:8

WebDriverError@chrome://remote/content/shared/webdriver/Errors.sys.mjs:197:5

NoSuchElementError@chrome://remote/content/shared/webdriver/Errors.sys.mjs:527:5

dom.find/</<@chrome://remote/content/shared/DOM.sys.mjs:136:16

##########################################################################

❓ Select an option: 3

+----+----------------------+-------------------------------------------------------------------+

| ID | Date | Content |

+----+----------------------+-------------------------------------------------------------------+

| 1 | 02/16/2025, 03:04:45 | 🎶✨ The resurgence of 90s pop icons in today's music scene is... |

+----+----------------------+-------------------------------------------------------------------+

============ OPTIONS ============

1. Post something

2. Reply to something

3. Show all Posts

4. Setup CRON Job

5. Quit

=================================

❓ Select an option: 1

ℹ️ Generating a post...

ℹ️ Length of post: 208

=> Preparing to post on Twitter: 🎶✨ The resurgence of 90s pop i...

Failed to find the text box element.

Tweet content (printed to terminal):

🎶✨ The resurgence of 90s pop icons in today's music scene is a nostalgic treat for fans! From remixes to surprise collaborations, it's a reminder that great music never truly fades away. #90sPop #MusicRevival

x#########################################################################

so i can generate but not type or find the buttons or boxes, even tried flipping the cod so i can type first...didnt work either . any suggestions? ive been stuck on this part for a imnute, and ive done all the fixes ive found so far including using css selector . i get nada.

twitter.py code

import re

import g4f

import sys

import os

import json

from cache import *

from config import *

from status import *

from constants import *

from typing import List

from datetime import datetime

from termcolor import colored

from selenium_firefox import *

from selenium import webdriver

from selenium.common import exceptions

from selenium.webdriver.common import keys

from selenium.webdriver.common.by import By

from selenium.webdriver.firefox.service import Service

from selenium.webdriver.firefox.options import Options

from webdriver_manager.firefox import GeckoDriverManager

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.support import expected_conditions as EC

# Import joblib for parallel processing

from joblib import Parallel, delayed

class Twitter:

"""

Class for the Bot that grows a Twitter account.

"""

def __init__(self, account_uuid: str, account_nickname: str, fp_profile_path: str, topic: str) -> None:

"""

Initializes the Twitter Bot.

Args:

account_uuid (str): The account UUID

account_nickname (str): The account nickname

fp_profile_path (str): The path to the Firefox profile

topic (str): The topic to generate posts about

Returns:

None

"""

self.account_uuid: str = account_uuid

self.account_nickname: str = account_nickname

self.fp_profile_path: str = fp_profile_path

self.topic: str = topic

# Initialize the Firefox options

self.options: Options = Options()

# Set headless state of browser if enabled

if get_headless():

self.options.add_argument("--headless")

# Set the Firefox profile path

self.options.set_preference("profile", fp_profile_path)

# Initialize the Firefox service

self.service: Service = Service(GeckoDriverManager().install())

# Initialize the browser

self.browser: webdriver.Firefox = webdriver.Firefox(service=self.service, options=self.options)

# Initialize the wait instance

self.wait: WebDriverWait = WebDriverWait(self.browser, 40)

def post(self, text: str = None) -> None:

"""

Starts the Twitter Bot.

Args:

text (str): The text to post

Returns:

None

"""

bot: webdriver.Firefox = self.browser

verbose: bool = get_verbose()

bot.get("https://x.com/compose/post")

post_content: str = self.generate_post()

now: datetime = datetime.now()

# Show a preview of the tweet content

print(colored(" => Preparing to post on Twitter:", "blue"), post_content[:30] + "...")

# Determine the tweet content (either generated or provided)

body = post_content if text is None else text

# Try to find the text box and type the content

text_box = None

selectors = [

(By.CSS_SELECTOR, "div.notranslate.public-DraftEditor-content[role='textbox']"),

(By.XPATH, "//div[@data-testid='tweetTextarea_0']//div[@role='textbox']")

]

for selector in selectors:

try:

text_box = self.wait.until(EC.element_to_be_clickable(selector))

text_box.click()

text_box.send_keys(body)

break

except exceptions.TimeoutException:

continue

# If the text box wasn't found, print error, cache the post, and exit gracefully

if text_box is None:

print(colored("Failed to find the text box element.", "red"))

print(colored("Tweet content (printed to terminal):", "yellow"))

print(body)

self.add_post({

"content": post_content,

"date": now.strftime("%m/%d/%Y, %H:%M:%S")

})

return

# Try to find the "Post" button and click it

tweet_button = None

selectors = [

(By.XPATH, "//span[contains(@class, 'css-1jxf684') and text()='Post']"),

(By.XPATH, "//*[text()='Post']")

]

for selector in selectors:

try:

tweet_button = self.wait.until(EC.element_to_be_clickable(selector))

tweet_button.click()

break

except exceptions.TimeoutException:

continue

# If the tweet button wasn't found, print error, cache the post, and exit gracefully

if tweet_button is None:

print(colored("Failed to find the tweet button element.", "red"))

print(colored("Tweet content (printed to terminal):", "yellow"))

print(body)

self.add_post({

"content": post_content,

"date": now.strftime("%m/%d/%Y, %H:%M:%S")

})

return

if verbose:

print(colored(" => Pressed [ENTER] Button on Twitter..", "blue"))

# Wait for confirmation that the tweet has been posted

self.wait.until(EC.presence_of_element_located((By.XPATH, "//div[@data-testid='tweetButton']")))

# Add the post to the cache

self.add_post({

"content": post_content,

"date": now.strftime("%m/%d/%Y, %H:%M:%S")

})

success("Posted to Twitter successfully!")

def get_posts(self) -> List[dict]:

"""

Gets the posts from the cache.

Returns:

posts (List[dict]): The posts

"""

if not os.path.exists(get_twitter_cache_path()):

# Create the cache file if it doesn't exist

with open(get_twitter_cache_path(), 'w') as file:

json.dump({"posts": []}, file, indent=4)

with open(get_twitter_cache_path(), 'r') as file:

parsed = json.load(file)

# Find our account and its posts

accounts = parsed.get("accounts", [])

for account in accounts:

if account["id"] == self.account_uuid:

posts = account.get("posts", [])

return posts

return []

def add_post(self, post: dict) -> None:

"""

Adds a post to the cache.

Args:

post (dict): The post to add

Returns:

None

"""

posts = self.get_posts()

posts.append(post)

with open(get_twitter_cache_path(), 'r') as file:

previous_json = json.load(file)

# Find our account and append the new post

accounts = previous_json.get("accounts", [])

for account in accounts:

if account["id"] == self.account_uuid:

account["posts"].append(post)

# Commit changes to the cache

with open(get_twitter_cache_path(), "w") as f:

json.dump(previous_json, f, indent=4)

def generate_post(self) -> str:

"""

Generates a post for the Twitter account based on the topic.

Returns:

post (str): The post

"""

completion = g4f.ChatCompletion.create(

model=parse_model(get_model()),

messages=[

{

"role": "user",

"content": f"Generate a Twitter post about: {self.topic} in {get_twitter_language()}. "

"The limit is 2 sentences. Choose a specific sub-topic of the provided topic."

}

]

)

if get_verbose():

info("Generating a post...")

if completion is None:

error("Failed to generate a post. Please try again.")

sys.exit(1)

# Remove asterisks and quotes from the generated content

completion = re.sub(r"\*", "", completion).replace("\"", "")

if get_verbose():

info(f"Length of post: {len(completion)}")

# Instead of recursively generating a new post, trim it if it's too long.

max_length = 260

if len(completion) > max_length:

# Optionally, you could trim on a word boundary instead of a strict character limit.

trimmed = completion[:max_length].rsplit(" ", 1)[0] + "..."

if get_verbose():

info(f"Trimmed post to {len(trimmed)} characters.")

return trimmed

return completion

| open | 2025-02-16T08:15:55Z | 2025-02-18T20:37:48Z | https://github.com/FujiwaraChoki/MoneyPrinterV2/issues/100 | [] | ragnorcap | 3 |

roboflow/supervision | machine-learning | 1,543 | [InferenceSlicer] Contradictory documentation regarding overlap_ratio_wh | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar bug report.

### Bug

`InferenceSlicer` object in the latest update. Specifically, the `overlap_ratio_wh` parameter is mentioned as both a new feature and deprecated in different sections of the release notes.

In the "Changed" section, it states:

> InferenceSlicer now features an `overlap_ratio_wh` parameter, making it easier to compute slice sizes when handling overlapping slices. [#1434](https://github.com/supervisely/supervisely/issues/1434)

However, in the "Deprecated" section, it mentions:

> `overlap_ratio_wh` in InferenceSlicer.__init__ is deprecated and will be removed in supervision-0.27.0. Use `overlap_wh` instead.

This seems contradictory: on one hand, `overlap_ratio_wh` is presented as a new feature, while on the other hand, it is marked for deprecation and removal in favor of `overlap_wh`.

Could you clarify whether `overlap_ratio_wh` should be used, or if we should transition directly to `overlap_wh`? Additionally, updating the documentation to make this distinction clear would help avoid confusion for future users.

Thank you!

### Environment

- Supervision 0.23.0

- Python 3.11

### Minimal Reproducible Example

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [x] Yes I'd like to help by submitting a PR! | closed | 2024-09-25T12:32:13Z | 2024-10-01T12:18:14Z | https://github.com/roboflow/supervision/issues/1543 | [

"bug"

] | tibeoh | 5 |

sktime/pytorch-forecasting | pandas | 1,032 | Can you please help in intepreting the output of plot_prediction_actual_by_variable()? | PyTorch-Forecasting version: 0.10.2

Torch: 1.10.1

Python version: 3.8

Operating System: Windows

I woukd need some help to interpret the plot produced by _plot_prediction_actual_by_variable()_

I take two hereafter as an example (one related to a continuous and one to a categorical variable).

questions:

- are these the forecast of the X variables? if yes, is such forecast produced by the lstm-decoder? (all of it then fed into the self-attention)

- is such plot representing the validation set of the Xs over which I compare with the actual (real) data? (the model should split each X in X_train and X_val )

- how to interpret it? I mean what are the axes (x,y,z) meaning? since it is a prediction, I would expect on the x-axis the time index which in my idea would correspond to the decoder length

- what the average means? in my idea in every batch of training, the model should forecast the Xs before doing the forecast for y. But in every batch the forecast of Xs is probably different so I would guess i will end up with several estimate (equal to the number of batch) of X at t+1..is the average simply the algebraic average of these estimates?

- regarding the categorical variable (months), what is the meaning of prediction? the distance between the blue and the red dot what does it mean? These are variable time-dependent which are known into the future, why the model is predicting those? | open | 2022-06-15T15:06:13Z | 2022-06-15T15:06:13Z | https://github.com/sktime/pytorch-forecasting/issues/1032 | [] | LuigiDarkSimeone | 0 |

axnsan12/drf-yasg | django | 369 | readOnly on SchemaRef | Let say I have a nested serializer like this:

```python

class AccountSerializer(Serializer):

name = CharField()

class PostSerializer(Serializer):

title = CharField()

account = AccountSerializer(read_only=True)

```

The corresponding Schema generated for account field in Post is `SchemaRef([('$ref', '#/definitions/Account')])` but the "read_only=True" is lost.

How can I keep the readOnly attribute with nested serializer? | closed | 2019-05-17T15:21:01Z | 2019-06-12T23:50:31Z | https://github.com/axnsan12/drf-yasg/issues/369 | [] | luxcem | 1 |

autogluon/autogluon | scikit-learn | 4,756 | How to Use Focal Loss Function in Classification Problems with Autogluon? | First of all, thanks for such an excellent open-source project.

Currently, I'm using Autogluon for small-sample classification tasks and I've found that the Focal loss function is very helpful for learning with imbalanced samples.

So, how can I use the Focal loss function in classification problems with Autogluon? | closed | 2024-12-27T06:10:11Z | 2025-01-13T23:33:14Z | https://github.com/autogluon/autogluon/issues/4756 | [] | lovechang1986 | 1 |

Farama-Foundation/Gymnasium | api | 385 | [Question] Interpretation of ground contact forces in Ant-v4 | ### Question

I am trying to detect if the agent of the Ant-v4 environment has ground contact on any of its legs and had the idea to monitor the contact forces (via `use_contact_forces=True`). However, from the documentation it is not clear to me which force corresponds to what. My first guess was the "ground link", however these forces are constantly zero in my experiments.

Could you please clarify which forces are applicable to my problem?

Thank you very much in advance. | closed | 2023-03-14T10:45:48Z | 2023-03-15T09:52:51Z | https://github.com/Farama-Foundation/Gymnasium/issues/385 | [

"question"

] | BeFranke | 5 |

seanharr11/etlalchemy | sqlalchemy | 28 | UnicodeDecodeError with Postgres -> MSSQL migration | I tried to use etlalchemy@1.1.1 to copy some data from Postgres (via psycopg2@2.7.3.1) to MSSQL (via pyodbc@4.0.17) using python 2.7 (since per #14 python3 doesn't seem to be supported). (This is on Windows, but I expected this to become important later when running external commands.)

It failed with:

File "...\sqlalchemy\sql\compiler.py", line 1895, in visit_insert

for crud_param_set in crud_params

UnicodeDecodeError: 'ascii' codec can't decode byte 0xd0 in position 101: ordinal not in range(128)

while trying to generate the `INSERT`s for the non-ASCII data to dump into the intermediate file.

While poking around I realized I don't understand how non-Unicode data is supposed to be handled.

The source database uses UTF8 encoding and has this table definition:

CREATE TABLE public.TABLE_NAME

(

id numeric(18,0) NOT NULL,

name character varying(255) COLLATE pg_catalog."default",

...

)