repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

horovod/horovod | deep-learning | 3,228 | Support for ElasticRayExecutor on ray.tune | I'm looking for best practices when implementing the Horovod `ElasticRayExecutor` (0.23.0) on `ray.tune` (1.7.0)

The ray examples folder contain [code](https://github.com/ray-project/ray/blob/master/python/ray/tune/examples/horovod_simple.py#L46) for the non-elastic RayExecutor w/ ray.tune.

And the Horovod [docs](https://horovod.readthedocs.io/en/stable/ray_include.html#elastic-ray-executor) contain some helpful context for running Elastic Ray+Horovod w/o ray.tune.

But there's not yet an elastic ray.tune implementation. It appears that the [`_HorovodTrainable`](https://github.com/ray-project/ray/blob/master/python/ray/tune/integration/horovod.py#L113) trainable uses the non-elastic RayExecutor so one would have to write a new `DistributedTrainable` class to make this compatible.

How to do this is not immediately clear to me, though, given that the [`RayExecutor`](https://github.com/horovod/horovod/blob/c4306ec45ab823f71b999bdc30a5995d3f8193fe/horovod/ray/runner.py#L129) API differs from the [`ElasticRayExecutor`](https://github.com/horovod/horovod/blob/master/horovod/ray/elastic.py#L149)...

Any tips would be greatly appreciated! | open | 2021-10-16T23:47:06Z | 2021-10-24T17:34:41Z | https://github.com/horovod/horovod/issues/3228 | [

"enhancement"

] | nmatare | 3 |

Evil0ctal/Douyin_TikTok_Download_API | api | 115 | [BUG] API返回中的official_api中的值需要进行修改 | ***发生错误的平台?***

如:抖音/TikTok

***发生错误的端点?***

如:API-V1/API-V2/Web APP

***提交的输入值?***

如:短视频链接

***是否有再次尝试?***

如:是,发生错误后X时间后错误依旧存在。

***你有查看本项目的自述文件或接口文档吗?***

如:有,并且很确定该问题是程序导致的。

| closed | 2022-12-02T11:25:21Z | 2022-12-02T23:01:45Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/115 | [

"BUG",

"Fixed"

] | Evil0ctal | 1 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 775 | 使用langchain检索式问答输入问题后报错 电脑带有A100显卡 | ### 提交前必须检查以下项目

- [X] 请确保使用的是仓库最新代码(git pull),一些问题已被解决和修复。

- [X] 由于相关依赖频繁更新,请确保按照[Wiki](https://github.com/ymcui/Chinese-LLaMA-Alpaca/wiki)中的相关步骤执行

- [X] 我已阅读[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案

- [X] 第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[text-generation-webui](https://github.com/oobabooga/text-generation-webui)、[LlamaChat](https://github.com/alexrozanski/LlamaChat)等,同时建议到对应的项目中查找解决方案

- [X] 模型正确性检查:务必检查模型的[SHA256.md](https://github.com/ymcui/Chinese-LLaMA-Alpaca/blob/main/SHA256.md),模型不对的情况下无法保证效果和正常运行

### 问题类型

模型推理

### 基础模型

LLaMA-13B

### 操作系统

Linux

### 详细描述问题

```

python3 langchain_sum.py \

--model_path /root/7_17_alpaca \

--file_path doc.txt \

--chain_type refine

```

### 依赖情况(代码类问题务必提供)

accelerate 0.20.3

aiohttp 3.8.4

aiosignal 1.3.1

anyio 3.7.0

argilla 1.12.1

async-timeout 4.0.2

attrs 21.2.0

Automat 20.2.0

backoff 2.2.1

bcrypt 3.2.0

beautifulsoup4 4.12.2

blinker 1.4

certifi 2023.5.7

cffi 1.15.1

chardet 4.0.0

charset-normalizer 3.1.0

chromadb 0.3.23

click 8.0.3

clickhouse-connect 0.6.8

cloud-init 19.1.21

cmake 3.27.0

colorama 0.4.4

colorclass 2.2.2

command-not-found 0.3

commonmark 0.9.1

compressed-rtf 1.0.6

configobj 5.0.8

constantly 15.1.0

cryptography 41.0.2

dataclasses-json 0.5.12

datasets 2.13.1

dbus-python 1.2.18

decorator 4.4.2

deepspeed 0.9.5

Deprecated 1.2.14

dill 0.3.6

diskcache 5.6.1

distro 1.7.0

distro-info 1.1build1

duckdb 0.8.1

easygui 0.98.3

ebcdic 1.1.1

et-xmlfile 1.1.0

exceptiongroup 1.1.2

extract-msg 0.41.1

faiss-cpu 1.7.4

fastapi 0.99.1

filelock 3.12.2

frozenlist 1.3.3

fsspec 2023.6.0

gpt4all 0.3.4

greenlet 2.0.2

h11 0.14.0

hjson 3.1.0

hnswlib 0.7.0

httpcore 0.16.3

httplib2 0.20.2

httptools 0.6.0

httpx 0.23.3

huggingface-hub 0.15.1

hyperlink 21.0.0

idna 3.3

IMAPClient 2.3.1

importlib-metadata 4.6.4

incremental 21.3.0

iniconfig 2.0.0

jeepney 0.7.1

Jinja2 3.1.2

joblib 1.3.1

jsonpatch 1.32

jsonpointer 2.3

jsonschema 4.17.3

keyring 23.5.0

langchain 0.0.197

langchainplus-sdk 0.0.20

langsmith 0.0.11

lark-parser 0.12.0

launchpadlib 1.10.16

lazr.restfulclient 0.14.4

lazr.uri 1.0.6

lit 16.0.6

llama-cpp-python 0.1.50

lxml 4.9.3

lz4 4.3.2

Markdown 3.4.3

MarkupSafe 2.1.2

marshmallow 3.19.0

monotonic 1.6

more-itertools 8.10.0

mpmath 1.3.0

msg-parser 1.2.0

msoffcrypto-tool 5.1.1

multidict 6.0.4

multiprocess 0.70.14

mypy-extensions 1.0.0

netifaces 0.11.0

networkx 3.1

ninja 1.11.1

nltk 3.8.1

numexpr 2.8.4

numpy 1.23.5

nvidia-cublas-cu11 11.10.3.66

nvidia-cuda-cupti-cu11 11.7.101

nvidia-cuda-nvrtc-cu11 11.7.99

nvidia-cuda-runtime-cu11 11.7.99

nvidia-cudnn-cu11 8.5.0.96

nvidia-cufft-cu11 10.9.0.58

nvidia-curand-cu11 10.2.10.91

nvidia-cusolver-cu11 11.4.0.1

nvidia-cusparse-cu11 11.7.4.91

nvidia-nccl-cu11 2.14.3

nvidia-nvtx-cu11 11.7.91

oauthlib 3.2.2

olefile 0.46

oletools 0.60.1

openapi-schema-pydantic 1.2.4

openpyxl 3.1.2

packaging 23.1

pandas 1.5.3

pandoc 2.3

pcodedmp 1.2.6

pdfminer.six 20221105

peft 0.3.0.dev0

pexpect 4.8.0

Pillow 10.0.0

pip 22.0.2

pluggy 1.2.0

plumbum 1.8.2

ply 3.11

posthog 3.0.1

protobuf 4.23.4

psutil 5.9.5

ptyprocess 0.7.0

py-cpuinfo 9.0.0

pyarrow 12.0.1

pyasn1 0.4.8

pyasn1-modules 0.2.1

pycparser 2.21

pydantic 1.10.10

Pygments 2.15.1

PyGObject 3.42.1

PyHamcrest 2.0.2

PyJWT 2.3.0

PyMuPDF 1.22.3

pyOpenSSL 21.0.0

pypandoc 1.11

pyparsing 2.4.7

pyre-extensions 0.0.29

pyrsistent 0.19.3

pyserial 3.5

pytest 7.4.0

python-apt 2.4.0+ubuntu1

python-dateutil 2.8.2

python-debian 0.1.43ubuntu1

python-docx 0.8.11

python-dotenv 1.0.0

python-linux-procfs 0.6.3

python-magic 0.4.24

python-pptx 0.6.21

pytz 2023.3

pytz-deprecation-shim 0.1.0.post0

pyudev 0.22.0

PyYAML 6.0

red-black-tree-mod 1.20

regex 2023.6.3

requests 2.30.0

rfc3986 1.5.0

rich 13.0.1

RTFDE 0.0.2

safetensors 0.3.1

scikit-learn 1.3.0

scipy 1.11.1

screen-resolution-extra 0.0.0

SecretStorage 3.3.1

sentence-transformers 2.2.2

sentencepiece 0.1.97

service-identity 18.1.0

setuptools 59.6.0

shortuuid 1.0.11

six 1.16.0

sniffio 1.3.0

sos 4.4

soupsieve 2.4.1

SQLAlchemy 2.0.19

ssh-import-id 5.11

starlette 0.27.0

sympy 1.12

systemd-python 234

tabulate 0.9.0

tenacity 8.2.2

threadpoolctl 3.1.0

tokenizers 0.13.3

tomli 2.0.1

torch 2.0.1

torchvision 0.15.2

tqdm 4.65.0

transformers 4.30.0

triton 2.0.0

Twisted 22.1.0

typer 0.7.0

typing_extensions 4.7.1

typing-inspect 0.9.0

tzdata 2023.3

tzlocal 4.2

ubuntu-advantage-tools 8001

ubuntu-drivers-common 0.0.0

ufw 0.36.1

unattended-upgrades 0.1

unstructured 0.6.6

urllib3 2.0.2

uvicorn 0.22.0

uvloop 0.17.0

wadllib 1.3.6

watchfiles 0.19.0

websockets 11.0.3

wheel 0.37.1

wrapt 1.14.1

xformers 0.0.20

xkit 0.0.0

XlsxWriter 3.1.2

xxhash 3.2.0

yarl 1.9.2

zipp 1.0.0

zope.interface 5.4.0

zstandard 0.21.0

### 运行日志或截图

Loading the embedding model...

No sentence-transformers model found with name /root/text2vec-large-chinese. Creating a new one with MEAN pooling.

[2023-07-20 16:44:17,426] [INFO] [real_accelerator.py:110:get_accelerator] Setting ds_accelerator to cuda (auto detect)

loading LLM...

The model weights are not tied. Please use the `tie_weights` method before using the `infer_auto_device` function.

Loading checkpoint shards: 100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████| 3/3 [00:10<00:00, 3.63s/it]

请输入问题:你好

/usr/local/lib/python3.10/dist-packages/transformers/generation/utils.py:1259: UserWarning: You have modified the pretrained model configuration to control generation. This is a deprecated strategy to control generation and will be removed soon, in a future version. Please use a generation configuration file (see https://huggingface.co/docs/transformers/main_classes/text_generation)

warnings.warn(

/usr/local/lib/python3.10/dist-packages/transformers/generation/utils.py:1353: UserWarning: Using `max_length`'s default (1000) to control the generation length. This behaviour is deprecated and will be removed from the config in v5 of Transformers -- we recommend using `max_new_tokens` to control the maximum length of the generation.

warnings.warn(

Traceback (most recent call last):

File "/root/Chinese-LLaMA-Alpaca/scripts/langchain/langchain_qa.py", line 115, in <module>

print(qa.run(query))

File "/root/langchain/langchain/chains/base.py", line 440, in run

return self(args[0], callbacks=callbacks, tags=tags, metadata=metadata)[

File "/root/langchain/langchain/chains/base.py", line 243, in __call__

raise e

File "/root/langchain/langchain/chains/base.py", line 237, in __call__

self._call(inputs, run_manager=run_manager)

File "/root/langchain/langchain/chains/retrieval_qa/base.py", line 133, in _call

answer = self.combine_documents_chain.run(

File "/root/langchain/langchain/chains/base.py", line 445, in run

return self(kwargs, callbacks=callbacks, tags=tags, metadata=metadata)[

File "/root/langchain/langchain/chains/base.py", line 243, in __call__

raise e

File "/root/langchain/langchain/chains/base.py", line 237, in __call__

self._call(inputs, run_manager=run_manager)

File "/root/langchain/langchain/chains/combine_documents/base.py", line 106, in _call

output, extra_return_dict = self.combine_docs(

File "/root/langchain/langchain/chains/combine_documents/refine.py", line 152, in combine_docs

res = self.initial_llm_chain.predict(callbacks=callbacks, **inputs)

File "/root/langchain/langchain/chains/llm.py", line 252, in predict

return self(kwargs, callbacks=callbacks)[self.output_key]

File "/root/langchain/langchain/chains/base.py", line 243, in __call__

raise e

File "/root/langchain/langchain/chains/base.py", line 237, in __call__

self._call(inputs, run_manager=run_manager)

File "/root/langchain/langchain/chains/llm.py", line 92, in _call

response = self.generate([inputs], run_manager=run_manager)

File "/root/langchain/langchain/chains/llm.py", line 102, in generate

return self.llm.generate_prompt(

File "/root/langchain/langchain/llms/base.py", line 186, in generate_prompt

return self.generate(prompt_strings, stop=stop, callbacks=callbacks, **kwargs)

File "/root/langchain/langchain/llms/base.py", line 279, in generate

output = self._generate_helper(

File "/root/langchain/langchain/llms/base.py", line 223, in _generate_helper

raise e

File "/root/langchain/langchain/llms/base.py", line 210, in _generate_helper

self._generate(

File "/root/langchain/langchain/llms/base.py", line 602, in _generate

self._call(prompt, stop=stop, run_manager=run_manager, **kwargs)

File "/root/langchain/langchain/llms/huggingface_pipeline.py", line 169, in _call

response = self.pipeline(prompt)

File "/usr/local/lib/python3.10/dist-packages/transformers/pipelines/text_generation.py", line 201, in __call__

return super().__call__(text_inputs, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/pipelines/base.py", line 1120, in __call__

return self.run_single(inputs, preprocess_params, forward_params, postprocess_params)

File "/usr/local/lib/python3.10/dist-packages/transformers/pipelines/base.py", line 1127, in run_single

model_outputs = self.forward(model_inputs, **forward_params)

File "/usr/local/lib/python3.10/dist-packages/transformers/pipelines/base.py", line 1026, in forward

model_outputs = self._forward(model_inputs, **forward_params)

File "/usr/local/lib/python3.10/dist-packages/transformers/pipelines/text_generation.py", line 263, in _forward

generated_sequence = self.model.generate(input_ids=input_ids, attention_mask=attention_mask, **generate_kwargs)

File "/usr/local/lib/python3.10/dist-packages/torch/utils/_contextlib.py", line 115, in decorate_context

return func(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/generation/utils.py", line 1522, in generate

return self.greedy_search(

File "/usr/local/lib/python3.10/dist-packages/transformers/generation/utils.py", line 2339, in greedy_search

outputs = self(

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/accelerate/hooks.py", line 165, in new_forward

output = old_forward(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/models/llama/modeling_llama.py", line 688, in forward

outputs = self.model(

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/accelerate/hooks.py", line 165, in new_forward

output = old_forward(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/models/llama/modeling_llama.py", line 578, in forward

layer_outputs = decoder_layer(

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/accelerate/hooks.py", line 165, in new_forward

output = old_forward(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/models/llama/modeling_llama.py", line 292, in forward

hidden_states, self_attn_weights, present_key_value = self.self_attn(

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/accelerate/hooks.py", line 165, in new_forward

output = old_forward(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/transformers/models/llama/modeling_llama.py", line 194, in forward

query_states = self.q_proj(hidden_states).view(bsz, q_len, self.num_heads, self.head_dim).transpose(1, 2)

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1501, in _call_impl

return forward_call(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/accelerate/hooks.py", line 165, in new_forward

output = old_forward(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/linear.py", line 114, in forward

return F.linear(input, self.weight, self.bias)

RuntimeError: "addmm_impl_cpu_" not implemented for 'Half' | closed | 2023-07-20T09:04:23Z | 2023-07-20T12:43:38Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/775 | [] | ai499 | 3 |

ufoym/deepo | jupyter | 4 | Can you tell me where is the caffe install folder? | Your docker image is amazing and I have tested all deeplearning tools like caffe,tensorflow etc can work. But I want to find the location of the build folder of caffe.

Thanks. | closed | 2017-10-30T12:03:15Z | 2020-07-19T01:04:02Z | https://github.com/ufoym/deepo/issues/4 | [] | supernihui | 2 |

Yorko/mlcourse.ai | pandas | 330 | Яндекс&МФТИ, Coursera, Final project - Идентификация пользователей | Здравствуйте! Уточните пожалуйста, какова форма ответа в задании 2 недели, вопрос 2:

"Распределено ли нормально число уникальных сайтов в сессии?". В форме нет четких указаний на формулировку ответа, варианты "Нет", "No", значение статистики и p-value критерия Шапиро-Вилка не подходят...

Может, я неверно посчитал, но ведь так и не понять :) | closed | 2018-04-28T16:12:15Z | 2018-08-04T16:07:50Z | https://github.com/Yorko/mlcourse.ai/issues/330 | [

"invalid"

] | levbed | 1 |

chezou/tabula-py | pandas | 327 | Allow columns parameter to use relative area | **Is your feature request related to a problem? Please describe.**

<!--- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

Currently, the `columns` parameter accepts a list of floats, which map to the horizontal location in points even when setting `relative_area` to `True`. The list of floats cannot represent relative position on the page.

**Describe the solution you'd like**

<!--- A clear and concise description of what you want to happen. -->

I would like the `columns` parameter to use relative position of the page width just like the `area` parameter does when `relative_area` is set to `True`.

**Describe alternatives you've considered**

<!--- A clear and concise description of any alternative solutions or features you've considered. -->

This feature is able to be completed by setting the `options` parameter. For example `['--columns %25,50,80.6']`.

**Additional context**

<!--- Add any other context or screenshots about the feature request here. -->

I think it would be nice to have this feature directly available instead of using `options`. | closed | 2022-11-23T21:32:40Z | 2022-12-01T15:52:05Z | https://github.com/chezou/tabula-py/issues/327 | [] | tdpetrou | 5 |

ckan/ckan | api | 8,605 | Solution: Automated data enrichment with metadata, tagging, annotation | **Problem description**

Data scientists and ML engineers spend unnecessary time by manually searching through datasets to understand their characteristics due to insufficient or inconsistent metadata. It produces waste by repeatedly analyzing basic dataset characteristics.

**Problem discovery**

By interviewing an ML Engineer (it'll be a video interview next month), it was clearly said that: "...metadata helps me as an engineer to swiftly navigate by data, quickly get data statistics and pool slices of data with required properties."

Extrapolating this statement we get into more specifics:

- ML Engineers need quick access to data statistics and properties

- Datasets navigation is often cumbersome without proper tagging

- Manual annotation is time-consuming and prone to inconsistencies

- Data users require efficient ways to filter datasets

- Manual and ad-hoc approaches are common but pricy and time consuming

**Solution hypothesis**

ML powered automated data enrichment based on dataset content analysis; summarization of characteristics inside the dataset; names, locations, dates extraction. Tag consistency should be preserved.

- Amount of missing values and data consistency may be detected

- Created as a plugin

- Ability to tune enrichment

Additional functionality:

- Integration with existing search functionality

- API endpoints for automated tagging

**Success metrics**

1. Reduction in time spent on data discovery

2. Accuracy of automated annotations

**Questions to consider:**

Is this change going to break current installations?

- No breaking changes to core CKAN functionality. Implemented as optional, additive features.

Can we provide a backwards compatibility?

- New features implemented as optional extensions

How easy is gonna be for current implementations to migrate to this new release?

- No downtime required for core functionality

Do current versions of CKAN have the adequate resources/support to migrate to this new version?

- Optional GPU support?

Are we going to change the database schema?

- New table for generated metadata and tags

Are we going to change the API?

- New endpoints added

Are we going to deprecate Interfaces?

- No we wouldn't | open | 2025-01-04T19:17:06Z | 2025-01-07T13:18:05Z | https://github.com/ckan/ckan/issues/8605 | [] | thegostev | 0 |

ansible/awx | automation | 15,007 | Show status for host in job, not status of job in list of recent jobs for host | ### Please confirm the following

- [X] I agree to follow this project's [code of conduct](https://docs.ansible.com/ansible/latest/community/code_of_conduct.html).

- [X] I have checked the [current issues](https://github.com/ansible/awx/issues) for duplicates.

- [X] I understand that AWX is open source software provided for free and that I might not receive a timely response.

### Feature type

Enhancement to Existing Feature

### Feature Summary

Currently the exit code from ansible-playbook is being used to determine the status of the recent jobs in the activity log for a host.

Finding out, what status the actual host had in a particular job, is quite tedious.

I am aware, that it would be at least as tedious to to that in awx - but it would make awx a lot more user-friendly.

### Select the relevant components

- [X] UI

- [ ] API

- [ ] Docs

- [ ] Collection

- [ ] CLI

- [X] Other

### Steps to reproduce

Finding out, what status the actual host had in a particular job, is quite tedious:

- check job output

- check status of that host in that job

### Current results

Currently the activity log for a host shows the status of the recent _jobs_, the host was in - not the status of the _host_ in these _jobs_ (which is, what the user would expect, looking at the recent activity of the host)

### Sugested feature result

The activity log of a host should show the status of the recent jobs of the host.

### Additional information

_No response_ | open | 2024-03-18T08:30:46Z | 2024-03-18T08:31:16Z | https://github.com/ansible/awx/issues/15007 | [

"type:enhancement",

"component:ui",

"needs_triage",

"community"

] | leitwerk-ag | 0 |

huggingface/datasets | pandas | 7,077 | column_names ignored by load_dataset() when loading CSV file | ### Describe the bug

load_dataset() ignores the column_names kwarg when loading a CSV file. Instead, it uses whatever values are on the first line of the file.

### Steps to reproduce the bug

Call `load_dataset` to load data from a CSV file and specify `column_names` kwarg.

### Expected behavior

The resulting dataset should have the specified column names **and** the first line of the file should be considered as data values.

### Environment info

- `datasets` version: 2.20.0

- Platform: Linux-5.10.0-30-cloud-amd64-x86_64-with-glibc2.31

- Python version: 3.9.2

- `huggingface_hub` version: 0.24.2

- PyArrow version: 17.0.0

- Pandas version: 2.2.2

- `fsspec` version: 2024.5.0 | open | 2024-07-26T14:18:04Z | 2024-07-30T07:52:26Z | https://github.com/huggingface/datasets/issues/7077 | [] | luismsgomes | 1 |

ultralytics/yolov5 | pytorch | 12,798 | How to load Custom Models in VsCode on windows | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I already read this one https://docs.ultralytics.com/yolov5/tutorials/pytorch_hub_model_loading/#custom-models

How to fix it?

Thank you

### Additional

_No response_ | closed | 2024-03-07T16:47:26Z | 2024-04-18T00:20:36Z | https://github.com/ultralytics/yolov5/issues/12798 | [

"question",

"Stale"

] | Waariss | 2 |

zappa/Zappa | flask | 1,361 | The role defined for the function cannot be assumed by Lambda. | <!--- Provide a general summary of the issue in the Title above -->

## Context

I was deploying a simple (admin) only django install to test zappa and the zappa init was fine but on my first run of zappa deploy dev I go the error in the title

<!--- Provide a more detailed introduction to the issue itself, and why you consider it to be a bug -->

<!--- Also, please make sure that you are running Zappa _from a virtual environment_ and are using Python 3.8/3.9/3.10/3.11/3.12 -->

Python 3.12

## Expected Behavior

<!--- Tell us what should happen -->

I expected to get through to Deployment complete

## Actual Behavior

<!--- Tell us what happens instead -->

An error occurred (InvalidParameterValueException) when calling the CreateFunction operation: The role defined for the function cannot be assumed by Lambda.

## Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

https://stackoverflow.com/a/37438525/2434654 this link suggested sleeping a few seconds so I tried it again and it got to Deployment complete.

**Perhaps introduce a pause in the deployment after the upload to wait for the role to be ready if others have this issue.**

## Steps to Reproduce

<!--- Provide a link to a live example, or an unambiguous set of steps to -->

<!--- reproduce this bug include code to reproduce, if relevant -->

1. Step up super basic django

2. set up database (possibly not required)

3. setup amazon account to deploy ( i used sso)

4. zappa init

5. zappa deploy dev

## Your Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* Zappa version used: `0.59.0`

* Operating System and Python version: MacOS 15.2

* The output of `pip freeze`:

```

argcomplete==3.5.3

asgiref==3.8.1

boto3==1.35.95

botocore==1.35.95

certifi==2024.12.14

cfn-flip==1.3.0

charset-normalizer==3.4.1

click==8.1.8

django==5.1.4

durationpy==0.9

hjson==3.1.0

idna==3.10

jmespath==1.0.1

kappa==0.6.0

markupsafe==3.0.2

pip==24.3.1

placebo==0.9.0

psycopg==3.2.3

psycopg-binary==3.2.3

python-dateutil==2.9.0.post0

python-slugify==8.0.4

pyyaml==6.0.2

requests==2.32.3

s3transfer==0.10.4

setuptools==75.8.0

six==1.17.0

sqlparse==0.5.3

text-unidecode==1.3

toml==0.10.2

tqdm==4.67.1

troposphere==4.8.3

typing-extensions==4.12.2

urllib3==2.3.0

werkzeug==3.1.3

wheel==0.45.1

zappa==0.59.0

```

* Link to your project (optional):

* Your `zappa_settings.json`:

```

{

"dev": {

"aws_region": "ap-southeast-2",

"django_settings": "project.settings",

"exclude": [

"boto3",

"dateutil",

"botocore",

"s3transfer",

"concurrent"

],

"profile_name": "default",

"project_name": "unity",

"runtime": "python3.12",

"s3_bucket": "random-name"

}

}

```

| open | 2025-01-08T22:04:39Z | 2025-02-14T05:41:53Z | https://github.com/zappa/Zappa/issues/1361 | [] | nigeljames-tess | 1 |

Gozargah/Marzban | api | 694 | امکان ویرایش نام کاربری در آپدیت بعدی | کاش می شد این قابلیت فعال باشه بدون اینکه لینک ساب تغیر کنه نام کاربری رو ویرایش کرد

میشه ظوری تنظیم کنین در اپدیت بعدی که لینک ساب بر اساس شماره بندی مخصوص خودش داخل دیتایس لینک بده که در صورت تغیر یوزرنیم اون عوض نشه | closed | 2023-12-12T15:34:59Z | 2023-12-12T16:30:36Z | https://github.com/Gozargah/Marzban/issues/694 | [

"Feature"

] | hayousef68 | 1 |

ansible/awx | automation | 14,931 | When job is running, selected EE is not visible in the UI, it appears only after it finishes | ### Please confirm the following

- [X] I agree to follow this project's [code of conduct](https://docs.ansible.com/ansible/latest/community/code_of_conduct.html).

- [X] I have checked the [current issues](https://github.com/ansible/awx/issues) for duplicates.

- [X] I understand that AWX is open source software provided for free and that I might not receive a timely response.

### Feature type

New Feature

### Feature Summary

Right now EE that was selected is invisible to user, see here

A specific version of EE was used for this template, but it's not visible anywhere in the job history (it was changed between runs) and therefore we can't verify if that specific version was really used or not and which version of EE the job was started with

### Select the relevant components

- [X] UI

- [ ] API

- [ ] Docs

- [ ] Collection

- [ ] CLI

- [ ] Other

### Steps to reproduce

Create a template, pick a custom EE, start a job, then edit template, pick another EE, start a new job. You won't be able to tell which job was started with which EE.

### Current results

See above

### Sugested feature result

Make it so that chosen EE version is visible just like other fields, job template, project etc.

### Additional information

_No response_ | open | 2024-02-27T09:01:41Z | 2024-03-06T16:17:47Z | https://github.com/ansible/awx/issues/14931 | [

"type:enhancement",

"component:ui",

"needs_triage",

"community"

] | benapetr | 8 |

Lightning-AI/pytorch-lightning | data-science | 20,384 | Custom TQDMProgressBar changes not reflected | ### Bug description

I wrote a custom TQDMProgressBar class with some changes. When I run `train.fit()` in JupyterLab the default progress bar is still used, however.

### What version are you seeing the problem on?

v2.4

### How to reproduce the bug

```python

from lightning.pytorch.callbacks import TQDMProgressBar

class CustomProgBar(TQDMProgressBar):

def __init__(self, ncols: int = 100):

super().__init__(leave=True)

self.ncols = ncols

def init_sanity_tqdm(self):

bar = super().init_sanity_tqdm()

bar.ncols = self.ncols

return bar

def init_train_tqdm(self):

bar = super().init_train_tqdm()

bar.ncols = self.ncols

return bar

def init_validation_tqdm(self):

bar = super().init_validation_tqdm()

bar.ncols = self.ncols

return bar

trainer = L.Trainer(accelerator="cpu", max_epochs=5, callbacks=[CustomProgBar(),], log_every_n_steps=1)

# `model` and `data` are LightningModule and LightningDataModule instances, respectively.

# I can include the code for this if you think it's needed for debugging this.

trainer.fit(model, datamodule=data)

```

### Error messages and logs

Printout without `callbacks` argument passed:

```

| Name | Type | Params | Mode

------------------------------------------

0 | model | UNet | 3.0 M | eval

1 | loss | DiceLoss | 0 | eval

------------------------------------------

3.0 M Trainable params

0 Non-trainable params

3.0 M Total params

11.893 Total estimated model params size (MB)

0 Modules in train mode

112 Modules in eval mode

Sanity Checking: | | 0/? [00:00<…

Training: | | 0/? [00:00<…

```

Printout with just the `CustomProgBar` callaback:

```

| Name | Type | Params | Mode

------------------------------------------

0 | model | UNet | 3.0 M | eval

1 | loss | DiceLoss | 0 | eval

------------------------------------------

3.0 M Trainable params

0 Non-trainable params

3.0 M Total params

11.893 Total estimated model params size (MB)

0 Modules in train mode

112 Modules in eval mode

Sanity Checking: | | 0/? [00:00<…

Training: | | 0/? [00:00<…

```

### Environment

<details>

<summary>Current environment</summary>

```

#- PyTorch Lightning Version (e.g., 2.4.0): 2.4.0

#- PyTorch Version (e.g., 2.4): 2.4.0

#- Python version (e.g., 3.12): 3.12.3

#- OS (e.g., Linux): Windows 10

#- CUDA/cuDNN version: n/a

#- GPU models and configuration: none, CPU only

#- How you installed Lightning(`conda`, `pip`, source): pip

#- TQDM version: 4.66.6

```

</details>

### More info

_No response_ | open | 2024-11-01T18:56:25Z | 2024-11-20T20:10:38Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20384 | [

"bug",

"needs triage",

"ver: 2.4.x"

] | oseymour | 0 |

zappa/Zappa | django | 401 | [Migrated] from zappa.concurrent.futures import LambdaPoolExecutor? | Originally from: https://github.com/Miserlou/Zappa/issues/1024 by [olirice](https://github.com/olirice)

**Feature Proposal**

Implement `LambdaPoolExecutor` with a similar api to

`ThreadPoolExecutor` and `ProcessPoolExecutor`?

i.e.

``` python

from concurrent.futures import as_completed # pip install futures on py2.7

from zappa.concurrent.futures import LambdaPoolExecutor

# Pushes current working directory to Lambda

executor = LambdaPoolExecutor(max_workers=15)

# Function to execute in Lambda function

def do_stuff(x: int) -> int:

return 1

futures = []

for val in range(100):

# Submit work to Lambda function

# Store python future (non-blocking)

future = executor.submit(do_stuff, val)

futures.append(future)

# As each task completes

for output in as_completed(futures):

# Collect result from futures object

result = output.result()

# do more stuff with result

```

Thoughts:

1. Initialization of the executor would package up the project and ship it to Lambda (if not exists).

2. Submitting work to the executor pickles the variable `x`, copies it up to S3 and notifies a handler in Lambda

3. The handler downloads, unpickles, and passes the variables to the function

4. The client (`LambdaPoolExecutor`) repeatedly checks S3 for a response to see if the work is done

5. When the work is done, copy the pickled response from S3, unpickle, and set the result to the futures object

**But why?**

Zappa for cluster computing!

- Drop in replacement for python concurrency primitives

- Map reduce

- ETL

- Distributed web scraping

- Generally take advantage AWS's CPU our internet connection resources with little effort

**Possible hang ups**

- 5 minute max execution time

All thoughts welcome

If the response is positive, I'll take a swing at implementing it. | closed | 2021-02-20T08:27:58Z | 2022-08-19T07:28:47Z | https://github.com/zappa/Zappa/issues/401 | [] | jneves | 1 |

aio-libs/aiomysql | asyncio | 214 | get a problem in cursor | Hello everyone,

I use pool to execute sql.the example is like that without any problem

```python

async with _pool.acquire() as conn:

async with conn.cursor(aiomysql.DictCursor) as _cur:

await _cur.execute(sql,kwargs or args)

rs = await _cur.fetchall()

```

and ab result is good

```

Time taken for tests: 39.560 seconds

Complete requests: 10000

Failed requests: 0

Total transferred: 3450000 bytes

HTML transferred: 2460000 bytes

Requests per second: 252.78 [#/sec] (mean)

```

**but** I want to do it like that in a Class

```python

async def _cursor(self):

async with self._pool.acquire() as conn:

_cur = await conn.cursor(aiomysql.DictCursor)

return _cur

```

```python

async def query(self,sql,*args,**kwargs):

_cur = await self._cursor()

try:

await _cur.execute(sql,kwargs or args)

rs = await _cur.fetchall()

return rs

finally:

await _cur.close()

```

the ab result is so bad

```

Complete requests: 10000

Failed requests: 9919

(Connect: 0, Receive: 0, Length: 9919, Exceptions: 0)

Non-2xx responses: 83

Total transferred: 3443379 bytes

HTML transferred: 2451814 bytes

Requests per second: 163.92 [#/sec] (mean)

```

I know that there is problem in my codes, but I don't know what is different.

maybe conn don't close correctly?or the conn close too late?

| closed | 2017-10-10T12:30:25Z | 2017-10-15T16:34:40Z | https://github.com/aio-libs/aiomysql/issues/214 | [] | shownb | 3 |

vimalloc/flask-jwt-extended | flask | 478 | make `current_user` available in jinja templates | Can jinja templates get `current_user` variable access without passing it explicitly? Like `Flask-Login` does, its quite convinient | closed | 2022-05-22T19:05:51Z | 2022-07-23T21:34:35Z | https://github.com/vimalloc/flask-jwt-extended/issues/478 | [] | ghost | 2 |

MagicStack/asyncpg | asyncio | 383 | Compiling the docs leads to missing sections | ## Steps to reproduce

```

git clone https://github.com/MagicStack/asyncpg

cd asyncpg/docs

git checkout v0.18.1

python3 -m venv .venv

source .venv/bin/activate

pip install -r requirements.txt

make html

```

## Expected

The connection pools section, located at _build/html/api/index.html#connection-pools, appears the same as https://magicstack.github.io/asyncpg/current/api/index.html#connection-pools.

## Actual

The section is empty.

## Build log

```

python -m sphinx -b html -d _build/doctrees . _build/html

Running Sphinx v1.8.1

loading pickled environment... done

building [mo]: targets for 0 po files that are out of date

building [html]: targets for 1 source files that are out of date

updating environment: 31 added, 0 changed, 0 removed

reading sources... [100%] usage

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/base.rst:3: WARNING: Error in "currentmodule" directive:

maximum 1 argument(s) allowed, 3 supplied.

.. currentmodule:: {{ module }}

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/class.rst:3: WARNING: Error in "currentmodule" directive:

maximum 1 argument(s) allowed, 3 supplied.

.. currentmodule:: {{ module }}

WARNING: invalid signature for autoclass ('{{ objname }}')

WARNING: don't know which module to import for autodocumenting '{{ objname }}' (try placing a "module" or "currentmodule" directive in the document, or giving an explicit module name)

WARNING: invalid signature for automodule ('{{ fullname }}')

WARNING: don't know which module to import for autodocumenting '{{ fullname }}' (try placing a "module" or "currentmodule" directive in the document, or giving an explicit module name)

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/base.rst:3: WARNING: Error in "currentmodule" directive:

maximum 1 argument(s) allowed, 3 supplied.

.. currentmodule:: {{ module }}

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/class.rst:3: WARNING: Error in "currentmodule" directive:

maximum 1 argument(s) allowed, 3 supplied.

.. currentmodule:: {{ module }}

WARNING: invalid signature for autoclass ('{{ objname }}')

WARNING: don't know which module to import for autodocumenting '{{ objname }}' (try placing a "module" or "currentmodule" directive in the document, or giving an explicit module name)

WARNING: invalid signature for automodule ('{{ fullname }}')

WARNING: don't know which module to import for autodocumenting '{{ fullname }}' (try placing a "module" or "currentmodule" directive in the document, or giving an explicit module name)

WARNING: autodoc: failed to import function 'connection.connect' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'connection.Connection' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'prepared_stmt.PreparedStatement' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'transaction.Transaction' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'cursor.CursorFactory' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'cursor.Cursor' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import function 'pool.create_pool' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import class 'pool.Pool' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

WARNING: autodoc: failed to import module 'types' from module 'asyncpg'; the following exception was raised:

cannot import name 'Protocol' from 'asyncpg.protocol.protocol' (unknown location)

looking for now-outdated files... none found

pickling environment... done

checking consistency... /home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/Jinja2-2.10.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/Pygments-2.2.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/alabaster-0.7.12.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/certifi-2018.10.15.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/chardet-3.0.4.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/docutils-0.14.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/pytz-2018.7.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/requests-2.20.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/six-1.11.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/snowballstemmer-1.2.1.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/base.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/class.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/module.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/Jinja2-2.10.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/Pygments-2.2.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/alabaster-0.7.12.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/certifi-2018.10.15.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/chardet-3.0.4.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/docutils-0.14.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/pytz-2018.7.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/requests-2.20.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/six-1.11.0.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/snowballstemmer-1.2.1.dist-info/DESCRIPTION.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/base.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/class.rst: WARNING: document isn't included in any toctree

/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib64/python3.7/site-packages/sphinx/ext/autosummary/templates/autosummary/module.rst: WARNING: document isn't included in any toctree

done

preparing documents... done

writing output... [100%] usage

generating indices... genindex py-modindex

writing additional pages... search

copying static files... done

copying extra files... done

dumping search index in English (code: en) ... done

dumping object inventory... done

build succeeded, 47 warnings.

The HTML pages are in _build/html.

Build finished. The HTML pages are in _build/html.

```

## Attempted workaround

Building asyncpg from source first in the same venv also does not help:

```

cd ../

pip install -e .

```

```

Obtaining file:///home/benjamin/code/vcs/git/com/github/%40/MagicStack/asyncpg

Complete output from command python setup.py egg_info:

running egg_info

writing asyncpg.egg-info/PKG-INFO

writing dependency_links to asyncpg.egg-info/dependency_links.txt

writing requirements to asyncpg.egg-info/requires.txt

writing top-level names to asyncpg.egg-info/top_level.txt

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/setup.py", line 294, in <module>

setup_requires=setup_requires,

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/setuptools/__init__.py", line 140, in setup

return distutils.core.setup(**attrs)

File "/usr/lib/python3.7/distutils/core.py", line 148, in setup

dist.run_commands()

File "/usr/lib/python3.7/distutils/dist.py", line 966, in run_commands

self.run_command(cmd)

File "/usr/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/setuptools/command/egg_info.py", line 296, in run

self.find_sources()

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/setuptools/command/egg_info.py", line 303, in find_sources

mm.run()

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/setuptools/command/egg_info.py", line 534, in run

self.add_defaults()

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/setuptools/command/egg_info.py", line 570, in add_defaults

sdist.add_defaults(self)

File "/usr/lib/python3.7/distutils/command/sdist.py", line 228, in add_defaults

self._add_defaults_ext()

File "/usr/lib/python3.7/distutils/command/sdist.py", line 311, in _add_defaults_ext

build_ext = self.get_finalized_command('build_ext')

File "/usr/lib/python3.7/distutils/cmd.py", line 299, in get_finalized_command

cmd_obj.ensure_finalized()

File "/usr/lib/python3.7/distutils/cmd.py", line 107, in ensure_finalized

self.finalize_options()

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/setup.py", line 233, in finalize_options

annotate=self.cython_annotate)

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/Cython/Build/Dependencies.py", line 956, in cythonize

aliases=aliases)

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/Cython/Build/Dependencies.py", line 801, in create_extension_list

for file in nonempty(sorted(extended_iglob(filepattern)), "'%s' doesn't match any files" % filepattern):

File "/home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/docs/.venv/lib/python3.7/site-packages/Cython/Build/Dependencies.py", line 111, in nonempty

raise ValueError(error_msg)

ValueError: 'asyncpg/pgproto/pgproto.pyx' doesn't match any files

----------------------------------------

Command "python setup.py egg_info" failed with error code 1 in /home/benjamin/code/vcs/git/com/github/@/MagicStack/asyncpg/

``` | closed | 2018-11-04T18:10:50Z | 2018-11-04T18:24:32Z | https://github.com/MagicStack/asyncpg/issues/383 | [] | ioistired | 1 |

mars-project/mars | scikit-learn | 2,659 | Support `merge_small_files` for `md.read_parquet` etc | <!--

Thank you for your contribution!

Please review https://github.com/mars-project/mars/blob/master/CONTRIBUTING.rst before opening an issue.

-->

**Is your feature request related to a problem? Please describe.**

For reading data op like `md.read_parquet` and `md.read_csv`, if too many small files exist, a lot of chunks would be created, and the upcoming computation could be extremely slow. Thus I suggest to add a `merge_small_files` argument to these functions to enable auto optimization on merging small files.

**Describe the solution you'd like**

Sample a few input chunks, e.g. 10 chunks, get `k = 128M / {size of the largest chunk}`, if greater than 2, try to merge small chunks every k chunks.

| closed | 2022-01-27T10:38:11Z | 2022-01-30T02:12:27Z | https://github.com/mars-project/mars/issues/2659 | [

"type: enhancement",

"mod: dataframe",

"task: medium"

] | qinxuye | 0 |

marshmallow-code/flask-smorest | rest-api | 413 | Proper way to use schemas with alt_response? | I have a route handler that looks like this:

```py

@bp.arguments(Args)

@bp.response(200, GoodResponse)

@bp.alt_response(400, schema=ErrorResponse)

def put(self, args):

...

if errors:

abort(400, errors=errors)

else:

return results

```

I've tried a few different variations in the error case to get things returning properly and validated according to the schema but can't get it working. Is it correct that the schema specified in `alt_response` will be used to validate and serialize the object returned in a `400` case here? I've experimented with putting invalid data into the `errors` object here, and it doesn't seem to make a difference.

My goal here is to return a 400 response with a JSON body that has the shape of `ErrorResponse`. Thanks in advance for any help! | closed | 2022-10-25T22:52:43Z | 2024-05-24T20:16:28Z | https://github.com/marshmallow-code/flask-smorest/issues/413 | [

"question"

] | GSGerritsen | 7 |

frappe/frappe | rest-api | 31,527 | Edit button misalignment |  | open | 2025-03-05T09:11:20Z | 2025-03-05T09:11:20Z | https://github.com/frappe/frappe/issues/31527 | [

"bug"

] | maasanto | 0 |

microsoft/nni | pytorch | 5,558 | Need shape format support for predefined one shot search space | Describe the issue:

When I add the profiler as tutorial instructed below:

```python

dummy_input = torch.randn(1, 3, 32, 32)

profiler = NumParamsProfiler(model_space, dummy_input)

penalty = ExpectationProfilerPenalty(profiler, 500e3)

strategy = DartsStrategy(gradient_clip_val=5.0, penalty=penalty)

```

This error appeared:

```bash

[2023-05-12 22:02:18] WARNING: Shape information is not explicitly propagated when executing aten.avg_pool2d.default, and and a recent module that needs shape information has no shape inference formula. Module calling stack:

- '' (type: nni.nas.hub.pytorch.nasnet.DARTS, NO shape formula)

- 'stages.0' (type: nni.nas.hub.pytorch.nasnet.NDSStage, NO shape formula)

- 'stages.0.blocks.0' (type: nni.nas.nn.pytorch.cell.Cell, NO shape formula)

- 'stages.0.blocks.0.ops.0.0' (type: nni.nas.nn.pytorch.choice.LayerChoice, HAS shape formula)

- 'stages.0.blocks.0.ops.0.0.avg_pool_3x3' (type: torch.nn.modules.pooling.AvgPool2d, NO shape formula)

Traceback (most recent call last):

File "/home/dzhang/Documents/ml-experimental/TinyNAS/nas_tutorial/darts.py", line 235, in <module>

profiler = NumParamsProfiler(model_space, dummy_input)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/flops.py", line 197, in __init__

self.profiler = FlopsParamsProfiler(model_space, args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/flops.py", line 171, in __init__

shapes = submodule_input_output_shapes(model_space, *args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 466, in submodule_input_output_shapes

model(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1212, in _call_impl

result = forward_call(*input, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/hub/pytorch/nasnet.py", line 613, in forward

s0, s1 = stage([s0, s1])

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1212, in _call_impl

result = forward_call(*input, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/nn/pytorch/repeat.py", line 158, in forward

x = block(x)

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1212, in _call_impl

result = forward_call(*input, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/nn/pytorch/cell.py", line 385, in forward

current_state.append(op(inp(states)))

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1215, in _call_impl

hook_result = hook(self, input, result)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 514, in module_shape_inference_hook

result = _module_shape_inference_impl(module, output, *input, is_leaf=is_leaf)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 628, in _module_shape_inference_impl

output_shape = formula(module, *input_args, **formula_kwargs, **input_kwargs)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape_formula.py", line 230, in layer_choice_formula

expressions[val] = extract_shape_info(shape_inference(module[val], *args, is_leaf=is_leaf, **kwargs))

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 498, in shape_inference

outputs = module(*args, **kwargs)

File "/usr/local/lib/python3.10/dist-packages/torch/nn/modules/module.py", line 1215, in _call_impl

hook_result = hook(self, input, result)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 514, in module_shape_inference_hook

result = _module_shape_inference_impl(module, output, *input, is_leaf=is_leaf)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 617, in _module_shape_inference_impl

tree_map(_ensure_shape, outputs)

File "/usr/local/lib/python3.10/dist-packages/torch/utils/_pytree.py", line 192, in tree_map

return tree_unflatten([fn(i) for i in flat_args], spec)

File "/usr/local/lib/python3.10/dist-packages/torch/utils/_pytree.py", line 192, in <listcomp>

return tree_unflatten([fn(i) for i in flat_args], spec)

File "/usr/local/lib/python3.10/dist-packages/nni/nas/profiler/pytorch/utils/shape.py", line 609, in _ensure_shape

raise RuntimeError(

RuntimeError: Shape inference failed because no shape inference formula is found for AvgPool2d(kernel_size=3, stride=1, padding=1) of type AvgPool2d. Meanwhile the nested modules and functions inside failed to propagate the shape information. Please provide a `_shape_forward` member

function or register a formula using `register_shape_inference_formula`.

```

According to the previous issue in https://github.com/microsoft/nni/issues/5538, I think it is AvgPool2d does not have shape inference formula, since it is not customzied search space, so can you add the support on your side?

Environment:

NNI version: latest(Build from source and use Dockerfile in master branch)

Training service (local|remote|pai|aml|etc): local

Client OS: Unbuntu

Python version: 3.8

PyTorch/TensorFlow version: 1.10.2

Is conda/virtualenv/venv used?: No

Is running in Docker?: Yes

Configuration:

Experiment config (remember to remove secrets!): Same as Latest Version in Darts example

Search space: Darts | open | 2023-05-12T22:16:56Z | 2023-05-25T11:28:20Z | https://github.com/microsoft/nni/issues/5558 | [] | dzk9528 | 9 |

nvbn/thefuck | python | 807 | Not Running in Fish Shell | <!-- If you have any issue with The Fuck, sorry about that, but we will do what we

can to fix that. Actually, maybe we already have, so first thing to do is to

update The Fuck and see if the bug is still there. -->

<!-- If it is (sorry again), check if the problem has not already been reported and

if not, just open an issue on [GitHub](https://github.com/nvbn/thefuck) with

the following basic information: -->

The output of `thefuck --version` (something like `The Fuck 3.1 using Python 3.5.0`):

**The Fuck 3.26 using Python 3.6.5**

Your shell and its version (`bash`, `zsh`, *Windows PowerShell*, etc.):

**Fish v2.7.1 (works fine in Bash)**

Your system (Debian 7, ArchLinux, Windows, etc.):

**macOS 10.13.5 Beta (17F45c)**

How to reproduce the bug:

**Run 'fuck' command after entering any incorrect command in Fish shell.**

The output of The Fuck with `THEFUCK_DEBUG=true` exported (typically execute `export THEFUCK_DEBUG=true` in your shell before The Fuck):

```

DEBUG: Run with settings: {'alter_history': True,

'debug': True,

'env': {'GIT_TRACE': '1', 'LANG': 'C', 'LC_ALL': 'C'},

'exclude_rules': [],

'history_limit': None,

'instant_mode': False,

'no_colors': False,

'priority': {},

'repeat': False,

'require_confirmation': True,

'rules': [<const: All rules enabled>],

'slow_commands': ['lein', 'react-native', 'gradle', './gradlew', 'vagrant'],

'user_dir': PosixPath('/Users/user/.config/thefuck'),

'wait_command': 3,

'wait_slow_command': 15}

DEBUG: Total took: 0:00:00.296931

Traceback (most recent call last):

File "/usr/local/bin/thefuck", line 12, in <module>

sys.exit(main())

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/entrypoints/main.py", line 25, in main

fix_command(known_args)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/entrypoints/fix_command.py", line 36, in fix_command

command = types.Command.from_raw_script(raw_command)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/types.py", line 81, in from_raw_script

expanded = shell.from_shell(script)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/shells/generic.py", line 30, in from_shell

return self._expand_aliases(command_script)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/shells/fish.py", line 65, in _expand_aliases

aliases = self.get_aliases()

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/shells/fish.py", line 60, in get_aliases

raw_aliases = _get_aliases(overridden)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/utils.py", line 33, in wrapper

memo[key] = fn(*args, **kwargs)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/utils.py", line 267, in wrapper

return _cache.get_value(fn, depends_on, args, kwargs)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/utils.py", line 243, in get_value

value = fn(*args, **kwargs)

File "/usr/local/Cellar/thefuck/3.26/libexec/lib/python3.6/site-packages/thefuck/shells/fish.py", line 25, in _get_aliases

name, value = alias.replace('alias ', '', 1).split(' ', 1)

ValueError: not enough values to unpack (expected 2, got 1)

```

If the bug only appears with a specific application, the output of that application and its version:

N/A

Anything else you think is relevant:

N/A

<!-- It's only with enough information that we can do something to fix the problem. -->

| closed | 2018-04-27T00:58:57Z | 2018-05-22T17:32:16Z | https://github.com/nvbn/thefuck/issues/807 | [

"bug",

"fish"

] | grokdesigns | 14 |

trevismd/statannotations | seaborn | 152 | Feature request: permutation test | Can the built-in scipy.stats permutation_test function be an option for statistical tests?

Many Thanks! | open | 2024-05-24T07:52:46Z | 2024-11-30T08:54:21Z | https://github.com/trevismd/statannotations/issues/152 | [] | naureeng | 2 |

qubvel-org/segmentation_models.pytorch | computer-vision | 849 | Is this library still maintained? | It's been almost a year since the last release, and most commits since then have been limited to auto-generated dependabot PRs. The outdated version of timm required to use smp now makes it incompatible with the latest release of lightly: https://github.com/microsoft/torchgeo/issues/1824. PRs to update the version of timm have been ignored (https://github.com/qubvel/segmentation_models.pytorch/pull/839), and requests to unpin the timm dependency have been rejected (https://github.com/qubvel/segmentation_models.pytorch/issues/620). Our own contributions have been closed, and requests to reopen them have been ignored as well (https://github.com/qubvel/segmentation_models.pytorch/pull/776).

Which begs the question: is this library still maintained?

If yes, then it would be incredibly helpful to unpin the timm dependency.

If no, then would you be willing to pass the torch (heh) on to someone else so this incredibly useful library does not become abandoned? Alternatively, does anyone know any alternatives that offer the same functionality and compatibility with modern timm releases as smp? | closed | 2024-01-25T09:51:18Z | 2024-09-27T10:13:45Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/849 | [] | adamjstewart | 18 |

ranaroussi/yfinance | pandas | 1,727 | Module 'yfinance' has no attribute 'Ticker' | ### Describe bug

I try to use command

import yfinance as yf

import pandas_datareader as pdr

import pandas as pd

from datetime import datetime

import yfinance as yf

msft = yf.Ticker('MSFT')

and get a response of AttributeError: module 'yfinance' has no attribute 'Ticker'

I'm using Miniconda. Tried to use this also with Anaconda but it gets the same response. I've installed all the needed packages with conda and pip and when I try to install them again I get a response of Requirement already satisfied.

I have no idea what is wrong as everything should be working.

### Simple code that reproduces your problem

import yfinance as yf

import pandas_datareader as pdr

import pandas as pd

from datetime import datetime

import yfinance as yf

msft = yf.Ticker('MSFT')

[4](file:///d%3A/Ville/Anaconda/yfinance.py?line=3) from datetime import datetime

[6](file:///d%3A/Ville/Anaconda/yfinance.py?line=5) import yfinance as yf

----> [8](file:///d%3A/Ville/Anaconda/yfinance.py?line=7) msft = yf.Ticker('MSFT')

[10](file:///d%3A/Ville/Anaconda/yfinance.py?line=9) msft.info

AttributeError: module 'yfinance' has no attribute 'Ticker'

### Debug log

Exception has occurred: AttributeError

partially initialized module 'yfinance' has no attribute 'Ticker' (most likely due to a circular import)

File "D:\Ville\Anaconda\yfinance.py", line 8, in <module>

msft = yf.Ticker('MSFT')

^^^^^^^^^

File "D:\Ville\Anaconda\yfinance.py", line 1, in <module>

import yfinance as yf

AttributeError: partially initialized module 'yfinance' has no attribute 'Ticker' (most likely due to a circular import)

### Bad data proof

_No response_

### `yfinance` version

0.2.31

### Python version

_No response_

### Operating system

_No response_ | closed | 2023-10-18T16:48:03Z | 2023-10-18T17:51:24Z | https://github.com/ranaroussi/yfinance/issues/1727 | [] | Vakke | 3 |

autogluon/autogluon | computer-vision | 4,180 | Accessing probabilities of bagged models | Hi, I was wondering if there's any way of accessing the probabilities of each of the bagged models which are averaged to get the output of ```predict_proba()``` for the L1 models? This would be helpful to be able to calculate uncertainties for each of the models as well as uncertainty for the entire weighted ensemble

Thanks! | open | 2024-05-08T00:44:01Z | 2024-11-25T22:56:48Z | https://github.com/autogluon/autogluon/issues/4180 | [

"enhancement",

"module: tabular"

] | amanmalali | 3 |

jupyter/nbviewer | jupyter | 423 | Test using tornado.testing | In our current testing setup, we're doing functional tests with no way to do mocks against GitHub itself. This ends up causing a lot of bad builds when things are actually fine. Since things can time out between requests and nbviewer, I this there's a mismatch.

Using [`tornado.testing`](http://tornado.readthedocs.org/en/latest/testing.html) should help do tests properly for nbviewer.

| open | 2015-03-12T22:07:10Z | 2015-09-01T01:15:48Z | https://github.com/jupyter/nbviewer/issues/423 | [

"type:Maintenance"

] | rgbkrk | 3 |

mirumee/ariadne | api | 439 | Could you write it more detail | I can integrate ariadne with Django by following the instruction in the ariadne document, however i don't understand the django-channels integration section, i think the sample code is a little bit short and tricky for newbies to follow. Moreover, Django today supports Asgi server out of the box. So can i add Subscription feature to my app without installing channels ? | closed | 2020-11-05T07:28:24Z | 2020-11-05T10:08:20Z | https://github.com/mirumee/ariadne/issues/439 | [] | iamleson98 | 1 |

apache/airflow | machine-learning | 47,905 | Fix mypy-boto3-appflow version | ### Body

We set TODO to handle the version limitation

https://github.com/apache/airflow/blob/9811f1d6d0fe557ab204b20ad5cdf7423926bd22/providers/src/airflow/providers/amazon/provider.yaml#L146-L148

I open issue for viability as it's a small scope and good task for new contributors.

### Committer

- [x] I acknowledge that I am a maintainer/committer of the Apache Airflow project. | closed | 2025-03-18T11:28:58Z | 2025-03-19T13:33:43Z | https://github.com/apache/airflow/issues/47905 | [

"provider:amazon",

"area:providers",

"good first issue",

"kind:task"

] | eladkal | 2 |

tiangolo/uwsgi-nginx-flask-docker | flask | 196 | UWSGI with Python 3.7 in Dockerfile | Hi, Tiangolo

Great work brother , hope you're the best , i have isse when run Docker file contain "Python 3.7 , Uwsgi , Postgres, Nginx "

Build command by jenkins , everything running but when docker logs -f app showing this

**"2020-07-16 00:34:26,763 INFO spawned: 'uwsgi' with pid 480

[uWSGI] getting INI configuration from /home/app/payouts_portal/uwsgi.ini

2020-07-16 00:34:27,774 INFO success: uwsgi entered RUNNING state, process has stayed up for > than 1 seconds (startsecs)"** | closed | 2020-07-16T00:42:46Z | 2020-12-17T00:27:59Z | https://github.com/tiangolo/uwsgi-nginx-flask-docker/issues/196 | [

"answered"

] | abobakrahmed | 2 |

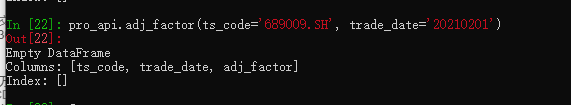

waditu/tushare | pandas | 1,505 | 复权数据接口错误(九号公司缺少20210201复权数据) |

(九号公司缺少20210201复权数据) | open | 2021-02-01T12:33:23Z | 2021-02-01T12:33:54Z | https://github.com/waditu/tushare/issues/1505 | [] | winstonzhong | 1 |

dgtlmoon/changedetection.io | web-scraping | 2,415 | [feature] Compare numeric values before notification? | **Version and OS**

Current on Docker

**Is your feature request related to a problem? Please describe.**

Hi, I'm pretty new to CD and even if I did the first steps sucessfully. What a nice tool!

But I seem to struggle on monitoring a products price and to get only notification, if the price is bigger / lower a certain value. Currently I still get a notification on simply every poll and this is pretty anoying and stress to manually check the value every time by hand.

( Sorry but there seem to be no option in the WebUI and also no tutorial seems to address this feature to supress notifications if they don't pass a filter.)

**Describe the solution you'd like**

Ability for a check or check notification to supress the notification, if a numeric filter dosesn't match e.g. x > 1000.0

**Describe the use-case and give concrete real-world examples**

Notify only if prices, quantities, metrices, ... rise a certain level. | closed | 2024-06-14T18:14:36Z | 2024-07-12T15:09:45Z | https://github.com/dgtlmoon/changedetection.io/issues/2415 | [

"enhancement"

] | Matthias84 | 1 |

fastapi-users/fastapi-users | asyncio | 5 | Improve test coverage | Current coverage: [](https://codecov.io/gh/frankie567/fastapi-users)

| closed | 2019-10-13T11:48:13Z | 2019-10-15T05:55:10Z | https://github.com/fastapi-users/fastapi-users/issues/5 | [

"enhancement"

] | frankie567 | 0 |

hbldh/bleak | asyncio | 1,065 | Advertisements only seldom received/displayed | * bleak version: 0.18.1

* Python version: Python 3.9.2

* Operating System: Linux test 5.15.61-v7l+ #1579 SMP Fri Aug 26 11:13:03 BST 2022 armv7l GNU/Linux

* BlueZ version (`bluetoothctl -v`) in case of Linux: 5.55

### Description

I have a device which is sending a lot advertisements. I said it to do in maximum speed.

test@test:~/bleak $ sudo hcitool lescan --duplicate

LE Scan ...

E4:5F:01:BA:05:2D (unknown)

E4:5F:01:BA:05:2D

E4:5F:01:BA:05:2D (unknown)

E4:5F:01:BA:05:2D

E4:5F:01:BA:05:2D (unknown)

E4:5F:01:BA:05:2D

I can see it advertising at a high speed (around 40 advertisements per second).

Then I use (from example):

```

test@test:~/bleak $ cat detection.py

```

```python

"""

Detection callback w/ scanner

--------------

Example showing what is returned using the callback upon detection functionality

Updated on 2020-10-11 by bernstern <bernie@allthenticate.net>

"""

import asyncio

import logging

import sys

from bleak import BleakScanner

from bleak.backends.device import BLEDevice

from bleak.backends.scanner import AdvertisementData

logger = logging.getLogger(__name__)

def simple_callback(device: BLEDevice, advertisement_data: AdvertisementData):

logger.info(f"{device.address}: {advertisement_data}")

async def main(service_uuids):

scanner = BleakScanner(simple_callback, service_uuids)

while True:

print("(re)starting scanner")

await scanner.start()

await asyncio.sleep(5.0)

await scanner.stop()

if __name__ == "__main__":

logging.basicConfig(

level=logging.INFO,

format="%(asctime)-15s %(name)-8s %(levelname)s: %(message)s",

)

service_uuids = sys.argv[1:]

asyncio.run(main(service_uuids))

test@test:~/bleak $

```

It gives the following output:

```

$ python3 detection.py

(re)starting scanner

2022-10-05 13:16:40,784 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\x98\xc3\x01'})

2022-10-05 13:16:45,775 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\x98\xc3\x01'})

(re)starting scanner

2022-10-05 13:16:45,798 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\xb1\xc3\x01'})

2022-10-05 13:16:50,799 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\xb1\xc3\x01'})

(re)starting scanner

2022-10-05 13:16:50,819 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\xca\xc3\x01'})

2022-10-05 13:16:55,823 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\xca\xc3\x01'})

(re)starting scanner

2022-10-05 13:16:55,838 __main__ INFO: E4:5F:01:BA:05:2D: AdvertisementData(manufacturer_data={65535: b'\xbe\xac\x13\xb7\xcbV\x81ZH\xec\xa0\x1f0!\xabI\xa1U\x00\x00:\xe3\xc3\x01'})

```

It gives only seldom data, far away from 40 per second.

I assume the checked fields remain equal, but there shall be at least a counter inside, which increases every 200ms.

So I assume at least I should see an output every 200ms?

Could I tell Bleak to display every received advertisement?

Why is stop/restart needed? Just that duplicates are reported again?

Or do I somehow need to tell BlueZ under Bleak to use something like lescan with --duplicate?

Or could it be that problem is on sender side? Advertisement data not correct? Or only sometimes correct? | open | 2022-10-05T13:22:57Z | 2022-10-06T17:34:51Z | https://github.com/hbldh/bleak/issues/1065 | [

"3rd party issue",

"Backend: BlueZ"

] | capiman | 21 |

ultralytics/ultralytics | deep-learning | 19,292 | Colab default setting could not covert to tflite | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

_No response_

### Bug

Few weeks ago I can convert the yolo11n.pt to tflite, but now the colab default python setting will be python 3.11.11.

At this version, the tflite could not be converted by onnx2tf.

How can I fix it?

```

from ultralytics import YOLO

model = YOLO('yolo11n.pt')

model.export(format='tflite', imgsz=192, int8=True)

model = YOLO('yolo11n_saved_model/yolo11n_full_integer_quant.tflite')

res = model.predict(imgsz=192)

res[0].plot(show=True)

```

```

Downloading https://ultralytics.com/assets/Arial.ttf to '/root/.config/Ultralytics/Arial.ttf'...

100%|██████████| 755k/755k [00:00<00:00, 116MB/s]