repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

nonebot/nonebot2 | fastapi | 2,943 | Plugin: nonebot-plugin-githubmodels | ### PyPI 项目名

nonebot-plugin-githubmodels

### 插件 import 包名

githubmodels

### 标签

[]

### 插件配置项

```dotenv

GITHUB_TOKEN="hxjxnfkdmzjs"

```

| closed | 2024-09-12T15:43:52Z | 2024-09-12T15:51:08Z | https://github.com/nonebot/nonebot2/issues/2943 | [

"Plugin"

] | lyqgzbl | 2 |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 1,115 | I can't make sense of this error... can somebody please help me | I made it to step 5 of installation without problems, and even received "all test passed" when running `python demo_cli.py`,

However, when i get to I get to launching the toolbox, `python demo_toolbox.py -d <datasets_root>` (where datasets_root points to train-clean-100 downloaded per step 4), I receive this error:

```

Traceback (most recent call last):

File "/media/user/drive2/Documents/Real-Time-Voice-Cloning/demo_toolbox.py", line 5, in <module>

from toolbox import Toolbox

File "/media/user/drive2/Documents/Real-Time-Voice-Cloning/toolbox/__init__.py", line 11, in <module>

from toolbox.ui import UI

File "/media/user/drive2/Documents/Real-Time-Voice-Cloning/toolbox/ui.py", line 11, in <module>

import umap

File "/home/user/anaconda3/lib/python3.9/site-packages/umap/__init__.py", line 2, in <module>

from .umap_ import UMAP

File "/home/user/anaconda3/lib/python3.9/site-packages/umap/umap_.py", line 47, in <module>

from pynndescent import NNDescent

File "/home/user/anaconda3/lib/python3.9/site-packages/pynndescent/__init__.py", line 3, in <module>

from .pynndescent_ import NNDescent, PyNNDescentTransformer

File "/home/user/anaconda3/lib/python3.9/site-packages/pynndescent/pynndescent_.py", line 16, in <module>

import pynndescent.sparse as sparse

File "/home/user/anaconda3/lib/python3.9/site-packages/pynndescent/sparse.py", line 229, in <module>

def sparse_mul(ind1, data1, ind2, data2):

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/decorators.py", line 219, in wrapper

disp.compile(sig)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/dispatcher.py", line 965, in compile

cres = self._compiler.compile(args, return_type)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/dispatcher.py", line 129, in compile

raise retval

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/dispatcher.py", line 139, in _compile_cached

retval = self._compile_core(args, return_type)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/dispatcher.py", line 152, in _compile_core

cres = compiler.compile_extra(self.targetdescr.typing_context,

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler.py", line 693, in compile_extra

return pipeline.compile_extra(func)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler.py", line 429, in compile_extra

return self._compile_bytecode()

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler.py", line 497, in _compile_bytecode

return self._compile_core()

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler.py", line 476, in _compile_core

raise e

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler.py", line 463, in _compile_core

pm.run(self.state)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler_machinery.py", line 353, in run

raise patched_exception

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler_machinery.py", line 341, in run

self._runPass(idx, pass_inst, state)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler_lock.py", line 35, in _acquire_compile_lock

return func(*args, **kwargs)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler_machinery.py", line 296, in _runPass

mutated |= check(pss.run_pass, internal_state)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/compiler_machinery.py", line 269, in check

mangled = func(compiler_state)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/typed_passes.py", line 105, in run_pass

typemap, return_type, calltypes, errs = type_inference_stage(

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/typed_passes.py", line 83, in type_inference_stage

errs = infer.propagate(raise_errors=raise_errors)

File "/home/user/anaconda3/lib/python3.9/site-packages/numba/core/typeinfer.py", line 1086, in propagate

raise errors[0]

numba.core.errors.TypingError: Failed in nopython mode pipeline (step: nopython frontend)

- Resolution failure for literal arguments:

No implementation of function Function(<function impl_append at 0x7f87bea19670>) found for signature:

>>> impl_append(ListType[int32], int32)

There are 2 candidate implementations:

- Of which 2 did not match due to:

Overload in function 'impl_append': File: numba/typed/listobject.py: Line 592.

With argument(s): '(ListType[int32], int32)':

Rejected as the implementation raised a specific error:

TypingError: Failed in nopython mode pipeline (step: nopython frontend)

Untyped global name 'ListStatus': Cannot determine Numba type of <class 'shibokensupport.enum_310.EnumMeta'>

File "../../../../../home/user/anaconda3/lib/python3.9/site-packages/numba/typed/listobject.py", line 602:

def impl(l, item):

<source elided>

status = _list_append(l, casteditem)

if status == ListStatus.LIST_OK:

^

raised from /home/user/anaconda3/lib/python3.9/site-packages/numba/core/typeinfer.py:1480

- Resolution failure for non-literal arguments:

None

During: resolving callee type: BoundFunction((<class 'numba.core.types.containers.ListType'>, 'append') for ListType[int32])

During: typing of call at /home/user/anaconda3/lib/python3.9/site-packages/pynndescent/sparse.py (244)

File "../../../../../home/user/anaconda3/lib/python3.9/site-packages/pynndescent/sparse.py", line 244:

def sparse_mul(ind1, data1, ind2, data2):

<source elided>

if val != 0:

result_ind.append(j1)

^

```

Can somebody please help me make sense of this issue? Did I go wrong somewhere in the installation? | closed | 2022-09-22T07:52:13Z | 2023-01-08T08:55:12Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1115 | [] | ikesaber | 0 |

ultralytics/yolov5 | pytorch | 12,897 | Running Hyperparameter Evolution raises ValueError | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar bug report.

### YOLOv5 Component

Training, Evolution

### Bug

I was trying to train my custom model locally on `Nvidia RTX 3050` but it raises a ValueError. I checked and it raises the same error on coco128 dataset.

This is the dump:

```

(.venv-cuda121) PS C:\workspace\adis\yolov5> python train.py --img 640 --batch 4 --epochs 3 --data coco128.yaml --weights yolov5s.pt --cache --evolve

train: weights=yolov5s.pt, cfg=, data=coco128.yaml, hyp=data\hyps\hyp.scratch-low.yaml, epochs=3, batch_size=4, imgsz=640, rect=False, resume=False, nosave=False, noval=False, noautoanchor=False, noplots=False, evolve=300, evolve_population=data\hyps, resume_evolve=None, bucket=, cache=ram, image_weights=False, device=, multi_scale=False, single_cls=False, optimizer=SGD, sync_bn=False, workers=8, project=runs\train, name=exp, exist_ok=False, quad=False, cos_lr=False, label_smoothing=0.0, patience=100, freeze=[0], save_period=-1, seed=0, local_rank=-1, entity=None, upload_dataset=False, bbox_interval=-1, artifact_alias=latest, ndjson_console=False, ndjson_file=False

github: up to date with https://github.com/ultralytics/yolov5

YOLOv5 v7.0-296-gae4ef3b2 Python-3.11.1 torch-2.2.2+cu121 CUDA:0 (NVIDIA GeForce RTX 3050 Laptop GPU, 4096MiB)

hyperparameters: lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.5, cls_pw=1.0, obj=1.0, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.01041, hsv_s=0.54703, hsv_v=0.27739, degrees=0.0, translate=0.04591, scale=0.75544, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=0.85834, mixup=0.04266, copy_paste=0.0, anchors=3

Comet: run 'pip install comet_ml' to automatically track and visualize YOLOv5 runs in Comet

Overriding model.yaml anchors with anchors=3

from n params module arguments

0 -1 1 3520 models.common.Conv [3, 32, 6, 2, 2]

1 -1 1 18560 models.common.Conv [32, 64, 3, 2]

2 -1 1 18816 models.common.C3 [64, 64, 1]

3 -1 1 73984 models.common.Conv [64, 128, 3, 2]

4 -1 2 115712 models.common.C3 [128, 128, 2]

5 -1 1 295424 models.common.Conv [128, 256, 3, 2]

6 -1 3 625152 models.common.C3 [256, 256, 3]

7 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

8 -1 1 1182720 models.common.C3 [512, 512, 1]

9 -1 1 656896 models.common.SPPF [512, 512, 5]

10 -1 1 131584 models.common.Conv [512, 256, 1, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 models.common.Concat [1]

13 -1 1 361984 models.common.C3 [512, 256, 1, False]

14 -1 1 33024 models.common.Conv [256, 128, 1, 1]

15 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

16 [-1, 4] 1 0 models.common.Concat [1]

17 -1 1 90880 models.common.C3 [256, 128, 1, False]

18 -1 1 147712 models.common.Conv [128, 128, 3, 2]

19 [-1, 14] 1 0 models.common.Concat [1]

20 -1 1 296448 models.common.C3 [256, 256, 1, False]

21 -1 1 590336 models.common.Conv [256, 256, 3, 2]

22 [-1, 10] 1 0 models.common.Concat [1]

23 -1 1 1182720 models.common.C3 [512, 512, 1, False]

24 [17, 20, 23] 1 229245 models.yolo.Detect [80, [[0, 1, 2, 3, 4, 5], [0, 1, 2, 3, 4, 5], [0, 1, 2, 3, 4, 5]], [128, 256, 512]]

Model summary: 214 layers, 7235389 parameters, 7235389 gradients, 16.6 GFLOPs

Transferred 348/349 items from yolov5s.pt

AMP: checks passed

optimizer: SGD(lr=0.01) with parameter groups 57 weight(decay=0.0), 60 weight(decay=0.0005), 60 bias

train: Scanning C:\workspace\adis\datasets\coco128\labels\train2017.cache... 126 images, 2 backgrounds, 0 corrupt: 100%|██████████| 128/128 [00:00<?, ?it/s

train: Caching images (0.1GB ram): 100%|██████████| 128/128 [00:00<00:00, 1829.21it/s]

val: Scanning C:\workspace\adis\datasets\coco128\labels\train2017.cache... 126 images, 2 backgrounds, 0 corrupt: 100%|██████████| 128/128 [00:00<?, ?it/s]

AutoAnchor: 0.36 anchors/target, 0.097 Best Possible Recall (BPR). Anchors are a poor fit to dataset , attempting to improve...

AutoAnchor: WARNING Extremely small objects found: 3 of 929 labels are <3 pixels in size

AutoAnchor: Running kmeans for 9 anchors on 928 points...

AutoAnchor: Evolving anchors with Genetic Algorithm: fitness = 0.6715: 100%|██████████| 1000/1000 [00:00<00:00, 2909.40it/s]

AutoAnchor: thr=0.25: 0.9925 best possible recall, 3.71 anchors past thr

AutoAnchor: n=9, img_size=640, metric_all=0.261/0.672-mean/best, past_thr=0.478-mean: 11,11, 20,27, 51,57, 125,86, 92,175, 140,287, 280,226, 378,368, 549,444

AutoAnchor: Done (optional: update model *.yaml to use these anchors in the future)

Plotting labels to runs\evolve\exp4\labels.jpg...

Image sizes 640 train, 640 val

Using 4 dataloader workers

Logging results to runs\evolve\exp4

Starting training for 3 epochs...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

0%| | 0/32 [00:00<?, ?it/s]

Traceback (most recent call last):

File "C:\workspace\adis\yolov5\train.py", line 848, in <module>

main(opt)

File "C:\workspace\adis\yolov5\train.py", line 754, in main

results = train(hyp.copy(), opt, device, callbacks)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\train.py", line 356, in train

for i, (imgs, targets, paths, _) in pbar: # batch -------------------------------------------------------------

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\tqdm\std.py", line 1181, in __iter__

for obj in iterable:

File "C:\workspace\adis\yolov5\utils\dataloaders.py", line 239, in __iter__

yield next(self.iterator)

^^^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\dataloader.py", line 631, in __next__

data = self._next_data()

^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\dataloader.py", line 1346, in _next_data

return self._process_data(data)

^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\dataloader.py", line 1372, in _process_data

data.reraise()

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\_utils.py", line 722, in reraise

raise exception

ValueError: Caught ValueError in DataLoader worker process 0.

Original Traceback (most recent call last):

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\_utils\worker.py", line 308, in _worker_loop

data = fetcher.fetch(index)

^^^^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\_utils\fetch.py", line 51, in fetch

data = [self.dataset[idx] for idx in possibly_batched_index]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\workspace\adis\yolov5\.venv-cuda121\Lib\site-packages\torch\utils\data\_utils\fetch.py", line 51, in <listcomp>

data = [self.dataset[idx] for idx in possibly_batched_index]

~~~~~~~~~~~~^^^^^

File "C:\workspace\adis\yolov5\utils\dataloaders.py", line 777, in __getitem__

img, labels = mixup(img, labels, *self.load_mosaic(random.choice(self.indices)))

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Program Files\Python311\Lib\random.py", line 369, in choice

if not seq:

ValueError: The truth value of an array with more than one element is ambiguous. Use a.any() or a.all()

```

If this is an Nvidia related bug, then this is my info from nvidia-smi

```

Mon Apr 8 20:41:10 2024

+---------------------------------------------------------------------------------------+

| NVIDIA-SMI 546.21 Driver Version: 546.21 CUDA Version: 12.3 |

|-----------------------------------------+----------------------+----------------------+

| GPU Name TCC/WDDM | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+======================+======================|

| 0 NVIDIA GeForce RTX 3050 ... WDDM | 00000000:01:00.0 Off | N/A |

| N/A 36C P0 7W / 40W | 0MiB / 4096MiB | 0% Default |

| | | N/A |

+-----------------------------------------+----------------------+----------------------+

+---------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=======================================================================================|

| No running processes found |

+---------------------------------------------------------------------------------------+

### Environment

YOLOv5 v7.0-296-gae4ef3b2 Python-3.11.1 torch-2.2.2+cu121 CUDA:0 (NVIDIA GeForce RTX 3050 Laptop GPU, 4096MiB)

### Minimal Reproducible Example

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | closed | 2024-04-08T15:12:26Z | 2024-10-20T19:43:15Z | https://github.com/ultralytics/yolov5/issues/12897 | [

"bug",

"Stale"

] | RAHUL01-09 | 3 |

microsoft/nni | deep-learning | 5,031 | How to set useActivateGpu=true in remote mode? | **Describe the issue**:

I can use the NNI normally with Remote mode on the CPU, although it is very slow.

When I try to use NNI to run the program on GPU under the remote mode, the program is always waiting but didn't run at all.

One possible reason is that all of the GPUs in the remote machine are partly occupied and the NNI won't use the GPU until the GPU is totally free.

When using the local environment, NNI allows me to set useActivateGpu=true to use the working GPU.

However, in remote mode, it will throw the error that **"AttributeError: RemoteConfig does not have field(s) useactivegpu"**

So I want to know how to set the config to use active GPU under remote Configuration.

**Environment**:

- NNI version: 2.7

- Training service (local|remote|pai|aml|etc): remote

- Client OS: Ubuntu 20.04

- Server OS (for remote mode only): Ubuntu 20.04

- Python version: 3.8.5

- PyTorch/TensorFlow version: PyTorch v1.8.1

- Is conda/virtualenv/venv used?: use conda environment

- Is running in Docker?: No

**Configuration**:

- Experiment config (remember to remove secrets!):

```

maxTrialNumber: 20

trialCommand: python main.py

trialCodeDirectory: .

trialGpuNumber: 2

trialConcurrency: 4

tuner:

name: TPE

classArgs:

optimize_mode: maximize

trainingService:

useActiveGpu: true

platform: remote

reuseMode: true

# gpuIndices: '0'

machineList:

- host: xxx.xxx.xx.xx

user: xxx

ssh_key_file: ~/.ssh/id_rsa

```

- Search space:

```

{

"lr": {"_type": "choice", "_value": [0.002, 0.001, 0.0005]},

"l2": {"_type": "choice", "_value": [1e-5, 2e-5, 5e-5]}

}

```

**Log message**:

- nnimanager.log:

- dispatcher.log:

- nnictl stdout and stderr:

```

Traceback (most recent call last):

File "/home/xxx/.miniconda3/envs/torch/bin/nnictl", line 8, in <module>

sys.exit(parse_args())

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/tools/nnictl/nnictl.py", line 497, in parse_args

args.func(args)

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/tools/nnictl/launcher.py", line 77, in create_experiment

config = ExperimentConfig.load(config_file)

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/experiment/config/base.py", line 140, in load

config = cls(**data)

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/experiment/config/experiment_config.py", line 104, in __init__

self.training_service = utils.load_training_service_config(self.training_service)

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/experiment/config/utils/internal.py", line 157, in load_training_service_config

return cls(**config)

File "/home/xxx/.miniconda3/envs/torch/lib/python3.8/site-packages/nni/experiment/config/base.py", line 93, in __init__

raise AttributeError(f'{class_name} does not have field(s) {fields}')

AttributeError: RemoteConfig does not have field(s) useactivegpu

```

<!--

Where can you find the log files:

LOG: https://github.com/microsoft/nni/blob/master/docs/en_US/Tutorial/HowToDebug.md#experiment-root-director

STDOUT/STDERR: https://nni.readthedocs.io/en/stable/reference/nnictl.html#nnictl-log-stdout

-->

**How to reproduce it?**: | closed | 2022-07-30T06:43:34Z | 2022-09-07T10:42:16Z | https://github.com/microsoft/nni/issues/5031 | [

"user raised",

"support",

"remote"

] | unikcc | 1 |

absent1706/sqlalchemy-mixins | sqlalchemy | 32 | Does BaseModel.set_session(session) only run once? | Or BaseModel.set_session(session) needs to run for every request? | closed | 2020-01-11T07:11:46Z | 2020-03-31T16:21:00Z | https://github.com/absent1706/sqlalchemy-mixins/issues/32 | [] | scil | 1 |

pytorch/vision | machine-learning | 8,389 | Compiling resize_image: function interpolate not_implemented | ### 🐛 Describe the bug

I am compiling a method (mode=default, fullgrph=True), which calls torchvision.transforms.v2.functional.resize_image. However, I receive an error, which indicates that the interpolate method is not implemented. I am using pytorch lightning and weirdly this only happens during validation. It works fine during training.

```

Failed running call_function <function interpolate at 0x7f60c39593a0>(*(FakeTensor(..., device='cuda:0', size=(75, 3, 256, 256)),), **{'size': [224, 224], 'mode': 'bicubic', 'align_corners': False, 'antialias': True}):

Multiple dispatch failed for 'torch.ops.aten.size'; all __torch_dispatch__ handlers returned NotImplemented:

- tensor subclass <class 'torch._subclasses.fake_tensor.FakeTensor'>

For more information, try re-running with TORCH_LOGS=not_implemented

from user code:

File ...

File "/some/python/file.py", line 54, in encode

x = tv_func.resize_image(

File ".../miniconda3/envs/deepmotion3/lib/python3.11/site-packages/torchvision/transforms/v2/functional/_geometry.py", line 260, in resize_image

image = interpolate(

Set TORCH_LOGS="+dynamo" and TORCHDYNAMO_VERBOSE=1 for more information

You can suppress this exception and fall back to eager by setting:

import torch._dynamo

torch._dynamo.config.suppress_errors = True

TypeError: Multiple dispatch failed for 'torch.ops.aten.size'; all __torch_dispatch__ handlers returned NotImplemented:

- tensor subclass <class 'torch._subclasses.fake_tensor.FakeTensor'>

For more information, try re-running with TORCH_LOGS=not_implemented

The above exception was the direct cause of the following exception:

RuntimeError: Failed running call_function <function interpolate at 0x7f60c39593a0>(*(FakeTensor(..., device='cuda:0', size=(75, 3, 256, 256)),), **{'size': [224, 224], 'mode': 'bicubic', 'align_corners': False, 'antialias': True}):

Multiple dispatch failed for 'torch.ops.aten.size'; all __torch_dispatch__ handlers returned NotImplemented:

- tensor subclass <class 'torch._subclasses.fake_tensor.FakeTensor'>

For more information, try re-running with TORCH_LOGS=not_implemented

During handling of the above exception, another exception occurred:

File "/some/python/file.py", line 299, in validation_step

loss = self.shared_step(step_batch, train=False)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/some/python/file.py", line 586, in run

trainer.fit(model, datamodule, ckpt_path=opt.resume_from_checkpoint)

File "/some/python/file.py", line 702, in <module>

run(opt, trainer, datamodule, model)

torch._dynamo.exc.TorchRuntimeError: Failed running call_function <function interpolate at 0x7f60c39593a0>(*(FakeTensor(..., device='cuda:0', size=(75, 3, 256, 256)),), **{'size': [224, 224], 'mode': 'bicubic', 'align_corners': False, 'antialias': True}):

Multiple dispatch failed for 'torch.ops.aten.size'; all __torch_dispatch__ handlers returned NotImplemented:

- tensor subclass <class 'torch._subclasses.fake_tensor.FakeTensor'>

For more information, try re-running with TORCH_LOGS=not_implemented

from user code:

File ...

File "/some/python/file.py", line 54, in encode

x = tv_func.resize_image(

File ".../miniconda3/envs/deepmotion3/lib/python3.11/site-packages/torchvision/transforms/v2/functional/_geometry.py", line 260, in resize_image

image = interpolate(

Set TORCH_LOGS="+dynamo" and TORCHDYNAMO_VERBOSE=1 for more information

You can suppress this exception and fall back to eager by setting:

import torch._dynamo

torch._dynamo.config.suppress_errors = True

```

### Versions

Collecting environment information...

PyTorch version: 2.2.1

Is debug build: False

CUDA used to build PyTorch: 12.1

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.4 LTS (x86_64)

GCC version: (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0

Clang version: Could not collect

CMake version: version 3.22.1

Libc version: glibc-2.35

Python version: 3.11.8 | packaged by conda-forge | (main, Feb 16 2024, 20:53:32) [GCC 12.3.0] (64-bit runtime)

Python platform: Linux-5.15.0-102-generic-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: Could not collect

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration:

GPU 0: NVIDIA A100-PCIE-40GB

GPU 1: NVIDIA A100-PCIE-40GB

GPU 2: NVIDIA A100-PCIE-40GB

GPU 3: NVIDIA A100-PCIE-40GB

GPU 4: NVIDIA A100-PCIE-40GB

GPU 5: NVIDIA A100-PCIE-40GB

GPU 6: NVIDIA A100-PCIE-40GB

GPU 7: NVIDIA A100-PCIE-40GB

Nvidia driver version: 535.171.04

cuDNN version: Probably one of the following:

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_adv_infer.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_adv_train.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_cnn_infer.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_cnn_train.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_ops_infer.so.8.1.0

/usr/local/cuda-11.1/targets/x86_64-linux/lib/libcudnn_ops_train.so.8.1.0

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 43 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 64

On-line CPU(s) list: 0-63

Vendor ID: AuthenticAMD

Model name: AMD EPYC 7452 32-Core Processor

CPU family: 23

Model: 49

Thread(s) per core: 1

Core(s) per socket: 32

Socket(s): 2

Stepping: 0

Frequency boost: enabled

CPU max MHz: 2350.0000

CPU min MHz: 1500.0000

BogoMIPS: 4700.09

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx mmxext fxsr_opt pdpe1gb rdtscp lm constant_tsc rep_good nopl nonstop_tsc cpuid extd_apicid aperfmperf rapl pni pclmulqdq monitor ssse3 fma cx16 sse4_1 sse4_2 movbe popcnt aes xsave avx f16c rdrand lahf_lm cmp_legacy svm extapic cr8_legacy abm sse4a misalignsse 3dnowprefetch osvw ibs skinit wdt tce topoext perfctr_core perfctr_nb bpext perfctr_llc mwaitx cpb cat_l3 cdp_l3 hw_pstate ssbd mba ibrs ibpb stibp vmmcall fsgsbase bmi1 avx2 smep bmi2 cqm rdt_a rdseed adx smap clflushopt clwb sha_ni xsaveopt xsavec xgetbv1 cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local clzero irperf xsaveerptr rdpru wbnoinvd amd_ppin arat npt lbrv svm_lock nrip_save tsc_scale vmcb_clean flushbyasid decodeassists pausefilter pfthreshold avic v_vmsave_vmload vgif v_spec_ctrl umip rdpid overflow_recov succor smca sme sev sev_es

Virtualization: AMD-V

L1d cache: 2 MiB (64 instances)

L1i cache: 2 MiB (64 instances)

L2 cache: 32 MiB (64 instances)

L3 cache: 256 MiB (16 instances)

NUMA node(s): 2

NUMA node0 CPU(s): 0-31

NUMA node1 CPU(s): 32-63

Vulnerability Gather data sampling: Not affected

Vulnerability Itlb multihit: Not affected

Vulnerability L1tf: Not affected

Vulnerability Mds: Not affected

Vulnerability Meltdown: Not affected

Vulnerability Mmio stale data: Not affected

Vulnerability Retbleed: Mitigation; untrained return thunk; SMT disabled

Vulnerability Spec rstack overflow: Mitigation; SMT disabled

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl and seccomp

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; Retpolines, IBPB conditional, STIBP disabled, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Not affected

Versions of relevant libraries:

[pip3] numpy==1.26.4

[pip3] pytorch-lightning==2.1.3

[pip3] torch==2.2.1

[pip3] torch-fidelity==0.3.0

[pip3] torchaudio==2.2.1

[pip3] torchdiffeq==0.2.3

[pip3] torchmetrics==1.3.2

[pip3] torchvision==0.17.1

[pip3] triton==2.2.0

[conda] blas 1.0 mkl

[conda] libjpeg-turbo 2.0.0 h9bf148f_0 pytorch

[conda] mkl 2023.1.0 h213fc3f_46344

[conda] mkl-service 2.4.0 py311h5eee18b_1

[conda] mkl_fft 1.3.8 py311h5eee18b_0

[conda] mkl_random 1.2.4 py311hdb19cb5_0

[conda] numpy 1.26.4 py311h08b1b3b_0

[conda] numpy-base 1.26.4 py311hf175353_0

[conda] pytorch 2.2.1 py3.11_cuda12.1_cudnn8.9.2_0 pytorch

[conda] pytorch-cuda 12.1 ha16c6d3_5 pytorch

[conda] pytorch-lightning 2.1.3 pyhd8ed1ab_0 conda-forge

[conda] pytorch-mutex 1.0 cuda pytorch

[conda] torch-fidelity 0.3.0 pypi_0 pypi

[conda] torchaudio 2.2.1 py311_cu121 pytorch

[conda] torchdiffeq 0.2.3 pypi_0 pypi

[conda] torchmetrics 1.3.2 pyhd8ed1ab_0 conda-forge

[conda] torchtriton 2.2.0 py311 pytorch

[conda] torchvision 0.17.1 py311_cu121 pytorch | open | 2024-04-19T18:04:08Z | 2024-04-29T13:10:51Z | https://github.com/pytorch/vision/issues/8389 | [] | treasan | 1 |

JaidedAI/EasyOCR | deep-learning | 629 | Why are there some pictures with errors? | The same code, Why are there some pictures with errors?

Here is the error message

```

CUDA not available - defaulting to CPU. Note: This module is much faster with a GPU.

Traceback (most recent call last):

File "D:/code/python/base/test1.py", line 6, in <module>

result = reader.readtext('invoice.png')

File "D:\python\base\lib\site-packages\easyocr\easyocr.py", line 397, in readtext

filter_ths, y_ths, x_ths, False, output_format)

File "D:\python\base\lib\site-packages\easyocr\easyocr.py", line 325, in recognize

image_list, max_width = get_image_list(h_list, f_list, img_cv_grey, model_height = imgH)

File "D:\python\base\lib\site-packages\easyocr\utils.py", line 540, in get_image_list

maximum_y,maximum_x = img.shape

AttributeError: 'NoneType' object has no attribute 'shape'

Process finished with exit code 1

```

**this is error code**

```python

import easyocr

import time

start = time.time()

reader = easyocr.Reader(['ch_sim','en'])

result = reader.readtext('发票.png')

for word in result:

print(word[1])

end = time.time()

print(end - start)

```

**And when I change it to this it can work**

```python

import easyocr

import time

start = time.time()

reader = easyocr.Reader(['ch_sim','en'])

img = open('invoice.png','rb')

source = img.read()

result = reader.readtext(source)

for word in result:

print(word[1])

end = time.time()

print(end - start)

```

| closed | 2021-12-24T10:22:22Z | 2022-08-07T05:01:56Z | https://github.com/JaidedAI/EasyOCR/issues/629 | [] | jinhuiDing | 0 |

pykaldi/pykaldi | numpy | 90 | why do i get different result for computing fbank-feature between pykaldi and kaldi | i install the pykaldi from source.

then compare the result for using pykaldi to result for using compute-fbank-feats in kaldi with test.wav

1.code(use pykaldi)

sf_bank=16000.0

m3 = SubVector(mean(s3, axis=0))

f3=fbank.compute_features(m3,sf_fbank,1.00)

feature:

13.5067 10.8341 10.0170 ... 13.6531 13.5450 13.8087

6.6261 5.5527 8.3816 ... 10.7848 10.2027 8.7894

7.0736 8.8376 10.0984 ... 10.7526 9.8521 8.4278

... ⋱ ...

9.5450 10.1321 10.1961 ... 9.6800 9.4741 9.2639

8.4494 7.7848 7.4247 ... 10.3842 10.7975 9.4761

4.6003 4.6669 8.1002 ... 10.0861 9.9920 9.1505

2.kaldi

set sample_frq=16000.0 in fbank.conf

compute-fbank-feats --verbose=0 --config=conf/fbank.conf scp,p:testfile.scp ark,t:- |less

feature:

13.50692 10.83265 10.02174 ...13.64816 13.54409 13.81221

6.617604 5.457501 8.411386 ...10.66659 10.40139 8.803407

7.078373 8.840377 10.10209 ...10.81204 9.477433 8.874421

......

| closed | 2019-03-15T09:43:38Z | 2019-03-19T05:53:05Z | https://github.com/pykaldi/pykaldi/issues/90 | [] | liuchenbaidu | 6 |

jupyter/docker-stacks | jupyter | 2,083 | After starting the container with NB_USER=root, NB_UID=0, and NB_GID=0, $HOME environment variable is still /home/jovyan | ### What docker image(s) are you using?

datascience-notebook

### Host OS system

Ubuntu 22.04

### Host architecture

x86_64

### What Docker command are you running?

docker run -d --rm --user root -p 8888:8888 -e JUPYTER_TOKEN=123 -e NB_UID=0 -e NB_GID=0 -e NB_USER=root -e

NOTEBOOK_ARGS="--allow-root" quay.io/jupyter/datascience-notebook:2024-01-16

### How to Reproduce the problem?

1. Start the container using the comment above and exec into the container

```

ubuntu$ docker run -d --rm --user root -p 8888:8888 -e JUPYTER_TOKEN=123 -e NB_UID=0 -e NB_GID=0 -e NB_USER=root -e

NOTEBOOK_ARGS="--allow-root" quay.io/jupyter/datascience-notebook:2024-01-16

025ef42b70c01de329f8b6371feb008525a0393284b3d873df7642d396f2ff2d

```

2. Exec into the container

```

ubuntu$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

025ef42b70c0 quay.io/jupyter/datascience-notebook:2024-01-16 "tini -g -- start-no…" 8 seconds ago Up 7 seconds (healthy) 0.0.0.0:8888->8888/tcp, :::8888->8888/tcp happy_sutherland

70058a13a501 resero-svc-studies:latest "/bin/bash" 27 hours ago Up 27 hours resero-devcontainer

ubuntu$ docker exec -u root happy_sutherland bash

```

3. Check the user

```

ubuntu$ whoami

ubuntu

```

4. Check $HOME

(base) root@025ef42b70c0:~# echo $HOME

/home/jovyan

NOTE: /etc/passwd is fine

```

(base) root@025ef42b70c0:~# cat /etc/passwd

root:x:0:0:root:/home/root:/bin/bash

daemon:x:1:1:daemon:/usr/sbin:/usr/sbin/nologin

bin:x:2:2:bin:/bin:/usr/sbin/nologin

sys:x:3:3:sys:/dev:/usr/sbin/nologin

sync:x:4:65534:sync:/bin:/bin/sync

games:x:5:60:games:/usr/games:/usr/sbin/nologin

man:x:6:12:man:/var/cache/man:/usr/sbin/nologin

lp:x:7:7:lp:/var/spool/lpd:/usr/sbin/nologin

mail:x:8:8:mail:/var/mail:/usr/sbin/nologin

news:x:9:9:news:/var/spool/news:/usr/sbin/nologin

uucp:x:10:10:uucp:/var/spool/uucp:/usr/sbin/nologin

proxy:x:13:13:proxy:/bin:/usr/sbin/nologin

www-data:x:33:33:www-data:/var/www:/usr/sbin/nologin

backup:x:34:34:backup:/var/backups:/usr/sbin/nologin

list:x:38:38:Mailing List Manager:/var/list:/usr/sbin/nologin

irc:x:39:39:ircd:/run/ircd:/usr/sbin/nologin

gnats:x:41:41:Gnats Bug-Reporting System (admin):/var/lib/gnats:/usr/sbin/nologin

nobody:x:65534:65534:nobody:/nonexistent:/usr/sbin/nologin

_apt:x:100:65534::/nonexistent:/usr/sbin/nologin

jovyan:x:1000:100::/home/jovyan:/bin/bash

```

### Command output

_No response_

### Expected behavior

`echo $HOME` should be `/home/root`

### Actual behavior

`echo $HOME` is `/home/jovyan`

### Anything else?

_No response_

### Latest Docker version

- [X] I've updated my Docker version to the latest available, and the issue persists | closed | 2024-01-17T12:12:14Z | 2024-01-17T12:31:08Z | https://github.com/jupyter/docker-stacks/issues/2083 | [

"type:Bug"

] | anil-resero | 3 |

Zeyi-Lin/HivisionIDPhotos | machine-learning | 158 | 想请教下各位大佬,nodejs有相关的库可以实现证件照图片处理的功能嘛 | closed | 2024-09-21T11:45:49Z | 2024-10-04T12:00:12Z | https://github.com/Zeyi-Lin/HivisionIDPhotos/issues/158 | [] | Chandie-Zhang | 3 | |

deepfakes/faceswap | deep-learning | 1,022 | Windows installer broken | **Crash reports MUST be included when reporting bugs.**

(check) CPU Supports SSE4 Instructions

(check) Completed check for installed applications

(check) Setting up for: cpu

Downloading Miniconda3...

Installing Miniconda3. This will take a few minutes...

Miniconda3 installed.

Initializing Conda...

Creating Conda Virtual Environment...

Error Creating Conda Virtual Environment

Install Aborted

**Describe the bug**

Installer is broken

**To Reproduce**

Steps to reproduce the behavior:

1. Download installer (Windows) from github

2. Click "Install"

3. See error

**Expected behavior**

Just install

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Desktop (please complete the following information):**

- OS: Windows 8.1

- Python Version 3.8.3rc1

- Conda Version I first time hear about it , i never instaled it.

- Commit ID What ?

-

**Additional context**

_--===+ Hardware +===--_

Intel Pentium (R) n3530

Intel HD Graphics (Bay Trail)

4GB (SODIMM) [DDR3]

HDD 500GB

UEFI

**Crash Report**

Faceswap is not installing , so they have no directory for storing crash report | closed | 2020-05-11T07:01:49Z | 2020-05-13T11:32:29Z | https://github.com/deepfakes/faceswap/issues/1022 | [] | BlueONn | 1 |

tflearn/tflearn | data-science | 640 | a little error in the doc page | At doc page [get_started](http://tflearn.org/getting_started/), the first example of topic 'Layers':

```

with tf.name_scope('conv1'):

W = tf.Variable(tf.random_normal([5, 5, 1, 32]), dtype=tf.float32, name='Weights')

b = tf.Variable(tf.random_normal([32]), dtype=tf.float32, name='biases')

x = tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

x = tf.add_bias(W, b)

x = tf.nn.relu(x)

```

the fivth line should be `x = tf.add_bias(x, b)`. | closed | 2017-02-28T16:04:25Z | 2017-03-16T22:47:36Z | https://github.com/tflearn/tflearn/issues/640 | [] | cooljacket | 0 |

microsoft/nni | pytorch | 5,338 | Add a parameter in generate_scenario for the file 'scenario.txt' instead of harcoding | <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

It would be great in the function https://github.com/microsoft/nni/blob/e85f029bd4e4b1bdf3e679893fb6447e4d6b2c79/nni/algorithms/hpo/smac_tuner/convert_ss_to_scenario.py#L192 to add a parameter for `scenario.txt` instead of hardcoding it.

**Why is this needed**:

I'm using SMAC tuner in a Databricks compute where I can't write to `.`, I can only write to `/tmp/`. If I could parametrize the file, then I would be able to use SMAC.

**Without this feature, how does current nni work**:

SMAC won't work in Databricks and probably on Synapse (I haven't tried, but it is my guess)

**Components that may involve changes**:

**Brief description of your proposal if any**:

Difficult fix:

Change the signature of [generate_scenario](https://github.com/microsoft/nni/blob/e85f029bd4e4b1bdf3e679893fb6447e4d6b2c79/nni/algorithms/hpo/smac_tuner/convert_ss_to_scenario.py#L114), and then also the SMAC arguments.

Easy fix:

Instead of hardcoding `scenario.txt` hardcode `/tmp/scenario.txt`. I'll send this PR in case you want it | closed | 2023-02-07T12:28:14Z | 2023-02-27T16:05:23Z | https://github.com/microsoft/nni/issues/5338 | [] | miguelgfierro | 0 |

ageitgey/face_recognition | python | 721 | Issue with dLib installation with Cuda and AVX support | * face_recognition version: Latest

* Python version: 3.7

* Operating System: Linux with GPU

### Description-- Please help - cmake version 2.8.12

Trying to install dLib with AVX and Cuda support

Command tried-

Paste the command(s) you ran and the output.

python3 setup.py install --yes USE_AVX_INSTRUCTIONS --yes DLIB_USE_CUDA

File "/usr/local/lib/python3.7/distutils/command/install_lib.py", line 107, in build

self.run_command('build_ext')

File "/usr/local/lib/python3.7/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/usr/local/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "setup.py", line 119, in run

self.build_extension(ext)

File "setup.py", line 153, in build_extension

subprocess.check_call(cmake_setup, cwd=build_folder)

File "/usr/local/lib/python3.7/subprocess.py", line 328, in check_call

raise CalledProcessError(retcode, cmd)

subprocess.CalledProcessError: Command '['cmake', '/root/dlib-19.9/tools/python', '-DCMAKE_LIBRARY_OUTPUT_DIRECTORY=/root/dlib-19.9/build/lib.linux-x86_64-3.7', '-DPYTHON_EXECUTABLE=/usr/local/bin/python3', '-DUSE_AVX_INSTRUCTIONS=yes', '-DDLIB_USE_CUDA=yes', '-DCMAKE_BUILD_TYPE=Release']' returned non-zero exit status 1.

Then after i tired builing the dlib to just give a chance in case it builds-

command -

cd dlib

mkdir build; cd build; cmake ..;

-- The CXX compiler identification is unknown

CMake Error: your CXX compiler: "CMAKE_CXX_COMPILER-NOTFOUND" was not found. Please set CMAKE_CXX_COMPILER to a valid compiler path or name.

CMake Error: your CXX compiler: "CMAKE_CXX_COMPILER-NOTFOUND" was not found. Please set CMAKE_CXX_COMPILER to a valid compiler path or name.

-- Using CMake version: 2.8.12.2

-- Compiling dlib version: 19.16.99

CMake Error at dlib/cmake_utils/set_compiler_specific_options.cmake:130 (if):

if given arguments:

"Clang" "MATCHES"

Unknown arguments specified

Call Stack (most recent call first):

dlib/CMakeLists.txt:27 (include)

-- Configuring incomplete, errors occurred!

See also "/root/dlib/build/CMakeFiles/CMakeOutput.log".

See also "/root/dlib/build/CMakeFiles/CMakeError.log".

| open | 2019-01-22T10:02:03Z | 2019-01-22T10:02:03Z | https://github.com/ageitgey/face_recognition/issues/721 | [] | 74981 | 0 |

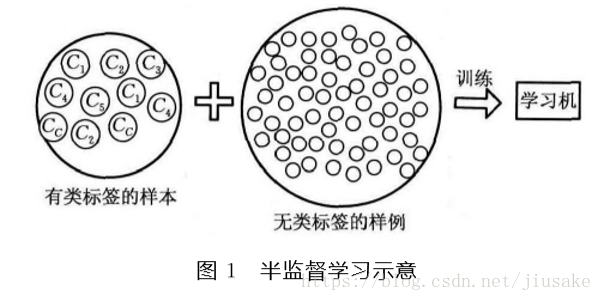

apachecn/ailearning | python | 484 | 无监督算法【需要完善+补充】 | 半监督学习(Semi-Supervised Learning,SSL)类属于机器学习(Machine Learning,ML)。

## 一 ML有两种基本类型的学习任务:

> 1.监督学习(Supervised Learning,SL)

根据输入-输出样本对L={(x1,y1),···,(xl,yl)}学习输入到输出的映射f:X->Y,来预测测试样例的输出值。SL包括分类(Classification)和回归(Regression)两类任务,分类中的样例xi∈Rm(输入空间),类标签yi∈{c1,c2,···,cc},cj∈N;回归中的输入xi∈Rm,输出yi∈R(输出空间)。

> 2. 无监督学习(Unsupervised Learning,UL)

利用无类标签的样例U={x1,···,xn}所包含的信息学习其对应的类标签Yu=[y1···yn]T,由学习到的类标签信息把样例划分到不同的簇(Clustering)或找到高维输入数据的低维结构。UL包括聚类(Clistering)和降维(Dimensionality Reduction)两类任务。

## 二 半监督学习(Semi-Supervised Learning,UL)

在许多ML的实际应用中,很容易找到海量的无类标签的样例,但需要使用特殊设备或经过昂贵且用时非常长的实验过程进行人工标记才能得到有类标签的样本,由此产生了极少量的有类标签的样本和过剩的无类标签的样例。因此,人们尝试将大量的无类标签的样例加入到有限的有类标签的样本中一起训练来进行学习,期望能对学习性能起到改进的作用,由此产生了SSL,如如图1所示。SSL避免了数据和资源的浪费,同时解决了SL的 模型泛化能力不强和UL的模型不精确等问题。

> 1.半监督学习依赖的假设

SSL的成立依赖于模型假设,当模型假设正确时,无类标签的样例能够帮助改进学习性能。SSL依赖的假设有以下3个:

(1)平滑假设(Smoothness Assumption)

位于稠密数据区域的两个距离很近的样例的类标签相似,也就是说,当两个样例被稠密数据区域中的边连接时,它们在很大的概率下有相同的类标签;相反地,当两个样例被稀疏数据区域分开时,它们的类标签趋于不同.

(2)聚类假设(Cluster Assumption)

当两个样例位于同一聚类簇时,它们在很大的概率下有相同的类标签.这个假设的等价定义为低密度分离假设(Low Sensity Separation Assumption),即分类 决策边界应该穿过稀疏数据区域,而避免将稠密数 据区域的样例分到决策边界两侧.

(3)流形假设(Manifold Assumption)

将高维数据嵌入到低维流形中,当两个样例位于低维流形中的一个小局部邻域内时,它们具有相似的类标签。许多实验研究表明当SSL不满足这些假设或模型假设不正确时,无类标签的样例不仅不能对学习性能起到改进作用,反而会恶化学习性能,导致 SSL的性能下降.但是还有一些实验表明,在一些特殊的情况下即使模型假设正确,无类标签的样例也有可能损害学习性能。

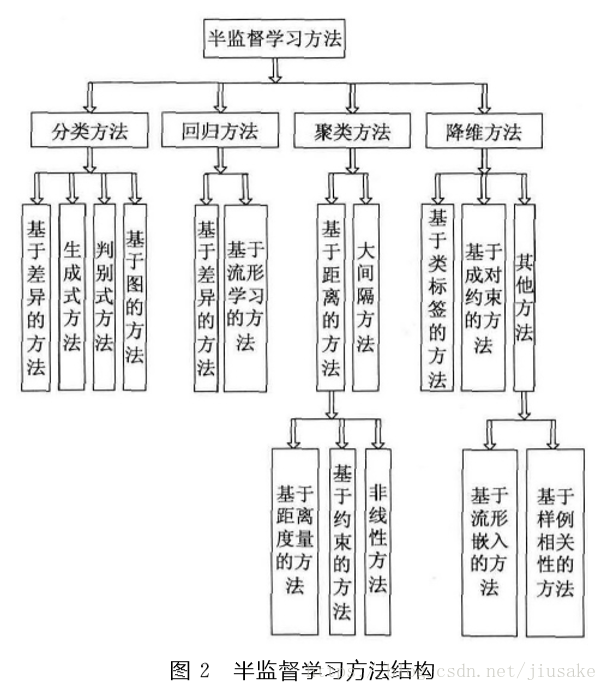

> 2.半监督学习的分类

SSL按照统计学习理论的角度包括直推 (Transductive )SSL和归纳(Inductive)SSL两类模式。直推 SSL只处理样本空间内给定的训练数据,利用训练数据中有类标签的样本和无类标签的样例进行训练,预测训练数据中无类标签的样例的类标签;归纳SSL处理整个样本空间中所有给定和未知的样例,同时利用训练数据中有类标签的样本和无类标签的样例,以及未知的测试样例一起进行训练,不仅预测训练数据中无类标签的样例的类标签,更主要的是预测未知的测试样例的类标签。从不同的学习场景看,SSL可分为4大类:

(1)半监督分类 (Semi-Supervised Classification)

在无类标签的样例的帮助下训练有类标 签的样本,获得比只用有类标签的样本训练得到的分类器性能更优的分类器,弥补有类标签的样本不足的缺陷,其中类标签yi取有限离散值yi∈{c1,c2,···,cc},cj∈N。

(2)半监督回归(Semi-Supervised Regression)

在无输出的输入的帮助下训练有输出的输入,获得比只用有输出的输入训练得到的回归器性能更好的回归器,其中输出yi 取连续值 yi∈R。

(3)半监督聚类(Semi-Supervised Clustering)

在有类标签的样本的信息帮助下获得比只用无类标 签的样例得到的结果更好的簇,提高聚类方法的精度。

(4)半监督降维(Semi-Supervised Dimensionality Reduction)

在有类标签的样本的信息帮助下找到高维输入数据的低维结构,同时保持原始高维数据和成对约束(Pair-Wise Constraints)的结构不变,即在高维空间中满足正约束(Must-Link Constraints)的样例在低维空间中相距很近,在高维空间中满足负约束(Cannot-Link Constraints)的样例在低维空间中距离很远。

---

原文地址:https://blog.csdn.net/jiusake/article/details/80016171 | closed | 2019-02-25T08:38:34Z | 2021-09-07T17:45:35Z | https://github.com/apachecn/ailearning/issues/484 | [] | jiangzhonglian | 0 |

fbdesignpro/sweetviz | data-visualization | 45 | Add box plots | version: 1.0.3

date: Jul 22, 2020

Currently "sweetviz" only has bar-charts for visualizations. For medium-size data analysis (such as titanic or Boston housing) it is not much costly to show box-plots as well as bar-plots. For a larger dataset, it can be made optional in `config.ini`file and can also be determined file size to make it true or false.

For example:

```

if file size < 50MB:

show boxplots and bar plots

else:

show only bar plots

``` | open | 2020-07-22T16:04:55Z | 2020-07-23T14:56:45Z | https://github.com/fbdesignpro/sweetviz/issues/45 | [

"feature request"

] | bhishanpdl | 0 |

graphistry/pygraphistry | jupyter | 440 | publish cucat | it should be clear how to get a versioned cucat

ideas:

- [x] pypi / pip

For now, instead, git tag so versioned pip install github ... tag <--- for now, use semvar: https://semver.org/ | open | 2023-02-20T23:32:51Z | 2023-12-07T06:13:24Z | https://github.com/graphistry/pygraphistry/issues/440 | [

"enhancement",

"p4",

"infra"

] | lmeyerov | 1 |

microsoft/unilm | nlp | 1,573 | Unable to use finetuned LayoutLMV3 for object detection task model for testing | **Describe**

Model I am using (LayoutLMV3):

I have sucessfully finetuned LayoutLMV3 model on custom dataset similar to publaynet dataset on object detection task , it saves a .pth model but when I try to use it for eval using this script :

python train_net.py --config-file cascade_layoutlmv3.yaml --eval-only --num-gpus 8 \

MODEL.WEIGHTS /path/to/layoutlmv3-base-finetuned-publaynet/model_final.pth \

OUTPUT_DIR /path/to/layoutlmv3-base-finetuned-publaynet

I get error :

[06/30 11:16:16 detectron2]: Full config saved to /content/output_dir/config.yaml

file /content/output_dir/config.json not found

Traceback (most recent call last):

File "/usr/local/lib/python3.7/dist-packages/transformers/configuration_utils.py", line 558, in get_config_dict

user_agent=user_agent,

File "/usr/local/lib/python3.7/dist-packages/transformers/file_utils.py", line 1506, in cached_path

raise EnvironmentError(f"file {url_or_filename} not found")

OSError: file /content/output_dir/config.json not found

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/content/unilm/layoutlmv3/examples/object_detection/train_net.py", line 122, in

args=(args,),

File "/usr/local/lib/python3.7/dist-packages/detectron2/engine/launch.py", line 82, in launch

main_func(*args)

File "/content/unilm/layoutlmv3/examples/object_detection/train_net.py", line 90, in main

model = MyTrainer.build_model(cfg)

File "/content/unilm/layoutlmv3/examples/object_detection/ditod/mytrainer.py", line 553, in build_model

model = build_model(cfg)

File "/usr/local/lib/python3.7/dist-packages/detectron2/modeling/meta_arch/build.py", line 22, in build_model

model = META_ARCH_REGISTRY.get(meta_arch)(cfg)

File "/usr/local/lib/python3.7/dist-packages/detectron2/config/config.py", line 189, in wrapped

explicit_args = _get_args_from_config(from_config_func, *args, **kwargs)

File "/usr/local/lib/python3.7/dist-packages/detectron2/config/config.py", line 245, in _get_args_from_config

ret = from_config_func(*args, **kwargs)

File "/usr/local/lib/python3.7/dist-packages/detectron2/modeling/meta_arch/rcnn.py", line 72, in from_config

backbone = build_backbone(cfg)

File "/usr/local/lib/python3.7/dist-packages/detectron2/modeling/backbone/build.py", line 31, in build_backbone

backbone = BACKBONE_REGISTRY.get(backbone_name)(cfg, input_shape)

File "/content/unilm/layoutlmv3/examples/object_detection/ditod/backbone.py", line 168, in build_vit_fpn_backbone

bottom_up = build_VIT_backbone(cfg)

File "/content/unilm/layoutlmv3/examples/object_detection/ditod/backbone.py", line 154, in build_VIT_backbone

config_path=config_path, image_only=cfg.MODEL.IMAGE_ONLY, cfg=cfg)

File "/content/unilm/layoutlmv3/examples/object_detection/ditod/backbone.py", line 84, in init

config = AutoConfig.from_pretrained(config_path)

File "/usr/local/lib/python3.7/dist-packages/transformers/models/auto/configuration_auto.py", line 558, in from_pretrained

config_dict, _ = PretrainedConfig.get_config_dict(pretrained_model_name_or_path, **kwargs)

File "/usr/local/lib/python3.7/dist-packages/transformers/configuration_utils.py", line 575, in get_config_dict

raise EnvironmentError(msg)

OSError: Can't load config for '/content/output_dir/'. Make sure that:

'/content/output_dir/' is a correct model identifier listed on 'https://huggingface.co/models'

(make sure '/content/output_dir/' is not a path to a local directory with something else, in that case)

or '/content/output_dir/' is the correct path to a directory containing a config.json file

| open | 2024-06-12T10:47:55Z | 2024-10-16T02:36:49Z | https://github.com/microsoft/unilm/issues/1573 | [] | maniyarsuyash | 1 |

roboflow/supervision | deep-learning | 1,376 | mAP for small, medium and large objects | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Description

I saw an issue online where the user demanded calculation of MeanAveragePrecision for small, medium and large objects for HBB and OBB detection models. I thought that maybe this will be a good idea to expand this in supervision library.

We can discuss about this issue and maybe potential approach on how to achieve this.

### Use case

_No response_

### Additional

_No response_

### Are you willing to submit a PR?

- [X] Yes I'd like to help by submitting a PR! | closed | 2024-07-17T15:15:41Z | 2024-07-17T16:16:32Z | https://github.com/roboflow/supervision/issues/1376 | [

"enhancement"

] | Bhavay-2001 | 2 |

aidlearning/AidLearning-FrameWork | jupyter | 74 | 请问可以支持预装最新版的wps office for linux arm吗,希望在大屏安卓平板上能有办公能力 | 请问可以支持预装最新版的wps office for linux arm吗,希望在大屏安卓能有办公能力

| closed | 2020-01-18T14:07:06Z | 2020-02-11T17:38:37Z | https://github.com/aidlearning/AidLearning-FrameWork/issues/74 | [] | zihaoxingstudy1 | 3 |

LAION-AI/Open-Assistant | machine-learning | 3,109 | Support user-level OAuth plugin authentication | Will support plugins for which `ai-plugin.json` contains:

```

"auth": {

"type": "oauth"

},

```

- [x] Plugin has client ID and secret, securely store encrypted version of client secret, store client ID

- [x] Mechanism for plugin author receiving verification token

- [x] Redirect user to plugin auth URL

- [x] When user is redirected back to OA, exchange received code for an access token

See OpenAI spec for [OAuth plugin authentication](https://platform.openai.com/docs/plugins/authentication/oauth).

Once backend support is merged, a new issue can be opened for the frontend to support it | open | 2023-05-09T19:48:03Z | 2023-06-06T15:12:53Z | https://github.com/LAION-AI/Open-Assistant/issues/3109 | [

"inference"

] | olliestanley | 0 |

dynaconf/dynaconf | django | 1,055 | Multiple cast validators get discarded | Hi there, thanks for this great project,

**Describe the bug**

After defining a dynaconf `cast` validator, attempting to define subsequent dynaconf `cast` validators on the same variable get discarded while they should also be taken into account

**To Reproduce**

1. Having the following a.toml file:

**a.toml**

```toml

var = "1"

```

2. And the following sample code:

**a.py**

```python

from dynaconf import Dynaconf, Validator

settings = Dynaconf(settings_file="a.toml")

def a(v):

print("called a")

return v

def b(v):

print("called b")

return v

validators = [

Validator("var", cast=a),

Validator("var", cast=b),

]

settings.validators.register(*validators)

settings.validators.validate_all()

```

3. Executing `a.py` under a virtualenv with `dynaconf` `3.2.4` installed:

```bash

$ python3 a.py

called a

```

I would expect to see `called a` and then an additional line `called b` after that.

**Additional context**

This bug is because of `Validator("var", cast=a)` and `Validator("var", cast=b)` comparing equal with the current definition of [`__eq__`](https://github.com/dynaconf/dynaconf/blob/0390393c27a7ef27104bbda2426b3382dcc7fb9f/dynaconf/validator.py#L155-L169):

```python

def __eq__(self, other: object) -> bool:

if self is other:

return True

if type(self).__name__ != type(other).__name__:

return False

identical_attrs = (

getattr(self, attr) == getattr(other, attr)

for attr in EQUALITY_ATTRS

)

if all(identical_attrs):

return True

return False

```

and with

```python

EQUALITY_ATTRS = (

"names",

"must_exist",

"when",

"condition",

"operations",

"envs",

)

```

Since `cast` is not in the `EQUALITY_ATTRS`, validators on the same name with just a cast defined are considered equal and the second validator are skipped altogether (see [this part of the code](https://github.com/dynaconf/dynaconf/blob/0390393c27a7ef27104bbda2426b3382dcc7fb9f/dynaconf/validator.py#L463))

I think a one-line fix for this would be to add the `cast` attribute to the EQUALITY_ATTRS. | closed | 2024-02-20T12:57:19Z | 2024-03-25T17:08:52Z | https://github.com/dynaconf/dynaconf/issues/1055 | [

"bug"

] | nikoskoukis-slamcore | 0 |

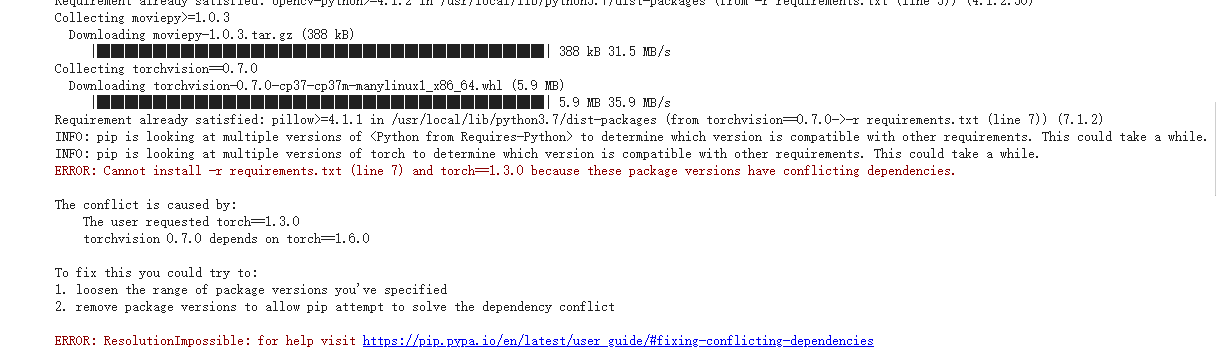

hzwer/ECCV2022-RIFE | computer-vision | 203 | 使用Colab运行时遇到了问题 |

```

INFO: pip is looking at multiple versions of <Python from Requires-Python> to determine which version is compatible with other requirements. This could take a while.

INFO: pip is looking at multiple versions of torch to determine which version is compatible with other requirements. This could take a while.

ERROR: Cannot install -r requirements.txt (line 7) and torch==1.3.0 because these package versions have conflicting dependencies.

The conflict is caused by:

The user requested torch==1.3.0

torchvision 0.7.0 depends on torch==1.6.0

To fix this you could try to:

1. loosen the range of package versions you've specified

2. remove package versions to allow pip attempt to solve the dependency conflict

ERROR: ResolutionImpossible: for help visit https://pip.pypa.io/en/latest/user_guide/#fixing-conflicting-dependencies

``` | closed | 2021-10-07T12:16:57Z | 2021-10-08T05:13:11Z | https://github.com/hzwer/ECCV2022-RIFE/issues/203 | [] | Neycrol | 1 |

globaleaks/globaleaks-whistleblowing-software | sqlalchemy | 3,216 | Regression on Export/Download of Files introduced in 4.9.1 | **Describe the bug**

On selecting single/multiple reports and clicking "Export", I receive a popup message which says "Error!". When I look at the network tab, I can see that I'm getting an error "Method Not Implemented". Likewise, when I attempt to download an attachment on the report, it opens a new tab, which notifies me that I'm "Not Authenticated" with an error code of 10.

**To Reproduce**

Steps to reproduce the behavior:

1. Log in as recipient

2. Click on reports tab

3. Select one or multiple records

4. Click export

5. Error appears

6. Click on single report

7. Navigate to the attached files

8. Click Download

9. New tab opens with error.

**Log Details**

```

- - [15/Apr/2022:21:03:22 +0000] "POST /api/token HTTP/1.1" 201 127 0ms - "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/100.0.4896.79 Safari/537.36"

- -[15/Apr/2022:21:03:22 +0000] "GET /api/rfile/cdb5480d-2122-4ada-8eff-9ed2f0d6f303?token=9b56570fc8fa7bb929d862a75cc94fc3829cf8dd36671af083bcea9b0f962c58 HTTP/1.1" 412 82 0ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET / HTTP/1.1" 200 1804 1ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /css/styles.min.css HTTP/1.1" 200 53361 26ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /js/scripts.min.js HTTP/1.1" 200 259617 90ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /api/public HTTP/1.1" 200 2886 1ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /data/favicon.ico HTTP/1.1" 200 5703 6ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /lib/js/locale/angular-locale_en.js HTTP/1.1" 200 955 1ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /l10n/en HTTP/1.1" 200 9513 4ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /webfonts/metropolis-all-700-normal.woff2 HTTP/1.1" 200 26456 1ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /webfonts/metropolis-all-400-normal.woff2 HTTP/1.1" 200 24180 2ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /data/logo.png HTTP/1.1" 200 6869 2ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:05:51 +0000] "GET /webfonts/fa-solid-900.woff2 HTTP/1.1" 200 154296 26ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:00 +0000] "POST /api/token HTTP/1.1" 201 127 0ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:05 +0000] "POST /api/authentication HTTP/1.1" 201 196 4254ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:05 +0000] "GET /data/favicon.ico HTTP/1.1" 200 5703 4ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:05 +0000] "GET /api/preferences HTTP/1.1" 200 508 13ms - "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/100.0.4896.79 Safari/537.36"

]- - [15/Apr/2022:21:06:07 +0000] "GET /data/favicon.ico HTTP/1.1" 200 5703 11ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:07 +0000] "GET /api/preferences HTTP/1.1" 200 508 27ms - "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/100.0.4896.79 Safari/537.36"

- - [15/Apr/2022:21:06:07 +0000] "GET /api/rtips HTTP/1.1" 200 873 41ms - "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/100.0.4896.79 Safari/537.36"

- - [15/Apr/2022:21:06:10 +0000] "POST /api/token HTTP/1.1" 201 126 0ms - "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/100.0.4896.79 Safari/537.36"

- - [15/Apr/2022:21:06:10 +0000] "GET /api/rtips/133b72f7-b854-4db2-8dcc-4c7b966cc1f8/export?token=1f402e0a739e1065d61c98b640fb87a02c1ac3f81aea3f6e3d81d5d34c637779 HTTP/1.1" 412 82 0ms - "[REMOVED_USER_AGENT]"

- - [15/Apr/2022:21:06:15 +0000] "POST /api/rtips/133b72f7-b854-4db2-8dcc-4c7b966cc1f8/export HTTP/1.1" 501 85 -1ms - "[REMOVED_USER_AGENT]"

```

**Expected behavior**

Successful export of record or download of file.

**Desktop (please complete the following information):**

- Ubuntu 20.08, accessing the recipient dashboard from Macbook iOS 12.0.1

| closed | 2022-04-15T20:32:32Z | 2022-04-16T10:02:06Z | https://github.com/globaleaks/globaleaks-whistleblowing-software/issues/3216 | [

"T: Bug",

"C: Client",

"C: Backend"

] | jennycalendar | 3 |

Farama-Foundation/PettingZoo | api | 1,150 | Passing the Parallel API tests in PettingZoo for custom multi-agent environment? | ### Question

```

from pettingzoo.test import (

parallel_api_test,

parallel_seed_test,

max_cycles_test,

performance_benchmark,

)

```

I have a custom multiagent environment that extends **ParallelEnv**, and since I passed the *parallel_api_test*,

I plan to pass the other ones as well before starting training:

1. **parallel_seed_test**

```

...

File "D:\anaconda3\Lib\site-packages\pettingzoo\test\seed_test.py", line 139, in parallel_seed_test

check_environment_deterministic_parallel(env1, env2, num_cycles)

File "D:\anaconda3\Lib\site-packages\pettingzoo\test\seed_test.py", line 108, in check_environment_deterministic_parallel

assert data_equivalence(actions1, actions2), "Incorrect action seeding"

```

I have no idea how to pass this one. I tried adding `np.random.seed()` statements in my observation_space and action_space functions, but I don't know how to get deterministic actions. Please advise. Are there any steps I can follow to pass the seed test and make my environment results reproducible?

2. **max_cycles_test**

```

...

File "D:\anaconda3\Lib\site-packages\pettingzoo\test\max_cycles_test.py", line 6, in max_cycles_test

parallel_env = mod.parallel_env(max_cycles=max_cycles)

^^^^^^^^^^^^^^^^

AttributeError: 'MultiAgentHighway' object has no attribute 'parallel_env'

```

I'm not sure how to use this? I have `end_of_sim` as the maximum number of steps in the simulation before which the simulation is closed forcefully?

3. **performance_benchmark**: Had to convert my `ParallelEnv` to `AEC` with `parallel_to_aec()` to use this.

```

2466.7955100048803 turns per second

123.33977550024402 cycles per second

Finished performance benchmark

```

How do I evaluate these numbers? Please advise.

Thank you in advance :) | closed | 2023-12-25T01:31:37Z | 2024-01-09T15:11:35Z | https://github.com/Farama-Foundation/PettingZoo/issues/1150 | [

"question"

] | hridayns | 3 |

DistrictDataLabs/yellowbrick | scikit-learn | 1,088 | AttributeError: 'KMeans' object has no attribute 'show' | **Describe the bug**

I am getting this AttributeError: 'KMeans' object has no attribute 'show' when implementing Elbow Method for KMeans clustering. The elbow graph is plotted after showing the error.

**To Reproduce**

```python

from sklearn.cluster import KMeans

from sklearn.datasets import make_blobs

from yellowbrick.cluster import KElbowVisualizer

# Generate synthetic dataset with 8 random clusters

X, y = make_blobs(n_samples=1000, n_features=12, centers=8, random_state=42)

# Instantiate the clustering model and visualizer

model = KMeans()

visualizer = KElbowVisualizer(model, k=(4,12))

visualizer.fit(X) # Fit the data to the visualizer

visualizer.show() # Finalize and render the figure

```

**Traceback**

```

<ipython-input-69-f375a0067df6> in <module>()

14

15 visualizer.fit(X) # Fit the data to the visualizer

---> 16 visualizer.show() # Finalize and render the figure

/usr/local/lib/python3.6/dist-packages/yellowbrick/utils/wrapper.py in __getattr__(self, attr)

40 def __getattr__(self, attr):

41 # proxy to the wrapped object

---> 42 return getattr(self._wrapped, attr)

AttributeError: 'KMeans' object has no attribute 'show'

```

**Desktop (please complete the following information):**

- OS: Windows 10

- Python Version 3.6, google colab

- Yellowbrick Version 0.9.1

**Additional context**

Nope.

| closed | 2020-07-28T13:38:10Z | 2020-07-28T16:26:30Z | https://github.com/DistrictDataLabs/yellowbrick/issues/1088 | [] | Gdkmak | 1 |

ultralytics/ultralytics | deep-learning | 19,316 | Inquiry Regarding Licensing for Commercial Use of YOLO with Custom Training Tool | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

Hello,

I have developed a user-friendly tool (UI) that enables code-free training of YOLO models on custom datasets. I am planning to commercialize this tool and would like to clarify the licensing requirements.

Specifically, I would like to know:

Do I need to obtain a commercial license to use YOLO within my tool, which will be marketed to customers for training custom models?

If my customers use the tool to train models and implement them in their production systems, will they also require a separate license?

Your guidance on the licensing implications for both the tool provider (myself) and the end users (my customers) would be highly appreciated.

Thank you in advance for your assistance. I look forward to your response.

Dorra

### Additional

_No response_ | open | 2025-02-19T16:45:49Z | 2025-02-24T07:08:11Z | https://github.com/ultralytics/ultralytics/issues/19316 | [

"question",

"enterprise"

] | DoBacc | 2 |

xinntao/Real-ESRGAN | pytorch | 340 | raise ValueError("Number of processes must be at least 1") | ### Can anyone help me, why is this?

┌─[Michael@code-me] - [~/Real-ESRGAN] - [Thu May 26, 00:40]

└─[$]> python3 inference_realesrgan_video.py -i /Users/Michael/Downloads/aa.mp4 -n realesr-animevideov3 -s 2 --suffix outx2

Traceback (most recent call last):

File "/Users/Michael/Real-ESRGAN/inference_realesrgan_video.py", line 362, in <module>

main()

File "/Users/Michael/Real-ESRGAN/inference_realesrgan_video.py", line 354, in main

run(args)

File "/Users/Michael/Real-ESRGAN/inference_realesrgan_video.py", line 272, in run

pool = ctx.Pool(num_process)

File "/Library/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/context.py", line 119, in Pool

return Pool(processes, initializer, initargs, maxtasksperchild,

File "/Library/Frameworks/Python.framework/Versions/3.9/lib/python3.9/multiprocessing/pool.py", line 205, in __init__

raise ValueError("Number of processes must be at least 1")

ValueError: Number of processes must be at least 1` | open | 2022-05-25T16:55:29Z | 2022-09-07T14:42:14Z | https://github.com/xinntao/Real-ESRGAN/issues/340 | [] | smoosex | 3 |

lucidrains/vit-pytorch | computer-vision | 230 | Attention maps for PiT | @lucidrains maybe it's only a typo, but in the PiT example, it is said that the attention maps are also outputed, but in fact it is not we only get the predictions. Could help me get those attention maps ?

Thanks in advance | open | 2022-08-09T10:08:08Z | 2022-08-09T10:08:08Z | https://github.com/lucidrains/vit-pytorch/issues/230 | [] | Maxlanglet | 0 |

geex-arts/django-jet | django | 396 | persian calendar need | in Iran and many other cuntres | open | 2019-06-03T19:57:42Z | 2019-06-03T19:57:42Z | https://github.com/geex-arts/django-jet/issues/396 | [] | shahriardn | 0 |

Yorko/mlcourse.ai | scikit-learn | 22 | Решение вопроса 5.11 не стабильно | Даже при выставленных random_state параметрах, best_score лучшей модели отличается от вариантов в ответах.

Подтверждено запуском несколькими участниками.

Возможно влияют конкретные версии пакетов на расчеты.

Могу приложить ipynb, на котором воспроизводится. | closed | 2017-04-03T08:43:37Z | 2017-04-03T08:52:22Z | https://github.com/Yorko/mlcourse.ai/issues/22 | [] | coodix | 2 |

FlareSolverr/FlareSolverr | api | 988 | [yggtorrent] (testing) Exception (yggtorrent): FlareSolverr was unable to process the request, please check FlareSolverr logs | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Discussions

### Environment

```markdown

- FlareSolverr version: 3.3.10

- Last working FlareSolverr version: 3.3.10

- Operating system: OMV

- Are you using Docker: [yes/no] yes

- FlareSolverr User-Agent (see log traces or / endpoint): Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36

- Are you using a VPN: [yes/no] no

- Are you using a Proxy: [yes/no] no

- Are you using Captcha Solver: [yes/no] no

- If using captcha solver, which one:

- URL to test this issue:

```

### Description

in jackett I run the test on yggtorrent and get this error

### Logged Error Messages

```text

Exception (yggtorrent): FlareSolverr was unable to process the request, please check FlareSolverr logs. Message: Error: Error solving the challenge. Message: unknown error: net::ERR_CONNECTION_REFUSED\n (Session info: chrome=119.0.6045.123)\nStacktrace:\n#0 0x555fc69c5d23 <unknown>\n#1 0x555fc6693a3e <unknown>\n#2 0x555fc668c5a6 <unknown>\n#3 0x555fc667e345 <unknown>\n#4 0x555fc667fd20 <unknown>\n#5 0x555fc667e8b4 <unknown>\n#6 0x555fc667d717 <unknown>\n#7 0x555fc667d5ac <unknown>\n#8 0x555fc667c097 <unknown>\n#9 0x555fc667c4e4 <unknown>\n#10 0x555fc6696ff2 <unknown>\n#11 0x555fc671e8a6 <unknown>\n#12 0x555fc6705c92 <unknown>\n#13 0x555fc671e288 <unknown>\n#14 0x555fc6705a43 <unknown>\n#15 0x555fc66cfbc6 <unknown>\n#16 0x555fc66d0fb2 <unknown>\n#17 0x555fc699ada7 <unknown>\n#18 0x555fc699de8d <unknown>\n#19 0x555fc699d938 <unknown>\n#20 0x555fc699e3a5 <unknown>\n#21 0x555fc698d1df <unknown>\n#22 0x555fc699e732 <unknown>\n#23 0x555fc6977666 <unknown>\n#24 0x555fc69b6e95 <unknown>\n#25 0x555fc69b707b <unknown>\n#26 0x555fc69c52af <unknown>\n#27 0x7f4b8c43dea7 start_thread\n: FlareSolverr was unable to process the request, please check FlareSolverr logs. Message: Error: Error solving the challenge. Message: unknown error: net::ERR_CONNECTION_REFUSED\n (Session info: chrome=119.0.6045.123)\nStacktrace:\n#0 0x555fc69c5d23 <unknown>\n#1 0x555fc6693a3e <unknown>\n#2 0x555fc668c5a6 <unknown>\n#3 0x555fc667e345 <unknown>\n#4 0x555fc667fd20 <unknown>\n#5 0x555fc667e8b4 <unknown>\n#6 0x555fc667d717 <unknown>\n#7 0x555fc667d5ac <unknown>\n#8 0x555fc667c097 <unknown>\n#9 0x555fc667c4e4 <unknown>\n#10 0x555fc6696ff2 <unknown>\n#11 0x555fc671e8a6 <unknown>\n#12 0x555fc6705c92 <unknown>\n#13 0x555fc671e288 <unknown>\n#14 0x555fc6705a43 <unknown>\n#15 0x555fc66cfbc6 <unknown>\n#16 0x555fc66d0fb2 <unknown>\n#17 0x555fc699ada7 <unknown>\n#18 0x555fc699de8d <unknown>\n#19 0x555

```

### Screenshots

| closed | 2023-11-28T00:42:44Z | 2023-11-28T15:46:21Z | https://github.com/FlareSolverr/FlareSolverr/issues/988 | [

"more information needed"

] | tifo71 | 8 |

tflearn/tflearn | data-science | 1,094 | KeyError: "The name 'Momentum' refers to an Operation not in the graph | When I load a pretrained tflean model, an keyError is rased.

File "C:\Anaconda3\lib\site-packages\tensorflow\python\training\saver.py", line 1810, in import_meta_graph

**kwargs)

File "C:\Anaconda3\lib\site-packages\tensorflow\python\framework\meta_graph.py", line 696, in import_scoped_meta_graph

ops.prepend_name_scope(value, scope_to_prepend_to_names))

File "C:\Anaconda3\lib\site-packages\tensorflow\python\framework\ops.py", line 3035, in as_graph_element

return self._as_graph_element_locked(obj, allow_tensor, allow_operation)

File "C:\Anaconda3\lib\site-packages\tensorflow\python\framework\ops.py", line 3095, in _as_graph_element_locked

"graph." % repr(name))

KeyError: "The name 'Momentum' refers to an Operation not in the graph." | open | 2018-10-20T05:46:43Z | 2019-03-21T17:26:20Z | https://github.com/tflearn/tflearn/issues/1094 | [] | hongminli | 2 |

public-apis/public-apis | api | 3,843 | NASA API website | I just looked at the NASA API website and there it asks me to register with an API key.

Maybe I am getting something wrong here, but if not I would love to fix this. I haven't really done much open source work, but I would love to change that.

Here is the link: https://api.nasa.gov/

Someone who wants to join and help can click it.

| closed | 2024-04-28T02:57:55Z | 2024-04-28T03:39:27Z | https://github.com/public-apis/public-apis/issues/3843 | [] | Fooooooooool | 3 |

svc-develop-team/so-vits-svc | pytorch | 89 | [Help]: 4.0 不工作。在转换后的音频中引入不需要的失真。源音高未正确转换。4.0 Not working. introducing unwanted distortion in converted audio. source pitch not properly converted. | ### Please check the checkboxes below.

- [X] I have read *[README.md](https://github.com/svc-develop-team/so-vits-svc/blob/4.0/README.md)* and *[Quick solution in wiki](https://github.com/svc-develop-team/so-vits-svc/wiki/Quick-solution)* carefully.

- [X] I have been troubleshooting issues through various search engines. The questions I want to ask are not common.

- [X] I am NOT using one click package / environment package.

### OS version

Linux e2e-99-151 4.15.0-206-generic #217-Ubuntu SMP Fri Feb 3 19:10:13 UTC 2023 x86_64 x86_64 x86_64 GNU/Linux

### GPU

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 525.60.13 Driver Version: 525.60.13 CUDA Version: 12.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA A100 80G... Off | 00000000:01:01.0 Off | On |

| N/A 36C P0 93W / 300W | 10627MiB / 81920MiB | N/A Default |

| | | Enabled |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| MIG devices: |

+------------------+----------------------+-----------+-----------------------+

| GPU GI CI MIG | Memory-Usage | Vol| Shared |

| ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG|

| | | ECC| |

|==================+======================+===========+=======================|

| 0 3 0 0 | 8324MiB / 19968MiB | 14 0 | 1 0 1 0 0 |

| | 2MiB / 32767MiB | | |

+------------------+----------------------+-----------+-----------------------+

| 0 4 0 1 | 6MiB / 19968MiB | 14 0 | 1 0 1 0 0 |

| | 0MiB / 32767MiB | | |

+------------------+----------------------+-----------+-----------------------+

| 0 5 0 2 | 6MiB / 19968MiB | 14 0 | 1 0 1 0 0 |

| | 0MiB / 32767MiB | | |

+------------------+----------------------+-----------+-----------------------+

| 0 6 0 3 | 2290MiB / 19968MiB | 14 0 | 1 0 1 0 0 |

| | 2MiB / 32767MiB | | |

+------------------+----------------------+-----------+-----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 3 0 16814 C /root/anaconda3/bin/python 8312MiB |

| 0 6 0 5768 C python3 2276MiB |

+-----------------------------------------------------------------------------+

### Python version

Python 3.8.16

### PyTorch version

Name: torch Version: 1.13.1 Summary: Tensors and Dynamic neural networks in Python with strong GPU acceleration Home-page: https://pytorch.org/ Author: PyTorch Team Author-email: packages@pytorch.org License: BSD-3 Location: /root/anaconda3/envs/SOVITS/lib/python3.8/site-packages Requires: nvidia-cublas-cu11, nvidia-cuda-nvrtc-cu11, nvidia-cuda-runtime-cu11, nvidia-cudnn-cu11, typing-extensions Required-by: fairseq, torchaudio, torchvision, triton

### Branch of sovits

4.0(Default)

### Dataset source (Used to judge the dataset quality)

Recorded in recording studio

### Where thr problem occurs or what command you executed

python inference_main.py -m "logs/44k/G_200000.pth" -c "configs/config.json" -n "source.wav" -t 0 -s "aki" -a -cr 0.5

### Problem description

introducing unwanted distortion in converted audio. source pitch not properly converted.

4.0 version not working properly. Please help me

4.0 不工作。在转换后的音频中引入不需要的失真。源音高未正确转换。

请帮我

### Log

```python

python inference_main.py -m "logs/44k/G_200000.pth" -c "configs/config.json" -n "source.wav" -t 0 -s "aki" -a -cr 0.5

load model(s) from hubert/checkpoint_best_legacy_500.pt

INFO:fairseq.tasks.text_to_speech:Please install tensorboardX: pip install tensorboardX

INFO:fairseq.tasks.hubert_pretraining:current directory is /root/Experiments/NewExperiments/so-vits-svc-4.0-mean-spk-emb

INFO:fairseq.tasks.hubert_pretraining:HubertPretrainingTask Config {'_name': 'hubert_pretraining', 'data': 'metadata', '

fine_tuning': False, 'labels': ['km'], 'label_dir': 'label', 'label_rate': 50.0, 'sample_rate': 16000, 'normalize': Fals

e, 'enable_padding': False, 'max_keep_size': None, 'max_sample_size': 250000, 'min_sample_size': 32000, 'single_target':

False, 'random_crop': True, 'pad_audio': False}

INFO:fairseq.models.hubert.hubert:HubertModel Config: {'_name': 'hubert', 'label_rate': 50.0, 'extractor_mode': default,