repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

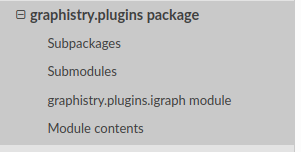

graphistry/pygraphistry | jupyter | 456 | [BUG] Plugins docs nav missing packages and methods | **Describe the bug**

2

nav issues

- [ ] `graphistry.plugins.cugraph` is missing from the nav

- [ ] methods are not listed in the nav for `graphistry.plugins.cugraph` and `graphistry.plugins.igraph`

| open | 2023-04-03T22:12:56Z | 2023-04-03T22:29:53Z | https://github.com/graphistry/pygraphistry/issues/456 | [

"bug",

"p2",

"docs"

] | lmeyerov | 0 |

gee-community/geemap | jupyter | 845 | Add Ocean Color timelapse ( sea surface temperature, chlorophyll a concentrations) | References:

- https://github.com/giswqs/streamlit-geospatial/issues/21

- https://developers.google.com/earth-engine/datasets/catalog/NASA_OCEANDATA_MODIS-Aqua_L3SMI

- https://developers.google.com/earth-engine/datasets/catalog/NASA_OCEANDATA_MODIS-Terra_L3SMI

- https://developers.google.com/earth-engine/datasets/catalog/COPERNICUS_S3_OLCI | closed | 2021-12-31T03:27:24Z | 2021-12-31T03:40:11Z | https://github.com/gee-community/geemap/issues/845 | [

"Feature Request"

] | giswqs | 1 |

chatopera/Synonyms | nlp | 61 | out of vocabulary | # description

分词分出“多少钱”的词性为n,查找同义词时synonyms.display("多少钱")返回out of vocabulary,查看文件vocab.txt中有一条记录为 多少钱 3 nr,请问是哪里出了问题

## current

## expected

# solution

# environment

* version:

The commit hash (`git rev-parse HEAD`)

| closed | 2018-05-01T15:49:30Z | 2018-05-05T13:14:19Z | https://github.com/chatopera/Synonyms/issues/61 | [] | GitOad | 1 |

autokey/autokey | automation | 624 | Substitution erases characters before it | ## Classification:

Bug

## Reproducibility:

Sometimes

## Version

AutoKey version: 0.95.10

Used GUI (Gtk, Qt, or both): Gtk

Installed via: pip 21.1.3

Linux Distribution: Ubuntu 20.04

## Summary

When abbreviations of phrases are typed out, the the front of the substitution overwrites characters in the text before it.

## Steps to Reproduce (if applicable)

- Create a phrase.

- Select Paste using 'Keyboard'.

- Add an abbreviation for the phrase.

- Use the option Trigger on all 'non-word'.

- Check the option Remove Typed Abbreviation

- The error occurs regardless of whether 'Ignore case of typed abbreviation' and 'Trigger immediately' are checked.

- Type the abbrevation possibly elsewhere ahead of some text. Leave a space between the text and the abbreviation.

Let's take this example: abbrevation is 'cr', expanded is 'Chorus', and you are typing 'cr' in front of 'lorem ipsum'

## Expected Results

`lorem ipsum Chorus`

## Actual Results

`lorem ipsChorus `

Edit: If I use Select Paste using 'Ctrl+V' instead, the results vary; `lorem ipsChorus Chorus` or `lorem ipsumChoruChorus` or something else.

Running `autokey-gtk --verbose` gives me `ModuleNotFoundError: No module named 'dbus'` and installing dbus using pip/conda isn't working out.

## Notes

EDIT: Only occurs while using the Vivaldi browser. I'm using version 4.1. | open | 2021-11-09T06:16:08Z | 2021-11-11T12:18:22Z | https://github.com/autokey/autokey/issues/624 | [

"bug",

"phrase expansion",

"low-priority"

] | arunkumaraqm | 5 |

ultralytics/yolov5 | deep-learning | 13,539 | Issue with Tellu Organoid Classifier using Yolov5 | ### Search before asking

- [x] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I am having this issue with Tellu Intestinal Organoid Classifier https://colab.research.google.com/drive/1j-jutA52LlPuB4WGBDaoAhimqXC3xguX?usp=sharing

Has worked perfectly before, but know I cannot get this code to run. any help would be appreciated, thanks.

detect: weights=['/content/yolov5/TelluWeights.pt'], source=/content/drive/MyDrive/BlatchleyLab/Projects/Role_of_integrins_in_symmetry_breaking/241217_DJT_SC_Rac1i_p30/D4, data=data/coco128.yaml, imgsz=[640, 640], conf_thres=0.35, iou_thres=0.45, max_det=1000, device=, view_img=False, save_txt=True, save_conf=True, save_crop=False, nosave=False, classes=None, agnostic_nms=False, augment=False, visualize=False, update=False, project=runs/detect, name=D4, exist_ok=False, line_thickness=3, hide_labels=True, hide_conf=False, half=False, dnn=False, vid_stride=1

YOLOv5 🚀 v7.0-0-g915bbf29 Python-3.11.11 torch-2.6.0+cu124 CPU

Traceback (most recent call last):

File "/content/yolov5/detect.py", line 259, in <module>

main(opt)

File "/content/yolov5/detect.py", line 254, in main

run(**vars(opt))

File "/usr/local/lib/python3.11/dist-packages/torch/utils/_contextlib.py", line 116, in decorate_context

return func(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^

File "/content/yolov5/detect.py", line 96, in run

model = DetectMultiBackend(weights, device=device, dnn=dnn, data=data, fp16=half)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/content/yolov5/models/common.py", line 345, in __init__

model = attempt_load(weights if isinstance(weights, list) else w, device=device, inplace=True, fuse=fuse)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/content/yolov5/models/experimental.py", line 79, in attempt_load

ckpt = torch.load(attempt_download(w), map_location='cpu') # load

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/dist-packages/torch/serialization.py", line 1470, in load

raise pickle.UnpicklingError(_get_wo_message(str(e))) from None

_pickle.UnpicklingError: Weights only load failed. This file can still be loaded, to do so you have two options, do those steps only if you trust the source of the checkpoint.

(1) In PyTorch 2.6, we changed the default value of the `weights_only` argument in `torch.load` from `False` to `True`. Re-running `torch.load` with `weights_only` set to `False` will likely succeed, but it can result in arbitrary code execution. Do it only if you got the file from a trusted source.

(2) Alternatively, to load with `weights_only=True` please check the recommended steps in the following error message.

WeightsUnpickler error: Unsupported global: GLOBAL models.yolo.Model was not an allowed global by default. Please use `torch.serialization.add_safe_globals([Model])` or the `torch.serialization.safe_globals([Model])` context manager to allowlist this global if you trust this class/function.

Check the documentation of torch.load to learn more about types accepted by default with weights_only https://pytorch.org/docs/stable/generated/torch.load.html.

\nThe script also accepts other parameters. See https://github.com/ultralytics/yolov5/blob/master/detect.py for all the options.\n --max-detect 1000. Default is 1000; if you require more, add the parameter to the line of code above with a different value.\n --hide-labels Adding this parameter will hide the name of the organoid class detected in the result images. Convenient in dense cultures and for publication purposes\n\nExample:\n!python detect.py --weights $tellu --conf 0.35 --source $dir --save-txt --save-conf --name "results" --hide-labels --max-detect 2000\n

### Additional

_No response_ | open | 2025-03-21T18:02:05Z | 2025-03-22T04:50:41Z | https://github.com/ultralytics/yolov5/issues/13539 | [

"question",

"detect"

] | djtigani | 2 |

microsoft/qlib | deep-learning | 1,796 | port_analysis_config中strategy下kwarg的signal问题 | 在benchmark文件夹下面,模型对应的yaml配置文件中,一般使用signal<PRED >

port_analysis_config: &port_analysis_config

strategy:

class: TopkDropoutStrategy

module_path: qlib.contrib.strategy

kwargs:

signal:<PRED>

topk: 50

n_drop: 5

在workflow__by_code.py中,使用signal": (model, dataset)。

port_analysis_config = {.....

"strategy": {

"class": "TopkDropoutStrategy",

"module_path": "qlib.contrib.strategy.signal_strategy",

"kwargs": {

"signal": (model, dataset),

"topk": 50,

"n_drop": 5,

},

},

问题是<pred>中的pred是什么定义,没有看到相关说明?然后就是这两种方式有什么区别,谢谢大佬们。 | closed | 2024-05-24T01:14:06Z | 2024-05-24T01:15:13Z | https://github.com/microsoft/qlib/issues/1796 | [

"question"

] | semiparametric | 0 |

deeppavlov/DeepPavlov | tensorflow | 910 | Error while running in Alexa mode | I'm running in Alexa mode and getting the error:

```

ubuntu@ip-172-31-26-238:~/alexa_test$ python -m deeppavlov alexa server_config.json -d

Traceback (most recent call last):

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/__main__.py", line 3, in <module>

main()

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/deep.py", line 116, in main

default_skill_wrap=not args.no_default_skill)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/utils/alexa/server.py", line 81, in run_alexa_default_agent

ssl_cert=ssl_cert)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/utils/alexa/server.py", line 137, in run_alexa_server

bot = Bot(agent_generator, alexa_server_params, input_q, output_q)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/utils/alexa/bot.py", line 68, in __init__

self.agent = self._init_agent()

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/utils/alexa/bot.py", line 95, in _init_agent

agent = self.agent_generator()

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/utils/alexa/server.py", line 70, in get_default_agent

model = build_model(model_config)

File "/home/ubuntu/.pyenv/versions/3.6.8/lib/python3.6/site-packages/deeppavlov/core/commands/infer.py", line 44, in build_model

model_config = config['chainer']

KeyError: 'chainer'

ubuntu@ip-172-31-26-

```

My server_config.json:

```

{

"common_defaults": {

"host": "0.0.0.0",

"port": 5000,

"model_endpoint": "/model",

"model_args_names": ["context"],

"https": false,

"https_cert_path": "",

"https_key_path": "",

"stateful": false,

"multi_instance": false

},

"telegram_defaults": {

"token": ""

},

"ms_bot_framework_defaults": {

"auth_polling_interval": 3500,

"conversation_lifetime": 3600,

"auth_app_id": "",

"auth_app_secret": ""

},

"alexa_defaults": {

"intent_name": "AskDeepPavlov",

"slot_name": "raw_input",

"start_message": "Welcome to DeepPavlov Alexa wrapper!",

"unsupported_message": "Sorry, DeepPavlov can't understand it.",

"conversation_lifetime": 3600

},

"model_defaults": {

"ODQA": {

"host": "",

"port": "",

"model_endpoint": "/odqa",

"model_args_names": ""

}

}

}

```

Why is the error happening?

Seems that everything should be right. I followed the documentation guide. Just deleted other skills from `model_defaults` section. | closed | 2019-06-29T11:49:10Z | 2019-07-02T20:25:54Z | https://github.com/deeppavlov/DeepPavlov/issues/910 | [] | sld | 3 |

MilesCranmer/PySR | scikit-learn | 390 | Warning for `^` | The `^` operator is super inefficient if the user is not using `nested_constraints`, so I think a warning should be raised when it is used without `nested_constraints` set. | closed | 2023-07-24T20:29:16Z | 2023-08-05T22:33:13Z | https://github.com/MilesCranmer/PySR/issues/390 | [] | MilesCranmer | 2 |

dpgaspar/Flask-AppBuilder | flask | 1,412 | Large number of Mongo Role/Permission queries by exist_permission_on_roles on page load | If you'd like to report a bug in Flask-Appbuilder, fill out the template below. Provide

any extra information that may be useful

### Environment

apispec==1.3.3

attrs==19.3.0

Babel==2.8.0

blinker==1.4

click==7.1.2

colorama==0.4.3

defusedxml==0.6.0

dnspython==1.16.0

email-validator==1.1.1

Flask==1.1.2

Flask-AppBuilder==2.3.4

Flask-Babel==1.0.0

Flask-DebugToolbar==0.11.0

Flask-JWT-Extended==3.24.1

Flask-Login==0.4.1

flask-mongoengine==0.9.5

Flask-OpenID==1.2.5

Flask-SQLAlchemy==2.4.3

Flask-WTF==0.14.3

idna==2.9

importlib-metadata==1.6.1

itsdangerous==1.1.0

Jinja2==2.11.2

jsonschema==3.2.0

MarkupSafe==1.1.1

marshmallow==2.21.0

marshmallow-enum==1.5.1

marshmallow-sqlalchemy==0.23.1

mongoengine==0.20.0

prison==0.1.3

PyJWT==1.7.1

pymongo==3.5.1

pyrsistent==0.16.0

python-dateutil==2.8.1

python3-openid==3.1.0

pytz==2020.1

PyYAML==5.3.1

six==1.15.0

SQLAlchemy==1.3.17

SQLAlchemy-Utils==0.36.6

Werkzeug==1.0.1

WTForms==2.3.1

zipp==3.1.0

### Describe the expected results

I Used the Contacts example application from the repo, and with Flask Debug Toolbar enabled to monitor mongo query times, to replicate the issue. Updated app/__init__.py shown below, and the additions to config.py also displayed below. When a basic page is loaded in this case the "List Groups" page I would expect a small number of mongo queries related to the permissioning and then a small number relating to loading/presenting the data.

```python

import logging

from flask import Flask

from flask_appbuilder import AppBuilder

from flask_appbuilder.security.mongoengine.manager import SecurityManager

from flask_mongoengine import MongoEngine

from flask_debugtoolbar import DebugToolbarExtension

logging.basicConfig(format="%(asctime)s:%(levelname)s:%(name)s:%(message)s")

logging.getLogger().setLevel(logging.DEBUG)

app = Flask(__name__)

app.config.from_object("config")

dbmongo = MongoEngine(app)

app.debug = True

toolbar = DebugToolbarExtension(app)

appbuilder = AppBuilder(app, security_manager_class=SecurityManager)

from . import models, views # noqa

```

```python

DEBUG_TB_PANELS = [

'flask_debugtoolbar.panels.versions.VersionDebugPanel',

'flask_debugtoolbar.panels.timer.TimerDebugPanel',

'flask_debugtoolbar.panels.headers.HeaderDebugPanel',

'flask_debugtoolbar.panels.request_vars.RequestVarsDebugPanel',

'flask_debugtoolbar.panels.template.TemplateDebugPanel',

'flask_debugtoolbar.panels.logger.LoggingPanel',

'flask_debugtoolbar.panels.profiler.ProfilerDebugPanel',

'flask_mongoengine.panels.MongoDebugPanel',

]

```

### Describe the actual results

When a page is loaded, there are 700+ mongo queries made.

When the flask application is communicating with a remote mongo instance and network latency increases this results in huge page load times.

### Steps to reproduce

This behaviour exists in the mongoengine example app within the repository and code above will recreate.

On investigation using flask_debugtoolbar, I was able to narrow this down to the exist_permission_on_roles function within the mongoengine/security/manager.py:SecurityManager. Due to the document structure - i.e. reference fields - a lot of queries are made on each individual call, a single call to this function in our case took 2-3 seconds and it is actually called multiple times. By immediately returning true from this function mongoqueries were reduced to < 5 and subsequently page loads were as expected but obviously this renders the Roles/Permissions functionality redundant.

It looks like the data model needs to be changed significantly when using mongo. At the moment I've overridden the SecurityManager:exist_permission_on_roles with a version which caches the permissions and roles in memory and so works around this. Will continue to investigate options around data model.

| closed | 2020-06-23T14:53:49Z | 2022-07-08T14:31:03Z | https://github.com/dpgaspar/Flask-AppBuilder/issues/1412 | [

"stale"

] | colinpattison | 4 |

ranaroussi/yfinance | pandas | 1,361 | fast_info failing for 2.7 on timezone | basic_info still worked for me while fast_info fails on timezone

For what it is worth, I wrote code to bring all the values backtogther into a single dictionary ie: like the old Ticker info

There is a penalty of course in time but I put my data into redis to reduce calls to yfinance

'''

sym = sym # from above

sinfo_d = {}

removed_d = {"regularMarketPrice": "last_price", "dayHigh": "day_high", "dayLow": "day_low", 'fiftyTwoWeekHigh': 'year_high',

'fiftyTwoWeekLow': "year_low", 'volume': 'last_volume', 'averageVolume': 'three_month_average_volume',

'regularMarketVolume': 'ten_day_average_volume', 'marketCap': 'market_cap', 'currency': 'currency',

'regularMarketDayHigh': "day_high", 'regularMarketDayLow': 'day_low', 'averageVolume10days': "ten_day_average_volume",

'open': "open", "toCurrency": "currency", 'fiftyDayAverage': "fifty_day_average",

'twoHundredDayAverage': "two_hundred_day_average", 'averageDailyVolume10Day': "ten_day_average_volume",

'regularMarketPreviousClose': 'previous_close', 'previousClose': "previous_close", 'regularMarketOpen': 'open', 'regularMarketClose': 'close',

'exchangeTimezoneName': 'timezone', 'exchangeTimezoneShortName': 'timezone', 'exchange': 'exchange', 'symbol': 'symbol', 'currentPrice': 'last_price'

>> }

for k in sinfo:

# dbug(f"chkg k: {k}")

if k in removed_d:

dbug(f"Grabbing basic_info for k: {k}")

if k == 'symbol':

sinfo_d[k] = sym

else:

sinfo_d[k] = sbinfo_d[removed_d[k]]

dbug(sinfo_d[k])

continue

# dbug(f"k: {k} {sinfo[k]}")

sinfo_d[k] = sinfo[k]

gtable(sinfo_d, 'hdr', 'prnt', title="debugging", footer=dbug('here'), rnd=2, human=True) # displays table of info dictionary

'''' | closed | 2023-01-26T20:13:30Z | 2023-03-27T15:41:14Z | https://github.com/ranaroussi/yfinance/issues/1361 | [] | geoffmcnamara | 3 |

sunscrapers/djoser | rest-api | 351 | Exposure of user pk, small security issue | Thank you for making good app. I found small security issue.

For example, when you create account at` "/users/create/"`, you will receive this response.

```

{

"email": "my_name@example.com",

"id": 1000,

"username": "my_name"

}

```

For some developer, this become security issue: [from security.stackexchange](https://security.stackexchange.com/questions/56357/should-i-obscure-database-primary-keys-ids-in-application-front-end#comment89550_56358)

> You would also reveal the number of IDs ..... and the current rate of creation (someone creates one, then a set time later finds the new maximum). This might be of interest in the case of user accounts, as eg. it gives clues as to the financial viability of your application to a competitor. – Julia Hayward

Can I remove user id by djoser settings ?

If no setting about this, I will write views(override djoser views) for no user id response, for private use.

someone needs this improvement?

If djoser maintainer think this is useful, and they can merge this improvement,

I will write code and send pull request.

In that case, I will add Djoser settings as bellow ( in `settings.py` )

```

DJOSER = {

'RETURN_USER_ID': False, # Default is True for compatibility

}

````

How do you think ? Please tell me your opinion ! | closed | 2019-02-09T00:44:16Z | 2019-02-21T13:28:02Z | https://github.com/sunscrapers/djoser/issues/351 | [] | ghost | 2 |

Significant-Gravitas/AutoGPT | python | 8,721 | Condition Block tries to convert everything to a float | You can replicate this by applying this diff for a new test (that you should add)

```diff

diff --git a/autogpt_platform/backend/backend/blocks/branching.py b/autogpt_platform/backend/backend/blocks/branching.py

index 65a01c977..1d3fbe18e 100644

--- a/autogpt_platform/backend/backend/blocks/branching.py

+++ b/autogpt_platform/backend/backend/blocks/branching.py

@@ -57,16 +57,27 @@ class ConditionBlock(Block):

output_schema=ConditionBlock.Output,

description="Handles conditional logic based on comparison operators",

categories={BlockCategory.LOGIC},

- test_input={

- "value1": 10,

- "operator": ComparisonOperator.GREATER_THAN.value,

- "value2": 5,

- "yes_value": "Greater",

- "no_value": "Not greater",

- },

+ test_input=[

+ {

+ "value1": 10,

+ "operator": ComparisonOperator.GREATER_THAN.value,

+ "value2": 5,

+ "yes_value": "Greater",

+ "no_value": "Not greater",

+ },

+ {

+ "value1": "hello",

+ "operator": ComparisonOperator.EQUAL.value,

+ "value2": "hello",

+ "yes_value": "Equal",

+ "no_value": "Not equal",

+ },

+ ],

test_output=[

("result", True),

("yes_output", "Greater"),

+ ("result", True),

+ ("yes_output", "Equal"),

],

)

``` | closed | 2024-11-19T17:22:01Z | 2024-12-05T19:51:22Z | https://github.com/Significant-Gravitas/AutoGPT/issues/8721 | [

"bug"

] | ntindle | 0 |

Lightning-AI/pytorch-lightning | data-science | 20,462 | Type Error in configure_optimizers | ### Bug description

As suggested in the [docs](https://lightning.ai/docs/pytorch/stable/api/lightning.pytorch.core.LightningModule.html#lightning.pytorch.core.LightningModule.configure_optimizers) my configure_optimizers looks ruffly like this:

```

def configure_optimizers(self) -> OptimizerLRSchedulerConfig:

...

return {'optimizer': optimizer, 'lr_scheduler': scheduler}

```

Note that if I want to specify a return type I have to choose `OptimizerLRSchedulerConfig` (or `OptimizerLRScheduler`) since that is the only sub type of `OptimizerLRScheduler` (the return type of `configure_optimizers`) that is a dict.

When I run that I get

```

lightning_fabric.utilities.exceptions.MisconfigurationException: `configure_optimizers` must include a monitor when a `ReduceLROnPlateau` scheduler is used. For example: {"optimizer": optimizer, "lr_scheduler": scheduler, "monitor": "metric_to_track"}

```

But I cannot add `'monitor': 'metric_to_track'` to my returned dict since then mypy complains

```

error: Extra key "monitor" for TypedDict "OptimizerLRSchedulerConfig" [typeddict-unknown-key]

```

## Suggested fix

I suggest to replace

```

class OptimizerLRSchedulerConfig(TypedDict):

optimizer: Optimizer

lr_scheduler: NotRequired[Union[LRSchedulerTypeUnion, LRSchedulerConfigType]]

```

from utilities/types.py with

```

class OptimizerConfigDict(TypedDict):

optimizer: Optimizer

class OptimizerLRSchedulerConfigDict(TypedDict):

optimizer: Optimizer

lr_scheduler: Union[LRSchedulerTypeUnion, LRSchedulerConfigType]

monitor: str

```

and

```

OptimizerLRScheduler = Optional[

Union[

Optimizer,

Sequence[Optimizer],

Tuple[Sequence[Optimizer], Sequence[Union[LRSchedulerTypeUnion, LRSchedulerConfig]]],

OptimizerLRSchedulerConfig,

Sequence[OptimizerLRSchedulerConfig],

]

]

```

with

```

OptimizerLRScheduler = Optional[

Union[

Optimizer,

Sequence[Optimizer],

Tuple[Sequence[Optimizer], Sequence[Union[LRSchedulerTypeUnion, LRSchedulerConfig]]],

OptimizerConfigDict,

OptimizerLRSchedulerConfigDict,

Sequence[OptimizerConfigDict],

Sequence[OptimizerLRSchedulerConfigDict],

]

]

```

### What version are you seeing the problem on?

v2.4

### How to reproduce the bug

_No response_

### Error messages and logs

```

# Error messages and logs here please

```

### Environment

<details>

<summary>Current environment</summary>

```

#- PyTorch Lightning Version (e.g., 2.4.0):

#- PyTorch Version (e.g., 2.4):

#- Python version (e.g., 3.12):

#- OS (e.g., Linux):

#- CUDA/cuDNN version:

#- GPU models and configuration:

#- How you installed Lightning(`conda`, `pip`, source):

```

</details>

### More info

_No response_ | closed | 2024-12-03T16:14:32Z | 2024-12-10T09:22:14Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20462 | [

"bug",

"ver: 2.4.x"

] | LukasSalchow | 2 |

ageitgey/face_recognition | machine-learning | 719 | Windows Chinese gibberish | The Chinese character of the position of the avatars is displayed in gibberish. How to set it in Windows environment to support Chinese characters.The effect looks like this:

| open | 2019-01-17T03:51:45Z | 2019-03-25T14:00:57Z | https://github.com/ageitgey/face_recognition/issues/719 | [] | bingws | 1 |

scikit-hep/awkward | numpy | 3,413 | `ctypes` abstraction is not scoped to NumPy only | It seems like we some-where rely on `array.ctypes` internally. We should try and abstract that to inside the nplike / kernels. | open | 2025-03-07T14:17:48Z | 2025-03-07T14:18:08Z | https://github.com/scikit-hep/awkward/issues/3413 | [

"cleanup"

] | agoose77 | 0 |

iterative/dvc | machine-learning | 9,814 | dvc get: throws error when file is in subfolder | # Bug Report

dvc get: throws error when file is in subfolder

## Description

I tracked a file `$SUBDIR/filedata.txt` with DVC. When trying to retrieve this file with `dvc get`, I get an error

```

ERROR: unexpected error - 'NoneType' object has no attribute 'fs'

```

if `$SUBDIR` is not set to the root folder. In other words, the following script works as expected for `SUBDIR=.` but throws the error for `SUBDIR=sub`.

### Reproduce

Please specify some absolute path in line 2. The script works as expected if `SUBDIR=.` is used in line 1, i.e., it obtains the file `datafile.txt` and saves it as `datafile_get.txt`. However, if a subdirectory name (here: `sub`) is specified, the script crashes.

```

SUBDIR=sub

MY_PATH=<SOME_ABSOLUTE_PATH>

mkdir $MY_PATH

cd $MY_PATH

REMOTE_PATH=$MY_PATH/remote/git_storage.git

LOCAL_PATH=$MY_PATH/local

STORE_PATH=$MY_PATH/store

CACHE_PATH=$MY_PATH/cache

git init --bare -q $REMOTE_PATH

git clone $REMOTE_PATH $LOCAL_PATH

cd $LOCAL_PATH

dvc init -q

cat <<EOT >> .dvc/config

[core]

remote = store

[cache]

dir = $CACHE_PATH

type = reflink,symlink

['remote "store"']

url = $STORE_PATH

EOT

# First commit

mkdir $SUBDIR

cd $SUBDIR

echo "Hello World!" >> datafile.txt

dvc add datafile.txt

git add .

git commit -q -m "initial"

dvc push

git push

# Retrieve file

PREVIOUS_COMMIT=$(git log -n 1 --pretty=format:"%H")

dvc get $REMOTE_PATH $SUBDIR/datafile.txt -o datafile_get.txt --rev $PREVIOUS_COMMIT

```

### Expected

I expect that no error is thrown, the file `sub/datafile.txt` can be successfully retrieved, and will be saved as `sub/datafile_get.txt`.

### Environment information

<!--

This is required to ensure that we can reproduce the bug.

-->

**Output of `dvc doctor`:**

```

$ dvc doctor

DVC version: 3.12.0 (pip)

-------------------------

Platform: Python 3.10.8 on Linux-3.10.0-1127.8.2.el7.x86_64-x86_64-with-glibc2.17

Subprojects:

dvc_data = 2.11.0

dvc_objects = 0.24.1

dvc_render = 0.5.3

dvc_task = 0.3.0

scmrepo = 1.1.0

Supports:

http (aiohttp = 3.8.4, aiohttp-retry = 2.8.3),

https (aiohttp = 3.8.4, aiohttp-retry = 2.8.3),

s3 (s3fs = 2023.6.0, boto3 = 1.26.76)

Config:

Global: /home/kpetersen/.config/dvc

System: /etc/xdg/dvc

Cache types: hardlink, symlink

Cache directory: xfs on /dev/sda1

Caches: local

Remotes: local

Workspace directory: xfs on /dev/sda1

Repo: dvc, git

Repo.site_cache_dir: /var/tmp/dvc/repo/0f7f5db67dbdcb6608d56609eb9b05eb

```

**Additional Information (if any):**

<!--

Please check https://github.com/iterative/dvc/wiki/Debugging-DVC on ways to gather more information regarding the issue.

If applicable, please also provide a `--verbose` output of the command, eg: `dvc add --verbose`.

If the issue is regarding the performance, please attach the profiling information and the benchmark comparisons.

-->

| closed | 2023-08-07T06:50:15Z | 2023-08-08T17:39:01Z | https://github.com/iterative/dvc/issues/9814 | [

"awaiting response"

] | kpetersen-hf | 2 |

deepfakes/faceswap | deep-learning | 907 | Return Code: 3221225477 when train the model again after Termination | **Crash reports MUST be included when reporting bugs.**

When I create a Model it works very well. Then when I Terminate the training process and it saved the model and I start the training process again I get: Status: Failed - train.py. Return Code: 3221225477 in the Statusbar in the left bottom.

I don't get any error displayed in the big output textfield.

**To Reproduce**

Steps to reproduce the behavior:

Create a google virtual machine with 6 cores and an Tesla V100 and 10 GB ram

Download Tesladriver and install Faceswap

Create a new Model.

After 100 iterations press terminate and wait till the process stops.

Press train again and after the Message: Enabled TensorBoardLogging the error comes.

**Expected behavior**

I expect that the model train again.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Desktop (please complete the following information):**

- OS: WIndows Server Datacenter 2019

- Python Version the one downloaded by the Windowsinstaller today

- Conda Version the one downloaded by the Windowsinstaller today

- Commit ID the one downloaded by the Windowsinstaller today

**Crash Report**

No crashreport is generated | closed | 2019-10-25T19:08:00Z | 2020-09-27T00:04:34Z | https://github.com/deepfakes/faceswap/issues/907 | [] | Maxinger15 | 0 |

sktime/pytorch-forecasting | pandas | 1,321 | [TFT] NaN forecasts for series of length 1 with GroupNormalizer | - PyTorch-Forecasting version: 1.0.0

### Expected behavior

I have series of all sizes in my dataset, including several that are smaller than my `max_prediction_length`. However, the minimum length is 1 (I haven't yet tried the "pure" cold-start experience).

To ensure that TFT is able to provide forecasts regardless of the length of the series, I set `min_encoder_length` to 0. With these configurations, I should be able to produce forecasts for all series.

### Actual behavior

For some reason I don't understand, I have no problem when I don't normalize the target by group, but when I do, the forecasts for **all series of length 1** are **NaNs** ==> **no problem for lengths 2 or more**!

I've already tested and checked a lot of things and I've dived into the source code to try to understand. It seems that the problem arises at the `predict` time, I haven't seen anything unusual before, for example in the construction of datasets...

Have I misunderstood something? Or is it an side-effect bug in the GroupNormalizer during inference?

### Code to reproduce the problem

```

train_ds = TimeSeriesDataSet(

data=data,

time_idx="time_idx",

target="qty",

group_ids=["ts_id"],

min_encoder_length=1,

max_encoder_length=2*prediction_length,

min_prediction_length=prediction_length,

max_prediction_length=prediction_length,

target_normalizer=GroupNormalizer(groups=["model_id"])

)

pred_ds = TimeSeriesDataSet.from_dataset(train_ds, data, predict=True)

batch_size = 512

train_dataloader = train_ds.to_dataloader(train=True, batch_size=batch_size)

pred_dataloader = pred_ds.to_dataloader(train=False, batch_size=batch_size)

trainer = pl.Trainer(

max_epochs=5, # tmp for debug

limit_train_batches=5, # tmp for debug

gradient_clip_val=100.0,

accelerator="auto",

logger=None,

)

estimator = TemporalFusionTransformer.from_dataset(

train_ds,

learning_rate=0.001,

hidden_size=16,

attention_head_size=1,

dropout=0.1,

hidden_continuous_size=8,

output_size=7,

loss=QuantileLoss(),

log_interval=1,

reduce_on_plateau_patience=5,

)

trainer.fit(

estimator,

train_dataloaders=train_dataloader

)

predictions = estimator.predict(pred_dataloader, mode="prediction", return_index=True)

``` | open | 2023-06-02T15:28:35Z | 2023-10-03T08:23:18Z | https://github.com/sktime/pytorch-forecasting/issues/1321 | [] | Antoine-Schwartz | 4 |

sktime/sktime | scikit-learn | 7,763 | [BUG] Conversion of 1-column pd.DataFrame to pd.Series loses the column name. Conversion happens for forecasters with scitype:y = pd.Series. | Suppose XYZ is a forecaster with y scitype of pd.Series.

If you call XYZ.fit(y) with y a pd.DataFrame with a single column, sktime is "robust" and will convert the single column DataFrame to a pd.Series. This is done in routine 'convert_MvS_to_UvS_as_Series' in datatypes/_series/_convert.py.

The problem is that in doing this, the column name from the DataFrame has been lost. It should be retained as the attr name in the new series.

This can be reproduced by calling the fit method with a 1-column DataFrame on any forecaster that has y scitype of pd.Series.

| closed | 2025-02-05T08:17:57Z | 2025-02-18T07:36:42Z | https://github.com/sktime/sktime/issues/7763 | [

"bug",

"module:datatypes"

] | ericjb | 1 |

wagtail/wagtail | django | 12,530 | Allow interactions with locked pages | ### Is your proposal related to a problem?

When a page is locked due to a workflow or otherwise, the locking implementation makes it much harder / impossible to do any interaction with the page. See demo: [Welcome to the Wagtail bakery](https://static-wagtail-v6-1.netlify.app/admin-editor/pages/60/edit/). Here are interactions that are harder than necessary, even though they’re entirely safe:

- Copying content from the locked page to another.

- Using collapsible blocks to read the content more easily

- Navigating to a linked snippet / image / page etc

### Describe the solution you'd like

Change the implementation so that instead of the `content-locked` class, we only disable the specific page elements that are actually problematic. Ideally we would support all of those interactions with the content I mentioned above, while still communicating to page users that the page is un-editable.

### Describe alternatives you've considered

There are quite a few - for example using the "copy" feature to make a new page, or cancelling the workflow / getting the page unlocked temporarily. However they don’t seem particularly commensurate with the problem at hand.

### Additional context

None

### Working on this

<!--

Do you have thoughts on skills needed?

Are you keen to work on this yourself once the issue has been accepted?

Please let us know here.

-->

Anyone can contribute to this. View our [contributing guidelines](https://docs.wagtail.org/en/latest/contributing/index.html), add a comment to the issue once you’re ready to start.

| open | 2024-11-02T00:41:58Z | 2024-12-26T04:51:01Z | https://github.com/wagtail/wagtail/issues/12530 | [

"type:Enhancement",

"Accessibility",

"component:Workflow",

"component:Locking",

"Sprint topic"

] | thibaudcolas | 1 |

akfamily/akshare | data-science | 5,738 | stock_bid_ask_em 盘口数据没有时间属性 | stock_bid_ask_em 接口盘口数据没有时间,感知不了是什么时候的交易日的数据。

或者有没有接口提供今天是否为a股的交易日,根据这个也有足够信息可以判定

| closed | 2025-02-26T16:25:45Z | 2025-02-26T16:50:04Z | https://github.com/akfamily/akshare/issues/5738 | [] | Falicitas | 2 |

joerick/pyinstrument | django | 39 | How can i use it in windows? | closed | 2018-05-15T17:30:29Z | 2018-05-20T12:33:58Z | https://github.com/joerick/pyinstrument/issues/39 | [] | owandywang | 3 | |

amdegroot/ssd.pytorch | computer-vision | 114 | Implicit dimension choice for softmax has been deprecated. Change the call to include dim=X as an argument. | I'm getting this UserWarning:

> Implicit dimension choice for softmax has been deprecated. Change the call to include dim=X as an argument.

> self.softmax(conf.view(-1, self.num_classes)), # conf preds | open | 2018-02-23T12:44:30Z | 2018-02-26T21:48:02Z | https://github.com/amdegroot/ssd.pytorch/issues/114 | [] | santhoshdc1590 | 1 |

alteryx/featuretools | scikit-learn | 1,793 | Add documentation page on Time Series with Featuretools | As a user of Featuretools, I would like to view documentation on how to use Featuretools with a time series problem.

I want to understand how Featuretools can help with feature engineering in a time series problem.

I want to see the helpful primitives that Featuretools has for time series problems.

| closed | 2021-11-22T17:30:39Z | 2022-02-18T19:20:46Z | https://github.com/alteryx/featuretools/issues/1793 | [] | gsheni | 0 |

deepset-ai/haystack | nlp | 8,747 | Extend TransformersTextRouter to use other model providers | **Is your feature request related to a problem? Please describe.**

Users would like to use vLLM or other model providers for text routing but currently the only supported option is to load a model from huggingface and run it.

**Describe the solution you'd like**

Similar to the vLLM integration for chat generators, it would be great if there was a text router that could be used with `api_base_url` or a similar parameter.

**Describe alternatives you've considered**

Write a custom component.

**Additional context**

TransformersZeroShotTextRouter could be extended in a similar way.

| open | 2025-01-19T11:57:28Z | 2025-01-24T05:27:20Z | https://github.com/deepset-ai/haystack/issues/8747 | [

"type:feature",

"P3"

] | julian-risch | 1 |

PokemonGoF/PokemonGo-Bot | automation | 5,768 | New security measures | FYI, the app now may ask for a captcha on logging in. I got a warning on one of my accounts that 3rd party software had been detected. The bot software no longer runs after it logs in and gets the profile info, I worry that trying to use the software and not dealing with captcha might be an extra flag on the account.

Thank you for the good times and the help, I must admit that I play the game more in real life because I had the bot, than if I didn't.

| open | 2016-10-05T23:46:54Z | 2016-10-10T06:07:30Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5768 | [] | ajhalls | 23 |

horovod/horovod | tensorflow | 3,256 | Error on KerasEstimator for Spark | @tgaddair

**Environment:**

1. Framework: (TensorFlow, Keras, PyTorch, MXNet): TensorFlow

2. Framework version: 2.6.0

3. Horovod version: 0.22.1

7. Python version: 3.8.10

8. Spark / PySpark version: 3.2.0

**Bug report:**

I have an spark dataframe with two columns: `content`, binary content of x images loaded with Databricks Spark Autoloader for Cloud Files and `class`, another column with long format and class value.

I defined the `KerasEstimator` to use a `transformation_fn`, this function loads the binary data of content and returns it as numpy array of shape (256,256,3), the class value is returned as it is.

When I trigger the fit method of the model I get this error:

```

[1,0]<stderr>:

[1,0]<stderr>:Function call stack:

[1,0]<stderr>:train_function

[1,0]<stderr>:

--------------------------------------------------------------------------

Primary job terminated normally, but 1 process returned

a non-zero exit code. Per user-direction, the job has been aborted.

--------------------------------------------------------------------------

[1,2]<stderr>:2021-11-02 08:50:51.469193: W tensorflow/core/framework/op_kernel.cc:1669] OP_REQUIRES failed at cast_op.cc:121 : Unimplemented: Cast string to float is not supported

[1,2]<stderr>:Traceback (most recent call last):

[1,2]<stderr>: File "/usr/lib/python3.8/runpy.py", line 194, in _run_module_as_main

[1,2]<stderr>: return _run_code(code, main_globals, None,

[1,2]<stderr>: File "/usr/lib/python3.8/runpy.py", line 87, in _run_code

[1,2]<stderr>: exec(code, run_globals)

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/horovod/spark/task/mpirun_exec_fn.py", line 52, in <module>

[1,2]<stderr>: main(codec.loads_base64(sys.argv[1]), codec.loads_base64(sys.argv[2]))

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/horovod/spark/task/mpirun_exec_fn.py", line 45, in main

[1,2]<stderr>: task_exec(driver_addresses, settings, 'OMPI_COMM_WORLD_RANK', 'OMPI_COMM_WORLD_LOCAL_RANK')

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/horovod/spark/task/__init__.py", line 61, in task_exec

[1,2]<stderr>: result = fn(*args, **kwargs)

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/horovod/spark/keras/remote.py", line 242, in train

[1,2]<stderr>: history = fit(model, train_data, val_data, steps_per_epoch,

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/horovod/spark/keras/util.py", line 40, in fn

[1,2]<stderr>: return model.fit(

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/keras/engine/training.py", line 1184, in fit

[1,2]<stderr>: tmp_logs = self.train_function(iterator)

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/def_function.py", line 885, in __call__

[1,2]<stderr>: result = self._call(*args, **kwds)

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/def_function.py", line 950, in _call

[1,2]<stderr>: return self._stateless_fn(*args, **kwds)

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/function.py", line 3039, in __call__

[1,2]<stderr>: return graph_function._call_flat(

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/function.py", line 1963, in _call_flat

[1,2]<stderr>: return self._build_call_outputs(self._inference_function.call(

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/function.py", line 591, in call

[1,2]<stderr>: outputs = execute.execute(

[1,2]<stderr>: File "/databricks/python/lib/python3.8/site-packages/tensorflow/python/eager/execute.py", line 59, in quick_execute

[1,2]<stderr>: tensors = pywrap_tfe.TFE_Py_Execute(ctx._handle, device_name, op_name,

[1,2]<stderr>:tensorflow.python.framework.errors_impl.UnimplementedError: Cast string to float is not supported

[1,2]<stderr>: [[node model/Cast (defined at databricks/python/lib/python3.8/site-packages/horovod/spark/keras/util.py:40) ]] [Op:__inference_train_function_11503]

```

| open | 2021-11-02T09:05:06Z | 2021-11-04T19:30:16Z | https://github.com/horovod/horovod/issues/3256 | [

"bug"

] | WaterKnight1998 | 2 |

STVIR/pysot | computer-vision | 604 | 有人成功训练SiamMask了吗? | 我在训练SiamMask中遇到以下报错,看了历史issues,该问题仍未解决,向各位大佬求助!!!

Traceback (most recent call last):

File "../../tools/train.py", line 317, in <module>

main()

File "../../tools/train.py", line 312, in main

train(train_loader, dist_model, optimizer, lr_scheduler, tb_writer)

File "../../tools/train.py", line 210, in train

outputs = model(data)

File "/home/work/anaconda3/envs/pysot/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1130, in _call_impl

return forward_call(*input, **kwargs)

File "/home/work/dingqishuai/pysot/pysot/utils/distributed.py", line 43, in forward

return self.module(*args, **kwargs)

File "/home/work/anaconda3/envs/pysot/lib/python3.8/site-packages/torch/nn/modules/module.py", line 1130, in _call_impl

return forward_call(*input, **kwargs)

File "/home/work/dingqishuai/pysot/pysot/models/model_builder.py", line 115, in forward

outputs['total_loss'] += cfg.TRAIN.MASK_WEIGHT * mask_loss

TypeError: unsupported operand type(s) for *: 'float' and 'NoneType'

Pysot是否仅支持SiamMask的推理和测评?而不支持训练?Pysot还会继续维护更新吗? | open | 2023-12-19T07:21:11Z | 2023-12-19T07:21:11Z | https://github.com/STVIR/pysot/issues/604 | [] | dqs932 | 0 |

widgetti/solara | jupyter | 444 | ipycanvas doesn't work with solara | I want to develop an app that uses solara and [ipycanvas](https://github.com/jupyter-widgets-contrib/ipycanvas), but it seems like ipycanvas isn't supported.

App code:

```python

import solara

from ipycanvas import Canvas

@solara.component

def Page():

canvas = Canvas(width=200, height=200)

return canvas

```

Then, I start the app:

```sh

solara run app.py

```

And I get this error:

```pytb

Traceback (most recent call last):

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/reacton/core.py", line 1675, in _render

root_element = el.component.f(*el.args, **el.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/eduardo/Desktop/jupyai-demos/app.py", line 58, in Page

canvas = RoughCanvas(width=width, height=height)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipycanvas/canvas.py", line 620, in __init__

super(Canvas, self).__init__(*args, **kwargs)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipywidgets/widgets/widget.py", line 506, in __init__

self.open()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipywidgets/widgets/widget.py", line 525, in open

state, buffer_paths, buffers = _remove_buffers(self.get_state())

^^^^^^^^^^^^^^^^

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipywidgets/widgets/widget.py", line 615, in get_state

value = to_json(getattr(self, k), self)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipywidgets/widgets/widget.py", line 54, in _widget_to_json

return "IPY_MODEL_" + x.model_id

^^^^^^^^^^

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/patch.py", line 367, in model_id_debug

raise RuntimeError(f"Widget has been closed, the stacktrace when the widget was closed is:\n{closed_stack[id(self)]}")

RuntimeError: Widget has been closed, the stacktrace when the widget was closed is:

File "/Users/eduardo/miniconda3/envs/tmp/bin/solara", line 8, in <module>

sys.exit(main())

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/__main__.py", line 706, in main

cli()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/click/core.py", line 1157, in __call__

return self.main(*args, **kwargs)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/rich_click/rich_command.py", line 126, in main

rv = self.invoke(ctx)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/click/core.py", line 1688, in invoke

return _process_result(sub_ctx.command.invoke(sub_ctx))

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/click/core.py", line 1434, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/click/core.py", line 783, in invoke

return __callback(*args, **kwargs)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/__main__.py", line 428, in run

start_server()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/__main__.py", line 400, in start_server

server.run()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/uvicorn/server.py", line 61, in run

return asyncio.run(self.serve(sockets=sockets))

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/runners.py", line 190, in run

return runner.run(main)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/base_events.py", line 640, in run_until_complete

self.run_forever()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/base_events.py", line 607, in run_forever

self._run_once()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/base_events.py", line 1922, in _run_once

handle._run()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/asyncio/events.py", line 80, in _run

self._context.run(self._callback, *self._args)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/uvicorn/server.py", line 68, in serve

config.load()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/uvicorn/config.py", line 467, in load

self.loaded_app = import_from_string(self.app)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/uvicorn/importer.py", line 21, in import_from_string

module = importlib.import_module(module_str)

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/importlib/__init__.py", line 126, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1204, in _gcd_import

File "<frozen importlib._bootstrap>", line 1176, in _find_and_load

File "<frozen importlib._bootstrap>", line 1147, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 690, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 940, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/starlette.py", line 47, in <module>

from . import app as appmod

File "<frozen importlib._bootstrap>", line 1232, in _handle_fromlist

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "<frozen importlib._bootstrap>", line 1176, in _find_and_load

File "<frozen importlib._bootstrap>", line 1147, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 690, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 940, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/app.py", line 407, in <module>

apps["__default__"] = AppScript(os.environ.get("SOLARA_APP", "solara.website.pages:Page"))

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/app.py", line 79, in __init__

dummy_kernel_context.close()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/kernel_context.py", line 92, in close

widgets.Widget.close_all()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/ipywidgets/widgets/widget.py", line 351, in close_all

widget.close()

File "/Users/eduardo/miniconda3/envs/tmp/lib/python3.11/site-packages/solara/server/patch.py", line 387, in close_widget_debug

stacktrace = "".join(traceback.format_stack())

```

Is this expected? Are there any workarounds?

| open | 2024-01-04T22:11:45Z | 2024-01-17T15:25:21Z | https://github.com/widgetti/solara/issues/444 | [] | edublancas | 2 |

oegedijk/explainerdashboard | plotly | 43 | logins with one username and one password | Checking https://explainerdashboard.readthedocs.io/en/latest/deployment.html?highlight=auth#setting-logins-and-password

I was testing with one login and one password.

`logins=["U", "P"]` doesn't work (see below) but `logins=[["U", "P"]]` does.

I don't suppose there is a `login` kwarg? or it can handle a list of len 2? It seems them is coming from dash_auth so I could upstream this there.

```

File "src/dashboard_cel.py", line 24, in <module>

logins=["Celebrity", "Beyond"],

File "C:\Users\131416\AppData\Local\Continuum\anaconda3\envs\e\lib\site-packages\explainerdashboard\dashboards.py", line 369, in __init__

self.auth = dash_auth.BasicAuth(self.app, logins)

File "C:\Users\131416\AppData\Local\Continuum\anaconda3\envs\e\lib\site-packages\dash_auth\basic_auth.py", line 11, in __init__

else {k: v for k, v in username_password_list}

File "C:\Users\131416\AppData\Local\Continuum\anaconda3\envs\e\lib\site-packages\dash_auth\basic_auth.py", line 11, in <dictcomp>

else {k: v for k, v in username_password_list}

ValueError: too many values to unpack (expected 2)

```

| closed | 2020-12-08T22:17:21Z | 2020-12-10T10:57:47Z | https://github.com/oegedijk/explainerdashboard/issues/43 | [] | raybellwaves | 3 |

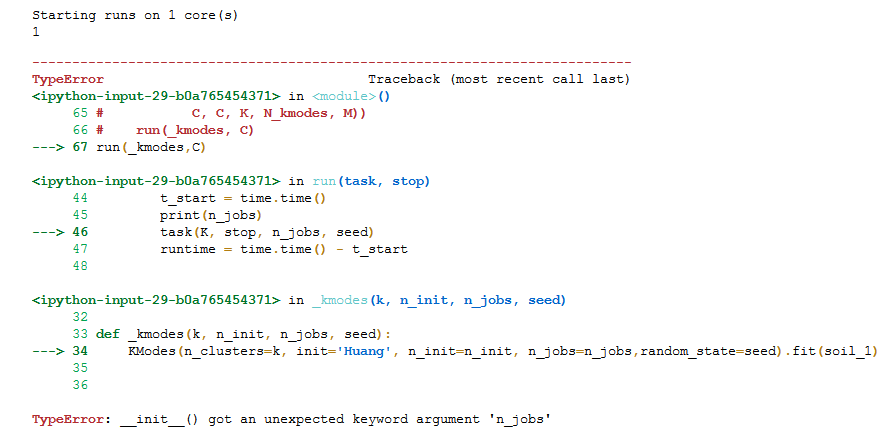

tflearn/tflearn | data-science | 278 | image size more than about 220 pixel, loss is Nan during training imagenet | I mention in this thread https://github.com/tflearn/tflearn/issues/262, when image size is more than about 220 pixel, loss is nan, but if i decrease image size, then loss and training accuracy is normal.How to solve this so i can use larger image size?

| open | 2016-08-12T15:53:20Z | 2016-08-12T21:09:42Z | https://github.com/tflearn/tflearn/issues/278 | [] | lfwin | 1 |

netbox-community/netbox | django | 18,808 | Clean up nonexistent squashed migrations | ### Proposed Changes

The `sqlmigrate` command fails with errors such as: `django.db.migrations.exceptions.NodeNotFoundError: Migration extras.0002_squashed_0059 dependencies reference nonexistent parent node ('dcim', '0002_auto_20160622_1821')`

This results from migration squashing where the `dependencies` list was not updated to point to the new squashed migrations.

Proposed change is to fix all `dependencies` pointers in existing migrations to ensure they point to the existing migrations containing the original (missing) parent nodes.

### Justification

Though `sqlmigrate` is rarely used (it produces the native SQL operations to be applied during migrations in case they need to be run manually), it is useful as a diagnostic tool and ought to work properly. (Note: `migrate` itself does not seem to have this issue.)

| closed | 2025-03-05T01:05:54Z | 2025-03-10T16:57:46Z | https://github.com/netbox-community/netbox/issues/18808 | [

"status: accepted",

"type: housekeeping"

] | bctiemann | 1 |

graphql-python/graphene-django | django | 971 | DjangoObjectType duplicate models breaks Relay node resolution | I have exactly the same issue as #107.

The proposed solution no longer works

How to do this now in the current state ? | open | 2020-05-24T22:06:06Z | 2022-01-25T14:48:05Z | https://github.com/graphql-python/graphene-django/issues/971 | [

"🐛bug"

] | boolangery | 8 |

microsoft/JARVIS | pytorch | 65 | Can't run JARVIS in local full mode. | I downloaded all the models (28 models in 431GB) on my PC.

And I have hybrid/minimal mode running successfully.

But I can't have JARVIS running in local/full mode. (I do have 128GB RAM)

I got a error message saying that it can't load the file that exists actually. And I checked the file read permission with the process running user no problem.

| closed | 2023-04-06T09:24:20Z | 2023-04-07T10:31:34Z | https://github.com/microsoft/JARVIS/issues/65 | [] | meeeo | 2 |

inducer/pudb | pytest | 448 | RecursionError is not caught by the debugger | With something like

```py

def test():

raise ValueError

import pudb

pudb.set_trace()

test()

```

If you step over `test()`, it tells you that an exception has been raised. But with

```py

def test():

test()

import pudb

pudb.set_trace()

test()

```

The whole program exits with a traceback. In the case where you instead run `python -m pudb file.py`, the RecursionError is caught, but as an "uncaught exception" (post mortem).

I browsed the pudb and bdb code and it isn't clear to me why this is happening. | open | 2021-05-03T23:03:24Z | 2021-05-03T23:29:10Z | https://github.com/inducer/pudb/issues/448 | [] | asmeurer | 2 |

ydataai/ydata-profiling | data-science | 883 | n_rows and n_columns must be positive integer. (Same as #853 and #836) | **Describe the bug**

This is similar to #853 and #836, but posting anyway just as another example with version info and a screenshot in Jupyter Notebook. Feel free to mark as a duplicate if desired.

When attempting the basic profile.to_widgets() example in the README, I encounter the attached error that n-rows and n-columns must be positive integers.

<img width="832" alt="pandas_error" src="https://user-images.githubusercontent.com/42592742/141694005-1c5d9f1e-dc3e-4c9b-8d16-2278f8c11e2d.PNG">

**To Reproduce**

follow the example in picture above

**Version information:**

Windows 10

Python: 3.8.5

Jupyter Notebook: 6.1.4

pandas-profiling: 3.1.0

**Additional context**

Just wanted to throw this out there since I've never used the profiling report and it seems the basic example is broken - I could be doing something obviously wrong though.

Happy to attempt a bug fix and PR if this is an actual issue. Let me know what else you all might need. | closed | 2021-11-14T18:43:49Z | 2023-01-27T19:16:13Z | https://github.com/ydataai/ydata-profiling/issues/883 | [

"bug 🐛"

] | WillTirone | 8 |

falconry/falcon | api | 1,422 | fix: Custom serializers not called for errors that inherit from NoRepresentation, OptionalRepresentation | This is a breakout issue from https://github.com/falconry/falcon/issues/452 - It could be potentially breaking in the case that a custom error serializer is not prepared to deal with these additional error types. | closed | 2019-01-31T21:20:47Z | 2019-02-14T22:18:04Z | https://github.com/falconry/falcon/issues/1422 | [

"bug",

"breaking-change"

] | kgriffs | 0 |

joouha/euporie | jupyter | 129 | Config Location resolve order | I was wondering if we could set an order to the config location? Perhaps it is just me, but on OSX I always have a `${HOME}/.config` directory to make my [dotfiles](https://github.com/stevenwalton/.dotfiles/tree/master/configs) much more portable, since this is where `$XDG_CONFIG_HOME` usually points to.

Request: first check `${HOME}/.config/euporie` and then `${HOME}/Library/Application Support/euporie/`

It may just be me that does this and if so then it's probably not worth bothering with | open | 2025-02-07T01:15:50Z | 2025-02-07T01:15:50Z | https://github.com/joouha/euporie/issues/129 | [] | stevenwalton | 0 |

Ehco1996/django-sspanel | django | 127 | 后端运行出现问题,请帮忙看看。 | [root@server1 shadowsocksr]# python server.py

IPv6 support

Traceback (most recent call last):

File "server.py", line 74, in <module>

main()

File "server.py", line 54, in main

if get_config().API_INTERFACE == 'mudbjson':

AttributeError: 'NoneType' object has no attribute 'API_INTERFACE' | closed | 2018-05-28T05:26:30Z | 2018-07-30T03:19:32Z | https://github.com/Ehco1996/django-sspanel/issues/127 | [] | vggh66 | 2 |

onnx/onnx | tensorflow | 5,818 | get trt engine from onnx model,but the resout is different,why? | the resout of pth and onnx is same,but when I get the trt engine from onnx model,the resout is different,why?

pytorch 2 version,trt 8.6 version.linux,4090 GPU. | closed | 2023-12-21T02:26:34Z | 2023-12-21T08:42:12Z | https://github.com/onnx/onnx/issues/5818 | [

"question"

] | henbucuoshanghai | 2 |

piskvorky/gensim | data-science | 3,168 | Error with older wiki dumps | <!--

**IMPORTANT**:

- Use the [Gensim mailing list](https://groups.google.com/forum/#!forum/gensim) to ask general or usage questions. Github issues are only for bug reports.

- Check [Recipes&FAQ](https://github.com/RaRe-Technologies/gensim/wiki/Recipes-&-FAQ) first for common answers.

Github bug reports that do not include relevant information and context will be closed without an answer. Thanks!

-->

#### Problem description

I am trying to load wiki dump and extract articles for word2vec training. This works well for more recent dumps. But for older dumps (e.g., 2010 dump), it fails.

#### Steps/code/corpus to reproduce

Include full tracebacks, logs and datasets if necessary. Please keep the examples minimal ("minimal reproducible example").

If your problem is with a specific Gensim model (word2vec, lsimodel, doc2vec, fasttext, ldamodel etc), include the following:

```python

import multiprocessing

from gensim.corpora.wikicorpus import WikiCorpus

from gensim.models.word2vec import Word2Vec

import logging

logging.basicConfig(format='%(asctime)s: %(levelname)s: %(message)s')

logging.root.setLevel(level=logging.INFO)

wiki_dump= './enwiki-20100312-pages-articles.xml.bz2'

wiki= WikiCorpus(fname= wiki_dump,

lower= False,

lemmatize=False,

dictionary={}, #not needed for word2vec (https://groups.google.com/u/1/g/gensim/c/aI7vbNCxhb8)

processes= max(1, multiprocessing.cpu_count() - 1),

token_min_len=1,

token_max_len=50)

txt_file = './enwiki_20100312_extracted_articles_v1.txt'

with open(txt_file, 'w') as f:

for i, text in enumerate(wiki.get_texts()):

f.write(" ".join(text) + "\n")

if i % 50000 == 0:

logging.info("Saved %d articles" % i)

logging.info("Finished extract wiki, Saved in %s" % txt_file)

```

`Process InputQueue-24:

Traceback (most recent call last):

File "/anaconda/envs/spacy_v3/lib/python3.8/multiprocessing/process.py", line 315, in _bootstrap

self.run()

File "/home/user-sas01/.local/lib/python3.8/site-packages/gensim-4.0.0b0-py3.8-linux-x86_64.egg/gensim/utils.py", line 1215, in run

wrapped_chunk = [list(chunk)]

File "/home/user-sas01/.local/lib/python3.8/site-packages/gensim-4.0.0b0-py3.8-linux-x86_64.egg/gensim/corpora/wikicorpus.py", line 679, in <genexpr>

texts = (

File "/home/user-sas01/.local/lib/python3.8/site-packages/gensim-4.0.0b0-py3.8-linux-x86_64.egg/gensim/corpora/wikicorpus.py", line 430, in extract_pages

ns = elem.find(ns_path).text

AttributeError: 'NoneType' object has no attribute 'text'

`

#### Versions

Please provide the output of:

```python

Linux-5.4.0-1047-azure-x86_64-with-glibc2.10

Python 3.8.5 (default, Sep 4 2020, 07:30:14)

[GCC 7.3.0]

Bits 64

NumPy 1.19.2

SciPy 1.6.0

gensim 4.0.0beta

FAST_VERSION 1

```

| open | 2021-06-09T06:00:23Z | 2021-06-09T08:33:17Z | https://github.com/piskvorky/gensim/issues/3168 | [] | santoshbs | 3 |

nerfstudio-project/nerfstudio | computer-vision | 2,805 | Got an unexpected keyword argument 'point_shape' | I was trying to train the splatfacto model through command :

` ns-train splatfacto --pipeline.model.cull_alpha_thresh=0.005 --pipeline.model.continue_cull_post_densification=False --data ../../data/skoda_ultra_wide/ --output-dir ../../skoda_ultra_wide`

I had this error:

`File "/User/.local/bin/ns-train", line 8, in <module>

sys.exit(entrypoint())

File "/User3D/nerfstudio/nerfstudio/scripts/train.py", line 262, in entrypoint

main(

File "/User/3D/nerfstudio/nerfstudio/scripts/train.py", line 247, in main

launch(

File "/User/3D/nerfstudio/nerfstudio/scripts/train.py", line 189, in launch

main_func(local_rank=0, world_size=world_size, config=config)

File "/User/3D/nerfstudio/nerfstudio/scripts/train.py", line 99, in train_loop

trainer.setup()

File "/User/3D/nerfstudio/nerfstudio/engine/trainer.py", line 178, in setup

self.viewer_state = ViewerState(

File "/User/3D/nerfstudio/nerfstudio/viewer/viewer.py", line 263, in __init__

self.viser_server.add_point_cloud(

TypeError: MessageApi.add_point_cloud() got an unexpected keyword argument 'point_shape' `

I simply run the command on a data folder that was the result of a `ns-process` command. It asked me this:

`load_3D_points is true, but the dataset was processed with an outdated ns-process-data that didn't convert colmap points to .ply! Update the colmap dataset automatically? [y/n]:`

I put y as answer and from that moment it gives me that error. | closed | 2024-01-22T13:21:19Z | 2024-01-22T14:46:00Z | https://github.com/nerfstudio-project/nerfstudio/issues/2805 | [] | SalvoPisciotta | 0 |

JoeanAmier/XHS-Downloader | api | 207 | API 模式传入 Cookie 什么时候可以上传到Docker镜像 | API 模式传入 Cookie 什么时候可以上传到Docker镜像 | open | 2024-12-21T13:43:38Z | 2025-01-17T11:51:38Z | https://github.com/JoeanAmier/XHS-Downloader/issues/207 | [] | kiko923 | 3 |

deepinsight/insightface | pytorch | 2,107 | Why you remove last relu of resnet block? | Hi,

In standard ResNet block, there is a relu at the end of the block (https://github.com/open-mmlab/mmclassification/blob/master/mmcls/models/backbones/resnet.py#L131), but you don't have that (https://github.com/deepinsight/insightface/blob/master/recognition/arcface_torch/backbones/iresnet.py#L57). I tried to add relu but got a very bad result on my own dataset.

Any reason you made the change? | closed | 2022-09-16T09:53:00Z | 2022-09-24T01:35:48Z | https://github.com/deepinsight/insightface/issues/2107 | [] | twmht | 0 |

python-gino/gino | asyncio | 710 | How to close print sql | adding `echo` does not work, how can I close it?

```

db = Gino(

dsn=db_dsn,

pool_min_size=config.DB_POOL_MIN_SIZE,

pool_max_size=config.DB_POOL_MAX_SIZE,

echo=`config.DB_ECHO`,

ssl=config.DB_SSL,

use_connection_for_request=config.DB_USE_CONNECTION_FOR_REQUEST,

retry_limit=config.DB_RETRY_LIMIT,

retry_interval=config.DB_RETRY_INTERVAL,

)

```

| closed | 2020-07-21T13:06:01Z | 2022-11-19T01:10:42Z | https://github.com/python-gino/gino/issues/710 | [

"bug"

] | oleeks | 5 |

Asabeneh/30-Days-Of-Python | pandas | 535 | Close useless issues and merge pull requests. | I was looking through the issues and pull requests tabs and I noticed a lot of proper pull requests that would improve the 30 DoP repo. I would be willing to, but otherwise somebody should clean up those tabs. | open | 2024-06-29T17:44:16Z | 2024-06-29T17:44:16Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/535 | [] | SlothScript | 0 |

Miserlou/Zappa | django | 1,884 | How does Zappa actually work and best practices? | I'm trying to figure out how Zappa actually works and what's going on under-the-hood, along with best practices for deploying into production.

I've been playing around with it, and even though Zappa actually links to "guides", none actually focus on "here are the solid foundations and the `n` best practices for deployment". Instead, they all just kinda have the same 3-4 commands showing how to deploy. No meat, just bones.

As an example, I'm testing a Falcon API with Zappa. So far I've only been able to make it work if Zappa is a requirement for pip. Is that needed? I have some code like this:

```python

import falcon

class TestResource(object):

def on_get(self, req, resp):

resp.status = falcon.HTTP_200

resp.body = "Hello"

app = falcon.API()

test = TestResource()

app.add_route("/hello", test)

```

I've seen example projects, Falcon specific, using custom handlers for WSGI and lambda handling. Again, is this needed? Why? Do I need an empty `__init__.py` in the project source too?

The documentation seems to have a lot of concrete examples for specific use case only. But nothing telling me *why*. I also can't be bothered to start digging around in the source code for answers right now. | closed | 2019-06-11T13:46:24Z | 2019-06-17T09:26:35Z | https://github.com/Miserlou/Zappa/issues/1884 | [] | ghost | 0 |

dynaconf/dynaconf | django | 858 | Suggestions on how to `reverse` in my Django settings.yaml | I currently define settings like `LOGIN_URL` as `reverse_lazy("account_login")`. Is there a way to replicate this type of configuration into something I can use in my Dynaconf settings.yaml?

If possible, I'd prefer to keep all settings in my Dynaconf settings.yaml and keep my Django settings.py file as empty as possible. | closed | 2023-02-04T15:34:20Z | 2023-03-30T19:30:37Z | https://github.com/dynaconf/dynaconf/issues/858 | [

"question",

"Docs"

] | wgordon17 | 6 |

junyanz/pytorch-CycleGAN-and-pix2pix | pytorch | 1,357 | Some problems encountered during testing | After I trained the cyclegan model, why did this result appear in the model test? I trained it twice. | closed | 2021-12-27T01:10:45Z | 2021-12-27T12:50:04Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1357 | [] | supersarr | 2 |

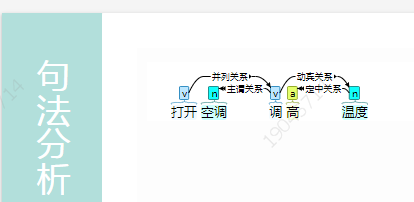

hankcs/HanLP | nlp | 1,344 | 在线演示与代码句法分析结果存在差异。 | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检索功能](https://github.com/hankcs/HanLP/issues)搜索了我的问题,也没有找到答案。

* 我明白开源社区是出于兴趣爱好聚集起来的自由社区,不承担任何责任或义务。我会礼貌发言,向每一个帮助我的人表示感谢。

* [x] 我在此括号内输入x打钩,代表上述事项确认完毕

## 版本号

<!-- 发行版请注明jar文件名去掉拓展名的部分;GitHub仓库版请注明master还是portable分支 -->

当前最新版本号是:

我使用的版本是:

<!--以上属于必填项,以下可自由发挥-->

hanlp-1.7.5,master均存在此问题

## 我的问题

在线演示“http://www.hanlp.com/?sentence=打开空调调高温度”

与源码结果不同

<!-- 请详细描述问题,越详细越可能得到解决 -->

对比在线演示和master,hanlp-1.7.5中的DemoDependencyParser的依存分析结果,解析句子“打开空调调高温度”,得到的结果是不同的,是使用了不同模型吗

## 复现问题

<!-- 你是如何操作导致产生问题的?比如修改了代码?修改了词典或模型?-->

没有修改任何

master与jar包结果。

1 打开 打开 v v _ 0 核心关系 _ _

2 空调 空调 n n _ 1 动宾关系 _ _

3 调高 调高 v v _ 4 定中关系 _ _

4 温度 温度 n n _ 1 动宾关系 _ _

在线演示结果

| closed | 2019-12-07T07:02:21Z | 2019-12-07T07:26:31Z | https://github.com/hankcs/HanLP/issues/1344 | [

"duplicated"

] | mfxss | 1 |

clovaai/donut | nlp | 173 | OSError: Unable to load vocabulary when using with Windows | Trying to `train.py` a new language based on a corpora I generated with synthDOG, running the command `python train.py --config config/base.yaml --exp_version "base"` on up-to-date Windows 11 inside a conda virtualenv. Dev mode in Windows is activated, and I've launched Anaconda as admin, cmd also shows Administrator: at the top.

The error is as follows:

```

Traceback (most recent call last):

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\transformers\tokenization_utils_base.py", line 1958, in _from_pretrained

tokenizer = cls(*init_inputs, **init_kwargs)

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\transformers\models\xlm_roberta\tokenization_xlm_roberta.py", line 168, in __init__

self.sp_model.Load(str(vocab_file))

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\sentencepiece\__init__.py", line 905, in Load

return self.LoadFromFile(model_file)

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\sentencepiece\__init__.py", line 310, in LoadFromFile

return _sentencepiece.SentencePieceProcessor_LoadFromFile(self, arg)

OSError: Not found: "C:\Users\Csanád\.cache\models--naver-clova-ix--donut-base\snapshots\a959cf33c20e09215873e338299c900f57047c61\sentencepiece.bpe.model": No such file or directory Error #2

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\Users\Csanád\Documents\Kontron\donut-master\train.py", line 149, in <module>

train(config)

File "C:\Users\Csanád\Documents\Kontron\donut-master\train.py", line 57, in train

model_module = DonutModelPLModule(config)

File "C:\Users\Csanád\Documents\Kontron\donut-master\lightning_module.py", line 30, in __init__

self.model = DonutModel.from_pretrained(

File "C:\Users\Csanád\Documents\Kontron\donut-master\donut\model.py", line 594, in from_pretrained

model = super(DonutModel, cls).from_pretrained(pretrained_model_name_or_path, revision="official", *model_args, **kwargs)

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\transformers\modeling_utils.py", line 2498, in from_pretrained

model = cls(config, *model_args, **model_kwargs)

File "C:\Users\Csanád\Documents\Kontron\donut-master\donut\model.py", line 390, in __init__

self.decoder = BARTDecoder(

File "C:\Users\Csanád\Documents\Kontron\donut-master\donut\model.py", line 159, in __init__

self.tokenizer = XLMRobertaTokenizer.from_pretrained(

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\transformers\tokenization_utils_base.py", line 1804, in from_pretrained

return cls._from_pretrained(

File "C:\ProgramData\Anaconda3\envs\Donut\lib\site-packages\transformers\tokenization_utils_base.py", line 1960, in _from_pretrained

raise OSError(

OSError: Unable to load vocabulary from file. Please check that the provided vocabulary is accessible and not corrupted.

```

When running by default, the cache link is incorrect, as it generates something like "C:\User\me/.cache\etc.". I manually changed the cache, but still after downloading about a GB of model files, the folder only contains SYMLINK files that are 0 KB. Even when I manually downloads all the files and add them in, the path won't be recognized. Copy-pasting the apparently erroneous file path from the cmd stack trace to a python `with open()` command, it seems to open it just fine. I have no idea what's wrong, but I'd really like some help, I'm going crazy. It's saying files aren't there, but they are. | open | 2023-03-30T20:37:32Z | 2023-03-30T20:57:07Z | https://github.com/clovaai/donut/issues/173 | [] | csanadpoda | 0 |

biolab/orange3 | scikit-learn | 6,180 | Widget save data shuffle the table columns | Hi

I'm just starting using Orange and I realised that the widget "Save Data" when writing the xcel file shuffle the columns..

This is how the Save data sees the input table (just the first columns

This is how it is going on the file

<!--

Thanks for taking the time to report a bug!

If you're raising an issue about an add-on (i.e., installed via Options > Add-ons), raise an issue in the relevant add-on's issue tracker instead. See: https://github.com/biolab?q=orange3

To fix the bug, we need to be able to reproduce it. Please answer the following questions to the best of your ability.

-->

**What's wrong?**

<!-- Be specific, clear, and concise. Include screenshots if relevant. -->

<!-- If you're getting an error message, copy it, and enclose it with three backticks (```). -->

**How can we reproduce the problem?**

<!-- Upload a zip with the .ows file and data. -->

<!-- Describe the steps (open this widget, click there, then add this...) -->

**What's your environment?**

<!-- To find your Orange version, see "Help → About → Version" or `Orange.version.full_version` in code -->

- Operating system:

- Orange version:

- How you installed Orange:

| closed | 2022-10-21T13:12:10Z | 2023-01-20T10:07:02Z | https://github.com/biolab/orange3/issues/6180 | [

"wish",

"meal"

] | EGalloni | 3 |

MycroftAI/mycroft-core | nlp | 3,145 | Unsupported locale setting with debug | **Describe the bug**

On my raspberry pi 2b, when i run ./start-mycroft debug, i get the following error:

`pi@raspi2b:~/mycroft-core $ ./start-mycroft.sh debug

Already up to date.

Starting all mycroft-core services

Initializing...

Starting background service bus

CAUTION: The Mycroft bus is an open websocket with no built-in security

measures. You are responsible for protecting the local port

8181 with a firewall as appropriate.

Starting background service skills

Starting background service audio

Starting background service voice

Starting background service enclosure

Starting cli

Traceback (most recent call last):

File "/usr/lib/python3.7/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/usr/lib/python3.7/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/home/pi/mycroft-core/mycroft/client/text/__main__.py", line 21, in <module>

from .text_client import (

File "/home/pi/mycroft-core/mycroft/client/text/text_client.py", line 40, in <module>

locale.setlocale(locale.LC_ALL, "") # Set LC_ALL to user default

File "/usr/lib/python3.7/locale.py", line 604, in setlocale

return _setlocale(category, locale)

locale.Error: unsupported locale setting`