repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

psf/black | python | 4,495 | string-processing f-string debug expressions quotes changed when using conversion | **Describe the bug**

When using unstable, f-string quotes get incorrectly changed if the expression contains a conversion. This changes program behavior. As an example, `"" f'{""=!r}'` currently formats to `f"{''=!r}"`.

```pycon

>>> print("" f'{""=!r}')

""=''

>>> print(f"{''=!r}")

''=''

```

**To Reproduce**

Format this code

```py

"" f'{""=!r}'

```

[Playground link](https://black.vercel.app/?version=stable&state=_Td6WFoAAATm1rRGAgAhARYAAAB0L-Wj4ACXAFtdAD2IimZxl1N_Wk7-Dfg-d5s6AnMmL7B_WZZRlN_6aBCr8bFyFffmq3_Lm33Nvx2BWLIsbCFGc_IwSfk4cIkXOuIJOKWEI_BYc9LTyr-qC_3OnwK3-YSdkaLJQAAAALNG27MBBhCJAAF3mAEAAAAQ4riMscRn-wIAAAAABFla)

**Expected behavior**

The program behavior should be unchanged.

**Additional context**

I found this while investigating how to fix #4493/#4494. The issue comes in two parts:

- String concatenation allows for f-string quotes to be changed.

- This is the same issue that leads to both #4493 and #4494.

- Both still have the same issue even if the first string isn't an f-string.

- The regex used to detect quotes in debug f-string expressions is flawed

- Here's the current regex: `.*[\'\"].*(?<![!:=])={1}(?!=)(?![^\s:])`

- While looking at the [f-string lexical analysis section](https://docs.python.org/3/reference/lexical_analysis.html#f-strings), I noticed it is missing logic for the conversions (`!s`, `!r`, `!a`)

The second part is fairly easy to fix, the regex just needs to be updated.

The first part is what's giving me trouble. Based on the fact that [all the f-string formatting code is commented out](https://github.com/psf/black/blob/f54f34799b52dbe48c5f9d5f31ad0f9bf82d0fa5/src/black/linegen.py#L524-L555), I assume there has been some sort of mismatch in intent, since part of that is `normalize_fstring_quotes`. To me, this looks like a source of code duplication, since `normalize_fstring_quotes` will need to handle all these same edge cases. That leads to the easiest way to solve this, which is to disallow merges that change an f-string's quote. As for anything past that, I'm not sure what the best way is to prevent the code in merging and normalizing from desyncing.

| open | 2024-10-22T20:20:02Z | 2024-10-25T20:24:37Z | https://github.com/psf/black/issues/4495 | [

"T: bug",

"F: strings"

] | MeGaGiGaGon | 2 |

developmentseed/lonboard | data-visualization | 352 | Feature request: export to Protomaps? | Lonboard is amazing for exploratory data analysis - thank you!

however, not sure how best to publish the results of an analysis.

Would it be feasible to export a lonboard map to the protomaps pmtiles format? (E.g. for embedding interactively on a static website) | closed | 2024-02-09T13:56:24Z | 2024-02-29T20:30:26Z | https://github.com/developmentseed/lonboard/issues/352 | [] | jaanli | 3 |

tfranzel/drf-spectacular | rest-api | 1,006 | Auto schema generation doesn't support pydantic schema | **Describe the bug**

I currently use `SQLModel` and `Pydantic` to support queries to my database and serialization of said data into json. Pydantic comes with a handy `schema` feature for all BaseModels that generates either a json or dict schema.

When I use a single model with no nested fields and pass in the Model's dict schema to the `@extend_schema` decorator as one o the response params, ex: `responses={200: my_model.schema()}`, this works fine. However, if I have a model with another model as one of its fields (see [here](https://docs.pydantic.dev/1.10/usage/schema/#schema-customization) for an example), the schema generated from that isn't interpreted correctly by `drf-spectacular`.

Specifically, the problem seems to be that `Pydantic` generates its documentation for nested models inside of a `definitions` key. I'm wondering if support could be added to `drf-spectacular` to move anything declared under the `definitions` keyword to the `components/schema` section of the api docs. `Pydantic` does add support for customizing the `$ref` location/prefix for models referenced elsewhere, so this wouldn't be an issue.

| closed | 2023-06-18T12:28:07Z | 2023-07-17T11:27:52Z | https://github.com/tfranzel/drf-spectacular/issues/1006 | [] | sydney-runkle | 29 |

autogluon/autogluon | data-science | 4,427 | Fix Colab and Kaggle Import on Source Install | (Help Wanted) If anyone knows how fix the below issue so we no longer need to restart the runtime after source install, please let us know.

Currently on Kaggle and Colab, the following fails:

```

!git clone https://github.com/autogluon/autogluon

!cd autogluon && pip install -e common/

from autogluon.common import FeatureMetadata

```

Exception:

```

ImportError: cannot import name 'FeatureMetadata' from 'autogluon.common' (unknown location)

```

```

import sys

print(sys.path)

# --> autogluon.common is missing in sys.path

```

On Kaggle, this works:

```

!git clone https://github.com/autogluon/autogluon

!cd autogluon && pip install -e common/

# Run -> Restart & Clear Cell Outputs -> Continue to import statement

from autogluon.common import FeatureMetadata

```

On Colab, this works:

```

!git clone https://github.com/autogluon/autogluon

!cd autogluon && pip install -e common/

# Runtime -> Restart session -> Continue to import statement

from autogluon.common import FeatureMetadata

```

Note: You can replace `pip install -e common/` with `./full_install.sh` which will get the same end result.

### Potential Solutions

#### Remove `-e` (editable install flag)

The following works:

```

!git clone https://github.com/autogluon/autogluon

!cd autogluon && pip install common/

from autogluon.common import FeatureMetadata

```

This remove the `-e` (editable install) flag from the common install. Unsure what drawbacks this has.

### Related Links

- https://stackoverflow.com/questions/57838013/modulenotfounderror-after-successful-pip-install-in-google-colaboratory | open | 2024-08-24T04:21:00Z | 2025-03-07T20:36:25Z | https://github.com/autogluon/autogluon/issues/4427 | [

"bug",

"help wanted",

"env: kaggle",

"install",

"env: colab",

"priority: 0"

] | Innixma | 1 |

polakowo/vectorbt | data-visualization | 683 | Exits do not trigger consistently when using limit orders with from_signals | To replicate, let's say I have a 5min dataframe for AAPL where the index includes ETH session (04:00 to 19:55 US/Eastern), and I have a column 'vwap' representing the anchored VWAP, which is np.nan outside of the RTH session.

Generate an entry at 9:30, and an exit at 15:30. Without limit orders, this works fine:

```python

pf = vbt.Portfolio.from_signals(

df['close'],

entries = df.index.time == dt.time(9,30),

exits = df.index.time >= dt.time(15,30),

freq='5min'

)

pf.trades.plot()

```

You can see that on each day, each entry is paired with a 15:30 exit.

With a limit order, exits are inconsistently enforced:

```python

pf = vbt.Portfolio.from_signals(

df['close'],

entries = df.index.time == dt.time(9,30),

exits = df.index.time >= dt.time(15,30),

price=df['vwap'],

order_type="limit",

freq='5min'

)

pf.trades.plot()

```

The 1st and 5th entries exited on the first bar of the next day. The last entry exited at 9:30 on the next day. Some days don't have entries since VWAP isn't hit, which is correct.

I thought the issue might have been with open limit orders, but I tried playing around with different variations of limit_tif, limit_expiry and time_delta_format to get the orders to expire ASAP, but none of that worked.

For reference, my VWAP column looks like this for a single day:

| closed | 2024-01-17T22:01:55Z | 2024-01-18T14:13:33Z | https://github.com/polakowo/vectorbt/issues/683 | [] | rxhh | 2 |

browser-use/browser-use | python | 832 | buildDomTree.js may not use getEventListeners | ### Bug Description

I've found elements like this: <div data-v-555aab2b="">I Accept</div> that lack clear interactive features, but they do have click events. When I try to get the listeners using the Chrome DevTools API through JavaScript code, it returns an empty value.

This should be a relatively common situation. It's possible to retrieve these listeners within the F12 developer mode, but playwright page.evaluate lacks the necessary permissions. This fundamental issue can be resolved through the CDP (Chrome DevTools Protocol) or other means.

// Helper function to safely get event listeners

function getEventListeners(el) {

try {

// Try to get listeners using Chrome DevTools API

return window.getEventListeners?.(el) || {};

} catch (e) {

<img width="544" alt="Image" src="https://github.com/user-attachments/assets/1e341f9a-305d-40a5-9d47-ba86e5542c93" />

### Reproduction Steps

Just use the html code to test the function.

<!DOCTYPE html>

<html>

<head>

<title>Clickable Div Example</title>

<style>

div[data-v-555aab2b] {

border: 1px solid #ccc;

padding: 10px;

cursor: pointer;

}

</style>

</head>

<body>

<div data-v-555aab2b="">I Accept</div>

<script>

const divElement = document.querySelector('div[data-v-555aab2b]');

divElement.addEventListener('click', () => {

console.log('Div with data-v-555aab2b clicked!');

alert('Div with data-v-555aab2b clicked!');

});

</script>

</body>

</html>

### Code Sample

```python

url = "file:///test.html"

initial_actions = [

{'go_to_url': {'url': url}},

]

agent = Agent(

task="test",

initial_actions=initial_actions,

)

agent.run()

```

### Version

pip 0.1.37

### LLM Model

Gemini 1.5 Pro

### Operating System

macos 13

### Relevant Log Output

```shell

``` | closed | 2025-02-23T10:42:48Z | 2025-03-11T02:22:17Z | https://github.com/browser-use/browser-use/issues/832 | [

"bug"

] | awes61 | 3 |

FujiwaraChoki/MoneyPrinterV2 | automation | 15 | Error when running on Mac |

Hi. I got error when running on Mac.

What should i do ? | closed | 2024-02-19T05:28:59Z | 2024-12-31T08:26:23Z | https://github.com/FujiwaraChoki/MoneyPrinterV2/issues/15 | [] | s4-hub | 4 |

biolab/orange3 | scikit-learn | 6,129 | Orange installed from conda/pip does not have an icon (on Mac) | ### Discussed in https://github.com/biolab/orange3/discussions/6122

<div type='discussions-op-text'>

<sup>Originally posted by **DylanZDD** September 4, 2022</sup>

<img width="144" alt="Screen Shot 2022-09-04 at 12 26 04" src="https://user-images.githubusercontent.com/44270787/188297386-c463907c-9e7f-45ea-b46f-b0ad9b6f8f23.png">

<img width="1431" alt="Screen Shot 2022-09-04 at 12 26 13" src="https://user-images.githubusercontent.com/44270787/188297398-7584db2e-be45-4b6b-839a-f20de5185e50.png">

</div>

A quick search led me here:

https://stackoverflow.com/questions/33134594/set-tkinter-python-application-icon-in-mac-os-x

I think other platforms have similar problems. | closed | 2022-09-05T11:49:28Z | 2023-01-20T08:39:42Z | https://github.com/biolab/orange3/issues/6129 | [

"bug",

"snack"

] | markotoplak | 0 |

collerek/ormar | fastapi | 1,031 | How to query a string in all table columns? | ### Discussed in https://github.com/collerek/ormar/discussions/911

<div type='discussions-op-text'>

<sup>Originally posted by **lucashahnndev** October 29, 2022</sup>

I apologize if something is wrong because I'm Brazilian and I'm using the translator

I searched the documentation and didn't find anything similar, or at least I didn't understand! I would like to make a query without knowing which column should search so make a query on all columns of the table.</div> | open | 2023-03-15T20:15:17Z | 2023-03-15T20:15:17Z | https://github.com/collerek/ormar/issues/1031 | [] | lucashahnndev | 0 |

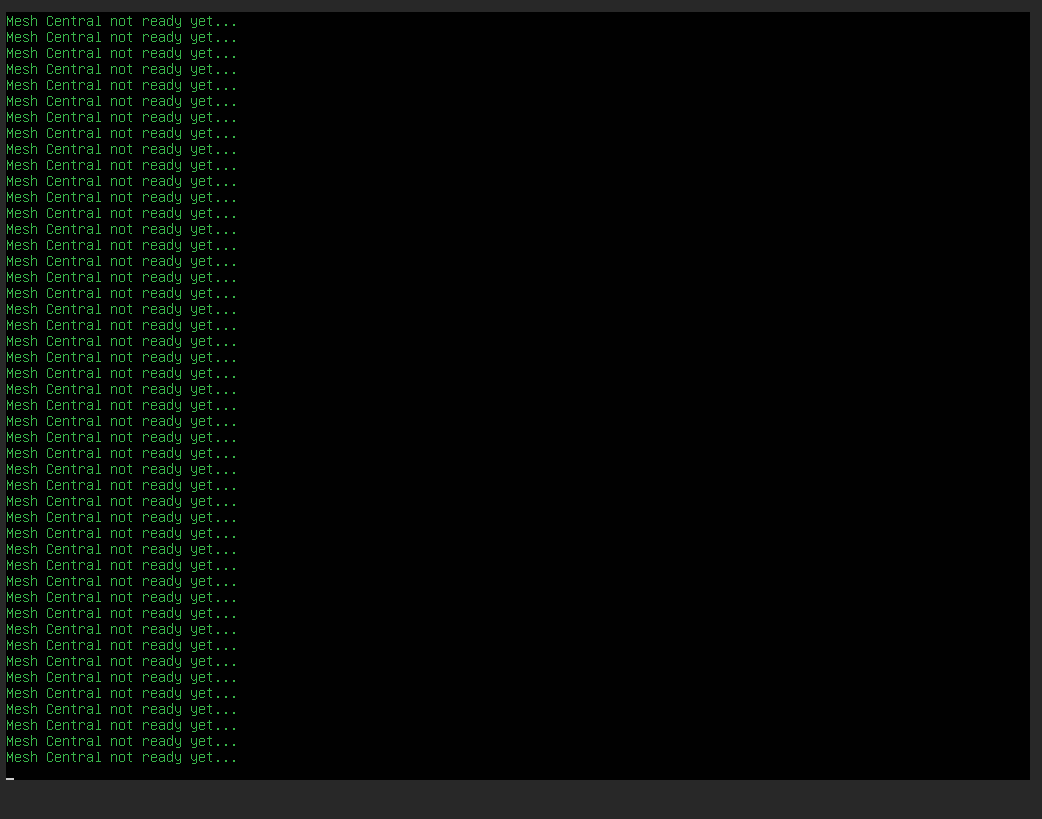

amidaware/tacticalrmm | django | 1,182 | Stuck at Mesh Central not ready yet | **Server Info (please complete the following information):**

- OS: Ubuntu 20.04

- Browser: Chrome

- RMM Version (as shown in top left of web UI): Fresh install (Latest Version)

**Installation Method:**

- Standard

**Describe the bug**

Fresh install stuck at Mesh Central not ready yet

**Screenshots**

**Additional context**

I searched and most of case they said not enough ram . my server has 16gb of ram and 500gb of storage

Any idea what i could try ?

| closed | 2022-06-22T17:39:22Z | 2022-06-22T18:17:38Z | https://github.com/amidaware/tacticalrmm/issues/1182 | [] | Aghiad90 | 3 |

pennersr/django-allauth | django | 4,068 | WebAuthn Login POST API - Handling X-Session-Token for Initial Login Request (App Usage) | I'm encountering an issue with the WebAuthn login process using the app usage of the API in Django-Allauth. According to the documentation, the X-Session-Token header is required when using the app client for API calls.

While I am successfully able to perform actions such as adding and deleting WebAuthn tokens for a user account, I'm running into a problem when trying to log in using a Passkey. Specifically, the POST request to the WebAuthn login endpoint is returning a 400 error with the message Incorrect code.

Error Details:

```

{

"url": "/api/_allauth/app/v1/auth/webauthn/login",

"statusCode": 400,

"statusMessage": "Bad Request",

"data": {

"status": 400,

"errors": [

{

"message": "Incorrect code.",

"code": "incorrect_code",

"param": "credential"

}

]

}

}

```

Debugging Information

After extensive debugging, I've determined that the issue likely relates to the X-Session-Token header. Here's what I observed:

1.Login with Password Flow:

- Logging in with a password and being redirected to /authenticate/webauthn allows successful login using the WebAuthn passkey.

- In this scenario, I receive a 401 response from the login attempt, which includes a session_token in the error response. I then set this session_token and proceed to log in successfully.

2.Direct WebAuthn Login Flow:

- When attempting to log in directly using WebAuthn (without a password first), the login GET method does not return a session_token, and hence I cannot include the X-Session-Token in the initial POST request.

- This is the GET response from `webauthn/login`

```

{

status: 200,

data: {

request_options: {

publicKey: {

challenge: "QTc7Vf1NF2iI-LlK7A1ZlaIH4GwdcjPVvgrVxV3gbtY",

rpId: "webside.gr",

allowCredentials: [],

userVerification: "preferred"

}

}

}

}

```

3.Workaround:

- By logging in using a password first, then proceeding with WebAuthn without refreshing the browser (SPA), I can successfully log in using the passkey.

Main Question

How should the X-Session-Token be handled in the initial WebAuthn login API call? Since this is the first API call I make, there's no session token available to include in the request headers. Is there a specific flow or additional API call I should make to obtain this token, or is this a potential bug in the library?

Code Example

Here is the relevant code snippet:

```

async function onSubmit() {

try {

loading.value = true

const optResp = await getWebAuthnRequestOptionsForLogin()

const jsonOptions = optResp?.data.request_options

if (!jsonOptions) {

throw new Error('No creation options')

}

const options = parseRequestOptionsFromJSON(jsonOptions)

const credential = await get(options)

session.value = await loginUsingWebAuthn({

credential,

})

await performPostLoginActions()

}

catch (error) {

console.error('=== Error ===', error)

toast.add({

title: t('common.error.default'),

color: 'red',

})

}

finally {

await finalizeLogin()

}

}

``` | closed | 2024-08-23T15:31:03Z | 2024-08-23T22:48:17Z | https://github.com/pennersr/django-allauth/issues/4068 | [

"Unconfirmed"

] | vasilistotskas | 3 |

jmcnamara/XlsxWriter | pandas | 266 | Issues hyperlinking | Hello,

I am using XlsxWriter in order to export some information into a XLSX file.

I am using the last version of XlsxWriter and Python 2.7.6.

The issue comes when I print out the following information into a field of the excel file:

_http://www.test.com/…/test-my-stuff-well…/ blablabla blablabla_

As you can see there is a url and text. So when XlsxWriter tries to hyperlink the url it does happen an error that makes the excel creation fail or forces the excel app to recover to open the file.

My solution was disable the hyperlinking using:

_workbook = xlsxwriter.Workbook(output, {'strings_to_urls': False})_

Is there a way to handle this situation without disabling the hyperlinking?

| closed | 2015-06-12T15:45:13Z | 2015-06-15T14:17:49Z | https://github.com/jmcnamara/XlsxWriter/issues/266 | [

"question"

] | clopezcapo | 7 |

microsoft/JARVIS | deep-learning | 215 | data目录下hg上llm的元数据文件p0_models.jsonl怎么获取 | data目录下hg上llm的元数据文件p0_models.jsonl怎么获取?huggingface上的model增加了很多,这个上面过滤了下只有673个,请问下导出最新的这个数据? | open | 2023-06-27T08:23:01Z | 2023-06-27T08:23:01Z | https://github.com/microsoft/JARVIS/issues/215 | [] | elven2016 | 0 |

mirumee/ariadne | api | 294 | Error importing module (circular import) when the local script is called graphql.py | **Description**: when trying to import the ariadne module (either as `import ariadne` or `from ariadne import ...` when your own module file is named `graphql.py`, it causes the following error:

```

Exception has occurred: ImportError

cannot import name 'gql' from partially initialized module 'ariadne' (most likely due to a circular import) (/usr/local/lib/python3.8/site-packages/ariadne/__init__.py)

File "/workspaces/project/graphql.py", line 1, in <module>

from ariadne import gql

File "/workspaces/project/graphql.py", line 1, in <module>

from ariadne import gql

```

**Workaround**: Renaming your file from `graphql.py` to anything else resolves this error. | closed | 2020-01-15T15:39:08Z | 2020-01-15T15:58:10Z | https://github.com/mirumee/ariadne/issues/294 | [] | v1b1 | 3 |

vaexio/vaex | data-science | 1,275 | [QUESTION] How to handle dates in filters or how to use boolean arrays in select? | **How to handle dates in filters or how to use boolean arrays in select?**

I like to filter by a column with dates, given some date range or a boolean array. Using Pandas I'm able to use either way, however, with vaex I get back either a `NoneType` (with boolean arrays) or get an error message when directly compare vaex's date column with a date.

I think for me the option to just throw in a boolean array will be more useful, but I'm also wondering if I can use date comparison directly with vaex.

**Example**

Given a Dataframe

```

import numpy as np

import pandas as pd

np.random.seed(0)

arrays = np.concatenate(np.array([range(10), range(10)]))

df = pd.DataFrame(

pd.date_range("20200101", freq="D", periods=arrays.shape[0]),

index=arrays,

columns=["date"],

)

df.index.names = ["id"]

df.head(3)

```

returns

```

date

id

1 2020-01-01

2 2020-01-02

3 2020-01-03

```

I try to select a date range from this data, using Pandas I can do

```

pdsel = df.date < pd.Timestamp("20200103")

df.loc[pdsel]

```

returns

```

date

id

1 2020-01-01

2 2020-01-02

```

now moving on to vaex

```

import vaex

vaex_df = vaex.from_pandas(df, copy_index=True)

```

first, just using the boolean output from `pdsel` returns `None`

```

type(vaex_df[pdsel])

```

returns

```

NoneType

```

and finally using vaex itself results in a lengthy error message

```

vaex_df[vaex_df.date < pd.Timestamp("20200103")]

```

returns (just a tiny bit of the whole error message)

```

[...]

File "<string>", line 1

(date < 2020-01-03 00:00:00)

^

SyntaxError: leading zeros in decimal integer literals are not permitted; use an 0o prefix for octal integers

File "<string>", line 1

(date < 2020-01-03 00:00:00)

^

SyntaxError: leading zeros in decimal integer literals are not permitted; use an 0o prefix for octal integers

[...]

``` | closed | 2021-03-20T22:52:24Z | 2021-03-21T14:18:23Z | https://github.com/vaexio/vaex/issues/1275 | [] | Eisbrenner | 4 |

zama-ai/concrete-ml | scikit-learn | 875 | Significant Accuracy Decrease After FHE Execution | ## Summary

What happened/what you expected to happen?

## Description

We've observed significant accuracy discrepancies when running our model with different FHE settings. The original PyTorch model achieves 63% accuracy. With FHE disabled, the accuracy drops to 50%, and with FHE execution enabled, it further decreases to 32%. The compilation uses a dummy input of shape (1, 100) with random values (numpy.random.randn(1, 100).astype(numpy.float32)). Since the accuracy with FHE disabled matches the quantized model's accuracy, it suggests that the accuracy loss from 63% to 50% is likely due to quantization. However, the substantial drop to 32% when enabling FHE execution indicates a potential issue with the FHE implementation or configuration that requires further investigation.

- versions affected: concrete-ml 1.6.1

- python version: 3.10

- config (optional: HW, OS):

- workaround (optional): if you’ve a way to workaround the issue

- proposed fix (optional): if you’ve a way to fix the issue

Step by step procedure someone should follow to trigger the bug:

<details><summary>minimal POC to trigger the bug</summary>

<p>

```python

print("Minimal POC to reproduce the bug")

Andthe above screenshots are my compile process and process of performing the compile model on the encrypted data.

I guess that the issue is related to FHE execution.

And i write this file based on this open source code:https://github.com/zama-ai/concrete-ml/blob/main/docs/advanced_examples/ClientServer.ipynb

```

</p>

</details>

| open | 2024-09-19T12:50:45Z | 2025-03-04T15:02:35Z | https://github.com/zama-ai/concrete-ml/issues/875 | [

"bug"

] | Sarahfbb | 7 |

pywinauto/pywinauto | automation | 1,201 | How to use Pre-supplied Tests? | I tried the tests like below:

```

truncation.TruncationTest(app.windows(visible_only=True))

missalignment.MissalignmentTest(app.windows(visible_only=True))

```

but seems they does not work as expected, cause I do have something controls truncation, but it report nothing.

I have also tried your examples/notepad_fast.py (where I found code about tests)

`bugs = app.PageSetupDlg.run_tests('Truncation')`

It seems does not run as expected, I mean it will not run code in `if win.ref:` condition.

and what does this **win.ref** used for? | open | 2022-04-06T07:37:11Z | 2022-05-12T14:04:49Z | https://github.com/pywinauto/pywinauto/issues/1201 | [

"question"

] | saimadao | 2 |

mlfoundations/open_clip | computer-vision | 171 | Fp16 or Fp32 in the inference phase? | Hello~

Thanks for the great work!

And I'd like to know that the impact of using fp16 not fp32 in the inference phase (e.g., the ViT-H-14). I find that all the models in OpenAI clip will convert to fp16 automatically. So does it matter here?

Thank you~

Zhiliang. | closed | 2022-09-21T17:42:04Z | 2022-09-21T18:23:56Z | https://github.com/mlfoundations/open_clip/issues/171 | [] | pengzhiliang | 2 |

microsoft/JARVIS | pytorch | 72 | I seems to have deploy the server successfully, but a 404 error is returned in the web API | Dear Jarvis Team

I'm new to posting issues on Github, Please excuse any unclear descriptions.

I followed the Guidance for server as follow:

```

# setup env

cd server

conda create -n jarvis python=3.8

conda activate jarvis

conda install pytorch torchvision torchaudio pytorch-cuda=11.7 -c pytorch -c nvidia

pip install -r requirements.txt

# download models

cd models

sh download.sh # required when `inference_mode` is `local` or `hybrid`

# run server

cd ..

python models_server.py --config config.yaml # required when `inference_mode` is `local` or `hybrid`

python awesome_chat.py --config config.yaml --mode server # for text-davinci-003

```

I believe I successfully download the models and I try run command: `python models_server.py --config config.yaml `

I got followed return:

I change the config.yaml file as follow (mainly from localhost to 0.0.0.0 cause I run it on a server machine):

But when I try to test the model out according to the guidance, I got a 404 error as return, The guidance show me that I could send a request to the port to "communicate" with my deployed model on the server as follow:

```

# request

curl --location 'http://localhost:8004/tasks' \

--header 'Content-Type: application/json' \

--data '{

"messages": [

{

"role": "user",

"content": "based on pose of /examples/d.jpg and content of /examples/e.jpg, please show me a new image"

}

]

}'

# response

[{"args":{"image":"/examples/d.jpg"},"dep":[-1],"id":0,"task":"openpose-control"},{"args":{"image":"/examples/e.jpg"},"dep":[-1],"id":1,"task":"image-to-text"},{"args":{"image":"<GENERATED>-0","text":"<GENERATED>-1"},"dep":[1,0],"id":2,"task":"openpose-text-to-image"}]

```

So I write a small python code as:

```

import requests

import json

url = 'http://localhost:8005/hugginggpt'

headers = {'Content-Type': 'application/json'}

data = {

"messages": [

{

"role": "user",

"content": "please generate a video based on \"Spiderman is surfing\""

}

]

}

response = requests.post(url, headers=headers, data=json.dumps(data))

if response.status_code == 200:

result = json.loads(response.content)

print(result)

else:

print('Request failed with status code: %d' % response.status_code)

```

And I got following error:

```

Request failed with status code: 404

```

Similarly, I get the same message when I use my browser to access port 8005 of the ip of the server where I have deployed jarvis:

Is there anything I forget to do for a successfully deploy?

Many thanks!

| closed | 2023-04-06T13:17:07Z | 2023-04-06T23:07:23Z | https://github.com/microsoft/JARVIS/issues/72 | [] | Daeda1used | 13 |

collerek/ormar | fastapi | 789 | Joins result in an error with tables that have a period in their name | **Describe the bug**

https://github.com/collerek/ormar/blob/a27d7673a5354429bef4158297d76a58522c1579/ormar/queryset/join.py#L112

Because `SqlJoin._on_clause` expects a `from_clause` string in the format of "table_name.column_name", if your table name has a period in it, the string will be chunked into more than two parts, causing an error when it cannot set the two variables.

**To Reproduce**

Steps to reproduce the behavior:

1. Create a model with a foreign key and a tablename with a period in it

2. Attempt to filter data over the foreign key relation, which should result in a join and then in an error

```ValueError: too many values to unpack (expected 2) ```

**Expected behavior**

Ormar handles the period without issue.

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Versions (please complete the following information):**

- Database backend used: MySQL and SQLite

- Python version: 3.10

- `ormar` version: 0.11.2

- `pydantic` version: 1.9.1

**Solution**

One solution I would propose since users generally don't use periods in their columns is to just reserve the last chunk for the column name and do something like this.

```

parts = from_clause.split(".")

table = ".".join(parts[:-1])

column = parts[-1]

```

However, a more robust solution would be to just keep table name and column name separate strings until they are run through `quotter()`.

Let me know if there is a preferred method to solve this and I'll open a pull request. | closed | 2022-08-19T19:53:51Z | 2023-09-06T20:13:04Z | https://github.com/collerek/ormar/issues/789 | [

"bug"

] | pmdevita | 2 |

LAION-AI/Open-Assistant | python | 3,749 | Potential Information Leakage | In the source code, sensitive informaiton like `api_key` is inserted into the log. It is a potential security issue as bescribed in [cwe-532](https://cwe.mitre.org/data/definitions/532.html). The `api_key` could be redacted.

The leakage could happen in

[1](https://github.com/LAION-AI/Open-Assistant/blob/f1e6ed9526f5817531f3ab85441a40b3671ddccb/backend/oasst_backend/api/v1/admin.py#L44)

[2](https://github.com/LAION-AI/Open-Assistant/blob/f1e6ed9526f5817531f3ab85441a40b3671ddccb/inference/server/main.py#L119)

[3](https://github.com/LAION-AI/Open-Assistant/blob/f1e6ed9526f5817531f3ab85441a40b3671ddccb/inference/server/oasst_inference_server/worker_utils.py#L58) | open | 2024-03-11T14:52:20Z | 2024-03-11T14:52:20Z | https://github.com/LAION-AI/Open-Assistant/issues/3749 | [] | nevercodecorrect | 0 |

ansible/ansible | python | 84,495 | "creates" is ignored when multiple files are specified, and the files are outside current directory | ### Summary

The "creates" constraint in `shell` behaves differently depending on where the listed files are located.

When a single file is given, it appears to behave as expected, i.e., if the file already exists then the task is not performed.

When multiple files are given, and they are in the task path, behavior is as above.

When multiple files are given, and they are not in the task path, the task is run even though the files already exist.

See playbook in section "Steps to reproduce". Run that playbook twice to reproduce.

### Issue Type

Bug Report

### Component Name

shell

### Ansible Version

```console

$ ansible --version

ansible [core 2.16.3]

config file = None

configured module search path = ['/home/ubuntu/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python3/dist-packages/ansible

ansible collection location = /home/ubuntu/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/bin/ansible

python version = 3.12.3 (main, Nov 6 2024, 18:32:19) [GCC 13.2.0] (/usr/bin/python3)

jinja version = 3.1.2

libyaml = True

```

### Configuration

```console

# if using a version older than ansible-core 2.12 you should omit the '-t all'

$ ansible-config dump --only-changed -t all

CONFIG_FILE() = None

```

### OS / Environment

Ubuntu 24.04

### Steps to Reproduce

<!--- Paste example playbooks or commands between quotes below -->

```yaml (paste below)

- hosts:

- '127.0.0.1'

tasks:

- name: success - Create single file in ~

args:

creates: /home/ubuntu/a

ansible.builtin.shell: |

touch /home/ubuntu/a

- name: success - Create multiple files in current directory

args:

creates:

- a

- b

ansible.builtin.shell: |

touch a b

- name: failure - Create multiple files in ~

args:

creates:

- /home/ubuntu/a

- /home/ubuntu/b

ansible.builtin.shell: |

touch /home/ubuntu/a /home/ubuntu/b

```

### Expected Results

See the section "Summary".

### Actual Results

```console

[WARNING]: No inventory was parsed, only implicit localhost is available

[WARNING]: provided hosts list is empty, only localhost is available. Note that

the implicit localhost does not match 'all'

ansible-playbook [core 2.16.3]

config file = None

configured module search path = ['/home/ubuntu/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python3/dist-packages/ansible

ansible collection location = /home/ubuntu/.ansible/collections:/usr/share/ansible/collections

executable location = /usr/bin/ansible-playbook

python version = 3.12.3 (main, Nov 6 2024, 18:32:19) [GCC 13.2.0] (/usr/bin/python3)

jinja version = 3.1.2

libyaml = True

No config file found; using defaults

host_list declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

Skipping due to inventory source not existing or not being readable by the current user

script declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

auto declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

Skipping due to inventory source not existing or not being readable by the current user

yaml declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

Skipping due to inventory source not existing or not being readable by the current user

ini declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

Skipping due to inventory source not existing or not being readable by the current user

toml declined parsing /etc/ansible/hosts as it did not pass its verify_file() method

Skipping callback 'default', as we already have a stdout callback.

Skipping callback 'minimal', as we already have a stdout callback.

Skipping callback 'oneline', as we already have a stdout callback.

PLAYBOOK: main.yml *************************************************************

1 plays in ansible/main.yml

PLAY [127.0.0.1] ***************************************************************

TASK [Gathering Facts] *********************************************************

task path: /home/ubuntu/.dotfiles/ansible/main.yml:1

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: ubuntu

<127.0.0.1> EXEC /bin/sh -c 'echo ~ubuntu && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /home/ubuntu/.ansible/tmp `"&& mkdir "` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575 `" && echo ansible-tmp-1735297572.0545905-2753-218625252217575="` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575 `" ) && sleep 0'

Using module file /usr/lib/python3/dist-packages/ansible/modules/setup.py

<127.0.0.1> PUT /home/ubuntu/.ansible/tmp/ansible-local-27508expt46y/tmpn0vwkjic TO /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575/AnsiballZ_setup.py

<127.0.0.1> EXEC /bin/sh -c 'chmod u+x /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575/ /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575/AnsiballZ_setup.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '/usr/bin/python3 /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575/AnsiballZ_setup.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c 'rm -f -r /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.0545905-2753-218625252217575/ > /dev/null 2>&1 && sleep 0'

ok: [127.0.0.1]

TASK [success - Create single file in ~] ***************************************

task path: /home/ubuntu/.dotfiles/ansible/main.yml:5

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: ubuntu

<127.0.0.1> EXEC /bin/sh -c 'echo ~ubuntu && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /home/ubuntu/.ansible/tmp `"&& mkdir "` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247 `" && echo ansible-tmp-1735297572.6977189-2832-40327463316247="` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247 `" ) && sleep 0'

Using module file /usr/lib/python3/dist-packages/ansible/modules/command.py

<127.0.0.1> PUT /home/ubuntu/.ansible/tmp/ansible-local-27508expt46y/tmp3s5a50wb TO /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247/AnsiballZ_command.py

<127.0.0.1> EXEC /bin/sh -c 'chmod u+x /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247/ /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '/usr/bin/python3 /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c 'rm -f -r /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.6977189-2832-40327463316247/ > /dev/null 2>&1 && sleep 0'

ok: [127.0.0.1] => {

"changed": false,

"cmd": "touch /home/ubuntu/a\n",

"delta": null,

"end": null,

"invocation": {

"module_args": {

"_raw_params": "touch /home/ubuntu/a\n",

"_uses_shell": true,

"argv": null,

"chdir": null,

"creates": "/home/ubuntu/a",

"executable": null,

"expand_argument_vars": true,

"removes": null,

"stdin": null,

"stdin_add_newline": true,

"strip_empty_ends": true

}

},

"msg": "Did not run command since '/home/ubuntu/a' exists",

"rc": 0,

"start": null,

"stderr": "",

"stderr_lines": [],

"stdout": "skipped, since /home/ubuntu/a exists",

"stdout_lines": [

"skipped, since /home/ubuntu/a exists"

]

}

TASK [success - Create multiple files in current directory] ********************

task path: /home/ubuntu/.dotfiles/ansible/main.yml:11

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: ubuntu

<127.0.0.1> EXEC /bin/sh -c 'echo ~ubuntu && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /home/ubuntu/.ansible/tmp `"&& mkdir "` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105 `" && echo ansible-tmp-1735297572.8988683-2857-167062530684105="` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105 `" ) && sleep 0'

Using module file /usr/lib/python3/dist-packages/ansible/modules/command.py

<127.0.0.1> PUT /home/ubuntu/.ansible/tmp/ansible-local-27508expt46y/tmp65ji5wt4 TO /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105/AnsiballZ_command.py

<127.0.0.1> EXEC /bin/sh -c 'chmod u+x /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105/ /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '/usr/bin/python3 /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c 'rm -f -r /home/ubuntu/.ansible/tmp/ansible-tmp-1735297572.8988683-2857-167062530684105/ > /dev/null 2>&1 && sleep 0'

ok: [127.0.0.1] => {

"changed": false,

"cmd": "touch a b\n",

"delta": null,

"end": null,

"invocation": {

"module_args": {

"_raw_params": "touch a b\n",

"_uses_shell": true,

"argv": null,

"chdir": null,

"creates": "['a', 'b']",

"executable": null,

"expand_argument_vars": true,

"removes": null,

"stdin": null,

"stdin_add_newline": true,

"strip_empty_ends": true

}

},

"msg": "Did not run command since '['a', 'b']' exists",

"rc": 0,

"start": null,

"stderr": "",

"stderr_lines": [],

"stdout": "skipped, since ['a', 'b'] exists",

"stdout_lines": [

"skipped, since ['a', 'b'] exists"

]

}

TASK [failure - Create multiple files in ~] ************************************

task path: /home/ubuntu/.dotfiles/ansible/main.yml:19

<127.0.0.1> ESTABLISH LOCAL CONNECTION FOR USER: ubuntu

<127.0.0.1> EXEC /bin/sh -c 'echo ~ubuntu && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '( umask 77 && mkdir -p "` echo /home/ubuntu/.ansible/tmp `"&& mkdir "` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637 `" && echo ansible-tmp-1735297573.0390477-2882-220125337962637="` echo /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637 `" ) && sleep 0'

Using module file /usr/lib/python3/dist-packages/ansible/modules/command.py

<127.0.0.1> PUT /home/ubuntu/.ansible/tmp/ansible-local-27508expt46y/tmpa3nihfbn TO /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637/AnsiballZ_command.py

<127.0.0.1> EXEC /bin/sh -c 'chmod u+x /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637/ /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c '/usr/bin/python3 /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637/AnsiballZ_command.py && sleep 0'

<127.0.0.1> EXEC /bin/sh -c 'rm -f -r /home/ubuntu/.ansible/tmp/ansible-tmp-1735297573.0390477-2882-220125337962637/ > /dev/null 2>&1 && sleep 0'

changed: [127.0.0.1] => {

"changed": true,

"cmd": "touch /home/ubuntu/a /home/ubuntu/b\n",

"delta": "0:00:00.003488",

"end": "2024-12-27 11:06:13.155383",

"invocation": {

"module_args": {

"_raw_params": "touch /home/ubuntu/a /home/ubuntu/b\n",

"_uses_shell": true,

"argv": null,

"chdir": null,

"creates": "['/home/ubuntu/a', '/home/ubuntu/b']",

"executable": null,

"expand_argument_vars": true,

"removes": null,

"stdin": null,

"stdin_add_newline": true,

"strip_empty_ends": true

}

},

"msg": "",

"rc": 0,

"start": "2024-12-27 11:06:13.151895",

"stderr": "",

"stderr_lines": [],

"stdout": "",

"stdout_lines": []

}

PLAY RECAP *********************************************************************

127.0.0.1 : ok=4 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

```

### Code of Conduct

- [X] I agree to follow the Ansible Code of Conduct | open | 2024-12-27T11:19:47Z | 2025-01-14T15:48:48Z | https://github.com/ansible/ansible/issues/84495 | [

"module",

"bug",

"affects_2.16",

"data_tagging"

] | erikv85 | 7 |

Sanster/IOPaint | pytorch | 180 | I have two suggestions | First,thanks you for sharing the project,It's very nice.

details:

1)lama.py

when I have a model named big-lama.pt,I should not download from the remote server.I suggest you change lama_mdoel_url support the path,like this:

```

url_or_path = LAMA_MODEL_URL

if os.path.exists(url_or_path):

model_path = url_or_path

else:

model_path = download_model(url_or_path)

```

2)server.py

it's maybe a bug,when I run the code,the debug console says cache_timeout=0 is not support,I delete the cache_timeout=0,then all is ok. | open | 2023-01-12T03:05:28Z | 2023-01-12T03:05:28Z | https://github.com/Sanster/IOPaint/issues/180 | [] | aqie13 | 0 |

sqlalchemy/alembic | sqlalchemy | 514 | shell script doesn't work for python paths with spaces | **Migrated issue, originally created by Nonprofit Metrics**

Upon installing Alembic into a Python Virtualenv, where a space exists in the python path, the "alembic" shell command fails with the message "bad interpreter: No such file or directory". I'm using alembic 1.0.1, just installed from pip.

It appears the error is from the shebang line in the script (...bin/alembic), which for me reads:

```

#!"/Users/me/Directory With Spaces/myvirtualenv/bin/python2.7"

```

No way of escaping the path or spaces works at all. The way I got it to work is to replace the shebang line with:

```

#!/bin/sh

'''exec' "/Users/me/Directory With Spaces/myvirtualenv/bin/python2.7" "$0" "$@"

' '''

```

This is the way celery and others solve the problem.

I couldn't figure out where setup.py generated the shell "bin/alembic" file, or else I would've submitted a pull request.

Thanks!

| closed | 2018-10-24T00:34:53Z | 2018-10-24T01:15:02Z | https://github.com/sqlalchemy/alembic/issues/514 | [

"bug",

"low priority",

"command interface"

] | sqlalchemy-bot | 7 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 700 | NotADirectoryError occurs when using combine_A_and_B.py | I am preparing my own datasets and **I want to combine my own sketch and photo images into one picture for trainning on the pix2pix model**. I read the ["Prepare your own datasets for pix2pix"instruction](https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/blob/master/docs/tips.md#prepare-your-own-datasets-for-cyclegan) carefully, also, I searched the issues similar but there is no help. So I open a new issue for help.

### My folder **directories** are as follow:

the **sketch images** are in the

**/pytorch-CycleGAN-and-pix2pix/datasets/combine/A/train**

and there are 72 images named like frame_0028.jpg.

the **photo images** are in the

**/pytorch-CycleGAN-and-pix2pix/datasets/combine/B/train**

and there are 72 corresponding object photo images with same name in A.

and the **output folder**:

**/pytorch-CycleGAN-and-pix2pix/datasets/combine/AB**

### My commands try

my first try command is:

**python datasets/combine_A_and_B.py --fold_A ./datasets/combine/A --fold_B ./datasets/combine/B --fold_AB ./datasets/combine/AB**

but terminal says:

[fold_A] = ./datasets/combine/A

[fold_B] = ./datasets/combine/B

[fold_AB] = ./datasets/combine/AB

[num_imgs] = 1000000

[use_AB] = False

Traceback (most recent call last):

File "datasets/combine_A_and_B.py", line 22, in <module>

img_list = os.listdir(img_fold_A)

**NotADirectoryError: [Errno 20] Not a directory: './datasets/combine/A/.DS_Store'**

I think maybe I should **specify the train folder**, so my second try command is:

**python datasets/combine_A_and_B.py --fold_A ./datasets/combine/A/train --fold_B ./datasets/combine/B/train --fold_AB ./datasets/combine/AB**

terminal still unhappy:

[fold_A] = ./datasets/combine/A/train

[fold_B] = ./datasets/combine/B/train

[fold_AB] = ./datasets/combine/AB

[num_imgs] = 1000000

[use_AB] = False

Traceback (most recent call last):

File "datasets/combine_A_and_B.py", line 22, in <module>

img_list = os.listdir(img_fold_A)

**NotADirectoryError: [Errno 20] Not a directory: './datasets/combine/A/train/frame_0028.jpg'**

Could anyone give me some advice? Thanks a lot ! | closed | 2019-07-13T03:31:52Z | 2023-07-11T09:17:54Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/700 | [] | diaosiji | 4 |

streamlit/streamlit | python | 10,678 | Clickable Container | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [x] I added a descriptive title and summary to this issue.

### Summary

Hi,

it would be very nice, if I could turn a st.container clickable (with an on_click event), so I could build clickable boxes with awesome content. :-)

Best Regards

### Why?

Because I have already tried to build a tile with streamlit, and it is very very difficult. That would make it much easier.

### How?

_No response_

### Additional Context

_No response_ | open | 2025-03-07T12:00:23Z | 2025-03-07T12:56:13Z | https://github.com/streamlit/streamlit/issues/10678 | [

"type:enhancement",

"feature:st.container",

"area:events"

] | alex-bork | 1 |

nltk/nltk | nlp | 2,876 | TreebankWordDetokenizer is not always an inverse of TreebankWordTokenizer | For example, for the following script:

```python

import nltk

import nltk.tokenize

tokens = nltk.tokenize.TreebankWordTokenizer().tokenize("I wanna watch something")

print(tokens)

sentence = nltk.tokenize.treebank.TreebankWordDetokenizer().detokenize(tokens)

print(sentence)

```

The result is:

```

['I', 'wan', 'na', 'watch', 'something']

I wannawatch something

```

The detokenized sentence should be: `I wanna watch something` | closed | 2021-11-04T12:38:16Z | 2021-11-06T23:34:16Z | https://github.com/nltk/nltk/issues/2876 | [

"bug",

"tokenizer"

] | kazet | 1 |

mwaskom/seaborn | data-visualization | 3,707 | Issue with facet grid and legends | Here is an MRE:

```python

import pandas as pd

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

np.random.seed(1)

n_samples = 1000

data = {

'metric': np.random.rand(n_samples),

'method': np.random.choice(['Method A', 'Method B', 'Method C'], n_samples),

'criterion': np.random.choice(['Criterion 1', 'Criterion 2'], n_samples),

'category': np.random.choice(['Category X', 'Category Y'], n_samples)

}

generic_df = pd.DataFrame(data)

# Plot using Seaborn's FacetGrid

g = sns.FacetGrid(generic_df, col='criterion', row='category',

margin_titles=True, height=3, aspect=1.2)

g.map_dataframe(sns.histplot, x='metric', hue='method', bins=30, multiple='stack')

g.set_axis_labels('Metric', 'Frequency')

g.set_titles(col_template='{col_name}', row_template='{row_name}')

g.fig.subplots_adjust(top=0.89)

g.fig.suptitle('Histogram of Metric Grouped by Criterion and Category')

g.add_legend()

# Display the plot

plt.show()

```

I can move `hue='method'` over to `sns.FacetGrid` and the legend will display, but that changes the bar plot so it is not properly stacked. | closed | 2024-06-05T21:27:21Z | 2024-06-06T18:31:58Z | https://github.com/mwaskom/seaborn/issues/3707 | [] | vsbuffalo | 2 |

TracecatHQ/tracecat | fastapi | 886 | Broken link in user interface | **Describe the bug**

The "Find playbook" button in web ui has broken link to github

The button leads to https://github.com/TracecatHQ/tracecat/tree/main/playbooks , but this folder doesn't exist in main branch

**To reproduce**

1. Open tracecat web ui and go to "Workflows"

2. Click on "Find playbook" button

**Expected behavior**

Link to existing resources

**Screenshots**

<img width="950" alt="Image" src="https://github.com/user-attachments/assets/bcaaa370-a436-40d0-be65-a2b3835a854f" />

**Environment (please complete the following information):**

- Tracecat version 0.26.1 (commit `d652ab70e15773289058dab588fdddac636eb8a7`)

- Cloned repo and deployed with docker compose

| closed | 2025-02-22T15:23:33Z | 2025-02-23T14:19:54Z | https://github.com/TracecatHQ/tracecat/issues/886 | [

"frontend",

"triage"

] | szymon-romanko | 1 |

vllm-project/vllm | pytorch | 14,443 | [Bug]: External Launcher producing NaN outputs on Large Models when Collocating with Model Training | ### Your current environment

<details>

<summary>The output of `python collect_env.py`</summary>

```text

PyTorch version: 2.5.1+cu124

Is debug build: False

CUDA used to build PyTorch: 12.4

ROCM used to build PyTorch: N/A

OS: CentOS Stream 9 (x86_64)

GCC version: (GCC) 11.5.0 20240719 (Red Hat 11.5.0-2)

Clang version: Could not collect

CMake version: Could not collect

Libc version: glibc-2.34

Python version: 3.10.16 (main, Feb 5 2025, 05:53:54) [GCC 11.5.0 20240719 (Red Hat 11.5.0-2)] (64-bit runtime)

Python platform: Linux-5.14.0-284.73.1.el9_2.x86_64-x86_64-with-glibc2.34

Is CUDA available: True

CUDA runtime version: 12.4.131

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration:

GPU 0: NVIDIA A100-SXM4-80GB

GPU 1: NVIDIA A100-SXM4-80GB

GPU 2: NVIDIA A100-SXM4-80GB

GPU 3: NVIDIA A100-SXM4-80GB

Nvidia driver version: 550.54.14

Versions of relevant libraries:

[pip3] numpy==1.26.4

[pip3] nvidia-cublas-cu12==12.4.5.8

[pip3] nvidia-cuda-cupti-cu12==12.4.127

[pip3] nvidia-cuda-nvrtc-cu12==12.4.127

[pip3] nvidia-cuda-runtime-cu12==12.4.127

[pip3] nvidia-cudnn-cu12==9.1.0.70

[pip3] nvidia-cufft-cu12==11.2.1.3

[pip3] nvidia-curand-cu12==10.3.5.147

[pip3] nvidia-cusolver-cu12==11.6.1.9

[pip3] nvidia-cusparse-cu12==12.3.1.170

[pip3] nvidia-cusparselt-cu12==0.6.2

[pip3] nvidia-nccl-cu12==2.21.5

[pip3] nvidia-nvjitlink-cu12==12.4.127

[pip3] nvidia-nvtx-cu12==12.4.127

[pip3] pyzmq==26.2.1

[pip3] torch==2.5.1

[pip3] torchaudio==2.5.1

[pip3] torchvision==0.20.1

[pip3] transformers==4.49.0

[pip3] triton==3.1.0

```

</details>

### 🐛 Describe the bug

We are observing the following when collocating a relatively large model (e.g. 72B) using external launcher with a model sharding framework (e.g., FSDP).

Motivation: for RLHF, collocating is desirable, but when the model is large, we expect to shard both the vllm and model in training so that we can fit into the GPU.

- for VLLM, it would be TP (or PP)

- for model in training, it would be using a framework like FSDP and DeepSpeed

Bug: we are observing NaN's coming from the TPed VLLM model when the model size becomes big. We have a reproduction script in this [gist](https://gist.github.com/fabianlim/66dba9138d399f4c6c70674d92503444) which demostrates the following behavior

- in this script, we demonstrate two scenarios, i) the model in train is sharded with FSDP, ii) the model is unsharded. This is controlled by setting `shard_train_model=True` and `False`, respectively.

- For demonstration purposes: the model in train is a very small OPT model. In actual use-cases, the model in train is usually the same as the VLLM model. But this is purposely done to make the script runnable in 4 x 80GB GPUs, and also to demonstrate this observation is independent on the size of the model in train.

Setting `shard_train_model=False`, the outputs are correct:

```

# text (0): We can visualize the spheres with radii 11, 13, and 19

# text (1): First, let's denote $c = \log_{2^b}(2^{100

# text (2): First, we need to find the dimensions of the rhombus. A key property of the rh

# text (3): First, I’ll start by simplifying the given equation. We are given the equation: $\sqrt

```

Setting `shard_train_model=True`, the outputs become garbled due to NaN's creeping into the tensors:

```

# - the strange characters caused by NaN's coming from TP reduce

# text (0): We can visualize the spheres with radii 11, 13, and 19

# text (1): !!!!!!!!!!!!!!!!!!!!

# text (2): !!!!!!!!!!!!!!!!!!!!

# text (3): !!!!!!!!!!!!!!!!!!!!

```

Reproduction code found in this [gist](https://gist.github.com/fabianlim/66dba9138d399f4c6c70674d92503444).

**Work-around**

In the actual use case, turning off FSDP for the model-in-train is out of the question. I have found another workaround, which is to set `max_num_seq=1`, which slows down my inference but at least still allows me to fit all models in the GPUs. But this is not ideal as there is no more continous batching

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | open | 2025-03-07T15:16:32Z | 2025-03-07T15:22:24Z | https://github.com/vllm-project/vllm/issues/14443 | [

"bug"

] | fabianlim | 0 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 508 | Have probles to get it working on a Devuan distribution. | Hello i am having this output on a Devuan (it directly did nt work on my old linux mint :C) python3 demo_cli.py

2020-08-24 22:00:26.726664: W tensorflow/stream_executor/platform/default/dso_loader.cc:59] Could not load dynamic library 'libcudart.so.10.1'; dlerror: libcudart.so.10.1: cannot open shared object file: No such file or directory

2020-08-24 22:00:26.726717: I tensorflow/stream_executor/cuda/cudart_stub.cc:29] Ignore above cudart dlerror if you do not have a GPU set up on your machine.

Traceback (most recent call last):

File "demo_cli.py", line 4, in <module>

from synthesizer.inference import Synthesizer

File "/home/src/voiceclone/Real-Time-Voice-Cloning-master/synthesizer/inference.py", line 1, in <module>

from synthesizer.tacotron2 import Tacotron2

File "/home/src/voiceclone/Real-Time-Voice-Cloning-master/synthesizer/tacotron2.py", line 3, in <module>

from synthesizer.models import create_model

File "/home/src/voiceclone/Real-Time-Voice-Cloning-master/synthesizer/models/__init__.py", line 1, in <module>

from .tacotron import Tacotron

File "/home/src/voiceclone/Real-Time-Voice-Cloning-master/synthesizer/models/tacotron.py", line 4, in <module>

from synthesizer.models.helpers import TacoTrainingHelper, TacoTestHelper

File "/home/src/voiceclone/Real-Time-Voice-Cloning-master/synthesizer/models/helpers.py", line 3, in <module>

from tensorflow.contrib.seq2seq import Helper

ModuleNotFoundError: No module named 'tensorflow.contrib'

seems somethi with the tensor flow version? the requieremnt subversion from the requirment.txt give me an error for that exact version, i just install the last one :c | closed | 2020-08-25T04:19:52Z | 2020-08-26T02:02:50Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/508 | [] | afantasialiberal | 2 |

kornia/kornia | computer-vision | 2,158 | Augmentation Sampling Speed Improvement | In general, for all samplings, I am thinking to use only one sampler for each uniform sampler. However, it will lose the ability to be customized for each operation. For example:

```python

class ColorJiggleGenerator(RandomGeneratorBase):

...

def make_samplers(self, device: torch.device, dtype: torch.dtype) -> None:

brightness = _range_bound(self.brightness, 'brightness', center=1.0, bounds=(0, 2), device=device, dtype=dtype)

contrast: Tensor = _range_bound(self.contrast, 'contrast', center=1.0, device=device, dtype=dtype)

saturation: Tensor = _range_bound(self.saturation, 'saturation', center=1.0, device=device, dtype=dtype)

hue: Tensor = _range_bound(self.hue, 'hue', bounds=(-0.5, 0.5), device=device, dtype=dtype)

_joint_range_check(brightness, "brightness", (0, 2))

_joint_range_check(contrast, "contrast", (0, float('inf')))

_joint_range_check(hue, "hue", (-0.5, 0.5))

_joint_range_check(saturation, "saturation", (0, float('inf')))

self.brightness_sampler = Uniform(brightness[0], brightness[1], validate_args=False)

self.contrast_sampler = Uniform(contrast[0], contrast[1], validate_args=False)

self.hue_sampler = Uniform(hue[0], hue[1], validate_args=False)

self.saturation_sampler = Uniform(saturation[0], saturation[1], validate_args=False)

self.randperm = partial(torch.randperm, device=device, dtype=dtype)

def forward(self, batch_shape: torch.Size, same_on_batch: bool = False) -> Dict[str, Tensor]:

batch_size = batch_shape[0]

_common_param_check(batch_size, same_on_batch)

_device, _dtype = _extract_device_dtype([self.brightness, self.contrast, self.hue, self.saturation])

brightness_factor = _adapted_rsampling((batch_size,), self.brightness_sampler, same_on_batch)

contrast_factor = _adapted_rsampling((batch_size,), self.contrast_sampler, same_on_batch)

hue_factor = _adapted_rsampling((batch_size,), self.hue_sampler, same_on_batch)

saturation_factor = _adapted_rsampling((batch_size,), self.saturation_sampler, same_on_batch)

return dict(

brightness_factor=brightness_factor.to(device=_device, dtype=_dtype),

contrast_factor=contrast_factor.to(device=_device, dtype=_dtype),

hue_factor=hue_factor.to(device=_device, dtype=_dtype),

saturation_factor=saturation_factor.to(device=_device, dtype=_dtype),

order=self.randperm(4).to(device=_device, dtype=_dtype).long(),

)

```

```python

class ColorJiggleGenerator(RandomGeneratorBase):

...

def make_samplers(self, device: torch.device, dtype: torch.dtype) -> None:

brightness = _range_bound(self.brightness, 'brightness', center=1.0, bounds=(0, 2), device=device, dtype=dtype)

contrast: Tensor = _range_bound(self.contrast, 'contrast', center=1.0, device=device, dtype=dtype)

saturation: Tensor = _range_bound(self.saturation, 'saturation', center=1.0, device=device, dtype=dtype)

hue: Tensor = _range_bound(self.hue, 'hue', bounds=(-0.5, 0.5), device=device, dtype=dtype)

_joint_range_check(brightness, "brightness", (0, 2))

_joint_range_check(contrast, "contrast", (0, float('inf')))

_joint_range_check(hue, "hue", (-0.5, 0.5))

_joint_range_check(saturation, "saturation", (0, float('inf')))

self.brightness_sampler = Uniform(brightness[0], brightness[1], validate_args=False)

self.contrast_sampler = Uniform(contrast[0], contrast[1], validate_args=False)

self.hue_sampler = Uniform(hue[0], hue[1], validate_args=False)

self.saturation_sampler = Uniform(saturation[0], saturation[1], validate_args=False)

self.randperm = partial(torch.randperm, device=device, dtype=dtype)

def forward(self, batch_shape: torch.Size, same_on_batch: bool = False) -> Dict[str, Tensor]:

batch_size = batch_shape[0]

_common_param_check(batch_size, same_on_batch)

_device, _dtype = _extract_device_dtype([self.brightness, self.contrast, self.hue, self.saturation])

brightness_factor = _adapted_rsampling((batch_size,), self.brightness_sampler, same_on_batch)

contrast_factor = _adapted_rsampling((batch_size,), self.contrast_sampler, same_on_batch)

hue_factor = _adapted_rsampling((batch_size,), self.hue_sampler, same_on_batch)

saturation_factor = _adapted_rsampling((batch_size,), self.saturation_sampler, same_on_batch)

return dict(

brightness_factor=brightness_factor.to(device=_device, dtype=_dtype),

contrast_factor=contrast_factor.to(device=_device, dtype=_dtype),

hue_factor=hue_factor.to(device=_device, dtype=_dtype),

saturation_factor=saturation_factor.to(device=_device, dtype=_dtype),

order=self.randperm(4).to(device=_device, dtype=_dtype).long(),

)

```

To something like:

```python

class ColorJiggleGenerator(RandomGeneratorBase):

def make_samplers(self, device: torch.device, dtype: torch.dtype) -> None:

self._brightness = _range_bound(self.brightness, 'brightness', center=1.0, bounds=(0, 2), device=device, dtype=dtype)

self._contrast: Tensor = _range_bound(self.contrast, 'contrast', center=1.0, device=device, dtype=dtype)

self._saturation: Tensor = _range_bound(self.saturation, 'saturation', center=1.0, device=device, dtype=dtype)

self._hue: Tensor = _range_bound(self.hue, 'hue', bounds=(-0.5, 0.5), device=device, dtype=dtype)

_joint_range_check(self._brightness, "brightness", (0, 2))

_joint_range_check(self._contrast, "contrast", (0, float('inf')))

_joint_range_check(self._hue, "hue", (-0.5, 0.5))

_joint_range_check(self._saturation, "saturation", (0, float('inf')))

self.randperm = partial(torch.randperm, device=device, dtype=dtype)

self.generic_sampler = Uniform(0, 1)

def forward(self, batch_shape: torch.Size, same_on_batch: bool = False) -> Dict[str, Tensor]:

batch_size = batch_shape[0]

_common_param_check(batch_size, same_on_batch)

_device, _dtype = _extract_device_dtype([self.brightness, self.contrast, self.hue, self.saturation])

generic_factors = _adapted_rsampling((batch_size * 4,), self.generic_sampler, same_on_batch).to(device=_device, dtype=_dtype)

brightness_factor = (generic_factors[:batch_size] - self._brightness[0]) / (self._brightness[1] - self._brightness[0])

return dict(

brightness_factor=brightness_factor,

contrast_factor=...,

hue_factor=...,

saturation_factor=...,

order=self.randperm(4).to(device=_device, dtype=_dtype).long(),

)

```

I think this might improve a bit of the sampling speed. | closed | 2023-01-19T12:48:05Z | 2023-01-26T11:55:05Z | https://github.com/kornia/kornia/issues/2158 | [

"help wanted"

] | shijianjian | 1 |

vllm-project/vllm | pytorch | 14,659 | [Bug]: `subprocess.CalledProcessError` when building docker image from source on AMD MI210 | ### Your current environment

I have trouble building docker image right now.

### 🐛 Describe the bug

The raised error:

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/cmd.py", line 339, in run_command

> 1913.1 self.distribution.run_command(command)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/dist.py", line 999, in run_command

> 1913.1 super().run_command(command)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/dist.py", line 1002, in run_command

> 1913.1 cmd_obj.run()

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/command/build.py", line 136, in run

> 1913.1 self.run_command(cmd_name)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/cmd.py", line 339, in run_command

> 1913.1 self.distribution.run_command(command)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/dist.py", line 999, in run_command

> 1913.1 super().run_command(command)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/dist.py", line 1002, in run_command

> 1913.1 cmd_obj.run()

> 1913.1 File "/app/vllm/setup.py", line 267, in run

> 1913.1 super().run()

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/command/build_ext.py", line 99, in run

> 1913.1 _build_ext.run(self)

> 1913.1 File "/usr/local/lib/python3.12/dist-packages/setuptools/_distutils/command/build_ext.py", line 365, in run

> 1913.1 self.build_extensions()

> 1913.1 File "/app/vllm/setup.py", line 238, in build_extensions

> 1913.1 subprocess.check_call(["cmake", *build_args], cwd=self.build_temp)

> 1913.1 File "/usr/lib/python3.12/subprocess.py", line 415, in check_call

> 1913.1 raise CalledProcessError(retcode, cmd)

> 1913.1 subprocess.CalledProcessError: Command '['cmake', '--build', '.', '-j=192', '--target=_moe_C', '--target=_rocm_C', '--target=_C']' returned non-zero exit status 1.

> ------

> Dockerfile.rocm:40

> --------------------

> 39 | # Build vLLM

> 40 | >>> RUN cd vllm \

> 41 | >>> && python3 -m pip install -r requirements/rocm.txt \

> 42 | >>> && python3 setup.py clean --all \

> 43 | >>> && if [ ${USE_CYTHON} -eq "1" ]; then python3 setup_cython.py build_ext --inplace; fi \

> 44 | >>> && python3 setup.py bdist_wheel --dist-dir=dist

> 45 | FROM scratch AS export_vllm

> --------------------

> ERROR: failed to solve: process "/bin/sh -c cd vllm && python3 -m pip install -r requirements/rocm.txt && python3 setup.py clean --all && if [ ${USE_CYTHON} -eq \"1\" ]; then python3 setup_cython.py build_ext --inplace; fi && python3 setup.py bdist_wheel --dist-dir=dist" did not complete successfully: exit code: 1

It seems that the error `subprocess.CalledProcessError` indicates that the `cmake` command executed within the Dockerfile failed.

**Are the arguments passed to `cmake` correct?**

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | closed | 2025-03-12T07:12:41Z | 2025-03-20T06:37:09Z | https://github.com/vllm-project/vllm/issues/14659 | [

"bug"

] | luciaganlulu | 1 |

Evil0ctal/Douyin_TikTok_Download_API | api | 313 | [BUG] Can't download video from douyin | I use the sample python code, then return the follow error when download the video

URL: https://www.douyin.com/video/6914948781100338440

ERROR

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/Users/jame/Code/home/video/download.py", line 12, in <module>

asyncio.run(hybrid_parsing(url=input("Paste Douyin/TikTok/Bilibili share URL here: ")))

File "/opt/homebrew/Cellar/python@3.11/3.11.5/Frameworks/Python.framework/Versions/3.11/lib/python3.11/asyncio/runners.py", line 190, in run

return runner.run(main)

^^^^^^^^^^^^^^^^

File "/opt/homebrew/Cellar/python@3.11/3.11.5/Frameworks/Python.framework/Versions/3.11/lib/python3.11/asyncio/runners.py", line 118, in run

return self._loop.run_until_complete(task)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/opt/homebrew/Cellar/python@3.11/3.11.5/Frameworks/Python.framework/Versions/3.11/lib/python3.11/asyncio/base_events.py", line 653, in run_until_complete

return future.result()

^^^^^^^^^^^^^^^

File "/Users/jame/Code/home/video/download.py", line 8, in hybrid_parsing

result = await api.hybrid_parsing(url)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jame/.local/share/virtualenvs/video-hF2q1l9e/lib/python3.11/site-packages/douyin_tiktok_scraper/scraper.py", line 467, in hybrid_parsing

data = await self.get_douyin_video_data(video_id) if url_platform == 'douyin' \

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jame/.local/share/virtualenvs/video-hF2q1l9e/lib/python3.11/site-packages/tenacity/_asyncio.py", line 88, in async_wrapped

return await fn(*args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jame/.local/share/virtualenvs/video-hF2q1l9e/lib/python3.11/site-packages/tenacity/_asyncio.py", line 47, in __call__

do = self.iter(retry_state=retry_state)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/jame/.local/share/virtualenvs/video-hF2q1l9e/lib/python3.11/site-packages/tenacity/__init__.py", line 326, in iter

raise retry_exc from fut.exception()

tenacity.RetryError: RetryError[<Future at 0x103763b90 state=finished raised ValueError>] | closed | 2023-11-02T08:57:37Z | 2024-02-07T03:45:27Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/313 | [

"BUG",

"enhancement"

] | nhannguyentrong | 6 |

WZMIAOMIAO/deep-learning-for-image-processing | deep-learning | 72 | predict issue | 模型训练完成后,进行predict时出现如下操作:

Traceback (most recent call last):

File "/Users/mengxiangyu/Desktop/faster_rcnn/predict.py", line 47, in <module>

model.load_state_dict(torch.load(train_weights)["model"])

File "/Users/mengxiangyu/anaconda3/envs/th1.6/lib/python3.7/site-packages/torch/serialization.py", line 577, in load

with _open_zipfile_reader(opened_file) as opened_zipfile:

File "/Users/mengxiangyu/anaconda3/envs/th1.6/lib/python3.7/site-packages/torch/serialization.py", line 241, in __init__

super(_open_zipfile_reader, self).__init__(torch._C.PyTorchFileReader(name_or_buffer))

RuntimeError: [enforce fail at inline_container.cc:144] . PytorchStreamReader failed reading zip archive: failed finding central directory

是什么原因,是否是加载的模型有问题 | closed | 2020-10-27T03:19:56Z | 2020-11-07T03:35:35Z | https://github.com/WZMIAOMIAO/deep-learning-for-image-processing/issues/72 | [] | menggerSherry | 1 |

sammchardy/python-binance | api | 717 | Client.get_all_coins_info() method missing? | **Describe the bug**

Client.get_all_coins_info() method is missing (its not shown in autocomplete and if used causes 'AttributeError: 'Client' object has no attribute 'get_all_coins_info'

**To Reproduce**

```

import binance.client as binance

client = binance.Client()

x = client.get_all_coins_info()

```

**Expected behavior**

Expect to see the response as specified in the docs: [link](https://python-binance.readthedocs.io/en/latest/binance.html?highlight=get_all_coins_info#binance.client.Client.get_all_coins_info)

**Environment (please complete the following information):**

- Python version: 3.9.1

- Virtual Env: conda

- OS: Windows 10

- python-binance version: 0.7.5

**Logs or Additional context**

N/A

| open | 2021-03-02T22:27:08Z | 2021-04-07T21:56:17Z | https://github.com/sammchardy/python-binance/issues/717 | [] | BGriffy78 | 4 |

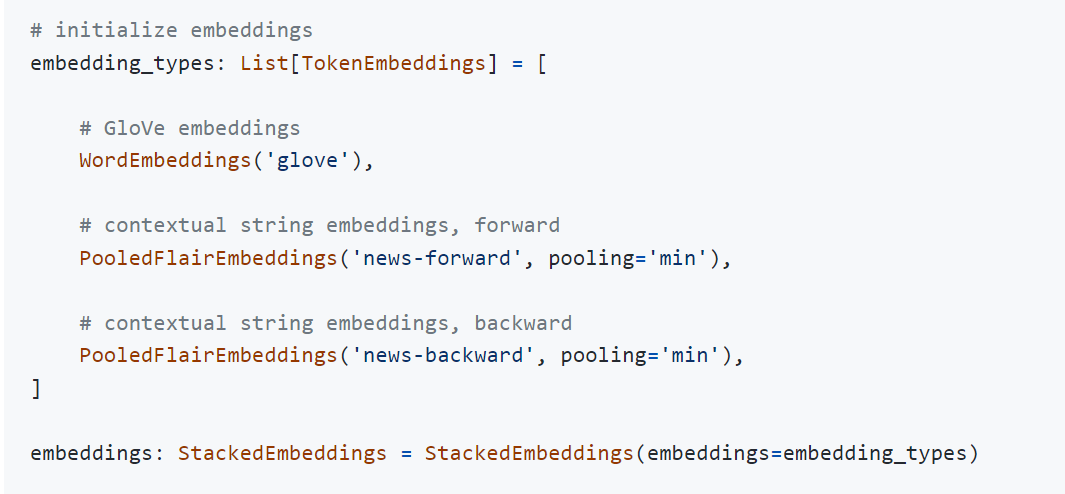

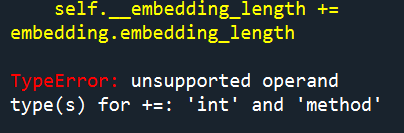

flairNLP/flair | pytorch | 2,742 | StackedEmbeddings with PooledFlairEmbeddings returning TypeError | **Describe the bug**

While running the given code for the best configuration for CONLL-03 NER on English at the following page: [link](https://github.com/flairNLP/flair/blob/b3797096742699997e77d12a66c82310361990a4/resources/docs/EXPERIMENTS.md) I get a TypeError (see screenshot). I have tested out several embeddings (Word and Flair) and saw that this TypeError is only occurring when running with PooledFlair. I have controlled the source code where the error is (token.py), but could not come up with a possible solution.

**Screenshots**

**Environment:**

- Windows

- python 3.10

- flair -0.11

| closed | 2022-04-25T13:36:46Z | 2022-05-04T11:45:41Z | https://github.com/flairNLP/flair/issues/2742 | [

"bug"

] | Nuveyla | 2 |

Lightning-AI/LitServe | rest-api | 286 | Example in documentation on how to setup an OpenAI-spec API with LlamaIndex-RAG | ## 🚀 Feature

A new page of documentation explaining how to expose an LlamaIndex RAG using an OpenAI-compatible API.

### Motivation

It took me a good 6 hours to put together these two tutorials: [LlamaIndex RAG API](https://lightning.ai/lightning-ai/studios/deploy-a-private-llama-3-1-rag-api) and [OpenAI spec](https://lightning.ai/docs/litserve/features/open-ai-spec) to expose my LlamaIndex app with an OpenAI-spec API. Maybe I'm a bit stupid but I think this should be a pretty common use-case so I wanted to write a new page in Litserve's documentation. But I couldn't find the docs source code here so I'm writing an issue instead.

## Code

### server.py

```python

from simple_llm import SimpleLLM

import litserve as ls

class LlamaIndexAPI(ls.LitAPI):

def setup(self, device):

self.llm = SimpleLLM()

def predict(self, messages):

for token in self.llm.stream(messages):

yield token

if __name__ == "__main__":

api = LlamaIndexAPI()

server = ls.LitServer(api, spec=ls.OpenAISpec(), stream=True)

server.run(port=8000)

```

### simple_llm.py

```python

from llama_index.llms.openai import OpenAI

from llama_index.core import SimpleDirectoryReader, VectorStoreIndex

from llama_index.core.llms import ChatMessage

class SimpleLLM(object):

def __init__(self):

reader = SimpleDirectoryReader(input_dir="data")

docs = reader.load_data()

index = VectorStoreIndex.from_documents(docs, show_progress=True)

llm = OpenAI(model="gpt-4o-mini", temperature=0)

self.engine = index.as_chat_engine(streaming=True, similarity_top_k=2, llm=llm)

def stream(self, messages_dict):

messages = [

ChatMessage(

role=message["role"],

content=message["content"],

)

for message in messages_dict

]

return self.engine.stream_chat(

messages[-1].content, chat_history=messages[:-1]

).response_gen

```

### test.py

```python

from openai import OpenAI

endpoint = "http://localhost:8000/v1"

client = OpenAI(base_url=endpoint, api_key="lit")

response = client.chat.completions.create(

model="lit",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Give me your favourite colour"},

{"role": "assistant", "content": "I quite like green."},

{"role": "user", "content": "Why is it so?."},

],

stream=True

)

for chunk in response:

print(chunk.choices[0].delta.content)

``` | closed | 2024-09-22T16:11:01Z | 2024-10-16T09:59:16Z | https://github.com/Lightning-AI/LitServe/issues/286 | [

"enhancement",

"help wanted"

] | PierreMesure | 4 |

lexiforest/curl_cffi | web-scraping | 143 | what the acurl parameter gives and affects? | what the acurl parameter gives and affects? | closed | 2023-10-17T20:40:07Z | 2023-10-20T11:21:14Z | https://github.com/lexiforest/curl_cffi/issues/143 | [] | r00t-Taurus | 1 |

kynan/nbstripout | jupyter | 159 | `--dry-run` should exit non-0 if files would be updated | Using `nbstripout` as a pre-commit hook with the `--dry-run` option so the verification fails with a list of files that need to be updated **without** actually updating the files. But `--dry-run` always exits with `0` (success), which means the hook doesn't work with `--dry-run`.