repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

plotly/plotly.py | plotly | 4,395 | plotly express showing Unicode characters with to_json() | I took some code from the plotly examples on the website and ran it in a notebook.

```py

import plotly.express as px

df = px.data.tips()

fig = px.histogram(df, x="total_bill")

fig.show()

(fig.to_json())[:150]

```

The hover text in the image that shows up in notebook is fine - but when I export the JSON an use it on my website there are unicode characters present in the JSON:

```

'{"data":[{"alignmentgroup":"True","bingroup":"x","hovertemplate":"total_bill=%{x}\\u003cbr\\u003ecount=%{y}\\u003cextra\\u003e\\u003c\\u002fextra\\u003e","le'

```

| closed | 2023-10-25T18:22:38Z | 2024-07-11T17:18:52Z | https://github.com/plotly/plotly.py/issues/4395 | [] | AbdealiLoKo | 1 |

sqlalchemy/sqlalchemy | sqlalchemy | 10,675 | syntax error with mysql bulk update via INSERT ... SELECT ... ON DUPLICATE KEY UPDATE | ### Discussed in https://github.com/sqlalchemy/sqlalchemy/discussions/10514

<div type='discussions-op-text'>

<sup>Originally posted by **anentropic** October 20, 2023</sup>

I am trying to bulk update via an insert on duplicate, but using `from_select` (instead of list of values as seen here https://github.com/sqlalchemy/sqlalchemy/discussions/9328)

I am getting error from mysql like:

```sql

(MySQLdb.ProgrammingError) (1064, "You have an error in your SQL syntax; check the manual that corresponds to

your MySQL server version for the right syntax to use near

'AS new ON DUPLICATE KEY UPDATE dealer_dealer_name = new.dealer_dealer_name, cust' at line 3")

[SQL: INSERT INTO mytable (...) SELECT dealer_dealer_names.value AS dealer_dealer_name, ...

FROM mytable

LEFT OUTER JOIN etl_anonymised_company_name AS dealer_dealer_names ON md5(mytable.dealer_dealer_name) = dealer_dealer_names.`key`

...<more similar joins>...

WHERE mytable.last_modified_date >= %s AND mytable.last_modified_date < %s

ORDER BY mytable.last_modified_date

AS new

ON DUPLICATE KEY UPDATE dealer_dealer_name = new.dealer_dealer_name, ...]

```

my sqlalchemy looks like:

```python

insert_clause = insert(MyTable).from_select(

[col.name for col in insert_columns],

make_select_query(insert_columns),

)

update_query = (

insert_clause.on_duplicate_key_update({

col_name: getattr(insert_clause.inserted, col_name)

for col_name in update_columns

})

)

with engine.begin() as conn:

result = conn.execute(update_query)

```

mysql error seems to imply it doesn't like how sqlalchemy has aliased the select query `AS new` (it's not something I have done explicitly in my `select` instance)

if I print `str(update_query)` it looks different, there's no select alias and the field updates look like `ON DUPLICATE KEY UPDATE dealer_dealer_name = VALUES(dealer_dealer_name)`

I realise this isn't a minimal reproducible case at the moment, just wondered if there's something obvious I should be doing differently</div> | open | 2023-11-22T19:55:10Z | 2023-11-22T19:55:10Z | https://github.com/sqlalchemy/sqlalchemy/issues/10675 | [

"bug",

"mysql",

"PRs (with tests!) welcome",

"dml"

] | CaselIT | 0 |

deepinsight/insightface | pytorch | 2,188 | [ONNXRuntimeError] : 1 : FAIL : Non-zero status code returned while running BatchNormalization node. | I got this error while testing my new model in http://iccv21-mfr.com/ server. I don't know the root of the problem. Is the problem caused by the model to onnx converter or the version of onnxruntime that is on the server? | open | 2022-12-07T01:06:31Z | 2022-12-08T05:33:38Z | https://github.com/deepinsight/insightface/issues/2188 | [] | Sengli11 | 1 |

Kanaries/pygwalker | pandas | 389 | Pygwalker in Streamlit Python 3.9 | Hi,

Has anyone tested pygwalker in streamlit with python 3.9 in amazon redshift?

Locally, the code runs well, but when deployed into the cloud, we get the below error.

All ideas appreciated, thanks!

ModuleNotFoundError: No Module named '_sqlite3'

| closed | 2024-01-10T13:03:50Z | 2024-01-18T00:54:14Z | https://github.com/Kanaries/pygwalker/issues/389 | [

"fixed but needs feedback"

] | ghost | 1 |

errbotio/errbot | automation | 1,216 | Error in the docs reported by Google search index. | http://errbot.io/en/4.2/_modules/errbot/backends/test.html is probably referenced somewhere but points to nothing. | closed | 2018-05-17T11:48:00Z | 2020-01-19T04:30:42Z | https://github.com/errbotio/errbot/issues/1216 | [

"type: documentation"

] | gbin | 1 |

NullArray/AutoSploit | automation | 963 | Divided by zero exception339 | Error: Attempted to divide by zero.339 | closed | 2019-04-19T16:03:52Z | 2019-04-19T16:35:38Z | https://github.com/NullArray/AutoSploit/issues/963 | [] | AutosploitReporter | 0 |

supabase/supabase-py | flask | 395 | Incorrect padding when setting session from URL encoded access_token | **Describe the bug**

I'm trying to handle the redirects for verify-user and password reset. I have grabbed the access_token and refresh_token from the URL, passed it to the function supabase.auth.set_session(access_token, refresh_token) and I immediately get an 'incorrect padding' error.

I can decode the tokens on jwt.io no problem so was confused on the issue. Anyway, GPT4 came up with a custom solution that actually worked:

error:

```

access_token = 'foo'

refresh_token = 'bar'

session = supabase.auth.set_session(access_token, refresh_token)

response = supabase.auth.update_user({"password": new_password}

```

Potential solution:

```

def custom_decode_jwt_payload(self, token: str):

_, payload, _ = token.split(".")

payload += "=" * (-len(payload) % 4)

payload = base64.urlsafe_b64decode(payload)

return json.loads(payload)

SyncGoTrueClient._decode_jwt = custom_decode_jwt_payload

session = supabase.auth.set_session(access_token, refresh_token)

response = supabase.auth.update_user({"password": new_password})

```

**To Reproduce**

Steps to reproduce the behavior:

```

access_token = 'foo'

refresh_token = 'bar'

session = supabase.auth.set_session(access_token, refresh_token)

response = supabase.auth.update_user({"password": new_password})

```

**Expected behavior**

Expecting a session to be made but its erroring with incorrect_padding

**Screenshots**

If applicable, add screenshots to help explain your problem.

**Desktop (please complete the following information):**

- OS: [e.g. iOS]

- Browser [e.g. chrome, safari]

- Version [e.g. 22]

**Smartphone (please complete the following information):**

- Device: [e.g. iPhone6]

- OS: [e.g. iOS8.1]

- Browser [e.g. stock browser, safari]

- Version [e.g. 22]

**Additional context**

Add any other context about the problem here.

| closed | 2023-03-16T12:49:32Z | 2023-09-17T15:12:05Z | https://github.com/supabase/supabase-py/issues/395 | [] | philmade | 2 |

tensorpack/tensorpack | tensorflow | 605 | SyncMultiGPUTrainerReplicated-shared GPUs hang | In short: two tensorpack processes, both using `SyncMultiGPUTrainerReplicated` and two same GPUs, hang.

To reproduce:

1. Choose an example using `SyncMultiGPUTrainerReplicated`, e.g. `tensorpack/examples/ResNet/imagenet-resnet.py`.

To make GPUs sharable, prevent one process from consuming all memory by appending the following snippet at the top of the script:

```python

import tensorflow as tf

tmp_config = tf.ConfigProto()

tmp_config.gpu_options.allow_growth = True

tmp_session = tf.Session(config=tmp_config)

```

2. Run the script twice.

2.a. Run once:

```bash

gpu=0,1; CUDA_VISIBLE_DEVICES=${gpu} python imagenet-resnet.py --gpu ${gpu} --fake

```

2.b. Wait until the first process completes a few epochs, then run the same line again.

As soon as the second process starts its training, both processes hang, making it impossible to kill any of them or free the GPUs' memory and util, without any error report. The only solution is to restart the server.

Don't know if `SyncMultiGPUTrainerReplicated` or the `allow_growth` snippet is misused in the case or it's a bug.

Thanks for any help! | closed | 2018-01-23T15:39:17Z | 2018-06-15T08:05:20Z | https://github.com/tensorpack/tensorpack/issues/605 | [

"upstream issue"

] | arrowrowe | 3 |

adbar/trafilatura | web-scraping | 24 | Only one author extracted, even when there are multiple | Example article: https://www.nytimes.com/2020/10/19/us/politics/trump-ads-biden-election.html

This is authored by _Maggie Haberman, Shane Goldmacher and Michael Crowley_, but trafilatura will only show the first one. They are all in the JSON-LD so I think they should all be extracted, and author should be an array. | closed | 2020-10-22T18:04:34Z | 2020-11-06T15:20:48Z | https://github.com/adbar/trafilatura/issues/24 | [] | atestu | 4 |

man-group/arctic | pandas | 71 | Benchmarking | Hello,

it will be nice to provide some benchmarks files

nose-timer can help https://github.com/mahmoudimus/nose-timer

Here is an example which can be extend to Arctic

``` python

import time

import numpy as np

import numpy.ma as ma

import pandas as pd

pd.set_option('max_rows', 10)

pd.set_option('expand_frame_repr', False)

pd.set_option('max_columns', 12)

import pymongo

import monary

import xray

URI_DEFAULT = 'mongodb://127.0.0.1:27017'

N_DEFAULT = 50000

def ticks(N):

idx = pd.date_range('20150101',freq='ms',periods=N)

bids = np.random.uniform(0.8, 1.0, N)

spread = np.random.uniform(0, 0.0001, N)

asks = bids + spread

df_ticks = pd.DataFrame({'Bid': bids, 'Ask': asks}, index=idx)

df_ticks['Symbol'] = 'CUR1/CUR2'

df_ticks = df_ticks.reset_index()

return df_ticks

class Test00Pandas:

@classmethod

def setupClass(cls):

N = N_DEFAULT

cls.df = ticks(N)

def test_01_to_dict_01_records(self):

d = self.df.to_dict('records')

def test_01_to_dict_02_split(self):

d = self.df.to_dict('split')

class Test01PyMongoPandasDataFrame:

"""

PyMongo and Pandas DataFrame

"""

@classmethod

def setupClass(cls):

N = N_DEFAULT

URI = URI_DEFAULT

cls.db_name = 'benchdb_pymongo'

cls.collection_name = 'ticks'

cls.df = ticks(N)

cls.columns = ['Bid', 'Ask']

cls.df = cls.df[cls.columns]

cls.client = pymongo.MongoClient(URI)

cls.client.drop_database(cls.db_name)

cls.collection = cls.client[cls.db_name][cls.collection_name]

def setUp(self):

pass

def tearDown(self):

pass

def test_01_store(self):

print(self.df)

self.collection.insert_many(self.df.to_dict('records'))

#time.sleep(2)

def test_02_retrieve(self):

df_retrieved = pd.DataFrame(list(self.client[self.db_name][self.collection_name].find()))

print(df_retrieved)

class Test02MonaryPandasDataFrame:

"""

Monary and Pandas DataFrame

"""

@classmethod

def setupClass(cls):

N = N_DEFAULT

URI = URI_DEFAULT

cls.db_name = 'benchdb_monary'

cls.collection_name = 'ticks'

cls.df = ticks(N)

cls.columns = ['Bid', 'Ask']

cls._client = pymongo.MongoClient(URI)

cls._client.drop_database(cls.db_name)

cls.m = monary.Monary(URI)

def test_01_store(self):

#ma.masked_array(self.df['Symbol'].values, self.df['Symbol'].isnull()),

mparams = monary.MonaryParam.from_lists([

ma.masked_array(self.df['Bid'].values, self.df['Bid'].isnull()),

ma.masked_array(self.df['Ask'].values, self.df['Ask'].isnull())],

self.columns)

self.m.insert(self.db_name, self.collection_name, mparams)

def test_02_retrieve(self):

arrays = self.m.query(self.db_name, self.collection_name, {}, self.columns, ['float64', 'float64'])

print(arrays)

df_retrieved = pd.DataFrame(arrays)

print(df_retrieved)

class Test03MonaryXrayDataset:

"""

Monary and xray

https://bitbucket.org/djcbeach/monary/issues/21/use-xraydataset-with-monary

"""

@classmethod

def setupClass(cls):

N = N_DEFAULT

URI = URI_DEFAULT

cls.db_name = 'benchdb_monary_xray'

cls.collection_name = 'ticks'

cls._df = ticks(N)

cls.ds = xray.Dataset.from_dataframe(cls._df)

cls.columns = ['Bid', 'Ask']

cls.ds = cls.ds[cls.columns]

cls._client = pymongo.MongoClient(URI)

cls._client.drop_database(cls.db_name)

cls.m = monary.Monary(URI)

def test_01_store(self):

lst_cols = list(map(lambda col: self.ds[col].to_masked_array(), self.ds.data_vars))

mparams = monary.MonaryParam.from_lists(lst_cols, list(self.ds.data_vars), ['float64', 'float64'])

self.m.insert(self.db_name, self.collection_name, mparams)

class Test04OdoPandasDataFrame:

"""

Pandas DataFrame and odo

"""

@classmethod

def setupClass(cls):

N = N_DEFAULT

URI = URI_DEFAULT

cls.db_name = 'benchdb_odo'

cls.collection_name = 'ticks'

cls.df = ticks(N)

cls.columns = ['Bid', 'Ask']

cls.df = cls.df[cls.columns]

cls.client = pymongo.MongoClient(URI)

cls.client.drop_database(cls.db_name)

cls.collection = cls.client[cls.db_name][cls.collection_name]

def test_01_store(self):

odo(self.df, self.collection)

def test_02_retrieve(self):

df_retrieved = odo(self.collection, pd.DataFrame)

```

it shows:

```

test_mongodb.Test02MonaryPandasDataFrame.test_02_retrieve: 3.7676s

test_mongodb.Test01PyMongoPandasDataFrameToDictRecords.test_01_store: 3.1900s

test_mongodb.Test04OdoPandasDataFrame.test_01_store: 3.0213s

test_mongodb.Test00Pandas.test_01_to_dict_01_records: 1.6180s

test_mongodb.Test02MonaryPandasDataFrame.test_01_store: 1.3025s

test_mongodb.Test03MonaryXrayDataset.test_01_store: 1.2680s

test_mongodb.Test00Pandas.test_01_to_dict_02_split: 1.2489s

test_mongodb.Test01PyMongoPandasDataFrameToDictRecords.test_02_retrieve: 0.5064s

test_mongodb.Test04OdoPandasDataFrame.test_02_retrieve: 0.4867s

```

Pandas uses vbench https://github.com/pydata/vbench

| closed | 2015-12-27T11:03:30Z | 2016-04-29T12:08:58Z | https://github.com/man-group/arctic/issues/71 | [

"enhancement"

] | femtotrader | 3 |

microsoft/unilm | nlp | 1,526 | How to perform inference on a single image using fine-tuned LayoutLMv3 model? | I have fine-tuned a LayoutLMv3 model and now I want to utilize it for layout analysis and information extraction on a single image. I have successfully trained this model, but I'm facing some difficulties during the inference phase.

| open | 2024-04-19T09:00:11Z | 2024-07-26T06:09:08Z | https://github.com/microsoft/unilm/issues/1526 | [] | laminggg | 1 |

reiinakano/scikit-plot | scikit-learn | 102 | Add numerical digit precision parameter | Hi there,

I was wondering if there is a way of defining the digit numerical precision of values such as roc_auc.

To see what I mean, let me point you to `sklearn` API such as for [Classification Report](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.classification_report.html), where the parameter `digits` defines to what precision the values are presented.

This is specially important, for example, when one is training classifiers that are already in the top, say, +99.5% of accuracy/precision/recall/auc and we want to study differences amongst classifiers that are competing at the 0.1% level.

Namely I noticed that digit precision is not consistent throughout `scikit-plot`, where `roc_auc` is presenting three digit precision, whil `precision_recall` is presenting four digit precision.

As you can imagine, for scientific publication purposes it's a bit *inelegant* to present bound metrics with different precision.

Thanks! | open | 2019-05-31T07:51:25Z | 2019-07-08T10:02:12Z | https://github.com/reiinakano/scikit-plot/issues/102 | [

"enhancement",

"help wanted"

] | romanovzky | 1 |

AirtestProject/Airtest | automation | 1,206 | airtest ios连接的api: connect_device无法连接多台ios | 现在airtest脚本api:connect_device连接ios设备时只能给定127.0.0.1:8100,无法连接多台ios设备?通过tidevice -u uuid wdaproxy启动多台ios设备的wda后,每个设备wda的端口映射到macOs机器不同端口,connect_device参数给定127.0.0.1:不同的端口,实际都是连接的127.0.0.1:8100的设备,无法连接多台设备 | open | 2024-04-16T10:09:16Z | 2024-04-26T07:02:17Z | https://github.com/AirtestProject/Airtest/issues/1206 | [] | csushiye | 4 |

daleroberts/itermplot | matplotlib | 28 | Pandas | I was trying to follow along with the pandas plot tutorial, but none of the examples work.

Perhaps Pandas is not supported?

[pandas visualization tutorial](https://pandas.pydata.org/pandas-docs/version/0.18.1/visualization.html) | closed | 2017-11-25T15:17:12Z | 2021-09-07T13:27:42Z | https://github.com/daleroberts/itermplot/issues/28 | [] | michaelfresco | 3 |

csurfer/pyheat | matplotlib | 7 | Integration with Jupyter Notebooks | It would be really cool to integrate this within Jupyter notebooks through a magic command:

| closed | 2017-02-17T13:17:58Z | 2017-08-19T02:21:12Z | https://github.com/csurfer/pyheat/issues/7 | [

"enhancement"

] | ozroc | 2 |

mirumee/ariadne | api | 165 | Propose a pub/sub contract for resolvers and subscriptions | I propose that we propose a contract/interface that could be implemented over different transports to aid application authors with using pub/sub and observing changes.

I imagine the common pattern would be similar to the one below:

```python

from graphql.pyutils import EventEmitter, EventEmitterAsyncIterator

class PubSub:

def __init__(self):

self.emitter = EventEmitter()

def subscribe(self, event_type):

return EventEmitterAsyncIterator(self.emitter, event_type)

async def publish(self, event_type, message):

raise NotImplementedError()

```

A dummy implementation (useful for local development) could hook the `publish` method right into the emitter:

```python

class DummyPubSub(PubSub):

async def publish(self, event_type, message):

self.emitter.emit(event_type, message)

```

Another implementation could hook it to a Redis server:

```python

import asyncio

import aioredis

async def start_listening(redis, channel, emitter):

listener = await redis.subscribe(channel)

while (await listener.wait_message()):

data = await listener.get_json()

event_type, message = data

emitter.emit(event_type, message)

async def stop_listening(redis, channel):

await redis.unsubscribe(channel)

class RedisPubSub(PubSub):

def __init__(self, redis, channel):

super().__init__()

self.redis = redis

self.channel = channel

asyncio.ensure_future(start_listening(self.redis, self.channel, self.emitter))

def __del__(self):

asyncio.ensure_future(stop_listening(self.redis, self.channel))

async def publish(self, event_type, message):

await self.redis.publish_json(self.channel, [event_type, message])

```

Similar implementations could happen for AWS SNS+SQS, Google Could Pub/Sub etc.

The tricky part is how to help with passing the object between resolvers and subscriptions. I think the most natural way would be to add it to the context. If we can come up with a standard name then it's easy to write a decorator that automatically unpacks it into a keyword argument:

```python

@mutation.field("updateProduct")

@with_pubsub

def resolve_update_product(parent, info, pubsub):

...

pubsub.publish("product_updated", product.id)

``` | closed | 2019-05-08T16:03:09Z | 2024-01-23T17:43:34Z | https://github.com/mirumee/ariadne/issues/165 | [

"enhancement",

"decision needed"

] | patrys | 3 |

deeppavlov/DeepPavlov | tensorflow | 857 | Download weights from command line | Hi,

Is there a way to download the weights from command line?

For example, when I do `python -m deeppavlov install squad_bert`, it only downloads the code, not the weights.

Lucas | closed | 2019-05-29T09:35:30Z | 2019-05-29T09:47:08Z | https://github.com/deeppavlov/DeepPavlov/issues/857 | [] | lcswillems | 5 |

sqlalchemy/alembic | sqlalchemy | 1,096 | Bug in docs example throws Error InvalidSchemaName even if the schema name is valid and exists. | **Describe the bug**

Alembic tenant is not case sensitive and, if a schema name has uppercase chars, it throws an error (schema not found).

I have used this [alembic tutorial (cookbook) on using support for multiple schemas in Postgres](https://alembic.sqlalchemy.org/en/latest/cookbook.html#rudimental-schema-level-multi-tenancy-for-postgresql-databases).

I use Postgres and my schema name is `'John'`.

If I run `alembic -x tenant=John upgrade head` I get an error:

`sqlalchemy.exc.ProgrammingError: (psycopg2.errors.InvalidSchemaName) no schema has been selected to create in...`

It works if my schema name is lowercase 'john' and If I run `alembic -x tenant=john upgrade head` .

**How to fix it:**

In this line of the cookbook example:

`connection.execute("set search_path to %s" % current_tenant)`

wrap the `%s` into quotes or double quotes like this:

`connection.execute("set search_path to '%s'" % current_tenant)`

or this

`connection.execute('set search_path to "%s"' % current_tenant)`

Solution hint:

This [answer](https://dba.stackexchange.com/a/195560/200208) on stackexchange.

**Expected behavior**

The tenant (schema name) should be case sensitive.

**To Reproduce**

Use this [alembic tutorial (cookbook) on using support for multiple schemas in Postgres](https://alembic.sqlalchemy.org/en/latest/cookbook.html#rudimental-schema-level-multi-tenancy-for-postgresql-databases).

To get an error:

Create a Postgres schema named `'John'`.

Run `alembic -x tenant=John upgrade head`

To get an succeed:

Create a Postgres schema named `'john'`.

Run `alembic -x tenant=john upgrade head`

**Error**

```

(venv) PS C:\Users\Asus\Desktop\kerp_dev\backend\apps> alembic -x tenant=John upgrade head

INFO [alembic.runtime.migration] Context impl PostgresqlImpl.

INFO [alembic.runtime.migration] Will assume transactional DDL.

Traceback (most recent call last):

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 1802, in _execute_context

self.dialect.do_execute(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\default.py", line 732, in do_execute

cursor.execute(statement, parameters)

psycopg2.errors.InvalidSchemaName: no schema has been selected to create in

LINE 2: CREATE TABLE alembic_version (

^

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "C:\Users\Asus\AppData\Local\Programs\Python\Python310\lib\runpy.py", line 196, in _run_module_as_main

return _run_code(code, main_globals, None,

File "C:\Users\Asus\AppData\Local\Programs\Python\Python310\lib\runpy.py", line 86, in _run_code

exec(code, run_globals)

File "C:\Users\Asus\Desktop\kerp_dev\venv\Scripts\alembic.exe\__main__.py", line 7, in <module>

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\config.py", line 590, in main

CommandLine(prog=prog).main(argv=argv)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\config.py", line 584, in main

self.run_cmd(cfg, options)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\config.py", line 561, in run_cmd

fn(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\command.py", line 322, in upgrade

script.run_env()

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\script\base.py", line 569, in run_env

util.load_python_file(self.dir, "env.py")

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\util\pyfiles.py", line 94, in load_python_file

module = load_module_py(module_id, path)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\util\pyfiles.py", line 110, in load_module_py

spec.loader.exec_module(module) # type: ignore

File "<frozen importlib._bootstrap_external>", line 883, in exec_module

File "<frozen importlib._bootstrap>", line 241, in _call_with_frames_removed

File "C:\Users\Asus\Desktop\kerp_dev\backend\apps\alembic\env.py", line 99, in <module>

run_migrations_online()

File "C:\Users\Asus\Desktop\kerp_dev\backend\apps\alembic\env.py", line 93, in run_migrations_online

context.run_migrations()

File "<string>", line 8, in run_migrations

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\runtime\environment.py", line 853, in run_migrations

self.get_context().run_migrations(**kw)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\runtime\migration.py", line 606, in run_migrations

self._ensure_version_table()

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\alembic\runtime\migration.py", line 542, in _ensure_version_table

self._version.create(self.connection, checkfirst=True)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\sql\schema.py", line 950, in create

bind._run_ddl_visitor(ddl.SchemaGenerator, self, checkfirst=checkfirst)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 2113, in _run_ddl_visitor

visitorcallable(self.dialect, self, **kwargs).traverse_single(element)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\sql\visitors.py", line 524, in traverse_single

return meth(obj, **kw)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\sql\ddl.py", line 893, in visit_table

self.connection.execute(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 1289, in execute

return meth(self, multiparams, params, _EMPTY_EXECUTION_OPTS)

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\sql\ddl.py", line 80, in _execute_on_connection

return connection._execute_ddl(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 1381, in _execute_ddl

ret = self._execute_context(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 1845, in _execute_context

self._handle_dbapi_exception(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 2026, in _handle_dbapi_exception

util.raise_(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\util\compat.py", line 207, in raise_

raise exception

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\base.py", line 1802, in _execute_context

self.dialect.do_execute(

File "C:\Users\Asus\Desktop\kerp_dev\venv\lib\site-packages\sqlalchemy\engine\default.py", line 732, in do_execute

cursor.execute(statement, parameters)

sqlalchemy.exc.ProgrammingError: (psycopg2.errors.InvalidSchemaName) no schema has been selected to create in

LINE 2: CREATE TABLE alembic_version (

^

[SQL:

CREATE TABLE alembic_version (

version_num VARCHAR(32) NOT NULL,

CONSTRAINT alembic_version_pkc PRIMARY KEY (version_num)

)

]

(Background on this error at: https://sqlalche.me/e/14/f405)

```

**Versions.**

- OS: Windows 11 (build 22000.1042)

- Python: 3.10.7

- Alembic: 1.8.1

- SQLAlchemy: 1.4.29

- Database: Postgres 14

- DBAPI: psycopg2

| closed | 2022-10-09T10:58:11Z | 2022-10-17T12:53:35Z | https://github.com/sqlalchemy/alembic/issues/1096 | [

"bug",

"documentation"

] | alispa | 3 |

seleniumbase/SeleniumBase | pytest | 2,724 | SSL Errors on MacOS when downloading chromedriver | Running Sonoma 14.4.1, reset to factory defaults, with python 3.12.2

The error:

`ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1000)`

Running `certifi.where()` yields the cacert.pem

`<redacted for length>/lib/python3.12/site-packages/certifi/cacert.pem`

which (seems to) have valid certs upon visual inspection.

When I build the app bundle with Py2app, and then run the executable inside

<details>

<summary>I get this traceback</summary>

```python

Warning: uc_driver not found. Getting it now:

*** chromedriver to download = 124.0.6367.91 (Latest Stable)

Traceback (most recent call last):

File "urllib/request.pyc", line 1344, in do_open

File "http/client.pyc", line 1331, in request

File "http/client.pyc", line 1377, in _send_request

File "http/client.pyc", line 1326, in endheaders

File "http/client.pyc", line 1085, in _send_output

File "http/client.pyc", line 1029, in send

File "http/client.pyc", line 1472, in connect

File "ssl.pyc", line 455, in wrap_socket

File "ssl.pyc", line 1042, in _create

File "ssl.pyc", line 1320, in do_handshake

ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1000)

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "seleniumbase/core/browser_launcher.pyc", line 3540, in get_local_driver

File "seleniumbase/undetected/__init__.pyc", line 130, in __init__

File "seleniumbase/undetected/patcher.pyc", line 108, in auto

File "seleniumbase/undetected/patcher.pyc", line 126, in fetch_release_number

File "urllib/request.pyc", line 215, in urlopen

File "urllib/request.pyc", line 515, in open

File "urllib/request.pyc", line 532, in _open

File "urllib/request.pyc", line 492, in _call_chain

File "urllib/request.pyc", line 1392, in https_open

File "urllib/request.pyc", line 1347, in do_open

urllib.error.URLError: <urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1000)>

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/dist/mac_shytest.app/Contents/Resources/__boot__.py", line 161, in <module>

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/dist/mac_shytest.app/Contents/Resources/__boot__.py", line 84, in _run

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/dist/mac_shytest.app/Contents/Resources/mac_shytest.py", line 5, in <module>

File "seleniumbase/plugins/driver_manager.pyc", line 516, in Driver

File "seleniumbase/core/browser_launcher.pyc", line 1632, in get_driver

File "seleniumbase/core/browser_launcher.pyc", line 3552, in get_local_driver

TypeError: argument of type 'SSLCertVerificationError' is not iterable

```

</details>

I noticed it error'd after running `undetected/patcher`, so I created a patcher object and ran:

```Python

firsttry = Patcher(version_main=0, force=False, executable_path=None)

firsttry.auto()

```

<details>

<summary>which yields this traceback</summary>

```python

Traceback (most recent call last):

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 1344, in do_open

h.request(req.get_method(), req.selector, req.data, headers,

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1331, in request

self._send_request(method, url, body, headers, encode_chunked)

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1377, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1326, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1085, in _send_output

self.send(msg)

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1029, in send

self.connect()

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/http/client.py", line 1472, in connect

self.sock = self._context.wrap_socket(self.sock,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/ssl.py", line 455, in wrap_socket

return self.sslsocket_class._create(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/ssl.py", line 1042, in _create

self.do_handshake()

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/ssl.py", line 1320, in do_handshake

self._sslobj.do_handshake()

ssl.SSLCertVerificationError: [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1000)

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/test1.py", line 306, in <module>

firsttry.auto()

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/test1.py", line 108, in auto

release = self.fetch_release_number()

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/test1.py", line 126, in fetch_release_number

return urlopen(self.url_repo + path).read().decode()

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 215, in urlopen

return opener.open(url, data, timeout)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 515, in open

response = self._open(req, data)

^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 532, in _open

result = self._call_chain(self.handle_open, protocol, protocol +

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 492, in _call_chain

result = func(*args)

^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 1392, in https_open

return self.do_open(http.client.HTTPSConnection, req,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/Library/Frameworks/Python.framework/Versions/3.12/lib/python3.12/urllib/request.py", line 1347, in do_open

raise URLError(err)

urllib.error.URLError: <urlopen error [SSL: CERTIFICATE_VERIFY_FAILED] certificate verify failed: unable to get local issuer certificate (_ssl.c:1000)>

Exception ignored in: <function Patcher.__del__ at 0x1027c8b80>

Traceback (most recent call last):

File "/Users/dylan/PycharmProjects/shy_drivers/apptest/test1.py", line 283, in __del__

AttributeError: 'NoneType' object has no attribute 'monotonic'

```

</details>

| closed | 2024-04-28T21:09:59Z | 2024-04-29T00:49:28Z | https://github.com/seleniumbase/SeleniumBase/issues/2724 | [

"external",

"can't reproduce",

"UC Mode / CDP Mode"

] | Dylgod | 1 |

timkpaine/lantern | plotly | 172 | add "superstore" like random data | closed | 2018-09-19T16:37:43Z | 2018-09-19T21:03:14Z | https://github.com/timkpaine/lantern/issues/172 | [

"feature",

"datasets"

] | timkpaine | 0 | |

pyqtgraph/pyqtgraph | numpy | 3,267 | ParameterTree drop-down list shows up blank | <!-- In the following, please describe your issue in detail! -->

<!-- If some sections do not apply, just remove them. -->

### Short description

<!-- This should summarize the issue. -->

If I configure a parametertree as a drop-down menu with pre-defined list and default value, the drop-down shows up as empty

### Code to reproduce

<!-- Please provide a minimal working example that reproduces the issue in the code block below.

Ideally, this should be a full example someone else could run without additional setup. -->

```python

import sys

from PyQt5.QtWidgets import QApplication, QMainWindow, QVBoxLayout, QWidget

import pyqtgraph as pg

from pyqtgraph.parametertree import Parameter, ParameterTree

class ParameterTreeApp(QMainWindow):

def __init__(self):

super().__init__()

# Set up the main window

self.setGeometry(100, 100, 400, 300)

# Create a central widget and layout

central_widget = QWidget()

layout = QVBoxLayout()

central_widget.setLayout(layout)

self.setCentralWidget(central_widget)

# Define parameters with a drop-down menu

params = [

{'name': 'Select Item', 'type': 'list', 'values': ['Option 1', 'Option 2', 'Option 3'], 'value': 'Option 1'},

]

# Create a Parameter object

self.parameter = Parameter.create(name='params', type='group', children=params)

# Create a ParameterTree and set the parameters

self.parameter_tree = ParameterTree()

self.parameter_tree.setParameters(self.parameter, showTop=False)

# Add the ParameterTree to the layout

layout.addWidget(self.parameter_tree)

if __name__ == '__main__':

app = QApplication(sys.argv)

window = ParameterTreeApp()

window.show()

sys.exit(app.exec_())

```

### Expected behavior

The drop-down should be default set to "Option 1", which list of options "Option 1", "Option 2", and "Option 3"

### Real behavior

Drop down is empty, with no default value set.

<img width="398" alt="Image" src="https://github.com/user-attachments/assets/82ea6bc2-8022-49cd-a4fd-05276e1348cb" />

### Tested environment(s)

* PyQtGraph version: 0.13.7

* PyQt: 5.15.9

* Python version: 3.12.9

* Operating system: Red Hat 8

* Installation method: conda

| closed | 2025-02-27T16:57:39Z | 2025-02-27T19:09:40Z | https://github.com/pyqtgraph/pyqtgraph/issues/3267 | [] | echandler-anl | 2 |

sigmavirus24/github3.py | rest-api | 892 | branch.protect returns 404 instead of bool value | **Issue type**: bug

------

**Versions**

- Python 2.7

- pip 18.1

- github3.py 1.2.0

- requests 2.19.1

- uritemplate 0.3.0,

- python-dateutil 2.7.3

------

**Traceback**:

```

Traceback (most recent call last):

....

status_checks=['required_pull_request_reviews'])

File "/home/vozniak/projects/github/virtualenv/local/lib/python2.7/site-packages/github3/decorators.py", line 30, in auth_wrapper

return func(self, *args, **kwargs)

File "/home/vozniak/projects/github/virtualenv/local/lib/python2.7/site-packages/github3/repos/branch.py", line 116, in protect

json = self._json(resp, 200)

File "/home/vozniak/projects/github/virtualenv/local/lib/python2.7/site-packages/github3/models.py", line 156, in _json

raise exceptions.error_for(response)

github3.exceptions.NotFoundError: 404 Not Found

```

------

**Description**:

When trying to protect branch I got an issue 404 on this line:

https://github.com/sigmavirus24/github3.py/blob/master/src/github3/repos/branch.py#L116

reproducible example:

```python

master_branch.protect(enforcement='off', status_checks=['required_pull_request_reviews'])

```

------

*Generated with github3.py using the report_issue script*

| closed | 2018-10-08T13:05:13Z | 2021-11-01T01:08:44Z | https://github.com/sigmavirus24/github3.py/issues/892 | [] | VolVoz | 7 |

arnaudmiribel/streamlit-extras | streamlit | 21 | Add mentions | As in https://playground.streamlitapp.com/?q=github-mention

Worth trying with other pages, other social networks too | closed | 2022-09-22T08:17:31Z | 2022-09-22T19:37:30Z | https://github.com/arnaudmiribel/streamlit-extras/issues/21 | [

"enhancement"

] | arnaudmiribel | 0 |

OFA-Sys/Chinese-CLIP | computer-vision | 278 | 关于执行sh脚本文件的报错 RuntimeError: NCCL error in: ../torch/lib/c10d/ProcessGroupNCCL.cpp:911, unhandled system error, NCCL version 2.7.8 | (CLIP) fumon@LAPTOP-2S5HFEN5:~/Chinese-CLIP-master/Chinese-CLIP-master$ bash run_scripts/muge_finetune_vit-b-16_rbt-base.sh DATAPATH

/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launch.py:178: FutureWarning: The module torch.distributed.launch is deprecated

and will be removed in future. Use torch.distributed.run.

Note that --use_env is set by default in torch.distributed.run.

If your script expects `--local_rank` argument to be set, please

change it to read from `os.environ['LOCAL_RANK']` instead. See

https://pytorch.org/docs/stable/distributed.html#launch-utility for

further instructions

warnings.warn(

Loading vision model config from cn_clip/clip/model_configs/RN50.json

Loading text model config from cn_clip/clip/model_configs/RBT3-chinese.json

Traceback (most recent call last):

File "cn_clip/training/main.py", line 350, in <module>

main()

File "cn_clip/training/main.py", line 135, in main

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_device_rank], find_unused_parameters=find_unused_parameters)

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/nn/parallel/distributed.py", line 496, in __init__

dist._verify_model_across_ranks(self.process_group, parameters)

RuntimeError: NCCL error in: ../torch/lib/c10d/ProcessGroupNCCL.cpp:911, unhandled system error, NCCL version 2.7.8

ncclSystemError: System call (socket, malloc, munmap, etc) failed.

ERROR:torch.distributed.elastic.multiprocessing.api:failed (exitcode: 1) local_rank: 0 (pid: 28405) of binary: /home/fumon/anaconda3/envs/CLIP/bin/python

Traceback (most recent call last):

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/runpy.py", line 192, in _run_module_as_main

return _run_code(code, main_globals, None,

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launch.py", line 193, in <module>

main()

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launch.py", line 189, in main

launch(args)

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launch.py", line 174, in launch

run(args)

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/run.py", line 689, in run

elastic_launch(

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launcher/api.py", line 116, in __call__

return launch_agent(self._config, self._entrypoint, list(args))

File "/home/fumon/anaconda3/envs/CLIP/lib/python3.8/site-packages/torch/distributed/launcher/api.py", line 244, in launch_agent

raise ChildFailedError(

torch.distributed.elastic.multiprocessing.errors.ChildFailedError:

cn_clip/training/main.py FAILED

Root Cause:

[0]:

time: 2024-03-26_21:46:34

rank: 0 (local_rank: 0)

exitcode: 1 (pid: 28405)

error_file: <N/A>

msg: "Process failed with exitcode 1"

Other Failures:

<NO_OTHER_FAILURES>

| open | 2024-03-25T14:24:00Z | 2024-03-26T16:25:44Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/278 | [] | Fumon554 | 0 |

kennethreitz/responder | graphql | 242 | Ability to modify swagger strings | The built-in openapi support is great! Kudos to that. However, it would be nice if there were ways to modify more swagger strings such as page title, `default`, `description` etc. Not a very important feature but would be nice to have to make swagger docs more customizable.

I am thinking passing common variables within `responder.API` along with already existing "title" and "version"? | closed | 2018-11-20T09:42:18Z | 2019-03-13T00:22:21Z | https://github.com/kennethreitz/responder/issues/242 | [

"good first issue"

] | here0to0learn | 3 |

deeppavlov/DeepPavlov | tensorflow | 812 | Dialogue Bot for goal-oriented task issue. | from deeppavlov import build_model, configs

bot1 = build_model(configs.go_bot.gobot_dstc2, download=True)

----------------------------------------------------------------------

2019-04-22 06:18:37.365 INFO in 'deeppavlov.core.data.utils'['utils'] at line 63: Downloading from http://files.deeppavlov.ai/datasets/dstc2_v2.tar.gz to /root/.deeppavlov/downloads/dstc2_v2.tar.gz

100%|██████████| 506k/506k [00:00<00:00, 743kB/s]

2019-04-22 06:18:38.53 INFO in 'deeppavlov.core.data.utils'['utils'] at line 201: Extracting /root/.deeppavlov/downloads/dstc2_v2.tar.gz archive into /root/.deeppavlov/downloads/dstc2

2019-04-22 06:18:38.681 INFO in 'deeppavlov.core.data.utils'['utils'] at line 63: Downloading from http://files.deeppavlov.ai/embeddings/glove.6B.100d.txt to /root/.deeppavlov/downloads/embeddings/glove.6B.100d.txt

347MB [00:20, 17.1MB/s]

2019-04-22 06:18:59.505 INFO in 'deeppavlov.core.data.utils'['utils'] at line 63: Downloading from http://files.deeppavlov.ai/deeppavlov_data/slotfill_dstc2.tar.gz to /root/.deeppavlov/slotfill_dstc2.tar.gz

100%|██████████| 641k/641k [00:00<00:00, 951kB/s]

2019-04-22 06:19:00.185 INFO in 'deeppavlov.core.data.utils'['utils'] at line 201: Extracting /root/.deeppavlov/slotfill_dstc2.tar.gz archive into /root/.deeppavlov/models

2019-04-22 06:19:00.769 INFO in 'deeppavlov.core.data.utils'['utils'] at line 63: Downloading from http://files.deeppavlov.ai/deeppavlov_data/gobot_dstc2_v7.tar.gz to /root/.deeppavlov/gobot_dstc2_v7.tar.gz

100%|██████████| 969k/969k [00:01<00:00, 543kB/s]

2019-04-22 06:19:01.853 INFO in 'deeppavlov.core.data.utils'['utils'] at line 201: Extracting /root/.deeppavlov/gobot_dstc2_v7.tar.gz archive into /root/.deeppavlov/models

2019-04-22 06:19:01.874 INFO in 'deeppavlov.core.data.simple_vocab'['simple_vocab'] at line 103: [loading vocabulary from /root/.deeppavlov/models/gobot_dstc2/word.dict]

2019-04-22 06:19:01.878 WARNING in 'deeppavlov.core.models.serializable'['serializable'] at line 47: No load path is set for Sqlite3Database in 'infer' mode. Using save path instead

2019-04-22 06:19:01.880 INFO in 'deeppavlov.core.data.sqlite_database'['sqlite_database'] at line 63: Loading database from /root/.deeppavlov/downloads/dstc2/resto.sqlite.

2019-04-22 06:19:04.804 INFO in 'deeppavlov.core.data.simple_vocab'['simple_vocab'] at line 103: [loading vocabulary from /root/.deeppavlov/models/slotfill_dstc2/word.dict]

2019-04-22 06:19:04.814 INFO in 'deeppavlov.core.data.simple_vocab'['simple_vocab'] at line 103: [loading vocabulary from /root/.deeppavlov/models/slotfill_dstc2/tag.dict]

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/op_def_library.py:263: colocate_with (from tensorflow.python.framework.ops) is deprecated and will be removed in a future version.

Instructions for updating:

Colocations handled automatically by placer.

Using TensorFlow backend.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/core/layers/tf_layers.py:948: calling dropout (from tensorflow.python.ops.nn_ops) with keep_prob is deprecated and will be removed in a future version.

Instructions for updating:

Please use `rate` instead of `keep_prob`. Rate should be set to `rate = 1 - keep_prob`.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/core/layers/tf_layers.py:66: conv1d (from tensorflow.python.layers.convolutional) is deprecated and will be removed in a future version.

Instructions for updating:

Use keras.layers.conv1d instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/core/layers/tf_layers.py:69: batch_normalization (from tensorflow.python.layers.normalization) is deprecated and will be removed in a future version.

Instructions for updating:

Use keras.layers.batch_normalization instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/models/ner/network.py:248: dense (from tensorflow.python.layers.core) is deprecated and will be removed in a future version.

Instructions for updating:

Use keras.layers.dense instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/models/ner/network.py:259: softmax_cross_entropy_with_logits (from tensorflow.python.ops.nn_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Future major versions of TensorFlow will allow gradients to flow

into the labels input on backprop by default.

See `tf.nn.softmax_cross_entropy_with_logits_v2`.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/tensorflow/python/ops/math_ops.py:3066: to_int32 (from tensorflow.python.ops.math_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.cast instead.

WARNING:tensorflow:From /usr/local/lib/python3.6/dist-packages/deeppavlov/core/models/tf_model.py:49: checkpoint_exists (from tensorflow.python.training.checkpoint_management) is deprecated and will be removed in a future version.

Instructions for updating:

Use standard file APIs to check for files with this prefix.

2019-04-22 06:19:06.539 INFO in 'deeppavlov.core.models.tf_model'['tf_model'] at line 50: [loading model from /root/.deeppavlov/models/slotfill_dstc2/model]

INFO:tensorflow:Restoring parameters from /root/.deeppavlov/models/slotfill_dstc2/model

/usr/local/lib/python3.6/dist-packages/fuzzywuzzy/fuzz.py:35: UserWarning: Using slow pure-python SequenceMatcher. Install python-Levenshtein to remove this warning

warnings.warn('Using slow pure-python SequenceMatcher. Install python-Levenshtein to remove this warning')

paramiko missing, opening SSH/SCP/SFTP paths will be disabled. `pip install paramiko` to suppress

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-7-7d0b0559004d> in <module>()

1 from deeppavlov import build_model, configs

2

----> 3 bot1 = build_model(configs.go_bot.gobot_dstc2, download=True)

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/commands/infer.py in build_model(config, mode, load_trained, download, serialized)

59 component_serialized = None

60

---> 61 component = from_params(component_config, mode=mode, serialized=component_serialized)

62

63 if 'in' in component_config:

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/params.py in from_params(params, mode, serialized, **kwargs)

95

96 # find the submodels params recursively

---> 97 config_params = {k: _init_param(v, mode) for k, v in config_params.items()}

98

99 try:

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/params.py in <dictcomp>(.0)

95

96 # find the submodels params recursively

---> 97 config_params = {k: _init_param(v, mode) for k, v in config_params.items()}

98

99 try:

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/params.py in _init_param(param, mode)

49 elif isinstance(param, dict):

50 if {'ref', 'class_name', 'config_path'}.intersection(param.keys()):

---> 51 param = from_params(param, mode=mode)

52 else:

53 param = {k: _init_param(v, mode) for k, v in param.items()}

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/params.py in from_params(params, mode, serialized, **kwargs)

92 log.exception(e)

93 raise e

---> 94 cls = get_model(cls_name)

95

96 # find the submodels params recursively

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/registry.py in get_model(name)

69 raise ConfigError("Model {} is not registered.".format(name))

70 return cls_from_str(name)

---> 71 return cls_from_str(_REGISTRY[name])

72

73

/usr/local/lib/python3.6/dist-packages/deeppavlov/core/common/registry.py in cls_from_str(name)

38 .format(name))

39

---> 40 return getattr(importlib.import_module(module_name), cls_name)

41

42

/usr/lib/python3.6/importlib/__init__.py in import_module(name, package)

124 break

125 level += 1

--> 126 return _bootstrap._gcd_import(name[level:], package, level)

127

128

/usr/lib/python3.6/importlib/_bootstrap.py in _gcd_import(name, package, level)

/usr/lib/python3.6/importlib/_bootstrap.py in _find_and_load(name, import_)

/usr/lib/python3.6/importlib/_bootstrap.py in _find_and_load_unlocked(name, import_)

/usr/lib/python3.6/importlib/_bootstrap.py in _load_unlocked(spec)

/usr/lib/python3.6/importlib/_bootstrap_external.py in exec_module(self, module)

/usr/lib/python3.6/importlib/_bootstrap.py in _call_with_frames_removed(f, *args, **kwds)

/usr/local/lib/python3.6/dist-packages/deeppavlov/models/embedders/glove_embedder.py in <module>()

17

18 import numpy as np

---> 19 from gensim.models import KeyedVectors

20 from overrides import overrides

21

/usr/local/lib/python3.6/dist-packages/gensim/__init__.py in <module>()

3 """

4

----> 5 from gensim import parsing, corpora, matutils, interfaces, models, similarities, summarization, utils # noqa:F401

6 import logging

7

/usr/local/lib/python3.6/dist-packages/gensim/models/__init__.py in <module>()

5

6 # bring model classes directly into package namespace, to save some typing

----> 7 from .coherencemodel import CoherenceModel # noqa:F401

8 from .hdpmodel import HdpModel # noqa:F401

9 from .ldamodel import LdaModel # noqa:F401

/usr/local/lib/python3.6/dist-packages/gensim/models/coherencemodel.py in <module>()

34 from gensim import interfaces, matutils

35 from gensim import utils

---> 36 from gensim.topic_coherence import (segmentation, probability_estimation,

37 direct_confirmation_measure, indirect_confirmation_measure,

38 aggregation)

/usr/local/lib/python3.6/dist-packages/gensim/topic_coherence/probability_estimation.py in <module>()

10 import logging

11

---> 12 from gensim.topic_coherence.text_analysis import (

13 CorpusAccumulator, WordOccurrenceAccumulator, ParallelWordOccurrenceAccumulator,

14 WordVectorsAccumulator)

/usr/local/lib/python3.6/dist-packages/gensim/topic_coherence/text_analysis.py in <module>()

19

20 from gensim import utils

---> 21 from gensim.models.word2vec import Word2Vec

22

23 logger = logging.getLogger(__name__)

/usr/local/lib/python3.6/dist-packages/gensim/models/word2vec.py in <module>()

119

120 from gensim.utils import keep_vocab_item, call_on_class_only

--> 121 from gensim.models.keyedvectors import Vocab, Word2VecKeyedVectors

122 from gensim.models.base_any2vec import BaseWordEmbeddingsModel

123

/usr/local/lib/python3.6/dist-packages/gensim/models/keyedvectors.py in <module>()

160 # If pyemd is attempted to be used, but isn't installed, ImportError will be raised in wmdistance

161 try:

--> 162 from pyemd import emd

163 PYEMD_EXT = True

164 except ImportError:

/usr/local/lib/python3.6/dist-packages/pyemd/__init__.py in <module>()

73

74 from .__about__ import *

---> 75 from .emd import emd, emd_with_flow, emd_samples

__init__.pxd in init pyemd.emd()

ValueError: numpy.ufunc size changed, may indicate binary incompatibility. Expected 216 from C header, got 192 from PyObject | closed | 2019-04-22T06:23:36Z | 2019-04-23T08:33:43Z | https://github.com/deeppavlov/DeepPavlov/issues/812 | [] | Pem14604 | 2 |

vllm-project/vllm | pytorch | 15,380 | [Usage][UT]:Why the answer is ' 0, 1' | ### Your current environment

INFO 03-24 14:31:22 [__init__.py:256] Automatically detected platform cuda.

Collecting environment information...

PyTorch version: 2.6.0+cu124

Is debug build: False

CUDA used to build PyTorch: 12.4

ROCM used to build PyTorch: N/A

OS: Ubuntu 22.04.4 LTS (x86_64)

GCC version: (Ubuntu 11.4.0-1ubuntu1~22.04) 11.4.0

Clang version: Could not collect

CMake version: version 3.22.1

Libc version: glibc-2.35

Python version: 3.12.9 | packaged by Anaconda, Inc. | (main, Feb 6 2025, 18:56:27) [GCC 11.2.0] (64-bit runtime)

Python platform: Linux-5.15.0-86-generic-x86_64-with-glibc2.35

Is CUDA available: True

CUDA runtime version: 12.4.131

CUDA_MODULE_LOADING set to: LAZY

GPU models and configuration: GPU 0: NVIDIA A100-PCIE-40GB

Nvidia driver version: 550.107.02

cuDNN version: Probably one of the following:

/usr/lib/x86_64-linux-gnu/libcudnn.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_adv.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_cnn.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_engines_precompiled.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_engines_runtime_compiled.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_graph.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_heuristic.so.9.1.0

/usr/lib/x86_64-linux-gnu/libcudnn_ops.so.9.1.0

HIP runtime version: N/A

MIOpen runtime version: N/A

Is XNNPACK available: True

CPU:

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 46 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 80

On-line CPU(s) list: 0-79

Vendor ID: GenuineIntel

Model name: Intel Xeon Processor (Cascadelake)

CPU family: 6

Model: 85

Thread(s) per core: 2

Core(s) per socket: 20

Socket(s): 2

Stepping: 6

BogoMIPS: 5999.76

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush mmx fxsr sse sse2 ht syscall nx pdpe1gb rdtscp lm constant_tsc rep_good nopl xtopology cpuid pni pclmulqdq ssse3 fma cx16 pcid sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand hypervisor lahf_lm abm 3dnowprefetch cpuid_fault invpcid_single ssbd ibrs ibpb fsgsbase bmi1 hle avx2 smep bmi2 erms invpcid rtm avx512f avx512dq rdseed adx smap clflushopt clwb avx512cd avx512bw avx512vl xsaveopt xsavec xgetbv1 arat pku ospke avx512_vnni

L1d cache: 2.5 MiB (80 instances)

L1i cache: 2.5 MiB (80 instances)

L2 cache: 160 MiB (40 instances)

L3 cache: 32 MiB (2 instances)

NUMA node(s): 2

NUMA node0 CPU(s): 0-39

NUMA node1 CPU(s): 40-79

Vulnerability Gather data sampling: Unknown: Dependent on hypervisor status

Vulnerability Itlb multihit: KVM: Mitigation: VMX unsupported

Vulnerability L1tf: Mitigation; PTE Inversion

Vulnerability Mds: Vulnerable: Clear CPU buffers attempted, no microcode; SMT Host state unknown

Vulnerability Meltdown: Vulnerable

Vulnerability Mmio stale data: Vulnerable: Clear CPU buffers attempted, no microcode; SMT Host state unknown

Vulnerability Retbleed: Mitigation; IBRS

Vulnerability Spec rstack overflow: Not affected

Vulnerability Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl and seccomp

Vulnerability Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Vulnerability Spectre v2: Mitigation; IBRS, IBPB conditional, STIBP disabled, RSB filling, PBRSB-eIBRS Not affected

Vulnerability Srbds: Not affected

Vulnerability Tsx async abort: Vulnerable: Clear CPU buffers attempted, no microcode; SMT Host state unknown

Versions of relevant libraries:

[pip3] numpy==1.26.4

[pip3] nvidia-cublas-cu12==12.4.5.8

[pip3] nvidia-cuda-cupti-cu12==12.4.127

[pip3] nvidia-cuda-nvrtc-cu12==12.4.127

[pip3] nvidia-cuda-runtime-cu12==12.4.127

[pip3] nvidia-cudnn-cu12==9.1.0.70

[pip3] nvidia-cufft-cu12==11.2.1.3

[pip3] nvidia-curand-cu12==10.3.5.147

[pip3] nvidia-cusolver-cu12==11.6.1.9

[pip3] nvidia-cusparse-cu12==12.3.1.170

[pip3] nvidia-cusparselt-cu12==0.6.2

[pip3] nvidia-nccl-cu12==2.21.5

[pip3] nvidia-nvjitlink-cu12==12.4.127

[pip3] nvidia-nvtx-cu12==12.4.127

[pip3] pyzmq==26.3.0

[pip3] torch==2.6.0

[pip3] torchaudio==2.6.0

[pip3] torchvision==0.21.0

[pip3] transformers==4.49.0

[pip3] triton==3.2.0

[conda] numpy 1.26.4 pypi_0 pypi

[conda] nvidia-cublas-cu12 12.4.5.8 pypi_0 pypi

[conda] nvidia-cuda-cupti-cu12 12.4.127 pypi_0 pypi

[conda] nvidia-cuda-nvrtc-cu12 12.4.127 pypi_0 pypi

[conda] nvidia-cuda-runtime-cu12 12.4.127 pypi_0 pypi

[conda] nvidia-cudnn-cu12 9.1.0.70 pypi_0 pypi

[conda] nvidia-cufft-cu12 11.2.1.3 pypi_0 pypi

[conda] nvidia-curand-cu12 10.3.5.147 pypi_0 pypi

[conda] nvidia-cusolver-cu12 11.6.1.9 pypi_0 pypi

[conda] nvidia-cusparse-cu12 12.3.1.170 pypi_0 pypi

[conda] nvidia-cusparselt-cu12 0.6.2 pypi_0 pypi

[conda] nvidia-nccl-cu12 2.21.5 pypi_0 pypi

[conda] nvidia-nvjitlink-cu12 12.4.127 pypi_0 pypi

[conda] nvidia-nvtx-cu12 12.4.127 pypi_0 pypi

[conda] pyzmq 26.3.0 pypi_0 pypi

[conda] torch 2.6.0 pypi_0 pypi

[conda] torchaudio 2.6.0 pypi_0 pypi

[conda] torchvision 0.21.0 pypi_0 pypi

[conda] transformers 4.49.0 pypi_0 pypi

[conda] triton 3.2.0 pypi_0 pypi

ROCM Version: Could not collect

Neuron SDK Version: N/A

vLLM Version: 0.8.0rc3.dev66+gffcfb77c7

vLLM Build Flags:

CUDA Archs: Not Set; ROCm: Disabled; Neuron: Disabled

GPU Topology:

GPU0 CPU Affinity NUMA Affinity GPU NUMA ID

GPU0 X 0-79 0-1 N/A

Legend:

X = Self

SYS = Connection traversing PCIe as well as the SMP interconnect between NUMA nodes (e.g., QPI/UPI)

NODE = Connection traversing PCIe as well as the interconnect between PCIe Host Bridges within a NUMA node

PHB = Connection traversing PCIe as well as a PCIe Host Bridge (typically the CPU)

PXB = Connection traversing multiple PCIe bridges (without traversing the PCIe Host Bridge)

PIX = Connection traversing at most a single PCIe bridge

NV# = Connection traversing a bonded set of # NVLinks

OMP_NUM_THREADS=10

MKL_NUM_THREADS=10

LD_LIBRARY_PATH=/root/autodl-tmp/miniconda/envs/vllm_wl/lib/python3.12/site-packages/cv2/../../lib64:

NCCL_CUMEM_ENABLE=0

TORCHINDUCTOR_COMPILE_THREADS=1

CUDA_MODULE_LOADING=LAZY

### How would you like to use vllm

When comparing the short outputs of HF and vLLM(greedy sampling) using the test [script](https://github.com/vllm-project/vllm/blob/v0.8.1/tests/basic_correctness/test_basic_correctness.py), i got the answer and i reproduced it as following:

```

from vllm import LLM, SamplingParams

prompt = "The following numbers of the sequence " + ", ".join(

str(i) for i in range(1024)) + " are:"

sampling_params = SamplingParams(temperature=0, max_tokens=5)

# Create an LLM.

llm = LLM(model="Qwen/Qwen2.5-7B-Instruct")

outputs = llm.generate(prompt, sampling_params)

# Print the outputs.

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated text: {generated_text!r}")

```

and i got Generated text: ' 0, 1', i wonder why is this the answer?

### Before submitting a new issue...

- [x] Make sure you already searched for relevant issues, and asked the chatbot living at the bottom right corner of the [documentation page](https://docs.vllm.ai/en/latest/), which can answer lots of frequently asked questions. | open | 2025-03-24T06:35:05Z | 2025-03-24T06:36:17Z | https://github.com/vllm-project/vllm/issues/15380 | [

"usage"

] | Potabk | 0 |

airtai/faststream | asyncio | 1,899 | Feat: add warning for NATS subscriber factory if user sets useless options | **Describe the bug**

The extra_options parameter is not utilized when using pull_subscribe in nats.

Below is the function signature from natspy

``` python

async def subscribe(

self,

subject: str,

queue: Optional[str] = None,

cb: Optional[Callback] = None,

durable: Optional[str] = None,

stream: Optional[str] = None,

config: Optional[api.ConsumerConfig] = None,

manual_ack: bool = False,

ordered_consumer: bool = False,

idle_heartbeat: Optional[float] = None,

flow_control: bool = False,

pending_msgs_limit: int = DEFAULT_JS_SUB_PENDING_MSGS_LIMIT,

pending_bytes_limit: int = DEFAULT_JS_SUB_PENDING_BYTES_LIMIT,

deliver_policy: Optional[api.DeliverPolicy] = None,

headers_only: Optional[bool] = None,

inactive_threshold: Optional[float] = None,

) -> PushSubscription:

async def pull_subscribe(

self,

subject: str,

durable: Optional[str] = None,

stream: Optional[str] = None,

config: Optional[api.ConsumerConfig] = None,

pending_msgs_limit: int = DEFAULT_JS_SUB_PENDING_MSGS_LIMIT,

pending_bytes_limit: int = DEFAULT_JS_SUB_PENDING_BYTES_LIMIT,

inbox_prefix: bytes = api.INBOX_PREFIX,

) -> JetStreamContext.PullSubscription:

```

**How to reproduce**

```python

import asyncio

from faststream import FastStream

from faststream.nats import PullSub, NatsBroker

from nats.js.api import DeliverPolicy

broker = NatsBroker()

app = FastStream(broker)

@broker.subscriber(subject="test", deliver_policy=DeliverPolicy.LAST, stream="test", pull_sub=PullSub())

async def handle_msg(msg: str): ...

if __name__ == "__main__":

asyncio.run(app.run())

```

| closed | 2024-11-07T10:05:47Z | 2024-11-11T05:58:02Z | https://github.com/airtai/faststream/issues/1899 | [

"enhancement",

"good first issue",

"help wanted"

] | HHongSeungWoo | 4 |

sinaptik-ai/pandas-ai | data-visualization | 871 | index 0 is out of bounds for axis 0 with size 0 | ### System Info

pandasai - 1.5.15

Python - 3.9.13

### 🐛 Describe the bug

smart_df = SmartDataframe(

df,

config={"llm": llm, "custom_head": df.head(2)})

ques = 'Which companies are doing better than American Express in Waste category?'

ans = smart_df.chat(ques, output_type = "dataframe")

print(ans)

'Unfortunately, I was not able to answer your question, because of the following error:\n\nindex 0 is out of bounds for axis 0 with size 0\n' | closed | 2024-01-12T08:23:36Z | 2024-06-01T00:21:02Z | https://github.com/sinaptik-ai/pandas-ai/issues/871 | [] | Devicharith | 1 |

facebookresearch/fairseq | pytorch | 5,004 | What is the license of the TTS models? | #### What is your question?

I have been testing your TTS system for both english and spanish. For the later, I'm using facebook/tts_transformer-es-css10.

Fairseq is MIT licensed, but I can't find anything about the model itself.

Where can I find information about under what license is this model registered?

Much grateful. | open | 2023-03-03T15:45:31Z | 2023-03-03T15:45:31Z | https://github.com/facebookresearch/fairseq/issues/5004 | [

"question",

"needs triage"

] | ADD-eNavarro | 0 |

labmlai/annotated_deep_learning_paper_implementations | machine-learning | 118 | bracket balance | No opening bracket after mu in ddpm page

https://nn.labml.ai/diffusion/ddpm/index.html

| closed | 2022-04-23T16:15:12Z | 2022-07-02T10:02:49Z | https://github.com/labmlai/annotated_deep_learning_paper_implementations/issues/118 | [

"documentation"

] | maloyan | 1 |

marcomusy/vedo | numpy | 658 | Normalized diverging colormap for Volume object | I want to plot a volume object with a diverging colormap of unequal positive and negative fraction. In the case of a 2D plot with python matplotlib I would create the colormap with the "LinearSegmentedColormap" function from "matplotlib.colors", e.g.:

```python

data = np.random.random([100, 100]) * 100 - 70

minimum = data.min()

maximum = data.max()

absmax = np.abs(data).max()

absmin = np.abs(data).min()

val = -minimum/(maximum - minimum)

m = LinearSegmentedColormap.from_list(

"mycolormap",

colors = [

(0.0, [0.0,0.0,1.0]),

(val, [1.0,1.0,1.0]),

(1.0, [1.0,0.0,0.0])

]

)

plt.figure(figsize=(7, 6))

plt.pcolormesh(data, cmap=m)

plt.colorbar()

plt.show()

```

However, if I create the same colormap "m" for a 3D-Volume "vol" object and use it with "vol.cmap(m)" there is just a black-white coloring of the Volume and the colorbar is black, so fully transparent I would guess.

My questions are:

1. How can I create the diverging colormap for the Volume object?

2. How can I set the transparency of the colorbar to zero (or equally alpha to 1)?

3. How can I fix the colormap at a specific position of the screen (so it does not move with the 3D-view)?

| closed | 2022-06-08T07:15:58Z | 2022-07-16T16:42:10Z | https://github.com/marcomusy/vedo/issues/658 | [] | MesoBolt | 3 |

shaikhsajid1111/facebook_page_scraper | web-scraping | 115 | no post_url, skipping | Hello

When running the scarper: i got the following error "no post_url, skipping" repeatdly,

no post scraped

I am using "firefox" browser.

Is there a solution? | open | 2024-06-01T20:10:14Z | 2024-07-14T12:49:14Z | https://github.com/shaikhsajid1111/facebook_page_scraper/issues/115 | [] | saqtam66 | 9 |

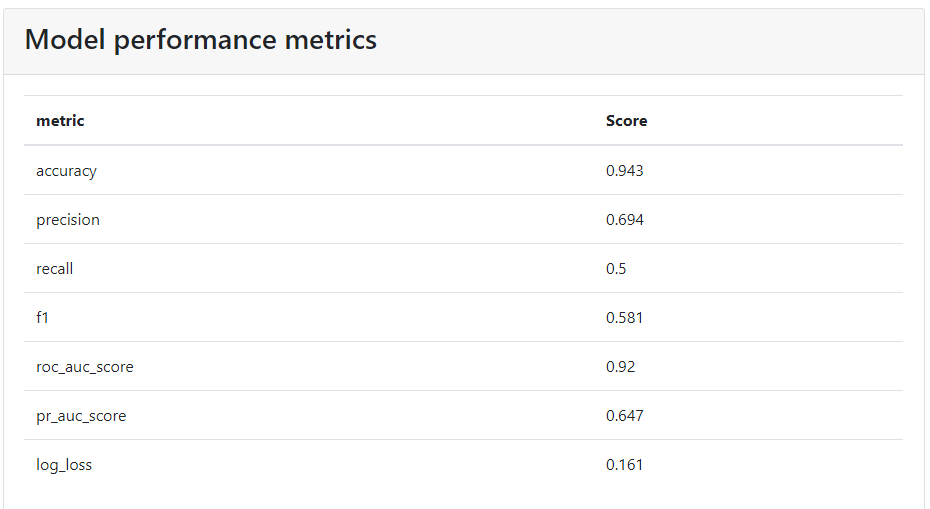

oegedijk/explainerdashboard | dash | 80 | hide metrics table Model Performance Metric | Hi @oegedijk,

Is there some way to hide some metrics in model summary, table Model Performance Metric? I read the documentation but not find this functionality. In source code the metrics are get by class ClassifierModelSummaryComponent, but that class d'ont have any parameter to hide. E.g., using the parameter hide_prauc only hide the PR AUC plot not the metric pr_auc_score. Below snapshot of the table

| closed | 2021-02-04T02:01:07Z | 2021-02-25T19:55:43Z | https://github.com/oegedijk/explainerdashboard/issues/80 | [] | mvpalheta | 8 |

sunscrapers/djoser | rest-api | 111 | Guidance to setup email sending | Is there guidance on setting up the email function for password reset and activation? Currently my implementation only save an email to the media folder and unable to send it out as email. It will be great to provide some tips on the documentation.

Much appreciate!

| closed | 2016-01-18T02:12:55Z | 2016-01-21T01:48:59Z | https://github.com/sunscrapers/djoser/issues/111 | [] | junhua | 0 |

Ehco1996/django-sspanel | django | 571 | 直接操控中转节点 | closed | 2021-09-01T00:52:23Z | 2021-12-28T00:36:55Z | https://github.com/Ehco1996/django-sspanel/issues/571 | [] | Ehco1996 | 0 | |

onnx/onnxmltools | scikit-learn | 375 | lgb BUG |

| open | 2020-03-16T02:13:13Z | 2020-04-15T10:54:47Z | https://github.com/onnx/onnxmltools/issues/375 | [] | yuanjie-ai | 1 |

scanapi/scanapi | rest-api | 503 | --browse option does not work on MacOS | ## Bug report

### Environment

- Operating System: MacOS

- Python version: 3.9.0

- ScanAPI version: main, unreleased

### Description of the bug

<!-- A clear and concise description of what the bug is. -->

`--browser` CLI flag does not open the browser automatically.

https://github.com/scanapi/scanapi/pull/496/

### Expected behavior?

<!-- A clear and concise description of what you expected to happen. -->

Open the report automatically in the browser

### How to reproduce the bug?

<!-- Steps to reproduce the issue. -->

Run `scan run -b` using a MacOS.

### Anything else we need to know?

<!-- Add any other additional details about the issue. -->

Probably it is missing two things:

- absolute path

- `file://` suffix

https://stackoverflow.com/a/22004572/8298081

https://stackoverflow.com/a/33426646/8298081

Testing manually, this works:

```shell

>>> import webbrowser

>>> webbrowser.open("file:///Users/camilamaia/workspace/scanapi-org/examples/demo-api/scanapi-report.html")

```

The following don't work:

```shell

>>> webbrowser.open("file://scanapi-report.html")

>>> webbrowser.open("/Users/camilamaia/workspace/scanapi-org/examples/demo-api/scanapi-report.html")

```

| closed | 2021-08-25T20:54:34Z | 2021-08-27T14:50:06Z | https://github.com/scanapi/scanapi/issues/503 | [

"Bug",

"CLI"

] | camilamaia | 4 |

zappa/Zappa | django | 549 | [Migrated] Unable to access json event data | Originally from: https://github.com/Miserlou/Zappa/issues/1458 by [joshlsullivan](https://github.com/joshlsullivan)

Hi there, when I deploy Zappa, I'm unable to access json data from the Lambda event. If I print the event data, this is what I get:

`[DEBUG] 2018-03-24T14:40:37.991Z 517bfc13-2f71-11e8-9ff3-ed7722cf9e11 Zappa Event: {'eventVersion': '1.0', 'eventName': 'edit_client_event', 'eventArgs': {'jobUUID': 'a5aa3a03-b290-4469-b7ce-711045a57dfb'}, 'auth': {'accountUUID': 'ce9fee13-3327-4bf2-9eb9-89930316690b', 'staffUUID': 'd5b495e7-e3ec-45ff-8ca6-214bfacd13cb'}}`

Here's how I was able to access the json data before deploying Zappa:

`def lambda_handler(event, context):

print(event)

job = event['eventArgs']['jobUUID']`

Any ideas? | closed | 2021-02-20T12:22:36Z | 2024-04-13T16:37:17Z | https://github.com/zappa/Zappa/issues/549 | [

"no-activity",

"auto-closed"

] | jneves | 2 |

seleniumbase/SeleniumBase | web-scraping | 2,402 | Could not connect to the CAPTCHA service. Please try again. | Hello, Im using seleniumbase with uc=True.

The Problem is that I still get detected on a site where i want a bot to checkout. The message "Could not connect to the CAPTCHA service. Please try again." pops up and im not redirected to the checkout page. Is there a workaround? or some settings I have to add? | closed | 2023-12-31T13:42:45Z | 2023-12-31T15:05:50Z | https://github.com/seleniumbase/SeleniumBase/issues/2402 | [

"question",

"UC Mode / CDP Mode"

] | JakobReal-rgb | 1 |

LAION-AI/Open-Assistant | python | 2,849 | Admin interface: Change display name | Currently the display name field of a user in the admin interface is read-only.

Extend the functionality of the [admin/manage_user](https://github.com/LAION-AI/Open-Assistant/blob/main/website/src/pages/admin/manage_user/%5Bid%5D.tsx) page and allow editing of the display name. | closed | 2023-04-23T08:23:28Z | 2023-04-27T11:04:45Z | https://github.com/LAION-AI/Open-Assistant/issues/2849 | [

"website",

"good first issue"

] | andreaskoepf | 1 |

huggingface/datasets | nlp | 6,867 | Improve performance of JSON loader | As reported by @natolambert, loading regular JSON files with `datasets` shows poor performance.

The cause is that we use the `json` Python standard library instead of other faster libraries. See my old comment: https://github.com/huggingface/datasets/pull/2638#pullrequestreview-706983714

> There are benchmarks that compare different JSON packages, with the Standard Library one among the worst performant:

> - https://github.com/ultrajson/ultrajson#benchmarks

> - https://github.com/ijl/orjson#performance

I remember having a discussion about this and it was decided that it was better not to include an additional dependency on a 3rd-party library.

However:

- We already depend on `pandas` and `pandas` depends on `ujson`: so we have an indirect dependency on `ujson`

- Even if the above were not the case, we always could include `ujson` as an optional extra dependency, and check at runtime if it is installed to decide which library to use, either json or ujson | closed | 2024-05-04T15:04:16Z | 2024-05-17T16:22:28Z | https://github.com/huggingface/datasets/issues/6867 | [

"enhancement"

] | albertvillanova | 5 |

Esri/arcgis-python-api | jupyter | 1,506 | clone_items() operation with copy_data=False stills copies data | **Describe the bug**

We have run into cases where the `clone_items()` operation with `copy_data = False` stills copies data from the source Portal to the target Portal.

**To Reproduce**

This happened for services published as dynamic map services from ArcMap that had feature access enabled. for the same Map Service there is a Feature Service end point. We wanted to copy a reference to the source feature service using `clone_item()`, but then the result was a hosted feature service in the target portal with the data copied. parameter `copy_data` was set to `False`.

**Expected behavior**