repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

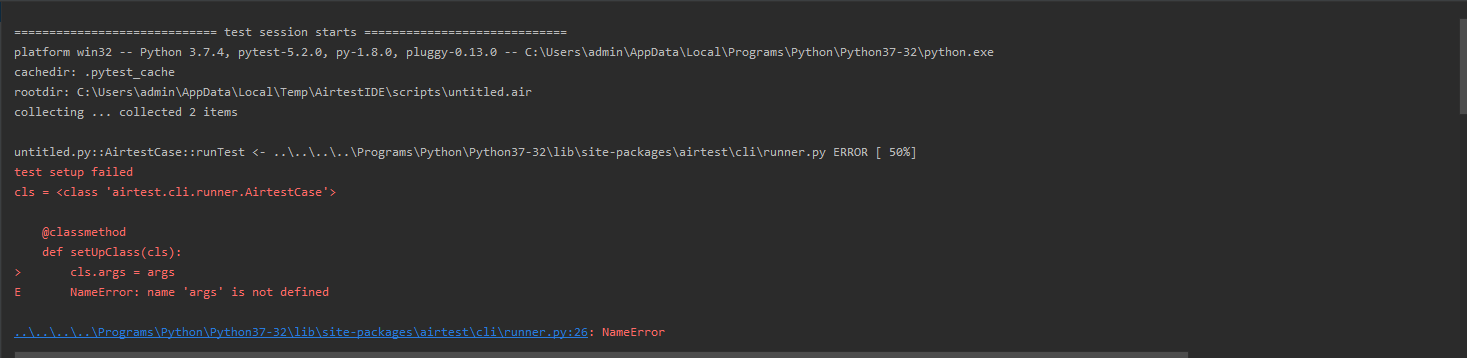

AirtestProject/Airtest | automation | 690 | name 'args' is not defined |

i follow http://airtest.netease.com/docs/en/1_online_help/advanced_features.html?highlight=setup and give error. Am I wrong setup? | closed | 2020-02-10T01:29:52Z | 2020-02-13T08:48:04Z | https://github.com/AirtestProject/Airtest/issues/690 | [] | giangnb-dev | 3 |

MilesCranmer/PySR | scikit-learn | 566 | Windows Julia Install - could not load library "libpcre2-8" The specified module could not be found. | ### What happened?

After installing PySR on windows, on the first import of the module, the Julia install starts, but fails with this error message:

> ...

> fatal: error thrown and no exception handler available.

> InitError(mod=:Sys, error=ErrorException("could not load library "libpcre2-8"

> The specified module could not be found. "))

> ijl_errorf at C:/workdir/src\rtutils.c:77

> ...

I located libpcre2-8 in the virtual environment folder: `...\venv\julia_env\pyjuliapkg\install\bin\libpcre2-8.dll`

I found [this](https://github.com/JuliaLang/julia/issues/52205) issue on the julia repository, but it has no solution given.

Does anyone know of a workaround?

Would installing julia separately (outside of venv) help?

### Version

0.17.2

### Operating System

Windows

### Package Manager

pip

### Interface

Script (i.e., `python my_script.py`)

### Relevant log output

_No response_

### Extra Info

_No response_ | closed | 2024-03-13T12:06:21Z | 2024-06-17T18:09:56Z | https://github.com/MilesCranmer/PySR/issues/566 | [

"bug"

] | tbuckworth | 21 |

vimalloc/flask-jwt-extended | flask | 287 | Incomplete docs | Hey guys :),

I love the work you have done with this package. Thank You!

Unfortunately the doc-page https://flask-jwt-extended.readthedocs.io/en/stable/blacklist_and_token_revoking.html is not available.

Could you have a look into this issue?

Best regards from Berlin,

Luca | closed | 2019-11-01T09:20:44Z | 2019-11-01T13:42:55Z | https://github.com/vimalloc/flask-jwt-extended/issues/287 | [] | LucaTabone | 3 |

pytorch/pytorch | deep-learning | 148,891 | Upgrading FlashAttention to V3 | # Summary

We are currently building and utilizing FlashAttention2 for torch.nn.functional.scaled_dot_product_attention

Up until recently the files we build and our integration was very manual. We recently changed this and made FA a third_party/submodule: https://github.com/pytorch/pytorch/pull/146372

This makes it easier to pull in new files (including those for FAv3) however due to the fact that third_party extensions do not have a mechanism to be re-integrated into ATen the build system + flash_api is still manual.

### Plan

At a very high level we have a few options. I will for the sake of argument though not include the runtime dependency option. So for know lets assume we need to build and ship the kernels in libtorchcuda.so

1. Replace entirely FAv2 w/ FAv3:

This up until recently seemed like a non ideal option since we would lose FA support for A100 + machines. This has changed in: https://github.com/Dao-AILab/flash-attention/commit/7bc3f031a40ffc7b198930b69cf21a4588b4d2f9 and therefor this seems like a much more viable option, and least binary size impactful. I think the main difference is that FAv3 doesn't support Dropout. TBD if this a large enough blocker.

2. Add FAv3 along w/ FAv2

This would require adding another backend to SDPA for FAv3. This would naively have a large impact to binary size, however we could choose to only build these kernels on H100 machines.

I am personally in favor of 1 since it easier to maintain and will provide increased perf on a100 machines for the hot path (no dropout).

For both paths, updates to internal build system will be needed. | open | 2025-03-10T16:54:15Z | 2025-03-14T19:43:47Z | https://github.com/pytorch/pytorch/issues/148891 | [

"triaged",

"module: sdpa"

] | drisspg | 2 |

graphql-python/graphene | graphql | 1,468 | Is there any way to transform variables before resolving fields? | **Is your feature request related to a problem? Please describe.**

We got queries with variables. One variable is popular and it's need to be transformed every time when query calling.

**Describe the solution you'd like**

Need something function like `transform_before_xvariablenamex()` or another variant to transform variable before start to use it in `resolve_xxx()`

**Describe alternatives you've considered**

I do not want to write some ugly function and import it to resolvers of fields of models where that variable is come in.

**Additional context**

Need something like in marshmallow, when they transform data before use it in functions.

| closed | 2022-10-19T16:11:15Z | 2022-12-10T11:35:40Z | https://github.com/graphql-python/graphene/issues/1468 | [

"question"

] | SomeAkk | 3 |

mitmproxy/mitmproxy | python | 6,590 | Binary doesn't run on Mac M1 (silicon) | #### Problem Description

I apologize if this is somehow an expected outcome as I can see that there's only `x86_64` dmg file provided for macOS. I'm using macOS 14.2.1 on a M1 Max machine, and I cannot get mitmproxy to work. It's complaining about the CPU type.

I do not have Rosetta installed on my system.

#### Steps to reproduce the behavior:

1. Install using Homebrew `brew install --cask mitmproxy`

2. Try `mitmproxy --version`

3. See the error

```

==> Installing Cask mitmproxy

==> Linking Binary 'mitmproxy' to '/opt/homebrew/bin/mitmproxy'

==> Linking Binary 'mitmdump' to '/opt/homebrew/bin/mitmdump'

==> Linking Binary 'mitmweb' to '/opt/homebrew/bin/mitmweb'

🍺 mitmproxy was successfully installed!

~ » mitmproxy --version

zsh: bad CPU type in executable: mitmproxy

```

#### System Information

* macOS version: `14.2.1`, no Rosetta

| closed | 2024-01-08T21:45:24Z | 2024-09-30T12:00:25Z | https://github.com/mitmproxy/mitmproxy/issues/6590 | [

"kind/triage"

] | ngocphamm | 4 |

matterport/Mask_RCNN | tensorflow | 2,625 | WARNING:root:You are using the default load_mask(), maybe you need to define your own one. | WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

Epoch 1/10

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

WARNING:root:You are using the default load_mask(), maybe you need to define your own one.

I have this error when I am trying to costum MaskRCNN on my own dataset.

Is there anyone has faced the same issue and got the solution | open | 2021-07-06T21:32:55Z | 2024-03-31T20:49:39Z | https://github.com/matterport/Mask_RCNN/issues/2625 | [] | AidaSilva | 12 |

ARM-DOE/pyart | data-visualization | 1,720 | LIDAR ppi converation in gridded format. | I have LiDAR data that I'd like to convert to radar format (because I want to use pyart package) and then grid it. However, after gridding, I noticed that the ds.rsw values are NaN. Could you please advise on how to retrieve valid values for ds.rsw?

import pyart

import numpy as np

from datetime import datetime

from netCDF4 import Dataset

import warnings

warnings.filterwarnings('ignore')

# Load data lidar data

file_path = "D:/lidar/ncfiles/WCS000248_2023-09-23_09-50-36_ppi_40_100m.nc"

data = Dataset(file_path)

sweep_group = data.groups["Sweep_860123"]

time = sweep_group.variables["time"][:]

latitude = data.variables["latitude"][:]

longitude = data.variables["longitude"][:]

altitude = data.variables["altitude"][:]

azimuth = sweep_group.variables["azimuth"][:]

elevation = sweep_group.variables["elevation"][:]

range_ = sweep_group.variables["range"][:]

radial_wind_speed = sweep_group.variables["radial_wind_speed"][:]

rsw = np.array(radial_wind_speed)

# Create radar object using pyart

radar = pyart.testing.make_empty_ppi_radar(rsw.shape[1], len(azimuth), 1)

radar.latitude['data'] = np.array([latitude])

radar.longitude['data'] = np.array([longitude])

radar.altitude['data'] = np.array([altitude])

#radar.time['data'] = np.array([(t - time_converted[0]).total_seconds() for t in time_converted])

radar.time = {

'standard_name': 'time',

'long_name': 'time in seconds since volume start',

'calendar': 'gregorian',

'units': 'seconds since 2023-09-23T04:35:05Z',

'comment': 'times are relative to the volume start_time',

'data': np.array([(t - time_converted[0]).total_seconds() for t in time_converted]),

'_FillValue':1e+20

}

radar.azimuth['data'] = azimuth

radar.elevation['data'] = elevation

radar.range['data'] = range_

radial_wind_speed_dict = {

'long_name': 'radial_wind_speed',

'standard_name': 'radial_wind_speed_of_scatterers_away_from_instrument',

'units': 'm/s',

'sampling_ratio': 1.0,

'_FillValue': -9999 ,

'grid_mapping': 'grid_mapping',

'coordinates': 'time range',

'data': np.ma.masked_invalid(rsw) # Mask invalid data

}

radar.fields = { 'rws': radial_wind_speed_dict}

#success plot this:

import matplotlib.pyplot as plt

from pyart.graph import RadarDisplay

display = RadarDisplay(radar)

fig, ax = plt.subplots(figsize=(10, 8))

display.plot_ppi("rws", sweep=0, ax=ax, cmap="coolwarm")

plt.show()

## Now grid

grid_limits = ((10., 4000.), (-4500., 4500.), (-4500., 4500.))

grid_shape = (20, 50, 50)

grid = pyart.map.grid_from_radars([radar], grid_limits=grid_limits, grid_shape=grid_shape)

ds = grid_dv.to_xarray()

print(ds)

print(ds)

<xarray.Dataset> Size: 641kB

Dimensions: (time: 1, z: 20, y: 50, x: 50, nradar: 1)

Coordinates: (12/16)

* time (time) object 8B 2023-09-23 04:35:05

* z (z) float64 160B 10.0 220.0 ... 3.79e+03 4e+03

lat (y, x) float64 20kB 45.02 45.02 ... 45.1 45.1

lon (y, x) float64 20kB 7.603 7.605 ... 7.715 7.717

* y (y) float64 400B -4.5e+03 -4.316e+03 ... 4.5e+03

* x (x) float64 400B -4.5e+03 -4.316e+03 ... 4.5e+03

...

origin_altitude (time) float64 8B nan

radar_altitude (nradar) float64 8B nan

radar_latitude (nradar) float64 8B 45.06

radar_longitude (nradar) float64 8B 7.66

radar_time (nradar) int64 8B 0

radar_name (nradar) <U10 40B 'fake_radar'

Dimensions without coordinates: nradar

Data variables:

rws (time, z, y, x) float64 400kB nan nan ... nan

ROI (time, z, y, x) float32 200kB 500.0 ... 500.0

Attributes:

radar_name: fake_radar

nradar: 1

instrument_name: fake_radar

np.nanmax(ds.rws)

Out[18]: nan

np.nanmin(ds.rws)

Out[19]: nan

| open | 2025-01-18T18:09:02Z | 2025-01-22T16:59:16Z | https://github.com/ARM-DOE/pyart/issues/1720 | [] | priya1809 | 13 |

gradio-app/gradio | data-science | 9,967 | MultimodalTextbox interactive=False doesn't work with the submit button | ### Describe the bug

When setting interactive=False with MultimodalTextbox, it doesn't disable the submit button.

The text entry and image upload button are disabled so the content cannot be changed, but the submission can still take place.

### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

def greet(a, c):

return gr.MultimodalTextbox(interactive=False), a, c+1

with gr.Blocks() as demo:

a = gr.MultimodalTextbox(interactive=True, show_label=False)

b = gr.Textbox(interactive=False, show_label=False)

c = gr.Number(interactive=False, show_label=False)

a.submit(fn=greet, inputs=[a, c], outputs=[a, b, c])

if __name__ == "__main__":

demo.launch()

```

### Screenshot

I can still click the submit button multiple times after it is set to be non-interactive.

### Logs

_No response_

### System Info

```shell

Gradio Playground

```

### Severity

I can work around it | open | 2024-11-16T03:21:18Z | 2024-11-16T03:29:07Z | https://github.com/gradio-app/gradio/issues/9967 | [

"bug"

] | sthemeow | 0 |

skforecast/skforecast | scikit-learn | 427 | MLPRegressor Bayesian search | Hi, can I search hidden_layer_sizes for MLPRegressor using Bayesian search?

If yes, how can I write search_space code for it?

E.g, I use for grid search param_grid = {'hidden_layer_sizes':[[50,50], [70,50], [100,50], [100,100],[100],[100,50,30],[256,256],[256,256,128]].

I would like to write something like this for Bayesian search_space | closed | 2023-05-11T11:13:24Z | 2023-05-17T06:34:02Z | https://github.com/skforecast/skforecast/issues/427 | [

"question"

] | AVPokrovsky | 3 |

ivy-llc/ivy | numpy | 28,847 | Add Numpy Frontend Support to Ivy Transpiler | **Description**:

The current implementation of `ivy.transpile` supports `"torch"` as the sole `source` argument. This allows transpiling PyTorch functions or classes to target frameworks like TensorFlow, JAX, or NumPy. This task aims to extend the functionality by adding Numpy as a valid `source`, enabling transpilation of Numpy code to other frameworks via Ivy's intermediate representation.

For example, after completing this task, we should be able to transpile Numpy code using:

```python

ivy.transpile(func, source="numpy", target="jax")

```

### Goals:

The main objective is to implement the first two stages of the transpilation pipeline for Numpy:

1. **Lower Numpy code to Ivy’s Numpy Frontend IR.**

2. **Transform the Numpy Frontend IR to Ivy’s core representation.**

Once these stages are complete, the rest of the pipeline can be reused to target other frameworks like JAX, PyTorch, or TensorFlow. The steps would look as follows:

```text

source='numpy' → target='numpy_frontend'

source='numpy_frontend' → target='ivy'

source='ivy' → target='jax'/'torch'/etc.

```

This mirrors the existing pipeline for PyTorch:

```text

source='torch' → target='torch_frontend'

source='torch_frontend' → target='ivy'

source='ivy' → target='jax'/'numpy'/etc.

```

### Key Tasks:

1. **Add Native Framework-Specific Implementations for Core Transformation Passes:**

- For example, implement the `native_numpy_recursive_transformer.py` for traversing and transforming Numpy native source code.

- Use `native_torch_recursive_transformer.py` as a reference ([example here](https://github.com/ivy-llc/ivy/blob/open-source/ivy/transpiler/transformations/transformers/recursive_transformer/native_torch_recursive_transformer.py#L18))

2. **Define the Transformation Pipeline for Numpy to Numpy Frontend IR:**

- Create a new pipeline in `source_to_frontend_translator_config.py` to handle the stage `source='numpy', target='numpy_frontend'` ([example here](https://github.com/ivy-llc/ivy/blob/open-source/ivy/transpiler/translations/configurations/source_to_frontend_translator_config.py#L88)).

3. **Define the Transformation Pipeline for Numpy Frontend IR to Ivy:**

- Add another pipeline in `frontend_to_ivy_translator_config.py` to handle the stage `source='numpy_frontend', target='ivy'` ([example here](https://github.com/ivy-llc/ivy/blob/open-source/ivy/transpiler/translations/configurations/frontend_to_ivy_translator_config.py#L92)).

4. **Add Stateful Classes for Numpy**

- **NOTE:** Numpy does not natively support any stateful classes so this step can be skipped.

5. **Understand and Leverage Reusability:**

- Explore reusable components in the existing PyTorch pipeline, especially for AST transformers and configuration management.

### Testing:

- Familiarize yourself with the transpilation flow by exploring [transpiler tests](https://github.com/ivy-llc/ivy/tree/open-source/ivy_tests/test_transpiler)

- Add appropriate tests to validate Numpy source transpilation at each stage of the pipeline.

### Additional Notes:

- Keep in mind the modular and extensible design of the transpiler, ensuring that the new implementation integrates smoothly into the existing architecture.

| open | 2024-12-17T09:59:44Z | 2025-03-18T14:48:26Z | https://github.com/ivy-llc/ivy/issues/28847 | [

"NumPy Frontend",

"ToDo",

"Transpiler"

] | YushaArif99 | 1 |

aleju/imgaug | machine-learning | 617 | Augment bounding boxes is broken when only _augment_keypoints is defined | I have an augmenter that implements `_augment_keypoints` and `_augment_images`, which should be sufficient for augmenting both vector and raster representations of data. However, I noticed when I updated imgaug, my boxes were no longer being augmented.

It looks like 0.4.0 broke the behavior where `augment_bounding_boxes` used to work as long as `_augment_keypoints` was defined.

The currently implementation in Augmenter is:

```python

def _augment_bounding_boxes(self, bounding_boxes_on_images, random_state,

parents, hooks):

return bounding_boxes_on_images

def _augment_polygons(self, polygons_on_images, random_state, parents,

hooks):

return polygons_on_images

```

However, if only the two aforementioned functions are implemented then the augmenter will change the image, but the boxes will be unchanged. At the very least this should cause an Exception, but I think a better fix would simply be to use the _augment_keypoints function if it exists:

```python

def _augment_bounding_boxes(self, bounding_boxes_on_images, random_state,

parents, hooks):

return self._augment_bounding_boxes_as_keypoints(

bounding_boxes_on_images, random_state, parents, hooks)

def _augment_polygons(self, polygons_on_images, random_state, parents,

hooks):

return self._augment_polygons_boxes_as_keypoints(

polygons_on_images, random_state, parents, hooks)

```

| closed | 2020-02-16T23:40:51Z | 2020-02-17T20:08:51Z | https://github.com/aleju/imgaug/issues/617 | [] | Erotemic | 1 |

miguelgrinberg/python-socketio | asyncio | 923 | client doesn't receives if *args is in the event function parameters | Hello Miguel, first of all , a ton of thanks to you for creating such amazing product and making it awailable for the community, I really appreciate your efforts. I'm a newbie in python world so please bear with me. I'm trying to provide as much info as I can, to help you to help me solve this issue.

I'm getting live data from a broker's websocket, wrapped inside a python class which is stored in another file named "ws" and is imported into this file. The Broker has provided two functions socket and custom_message code for them is as follows.

socket

```

def socket(access_token):

data_type = "symbolData"

symbol =["NSE:SBIN-EQ"]

fs = ws.FyersSocket(access_token=access_token,run_background=False,log_path="/home/Downloads/")

fs.websocket_data = custom_message

fs.subscribe(symbol=symbol,data_type=data_type)

fs.keep_running()

```

custom_message

```

def custom_message(msg):

print (f"Custom:{msg}")

```

As you can see the data from brokers data socket is getting set into ```custom_message``` function but I want to stream this data to a charting library so needed a websocket which could work with above python functions and thanks to you I had python socketio. So I created an ASGI websocket watching your videos.

The ```msg``` in received by ```custom_message``` is a python list containing a dictionary. As both the functions ```socket``` and ```custom_message``` are synchronous and the ASGI requires asyn functions, I needed a bridge that could connect these sync function to event async function. So I made ```custom_message``` function recive the message and forward it to async function. I implemented it as follows

```

import socketio, asyncio, config

from fyers_api.Websocket import ws

from Access_Token import access_token

sio = socketio.AsyncServer(async_mode='asgi',logger=True, engineio_logger=True,cors_allowed_origins=('*'))

app=socketio.ASGIApp(sio,static_files={

'/':'./public/'

})

access_token = config.client_id+":"+access_token

@sio.event

async def connect(sid, environ):

print(sid,"connected")

@sio.event

async def disconnect(sid):

print(sid,"[disconnected]")

@sio.event

async def sum(sid,data):

result = data['numbers'][0] + data['numbers'][1]

await sio.emit('sum_result',{'result':result}, to=sid)

await sio.emit('reconnect',{'result':1}, to=sid)

print(sid,result)

def socket(sid,fysymbol):

symbol =["NSE:SBIN-EQ"]

data_type = "symbolData"

fs = ws.FyersSocket(sid,fysymbol,access_token=access_token,run_background=False,log_path="/home/akshay/Documents/pytrade/log/")

fs.websocket_data = custom_message

fs.subscribe(symbol=symbol,data_type=data_type)

fs.keep_running()

def custom_message(sid,data,*args):

asyncio.run(SubAdd(sid,data,*args))

@sio.event

async def SubAdd(sid,data,*args): ########This is what is causing issue

await sio.emit('reconnect',{'result':1}, to=sid)

if args:

for arg in args:

emit_data = str(str(0)+"~"+str(arg['symbol'].replace("-","~").replace(":","~"))+"~"+str(arg['timestamp'])+"~"+str(arg['min_open_price'])+"~"+str(arg['min_high_price'])+"~"+str(arg['min_low_price'])+"~"+str(arg['min_close_price'])+"~"+str(arg['min_volume']))

print(f"emit_data={emit_data}")

await sio.emit("m",emit_data, to=sid)

sid = sid

fysymbol = data['fysymbol']

print("SubAdd isConnected")

#socket(sid,fysymbol)

@sio.event

async def SubRemove(sid,data):

fysymbol,resolution = data['fysymbol'],data['resolution']

fyersSocket.unsubscribe(symbol=symbol)

symbol.remove(fysymbol)

await sio.emit('UnSubscribed',data['fysymbol'], to=sid)

print(sid,"[UnSubscribed]",data['fysymbol'])

```

Which is working fine if we look at the server's logs in the terminal e.g.

```4dacEB52_RzDB83fAAAA: Received packet MESSAGE data 2["sum",{"numbers":[1,2]}]

received event "sum" from GOGFG4v_gCrobHcFAAAB [/]

emitting event "sum_result" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["sum_result",{"result":3}]```

and

```emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259912~471.1~471.15~470.85~471.15~9400"]

```

Terminal Logs

```

uvicorn app:app --port 9999 --reload

INFO: Will watch for changes in these directories: ['/home/akshay/Documents/pytrade/pytrade']

INFO: Uvicorn running on http://127.0.0.1:9999 (Press CTRL+C to quit)

INFO: Started reloader process [12498] using watchgod

Server initialized for asgi.

INFO: Started server process [12509]

INFO: Waiting for application startup.

INFO: Application startup complete.

4dacEB52_RzDB83fAAAA: Sending packet OPEN data {'sid': '4dacEB52_RzDB83fAAAA', 'upgrades': [], 'pingTimeout': 20000, 'pingInterval': 25000}

4dacEB52_RzDB83fAAAA: Received request to upgrade to websocket

INFO: ('127.0.0.1', 40320) - "WebSocket /socket.io/" [accepted]

4dacEB52_RzDB83fAAAA: Upgrade to websocket successful

INFO: connection open

4dacEB52_RzDB83fAAAA: Received packet MESSAGE data 0

GOGFG4v_gCrobHcFAAAB connected

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 0{"sid":"GOGFG4v_gCrobHcFAAAB"}

4dacEB52_RzDB83fAAAA: Received packet MESSAGE data 2["sum",{"numbers":[1,2]}]

received event "sum" from GOGFG4v_gCrobHcFAAAB [/]

emitting event "sum_result" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["sum_result",{"result":3}]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

GOGFG4v_gCrobHcFAAAB 3

4dacEB52_RzDB83fAAAA: Received packet MESSAGE data 2["SubAdd",{"fysymbol":"NSE:SBIN-EQ","resolution":"1"}]

received event "SubAdd" from GOGFG4v_gCrobHcFAAAB [/]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

SubAdd isConnected

sid,fysymbol= ('GOGFG4v_gCrobHcFAAAB', 'NSE:SBIN-EQ')

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

emit_data=0~NSE~SBIN~EQ~1652259910~471.1~471.15~470.85~471.1~6627

emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259910~471.1~471.15~470.85~471.1~6627"]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

emit_data=0~NSE~SBIN~EQ~1652259911~471.1~471.15~470.85~471.15~6757

emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259911~471.1~471.15~470.85~471.15~6757"]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

emit_data=0~NSE~SBIN~EQ~1652259912~471.1~471.15~470.85~471.15~9400

emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259912~471.1~471.15~470.85~471.15~9400"]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

emit_data=0~NSE~SBIN~EQ~1652259913~471.1~471.15~470.85~471.15~9450

emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259913~471.1~471.15~470.85~471.15~9450"]

emitting event "reconnect" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["reconnect",{"result":1}]

emit_data=0~NSE~SBIN~EQ~1652259914~471.1~471.15~470.85~471.15~9520

emitting event "m" to GOGFG4v_gCrobHcFAAAB [/]

4dacEB52_RzDB83fAAAA: Sending packet MESSAGE data 2["m","0~NSE~SBIN~EQ~1652259914~471.1~471.15~470.85~471.15~9520"]

```

The client receives the ```sum_result``` and ```reconnect``` message from ```sum``` event. However, it isn't receiving the (second) ```reconnect``` and ```m``` message in the ```SubAdd``` event which is evident from message logs in devtools.

Logs of devtools

So what and where should I make the changes so that client whould receive all messages from all events.

Thank You!!

client.js

```

const socket = io('http://localhost:9999', {

transports: ['websocket', 'polling', 'flashsocket']

});

const channelToSubscription = new Map();

console.log('ChannelToSubscription=',channelToSubscription ),

socket.on('connect', () => {

console.log('[socket] Connected');

socket.emit('sum',{numbers:[1,2]});

});

socket.on('sum_result', (data) =>{

console.log(data.result);

});

socket.on('reconnect', (data) =>{

console.log(data.result);

});

socket.on('disconnect', (reason) => {

console.log('[socket] Disconnected:', reason);

});

socket.on('error', (error) => {

console.log('[socket] Error:', error);

});

socket.on('m', data => {

console.log('[socket] Message:', data);

const [

eventTypeStr,

exchange,

fromSymbol,

toSymbol,

tradeTimeStr,

openPrice,

highPrice,

lowPrice,

closePrice,

volumeStr,

] = data.split('~');

//console.log(lowPrice);

//if (parseInt(eventTypeStr) !== 0) {

// // skip all non-TRADE events

// return;

//}

const tradePrice = parseFloat(openPrice);

const tradeTime = parseInt(tradeTimeStr)*1000;

const openStr = parseFloat(openPrice);

const highStr = parseFloat(highPrice);

const lowStr = parseFloat(lowPrice);

const closeStr = parseFloat(closePrice);

const volume_Str = parseFloat(volumeStr);

//console.log(volume_Str);

const channelString = `${exchange}:${fromSymbol}-${toSymbol}`;

const subscriptionItem = channelToSubscription.get(channelString);

if (subscriptionItem === undefined) {

return;

}

``` | closed | 2022-05-11T09:51:11Z | 2022-05-11T10:11:24Z | https://github.com/miguelgrinberg/python-socketio/issues/923 | [] | akshay7892 | 1 |

amidaware/tacticalrmm | django | 1,847 | allow variables to be used in alert templates recipient | **Is your feature request related to a problem? Please describe.**

Currently only manual work is possible when sending email alerts to different recipients and when recipients can be as many a 1 per devices/site the number of alert template can quickly get out of control

**Describe the solution you'd like**

allow to use all the possible variables Global/client/site/agent as a recipient for an email alert template.

**Describe alternatives you've considered**

a butload of different template

**Additional context**

| open | 2024-04-17T09:19:32Z | 2024-04-17T09:19:32Z | https://github.com/amidaware/tacticalrmm/issues/1847 | [] | P6g9YHK6 | 0 |

datadvance/DjangoChannelsGraphqlWs | graphql | 95 | how to fix it | WebSocket client does not request for the subprotocol graphql-ws! | closed | 2022-09-30T06:55:12Z | 2022-10-14T07:29:31Z | https://github.com/datadvance/DjangoChannelsGraphqlWs/issues/95 | [] | George191 | 0 |

HIT-SCIR/ltp | nlp | 541 | pyltp和新版ltp之间的差别在哪里呢 | 最近在做事件抽取的任务,发现很多项目都是用pyltp写的,用ltp4重写方法的时候找不到pyltp输出结果,可以出一个教程么 | closed | 2021-10-29T03:09:20Z | 2022-09-12T06:49:20Z | https://github.com/HIT-SCIR/ltp/issues/541 | [] | jwc19890114 | 1 |

bloomberg/pytest-memray | pytest | 10 | Ability to persist the binary dump post test run | ## Feature Request

I'd like to allow the user to explicitly request persisting the binary files via a `--memray-persist-bin` flag that can take a folder as an argument. This would change the folder where the temporary files are stored from temporary to the passed in argument. To help identify which binary belongs to which test I'd also propose to add the test name (`pyfuncitem.nodeid` - might need to normalize the test name for characters not allowed on the path) as a suffix (after the uuid).

This would allow users to do further analysis of the binary files after the run (such as generating a flamegraph). This could be also used for #7. Most often I imagine this using in form of:

```

pytest -k test_memory_usage --memray --memray-persist-bin ./memray-bins

memray flamegraph ./memray-bins/21321asad213421.test_memory_usage.bin

```

**Describe alternatives you've considered**

Users could rewrite their test as a python module invocation and use memray directly. The downside is that making fixtures work as function calls can be complicated.

| closed | 2022-05-10T20:51:13Z | 2022-05-17T16:36:50Z | https://github.com/bloomberg/pytest-memray/issues/10 | [] | gaborbernat | 0 |

matplotlib/matplotlib | data-science | 29,204 | [Bug]: twiny in log scale can't set `tick_params(top=False)` | ### Bug summary

If `ax2=ax.twiny()` in linear scale, `ax2.tick_params(top=False)` works fine, but failed when set `ax2.set_xscale('log')` .

### Code for reproduction

```Python

import numpy as np

import matplotlib.pyplot as plt

fig, ax = plt.subplots(1, 1)

x = np.arange(0.1, 100, 0.1)

y = np.sin(x)

xlim = [1e-1, 1e2]

ax.plot(x, y)

ax.set_xlim(xlim)

ax.set_xscale('log')

ax2 = ax.twiny()

ax2.set_xscale('log')

ax2.set_xlim(xlim)

ax2.tick_params(top=False, labeltop=False) # top=False not work

```

### Actual outcome

Ticks on top still exist.

### Expected outcome

While when I comment `ax2.set_xscale('log')`, ticks on top disappear.

### Additional information

+ How I triggerd this bug?

I want to plot two lines in log scale, with same x-limits but different x-ticks and x-ticklabels, and there will be two rows of x-ticklabels shared the same x-axis.

### Operating system

Ubuntu

### Matplotlib Version

3.9.2

### Matplotlib Backend

module://matplotlib_inline.backend_inline

### Python version

Python 3.10.14

### Jupyter version

4.2.5

### Installation

pip | closed | 2024-11-29T02:54:11Z | 2024-11-29T16:27:08Z | https://github.com/matplotlib/matplotlib/issues/29204 | [

"Community support"

] | Dengda98 | 1 |

davidsandberg/facenet | computer-vision | 756 | ImportError: No module named src.generative.models.dfc_vae | when i have execute this command on Ubuntu ..

python src/generative/train_vae.py src.generative.models.dfc_vae ~/datasets/clf_mtcnnpy_128 src/models/inception_resnet_v1 ~/models/export/20170512-110547/model-20170512-110547.ckpt-250000 --models_base_dir ~/vae/ --reconstruction_loss_type PERCEPTUAL --loss_features 'Conv2d_1a_3x3,Conv2d_2a_3x3,Conv2d_2b_3x3' --max_nrof_steps 50000 --batch_size 128 --latent_var_size 100 --initial_learning_rate 0.0002 --alfa 1.0 --beta 0.5

Error message occure

**ImportError: No module named src.generative.models.dfc_vae** | open | 2018-05-23T08:27:37Z | 2018-05-24T16:26:33Z | https://github.com/davidsandberg/facenet/issues/756 | [] | praveenkumarchandaliya | 1 |

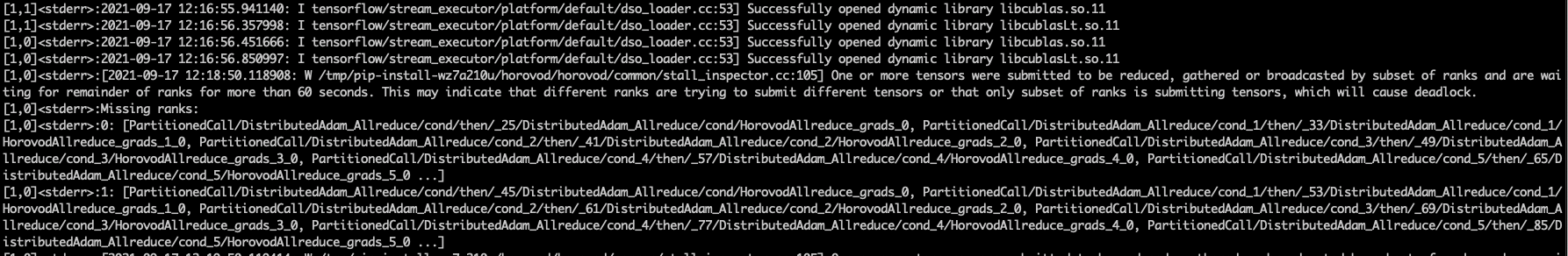

horovod/horovod | machine-learning | 3,170 | Stall ranks with tf.keras.callbacks.TensorBoard | **Environment:**

1. Framework: TensorFlow

2. Framework version: 2.5.0

3. Horovod version: 0.22.1

4. MPI version: 3.0.0

5. CUDA version: 11.2

6. NCCL version: 2.8.4-1+cuda10.2

7. Python version: 3.6.9

8. Spark / PySpark version:

9. Ray version:

10. OS and version: 18.04

11. GCC version: 7.5.0

12. CMake version: 3.10.2

**Checklist:**

1. Did you search issues to find if somebody asked this question before?

2. If your question is about hang, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/running.rst)?

3. If your question is about docker, did you read [this doc](https://github.com/horovod/horovod/blob/master/docs/docker.rst)?

4. Did you check if you question is answered in the [troubleshooting guide](https://github.com/horovod/horovod/blob/master/docs/troubleshooting.rst)?

**Bug report:**

Please describe erroneous behavior you're observing and steps to reproduce it.

Horovod found stall ranks after enable Tensorboard callback on [tensorflow2_keras_mnist.py](https://github.com/horovod/horovod/blob/master/examples/tensorflow2/tensorflow2_keras_mnist.py).

To reproduce it, update TenosorBoard parameters as follows:

```

callbacks.append(tf.keras.callbacks.TensorBoard('/tmp/log', update_freq=1000))

```

| open | 2021-09-17T12:33:32Z | 2022-07-01T02:52:25Z | https://github.com/horovod/horovod/issues/3170 | [

"bug"

] | acmore | 10 |

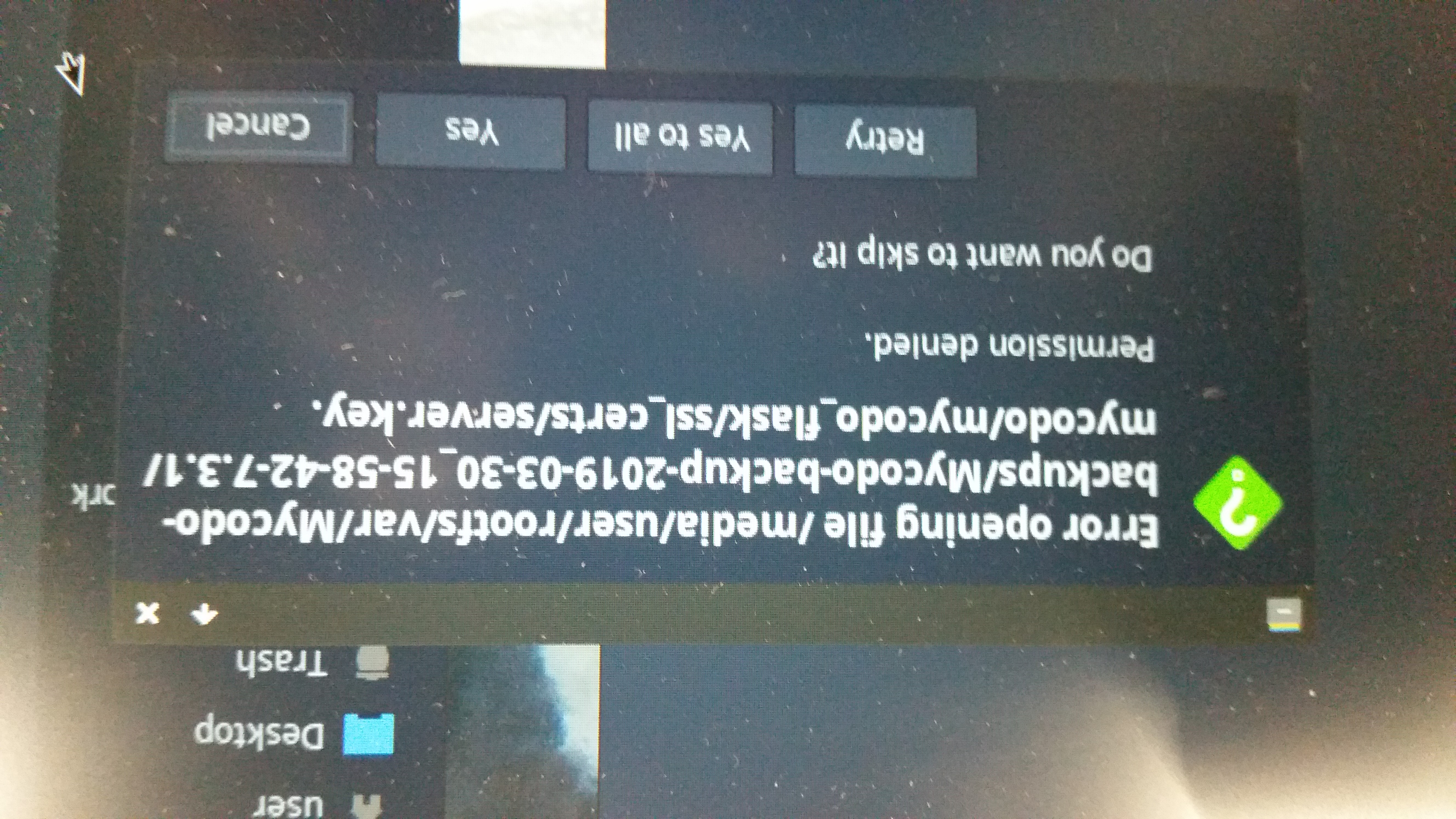

kizniche/Mycodo | automation | 644 | Backup transfer to another SD Card | How it is possible to transfer an backup from Card A to another SD Card B and restore it there?

I tried to copy the Backup but 1 file cant be copied.

| closed | 2019-03-31T00:11:01Z | 2019-04-02T21:18:01Z | https://github.com/kizniche/Mycodo/issues/644 | [] | RynFlutsch | 5 |

ymcui/Chinese-LLaMA-Alpaca-2 | nlp | 442 | Forgetting English During Chinese LLM Training | ### Check before submitting issues

- [X] Make sure to pull the latest code, as some issues and bugs have been fixed.

- [X] I have read the [Wiki](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki) and [FAQ section](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/FAQ) AND searched for similar issues and did not find a similar problem or solution

- [X] Third-party plugin issues - e.g., [llama.cpp](https://github.com/ggerganov/llama.cpp), [LangChain](https://github.com/hwchase17/langchain), [text-generation-webui](https://github.com/oobabooga/text-generation-webui), we recommend checking the corresponding project for solutions

### Type of Issue

Performance issue

### Base Model

Chinese-LLaMA-2 (7B/13B)

### Operating System

Linux

### Describe your issue in detail

First of all, I would like to express my gratitude for the amazing work you and your team have done in developing LLMs.

I have been using Llama2 for training models in different language, and I have noticed that the model doesn't work well in English after training. after training, it cannot produce English answers. I also checked the Chinese model, and it didn't answer in English either, even when I asked my question in English.

I was wondering if you have checked the forgetting in English and published the results, and if this forgetting was done on purpose.

Also I wanna know Is there anything we can do to avoid forgetting? I would appreciate any insights or suggestions you may have on this matter.

Thank you again for your hard work and dedication to advancing the field of language modeling.

Best regards,

### Dependencies (must be provided for code-related issues)

```

# Please copy-and-paste your dependencies here.

```

### Execution logs or screenshots

```

torchrun --nnodes 1 --nproc_per_node 4 run_clm_pt_with_peft.py \

--deepspeed ds_zero2_no_offload.json \

--model_name_or_path /home/hadoop/.cache/huggingface/hub/models--meta-llama--Llama-2-7b-hf/snapshots/6fdf2e60f86ff2481f2241aaee459f85b5b0bbb9/ \

--tokenizer_name_or_path /home/hadoop/abolfazl/Chinese-LLaMA-Alpaca-2/scripts/tokenizer/merged_tokenizer_hf \

--dataset_dir /home/hadoop/abolfazl/parvin2 \

--data_cache_dir /home/hadoop/abolfazl/Chinese-LLaMA-Alpaca-2/scripts/training/cache \

--validation_split_percentage 0.001 \

--per_device_train_batch_size 8 \

--do_train \

--seed $RANDOM \

--fp16 \

--num_train_epochs 1 \

--lr_scheduler_type cosine \

--learning_rate 2e-4 \

--warmup_ratio 0.001 \

--weight_decay 0.001 \

--logging_strategy steps \

--logging_steps 10 \

--save_strategy steps \

--save_total_limit 3 \

--save_steps 1000 \

--gradient_accumulation_steps 1 \

--preprocessing_num_workers 8 \

--block_size 128 \

--output_dir /home/hadoop/abolfazl/Chinese-LLaMA-Alpaca-2/out_pt_secondtry \

--overwrite_output_dir \

--ddp_timeout 30000 \

--logging_first_step True \

--lora_rank 64 \

--lora_alpha 16 \

--trainable "q_proj,v_proj,k_proj,o_proj,gate_proj,down_proj,up_proj" \

--lora_dropout 0.05 \

--modules_to_save "embed_tokens,lm_head" \

--torch_dtype float16 \

--load_in_kbits 4 \

--gradient_checkpointing \

--ddp_find_unused_parameters False``` | closed | 2023-12-05T13:01:06Z | 2024-01-14T06:47:58Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/issues/442 | [

"stale"

] | Abolfazl-kr | 11 |

sqlalchemy/alembic | sqlalchemy | 868 | ENUM with metadata is not translated to sql CREATE TYPE in autogenerate | Hi,

I know that ENUM autogeneration is not entirely complete and polished, but I think I might have encountered not something not working, but working incorrectly :)

**Describe the bug**

1. Autogenerate for postgres ENUM with `metadata=...` generates a migration with `Metadata(bind=None)` without importing `Metadata`, resulting in NameError when running migration.

2. The enum type is not created in sql if specified with `metadata=...`, even though it seems like a well-documented use-case in [sqlalchemy docs](https://docs.sqlalchemy.org/en/13/dialects/postgresql.html#sqlalchemy.dialects.postgresql.ENUM).

**Expected behavior**

- `from sqlalchemy import MetaData`

- Create the enum type in the database

**To Reproduce**

```py

from enum import Enum

from sqlalchemy import BigInteger, Column, MetaData, Table

from sqlalchemy.dialects.postgresql import ENUM

metadata = MetaData(schema="myschema")

class SomeEnum(str, Enum):

OPEN = "OPEN"

CLOSED = "CLOSED"

some_enum = ENUM(

SomeEnum,

metadata=metadata,

schema=metadata.schema,

)

some_table = Table(

"some_table",

metadata,

Column("id", BigInteger, primary_key=True),

Column("some_enum_value", some_enum),

)

```

Resulting migration file:

```py

"""initial

Revision ID: 653c623403f4

Revises:

Create Date: 2021-06-24 18:15:52.750889

"""

from alembic import op

import sqlalchemy as sa

from sqlalchemy.dialects import postgresql

# revision identifiers, used by Alembic.

revision = '653c623403f4'

down_revision = None

branch_labels = None

depends_on = None

def upgrade():

# ### commands auto generated by Alembic - please adjust! ###

op.create_table('some_table',

sa.Column('id', sa.BigInteger(), nullable=False),

sa.Column('some_enum_value', postgresql.ENUM('OPEN', 'CLOSED', name='someenum', schema='myschema', metadata=MetaData(bind=None)), nullable=True),

sa.PrimaryKeyConstraint('id'),

schema='myschema'

)

# ### end Alembic commands ###

def downgrade():

# ### commands auto generated by Alembic - please adjust! ###

op.drop_table('some_table', schema='myschema')

# ### end Alembic commands ###

```

**Error**

```

18:15 $ alembic upgrade head --sql

INFO [alembic.runtime.migration] Context impl PostgresqlImpl.

INFO [alembic.runtime.migration] Generating static SQL

INFO [alembic.runtime.migration] Will assume transactional DDL.

BEGIN;

CREATE TABLE myschema.alembic_version (

version_num VARCHAR(32) NOT NULL,

CONSTRAINT alembic_version_pkc PRIMARY KEY (version_num)

);

INFO [alembic.runtime.migration] Running upgrade -> 653c623403f4, initial

-- Running upgrade -> 653c623403f4

Traceback (most recent call last):

File "/home/.../bin/alembic", line 8, in <module>

sys.exit(main())

File "/home/.../lib/python3.8/site-packages/alembic/config.py", line 559, in main

CommandLine(prog=prog).main(argv=argv)

File "/home/.../lib/python3.8/site-packages/alembic/config.py", line 553, in main

self.run_cmd(cfg, options)

File "/home/.../lib/python3.8/site-packages/alembic/config.py", line 530, in run_cmd

fn(

File "/home/.../lib/python3.8/site-packages/alembic/command.py", line 293, in upgrade

script.run_env()

File "/home/.../lib/python3.8/site-packages/alembic/script/base.py", line 490, in run_env

util.load_python_file(self.dir, "env.py")

File "/home/.../lib/python3.8/site-packages/alembic/util/pyfiles.py", line 97, in load_python_file

module = load_module_py(module_id, path)

File "/home/.../lib/python3.8/site-packages/alembic/util/compat.py", line 184, in load_module_py

spec.loader.exec_module(module)

File "<frozen importlib._bootstrap_external>", line 783, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "migrations/env.py", line 90, in <module>

run_migrations_offline()

File "migrations/env.py", line 60, in run_migrations_offline

context.run_migrations()

File "<string>", line 8, in run_migrations

File "/home/.../lib/python3.8/site-packages/alembic/runtime/environment.py", line 813, in run_migrations

self.get_context().run_migrations(**kw)

File "/home/.../lib/python3.8/site-packages/alembic/runtime/migration.py", line 561, in run_migrations

step.migration_fn(**kw)

File "/home/.../migrations/versions/2021_06_24_653c623403f4_initial.py", line 23, in upgrade

sa.Column('some_enum_value', postgresql.ENUM('OPEN', 'CLOSED', name='someenum', schema='myschema', metadata=MetaData(bind=None)), nullable=True),

NameError: name 'MetaData' is not defined

```

Note that adding the missing import (`from sqlalchemy import MetaData`) results in a proper sql, but without the `CREATE TYPE`:

```

alembic upgrade head --sql

INFO [alembic.runtime.migration] Context impl PostgresqlImpl.

INFO [alembic.runtime.migration] Generating static SQL

INFO [alembic.runtime.migration] Will assume transactional DDL.

BEGIN;

CREATE TABLE myschema.alembic_version (

version_num VARCHAR(32) NOT NULL,

CONSTRAINT alembic_version_pkc PRIMARY KEY (version_num)

);

INFO [alembic.runtime.migration] Running upgrade -> 653c623403f4, initial

-- Running upgrade -> 653c623403f4

CREATE TABLE myschema.some_table (

id BIGSERIAL NOT NULL,

some_enum_value myschema.someenum,

PRIMARY KEY (id)

);

INSERT INTO myschema.alembic_version (version_num) VALUES ('653c623403f4');

COMMIT;

```

**Versions.**

- OS: Ubuntu 20.04.2

- Python: 3.8.5

- Alembic: 1.6.5

- SQLAlchemy: 1.3.24 (I can't upgrade to 1.4 yet)

- Database: PostgreSQL 12.7

- DBAPI: psycopg2-binary 2.8.6

**Additional context**

Note that removing the `metadata` argument makes the issue disappear:

```py

some_enum = ENUM(

SomeEnum,

# metadata=metadata,

schema=metadata.schema,

)

```

gives no `metadata=Metadata(bind=None)`, so no NameError is given as a result:

```py

sa.Column('some_enum_value', postgresql.ENUM('OPEN', 'CLOSED', name='someenum', schema='myschema'), nullable=True),

```

Also, the resulting SQL is as expected:

```sql

-- Running upgrade -> c2d662ec840a

CREATE TYPE myschema.someenum AS ENUM ('OPEN', 'CLOSED');

CREATE TABLE myschema.some_table (

id BIGSERIAL NOT NULL,

some_enum_value myschema.someenum,

PRIMARY KEY (id)

);

```

According to the [sqlalchemy docs](https://docs.sqlalchemy.org/en/13/dialects/postgresql.html#sqlalchemy.dialects.postgresql.ENUM):

>To use a common enumerated type between multiple tables, the best practice is to declare the Enum or ENUM independently, and associate it with the MetaData object itself:

>...

>If we specify checkfirst=True, the individual table-level create operation will check for the ENUM and create if not exists:

So I guess in scenario where I provided the `metadata` argument, it *is expected* that the enum would not be created by sqlalchemy **table create** unless asked to with `checkfirst=True`.

However I think that alembic should either produce the same SQL statements in both cases (metadata argument to enum specified or not), or at least inform in the docs how to enforce the checkfirst-like behaviour.

| open | 2021-06-24T19:33:12Z | 2021-06-25T08:44:41Z | https://github.com/sqlalchemy/alembic/issues/868 | [

"duplicate",

"question",

"autogenerate for enums"

] | bluefish6 | 1 |

smiley/steamapi | rest-api | 43 | Adding pleyerstats attribute to a game entity. | Currently, a lot of information dropped out of GetUserStatsForGame response. Only achievements are used.

But there is more useful information for some games in 'playerstats'.

Was trying to create a pull request with that tweak :) | closed | 2017-02-19T03:16:31Z | 2019-04-11T02:05:03Z | https://github.com/smiley/steamapi/issues/43 | [

"question",

"steamworks"

] | theSimplex | 4 |

deepspeedai/DeepSpeed | machine-learning | 5,776 | [BUG] Universal checkpoint conversion - "Cannot find layer_01* files in there" | I am tryin to use the universal checkpoint conversion code, `python ds_to_universal.py `, but I get this error that can't find a layer number. I'm not sure why, but I am missing layer 01 and 16, my code just skips creating them when saving the checkpoint. Deepspeed ckpt conversion is expecting them, and therefore breaks. Does that sound familiar to anyone? Thanks in advance!

I am using GPT Neox codebase, and have Deepspeed 0.14.4 installed.

Error:

```

.../global_step4 seems a bogus DeepSpeed checkpoint folder: Cannot find layer_01* files in there.

```

Here are the files in my save directory:

```

bf16_zero_pp_rank_0_mp_rank_00_optim_states.pt layer_04-model_00-model_states.pt layer_09-model_00-model_states.pt layer_14-model_00-model_states.pt

configs layer_05-model_00-model_states.pt layer_10-model_00-model_states.pt layer_15-model_00-model_states.pt

layer_00-model_00-model_states.pt layer_06-model_00-model_states.pt layer_11-model_00-model_states.pt layer_17-model_00-model_states.pt

layer_02-model_00-model_states.pt layer_07-model_00-model_states.pt layer_12-model_00-model_states.pt mp_rank_00_model_states.pt

layer_03-model_00-model_states.pt layer_08-model_00-model_states.pt layer_13-model_00-model_states.pt

``` | open | 2024-07-17T07:06:08Z | 2024-09-09T12:09:31Z | https://github.com/deepspeedai/DeepSpeed/issues/5776 | [

"bug",

"training"

] | exnx | 3 |

dask/dask | scikit-learn | 11,343 | Bug: Can't perform a (meaningful) "outer" concatenation with dask-expr on `axis=1` | <!-- Please include a self-contained copy-pastable example that generates the issue if possible.

Please be concise with code posted. See guidelines below on how to provide a good bug report:

- Craft Minimal Bug Reports http://matthewrocklin.com/blog/work/2018/02/28/minimal-bug-reports

- Minimal Complete Verifiable Examples https://stackoverflow.com/help/mcve

Bug reports that follow these guidelines are easier to diagnose, and so are often handled much more quickly.

-->

**Describe the issue**: I *think* this is a bug, because the behaviour is inconsistent between dask-expr and "original-dask". But it is for a bit of an edge case that I can't see coming up a whole lot.

Concatenating two dataframes of different length throws an "assertion error" rather than following the previous behaviour of discarding non-matching indexes or null-filling those indexes depending on the "join" keyword. (I don't feel like that's a very clear way of phrasing it, sorry! The example below should help)

**Minimal Complete Verifiable Example**:

```python

import dask.dataframe as dd

one = dd.from_dict({"a": [1, 2, 3], "b": [1, 2, 3]}, npartitions=1)

two = dd.from_dict({"c": [1, 2]}, npartitions=1)

dd.concat([one, two], axis=1, join="outer")

# previous result from old versions of dask (i.e. 2024.2)

# a b c

# 0 1 1 1.0

# 1 2 2 2.0

# 2 3 3 NaN

# Now throws an AssertionError

dd.concat([one, two], axis=1, join="inner")

# previous result from old versions of dask (i.e. 2024.2)

# a b c

# 0 1 1 1.0

# 1 2 2 2.0

# now also throws an AssertionError

```

**Anything else we need to know?**:

The error stack makes it has a good hint on where/why this happens `dask_expr/_expr.py` has this line:

`assert arg.divisions == dependencies[0].divisions`

Which in the case of those above two concats, I guess *isn't* the case, either one needs to be null filled, or the other needs to be shortened, or else they won't match up.

**Environment**:

- Dask version: 2024.8.0

- Python version: 3.10.12

- Operating System: Ubuntu (Jammy Jellyfish)

- Install method (conda, pip, source): pip

| closed | 2024-08-23T13:33:03Z | 2024-08-26T15:36:10Z | https://github.com/dask/dask/issues/11343 | [

"dask-expr"

] | benrutter | 1 |

huggingface/text-generation-inference | nlp | 2,853 | Entire system crashes when get to warm up model | ### System Info

```

model=meta-llama/Llama-3.3-70B-Instruct

# share a volume with the Docker container to avoid downloading weights every run

volume=/srv/ai/data/tgi

docker run --gpus "1,2,3,4" --shm-size 1g -e HF_TOKEN=[TOKEN] -p 8080:80 -v $volume:/data \

ghcr.io/huggingface/text-generation-inference:3.0.0 \

--model-id $model \

--quantize eetq \

--cuda-memory-fraction 0.95

```

4x 3090 tis, epyc cpu, 256gb ram

### Information

- [X] Docker

- [ ] The CLI directly

### Tasks

- [ ] An officially supported command

- [ ] My own modifications

### Reproduction

Run docker command above

```

2024-12-17T17:23:53.961980Z INFO text_generation_launcher: Using prefill chunking = True

2024-12-17T17:23:54.547663Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T17:23:54.547663Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T17:23:54.558361Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T17:23:54.572348Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T17:23:54.821433Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-1

2024-12-17T17:23:54.821492Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-3

2024-12-17T17:23:54.821530Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-2

2024-12-17T17:23:54.847944Z INFO shard-manager: text_generation_launcher: Shard ready in 150.41845764s rank=3

2024-12-17T17:23:54.858639Z INFO shard-manager: text_generation_launcher: Shard ready in 150.432820265s rank=1

2024-12-17T17:23:54.872643Z INFO shard-manager: text_generation_launcher: Shard ready in 150.439607673s rank=2

2024-12-17T17:23:55.047221Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-0

2024-12-17T17:23:55.048286Z INFO shard-manager: text_generation_launcher: Shard ready in 150.622573521s rank=0

2024-12-17T17:23:55.115403Z INFO text_generation_launcher: Starting Webserver

2024-12-17T17:23:55.210971Z INFO text_generation_router_v3: backends/v3/src/lib.rs:125: Warming up model

2024-12-17T17:23:55.231460Z INFO text_generation_launcher: Using optimized Triton indexing kernels.

```

After this the server dies and have to manuall power cycle

Full logs trying smaller model and tried disabling cuda-graphs

```

2024-12-17T18:03:27.087401Z INFO text_generation_launcher: Args {

model_id: "Qwen/Qwen2.5-32B-Instruct",

revision: None,

validation_workers: 2,

sharded: None,

num_shard: None,

quantize: Some(

Eetq,

),

speculate: None,

dtype: None,

kv_cache_dtype: None,

trust_remote_code: false,

max_concurrent_requests: 128,

max_best_of: 2,

max_stop_sequences: 4,

max_top_n_tokens: 5,

max_input_tokens: None,

max_input_length: None,

max_total_tokens: None,

waiting_served_ratio: 0.3,

max_batch_prefill_tokens: None,

max_batch_total_tokens: None,

max_waiting_tokens: 20,

max_batch_size: None,

cuda_graphs: Some(

[

0,

],

),

hostname: "4eee9dca0df9",

port: 80,

shard_uds_path: "/tmp/text-generation-server",

master_addr: "localhost",

master_port: 29500,

huggingface_hub_cache: None,

weights_cache_override: None,

disable_custom_kernels: false,

cuda_memory_fraction: 0.95,

rope_scaling: None,

rope_factor: None,

json_output: false,

otlp_endpoint: None,

otlp_service_name: "text-generation-inference.router",

cors_allow_origin: [],

api_key: None,

watermark_gamma: None,

watermark_delta: None,

ngrok: false,

ngrok_authtoken: None,

ngrok_edge: None,

tokenizer_config_path: None,

disable_grammar_support: false,

env: false,

max_client_batch_size: 4,

lora_adapters: None,

usage_stats: On,

payload_limit: 2000000,

enable_prefill_logprobs: false,

}

2024-12-17T18:03:27.088023Z INFO hf_hub: Token file not found "/data/token"

2024-12-17T18:03:28.994330Z INFO text_generation_launcher: Using attention flashinfer - Prefix caching true

2024-12-17T18:03:28.994349Z INFO text_generation_launcher: Sharding model on 4 processes

2024-12-17T18:03:29.030950Z WARN text_generation_launcher: Unkown compute for card nvidia-geforce-rtx-3090-ti

2024-12-17T18:03:29.064926Z INFO text_generation_launcher: Default `max_batch_prefill_tokens` to 4096

2024-12-17T18:03:29.065078Z INFO download: text_generation_launcher: Starting check and download process for Qwen/Qwen2.5-32B-Instruct

2024-12-17T18:03:32.104130Z INFO text_generation_launcher: Files are already present on the host. Skipping download.

2024-12-17T18:03:32.680081Z INFO download: text_generation_launcher: Successfully downloaded weights for Qwen/Qwen2.5-32B-Instruct

2024-12-17T18:03:32.680348Z INFO shard-manager: text_generation_launcher: Starting shard rank=1

2024-12-17T18:03:32.680364Z INFO shard-manager: text_generation_launcher: Starting shard rank=0

2024-12-17T18:03:32.680439Z INFO shard-manager: text_generation_launcher: Starting shard rank=3

2024-12-17T18:03:32.686107Z INFO shard-manager: text_generation_launcher: Starting shard rank=2

2024-12-17T18:03:35.215815Z INFO text_generation_launcher: Using prefix caching = True

2024-12-17T18:03:35.215842Z INFO text_generation_launcher: Using Attention = flashinfer

2024-12-17T18:03:42.713034Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:03:42.714007Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:03:42.714678Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:03:42.721143Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:03:52.722256Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:03:52.723416Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:03:52.723960Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:03:52.730231Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:02.731685Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:02.733008Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:02.733511Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:02.739340Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:12.740983Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:12.742778Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:12.743260Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:12.748509Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:22.750201Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:22.752482Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:22.753057Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:22.757785Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:32.759340Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:32.762067Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:32.762852Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:32.767034Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:42.768492Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:42.771758Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:42.772535Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:42.776268Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:04:52.777706Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:04:52.781362Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:04:52.782289Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:04:52.785605Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:02.786995Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:02.790997Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:02.792054Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:05:02.794933Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:12.796209Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:12.800615Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:12.802012Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:05:12.804257Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:22.805536Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:22.810307Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:22.811833Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:05:22.813416Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:32.814759Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:32.819792Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:32.821590Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:05:32.821834Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:42.824027Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:42.829566Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:42.830560Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:42.831422Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:05:52.833387Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=3

2024-12-17T18:05:52.839573Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=0

2024-12-17T18:05:52.840175Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=2

2024-12-17T18:05:52.841278Z INFO shard-manager: text_generation_launcher: Waiting for shard to be ready... rank=1

2024-12-17T18:06:01.763800Z INFO text_generation_launcher: Using prefill chunking = True

2024-12-17T18:06:02.627022Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-1

2024-12-17T18:06:02.627076Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-3

2024-12-17T18:06:02.627110Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-0

2024-12-17T18:06:02.642621Z INFO shard-manager: text_generation_launcher: Shard ready in 149.940971364s rank=3

2024-12-17T18:06:02.649583Z INFO shard-manager: text_generation_launcher: Shard ready in 149.948711278s rank=0

2024-12-17T18:06:02.650706Z INFO shard-manager: text_generation_launcher: Shard ready in 149.949875248s rank=1

2024-12-17T18:06:02.848613Z INFO text_generation_launcher: Server started at unix:///tmp/text-generation-server-2

2024-12-17T18:06:02.849891Z INFO shard-manager: text_generation_launcher: Shard ready in 150.143446295s rank=2

2024-12-17T18:06:02.909856Z INFO text_generation_launcher: Starting Webserver

2024-12-17T18:06:03.001599Z INFO text_generation_router_v3: backends/v3/src/lib.rs:125: Warming up model

2024-12-17T18:06:03.023245Z INFO text_generation_launcher: Using optimized Triton indexing kernels.

```

### Expected behavior

No system crash | open | 2024-12-17T18:02:57Z | 2024-12-18T04:46:58Z | https://github.com/huggingface/text-generation-inference/issues/2853 | [] | ad-astra-video | 1 |

jumpserver/jumpserver | django | 14,951 | [Bug] jms_core sleep 365 days | ### Product Version

v4.7.0-ce

### Product Edition

- [x] Community Edition

- [ ] Enterprise Edition

- [ ] Enterprise Trial Edition

### Installation Method

- [ ] Online Installation (One-click command installation)

- [x] Offline Package Installation

- [ ] All-in-One

- [ ] 1Panel

- [ ] Kubernetes

- [ ] Source Code

### Environment Information

os: centos 7

kernel: 5.4.278-1.el7.elrepo.x86_64

### 🐛 Bug Description

install stucks because of the sleep 365 days

### Recurrence Steps

1. alter the config-example.txt

2. run ./jmsctl.sh install

3. it creates the jms_core and stuck

4. docker logs -f jms_core shows sleep 365 days

5. both try scripts/images and the dockerhub jumpserver/core:v4.7.0-ce

### Expected Behavior

_No response_

### Additional Information

_No response_

### Attempted Solutions

_No response_ | closed | 2025-02-28T06:57:11Z | 2025-03-04T06:27:12Z | https://github.com/jumpserver/jumpserver/issues/14951 | [

"🐛 Bug"

] | spiritman1990 | 8 |

horovod/horovod | tensorflow | 2,976 | The speed of multi-node is slower than single-node | I used pytorch and Horovod with two 1080ti GPU on one machine.

When I use single-node, each epoch step will take 3s, but it will take 7s when I use two-node.

Is it normal for the data exchange between nodes to take so long? | closed | 2021-06-11T09:42:32Z | 2024-01-31T03:54:50Z | https://github.com/horovod/horovod/issues/2976 | [] | walt676 | 1 |

ludwig-ai/ludwig | data-science | 3,430 | Throws 'IndexError: Dimension specified as 0 but tensor has no dimensions' during training. | **Describe the bug**

I am trying to run the LLM_few-shot example (https://github.com/ludwig-ai/ludwig/blob/master/examples/llm_few_shot_learning/simple_model_training.py) on google colab and getting the following error in the training stage.

==== Log ====

```

INFO:ludwig.models.llm:Done.

INFO:ludwig.utils.tokenizers:Loaded HuggingFace implementation of facebook/opt-350m tokenizer

INFO:ludwig.trainers.trainer:Tuning batch size...

INFO:ludwig.utils.batch_size_tuner:Tuning batch size...

INFO:ludwig.utils.batch_size_tuner:Exploring batch_size=1

INFO:ludwig.utils.checkpoint_utils:Successfully loaded model weights from /tmp/tmpnqj9shge/latest.ckpt.

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

[<ipython-input-15-cbbfc30da30b>](https://localhost:8080/#) in <cell line: 6>()

4 preprocessed_data, # tuple Ludwig Dataset objects of pre-processed training data

5 output_directory, # location of training results stored on disk

----> 6 ) = model.train(

7 dataset=df,experiment_name="simple_experiment", model_name="simple_model", skip_save_processed_input=False)

8

10 frames

[/usr/local/lib/python3.10/dist-packages/ludwig/models/llm.py](https://localhost:8080/#) in _remove_left_padding(self, input_ids_sample)

629 else:

630 pad_idx = 0

--> 631 input_ids_sample_no_padding = input_ids_sample[pad_idx + 1 :]

632

633 # Start from the first BOS token

IndexError: Dimension specified as 0 but tensor has no dimensions

```

====End of Log ====

**Config is as follows**

```

config = yaml.unsafe_load(

"""

model_type: llm

model_name: facebook/opt-350m

generation:

temperature: 0.1

top_p: 0.75

top_k: 40

num_beams: 4

max_new_tokens: 64

prompt:

task: "Classify the sample input as either negative, neutral, or positive."

retrieval:

type: semantic

k: 3

model_name: paraphrase-MiniLM-L3-v2

input_features:

-

name: review

type: text

output_features:

-

name: label

type: category

preprocessing:

fallback_label: "neutral"

decoder:

type: category_extractor

match:

"negative":

type: contains

value: "positive"

"neural":

type: contains

value: "neutral"

"positive":

type: contains

value: "positive"

preprocessing:

split:

type: fixed

trainer:

type: finetune

epochs: 2

"""

)

```

**Environment (please complete the following information):**

OS: Colab

Python version 3.10

Ludwig version 0.8

**Additional context**

| closed | 2023-06-05T09:51:38Z | 2024-10-18T13:36:14Z | https://github.com/ludwig-ai/ludwig/issues/3430 | [] | chayanray | 6 |

Miksus/rocketry | automation | 227 | Conditions: cron "0/20 * * * *" is not same as "*/20 * * * *" | **Describe the bug**

"0/20 * * * *" cron pattern launches my tasks hourly while "*/20 * * * *" launches tasks every 20 minutes

There is a screenshot of my log stats from heroku for 0/20 * * * * pattern

there is one for */20 * * * *

**Expected behavior**

I expect these patterns to behave in the same way

**Desktop (please complete the following information):**

- OS: Windows 10 Professional

- Python version: 3.11.6

requirements.txt:

pydantic==1.10.10

fastapi

rocketry

requests

boto3

uvicorn[standard]

| closed | 2023-11-15T13:57:31Z | 2023-12-14T09:32:03Z | https://github.com/Miksus/rocketry/issues/227 | [

"bug"

] | nikitazavadsky | 2 |

yezz123/authx | pydantic | 560 | ♻️ refactor error handling | closed | 2024-03-30T23:49:05Z | 2024-04-04T02:17:15Z | https://github.com/yezz123/authx/issues/560 | [

"enhancement",

"v1"

] | yezz123 | 0 | |

gradio-app/gradio | deep-learning | 10,665 | Gradio Microphone recording not working | ### Describe the bug

1. Go to https://www.gradio.app/guides/real-time-speech-recognition

2. Record yourself under https://www.gradio.app/guides/real-time-speech-recognition#2-create-a-full-context-asr-demo-with-transformers

3. Hit submit after website hangs on you

4. The waveform is not as recorded and the output text is not what you said.

Issues

- performance issue with Gradio Microphone. Website will not be responsive

- The waveform is not what I recorded.

Reporting this for gradio 5.17.1. There are reports for other versions of gradio as well.

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```python

import gradio as gr

from openai import OpenAI

OPEN_AI_API_KEY = "use your own"

def transcribe(audio):

text = ""

client = OpenAI(api_key=OPEN_AI_API_KEY)

try:

with open(audio, "rb") as audio_file:

text = client.audio.transcriptions.create(

file = audio_file,

response_format="text",

model = "whisper-1",

language = "en",

)